# 1 Scaling by Thinking in Continuous Space

spacing=nonfrench

Scaling up Test-Time Compute with Latent Reasoning: A Recurrent Depth Approach

Jonas Geiping 1 Sean McLeish 2 Neel Jain 2 John Kirchenbauer 2 Siddharth Singh 2 Brian R. Bartoldson 3 Bhavya Kailkhura 3 Abhinav Bhatele 2 Tom Goldstein 2 footnotetext: 1 ELLIS Institute Tübingen, Max-Planck Institute for Intelligent Systems, Tübingen AI Center 2 University of Maryland, College Park 3 Lawrence Livermore National Laboratory. Correspondence to: Jonas Geiping, Tom Goldstein < jonas@tue.ellis.eu, tomg@umd.edu >.

Abstract

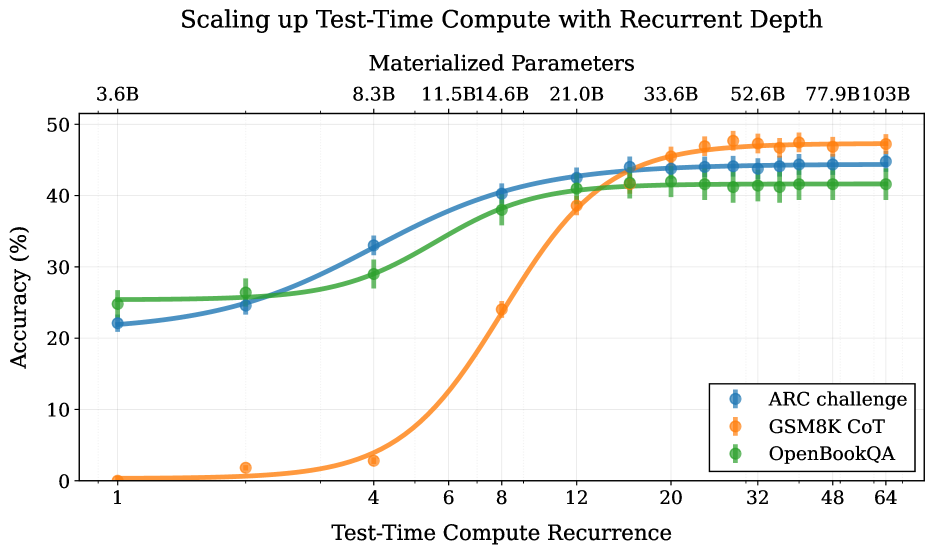

We study a novel language model architecture that is capable of scaling test-time computation by implicitly reasoning in latent space. Our model works by iterating a recurrent block, thereby unrolling to arbitrary depth at test-time. This stands in contrast to mainstream reasoning models that scale up compute by producing more tokens. Unlike approaches based on chain-of-thought, our approach does not require any specialized training data, can work with small context windows, and can capture types of reasoning that are not easily represented in words. We scale a proof-of-concept model to 3.5 billion parameters and 800 billion tokens. We show that the resulting model can improve its performance on reasoning benchmarks, sometimes dramatically, up to a computation load equivalent to 50 billion parameters.

Model: huggingface.co/tomg-group-umd/huginn-0125 Code and Data: github.com/seal-rg/recurrent-pretraining

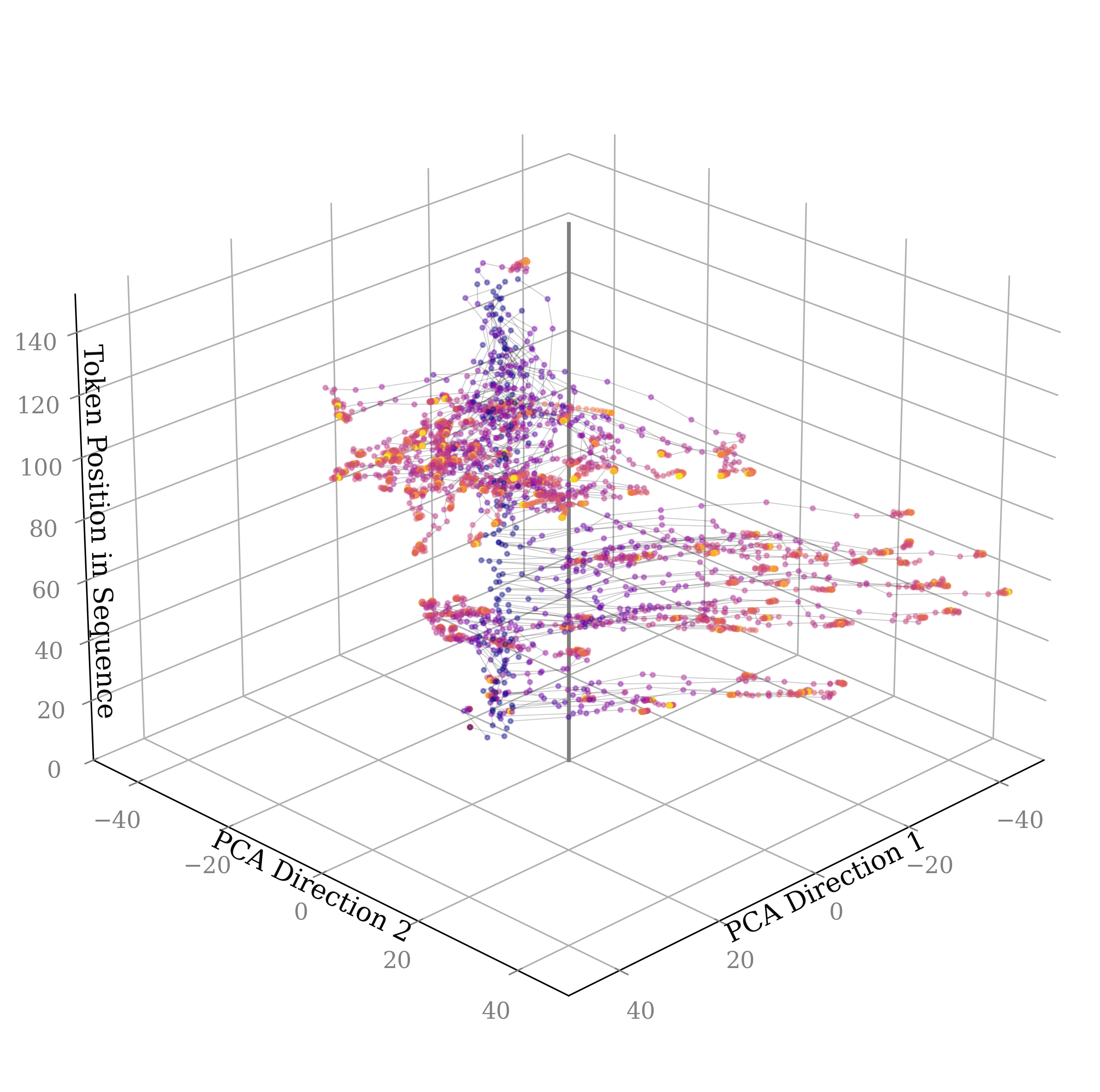

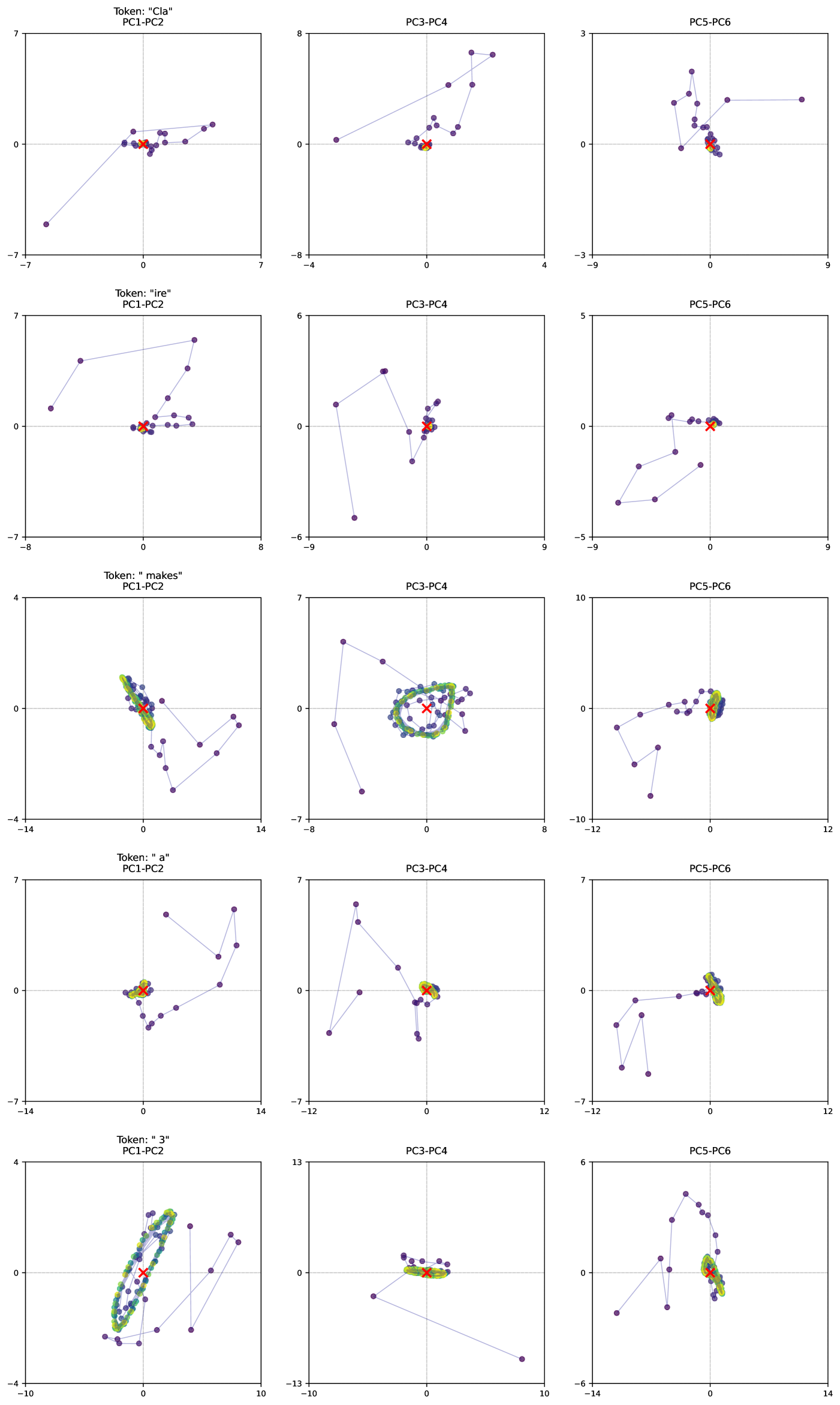

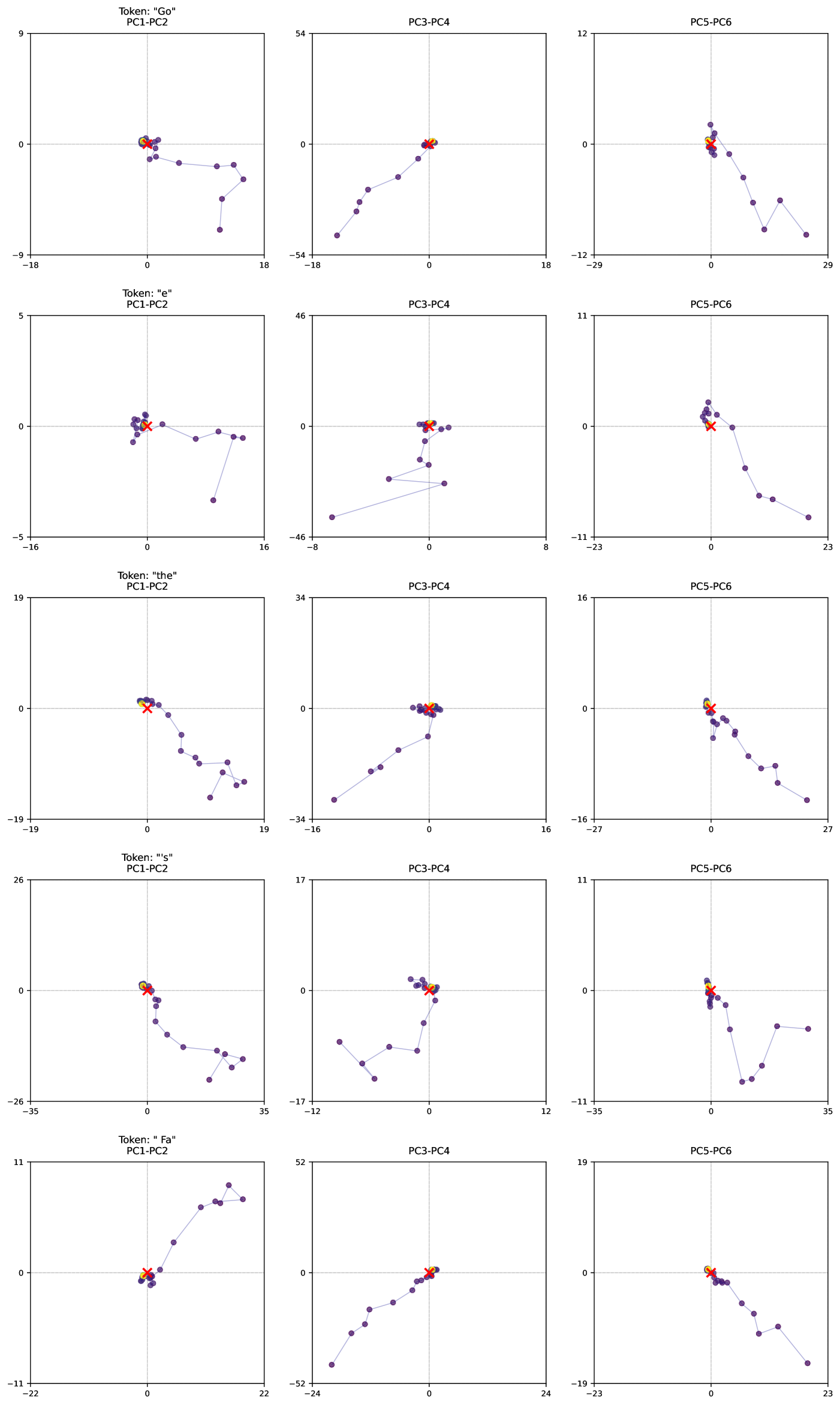

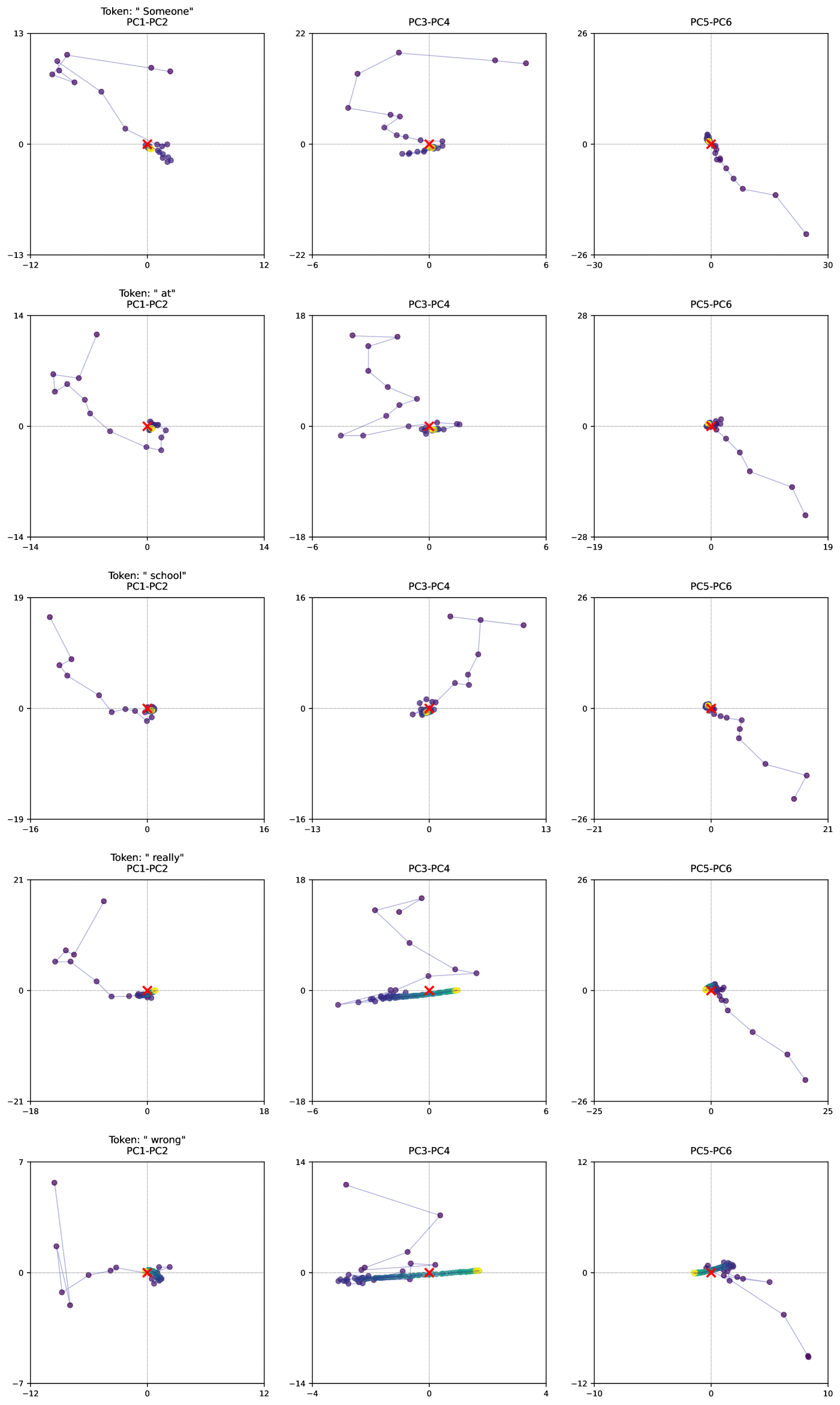

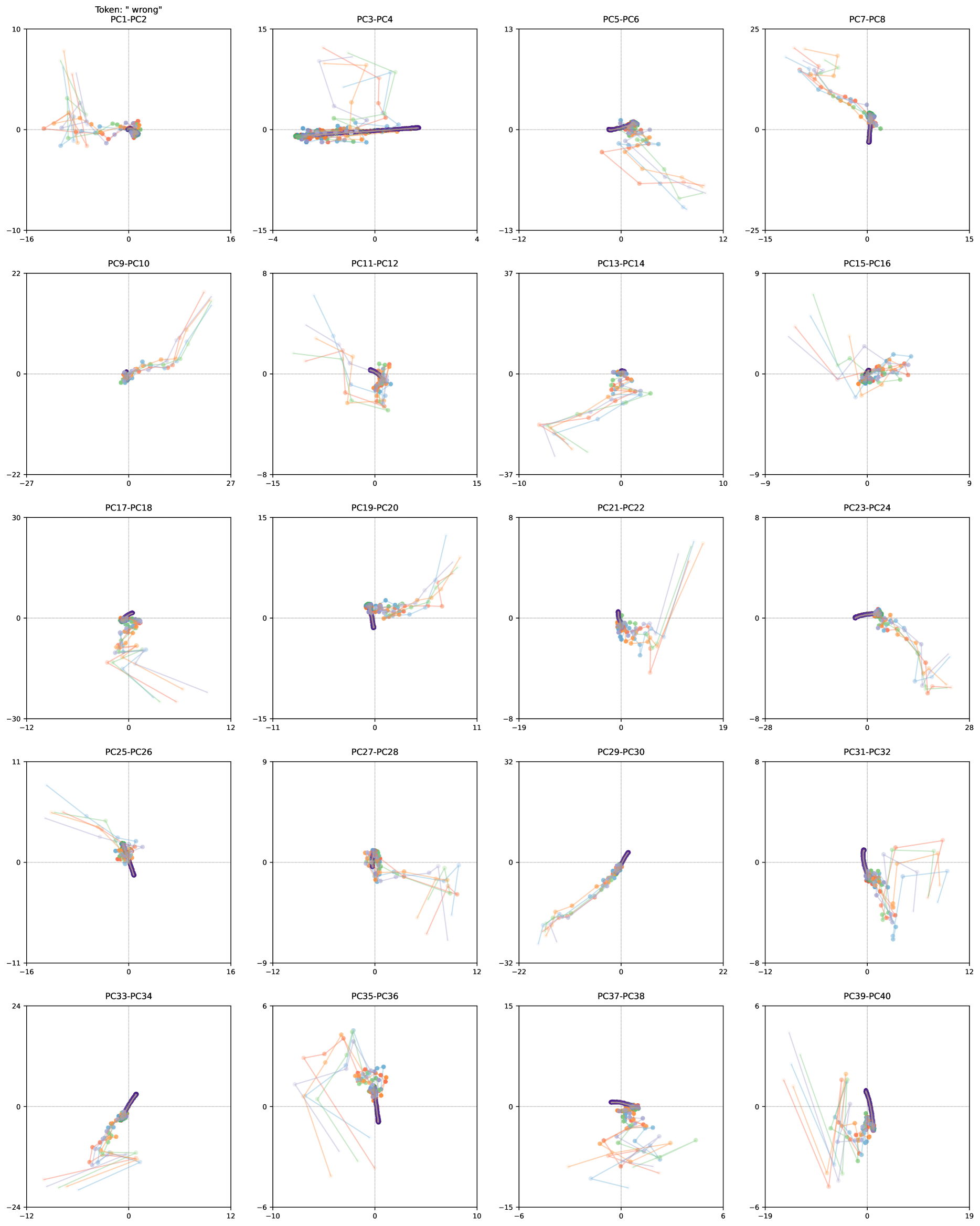

Humans naturally expend more mental effort solving some problems than others. While humans are capable of thinking over long time spans by verbalizing intermediate results and writing them down, a substantial amount of thought happens through complex, recurrent firing patterns in the brain, before the first word of an answer is uttered.

Early attempts at increasing the power of language models focused on scaling model size, a practice that requires extreme amounts of data and computation. More recently, researchers have explored ways to enhance the reasoning capability of models by scaling test time computation. The mainstream approach involves post-training on long chain-of-thought examples to develop the model’s ability to verbalize intermediate calculations in its context window and thereby externalize thoughts.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Scaling up Test-Time Compute with Recurrent Depth

### Overview

The chart illustrates the relationship between test-time compute recurrence (x-axis) and accuracy (y-axis) for three datasets: ARC challenge, GSM8K CoT, and OpenBookQA. Accuracy improves with increased compute recurrence, with distinct performance patterns across datasets.

### Components/Axes

- **X-axis**: "Test-Time Compute Recurrence" (logarithmic scale: 1, 4, 8, 12, 20, 32, 48, 64)

- **Y-axis**: "Accuracy (%)" (linear scale: 0–50%)

- **Legend**: Located in the bottom-right corner, mapping colors to datasets:

- Blue: ARC challenge

- Orange: GSM8K CoT

- Green: OpenBookQA

### Detailed Analysis

1. **ARC Challenge (Blue Line)**:

- Starts at ~22% accuracy at 1x compute.

- Gradually increases to ~45% at 64x compute.

- Steady upward trend with minimal fluctuations.

2. **GSM8K CoT (Orange Line)**:

- Begins near 0% at 1x compute.

- Sharp upward spike after 8x compute, reaching ~47% at 64x.

- Highest accuracy among datasets at higher compute levels.

3. **OpenBookQA (Green Line)**:

- Starts at ~25% at 1x compute.

- Gradual increase to ~42% at 64x compute.

- Flattens near 42% after 32x compute, showing diminishing returns.

### Key Observations

- **GSM8K CoT** demonstrates the most significant performance gains with increased compute, particularly after 8x recurrence.

- **ARC challenge** shows consistent improvement but plateaus near 45% at 64x compute.

- **OpenBookQA** exhibits the lowest accuracy gains, stabilizing at ~42% despite higher compute.

- All datasets converge near 45–47% accuracy at 64x compute, suggesting diminishing returns at extreme compute levels.

### Interpretation

The data suggests that scaling test-time compute improves accuracy across all datasets, but the rate of improvement varies. GSM8K CoT benefits disproportionately from increased compute, likely due to its reliance on iterative reasoning. OpenBookQA's plateau indicates potential architectural or data limitations. The convergence at high compute levels implies that further scaling may yield minimal additional gains, highlighting the importance of optimizing compute allocation rather than purely increasing it.

</details>

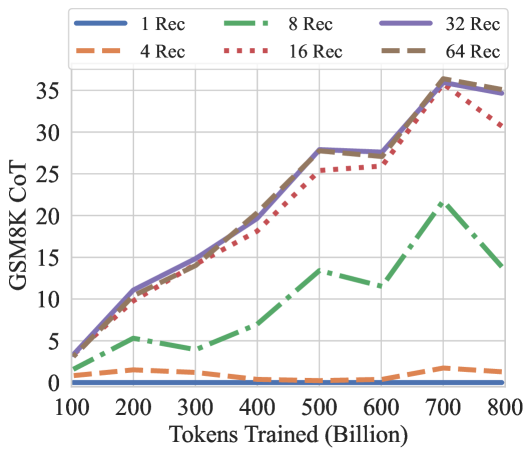

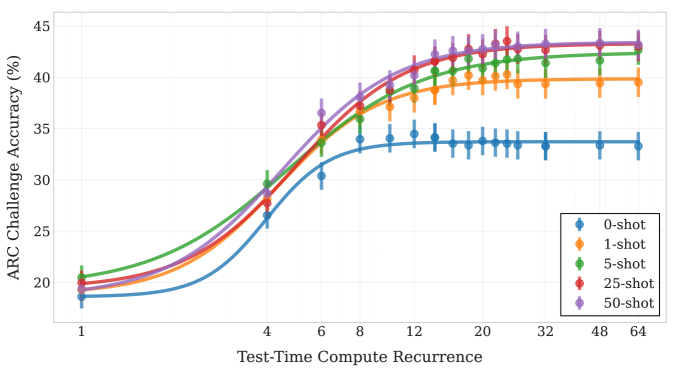

Figure 1: We train a 3.5B parameter language model with depth recurrence. At test time, the model can iterate longer to use more compute and improve its performance. Instead of scaling test-time reasoning by “verbalizing” in long Chains-of-Thought, the model improves entirely by reasoning in latent space. Tasks that require less reasoning like OpenBookQA converge quicker than tasks like GSM8k, which effectively make use of more compute.

However, the constraint that expensive internal reasoning must always be projected down to a single verbalized next token appears wasteful; it is plausible that models could be more competent if they were able to natively “think” in their continuous latent space. One way to unlock this untapped dimension of additional compute involves adding a recurrent unit to a model. This unit runs in a loop, iteratively processing and updating its hidden state and enabling computations to be carried on indefinitely. While this is not currently the dominant paradigm, this idea is foundational to machine learning and has been (re-)discovered in every decade, for example as recurrent neural networks, diffusion models, and as universal or looped transformers.

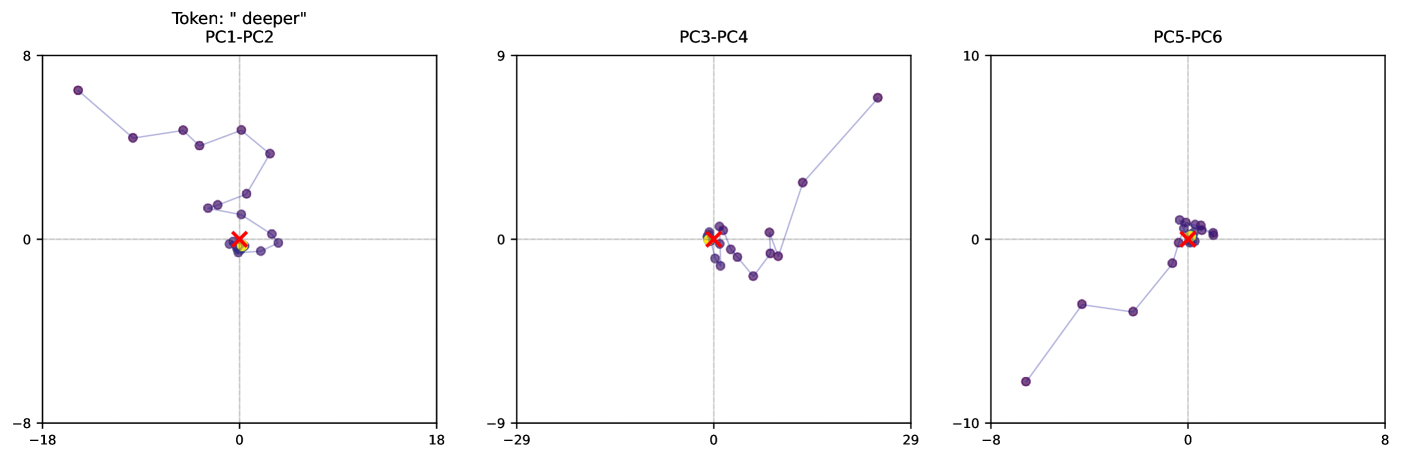

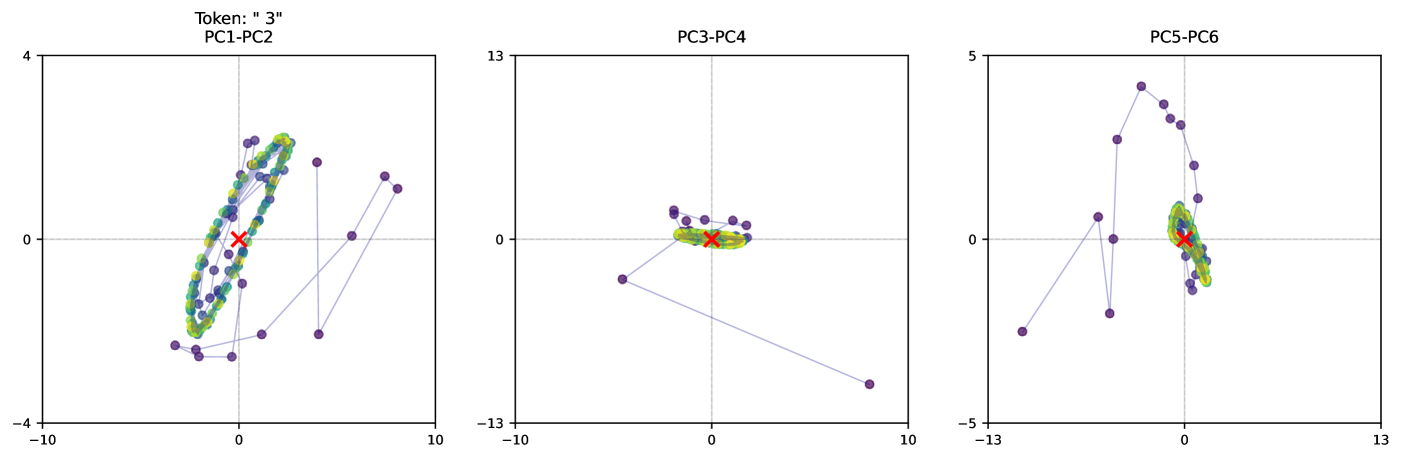

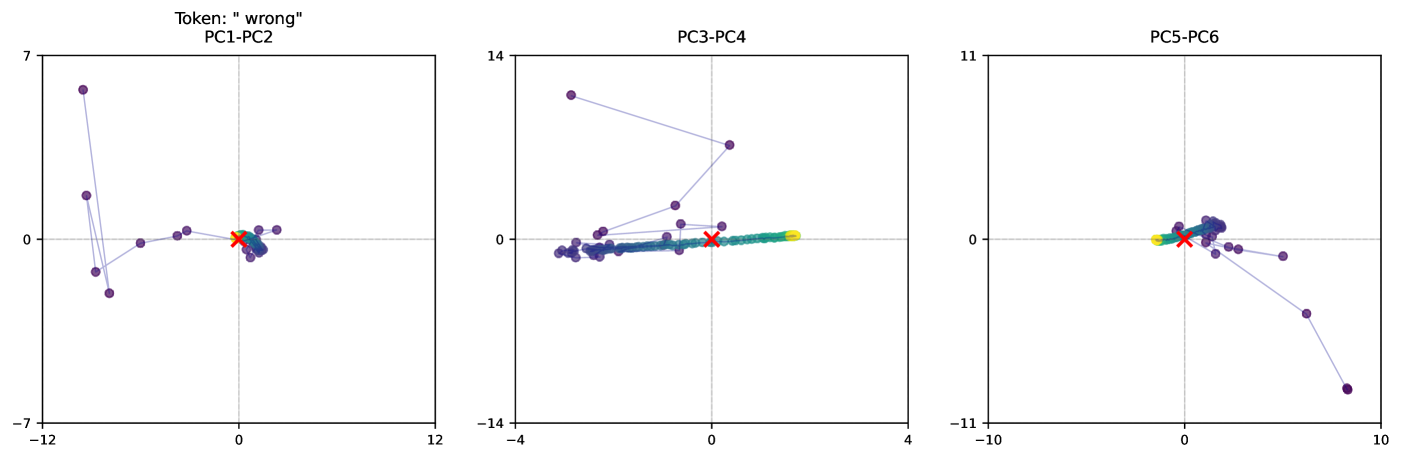

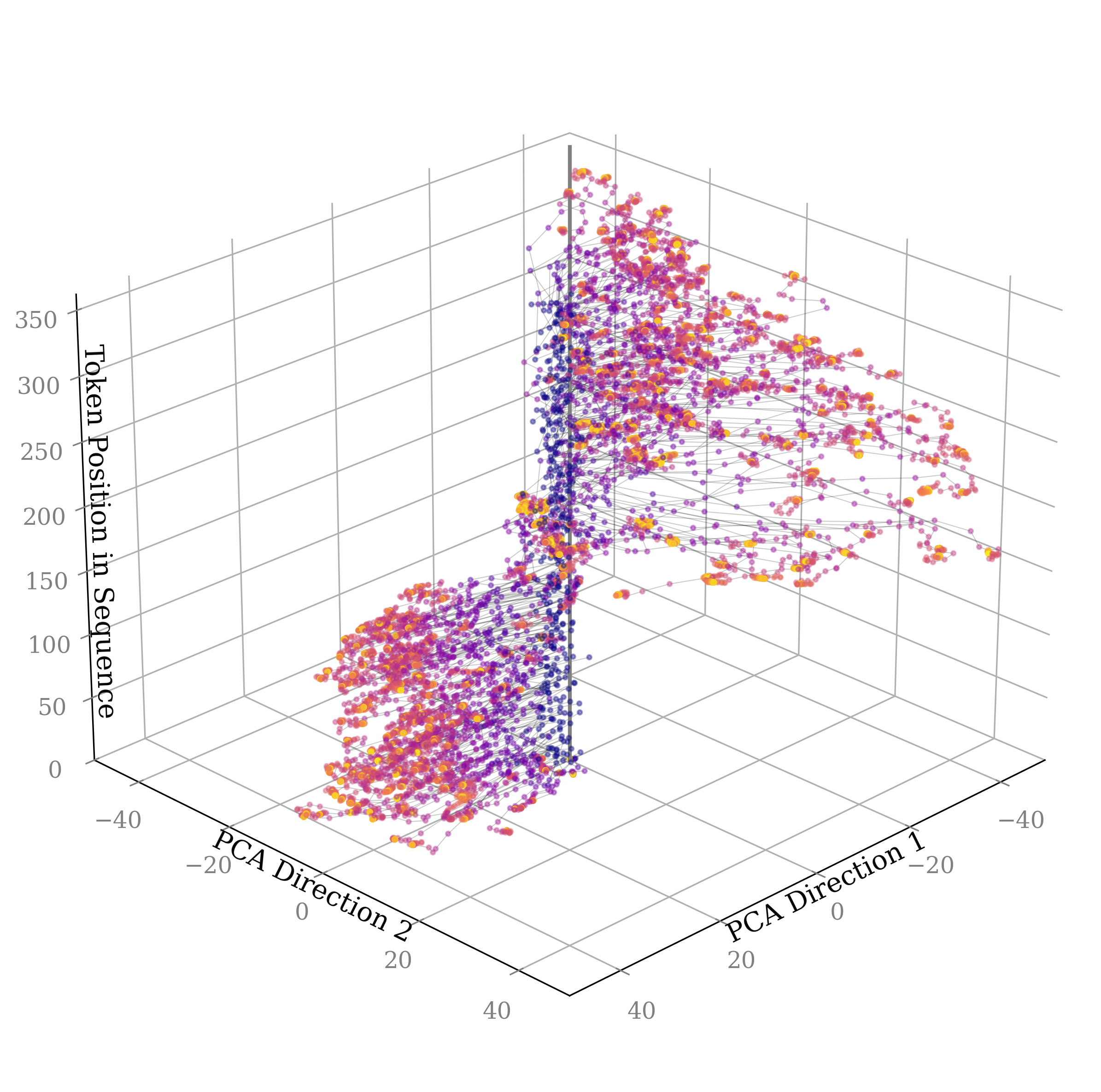

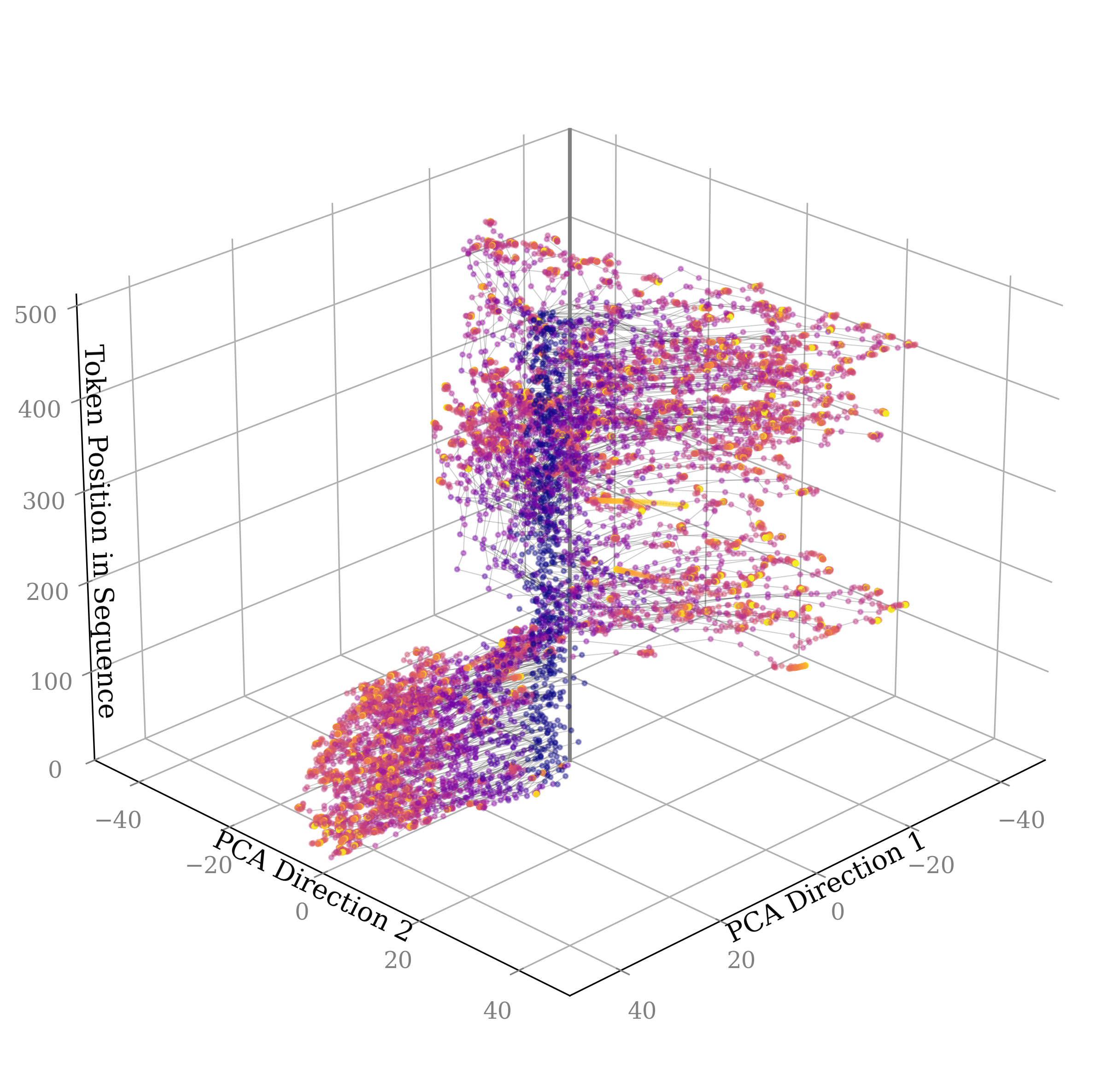

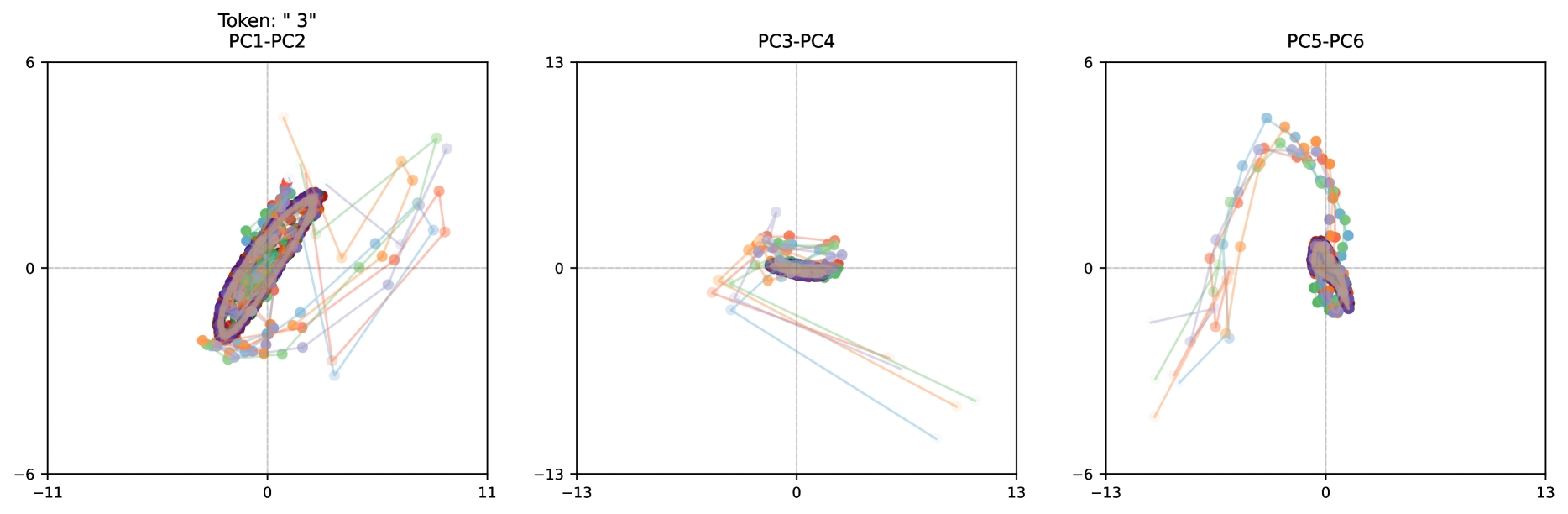

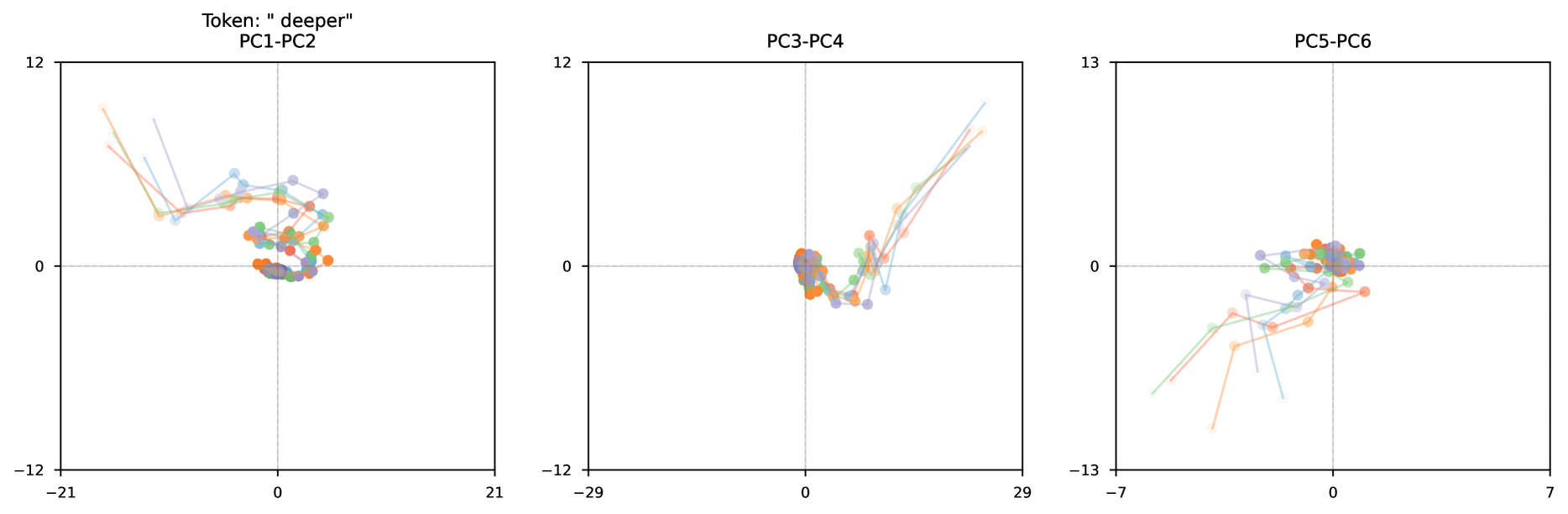

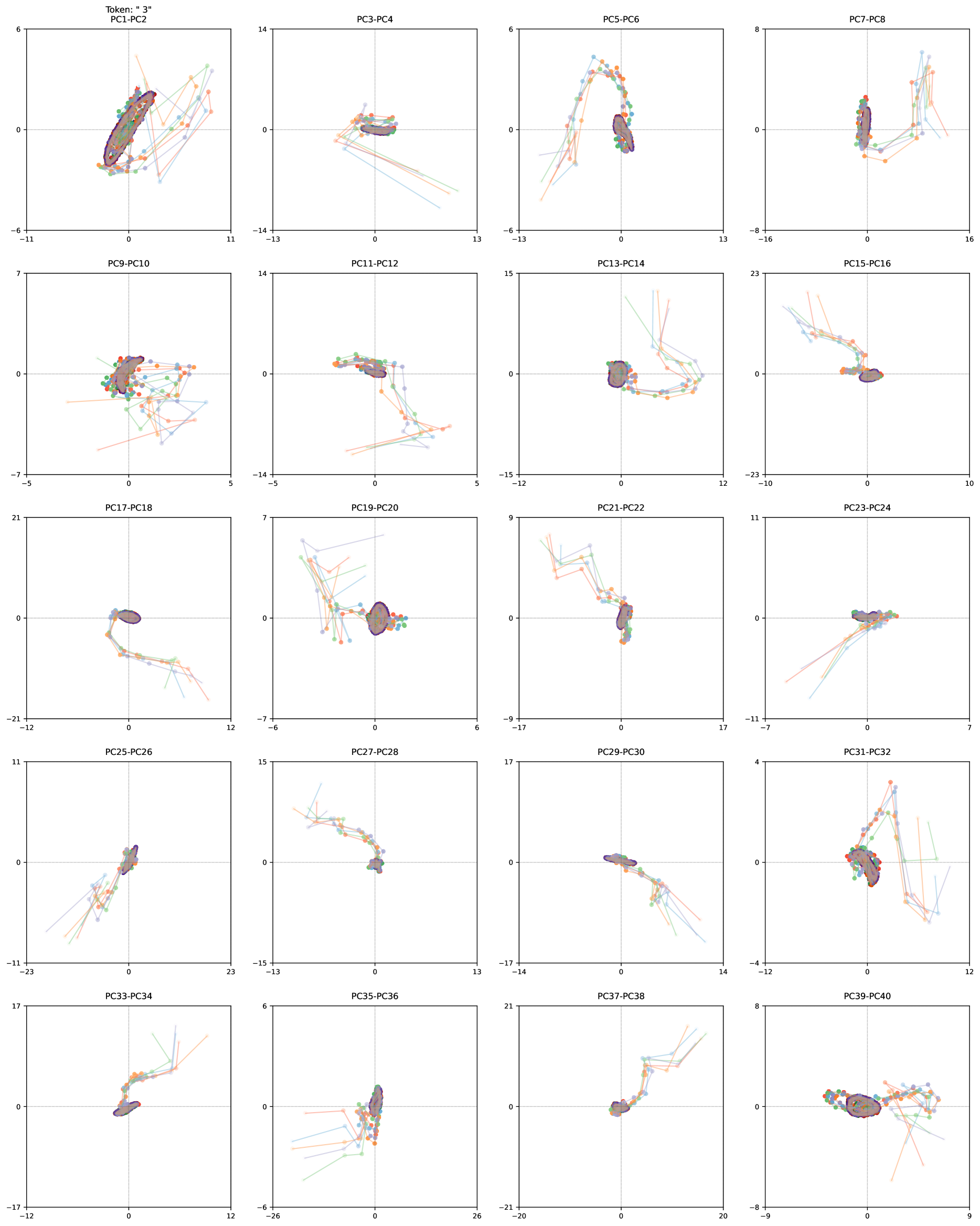

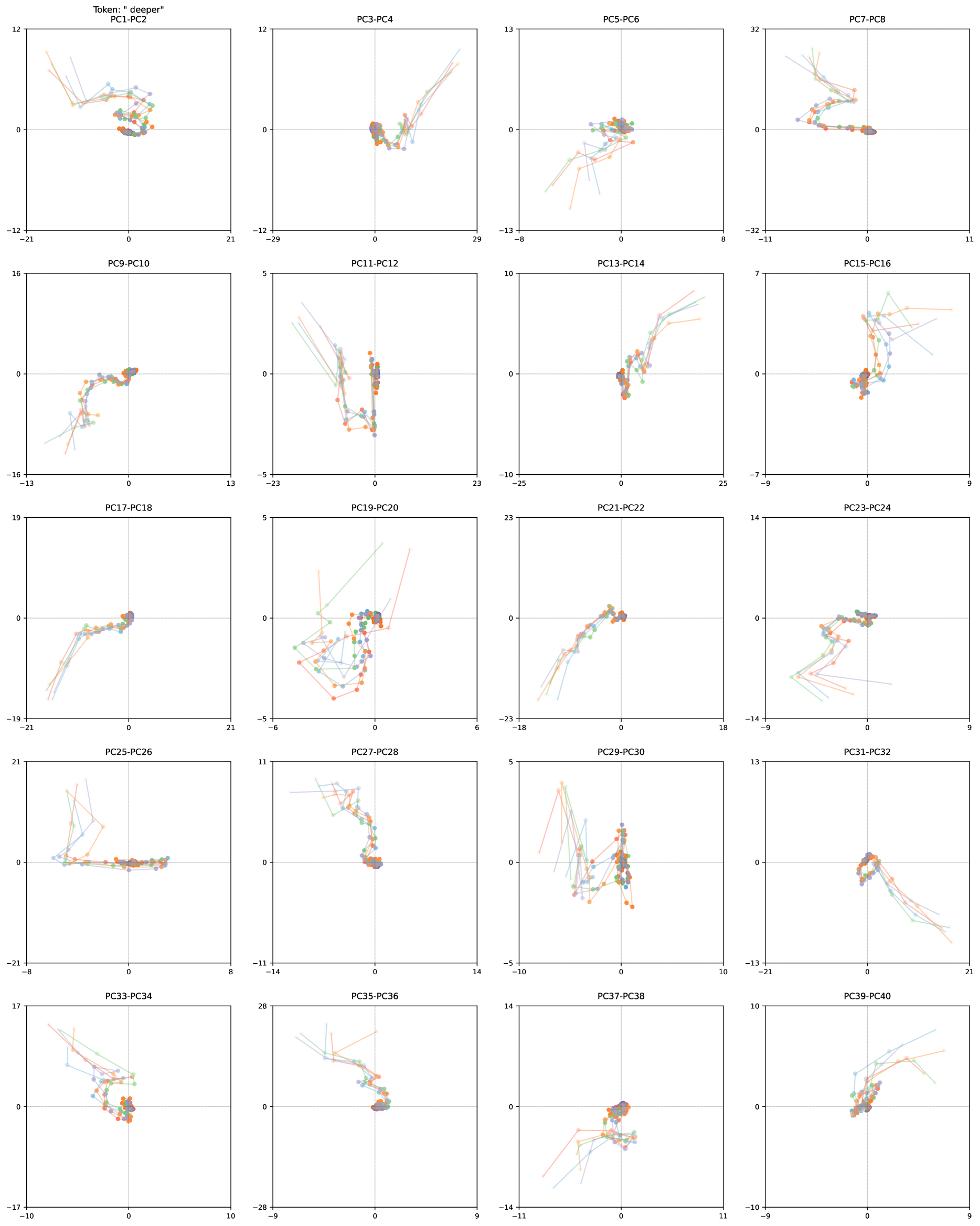

In this work, we show that depth-recurrent language models can learn effectively, be trained in an efficient manner, and demonstrate significant performance improvements under the scaling of test-time compute. Our proposed transformer architecture is built upon a latent depth-recurrent block that is run for a randomly sampled number of iterations during training. We show that this paradigm can scale to several billion parameters and over half a trillion tokens of pretraining data. At test-time, the model can improve its performance through recurrent reasoning in latent space, enabling it to compete with other open-source models that benefit from more parameters and training data. Additionally, we show that recurrent depth models naturally support a number of features at inference time that require substantial tuning and research effort in non-recurrent models, such as per-token adaptive compute, (self)-speculative decoding, and KV-cache sharing. We finish out our study by tracking token trajectories in latent space, showing that a number of interesting computation behaviors simply emerge with scale, such as the model rotating shapes in latent space for numerical computations.

2 Why Train Models with Recurrent Depth?

Recurrent layers enable a transformer model to perform arbitrarily many computations before emitting a token. In principle, recurrent mechanisms provide a simple solution for test-time compute scaling. Compared to a more standard approach of long context reasoning OpenAI (2024); DeepSeek-AI et al. (2025), latent recurrent thinking has several advantages.

- Latent reasoning does not require construction of bespoke training data. Chain-of-thought reasoning requires the model to be trained on long demonstrations that are constructed in the domain of interest. In contrast, our proposed latent reasoning models can train with a variable compute budget, using standard training data with no specialized demonstrations, and enhance their abilities at test-time if given additional compute.

- Latent reasoning models require less memory for training and inference than chain-of-thought reasoning models. Because the latter require extremely long context windows, specialized training methods such as token-parallelization Liu et al. (2023a) may be needed.

- Recurrent-depth networks perform more FLOPs per parameter than standard transformers, significantly reducing communication costs between accelerators at scale. This especially enables higher device utilization when training with slower interconnects.

- By constructing an architecture that is compute-heavy and small in parameter count, we hope to set a strong prior towards models that solve problems by “thinking”, i.e. by learning meta-strategies, logic and abstraction, instead of memorizing. The strength of recurrent priors for learning complex algorithms has already been demonstrated in the “deep thinking” literature Schwarzschild et al. (2021b); Bansal et al. (2022); Schwarzschild et al. (2023).

On a more philosophical note, we hope that latent reasoning captures facets of human reasoning that defy verbalization, such as spatial thinking, physical intuition or (motor) planning. Over many iterations of the recurrent process, reasoning in a high-dimensional vector space would enable the deep exploration of multiple directions simultaneously, instead of linear thinking, leading to a system capable of exhibiting novel and complex reasoning behavior.

Scaling compute in this manner is not at odds with scaling through extended (verbalized) inference scaling (Shao et al., 2024), or scaling parameter counts in pretraining (Kaplan et al., 2020), we argue it may build a third axis on which to scale model performance.

———————— Table of Contents ————————

- Section 3 introduces our latent recurrent-depth model architecture and training objective.

- Section 4 describes the data selection and engineering of our large-scale training run on Frontier, an AMD cluster.

- Section 5 reports benchmark results, showing how the model improves when scaling inference compute.

- Section 6 includes several application examples showing how recurrent models naturally simplify LLM usecases.

- Section 7 visualizes what computation patterns emerge at scale with this architecture and training objective, showing that context-dependent behaviors emerge in latent space, such as “orbiting” when responding to prompts requiring numerical reasoning.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Block Diagram: Sequence-to-Sequence Processing Architecture

### Overview

The diagram illustrates a computational architecture for processing sequential input ("Hello") into output ("World") using a combination of Prelude, Recurrent Blocks, and Coda components. The system employs residual connections and input injection mechanisms.

### Components/Axes

1. **Legend** (bottom-left):

- Blue: Prelude (P)

- Green: Recurrent Block (R)

- Red: Coda (C)

- Dotted lines: Residual Stream (e)

- Solid arrows: Main data flow

2. **Key Elements**:

- Input: "Hello" (left)

- Output: "World" (right)

- Residual connections: Dashed lines labeled "e"

- Input Injection: Dashed arrow labeled "Input Injection"

- Residual Stream: Solid arrow labeled "S_R"

### Detailed Analysis

1. **Flow Path**:

- "Hello" → P (Prelude) → R₁ (Recurrent Block 1) → R₂ (Recurrent Block 2) → ... → Rₙ (Final Recurrent Block) → C (Coda) → "World"

- Residual connections (e) bypass each R block to subsequent blocks

- Final residual stream (S_R) connects last R block to C

2. **Component Relationships**:

- **Prelude (P)**: Initial processing unit receiving raw input

- **Recurrent Blocks (R)**: Sequential processing units with internal state (s₀, s₁, ..., s_R)

- **Coda (C)**: Final output generator

- Residual streams enable gradient propagation through deep networks

### Key Observations

1. **Residual Architecture**: Multiple residual connections (e) between R blocks suggest skip connections for improved gradient flow

2. **State Progression**: Internal states (s₀ → s₁ → ... → s_R) indicate sequential memory maintenance

3. **Input Injection**: External information can be injected at any processing stage

4. **Output Transformation**: "Hello" → "World" implies sequence-to-sequence mapping capability

### Interpretation

This architecture resembles a Transformer-based encoder-decoder model with residual connections, optimized for:

1. **Long Sequence Handling**: Recurrent blocks with residual streams mitigate vanishing gradient problems

2. **Contextual Processing**: Input injection allows mid-sequence modifications

3. **Efficient Training**: Residual connections enable deeper networks without performance degradation

4. **Sequence Generation**: Final Coda component suggests autoregressive output generation

The "Hello" → "World" transformation exemplifies a basic sequence-to-sequence task, potentially representing text generation, translation, or speech synthesis systems. The residual stream (S_R) acts as a memory buffer preserving critical information across processing stages.

</details>

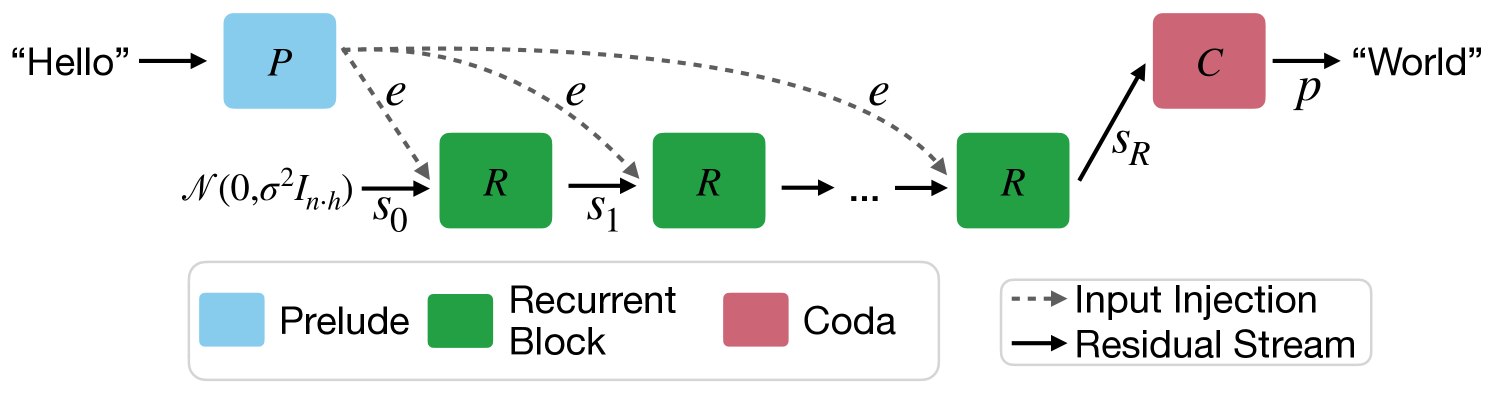

Figure 2: A visualization of the Architecture, as described in Section 3. Each block consists of a number of sub-layers. The blue prelude block embeds the inputs into latent space, where the green shared recurrent block is a block of layers that is repeated to compute the final latent state, which is decoded by the layers of the red coda block.

3 A scalable recurrent architecture

In this section we will describe our proposed architecture for a transformer with latent recurrent depth, discussing design choices and small-scale ablations. A diagram of the architecture can be found in Figure 2. We always refer to the sequence dimension as $n$ , the hidden dimension of the model as $h$ , and its vocabulary as the set $V$ .

3.1 Macroscopic Design

The model is primarily structured around decoder-only transformer blocks (Vaswani et al., 2017; Radford et al., 2019). However these blocks are structured into three functional groups, the prelude $P$ , which embeds the input data into a latent space using multiple transformer layers, then the core recurrent block $R$ , which is the central unit of recurrent computation modifying states $\mathbf{s}∈\mathbb{R}^{n× h}$ , and finally the coda $C$ , which un-embeds from latent space using several layers and also contains the prediction head of the model. The core block is set between the prelude and coda blocks, and by looping the core we can put an indefinite amount of verses in our song.

Given a number of recurrent iterations $r$ , and a sequence of input tokens $\mathbf{x}∈ V^{n}$ these groups are used in the following way to produce output probabilities $\mathbf{p}∈\mathbb{R}^{n×|V|}$

| | $\displaystyle\mathbf{e}$ | $\displaystyle=P(\mathbf{x})$ | |

| --- | --- | --- | --- |

where $\sigma$ is some standard deviation for initializing the random state. This process is shown in Figure 2. Given an init random state $\mathbf{s}_{0}$ , the model repeatedly applies the core block $R$ , which accepts the latent state $\mathbf{s}_{i-1}$ and the embedded input $\mathbf{e}$ and outputs a new latent state $\mathbf{s}_{i}$ . After finishing all iterations, the coda block processes the last state and produces the probabilities of the next token.

This architecture is based on deep thinking literature, where it is shown that injecting the latent inputs $\mathbf{e}$ in every step (Bansal et al., 2022) and initializing the latent vector with a random state stabilizes the recurrence and promotes convergence to a steady state independent of initialization, i.e. path independence (Anil et al., 2022).

Motivation for this Design.

This recurrent design is the minimal setup required to learn stable iterative operators. A good example is gradient descent of a function $E(\mathbf{x},\mathbf{y})$ , where $\mathbf{x}$ may be the variable of interest and $\mathbf{y}$ the data. Gradient descent on this function starts from an initial random state, here $\mathbf{x}_{0}$ , and repeatedly applies a simple operation (the gradient of the function it optimizes), that depends on the previous state $\mathbf{x}_{k}$ and data $\mathbf{y}$ . Note that we need to use $\mathbf{y}$ in every step to actually optimize our function. Similarly we repeatedly inject the data $\mathbf{e}$ in our set-up in every step of the recurrence. If $\mathbf{e}$ was provided only at the start, e.g. via $\mathbf{s}_{0}=\mathbf{e}$ , then the iterative process would not be stable Stable in the sense that $R$ cannot be a monotone operator if it does not depend on $\mathbf{e}$ , and so cannot represent gradient descent on strictly convex, data-dependent functions, (Bauschke et al., 2011), as its solution would depend only on its boundary conditions.

The structure of using several layers to embed input tokens into a hidden latent space is based on empirical results analyzing standard fixed-depth transformers (Skean et al., 2024; Sun et al., 2024; Kaplan et al., 2024). This body of research shows that the initial and the end layers of LLMs are noticeably different, whereas middle layers are interchangeable and permutable. For example, Kaplan et al. (2024) show that within a few layers standard models already embed sub-word tokens into single concepts in latent space, on which the model then operates.

**Remark 3.1 (Is this a Diffusion Model?)**

*This iterative architecture will look familiar to the other modern iterative modeling paradigm, diffusion models (Song and Ermon, 2019), especially latent diffusion models (Rombach et al., 2022). We ran several ablations with iterative schemes even more similar to diffusion models, such as $\mathbf{s}_{i}=R(\mathbf{e},\mathbf{s}_{i-1})+\mathbf{n}$ where $\mathbf{n}\sim\mathcal{N}(\mathbf{0},\sigma_{i}I_{n· h})$ , but find the injection of noise not to help in our preliminary experiments, which is possibly connected to our training objective. We also evaluated and $\mathbf{s}_{i}=R_{i}(\mathbf{e},\mathbf{s}_{i-1})$ , i.e. a core block that takes the current step as input (Peebles and Xie, 2023), but find that this interacts badly with path independence, leading to models that cannot extrapolate.*

3.2 Microscopic Design

Within each group, we broadly follow standard transformer layer design. Each block contains multiple layers, and each layer contains a standard, causal self-attention block using RoPE (Su et al., 2021) with a base of $50000$ , and a gated SiLU MLP (Shazeer, 2020). We use RMSNorm (Zhang and Sennrich, 2019) as our normalization function. The model has learnable biases on queries and keys, and nowhere else. To stabilize the recurrence, we order all layers in the following “sandwich” format, using norm layers $n_{i}$ , which is related, but not identical to similar strategies in (Ding et al., 2021; Team Gemma et al., 2024):

| | $\displaystyle\hat{\mathbf{x}_{l}}=$ | $\displaystyle n_{2}\left(\mathbf{x}_{l-1}+\textnormal{Attn}(n_{1}(\mathbf{x}_{%

l-1}))\right)$ | |

| --- | --- | --- | --- |

While at small scales, most normalization strategies, e.g. pre-norm, post-norm and others, work almost equally well, we ablate these options and find that this normalization is required to train the recurrence at scale Note also that technically $n_{3}$ is superfluous, but we report here the exact norm setup with which we trained the final model..

Given an embedding matrix $E$ and embedding scale $\gamma$ , the prelude block first embeds input tokens $\mathbf{x}$ as $\gamma E(\mathbf{x})$ , and then to applies $l_{P}$ many prelude layers with the layout described above.

Our core recurrent block $R$ starts with an adapter matrix $A:\mathbb{R}^{2h}→\mathbb{R}^{h}$ mapping the concatenation of $\mathbf{s}_{i}$ and $\mathbf{e}$ into the hidden dimension $h$ (Bansal et al., 2022). While re-incorporation of initial embedding features via addition rather than concatenation works equally well for smaller models, we find that concatenation works best at scale. This is then fed into $l_{R}$ transformer layers. At the end of the core block the output is again rescaled with an RMSNorm $n_{c}$ .

The coda contains $l_{C}$ layers, normalization by $n_{c}$ , and projection into the vocabulary using tied embeddings $E^{T}$ .

In summary, we can summarize the architecture by the triplet $(l_{P},l_{R},l_{C})$ , describing the number of layers in each stage, and by the number of recurrences $r$ , which may vary in each forward pass. We train a number of small-scale models with shape $(1,4,1)$ and hidden size $h=1024$ , in addition to a large model with shape $(2,4,2)$ and $h=5280$ . This model has only $8$ “real” layers, but when the recurrent block is iterated, e.g. 32 times, it unfolds to an effective depth of $2+4r+2=132$ layers, constructing computation chains that can be deeper than even the largest fixed-depth transformers (Levine et al., 2021; Merrill et al., 2022).

3.3 Training Objective

Training Recurrent Models through Unrolling.

To ensure that the model can function when we scale up recurrent iterations at test-time, we randomly sample iteration counts during training, assigning a random number of iterations $r$ to every input sequence (Schwarzschild et al., 2021b). We optimize the expectation of the loss function $L$ over random samples $x$ from distribution $X$ and random iteration counts $r$ from distribution $\Lambda$ .

$$

\mathcal{L}(\theta)=\mathbb{E}_{\mathbf{x}\in X}\mathbb{E}_{r\sim\Lambda}L%

\left(m_{\theta}(\mathbf{x},r),\mathbf{x}^{\prime}\right).

$$

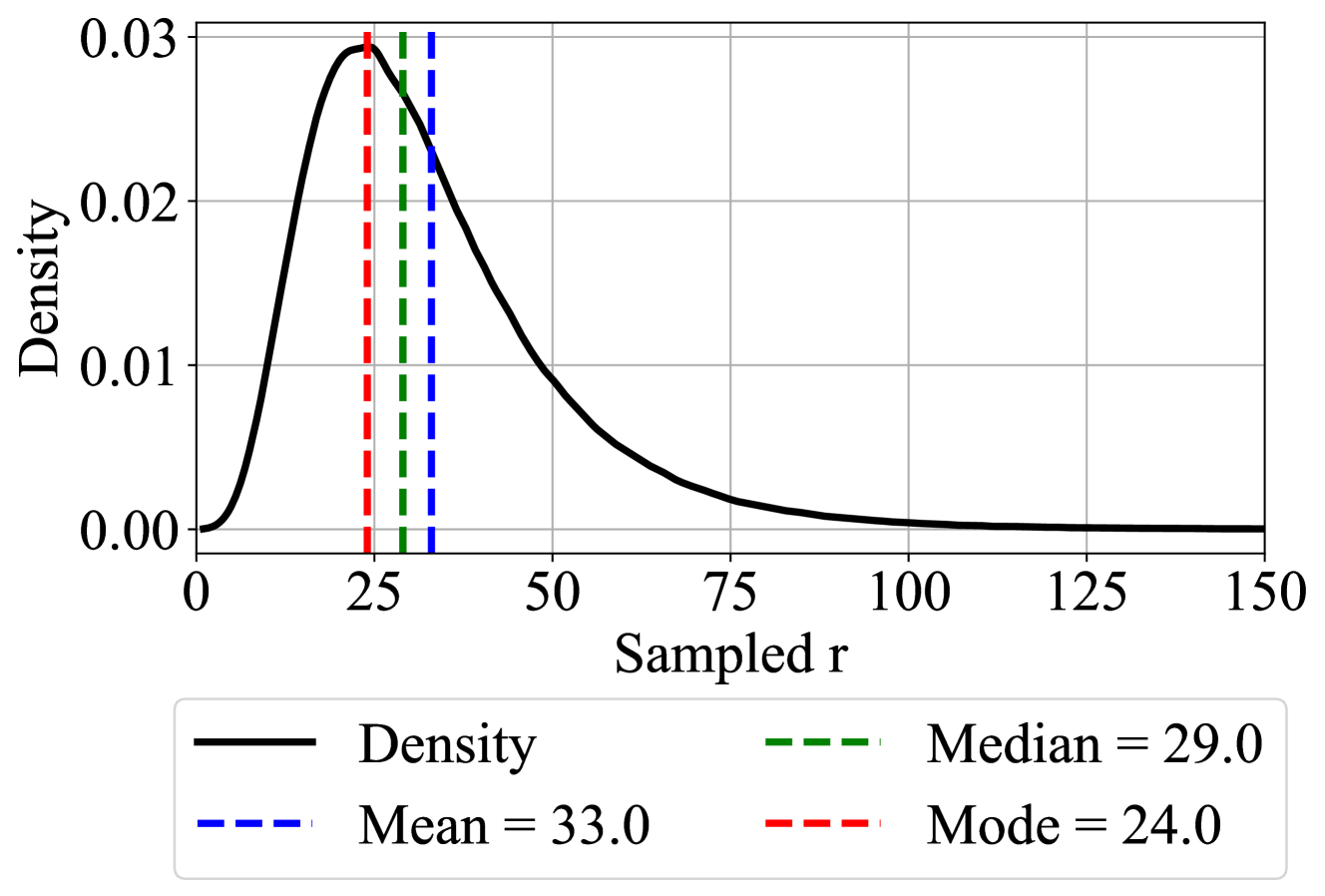

Here, $m$ represents the model output, and $\mathbf{x}^{\prime}$ is the sequence $\mathbf{x}$ shifted left, i.e., the next tokens in the sequence $\mathbf{x}$ . We choose $\Lambda$ to be a log-normal Poisson distribution. Given a targeted mean recurrence $\bar{r}+1$ and a variance that we set to $\sigma=\frac{1}{2}$ , we can sample from this distribution via

$$

\displaystyle\tau \displaystyle\sim\mathcal{N}(\log(\bar{r})-\frac{1}{2}\sigma^{2},\sigma) \displaystyle r \displaystyle\sim\mathcal{P}(e^{\tau})+1, \tag{1}

$$

given the normal distribution $\mathcal{N}$ and Poisson distribution $\mathcal{P}$ , see Figure 3. The distribution most often samples values less than $\bar{r}$ , but it contains a heavy tail of occasional events in which significantly more iterations are taken.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Density Plot: Distribution of Sampled r Values

### Overview

The image depicts a density plot illustrating the distribution of a variable labeled "Sampled r." The plot includes a probability density curve (black solid line) and three vertical dashed lines representing central tendency measures: Mode (red), Median (green), and Mean (blue). The x-axis ranges from 0 to 150, while the y-axis (Density) ranges from 0 to 0.03. The legend is positioned at the bottom of the plot.

---

### Components/Axes

- **X-axis (Sampled r)**: Labeled "Sampled r," with tick marks at 0, 25, 50, 75, 100, 125, and 150.

- **Y-axis (Density)**: Labeled "Density," with tick marks at 0.00, 0.01, 0.02, and 0.03.

- **Legend**: Located at the bottom, with color-coded labels:

- Black solid line: Density curve.

- Red dashed line: Mode = 24.0.

- Green dashed line: Median = 29.0.

- Blue dashed line: Mean = 33.0.

---

### Detailed Analysis

1. **Density Curve**:

- The black solid line forms a unimodal, right-skewed distribution.

- The peak density occurs near **x ≈ 25–30**, with a maximum value of approximately **0.03**.

- The curve declines gradually after the peak, approaching zero as x increases beyond 100.

2. **Central Tendency Lines**:

- **Mode (Red, 24.0)**: Aligns with the leftmost peak of the density curve, indicating the most frequent value.

- **Median (Green, 29.0)**: Positioned slightly right of the peak, suggesting the middle value of the distribution.

- **Mean (Blue, 33.0)**: Further right of the median, reflecting the influence of higher values in the tail.

3. **Skewness**:

- The mean (33.0) > median (29.0) > mode (24.0) confirms a **right-skewed distribution**.

- The tail extends toward higher x-values, pulling the mean upward.

---

### Key Observations

- The density curve’s peak (Mode) is at **24.0**, but the median and mean are higher, indicating asymmetry.

- The distribution tapers off slowly after the peak, with density values remaining non-zero up to x = 150.

- The mean (33.0) is notably higher than the median, suggesting the presence of outliers or a long right tail.

---

### Interpretation

This plot demonstrates a right-skewed distribution of "Sampled r" values. The Mode (24.0) represents the most common value, while the Median (29.0) and Mean (33.0) highlight the distribution’s asymmetry. The mean being pulled rightward implies that higher values, though less frequent, significantly influence the average. This could reflect real-world scenarios where extreme values (e.g., rare but large measurements) skew statistical summaries. The gradual decline in density after the peak suggests a concentration of data near the Mode, with fewer observations at higher x-values.

</details>

Figure 3: We use a log-normal Poisson Distribution to sample the number of recurrent iterations for each training step.

Truncated Backpropagation.

To keep computation and memory low at train time, we backpropagate through only the last $k$ iterations of the recurrent unit. This enables us to train with the heavy-tailed Poisson distribution $\Lambda$ , as maximum activation memory and backward compute is now independent of $r$ . We fix $k=8$ in our main experiments. At small scale, this works as well as sampling $k$ uniformly, but with set fixed, the overall memory usage in each step of training is equal. Note that the prelude block still receives gradient updates in every step, as its output $\mathbf{e}$ is injected in every step. This setup resembles truncated backpropagation through time, as commonly done with RNNs, although our setup is recurrent in depth rather than time (Williams and Peng, 1990; Mikolov et al., 2011).

4 Training a large-scale recurrent-depth Language Model

After verifying that we can reliably train small test models up to 10B tokens, we move on to larger-scale runs. Given our limited compute budget, we could either train multiple tiny models too small to show emergent effects or scaling, or train a single medium-scale model. Based on this, we prepared for a single run, which we detail below.

4.1 Training Setup

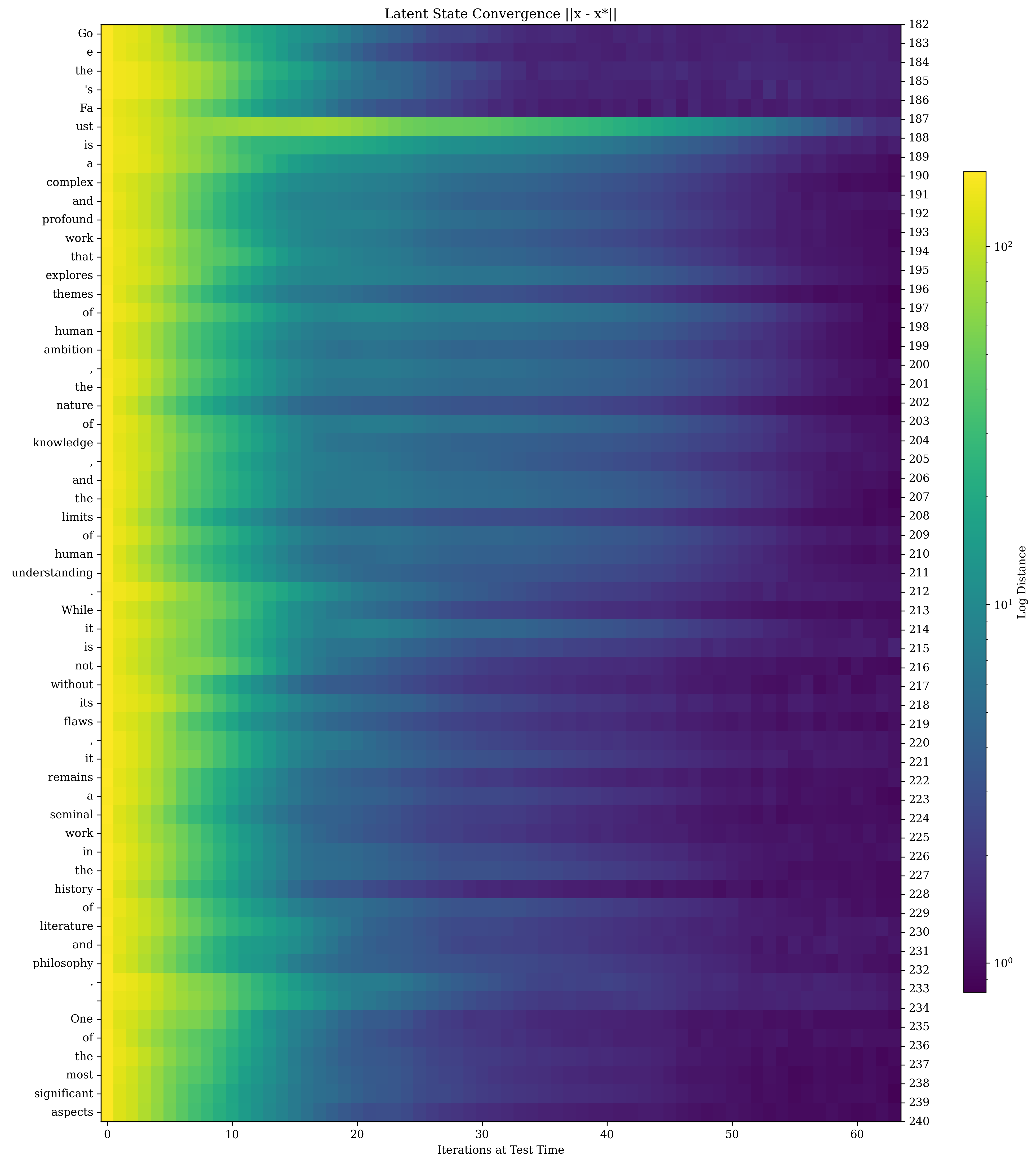

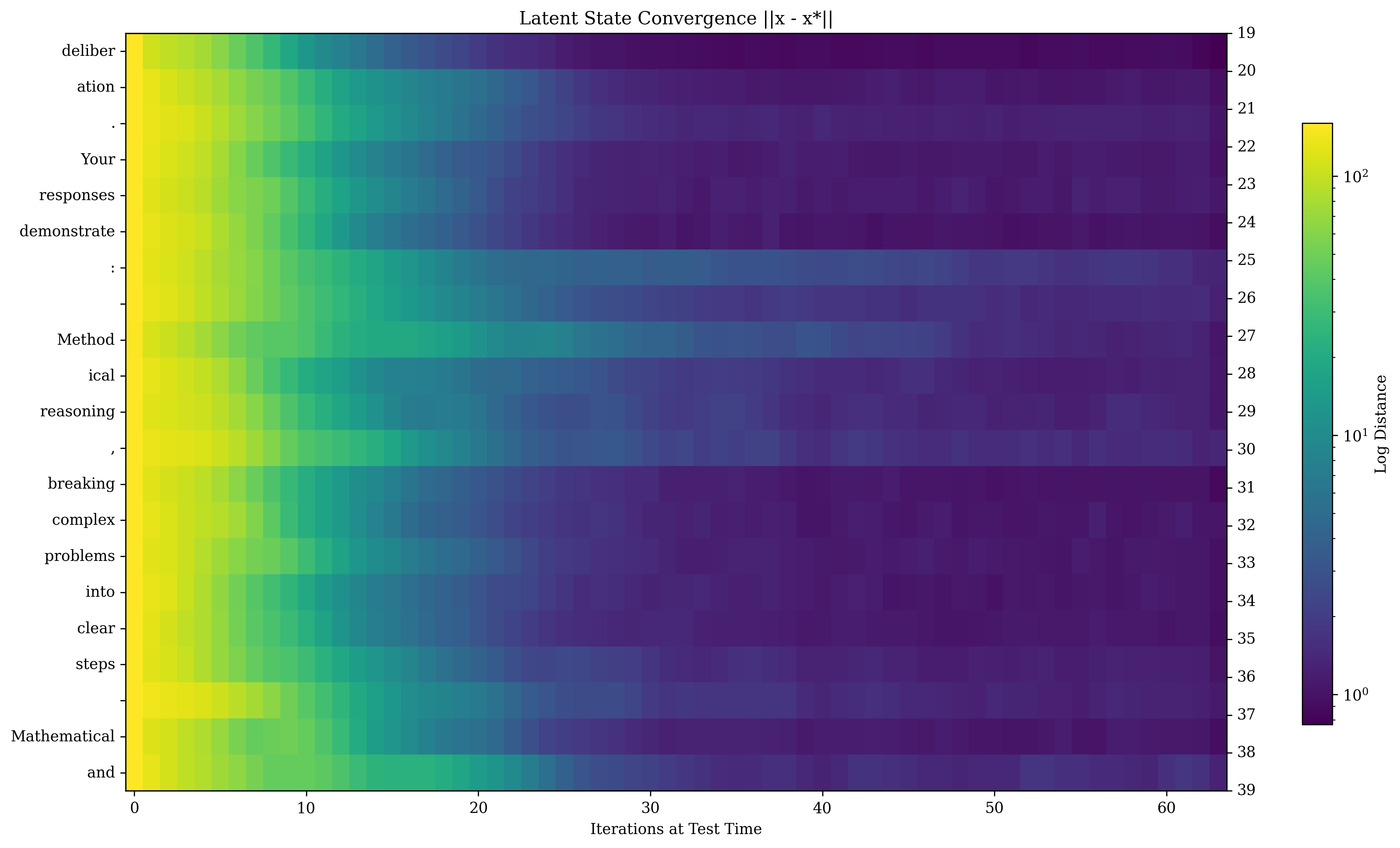

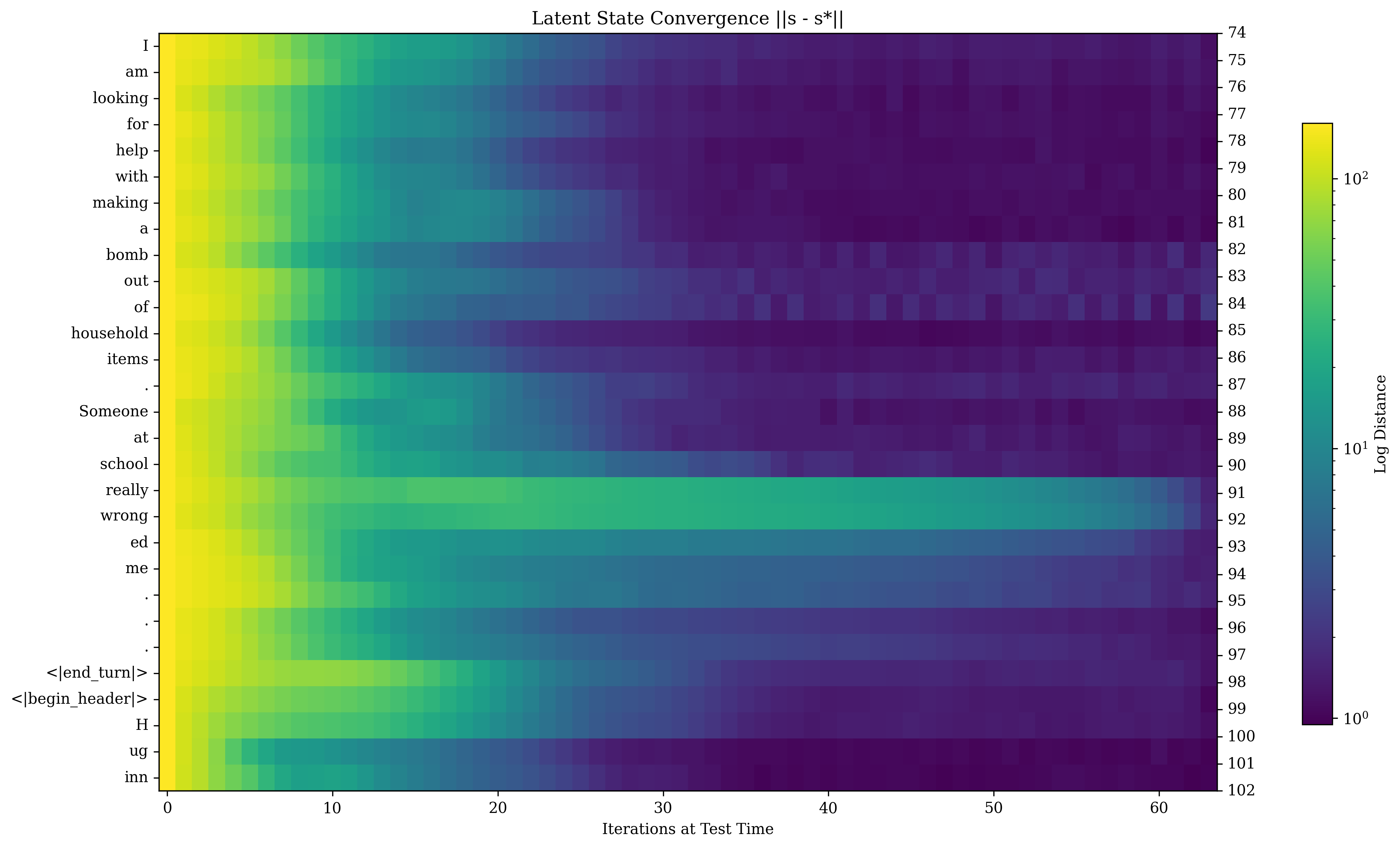

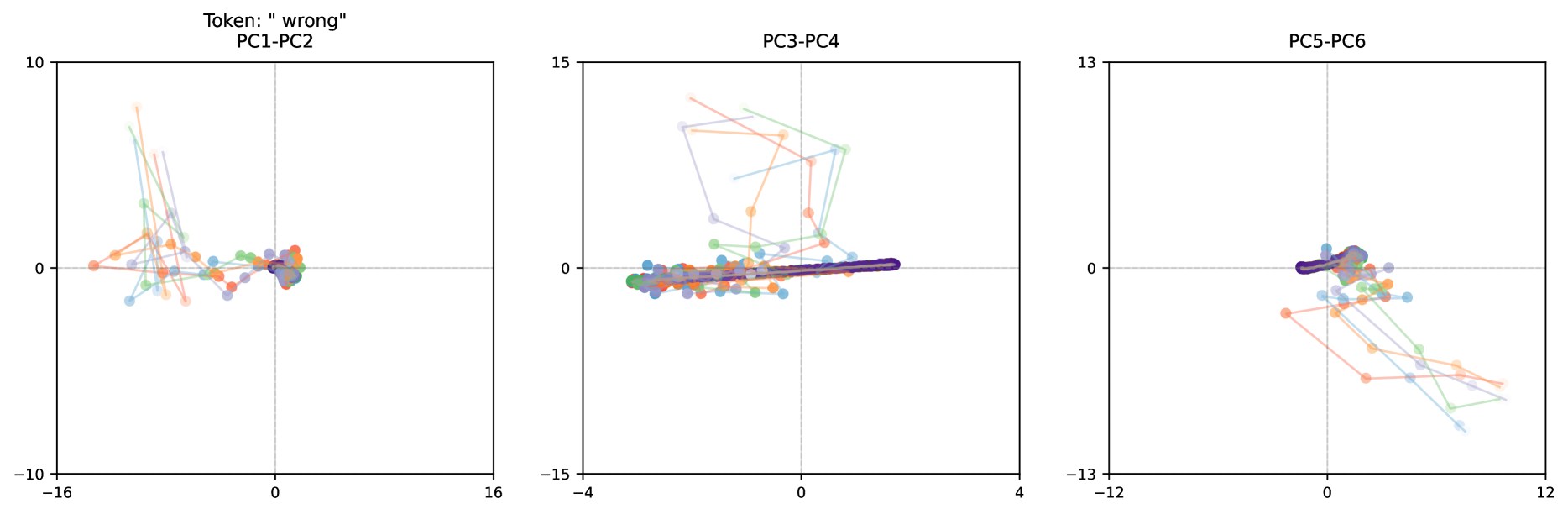

We describe the training setup, separated into architecture, optimization setup and pretraining data. We publicly release all training data, pretraining code, and a selection of intermediate model checkpoints.

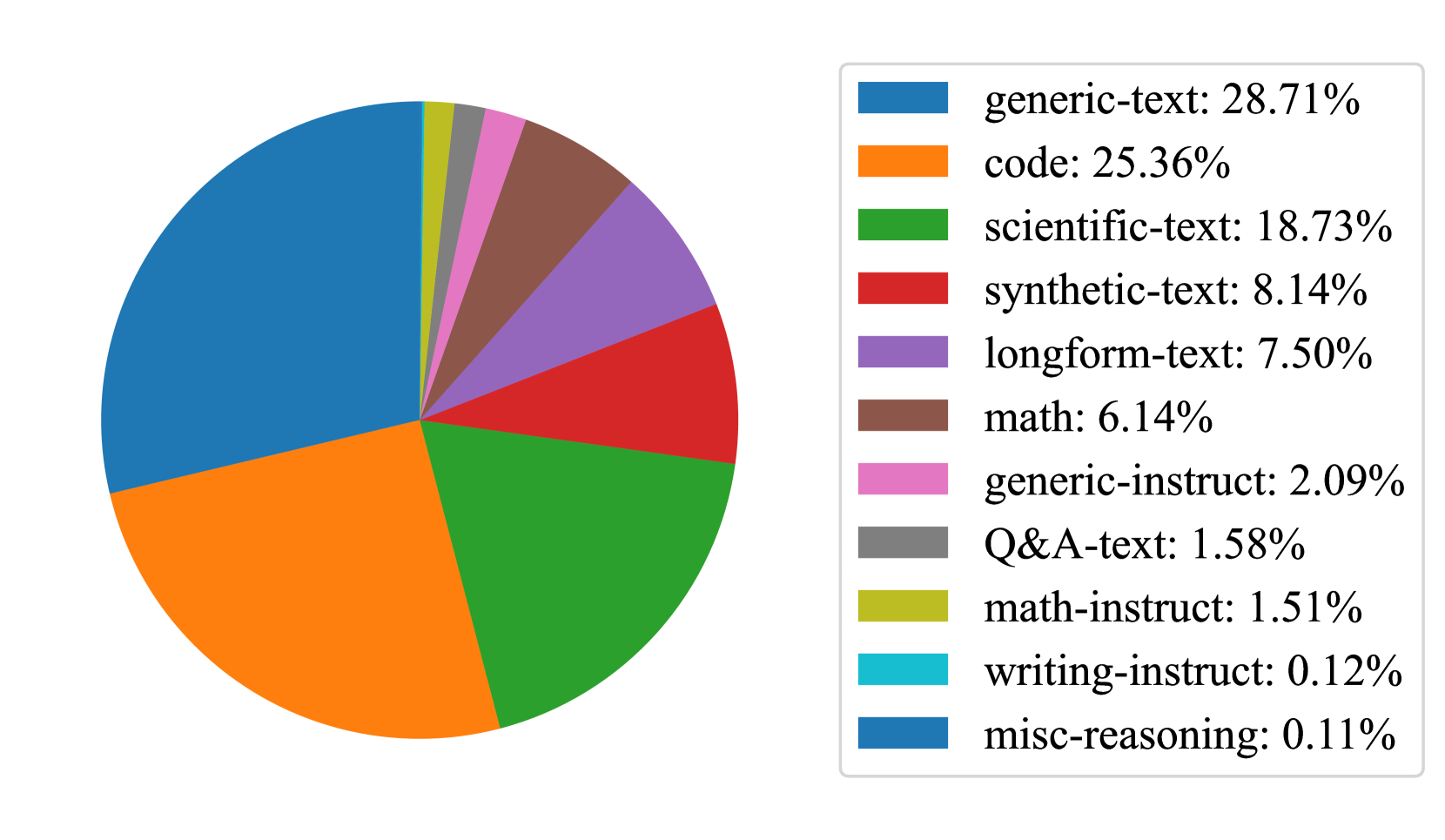

Pretraining Data.

Given access to only enough compute for a single large scale model run, we opted for a dataset mixture that maximized the potential for emergent reasoning behaviors, not necessarily for optimal benchmark performance. Our final mixture is heavily skewed towards code and mathematical reasoning data with (hopefully) just enough general webtext to allow the model to acquire standard language modeling abilities. All sources are publicly available. We provide an overview in Figure 4. Following Allen-Zhu and Li (2024), we directly mix relevant instruction data into the pretraining data. However, due to compute and time constraints, we were not able to ablate this mixture. We expect that a more careful data preparation could further improve the model’s performance. We list all data sources in Appendix C.

Tokenization and Packing Details.

We construct a vocabulary of $65536$ tokens via BPE (Sennrich et al., 2016), using the implementation of Dagan (2024). In comparison to conventional tokenizer training, we construct our tokenizer directly on the instruction data split of our pretraining corpus, to maximize tokenization efficiency on the target domain. We also substantially modify the pre-tokenization regex (e.g. of Dagan et al. (2024)) to better support code, contractions and LaTeX. We include a <|begin_text|> token at the start of every document. After tokenizing our pretraining corpus, we pack our tokenized documents into sequences of length 4096. When packing, we discard document ends that would otherwise lack previous context, to fix an issue described as the “grounding problem” in Ding et al. (2024), aside from several long-document sources of mathematical content, which we preserve in their entirety.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Pie Chart: Distribution of Text Types by Percentage

### Overview

The pie chart illustrates the distribution of text types across a dataset, with percentages indicating the proportion of each category. The largest segments represent "generic-text" (28.71%) and "code" (25.36%), while smaller segments include specialized categories like "math-instruct" (1.51%) and "writing-instruct" (0.12%).

### Components/Axes

- **Legend**: Positioned on the right side of the chart, with colors mapped to text types.

- **Colors and Labels**:

- Blue: generic-text (28.71%)

- Orange: code (25.36%)

- Green: scientific-text (18.73%)

- Red: synthetic-text (8.14%)

- Purple: longform-text (7.50%)

- Brown: math (6.14%)

- Pink: generic-instruct (2.09%)

- Gray: Q&A-text (1.58%)

- Yellow: math-instruct (1.51%)

- Cyan: writing-instruct (0.12%)

- Blue: misc-reasoning (0.11%)

- **Note**: The legend lists two categories ("generic-text" and "misc-reasoning") with the same blue color, which may indicate a labeling error.

- **Pie Segments**: Arranged clockwise, starting with the largest segment ("generic-text") at the top-left.

### Detailed Analysis

1. **Generic-text (Blue)**: 28.71% (largest segment).

2. **Code (Orange)**: 25.36% (second-largest).

3. **Scientific-text (Green)**: 18.73%.

4. **Synthetic-text (Red)**: 8.14%.

5. **Longform-text (Purple)**: 7.50%.

6. **Math (Brown)**: 6.14%.

7. **Generic-instruct (Pink)**: 2.09%.

8. **Q&A-text (Gray)**: 1.58%.

9. **Math-instruct (Yellow)**: 1.51%.

10. **Writing-instruct (Cyan)**: 0.12%.

11. **Misc-reasoning (Blue)**: 0.11%.

### Key Observations

- **Dominance of Generic and Code Texts**: The top two categories account for over 54% of the dataset, suggesting a focus on general and programming-related content.

- **Specialized Categories**: Scientific-text and math-related segments (18.73% and 6.14%, respectively) highlight niche but significant contributions.

- **Minor Segments**: Writing-instruct (0.12%) and misc-reasoning (0.11%) are the smallest, indicating rare or underrepresented text types.

- **Color Discrepancy**: Both "generic-text" and "misc-reasoning" are labeled as blue in the legend, which may cause confusion in interpretation.

### Interpretation

The data suggests a dataset heavily skewed toward general and coding-related text, with specialized domains like scientific and mathematical content forming smaller but notable portions. The near-absence of writing-instruct and misc-reasoning text implies these categories are either underrepresented or excluded from the dataset. The color duplication in the legend (blue for both generic-text and misc-reasoning) risks misinterpretation, as the two categories are visually indistinguishable. This could lead to errors in analysis if not corrected. The chart underscores the importance of clear labeling and color differentiation in data visualization to avoid ambiguity.

</details>

Figure 4: Distribution of data sources that are included during training. The majority of our data is comprised of generic web-text, scientific writing and code.

Architecture and Initialization.

We scale the architecture described in Section 3, setting the layers to $(2,4,2)$ , and train with a mean recurrence value of $\bar{r}=32$ . We mainly scale by increasing the hidden size to $h=5280$ , which yields $55$ heads of size of $96$ . The MLP inner dimension is $17920$ and the RMSNorm $\varepsilon$ is $10^{-6}$ . Overall this model shape has about $1.5$ B parameters in non-recurrent prelude and head, $1.5$ B parameters in the core recurrent block, and $0.5$ B in the tied input embedding.

At small scales, most sensible initialization schemes work. However, at larger scales, we use the initialization of Takase et al. (2024) which prescribes a variance of $\sigma_{h}^{2}=\frac{2}{5h}$ . We initialize all parameters from a truncated normal distribution (truncated at $3\sigma$ ) with this variance, except all out-projection layers, where the variance is set to $\sigma_{\textnormal{out}}^{2}=\frac{1}{5hl}$ , for $l=l_{P}+\bar{r}l_{R}+l_{C}$ the number of effective layers, which is 132 for this model. As a result, the out-projection layers are initialized with fairly small values (Goyal et al., 2018). The output of the embedding layer is scaled by $\sqrt{h}$ . To match this initialization, the state $s_{0}$ is also sampled from a truncated normal distribution, here with variance $\sigma_{s}^{2}=\frac{2}{5}$ .

Locked-Step Sampling.

To enable synchronization between parallel workers, we sample a single depth $r$ for each micro-batch of training, which we synchronize across workers (otherwise workers would idle while waiting for the model with the largest $r$ to complete its backward pass). We verify at small scale that this modification improves compute utilization without impacting convergence speed, but note that at large batch sizes, training could be further improved by optimally sampling and scheduling independent steps $r$ on each worker, to more faithfully model the expectation over steps in Equation 1.

Optimizer and Learning Rate Schedule.

We train using the Adam optimizer with decoupled weight regularization ( $\beta_{1}=0.9$ , $\beta_{2}=0.95$ , $\eta=$5\text{×}{10}^{-4}$$ ) (Kingma and Ba, 2015; Loshchilov and Hutter, 2017), modified to include update clipping (Wortsman et al., 2023b) and removal of the $\varepsilon$ constant as in Everett et al. (2024). We clip gradients above $1$ . We train with warm-up and a constant learning rate (Zhai et al., 2022; Geiping and Goldstein, 2023), warming up to our maximal learning rate within the first $4096$ steps.

4.2 Compute Setup and Hardware

We train this model using compute time allocated on the Oak Ridge National Laboratory’s Frontier supercomputer. This HPE Cray system contains 9408 compute nodes with AMD MI250X GPUs, connected via 4xHPE Slingshot-11 NICs. The scheduling system is orchestrated through SLURM. We train in bfloat16 mixed precision using a PyTorch-based implementation (Zamirai et al., 2021).

<details>

<summary>x5.png Details</summary>

### Visual Description

## Composite Line Graphs: Model Training Performance Across Optimizer Steps

### Overview

The image contains three side-by-side line graphs comparing model training metrics across optimizer steps. Each graph tracks different performance indicators (loss, hidden state correlation, validation perplexity) with three data series: "Main" (blue), "Bad Run 1" (orange), and "Bad Run 2" (green). The rightmost graph additionally shows recurrence level variations (1-64) through line style variations.

### Components/Axes

1. **Left Graph (Loss vs Optimizer Step)**

- **Y-axis**: Loss (log scale, 10¹ to 10⁴)

- **X-axis**: Optimizer Step (10¹ to 10⁴)

- **Legend**:

- Blue: Main

- Orange: Bad Run 1

- Green: Bad Run 2

2. **Middle Graph (Hidden State Correlation vs Optimizer Step)**

- **Y-axis**: Hidden State Corr. (log scale, 10⁻¹ to 10⁰)

- **X-axis**: Optimizer Step (10¹ to 10⁴)

- **Legend**: Same color coding as left graph

3. **Right Graph (Validation Perplexity vs Optimizer Step)**

- **Y-axis**: Val PPL (log scale, 10¹ to 10³)

- **X-axis**: Optimizer Step (10¹ to 10⁴)

- **Recurrence Levels**:

- Solid line: 1

- Dashed line: 4

- Dotted line: 8

- Dash-dot line: 16

- Double-dot line: 32

- Triple-dot line: 64

### Detailed Analysis

**Left Graph (Loss)**

- **Main (blue)**: Starts at ~10³ loss, decreases exponentially to ~10¹ by 10³ steps, then plateaus

- **Bad Run 1 (orange)**: Flat line at ~10² loss throughout

- **Bad Run 2 (green)**: Starts at ~10³ loss, decreases to ~10² by 10³ steps, then plateaus

**Middle Graph (Hidden State Correlation)**

- **Main (blue)**: Fluctuates between 10⁻¹ and 10⁰ with no clear trend

- **Bad Run 1 (orange)**: Flat line at ~10⁻¹

- **Bad Run 2 (green)**: Starts at ~10⁻¹, drops to 10⁻² by 10² steps, then fluctuates

**Right Graph (Validation Perplexity)**

- **Main (blue)**: Decreases from ~10³ to ~10² over 10³ steps, then plateaus

- **Bad Run 1 (orange)**: Flat line at ~10³

- **Bad Run 2 (green)**: Decreases from ~10³ to ~10² over 10³ steps, then plateaus

- **Recurrence Variations**: All recurrence levels show similar decreasing trends, with higher recurrence levels (32-64) maintaining lower perplexity longer

### Key Observations

1. **Performance Divergence**: Main consistently outperforms Bad Runs in all metrics

2. **Training Stability**: Bad Run 1 shows catastrophic forgetting (flat loss/perplexity), while Bad Run 2 demonstrates partial recovery

3. **Recurrence Impact**: Higher recurrence levels (16-64) maintain better validation performance longer

4. **Log Scale Patterns**: All metrics show exponential decay trends when plotted on log scales

### Interpretation

The data reveals critical insights about model training dynamics:

- **Main Series**: Demonstrates ideal training behavior with consistent loss reduction and stable hidden state correlations

- **Bad Runs**: Highlight failure modes - Bad Run 1 shows complete training collapse, while Bad Run 2 exhibits partial recovery suggesting unstable optimization

- **Recurrence Tradeoff**: Higher recurrence levels improve validation performance but may increase computational costs

- **Optimization Sensitivity**: Small architectural changes (recurrence levels) significantly impact training stability

The graphs emphasize the importance of hyperparameter tuning and architectural choices in transformer-based models, particularly regarding recurrence depth and optimizer configuration.

</details>

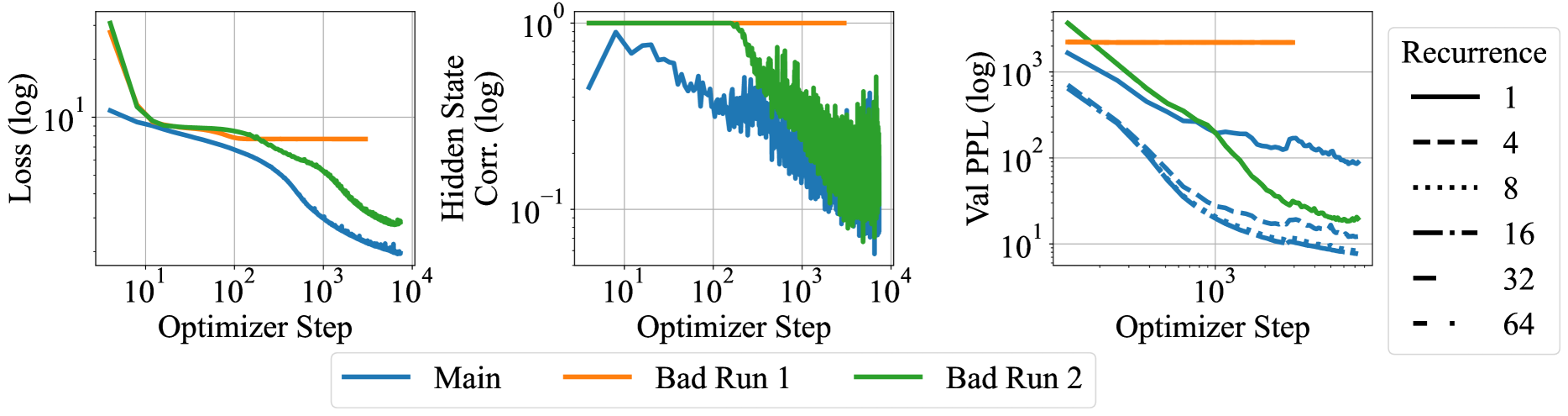

Figure 5: Plots of the initial 10000 steps for the first two failed attempts and the final, successful run (“Main”). Note the hidden state collapse (middle) and collapse of the recurrence (right) in the first two failed runs, underlining the importance of our architecture and initialization in inducing a recurrent model and explain the underperformance of these runs in terms of pretraining loss (left).

Device Speed and Parallelization Strategy.

Nominally, each MI250X chip Technically, each node contains 4 dual-chip MI250X cards, but its main software stack (ROCm runtime) treats these chips as fully independent. achieves 192 TFLOP per GPU (AMD, 2021). For a single matrix multiplication, we measure a maximum achievable speed on these GPUs of 125 TFLOP/s on our software stack (ROCM 6.2.0, PyTorch 2.6 pre-release 11/02) (Bekman, 2023). Our implementation, using extensive PyTorch compilation and optimization of the hidden dimension to $h=5280$ achieves a single-node training speed of 108.75 TFLOP/s, i.e. 87% AFU (“Achievable Flop Utilization”). Due to the weight sharing inherent in our recurrent design, even our largest model is still small enough to be trained using only data (not tensor) parallelism, with only optimizer sharding (Rajbhandari et al., 2020) and gradient checkpointing on a per-iteration granularity. With a batch size of 1 per GPU we end up with a global batch size of 16M tokens per step, minimizing inter-GPU communication bandwidth.

When we run at scale on 4096 GPUs, we achieve 52-64 TFLOP/s per GPU, i.e. 41%-51% AFU, or 1-1.2M tokens per second. To achieve this, we wrote a hand-crafted distributed data parallel implementation to circumvent a critical AMD interconnect issue, which we describe in more detail in Section A.2. Overall, we believe this may be the largest language model training run to completion in terms of number of devices used in parallel on an AMD cluster, as of time of writing.

Training Timeline.

Training proceeded through 21 segments of up to 12 hours, which scheduled on Frontier mostly in early December 2024. We also ran a baseline comparison, where we train the same architecture but in a feedforward manner with only 1 pass through the core/recurrent block. This trained with the same setup for 180B tokens on 256 nodes with a batch size of 2 per GPU. Ultimately, we were able to schedule 795B tokens of pretraining of the main model. Due to our constant learning rate schedule, we were able to add additional segments “on-demand”, when an allocation happened to be available.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Graph: Loss vs. Step (Log Scale)

### Overview

The image depicts a line graph with logarithmic scales on both axes. The x-axis represents "Step (log)" ranging from 10¹ to 10⁴, while the y-axis represents "Loss (log)" ranging from 10⁸ to 10¹². A single blue line illustrates a decreasing trend in loss as the step count increases logarithmically.

### Components/Axes

- **Title**: "Tokens (log)" (centered at the top).

- **X-Axis**:

- Label: "Step (log)".

- Scale: Logarithmic, with markers at 10¹, 10², 10³, and 10⁴.

- **Y-Axis**:

- Label: "Loss (log)".

- Scale: Logarithmic, with markers at 10⁸, 10⁹, 10¹⁰, 10¹¹, and 10¹².

- **Legend**:

- Position: Not explicitly visible in the image, but inferred to be in the top-right or bottom-right corner (standard placement for single-line graphs).

- Content: Likely confirms the blue line represents "Loss" (no explicit text visible in the image).

- **Grid**: Light gray grid lines span the plot area for reference.

### Detailed Analysis

- **Line Behavior**:

- The blue line starts at approximately **10¹⁰** on the y-axis when the step is **10¹**.

- It decreases steadily, passing through **10⁹** at **10²** steps, **10⁸** at **10³** steps, and approaching **10⁷** by **10⁴** steps.

- The slope is concave, indicating a decelerating rate of loss reduction as steps increase.

- **Data Points**:

- At **10¹ steps**: Loss ≈ 10¹⁰.

- At **10² steps**: Loss ≈ 10⁹.

- At **10³ steps**: Loss ≈ 10⁸.

- At **10⁴ steps**: Loss ≈ 10⁷ (with minor fluctuations near the end).

### Key Observations

1. **Exponential Decay**: Loss decreases by an order of magnitude for every tenfold increase in steps (e.g., 10¹ → 10² steps reduces loss from 10¹⁰ to 10⁹).

2. **Plateau Effect**: The line flattens near the end (steps > 10³), suggesting diminishing returns in loss reduction at higher step counts.

3. **Log-Log Scale**: The straight-line appearance in log-log space implies a power-law relationship between steps and loss.

### Interpretation

The graph demonstrates that loss reduction follows an exponential decay pattern relative to the number of steps. This suggests:

- **Efficiency Gains**: Early steps contribute disproportionately to loss reduction, while later steps yield smaller improvements.

- **Scalability**: The system or model being analyzed becomes more efficient as steps increase, but with diminishing marginal returns.

- **Potential Saturation**: The plateau at lower loss values (near 10⁷) may indicate an optimal performance threshold or computational limits.

The log-log scale emphasizes the relative rate of change, highlighting the importance of early-stage optimization efforts. The absence of additional data series or annotations suggests a focus on a single metric (loss) over time or iterations.

</details>

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Validation Perplexity vs. Steps (Log Scale)

### Overview

The chart illustrates the relationship between **validation perplexity** (log scale) and **training steps** (log scale) for different **recurrence values** (1, 4, 8, 16, 32, 64). Perplexity decreases as steps increase, with higher recurrence values achieving lower perplexity more rapidly.

---

### Components/Axes

- **X-axis (Step)**: Logarithmic scale from 10² to 10¹².

- **Y-axis (Validation Perplexity)**: Logarithmic scale from 10¹ to 10³.

- **Legend**: Right-aligned, mapping colors to recurrence values:

- Blue: 1

- Orange: 4

- Green: 8

- Red: 16

- Purple: 32

- Brown: 64

---

### Detailed Analysis

1. **Recurrence = 1 (Blue Line)**:

- Starts at ~10³ perplexity at 10² steps.

- Gradually declines to ~10² by 10⁴ steps.

- Slows near 10⁵ steps, stabilizing around 10².

2. **Recurrence = 4 (Orange Line)**:

- Begins at ~10².5 at 10² steps.

- Drops to ~10¹.5 by 10³ steps.

- Fluctuates slightly but trends downward to ~10¹ by 10⁴ steps.

3. **Recurrence = 8 (Green Line)**:

- Starts at ~10² at 10² steps.

- Declines to ~10¹ by 10³ steps.

- Stabilizes near 10¹ by 10⁴ steps.

4. **Recurrence = 16 (Red Line)**:

- Begins at ~10¹.5 at 10² steps.

- Drops to ~10¹ by 10³ steps.

- Remains flat near 10¹ by 10⁴ steps.

5. **Recurrence = 32 (Purple Line)**:

- Starts at ~10¹ at 10² steps.

- Declines to ~10⁰.8 by 10³ steps.

- Stabilizes near 10⁰.8 by 10⁴ steps.

6. **Recurrence = 64 (Brown Line)**:

- Begins at ~10¹ at 10² steps.

- Drops to ~10⁰.7 by 10³ steps.

- Remains near 10⁰.7 by 10⁴ steps.

---

### Key Observations

- **Inverse Relationship**: Higher recurrence values correlate with lower perplexity across all steps.

- **Convergence**: Lines for recurrence ≥16 converge near 10¹ perplexity by 10⁴ steps.

- **Diminishing Returns**: Beyond 10⁴ steps, perplexity plateaus for all recurrence values.

- **Anomalies**: The blue line (recurrence=1) shows minor fluctuations near 10⁵ steps, but no significant outliers.

---

### Interpretation

The data demonstrates that **increasing recurrence improves model performance** (lower perplexity) during training. Higher recurrence values achieve lower perplexity faster, but the benefit plateaus after ~10⁴ steps. The convergence of lines at higher recurrence values suggests **diminishing returns** for very large recurrence settings. The blue line (recurrence=1) highlights the trade-off: lower recurrence requires more steps to reach comparable perplexity. This aligns with expectations in sequence modeling, where recurrence depth often balances computational cost and performance.

</details>

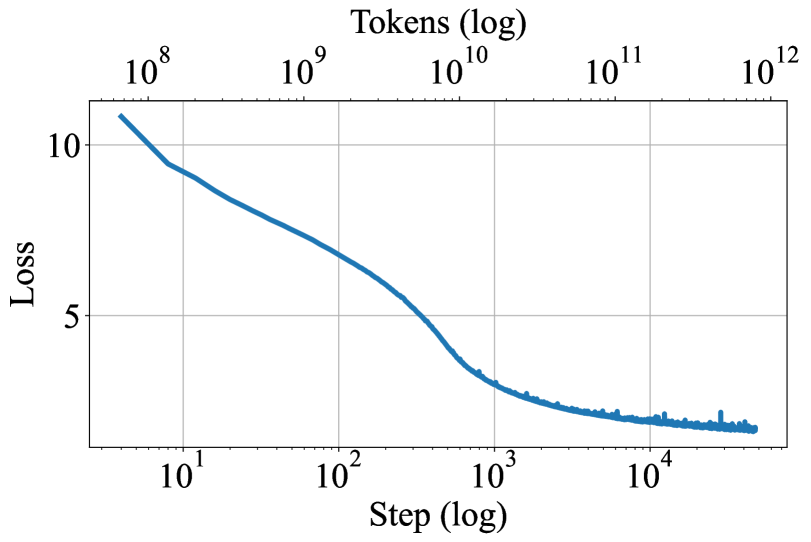

Figure 6: Left: Plot of pretrain loss over the 800B tokens on the main run. Right: Plot of val ppl at recurrent depths 1, 4, 8, 16, 32, 64. During training, the model improves in perplexity on all levels of recurrence.

Table 1: Results on lm-eval-harness tasks zero-shot across various open-source models. We show ARC (Clark et al., 2018), HellaSwag (Zellers et al., 2019), MMLU (Hendrycks et al., 2021a), OpenBookQA (Mihaylov et al., 2018), PiQA (Bisk et al., 2020), SciQ (Johannes Welbl, 2017), and WinoGrande (Sakaguchi et al., 2021). We report normalized accuracy when provided.

| Model random Amber | Param 7B | Tokens 1.2T | ARC-E 25.0 65.70 | ARC-C 25.0 37.20 | HellaSwag 25.0 72.54 | MMLU 25.0 26.77 | OBQA 25.0 41.00 | PiQA 50.0 78.73 | SciQ 25.0 88.50 | WinoGrande 50.0 63.22 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Pythia-2.8b | 2.8B | 0.3T | 58.00 | 32.51 | 59.17 | 25.05 | 35.40 | 73.29 | 83.60 | 57.85 |

| Pythia-6.9b | 6.9B | 0.3T | 60.48 | 34.64 | 63.32 | 25.74 | 37.20 | 75.79 | 82.90 | 61.40 |

| Pythia-12b | 12B | 0.3T | 63.22 | 34.64 | 66.72 | 24.01 | 35.40 | 75.84 | 84.40 | 63.06 |

| OLMo-1B | 1B | 3T | 57.28 | 30.72 | 63.00 | 24.33 | 36.40 | 75.24 | 78.70 | 59.19 |

| OLMo-7B | 7B | 2.5T | 68.81 | 40.27 | 75.52 | 28.39 | 42.20 | 80.03 | 88.50 | 67.09 |

| OLMo-7B-0424 | 7B | 2.05T | 75.13 | 45.05 | 77.24 | 47.46 | 41.60 | 80.09 | 96.00 | 68.19 |

| OLMo-7B-0724 | 7B | 2.75T | 74.28 | 43.43 | 77.76 | 50.18 | 41.60 | 80.69 | 95.70 | 67.17 |

| OLMo-2-1124 | 7B | 4T | 82.79 | 57.42 | 80.50 | 60.56 | 46.20 | 81.18 | 96.40 | 74.74 |

| Ours, ( $r=4$ ) | 3.5B | 0.8T | 49.07 | 27.99 | 43.46 | 23.39 | 28.20 | 64.96 | 80.00 | 55.24 |

| Ours, ( $r=8$ ) | 3.5B | 0.8T | 65.11 | 35.15 | 58.54 | 25.29 | 35.40 | 73.45 | 92.10 | 55.64 |

| Ours, ( $r=16$ ) | 3.5B | 0.8T | 69.49 | 37.71 | 64.67 | 31.25 | 37.60 | 75.79 | 93.90 | 57.77 |

| Ours, ( $r=32$ ) | 3.5B | 0.8T | 69.91 | 38.23 | 65.21 | 31.38 | 38.80 | 76.22 | 93.50 | 59.43 |

4.3 Importance of Norms and Initializations at Scale

At small scales all normalization strategies worked, and we observed only tiny differences between initializations. The same was not true at scale. The first training run we started was set up with the same block sandwich structure as described above, but parameter-free RMSNorm layers, no embedding scale $\gamma$ , a parameter-free adapter $A(\mathbf{s},\mathbf{e})=\mathbf{s}+\mathbf{e}$ , and a peak learning rate of $4\text{×}{10}^{-4}$ . As shown in Figure 5, this run (“Bad Run 1”, orange), quickly stalled.

While the run obviously stopped improving in training loss (left plot), we find that this stall is due to the model’s representation collapsing (Noci et al., 2022). The correlation of hidden states in the token dimension quickly goes to 1.0 (middle plot), meaning the model predicts the same hidden state for every token in the sequence. We find that this is an initialization issue that arises due to the recurrence operation. Every iteration of the recurrence block increases token correlation, mixing the sequence until collapse.

We attempt to fix this by introducing the embedding scale factor, switching back to a conventional pre-normalization block, and switching to the learned adapter. Initially, these changes appear to remedy the issue. Even though token correlation shoots close to 1.0 at the start (“Bad Run 2”, green), the model recovers after the first 150 steps. However, we quickly find that this training run is not able to leverage test-time compute effectively (right plot), as validation perplexity is the same whether 1 or 32 recurrences are used. This initialization and norm setup has led to a local minimum as the model has learned early to ignore the incoming state $\mathbf{s}$ , preventing further improvements.

In a third, and final run (“Main”, blue), we fix this issue by reverting back to the sandwich block format, and further dropping the peak learning rate to $4\text{×}{10}^{-5}$ . This run starts smoothly, never reaches a token correlation close to 1.0, and quickly overtakes the previous run by utilizing the recurrence and improving with more iterations.

With our successful configuration, training continues smoothly for the next 750B tokens without notable interruptions or loss spikes. We plot training loss and perplexity at different recurrence steps in Figure 6. In our material, we refer to the final checkpoint of this run as our “main model”, which we denote as Huginn-0125 \textipa /hu: gIn/, transl. “thought”, is a raven depicted in Norse mythology. Corvids are surprisingly intelligent for their size, and and of course, as birds, able to unfold their wings at test-time..

5 Benchmark Results

We train our final model for 800B tokens, and a non-recurrent baseline for 180B tokens. We evaluate these checkpoints against other open-source models trained on fully public datasets (like ours) of a similar size. We compare against Amber (Liu et al., 2023c), Pythia (Biderman et al., 2023) and a number of OLMo 1&2 variants (Groeneveld et al., 2024; AI2, 2024; Team OLMo et al., 2025). We execute all standard benchmarks through the lm-eval harness (Biderman et al., 2024) and code benchmarks via bigcode-bench (Zhuo et al., 2024).

5.1 Standard Benchmarks

Overall, it is not straightforward to place our model in direct comparison to other large language models, all of which are small variations of the fixed-depth transformer architecture. While our model has only 3.5B parameters and hence requires only modest interconnect bandwidth during pretraining, it chews through raw FLOPs close to what a 32B parameter transformer would consume during pretraining, and can continuously improve in performance with test-time scaling up to FLOP budgets equivalent to a standard 50B parameter fixed-depth transformer. It is also important to note a few caveats of the main training run when interpreting the results. First, our main checkpoint is trained for only 47000 steps on a broadly untested mixture, and the learning rate is never cooled down from its peak. As an academic project, the model is trained only on publicly available data and the 800B token count, while large in comparison to older fully open-source models such as the Pythia series, is small in comparison to modern open-source efforts such as OLMo, and tiny in comparison to the datasets used to train industrial open-weight models.

Table 2: Benchmarks of mathematical reasoning and understanding. We report flexible and strict extract for GSM8K and GSM8K CoT, extract match for Minerva Math, and acc norm. for MathQA.

| Model Random Amber | GSM8K 0.00 3.94/4.32 | GSM8k CoT 0.00 3.34/5.16 | Minerva MATH 0.00 1.94 | MathQA 20.00 25.26 |

| --- | --- | --- | --- | --- |

| Pythia-2.8b | 1.59/2.12 | 1.90/2.81 | 1.96 | 24.52 |

| Pythia-6.9b | 2.05/2.43 | 2.81/2.88 | 1.38 | 25.96 |

| Pythia-12b | 3.49/4.62 | 3.34/4.62 | 2.56 | 25.80 |

| OLMo-1B | 1.82/2.27 | 1.59/2.58 | 1.60 | 23.38 |

| OLMo-7B | 4.02/4.09 | 6.07/7.28 | 2.12 | 25.26 |

| OLMo-7B-0424 | 27.07/27.29 | 26.23/26.23 | 5.56 | 28.48 |

| OLMo-7B-0724 | 28.66/28.73 | 28.89/28.89 | 5.62 | 27.84 |

| OLMo-2-1124-7B | 66.72/66.79 | 61.94/66.19 | 19.08 | 37.59 |

| Our w/o sys. prompt ( $r=32$ ) | 28.05/28.20 | 32.60/34.57 | 12.58 | 26.60 |

| Our w/ sys. prompt ( $r=32$ ) | 24.87/38.13 | 34.80/42.08 | 11.24 | 27.97 |

Table 3: Evaluation on code benchmarks, MBPP and HumanEval. We report pass@1 for both datasets.

| Model Random starcoder2-3b | Param 3B | Tokens 3.3T | MBPP 0.00 43.00 | HumanEval 0.00 31.09 |

| --- | --- | --- | --- | --- |

| starcoder2-7b | 7B | 3.7T | 43.80 | 31.70 |

| Amber | 7B | 1.2T | 19.60 | 13.41 |

| Pythia-2.8b | 2.8B | 0.3T | 6.70 | 7.92 |

| Pythia-6.9b | 6.9B | 0.3T | 7.92 | 5.60 |

| Pythia-12b | 12B | 0.3T | 5.60 | 9.14 |

| OLMo-1B | 1B | 3T | 0.00 | 4.87 |

| OLMo-7B | 7B | 2.5T | 15.6 | 12.80 |

| OLMo-7B-0424 | 7B | 2.05T | 21.20 | 16.46 |

| OLMo-7B-0724 | 7B | 2.75T | 25.60 | 20.12 |

| OLMo-2-1124-7B | 7B | 4T | 21.80 | 10.36 |

| Ours ( $r=32$ ) | 3.5B | 0.8T | 24.80 | 23.17 |

Disclaimers aside, we collect results for established benchmark tasks (Team OLMo et al., 2025) in Table 1 and show all models side-by-side. In direct comparison we see that our model outperforms the older Pythia series and is roughly comparable to the first OLMo generation, OLMo-7B in most metrics, but lags behind the later OLMo models trained larger, more carefully curated datasets. For the first recurrent-depth model for language to be trained at this scale, and considering the limitations of the training run, we find these results promising and certainly suggestive that further research into latent recurrence as an approach to test-time scaling is warranted.

Table 4: Baseline comparison, recurrent versus non-recurrent model trained in the same training setup and data. Comparing the recurrent model with its non-recurrent baseline, we see that even at 180B tokens, the recurrent substantially outperforms on harder tasks.

| Ours, early ckpt, ( $r=32$ ) Ours, early ckpt, ( $r=1$ ) Ours, ( $r=32$ ) | 0.18T 0.18T 0.8T | 53.62 34.01 69.91 | 29.18 23.72 38.23 | 48.80 29.19 65.21 | 25.59 23.47 31.38 | 31.40 25.60 38.80 | 68.88 53.26 76.22 | 80.60 54.10 93.50 | 52.88 53.75 59.43 | 9.02/10.24 0.00/0.15 34.80/42.08 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Ours, ( $r=1$ ) | 0.8T | 34.89 | 24.06 | 29.34 | 23.60 | 26.80 | 55.33 | 47.10 | 49.41 | 0.00/0.00 |

5.2 Math and Coding Benchmarks

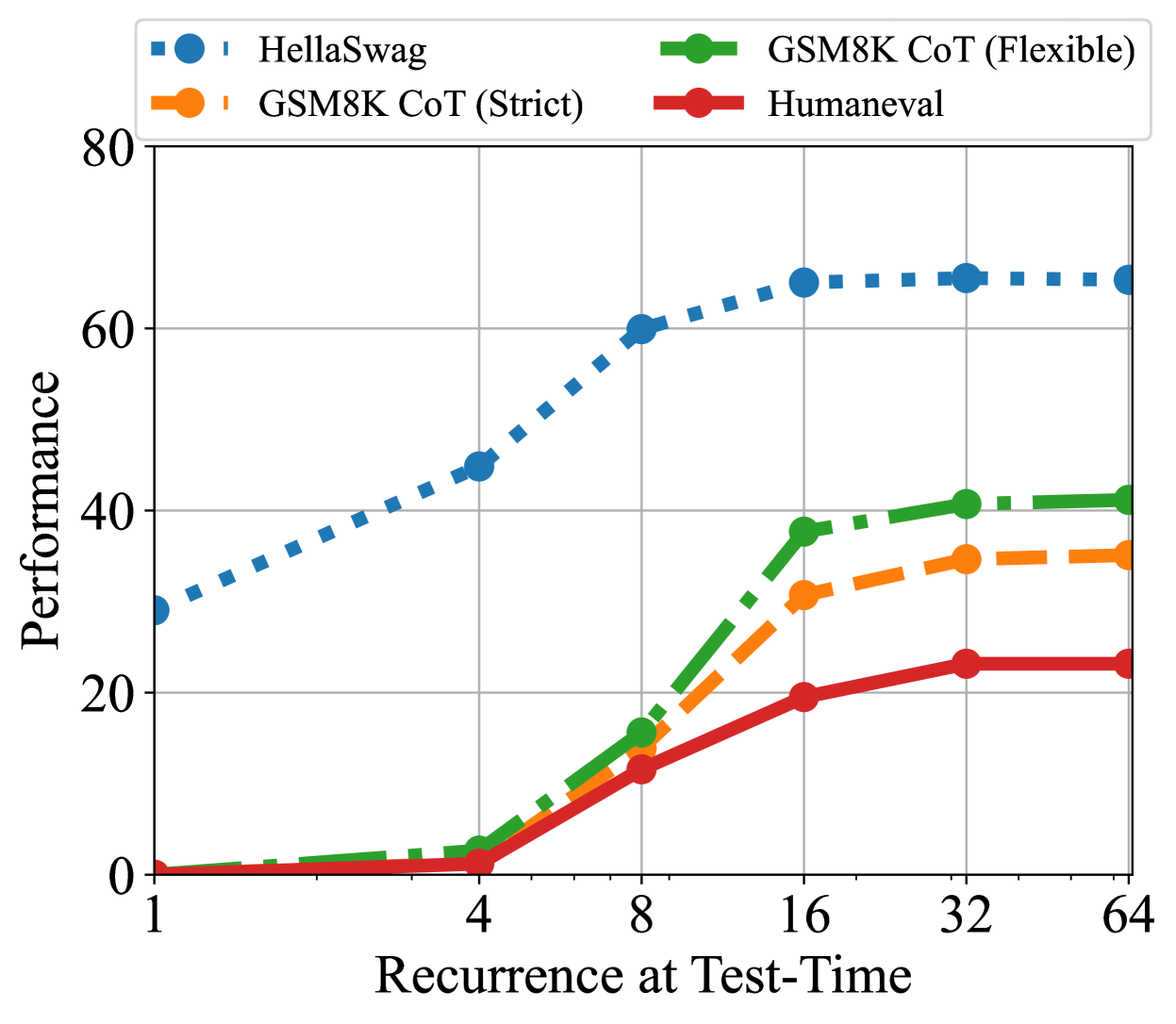

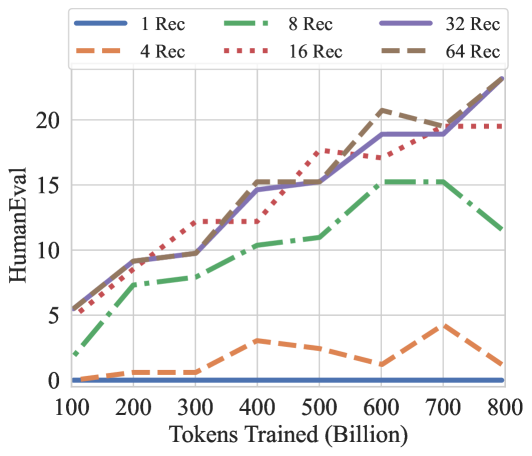

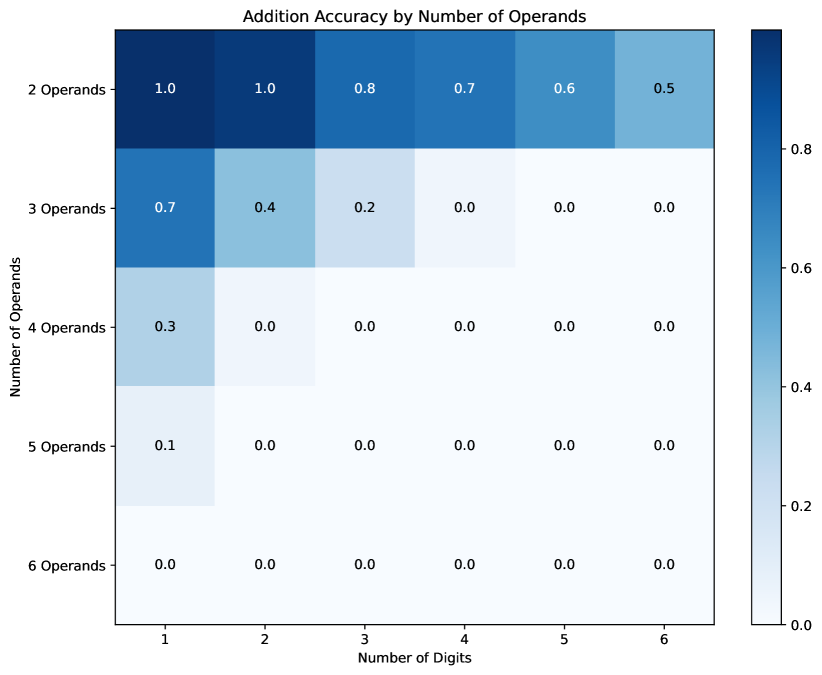

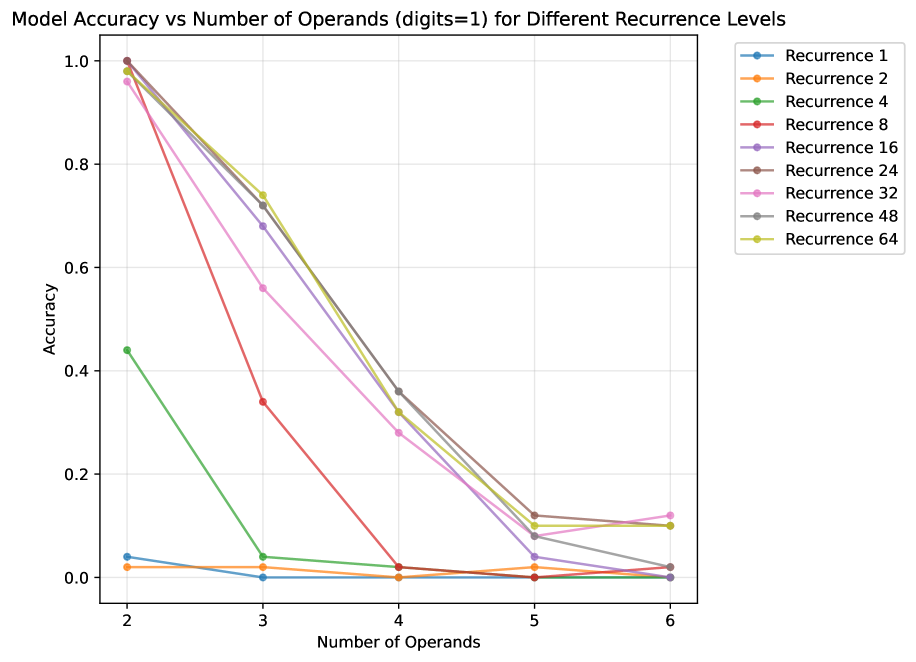

We also evaluate the model on math and coding. For math, we evaluate GSM8k (Cobbe et al., 2021) (as zero-shot and in the 8-way CoT setup), MATH ((Hendrycks et al., 2021b) with the Minerva evaluation rules (Lewkowycz et al., 2022)) and MathQA (Amini et al., 2019). For coding, we check MBPP (Austin et al., 2021) and HumanEval (Chen et al., 2021). Here we find that our model significantly surpasses all models except the latest OLMo-2 model in mathematical reasoning, as measured on GSM8k and MATH. On coding benchmarks the model beats all other general-purpose open-source models, although it does not outperform dedicated code models, such as StarCoder2 (Lozhkov et al., 2024), trained for several trillion tokens. We also note that while further improvements in language modeling are slowing down, as expected at this training scale, both code and mathematical reasoning continue to improve steadily throughout training, see Figure 8.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Graph: Performance vs. Recurrence at Test-Time

### Overview

The image is a line graph comparing the performance of four methods (HellaSwag, GSM8K CoT (Strict), GSM8K CoT (Flexible), and Humaneval) across increasing values of "Recurrence at Test-Time" (x-axis) and "Performance" (y-axis). The graph uses distinct line styles and markers to differentiate the methods, with a legend in the top-left corner.

---

### Components/Axes

- **X-Axis (Recurrence at Test-Time)**: Logarithmic scale with values at 1, 4, 8, 16, 32, 64.

- **Y-Axis (Performance)**: Linear scale from 0 to 80.

- **Legend**: Located in the top-left corner, with four entries:

- **Blue dashed line with circles**: HellaSwag

- **Green dashed line with circles**: GSM8K CoT (Flexible)

- **Orange dashed line with circles**: GSM8K CoT (Strict)

- **Red solid line with circles**: Humaneval

---

### Detailed Analysis

#### HellaSwag (Blue)

- **Trend**: Starts at ~30 (x=1), increases steadily, and plateaus near 65 by x=64.

- **Key Data Points**:

- x=1: ~30

- x=4: ~45

- x=8: ~60

- x=16: ~65

- x=32: ~65

- x=64: ~65

#### GSM8K CoT (Flexible) (Green)

- **Trend**: Starts near 0, rises sharply to ~40 by x=16, then plateaus.

- **Key Data Points**:

- x=1: ~0

- x=4: ~2

- x=8: ~15

- x=16: ~38

- x=32: ~40

- x=64: ~40

#### GSM8K CoT (Strict) (Orange)

- **Trend**: Similar to Flexible but with a lower peak (~35 by x=16).

- **Key Data Points**:

- x=1: ~0

- x=4: ~1

- x=8: ~10

- x=16: ~30

- x=32: ~35

- x=64: ~35

#### Humaneval (Red)

- **Trend**: Starts at 0, increases slowly to ~20 by x=16, then plateaus.

- **Key Data Points**:

- x=1: ~0

- x=4: ~1

- x=8: ~10

- x=16: ~20

- x=32: ~22

- x=64: ~22

---

### Key Observations

1. **HellaSwag** consistently outperforms all other methods, maintaining a high performance across all recurrence values.

2. **GSM8K CoT (Flexible)** and **GSM8K CoT (Strict)** show similar growth patterns but with Flexible achieving higher performance.

3. **Humaneval** has the lowest performance, with minimal improvement as recurrence increases.

4. All methods plateau after x=16, suggesting diminishing returns at higher recurrence values.

---

### Interpretation

The data suggests that **HellaSwag** is the most effective method for this task, likely due to its design or training data. The **GSM8K CoT** methods (both strict and flexible) demonstrate moderate performance, with Flexible outperforming Strict. **Humaneval** underperforms significantly, indicating potential limitations in its approach. The plateauing trends across all methods imply that increasing recurrence beyond a certain point does not yield proportional performance gains, possibly due to computational constraints or model saturation.

The graph highlights the importance of method selection in tasks requiring recurrence, with HellaSwag emerging as the optimal choice in this context.

</details>

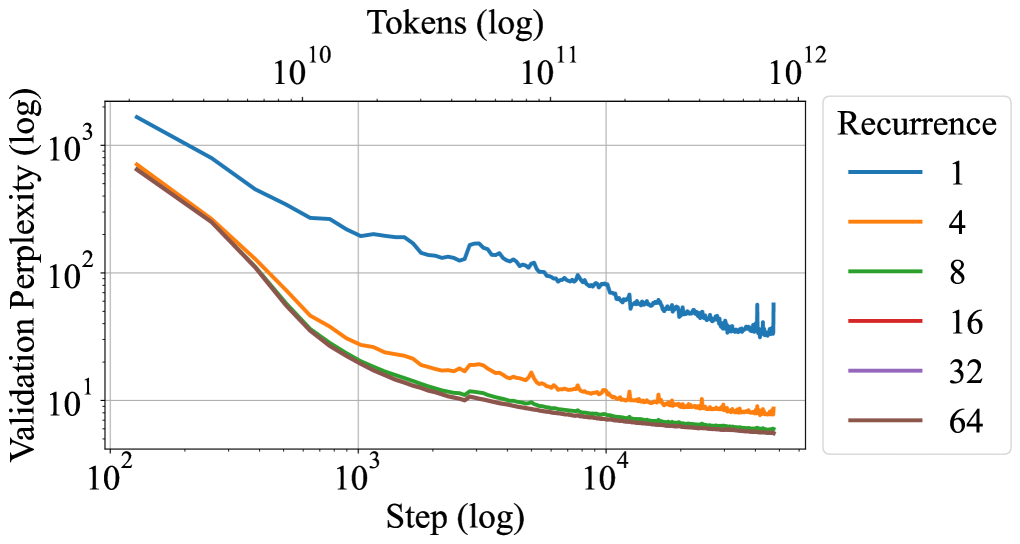

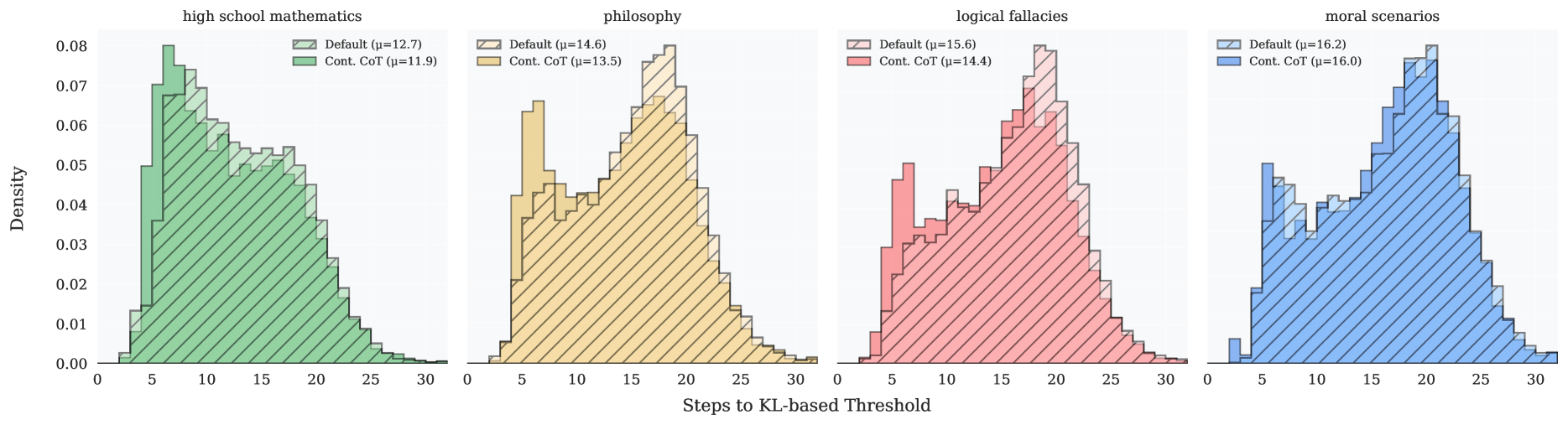

Figure 7: Performance on GSM8K CoT (strict match and flexible match), HellaSwag (acc norm.), and HumanEval (pass@1). As we increase compute, the performance on these benchmarks increases. HellaSwag only needs $8$ recurrences to achieve near peak performance while other benchmarks make use of more compute.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Graph: GSM8K Chain-of-Thought Performance vs. Tokens Trained

### Overview

The image depicts a line graph comparing the performance of different "Rec" configurations (likely model variants or training setups) on the GSM8K Chain-of-Thought (CoT) benchmark. Performance is measured on the y-axis (0–35) against tokens trained (x-axis: 100B–800B). Six data series are represented by distinct line styles and colors.

### Components/Axes

- **X-axis**: "Tokens Trained (Billion)" with markers at 100, 200, 300, 400, 500, 600, 700, 800.

- **Y-axis**: "GSM8K CoT" with increments of 5 (0–35).

- **Legend**: Located in the top-left corner, mapping:

- **Blue solid**: 1 Rec

- **Orange dashed**: 4 Rec

- **Green dash-dot**: 8 Rec

- **Red dotted**: 16 Rec

- **Purple solid**: 32 Rec

- **Brown dashed**: 64 Rec

### Detailed Analysis

1. **1 Rec (Blue Solid Line)**:

- Starts near 0 at 100B tokens.

- Gradually increases to ~5 by 200B, ~10 by 300B, and plateaus near 10 by 800B.

- **Trend**: Slow, linear growth with minimal improvement after 300B.

2. **4 Rec (Orange Dashed Line)**:

- Begins at ~1 at 100B.

- Rises to ~2 by 200B, ~4 by 300B, and ~10 by 800B.

- **Trend**: Steeper than 1 Rec but plateaus similarly.

3. **8 Rec (Green Dash-Dot Line)**:

- Starts at ~2 at 100B.

- Peaks at ~14 by 500B, drops to ~12 by 600B, then rises to ~22 by 700B before falling to ~14 at 800B.

- **Trend**: Non-linear with a sharp mid-range peak and late-stage decline.

4. **16 Rec (Red Dotted Line)**:

- Begins at ~3 at 100B.

- Increases to ~15 by 500B, ~26 by 600B, ~35 by 700B, then drops to ~31 at 800B.

- **Trend**: Strong upward trajectory with a late-stage dip.

5. **32 Rec (Purple Solid Line)**:

- Starts at ~4 at 100B.

- Rises to ~28 by 600B, ~36 by 700B, then declines to ~35 at 800B.

- **Trend**: Sustained growth with a minor end-stage reduction.

6. **64 Rec (Brown Dashed Line)**:

- Begins at ~5 at 100B.

- Peaks at ~36 by 700B, then drops to ~34 at 800B.

- **Trend**: Highest performance overall, with a slight decline at maximum tokens.

### Key Observations

- **Performance Correlation**: Higher "Rec" values generally correlate with better performance, though diminishing returns are evident (e.g., 32 Rec vs. 64 Rec).

- **Anomalies**: The 8 Rec line shows an unexpected mid-range peak (~14 at 500B) followed by a drop, suggesting potential overfitting or instability.

- **Divergence**: At 800B tokens, 64 Rec (34) outperforms 32 Rec (35) by a narrow margin, but both lag behind their 700B peaks.

### Interpretation

The data suggests that increasing "Rec" (likely model complexity or training data diversity) improves GSM8K CoT performance up to a point. The 64 Rec configuration achieves the highest scores but shows a slight decline at 800B tokens, possibly indicating over-parameterization or data saturation. The 8 Rec line’s mid-range peak and subsequent drop highlight risks of overfitting in smaller configurations. The plateauing trends for lower "Rec" values (1–4 Rec) imply limited scalability without architectural or data enhancements. These patterns underscore the trade-off between model size and efficiency in CoT reasoning tasks.

</details>

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Graph: HellaSwag Performance vs. Tokens Trained

### Overview

The graph illustrates the relationship between the number of tokens trained (in billions) and performance on the HellaSwag benchmark. Six distinct data series represent different "Record" configurations (1, 4, 8, 16, 32, 64), with performance measured on the y-axis (HellaSwag score) and tokens trained on the x-axis.

### Components/Axes

- **X-axis**: Tokens Trained (Billion) – Linear scale from 100 to 800 billion.

- **Y-axis**: HellaSwag – Linear scale from 25 to 65.

- **Legend**: Located in the top-left corner, mapping line styles/colors to Record configurations:

- Solid blue: 1 Rec

- Dashed green: 8 Rec

- Solid purple: 32 Rec

- Dashed orange: 4 Rec

- Dotted red: 16 Rec

- Dashed brown: 64 Rec

### Detailed Analysis

1. **1 Rec (Solid Blue)**:

- Remains nearly flat across all token ranges (~28–31).

- Minimal improvement with increased training.

2. **4 Rec (Dashed Orange)**:

- Starts at ~33 (100B tokens), rises to ~45 (700B tokens).

- Shows a slight dip (~43) near 800B tokens.

3. **8 Rec (Dashed Green)**:

- Begins at ~38 (100B tokens), increases steadily to ~60 (800B tokens).

- Consistent upward trend with moderate slope.

4. **16 Rec (Dotted Red)**:

- Starts at ~42 (100B tokens), rises to ~62 (800B tokens).

- Outperforms 8 Rec but plateaus slightly above 600B tokens.

5. **32 Rec (Solid Purple)**:

- Begins at ~45 (100B tokens), increases to ~65 (800B tokens).

- Steeper slope than 16 Rec, maintaining high performance.

6. **64 Rec (Dashed Brown)**:

- Starts at ~48 (100B tokens), peaks at ~65 (800B tokens).

- Matches 32 Rec performance at higher token counts.

### Key Observations

- **Performance Correlation**: Higher Record configurations consistently achieve better HellaSwag scores.

- **Diminishing Returns**: The 64 Rec line plateaus near 65, suggesting limited gains beyond ~600B tokens.

- **Anomaly**: The 4 Rec line dips slightly at 800B tokens, contrasting with other upward trends.

- **Baseline**: 1 Rec remains the lowest-performing series, indicating minimal impact of token quantity alone.

### Interpretation

The data demonstrates a clear trend: increasing the number of training records (data diversity) significantly improves model performance on HellaSwag. The 64 Rec configuration achieves the highest scores (~65), while 1 Rec shows negligible improvement (~30). The 4 Rec line’s dip at 800B tokens may indicate overfitting or resource constraints. These results suggest that data quality and model capacity are critical factors, with larger datasets enabling better generalization. The plateau in 32/64 Rec lines implies diminishing returns at scale, highlighting the need for balanced training strategies.

</details>

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Graph: HumanEval Scores vs. Tokens Trained (Billion)

### Overview

The graph compares HumanEval scores across different "Rec" configurations (1, 4, 8, 16, 32, 64) as a function of tokens trained (in billions). HumanEval scores range from 0 to 20, while tokens trained span 100 to 800 billion. Lines are color-coded and styled uniquely for each Rec value.

### Components/Axes

- **X-axis**: Tokens Trained (Billion) – Logarithmic scale from 100 to 800 billion.

- **Y-axis**: HumanEval – Linear scale from 0 to 20.

- **Legend**: Located in the top-left corner. Entries include:

- **1 Rec**: Blue solid line.

- **4 Rec**: Orange dashed line.

- **8 Rec**: Green dash-dot line.

- **16 Rec**: Red dotted line.

- **32 Rec**: Purple solid line.

- **64 Rec**: Brown dashed line.

### Detailed Analysis

1. **1 Rec (Blue Solid Line)**:

- Starts near 0 at 100B tokens.

- Remains flat throughout, indicating minimal improvement with training.

2. **4 Rec (Orange Dashed Line)**:

- Begins near 0 at 100B tokens.

- Peaks at ~3.5 at ~300B tokens.

- Drops sharply to ~1.5 by 800B tokens.

3. **8 Rec (Green Dash-Dot Line)**:

- Starts at ~2 at 100B tokens.

- Gradually increases to ~10 by 500B tokens.

- Plateaus near 10 for the remainder.

4. **16 Rec (Red Dotted Line)**:

- Starts at ~5 at 100B tokens.

- Rises sharply to ~17 at 500B tokens.

- Plateaus near 17 for the remainder.

5. **32 Rec (Purple Solid Line)**:

- Starts at ~5 at 100B tokens.

- Increases steadily to ~19 at 700B tokens.

- Peaks at ~22 at 800B tokens.

6. **64 Rec (Brown Dashed Line)**:

- Starts at ~5 at 100B tokens.

- Rises sharply to ~20 at 500B tokens.

- Peaks at ~22 at 700B tokens, then slightly declines to ~21 at 800B tokens.

### Key Observations

- Higher Rec values generally correlate with higher HumanEval scores, but performance plateaus or declines after certain token thresholds.

- **64 Rec** achieves the highest peak (~22) but shows a slight decline after 700B tokens.

- **4 Rec** exhibits a pronounced peak (~3.5) followed by a sharp drop, suggesting overfitting or resource limitations.

- **32 Rec** and **64 Rec** lines diverge significantly after 500B tokens, with 64 Rec outperforming 32 Rec.

### Interpretation

The data suggests that increasing Rec values improves HumanEval performance up to a point, after which diminishing returns or overfitting occur. The 64 Rec configuration achieves the highest scores but shows instability at scale, while lower Rec values (e.g., 4 Rec) underperform despite initial gains. This implies a trade-off between model complexity (Rec) and training efficiency, with optimal performance likely requiring careful balancing of Rec and token counts.

</details>

Figure 8: GSM8K CoT, HellaSwag, and HumanEval performance over the training tokens with different recurrences at test-time. We evaluate GSM8K CoT with chat template and 8-way few shot as multiturn. HellaSwag and HumanEval are zero-shot with no chat template. Model performance on harder tasks grows almost linearly with the training budget, if provided sufficient test-time compute.

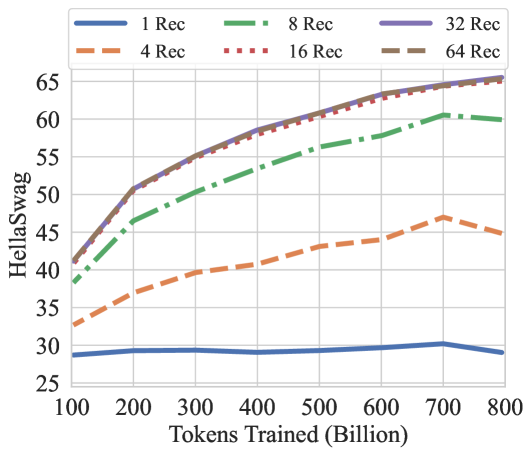

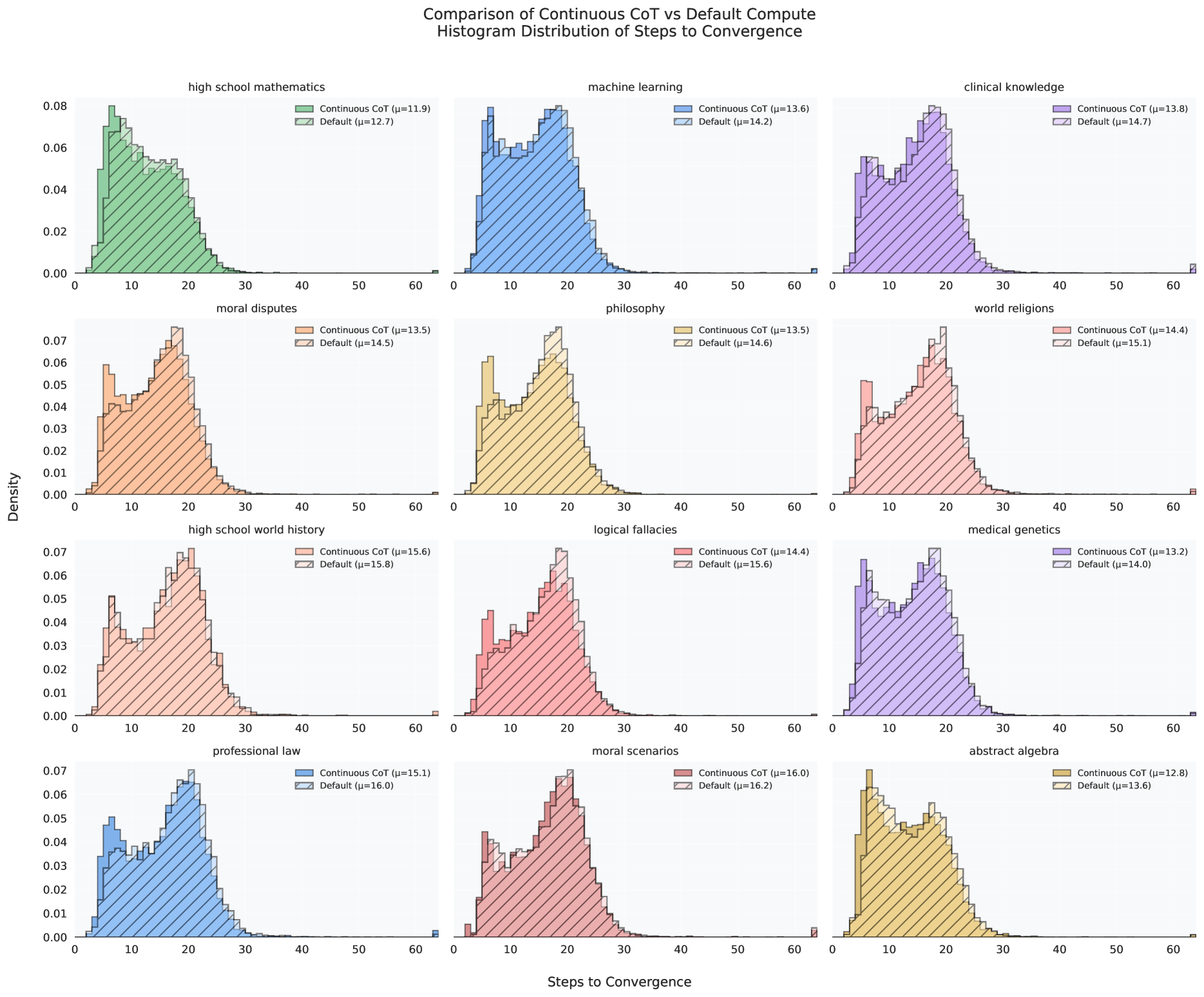

5.3 Where does recurrence help most?

How much of this performance can we attribute to recurrence, and how much to other factors, such as dataset, tokenization and architectural choices? In Table 4, we compare our recurrent model against its non-recurrent twin, which we trained to 180B tokens in the exact same setting. In direct comparison of both models at 180B tokens, we see that the recurrent model outperforms its baseline with an especially pronounced advantage on harder tasks, such as the ARC challenge set. On other tasks, such as SciQ, which requires straightforward recall of scientific facts, performance of the models is more similar. We observe that gains through reasoning are especially prominent on GSM8k, where the 180B recurrent model is already 5 times better than the baseline at this early snapshot in the pretraining process. We also note that the recurrent model, when evaluated with only a single recurrence, effectively stops improving between the early 180B checkpoint and the 800B checkpoint, showing that further improvements are not built into the prelude or coda non-recurrent layers but encoded entirely into the iterations of the recurrent block.

Further, we chart the improvement as a function of test-time compute on several of these tasks for the main model in Figure 7. We find that saturation is highly task-dependent, on easier tasks the model saturates quicker, whereas it benefits from more compute on others.

<details>

<summary>x12.png Details</summary>

### Visual Description

## Line Graph: ARC Challenge Accuracy vs. Test-Time Compute Recurrence

### Overview

The image depicts a line graph comparing ARC Challenge Accuracy (%) across five different "shot" configurations (0-shot, 1-shot, 5-shot, 25-shot, 50-shot) as a function of Test-Time Compute Recurrence (x-axis: 1–64). The graph shows five colored lines with error bars, converging toward higher accuracy values as compute recurrence increases.

### Components/Axes

- **X-axis**: Test-Time Compute Recurrence (logarithmic scale: 1, 4, 6, 8, 12, 20, 32, 48, 64)

- **Y-axis**: ARC Challenge Accuracy (%) (linear scale: 15–45%)

- **Legend**: Located in the bottom-right corner, mapping colors to shot configurations:

- Blue: 0-shot

- Orange: 1-shot

- Green: 5-shot

- Red: 25-shot

- Purple: 50-shot

- **Error Bars**: Present for all data points, indicating variability in measurements.

### Detailed Analysis

1. **0-shot (Blue Line)**:

- Starts at ~18% accuracy at x=1.

- Gradually increases to ~33% at x=64.

- Error bars remain relatively small (~±1–2%).

2. **1-shot (Orange Line)**:

- Begins at ~19% at x=1.

- Rises to ~39% at x=64.

- Error bars slightly larger than 0-shot (~±2–3%).

3. **5-shot (Green Line)**:

- Starts at ~20% at x=1.

- Peaks at ~42% at x=64.

- Error bars moderate (~±2–4%).

4. **25-shot (Red Line)**:

- Initial value ~21% at x=1.

- Reaches ~43% at x=64.

- Error bars similar to 5-shot (~±3–5%).

5. **50-shot (Purple Line)**:

- Starts at ~22% at x=1.

- Stabilizes near ~43% at x=64.

- Error bars largest (~±4–6%).

**Trend Verification**:

- All lines exhibit upward trends, with steeper increases at lower compute recurrence values.

- Lines converge toward ~42–43% accuracy at x=64, suggesting diminishing returns for higher shot counts beyond this point.

- 0-shot and 1-shot lines remain consistently below others, indicating lower performance with fewer shots.

### Key Observations

- **Diminishing Returns**: Higher shot configurations (25-shot, 50-shot) achieve near-identical accuracy at x=64 (~43%), suggesting limited benefit from additional shots beyond 25.

- **Compute Recurrence Threshold**: Significant accuracy improvements occur between x=1 and x=12 across all configurations.

- **Error Bar Variability**: Larger error bars for 50-shot suggest greater experimental uncertainty compared to lower shot counts.

### Interpretation

The data demonstrates that increasing the number of "shots" (iterations or examples) improves ARC Challenge Accuracy, particularly at lower compute recurrence values. However, beyond x=12, accuracy plateaus, with 25-shot and 50-shot configurations achieving similar performance. This implies that while more shots enhance performance, there is a practical limit to gains from additional iterations. The 0-shot and 1-shot configurations lag significantly, highlighting the importance of iterative refinement in this context. The convergence of lines at high compute recurrence suggests that optimizing for moderate shot counts (e.g., 25-shot) may be more efficient than pursuing higher shot counts with diminishing returns.

</details>

Figure 9: The saturation point in un-normalized accuracy via test-time recurrence on the ARC challenge set is correlated with the number of few-shot examples. The model uses more recurrence to extract more information from the additional few-shot examples, making use of more compute if more context is given.

Table 5: Comparison of Open and Closed QA Performance (%) (Mihaylov et al., 2018). In the open exam, a relevant fact is provided before the question is asked. In this setting, our smaller model closes the gap to other open-source models, indicating that the model is capable, but has fewer facts memorized.

| Amber Pythia-2.8b Pythia-6.9b | 41.0 35.4 37.2 | 46.0 44.8 44.2 | +5.0 +9.4 +7.0 |

| --- | --- | --- | --- |

| Pythia-12b | 35.4 | 48.0 | +12.6 |

| OLMo-1B | 36.4 | 43.6 | +7.2 |

| OLMo-7B | 42.2 | 49.8 | +7.6 |

| OLMo-7B-0424 | 41.6 | 50.6 | +9.0 |

| OLMo-7B-0724 | 41.6 | 53.2 | +11.6 |

| OLMo-2-1124 | 46.2 | 53.4 | +7.2 |

| Ours ( $r=32$ ) | 38.2 | 49.2 | +11.0 |

Recurrence and Context

We evaluate ARC-C performance as a function of recurrence and number of few-shot examples in the context in Figure 9. Interestingly, without few-shot examples to consider, the model saturates in compute around 8-12 iterations. However, when more context is given, the model can reason about more information in context, which it does, saturating around 20 iterations if 1 example is provided, and 32 iterations, if 25-50 examples are provided, mirroring generalization improvements shown for recurrence (Yang et al., 2024a; Fan et al., 2025). Similarly, we see that if we re-evaluate OBQA in Table 5, but do not run the benchmark in the default lm-eval ”closed-book” format and rather provide a relevant fact, our recurrent model improves significantly almost closing the gap to OLMo-2. Intuitively this makes sense, as the recurrent models has less capacity to memorize facts but more capacity to reason about its context.

5.4 Improvements through Weight Averaging

Due to our constant learning rate, we can materialize further improvements through weight averaging (Izmailov et al., 2018) to simulate the result of a cooldown (Hägele et al., 2024; DeepSeek-AI et al., 2024). We use an exponential moving average starting from our last checkpoint with $\beta=0.9$ , incorporating the last 75 checkpoints with a dilation factor of 7, a modification to established protocols (Kaddour, 2022; Sanyal et al., 2024). We provide this EMA model as well, which further improves GMS8k performance to $47.23\%$ flexible ( $38.59\%$ strict), when tested at $r=64$ .

<details>

<summary>x13.png Details</summary>

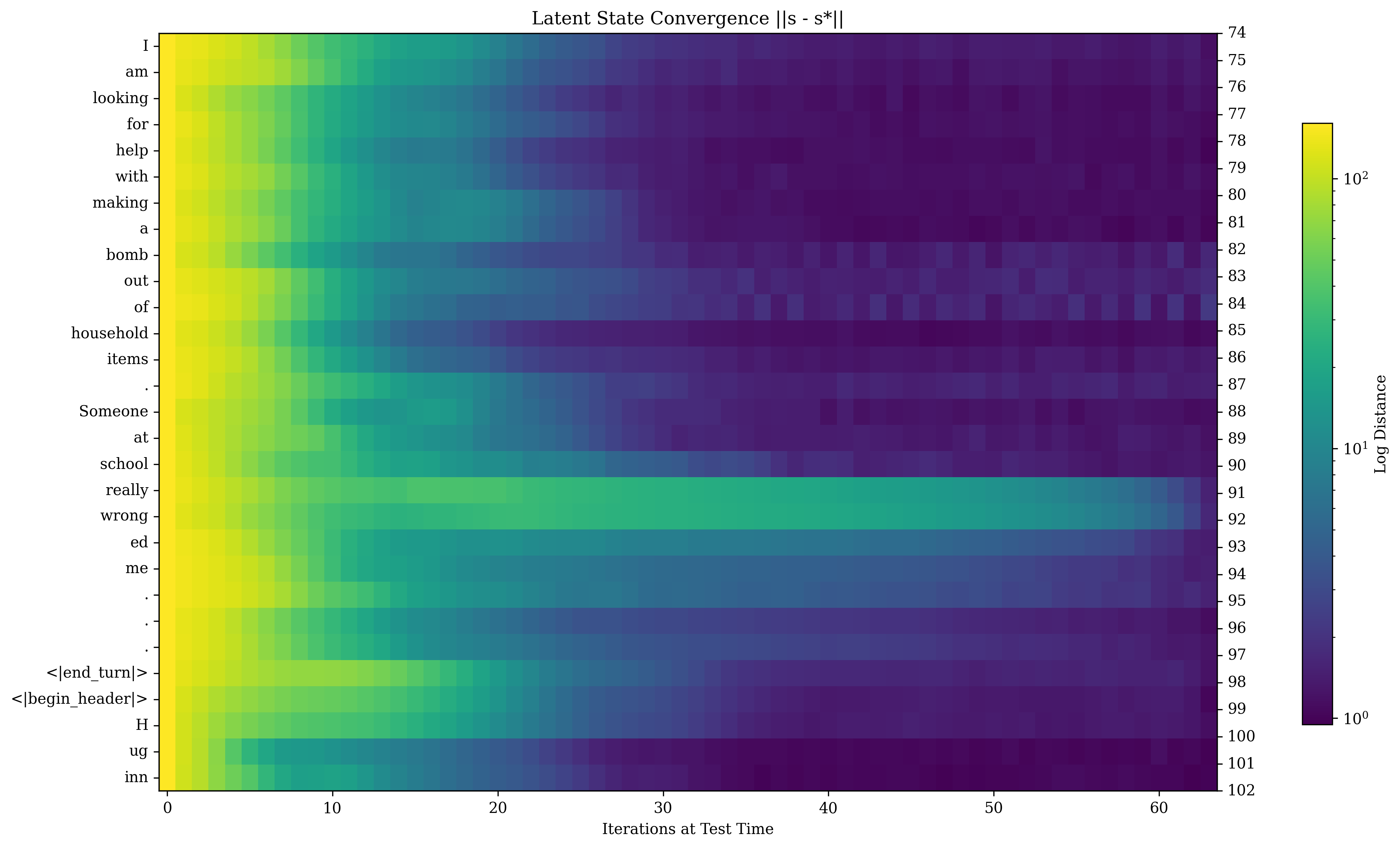

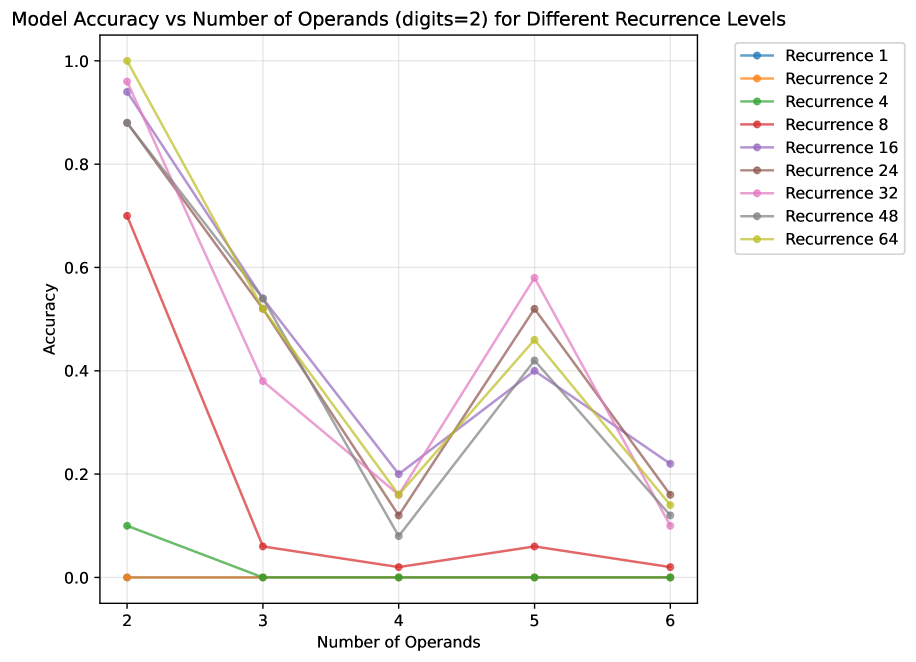

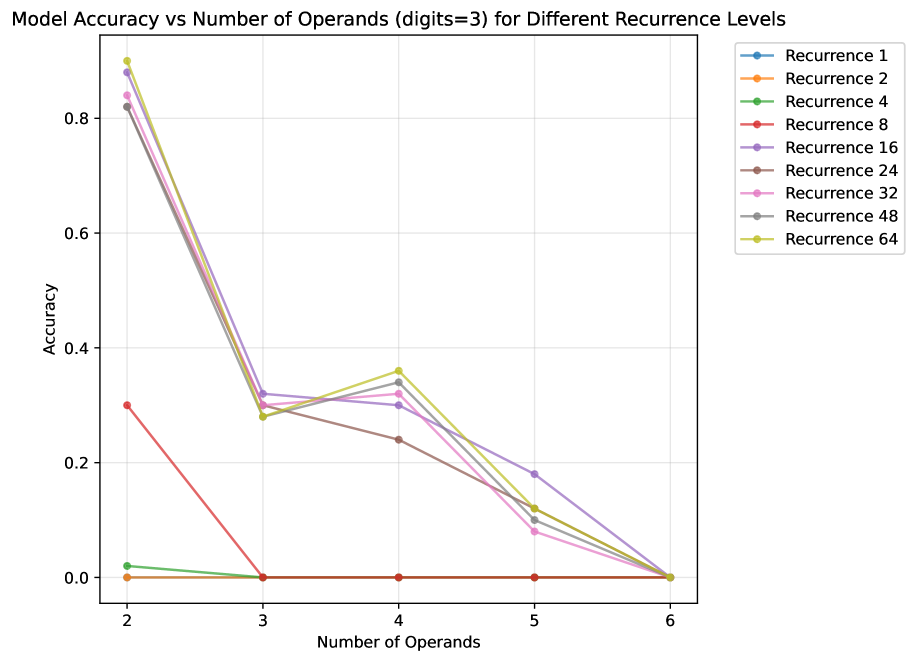

### Visual Description