# Proving the Coding Interview: A Benchmark for Formally Verified Code Generation

**Authors**: Quinn Dougherty, Ronak Mehta

> Beneficial AI Foundation

> Independent

conference_101719.bib

## Abstract

We introduce the Formally Verified Automated Programming Progress Standards, or FVAPPS, a benchmark of 4715 samples for writing programs and proving their correctness, the largest formal verification benchmark, including 1083 curated and quality controlled samples. Previously, APPS provided a benchmark and dataset for programming puzzles to be completed in Python and checked against unit tests, of the kind seen in technical assessments in the software engineering industry. Building upon recent approaches for benchmarks in interactive theorem proving, we generalize the unit tests to Lean 4 theorems given without proof (i.e., using Lean’s “sorry” keyword). On the 406 theorems of 100 randomly selected samples, Sonnet correctly proves 30% and Gemini correctly proves 18%. We challenge the machine learning and program synthesis communities to solve both each general purpose programming problem and its associated correctness specifications. The benchmark is available at https://huggingface.co/datasets/quinn-dougherty/fvapps.

Index Terms: theorem proving, machine learning, program synthesis, formal verification

## I Introduction

Large Language Models (LLMs) have developed incredible capabilities, spanning creative writing, tutoring and education, information retrieval and distillation, common sense reasoning, complex mathematical reasoning, and even code generation [chatbotarena, gpt4, llama3, deepseek]. Particular focus and attention has been given towards LLM-assisted software development, given its economic value and position between natural language and formal logic.

We have seen rapid advancements in code generation capabilities [Singh2023CodeFusionAP, jimenez2023swe, Hendrycks2021MeasuringCC]. With this has come strong concerns around the misuse and safety of code generated by these models, and the ability to prove or guarantee that the produced code is sound. An approach commonly employed today includes running LLM-generated code in restricted development environments, and ensuring tight feedback loops with software engineers who understand the unique failure modes of their particular complex software. This paired programming-style approach is common in LLM chatbot interfaces, as well as programming assistants more strongly integrated in the software engineering development cycle such as GitHub Copilot [chen2021evaluating] and Cursor (Anysphere Inc., 2023).

However, recent paradigm shifts in the ability to develop and enhance capabilities through supervised fine-tuning has led to fully autonomous software engineering, which includes code generation and execution in the development loop (e.g., Devin, Cognition AI, Inc. 2023, [wang2024openhandsopenplatformai]). Without a human evaluating code before it is run, strong assumptions or restrictions are necessary to ensure that generated code is safe and correct. Critically, as these tools become ubiquitous across more and more applications that touch the real world, it will be a requirement that we have guarantees of their safety and correctness. Code generated for managing medical devices, for managing patient records, for ensuring the security of private personal information, for controlling autonomous vehicles, for cybersecurity defense systems, and for military use will all require strong guarantees before users, organizations, and governments can make effective use of these tools.

While software quality assurance primarily relies on extensive testing, the emerging field of deductive verification offers a more rigorous alternative by mathematically proving code correctness against formal specifications. However, this approach requires deep system knowledge, explicit property definitions, and often demands rewriting existing code in specialized proof assistant languages—a significant challenge given the current state of tools. As Large Language Models (LLMs) advance in code generation capabilities, they may eventually enable automated creation of deductively verifiable code, allowing us to defer trust to theorem provers rather than testing alone. This could revolutionize mission-critical software development by providing formal guarantees against bugs and hallucinations, though current LLM capabilities fall short of this goal, and it remains unclear whether fine-tuning or prompt engineering can bridge this gap.

Contributions. We deliver a set of 4,715 Lean 4 files consisting of a solution function signature and theorem statements, all of which are passed on for future proving via the “sorry” keyword, allowing the lean executable to compile without actual proofs or implementations. We provide tags for samples that pass additional, rigorous quality assurance, defined by inline unit testing and property tests in Lean.

## II The FVAPPS Benchmark

<details>

<summary>extracted/6182580/figs/FVAPPS_Pipeline.png Details</summary>

### Visual Description

\n

## Diagram: Benchmark Generation Pipeline

### Overview

This diagram illustrates a pipeline for benchmark generation, specifically for the FVAPPS project. It depicts a series of processing steps, decision points, and outcomes related to creating and validating benchmarks. The pipeline begins with an original dataset and progresses through several stages of test generation and verification, ultimately resulting in either "Guarded and Plausible", "Guarded", or "Unguarded" benchmarks.

### Components/Axes

The diagram consists of rectangular process blocks, diamond-shaped decision points, and rounded rectangle output states. Arrows indicate the flow of the pipeline. The key components are:

* **Original APPS Dataset:** The starting point of the pipeline.

* **Preprocess Dataset:** A processing step.

* **Generate Python Property Tests:** A processing step.

* **Generate Lean Theorem Statements:** A processing step.

* **Generate Solutions and #guard_msgs Unit Tests:** A processing step.

* **Plausible?:** A decision point.

* **python Success?:** A decision point.

* **pytest Success?:** A decision point.

* **lean Success?:** A decision point.

* **lean Success?:** A decision point.

* **Guarded and Plausible:** An output state.

* **Guarded:** An output state.

* **Unguarded:** An output state.

* **FVAPPS:** A label in the top-right corner.

### Detailed Analysis or Content Details

The pipeline flow is as follows:

1. The process begins with the "Original APPS Dataset".

2. The dataset is fed into the "Preprocess Dataset" block.

3. From "Preprocess Dataset", the flow splits based on the "python Success?" decision point.

* If "Yes", the flow proceeds to "Generate Python Property Tests".

* If "No", the flow returns to "Preprocess Dataset".

4. From "Generate Python Property Tests", the flow splits based on the "pytest Success?" decision point.

* If "Yes", the flow proceeds to "Generate Lean Theorem Statements".

* If "No", the flow returns to "Preprocess Dataset".

5. From "Generate Lean Theorem Statements", the flow splits based on the "lean Success?" decision point.

* If "Yes", the flow proceeds to "Generate Solutions and #guard_msgs Unit Tests".

* If "No", the flow returns to "Preprocess Dataset".

6. From "Generate Solutions and #guard_msgs Unit Tests", the flow splits based on the "lean Success?" decision point.

* If "Yes", the flow proceeds to the "Plausible?" decision point.

* If "No", the flow returns to "Preprocess Dataset".

7. From "Plausible?", the flow splits:

* If "Yes", the flow proceeds to "Guarded and Plausible".

* If "No", the flow proceeds to a check for "10 Failures?".

8. If "10 Failures?" is true, the flow proceeds to "Unguarded".

9. If "10 Failures?" is false, the flow proceeds to "Guarded".

### Key Observations

The pipeline is cyclical, with multiple paths returning to the "Preprocess Dataset" block, indicating an iterative refinement process. The decision points ("python Success?", "pytest Success?", "lean Success?", "Plausible?") act as gatekeepers, determining whether the generated benchmarks meet certain criteria. The final output states ("Guarded and Plausible", "Guarded", "Unguarded") represent the quality and reliability of the generated benchmarks.

### Interpretation

This diagram represents a robust benchmark generation process designed to produce high-quality, reliable benchmarks for the FVAPPS project. The iterative nature of the pipeline suggests a focus on continuous improvement and validation. The multiple decision points and output states indicate a nuanced approach to benchmark assessment, considering factors such as Python test success, Lean theorem verification, and overall plausibility. The "Unguarded" state suggests that benchmarks failing certain criteria are still retained, potentially for debugging or further analysis. The pipeline aims to create benchmarks that are both "Guarded" (meaning they are protected against errors) and "Plausible" (meaning they are realistic and meaningful). The presence of "#guard_msgs" in the "Generate Solutions and #guard_msgs Unit Tests" block suggests that the generated unit tests include mechanisms for detecting and handling potential errors or vulnerabilities.

</details>

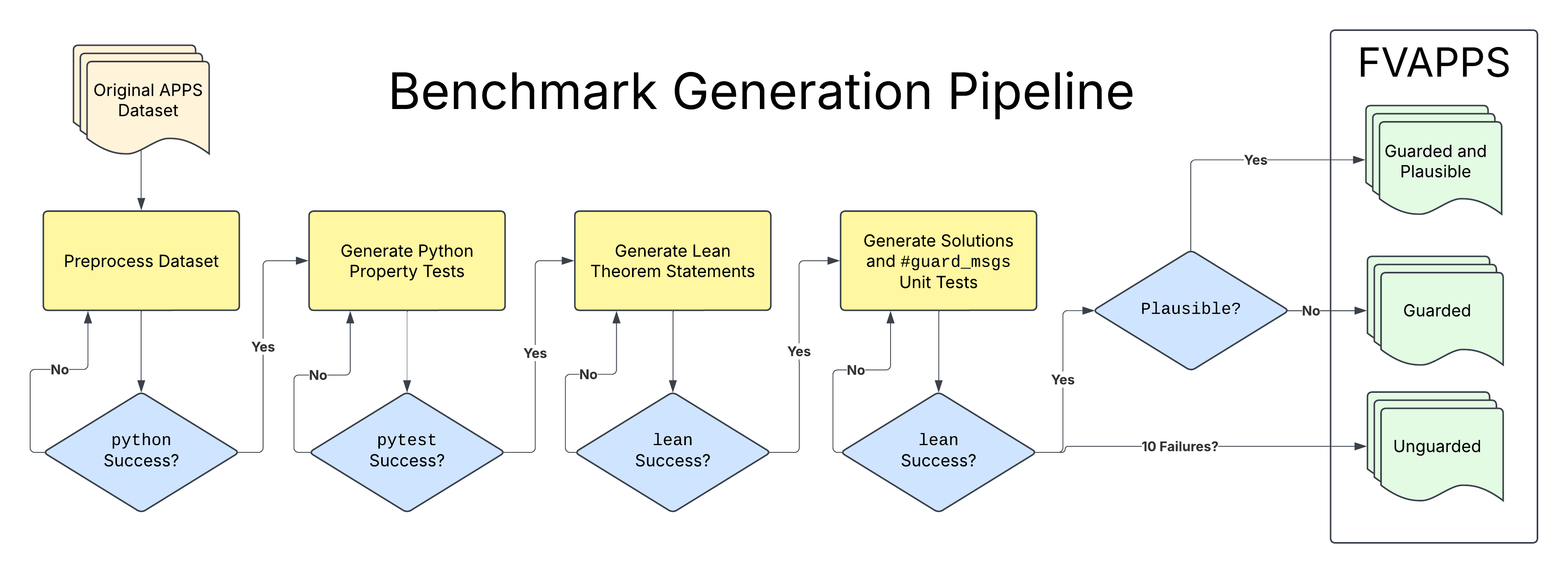

Figure 1: Benchmark generation pipeline for creating coding interview theorem statements in Lean from APPS questions and solutions.

A strong benchmark for evaluating model capabilities for theorem proving should have a number of desirable properties. With a particular focus on software engineering, algorithms and properties of those algorithms should well represent tasks and solutions that are used to evaluate skill in the labor force today. Coding interview questions fit this requirement naturally. With generic coding capabilities well-evaluated, we can build upon existing benchmarks of coding ability to generate our formally-verified one.

We begin with APPS, a benchmark and dataset for programming puzzle solving [Hendrycks2021MeasuringCC]. Developed largely by scraping the interview preparation platforms CodeForces and LeetCode, each sample consists of a natural language question, a ternary flag marking difficulty, a list of correct solutions in Python, and a few input-output test case pairs.

### II-A An Example Task

⬇

def solve_elections (n : Nat) (voters : List (Nat à Nat)) : Nat := sorry

theorem solve_elections_nonnegative (n : Nat) (voters : List (Nat à Nat)) : solve_elections n voters >= 0 := sorry

theorem solve_elections_upper_bound (n : Nat) (voters : List (Nat à Nat)) : solve_elections n voters <= List. foldl (λ acc (pair : Nat à Nat) => acc + pair.2) 0 voters := sorry

theorem solve_elections_zero_votes (n : Nat) (voters : List (Nat à Nat)) : (List. all voters (fun pair => pair.1 = 0)) -> solve_elections n voters = 0 := sorry

theorem solve_elections_single_zero_vote : solve_elections 1 [(0, 5)] = 0 := sorry

Figure 2: FVAPPS sample 23, derived from train sample 23 of APPS source. The def is where the solver implements the function, each theorem is a correctness specification.

As an example, consider the crooked election problem:

There are $n$ voters, and two ways to convince each of them to vote for you. The first way to convince the $i$ -th voter is to pay him $p_{i}$ coins. The second way is to make $m_{i}$ other voters vote for you, and the $i$ -th voter will vote for free. Moreover, the process of such voting takes place in several steps. For example, if there are five voters with $m_{1}=1$ , $m_{2}=2$ , $m_{3}=2$ , $m_{4}=4$ , $m_{5}=5$ , then you can buy the vote of the fifth voter, and eventually everyone will vote for you. Set of people voting for you will change as follows: ${5}\rightarrow{1,5}\rightarrow{1,2,3,5}\rightarrow{1,2,3,4,5}$ .

Calculate the minimum number of coins you have to spend so that everyone votes for you.

To get everyone to vote for you, you can either purchase votes for each voter’s declared price, or convert each voter through their declared groupthink threshold. This is the setup of sample 0023, derived from APPS problem train/23 https://huggingface.co/datasets/codeparrot/apps/viewer/all/train?row=23. Figure 2 is our pipeline’s output that we ship in our dataset. Crucially, it is one def and multiple theorem s, though many samples include extra definitions that are helper functions either for the solution or some theorem statement. The def is where the problem solution is implemented as a first-order requirement to proving theorems about its correctness.

While a number of theorem provers now exist, we choose Lean because of its explosive growth in mathematics https://leanprover-community.github.io/mathlib_stats.html and its fertile potential for general-purpose programming [christiansen2023fpinlean]. Lean is capable of expressing arbitrary programs, taking advantage of the identity monad and the partial keyword.

### II-B Generation Pipeline

Sonnet 3.5 read run $\lambda c.c+\texttt{stderr}$ exitcode write $p_{0}$ $c_{k}$ 0 1 $p_{k+1}$

Figure 3: Our generic scaffolding loop used at various stages of our pipeline. The run element is replaced with the python, pytest, lean, or lake build executable respectively.

We use Anthropic’s Claude Sonnet 3.5 Model version claude-3-5-sonnet-20241022, accessed October 25th 2024. to power five core parts of our benchmark creation pipeline. In stages one through three of our pipeline, whenever a non-deductive LLM call is used for processing, we allow for a maximum of 5 attempts before "failing" on that sample. Stage four allows for a maximum of ten, and stage five has no LLM call. These loops include simple instructions that pass relevant error messages back to the model and ask for an updated response. Given that subsets of our pipeline fall withing the scope of what is capable with current LLMs, we construct scaffolding loops for iterating on model-based solutions. Broadly, we use outputs from python, pytest, lean, and lake build to ensure the generated code is correct, and to collect standard error and outputs to send back to the model when incorrect (Figure 3).

We first preprocess the original APPS dataset, aggregating unit tests and solutions into one file with a particular function definition and architecture that better lends itself to automated unit testing. This step also selects a single solution from the set of solutions provided by the original APPS dataset.

Property-based tests are procedurally generated unit tests, up to boundary conditions given on input types [MacIver2019Hypothesis]. We use our scaffolding loop outlined above, specifically feeding pytest ’s standard error and standard out messages back into the next API call. When pytest returns an empty standard error and an exit code of zero, we write the final file of tests to disk and exit the loop.

The lean executable is used as our supervisor for the third step, again with standard error and standard output fed back to the model API until a valid lean file is constructed. The model is prompted to explicitly convert the property tests into unproven theorem statements (cleared via sorry). The lean executable Lean (version 4.12.0, x86_64-unknown-linux-gnu, commit dc2533473114, Release). is used to typecheck the theorem statements, ignoring the sorry s but ensuring statements are well-formed.

Step four of our process aims to add more strict quality assurance to our lean outputs. Using unit tests that have been generated by our preprocessing step as assertions in Python, we deductively parse these assertions to construct inline unit tests in Lean https://github.com/leanprover/lean4/blob/master/src/Lean/Elab/GuardMsgs.lean. In parallel, we translate the Python solutions into Lean 4 as def s, and require that the guard messages "pass". While theorem statements and property tests are broadly our primary goal and focus, these unit tests provide an easy way to filter for solutions that are not correct in some minimal form.

The last phase of our pipeline involves ensuring that the theorems we wish to provide are probably true, using Lean’s property-based testing framework Plausible https://reservoir.lean-lang.org/@leanprover-community/plausible. Any theorems that are not " plausible " at this stage, given our solutions that pass unit tests, are filtered.

All code and prompts used for the generation can be found at our open-source GitHub repository. https://github.com/quinn-dougherty/fvapps

### II-C Results

| 0 | 1963 | 1735 |

| --- | --- | --- |

| 1 | 1915 | 1450 |

| 2 | 584 | 842 |

| 3 | 143 | 394 |

| 4 | 75 | 183 |

| 5 | 35 | 111 |

TABLE I: Iterations needed for Pytest and Lean agents to converge, filtered to those that made it passed Stage 3 of the pipeline.

We provide three subsets of our benchmark according to the assurance level, depending on when the samples were chosen and filtered in our pipeline.

Unguarded

This largest set represents all examples that generated valid theorems, ending at Stage 3 of our full pipeline. Table I shows the number of execution-generation loops needed to pass our generation criterion in Stages 2 and 3. The Pytest agent took on average 0.846 iterations (with the 0th position as initialization prompt), and the Lean agent took on average 1.188 iterations.

<details>

<summary>extracted/6182580/figs/num_theorems_across_samples.png Details</summary>

### Visual Description

\n

## Histogram: Number of Definitions and Theorems Across Samples

### Overview

The image presents a histogram visualizing the distribution of the number of theorems across a sample of 4715 items. The x-axis represents the number of theorems, and the y-axis represents the number of samples. The data is presented as a series of bins, showing the frequency of each number of theorems.

### Components/Axes

* **Title:** "Number of defs and theorems across samples (N=4715)"

* **X-axis Label:** "Num theorems"

* **X-axis Scale:** Ranges from 0 to approximately 50, with increments of 10.

* **Y-axis Label:** "Num samples"

* **Y-axis Scale:** Ranges from 0 to approximately 1200, with increments of 200.

* **Data Series:** A single histogram series, filled with a blue color.

### Detailed Analysis

The histogram shows a strong right skew. The distribution is heavily concentrated towards lower numbers of theorems.

* **Peak:** The highest frequency occurs between 5 and 8 theorems, with a peak value of approximately 1250 samples.

* **Trend:** The number of samples decreases rapidly as the number of theorems increases.

* **Data Points (Approximate):**

* 0-1 theorems: ~200 samples

* 1-2 theorems: ~400 samples

* 2-3 theorems: ~600 samples

* 3-4 theorems: ~800 samples

* 4-5 theorems: ~1000 samples

* 5-6 theorems: ~1250 samples

* 6-7 theorems: ~1100 samples

* 7-8 theorems: ~900 samples

* 8-9 theorems: ~650 samples

* 9-10 theorems: ~400 samples

* 10-11 theorems: ~250 samples

* 11-12 theorems: ~150 samples

* 12-13 theorems: ~80 samples

* 13-14 theorems: ~40 samples

* 14-15 theorems: ~20 samples

* 15-20 theorems: ~50 samples (combined)

* 20-50 theorems: ~50 samples (combined)

### Key Observations

* The vast majority of samples have a small number of theorems (less than 10).

* There is a long tail extending to higher numbers of theorems, but the frequency is very low.

* The distribution is not symmetrical.

### Interpretation

The data suggests that most of the analyzed items (definitions and theorems) contain a relatively small number of theorems. This could indicate that the items are primarily focused on basic concepts or that the theorems are often implicit rather than explicitly stated. The long tail suggests that some items are significantly more complex and contain a large number of theorems, but these are relatively rare. The skewness indicates that the number of theorems is not uniformly distributed across the samples. This could be due to the nature of the data itself, or it could be a result of the sampling method. The sample size of 4715 is reasonably large, suggesting that the observed distribution is likely representative of the underlying population.

</details>

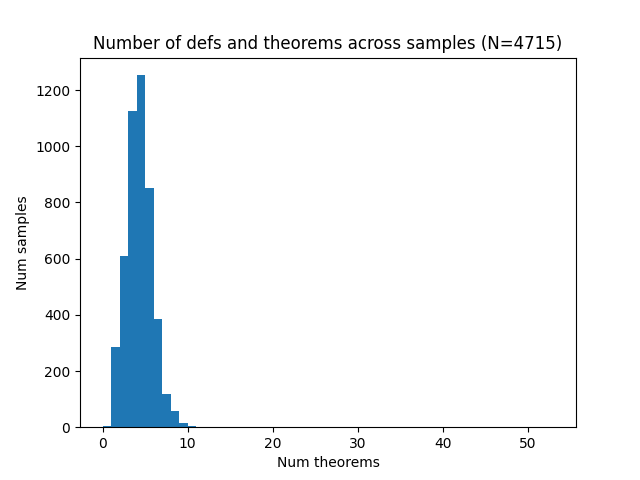

Figure 4: Total number of defs and theorems across FVAPPS samples.

Of the 4715 successful samples, the median number of theorems is four (Figure 4).

Guarded

Samples that passed Stage 4 of our pipeline include those samples where solutions were able to be identified that pass simple unit tests. While we don’t include these solutions in our set here, the definitions have been verified to admit a solution such that these basic unit tests pass. Importantly this means that claude-3-5-sonnet-20241022 was able to solve the problem with our scaffold. 2089 samples passed this level of assurance.

Guarded and Plausible

1083 samples passed through all five parts of our pipeline These problems are solvable and well-stated to the highest possible probability short of a full proof (which we leave to the LLMs and other program synthesis methods). Note that the definitions in each of the sets Guarded and Guarded and Plausible are somehow "easy" in the sense that current language models can provide definitions.

All 4715 samples are provided as a dataset on HuggingFace with appropriate tagging. https://huggingface.co/datasets/quinn-dougherty/fvapps

## III Baseline Experiments

Our baseline task is to implement and prove each invocation of the word "sorry" in the benchmark’s source specification. We provide preliminary evaluations here, using a loop similar to the generation pipeline. We attempt to solve 101 randomly sampled FVAPPS problems with both Claude 3.5 Sonnet (new) and Gemini 1.5 Pro Retrieved in early November 2024.. For a direct human comparison, we allocated ten hours for a baseliner to attempt one sample.

### III-A Model Baseline Details

We use Lean 4.12.0 throughout our benchmark generation and baseline, pinned with Mathlib available in the environment. Mathlib commit: 809c3fb3b5c8f5d7dace56e200b426187516535a We attempt to produce a partial baseline for a subset of FVAPPS using claude-3-5-sonnet-20241022 (the same model that generated the task).

A note on open source models. We spent some time attempting to solve benchmark samples with small and medium-sized open source models. Particularly, we attempted simple, single-shot autocompletion of theorem statements using DeepSeek’s DeepSeek-Prover-V1.5-RL https://huggingface.co/deepseek-ai/DeepSeek-Prover-V1.5-RL [xin2024deepseekproverv15harnessingproofassistant], but were unable to find a one-shot or loop-based scaffolding solution that made any sufficient progress. An important part of this task seems to be the ability to iterate, and while models fine-tuned to reduce loss on next token prediction in Lean may eventually prove to be useful, currently their limited capability in broader, less narrow goals is insufficient.

#### III-A 1 Prompt Details

Because Lean 4 is in active development and interfaces and functions change with reasonable frequency, we found it was critical to give the LLM sufficient context to avoid common pitfalls associated with biases it may have due to outdated training data. We found significantly higher success rates by following a number of key insights by Victor Taelin https://github.com/VictorTaelin/AI-scripts/, including 1) direct, explicit description of the problem formulation and goal, 2) details of the Lean 4 environment that may be significantly different from its pretrain dataset, and 3) multiple examples of theorem statements and solutions to theorem statements.

#### III-A 2 Scaffolding Loop (Agent)

We initially provide the model with a system prompt consisting of the details in the previous section, and prompt the model first with the original English-language description of the problem and the sorry ’d benchmark .lean file (Figure 2), with instructions to implement the sorry s. After the model responds, any code block provided is extracted, written to a Basic.lean file, and run in a custom managed environment with specifically pinned libraries using lake build. The full standard input and output alongside returncode are sent back to the model with the instruction to address the issues.

Initial attempts were unsuccessful in solving all of the theorem statements at once; to give the model a better chance at solving the problem we show and allow the model to solve one statement at a time, starting with the definitions and then appending each theorem independently, to be proven separately from the rest.

### III-B Human Baseline

A human attempted the function and one of the theorems from sample 0023. It took them 10 hours to accomplish the function shown in Figure 5, since they needed termination proofs for two recursors. In the time allotted, they did not make it through any of the proofs. A problem they ran into with the proofs was stack overflow trying to unfold the recursors. Given this, we don’t think a full human baseline is a feasible option.

⬇

def go (voters holdouts : List (Nat à Nat)) (expense conversions : Nat) : Nat :=

if conversions ⥠voters. length then

expense

else

let filtered := filter_cascade holdouts conversions

if filtered. isEmpty then

expense

else

let bestValue := filtered. argmax? utility |>. get!

let holdouts ’ := filtered. eraseP (. == bestValue)

go voters

holdouts ’

(expense + bestValue. snd)

(conversions + 1)

termination_by (voters. length - conversions)

decreasing_by omega

def solve_elections (n : Nat) (voters : List (Nat à Nat)) : Nat :=

go voters voters 0 0

Figure 5: Human baseline’s solution to the definition for sample 23 of FVAPPS (helper recursor omitted for brevity, available on GitHub)

### III-C Comparing Model Baseline and Human Baseline on One Sample

On sample 23 regarding the crooked election, both successfully implemented the definition of the function (Appendix -C) and succeeded on no nontrivial proofs. We let Sonnet attempt the second theorem for 100 loops, and while it made a valiant effort, it diverged. The human and Sonnet clearly took two very different approaches, but it remains to be seen which approach has better proof ergonomics.

### III-D Model performance on more samples

<details>

<summary>extracted/6182580/figs/qualitative_assessment_of_model_baseline_results.png Details</summary>

### Visual Description

\n

## Bar Chart: Qualitative assessments of model baseline results

### Overview

This bar chart compares the performance of two models, "Sonnet" (blue bars) and "Gemini" (orange bars), across seven different qualitative assessments. The y-axis represents a numerical score, while the x-axis lists the assessment categories. The chart visually displays the relative performance of each model in each category.

### Components/Axes

* **Title:** Qualitative assessments of model baseline results

* **X-axis Label:** Assessment Category

* Categories: "Easy Branch of If", "Effectively Unit Test", "Non-negativity of Nat.", "Too Trivial", "Actually Nontrivial", "Bug", "Model Declares Untrue"

* **Y-axis Label:** Score (Scale from 0 to 12, increments of 2)

* **Legend:**

* Sonnet (Blue)

* Gemini (Orange)

### Detailed Analysis

The chart consists of paired bars for each assessment category, representing the scores for Sonnet and Gemini.

* **Easy Branch of If:** Sonnet scores approximately 12, Gemini scores approximately 7.

* **Effectively Unit Test:** Sonnet scores approximately 10, Gemini scores approximately 6.

* **Non-negativity of Nat.:** Sonnet scores approximately 9.5, Gemini scores approximately 6.

* **Too Trivial:** Sonnet scores approximately 8.5, Gemini scores approximately 9.5.

* **Actually Nontrivial:** Sonnet scores approximately 9, Gemini scores approximately 8.5.

* **Bug:** Sonnet scores approximately 8, Gemini scores approximately 6.

* **Model Declares Untrue:** Sonnet scores approximately 6, Gemini scores approximately 2.

The Sonnet model generally outperforms Gemini in most categories. Gemini shows a slight advantage in the "Too Trivial" category.

### Key Observations

* Sonnet consistently scores higher than Gemini across most assessments.

* The largest performance difference is observed in the "Easy Branch of If" and "Model Declares Untrue" categories.

* The "Too Trivial" category is the only one where Gemini outperforms Sonnet.

* The scores for both models are relatively high, suggesting both models perform reasonably well overall.

### Interpretation

The data suggests that the Sonnet model is generally more robust and accurate than the Gemini model across a range of qualitative assessments. The "Too Trivial" category being an exception suggests Gemini may be better suited for very simple tasks, or that the assessment itself is flawed. The significant difference in "Easy Branch of If" and "Model Declares Untrue" indicates Sonnet is better at handling basic conditional logic and avoiding false positives. The chart provides a comparative performance overview, highlighting the strengths and weaknesses of each model. The assessments themselves are qualitative, so the numerical scores likely represent subjective evaluations. Further investigation into the specific criteria used for each assessment would be beneficial to understand the underlying reasons for the observed performance differences.

</details>

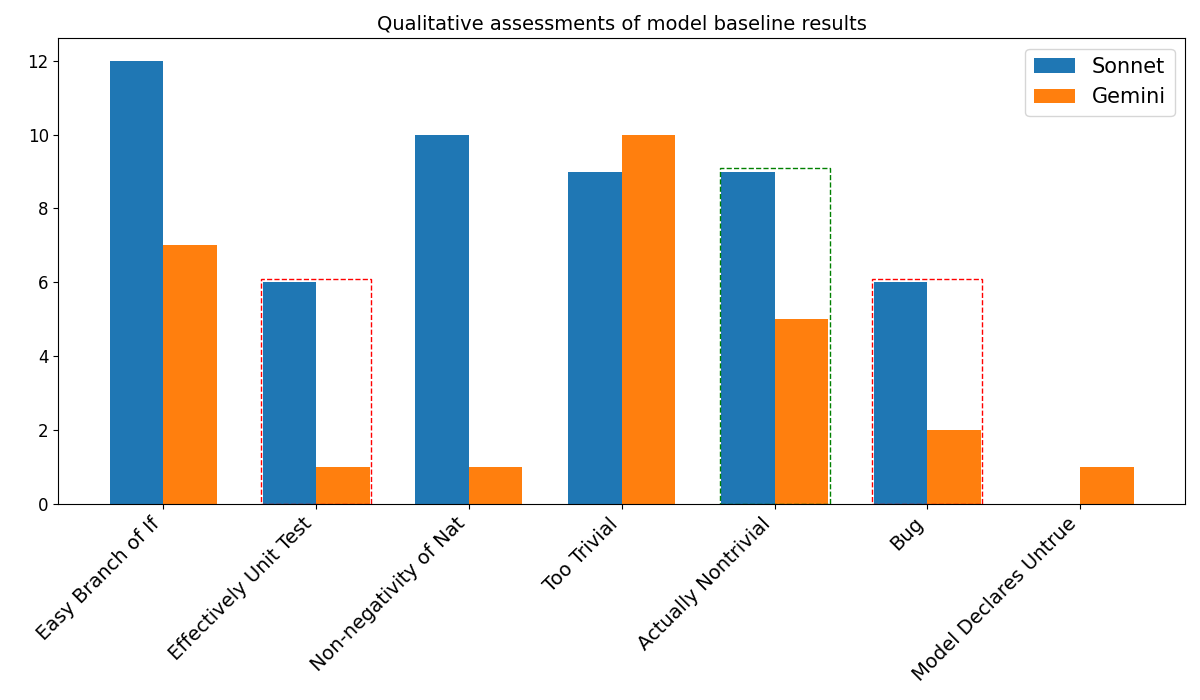

Figure 6: Qualitative categories of theorem solutions in the 100 samples, first two theorems each sample. The red box shows completely spurious results, either bugs or a substitution of a quantified variable with a single value. The green box shows the most nontrivial results. The other categories are neither spurious nor impressive, though they require some syntactic fluency that many language models would fail at.

Both Sonnet and Gemini ran for a maximum of 25 loops for the def s and a max of 50 loops for each theorem. Sonnet completed all but seven of the definitions and 43 samples had at least one theorem completed. Gemini completed only 71 of the definitions, and 23 samples had at least one theorem completed. Figure 6 shows the breakdown across our different buckets, assessed qualitatively. The green box surrounds the best solutions, nontrivial proofs that involve both intricate reasoning and correct syntax. The red box shows completely spurious results, either bugs or a substitution of a quantified variable with a single value. The other categories are neither spurious nor impressive, though they require some syntactic fluency that many language models would fail at. Importantly, Gemini declared the second theorem of sample 3078 incorrect/unsolvable in a comment, while Sonnet nontrivially solved it.

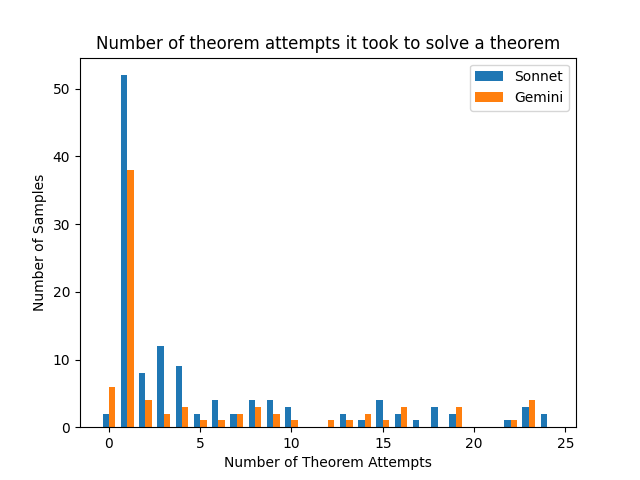

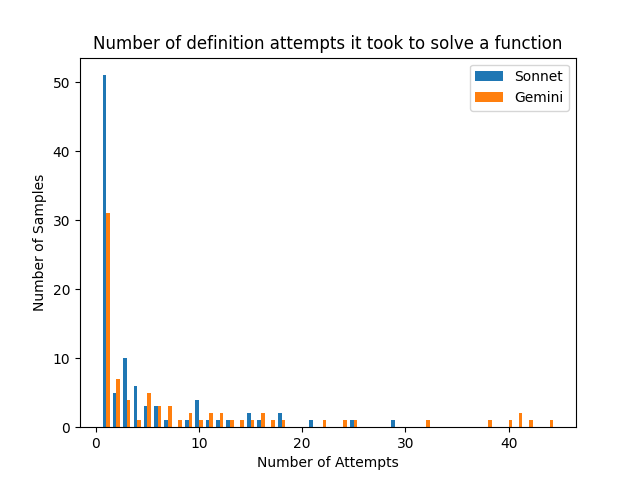

Figures 7 and 8 show how many samples took how many attempts. Of the theorems that got eventually completed, roughly 20% of each model’s were done in zero or one iteration of the loop.

<details>

<summary>extracted/6182580/figs/number_of_theorem_attempts_it_took_to_solve_a_theorem.png Details</summary>

### Visual Description

\n

## Bar Chart: Theorem Solving Attempts

### Overview

This bar chart compares the number of theorem attempts required to solve a theorem by two different models: "Sonnet" and "Gemini". The x-axis represents the number of theorem attempts, and the y-axis represents the number of samples (frequency). The chart displays the distribution of attempts for each model.

### Components/Axes

* **Title:** "Number of theorem attempts it took to solve a theorem" (centered at the top)

* **X-axis Label:** "Number of Theorem Attempts" (bottom-center)

* Scale: 0 to 25, with increments of 5.

* **Y-axis Label:** "Number of Samples" (left-center)

* Scale: 0 to 50, with increments of 10.

* **Legend:** Located in the top-right corner.

* "Sonnet" - represented by a blue color.

* "Gemini" - represented by an orange color.

### Detailed Analysis

The chart consists of two sets of bars, one for each model.

**Sonnet (Blue):**

The Sonnet model shows a peak in attempts around 1 attempt, with approximately 53 samples. The number of samples decreases rapidly as the number of attempts increases. There are noticeable bars at:

* 0 attempts: ~53 samples

* 1 attempt: ~9 samples

* 2 attempts: ~6 samples

* 3 attempts: ~5 samples

* 4 attempts: ~4 samples

* 5 attempts: ~3 samples

* 6 attempts: ~2 samples

* 7 attempts: ~2 samples

* 8 attempts: ~2 samples

* 9 attempts: ~2 samples

* 10 attempts: ~2 samples

* 11 attempts: ~1 sample

* 12 attempts: ~1 sample

* 13 attempts: ~1 sample

* 14 attempts: ~1 sample

* 15 attempts: ~1 sample

* 16 attempts: ~1 sample

* 17 attempts: ~1 sample

* 18 attempts: ~1 sample

* 19 attempts: ~1 sample

* 20 attempts: ~1 sample

* 21 attempts: ~1 sample

* 22 attempts: ~1 sample

* 23 attempts: ~1 sample

* 24 attempts: ~1 sample

* 25 attempts: ~1 sample

**Gemini (Orange):**

The Gemini model also peaks around 0-1 attempts, but has a higher frequency at 0 attempts than Sonnet. The distribution is more spread out than Sonnet's. There are noticeable bars at:

* 0 attempts: ~39 samples

* 1 attempt: ~7 samples

* 2 attempts: ~4 samples

* 3 attempts: ~3 samples

* 4 attempts: ~3 samples

* 5 attempts: ~2 samples

* 6 attempts: ~2 samples

* 7 attempts: ~2 samples

* 8 attempts: ~1 sample

* 9 attempts: ~1 sample

* 10 attempts: ~1 sample

* 11 attempts: ~1 sample

* 12 attempts: ~1 sample

* 13 attempts: ~1 sample

* 14 attempts: ~1 sample

* 15 attempts: ~1 sample

* 16 attempts: ~1 sample

* 17 attempts: ~1 sample

* 18 attempts: ~1 sample

* 19 attempts: ~1 sample

* 20 attempts: ~1 sample

* 21 attempts: ~1 sample

* 22 attempts: ~1 sample

* 23 attempts: ~1 sample

* 24 attempts: ~1 sample

* 25 attempts: ~1 sample

### Key Observations

* Both models show a strong preference for solving theorems with very few attempts (0-2).

* Sonnet has a higher peak at 1 attempt compared to Gemini.

* Gemini has a higher frequency of solving theorems with 0 attempts compared to Sonnet.

* The distributions for both models are right-skewed, indicating that most theorems are solved with a small number of attempts, but some require significantly more.

### Interpretation

The data suggests that both Sonnet and Gemini are relatively efficient at solving theorems, as the majority of solutions are found with a minimal number of attempts. The difference in the distributions suggests that Gemini might be slightly better at solving theorems on the first attempt, while Sonnet might be more consistent in finding solutions within a few attempts. The long tail of the distributions indicates that there are some theorems that are particularly challenging for both models, requiring a larger number of attempts to solve. This could be due to the complexity of the theorems or limitations in the models' reasoning capabilities. The chart provides a comparative performance analysis of the two models in the context of theorem solving.

</details>

Figure 7: Number of theorem attempts it took to solve a theorem, conditional on that theorem succeeding.

Of the 406 theorems attempted, Sonnet accomplished 121 ( $30\$ ) and Gemini accomplished 74 ( $18.5\$ ).

### III-E Discussion

We noticed that Sonnet solved many problems via imperative programming with the identity monad (Id.run do). However, this kind of solution fails to capture inductive structure that lends itself to proofs. This means future solutions to FVAPPS will take one of two directions: an unwieldy and uninterpretable proof involving the identity monad, or ensuring that solutions are factored in a way that can exploit inductive structure.

Table II shows the split over our quality assurance steps over the entire baseline set and those that were solved. While this sample is small, we roughly find that our higher-quality samples that are both guarded and plausible are somewhat more likely to be solvable by current models.

| Unguarded Guarded Guarded and Plausible | 69 18 14 | 41 12 7 | 28 11 4 |

| --- | --- | --- | --- |

| Total | 101 | 60 | 43 |

TABLE II: Baseline results split across quality assurance stages of our generation pipeline.

## IV Related Work

Research and development into stronger programming guarantees spans a number of large and growing communities in the software landscape. While interest in generally stronger-typed programming paradigms is growing (e.g., growing interest in converting the Linux kernel codebase to Rust, https://github.com/Rust-for-Linux/linux increasing availability of static typing in Python https://realpython.com/python312-typing/) there remains a smaller group of people and programming languages focused directly on formal verification. Coq [coq], Dafny [dafnybook], Isabelle [isabellebook], and Lean [demoura2015lean] all target theorem proving and formal verification as first class goals, albeit with varying visions and core foundations.

Automating programming with machine learning or program synthesis is a rich area [ellis2020dreamcodergrowinggeneralizableinterpretable, austin2021programsynthesislargelanguage]

### IV-A Datasets and Benchmarks

Informal Mathematical theorem proving datasets are the most extensive, with benchmarks ranging from 15,000 to over 100,000 proofs [coqgym, leandojo, jiang2021lisa, naturalproofs], plus the Archive of Formal Proofs containing around 1 million lines of code [afp]. Unverified program synthesis benchmarks are typically an order of magnitude smaller, containing thousands of programs, with APPS being the largest at 10,000 programs [Hendrycks2021MeasuringCC] (though LiveCodeBench continues to grow [livecodebench]). In stark contrast, formal software verification benchmarks have historically been much more limited in size, with both available datasets (Clover and dafny-synthesis) containing order of 100 programs each [clover, dafny-synthesis], with DafnyBench [loughridge2024dafnybench] up at 782.

### IV-B Models

Models like DeepSeek [xin2024deepseekproveradvancingtheoremproving, xin2024deepseekproverv15harnessingproofassistant] and AlphaProof [deepmind2024imo] have advanced the ability of AI to do math, both formally and informally. DeepSeek Prover has demonstrated significant capabilities in formal mathematics, achieving state-of-the-art performance on the Lean theorem proving benchmark. The model combines large-scale pretraining on mathematical texts with specialized fine-tuning on formal proof corpora, enabling it to generate valid formal proofs for complex mathematical statements.

AlphaProof [deepmind2024imo] represents another milestone in automated theorem proving, showing particular strength in creative mathematical problem-solving. Its success on IMO-level problems demonstrates the potential for AI systems to engage in sophisticated mathematical reasoning, though the gap between informal mathematical proofs and formal verification remains significant.

In the domain of program synthesis and verification, models have evolved along several tracks. Traditional program synthesis approaches using symbolic techniques and SMT solvers have been augmented by neural methods [wang2023legoprover]. Large language models fine-tuned on code, such as CodeLlama [Rozire2023CodeLO] and GPT-4 [gpt4], have shown promising results in generating functionally correct programs, but their capabilities in formal verification remain limited.

Recent work has begun to bridge this gap [Baif2024DafnyCopilot, yang2024autoverusautomatedproofgeneration] demonstrating the potential for AI assistance in formal program verification. However, these systems typically require significant human guidance and cannot yet autonomously generate both correct programs and their formal proofs. The challenge of simultaneously reasoning about program semantics and verification conditions remains an open problem in the field.

## V Discussion

Our current presentation has a few limitations, and clear directions for future work. While the samples in our final guarded and plausible set are quite high quality, it is nontheless possible that the theorem statements do not correspond to desirable properties nor that they, in union, cover the full range of properties one would ideally prefer. While manual spot-checking did not reveal any systematic failures post complete five-stage processing, it is nontheless possible that particular theorems are not applicable or vacuous in some form.

The FVAPPS benchmark evaluates AI systems on both program synthesis and formal verification by extending APPS with Lean 4 theorem specifications. While current language models can implement programming solutions https://www.anthropic.com/news/3-5-models-and-computer-use https://openai.com/index/introducing-swe-bench-verified/, they struggle with formal verification proofs, highlighting the challenge between imperative programming and proof-amenable structures. The benchmark’s difficulty is demonstrated by limited progress from both human baselines and models. FVAPPS opens research directions in simultaneous implementation and verification reasoning, automated proof-amenable solution structuring, and scaling formal verification, providing a framework to measure progress toward verified automated programming.

## Acknowledgment

We thank Buck Shlegeris, Jason Gross, and Rajashree Agarwal for valuable feedback and discussion during early versions of this work. We are also grateful to the Anthropic External Researcher program for providing API credits for benchmark generation, and to FAR.ai for providing operations and administrative support during the course of the project.

### -A Details of buckets in 6

Table III declares which samples in particular fall into Figure 6 ’s buckets. Of note is that 3078 is declared untrue in a code comment by Gemini, but solved completely by Sonnet. Otherwise, many samples fall into the same buckets across models.

TABLE III: Comparison of theorem classifications between Sonnet and Gemini

### -B Number of definition attempts it took to solve a function, conditional on it succeeding

Figure 8 is a companion to Figure 7 but refers to definitions instead of theorems. Of the def s that succeeded at all, 50% of Sonnet’s and 31% of Gemini’s succeeded in one shot.

<details>

<summary>extracted/6182580/figs/number_of_definition_attempts_it_took_to_solve_a_function.png Details</summary>

### Visual Description

\n

## Bar Chart: Definition Attempts to Solve a Function

### Overview

This bar chart visualizes the distribution of the number of attempts required for two models, "Sonnet" and "Gemini", to solve a function. The x-axis represents the number of attempts, and the y-axis represents the number of samples (frequency). The chart uses blue bars for Sonnet and orange bars for Gemini.

### Components/Axes

* **Title:** "Number of definition attempts it took to solve a function" (centered at the top)

* **X-axis Label:** "Number of Attempts" (centered at the bottom)

* **Y-axis Label:** "Number of Samples" (centered on the left)

* **X-axis Scale:** Ranges from 0 to approximately 45, with tick marks at intervals of 10.

* **Y-axis Scale:** Ranges from 0 to 50, with tick marks at intervals of 10.

* **Legend:** Located in the top-right corner.

* "Sonnet" - Blue

* "Gemini" - Orange

### Detailed Analysis

The chart displays histograms for both Sonnet and Gemini.

**Sonnet (Blue Bars):**

The Sonnet data shows a strong peak at 0 attempts, with a value of approximately 52 samples. The frequency decreases rapidly as the number of attempts increases. There are visible bars up to approximately 25 attempts, but their heights are significantly lower. The bars become sparse and short beyond 15 attempts.

* 0 Attempts: ~52 samples

* 1 Attempt: ~8 samples

* 2 Attempts: ~6 samples

* 3 Attempts: ~4 samples

* 4 Attempts: ~3 samples

* 5 Attempts: ~2 samples

* 6-9 Attempts: ~1-2 samples

* 10-25 Attempts: ~1 sample each

**Gemini (Orange Bars):**

The Gemini data also exhibits a peak at 0 attempts, with a value of approximately 8 samples. The distribution is broader than Sonnet's, with a more gradual decline in frequency as the number of attempts increases. There are visible bars up to approximately 45 attempts, though the heights are generally low.

* 0 Attempts: ~8 samples

* 1 Attempt: ~6 samples

* 2 Attempts: ~4 samples

* 3-5 Attempts: ~2-3 samples

* 6-10 Attempts: ~1-2 samples

* 11-45 Attempts: ~1 sample each

### Key Observations

* Both models perform best with 0 attempts, but Sonnet significantly outperforms Gemini in this category.

* Sonnet's distribution is more concentrated around lower attempt numbers, indicating it solves the function more quickly and consistently.

* Gemini has a wider distribution, suggesting it requires more attempts in some cases.

* The number of samples decreases rapidly for both models as the number of attempts increases.

### Interpretation

The data suggests that Sonnet is more efficient at solving the given function than Gemini. Sonnet requires fewer attempts to reach a solution in a larger proportion of cases. The difference in performance is most pronounced at 0 attempts, where Sonnet has a much higher frequency. Gemini, while still capable of solving the function, exhibits a broader range of attempt numbers, indicating greater variability in its performance. This could be due to differences in the underlying algorithms or training data used for each model. The chart provides a clear visual comparison of the efficiency of the two models in solving the function. The rapid decline in sample counts for higher attempt numbers for both models suggests that the function is relatively easy to solve, but that both models occasionally struggle.

</details>

Figure 8: Definition attempts needed to solve a function, conditional on success.

### -C Baseline solutions to problem 23

Gemini accomplished 10 in 11 attempts and sonnet failed 9 after 50 attempts.

⬇

def solve_elections (n : Nat) (voters : List (Nat à Nat)) : Nat := Id. run do

let range := List. range (n + 1)

let mut best := none

for k in range do

match try_subset ⨠n, voters, 0, k, []⩠with

| some cost =>

match best with

| none => best := some cost

| some b => if cost < b then best := some cost

| none => continue

best. getD 0

Figure 9: claude-3-5-sonnet-20241022’s attempt at the definition for sample 23 of FVAPPS (try_subset omitted for brevity, available on GitHub)

⬇

def solve_elections (n : Nat) (voters : List (Nat à Nat)) : Nat :=

let voters := voters. toArray. qsort (fun v â v â => (v â. fst < v â. fst) || (v â. fst == v â. fst && v â. snd > v â. snd)) |>. toList – Use boolean comparisons for sorting

let rec go (cost : Nat) (numVoters : Nat) (remainingVoters : List (Nat à Nat)) : Nat :=

if numVoters == n then cost

else

match remainingVoters with

| [] => cost

| (m, p):: rest =>

let buyCost := go (cost + p) (numVoters) rest

let freeCost := if m <= numVoters then go cost (min n (numVoters + 1)) rest else buyCost

min buyCost freeCost

go 0 0 voters

Figure 10: gemini-1.5-pro’s solution to the definition for sample 23 of FVAPPS