# Logits are All We Need to Adapt Closed Models

**Authors**: Gaurush Hiranandani, Haolun Wu, Subhojyoti Mukherjee, Sanmi Koyejo

Abstract

Many commercial Large Language Models (LLMs) are often closed-source, limiting developers to prompt tuning for aligning content generation with specific applications. While these models currently do not provide access to token logits, we argue that if such access were available, it would enable more powerful adaptation techniques beyond prompt engineering. In this paper, we propose a token-level probability reweighting framework that, given access to logits and a small amount of task-specific data, can effectively steer black-box LLMs toward application-specific content generation. Our approach views next-token prediction through the lens of supervised classification. We show that aligning black-box LLMs with task-specific data can be formulated as a label noise correction problem, leading to Plugin model – an autoregressive probability reweighting model that operates solely on logits. We provide theoretical justification for why reweighting logits alone is sufficient for task adaptation. Extensive experiments with multiple datasets, LLMs, and reweighting models demonstrate the effectiveness of our method, advocating for broader access to token logits in closed-source models. We provide our code at this https URL.

Distribution Shift, Black-box Model, Reweighing, Decoding, Large Language Models

1 Introduction

The rise of Large Language Models (LLMs) has revolutionized generative Artificial Intelligence, yet the most capable models are often closed-source or black-box (Achiam et al., 2023; Bai et al., 2022a). These models generate text based on input prompts but keep their internal weights and training data undisclosed, limiting transparency and customization. Despite these constraints, closed-source LLMs are widely adopted across applications ranging from travel itinerary generation to tax advice, with developers largely relying on prompt optimization to achieve domain-specific outputs.

However, this reliance on prompt engineering is insufficient for specialized tasks, e.g., those requiring brand-specific tone or style. Consider a content writer aiming to generate product descriptions that reflect a brand’s unique identity. Black-box LLMs, trained on broad datasets, often fail to meet such nuanced requirements. With access limited to generated tokens, developers resort to zero-shot (Kojima et al., 2022) or few-shot (Song et al., 2023) prompting techniques. However, if model weights were accessible, advanced techniques like Parameter-Efficient Fine-Tuning (PEFT) using LoRA (Hu et al., 2021), QLoRA (Dettmers et al., 2024), prefix tuning (Li & Liang, 2021), or adapters (Hu et al., 2023a) could be employed for fine-tuning. Yet, due to intellectual property concerns and the high costs of development, most commercial LLMs remain closed-source, and even with API-based fine-tuning options, concerns over data privacy discourage developers from sharing proprietary data.

<details>

<summary>extracted/6617062/icml2025/images/plugin_fig.png Details</summary>

### Visual Description

# Technical Document Extraction: Plugin Model Architecture

This document describes a technical diagram illustrating the architecture and data flow of a **Plugin Model** used for text generation or classification, specifically demonstrating how a reweighting mechanism influences the output of a black-box model.

## 1. Component Isolation

The diagram is structured into three primary horizontal regions:

* **Input (Left):** A document icon representing the initial text prompt.

* **Main Processing Block (Center):** A light purple rounded rectangle labeled "Plugin Model" containing the core logic.

* **Output & Feedback (Right):** The resulting text and a feedback loop for the next inference step.

---

## 2. Detailed Component Analysis

### A. Input Stage

* **Text Prompt:** A document icon contains the text: `"This shirt is ..."`

* **Flow:** An arrow leads from the input into the Plugin Model, where it splits into two parallel processing paths.

### B. Plugin Model (Internal Components)

The Plugin Model consists of two sub-models that process the input simultaneously:

1. **Black-box Model:**

* **Visual:** Represented by a dark grey rectangular block.

* **Output:** A probability distribution table (Table 1).

2. **Reweighting Model:**

* **Visual:** Represented by a white rectangular block with vertical bars on the sides.

* **Output:** A probability distribution table (Table 2).

### C. Data Tables (Probability Distributions)

The diagram uses three tables to show how values are combined. Each table contains a list of tokens and their associated numerical weights.

| Token | Table 1 (Black-box) | Table 2 (Reweighting) | Table 3 (Combined Output) |

| :--- | :--- | :--- | :--- |

| **nice** | 0.1 | 0.1 | 0.01 |

| **adidas** | 0.1 | 0.7 | **0.07** |

| **black** | 0.3 | 0.1 | 0.03 |

| **...** | ... | ... | ... |

* **Visual Coding:** In all tables, the row for "black" is highlighted in yellow, and the row for "..." is highlighted in orange.

---

## 3. Process Flow and Logic

### Step 1: Parallel Inference

The input prompt is fed into both the **Black-box Model** and the **Reweighting Model**.

* The Black-box Model predicts "black" as the most likely next token (0.3).

* The Reweighting Model strongly favors "adidas" (0.7).

### Step 2: Multiplication Operation

The outputs from both tables are combined using a mathematical operation represented by a **circled 'X' (multiplication symbol)**.

* **Trend Verification:** The final value is the product of the two previous values (e.g., $0.1 \times 0.7 = 0.07$). This operation shifts the highest probability from "black" to "adidas".

### Step 3: Final Output Generation

* The combined table (Table 3) identifies **adidas** (0.07) as the winning token (indicated by bold text).

* **Resulting Text:** A document icon on the right shows the completed sentence: `"This shirt is adidas ..."`

### Step 4: Iterative Feedback

* **Label:** "Next inference step"

* **Flow:** A dashed line with an arrow loops from the final output back to the initial input document. This indicates that the generated word "adidas" is appended to the prompt for the next cycle of generation.

---

## 4. Summary of Data Points

* **Primary Language:** English.

* **Key Entities:** Black-box Model, Reweighting Model, Plugin Model.

* **Numerical Values:** 0.1, 0.3, 0.7, 0.01, 0.07, 0.03.

* **Keywords:** nice, adidas, black.

</details>

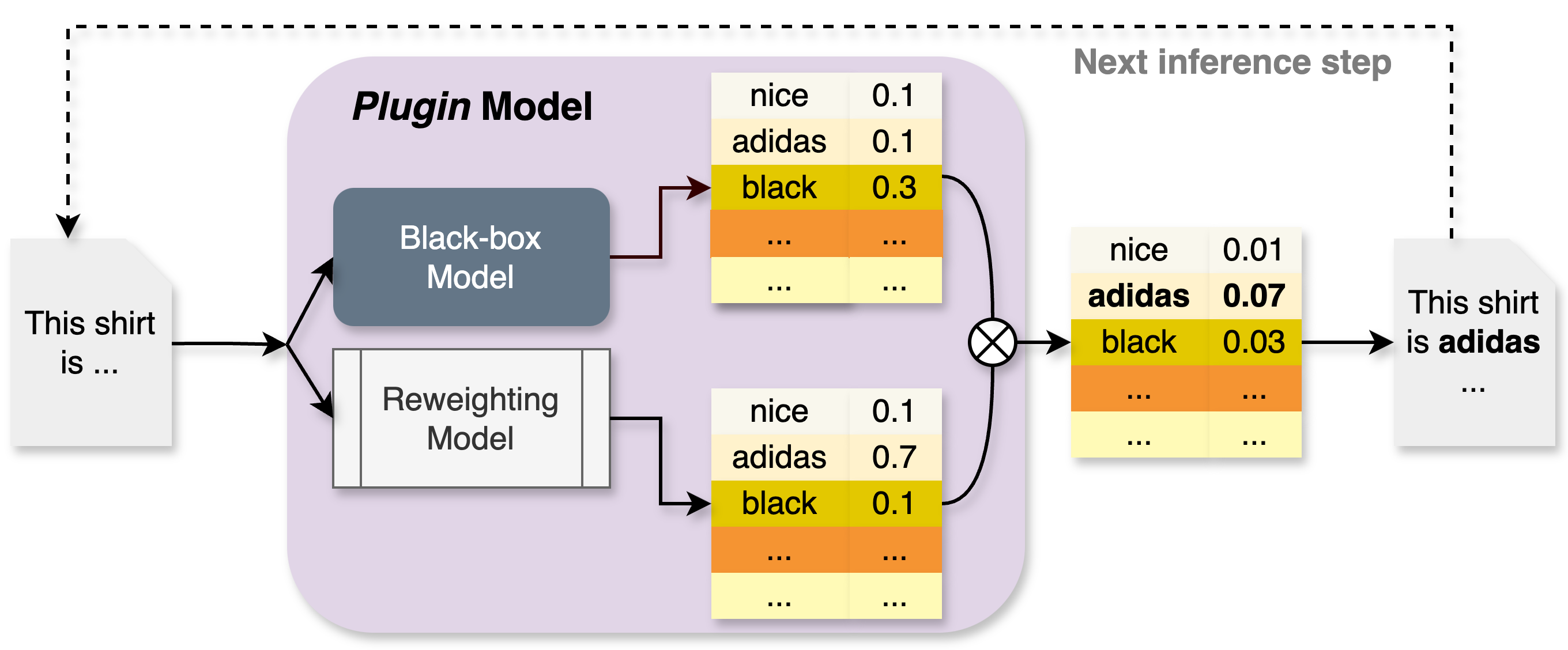

Figure 1: Inference phase of the Plugin model. The token probabilities are a product of the probabilities from the black-box model and a reweighting model that denotes label transitioning.

In this paper, we propose a middle ground between general-purpose LLM creators and developers seeking application-specific alignment. We argue that providing access to token logits, in addition to generated text, would enable more effective customization for downstream tasks. Viewing next-token prediction as a classification problem, we draw an analogy between LLMs and supervised classification models. Since decoder-only LLMs are trained to predict the next token given preceding tokens, aligning black-box LLMs to domain-specific data can be reframed as a label noise correction problem in supervised classification. In this analogy, the LLM’s broad training data serves as proxy labels, while application-specific data represents true labels. This can be interpreted as a distribution shift scenario. For example, in “label shift” (Lipton et al., 2018), certain tokens may appear more frequently in application-specific data than in the LLM’s original corpus. In “class-dependent or independent label noise” (Patrini et al., 2017), synonymous expressions or stylistic variations in application data may diverge from those seen during model training.

Inspired by the label noise correction method of Patrini et al. (2017), which estimates a transition matrix to correct class-dependent noise, we adapt this idea to black-box LLM alignment. Unlike prior work that modifies the loss and retrains the model, we lack access to the LLM’s training data and cannot retrain the model. Instead, we estimate an autoregressive transition matrix from application-specific data and use it to reweight token probabilities at inference.

This autoregressive extension is novel, as it accounts for dependencies on previously generated tokens when adjusting logits for the next token. By adapting label noise correction techniques to autoregressive language modeling, we present a practical method to align black-box LLMs using only logits—without requiring access to model weights or original training data.

Our contributions are summarized as follows:

1. We formulate the problem of adapting black-box LLMs for application-specific content generation as a loss correction approach, requiring only token logits at each generation step. This bridges label noise correction in supervised classification with autoregressive language modeling (Sections 2 and 3).

1. We propose an autoregressive probability reweighting framework, enabling token-level probability adjustment during inference. The resulting Plugin model dynamically reweights logits to align generation with task-specific data (Section 4).

1. We provide theoretical guarantees, showing that under mild assumptions, the Plugin model consistently aligns probability estimates with the target distribution given sufficient application-specific samples. To our knowledge, this is the first work to establish such consistency in an autoregressive label noise setting (Section 5).

1. We conduct extensive experiments across four language generation datasets and three black-box LLMs. Our results, supported by multiple ablations, demonstrate that the Plugin model outperforms baselines in adapting black-box LLMs for domain-specific content generation (Section 7). Based on our results, we advocate for publishing token logits alongside outputs in closed-source LLMs.

2 Preliminaries

We begin by establishing the notation. The index set is denoted as $[c]=\{1,...,c\}$ for any positive integer $c$ . Vectors are represented in boldface, for example, $\bm{v}$ , while matrices are denoted using uppercase letters, such as $V$ . The coordinates of a vector are indicated with subscripts, for instance, $v_{j}$ . The all-ones vector is denoted by $\mathbf{1}$ , with its size being clear from the context. The $c$ -dimensional simplex is represented as $\Delta^{c-1}⊂[0,1]^{c}$ . Finally, a sequence $(x_{t},x_{t-1},...,x_{1})$ of size $t$ is denoted by $x_{t:1}$ .

We assume access to language data for the target task, while the black-box LLM, trained on broad world knowledge, is treated as having learned from a noisy version of this data. We seek to adapt the black-box model to align with the task-specific distribution. To formalize this, we extend the label-noise framework from supervised classification (Patrini et al., 2017) to the decoder-only language modeling.

Decoder-only models are trained using a next-token prediction objective. At each step, this setup resembles a supervised classification problem with $|V|$ classes, where $V$ is the vocabulary of tokens. Formally, the label space at step $t$ is ${\cal X}_{t}=\{\bm{e}^{i}:i∈[|V|]\}$ , where $\bm{e}^{i}$ denotes the $i$ -th standard canonical vector in $\mathbb{R}^{|V|}$ , i.e., $\bm{e}^{i}∈\{0,1\}^{|V|},\mathbf{1}^{T}\bm{e}^{i}=1$ . The task at each step $t$ is to predict the next token $\bm{x}_{t}$ (denoted as one-hot vector) given a sequence of tokens $\bm{x}_{t-1:1}$ .

One observes examples $(\bm{x}_{t},\bm{x}_{t-1:1})$ drawn from an unknown distribution $p^{*}(\bm{x}_{t},\bm{x}_{t-1:1})=p^{*}(\bm{x}_{t}|\bm{x}_{t-1:1})p^{*}(\bm{x}_%

{t-1:1})$ over $V× V^{[t-1]}$ , with expectations denoted by $E^{*}_{\bm{x}_{t},\bm{x}_{t-1:1}}$ . Cross-entropy loss is typically used for training over the vocabulary tokens. Assuming access to token logits, and thus the softmax outputs, from the black-box LLM, we interpret the softmax output as a vector approximating the class-conditional probabilities $p^{*}(\bm{x}_{t}|\bm{x}_{t-1:1})$ , denoted as $b(\bm{x}_{t}|\bm{x}_{t-1:1})∈\Delta^{|V|-1}$ .

To quantify the discrepancy between the target label $\bm{x}_{t}=\bm{e}^{i}$ at step $t$ and the model’s predicted output, we define a loss function $\ell:|V|×\Delta^{|V|-1}→\mathbb{R}$ . A common choice in next-token prediction tasks is the cross-entropy loss:

$$

\displaystyle\ell(\bm{e}^{i},b(\bm{x}_{t}|{\bm{x}_{t-1:1}})) \displaystyle=-(\bm{e}^{i})^{T}\log b(\bm{x}_{t}|\bm{x}_{t-1:1}) \displaystyle=-\log b(\bm{x}_{t}=\bm{e}^{i}|\bm{x}_{t-1:1}). \tag{1}

$$

With some abuse of notation, the loss in vector form $\bm{\ell}:\Delta^{|V|-1}→\mathbb{R}^{|V|}$ , computed on every possible label is $\bm{\ell}(b(\bm{x}_{t}|\bm{x}_{t-1:1}))=\Big{(}\ell(\bm{e}^{1},b(\bm{x}_{t}|%

\bm{x}_{t-1:1})),...,\ell(\bm{e}^{|V|},b(\bm{x}_{t}|\bm{x}_{t-1:1}))\Big{)}^%

{T}.$

3 Loss Robustness

We extend label noise modeling to the autoregressive language setting, focusing on asymmetric or class-conditional noise. At each step $t$ , the label $\bm{x}_{t}$ in the black-box model’s training data is flipped to $\tilde{\bm{x}}_{t}∈ V$ with probability $p^{*}(\tilde{\bm{x}}_{t}|\bm{x}_{t})$ , while preceding tokens $(\bm{x}_{t-1:1})$ remain unchanged. As a result, the black-box model observes samples from a noisy distribution: $p^{*}(\tilde{\bm{x}}_{t},\bm{x}_{t-1:1})=\sum_{\bm{x}_{t}}p^{*}(\tilde{\bm{x}}%

_{t}|\bm{x}_{t})p^{*}(\bm{x}_{t}|\bm{x}_{t-1:1})p^{*}(\bm{x}_{t-1:1}).$

We define the noise transition matrix $T_{t}∈[0,1]^{|V|×|V|}$ at step $t$ , where each entry $T_{t_{ij}}=p^{*}(\tilde{\bm{x}}_{t}=\bm{e}^{j}|\bm{x}_{t}=\bm{e}^{i})$ represents the probability of label flipping. This matrix is row-stochastic but not necessarily symmetric.

To handle asymmetric label noise, we modify the loss $\bm{\ell}$ for robustness. Initially, assuming a known $T_{t}$ , we apply a loss correction inspired by (Patrini et al., 2017; Sukhbaatar et al., 2015). We then relax this assumption by estimating $T_{t}$ directly, forming the basis of our Plugin model approach.

We observe that a language model trained with no loss correction would result in a predictor for noisy labels $b(\tilde{\bm{x}}_{t}|\bm{x}_{t-1:1})$ . We can make explicit the dependence on $T_{t}$ . For example, with cross-entropy we have:

$$

\displaystyle\ell(\bm{e}^{i},b(\tilde{\bm{x}}_{t}|\bm{x}_{t-1:1}))=-\log b(%

\tilde{\bm{x}}_{t}=\bm{e}^{i}|\bm{x}_{t-1:1}) \displaystyle=-\log\sum_{j=1}^{|V|}p^{*}(\tilde{\bm{x}}_{t}=\bm{e}^{i}|\bm{x}_%

{t}=\bm{e}^{j})b(\bm{x}_{t}=\bm{e}^{j}|\bm{x}_{t-1:1}) \displaystyle=-\log\sum_{j=1}^{|V|}T_{t_{ji}}{b}(\bm{x}_{t}=\bm{e}^{j}|\bm{x}_%

{t-1:1}), \tag{2}

$$

or in matrix form

$$

\bm{\ell}(b(\tilde{\bm{x}}_{t}|\bm{x}_{t-1:1}))=-\log T_{t}^{\top}b(\bm{x}_{t}%

|\bm{x}_{t-1:1}). \tag{3}

$$

This loss compares the noisy label $\tilde{\bm{x}}_{t}$ to the noisy predictions averaged via the transition matrix $T_{t}$ at step $t$ . Cross-entropy loss, commonly used for next-token prediction, is a proper composite loss with the softmax function as its inverse link function (Patrini et al., 2017). Consequently, from Theorem 2 of Patrini et al. (2017), the minimizer of the forwardly-corrected loss in Equation (3) on noisy data aligns with the minimizer of the true loss on clean data, i.e.,

| | | $\displaystyle\operatorname*{argmin}_{w}E^{*}_{\tilde{\bm{x}}_{t},\bm{x}_{t-1:1%

}}\Big{[}\bm{\ell}(\bm{x}_{t},T_{t}^{→p}b(\bm{x}_{t}|\bm{x}_{t-1:1}))\Big{]}$ | |

| --- | --- | --- | --- |

where $w$ are the language model’s weights, implicitly embedded in the softmax output $b$ from the black-box model. This result suggests that if $T_{t}$ were known, we could transform the softmax output $b(\bm{x}_{t}\mid\bm{x}_{t-1:1})$ using $T_{t}^{T}$ , use the transformed predictions as final outputs, and retrain the model accordingly. However, since $T_{t}$ is unknown and training data is inaccessible, estimating $T_{t}$ from clean data is essential to our approach.

3.1 Estimation of Transition Matrix

We assume access to a small amount of target data for the task. Given that the black-box model is expressive enough to approximate $p^{*}(\tilde{\bm{x}}_{t}\mid\bm{x}_{t-1:1})$ (Assumption (2) in Theorem 3 of Patrini et al. (2017)), the transition matrix $T_{t}$ can be estimated from this target data. Considering the supervised classification setting at step $t$ , let $\mathcal{X}_{t}^{i}$ represent all target data samples where $\bm{x}_{t}=\bm{e}^{i}$ and the preceding tokens are $(\bm{x}_{t-1:1})$ . A naive estimate of the transition matrix is: $\hat{T}_{t_{ij}}=b(\tilde{\bm{x}}_{t}=\bm{e}^{j}|\bm{x}_{t}=\bm{e}^{i})=\frac{%

1}{|\mathcal{X}_{t}^{i}|}\sum_{x∈\mathcal{X}_{t}^{i}}b(\tilde{\bm{x}}_{t}=%

\bm{e}^{j}|\bm{x}_{t-1:1})$ . While this setup works for a single step $t$ , there are two key challenges in extending it across all steps in the token prediction task:

1. Limited sample availability: The number of samples where $\bm{x}_{t}=\bm{e}^{i}$ and the preceding tokens $(\bm{x}_{t-1},...,\bm{x}_{1})$ match exactly is limited in the clean data, especially with large vocabulary sizes (e.g., $|V|=O(100K)$ for LLaMA (Dubey et al., 2024)). This necessitates modeling the transition matrix as a function of features derived from $\bm{x}_{t-1:1}$ , akin to text-based autoregressive models.

1. Large parameter space: With a vocabulary size of $|V|=O(100K)$ , the transition matrix $T_{t}$ has approximately 10 billion parameters. This scale may exceed the size of the closed-source LLM and cannot be effectively learned from limited target data. Thus, structural restrictions must be imposed on $T_{t}$ to reduce its complexity.

To address these challenges, we impose the restriction that the transition matrix $T_{t}$ is diagonal. While various constraints could be applied to simplify the problem, assuming $T_{t}$ is diagonal offers two key advantages. First, it allows the transition matrix—effectively a vector in this case—to be modeled using standard autoregressive language models, such as a GPT-2 model with $k$ transformer blocks, a LLaMA model with $d$ -dimensional embeddings, or a fine-tuned GPT-2-small model. These architectures can be adjusted based on the size of the target data. Second, a diagonal transition matrix corresponds to a symmetric or class-independent label noise setup, where $\bm{x}_{t}=\bm{e}^{i}$ flips to any other class with equal probability in the training data. This assumption, while simplifying, remains realistic within the framework of label noise models.

Enforcing a diagonal structure ensures efficient estimation of the transition matrix while maintaining practical applicability within our framework. Next, we outline our approach for adapting closed-source language models to target data.

4 Proposed Method: The Plugin Approach

To estimate the autoregressive transition vector, we train an autoregressive language model on target data, which operates alongside the black-box model during inference. This model acts as an autoregressive reweighting mechanism, adjusting the token probabilities produced by the black-box model. The combined approach, integrating probabilities from the black-box and reweighting models, is referred to as the Plugin model. The term Plugin is inspired by classification literature, where plugin methods reweight probabilities to adapt to distribution shifts (Koyejo et al., 2014; Narasimhan et al., 2015; Hiranandani et al., 2021). We now detail the training and inference phases, summarized in Algorithm 1 (Appendix A) and illustrated in Figure 1.

4.1 Training the Plugin Model

During each training iteration, a sequence $s$ of $m$ tokens is passed through both the black-box model and the reweighting model to obtain token probabilities $\{\bm{b}_{1},\bm{b}_{2},...,\bm{b}_{m}\}$ and $\{\bm{r}_{1},\bm{r}_{2},...,\bm{r}_{m}\}$ , respectively, where each $\bm{b}_{i},\bm{r}_{i}∈\Delta^{|V|-1}$ . The final token probability from the Plugin model is computed by normalizing the element-wise product of these probabilities:

$$

{\bm{p}}_{i}=\frac{\bm{b}_{i}\odot\bm{r}_{i}}{\|\bm{b}_{i}\odot\bm{r}_{i}\|_{1%

}}. \tag{4}

$$

The sequence-level cross-entropy loss is given by:

$$

\ell_{s}=-\frac{1}{m}\sum_{i=1}^{m}\sum_{j=1}^{|V|}\log({\bm{p}}_{i})\odot\bm{%

e}_{j}, \tag{5}

$$

where the $j$ -th token appears at the $i$ -th position in the sequence $s$ . During backpropagation, only the reweighting model parameters are updated, while the black-box model remains frozen. This formulation extends naturally to batch training, refining $\bm{r}_{i}$ over iterations to approximate the transition vector governing label shifts in the target data.

4.2 Inference from the Plugin Model

Given a fully trained reweighting model and access to the black-box model, token generation proceeds autoregressively. At the first step, the black-box model produces token probabilities $\bm{b}_{1}$ , while the reweighting model outputs $\bm{r}_{1}$ . The Plugin model selects the first token as $\bm{x}_{1}=\operatorname*{argmax}_{V}(\bm{b}_{1}\odot\bm{r}_{1}).$ For subsequent steps, given the previously generated tokens $\bm{x}_{t-1:1}$ , we obtain probabilities $\bm{b}_{t}$ from the black-box model and $\bm{r}_{t}$ from the reweighting model. The Plugin model then predicts the next token as: $\bm{x}_{t}=\operatorname*{argmax}_{V}(\bm{b}_{t}\odot\bm{r}_{t})$ .

The process continues until a stopping criterion is met. Note that, this manuscript focuses on greedy decoding for inference. Other decoding strategies, such as temperature scaling, top- $p$ sampling, or beam search, can be incorporated by normalizing the element-wise product of probabilities and using them as the final token distribution, as in Equation (4).

5 Theoretical Analysis

We establish the convergence properties of Plugin, showing that after $t$ tokens, it accurately estimates the autoregressive noise transition matrix. Modeling the matrix as a function of an unknown parameter $\bm{\theta}_{*}$ , we prove that optimizing the autoregressive loss over token sequences enables consistent estimation of $\bm{\theta}_{*}$ with high probability. To our knowledge, this is the first finite-time convergence analysis for transition matrix estimation under autoregressive noisy loss.

Let $\mathcal{F}^{t-1}$ denote the history of selected tokens up to time $t-1$ . Let an unknown parameter $\bm{\theta}_{*}∈\bm{\Theta}⊂eq\mathbb{R}^{d}$ governs the transition dynamics of label flipping between token pairs. The transition matrix at time $t$ , denoted as $T_{t}(\bm{\theta}_{*}|\mathcal{F}^{t-1})$ , depends on $\bm{\theta}_{*}$ and all previously observed tokens. Before proving our main result, we first make a few assumptions.

**Assumption 5.1**

*Let $T_{t}(\bm{\theta}_{*};x_{i},x_{j},\mathcal{F}^{t-1})$ denote the $(i,j)$ -th component of the transition matrix, and let $f_{I_{t}}(\bm{\theta}_{*};x_{i},x_{j},\mathcal{F}^{t-1})$ be the transition function that determines the transition from $x_{i}$ to $x_{j}$ , where $I_{t}$ is the $x_{i}$ token selected at time $t$ . Let $x_{i},x_{j}\!∈\!\mathbb{R}^{d}$ . We assume that $∇ f_{I_{t}}(\bm{\theta}_{*};x_{i},x_{j},\mathcal{F}^{t-1})\!<\!\lambda_{0}$ and $∇^{2}f_{I_{t}}(\bm{\theta}_{*};x_{i},x_{j},\mathcal{F}^{t-1})\!<\!\lambda%

_{1}$ for some constant $\lambda_{0}\!>\!0$ , $\lambda_{1}>0$ and for all steps $t$ .*

5.1 assumes the transition matrix depends on the history-dependent function $f_{I_{t}}(·)$ with bounded gradient and Hessian, similar to assumptions in (Singh et al., 2023; Zhang et al., 2024) for other deep models.

**Assumption 5.2**

*We assume the cross-entropy loss (5) is clipped by $\epsilon>0$ and upper bounded as $\ell^{clipped}_{t}\!\!≤\!\!C|V|^{2}(Y_{t}-f_{I_{t}}(\bm{\theta}_{*};x_{i},x%

_{j},\mathcal{F}^{t-1}))^{2}$ for any time $t$ , where $Y_{t}$ is the predicted token class, $f_{I_{t}}$ determines the true class and satisfies 5.1, and $C\!>\!0$ is a constant.*

5.2 ensures that the clipped log loss is upper bounded by a smoother squared loss. For the remaining of this section we refer to this squared loss at time $t$ as $\ell_{t}(\bm{\theta})$ . Let the Plugin model minimize the loss $\ell_{1}(\bm{\theta}),\ell_{2}(\bm{\theta}),·s,\ell_{t}(\bm{\theta})$ over $t$ iterations. Let ${\widehat{\bm{\theta}}}_{t}=\operatorname*{argmin}_{\bm{\theta}∈\bm{\Theta}}%

\sum_{s=1}^{t}\ell_{s}(\bm{\theta})$ . At every iteration $t$ , the Plugin algorithm looks into the history $\mathcal{F}^{t-1}$ and samples a token $\bm{x}_{t}\sim\bm{p}_{\hat{\theta}_{t}}=\bm{b}_{t}\odot\bm{r}_{\hat{\theta}_{t}}$ .

Let $\widehat{\cal L}_{t}(\bm{\theta})=\frac{1}{t}\sum_{s=1}^{t}\ell_{s}(\bm{\theta})$ and its expectation ${\cal L}_{t}(\bm{\theta})=\frac{1}{t}\sum_{s=1}^{t}\mathbb{E}_{x_{s}\sim%

\mathbf{p}_{{\widehat{\bm{\theta}}}_{s-1}}}[\ell_{s}(\bm{\theta})|\mathcal{F}^%

{s-1}]$ . We impose regularity and smoothness assumptions on the loss function $\ell_{t}(\bm{\theta})$ as stated in B.1 (Appendix B). We are now ready to prove the main theoretical result of the paper.

**Theorem 1**

*Suppose $\ell_{1}(\bm{\theta}),·s,\ell_{t}(\bm{\theta}):\mathbb{R}^{|V|}\!\!%

→\!\!\mathbb{R}$ are loss functions from a distribution that satisfies Assumptions 5.1, 5.2, and B.1. Define ${\cal L}_{t}(\bm{\theta})=\frac{1}{t}\sum_{s=1}^{t}\mathbb{E}_{x_{s}\sim%

\mathbf{p}_{{\widehat{\bm{\theta}}}_{s-1}}}[\ell_{s}(\bm{\theta})|\mathcal{F}^%

{s-1}]$ where, ${\widehat{\bm{\theta}}}_{t}=\operatorname*{argmin}_{\bm{\theta}∈\bm{\Theta}}%

\sum_{s=1}^{t}\ell_{s}(\bm{\theta})$ . If $t$ is large enough such that $\frac{\gamma\log(dt)}{t}≤ c^{\prime}\min\left\{\frac{1}{C_{1}C_{2}|V|^{4}},%

\frac{\max\limits_{\bm{\theta}∈\bm{\Theta}}\left(\!{\cal L}_{t}(\bm{\theta})%

\!-\!{\cal L}_{t}\left(\bm{\theta}_{*}\!\right)\right)}{C_{2}}\right\}$ then for a constant $\gamma≥ 2$ , universal constants $C_{1},C_{2},c^{\prime}$ , we have that

| | $\displaystyle\left(1-\rho_{t}\right)\frac{\sigma_{t}^{2}}{t}-\frac{C_{1}^{2}}{%

t^{\gamma/2}}$ | $\displaystyle≤\mathbb{E}\left[{\cal L}_{t}({\widehat{\bm{\theta}}}_{t})-{%

\cal L}_{t}\left(\bm{\theta}_{*}\right)\right]$ | |

| --- | --- | --- | --- |

where $\sigma^{2}_{t}\coloneqq\mathbb{E}\left[\frac{1}{2}\left\|∇\widehat{\cal L%

}_{t}\left(\bm{\theta}_{*}\right)\right\|_{\left(∇^{2}{\cal L}_{t}\left(%

\bm{\theta}_{*}\right)\right)^{-1}}^{2}\right]$ , and $\rho_{t}\coloneqq\left(C_{1}C_{2}+2\eta^{2}\lambda_{1}^{2}\right)\sqrt{\frac{%

\gamma\log(dt)}{t}}$ .*

1 bounds the difference between the estimated and true average loss functions, showing that this gap diminishes as the number of training tokens increases. Since ${\widehat{\bm{\theta}}}_{t}=\operatorname*{argmin}_{\bm{\theta}∈\bm{\Theta}}%

\sum_{s=1}^{t}\ell_{s}(\bm{\theta})$ , the Plugin model progressively refines its estimation of the unknown parameter $\bm{\theta}_{*}$ . As the transition matrix $T_{t}(\bm{\theta}_{*};x_{i},x_{j},\mathcal{F}^{t-1})$ is derived from $f_{I_{t}}(\bm{\theta}_{*};x_{i},x_{j},\mathcal{F}^{t-1})$ , which depends on $\bm{\theta}_{*}$ , training on sufficiently many tokens ensures an accurate estimation of each component of $T_{t}(\bm{\theta}_{*}|\mathcal{F}^{t-1})$ .

Our proof reformulates the problem as a sequential hypothesis testing setting to estimate the average loss function ${\cal L}_{t}({\widehat{\bm{\theta}}}_{t})$ using the sequence of losses $\ell_{1}(\bm{\theta}),...,\ell_{t}(\bm{\theta})$ (Naghshvar & Javidi, 2013; Lattimore & Szepesvári, 2020). Unlike prior work (Frostig et al., 2015; Chaudhuri et al., 2015), which assumes i.i.d. losses, the loss at time $t$ in our setting depends on all previous losses. Additionally, Mukherjee et al. (2022) study a different active regression setting without considering cross-entropy loss or transition noise matrices as in Patrini et al. (2017). We provide a brief overview of the proof technique in Remark B.9 (Appendix B), highlighting key novelties.

6 Related Work

Parameter-Efficient Fine-Tuning (PEFT).

PEFT methods adapt LLMs to downstream tasks while minimizing computational overhead. LoRA (Hu et al., 2021) and QLoRA (Dettmers et al., 2024) introduce low-rank updates and quantization for efficient fine-tuning, while prefix tuning (Li & Liang, 2021), adapters (Hu et al., 2023b), and soft prompting (Lester et al., 2021) modify task-specific representations through trainable layers or embeddings. Torroba-Hennigen et al. (2025) further explore the equivalence between gradient-based transformations and adapter-based tuning. However, these methods require access to model weights, gradients, or architecture details, making them unsuitable for closed-source LLMs and inapplicable as baselines in our setup. In contrast, our approach operates solely on token logits, enabling adaptation without modifying the underlying model. Thus, we emphasize that the Plugin model is not an alternative to fine-tuning, but rather an approach that uniquely stands for adapting black-box LLMs which only provide logit access.

Steering and Aligning LLMs.

LLM alignment methods primarily use reinforcement learning or instruction tuning. RLHF and DPO (Christiano et al., 2017; Ouyang et al., 2022; Rafailov et al., 2024) optimize model behavior via human preferences, with DPO eliminating reward modeling. Constitutional AI (Bai et al., 2022b) aligns models using self-generated principles, while instruction tuning (Wei et al., 2021; Sanh et al., 2022) adapts them via task-specific demonstrations. Unlike our approach, these methods require model weights and training data, limiting their applicability as baselines in our setup.

Calibration of LLMs.

LLM calibration methods aim to align model confidence with predictive accuracy and adjust confidence scores but do not alter token predictions (Ulmer et al., 2024; Shen et al., 2024; Huang et al., 2024; Kapoor et al., 2024; Zhu et al., 2023; Zhang et al., 2023). In contrast, our method reweights token probabilities at inference, enabling adaptation of black-box LLMs without modifying the model or requiring fine-tuning.

Black-box LLMs.

Prior work explores various approaches for adapting black-box LLMs without fine-tuning, though they differ fundamentally from our method. (Gao et al., 2024) infer user preferences through interactive edits but do not adapt models based on past language data. Diffusion-LM (Li et al., 2022) formulates text generation as a non-autoregressive denoising process, whereas our approach reweights token probabilities autoregressively without requiring black-box model weights. Discriminator-based methods (Dathathri et al., 2020; Mireshghallah et al., 2022; Yang & Klein, 2021; Krause et al., 2021) control generation based on predefined attributes, contrasting with our method, which enables free-form text adaptation. DExperts (Liu et al., 2021, 2024) combines expert and anti-expert probabilities; we incorporate a similar probability combining strategy in a modified baseline without a de-expert component. In-context learning (Long et al., 2023; Dong et al., 2024) offers a common adaptation technique for black-box models and serves as a baseline in our setup.

Table 1: Performance comparison on E2E NLG dataset. We show mean and standard deviation of the metrics over five seeds.

| Model | Method | BLEU | Rouge-1 | Rouge-2 | Rouge-L | METEOR | CIDEr | NIST |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| GPT2-M | Zeroshot | 0.0247 | 0.3539 | 0.1003 | 0.2250 | 0.3015 | 0.0156 | 0.6133 |

| GPT2-M | ICL-1 | 0.0543 ±0.026 | 0.3431 ±0.048 | 0.1299 ±0.033 | 0.2280 ±0.047 | 0.3434 ±0.051 | 0.0260 ±0.042 | 0.7767 ±0.060 |

| GPT2-M | ICL-3 | 0.0750 ±0.035 | 0.3955 ±0.028 | 0.1676 ±0.020 | 0.2649 ±0.052 | 0.3977 ±0.063 | 0.0252 ±0.049 | 0.8993 ±0.076 |

| GPT2-M | NewModel | 0.2377 ±0.011 | 0.5049 ±0.014 | 0.2742 ±0.013 | 0.3902 ±0.006 | 0.4521 ±0.016 | 0.3938 ±0.019 | 1.1927 ±0.069 |

| GPT2-M | WeightedComb | 0.1709 ±0.008 | 0.4817 ±0.020 | 0.2447 ±0.011 | 0.3720 ±0.014 | 0.4071 ±0.025 | 0.3329 ±0.027 | 1.0864 ±0.002 |

| GPT2-M | TempNet | 0.1036 ±0.010 | 0.3425 ±0.016 | 0.1526 ±0.012 | 0.2735 ±0.010 | 0.2615 ±0.016 | 0.4116 ±0.023 | 0.2826 ±0.057 |

| GPT2-M | Plugin (Ours) | 0.1863 ±0.010 | 0.5227 ±0.011 | 0.2612 ±0.013 | 0.3728 ±0.003 | 0.4857 ±0.012 | 0.3544 ±0.013 | 1.1241 ±0.009 |

| GPT2-XL | Zeroshot | 0.0562 | 0.4013 | 0.1636 | 0.2862 | 0.3697 | 0.0187 | 0.5338 |

| GPT2-XL | ICL-1 | 0.0686 ±0.032 | 0.4016 ±0.042 | 0.1404 ±0.052 | 0.2745 ±0.025 | 0.3503 ±0.019 | 0.0353 ±0.015 | 0.7944 ±0.067 |

| GPT2-XL | ICL-3 | 0.0980 ±0.035 | 0.4188 ±0.040 | 0.1923 ±0.046 | 0.2912 ±0.031 | 0.3925 ±0.027 | 0.0250 ±0.017 | 0.9390 ±0.054 |

| GPT2-XL | NewModel | 0.2377 ±0.011 | 0.5049 ±0.014 | 0.2742 ±0.013 | 0.3902 ±0.006 | 0.4521 ±0.016 | 0.3938 ±0.019 | 1.1927 ±0.069 |

| GPT2-XL | WeightedComb | 0.1184 ±0.010 | 0.4237 ±0.016 | 0.1858 ±0.012 | 0.3004 ±0.010 | 0.3776 ±0.016 | 0.1818 ±0.023 | 1.0261 ±0.057 |

| GPT2-XL | TempNet | 0.1325 ±0.013 | 0.4642 ±0.017 | 0.2516 ±0.016 | 0.3021 ±0.022 | 0.4126 ±0.025 | 0.3627 ±0.033 | 0.8027 ±0.047 |

| GPT2-XL | Plugin (Ours) | 0.2470 ±0.009 | 0.5536 ±0.007 | 0.3084 ±0.007 | 0.4213 ±0.008 | 0.5057 ±0.009 | 0.5455 ±0.013 | 1.2736 ±0.051 |

| LLaMA-3.1-8B | Zeroshot | 0.3226 | 0.6917 | 0.4050 | 0.5004 | 0.6041 | 0.9764 | 1.1310 |

| LLaMA-3.1-8B | ICL-1 | 0.3301 ±0.037 | 0.6914 ±0.027 | 0.4126 ±0.026 | 0.5023 ±0.018 | 0.6037 ±0.015 | 0.9715 ±0.057 | 1.1735 ±0.066 |

| LLaMA-3.1-8B | ICL-3 | 0.3527 ±0.033 | 0.6936 ±0.036 | 0.4217 ±0.017 | 0.5127 ±0.017 | 0.6202 ±0.009 | 0.9927 ±0.018 | 1.1672 ±0.047 |

| LLaMA-3.1-8B | NewModel | 0.2452 ±0.008 | 0.5347 ±0.005 | 0.2905 ±0.006 | 0.4097 ±0.005 | 0.4812 ±0.009 | 0.4571 ±0.021 | 1.2281 ±0.041 |

| LLaMA-3.1-8B | WeightedComb | 0.3517 ±0.004 | 0.7040 ±0.004 | 0.4249 ±0.004 | 0.5181 ±0.003 | 0.6206 ±0.002 | 1.0947 ±0.010 | 1.1737 ±0.015 |

| LLaMA-3.1-8B | TempNet | 0.3502 ±0.023 | 0.6927 ±0.006 | 0.4216 ±0.023 | 0.5027 ±0.017 | 0.6124 ±0.019 | 0.9625 ±0.025 | 1.1713 ±0.027 |

| LLaMA-3.1-8B | Plugin (Ours) | 0.3691 ±0.013 | 0.7113 ±0.002 | 0.4374 ±0.004 | 0.5247 ±0.002 | 0.6392 ±0.009 | 1.1441 ±0.030 | 1.1749 ±0.034 |

Table 2: Performance comparison on Web NLG dataset. We show mean and standard deviation of the metrics over five seeds.

| Model | Method | BLEU | Rouge-1 | Rouge-2 | Rouge-L | METEOR | CIDEr | NIST |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| GPT2-M | Zeroshot | 0.0213 | 0.2765 | 0.1014 | 0.1872 | 0.2111 | 0.0479 | 0.2340 |

| GPT2-M | ICL-1 | 0.0317 ±0.013 | 0.3388 ±0.021 | 0.1318 ±0.013 | 0.2346 ±0.019 | 0.2876 ±0.042 | 0.0732 ±0.053 | 0.2715 ±0.042 |

| GPT2-M | ICL-3 | 0.0461 ±0.014 | 0.3388 ±0.018 | 0.1378 ±0.016 | 0.2291 ±0.010 | 0.3408 ±0.027 | 0.0748 ±0.031 | 0.3283 ±0.037 |

| GPT2-M | NewModel | 0.1071 ±0.005 | 0.3260 ±0.010 | 0.1496 ±0.014 | 0.2724 ±0.013 | 0.2642 ±0.008 | 0.4327 ±0.023 | 0.2916 ±0.031 |

| GPT2-M | WeightedComb | 0.0692 ±0.007 | 0.3593 ±0.010 | 0.1568 ±0.008 | 0.2834 ±0.015 | 0.2379 ±0.030 | 0.1916 ±0.028 | 0.2996 ±0.037 |

| GPT2-M | TempNet | 0.1045 ±0.012 | 0.3526 ±0.014 | 0.1526 ±0.014 | 0.2731 ±0.018 | 0.3326 ±0.026 | 0.4237 ±0.033 | 0.3002 ±0.048 |

| GPT2-M | Plugin (Ours) | 0.1280 ±0.007 | 0.4590 ±0.005 | 0.2226 ±0.005 | 0.3515 ±0.006 | 0.3832 ±0.010 | 0.7280 ±0.039 | 0.3060 ±0.017 |

| GPT2-XL | Zeroshot | 0.0317 | 0.2992 | 0.1321 | 0.2417 | 0.1969 | 0.0491 | 0.1826 |

| GPT2-XL | ICL-1 | 0.0510 ±0.024 | 0.3223 ±0.026 | 0.1526 ±0.016 | 0.2562 ±0.031 | 0.2591 ±0.009 | 0.1336 ±0.029 | 0.2235 ±0.033 |

| GPT2-XL | ICL-3 | 0.0744 ±0.016 | 0.3383 ±0.036 | 0.1682 ±0.016 | 0.2651 ±0.028 | 0.3071 ±0.014 | 0.1675 ±0.024 | 0.2550 ±0.021 |

| GPT2-XL | NewModel | 0.1071 ±0.005 | 0.3260 ±0.010 | 0.1496 ±0.014 | 0.2724 ±0.013 | 0.2642 ±0.008 | 0.4327 ±0.023 | 0.2916 ±0.031 |

| GPT2-XL | WeightedComb | 0.0636 ±0.006 | 0.3453 ±0.007 | 0.1666 ±0.003 | 0.2782 ±0.005 | 0.2871 ±0.006 | 0.2460 ±0.005 | 0.2981 ±0.018 |

| GPT2-XL | TempNet | 0.0925 ±0.008 | 0.3357 ±0.009 | 0.1663 ±0.014 | 0.2764 ±0.011 | 0.3025 ±0.009 | 0.4226 ±0.013 | 0.2837 ±0.027 |

| GPT2-XL | Plugin (Ours) | 0.1673 ±0.004 | 0.4616 ±0.007 | 0.2527 ±0.007 | 0.3757 ±0.008 | 0.3895 ±0.007 | 0.8987 ±0.013 | 0.2646 ±0.003 |

| LLaMA-3.1-8B | Zeroshot | 0.1453 | 0.5278 | 0.3030 | 0.3982 | 0.4314 | 0.6991 | 0.2684 |

| LLaMA-3.1-8B | ICL-1 | 0.2166 ±0.031 | 0.5944 ±0.027 | 0.3706 ±0.025 | 0.4667 ±0.013 | 0.5651 ±0.045 | 1.5719 ±0.024 | 0.2462 ±0.038 |

| LLaMA-3.1-8B | ICL-3 | 0.2031 ±0.027 | 0.5937 ±0.019 | 0.3821 ±0.015 | 0.4653 ±0.024 | 0.5682 ±0.046 | 1.3826 ±0.051 | 0.2469 ±0.045 |

| LLaMA-3.1-8B | NewModel | 0.1284 ±0.005 | 0.3506 ±0.009 | 0.1673 ±0.007 | 0.2879 ±0.009 | 0.2921 ±0.008 | 0.4999 ±0.030 | 0.2973 ±0.008 |

| LLaMA-3.1-8B | WeightedComb | 0.1922 ±0.012 | 0.5986 ±0.019 | 0.3612 ±0.012 | 0.4659 ±0.008 | 0.4470 ±0.030 | 1.1855 ±0.075 | 0.2575 ±0.020 |

| LLaMA-3.1-8B | TempNet | 0.2315 ±0.010 | 0.5916 ±0.015 | 0.3794 ±0.012 | 0.4620 ±0.010 | 0.5581 ±0.036 | 1.4826 ±0.043 | 0.2513 ±0.020 |

| LLaMA-3.1-8B | Plugin (Ours) | 0.2542 ±0.004 | 0.6375 ±0.005 | 0.3873 ±0.005 | 0.4869 ±0.007 | 0.5724 ±0.004 | 1.5911 ±0.046 | 0.2590 ±0.003 |

Table 3: Performance comparison on CommonGen dataset. We show mean and standard deviation of the metrics over five seeds.

| Model | Method | BLEU | Rouge-1 | Rouge-2 | Rouge-L | METEOR | CIDEr | NIST |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| GPT2-M | Zeroshot | 0.0153 | 0.2216 | 0.0409 | 0.1527 | 0.2848 | 0.0001 | 0.3686 |

| GPT2-M | ICL-1 | 0.0157 ±0.013 | 0.2580 ±0.024 | 0.0362 ±0.096 | 0.1388 ±0.102 | 0.2871 ±0.107 | 0.0222 ±0.076 | 0.3704 ±0.101 |

| GPT2-M | ICL-3 | 0.0552 ±0.010 | 0.3610 ±0.019 | 0.1248 ±0.045 | 0.2680 ±0.089 | 0.4079 ±0.133 | 0.1366 ±0.125 | 0.5340 ±0.087 |

| GPT2-M | NewModel | 0.1260 ±0.007 | 0.4106 ±0.016 | 0.1683 ±0.013 | 0.3740 ±0.009 | 0.3600 ±0.024 | 0.4570 ±0.058 | 0.7113 ±0.025 |

| GPT2-M | WeightedComb | 0.0567 ±0.005 | 0.3918 ±0.010 | 0.1353 ±0.005 | 0.3280 ±0.010 | 0.2929 ±0.016 | 0.2623 ±0.042 | 0.4353 ±0.028 |

| GPT2-M | TempNet | 0.1248 ±0.015 | 0.4048 ±0.014 | 0.1528 ±0.015 | 0.3526 ±0.014 | 0.3883 ±0.017 | 0.4492 ±0.023 | 0.4037 ±0.058 |

| GPT2-M | Plugin (Ours) | 0.1366 ±0.003 | 0.4533 ±0.007 | 0.1878 ±0.003 | 0.3934 ±0.006 | 0.4095 ±0.011 | 0.5572 ±0.022 | 0.6395 ±0.061 |

| GPT2-XL | Zeroshot | 0.0317 | 0.2992 | 0.1321 | 0.2417 | 0.1969 | 0.0491 | 0.1826 |

| GPT2-XL | ICL-1 | 0.0508 ±0.023 | 0.3201 ±0.035 | 0.1526 ±0.097 | 0.2562 ±0.103 | 0.2591 ±0.089 | 0.1336 ±0.092 | 0.2235 ±0.069 |

| GPT2-XL | ICL-3 | 0.0744 ±0.011 | 0.3383 ±0.014 | 0.1682 ±0.030 | 0.2651 ±0.072 | 0.3071 ±0.073 | 0.1675 ±0.066 | 0.2550 ±0.047 |

| GPT2-XL | NewModel | 0.1260 ±0.007 | 0.4106 ±0.016 | 0.1683 ±0.013 | 0.3740 ±0.009 | 0.3600 ±0.024 | 0.4570 ±0.058 | 0.7113 ±0.025 |

| GPT2-XL | WeightedComb | 0.0614 ±0.020 | 0.3364 ±0.024 | 0.1347 ±0.009 | 0.2969 ±0.019 | 0.2921 ±0.018 | 0.2763 ±0.010 | 0.3352 ±0.051 |

| GPT2-XL | TempNet | 0.1154 ±0.020 | 0.3937 ±0.026 | 0.1482 ±0.017 | 0.3625 ±0.013 | 0.3389 ±0.019 | 0.4376 ±0.018 | 0.5927 ±0.047 |

| GPT2-XL | Plugin (Ours) | 0.1791 ±0.014 | 0.4932 ±0.007 | 0.2288 ±0.004 | 0.4347 ±0.007 | 0.4702 ±0.006 | 0.7283 ±0.012 | 0.6554 ±0.038 |

| LLaMA-3.1-8B | Zeroshot | 0.0643 | 0.2776 | 0.1181 | 0.2488 | 0.3857 | 0.3155 | 0.3347 |

| LLaMA-3.1-8B | ICL-1 | 0.0615 ±0.027 | 0.2697 ±0.033 | 0.1158 ±0.062 | 0.2469 ±0.087 | 0.3822 ±0.069 | 0.3005 ±0.072 | 0.3059 ±0.094 |

| LLaMA-3.1-8B | ICL-3 | 0.0635 ±0.016 | 0.2748 ±0.024 | 0.1225 ±0.018 | 0.3120 ±0.047 | 0.4012 ±0.029 | 0.3250 ±0.022 | 0.3794 ±0.034 |

| LLaMA-3.1-8B | NewModel | 0.0753 ±0.004 | 0.3716 ±0.005 | 0.1122 ±0.003 | 0.3404 ±0.004 | 0.2665 ±0.006 | 0.1919 ±0.015 | 0.6900 ±0.046 |

| LLaMA-3.1-8B | WeightedComb | 0.1789 ±0.005 | 0.3485 ±0.012 | 0.1797 ±0.008 | 0.2981 ±0.012 | 0.3637 ±0.011 | 0.5503 ±0.046 | 0.5450 ±0.020 |

| LLaMA-3.1-8B | TempNet | 0.1524 ±0.008 | 0.3372 ±0.015 | 0.1524 ±0.010 | 0.3298 ±0.017 | 0.3676 ±0.015 | 0.3986 ±0.033 | 0.5286 ±0.023 |

| LLaMA-3.1-8B | Plugin (Ours) | 0.2665 ±0.010 | 0.5800 ±0.002 | 0.3139 ±0.005 | 0.5037 ±0.004 | 0.5829 ±0.003 | 1.0876 ±0.020 | 0.7031 ±0.007 |

Table 4: Performance comparison on Adidas dataset. We show mean and standard deviation of the metrics over five seeds.

| Model | Method | BLEU | Rouge-1 | Rouge-2 | Rouge-L | METEOR | CIDEr | NIST |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| GPT2-M | Zeroshot | 0.0046 | 0.2488 | 0.0189 | 0.1353 | 0.1653 | 0.0312 | 0.6860 |

| GPT2-M | ICL-1 | 0.0088 ±0.054 | 0.2667 ±0.047 | 0.0247 ±0.66 | 0.1358 ±0.041 | 0.1762 ±0.028 | 0.0464 ±0.089 | 0.6793 ±0.078 |

| GPT2-M | ICL-3 | 0.0121 ±0.047 | 0.2693 ±0.028 | 0.0262 ±0.054 | 0.1470 ±0.020 | 0.1806 ±0.030 | 0.0415 ±0.104 | 0.7037 ±0.081 |

| GPT2-M | NewModel | 0.0515 ±0.016 | 0.2690 ±0.014 | 0.0637 ±0.014 | 0.1697 ±0.008 | 0.1918 ±0.013 | 0.0550 ±0.086 | 0.6682 ±0.047 |

| GPT2-M | WeightedComb | 0.0565 ±0.014 | 0.2630 ±0.028 | 0.0495 ±0.018 | 0.1565 ±0.015 | 0.1938 ±0.019 | 0.0585 ±0.088 | 0.6456 ±0.156 |

| GPT2-M | TempNet | 0.0442 ±0.017 | 0.2672 ±0.019 | 0.0482 ±0.022 | 0.1582 ±0.020 | 0.1902 ±0.017 | 0.0525 ±0.031 | 0.6533 ±0.098 |

| GPT2-M | Plugin (Ours) | 0.0486 ±0.006 | 0.2766 ±0.002 | 0.0515 ±0.007 | 0.1684 ±0.005 | 0.1994 ±0.004 | 0.0626 ±0.017 | 0.7919 ±0.024 |

| GPT2-XL | Zeroshot | 0.0075 | 0.2309 | 0.0278 | 0.1438 | 0.1487 | 0.0184 | 0.4956 |

| GPT2-XL | ICL-1 | 0.0109 ±0.039 | 0.2567 ±0.082 | 0.0265 ±0.054 | 0.1519 ±0.038 | 0.1649 ±0.052 | 0.0318 ±0.171 | 0.5133 ±0.162 |

| GPT2-XL | ICL-3 | 0.0295 ±0.037 | 0.2509 ±0.071 | 0.0395 ±0.043 | 0.1536 ±0.039 | 0.1658 ±0.041 | 0.0321 ±0.109 | 0.5176 ±0.116 |

| GPT2-XL | NewModel | 0.0515 ±0.016 | 0.2690 ±0.014 | 0.0637 ±0.014 | 0.1697 ±0.008 | 0.1918 ±0.013 | 0.0550 ±0.086 | 0.6682 ±0.047 |

| GPT2-XL | WeightedComb | 0.0567 ±0.016 | 0.2210 ±0.027 | 0.0714 ±0.015 | 0.1550 ±0.024 | 0.1674 ±0.017 | 0.0183 ±0.117 | 0.4105 ±0.109 |

| GPT2-XL | TempNet | 0.0539 ±0.018 | 0.2598 ±0.026 | 0.0686 ±0.014 | 0.1562 ±0.019 | 0.1863 ±0.029 | 0.0462 ±0.120 | 0.5263 ±0.117 |

| GPT2-XL | Plugin (Ours) | 0.0600 ±0.017 | 0.2710 ±0.025 | 0.0722 ±0.018 | 0.1725 ±0.017 | 0.1995 ±0.018 | 0.1195 ±0.138 | 0.6375 ±0.120 |

| LLaMA-3.1-8B | Zeroshot | 0.0120 | 0.2470 | 0.0318 | 0.1493 | 0.1526 | 0.0424 | 0.5285 |

| LLaMA-3.1-8B | ICL-1 | 0.0220 ±0.044 | 0.2472 ±0.072 | 0.0405 ±0.068 | 0.1434 ±0.057 | 0.1686 ±0.041 | 0.0555 ±0.133 | 0.5078 ±0.142 |

| LLaMA-3.1-8B | ICL-3 | 0.0177 ±0.041 | 0.2385 ±0.065 | 0.0364 ±0.071 | 0.1408 ±0.030 | 0.1712 ±0.029 | 0.0587 ±0.102 | 0.5775 ±0.145 |

| LLaMA-3.1-8B | NewModel | 0.0506 ±0.011 | 0.2700 ±0.011 | 0.0634 ±0.006 | 0.1749 ±0.006 | 0.1995 ±0.009 | 0.0575 ±0.051 | 0.6570 ±0.072 |

| LLaMA-3.1-8B | WeightedComb | 0.0357 ±0.017 | 0.2583 ±0.014 | 0.0661 ±0.015 | 0.1560 ±0.011 | 0.1706 ±0.016 | 0.0745 ±0.086 | 0.5927 ±0.077 |

| LLaMA-3.1-8B | TempNet | 0.0472 ±0.016 | 0.2647 ±0.022 | 0.0625 ±0.012 | 0.1625 ±0.020 | 0.1857 ±0.013 | 0.0586 ±0.103 | 0.5926 ±0.137 |

| LLaMA-3.1-8B | Plugin (Ours) | 0.0611 ±0.018 | 0.2714 ±0.029 | 0.0742 ±0.020 | 0.1759 ±0.019 | 0.1990 ±0.020 | 0.1293 ±0.152 | 0.6361 ±0.134 |

7 Experiments

We divide this section into four parts. Section 7.1 evaluates Plugin on four text generation datasets across three black-box language models. Since the Plugin model is trained on top of black-box models, we refer to black-box models interchangeably as base models. Section 7.2 discusses how Plugin can be applied on top of any prompt-tuning method as a wrapper, when logits are accessible. Section 7.3 presents ablation studies analyzing the impact of black-box model quality, Plugin ’s complexity, and architecture choices. Section 7.4 shows qualitative analysis and case studies.

We evaluate Plugin on four text generation benchmarks: (a) E2E NLG (Dušek et al., 2020), (b) Web NLG (Gardent et al., 2017), (c) CommonGen (Lin et al., 2020), and (d) the Adidas product description dataset (adi, 2023). For the first three datasets, we use the train-validation-test splits from the Transformers library (Wolf, 2020). To introduce distribution shifts, we filter Web NLG’s training data to include only infrastructure descriptions, while validation and test sets retain person descriptions. Similarly, CommonGen’s training set is restricted to samples having man, while validation and test sets remain unchanged. Details of this setup are in Section 7.4. The Adidas dataset is split into validation and test sets. Data statistics are provided in Table 6, Appendix C.1.

7.1 Text Generation Performance Comparison

We evaluate Plugin on the text generation task using only the validation and test splits of all four datasets, reserving the train split for ablation studies (Section 7.3). Plugin and baseline models are trained on the small validation set, with performance measured on the test set. Additionally, we allocate 40% of the validation data as hyper-validation for cross-validation of hyperparameters.

Performance is reported using seven standard natural language generation metrics: (a) BLEU (Papineni et al., 2002), (b) ROUGE-1 (Lin, 2004), (c) ROUGE-2 (Lin, 2004), (d) ROUGE-L (Lin & Och, 2004), (e) METEOR (Banerjee & Lavie, 2005), (f) CIDEr (Vedantam et al., 2015), and (g) NIST (Doddington, 2002). All experiments are repeated over five random seeds, and we report the mean and standard deviation for each metric.

We compare Plugin with the following baselines: (a) Zeroshot: The black-box model directly performs text generation without additional adaptation. (b) ICL-1 (Long et al., 2023): One randomly selected validation sample is used as an in-context example. (c) ICL-3 (Long et al., 2023): Three randomly selected validation samples are used as in-context examples. (d) NewModel: A new language model is trained using the validation data. (e) WeightedComb (Liu et al., 2021): A new model is trained alongside the black-box model, with token probabilities computed as $\alpha\bm{n}+(1-\alpha)\bm{b}$ , where $\bm{n}$ represents the probabilities from the new model and $\alpha$ is cross-validated in $\{0.25,0.50,0.75\}$ . (f) TempNet (Qiu et al., 2024), a recent logit-scaling approach that learns a global temperature per input and uniformly scales logits during generation. Since the black-box model weights are inaccessible, fine-tuning-based approaches are not applicable in our setting. Nonetheless, we include a comparison with LoRA in Appendix C.4 for completeness. This highlights Plugin ’s competitiveness despite operating under stricter access constraints than required for PEFT.

All methods use the same prompts where applicable (Appendix C.2) and employ greedy decoding. The base (black-box) models used are GPT2-M (Radford et al., 2019), GPT2-XL (Radford et al., 2019), and LLaMA-3.1-8B (Dubey et al., 2024). NewModel, WeightedComb, and the reweighting model in Plugin share the same architecture. For GPT-based models, these use a Transformer encoder with one hidden layer and default configurations. For LLaMA-based models, the architecture consists of a Transformer encoder with one hidden layer, 256 hidden size, 1024 intermediate size, and one attention head. Learning rate and weight decay are cross-validated over $\{1e-5,5e-5,1e-4,5e-4,1e-3,5e-3\}$ and $\{0.01,0.1,1,10\}$ , respectively. Models are trained using AdamW with warmup followed by linear decay, and early stopping is applied if the hyper-validation loss does not decrease for five consecutive epochs.

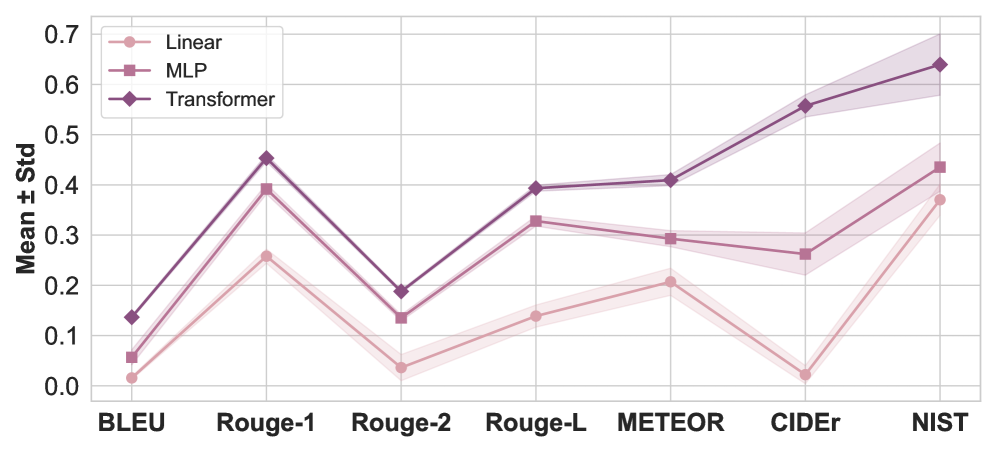

As shown in Tables 1 – 4 (the best is bold, the second best is underlined), Plugin outperforms baselines across nearly all datasets, black-box models, and evaluation metrics. NewModel occasionally achieves higher NIST scores due to increased repetition of less-frequent input tokens, but this comes at the cost of coherence, as reflected by other metrics. WeightedComb does not perform well, indicating one combination for all tokens is not a good modeling choice. TempNet, which learns a single temperature per input and uniformly scales logits during generation, also underperforms. In contrast, Plugin reweights logits at each timestep, enabling finer, context-sensitive adjustments.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Data Extraction: Performance Comparison of GPT2-M Models

This document provides a detailed extraction of data from two side-by-side bar charts comparing the performance of "Base" vs. "Plugin" configurations across different fine-tuning stages of the GPT2-M model.

## 1. Document Structure and Metadata

* **Image Type:** Grouped Bar Charts (2 panels).

* **Primary Language:** English.

* **X-Axis Categories (Common to both):**

1. GPT2-M (zeroshot)

2. GPT2-M (1FT)

3. GPT2-M (2FT)

4. GPT2-M (5FT)

* **Legend (Common to both):**

* **Base:** Light mauve/pink color (Left bar in each pair).

* **Plugin:** Dark purple/plum color (Right bar in each pair).

* **Legend Location:** Top-left quadrant of each chart.

* **Y-Axis Label:** "Value" (Shared/Centralized).

---

## 2. Chart 1: BLEU Score Analysis

### Component Isolation: BLEU Chart

* **Header:** BLEU

* **Y-Axis Range:** 0.00 to 0.30+ (Markers every 0.05).

* **Trend Verification:**

* **Base Series:** Shows a significant jump from zeroshot to 1FT, plateaus at 2FT, and increases slightly at 5FT.

* **Plugin Series:** Shows a consistent upward trend across all stages, significantly outperforming the Base model at every stage.

### Extracted Data Points (Approximate based on grid alignment)

| Model Stage | Base (Light Mauve) | Plugin (Dark Purple) |

| :--- | :--- | :--- |

| **GPT2-M (zeroshot)** | ~0.025 | ~0.185 |

| **GPT2-M (1FT)** | ~0.220 | ~0.300 |

| **GPT2-M (2FT)** | ~0.220 | ~0.310 |

| **GPT2-M (5FT)** | ~0.245 | ~0.320 |

---

## 3. Chart 2: Rouge-L Score Analysis

### Component Isolation: Rouge-L Chart

* **Header:** Rouge-L

* **Y-Axis Range:** 0.00 to 0.50 (Markers every 0.10).

* **Trend Verification:**

* **Base Series:** Shows a steady, linear-style upward trend from zeroshot through 5FT.

* **Plugin Series:** Shows a sharp increase from zeroshot to 1FT, followed by a very gradual upward slope through 5FT.

### Extracted Data Points (Approximate based on grid alignment)

| Model Stage | Base (Light Mauve) | Plugin (Dark Purple) |

| :--- | :--- | :--- |

| **GPT2-M (zeroshot)** | ~0.225 | ~0.390 |

| **GPT2-M (1FT)** | ~0.385 | ~0.465 |

| **GPT2-M (2FT)** | ~0.400 | ~0.470 |

| **GPT2-M (5FT)** | ~0.425 | ~0.485 |

---

## 4. Key Technical Observations

1. **Plugin Superiority:** In both BLEU and Rouge-L metrics, the "Plugin" configuration consistently outperforms the "Base" configuration across all training stages.

2. **Zeroshot Impact:** The most dramatic performance delta between Base and Plugin occurs in the "zeroshot" stage, particularly in the BLEU metric where the Base model is near zero while the Plugin model achieves a substantial score.

3. **Diminishing Returns:** While performance increases with more fine-tuning (from 1FT to 5FT), the rate of improvement slows down significantly after the first fine-tuning stage (1FT) for both metrics.

4. **Metric Scaling:** Rouge-L values are generally higher than BLEU values for the same model configurations, with the Plugin model approaching a 0.50 Rouge-L score at 5FT.

</details>

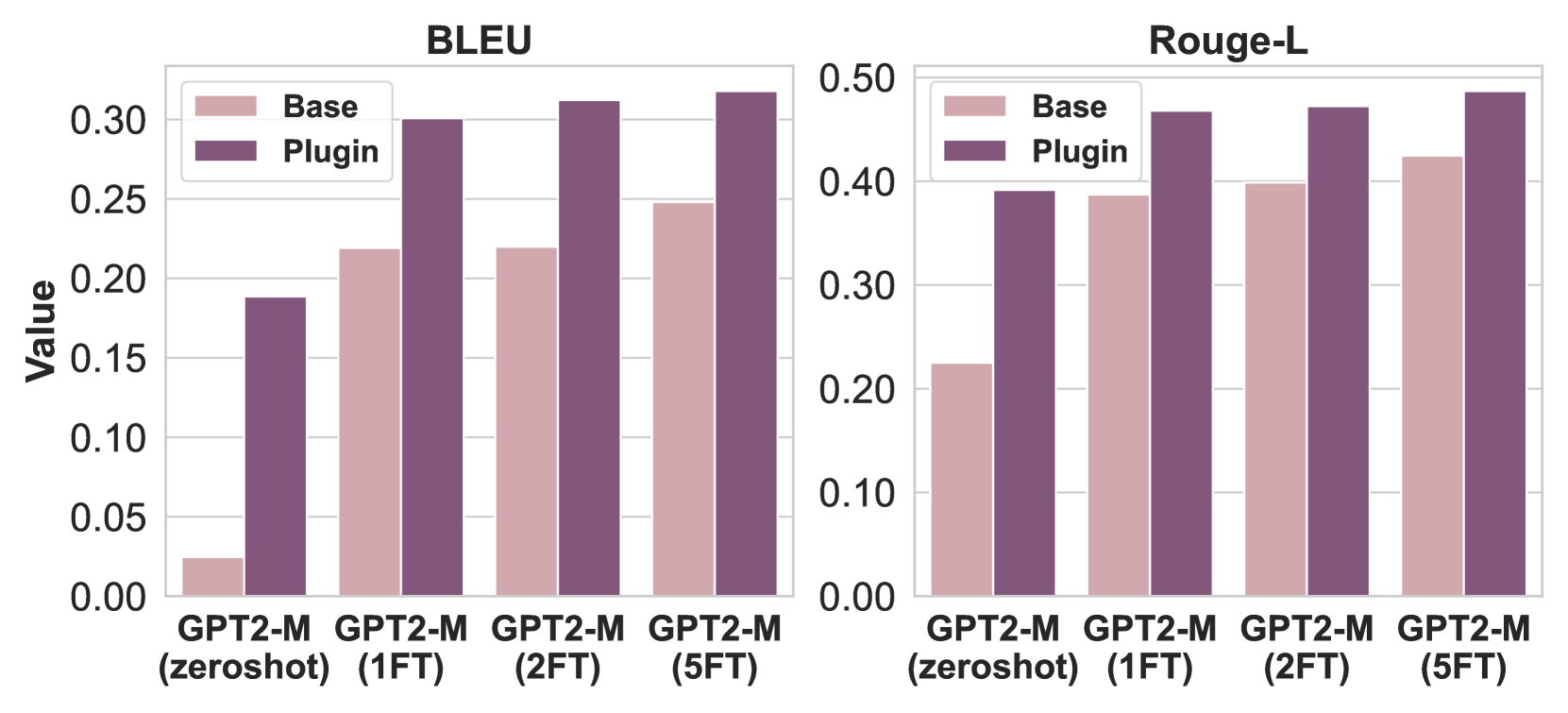

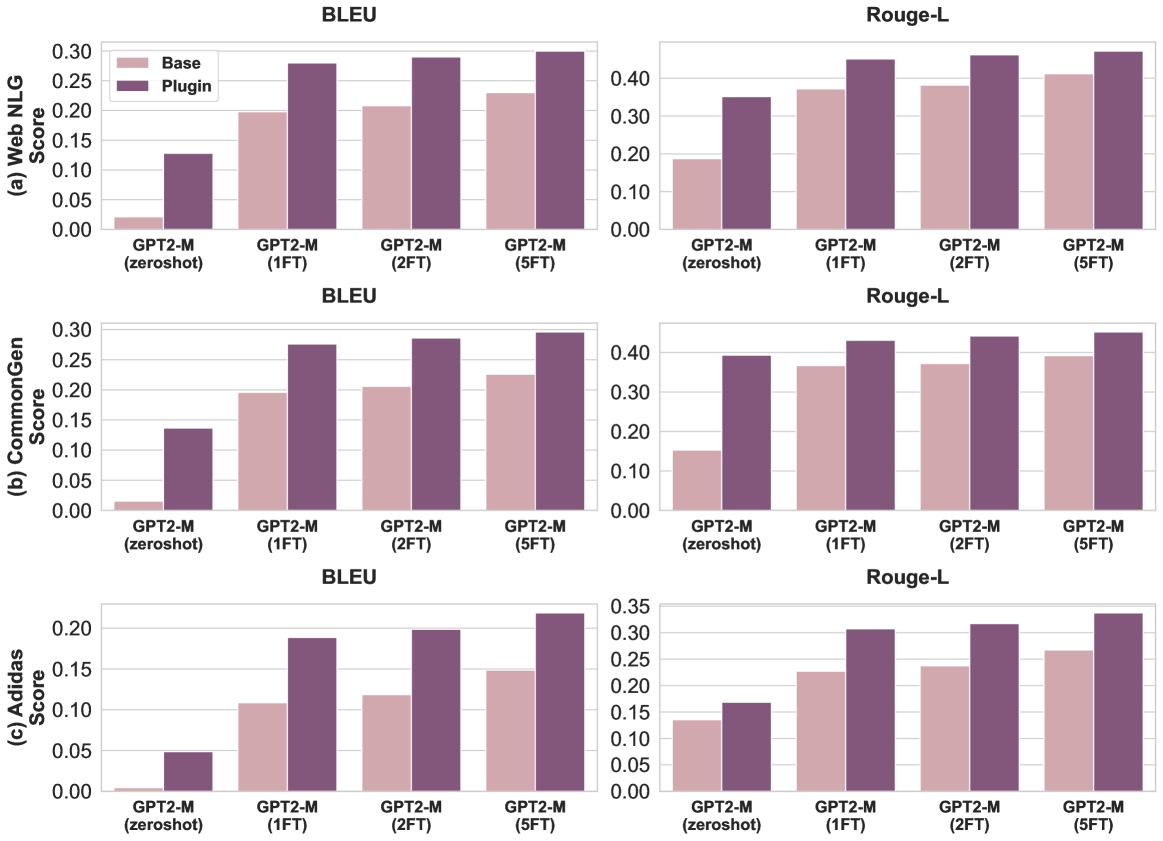

Figure 2: Plugin with increasingly fine-tuned GPT2-M models on the E2E NLG dataset. Results demonstrate that as the quality of the base model improves, the performance of the Plugin improves.

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Data Extraction: Performance Comparison of Reweighting Modules

This document contains a detailed extraction of data from two side-by-side bar charts comparing the performance of different reweighting modules across two metrics: **BLEU** and **Rouge-L**.

## 1. General Layout and Metadata

- **Image Type:** Comparative Bar Charts with overlaid line plots.

- **Language:** English.

- **X-Axis Label (Both Charts):** "Choice of the reweighting module"

- **Y-Axis Label (Left Chart):** "Value"

- **X-Axis Categories (Common to both):**

1. No plugin

2. 1-layer

3. 2-layer

4. 4-layer

5. 8-layer

6. 12-layer

7. GPT2 Small

- **Visual Encoding:**

- Bars represent the value for each category.

- A dashed black line with circular markers connects the top of each bar to visualize the trend across configurations.

- Color Gradient: The bars transition from a light dusty rose (left) to a deep plum/purple (right).

---

## 2. Chart 1: BLEU Score Analysis

### Component Isolation: BLEU

- **Y-Axis Scale:** 0.00 to 0.30 with increments of 0.05.

- **Trend Description:** There is a sharp initial increase from "No plugin" to "1-layer," followed by a slight plateau/minor decrease through the "12-layer" configuration. A significant final spike occurs for the "GPT2 Small" module, which achieves the highest performance.

### Data Points (Estimated from Y-Axis)

| Reweighting Module | BLEU Value (Approx.) | Visual Trend |

| :--- | :--- | :--- |

| No plugin | 0.025 | Baseline |

| 1-layer | 0.188 | Sharp Increase |

| 2-layer | 0.162 | Slight Decrease |

| 4-layer | 0.160 | Plateau |

| 8-layer | 0.159 | Plateau |

| 12-layer | 0.158 | Plateau |

| GPT2 Small | 0.292 | Significant Spike (Peak) |

---

## 3. Chart 2: Rouge-L Score Analysis

### Component Isolation: Rouge-L

- **Y-Axis Scale:** 0.00 to 0.40+ (Markers go up to 0.45+) with increments of 0.10.

- **Trend Description:** Similar to the BLEU chart, there is a massive jump from "No plugin" to "1-layer." The performance remains remarkably stable (flat) across the "1-layer" to "12-layer" range, before another notable increase for the "GPT2 Small" module.

### Data Points (Estimated from Y-Axis)

| Reweighting Module | Rouge-L Value (Approx.) | Visual Trend |

| :--- | :--- | :--- |

| No plugin | 0.225 | Baseline |

| 1-layer | 0.392 | Sharp Increase |

| 2-layer | 0.380 | Minor Dip / Stable |

| 4-layer | 0.378 | Stable |

| 8-layer | 0.376 | Stable |

| 12-layer | 0.378 | Stable |

| GPT2 Small | 0.465 | Significant Spike (Peak) |

---

## 4. Summary of Findings

- **Impact of Plugin:** Adding any reweighting module (even a 1-layer version) provides a substantial performance boost over the "No plugin" baseline in both metrics.

- **Layer Scaling:** Increasing the number of layers in the reweighting module from 1 to 12 does not result in performance gains; in fact, performance remains largely stagnant or slightly regresses compared to the 1-layer version.

- **Model Superiority:** The "GPT2 Small" module consistently outperforms all other configurations, including the multi-layer custom modules, suggesting that the pre-trained architecture provides a superior basis for reweighting.

</details>

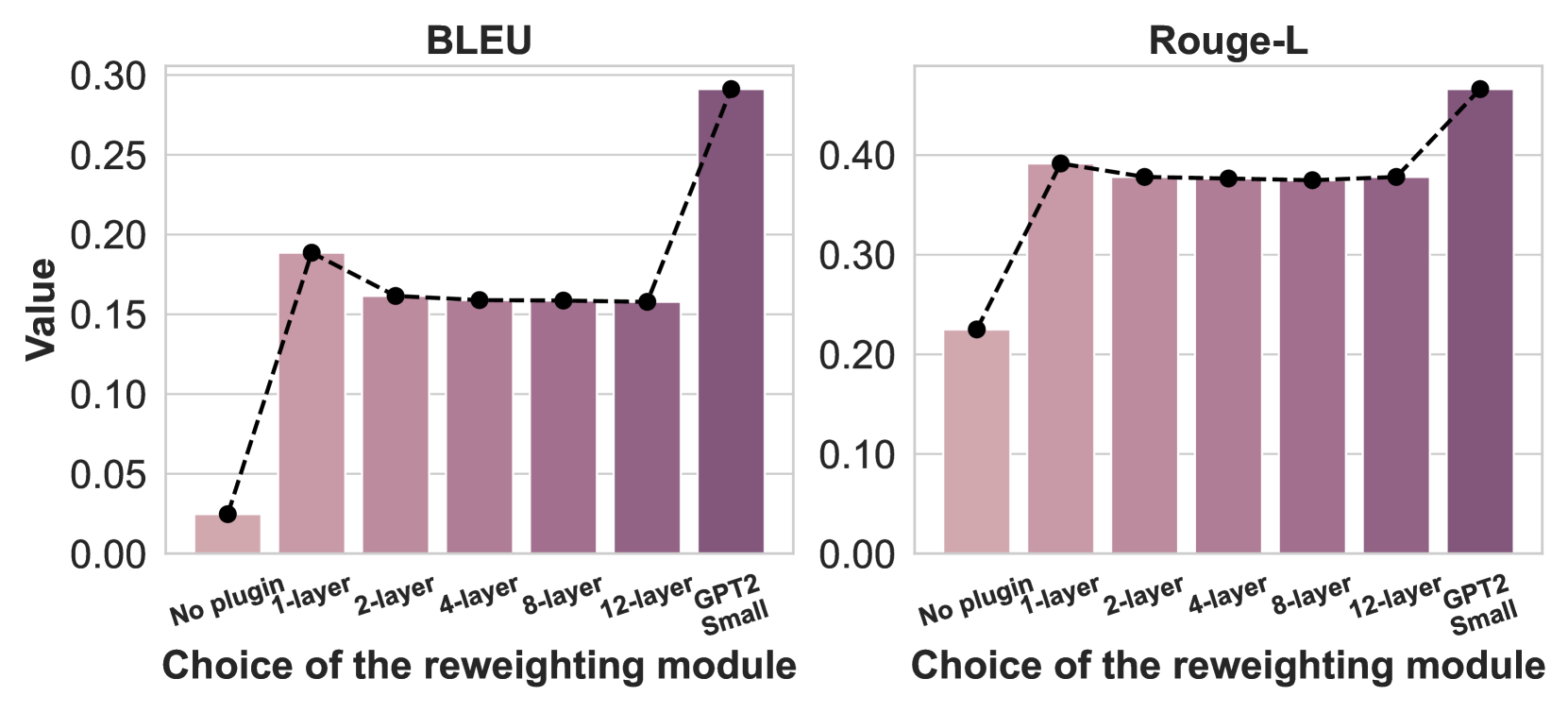

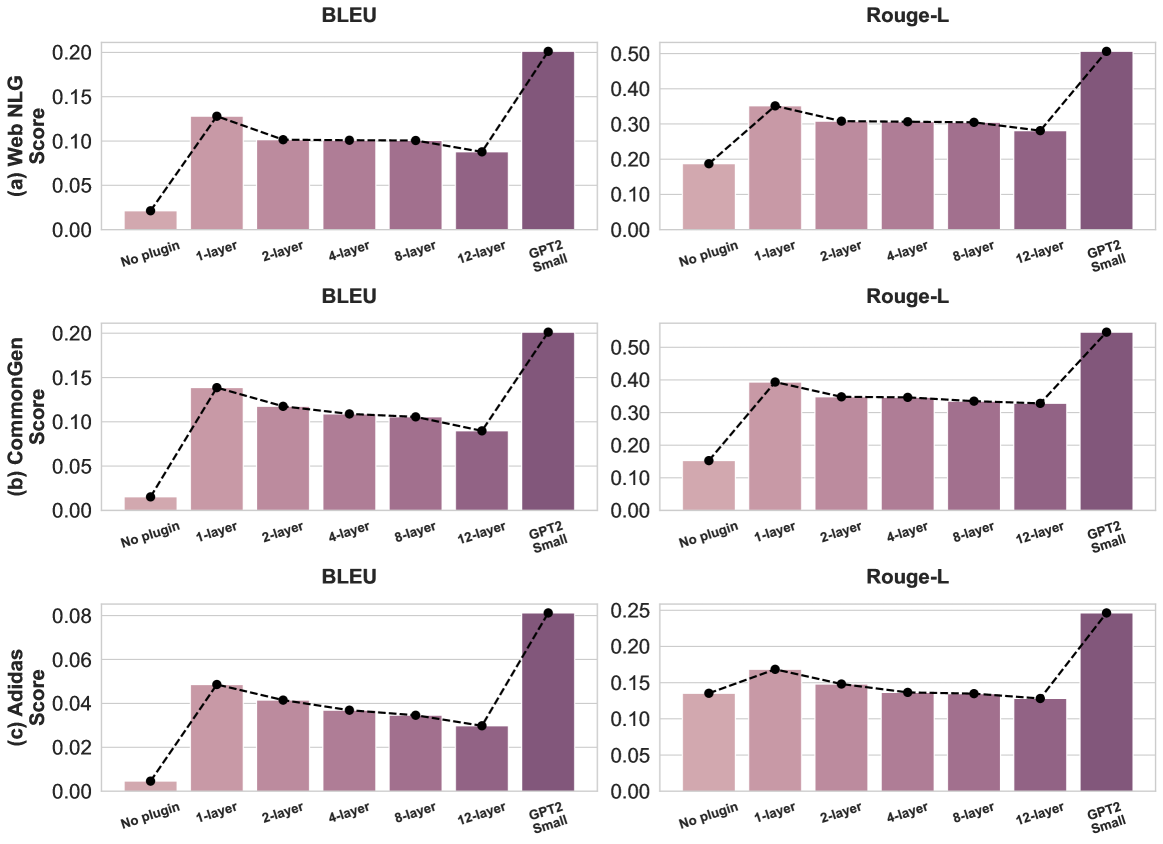

Figure 3: Performance of GPT2-M with varying reweighting model complexities on E2E NLG (BLEU, ROUGE-L). A single-layer reweighting model yields significant gains, while additional layers degrade performance due to overfitting. Initializing with GPT2-Small as the reweighting model improves performance, demonstrating the benefits of leveraging small pretrained models.

We note that the absolute numbers may not appear competitive with state-of-the-art results, because (a) we restrict to greedy decoding (Section 4.2), and (b) Web NLG and CommonGen use distribution-shifted subsets.

We also conduct a human evaluation on 100 Adidas dataset samples, where three subjects compare outputs from Plugin and ICL-3 using LLaMA-3.1 as the base model. Evaluators select the prediction closest to the ground truth, with Plugin preferred in 81% of cases. Details are in Appendix C.7.

<details>

<summary>extracted/6617062/icml2025/images/adidas_histogram.png Details</summary>

### Visual Description

# Technical Document: Analysis of Plugin Model Reweighting in Text Generation

This document provides a comprehensive extraction and analysis of the provided technical diagram, which illustrates the effect of a "Plugin Model" on text generation via a reweighting process.

## 1. Component Isolation

The image is structured into three primary horizontal segments:

* **Top Segment (Input & Reference):** Defines the task and the target output.

* **Middle Segment (Process):** Shows the transformation from a Base Model to a Plugin Model via "reweighting."

* **Bottom Segment (Data Visualization):** Three bar charts showing probability distributions at specific decoding steps.

---

## 2. Textual Content Extraction

### Region: Given Product Attributes (Top Left)

* **Icon:** A black long-sleeve zip-up shirt.

* **Header:** Given Product Attributes

* **Input Text:** "Given the following attributes of a product, write a description."

* **Data Fields:**

| Field | Value |

| :--- | :--- |

| `name` | [Adizero 1/2 Zip Long Sleeve Tee] |

| `Category` | [Clothing] |

| `Price` | [68] |

| `Color` | [Black] |

### Region: Ground-truth Reference (Top Right)

* **Icon:** A male character with glasses and a lightbulb (representing an expert/human).

* **Header:** Ground-truth Reference

* **Description:** "More of the warmth, less of the weight. This adidas running tee gives you a light, stretchy layer to wear while training and racing. AEROREADY wicks moisture, so you stay dry before it gets cold. Slip the thumbhole sleeves over your hands for extra coverage. This product is made with Primegreen, a series of high-performance recycled materials."

### Region: Base Model (Middle Left)

* **Icon:** A blue robot.

* **Header:** Base Model

* **Description:** "The adizero 1/2 zip long sleeve tee is a great way to keep your feet warm and dry. The soft, fabric has been designed with comfort in mind."

* **Note:** The model incorrectly mentions "feet" for a shirt product.

### Region: Plugin Model (Middle Right)

* **Icon:** A blue robot combined with a pink brain/circuit icon.

* **Header:** Plugin Model

* **Process Arrow:** A black arrow pointing from Base Model to Plugin Model labeled **"+ reweighting"**.

* **Description:** "It's a long sleeve tee that is made in the adidas style. It keeps all your busy days short to **<u>keep</u>** you feeling fresh, energized and ready for whatever comes next. This product belongs on this page:Stay **<u>comfortable</u>** through our **<u>ambition</u>**-driven line of high-performance recycled materials."

* **Formatting:** The words "keep", "comfortable", and "ambition" are highlighted in red and underlined, corresponding to the charts below.

---

## 3. Data Visualization: Probability Distributions

All three charts share the following characteristics:

* **Y-Axis:** "Normalized Probability" (Scale varies per chart).

* **X-Axis:** Categorical labels representing a vocabulary of tokens (e.g., adidas, made, recycled, shoes, etc.).

* **Legend:**

* **Light Blue:** Base Model

* **Light Red/Pink:** Plugin Model

* **Legend Placement:** Top right of each chart [x≈0.9, y≈0.9].

### Chart 1: Decoding Step 23

* **Target Token:** "keep"

* **Trend:** The Base Model has low probability across most tokens. The Plugin Model shows a massive spike for the token "keep".

* **Key Data Points:**

| Token | Base Model Prob | Plugin Model Prob |

| :--- | :--- | :--- |

| "keep" | ~0.01 | ~0.17 (Highest peak) |

| "soft" | ~0.02 | ~0.01 |

| "running" | ~0.01 | ~0.005 |

### Chart 2: Decoding Step 48

* **Target Token:** "comfortable"

* **Trend:** The Base Model distribution is nearly flat/zero for the target. The Plugin Model shows a singular dominant spike at "comfortable".

* **Key Data Points:**

| Token | Base Model Prob | Plugin Model Prob |

| :--- | :--- | :--- |

| "comfortable" | ≈ 0.00 | ≈ 0.09 (Highest peak) |

| "dry" | ≈ 0.005 | ≈ 0.032 |

### Chart 3: Decoding Step 51

* **Target Token:** "ambition"

* **Trend:** This chart shows a higher y-axis scale (up to 0.5). The Base Model has a notable peak at "materials", but the Plugin Model overrides this with an extremely high probability for "ambition".

* **Key Data Points:**

| Token | Base Model Prob | Plugin Model Prob |

| :--- | :--- | :--- |

| "ambition" | ≈ 0.00 | ≈ 0.51 (Dominant peak) |

| "materials" | ≈ 0.20 | ≈ 0.08 |

| "products" | ≈ 0.08 | ≈ 0.11 |

---

## 4. Summary of Findings

The image demonstrates a **reweighting mechanism** where a "Plugin Model" modifies the output of a "Base Model."

1. **Correction:** The Base Model produced a hallucination ("keep your feet warm" for a shirt).

2. **Mechanism:** The bar charts prove that at specific decoding steps (23, 48, 51), the Plugin Model significantly increases the probability of specific tokens ("keep", "comfortable", "ambition") that were nearly non-existent in the Base Model's original distribution.

3. **Result:** The final text is steered toward a specific vocabulary, likely derived from the "Ground-truth Reference" or a specific stylistic plugin.

</details>

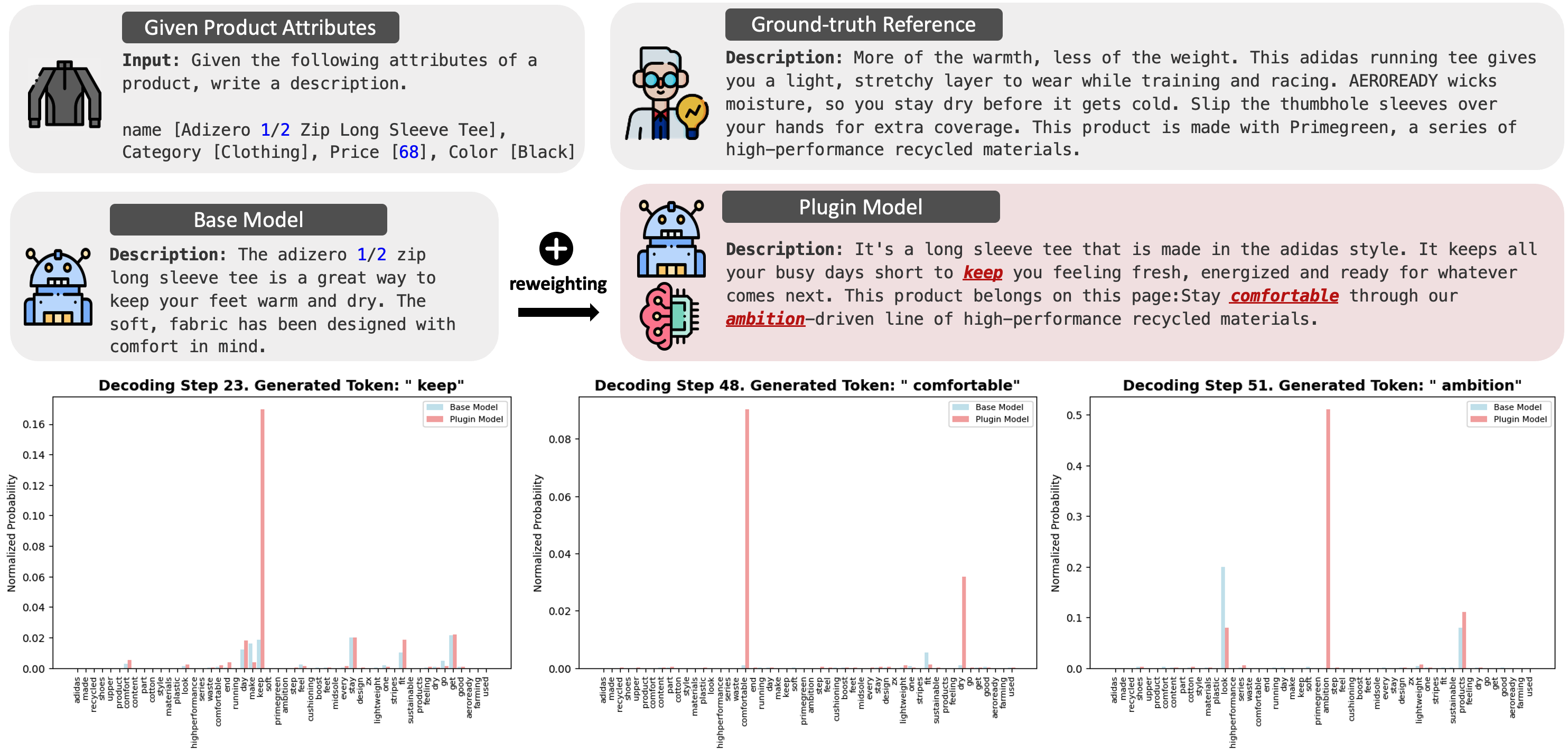

Figure 4: Comparison of the adaptation ability between the base model and Plugin on Adidas dataset. Plugin, enhanced with a reweighting model, generates text that better aligns with the “ Adidas domain ”. The bottom row illustrates token probabilities for key Adidas-related words at different decoding steps, showing how the reweighting model influences token selection.

7.2 Plugin as a Wrapper

Table 5: Performance comparison of BDPL and BDPL + Plugin.

| Dataset | Method | BLEU | | Rouge-L | METEOR | CIDEr | NIST |

| --- | --- | --- | --- | --- | --- | --- | --- |

| E2E NLG | BDPL | 0.2287 | | 0.3922 | 0.4628 | 0.4216 | 0.8625 |

| BDPL + Plugin | 0.4527 | | 0.6027 | 0.6214 | 0.7002 | 2.0817 | |

| WEB NLG | BDPL | 0.1024 | | 0.3017 | 0.3527 | 0.4321 | 0.2631 |

| BDPL + Plugin | 0.2137 | | 0.5928 | 0.5766 | 1.0826 | 0.6142 | |

| CommonGen | BDPL | 0.1023 | | 0.2936 | 0.3362 | 0.2517 | 0.4226 |

| BDPL + Plugin | 0.2614 | | 0.5241 | 0.5016 | 0.8251 | 0.9261 | |

| Adidas | BDPL | 0.0417 | | 0.1710 | 0.1826 | 0.0861 | 0.6034 |

| BDPL + Plugin | 0.0623 | | 0.1759 | 0.2148 | 0.1325 | 0.7024 | |

If logit access is available, Plugin can be applied on top of any prompt-based method using its best-found prompt. For example, our Zeroshot prompt is reused across methods. We also apply Plugin to the Black-box Discrete Prompt Learning (BDPL) approach from Diao et al. (2022), following their recommended 75 API call budget. Table 5 shows results on all datasets with GPT2-XL as the base model. Plugin outperforms BDPL (see Tables 1 – 4), and their combination yields further gains, underscoring the utility of logit-level access in strengthening prompt-based methods.

7.3 Ablation Study

We now show ablation studies that reflect various aspects of the Plugin model. We display the results using GPT2-M as base model on the E2E NLG dataset. The observation is similar on other base models and datasets (Appendix C.5).

Impact of Base Model Quality.

We fine-tune GPT2-M for varying epochs, denoted as 1FT (one epoch), 2FT (two epochs), and 5FT (five epochs), and train a Plugin model for each. Figure 2 shows that as the base model’s task-specific quality improves, the Plugin’s performance improves.

Complexity of the Reweighting Model in Plugin.

We train Plugin models with reweighting architectures varying from 1 to 12 transformer layers while keeping other configurations unchanged. Additionally, we train a variant where the reweighting model is initialized with GPT2-Small. As shown in Figure 3, a single-layer reweighting model yields significant improvements over the base GPT2-M model, while additional layers (e.g., 2, 4, 8, 12) offer diminishing returns and slight performance decline due to overfitting on the small validation set of E2E NLG. This suggests that more data is required for learning complex reweighting models. Notably, initializing with a pretrained GPT2-Small substantially improves performance, underscoring the advantage of using small pretrained models for reweighting due to their inherent autoregressive properties.

7.4 Qualitative Analysis and Case Study

Plugin adapting to distribution shift.

We evaluate Plugin on distribution-shifted Web NLG and CommonGen using LLaMA-3.1-8B as the base model. Web NLG training data contains only Infrastructure concepts, while validation and test sets include Person concepts. Similarly, CommonGen training data features man, whereas validation and test sets contain both man and woman. The base model is fine-tuned on the training data, and Plugin is trained on validation data using the fine-tuned model as the base. These settings reflect different degrees of domain shift, even adversarial to some extent as the training distributions induce biases (e.g., overemphasis on infrastructure or male-related concepts), and Plugin is tasked with correcting them during inference.

Using GPT-4o (Hurst et al., 2024) as an evaluator, the fine-tuned Web NLG model generates only 17.99% Person -related sentences, while Plugin increases this to 71.34%. On CommonGen, the fine-tuned model generates 10.37% Woman -related sentences, whereas Plugin improves this to 31.92%. These results highlight Plugin ’s ability to adapt under distribution shift and mitigate biases in the base model.

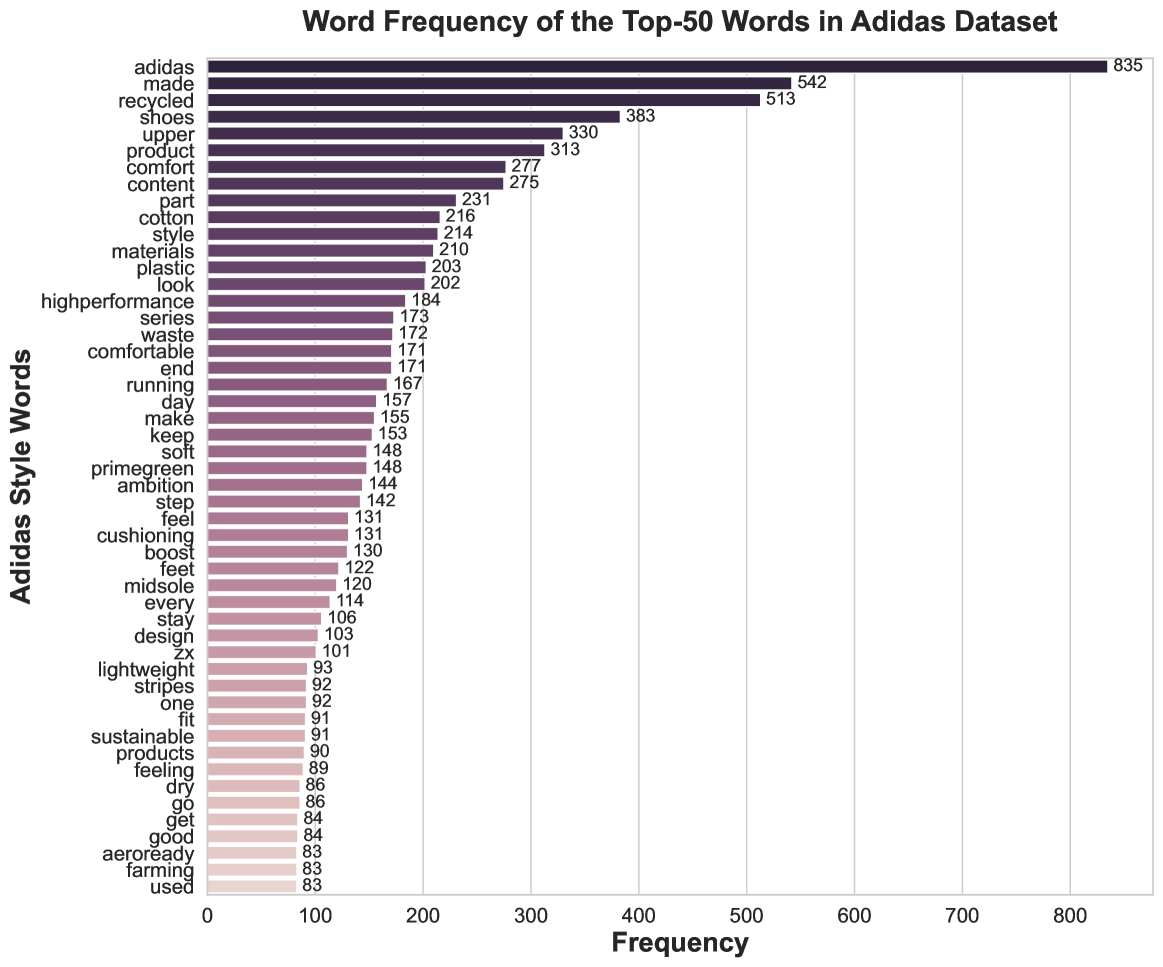

Case study: Plugin adapting to domain (extreme distirbution shifts).

We examine token probabilities during inference for LLaMA-3.1-8B and Plugin to assess domain adaptation in the Adidas dataset, which features product-centric language and a brand-specific tone that diverge significantly from the general pretraining distribution of the black-box LLMs. This experimental setup can also be viewed as extreme distribution shift. Removing stopwords, we extract the top-50 most frequent words, defining the “ Adidas domain ”. Figure 4 illustrates this adaptation: the first row shows product attributes and ground-truth references; the second row compares outputs from the base model (left) and Plugin (right); the third row visualizes model probabilities for “ Adidas domain ” words at three decoding steps.

As seen in Figure 4, Plugin dynamically reweights probabilities to align with domain-specific language. At step 23, “keep” is significantly upweighted. At step 48, “comfortable” and “dry” gain prominence over “fit,” which the base model favors. At step 59, “recycled” is preferred by Plugin, aligning with the ground truth, while the base model favors “running” and “products”. This demonstrates that Plugin effectively steers generation toward domain-specific terminology, whereas the base model, trained on broad corpora, lacks inherent domain preference.

Unlike methods that prune or suppress tokens, Plugin softly reweights token probabilities without eliminating any vocabulary candidates. This preserves full coverage while amplifying domain-specific terms. To quantify this, we measure the total occurrences of the top-50 “ Adidas domain ” words in generated outputs: Plugin includes 25.6% of these terms compared to 13.8% in the base model, indicating substantially improved alignment with domain language.

8 Conclusion

We propose Plugin, a token-level probability reweighting framework that adapts black-box LLMs using only logits and small task-specific data. Framing next-token prediction as a label noise correction problem, we demonstrate both theoretical guarantees and empirical effectiveness across multiple datasets and models. Our findings highlight the potential of logit-based adaptation and advocate for broader access to token logits in closed-source LLMs.

Acknowledgements

HW acknowledges support by Fonds de recherche du Québec – Nature et technologies (FRQNT) and Borealis AI. SK acknowledges support by NSF 2046795 and 2205329, IES R305C240046, the MacArthur Foundation, Stanford HAI, OpenAI, and Google.

Impact Statement

This work introduces a powerful middle ground between fully black-box APIs and fully white-box access to large language models (LLMs), addressing a critical constraint faced by developers: the inability to adapt models when weights and architecture are inaccessible. By leveraging token-level logits—without requiring access to model weights or architecture—our approach enables meaningful adaptation of closed-source LLMs for domain-specific tasks. This has far-reaching implications for both research and industry: it empowers developers to customize models within privacy-preserving, IP-sensitive environments while ensuring greater control, transparency, and safety. Our findings advocate for broader logit access as a scalable, secure, and effective interface—bridging the gap between usability and protection of proprietary models—and open new possibilities for equitable, context-aware language generation in real-world applications.

While Plugin effectively adapts black-box LLMs, it has some limitations, too. Since it only reweights token probabilities without modifying internal representations or embeddings, it may struggle with tasks requiring deep structural adaptations, such as executing complex reasoning. Further research on this aspect is needed. Additionally, although Plugin avoids full fine-tuning, training a separate reweighting model introduces computational overhead compared to prompt tuning or in-context learning, with efficiency depending on the complexity of the reweighting model and the availability of task-specific data.

References

- adi (2023) Adidas us retail products dataset. Kaggle, 2023. URL https://www.kaggle.com/datasets/whenamancodes/adidas-us-retail-products-dataset.

- Achiam et al. (2023) Achiam, J., Adler, S., Agarwal, S., Ahmad, L., Akkaya, I., Aleman, F. L., Almeida, D., Altenschmidt, J., Altman, S., Anadkat, S., et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Bai et al. (2022a) Bai, Y., Jones, A., Ndousse, K., Askell, A., Chen, A., DasSarma, N., Drain, D., Fort, S., Ganguli, D., Henighan, T., et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv preprint arXiv:2204.05862, 2022a.

- Bai et al. (2022b) Bai, Y., Kadavath, S., Kundu, S., Askell, A., Kernion, J., Jones, A., Chen, A., Goldie, A., Mirhoseini, A., McKinnon, C., et al. Constitutional ai: Harmlessness from ai feedback. arXiv preprint arXiv:2212.08073, 2022b.

- Banerjee & Lavie (2005) Banerjee, S. and Lavie, A. Meteor: An automatic metric for mt evaluation with improved correlation with human judgments. In ACL workshop on intrinsic and extrinsic evaluation measures for machine translation and/or summarization, pp. 65–72, 2005.

- Chaudhuri et al. (2015) Chaudhuri, K., Kakade, S. M., Netrapalli, P., and Sanghavi, S. Convergence rates of active learning for maximum likelihood estimation. Advances in Neural Information Processing Systems, 28, 2015.

- Christiano et al. (2017) Christiano, P. F., Leike, J., Brown, T., Martic, M., Legg, S., and Amodei, D. Deep reinforcement learning from human preferences. Advances in neural information processing systems, 30, 2017.

- Dathathri et al. (2020) Dathathri, S., Madotto, A., Lan, J., Hung, J., Frank, E., Molino, P., Yosinski, J., and Liu, R. Plug and play language models: A simple approach to controlled text generation. In International Conference on Learning Representations, 2020.

- Dettmers et al. (2024) Dettmers, T., Pagnoni, A., Holtzman, A., and Zettlemoyer, L. Qlora: Efficient finetuning of quantized llms. Advances in Neural Information Processing Systems, 36, 2024.

- Diao et al. (2022) Diao, S., Huang, Z., Xu, R., Li, X., Lin, Y., Zhou, X., and Zhang, T. Black-box prompt learning for pre-trained language models. arXiv preprint arXiv:2201.08531, 2022.

- Doddington (2002) Doddington, G. Automatic evaluation of machine translation quality using n-gram co-occurrence statistics. In International conference on Human Language Technology Research, pp. 138–145, 2002.

- Dong et al. (2024) Dong, Q., Li, L., Dai, D., Zheng, C., Ma, J., Li, R., Xia, H., Xu, J., Wu, Z., Chang, B., et al. A survey on in-context learning. In Conference on Empirical Methods in Natural Language Processing, pp. 1107–1128, 2024.

- Dubey et al. (2024) Dubey, A., Jauhri, A., Pandey, A., Kadian, A., Al-Dahle, A., Letman, A., Mathur, A., Schelten, A., Yang, A., Fan, A., et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Dušek et al. (2020) Dušek, O., Novikova, J., and Rieser, V. Evaluating the state-of-the-art of end-to-end natural language generation: The e2e nlg challenge. Computer Speech & Language, 59:123–156, 2020.

- Frostig et al. (2015) Frostig, R., Ge, R., Kakade, S. M., and Sidford, A. Competing with the empirical risk minimizer in a single pass. In Conference on learning theory, pp. 728–763. PMLR, 2015.

- Gao et al. (2024) Gao, G., Taymanov, A., Salinas, E., Mineiro, P., and Misra, D. Aligning llm agents by learning latent preference from user edits. arXiv preprint arXiv:2404.15269, 2024.

- Gardent et al. (2017) Gardent, C., Shimorina, A., Narayan, S., and Perez-Beltrachini, L. Creating training corpora for nlg micro-planning. In Annual Meeting of the Association for Computational Linguistics, pp. 179–188, 2017.

- Hiranandani et al. (2021) Hiranandani, G., Mathur, J., Narasimhan, H., Fard, M. M., and Koyejo, S. Optimizing black-box metrics with iterative example weighting. In International Conference on Machine Learning, pp. 4239–4249. PMLR, 2021.

- Hsu et al. (2012) Hsu, D., Kakade, S., Zhang, T., et al. A tail inequality for quadratic forms of subgaussian random vectors. Electronic Communications in Probability, 17, 2012.

- Hu et al. (2021) Hu, E. J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., Wang, L., and Chen, W. Lora: Low-rank adaptation of large language models. arXiv preprint arXiv:2106.09685, 2021.

- Hu et al. (2023a) Hu, Z., Wang, L., Lan, Y., Xu, W., Lim, E.-P., Bing, L., Xu, X., Poria, S., and Lee, R. LLM-adapters: An adapter family for parameter-efficient fine-tuning of large language models. In Conference on Empirical Methods in Natural Language Processing, pp. 5254–5276, 2023a.

- Hu et al. (2023b) Hu, Z., Wang, L., Lan, Y., Xu, W., Lim, E.-P., Bing, L., Xu, X., Poria, S., and Lee, R. Llm-adapters: An adapter family for parameter-efficient fine-tuning of large language models. In Conference on Empirical Methods in Natural Language Processing, pp. 5254–5276, 2023b.

- Huang et al. (2024) Huang, Y., Liu, Y., Thirukovalluru, R., Cohan, A., and Dhingra, B. Calibrating long-form generations from large language models. arXiv preprint arXiv:2402.06544, 2024.

- Hurst et al. (2024) Hurst, A., Lerer, A., Goucher, A. P., Perelman, A., Ramesh, A., Clark, A., Ostrow, A., Welihinda, A., Hayes, A., Radford, A., et al. Gpt-4o system card. arXiv preprint arXiv:2410.21276, 2024.

- Kapoor et al. (2024) Kapoor, S., Gruver, N., Roberts, M., Pal, A., Dooley, S., Goldblum, M., and Wilson, A. Calibration-tuning: Teaching large language models to know what they don’t know. In Workshop on Uncertainty-Aware NLP, pp. 1–14, 2024.

- Kojima et al. (2022) Kojima, T., Gu, S. S., Reid, M., Matsuo, Y., and Iwasawa, Y. Large language models are zero-shot reasoners. Advances in neural information processing systems, 35, 2022.

- Koyejo et al. (2014) Koyejo, O. O., Natarajan, N., Ravikumar, P. K., and Dhillon, I. S. Consistent binary classification with generalized performance metrics. Advances in neural information processing systems, 27, 2014.

- Krause et al. (2021) Krause, B., Gotmare, A. D., McCann, B., Keskar, N. S., Joty, S., Socher, R., and Rajani, N. F. Gedi: Generative discriminator guided sequence generation. In Findings of the Association for Computational Linguistics: EMNLP 2021, pp. 4929–4952, 2021.

- Lattimore & Szepesvári (2020) Lattimore, T. and Szepesvári, C. Bandit algorithms. Cambridge University Press, 2020.

- Lester et al. (2021) Lester, B., Al-Rfou, R., and Constant, N. The power of scale for parameter-efficient prompt tuning. In Conference on Empirical Methods in Natural Language Processing, pp. 3045–3059, 2021.

- Li et al. (2022) Li, X., Thickstun, J., Gulrajani, I., Liang, P. S., and Hashimoto, T. B. Diffusion-lm improves controllable text generation. Advances in Neural Information Processing Systems, 35:4328–4343, 2022.

- Li & Liang (2021) Li, X. L. and Liang, P. Prefix-tuning: Optimizing continuous prompts for generation. In Annual Meeting of the Association for Computational Linguistics, pp. 4582–4597, 2021.

- Lin et al. (2020) Lin, B. Y., Zhou, W., Shen, M., Zhou, P., Bhagavatula, C., Choi, Y., and Ren, X. Commongen: A constrained text generation challenge for generative commonsense reasoning. In Findings of the Association for Computational Linguistics, pp. 1823–1840, 2020.

- Lin (2004) Lin, C.-Y. Rouge: A package for automatic evaluation of summaries. In Text summarization branches out, pp. 74–81, 2004.

- Lin & Och (2004) Lin, C.-Y. and Och, F. J. Automatic evaluation of machine translation quality using longest common subsequence and skip-bigram statistics. In Annual meeting of the association for computational linguistics, pp. 605–612, 2004.