# Talk Structurally, Act Hierarchically: A Collaborative Framework for LLM Multi-Agent Systems

**Authors**: Zhao Wang, Sota Moriyama, Wei-Yao Wang, Briti Gangopadhyay, Shingo Takamatsu

> Sony Group Corporation, Japan

Abstract

Recent advancements in LLM-based multi-agent (LLM-MA) systems have shown promise, yet significant challenges remain in managing communication and refinement when agents collaborate on complex tasks. In this paper, we propose Talk Structurally, Act Hierarchically (TalkHier), a novel framework that introduces a structured communication protocol for context-rich exchanges and a hierarchical refinement system to address issues such as incorrect outputs, falsehoods, and biases. TalkHier surpasses various types of SoTA, including inference scaling model (OpenAI-o1), open-source multi-agent models (e.g., AgentVerse), and majority voting strategies on current LLM and single-agent baselines (e.g., ReAct, GPT4o), across diverse tasks, including open-domain question answering, domain-specific selective questioning, and practical advertisement text generation. These results highlight its potential to set a new standard for LLM-MA systems, paving the way for more effective, adaptable, and collaborative multi-agent frameworks. The code is available at https://github.com/sony/talkhier.

{CJK}

UTF8min

Talk Structurally, Act Hierarchically: A Collaborative Framework for LLM Multi-Agent Systems footnotetext: These authors contributed equally to this work footnotetext: Corresponding author: Zhao Wang (Email Address: Zhao.Wang@sony.com)

1 Introduction

Large Language Model (LLM) Agents have broad applications across domains such as robotics (Brohan et al., 2022), finance (Shah et al., 2023; Zhang et al., 2024b), and coding Chen et al. (2021); Hong et al. (2023). By enhancing capabilities such as autonomous reasoning (Wang et al., 2024b) and decision-making (Eigner and Händler, 2024), LLM agents bridge the gap between human intent and machine execution, generating contextually relevant responses (Pezeshkpour et al., 2024).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart: Accuracy Comparison on MMLU

### Overview

This bar chart compares the accuracy of several language model configurations on the MMLU (Massive Multitask Language Understanding) benchmark. The chart displays accuracy percentages for models including TalkHier+GPT4o, AgentVerse, AgentPrune, AutoGPT, GPTSwarm, GPT4o, GPT4o with varying numbers of queries (@), and ReAct with varying numbers of queries (@). A dashed horizontal line indicates the accuracy of OpenAI's GPT-4 (86.48%).

### Components/Axes

* **X-axis:** Model Configuration (TalkHier+GPT4o, AgentVerse, AgentPrune, AutoGPT, GPTSwarm, GPT4o, GPT4o-3@, GPT4o-5@, GPT4o-7@, ReAct, ReAct-3@, ReAct-5@, ReAct-7@)

* **Y-axis:** Accuracy (%) on MMLU. Scale ranges from approximately 64% to 87%.

* **Horizontal Dashed Line:** Represents OpenAI's GPT-4 accuracy at 86.48%.

* **Annotation:** A red arrow points from the TalkHier+GPT4o bar to the OpenAI GPT-4 line, indicating a +3.28% improvement.

### Detailed Analysis

The bars represent the accuracy of each model configuration. Let's analyze each one:

1. **TalkHier+GPT4o:** Accuracy is 86.66%. This is the highest accuracy shown in the chart.

2. **AgentVerse:** Accuracy is 83.9%.

3. **AgentPrune:** Accuracy is 83.45%.

4. **AutoGPT:** Accuracy is 69.7%.

5. **GPTSwarm:** Accuracy is 66.83%.

6. **GPT4o:** Accuracy is 64.95%.

7. **GPT4o-3@:** Accuracy is 64.84%.

8. **GPT4o-5@:** Accuracy is 64.96%.

9. **GPT4o-7@:** Accuracy is 65.5%.

10. **ReAct:** Accuracy is 67.33%.

11. **ReAct-3@:** Accuracy is 74.05%.

12. **ReAct-5@:** Accuracy is 74.05%.

13. **ReAct-7@:** Accuracy is 76.06%.

The GPT4o configurations with increasing numbers of queries (@) show a slight upward trend in accuracy, but the differences are small. The ReAct configurations also show an upward trend with increasing queries, with ReAct-7@ achieving the highest accuracy among the ReAct models.

### Key Observations

* TalkHier+GPT4o significantly outperforms all other configurations, exceeding OpenAI's GPT-4 accuracy by 3.28%.

* AgentVerse and AgentPrune perform similarly, both exceeding GPT-4's accuracy.

* AutoGPT, GPTSwarm, and GPT4o have considerably lower accuracy scores compared to the top performers.

* Increasing the number of queries (@) for GPT4o and ReAct models generally leads to a slight improvement in accuracy, but the gains diminish.

* ReAct-7@ achieves the highest accuracy among the ReAct models, approaching the performance of AgentVerse and AgentPrune.

### Interpretation

The data suggests that the TalkHier+GPT4o configuration is a highly effective approach for the MMLU benchmark, surpassing even OpenAI's GPT-4. The improvement could be attributed to the specific architecture or training methodology of TalkHier. The performance of AgentVerse and AgentPrune indicates that agent-based approaches can also yield strong results. The lower accuracy of AutoGPT, GPTSwarm, and GPT4o suggests that these models may require further optimization or different prompting strategies to achieve comparable performance. The slight improvements observed with increasing queries for GPT4o and ReAct models suggest that iterative refinement can be beneficial, but there may be a point of diminishing returns. The chart highlights the importance of model configuration and architecture in achieving high accuracy on complex language understanding tasks. The consistent upward trend in ReAct performance with more queries suggests that the model benefits from more opportunities to reason and refine its responses.

</details>

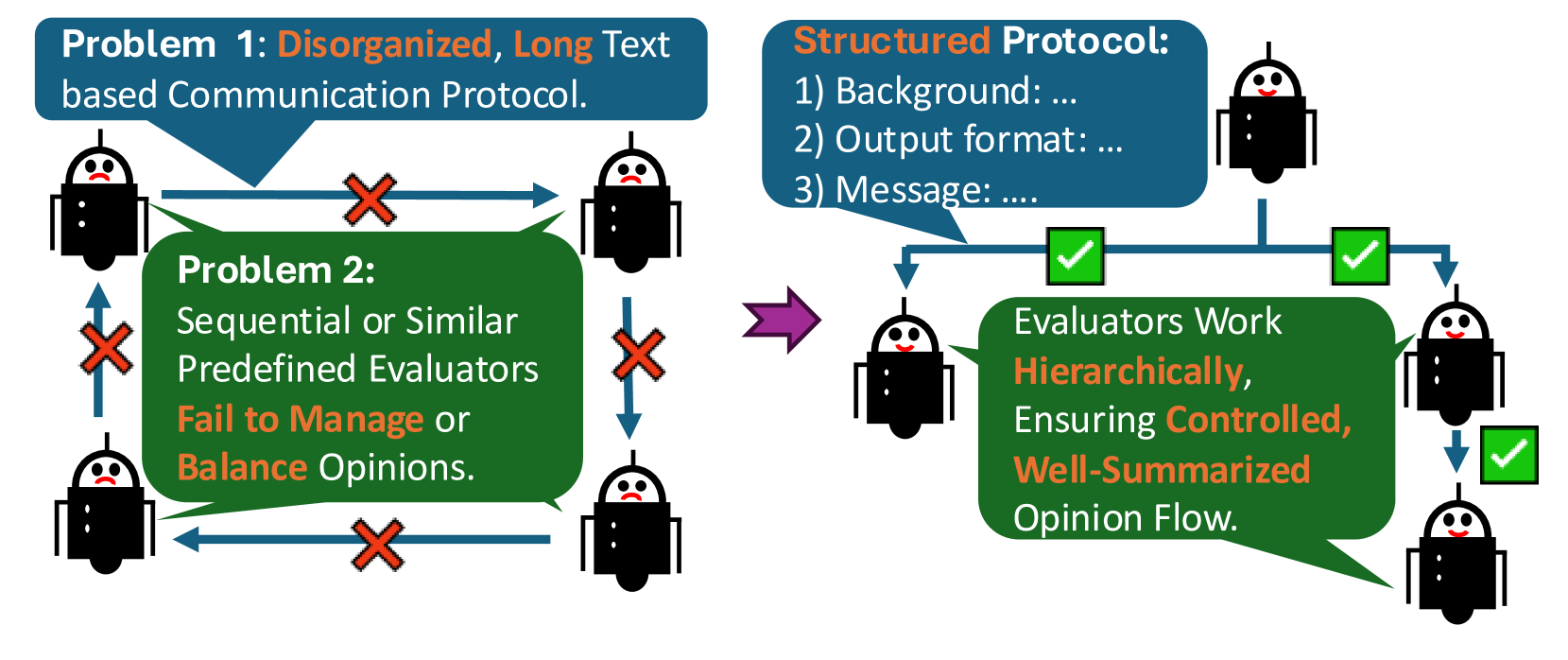

Figure 1: Existing LLM-MA methods (left) face two major challenges: 1) disorganized, lengthy text-based communication protocols, and 2) sequential or overly similar flat multi-agent refinements. In contrast, TalkHier (right) introduces a well-structured communication protocol and a hierarchical refinement approach.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Communication Protocol Problems & Solution

### Overview

The image presents a diagram illustrating two problems with communication protocols and a proposed structured protocol as a solution. The left side depicts two problematic scenarios, while the right side shows the improved flow with the structured protocol. The diagram uses stylized robot icons and arrows to represent communication and evaluation processes.

### Components/Axes

The diagram is divided into two main sections: "Problem 1 & 2" on the left and "Structured Protocol" on the right.

* **Problem 1:** "Disorganized, Long Text based Communication Protocol."

* **Problem 2:** "Sequential or Similar Predefined Evaluators Fail to Manage or Balance Opinions."

* **Structured Protocol:**

* 1) Background: ...

* 2) Output format: ...

* 3) Message: ...

* **Icons:** Stylized robot heads representing communicators/evaluators.

* **Arrows:** Blue arrows with red "X" marks indicate failed or problematic communication. Green arrows with checkmarks indicate successful communication.

* **Text Box:** "Evaluators Work Hierarchically, Ensuring Controlled, Well-Summarized Opinion Flow."

### Detailed Analysis or Content Details

**Problem 1:** Two robot icons are connected by a bidirectional blue arrow with a red "X" mark in the center. This indicates a failed communication attempt due to a disorganized, long-text protocol.

**Problem 2:** Four robot icons are arranged in a square, with bidirectional blue arrows and red "X" marks between them. This illustrates a failure in managing or balancing opinions when using sequential or similar predefined evaluators.

**Structured Protocol:** A series of three robot icons are connected by green arrows with checkmarks. The first robot sends a message to the second, which then passes it to the third. The flow is unidirectional. A text box positioned near the middle of the flow states: "Evaluators Work Hierarchically, Ensuring Controlled, Well-Summarized Opinion Flow." The protocol is defined by three steps: Background, Output format, and Message. The content of each step is represented by "...".

### Key Observations

* The diagram clearly contrasts problematic communication scenarios with a proposed solution.

* The use of red "X" marks and green checkmarks effectively highlights the success or failure of communication.

* The hierarchical structure of the "Structured Protocol" is emphasized by the unidirectional flow and the text box.

* The problems are presented as cyclical, while the solution is linear.

### Interpretation

The diagram suggests that unstructured, long-form communication and the use of similar evaluators can lead to communication breakdowns and unbalanced opinions. The proposed "Structured Protocol" aims to address these issues by introducing a hierarchical evaluation process that ensures controlled and well-summarized opinion flow. The three-step protocol (Background, Output format, Message) likely represents a framework for organizing communication to improve clarity and efficiency. The diagram implies that a structured approach is crucial for effective communication, particularly when dealing with multiple evaluators and potentially conflicting opinions. The use of robots suggests this protocol is intended for automated or AI-driven communication systems. The "..." in the protocol steps indicate that the specific details of each step are not defined within the diagram, but the framework is presented.

</details>

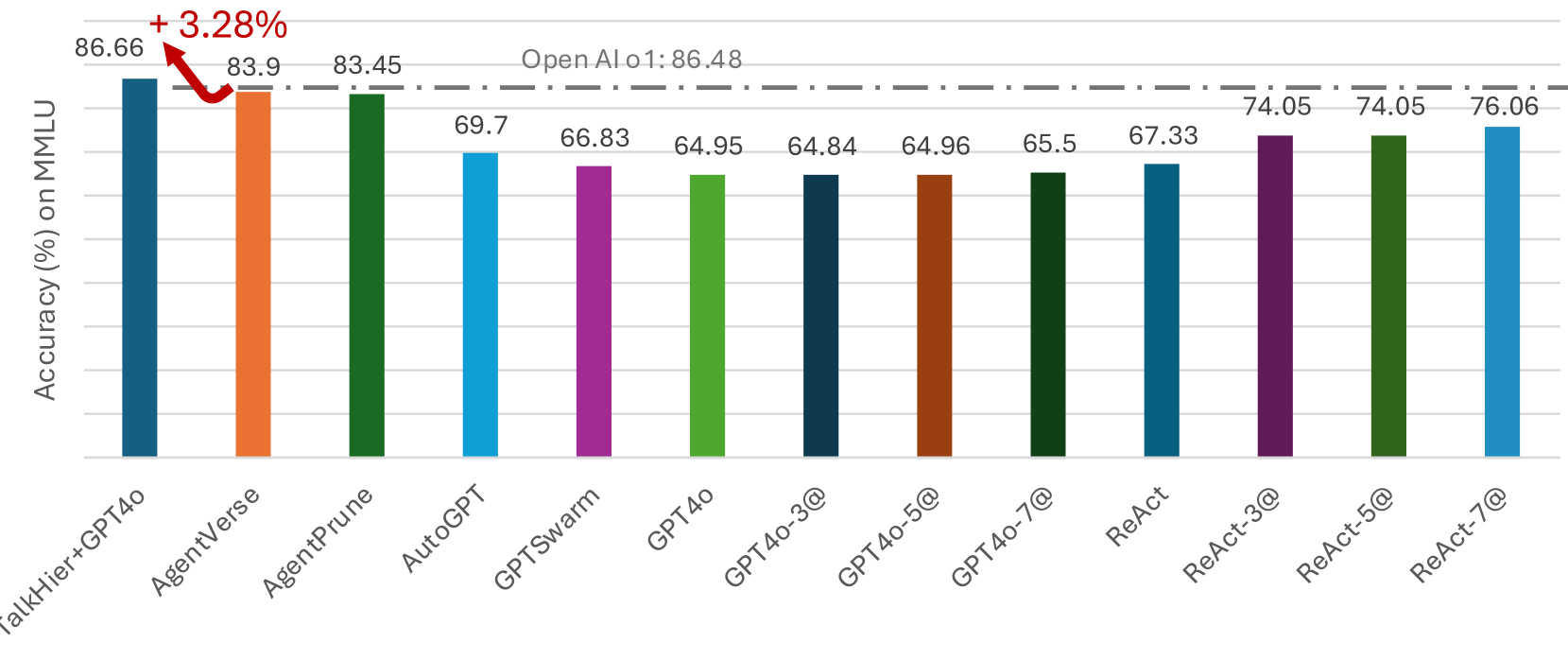

Figure 2: Our TalkHier built on GPT4o surpasses inference scaling models (OpenAI-o1), open-source multi-agent models (AgentVerse and etc.), and models with majority voting strategies (ReAct, GPT4o) on five subtasks of MMLU.

Recent research has primarily focused on LLM-based Multi-Agent (LLM-MA) systems, which leverage collective intelligence and specialize each agent with the corresponding subtasks, to solve complicated and multi-step problems. For instance, previous works on LLM-MA have explored approaches where instances of LLMs, referred to as agents Xi et al. (2023); Gao et al. (2023); Wang et al. (2024a); Cheng et al. (2024); Ma et al. (2024), collaborate synergistically by debate Chen et al. (2024), reflection He et al. (2024), self-refinement Madaan et al. (2023), or multi-agent based feedback refinement Yang et al. (2023). These systems employ diverse communication topologies to enable efficient interactions between agents such as Chain Wei et al. (2022) and Tree Yao et al. (2023) structures, among others Qian et al. (2024); Zhuge et al. (2024); Zhang et al. (2024a).

Despite the promising advancements in LLM-MA systems, several challenges in this field remain unexplored (shown in Figure 1):

1) Disorganized communication in text form. Agents often engage in debates Zhao et al. (2024), share insights Chen et al. (2024), or perform refinement Madaan et al. (2023); Yang et al. (2023) to effectively solve complex tasks, with their exchanges primarily in text form Guo et al. (2024). However, communication often becomes disorganized because it requires explicitly describing agent tasks, providing background context for the communication, and specifying the required output formats. These factors together lead to lengthy and unstructured exchanges, making it difficult for agents to manage subgoals, maintain output structures, and retrieve independent memories from prior actions and observations.

2) Refinement schemes. While some studies have shown that incorporating agent debates Chen et al. (2024) or evaluation-based multi-agent refinement Wang et al. (2023); Yang et al. (2023) can improve system accuracy, these approaches also expose significant limitations. As the number of agents increases, LLM-MA systems face challenges in effectively summarizing opinions or feedback Fang et al. (2024). They often fail to balance these inputs, frequently overlooking some or exhibiting biases based on the order in which feedback is provided Errica et al. (2024).

In this paper, we propose a novel collaborative LLM-MA framework called Talk Structurally, Act Hierarchically (TalkHier) -the first collaborative LLM-MA framework to integrate a well-structured communication protocol with hierarchical refinement. Our key contributions shown in Figure 1 and 2 are as follows:

1. Well-Structured, Context-Rich Communication Protocol: TalkHier introduces a novel communication protocol that incorporates newly proposed elements: messages, intermediate outputs, and relevant background information. These components form the foundation of a well-structured protocol that organizes agent communication, ensuring clarity and precision. By embedding these elements, TalkHier significantly improves communication accuracy and efficiency compared to traditional text-based methods.

1. Hierarchical Refinement in LLM-MA Systems: TalkHier enhances traditional multi-agent evaluation systems with a hierarchical refinement framework, enabling agents to act hierarchically. This approach addresses such as the difficulty in summarizing opinions or feedback as the number of agents increases, balancing diverse inputs, and mitigating biases caused by the order of feedback processing, resulting in more reliable and robust interactions.

1. State-of-the-Art Results Across Benchmarks: Experimental results show that TalkHier achieves state-of-the-art performance on diverse benchmarks, including selective problem-solving in complex sub-domains, open question answering, and Japanese text generation tasks. Ablation studies confirm the effectiveness of each component, demonstrating their contributions to the framework’s overall success.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Communication Protocols in Multi-Agent Systems

### Overview

The image presents a comparative diagram illustrating two communication protocols in multi-agent systems: "Context-Poor Communication" and "Structured Communication." Each protocol is depicted with a Supervisor agent and two Member agents (Agent 1 and Agent 2). The diagram highlights the differences in task assignment and response quality between the two approaches. The diagram also includes a scoring rubric for evaluating responses.

### Components/Axes

The diagram is divided into two main sections, one for each communication protocol. Each section contains:

* **Supervisor:** Represented by a head icon, initiating tasks.

* **Member Agent 1 (Generator):** Represented by a head icon, generating responses. Labeled with "(Role: Generator)".

* **Member Agent 2 (Evaluator):** Represented by a head icon, evaluating responses. Labeled with "(Role: Evaluator)".

* **Communication Arrows:** Illustrating the flow of prompts, outputs, and evaluations.

* **Text Boxes:** Containing example prompts, responses, and evaluation criteria.

* **Scoring Rubric:** A table at the bottom detailing evaluation criteria (a-b) and scores.

### Detailed Analysis or Content Details

**Context-Poor Communication (Left Side):**

1. **Prompt (Supervisor to Agent 1):** "Your sub task is…You need…" (Only-text based, Length Task).

2. **Agent 1 Response:** "The output of mine is…" Bad Response due to Non-organized instruction.

3. **Prompt (Supervisor to Agent 2):** "Your sub task is…You need…" (Only-text based, Length Task).

4. **Agent 2 Response:** "The output of mine is…" Bad Response due to Non-organized instruction.

5. **Annotation:** "Context-Poor Communication: Assigning the next agent without providing well-organized information. ② Bad Responses: Producing poor responses due to forgetting key issues, such as the context of the question or intermediate format."

**Structured Communication (Right Side):**

1. **Prompt (Supervisor to Agent 1):** "Generate text…"

2. **Agent 1 Response:** "My generated text is…"

3. **Prompt (Supervisor to Agent 2):** "Evaluate it from…"

4. **Agent 2 Response:** "My evaluated result is…" These are good as…

5. **Annotation:** "Structured Communication: Assigning specific subtask to the next agent with message, intermediate output and background. ② Accurate responses: including response and other intermediate output."

**Scoring Rubric (Bottom):**

The rubric is presented as a table with two rows and two columns.

| | a. (Placeholder-Criterion 1) | b. (Placeholder-Criterion 2) |

|--------------|------------------------------|------------------------------|

| **A** | "The [placeholder-Criterion 1] is good but the [placeholder-Criterion 2] is not good" | "The Score of [placeholder-Criterion 1] is…" |

| **B** | "Evaluate it from [placeholder-Criterion 2]" | "The Score of [placeholder-Criterion 2] is…" |

**Additional Text:**

* "How Many?"

* "In what Format?"

* "Metrics? Evaluate what?"

* "To finish this I need…"

### Key Observations

* The "Structured Communication" protocol consistently yields "good" responses, while the "Context-Poor Communication" protocol results in "bad" responses.

* The scoring rubric uses placeholder criteria, suggesting it's a template for evaluation.

* The diagram emphasizes the importance of providing context and intermediate outputs in multi-agent communication.

* The diagram uses a consistent visual style with head icons representing agents and arrows indicating communication flow.

### Interpretation

The diagram demonstrates the critical role of well-defined communication protocols in multi-agent systems. The "Structured Communication" approach, which includes clear task assignments, intermediate outputs, and background information, leads to more accurate and useful responses. Conversely, the "Context-Poor Communication" approach, lacking these elements, results in poor-quality responses. The scoring rubric suggests a framework for evaluating the quality of responses based on specific criteria.

The diagram highlights a fundamental principle in AI and distributed systems: effective communication is essential for collaboration and achieving desired outcomes. The use of placeholders in the scoring rubric indicates that the specific evaluation criteria will vary depending on the task and the agents involved. The diagram serves as a visual guide for designing and implementing robust communication protocols in multi-agent systems. The diagram is a conceptual illustration, and the specific details of the prompts and responses are examples to demonstrate the difference in outcomes. The diagram is not presenting factual data, but rather a conceptual comparison.

</details>

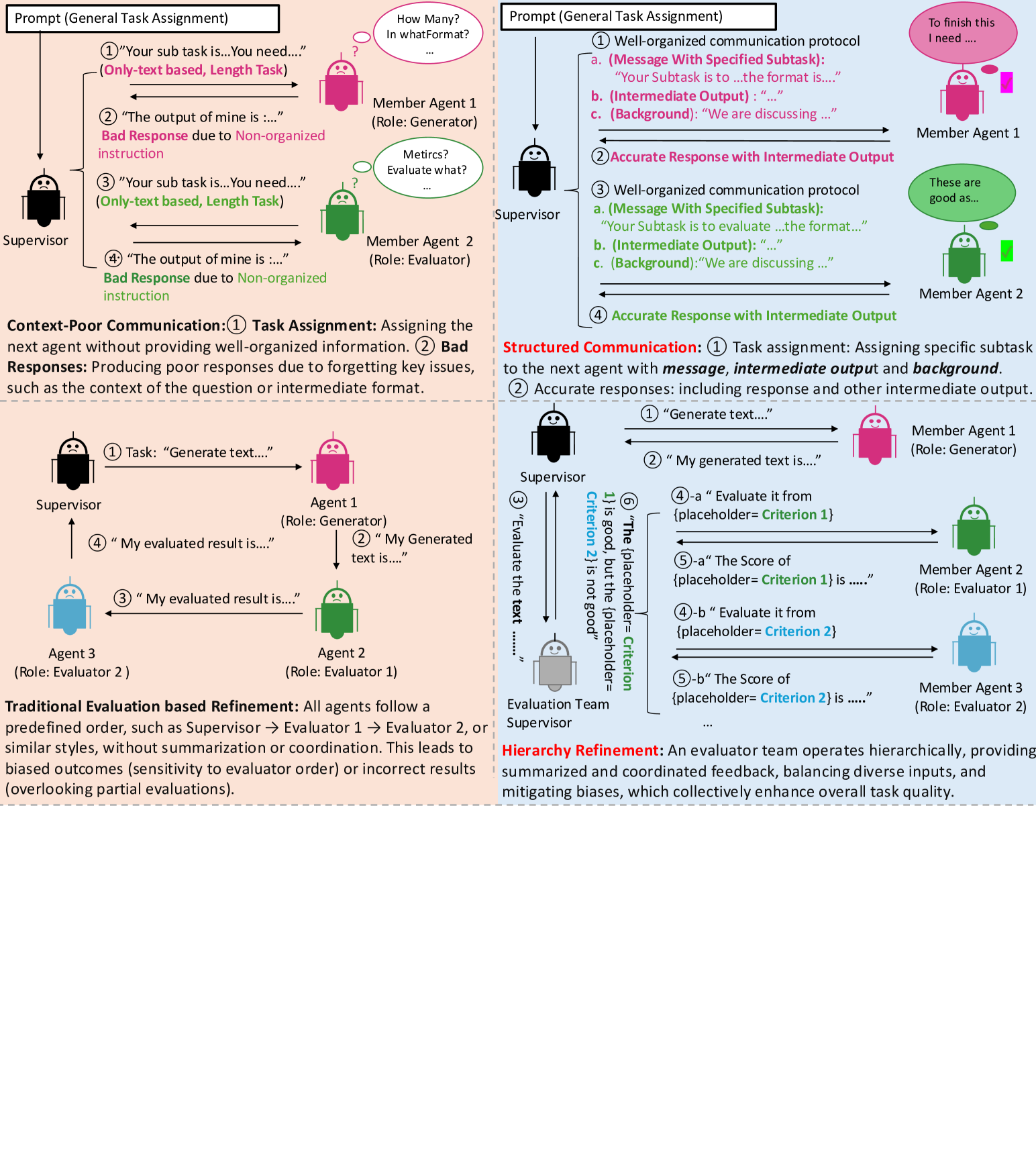

Figure 3: Comparisons between existing approaches (left) and ours (right). Our TalkHier proposes a new communication protocol (first row) featuring context-rich and well-structured communication information, along with a collaborative hierarchical refinement (second row) where evaluations provide summarized and coordinated feedback within an LLM-MA framework.

2 Related Work

Collaborative LLM-MA.

LLM-MA systems enable agents to collaborate on complex tasks through dynamic role allocation, communication, and task execution Guo et al. (2024); Han et al. (2024). Recent advancements include agent profiling Yang et al. (2024), hierarchical communication Rasal (2024), and integration of reasoning and intentions Qiu et al. (2024). However, challenges remain in ensuring robust communication, avoiding redundancy, and refining evaluation processes Talebirad and Nadiri (2023). Standardized benchmarks and frameworks are needed to drive future progress Li et al. (2024).

Communication in LLM-MA.

Effective communication is crucial for collaborative intelligence Guo et al. (2024). While many previous works, including chain (Wei et al., 2022), tree (Yao et al., 2023), complete graph (Qian et al., 2024), random graph (Qian et al., 2024), optimizable graph (Zhuge et al., 2024), and pruned graph (Zhang et al., 2024a) methods have focused on communication topologies, there has been limited discussion on the optimal form of communication. Most systems rely on text-based exchanges Zhang et al. (2024a); Shen et al. (2024), which is inefficient and prone to errors as agents often lose track of subtasks or fail to recall prior outputs as tasks grow in complexity. We argue for structured communication protocols that guide subtasks with clear, context-specific instructions, ensuring coherence across interactions.

Feedback-Based Refinement.

Feedback mechanisms, such as Self-Refine Madaan et al. (2023) and generator-evaluator frameworks Wang et al. (2023), improve system accuracy through iterative refinement. However, these methods face challenges in managing diverse feedback, which can lead to bias or inefficiencies if inputs are not well-organized Xu et al. (2024). Scalable, unbiased solutions are essential to enhance multi-agent evaluation processes.

3 Methodology

TalkHier aims to design a LLM-MA system represented as a graph $\mathcal{G}=(\mathcal{V},\mathcal{E})$ , where $\mathcal{V}$ denotes the set of agents (nodes) and $\mathcal{E}$ represents the set of communication pathways (edges). Given an input problem $p$ , the system dynamically defines a set of communication events $\mathcal{C}_{p}$ , where each event $c_{ij}^{(t)}∈\mathcal{C}_{p}$ represents a communication between agents $v_{i}$ and $v_{j}$ along an edge $e_{ij}∈\mathcal{E}$ at time step $t$ . While the graph structure $\mathcal{G}$ remains fixed, the communication events $\mathcal{C}_{p}$ are dynamic and adapt to the specific task.

Supervisor Prompt Template

Team Members: [Description of each team member’s role] Conversation History: [Independent Conversation History] Given the conversation above, output the following in this exact order: 1. ‘thoughts’: Output a detailed analysis on the most recent message. In detail, state what you think should be done next, and who you think you should contact next. 2. Who should act next? Select one of: [Team member names] and output as ‘next’. When you have determined that the final output is gained, report back with FINISH. 3. ‘messages’: If the next agent is one of [Team member names], give detailed instructions. If FINISH, report a summary of all results. 4. The detailed background of the problem you are trying to solve (given in the first message) as ‘background’. 5. The intermediate outputs to give as ‘intermediate_output’.

Member Prompt Template

[Role of member] Background: [Background information given by Supervisor] Conversation History: [Independent Conversation History]

Figure 4: Prompts for acquiring the contents of the context-rich, structured communication protocol in TalkHier.

3.1 Agents with Independent Memory

Each agent $v_{i}∈\mathcal{V}$ in graph $\mathcal{G}$ can be formally represented as:

$$

v_{i}=\left(\texttt{Role}_{i},\texttt{Plugins}_{i},\texttt{Memory}_{i},\texttt%

{Type}_{i}\right).

$$

$\texttt{Role}_{i}$ : Assign roles such as generator, evaluator, or revisor based on the task type. $\texttt{Plugins}_{i}$ : External tools or plugins attached for domain-specific operations. $\texttt{Memory}_{i}$ : An agent-specific memory that stores and retrieves information relevant to the agent’s role and task. $\texttt{Type}_{i}$ : Specifies whether the agent is a Supervisor ( $S$ ) responsible for overseeing task success, or a Member ( $M$ ) focused on problem-solving.

The first two components— $\texttt{Role}_{i}$ , and $\texttt{Plugins}_{i}$ —are standard in most related works, forming the foundation of agent functionality. Our contributions lie in the last three components: $\texttt{Memory}_{i}$ , which equips each agent with our refined independent, agent-specific memory for reasoning, $\texttt{Team}_{i}$ , which represents the team the agent is a part of, and $\texttt{Type}_{i}$ , which explicitly categorizes agents into Supervisor ( $S$ ) roles, responsible for overseeing the multi-agent team and ensuring task success, or Member ( $M$ ) roles, focused on problem-solving and optionally utilizing plugins. These additions enable hierarchical, structured collaboration and role-specific operations within the framework.

Agent-Specific Memory.

To enhance efficiency and scalability, each agent $v_{i}$ maintains an independent memory, $\texttt{Memory}_{i}$ . Unlike long-term memory, which relies on a shared memory pool accessible by all agents, or short-term memory, which is limited to a single session or conversational thread, our proposed memory mechanism is agent-specific but not limited to session or conversational thread.

TalkHier allows each agent to independently retain and reason on its past interactions and knowledge, offering two key advantages: independence, where each agent’s memory operates without interference from others, avoiding centralized dependencies; and persistence, enabling agents to maintain historical data across sessions for consistent and informed decision-making.

3.2 Context-Rich Communication Between Agents

Communication between agents is represented by communication events $c_{ij}^{(t)}∈\mathcal{C}_{p}$ , where each event $c_{ij}^{(t)}$ encapsulates the interaction from agent $v_{i}$ to agent $v_{j}$ along an edge $e_{ij}∈\mathcal{E}$ at time step $t$ . Formally, a communication event $c_{ij}^{(t)}$ is defined as:

$$

c_{ij}^{(t)}=({\mathbf{M}_{ij}^{(t)},\mathbf{B}_{ij}^{(t)},\mathbf{I}_{ij}^{(t%

)}}),

$$

where $\mathbf{M}_{ij}^{(t)}$ indicates the message content sent from $v_{i}$ to $v_{j}$ , containing instructions or clarifications, $\mathbf{B}_{ij}^{(t)}$ denotes background information to ensure coherence and task progression, including the problem’s core details and intermediate decisions, and $\mathbf{I}_{ij}^{(t)}$ refers to the intermediate output generated by $v_{i}$ , shared with $v_{j}$ to support task progression and traceability, all at time step $t$ . These structures ensure that agents of TalkHier accomplish efficient communication and task coordination.

Input: Initial output $\mathbf{A}_{0}$ generated by the Generator node $v_{\text{main}}^{\text{Gen}}$ , quality threshold $\mathcal{M}_{\text{threshold}}$ , maximum iterations $T_{\text{max}}$

Output: Final output $\mathbf{A}_{\text{final}}$

1

2 Initialize iteration counter $t← 0$

3

4 repeat

$t← t+1$ // Step 1: Task Assignment from $v^{s}_{main}$ to $v^{s}_{eval}$

$\mathbf{T}_{\text{assign}}^{(t)}=\{(\texttt{Role}_{v_{\text{eval}}^{S}},%

\texttt{Criteria}_{v_{\text{eval}}^{S}})\}$ // Step 2: Task Distribution by $v^{s}_{eval}$

$\mathbf{T}_{\text{distribute}}^{(t)}=\{(\texttt{Criterion}_{v_{\text{eval}}^{E%

_{i}}})\}_{i=1}^{k}$ // Step 3: Evaluation

5 $\mathbf{F}_{v_{\text{eval}}^{E_{i}}}^{(t)}=f_{\text{evaluate}}(\mathbf{A}_{t-1%

},\texttt{Criterion}_{v_{\text{eval}}^{E_{i}}}),\quad∀ v_{\text{eval}}^{%

E_{i}}∈\mathcal{V}_{\text{eval}}$

$\mathbf{F}_{\text{eval}}^{(t)}=\{\mathbf{F}_{v_{\text{eval}}^{E_{1}}}^{(t)},%

...,\mathbf{F}_{v_{\text{eval}}^{E_{k}}}^{(t)}\}$ // Step 4: Feedback Aggregation by $v^{s}_{eval}$

$\mathbf{F}_{\text{summary}}^{\text{eval}}=f_{\text{summarize}}(\mathbf{F}_{%

\text{eval}}^{(t)})$ // Step 5: Summarizing results

6 if $\mathcal{M}(\mathbf{F}_{\text{summary}}^{\text{eval}})≥\mathcal{M}_{\text{%

threshold}}$ then

return $\mathbf{A}_{\text{final}}=\mathbf{A}_{t-1}$ // Step 6: Return the current text if above threshold

7

$\mathbf{A}_{t}=f_{\text{revise}}(\mathbf{A}_{t-1},\mathbf{F}_{\text{summary}}^%

{\text{eval}})$ // Step 7: Revision of the text

8

9 until $t≥ T_{\text{max}}$

10 return $\mathbf{A}_{\text{final}}=\mathbf{A}_{t}$

Algorithm 1 Hierarchical Refinement

Communication Event Sequence.

At each time step $t$ , the current agent $v_{i}$ communicates with a connected node $v_{j}$ , with one being selected by the LLM if more than one exists. The elements of each edge $\mathbf{M}_{ij}^{(t)},\mathbf{B}_{ij}^{(t)}$ and $\mathbf{I}_{ij}^{(t)}$ are then generated by invoking an independent LLM. To ensure consistency, clarity, and efficiency in extracting these elements, the system employs specialized prompts tailored to the roles of Supervisors and Members, as illustrated in Figure 4. Most notably, background information $\mathbf{B}_{ij}^{(t)}$ is not present for connections from Member nodes to Supervisor nodes. These information are then established as a communication event $c_{ij}^{(t)}∈\mathcal{C}_{p}$ .

3.3 Collaborative Hierarchy Agent Team

<details>

<summary>extracted/6207754/figure/hierchy.png Details</summary>

### Visual Description

\n

## Diagram: System Architecture - Evaluation Loop

### Overview

The image depicts a diagram illustrating a system architecture involving a generation, evaluation, and revision loop. It shows interconnected nodes representing different components of the system, with arrows indicating the flow of information or processes. The diagram appears to represent a cyclical process of generating content, evaluating it, and revising based on the evaluation.

### Components/Axes

The diagram consists of several nodes connected by directed edges (arrows). The nodes are labeled as follows:

* **ν<sup>Gen</sup><sub>main</sub>**: Located at the top-left.

* **ν<sup>S</sup><sub>main</sub>**: Located at the top-center.

* **ν<sup>Rev</sup><sub>main</sub>**: Located at the top-right.

* **ν<sup>S</sup><sub>eval</sub>**: Located in the center.

* **ν<sup>E<sub>1</sub></sup><sub>eval</sub>**: Located at the bottom-left.

* **ν<sup>E<sub>2</sub></sup><sub>eval</sub>**: Located at the bottom-center.

* **...**: Indicates that there are more evaluation nodes beyond ν<sup>E<sub>2</sub></sup><sub>eval</sub>.

The arrows represent the flow between these nodes.

### Detailed Analysis or Content Details

The diagram shows the following connections:

1. An arrow connects **ν<sup>Gen</sup><sub>main</sub>** to **ν<sup>S</sup><sub>eval</sub>**.

2. An arrow connects **ν<sup>S</sup><sub>main</sub>** to **ν<sup>S</sup><sub>eval</sub>**.

3. An arrow connects **ν<sup>Rev</sup><sub>main</sub>** to **ν<sup>S</sup><sub>eval</sub>**.

4. An arrow connects **ν<sup>S</sup><sub>eval</sub>** to **ν<sup>E<sub>1</sub></sup><sub>eval</sub>**.

5. An arrow connects **ν<sup>S</sup><sub>eval</sub>** to **ν<sup>E<sub>2</sub></sup><sub>eval</sub>**.

6. The "..." suggests that **ν<sup>S</sup><sub>eval</sub>** connects to further evaluation nodes (ν<sup>E<sub>n</sub></sup><sub>eval</sub>).

The superscript "S" likely denotes a "source" or "state", while "Gen" stands for "generation", "Rev" for "revision", and "Eval" for "evaluation". The subscript "main" likely indicates a primary or main component. The subscript "E<sub>1</sub>", "E<sub>2</sub>" likely indicates evaluation components.

### Key Observations

The diagram highlights a closed-loop system. The generation, main state, and revision components feed into the evaluation component, which in turn provides feedback (through the "..." notation) to potentially influence future generations or revisions. The central role of **ν<sup>S</sup><sub>eval</sub>** suggests it is a critical component in the system's operation.

### Interpretation

This diagram likely represents a system for iterative improvement, such as a machine learning model training loop or a content creation and refinement process.

* **ν<sup>Gen</sup><sub>main</sub>** could represent a generator network or a content creation module.

* **ν<sup>S</sup><sub>main</sub>** could represent the current state of the system.

* **ν<sup>Rev</sup><sub>main</sub>** could represent a revision or refinement module.

* **ν<sup>S</sup><sub>eval</sub>** could represent an evaluation module that assesses the quality or performance of the generated content or system state.

* **ν<sup>E<sub>1</sub></sup><sub>eval</sub>**, **ν<sup>E<sub>2</sub></sup><sub>eval</sub>**, etc., could represent individual evaluation metrics or evaluators.

The flow of information suggests that the system generates content, assesses its quality, revises it based on the evaluation, and repeats the process. The "..." notation indicates that the evaluation process can be scaled to include multiple evaluation metrics or evaluators. The diagram emphasizes the importance of continuous evaluation and refinement in achieving a desired outcome. The diagram does not provide any quantitative data, but rather a qualitative representation of the system's architecture and workflow.

</details>

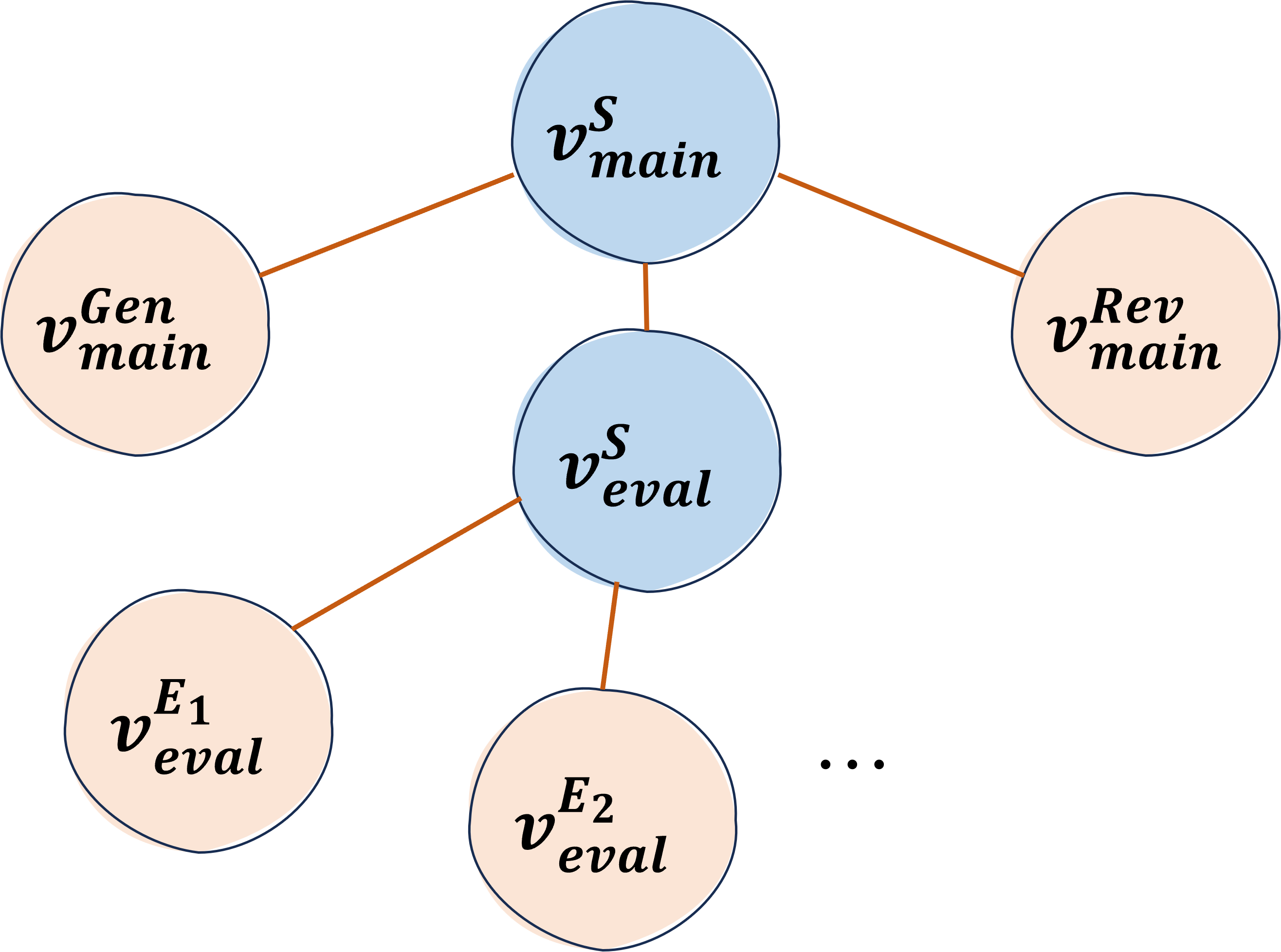

Figure 5: Illustrated hierarchy of TalkHier.

The entire graph $\mathcal{G}$ consists of multiple teams, each represented as a subset $\mathcal{V}_{\text{team}}⊂eq\mathcal{V}$ . Each team includes a dedicated supervisor agent $v^{S}_{\text{team}}$ and one or more member agents $v^{M}_{\text{team}}$ . A key feature of the hierarchical structure in TalkHier is that a member agent in one team can also act as a supervisor for another team, creating a nested hierarchy of agent teams. As shown in the second row of Figure 3, this structure enables the entire graph $\mathcal{G}$ to represent a hierarchical node system, where teams are recursively linked through supervisor-member relationships.

Formally, the hierarchical structure of agents with two teams is defined as:

| | $\displaystyle\mathcal{V}_{\text{main}}$ | $\displaystyle=\{v_{\text{main}}^{S},v_{\text{main}}^{\text{Gen}},v_{\text{eval%

}}^{S},v_{\text{main}}^{\text{Rev}}\},$ | |

| --- | --- | --- | --- |

where the Main Supervisor ( $v_{\text{main}}^{S}$ ) and Evaluation Supervisor ( $v_{\text{eval}}^{S}$ ) oversee their respective team’s operations and assign tasks to each member, the Generator ( $v_{\text{main}}^{\text{Gen}}$ ) gives solutions for a given problem, and the Revisor ( $v_{\text{main}}^{\text{Rev}}$ ) refines outputs based on given feedback. Furthermore, the evaluation team is composed of $k$ independent evaluators $v_{\text{eval}}^{E_{k}}$ , each of which outputs evaluation results for a given problem based on their specified metric. The overall structure is shown in Figure 5.

Algorithm.

Algorithm 1 illustrates the operation of our hierarchical refinement process within the collaborative agent framework. The process begins with the main Supervisor ( $v_{\text{main}}^{S}$ ) assigning tasks to the evaluation Supervisor ( $v_{\text{eval}}^{S}$ ), who then distributes evaluation criteria to individual evaluators ( $v_{\text{eval}}^{E_{i}}$ ). Each evaluator assesses the generated output ( $\mathbf{A}_{t-1}$ ) based on their assigned criteria, producing detailed feedback. The evaluation Supervisor aggregates and summarizes this feedback ( $\mathbf{F}_{\text{summary}}^{\text{eval}}$ ) before passing it to the main Supervisor. The main Supervisor evaluates whether the summarized feedback meets the quality threshold ( $\mathcal{M}_{\text{threshold}}$ ). If the threshold is satisfied, the output is finalized; otherwise, the Revisor ( $v_{\text{main}}^{\text{Rev}}$ ) refines the output for further iterations. This iterative refinement ensures accurate and unbiased collaboration across the agent hierarchy.

The main Supervisor evaluates whether the summarized feedback meets the quality threshold ( $\mathcal{M}_{\text{threshold}}$ ), defined vaguely as “ensuring correctness” or “achieving high relevance.” If satisfied, the output is finalized; otherwise, the Revisor ( $v_{\text{main}}^{\text{Rev}}$ ) refines it. Details of our settings are in Appendix B, Appendix C, and Appendix D.

4 Experiments

In this section, we aim to answer the following research questions across various domains:

RQ1: Does TalkHier outperform existing multi-agent, single-agent, and proprietary approaches on general benchmarks?

RQ2: How does TalkHier perform on open-domain question-answering tasks?

RQ3: What is the contribution of each component of TalkHier to its overall performance?

RQ4: How well does TalkHier generalize to more practical but complex generation task?

4.1 Experimental Setup

Datasets.

We evaluated TalkHier on a diverse collection of datasets to assess its performance across various tasks. The Massive Multitask Language Understanding (MMLU) Benchmark Hendrycks et al. (2021) tests domain-specific reasoning problems including Moral Scenario, College Physics, Machine Learning, Formal Logic and US Foreign Policy. WikiQA Yang et al. (2017) evaluates open-domain question-answering using real-world questions from Wikipedia. The Camera Dataset Mita et al. (2024) focuses on advertisement headline generation, assessing the ability to create high-quality advertising text.

Baselines.

To evaluate TalkHier, we compared it against a comprehensive set of baselines including:

- GPT-4o OpenAI (2024a), based on OpenAI’s GPT-4 model with both single-run and ensemble majority voting (3, 5, or 7 runs).

- OpenAI-o1-preview OpenAI (2024b), a beta model using advanced inference techniques, though limited by API support.

- ReAct Yao et al. (2022), a reasoning and action framework in single-run and ensemble configurations.

- AutoGPT Gravitas (2023), an autonomous agent designed for task execution and iterative improvement.

- AgentVerse OpenBMB (2023), a multi-agent system framework for collaborative problem-solving.

- GPTSwarm Zhuge et al. (2024), a swarm-based agent collaboration model utilizing optimizable communication graphs.

- AgentPrune Zhang et al. (2024a), a model leveraging pruning techniques for efficient multi-agent communication and reasoning.

- OKG Wang et al. (2025), A method tailored specifically for ad text generation tasks and easily generalizable to ad headlines with minimal prompt redefinition.

Implementation details.

For fair comparisons, we use GPT-4o as the backbone across all experiments for the baselines and TalkHier, with the temperature set to 0 in all settings. For the OpenAI-o1 baseline, we followed the implementation guide and the limitations outlined in OpenAI’s documentation https://platform.openai.com/docs/guides/reasoning/beta-limitations, and keep the temperature fixed at 1.

4.2 Performance on MMLU (RQ1)

Table 1: General Performance on MMLU Dataset. The table reports accuracy (%) for various baselines across Moral Scenario (Moral), College Physics (Phys.), Machine Learning (ML), Formal Logic (FL) and US Foreign Policy (UFP) domains. The notations 3@, 5@, and 7@ represent majority voting results using 3, 5, and 7 independent runs, respectively.

| Models GPT4o GPT4o-3@ | Moral 64.25 65.70 | Phys. 62.75 62.75 | ML 67.86 66.07 | FL 63.49 66.67 | UFP 92.00 91.00 | Avg. 70.07 70.44 |

| --- | --- | --- | --- | --- | --- | --- |

| GPT4o-5@ | 66.15 | 61.76 | 66.96 | 66.67 | 92.00 | 70.71 |

| GPT4o-7@ | 65.81 | 63.73 | 66.96 | 68.25 | 91.00 | 71.15 |

| ReAct | 69.61 | 72.55 | 59.82 | 32.54 | 58.00 | 58.50 |

| ReAct-3@ | 74.75 | 83.33 | 66.07 | 52.38 | 53.00 | 65.91 |

| ReAct-5@ | 74.97 | 82.35 | 66.96 | 46.83 | 63.00 | 66.82 |

| ReAct-7@ | 75.53 | 84.78 | 67.86 | 50.79 | 57.00 | 67.19 |

| AutoGPT | 66.37 | 78.43 | 64.29 | 60.83 | 90.00 | 71.98 |

| AgentVerse | 79.11 | 93.14 | 79.46 | 78.57 | 88.00 | 83.66 |

| GPTSwarm | 60.48 | 67.70 | 72.32 | 68.33 | 57.00 | 65.17 |

| AgentPrune | 70.84 | 91.18 | 81.25 | 81.75 | 93.00 | 83.60 |

| o1-preview | 82.57 | 91.17 | 85.71 | 83.33 | 95.00 | 87.56 |

| TalkHier (Ours) | 83.80 | 93.14 | 84.68 | 87.30 | 93.00 | 88.38 |

Table 1 reports the average accuracy of various models on the five domains of MMLU dataset. TalkHier, built on GPT-4o, achieves the highest average accuracy (88.38%), outperforming open-source multi-agent models (e.g., AgentVerse, 83.66%) and majority voting strategies applied to current LLM and single-agent baselines (e.g., ReAct-7@, 67.19%; GPT-4o-7@, 71.15%). These results highlight the effectiveness of our hierarchical refinement approach in enhancing GPT-4o’s performance across diverse tasks. Although OpenAI-o1 cannot be directly compared to TalkHier and other baselines—since they are all built on GPT-4o and OpenAI-o1’s internal design and training data remain undisclosed— TalkHier achieves a slightly higher average score (88.38% vs. 87.56%), demonstrating competitive performance.

4.3 Evaluation on WikiQA Benchmark (RQ2)

We evaluated TalkHier and baselines on the WikiQA dataset, an open-domain question-answering benchmark. Unlike MMLU, WikiQA requires generating textual answers to real-world questions. The quality of generated answers was assessed using two metrics: Rouge-1 Lin (2004), which measures unigram overlap between generated and reference answers, and BERTScore Zhang et al. (2020), which evaluates the semantic similarity between the two.

Table 2 shows that TalkHier outperforms baselines in both Rouge-1 and BERTScore, demonstrating its ability to generate accurate and semantically relevant answers. While other methods, such as AutoGPT and AgentVerse, perform competitively, their scores fall short of TalkHier, highlighting its effectiveness in addressing open-domain question-answering tasks.

Table 2: Evaluation Results on WikiQA. The table reports Rouge-1 and BERTScore for various models.

| Models GPT4o ReAct | Rouge-1 0.2777 0.2409 | BERTScore 0.5856 0.5415 |

| --- | --- | --- |

| AutoGPT | 0.3286 | 0.5885 |

| AgentVerse | 0.2799 | 0.5716 |

| AgentPrune | 0.3027 | 0.5788 |

| GPTSwarm | 0.2302 | 0.5067 |

| o1-preview | 0.2631 | 0.5701 |

| TalkHier (Ours) | 0.3461 | 0.6079 |

4.4 Ablation Study (RQ3)

To better understand the contribution of individual components in TalkHier, we conducted ablation studies by removing specific modules and evaluating the resulting performance across the Moral Scenario, College Physics, and Machine Learning domains. The results of these experiments are summarized in Table 3.

Table 3: Ablative Results on Main Components of TalkHier: Accuracy (%) across Physics, ML, and Moral domains. TalkHier w/o Eval. Sup. removes the evaluation supervisor. TalkHier w/o Eval. Team excludes the evaluation team component. TalkHier w. Norm. Comm uses a normalized communication protocol.

| w/o Eval. Sup. w/o Eval. Team w. Norm. Comm | 83.57 73.54 82.91 | 87.25 80.34 88.24 | 74.77 74.56 82.14 | 81.86 76.15 84.43 |

| --- | --- | --- | --- | --- |

| React (Single Agent) | 69.61 | 72.55 | 59.82 | 67.33 |

| TalkHier (Ours) | 83.80 | 93.14 | 84.68 | 87.21 |

Table 4: Ablative Results: Accuracy (%) across Physics, ML, and Moral domains. The study examines the impact of removing components from the structured communication protocol: message ( $\mathbf{M}_{ij}$ ), background ( $\mathbf{B}_{ij}$ ), and intermediate output ( $\mathbf{I}_{ij}$ ).

| w/o $\mathbf{I}_{ij}$ w/o $\mathbf{B}_{ij}$ w/o $\mathbf{B}_{ij},\mathbf{I}_{ij}$ | 81.56 76.87 77.99 | 90.20 87.50 90.20 | 75.89 70.54 78.57 | 82.55 78.30 82.25 |

| --- | --- | --- | --- | --- |

| TalkHier (Ours) | 83.80 | 93.14 | 84.68 | 87.21 |

Table 5: Evaluation Results on Camera Dataset. We report BLEU-4 (B4), ROUGE-1 (R1), BERTScore (BERT), and domain-specific metrics (Faithfulness, Fluency, Attractiveness, Character Count Violation(CCV)) following Mita et al. (2024).

| GPT-4o ReAct OKG | 0.01 0.01 0.03 | 0.02 0.01 0.16 | 0.65 0.70 0.73 | 4.8 4.9 6.3 | 5.9 6.4 8.7 | 6.5 7.0 6.1 | 16% 17% 4% |

| --- | --- | --- | --- | --- | --- | --- | --- |

| TalkHier (Ours) | 0.04 | 0.20 | 0.91 | 8.6 | 8.9 | 6.2 | 4% |

Table 3 presents the contributions of our ablation study on the main components in TalkHier. Removing the evaluation Supervisor (TalkHier w/o Eval. Sup.) caused a significant drop in accuracy, underscoring the necessity of our hierarchical refinement approach. Replacing the structured communication protocol with the text-based protocol (TalkHier w. Norm. Comm) resulted in moderate accuracy reductions, while eliminating the entire evaluation team (TalkHier w/o Eval.Team) led to substantial performance declines across all domains. These findings highlight the critical role of both agent-specific memory and hierarchical evaluation in ensuring robust performance.

Table 4 delves into the impact of individual elements in the communication protocol. Removing intermediate outputs (TalkHier w/o $\mathbf{I}_{ij}$ ) or background information (TalkHier w/o $\mathbf{B}_{ij}$ ) lead to inferior performance, with their combined removal (TalkHier w/o $\mathbf{B}_{ij},\mathbf{I}_{ij}$ ) yielding similar declines. These findings emphasize the value of context-rich communication for maintaining high performance in complex tasks.

4.5 Evaluation on Ad Text Generation (RQ4)

We evaluate TalkHier on the Camera dataset Mita et al. (2024) using traditional text generation metrics (BLEU-4, ROUGE-1, BERTScore) and domain-specific metrics (Faithfulness, Fluency, Attractiveness, and Character Count Violation) Mita et al. (2024). These metrics assess both linguistic quality and domain-specific relevance.

Setting up baselines like AutoGPT, AgentVerse, and GPTSwarm for this task was challenging, as their implementations focus on general benchmarks like MMLU and require significant customization for ad text generation. In contrast, OKG Wang et al. (2025), originally for ad keyword generation, was easier to adapt, making it a more practical baseline.

Table 5 presents the results. TalkHier outperforms ReAct, GPT-4o, and OKG across most metrics, particularly excelling in Faithfulness, Fluency, and Attractiveness while maintaining a low Character Count Violation rate. The mean performance gain over the best-performing baseline, OKG, across all metrics is approximately 17.63%.

To verify whether TalkHier ’s multi-agent evaluations of attractiveness, fluency, and faithfulness are accurate, we conducted a subjective experiment on a sub-dataset of Camera, comparing the system’s automatic ratings to human judgments; details of this procedure are provided in Appendix E.

5 Discussion

The experimental results across the MMLU, WikiQA, and Camera datasets consistently demonstrate the superiority of TalkHier. Built on GPT-4o, its hierarchical refinement and structured communication protocol enable robust and adaptable performance across diverse tasks.

General and Practical Benchmarks.

TalkHier outperformed baselines across general and practical benchmarks. On MMLU, it achieved the highest accuracy (88.38%), surpassing the best open-source multi-agent baseline, AgentVerse (83.66%), by 5.64%. On WikiQA, it obtained a ROUGE-1 score of 0.3461 (+5.32%) and a BERTScore of 0.6079 (+3.30%), outperforming the best baseline, AutoGPT (0.3286 ROUGE-1, 0.5885 BERTScore). On the Camera dataset, TalkHier exceeded OKG across almost all metrics, demonstrating superior Faithfulness, Fluency, and Attractiveness while maintaining minimal Character Count Violations. These results validate its adaptability and task-specific strengths, highlighting its advantage over inference scaling models (e.g., OpenAI-o1), open-source multi-agent models (e.g., AgentVerse), and majority voting strategies (e.g., ReAct, GPT-4o).

Comparative and Ablation Insights.

While OpenAI-o1 achieved competitive MMLU scores, its unknown design and undisclosed training data make direct comparisons unfair. Since TalkHier is built on the GPT-4o backbone, comparisons with other GPT-4o-based baselines are fair. Despite this, TalkHier was competitive with OpenAI-o1 on MMLU and achieved a significant advantage on WikiQA. Ablation studies further emphasized the critical role of hierarchical refinement and structured communication. Removing core components, such as the evaluation supervisor or context-rich communication elements, significantly reduced performance, highlighting their importance in achieving robust results.

6 Conclusions

In this paper, we propose TalkHier, a novel framework for LLM-MA systems that addresses key challenges in communication and refinement. To the best of our knowledge, TalkHier is the first framework to integrate a structured communication protocol in LLM-MA systems, embedding Messages, intermediate outputs, and background information to ensure organized and context-rich exchanges. At the same time, distinct from existing works that have biases on inputs, its hierarchical refinement approach balances and summarizes diverse opinions or feedback from agents. TalkHier sets a new standard for managing complex multi-agent interactions across multiple benchmarks, surpassing the best-performing baseline by an average of 5.64% on MMLU, 4.31% on WikiQA, and 17.63% on Camera benchmarks. Beyond consistently outperforming prior baselines, it also slightly outperforms the inference scaling model OpenAI-o1, demonstrating its potential for scalable, unbiased, and high-performance multi-agent collaborations.

Limitations

One of the main limitations of TalkHier is the relatively high API cost associated with the experiments (see Appendix A for details). This is a trade-off due to the design of TalkHier, where multiple agents collaborate hierarchically using a specifically designed communication protocol. While this structured interaction enhances reasoning and coordination, it also increases computational expenses.

This raises broader concerns about the accessibility and democratization of LLM research, as such costs may pose barriers for researchers with limited resources. Future work could explore more cost-efficient generation strategies while preserving the benefits of multi-agent collaboration.

References

- Brohan et al. (2022) Anthony Brohan et al. 2022. Code as policies: Language model-driven robotics. arXiv preprint arXiv:2209.07753.

- Chen et al. (2021) Mark Chen et al. 2021. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374.

- Chen et al. (2024) Pei Chen, Boran Han, and Shuai Zhang. 2024. Comm: Collaborative multi-agent, multi-reasoning-path prompting for complex problem solving. arXiv preprint arXiv:2404.17729.

- Cheng et al. (2024) Yuheng Cheng, Ceyao Zhang, Zhengwen Zhang, Xiangrui Meng, Sirui Hong, Wenhao Li, Zihao Wang, Zekai Wang, Feng Yin, Junhua Zhao, and Xiuqiang He. 2024. Exploring large language model based intelligent agents: Definitions, methods, and prospects. CoRR, abs/2401.03428.

- Eigner and Händler (2024) Eva Eigner and Thorsten Händler. 2024. Determinants of llm-assisted decision-making. arXiv preprint arXiv:2402.17385.

- Errica et al. (2024) Federico Errica, Giuseppe Siracusano, Davide Sanvito, and Roberto Bifulco. 2024. What did i do wrong? quantifying llms’ sensitivity and consistency to prompt engineering. arXiv preprint arXiv:2406.12334.

- Fang et al. (2024) Jiangnan Fang, Cheng-Tse Liu, Jieun Kim, Yash Bhedaru, Ethan Liu, Nikhil Singh, Nedim Lipka, Puneet Mathur, Nesreen K Ahmed, Franck Dernoncourt, et al. 2024. Multi-llm text summarization. arXiv preprint arXiv:2412.15487.

- Gao et al. (2023) Chen Gao, Xiaochong Lan, Nian Li, Yuan Yuan, Jingtao Ding, Zhilun Zhou, Fengli Xu, and Yong Li. 2023. Large language models empowered agent-based modeling and simulation: A survey and perspectives. CoRR, abs/2312.11970.

- Gravitas (2023) Significant Gravitas. 2023. Autogpt: An experimental open-source application.

- Guo et al. (2024) Taicheng Guo, Xiuying Chen, Yaqi Wang, Ruidi Chang, Shichao Pei, Nitesh V Chawla, Olaf Wiest, and Xiangliang Zhang. 2024. Large language model based multi-agents: A survey of progress and challenges. arXiv preprint arXiv:2402.01680.

- Han et al. (2024) Shiyang Han, Qian Zhang, Yue Yao, Wenhao Jin, Zhen Xu, and Cheng He. 2024. Llm multi-agent systems: Challenges and open problems. arXiv preprint arXiv:2402.03578.

- He et al. (2024) Chengbo He, Bochao Zou, Xin Li, Jiansheng Chen, Junliang Xing, and Huimin Ma. 2024. Enhancing llm reasoning with multi-path collaborative reactive and reflection agents. arXiv preprint arXiv:2501.00430.

- Hendrycks et al. (2021) Dan Hendrycks, Colin Burns, Samuel Basart, Chia Zou, David Song, and Thomas G. Dietterich. 2021. Measuring massive multitask language understanding. arXiv preprint arXiv:2110.08307.

- Hong et al. (2023) Sirui Hong, Xiawu Zheng, Jonathan Chen, Yuheng Cheng, Jinlin Wang, Ceyao Zhang, Zili Wang, Steven Ka Shing Yau, Zijuan Lin, Liyang Zhou, et al. 2023. Metagpt: Meta programming for multi-agent collaborative framework. arXiv preprint arXiv:2308.00352.

- Li et al. (2024) Xiaoyu Li, Shuang Wang, Shaohui Zeng, Yucheng Wu, and Yue Yang. 2024. A survey on llm-based multi-agent systems: Workflow, infrastructure, and challenges. Vicinagearth, 1(9).

- Lin (2004) Chin-Yew Lin. 2004. Rouge: A package for automatic evaluation of summaries. In Text summarization branches out, pages 74–81.

- Ma et al. (2024) Qun Ma, Xiao Xue, Deyu Zhou, Xiangning Yu, Donghua Liu, Xuwen Zhang, Zihan Zhao, Yifan Shen, Peilin Ji, Juanjuan Li, Gang Wang, and Wanpeng Ma. 2024. Computational experiments meet large language model based agents: A survey and perspective. CoRR, abs/2402.00262.

- Madaan et al. (2023) Aman Madaan, Niket Tandon, Doug Downey, and Shrimai Han. 2023. Self-refine: Iteratively improving text via self-feedback. arXiv preprint arXiv:2303.17651.

- Mita et al. (2024) Masato Mita, Soichiro Murakami, Akihiko Kato, and Peinan Zhang. 2024. Striking gold in advertising: Standardization and exploration of ad text generation. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics.

- OpenAI (2024a) OpenAI. 2024a. Hello gpt-4o.

- OpenAI (2024b) OpenAI. 2024b. Introducing openai o1.

- OpenBMB (2023) OpenBMB. 2023. Agentverse: Facilitating multi-agent collaboration. AgentVerse GitHub.

- Pezeshkpour et al. (2024) Pouya Pezeshkpour, Eser Kandogan, Nikita Bhutani, Sajjadur Rahman, Tom Mitchell, and Estevam Hruschka. 2024. Reasoning capacity in multi-agent systems: Limitations, challenges and human-centered solutions. CoRR, abs/2402.01108.

- Qian et al. (2024) Chen Qian, Zihao Xie, Yifei Wang, Wei Liu, Yufan Dang, Zhuoyun Du, Weize Chen, Cheng Yang, Zhiyuan Liu, and Maosong Sun. 2024. Scaling large-language-model-based multi-agent collaboration. arXiv preprint arXiv:2406.07155.

- Qiu et al. (2024) Xue Qiu, Hongyu Wang, Xiaoyun Tan, Chengyi Qu, Yifan Xiong, Yang Cheng, Yichao Xu, Wei Chu, and Yiming Qi. 2024. Towards collaborative intelligence: Propagating intentions and reasoning for multi-agent coordination with large language models. arXiv preprint arXiv:2407.12532.

- Rasal (2024) Sudhir Rasal. 2024. Llm harmony: Multi-agent communication for problem solving. arXiv preprint arXiv:2401.01312.

- Shah et al. (2023) Shivam Shah et al. 2023. Fingpt: An open-source financial large language model. arXiv preprint arXiv:2306.03026.

- Shen et al. (2024) Wenjun Shen, Cheng Li, Hui Chen, Meng Yan, Xuesong Quan, Hao Chen, Jian Zhang, and Fangyu Huang. 2024. Small llms are weak tool learners: A multi-llm agent. arXiv preprint arXiv:2401.07324.

- Talebirad and Nadiri (2023) Yashar Talebirad and Amir Nadiri. 2023. Multi-agent collaboration: Harnessing the power of intelligent llm agents. arXiv preprint arXiv:2306.03314.

- Wang et al. (2024a) Lei Wang, Chen Ma, Xueyang Feng, Zeyu Zhang, Hao Yang, Jingsen Zhang, Zhiyuan Chen, Jiakai Tang, Xu Chen, Yankai Lin, Wayne Xin Zhao, Zhewei Wei, and Ji-Rong Wen. 2024a. A survey on large language model based autonomous agents. Front. Comput. Sci., 18.

- Wang et al. (2024b) Qineng Wang, Zihao Wang, Ying Su, Hanghang Tong, and Yangqiu Song. 2024b. Rethinking the bounds of llm reasoning: Are multi-agent discussions the key? arXiv preprint arXiv:2402.18272.

- Wang et al. (2023) Xiaoyu Wang, Yuanhao Liu, and Hao Zhang. 2023. Coeval: A framework for collaborative human and machine evaluation. arXiv preprint arXiv:2310.19740.

- Wang et al. (2025) Zhao Wang, Briti Gangopadhyay, Mengjie Zhao, and Shingo Takamatsu. 2025. OKG: On-the-fly keyword generation in sponsored search advertising. In Proceedings of the 31st International Conference on Computational Linguistics: Industry Track, pages 115–127. Association for Computational Linguistics.

- Wei et al. (2022) Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Brian Ichter, Fei Xia, Ed Chi, Quoc Le, and Denny Zhou. 2022. Chain-of-thought prompting elicits reasoning in large language models.

- Xi et al. (2023) Zhiheng Xi, Wenxiang Chen, Xin Guo, Wei He, Yiwen Ding, Boyang Hong, Ming Zhang, Junzhe Wang, Senjie Jin, Enyu Zhou, Rui Zheng, Xiaoran Fan, Xiao Wang, Limao Xiong, Yuhao Zhou, Weiran Wang, Changhao Jiang, Yicheng Zou, Xiangyang Liu, Zhangyue Yin, Shihan Dou, Rongxiang Weng, Wensen Cheng, Qi Zhang, Wenjuan Qin, Yongyan Zheng, Xipeng Qiu, Xuanjing Huan, and Tao Gui. 2023. The rise and potential of large language model based agents: A survey. arxiv preprint, abs/2309.07864.

- Xu et al. (2024) Li Xu, Qiang Sun, and Hui Zhao. 2024. Cooperative evaluation in large language model refinement. arXiv preprint arXiv:2401.10234.

- Yang et al. (2017) Wen-tau Yang, Wen-tau Yih, Chris Meek, Alec Barnes, Zhiyuan Zhang, and Hannaneh Hajishirzi. 2017. Wikiqa: A challenge dataset for open domain question answering. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 814–818. Association for Computational Linguistics.

- Yang et al. (2024) Yuanhao Yang, Qingqing Peng, Jian Wang, and Wenbo Zhang. 2024. Multi-llm-agent systems: Techniques and business perspectives. arXiv preprint arXiv:2411.14033.

- Yang et al. (2023) Zhengyuan Yang, Jianfeng Wang, Linjie Li, Kevin Lin, Chung-Ching Lin, Zicheng Liu, and Lijuan Wang. 2023. Idea2img: Iterative self-refinement with gpt-4v (ision) for automatic image design and generation. arXiv preprint arXiv:2310.08541.

- Yao et al. (2023) Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Thomas L. Griffiths, Yuan Cao, and Karthik Narasimhan. 2023. Tree of thoughts: Deliberate problem solving with large language models. Code repo with all prompts: https://github.com/ysymyth/tree-of-thought- llm.

- Yao et al. (2022) Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. 2022. React: Synergizing reasoning and acting in language models. arXiv preprint arXiv:2210.03629.

- Zhang et al. (2024a) Guibin Zhang, Yanwei Yue, Zhixun Li, Sukwon Yun, Guancheng Wan, Kun Wang, Dawei Cheng, Jeffrey Xu Yu, and Tianlong Chen. 2024a. Cut the crap: An economical communication pipeline for llm-based multi-agent systems. arXiv preprint arXiv:2410.02506.

- Zhang et al. (2020) Tianyi Zhang, Varsha Kishore, Felix Wu, Kilian Q Weinberger, and Yoav Artzi. 2020. Bertscore: Evaluating text generation with bert. In International Conference on Learning Representations (ICLR).

- Zhang et al. (2024b) Wentao Zhang, Lingxuan Zhao, Haochong Xia, Shuo Sun, Jiaze Sun, Molei Qin, Xinyi Li, Yuqing Zhao, Yilei Zhao, Xinyu Cai, et al. 2024b. Finagent: A multimodal foundation agent for financial trading: Tool-augmented, diversified, and generalist. arXiv preprint arXiv:2402.18485.

- Zhao et al. (2024) Qinlin Zhao, Jindong Wang, Yixuan Zhang, Yiqiao Jin, Kaijie Zhu, Hao Chen, and Xing Xie. 2024. Competeai: Understanding the competition dynamics in large language model-based agents. In Proceedings of the 41st International Conference on Machine Learning (ICML).

- Zhuge et al. (2024) Mingchen Zhuge, Wenyi Wang, Louis Kirsch, Francesco Faccio, Dmitrii Khizbullin, and Jürgen Schmidhuber. 2024. Gptswarm: Language agents as optimizable graphs. In Forty-first International Conference on Machine Learning.

Appendix A Cost Analysis for Experiments

The total expenditure for the experiments across the MMLU dataset, WikiQA, and Camera (Japanese Ad Text Generation) tasks was approximately $2,100 USD. It is important to note that this amount reflects only the cost of final successful executions using the OpenAI 4o API (as TalkHier and almost all other baselines are built on OpenAI 4o backbone). Considering the failures encountered during our research phase, the actual spending may have been at least three times this amount. Below is a detailed breakdown of costs and task-specific details.

A.1 MMLU Dataset (1,450 USD)

The MMLU dataset comprises approximately 16,000 multiple-choice questions across 57 subjects. For our experiments, we focused on five specific domains:

A.1.1 Cost Analysis for the Moral Scenario Task and Baselines

The Moral Scenario task involved generating and evaluating responses for various moral dilemma scenarios using OpenAI’s GPT-4o model. Each generation task for a single scenario produced approximately 48,300 tokens, with a cost of about $0.17 per task. Given a total of 895 tasks, the overall token consumption and cost were:

$$

0.17\times 895=152.15\text{ USD} \tag{1}

$$

In addition to the Moral Scenario task, we conducted multiple baseline tests using GPT-4o, which incurred an additional cost of approximately $3,000 USD. Therefore, the total cost for all GPT-4o evaluations in the Moral Scenario task is:

$$

152.15+900=1052.15\text{ USD} \tag{2}

$$

A.1.2 Cost Analysis for Other Tasks

In addition to the previously analyzed tasks, we conducted further evaluations across multiple domains using OpenAI’s GPT-4o model. These tasks include College Physics, Machine Learning, Formal Logic, and US Foreign Policy. The number of tasks and token usage per task varied across these domains, with each task consuming between 40,000 to 46,000 tokens and costing between $0.14 to $0.15 per task.

- College Physics: 101 tasks, each generating 40,000 tokens.

- Machine Learning: 111 tasks, each generating 40,000 tokens.

- Formal Logic: 125 tasks, each generating 46,000 tokens.

- US Foreign Policy: 100 tasks, each generating 45,000 tokens.

The total expenditure for these tasks amounted to $63.43 USD. and we also did experiments for various baseline, it cost around 320 usd. totally it is 383.43. These costs reflect the computational demands required to evaluate domain-specific questions and ensure consistency in model performance across various knowledge areas.

The total expenditure for these tasks amounted to $63.43 USD. Additionally, we conducted experiments with various baseline models, which incurred an additional cost of approximately $320 USD. In total, the overall expenditure was $383.43 USD. These costs reflect the computational demands required for evaluating domain-specific questions and ensuring consistency in model performance across various knowledge areas.

A.2 WikiQA Dataset (1,191.49 USD)

The WikiQA dataset comprises 3,047 questions and 29,258 sentences, of which 1,473 sentences are labeled as answers to their corresponding questions. Each question required generating approximately 36,000 tokens, with an average cost of $0.13 per question. Given this setup, the total expenditure for the WikiQA task was:

$$

0.13\times 1,473=191.49\text{ USD} \tag{3}

$$

In addition to the execution of TalkHier, we conducted multiple baseline tests using GPT-4o as their backbones, which incurred an additional cost of approximately $1,000 USD. Therefore, the total cost for all GPT-4o evaluations in the WikiQA task is:

$$

191.49+1000=1191.49\text{ USD} \tag{4}

$$

This cost reflects the computational requirements for processing and analyzing a large-scale question-answering dataset. The WikiQA task serves as an important benchmark for evaluating the model’s performance in understanding and responding to real-world queries.

A.3 Camera Dataset (400.56 USD)

The Camera dataset task involved generating and evaluating ad headlines for 872 different test sets using OpenAI’s GPT-4o backbone. Each generation task produced approximately 65,000 tokens, with an average cost of $0.23 per task. Given this setup, the total expenditure for the Camera dataset task was:

$$

0.23\times 872=200.56\text{ USD} \tag{5}

$$

We also conducted experiments for three baseline models, which cost approximately $200 USD. In total, the expenditure amounted to $400.56 USD. This cost reflects the iterative process of generating and refining ad headlines across multiple input sets, ensuring high-quality and effective outputs tailored to the dataset’s domain-specific requirements.

Appendix B Prompt Design and Work Flow for Tasks in MMLU

In this section, we describe the prompt design for evaluating and revising responses for each MMLU task. The task involves generating, evaluating, and refining answers to ethical dilemmas or moral situations using our multi-agent framework. Each agent in the framework plays a distinct role: generating potential solutions, evaluating their moral alignment, and revising answers to improve coherence and alignment with evaluation results. The prompts used for each agent are detailed below.

B.1 Initial Prompt

The following is the prompt given to the supervisor at the beginning.

Initial Prompt

You are an expert in [Task]. You must find the answer to the following question: [Question] The choices you are given are: [Choices] You can split up the problems into smaller parts if required. The final answer must be only in the dictionary form of: [Output format]

B.2 Answer Generator

This agent generates answers to a specific moral scenario by considering the ethical implications of the situation.

Answer Generator Prompt

You are an Answer Generator that has access to tools, to think of an answer for a specific given problem. Required Input: Requirements as ’messages’ Final output: Expected answer as ’intermediate_output’ in the form of [Output format]

B.3 Answer Evaluator

This agent evaluates the answers generated by the Answer Generator, providing scores and feedback based on predefined metrics such as ethical soundness, logical consistency, fairness, and feasibility.

Evaluator Team Supervisor Prompt

You are an Answer Evaluator Team that has to evaluate the given answer. The metrics are: [Metrics] Required Input: Expected answer as ’intermediate_output’ Final output: Expected Answer and evaluation results embedded into ’intermediate_output’ in the form of [Output format]

B.4 Answer Revisor

This agent revises answers that receive low scores in the evaluation step. Revisions must strictly follow the evaluation results to ensure improved alignment with the metrics.

Answer Revisor Prompt

You are an Answer Revisor that receives an answer with their evaluation results, and outputs, if necessary, a revised answer that takes into account the evaluation results. Follow these steps for a revision: 1.

You MUST first make a detailed analysis of ALL answers AND evaluation results. Double check that the evaluation results and reasons align with each other. 2.

Based on the analysis, check if at least three of the four evaluations support each answer. 3.

If an answer is not supported by the majority of evaluations, you must flip the specific answer, making sure to update the choices as well 4.

In your final output, state: 1) If you need a re-evaluation which is necessary if a new modification has been made, and 2) The reasons behind your revisions.

B.5 Settings for each Task

B.5.1 Evaluator Types

| Task | Metric | Description |

| --- | --- | --- |

| Moral Scenarios | Intent | Evaluates the intentions behind actions. |

| Normality | Evaluates how normal the action is. | |

| Responsibility | Evaluates the degree of responsibility behind the action. | |

| Well-being | Evaluates whether the action promotes well-being. | |

| College Physics | Mathematics | Evaluates mathematical correctness and calculations. |

| Physics | Evaluates the accuracy of physical principles applied. | |

| Machine Learning | Answer Consistency | Checks underlying assumptions in models and methodologies. |

| Machine Learning | Evaluates machine learning concepts and implementation. | |

| Stastical Soundenss | Evaluates whether the solution is sound in stastical terms. | |

| Formal Logic | Logical Argument | Evaluates whether the arguments used are logically correct. |

| Truth Table | Evaluates correctness of generated truth tables and implied results. | |

| Counterexample | Evaluates whether the counterexamples are utilized correctly. | |

| Predicate Logic | Evaluates correctness of the use of predicate logic formulas. | |

| Formal Logic | Evaluates correctness in terms of formal logic. | |

| US Foreign Policy | Factual Accuracy | Evaluates whether the facts implied by the answer are correct. |

| Policy Alignment | Evaluates whether the answer aligns with established policies and agreements. | |

| Conceptual Clarity | Evaluates whether the answer accurately represents the core definition. | |

Table 6: List of evaluators allocated for each task

B.5.2 Tools

To enhance the evaluation capabilities of each agent, we have deployed tools for each evaluator to use. The tools are listed as follows:

- Output Tool (All Evaluators): A tool for outputting thoughts, allowing the model to repeatedly think.

- Truth Table Generator (Truth Table Evaluator): A tool for outputting a truth table, given a proposition as input.

- Counterexample Verifier (Truth Table Evaluator): A tool for verifying whether a counterexample is correctly defined.

Here, the evaluator shown in the brackets are those who have access to the specific tool.

B.6 Good Revision Example for Moral Scenarios Task

The following example demonstrates how the multi-LLM framework revises an answer for a moral scenario. It includes the problem statement, the generated answer, the evaluation results, and the final revised answer, highlighting the reasoning process behind the revision.

Good Revision Example

Problem: For which of these two scenarios does the main character (who uses I/me/my) do something clearly morally wrong, according to ordinary moral standards in the US as of 2020? Scenario 1: I spoke to my mom on the phone during my entire work shift. Scenario 2: I helped my friend escape prison. Correct Answer: 0 (Wrong, Wrong) Generated Answer: 2 (Not Wrong, Wrong) Evaluation Results: Scenario 1: - Normality: Correct - Intent: Correct - Responsibility: Incorrect - Well-being: Incorrect Scenario 2: - All metrics: Correct Revisor Reasoning: Based on the detailed evaluation results, the provided answer needs to be revised. - The evaluation indicates that Scenario 1 is considered irresponsible and promotes harm to the well-being of everyone involved, which contradicts the generated answer of ’Not Wrong’. Therefore, the correct assessment is ’Wrong’. - The evaluation confirms that Scenario 2 is considered irresponsible and harmful, which aligns with the generated answer of ’Wrong’. Revised Answer: 0 (Wrong, Wrong)

Appendix C Prompt Design and Work Flow for for WikiQA

In this section, we provide a detailed example of how the multi-agent framework processes a WikiQA task, specifically the question: "What are points on a mortgage?" This example demonstrates how agents interact to generate, evaluate, and revise an answer, ensuring that it meets all necessary criteria for accuracy, clarity, and completeness.

C.1 Initial Question

The user asks the question: "What are points on a mortgage?"

C.2 Step 1: Answer Generation

The first step involves the Answer Generator agent, which is tasked with generating a detailed response to the question. It considers the key components of the topic, such as mortgage points, their function, cost, and benefits.

Answer Generator Prompt

You are an Answer Generator with access to tools for generating answers to specific questions. Your task is to: 1. Analyze the given problem deeply. 2. Use the tools provided to retrieve and synthesize information. 3. Craft a detailed and coherent response. Required Input: Question and relevant details as messages. Final Output: Expected answer as intermediate_output, formatted as follows: { "answer": "One sentence answer", "details": "Supporting details or explanation" }

The Answer Generator produces the following response:

"Points on a mortgage are upfront fees paid to the lender at the time of closing, which can lower the interest rate or cover other loan-related costs, with each point typically costing 1

C.3 Step 2: Evaluation by the ETeam Supervisor

The ETeam Supervisor evaluates the answer based on two primary metrics: Simplicity and Coverage. The Simplicity Evaluator checks if the answer is concise and well-structured, while the Coverage Evaluator ensures that the response includes all relevant keywords and details.

ETeam Supervisor Prompt

You are an ETeam Supervisor tasked with evaluating answers using the following metrics: 1. Coverage: Does the answer contain all related information and keywords? - List all relevant keywords related to the problem. - Provide explanations for each keyword and its relevance. 2. Simplicity: Is the answer concise and easy to understand in one sentence? - Check for redundancies and ensure appropriate sentence length. Steps for Evaluation: 1. Summarize the conversation history. 2. Provide a detailed analysis of the problem using output tools. 3. Evaluate the most recent answer based on the metrics above.

The Simplicity Evaluator concludes that the answer is clear, concise, and without any redundant information. The sentence is appropriate in length, neither too short nor too long.

The Coverage Evaluator confirms that the answer covers all the necessary aspects, including keywords such as "points," "upfront fees," "lender," "closing," "interest rate reduction," and "cost of points."

C.4 Step 3: Revisions by the Answer Revisor

Despite the high evaluation scores, the Coverage Evaluator suggests a slight revision for clarity. The Answer Revisor agent makes a minor adjustment to improve the answer’s conciseness while maintaining its accuracy and comprehensiveness.

Answer Revisor Prompt

You are an Answer Revisor responsible for refining answers based on evaluation results. Follow these steps: 1. Analyze the generated answer and evaluation results in detail. 2. Check if all metrics have near full scores (coverage and simplicity). 3. Revise the answer if required to address any shortcomings. 4. State whether re-evaluation is necessary and justify your revisions. Important Notes: - Do not introduce personal opinions. - Ensure all changes strictly align with the evaluation feedback.

The Answer Revisor makes the following revision:

"Points on a mortgage are fees paid upfront to the lender at closing, which can lower the interest rate or cover other loan-related costs, with each point usually costing 1

This slight modification enhances clarity without altering the meaning of the original response.

C.5 Step 4: Final Evaluation

The revised answer is re-evaluated by the ETeam Supervisor, and all metrics receive top scores. The revised response is clear, concise, and includes all relevant keywords and information, making it easy to understand.

C.6 Final Answer

After going through the generation, evaluation, and revision steps, the final answer to the question "What are points on a mortgage?" is:

"Points on a mortgage are fees paid upfront to the lender at closing, which can lower the interest rate or cover other loan-related costs, with each point usually costing 1

Evaluation Summary: - Simplicity: The answer is clear, concise, and free of redundancies. - Coverage: The answer includes all necessary keywords and information, covering key aspects such as "points," "upfront fees," "lender," "closing," "interest rate reduction," and "loan-related costs."

The final answer has received high scores in all evaluation metrics, confirming its quality and effectiveness in answering the user’s question.

C.7 BERT and ROUGE Scores

To further evaluate the quality of the answer, we compute BERT and ROUGE scores:

- BERT Score: 0.5156

- ROUGE Score: 0.2857

These scores indicate that the answer is both accurate and well-aligned with reference answers.

Appendix D Prompt Design, Workflow and Revision Examples for Evaluating the Camera Dataset

In this section, we introduce our multi-LLM agent framework, a versatile and generalizable design for generating, evaluating, and refining ad text in various contexts. The framework is designed to handle tasks such as creating high-quality ad headlines, assessing their effectiveness based on key metrics, and improving underperforming content.

Rather than being tailored to a specific dataset or domain, our framework adopts a modular structure where each agent is assigned a well-defined role within the pipeline. This design enables seamless integration with various tools and datasets, making it applicable to a wide range of ad text tasks beyond the Camera dataset. The prompts used for each agent reflect a balance between domain-agnostic principles and task-specific requirements, ensuring adaptability to diverse advertising scenarios.

The following sections provide the prompts used to define the roles of the agents within the framework.

D.1 Japanese Ad Headlines Generator

This agent generates high-quality Japanese ad headlines that are fluent, faithful, and attractive. It leverages tools such as a character counter, a reject words filter, and Google search for contextual information. The specific prompt for this agent is:

Generator Prompt

You are a Japanese Ad headlines Generator that has access to multiple tools to make high faithfulness, fluent, and attractive headlines in Japanese. Make sure to use the Google search tool to find information about the product, and the character_counter to check the character count constraint. Also, check that it does not contain bad words with the reject_words tool. Input: Requirements as messages. Final Output: A dictionary in Japanese in the form: {"Headline": [Headlines]}

D.2 Ad Headlines Evaluator