# Unlocking the Potential of Generative AI through Neuro-Symbolic Architectures – Benefits and Limitations

**Authors**: Oualid Bougzime, Samir Jabbar, Christophe Cruz, Frédéric Demoly

> ICB UMR 6303 CNRS, Université Marie et Louis Pasteur, UTBM, 90010 Belfort Cedex, France

> ICB UMR 6303 CNRS, Université Bourgogne Europe, 21078 Dijon, France

> ICB UMR 6303 CNRS, Université Marie et Louis Pasteur, UTBM, 90010 Belfort Cedex, France Institut universitaire de France (IUF), Paris, France

## Abstract

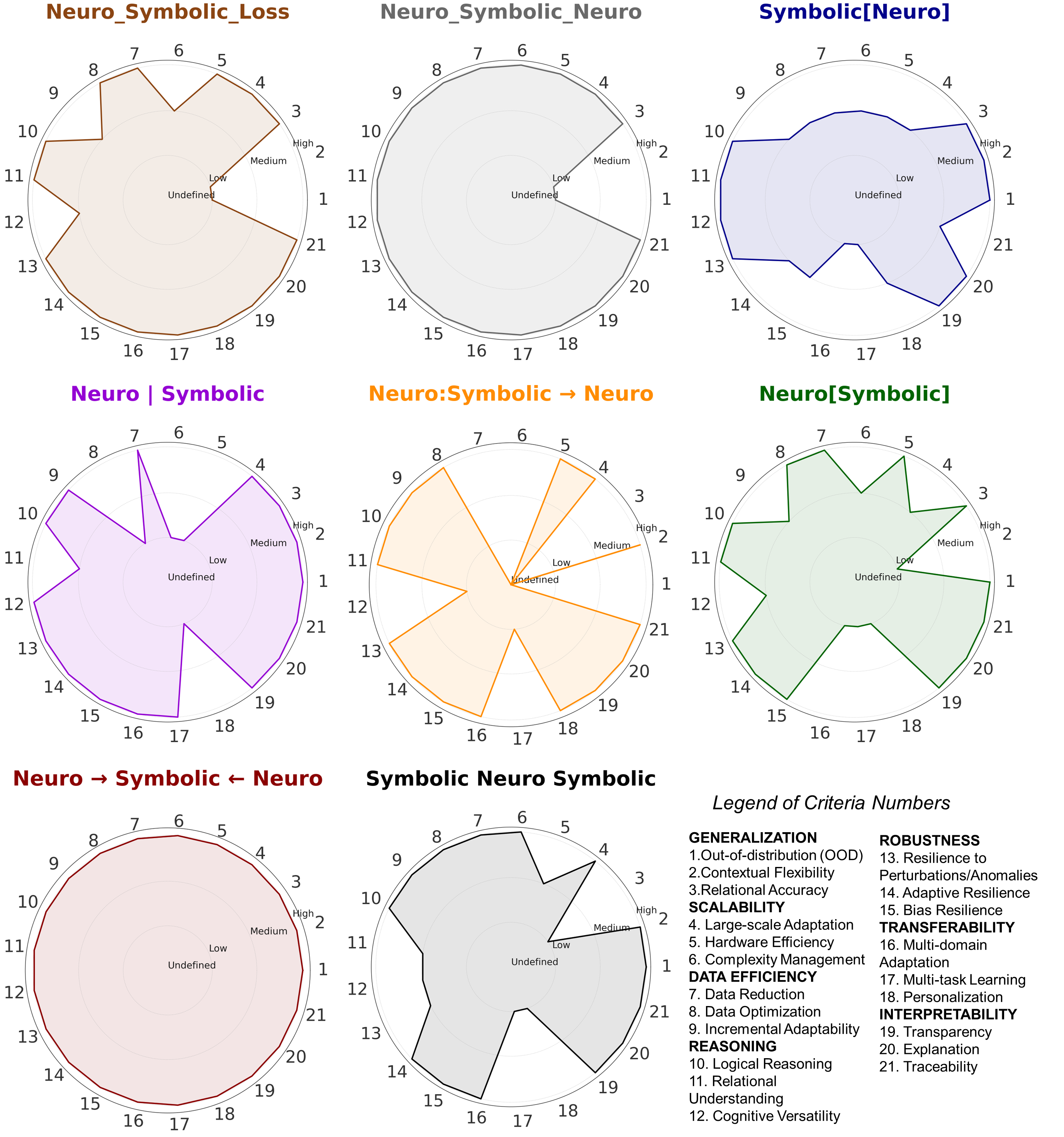

Neuro-symbolic artificial intelligence (NSAI) represents a transformative approach in artificial intelligence (AI) by combining deep learning’s ability to handle large-scale and unstructured data with the structured reasoning of symbolic methods. By leveraging their complementary strengths, NSAI enhances generalization, reasoning, and scalability while addressing key challenges such as transparency and data efficiency. This paper systematically studies diverse NSAI architectures, highlighting their unique approaches to integrating neural and symbolic components. It examines the alignment of contemporary AI techniques such as retrieval-augmented generation, graph neural networks, reinforcement learning, and multi-agent systems with NSAI paradigms. This study then evaluates these architectures against comprehensive set of criteria, including generalization, reasoning capabilities, transferability, and interpretability, therefore providing a comparative analysis of their respective strengths and limitations. Notably, the Neuro → Symbolic ← Neuro model consistently outperforms its counterparts across all evaluation metrics. This result aligns with state-of-the-art research that highlight the efficacy of such architectures in harnessing advanced technologies like multi-agent systems.

Keywords: Neuro-symbolic Artificial Intelligence, Neural Network, Symbolic AI, Generative AI, Retrieval-Augmented Generation (RAG), Reinforcement Learning (RL), Natural Language Processing (NLP), Explainable AI (XAI), Benchmark

## 1 Introduction

Neuro-symbolic artificial intelligence (NSAI) is fundamentally defined as the combination of deep learning and symbolic reasoning [1]. This hybrid approach aims to overcome the limitations of both symbolic and neural artificial intelligence (AI) systems while harnessing their respective strengths. Symbolic AI excels in reasoning and interpretability, whereas neural AI thrives in learning from vast amounts of data. By merging these paradigms, NSAI aspires to embody two fundamental aspects of intelligent cognitive behavior: the ability to learn from experience and the capacity to reason based on acquired knowledge [1, 2].

The importance of NSAI has been increasingly recognized in recent years, especially after the 2019 Montreal AI Debate between Gary Marcus and Yoshua Bengio. This debate highlighted two contrasting perspectives on the future of AI: Marcus argued that “expecting a monolithic architecture to handle abstraction and reasoning is unrealistic,” emphasizing the limitations of current AI systems, while Bengio maintained that “sequential reasoning can be performed while staying in a deep learning framework” [3]. This discussion brought attention to the strengths and weaknesses of neural and symbolic approaches, catalyzing a surge of interest in hybrid solutions. Bengio’s subsequent remarks at IJCAI 2021 underscored the importance of addressing out-of-distribution (OOD) generalization, stating that “we need a new learning theory” to tackle this critical challenge [4]. This aligns with the broader consensus within the AI community that combining neural and symbolic paradigms is essential to developing more robust and adaptable systems. Drawing on concepts like Daniel Kahneman’s dual-process theory of reasoning, which compares fast, intuitive thinking (System 1) to deliberate, logical thought (System 2), NSAI seeks to bridge the gap between learning from data and reasoning with structured knowledge [5]. Despite ongoing debates about the optimal architecture for integrating these two paradigms, the 2019 Montreal AI Debate has played a pivotal role in shaping the trajectory of research in this promising field [6, 7, 8, 9, 10, 11].

NSAI offers a promising avenue for addressing limitations of purely symbolic or neural systems. For instance, while neural networks (NNs) often struggle with interpretability, symbolic AI systems are rigid and require extensive domain knowledge. By combining the adaptability of neural models with the explicit reasoning capabilities of symbolic methods, NSAI systems aim to provide enhanced generalization, interpretability, and robustness. These characteristics make NSAI particularly well-suited for solving complex, real-world problems where adaptability and transparency are critical [12]. Several NSAI architectures have been proposed to integrate these paradigms effectively. Examples include Symbolic Neuro Symbolic systems, Symbolic[Neuro], Neuro[Symbolic], Neuro — Symbolic coroutines, Neuro Symbolic, and others [13]. Each architecture offers unique advantages but also poses specific challenges in terms of scalability, interpretability, and adaptability. A systematic evaluation of these architectures is imperative to understand their potential and limitations, guiding future research in this rapidly evolving field.

Generative AI has witnessed remarkable advancements, encompassing a diverse range of technologies that address various challenges in data processing, reasoning, and decision-making. These advancements can be categorized into several major branches of AI. Natural language processing (NLP) [14] includes technologies such as retrieval-augmented generation (RAG) [15], sequence-to-sequence models [16], semantic parsing [17], named entity recognition (NER) [18], and relation extraction [19], which focus on understanding and generating human language. Reinforcement learning techniques, like reinforcement learning with human feedback (RLHF) [20], enable systems to learn optimal actions through interaction with their environment. Advanced NNs include innovations such as graph neural networks (GNNs) [21] and generative adversarial networks (GANs) [22], which excel in handling structured data and generating realistic data samples, respectively. Multi-agent systems [23, 24] explore the coordination and decision-making among multiple intelligent agents. Recent advances leverage mixture of experts (MoE) architectures to enhance scalability and specialization in collaborative frameworks. In MoE-based multi-agent systems, each expert operates as an autonomous agent, specializing in distinct sub-tasks or data domains, while a dynamic gating mechanism orchestrates their contributions [25, 26]. Transfer Learning [27], including pre-training [28], fine-tuning [29], and few-shot learning [30], allows AI models to adapt knowledge from one task to another efficiently. Explainable AI (XAI) [31] focuses on making AI systems transparent and interpretable, while efficient learning techniques, such as model distillation [32], aim to optimize resource usage. Reasoning and inference methods like chain-of-thought (CoT) [33] reasoning and link prediction enhance logical decision-making capabilities. Lastly, continuous learning [34] paradigms ensure adaptability over time. Together, these technologies form a comprehensive toolkit for tackling the increasingly complex demands of generative AI applications.

The classification of generative AI technologies within the NSAI framework is crucial for several reasons. Firstly, it provides a structured approach to understanding how these diverse technologies relate to and enhance NSAI capabilities. By mapping these techniques to specific NSAI architectures, researchers and practitioners can better grasp their potential applications and limitations. This classification also facilitates the identification of synergies between different AI approaches, potentially leading to more robust and versatile hybrid systems. Furthermore, it aids in decision-making processes when selecting appropriate technologies for specific tasks, considering factors like interpretability, reasoning capabilities, and generalization. As AI continues to evolve, this systematic categorization becomes increasingly valuable for bridging the gap between cutting-edge research and practical implementation, ultimately driving the field towards more integrated and powerful AI solutions.

Therefore, this research aims to explores the alignment of generative AI technologies with the core catergories of NASAI and examines the insights this classification provides regarding their strenghts and limitations. The proposed methodology is threefold: (i) to define and analyze existing NSAI architectures, (ii) to classify generative AI technologies within the NSAI framework to provide a unified perspective on their integration, and (iii) to develop a systematic framework for assessing NSAI architectures across various criteria.

## 2 Neuro-Symbolic AI: Combining Learning and Reasoning to Overcome AI’s Limitations

NNs have been exemplary in handling unstructured forms of data, e.g., images, sounds, and textual data. The capacity of these networks to acquire sophisticated patterns and representations from voluminous datasets has provided major breakthroughs in a series of disciplines, from computer vision, speech recognition, to NLP [35, 14]. One of the major benefits of NNs is that they learn and become better from raw data without requiring pre-coded rules or expert knowledge. This makes them highly scalable and efficient to utilize in applications with large raw data. However, despite these benefits, NNs also have some very well-documented disadvantages. One of the major ones of these might be that they are not transparent. Indeed, neural models pose interpretability challenges, making it difficult to understand the process by which they arrive at specific decisions or predictions. Such opacity causes problems for critical applications where explanation is necessary, such as in healthcare, finance, legal frameworks, and engineering. Additionally, NNs have a high requirement for data, requiring substantial amounts of labeled training data in order to operate effectively. This reliance on large data makes them ineffective when applied to data-scarce or data-costly environments. Neural models also struggle with reasoning and generalizing beyond their training data, which makes their performance less impressive when it comes to tasks in logical inference or commonsense reasoning. Specifically, tasks including understanding causality, sequential problem-solving, and decision-making relying on outside world knowledge.

Symbolic AI is better at handling areas that are weaker for NNs. Symbolic systems function on explicit rules and structured representations, which enables them to achieve reasoning tasks related to complicated issues, such as mathematical proofs, planning, and expert systems. Symbolic AI is most important because it is transparent. Since symbolic methods are grounded in known rules and logical formalisms, decision-making processes are easy to interpret and explain. However, symbolic AI systems have some drawbacks. One of the biggest ones is that they are rigid and difficult to respond to new circumstances. They require rules to be manually defined and require structured input data, leading them difficult to apply to real-world situations where data might contain noise, incompleteness, or unstructured form. They are also susceptible to combinatorial explosions in handling big data or hard reasoning problems, which significantly slows down their performance at scale. Symbolic systems are also not well suited for perception tasks like image or speech recognition since they are unable to draw knowledge from raw data alone.

While traditional NNs are strong at recognizing patterns in collections of data but falter when presented with new situations, symbolic reasoning provides a rational foundation for decision-making but is limited in the manner in which it can learn knowledge from new information and adapt in a dynamic process. The combination of these two approaches in NSAI effectively minimizes these limitations, producing a more flexible, explainable, and effective AI system. Another distinguishing feature of NSAI is that it is able to generalize outside its training set. Traditional AI systems are prone to fail in novel situations; however, NSAI, because of its combination of learning and logical reasoning, works better in such cases. Such a feature is critical for real-world applications such as autonomous transport and medicine, where systems need to perform well in uncontrolled environments. Apart from that, in an interdisciplinary engineering context such as 4D printing, which brings together materials science, additive manufacturing, and engineering, NSAI holds significant promise for improving both the interpretability and reliability of design decisions on the actuation and mechanical performance, and printability. Although these advantages seem promising, they remain hypotheses requiring more extensive validation and industrial-scale testing. Ongoing research must demonstrate, through empirical studies and real-world implementations, how NSAI can reliably accelerate the discovery of smart materials and structures [36]. The second key benefit point of NSAI is that it has a reduced need for big data sets. Traditional AI systems usually require a tremendous amount of data to operate, which might be very time- and resource-consuming. NSAI, however, is able to do better with a much smaller set of data required, due to its symbolic reasoning ability. This makes it a more sustainable and viable option, especially for small organizations or new research areas with limited resources. Along with the aforementioned data efficiency, NSAI models also have the exceptional transferability, i.e., their capacity for using knowledge learned from one task and applying it in another with less need for retraining. Such a property is highly desirable in situations where there is little data related to a new task.

## 3 Neuro-Symbolic AI Architectures

This section provides an overview of various NSAI architectures, offering insights into their design principles, integration strategies, and unique capabilities. While Kautz’s classification [13] serves as a foundational framework, we extend it by incorporating additional architectural perspectives to capture the evolving landscape of NSAI systems. These approaches range from symbolic systems augmented by neural modules for specialized tasks to deeply integrated models where explicit reasoning engines operate within neural frameworks. This expanded categorization highlights the diversity of design strategies and the broad applicability of NSAI techniques, emphasizing their potential for more interpretable, robust, and data-efficient AI solutions.

### 3.1 Sequential

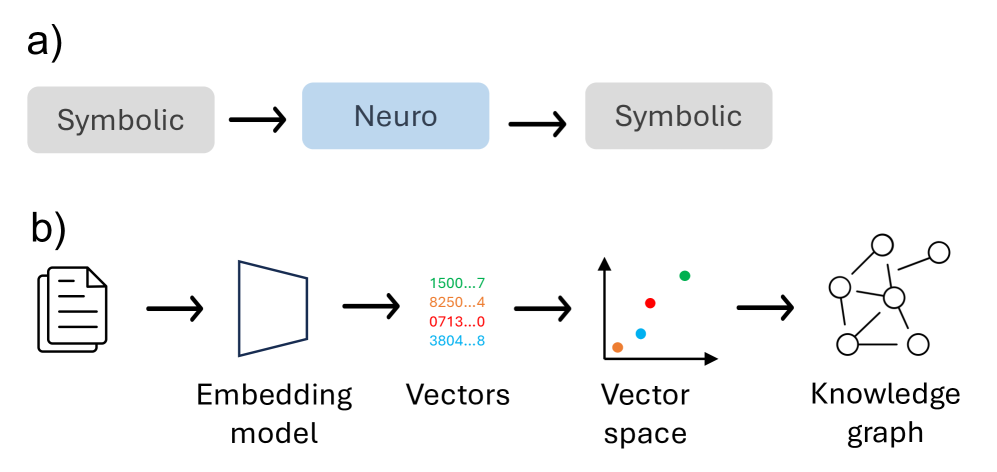

As part of the sequential NSAI, the Symbolic $\to$ Neuro $\to$ Symbolic architecture involves systems where both the input and output are symbolic, with a NN acting as a mediator for processing (Figure 1 a). Symbolic input, such as logical expressions or structured data, is first mapped into a continuous vector space through an encoding process. The NN operates on this encoded representation, enabling it to learn complex transformations or patterns that are difficult to model symbolically. Once the processing is complete, the resulting vector is decoded back into symbolic form, ensuring that the final output aligns with the structure and semantics of the input domain. This framework is especially useful for tasks that require leveraging the generalization capabilities of NNs while preserving symbolic interpretability [37, 38]. A formulation of this architecture is presented below:

$$

y=f_{\text{neural}}(x) \tag{1}

$$

where $x$ is the symbolic input, $f_{\text{neural}}(x)$ represents the NN that processes the input, and $y$ is the symbolic output.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Symbolic-Neuro-Symbolic Pipeline and Knowledge Graph Construction

### Overview

The image is a two-part technical diagram illustrating a conceptual pipeline for processing information. Part (a) shows a high-level, abstract flow between symbolic and neuro (neural) representations. Part (b) provides a more detailed, step-by-step breakdown of a specific process that transforms a document into a structured knowledge graph via embedding models and vector spaces.

### Components/Axes

The diagram is divided into two labeled sections:

* **a)** A three-stage linear flow.

* **b)** A five-stage linear flow.

**Part (a) Components:**

1. **Symbolic** (Gray box, left)

2. **Neuro** (Blue box, center)

3. **Symbolic** (Gray box, right)

* **Flow Direction:** Left to right, indicated by black arrows.

**Part (b) Components (from left to right):**

1. **Document Icon:** Represents the input source material.

2. **Embedding model:** Represented by a blue trapezoid icon.

3. **Vectors:** A list of four numerical sequences, each in a different color.

* Green: `1500...7`

* Orange: `8250...4`

* Red: `0713...0`

* Blue: `3804...8`

4. **Vector space:** A 3D coordinate system (x, y, z axes) containing four colored dots corresponding to the vector colors above.

5. **Knowledge graph:** A network diagram of interconnected nodes (circles) and edges (lines).

* **Flow Direction:** Left to right, indicated by black arrows between each stage.

### Detailed Analysis

The diagram describes a two-level process:

**Level 1 (Part a):** This is a conceptual model of a hybrid AI system. It suggests a cycle where symbolic data (e.g., rules, logic, structured knowledge) is processed by a neural network ("Neuro"), and the output is then converted back into a symbolic form. This is a common architecture in neuro-symbolic AI.

**Level 2 (Part b):** This details a specific technical pipeline for knowledge graph construction:

1. **Input:** A document (icon).

2. **Processing:** The document is fed into an **Embedding model**.

3. **Output of Model:** The model produces numerical **Vectors**. The ellipsis (`...`) indicates these are truncated representations of longer numerical sequences. The four distinct colors suggest four different data points or entities extracted from the document.

4. **Representation:** These vectors are plotted as points in a multi-dimensional **Vector space**. The spatial proximity of the colored dots (e.g., the red and blue dots appear closer together than the green dot) implies semantic similarity between the corresponding data points.

5. **Final Structure:** The relationships and similarities captured in the vector space are used to construct a **Knowledge graph**, where entities are nodes and their relationships are edges.

### Key Observations

* **Color Consistency:** The four colors (green, orange, red, blue) used for the numerical vectors are consistently applied to the corresponding points in the vector space diagram. This is a critical visual link showing the direct mapping from abstract numbers to a spatial representation.

* **Abstraction Gradient:** The diagram moves from concrete (a document icon) to increasingly abstract representations (vectors, vector space) and back to a structured, human-interpretable form (knowledge graph).

* **Spatial Metaphor:** The "Vector space" component uses a 3D plot to visually communicate the concept of high-dimensional data, where distance represents similarity.

* **Process Linearity:** Both parts (a) and (b) depict strictly linear, sequential processes without feedback loops or branching paths.

### Interpretation

This diagram illustrates a foundational workflow in modern AI and natural language processing. It demonstrates how unstructured text (a document) can be systematically transformed into structured, queryable knowledge.

* **What it suggests:** The pipeline shows a method for automated knowledge extraction. The embedding model converts text into a mathematical form (vectors) that captures semantic meaning. Organizing these vectors in a space allows for the identification of relationships (similar items are close). Finally, these relationships are formalized into a knowledge graph, which is a database optimized for connecting information.

* **Relationship between elements:** Part (a) provides the philosophical framework (neuro-symbolic integration), while part (b) gives a practical implementation of one direction of that flow: from symbolic input (document) through a neuro processing stage (embedding model) to a new symbolic output (knowledge graph).

* **Notable implication:** The diagram emphasizes that the core of this transformation is the **embedding model**, which acts as the translator between human-readable text and machine-processable mathematics. The quality of the final knowledge graph is fundamentally dependent on the quality of this embedding step. The use of a simple document icon as the starting point underscores the potential to apply this process to vast corpora of text.

</details>

Figure 1: Sequential architecture: (a) Principle and (b) application to knowledge graph construction.

This architecture can be used in a semantic parsing task, where the input is a sequence of symbolic tokens (e.g., words). Here, each token is mapped to a continuous embedding via word2vec, GloVe, or a similar method [39, 40]. The NN then processes these embeddings to learn compositional patterns or transformations. From this, the network’s output layer decodes the processed information back into a structured logical form (such as knowledge-graph triples), as illustrated in Figure 1 b.

### 3.2 Nested

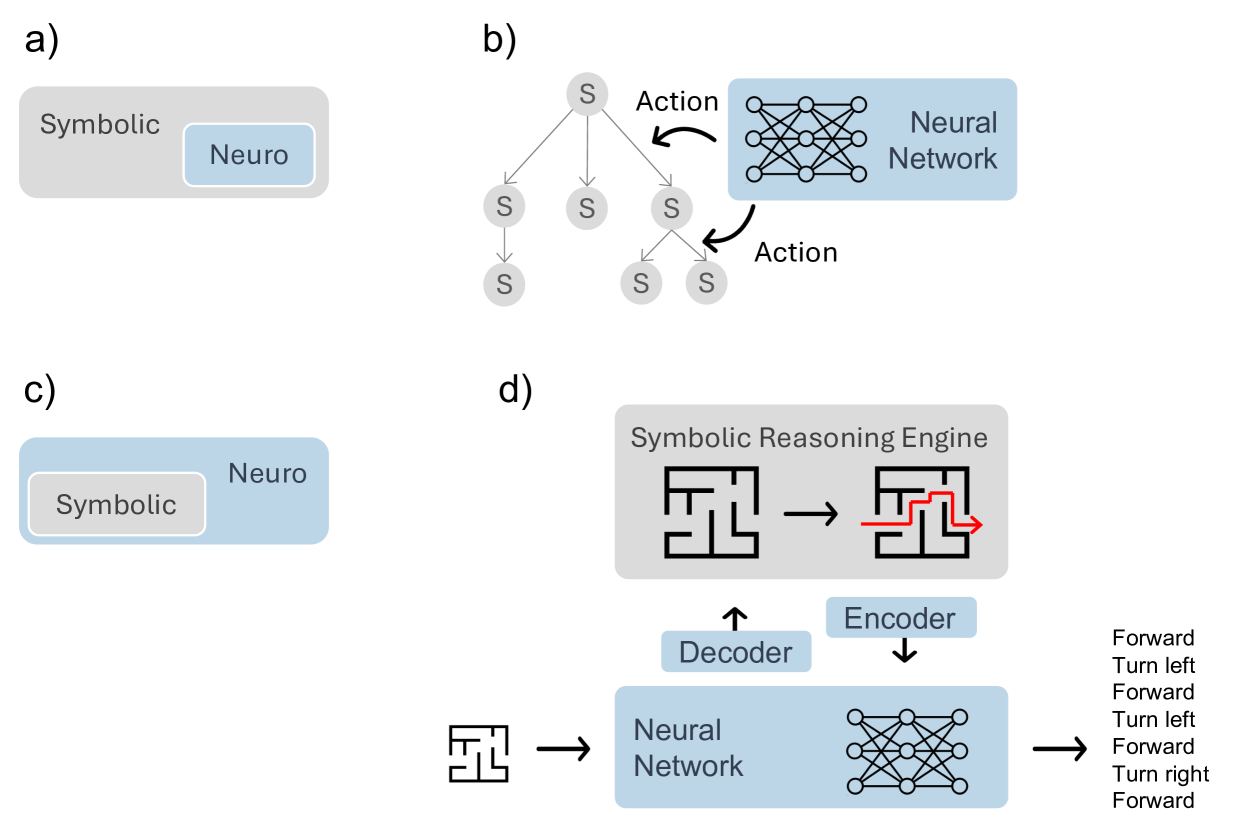

The nested NSAI category is composed of two different architectures. The first – Symbolic[Neuro] – places a NN as a subcomponent within a predominantly symbolic system (Figure 2 a). Here, the NN is used to perform tasks that require statistical pattern recognition, such as extracting features from raw data or making probabilistic inferences, which are then utilized by the symbolic system. The symbolic framework orchestrates the overall reasoning process, incorporating the neural outputs as intermediate results [41]. This architecture can formally defined as follows:

$$

y=g_{\text{symbolic}}(x,f_{\text{neural}}(z)) \tag{2}

$$

where $x$ represents the symbolic context, $z$ is the input passed from the symbolic reasoner to the NN, $f_{\text{neural}}(z)$ expresses the neural model processing the input, and $g_{\text{symbolic}}$ the symbolic reasoning engine that integrates neural outputs. A well-known instance of this architecture is AlphaGo [41], where a symbolic Monte-Carlo tree search orchestrates high-level decision-making, while a NN evaluates board states, providing a data-driven heuristic to guide the symbolic search process [42] (Figure 2 b). Similarly, in a medical diagnosis scenario, a rule-based engine oversees the core diagnostic process by applying expert guidelines to patient history, symptoms, and lab results. At the same time, a NN interprets unstructured radiological images, delivering key indicators such as tumor likelihood. The symbolic system then integrates these indicators into its final decision, combining transparent and rule-driven logic with robust pattern recognition.

The second architecture – Neuro[Symbolic] – integrates a symbolic reasoning engine as a component within a neural system, allowing the network to incorporate explicit symbolic rules or relationships during its operation (Figure 2 c). The symbolic engine provides structured reasoning capabilities, such as rule-based inference or logic, which complement the NN’s ability to generalize from data. By embedding symbolic reasoning within the neural framework, the system gains interpretability and structured decision-making while retaining the flexibility and scalability of neural computation. This integration is particularly effective for tasks that require reasoning under constraints or adherence to predefined logical frameworks [43, 44]. This configuration can be described as follows:

$$

y=f_{\text{neural}}(x,g_{\text{symbolic}}(z)) \tag{3}

$$

where $x$ represents the input data to the neural system, $z$ is the input passed from the NN to the symbolic reasoner, $g_{\text{symbolic}}$ is the symbolic reasoning function, and $f_{\text{neural}}$ denotes the NN processing the combined inputs.

This architecture is currently applied in automated warehouse, where a robot navigates dynamically changing aisles. During normal operation, it relies on a neural policy to select routes based on learned patterns. When it encounters an unexpected obstacle, it offloads route computation to a symbolic solver (e.g., a pathfinding or constraint-satisfaction algorithm), which returns an alternative path. The solver’s output is then integrated back into the neural policy, and the robot resumes its usual pattern-based navigation. Over time, the robot also learns to identify which challenges call for the symbolic solver, effectively blending fast pattern recognition with precise combinatorial planning.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Neuro-Symbolic AI Architectures

### Overview

The image displays four distinct conceptual diagrams (labeled a, b, c, d) illustrating different architectural paradigms for integrating symbolic reasoning and neural networks. The diagrams use a consistent color scheme: gray represents symbolic components, and light blue represents neural components. The overall theme is the structural relationship between these two AI approaches.

### Components/Axes

The image is divided into four quadrants, each containing a labeled sub-diagram:

* **a)** Top-left: A nested box diagram.

* **b)** Top-right: A tree structure connected to a neural network.

* **c)** Bottom-left: An inverted nested box diagram.

* **d)** Bottom-right: A more complex system flow involving mazes, a reasoning engine, and a neural network with an encoder-decoder structure.

**Labels and Text Elements:**

* **a)** Outer gray box: "Symbolic". Inner blue box: "Neuro".

* **b)** Tree nodes: "S". Arrows labeled: "Action". Blue box: "Neural Network".

* **c)** Outer blue box: "Neuro". Inner gray box: "Symbolic".

* **d)** Top gray box: "Symbolic Reasoning Engine". Blue boxes: "Encoder", "Decoder", "Neural Network". Text list on far right: "Forward", "Turn left", "Forward", "Turn left", "Forward", "Turn right", "Forward".

### Detailed Analysis

**Diagram a) - Neuro within Symbolic:**

* **Structure:** A large gray rectangle labeled "Symbolic" contains a smaller, nested blue rectangle labeled "Neuro".

* **Relationship:** This visually represents a system where a neural network component operates *within* or is subservient to a broader symbolic reasoning framework. The symbolic system is the primary container.

**Diagram b) - Symbolic Tree with Neural Action Selection:**

* **Structure:** A tree graph on the left has a root node "S" branching into three child nodes "S", with one of those further branching into two leaf nodes "S". Arrows labeled "Action" point from two of the symbolic ("S") nodes toward a blue box on the right labeled "Neural Network", which contains a standard multilayer perceptron icon.

* **Relationship:** This depicts a hybrid system where a symbolic search or planning process (the tree) generates candidate states or decisions ("S"). A neural network is then used to evaluate these candidates or select the next "Action" to take within the symbolic structure.

**Diagram c) - Symbolic within Neuro:**

* **Structure:** The inverse of diagram (a). A large blue rectangle labeled "Neuro" contains a smaller, nested gray rectangle labeled "Symbolic".

* **Relationship:** This represents a system where symbolic reasoning modules are embedded *within* a dominant neural network architecture. The neural system is the primary container, potentially using symbolic logic as a specialized subroutine.

**Diagram d) - Integrated Neuro-Symbolic System for Navigation:**

* **Structure:** This is a multi-stage pipeline.

1. **Input:** A maze icon (left) feeds into a blue "Neural Network" box.

2. **Neural Processing:** The neural network has an "Encoder" (down arrow) and a "Decoder" (up arrow) connecting it to a higher-level component.

3. **Symbolic Reasoning:** The "Decoder" output goes to a gray "Symbolic Reasoning Engine" box. This box contains two maze icons: the first is a raw maze, and the second shows the same maze with a red path solving it (arrow indicating solution direction).

4. **Output:** The "Encoder" receives input from the symbolic engine. The final output from the neural network is a sequence of text commands: "Forward", "Turn left", "Forward", "Turn left", "Forward", "Turn right", "Forward".

* **Relationship:** This illustrates a full neuro-symbolic pipeline for a task like maze navigation. The neural network likely processes the raw maze input (perception), the symbolic engine reasons about the solution path (planning/logic), and the neural network then translates this symbolic plan into executable action commands (control).

### Key Observations

1. **Architectural Dichotomy:** Diagrams (a) and (c) present a fundamental dichotomy in integration: is the symbolic system the host or the guest within the neural system?

2. **Functional Separation:** Diagram (b) shows a clear functional separation: symbolic search generates options, neural networks select among them.

3. **Complex Pipeline:** Diagram (d) is the most detailed, showing a complete perception-reasoning-action loop. It explicitly includes an encoder-decoder interface between the neural and symbolic components, suggesting a translation of representations between the two domains.

4. **Consistent Visual Language:** The use of gray for symbolic and blue for neural is maintained throughout, allowing for easy comparison of the structural relationships.

### Interpretation

These diagrams collectively map the design space for neuro-symbolic AI systems. They move from simple conceptual relationships (a, c) to more functional (b) and finally to a detailed, task-specific implementation (d).

* **What it demonstrates:** The image argues that there is no single way to combine neural and symbolic AI. The choice of architecture depends on the problem. For tasks requiring structured search and explicit reasoning (like planning in a maze, as in d), a tight integration where symbolic engines handle core logic and neural networks handle perception and translation may be optimal. For other tasks, one paradigm might simply host the other as a module.

* **Relationships:** The progression suggests an evolution in thinking, from abstract containment models to interactive and finally integrated pipeline models. The "Action" arrows in (b) and the encoder/decoder in (d) highlight the critical challenge of *interface design*—how these two fundamentally different computational paradigms communicate.

* **Notable Pattern:** The most sophisticated diagram (d) is applied to a classic AI problem (navigation), implying that neuro-symbolic approaches are particularly suited for tasks that require both pattern recognition (seeing the maze) and logical deduction (finding the path). The explicit text output ("Forward", "Turn left"...) underscores the goal of generating interpretable, high-level commands from low-level data.

</details>

Figure 2: Nested architectures: (a) Symbolic[Neuro] principle and (b) its application to tree Search, (c) Neuro[Symbolic] principle and (d) its application to maze-solving.

Figure 2 d illustrates this framework, a symbolic reasoning engine processes structured data, such as a maze, to generate a solution path. A NN encodes the problem into a latent representation and decodes it into a symbolic sequence of actions (e.g., forward, turn left, turn right).

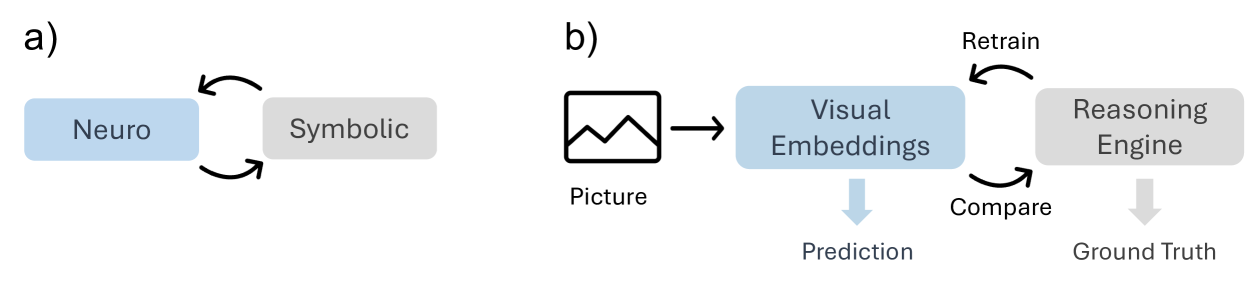

### 3.3 Cooperative

As a cooperative framework, Neuro $|$ Symbolic uses neural and symbolic components as interconnected coroutines, collaborating iteratively to solve a task (Figure 3 a). NNs process unstructured data, such as images or text, and convert it into symbolic representations that are easier to reason about. The symbolic reasoning component then evaluates and refines these representations, providing structured feedback to guide the NN’s updates. This feedback loop continues over multiple iterations until the system converges on a solution that meets predefined symbolic constraints or criteria. By combining the strengths of NNs for generalization and symbolic reasoning for interpretability, this approach achieves robust and adaptive problem-solving [45]. This architecture can be described as follows:

$$

z^{(t+1)}=f_{\text{neural}}(x,y^{(t)}),\quad y^{(t+1)}=g_{\text{symbolic}}(z^{

(t+1)}),\quad\forall t\in\{0,1,\dots,n\} \tag{4}

$$

where $x$ represents non-symbolic data input, $z^{(t)}$ is the intermediate symbolic representation at iteration $t$ , $y^{(t)}$ is the symbolic reasoning output at iteration $t$ , $f_{\text{neural}}(x,y^{(t)})$ expresses the NN that processes the input $x$ and feedback from the symbolic output $y^{(t)}$ , $g_{\text{symbolic}}(z^{(t+1)})$ is the symbolic reasoning engine that updates $y^{(t+1)}$ based on the neural output $z^{(t+1)}$ , and $n$ is the maximum number of iterations or a convergence threshold. The hybrid reasoning halts when the outputs $y^{(t)}$ converge (e.g., $|y^{(t+1)}-y^{(t)}|<\epsilon$ )), where $\epsilon$ is a small threshold denoting minimal change between successive outputs, or when the maximum iterations $n$ is reached.

For instance, this architecture can applied in autonomous driving systems, where a NN processes real-time images from vehicle cameras to detect and classify traffic signs. It identifies shapes, colors, and patterns to suggest potential signs, such as speed limits or stop signs. A symbolic reasoning engine then evaluates these detections based on contextual rules—like verifying if a detected speed limit sign matches the road type or if a stop sign appears in a logical position (e.g., near intersections). If inconsistencies are detected, such as a stop sign identified in the middle of a highway, the symbolic system flags the issue and prompts the neural network to re-evaluate the scene. This iterative feedback loop continues until the system reaches consistent, high-confidence decisions, ensuring robust and reliable traffic sign recognition, even in challenging conditions like poor lighting or partial occlusions (Figure 3 b).

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Neuro-Symbolic AI Architecture

### Overview

The image displays two related conceptual diagrams, labeled **a)** and **b)**, illustrating a neuro-symbolic artificial intelligence architecture. Diagram **a)** presents a high-level abstraction of the core concept, while diagram **b)** expands this into a specific pipeline for visual reasoning tasks. The diagrams use a consistent color scheme: blue for neural/perceptual components and gray for symbolic/reasoning components.

### Components/Axes

The image is divided into two distinct panels:

**Panel a) (Left Side):**

* **Component 1:** A blue rectangular box labeled **"Neuro"**.

* **Component 2:** A gray rectangular box labeled **"Symbolic"**.

* **Relationship:** Two curved, black arrows form a closed loop between the "Neuro" and "Symbolic" boxes, indicating a bidirectional, cyclical interaction or feedback loop.

**Panel b) (Right Side):**

* **Input:** An icon of a landscape picture labeled **"Picture"**.

* **Neural Component:** A blue rectangular box labeled **"Visual Embeddings"**.

* **Symbolic Component:** A gray rectangular box labeled **"Reasoning Engine"**.

* **Outputs:**

* A downward-pointing blue arrow from "Visual Embeddings" leads to the label **"Prediction"**.

* A downward-pointing gray arrow from "Reasoning Engine" leads to the label **"Ground Truth"**.

* **Process Flow & Feedback:**

* A straight black arrow points from the "Picture" icon to the "Visual Embeddings" box.

* A curved black arrow labeled **"Compare"** points from the "Visual Embeddings" box to the "Reasoning Engine" box.

* A second curved black arrow labeled **"Retrain"** points from the "Reasoning Engine" box back to the "Visual Embeddings" box, completing a feedback loop.

### Detailed Analysis

The diagrams describe a system where perception and reasoning are integrated.

* **In Diagram a):** The core principle is a continuous cycle between a "Neuro" module (likely a neural network for pattern recognition) and a "Symbolic" module (likely a logic-based system for structured reasoning). The bidirectional arrows imply that each module informs and updates the other.

* **In Diagram b):** This principle is applied to a visual task.

1. A **"Picture"** is processed by the **"Visual Embeddings"** module (the "Neuro" component), which generates a numerical representation.

2. This representation is used to make a **"Prediction"**.

3. Simultaneously, the embeddings are sent (**"Compare"**) to the **"Reasoning Engine"** (the "Symbolic" component).

4. The Reasoning Engine likely evaluates the prediction against logical rules or known facts to establish a **"Ground Truth"**.

5. Crucially, the Reasoning Engine can send feedback (**"Retrain"**) to the Visual Embeddings module, suggesting the symbolic reasoning is used to improve or correct the neural perception model over time.

### Key Observations

1. **Color-Coded Semantics:** The consistent use of blue for "Neuro"/"Visual Embeddings" and gray for "Symbolic"/"Reasoning Engine" visually reinforces the separation and collaboration between these two AI paradigms.

2. **Closed-Loop System:** Both diagrams emphasize a closed-loop, iterative process. This is not a simple feed-forward pipeline but a system designed for continuous learning and refinement.

3. **Direction of Feedback:** The "Retrain" arrow in diagram **b)** is particularly significant. It indicates that the symbolic reasoning component has authority to update the neural component, which is a specific architectural choice in neuro-symbolic AI.

4. **Abstraction to Application:** Diagram **a)** is a pure conceptual model, while **b)** instantiates it with specific modules ("Visual Embeddings," "Reasoning Engine") and data flows ("Picture," "Prediction," "Ground Truth").

### Interpretation

These diagrams illustrate a **neuro-symbolic AI approach** aimed at overcoming the limitations of purely neural or purely symbolic systems.

* **What it suggests:** The architecture proposes that robust AI requires both **subsymbolic perception** (the "Neuro" part, good at handling noisy, raw data like images) and **symbolic reasoning** (the "Symbolic" part, good at logic, explanation, and leveraging structured knowledge). The "Compare" and "Retrain" loop is the mechanism for integration, where symbolic knowledge guides and improves perceptual learning.

* **How elements relate:** The "Picture" is the real-world input. "Visual Embeddings" are the system's internal, learned representation of that picture. The "Prediction" is the system's initial guess. The "Reasoning Engine" acts as a critic or supervisor, using rules or knowledge to judge the prediction against a "Ground Truth" and then providing corrective feedback to make the perceptual model ("Visual Embeddings") more accurate or aligned with logical constraints.

* **Notable implication:** This model addresses a key challenge in AI: creating systems that can both perceive the world flexibly *and* reason about it reliably. The "Retrain" pathway suggests a focus on **explainability and corrective learning**, where errors can be traced back and fixed through logical analysis rather than just more data. The absence of numerical data or trends indicates this is a conceptual framework, not a performance report.

</details>

Figure 3: Cooperative architecture: (a) principle and (b) application to visual reasoning.

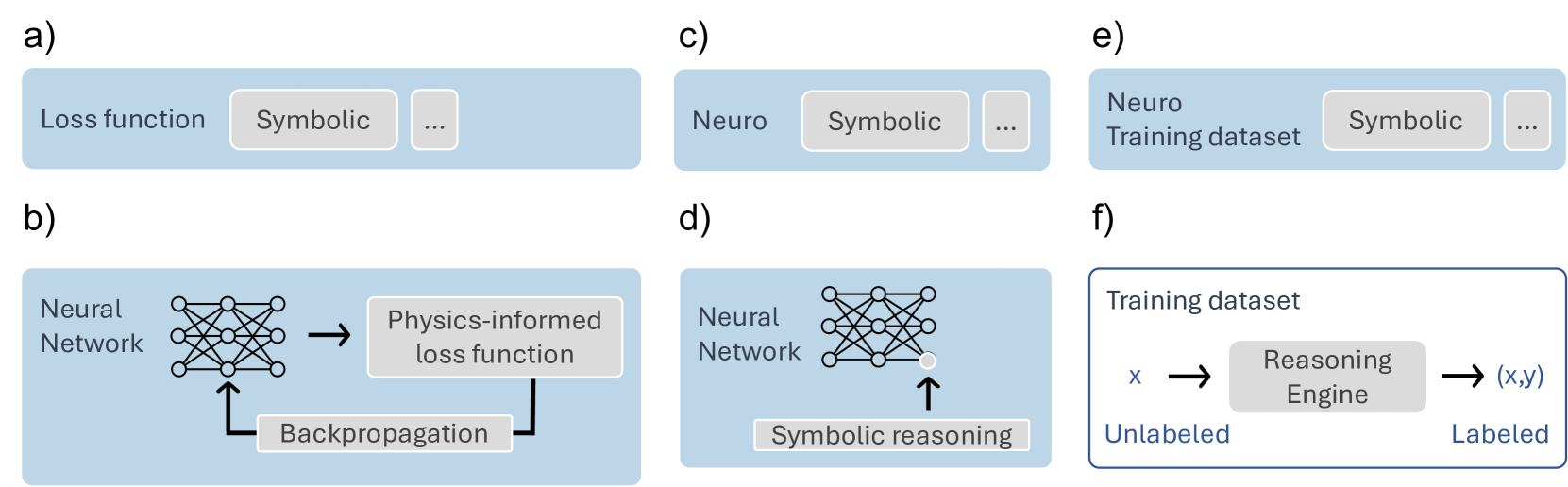

### 3.4 Compiled

As part of the compiled NSAI, Neuro Symbolic Loss uses symbolic reasoning into the loss function of a NN (Figure 4 a). The loss function is typically used to measure the discrepancy between the model’s predictions and the true outputs. By incorporating symbolic rules or constraints, the network’s training process not only minimizes prediction error but also ensures that the output aligns with symbolic logic or predefined relational structures. This allows the model to learn not just from data but also from symbolic reasoning, helping to guide its learning process toward solutions that are both accurate and consistent with symbolic principles.

$$

\mathcal{L}=\mathcal{L}_{\text{task}}(y,y_{\text{target}})+\lambda\cdot

\mathcal{L}_{\text{symbolic}}(y) \tag{5}

$$

where $y$ is the model prediction, $y_{\text{target}}$ represents the ground truth labels, $\mathcal{L}_{\text{task}}$ is the task-specific loss (e.g., cross-entropy), $\mathcal{L}_{\text{symbolic}}$ is the penalization for violating symbolic rules, $\lambda$ the Weight balancing the two loss components, and $\mathcal{L}$ the final loss, combining both the task-specific loss and the symbolic constraint penalty to guide model optimization. This architecture is typically useful in the field of 4D printing, where structures need to be optimized at the material level to achieve a target shape. In such a case, a NN predicts the material distribution and geometric configuration that allows the structure to adapt under external stimuli. The training process incorporates a physics-informed loss function, where, in addition to minimizing the difference between predicted and desired mechanical behavior, the model is penalized whenever the predicted deformation violates symbolic mechanical constraints, such as equilibrium equations or the stress-strain relationship (Figure 4 b). By embedding these symbolic equations directly into the loss function, the NN learns to generate designs that are not only data-driven but also physically consistent, ensuring that the final 4D-printed structure maintains the desired shape across different operational conditions.

A second compiled NSAI architecture, called Neuro Symbolic Neuro, uses symbolic reasoning at the neuron level by replacing traditional activation functions with mechanisms that incorporate symbolic reasoning (Figure 4 c). Rather than using standard mathematical operations like ReLU or sigmoid, the neuron activation is governed by symbolic rules or logic. This allows the NN to reason symbolically at a more granular level, integrating explicit reasoning steps into the learning process. This fusion of symbolic and neural operations enables more interpretable and constrained decision-making within the network, enhancing its ability to reason in a structured and rule-based manner while retaining the flexibility of neural computations. This architecture can be described as follows:

$$

y=g_{\text{symbolic}}(x) \tag{6}

$$

where: $x$ represents the pre-activation input, $g_{\text{symbolic}}(x)$ is the symbolic reasoning-based activation function, and $y$ the final neuron. This architecture can find application in lean approval systems, where neural activations are driven by symbolic financial rules rather than traditional functions. One example is the collateral-based constraint neuron, which dynamically adjusts the risk score based on the value of the pledged collateral. When the collateral’s value falls below a predefined threshold relative to the loan amount, the neuron applies a strict penalty that substantially increases the risk score, effectively preventing the system from underestimating the associated financial risk. This symbolic constraint ensures that, regardless of favorable patterns identified in other data, the model consistently accounts for the critical impact of insufficient collateral, leading to more reliable and regulation-compliant credit decisions (Figure 4 d).

Finally, the last compiled architecture, Neuro:Symbolic $\to$ Neuro, uses a symbolic reasoner to generate labeled data pairs $(x,y)$ , where $y$ is produced by applying symbolic rules or reasoning to the input $x$ (Figure 4 e). These pairs are then used to train a NN, which learns to map from the symbolic input $x$ to the corresponding output $y$ . The symbolic reasoner acts as a supervisor, providing high-quality, structured labels that guide the NN’s learning process [46]. This architecture can be governed as follows:

$$

\mathcal{D}_{\text{train}}=\{(x,g_{\text{symbolic}}(x))\mid x\in\mathcal{X}\} \tag{7}

$$

where $\mathcal{D}_{\text{train}}$ is the training dataset, $x$ denotes the unlabeled data, $g_{\text{symbolic}}(x)$ represents symbolic rules generating labeled data, and $\mathcal{X}$ the set of all input data (Figure 4 b).

Figure 4 f illustrates this architecture, where a reasoning engine is used to label unlabeled training data, transforming raw inputs into structured $(x,y)$ pairs, where symbolic rules enhance the data quality.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram Set: Neuro-Symbolic AI Integration Schematics

### Overview

The image displays six schematic diagrams, labeled a) through f), illustrating different conceptual approaches to integrating neural ("Neuro") and symbolic ("Symbolic") components within artificial intelligence systems. The diagrams are presented in a 2x3 grid layout on a white background. Each diagram is contained within a light blue rectangular box, except for diagram f), which has a white background with a blue border. The diagrams use simple icons (a neural network graph, text boxes, arrows) to represent components and data flow.

### Components/Axes

There are no traditional chart axes. The components are labeled text boxes and icons. The key elements across all diagrams are:

* **Neural Network Icon:** A stylized graph of interconnected nodes, representing a neural network model.

* **Text Boxes:** Labeled with specific functions or components (e.g., "Loss function", "Symbolic", "Physics-informed loss function", "Reasoning Engine").

* **Arrows:** Indicate the direction of data flow, influence, or process steps.

* **Ellipsis (...):** Appears in diagrams a), c), and e), suggesting additional, unspecified components or steps.

### Detailed Analysis

The image is segmented into six independent panels. Each is analyzed below:

**a) Top-Left Panel**

* **Content:** A light blue box containing the text "Loss function" on the left, a gray box labeled "Symbolic" in the center, and a smaller gray box with an ellipsis "..." on the right.

* **Interpretation:** Suggests a symbolic component is part of or influences the loss function in a training process.

**b) Bottom-Left Panel**

* **Content:** A light blue box showing a process flow.

* Left: Text "Neural Network" next to a neural network icon.

* Center: A right-pointing arrow leads to a gray box labeled "Physics-informed loss function".

* Bottom: A gray box labeled "Backpropagation" has an arrow pointing up to the neural network icon.

* **Flow:** Neural Network -> Physics-informed loss function. The loss function's output is used for Backpropagation, which updates the Neural Network.

**c) Top-Center Panel**

* **Content:** A light blue box containing the text "Neuro" on the left, a gray box labeled "Symbolic" in the center, and a smaller gray box with an ellipsis "..." on the right.

* **Interpretation:** A high-level representation of a neuro-symbolic system, implying a "Neuro" component works alongside a "Symbolic" component and others.

**d) Bottom-Center Panel**

* **Content:** A light blue box.

* Left: Text "Neural Network" next to a neural network icon.

* Bottom: A gray box labeled "Symbolic reasoning" has an arrow pointing up to the neural network icon.

* **Flow:** Symbolic reasoning provides input or constraints directly to the Neural Network.

**e) Top-Right Panel**

* **Content:** A light blue box containing the text "Neuro Training dataset" on the left, a gray box labeled "Symbolic" in the center, and a smaller gray box with an ellipsis "..." on the right.

* **Interpretation:** Suggests a symbolic component is involved in the creation or processing of the training dataset for a neural model.

**f) Bottom-Right Panel**

* **Content:** A white box with a blue border, titled "Training dataset".

* Left: Text "Unlabeled" below the variable "x".

* Center: A right-pointing arrow leads to a gray box labeled "Reasoning Engine".

* Right: A right-pointing arrow leads from the engine to the tuple "(x,y)", with the text "Labeled" below it.

* **Flow:** Unlabeled data `x` is processed by a Reasoning Engine to produce labeled data `(x,y)`. This depicts a symbolic system generating labels for a dataset.

### Key Observations

1. **Integration Points:** The diagrams highlight four primary integration points for neuro-symbolic AI:

* **Loss Function Design** (a, b): Symbolic knowledge or physics constraints are embedded into the objective function.

* **Direct Model Influence** (d): Symbolic reasoning modules directly influence the neural network's parameters or activations.

* **Dataset Curation** (e, f): Symbolic systems are used to create, label, or structure training data.

* **High-Level Architecture** (c): A generic representation of combined neuro and symbolic components.

2. **Flow Direction:** Diagrams b), d), and f) explicitly show directional flow. In b) and d), the symbolic component influences the neural component. In f), the symbolic engine transforms data from unlabeled to labeled.

3. **Visual Consistency:** The neural network icon is identical in b) and d). The "Symbolic" text box is visually consistent in a), c), and e). The ellipsis box is consistent in a), c), and e).

### Interpretation

This set of diagrams serves as a taxonomy of fundamental strategies for combining neural and symbolic AI paradigms. It moves from abstract representations (a, c, e) to more concrete process flows (b, d, f).

* **What it demonstrates:** The core idea is that symbolic knowledge (rules, logic, physics) can be injected into the neural AI pipeline at different stages: during data preparation, within the model's objective function, or as a direct input to the model itself. Diagram f) is particularly notable as it shows a pure symbolic system ("Reasoning Engine") acting as a data labeler for a subsequent neural training process.

* **Relationships:** The diagrams contrast "soft" integration (where symbolic elements are part of a larger pipeline, as in a, b, e, f) with "hard" integration (where symbolic reasoning directly acts on the neural network, as in d). Panel b) specifically illustrates a popular sub-field: Physics-Informed Neural Networks (PINNs), where known physical laws (symbolic knowledge) are encoded into the loss function.

* **Notable Patterns:** The consistent use of the ellipsis in a), c), and e) implies these are simplified views of more complex systems. The shift from a blue background to a white background in f) may visually distinguish the data generation/labeling process from the model training processes shown in the other panels. The absence of any diagram showing a neural network influencing a symbolic system suggests the focus here is on using symbolic AI to enhance or structure neural AI, not the reverse.

</details>

Figure 4: Compiled architectures: (a) Neuro Symbolic Loss principle and (b) application to physics-informed learning; (c) Neuro Symbolic Neuro principle and (d) application of symbolic reasoning in NNs; (e) Neuro:Symbolic $\rightarrow$ Neuro principle and (f) application to data Llabeling.

### 3.5 Ensemble

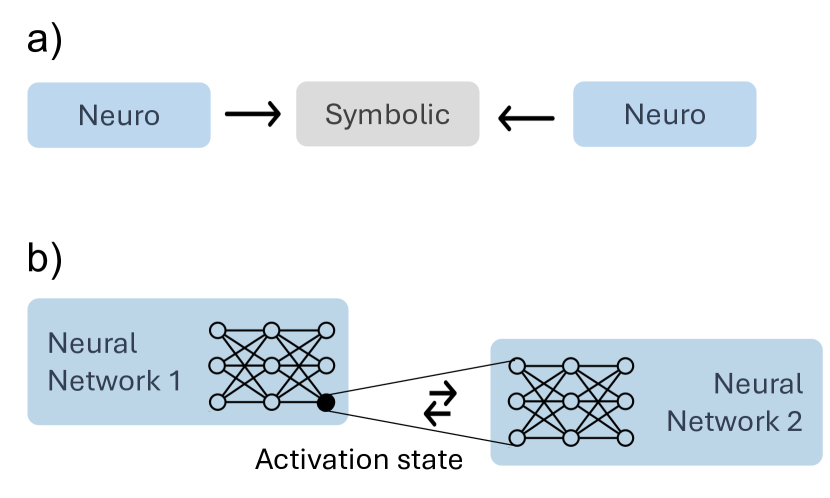

Another promising architecture, called Neuro $\to$ Symbolic $\leftarrow$ Neuro uses multiple interconnected NNs via a symbolic fibring function, which enables them to collaborate and share information while adhering to symbolic constraints (Figure 5 a). The symbolic function acts as an intermediary, facilitating communication between the networks by ensuring that their interactions respect predefined symbolic rules or structures. This enables the networks to exchange information in a structured manner, allowing them to jointly solve problems while benefiting from both the statistical learning power of NNs and the logical constraints imposed by the symbolic system [47]. This architecture can formally defined as follows:

$$

y=g_{\text{fibring}}(\{f_{i}\}_{i=1}^{n}) \tag{8}

$$

where $f_{i}$ represents the individual NN, $g_{\text{fibring}}$ is the logic-aware aggregator that enforces symbolic constraints while unifying the outputs of multiple NNs, $n$ the umber of NNs, and $y$ is the combined output of interconnected NNs, produced through the symbolic fibring function $g_{\text{fibring}}$ . For instance in smart cities and urban planning, multiple NNs can be employed, each handle a different urban data stream—such as real-time traffic flow, energy consumption, and air quality measurements. A symbolic fibring function then harmonizes these outputs, enforcing city-level constraints (e.g., ensuring pollution alerts match local environmental regulations, verifying that traffic predictions align with current road network rules). If one network forecasts a surge in vehicle congestion that would push pollution levels beyond acceptable thresholds, the symbolic aggregator identifies the conflict and directs all networks to converge on a coordinated strategy—such as adjusting traffic signals or advising public transport usage. By leveraging each network’s specialized insight within logical urban-planning constraints, the system delivers efficient, consistent decisions across the city’s complex infrastructure.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Neuro-Symbolic Integration and Neural Network Activation Transfer

### Overview

The image is a two-part technical diagram illustrating concepts in artificial intelligence, specifically focusing on neuro-symbolic integration and inter-network communication. It consists of two labeled sections, **a)** and **b)**, presented vertically on a plain white background. The diagram uses simple geometric shapes, lines, and text to convey architectural and process concepts.

### Components/Axes

The diagram is divided into two distinct components:

**Part a) - Neuro-Symbolic Integration Model**

* **Components:** Three rectangular boxes arranged horizontally.

* **Left Box:** Light blue rectangle containing the text "Neuro".

* **Center Box:** Light gray rectangle containing the text "Symbolic".

* **Right Box:** Light blue rectangle containing the text "Neuro".

* **Flow/Relationship:** Two black arrows indicate directionality.

* One arrow points from the left "Neuro" box to the central "Symbolic" box.

* Another arrow points from the right "Neuro" box to the central "Symbolic" box.

* **Spatial Layout:** The "Symbolic" box is centrally positioned, with the two "Neuro" boxes flanking it symmetrically on the left and right.

**Part b) - Neural Network Activation State Transfer**

* **Components:** Two stylized neural network diagrams and connecting elements.

* **Left Network:** A light blue rounded rectangle labeled "Neural Network 1". Inside is a schematic of a neural network with 3 layers of nodes (circles) connected by lines. One node in the middle layer is highlighted as a solid black circle.

* **Right Network:** A light blue rounded rectangle labeled "Neural Network 2". Inside is a similar schematic of a neural network with 3 layers of nodes.

* **Flow/Relationship:** A connection is shown between the two networks.

* Two thin black lines originate from the highlighted black node in "Neural Network 1" and connect to two different nodes in the first layer of "Neural Network 2".

* Between these connecting lines is a double-headed, zig-zag arrow (⇄) symbolizing a bidirectional exchange or transformation.

* Below this arrow is the label "Activation state".

* **Spatial Layout:** The two network boxes are placed side-by-side, with "Neural Network 1" on the left and "Neural Network 2" on the right. The connecting lines and arrow are positioned in the space between them.

### Detailed Analysis

* **Textual Content:** All text is in English. The extracted labels are:

* `a)`

* `Neuro`

* `Symbolic`

* `b)`

* `Neural Network 1`

* `Neural Network 2`

* `Activation state`

* **Visual Elements & Symbolism:**

* **Color Coding:** Light blue is used for neural ("Neuro") components. Light gray is used for the symbolic component. This color distinction visually separates the two paradigms.

* **Arrows in (a):** The arrows are unidirectional, pointing *toward* the "Symbolic" box. This suggests a flow of information or processing from neural modules into a symbolic reasoning or representation module.

* **Network Schematic in (b):** The networks are represented as fully connected layers (a common abstraction). The highlighted black node signifies a specific unit whose internal state (its "activation") is being extracted or referenced.

* **Connection in (b):** The lines from the single black node to multiple nodes in the second network illustrate a one-to-many relationship. The bidirectional arrow (⇄) labeled "Activation state" indicates that the state is not merely copied but may be transformed, compared, or used in a reciprocal process between the two networks.

### Key Observations

1. **Conceptual Progression:** Part **a)** presents a high-level architectural concept (neuro-symbolic integration), while part **b)** zooms in on a potential low-level mechanism (activation state transfer) that could enable such integration or collaboration between neural components.

2. **Directionality is Key:** In **a)**, the flow is convergent (many-to-one) towards the symbolic center. In **b)**, the flow is divergent (one-to-many) from a specific point in one network to another, with a bidirectional process indicated.

3. **Abstraction Level:** The diagram is highly abstract. It does not specify the nature of the "Neuro" modules, the rules of the "Symbolic" system, the architecture of the neural networks, or the mathematical form of the "Activation state".

### Interpretation

This diagram illustrates two complementary ideas in advanced AI systems:

1. **Hybrid Intelligence (Part a):** It proposes a model where sub-symbolic, pattern-recognition systems ("Neuro") feed processed information into a central, structured, rule-based system ("Symbolic"). This is a classic depiction of **neuro-symbolic AI**, aiming to combine the learning and perception strengths of neural networks with the reasoning, explainability, and precision of symbolic AI. The two "Neuro" boxes could represent different sensory modalities (e.g., vision and language) or different processing streams converging for integrated reasoning.

2. **Mechanism for Integration or Collaboration (Part b):** It suggests a technical method for how two distinct neural networks might communicate or share knowledge. By extracting the **activation state** (the pattern of activity) of a specific neuron or layer from "Network 1" and using it to influence or communicate with "Network 2", the system enables a form of **knowledge transfer, distillation, or collaborative processing**. The bidirectional arrow implies this could be part of a training procedure (like in some forms of adversarial or cooperative learning) or an inference-time communication protocol. This mechanism could be a building block for creating the "Neuro" components in part **a)** or for enabling them to interact effectively.

**Overall, the diagram moves from a conceptual framework for hybrid AI (a) to a potential implementation detail for enabling communication between neural components (b), which is a foundational challenge in building such integrated systems.**

</details>

Figure 5: Ensemble architecture: (a) principle and (b) application to NN collaboration.

Figure 5 b illustrates this architecture, where two NNs (Neural Network 1 and Neural Network 2) communicate through activation states, which enables dynamic exchange of learned representations.

## 4 Leveraging NSAI in AI Technologies

Generative AI is advancing at a remarkable pace, addressing increasingly complex challenges through the integration of diverse methodologies. A key development is the combination of NNs with symbolic reasoning, resulting in hybrid systems that leverage both strengths. Recent studies have demonstrated the effectiveness of this approach in various applications, including design generation and enhancing instructability in generative models [48, 49]. This section aims to classify contemporary AI techniques such as RAG, GNNs, agent-based AI, and transfer learning within the NSAI framework. This classification clarifies how generative AI aligns with neuro-symbolic approaches, bridging cutting-edge research with established paradigms. It also reveals how generative AI increasingly embodies both neural and symbolic characteristics, moving beyond siloed methods.

Additionally, this classification enhances our understanding of these techniques’ roles in AI’s broader landscape, particularly in addressing challenges like interpretability, reasoning, and generalization. It identifies synergies between methods, fostering robust hybrid models that combine neural learning’s adaptability with symbolic reasoning’s precision. Lastly, it supports informed decision-making, guiding researchers and practitioners in selecting the most suitable AI techniques for specific tasks.

### 4.1 Overview of Key AI Technologies

One of the most significant advancements is RAG, which integrates information retrieval with generative models to perform knowledge-intensive tasks. By combining a retrieval mechanism to extract relevant external data with Seq2Seq models for generation [50], RAG excels in applications such as question answering and knowledge-driven conversational AI [51]. Seq2Seq models themselves, built as encoder-decoder architectures, have been pivotal in machine translation, text summarization, and conversational modeling, providing the foundation for many generative AI systems. An extension of RAG is the GraphRAG approach [52], which incorporates graph-based reasoning into the retrieval and generation process. By leveraging knowledge graph (KGq) and ontologies structures to represent relationships between information elements, GraphRAG enhances query-focused summarization and reasoning tasks [53, 54]. This method has demonstrated success in producing coherent and contextually rich summaries by integrating local and global reasoning.

GNNs [55] represent a breakthrough in extending neural architectures to graph-structured data, enabling advanced reasoning over interconnected entities. Their ability to model relationships between entities makes them indispensable for a range of tasks, including link prediction, node classification, and recommendation systems, with notable success in KG reasoning. GNNs have also proven highly effective in named entity recognition (NER) [56], where they can leverage graph representations to capture contextual dependencies and relationships between entities in text. This capability extends to relation extraction [57], where GNNs identify and classify semantic relationships between entities, crucial for building and enhancing KG.

Advances in agentic AI systems, which leverage Large Language Models (LLMs), have shown significant potential in enabling autonomous decision-making and task execution. These systems are designed to function independently, interacting with environments, coordinating with other agents, and adapting to dynamic situations without human intervention. A notable example is AutoGen [58], a framework that enables the creation of autonomous agents that can interact with each other to solve tasks and improve through continual interactions. Recent work has further enhanced these systems through MoE architectures, which integrate specialized sub-models (“experts”) into multi-agent frameworks to optimize task-specific performance and computational efficiency. For instance, MoE-based coordination allows agents to dynamically activate subsets of experts based on context, enabling scalable specialization in complex environments [59, 60]. Xie et al. [61] explored the role of LLMs in these agentic systems, discussing their ability to facilitate autonomous cooperation and communication between agents in complex environments, and marking an important step toward scalable and self-sufficient AI. By combining MoE principles with multi-agent collaboration, systems can achieve hierarchical decision-making: LLMs act as meta-controllers, routing tasks to specialized agents (e.g., vision, planning, or language experts) while maintaining global coherence.

However, the growing autonomy of such systems underscores the importance of XAI [62] to ensure transparency and trust. XAI has gained prominence as a means to enhance accountability and support ethical AI adoption. By providing insights into model behavior, XAI ensures that even highly autonomous systems remain interpretable and accountable, addressing concerns about their decisions and actions in sensitive and dynamic environments.

Recent advancements in AI have demonstrated the potential of integrating fine-tuning, distillation, and in-context learning to enhance model performance. Huang et al. [63] introduced in-context learning distillation, a novel method that transfers few-shot learning capabilities from large pre-trained LLMs to smaller models. By combining in-context learning objectives with traditional language modeling, this approach allows smaller models to perform effectively with limited data while maintaining computational efficiency.

Transfer learning [64] has similarly emerged as a foundational technique, enabling pre-trained models to adapt their extensive knowledge to new domains using minimal data. This capability is particularly advantageous in resource-constrained scenarios. Techniques such as feature extraction, where pre-trained model layers are repurposed for specific tasks, and fine-tuning, which involves adjusting the weights of the pre-trained model for new tasks, further illustrate its adaptability.

Complementing these methods, prompt engineering empowers LLMs to perform task-specific functions through carefully designed prompts. Techniques such as CoT prompting [33], zero-shot [65], and few-shot prompting enhance the ability of LLMs to reason and generalize across diverse tasks without extensive retraining [66]. Additionally, knowledge distillation plays a crucial role in optimizing AI models by transferring knowledge from larger, more complex models to smaller, efficient ones [67]. Variants of distillation, such as task-specific distillation, feature distillation, and response-based distillation, further streamline the process for edge computing and resource-limited environments.

Reinforcement learning and its variant RLHF [68], focus on training agents to make sequential decisions in dynamic environments. RLHF further aligns agent behavior with human preferences, fostering ethical and adaptive AI systems. Finally, continuous learning, or lifelong learning, addresses the challenge of adapting AI systems to new data while retaining previously learned knowledge, ensuring AI remains effective in changing environments [69].

These techniques represent the cutting edge of generative AI, each contributing to solving complex challenges across diverse applications. The classification of these methods within NSAI paradigm, explored in the following sections, offers a structured perspective on their synergies and practical relevance.

### 4.2 Classification of AI Technologies within NSAI Architectures

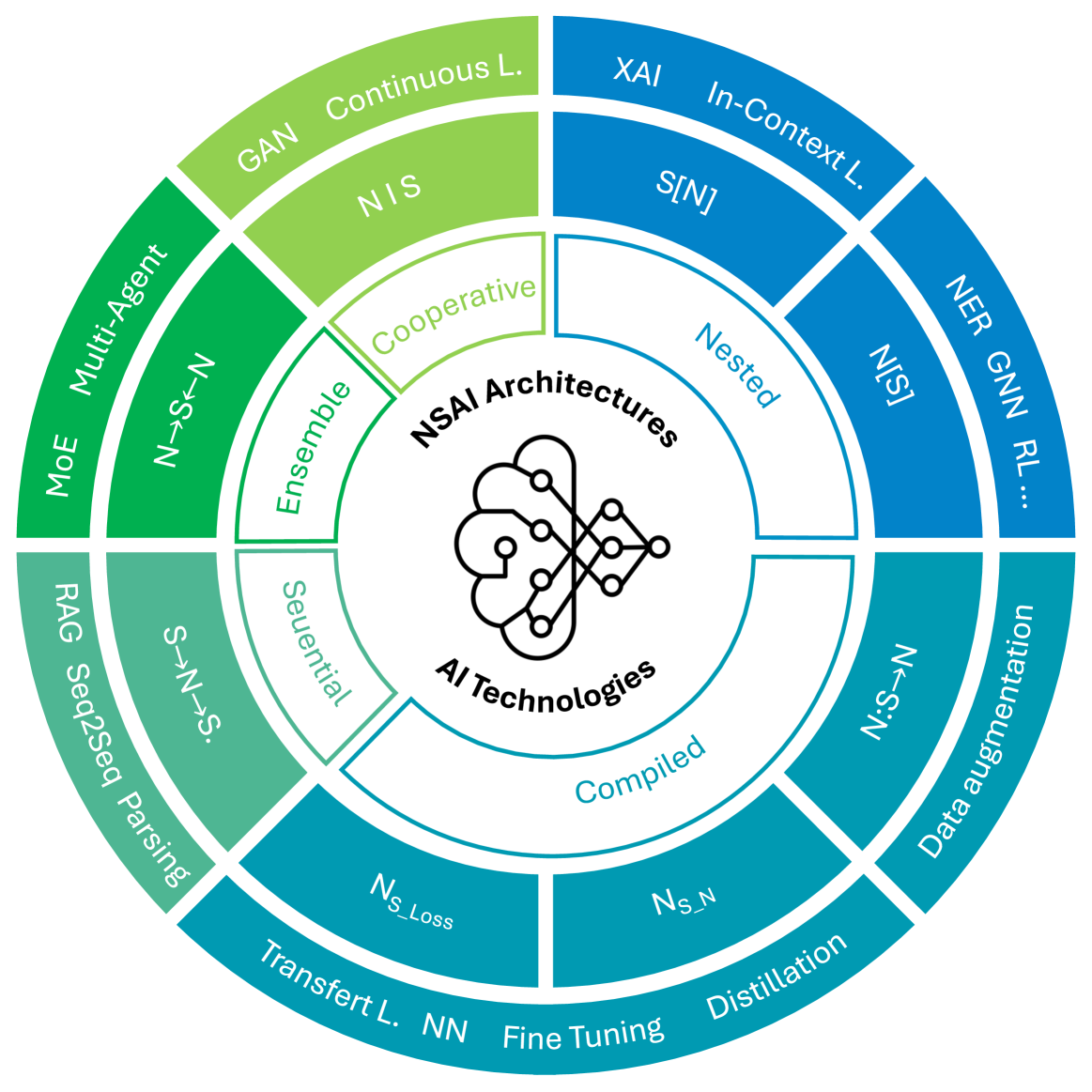

This section categorizes generative AI techniques within the eight distinct NSAI architectures, highlighting their underlying principles and practical applications. By classifying these approaches, we gain a clearer understanding of how neural and symbolic methods synergize to address diverse challenges in AI, as summarized in Figure 6.

<details>

<summary>x6.png Details</summary>

### Visual Description

## [Diagram Type]: Hierarchical Circular Taxonomy of NSAI Architectures and AI Technologies

### Overview

The image is a multi-layered circular diagram (a sunburst or radial chart) that visually categorizes and relates various AI technologies and architectural paradigms under the central theme of "NSAI Architectures" and "AI Technologies." The diagram uses concentric rings and color-coded segments to show hierarchical relationships, with a central icon representing the integration of neural networks and knowledge graphs.

### Components/Axes

* **Central Core:** A white circle containing a black line-art icon of a brain interconnected with a network graph. Above the icon is the text **"NSAI Architectures"** and below it is **"AI Technologies"**.

* **Structure:** The diagram is organized into three primary concentric rings or layers radiating from the center.

1. **Innermost Ring (Middle Layer):** Divided into five colored segments, each labeled with a broad architectural or methodological category.

2. **Outer Ring:** Divided into multiple segments, each containing specific AI techniques, models, or concepts. These segments are color-coded to correspond with the category in the middle ring.

3. **Outermost Ring (Implicit):** The outermost edge of the diagram, where the specific technique labels are placed.

* **Color Coding & Legend (Implicit):** The diagram uses color to group related concepts. There is no separate legend box; the color association is spatial and direct.

* **Green (Light to Dark):** Associated with the "Cooperative" and "Ensemble" categories.

* **Blue (Light to Dark):** Associated with the "Nested" and "Compiled" categories.

* **Teal:** Associated with the "Sequential" category.

### Detailed Analysis

The diagram is segmented into five primary categories in the middle ring, each with associated technologies in the outer ring. The analysis proceeds clockwise from the top.

**1. Category: Cooperative (Light Green Segment, Top-Left)**

* **Associated Outer Ring Technologies (Clockwise):**

* `GAN` (Generative Adversarial Network)

* `Continuous L.` (Likely "Continuous Learning")

* `NIS` (Unclear abbreviation, possibly "Neural Inference System" or similar)

* `S[N]` (Unclear notation, possibly "System[Network]" or a specific model designation)

**2. Category: Nested (Light Blue Segment, Top-Right)**

* **Associated Outer Ring Technologies (Clockwise):**

* `XAI` (Explainable AI)

* `In-Context L.` (In-Context Learning)

* `NER` (Named Entity Recognition)

* `GNN` (Graph Neural Network)

* `RL...` (Reinforcement Learning, with ellipsis suggesting continuation or related methods)

**3. Category: Compiled (Dark Blue Segment, Bottom-Right)**

* **Associated Outer Ring Technologies (Clockwise):**

* `Data augmentation`

* `N->S_N` (Unclear notation, possibly "Network to Specific Network" or a transformation rule)

* `Distillation` (Knowledge Distillation)

* `Fine Tuning`

* `NN` (Neural Network)

* `Transfert L.` (Transfer Learning, note the French spelling "Transfert")

* `Ns_Loss` (Unclear, possibly "Network-specific Loss" or a named loss function)

**4. Category: Sequential (Teal Segment, Bottom-Left)**

* **Associated Outer Ring Technologies (Clockwise):**

* `S->N->S.` (Unclear notation, likely representing a sequence like "Sequence to Network to Sequence")

* `RAG` (Retrieval-Augmented Generation)

* `Seq2Seq` (Sequence-to-Sequence models)

* `Parsing`

**5. Category: Ensemble (Medium Green Segment, Left)**

* **Associated Outer Ring Technologies (Clockwise):**

* `N->S->N` (Unclear notation, likely "Network to Sequence to Network")

* `Multi-Agent`

* `MoE` (Mixture of Experts)

### Key Observations

* **Hierarchical Grouping:** The diagram successfully groups specific AI techniques (outer ring) under broader methodological paradigms (middle ring), suggesting a taxonomy for organizing NSAI (likely "Neuro-Symbolic AI" or similar) approaches.

* **Notation Ambiguity:** Several labels use symbolic notation (`S[N]`, `N->S_N`, `S->N->S.`) whose precise meaning is not defined within the diagram itself. These likely represent specific data flow or architectural patterns familiar to a specialized audience.

* **Color as Primary Organizing Principle:** The color gradient from green to blue to teal is the main visual cue for categorization, creating a smooth transition around the circle.

* **Central Integration Symbol:** The brain-network icon at the core visually reinforces the theme of integrating different AI paradigms (neural and symbolic, or different architectural styles).

* **Language:** All text is in English, with one instance of French spelling (`Transfert L.`).

### Interpretation

This diagram serves as a conceptual map for the field of advanced AI architectures, specifically those likely falling under the Neuro-Symbolic AI (NSAI) umbrella. It argues that the landscape can be understood through five core paradigms: Cooperative, Nested, Compiled, Sequential, and Ensemble methods.

* **Relationships:** The diagram suggests that techniques like GANs and Continuous Learning are fundamentally "Cooperative" in nature, while RAG and Seq2Seq models are "Sequential." The "Compiled" category appears to be a broad bucket for foundational training and optimization techniques (Data Augmentation, Fine Tuning, Distillation).

* **Underlying Message:** The structure implies that building complex AI systems is not about choosing a single model, but about selecting and combining techniques from these different architectural families. The central icon emphasizes that the goal is an integrated, brain-like intelligence.

* **Anomalies/Notable Points:** The inclusion of "XAI" (Explainable AI) under "Nested" is interesting, suggesting that explainability is viewed as an architectural property of nested systems rather than just an add-on feature. The use of French spelling for "Transfer Learning" might indicate the origin of the diagram or a specific academic tradition.

* **Utility:** For a researcher or engineer, this diagram provides a high-level framework to locate a specific technique within a broader architectural strategy, potentially guiding the design of hybrid AI systems. The ambiguous notations (`N->S_N`, etc.) act as pointers to more detailed technical specifications that would be needed for implementation.

</details>

Figure 6: Classification of AI technologies into NSAI architectures.

#### 4.2.1 The Sequential Paradigm: From Symbolic to Neural Reasoning

Techniques like RAG, GraphRAG, and Seq2Seq models (including LLMs, e.g., GPT [70]) align with this method due to their reliance on neural encodings of symbolic data (e.g., text or structured information) to perform complex transformations before outputting results in symbolic form. Similarly, semantic parsing benefits from this framework by leveraging NNs to uncover latent patterns in symbolic inputs and generating interpretable symbolic conclusions. For instance, RAG-Logic proposes a dynamic example-based framework using RAG to enhance logical reasoning capabilities by integrating relevant, contextually appropriate examples [71]. It first encodes symbolic input (e.g., logical premises) into neural representations using the RAG knowledge base search module. Neural processing occurs through the translation module, which transforms the input into formal logical formulas. Finally, the fix module ensures syntactic correctness, and the solver module evaluates the logical consistency of the formulas, decoding the results back into symbolic output. This process maintains the interpretability of symbolic reasoning while leveraging the power of NNs to improve flexibility and performance.

#### 4.2.2 The Nested Paradigm: Embedding Symbolic Logic in Neural Systems

In-context learning, such as few-shot learning and CoT reasoning, aligns with the Symbolic[Neuro] approach by leveraging NNs for context-aware predictions, while symbolic systems facilitate higher-order reasoning. Similarly, XAI falls into this category, as it often combines neural models for extracting features with symbolic frameworks to produce explanations that are easily understood by humans.

Zhang et al. [72] presented a framework in which symbolic reasoning is enhanced by NNs. CoT is used as a method to generate prompts that combine symbolic rules with neural reasoning. For example, the task of reasoning about relationships between entities, such as “Joseph’s sister is Katherine” is approached by generating a reasoning path through CoT. The reasoning path is structured using symbolic rules, such as $Sister(A,C)\leftarrow Brother(A,B)\land Sister(B,C)$ , which define the relationships between entities. These rules are then used to form CoT prompts that guide the model through the reasoning steps. The NN processes these prompts, performing feature extraction and probabilistic inference, while the symbolic system (including the knowledge base and logic rules) orchestrates the overall reasoning process. In this approach, the symbolic framework is the primary system for structuring the reasoning task, and the NN acts as a subcomponent that processes raw data and interprets the symbolic rules in the context of the query.