# A-Mem: Agentic Memory for LLM Agents

Abstract

While large language model (LLM) agents can effectively use external tools for complex real-world tasks, they require memory systems to leverage historical experiences. Current memory systems enable basic storage and retrieval but lack sophisticated memory organization, despite recent attempts to incorporate graph databases. Moreover, these systems’ fixed operations and structures limit their adaptability across diverse tasks. To address this limitation, this paper proposes a novel agentic memory system for LLM agents that can dynamically organize memories in an agentic way. Following the basic principles of the Zettelkasten method, we designed our memory system to create interconnected knowledge networks through dynamic indexing and linking. When a new memory is added, we generate a comprehensive note containing multiple structured attributes, including contextual descriptions, keywords, and tags. The system then analyzes historical memories to identify relevant connections, establishing links where meaningful similarities exist. Additionally, this process enables memory evolution – as new memories are integrated, they can trigger updates to the contextual representations and attributes of existing historical memories, allowing the memory network to continuously refine its understanding. Our approach combines the structured organization principles of Zettelkasten with the flexibility of agent-driven decision making, allowing for more adaptive and context-aware memory management. Empirical experiments on six foundation models show superior improvement against existing SOTA baselines.

<details>

<summary>x1.png Details</summary>

### Visual Description

Icon/Small Image (25x24)

</details>

Code for Benchmark Evaluation: https://github.com/WujiangXu/AgenticMemory

<details>

<summary>x2.png Details</summary>

### Visual Description

Icon/Small Image (25x24)

</details>

Code for Production-ready Agentic Memory: https://github.com/WujiangXu/A-mem-sys

1 Introduction

Large Language Model (LLM) agents have demonstrated remarkable capabilities in various tasks, with recent advances enabling them to interact with environments, execute tasks, and make decisions autonomously [23, 33, 7]. They integrate LLMs with external tools and delicate workflows to improve reasoning and planning abilities. Though LLM agent has strong reasoning performance, it still needs a memory system to provide long-term interaction ability with the external environment [35].

Existing memory systems [25, 39, 28, 21] for LLM agents provide basic memory storage functionality. These systems require agent developers to predefine memory storage structures, specify storage points within the workflow, and establish retrieval timing. Meanwhile, to improve structured memory organization, Mem0 [8], following the principles of RAG [9, 18, 30], incorporates graph databases for storage and retrieval processes. While graph databases provide structured organization for memory systems, their reliance on predefined schemas and relationships fundamentally limits their adaptability. This limitation manifests clearly in practical scenarios - when an agent learns a novel mathematical solution, current systems can only categorize and link this information within their preset framework, unable to forge innovative connections or develop new organizational patterns as knowledge evolves. Such rigid structures, coupled with fixed agent workflows, severely restrict these systems’ ability to generalize across new environments and maintain effectiveness in long-term interactions. The challenge becomes increasingly critical as LLM agents tackle more complex, open-ended tasks, where flexible knowledge organization and continuous adaptation are essential. Therefore, how to design a flexible and universal memory system that supports LLM agents’ long-term interactions remains a crucial challenge.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: LLM Agent Interaction

### Overview

The image is a diagram illustrating the interaction between an environment, LLM (Large Language Model) agents, and memory. It shows a cyclical flow of information between these three components.

### Components/Axes

* **Environment:** Represented by a globe icon with blue oceans and green landmasses.

* **LLM Agents:** Represented by a blue robot icon inside a speech bubble.

* **Memory:** Represented by a stack of three purple rectangles.

* **Interaction:** A bidirectional arrow connecting the Environment and LLM Agents.

* **Write:** A unidirectional arrow pointing from LLM Agents to Memory.

* **Read:** A unidirectional arrow pointing from Memory to LLM Agents.

### Detailed Analysis

* **Environment:** The globe icon suggests a real-world or simulated environment that the LLM agents interact with.

* **LLM Agents:** The robot icon represents the LLM agents, which are the central processing units in this diagram. They interact with the environment and the memory.

* **Memory:** The stack of rectangles represents a memory storage system where the LLM agents can write and read data.

* **Interaction:** The bidirectional arrow labeled "Interaction" indicates that the LLM agents can both receive information from and send information to the environment.

* **Write:** The unidirectional arrow labeled "Write" indicates that the LLM agents can store data in the memory.

* **Read:** The unidirectional arrow labeled "Read" indicates that the LLM agents can retrieve data from the memory.

### Key Observations

* The diagram shows a closed-loop system where the LLM agents interact with the environment, write data to memory, read data from memory, and then use that data to further interact with the environment.

* The LLM Agents are central to the diagram, acting as the intermediary between the Environment and Memory.

### Interpretation

The diagram illustrates a basic architecture for an LLM agent system. The LLM agents interact with an environment, learn from it, and store information in memory. This stored information can then be used to improve future interactions with the environment. The "Write" and "Read" operations highlight the LLM's ability to learn and adapt over time. The diagram suggests a continuous learning process where the LLM agents are constantly updating their knowledge based on their interactions with the environment and the data stored in memory. This model is applicable to various applications, such as robotics, natural language processing, and decision-making systems.

</details>

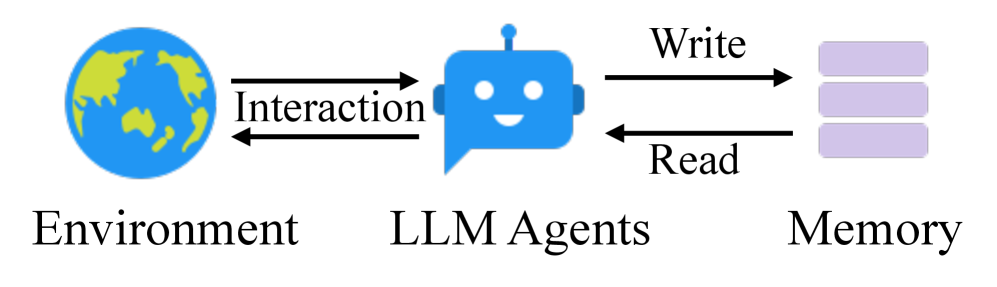

(a) Traditional memory system.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: LLM Agent Interaction with Environment and Memory

### Overview

The image is a diagram illustrating the interaction of LLM (Large Language Model) Agents with an environment and an agentic memory. It shows a cyclical flow of information between these three components.

### Components/Axes

* **Environment:** Represented by a globe icon with blue oceans and green landmasses.

* **LLM Agents:** Represented by a blue robot-like icon with a speech bubble.

* **Agentic Memory:** Represented by a blue robot-like icon with a speech bubble, connected to three horizontal memory blocks.

* **Interaction:** A bidirectional arrow connecting the Environment and LLM Agents, labeled "Interaction".

* **Write:** A unidirectional arrow pointing from LLM Agents to Agentic Memory, labeled "Write".

* **Read:** A unidirectional arrow pointing from Agentic Memory to LLM Agents, labeled "Read".

### Detailed Analysis

* The "Environment" interacts with the "LLM Agents" through a two-way "Interaction".

* The "LLM Agents" "Write" to the "Agentic Memory".

* The "Agentic Memory" is read by the "LLM Agents" through a "Read" operation.

* The "Agentic Memory" consists of three horizontal blocks, each with an arrow pointing towards the "LLM Agents", indicating the flow of information during the "Read" operation.

### Key Observations

* The diagram illustrates a closed-loop system where LLM Agents interact with the environment, store information in memory, and retrieve information from memory.

* The use of robot-like icons for LLM Agents and Agentic Memory suggests that these components are intelligent and capable of processing information.

### Interpretation

The diagram depicts a system where LLM Agents learn and adapt through interaction with their environment and by utilizing a memory component. The "Interaction" with the environment provides the agents with new information, which they can then "Write" to their "Agentic Memory". This stored information can then be "Read" by the agents to inform their future interactions with the environment. This cycle allows the agents to improve their performance over time by learning from their experiences. The three memory blocks suggest that the agentic memory may be structured or tiered in some way.

</details>

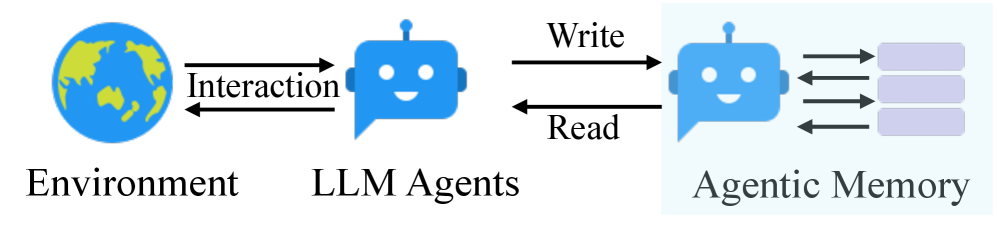

(b) Our proposed agentic memory.

Figure 1: Traditional memory systems require predefined memory access patterns specified in the workflow, limiting their adaptability to diverse scenarios. Contrastly, our A-Mem enhances the flexibility of LLM agents by enabling dynamic memory operations.

In this paper, we introduce a novel agentic memory system, named as A-Mem, for LLM agents that enables dynamic memory structuring without relying on static, predetermined memory operations. Our approach draws inspiration from the Zettelkasten method [15, 1], a sophisticated knowledge management system that creates interconnected information networks through atomic notes and flexible linking mechanisms. Our system introduces an agentic memory architecture that enables autonomous and flexible memory management for LLM agents. For each new memory, we construct comprehensive notes, which integrates multiple representations: structured textual attributes including several attributes and embedding vectors for similarity matching. Then A-Mem analyzes the historical memory repository to establish meaningful connections based on semantic similarities and shared attributes. This integration process not only creates new links but also enables dynamic evolution when new memories are incorporated, they can trigger updates to the contextual representations of existing memories, allowing the entire memories to continuously refine and deepen its understanding over time. The contributions are summarized as:

We present A-Mem, an agentic memory system for LLM agents that enables autonomous generation of contextual descriptions, dynamic establishment of memory connections, and intelligent evolution of existing memories based on new experiences. This system equips LLM agents with long-term interaction capabilities without requiring predetermined memory operations.

We design an agentic memory update mechanism where new memories automatically trigger two key operations: link generation and memory evolution. Link generation automatically establishes connections between memories by identifying shared attributes and similar contextual descriptions. Memory evolution enables existing memories to dynamically adapt as new experiences are analyzed, leading to the emergence of higher-order patterns and attributes.

We conduct comprehensive evaluations of our system using a long-term conversational dataset, comparing performance across six foundation models using six distinct evaluation metrics, demonstrating significant improvements. Moreover, we provide T-SNE visualizations to illustrate the structured organization of our agentic memory system.

2 Related Work

2.1 Memory for LLM Agents

Prior works on LLM agent memory systems have explored various mechanisms for memory management and utilization [23, 21, 8, 39]. Some approaches complete interaction storage, which maintains comprehensive historical records through dense retrieval models [39] or read-write memory structures [24]. Moreover, MemGPT [25] leverages cache-like architectures to prioritize recent information. Similarly, SCM [32] proposes a Self-Controlled Memory framework that enhances LLMs’ capability to maintain long-term memory through a memory stream and controller mechanism. However, these approaches face significant limitations in handling diverse real-world tasks. While they can provide basic memory functionality, their operations are typically constrained by predefined structures and fixed workflows. These constraints stem from their reliance on rigid operational patterns, particularly in memory writing and retrieval processes. Such inflexibility leads to poor generalization in new environments and limited effectiveness in long-term interactions. Therefore, designing a flexible and universal memory system that supports agents’ long-term interactions remains a crucial challenge.

2.2 Retrieval-Augmented Generation

Retrieval-Augmented Generation (RAG) has emerged as a powerful approach to enhance LLMs by incorporating external knowledge sources [18, 6, 10]. The standard RAG [37, 34] process involves indexing documents into chunks, retrieving relevant chunks based on semantic similarity, and augmenting the LLM’s prompt with this retrieved context for generation. Advanced RAG systems [20, 12] have evolved to include sophisticated pre-retrieval and post-retrieval optimizations. Building upon these foundations, recent researches has introduced agentic RAG systems that demonstrate more autonomous and adaptive behaviors in the retrieval process. These systems can dynamically determine when and what to retrieve [4, 14], generate hypothetical responses to guide retrieval, and iteratively refine their search strategies based on intermediate results [31, 29].

However, while agentic RAG approaches demonstrate agency in the retrieval phase by autonomously deciding when and what to retrieve [4, 14, 38], our agentic memory system exhibits agency at a more fundamental level through the autonomous evolution of its memory structure. Inspired by the Zettelkasten method, our system allows memories to actively generate their own contextual descriptions, form meaningful connections with related memories, and evolve both their content and relationships as new experiences emerge. This fundamental distinction in agency between retrieval versus storage and evolution distinguishes our approach from agentic RAG systems, which maintain static knowledge bases despite their sophisticated retrieval mechanisms.

3 Methodolodgy

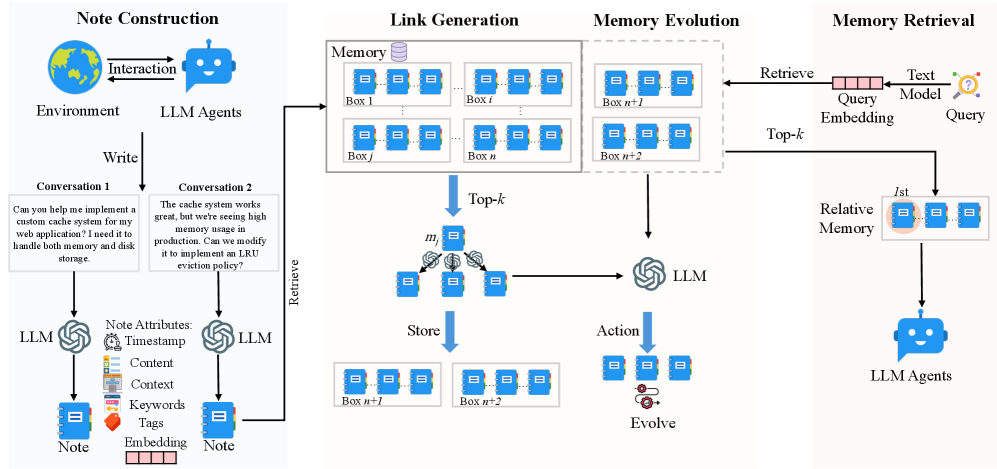

Our proposed agentic memory system draws inspiration from the Zettelkasten method, implementing a dynamic and self-evolving memory system that enables LLM agents to maintain long-term memory without predetermined operations. The system’s design emphasizes atomic note-taking, flexible linking mechanisms, and continuous evolution of knowledge structures.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: LLM Memory Management

### Overview

The image is a diagram illustrating the process of memory management using Large Language Models (LLMs). It is divided into four main sections: Note Construction, Link Generation, Memory Evolution, and Memory Retrieval. The diagram shows how user interactions are converted into notes, linked in memory, evolved over time, and retrieved when needed.

### Components/Axes

* **Titles:**

* Note Construction (Top-Left)

* Link Generation (Top-Center-Left)

* Memory Evolution (Top-Center-Right)

* Memory Retrieval (Top-Right)

* **Note Construction:**

* Environment: Depicted as a globe.

* LLM Agents: Depicted as a blue chatbot icon.

* Interaction: A bidirectional arrow between the Environment and LLM Agents.

* Write: A downward arrow indicating the writing process.

* Conversation 1: A text box containing the question, "Can you help me implement a custom cache system for my web application? I need it to handle both memory and disk storage."

* Conversation 2: A text box containing the statement, "The cache system works great, but we're seeing high memory usage in production. Can we modify it to implement an LRU eviction policy?"

* LLM: Represented by the LLM logo.

* Note Attributes:

* Timestamp: Clock icon.

* Content: List icon.

* Context: Document icon.

* Keywords: Tag icon.

* Tags: Orange tag icon.

* Embedding: A series of small rectangles.

* Note: Represented as a blue notebook icon.

* **Link Generation:**

* Memory: Represented as a database icon.

* Boxes: Labeled as Box 1, Box i, Box j, Box n. Each box contains three blue notebook icons.

* Top-k: A downward arrow indicating the top-k selection process.

* m: A node with three blue notebook icons, connected to other nodes via lines and small circular icons.

* Store: A downward arrow indicating the storing process.

* Boxes: Labeled as Box n+1, Box n+2. Each box contains three blue notebook icons.

* **Memory Evolution:**

* Boxes: Labeled as Box n+1, Box n+2. Each box contains three blue notebook icons.

* LLM: Represented by the LLM logo.

* Action: A downward arrow indicating the action process.

* Evolve: A node with three blue notebook icons and a gear icon.

* **Memory Retrieval:**

* Retrieve: An arrow pointing from the "Memory Evolution" section to a "Query Embedding" box.

* Query Embedding: A horizontal box labeled "Query Embedding" with "Text Model" above it.

* Query: A question mark icon.

* Top-k: An arrow pointing from the "Query Embedding" box to the "Relative Memory" box.

* Relative Memory: A box containing three blue notebook icons, with the leftmost icon highlighted with a pink circle and labeled "1st".

* LLM Agents: Depicted as a blue chatbot icon.

### Detailed Analysis or Content Details

* **Note Construction:** The process starts with an interaction between the environment and LLM agents. The agents write conversations, which are then processed by LLMs to create notes. These notes have attributes such as timestamp, content, context, keywords, tags, and embeddings.

* **Link Generation:** The notes are stored in memory boxes (Box 1 to Box n). A top-k selection process retrieves relevant notes, which are then stored in new memory boxes (Box n+1 and Box n+2).

* **Memory Evolution:** The notes in memory boxes (Box n+1 and Box n+2) are processed by an LLM, which takes action and evolves the notes.

* **Memory Retrieval:** A query is embedded using a text model. A top-k selection process retrieves relative memory, and the first (1st) item is highlighted. The retrieved information is then used by LLM agents.

### Key Observations

* The diagram illustrates a cyclical process of note creation, storage, evolution, and retrieval.

* LLMs play a central role in processing and evolving the notes.

* The top-k selection process is used in both link generation and memory retrieval.

* The diagram highlights the importance of note attributes in the memory management process.

### Interpretation

The diagram presents a high-level overview of how LLMs can be used for memory management. The process involves converting user interactions into structured notes, linking these notes in memory, evolving them over time, and retrieving them when needed. The use of top-k selection suggests a mechanism for prioritizing relevant information. The diagram emphasizes the importance of context and attributes in managing and retrieving information effectively. The cyclical nature of the process indicates a continuous learning and adaptation mechanism.

</details>

Figure 2: Our A-Mem architecture comprises three integral parts in memory storage. During note construction, the system processes new interaction memories and stores them as notes with multiple attributes. The link generation process first retrieves the most relevant historical memories and then employs an LLM to determine whether connections should be established between them. The concept of a ’box’ describes that related memories become interconnected through their similar contextual descriptions, analogous to the Zettelkasten method. However, our approach allows individual memories to exist simultaneously within multiple different boxes. During the memory retrieval stage, we extract query embeddings using a text encoding model and search the memory database for relevant matches. When related memory is retrieved, similar memories that are linked within the same box are also automatically accessed.

3.1 Note Construction

Building upon the Zettelkasten method’s principles of atomic note-taking and flexible organization, we introduce an LLM-driven approach to memory note construction. When an agent interacts with its environment, we construct structured memory notes that capture both explicit information and LLM-generated contextual understanding. Each memory note $m_{i}$ in our collection $\mathcal{M}=\{m_{1},m_{2},...,m_{N}\}$ is represented as:

$$

m_{i}=\{c_{i},t_{i},K_{i},G_{i},X_{i},e_{i},L_{i}\} \tag{1}

$$

where $c_{i}$ represents the original interaction content, $t_{i}$ is the timestamp of the interaction, $K_{i}$ denotes LLM-generated keywords that capture key concepts, $G_{i}$ contains LLM-generated tags for categorization, $X_{i}$ represents the LLM-generated contextual description that provides rich semantic understanding, and $L_{i}$ maintains the set of linked memories that share semantic relationships. To enrich each memory note with meaningful context beyond its basic content and timestamp, we leverage an LLM to analyze the interaction and generate these semantic components. The note construction process involves prompting the LLM with carefully designed templates $P_{s1}$ :

$$

K_{i},G_{i},X_{i}\leftarrow\text{LLM}(c_{i}\;\Vert t_{i}\;\Vert P_{s1}) \tag{2}

$$

Following the Zettelkasten principle of atomicity, each note captures a single, self-contained unit of knowledge. To enable efficient retrieval and linking, we compute a dense vector representation via a text encoder [27] that encapsulates all textual components of the note:

$$

e_{i}=f_{\text{enc}}[\;\text{concat}(c_{i},K_{i},G_{i},X_{i})\;] \tag{3}

$$

By using LLMs to generate enriched components, we enable autonomous extraction of implicit knowledge from raw interactions. The multi-faceted note structure ( $K_{i}$ , $G_{i}$ , $X_{i}$ ) creates rich representations that capture different aspects of the memory, facilitating nuanced organization and retrieval. Additionally, the combination of LLM-generated semantic components with dense vector representations provides both context and computationally efficient similarity matching.

3.2 Link Generation

Our system implements an autonomous link generation mechanism that enables new memory notes to form meaningful connections without predefined rules. When the constrctd memory note $m_{n}$ is added to the system, we first leverage its semantic embedding for similarity-based retrieval. For each existing memory note $m_{j}∈\mathcal{M}$ , we compute a similarity score:

$$

s_{n,j}=\frac{e_{n}\cdot e_{j}}{|e_{n}||e_{j}|} \tag{4}

$$

The system then identifies the top- $k$ most relevant memories:

$$

\mathcal{M}_{\text{near}}^{n}=\{m_{j}|\;\text{rank}(s_{n,j})\leq k,m_{j}\in\mathcal{M}\} \tag{5}

$$

Based on these candidate nearest memories, we prompt the LLM to analyze potential connections based on their potential common attributes. Formally, the link set of memory $m_{n}$ update like:

$$

L_{i}\leftarrow\text{LLM}(m_{n}\;\Vert\mathcal{M}_{\text{near}}^{n}\;\Vert P_{s2}) \tag{6}

$$

Each generated link $l_{i}$ is structured as: $L_{i}=\{m_{i},...,m_{k}\}$ . By using embedding-based retrieval as an initial filter, we enable efficient scalability while maintaining semantic relevance. A-Mem can quickly identify potential connections even in large memory collections without exhaustive comparison. More importantly, the LLM-driven analysis allows for nuanced understanding of relationships that goes beyond simple similarity metrics. The language model can identify subtle patterns, causal relationships, and conceptual connections that might not be apparent from embedding similarity alone. We implements the Zettelkasten principle of flexible linking while leveraging modern language models. The resulting network emerges organically from memory content and context, enabling natural knowledge organization.

3.3 Memory Evolution

After creating links for the new memory, A-Mem evolves the retrieved memories based on their textual information and relationships with the new memory. For each memory $m_{j}$ in the nearest neighbor set $\mathcal{M}_{\text{near}}^{n}$ , the system determines whether to update its context, keywords, and tags. This evolution process can be formally expressed as:

$$

m_{j}^{*}\leftarrow\text{LLM}(m_{n}\;\Vert\mathcal{M}_{\text{near}}^{n}\setminus m_{j}\;\Vert m_{j}\;\Vert P_{s3}) \tag{7}

$$

The evolved memory $m_{j}^{*}$ then replaces the original memory $m_{j}$ in the memory set $\mathcal{M}$ . This evolutionary approach enables continuous updates and new connections, mimicking human learning processes. As the system processes more memories over time, it develops increasingly sophisticated knowledge structures, discovering higher-order patterns and concepts across multiple memories. This creates a foundation for autonomous memory learning where knowledge organization becomes progressively richer through the ongoing interaction between new experiences and existing memories.

3.4 Retrieve Relative Memory

In each interaction, our A-Mem performs context-aware memory retrieval to provide the agent with relevant historical information. Given a query text $q$ from the current interaction, we first compute its dense vector representation using the same text encoder used for memory notes:

$$

e_{q}=f_{\text{enc}}(q) \tag{8}

$$

The system then computes similarity scores between the query embedding and all existing memory notes in $\mathcal{M}$ using cosine similarity:

$$

s_{q,i}=\frac{e_{q}\cdot e_{i}}{|e_{q}||e_{i}|},\text{where}\;e_{i}\in m_{i},\;\forall m_{i}\in\mathcal{M} \tag{9}

$$

Then we retrieve the k most relevant memories from the historical memory storage to construct a contextually appropriate prompt.

$$

\mathcal{M}_{\text{retrieved}}=\{m_{i}|\text{rank}(s_{q,i})\leq k,m_{i}\in\mathcal{M}\} \tag{10}

$$

These retrieved memories provide relevant historical context that helps the agent better understand and respond to the current interaction. The retrieved context enriches the agent’s reasoning process by connecting the current interaction with related past experiences stored in the memory system.

4 Experiment

4.1 Dataset and Evaluation

To evaluate the effectiveness of instruction-aware recommendation in long-term conversations, we utilize the LoCoMo dataset [22], which contains significantly longer dialogues compared to existing conversational datasets [36, 13]. While previous datasets contain dialogues with around 1K tokens over 4-5 sessions, LoCoMo features much longer conversations averaging 9K tokens spanning up to 35 sessions, making it particularly suitable for evaluating models’ ability to handle long-range dependencies and maintain consistency over extended conversations. The LoCoMo dataset comprises diverse question types designed to comprehensively evaluate different aspects of model understanding: (1) single-hop questions answerable from a single session; (2) multi-hop questions requiring information synthesis across sessions; (3) temporal reasoning questions testing understanding of time-related information; (4) open-domain knowledge questions requiring integration of conversation context with external knowledge; and (5) adversarial questions assessing models’ ability to identify unanswerable queries. In total, LoCoMo contains 7,512 question-answer pairs across these categories. Besides, we use a new dataset, named DialSim [16], to evaluate the effectiveness of our memory system. It is question-answering dataset derived from long-term multi-party dialogues. The dataset is derived from popular TV shows (Friends, The Big Bang Theory, and The Office), covering 1,300 sessions spanning five years, containing approximately 350,000 tokens, and including more than 1,000 questions per session from refined fan quiz website questions and complex questions generated from temporal knowledge graphs.

For comparison baselines, we compare to LoCoMo [22], ReadAgent [17], MemoryBank [39] and MemGPT [25]. The detailed introduction of baselines can be found in Appendix A.1 For evaluation, we employ two primary metrics: the F1 score to assess answer accuracy by balancing precision and recall, and BLEU-1 [26] to evaluate generated response quality by measuring word overlap with ground truth responses. Also, we report the average token length for answering one question. Besides reporting experiment results with four additional metrics (ROUGE-L, ROUGE-2, METEOR, and SBERT Similarity), we also present experimental outcomes using different foundation models including DeepSeek-R1-32B [11], Claude 3.0 Haiku [2], and Claude 3.5 Haiku [3] in Appendix A.3.

4.2 Implementation Details

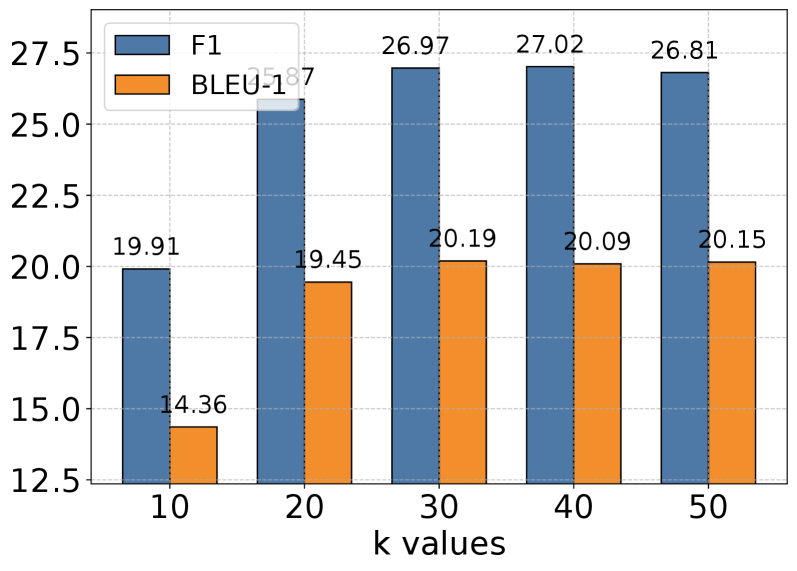

For all baselines and our proposed method, we maintain consistency by employing identical system prompts as detailed in Appendix B. The deployment of Qwen-1.5B/3B and Llama 3.2 1B/3B models is accomplished through local instantiation using Ollama https://github.com/ollama/ollama, with LiteLLM https://github.com/BerriAI/litellm managing structured output generation. For GPT models, we utilize the official structured output API. In our memory retrieval process, we primarily employ $k$ =10 for top- $k$ memory selection to maintain computational efficiency, while adjusting this parameter for specific categories to optimize performance. The detailed configurations of $k$ can be found in Appendix A.5. For text embedding, we implement the all-minilm-l6-v2 model across all experiments.

Table 1: Experimental results on LoCoMo dataset of QA tasks across five categories (Multi Hop, Temporal, Open Domain, Single Hop, and Adversial) using different methods. Results are reported in F1 and BLEU-1 (%) scores. The best performance is marked in bold, and our proposed method A-Mem (highlighted in gray) demonstrates competitive performance across six foundation language models.

| Model | Method | Category | Average | | | | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Multi Hop | Temporal | Open Domain | Single Hop | Adversial | Ranking | Token | | | | | | | | | |

| F1 | BLEU | F1 | BLEU | F1 | BLEU | F1 | BLEU | F1 | BLEU | F1 | BLEU | Length | | | |

| GPT | 4o-mini | LoCoMo | 25.02 | 19.75 | 18.41 | 14.77 | 12.04 | 11.16 | 40.36 | 29.05 | 69.23 | 68.75 | 2.4 | 2.4 | 16,910 |

| ReadAgent | 9.15 | 6.48 | 12.60 | 8.87 | 5.31 | 5.12 | 9.67 | 7.66 | 9.81 | 9.02 | 4.2 | 4.2 | 643 | | |

| MemoryBank | 5.00 | 4.77 | 9.68 | 6.99 | 5.56 | 5.94 | 6.61 | 5.16 | 7.36 | 6.48 | 4.8 | 4.8 | 432 | | |

| MemGPT | 26.65 | 17.72 | 25.52 | 19.44 | 9.15 | 7.44 | 41.04 | 34.34 | 43.29 | 42.73 | 2.4 | 2.4 | 16,977 | | |

| A-Mem | 27.02 | 20.09 | 45.85 | 36.67 | 12.14 | 12.00 | 44.65 | 37.06 | 50.03 | 49.47 | 1.2 | 1.2 | 2,520 | | |

| 4o | LoCoMo | 28.00 | 18.47 | 9.09 | 5.78 | 16.47 | 14.80 | 61.56 | 54.19 | 52.61 | 51.13 | 2.0 | 2.0 | 16,910 | |

| ReadAgent | 14.61 | 9.95 | 4.16 | 3.19 | 8.84 | 8.37 | 12.46 | 10.29 | 6.81 | 6.13 | 4.0 | 4.0 | 805 | | |

| MemoryBank | 6.49 | 4.69 | 2.47 | 2.43 | 6.43 | 5.30 | 8.28 | 7.10 | 4.42 | 3.67 | 5.0 | 5.0 | 569 | | |

| MemGPT | 30.36 | 22.83 | 17.29 | 13.18 | 12.24 | 11.87 | 60.16 | 53.35 | 34.96 | 34.25 | 2.4 | 2.4 | 16,987 | | |

| A-Mem | 32.86 | 23.76 | 39.41 | 31.23 | 17.10 | 15.84 | 48.43 | 42.97 | 36.35 | 35.53 | 1.6 | 1.6 | 1,216 | | |

| Qwen2.5 | 1.5b | LoCoMo | 9.05 | 6.55 | 4.25 | 4.04 | 9.91 | 8.50 | 11.15 | 8.67 | 40.38 | 40.23 | 3.4 | 3.4 | 16,910 |

| ReadAgent | 6.61 | 4.93 | 2.55 | 2.51 | 5.31 | 12.24 | 10.13 | 7.54 | 5.42 | 27.32 | 4.6 | 4.6 | 752 | | |

| MemoryBank | 11.14 | 8.25 | 4.46 | 2.87 | 8.05 | 6.21 | 13.42 | 11.01 | 36.76 | 34.00 | 2.6 | 2.6 | 284 | | |

| MemGPT | 10.44 | 7.61 | 4.21 | 3.89 | 13.42 | 11.64 | 9.56 | 7.34 | 31.51 | 28.90 | 3.4 | 3.4 | 16,953 | | |

| A-Mem | 18.23 | 11.94 | 24.32 | 19.74 | 16.48 | 14.31 | 23.63 | 19.23 | 46.00 | 43.26 | 1.0 | 1.0 | 1,300 | | |

| 3b | LoCoMo | 4.61 | 4.29 | 3.11 | 2.71 | 4.55 | 5.97 | 7.03 | 5.69 | 16.95 | 14.81 | 3.2 | 3.2 | 16,910 | |

| ReadAgent | 2.47 | 1.78 | 3.01 | 3.01 | 5.57 | 5.22 | 3.25 | 2.51 | 15.78 | 14.01 | 4.2 | 4.2 | 776 | | |

| MemoryBank | 3.60 | 3.39 | 1.72 | 1.97 | 6.63 | 6.58 | 4.11 | 3.32 | 13.07 | 10.30 | 4.2 | 4.2 | 298 | | |

| MemGPT | 5.07 | 4.31 | 2.94 | 2.95 | 7.04 | 7.10 | 7.26 | 5.52 | 14.47 | 12.39 | 2.4 | 2.4 | 16,961 | | |

| A-Mem | 12.57 | 9.01 | 27.59 | 25.07 | 7.12 | 7.28 | 17.23 | 13.12 | 27.91 | 25.15 | 1.0 | 1.0 | 1,137 | | |

| Llama 3.2 | 1b | LoCoMo | 11.25 | 9.18 | 7.38 | 6.82 | 11.90 | 10.38 | 12.86 | 10.50 | 51.89 | 48.27 | 3.4 | 3.4 | 16,910 |

| ReadAgent | 5.96 | 5.12 | 1.93 | 2.30 | 12.46 | 11.17 | 7.75 | 6.03 | 44.64 | 40.15 | 4.6 | 4.6 | 665 | | |

| MemoryBank | 13.18 | 10.03 | 7.61 | 6.27 | 15.78 | 12.94 | 17.30 | 14.03 | 52.61 | 47.53 | 2.0 | 2.0 | 274 | | |

| MemGPT | 9.19 | 6.96 | 4.02 | 4.79 | 11.14 | 8.24 | 10.16 | 7.68 | 49.75 | 45.11 | 4.0 | 4.0 | 16,950 | | |

| A-Mem | 19.06 | 11.71 | 17.80 | 10.28 | 17.55 | 14.67 | 28.51 | 24.13 | 58.81 | 54.28 | 1.0 | 1.0 | 1,376 | | |

| 3b | LoCoMo | 6.88 | 5.77 | 4.37 | 4.40 | 10.65 | 9.29 | 8.37 | 6.93 | 30.25 | 28.46 | 2.8 | 2.8 | 16,910 | |

| ReadAgent | 2.47 | 1.78 | 3.01 | 3.01 | 5.57 | 5.22 | 3.25 | 2.51 | 15.78 | 14.01 | 4.2 | 4.2 | 461 | | |

| MemoryBank | 6.19 | 4.47 | 3.49 | 3.13 | 4.07 | 4.57 | 7.61 | 6.03 | 18.65 | 17.05 | 3.2 | 3.2 | 263 | | |

| MemGPT | 5.32 | 3.99 | 2.68 | 2.72 | 5.64 | 5.54 | 4.32 | 3.51 | 21.45 | 19.37 | 3.8 | 3.8 | 16,956 | | |

| A-Mem | 17.44 | 11.74 | 26.38 | 19.50 | 12.53 | 11.83 | 28.14 | 23.87 | 42.04 | 40.60 | 1.0 | 1.0 | 1,126 | | |

4.3 Empricial Results

Performance Analysis. In our empirical evaluation, we compared A-Mem with four competitive baselines including LoCoMo [22], ReadAgent [17], MemoryBank [39], and MemGPT [25] on the LoCoMo dataset. For non-GPT foundation models, our A-Mem consistently outperforms all baselines across different categories, demonstrating the effectiveness of our agentic memory approach. For GPT-based models, while LoCoMo and MemGPT show strong performance in certain categories like Open Domain and Adversial tasks due to their robust pre-trained knowledge in simple fact retrieval, our A-Mem demonstrates superior performance in Multi-Hop tasks achieves at least two times better performance that require complex reasoning chains. In addition to experiments on the LoCoMo dataset, we also compare our method on the DialSim dataset against LoCoMo and MemGPT. A-Mem consistently outperforms all baselines across evaluation metrics, achieving an F1 score of 3.45 (a 35% improvement over LoCoMo’s 2.55 and 192% higher than MemGPT’s 1.18). The effectiveness of A-Mem stems from its novel agentic memory architecture that enables dynamic and structured memory management. Unlike traditional approaches that use static memory operations, our system creates interconnected memory networks through atomic notes with rich contextual descriptions, enabling more effective multi-hop reasoning. The system’s ability to dynamically establish connections between memories based on shared attributes and continuously update existing memory descriptions with new contextual information allows it to better capture and utilize the relationships between different pieces of information.

Table 2: Comparison of different memory mechanisms across multiple evaluation metrics on DialSim [16]. Higher scores indicate better performance, with A-Mem showing superior results across all metrics.

| Method | F1 | BLEU-1 | ROUGE-L | ROUGE-2 | METEOR | SBERT Similarity |

| --- | --- | --- | --- | --- | --- | --- |

| LoCoMo | 2.55 | 3.13 | 2.75 | 0.90 | 1.64 | 15.76 |

| MemGPT | 1.18 | 1.07 | 0.96 | 0.42 | 0.95 | 8.54 |

| A-Mem | 3.45 | 3.37 | 3.54 | 3.60 | 2.05 | 19.51 |

Cost-Efficiency Analysis. A-Mem demonstrates significant computational and cost efficiency alongside strong performance. The system requires approximately 1,200 tokens per memory operation, achieving an 85-93% reduction in token usage compared to baseline methods (LoCoMo and MemGPT with 16,900 tokens) through our selective top-k retrieval mechanism. This substantial token reduction directly translates to lower operational costs, with each memory operation costing less than $0.0003 when using commercial API services—making large-scale deployments economically viable. Processing times average 5.4 seconds using GPT-4o-mini and only 1.1 seconds with locally-hosted Llama 3.2 1B on a single GPU. Despite requiring multiple LLM calls during memory processing, A-Mem maintains this cost-effective resource utilization while consistently outperforming baseline approaches across all foundation models tested, particularly doubling performance on complex multi-hop reasoning tasks. This balance of low computational cost and superior reasoning capability highlights A-Mem ’s practical advantage for deployment in the real world.

Table 3: An ablation study was conducted to evaluate our proposed method against the GPT-4o-mini base model. The notation ’w/o’ indicates experiments where specific modules were removed. The abbreviations LG and ME denote the link generation module and memory evolution module, respectively.

| Method | Category | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Multi Hop | Temporal | Open Domain | Single Hop | Adversial | | | | | | |

| F1 | BLEU-1 | F1 | BLEU-1 | F1 | BLEU-1 | F1 | BLEU-1 | F1 | BLEU-1 | |

| w/o LG & ME | 9.65 | 7.09 | 24.55 | 19.48 | 7.77 | 6.70 | 13.28 | 10.30 | 15.32 | 18.02 |

| w/o ME | 21.35 | 15.13 | 31.24 | 27.31 | 10.13 | 10.85 | 39.17 | 34.70 | 44.16 | 45.33 |

| A-Mem | 27.02 | 20.09 | 45.85 | 36.67 | 12.14 | 12.00 | 44.65 | 37.06 | 50.03 | 49.47 |

4.4 Ablation Study

To evaluate the effectiveness of the Link Generation (LG) and Memory Evolution (ME) modules, we conduct the ablation study by systematically removing key components of our model. When both LG and ME modules are removed, the system exhibits substantial performance degradation, particularly in Multi Hop reasoning and Open Domain tasks. The system with only LG active (w/o ME) shows intermediate performance levels, maintaining significantly better results than the version without both modules, which demonstrates the fundamental importance of link generation in establishing memory connections. Our full model, A-Mem, consistently achieves the best performance across all evaluation categories, with particularly strong results in complex reasoning tasks. These results reveal that while the link generation module serves as a critical foundation for memory organization, the memory evolution module provides essential refinements to the memory structure. The ablation study validates our architectural design choices and highlights the complementary nature of these two modules in creating an effective memory system.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: F1 and BLEU Scores vs. k Values

### Overview

The image is a bar chart comparing F1 and BLEU scores for different values of 'k'. The x-axis represents 'k values' ranging from 10 to 50, while the y-axis represents the scores, ranging from 12.5 to 27.5. Two data series are displayed: F1 (blue) and BLEU (orange).

### Components/Axes

* **Title:** There is no explicit title.

* **X-axis:** 'k values' with markers at 10, 20, 30, 40, and 50.

* **Y-axis:** Numerical scale ranging from 12.5 to 27.5, with increments of 2.5.

* **Legend:** Located in the top-left corner.

* Blue: F1

* Orange: BLEU

* **Gridlines:** Horizontal gridlines are present to aid in value estimation.

### Detailed Analysis

The chart presents F1 and BLEU scores for k values of 10, 20, 30, 40, and 50.

* **F1 (Blue):**

* k=10: 19.91

* k=20: 25.87

* k=30: 26.97

* k=40: 27.02

* k=50: 26.81

The F1 score increases significantly from k=10 to k=20, then shows a slight increase to k=40, followed by a minor decrease at k=50.

* **BLEU (Orange):**

* k=10: 14.36

* k=20: 19.45

* k=30: 20.19

* k=40: 20.09

* k=50: 20.15

The BLEU score increases from k=10 to k=30, then plateaus with minor fluctuations between k=30 and k=50.

### Key Observations

* The F1 score is consistently higher than the BLEU score for all k values.

* The F1 score shows a more pronounced increase with increasing k values compared to the BLEU score.

* The BLEU score plateaus after k=30, suggesting diminishing returns for higher k values.

### Interpretation

The chart suggests that increasing the 'k value' initially improves both F1 and BLEU scores. However, the BLEU score plateaus after k=30, while the F1 score reaches its peak at k=40. This indicates that there is an optimal 'k value' for maximizing the F1 score, beyond which further increases in 'k' do not significantly improve performance and may even lead to a slight decrease. The F1 score appears to be more sensitive to changes in 'k' compared to the BLEU score. The data suggests that a 'k value' around 30-40 might be optimal for this particular scenario, balancing both F1 and BLEU scores.

</details>

(a) Multi Hop

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: F1 and BLEU-13 Scores vs. k Values

### Overview

The image is a bar chart comparing F1 and BLEU-13 scores for different values of 'k'. The chart displays two sets of vertical bars for each 'k' value, one representing the F1 score (blue) and the other representing the BLEU-13 score (orange). The x-axis represents 'k values', and the y-axis represents the scores.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:**

* Label: "k values"

* Values: 10, 20, 30, 40, 50

* **Y-axis:**

* Scale: 35.0 to 47.5, incrementing by 2.5

* **Legend:** Located in the top-left corner.

* Blue bar: "F1"

* Orange bar: "BLEU-13"

* **Grid:** The chart has a light gray grid in the background.

### Detailed Analysis

The chart presents F1 and BLEU-13 scores for k values of 10, 20, 30, 40, and 50.

**F1 Scores (Blue Bars):**

* k = 10: 43.60

* k = 20: 45.03

* k = 30: 45.22

* k = 40: 45.85

* k = 50: 45.60

Trend: The F1 score generally increases from k=10 to k=40, then slightly decreases at k=50.

**BLEU-13 Scores (Orange Bars):**

* k = 10: 35.53

* k = 20: 35.85

* k = 30: 36.44

* k = 40: 36.67

* k = 50: 35.76

Trend: The BLEU-13 score increases from k=10 to k=40, then decreases at k=50.

### Key Observations

* The F1 scores are consistently higher than the BLEU-13 scores for all 'k' values.

* Both F1 and BLEU-13 scores peak around k=40.

* The F1 score shows a more pronounced increase from k=10 to k=20 compared to the other intervals.

### Interpretation

The chart suggests that increasing the 'k' value initially improves both F1 and BLEU-13 scores, indicating better performance up to a certain point. The slight decrease in scores at k=50 suggests that there might be a point of diminishing returns or even a negative impact on performance with excessively high 'k' values. The F1 score, being consistently higher, might be a more robust metric in this context compared to BLEU-13. The optimal 'k' value appears to be around 40, where both metrics achieve relatively high scores.

</details>

(b) Temporal

<details>

<summary>x8.png Details</summary>

### Visual Description

## Bar Chart: F1 and BLEU-1 Scores vs. k Values

### Overview

The image is a bar chart comparing F1 and BLEU-1 scores for different values of 'k'. The x-axis represents 'k values' ranging from 10 to 50, and the y-axis represents the score. Each 'k value' has two bars, one for F1 score (blue) and one for BLEU-1 score (orange). Numerical values are displayed above each bar.

### Components/Axes

* **X-axis:** 'k values' with markers at 10, 20, 30, 40, and 50.

* **Y-axis:** Score, ranging from 6 to 14. No explicit units are given.

* **Legend:** Located in the top-left corner.

* Blue bar: F1

* Orange bar: BLEU-1

* **Gridlines:** Horizontal gridlines are present at intervals of 2, starting from 6.

### Detailed Analysis

The chart presents F1 and BLEU-1 scores for k values of 10, 20, 30, 40, and 50.

* **k = 10:**

* F1 (blue): 7.38

* BLEU-1 (orange): 7.03

* **k = 20:**

* F1 (blue): 10.29

* BLEU-1 (orange): 9.61

* **k = 30:**

* F1 (blue): 12.24

* BLEU-1 (orange): 10.57

* **k = 40:**

* F1 (blue): 10.35

* BLEU-1 (orange): 9.76

* **k = 50:**

* F1 (blue): 12.11

* BLEU-1 (orange): 12.00

**Trends:**

* **F1 Score:** The F1 score generally increases from k=10 to k=30, then decreases at k=40, and increases again at k=50.

* **BLEU-1 Score:** The BLEU-1 score generally increases from k=10 to k=30, then decreases at k=40, and increases again at k=50.

### Key Observations

* For all k values, the F1 score is higher than the BLEU-1 score, except at k=50 where the BLEU-1 score is slightly lower.

* The highest F1 score is observed at k=30 (12.24).

* The highest BLEU-1 score is observed at k=50 (12.00).

* Both F1 and BLEU-1 scores show a similar trend, increasing initially, then decreasing, and increasing again.

### Interpretation

The chart compares the performance of a system using F1 and BLEU-1 metrics for different values of 'k'. The data suggests that increasing 'k' initially improves both F1 and BLEU-1 scores, but there's a point (around k=40) where performance dips before recovering at k=50. The optimal 'k' value, based on this data, appears to be around 30 for F1 and 50 for BLEU-1. The relationship between F1 and BLEU-1 is generally consistent, with F1 scores being slightly higher than BLEU-1 scores for most 'k' values. This could indicate that the system is better at balancing precision and recall (F1) than at matching n-grams (BLEU-1), except at k=50 where they are nearly equal.

</details>

(c) Open Domain

<details>

<summary>x9.png Details</summary>

### Visual Description

## Bar Chart: F1 and BLEU-1 Scores vs. k Values

### Overview

The image is a bar chart comparing F1 and BLEU-1 scores for different values of 'k'. The x-axis represents 'k values' ranging from 10 to 50, and the y-axis represents the scores. The chart displays two data series: F1 scores (represented by blue bars) and BLEU-1 scores (represented by orange bars).

### Components/Axes

* **X-axis:** 'k values' with markers at 10, 20, 30, 40, and 50.

* **Y-axis:** Numerical scale ranging from approximately 25 to 45, with gridlines at intervals of 5.

* **Legend (top-left):**

* Blue: F1

* Orange: BLEU-1

### Detailed Analysis

* **F1 (Blue Bars):** The F1 score generally increases as the 'k value' increases.

* k = 10: F1 = 31.15

* k = 20: F1 = 33.67

* k = 30: F1 = 38.15

* k = 40: F1 = 41.55

* k = 50: F1 = 44.55

* **BLEU-1 (Orange Bars):** The BLEU-1 score also increases as the 'k value' increases.

* k = 10: BLEU-1 = 25.43

* k = 20: BLEU-1 = 28.31

* k = 30: BLEU-1 = 32.12

* k = 40: BLEU-1 = 34.32

* k = 50: BLEU-1 = 37.02

### Key Observations

* The F1 score is consistently higher than the BLEU-1 score for all 'k values'.

* Both F1 and BLEU-1 scores show a positive correlation with 'k values'.

* The increase in F1 score appears to be more pronounced than the increase in BLEU-1 score as 'k' increases.

### Interpretation

The chart suggests that increasing the 'k value' improves both F1 and BLEU-1 scores, indicating better performance in whatever task these metrics are evaluating. The F1 score consistently outperforms the BLEU-1 score, implying that the system or model being evaluated performs better according to the F1 metric. The increasing trend suggests that further increasing 'k' might lead to even higher scores, although this is not explicitly shown in the chart. The relationship between 'k' and these metrics is likely related to the specific algorithm or model being used, and further investigation would be needed to understand the underlying reasons for this trend.

</details>

(d) Single Hop

<details>

<summary>x10.png Details</summary>

### Visual Description

## Bar Chart: F1 and BLEU-1 Scores vs. k Values

### Overview

The image is a bar chart comparing F1 and BLEU-1 scores for different values of 'k'. The chart displays two sets of bars for each k value, one representing the F1 score (blue) and the other representing the BLEU-1 score (orange). The x-axis represents 'k values', and the y-axis represents the score.

### Components/Axes

* **X-axis:** 'k values' with markers at 10, 20, 30, 40, and 50.

* **Y-axis:** Numerical scale ranging from approximately 28 to 52, with no explicit label.

* **Legend:** Located in the top-left corner, indicating that the blue bars represent 'F1' and the orange bars represent 'BLEU-1'.

* **Gridlines:** Horizontal dashed gridlines are present.

### Detailed Analysis

The chart presents the following data points:

* **k = 10:**

* F1 (blue): 30.29

* BLEU-1 (orange): 29.49

* **k = 20:**

* F1 (blue): 39.11

* BLEU-1 (orange): 38.35

* **k = 30:**

* F1 (blue): 43.86

* BLEU-1 (orange): 43.19

* **k = 40:**

* F1 (blue): 50.03

* BLEU-1 (orange): 49.47

* **k = 50:**

* F1 (blue): 47.76

* BLEU-1 (orange): 47.24

**Trend Verification:**

* **F1 (blue):** Generally increases from k=10 to k=40, then decreases slightly at k=50.

* **BLEU-1 (orange):** Generally increases from k=10 to k=40, then decreases slightly at k=50.

### Key Observations

* Both F1 and BLEU-1 scores increase as 'k' increases from 10 to 40.

* Both scores peak at k=40 and then slightly decrease at k=50.

* The F1 score is consistently slightly higher than the BLEU-1 score for each 'k' value.

### Interpretation

The chart suggests that increasing the value of 'k' initially improves both F1 and BLEU-1 scores, indicating better performance up to a point. However, beyond k=40, the performance starts to decline slightly, suggesting that there might be an optimal value for 'k' around 40. The consistent difference between F1 and BLEU-1 scores might indicate inherent differences in what these metrics capture, or it could be a characteristic of the specific model or task being evaluated.

</details>

(e) Adversarial

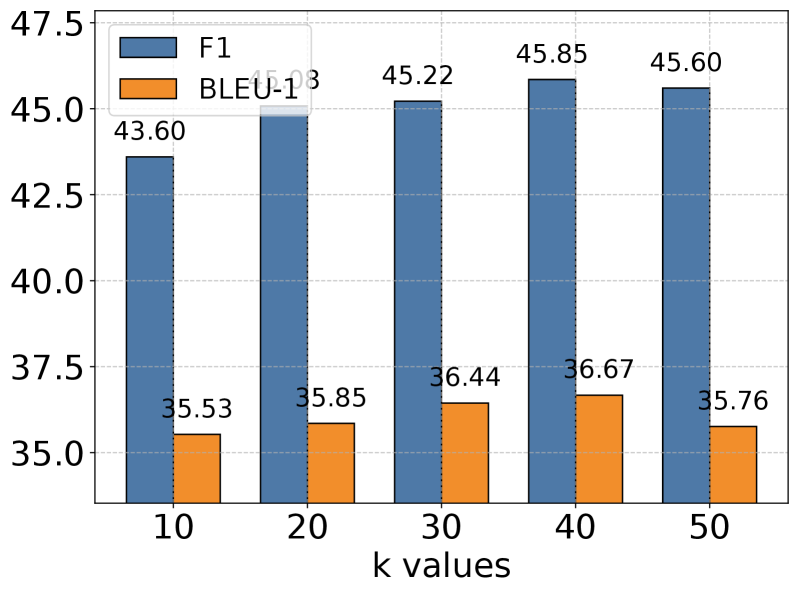

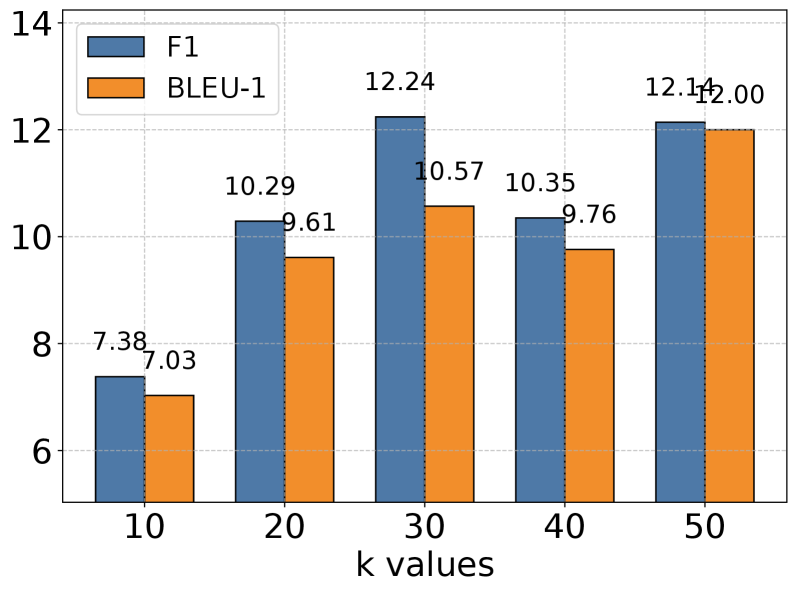

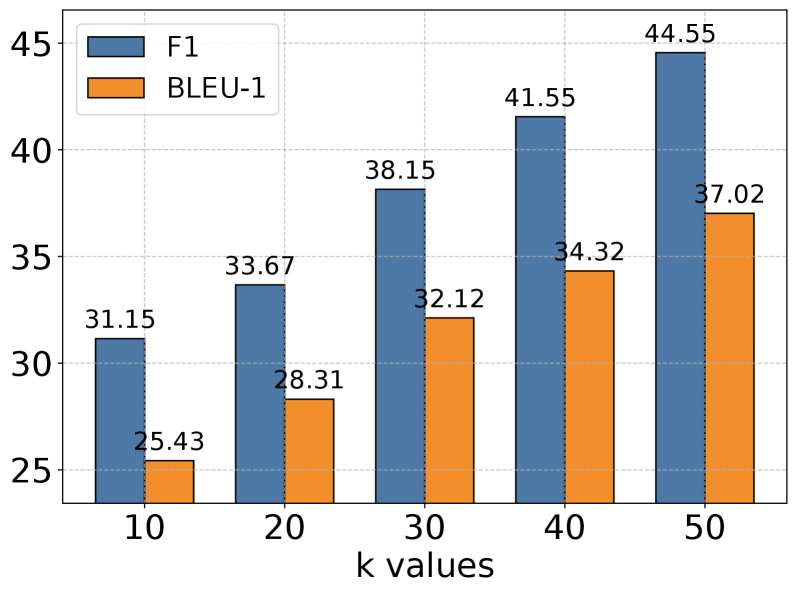

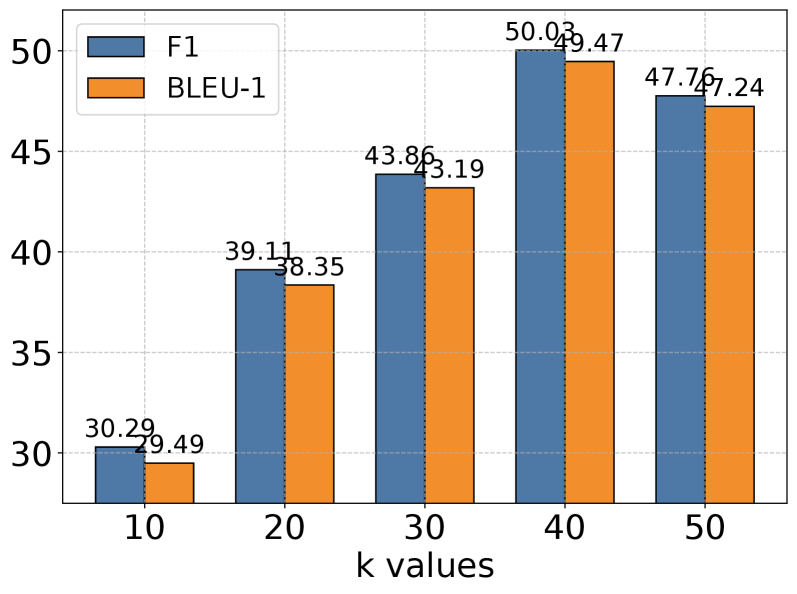

Figure 3: Impact of memory retrieval parameter k across different task categories with GPT-4o-mini as the base model. While larger k values generally improve performance by providing richer historical context, the gains diminish beyond certain thresholds, suggesting a trade-off between context richness and effective information processing. This pattern is consistent across all evaluation categories, indicating the importance of balanced context retrieval for optimal performance.

4.5 Hyperparameter Analysis

We conducted extensive experiments to analyze the impact of the memory retrieval parameter k, which controls the number of relevant memories retrieved for each interaction. As shown in Figure 3, we evaluated performance across different k values (10, 20, 30, 40, 50) on five categories of tasks using GPT-4o-mini as our base model. The results reveal an interesting pattern: while increasing k generally leads to improved performance, this improvement gradually plateaus and sometimes slightly decreases at higher values. This trend is particularly evident in Multi Hop and Open Domain tasks. The observation suggests a delicate balance in memory retrieval - while larger k values provide richer historical context for reasoning, they may also introduce noise and challenge the model’s capacity to process longer sequences effectively. Our analysis indicates that moderate k values strike an optimal balance between context richness and information processing efficiency.

Table 4: Comparison of memory usage and retrieval time across different memory methods and scales.

| Memory Size | Method | Memory Usage (MB) | Retrieval Time ( $\mu\text{s}$ ) |

| --- | --- | --- | --- |

| 1,000 | A-Mem | 1.46 | 0.31 0.30 |

| MemoryBank [39] | 1.46 | 0.24 0.20 | |

| ReadAgent [17] | 1.46 | 43.62 8.47 | |

| 10,000 | A-Mem | 14.65 | 0.38 0.25 |

| MemoryBank [39] | 14.65 | 0.26 0.13 | |

| ReadAgent [17] | 14.65 | 484.45 93.86 | |

| 100,000 | A-Mem | 146.48 | 1.40 0.49 |

| MemoryBank [39] | 146.48 | 0.78 0.26 | |

| ReadAgent [17] | 146.48 | 6,682.22 111.63 | |

| 1,000,000 | A-Mem | 1464.84 | 3.70 0.74 |

| MemoryBank [39] | 1464.84 | 1.91 0.31 | |

| ReadAgent [17] | 1464.84 | 120,069.68 1,673.39 | |

4.6 Scaling Analysis

To evaluate storage costs with accumulating memory, we examined the relationship between storage size and retrieval time across our A-Mem system and two baseline approaches: MemoryBank [39] and ReadAgent [17]. We evaluated these three memory systems with identical memory content across four scale points, increasing the number of entries by a factor of 10 at each step (from 1,000 to 10,000, 100,000, and finally 1,000,000 entries). The experimental results reveal key insights about our A-Mem system’s scaling properties: In terms of space complexity, all three systems exhibit identical linear memory usage scaling ( $O(N)$ ), as expected for vector-based retrieval systems. This confirms that A-Mem introduces no additional storage overhead compared to baseline approaches. For retrieval time, A-Mem demonstrates excellent efficiency with minimal increases as memory size grows. Even when scaling to 1 million memories, A-Mem ’s retrieval time increases only from 0.31 $\mu\text{s}$ to 3.70 $\mu\text{s}$ , representing exceptional performance. While MemoryBank shows slightly faster retrieval times, A-Mem maintains comparable performance while providing richer memory representations and functionality. Based on our space complexity and retrieval time analysis, we conclude that A-Mem ’s retrieval mechanisms maintain excellent efficiency even at large scales. The minimal growth in retrieval time across memory sizes addresses concerns about efficiency in large-scale memory systems, demonstrating that A-Mem provides a highly scalable solution for long-term conversation management. This unique combination of efficiency, scalability, and enhanced memory capabilities positions A-Mem as a significant advancement in building powerful and long-term memory mechanism for LLM Agents.

4.7 Memory Analysis

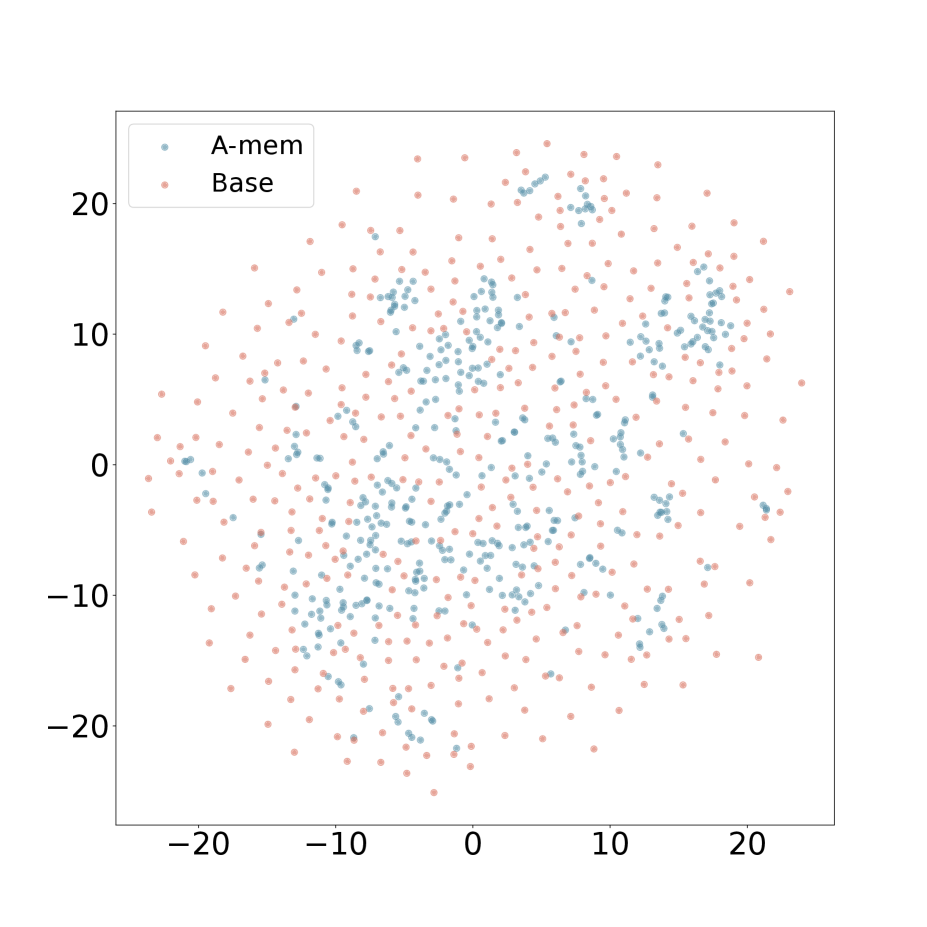

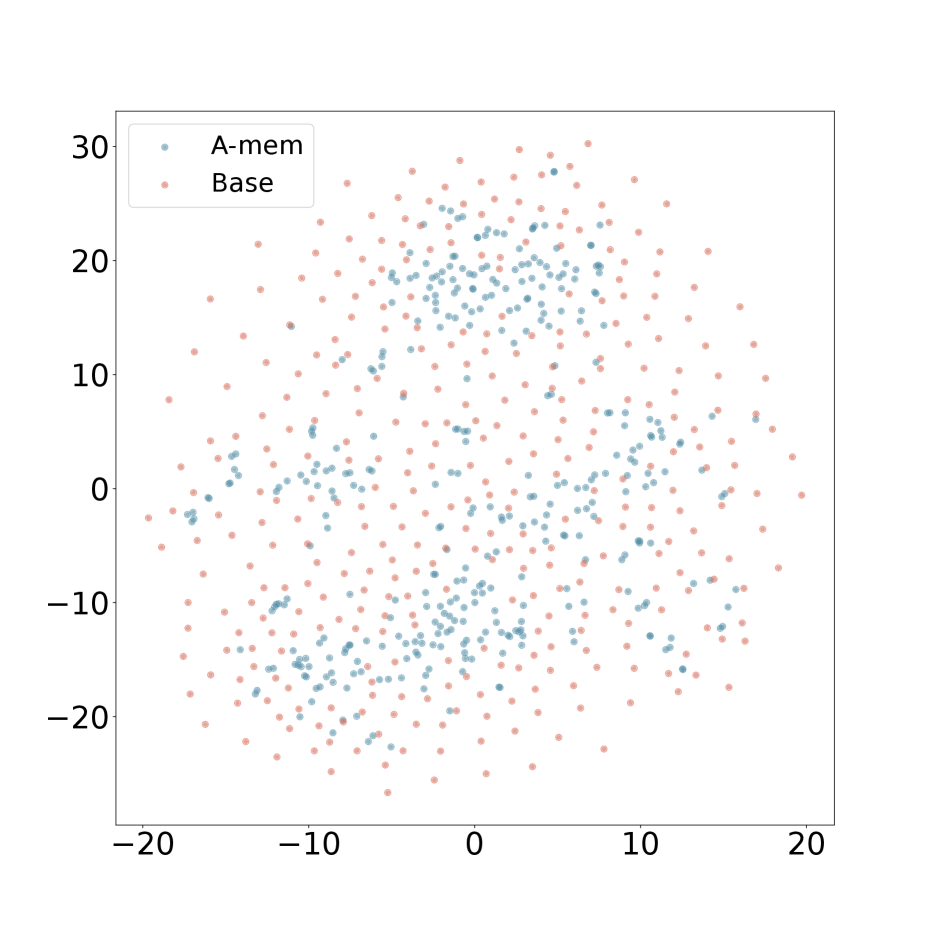

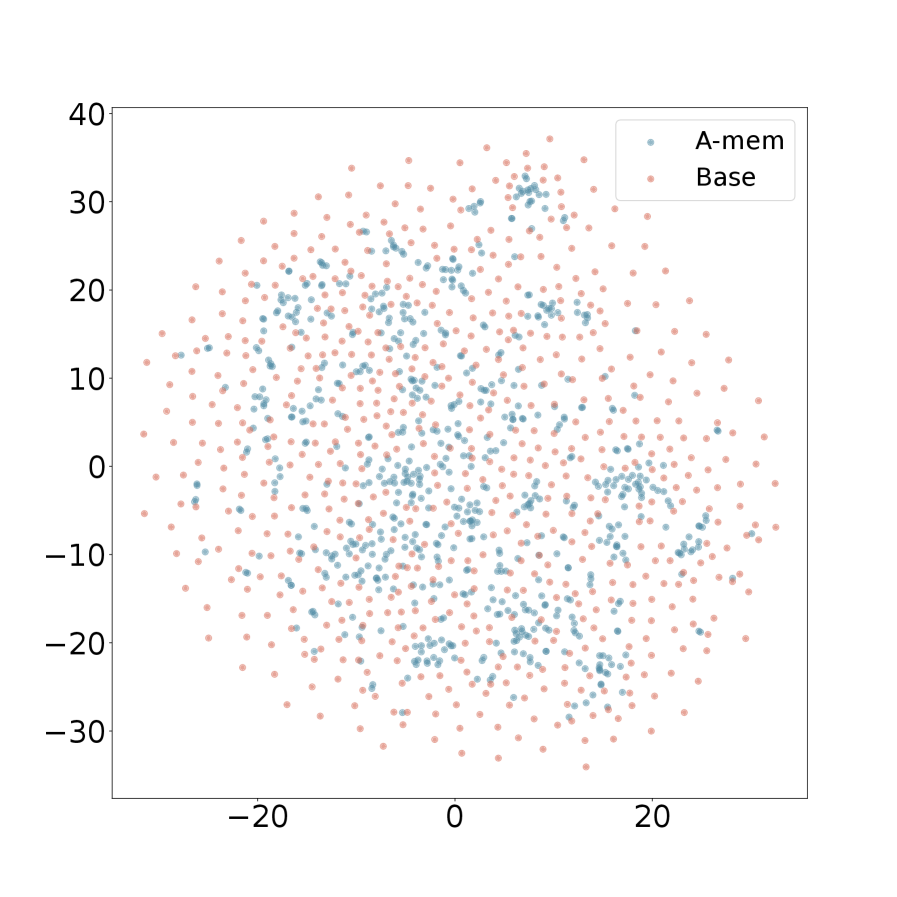

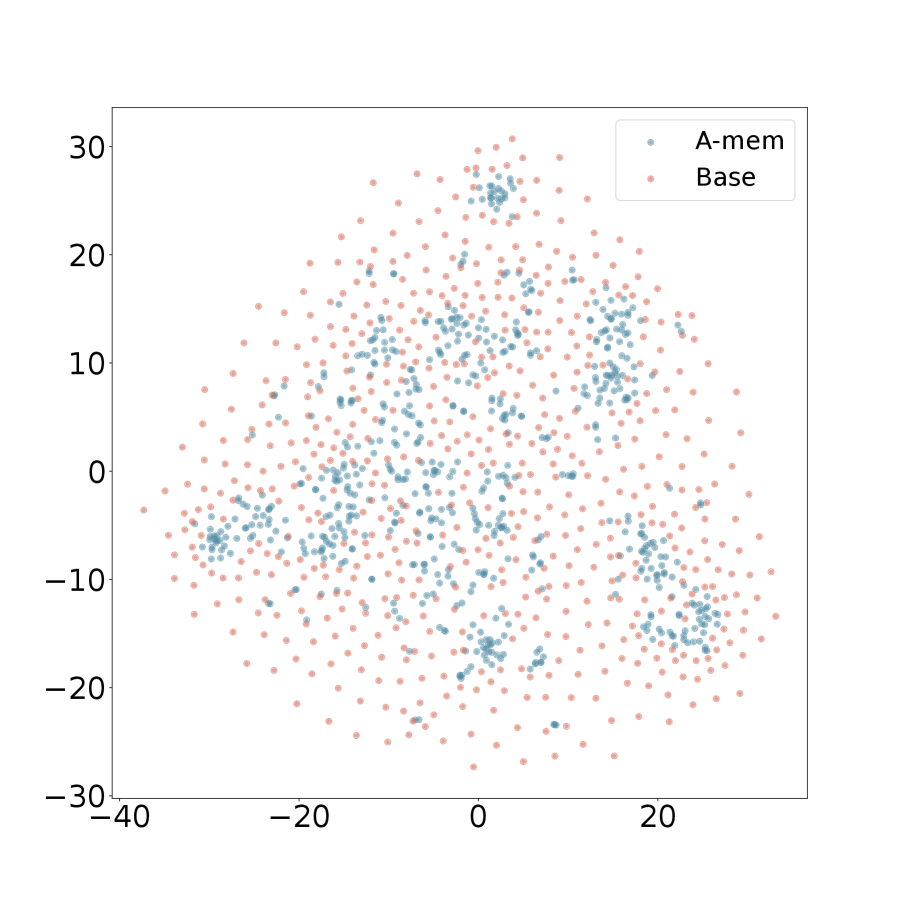

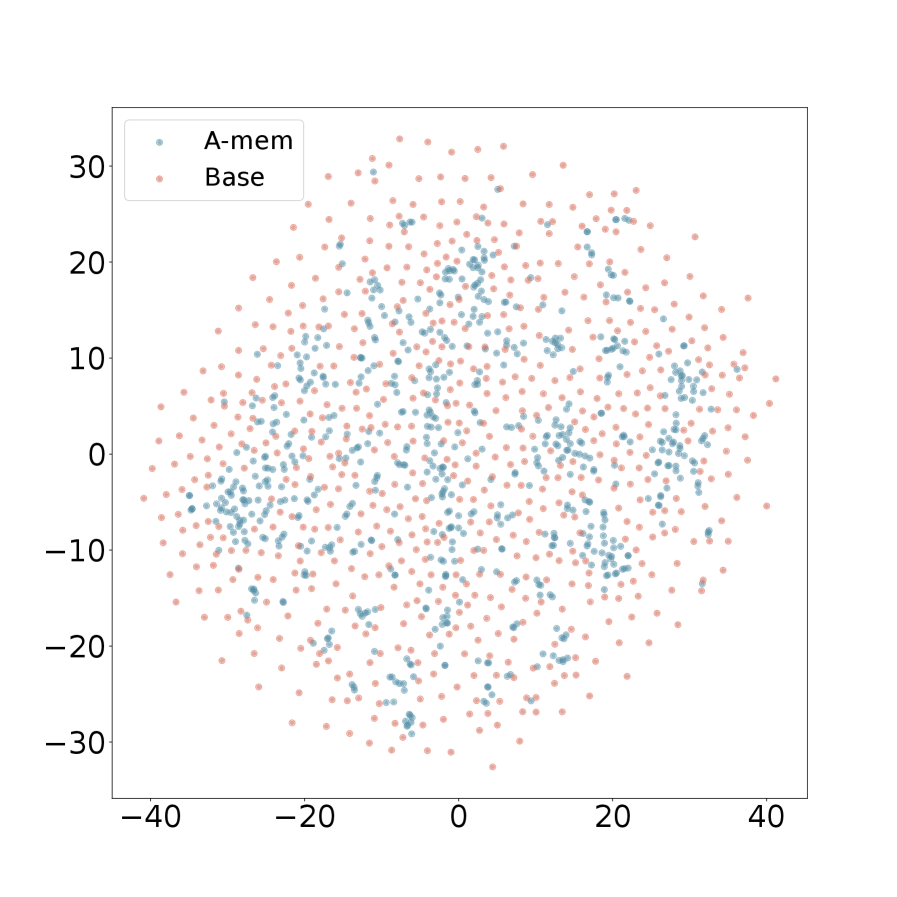

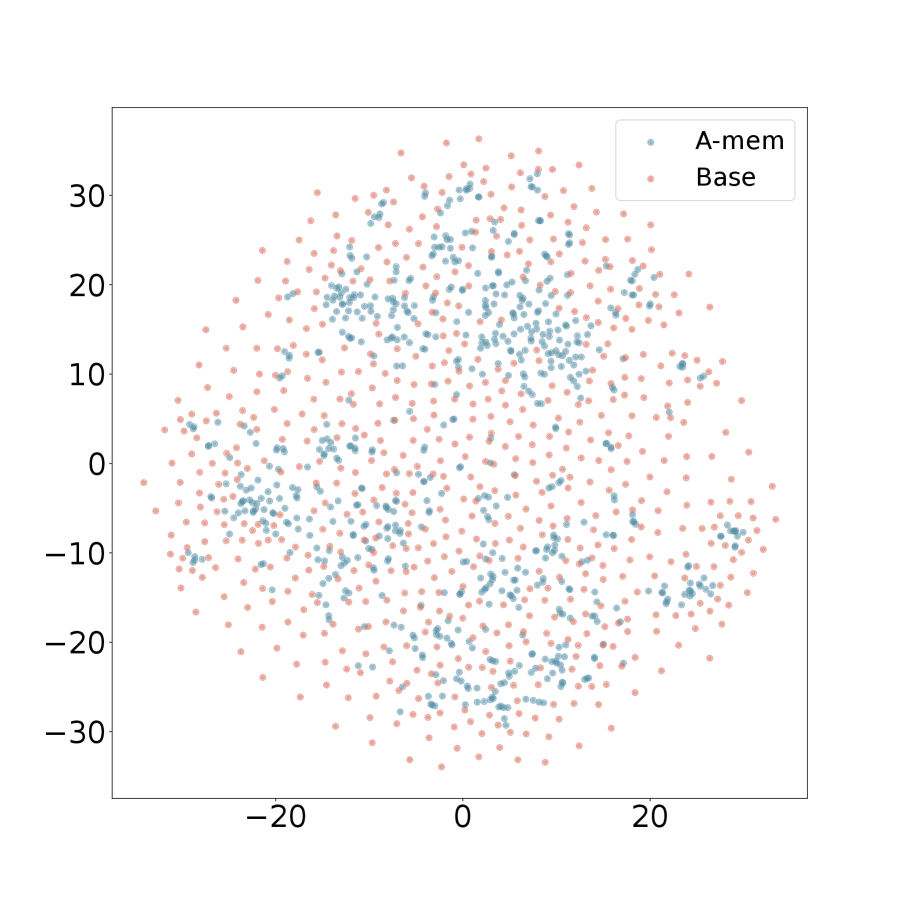

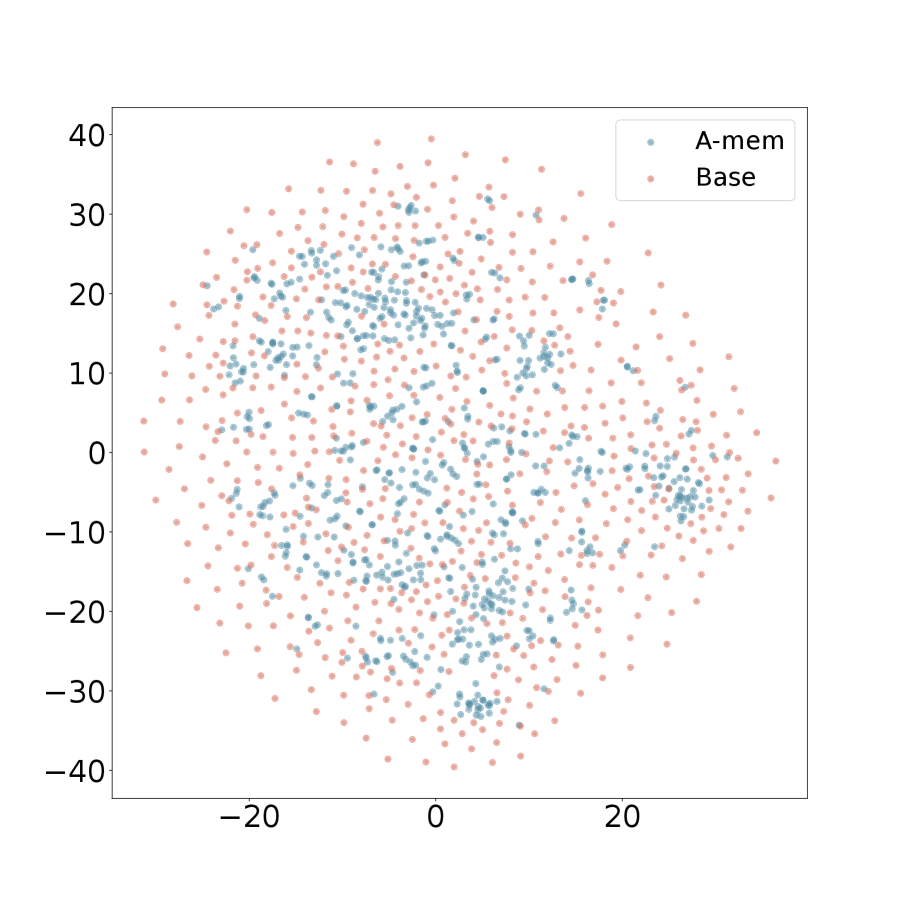

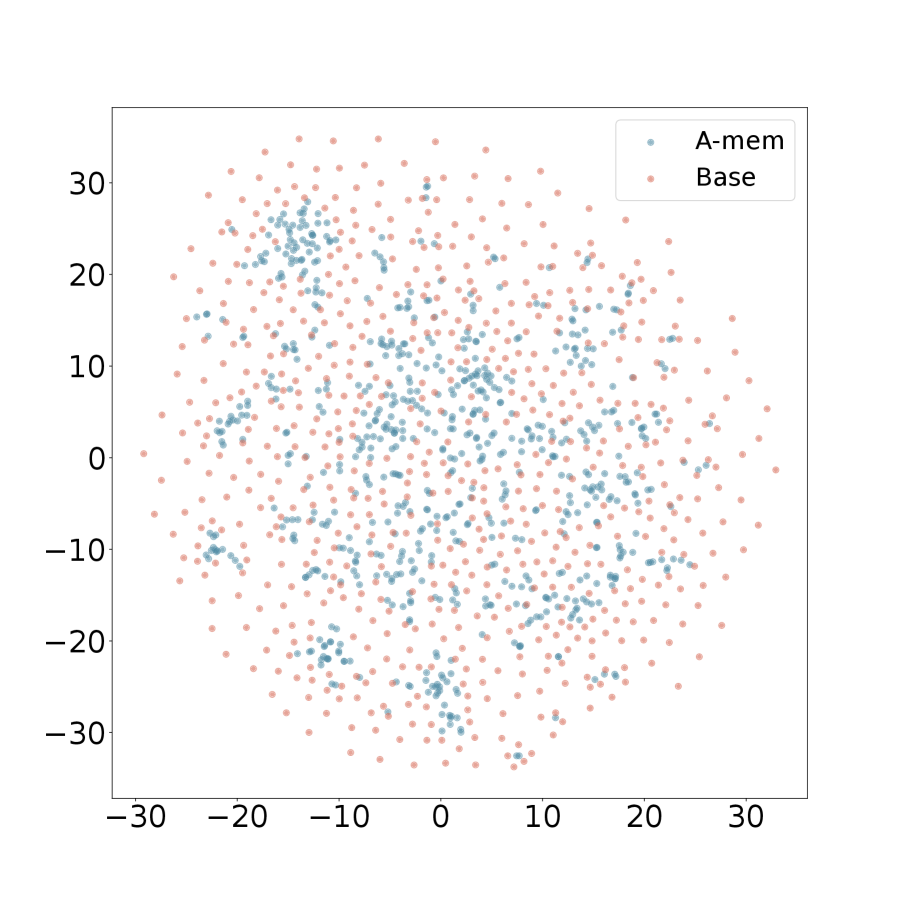

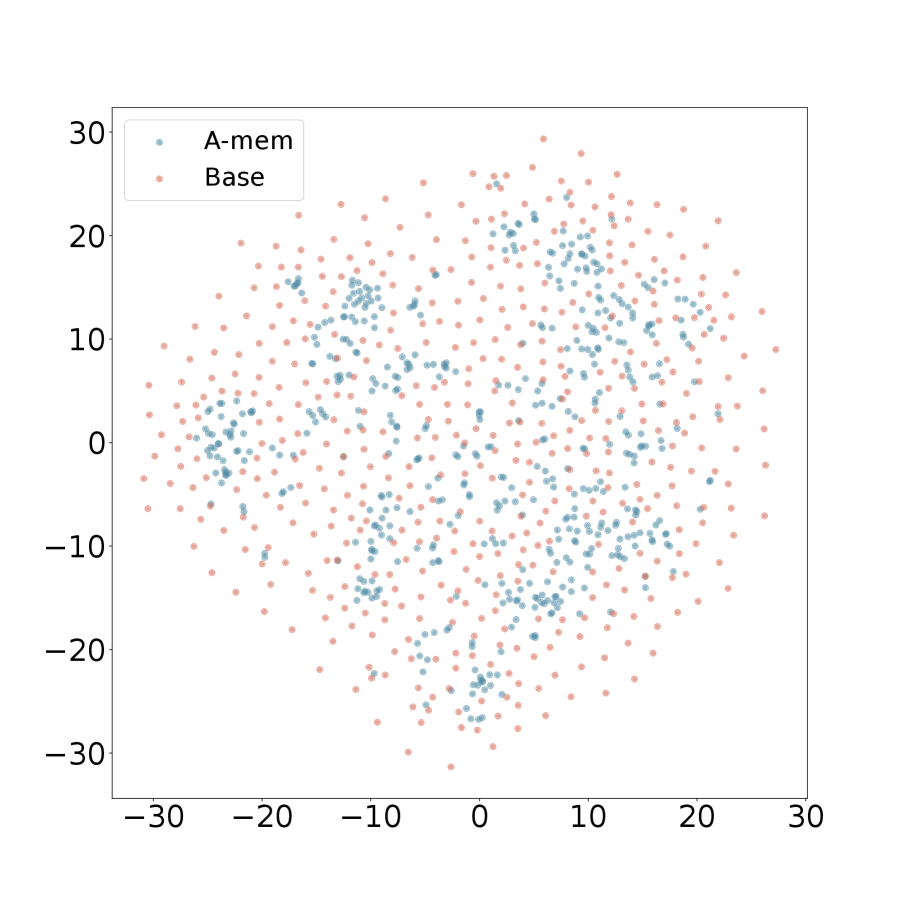

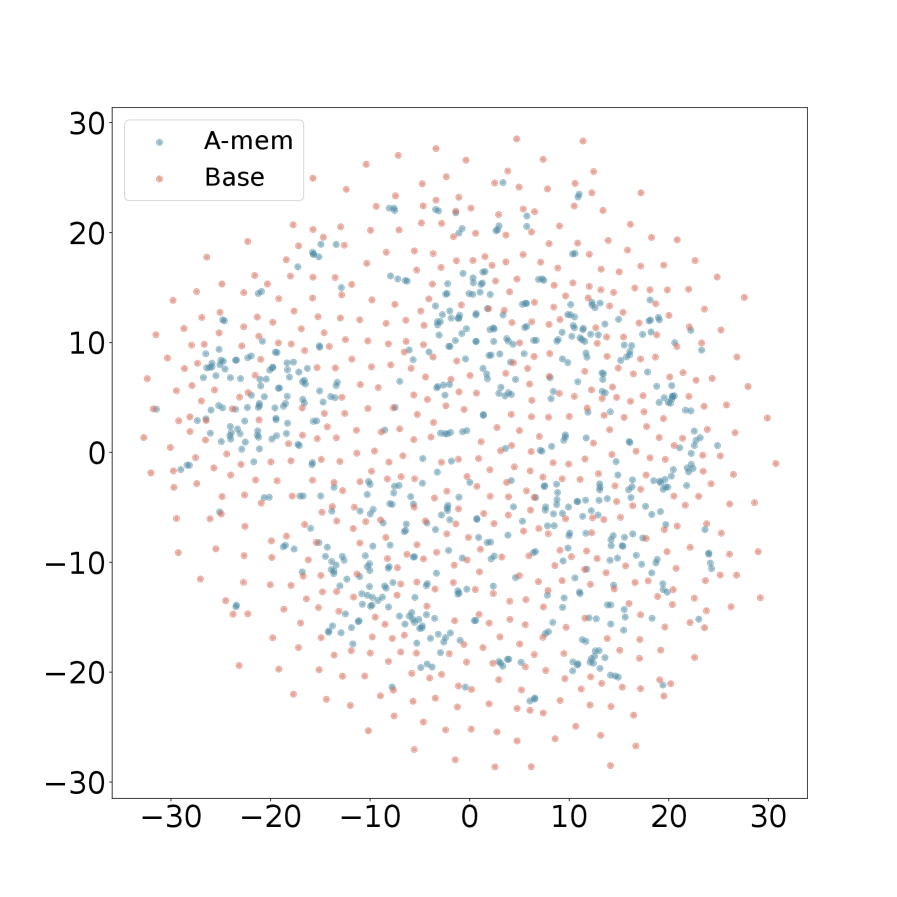

We present the t-SNE visualization in Figure 4 of memory embeddings to demonstrate the structural advantages of our agentic memory system. Analyzing two dialogues sampled from long-term conversations in LoCoMo [22], we observe that A-Mem (shown in blue) consistently exhibits more coherent clustering patterns compared to the baseline system (shown in red). This structural organization is particularly evident in Dialogue 2, where well-defined clusters emerge in the central region, providing empirical evidence for the effectiveness of our memory evolution mechanism and contextual description generation. In contrast, the baseline memory embeddings display a more dispersed distribution, demonstrating that memories lack structural organization without our link generation and memory evolution components. These visualization results validate that A-Mem can autonomously maintain meaningful memory structures through dynamic evolution and linking mechanisms. More results can be seen in Appendix A.4.

<details>

<summary>x11.png Details</summary>

### Visual Description

## Scatter Plot: A-mem vs. Base

### Overview

The image is a scatter plot comparing two datasets, "A-mem" and "Base". The plot displays the distribution of data points for each dataset across a two-dimensional space, with no explicit x or y axis labels. The "A-mem" data points are represented in light blue, while the "Base" data points are in light red.

### Components/Axes

* **X-axis:** No explicit label, but ranges approximately from -25 to 25.

* **Y-axis:** No explicit label, but ranges approximately from -25 to 25.

* **Legend:** Located in the top-left corner.

* "A-mem": Light blue data points.

* "Base": Light red data points.

### Detailed Analysis

The scatter plot shows the distribution of "A-mem" and "Base" data points.

* **A-mem (Light Blue):** The light blue points are more concentrated in the central region of the plot, with a higher density around the origin (0,0). They are also present, but less dense, in the outer regions.

* **Base (Light Red):** The light red points are more evenly distributed across the entire plot area, including the outer regions. They appear to have a more uniform spread compared to the "A-mem" data.

Approximate data point ranges:

* **A-mem:**

* X-axis: Mostly between -15 and 15.

* Y-axis: Mostly between -15 and 15.

* **Base:**

* X-axis: Ranging from -25 to 25.

* Y-axis: Ranging from -25 to 25.

### Key Observations

* The "A-mem" data points are more clustered towards the center of the plot, indicating a higher concentration in that region.

* The "Base" data points are more dispersed, suggesting a broader distribution across the plot area.

* There is some overlap between the two datasets, particularly in the central region.

### Interpretation

The scatter plot suggests that the "A-mem" dataset exhibits a stronger central tendency compared to the "Base" dataset. This could indicate that "A-mem" data points are more similar to each other or that they are influenced by a common factor that pulls them towards the center. The "Base" dataset, on the other hand, appears to be more diverse or influenced by a wider range of factors, resulting in a more dispersed distribution. The lack of axis labels makes it difficult to interpret the specific meaning of the x and y dimensions, but the relative distributions of the two datasets provide valuable insights into their underlying characteristics.

</details>

(a) Dialogue 1

<details>

<summary>x12.png Details</summary>

### Visual Description

## Scatter Plot: A-mem vs Base

### Overview

The image is a scatter plot showing the distribution of two datasets, labeled "A-mem" and "Base". The plot displays the data points in a two-dimensional space, with no explicit x or y axis labels. The data points are scattered across the plot, with some overlap between the two datasets.

### Components/Axes

* **X-axis:** Ranges from approximately -20 to 20, with tick marks at -20, -10, 0, 10, and 20. No label is provided.

* **Y-axis:** Ranges from approximately -20 to 30, with tick marks at -20, -10, 0, 10, 20, and 30. No label is provided.

* **Legend (Top-Left):**

* A-mem: Represented by light blue dots.

* Base: Represented by light red dots.

### Detailed Analysis

* **A-mem (Light Blue):** The light blue data points are scattered throughout the plot, with a higher concentration in the central region.

* **Base (Light Red):** The light red data points are also scattered throughout the plot, with a distribution similar to the "A-mem" data, but perhaps slightly more dispersed.

**Specific Data Point Analysis:**

It is impossible to provide exact coordinates for each point due to the nature of a scatter plot and the lack of gridlines. However, we can describe the general distribution:

* **A-mem:**

* Most points are concentrated within the range of x = -10 to 10 and y = -10 to 20.

* There are fewer points in the extreme corners of the plot.

* **Base:**

* Similar distribution to A-mem, but with slightly more points extending towards the edges of the plot.

* The density of points appears slightly lower than A-mem in the central region.

### Key Observations

* Both datasets ("A-mem" and "Base") exhibit a roughly similar distribution pattern.

* There is significant overlap between the two datasets, suggesting that they are not easily separable in this two-dimensional space.

* The central region of the plot appears to have a higher density of points for both datasets.

### Interpretation

The scatter plot visualizes the relationship between two datasets, "A-mem" and "Base". The overlapping distributions suggest that the two datasets share similar characteristics or are influenced by similar factors. Without further context or axis labels, it is difficult to determine the specific meaning of the plot. However, the visualization provides a basis for comparing the two datasets and identifying potential patterns or clusters. The plot suggests that a simple linear separation of the two datasets is unlikely to be effective.

</details>

(b) Dialogue 2

Figure 4: T-SNE Visualization of Memory Embeddings Showing More Organized Distribution with A-Mem (blue) Compared to Base Memory (red) Across Different Dialogues. Base Memory represents A-Mem without link generation and memory evolution.

5 Conclusions

In this work, we introduced A-Mem, a novel agentic memory system that enables LLM agents to dynamically organize and evolve their memories without relying on predefined structures. Drawing inspiration from the Zettelkasten method, our system creates an interconnected knowledge network through dynamic indexing and linking mechanisms that adapt to diverse real-world tasks. The system’s core architecture features autonomous generation of contextual descriptions for new memories and intelligent establishment of connections with existing memories based on shared attributes. Furthermore, our approach enables continuous evolution of historical memories by incorporating new experiences and developing higher-order attributes through ongoing interactions. Through extensive empirical evaluation across six foundation models, we demonstrated that A-Mem achieves superior performance compared to existing state-of-the-art baselines in long-term conversational tasks. Visualization analysis further validates the effectiveness of our memory organization approach. These results suggest that agentic memory systems can significantly enhance LLM agents’ ability to utilize long-term knowledge in complex environments.

6 Limitations

While our agentic memory system achieves promising results, we acknowledge several areas for potential future exploration. First, although our system dynamically organizes memories, the quality of these organizations may still be influenced by the inherent capabilities of the underlying language models. Different LLMs might generate slightly different contextual descriptions or establish varying connections between memories. Additionally, while our current implementation focuses on text-based interactions, future work could explore extending the system to handle multimodal information, such as images or audio, which could provide richer contextual representations.

References

- [1] Sönke Ahrens. How to Take Smart Notes: One Simple Technique to Boost Writing, Learning and Thinking. Amazon, 2017. Second Edition.

- [2] Anthropic. The claude 3 model family: Opus, sonnet, haiku. Anthropic, Mar 2024. Accessed May 2025.

- [3] Anthropic. Claude 3.5 sonnet model card addendum. Technical report, Anthropic, 2025. Accessed May 2025.

- [4] Akari Asai, Zeqiu Wu, Yizhong Wang, Avirup Sil, and Hannaneh Hajishirzi. Self-rag: Learning to retrieve, generate, and critique through self-reflection. arXiv preprint arXiv:2310.11511, 2023.

- [5] Satanjeev Banerjee and Alon Lavie. Meteor: An automatic metric for mt evaluation with improved correlation with human judgments. In Proceedings of the acl workshop on intrinsic and extrinsic evaluation measures for machine translation and/or summarization, pages 65–72, 2005.

- [6] Sebastian Borgeaud, Arthur Mensch, Jordan Hoffmann, Trevor Cai, Eliza Rutherford, Katie Millican, George Bm Van Den Driessche, Jean-Baptiste Lespiau, Bogdan Damoc, Aidan Clark, et al. Improving language models by retrieving from trillions of tokens. In International conference on machine learning, pages 2206–2240. PMLR, 2022.

- [7] Xiang Deng, Yu Gu, Boyuan Zheng, Shijie Chen, Sam Stevens, Boshi Wang, Huan Sun, and Yu Su. Mind2web: Towards a generalist agent for the web. Advances in Neural Information Processing Systems, 36:28091–28114, 2023.

- [8] Khant Dev and Singh Taranjeet. mem0: The memory layer for ai agents. https://github.com/mem0ai/mem0, 2024.

- [9] Darren Edge, Ha Trinh, Newman Cheng, Joshua Bradley, Alex Chao, Apurva Mody, Steven Truitt, and Jonathan Larson. From local to global: A graph rag approach to query-focused summarization. arXiv preprint arXiv:2404.16130, 2024.

- [10] Yunfan Gao, Yun Xiong, Xinyu Gao, Kangxiang Jia, Jinliu Pan, Yuxi Bi, Yi Dai, Jiawei Sun, and Haofen Wang. Retrieval-augmented generation for large language models: A survey. arXiv preprint arXiv:2312.10997, 2023.

- [11] Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- [12] I. Ilin. Advanced rag techniques: An illustrated overview, 2023.

- [13] Jihyoung Jang, Minseong Boo, and Hyounghun Kim. Conversation chronicles: Towards diverse temporal and relational dynamics in multi-session conversations. arXiv preprint arXiv:2310.13420, 2023.

- [14] Zhengbao Jiang, Frank F Xu, Luyu Gao, Zhiqing Sun, Qian Liu, Jane Dwivedi-Yu, Yiming Yang, Jamie Callan, and Graham Neubig. Active retrieval augmented generation. arXiv preprint arXiv:2305.06983, 2023.

- [15] David Kadavy. Digital Zettelkasten: Principles, Methods, & Examples. Google Books, May 2021.

- [16] Jiho Kim, Woosog Chay, Hyeonji Hwang, Daeun Kyung, Hyunseung Chung, Eunbyeol Cho, Yohan Jo, and Edward Choi. Dialsim: A real-time simulator for evaluating long-term multi-party dialogue understanding of conversational agents. arXiv preprint arXiv:2406.13144, 2024.

- [17] Kuang-Huei Lee, Xinyun Chen, Hiroki Furuta, John Canny, and Ian Fischer. A human-inspired reading agent with gist memory of very long contexts. arXiv preprint arXiv:2402.09727, 2024.

- [18] Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems, 33:9459–9474, 2020.

- [19] Chin-Yew Lin. Rouge: A package for automatic evaluation of summaries. In Text summarization branches out, pages 74–81, 2004.

- [20] Xi Victoria Lin, Xilun Chen, Mingda Chen, Weijia Shi, Maria Lomeli, Rich James, Pedro Rodriguez, Jacob Kahn, Gergely Szilvasy, Mike Lewis, et al. Ra-dit: Retrieval-augmented dual instruction tuning. arXiv preprint arXiv:2310.01352, 2023.

- [21] Zhiwei Liu, Weiran Yao, Jianguo Zhang, Liangwei Yang, Zuxin Liu, Juntao Tan, Prafulla K Choubey, Tian Lan, Jason Wu, Huan Wang, et al. Agentlite: A lightweight library for building and advancing task-oriented llm agent system. arXiv preprint arXiv:2402.15538, 2024.

- [22] Adyasha Maharana, Dong-Ho Lee, Sergey Tulyakov, Mohit Bansal, Francesco Barbieri, and Yuwei Fang. Evaluating very long-term conversational memory of llm agents. arXiv preprint arXiv:2402.17753, 2024.

- [23] Kai Mei, Zelong Li, Shuyuan Xu, Ruosong Ye, Yingqiang Ge, and Yongfeng Zhang. Aios: Llm agent operating system. arXiv e-prints, pp. arXiv–2403, 2024.

- [24] Ali Modarressi, Ayyoob Imani, Mohsen Fayyaz, and Hinrich Schütze. Ret-llm: Towards a general read-write memory for large language models. arXiv preprint arXiv:2305.14322, 2023.

- [25] Charles Packer, Sarah Wooders, Kevin Lin, Vivian Fang, Shishir G Patil, Ion Stoica, and Joseph E Gonzalez. Memgpt: Towards llms as operating systems. arXiv preprint arXiv:2310.08560, 2023.

- [26] Kishore Papineni, Salim Roukos, Todd Ward, and Wei-Jing Zhu. Bleu: a method for automatic evaluation of machine translation. In Proceedings of the 40th annual meeting of the Association for Computational Linguistics, pages 311–318, 2002.

- [27] Nils Reimers and Iryna Gurevych. Sentence-bert: Sentence embeddings using siamese bert-networks. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 11 2019.

- [28] Aymeric Roucher, Albert Villanova del Moral, Thomas Wolf, Leandro von Werra, and Erik Kaunismäki. ‘smolagents‘: a smol library to build great agentic systems. https://github.com/huggingface/smolagents, 2025.

- [29] Zhihong Shao, Yeyun Gong, Yelong Shen, Minlie Huang, Nan Duan, and Weizhu Chen. Enhancing retrieval-augmented large language models with iterative retrieval-generation synergy. arXiv preprint arXiv:2305.15294, 2023.

- [30] Zeru Shi, Kai Mei, Mingyu Jin, Yongye Su, Chaoji Zuo, Wenyue Hua, Wujiang Xu, Yujie Ren, Zirui Liu, Mengnan Du, et al. From commands to prompts: Llm-based semantic file system for aios. arXiv preprint arXiv:2410.11843, 2024.

- [31] Harsh Trivedi, Niranjan Balasubramanian, Tushar Khot, and Ashish Sabharwal. Interleaving retrieval with chain-of-thought reasoning for knowledge-intensive multi-step questions. arXiv preprint arXiv:2212.10509, 2022.

- [32] Bing Wang, Xinnian Liang, Jian Yang, Hui Huang, Shuangzhi Wu, Peihao Wu, Lu Lu, Zejun Ma, and Zhoujun Li. Enhancing large language model with self-controlled memory framework. arXiv preprint arXiv:2304.13343, 2023.

- [33] Xingyao Wang, Boxuan Li, Yufan Song, Frank F Xu, Xiangru Tang, Mingchen Zhuge, Jiayi Pan, Yueqi Song, Bowen Li, Jaskirat Singh, et al. Openhands: An open platform for ai software developers as generalist agents. arXiv preprint arXiv:2407.16741, 2024.

- [34] Zhiruo Wang, Jun Araki, Zhengbao Jiang, Md Rizwan Parvez, and Graham Neubig. Learning to filter context for retrieval-augmented generation. arXiv preprint arXiv:2311.08377, 2023.

- [35] Lilian Weng. Llm-powered autonomous agents. lilianweng.github.io, Jun 2023.

- [36] J Xu. Beyond goldfish memory: Long-term open-domain conversation. arXiv preprint arXiv:2107.07567, 2021.

- [37] Wenhao Yu, Hongming Zhang, Xiaoman Pan, Kaixin Ma, Hongwei Wang, and Dong Yu. Chain-of-note: Enhancing robustness in retrieval-augmented language models. arXiv preprint arXiv:2311.09210, 2023.

- [38] Zichun Yu, Chenyan Xiong, Shi Yu, and Zhiyuan Liu. Augmentation-adapted retriever improves generalization of language models as generic plug-in. arXiv preprint arXiv:2305.17331, 2023.

- [39] Wanjun Zhong, Lianghong Guo, Qiqi Gao, He Ye, and Yanlin Wang. Memorybank: Enhancing large language models with long-term memory. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 19724–19731, 2024. Contents

1. 1 Introduction

1. 2 Related Work

1. 2.1 Memory for LLM Agents

1. 2.2 Retrieval-Augmented Generation

1. 3 Methodolodgy

1. 3.1 Note Construction

1. 3.2 Link Generation

1. 3.3 Memory Evolution

1. 3.4 Retrieve Relative Memory

1. 4 Experiment

1. 4.1 Dataset and Evaluation

1. 4.2 Implementation Details

1. 4.3 Empricial Results

1. 4.4 Ablation Study

1. 4.5 Hyperparameter Analysis

1. 4.6 Scaling Analysis

1. 4.7 Memory Analysis

1. 5 Conclusions

1. 6 Limitations

1. A Experiment

1. A.1 Detailed Baselines Introduction

1. A.2 Evaluation Metric

1. A.3 Comparison Results

1. A.4 Memory Analysis

1. A.5 Hyperparameters setting

1. B Prompt Templates and Examples

1. B.1 Prompt Template of Note Construction

1. B.2 Prompt Template of Link Generation

1. B.3 Prompt Template of Memory Evolution

1. B.4 Examples of Q/A with A-Mem

APPENDIX

Appendix A Experiment

A.1 Detailed Baselines Introduction

LoCoMo [22] takes a direct approach by leveraging foundation models without memory mechanisms for question answering tasks. For each query, it incorporates the complete preceding conversation and questions into the prompt, evaluating the model’s reasoning capabilities.

ReadAgent [17] tackles long-context document processing through a sophisticated three-step methodology: it begins with episode pagination to segment content into manageable chunks, followed by memory gisting to distill each page into concise memory representations, and concludes with interactive look-up to retrieve pertinent information as needed.

MemoryBank [39] introduces an innovative memory management system that maintains and efficiently retrieves historical interactions. The system features a dynamic memory updating mechanism based on the Ebbinghaus Forgetting Curve theory, which intelligently adjusts memory strength according to time and significance. Additionally, it incorporates a user portrait building system that progressively refines its understanding of user personality through continuous interaction analysis.

MemGPT [25] presents a novel virtual context management system drawing inspiration from traditional operating systems’ memory hierarchies. The architecture implements a dual-tier structure: a main context (analogous to RAM) that provides immediate access during LLM inference, and an external context (analogous to disk storage) that maintains information beyond the fixed context window.

A.2 Evaluation Metric

The F1 score represents the harmonic mean of precision and recall, offering a balanced metric that combines both measures into a single value. This metric is particularly valuable when we need to balance between complete and accurate responses:

$$

\text{F1}=2\cdot\frac{\text{precision}\cdot\text{recall}}{\text{precision}+\text{recall}} \tag{11}

$$

where

$$

\text{precision}=\frac{\text{true positives}}{\text{true positives}+\text{false positives}} \tag{12}

$$

$$

\text{recall}=\frac{\text{true positives}}{\text{true positives}+\text{false negatives}} \tag{13}

$$

In question-answering systems, the F1 score serves a crucial role in evaluating exact matches between predicted and reference answers. This is especially important for span-based QA tasks, where systems must identify precise text segments while maintaining comprehensive coverage of the answer.

BLEU-1 [26] provides a method for evaluating the precision of unigram matches between system outputs and reference texts:

$$

\text{BLEU-1}=BP\cdot\exp(\tsum\slimits@_{n=1}^{1}w_{n}\log p_{n}) \tag{14}

$$

where

$$

BP=\begin{cases}1&\text{if }c>r\\

e^{1-r/c}&\text{if }c\leq r\end{cases} \tag{15}

$$

$$

p_{n}=\frac{\tsum\slimits@_{i}\tsum\slimits@_{k}\min(h_{ik},m_{ik})}{\tsum\slimits@_{i}\tsum\slimits@_{k}h_{ik}} \tag{16}

$$

Here, $c$ is candidate length, $r$ is reference length, $h_{ik}$ is the count of n-gram i in candidate k, and $m_{ik}$ is the maximum count in any reference. In QA, BLEU-1 evaluates the lexical precision of generated answers, particularly useful for generative QA systems where exact matching might be too strict.

ROUGE-L [19] measures the longest common subsequence between the generated and reference texts.

$$

\text{ROUGE-L}=\frac{(1+\beta^{2})R_{l}P_{l}}{R_{l}+\beta^{2}P_{l}} \tag{17}

$$

$$

R_{l}=\frac{\text{LCS}(X,Y)}{|X|} \tag{18}

$$

$$

P_{l}=\frac{\text{LCS}(X,Y)}{|Y|} \tag{19}

$$

where $X$ is reference text, $Y$ is candidate text, and LCS is the Longest Common Subsequence.

ROUGE-2 [19] calculates the overlap of bigrams between the generated and reference texts.

$$

\text{ROUGE-2}=\frac{\tsum\slimits@_{\text{bigram}\in\text{ref}}\min(\text{Count}_{\text{ref}}(\text{bigram}),\text{Count}_{\text{cand}}(\text{bigram}))}{\tsum\slimits@_{\text{bigram}\in\text{ref}}\text{Count}_{\text{ref}}(\text{bigram})} \tag{20}

$$

Both ROUGE-L and ROUGE-2 are particularly useful for evaluating the fluency and coherence of generated answers, with ROUGE-L focusing on sequence matching and ROUGE-2 on local word order.

METEOR [5] computes a score based on aligned unigrams between the candidate and reference texts, considering synonyms and paraphrases.

$$

\text{METEOR}=F_{\text{mean}}\cdot(1-\text{Penalty}) \tag{21}

$$

$$

F_{\text{mean}}=\frac{10P\cdot R}{R+9P} \tag{22}

$$

$$

\text{Penalty}=0.5\cdot(\frac{\text{ch}}{m})^{3} \tag{23}

$$

where $P$ is precision, $R$ is recall, ch is number of chunks, and $m$ is number of matched unigrams. METEOR is valuable for QA evaluation as it considers semantic similarity beyond exact matching, making it suitable for evaluating paraphrased answers.

SBERT Similarity [27] measures the semantic similarity between two texts using sentence embeddings.

$$

\text{SBERT\_Similarity}=\cos(\text{SBERT}(x),\text{SBERT}(y)) \tag{24}

$$

$$

\cos(a,b)=\frac{a\cdot b}{\|a\|\|b\|} \tag{25}

$$

SBERT( $x$ ) represents the sentence embedding of text. SBERT Similarity is particularly useful for evaluating semantic understanding in QA systems, as it can capture meaning similarities even when the lexical overlap is low.