# S2r: Teaching LLMs to Self-verify and Self-correct via Reinforcement Learning

> Equal contribution. This work was done during Peisong, Cheng, Jiaqi and Bang were interning at Tencent. Corresponding authors.

## Abstract

Recent studies have demonstrated the effectiveness of LLM test-time scaling. However, existing approaches to incentivize LLMs’ deep thinking abilities generally require large-scale data or significant training efforts. Meanwhile, it remains unclear how to improve the thinking abilities of less powerful base models. In this work, we introduce S 2 r, an efficient framework that enhances LLM reasoning by teaching models to self-verify and self-correct during inference. Specifically, we first initialize LLMs with iterative self-verification and self-correction behaviors through supervised fine-tuning on carefully curated data. The self-verification and self-correction skills are then further strengthened by both outcome-level and process-level reinforcement learning, with minimized resource requirements, enabling the model to adaptively refine its reasoning process during inference. Our results demonstrate that, with only 3.1k self-verifying and self-correcting behavior initialization samples, Qwen2.5-math-7B achieves an accuracy improvement from 51.0% to 81.6%, outperforming models trained on an equivalent amount of long-CoT distilled data. Extensive experiments and analysis based on three base models across both in-domain and out-of-domain benchmarks validate the effectiveness of S 2 r. Our code and data are available at https://github.com/NineAbyss/S2R.

S 2 r: Teaching LLMs to Self-verify and Self-correct via Reinforcement Learning

Ruotian Ma 1 thanks: Equal contribution. This work was done during Peisong, Cheng, Jiaqi and Bang were interning at Tencent., Peisong Wang 2 footnotemark: , Cheng Liu 1, Xingyan Liu 1, Jiaqi Chen 3, Bang Zhang 1, Xin Zhou 4, Nan Du 1 thanks: Corresponding authors. , Jia Li 5 To ensure a fair comparison, we report the Pass@1 (greedy) accuracy obtained without the process preference model of rStar, rather than the result obtained with increased test-time computation using 64 trajectories. 1 Tencent 2 Tsinghua University 3 The University of Hong Kong 4 Fudan University 5 The Hong Kong University of Science and Technology (Guangzhou) ruotianma@tencent.com, wps22@mails.tsinghua.edu.cn

## 1 Introduction

Recent advancements in Large Language Models (LLMs) have demonstrated a paradigm shift from scaling up training-time efforts to test-time compute Snell et al. (2024a); Kumar et al. (2024); Qi et al. (2024); Yang et al. (2024). The effectiveness of scaling test-time compute is illustrated by OpenAI o1 OpenAI (2024), which shows strong reasoning abilities by performing deep and thorough thinking, incorporating essential skills like self-checking, self-verifying, self-correcting and self-exploring during the model’s reasoning process. This paradigm not only enhances reasoning in domains like mathematics and science but also offers new insights into improving the generalizability, helpfulness and safety of LLMs across various general tasks OpenAI (2024); Guo et al. (2025).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance vs. Data Size on MATH500

### Overview

This image is a scatter plot that visualizes the performance (Accuracy in %) of different language models against their data size (log10) on the MATH500 dataset. Each point represents a specific model, with its position indicating its accuracy and data size.

### Components/Axes

* **Title:** MATH500

* **X-axis:**

* **Title:** Data Size (log10)

* **Scale:** Logarithmic, ranging from 3 to 8.

* **Markers:** 3, 4, 5, 6, 7, 8.

* **Y-axis:**

* **Title:** Accuracy (%)

* **Scale:** Linear, ranging from 76 to 86.

* **Markers:** 76, 78, 80, 82, 84, 86.

* **Data Points:** Five distinct colored circles, each labeled with the name of a language model.

* **Green Circle:** Labeled "Qwen2.5-Math-7B-S²R-ORL (ours)"

* **Pink Circle:** Labeled "Qwen2.5-Math-7B-Instruct"

* **Orange Circle:** Labeled "Eurus-2-7B-PRIME"

* **Blue Circle:** Labeled "rStar-Math-7B"

* **Dark Purple Circle:** Labeled "Qwen2.5-7B-SimpleRL-Zero"

### Detailed Analysis

The plot displays the following data points:

1. **Qwen2.5-Math-7B-S²R-ORL (ours)** (Green Circle):

* **Trend:** This point is positioned at the top-left of the cluster, indicating high accuracy with a relatively smaller data size compared to some other models.

* **Approximate Coordinates:** Data Size (log10) ≈ 3.9, Accuracy (%) ≈ 84.5

2. **Qwen2.5-Math-7B-Instruct** (Pink Circle):

* **Trend:** This point is located in the upper-right quadrant of the plot, showing a good balance of high accuracy and a larger data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 6.5, Accuracy (%) ≈ 83.5

3. **Eurus-2-7B-PRIME** (Orange Circle):

* **Trend:** This point is situated in the middle-lower section of the plot, suggesting moderate accuracy with a medium data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 5.5, Accuracy (%) ≈ 79.5

4. **rStar-Math-7B** (Blue Circle):

* **Trend:** This point is in the lower-right section, indicating lower accuracy with a larger data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 7.0, Accuracy (%) ≈ 78.2

5. **Qwen2.5-7B-SimpleRL-Zero** (Dark Purple Circle):

* **Trend:** This point is at the bottom-left, showing the lowest accuracy among the plotted models, with a relatively small data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 4.0, Accuracy (%) ≈ 77.0

### Key Observations

* The model "Qwen2.5-Math-7B-S²R-ORL (ours)" achieves the highest accuracy (approximately 84.5%) among the plotted models, despite having one of the smallest data sizes (approximately 3.9 log10).

* "Qwen2.5-Math-7B-Instruct" also demonstrates high accuracy (approximately 83.5%) but with a significantly larger data size (approximately 6.5 log10).

* "Qwen2.5-7B-SimpleRL-Zero" has the lowest accuracy (approximately 77.0%) and a relatively small data size (approximately 4.0 log10).

* "rStar-Math-7B" has a larger data size (approximately 7.0 log10) but a lower accuracy (approximately 78.2%) compared to "Eurus-2-7B-PRIME".

### Interpretation

This scatter plot suggests a general trend where increased data size might not always directly correlate with improved accuracy, or that model architecture and training methods play a crucial role. The "Qwen2.5-Math-7B-S²R-ORL (ours)" model stands out as being highly efficient, achieving top-tier accuracy with a comparatively smaller data footprint. This could imply a more effective learning process or better generalization capabilities. Conversely, models like "rStar-Math-7B" show that simply increasing data size doesn't guarantee superior performance, as it has a larger data size but lower accuracy than "Eurus-2-7B-PRIME". The "Qwen2.5" family of models shows varying performance based on their specific training (e.g., Instruct vs. SimpleRL-Zero vs. S²R-ORL), highlighting the impact of fine-tuning and reinforcement learning techniques. The plot effectively allows for a quick comparison of model trade-offs between performance and data requirements on the MATH500 benchmark.

</details>

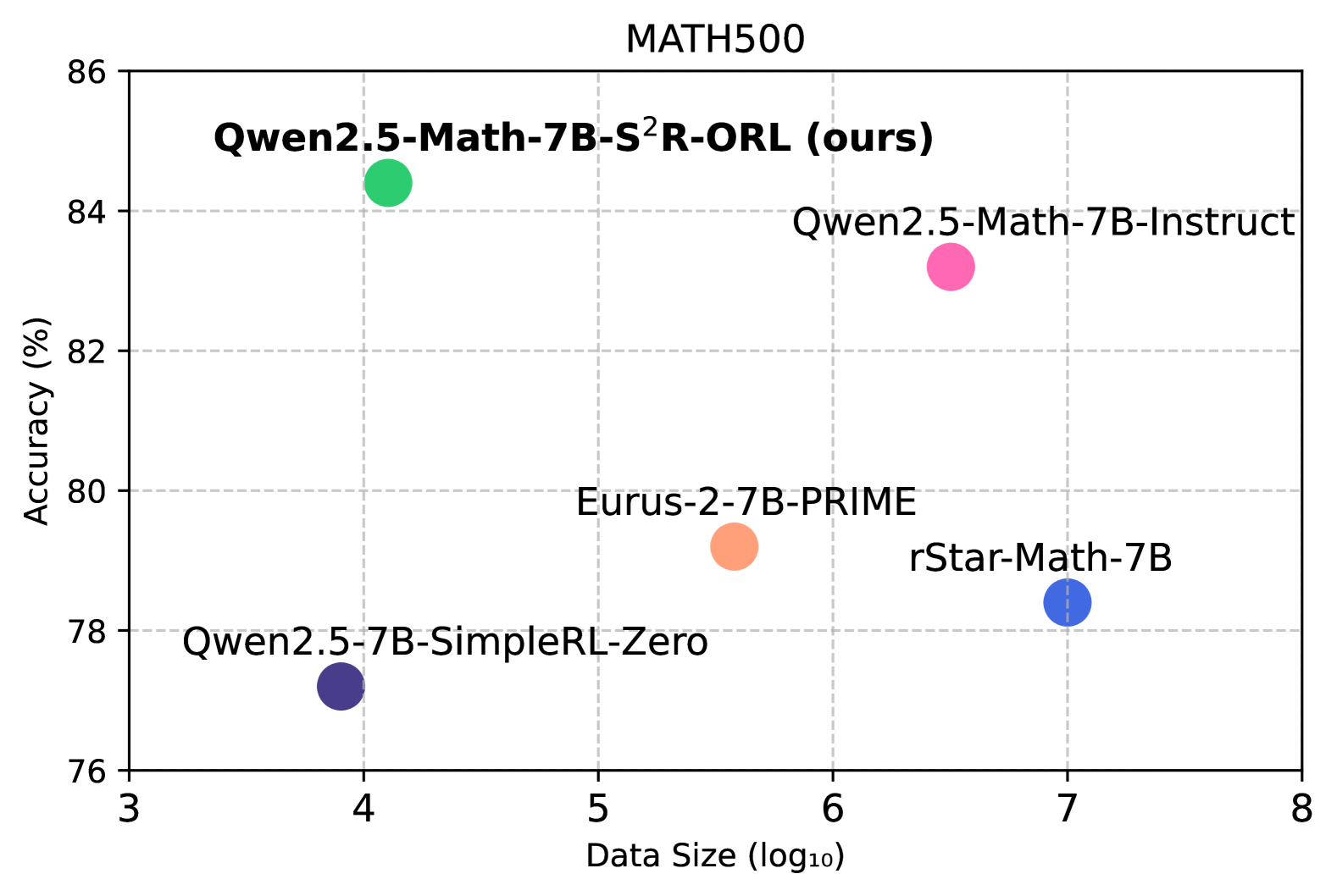

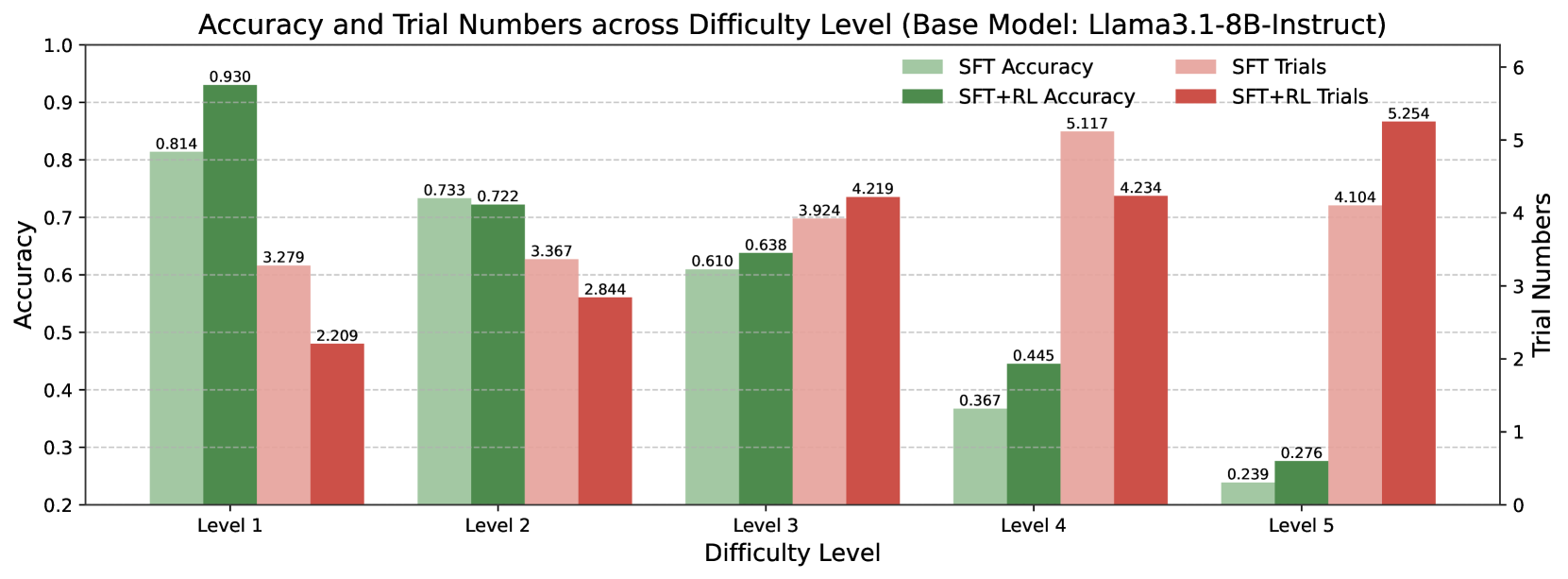

Figure 1: The data efficiency of S 2 r compared to competitive methods, with all models initialized from Qwen2.5-Math-7B.

Recent studies have made various attempts to replicate the success of o1. These efforts include using large-scale Monte Carlo Tree Search (MCTS) to construct long-chain-of-thought (long-CoT) training data, or to scale test-time reasoning to improve the performance of current models Guan et al. (2025); Zhao et al. (2024); Snell et al. (2024b); constructing high-quality long-CoT data for effective behavior cloning with substantial human effort Qin et al. (2024); and exploring reinforcement learning to enhance LLM thinking abilities on large-scale training data and models Guo et al. (2025); Team et al. (2025); Cui et al. (2025); Yuan et al. (2024). Recently, DeepSeek R1 Guo et al. (2025) demonstrated that large-scale reinforcement learning can incentivize LLM’s deep thinking abilities, with the R1 series showcasing the promising potential of long-thought reasoning. However, these approaches generally requires significant resources to enhance LLMs’ thinking abilities, including large datasets, substantial training-time compute, and considerable human effort and time costs. Meanwhile, it remains unclear how to incentivize valid thinking in smaller or less powerful LLMs beyond distilling knowledge from more powerful models.

In this work, we propose S 2 r, an efficient alternative to enhance the thinking abilities of LLMs, particularly for smaller or less powerful LLMs. Instead of having LLMs imitate the thinking process of larger, more powerful models, S 2 r focus on teaching LLMs to think deeply by iteratively adopting two critical thinking skills: self-verifying and self-correcting. By acquiring these two capabilities, LLMs can continuously reassess their solutions, identify mistakes during solution exploration, and refine previous solutions after self-checking. Such a paradigm also enables flexible allocation of test-time compute to different levels of problems. Our results show that, with only 3.1k training samples, Qwen2.5-math-7B significantly benefits from learning self-verifying and self-correcting behaviors, achieving a 51.0% to 81.6% accuracy improvement on the Math500 test set. This performance outperforms the same base model distilled from an equivalent amount of long-CoT data (accuracy 80.2%) from QwQ-32B-Preview Team (2024a).

More importantly, S 2 r employs both outcome-level and process-level reinforcement learning (RL) to further enhance the LLMs’ self-verifying and self-correcting capabilities. Using only rule-based reward models, RL improves the validity of both the self-verification and self-correction process, allowing the models to perform more flexible and effective test-time scaling through a self-directed trial-and-error process. By comparing outcome-level and process-level RL for our task, we found that process-level supervision is particularly effective in boosting accuracy of the thinking skills at intermediate steps, which might benefit base models with limited reasoning abilities. In contrast, outcome-level supervision enables models explore more flexible trial-and-error paths towards the correct final answer, leading to consistent improvement in the reasoning abilities of more capable base models. Additionally, we further show the potential of offline reinforcement learning as a more efficient alternative to the online RL training.

We conducted extensive experiments across 3 LLMs on 7 math reasoning benchmarks. Experimental results demonstrate that S 2 r outperforms competitive baselines in math reasoning, including recently-released advanced o1-like models Eurus-2-7B-PRIME Cui et al. (2025), rStar-Math-7B Guan et al. (2025) and Qwen2.5-7B-SimpleRL Zeng et al. (2025). We also found that S 2 r is generalizable to out-of-domain general tasks, such as MMLU-PRO, highlighting the validity of the learned self-verifying and self-correcting abilities. Additionally, we conducted a series of analytical experiments to better demonstrate the reasoning mechanisms of the obtained models, and provide insights into performing online and offline RL training for enhancing LLM reasoning.

## 2 Methodology

The main idea behind teaching LLMs self-verification and self-correction abilities is to streamline deep thinking into a critical paradigm: self-directed trial-and-error with self-verification and self-correction. Specifically: (1) LLMs are allowed to explore any potential (though possibly incorrect) solutions, especially when tackling difficult problems; (2) during the process, self-verification is essential for detecting mistakes on-the-fly; (3) self-correction enables the model to fix detected mistakes. This paradigm forms an effective test-time scaling approach that is more accessible for less powerful base models and is generalizable across various tasks.

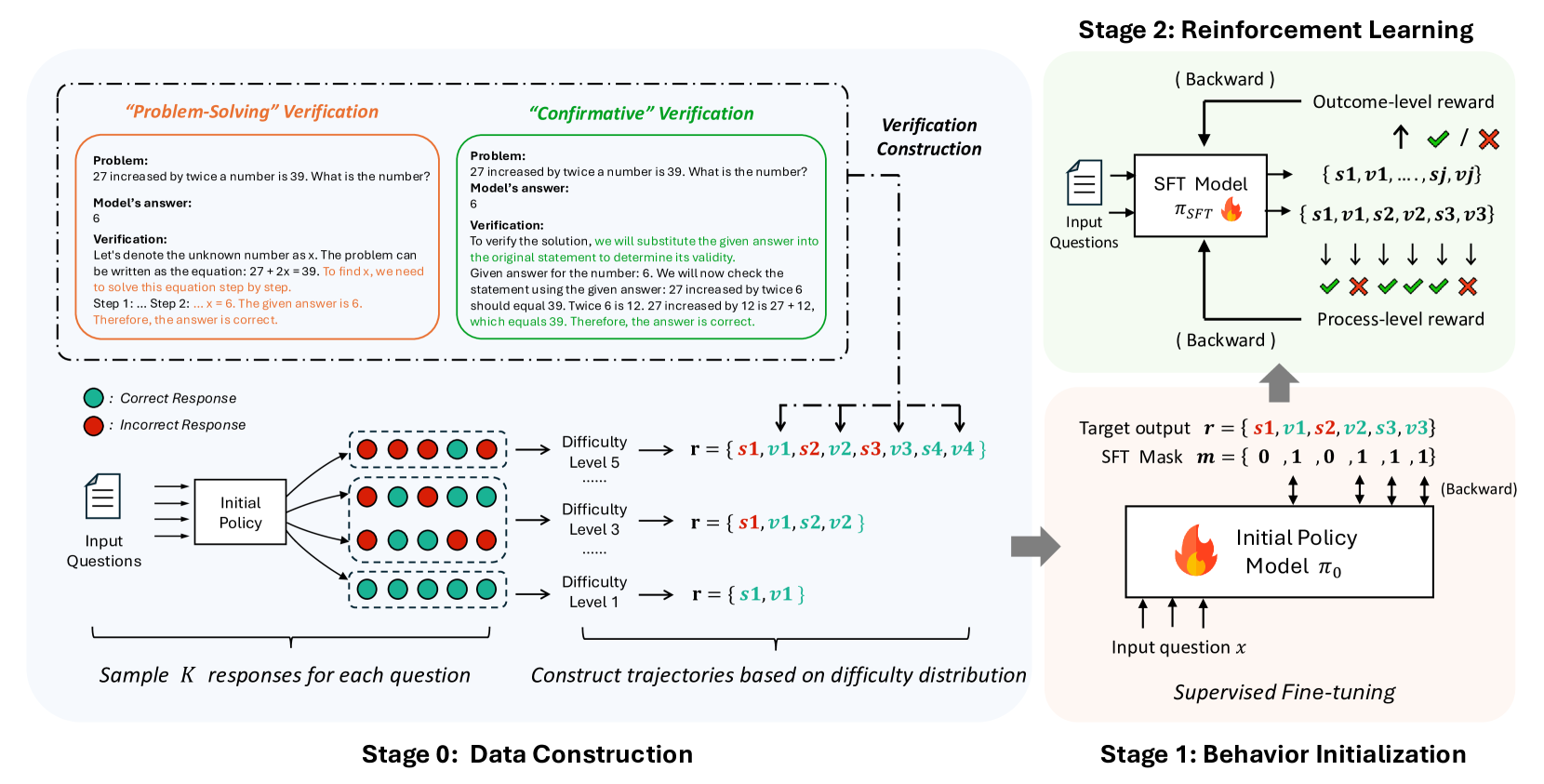

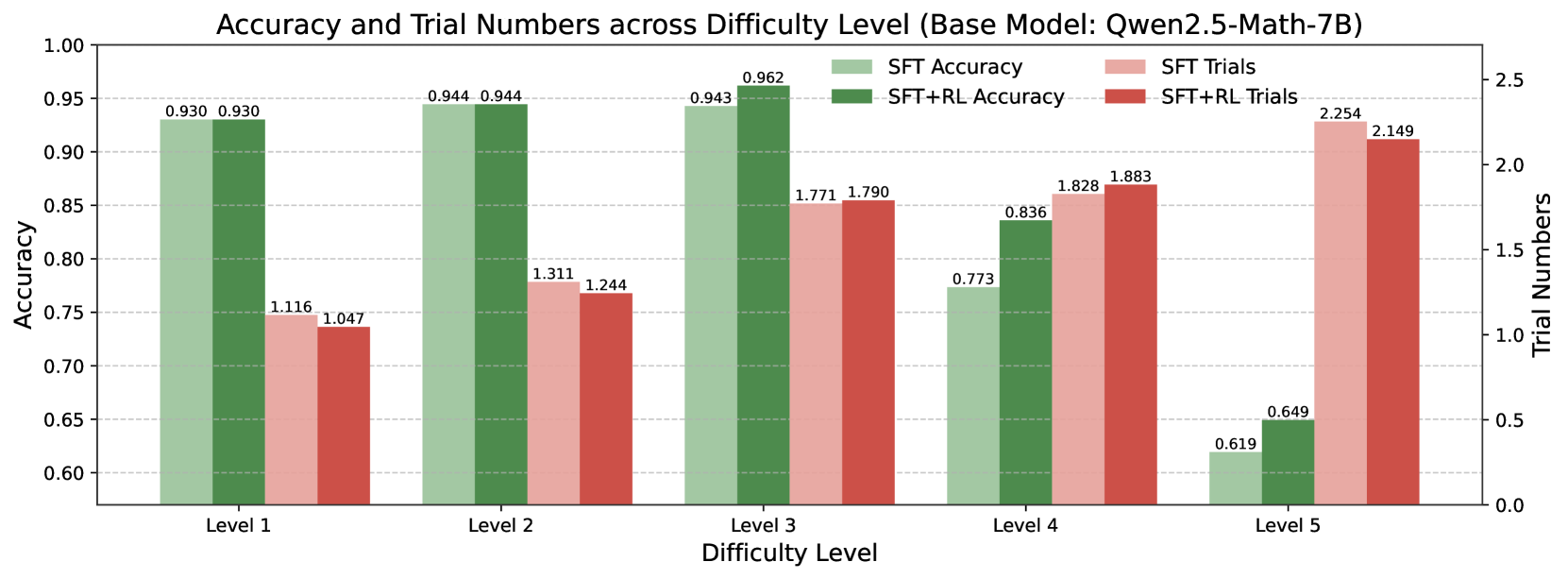

In this section, we first formally define the problem (§ 2.1). Next, we present the two-stage training framework of S 2 r, as described in Figure 2:

Stage 1: Behavior Initialization: We first construct dynamic self-verifying and self-correcting trial-and-error trajectories to initialize the desired behavior. Then, we apply supervised fine-tuning (SFT) to the initial policy models using these trajectories, resulting in behavior-initialized policy models (§ 2.2);

Stage 2: Reinforcement Learning: Following behavior initialization, we employ reinforcement learning to further enhance the self-verifying and self-correcting capabilities of the policy models. We explore both outcome-level and process-level RL methods, as well as their offline versions (§ 2.3).

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Stages of Reinforcement Learning for Model Training

### Overview

This diagram illustrates a three-stage process for training a model, likely a language model or a similar AI system, using reinforcement learning. The stages are: Stage 0: Data Construction, Stage 1: Behavior Initialization, and Stage 2: Reinforcement Learning. The diagram details how data is generated, how an initial policy is established, and how reinforcement learning is applied to refine the model's performance.

### Components/Axes

**Stage 0: Data Construction**

* **Input:** "Input Questions" (represented by a document icon).

* **Process:** An "Initial Policy" box receives input questions.

* **Output:** The "Initial Policy" generates "Sample K responses for each question." These responses are visually represented as a grid of colored circles, indicating "Correct Response" (green) and "Incorrect Response" (red).

* **Legend:**

* Green circle: Correct Response

* Red circle: Incorrect Response

* **Further Processing:** These samples are used to "Construct trajectories based on difficulty distribution." This is shown by arrows leading to different difficulty levels:

* Difficulty Level 5

* Difficulty Level 3

* Difficulty Level 1

* **Trajectory Representation:** Each difficulty level is associated with a set of tokens represented as `r = {s1, v1, s2, v2, s3, v3, s4, v4}` for Level 5, `r = {s1, v1, s2, v2}` for Level 3, and `r = {s1, v1}` for Level 1. The colors of the tokens in the example trajectories are:

* Level 5: `s1` (red), `v1` (green), `s2` (red), `v2` (green), `s3` (red), `v3` (green), `s4` (red), `v4` (green)

* Level 3: `s1` (red), `v1` (green), `s2` (red), `v2` (green)

* Level 1: `s1` (red), `v1` (green)

**Verification Construction (Within Stage 0)**

* This section provides two methods of verification for a given problem and model answer.

* **"Problem-Solving" Verification:**

* **Problem:** "27 increased by twice a number is 39. What is the number?"

* **Model's answer:** 6

* **Verification:** A step-by-step explanation of how to solve the equation `27 + 2x = 39` to arrive at `x = 6`.

* **"Confirmative" Verification:**

* **Problem:** "27 increased by twice a number is 39. What is the number?"

* **Model's answer:** 6

* **Verification:** Substituting the answer `6` back into the original statement to confirm its validity. It shows that `27 increased by twice 6` (which is `27 + 12`) equals `39`.

**Stage 1: Behavior Initialization**

* **Process:** "Supervised Fine-tuning" is applied to an "Initial Policy Model π₀".

* **Input:** "Input question x".

* **Output:** The model outputs a "Target output r = {s1, v1, s2, s3, v3}" and an "SFT Mask m = {0, 1, 0, 1, 1, 1, 1}". Arrows indicate a "Backward" pass.

**Stage 2: Reinforcement Learning**

* **Process:** A "SFT Model π_SFT" (presumably the model after Stage 1) is trained using reinforcement learning.

* **Input:** "Input Questions".

* **Outputs:**

* **Outcome-level reward:** Indicated by an upward arrow with checkmarks (✓) and crosses (✗), representing success or failure. The possible outcomes are listed as `{s1, v1, ..., sj, vj}`.

* **Process-level reward:** Indicated by downward arrows with checkmarks (✓) and crosses (✗), representing success or failure at intermediate steps.

* **Feedback Loop:** Both outcome-level and process-level rewards are fed back to the SFT Model via a "Backward" pass.

* **Relationship to Stage 1:** The SFT Model in Stage 2 appears to be an evolution of the Initial Policy Model from Stage 1. The "Target output r" and "SFT Mask m" from Stage 1 are shown feeding into the SFT Model in Stage 2, along with "Input question x".

### Detailed Analysis or Content Details

**Stage 0: Data Construction**

The diagram shows that input questions are processed by an initial policy to generate multiple responses. These responses are categorized as correct (green) or incorrect (red). The distribution of these responses is then used to construct trajectories based on difficulty levels (Level 5, Level 3, Level 1). The token sets associated with each difficulty level suggest a hierarchical structure or varying complexity of generated sequences. For example, Level 5 has the longest sequence of tokens (`s1` through `v4`), while Level 1 has the shortest (`s1`, `v1`). The coloring of these tokens (red for `s` tokens, green for `v` tokens) might indicate specific types of actions or states within the trajectory.

The verification sections provide a concrete example of a mathematical problem and demonstrate two distinct methods for verifying the model's answer:

1. **Problem-Solving:** This method involves solving the problem from scratch to derive the correct answer and comparing it to the model's answer.

2. **Confirmative:** This method involves plugging the model's answer back into the problem statement to check for consistency. Both methods confirm that the model's answer of `6` for the problem "27 increased by twice a number is 39" is correct.

**Stage 1: Behavior Initialization**

This stage focuses on supervised fine-tuning (SFT) of an initial policy model (π₀). The model takes an input question `x` and is trained to produce a target output `r = {s1, v1, s2, s3, v3}`. A corresponding "SFT Mask" `m = {0, 1, 0, 1, 1, 1, 1}` is also generated. The mask likely indicates which parts of the target output are relevant or should be attended to during fine-tuning. The "Backward" arrow suggests that gradients are propagated during this supervised learning process.

**Stage 2: Reinforcement Learning**

This stage applies reinforcement learning to a "SFT Model π_SFT". The model receives input questions and generates outputs that are evaluated by both "Outcome-level reward" and "Process-level reward".

* **Outcome-level reward:** This reward is a global evaluation of the final output. The symbols `✓` and `✗` indicate successful or unsuccessful outcomes, respectively. The set `{s1, v1, ..., sj, vj}` likely represents the possible final states or actions.

* **Process-level reward:** This reward provides feedback on intermediate steps of the model's generation process. The visual representation of multiple downward arrows with `✓` and `✗` suggests that each step in the generated sequence is evaluated.

Both reward signals are fed back to the SFT Model via a "Backward" pass, allowing the model to learn and improve its policy to maximize cumulative rewards. The diagram shows that the "Target output r" and "SFT Mask m" from Stage 1 are inputs to the SFT Model in Stage 2, implying that the supervised fine-tuning in Stage 1 initializes the model for the subsequent reinforcement learning phase.

### Key Observations

* The diagram outlines a structured approach to training a model, moving from data generation and initial policy learning to sophisticated reinforcement learning.

* The use of both "Problem-Solving" and "Confirmative" verification methods in Stage 0 highlights a robust approach to ensuring the correctness of generated data or model outputs.

* The concept of "difficulty distribution" in Stage 0 suggests that the training data is curated to cover a range of problem complexities.

* Stage 1 (Supervised Fine-tuning) serves as a crucial initialization step, preparing the model with a baseline behavior before it undergoes reinforcement learning.

* Stage 2 (Reinforcement Learning) utilizes both global (outcome-level) and granular (process-level) rewards, indicating a comprehensive feedback mechanism for model improvement.

* The "Backward" arrows consistently denote the flow of gradients or error signals in supervised fine-tuning and reinforcement learning.

### Interpretation

This diagram depicts a common pipeline in modern AI development, particularly for tasks involving sequential generation or decision-making, such as natural language processing or game playing.

**Stage 0: Data Construction** lays the groundwork by generating a diverse and verified dataset. The inclusion of verification methods suggests a focus on data quality and correctness, which is paramount for effective model training. The segmentation by difficulty level implies a strategy to gradually expose the model to increasingly complex tasks, a common technique in curriculum learning. The token sets `r = {s1, v1, ...}` likely represent sequences of actions or states, where `s` and `v` might denote different types of tokens or decisions. The coloring of these tokens (red/green) could represent specific attributes or classes within the sequence, potentially related to the correctness or type of action taken.

**Stage 1: Behavior Initialization** is critical for providing the reinforcement learning agent with a reasonable starting point. Supervised fine-tuning on a dataset of input-output pairs (questions and target outputs) allows the model to learn basic patterns and generate plausible responses. The "SFT Mask" might be used to guide the fine-tuning process, focusing the model's attention on specific parts of the input or output that are deemed most important. This stage prevents the reinforcement learning agent from starting with a completely random policy, which would be highly inefficient.

**Stage 2: Reinforcement Learning** is where the model truly learns to optimize its behavior. By receiving rewards based on both the final outcome and the intermediate steps, the model can learn to make better decisions throughout its generation process. The distinction between outcome-level and process-level rewards is significant:

* **Outcome-level reward** is essential for achieving the overall goal.

* **Process-level reward** is crucial for learning efficient and correct intermediate steps, which can lead to better outcomes and prevent common errors. This is particularly important in complex tasks where a single error early on can derail the entire process.

The overall flow suggests a progression from supervised learning to reinforcement learning, a paradigm often referred to as "pre-training and fine-tuning" or "behavior cloning followed by reinforcement learning." The diagram effectively visualizes how an initial, supervised policy is refined through trial and error guided by rewards, leading to a more robust and optimized model. The "Backward" arrows are a consistent visual cue for the backpropagation of errors or gradients, a fundamental mechanism in training deep learning models. The entire process aims to build a model that not only generates correct answers but does so through a sound and efficient reasoning process.

</details>

Figure 2: Overview of S 2 r.

### 2.1 Problem Setup

We formulate the desired LLM reasoning paradigm as a sequential decision-making process under a reinforcement learning framework. Given a problem $x$ , the language model policy $\pi$ is expected to generate a sequence of interleaved reasoning actions $y=(a_{1},a_{2},\cdots,a_{T})$ until reaching the termination action <end>. We represent the series of actions before an action $a_{t}\in y$ as $y_{:a_{t}}$ , i.e., $y_{:a_{t}}=(a_{1},a_{2},\cdots,a_{t-i})$ , where $a_{t}$ is excluded. The number of tokens in $y$ is denoted as $|y|$ , and the total number of actions in $y$ is denoted as $|y|_{a}$ .

We restrict the action space to three types: “ solve ”, “ verify ”, and “ <end> ”, where “ solve ” actions represent direct attempts to solve the problem, “ verify ” actions correspond to self-assessments of the preceding solution, and “ <end> ” actions signal the completion of the reasoning process. We denote the type of action $a_{i}$ as $Type(\cdot)$ , where $Type(a_{i})\in\{\texttt{verify},\texttt{solve},\texttt{<end>}\}$ . We expect the policy to learn to explore new solutions by generating “ solve ” actions, to self-verify the correctness of preceding solutions with “ verify ” actions, and to correct the detected mistakes with new “ solve ” actions if necessary. Therefore, for each action $a_{i}$ , the type of the next action $a_{i+1}$ is determined by the following rules:

$$

Type(a_{i+1})=\begin{cases}\texttt{verify},&Type(a_{i})=\texttt{solve}\\

\texttt{solve},&Type(a_{i})=\texttt{verify}\\

&\text{ and }\text{Parser}(a_{i})=\textsc{incorrect}\\

\texttt{<end>},&Type(a_{i})=\texttt{verify}\\

&\text{ and }\text{Parser}(a_{i})=\textsc{correct}\\

\end{cases}

$$

Here, $Parser(a)\in\{\textsc{correct},\textsc{incorrect}\}$ (for any action $a$ where $Type(a)=\texttt{verify}$ ) is a function (e.g., a regex) that converts the model’s free-form verification text into binary judgments.

For simplicity, we denote the $j$ -th solve action as $s_{j}$ and the $j$ -th verify action as $v_{j}$ . Then we have $y=(s_{1},v_{1},s_{2},v_{2},\cdots,s_{k},v_{k},\texttt{<end>})$ .

### 2.2 Initializing Self-verification and Self-correction Behaviors

#### 2.2.1 Learning Valid Self-verification

Learning to perform valid self-verification is the most crucial part in S 2 r, as models can make mistakes during trial-and-error, and recognizing intermediate mistakes is critical for reaching the correct answer. In this work, we explore two methods for constructing self-verification behavior.

“Problem-Solving” Verification

The most intuitive method for verification construction is to directly query existing models to generate verifications on the policy models’ responses, and then filter for valid verifications. By querying existing models using different prompts, we found that existing models tend to perform verification in a “Problem-Solving” manner, i.e., by re-solving the problem and checking whether the answer matches the given one. We refer to this kind of verification as “Problem-Solving” Verification.

“Confirmative” Verification

"Problem-solving" verification is intuitively not the ideal verification behavior we seek. Ideally, we expect the model to think outside the box and re-examine the solution from a new perspective, rather than thinking from the same problem-solving view for verification. We refer to this type of verification behavior as “Confirmative” Verification. Specifically, we construct “Confirmative” Verification by prompting existing LLMs to "verify the correctness of the answer without re-solving the problem", and filtering out invalid verifications using LLM-as-a-judge. The detail implementation can be found in Appendix § A.1.

#### 2.2.2 Learning Self-correction

Another critical part of S 2 r is enabling the model to learn self-correction. Inspired by Kumar et al. (2024) and Snell et al. (2024b), we initialize the self-correcting behavior by concatenating a series of incorrect solutions (each followed by a verification recognizing the mistakes) with a final correct solution. As demonstrated by Kumar et al. (2024), LLMs typically fail to learn valid self-correction behavior through SFT, but the validity of self-correction can be enhanced through reinforcement learning. Therefore, we only initialize the self-correcting behavior at this stage, leaving further enhancement of the self-correcting capabilities to the RL stage.

#### 2.2.3 Constructing Dynamic Trial-and-Error Trajectory

We next construct the complete trial-and-error trajectories for behavior initialization SFT, following three principles:

- To ensure the diversity of the trajectories, we construct trajectories of various lengths. Specifically, we cover $k\in\{1,2,3,4\}$ for $y=(a_{1},\cdots,a_{2k})=(s_{1},v_{1},\cdots,s_{k},v_{k})$ in the trajectories.

- To ensure that the LLMs learn to verify and correct their own errors, we construct the failed trials in each trajectory by sampling and filtering from the LLMs’ own responses.

- As a plausible test-time scaling method allocates reasonable effort to varying levels of problems, it is important to ensure the trial-and-error trajectories align with the difficulty level of problems. Specifically, more difficult problems will require more trial-and-error iterations before reaching the correct answer. Thus, we determine the length of each trajectory based on the accuracy of the sampled responses for each base model.

#### 2.2.4 Supervised Fine-tuning for Thinking Behavior Initialization

Once the dynamic self-verifying and self-correcting training data $\mathcal{D}_{SFT}$ is ready, we optimize the policy $\pi$ for thinking behavior initialization by minimizing the following objective:

$$

\mathcal{L}=-\mathbb{E}_{(x,y)\sim\mathcal{D}_{SFT}}\sum_{a_{t}\in y}\delta_{

mask}(a_{t})\log\pi(a_{t}\mid x,y_{:a_{t}}) \tag{1}

$$

where the mask function $\delta_{mask}(a_{t})$ for action $a_{t}$ in $y=(a_{1},\cdots,a_{T})$ is defined as:

$$

\delta_{mask}(a_{t})=\begin{cases}1,&\text{if }Type(a_{t})=\texttt{verify}\\

1,&\text{if }Type(a_{t})=\texttt{solve}\text{ and }t=T-1\\

1,&\text{if }Type(a_{t})=\texttt{<end>}\text{ and }t=T\\

0,&\text{otherwise}\end{cases}

$$

That is, we optimize the probability of all verifications and only the last correct solution $s_{N}$ by using masks during training.

### 2.3 Boosting Thinking Capabilities via Reinforcement Learning

After Stage 1, we initialized the policy model $\pi$ with self-verification and self-correction behavior, obtaining $\pi_{SFT}$ . We then explore further enhancing these thinking capabilities of $\pi_{SFT}$ via reinforcement learning. Specifically, we explore two simple RL algorithms: the outcome-level REINFORCE Leave-One-Out (RLOO) algorithm and a proces-level group-based RL algorithm.

#### 2.3.1 Outcome-level RLOO

We first introduce the outcome-level REINFORCE Leave-One-Out (RLOO) algorithm Ahmadian et al. (2024); Kool et al. (2019) to further enhance the self-verification and self-correction capabilities of $\pi_{SFT}$ . Given a problem $x$ and the response $y=(s_{1},v_{1},...,s_{T},v_{T})$ , we define the reward function $R_{o}(x,y)$ based on the correctness of the last solution $s_{T}$ :

$$

R_{o}(x,y)=\begin{cases}1,&V_{golden}(s_{T})=\texttt{correct}\\

-1,&otherwise\\

\end{cases}

$$

Here $V_{golden}(\cdot)\in\{\texttt{correct},\texttt{incorrect}\}$ represents ground-truth validation by matching the golden answer with the given solution. We calculate the advantage of each response $y$ using an estimated baseline and KL reward shaping as follows:

$$

A(x,y)=R_{o}(x,y)-\hat{b}-\beta\log\frac{\pi_{\theta_{old}}(y|x)}{\pi_{ref}(y|

x)} \tag{2}

$$

where $\beta$ is the KL divergence regularization coefficient, and $\pi_{\text{ref}}$ is the reference policy (in our case, $\pi_{SFT}$ ). $\hat{b}(x,y^{(m)})=\frac{1}{M-1}\sum_{\begin{subarray}{c}j=1,...,M\\ j\neq m\end{subarray}}.R_{o}(x,y^{(j)})$ is the baseline estimation of RLOO, which represents the leave-one-out mean of $M$ sampled outputs $\{y^{(1)},...y^{(M)}\}$ for each input $x$ , serving as a baseline estimation for each $y^{(m)}$ . Then, we optimize the policy $\pi_{\theta}$ by minimizing the following objective after each sampling episode based on $\pi_{\theta_{old}}$ :

$$

\begin{split}\mathcal{L}(\theta)\ &=\ -\mathbb{E}_{\begin{subarray}{c}x\sim

\mathcal{D}\\

y\sim\pi_{\theta_{\text{old}}}(\cdot|x)\end{subarray}}\bigg{[}\min\big{(}r(

\theta)A(x,y),\\

&\text{clip}\big{(}r(\theta),1-\epsilon,1+\epsilon\big{)}A(x,y)\big{)}\bigg{]}

\end{split} \tag{3}

$$

where $r(\theta)=\frac{\pi_{\theta}(y|x)}{\pi_{\theta_{\text{old}}}(y|x)}$ is the probability ratio.

When implementing the above loss function, we treat $y$ as a complete trajectory sampled with an input problem $x$ , meaning we optimize the entire trajectory with outcome-level supervision. With this approach, we aim to incentivize the policy model to explore more dynamic self-verification and self-correcting trajectories on its own, which has been demonstrated as an effective practice in recent work Guo et al. (2025); Team et al. (2025).

#### 2.3.2 Process-level Group-based RL

Process-level supervision has demonstrated effectiveness in math reasoning Lightman et al. (2023a); Wang et al. (2024c). Since the trajectory of S 2 r thinking is naturally divided into self-verification and self-correction processes, it is intuitive to adopt process-level supervision for RL training.

Inspired by RLOO and process-level GRPO Shao et al. (2024), we designed a group-based process-level optimization method. Specifically, we regard each action $a$ in the output trajectory $y$ as a sub-process and define the action level reward function $R_{a}(a\mid x,y_{:a})$ based on the action type. For each “ solve ” action $s_{j}$ , we expect the policy to generate the correct solution; for each “ verify ” action $v_{j}$ , we expect the verification to align with the actual solution validity. The corresponding rewards are defined as follows:

$$

R_{a}(s_{j}\mid x,y_{:s_{j}})=\begin{cases}1,&V_{golden}(s_{j})=\texttt{

correct}\\

-1,&otherwise\\

\end{cases}

$$

$$

R_{a}(v_{j}\mid x,y_{:v_{j}})=\begin{cases}1,&Parser(v_{j})=V_{golden}(s_{j})

\\

-1,&otherwise\\

\end{cases}

$$

To calculate the advantage of each action $a_{t}$ , we estimate the baseline as the average reward of the group of actions sharing the same reward context:

$$

\mathbf{R}(a_{t}\mid x,y)=\left(R_{a}(a_{i}\mid x,y_{:a_{i}})\right)_{i=1}^{t-1}

$$

which is defined as the reward sequence of the previous actions $y_{:a_{t}}$ of each action $a_{t}$ . We denote the set of actions sharing the same reward context $\mathbf{R}(a_{t}\mid x,y)$ as $\mathcal{G}(\mathbf{R}(a_{t}\mid x,y))$ . Then the baseline can be estimated as follows:

$$

\begin{split}&\hat{b}(a_{t}\mid x,y)=\\

&\frac{1}{|\mathcal{G}(\mathbf{R}(a_{t}|x,y))|}\sum_{a\in\mathcal{G}(\mathbf{R

}(a_{t}|x,y))}R_{a}(a|x^{(a)},y^{(a)}_{:a})\end{split} \tag{4}

$$

And the advantage of each action $a_{t}$ is:

$$

\begin{split}A(a_{t}\mid x,y)=&R_{a}(a_{t}\mid x,y_{:a_{t}})-\hat{b}(a_{t}\mid

x

,y)\\

&-\beta\log\frac{\pi_{\theta_{old}}(a_{t}\mid x,y)}{\pi_{\text{ref}}(a_{t}\mid

x

,y)}\end{split} \tag{5}

$$

The main idea of the group-based baseline estimation is that the actions sharing the same reward context are provided with similar amounts of information before the action is taken. For instance, all actions sharing a reward context consisting of one failed attempt and one successful verification (i.e., $\mathbf{R}(a_{t}|x,y)=(-1,1)$ ) are provided with the information about the problem, a failed attempt, and the reassessment on the failure. Given the same amount of information, it is reasonable to estimate a baseline using the average reward of these actions.

Putting it all together, we minimize the following surrogate loss function to update the policy parameters $\theta$ , using trajectories collected from $\pi_{old}$ :

$$

\begin{split}\mathcal{L}(\theta)\ &=\ -\mathbb{E}_{\begin{subarray}{c}x\sim

\mathcal{D}\\

y\sim\pi_{\theta_{\text{old}}}(\cdot|x)\end{subarray}}\bigg{[}\frac{1}{|y|_{a}

}\sum_{a\in y}\min\big{(}r_{a}(\theta)A(a|x,y_{:a}),\\

&\text{clip}\big{(}r_{a}(\theta),1-\epsilon,1+\epsilon\big{)}A(a|x,y_{:a})\big

{)}\bigg{]}\end{split} \tag{6}

$$

where $r_{a}(\theta)=\frac{\pi_{\theta}(a|x,y_{:a})}{\pi_{\theta_{\text{old}}}(a|x,y_ {:a})}$ is the importance ratio.

### 2.4 More Efficient Training with Offline RL

While online RL is known for its high resource requirements, offline RL, which does not require real-time sampling during training, offers a more efficient alternative for RL training. Additionally, offline sampling allows for more accurate baseline calculations with better trajectories grouping for each policy. As part of our exploration into more efficient RL training in S 2 r framework, we also experimented with offline RL to assess its potential in further enhancing the models’ thinking abilities. In Appendix § D.2, we include more details and formal definition for offline RL training.

## 3 Experiment

To verify the effectiveness of the proposed method, we conducted extensive experiments across 3 different base policy models on various benchmarks.

| Stage 1: Behavior Initialization | | |

| --- | --- | --- |

| Base Model | Source | # Training Data |

| Llama-3.1-8B-Instruct | MATH | 4614 |

| Qwen2-7B-Instruct | MATH | 4366 |

| Qwen2.5-Math-7B | MATH | 3111 |

| Stage 2: Reinforcement Learning | | |

| Base Model | Source | # Training Data |

| Llama-3.1-8B-Instruct | MATH+GSM8K | 9601 |

| Qwen2-7B-Instruct | MATH+GSM8K | 9601 |

| Qwen2.5-Math-7B | MATH+OpenMath2.0 | 10000 |

Table 1: Training data statistics.

Table 2: The performance of S 2 r and other strong baselines on the most challenging math benchmarks is presented. BI refers to the behavior-initialized models through supervised fine-tuning, ORL denotes models trained with outcome-level RL, and PRL refers to models trained with process-level RL. The highest results are highlighted in bold and the second-best results are marked with underline. For some baselines, we use the results from their original reports or from Guan et al. (2025), denoted by ∗.

### 3.1 Experiment Setup

Base Models

To evaluate the general applicability of our method across different LLMs, we conducted experiments using three distinct base models: Llama-3.1-8B-Instruct Dubey et al. (2024), Qwen2-7B-Instruct qwe (2024), and Qwen2.5-Math-7B Qwen (2024). Llama-3.1-8B-Instruct and Qwen2-7B-Instruct are versatile general-purpose models trained on diverse domains without a specialized focus on mathematical reasoning. In contrast, Qwen2.5-Math-7B is a state-of-the-art model specifically tailored for mathematical problem-solving and has been widely adopted in recent research on math reasoning Guan et al. (2025); Cui et al. (2025); Zeng et al. (2025).

Training Data Setup

For Stage 1: Behavior Initialization, we used the widely adopted MATH Hendrycks et al. (2021a) training set for dynamic trial-and-error data collection We use the MATH split from Lightman et al. (2023a), i.e., 12000 problems for training and 500 problems for testing.. For each base model, we sampled 5 responses per problem in the training data. After data filtering and sampling, we constructed a dynamic trial-and-error training set consisting of 3k-4k instances for each base model. Detailed statistics of the training set are shown in Table 1. For Stage 2: Reinforcement Learning, we used the MATH+GSM8K Cobbe et al. (2021a) training data for RL training on the policy $\pi_{SFT}$ initialized from Llama-3.1-8B-Instruct and Qwen2-7B-Instruct. Since Qwen2.5-math-7b already achieves high accuracy on the GSM8K training data after Stage 1, we additionally include training data randomly sampled from the OpenMath2 dataset Toshniwal et al. (2024). Following Cui et al. (2025), we filter out excessively easy or difficult problems based on each $\pi_{SFT}$ from Stage 1 to enhance the efficiency and stability of RL training, resulting in RL training sets consisting of approximately 10000 instances. Detailed statistics of the final training data can be found in Table 1. Additional details on training data construction can be found in in Appendix § A.1.

Baselines

We benchmark our proposed method against four categories of strong baselines:

- Frontier LLMs includes cutting-edge proprietary models such as GPT-4o, the latest Claude, and OpenAI’s o1-preview and o1-mini.

- Top-tier open-source reasoning models covers state-of-the-art open-source models known for their strong reasoning capabilities, including Mathstral-7B-v0.1 Team (2024b), NuminaMath-72B LI et al. (2024), LLaMA3.1-70B-Instruct Dubey et al. (2024), and Qwen2.5-Math-72B-Instruct Yang et al. (2024).

- Enhanced models built on Qwen2.5-Math-7B: Given the recent popularity of Qwen2.5-Math-7B as a base policy model, we evaluate S 2 r against three competitive baselines that have demonstrated superior performance based on Qwen2.5-Math-7B: Eurus-2-7B-PRIME Cui et al. (2025), rStar-Math-7B Guan et al. (2025), and Qwen2.5-7B-SimpleRL Zeng et al. (2025). These models serve as direct and strong baseline for our Qwen2.5-Math-7B-based variants.

- SFT with different CoT constructions: We also compare with training on competitive types of CoT reasoning, including the original CoT solution in the training datasets, and Long-CoT solutions distilled from QwQ-32B-Preview Team (2024a), a widely adopted open-source o1-like model Chen et al. (2024c); Guan et al. (2025); Zheng et al. (2024). Specifically, to ensure a fair comparison between behavior initialization with long-CoT and S 2 r, we use long-CoT data of the same size as our behavior initialization data. We provide more details on the baseline data construction in Appendix § A.2.3.

More details on the baselines are included in Appendix § A.2.

Evaluation Datasets

We evaluate the proposed method on 7 diverse mathematical benchmarks. To ensure a comprehensive evaluation, in addition to the in-distribution GSM8K Cobbe et al. (2021b) and MATH500 Lightman et al. (2023a) test sets, we include challenging out-of-distribution benchmarks covering various difficulty levels and mathematical domains, including the AIME 2024 competition problems AI-MO (2024a), the AMC 2023 exam AI-MO (2024b), the advanced reasoning tasks from Olympiad Bench He et al. (2024), and college-level problem sets from College Math Tang et al. (2024a). Additionally, we assess performance on real-world standardized tests, the GaoKao (Chinese College Entrance Exam) En 2023 Liao et al. (2024). A detailed description of these datasets is provided in Appendix § B.1.

Evaluation Metrics

We report Pass@1 accuracy for all baselines. For inference, we employ vLLM Kwon et al. (2023) and develop evaluation scripts based on Qwen Math’s codebase. All evaluations are performed using greedy decoding. Details of the prompts used during inference are provided in Appendix § A.3. All implementation details, including hyperparameter settings, can be found in Appendix § B.2.

### 3.2 Main Results

Table 2 shows the main results of S 2 r compared with baseline methods. We can observe that: (1) S 2 r consistently improves the reasoning abilities of models across all base models. Notably, on Qwen2.5-Math-7B, the proposed method improves the base model by 32.2% on MATH500 and by 34.3% on GSM8K. (2) Generally, S 2 r outperforms the baseline methods derived from the same base models across most benchmarks. Specifically, on Qwen2.5-Math-7B, S 2 r surpasses several recently proposed competitive baselines, such as Eurus-2-7B-PRIME, rStar-Math-7B and Qwen2.5-7B-SimpleRL. While Eurus-2-7B-PRIME and rStar-Math-7B rely on larger training datasets (Figure 1) and require more data construction and reward modeling efforts, S 2 r only needs linear sampling efforts for data construction, 10k RL training data and rule-based reward modeling. These results highlight the efficiency of S 2 r. (3) With the same scale of SFT data, S 2 r also outperforms the long-CoT models distilled from QwQ-32B-Preview, demonstrating that learning to self-verify and self-correct is an effective alternative to long-CoT for test-time scaling in smaller LLMs.

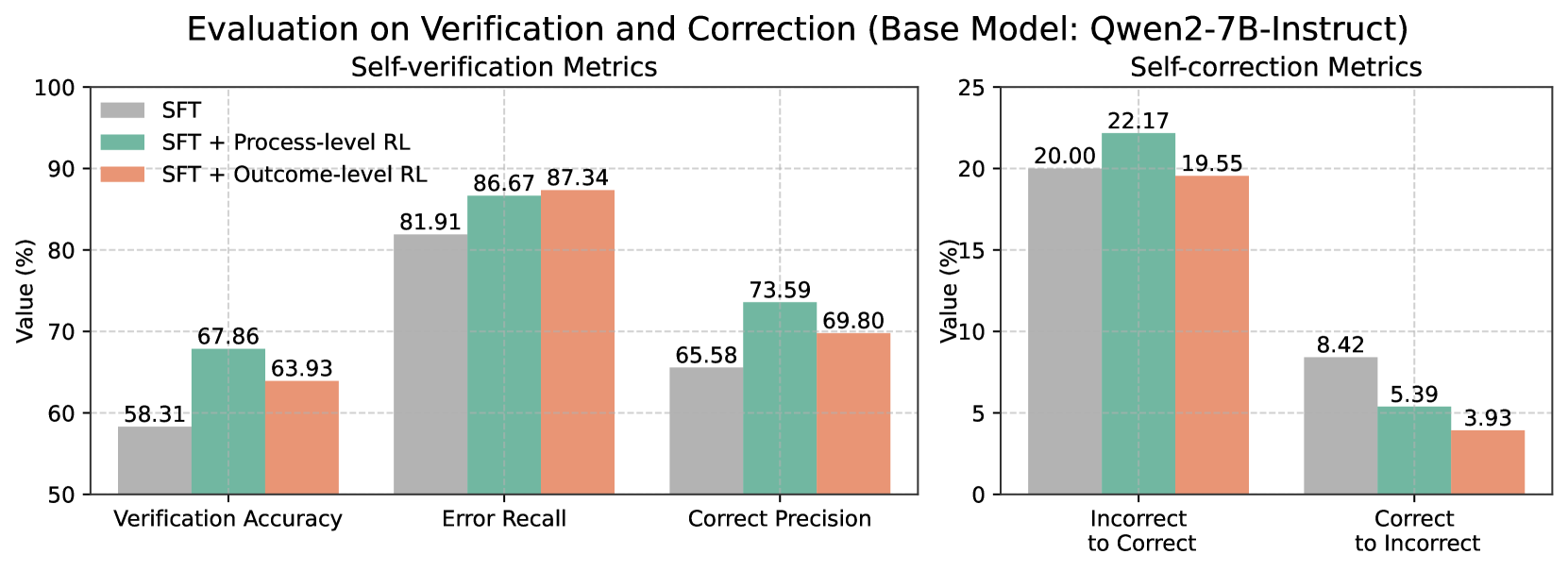

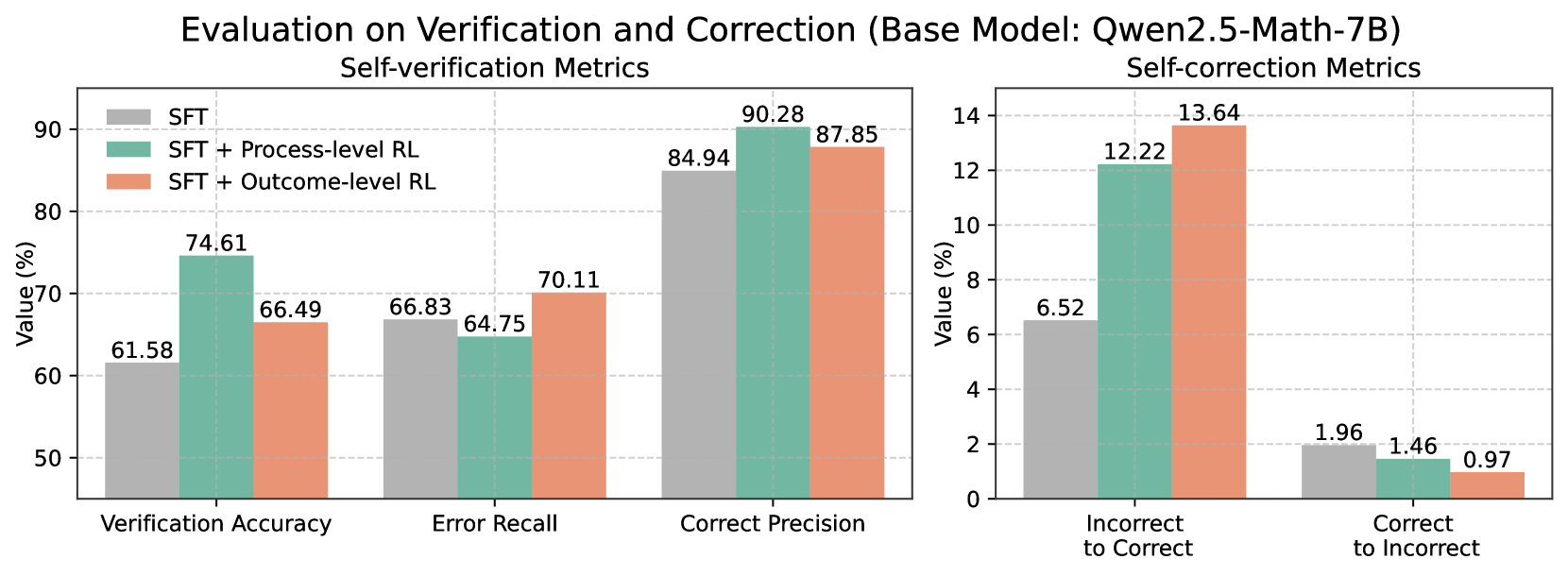

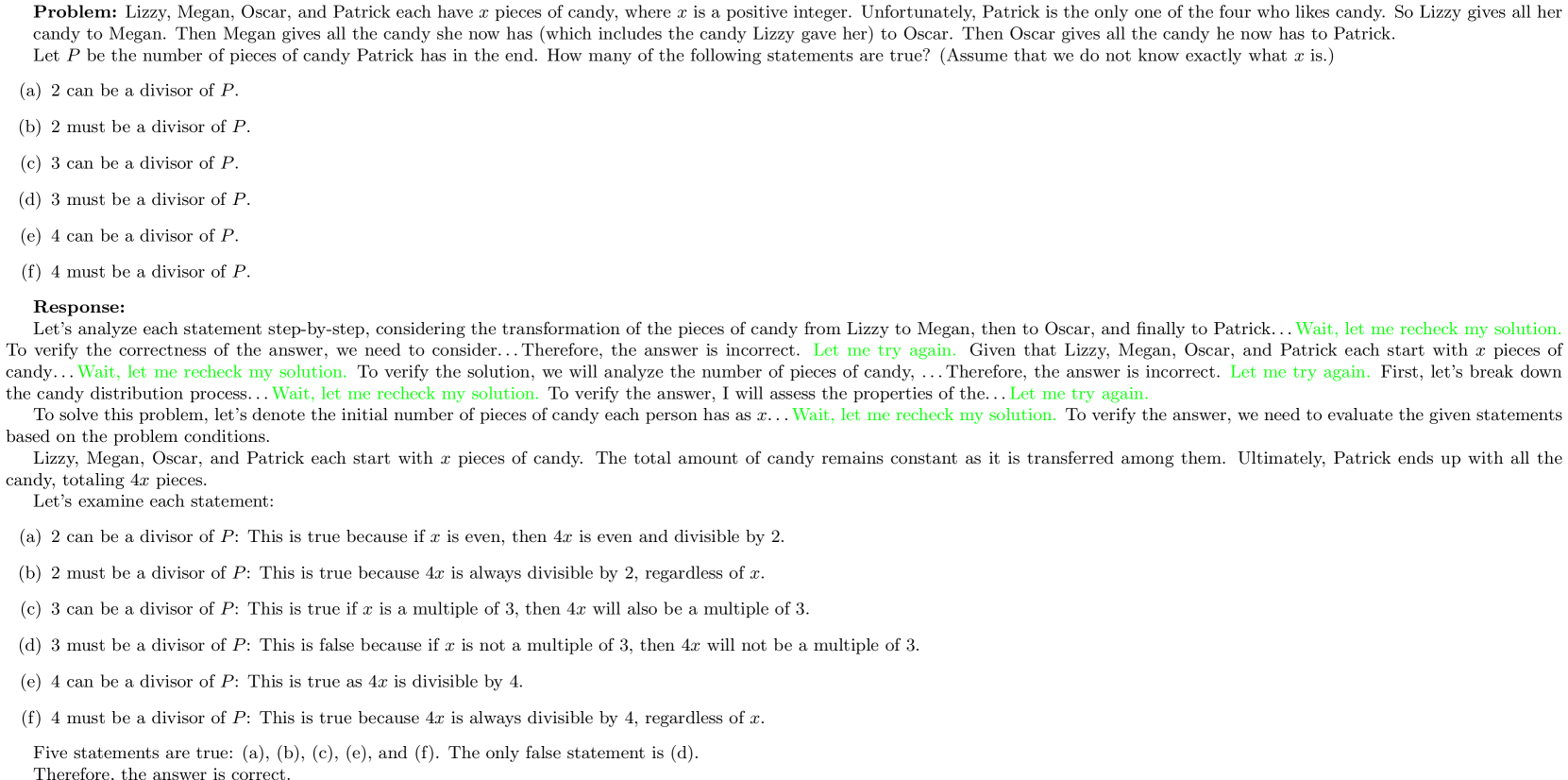

Comparing process-level and outcome-level RL, we find that outcome-level RL generally outperforms process-level RL across the three models. This is likely because outcome-level RL allows models to explore trajectories without emphasizing intermediate accuracy, which may benefit enhancing long-thought reasoning in stronger base models like Qwen2.5-Math-7B. In contrast, process-level RL, which provides guidance for each intermediate verification and correction step, may be effective for models with lower initial capabilities, such as Qwen2-7B-Instruct. As shown in Figure 3, process-level RL can notably enhance the verification and correction abilities of Qwen2-7B- S 2 r -BI.

| Model | FOLIO | CRUX- Eval | Strategy- QA | MMLUPro- STEM |

| --- | --- | --- | --- | --- |

| Qwen2.5-Math-72B-Instruct | 69.5 | 68.6 | 94.3 | 66.0 |

| Llama-3.1-70B-Instruct ∗ | 65.0 | 59.6 | 88.8 | 61.7 |

| OpenMath2-Llama3.1-70B ∗ | 68.5 | 35.1 | 95.6 | 55.0 |

| QwQ-32B-Preview ∗ | 84.2 | 65.2 | 88.2 | 71.9 |

| Eurus-2-7B-PRIME | 56.7 | 50.0 | 79.0 | 53.7 |

| Qwen2.5-Math-7B-Instruct | 61.6 | 28.0 | 81.2 | 44.7 |

| Qwen2.5-Math-7B | 37.9 | 40.8 | 61.1 | 46.0 |

| Qwen2.5-Math-7B- S 2 r -BI (ours) | 58.1 | 48.0 | 88.7 | 49.8 |

| Qwen2.5-Math-7B- S 2 r -ORL (ours) | 61.6 | 50.9 | 90.8 | 50.0 |

Table 3: Performance of the proposed method and the baseline methods on 4 cross-domain tasks. The results with ∗ are reported by Shen et al. (2025).

### 3.3 Generalizing to Cross-domain Tasks

Despite training on math reasoning tasks, we found that the learned self-verifying and self-correcting capability can also generalize to out-of-distribution general domains. In Table 3, we evaluate the SFT model and the outcome-level RL model based on Qwen2.5-Math-7B on four cross-domain tasks: FOLIO Han et al. (2022) on logical reasoning, CRUXEval Gu et al. (2024) on code reasoning, StrategyQA Geva et al. (2021) on multi-hop reasoning and MMLUPro-STEM on multi-task complex understanding Wang et al. (2024d); Shen et al. (2025), with details of these datasets provided in Appendix § B.1. The results show that after learning to self-verify and self-correct, the proposed method effectively boosts the base model’s performance across all tasks and achieves comparative results to the baseline models. These findings indicate that the learned self-verifying and self-correcting capabilities are general thinking skills, which can also benefit reasoning in general domains. Additionally, we expect that the performance in specific domains can be further improved by applying S 2 r training on domain data with minimal reward model requirements (e.g., rule-based or LLM-as-a-judge). For better illustration, we show cases on how the trained models perform self-verifying and self-correcting on general tasks in Appendix § E.

### 3.4 Analyzing Self-verification and Self-correction Abilities

In this section, we conduct analytical experiments on the models’ self-verification and self-correction capabilities from various perspectives.

#### 3.4.1 Problem-solving v.s. Confirmative Verification

We first compare the Problem-solving and Confirmative Verification methods described in § 2.2.1. In Table 4, we present the verification results of different methods on the Math500 test set. We report the overall verification accuracy, as well as the initial verification accuracy when the initial answer is correct ( $V_{golden}(s_{0})=\texttt{correct}$ ) and incorrect ( $V_{golden}(s_{0})=\texttt{incorrect}$ ), respectively.

| Base Model | Methods | Overall Verification Acc. | Initial Verification Acc. | |

| --- | --- | --- | --- | --- |

| $V_{golden}(s_{0})$ $=\texttt{correct}$ | $V_{golden}(s_{0})$ $=\texttt{incorrect}$ | | | |

| Llama3.1-8B-Instruct | Problem-solving | 80.10 | 87.28 | 66.96 |

| Confirmative | 65.67 | 77.27 | 78.22 | |

| Qwen2-7B-Instruct | Problem-solving | 73.28 | 90.24 | 67.37 |

| Confirmative | 58.31 | 76.16 | 70.05 | |

| Qwen2.5-Math-7B | Problem-solving | 77.25 | 91.21 | 56.67 |

| Confirmative | 61.58 | 82.80 | 68.04 | |

Table 4: Comparison of problem-solving and confirmative verification.

We observe from the table that: (1) Generally, problem-solving verification achieves superior overall accuracy compared to confirmative verification. This result is intuitive, as existing models are trained for problem-solving, and recent studies have highlighted the difficulty of existing LLMs in performing reverse thinking Berglund et al. (2023); Chen et al. (2024b). During data collection, we also found that existing models tend to verify through problem-solving, even when prompted to verify without re-solving (see Table 6 in Appendix § A.1). (2) In practice, accuracy alone does not fully reflect the validity of a method. For example, when answer accuracy is sufficiently high, predicting all answers as correct will naturally lead to high verification accuracy, but this is not a desired behavior. By further examining the initial verification accuracy for both correct and incorrect answers, we found that problem-solving verification exhibits a notable bias toward predicting answers as correct, while the predictions from confirmative verification are more balanced. We deduce that this bias arises might be because problem-solving verification is more heavily influenced by the preceding solution, aligning with previous studies showing that LLMs struggle to identify their own errors Huang et al. (2023); Tyen et al. (2023). In contrast, confirmative verification performs verification from different perspectives, making it less influenced by the LLMs’ preceding solution.

In all experiments, we used confirmative verification for behavior initialization.

#### 3.4.2 Boosting Self-verifying and Self-correcting with RL

In this experiment, we investigate the effect of RL training on the models’ self-verifying and self-correcting capabilities.

We assess self-verification using the following metrics: (1) Verification Accuracy: The overall accuracy of verification predictions, as described in § 3.4.1. (2) Error Recall: The recall of verification when the preceding answers are incorrect. (3) Correct Precision: The precision of verification when it predicts the answers as correct. Both Error Recall and Correct Precision directly affect the final answer accuracy: if verification fails to detect an incorrect answer, or if it incorrectly predicts an answer as correct, the final answer will be wrong.

For self-correction, we use the following metrics: (1) Incorrect to Correct Rate: the rate at which the model successfully corrects an incorrect initial answer to a correct final answer. (2) Correct to Incorrect Rate: the rate at which the model incorrectly changes a correct initial answer to an incorrect final answer. We provide the formal definitions of the metrics used in Appendix § C.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)

### Overview

This image contains two bar charts side-by-side, presenting evaluation metrics for a base model named "Qwen2-7B-Instruct". The left chart displays "Self-verification Metrics", and the right chart displays "Self-correction Metrics". Both charts compare three different configurations: "SFT", "SFT + Process-level RL", and "SFT + Outcome-level RL". The y-axis for both charts represents "Value (%)".

### Components/Axes

**Overall Title:** Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)

**Left Chart: Self-verification Metrics**

* **Title:** Self-verification Metrics

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 50 to 100, with major ticks at 50, 60, 70, 80, 90, 100.

* **X-axis Categories:** Verification Accuracy, Error Recall, Correct Precision.

* **Legend:** Located in the top-left quadrant of the left chart.

* **SFT:** Represented by a light grey rectangle.

* **SFT + Process-level RL:** Represented by a teal/mint green rectangle.

* **SFT + Outcome-level RL:** Represented by a coral/light orange rectangle.

**Right Chart: Self-correction Metrics**

* **Title:** Self-correction Metrics

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 0 to 25, with major ticks at 0, 5, 10, 15, 20, 25.

* **X-axis Categories:** Incorrect to Correct, Correct to Incorrect.

* **Legend:** The legend from the left chart is applicable to both charts.

### Detailed Analysis

**Left Chart: Self-verification Metrics**

* **Verification Accuracy:**

* SFT (Grey): 58.31%

* SFT + Process-level RL (Teal): 67.86%

* SFT + Outcome-level RL (Coral): 63.93%

* **Trend:** SFT + Process-level RL shows the highest Verification Accuracy, followed by SFT + Outcome-level RL, and then SFT.

* **Error Recall:**

* SFT (Grey): 81.91%

* SFT + Process-level RL (Teal): 86.67%

* SFT + Outcome-level RL (Coral): 87.34%

* **Trend:** SFT + Outcome-level RL shows the highest Error Recall, closely followed by SFT + Process-level RL, and then SFT.

* **Correct Precision:**

* SFT (Grey): 65.58%

* SFT + Process-level RL (Teal): 73.59%

* SFT + Outcome-level RL (Coral): 69.80%

* **Trend:** SFT + Process-level RL shows the highest Correct Precision, followed by SFT + Outcome-level RL, and then SFT.

**Right Chart: Self-correction Metrics**

* **Incorrect to Correct:**

* SFT (Grey): 20.00%

* SFT + Process-level RL (Teal): 22.17%

* SFT + Outcome-level RL (Coral): 19.55%

* **Trend:** SFT + Process-level RL shows the highest rate of correcting incorrect predictions, followed by SFT, and then SFT + Outcome-level RL.

* **Correct to Incorrect:**

* SFT (Grey): 8.42%

* SFT + Process-level RL (Teal): 5.39%

* SFT + Outcome-level RL (Coral): 3.93%

* **Trend:** SFT shows the highest rate of incorrectly correcting correct predictions, while SFT + Outcome-level RL shows the lowest rate. The SFT + Process-level RL is in between.

### Key Observations

* **Self-verification:** The "SFT + Process-level RL" configuration generally performs best across "Verification Accuracy" and "Correct Precision". "SFT + Outcome-level RL" performs best for "Error Recall". All RL-enhanced configurations ("SFT + Process-level RL" and "SFT + Outcome-level RL") outperform the base "SFT" model in all self-verification metrics.

* **Self-correction:** For "Incorrect to Correct", "SFT + Process-level RL" is the best. For "Correct to Incorrect", "SFT + Outcome-level RL" is the best, indicating it is least likely to make a correct prediction incorrect.

* **Trade-offs:** There appears to be a trade-off between "Incorrect to Correct" and "Correct to Incorrect" rates. While "SFT + Process-level RL" excels at correcting errors, it also has a higher rate of making correct predictions incorrect compared to "SFT + Outcome-level RL". Conversely, "SFT + Outcome-level RL" is better at preserving correct predictions but is slightly less effective at correcting incorrect ones compared to "SFT + Process-level RL".

### Interpretation

The data suggests that applying Reinforcement Learning (RL) techniques, specifically "Process-level RL" and "Outcome-level RL", to the base "SFT" model significantly improves its self-verification and self-correction capabilities when evaluated on the "Qwen2-7B-Instruct" base model.

The "Self-verification Metrics" indicate that RL enhancements lead to better accuracy in verifying information, recalling errors, and precisely correcting errors. The "SFT + Process-level RL" configuration appears to be a strong contender for overall self-verification performance, particularly in accuracy and precision.

The "Self-correction Metrics" reveal nuanced performance. "SFT + Process-level RL" is most effective at turning incorrect predictions into correct ones. However, "SFT + Outcome-level RL" demonstrates a superior ability to avoid degrading correct predictions into incorrect ones. This suggests that "Outcome-level RL" might be more conservative or robust in maintaining correctness, while "Process-level RL" might be more aggressive in error correction, potentially at the cost of introducing new errors.

In essence, the choice between "SFT + Process-level RL" and "SFT + Outcome-level RL" might depend on the specific priorities of the application. If the primary goal is to maximize the correction of errors, "SFT + Process-level RL" is favored. If the priority is to minimize the degradation of correct predictions, "SFT + Outcome-level RL" is the better choice. Both RL approaches offer substantial improvements over the baseline "SFT" model.

</details>

(a)

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Evaluation on Verification and Correction (Base Model: Qwen2.5-Math-7B)

### Overview

This image contains two bar charts side-by-side, presenting the results of self-verification and self-correction metrics for a base model named "Qwen2.5-Math-7B". The metrics are evaluated across three different configurations: "SFT", "SFT + Process-level RL", and "SFT + Outcome-level RL". The left chart focuses on "Self-verification Metrics" and the right chart on "Self-correction Metrics".

### Components/Axes

**Overall Title:** Evaluation on Verification and Correction (Base Model: Qwen2.5-Math-7B)

**Left Chart:**

* **Title:** Self-verification Metrics

* **X-axis Title:** (Implicitly, the metric categories)

* **X-axis Categories:** Verification Accuracy, Error Recall, Correct Precision

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 50 to 90, with major ticks at 50, 60, 70, 80, 90.

* **Legend:** Located in the top-left quadrant of the left chart.

* **SFT:** Represented by a grey color.

* **SFT + Process-level RL:** Represented by a teal/green color.

* **SFT + Outcome-level RL:** Represented by a coral/orange color.

**Right Chart:**

* **Title:** Self-correction Metrics

* **X-axis Title:** (Implicitly, the metric categories)

* **X-axis Categories:** Incorrect to Correct, Correct to Incorrect

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 0 to 14, with major ticks at 0, 2, 4, 6, 8, 10, 12, 14.

* **Legend:** The legend from the left chart applies to both charts.

### Detailed Analysis

**Left Chart: Self-verification Metrics**

* **Verification Accuracy:**

* SFT (Grey): 61.58%

* SFT + Process-level RL (Teal): 74.61%

* SFT + Outcome-level RL (Coral): 66.49%

* **Error Recall:**

* SFT (Grey): 66.83%

* SFT + Process-level RL (Teal): 64.75%

* SFT + Outcome-level RL (Coral): 70.11%

* **Correct Precision:**

* SFT (Grey): 84.94%

* SFT + Process-level RL (Teal): 90.28%

* SFT + Outcome-level RL (Coral): 87.85%

**Right Chart: Self-correction Metrics**

* **Incorrect to Correct:**

* SFT (Grey): 6.52%

* SFT + Process-level RL (Teal): 12.22%

* SFT + Outcome-level RL (Coral): 13.64%

* **Correct to Incorrect:**

* SFT (Grey): 1.96%

* SFT + Process-level RL (Teal): 1.46%

* SFT + Outcome-level RL (Coral): 0.97%

### Key Observations

* **Verification Accuracy:** "SFT + Process-level RL" shows the highest Verification Accuracy (74.61%), significantly outperforming both "SFT" (61.58%) and "SFT + Outcome-level RL" (66.49%).

* **Error Recall:** "SFT + Outcome-level RL" has the highest Error Recall (70.11%), followed by "SFT" (66.83%). "SFT + Process-level RL" has the lowest Error Recall (64.75%).

* **Correct Precision:** "SFT + Process-level RL" achieves the highest Correct Precision (90.28%), with "SFT + Outcome-level RL" (87.85%) and "SFT" (84.94%) following.

* **Incorrect to Correct:** The addition of RL significantly improves the ability to correct incorrect predictions. "SFT + Outcome-level RL" shows the highest value (13.64%), followed closely by "SFT + Process-level RL" (12.22%), both substantially higher than "SFT" (6.52%).

* **Correct to Incorrect:** The addition of RL appears to reduce the rate of correcting correct predictions. "SFT" has the highest rate (1.96%), while "SFT + Outcome-level RL" has the lowest (0.97%), with "SFT + Process-level RL" in between (1.46%).

### Interpretation

The data suggests that incorporating Reinforcement Learning (RL) strategies, particularly "Process-level RL" and "Outcome-level RL", generally enhances the self-verification and self-correction capabilities of the "Qwen2.5-Math-7B" model compared to the base "SFT" model.

Specifically, "SFT + Process-level RL" demonstrates superior performance in "Verification Accuracy" and "Correct Precision", indicating a better ability to accurately verify its own outputs and to precisely identify correct predictions. This configuration also shows a substantial improvement in correcting "Incorrect to Correct" scenarios, suggesting it is more adept at fixing its own mistakes.

"SFT + Outcome-level RL" also shows significant gains in correcting "Incorrect to Correct" predictions, even surpassing "Process-level RL" in this specific metric. It also leads in "Error Recall", implying it is better at identifying errors that need attention. However, it shows a slight decrease in "Correct Precision" compared to "Process-level RL" and a notable reduction in "Correct to Incorrect" rates, which could imply a more conservative approach to corrections, potentially avoiding unnecessary changes to correct outputs.

The base "SFT" model performs the lowest across most metrics, highlighting the benefit of the RL fine-tuning. The trade-off between "Incorrect to Correct" and "Correct to Incorrect" rates is also evident. While RL methods improve the correction of errors, they might also slightly increase the risk of incorrectly modifying already correct outputs, though "Outcome-level RL" appears to mitigate this risk more effectively than "Process-level RL".

Overall, the results indicate that RL fine-tuning is a promising direction for improving the self-evaluation and self-correction abilities of large language models, with "SFT + Outcome-level RL" showing a strong balance of correcting errors and preserving correct outputs.

</details>

(b)

Figure 3: Evaluation on verification and correction.

In Figure 3, we present the results of the behavior-initialized model (SFT) and different RL models obtained from Qwen2.5-Math-7B. We observe that: (1) Both RL methods effectively enhance self-verification accuracy. The process-level RL shows larger improvement on accuracy, while the outcome-level RL consistently improves Error Recall and Correct Precision. This might be because process-level supervision indiscriminately promotes verification accuracy in intermediate steps, while outcome-level supervision allows the policy model to explore freely in intermediate steps and only boosts the final answer accuracy, thus mainly enhancing Error Recall and Correct Precision (which directly relate to final answer accuracy). (2) Both RL methods can successfully enhance the models’ self-correction capability. Notably, the model’s ability to correct incorrect answers is significantly improved after RL training. The rate of model mistakenly altering correct answers is also notably reduced. This comparison demonstrates that S 2 r can substantially enhance the validity of models’ self-correction ability.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Accuracy and Trial Numbers across Difficulty Level (Base Model: Llama3.1-8B-Instruct)

### Overview

This bar chart displays the "Accuracy" and "Trial Numbers" for two different training configurations, "SFT" and "SFT+RL", across five distinct "Difficulty Levels" (Level 1 to Level 5). The chart uses a dual y-axis system: the left y-axis represents "Accuracy" ranging from 0.2 to 1.0, and the right y-axis represents "Trial Numbers" ranging from 0 to 6. The base model used for this analysis is Llama3.1-8B-Instruct.

### Components/Axes

* **Title:** "Accuracy and Trial Numbers across Difficulty Level (Base Model: Llama3.1-8B-Instruct)"

* **X-axis:** "Difficulty Level" with categories: "Level 1", "Level 2", "Level 3", "Level 4", "Level 5".

* **Left Y-axis:** "Accuracy" with a scale from 0.2 to 1.0, marked at intervals of 0.1.

* **Right Y-axis:** "Trial Numbers" with a scale from 0 to 6, marked at intervals of 1.

* **Legend:** Located in the top-center of the chart.

* Light Green bar: "SFT Accuracy"

* Dark Green bar: "SFT+RL Accuracy"

* Light Red bar: "SFT Trials"

* Dark Red bar: "SFT+RL Trials"

### Detailed Analysis

The chart presents grouped bars for each difficulty level, with two bars representing accuracy and two bars representing trial numbers.

**Level 1:**

* **SFT Accuracy:** 0.814 (light green bar, left axis)

* **SFT+RL Accuracy:** 0.930 (dark green bar, left axis)

* **SFT Trials:** 3.279 (light red bar, right axis)

* **SFT+RL Trials:** 2.209 (dark red bar, right axis)

**Level 2:**

* **SFT Accuracy:** 0.733 (light green bar, left axis)

* **SFT+RL Accuracy:** 0.722 (dark green bar, left axis)

* **SFT Trials:** 3.367 (light red bar, right axis)

* **SFT+RL Trials:** 2.844 (dark red bar, right axis)

**Level 3:**

* **SFT Accuracy:** 0.610 (light green bar, left axis)

* **SFT+RL Accuracy:** 0.638 (dark green bar, left axis)

* **SFT Trials:** 3.924 (light red bar, right axis)

* **SFT+RL Trials:** 4.219 (dark red bar, right axis)

**Level 4:**

* **SFT Accuracy:** 0.367 (light green bar, left axis)

* **SFT+RL Accuracy:** 0.445 (dark green bar, left axis)

* **SFT Trials:** 5.117 (light red bar, right axis)

* **SFT+RL Trials:** 4.234 (dark red bar, right axis)

**Level 5:**

* **SFT Accuracy:** 0.239 (light green bar, left axis)

* **SFT+RL Accuracy:** 0.276 (dark green bar, left axis)

* **SFT Trials:** 4.104 (light red bar, right axis)

* **SFT+RL Trials:** 5.254 (dark red bar, right axis)

### Key Observations

* **Accuracy Trend:**

* "SFT Accuracy" generally decreases as difficulty level increases, starting at 0.814 for Level 1 and dropping to 0.239 for Level 5.

* "SFT+RL Accuracy" also generally decreases with increasing difficulty, but it consistently outperforms "SFT Accuracy" for Level 1, Level 4, and Level 5. For Level 2 and Level 3, "SFT Accuracy" is slightly higher or comparable to "SFT+RL Accuracy".

* **Trial Numbers Trend:**

* "SFT Trials" show a general increase from Level 1 (3.279) to Level 4 (5.117), with a slight dip at Level 5 (4.104).

* "SFT+RL Trials" show a decrease from Level 1 (2.209) to Level 2 (2.844), then an increase to Level 3 (4.219) and Level 4 (4.234), and finally a significant increase to Level 5 (5.254).

* **Comparison of SFT vs. SFT+RL:**

* "SFT+RL Accuracy" is higher than "SFT Accuracy" at Level 1 (0.930 vs 0.814), Level 4 (0.445 vs 0.367), and Level 5 (0.276 vs 0.239).

* "SFT Accuracy" is slightly higher than "SFT+RL Accuracy" at Level 2 (0.733 vs 0.722) and Level 3 (0.638 vs 0.610).

* "SFT Trials" are generally lower than "SFT+RL Trials" for Level 3, Level 4, and Level 5, but higher for Level 1 and Level 2. Notably, "SFT+RL Trials" are highest at Level 5 (5.254), while "SFT Trials" are highest at Level 4 (5.117).

### Interpretation

This chart suggests that the "SFT+RL" training method generally leads to higher accuracy compared to "SFT" alone, particularly at lower difficulty levels (Level 1) and higher difficulty levels (Level 4 and 5). However, for intermediate difficulty levels (Level 2 and 3), the standard "SFT" method shows comparable or slightly better accuracy.

The trial numbers indicate the computational effort or number of training iterations. It appears that achieving higher accuracy with "SFT+RL" might sometimes require more trials, as seen at Level 5 where "SFT+RL Trials" are the highest (5.254) and "SFT+RL Accuracy" is also higher than "SFT Accuracy". Conversely, at Level 4, "SFT Trials" are higher than "SFT+RL Trials", yet "SFT+RL Accuracy" is still superior. This implies a complex relationship between training trials and accuracy, where the RL component might be more efficient in certain scenarios or require different trial counts to reach optimal performance.

The overall trend of decreasing accuracy with increasing difficulty level is expected for both training methods. The performance drop is more pronounced for "SFT Accuracy" at higher difficulty levels. The "SFT+RL" method seems to mitigate this drop to some extent, especially at Level 5, where it achieves a higher accuracy despite the overall decline. This indicates that reinforcement learning might be beneficial for improving model robustness and performance on more challenging tasks.

</details>

(a)

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: Accuracy and Trial Numbers across Difficulty Level

### Overview

This bar chart displays the accuracy and trial numbers for two different training methodologies, "SFT" and "SFT+RL", across five distinct difficulty levels. The chart uses a dual y-axis system: the left y-axis represents "Accuracy" (ranging from 0.60 to 1.00), and the right y-axis represents "Trial Numbers" (ranging from 0.0 to 2.5). The x-axis denotes the "Difficulty Level", with categories from Level 1 to Level 5.

### Components/Axes

* **Title:** "Accuracy and Trial Numbers across Difficulty Level (Base Model: Qwen2.5-Math-7B)"

* **X-axis Label:** "Difficulty Level"

* **Categories:** Level 1, Level 2, Level 3, Level 4, Level 5

* **Left Y-axis Label:** "Accuracy"

* **Scale:** 0.60, 0.65, 0.70, 0.75, 0.80, 0.85, 0.90, 0.95, 1.00

* **Right Y-axis Label:** "Trial Numbers"

* **Scale:** 0.0, 0.5, 1.0, 1.5, 2.0, 2.5

* **Legend:** Located in the top-center of the chart.

* **SFT Accuracy:** Light green bars.

* **SFT+RL Accuracy:** Dark green bars.

* **SFT Trials:** Light red bars.

* **SFT+RL Trials:** Dark red bars.

### Detailed Analysis

The chart presents grouped bars for each difficulty level, with two bars representing accuracy and two bars representing trial numbers.

**Level 1:**

* **SFT Accuracy:** 0.930 (light green bar, left y-axis)

* **SFT+RL Accuracy:** 0.930 (dark green bar, left y-axis)

* **SFT Trials:** 1.116 (light red bar, right y-axis)

* **SFT+RL Trials:** 1.047 (dark red bar, right y-axis)

**Level 2:**

* **SFT Accuracy:** 0.944 (light green bar, left y-axis)

* **SFT+RL Accuracy:** 0.944 (dark green bar, left y-axis)

* **SFT Trials:** 1.311 (light red bar, right y-axis)

* **SFT+RL Trials:** 1.244 (dark red bar, right y-axis)

**Level 3:**

* **SFT Accuracy:** 0.943 (light green bar, left y-axis)

* **SFT+RL Accuracy:** 0.962 (dark green bar, left y-axis)

* **SFT Trials:** 1.771 (light red bar, right y-axis)

* **SFT+RL Trials:** 1.790 (dark red bar, right y-axis)

**Level 4:**

* **SFT Accuracy:** 0.773 (light green bar, left y-axis)

* **SFT+RL Accuracy:** 0.836 (dark green bar, left y-axis)

* **SFT Trials:** 1.828 (light red bar, right y-axis)

* **SFT+RL Trials:** 1.883 (dark red bar, right y-axis)

**Level 5:**

* **SFT Accuracy:** 0.619 (light green bar, left y-axis)

* **SFT+RL Accuracy:** 0.649 (dark green bar, left y-axis)

* **SFT Trials:** 2.254 (light red bar, right y-axis)

* **SFT+RL Trials:** 2.149 (dark red bar, right y-axis)

### Key Observations

* **Accuracy Trend:** Accuracy for both SFT and SFT+RL generally decreases as the difficulty level increases, with a notable drop observed from Level 3 to Level 4, and further decline to Level 5.

* **SFT+RL vs. SFT Accuracy:** In Level 1 and Level 2, SFT and SFT+RL accuracies are identical (0.930 and 0.944 respectively). From Level 3 onwards, SFT+RL consistently shows slightly higher accuracy than SFT. The largest gap is at Level 5 (0.649 vs 0.619).

* **Trial Numbers Trend:** Trial numbers for both SFT and SFT+RL generally increase with increasing difficulty level, peaking at Level 5.

* **SFT+RL vs. SFT Trials:** SFT+RL trials are consistently lower than SFT trials for Level 1, Level 2, and Level 5. However, at Level 3 and Level 4, SFT+RL trials are slightly higher than SFT trials.

* **Correlation between Accuracy and Trials:** There appears to be an inverse relationship between accuracy and trial numbers. As difficulty increases, accuracy tends to decrease, while trial numbers tend to increase.

### Interpretation

This chart demonstrates the performance of a base model (Qwen2.5-Math-7B) under different training conditions (SFT vs. SFT+RL) across varying difficulty levels.

The data suggests that for lower difficulty levels (1 and 2), the SFT and SFT+RL training methods yield identical accuracy, and SFT+RL requires slightly fewer trials. As the difficulty increases (Level 3 onwards), the SFT+RL method begins to outperform SFT in terms of accuracy, albeit with a slight increase in trial numbers at Level 3 and 4, and a decrease at Level 5 compared to SFT. The significant drop in accuracy from Level 3 to Level 5 for both methods, coupled with the rise in trial numbers, indicates that the model struggles with higher difficulty problems, requiring more computational effort to achieve lower performance. The SFT+RL method appears to be more robust at higher difficulties, maintaining better accuracy despite the increased challenge. The exact reason for the identical accuracy at Levels 1 and 2, and the fluctuating trial number comparison between SFT and SFT+RL at higher levels, warrants further investigation into the specific training dynamics.

</details>

(b)

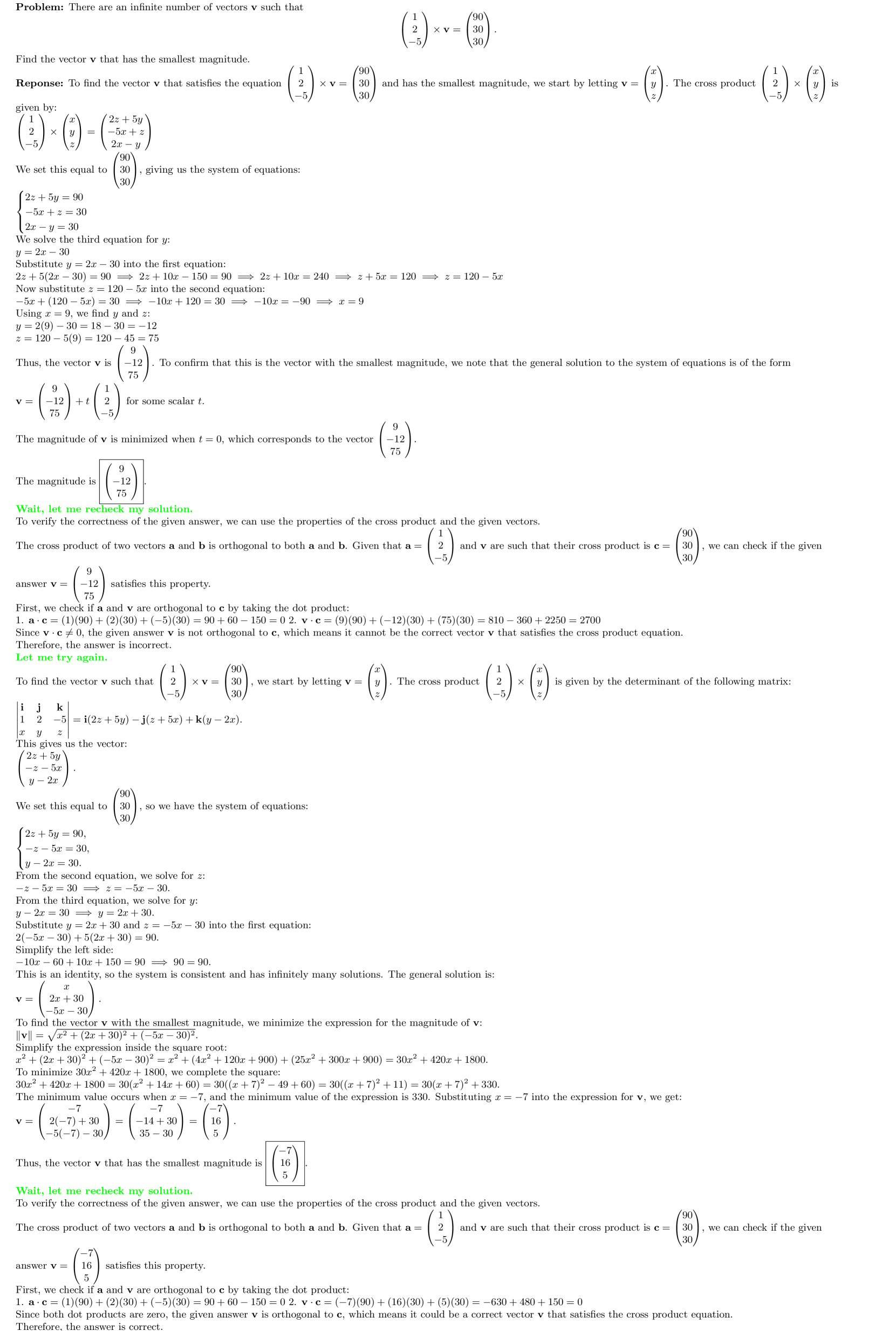

Figure 4: The accuracy and average trial number of different models across difficulty levels. Evaluated on MATH500 test set.

#### 3.4.3 Improvement across Difficulty Levels

To further illustrate the effect of S 2 r training, Figure 4 shows the answer accuracy and average number of trials (i.e., the average value of " $K$ " across all $y=(s_{1},v_{1},\cdots,s_{K},v_{K})$ under each difficulty level) for the SFT and SFT+RL models. We observe that: (1) By learning to self-verify and self-correct during reasoning, the models learn to dynamically allocate test-time effort. For easier problems, the models can reach a confident answer with fewer trials, while for more difficult problems, they require more trials to achieve a confident answer. (2) RL further improves test-time effort allocation, particularly for less capable model (e.g., Llama3.1-8B-Instruct). (3) After RL training, the answer accuracy for more difficult problems is notably improved, demonstrating the effectiveness of the self-verifying and self-correcting paradigm in enhancing the models’ reasoning abilities.

| | Datasets | xx Average xx | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Model | MATH 500 | AIME 2024 | AMC 2023 | College Math | Olympiad Bench | GSM8K | GaokaoEn 2023 | |

| General Model: Qwen2-7B-Instruct | | | | | | | | |

| Qwen2-7B-Instruct | 51.2 | 3.3 | 30.0 | 18.2 | 19.1 | 86.4 | 39.0 | 35.3 |

| Qwen2-7B- S 2 r -BI (ours) | 61.2 | 3.3 | 27.5 | 41.1 | 27.1 | 87.4 | 49.1 | 42.4 |

| Qwen2-7B- S 2 r -PRL (ours) | 65.4 | 6.7 | 35.0 | 36.7 | 27.0 | 89.0 | 49.9 | 44.2 |

| Qwen2-7B- S 2 r -ORL (ours) | 64.8 | 3.3 | 42.5 | 34.7 | 26.2 | 86.4 | 50.9 | 44.1 |

| Qwen2-7B–Instruct- S 2 r -PRL-offline (ours) | 61.6 | 10.0 | 32.5 | 40.2 | 26.5 | 87.6 | 50.4 | 44.1 |

| Qwen2-7B-Instruct- S 2 r -ORL-offline (ours) | 61.0 | 6.7 | 37.5 | 40.5 | 27.3 | 87.4 | 49.6 | 44.3 |

| Math-Specialized Model: Qwen2.5-Math-7B | | | | | | | | |

| Qwen2.5-Math-7B | 51.0 | 16.7 | 45.0 | 21.5 | 16.7 | 58.3 | 39.7 | 35.6 |

| Qwen2.5-Math-7B- S 2 r -BI (ours) | 81.6 | 23.3 | 60.0 | 43.9 | 44.4 | 91.9 | 70.1 | 59.3 |

| Qwen2.5-Math-7B- S 2 r -PRL (ours) | 83.4 | 26.7 | 70.0 | 43.8 | 46.4 | 93.2 | 70.4 | 62.0 |

| Qwen2.5-Math-7B- S 2 r -ORL (ours) | 84.4 | 23.3 | 77.5 | 43.8 | 44.9 | 92.9 | 70.1 | 62.4 |

| Qwen2.5-Math-7B- S 2 r -PRL-offline (ours) | 83.4 | 23.3 | 62.5 | 50.0 | 46.7 | 92.9 | 72.2 | 61.6 |

| Qwen2.5-Math-7B- S 2 r -ORL-offline (ours) | 82.0 | 20.0 | 67.5 | 49.8 | 45.8 | 92.6 | 70.4 | 61.2 |

Table 5: Comparison of S 2 r using online and offline RL training.

### 3.5 Exploring Offline RL

As described in § 2.4, we explore offline RL as a more efficient alternative to online RL training, given the effectiveness of offline RL has been demonstrated in recent studies Baheti et al. (2023); Cheng et al. (2025); Wang et al. (2024b).

Table 5 presents the results of offline RL with process-level and outcome-level supervision, compared to online RL. We can observe that: (1) Different from online RL, process-level supervision outperforms outcome-level supervision in offline RL training. This interesting phenomenon may be due to: a) Outcome-level RL, which excels at allowing models to freely explore dynamic trajectories, is more suitable for on-the-fly sampling during online parameter updating. b) In contrast, process-level RL, which requires accurate baseline estimation for intermediate steps, benefits from offline trajectory sampling, which can provide more accurate baseline estimates with larger scale data sampling. (2) Offline RL consistently improves performance over the behavior-initialized models across most benchmarks and achieves comparable results to online RL. These results highlight the potential of offline RL as a more efficient alternative for enhancing LLMs’ deep reasoning.

## 4 Related Work

### 4.1 Scaling Test-time Compute