# RAG-Gym: Systematic Optimization of Language Agents for Retrieval-Augmented Generation

**Authors**: Guangzhi Xiong, Qiao Jin, Xiao Wang, Yin Fang, Haolin Liu, Yifan Yang, Fangyuan Chen, Zhixing Song, Dengyu Wang, Minjia Zhang, Zhiyong Lu, Aidong Zhang

> University of Virginia

> National Institutes of Health

> University of Illinois at Urbana Champaign

> Dana-Farber Cancer Institute

> University of Alabama at Birmingham

> Yale School of Medicine

## Abstract

Retrieval-augmented generation (RAG) has shown great promise for knowledge-intensive tasks and recently advanced with agentic RAG, where language agents engage in multi-round interactions with external knowledge sources for adaptive information retrieval. However, existing agentic RAG methods often depend on ad-hoc prompt engineering and lack a unified optimization framework. We introduce RAG-Gym, a comprehensive platform that systematically explores three optimization dimensions: (1) prompt engineering, (2) actor tuning, and (3) critic training. For prompt engineering, we propose Re 2 Search, a novel agent incorporating reasoning reflection that significantly outperforms standard prompts. In actor tuning, we evaluate three popular post-training algorithms with fine-grained process supervision and identify direct preference optimization as the most effective. We further demonstrate that a trained critic can enhance inference by selecting higher-quality intermediate reasoning steps. Together, these findings lead to the optimized Re 2 Search++ agent, which surpasses most recent methods like Search-R1 by a relative increase of 3.2% to 11.6% in average F1. Finally, we examine the impact of different reward sources and analyze scaling properties in training and inference, offering practical insights for agentic RAG optimization. The project homepage is available at https://rag-gym.github.io/.

footnotetext: Equal contribution. † Co-correspondence.

## 1 Introduction

Large language models (LLMs) often struggle with knowledge-intensive questions when lacking sufficient or up-to-date domain knowledge, leading to inaccurate responses or hallucinations [97, 59, 28]. Retrieval-augmented generation (RAG) addresses this by grounding outputs in relevant information from information retrieval (IR) systems, improving both accuracy and verifiability of answers [42, 18]. Agentic pipelines such as ReAct [91] enhances conventional RAG by allowing LLMs to actively generate search queries and interact with IR systems in multiple rounds, which has been shown to be more effective in solving complex tasks that need multi-hop reasoning [91, 4, 65]. However, most existing agentic RAG methods focus on prompt engineering [73, 4, 31, 54], which demands substantial manual effort and often fails to generalize across tasks [40, 70, 2].

Meanwhile, although various LLM post-training algorithms have been developed to enhance downstream performance, they are not directly suited for agentic RAG, where the model must dynamically adjust its token-generation strategy in response to newly retrieved context during the reasoning process. Recent works have adapted reinforcement learning with outcome-based rewards for agentic RAG [69, 33, 8]. However, by overlooking process-level supervision, these approaches risk generating suboptimal intermediate search actions and exhibit limited generalization on unseen data. Given that the retrieval steps fundamentally shape the reasoning trajectory and ultimately influence the final answer, providing fine-grained supervision over these intermediate steps is essential for optimizing agentic RAG. Nevertheless, systematic analyses on how to optimize the language agent and identify best practices for enhancing overall agentic RAG performance are still lacking.

In this work, we present RAG-Gym, a systematic framework that enhances agentic RAG along three dimensions: prompt engineering, actor tuning, and critic training. We review and compare the functional components of existing agentic RAG pipelines (see Table 1) and introduce a novel agent design Re 2 Search that leverages reasoning reflection to improve performance. Our comprehensive experiments across three widely used LLM post-training algorithms reveal that fine-grained, process-level supervision substantially boosts performance, particularly when both positive and negative feedback are integrated. Furthermore, we show that training a critic to evaluate intermediate steps yields additional gains across diverse LLMs. By integrating these insights, our optimized Re 2 Search++ agent achieves superior performance than existing methods on challenging knowledge-intensive tasks (+ 3.2% $\sim$ 11.6% in average F1), especially on unseen datasets (+ 8.5% $\sim$ 24.7%). We also discuss reward sources as well as the training and inference scaling properties of agentic RAG, providing practical guidelines for future optimization. Our key contributions are summarized as follows:

- We introduce RAG-Gym, a comprehensive framework that integrates advanced prompt engineering, actor tuning, and critic training to enhance agentic RAG.

- Our extensive experiments uncover best practices across these dimensions and lead to the development of the optimized agent Re 2 Search++, which consistently outperforms existing methods on challenging knowledge-intensive tasks.

- We provide a detailed analysis of reward sources as well as training and inference scaling properties, offering actionable insights for future advancements in agentic RAG.

## 2 RAG-Gym Framework

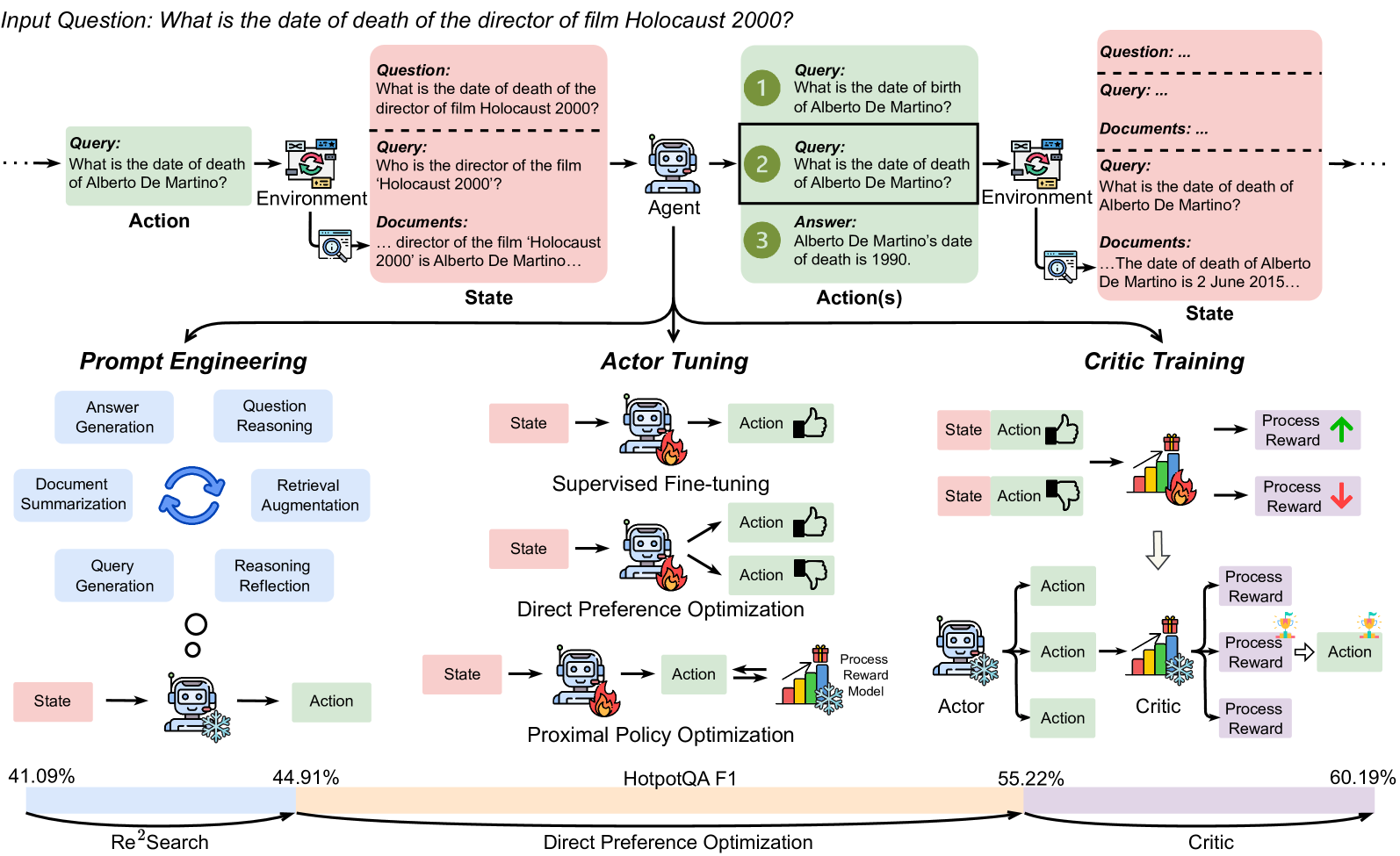

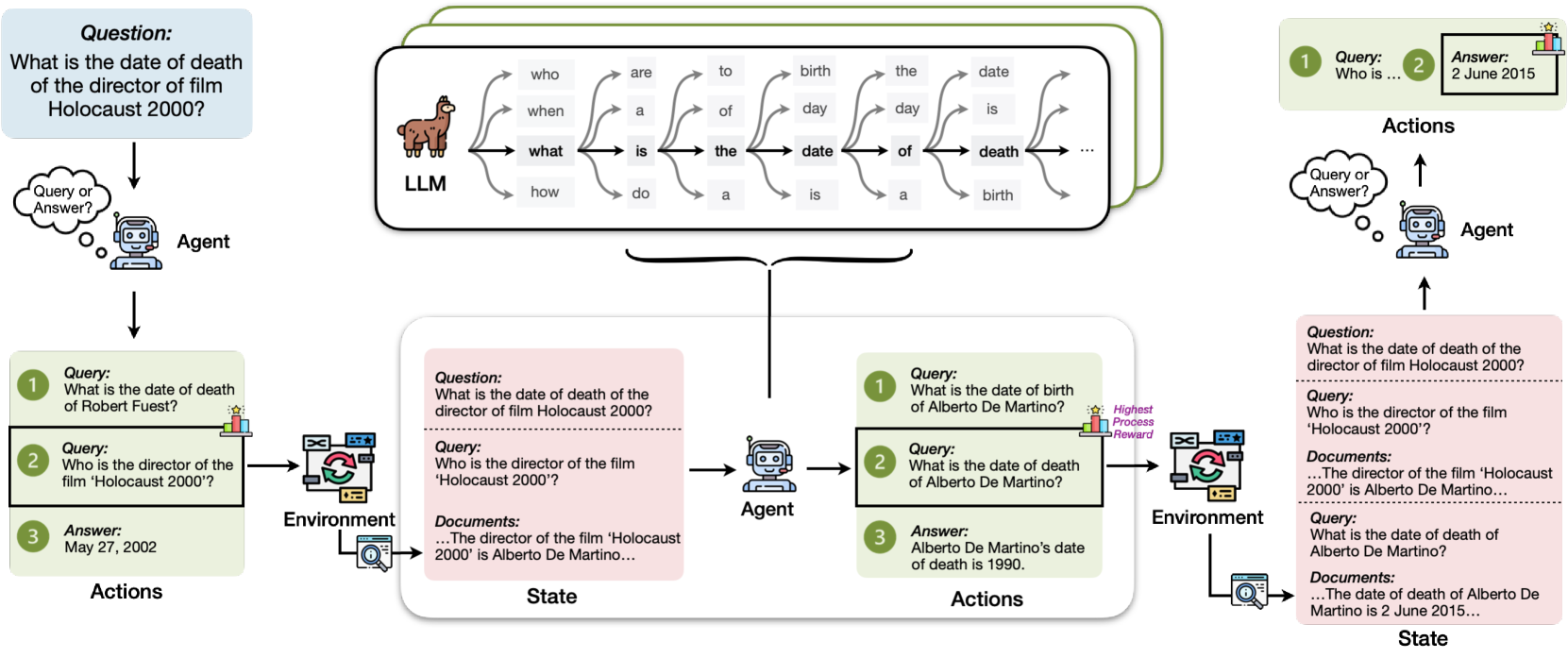

To facilitate fine-grained process-level supervision and systematic evaluation of optimization methods for agentic RAG, we introduce the RAG-Gym framework. RAG-Gym formulates knowledge-intensive question answering as a high-level MDP with well-defined intermediate actions, and provides a modular approach for optimizing language agents across three key components. An overview of RAG-Gym is presented in Figure 1.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Reinforcement Learning for Question Answering

### Overview

This diagram illustrates a reinforcement learning pipeline for question answering, showcasing the interaction between an agent, environment, and the different stages of training: Prompt Engineering, Actor Tuning, and Critic Training. It depicts the flow of queries, documents, states, actions, and rewards. The diagram also includes performance metrics (F1 scores) for different training methods.

### Components/Axes

The diagram is segmented into three main sections: Prompt Engineering, Actor Tuning, and Critic Training, arranged horizontally. Each section demonstrates a different stage of the reinforcement learning process. The diagram also features a top section illustrating the initial question answering process.

* **Top Section:**

* **Input Question:** "What is the date of death of the director of film Holocaust 2000?"

* **Environment:** Represented by a computer icon.

* **Agent:** Represented by a robot head icon.

* **Query:** Text boxes showing the sequence of queries: "What is the date of death of Alberto De Martino?", "Who is the director of the film Holocaust 2000?", "director of the film 'Holocaust 2000' is Alberto De Martino..."

* **Documents:** Text boxes containing relevant documents.

* **Answer:** "Alberto De Martino's date of death is 1990."

* **State:** Text box labeled "State".

* **Action(s):** Text box labeled "Action(s)".

* **Prompt Engineering:**

* **Components:** Answer Generation, Question Reasoning, Document Summarization, Retrieval Augmentation, Query Generation, Reasoning Reflection.

* **State/Action:** Represented by circular nodes connected by arrows.

* **Actor Tuning:**

* **Components:** Supervised Fine-tuning, Direct Preference Optimization, Proximal Policy Optimization.

* **State/Action:** Represented by rectangular nodes connected by arrows.

* **Critic Training:**

* **Components:** A series of "State Action" pairs leading to "Process Reward" and "Action" nodes.

* **Performance Metrics:** 41.09%, 44.91%, 55.22%, 60.19% (F1 scores).

* **Legend (Implicit):** Colors are used to differentiate the stages and components. Pink represents Prompt Engineering, Blue represents Actor Tuning, and Green represents Critic Training.

* **Labels:** Re²Search, HotpotQA F1, Direct Preference Optimization, Critic.

### Detailed Analysis or Content Details

The diagram illustrates a multi-stage reinforcement learning process.

* **Initial Question Answering (Top Section):** The process begins with an input question. The agent interacts with the environment, formulating queries, retrieving documents, and ultimately arriving at an answer. The example shows the agent correctly determining Alberto De Martino's date of death as 1990.

* **Prompt Engineering:** This stage focuses on refining the prompts used for question answering. It involves components like answer generation, question reasoning, document summarization, retrieval augmentation, query generation, and reasoning reflection. The flow is represented by a circular loop of "State" and "Action". The F1 score is 41.09%.

* **Actor Tuning:** This stage involves training the agent's policy using methods like supervised fine-tuning, direct preference optimization, and proximal policy optimization. Each method is represented by a "State" and "Action" pair. The F1 score is 44.91%.

* **Critic Training:** This stage focuses on training a critic to evaluate the agent's actions. The process involves a series of "State Action" pairs, followed by "Process Reward" and "Action" nodes. The F1 scores increase progressively: 55.22% and 60.19%. The arrows indicate a flow of information and reward signals. The green color consistently represents the Critic Training section.

### Key Observations

* The F1 score progressively increases from Prompt Engineering (41.09%) to Actor Tuning (44.91%) and then to Critic Training (60.19%), indicating improved performance with each stage.

* The Critic Training stage shows two distinct F1 scores (55.22% and 60.19%), potentially representing different iterations or configurations within the critic training process.

* The diagram emphasizes the iterative nature of reinforcement learning, with cycles of state, action, and reward.

* The use of color-coding effectively distinguishes the different stages of the pipeline.

### Interpretation

This diagram demonstrates a reinforcement learning approach to improving question answering systems. The pipeline starts with a basic question answering process and then iteratively refines the system through prompt engineering, actor tuning, and critic training. The increasing F1 scores suggest that each stage contributes to improved performance. The diagram highlights the importance of both policy optimization (Actor Tuning) and value estimation (Critic Training) in achieving high-quality question answering. The diagram suggests that the system learns to formulate better queries, retrieve more relevant documents, and generate more accurate answers through this iterative process. The inclusion of specific methods like Direct Preference Optimization and Proximal Policy Optimization indicates a focus on advanced reinforcement learning techniques. The diagram is a high-level overview of the system and does not provide details on the specific algorithms or implementation details.

</details>

Figure 1: Overview of the RAG-Gym framework. RAG-Gym employs a modular design, comprising prompt engineering, actor tuning, and critic training, to systematically optimize agentic RAG performance. By leveraging all three components, RAG-Gym improves the F1 score of the ReAct agent on HotpotQA from 41.09% to 60.19%.

### 2.1 Knowledge-intensive Question Answering as Markov Decision Process

While sequential token generation in LLMs can be modeled as an MDP [43, 49, 93], the integration of interactions with the IR environment introduces complex and inconsistent state transitions across agent architectures. To address this, we propose a hierarchical MDP formulation in RAG-Gym that unifies diverse agentic RAG designs. At the high level, agentic RAG is represented as a sequence of reasoning steps that interact with an IR system, while at the low level, each action involves sequential token generation by LLMs. Below, we formally define the components of the high-level MDP.

States. For the agentic RAG process of a given question $\mathcal{Q}$ , we define the state $s_{t}$ at time step $t$ to be a set consisting of the original question $\mathcal{Q}$ and the information-seeking history $\mathcal{H}_{t}$ . The information-seeking history is a sequence of search queries $q_{1},\cdots,q_{t-1}$ and their corresponding sets of retrieved documents $D_{1},\cdots,D_{t-1}$ , and is used to augment the agent’s knowledge for answering the original question. The initial state is defined as $s_{1}=(\mathcal{Q},\mathcal{H}_{1})$ , where $\mathcal{H}_{1}$ is an empty set.

Actions. Although agents may employ various strategies to reason about the current state and generate different token sequences, RAG-Gym standardizes these outputs by defining a common macro-action space. At each time step $t$ , the action $a_{t}$ is either a search query or a predicted answer to the original question. While the detailed generated token sequences may differ among agent designs, they must always be semantically equivalent to a designated macro-action within the context of agentic RAG.

Environment. The high-level MDP environment in RAG-Gym is powered by an IR system, which is central to the agentic RAG approach. At each time step $t$ , if the agent’s action $a_{t}$ is a search query $q_{t}$ , the IR system returns a corresponding set of documents $D_{t}$ . The state is then updated from $s_{t}=(\mathcal{Q},\mathcal{H}_{t})$ to $s_{t+1}=(\mathcal{Q},\,\mathcal{H}_{t}\cup\{(q_{t},D_{t})\})$ . Conversely, if $a_{t}$ predicts an answer to $\mathcal{Q}$ , the episode terminates. To maintain stable and reproducible state transitions, the configuration of the IR system (e.g., the number of returned documents) remains constant throughout.

Rewards. For the high-level MDP, the immediate reward for a state-action pair $(s_{t},a_{t})$ is defined as zero when $a_{t}$ is a search query, and as the correctness of the predicted answer when $a_{t}$ is an answer. Moreover, by formulating knowledge-intensive QA as a high-level MDP, we can directly assess the quality of intermediate actions, with process-level rewards derived from various sources (e.g., human annotations, LLM evaluations, or rollouts). This enables both the evaluation of intermediate actions and the fine-grained supervision of language agents through process-level feedback.

### 2.2 Systematic Optimization of Agentic Retrieval-augmented Generation

With the high-level MDP formulation, RAG-Gym optimizes the agentic RAG system through three key components: (1) prompt engineering, which refines the language agent’s structure and operational design; (2) actor tuning, which adjusts the LLM parameters to improve decision-making; and (3) critic training, which develops an external verifier to assess the quality of generated macro-actions.

#### 2.2.1 Prompt Engineering

The first aspect of optimizing agentic RAG is crafting effective prompts that guide the language model in generating the appropriate actions. The system prompt defines the agent’s functional capabilities when processing a given state. RAG-Gym summarizes the essential functions into six distinct categories:

- Answer generation: The agent produces a final answer to the question.

- Question reasoning: The agent outlines reasoning steps before providing the answer.

- Retrieval augmentation: The agent incorporates retrieved content to enhance its answer.

- Query generation: The agent formulates queries to search for relevant documents.

- Document summarization: The agent condenses retrieved content to extract key information.

- Reasoning reflection: The agent reviews its reasoning to identify any unverified claims.

While the first five components have already been employed in existing agent architectures, the final component reasoning reflection is a novel addition by RAG-Gym. Inspired by recent advancements in reasoning models in which the models can reflect on their own reasoning process for self-correction [19], the newly introduced reasoning reflection directs the agent to scrutinize its reasoning process and identify claims that are unsupported by the information seeking history, thereby linking search query generation to answer reasoning to produce more precise and relevant queries.

Combining reasoning reflection with other existing components, we propose a new agent architecture called Re 2 Search, which stands for Re asoning, Re flection, and Search. A Re 2 Search agent first reasons about all available information to construct an answer to the original question. It then reflects on its reasoning process to identify unverified claims that lack sufficient justification based on available evidence. These unverified claims form the basis for generating the next search query that is designed to retrieve the missing information required for constructing the answer. Table 1 summarizes the presence or absence of these components in several existing agent architectures, including Direct, CoT [81], RAG [42], ReAct [91], Search-o1 [44], and our proposed Re 2 Search, each enabling different LLM capabilities through prompting.

Table 1: A comparative overview of agent architectures based on their functional components.

| Component | Direct | CoT [81] | RAG [42] | ReAct [91] | Search-o1 [44] | Re 2 Search |

| --- | --- | --- | --- | --- | --- | --- |

| Answer Generation | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ |

| Question Reasoning | ✗ | ✔ | ✔ | ✔ | ✔ | ✔ |

| Retrieval Augmentation | ✗ | ✗ | ✔ | ✔ | ✔ | ✔ |

| Query Generation | ✗ | ✗ | ✗ | ✔ | ✔ | ✔ |

| Document Summarization | ✗ | ✗ | ✗ | ✗ | ✔ | ✔ |

| Reasoning Reflection | ✗ | ✗ | ✗ | ✗ | ✗ | ✔ |

#### 2.2.2 Actor Tuning

The second aspect of optimizing agentic RAG is tuning LLM parameters to directly enhance reasoning capability. Decomposing knowledge-intensive QA into intermediate steps, the high-level MDP in RAG-Gym enables the targeted optimization of language agents by focusing on the generated action at each step, reducing the task to standard text generation. This streamlines the training process and facilitates the application of various LLM post-training algorithms to enhance agent performance.

Process Reward Data Collection. As discussed in our high-level MDP definition, the process reward for intermediate actions can be derived from multiple sources, including human annotations, LLM evaluations, or rollouts. In our implementation, we focus on collecting process reward data using advanced LLMs such as GPT-4o [1]. Specifically, we sample trajectories from an untuned agent and obtain process reward annotations from GPT-4o, while filtering out trajectories that do not result in a correct final answer using the outcome reward. This strategy enables us to efficiently gather high-quality process reward data, which is subsequently used to optimize the LLMs for agentic RAG. Further details on alternative process reward sources can be found in Section 4.1, with additional information about the data collection pipeline provided in Appendix E.

Process-based Training Algorithms.

Let $\mathfrak{D}$ denote the process reward dataset, which consists of tuples $(s,a^{+},a^{-})$ , where $s$ is a state, $a^{+}$ is a preferred (high-quality) action, and $a^{-}$ is a less-preferred (lower-quality) action. Each action is annotated based on the quality of the generated query or predicted answer. We assign the preference label to the entire token sequence produced when reasoning about the state, thereby reducing process-based actor tuning to a standard text generation problem. RAG-Gym implements and compares three widely used LLM post-training algorithms:

- Supervised fine-tuning (SFT) [52]: This method uses high-quality intermediate actions to train language agents by maximizing the log-likelihood of preferred actions ( $a^{+}$ ) conditioned on their respective states $s$ .

- Direct preference optimization (DPO) [56]: This approach employs a contrastive learning framework that utilizes both preferred ( $a^{+}$ ) and unpreferred ( $a^{-}$ ) actions. The DPO objective encourages the agent to increase the likelihood of preferred actions while decreasing that of unpreferred actions.

- Proximal policy optimization (PPO) [60]: This is an online reinforcement learning algorithm for policy optimization. The collected data $\mathfrak{D}$ is first used to train a process reward model $r_{\phi}(s,a)$ . PPO then optimizes the agent to maximize the process reward of newly generated actions, while constraining policy updates to ensure stability.

#### 2.2.3 Critic Training

The third aspect of optimizing agentic RAG involves training a critic, denoted as $r_{\phi}$ , to act as an external evaluator of generated actions. The critic is designed to predict process rewards for a given state-action pair $(s,a)$ . Its training objective employs a contrastive loss that distinguishes preferred actions from less-preferred ones, following the preference modeling approach widely used in LLM alignment and reward modeling [47, 52]:

$$

\mathcal{L}_{\text{critic}}(\phi)=-\mathbb{E}_{(s,a^{+},a^{-})\sim

\mathfrak{D}}\Big{[}\log\sigma\big{(}r_{\phi}(s,a^{+})-r_{\phi}(s,a^{-})\big{)

}\Big{]}, \tag{1}

$$

where $\sigma$ is the sigmoid function and $\mathfrak{D}$ denotes the process reward dataset containing both preferred ( $a^{+}$ ) and less-preferred ( $a^{-}$ ) actions.

While process reward modeling has been studied in the context of math reasoning [62, 46], its application to agentic RAG for knowledge-intensive question answering remains largely underexplored. In RAG-Gym, our process-level critic is tailored to evaluate intermediate actions such as search queries, rather than only final answers. This approach enables more fine-grained and actionable feedback, facilitating the optimization of agentic RAG systems through process-level supervision. Once trained, the critic provides targeted feedback on generated actions, guiding the language agent to make decisions that are more likely to lead to successful outcomes.

## 3 Main Results

### 3.1 Experimental Settings

To assess the performance of various agents on knowledge-intensive QA tasks and evaluate the benefits of different optimization methods in RAG-Gym, we consider four datasets that are both knowledge- and reasoning-intensive, spanning general and medical domains. Specifically, we use HotpotQA [90], 2WikiMultihopQA [21], and Bamboogle [54], which are popular multi-hop QA datasets constructed from Wikipedia, as well as the MedQA dataset [34], which consists of medical exam questions that require specialized domain knowledge and complex reasoning. Following prior work [61], HotpotQA, 2WikiMultihopQA, and Bamboogle are evaluated using Exact Match (EM) and F1 scores, while the multi-choice MedQA dataset is assessed with accuracy (Acc). We also compute the average EM and F1 scores across different tasks, treating accuracy as equivalent to both metrics in the multi-choice evaluation setting. For actor and critic training in RAG-Gym, 1k questions were sampled from the HotpotQA and MedQA training sets for process reward data collection. To test the generalizability of the tuned agents, 2WikiMultihopQA and Bamboogle were evaluated using LLMs trained on HotpotQA. More implementation details can be found in Appendices C, E, H.

### 3.2 Performance Improvements by Prompt Engineering and Actor Tuning

Table 2 presents a performance comparison of various agents and their tuned versions using different actor tuning algorithms in RAG-Gym. The results indicate that the Re 2 Search agent consistently outperforms other agents in both zero-shot and actor-tuned settings. Furthermore, when comparing Table 2 with Table 1, which details the functional components of each agent, it can be observed that more components generally leads to improved performance. This observation validates the effectiveness of the summarized functions in RAG-Gym, as well as the design of the Re 2 Search agent, which incorporates all identified components, including our newly proposed reasoning reflection. Additional case studies of our proposed Re 2 Search agent are provided in Appendices G.1 and G.2.

Table 2: Agent performance with Llama-3.1-8B backbone. Highest scores are bolded.

| Method | Agent | HotpotQA | 2Wiki | Bamboogle | MedQA | Average | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| EM | F1 | EM | F1 | EM | F1 | Acc | EM | F1 | | |

| Zero-shot Learning | Direct | 21.10 | 27.93 | 24.10 | 27.68 | 9.60 | 14.89 | 61.82 | 29.16 | 33.08 |

| CoT | 27.10 | 35.17 | 25.70 | 30.08 | 37.60 | 49.50 | 69.60 | 40.00 | 46.09 | |

| RAG | 38.30 | 48.57 | 32.00 | 36.91 | 22.40 | 33.73 | 66.85 | 39.89 | 46.51 | |

| ReAct | 30.70 | 41.09 | 28.90 | 35.03 | 32.00 | 41.35 | 62.37 | 38.49 | 44.96 | |

| Search-o1 | 35.30 | 47.33 | 34.00 | 41.29 | 44.80 | 52.50 | 66.14 | 45.06 | 51.82 | |

| Re 2 Search | 34.00 | 44.91 | 41.50 | 49.06 | 44.80 | 55.33 | 70.31 | 47.65 | 54.90 | |

| RAG-Gym Supervised Fine-tuning | Direct | 22.80 | 31.67 | 28.00 | 33.17 | 20.00 | 27.21 | 63.63 | 33.61 | 38.92 |

| CoT | 26.50 | 35.60 | 27.30 | 32.10 | 42.40 | 53.89 | 69.68 | 41.47 | 47.82 | |

| RAG | 41.50 | 52.26 | 38.00 | 42.74 | 28.80 | 40.76 | 67.79 | 44.02 | 50.89 | |

| ReAct | 35.50 | 46.06 | 31.00 | 36.79 | 34.40 | 44.17 | 66.69 | 41.90 | 48.43 | |

| Search-o1 | 38.20 | 50.02 | 39.00 | 45.91 | 46.40 | 57.18 | 67.64 | 47.81 | 55.19 | |

| Re 2 Search | 37.60 | 49.16 | 44.00 | 50.54 | 44.80 | 56.78 | 69.52 | 48.98 | 56.50 | |

| RAG-Gym Direct Preference Optimization | Direct | 20.80 | 28.79 | 25.20 | 29.45 | 12.00 | 20.67 | 62.37 | 30.09 | 35.32 |

| CoT | 26.30 | 35.06 | 28.20 | 32.84 | 40.80 | 51.67 | 71.33 | 41.66 | 47.73 | |

| RAG | 38.00 | 49.38 | 37.60 | 42.88 | 28.80 | 39.57 | 67.79 | 43.05 | 49.91 | |

| ReAct | 33.00 | 43.96 | 32.20 | 39.24 | 44.80 | 54.35 | 68.89 | 44.72 | 51.61 | |

| Search-o1 | 42.20 | 54.34 | 44.10 | 52.66 | 42.40 | 55.59 | 70.23 | 49.73 | 58.21 | |

| Re 2 Search | 42.20 | 55.22 | 44.30 | 51.36 | 48.00 | 56.57 | 72.11 | 51.65 | 58.82 | |

| RAG-Gym Proximal Policy Optimization | Direct | 19.20 | 26.17 | 25.60 | 28.84 | 7.20 | 12.17 | 61.12 | 28.28 | 32.08 |

| CoT | 25.50 | 33.68 | 24.20 | 29.02 | 43.20 | 52.54 | 68.50 | 40.35 | 45.94 | |

| RAG | 37.70 | 47.60 | 32.00 | 36.29 | 28.80 | 40.24 | 68.03 | 41.63 | 41.44 | |

| ReAct | 35.80 | 47.56 | 33.20 | 40.06 | 36.80 | 46.79 | 67.32 | 43.28 | 50.43 | |

| Search-o1 | 38.30 | 50.24 | 32.60 | 39.34 | 50.40 | 59.92 | 70.15 | 47.86 | 54.91 | |

| Re 2 Search | 38.40 | 50.30 | 41.40 | 48.06 | 49.60 | 62.06 | 71.72 | 50.28 | 58.04 | |

By comparing different process supervision approaches for actor tuning, we observe that process supervision consistently enhances agent performance relative to the zero-shot learning (ZSL) baseline. This improvement underscores the critical role of process supervision in refining agentic RAG. Notably, for Direct, CoT, and RAG agents, where tuning focuses solely on answer generation, SFT slightly outperforms both DPO and PPO. In contrast, for ReAct, Search-o1, and Re 2 Search agents, where the tuning process also involves generating high-quality queries, DPO and PPO surpass SFT, with DPO demonstrating a slight edge over PPO on most tasks. These findings highlight the importance of utilizing both positive and negative samples during training, especially for agents that require complex, multi-step reasoning with environmental feedback. Furthermore, the tuned agents tend to generate more search queries during inference, as elaborated in Appendix F.

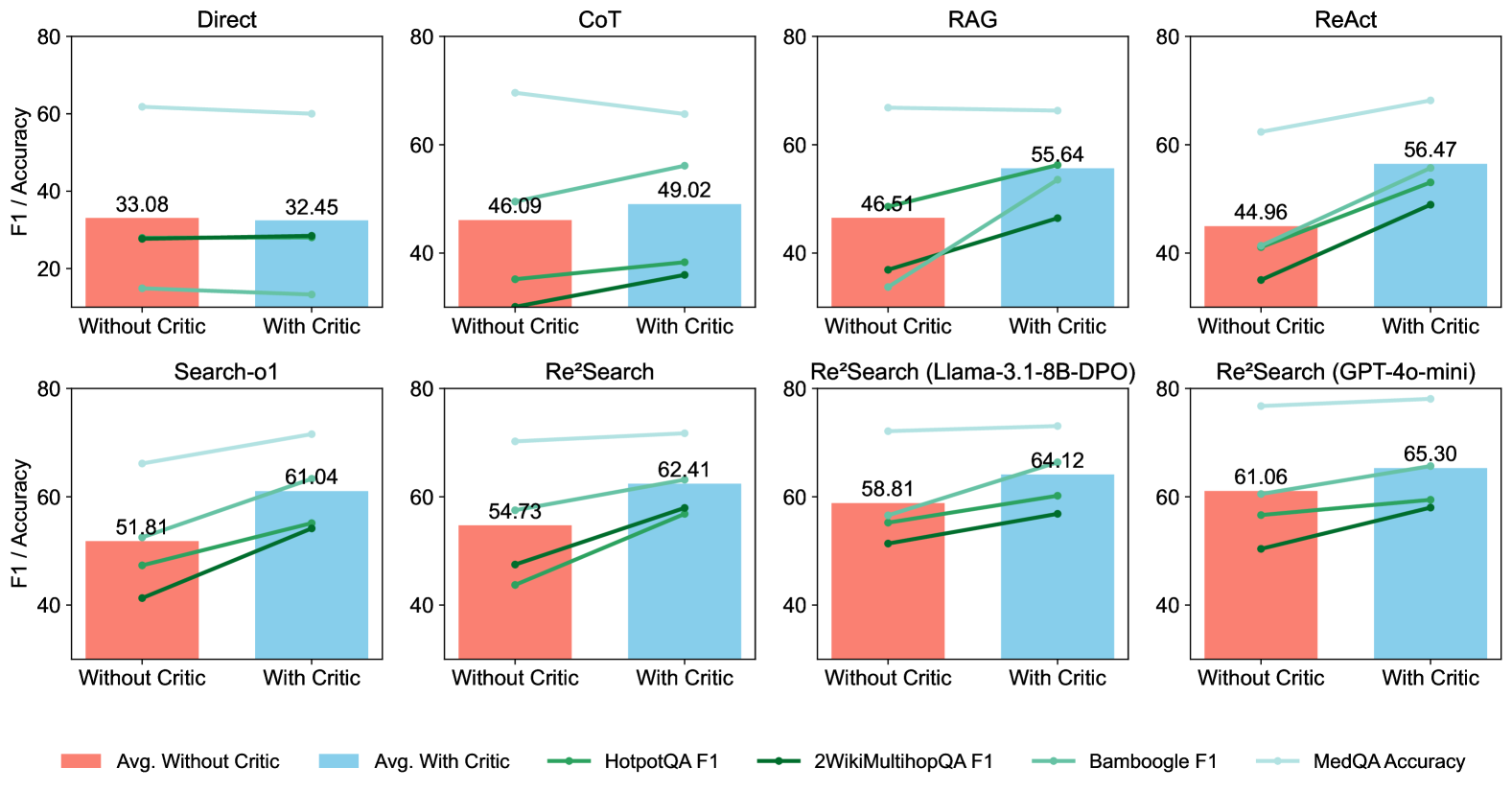

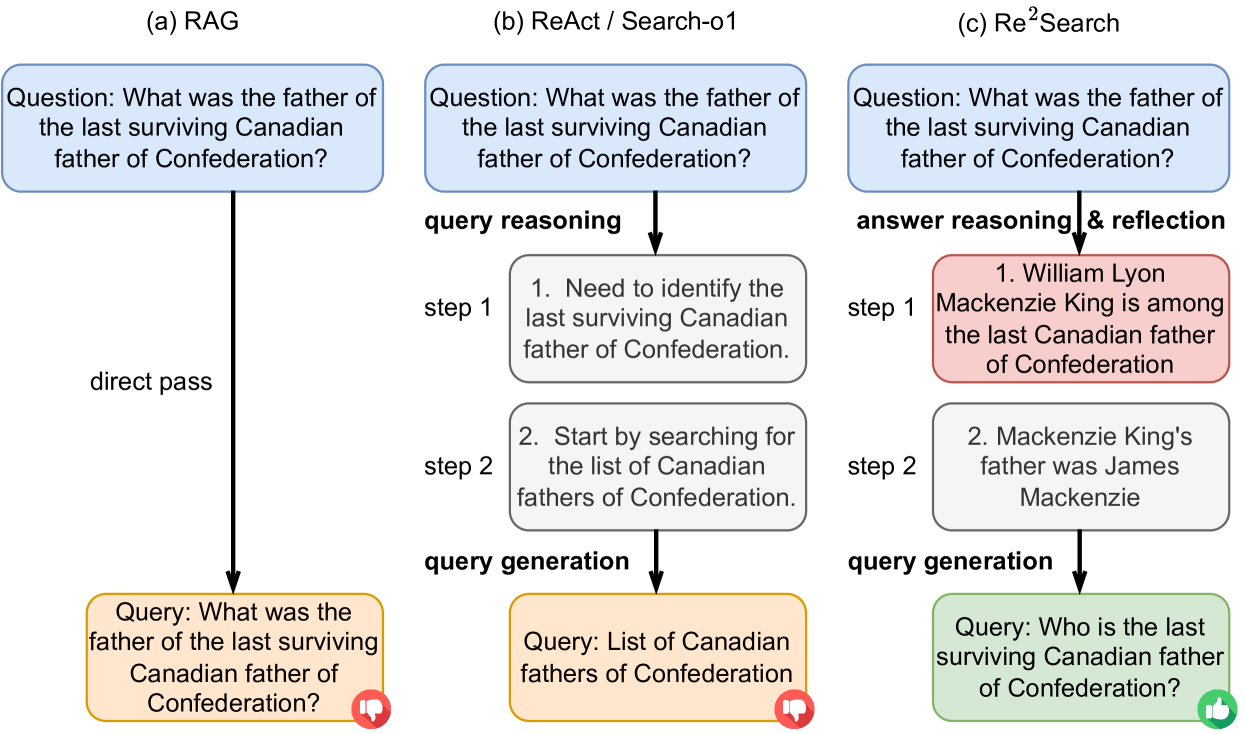

### 3.3 Performance Improvements by Critic Training

Figure 2 illustrates the performance improvements achieved through critic training. The label “With Critic” indicates that an external critic evaluates 10 sampled actions at each step to select the best one. In our experiments, all agents except for “Direct” consistently benefit from critic training. Moreover, these performance gains transfer to actors using different LLMs. As shown in the figure, not only does the original Llama-3.1-8B benefit from the trained critic, but both the DPO-tuned Llama-3.1-8B and GPT-4o-mini also experience significant improvements across all datasets using the same critic. This highlights the potential of employing trained critics as a plug-and-play module to enhance agentic RAG performance, particularly for proprietary LLMs where direct fine-tuning is not feasible. A case study of using trained critics during inference is provided in Appendix G.3.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Bar Chart with Line Overlays: Performance Comparison of Different Methods with and without Critic

### Overview

The image presents a series of bar charts, each representing the performance of a different method (Direct, CoT, RAG, ReAct, Search-01, ReSearch, Re²Search (Llama-3.1-8B-DPO), Re²Search (GPT-4o-mini)) on a task. Each method is evaluated twice: once "Without Critic" and once "With Critic". Performance is measured using F1 score and Accuracy, represented by bar heights and line trends respectively. The charts are arranged in a 2x4 grid.

### Components/Axes

* **Y-axis Label:** "F1 / Accuracy" (ranging from 0 to 80)

* **X-axis Labels:** "Without Critic", "With Critic"

* **Chart Titles:** "Direct", "CoT", "RAG", "ReAct", "Search-01", "ReSearch", "Re²Search (Llama-3.1-8B-DPO)", "Re²Search (GPT-4o-mini)"

* **Legend:**

* Avg. Without Critic (Peach/Light Orange)

* Avg. With Critic (Light Blue)

* HotpotQA F1 (Red)

* 2WikiMultihopQA F1 (Green)

* Bamboogle F1 (Blue)

* MedQA Accuracy (Purple)

### Detailed Analysis or Content Details

**1. Direct:**

* Avg. Without Critic: Approximately 33.08

* Avg. With Critic: Approximately 32.45

* HotpotQA F1: Line starts at ~20 and ends at ~25.

* 2WikiMultihopQA F1: Line starts at ~20 and ends at ~25.

* Bamboogle F1: Line starts at ~20 and ends at ~25.

* MedQA Accuracy: Line starts at ~20 and ends at ~25.

**2. CoT:**

* Avg. Without Critic: Approximately 46.09

* Avg. With Critic: Approximately 49.02

* HotpotQA F1: Line starts at ~35 and ends at ~40.

* 2WikiMultihopQA F1: Line starts at ~35 and ends at ~40.

* Bamboogle F1: Line starts at ~35 and ends at ~40.

* MedQA Accuracy: Line starts at ~35 and ends at ~40.

**3. RAG:**

* Avg. Without Critic: Approximately 46.61

* Avg. With Critic: Approximately 55.64

* HotpotQA F1: Line starts at ~35 and ends at ~45.

* 2WikiMultihopQA F1: Line starts at ~35 and ends at ~45.

* Bamboogle F1: Line starts at ~35 and ends at ~45.

* MedQA Accuracy: Line starts at ~35 and ends at ~45.

**4. ReAct:**

* Avg. Without Critic: Approximately 44.96

* Avg. With Critic: Approximately 56.47

* HotpotQA F1: Line starts at ~35 and ends at ~45.

* 2WikiMultihopQA F1: Line starts at ~35 and ends at ~45.

* Bamboogle F1: Line starts at ~35 and ends at ~45.

* MedQA Accuracy: Line starts at ~35 and ends at ~45.

**5. Search-01:**

* Avg. Without Critic: Approximately 51.81

* Avg. With Critic: Approximately 61.04

* HotpotQA F1: Line starts at ~40 and ends at ~50.

* 2WikiMultihopQA F1: Line starts at ~40 and ends at ~50.

* Bamboogle F1: Line starts at ~40 and ends at ~50.

* MedQA Accuracy: Line starts at ~40 and ends at ~50.

**6. ReSearch:**

* Avg. Without Critic: Approximately 54.73

* Avg. With Critic: Approximately 62.41

* HotpotQA F1: Line starts at ~40 and ends at ~50.

* 2WikiMultihopQA F1: Line starts at ~40 and ends at ~50.

* Bamboogle F1: Line starts at ~40 and ends at ~50.

* MedQA Accuracy: Line starts at ~40 and ends at ~50.

**7. Re²Search (Llama-3.1-8B-DPO):**

* Avg. Without Critic: Approximately 58.81

* Avg. With Critic: Approximately 64.12

* HotpotQA F1: Line starts at ~45 and ends at ~55.

* 2WikiMultihopQA F1: Line starts at ~45 and ends at ~55.

* Bamboogle F1: Line starts at ~45 and ends at ~55.

* MedQA Accuracy: Line starts at ~45 and ends at ~55.

**8. Re²Search (GPT-4o-mini):**

* Avg. Without Critic: Approximately 61.06

* Avg. With Critic: Approximately 65.30

* HotpotQA F1: Line starts at ~45 and ends at ~55.

* 2WikiMultihopQA F1: Line starts at ~45 and ends at ~55.

* Bamboogle F1: Line starts at ~45 and ends at ~55.

* MedQA Accuracy: Line starts at ~45 and ends at ~55.

In all charts, the lines representing the different F1 scores and accuracy metrics generally slope upwards from "Without Critic" to "With Critic", indicating an improvement in performance when using the "Critic" component.

### Key Observations

* The "Re²Search (GPT-4o-mini)" method consistently shows the highest average performance, both with and without the critic.

* The "Direct" method exhibits the lowest average performance.

* The performance improvement from "Without Critic" to "With Critic" is most pronounced in the RAG, ReAct, Search-01, ReSearch, Re²Search (Llama-3.1-8B-DPO), and Re²Search (GPT-4o-mini) methods.

* The lines representing the different metrics (HotpotQA F1, 2WikiMultihopQA F1, Bamboogle F1, MedQA Accuracy) are nearly identical within each chart, suggesting a consistent performance trend across these datasets.

### Interpretation

The data suggests that incorporating a "Critic" component generally improves the performance of various methods on the evaluated task. The magnitude of improvement varies depending on the method, with some methods (like Direct) showing minimal gains, while others (like RAG and ReAct) experience substantial improvements. The consistent upward trend of the lines indicates that the "Critic" is effectively refining the outputs of these methods. The superior performance of "Re²Search (GPT-4o-mini)" suggests that this method, combined with the "Critic", is particularly well-suited for the task. The near-identical trends of the different metrics within each chart imply that the "Critic" is improving performance across a range of datasets and evaluation criteria. This could indicate that the "Critic" is addressing fundamental issues in the reasoning or generation process, rather than being specific to a particular dataset.

</details>

Figure 2: Performance improvements across various agents with critics.

### 3.4 Comparisons with Outcome Supervision Methods

Combining the findings from previous sections, we introduce Re 2 Search++, an optimized agent that integrates the best choices from each optimization direction. Built on Re 2 Search and tuned with DPO while utilizing a trained critic for action selection, Re 2 Search++ is evaluated against recent methods such as Search-R1 [33] and R1-Searcher [69], which rely on outcome supervision via reinforcement learning (RL) with over 8k training questions. As these methods primarily focus on general-domain questions, we exclude MedQA from this evaluation for a fair comparison. Table 3 shows that Re 2 Search++ achieves performance comparable to that of the RL-tuned agents on the datasets used for their training (HotpotQA for Search-R1; HotpotQA and 2WikiMultihopQA for R1-Searcher), while significantly outperforming them on unseen datasets and achieving the best performance on average. This result underscores the overfitting issues of RL-based outcome supervision methods and highlights the robustness and generalizability of Re 2 Search++ through its fine-grained process supervision on intermediate steps.

Table 3: Comparison of Re 2 Search++ and other methods. Shading indicates in-domain model performance. CEM represents the “Cover Exact Match” used in [69].

| LLM | Method | HotpotQA | 2WikiMultihopQA | Bamboogle | Average | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| EM | CEM | F1 | EM | CEM | F1 | EM | CEM | F1 | EM | CEM | F1 | | |

| Llama -3.1-8B | ReAct | 30.70 | 38.40 | 41.09 | 28.90 | 38.00 | 35.03 | 32.00 | 36.80 | 41.35 | 30.57 | 37.73 | 39.16 |

| Search-o1 | 35.30 | 43.80 | 47.33 | 34.00 | 45.80 | 41.29 | 44.80 | 48.80 | 52.50 | 38.03 | 46.13 | 47.04 | |

| R1-Searcher | 44.90 | 50.40 | 56.88 | 48.70 | 51.30 | 54.24 | 38.40 | 40.80 | 53.21 | 44.00 | 47.50 | 54.78 | |

| Re 2 Search++ | 46.50 | 57.80 | 60.19 | 48.90 | 60.50 | 56.85 | 55.20 | 63.20 | 66.37 | 50.20 | 60.50 | 61.14 | |

| Qwen -2.5-7B | ReAct | 36.00 | 40.10 | 45.84 | 38.60 | 44.50 | 45.02 | 35.20 | 38.40 | 44.94 | 36.60 | 41.00 | 45.27 |

| Search-o1 | 40.70 | 46.60 | 52.15 | 38.90 | 46.20 | 45.79 | 40.80 | 44.80 | 52.91 | 40.17 | 45.87 | 50.28 | |

| Search-R1 | 44.90 | 49.40 | 57.30 | 43.90 | 47.80 | 50.07 | 40.80 | 41.60 | 51.69 | 43.20 | 46.27 | 53.02 | |

| R1-Searcher | 46.80 | 53.70 | 59.61 | 48.80 | 55.00 | 55.36 | 44.80 | 48.00 | 54.01 | 46.80 | 52.23 | 56.33 | |

| Re 2 Search++ | 44.40 | 50.30 | 56.47 | 47.00 | 56.50 | 54.35 | 52.94 | 56.30 | 63.51 | 48.11 | 54.37 | 58.11 | |

## 4 Analysis and Discussion

### 4.1 Comparison of Different Reward Sources

As discussed in Section 2, the process reward can be collected from different sources. This section focuses on the evaluation of the effectiveness of these sources in guiding the agent’s action selection toward correct answers, as well as their alignment with human preferences, which are often considered to have the highest quality for process annotation [98]. Specifically, we compare the GPT-4o annotations with Llama-3.1-8B, as well as the rollout-based annotations using Math-Shepherd [77]. We collect process annotations from human experts on MedQA to examine the alignment between the trained reward models and human preferences.

Table 4: Comparison of various reward sources. ORM/PRM denotes the outcome/process reward model. Outcome sources are labeled for PRMs due to the trajectory filtering in RAG-Gym.

| Type | Outcome Source | Process Source | HotpotQA (EM / F1) | 2Wiki (EM / F1) | Bamboogle (EM / F1) | MedQA (Acc / Agree) |

| --- | --- | --- | --- | --- | --- | --- |

| ORM | Truth | – | 41.10 / 53.35 | 47.70 / 55.59 | 43.20 / 57.46 | 66.77 / – |

| PRM (Random) | – | – | 32.20 / 42.83 | 35.70 / 42.00 | 38.40 / 47.86 | 68.26 / 50.00 |

| PRM (Rollout) | Truth | Rollout | 39.60 / 51.85 | 42.94 / 49.57 | 48.80 / 56.05 | 68.34 / 71.03 |

| PRM (Llama) | Truth | Llama-3.1-8B | 40.30 / 51.74 | 40.70 / 48.22 | 44.80 / 54.36 | 68.50 / 65.99 |

| PRM (GPT) | Truth | GPT-4o | 44.10 / 56.84 | 50.20 / 57.94 | 51.20 / 63.15 | 71.96 / 85.85 |

The results are shown in Table 4. The reward model trained with GPT-4o annotations delivers the highest performance across all datasets, effectively providing accurate, fine-grained process rewards for agent optimization. Moreover, it exhibits the best alignment with human preferences, achieving an agreement rate of 85.85% with human annotators. In contrast, although rollouts and Llama-3.1-8B annotations improve action selection relative to a process reward model with random selections, they are generally less effective than GPT-4o annotations and sometimes even bring inferior outcomes on general-domain questions. This result underscores the limitations of current rollout-based methods, originally designed for math reasoning, in the context of complex reasoning and search tasks, and highlights the need for tailored approaches in agentic RAG.

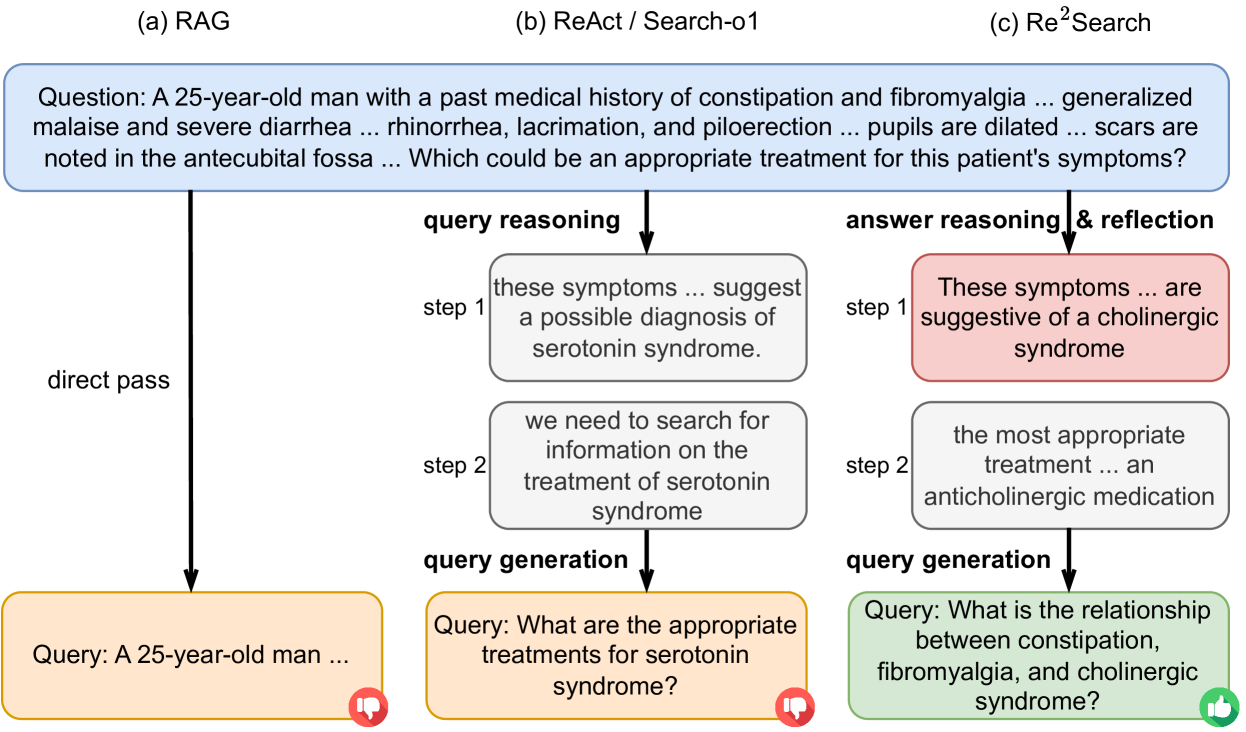

### 4.2 Training Time Scaling

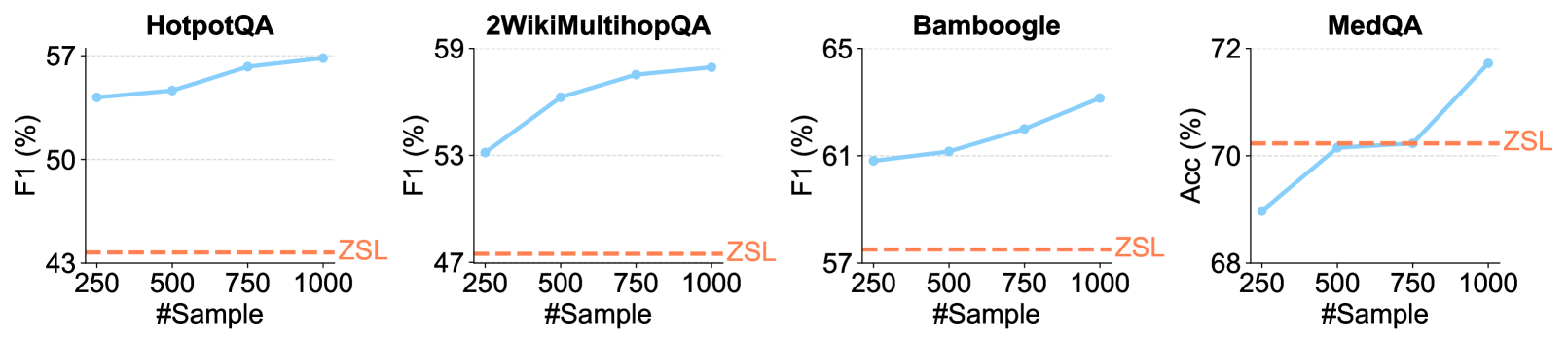

For the evaluation of training sample size and its impacts on the performance of Re 2 Search agents, we conducted experiments using critics trained on varying numbers of instances, ranging from 250 to 1000 questions. The results, presented in Figure 3, show how the agent’s performance scales with the availability of more training data across four datasets. In general, the performance of Re 2 Search improves with an increasing number of training samples, but the gains tend to converge as the sample size grows. Notably, there is a sharp improvement in F1 scores on HotpotQA, 2WikiMultihopQA, and Bamboogle when comparing the ZSL baseline to process reward models trained on 250 samples, showing that even a small amount of process reward data can yield significant performance gains. However, the improvements become less pronounced on HotpotQA and 2WikiMultihopQA when increasing the training samples from 500 to 1000, indicating diminishing returns as the model approaches a saturation point in its learning from additional data.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Charts: Performance vs. Sample Size for Question Answering Models

### Overview

The image presents four separate line charts, each depicting the performance of a different question answering (QA) model (HotpotQA, 2WikiMultihopQA, Bamboogle, and MedQA) as a function of the number of training samples used. Performance is measured using either F1 score (for HotpotQA, 2WikiMultihopQA, and Bamboogle) or Accuracy (for MedQA). A dashed red line labeled "ZSL" represents a baseline performance level for each model.

### Components/Axes

Each chart shares the following components:

* **X-axis:** "#Sample" - Represents the number of training samples, ranging from 250 to 1000, with markers at 250, 500, 750, and 1000.

* **Y-axis:** Varies by chart:

* HotpotQA: "F1 (%)" - F1 score in percentage, ranging from approximately 43% to 57%.

* 2WikiMultihopQA: "F1 (%)" - F1 score in percentage, ranging from approximately 47% to 59%.

* Bamboogle: "F1 (%)" - F1 score in percentage, ranging from approximately 57% to 65%.

* MedQA: "Acc (%)" - Accuracy in percentage, ranging from approximately 68% to 72%.

* **Data Series:** A solid blue line representing the performance of the QA model.

* **Baseline:** A dashed red line labeled "ZSL" representing a zero-shot learning baseline.

* **Titles:** Each chart is labeled with the name of the corresponding QA model (HotpotQA, 2WikiMultihopQA, Bamboogle, MedQA).

### Detailed Analysis or Content Details

**HotpotQA:**

* The blue line starts at approximately 47% F1 score at 250 samples.

* It decreases slightly to around 44% at 500 samples.

* It then increases to approximately 52% at 750 samples.

* Finally, it reaches approximately 55% at 1000 samples.

* The ZSL baseline is consistently around 43%.

**2WikiMultihopQA:**

* The blue line begins at approximately 49% F1 score at 250 samples.

* It increases steadily to approximately 54% at 500 samples.

* It continues to rise to approximately 57% at 750 samples.

* It reaches approximately 58% at 1000 samples.

* The ZSL baseline is consistently around 47%.

**Bamboogle:**

* The blue line starts at approximately 60% F1 score at 250 samples.

* It increases to approximately 62% at 500 samples.

* It continues to rise to approximately 63% at 750 samples.

* It reaches approximately 65% at 1000 samples.

* The ZSL baseline is consistently around 57%.

**MedQA:**

* The blue line starts at approximately 69% Accuracy at 250 samples.

* It increases rapidly to approximately 71% at 500 samples.

* It continues to rise to approximately 71.5% at 750 samples.

* It reaches approximately 72% at 1000 samples.

* The ZSL baseline is consistently around 68%.

### Key Observations

* All four models show a general trend of increasing performance with an increasing number of training samples.

* MedQA exhibits the most significant performance improvement with increasing sample size, showing a steep upward slope.

* The ZSL baseline remains relatively constant across all models and sample sizes.

* HotpotQA shows a slight dip in performance between 250 and 500 samples, before recovering.

### Interpretation

The data suggests that increasing the amount of training data generally improves the performance of these question answering models. The magnitude of improvement varies between models, with MedQA benefiting the most from additional data. The consistent ZSL baseline indicates a level of performance achievable without any specific training data, likely due to inherent knowledge within the model or the ability to make reasonable guesses. The slight dip in HotpotQA's performance at 500 samples could be due to noise in the data or a temporary overfitting issue. Overall, the charts demonstrate the importance of data quantity in training effective question answering systems. The models are all performing above the ZSL baseline, indicating that training is beneficial. The differences in performance gains between models suggest varying degrees of data efficiency and model capacity.

</details>

Figure 3: Performance of Re 2 Search agents with critics trained on different numbers of samples.

For MedQA, which involves complex reasoning and information-seeking tasks requiring domain-specific knowledge, a different trend is observed. With only 250 training samples, the performance slightly drops below the ZSL baseline, highlighting the challenges of capturing intricate domain-specific processes with limited training data. As the sample size increases, however, the performance gradually recovers and eventually surpasses the ZSL baseline, achieving the highest accuracy of 71.72% with 1000 samples. This underscores the importance of sufficient training data in capturing the nuanced reasoning and query-generation processes required for specialized tasks.

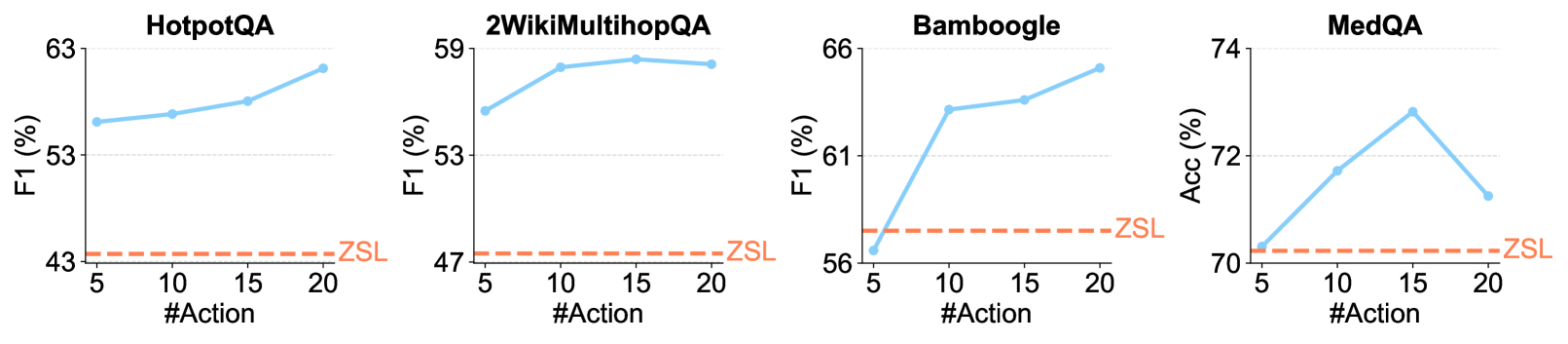

### 4.3 Inference Time Scaling

Since trained critics optimize action-taking by identifying high-quality actions from the generated candidates during inference, we explored how the agent performance changes with the increasing number of sampled actions at each time step. Figure 4 displays the results of our inference time scaling study, with Re 2 Search as the tested agent. We observe a consistent trend across multiple benchmarks, where increasing the number of sampled actions generally improves performance. Specifically, for HotpotQA and Bamboogle, the F1 score continues to rise as more actions are sampled, demonstrating the benefits of expanding the candidate set to enable better action selection at each step. However, performance gains gradually diminish, indicating that the agent reaches a point where additional sampled actions contribute less to improvement. This suggests that while action sampling is beneficial, there is a limit to how much additional sampling enhances decision-making.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Line Charts: Performance of Question Answering Models with Varying Action Counts

### Overview

The image presents four separate line charts, each depicting the performance of a different question answering (QA) model – HotpotQA, 2WikiMultihopQA, Bamboogle, and MedQA – as a function of the number of actions taken. The performance metric varies between models (F1 score for HotpotQA, 2WikiMultihopQA, and Bamboogle, and Accuracy (Acc) for MedQA). A dashed horizontal line represents the performance of a baseline method labeled "ZSL" across all charts.

### Components/Axes

Each chart shares the following components:

* **X-axis:** Labeled "#Action", representing the number of actions, with a scale ranging from 5 to 20 in increments of 5.

* **Y-axis:** Represents the performance metric.

* HotpotQA, 2WikiMultihopQA, and Bamboogle: Labeled "F1 (%)", with a scale ranging from approximately 43% to 63%.

* MedQA: Labeled "Acc (%)", with a scale ranging from approximately 70% to 74%.

* **Data Series:** Each chart contains two lines:

* A solid light-blue line representing the performance of the specific QA model.

* A dashed orange line representing the performance of the "ZSL" baseline.

* **Titles:** Each chart has a title indicating the QA model being evaluated (HotpotQA, 2WikiMultihopQA, Bamboogle, MedQA).

* **Legend:** The legend is implicit, with "ZSL" clearly labeled on the dashed orange line.

### Detailed Analysis or Content Details

**1. HotpotQA:**

* The light-blue line slopes upward, indicating increasing F1 score with increasing number of actions.

* At #Action = 5, the F1 score is approximately 54%.

* At #Action = 10, the F1 score is approximately 57%.

* At #Action = 15, the F1 score is approximately 60%.

* At #Action = 20, the F1 score is approximately 62%.

* The ZSL baseline maintains a constant F1 score of approximately 43%.

**2. 2WikiMultihopQA:**

* The light-blue line initially rises sharply, then plateaus.

* At #Action = 5, the F1 score is approximately 48%.

* At #Action = 10, the F1 score is approximately 56%.

* At #Action = 15, the F1 score is approximately 59%.

* At #Action = 20, the F1 score is approximately 59%.

* The ZSL baseline maintains a constant F1 score of approximately 47%.

**3. Bamboogle:**

* The light-blue line initially decreases, then increases.

* At #Action = 5, the F1 score is approximately 64%.

* At #Action = 10, the F1 score is approximately 58%.

* At #Action = 15, the F1 score is approximately 60%.

* At #Action = 20, the F1 score is approximately 63%.

* The ZSL baseline maintains a constant F1 score of approximately 56%.

**4. MedQA:**

* The light-blue line initially increases, then decreases.

* At #Action = 5, the Accuracy is approximately 71%.

* At #Action = 10, the Accuracy is approximately 72%.

* At #Action = 15, the Accuracy is approximately 73%.

* At #Action = 20, the Accuracy is approximately 73%.

* The ZSL baseline maintains a constant Accuracy of approximately 70%.

### Key Observations

* All models show some improvement in performance with increasing actions, although the nature of the improvement varies.

* Bamboogle exhibits a non-monotonic performance curve, initially decreasing before increasing.

* The ZSL baseline consistently performs worse than all the QA models across all action counts.

* 2WikiMultihopQA shows a rapid initial improvement, followed by diminishing returns.

* MedQA shows a relatively stable performance around 73% accuracy for action counts of 15 and 20.

### Interpretation

The charts demonstrate the impact of increasing the number of actions on the performance of different question answering models. The "ZSL" baseline likely represents a zero-shot learning approach, where the model is evaluated without any specific training on the task. The fact that all models outperform ZSL suggests that allowing the model to take more actions (potentially through reasoning or information retrieval steps) improves its ability to answer questions.

The varying trends across models suggest that different QA architectures benefit differently from increased action counts. The non-monotonic behavior of Bamboogle could indicate an optimal action count beyond which performance degrades, potentially due to increased noise or irrelevant information. The plateauing performance of 2WikiMultihopQA suggests that the model reaches a point of diminishing returns, where additional actions do not significantly improve its ability to find the correct answer. The MedQA results suggest that the model is relatively robust to changes in action count within the tested range.

These results highlight the importance of action selection and control in question answering systems. Further investigation could focus on identifying the optimal action count for each model and understanding the reasons behind the observed performance trends.

</details>

Figure 4: Performance of Re 2 Search agents with different numbers of actions sampled per step.

## 5 Related Work

### 5.1 Retrieval-Augmented Generation

Retrieval-Augmented Generation (RAG) has emerged as a powerful paradigm for enhancing large language models (LLMs) on knowledge-intensive tasks. A typical RAG framework comprises two core components: a retriever, which selects relevant documents from a large corpus, and a generator, which synthesizes information from these documents to produce coherent and contextually appropriate responses [42, 18, 9]. RAG has demonstrated strong performance across diverse domains, including open-domain question answering [37, 25, 7, 88, 63], fact-checking [78, 66], and summarization [3]. Subsequent research has focused on improving both the retriever’s ability to select relevant documents [95, 51, 89, 30, 32] and the generator’s capacity to effectively utilize retrieved information [15, 82, 80], thereby boosting overall system performance [26, 96, 36, 94]. Nevertheless, most RAG pipelines still rely on a single retrieval step, which can be inadequate for complex queries that require synthesizing information from multiple sources.

### 5.2 Multi-hop Question Answering

Multi-hop question answering (QA) tasks require systems to synthesize information from multiple, diverse sources to produce accurate answers [90, 21]. These tasks highlight the limitations of conventional RAG architectures, where a single retrieval step often fails to capture the comprehensive context needed for complex queries. To address this, language agents such as ReAct [91, 73, 4, 31, 54] have been proposed, interleaving reasoning and retrieval to dynamically accumulate relevant evidence [57, 79, 38, 61], which has shown promise in improving LLM performance [76, 64, 92, 27, 29]. However, most of these methods still rely heavily on prompt engineering, which can be fragile and may not effectively optimize language agents for knowledge-intensive tasks [40, 70, 2]. Recent studies have explored reinforcement learning (RL) to optimize language agents for multi-hop QA [69, 33, 8, 17, 55], but these approaches risk generating suboptimal intermediate search actions and show limited generalization to unseen data, as demonstrated in our experiments. Other concurrent work investigates process-level supervision [22, 12, 45, 71], but typically focuses on specific agent architectures and a narrow set of supervision methods, offering limited insight into the systematic optimization of language agents.

### 5.3 Post-training of Large Language Models

Beyond the foundational knowledge acquired during pre-training, post-training methods are essential for refining Large Language Models (LLMs) and aligning them with specific downstream tasks and desired behaviors. Supervised Fine-Tuning (SFT) adapts models using curated instruction-response pairs to promote task-specific capabilities [52, 10]. While SFT enhances instruction-following, further alignment with nuanced human preferences is often achieved through Reinforcement Learning from Human Feedback (RLHF) [52, 6, 5], typically implemented via Proximal Policy Optimization (PPO) [60]. More recently, critic-free approaches such as Direct Preference Optimization (DPO) have emerged as streamlined alternatives [56, 50, 14, 87], directly optimizing the LLM policy based on reward annotations and bypassing the need for a separately trained reward model. Although these techniques bring strong gains on text generation benchmarks, their integration into agentic RAG pipelines, where models must dynamically interact with retrieval systems and adapt reasoning strategies to evolving contexts, remains underexplored.

## 6 Conclusion

This work presents RAG-Gym as a unified and extensible framework for systematically optimizing agentic RAG along the axes of prompt engineering, actor tuning, and critic training. Through extensive empirical analysis, we demonstrate that integrating reasoning reflection, process-level direct preference optimization, and critic-guided inference yields substantial improvements over existing approaches. We hope RAG-Gym will serve as a foundation for further advances in robust, adaptive, and interpretable retrieval-augmented language agents.

## References

- Achiam et al. [2023] Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Akinwande et al. [2023] Victor Akinwande, Yiding Jiang, Dylan Sam, and J Zico Kolter. Understanding prompt engineering may not require rethinking generalization. arXiv preprint arXiv:2310.03957, 2023.

- An et al. [2021] Chenxin An, Ming Zhong, Zhichao Geng, Jianqiang Yang, and Xipeng Qiu. Retrievalsum: A retrieval enhanced framework for abstractive summarization. arXiv preprint arXiv:2109.07943, 2021.

- Asai et al. [2024] Akari Asai, Zeqiu Wu, Yizhong Wang, Avirup Sil, and Hannaneh Hajishirzi. Self-rag: Learning to retrieve, generate, and critique through self-reflection. In The Twelfth International Conference on Learning Representations, ICLR 2024, Vienna, Austria, May 7-11, 2024. OpenReview.net, 2024. URL https://openreview.net/forum?id=hSyW5go0v8.

- Askell et al. [2021] Amanda Askell, Yuntao Bai, Anna Chen, Dawn Drain, Deep Ganguli, Tom Henighan, Andy Jones, Nicholas Joseph, Ben Mann, Nova DasSarma, et al. A general language assistant as a laboratory for alignment. arXiv preprint arXiv:2112.00861, 2021.

- Bai et al. [2022] Yuntao Bai, Andy Jones, Kamal Ndousse, Amanda Askell, Anna Chen, Nova DasSarma, Dawn Drain, Stanislav Fort, Deep Ganguli, Tom Henighan, et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv preprint arXiv:2204.05862, 2022.

- Borgeaud et al. [2022] Sebastian Borgeaud, Arthur Mensch, Jordan Hoffmann, Trevor Cai, Eliza Rutherford, Katie Millican, George Bm Van Den Driessche, Jean-Baptiste Lespiau, Bogdan Damoc, Aidan Clark, et al. Improving language models by retrieving from trillions of tokens. In International conference on machine learning, pages 2206–2240. PMLR, 2022.

- Chen et al. [2025] Mingyang Chen, Tianpeng Li, Haoze Sun, Yijie Zhou, Chenzheng Zhu, Haofen Wang, Jeff Z Pan, Wen Zhang, Huajun Chen, Fan Yang, et al. Research: Learning to reason with search for llms via reinforcement learning. arXiv preprint arXiv:2503.19470, 2025.

- Cheng et al. [2025] Mingyue Cheng, Yucong Luo, Jie Ouyang, Qi Liu, Huijie Liu, Li Li, Shuo Yu, Bohou Zhang, Jiawei Cao, Jie Ma, et al. A survey on knowledge-oriented retrieval-augmented generation. arXiv preprint arXiv:2503.10677, 2025.

- Chung et al. [2024] Hyung Won Chung, Le Hou, Shayne Longpre, Barret Zoph, Yi Tay, William Fedus, Yunxuan Li, Xuezhi Wang, Mostafa Dehghani, Siddhartha Brahma, et al. Scaling instruction-finetuned language models. Journal of Machine Learning Research, 25(70):1–53, 2024.

- Cormack et al. [2009] Gordon V Cormack, Charles LA Clarke, and Stefan Buettcher. Reciprocal rank fusion outperforms condorcet and individual rank learning methods. In Proceedings of the 32nd international ACM SIGIR conference on Research and development in information retrieval, pages 758–759, 2009.

- Dong et al. [2024] Guanting Dong, Chenghao Zhang, Mengjie Deng, Yutao Zhu, Zhicheng Dou, and Ji-Rong Wen. Progressive multimodal reasoning via active retrieval. arXiv preprint arXiv:2412.14835, 2024.

- Dubey et al. [2024] Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Ethayarajh et al. [2024] Kawin Ethayarajh, Winnie Xu, Niklas Muennighoff, Dan Jurafsky, and Douwe Kiela. Kto: Model alignment as prospect theoretic optimization. arXiv preprint arXiv:2402.01306, 2024.

- Fang et al. [2024] Feiteng Fang, Yuelin Bai, Shiwen Ni, Min Yang, Xiaojun Chen, and Ruifeng Xu. Enhancing noise robustness of retrieval-augmented language models with adaptive adversarial training. arXiv preprint arXiv:2405.20978, 2024.

- Fu et al. [2025] Jiayi Fu, Xuandong Zhao, Chengyuan Yao, Heng Wang, Qi Han, and Yanghua Xiao. Reward shaping to mitigate reward hacking in rlhf. arXiv preprint arXiv:2502.18770, 2025.

- Gao et al. [2024] Jingsheng Gao, Linxu Li, Weiyuan Li, Yuzhuo Fu, and Bin Dai. Smartrag: Jointly learn rag-related tasks from the environment feedback. arXiv preprint arXiv:2410.18141, 2024.

- Gao et al. [2023] Yunfan Gao, Yun Xiong, Xinyu Gao, Kangxiang Jia, Jinliu Pan, Yuxi Bi, Yi Dai, Jiawei Sun, and Haofen Wang. Retrieval-augmented generation for large language models: A survey. arXiv preprint arXiv:2312.10997, 2023.

- Guo et al. [2025] Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- Han et al. [2024] Binglan Han, Teo Susnjak, and Anuradha Mathrani. Automating systematic literature reviews with retrieval-augmented generation: A comprehensive overview. Applied Sciences, 14(19):9103, 2024.

- Ho et al. [2020] Xanh Ho, Anh-Khoa Duong Nguyen, Saku Sugawara, and Akiko Aizawa. Constructing a multi-hop qa dataset for comprehensive evaluation of reasoning steps. In Proceedings of the 28th International Conference on Computational Linguistics, pages 6609–6625, 2020.

- Hsu et al. [2024] Sheryl Hsu, Omar Khattab, Chelsea Finn, and Archit Sharma. Grounding by trying: Llms with reinforcement learning-enhanced retrieval. arXiv preprint arXiv:2410.23214, 2024.

- Hu et al. [2021] Edward J Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. Lora: Low-rank adaptation of large language models. arXiv preprint arXiv:2106.09685, 2021.

- Hu et al. [2024] Jian Hu, Xibin Wu, Zilin Zhu, Weixun Wang, Dehao Zhang, Yu Cao, et al. Openrlhf: An easy-to-use, scalable and high-performance rlhf framework. arXiv preprint arXiv:2405.11143, 2024.

- Izacard and Grave [2021] Gautier Izacard and Édouard Grave. Leveraging passage retrieval with generative models for open domain question answering. In Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume, pages 874–880, 2021.

- Izacard et al. [2023] Gautier Izacard, Patrick Lewis, Maria Lomeli, Lucas Hosseini, Fabio Petroni, Timo Schick, Jane Dwivedi-Yu, Armand Joulin, Sebastian Riedel, and Edouard Grave. Atlas: Few-shot learning with retrieval augmented language models. Journal of Machine Learning Research, 24(251):1–43, 2023.

- Jeong et al. [2024] Soyeong Jeong, Jinheon Baek, Sukmin Cho, Sung Ju Hwang, and Jong-Cheol Park. Adaptive-rag: Learning to adapt retrieval-augmented large language models through question complexity. In 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 7036–7050. Association for Computational Linguistics, 2024.

- Ji et al. [2023] Ziwei Ji, Nayeon Lee, Rita Frieske, Tiezheng Yu, Dan Su, Yan Xu, Etsuko Ishii, Ye Jin Bang, Andrea Madotto, and Pascale Fung. Survey of hallucination in natural language generation. ACM Computing Surveys, 55(12):1–38, 2023.

- Jiang et al. [2025a] Pengcheng Jiang, Lang Cao, Ruike Zhu, Minhao Jiang, Yunyi Zhang, Jimeng Sun, and Jiawei Han. Ras: Retrieval-and-structuring for knowledge-intensive llm generation. arXiv preprint arXiv:2502.10996, 2025a.

- Jiang et al. [2025b] Pengcheng Jiang, Jiacheng Lin, Lang Cao, Runchu Tian, SeongKu Kang, Zifeng Wang, Jimeng Sun, and Jiawei Han. Deepretrieval: Hacking real search engines and retrievers with large language models via reinforcement learning. arXiv preprint arXiv:2503.00223, 2025b.

- Jiang et al. [2023] Zhengbao Jiang, Frank F Xu, Luyu Gao, Zhiqing Sun, Qian Liu, Jane Dwivedi-Yu, Yiming Yang, Jamie Callan, and Graham Neubig. Active retrieval augmented generation. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 7969–7992, 2023.

- Jiang et al. [2024] Ziyan Jiang, Xueguang Ma, and Wenhu Chen. Longrag: Enhancing retrieval-augmented generation with long-context llms. arXiv preprint arXiv:2406.15319, 2024.

- Jin et al. [2025] Bowen Jin, Hansi Zeng, Zhenrui Yue, Jinsung Yoon, Sercan Arik, Dong Wang, Hamed Zamani, and Jiawei Han. Search-r1: Training llms to reason and leverage search engines with reinforcement learning. arXiv preprint arXiv:2503.09516, 2025.

- Jin et al. [2021] Di Jin, Eileen Pan, Nassim Oufattole, Wei-Hung Weng, Hanyi Fang, and Peter Szolovits. What disease does this patient have? a large-scale open domain question answering dataset from medical exams. Applied Sciences, 11(14):6421, 2021.

- Jin et al. [2023] Qiao Jin, Won Kim, Qingyu Chen, Donald C Comeau, Lana Yeganova, W John Wilbur, and Zhiyong Lu. Medcpt: Contrastive pre-trained transformers with large-scale pubmed search logs for zero-shot biomedical information retrieval. Bioinformatics, 39(11):btad651, 2023.

- Jin et al. [2024] Zhuoran Jin, Hongbang Yuan, Tianyi Men, Pengfei Cao, Yubo Chen, Kang Liu, and Jun Zhao. Rag-rewardbench: Benchmarking reward models in retrieval augmented generation for preference alignment. arXiv preprint arXiv:2412.13746, 2024.

- Karpukhin et al. [2020] Vladimir Karpukhin, Barlas Oguz, Sewon Min, Patrick Lewis, Ledell Wu, Sergey Edunov, Danqi Chen, and Wen-tau Yih. Dense passage retrieval for open-domain question answering. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pages 6769–6781, 2020.

- Khot et al. [2023] Tushar Khot, Harsh Trivedi, Matthew Finlayson, Yao Fu, Kyle Richardson, Peter Clark, and Ashish Sabharwal. Decomposed prompting: A modular approach for solving complex tasks. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net, 2023. URL https://openreview.net/forum?id=_nGgzQjzaRy.

- Lála et al. [2023] Jakub Lála, Odhran O’Donoghue, Aleksandar Shtedritski, Sam Cox, Samuel G Rodriques, and Andrew D White. Paperqa: Retrieval-augmented generative agent for scientific research. arXiv preprint arXiv:2312.07559, 2023.

- Lamba [2024] Divya Lamba. The role of prompt engineering in improving language understanding and generation. International Journal For Multidisciplinary Research, 2024. URL https://api.semanticscholar.org/CorpusID:274939741.

- Lang and Gürpinar [2025] Guido Lang and Tan Gürpinar. Ai-powered learning support: A study of retrieval-augmented generation (rag) chatbot effectiveness in an online course. Information Systems Education Journal, 23(2), 2025.

- Lewis et al. [2020] Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems, 33:9459–9474, 2020.

- Li et al. [2024a] Dongheng Li, Yongchang Hao, and Lili Mou. Llmr: Knowledge distillation with a large language model-induced reward. In Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024), pages 10657–10664, 2024a.

- Li et al. [2025] Xiaoxi Li, Guanting Dong, Jiajie Jin, Yuyao Zhang, Yujia Zhou, Yutao Zhu, Peitian Zhang, and Zhicheng Dou. Search-o1: Agentic search-enhanced large reasoning models. arXiv preprint arXiv:2501.05366, 2025.

- Li et al. [2024b] Xingxuan Li, Weiwen Xu, Ruochen Zhao, Fangkai Jiao, Shafiq Joty, and Lidong Bing. Can we further elicit reasoning in llms? critic-guided planning with retrieval-augmentation for solving challenging tasks. arXiv preprint arXiv:2410.01428, 2024b.

- Lightman et al. [2024] Hunter Lightman, Vineet Kosaraju, Yuri Burda, Harrison Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step. In The Twelfth International Conference on Learning Representations, ICLR 2024, Vienna, Austria, May 7-11, 2024. OpenReview.net, 2024. URL https://openreview.net/forum?id=v8L0pN6EOi.

- Liu et al. [2020] Fei Liu et al. Learning to summarize from human feedback. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 583–592, 2020.

- Liu et al. [2025] Siru Liu, Allison B McCoy, and Adam Wright. Improving large language model applications in biomedicine with retrieval-augmented generation: a systematic review, meta-analysis, and clinical development guidelines. Journal of the American Medical Informatics Association, page ocaf008, 2025.

- Ma et al. [2024] Hao Ma, Tianyi Hu, Zhiqiang Pu, Liu Boyin, Xiaolin Ai, Yanyan Liang, and Min Chen. Coevolving with the other you: Fine-tuning llm with sequential cooperative multi-agent reinforcement learning. Advances in Neural Information Processing Systems, 37:15497–15525, 2024.

- Meng et al. [2024] Yu Meng, Mengzhou Xia, and Danqi Chen. Simpo: Simple preference optimization with a reference-free reward. Advances in Neural Information Processing Systems, 37:124198–124235, 2024.

- Nguyen et al. [2024] Thang Nguyen, Peter Chin, and Yu-Wing Tai. Reward-rag: Enhancing rag with reward driven supervision. arXiv preprint arXiv:2410.03780, 2024.

- Ouyang et al. [2022] Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. Advances in neural information processing systems, 35:27730–27744, 2022.

- Pipitone and Alami [2024] Nicholas Pipitone and Ghita Houir Alami. Legalbench-rag: A benchmark for retrieval-augmented generation in the legal domain. arXiv preprint arXiv:2408.10343, 2024.

- Press et al. [2023] Ofir Press, Muru Zhang, Sewon Min, Ludwig Schmidt, Noah A Smith, and Mike Lewis. Measuring and narrowing the compositionality gap in language models. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 5687–5711, 2023.

- Qian et al. [2025] Cheng Qian, Emre Can Acikgoz, Qi He, Hongru Wang, Xiusi Chen, Dilek Hakkani-Tür, Gokhan Tur, and Heng Ji. Toolrl: Reward is all tool learning needs. arXiv preprint arXiv:2504.13958, 2025.

- Rafailov et al. [2023] Rafael Rafailov, Archit Sharma, Eric Mitchell, Christopher D Manning, Stefano Ermon, and Chelsea Finn. Direct preference optimization: Your language model is secretly a reward model. Advances in Neural Information Processing Systems, 36:53728–53741, 2023.

- Ram et al. [2023] Ori Ram, Yoav Levine, Itay Dalmedigos, Dor Muhlgay, Amnon Shashua, Kevin Leyton-Brown, and Yoav Shoham. In-context retrieval-augmented language models. Transactions of the Association for Computational Linguistics, 11:1316–1331, 2023.

- Robertson et al. [2009] Stephen Robertson, Hugo Zaragoza, et al. The probabilistic relevance framework: Bm25 and beyond. Foundations and Trends® in Information Retrieval, 3(4):333–389, 2009.

- Sahoo et al. [2024] Satya S Sahoo, Joseph M Plasek, Hua Xu, Özlem Uzuner, Trevor Cohen, Meliha Yetisgen, Hongfang Liu, Stéphane Meystre, and Yanshan Wang. Large language models for biomedicine: foundations, opportunities, challenges, and best practices. Journal of the American Medical Informatics Association, page ocae074, 2024.

- Schulman et al. [2017] John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Shao et al. [2023] Zhihong Shao, Yeyun Gong, Yelong Shen, Minlie Huang, Nan Duan, and Weizhu Chen. Enhancing retrieval-augmented large language models with iterative retrieval-generation synergy. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 9248–9274, 2023.

- Shao et al. [2024] Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300, 2024.

- Shi et al. [2025] Yucheng Shi, Tianze Yang, Canyu Chen, Quanzheng Li, Tianming Liu, Xiang Li, and Ninghao Liu. Searchrag: Can search engines be helpful for llm-based medical question answering? arXiv preprint arXiv:2502.13233, 2025.

- Shi et al. [2024] Zhengliang Shi, Shuo Zhang, Weiwei Sun, Shen Gao, Pengjie Ren, Zhumin Chen, and Zhaochun Ren. Generate-then-ground in retrieval-augmented generation for multi-hop question answering. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 7339–7353, 2024.

- Shinn et al. [2024] Noah Shinn, Federico Cassano, Ashwin Gopinath, Karthik Narasimhan, and Shunyu Yao. Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems, 36, 2024.

- Shuster et al. [2021] Kurt Shuster, Spencer Poff, Moya Chen, Douwe Kiela, and Jason Weston. Retrieval augmentation reduces hallucination in conversation. In Findings of the Association for Computational Linguistics: EMNLP 2021, pages 3784–3803, 2021.

- Skalse et al. [2022] Joar Skalse, Nikolaus Howe, Dmitrii Krasheninnikov, and David Krueger. Defining and characterizing reward gaming. Advances in Neural Information Processing Systems, 35:9460–9471, 2022.

- Skarlinski et al. [2024] Michael D Skarlinski, Sam Cox, Jon M Laurent, James D Braza, Michaela Hinks, Michael J Hammerling, Manvitha Ponnapati, Samuel G Rodriques, and Andrew D White. Language agents achieve superhuman synthesis of scientific knowledge. arXiv preprint arXiv:2409.13740, 2024.

- Song et al. [2025] Huatong Song, Jinhao Jiang, Yingqian Min, Jie Chen, Zhipeng Chen, Wayne Xin Zhao, Lei Fang, and Ji-Rong Wen. R1-searcher: Incentivizing the search capability in llms via reinforcement learning. arXiv preprint arXiv:2503.05592, 2025.

- Subramonyam et al. [2025] Hari Subramonyam, Divy Thakkar, Andrew Ku, Juergen Dieber, and Anoop K Sinha. Prototyping with prompts: Emerging approaches and challenges in generative ai design for collaborative software teams. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, pages 1–22, 2025.

- Sun et al. [2025] Zhongxiang Sun, Qipeng Wang, Weijie Yu, Xiaoxue Zang, Kai Zheng, Jun Xu, Xiao Zhang, Song Yang, and Han Li. Rearter: Retrieval-augmented reasoning with trustworthy process rewarding. arXiv preprint arXiv:2501.07861, 2025.

- Swacha and Gracel [2025] Jakub Swacha and Michał Gracel. Retrieval-augmented generation (rag) chatbots for education: A survey of applications. Applied Sciences, 15(8):4234, 2025.

- Trivedi et al. [2023] Harsh Trivedi, Niranjan Balasubramanian, Tushar Khot, and Ashish Sabharwal. Interleaving retrieval with chain-of-thought reasoning for knowledge-intensive multi-step questions. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 10014–10037, 2023.

- Vaswani et al. [2017] Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. Advances in neural information processing systems, 30, 2017.

- von Werra et al. [2020] Leandro von Werra, Younes Belkada, Lewis Tunstall, Edward Beeching, Tristan Thrush, Nathan Lambert, Shengyi Huang, Kashif Rasul, and Quentin Gallouédec. Trl: Transformer reinforcement learning. https://github.com/huggingface/trl, 2020.

- Wang et al. [2023] Keheng Wang, Feiyu Duan, Sirui Wang, Peiguang Li, Yunsen Xian, Chuantao Yin, Wenge Rong, and Zhang Xiong. Knowledge-driven cot: Exploring faithful reasoning in llms for knowledge-intensive question answering. arXiv preprint arXiv:2308.13259, 2023.

- Wang et al. [2024a] Peiyi Wang, Lei Li, Zhihong Shao, Runxin Xu, Damai Dai, Yifei Li, Deli Chen, Yu Wu, and Zhifang Sui. Math-shepherd: Verify and reinforce llms step-by-step without human annotations. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 9426–9439, 2024a.

- Wang et al. [2024b] Yuxia Wang, Minghan Wang, Muhammad Arslan Manzoor, Fei Liu, Georgi Georgiev, Rocktim Das, and Preslav Nakov. Factuality of large language models: A survey. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 19519–19529, 2024b.

- Wang et al. [2024c] Zihao Wang, Anji Liu, Haowei Lin, Jiaqi Li, Xiaojian Ma, and Yitao Liang. Rat: Retrieval augmented thoughts elicit context-aware reasoning in long-horizon generation. arXiv preprint arXiv:2403.05313, 2024c.

- Wang et al. [2024d] Zilong Wang, Zifeng Wang, Long Le, Huaixiu Steven Zheng, Swaroop Mishra, Vincent Perot, Yuwei Zhang, Anush Mattapalli, Ankur Taly, Jingbo Shang, et al. Speculative rag: Enhancing retrieval augmented generation through drafting. arXiv preprint arXiv:2407.08223, 2024d.

- Wei et al. [2022] Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35:24824–24837, 2022.

- Wei et al. [2025] Zhepei Wei, Wei-Lin Chen, and Yu Meng. InstructRAG: Instructing retrieval-augmented generation via self-synthesized rationales. In The Thirteenth International Conference on Learning Representations, 2025. URL https://openreview.net/forum?id=P1qhkp8gQT.

- Wiratunga et al. [2024] Nirmalie Wiratunga, Ramitha Abeyratne, Lasal Jayawardena, Kyle Martin, Stewart Massie, Ikechukwu Nkisi-Orji, Ruvan Weerasinghe, Anne Liret, and Bruno Fleisch. Cbr-rag: case-based reasoning for retrieval augmented generation in llms for legal question answering. In International Conference on Case-Based Reasoning, pages 445–460. Springer, 2024.

- Xiao et al. [2023] Shitao Xiao, Zheng Liu, Peitian Zhang, and Niklas Muennighoff. C-pack: Packaged resources to advance general chinese embedding, 2023.