# Hallucination Detection in LLMs Using Spectral Features of Attention Maps

**Authors**:

- Bogdan Gabrys, Tomasz Kajdanowicz (Wroclaw University of Science and Technology,

University of Technology Sydney,)

- Correspondence: jakub.binkowski@pwr.edu.pl

Abstract

Large Language Models (LLMs) have demonstrated remarkable performance across various tasks but remain prone to hallucinations. Detecting hallucinations is essential for safety-critical applications, and recent methods leverage attention map properties to this end, though their effectiveness remains limited. In this work, we investigate the spectral features of attention maps by interpreting them as adjacency matrices of graph structures. We propose the $\operatorname{LapEigvals}$ method, which utilizes the top- $k$ eigenvalues of the Laplacian matrix derived from the attention maps as an input to hallucination detection probes. Empirical evaluations demonstrate that our approach achieves state-of-the-art hallucination detection performance among attention-based methods. Extensive ablation studies further highlight the robustness and generalization of $\operatorname{LapEigvals}$ , paving the way for future advancements in the hallucination detection domain.

Hallucination Detection in LLMs Using Spectral Features of Attention Maps

Jakub Binkowski 1, Denis Janiak 1, Albert Sawczyn 1 Bogdan Gabrys 2, Tomasz Kajdanowicz 1 1 Wroclaw University of Science and Technology, 2 University of Technology Sydney, Correspondence: jakub.binkowski@pwr.edu.pl

1 Introduction

The recent surge of interest in Large Language Models (LLMs), driven by their impressive performance across various tasks, has led to significant advancements in their training, fine-tuning, and application to real-world problems. Despite progress, many challenges remain unresolved, particularly in safety-critical applications with a high cost of errors. A significant issue is that LLMs are prone to hallucinations, i.e. generating "content that is nonsensical or unfaithful to the provided source content" (Farquhar et al., 2024; Huang et al., 2023). Since eliminating hallucinations is impossible (Lee, 2023; Xu et al., 2024), there is a pressing need for methods to detect when a model produces hallucinations. In addition, examining the internal behavior of LLMs in the context of hallucinations may yield important insights into their characteristics and support further advancements in the field. Recent studies have shown that hallucinations can be detected using internal states of the model, e.g., hidden states (Chen et al., 2024) or attention maps (Chuang et al., 2024a), and that LLMs can internally "know when they do not know" (Azaria and Mitchell, 2023; Orgad et al., 2025). We show that spectral features of attention maps coincide with hallucinations and, building on this observation, propose a novel method for their detection.

As highlighted by (Barbero et al., 2024), attention maps can be viewed as weighted adjacency matrices of graphs. Building on this perspective, we performed statistical analysis and demonstrated that the eigenvalues of a Laplacian matrix derived from attention maps serve as good predictors of hallucinations. We propose the $\operatorname{LapEigvals}$ method, which utilizes the top- $k$ eigenvalues of the Laplacian as input features of a probing model to detect hallucinations. We share full implementation in a public repository: https://github.com/graphml-lab-pwr/lapeigvals.

We summarize our contributions as follows:

1. We perform statistical analysis of the Laplacian matrix derived from attention maps and show that it could serve as a better predictor of hallucinations compared to the previous method relying on the log-determinant of the maps.

1. Building on that analysis and advancements in the graph-processing domain, we propose leveraging the top- $k$ eigenvalues of the Laplacian matrix as features for hallucination detection probes and empirically show that it achieves state-of-the-art performance among attention-based approaches.

1. Through extensive ablation studies, we demonstrate properties, robustness and generalization of $\operatorname{LapEigvals}$ and suggest promising directions for further development.

2 Motivation

<details>

<summary>x1.png Details</summary>

### Visual Description

## Heatmaps: AttnScore vs. Laplacian Eigenvalues

### Overview

The image presents two heatmaps side-by-side, visualizing "AttnScore" and "Laplacian Eigenvalues." Both heatmaps share the same axes: "Layer Index" (vertical) and "Head Index" (horizontal), ranging from 0 to 28. The color intensity in each heatmap represents a "p-value," as indicated by the colorbar on the right, ranging from 0.0 (dark purple) to 0.8 (light orange).

### Components/Axes

* **Titles:**

* Left Heatmap: "AttnScore"

* Right Heatmap: "Laplacian Eigenvalues"

* **Axes:**

* Vertical Axis (both heatmaps): "Layer Index," with ticks at 0, 4, 8, 12, 16, 20, 24, and 28.

* Horizontal Axis (both heatmaps): "Head Index," with ticks at 0, 4, 8, 12, 16, 20, 24, and 28.

* **Colorbar (right side):**

* Label: "p-value"

* Scale: Ranges from 0.0 (dark purple) to 0.8 (light orange), with ticks at 0.0, 0.2, 0.4, 0.6, and 0.8.

### Detailed Analysis

**AttnScore Heatmap (Left):**

* The heatmap displays the p-values for AttnScore across different layers and heads.

* There is a distribution of p-values across the layers and heads.

* Layer 20, Head 4 has a p-value of approximately 0.6.

* Layer 12, Head 12 has a p-value of approximately 0.4.

* Layer 4, Head 4 has a p-value of approximately 0.8.

**Laplacian Eigenvalues Heatmap (Right):**

* The heatmap displays the p-values for Laplacian Eigenvalues across different layers and heads.

* The distribution of p-values appears less dense compared to the AttnScore heatmap.

* Layer 0, Head 4 has a p-value of approximately 0.6.

* Layer 28, Head 20 has a p-value of approximately 0.4.

* Layer 4, Head 28 has a p-value of approximately 0.8.

### Key Observations

* Both heatmaps show the distribution of p-values across different layers and heads.

* The AttnScore heatmap appears to have a higher density of non-zero p-values compared to the Laplacian Eigenvalues heatmap.

* The p-values range from 0.0 to 0.8 in both heatmaps.

### Interpretation

The heatmaps visualize the statistical significance (p-value) of AttnScore and Laplacian Eigenvalues across different layers and heads of a model (likely a neural network). The AttnScore heatmap suggests that attention scores are more consistently significant across various layers and heads, while the Laplacian Eigenvalues heatmap indicates that the significance of Laplacian Eigenvalues is more sparse or concentrated in specific layer-head combinations. This could imply that attention mechanisms play a more pervasive role in the model's operation compared to the properties captured by Laplacian Eigenvalues.

</details>

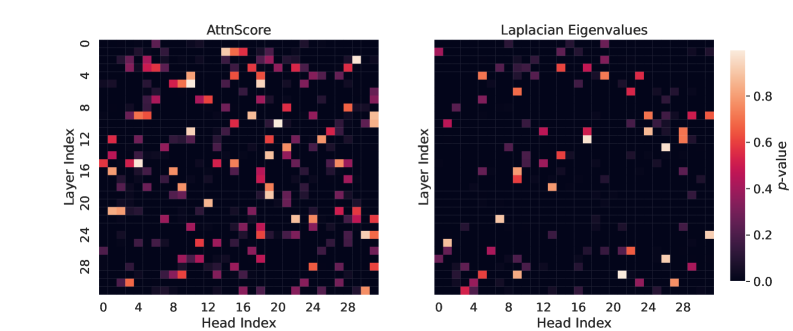

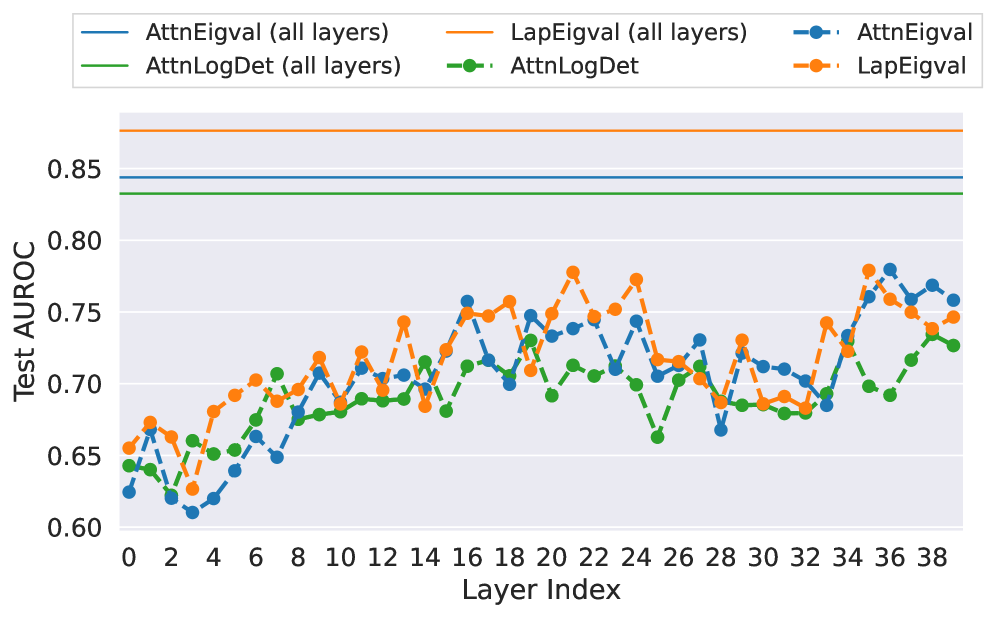

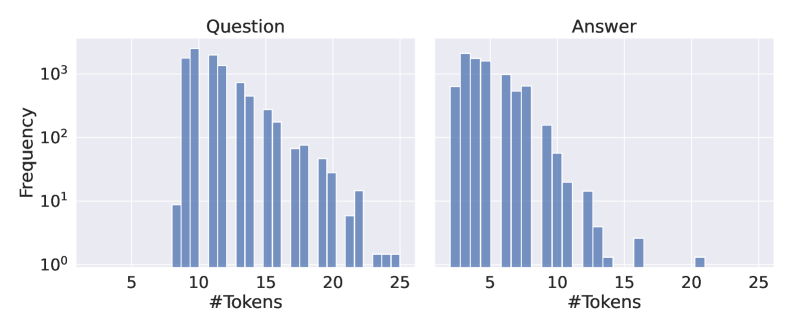

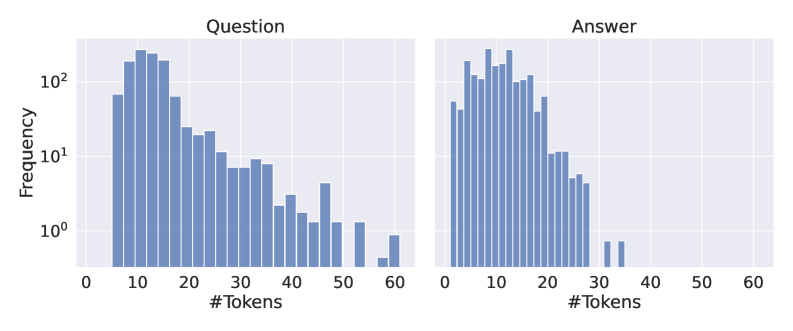

Figure 1: Visualization of $p$ -values from the two-sided Mann-Whitney U test for all layers and heads of Llama-3.1-8B across two feature types: $\operatorname{AttentionScore}$ and the $k{=}10$ Laplacian eigenvalues. These features were derived from attention maps collected when the LLM answered questions from the TriviaQA dataset. Higher $p$ -values indicate no significant difference in feature values between hallucinated and non-hallucinated examples. For $\operatorname{AttentionScore}$ , $80\%$ of heads have $p<0.05$ , while for Laplacian eigenvalues, this percentage is $91\%$ . Therefore, Laplacian eigenvalues may be better predictors of hallucinations, as feature values across more heads exhibit statistically significant differences between hallucinated and non-hallucinated examples.

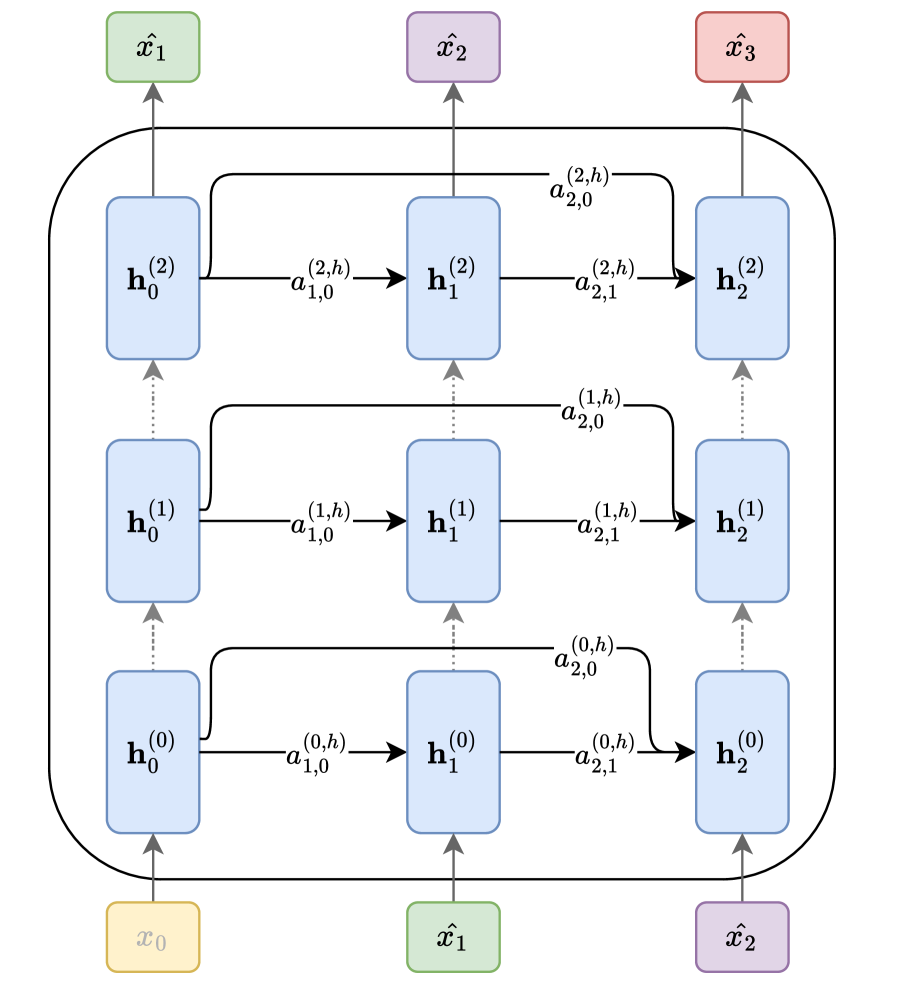

Considering the attention matrix as an adjacency matrix representing a set of Markov chains, each corresponding to one layer of an LLM (Wu et al., 2024) (see Figure 2), we can leverage its spectral properties, as was done in many successful graph-based methods (Mohar, 1997; von Luxburg, 2007; Bruna et al., 2013; Topping et al., 2022). In particular, it was shown that the graph Laplacian might help to describe several graph properties, like the presence of bottlenecks (Topping et al., 2022; Black et al., 2023). We hypothesize that hallucinations may arise from disruptions in information flow, such as bottlenecks, which could be detected through the graph Laplacian.

To assess whether our hypothesis holds, we computed graph spectral features and verified if they provide a stronger coincidence with hallucinations than the previous attention-based method - $\operatorname{AttentionScore}$ (Sriramanan et al., 2024). We prompted an LLM with questions from the TriviaQA dataset (Joshi et al., 2017) and extracted attention maps, differentiating by layers and heads. We then computed the spectral features, i.e., the 10 largest eigenvalues of the Laplacian matrix from each head and layer. Further, we conducted a two-sided Mann-Whitney U test (Mann and Whitney, 1947) to compare whether Laplacian eigenvalues and the values of $\operatorname{AttentionScore}$ are different between hallucinated and non-hallucinated examples. Figure 1 shows $p$ -values for all layers and heads, indicating that $\operatorname{AttentionScore}$ often results in higher $p$ -values compared to Laplacian eigenvalues. Overall, we studied 7 datasets and 5 LLMs and found similar results (see Appendix A). Based on these findings, we propose leveraging top- $k$ Laplacian eigenvalues as features for a hallucination probe.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Recurrent Neural Network Architecture

### Overview

The image depicts a recurrent neural network (RNN) architecture with three layers and three time steps. It illustrates the flow of information between hidden states and input/output nodes. The diagram highlights the recurrent connections within each layer and the connections between layers.

### Components/Axes

* **Nodes:**

* Input nodes: x₀ (yellow), x̂₁ (green), x̂₂ (purple) at the bottom.

* Output nodes: x̂₁ (green), x̂₂ (purple), x̂₃ (red) at the top.

* Hidden state nodes: h₀⁽⁰⁾, h₁⁽⁰⁾, h₂⁽⁰⁾, h₀⁽¹⁾, h₁⁽¹⁾, h₂⁽¹⁾, h₀⁽²⁾, h₁⁽²⁾, h₂⁽²⁾ (all light blue).

* **Connections:**

* Vertical dotted arrows: Represent connections between layers at the same time step.

* Horizontal solid arrows: Represent recurrent connections within the same layer.

* Solid arrows from input nodes to the first layer of hidden states.

* Solid arrows from the last layer of hidden states to the output nodes.

* **Labels:**

* a₁,(i,h) labels the horizontal arrows from left to center

* a₂,(i,h) labels the horizontal arrows from center to right

* a₂,(i,0) labels the curved arrows from left to right

### Detailed Analysis

* **Layer 0 (Bottom):**

* Input: x₀ (yellow) connects to h₀⁽⁰⁾.

* Hidden states: h₀⁽⁰⁾ connects to h₁⁽⁰⁾ via a₁,(0,h), and h₁⁽⁰⁾ connects to h₂⁽⁰⁾ via a₂,(0,h).

* h₀⁽⁰⁾ connects to h₀⁽¹⁾ in the layer above via a dotted arrow.

* h₁⁽⁰⁾ connects to h₁⁽¹⁾ in the layer above via a dotted arrow.

* h₂⁽⁰⁾ connects to h₂⁽¹⁾ in the layer above via a dotted arrow.

* **Layer 1 (Middle):**

* Hidden states: h₀⁽¹⁾ connects to h₁⁽¹⁾ via a₁,(1,h), and h₁⁽¹⁾ connects to h₂⁽¹⁾ via a₂,(1,h).

* h₀⁽¹⁾ connects to h₀⁽²⁾ in the layer above via a dotted arrow.

* h₁⁽¹⁾ connects to h₁⁽²⁾ in the layer above via a dotted arrow.

* h₂⁽¹⁾ connects to h₂⁽²⁾ in the layer above via a dotted arrow.

* **Layer 2 (Top):**

* Hidden states: h₀⁽²⁾ connects to h₁⁽²⁾ via a₁,(2,h), and h₁⁽²⁾ connects to h₂⁽²⁾ via a₂,(2,h).

* Output: h₀⁽²⁾ connects to x̂₁ (green), h₁⁽²⁾ connects to x̂₂ (purple), and h₂⁽²⁾ connects to x̂₃ (red).

* **Recurrent Connections:**

* h₀⁽⁰⁾ connects to h₂⁽¹⁾ via a curved arrow labeled a₂,(0,0).

* h₀⁽¹⁾ connects to h₂⁽²⁾ via a curved arrow labeled a₂,(1,0).

* h₀⁽²⁾ connects to h₂⁽²⁾ via a curved arrow labeled a₂,(2,0).

* **Input/Output Mapping:**

* x₀ (yellow) -> h₀⁽⁰⁾ -> ... -> x̂₁ (green)

* x̂₁ (green) -> h₁⁽⁰⁾ -> ... -> x̂₂ (purple)

* x̂₂ (purple) -> h₂⁽⁰⁾ -> ... -> x̂₃ (red)

### Key Observations

* The diagram illustrates a typical RNN architecture with recurrent connections within each layer and connections between layers.

* The hidden states at each time step are influenced by the input at that time step and the hidden state from the previous time step.

* The output at each time step is determined by the hidden state at that time step.

* The diagram shows a three-layer RNN, but the architecture can be extended to more layers.

* The diagram shows a three-time-step RNN, but the architecture can be extended to more time steps.

### Interpretation

The diagram represents a simplified view of a recurrent neural network. The RNN processes sequential data by maintaining a hidden state that is updated at each time step. The recurrent connections allow the network to "remember" information from previous time steps, which is crucial for tasks such as natural language processing and time series analysis. The diagram highlights the key components of an RNN, including the input nodes, hidden states, output nodes, and connections between them. The recurrent connections enable the network to learn complex patterns in sequential data. The connections between layers allow the network to extract hierarchical features from the input data. The diagram provides a visual representation of the flow of information through the network, which can be helpful for understanding how RNNs work.

</details>

Figure 2: The autoregressive inference process in an LLM is depicted as a graph for a single attention head $h$ (as introduced by (Vaswani, 2017)) and three generated tokens ( $\hat{x}_{1},\hat{x}_{2},\hat{x}_{3}$ ). Here, $\mathbf{h}^{(l)}_{i}$ represents the hidden state at layer $l$ for the input token $i$ , while $a^{(l,h)}_{i,j}$ denotes the scalar attention score between tokens $i$ and $j$ at layer $l$ and attention head $h$ . Arrows direction refers to information flow during inference.

3 Method

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Hallucination Detection Pipeline

### Overview

The image is a flowchart illustrating a pipeline for detecting hallucinations in a Large Language Model (LLM). It shows the flow of data and processes from a QA Dataset to a Hallucination Probe.

### Components/Axes

* **Shapes:** The diagram uses rectangles and parallelograms to represent different stages or components. Rectangles with rounded corners are used for processes, while parallelograms are used for data.

* **Colors:** The diagram uses three colors: yellow, blue, and green. Yellow is used for the initial dataset, blue for LLM-related components, and green for intermediate data or labels.

* **Lines:** Solid lines indicate the primary flow of data, while dashed lines indicate secondary or feedback paths.

* **Text Labels:** Each shape is labeled with a descriptive name.

### Detailed Analysis

1. **QA Dataset:** A yellow rectangle on the left labeled "QA Dataset" serves as the starting point.

2. **LLM:** A blue rectangle labeled "LLM" is positioned to the right of the QA Dataset. A solid arrow connects the QA Dataset to the LLM.

3. **Answers:** A green parallelogram labeled "Answers" is positioned below the LLM. A dashed arrow connects the LLM to the Answers.

4. **Attention Maps:** A green parallelogram labeled "Attention Maps" is positioned to the right of the LLM. A solid arrow connects the LLM to the Attention Maps.

5. **Judge LLM:** A blue rectangle labeled "Judge LLM" is positioned to the right of the Answers. A dashed arrow connects the Answers to the Judge LLM.

6. **Hallucination Labels:** A green parallelogram labeled "Hallucination Labels" is positioned to the right of the Judge LLM. A dashed arrow connects the Judge LLM to the Hallucination Labels.

7. **Feature Extraction (LapEigvals):** A blue rectangle labeled "Feature Extraction (LapEigvals)" is positioned to the right of the Attention Maps. A solid arrow connects the Attention Maps to the Feature Extraction.

8. **Hallucination Probe (logistic regression):** A blue rectangle labeled "Hallucination Probe (logistic regression)" is positioned to the right of the Feature Extraction and above the Hallucination Labels. A solid arrow connects the Feature Extraction to the Hallucination Probe. A dashed arrow connects the Hallucination Labels to the Hallucination Probe.

9. **Feedback Loop:** A dashed arrow connects the Hallucination Labels back to the Judge LLM. A dashed arrow connects the Hallucination Labels back to the QA Dataset.

### Key Observations

* The pipeline starts with a QA Dataset and uses an LLM to generate answers and attention maps.

* Feature extraction is performed on the attention maps using LapEigvals.

* A Judge LLM evaluates the answers and generates hallucination labels.

* A Hallucination Probe, using logistic regression, combines the extracted features and hallucination labels to detect hallucinations.

* There is a feedback loop from the hallucination labels back to the Judge LLM and the QA Dataset.

### Interpretation

The diagram illustrates a method for detecting hallucinations in LLMs. The process involves generating answers and attention maps, extracting features from the attention maps, and using a Judge LLM to provide hallucination labels. These elements are then combined in a Hallucination Probe to identify instances of hallucination. The feedback loop suggests an iterative process where the hallucination labels are used to refine the Judge LLM and potentially improve the QA Dataset. The use of LapEigvals for feature extraction from attention maps indicates a focus on structural or relational aspects of the attention patterns.

</details>

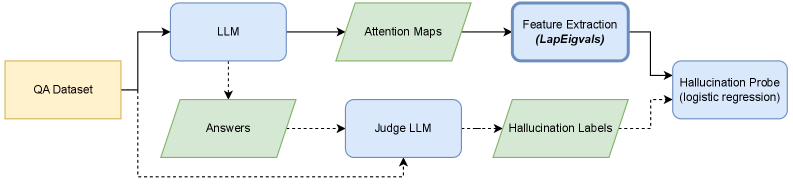

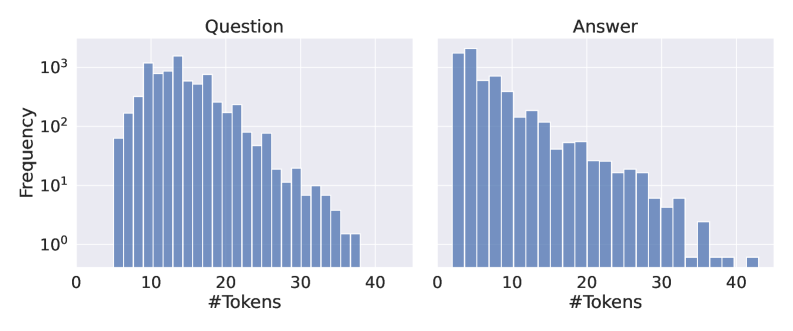

Figure 3: Overview of the methodology used in this work. Solid lines indicate the test-time pipeline, while dashed lines represent additional pipeline steps for generating labels for training the hallucination probe (logistic regression). The primary contribution of this work is leveraging the top- $k$ eigenvalues of the Laplacian as features for the hallucination probe, highlighted with a bold box on the diagram.

In our method, we train a hallucination probe using only attention maps, which we extracted during LLM inference, as illustrated in Figure 2. The attention map is a matrix containing attention scores for all tokens processed during inference, while the hallucination probe is a logistic regression model that uses features derived from attention maps as input. This work’s core contribution is using the top- $k$ eigenvalues of the Laplacian matrix as input features, which we detail below.

Denote $\mathbf{A}^{(l,h)}∈\mathbb{R}^{T× T}$ as the attention map matrix for layer $l∈\{1...c L\}$ and attention head $h∈\{1...c H\}$ , where $T$ is the total number of tokens generated by an LLM (including input tokens), $L$ the number of layers (transformer blocks), and $H$ the number of attention heads. The attention matrix is row-stochastic, meaning each row sums to 1 ( $\sum_{j=0}^{T}\mathbf{A}^{(l,h)}_{:,j}=\mathbf{1}$ ). It is also lower triangular ( $a^{(l,h)}_{ij}=0$ for all $j>i$ ) and non-negative ( $a^{(l,h)}_{ij}≥ 0$ for all $i,j$ ). We can view $\mathbf{A}^{(l,h)}$ as a weighted adjacency matrix of a directed graph, where each node represents processed token, and each directed edge from token $i$ to token $j$ is weighted by the attention score, as depicted in Figure 2.

Then, we define the Laplacian of a layer $l$ and attention head $h$ as:

$$

\mathbf{L}^{(l,h)}=\mathbf{D}^{(l,h)}-\mathbf{A}^{(l,h)}, \tag{1}

$$

where $\mathbf{D}^{(l,h)}$ is a diagonal degree matrix. Since the attention map defines a directed graph, we distinguish between the in-degree and out-degree matrices. The in-degree is computed as the sum of attention scores from preceding tokens, and due to the softmax normalization, it is uniformly 1. Therefore, we define $\mathbf{D}^{(l,h)}$ as the out-degree matrix, which quantifies the total attention a token receives from tokens that follow it. To ensure these values remain independent of the sequence length, we normalize them by the number of subsequent tokens (i.e., the number of outgoing edges).

$$

d^{(l,h)}_{ii}=\frac{\sum_{u}{a^{(l,h)}_{ui}}}{T-i}, \tag{2}

$$

where $i,u∈\{0,...,(T-1)\}$ denote token indices. The Laplacian defined this way is bounded, i.e., $\mathbf{L}^{(l,h)}_{ij}∈\left[-1,1\right]$ (see Appendix B for proofs). Intuitively, the resulting Laplacian for each processed token represents the average attention score to previous tokens reduced by the attention score to itself. As eigenvalues of the Laplacian can summarize information flow in a graph (von Luxburg, 2007; Topping et al., 2022), we take eigenvalues of $\mathbf{L}^{(l,h)}$ , which are diagonal entries due to the lower triangularity of the Laplacian matrix, and sort them:

$$

\tilde{z}^{(l,h)}=\operatorname{sort\left(\operatorname{diag\left(\mathbf{L}^{(l,h)}\right)}\right)} \tag{3}

$$

Recently, (Zhu et al., 2024) found features from the entire token sequence, rather than a single token, improving hallucination detection. Similarly, (Kim et al., 2024) demonstrated that information from all layers, instead of a single one in isolation, yields better results on this task. Motivated by these findings, our method uses features from all tokens and all layers as input to the probe. Therefore, we take the top- $k$ largest values from each head and layer and concatenate them into a single feature vector $z$ , where $k$ is a hyperparameter of our method:

$$

z=\operatorname*{\mathchoice{\Big\|}{\big\|}{\|}{\|}}_{\forall l\in L,\forall h\in H}\left[\tilde{z}^{(l,h)}_{T},\tilde{z}^{(l,h)}_{T-1},\dotsc,\tilde{z}^{(l,h)}_{T-k}\right] \tag{4}

$$

Since LLMs contain dozens of layers and heads, the probe input vector $z∈\mathbb{R}^{L· H· k}$ can still be high-dimensional. Thus, we project it to a lower dimensionality using PCA (Jolliffe and Cadima, 2016). We call our approach $\operatorname{LapEigvals}$ .

4 Experimental setup

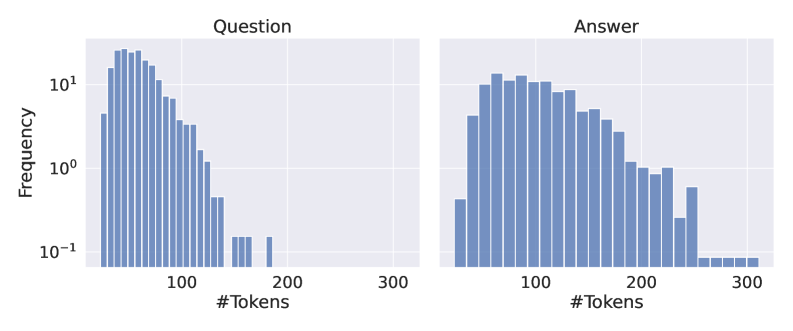

The overview of the methodology used in this work is presented in Figure 3. Next, we describe each step of the pipeline in detail.

4.1 Dataset construction

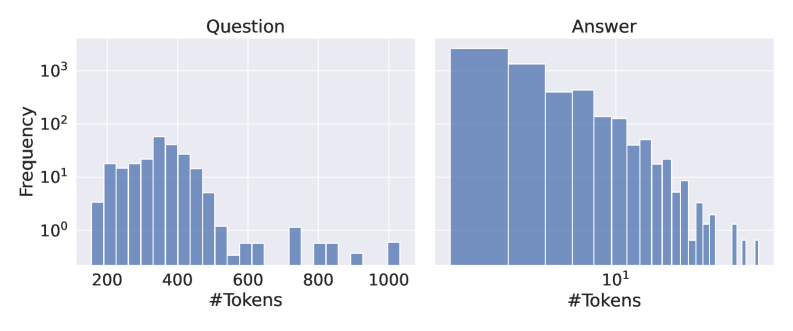

We use annotated QA datasets to construct the hallucination detection datasets and label incorrect LLM answers as hallucinations. To assess the correctness of generated answers, we followed prior work (Orgad et al., 2025) and adopted the llm-as-judge approach (Zheng et al., 2023), with the exception of one dataset where exact match evaluation against ground-truth answers was possible. For llm-as-judge, we prompted a large LLM to classify each response as either hallucination, non-hallucination, or rejected, where rejected indicates that it was unclear whether the answer was correct, e.g., the model refused to answer due to insufficient knowledge. Based on the manual qualitative inspection of several LLMs, we employed gpt-4o-mini (OpenAI et al., 2024) as the judge model since it provides the best trade-off between accuracy and cost. To confirm the reliability of the labels, we additionally verified agreement with the larger model, gpt-4.1, on Llama-3.1-8B and found that the agreement between models falls within the acceptable range widely adopted in the literature (see Appendix F).

For experiments, we selected 7 QA datasets previously utilized in the context of hallucination detection (Chen et al., 2024; Kossen et al., 2024; Chuang et al., 2024b; Mitra et al., 2024). Specifically, we used the validation set of NQ-Open (Kwiatkowski et al., 2019), comprising $3{,}610$ question-answer pairs, and the validation set of TriviaQA (Joshi et al., 2017), containing $7{,}983$ pairs. To evaluate our method on longer inputs, we employed the development set of CoQA (Reddy et al., 2019) and the rc.nocontext portion of the SQuADv2 (Rajpurkar et al., 2018) datasets, with $5{,}928$ and $9{,}960$ examples, respectively. Additionally, we incorporated the QA part of the HaluEvalQA (Li et al., 2023) dataset, containing $10{,}000$ examples, and the generation part of the TruthfulQA (Lin et al., 2022) benchmark with $817$ examples. Finally, we used test split of GSM8k dataset Cobbe et al. (2021) with $1{,}319$ grade school math problems, evaluated by exact match against labels. For TriviaQA, CoQA, and SQuADv2, we followed the same preprocessing procedure as (Chen et al., 2024).

We generate answers using 5 open-source LLMs: Llama-3.1-8B hf.co/meta-llama/Llama-3.1-8B-Instruct and Llama-3.2-3B hf.co/meta-llama/Llama-3.2-3B-Instruct (Grattafiori et al., 2024), Phi-3.5 hf.co/microsoft/Phi-3.5-mini-instruct (Abdin et al., 2024), Mistral-Nemo hf.co/mistralai/Mistral-Nemo-Instruct-2407 (Mistral AI Team and NVIDIA, 2024), Mistral-Small-24B hf.co/mistralai/Mistral-Small-24B-Instruct-2501 (Mistral AI Team, 2025). We use two softmax temperatures for each LLM when decoding ( $temp∈\{0.1,1.0\}$ ) and one prompt (for all datasets we used a prompt in Listing 3, except for GSM8K in Listing 5). Overall, we evaluated hallucination detection probes on 10 LLM configurations and 7 QA datasets. We present the frequency of classes for answers from each configuration in Figure 9 (Appendix E).

4.2 Hallucination Probe

As a hallucination probe, we take a logistic regression model, using the implementation from scikit-learn (Pedregosa et al., 2011) with all parameters default, except for ${max\_iter{=}2000}$ and ${class\_weight{=}{{}^{\prime\prime}balanced^{\prime\prime}}}$ . For top- $k$ eigenvalues, we tested 5 values of $k∈\{5,10,20,50,100\}$ For datasets with examples having less than 100 tokens, we stop at $k{=}50$ and selected the result with the highest efficacy. All eigenvalues are projected with PCA onto 512 dimensions, except in per-layer experiments where there may be fewer than 512 features. In these cases, we apply PCA projection to match the input feature dimensionality, i.e., decorrelating them. As an evaluation metric, we use AUROC on the test split (additional results presenting Precision and Recall are reported in Appendix G.1).

4.3 Baselines

Our method is a supervised approach for detecting hallucinations using only attention maps. For a fair comparison, we adapt the unsupervised $\operatorname{AttentionScore}$ (Sriramanan et al., 2024) by using log-determinants of each head’s attention scores as features instead of summing them, and we also include the original $\operatorname{AttentionScore}$ , computed as the sum of log-determinants over heads, for reference. To evaluate the effectiveness of our proposed Laplacian eigenvalues, we compare them to the eigenvalues of raw attention maps, denoted as $\operatorname{AttnEigvals}$ . Extended results for each approach on a per-layer basis are provided in Appendix G.2, while Appendix G.4 presents a comparison with a method based on hidden states. Implementation and hardware details are provided in Appendix C.

5 Results

Table 1: Test AUROC for $\operatorname{LapEigvals}$ and several baseline methods. AUROC values were obtained in a single run of logistic regression training on features from a dataset generated with $temp{=}1.0$ . We mark results for $\operatorname{AttentionScore}$ in gray as it is an unsupervised approach, not directly comparable to the others. In bold, we highlight the best performance individually for each dataset and LLM. See Appendix G for extended results.

| Llama3.1-8B Llama3.1-8B Llama3.1-8B | $\operatorname{AttentionScore}$ $\operatorname{AttnLogDet}$ $\operatorname{AttnEigvals}$ | 0.493 0.769 0.782 | 0.720 0.826 0.838 | 0.589 0.827 0.819 | 0.556 0.793 0.790 | 0.538 0.748 0.768 | 0.532 0.842 0.843 | 0.541 0.814 0.833 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Llama3.1-8B | $\operatorname{LapEigvals}$ | 0.830 | 0.872 | 0.874 | 0.827 | 0.791 | 0.889 | 0.829 |

| Llama3.2-3B | $\operatorname{AttentionScore}$ | 0.509 | 0.717 | 0.588 | 0.546 | 0.530 | 0.515 | 0.581 |

| Llama3.2-3B | $\operatorname{AttnLogDet}$ | 0.700 | 0.851 | 0.801 | 0.690 | 0.734 | 0.789 | 0.795 |

| Llama3.2-3B | $\operatorname{AttnEigvals}$ | 0.724 | 0.768 | 0.819 | 0.694 | 0.749 | 0.804 | 0.723 |

| Llama3.2-3B | $\operatorname{LapEigvals}$ | 0.812 | 0.870 | 0.828 | 0.693 | 0.757 | 0.832 | 0.787 |

| Phi3.5 | $\operatorname{AttentionScore}$ | 0.520 | 0.666 | 0.541 | 0.594 | 0.504 | 0.540 | 0.554 |

| Phi3.5 | $\operatorname{AttnLogDet}$ | 0.745 | 0.842 | 0.818 | 0.815 | 0.769 | 0.848 | 0.755 |

| Phi3.5 | $\operatorname{AttnEigvals}$ | 0.771 | 0.794 | 0.829 | 0.798 | 0.782 | 0.850 | 0.802 |

| Phi3.5 | $\operatorname{LapEigvals}$ | 0.821 | 0.885 | 0.836 | 0.826 | 0.795 | 0.872 | 0.777 |

| Mistral-Nemo | $\operatorname{AttentionScore}$ | 0.493 | 0.630 | 0.531 | 0.529 | 0.510 | 0.532 | 0.494 |

| Mistral-Nemo | $\operatorname{AttnLogDet}$ | 0.728 | 0.856 | 0.798 | 0.769 | 0.772 | 0.812 | 0.852 |

| Mistral-Nemo | $\operatorname{AttnEigvals}$ | 0.778 | 0.842 | 0.781 | 0.761 | 0.758 | 0.821 | 0.802 |

| Mistral-Nemo | $\operatorname{LapEigvals}$ | 0.835 | 0.890 | 0.833 | 0.795 | 0.812 | 0.865 | 0.828 |

| Mistral-Small-24B | $\operatorname{AttentionScore}$ | 0.516 | 0.576 | 0.504 | 0.462 | 0.455 | 0.463 | 0.451 |

| Mistral-Small-24B | $\operatorname{AttnLogDet}$ | 0.766 | 0.853 | 0.842 | 0.747 | 0.753 | 0.833 | 0.735 |

| Mistral-Small-24B | $\operatorname{AttnEigvals}$ | 0.805 | 0.856 | 0.848 | 0.751 | 0.760 | 0.844 | 0.765 |

| Mistral-Small-24B | $\operatorname{LapEigvals}$ | 0.861 | 0.925 | 0.882 | 0.791 | 0.820 | 0.876 | 0.748 |

Table 1 presents the results of our method compared to the baselines. $\operatorname{LapEigvals}$ achieved the best performance among all tested methods on 6 out of 7 datasets. Moreover, our method consistently performs well across all 5 LLM architectures ranging from 3 up to 24 billion parameters. TruthfulQA was the only exception where $\operatorname{LapEigvals}$ was the second-best approach, yet it might stem from the small size of the dataset or severe class imbalance (depicted in Figure 9). In contrast, using eigenvalues of vanilla attention maps in $\operatorname{AttnEigvals}$ leads to worse performance, which suggests that transformation to Laplacian is the crucial step to uncover latent features of an LLM corresponding to hallucinations. In Appendix G, we show that $\operatorname{LapEigvals}$ consistently demonstrates a smaller generalization gap, i.e., the difference between training and test performance is smaller for our method. While the $\operatorname{AttentionScore}$ method performed poorly, it is fully unsupervised and should not be directly compared to other approaches. However, its supervised counterpart – $\operatorname{AttnLogDet}$ – remains inferior to methods based on spectral features, namely $\operatorname{AttnEigvals}$ and $\operatorname{LapEigvals}$ . In Table 6 in Appendix G.2, we present extended results, including per-layer and all-layers breakdowns, two temperatures used during answers generation, and a comparison between training and test AUROC. Moreover, compared to probes based on hidden states, our method performs best in most of the tested settings, as shown in Appendix G.4.

6 Ablation studies

To better understand the behavior of our method under different conditions, we conduct a comprehensive ablation study. This analysis provides valuable insights into the factors driving the $\operatorname{LapEigvals}$ performance and highlights the robustness of our approach across various scenarios. In order to ensure reliable results, we perform all studies on the TriviaQA dataset, which has a moderate input size and number of examples.

6.1 How does the number of eigenvalues influence performance?

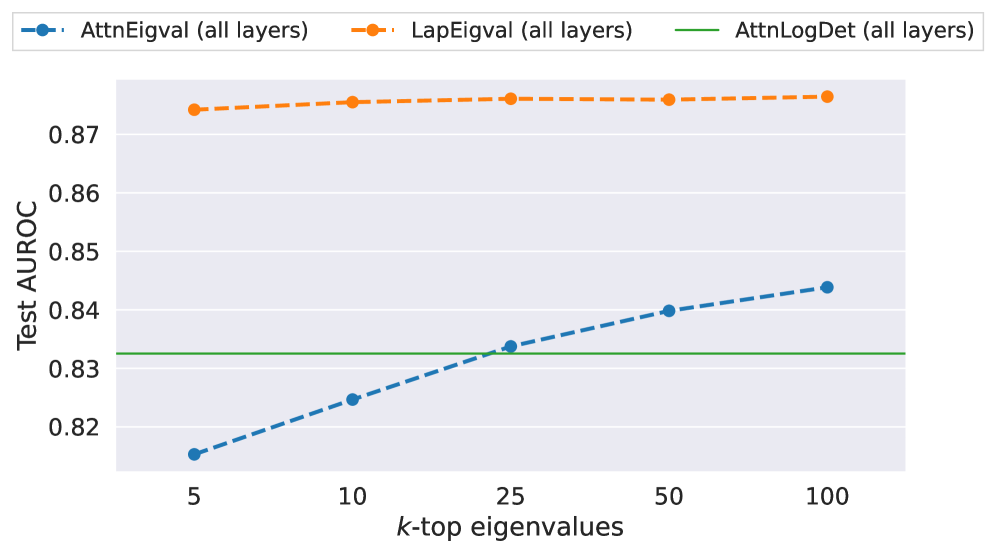

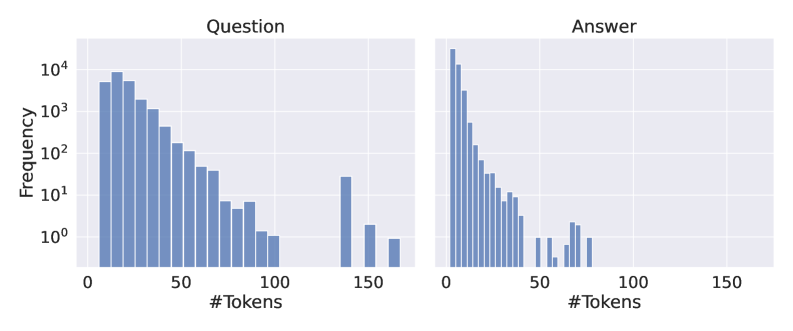

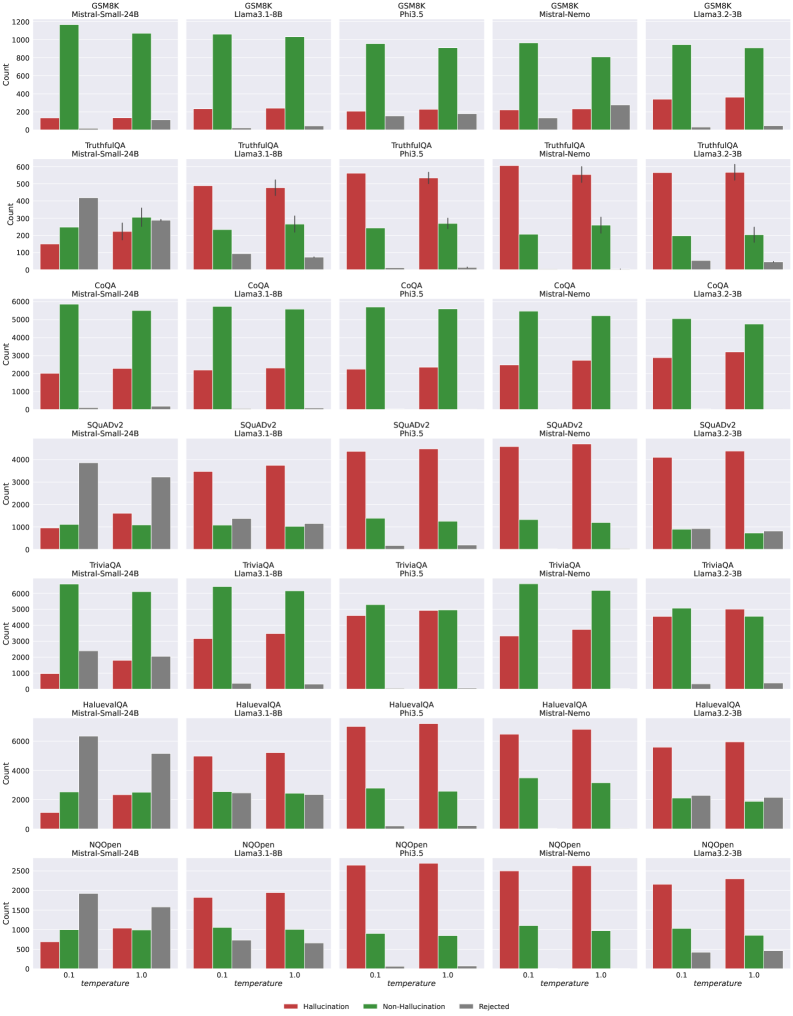

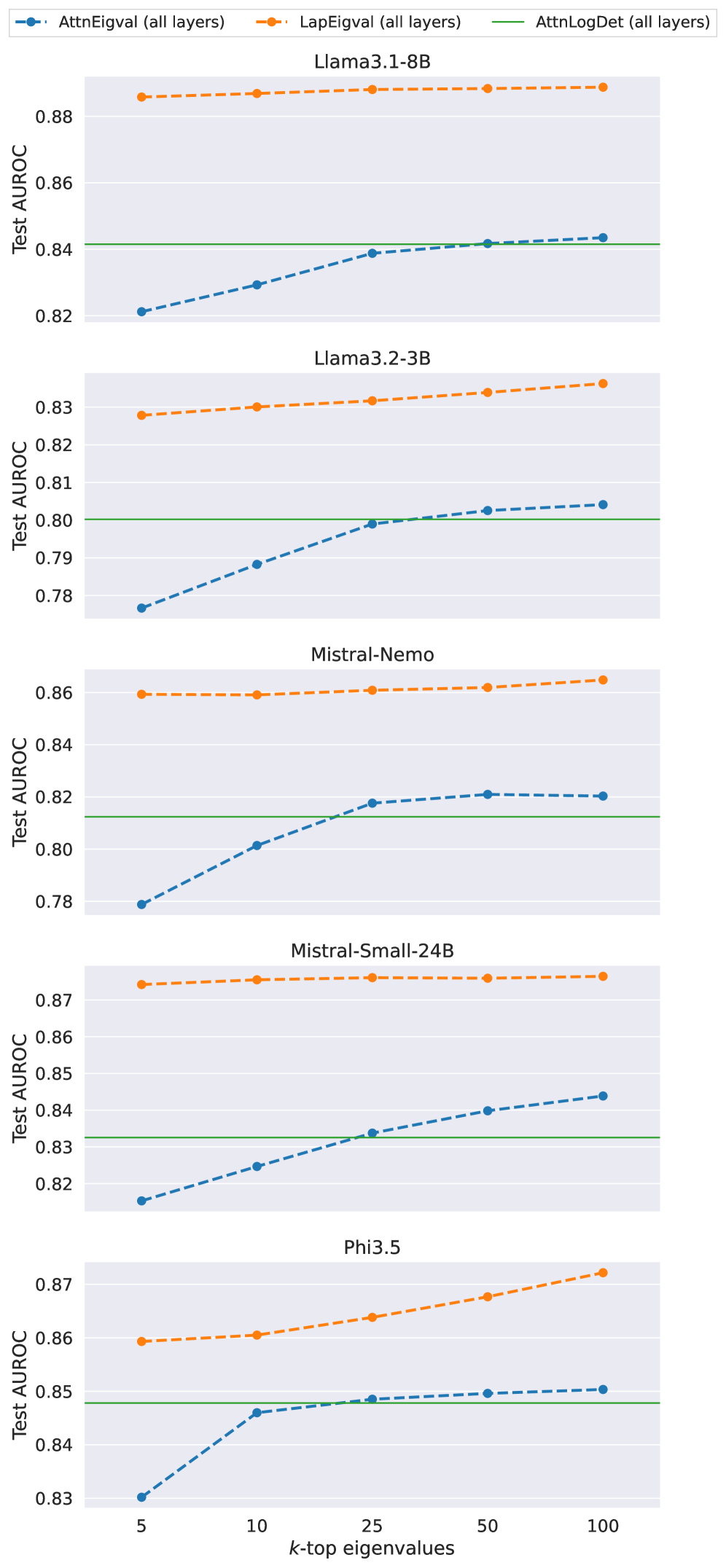

First, we verify how the number of eigenvalues influences the performance of the hallucination probe and present results for Mistral-Small-24B in Figure 4 (results for all models are showcased in Figure 10 in Appendix H). Generally, using more eigenvalues improves performance, but there is less variation in performance among different values of $k$ for $\operatorname{LapEigvals}$ compared to the baseline. Moreover, $\operatorname{LapEigvals}$ achieves significantly better performance with smaller input sizes, as $\operatorname{AttnEigvals}$ with the largest $k{=}100$ fails to surpass $\operatorname{LapEigvals}$ ’s performance at $k{=}5$ . These results confirm that spectral features derived from the Laplacian carry a robust signal indicating the presence of hallucinations and highlight the strength of our method.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Test AUROC vs. k-top Eigenvalues

### Overview

The image is a line chart comparing the performance of three different methods ("AttnEigval", "LapEigval", and "AttnLogDet") across varying numbers of top eigenvalues (k-top eigenvalues). Performance is measured by the Test Area Under the Receiver Operating Characteristic curve (AUROC).

### Components/Axes

* **X-axis:** "k-top eigenvalues" with markers at 5, 10, 25, 50, and 100.

* **Y-axis:** "Test AUROC" ranging from 0.82 to 0.87.

* **Legend:** Located at the top of the chart.

* Blue dashed line with circular markers: "AttnEigval (all layers)"

* Orange dashed line with circular markers: "LapEigval (all layers)"

* Green solid line: "AttnLogDet (all layers)"

### Detailed Analysis

* **AttnEigval (all layers):** (Blue, dashed) The line slopes upward.

* k=5: AUROC ≈ 0.816

* k=10: AUROC ≈ 0.824

* k=25: AUROC ≈ 0.833

* k=50: AUROC ≈ 0.840

* k=100: AUROC ≈ 0.844

* **LapEigval (all layers):** (Orange, dashed) The line is relatively flat, with a slight upward trend.

* k=5: AUROC ≈ 0.873

* k=10: AUROC ≈ 0.874

* k=25: AUROC ≈ 0.875

* k=50: AUROC ≈ 0.875

* k=100: AUROC ≈ 0.876

* **AttnLogDet (all layers):** (Green, solid) The line is flat.

* AUROC ≈ 0.832 for all k values.

### Key Observations

* "LapEigval (all layers)" consistently outperforms "AttnEigval (all layers)" and "AttnLogDet (all layers)" across all k values.

* "AttnLogDet (all layers)" has a constant AUROC regardless of the number of top eigenvalues used.

* "AttnEigval (all layers)" shows a positive correlation between the number of top eigenvalues and AUROC.

### Interpretation

The chart suggests that "LapEigval (all layers)" is the most effective method for this particular task, as it achieves the highest AUROC scores. Increasing the number of top eigenvalues (k) improves the performance of "AttnEigval (all layers)", but has little to no impact on "LapEigval (all layers)" and "AttnLogDet (all layers)". The consistent performance of "AttnLogDet (all layers)" indicates that its effectiveness is independent of the number of top eigenvalues considered. The data implies that the information captured by the top eigenvalues is more relevant to "AttnEigval (all layers)" than to the other two methods.

</details>

Figure 4: Probe performance across different top- $k$ eigenvalues: $k∈\{5,10,25,50,100\}$ for TriviaQA dataset with $temp{=}1.0$ and Mistral-Small-24B LLM.

6.2 Does using all layers at once improve performance?

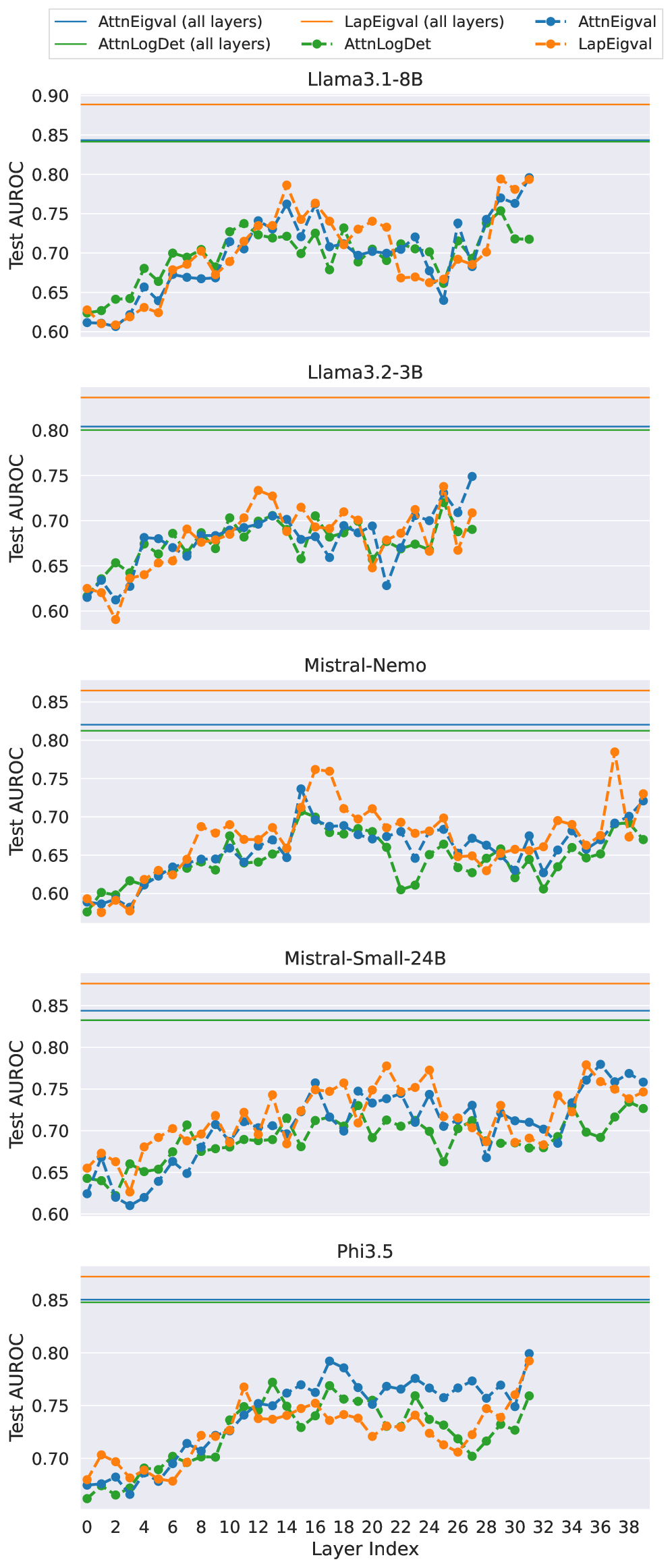

Second, we demonstrate that using all layers of an LLM instead of a single one improves performance. In Figure 5, we compare per-layer to all-layer efficacy for Mistral-Small-24B (results for all models are showcased in Figure 11 in Appendix H). For the per-layer approach, better performance is generally achieved with deeper LLM layers. Notably, peak performance varies across LLMs, requiring an additional search for each new LLM. In contrast, the all-layer probes consistently outperform the best per-layer probes across all LLMs. This finding suggests that information indicating hallucinations is spread across many layers of LLM, and considering them in isolation limits detection accuracy. Further, Table 6 in Appendix G summarises outcomes for the two variants on all datasets and LLM configurations examined in this work.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Test AUROC vs. Layer Index

### Overview

The image is a line chart comparing the Test AUROC (Area Under the Receiver Operating Characteristic curve) performance across different layers of a model, using different methods: AttnEigval, LapEigval, and AttnLogDet. The chart displays the performance of these methods across individual layers and also shows the performance of AttnEigval (all layers), LapEigval (all layers), and AttnLogDet (all layers) as horizontal lines.

### Components/Axes

* **X-axis:** Layer Index, ranging from 0 to 38 in increments of 2.

* **Y-axis:** Test AUROC, ranging from 0.60 to 0.85 in increments of 0.05.

* **Legend (Top-Left):**

* Blue solid line: AttnEigval (all layers)

* Green solid line: AttnLogDet (all layers)

* Orange solid line: LapEigval (all layers)

* Blue dashed line with circle markers: AttnEigval

* Green dashed line with circle markers: AttnLogDet

* Orange dashed line with circle markers: LapEigval

### Detailed Analysis

* **AttnEigval (all layers):** A solid blue horizontal line at approximately 0.845.

* **AttnLogDet (all layers):** A solid green horizontal line at approximately 0.83.

* **LapEigval (all layers):** A solid orange horizontal line at approximately 0.87.

* **AttnEigval:** (Dashed blue line with circle markers) Starts at approximately 0.66 at Layer Index 0, dips to 0.61 at Layer Index 4, then generally increases with fluctuations, reaching around 0.78 at Layer Index 38.

* **AttnLogDet:** (Dashed green line with circle markers) Starts at approximately 0.64 at Layer Index 0, increases to 0.70 at Layer Index 6, fluctuates, and ends at approximately 0.73 at Layer Index 38.

* **LapEigval:** (Dashed orange line with circle markers) Starts at approximately 0.67 at Layer Index 0, dips to 0.62 at Layer Index 4, then generally increases with fluctuations, reaching around 0.75 at Layer Index 38.

### Key Observations

* The "all layers" versions of AttnEigval, AttnLogDet, and LapEigval provide a consistent AUROC score across all layers, represented by horizontal lines.

* The individual layer performance (AttnEigval, AttnLogDet, and LapEigval) fluctuates across different layers.

* LapEigval (all layers) consistently shows the highest AUROC score, followed by AttnEigval (all layers) and then AttnLogDet (all layers).

* The individual layer performances of AttnEigval, AttnLogDet, and LapEigval are generally lower than their "all layers" counterparts.

### Interpretation

The chart compares the performance of different methods (AttnEigval, LapEigval, and AttnLogDet) for evaluating a model's performance across different layers. The "all layers" versions represent a baseline or aggregate performance, while the individual layer performances show how the model behaves at each specific layer. The higher AUROC scores for the "all layers" versions suggest that aggregating information across all layers leads to better overall performance compared to relying on individual layers. The fluctuations in individual layer performance indicate that some layers might be more informative or contribute more to the model's decision-making process than others. LapEigval (all layers) consistently outperforms the other methods, suggesting it might be a more effective approach for this particular model or task.

</details>

Figure 5: Analysis of model performance across different layers for Mistral-Small-24B and TriviaQA dataset with $temp{=}1.0$ and $k{=}100$ top eigenvalues (results for models operating on all layers provided for reference).

6.3 Does sampling temperature influence results?

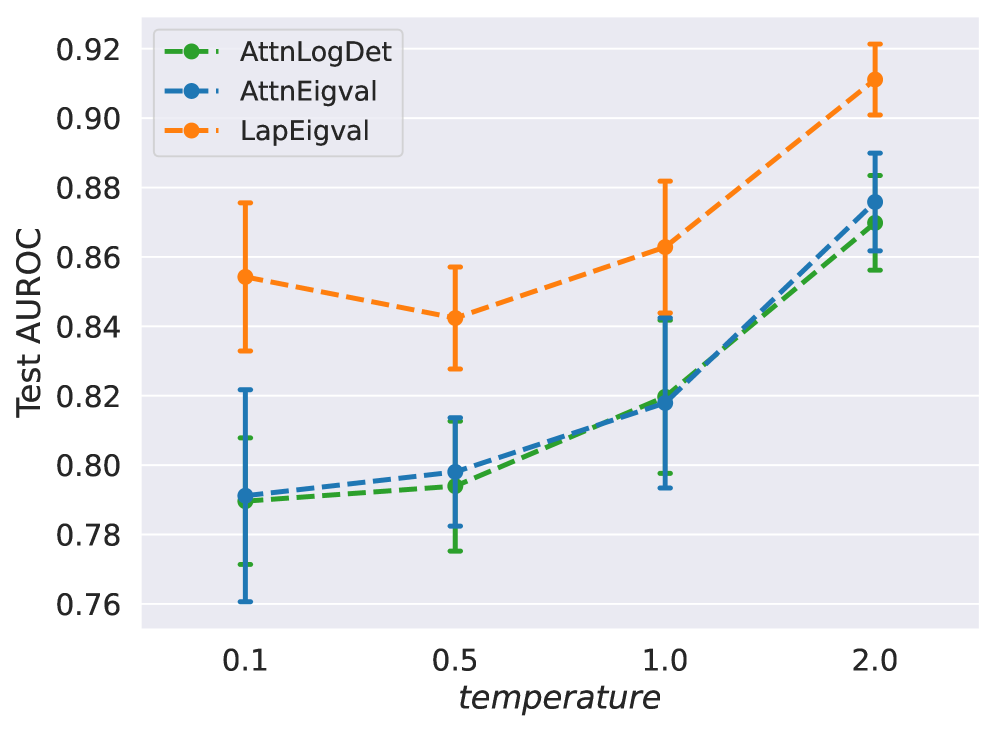

Here, we compare $\operatorname{LapEigvals}$ to the baselines on hallucination datasets, where each dataset contains answers generated at a specific decoding temperature. Higher temperatures typically produce more hallucinated examples (Lee, 2023; Renze, 2024), leading to dataset imbalance. Thus, to mitigate the effect of data imbalance, we sample a subset of $1{,}000$ hallucinated and $1{,}000$ non-hallucinated examples $10$ times for each temperature and train hallucination probes. Interestingly, in Figure 6, we observe that all models improve their performance at higher temperatures, but $\operatorname{LapEigvals}$ consistently achieves the best accuracy on all considered temperature values. The correlation of efficacy with temperature may be attributed to differences in the characteristics of hallucinations at higher temperatures compared to lower ones (Renze, 2024). Also, hallucination detection might be facilitated at higher temperatures due to underlying properties of softmax function (Veličković et al., 2024), and further exploration of this direction is left for future work.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Test AUROC vs. Temperature for Different Models

### Overview

The image is a line chart comparing the performance of three different models (AttnLogDet, AttnEigval, and LapEigval) based on their Test AUROC scores at varying temperatures (0.1, 0.5, 1.0, and 2.0). The chart includes error bars indicating the variability in the AUROC scores.

### Components/Axes

* **Title:** Test AUROC

* **X-axis:** temperature, with values 0.1, 0.5, 1.0, and 2.0

* **Y-axis:** Test AUROC, ranging from 0.76 to 0.92 with increments of 0.02.

* **Legend (top-left):**

* AttnLogDet (Green, dashed line)

* AttnEigval (Blue, dashed line)

* LapEigval (Orange, dashed line)

### Detailed Analysis

* **AttnLogDet (Green, dashed line):**

* At temperature 0.1, Test AUROC is approximately 0.79 with an error range of +/- 0.02.

* At temperature 0.5, Test AUROC is approximately 0.79 with an error range of +/- 0.02.

* At temperature 1.0, Test AUROC is approximately 0.82 with an error range of +/- 0.02.

* At temperature 2.0, Test AUROC is approximately 0.87 with an error range of +/- 0.01.

* Trend: Generally increasing with temperature.

* **AttnEigval (Blue, dashed line):**

* At temperature 0.1, Test AUROC is approximately 0.79 with an error range of +/- 0.03.

* At temperature 0.5, Test AUROC is approximately 0.795 with an error range of +/- 0.02.

* At temperature 1.0, Test AUROC is approximately 0.82 with an error range of +/- 0.03.

* At temperature 2.0, Test AUROC is approximately 0.875 with an error range of +/- 0.015.

* Trend: Generally increasing with temperature.

* **LapEigval (Orange, dashed line):**

* At temperature 0.1, Test AUROC is approximately 0.855 with an error range of +/- 0.02.

* At temperature 0.5, Test AUROC is approximately 0.84 with an error range of +/- 0.015.

* At temperature 1.0, Test AUROC is approximately 0.86 with an error range of +/- 0.02.

* At temperature 2.0, Test AUROC is approximately 0.91 with an error range of +/- 0.01.

* Trend: Relatively stable between 0.1 and 0.5, then increases at 1.0 and 2.0.

### Key Observations

* AttnLogDet and AttnEigval perform similarly across all temperatures.

* LapEigval generally outperforms AttnLogDet and AttnEigval, especially at lower temperatures.

* All models show an increase in Test AUROC as the temperature increases from 1.0 to 2.0.

* The error bars indicate some variability in the performance of each model at each temperature.

### Interpretation

The chart suggests that increasing the temperature generally improves the performance of all three models, as measured by Test AUROC. LapEigval appears to be the best-performing model overall, particularly at lower temperatures. The error bars indicate that the performance of each model can vary, so these results should be interpreted with caution. Further analysis with more data points and statistical significance testing would be needed to draw more definitive conclusions.

</details>

Figure 6: Test AUROC for different sampling $temp$ values during answer decoding on the TriviaQA dataset, using $k{=}100$ eigenvalues for $\operatorname{LapEigvals}$ and $\operatorname{AttnEigvals}$ with the Llama-3.1-8B LLM. Error bars indicate the standard deviation over 10 balanced samples containing $N=1000$ examples per class.

6.4 How does $\operatorname{LapEigvals}$ generalizes?

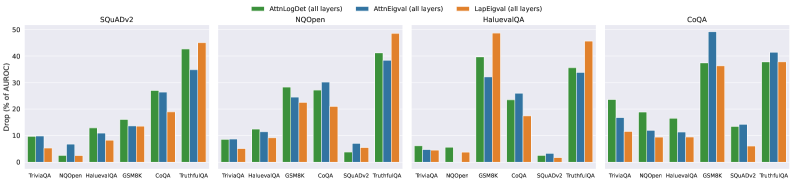

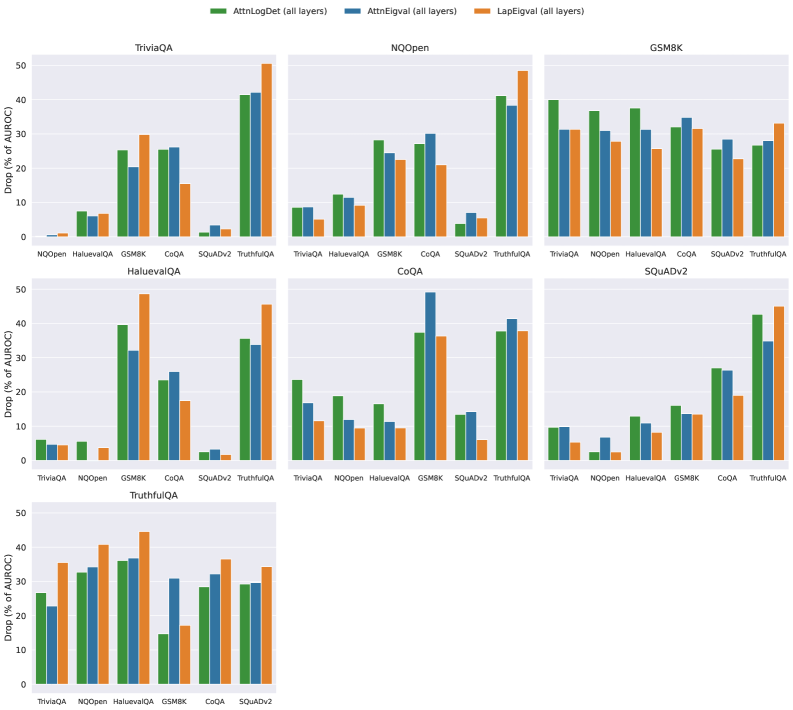

To check whether our method generalizes across datasets, we trained the hallucination probe on features from the training split of one QA dataset and evaluated it on the features from the test split of a different QA dataset. Due to space limitations, we present results for selected datasets and provide extended results and absolute efficacy values in Appendix I. Figure 7 showcases the percent drop in Test AUROC when using a different training dataset compared to training and testing on the same QA dataset. We can observe that $\operatorname{LapEigvals}$ provides a performance drop comparable to other baselines, and in several cases, it generalizes best. Interestingly, all methods exhibit poor generalization on TruthfulQA and GSM8K. We hypothesize that the weak performance on TruthfulQA arises from its limited size and class imbalance, whereas the difficulty on GSM8K likely reflects its distinct domain, which has been shown to hinder hallucination detection (Orgad et al., 2025). Additionally, in Appendix I, we show that $\operatorname{LapEigvals}$ achieves the highest test performance in all scenarios (except for TruthfulQA).

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: Drop in AUROC for Different Question Answering Datasets

### Overview

The image presents a series of bar charts comparing the drop in Area Under the Receiver Operating Characteristic Curve (AUROC) for different question answering datasets when using three different methods: AttnLogDet, AttnEigval, and LapEigval. The charts are grouped by dataset: SQuADv2, NQOpen, HaluevalQA, and CoQA. Each group shows the AUROC drop for various question types within that dataset.

### Components/Axes

* **Y-axis:** "Drop (% of AUROC)" with a scale from 0 to 50, incrementing by 10.

* **X-axis:** Question types within each dataset (TriviaQA, NQOpen, HaluevalQA, GSMBK, CoQA, SQuADv2, TruthfulQA, NOOper). Note that not all question types are present in each dataset group.

* **Legend:** Located at the top of the chart.

* Green: AttnLogDet (all layers)

* Blue: AttnEigval (all layers)

* Orange: LapEigval (all layers)

* **Chart Titles:**

* Top-left: SQuADv2

* Top-middle-left: NQOpen

* Top-middle-right: HaluevalQA

* Top-right: CoQA

### Detailed Analysis

**SQuADv2 Dataset:**

* TriviaQA: AttnLogDet ~9%, AttnEigval ~8%, LapEigval ~5%

* NQOpen: AttnLogDet ~2%, AttnEigval ~6%, LapEigval ~2%

* HaluevalQA: AttnLogDet ~12%, AttnEigval ~11%, LapEigval ~8%

* GSMBK: AttnLogDet ~15%, AttnEigval ~13%, LapEigval ~13%

* CoQA: AttnLogDet ~26%, AttnEigval ~26%, LapEigval ~18%

* TruthfulQA: AttnLogDet ~42%, AttnEigval ~30%, LapEigval ~19%

**NQOpen Dataset:**

* TriviaQA: AttnLogDet ~9%, AttnEigval ~9%, LapEigval ~4%

* HaluevalQA: AttnLogDet ~13%, AttnEigval ~12%, LapEigval ~9%

* GSMBK: AttnLogDet ~22%, AttnEigval ~21%, LapEigval ~17%

* CoQA: AttnLogDet ~38%, AttnEigval ~32%, LapEigval ~26%

* SQuADv2: AttnLogDet ~3%, AttnEigval ~7%, LapEigval ~3%

* TruthfulQA: AttnLogDet ~43%, AttnEigval ~33%, LapEigval ~29%

**HaluevalQA Dataset:**

* TriviaQA: AttnLogDet ~5%, AttnEigval ~5%, LapEigval ~5%

* NOOper: AttnLogDet ~2%, AttnEigval ~1%, LapEigval ~1%

* GSMBK: AttnLogDet ~36%, AttnEigval ~17%, LapEigval ~13%

* CoQA: AttnLogDet ~37%, AttnEigval ~22%, LapEigval ~17%

* SQuADv2: AttnLogDet ~2%, AttnEigval ~13%, LapEigval ~2%

* TruthfulQA: AttnLogDet ~37%, AttnEigval ~22%, LapEigval ~45%

**CoQA Dataset:**

* TriviaQA: AttnLogDet ~17%, AttnEigval ~12%, LapEigval ~9%

* NQOpen: AttnLogDet ~16%, AttnEigval ~10%, LapEigval ~7%

* HaluevalQA: AttnLogDet ~15%, AttnEigval ~10%, LapEigval ~7%

* GSMBK: AttnLogDet ~37%, AttnEigval ~47%, LapEigval ~32%

* SQuADv2: AttnLogDet ~15%, AttnEigval ~14%, LapEigval ~4%

* TruthfulQA: AttnLogDet ~38%, AttnEigval ~38%, LapEigval ~35%

### Key Observations

* The drop in AUROC varies significantly across different question types and datasets.

* AttnLogDet generally shows a higher drop in AUROC compared to AttnEigval and LapEigval, especially for TruthfulQA in SQuADv2 and NQOpen datasets.

* LapEigval often exhibits the lowest drop in AUROC, suggesting it might be more robust to certain types of questions.

* GSMBK and TruthfulQA questions tend to have a higher drop in AUROC compared to TriviaQA and NQOpen questions.

### Interpretation

The bar charts illustrate the impact of different attention mechanisms (AttnLogDet, AttnEigval, and LapEigval) on the performance of question answering models, as measured by the drop in AUROC. The data suggests that the choice of attention mechanism can significantly affect performance, depending on the type of question and the dataset used. AttnLogDet appears to be more sensitive to certain question types, leading to a larger performance drop. The relative consistency of LapEigval suggests it might be a more stable choice across different question types. The higher drop in AUROC for GSMBK and TruthfulQA questions indicates that these question types may be more challenging for the models to answer correctly, regardless of the attention mechanism used. The data highlights the importance of carefully selecting and tuning attention mechanisms for specific question answering tasks and datasets.

</details>

Figure 7: Generalization across datasets measured as a percent performance drop in Test AUROC (less is better) when trained on one dataset and tested on the other. Training datasets are indicated in the plot titles, while test datasets are shown on the $x$ -axis. Results computed on Llama-3.1-8B with $k{=}100$ top eigenvalues and $temp{=}1.0$ . Results for all datasets are presented in Appendix I.

6.5 How does performance vary across prompts?

Lastly, to assess the stability of our method across different prompts used for answer generation, we compared the results of the hallucination probes trained on features regarding four distinct prompts, the content of which is included in Appendix M. As shown in Table 2, $\operatorname{LapEigvals}$ consistently outperforms all baselines across all four prompts. While we can observe variations in performance across prompts, $\operatorname{LapEigvals}$ demonstrates the lowest standard deviation ( $0.05$ ) compared to $\operatorname{AttnLogDet}$ ( $0.016$ ) and $\operatorname{AttnEigvals}$ ( $0.07$ ), indicating its greater robustness.

Table 2: Test AUROC across four different prompts for answers on the TriviaQA dataset using Llama-3.1-8B with $temp{=}1.0$ and $k{=}50$ (some prompts have led to fewer than 100 tokens). Prompt $\boldsymbol{p_{3}}$ was the main one used to compare our method to baselines, as presented in Tables 1.

| $\operatorname{AttnLogDet}$ $\operatorname{AttnEigvals}$ $\operatorname{LapEigvals}$ | 0.847 0.840 0.882 | 0.855 0.870 0.890 | 0.842 0.842 0.888 | 0.860 0.875 0.895 |

| --- | --- | --- | --- | --- |

7 Related Work

Hallucinations in LLMs were proved to be inevitable (Xu et al., 2024), and to detect them, one can leverage either black-box or white-box approaches. The former approach uses only the outputs from an LLM, while the latter uses hidden states, attention maps, or logits corresponding to generated tokens.

Black-box approaches focus on the text generated by LLMs. For instance, (Li et al., 2024) verified the truthfulness of factual statements using external knowledge sources, though this approach relies on the availability of additional resources. Alternatively, SelfCheckGPT (Manakul et al., 2023) generates multiple responses to the same prompt and evaluates their consistency, with low consistency indicating potential hallucination.

White-box methods have emerged as a promising approach for detecting hallucinations (Farquhar et al., 2024; Azaria and Mitchell, 2023; Arteaga et al., 2024; Orgad et al., 2025). These methods are universal across all LLMs and do not require additional domain adaptation compared to black-box ones (Farquhar et al., 2024). They draw inspiration from seminal works on analyzing the internal states of simple neural networks (Alain and Bengio, 2016), which introduced linear classifier probes – models operating on the internal states of neural networks. Linear probes have been widely applied to the internal states of LLMs, notably for detecting hallucinations.

One of the first such probes was SAPLMA (Azaria and Mitchell, 2023), which demonstrated that one could predict the correctness of generated text straight from LLM’s hidden states. Further, the INSIDE method (Chen et al., 2024) tackled hallucination detection by sampling multiple responses from an LLM and evaluating consistency between their hidden states using a normalized sum of the eigenvalues from their covariance matrix. Also, (Farquhar et al., 2024) proposed a complementary probabilistic approach, employing entropy to quantify the model’s intrinsic uncertainty. Their method involves generating multiple responses, clustering them by semantic similarity, and calculating Semantic Entropy using an appropriate estimator. To address concerns regarding the validity of LLM probes, (Marks and Tegmark, 2024) introduced a high-quality QA dataset with simple true / false answers and causally demonstrated that the truthfulness of such statements is linearly represented in LLMs, which supports the use of probes for short texts.

Self-consistency methods (Liang et al., 2024), like INSIDE or Semantic Entropy, require multiple runs of an LLM for each input example, which substantially lowers their applicability. Motivated by this limitation, (Kossen et al., 2024) proposed to use Semantic Entropy Probe, which is a small model trained to predict expensive Semantic Entropy (Farquhar et al., 2024) from LLM’s hidden states. Notably, (Orgad et al., 2025) explored how LLMs encode information about truthfulness and hallucinations. First, they revealed that truthfulness is concentrated in specific tokens. Second, they found that probing classifiers on LLM representations do not generalize well across datasets, especially across datasets requiring different skills, which we confirmed in Section 6.4. Lastly, they showed that the probes could select the correct answer from multiple generated answers with reasonable accuracy, meaning LLMs make mistakes at the decoding stage, besides knowing the correct answer.

Recent studies have started to explore hallucination detection exclusively from attention maps. (Chuang et al., 2024a) introduced the lookback ratio, which measures how much attention LLMs allocate to relevant input parts when answering questions based on the provided context. The work most closely related to ours is (Sriramanan et al., 2024), which introduces the $\operatorname{AttentionScore}$ method. Although the process is unsupervised and computationally efficient, the authors note that its performance can depend highly on the specific layer from which the score is extracted. Compared to $\operatorname{AttentionScore}$ , our method is fully supervised and grounded in graph theory, as we interpret inference in LLM as a graph. While $\operatorname{AttentionScore}$ aggregates only the attention diagonal to compute its log-determinant, we instead derive features from the graph Laplacian, which captures all attention scores (see Eq. (1) and (2)). Additionally, we utilize all layers for detecting hallucination rather than a single one, demonstrating effectiveness of this approach. We also demonstrate that it performs poorly on the datasets we evaluated. Nonetheless, we drew inspiration from their approach, particularly using the lower triangular structure of matrices when constructing features for the hallucination probe.

8 Conclusions

In this work, we demonstrated that the spectral features of LLMs’ attention maps, specifically the eigenvalues of the Laplacian matrix, carry a signal capable of detecting hallucinations. Specifically, we proposed the $\operatorname{LapEigvals}$ method, which employs the top- $k$ eigenvalues of the Laplacian as input to the hallucination detection probe. Through extensive evaluations, we empirically showed that our method consistently achieves state-of-the-art performance among all tested approaches. Furthermore, multiple ablation studies demonstrated that our method remains stable across varying numbers of eigenvalues, diverse prompts, and generation temperatures while offering reasonable generalization.

In addition, we hypothesize that self-supervised learning (Balestriero et al., 2023) could yield a more robust and generalizable approach while uncovering non-trivial intrinsic features of attention maps. Notably, results such as those in Section 6.3 suggest intriguing connections to recent advancements in LLM research (Veličković et al., 2024; Barbero et al., 2024), highlighting promising directions for future investigation.

Limitations

Supervised method In our approach, one must provide labelled hallucinated and non-hallucinated examples to train the hallucination probe. While this can be handled by the llm-as-judge, it might introduce some noise or pose a risk of overfitting. Limited generalization across LLM architectures The method is incompatible with LLMs having different head and layer configurations. Developing architecture-agnostic hallucination probes is left for future work. Minimum length requirement Computing $\operatorname{top-k}$ Laplacian eigenvalues demands attention maps of at least $k$ tokens (e.g., $k{=}100$ require 100 tokens). Open LLMs Our method requires access to the internal states of LLM thus it cannot be applied to closed LLMs. Risks Please note that the proposed method was tested on selected LLMs and English data, so applying it to untested domains and tasks carries a considerable risk without additional validation.

Acknowledgements

We sincerely thank Piotr Bielak for his valuable review and insightful feedback, which helped improve this work. This work was funded by the European Union under the Horizon Europe grant OMINO – Overcoming Multilevel INformation Overload (grant number 101086321, https://ominoproject.eu/). Views and opinions expressed are those of the authors alone and do not necessarily reflect those of the European Union or the European Research Executive Agency. Neither the European Union nor the European Research Executive Agency can be held responsible for them. It was also co-financed with funds from the Polish Ministry of Education and Science under the programme entitled International Co-Financed Projects, grant no. 573977. We gratefully acknowledge the Wroclaw Centre for Networking and Supercomputing for providing the computational resources used in this work. This work was co-funded by the National Science Centre, Poland under CHIST-ERA Open & Re-usable Research Data & Software (grant number 2022/04/Y/ST6/00183). The authors used ChatGPT to improve the clarity and readability of the manuscript.

References

- Abdin et al. (2024) Marah Abdin, Jyoti Aneja, Hany Awadalla, Ahmed Awadallah, Ammar Ahmad Awan, Nguyen Bach, Amit Bahree, Arash Bakhtiari, Jianmin Bao, Harkirat Behl, Alon Benhaim, Misha Bilenko, Johan Bjorck, Sébastien Bubeck, Martin Cai, Qin Cai, Vishrav Chaudhary, Dong Chen, Dongdong Chen, Weizhu Chen, Yen-Chun Chen, Yi-Ling Chen, Hao Cheng, Parul Chopra, Xiyang Dai, Matthew Dixon, Ronen Eldan, Victor Fragoso, Jianfeng Gao, Mei Gao, Min Gao, Amit Garg, Allie Del Giorno, Abhishek Goswami, Suriya Gunasekar, Emman Haider, Junheng Hao, Russell J. Hewett, Wenxiang Hu, Jamie Huynh, Dan Iter, Sam Ade Jacobs, Mojan Javaheripi, Xin Jin, Nikos Karampatziakis, Piero Kauffmann, Mahoud Khademi, Dongwoo Kim, Young Jin Kim, Lev Kurilenko, James R. Lee, Yin Tat Lee, Yuanzhi Li, Yunsheng Li, Chen Liang, Lars Liden, Xihui Lin, Zeqi Lin, Ce Liu, Liyuan Liu, Mengchen Liu, Weishung Liu, Xiaodong Liu, Chong Luo, Piyush Madan, Ali Mahmoudzadeh, David Majercak, Matt Mazzola, Caio César Teodoro Mendes, Arindam Mitra, Hardik Modi, Anh Nguyen, Brandon Norick, Barun Patra, Daniel Perez-Becker, Thomas Portet, Reid Pryzant, Heyang Qin, Marko Radmilac, Liliang Ren, Gustavo de Rosa, Corby Rosset, Sambudha Roy, Olatunji Ruwase, Olli Saarikivi, Amin Saied, Adil Salim, Michael Santacroce, Shital Shah, Ning Shang, Hiteshi Sharma, Yelong Shen, Swadheen Shukla, Xia Song, Masahiro Tanaka, Andrea Tupini, Praneetha Vaddamanu, Chunyu Wang, Guanhua Wang, Lijuan Wang, Shuohang Wang, Xin Wang, Yu Wang, Rachel Ward, Wen Wen, Philipp Witte, Haiping Wu, Xiaoxia Wu, Michael Wyatt, Bin Xiao, Can Xu, Jiahang Xu, Weijian Xu, Jilong Xue, Sonali Yadav, Fan Yang, Jianwei Yang, Yifan Yang, Ziyi Yang, Donghan Yu, Lu Yuan, Chenruidong Zhang, Cyril Zhang, Jianwen Zhang, Li Lyna Zhang, Yi Zhang, Yue Zhang, Yunan Zhang, and Xiren Zhou. 2024. Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone. arXiv preprint. ArXiv:2404.14219 [cs].

- Alain and Bengio (2016) Guillaume Alain and Yoshua Bengio. 2016. Understanding intermediate layers using linear classifier probes.

- Ansel et al. (2024) Jason Ansel, Edward Yang, Horace He, Natalia Gimelshein, Animesh Jain, Michael Voznesensky, Bin Bao, Peter Bell, David Berard, Evgeni Burovski, Geeta Chauhan, Anjali Chourdia, Will Constable, Alban Desmaison, Zachary DeVito, Elias Ellison, Will Feng, Jiong Gong, Michael Gschwind, Brian Hirsh, Sherlock Huang, Kshiteej Kalambarkar, Laurent Kirsch, Michael Lazos, Mario Lezcano, Yanbo Liang, Jason Liang, Yinghai Lu, CK Luk, Bert Maher, Yunjie Pan, Christian Puhrsch, Matthias Reso, Mark Saroufim, Marcos Yukio Siraichi, Helen Suk, Michael Suo, Phil Tillet, Eikan Wang, Xiaodong Wang, William Wen, Shunting Zhang, Xu Zhao, Keren Zhou, Richard Zou, Ajit Mathews, Gregory Chanan, Peng Wu, and Soumith Chintala. 2024. PyTorch 2: Faster Machine Learning Through Dynamic Python Bytecode Transformation and Graph Compilation. In 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, Volume 2 (ASPLOS ’24). ACM.

- Arteaga et al. (2024) Gabriel Y. Arteaga, Thomas B. Schön, and Nicolas Pielawski. 2024. Hallucination Detection in LLMs: Fast and Memory-Efficient Finetuned Models. In Northern Lights Deep Learning Conference 2025.

- Azaria and Mitchell (2023) Amos Azaria and Tom Mitchell. 2023. The Internal State of an LLM Knows When It‘s Lying. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 967–976, Singapore. Association for Computational Linguistics.

- Balestriero et al. (2023) Randall Balestriero, Mark Ibrahim, Vlad Sobal, Ari Morcos, Shashank Shekhar, Tom Goldstein, Florian Bordes, Adrien Bardes, Gregoire Mialon, Yuandong Tian, Avi Schwarzschild, Andrew Gordon Wilson, Jonas Geiping, Quentin Garrido, Pierre Fernandez, Amir Bar, Hamed Pirsiavash, Yann LeCun, and Micah Goldblum. 2023. A Cookbook of Self-Supervised Learning. arXiv preprint. ArXiv:2304.12210 [cs].

- Barbero et al. (2024) Federico Barbero, Andrea Banino, Steven Kapturowski, Dharshan Kumaran, João G. M. Araújo, Alex Vitvitskyi, Razvan Pascanu, and Petar Veličković. 2024. Transformers need glasses! Information over-squashing in language tasks. arXiv preprint. ArXiv:2406.04267 [cs].

- Black et al. (2023) Mitchell Black, Zhengchao Wan, Amir Nayyeri, and Yusu Wang. 2023. Understanding Oversquashing in GNNs through the Lens of Effective Resistance. In International Conference on Machine Learning, pages 2528–2547. PMLR. ArXiv:2302.06835 [cs].

- Bruna et al. (2013) Joan Bruna, Wojciech Zaremba, Arthur Szlam, and Yann LeCun. 2013. Spectral Networks and Locally Connected Networks on Graphs. CoRR.

- Chen et al. (2024) Chao Chen, Kai Liu, Ze Chen, Yi Gu, Yue Wu, Mingyuan Tao, Zhihang Fu, and Jieping Ye. 2024. INSIDE: LLMs’ Internal States Retain the Power of Hallucination Detection. In The Twelfth International Conference on Learning Representations.

- Chuang et al. (2024a) Yung-Sung Chuang, Linlu Qiu, Cheng-Yu Hsieh, Ranjay Krishna, Yoon Kim, and James R. Glass. 2024a. Lookback Lens: Detecting and Mitigating Contextual Hallucinations in Large Language Models Using Only Attention Maps. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 1419–1436, Miami, Florida, USA. Association for Computational Linguistics.

- Chuang et al. (2024b) Yung-Sung Chuang, Yujia Xie, Hongyin Luo, Yoon Kim, James R. Glass, and Pengcheng He. 2024b. DoLa: Decoding by Contrasting Layers Improves Factuality in Large Language Models. In The Twelfth International Conference on Learning Representations.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. 2021. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168.

- Dao et al. (2022) Tri Dao, Daniel Y. Fu, Stefano Ermon, Atri Rudra, and Christopher Ré. 2022. FLASHATTENTION: fast and memory-efficient exact attention with IO-awareness. In Proceedings of the 36th international conference on neural information processing systems, Nips ’22, New Orleans, LA, USA. Curran Associates Inc. Number of pages: 16 tex.address: Red Hook, NY, USA tex.articleno: 1189.

- Farquhar et al. (2024) Sebastian Farquhar, Jannik Kossen, Lorenz Kuhn, and Yarin Gal. 2024. Detecting hallucinations in large language models using semantic entropy. Nature, 630(8017):625–630. Publisher: Nature Publishing Group.

- Grattafiori et al. (2024) Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, Amy Yang, Angela Fan, Anirudh Goyal, Anthony Hartshorn, Aobo Yang, Archi Mitra, Archie Sravankumar, Artem Korenev, Arthur Hinsvark, Arun Rao, Aston Zhang, Aurelien Rodriguez, Austen Gregerson, Ava Spataru, Baptiste Roziere, Bethany Biron, Binh Tang, Bobbie Chern, Charlotte Caucheteux, Chaya Nayak, Chloe Bi, Chris Marra, Chris McConnell, Christian Keller, Christophe Touret, Chunyang Wu, Corinne Wong, Cristian Canton Ferrer, Cyrus Nikolaidis, Damien Allonsius, Daniel Song, Danielle Pintz, Danny Livshits, Danny Wyatt, David Esiobu, Dhruv Choudhary, Dhruv Mahajan, Diego Garcia-Olano, Diego Perino, Dieuwke Hupkes, Egor Lakomkin, Ehab AlBadawy, Elina Lobanova, Emily Dinan, Eric Michael Smith, Filip Radenovic, Francisco Guzmán, Frank Zhang, Gabriel Synnaeve, Gabrielle Lee, Georgia Lewis Anderson, Govind Thattai, Graeme Nail, Gregoire Mialon, Guan Pang, Guillem Cucurell, Hailey Nguyen, Hannah Korevaar, Hu Xu, Hugo Touvron, Iliyan Zarov, Imanol Arrieta Ibarra, Isabel Kloumann, Ishan Misra, Ivan Evtimov, Jack Zhang, Jade Copet, Jaewon Lee, Jan Geffert, Jana Vranes, Jason Park, Jay Mahadeokar, Jeet Shah, Jelmer van der Linde, Jennifer Billock, Jenny Hong, Jenya Lee, Jeremy Fu, Jianfeng Chi, Jianyu Huang, Jiawen Liu, Jie Wang, Jiecao Yu, Joanna Bitton, Joe Spisak, Jongsoo Park, Joseph Rocca, Joshua Johnstun, Joshua Saxe, Junteng Jia, Kalyan Vasuden Alwala, Karthik Prasad, Kartikeya Upasani, Kate Plawiak, Ke Li, Kenneth Heafield, Kevin Stone, Khalid El-Arini, Krithika Iyer, Kshitiz Malik, Kuenley Chiu, Kunal Bhalla, Kushal Lakhotia, Lauren Rantala-Yeary, Laurens van der Maaten, Lawrence Chen, Liang Tan, Liz Jenkins, Louis Martin, Lovish Madaan, Lubo Malo, Lukas Blecher, Lukas Landzaat, Luke de Oliveira, Madeline Muzzi, Mahesh Pasupuleti, Mannat Singh, Manohar Paluri, Marcin Kardas, Maria Tsimpoukelli, Mathew Oldham, Mathieu Rita, Maya Pavlova, Melanie Kambadur, Mike Lewis, Min Si, Mitesh Kumar Singh, Mona Hassan, Naman Goyal, Narjes Torabi, Nikolay Bashlykov, Nikolay Bogoychev, Niladri Chatterji, Ning Zhang, Olivier Duchenne, Onur Çelebi, Patrick Alrassy, Pengchuan Zhang, Pengwei Li, Petar Vasic, Peter Weng, Prajjwal Bhargava, Pratik Dubal, Praveen Krishnan, Punit Singh Koura, Puxin Xu, Qing He, Qingxiao Dong, Ragavan Srinivasan, Raj Ganapathy, Ramon Calderer, Ricardo Silveira Cabral, Robert Stojnic, Roberta Raileanu, Rohan Maheswari, Rohit Girdhar, Rohit Patel, Romain Sauvestre, Ronnie Polidoro, Roshan Sumbaly, Ross Taylor, Ruan Silva, Rui Hou, Rui Wang, Saghar Hosseini, Sahana Chennabasappa, Sanjay Singh, Sean Bell, Seohyun Sonia Kim, Sergey Edunov, Shaoliang Nie, Sharan Narang, Sharath Raparthy, Sheng Shen, Shengye Wan, Shruti Bhosale, Shun Zhang, Simon Vandenhende, Soumya Batra, Spencer Whitman, Sten Sootla, Stephane Collot, Suchin Gururangan, Sydney Borodinsky, Tamar Herman, Tara Fowler, Tarek Sheasha, Thomas Georgiou, Thomas Scialom, Tobias Speckbacher, Todor Mihaylov, Tong Xiao, Ujjwal Karn, Vedanuj Goswami, Vibhor Gupta, Vignesh Ramanathan, Viktor Kerkez, Vincent Gonguet, Virginie Do, Vish Vogeti, Vítor Albiero, Vladan Petrovic, Weiwei Chu, Wenhan Xiong, Wenyin Fu, Whitney Meers, Xavier Martinet, Xiaodong Wang, Xiaofang Wang, Xiaoqing Ellen Tan, Xide Xia, Xinfeng Xie, Xuchao Jia, Xuewei Wang, Yaelle Goldschlag, Yashesh Gaur, Yasmine Babaei, Yi Wen, Yiwen Song, Yuchen Zhang, Yue Li, Yuning Mao, Zacharie Delpierre Coudert, Zheng Yan, Zhengxing Chen, Zoe Papakipos, Aaditya Singh, Aayushi Srivastava, Abha Jain, Adam Kelsey, Adam Shajnfeld, Adithya Gangidi, Adolfo Victoria, Ahuva Goldstand, Ajay Menon, Ajay Sharma, Alex Boesenberg, Alexei Baevski, Allie Feinstein, Amanda Kallet, Amit Sangani, Amos Teo, Anam Yunus, Andrei Lupu, Andres Alvarado, Andrew Caples, Andrew Gu, Andrew Ho, Andrew Poulton, Andrew Ryan, Ankit Ramchandani, Annie Dong, Annie Franco, Anuj Goyal, Aparajita Saraf, Arkabandhu Chowdhury, Ashley Gabriel, Ashwin Bharambe, Assaf Eisenman, Azadeh Yazdan, Beau James, Ben Maurer, Benjamin Leonhardi, Bernie Huang, Beth Loyd, Beto De Paola, Bhargavi Paranjape, Bing Liu, Bo Wu, Boyu Ni, Braden Hancock, Bram Wasti, Brandon Spence, Brani Stojkovic, Brian Gamido, Britt Montalvo, Carl Parker, Carly Burton, Catalina Mejia, Ce Liu, Changhan Wang, Changkyu Kim, Chao Zhou, Chester Hu, Ching-Hsiang Chu, Chris Cai, Chris Tindal, Christoph Feichtenhofer, Cynthia Gao, Damon Civin, Dana Beaty, Daniel Kreymer, Daniel Li, David Adkins, David Xu, Davide Testuggine, Delia David, Devi Parikh, Diana Liskovich, Didem Foss, Dingkang Wang, Duc Le, Dustin Holland, Edward Dowling, Eissa Jamil, Elaine Montgomery, Eleonora Presani, Emily Hahn, Emily Wood, Eric-Tuan Le, Erik Brinkman, Esteban Arcaute, Evan Dunbar, Evan Smothers, Fei Sun, Felix Kreuk, Feng Tian, Filippos Kokkinos, Firat Ozgenel, Francesco Caggioni, Frank Kanayet, Frank Seide, Gabriela Medina Florez, Gabriella Schwarz, Gada Badeer, Georgia Swee, Gil Halpern, Grant Herman, Grigory Sizov, Guangyi, Zhang, Guna Lakshminarayanan, Hakan Inan, Hamid Shojanazeri, Han Zou, Hannah Wang, Hanwen Zha, Haroun Habeeb, Harrison Rudolph, Helen Suk, Henry Aspegren, Hunter Goldman, Hongyuan Zhan, Ibrahim Damlaj, Igor Molybog, Igor Tufanov, Ilias Leontiadis, Irina-Elena Veliche, Itai Gat, Jake Weissman, James Geboski, James Kohli, Janice Lam, Japhet Asher, Jean-Baptiste Gaya, Jeff Marcus, Jeff Tang, Jennifer Chan, Jenny Zhen, Jeremy Reizenstein, Jeremy Teboul, Jessica Zhong, Jian Jin, Jingyi Yang, Joe Cummings, Jon Carvill, Jon Shepard, Jonathan McPhie, Jonathan Torres, Josh Ginsburg, Junjie Wang, Kai Wu, Kam Hou U, Karan Saxena, Kartikay Khandelwal, Katayoun Zand, Kathy Matosich, Kaushik Veeraraghavan, Kelly Michelena, Keqian Li, Kiran Jagadeesh, Kun Huang, Kunal Chawla, Kyle Huang, Lailin Chen, Lakshya Garg, Lavender A, Leandro Silva, Lee Bell, Lei Zhang, Liangpeng Guo, Licheng Yu, Liron Moshkovich, Luca Wehrstedt, Madian Khabsa, Manav Avalani, Manish Bhatt, Martynas Mankus, Matan Hasson, Matthew Lennie, Matthias Reso, Maxim Groshev, Maxim Naumov, Maya Lathi, Meghan Keneally, Miao Liu, Michael L. Seltzer, Michal Valko, Michelle Restrepo, Mihir Patel, Mik Vyatskov, Mikayel Samvelyan, Mike Clark, Mike Macey, Mike Wang, Miquel Jubert Hermoso, Mo Metanat, Mohammad Rastegari, Munish Bansal, Nandhini Santhanam, Natascha Parks, Natasha White, Navyata Bawa, Nayan Singhal, Nick Egebo, Nicolas Usunier, Nikhil Mehta, Nikolay Pavlovich Laptev, Ning Dong, Norman Cheng, Oleg Chernoguz, Olivia Hart, Omkar Salpekar, Ozlem Kalinli, Parkin Kent, Parth Parekh, Paul Saab, Pavan Balaji, Pedro Rittner, Philip Bontrager, Pierre Roux, Piotr Dollar, Polina Zvyagina, Prashant Ratanchandani, Pritish Yuvraj, Qian Liang, Rachad Alao, Rachel Rodriguez, Rafi Ayub, Raghotham Murthy, Raghu Nayani, Rahul Mitra, Rangaprabhu Parthasarathy, Raymond Li, Rebekkah Hogan, Robin Battey, Rocky Wang, Russ Howes, Ruty Rinott, Sachin Mehta, Sachin Siby, Sai Jayesh Bondu, Samyak Datta, Sara Chugh, Sara Hunt, Sargun Dhillon, Sasha Sidorov, Satadru Pan, Saurabh Mahajan, Saurabh Verma, Seiji Yamamoto, Sharadh Ramaswamy, Shaun Lindsay, Shaun Lindsay, Sheng Feng, Shenghao Lin, Shengxin Cindy Zha, Shishir Patil, Shiva Shankar, Shuqiang Zhang, Shuqiang Zhang, Sinong Wang, Sneha Agarwal, Soji Sajuyigbe, Soumith Chintala, Stephanie Max, Stephen Chen, Steve Kehoe, Steve Satterfield, Sudarshan Govindaprasad, Sumit Gupta, Summer Deng, Sungmin Cho, Sunny Virk, Suraj Subramanian, Sy Choudhury, Sydney Goldman, Tal Remez, Tamar Glaser, Tamara Best, Thilo Koehler, Thomas Robinson, Tianhe Li, Tianjun Zhang, Tim Matthews, Timothy Chou, Tzook Shaked, Varun Vontimitta, Victoria Ajayi, Victoria Montanez, Vijai Mohan, Vinay Satish Kumar, Vishal Mangla, Vlad Ionescu, Vlad Poenaru, Vlad Tiberiu Mihailescu, Vladimir Ivanov, Wei Li, Wenchen Wang, Wenwen Jiang, Wes Bouaziz, Will Constable, Xiaocheng Tang, Xiaojian Wu, Xiaolan Wang, Xilun Wu, Xinbo Gao, Yaniv Kleinman, Yanjun Chen, Ye Hu, Ye Jia, Ye Qi, Yenda Li, Yilin Zhang, Ying Zhang, Yossi Adi, Youngjin Nam, Yu, Wang, Yu Zhao, Yuchen Hao, Yundi Qian, Yunlu Li, Yuzi He, Zach Rait, Zachary DeVito, Zef Rosnbrick, Zhaoduo Wen, Zhenyu Yang, Zhiwei Zhao, and Zhiyu Ma. 2024. The Llama 3 Herd of Models. arXiv preprint. ArXiv:2407.21783 [cs].

- Huang et al. (2023) Lei Huang, Weijiang Yu, Weitao Ma, Weihong Zhong, Zhangyin Feng, Haotian Wang, Qianglong Chen, Weihua Peng, Xiaocheng Feng, Bing Qin, and Ting Liu. 2023. A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Questions. arXiv preprint. ArXiv:2311.05232 [cs].

- Jolliffe and Cadima (2016) Ian T. Jolliffe and Jorge Cadima. 2016. Principal component analysis: a review and recent developments. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences, 374(2065):20150202. Publisher: Royal Society.

- Joshi et al. (2017) Mandar Joshi, Eunsol Choi, Daniel Weld, and Luke Zettlemoyer. 2017. TriviaQA: A Large Scale Distantly Supervised Challenge Dataset for Reading Comprehension. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 1601–1611, Vancouver, Canada. Association for Computational Linguistics.

- Kim et al. (2024) Hazel Kim, Adel Bibi, Philip Torr, and Yarin Gal. 2024. Detecting LLM Hallucination Through Layer-wise Information Deficiency: Analysis of Unanswerable Questions and Ambiguous Prompts. arXiv preprint. ArXiv:2412.10246 [cs].

- Kossen et al. (2024) Jannik Kossen, Jiatong Han, Muhammed Razzak, Lisa Schut, Shreshth Malik, and Yarin Gal. 2024. Semantic Entropy Probes: Robust and Cheap Hallucination Detection in LLMs. arXiv preprint. ArXiv:2406.15927 [cs].

- Kuprieiev et al. (2025) Ruslan Kuprieiev, skshetry, Peter Rowland, Dmitry Petrov, Pawel Redzynski, Casper da Costa-Luis, David de la Iglesia Castro, Alexander Schepanovski, Ivan Shcheklein, Gao, Batuhan Taskaya, Jorge Orpinel, Fábio Santos, Daniele, Ronan Lamy, Aman Sharma, Zhanibek Kaimuldenov, Dani Hodovic, Nikita Kodenko, Andrew Grigorev, Earl, Nabanita Dash, George Vyshnya, Dave Berenbaum, maykulkarni, Max Hora, Vera, and Sanidhya Mangal. 2025. DVC: Data Version Control - Git for Data & Models.

- Kwiatkowski et al. (2019) Tom Kwiatkowski, Jennimaria Palomaki, Olivia Redfield, Michael Collins, Ankur Parikh, Chris Alberti, Danielle Epstein, Illia Polosukhin, Jacob Devlin, Kenton Lee, Kristina Toutanova, Llion Jones, Matthew Kelcey, Ming-Wei Chang, Andrew M. Dai, Jakob Uszkoreit, Quoc Le, and Slav Petrov. 2019. Natural Questions: A Benchmark for Question Answering Research. Transactions of the Association for Computational Linguistics, 7:452–466. Place: Cambridge, MA Publisher: MIT Press.

- Lee (2023) Minhyeok Lee. 2023. A Mathematical Investigation of Hallucination and Creativity in GPT Models. Mathematics, 11(10):2320.

- Li et al. (2024) Junyi Li, Jie Chen, Ruiyang Ren, Xiaoxue Cheng, Xin Zhao, Jian-Yun Nie, and Ji-Rong Wen. 2024. The Dawn After the Dark: An Empirical Study on Factuality Hallucination in Large Language Models. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 10879–10899, Bangkok, Thailand. Association for Computational Linguistics.

- Li et al. (2023) Junyi Li, Xiaoxue Cheng, Wayne Xin Zhao, Jian-Yun Nie, and Ji-Rong Wen. 2023. HaluEval: A Large-Scale Hallucination Evaluation Benchmark for Large Language Models. arXiv preprint. ArXiv:2305.11747 [cs].

- Liang et al. (2024) Xun Liang, Shichao Song, Zifan Zheng, Hanyu Wang, Qingchen Yu, Xunkai Li, Rong-Hua Li, Feiyu Xiong, and Zhiyu Li. 2024. Internal Consistency and Self-Feedback in Large Language Models: A Survey. CoRR, abs/2407.14507.

- Lin et al. (2022) Stephanie Lin, Jacob Hilton, and Owain Evans. 2022. TruthfulQA: Measuring How Models Mimic Human Falsehoods. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 3214–3252, Dublin, Ireland. Association for Computational Linguistics.

- Manakul et al. (2023) Potsawee Manakul, Adian Liusie, and Mark Gales. 2023. SelfCheckGPT: Zero-Resource Black-Box Hallucination Detection for Generative Large Language Models. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 9004–9017, Singapore. Association for Computational Linguistics.