# Self-rewarding correction for mathematical reasoning

**Authors**: Wei Xiong, Hanning Zhang, Chenlu Ye, Lichang Chen, Nan Jiang, Tong Zhang

## Abstract

We study self-rewarding reasoning large language models (LLMs), which can simultaneously generate step-by-step reasoning and evaluate the correctness of their outputs during the inference time- without external feedback. This integrated approach allows a single model to independently guide its reasoning process, offering computational advantages for model deployment.

We particularly focus on the representative task of self-correction, where models autonomously detect errors in their responses, revise outputs, and decide when to terminate iterative refinement loops. To enable this, we propose a two-staged algorithmic framework for constructing self-rewarding reasoning models using only self-generated data. In the first stage, we employ sequential rejection sampling to synthesize long chain-of-thought trajectories that incorporate both self-rewarding and self-correction mechanisms. Fine-tuning models on these curated data allows them to learn the patterns of self-rewarding and self-correction. In the second stage, we further enhance the models’ ability to assess response accuracy and refine outputs through reinforcement learning with rule-based signals. Experiments with Llama-3 and Qwen-2.5 demonstrate that our approach surpasses intrinsic self-correction capabilities and achieves performance comparable to systems that rely on external reward models.

Machine Learning, ICML

## 1 Introduction

Large language models (LLMs) have demonstrated remarkable capabilities in reasoning-related tasks such as mathematics and coding. Notable examples include ChatGPT (OpenAI, 2023), Claude (Anthropic, 2023), and Gemini (Team et al., 2023). Following the release of GPT4-o1, LLMs with strong reasoning abilities have attracted even more attention, along with inference methods that enhance reasoning. A particularly desirable property of such models is their ability to detect inconsistencies and errors in self-generated responses—based on feedback to their prior outputs—and correct these errors to produce improved responses. This process is often referred to as self-correction in the literature (Welleck et al., 2022; Madaan et al., 2024; Kim et al., 2024).

When an external ground-truth reward model is available, studies (Kim et al., 2024; Qu et al., 2024; Shinn et al., 2024) have shown that LLMs can refine their initial responses based on external gold reward feedback and determine when to terminate the self-correction loop. These approaches have proven effective for both mathematical reasoning and general agent tasks. Moreover, even when relying on imperfect proxy rewards, models can still achieve higher accuracy in revised responses by leveraging feedback from an outcome-based reward model (see Section 5 for empirical results). However, since these reward models are often themselves LLMs, deploying them requires running multiple models during inference, which increases computational costs and deployment complexity. In contrast, without external reward feedback, current LLMs struggle to refine their initial responses solely based on their intrinsic capabilities—a limitation known as intrinsic self-correction (Huang et al., 2023).

While reward models are traditionally trained with an additional scalar head for general-purpose chat (Ouyang et al., 2022; Bai et al., 2022; Touvron et al., 2023) and reasoning tasks (Cobbe et al., 2021a; Lightman et al., 2023), recent work suggests that LLMs themselves can generate reward signals in a generative way. For example, the LLM-as-a-judge approach (Zheng et al., 2023; Dubois et al., 2023) prompts the LLM to evaluate text outputs, effectively serving as a surrogate for human feedback. Another emerging direction explores generative reward models (Zhao et al., 2023; Dong et al., 2024; Zhang et al., 2024b; Mahan et al., 2024; Zhang et al., 2024a), which formulate evaluation tasks as instruction-following problems, using the probability of generating specific tokens as the reward value. These methods leverage LLMs’ next-token prediction capabilities, integrate the generation and evaluation into a unified framework.

Building on these insights, this work investigates self-rewarding reasoning models that can incorporate three abilities within a single LLM: (i) generating step-by-step reasoning paths for given prompts, (ii) evaluating the correctness of generated responses, and (iii) revising and enhancing previous responses based on self-rewarding signals. Our key contributions are as follows:

1. Self-rewarding reasoning framework. We introduce a self-rewarding reasoning framework for LLMs, which integrates the generator and reward model into a single LLM, enabling autonomous reasoning, evaluation, and correction. This unification simplifies the model’s decision-making process and reduces computational overhead compared to external reward-based approaches.

1. Algorithmic framework for self-correction. We focus on the self-correction in mathematical reasoning and propose a two-stage framework that relies only on self-generated data. In the first stage, we use sequential rejection sampling to construct long chain-of-thought (CoT) trajectories that encode both self-rewarding and self-correction behaviors. Fine-tuning models on these trajectories enables them to detect the error in the self-generated responses and revise the previous attempts. In the second stage, we further enhance these patterns through reinforcement learning with rule-based signals.

1. Empirical validation and analysis. Through extensive experiments, we show that self-rewarding correction significantly outperforms intrinsic self-correction. Additionally, we conduct ablation studies to investigate the learning dynamics of the proposed framework, providing deeper insights into its behavior and effectiveness. The training codes and datasets are publicly available on GitHub https://github.com/RLHFlow/Self-rewarding-reasoning-LLM.

## 2 Related Work

We review the works that are mostly related to our project in this section.

#### Self-rewarding alignment.

Our work aligns with research on self-rewarding alignment (Yuan et al., 2024b; Prasad et al., 2024), where both of our project and their methods share similar spirits that we can unify the generation ability and evaluation ability into a single LLM. These methods leverage iterative DPO-type algorithms, where the model labels its own generated responses to provide training signals for subsequent iterations, enabling self-improvement. In contrast, our approach does not focus on self-improvement during training. Instead, we rely on an external ground-truth reward model to provide learning signals in training. Our study emphasizes inference-time alignment for reasoning-focused LLMs, where self-rewarding signals are employed solely to guide inference rather than training.

#### Self-correction.

Our work is closely related to self-correction in LLMs. We refer interested readers to the survey (Pan et al., 2023) for a more comprehensive review and only review some representative approaches that are mostly related to our project. Li et al. (2024) demonstrated that incorporating teacher model reflections into SFT data enhances students’ self-reflection abilities in general-purpose conversation tasks. However, for reasoning tasks, Huang et al. (2023) found that current LLMs—without additional training—fail to self-correct purely through intrinsic reasoning (i.e., prompting). This observation is also validated in Qu et al. (2024); Tyen et al. (2023); Zheng et al. (2024). A more in-depth analysis shows that most prior successful studies in this domain depend on external (ground-truth) reward models to determine when to initiate and terminate self-correction (Kim et al., 2024; Qu et al., 2024; Shinn et al., 2024; Madaan et al., 2024). Currently, there is no major work demonstrating that intrinsic self-correction (via prompting or fine-tuning) is reliably effective. Furthermore, because external reward models are typically LLM-based, these methods introduce additional computational overhead by requiring a multi-agent system for inference.

Recognizing this challenge, our study explores how LLMs can autonomously evaluate response quality and correct errors without external reward models. Specifically, we introduce a self-rewarding reasoning framework that enables a single LLM to perform error detection and self-correction effectively. Among the works in self-correction, the most relevant work is the recent Kumar et al. (2024), which employed a multi-turn deep RL approach to train self-correcting models. In comparison, this work introduces a new and general self-rewarding formulation for reasoning-focused LLMs, with self-correction as a representative application. Compared to the intrinsic correction and the framework in Kumar et al. (2024), one major difference is that our framework equips models with self-rewarding ability, enabling our models to intelligently scale inference compute by selectively revising the first attempts, which helps to reduce computational overhead by avoiding unnecessary iterations. We will also design experiments to illustrate this idea.

Algorithmically, our approach also differs from Kumar et al. (2024). We first use sequential rejection sampling to construct long CoT trajectories with both self-rewarding and self-correction patterns, which serve as warm-up fine-tuning data. We then enhance these behaviors through reinforcement learning (using either DPO-type algorithms or PPO) with rule-based signals. In contrast, Kumar et al. (2024) employed RLOO (Ahmadian et al., 2024) with a specialized reward function for a two-turn self-correction task. While their no-public models (Gemini) and implementation details (parameters, codes) do not enable comparison, we believe that the multi-turn RL methods proposed by Kumar et al. (2024) could also complement the proposed self-rewarding framework, and achieve better reasoning performance compared to the standard reasoning models.

#### Rule-based RL for LLMs mathematical reasoning.

Rule-based reinforcement learning has received significant attention following the success of DeepSeek-R1 (DeepSeek-AI et al., 2025). Open-source efforts have since attempted to replicate its performance using Qwen models (Yang et al., 2024), including works such as Zeng et al. (2025); Cui et al. (2025); Zhang et al. (2025). These methods train LLMs using only the correctness score (whether the final answer is correct or not) and a format score (whether the final answer is output in a pre-determined format), in contrast to the previous works with the neural network-based reward model (Cobbe et al., 2021a; Lightman et al., 2023; Zhang et al., 2024a). In particular, DeepSeek-AI et al. (2025) observed that self-correction naturally emerges during RL training (referred to as an AHA moment in their report). However, our preliminary experiments, along with open-source replications using Qwen-2.5-Math (Liu et al., 2025; Zhang et al., 2025; Cheng et al., 2025), suggest that (i) the base models already exhibit some self-correction ability, though it is quite sparse. (ii) vanilla rule-based RL cannot consistently enhance self-correction without additional design.

Interestingly, even when using the same algorithms and data, similar improvements in mathematical reasoning are not observed in models such as Llama (Meta, 2024; Touvron et al., 2023). We hypothesize that Qwen-2.5-Math and DeepSeek-R1 benefit from extensive pre-training on high-quality mathematical corpora (e.g., 1T tokens for Qwen-2.5-Math (Yang et al., 2024)), and that the AHA moment may stem from carefully curated data containing self-correction patterns in pre-training or a cool-down stage. Since these datasets are non-public, the exact details remain unknown.

In contrast, our study shows that a warm-up stage using a carefully curated SFT dataset (collected via sequential rejection sampling) enables models to learn self-correction patterns more reliably. This foundation allows rule-based RL to further enhance these behaviors in a stable manner. We also remark that our two-stage framework and most of the associated experiments are performed prior to the release of DeepSeek-R1.

%“hspace–-10mm˝

| User: A particular star has an absolute magnitude $M=-7$ . If this star is observed in a galaxy that is at a distance of $3Mpc$ , what will its apparent magnitude be? |

| --- |

| Please reason step by step, and put your final answer within $\boxed{}$ . (prompt $x$ ) |

| Assistant: To determine the apparent magnitude of the star, we can use the distance modulus formula : $m-M=5\log_10(d)-5$ where: $m$ is the apparent magnitude, $M$ is the absolute magnitude, $d$ is the distance to the star in parsecs…. The apparent magnitude of the star, when observed from a distance of 3 Mpc, is approximately $7.58$ . So the final answer is: $\boxed{7.58}$ . (initial response $a^1$ ) |

| To verify the correctness of the solution, let’s recheck the steps … So the final answer is: $\boxed{20.39}$ . The self-evaluation is: [VERIFY] wrong. (self-rewarding evaluation $y^1$ ) |

| Let’s correct it step by step. Given: Absolute magnitude … The correct apparent magnitude is approximately $20.39$ . So the final answer is: $\boxed{20.39}$ . |

| (revised response $a^2$ ). Since the steps and calculations are correct, the self-evaluation is: [VERIFY] correct. (self-rewarding evaluation $y^2$ ) |

Table 1: An example of the self-rewarding reasoning path. We omit the detailed reasoning path for a clear presentation. The full trajectory is available at Table LABEL:tab:example_hh_rlhf_dataset in Appendix.

## 3 Self-rewarding Reasoning Language Models

We formulate the self-rewarding reasoning process as a multi-turn Markov Decision Process (MDP). After observing an initial prompt $s^1=x∈X$ from some distribution $d_0$ , an LLM, denoted as $π$ , will generate an initial reasoning attempt $a^1∼π^1(·|s^1)$ from the action space $A$ . The LLM then self-rewards its response by generating an evaluation:

$$

y^1∼π^1(·|s^1,a^1).

$$

If the model assesses its answer as correct ( $y^1=[VERIFY] correct$ , details provided later), the generation stops. Otherwise, the LLM proceeds to the next step, generating a refined response and evaluation:

$$

(a^2,y^2)∼π^2(·|s^2),

$$

where the generation is conditioned on the updated state $s^2=(s^1,a^1,y^1)$ . The self-refinement process continues until the model produces a self-evaluation $y^h$ that assesses the answer as correct.

We assume that we have access to the ground-truth verifier $r^⋆:X×A→\{0,1\}$ , which determines whether a response is correct. Throughout this study, we use the ToRA verification script (Gou et al., 2023), built on the Python library SymPy for symbolic mathematics. We also present a representative Example 1 to illustrate the process.

#### Two-stage training framework.

Following standard post-training practices for LLMs, we adopt a two-stage approach:

1. Self-rewarding instruction-following fine-tuning (IFT). Starting with an initial LLM $π_0$ (e.g., a general-purpose chatbot), we collect demonstration data by a sequential rejection sampling process and fine-tune $π_0$ to get an improved model $π_ref$ , which integrates self-rewarding reasoning abilities.

1. Reinforcement learning (RL) optimization. We further refine $π_ref$ using RL, leveraging it as the reference model. This stage can further enhance the model’s ability to assess correctness and refine previous responses.

### 3.1 Self-rewarding Instruction-following Fine-tuning

#### Self-rewarding by token prediction.

To train the LLMs to evaluate the reasoning steps, we formulate this task as an instruction-following task, following prior works (Zhao et al., 2023; Dong et al., 2024; Liu et al., 2023; Ye et al., 2024; Wang et al., 2024; Zhang et al., 2024b). Specifically, we allow models to include reasoning in their evaluations while requiring them to output specific tokens to indicate their evaluation results. We experimented with different token choices, such as: (i) a prompt “Is the most recent final answer correct (Yes or No)?” with “Yes” and “No” as the response tokens, as used in (Xie et al., 2023; Zhang et al., 2024b); (ii) explicit markers such as “[VERIFY] correct” and “[VERIFY] wrong”. Our experiments show no significant performance differences between these choices. During inference, rather than using the likelihood of “Yes” as a reward (as in (Zhao et al., 2023; Dong et al., 2024; Zhang et al., 2024b)), we sample the evaluation token from the distribution. This allows us to use a standard inference pipeline without any specific adjustment. See Table 1 for an example.

**Remark 3.1**

*We choose these specific tokens primarily for research simplicity. However, we expect that similar results can be achieved even if these special tokens are replaced with more natural language expressions, such as “wait”, “aha”, or “let me re-check the answer”, where one can also leverage the LLMs to complete this paraphrasing process.*

%“hspace–-10mm˝

| System: You are a mathematical reasoning assistant. For each problem, follow these steps strictly: 1. Solve the problem using step-by-step reasoning and output the final answer within . Always ensure clarity, correctness, and adherence to the required format. |

| --- |

| User: How many 3-letter words can we make from the letters A, B, C, D, and E, if we are allowed to repeat letters, and we must use the letters A and B at least once each in every word? (Here, a word is an arbitrary sequence of letters.) Let’s think step by step and output the final answer within . |

| Assistant: Here’s how we can break it down: 1. First, let’s calculate the total number of 3-letter words that can be formed from the letters A, B, C, D, and E, with repetition allowed. … 7. Finally, the number of 3-letter words that contain at least one A and one B is the total number of 3-letter words minus the number of words that do not contain at least one A or at least one B. The number of 3-letter words that can be made from the letters A, B, C, D, and E, where each word must contain at least one A and one B, is $\boxed{38}$ . |

| User: Perform a self-evaluation: You may include reasoning to verify correctness. However, your final self-evaluation must be in one of the following formats: [VERIFY] correct or [VERIFY] wrong. |

| Assistant: Let’s perform a self-evaluation of the solution. We first calculate … This is $125-101=24$ . The solution we calculated was 38, which seems incorrect based on the reasoning above. The correct answer should be 24. Let’s output the self-evaluation: [VERIFY] wrong. |

| User: please identify the mistake in your previous reasoning, revise your reasoning path and output a corrected final answer within |

| Assistant: Let’s correct the mistake in the previous reasoning. The correct steps should be as follows: 1. Calculate … 6. Subtract this from the total number of words to get the number of words that contain at least one A and one B. This is $125-101=24$ . The correct answer is indeed $\boxed{24}$ . |

Table 2: An example of the sequential rejection sampling to collect long CoT trajectories.

Data collection by sequential rejection sampling. We employ a rejection sampling approach, similar to STaR (Zelikman et al., 2022) and RAFT (Dong et al., 2023), where we generate a large amount of self-correction trajectories and only preserve the desired trajectories. The major difference is that since the self-correction behavior is sparse in base models and self-rewarding pattern is missing, it is unlikely to collect the desired trajectory directly. In view of this, we sequentially prompt the base model and generate different steps separately. Then, we combine them into long CoT trajectories that incorporate both self-rewarding and self-correction patterns.

Our data collection process consists of the following steps:

1. Generating initial reasoning responses: training prompts from datasets such as MATH (Hendrycks et al., 2021) and GSM8K (Cobbe et al., 2021a) and sample $N_1=50$ initial responses $a^1$ per prompt as our base trajectories (see Section 5 for details of experiment setups).

1. Self-rewarding signal sampling: For each prompt and initial response, we further sample $N_2=8$ self-evaluations and keep only one evaluation result that is the same as the ground truth. Then, we split them into $G^correct$ and $G^wrong$ using the ground-truth verifier $r^⋆$ .

1. Correction sampling: For each prompt and initial response in $G^wrong$ , we sample $M_1=8$ completions by providing the feedback that the initial response was wrong to collect trajectories that successfully revise incorrect responses. For each prompt and initial response in $G^correct$ , however, we also tell the model that the response was incorrect and collect $M_2=4$ completions. By doing so, we want to additionally collect “correct-to-correct” trajectories in the face of wrong judgment.

Eventually, we collect $8×|G^wrong|+4×|G^correct|$ full trajectories. Then, we filter the dataset and only keep the following types of data:

- $D^IFT_1:$ wrong $a^1$ , $y^1=[VERIFY] wrong$ , correct $a^2$ ;

- $D^IFT_2:$ correct $a^1$ , $y^1=[VERIFY] wrong$ , correct $a^2$ ;

- $D^IFT_3:$ correct $a^1$ , $y^1=[VERIFY] correct$ .

We provide an example of data collection process in Table 2. We limit the horizon to two iterations due to resource constraint, and preserve at most one trajectory per base sample to control dataset size. Then we fine-tune the LLMs using standard SFT pipeline to maximize:

$$

\displaystyle∑_D^IFT_1\big[\log P(y^1|x,a^1)+\log P(a^2|x,a^1,y^1)\big] \displaystyle+∑_D^IFT_2\log P(a^2|x,a^1,y^1)+∑_D^IFT_3\log P(y^1|x,a^1). \tag{1}

$$

In practice, however, we observe that the multi-task training can lead to stability issue and can slightly hurt the first-round performance. To mitigate this issue, we also train on the correct attempt $a^1$ for the samples in $D^IFT_3$ .

### 3.2 KL-regularized Reinforcement Learning

In this stage, we aim to further enhance the self-rewarding IFT models using reinforcement learning. We consider both deep RL methods (Schulman et al., 2017) and direct alignment algorithms (Zhao et al., 2023; Rafailov et al., 2023; Azar et al., 2023; Liu et al., 2023).

#### Learning signal.

To facilitate the reinforcement learning stage, we assume there exists a trajectory-wise reward function $u^⋆(τ)$ for trajectory

$$

τ=(x,a^1,y^1,…,a^H,y^H).

$$

However, instead of learning a proxy reward from data like the BT model in RLHF (Ouyang et al., 2022) or outcome-supervised reward (ORM) in previous mathematical reasoning literature (Lightman et al., 2023), we primarily use the oracle reward

$$

u^⋆(τ)=r^⋆(x,a^H),

$$

i.e., whether the final result is correct or not. The main advantage is that the oracle reward can largely mitigate the risk of reward hacking. This is also referred to as the rule-based RL in the very recent literature (DeepSeek-AI et al., 2025). We will also study the additional rule designs for either reward value assignment (PPO training) or data ranking (DPO training), where an implicit $u^⋆$ is determined by the set of rules we use.

Following standard RLHF methodologies (Ouyang et al., 2022; Bai et al., 2022), we optimize the following KL-regularized objective:

$$

\displaystyle\max_π∈ΠE_x∼ d_{0,a^1∼π^0(·|x)}E_τ∼π(·|x,a^1)\Big[ \displaystyle\hskip 17.00024ptu^⋆(τ)-η∑_h=1^HD_KL(π^h(·|s^h),π^h_ref(·|s^h))\Big]. \tag{2}

$$

The optimal policy, as well as its associated optimal value satisfies the following optimality condition (Xiong et al., 2024a; Xie et al., 2024a; Zhong et al., 2024).

**Proposition 3.2**

*We can recursively define the following optimal value functions and optimal policies for a KL-regularized MDP with horizon $H$ and deterministic external observation. For Q value, we have

$$

\displaystyle Q^⋆_h(s^h,a^h,y^h)= \tag{3}

$$

Also, for all $h∈[H]$ , we have:

| | $\displaystyle V_⋆^h(s^h)=η\log\underbrace{E_a^h,y^{h∼π_ref^h(·\mid s^h)}\exp\Big(\frac{Q_⋆^h(s^h,a^h,y^h)}{η}\Big)}_=:Z^h(s^{h)},$ | |

| --- | --- | --- |

$$

\displaystyleπ_⋆^h(a^h,y^h\mid s^h)=\frac{π^h_ref(a^h,y^h\mid s^h)}{Z^h(s^h)}·\exp\Big(\frac{Q_⋆^h(s^h,a^h,y^h)}{η}\Big). \tag{4}

$$*

We remark that one advantage of the proposition is that it allows deterministic external message (e.g. instruction prompts) in the state update, which will be useful when we consider a simplified research framework in Section 5.

We also adopt Direct Preference Optimization (DPO) (Rafailov et al., 2023; Azar et al., 2023; Zhao et al., 2023; Ethayarajh et al., 2024) to solve Equation 2, primarily due to computational constraints. In particular, we use the multi-turn DPO (M-DPO) framework from Xiong et al. (2024a), since it allows deterministic external observation in the state transition. To facilitate direct preference learning and bypass explicit reward training, we impose the following trajectory-level Bradley-Terry (BT) preference structure (Bradley & Terry, 1952). Specifically, given two trajectories $τ^1,τ^2$ , the probability of $τ^1$ being preferred than $τ^2$ , denoted as $τ^1\succτ^2$ , is

| | $\displaystyleℙ(τ^1\succτ^2 | τ^1,τ^2)=σ(u^⋆(τ^1)-u^⋆(τ^2)),$ | |

| --- | --- | --- |

where $σ(z)=1/(1+\exp(-z))$ is the sigmoid function. Following Xiong et al. (2024a), we take log on both sides of (4), and connect a utility function $u_θ$ with associated policy $π_θ$ and value $V_θ$ :

| | | $\displaystyle\log\frac{π_θ^h(y^1|s^1)}{π_ref^1(y^1|s^1)}=V_θ^2(s^2)-V_θ^1(s^1),$ | |

| --- | --- | --- | --- |

For a pair of trajectories $τ^w,~τ^l$ where $τ^w\succτ^l$ , we have

| | | $\displaystyle u_θ(τ^w)-u_θ(τ^l)=\log\frac{π_θ^1(y_w^1|x,a^1)}{π_ref^1(y_w^1|x,a^1)}-\log\frac{π_θ^1(y_l|x,a^1)}{π_ref^1(y_l|x,a^1)}$ | |

| --- | --- | --- | --- |

Taking this reward difference parameterization into the log-likelihood of the BT model $∑_(τ^w,τ^{l)∈D}\logσ\big(u_θ(τ^w)-u_θ(τ^l)\big),$ we obtain the loss function $L_\textup{M-DPO}(θ)$ :

$$

\displaystyle-∑_(τ^w,τ^{l)∈D}\logσ\Big(η\Big[\log\frac{π_θ^1(y_w^1|x,a^1)}{π_ref^1(y_w^1|x,a^1)}-\log\frac{π_θ^1(y_l|x,a^1)}{π_ref^1(y_l|x,a^1)} \displaystyle\hskip 17.00024pt+∑_h=1^H\log\frac{π_θ^h(a_w^h,y_w^h|s_w^h)}{π_ref^h(a_w^h|s_w^h)}-\log\frac{π_θ^h(a_l^h,y_l^h|s_l^h)}{π_ref^h(a_l^h,y_l^h|s_l^h)}\Big]\Big). \tag{5}

$$

Table 3: Main results of experiments with Qwen2.5-Math-7B-base. The single-turn baselines are used to train a regular CoT reasoning model. The baselines with † perform self-correction under the external prompt, where training may apply to enhance this ability. We use greedy decoding following the convention of the recent open-source projects on mathematical reasoning.

| | Single-turn STaR/RAFT | 77.0 | 77.0 | - | - | - |

| --- | --- | --- | --- | --- | --- | --- |

| Single-turn DPO | 76.8 | 76.8 | - | - | - | |

| Single-turn PPO | 79.4 | 79.4 | - | - | - | |

| Prompt with Gold RM † | 65.4 | 66.8 | 1.4 | 1.4 | 0.0 | |

| Intrinsic self-correction † | 65.4 | 51.4 | -14.0 | 1.4 | 15.4 | |

| MATH500 | STaR/RAFT for self-correction † | 71.6 | 70.4 | -1.2 | 5.0 | 6.2 |

| STaR/RAFT+ for self-correction † | 72.0 | 71.2 | -0.8 | 3.0 | 3.8 | |

| Self-rewarding IFT | 72.6 | 77.2 | 4.6 | 5.0 | 0.4 | |

| Self-rewarding IFT + DPO w correctness | 72.8 | 78.6 | 5.8 | 6.0 | 0.2 | |

| Self-rewarding IFT + PPO w correctness | 75.8 | 80.2 | 4.4 | 4.8 | 0.4 | |

| Single-turn STaR/RAFT | 40.1 | 40.1 | - | - | - | |

| Single-turn DPO | 39.0 | 39.0 | - | - | - | |

| Single-turn PPO | 39.5 | 39.5 | - | - | - | |

| Prompt with Gold RM † | 23.4 | 25.6 | 2.2 | 2.2 | 0 | |

| Intrinsic self-correction † | 23.4 | 18.1 | -5.3 | 2.2 | 7.5 | |

| OlympiadBench | STaR/RAFT for self-correction † | 36.5 | 32.5 | -4.0 | 7.2 | 11.2 |

| STaR/RAFT+ for self-correction † | 35.7 | 35.5 | -0.2 | 3.2 | 3.4 | |

| Self-rewarding IFT | 35.4 | 39.4 | 4.0 | 4.7 | 0.7 | |

| Self-rewarding IFT + DPO w correctness | 37.6 | 40.1 | 2.5 | 3.5 | 1.0 | |

| Self-rewarding IFT + PPO w correctness | 41.0 | 43.4 | 2.4 | 2.8 | 0.4 | |

| Single-turn STaR/RAFT | 32.0 | 32.0 | - | - | - | |

| Single-turn DPO | 31.6 | 31.6 | - | - | - | |

| Single-turn PPO | 33.1 | 33.1 | - | - | - | |

| Prompt with Gold RM † | 9.9 | 11.7 | 1.8 | 1.8 | 0 | |

| Intrinsic self-correction † | 9.9 | 8.4 | -1.5 | 1.8 | 3.3 | |

| Minerva Math | STaR/RAFT for self-correction † | 28.7 | 29.4 | 0.7 | 1.8 | 1.1 |

| STaR/RAFT+ for self-correction † | 25.7 | 25.3 | -0.4 | 0.8 | 1.2 | |

| Self-rewarding IFT | 23.2 | 28.7 | 5.5 | 7.3 | 1.8 | |

| Self-rewarding IFT + DPO w correctness | 26.8 | 34.6 | 7.8 | 9.6 | 1.8 | |

| Self-rewarding IFT + PPO w correctness | 34.0 | 38.4 | 4.4 | 5.1 | 0.7 | |

## 4 Experiment Results

#### Task, datasets, and data format.

We evaluate models’ mathematical reasoning abilities using standard benchmarks, including MATH500 (Hendrycks et al., 2020), OlympiadBench (He et al., 2024), and Minerva Math (Lewkowycz et al., 2022). These datasets provide a moderate size for reliable and efficient model evaluation, covering topics such as algebra, geometry, probability, number theory, and calculus. For training, we mainly use the prompts in NumiaMath-CoT dataset (Beeching et al., 2024). Specifically, we use a 50K subset for the self-rewarding IFT stage, a 10K subset for validation and model selection, and the remaining data for RL training. During inference, the model generates up to 4096 tokens, with VLLM 0.5.4 (Kwon et al., 2023) accelerating the process.

Evaluation metrics. We employ two categories of metrics to evaluate our models: (1) mathematical reasoning and self-correction and (2) reward model accuracy. First, we follow Kumar et al. (2024) to consider the following metrics to evaluate the models’ ability of mathematical reasoning and self-correction.

1. Turn 1: accuracy of the first attempt;

1. Final accuracy: accuracy of the final answer;

1. $Δ(t_1,t_2)$ : improvement in accuracy from the first attempt to the final answer;

1. $Δ^i→ c(t_1,t_2)$ : fraction of problems changed from incorrect to correct;

1. $Δ^c→ i(t_1,t_2)$ : fraction of problems changed from correct to incorrect.

Due to the nature of the self-rewarding reasoning framework, we additionally include the metrics to measure the accuracy as a reward model. We also defer a more comprehensive understanding of the proposed framework with a slightly simplified template to next section, where we will additionally compute the ratio of modifying a correct answer to incorrect when facing a misleading reward.

1. RM Accuracy $(a,b)$ : class-dependent accuracy for correct and incorrect trajectories. In other words, $a$ is the true positive rate and $b$ is the true negative rate;

1. Ratio $p^c→ i(t_1,t_2)$ : probability of modifying a correct answer to incorrect when facing a misleading reward.

For all evaluations, we use zero-shot CoT prompting and greedy decoding following the convention of recent projects with Qwen-2.5-Math models.

Experiment setup of self-rewarding IFT. We use Qwen2.5-Math-7B-base as the base model, which is continuously pre-trained on extensive mathematical and instruction-following data. Sequential rejection sampling (introduced in Section 3.1) is used for data collection, resulting in a dataset of 32K trajectories, where we roughly balance between correct and incorrect first attempts. In fine-tuning, samples are packed into 8192-token blocks and we use a learning rate of 1e-5, a cosine scheduler, and a 0.05 warm-up ratio. Global batch size is set to be 32. We train the models for three epochs and eventually select the one at the end of the first epoch.

Experiment setup of reinforcement learning. For iterative DPO training, we adopt setups from Xiong et al. (2024a) with a learning rate of $2× 10^-7$ , a cosine scheduler, and a batch size of 32. We tune $η∈\{0.1,0.5\}$ and also train with and without an NLL loss in the DPO objective (Pang et al., 2024; Xie et al., 2024a; Liu et al., 2024). For each iteration, we use 20K prompts and collect $8$ responses per prompt. Then, we extract the comparison pairs using the correctness score. If all responses admit the same score, we skip the prompt. A 10K validation set from NuminaMath-CoT is used for model selection. The primary metric for model selection is accuracy at turn 2. When models achieve comparable turn-2 accuracy, we choose the models with higher $Δ(t_1,t_2)$ improvement. The best model of these training setups is used as the representative model. For PPO training, we mainly follow a pulic example script of veRL (Sheng et al., 2024), which is publicly available https://github.com/RLHFlow/Online-DPO-R1/blob/main/ppo_training/verl_example.sh.

Baseline: improving the self-correction ability. We consider several baseline methods in the self-correction literature, including training-free approaches and fine-tuning. For training-free methods, we evaluate intrinsic self-correction (Huang et al., 2023), where models rely solely on prompting to perform correction, and self-correction with external ground-truth rewards (Qu et al., 2024). The prompts used for these methods are provided in Appendix B. We also include STaR and RAFT approaches (Zelikman et al., 2022; Dong et al., 2023), which are inspired by expert iteration in reinforcement learning (Anthony et al., 2017). These methods generate numerous trajectories with the base model, filter out failed attempts, and fine-tune on successfully revised responses. Following Kumar et al. (2024), we study a variant, STaR/RAFT+, which augments the training set with a set of correct-to-correct trajectories. To ensure a fair comparison, the total number of training samples for STaR/RAFT(+) is kept the same as in our self-rewarding IFT stage.

Baseline: improving the single-turn reasoning ability. In addition, we also consider several baselines that improve the models’ single-turn reasoning ability without self-correction. These methods include the STaR/RAFT (Zelikman et al., 2022; Dong et al., 2023), iterative DPO (Xiong et al., 2023) with the correctness score to rank data, and PPO with the correctness score. In particular, we adopt the iterative algorithms in the implementations of the STaR/RAFT and DPO because we observe that they achieve much better performance to serve as competitive baselines. We start from Qwen-2.5-Math-7B and train with only self-generated data for a fair comparison. We remark that the Qwen-2.5-Math-7B has been trained on many instruction-following data in the pre-training stage and the recent open-source projects also show that it can be used as the starting checkpoint without distillation from larger LLMs or human instructions (Zeng et al., 2025; Zhang et al., 2025).

### 4.1 Main Results

We report the main results in Table 3. Note that there can be an error of 0.1 due to rounding.

#### Intrinsic self-correction with prompting fails in general.

We first observe that intrinsic self-correction without explicit reward signals typically reduces final test accuracy. Upon analyzing the outputs, we find that models tend to modify their initial responses responses regardless of its correctness, as they lack a mechanism to determine when to refine their answers versus when to terminate the correction process. Moreover, even when given ground-truth rewards, base models with prompting alone achieve only marginal improvement in incorrect-to-correct transitions $Δ^i→ c(t_1,t_2)$ . For example, on MATH-500 benchmark, prompting with gold reward only leads to $Δ^i→ c(t_1,t_2)=1.4\$ .

We also notice that the STaR/RAFT method, which fine-tunes models on revised incorrect attempts, fails to significantly improve performance. It increases $Δ^i→ c(t_1,t_2)$ (incorrect-to-correct transitions) on MATH500 from $1.4\$ to $5.0\$ , but still suffers from a $Δ^c→ i(t_1,t_2)$ (correct-to-incorrect transitions) of $6.2\$ . Additionally, the STaR/RAFT+ variant, which includes correct-to-correct trajectories, becomes more conservative in modifying the initial attempt. While this reduces incorrect corrections ( $Δ^c→ i(t_1,t_2)$ ), it also lower $Δ^i→ c(t_1,t_2)$ , ultimately degrading test accuracy. These findings align with prior studies, and highlight the limitations of intrinsic self-correction, even with training (Huang et al., 2023; Kumar et al., 2024).

Table 4: The results of reward modeling accuracy ( $\$ ). We report the accuracy of self-rewarding signals for the three benchmarks in two separate classes. For instance, MATH-500 C is the accuracy of recognizing a correct trajectory, while MATH-500 W is the accuracy of recognizing a wrong trajectory. The model highlighted by $(*)$ is selected as the final model.

| PPO Step 100 PPO Step 220 $(⋆)$ DPO Iter 2 | 97.5 98.6 91.3 | 56.4 47.6 56.2 | 98.1 97.8 81.9 | 33.5 39.3 51.8 | 87.4 94.2 86.7 | 29.7 32.4 36.2 |

| --- | --- | --- | --- | --- | --- | --- |

| DPO Iter 5 $(⋆)$ | 92.0 | 50.6 | 88.2 | 44.5 | 92.4 | 37.4 |

#### Self-rewarding reasoning models significantly outperform existing baselines of self-correction.

Across all tasks, self-rewarding reasoning models consistently improve final accuracy with higher $Δ(t_1,t_2)$ compared to baseline methods. We notice that fine-tuning on the synthetic trajectories with self-correction behavior yields models with much higher $Δ^i→ c(t_1,t_2)$ , suggesting that the models are more good at correcting the error in the self-generated responses. Distint from the STaR/RAFT, models trained with self-rewarding IFT also exhibit significantly lower $Δ^c→ i(t_1,t_2)$ , indicating they are better at recognizing when to stop due to the additional self-rewarding signals. For instance, on MATH500,

- self-rewarding IFT achieves $Δ^i→ c=5.0\$ (vs. $1.4\$ for intrinsic self-correction);

- self-rewarding IFT achieves $Δ^c→ i=0.4\$ (vs. $15.4\$ for intrinsic self-correction and $3.8\$ for STaR/RAFT+);

Since STaR/RAFT(+) and self-rewarding IFT use the same data synthesis approach (rejection sampling) but under different self-correction frameworks, these results highlight the advantage of our self-rewarding reasoning framework.

#### Self-rewarding reasoning models improve the final accuracy compared to the single-turn baselines.

We also compare the self-rewarding reasoning models with RL training against their single-turn counterparts. For both the PPO and DPO, the self-rewarding reasoning models achieve higher final test accuracy due to the additional correction step. For instance, the self-rewarding IFT + PPO yields a model with $43.4\$ final accuracy on OlympiadBench, and $38.4\$ on Minerva Math, compared to the $39.5\$ and $33.1\$ of the single-turn counterpart. Similarly, with the DPO, the self-rewarding reasoning models achieve a $78.6\$ on MATH500, a $40.1\$ on OlympiadBench, and $34.6\$ on Minerva Math, while the single-turn DPO model admits $76.8\$ , respectively.

However, self-rewarding models use more tokens at inference due to the additional correction step. For a fair comparison, we will also study the behavior of self-rewarding correction under scaled test-time compute budgets in Section 5.

#### Deep RL algorithm outperforms the direct alignment algorithms.

We observe that PPO outperforms iterative DPO by a large margin. For example, the PPO-trained model achieves a $43.4\$ final accuracy on OlympiadBench, compared to the $40.1\$ of the DPO method. This suggests that when absolute reward signals are available, enforcing a preference structure (Bradley-Terry model) is unnecessary and may degrade performance. Another possible reason is the limited data utilization in DPO. We notice that, with our setup, we can collect comparison pairs for only 40% to 60% prompts. For the remaining prompts, models either generate no correct trajectories or all trajectories are correct. As a result, DPO utilizes less training data than PPO, which may contribute to its lower accuracy.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Graph with Dual Y-Axes: Test Result on MATH500

### Overview

The image is a line graph titled "Test Result on MATH500" that plots three metrics over the course of reinforcement learning training. It uses a dual y-axis design to display two different types of data: accuracy percentages (left axis) and token counts (right axis). The graph shows the progression of model performance and output length across 200 training steps.

### Components/Axes

* **Title:** "Test Result on MATH500" (centered at the top).

* **X-Axis:** Labeled "Training Steps of Reinforcement Learning". It has major tick marks at intervals of 50, ranging from 0 to 200.

* **Primary Y-Axis (Left):** Labeled "Average Accuracy (%)". It ranges from 72 to 82 with major tick marks every 2 units (72, 74, 76, 78, 80, 82).

* **Secondary Y-Axis (Right):** Labeled "Average Response Length (Tokens)". It ranges from 1100 to 1240 with major tick marks every 20 tokens (1100, 1120, 1140, 1160, 1180, 1200, 1220, 1240).

* **Legend:** Positioned at the top center of the chart area. It contains three entries:

1. A blue line with a square marker labeled "Turn 1 Accuracy".

2. A green line with a square marker labeled "Turn 2 Accuracy".

3. A red line with a square marker labeled "Response Length".

### Detailed Analysis

The graph tracks three distinct data series:

**1. Turn 1 Accuracy (Blue Line, Left Y-Axis):**

* **Trend:** Shows a general upward trend with moderate volatility. It starts at a moderate level, experiences a dip, and then recovers to finish at its highest point.

* **Data Points (Approximate):**

* Step 0: ~76.5%

* Step 25: ~76.0% (local minimum)

* Step 50: ~77.0%

* Step 75: ~78.5%

* Step 100: ~78.0%

* Step 125: ~79.5%

* Step 150: ~80.0%

* Step 175: ~79.5%

* Step 200: ~80.5% (peak)

**2. Turn 2 Accuracy (Green Line, Left Y-Axis):**

* **Trend:** Exhibits a more volatile but overall positive trend. It begins at the lowest point of all series, has several peaks and troughs, and ends significantly higher than it started.

* **Data Points (Approximate):**

* Step 0: ~72.5%

* Step 25: ~73.0%

* Step 50: ~72.0% (global minimum)

* Step 75: ~74.5%

* Step 100: ~73.5%

* Step 125: ~75.5%

* Step 150: ~76.0%

* Step 175: ~75.5%

* Step 200: ~76.0%

**3. Response Length (Red Line, Right Y-Axis):**

* **Trend:** Displays a distinct "U-shaped" or recovery trend. It starts high, drops sharply to a minimum in the first quarter of training, and then steadily increases for the remainder, surpassing its initial value.

* **Data Points (Approximate):**

* Step 0: ~1200 tokens

* Step 25: ~1120 tokens (global minimum)

* Step 50: ~1130 tokens

* Step 75: ~1160 tokens

* Step 100: ~1200 tokens

* Step 125: ~1220 tokens

* Step 150: ~1230 tokens

* Step 175: ~1220 tokens

* Step 200: ~1225 tokens

### Key Observations

1. **Inverse Early Relationship:** In the first 50 steps, as Response Length (red) decreases sharply, both accuracy metrics (blue, green) show stagnation or decline. This suggests an initial phase where the model may be learning to be more concise at the cost of accuracy.

2. **Positive Correlation Later:** After step 50, all three metrics trend upward together. The increase in response length from ~1130 to ~1225 tokens coincides with improvements in both Turn 1 and Turn 2 accuracy.

3. **Performance Gap:** Turn 1 Accuracy (blue) is consistently higher than Turn 2 Accuracy (green) throughout the entire training process, with the gap remaining relatively stable at approximately 3-5 percentage points.

4. **Volatility:** Turn 2 Accuracy (green) shows the most pronounced fluctuations, suggesting it is a more sensitive or difficult metric to optimize consistently compared to Turn 1 Accuracy.

### Interpretation

This chart illustrates the dynamics of a reinforcement learning process applied to a mathematical reasoning task (MATH500). The data suggests a multi-phase training evolution:

* **Phase 1 - Compression (Steps 0-50):** The model initially learns to drastically shorten its responses (from 1200 to 1120 tokens). This compression phase appears detrimental to performance, as both accuracy metrics dip or flatline. This could represent the model shedding verbose but incorrect reasoning patterns.

* **Phase 2 - Refinement (Steps 50-200):** The model then enters a phase where it gradually increases its response length again. Crucially, this added length is not mere verbosity; it correlates with a steady improvement in accuracy for both the first and second turns of reasoning. This indicates the model is learning to generate more substantive, correct, and possibly more detailed chains of thought.

* **The Accuracy-Length Trade-off:** The graph challenges a simplistic "shorter is better" view. The optimal performance (at step 200) is achieved with a response length (~1225 tokens) that is *longer* than the initial length, but yields significantly higher accuracy. The key is the *quality* of the tokens generated during the refinement phase.

* **Turn-Based Reasoning:** The persistent gap between Turn 1 and Turn 2 accuracy implies that generating a correct second step of reasoning remains a harder task than the first, even after extensive training. The parallel trends, however, show that improvements in the foundational first-turn reasoning positively influence the more complex second-turn reasoning.

In summary, the chart depicts a successful training run where an initial period of response shortening is followed by a longer period of constructive growth, where increased response length is harnessed to build more accurate multi-step mathematical reasoning.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Test Result on Minerva MATH

### Overview

The image displays a line chart tracking the performance accuracy of two metrics over the course of reinforcement learning training. The chart demonstrates a general upward trend for both metrics, indicating that training improves performance on the Minerva MATH benchmark.

### Components/Axes

* **Chart Title:** "Test Result on Minerva MATH" (centered at the top).

* **Y-Axis (Vertical):**

* **Label:** "Average Performance Accuracy (%)"

* **Scale:** Linear, ranging from 24 to 36, with major tick marks every 2 units (24, 26, 28, 30, 32, 34, 36).

* **X-Axis (Horizontal):**

* **Label:** "Training Steps of Reinforcement Learning"

* **Scale:** Linear, ranging from 0 to 200, with major tick marks every 50 steps (0, 50, 100, 150, 200).

* **Legend:** Located in the top-left corner of the plot area.

* **Blue Line with circular markers:** Labeled "Num 1 Accuracy".

* **Green Line with circular markers:** Labeled "Num 2 Accuracy".

* **Grid:** A light gray grid is present, aligning with the major ticks on both axes.

### Detailed Analysis

**Data Series 1: "Num 1 Accuracy" (Blue Line)**

* **Trend Verification:** The blue line shows a clear, albeit noisy, upward trend from left to right. It starts lower, experiences several peaks and valleys, but finishes at its highest point.

* **Approximate Data Points (Step, Accuracy %):**

* (0, ~28.5)

* (25, ~29.0)

* (50, ~30.5)

* (75, ~32.0)

* (100, ~31.0) - A notable dip.

* (125, ~32.5)

* (150, ~32.0)

* (175, ~33.0)

* (200, ~36.5) - The peak value.

**Data Series 2: "Num 2 Accuracy" (Green Line)**

* **Trend Verification:** The green line also shows a consistent upward trend. It starts at the lowest point on the chart and climbs steadily, with less pronounced volatility compared to the blue line.

* **Approximate Data Points (Step, Accuracy %):**

* (0, ~24.0)

* (25, ~24.5)

* (50, ~26.0)

* (75, ~28.0)

* (100, ~29.5)

* (125, ~30.5)

* (150, ~30.0)

* (175, ~31.5)

* (200, ~34.5)

### Key Observations

1. **Consistent Performance Gap:** The "Num 1 Accuracy" (blue) is consistently higher than the "Num 2 Accuracy" (green) at every measured training step. The gap appears to narrow slightly towards the end of training.

2. **Parallel Growth:** Both lines follow a broadly similar trajectory, suggesting the factors driving improvement affect both metrics, though not equally.

3. **Volatility:** The "Num 1 Accuracy" series exhibits more volatility, with a significant dip around step 100, while the "Num 2 Accuracy" series has a smoother ascent.

4. **Final Surge:** Both lines show their steepest increase in the final segment, between steps 175 and 200.

### Interpretation

This chart visualizes the learning curve for a model undergoing reinforcement learning on mathematical tasks. The data suggests that the training process is effective, as measured by both "Num 1" and "Num 2" accuracy metrics.

* **What the data suggests:** The model's ability to solve Minerva MATH problems improves with more training steps. The parallel rise of both lines indicates they are likely measuring related but distinct aspects of performance (e.g., different problem types, difficulty levels, or solution formats). The consistent gap implies "Num 1" represents a metric where the model has an inherent advantage or is easier to optimize.

* **Notable Anomalies:** The dip in "Num 1 Accuracy" at step 100 is an outlier in its trend. This could indicate a period of instability in training, a change in the training data distribution, or simply noise in the evaluation process. The fact that "Num 2 Accuracy" did not dip at the same point suggests the anomaly may be specific to the "Num 1" metric.

* **Underlying Meaning:** The chart provides evidence for the efficacy of the applied reinforcement learning method. The final values (~36.5% and ~34.5%) represent the model's performance after 200 steps, serving as a benchmark for this training run. The lack of a plateau suggests that further training steps might yield additional improvements.

</details>

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Final Result on OlympiadBench

### Overview

The image displays a line chart titled "Final Result on OlympiadBench." It plots the performance of two accuracy metrics over the course of reinforcement learning training steps. The chart shows a general upward trend for both metrics, indicating improvement with increased training.

### Components/Axes

* **Chart Title:** "Final Result on OlympiadBench" (centered at the top).

* **X-Axis:** Labeled "Training Steps of Reinforcement Learning." The axis has major tick marks at intervals of 50, labeled: 0, 50, 100, 150, 200.

* **Y-Axis:** Labeled "Average Benchmark Accuracy (%)". The axis has major tick marks at intervals of 1, labeled from 35 to 44.

* **Legend:** Located in the top-left corner of the chart area. It contains two entries:

* A green line labeled "Sum. M Accuracy"

* A blue line labeled "Final Accuracy"

* **Data Series:** Two lines plotted on the chart:

1. A **green line** representing "Sum. M Accuracy."

2. A **blue line** representing "Final Accuracy."

### Detailed Analysis

**Data Series 1: Sum. M Accuracy (Green Line)**

* **Trend:** The green line shows a generally positive, upward trend with moderate fluctuations. It starts at the lowest point on the chart and ends significantly higher.

* **Approximate Data Points:**

* Step 0: ~35.5%

* Step 25: ~35.8%

* Step 50: ~36.5%

* Step 75: ~37.5%

* Step 100: ~39.0% (local peak)

* Step 125: ~38.0% (dip)

* Step 150: ~39.5%

* Step 175: ~39.8%

* Step 200: ~40.5%

**Data Series 2: Final Accuracy (Blue Line)**

* **Trend:** The blue line also shows a positive, upward trend but is more volatile than the green line. It consistently remains above the green line throughout the training steps.

* **Approximate Data Points:**

* Step 0: ~39.5%

* Step 25: ~39.0% (dip)

* Step 50: ~40.5%

* Step 75: ~41.0%

* Step 100: ~42.0% (significant peak)

* Step 125: ~40.0% (sharp dip)

* Step 150: ~41.5%

* Step 175: ~42.0%

* Step 200: ~43.0% (highest point)

### Key Observations

1. **Consistent Performance Gap:** The "Final Accuracy" (blue) is consistently higher than the "Sum. M Accuracy" (green) at every measured training step. The gap between them is approximately 3-4 percentage points.

2. **Correlated Movements:** Both lines often move in tandem. For example, both show a local peak at step 100 and a subsequent dip at step 125, suggesting a common factor affecting both metrics at those training stages.

3. **Peak Performance:** Both metrics achieve their highest values at the final recorded step (200), with "Final Accuracy" reaching ~43% and "Sum. M Accuracy" reaching ~40.5%.

4. **Volatility:** The "Final Accuracy" line exhibits sharper peaks and valleys (e.g., the pronounced peak at step 100 and dip at step 125) compared to the somewhat smoother progression of the "Sum. M Accuracy" line.

### Interpretation

The chart demonstrates the effectiveness of reinforcement learning training on the OlympiadBench benchmark. The upward trajectory of both lines indicates that the model's performance improves as it undergoes more training steps.

The persistent gap between "Final Accuracy" and "Sum. M Accuracy" suggests these are measuring different aspects of performance. "Final Accuracy" likely represents the model's ultimate answer accuracy, while "Sum. M Accuracy" might be a component score or a metric from an intermediate step (e.g., summarization or multiple-choice accuracy). The fact that the final answer accuracy is higher implies the model may be effectively synthesizing or correcting intermediate outputs to arrive at better final answers.

The correlated dip after step 100 is a notable anomaly. This could indicate a period of instability in training, such as the model encountering a particularly challenging subset of data, a change in the learning rate, or a temporary overfitting phenomenon before recovering and continuing to improve. The overall trend, however, is positive, showing that extended training (up to 200 steps) yields better results on this benchmark.

</details>

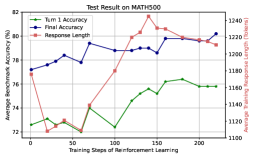

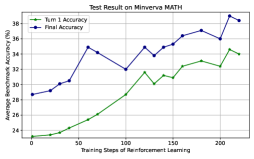

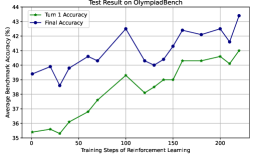

Figure 1: The learning dynamic of the PPO training, initialized from the self-rewarding IFT model. We also plot the average generation length during the training in the first figure.

#### Reward model (RM) accuracy.

Since our self-rewarding framework unifies the generator and reward model, we evaluate the accuracy of our models as a reward model. We observe that the Qwen2.5-Math-7B-base can fail to strictly follow the format by omitting the self-evaluation step or not generating the evaluation result in the pre-determined format possibly because the model is not instruction-following fine-tuned. However, this happens in less then 10% of the cases so we focus on the samples with the evaluation step and also further involve human supervision to summarize the statistics. We report the result in Table 4. We observe that the self-rewarding IFT model is much more good at recognizing the correct trajectories, as the accuracy is generally higher than $90\$ , even though we balance the two types of trajectories in the training set. This directly leads to the small $Δ^c→ i(t_1,t_2)$ we observe in the main table.

We also notice that the RL training (both PPO and DPO) does not consistently improve the reward modeling accuracy. Analysis of PPO checkpoints (initial model, Step 100 and Step 220) clearly shows a trade-off between correct and incorrect classification accuracy. The PPO training explores different trade-off between them, with the goal of maximizing the final accuracy. Similar observation also applies to the DPO training. Moreover, the best model of PPO training tends to prioritize recognizing correct trajectories, at the cost of lower accuracy in identifying incorrect responses, which aligns with the lower $Δ^c→ i(t_1,t_2)$ and also lower $Δ^i→ c(t_1,t_2)$ . This may be because correcting an incorrect answer is generally more challenging than maintaining a correct initial response. We defer a more detailed study of the impact of data composition on reward modeling accuracy to the next section.

#### Learning dynamic of the RL stage.

While the RL training improves final accuracy, the final test accuracy is determined by both the turn-1 accuracy and $Δ(t_1,t_2)$ . In particular, we notice that the final accuracy gains come primarily from the higher turn-1 accuracy, as the models after the RL training usually admit a much higher turn-1 accuracy, but also a lower $Δ^i→ c(t_1,t_2)$ . To understand the learning dynamic of the RL training, we plot test accuracy on three benchmarks in terms of the RL training steps in Figure 1. We observe that in the early stage of the RL training, both the turn-1 accuracy and the final accuracy increase, and their gap $Δ(t_1,t_2)$ is also increased or maintained as a stable level. This indicates that the models learn to use their knowledge better in the first round and improve or maintain a comparable level of correction ability. Around training step $100$ , however, the increase of the final accuracy is mainly from the higher turn-1 accuracy and their gap is narrowed, indicating less reliance on self-correction.

We also plot the average generation length in the first figure. Initially, the length decreases because the Qwen2.5-Math-7B-base model tends to generate many python codes, resulting in lengthy responses. We observe that the code usually takes many tokens and can lead to incomplete reasoning path and it is discouraged by the reward signal. This observation is consistent with Zeng et al. (2025). Then, the length increases in the next stage, indicating that the reflection and self-correction abilities are also encouraged by the RL training. Finally, the length decreases again, along with a higher turn-1 accuracy and a smaller $Δ(t_1,t_2)$ , indicating that the models learn to provide a correct answer in their first attempt and also, the self-correction pattern is discouraged. This is also supported by the reward model accuracy, where the RL-trained models tend to be more conservative and evaluate the attempt as correct.

Table 5: Main results of different methods on the test sets of MATH (first two groups of results) and GSM8K (last two groups of results). Models are evaluated with temperature 1.0, and results are averaged over three random seeds. Additional results using a temperature of 0.7 are included in the appendix due to space constraints.

| Llama-3-8B-it | Prompt with Gold RM | 20.7 | 30.3 | 9.6 | 9.6 | 0 |

| --- | --- | --- | --- | --- | --- | --- |

| Llama-3-8B-it | Prompt with External ORM | 20.7 | 26.2 | 5.5 | 8.8 | 3.3 |

| Llama-3-8B-it | Intrinsic self-correction | 20.7 | 22.0 | 1.3 | 8.8 | 7.5 |

| Llama-3-8B-it | STaR/RAFT for self-correction | 22.3 | 26.1 | 3.7 | 11.4 | 7.7 |

| Llama-3-8B-it | STaR/RAFT+ for self-correctio | 22.7 | 27.1 | 4.4 | 11.7 | 7.3 |

| Llama-3-8B-it | Self-rewarding IFT | 22.6 | 27.9 | 5.3 | 8.8 | 3.5 |

| Llama-3-8B-it | Self-rewarding IFT + Gold RM | 22.6 | 33.9 | 11.3 | 11.3 | 0 |

| Llama-3-SFT | Prompt with Gold RM | 36.2 | 45.0 | 8.8 | 8.8 | 0 |

| Llama-3-SFT | Prompt with External ORM | 36.2 | 39.2 | 3.0 | 7.5 | 4.5 |

| Llama-3-SFT | Intrinsic self-correction | 36.2 | 35.3 | -0.9 | 8.5 | 9.4 |

| Llama-3-SFT | STaR/RAFT for self-correctio | 38.5 | 36.7 | -1.8 | 10.5 | 12.3 |

| Llama-3-SFT | STaR/RAFT+ for self-correctio | 37.9 | 38.8 | 0.9 | 9.4 | 8.5 |

| Llama-3-SFT | Self-rewarding IFT | 37.1 | 40.3 | 3.2 | 7.2 | 4.0 |

| Llama-3-SFT | rewarding IFT + Gold RM | 37.1 | 46.8 | 9.7 | 9.7 | 0 |

| Llama-3-8B-it | Prompt with Gold RM | 64.0 | 72.1 | 8.1 | 8.1 | 0 |

| Llama-3-8B-it | Prompt with External ORM | 64.0 | 68.0 | 4.0 | 5.9 | 1.9 |

| Llama-3-8B-it | Intrinsic self-correction | 64.0 | 48.1 | -15.9 | 7.1 | 23.0 |

| Llama-3-8B-it | STaR/RAFT for self-correctio | 76.0 | 63.1 | -12.9 | 7.9 | 20.8 |

| Llama-3-8B-it | STaR/RAFT+ for self-correctio | 75.7 | 67.0 | -8.7 | 8.6 | 17.3 |

| Llama-3-8B-it | Self-rewarding IFT | 73.2 | 78.2 | 5.0 | 9.1 | 4.1 |

| Llama-3-SFT | Prompt with Gold RM | 74.6 | 83.1 | 8.5 | 8.5 | 0 |

| Llama-3-SFT | Prompt with External ORM | 74.6 | 76.7 | 2.1 | 5.5 | 3.4 |

| Llama-3-SFT | Intrinsic self-correction | 74.6 | 67.4 | -7.2 | 7.6 | 14.8 |

| Llama-3-SFT | STaR/RAFT for self-correctio | 73.8 | 67.4 | -6.4 | 9.0 | 15.4 |

| Llama-3-SFT | STaR/RAFT+ for self-correctio | 73.9 | 73.5 | -0.4 | 8.6 | 9.0 |

| Llama-3-SFT | Self-rewarding IFT | 76.1 | 79.2 | 3.1 | 4.7 | 1.6 |

## 5 More Experiment Results with a Two-turn Conversation Framework and Llama Models

In this section, we continue to investigate the self-rewarding reasoning framework.

### 5.1 Data Format: Simplified Two-turn Framework

Previously, we combined multiple reasoning steps into a single long CoT trajectory, which aligns with common practice. However, this approach poses significant challenges for our study, as models—particularly Qwen2.5-Math-7B-base—often fail to strictly follow instructions for evaluating or revising responses based on their history. For instance, models sometimes will also generate the evaluation results using $\boxed{}$ or not to correct the responses even though the self-evaluation result is “[VERIFY] wrong”. Additionally, models can perform multiple rounds of self-evaluation and correction, but these steps are tightly coupled and cannot be easily decoupled into separate stages.

To address these issues, we adopt a simplified two-turn conversation framework, where the user provides explicit instructions between different steps. Specifically, after receiving the mathematical problem, the model will first generate the CoT reasoning $a^1$ and self-evaluation $y$ . Then, the user provide a deterministic instruction $o$ based on the self-evaluation $y$ :

1. Since your initial response is self-evaluated as incorrect, there might be an error in the solution above because of lack of understanding of the question. Please correct the error, if any, and rewrite the solution. Put your final answer within $\boxed{}$ ;

1. Since your initial response is self-evaluated as correct, confirm it and provide no further modifications. Put your final answer within $\boxed{}$ .

Meanwhile, when collecting the data, the self-rewarding signal is determined directly by the ground-truth oracle reward with the template designed in Zhang et al. (2024b), without additional reasoning. While this simplification may reduce reward modeling accuracy (Zhang et al., 2024b), it facilitates controlled experimentation by allowing modifications to the self-rewarding signal. Similar frameworks—without the self-rewarding component—have been explored in previous works (Huang et al., 2023; Kumar et al., 2024). See Table 6 for an illustrative example.

An example of the self-rewarding reasoning path under the two-turn conversation framework.

%“hspace–-10mm˝

| Col1 |

| --- |

| User: Three positive integers $a,$ $b,$ and $x$ form an O’Hara triple $(a,b,x)$ if $√{a}+√{b}=x.$ For example, $(1,4,3)$ is an O’Hara triple because $√{1}+√{4}=3.$ If $(36,25,x)$ is an O’Hara triple, determine the value of $x.$ |

| Please reason step by step and put your final answer within {}. |

| Assistant: Here is my solution: Begin by substituting the numbers into the definition of an O’Hara triple: $x=√{36}+√{25}$ . Factor out the perfect squares from each term to simplify: $x=√{9× 4}+√{25}$ . Use the perfect square roots of each term and combine them: $x=3√{4}+5$ . Multiply 4 with the root: $x=12+5$ . Add 12 and 5: Final Answer: The final answer is $\boxed{x=17}$ . I hope it is correct. |

| Is my most recent final answer correct (Yes or No)? No. |

| User: Since your initial response is self-evaluated as incorrect, there might be an error in the solution above because of lack of understanding of the question. Please correct the error, if any, and rewrite the solution. |

| Assistant: Let’s re-evaluate the solution: We are given that (36, 25, x) is an O’Hara triple, so we can write: $√{a}+√{b}=x$ . Substituting a = 36 and b = 25, we get: $√{36}+√{25}=x$ . Simplifying the square roots, we get: $6+5=x$ . Adding 6 and 5, we get: $\boxed{x=11}$ |

Table 6: An example of the self-rewarding reasoning path under the two-turn conversation framework.

### 5.2 Experiment Setup

#### Base model, task, and datasets.

Qwen2.5-Math-7B-base serves as a strong and specialized base model, which is pre-trained on a large mathematical corpus. To ensure generality and a more comprehensive evaluation, we experiment with the Llama model series. Specifically, our base models include Llama-3-8B-it and Llama-3-SFT, the latter being fine-tuned on Open-MathInstruct2-1M (Toshniwal et al., 2024a). While both models are generally weaker than Qwen2.5-Math-7B-base, Llama-3-SFT is stronger than Llama-3-8B-it.

In this section, we evaluate the models’ mathematical reasoning abilities using the MATH and GSM8K benchmarks, which are well-suited to their capacities. For MATH, we use 7.5K training problems during the self-rewarding IFT stage, supplemented by 7.5K prompts from Open-MathInstruct2 for M-DPO training, with a similar setup for GSM8K. Model selection is performed using a 1K validation set from Open-MathInstruct2. Since we formulate the task as a multi-turn chat problem, we can directly use Axolotl’s training code https://github.com/axolotl-ai-cloud. During inference, the model generates up to 2048 tokens per round, with VLLM 0.5.4 (Kwon et al., 2023) accelerating the process.

#### Training Setup for Llama SFT.

For the self-rewarding IFT stage, we use a learning rate of 2e-6 with a batch size of 32 for Llama models and 64 for Llama-3-SFT training. Outcome-supervised reward models (ORMs) are trained using standard SFT recipes and datasets, as described in (Xiong et al., 2024b). Full hyperparameter configurations will be available in our GitHub repository.

We observe that models occasionally fail to follow the instruction to perform self-rewarding corrections, though this occurs in less than 5% of cases. In such scenarios, we terminate after the first round and use its output as the final answer.

### 5.3 Main Results with Llama Models

Experiments with Llama models align well with the Qwen model. Our experiments with Llama models show similar trends to those observed with Qwen models. Specifically, intrinsic self-correction—whether with or without STaR/RAFT-like training—fails to reliably correct errors in self-generated responses. Models tend to modify their initial responses regardless of correctness, making these methods beneficial primarily for weaker models where most first attempts are incorrect (e.g., MATH task with Llama-3-8B-it). However, for stronger models that solve most problems correctly on the first attempt (e.g., GSM8K task with Llama-3-SFT), intrinsic self-correction and STaR/RAFT methods significantly reduce turn-2 accuracy. In contrast, self-rewarding IFT models consistently improve turn-1 accuracy by effectively correcting errors while preserving already correct responses. This demonstrates the generality of the proposed framework.

To further evaluate the self-rewarding IFT model, we modify the self-rewarding signal to be the same as the oracle reward, eliminating the influence of reward signal quality and directly assessing the model’s ability to correct incorrect responses. For example, the baseline Llama-3-SFT achieve a $Δ^i→ c(t_1,t_2)=8.8\$ , while models fine-tuned with self-rewarding IFT exhibit a higher $Δ^i→ c(t_1,t_2)=9.7$ , indicating improved correction capabilities.

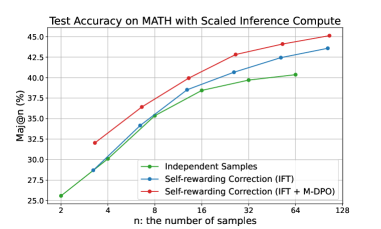

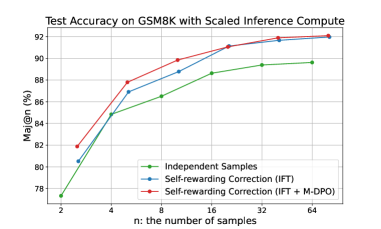

### 5.4 Self-rewarding Reasoning Framework Improves Efficiency in Test-time Inference Compute Scaling

Self-correction requires generating multiple LLM responses, making it crucial to compare models under equivalent inference budgets. As noted by Huang et al. (2023), prior self-correction approaches often perform no better than self-consistency (Wang et al., 2022) when constrained to an equal number of responses. To address this, we analyze self-rewarding correction under scaled test-time compute budgets by sampling $N$ reasoning paths and using majority voting for the final output. We report the results in Figure 2, where the DPO-aligned model is descripted in Section 5.6. For both MATH and GSM8K tasks, with a fixed inference budget, the self-rewarding correction model consistently outperforms independent sampling methods. For example, the independent sampling achieves an accuracy of 40.4% on MATH with 64 samples, whereas the self-rewarding correction method (using IFT and M-DPO training) achieves an accuracy of 42.8% with only 26.4 samples.

One key factor contributing to this improved efficiency is that, unlike intrinsic self-correction or STaR/RAFT methods, our models do not necessarily generate two samples per trajectory. Instead, they terminate early when the model is confident in the correctness of its first-round response. For instance, using Llama-3-8B-it as the base model, our approach generates an average of 1.65 samples per trajectory for MATH and 1.25 samples per trajectory for GSM8K, leading to significant computational savings.

### 5.5 Ablation Study on Data Distribution

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Test Accuracy on MATH with Scaled Inference Compute

### Overview

The image is a line chart titled "Test Accuracy on MATH with Scaled Inference Compute." It plots the performance of three different methods for solving MATH problems as a function of the number of samples used for inference. The chart demonstrates that increasing the number of samples (`n`) improves accuracy for all methods, with one method consistently outperforming the others.

### Components/Axes

* **Chart Title:** "Test Accuracy on MATH with Scaled Inference Compute" (centered at the top).

* **Y-Axis:**

* **Label:** "Maj@n (%)" (vertical text on the left). This likely stands for "Majority Vote at n samples" accuracy percentage.

* **Scale:** Linear scale from 25.0 to 45.0, with major tick marks every 2.5 units (25.0, 27.5, 30.0, 32.5, 35.0, 37.5, 40.0, 42.5, 45.0).

* **X-Axis:**

* **Label:** "n: the number of samples" (centered at the bottom).

* **Scale:** Logarithmic (base-2) scale with discrete points at n = 2, 4, 8, 16, 32, 64, 128.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains three entries, each with a colored line and marker symbol:

1. **Green line with circle markers:** "Independent Samples"

2. **Blue line with circle markers:** "Self-rewarding Correction (IFT)"

3. **Red line with circle markers:** "Self-rewarding Correction (IFT + M-DPO)"

* **Grid:** A light gray grid is present, aligning with the major ticks on both axes.

### Detailed Analysis

The chart displays three data series, each showing an upward trend that flattens as `n` increases (diminishing returns). The values below are approximate, read from the chart's grid.

**1. Independent Samples (Green Line)**

* **Trend:** Starts lowest, increases steadily, and shows the most pronounced flattening at higher `n`.

* **Data Points (Approximate):**

* n=2: ~25.5%

* n=4: ~30.0%

* n=8: ~34.5%

* n=16: ~38.0%

* n=32: ~39.5%

* n=64: ~40.5%

* n=128: ~41.0%

**2. Self-rewarding Correction (IFT) (Blue Line)**

* **Trend:** Starts higher than the green line, maintains a consistent lead over it, and follows a similar growth curve.

* **Data Points (Approximate):**

* n=2: ~27.5%

* n=4: ~31.0%

* n=8: ~35.5%

* n=16: ~39.0%

* n=32: ~41.0%

* n=64: ~42.5%

* n=128: ~43.5%

**3. Self-rewarding Correction (IFT + M-DPO) (Red Line)**

* **Trend:** Starts the highest and maintains the largest lead throughout. Its growth is steep initially and remains strong even at higher `n`.

* **Data Points (Approximate):**

* n=2: ~32.0%

* n=4: ~35.0%

* n=8: ~37.5%

* n=16: ~40.0%

* n=32: ~42.5%

* n=64: ~44.0%

* n=128: ~45.0%

### Key Observations

1. **Performance Hierarchy:** There is a clear and consistent performance hierarchy across all sample sizes: `IFT + M-DPO` > `IFT` > `Independent Samples`.

2. **Sample Efficiency:** The `IFT + M-DPO` method is the most sample-efficient. For example, it achieves ~40% accuracy at n=16, a level the `Independent Samples` method only approaches at n=64.

3. **Diminishing Returns:** All curves show diminishing returns. The gain from doubling `n` decreases as `n` becomes larger. This flattening is most severe for the `Independent Samples` method.

4. **Convergence Gap:** The performance gap between the methods appears relatively stable or slightly widening on the linear percentage scale as `n` increases, suggesting the advanced methods maintain their advantage.

### Interpretation

This chart provides strong evidence for the effectiveness of the "Self-rewarding Correction" technique, particularly when combined with "M-DPO" (likely a form of Direct Preference Optimization), for improving the reasoning capabilities of a model on the MATH benchmark.

* **What the data suggests:** The results demonstrate that simply generating more independent samples (`Independent Samples`) improves accuracy, but applying a correction mechanism (`IFT`) yields better results for the same computational budget (same `n`). Adding an additional optimization step (`M-DPO`) provides a further significant boost.

* **How elements relate:** The x-axis represents a computational budget (more samples = more cost/time). The y-axis represents performance. The chart shows that for any given budget, the advanced methods deliver higher performance. Conversely, to achieve a target accuracy, the advanced methods require a smaller budget.

* **Notable implications:** The steep initial rise of the red curve is particularly important. It indicates that the `IFT + M-DPO` method is exceptionally effective at low sample counts, which is crucial for practical applications where inference cost is a major constraint. The consistent ordering of the lines validates the incremental value of each component (`IFT` and `M-DPO`) in the proposed method. The chart argues that investing in better sample *quality* (through correction and preference optimization) is more effective than merely increasing sample *quantity* with a base model.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Test Accuracy on GSM8K with Scaled Inference Compute

### Overview

This image is a line chart titled "Test Accuracy on GSM8K with Scaled Inference Compute." It plots the performance of three different methods for improving the accuracy of a model on the GSM8K math reasoning benchmark as the number of inference samples (`n`) is scaled. The chart demonstrates that increasing the number of samples improves accuracy for all methods, with one method consistently outperforming the others.

### Components/Axes

* **Chart Title:** "Test Accuracy on GSM8K with Scaled Inference Compute" (centered at the top).

* **Y-Axis:** Labeled "Maj@n (%)". The scale runs from 78 to 92, with major gridlines at intervals of 2 (78, 80, 82, 84, 86, 88, 90, 92). This represents the majority vote accuracy percentage over `n` samples.

* **X-Axis:** Labeled "n: the number of samples". The scale is logarithmic, with labeled tick marks at 2, 4, 8, 16, 32, and 64.

* **Legend:** Located in the bottom-right corner of the plot area. It contains three entries:

1. **Green line with circle markers:** "Independent Samples"

2. **Blue line with circle markers:** "Self-rewarding Correction (IFT)"

3. **Red line with circle markers:** "Self-rewarding Correction (IFT + M-DPO)"

* **Grid:** A light gray grid is present for both major x and y axis ticks.

### Detailed Analysis

The chart displays three data series, each showing an upward trend as `n` increases.

**1. Independent Samples (Green Line)**

* **Trend:** Slopes upward steadily but is the lowest-performing method across all values of `n`.

* **Data Points (Approximate):**

* n=2: ~77.5%

* n=4: ~85.0%

* n=8: ~86.5%

* n=16: ~88.5%

* n=32: ~89.5%

* n=64: ~90.0%

**2. Self-rewarding Correction (IFT) (Blue Line)**

* **Trend:** Slopes upward, consistently positioned between the green and red lines.

* **Data Points (Approximate):**

* n=2: ~80.5%

* n=4: ~87.0%

* n=8: ~88.5%

* n=16: ~91.0%

* n=32: ~91.5%

* n=64: ~92.0%

**3. Self-rewarding Correction (IFT + M-DPO) (Red Line)**

* **Trend:** Slopes upward most steeply initially and maintains the highest position throughout.

* **Data Points (Approximate):**

* n=2: ~82.0%

* n=4: ~88.0%

* n=8: ~90.0%

* n=16: ~91.5%

* n=32: ~92.0%

* n=64: ~92.2%

### Key Observations

1. **Performance Hierarchy:** There is a clear and consistent performance hierarchy: IFT + M-DPO (red) > IFT (blue) > Independent Samples (green) at every measured value of `n`.

2. **Diminishing Returns:** All three curves show diminishing returns. The accuracy gain from doubling `n` is largest when moving from n=2 to n=4 and becomes progressively smaller as `n` increases. The curves begin to plateau after n=16.

3. **Convergence at High `n`:** The performance gap between the three methods narrows significantly as `n` increases. At n=64, the difference between the best (red) and worst (green) method is only about 2.2 percentage points, compared to a gap of ~4.5 points at n=2.