# Statistical physics analysis of graph neural networks: Approaching optimality in the contextual stochastic block model

**Authors**: Duranthon, Lenka Zdeborová

> Statistical physics of computation laboratory, École polytechnique fédérale de Lausanne, Switzerland

(November 21, 2025)

## Abstract

Graph neural networks (GNNs) are designed to process data associated with graphs. They are finding an increasing range of applications; however, as with other modern machine learning techniques, their theoretical understanding is limited. GNNs can encounter difficulties in gathering information from nodes that are far apart by iterated aggregation steps. This situation is partly caused by so-called oversmoothing; and overcoming it is one of the practically motivated challenges. We consider the situation where information is aggregated by multiple steps of convolution, leading to graph convolutional networks (GCNs). We analyze the generalization performance of a basic GCN, trained for node classification on data generated by the contextual stochastic block model. We predict its asymptotic performance by deriving the free energy of the problem, using the replica method, in the high-dimensional limit. Calling depth the number of convolutional steps, we show the importance of going to large depth to approach the Bayes-optimality. We detail how the architecture of the GCN has to scale with the depth to avoid oversmoothing. The resulting large depth limit can be close to the Bayes-optimality and leads to a continuous GCN. Technically, we tackle this continuous limit via an approach that resembles dynamical mean-field theory (DMFT) with constraints at the initial and final times. An expansion around large regularization allows us to solve the corresponding equations for the performance of the deep GCN. This promising tool may contribute to the analysis of further deep neural networks.

## I Introduction

### I.1 Summary of the narrative

Graph neural networks (GNNs) emerged as the leading paradigm when learning from data that are associated with a graph or a network. Given the ubiquity of such data in sciences and technology, GNNs are gaining importance in their range of applications, including chemistry [1], biomedicine [2], neuroscience [3], simulating physical systems [4], particle physics [5] and solving combinatorial problems [6, 7]. As common in modern machine learning, the theoretical understanding of learning with GNNs is lagging behind their empirical success. In the context of GNNs, one pressing question concerns their ability to aggregate information from far away parts of the graph: the performance of GNNs often deteriorates as depth increases [8]. This issue is often attributed to oversmoothing [9, 10], a situation where a multi-layer GNN averages out the relevant information. Consequently, mostly relatively shallow GNNs are used in practice or other strategies are designed to avoid oversmoothing [11, 12].

Understanding the generalization properties of GNNs on unseen examples is a path towards yet more powerful models. Existing theoretical works addressed the generalization ability of GNNs mainly by deriving generalization bounds, with a minimal set of assumptions on the architecture and on the data, relying on VC dimension, Rademacher complexity or a PAC-Bayesian analysis; see for instance [13] and the references therein. Works along these lines that considered settings related to one of this work include [14], [15] or [16]. However, they only derive loose bounds for the test performance of the GNN and they do not provide insights on the effect of the structure of data. [14] provides sharper bounds; yet they do not take into account the data structure and depend on continuity constants that cannot be determined a priori. In order to provide more actionable outcomes, the interplay between the architecture of the GNN, the training algorithm and the data needs to be understood better, ideally including constant factors characterizing their dependencies on the variety of parameters.

Statistical physics traditionally plays a key role in understanding the behaviour of complex dynamical systems in the presence of disorder. In the context of neural networks, the dynamics refers to the training, and the disorder refers to the data used for learning. In the case of GNNs, the data is related to a graph. The statistical physics research strategy defines models that are simplified and allow analytical treatment. One models both the data generative process, and the learning procedure. A key ingredient is a properly defined thermodynamic limit in which quantities of interest self-average. One then aims to derive a closed set of equations for the quantities of interest, akin to obtaining exact expressions for free energies from which physical quantities can be derived. While numerous other research strategies are followed in other theoretical works on GNNs, see above, the statistical physics strategy is the main one accounting for constant factors in the generalization performance and as such provides invaluable insight about the properties of the studied systems. This line of research has been very fruitful in the context of fully connected feed-forward neural networks, see e.g. [17, 18, 19]. It is reasonable to expect that also in the context of GNNs this strategy will provide new actionable insights.

The analysis of generalization of GNNs in the framework of the statistical physics strategy was initiated recently in [20] where the authors studied the performance of a single-layer graph convolutional neural network (GCN) applied to data coming from the so-called contextual stochastic block model (CSBM). The CSBM, introduced in [21, 22], is particularly suited as a prototypical generative model for graph-structured data where each node belongs to one of several groups and is associated with a vector of attributes. The task is then the classification of the nodes into groups. Such data are used by practitioners as a benchmark for performance of GNNs [15, 23, 24, 25]. On the theoretical side, the follow-up work [26] generalized the analysis of [20] to a broader class of loss functions but also alerted to the relatively large gap between the performance of a single-layer GCN and the Bayes-optimal performance.

In this paper, we show that the close-formed analysis of training a GCN on data coming from the CSBM can be extended to networks performing multiple layers of convolutions. With a properly tuned regularization and strength of the residual connection this allows us to approach the Bayes-optimal performance very closely. Our analysis sheds light on the interplay between the different parameters –mainly the depth, the strength of the residual connection and the regularization– and on how to select the values of the parameters to mitigate oversmoothing. On a technical level the analysis relies on the replica method, with the limit of large depth leading to a continuous formulation similar to neural ordinary differential equations [27] that can be treated analytically via an approach that resembles dynamical mean-field theory with the position in the network playing the role of time. We anticipate that this type of infinite depth analysis can be generalized to studies of other deep networks with residual connections such a residual networks or multi-layer attention networks.

### I.2 Further motivations and related work

#### I.2.1 Graph neural networks:

In this work we focus on graph neural networks (GNNs). GNNs are neural networks designed to work on data that can be represented as graphs, such as molecules, knowledge graphs extracted from encyclopedias, interactions among proteins or social networks. GNNs can predict properties at the level of nodes, edges or the whole graph. Given a graph $\mathcal{G}$ over $N$ nodes, its adjacency matrix $A\in\mathbb{R}^{N\times N}$ and initial features $h_{i}^{(0)}\in\mathbb{R}^{M}$ on each node $i$ , a GNN can be expressed as the mapping

$$

\displaystyle h_{i}^{(k+1)}=f_{\theta^{(k)}}\left(h_{i}^{(k)},\mathrm{aggreg}(\{h_{j}^{(k)},j\sim i\})\right) \tag{1}

$$

for $k=0,\dots,K$ with $K$ being the depth of the network. where $f_{\theta^{(k)}}$ is a learnable function of parameters $\theta^{(k)}$ and $\mathrm{aggreg}()$ is a function that aggregates the features of the neighboring nodes in a permutation-invariant way. A common choice is the sum function, akin to a convolution on the graph

$$

\displaystyle\mathrm{aggreg}(\{h_{j},j\sim i\})=\sum_{j\sim i}h_{j}=(Ah)_{i}\ . \tag{2}

$$

Given this choice of aggregation the GNN is called graph convolutional network (GCN) [28]. For a GNN of depth $K$ the transformed features $h^{(K)}\in\mathbb{R}^{M^{\prime}}$ can be used to predict the properties of the nodes, the edges or the graph by a learnt projection.

In this work we will consider a GCN with the following architecture, that we will define more precisely in the detailed setting part II. We consider one trainable layer $w\in\mathbb{R}^{M}$ , since dealing with multiple layers of learnt weights is still a major issue [29], and since we want to focus on modeling the impact of numerous convolution steps on the generalization ability of the GCN.

$$

\displaystyle h^{(k+1)} \displaystyle=\left(\frac{1}{\sqrt{N}}\tilde{A}+c_{k}I_{N}\right)h^{(k)} \displaystyle\hat{y} \displaystyle=\operatorname{sign}\left(\frac{1}{\sqrt{N}}w^{T}h^{(K)}\right) \tag{3}

$$

where $\tilde{A}$ is a rescaling of the adjacency matrix, $I_{N}$ is the identity, $c_{k}\in\mathbb{R}$ for all $k$ are the residual connection strengths and $\hat{y}\in\mathbb{R}^{N}$ are the predicted labels of each node. We will call the number of layers $K$ the depth, but we reiterate that only the layer $w$ is learned.

#### I.2.2 Analyzable model of synthetic data:

Modeling the training data is a starting point to derive sharp predictions. A popular model of attributed graph, that we will consider in the present work and define in detail in sec. II.1, is the contextual stochastic block model (CSBM), introduced in [21, 22]. It consists in $N$ nodes with labels $y\in\{-1,+1\}^{N}$ , in a binary stochastic block model (SBM) to model the adjacency matrix $A\in\mathbb{R}^{N\times N}$ and in features (or attributes) $X\in\mathbb{R}^{N\times M}$ defined on the nodes and drawn according to a Gaussian mixture. $y$ has to be recovered given $A$ and $X$ . The inference is done in a semi-supervised way, in the sense that one also has access to a train subset of $y$ .

A key aspect in statistical physics is the thermodynamic limit, how should $N$ and $M$ scale together. In statistical physics we always aim at a scaling in which quantities of interest concentrate around deterministic values, and the performance of the system ranges between as bad as random guessing to as good as perfect learning. As we will see, these two requirements are satisfied in the high-dimensional limit $N\to\infty$ and $M\to\infty$ with $\alpha=N/M$ of order one. This scaling limit also aligns well with the common graph datasets that are of interest in practice, for instance Cora [30] ( $N=3.10^{3}$ and $M=3.10^{3}$ ), Coauthor CS [31] ( $N=2.10^{4}$ and $M=7.10^{3}$ ), CiteSeer [32] ( $N=4.10^{3}$ and $M=3.10^{3}$ ) and PubMed [33] ( $N=2.10^{4}$ and $M=5.10^{2}$ ).

A series of works that builds on the CSBM with lower dimensionality of features that is $M=o(N)$ exists. Authors of [34] consider a one-layer GNN trained on the CSBM by logistic regression and derive bounds for the test loss; however, they analyze its generalization ability on new graphs that are independent of the train graph and do not give exact predictions. In [35] they propose an architecture of GNN that is optimal on the CSBM with low-dimensional features, among classifiers that process local tree-like neighborhoods, and they derive its generalization error. In [36] the authors analyze the structure and the separability of the convolved data $\tilde{A}^{K}X$ , for different rescalings $\tilde{A}$ of the adjacency matrix, and provide a bound on the classification error. Compared to our work these articles consider a low-dimensional setting ([35]) where the dimension of the features $M$ is constant, or a setting where $M$ is negligible compared to $N$ ([34] and [36]).

#### I.2.3 Tight prediction on GNNs in the high-dimensional limit:

Little has been done as to tightly predicting the performance of GNNs in the high-dimensional limit where both the size of the graph and the dimensionality of the features diverge proportionally. The only pioneering references in this direction we are aware of are [20] and [26], where the authors consider a simple single-layer GCN that performs only one step of convolution, $K=1$ , trained on the CSBM in a semi-supervised setting. In these works the authors express the performance of the trained network as a function of a finite set of order parameters following a system of self-consistent equations.

There are two important motivations to extend these works and to consider GCNs with a higher depth $K$ . First, the GNNs that are used in practice almost always perform several steps of aggregation, and a more realistic model should take this in account. Second, [26] shows that the GCN it considers is far from the Bayes-optimal (BO) performance and the Bayes-optimal rate for all common losses. The BO performance is the best that any algorithm can achieve knowing the distribution of the data, and the BO rate is the rate of convergence toward perfect inference when the signal strength of the graph grows to infinity. Such a gap is intriguing in the sense that previous works [37, 38] show that a simple one-layer fully-connected neural network can reach or be very close to the Bayes-optimality on simple synthetic datasets, including Gaussian mixtures. A plausible explanation is that on the CSBM considering only one step of aggregation $K=1$ is not enough to retrieve all information, and one has to aggregate information from further nodes. Consequently, even on this simple dataset, introducing depth and considering a GCN with several convolution layers, $K>1$ , is crucial.

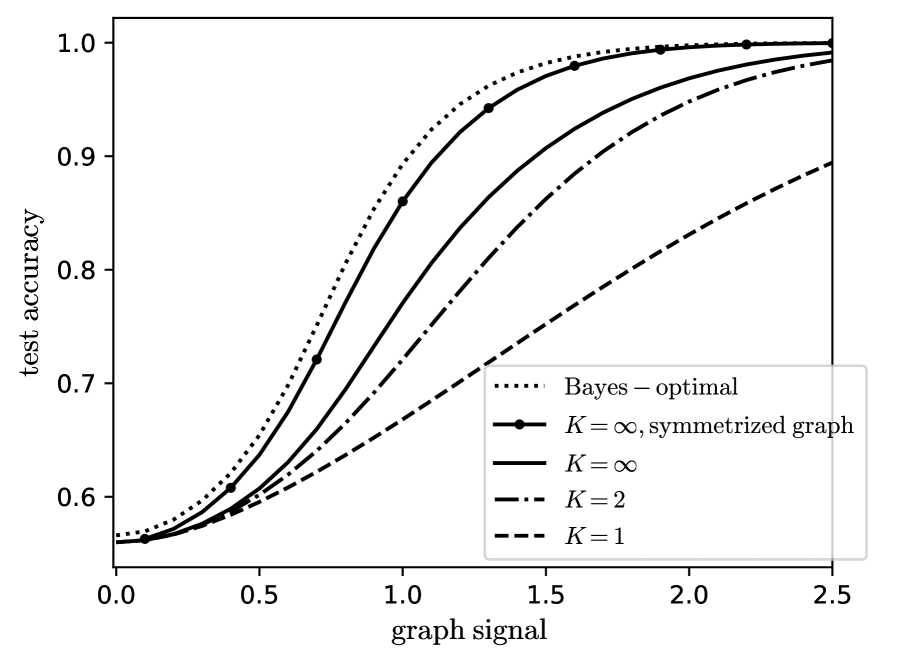

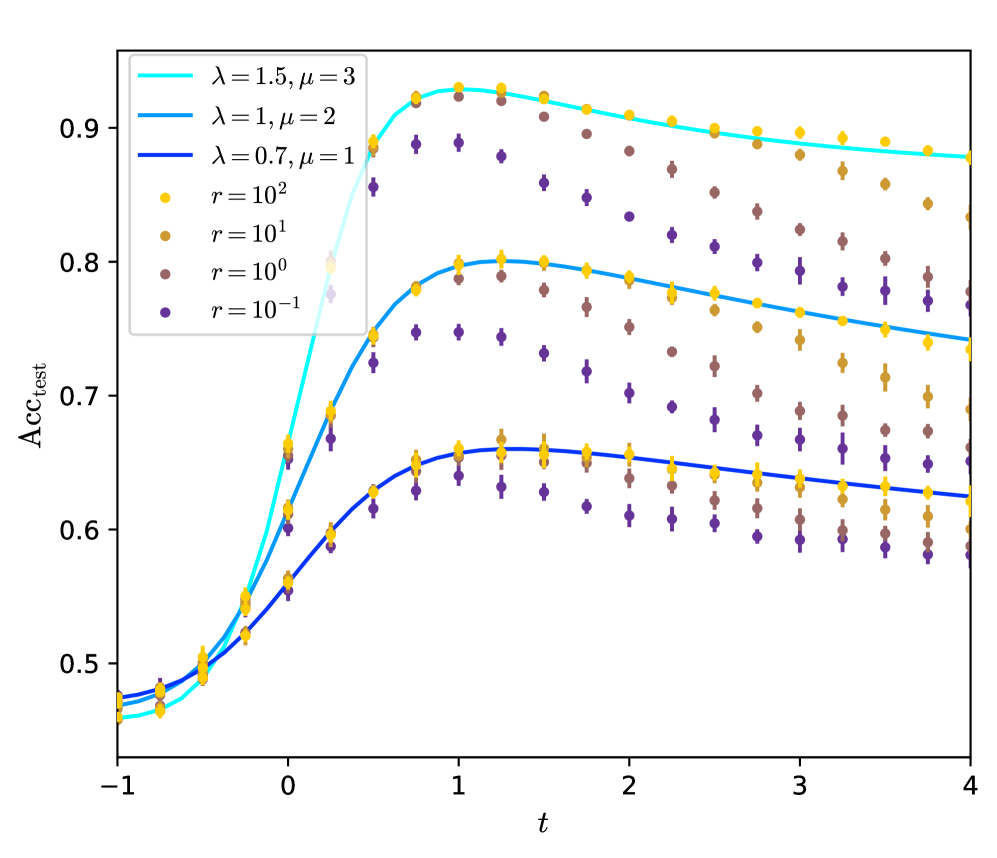

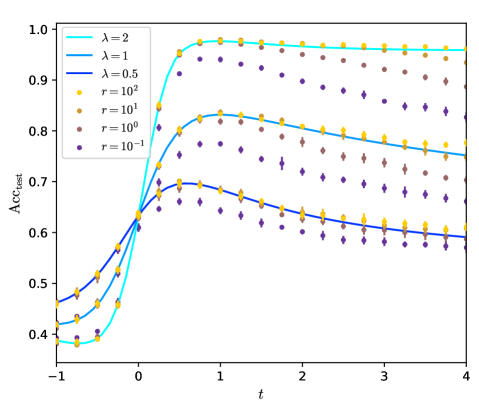

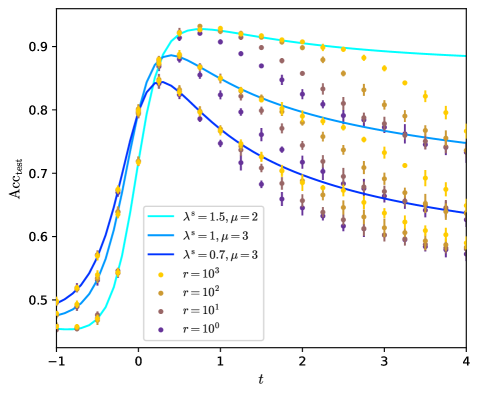

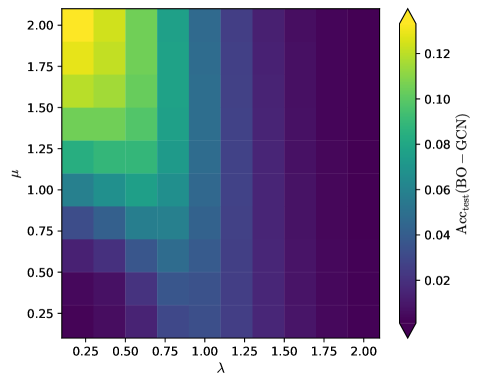

In the present work we study the effect of the depth $K$ of the convolution for the generalization ability of a simple GCN. A first part of our contribution consists in deriving the exact performance of a GCN performing several steps of convolution, trained on the CSBM, in the high-dimensional limit. We show that $K=2$ is the minimal number of steps to reach the BO learning rate. As to the performance at moderate signal strength, it appears that, if the architecture is well tuned, going to larger and larger $K$ increases the performance until it reaches a limit. This limit, if the adjacency matrix is symmetrized, can be close to the Bayes optimality. This is illustrated on fig. 1, which highlights the importance of numerous convolution layers.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Test Accuracy vs. Graph Signal

### Overview

The chart illustrates the relationship between test accuracy and graph signal strength for different model configurations. Test accuracy increases monotonically with graph signal strength, with distinct performance curves for various parameter settings (K values) and a theoretical Bayes-optimal baseline.

### Components/Axes

- **Y-axis**: Test accuracy (0.5 to 1.0)

- **X-axis**: Graph signal (0.0 to 2.5)

- **Legend**: Located in bottom-right corner, containing five entries:

1. Bayes-optimal (dotted line)

2. K=∞, symmetrized graph (solid line with dots)

3. K=∞ (solid line)

4. K=2 (dashed line)

5. K=1 (dash-dotted line)

### Detailed Analysis

1. **Bayes-optimal (dotted line)**:

- Starts at ~0.55 accuracy at graph signal 0.0

- Reaches ~1.0 accuracy at graph signal 2.5

- Shows smooth, consistent upward trend

2. **K=∞, symmetrized graph (solid line with dots)**:

- Begins at ~0.54 accuracy at graph signal 0.0

- Converges to Bayes-optimal performance by graph signal 1.5

- Matches Bayes-optimal curve exactly at graph signal 2.5

3. **K=∞ (solid line)**:

- Starts at ~0.53 accuracy at graph signal 0.0

- Approaches Bayes-optimal performance by graph signal 2.0

- Slightly lags behind symmetrized version at all points

4. **K=2 (dashed line)**:

- Begins at ~0.52 accuracy at graph signal 0.0

- Reaches ~0.95 accuracy at graph signal 2.5

- Shows gradual improvement with increasing graph signal

5. **K=1 (dash-dotted line)**:

- Starts at ~0.51 accuracy at graph signal 0.0

- Reaches ~0.90 accuracy at graph signal 2.5

- Demonstrates slowest improvement among all curves

### Key Observations

- All curves show positive correlation between graph signal and test accuracy

- Bayes-optimal performance serves as upper bound for all configurations

- Symmetrized graph with K=∞ achieves near-optimal performance

- Higher K values (∞ > 2 > 1) correspond to better performance

- Performance gap between K=∞ and K=1 narrows as graph signal increases

### Interpretation

The data demonstrates that increasing graph signal strength improves model performance across all configurations. The Bayes-optimal curve represents the theoretical maximum achievable accuracy. The K=∞, symmetrized graph configuration achieves performance nearly identical to the optimal, suggesting that graph symmetrization effectively captures essential information even with infinite parameters. Lower K values (1 and 2) show diminishing returns, indicating that model complexity (parameter count) significantly impacts performance when graph signal is limited. The convergence of K=∞ curves to Bayes-optimal performance at high graph signal strength implies that with sufficient data quality, even simple models can approach optimal performance through appropriate architectural choices like graph symmetrization.

</details>

Figure 1: Test accuracy of the graph neural network on data generated by the contextual stochastic block model vs the signal strength. We define the model and the network in section II. The test accuracy is maximized over all the hyperparameters of the network. The Bayes-optimal performance is from [39]. The line $K=1$ has been studied by [20, 26]; we improve it to $K>1$ , $K=\infty$ and symmetrized graphs. All the curves are theoretical predictions we derive in this work.

#### I.2.4 Oversmoothing and residual connections:

Going to larger depth $K$ is essential to obtain better performance. Yet, GNNs used in practice can be quite shallow, because of the several difficulties encountered at increasing depth, such that vanishing gradient, which is not specific to graph neural networks, or oversmoothing [9, 10]. Oversmoothing refers to the fact that the GNN tends to act like a low-pass filter on the graph and to smooth the features $h_{i}$ , which after too many steps may converge to the same vector for every node. A few steps of aggregation are beneficial but too many degrade the performance, as [40] shows for a simple GNN, close to the one we study, on a particular model. In the present work we show that the model we consider can suffer from oversmoothing at increasing $K$ if its architecture is not well-tuned and we precisely quantify it.

A way to mitigate vanishing gradient and oversmoothing is to allow the nodes to remember their initial features $h_{i}^{(0)}$ . This is done by adding residual (or skip) connections to the neural network, so the update function becomes

$$

\displaystyle h_{i}^{(k+1)}=c_{k}h_{i}^{(k)}+f_{\theta^{(k)}}\left(h_{i}^{(k)},\mathrm{aggreg}(\{h_{j}^{(k)},j\sim i\})\right) \tag{5}

$$

where the $c_{k}$ modulate the strength of the residual connections. The resulting architecture is known as residual network or resnet [41] in the context of fully-connected and convolutional neural networks. As to GNNs, architectures with residual connections have been introduced in [42] and used in [11, 12] to reach large numbers of layers with competitive accuracy. [43] additionally shows that residual connections help gradient descent. In the setting we consider we prove that residual connections are necessary to circumvent oversmoothing, to go to larger $K$ and to improve the performance.

#### I.2.5 Continuous neural networks:

Continuous neural networks can be seen as the natural limit of residual networks, when the depth $K$ and the residual connection strengths $c_{k}$ go to infinity proportionally, if $f_{\theta^{(k)}}$ is smooth enough with respect to $k$ . In this limit, rescaling $h^{(k+1)}$ with $c_{k}$ and setting $x=k/K$ and $c_{k}=K/t$ , the rescaled $h$ satisfies the differential equation

$$

\displaystyle\frac{\mathrm{d}h_{i}}{\mathrm{d}x}(x)=tf_{\theta(x)}\left(h_{i}(x),\mathrm{aggreg}(\{h_{j}(x),j\sim i\})\right)\ . \tag{6}

$$

This equation is called a neural ordinary differential equation [27]. The convergence of a residual network to a continuous limit has been studied for instance in [44]. Continuous neural networks are commonly used to model and learn the dynamics of time-evolving systems, by usually taking the update function $f_{\theta}$ independent of the time $t$ . For an example [45] uses a continuous fully-connected neural network to model turbulence in a fluid. As such, they are a building block of scientific machine learning; see for instance [46] for several applications. As to the generalization ability of continuous neural networks, the only theoretical work we are aware of is [47], that derives loose bounds based on continuity arguments.

Continuous neural networks have been extended to continuous GNNs in [48, 49]. For the GCN that we consider the residual connections are implemented by adding self-loops $c_{k}I_{N}$ to the graph. The continuous dynamic of $h$ is then

$$

\displaystyle\frac{\mathrm{d}h}{\mathrm{d}x}(x)=t\tilde{A}h(x)\ , \tag{7}

$$

with $t\in\mathbb{R}$ ; which is a diffusion on the graph. Other types of dynamics have been considered, such as anisotropic diffusion, where the diffusion factors are learnt, or oscillatory dynamics, that should avoid oversmoothing too; see for instance the review [50] for more details. No prior works predict their generalization ability. In this work we fill this gap by deriving the performance of the continuous limit of the simple GCN we consider.

### I.3 Summary of the main results:

We first generalize the work of [20, 26] to predict the performance of a simple GCN with arbitrary number $K$ of convolution steps. The network is trained in a semi-supervised way on data generated by the CSBM for node classification. In the high-dimensional limit and in the limit of dense graphs, the main properties of the trained network concentrate onto deterministic values, that do not depend on the particular realization of the data. The network is described by a few order parameters (or summary statistics), that satisfy a set of self-consistent equations, that we solve analytically or numerically. We thus have access to the expected train and test errors and accuracies of the trained network.

From these predictions we draw several consequences. Our main guiding line is to search for the architecture and the hyperparameters of the GCN that maximize its performance, and check whether the optimal GCN can reach the Bayes-optimal performance on the CSBM. The main parameters we considers are the depth $K$ , the residual connection strengths $c_{k}$ , the regularization $r$ and the loss function.

We consider the convergence rates towards perfect inference at large graph signal. We show that $K=2$ is the minimal depth to reach the Bayes-optimal rate, after which increasing $K$ or fine-tuning $c_{k}$ only leads to sub-leading improvements. In case of asymmetric graphs the GCN is not able to deal the asymmetry, for all $K$ or $c_{k}$ , and one has to pre-process the graph by symmetrizing it.

At finite graph signal the behaviour of the GCN is more complex. We find that large regularization $r$ maximizes the test accuracy in the case we consider, while the loss has little effect. The residual connection strengths $c_{k}$ have to be tuned to a same optimal value $c$ that depends on the properties of the graph.

An important point is that going to larger $K$ seems to improve the test accuracy. Yet the residual connection $c$ has to vary accordingly. If $c$ stays constant with respect to $K$ then the GCN will perform PCA on the graph $A$ , oversmooth and discard the information from the features $X$ . Instead, if $c$ grows with $K$ , the residual connections alleviate oversmoothing and the performance of the GCN keeps increasing with $K$ , if the diffusion time $t=K/c$ is well tuned.

The limit $K\to\infty,c\propto K$ is thus of particular interest. It corresponds to a continuous GCN performing diffusion on the graph. Our analysis can be extended to this case by directly taking the limit in the self-consistent equations. One has to further jointly expand them around $r\to+\infty$ and we keep the first order. At the end we predict the performance of the continuous GCN in an explicit and closed form. To our knowledge this is the first tight prediction of the generalization ability of a continuous neural network, and in particular of a continuous graph neural network. The large regularization limit $r\to+\infty$ is important: on one hand it appears to lead to the optimal performance of the neural network; on another hand, it is instrumental to analyze the continuous limit $K\to\infty$ and it allows to analytically solve the self-consistent equations describing the neural network.

We show that the continuous GCN at optimal time $t$ performs better than any finite- $K$ GCN. The optimal $t$ depends on the properties of the graph, and can be negative for heterophilic graphs. This result is a step toward solving one of the major challenges identified by [8]; that is, creating benchmarks where depth is necessary and building efficient deep networks.

The continuous GCN as large $r$ is optimal. Moreover, if run on the symmetrized graph, it approaches the Bayes-optimality on a broad range of configurations of the CSBM, as exemplified on fig. 1. We identify when the GCN fails to approach the Bayes-optimality: this happens when most of the information is contained in the features and not in the graph, and has to be processed in an unsupervised manner.

We provide the code that allows to evaluate our predictions in the supplementary material.

## II Detailed setting

### II.1 Contextual Stochastic Block Model for attributed graphs

We consider the problem of semi-supervised node classification on an attributed graph, where the nodes have labels and carry additional attributes, or features, and where the structure of the graph correlates with the labels. We consider a graph $\mathcal{G}$ made of $N$ nodes; each node $i$ has a binary label $y_{i}=\pm 1$ that is a Rademacher random variable.

The structure of the graph should be correlated with $y$ . We model the graph with a binary stochastic block model (SBM): the adjacency matrix $A\in\mathbb{R}^{N\times N}$ is drawn according to

$$

A_{ij}\sim\mathcal{B}\left(\frac{d}{N}+\frac{\lambda}{\sqrt{N}}\sqrt{\frac{d}{N}\left(1-\frac{d}{N}\right)}y_{i}y_{j}\right) \tag{8}

$$

where $\lambda$ is the signal-to-noise ratio (snr) of the graph, $d$ is the average degree of the graph, $\mathcal{B}$ is a Bernoulli law and the elements $A_{ij}$ are independent for all $i$ and $j$ . It can be interpreted in the following manner: an edge between $i$ and $j$ appears with a higher probability if $\lambda y_{i}y_{j}>1$ i.e. for $\lambda>0$ if the two nodes are in the same group. The scaling with $d$ and $N$ is chosen so that this model does not have a trivial limit at $N\to\infty$ both for $d=\Theta(1)$ and $d=\Theta(N)$ . Notice that we take $A$ asymmetric.

Additionally to the graph, each node $i$ carries attributes $X_{i}\in\mathbb{R}^{M}$ , that we collect in the matrix $X\in\mathbb{R}^{N\times M}$ . We set $\alpha=N/M$ the aspect ratio between the number of nodes and the dimension of the features. We model them by a Gaussian mixture: we draw $M$ hidden Gaussian variables $u_{\nu}\sim\mathcal{N}(0,1)$ , the centroid $u\in\mathbb{R}^{M}$ , and we set

$$

X=\sqrt{\frac{\mu}{N}}yu^{T}+W \tag{9}

$$

where $\mu$ is the snr of the features and $W$ is noise whose components $W_{i\nu}$ are independent standard Gaussians. We use the notation $\mathcal{N}(m,V)$ for a Gaussian distribution or density of mean $m$ and variance $V$ . The whole model for $(y,A,X)$ is called the contextual stochastic block model (CSBM) and was introduced in [21, 22].

We consider the task of inferring the labels $y$ given a subset of them. We define the training set $R$ as the set of nodes whose labels are revealed; $\rho=|R|/N$ is the training ratio. The test set $R^{\prime}$ is selected from the complement of $R$ ; we define the testing ratio $\rho^{\prime}=|R^{\prime}|/N$ . We assume that $R$ and $R^{\prime}$ are independent from the other quantities. The inference problem is to find back $y$ and $u$ given $A$ , $X$ , $R$ and the parameters of the model.

[22, 51] prove that the effective snr of the CSBM is

$$

\mathrm{snr}_{\mathrm{CSBM}}=\lambda^{2}+\mu^{2}/\alpha\ , \tag{10}

$$

in the sense that in the unsupervised regime $\rho=0$ for $\mathrm{snr}_{\mathrm{CSBM}}<1$ no information on the labels can be recovered while for $\mathrm{snr}_{\mathrm{CSBM}}>1$ partial information can be recovered. The information given by the graph is $\lambda^{2}$ while the information given by the features is $\mu^{2}/\alpha$ . As soon as a finite fraction of nodes $\rho>0$ is revealed the phase transition between no recovery and weak recovery disappears.

We work in the high-dimensional limit $N\to\infty$ and $M\to\infty$ while the aspect ratio $\alpha=N/M$ is of order one. The average degree $d$ should be of order $N$ , but taking $d$ growing with $N$ should be sufficient for our results to hold, as shown by our experiments. The other parameters $\lambda$ , $\mu$ , $\rho$ and $\rho^{\prime}$ are of order one.

### II.2 Analyzed architecture

In this work, we focus on the role of applying several data aggregation steps. With the current theoretical tools, the tight analysis of the generic GNN described in eq. (1) is not possible: dealing with multiple layers of learnt weights is hard; and even for a fully-connected two-layer perceptron this is a current and major topic [29]. Instead, we consider a one-layer GNN with a learnt projection $w$ . We focus on graph convolutional networks (GCNs) [28], where the aggregation is a convolution done by applying powers of a rescaling $\tilde{A}$ of the adjacency matrix. Last we remove the non-linearities. As we will see, the fact that the GCN is linear does not prevent it to approach the optimality in some regimes. The resulting GCN is referred to as simple graph convolutional network; it has been shown to have good performance while being much easier to train [52, 53]. The network we consider transforms the graph and the features in the following manner:

$$

h(w)=\prod_{k=1}^{K}\left(\frac{1}{\sqrt{N}}\tilde{A}+c_{k}I_{N}\right)\frac{1}{\sqrt{N}}Xw \tag{11}

$$

where $w\in\mathbb{R}^{M}$ is the layer of trainable weights, $I_{N}$ is the identity, $c_{k}\in\mathbb{R}$ is the strength of the residual connections and $\tilde{A}\in\mathbb{R}^{N\times N}$ is a rescaling of the adjacency matrix defined by

$$

\tilde{A}_{ij}=\left(\frac{d}{N}\left(1-\frac{d}{N}\right)\right)^{-1/2}\left(A_{ij}-\frac{d}{N}\right),\;\mathrm{for\;all\;}i,\,j. \tag{12}

$$

The prediction $\hat{y}_{i}$ of the label of $i$ by the GNN is then $\hat{y}_{i}=\operatorname{sign}h(w)_{i}$ .

$\tilde{A}$ is a rescaling of $A$ that is centered and normalized. In the limit of dense graphs, where $d$ is large, this will allow us to rely on a Gaussian equivalence property to analyze this GCN. The equivalence [54, 22, 20] states that in the high-dimensional limit, for $d$ growing with $N$ , $\tilde{A}$ can be approximated by the following spiked matrix $A^{\mathrm{g}}$ without changing the macroscopic properties of the GCN:

$$

A^{\mathrm{g}}=\frac{\lambda}{\sqrt{N}}yy^{T}+\Xi\ , \tag{13}

$$

where the components of the $N\times N$ matrix $\Xi$ are independent standard Gaussian random variables. The main reason for considering dense graphs instead of sparse graphs $d=\Theta(1)$ is to ease the theoretical analysis. The dense model can be described by a few order parameters; while a sparse SBM would be harder to analyze because many quantities, such as the degrees of the nodes, do not self-average, and one would need to take in account all the nodes, by predicting the performance on one realization of the graph or by running population dynamics. We believe that it would lead to qualitatively similar results, as for instance [55] shows, for a related model.

The above architecture corresponds to applying $K$ times a graph convolution on the projected features $Xw$ . At each convolution step $k$ a node $i$ updates its features by summing those of its neighbors and adding $c_{k}$ times its own features. In [20, 26] the same architecture was considered for $K=1$ ; we generalize these works by deriving the performance of the GCN for arbitrary numbers $K$ of convolution steps. As we will show this is crucial to approach the Bayes-optimal performance.

Compared to [20, 26], another important improvement towards the Bayes-optimality is obtained by symmetrizing the graph, and we will also study the performance of the GCN when it acts by applying the symmetrized rescaled adjacency matrix $\tilde{A}^{\mathrm{s}}$ defined by:

$$

\tilde{A}^{\mathrm{s}}=\frac{1}{\sqrt{2}}(\tilde{A}+\tilde{A}^{T})\ ,\quad A^{\mathrm{g,s}}=\frac{\lambda^{\mathrm{s}}}{\sqrt{N}}yy^{T}+\Xi^{\mathrm{s}}\ . \tag{14}

$$

$A^{\mathrm{g,s}}$ is its Gaussian equivalent, with $\lambda^{\mathrm{s}}=\sqrt{2}\lambda$ , $\Xi^{\mathrm{s}}$ is symmetric and $\Xi^{\mathrm{s}}_{i\leq j}$ are independent standard Gaussian random variables. In this article we derive and show the performance of the GNN both acting with $\tilde{A}$ and $\tilde{A}^{\mathrm{s}}$ but in a first part we will mainly consider and state the expressions for $\tilde{A}$ because they are simpler. We will consider $\tilde{A}^{\mathrm{s}}$ in a second part while taking the continuous limit. To deal with both cases, asymmetric or symmetrized, we define $\tilde{A}^{\mathrm{e}}\in\{\tilde{A},\tilde{A}^{\mathrm{s}}\}$ and $\lambda^{\mathrm{e}}\in\{\lambda,\lambda^{\mathrm{s}}\}$ .

The continuous limit of the above network (11) is defined by

$$

h(w)=e^{\frac{t}{\sqrt{N}}\tilde{A}^{\mathrm{e}}}\frac{1}{\sqrt{N}}Xw \tag{15}

$$

where $t$ is the diffusion time. It is obtained at large $K$ when the update between two convolutions becomes small, as follows:

$$

\left(\frac{t}{K\sqrt{N}}\tilde{A}^{\mathrm{e}}+I_{N}\right)^{K}\underset{K\to\infty}{\longrightarrow}e^{\frac{t}{\sqrt{N}}\tilde{A}^{\mathrm{e}}}\ . \tag{16}

$$

$h$ is the solution at time $t$ of the time-continuous diffusion of the features on the graph $\mathcal{G}$ with Laplacian $\tilde{A}^{\mathrm{e}}$ , defined by $\partial_{x}X(x)=\frac{1}{\sqrt{N}}\tilde{A}^{\mathrm{e}}X(x)$ and $X(0)=X$ . The discrete GCN can be seen as the discretization of the differential equation in the forward Euler scheme. The mapping with eq. (11) is done by taking $c_{k}=K/t$ for all $k$ and by rescaling the features of the discrete GCN $h(w)$ as $h(w)\prod_{k}c_{k}^{-1}$ so they remain of order one when $K$ is large. For the discrete GCN we do not directly consider the update $h_{k+1}=(I_{N}+c_{k}^{-1}\tilde{A}/\sqrt{N})h_{k}$ because we want to study the effect of having no residual connections, i.e. $c_{k}=0$ . The case where the diffusion coefficient depends on the position in the network is equivalent to a constant diffusion coefficient. Indeed, because of commutativity, the solution at time $t$ of $\partial_{x}X(x)=\frac{1}{\sqrt{N}}\mathrm{a}(x)\tilde{A}^{\mathrm{e}}X(x)$ for $\mathrm{a}:\mathbb{R}\to\mathbb{R}$ is $\exp\left(\int_{0}^{t}\mathrm{d}x\,\mathrm{a}(x)\frac{1}{\sqrt{N}}\tilde{A}^{\mathrm{e}}\right)X(0)$ .

The discrete and the continuous GCNs are trained by empirical risk minimization. We define the regularized loss

$$

L_{A,X}(w)=\frac{1}{\rho N}\sum_{i\in R}\ell(y_{i}h_{i}(w))+\frac{r}{\rho N}\sum_{\nu}\gamma(w_{\nu}) \tag{17}

$$

where $\gamma$ is a strictly convex regularization function, $r$ is the regularization strength and $\ell$ is a convex loss function. The regularization ensures that the GCN does not overfit the train data and has good generalization properties on the test set. We will focus on $l_{2}$ -regularization $\gamma(x)=x^{2}/2$ and on the square loss $\ell(x)=(1-x)^{2}/2$ (ridge regression) or the logistic loss $\ell(x)=\log(1+e^{-x})$ (logistic regression). Since $L$ is strictly convex it admits a unique minimizer $w^{*}$ . The key quantities we want to estimate are the average train and test errors and accuracies of this model, which are

$$

\displaystyle E_{\mathrm{train/test}}=\mathbb{E}\ \frac{1}{|\hat{R}|}\sum_{i\in\hat{R}}\ell(y_{i}h(w^{*})_{i}) \displaystyle\mathrm{Acc}_{\mathrm{train/test}}=\mathbb{E}\ \frac{1}{|\hat{R}|}\sum_{i\in\hat{R}\ ,}\delta_{y_{i}=\operatorname{sign}{h(w^{*})_{i}}} \tag{18}

$$

where $\hat{R}$ stands either for the train set $R$ or the test set $R^{\prime}$ and the expectation is taken over $y$ , $u$ , $A$ , $X$ , $R$ and $R^{\prime}$ . $\mathrm{Acc}_{\mathrm{train/test}}$ is the proportion of train/test nodes that are correctly classified. A main part of the present work is dedicated to the derivation of exact expressions for the errors and the accuracies. We will then search for the architecture of the GCN that maximizes the test accuracy $\mathrm{Acc}_{\mathrm{test}}$ .

Notice that one could treat the residual connection strengths $c_{k}$ as supplementary parameters, jointly trained with $w$ to minimize the train loss. Our analysis can straightforwardly be extended to deal with this case. Yet, as we will show, to take trainable $c_{k}$ degrades the test performances; and it is better to consider them as hyperparameters optimizing the test accuracy.

Table 1: Summary of the parameters of the model.

| $N$ $M$ $\alpha=N/M$ | number of nodes dimension of the attributes aspect ratio |

| --- | --- |

| $d$ | average degree of the graph |

| $\lambda$ | signal strength of the graph |

| $\mu$ | signal strength of the features |

| $\rho=|R|/N$ | fraction of training nodes |

| $\ell$ , $\gamma$ | loss and regularization functions |

| $r$ | regularization strength |

| $K$ | number of aggregation steps |

| $c_{k}$ , $c$ , $t$ | residual connection strengths, diffusion time |

### II.3 Bayes-optimal performance:

An interesting consequence of modeling the data as we propose is that one has access to the Bayes-optimal (BO) performance on this task. The BO performance is defined as the upper-bound on the test accuracy that any algorithm can reach on this problem, knowing the model and its parameters $\alpha,\lambda,\mu$ and $\rho$ . It is of particular interest since it will allow us to check how far the GCNs are from the optimality and how much improvement can one hope for.

The BO performance on this problem has been derived in [22] and [39]. It is expressed as a function of the fixed-point of an algorithm based on approximate message-passing (AMP). In the limit of large degrees $d=\Theta(N)$ this algorithm can be tracked by a few scalar state-evolution (SE) equations that we reproduce in appendix C.

## III Asymptotic characterization of the GCN

In this section we provide an asymptotic characterization of the performance of the GCNs previously defined. It relies on a finite set of order parameters that satisfy a system of self-consistent, or fixed-point, equations, that we obtain thanks to the replica method in the high-dimensional limit at finite $K$ . In a second part, for the continuous GCN, we show how to take the limit $K\to\infty$ for the order parameters and for their self-consistent equations. The continuous GCN is still described by a finite set of order parameters, but these are now continuous functions and the self-consistent equations are integral equations.

Notice that for a quadratic loss function $\ell$ there is an analytical expression for the minimizer $w^{*}$ of the regularized loss $L_{A,X}$ eq. (17), given by the regularized least-squares formula. Based on that, a computation of the performance of the GCN with random matrix theory (RMT) is possible. It would not be straightforward in the sense that the convolved features, the weights $w^{*}$ and the labels $y$ are correlated, and such a computation would have to take in account these correlations. Instead, we prefer to use the replica method, which has already been successfully applied to analyze several architectures of one (learnable) layer neural networks in articles such that [17, 38]. Compared to RMT, the replica method allows us to seamlessly handle the regularized pseudo-inverse of the least-squares and to deal with logistic regression, where no explicit expression for $w^{*}$ exists.

We compute the average train and test errors and accuracies eqs. (18) and (19) in the high-dimensional limit $N$ and $M$ large. We define the Hamiltonian

$$

H(w)=s\sum_{i\in R}\ell(y_{i}h(w)_{i})+r\sum_{\nu}\gamma(w_{\nu})+s^{\prime}\sum_{i\in R^{\prime}}\ell(y_{i}h(w)_{i}) \tag{20}

$$

where $s$ and $s^{\prime}$ are external fields to probe the observables. The loss of the test samples is in $H$ for the purpose of the analysis; we will take $s^{\prime}=0$ later and the GCN is minimizing the training loss (17). The free energy $f$ is defined as

$$

Z=\int\mathrm{d}w\,e^{-\beta H(w)}\ ,\quad f=-\frac{1}{\beta N}\mathbb{E}\log Z\ . \tag{21}

$$

$\beta$ is an inverse temperature; we consider the limit $\beta\to\infty$ where the partition function $Z$ concentrates over $w^{*}$ at $s=1$ and $s^{\prime}=0$ . The train and test errors are then obtained according to

$$

E_{\mathrm{train}}=\frac{1}{\rho}\frac{\partial f}{\partial s}\ ,\quad E_{\mathrm{test}}=\frac{1}{\rho^{\prime}}\frac{\partial f}{\partial s^{\prime}} \tag{22}

$$

both evaluated at $(s,s^{\prime})=(1,0)$ . One can, in the same manner, compute the average accuracies by introducing the observables $\sum_{i\in\hat{R}}\delta_{y_{i}=\operatorname{sign}{h(w)_{i}}}$ in $H$ . To compute $f$ we introduce $n$ replica:

$$

\mathbb{E}\log Z=\mathbb{E}\frac{\partial Z^{n}}{\partial n}(n=0)=\left(\frac{\partial}{\partial n}\mathbb{E}Z^{n}\right)(n=0)\ . \tag{23}

$$

To pursue the computation we need to precise the architecture of the GCN.

### III.1 Discrete GCN

#### III.1.1 Asymptotic characterization

In this section, we work at finite $K$ . We consider only the asymmetric graph. We define the state of the GCN after the $k^{\mathrm{th}}$ convolution step as

$$

h_{k}=\left(\frac{1}{\sqrt{N}}\tilde{A}+c_{k}I_{N}\right)h_{k-1}\ ,\quad h_{0}=\frac{1}{\sqrt{N}}Xw\ . \tag{24}

$$

$h_{K}=h(w)\in\mathbb{R}^{N}$ is the output of the full GCN. We introduce $h_{k}$ in the replicated partition function $Z^{n}$ and we integrate over the fluctuations of $A$ and $X$ . This couples the variables across the different layers $k=0\ldots K$ and one has to take in account the correlations between the different $h_{k}$ , which will result into order parameters of dimension $K$ . One has to keep separate the indices $i\in R$ and $i\notin R$ , whether the loss $\ell$ is active or not; consequently the free entropy of the problem will be a linear combination of $\rho$ times a potential with $\ell$ and $(1-\rho)$ times without $\ell$ . The limit $N\to\infty$ is taken thanks to Laplace’s method. The extremization is done in the space of the replica-symmetric ansatz, which is justified by the convexity of $H$ . The detailed computation is given in appendix A.

The outcome of the computation is that this problem is described by a set of twelve order parameters (or summary statistics). They are $\Theta=\{m_{w}\in\mathbb{R},Q_{w}\in\mathbb{R},V_{w}\in\mathbb{R},m\in\mathbb{R}^{K},Q\in\mathbb{R}^{K\times K},V\in\mathbb{R}^{K\times K}\}$ and their conjugates $\hat{\Theta}=\{\hat{m}_{w}\in\mathbb{R},\hat{Q}_{w}\in\mathbb{R},\hat{V}_{w}\in\mathbb{R},\hat{m}\in\mathbb{R},\hat{Q}\in\mathbb{R}^{K\times K},\hat{V}\in\mathbb{R}^{K\times K}\}$ , where

$$

\displaystyle m_{w}=\frac{1}{N}u^{T}w\ , \displaystyle m_{k}=\frac{1}{N}y^{T}h_{k}\ , \displaystyle Q_{w}=\frac{1}{N}w^{T}w\ , \displaystyle Q_{k,l}=\frac{1}{N}h^{T}_{k}h_{l}\ , \displaystyle V_{w}=\frac{\beta}{N}\operatorname{Tr}(\operatorname{Cov}_{\beta}(w,w))\ , \displaystyle V_{k,l}=\frac{\beta}{N}\operatorname{Tr}(\operatorname{Cov}_{\beta}(h_{k},h_{l}))\ . \tag{25}

$$

$m_{w}$ and $m_{k}$ are the magnetizations (or overlaps) between the weights and the hidden variables and between the $k^{\mathrm{th}}$ layer and the labels; the $Q$ s are the self-overlaps (or scalar products) between the different layers; and, writing $\operatorname{Cov}_{\beta}$ for the covariance under the density $e^{-\beta H}$ , the $V$ s are the covariances between different trainings on the same data, after rescaling by $\beta$ .

The order parameters $\Theta$ and $\hat{\Theta}$ satisfy the property that they extremize the following free entropy $\phi$ :

$$

\displaystyle\phi \displaystyle=\frac{1}{2}\left(\hat{V}_{w}V_{w}+\hat{V}_{w}Q_{w}-V_{w}\hat{Q}_{w}\right)-\hat{m}_{w}m_{w}+\frac{1}{2}\mathrm{tr}\left(\hat{V}V+\hat{V}Q-V\hat{Q}\right)-\hat{m}^{T}m \displaystyle\quad{}+\frac{1}{\alpha}\mathbb{E}_{u,\varsigma}\left(\log\int\mathrm{d}w\,e^{\psi_{w}(w)}\right)+\rho\mathbb{E}_{y,\xi,\zeta,\chi}\left(\log\int\prod_{k=0}^{K}\mathrm{d}h_{k}e^{\psi_{h}(h;s)}\right)+(1-\rho)\mathbb{E}_{y,\xi,\zeta,\chi}\left(\log\int\prod_{k=0}^{K}\mathrm{d}h_{k}e^{\psi_{h}(h;s^{\prime})}\right)\ , \tag{28}

$$

the potentials being

$$

\displaystyle\psi_{w}(w) \displaystyle=-r\gamma(w)-\frac{1}{2}\hat{V}_{w}w^{2}+\left(\sqrt{\hat{Q}_{w}}\varsigma+u\hat{m}_{w}\right)w \displaystyle\psi_{h}(h;\bar{s}) \displaystyle=-\bar{s}\ell(yh_{K})-\frac{1}{2}h_{<K}^{T}\hat{V}h_{<K}+\left(\xi^{T}\hat{Q}^{1/2}+y\hat{m}^{T}\right)h_{<K} \displaystyle\qquad{}+\log\mathcal{N}\left(h_{0}\left|\sqrt{\mu}ym_{w}+\sqrt{Q_{w}}\zeta;V_{w}\right.\right)+\log\mathcal{N}\left(h_{>0}\left|c\odot h_{<K}+\lambda ym+Q^{1/2}\chi;V\right.\right)\ , \tag{29}

$$

for $w\in\mathbb{R}$ and $h\in\mathbb{R}^{K+1}$ , where we introduced the Gaussian random variables $\varsigma\sim\mathcal{N}(0,1)$ , $\xi\sim\mathcal{N}(0,I_{K})$ , $\zeta\sim\mathcal{N}(0,1)$ and $\chi\sim\mathcal{N}(0,I_{K})$ , take $y$ Rademacher and $u\sim\mathcal{N}(0,1)$ , where we set $h_{>0}=(h_{1},\ldots,h_{K})^{T}$ , $h_{<K}=(h_{0},\ldots,h_{K-1})^{T}$ and $c\odot h_{<K}=(c_{1}h_{0},\ldots,c_{K}h_{K-1})^{T}$ and where $\bar{s}\in\{0,1\}$ controls whether the loss $\ell$ is active or not. We use the notation $\mathcal{N}(\cdot|m;V)$ for a Gaussian density of mean $m$ and variance $V$ . We emphasize that $\psi_{w}$ and $\psi_{h}$ are effective potentials taking in account the randomness of the model and that are defined over a finite number of variables, contrary to the initial loss function $H$ .

The extremality condition $\nabla_{\Theta,\hat{\Theta}}\,\phi=0$ can be stated in terms of a system of self-consistent equations that we give here. In the limit $\beta\to\infty$ one has to consider the extremizers of $\psi_{w}$ and $\psi_{h}$ defined as

$$

\displaystyle w^{*} \displaystyle=\operatorname*{argmax}_{w}\psi_{w}(w)\in\mathbb{R} \displaystyle h^{*} \displaystyle=\operatorname*{argmax}_{h}\psi_{h}(h;\bar{s}=1)\in\mathbb{R}^{K+1} \displaystyle h^{{}^{\prime}*} \displaystyle=\operatorname*{argmax}_{h}\psi_{h}(h;\bar{s}=0)\in\mathbb{R}^{K+1}\ . \tag{31}

$$

We also need to introduce $\operatorname{Cov}_{\psi_{h}}(h)$ and $\operatorname{Cov}_{\psi_{h}}(h^{\prime})$ the covariances of $h$ under the densities $e^{\psi_{h}(h,\bar{s}=1)}$ and $e^{\psi_{h}(h,\bar{s}=0)}$ . In the limit $\beta\to\infty$ they read

$$

\displaystyle\operatorname{Cov}_{\psi_{h}}(h) \displaystyle=\nabla\nabla\psi_{h}(h^{*};\bar{s}=1) \displaystyle\operatorname{Cov}_{\psi_{h}}(h^{\prime}) \displaystyle=\nabla\nabla\psi_{h}(h^{{}^{\prime}*};\bar{s}=0)\ , \tag{34}

$$

$\nabla\nabla$ being the Hessian with respect to $h$ . Last, for compactness we introduce the operator $\mathcal{P}$ that, for a function $g$ in $h$ , acts according to

$$

\mathcal{P}(g(h))=\rho g(h^{*})+(1-\rho)g(h^{{}^{\prime}*})\ . \tag{36}

$$

For instance $\mathcal{P}(hh^{T})=\rho h^{*}(h^{*})^{T}+(1-\rho)h^{{}^{\prime}*}(h^{{}^{\prime}*})^{T}$ and $\mathcal{P}(\operatorname{Cov}_{\psi_{h}}(h))=\rho\operatorname{Cov}_{\psi_{h}}(h)+(1-\rho)\operatorname{Cov}_{\psi_{h}}(h^{\prime})$ . Then the extremality condition gives the following self-consistent, or fixed-point, equations on the order parameters:

$$

\displaystyle m_{w}=\frac{1}{\alpha}\mathbb{E}_{u,\varsigma}\,uw^{*} \displaystyle Q_{w}=\frac{1}{\alpha}\mathbb{E}_{u,\varsigma}(w^{*})^{2} \displaystyle V_{w}=\frac{1}{\alpha}\frac{1}{\sqrt{\hat{Q}_{w}}}\mathbb{E}_{u,\varsigma}\,\varsigma w^{*} \displaystyle m=\mathbb{E}_{y,\xi,\zeta,\chi}\,y\mathcal{P}(h_{<K}) \displaystyle Q=\mathbb{E}_{y,\xi,\zeta,\chi}\mathcal{P}(h_{<K}h_{<K}^{T}) \displaystyle V=\mathbb{E}_{y,\xi,\zeta,\chi}\mathcal{P}(\operatorname{Cov}_{\psi_{h}}(h_{<K})) \displaystyle\hat{m}_{w}=\frac{\sqrt{\mu}}{V_{w}}\mathbb{E}_{y,\xi,\zeta,\chi}\,y\mathcal{P}(h_{0}-\sqrt{\mu}ym_{w}) \displaystyle\hat{Q}_{w}=\frac{1}{V_{w}^{2}}\mathbb{E}_{y,\xi,\zeta,\chi}\mathcal{P}\left((h_{0}-\sqrt{\mu}ym_{w}-\sqrt{Q}_{w}\zeta)^{2}\right) \displaystyle\hat{V}_{w}=\frac{1}{V_{w}}-\frac{1}{V_{w}^{2}}\mathbb{E}_{y,\xi,\zeta,\chi}\mathcal{P}(\operatorname{Cov}_{\psi_{h}}(h_{0})) \displaystyle\hat{m}=\lambda V^{-1}\mathbb{E}_{y,\xi,\zeta,\chi}\,y\mathcal{P}(h_{>0}-c\odot h_{<K}-\lambda ym) \displaystyle\hat{Q}=V^{-1}\mathbb{E}_{y,\xi,\zeta,\chi}\mathcal{P}\left((h_{>0}-c\odot h_{<K}-\lambda ym-Q^{1/2}\chi)^{\otimes 2}\right)V^{-1} \displaystyle\hat{V}=V^{-1}-V^{-1}\mathbb{E}_{y,\xi,\zeta,\chi}\mathcal{P}(\operatorname{Cov}_{\psi_{h}}(h_{>0}-c\odot h_{<K}))V^{-1} \tag{37}

$$

Once this system of equations is solved, the expected errors and accuracies can be expressed as

$$

\displaystyle E_{\mathrm{train}}=\mathbb{E}_{y,\xi,\zeta,\chi}\ell(yh_{K}^{*})\ , \displaystyle\mathrm{Acc}_{\mathrm{train}}=\mathbb{E}_{y,\xi,\zeta,\chi}\delta_{y=\operatorname{sign}(h_{K}^{*})} \displaystyle E_{\mathrm{test}}=\mathbb{E}_{y,\xi,\zeta,\chi}\ell(yh_{K}^{{}^{\prime}*})\ , \displaystyle\mathrm{Acc}_{\mathrm{test}}=\mathbb{E}_{y,\xi,\zeta,\chi}\delta_{y=\operatorname{sign}(h_{K}^{{}^{\prime}*})}\ . \tag{49}

$$

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Graphs: Accuracy vs. Parameter c for Different K and r Values

### Overview

The image contains six line graphs arranged in a 2x3 grid, each representing test accuracy (Acc_test) as a function of parameter c for different values of K (1, 2, 3) and r (10⁻², 10⁰, 10², 10⁴). A dashed "Bayes-optimal" reference line is present in all graphs. An inset contour plot in the center shows relationships between parameters c₁ and c₂.

### Components/Axes

- **X-axis**: Parameter c (ranges 0–2 or 0–3 depending on K)

- **Y-axis**: Test accuracy (Acc_test) from 0.6 to 1.0

- **Legend**: Located in top-right corner of main charts, with colors:

- Cyan: r = 10⁴

- Teal: r = 10²

- Olive: r = 10⁰

- Brown: r = 10⁻²

- Dashed black: Bayes-optimal

- **Inset**: Contour plot labeled "c₁ vs. c₂" with color-coded regions (yellow to purple)

### Detailed Analysis

#### K=1 (Top-left)

- **Trend**: All r values show sigmoidal growth toward Bayes-optimal (dashed line).

- **Data points**:

- r=10⁴: Peaks at ~0.85 Acc_test at c=1.5

- r=10²: Peaks at ~0.83 Acc_test at c=1.2

- r=10⁰: Peaks at ~0.80 Acc_test at c=1.0

- r=10⁻²: Peaks at ~0.75 Acc_test at c=0.8

#### K=2 (Top-middle)

- **Trend**: Faster convergence to Bayes-optimal than K=1.

- **Data points**:

- r=10⁴: Reaches ~0.92 Acc_test at c=1.8

- r=10²: Reaches ~0.90 Acc_test at c=1.5

- r=10⁰: Reaches ~0.87 Acc_test at c=1.2

- r=10⁻²: Reaches ~0.82 Acc_test at c=1.0

#### K=3 (Top-right)

- **Trend**: Nearest to Bayes-optimal across all c.

- **Data points**:

- r=10⁴: Maintains ~0.98 Acc_test from c=1.0 onward

- r=10²: Peaks at ~0.95 Acc_test at c=2.0

- r=10⁰: Peaks at ~0.93 Acc_test at c=1.8

- r=10⁻²: Peaks at ~0.89 Acc_test at c=1.5

#### K=1 (Bottom-left)

- **Trend**: Similar to top-left but lower baseline.

- **Data points**:

- r=10⁴: Peaks at ~0.78 Acc_test at c=1.5

- r=10²: Peaks at ~0.76 Acc_test at c=1.2

- r=10⁰: Peaks at ~0.73 Acc_test at c=1.0

- r=10⁻²: Peaks at ~0.68 Acc_test at c=0.8

#### K=2 (Bottom-middle)

- **Trend**: Improved over K=1 but less than top-middle.

- **Data points**:

- r=10⁴: Reaches ~0.88 Acc_test at c=1.8

- r=10²: Reaches ~0.86 Acc_test at c=1.5

- r=10⁰: Reaches ~0.83 Acc_test at c=1.2

- r=10⁻²: Reaches ~0.78 Acc_test at c=1.0

#### K=3 (Bottom-right)

- **Trend**: Slightly lower than top-right but still near-optimal.

- **Data points**:

- r=10⁴: Maintains ~0.95 Acc_test from c=1.0 onward

- r=10²: Peaks at ~0.92 Acc_test at c=2.0

- r=10⁰: Peaks at ~0.90 Acc_test at c=1.8

- r=10⁻²: Peaks at ~0.86 Acc_test at c=1.5

### Key Observations

1. **K Dependency**: Higher K values consistently show better convergence toward Bayes-optimal performance.

2. **r Dependency**: Larger r values (10⁴, 10²) outperform smaller r values (10⁰, 10⁻²) across all K.

3. **Inset Contour**: The yellow-to-purple gradient suggests optimal regions for c₁ and c₂, with yellow likely representing highest performance.

4. **Asymptotic Behavior**: All curves approach but never exceed the Bayes-optimal line, indicating theoretical limits.

### Interpretation

The data demonstrates that increasing K improves model performance by better capturing underlying patterns, while larger r values (potentially representing sample size or regularization strength) enhance accuracy. The contour plot implies that optimal parameter combinations (c₁, c₂) exist in specific regions, with yellow areas likely corresponding to highest performance. The consistent gap between observed accuracy and Bayes-optimal suggests inherent model limitations or data complexity. Notably, the bottom-row graphs (possibly validation/test sets) show lower performance than top-row training graphs, indicating potential overfitting for smaller r values.

</details>

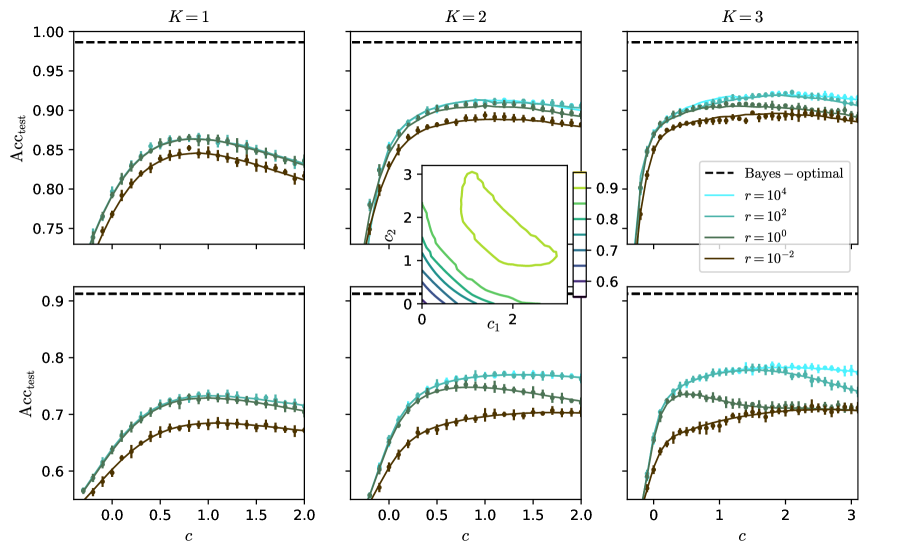

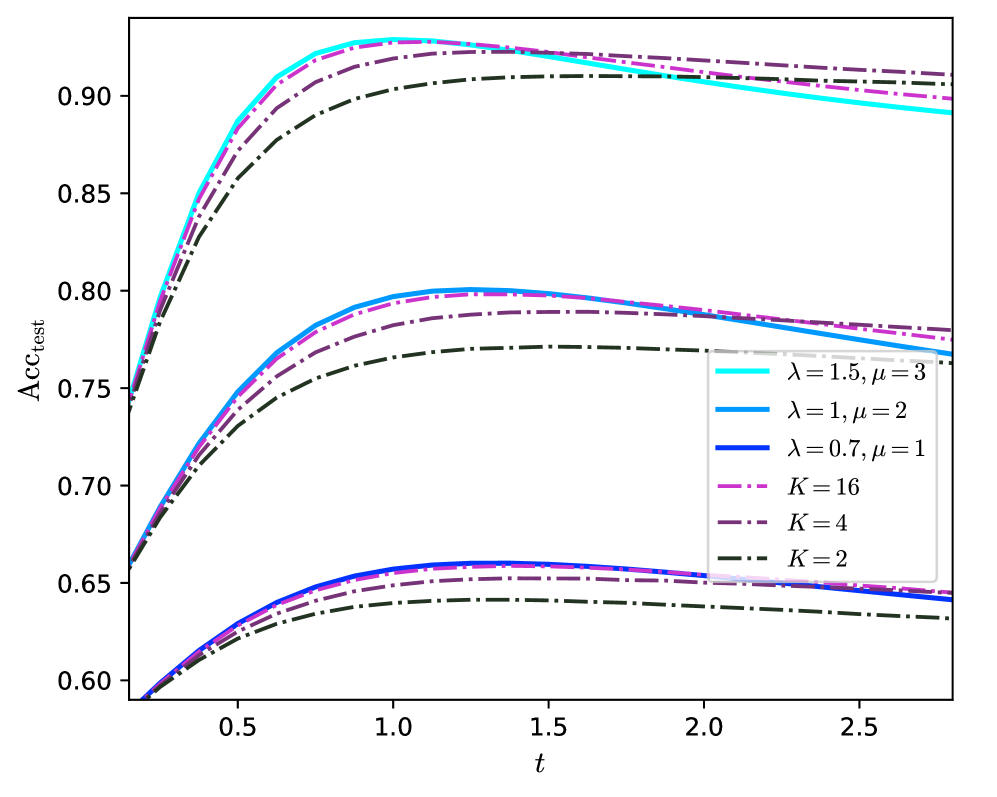

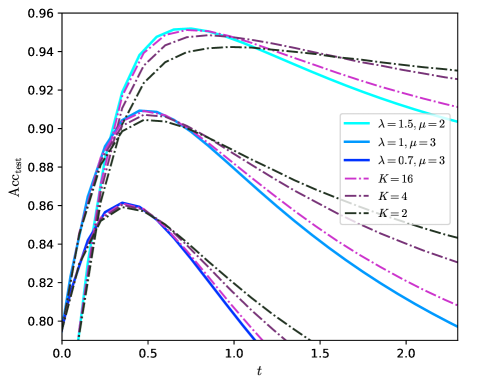

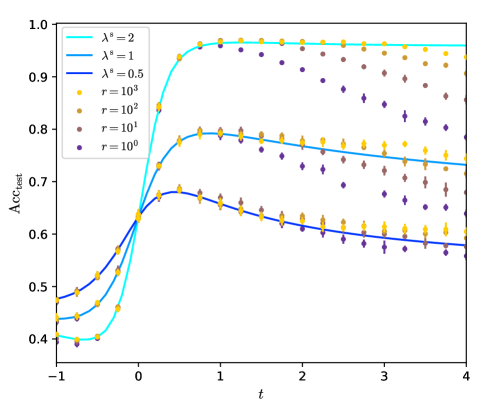

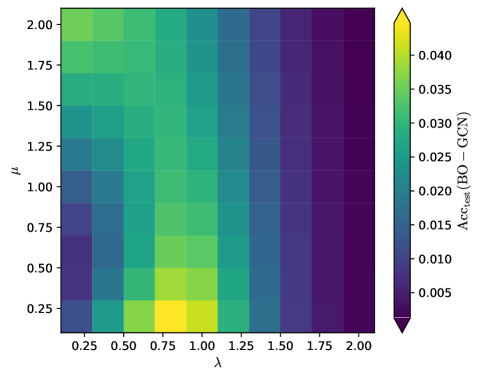

Figure 2: Predicted test accuracy $\mathrm{Acc}_{\mathrm{test}}$ for different values of $K$ . Top: for $\lambda=1.5$ , $\mu=3$ and logistic loss; bottom: for $\lambda=1$ , $\mu=2$ and quadratic loss; $\alpha=4$ and $\rho=0.1$ . We take $c_{k}=c$ for all $k$ . Inset: $\mathrm{Acc}_{\mathrm{test}}$ vs $c_{1}$ and $c_{2}$ at $K=2$ and at large $r$ . Dots: numerical simulation of the GCN for $N=10^{4}$ and $d=30$ , averaged over ten experiments.

#### III.1.2 Analytical solution

In general the system of self-consistent equations (37 - 48) has to be solved numerically. The equations are applied iteratively, starting from arbitrary $\Theta$ and $\hat{\Theta}$ , until convergence.

An analytical solution can be computed in some special cases. We consider ridge regression (i.e. quadratic $\ell$ ) and take $c=0$ no residual connections. Then $\operatorname{Cov}_{\psi_{h}}(h)$ , $\operatorname{Cov}_{\psi_{h}^{\prime}}(h)$ , $V$ and $\hat{V}$ are diagonal. We obtain that

$$

\mathrm{Acc}_{\mathrm{test}}=\frac{1}{2}\left(1+\mathrm{erf}\left(\frac{\lambda q_{y,K-1}}{\sqrt{2}}\right)\right)\ ,\quad q_{y,k}=\frac{m_{k}}{\sqrt{Q_{k,k}}}\ . \tag{51}

$$

The test accuracy only depends on the angle (or overlap) $q_{y,k}$ between the labels $y$ and the last hidden state $h_{K-1}$ of the GCN. $q_{y,k}$ can easily be computed in the limit $r\to\infty$ . In appendix A.3 we explicit the equations (37 - 50) and give their solution in that limit. In particular we obtain for any $k$

$$

\displaystyle m_{k} \displaystyle=\frac{\rho}{\alpha r}\left(\mu\lambda^{K+k}+\sum_{l=0}^{k}\lambda^{K-k+2l}\right) \displaystyle Q_{k,k} \displaystyle=\frac{\rho}{\alpha^{2}r^{2}}\left(\alpha\left(1+\rho\mu\lambda^{2K}+\rho\sum_{l=1}^{K}\lambda^{2l}\right)\right. \displaystyle\hskip 18.49988pt\left.{}+\sum_{l=0}^{k}\left(1+\rho\sum_{l^{\prime}=1}^{K-1-l}\lambda^{2l^{\prime}}+\frac{\alpha^{2}r^{2}}{\rho}m_{l}^{2}\right)\right)\,. \tag{52}

$$

#### III.1.3 Consequences: going to large $K$ is necessary

We derive consequences from the previous theoretical predictions. We numerically solve eqs. (37 - 48) for some plausible values of the parameters of the data model. We keep balanced the signals from the graph, $\lambda^{2}$ , and from the features, $\mu^{2}/\alpha$ ; we take $\rho=0.1$ to stick to the common case where few train nodes are available. We focus on searching the architecture that maximizes the test accuracy by varying the loss $\ell$ , the regularization $r$ , the residual connections $c_{k}$ and $K$ . For simplicity we will mostly consider the case where $c_{k}=c$ for all $k$ and for a given $c$ . We compare our theoretical predictions to simulations of the GCN for $N=10^{4}$ in fig. 2; as expected, the predictions are within the statistical errors. Details on the numerics are provided in appendix D. We provide the code to run our predictions in the supplementary material.

[26] already studies in detail the effect of $\ell$ , $r$ and $c$ at $K=1$ . It reaches the conclusion that the optimal regularization is $r\to\infty$ , that the choice of the loss $\ell$ has little effect and that there is an optimal $c=c^{*}$ of order one. According to fig. 2, it seems that these results can be extrapolated to $K>1$ . We indeed observe that, for both the quadratic and the logistic loss, at $K\in\{1,2,3\}$ , $r\to\infty$ seems optimal. Then the choice of the loss has little effect, because at large $r$ the output $h(w)$ of the network is small and only the behaviour of $\ell$ around 0 matters. Notice that, though $h(w)$ is small and the error $E_{\mathrm{train/test}}$ is trivially equal to $\ell(0)$ , the sign of $h(w)$ is mostly correct and the accuracy $\mathrm{Acc}_{\mathrm{train/test}}$ is not trivial. Last, according to the inset of fig. 2 for $K=2$ , to take $c_{1}=c_{2}$ is optimal and our assumption $c_{k}=c$ for all $k$ is justified.

To take trainable $c_{k}$ would degrade the test performances. We show this in fig. 15 in appendix E, where optimizing the train error $E_{\mathrm{train}}$ over $c_{k}=c$ trivially leads to $c=+\infty$ . Indeed, in this case the graph is discarded and the convolved features are proportional to the features $X$ ; which, if $\alpha\rho$ is small enough, are separable, and lead to a null train error. Consequently $c_{k}$ should be treated as a hyperparameter, tuned to maximize $\mathrm{Acc}_{\mathrm{test}}$ , as we do in the rest of the article.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Scatter Plot: Error Rate vs. Regularization Parameter (λ²)

### Overview

This scatter plot compares the test error rate (1 - Acc_test) across different regularization parameter values (λ²) for various model configurations. The plot includes multiple data series differentiated by model complexity (K), graph symmetrization, and theoretical benchmarks (Bayes-optimal and τ_BO).

### Components/Axes

- **X-axis**: λ² (regularization parameter), logarithmic scale from 10 to 26

- **Y-axis**: 1 - Acc_test (test error rate), logarithmic scale from 10⁻⁶ to 10⁻²

- **Legend**:

- Symbols:

- `●` = α=4, μ=1

- `+` = α=2, μ=2

- Lines:

- `---` = Bayes-optimal

- `...` = ½τ_BO

- Colors:

- Dark brown = K=1

- Medium brown = K=2

- Light brown = K=3

- Yellow = Symmetrized graphs (K=2 and K=3)

### Detailed Analysis

1. **Bayes-optimal reference**: The dashed line shows the theoretical minimum error rate, serving as a performance benchmark.

2. **τ_BO reference**: The dotted line represents half the Bayes-optimal error rate (1/2τ_BO), acting as an intermediate target.

3. **Model complexity (K)**:

- K=1 (dark brown circles): Highest error rates, consistently above τ_BO line

- K=2 (medium brown plus signs): Moderate error rates, approaching τ_BO line

- K=3 (light brown circles): Lowest error rates, closest to τ_BO line

4. **Symmetrized graphs**:

- K=2 (yellow circles): Slightly higher error than non-symmetrized K=2

- K=3 (yellow circles): Similar performance to non-symmetrized K=3

5. **Parameter combinations**:

- α=4, μ=1 (dark brown circles): Best performance across all K values

- α=2, μ=2 (brown plus signs): Worst performance, consistently above τ_BO line

### Key Observations

1. **Error reduction trend**: All data series show decreasing error rates as λ² increases, following the Bayes-optimal trend line.

2. **Symmetrization impact**: Symmetrized graphs (yellow circles) show minimal performance difference compared to non-symmetrized versions for K≥2.

3. **Parameter sensitivity**: The α=4, μ=1 configuration (dark brown circles) consistently outperforms α=2, μ=2 (brown plus signs) across all K values.

4. **Convergence pattern**: For λ² > 18, all configurations approach within 10⁻⁴ of the Bayes-optimal error rate.

### Interpretation

The plot demonstrates that:

1. **Regularization strength** (λ²) is critical for error minimization, with optimal performance achieved at λ² > 18.

2. **Model complexity** (K) directly impacts error rates, with higher K values enabling better approximation of the Bayes-optimal solution.

3. **Symmetrization** provides limited benefits for K≥2, suggesting diminishing returns in graph structure optimization.

4. **Parameter selection** (α, μ) significantly affects performance, with α=4, μ=1 being the most effective combination.

5. The τ_BO line (1/2τ_BO) acts as a practical target, with all configurations except α=2, μ=2 approaching this threshold at higher λ² values.

This analysis suggests that optimal model configuration requires balancing regularization strength (λ² > 18), model complexity (K≥2), and parameter selection (α=4, μ=1), while graph symmetrization offers minimal additional benefits for K≥2.

</details>

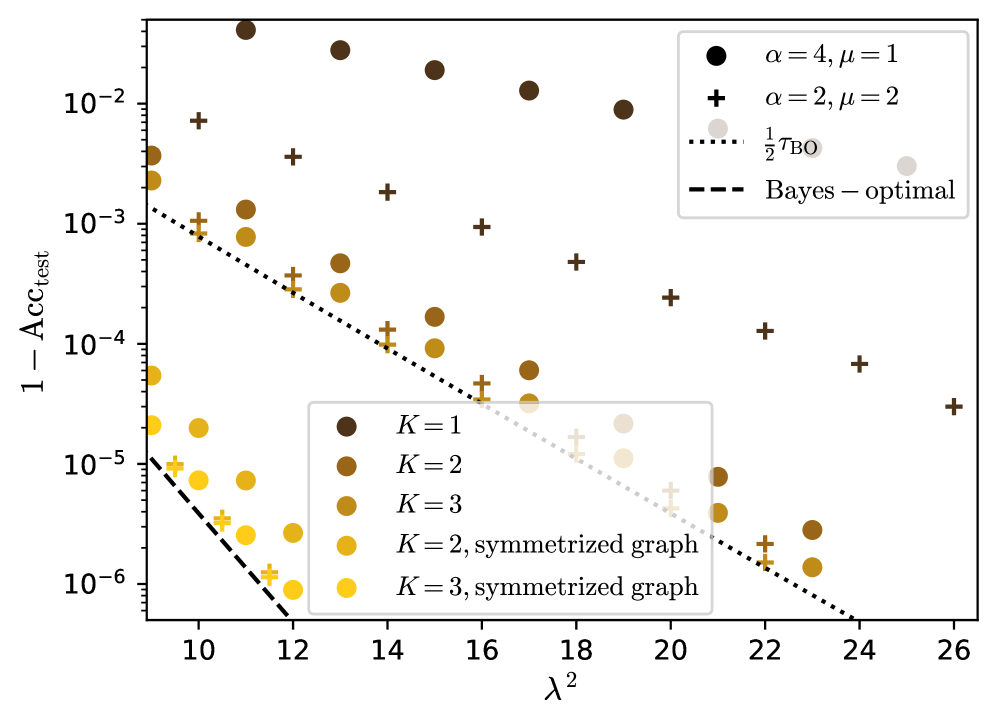

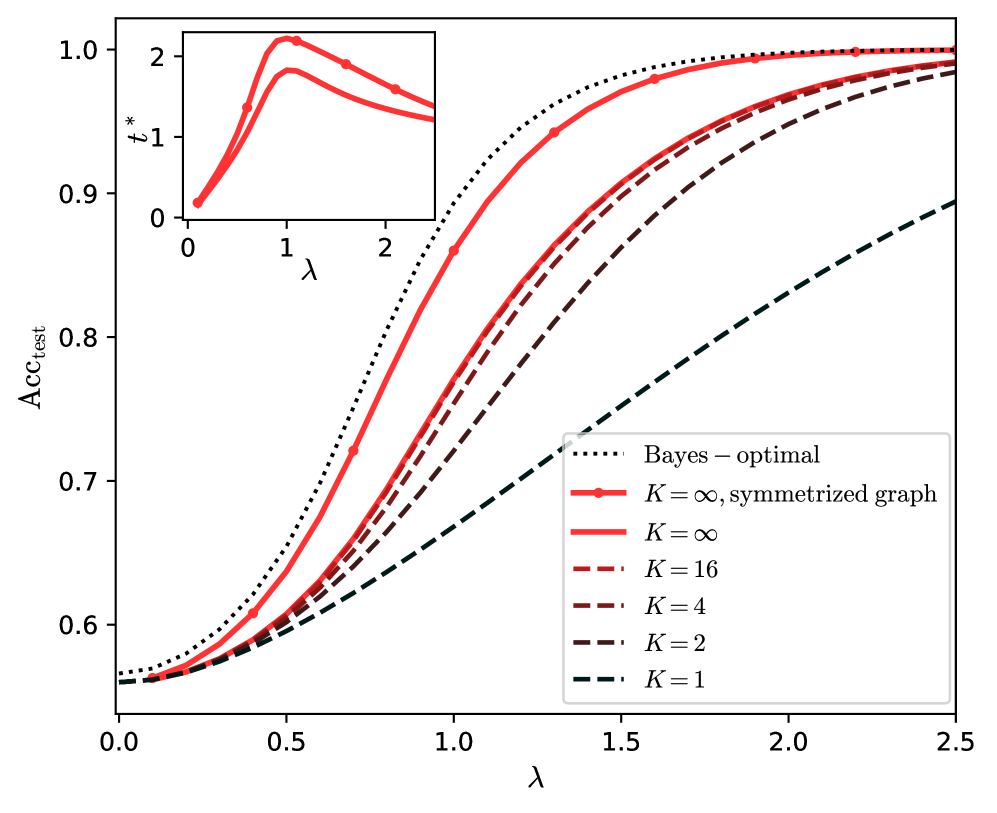

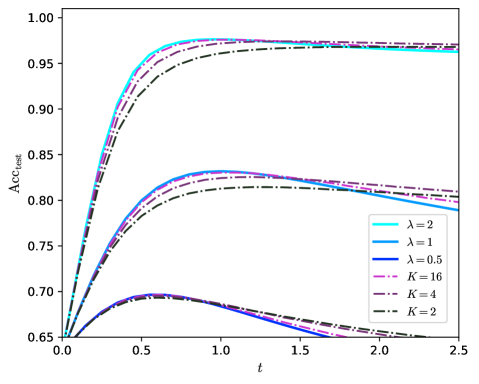

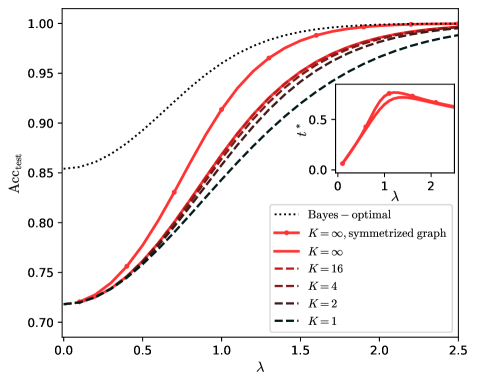

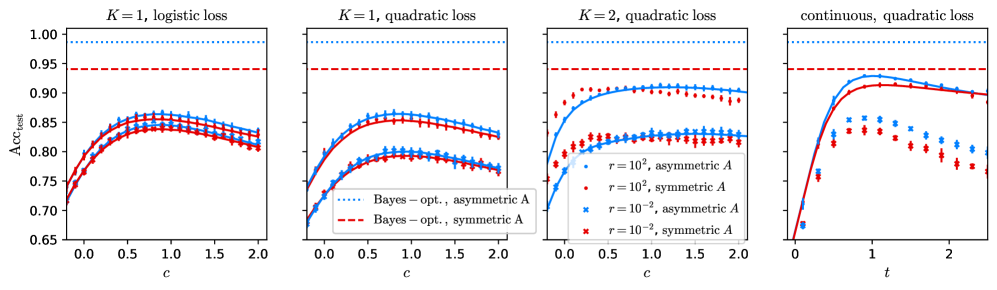

Figure 3: Predicted misclassification error $1-\mathrm{Acc}_{\mathrm{test}}$ at large $\lambda$ for two strengths of the feature signal. $r=\infty$ , $c=c^{*}$ is optimized by grid search and $\rho=0.1$ . The dots are theoretical predictions given by numerically solving the self-consistent equations (37 - 48) simplified in the limit $r\to\infty$ . For the symmetrized graph the self-consistent equations are eqs. (87 - 94) in the next part.

Finite $K$ :

We focus on the effect of varying the number $K$ of aggregation steps. [26] shows that at $K=1$ there is a large gap between the Bayes-optimal test accuracy and the best test accuracy of the GCN. We find that, according to fig. 2, for $K\in\{1,2,3\}$ , to increase $K$ reduces more and more the gap. Thus going to higher depth allows to approach the Bayes-optimality.

This also stands as to the learning rate when the signal $\lambda$ of the graph increases. At $\lambda\to\infty$ the GCN is consistent and correctly predicts the labels of all the test nodes, that is $\mathrm{Acc}_{\mathrm{test}}\underset{\lambda\to\infty}{\longrightarrow}1$ . The learning rate $\tau>0$ of the GCN is defined as

$$

\log(1-\mathrm{Acc}_{\mathrm{test}})\underset{\lambda\to\infty}{\sim}-\tau\lambda^{2}\ . \tag{54}

$$

As shown in [39], the rate $\tau_{\mathrm{BO}}$ of the Bayes-optimal test accuracy is

$$

\tau_{\mathrm{BO}}=1\ . \tag{55}

$$

For $K=1$ [26] proves that $\tau\leq\tau_{\mathrm{BO}}/2$ and that $\tau\to\tau_{\mathrm{BO}}/2$ when the signal from the features $\mu^{2}/\alpha$ diverges. We obtain that if $K>1$ then $\tau=\tau_{\mathrm{BO}}/2$ for any signal from the features. This is shown on fig. 3, where for $K=1$ the slope of the residual error varies with $\mu$ and $\alpha$ but does not reach half of the Bayes-optimal slope; while for $K>1$ it does, and the features only contribute with a sub-leading order.

Analytically, taking the limit in eqs. (52) and (53), at $c=0$ and $r\to\infty$ we have that

$$

\underset{\lambda\to\infty}{\mathrm{lim}}q_{y,K-1}\left\{\begin{array}[]{rr}=1&\mathrm{if}\,K>1\\

<1&\mathrm{if}\,K=1\end{array}\right. \tag{56}

$$

Since $\log(1-\operatorname{erf}(\lambda q_{y,K-1}/\sqrt{2}))\underset{\lambda\to\infty}{\sim}-\lambda^{2}q_{y,K-1}^{2}/2$ we recover the leading behaviour depicted on fig. 3. $c$ has little effect on the rate $\tau$ ; it only seems to vary the test accuracy by a sub-leading term.

Symmetrization:

We found that in order to reach the Bayes-optimal rate one has to further symmetrize the graph, according to eq. (14), and to perform the convolution steps by applying $\tilde{A}^{\mathrm{s}}$ instead of $\tilde{A}$ . Then, as shown on fig. 3, the GCN reaches the BO rate for any $K>1$ , at any signal from the features.

The reason of this improvement is the following. The GCN we consider is not able to deal with the asymmetry of the graph and the supplementary information it gives. This is shown on fig. 14 in appendix E for different values of $K$ at finite signal, in agreement with [20]. There is little difference in the performance of the simple GCN whether the graph is symmetric or not with same $\lambda$ . As to the rates, as shown by the computation in appendix C, a symmetric graph with signal $\lambda$ would lead to a BO rate $\tau_{\mathrm{BO}}^{\mathrm{s}}=1/2$ , which is the rate the GCN achieves on the asymmetric graph. It is thus better to let the GCN process the symmetrized the graph, which has a higher signal $\lambda^{\mathrm{s}}=\sqrt{2}\lambda$ , and which leads to $\tau=1=\tau_{\mathrm{BO}}$ .

Symmetrization is an important step toward the optimality and we will detail the analysis of the GCN on the symmetrized graph in part III.2.

Large $K$ and scaling of $c$ :

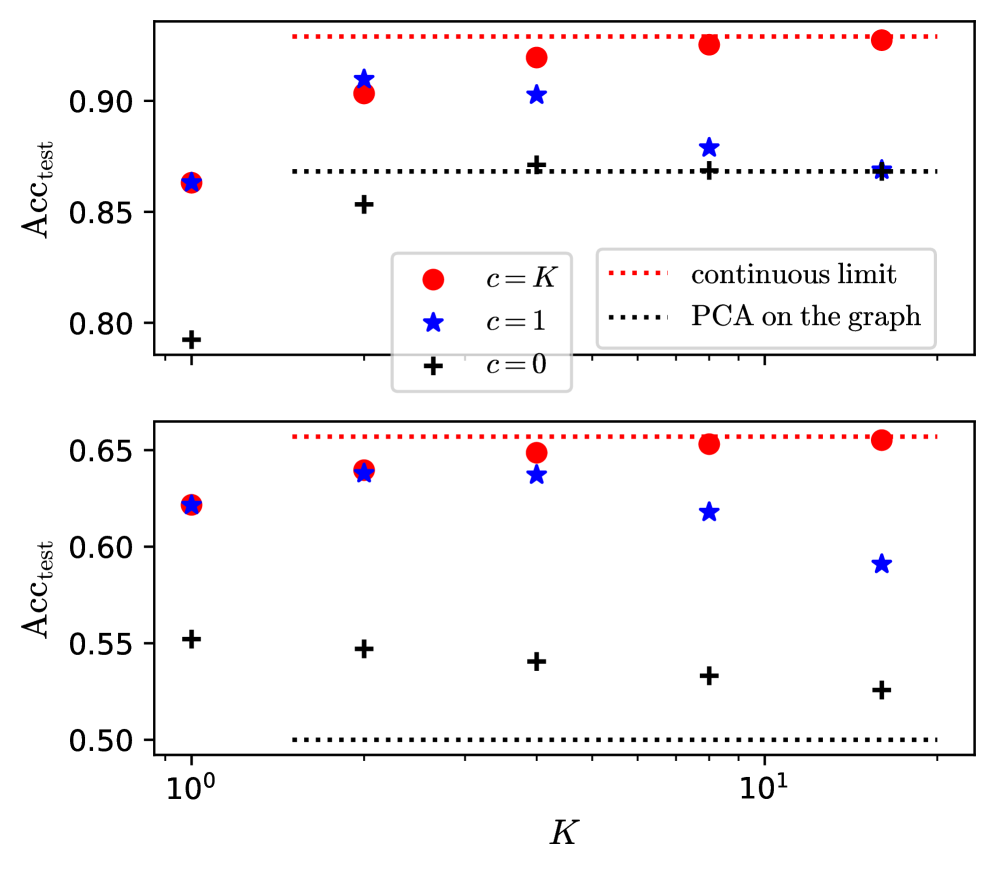

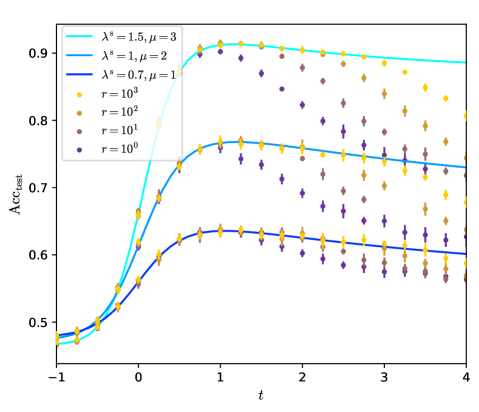

Going to larger $K$ is beneficial and allows the network to approach the Bayes optimality. Yet $K=3$ is not enough to reach it at finite $\lambda$ , and one can ask what happens at larger $K$ . An important point is that $c$ has to be well tuned. On fig. 2 we observe that $c^{*}$ , the optimal $c$ , is increasing with $K$ . To make this point more precise, on fig. 4 we show the predicted test accuracy for larger $K$ for different scalings of $c$ . We take $r=\infty$ since it appears to be the optimal regularization. We consider no residual connections, $c=0$ ; constant residual connections, $c=1$ ; or growing residual connections, $c\propto K$ .

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy Test (Acc_test) vs. Parameter K

### Overview

The image contains two vertically stacked line charts comparing test accuracy (Acc_test) across different values of parameter K (logarithmic scale from 10⁰ to 10¹). Three data series are plotted with distinct markers: red circles (c=K), blue stars (c=1), and black pluses (c=0). Two reference lines are shown: a red dotted line labeled "continuous limit" and a black dotted line labeled "PCA on the graph."

### Components/Axes

- **X-axis (K)**: Logarithmic scale from 10⁰ to 10¹, with gridlines at 10⁰, 10¹.

- **Y-axis (Acc_test)**: Ranges from 0.50 to 0.90 in the top subplot and 0.50 to 0.65 in the bottom subplot.

- **Legends**: Located in the top-left corner of each subplot, with:

- Red circles: `c = K`

- Blue stars: `c = 1`

- Black pluses: `c = 0`

- **Reference Lines**:

- Red dotted line: "continuous limit" (top subplot: ~0.90, bottom subplot: ~0.65)

- Black dotted line: "PCA on the graph" (top subplot: ~0.85, bottom subplot: ~0.50)

### Detailed Analysis

#### Top Subplot (Higher Accuracy Range)

- **Red Circles (c=K)**:

- Values: ~0.87 (K=1), ~0.90 (K=10), ~0.91 (K=100)

- Trend: Slightly increasing with K, plateauing near the red dotted line.

- **Blue Stars (c=1)**:

- Values: ~0.89 (K=1), ~0.88 (K=10), ~0.86 (K=100)

- Trend: Slightly decreasing with K, remaining below red circles.

- **Black Pluses (c=0)**:

- Values: ~0.85 (K=1), ~0.84 (K=10), ~0.83 (K=100)

- Trend: Gradual decline with K, consistently lowest.

#### Bottom Subplot (Lower Accuracy Range)

- **Red Circles (c=K)**:

- Values: ~0.63 (K=1), ~0.64 (K=10), ~0.65 (K=100)

- Trend: Slight increase with K, approaching the red dotted line.

- **Blue Stars (c=1)**:

- Values: ~0.62 (K=1), ~0.61 (K=10), ~0.59 (K=100)

- Trend: Decreasing with K, diverging from red circles.

- **Black Pluses (c=0)**:

- Values: ~0.55 (K=1), ~0.54 (K=10), ~0.53 (K=100)

- Trend: Steady decline with K, far below other series.

### Key Observations

1. **Red Circles (c=K)** consistently outperform other series, suggesting parameter adaptation to K improves accuracy.

2. **Black Pluses (c=0)** show the worst performance, indicating fixed parameters (c=0) degrade results as K increases.

3. **Blue Stars (c=1)** maintain mid-tier performance but degrade slightly with larger K.

4. **Reference Lines**:

- The red dotted line ("continuous limit") acts as an upper bound for both subplots.

- The black dotted line ("PCA on the graph") represents a lower bound, with data points generally above it.

### Interpretation

The data demonstrates that parameter scaling (`c=K`) yields the highest accuracy, particularly as K increases. This suggests adaptive parameter selection is critical for performance. The PCA method (`c=0`) underperforms, likely due to information loss from dimensionality reduction. The "continuous limit" line may represent an asymptotic upper bound achievable only with idealized conditions. The divergence between `c=1` and `c=K` in the bottom subplot highlights the importance of parameter tuning in low-resource regimes.

</details>

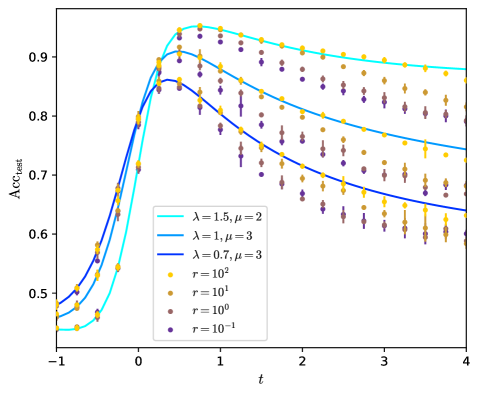

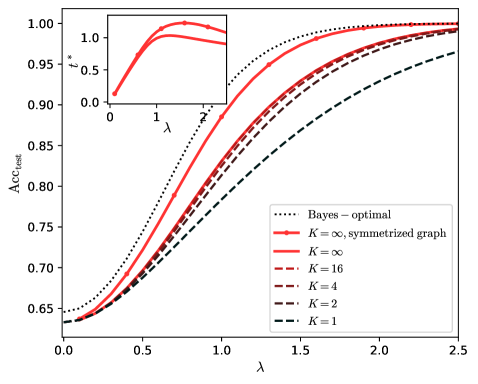

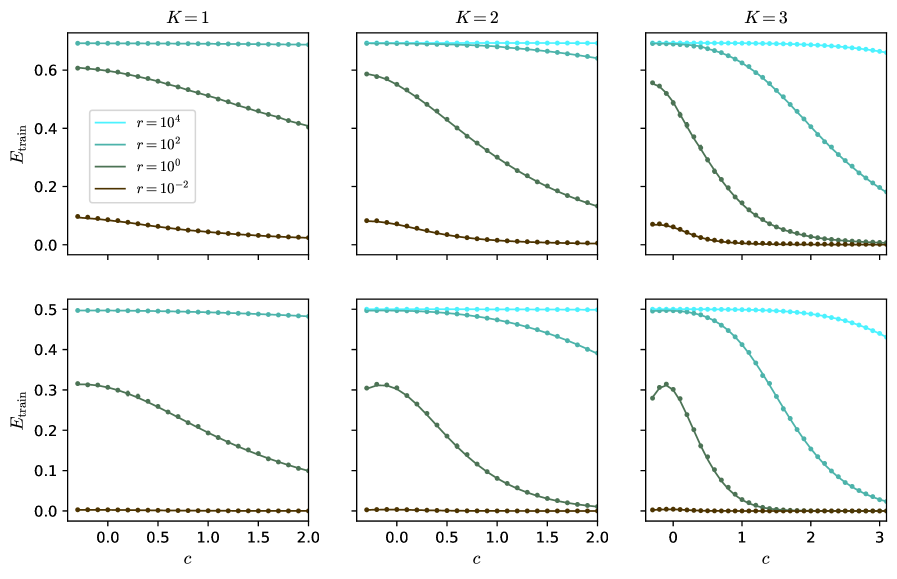

Figure 4: Predicted test accuracy $\mathrm{Acc}_{\mathrm{test}}$ vs $K$ for different scalings of $c$ , at $r=\infty$ . Top: for $\lambda=1.5$ , $\mu=3$ ; bottom: for $\lambda=0.7$ , $\mu=1$ ; $\alpha=4$ , $\rho=0.1$ . The predictions are given either by the explicit expression eqs. (51 - 53) for $c=0$ , either by solving the self-consistent equations (37 - 48) simplified in the limit $r\to\infty$ . The performance for the continuous limit are derived and given in the next section III.2, while the performance of PCA on the graph are given by eqs. (59 - 60).

A main observation is that, on fig. 4 for $K\to\infty$ , $c=0$ or $c=1$ converge to the same limit while $c\propto K$ converge to a different limit, that has higher accuracy.

In the case where $c=0$ or $c=1$ the GCN oversmooths at large $K$ . The limit it converges to corresponds to the accuracy of principal component analysis (PCA) on the sole graph; that is, it corresponds to the accuracy of the estimator $\hat{y}_{\mathrm{PCA}}=\operatorname{sign}\left(\mathrm{Re}(y_{1})\right)$ where $y_{1}$ is the leading eigenvector of $\tilde{A}$ . The overlap $q_{\mathrm{PCA}}$ between $y$ and $\hat{y}_{\mathrm{PCA}}$ and the accuracy are

$$

\displaystyle q_{\mathrm{PCA}}=\left\{\begin{array}[]{cr}\sqrt{1-\lambda^{-2}}&\mathrm{if}\,\lambda\geq 1\\

0&\mathrm{if}\,\lambda\leq 1\end{array}\right.\ , \displaystyle\mathrm{Acc}_{\mathrm{test,PCA}}=\frac{1}{2}\left(1+\mathrm{erf}\left(\frac{\lambda q_{\mathrm{PCA}}}{\sqrt{2}}\right)\right)\ . \tag{59}

$$

Consequently, if $c$ does not grow, the GCN will oversmooth at large $K$ , in the sense that all the information from the features $X$ vanishes. Only the information from the graph remains, that can still be informative if $\lambda>1$ . The formula (59 – 60) is obtained by taking the limit $K\to\infty$ in eqs. (51 – 53), for $c=0$ . For any constant $c$ it can also be recovered by considering the leading eigenvector $y_{1}$ of $\tilde{A}$ . At large $K$ , $(\tilde{A}/\sqrt{N}+cI)^{K}$ is dominated by $y_{1}$ and the output of the GCN is $h(w)\propto y_{1}$ for any $w$ . Consequently the GCN exactly acts like thresholded PCA on $\tilde{A}$ . The sharp transition at $\lambda=1$ corresponds to the BBP phase transition in the spectrum of $A^{g}$ and $\tilde{A}$ [56]. According to eqs. (51 – 53) the convergence of $q_{y,K-1}$ toward $q_{\mathrm{PCA}}$ is exponentially fast in $K$ if $\lambda>1$ ; it is like $1/\sqrt{K}$ , much slower, if $\lambda<1$ .

The fact that the oversmoothed features can be informative differs from several previous works where they are fully non-informative, such as [9, 10, 40]. This is mainly due to the normalization $\tilde{A}$ of $A$ we use and that these works do not use. It allows to remove the uniform eigenvector $(1,\ldots,1)^{T}$ , that otherwise dominates $A$ and leads to non-informative features. [36] emphasizes on this point and compares different ways of normalizing and correcting $A$ . This work concludes, as we do, that for a correct rescaling $\tilde{A}$ of $A$ , similar to ours, going to higher $K$ is always beneficial if $\lambda$ is high enough, and that the convergence to the limit is exponentially fast. Yet, at large $K$ it obtains bounds on the test accuracy that do not depend on the features: the network they consider still oversmooths in the precise sense we defined. This can be expected since it does not have residual connections, i.e. $c=0$ , that appear to be decisive.

In the case where $c\propto K$ the GCN does not oversmooth and it converges to a continuous limit, obtained as $(cI+\tilde{A}/\sqrt{N})^{K}\propto(I+t\tilde{A}/K\sqrt{N})^{K}\to e^{\frac{t\tilde{A}}{\sqrt{N}}}$ . We study this limit in detail in the next part where we predict the resulting accuracy for all constant ratios $t=c/K$ . In general the continuous limit has a better performance than the limit at constant $c$ that relies only on the graph, performing PCA, because it can take in account the features, which bring additional information.

Fig. 4 suggests that $\mathrm{Acc}_{\mathrm{test}}$ is monotonically increasing with $K$ if $c\propto K$ and that the continuous limit is the upper-bound on the performance at any $K$ . We will make this point more precise in the next part. Yet we can already see that, for this to be true, one has to correctly tune the ratio $c/K$ : for instance if $\lambda$ is small $\tilde{A}$ mostly contains noise and applying it to $X$ will mostly lower the accuracy. Shortly, if $c/K$ is optimized then $K\to\infty$ is better than any fixed $K$ . Consequently the continuous limit is the correct limit to maximize the test accuracy and it is of particular relevance.

### III.2 Continuous GCN

In this section we present the asymptotic characterization of the continuous GCN, both for the asymmetric graph and for its symmetrization. The continuous GCN is the limit of the discrete GCN when the number of convolution steps $K$ diverges while the residual connections $c$ become large. The order parameters that describe it, as well as the self-consistent equations they follow, can be obtained as the limit of those of the discrete GCN. We give a detailed derivation of how the limit is taken, since it is of independent interest.

The outcome is that the state $h$ of the GCN across the convolutions is described by a set of equations resembling the dynamical mean-field theory. The order parameters of the problem are continuous functions and the self-consistent equations can be expressed by expansion around large regularization $r\to\infty$ as integral equations, that specialize to differential equations in the asymmetric case. The resulting equations can be solved analytically; for asymmetric graphs, the covariance and its conjugate are propagators (or resolvant) of the two-dimensional Klein-Gordon equation. We show numerically that our approach is justified and agrees with simulations. Last we show that going to the continuous limit while symmetrizing the graph corresponds to the optimum of the architecture and allows to approach the Bayes-optimality.

#### III.2.1 Asymptotic characterization

To deal with both cases, asymmetric or symmetrized, we define $(\delta_{\mathrm{e}},\tilde{A}^{\mathrm{e}},\lambda^{\mathrm{e}})\in\{(0,\tilde{A},\lambda),(1,\tilde{A}^{\mathrm{s}},\lambda^{\mathrm{s}})\}$ , where we remind that $\tilde{A}^{\mathrm{s}}$ is the symmetrized $\tilde{A}$ with effective signal $\lambda^{\mathrm{s}}=\sqrt{2}\lambda$ . In particular $\delta_{\mathrm{e}}=0$ for the asymmetric and $\delta_{\mathrm{e}}=1$ for the symmetrized.

The continuous GCN is defined by the output function

$$

h(w)=e^{\frac{t}{\sqrt{N}}\tilde{A}^{\mathrm{e}}}\frac{1}{\sqrt{N}}Xw\ . \tag{61}

$$

We first derive the free entropy of the discretization of the GCN and then take the continuous limit. The discretization at finite $K$ is

$$

\displaystyle h(w)=h_{K}\ , \displaystyle h_{k+1}=\left(I_{N}+\frac{t}{K\sqrt{N}}\tilde{A}^{\mathrm{e}}\right)h_{k}\ , \displaystyle h_{0}=\frac{1}{\sqrt{N}}Xw\ . \tag{62}

$$

In the case of the asymmetric graph this discretization can be mapped to the discrete GCN of the previous section A as detailed in eq. (16) and the following paragraph; the free entropy and the order parameters of the two models are the same, up to a rescaling by $c$ .