# Graph-Augmented Reasoning: Evolving Step-by-Step Knowledge Graph Retrieval for LLM Reasoning

**Authors**: Wenjie Wu, Yongcheng Jing, Yingjie Wang, Wenbin Hu, Dacheng Tao

> Wenjie Wu and Wenbin Hu are with Wuhan University (Email: hwb@whu.edu.cn).

Yongcheng Jing, Yingjie Wang, and Dacheng Tao are with Nanyang Technological University (Email: dacheng.tao@ntu.edu.sg).

Abstract

Recent large language model (LLM) reasoning, despite its success, suffers from limited domain knowledge, susceptibility to hallucinations, and constrained reasoning depth, particularly in small-scale models deployed in resource-constrained environments. This paper presents the first investigation into integrating step-wise knowledge graph retrieval with step-wise reasoning to address these challenges, introducing a novel paradigm termed as graph-augmented reasoning. Our goal is to enable frozen, small-scale LLMs to retrieve and process relevant mathematical knowledge in a step-wise manner, enhancing their problem-solving abilities without additional training. To this end, we propose KG-RAR, a framework centered on process-oriented knowledge graph construction, a hierarchical retrieval strategy, and a universal post-retrieval processing and reward model (PRP-RM) that refines retrieved information and evaluates each reasoning step. Experiments on the Math500 and GSM8K benchmarks across six models demonstrate that KG-RAR yields encouraging results, achieving a 20.73% relative improvement with Llama-3B on Math500.

Index Terms: Large Language Model, Knowledge Graph, Reasoning

1 Introduction

Enhancing the reasoning capabilities of large language models (LLMs) continues to be a major challenge [1, 2, 3]. Conventional methods, such as chain-of-thought (CoT) prompting [4], improve inference by encouraging step-by-step articulation [5, 6, 7, 8, 9, 10], while external tool usage and domain-specific fine-tuning further refine specific task performance [11, 12, 13, 14, 15, 16]. Most recently, o1-like multi-step reasoning has emerged as a paradigm shift [17, 18, 19, 20, 21, 22], leveraging test-time compute strategies [5, 23, 24, 25, 26], exemplified by reasoning models like GPT-o1 [27] and DeepSeek-R1 [28]. These approaches, including Best-of-N [29] and Monte Carlo Tree Search [23] , allocate additional computational resources during inference to dynamically refine reasoning paths [30, 31, 29, 25].

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Comparison of Reasoning Approaches

### Overview

The image presents a comparative diagram illustrating three different reasoning approaches: Chain of Thought (CoT), Traditional Retrieval-Augmented Generation (RAG), and Step-by-Step Knowledge Graph Retrieval-Augmented Reasoning (KG-RAR). Each approach is depicted as a flow diagram, starting with a "Problem" input and culminating in an "Answer" output. The diagram highlights the different steps and components involved in each method.

### Components/Axes

The diagram consists of three vertically aligned columns, each representing a different approach. Each column is divided into stages:

1. **Problem:** Represented by a question mark inside a rounded rectangle.

2. **Reasoning Stage:** Labeled as "CoT-prompting", "CoT + RAG", and "CoT + KG-RAR" respectively. These are represented by rectangles.

3. **Step 1 & Step 2:** Represented by rectangles.

4. **Answer:** Represented by a rounded rectangle.

5. **Additional Components:** "Docs" and "KG" (Knowledge Graph) icons are present in the second and third columns, respectively. "Sub-KG" (Sub Knowledge Graph) is present in the third column. A magnifying glass icon is used to represent retrieval or search. A lightning bolt represents processing. A plus sign in a circle represents augmentation.

The bottom of each column is labeled with the name of the approach: "Chain of Thought", "Traditional RAG", and "Step-by-Step KG-RAR".

### Detailed Analysis or Content Details

**Column 1: Chain of Thought**

* Starts with "Problem".

* Proceeds to "CoT-prompting".

* Then to "Step1".

* Then to "Step2".

* Finally, to "Answer".

* A lightning bolt icon is placed between "Step2" and "Answer", indicating processing.

**Column 2: Traditional RAG**

* Starts with "Problem".

* Includes a "Docs" icon with a magnifying glass, indicating document retrieval.

* Proceeds to "CoT + RAG".

* Then to "Step1".

* Then to "Step2".

* Finally, to "Answer".

* A lightning bolt icon is placed between "Step2" and "Answer", indicating processing.

**Column 3: Step-by-Step KG-RAR**

* Starts with "Problem".

* Includes a "KG" icon with a magnifying glass, indicating knowledge graph retrieval.

* Proceeds to "CoT + KG-RAR".

* Then to "Step1".

* A "Sub-KG" icon with a magnifying glass is connected to "Step1".

* "KG-RAR of Step1" is placed below "Step1".

* Then to "Step2".

* A "Sub-KG" icon with a magnifying glass is connected to "Step2".

* Finally, to "Answer".

* A scale icon is placed between "Step2" and "Answer", indicating evaluation or refinement.

* A plus sign in a circle is placed between "KG" and "CoT + KG-RAR", and between "Sub-KG" and "Step1", and between "Sub-KG" and "Step2".

### Key Observations

* The "Traditional RAG" approach incorporates document retrieval ("Docs") while the "Step-by-Step KG-RAR" approach utilizes a knowledge graph ("KG").

* The "Step-by-Step KG-RAR" approach involves iterative retrieval from a "Sub-KG" at each step ("Step1" and "Step2").

* The "Step-by-Step KG-RAR" approach includes a refinement or evaluation step ("Scale" icon) before arriving at the "Answer".

* The "Chain of Thought" approach is the simplest, relying solely on prompting.

### Interpretation

The diagram illustrates a progression in complexity and sophistication of reasoning approaches. "Chain of Thought" represents a basic method, while "Traditional RAG" enhances it by incorporating external knowledge from documents. "Step-by-Step KG-RAR" represents the most advanced approach, leveraging a knowledge graph for iterative reasoning and refinement. The use of "Sub-KG" suggests a modular or hierarchical knowledge representation. The plus sign indicates augmentation of the reasoning process. The diagram suggests that incorporating external knowledge and iterative refinement can lead to more accurate and robust answers. The diagram is a conceptual illustration and does not contain specific data points or numerical values. It focuses on the *process* rather than the *results* of each approach. The diagram is intended to visually communicate the differences in architecture and flow between these three reasoning methods.

</details>

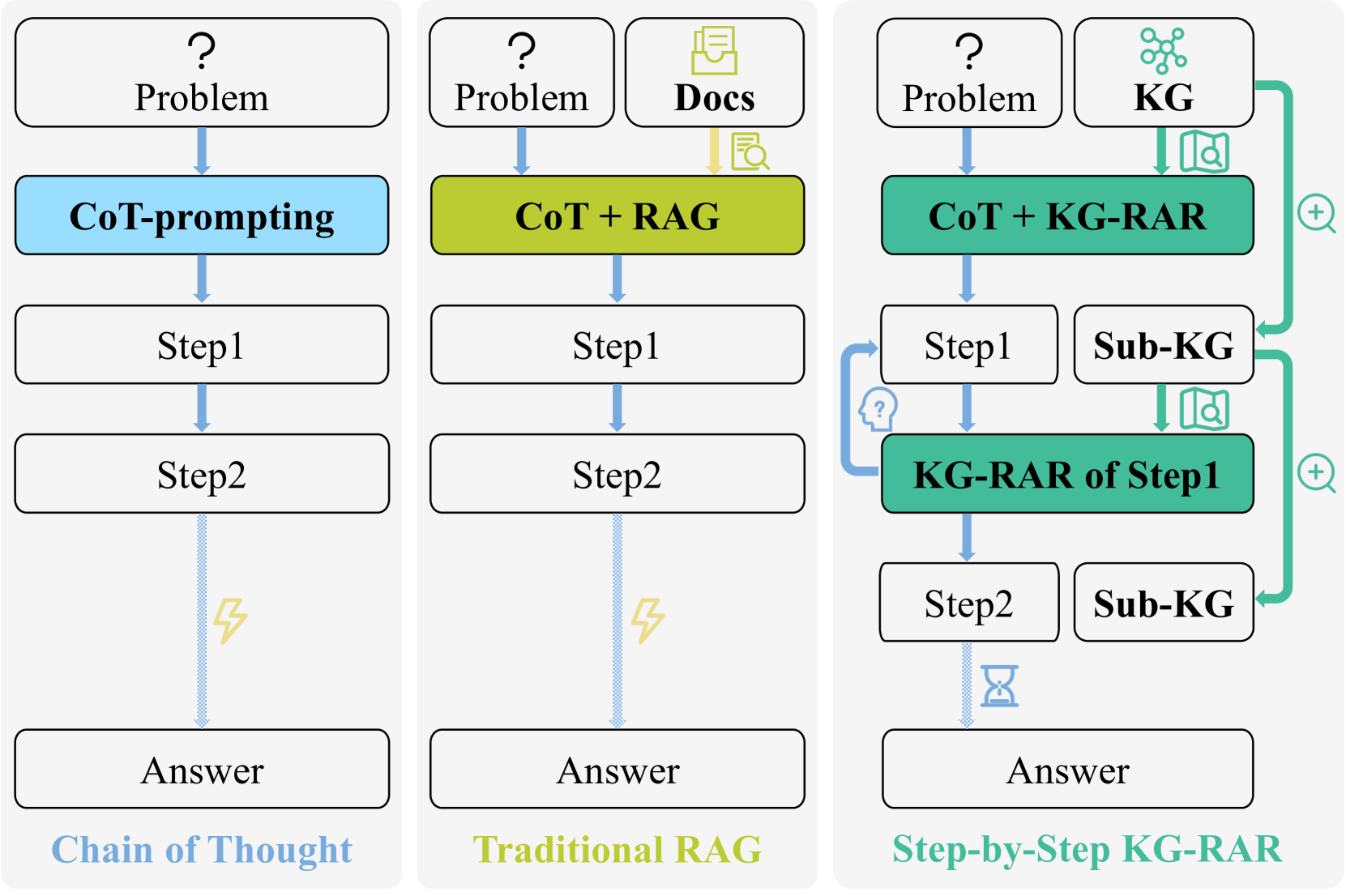

Figure 1: Illustration of the proposed step-by-step knowledge graph retrieval for o1-like reasoning, which dynamically retrieves and utilises structured sub-graphs (Sub-KGs) during reasoning. Our approach iteratively refines the reasoning process by retrieving relevant Sub-KGs at each step, enhancing accuracy, consistency, and reasoning depth for complex tasks, thereby offering a novel form of scaling test-time computation.

Despite encouraging advancements in o1-like reasoning, LLMs—particularly smaller and less powerful variants—continue to struggle with complex reasoning tasks in mathematics and science [2, 3, 32, 33]. These challenges arise from insufficient domain knowledge, susceptibility to hallucinations, and constrained reasoning depth [34, 35, 36]. Given the novelty of o1-like reasoning, effective solutions to these issues remain largely unexplored, with few studies addressing this gap in the literature [17, 18, 22]. One potential solution from the pre-o1 era is retrieval-augmented generation (RAG), which has been shown to mitigate hallucinations and factual inaccuracies by retrieving relevant information from external knowledge sources (Fig. 1, the \nth 2 column) [37, 38, 39]. However, in the context of o1-like multi-step reasoning, traditional RAG faces two significant challenges:

- (1) Step-wise hallucinations: LLMs may hallucinate during intermediate steps —a problem not addressed by applying RAG solely to the initial prompt [40, 41];

- (2) Missing structured relationships: Traditional RAG may retrieve information that lacks the structured relationships necessary for complex reasoning tasks, leading to inadequate augmentation that fails to capture the depth required for accurate reasoning [39, 42].

In this paper, we strive to address both challenges by introducing a novel graph-augmented multi-step reasoning scheme to enhance LLMs’ o1-like reasoning capability, as depicted in Fig. 1. Our idea is motivated by the recent success of knowledge graphs (KGs) in knowledge-based question answering and fact-checking [43, 44, 45, 46, 47, 48, 49]. Recent advances have demonstrated the effectiveness of KGs in augmenting prompts with retrieved knowledge or enabling LLMs to query KGs for factual information [50, 51]. However, little attention has been given to improving step-by-step reasoning for complex tasks with KGs [52, 53], such as mathematical reasoning, which requires iterative logical inference rather than simple knowledge retrieval.

To fill this gap, the objective of the proposed graph-augmented reasoning paradigm is to integrate structured KG retrieval into the reasoning process in a step-by-step manner, providing contextually relevant information at each reasoning step to refine reasoning paths and mitigate step-wise inaccuracies and hallucinations, thereby addressing both aforementioned challenges simultaneously. This approach operates without additional training, making it particularly well-suited for small-scale LLMs in resource-constrained environments. Moreover, it extends test-time compute by incorporating external knowledge into the reasoning context, transitioning from direct CoT to step-wise guided retrieval and reasoning.

Nevertheless, implementing this graph-augmented reasoning paradigm is accompanied with several key issues: (1) Frozen LLMs struggle to query KGs effectively [50], necessitating a dynamic integration strategy for iterative incorporation of graph-based knowledge; (2) Existing KGs primarily encode static facts rather than the procedural knowledge required for multi-step reasoning [54, 55], highlighting the need for process-oriented KGs; (3) Reward models, which are essential for validating reasoning steps [30, 29], often require costly fine-tuning and suffer from poor generalization [56], underscoring the need for a universal, training-free scoring mechanism tailored to KG.

To address these issues, we propose KG-RAR, a step-by-step knowledge graph based retrieval-augmented reasoning framework that retrieves, refines, and reasons using structured knowledge graphs in a step-wise manner. Specifically, to enable effective KG querying, we design a hierarchical retrieval strategy in which questions and reasoning steps are progressively matched to relevant subgraphs, dynamically narrowing the search space. Also, we present a process-oriented math knowledge graph (MKG) construction method that encodes step-by-step procedural knowledge, ensuring that LLMs retrieve and apply structured reasoning sequences rather than static facts. Furthermore, we introduce the post-retrieval processing and reward model (PRP-RM)—a training-free scoring mechanism that refines retrieved knowledge before reasoning and evaluates step correctness in real time. By integrating structured retrieval with test-time computation, our approach mitigates reasoning inconsistencies, reduces hallucinations, and enhances stepwise verification—all without additional training.

In sum, our contribution is therefore the first attempt that dynamically integrates step-by-step KG retrieval into an o1-like multi-step reasoning process. This is achieved through our proposed hierarchical retrieval, process-oriented graph construction method, and PRP-RM—a training-free scoring mechanism that ensures retrieval relevance and step correctness. Experiments on Math500 and GSM8K validate the effectiveness of our approach across six smaller models from the Llama3 and Qwen2.5 series. The best-performing model, Llama-3B on Math500, achieves a 20.73% relative improvement over CoT prompting, followed by Llama-8B on Math500 with a 15.22% relative gain and Llama-8B on GSM8K with an 8.68% improvement.

2 Related Work

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Problem Solving Process - Remainder Calculation

### Overview

The image depicts a diagram illustrating a problem-solving process, specifically for calculating the remainder when dividing 1234 by 35. The diagram is structured around two main branches: "Retrieving" and "Refining," with "Reasoning" steps interspersed. It uses boxes and arrows to show the flow of thought and calculations. The diagram also includes a "CoT" (Chain of Thought) section.

### Components/Axes

The diagram is divided into three main sections:

* **Question:** Located at the top, stating the problem.

* **Retrieving & Refining:** Two main branches representing different aspects of the problem-solving process.

* **CoT (Chain of Thought):** A vertical section on the right providing a step-by-step explanation.

The diagram also includes boxes labeled with concepts like "Congruence," "Modular Arithmetic," "Number Theory," "division algorithm," and "multiplication of relevant units digits." There are placeholders labeled "Is..." within several boxes, indicating where specific values or results would be inserted.

### Detailed Analysis or Content Details

**Question:** "A factory produces items in batches of 35. If today is the 1234th batch, what is the remainder?"

**Retrieving (Top Branch):**

* **Congruence:** Leads to a text block: "Suppose that a 305-digit integer SNS is composed of thirteen $78s and seventeen $33s. What is the remainder when SNS is divided by $365?"

* **Modular Arithmetic:** Connected to "multiplication of relevant units digits" and "application of last digit property." Placeholder: "modular arithmetic: Is..."

* **Number Theory:** Leads to a question: "What is the remainder when the product $1734 \times 5389 \times 806075$ is divided by 10?" Placeholder: "last digit property: Is..."

**Retrieving (Bottom Branch):**

* **Checking if the solution satisfies the congruence condition:** Leads to "performing division to find the quotient and remainder." Placeholder: "quotient: Is..."

* **Division Algorithm:** Connected to "expressing the number as a sum of powers of the base using the quotient and remainder." Placeholder: "remainder: Is..."

* **Finding the next multiple of the modulus:** Leads to "zero remainder: Is..." and "the solution does not satisfy the congruence condition."

**Refining (Right Branch):**

* **Retrieval:** "The question can be solved using modular arithmetic..."

* **Reasoning Step 1:** "Divide 1234 by 35: 1234 ÷ 35 = 35.2571"

* **Retrieval:** "The remainder is what remains after subtracting the largest multiple of 35 that fits into 1234..."

* **Reasoning Step 2:** "Subtract this product from 1234 to find the remainder: 1234 – 35 x 35 = 9"

**CoT (Chain of Thought):** "Let's think step by step..."

### Key Observations

The diagram illustrates a multi-step problem-solving approach. It combines theoretical concepts (Congruence, Modular Arithmetic) with practical calculations (division, subtraction). The "Retrieving" branches seem to explore different approaches or related problems, while the "Refining" branch focuses on the specific solution to the original question. The use of placeholders ("Is...") suggests this is a template for a more detailed solution.

### Interpretation

The diagram demonstrates a cognitive process for solving mathematical problems, particularly those involving remainders. It highlights the importance of both theoretical understanding (retrieving relevant concepts) and practical application (refining the solution through calculations). The "CoT" section emphasizes a step-by-step approach, which is crucial for complex problems. The inclusion of seemingly unrelated problems (the 305-digit integer and the product of large numbers) within the "Retrieving" branches suggests a strategy of drawing analogies or applying similar principles to the main problem. The diagram is not presenting a specific numerical answer, but rather a *process* for arriving at an answer. The placeholders indicate that the diagram is a framework for a complete solution, and the final remainder is calculated to be 9. The diagram is a visual representation of a Chain-of-Thought reasoning process.

</details>

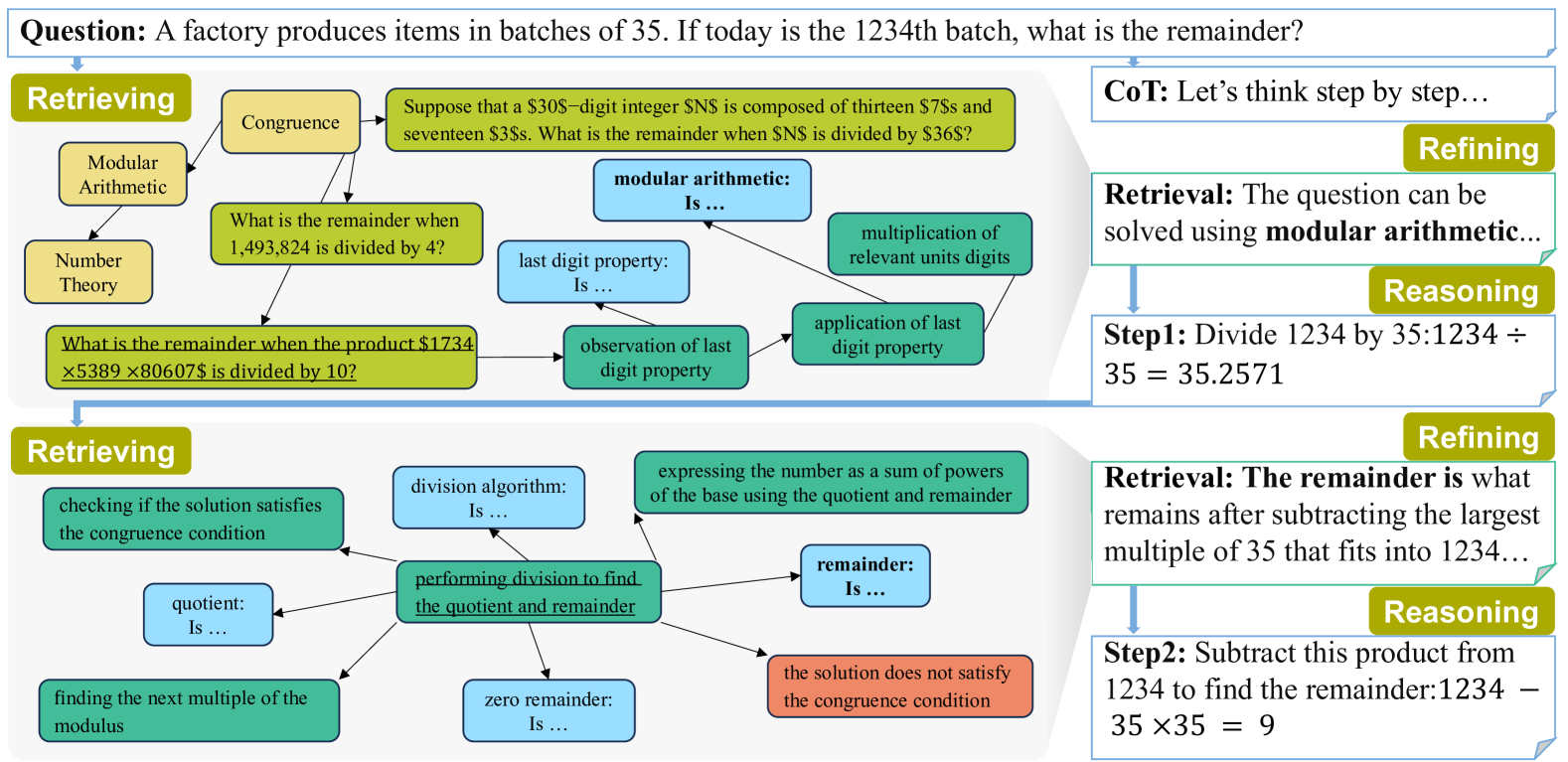

Figure 2: Example of Step-by-Step KG-RAR’s iterative process: 1) Retrieving: For a given question or intermediate reasoning step, the KG is retrieved to find the most similar problem or procedure (underlined in the figure) and extract its subgraph as the raw retrieval. 2) Refining: A frozen LLM processes the raw retrieval to generate a refined and targeted context for reasoning. 3) Reasoning: Using the refined retrieval, another LLM reflects on previous steps and generates next intermediate reasoning steps. This iterative workflow refines and guides the reasoning path to problem-solving.

2.1 LLM Reasoning

Large Language Models (LLMs) have advanced in structured reasoning through techniques like Chain-of-Thought (CoT) [4], Self-Consistency [5], and Tree-of-Thought [10], improving inference by generating intermediate steps rather than relying on greedy decoding [6, 7, 8, 57, 58, 9]. Recently, GPT-o1-like reasoning has emerged as a paradigm shift [17, 18, 25, 22], leveraging Test-Time Compute strategies such as Best-of-N [29], Beam Search [5], and Monte Carlo Tree Search [23], often integrated with reward models to refine reasoning paths dynamically [30, 31, 29, 59, 60, 61]. Reasoning models like DeepSeek-R1 exemplify this trend by iteratively searching, verifying, and refining solutions, significantly enhancing inference accuracy and robustness [27, 28]. However, these methods remain computationally expensive and challenging for small-scale LLMs, which struggle with hallucinations and inconsistencies due to limited reasoning capacity and lack of domain knowledge [32, 34, 3].

2.2 Knowledge Graphs Enhanced LLM Reasoning

Knowledge Graphs (KGs) are structured repositories of interconnected entities and relationships, offering efficient graph-based knowledge representation and retrieval [62, 63, 64, 65, 54]. Prior work integrating KGs with LLMs has primarily focused on knowledge-based reasoning tasks such as knowledge-based question answering [43, 44, 66, 67], fact-checking [45, 46, 68], and entity-centric reasoning [69, 47, 70, 48, 49]. However, in these tasks, ”reasoning” is predominantly limited to identifying and retrieving static knowledge rather than performing iterative, multi-step logical computations [71, 72, 73]. In contrast, our work is to integrate KGs with LLMs for o1-like reasoning in domains such as mathematics, where solving problems demands dynamic, step-by-step inference rather than static knowledge retrieval.

2.3 Reward Models

Reward models are essential across various domains such as computer vision [74, 75]. Notably, they play a crucial role in aligning LLM outputs with human preferences by evaluating accuracy, relevance, and logical consistency [76, 77, 78]. Fine-tuned reward models, including Outcome Reward Models (ORMs) [30] and Process Reward Models (PRMs) [31, 29, 60], improve validation accuracy but come at a high training cost and often lack generalization across diverse tasks [56]. Generative reward models [61] further enhance performance by integrating CoT reasoning into reward assessments, leveraging Test-Time Compute to refine evaluation. However, the reliance on fine-tuning makes these models resource-intensive and limits adaptability [56]. This underscores the need for universal, training-free scoring mechanisms that maintain robust performance while ensuring computational efficiency across various reasoning domains.

3 Pre-analysis

3.1 Motivation and Problem Definition

Motivation. LLMs have demonstrated remarkable capabilities across various domains [79, 4, 80, 81, 82], yet their proficiency in complex reasoning tasks remains limited [3, 83, 84]. Challenges such as hallucinations [34, 85], inaccuracies, and difficulties in handling complex, multi-step reasoning due to insufficient reasoning depth are particularly evident in smaller models or resource-constrained environments [86, 87, 88]. Moreover, traditional reward models, including ORMs [30] and PRMs [31, 29], require extensive fine-tuning, incurring significant computational costs for dataset collection, GPU usage, and prolonged training time [89, 90, 91]. Despite these efforts, fine-tuned reward models often suffer from poor generalization, restricting their effectiveness across diverse reasoning tasks [56].

To simultaneously overcome these challenges, this paper introduces a novel paradigm tailored for o1-like multi-step reasoning:

Remark 3.1 (Graph-Augmented Multi-Step Reasoning). The goal of graph-augmented reasoning is to enhance the step-by-step reasoning ability of frozen LLMs by integrating external knowledge graphs (KGs), eliminating the need for additional fine-tuning.

The proposed graph-augmented scheme aims to offer the following unique advantages:

- Improving Multi-Step Reasoning: Enhances reasoning capabilities, particularly for small-scale LLMs in resource-constrained environments;

- Scaling Test-Time Compute: Introduces a novel dimension of scaling test-time compute through dynamic integration of external knowledge;

- Transferability Across Reasoning Tasks: By leveraging domain-specific KGs, the framework can be easily adapted to various reasoning tasks, enabling transferability across different domains.

3.2 Challenge Analysis

However, implementing the proposed graph-augmented reasoning paradigm presents several critical challenges:

- Effective Integration: How can KGs be efficiently integrated with LLMs to support step-by-step reasoning without requiring model modifications? Frozen LLMs cannot directly query KGs effectively [50]. Additionally, since LLMs may suffer from hallucinations and inaccuracies during intermediate reasoning steps [34, 85, 41], it is crucial to dynamically integrate KGs at each step rather than relying solely on static knowledge retrieved at the initial stage;

- Knowledge Graph Construction: How can we design and construct process-oriented KGs tailored for LLM-driven multi-step reasoning? Existing KGs predominantly store static knowledge rather than the procedural and logical information required for reasoning [54, 55, 92]. A well-structured KG that represents reasoning steps, dependencies, and logical flows is necessary to support iterative reasoning;

- Universal Scoring Mechanism: How can we develop a training-free reward mechanism capable of universally evaluating reasoning paths across diverse tasks without domain-specific fine-tuning? Current approaches depend on fine-tuned reward models, which are computationally expensive and lack adaptability [56]. A universal, training-free scoring mechanism leveraging frozen LLMs is essential for scalable and efficient reasoning evaluation.

To address these challenges and unlock the full potential of graph-augmented reasoning, we propose a Step-by-Step Knowledge Graph based Retrieval-Augmented Reasoning (KG-RAR) framework, accompanied by a dedicated Post-Retrieval Processing and Reward Model (PRP-RM), which will be elaborated in the following section.

4 Proposed Approach

4.1 Overview

Our objective is to integrate KGs for o1-like reasoning with frozen, small-scale LLMs in a training-free and universal manner. This is achieved by integrating a step-by-step knowledge graph based retrieval-augmented reasoning (KG-RAR) module within a structured, iterative reasoning framework. As shown in Figure 2, the iterative process comprises three core phases: retrieving, refining, and reasoning.

4.2 Process-Oriented Math Knowledge Graph

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Math Problem Solving Workflow

### Overview

This diagram illustrates a workflow for solving math problems using datasets, a Large Language Model (LLM), and a Math Knowledge Graph. The process begins with a problem from a dataset, which is then processed by the LLM and ultimately interacts with the Math Knowledge Graph to arrive at a solution, potentially identifying errors along the way.

### Components/Axes

The diagram is divided into three main columns: "Datasets", "LLM", and "Math Knowledge Graph". Arrows indicate the flow of information between these components. Within each column, there are multiple steps or nodes.

* **Datasets:** Contains "Problem", "Step1", "Step2", "Step3". Each step is accompanied by an emoji: Step1 (✅), Step2 (✅), Step3 (🙁).

* **LLM:** A central column with an image of a llama. Arrows connect the datasets to the Math Knowledge Graph via the LLM.

* **Math Knowledge Graph:** Contains nodes representing "Branch", "SubField", "Problem", "Problem Type", "Procedure1", "Knowledge1", "Procedure2", "Knowledge2", "Error3", and "Knowledge3".

### Detailed Analysis or Content Details

The diagram shows a sequential process:

1. **Problem Input:** A "Problem" is initially presented from the "Datasets" column.

2. **LLM Processing:** The problem is passed to the "LLM" (represented by a llama image).

3. **Knowledge Graph Interaction - Branch/Subfield:** The LLM interacts with the "Math Knowledge Graph", initially connecting to "Branch" and "SubField" nodes.

4. **Problem Identification:** The LLM then identifies the "Problem" and its "Problem Type" within the Knowledge Graph.

5. **Step 1 Processing:** "Step1" from the "Datasets" column is processed by the LLM, leading to "Procedure1" and "Knowledge1" in the Knowledge Graph. Step1 is marked with a checkmark emoji (✅).

6. **Step 2 Processing:** "Step2" from the "Datasets" column is processed by the LLM, leading to "Procedure2" and "Knowledge2" in the Knowledge Graph. Step2 is marked with a checkmark emoji (✅).

7. **Step 3 Processing & Error Detection:** "Step3" from the "Datasets" column is processed by the LLM, leading to "Error3" and "Knowledge3" in the Knowledge Graph. Step3 is marked with a frowning face emoji (🙁), indicating a potential error.

The arrows indicate a unidirectional flow of information from left to right. The nodes in the "Math Knowledge Graph" are rectangular with rounded corners. The color of the nodes varies: green for successful steps ("Problem", "Procedure1", "Procedure2"), and red for error identification ("Error3"). The "Branch" and "SubField" nodes are yellow.

### Key Observations

* The diagram highlights a potential error detection mechanism in "Step3".

* The LLM acts as an intermediary between the datasets and the Math Knowledge Graph.

* The Knowledge Graph appears to be structured hierarchically, with "Branch" and "SubField" at the top level, followed by "Problem" and "Problem Type", and then "Procedure/Error" and "Knowledge".

* The use of emojis provides a quick visual indication of the success or failure of each step.

### Interpretation

This diagram illustrates a system designed to solve math problems by leveraging the reasoning capabilities of an LLM and the structured knowledge within a Math Knowledge Graph. The workflow suggests that the LLM not only uses the Knowledge Graph to find relevant information but also to validate its reasoning process. The inclusion of "Error3" suggests that the system is capable of identifying and flagging potential errors in its solution path. The emojis are a simple but effective way to communicate the status of each step in the process. The diagram implies a feedback loop where the LLM's interaction with the Knowledge Graph can lead to error detection and refinement of the solution. The overall system aims to combine the flexibility of LLMs with the reliability of structured knowledge representation.

</details>

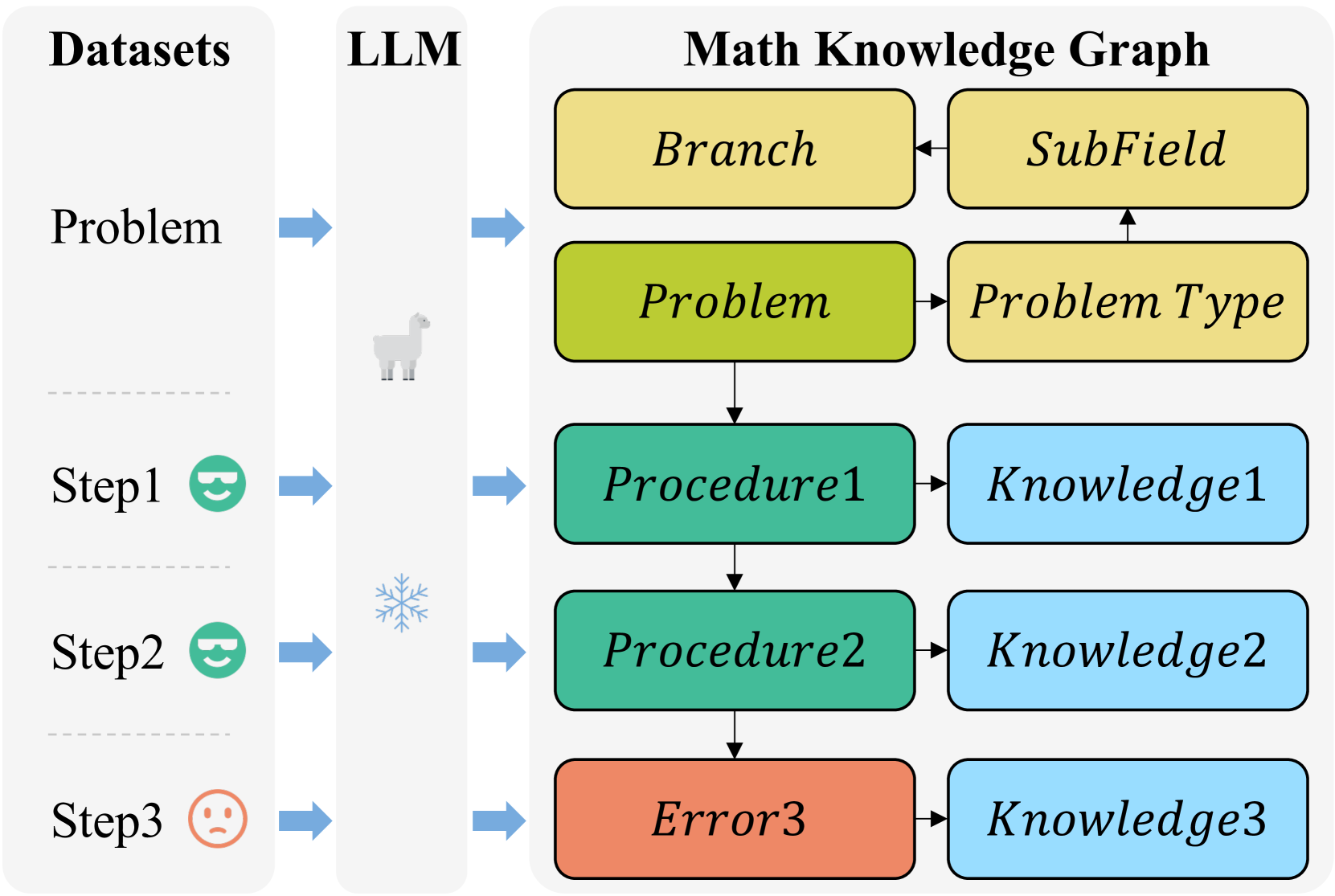

Figure 3: Pipeline for constructing the process-oriented math knowledge graph from process supervision datasets.

To support o1-like multi-step reasoning, we construct a Mathematical Knowledge Graph tailored for multi-step logical inference. Public process supervision datasets, such as PRM800K [29], provide structured problem-solving steps annotated with artificial ratings. Each sample will be decomposed into the following structured components: branch, subfield, problem type, problem, procedures, errors, and related knowledge.

The Knowledge Graph is formally defined as: $G=(V,E)$ , where $V$ represents nodes—including problems, procedures, errors, and mathematical knowledge—and $E$ represents edges encoding their relationships (e.g., ”derived from,” ”related to”).

As shown in Figure 3, for a given problem $P$ with solutions $S_{1},S_{2},...,S_{n}$ and human ratings, the structured representation is:

$$

P\mapsto\{B_{p},F_{p},T_{p},\mathbf{r}\},

$$

| | $\displaystyle S_{i}^{\text{good}}$ | $\displaystyle\mapsto\{S_{i},K_{i},\mathbf{r}_{i}^{\text{good}}\},\quad S_{i}^{%

\text{bad}}\mapsto\{E_{i},K_{i},\mathbf{r}_{i}^{\text{bad}}\},$ | |

| --- | --- | --- | --- |

where $B_{p},F_{p},T_{p}$ represent the branch, subfield, and type of $P$ , respectively. The symbols $S_{i}$ and $E_{i}$ denote the procedures derived from correct steps and the errors from incorrect steps, respectively. Additionally, $K_{i}$ contains relevant mathematical knowledge. The relationships between problems, steps, and knowledge are encoded through $\mathbf{r},\mathbf{r}_{i}^{\text{good}},\mathbf{r}_{i}^{\text{bad}}$ , which capture the edge relationships linking these elements.

To ensure a balance between computational efficiency and quality of KG, we employ a Llama-3.1-8B-Instruct model to process about 10,000 unduplicated samples from PRM800K. The LLM is prompted to output structured JSON data, which is subsequently transformed into a Neo4j-based MKG. This process yields a graph with approximately $80,000$ nodes and $200,000$ edges, optimized for efficient retrieval.

4.3 Step-by-Step Knowledge Graph Retrieval

KG-RAR for Problem Retrieval. For a given test problem $Q$ , the most relevant problem $P^{*}∈ V_{p}$ and its subgraph are retrieved to assist reasoning. The retrieval pipeline comprises the following steps:

1. Initial Filtering: Classify $Q$ by $B_{q},F_{q},T_{q}$ (branch, subfield, and problem type). The candidate set $V_{Q}⊂ V_{p}$ is filtered hierarchically, starting from $T_{q}$ , expanding to $F_{q}$ , and then to $B_{q}$ if no exact match is found.

2. Semantic Similarity Scoring:

$$

P^{*}=\arg\max_{P\in V_{Q}}\cos(\mathbf{e}_{Q},\mathbf{e}_{P}),

$$

where:

$$

\cos(\mathbf{e}_{Q},\mathbf{e}_{P})=\frac{\langle\mathbf{e}_{Q},\mathbf{e}_{P}%

\rangle}{\|\mathbf{e}_{Q}\|\|\mathbf{e}_{P}\|}

$$

and $\mathbf{e}_{Q},\mathbf{e}_{P}∈\mathbb{R}^{d}$ are embeddings of $Q$ and $P$ , respectively.

3. Context Retrieval: Perform Depth-First Search (DFS) on $G$ to retrieve procedures ( $S_{p}$ ), errors ( $E_{p}$ ), and knowledge ( $K_{p}$ ) connected to $P^{*}$ .

Input: Test problem $Q$ and MKG $G$

Output: Most relevant problem $P^{*}$ and its context $(S_{p},E_{p},K_{p})$

1 Filter $G$ using $B_{q}$ , $F_{q}$ , and $T_{q}$ to obtain $V_{Q}$ ;

2 foreach $P∈ V_{Q}$ do

3 Compute $\text{Sim}_{\text{semantic}}(Q,P)$ ;

4

5 $P^{*}←\arg\max_{P∈ V_{Q}}\text{Sim}_{\text{semantic}}(Q,P)$ ;

6 Retrieve $S_{p}$ , $E_{p}$ , and $K_{p}$ from $P^{*}$ using DFS;

7 return $P^{*}$ , $S_{p}$ , $E_{p}$ , and $K_{p}$ ;

Algorithm 1 KG-RAR for Problem Retrieval

KG-RAR for Step Retrieval. Given an intermediate reasoning step $S$ , the most relevant step $S^{*}∈ G$ and its subgraph is retrieved dynamically:

1. Contextual Filtering: Restrict the search space $V_{S}$ to the subgraph induced by previously retrieved top-k similar problems $\{P_{1},P_{2},...,P_{k}\}∈ V_{Q}$ .

2. Step Similarity Scoring:

$$

S^{*}=\arg\max_{S_{i}\in V_{S}}\cos(\mathbf{e}_{S},\mathbf{e}_{S_{i}}).

$$

3. Context Retrieval: Perform Breadth-First Search (BFS) on $G$ to extract subgraph of $S^{*}$ , including potential next steps, related knowledge, and error patterns.

4.4 Post-Retrieval Processing and Reward Model

Step Verification and End-of-Reasoning Detection. Inspired by previous works [93, 56, 94, 61], we use a frozen LLM to evaluate both step correctness and whether reasoning should terminate. The model is queried with an instruction, producing a binary classification decision:

$$

\textit{Is this step correct (Yes/No)? }\begin{cases}\text{Yes}\xrightarrow[%

Probability]{Token}p(\text{Yes})\\

\text{No}\xrightarrow[Probability]{Token}p(\text{No})\\

\text{Other Tokens.}\end{cases}

$$

The corresponding confidence score for step verification or reasoning termination is computed as:

$$

Score(S,I)=\frac{\exp(p(\text{Yes}|S,I))}{\exp(p(\text{Yes}|S,I))+\exp(p(\text%

{No}|S,I))}.

$$

For step correctness, the instruction $I$ is ”Is this step correct (Yes/No)?”, while for reasoning termination, the instruction $I_{E}$ is ”Has a final answer been reached (Yes/No)?”.

Post-Retrieval Processing. Post-retrieval processing is a crucial component of the retrieval-augmented generation (RAG) framework, ensuring that retrieved information is improved to maximize relevance while minimizing noise [37, 95, 96].

For a problem $P$ or a reasoning step $S$ :

$$

\mathcal{R}^{\prime}=\operatorname{LLM}_{\text{refine}}(P+\mathcal{R}\text{ or%

}S+\mathcal{R}),

$$

where $\mathcal{R}$ is the raw retrieved context, and $\mathcal{R}^{\prime}$ represents its rewritten, targeted form.

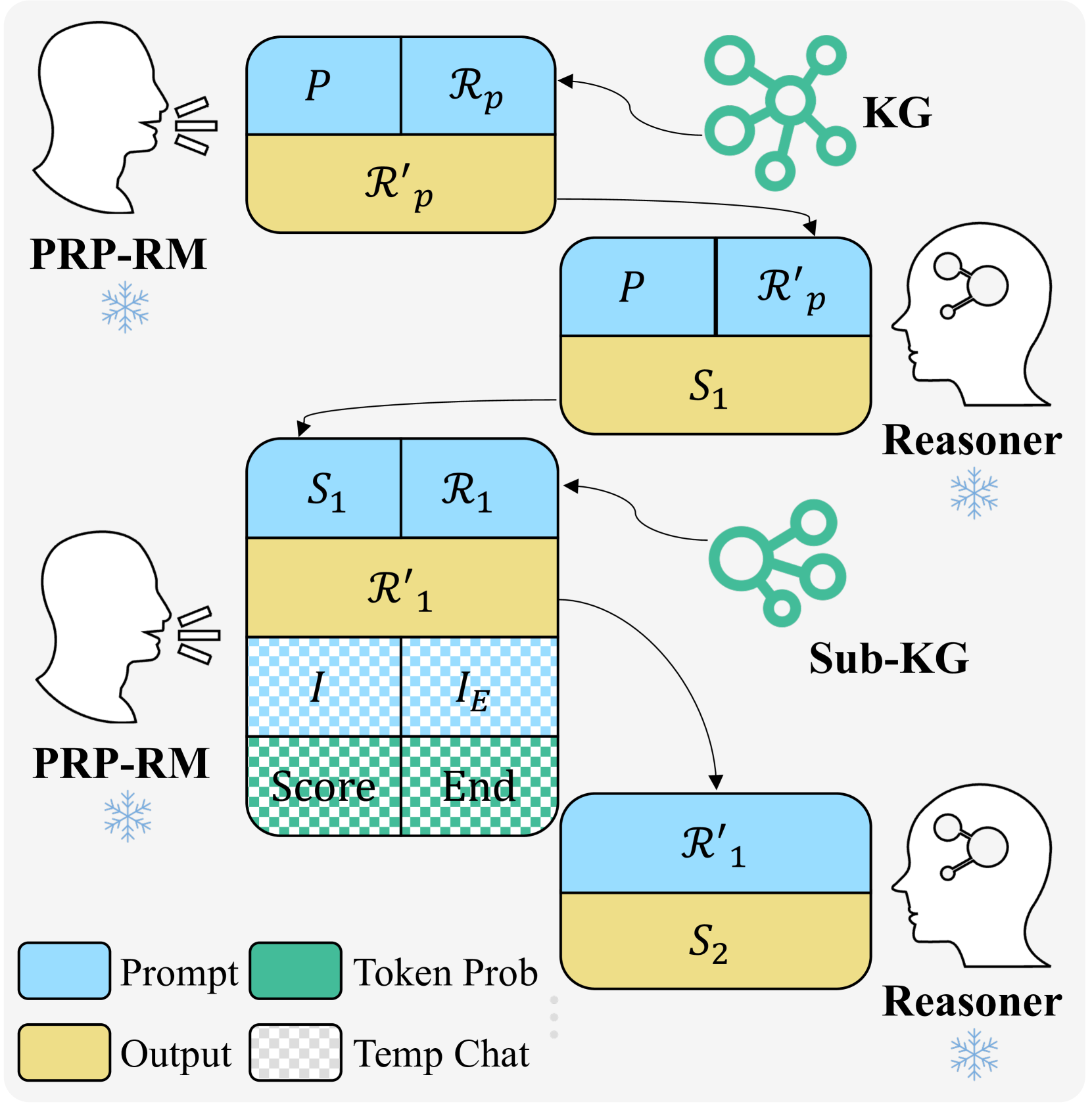

Iterative Refinement and Verification. Inspired by generative reward models [61, 97], we integrate retrieval refinement as a form of CoT reasoning before scoring each step. To ensure consistency in multi-step reasoning, we employ an iterative retrieval refinement and scoring mechanism, as illustrated in Figure 4.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Diagram: PRP-RM Reasoning Process

### Overview

This diagram illustrates the iterative reasoning process of a system called PRP-RM (likely Prompt-Reasoning-Response Model). It depicts two cycles of interaction between a user (represented by a head silhouette), a Knowledge Graph (KG), a Reasoner, and intermediate outputs. The process involves prompting, reasoning, generating sub-knowledge graphs, and ultimately producing a response.

### Components/Axes

The diagram consists of several key components:

* **PRP-RM (Prompt-Reasoning-Response Model):** Represented by a head silhouette with a snowflake icon, indicating user interaction.

* **KG (Knowledge Graph):** Represented by interconnected green circles.

* **Sub-KG (Sub Knowledge Graph):** Similar to KG, but smaller and representing a focused subset of knowledge.

* **Reasoner:** Represented by a brain silhouette with a snowflake icon, indicating the reasoning engine.

* **Prompt (P):** Represented by a blue rectangle.

* **Response (R):** Represented by a light blue rectangle.

* **Intermediate State (S1, S2):** Represented by a yellow rectangle.

* **Token Probability (I, IE):** Represented by a series of small blue rectangles within a larger rectangle.

* **Score & End:** Represented by a green and red striped rectangle.

* **Temp Chat:** Represented by a light gray rectangle.

* **Arrows:** Indicate the flow of information between components.

### Detailed Analysis or Content Details

The diagram shows two iterations of the reasoning process.

**Iteration 1:**

1. The top PRP-RM provides a **Prompt (P)**, which is passed to the **KG**.

2. The KG generates a **Response (Rp)**, and a revised prompt **(Rp')**.

3. The revised prompt **(Rp')** and the original **Prompt (P)** are passed to the **Reasoner**.

4. The Reasoner generates an intermediate state **(S1)**.

5. **S1** is then passed back to the PRP-RM.

**Iteration 2:**

1. The bottom PRP-RM provides **S1** as a new prompt.

2. **S1** is passed to the **Sub-KG**.

3. The **Sub-KG** generates a **Response (R1)**, and a revised prompt **(R1')**.

4. The revised prompt **(R1')** is passed to the **Reasoner**.

5. The Reasoner generates an intermediate state **(S2)**.

6. Within the bottom PRP-RM block, there is a section labeled with **Token Probability** containing two sub-sections **I** and **IE**. Below this is a section labeled **Score** and **End**.

7. **S2** is then passed to the Reasoner.

**Legend:**

* **Prompt:** Blue rectangle

* **Output:** Yellow rectangle

* **Token Prob:** Series of small blue rectangles

* **Temp Chat:** Light gray rectangle

### Key Observations

* The process is iterative, with the output of one cycle feeding into the next.

* The use of both a KG and a Sub-KG suggests a hierarchical knowledge representation.

* The Reasoner plays a central role in transforming prompts and knowledge into intermediate states.

* The "Token Prob," "Score," and "End" elements within the second PRP-RM block suggest a mechanism for generating and evaluating responses.

* The snowflake icons next to PRP-RM and Reasoner suggest a potential connection to a specific framework or technology.

### Interpretation

This diagram illustrates a complex reasoning pipeline. The PRP-RM acts as the interface, initiating the process with a prompt. The KG and Sub-KG provide the knowledge base, while the Reasoner performs the core reasoning steps. The iterative nature of the process allows the system to refine its understanding and generate more accurate responses. The inclusion of "Token Prob," "Score," and "End" suggests that the system employs a probabilistic approach to response generation, potentially using techniques like beam search or sampling. The diagram highlights the importance of knowledge representation, reasoning algorithms, and iterative refinement in building intelligent systems. The use of a Sub-KG suggests a strategy for focusing reasoning on relevant knowledge, improving efficiency and accuracy. The diagram does not provide specific data or numerical values, but rather a conceptual overview of the system's architecture and workflow.

</details>

Figure 4: Illustration of the Post-Retrieval Processing and Reward Model (PRP-RM). Given a problem $P$ and its retrieved context $\mathcal{R}_{p}$ from the Knowledge Graph (KG), PRP-RM refines it into $\mathcal{R^{\prime}}_{p}$ . The Reasoner LLM generates step $S_{1}$ based on $\mathcal{R^{\prime}}_{p}$ , followed by iterative retrieval and refinement ( $\mathcal{R}_{t}→\mathcal{R^{\prime}}_{t}$ ) for each step $S_{t}$ . Correctness is assessed using $I=$ ”Is this step correct?” to compute $\operatorname{Score}(S_{t})$ , while completion is checked via $I_{E}=$ ”Has a final answer been reached?” to compute $\operatorname{End}(S_{t})$ . The process continues until $\operatorname{End}(S_{t})$ surpasses a threshold or a predefined inference depth is reached.

Input: Current step $S$ and retrieved problems $\{P_{1},...,P_{k}\}$

Output: Relevant step $S^{*}$ and its context subgraph

1 Initialize step collection $V_{S}←\bigcup_{i=1}^{k}\text{Steps}(P_{i})$ ;

2 foreach $S_{i}∈ V_{S}$ do

3 Compute semantic similarity $\text{Sim}_{\text{semantic}}(S,S_{i})$ ;

4

5 $S^{*}←\arg\max_{S_{i}∈ V_{S}}\text{Sim}_{\text{semantic}}(S,S_{i})$ ;

6 Construct context subgraph via $\text{BFS}(S^{*})$ ;

7 return $S^{*}$ , $\text{subgraph}(S^{*})$ ;

Algorithm 2 KG-RAR for Step Retrieval

For a reasoning step $S_{t}$ , the iterative refinement history is:

$$

H_{t}=\{P+\mathcal{R}_{p},\mathcal{R}^{\prime}_{p},S_{1}+\mathcal{R}_{1},%

\mathcal{R}^{\prime}_{1},\dots,S_{t}+\mathcal{R}_{t},\mathcal{R}^{\prime}_{t}\}.

$$

The refined retrieval context is generated recursively:

$$

\mathcal{R}^{\prime}_{t}=\operatorname{LLM}_{\text{refine}}(H_{t-1},S_{t}+%

\mathcal{R}_{t}).

$$

The correctness and end-of-reasoning probabilities are:

$$

\operatorname{Score}(S_{t})=\frac{\exp(p(\text{Yes}|H_{t},I))}{\exp(p(\text{%

Yes}|H_{t},I))+\exp(p(\text{No}|H_{t},I))},

$$

$$

\operatorname{End}(S_{t})=\frac{\exp(p(\text{Yes}|H_{t},I_{E}))}{\exp(p(\text{%

Yes}|H_{t},I_{E}))+\exp(p(\text{No}|H_{t},I_{E}))}.

$$

This process iterates until $\operatorname{End}(S_{t})>\theta$ , signaling completion.

TABLE I: Performance evaluation across different levels of the Math500 dataset using various models and methods.

| Dataset: Math500 | Level 1 (+9.09%) | Level 2 (+5.38%) | Level 3 (+8.90%) | Level 4 (+7.61%) | Level 5 (+16.43%) | Overall (+8.95%) | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Model | Method | Maj | Last | Maj | Last | Maj | Last | Maj | Last | Maj | Last | Maj | Last |

| Llama-3.1-8B (+15.22%) | CoT-prompting | 80.6 | 80.6 | 74.1 | 74.1 | 59.4 | 59.4 | 46.4 | 46.4 | 27.4 | 27.1 | 51.9 | 51.9 |

| Step-by-Step KG-RAR | 88.4 | 81.4 | 83.3 | 82.2 | 70.5 | 69.5 | 53.9 | 53.9 | 32.1 | 25.4 | 59.8 | 57.0 | |

| Llama-3.2-3B (+20.73%) | CoT-prompting | 63.6 | 65.1 | 61.9 | 61.9 | 51.1 | 51.1 | 43.2 | 43.2 | 20.4 | 20.4 | 43.9 | 44.0 |

| Step-by-Step KG-RAR | 83.7 | 79.1 | 68.9 | 68.9 | 61.0 | 52.4 | 49.2 | 47.7 | 29.9 | 28.4 | 53.0 | 50.0 | |

| Llama-3.2-1B (-4.02%) | CoT-prompting | 64.3 | 64.3 | 52.6 | 52.2 | 41.6 | 41.6 | 25.3 | 25.3 | 8.0 | 8.2 | 32.3 | 32.3 |

| Step-by-Step KG-RAR | 72.1 | 72.1 | 50.0 | 50.0 | 40.0 | 40.0 | 18.0 | 19.5 | 10.4 | 13.4 | 31.0 | 32.2 | |

| Qwen2.5-7B (+2.91%) | CoT-prompting | 95.3 | 95.3 | 88.9 | 88.9 | 86.7 | 86.3 | 77.3 | 77.1 | 50.0 | 49.8 | 75.6 | 75.4 |

| Step-by-Step KG-RAR | 95.3 | 93.0 | 90.0 | 90.0 | 87.6 | 88.6 | 79.7 | 79.7 | 54.5 | 56.7 | 77.8 | 78.4 | |

| Qwen2.5-3B (+3.13%) | CoT-prompting | 93.0 | 93.0 | 85.2 | 85.2 | 81.0 | 80.6 | 62.5 | 62.5 | 40.1 | 39.3 | 67.1 | 66.8 |

| Step-by-Step KG-RAR | 95.3 | 95.3 | 84.4 | 85.6 | 83.8 | 77.1 | 64.1 | 64.1 | 44.0 | 38.1 | 69.2 | 66.4 | |

| Qwen2.5-1.5B (-5.12%) | CoT-prompting | 88.4 | 88.4 | 78.5 | 77.4 | 71.4 | 68.9 | 49.2 | 49.5 | 34.6 | 34.3 | 58.6 | 57.9 |

| Step-by-Step KG-RAR | 97.7 | 93.0 | 78.9 | 75.6 | 66.7 | 67.6 | 48.4 | 44.5 | 24.6 | 23.1 | 55.6 | 53.4 | |

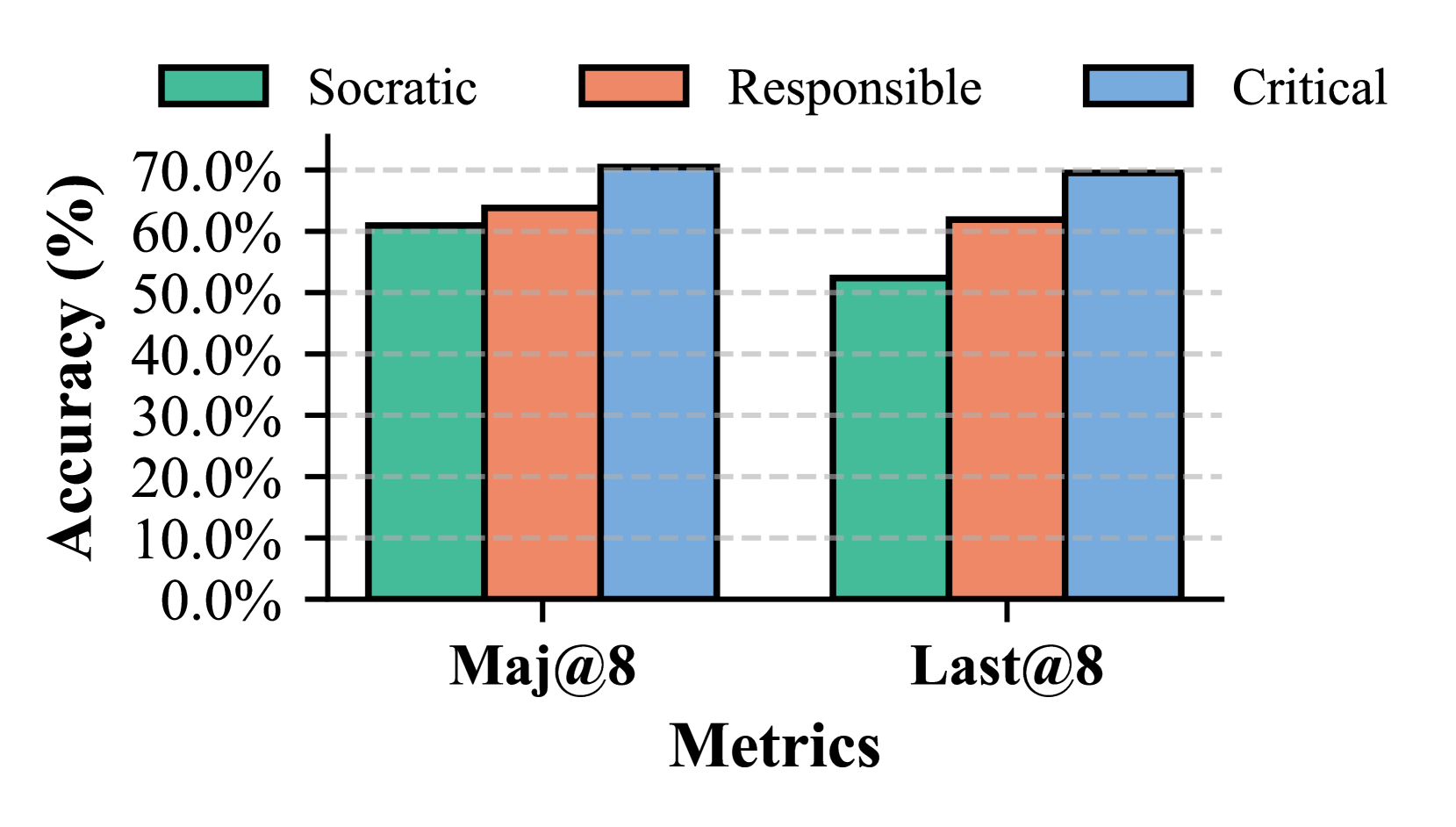

Role-Based System Prompting. Inspired by agent-based reasoning frameworks [98, 99, 100], we introduce role-based system prompting to further optimize our PRP-RM. In this approach, we define three distinct personas to enhance the reasoning process. The Responsible Teacher [101] processes retrieved knowledge into structured guidance and evaluates the correctness of each step. The Socratic Teacher [102], rather than pr oviding direct guidance, reformulates the retrieved content into heuristic questions, encouraging self-reflection. Finally, the Critical Teacher [103] acts as a critical evaluator, diagnosing reasoning errors before assigning a score. Each role focuses on different aspects of post-retrieval processing, improving robustness and interpretability.

5 Experiments

In this section, we evaluate the effectiveness of our proposed Step-by-Step KG-RAR and PRP-RM methods by comparing them with Chain-of-Thought (CoT) prompting [4] and finetuned reward models [30, 29]. Additionally, we perform ablation studies to examine the impact of individual components.

5.1 Experimental Setup

Following prior works [5, 29, 21], we evaluate on Math500 [104] and GSM8K [30], using Accuracy (%) as the primary metric. Experiments focus on instruction-tuned Llama3 [105] and Qwen2.5 [106], with Best-of-N [107, 30, 29] search ( $n=8$ for Math500, $n=4$ for GSM8K). We employ Majority Vote [5] for self-consistency and Last Vote [25] for benchmarking reward models. To evaluate PRP-RM, we compare against fine-tuned reward models: Math-Shepherd-PRM-7B [108], RLHFlow-ORM-Deepseek-8B and RLHFlow-PRM-Deepseek-8B [109]. For Step-by-Step KG-RAR, we set step depth to $8$ and padding to $4$ . The Socratic Teacher role is used in PRP-RM to minimize direct solving. Both the Reasoner LLM and PRP-RM remain consistent for fair comparison.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Difficulty Level for Different Models

### Overview

This line chart displays the accuracy of four different models – Math-Shepherd-PRM-7B, RLHFlow-PRM-Deepseek-8B, RLHFlow-ORM-Deepseek-8B, and PRP-RM(frozen Llama-3.2-3B) – across five levels of difficulty. Accuracy is measured in percentage, and the x-axis represents the difficulty level, ranging from 1 to 5. The chart uses different colored lines to represent each model, with a legend in the top-right corner.

### Components/Axes

* **X-axis:** Difficulty Level (1 to 5)

* **Y-axis:** Accuracy (%) - Scale ranges from 0% to 80% with increments of 20%.

* **Legend:** Located at the top-right corner, identifying each line with its corresponding model name and size.

* Math-Shepherd-PRM-7B (Light Green)

* RLHFlow-PRM-Deepseek-8B (Blue)

* RLHFlow-ORM-Deepseek-8B (Gray)

* PRP-RM(frozen Llama-3.2-3B) (Red-Brown)

* **Horizontal dashed lines:** at 20%, 40%, 60%, and 80% to aid in visual assessment of accuracy.

### Detailed Analysis

Here's a breakdown of each model's performance across the difficulty levels, based on the visual data:

* **Math-Shepherd-PRM-7B (Light Green):** This line starts at approximately 72% accuracy at Difficulty Level 1, then decreases steadily to around 22% at Difficulty Level 5.

* Difficulty 1: ~72%

* Difficulty 2: ~64%

* Difficulty 3: ~56%

* Difficulty 4: ~48%

* Difficulty 5: ~22%

* **RLHFlow-PRM-Deepseek-8B (Blue):** This line begins at approximately 62% accuracy at Difficulty Level 1, and declines to around 28% at Difficulty Level 5.

* Difficulty 1: ~62%

* Difficulty 2: ~60%

* Difficulty 3: ~52%

* Difficulty 4: ~44%

* Difficulty 5: ~28%

* **RLHFlow-ORM-Deepseek-8B (Gray):** This line starts at approximately 68% accuracy at Difficulty Level 1, and decreases to around 30% at Difficulty Level 5.

* Difficulty 1: ~68%

* Difficulty 2: ~66%

* Difficulty 3: ~54%

* Difficulty 4: ~46%

* Difficulty 5: ~30%

* **PRP-RM(frozen Llama-3.2-3B) (Red-Brown):** This line begins at approximately 60% accuracy at Difficulty Level 1, and declines to around 24% at Difficulty Level 5.

* Difficulty 1: ~60%

* Difficulty 2: ~58%

* Difficulty 3: ~50%

* Difficulty 4: ~42%

* Difficulty 5: ~24%

All lines exhibit a downward trend, indicating that accuracy decreases as the difficulty level increases.

### Key Observations

* All models show a decline in accuracy as the difficulty level increases.

* Math-Shepherd-PRM-7B consistently performs slightly better than the other models at lower difficulty levels (1 and 2).

* The accuracy of all models converges at higher difficulty levels (4 and 5), suggesting that all models struggle with the most challenging problems.

* The differences in accuracy between models are more pronounced at lower difficulty levels.

### Interpretation

The data suggests that all four models are capable of solving relatively simple problems (Difficulty Level 1), but their performance degrades significantly as the problems become more complex. The consistent downward trend across all models indicates that the difficulty level is a significant factor in determining accuracy. The initial performance advantage of Math-Shepherd-PRM-7B suggests it may be better suited for simpler tasks, while the convergence of all models at higher difficulty levels implies that all models face similar challenges when tackling complex problems. The fact that accuracy drops below 50% for all models at Difficulty Level 3 suggests that further improvements are needed to achieve high performance on more challenging tasks. The data could be used to inform model selection based on the expected difficulty of the problems to be solved, or to guide further research into improving the performance of these models on complex tasks.

</details>

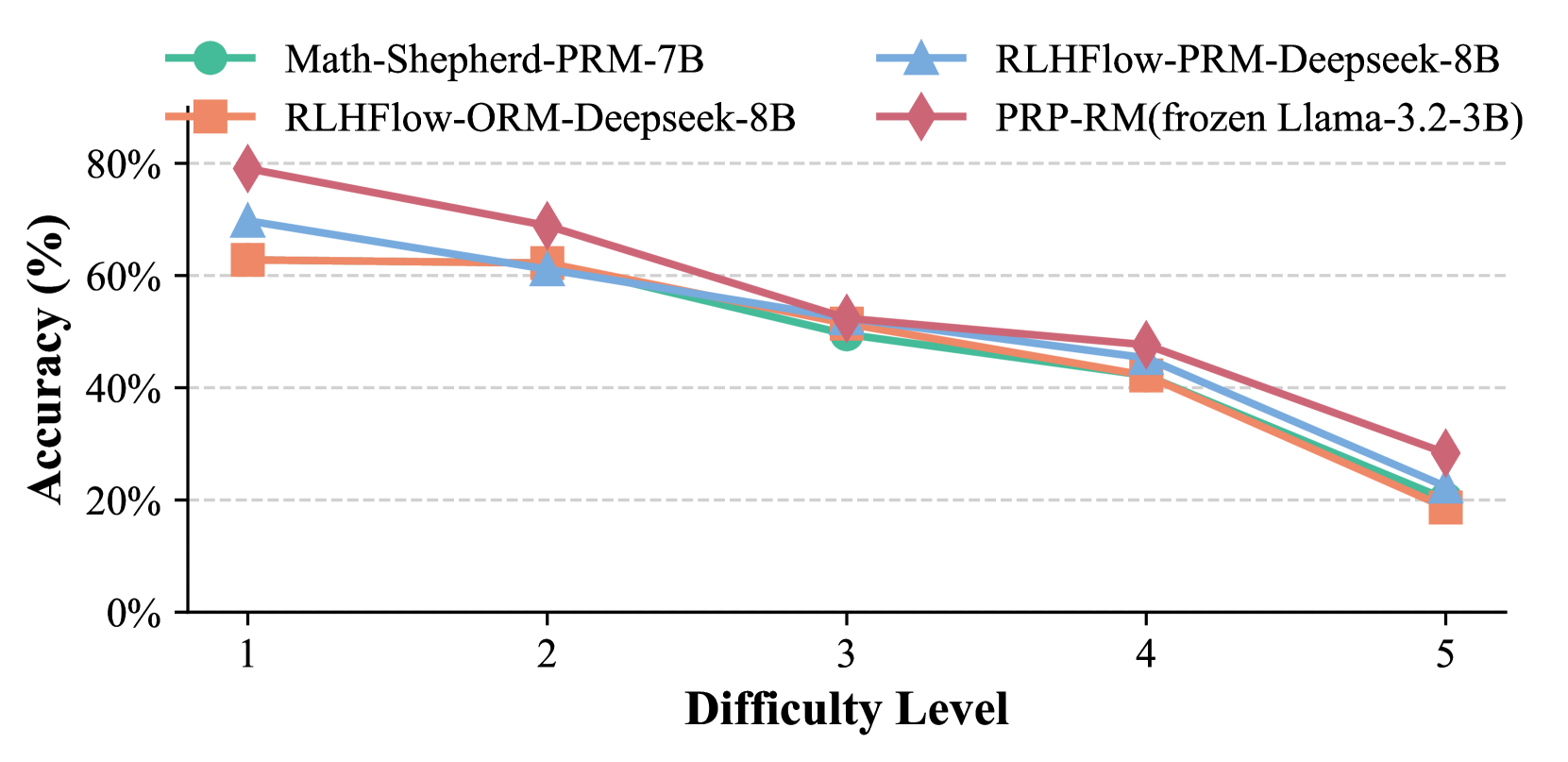

Figure 5: Comparison of reward models under Last@8.

5.2 Comparative Experimental Results

TABLE II: Evaluation results on the GSM8K dataset.

| Model | Method | Maj@4 | Last@4 |

| --- | --- | --- | --- |

| Llama-3.1-8B (+8.68%) | CoT-prompting | 81.8 | 82.0 |

| Step-by-Step KG-RAR | 88.9 | 88.0 | |

| Qwen-2.5-7B (+1.09%) | CoT-prompting | 91.6 | 91.1 |

| Step-by-Step KG-RAR | 92.6 | 93.1 | |

Table I shows that Step-by-Step KG-RAR consistently outperforms CoT-prompting across all difficulty levels on Math500, with more pronounced improvements in the Llama3 series compared to Qwen2.5, likely due to Qwen2.5’s higher baseline accuracy leaving less room for improvement. Performance declines for smaller models like Qwen-1.5B and Llama-1B on harder problems due to increased reasoning inconsistencies. Among models showing improvements, Step-by-Step KG-RAR achieves an average relative accuracy gain of 8.95% on Math500 under Maj@8, while Llama-3.2-8B attains a 8.68% improvement on GSM8K under Maj@4 (Table II). Additionally, PRP-RM achieves comparable performance to ORM and PRM. Figure 5 confirms its effectiveness with Llama-3B on Math500, highlighting its viability as a training-free alternative.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Bar Chart: Accuracy Comparison - Raw vs. Processed

### Overview

This bar chart compares the accuracy of "Raw" and "Processed" data across two metrics: "Maj@8" and "Last@8". Accuracy is measured in percentage (%). The chart consists of two sets of paired bars, one for each metric, representing the accuracy of the Raw and Processed data.

### Components/Axes

* **X-axis:** "Metrics" with two categories: "Maj@8" and "Last@8".

* **Y-axis:** "Accuracy (%)" ranging from 0% to 50%, with tick marks at 0%, 10%, 20%, 30%, 40%, and 50%.

* **Legend:** Located at the top-center of the chart.

* "Raw" - represented by a reddish-orange color.

* "Processed" - represented by a teal color.

### Detailed Analysis

**Maj@8:**

* The "Raw" bar for "Maj@8" reaches approximately 30% accuracy.

* The "Processed" bar for "Maj@8" reaches approximately 40% accuracy.

* The "Processed" bar is visually taller than the "Raw" bar, indicating higher accuracy.

**Last@8:**

* The "Raw" bar for "Last@8" reaches approximately 34% accuracy.

* The "Processed" bar for "Last@8" reaches approximately 42% accuracy.

* The "Processed" bar is visually taller than the "Raw" bar, indicating higher accuracy.

### Key Observations

* The "Processed" data consistently outperforms the "Raw" data for both metrics.

* The difference in accuracy between "Raw" and "Processed" is slightly larger for "Maj@8" than for "Last@8".

* Both metrics show accuracy values below 50%.

### Interpretation

The chart demonstrates that the processing step significantly improves accuracy for both "Maj@8" and "Last@8" metrics. This suggests that the processing method effectively refines the data, leading to more accurate results. The slightly larger improvement in "Maj@8" could indicate that the processing is particularly beneficial for this specific metric, or that the raw data for "Maj@8" was initially less accurate. The fact that both metrics remain below 50% suggests there is still room for improvement, even with the processing applied. The chart implies that the processing step is a valuable component of the overall system, but further optimization may be necessary to achieve higher accuracy levels.

</details>

Figure 6: Comparison of raw and processed retrieval.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Bar Chart: Accuracy Comparison of Different Retrieval Methods

### Overview

This bar chart compares the accuracy of three different retrieval methods – None, RAG (Retrieval-Augmented Generation), and KG-RAG (Knowledge Graph RAG) – across two metrics: Maj@8 and Last@8. Accuracy is measured in percentage (%).

### Components/Axes

* **X-axis:** "Metrics" with two categories: "Maj@8" and "Last@8".

* **Y-axis:** "Accuracy (%)" ranging from 0% to 60%, with increments of 10%.

* **Legend:** Located at the top-left corner, it defines the color coding for each retrieval method:

* Blue: "None"

* Red-Orange: "RAG"

* Green: "KG-RAG"

### Detailed Analysis

The chart consists of six bars, representing the accuracy of each method for each metric.

* **Maj@8:**

* "None": The blue bar reaches approximately 52% accuracy.

* "RAG": The red-orange bar reaches approximately 55% accuracy.

* "KG-RAG": The green bar reaches approximately 58% accuracy.

* **Last@8:**

* "None": The blue bar reaches approximately 45% accuracy.

* "RAG": The red-orange bar reaches approximately 43% accuracy.

* "KG-RAG": The green bar reaches approximately 52% accuracy.

### Key Observations

* KG-RAG consistently outperforms both "None" and "RAG" across both metrics.

* RAG performs slightly better than "None" for Maj@8, but slightly worse for Last@8.

* The difference in accuracy between "None" and "RAG" is relatively small.

* The largest performance gap is between "RAG" and "KG-RAG" for the Last@8 metric.

### Interpretation

The data suggests that incorporating a Knowledge Graph into the RAG process (KG-RAG) significantly improves accuracy in both "Majority at 8" (Maj@8) and "Last at 8" (Last@8) retrieval scenarios. The Maj@8 metric likely assesses whether the correct answer is within the top 8 retrieved results, while Last@8 likely assesses whether the correct answer is in the 8th retrieved result. The consistent improvement of KG-RAG indicates that leveraging knowledge graph information enhances the retrieval process, leading to more relevant and accurate results. The slight performance difference between "None" and "RAG" suggests that simple retrieval augmentation may not always be beneficial, and the quality of the retrieved context is crucial. The larger gap for Last@8 suggests that KG-RAG is particularly effective at improving the ranking of the correct answer, even if it's not among the top results. This could be due to the knowledge graph providing additional context that helps refine the ranking algorithm.

</details>

Figure 7: Comparison of various RAG types.

5.3 Ablation Studies

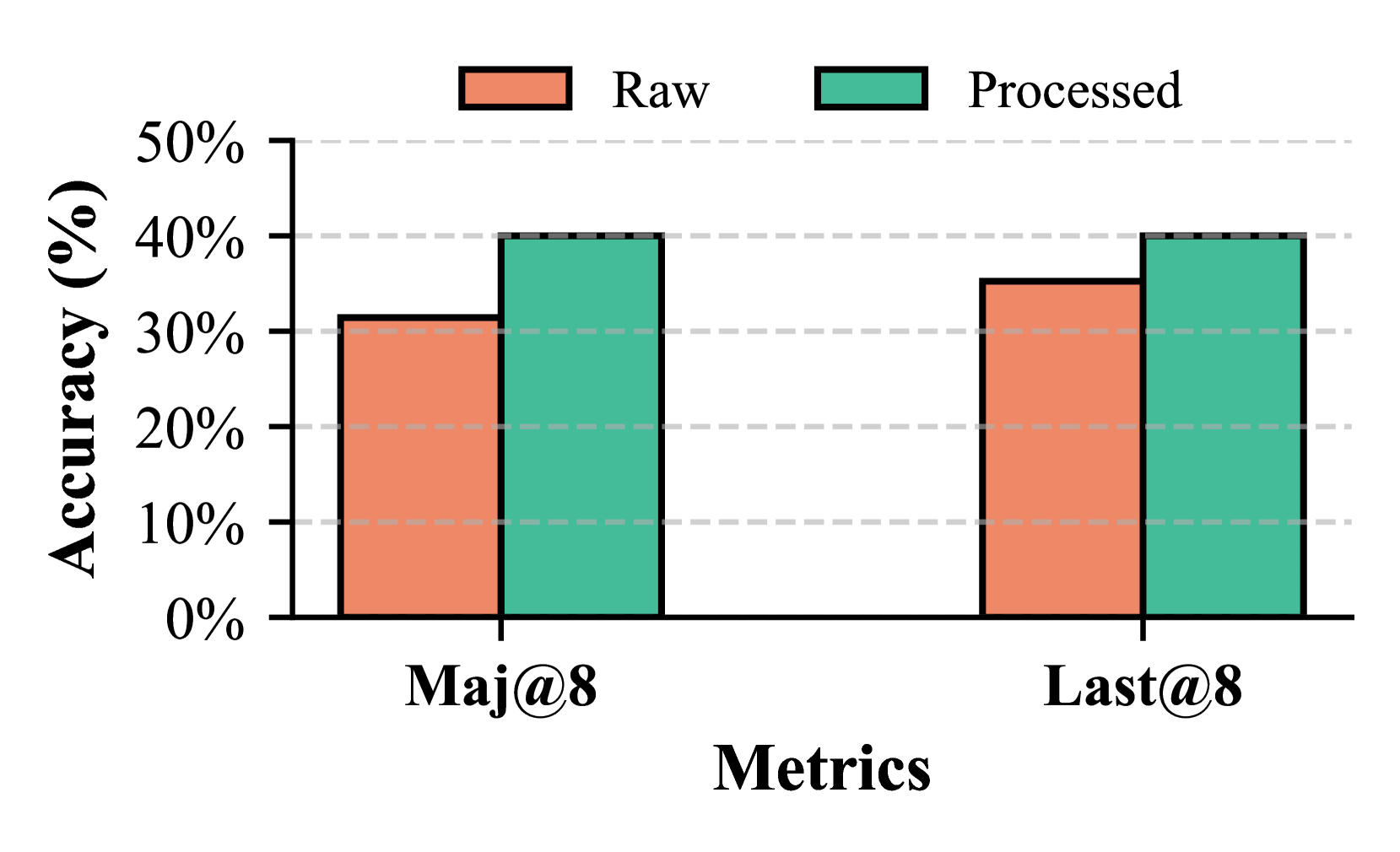

Effectiveness of Post-Retrieval Processing (PRP). We compare reasoning with refined retrieval from PRP-RM against raw retrieval directly from Knowledge Graphs. Figure 7 shows that refining the retrieval context significantly improves performance, with experiments using Llama-3B on Math500 Level 3.

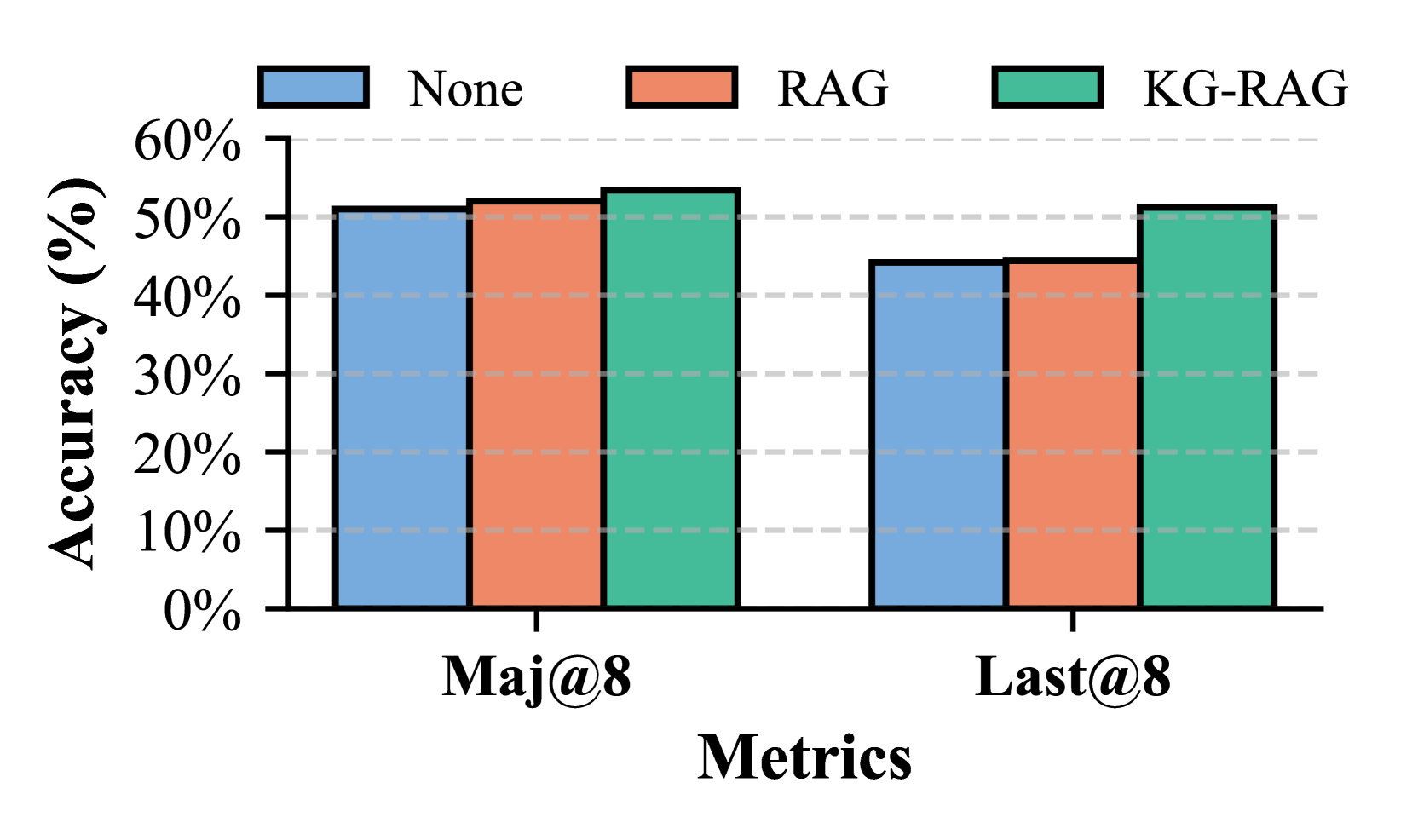

Effectiveness of Knowledge Graphs (KGs). KG-RAR outperforms both no RAG and unstructured RAG (PRM800K) baselines, demonstrating the advantage of structured retrieval (Figure 7, Qwen-0.5B Reasoner, Qwen-3B PRP-RM, Math500).

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Step Padding

### Overview

This line chart illustrates the relationship between "Step Padding" on the x-axis and "Accuracy (%)" on the y-axis, for two different metrics: "Maj@8" and "Compute". A third axis on the right represents "Computation" (Less, Medium, More). The chart shows how accuracy changes as step padding increases, and how this change differs between the two metrics.

### Components/Axes

* **X-axis:** "Step Padding" with markers at 1, 4, and +∞ (infinity).

* **Y-axis:** "Accuracy (%)" ranging from 20% to 50%, with tick marks at 20%, 30%, 40%, and 50%.

* **Right Y-axis:** "Computation" with labels "Less", "Medium", and "More", positioned vertically.

* **Legend:** Located in the top-right corner, containing:

* "Maj@8" - represented by a teal circle with a teal line.

* "Compute" - represented by a black 'x' with a dashed black line.

* **Horizontal dashed lines:** at 30%, 40% and 20% accuracy.

### Detailed Analysis

**Maj@8 (Teal Line):**

The teal line representing "Maj@8" starts at approximately 34% accuracy at Step Padding = 1. It increases to a peak of approximately 42% accuracy at Step Padding = 4, then decreases slightly to approximately 36% accuracy at Step Padding = +∞. The trend is initially upward, then slightly downward.

* Step Padding = 1: Accuracy ≈ 34%

* Step Padding = 4: Accuracy ≈ 42%

* Step Padding = +∞: Accuracy ≈ 36%

**Compute (Black Dashed Line):**

The black dashed line representing "Compute" starts at approximately 47% accuracy at Step Padding = 1. It decreases steadily to approximately 36% accuracy at Step Padding = 4, and continues to decrease to approximately 23% accuracy at Step Padding = +∞. The trend is consistently downward.

* Step Padding = 1: Accuracy ≈ 47%

* Step Padding = 4: Accuracy ≈ 36%

* Step Padding = +∞: Accuracy ≈ 23%

**Computation Axis:**

The "Computation" axis is qualitatively linked to the x-axis. Step Padding of 1 is associated with "More" computation, Step Padding of 4 is associated with "Medium" computation, and Step Padding of +∞ is associated with "Less" computation.

### Key Observations

* "Maj@8" accuracy *increases* with step padding up to a point (Step Padding = 4), then decreases.

* "Compute" accuracy *decreases* consistently with increasing step padding.

* There is an inverse relationship between computation and step padding. As step padding increases, the amount of computation decreases.

* "Compute" starts with a significantly higher accuracy than "Maj@8" at Step Padding = 1, but ends with a lower accuracy at Step Padding = +∞.

### Interpretation

The chart suggests a trade-off between accuracy and computational cost. Increasing step padding initially improves "Maj@8" accuracy, likely by allowing for more refined calculations, but eventually leads to diminishing returns. However, increasing step padding consistently reduces the accuracy of "Compute", and also reduces the computational burden.

The diverging trends of "Maj@8" and "Compute" indicate that these metrics are sensitive to step padding in different ways. "Maj@8" benefits from a moderate amount of step padding, while "Compute" is negatively impacted by it. The relationship to the "Computation" axis suggests that the benefits of step padding for "Maj@8" come at the cost of increased computation, while the drawbacks for "Compute" are associated with reduced computation.

The initial high accuracy of "Compute" could indicate that it is a simpler metric that is less sensitive to the nuances captured by step padding. The eventual lower accuracy of "Compute" at higher step padding values suggests that it may lose information as the step size increases. The peak in "Maj@8" accuracy at Step Padding = 4 suggests an optimal balance between computational cost and accuracy for this metric.

</details>

Figure 8: Comparison of step padding settings.

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Bar Chart: Accuracy Comparison of Different Approaches

### Overview

This bar chart compares the accuracy of three different approaches – Socratic, Responsible, and Critical – across two metrics: Maj@8 and Last@8. Accuracy is measured in percentage (%). The chart uses grouped bar representations to show the performance of each approach for each metric.

### Components/Axes

* **X-axis:** "Metrics" with two categories: "Maj@8" and "Last@8".

* **Y-axis:** "Accuracy (%)" ranging from 0.0% to 70.0% with increments of 10.0%. Horizontal dashed lines mark each 10% increment.

* **Legend:** Located at the top-center of the chart.

* Socratic: Green

* Responsible: Red-Brown

* Critical: Blue

* **Bars:** Grouped bars representing the accuracy for each approach and metric combination.

### Detailed Analysis

The chart presents accuracy values for each approach and metric.

**Maj@8:**

* **Socratic:** The green bar for Socratic at Maj@8 reaches approximately 62.0% accuracy.

* **Responsible:** The red-brown bar for Responsible at Maj@8 reaches approximately 65.0% accuracy.

* **Critical:** The blue bar for Critical at Maj@8 reaches approximately 70.0% accuracy.

**Last@8:**

* **Socratic:** The green bar for Socratic at Last@8 reaches approximately 52.0% accuracy.

* **Responsible:** The red-brown bar for Responsible at Last@8 reaches approximately 58.0% accuracy.

* **Critical:** The blue bar for Critical at Last@8 reaches approximately 70.0% accuracy.

### Key Observations

* The "Critical" approach consistently outperforms both "Socratic" and "Responsible" approaches across both metrics, achieving the highest accuracy in both Maj@8 and Last@8.

* The "Responsible" approach generally performs better than the "Socratic" approach, but the difference is not as significant as the difference between "Critical" and the other two.

* Accuracy is generally higher for Maj@8 than for Last@8 across all three approaches.

### Interpretation

The data suggests that the "Critical" approach is the most effective in terms of accuracy for both "Maj@8" and "Last@8" metrics. This could indicate that the "Critical" approach is better at identifying the correct answer when considering the top 8 most likely candidates ("Maj@8") and also when considering the last 8 candidates ("Last@8"). The lower accuracy for "Last@8" across all approaches might suggest that identifying the correct answer becomes more challenging when considering less probable candidates. The consistent performance difference between the approaches suggests a fundamental difference in their methodologies or underlying models. The fact that "Responsible" consistently outperforms "Socratic" suggests that the features or techniques used in the "Responsible" approach are more effective than those used in the "Socratic" approach. Further investigation into the specific characteristics of each approach would be needed to understand the reasons behind these performance differences.

</details>

Figure 9: Comparison of PRP-RM roles.

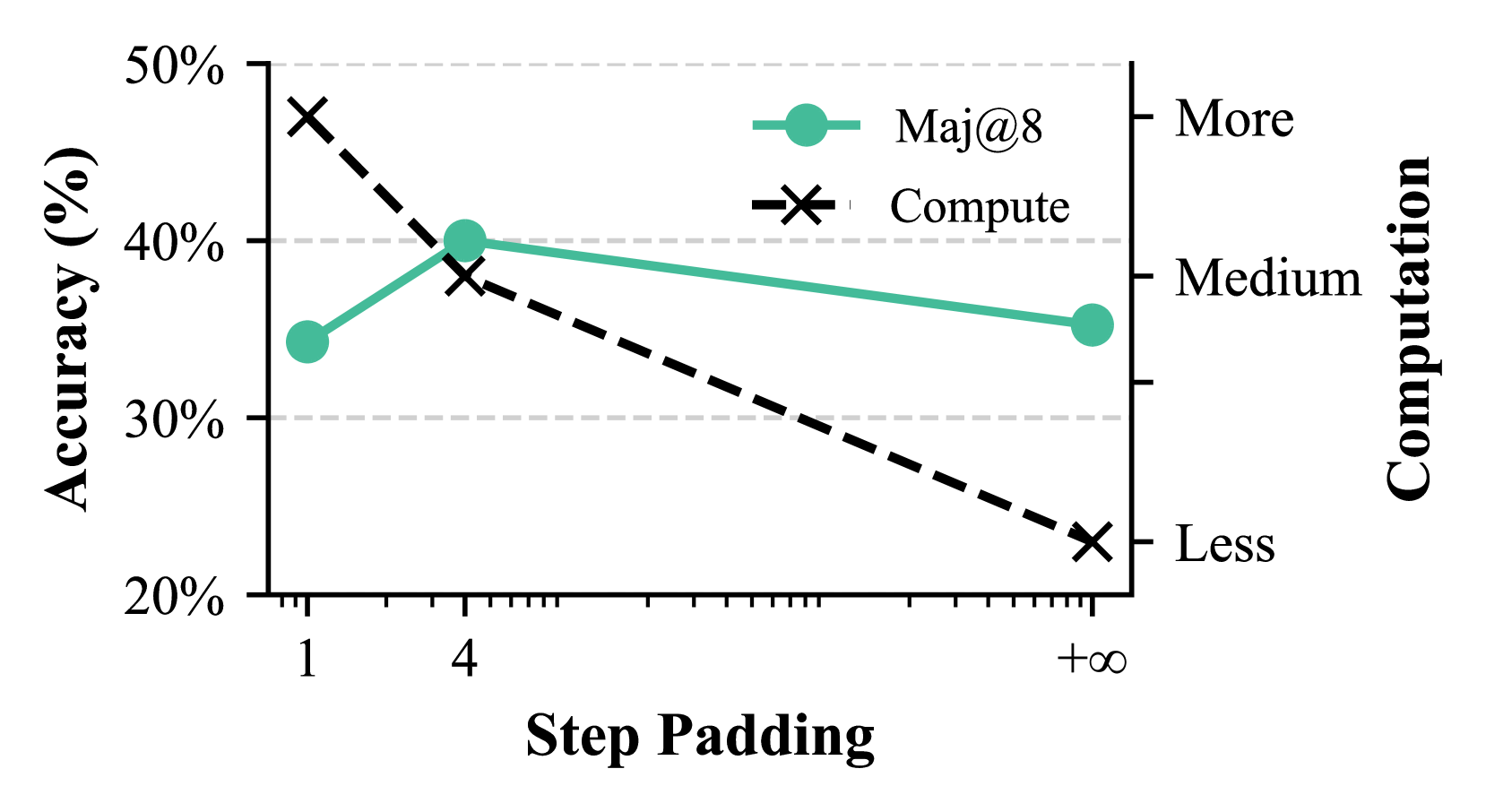

Effectiveness of Step-by-Step RAG. We evaluate step padding at 1, 4, and 1000. Small padding causes inconsistencies, while large padding hinders refinement. Figure 9 illustrates this trade-off (Llama-1B, Math500 Level 3).

<details>

<summary>x10.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Model Size

### Overview

This line chart depicts the relationship between model size (PRP-RM) and accuracy for two metrics: Maj@8 and Last@8. The chart shows how accuracy changes as the model size increases from 0.5B to 3B.

### Components/Axes

* **X-axis:** Model Size (PRP-RM) with markers at 0.5B, 1.5B, and 3B.

* **Y-axis:** Accuracy (%) with a scale ranging from 20% to 60%, incremented by 10%. Horizontal dashed lines mark 30%, 40%, and 50%.

* **Legend:** Located at the top-right of the chart.

* Maj@8: Represented by a teal line with circular markers.

* Last@8: Represented by a salmon-colored line with square markers.

### Detailed Analysis

* **Maj@8 (Teal Line):** The line slopes generally upward.

* At 0.5B, the accuracy is approximately 27%.

* At 1.5B, the accuracy is approximately 37%.

* At 3B, the accuracy is approximately 54%.

* **Last@8 (Salmon Line):** The line also slopes generally upward, but appears slightly steeper than the Maj@8 line.

* At 0.5B, the accuracy is approximately 24%.

* At 1.5B, the accuracy is approximately 44%.

* At 3B, the accuracy is approximately 52%.

### Key Observations

* Both metrics show a positive correlation between model size and accuracy. Larger models generally perform better.

* Last@8 consistently exhibits higher accuracy than Maj@8 across all model sizes.

* The increase in accuracy appears to diminish as the model size increases, suggesting a potential point of diminishing returns. The difference between 0.5B and 1.5B is larger than the difference between 1.5B and 3B for both metrics.

### Interpretation

The data suggests that increasing the model size (PRP-RM) leads to improved accuracy for both Maj@8 and Last@8 metrics. However, the rate of improvement decreases with larger model sizes. The consistently higher accuracy of Last@8 indicates that the model is better at predicting the last item in a sequence compared to the first. This could be due to the model learning more contextual information as it processes the sequence. The diminishing returns observed at larger model sizes suggest that further increasing the model size may not yield significant improvements in accuracy, and resources might be better allocated to other areas of model development, such as data quality or architectural improvements. The choice of metrics (Maj@8 and Last@8) implies an evaluation context focused on ranking or retrieval tasks where the position of the correct answer within a set of candidates is important.

</details>

Figure 10: Scaling PRP-RM sizes

<details>

<summary>x11.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Model Size

### Overview

This line chart depicts the relationship between model size (Reasoner) and accuracy for two metrics: Maj@8 and Last@8. The x-axis represents model size in billions of parameters (0.5B, 1.5B, 3B), and the y-axis represents accuracy as a percentage (ranging from 50% to 75%). The chart shows how accuracy changes as the model size increases for each metric.

### Components/Axes

* **X-axis:** "Model Size (Reasoner)" with markers at 0.5B, 1.5B, and 3B.

* **Y-axis:** "Accuracy (%)" with a scale ranging from 50% to 75% in 5% increments.

* **Legend:** Located at the top-right of the chart.

* "Maj@8" - Represented by a grey circular marker and a grey line.

* "Last@8" - Represented by a salmon/red square marker and a salmon/red line.

* **Gridlines:** Horizontal dashed grey lines at 50%, 55%, 60%, 65%, 70%, and 75%.

### Detailed Analysis

**Maj@8 (Grey Line):**

The grey line representing Maj@8 shows an upward trend as model size increases.

* At 0.5B, the accuracy is approximately 53%.

* At 1.5B, the accuracy increases to approximately 61%.

* At 3B, the accuracy reaches approximately 68%.

**Last@8 (Salmon/Red Line):**

The salmon/red line representing Last@8 also shows an upward trend, but it starts lower and ends higher than Maj@8.

* At 0.5B, the accuracy is approximately 51%.

* At 1.5B, the accuracy increases to approximately 63%.

* At 3B, the accuracy reaches approximately 69%.

### Key Observations

* Both metrics (Maj@8 and Last@8) demonstrate a positive correlation with model size – larger models generally achieve higher accuracy.

* Last@8 consistently exhibits slightly lower accuracy than Maj@8 at 0.5B and 1.5B, but surpasses Maj@8 at 3B.

* The increase in accuracy is more pronounced between 0.5B and 1.5B for both metrics than between 1.5B and 3B, suggesting diminishing returns with increasing model size.

### Interpretation

The data suggests that increasing the model size (Reasoner) improves performance on both Maj@8 and Last@8 metrics. The difference in performance between the two metrics indicates they are measuring different aspects of the model's reasoning ability. Maj@8 likely assesses the model's ability to identify the most relevant information, while Last@8 may evaluate its ability to consider all available information, even less prominent details. The diminishing returns observed at larger model sizes suggest that there's a point where increasing model size yields less significant improvements in accuracy. This could be due to factors like data limitations or the inherent complexity of the task. The crossover at 3B is interesting, and could indicate that Last@8 benefits more from increased model capacity than Maj@8. Further investigation would be needed to understand the underlying reasons for these trends.

</details>

Figure 11: Scaling reasoner LLM sizes

Comparison of PRP-RM Roles. Socratic Teacher minimizes direct problem-solving but sometimes introduces extraneous questions. Figure 9 shows Critical Teacher performs best among three roles (Llama-3B, Math500).

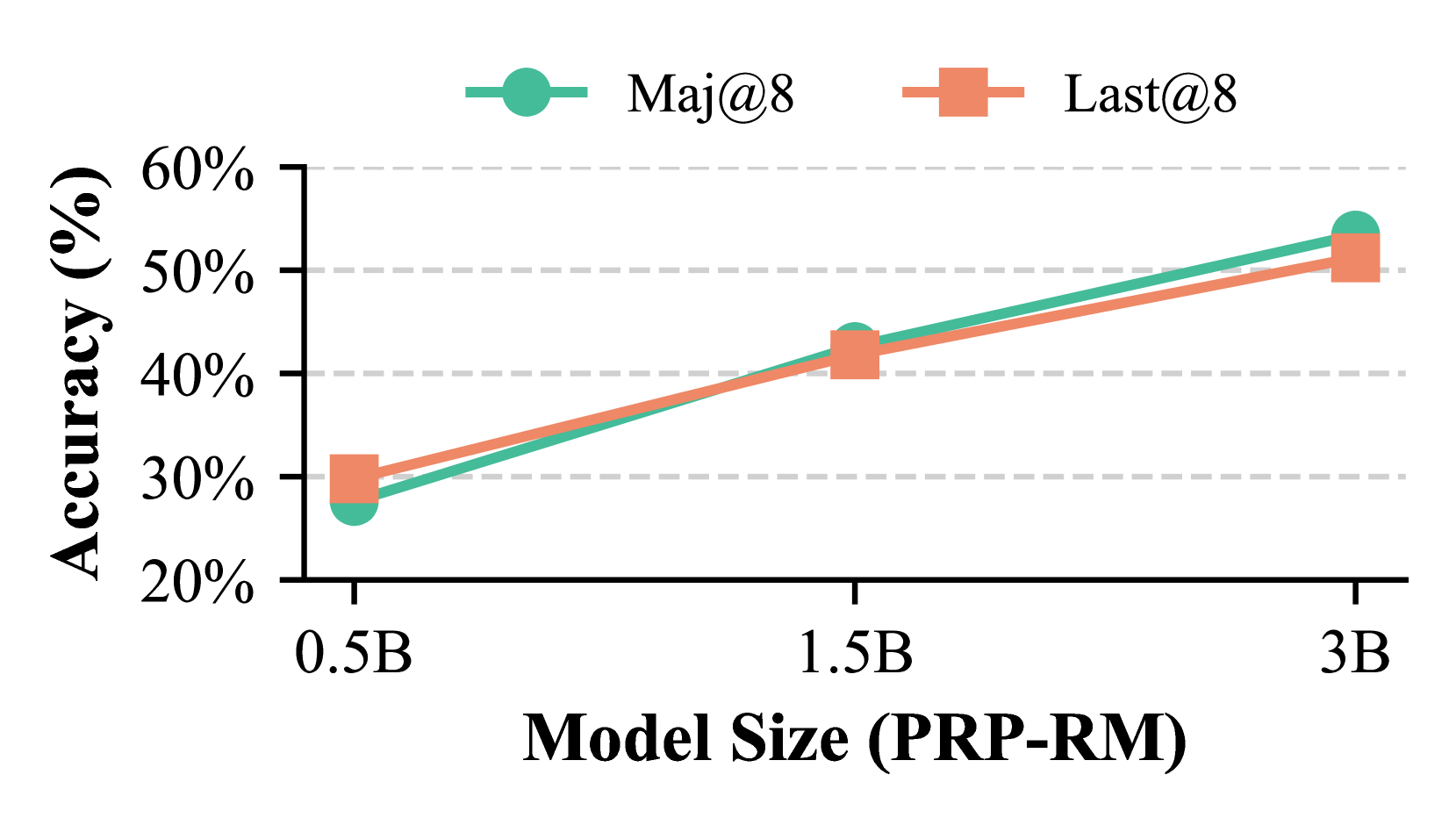

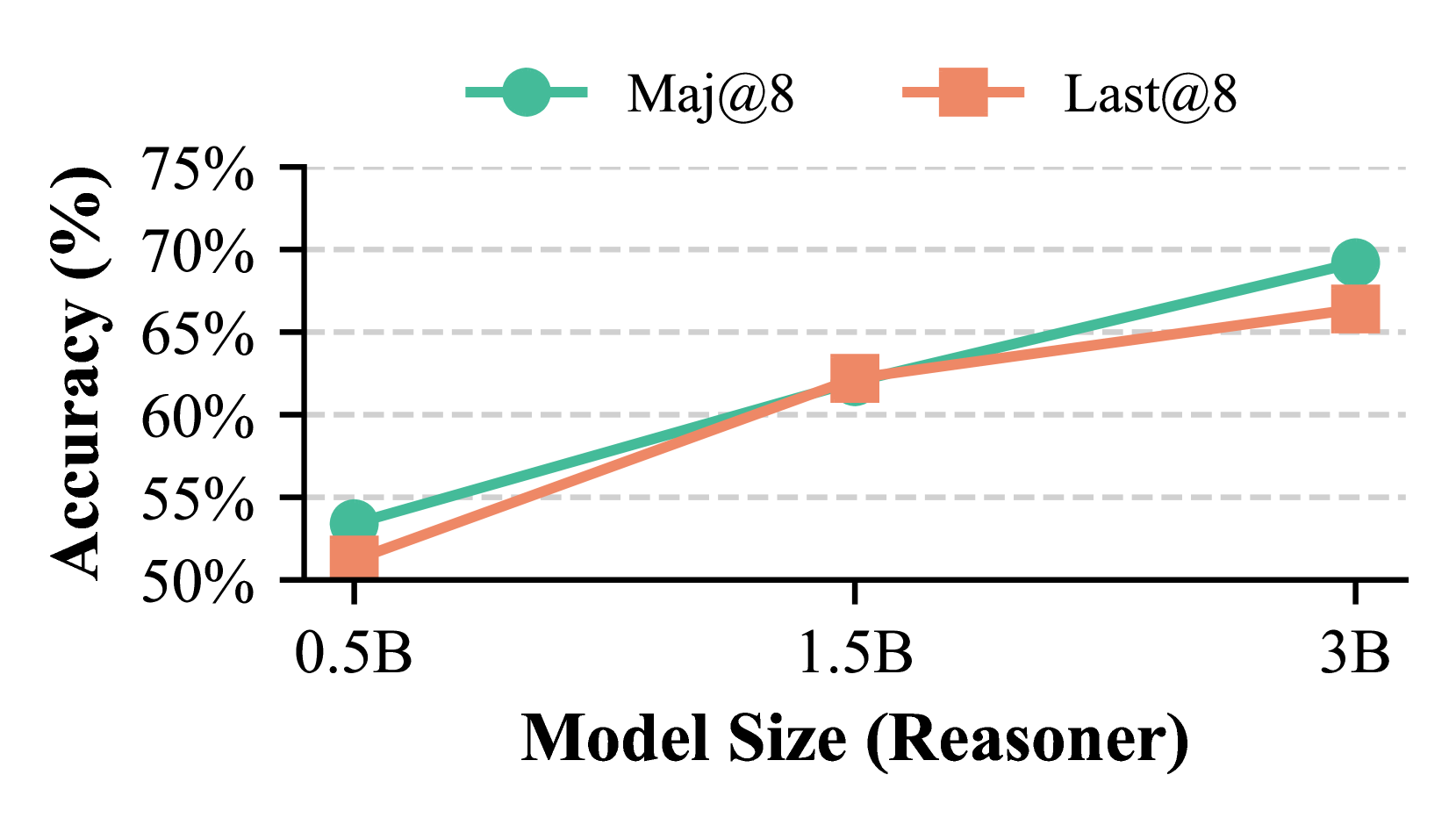

Scaling Model Size. Scaling trends in Section 5.2 are validated on Math500. Figures 11 and 11 confirm performance gains as both the Reasoner LLM and PRP-RM scale independently.

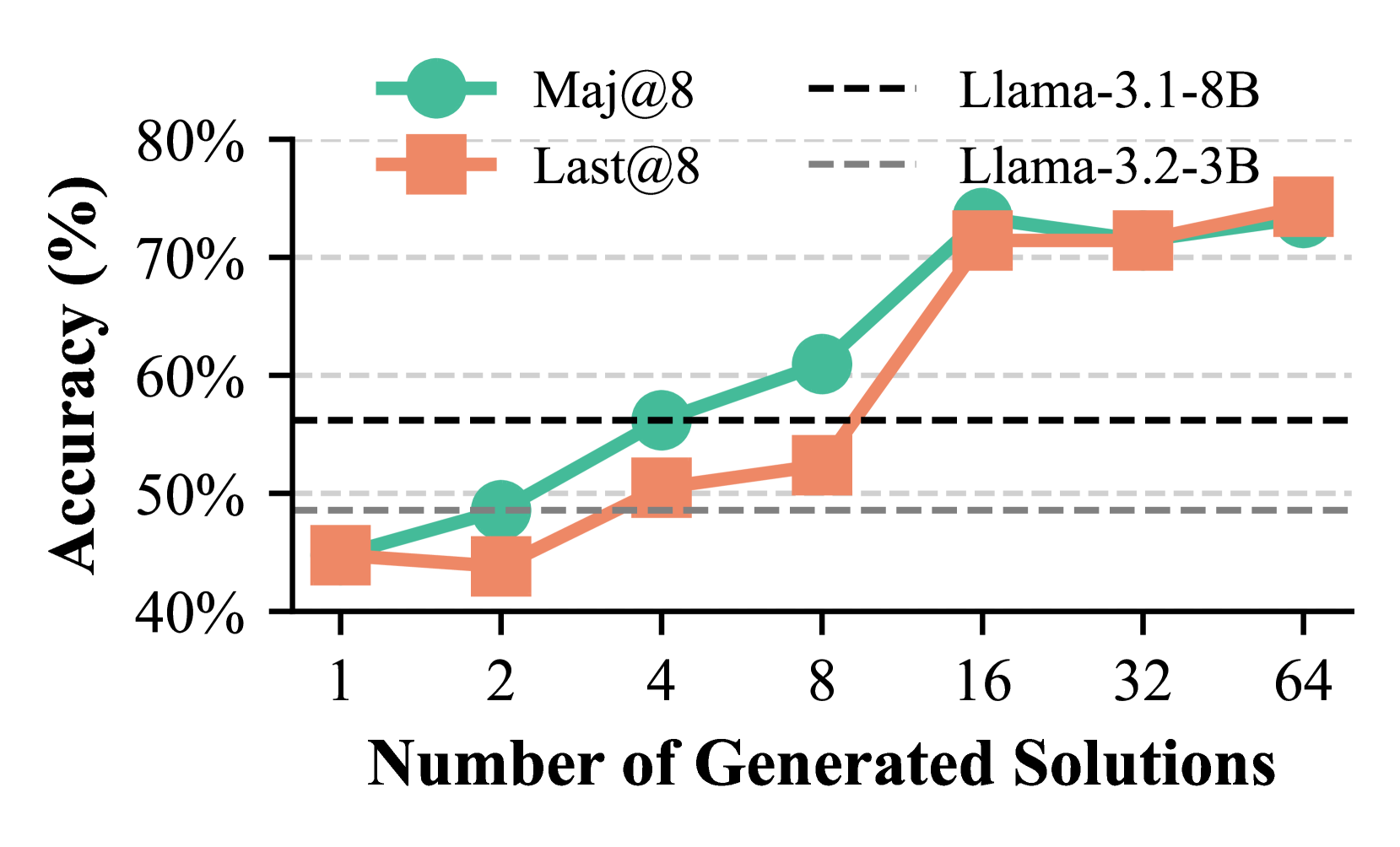

Scaling of Number of Solutions. We vary the number of generated solutions using Llama-3B on Math500 Level 3. Figure 13 shows accuracy improves incrementally with more solutions, underscoring the benefits of multiple candidates.

<details>

<summary>x12.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Number of Generated Solutions

### Overview

This line chart compares the accuracy of two language models, Llama-3.1-8B and Llama-3.2-3B, across two metrics (Maj@8 and Last@8) as the number of generated solutions increases. Accuracy is measured in percentage, and the number of generated solutions ranges from 1 to 64.

### Components/Axes

* **X-axis:** Number of Generated Solutions. Scale: 1, 2, 4, 8, 16, 32, 64.

* **Y-axis:** Accuracy (%). Scale: 40% to 80%.

* **Legend:** Located at the top-right of the chart.

* Maj@8 (Solid Green Line with Circle Markers)

* Last@8 (Solid Orange Line with Square Markers)

* Llama-3.1-8B (Dashed Black Line)

* Llama-3.2-3B (Dashed Gray Line)

* **Horizontal Lines:** Two dashed horizontal lines are present at 50% and 60% accuracy.

### Detailed Analysis

**Maj@8 (Green Line):**

The green line representing Maj@8 starts at approximately 43% accuracy at 1 generated solution. It shows a generally upward trend, with a steeper increase between 4 and 8 generated solutions, reaching approximately 68% at 8 solutions. The line continues to rise, reaching around 72% at 16 solutions, and plateaus around 72-73% for 32 and 64 solutions.

* 1 Solution: ~43%

* 2 Solutions: ~47%

* 4 Solutions: ~53%

* 8 Solutions: ~68%

* 16 Solutions: ~72%

* 32 Solutions: ~72%

* 64 Solutions: ~73%

**Last@8 (Orange Line):**

The orange line representing Last@8 begins at approximately 45% accuracy at 1 generated solution. It fluctuates slightly, reaching around 47% at 2 solutions, then dips to approximately 44% at 4 solutions. It then increases to around 50% at 8 solutions, and continues to rise, reaching approximately 68% at 32 and 64 solutions.

* 1 Solution: ~45%

* 2 Solutions: ~47%

* 4 Solutions: ~44%

* 8 Solutions: ~50%

* 16 Solutions: ~58%

* 32 Solutions: ~68%

* 64 Solutions: ~68%

**Llama-3.1-8B (Black Dashed Line):**

The black dashed line representing Llama-3.1-8B starts at approximately 58% accuracy at 1 generated solution. It remains relatively stable, fluctuating around 58-60% across all values of generated solutions (1 to 64).

* 1 Solution: ~58%

* 2 Solutions: ~59%

* 4 Solutions: ~59%

* 8 Solutions: ~60%

* 16 Solutions: ~60%

* 32 Solutions: ~60%

* 64 Solutions: ~60%

**Llama-3.2-3B (Gray Dashed Line):**

The gray dashed line representing Llama-3.2-3B starts at approximately 48% accuracy at 1 generated solution. It shows a slight upward trend, reaching around 52% at 2 solutions, and then plateaus around 52-54% for all other values of generated solutions (4 to 64).

* 1 Solution: ~48%

* 2 Solutions: ~52%

* 4 Solutions: ~53%

* 8 Solutions: ~53%

* 16 Solutions: ~54%

* 32 Solutions: ~54%

* 64 Solutions: ~54%

### Key Observations

* Maj@8 shows the most significant improvement in accuracy as the number of generated solutions increases, particularly between 4 and 16 solutions.

* Last@8 shows a more gradual increase in accuracy.

* Llama-3.1-8B consistently outperforms Llama-3.2-3B across all numbers of generated solutions.

* Both Llama models show relatively stable accuracy beyond 16 generated solutions.

* The 60% accuracy threshold is surpassed by Llama-3.1-8B and approached by Last@8 at higher solution counts.

### Interpretation

The data suggests that increasing the number of generated solutions improves the accuracy of both metrics (Maj@8 and Last@8), but the effect is more pronounced for Maj@8. This indicates that generating more solutions allows the model to explore a wider range of possibilities and potentially identify better solutions. The consistent outperformance of Llama-3.1-8B suggests that it is a more capable model than Llama-3.2-3B, at least for this specific task and evaluation metrics. The plateauing of accuracy at higher solution counts suggests that there is a diminishing return to generating more solutions beyond a certain point. The horizontal lines at 50% and 60% serve as benchmarks, highlighting the performance improvements achieved by the models as the number of generated solutions increases. The difference between Maj@8 and Last@8 could indicate that the model is better at identifying the *best* solution among many (Maj@8) than at consistently placing the best solution *last* in the generated list (Last@8).

</details>

Figure 12: Scaling of the number of solutions.

<details>

<summary>x13.png Details</summary>

### Visual Description

\n

## Bar Chart: Accuracy of Voting Methods

### Overview

This image presents a bar chart comparing the accuracy of five different voting methods. The y-axis represents accuracy in percentage, while the x-axis lists the voting methods. Each method is represented by a green bar, with the height of the bar indicating the corresponding accuracy.

### Components/Axes

* **X-axis Label:** "Voting Methods"

* **Y-axis Label:** "Accuracy (%)"

* **Y-axis Scale:** Ranges from 50% to 70%, with tick marks at 55%, 60%, 65%, and 70%.

* **Voting Methods (Categories):**

* Maj-Vote

* Last-Vote

* Min-Vote

* Last-Max

* Min-Max

### Detailed Analysis

The chart displays the accuracy of each voting method as a vertical bar.

* **Maj-Vote:** The bar for Maj-Vote reaches approximately 65.5%.

* **Last-Vote:** The bar for Last-Vote reaches approximately 62.5%.

* **Min-Vote:** The bar for Min-Vote reaches approximately 60.5%.

* **Last-Max:** The bar for Last-Max reaches approximately 57.5%.

* **Min-Max:** The bar for Min-Max reaches approximately 55.5%.

The bars are arranged in order from left to right, corresponding to the order of the voting methods listed above. The height of each bar visually represents the accuracy percentage.

### Key Observations

* Maj-Vote exhibits the highest accuracy among the five methods.

* Min-Max demonstrates the lowest accuracy.

* There is a general decreasing trend in accuracy from Maj-Vote to Min-Max.

* The differences in accuracy between adjacent methods are relatively small, except for the drop from Last-Max to Min-Max.

### Interpretation

The data suggests that the "Maj-Vote" method is the most accurate among the tested voting methods, while "Min-Max" is the least accurate. The chart demonstrates a clear performance hierarchy, indicating that the choice of voting method can significantly impact the accuracy of the outcome. The decreasing trend suggests that methods prioritizing later votes or minimizing values may be less reliable. The relatively small differences between some methods (e.g., Maj-Vote and Last-Vote) suggest that the impact of the voting method might be less pronounced in certain scenarios. This data could be used to inform the selection of a voting method in applications where accuracy is a critical factor. The chart provides a direct comparison of the performance of these methods, allowing for a data-driven decision-making process.

</details>

Figure 13: Comparison of various voting methods.

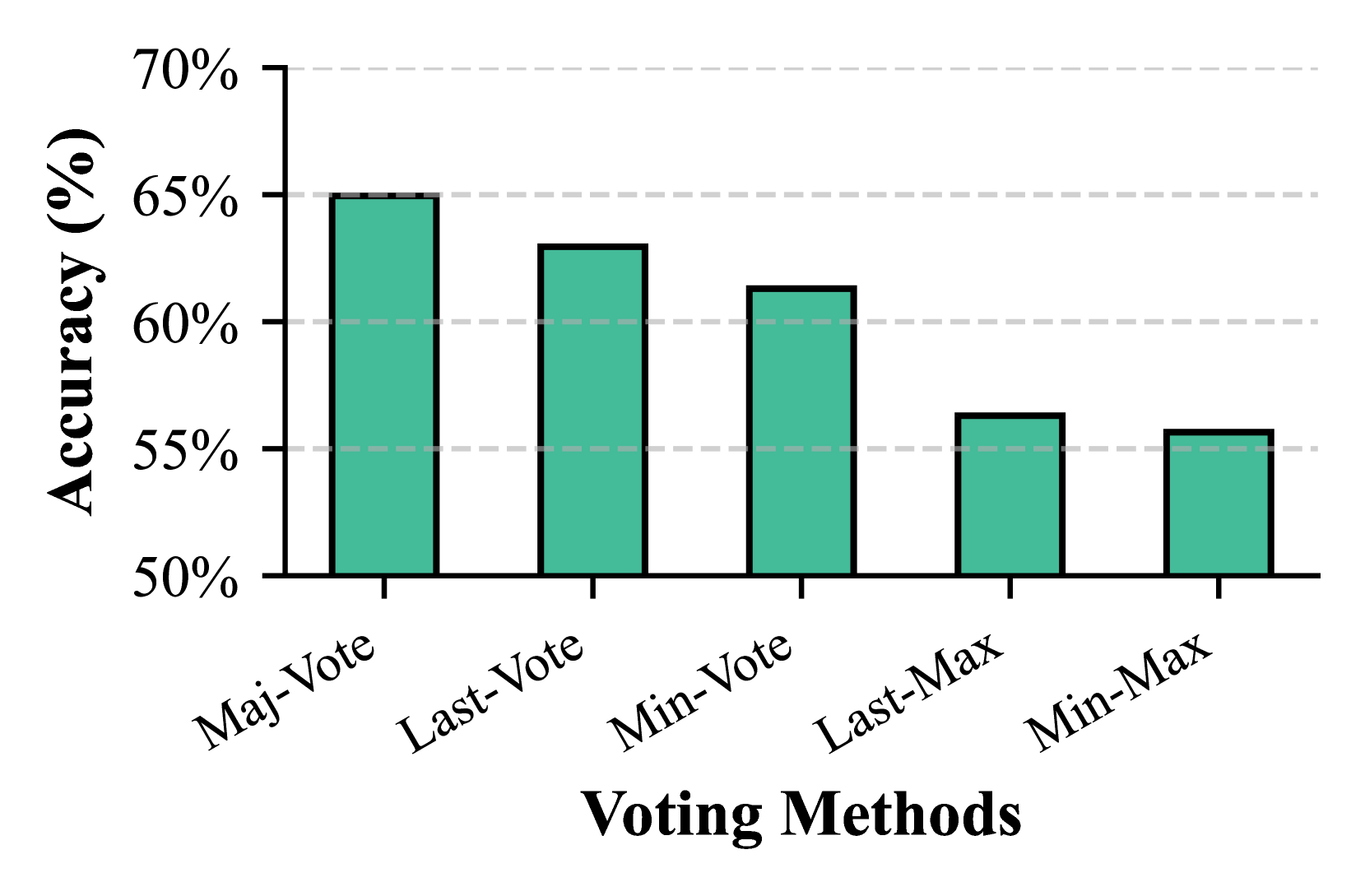

Comparison of Voting Methods. We widely evaluate five PRP-RM voting strategies: Majority Vote, Last Vote, Min Vote, Min-Max, and Last-Max [23, 21]. Majority Vote and Last Vote outperform others, as extreme-based methods are prone to PRP-RM overconfidence in incorrect solutions (Figure 13).

6 Conclusions and Limitations

In this paper, we introduce a novel graph-augmented reasoning paradigm that aims to enhance o1-like multi-step reasoning capabilities of frozen LLMs by integrating external KGs. Towards this end, we present step-by-step knowledge graph based retrieval-augmented reasoning (KG-RAR), a novel iterative retrieve-refine-reason framework that strengthens o1-like reasoning, facilitated by an innovative post-retrieval processing and reward model (PRP-RM) that refines raw retrievals and assigns step-wise scores to guide reasoning more effectively. Experimental results demonstrate an 8.95% relative improvement on average over CoT-prompting on Math500, with PRP-RM achieving competitive performance against fine-tuned reward models, yet without the heavy training or fine-tuning costs.