# TPU-Gen: LLM-Driven Custom Tensor Processing Unit Generator

**Authors**:

- Ramtin Zand, Shaahin Angizi (New Jersey Institute of Technology, Newark, NJ, USA,

University of South Carolina, Columbia, SC, USA)

- E-mails: {dv336,shaahin.angizi}@njit.edu

Abstract

The increasing complexity and scale of Deep Neural Networks (DNNs) necessitate specialized tensor accelerators, such as Tensor Processing Units (TPUs), to meet various computational and energy efficiency requirements. Nevertheless, designing optimal TPU remains challenging due to the high domain expertise level, considerable manual design time, and lack of high-quality, domain-specific datasets. This paper introduces TPU-Gen, the first Large Language Model (LLM) based framework designed to automate the exact and approximate TPU generation process, focusing on systolic array architectures. TPU-Gen is supported with a meticulously curated, comprehensive, and open-source dataset that covers a wide range of spatial array designs and approximate multiply-and-accumulate units, enabling design reuse, adaptation, and customization for different DNN workloads. The proposed framework leverages Retrieval-Augmented Generation (RAG) as an effective solution for a data-scare hardware domain in building LLMs, addressing the most intriguing issue, hallucinations. TPU-Gen transforms high-level architectural specifications into optimized low-level implementations through an effective hardware generation pipeline. Our extensive experimental evaluations demonstrate superior performance, power, and area efficiency, with an average reduction in area and power of 92% and 96% from the manual optimization reference values. These results set new standards for driving advancements in next-generation design automation tools powered by LLMs.

I Introduction

The rising computational demands of Deep Neural Networks (DNNs) have driven the adoption of specialized tensor processing accelerators, such as Tensor Processing Units (TPUs). These accelerators, characterized by low global data transfer, high clock frequencies, and deeply pipelined Processing Elements (PEs), excel in accelerating training and inference tasks by optimizing matrix multiplication [1]. Despite their effectiveness, the complexity and expertise required for their design remain significant barriers. Static accelerator design tools, such as Gemmini [2] and DNNWeaver [3], address some of these challenges by providing templates for systolic arrays, data flows, and software ecosystems [4, 5]. However, these tools still face limitations, including complex programming interfaces, high memory usage, and inefficiencies in handling diverse computational patterns [6, 7]. These constraints underscore the need for innovative solutions to streamline hardware design processes.

Large Language Models (LLMs) have emerged as a promising solution, offering the ability to generate hardware descriptions from high-level design intents. LLMs can potentially reduce the expertise and time required for DNN hardware development by encapsulating vast domain-specific knowledge. However, realizing this potential requires overcoming three critical challenges. First, existing datasets are often limited in size and detail, hindering the generation of reliable designs [8, 9]. Second, while fine-tuning is essential to minimize the human intervention, fine-tuning LLMs often results in hallucinations producing non-sensical or factually incorrect responses, compromising their applicability [10, 11]. Finally, an effective pipeline is needed to mitigate these hallucinations and ensure the generation of consistent, contextually accurate code [11]. Therefore, the core questions we seek to answer are the following– Can there be an effective way to rely on LLM to act as a critical mind and adapt implementations like Retrieval-Augmented Generation (RAG) to minimize hallucinations? Can we leverage domain-specific LLMs with RAG through an effective pipeline to automate the design process of TPU to meet various computational and energy efficiency requirements?

TABLE I: Comparison of the Selected LLM-based HDL/HLS generators.

| Property | Ours | [10] | [9] | [8] | [12] | [13] | [14] | [15] | [16] | [17] | [18] |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Function | TPU Gen. | Verilog Gen. | AI Accel. Gen. | Verilog Gen. | Verilog Gen. | Verilog Gen. | Hardware Verf. | Hardware Verf. | Verilog Gen. | $\dagger$ | AI Accel. Gen. |

| Chatbot ∗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ | ✗ | ✗ |

| Dataset | ✓ | ✓(Verilog) | ✗ | NA | NA | NA | ✗ | ✗ | ✓ | ✓ | ✓ |

| Output format | Verilog | Verilog | HLS | Verilog | Verilog | Verilog | Verilog | HDL | Verilog | Verilog | Chisel |

| Auto. Verif. | ✓ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ | ✓ | ✗ | ✓ |

| Human in Loop | Low | Medium | Medium | Medium | High | Low | Low | Low | Low | Low | Low |

| Fine tuning | ✓ | ✓ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✗ |

| RAG | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ |

∗ A user interface featuring Prompt template generation for the input of LLM. † Not applicable.

To answer this question, we develop the first-of-its-kind TPU-Gen as an automated exact and approximate TPU design generation framework with a comprehensive dataset specifically tailored for ever-growing DNN topologies. Our contributions in this paper are threefold: (1) Due to the limited availability of annotated data necessary for efficient fine-tuning of an open-source LLM, we introduce a meticulously curated dataset that encompasses various levels of detail and corresponding hardware descriptions, designed to enhance LLMs’ learning and generative capabilities in the context of TPU design; (2) We develop TPU-Gen as a potential solution to reduce hallucinations leveraging RAG and fine-tuning, to align best for the LLMs to streamline the approximate TPU design generation process considering budgetary constraints (e.g., power, latency, area), ensuring a seamless transition from high-level specifications to low-level implementations; and (3) We design extensive experiments to evaluate our approach’s performance and reliability, demonstrating its superiority over existing methods. We anticipate that TPU-Gen will provide a framework that will influence the future trajectory of DNN hardware acceleration research for generations to come The dataset and fine-tuned models are open-sourced. The link is omitted to maintain anonymity since the GitHub anonymous link should be under 2GB which is exceeded in this study..

II background

LLM for Hardware Design. LLMs show promise in generating Hardware Description Language (HDL) and High-Level Synthesis (HLS) code. Table I compares notable methods in this field. VeriGen [10] and ChatEDA [19] refine hardware design workflows, automating the RTL to GDSII process with fine-tuned LLMs. ChipGPT [8] and Autochip [13] integrate LLMs to generate and optimize hardware designs, with Autochip producing precise Verilog code through simulation feedback. Chip-Chat [12] demonstrates interactive LLMs like ChatGPT-4 in accelerating design space exploration. MEV-LLM [20] proposes multi-expert LLM architecture for Verilog code generation. RTLLM [21] and GPT4AIGChip [9] enhance design efficiency, showcasing LLMs’ ability to manage complex design tasks and broaden access to AI accelerator design. To the best of our knowledge, GPT4AIGChip [9] and SA-DS [18] are a few initial works focus on an extensive framework specifically aimed at the generation of domain-specific AI accelerator designs where SA-DS focus on creating a dataset in HLS and employ fine-tuning free methods such as single-shot and multi-shot inputs to LLM. Other works for hardware also include creation of SPICE circuits [22, 23]. However, the absence of prompt optimization, tailored datasets, model fine-tuning, and LLM hallucination pose a barrier to fully harnessing the potential of LLMs in such frameworks [19, 18]. This limitation confines their application to standard LLMs without fine-tuning or In-Context Learning (ICL) [19], which are among the most promising methods for optimizing LLMs [24].

Retrieval-Augmented Generation. RAG is a promising paradigm that combines deep learning with traditional retrieval techniques to help mitigate hallucinations in LLMs [25]. RAG leverages external knowledge bases, such as databases, to retrieve relevant information, facilitating the generation of more accurate and reliable responses [26, 25]. The primary challenge in deploying LLMs for hardware generation or any application lies in their tendency to deviate from the data and hallucinate, making it challenging to capture the essence of circuits and architectural components. LLMs tend to prioritize creativity and finding innovative solutions, which often results in straying from the data [11]. As previous works show, the RAG model can be a cost-efficient solution by retrieving and augmenting data, avoiding heavy computational demands [27].

Approximate MAC Units. Approximate computing has been widely explored as a means to trade reduced accuracy for gains in design metrics, including area, power consumption, and performance [28, 29, 30, 31, 32, 33]. As the computation core in various PEs in TPUs, several approximate Multiply-and-Accumulate (MAC) units have been proposed as alternatives to precise multipliers and adders and extensively analyzed in accelerating deep learning [34, 35]. These MAC units are composed of two arithmetic stages—multiplication and accumulation with previous products—each of which can be independently approximated. Most approximate multipliers, such as logarithmic multipliers, are composed of two key components: low-precision arithmetic logic and a pre-processing unit that acts as steering logic to prepare the operands for low-precision computation [36]. These multipliers typically balance accuracy and power efficiency. For example, the logarithmic multiplier introduced in [29] emphasizes accuracy, while the multipliers in [37] are designed to reduce power and latency. On the other hand, most approximate adders, such as lower part OR adder (LOA) [38], exploit the fact that extended carry propagation is infrequent, allowing adders to be divided into independent sub-adders shortening the critical path. To preserve computational accuracy, the approximation is applied to the least significant bits of the operands, while the most significant bits remain accurate.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Dataflow Diagram: Neural Network Accelerator Architecture

### Overview

The image is a dataflow diagram illustrating the architecture of a neural network accelerator. It shows the flow of data from input memory through a series of processing units (PAU and APE) arranged in a grid-like structure, controlled by a central controller, and finally to output memory. The diagram highlights the parallel processing capabilities of the architecture.

### Components/Axes

* **Input Memory:** Labeled "Weight/IFMAP Memory" (light blue rectangle on the left and top). IFMAP likely stands for Input Feature Map.

* **Demultiplexer (DEMUX):** A pink trapezoid that splits the input data stream (located on the left and top).

* **First-In, First-Out (FIFO) Buffers:** Represented by yellow stacks of rectangles.

* **Processing Array Units (PAU):** Represented by yellow squares.

* **Arithmetic Processing Elements (APE):** Represented by green squares.

* **Multiplexer (MUX):** A pink trapezoid that combines the output data streams (located at the bottom).

* **Output Memory:** Labeled "Output Memory (OFMAP)" (light blue rectangle at the bottom). OFMAP likely stands for Output Feature Map.

* **Controller:** A dashed-line rectangle in the top-left, connected to various components with dashed lines, indicating control signals.

### Detailed Analysis

The diagram depicts a dataflow architecture with the following key elements:

1. **Input Data:** Data, likely weights and input feature maps (IFMAP), is read from the "Weight/IFMAP Memory". There are two input memories, one on the left and one on the top.

2. **Demultiplexing:** The "DEMUX" splits the input data stream into multiple parallel streams.

3. **FIFO Buffers:** The data streams are fed into "FIFO" buffers, which likely act as temporary storage to synchronize data flow. Each FIFO appears to hold 4 data elements.

4. **Processing Array Units (PAU):** The data from the FIFOs is processed by "PAU" units.

5. **Arithmetic Processing Elements (APE):** The output of the PAUs is then fed into a grid of "APE" units. The diagram shows a 3x2 grid of APEs, but the "..." notation indicates that the grid can be extended.

6. **Multiplexing:** The outputs of the APEs are combined by the "MUX".

7. **Output Data:** The final result is written to the "Output Memory (OFMAP)".

8. **Controller:** The "Controller" manages the data flow and processing within the architecture. It sends control signals (dashed lines) to the DEMUX, FIFOs, PAUs, and APEs.

The data flows from left to right and top to bottom. The dotted lines indicate that the PAU outputs are connected to the APEs in the next row.

### Key Observations

* The architecture is designed for parallel processing, with multiple PAUs and APEs operating simultaneously.

* The FIFO buffers likely play a crucial role in synchronizing data flow and handling variations in processing time.

* The controller is responsible for orchestrating the entire dataflow and ensuring correct operation.

### Interpretation

The diagram illustrates a hardware architecture optimized for neural network computations. The parallel arrangement of PAUs and APEs allows for efficient processing of large amounts of data, which is essential for deep learning applications. The use of FIFO buffers and a central controller ensures that the data flows smoothly and that the processing units are utilized effectively. The architecture is likely designed to accelerate matrix multiplications and other common neural network operations. The presence of separate input memories for weights and IFMAP suggests that the architecture is optimized for convolutional neural networks (CNNs).

</details>

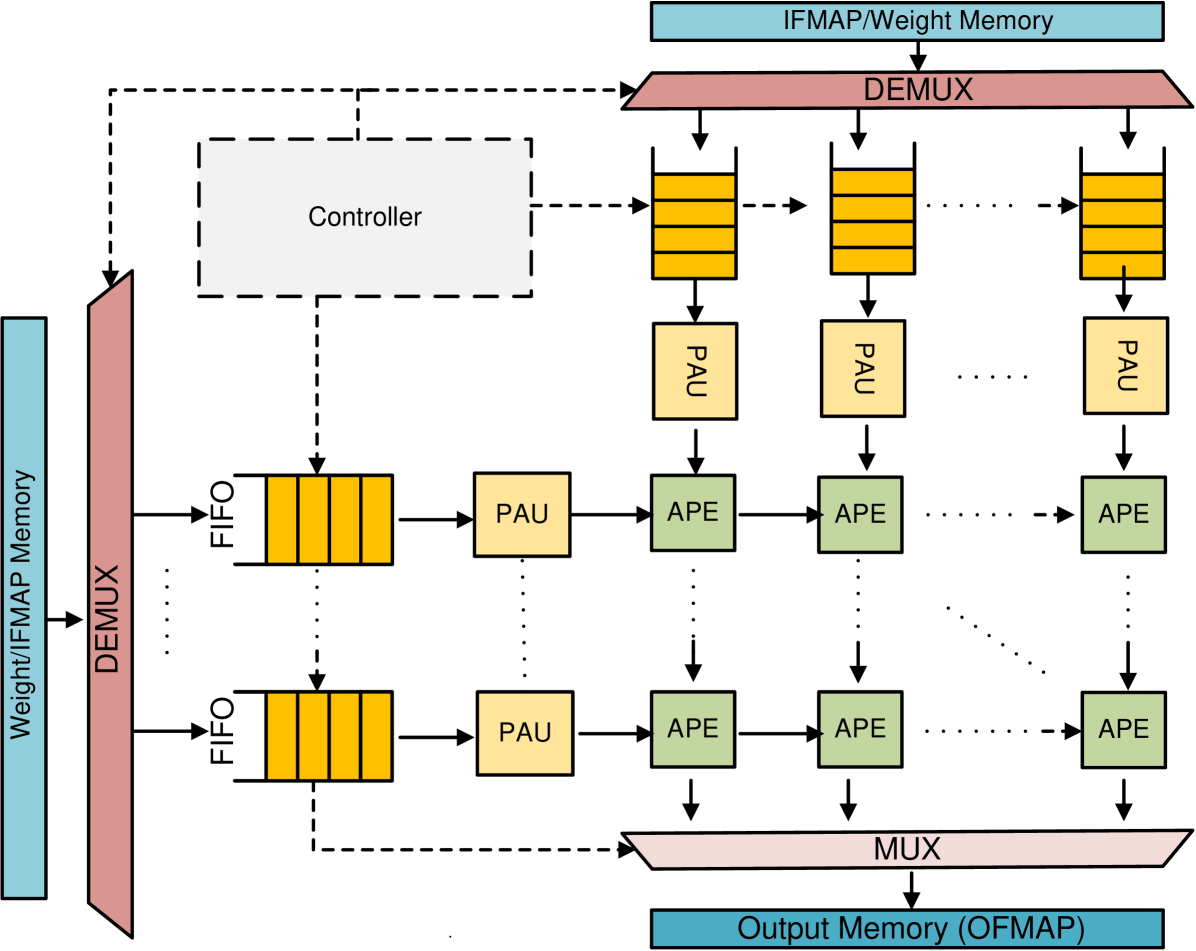

Figure 1: The overall template for TPU design.

III TPU-Gen Framework

III-A Architectural Template

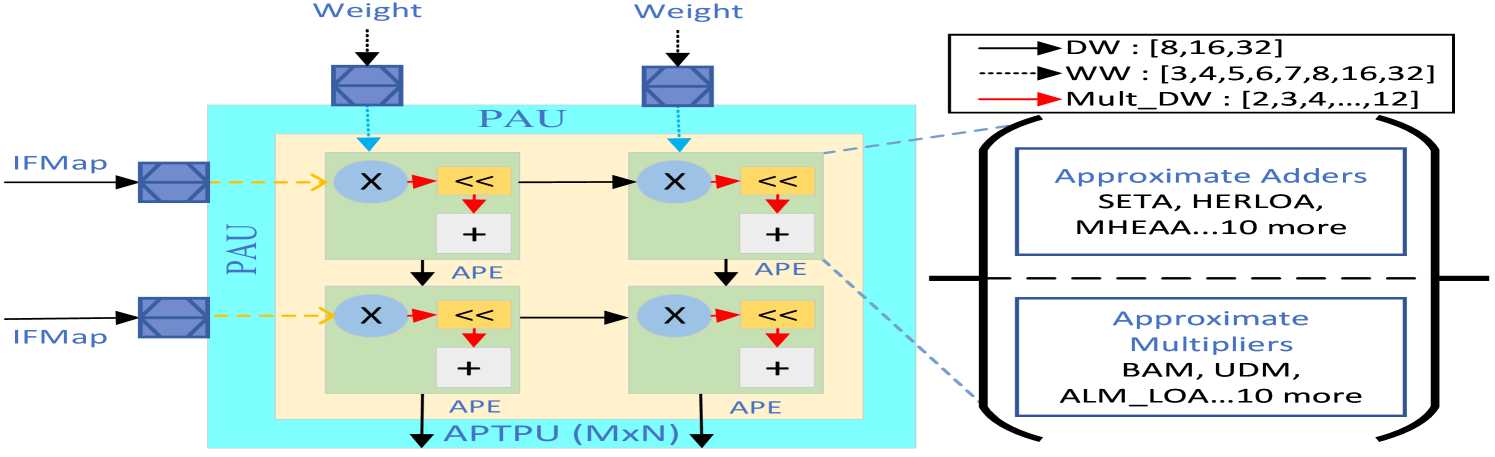

Developing a Generic Template. The TPU architecture utilizes a systolic array of PEs with MAC units for efficient matrix and vector computations. This design enhances performance and reduces energy consumption by reusing data, minimizing buffer operations [1]. Input data propagates diagonally through the array in parallel. The TPU template, illustrated in Fig. 1, extends the TPU’s systolic array with Output Stationary (OS) dataflow to enable concurrent approximation of input feature maps (IFMaps) and weights. It comprises five components: weight/IFMap memory, FIFOs, a controller, Pre-Approximate Units (PAUs), and Approximate Processing Elements (APEs). The weights and IFMaps are stored in their respective memories, with the controller managing memory access and data transfer to FIFOs per the OS dataflow. PAUs, positioned between FIFOs and APEs, dynamically truncate high-precision operands to lower precision before sending them to APEs, which perform MAC operations using approximate multipliers and adders. Sharing PAUs across rows and columns reduces hardware overhead, introducing minimal latency but significantly improving overall performance [39].

Highly-Parameterized RTL Code. We design highly flexible and parameterized RTL codes for 13 different approximate adders and 12 different approximate multipliers as representative approximate circuits. For the approximate adders, we have two tunable parameters: the bit-width and the imprecise part. The bit-width specifies the number of bits for each operand and the imprecise part specifies the number of inexact bits in the adder output. For the approximate multipliers, we have one common parameter, i.e., Width (W), which specifies the bit-width of the multiplication operands. We also have more tunable parameters based on specific multipliers, some of which are listed in Table II.

TABLE II: Approximate multiplier hyper-parameters

| Design | Parameter | Description | Default |

| --- | --- | --- | --- |

| BAM [40] | VBL | No. of zero bits during partial product generation | W/2 |

| ALM_LOA [41] | M | Inaccurate part of LOA adder | W/2 |

| ALM_MAA3 [41] | M | Inaccurate part of MAA3 adder | W/2 |

| ALM_SOA [41] | M | Inaccurate part of SOA adder | W/2 |

| ASM [42] | Nibble_Width | number of precomputed alphabets | 4 |

| DRALM [37] | MULT_DW | Truncated bits of each operand | W/2 |

| RoBA [43] | ROUND_WIDTH | Scales the widths of the shifter | 1 |

We leveraged the parametrized RTL library of approximate arithmetic circuits to build a TPU library that enables automatic selection of the systolic array size $S$ , bit precision $n$ , and one of the approximate multipliers and approximate adders. The internal parameters that are used to tune the approximate arithmetic libraries are also included in the TPU parameterized RTL library, thus, allowing the user to have complete flexibility to adjust their designs to meet specific hardware specifications and application accuracy requirements. Moreover, we developed a design automation methodology, enabling the automatic implementation and simulation of many TPU circuits in various simulation platforms such as Design Compiler and Vivado. In addition to the highly parameterized RTL codes, we developed TCL and Python scripts to autonomously measure their error, area, performance, and power dissipation under various constraints.

III-B Framework Overview

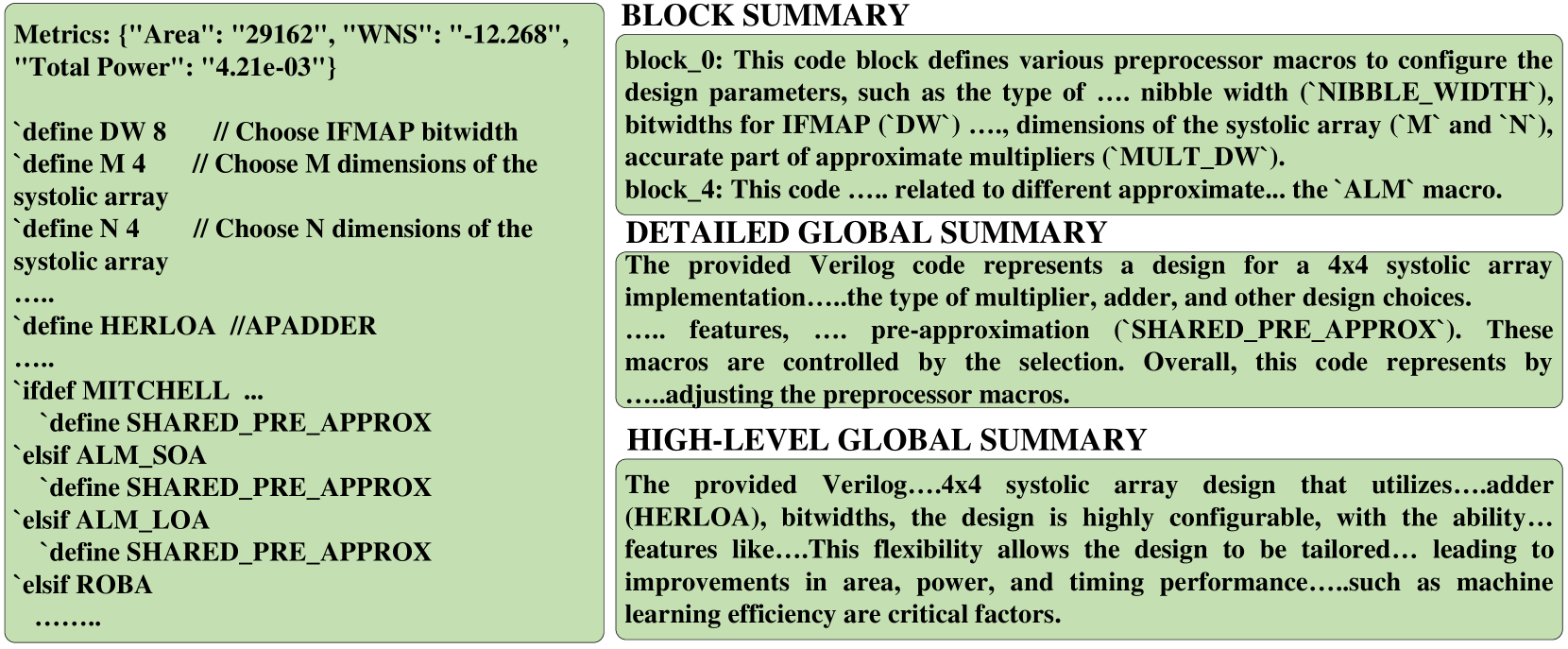

TPU-Gen framework depicted in Fig. 2 targets the development of domain-specific LLMs, emphasizing the interplay between the model’s responses and two key factors: the input prompt and the model’s learned parameters. The framework optimizes both elements to enhance LLM’s performance. An initial prompt conveying the user’s intent and key software and hardware specifications of the intended TPU design and application is enabled through the Prompt Generator in Step 1. A verbal description of a tensor processing accelerator design can often result in a many-to-one mapping as shown in Fig. 3 (a), especially when such descriptions do not align with the format of the training dataset. This misalignment increases the likelihood of hallucinations in the LLM’s output, potentially leading to faulty designs [44]. To minimize hallucinations and incorrect outputs in LLM-generated designs, studies have shown that inputs adhering closely to patterns observed in the training data produce more accurate and desirable results [17, 18]. However, this critical aspect has often been overlooked in previous state-of-the-art research [9], with some researchers opting instead to address the issue through prompt optimization techniques [18]. In this framework, we tackle the problem by employing a script that extracts key features, such as systolic size and relevant metrics, from any given verbal input by the user. These features are then embedded into a template, which serves as the prompt for the LLM input. As a domain-specific LLM, TPU-Gen focuses on generating the most valuable RTL top file detailing the circuit, and blocks involved in the presented architectural template in Section III.A.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: APTPU Generation Framework

### Overview

The image is a diagram illustrating the APTPU (Application-Specific Tensor Processing Unit) Generation Framework. It depicts the flow of information and processes involved in generating an APTPU based on user input and validation. The diagram is divided into three main sections: Input, APTPU Generation Framework, and Output.

### Components/Axes

* **Input (Left)**:

* "User prompt" (gray box with a user icon)

* "Prompt Generator" (green box with gear and document icons)

* Arrow labeled "1" indicating the flow from "User prompt" to "Prompt Generator"

* **APTPU Generation Framework (Center)**:

* "Multi-shot Learning\Fine-tuned LLM" (top green box with a brain icon)

* Arrow labeled "2" indicating the flow from "Prompt Generator" to "Multi-shot Learning\Fine-tuned LLM"

* "Retrieval-Augmented Generation (RAG)" (top-right green box with document icons)

* Arrow labeled "3" indicating the flow from "Multi-shot Learning\Fine-tuned LLM" to "Retrieval-Augmented Generation (RAG)"

* "Generate Code" (green box with a document icon)

* Arrow labeled "4" indicating the flow from "Retrieval-Augmented Generation (RAG)" to "Generate Code"

* "Automated Code Validation" (green box)

* Arrow labeled "5" indicating the flow from "Generate Code" to "Automated Code Validation"

* Green circular arrows indicating a loop between "Generate Code" and "Retrieval-Augmented Generation (RAG)"

* "LLM" (green box)

* "Data-set" (green cylinder)

* Red arrow labeled "6" indicating a feedback loop from "Data-set" to "Multi-shot Learning\Fine-tuned LLM"

* "Invalid" (orange box) indicating a negative validation result

* "Valid" (green box) indicating a positive validation result

* Arrow labeled "7" indicating the flow from "Automated Code Validation" to "Valid"

* **Output (Right)**:

* "APTPU w. needed perf" (green box with a factory and chip icon)

* Checkboxes labeled "Power", "Delay", and "Area"

* Ellipsis (...) indicating more parameters

* Arrow indicating the flow from "Valid" to "APTPU w. needed perf"

### Detailed Analysis or Content Details

The diagram illustrates the process of generating an APTPU using a combination of user input, machine learning, and code generation techniques.

1. **Input**: The process starts with a "User prompt" which is then processed by a "Prompt Generator".

2. **Multi-shot Learning/Fine-tuned LLM**: The output of the "Prompt Generator" is fed into a "Multi-shot Learning/Fine-tuned LLM" model.

3. **Retrieval-Augmented Generation (RAG)**: The LLM interacts with a "Retrieval-Augmented Generation (RAG)" module to generate code.

4. **Generate Code**: The RAG module generates code based on the input.

5. **Automated Code Validation**: The generated code is then validated automatically.

6. **Feedback Loop**: If the code is invalid, a feedback loop (indicated by the red arrow labeled "6") sends information back to the "Multi-shot Learning/Fine-tuned LLM" model, potentially using the "Data-set" to refine the model. The green circular arrows indicate a loop between "Generate Code" and "Retrieval-Augmented Generation (RAG)".

7. **Output**: If the code is valid, it leads to the generation of an "APTPU w. needed perf" (APTPU with needed performance), with parameters like "Power", "Delay", and "Area" being considered.

### Key Observations

* The diagram highlights the iterative nature of the APTPU generation process, with a feedback loop for invalid code.

* The use of machine learning (LLM) and retrieval-augmented generation (RAG) is central to the framework.

* The output is an APTPU that meets the required performance specifications.

### Interpretation

The diagram presents a high-level overview of an automated APTPU generation framework. It leverages user prompts, machine learning models, and code generation techniques to create application-specific tensor processing units. The feedback loop ensures that the generated code is validated and refined until it meets the desired performance criteria. The framework aims to automate the design and optimization of APTPUs, potentially reducing the time and effort required for manual design. The inclusion of parameters like "Power", "Delay", and "Area" suggests that the framework considers various performance metrics during the generation process.

</details>

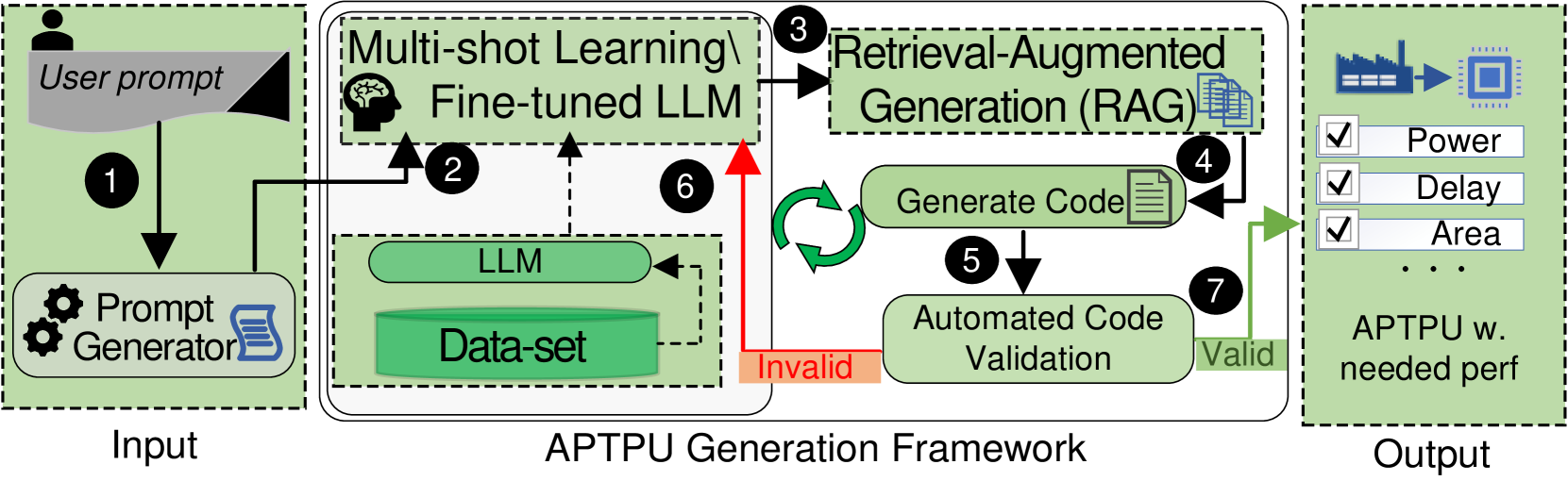

Figure 2: The proposed TPU-Gen framework.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: LLM-Based Hardware Design Flow

### Overview

The image presents a diagram illustrating the evolution of a hardware design process using a Large Language Model (LLM). It contrasts an initial, potentially flawed design approach with a refined, desired design achieved through LLM and prompt engineering. The diagram is divided into three sections, labeled (a), (b), and (c), representing different stages of the design process.

### Components/Axes

* **Section (a): Initial Design (Wrong Design)**

* **User:** Represented by a human figure at the bottom-left.

* **Descriptions 1, 2, 3:** Cloud-shaped containers representing user input or design specifications.

* **LLM:** A green box labeled "LLM" with a brain icon, indicating the Large Language Model.

* **Wrong Design:** An orange box with an "X" mark, indicating a flawed design output.

* **Chip Icon:** A blue chip icon.

* **Section (b): Prompt Generation**

* **Different User Inputs:** Title of the section.

* **Descriptions 1, 2, n:** Gray boxes representing different user inputs.

* **Prompt Generator:** A green box with gear icons, indicating a prompt generation process.

* **Code Generation:** A green box containing text "Generate the entire code for the <systolic_size> with... following.. input <bitwidth>....".

* **Document Icon:** A blue document icon.

* **Section (c): Desired Design**

* **User:** Represented by a human figure at the bottom-left.

* **Descriptions 1, 2, 3:** Cloud-shaped containers representing user input or design specifications.

* **Prompt Generator:** A green box with gear icons, indicating a prompt generation process.

* **LLM:** A green box labeled "LLM" with a brain icon, indicating the Large Language Model.

* **Desired Design:** A blue chip icon with a checkmark, indicating a successful design output.

* **Chip Icon:** A blue chip icon.

### Detailed Analysis or ### Content Details

* **Section (a): Initial Design**

* The user provides descriptions (1, 2, 3) as input.

* These descriptions are fed into the LLM.

* The LLM generates a design, which is marked as "Wrong Design".

* A text box near the bottom-left states: "I need a 16x16 systolic array with a dataflow. With support...bits...for app..."

* **Section (b): Prompt Generation**

* The section is titled "different user inputs".

* Multiple descriptions (1, 2, ... n) are used as input.

* These descriptions are fed into a "Prompt Generator".

* The "Prompt Generator" creates code for the <systolic_size> with specific input <bitwidth>.

* **Section (c): Desired Design**

* The user provides descriptions (1, 2, 3) as input.

* These descriptions are fed into a "Prompt Generator".

* The output of the "Prompt Generator" is fed into the LLM.

* The LLM generates a design, which is marked as "Desired Design".

### Key Observations

* The diagram highlights the iterative nature of hardware design.

* The "Prompt Generator" plays a crucial role in refining the design process.

* The LLM is used in both the initial and final design stages, but with different inputs and outcomes.

* The initial design (a) is directly generated from user descriptions, while the desired design (c) is generated after prompt engineering.

### Interpretation

The diagram illustrates how an LLM can be used to design hardware, specifically a TPU (Tensor Processing Unit). The initial attempt (a) results in a "Wrong Design," likely due to insufficient or ambiguous input. By introducing a "Prompt Generator" (b), the user can refine the input and generate more specific instructions for the LLM. This refined input leads to a "Desired Design" (c), indicating that prompt engineering is essential for successful hardware design using LLMs. The diagram suggests that simply feeding user descriptions to an LLM is not enough; a prompt generation step is necessary to achieve the desired outcome. The mention of "systolic_size" and "bitwidth" indicates that the design involves specific hardware parameters that need to be carefully controlled through prompt engineering.

</details>

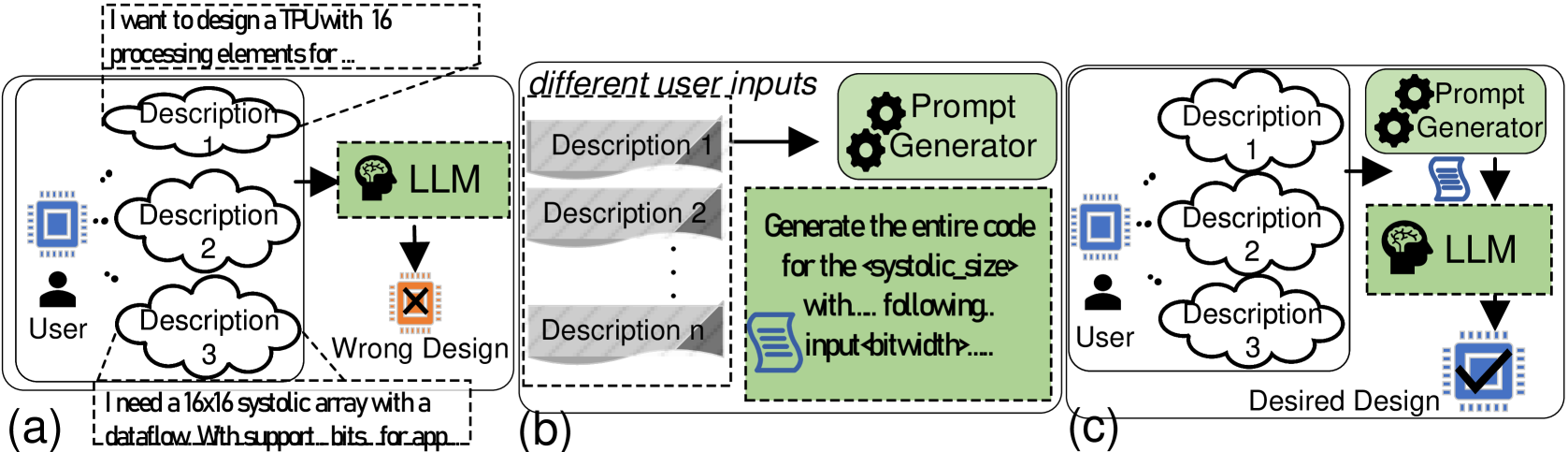

Figure 3: (a) Multiple descriptions for a single TPU design demonstrate that a design can be verbally defined in numerous ways, potentially misleading LLMs in generating the intended design, (b) Proposed prompt generator extracts the required features from the given verbal descriptions, (c) Using a script to generate a verbal description aligned with the training data.

An immediate usage of the proposed dataset explained in Section III.C in TPU-Gen is to help fine-tune a generic LLM for the task of TPU design, where the input with a prompt will be fed to the LLM (Step 2 in Fig. 2). Equivalently, one may employ ICL, or multi-shot learning as a more computationally efficient compromise to fine-tuning [24]. The multi-shot prompting techniques can be used where the proposed dataset will function as the source for multi-shot examples. Given that the TPU-Gen dataset integrates verbal descriptions with corresponding TPU systolic array design pairs, the LLM generates a TPU’s top-level file as the output in Verilog. This top-level file includes all necessary architectural module dependencies to ensure a fully functional design (step 3). Further, we propose to leverage the RAG module to generate the other dependency files into the project, completing the design (step 4). Next, a third-party quality evaluation tool can be employed to provide a quantitative evaluation of the design, verify functional correctness, and integrate the design with the full stack (step 5). Here, for quality and functional evaluation, the generated designs, initially described in Verilog, are synthesized using YOSYS [45]. This synthesis process incorporates an automated RTL-to-GDSII validation stage, where the generated designs are evaluated and classified as either Valid or Invalid based on the completeness of their code sequences and the correctness of their input-output relationships. Valid designs proceed to resource validation, where they are optimized with respect to Power, Performance, and Area (PPA) metrics. In contrast, designs flagged as Invalid initiate a feedback loop for error analysis and subsequent LLM retraining, enabling iterative refinement (steps 2 to 6) to achieve predefined performance criteria. Ultimately, designs that successfully pass these stages in step 7 are ready for submission to the foundry.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: APTPU Generation Process

### Overview

The image is a diagram illustrating the process of generating APTPU (presumably an acronym for a system or tool) configurations. The diagram shows a series of steps, starting from configuration files, moving through verification and OpenRoad, and culminating in the generation of APTPU-Gen. The process involves iterative refinement and the incorporation of metrics and descriptions.

### Components/Axes

The diagram consists of the following key components:

1. **APTPU CONFIG FILES:** Represented by a stack of blue documents.

2. **Verification:** A light blue box containing the text "Verification" and "Verify, Synthesize."

3. **OpenRoad:** A light blue box containing the text "OpenRoad" and "PPA reports."

4. **APTPU + Metrics corpus:** Represented by a stack of orange documents.

5. **granulated prompt:** Represented by a scroll icon.

6. **APTPU + Metrics + Descriptions:** Represented by a stack of green documents.

7. **APTPU-Gen:** Represented by a light blue cylinder (database) with a scroll icon.

8. **Arrows:** Black arrows indicate the flow of the process. A pair of curved white arrows indicates an iterative process.

9. **Numbers:** Black circles containing white numbers (1-5) indicate the sequence of steps.

### Detailed Analysis

* **Step 1:** APTPU CONFIG FILES (blue) flow downward to a process involving gears and the text "Tune variables, features." This leads to the "Verification" box (light blue) containing the text "Verify, Synthesize."

* **Step 2:** The "Verification" box (light blue) flows rightward to the "OpenRoad" box (light blue) containing the text "PPA reports."

* **Step 3:** The "OpenRoad" box (light blue) flows upward to the "APTPU + Metrics corpus" (orange).

* **Iterative Process:** A pair of curved white arrows indicates an iterative process between the "APTPU + Metrics corpus" (orange) and the "Tune variables, features" step.

* **Step 4:** The "APTPU + Metrics corpus" (orange) flows rightward, combined with a "granulated prompt" (scroll icon), to "APTPU + Metrics + Descriptions" (green).

* **Step 5:** The "APTPU + Metrics + Descriptions" (green) flows downward to the "APTPU-Gen" database (light blue cylinder).

### Key Observations

* The process starts with configuration files and involves iterative tuning and verification.

* Metrics and descriptions are added to the APTPU data as the process progresses.

* The final output is stored in an "APTPU-Gen" database.

### Interpretation

The diagram illustrates a systematic process for generating APTPU configurations. The iterative nature of the process suggests that the configurations are refined over time, likely based on performance metrics and other feedback. The addition of metrics and descriptions indicates an effort to improve the quality and usability of the generated configurations. The use of OpenRoad suggests that the process is related to physical design automation (PDA) or electronic design automation (EDA). The "granulated prompt" suggests that the process may involve some form of automated prompt generation or refinement.

</details>

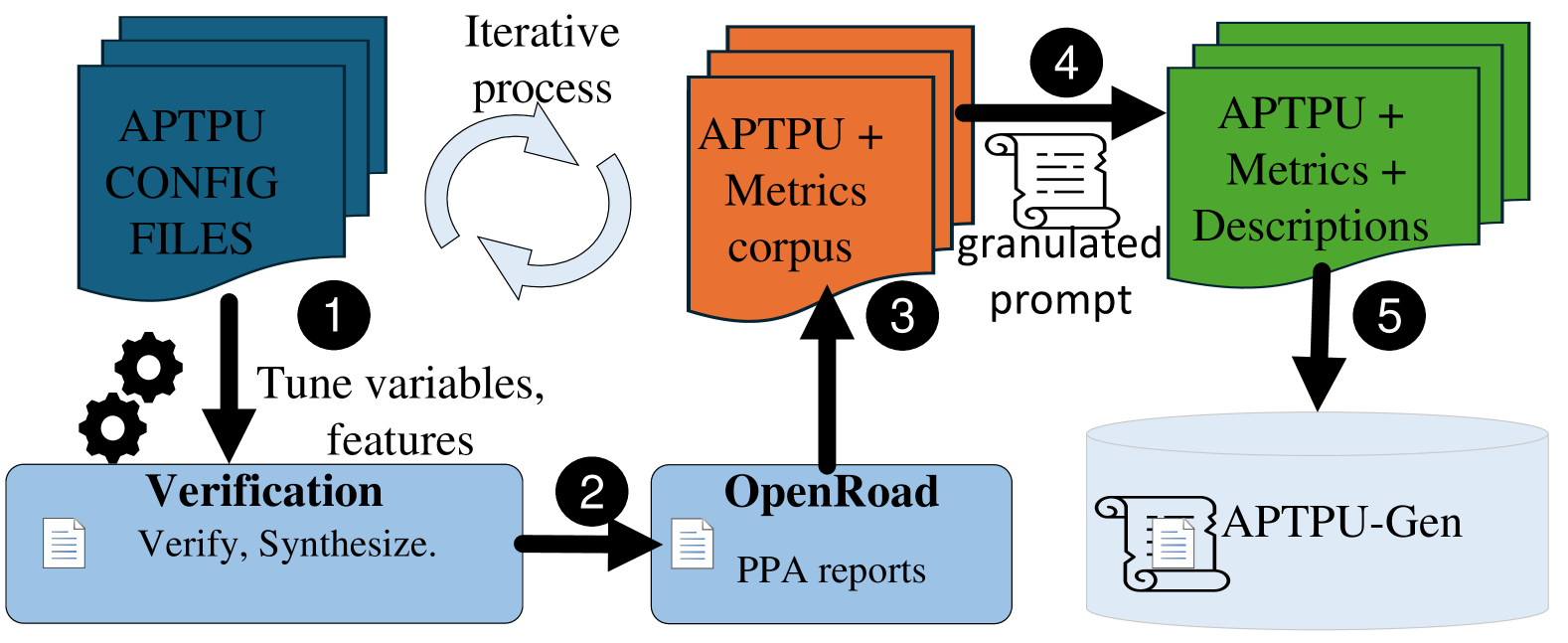

Figure 4: TPU-Gen dataset curation.

III-C Dataset Curation

Leveraging the parameterized RTL code of the TPU, we develop a script to systematically explore various architectural configurations and generate a wide range of designs within the proposed framework (step 1 in Fig. 4). The generated designs undergo synthesis and functional verification (step 2). Subsequently, the OpenROAD suite [46] is employed to produce PPA metrics (step 3). The PPA data is parsed using Pyverilog (step 4), resulting in the creation of a detailed, multi-level dataset that captures the reported PPA metrics (step 5). Steps 1 to 3 are iterated until all architectural variations are generated. The time required for each data point generation varies depending on the specific configuration. To efficiently populate the TPU-Gen dataset, we utilize multiple scripts that automate the generation of data points across different systolic array sizes, ensuring comprehensive coverage of design space exploration. Fig. 4 shows the detailed methodology underpinning our dataset creation. The validation when compared to prior works [10, 47] understanding we work in a different design space abstraction makes it tough to have a fair comparison. However, looking by the scale of operation and the framework’s efficiency we require minimal efforts comparatively.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Approximate Processing-in-Memory Architecture

### Overview

The image presents a diagram of an Approximate Processing-in-Memory (APiM) architecture. It illustrates the flow of data through Processing Array Units (PAUs) containing Approximate Processing Elements (APEs). The diagram also highlights the use of approximate adders and multipliers within the architecture.

### Components/Axes

* **Inputs:**

* `IFMap` (Input Feature Map): Two inputs labeled "IFMap" enter from the left, feeding into the PAU.

* `Weight`: Two inputs labeled "Weight" enter from the top, feeding into the PAU.

* **Processing Units:**

* `PAU` (Processing Array Unit): A light blue box containing the APEs.

* `APE` (Approximate Processing Element): Green boxes containing a multiplier, a shift operation, and an adder.

* `APTPU (MxN)`: Approximate Tensor Processing Unit, the overall architecture.

* **Operations:**

* `X`: Multiplication operation.

* `<<`: Shift operation.

* `+`: Addition operation.

* **Legend (Top-Right):**

* `DW`: Solid black line with an arrow, associated with the values `[8, 16, 32]`.

* `WW`: Dashed black line with an arrow, associated with the values `[3, 4, 5, 6, 7, 8, 16, 32]`.

* `Mult_DW`: Solid red line with an arrow, associated with the values `[2, 3, 4, ..., 12]`.

* **Approximate Components (Right):**

* `Approximate Adders`: Listed as "SETA, HERLOA, MHEAA...10 more".

* `Approximate Multipliers`: Listed as "BAM, UDM, ALM_LOA...10 more".

### Detailed Analysis

* **Data Flow:** The `IFMap` inputs enter the PAU and are processed by the APEs. The `Weight` inputs also feed into the PAU. Within each APE, the data undergoes multiplication, a shift operation, and addition. The output of each APE feeds into the next, and the final output goes to the `APTPU (MxN)`.

* **PAU Structure:** The PAU consists of a 2x2 grid of APEs. Each APE contains a multiplier (X), a shift operation (<<), and an adder (+).

* **Legend Interpretation:**

* The `DW` (Data Width) parameter takes values of 8, 16, or 32.

* The `WW` (Weight Width) parameter takes values from 3 to 8, and also 16 and 32.

* The `Mult_DW` (Multiplier Data Width) parameter takes values from 2 to 12.

* **Approximate Components:** The diagram highlights that approximate adders and multipliers are used within the architecture. Specific examples of approximate adders include SETA, HERLOA, and MHEAA. Examples of approximate multipliers include BAM, UDM, and ALM_LOA. The "...10 more" indicates that there are at least 10 other approximate adders and multipliers used.

### Key Observations

* The architecture utilizes approximate computing techniques to reduce power consumption and improve performance.

* The PAU is the core processing unit, containing multiple APEs.

* The diagram emphasizes the use of approximate adders and multipliers as key components of the architecture.

### Interpretation

The diagram illustrates a hardware architecture designed for efficient processing of data using approximate computing. The use of approximate adders and multipliers allows for trade-offs between accuracy and performance, potentially leading to significant improvements in energy efficiency. The PAU structure suggests a parallel processing approach, where multiple APEs operate simultaneously to accelerate computation. The diagram highlights the key components and data flow within the architecture, providing a high-level overview of its functionality. The architecture is likely intended for applications where some degree of error is tolerable in exchange for improved performance and energy efficiency, such as image processing or machine learning.

</details>

Figure 5: An example of one category and its design space parameters.

Fig. 5 visualizes the selection of different circuits to make PAUs and APEs accommodating different input Data Widths (DW) (8, 16, 32 bits) and Weight Widths (WW) (ranging from 3 to 32 bits) to generate approximate MAC units. These feature units highlight the flexible template of the TPU and enhance its adaptability and performance across various DNN workloads. Including lower bit-width weights is particularly advantageous for highly quantified models, enabling efficient processing with reduced computational resources.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Code Analysis and Summary

### Overview

The image presents a code snippet and summaries related to a Verilog design for a 4x4 systolic array. It includes metrics, preprocessor definitions, and block summaries at different levels of detail.

### Components/Axes

* **Metrics:** A dictionary containing "Area", "WNS", and "Total Power".

* **Preprocessor Definitions:** A series of `define` and `ifdef` statements that configure design parameters.

* **Block Summary:** A brief description of the functionality of specific code blocks.

* **Detailed Global Summary:** A more in-depth explanation of the Verilog code's purpose and features.

* **High-Level Global Summary:** A concise overview of the design's capabilities and benefits.

### Detailed Analysis or ### Content Details

**Metrics:**

* Area: 29162

* WNS (Worst Negative Slack): -12.268

* Total Power: 4.21e-03

**Preprocessor Definitions:**

* `define DW 8`: Chooses IFMAP bitwidth.

* `define M 4`: Chooses M dimensions of the systolic array.

* `define N 4`: Chooses N dimensions of the systolic array.

* `define HERLOA //APADDER`

* `ifdef MITCHELL`:

* `define SHARED_PRE_APPROX`

* `elsif ALM_SOA`:

* `define SHARED_PRE_APPROX`

* `elsif ALM_LOA`:

* `define SHARED_PRE_APPROX`

* `elsif ROBA`:

* (Further definitions are truncated in the image)

**Block Summary:**

* block_0: Defines preprocessor macros to configure design parameters like nibble width (`NIBBLE_WIDTH`), IFMAP bitwidths (`DW`), systolic array dimensions (`M` and `N`), and approximate multipliers (`MULT_DW`).

* block_4: Related to different approximate methods, specifically the `ALM` macro.

**Detailed Global Summary:**

* The Verilog code represents a 4x4 systolic array implementation.

* It involves choices for multipliers, adders, and other design elements.

* Features include pre-approximation (`SHARED_PRE_APPROX`), controlled by macro selection.

* The code allows for adjusting preprocessor macros.

**High-Level Global Summary:**

* The Verilog code is for a 4x4 systolic array design using an adder (HERLOA) and configurable bitwidths.

* The design is highly configurable, allowing tailoring for improvements in area, power, and timing performance.

* This is beneficial for applications like machine learning.

### Key Observations

* The code uses preprocessor macros extensively for configuration.

* The design is focused on a 4x4 systolic array architecture.

* Approximation techniques are used, likely for optimization purposes.

* The design aims for improvements in area, power, and timing performance, which are crucial for machine learning applications.

### Interpretation

The image provides a snapshot of a configurable Verilog design for a systolic array. The preprocessor definitions allow for customization of various parameters, such as bitwidths and array dimensions. The summaries highlight the design's focus on optimization and its suitability for machine learning applications. The metrics provide a quantitative assessment of the design's performance in terms of area, timing (WNS), and power consumption. The use of conditional compilation (`ifdef`, `elsif`) suggests that different approximation techniques or design choices can be selected based on specific requirements or constraints.

</details>

Figure 6: An example of a data point by adapting MG-V format.

TPU-Gen dataset offers 29,952 possible variations for a systolic array size with 8 different systolic array implementations to facilitate various workloads spanning from 4 $×$ 4 for smaller loads to 256 $×$ 256 to crunch bigger DNN workloads. Accounting for the systolic size variations in the TPU-Gen dataset promises a total of 29,952 $×$ 8 = 2,39,616 data points with PPA metrics reported. While TPU-Gen is constantly growing with newer data points, we checkpoint our dataset creation currently reported as having 25,000 individual TPU designs. We provide two variations: $(i)$ A top module file consisting of details of the entire circuit implementation, which can be used in cases such as RAG implementation to save the computation resources, and $(ii)$ A detailed, multi-level granulated dataset, as depicted in Fig. 6, is curated by adapting MG-Verilog [17] to assist LLM in generating Verilog code to support the development of a highly sophisticated, fine-tuned model. This model facilitates the automated generation of individual hardware modules, intelligent integration, deployment, and reuse across various designs and architectures. Please note that due to the domain-specific nature of the dataset, some data redundancy is inevitable, as similar modules are reused and reconfigured to construct new TPUs with varying architectural configurations. This structured dataset enables efficient exploration and customization of TPU designs while ensuring that the generated modules can be systematically adapted for different design requirements, leading to enhanced flexibility and scalability in hardware design automation. Additionally, we provide detailed metrics for each design iteration, which aid the LLM in generating budget-constrained designs or in creating an efficient design space exploration strategy to accelerate the result optimization process.

TABLE III: Prompts to successfully generate exact TPU modules via TPU-Gen.

| LLM Model Mistral-7B (Q3) | Module Generation Pass@1 17% | Module Integration Pass@3 83% | Pass@5 100% | Pass@10 100% | Pass@1 0% | Pass@3 25% | Pass@5 75% | Pass@10 100% |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| CodeLlama-7B (Q4) | 0% | 50% | 83% | 100% | 0% | 50% | 75% | 100% |

| CodeLlama-13B (Q4) | 66% | 83% | 100% | 100% | 25% | 75% | 100% | 100% |

| Claude 3.5 Sonnet | 83% | 100% | 100% | 100% | 75% | 100% | 100% | 100% |

| ChatGPT-4o | 83% | 100% | 100% | 100% | 50% | 100% | 100% | 100% |

| Gemmini Advanced | 50% | 50% | 74% | 91% | 25% | 75% | 74% | 91% |

IV Experiment Results

IV-A Objectives

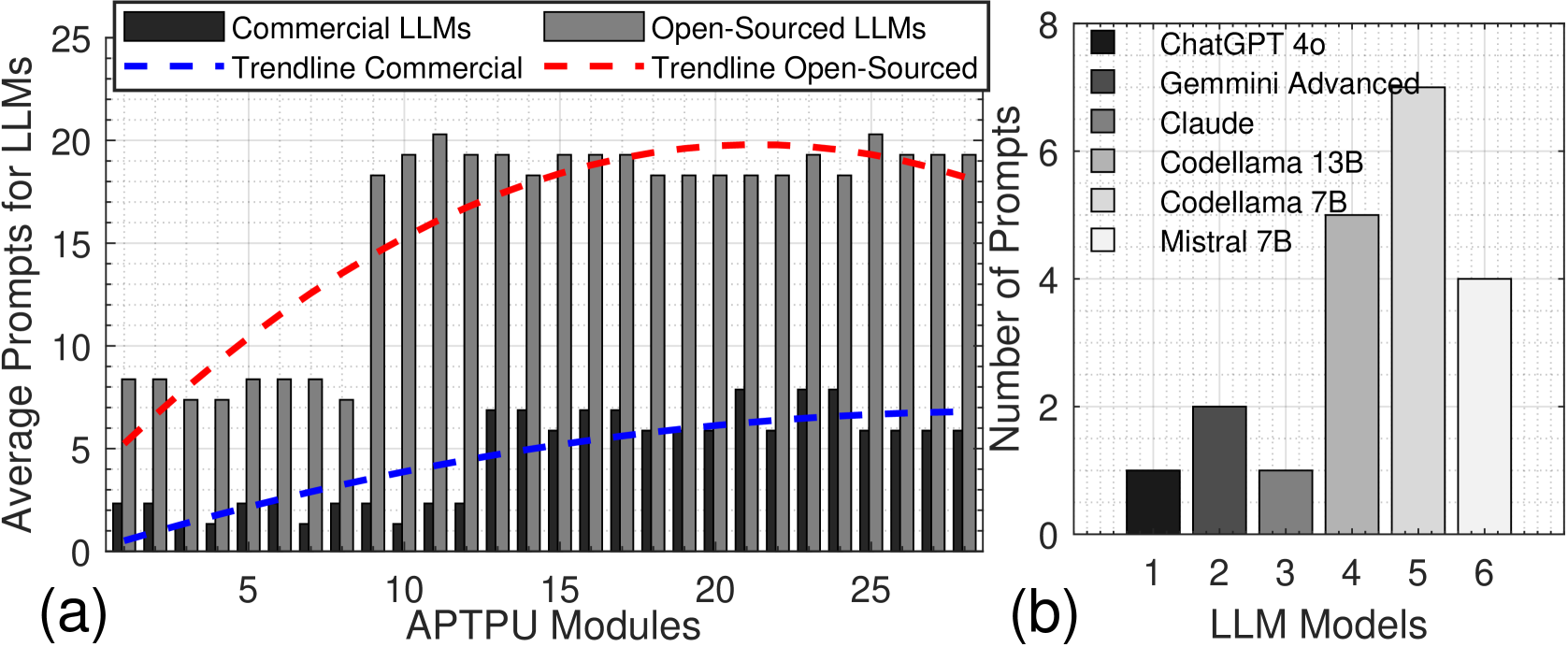

We designed four distinct experiments employing various approaches, each tailored to the unique capabilities of LLMs such as GPT [48], Gemini [49], and Claude [50], as well as the best open-source models from the leader board [51]. Each model is deployed in experiments aligning with the study’s objectives and anticipated outcomes. Experiments 1 focus on observing the prompting mechanism that assists LLM in generating the desired output by implementing ICL; with this knowledge, we develop the prompt template discussed in Sections III-B. Experiment 2 focuses on adapting the proposed TPU-Gen framework by fine-tuning LLM models. For fine-tuning, we used 4 $×$ A100 GPU with 80GB VRAM. Experiment 3 is to demonstrate the effectiveness of RAG in TPU-Gen and it’s applicability for hardware design. Experiment 4 tests the TPU-Gen framework’s ability to generate designs efficiently with an industry-standard 45nm technology library. Throughout the process, we also consider hardware under the given PPA budget to ensure the feasibility of achieving the objectives outlined in the initial phases.

IV-B Experiments and Results

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Charts: LLM Prompt Analysis

### Overview

The image presents two bar charts comparing the average prompts for different Large Language Models (LLMs). Chart (a) compares commercial and open-sourced LLMs against the number of APTPU modules. Chart (b) compares specific LLM models based on the number of prompts.

### Components/Axes

**Chart (a):**

* **Title:** Average Prompts for LLMs vs. APTPU Modules

* **X-axis:** APTPU Modules, ranging from 1 to 25 in increments of 5.

* **Y-axis:** Average Prompts for LLMs, ranging from 0 to 25 in increments of 5.

* **Data Series:**

* Commercial LLMs (dark gray bars)

* Open-Sourced LLMs (light gray bars)

* Trendline Commercial (blue dashed line)

* Trendline Open-Sourced (red dashed line)

* **Legend:** Located at the top of chart (a).

**Chart (b):**

* **Title:** Number of Prompts vs. LLM Models

* **X-axis:** LLM Models, numbered 1 to 6.

* **Y-axis:** Number of Prompts, ranging from 0 to 8 in increments of 2.

* **Data Series:**

* Model 1: ChatGPT 4o (dark gray bar)

* Model 2: Gemini Advanced (gray bar)

* Model 3: Claude (light gray bar)

* Model 4: Codellama 13B (lighter gray bar)

* Model 5: Codellama 7B (even lighter gray bar)

* Model 6: Mistral 7B (white bar)

* **Legend:** Located on the right side of chart (b).

### Detailed Analysis

**Chart (a):**

* **Commercial LLMs:** The dark gray bars representing commercial LLMs show a generally low number of average prompts, ranging from approximately 1 to 3 across different APTPU Modules. The trendline (blue dashed line) shows a slight upward slope, indicating a marginal increase in average prompts as the number of APTPU Modules increases.

* APTPU Modules = 1: ~1.5 prompts

* APTPU Modules = 5: ~2 prompts

* APTPU Modules = 10: ~2.5 prompts

* APTPU Modules = 15: ~2.7 prompts

* APTPU Modules = 20: ~3 prompts

* APTPU Modules = 25: ~3.2 prompts

* **Open-Sourced LLMs:** The light gray bars representing open-sourced LLMs show a significantly higher number of average prompts compared to commercial LLMs. The values range from approximately 8 to 20. The trendline (red dashed line) shows an initial steep upward slope, peaking around 15 APTPU Modules, then slightly decreasing.

* APTPU Modules = 1: ~8 prompts

* APTPU Modules = 5: ~8 prompts

* APTPU Modules = 10: ~18 prompts

* APTPU Modules = 15: ~20 prompts

* APTPU Modules = 20: ~18 prompts

* APTPU Modules = 25: ~19 prompts

**Chart (b):**

* **ChatGPT 4o (Model 1):** The dark gray bar indicates approximately 1 prompt.

* **Gemini Advanced (Model 2):** The gray bar indicates approximately 2 prompts.

* **Claude (Model 3):** The light gray bar indicates approximately 2 prompts.

* **Codellama 13B (Model 4):** The lighter gray bar indicates approximately 7 prompts.

* **Codellama 7B (Model 5):** The even lighter gray bar indicates approximately 5 prompts.

* **Mistral 7B (Model 6):** The white bar indicates approximately 4 prompts.

### Key Observations

* Open-sourced LLMs generally require more prompts than commercial LLMs, especially in the range of 10-20 APTPU Modules.

* The number of APTU Modules has a more significant impact on Open-Sourced LLMs than Commercial LLMs.

* Codellama 13B (Model 4) requires the most prompts among the specific LLM models compared in chart (b).

* ChatGPT 4o (Model 1) requires the fewest prompts among the specific LLM models compared in chart (b).

### Interpretation

The data suggests that open-sourced LLMs may be less efficient or require more interaction (prompts) to achieve desired results compared to commercial LLMs, particularly as the number of APTPU modules increases. This could be due to differences in model architecture, training data, or optimization strategies. The trendline for open-sourced LLMs indicates a non-linear relationship with APTPU modules, suggesting that there may be an optimal range for prompt efficiency.

The comparison of specific LLM models in chart (b) highlights the variability in prompt requirements across different models. Codellama 13B stands out as requiring significantly more prompts than other models, while ChatGPT 4o requires the fewest. This information could be valuable for users selecting an LLM based on their specific needs and resource constraints.

</details>

Figure 7: Average TPU-Gen prompts for (a) Module Generation, and (b) Module Integration via LLMs.

IV-B 1 Experiment 1: ICL-Driven TPU Generation and Approximate Design Adaptation.

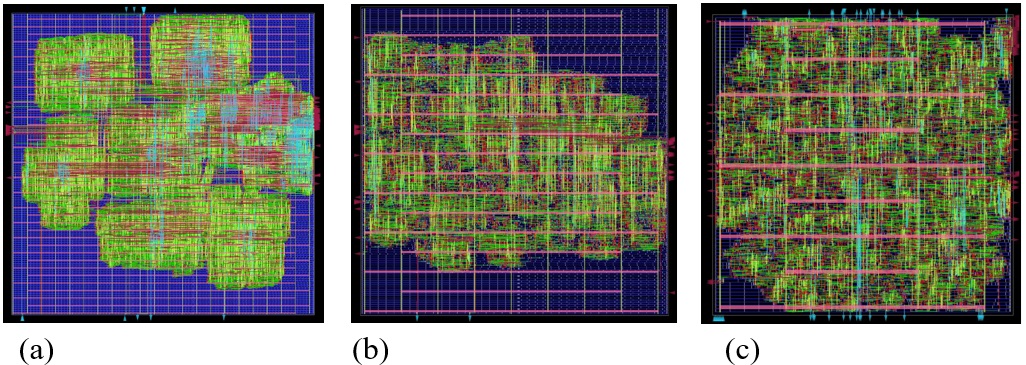

We evaluate the capability of LLMs to generate and synthesize a novel TPU architecture and its approximate version using TPU-Gen. Utilizing the prompt template from [18], we refined it to harness LLM capabilities better. LLM performance is assessed on two metrics: $(i)$ Module Generation —the ability to generate required modules, and $(ii)$ Module Integration —the capability to construct the top module by integrating components. We tested commercial models like [48, 49] via chat interfaces and open-source models listed in Table III, using LM Studio [52]. For the TPU, we successfully developed the design and obtained the GDSII layout (Fig. 8 (a)). Commercial models performed well with a single prompt at pass@1, averaging 72% in module generation and 50% in integration. Open-source models performed better with the increase of pass@k, averaging 72% for pass@1 in module generation to 100% and 50% to 100% upscale from pass@3 to pass@10 in integration. For the approximate TPU, involving approximate circuit algorithms, we provided example circuits and used ICL and Chain of Thought (CoT) to guide the LLMs. Open-source models struggled due to a lack of specialized knowledge, as shown in Fig. 7. The design layout from this experiment is in Fig. 8 (b). All outputs were manually verified using test benches. This is the first work to generate both exact and approximate TPU architectures using prompting to LLM. However, significant human expertise and intervention are required, especially for complex architectures like approximate circuits. To minimize the human involvement, we implement fine-tuning.

Takeaway 1. LLMs with efficient prompting are capable of generating exact and approximate TPU modules and integrate them to create complete designs. However, human involvement is extensively required, especially for novel architectures. Fine-tuning LLMs is necessary to reduce human intervention and facilitate the exploration of new designs.

IV-B 2 Experiment 2: Full TPU-Gen Implementation

This experiment investigates cost-efficient approaches for adapting domain-specific language models to hardware design. In previous experiments, we observed that limited spatial and hierarchical hardware knowledge hindered LLM performance in integrating circuits. The TPU-Gen template (Fig. 2) addresses this by delegating creative tasks to the LLM and retrieving dependent modules via RAG, optimizing AI accelerator design while reducing computational overhead and minimizing LLM hallucinations. ICL experiments show that fine-tuning enhances LLM reliability. The TPU-Gen proposes a way to develop domain-specific LLMs with minimal data. The experiment used a TPU-Gen dataset version 1 of 5,000 Verilog headers DW and WW inputs. This dataset comprises systolic array implementations with biased approximate circuit variations. We split data statically in 80:20 for training and testing open-source LLMs [51], with two primary goals of $1.$ Analyzing the impact of the prompt template generator on the fine-tuned LLM’s performance (Table IV). $2.$ Investigating the RAG model for hardware development.

<details>

<summary>extracted/6256789/Figures/GDSII.jpg Details</summary>

### Visual Description

## Integrated Circuit Layouts

### Overview

The image presents three different layouts of integrated circuits, labeled (a), (b), and (c). Each layout shows a complex arrangement of interconnected components and wiring on a grid-based background. The layouts differ in their overall structure and component density.

### Components/Axes

* **Grid Background:** A blue grid provides a spatial reference for the layout.

* **Components:** Represented by green and other colored shapes, indicating different functional blocks or circuit elements.

* **Wiring:** Red, light blue, and other colored lines represent the interconnections between components.

* **Labels:** The layouts are labeled (a), (b), and (c) at the bottom.

### Detailed Analysis or ### Content Details

**Layout (a):**

* The layout is organized into several distinct clusters or blocks of components.

* The clusters are interconnected by a network of wiring.

* The wiring density appears to be higher within the clusters than between them.

* The layout is not uniform, with some areas having a higher concentration of components than others.

* There are several red triangles along the left edge of the layout.

**Layout (b):**

* The layout is more uniformly distributed compared to layout (a).

* The components are arranged in a more regular pattern.

* There are horizontal red lines that appear to be power or ground rails.

* The wiring density is relatively consistent across the layout.

* There are several red triangles along the left edge of the layout.

**Layout (c):**

* Similar to layout (b), the components are arranged in a relatively uniform pattern.

* There are horizontal red lines that appear to be power or ground rails.

* The wiring density is relatively consistent across the layout.

* There are several light blue triangles along the top edge of the layout.

### Key Observations

* Layout (a) has a clustered structure, while layouts (b) and (c) have a more uniform structure.

* The wiring density varies across the layouts, with layout (a) having higher density within the clusters.

* The red lines in layouts (b) and (c) suggest a structured power or ground distribution network.

### Interpretation

The image shows different approaches to integrated circuit layout. Layout (a) might represent a design where functional blocks are physically grouped together, while layouts (b) and (c) might represent designs optimized for uniform component distribution and power delivery. The differences in layout structure can impact performance, power consumption, and manufacturability of the integrated circuit. The red triangles along the edges of layouts (a) and (b), and the light blue triangles along the top edge of layout (c) might be alignment marks or test points.

</details>

Figure 8: A GDSII layout of (a) TPU, (b) TPU by prompting LLM, (c) approximate TPU by TPU-Gen framework.

All models used Low-Rank Adaptation (LoRA) fine-tuning with the Adam optimizer at a learning rate of $1e^{-5}$ . The fine-tuned models were evaluated to generate the desired results efficiently with a random prompt at pass@ $1$ to generate the TPU. From Table IV, we can observe that the outputs without the prompt generator are labeled as failures as they were unsuitable for further development and RAG integration. We can observe the same prompt when parsed to the prompt-template generator with a single try; we score an accuracy of 86.6%. Further, we used RAG and then processed the generated Verilog headers for module retrieval. According to [11], LLMs tend to prioritize creativity and finding innovative solutions, which often results in straying from the data. To address this, we employed a compute and cost-efficient method. This shows that the fine-tuning along with RAG can greatly enhance the performance. Fig. 8 (c) shows the GDSII layout of the design generated by the TPU-Gen framework.

TABLE IV: Prompt Generator vs Human inputs to Fine-tuned models.

| CodeLlama-7B-hf CodeQwen1.5 -7B Mistral -7B | 27 25 28 | 03 05 02 | 01 0 02 | 29 30 28 |

| --- | --- | --- | --- | --- |

| Starcoder2-7B | 24 | 06 | 0 | 30 |

Takeaway 2. Prompting techniques such as prompt template steer LLM to generate desired results after fine-tuning, as observed 86% success in generation. RAG, a cost-efficient method to generate the hardware modules reliably, completing the entire Verilog design for an application with minimal computational overhead.

IV-B 3 Experiment 3: Significance of RAG

To assess the effectiveness of RAG in the TPU-Gen framework, we evaluated 1,000 Verilog header codes generated by fine-tuned LLMs under two conditions: with and without RAG integration. Table V presents results over 30 designs tested by our framework to generate complete project files. Without RAG, failures occurred due to output token limitations and hallucinated variables. RAG is essential as the design is not a standalone file to compile. Validated header codes were provided in the RAG-enabled pipeline, and required modules were dynamically retrieved from the RAG database, ensuring fully functional and accurate designs. Conversely, models without RAG relied solely on internal knowledge, leading to hallucinations, token constraints, and incomplete designs. Models using RAG consistently achieved pass rates exceeding 95%, with Mistral-7B and CodeLlama-7B-hf attaining 100% success. In contrast, all models failed entirely without RAG, underscoring its pivotal role in ensuring design accuracy and addressing LLM limitations. RAG provides a robust solution to key challenges in fine-tuned LLMs for TPU hardware design by retrieving external information from the RAG database, ensuring contextual accuracy, and significantly reducing hallucinations. Additionally, RAG dynamically fetches dependencies in a modular manner, enabling the generation of complete and accurate designs without exceeding token limits. RAG is a promising solution in this context since our models were fine-tuned with only Verilog header data detailing design features. However, fine-tuning models with the entire design data would expose LLMs to severe hallucinations and token limitations, making generating detailed and functional designs challenging.

TABLE V: significance of RAG in TPU-Gen.

| CodeLama-7B-hf Mistral-7B CodeQwen1.5-7B | 100 100 95 | 0 0 5 | 0 0 0 | 100 100 100 |

| --- | --- | --- | --- | --- |

| StarCoder2-7B | 98 | 2 | 0 | 100 |

Takeaway 3. The experiment highlights the significance of the RAG usage with a fine-tuned model to avoid hallucinations and let LLM be creative consistently.

IV-B 4 Experiment 4: Design Generation Efficiency

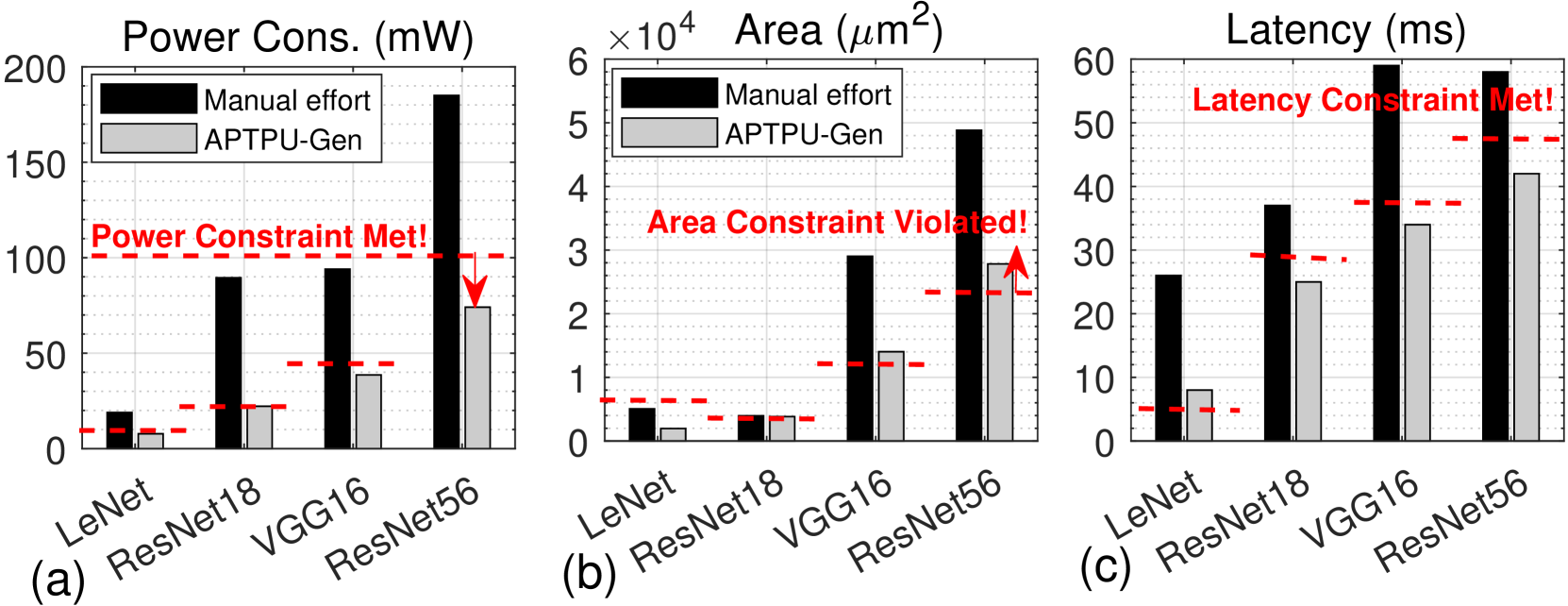

Building on the successful generation of approximate TPU in experiment 2, here we evaluate and benchmark the architectures produced by the TPU-Gen framework as the work performed in this paper is the first of it’s kind we are comparing against manual optimization created by expert human designers, focusing on power, area, and latency as shown in Fig. 9 (a)-(c). We utilize four DNN architectures for this evaluation: LeNet, ResNet18, VGG16, and ResNet56, performing inference tasks on the MNIST, CIFAR-10, SVHN, and CIFAR-100 datasets. In the manually optimized designs, a skilled hardware engineer fine-tunes parameters within the TPU template. This iterative optimization process is repeated until no further performance gains can be achieved within a reasonable timeframe of approximately one day [9], or the expert determines, based on empirical results, that additional refinements would yield minimal benefits. Using the PPA metrics as reference values (e.g., 100mW, 0.25mm 2, 48ms for ResNet56), both TPU-Gen and the manual user are tasked with generating the TPU architecture. Fig. 9 illustrates that across a range of network architectures, TPU-Gen consistently yields results with minimal deviation from the reference benchmarks. In contrast, the manual designs exhibit significant violations in terms of PPA.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Bar Chart: Power Consumption, Area, and Latency Comparison

### Overview

The image presents three bar charts comparing the power consumption, area, and latency of different neural network architectures (LeNet, ResNet18, VGG16, and ResNet56) using manual effort versus an automated approach (APTPU-Gen). Each chart also indicates whether a constraint (power, area, latency) was met or violated.

### Components/Axes

* **Chart Titles:**

* (a) Power Cons. (mW)

* (b) Area (µm²)

* (c) Latency (ms)

* **Y-Axes:**

* Power Consumption: Scale from 0 to 200 mW, incrementing by 50 mW.

* Area: Scale from 0 to 6 x 10^4 µm², incrementing by 1 x 10^4 µm².

* Latency: Scale from 0 to 60 ms, incrementing by 10 ms.

* **X-Axes:**

* Categories: LeNet, ResNet18, VGG16, ResNet56

* **Legend:** Located in the top-left of each chart.

* Black: Manual effort

* Gray: APTPU-Gen

* **Constraints:**

* Power Constraint Met! (Chart a)

* Area Constraint Violated! (Chart b)

* Latency Constraint Met! (Chart c)

### Detailed Analysis

**Chart (a): Power Consumption (mW)**

* **LeNet:**

* Manual effort: ~15 mW

* APTPU-Gen: ~5 mW

* **ResNet18:**

* Manual effort: ~90 mW

* APTPU-Gen: ~25 mW

* **VGG16:**

* Manual effort: ~95 mW

* APTPU-Gen: ~40 mW

* **ResNet56:**

* Manual effort: ~170 mW

* APTPU-Gen: ~80 mW

* **Power Constraint Met!:** A horizontal dashed red line is present at approximately 100 mW, with a downward-pointing arrow indicating that the APTPU-Gen power consumption for ResNet56 is below this constraint. Another dashed red line is present at approximately 25 mW.

**Chart (b): Area (µm²)**

* **LeNet:**

* Manual effort: ~0.4 x 10^4 µm²

* APTPU-Gen: ~0.2 x 10^4 µm²

* **ResNet18:**

* Manual effort: ~0.4 x 10^4 µm²

* APTPU-Gen: ~0.3 x 10^4 µm²

* **VGG16:**

* Manual effort: ~1.5 x 10^4 µm²

* APTPU-Gen: ~1.2 x 10^4 µm²

* **ResNet56:**

* Manual effort: ~5.0 x 10^4 µm²

* APTPU-Gen: ~3.0 x 10^4 µm²

* **Area Constraint Violated!:** A horizontal dashed red line is present at approximately 2.5 x 10^4 µm², with an upward-pointing arrow indicating that the manual effort area for ResNet56 exceeds this constraint. Another dashed red line is present at approximately 0.5 x 10^4 µm².

**Chart (c): Latency (ms)**

* **LeNet:**

* Manual effort: ~8 ms

* APTPU-Gen: ~2 ms

* **ResNet18:**

* Manual effort: ~27 ms

* APTPU-Gen: ~25 ms

* **VGG16:**

* Manual effort: ~35 ms

* APTPU-Gen: ~28 ms

* **ResNet56:**

* Manual effort: ~47 ms

* APTPU-Gen: ~42 ms

* **Latency Constraint Met!:** A horizontal dashed red line is present at approximately 35 ms, indicating that the latency for ResNet56 is below this constraint. Another dashed red line is present at approximately 5 ms.

### Key Observations

* APTPU-Gen consistently results in lower power consumption, area, and latency compared to manual effort across all network architectures.

* The power constraint is met for all architectures using APTPU-Gen.

* The area constraint is violated by the manual effort for ResNet56.

* The latency constraint is met for all architectures.

### Interpretation

The data suggests that the APTPU-Gen automated approach is more efficient than manual effort in terms of power consumption, area, and latency for the tested neural network architectures. The fact that the area constraint is violated by manual effort for ResNet56 highlights the potential benefits of using automated tools for optimizing network design, especially for larger and more complex architectures. The "constraints met" annotations suggest that the APTPU-Gen tool is designed to operate within predefined performance limits.

</details>

Figure 9: PPA metrics comparison for TPU architectures generated by TPU-Gen and the manual user: (a) Power consumption, (b) Area, (c) Latency.

Takeaway 4. TPU-Gen consistently yields results with minimal deviation from the PPA reference, whereas the manual designs exhibit significant violations.

V Conclusions

This paper introduces TPU-Gen, a novel dataset and a novel framework for TPU generation, addressing the complexities of generating AI accelerators amidst rapid AI model evolution. A key challenge, hallucinated variables, is mitigated using an RAG approach, dynamically adapting hardware modules. RAG enables cost-effective, full-scale RTL code generation, achieving budget-constrained outputs via fine-tuned models. Our extensive experimental evaluations demonstrate superior performance, power, and area efficiency, with an average reduction in area and power of 92% and 96% from the manual optimization reference values. These results set new standards for driving advancements in next-generation design automation tools powered by LLMs. We are committed to releasing the dataset and fine-tuned models publicly if accepted.

References

- [1] N. Jouppi, C. Young, N. Patil, and D. Patterson, “Motivation for and evaluation of the first tensor processing unit,” IEEE Micro, vol. 38, no. 3, pp. 10–19, 2018.

- [2] H. Genc et al., “Gemmini: Enabling systematic deep-learning architecture evaluation via full-stack integration,” in 2021 58th ACM/IEEE Design Automation Conference (DAC). IEEE, 2021, pp. 769–774.

- [3] H. Sharma, J. Park, D. Mahajan, E. Amaro, J. K. Kim, C. Shao, A. Mishra, and H. Esmaeilzadeh, “From high-level deep neural models to fpgas,” in 2016 49th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO). IEEE, 2016, pp. 1–12.

- [4] W.-Q. Ren et al., “A survey on collaborative dnn inference for edge intelligence,” Machine Intelligence Research, vol. 20, no. 3, pp. 370–395, 2023.

- [5] D. Vungarala, M. Morsali, S. Tabrizchi, A. Roohi, and S. Angizi, “Comparative study of low bit-width dnn accelerators: Opportunities and challenges,” in 2023 IEEE 66th International Midwest Symposium on Circuits and Systems (MWSCAS). IEEE, 2023, pp. 797–800.

- [6] P. Xu and Y. Liang, “Automatic code generation for rocket chip rocc accelerators,” 2020.

- [7] S. Angizi, Z. He, A. Awad, and D. Fan, “Mrima: An mram-based in-memory accelerator,” IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, vol. 39, no. 5, pp. 1123–1136, 2019.

- [8] K. Chang, Y. Wang, H. Ren, M. Wang, S. Liang, Y. Han, H. Li, and X. Li, “Chipgpt: How far are we from natural language hardware design,” arXiv preprint arXiv:2305.14019, 2023.

- [9] Y. Fu, Y. Zhang, Z. Yu, S. Li, Z. Ye, C. Li, C. Wan, and Y. C. Lin, “Gpt4aigchip: Towards next-generation ai accelerator design automation via large language models,” in 2023 IEEE/ACM International Conference on Computer Aided Design (ICCAD). IEEE, 2023, pp. 1–9.

- [10] S. Thakur, B. Ahmad, H. Pearce, B. Tan, B. Dolan-Gavitt, R. Karri, and S. Garg, “Verigen: A large language model for verilog code generation,” ACM Transactions on Design Automation of Electronic Systems, vol. 29, no. 3, pp. 1–31, 2024.

- [11] X. Jiang, Y. Tian, F. Hua, C. Xu, Y. Wang, and J. Guo, “A survey on large language model hallucination via a creativity perspective,” arXiv preprint arXiv:2402.06647, 2024.

- [12] J. Blocklove, S. Garg, R. Karri, and H. Pearce, “Chip-chat: Challenges and opportunities in conversational hardware design,” in 2023 ACM/IEEE 5th Workshop on Machine Learning for CAD (MLCAD). IEEE, 2023, pp. 1–6.

- [13] S. Thakur, J. Blocklove, H. Pearce, B. Tan, S. Garg, and R. Karri, “Autochip: Automating hdl generation using llm feedback,” arXiv preprint arXiv:2311.04887, 2023.

- [14] R. Ma, Y. Yang, Z. Liu, J. Zhang, M. Li, J. Huang, and G. Luo, “Verilogreader: Llm-aided hardware test generation,” arXiv:2406.04373v1, 2024.

- [15] W. Fang et al., “Assertllm: Generating and evaluating hardware verification assertions from design specifications via multi-llms,” arXiv:2402.00386v1, 2024.

- [16] M. Liu, N. Pinckney, B. Khailany, and H. Ren, “Verilogeval: Evaluating large language models for verilog code generation,” arXiv:2309.07544v2, 2024.

- [17] Y. Zhang, Z. Yu, Y. Fu, C. Wan, and Y. C. Lin, “Mg-verilog: Multi-grained dataset towards enhanced llm-assisted verilog generation,” arXiv preprint arXiv:2407.01910, 2024.

- [18] D. Vungarala, M. Nazzal, M. Morsali, C. Zhang, A. Ghosh, A. Khreishah, and S. Angizi, “Sa-ds: A dataset for large language model-driven ai accelerator design generation,” arXiv e-prints, pp. arXiv–2404, 2024.

- [19] H. Wu et al., “Chateda: A large language model powered autonomous agent for eda,” IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, 2024.

- [20] B. Nadimi and H. Zheng, “A multi-expert large language model architecture for verilog code generation,” arXiv preprint arXiv:2404.08029, 2024.

- [21] Y. Lu, S. Liu, Q. Zhang, and Z. Xie, “Rtllm: An open-source benchmark for design rtl generation with large language model,” in 2024 29th Asia and South Pacific Design Automation Conference (ASP-DAC). IEEE, 2024, pp. 722–727.

- [22] D. Vungarala, S. Alam, A. Ghosh, and S. Angizi, “Spicepilot: Navigating spice code generation and simulation with ai guidance,” arXiv preprint arXiv:2410.20553, 2024.

- [23] Y. Lai, S. Lee, G. Chen, S. Poddar, M. Hu, D. Z. Pan, and P. Luo, “Analogcoder: Analog circuit design via training-free code generation,” arXiv preprint arXiv:2405.14918, 2024.

- [24] D. Dai, Y. Sun, L. Dong, Y. Hao, S. Ma, Z. Sui, and F. Wei, “Why can gpt learn in-context? language models implicitly perform gradient descent as meta-optimizers,” arXiv preprint arXiv:2212.10559, 2022.

- [25] G. Izacard et al., “Atlas: Few-shot learning with retrieval augmented language models,” Journal of Machine Learning Research, vol. 24, no. 251, pp. 1–43, 2023.

- [26] J. Chen, H. Lin, X. Han, and L. Sun, “Benchmarking large language models in retrieval-augmented generation,” arXiv preprint arXiv:2309.01431, 2023.

- [27] R. Qin et al., “Robust implementation of retrieval-augmented generation on edge-based computing-in-memory architectures,” arXiv:2405.04700v1, 2024.

- [28] A. Roohi, S. Sheikhfaal, S. Angizi, D. Fan, and R. F. DeMara, “Apgan: Approximate gan for robust low energy learning from imprecise components,” IEEE Transactions on Computers, vol. 69, no. 3, pp. 349–360, 2019.

- [29] M. S. Ansari, B. Cockburn, and J. Han, “An improved logarithmic multiplier for energy-efficient neural computing,” IEEE Trans. on Comput., vol. 70, pp. 614–625, 2021.

- [30] S. Angizi, M. Morsali, S. Tabrizchi, and A. Roohi, “A near-sensor processing accelerator for approximate local binary pattern networks,” IEEE Transactions on Emerging Topics in Computing, vol. 12, no. 1, pp. 73–83, 2023.

- [31] H. Jiang, S. Angizi, D. Fan, J. Han, and L. Liu, “Non-volatile approximate arithmetic circuits using scalable hybrid spin-cmos majority gates,” IEEE Transactions on Circuits and Systems I: Regular Papers, vol. 68, no. 3, pp. 1217–1230, 2021.

- [32] S. Angizi, Z. He, A. S. Rakin, and D. Fan, “Cmp-pim: an energy-efficient comparator-based processing-in-memory neural network accelerator,” in Proceedings of the 55th Annual Design Automation Conference, 2018, pp. 1–6.

- [33] S. Angizi, H. Jiang, R. F. DeMara, J. Han, and D. Fan, “Majority-based spin-cmos primitives for approximate computing,” IEEE Transactions on Nanotechnology, vol. 17, no. 4, pp. 795–806, 2018.

- [34] M. E. Elbtity, H.-W. Son, D.-Y. Lee, and H. Kim, “High speed, approximate arithmetic based convolutional neural network accelerator,” 2020 International SoC Design Conference (ISOCC), pp. 71–72, 2020. [Online]. Available: https://api.semanticscholar.org/CorpusID:231826033

- [35] H. Younes, A. Ibrahim, M. Rizk, and M. Valle, “Algorithmic level approximate computing for machine learning classifiers,” 2019 26th IEEE Int. Conf. on Electron., Circuits and Syst. (ICECS), pp. 113–114, 2019.

- [36] S. Hashemi, R. I. Bahar, and S. Reda, “DRUM: A dynamic range unbiased multiplier for approximate applications,” 2015 IEEE/ACM Int. Conf. on Comput.-Aided Design (ICCAD), pp. 418–425, 2015.

- [37] P. Yin, C. Wang, H. Waris, W. Liu, Y. Han, and F. Lombardi, “Design and analysis of energy-efficient dynamic range approximate logarithmic multipliers for machine learning,” IEEE Transactions on Sustainable Computing, vol. 6, no. 4, pp. 612–625, 2021.

- [38] A. Dalloo, A. Najafi, and A. Garcia-Ortiz, “Systematic design of an approximate adder: The optimized lower part constant-or adder,” IEEE Transactions on Very Large Scale Integration (VLSI) Systems, vol. 26, no. 8, pp. 1595–1599, 2018.

- [39] M. E. Elbtity, P. S. Chandarana, B. Reidy, J. K. Eshraghian, and R. Zand, “Aptpu: Approximate computing based tensor processing unit,” IEEE Transactions on Circuits and Systems I: Regular Papers, vol. 69, no. 12, pp. 5135–5146, 2022.

- [40] F. Farshchi et al., “New approximate multiplier for low power digital signal processing,” The 17th CSI International Symposium on Computer Architecture & Digital Systems (CADS 2013), pp. 25–30, 2013.

- [41] W. Liu et al., “Design and evaluation of approximate logarithmic multipliers for low power error-tolerant applications,” IEEE Trans. on Circuits and Syst. I: Reg. Papers, vol. 65, pp. 2856–2868, 2018.

- [42] S. S. Sarwar et al., “Energy-efficient neural computing with approximate multipliers,” ACM Journal on Emerging Technologies in Computing Systems (JETC), vol. 14, pp. 1 – 23, 2018.

- [43] R. Zendegani et al., “Roba multiplier: A rounding-based approximate multiplier for high-speed yet energy-efficient digital signal processing,” IEEE Transactions on Very Large Scale Integration (VLSI) Systems, vol. 25, pp. 393–401, 2017. [Online]. Available: https://api.semanticscholar.org/CorpusID:206810935

- [44] M. Niu, H. Li, J. Shi, H. Haddadi, and F. Mo, “Mitigating hallucinations in large language models via self-refinement-enhanced knowledge retrieval,” arXiv preprint arXiv:2405.06545, 2024.

- [45] (2024) Yosys. [Online]. Available: https://github.com/YosysHQ/yosys

- [46] (2018) Openroad. [Online]. Available: https://github.com/The-OpenROAD-Project/OpenROAD

- [47] H. Pearce et al., “Dave: Deriving automatically verilog from english,” in MLCAD, 2020, pp. 27–32.

- [48] (2024) Openai gpt-4. [Online]. Available: https://openai.com/index/hello-gpt-4o/

- [49] (2024) Gemini. [Online]. Available: https://deepmind.google

- [50] (2023) Anthropic. [Online]. Available: https://www.anthropic.com

- [51] Evalplus leaderboard. https://evalplus.github.io/leaderboard.html. Accessed: 2024-09-21.

- [52] “Lm studio - discover, download, and run local llms,” https://lmstudio.ai/, accessed: 2024-09-21.