# Learning richness modulates equality reasoning in neural networks

**Authors**: Double blind review

## Abstract

Equality reasoning is ubiquitous and purely abstract: sameness or difference may be evaluated no matter the nature of the underlying objects. As a result, same-different (SD) tasks have been extensively studied as a starting point for understanding abstract reasoning in humans and across animal species. With the rise of neural networks that exhibit striking apparent proficiency for abstractions, equality reasoning in these models has also gained interest. Yet despite extensive study, conclusions about equality reasoning vary widely and with little consensus. To clarify the underlying principles in learning SD tasks, we develop a theory of equality reasoning in multi-layer perceptrons (MLP). Following observations in comparative psychology, we propose a spectrum of behavior that ranges from conceptual to perceptual outcomes. Conceptual behavior is characterized by task-specific representations, efficient learning, and insensitivity to spurious perceptual details. Perceptual behavior is characterized by strong sensitivity to spurious perceptual details, accompanied by the need for exhaustive training to learn the task. We develop a mathematical theory to show that an MLP’s behavior is driven by learning richness. Rich-regime MLPs exhibit conceptual behavior, whereas lazy-regime MLPs exhibit perceptual behavior. We validate our theoretical findings in vision SD experiments, showing that rich feature learning promotes success by encouraging hallmarks of conceptual behavior. Overall, our work identifies feature learning richness as a key parameter modulating equality reasoning, and suggests that equality reasoning in humans and animals may similarly depend on learning richness in neural circuits.

Keywords: equality reasoning; same-different; neural network; conceptual and perceptual behavior

## 1 Introduction

The ability to reason abstractly is a hallmark of human intelligence. Fluency with abstractions drives both our highest intellectual achievements and many of our daily necessities like telling time, navigating traffic, and planning leisure. At the same time, neural networks have grown tremendously in sophistication and scale. The latest examples exhibit increasingly impressive competency, and the potential to automate the reasoning process itself seems imminent (OpenAI, 2024, 2023; Bubeck ., 2023; Guo ., 2025). Nonetheless, it remains unclear to what extent these models are able to reason abstractly, and how consistently they behave (McCoy ., 2023; Mahowald ., 2024; Ullman, 2023). To begin answering these questions, we require a principled understanding of how neural networks can reason.

A particularly simple and salient form of abstract reasoning is equality reasoning: determining whether two objects are the same or different. The “sense of sameness is the very keel and backbone of our thinking,” (James, 1905) promoting its study as a tractable viewport into abstract reasoning across humans and animals (E A. Wasserman Young, 2010). Despite many decades of study, the history of equality reasoning abounds with widely varying conclusions. Success at same-different (SD) tasks have been documented in a large number of animals, including non-human primates (Vonk, 2003), honeybees (Giurfa ., 2001), pigeons (E A. Wasserman Young, 2010), crows (Smirnova ., 2015), and parrots (Obozova ., 2015). Others, however, have argued that animals employ perceptual shortcuts to solve these tasks like using stimulus variability, and lack a true conception of sameness or difference (Penn ., 2008). Competence at equality reasoning may require exposure to language or some form of symbolic training (Premack, 1983). Meanwhile, pre-lingual human infants have demonstrated sensitivity to same-different relations (G F. Marcus ., 1999; Saffran Thiessen, 2003; Rabagliati ., 2019).

Equality reasoning in neural networks is no less debated. G F. Marcus . (1999) discovered that seven-month-old infants succeed at an SD task where neural networks fail, launching a lively debate that continues to present day (Seidenberg ., 1999; Seidenberg Elman, 1999; Alhama Zuidema, 2019). Others have demonstrated severe shortcomings in neural networks directed to solve visual same-different reasoning and relational tasks (Kim ., 2018; Stabinger ., 2021; Vaishnav ., 2022; Webb ., 2023). Such failures motivate a growing literature in bespoke architectural advancements geared towards relational reasoning (Webb ., 2023, 2020; Santoro ., 2017; Battaglia ., 2018). At the same time, modern large language models routinely solve complex reasoning problems (Bubeck ., 2023). Their surprising success tempers earlier categorical claims against neural networks’ reasoning abilities. Even simple models like multi-layer perceptrons (MLPs) have recently been shown to solve equality and relational reasoning tasks with surprisingly efficacy (A. Geiger ., 2023; Tong Pehlevan, 2024).

The lack of consensus on equality reasoning in either organic or silicate brains speaks to the need for a stronger theoretical foundation. To this end, we present a theory of equality reasoning in MLPs that highlights the central role of a hitherto overlooked parameter: learning richness, a measure of how much internal representations change over the course of training (Chizat ., 2019). We find that MLPs in a rich learning regime exhibit conceptual behavior, where they develop salient, conceptual representations of sameness and difference, learn the task from few training examples, and remain largely insensitive to spurious perceptual details. In contrast, lazy regime MLPs exhibit perceptual behavior, where they solve the task only after exhaustive training and show strong sensitivity to perceptual variations. Our specific contributions are the following.

Contributions

- We hand-craft a solution to our same-different task that is expressible by an MLP, demonstrating the possibility for our model to solve this task. Our solution suggests what conceptual representations may look like, guiding subsequent analysis.

- We argue that an MLP trained in a rich feature learning regime attains the hand-crafted solution, and exhibits three hallmarks of conceptual behavior: conceptual representations, efficient learning, and insensitivity to spurious perceptual details.

- We prove that an MLP trained in a lazy learning regime can also solve an equality reasoning task, but exhibits perceptual behavior: it requires exhaustive training data and shows strong sensitivity to spurious perceptual details.

- We extend our results to same-different tasks with noise, calculating Bayes optimal performance under priors that either generalize to arbitrary inputs or memorize the training set. We demonstrate that rich MLPs attain Bayes optimal performance under the generalizing prior.

- We validate our results on complex visual SD tasks, showing that our theoretical predictions continue to hold.

Our theory clarifies the understudied role of learning richness in driving successful reasoning, with potential implications for both neural network design and animal cognition.

### 1.1 Related work

In studying same-different tasks comparatively across animal species, E. Wasserman . (2017) observe a continuum between perceptual and conceptual behavior. Some animals focus on spurious perceptual details in the task stimuli like image variability, and slowly gain competence through exhaustive repetition. Other animals and humans appear to develop a conceptual understanding of sameness, allowing them to learn the task quickly and ignore irrelevant percepts. Many others fall somewhere in between, exhibiting behavior with both perceptual and conceptual components. These observations lend themselves to a theory where representations and learning mechanisms operate over a continuous domain (Carstensen Frank, 2021).

Neural networks offer a natural instantiation of such a continuous theory. However, the extent to which neural networks can reason at all remains a hotly contested topic. Famously, Fodor Pylyshyn (1988) argue that connectionist models are poorly equipped to describe human reasoning. G F. Marcus (1998) further contends that neural networks are altogether incapable of solving many simple symbolic tasks (see also G F. Marcus . (1999); G F. Marcus (2003); G. Marcus (2020)). Boix-Adsera . (2023) have also argued that MLPs are unable to generalize on relational problems like our same-different task, though this finding has been contested (Tong Pehlevan, 2024; A. Geiger ., 2023).

Negative assertions about neural network reasoning appear to weaken when considering modern LLMs, which routinely solve complex math and logic problems (Bubeck ., 2023; OpenAI, 2024; Guo ., 2025). But even here, doubts remain about whether LLMs truly reason or merely reproduce superficial aspects of their enormous training set (McCoy ., 2023; Mahowald ., 2024). Nonetheless, A. Geiger . (2023) found that simple MLPs convincingly solve same-different tasks after moderate training. Tong Pehlevan (2024) further showed that MLPs solve a wide variety of relational reasoning tasks. We support these findings by arguing that MLPs solve a same-different task, but their performance is modulated by learning richness. In resonance with E. Wasserman . (2017), varying richness pushes an MLP along a spectrum between a perceptual and conceptual solutions to the task.

Learning richness itself refers to the degree of change in a neural network’s internal representations during training. A number of network parameters were recently discovered to control learning richness, including the readout scale, initialization scheme, and learning rate (Chizat ., 2019; Woodworth ., 2020; Yang Hu, 2021; Bordelon Pehlevan, 2022). In the brain, learning richness may correspond to forming adaptive representations that encode task-specific variables, in contrast to fixed representations that remain task agnostic (Farrell ., 2023). Studies have used learning richness to understand the neural representations underlying diverse phenomena like context-dependent decision making (Flesch ., 2022), multitask cognition (Ito Murray, 2023), generalizing knowledge to new tasks (Johnston Fusi, 2023), and even consciousness (Mastrovito ., 2024).

## 2 Setup

We consider the following same-different task, inspired by the setup in A. Geiger . (2023). The task consists of input pairs $\mathbf{z}_{1},\mathbf{z}_{2}\in\mathbb{R}^{d}$ , where $\mathbf{z}_{i}=\mathbf{s}_{i}+\mathbf{\eta}_{i}$ . The labeling function $y$ is given by

$$

y(\mathbf{z}_{1},\mathbf{z}_{2})=\begin{cases}1&\mathbf{s}_{1}=\mathbf{s}_{2}\\

0&\mathbf{s}_{1}\neq\mathbf{s}_{2}\end{cases}\,.

$$

Quantities $\mathbf{s}$ correspond to “symbols,” perturbed by a small amount of noise $\mathbf{\eta}$ . Noise is distributed as $\mathbf{\eta}\sim\mathcal{N}(\mathbf{0},\sigma^{2}\mathbf{I}/d)$ , for some choice of $\sigma^{2}$ . Initially we will take $\sigma^{2}=0$ so $\mathbf{z}=\mathbf{s}$ , but we will allow $\sigma^{2}$ to be nonzero when considering a noisy extension to the task. Our definition of equality implies exact identity, up to possible noise. Other commonly studied variants include equality up to transformation (Fleuret ., 2011), hierarchical equality (Premack, 1983), context-dependent equality (Raven, 2003), among many others. We pursue exact identity for its tractability and ubiquity in the literature, and investigate more general notions of equality later with experiments in the noisy case and in vision tasks.

The model consists of a two-layer MLP without bias parameters

$$

f(\mathbf{x})=\frac{1}{\gamma\sqrt{d}}\sum_{i=1}^{m}a_{i}\,\phi(\mathbf{w}_{i}\cdot\mathbf{x})\,, \tag{1}

$$

where $\phi$ is a ReLU activation applied point-wise to its inputs. We use the standard logit link function to produce predictions $\hat{y}=1/(1+e^{-f})$ . Inputs are concatenated as $\mathbf{x}=(\mathbf{z}_{1};\mathbf{z}_{2})\in\mathbb{R}^{2d}$ before being passed to $f$ . The model is trained using binary cross entropy loss with a learning rate $\alpha=\gamma^{2}d\,\alpha_{0}$ , for a fixed $\alpha_{0}$ . Hidden weight vectors are initialized as $\mathbf{w}_{i}\sim\mathcal{N}(\mathbf{0},\mathbf{I}/m)$ , and readouts as $a_{i}\sim\mathcal{N}(0,1/m)$ . To enable interpolation between rich and lazy learning regimes, the MLP is centered such that $f(\mathbf{x})=0$ at initialization, for all inputs $\mathbf{x}$ . We use a standard procedure for centering, described in Appendix F. Occasionally, we gather all readouts $a$ and hidden weights $\mathbf{w}$ into a single set $\mathbf{\theta}$ , and write $f(\mathbf{x};\mathbf{\theta})$ to mean an MLP $f$ parameterized by weights $\mathbf{\theta}$ . We avoid considering bias parameters to simplify the analysis. In practice, because the task is symmetric about the origin, we find that bias plays little role.

The parameter $\gamma$ controls learning richness, where higher values of $\gamma$ correspond to greater richness (Chizat ., 2019; M. Geiger ., 2020; Woodworth ., 2020; Bordelon Pehlevan, 2022). A neural network trained in a rich regime experiences significant changes to its hidden activations $\phi(\mathbf{w_{i}\cdot\mathbf{x}})$ , resulting in task-specific representations. In contrast, a neural network trained in a lazy regime retains task-agnostic representations determined by their initialization. The limit $\gamma\rightarrow 0$ induces lazy behavior. Increasing $\gamma$ increases learning richness. For our tasks, we find that $\gamma=1$ produces sufficiently rich learning $\gamma=1$ produces a scaling that is similar to $\mu$ P or mean-field parametrization common elsewhere in the rich learning literature (Yang Hu, 2021; Mei ., 2018; Rotskoff Vanden-Eijnden, 2022). However, these scalings technically consider an infinite width limit. Our setting considers an infinite input dimension limit (Biehl Schwarze, 1995; Saad Solla, 1995; Goldt ., 2019), resulting in an extra $1/\sqrt{d}$ prefactor that is not present in these other scalings., and increasing $\gamma$ beyond 1 does not qualitatively change our results (Figure C3). Appendix F elaborates on our scaling scheme.

Crucially, the training set consists of a finite number of symbols $\mathbf{s}_{1},\mathbf{s}_{2},\ldots,\mathbf{s}_{L}$ . These $L$ symbols are sampled before training begins as $\mathbf{s}\sim\mathcal{N}(\mathbf{0},\mathbf{I}/d)$ , then used exclusively to train the model. Training examples are balanced such that half consist of same examples and half consist of different examples. During testing, symbols $\mathbf{s}$ are sampled afresh for every input, measuring the model’s ability to generalize on unseen test examples. If a model has learned equality reasoning, then it should attain perfect test accuracy despite having never witnessed the particular inputs. When $\sigma^{2}=0$ , this procedure is precisely equivalent to using one-hot encoded symbol inputs with a fixed embedding matrix, where the model is trained on a subset of all possible symbols. Additional details on our model and setup are enumerated in Appendix G.

### 2.1 Conceptual and perceptual behavior

Central to our framework is the distinction between conceptual and perceptual behavior. Conceptual behavior refers to a facility with abstract concepts, enabling the reasoner to learn an abstract task quickly and generalize with limited dependency on spurious details. Perceptual behavior refers to the opposite, where the reasoner solves a task through sensory association. Such learning is typically characterized by exhaustive training and marked sensitivity to spurious perceptual details.

We posit that learning richness moves an MLP between conceptual and perceptual behavior. We identify three specific characteristics of a conceptual outcome:

1. Conceptual representations. We look for evidence of task-specific representations that denote sameness or difference. Such representations should be crucial to solving the task, and contribute towards the model’s efficiency and insensitivity to spurious perceptual details (below).

1. Efficiency. We measure learning efficiency using the number of different symbols $L$ observed during training. A conceptual reasoner should solve the task with a smaller $L$ than a perceptual reasoner.

1. Insensitivity to spurious perceptual details. Spurious perceptual details refer to aspects of the task that influence the input but not the correct output. A readily measurable example is the input dimension $d$ . Sameness or difference can be evaluated regardless of $d$ . A conceptual reasoner should perform equally well when training on tasks across a variety of $d$ , whereas a perceptual reasoner may find certain $d$ harder to learn with than others. We therefore evaluate this insensitivity by comparing the test accuracy of models trained across a large range of input dimensions.

A perceptual solution is characterized by the negation of each point: it does not develop task-specific representations, it requires a large $L$ to solve the task, and test accuracy changes substantially with $d$ . While potentially possible to have a mixed solution that exhibits a subset of these points, we do not observe them in practice, and the conceptual/perceptual distinction is sufficiently descriptive of our model.

<details>

<summary>x1.png Details</summary>

### Visual Description

## [Multi-Panel Scientific Figure]: Comparison of Rich (γ=1) vs. Lazy (γ≈0) Learning Regimes

### Overview

This image is a composite scientific figure containing six subplots (a-f) arranged in two rows and three columns. The top row (a, b, c) illustrates the "Rich regime (γ = 1)", while the bottom row (d, e, f) illustrates the "Lazy regime (γ ≈ 0)". The figure compares the learning dynamics, generalization performance, and internal representations of a machine learning model (likely a neural network) under these two distinct regimes. The plots include scatter plots, heatmaps, and line graphs.

### Components/Axes

**Global Structure:**

- **Top Row Title:** "Rich regime (γ = 1)"

- **Bottom Row Title:** "Lazy regime (γ ≈ 0)"

- **Panel Labels:** a, b, c (top row, left to right); d, e, f (bottom row, left to right).

**Panel a (Top-Left):**

- **Type:** Scatter plot.

- **X-axis Label:** `a_i`

- **Y-axis Label:** `(v_i^T · v_i^T) / l_i`

- **Y-axis Range:** Approximately -1 to 1.

- **X-axis Range:** Approximately -10 to 0.

- **Data:** A dense collection of blue points forming a sharp, step-like transition from y ≈ -1 to y ≈ 1 at x ≈ 0.

**Panel b (Top-Center):**

- **Type:** Heatmap.

- **X-axis Label:** `Input dimension (d)`

- **X-axis Ticks:** 2, 4, 7, 14, 27, 50, 93, 178, 382, 737.

- **Y-axis Label:** `# symbols (L)`

- **Y-axis Ticks:** 3, 5, 10, 20, 38, 74, 143, 275, 531, 1024.

- **Color Bar Label:** `Test acc.`

- **Color Bar Scale:** 0.5 (dark purple/black) to 1.0 (light peach/white).

- **Key Feature:** A horizontal dashed black line at approximately L = 5. The heatmap shows high test accuracy (light colors) across most of the space, particularly for larger d and L.

**Panel c (Top-Right):**

- **Type:** Line graph.

- **X-axis Label:** `# symbols (L)`

- **X-axis Scale:** Logarithmic, with ticks at 2³, 2⁶, 2⁹.

- **Y-axis Label:** `Test accuracy`

- **Y-axis Range:** 0.5 to 1.0.

- **Legend:** Located in the top-right corner. Contains:

- `Theory` (red dashed line)

- `γ = 1.00` (dark purple line with circle markers)

- `γ = 0.50` (purple line with circle markers)

- `γ = 0.25` (lighter purple line with circle markers)

- `γ = 0.10` (light purple line with circle markers)

- `γ = 0.05` (very light purple line with circle markers)

- `γ ≈ 0.00` (lightest purple/pink line with circle markers)

- **Horizontal Reference:** A dashed gray line labeled `chance` at y ≈ 0.5.

**Panel d (Bottom-Left):**

- **Type:** Scatter plot.

- **X-axis Label:** `a_i`

- **Y-axis Label:** `(v_i^T · v_i^T) / l_i` (same as panel a).

- **Y-axis Range:** Approximately -1 to 1.

- **X-axis Range:** Approximately -0.05 to 0.05.

- **Data:** A dense cloud of blue points centered around y = 0, with no sharp transition. The distribution is roughly symmetric and concentrated.

**Panel e (Bottom-Center):**

- **Type:** Heatmap.

- **X-axis Label:** `Input dimension (d)`

- **X-axis Ticks:** 128, 159, 198, 247, 307, 382, 476, 593, 737, 918.

- **Y-axis Label:** `# symbols (L)`

- **Y-axis Ticks:** 143, 178, 221, 275, 343, 427, 531, 661, 823, 1024.

- **Color Bar Label:** `Test acc.`

- **Color Bar Scale:** 0.6 (dark purple) to 1.0 (light peach/white).

- **Key Feature:** A diagonal dashed black line running from bottom-left to top-right. The heatmap shows a gradient where accuracy is highest (lightest) in the top-left (low d, high L) and lowest (darkest) in the bottom-right (high d, low L).

**Panel f (Bottom-Right):**

- **Type:** Line graph.

- **X-axis Label:** `Input dimension (d)`

- **X-axis Scale:** Logarithmic, with ticks at 2⁵, 2⁷, 2⁹.

- **Y-axis Label:** `Test accuracy`

- **Y-axis Range:** 0.5 to 1.0.

- **Legend:** Same as panel c (implied by color and marker consistency).

- **Horizontal Reference:** A dashed gray line labeled `chance` at y ≈ 0.5.

### Detailed Analysis

**Panel a (Rich Regime Scatter):** The plot shows a clear phase transition. For `a_i < 0`, the normalized inner product `(v_i^T · v_i^T) / l_i` is consistently -1. At `a_i ≈ 0`, there is a sharp, vertical jump to +1, which holds for `a_i > 0`. This indicates a binary, all-or-nothing change in the represented feature.

**Panel b (Rich Regime Heatmap):** Test accuracy is generally high (>0.8) across the explored space of input dimension `d` and number of symbols `L`. The horizontal dashed line at L≈5 may indicate a critical threshold for the number of symbols needed for good generalization in this regime. Accuracy appears to saturate near 1.0 for most combinations where L > 5.

**Panel c (Rich Regime Lines):** For the rich regime (γ=1.00, dark purple), test accuracy rapidly reaches ~1.0 as the number of symbols `L` increases beyond 2³ (8). As γ decreases (moving to lighter lines), the accuracy for a given `L` decreases, and more symbols are required to achieve high accuracy. The `γ ≈ 0.00` line shows the poorest performance, only slightly above chance for large `L`. The red dashed "Theory" line represents an upper bound or ideal performance.

**Panel d (Lazy Regime Scatter):** In contrast to panel a, the data points are scattered in a cloud centered at y=0, with a range of `a_i` values from -0.05 to 0.05. There is no sharp transition, suggesting a more gradual, distributed change in representations.

**Panel e (Lazy Regime Heatmap):** The accuracy pattern is fundamentally different from panel b. There is a strong diagonal trend: high accuracy is achieved only when the number of symbols `L` is large relative to the input dimension `d`. The diagonal dashed line likely represents a theoretical boundary (e.g., L ∝ d). Accuracy drops significantly in the region of high `d` and low `L` (bottom-right).

**Panel f (Lazy Regime Lines):** For the lazy regime, test accuracy is plotted against input dimension `d`. For all γ values, accuracy *decreases* as `d` increases. The rate of decrease is slower for higher γ values. Even for γ=1.00 (dark purple), accuracy falls from ~1.0 at d=2⁵ to ~0.8 at d=2⁹. For γ≈0.00, accuracy is near chance (0.5) for all `d`.

### Key Observations

1. **Regime Dichotomy:** The "Rich" and "Lazy" regimes exhibit qualitatively different behaviors in representation learning (a vs. d) and generalization scaling (b vs. e, c vs. f).

2. **Phase Transition vs. Gradual Change:** The rich regime shows a sharp, threshold-based transition in its internal metric (panel a), while the lazy regime shows a smooth, centered distribution (panel d).

3. **Scaling Laws:** In the rich regime, generalization improves with more symbols (`L`) and is robust to increasing input dimension (`d`). In the lazy regime, generalization degrades with increasing `d` and requires `L` to scale with `d` to maintain performance.

4. **Role of γ:** The parameter γ acts as a interpolation between regimes. As γ decreases from 1.00 towards 0.00, performance consistently degrades across all metrics, moving from the rich to the lazy regime's characteristics.

### Interpretation

This figure demonstrates a fundamental dichotomy in how neural networks can learn, governed by a hyperparameter γ (likely related to initialization scale or learning rate, akin to the "rich" vs. "lazy" or "feature" vs. "kernel" learning regimes in recent literature).

- **What the data suggests:** The "Rich regime" (γ=1) enables the model to learn discrete, symbolic representations (evidenced by the sharp transition in panel a) that generalize well and scale efficiently with problem complexity (panels b, c). The model actively shapes its internal features. The "Lazy regime" (γ≈0) results in a model that makes only small adjustments to its initial random features (panel d's cloud). Its generalization is akin to a kernel method, where performance is fundamentally limited by the ratio of symbols to input dimensions (panels e, f), leading to poor scaling with high-dimensional inputs.

- **How elements relate:** The scatter plots (a, d) explain the *mechanism* behind the performance curves (c, f) and heatmaps (b, e). The sharp transition in (a) allows for efficient coding and robust generalization, leading to the flat, high-accuracy curves in (c). The diffuse representation in (d) leads to the fragile, dimension-dependent performance in (f).

- **Notable anomalies/insights:** The most striking insight is the reversal of the scaling trend with input dimension `d`. In the rich regime, increasing `d` does not harm accuracy (panel b), while in the lazy regime, it is detrimental (panel e, f). This has critical implications for applying such models to high-dimensional real-world data. The diagonal boundary in panel e is a key quantitative finding, suggesting a precise scaling law (L ∝ d) for the lazy regime's capacity.

</details>

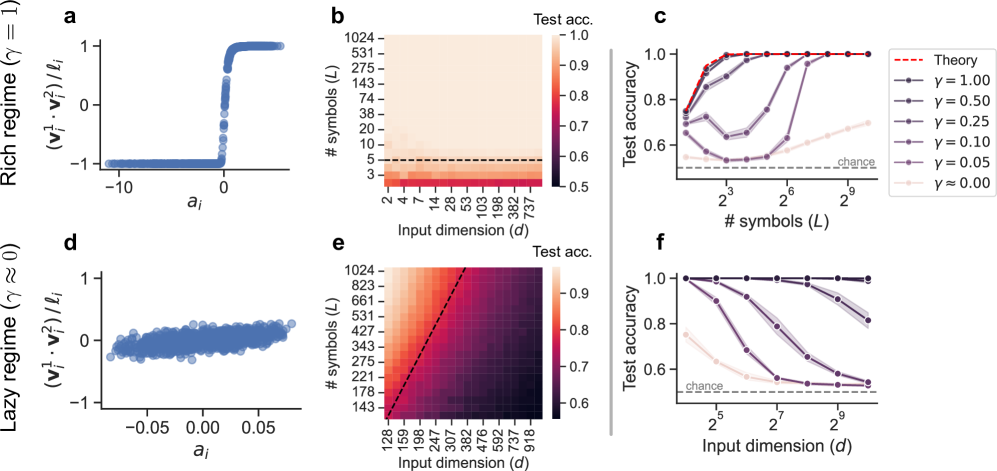

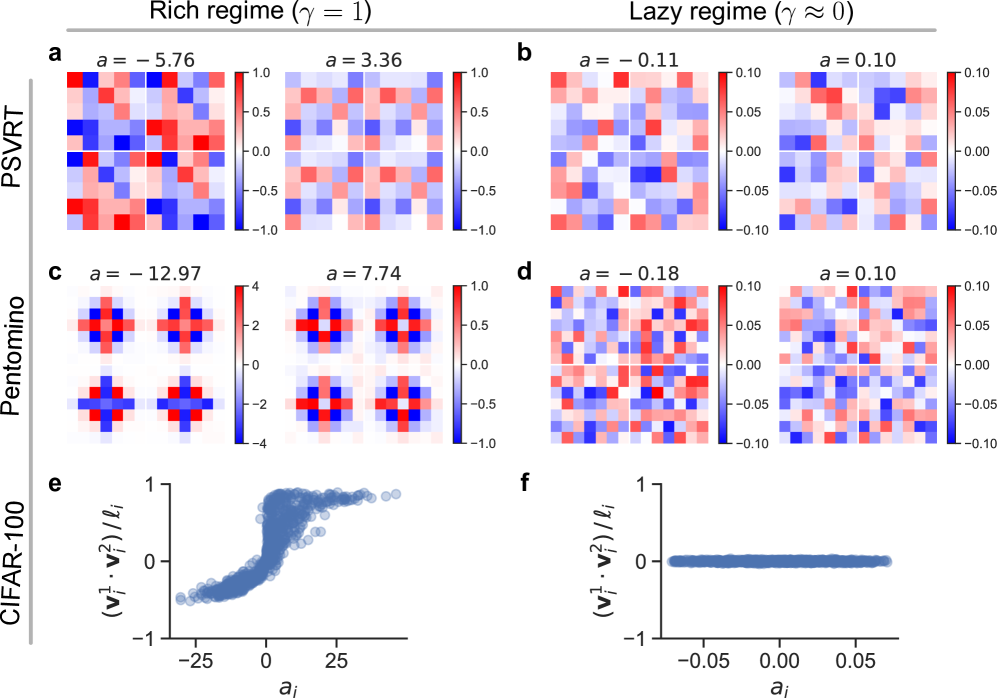

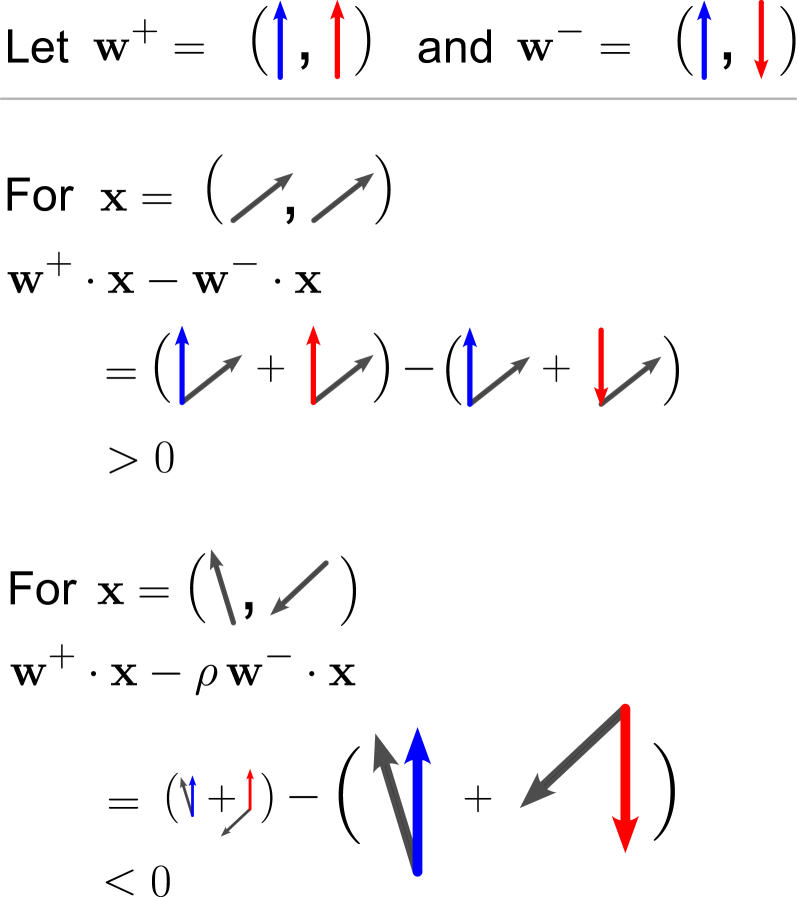

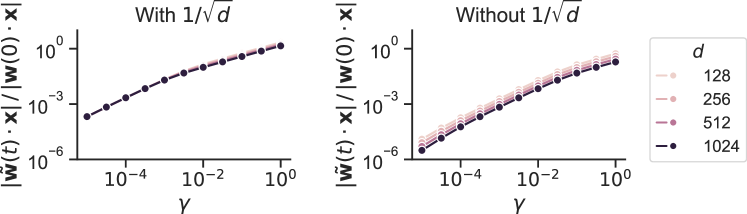

Figure 1: Rich and lazy regime simulations. We confirm our theoretical predictions with numeric simulations. (a). Hidden weight alignment plotted against readout weights for a rich model. Weights become parallel or antiparallel, with generally higher magnitudes among negative readouts. (b) Test accuracy across different input dimensions and training symbols for a rich model ( $m=4096$ ). Accuracy is not affected by input dimension. (c) Test accuracy across different numbers of training symbols, for varying learning richness ( $d=256$ , $m=1024$ ). Richer models attain high performance with substantially fewer training symbols. The theoretically predicted rich test accuracy shows excellent agreement with our richest model. Finer-grain validation is plotted in Figure C3. (d) Hidden weight alignment plotted against readout weights for a lazy model. There is some correlation between alignment and readout weight, but the weights are nowhere near as close to being parallel/antiparallel as in the rich regime. (e) Test accuracy across input dimensions and training symbols for a lazy model ( $m=4096$ ). Accuracy is substantially affected by input dimension. Theory predicts that the number of training symbols required to maintain high accuracy scales at worst as $L\propto d^{2}$ , plotted in black. (f) Test accuracy across different input dimensions, for varying learning richness ( $L=16,m=1024$ ). Richer models show less performance decay with increasing dimension. (all) Results are computed across six runs. Shading corresponds to empirical 95 percent confidence intervals.

## 3 Same-different task analysis

We present our analysis of the SD task. We first hand-craft a solution that is expressible by our MLP, and in the process suggest what conceptual representations of sameness and difference may look like (Section 3.1). We proceed to argue that a rich-regime MLP attains the hand-crafted solution through training. It leverages its conceptual representations to learn the task with few training symbols and insensitivity to the input dimension (Section 3.2), exhibiting conceptual behavior. In contrast, a lazy-regime MLP is unable to adapt its representations to the task, and consequently incurs a high training cost and substantial sensitivity to input dimension (Section 3.3), exhibiting perceptual behavior. In an extension to a noisy version of our task, we show that a rich MLP approaches Bayes optimal performance under a generalizing prior across different noise variance $\sigma^{2}$ (Section 3.3). We validate our results on more complex, image-based tasks in Section 4, and discuss broader implications in Section 5.

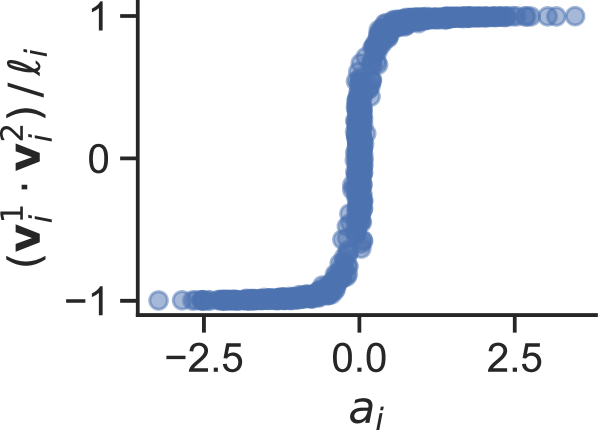

### 3.1 Hand-crafted solution

To establish whether an MLP can solve the same-different task at all, we first outline a hand-crafted solution using $m=4$ hidden units. Let $\mathbf{1}=(1,1,\ldots,1)\in\mathbb{R}^{d}$ . Define the weight vector $\mathbf{w}_{1}^{+}$ by concatenation: $\mathbf{w}_{1}^{+}=(\mathbf{1};\mathbf{1})\in\mathbb{R}^{2d}$ . Further define $\mathbf{w}_{2}^{+}=(-\mathbf{1};-\mathbf{1})$ , $\mathbf{w}_{1}^{-}=(\mathbf{1};-\mathbf{1})$ , and $\mathbf{w}_{2}^{-}=(-\mathbf{1};\mathbf{1})$ . Let $a^{+}=1$ and $a^{-}=\rho$ , for some value $\rho>0$ . Our MLP is given by

$$

\displaystyle f(\mathbf{x})=\,\, \displaystyle a^{+}\big{(}\phi(\mathbf{w}_{1}^{+}\cdot\mathbf{x})+\phi(\mathbf{w}_{2}^{+}\cdot\mathbf{x})\big{)} \displaystyle- \displaystyle a^{-}\big{(}\phi(\mathbf{w}_{1}^{-}\cdot\mathbf{x})+\phi(\mathbf{w}_{2}^{-}\cdot\mathbf{x})\big{)}\,. \tag{2}

$$

Note that the weight vectors $\mathbf{w}_{1}^{+},\mathbf{w}_{2}^{+}$ , which correspond to the positive readout $a^{+}$ , are parallel: their components point in the same direction with the same magnitude. Meanwhile, weight vectors $\mathbf{w}_{1}^{-},\mathbf{w}_{2}^{-}$ corresponding to the negative readout $a^{-}$ are antiparallel: their components point in exact opposite directions with the same magnitude. Only the sign of $f$ impacts the classification, so we assign $a^{+}=1$ and $a^{-}=\rho$ to represent the relative magnitude of $a^{-}$ against $a^{+}$ .

To see how this weight configuration solves the same-different task, suppose we receive a same example $\mathbf{x}=(\mathbf{z},\mathbf{z})$ . Plugging this into Eq (2) reveals that the negative terms vanish through our antiparallel weights, leaving $f(\mathbf{x})=2\,|\mathbf{1}\cdot\mathbf{z}|>0$ , correctly classifying this example.

Now suppose we receive a different example $\mathbf{x}^{\prime}=(\mathbf{z},\mathbf{z}^{\prime})$ . Recall that these quantities are sampled independently as $\mathbf{z},\mathbf{z}^{\prime}\sim\mathcal{N}(\mathbf{0},\mathbf{I}/d)$ . As a result, we can no longer rely on a convenient cancellation. The quantity $\phi(\mathbf{w}_{1}^{+}\cdot\mathbf{x}^{\prime})+\phi(\mathbf{w}_{2}^{+}\cdot\mathbf{x}^{\prime})$ is equal in distribution to the quantity $\phi(\mathbf{w}_{1}^{-}\cdot\mathbf{x}^{\prime})+\phi(\mathbf{w}_{2}^{-}\cdot\mathbf{x}^{\prime})$ , with respect to the randomness in $\mathbf{x}^{\prime}$ . Hence, to implement a consistent negative classification, we need to raise the relative magnitude $\rho$ of our negative readout weight. Indeed, we calculate $p(f(\mathbf{x}^{\prime})<0)=\frac{2}{\pi}\tan^{-1}(\rho)$ , which approaches $1$ for $\rho\gg 1$ . Full details are recorded in Appendix B. Hence, by maintaining a large negative readout, we classify negative examples correctly with high probability. An illustration of this solution is provided in Figure B1.

An MLP need not implement this precise weight configuration to solve the SD task. Rather, our hand-crafted solution suggests two general conditions:

1. Parallel/antiparallel weight vectors. Weights associated with positive readouts must be parallel, and weight associated with negative readouts must be antiparallel. This allows us to classify any same example by canceling the contribution from negative readouts.

1. Large negative readouts. The cumulative magnitude of the negative readouts must be larger than that of the positive readouts. This allows us to classify any different example by raising the contribution from negative readouts.

Observe also that parallel and antiparallel weights are suggestive of conceptual representations for sameness and difference. Parallel weights contribute to a same classification, and exemplify the structure of a same example: the two components point in the same direction. Antiparallel weights contribute to a different classification, and exemplify the structure of a different example: the two components point as far apart as possible. We look for parallel/antiparallel weight vectors as evidence for conceptual representations of our SD task.

### 3.2 Rich regime

The rich learning regime is characterized by substantial weight changes throughout the course of training. For the MLP given in Eq (1), larger values of $\gamma$ lead to rich learning behavior. We allow $\gamma$ to vary between 0 and 1. The range $\gamma>1$ is considered in Figure C3, where we see that no qualitative changes to our results occur for larger values of $\gamma$ .

To study the rich regime, we take two approaches. First, recent theoretical work (Morwani ., 2023; Wei ., 2019; Chizat Bach, 2020) suggest that MLPs trained in a rich learning regime on a classification task discover a max margin solution: the weights maximize the distance between training points of different classes. We derive the max margin weights for an MLP with quadratic activations in Theorem 1, finding that the max margin solution consists of parallel/antiparallel weight vectors, just as required from our hand-crafted solution. We defer the proof of this theorem to Appendix C.

**Theorem 1**

*Let $\mathcal{D}=\{\mathbf{x}_{n},y_{n}\}_{n=1}^{P}$ be a training set consisting of $P$ points sampled across $L$ training symbols, as specified in Section 2. Let $f$ be the MLP given by Eq 1, with two changes:

1. Fix the readouts $a_{i}=\pm 1$ , where exactly $m/2$ readouts are positive and the remaining are negative.

1. Use quadratic activations $\phi(\cdot)=(\cdot)^{2}$ .

For weights $\mathbf{\theta}=\left\{\mathbf{w}_{i}\right\}_{i=1}^{m}$ , define the max margin set $\Delta(\mathbf{\theta})$ to be

$$

\Delta(\mathbf{\theta})=\operatorname*{arg\,max}_{\mathbf{\theta}}\frac{1}{P}\sum_{n=1}^{P}\left[(2y_{n}-1)f(\mathbf{x}_{n};\mathbf{\theta})\right]\,,

$$

subject to the norm constraints $\left|\left|\mathbf{w}_{i}\right|\right|=1$ . If $P,L\rightarrow\infty$ , then for any $\mathbf{w}_{i}=(\mathbf{v}_{i}^{1};\mathbf{v}_{i}^{2})\in\Delta(\mathbf{\theta})$ and $\ell_{i}=\left|\left|\mathbf{v}_{i}^{1}\right|\right|\,\left|\left|\mathbf{v}_{i}^{2}\right|\right|$ , we have that $\mathbf{v}_{i}^{1}\cdot\mathbf{v}_{i}^{2}/\ell_{i}=1$ if $a_{i}=1$ and $\mathbf{v}_{i}^{1}\cdot\mathbf{v}_{i}^{2}/\ell_{i}=-1$ if $a_{i}=-1$ . Further, $\left|\left|\mathbf{v}_{i}^{1}\right|\right|=\left|\left|\mathbf{v}_{i}^{2}\right|\right|$ .*

However, the max margin result does not use ReLU MLPs, relies on fixed readouts $a_{i}$ , and says nothing about learning efficiency or insensitivity to spurious perceptual details, two additional properties we require from a conceptual solution. To address these shortcomings, we extend the analysis by proposing a heuristic construction that approximates a rich ReLU MLP as an ensemble of independent Markov processes (Section C.2). Doing so enables a deeper characterization of rich learning dynamics, resulting in the following approximation of the test accuracy. Given an unseen test point $\mathbf{x},y$ , and prediction $\hat{y}$ ,

$$

p(y=\hat{y}(\mathbf{x}))\approx\frac{1}{2}+\frac{1}{2}\Phi\left(\sqrt{\frac{2(L^{2}-L)}{13(\pi-2)}}\right)\,, \tag{3}

$$

where $L$ is the number of training symbols and $\Phi$ is the CDF of a standard normal distribution. This estimate is for $L\geq 3$ . For $L=2$ , $p(y=\hat{y})=3/4$ . See Section C.5 for details. This estimate suggests that the model attains over 95 percent test accuracy with as few as $L=5$ training symbols, and test accuracy does not change with different $d$ .

We confirm our theoretical predictions with simulations in Figure 1. At the end of training, the hidden weights indeed become parallel and antiparallel, with negative coefficients gaining larger magnitude (Figure 1 a). Figures 1 b and c show that the rich model learns the same-different task with substantially fewer training symbols than lazier models, and exhibits excellent agreement with our theoretical test accuracy prediction. As predicted, the rich model’s performance does not vary with input dimension (Figure 1 b).

Altogether, the rich model develops conceptual representations, learns the same-different task given only a small number of training symbols, and exhibits clear insensitivity to input dimension. In this way, it exhibits conceptual behavior on the same-different task.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Multi-Panel Line Chart: Test Accuracy vs. Number of Symbols under Varying Noise Levels

### Overview

The image displays a series of four line charts arranged horizontally. Each chart plots "Test accuracy" on the y-axis against the "# symbols (L)" on the x-axis (logarithmic scale). The four panels represent experiments conducted under different noise levels, denoted by σ² (sigma squared), with values of 0, 1, 2, and 4 from left to right. Each panel contains multiple data series corresponding to different values of a regularization parameter γ (gamma), along with two baseline performance lines labeled "Bayes gen" and "Bayes mem".

### Components/Axes

* **Panels:** Four distinct plots, each titled with its noise level: `σ² = 0`, `σ² = 1`, `σ² = 2`, `σ² = 4`.

* **Y-Axis (All Panels):** Label: `Test accuracy`. Scale: Linear, ranging from 0.5 to 1.0. Major ticks are at 0.5 and 1.0.

* **X-Axis (All Panels):** Label: `# symbols (L)`. Scale: Logarithmic (base 2). Major ticks are labeled at `2^5` (32) and `2^10` (1024). The axis spans approximately from 2^0 (1) to 2^12 (4096).

* **Legend (Located to the right of the fourth panel):**

* **Data Series (Solid lines with circular markers):**

* `γ = 10^0` (Dark purple/black)

* `γ = 10^-1` (Dark purple)

* `γ = 10^-2` (Medium purple)

* `γ = 10^-3` (Light purple)

* `γ = 10^-4` (Lighter purple/pink)

* `γ = 0` (Light pink)

* **Baseline Series (Dashed lines):**

* `Bayes gen` (Red dashed line)

* `Bayes mem` (Magenta/pink dashed line)

### Detailed Analysis

**Panel 1: σ² = 0 (No Noise)**

* **Trend:** All models achieve high accuracy (>0.9) as the number of symbols (L) increases. The convergence speed depends heavily on γ.

* **Data Points (Approximate):**

* `γ = 10^0` and `γ = 10^-1`: Reach near-perfect accuracy (~1.0) very quickly, by L ≈ 2^3 (8).

* `γ = 10^-2`: Reaches ~1.0 by L ≈ 2^5 (32).

* `γ = 10^-3`: Reaches ~1.0 by L ≈ 2^7 (128).

* `γ = 10^-4`: Reaches ~0.98 by L ≈ 2^10 (1024).

* `γ = 0`: Shows a distinct dip in accuracy at low L (minimum ~0.6 at L≈2^2), then recovers to ~0.95 by L≈2^10.

* `Bayes gen` (Red dashed): Constant at 1.0.

* `Bayes mem` (Magenta dashed): Not visible/plotted in this panel, likely coincides with the top axis or is not applicable.

**Panel 2: σ² = 1 (Low Noise)**

* **Trend:** All models show reduced final accuracy compared to the noiseless case. Higher γ values lead to faster convergence but potentially lower asymptotic performance.

* **Data Points (Approximate):**

* `γ = 10^0`: Converges fastest, plateauing at ~0.95 by L≈2^7.

* `γ = 10^-1`: Plateaus at ~0.95 by L≈2^8.

* `γ = 10^-2`: Plateaus at ~0.93 by L≈2^9.

* `γ = 10^-3`: Plateaus at ~0.88 by L≈2^10.

* `γ = 10^-4`: Plateaus at ~0.82 by L≈2^11.

* `γ = 0`: Shows a shallow dip, then slowly rises to ~0.75 by L≈2^11.

* `Bayes gen` (Red dashed): Constant at ~0.98.

* `Bayes mem` (Magenta dashed): Constant at ~0.65.

**Panel 3: σ² = 2 (Medium Noise)**

* **Trend:** Performance degradation continues. The gap between high-γ and low-γ models widens. The `Bayes mem` baseline becomes more relevant.

* **Data Points (Approximate):**

* `γ = 10^0`: Plateaus at ~0.90 by L≈2^8.

* `γ = 10^-1`: Plateaus at ~0.88 by L≈2^9.

* `γ = 10^-2`: Plateaus at ~0.85 by L≈2^10.

* `γ = 10^-3`: Plateaus at ~0.78 by L≈2^11.

* `γ = 10^-4`: Plateaus at ~0.70 by L≈2^11.

* `γ = 0`: Rises slowly to ~0.65 by L≈2^11.

* `Bayes gen` (Red dashed): Constant at ~0.92.

* `Bayes mem` (Magenta dashed): Constant at ~0.60.

**Panel 4: σ² = 4 (High Noise)**

* **Trend:** All models struggle. Final accuracies are significantly lower. The benefit of increasing L diminishes for low-γ models.

* **Data Points (Approximate):**

* `γ = 10^0`: Plateaus at ~0.82 by L≈2^9.

* `γ = 10^-1`: Plateaus at ~0.80 by L≈2^10.

* `γ = 10^-2`: Plateaus at ~0.75 by L≈2^10.

* `γ = 10^-3`: Plateaus at ~0.68 by L≈2^11.

* `γ = 10^-4`: Plateaus at ~0.62 by L≈2^11.

* `γ = 0`: Rises very slowly to ~0.58 by L≈2^11.

* `Bayes gen` (Red dashed): Constant at ~0.80.

* `Bayes mem` (Magenta dashed): Constant at ~0.55.

### Key Observations

1. **Noise Impact:** Increasing noise (σ²) universally reduces the maximum achievable test accuracy for all models and baselines.

2. **Regularization (γ) Effect:** Higher γ values (stronger regularization) lead to faster learning (steeper initial slope) and better robustness to noise, as seen by their higher plateau in noisy conditions (σ²=2,4). However, in the noiseless case (σ²=0), very high γ (`10^0`) converges quickly but all high-γ models perform similarly at the top.

3. **Pathological Case (γ=0):** The model with no regularization (`γ=0`) performs worst in all scenarios. In the noiseless case, it exhibits a characteristic "U-shaped" learning curve, initially getting worse before improving.

4. **Baselines:** The `Bayes gen` (generalization) line represents an upper bound that decreases with noise. The `Bayes mem` (memorization) line represents a lower bound that also decreases with noise. The gap between these baselines narrows as noise increases.

5. **Data Efficiency:** For a fixed noise level, achieving a target accuracy requires exponentially more symbols (L) as γ decreases. For example, at σ²=1, reaching 0.9 accuracy requires L≈2^6 for γ=10^0 but L>2^10 for γ=10^-3.

### Interpretation

This chart demonstrates the **bias-variance tradeoff** in the context of learning from symbolic data under noise. The parameter γ controls model complexity/regularization.

* **High γ (High Bias, Low Variance):** These models learn simple patterns quickly and are robust to noise, as they are less likely to fit the noise. Their performance plateaus earlier but at a higher level in noisy environments.

* **Low γ (Low Bias, High Variance):** These models are more complex and can fit intricate patterns. In noiseless data, they eventually reach perfect accuracy but require more data (symbols). In noisy data, they are prone to overfitting the noise, leading to poorer generalization and lower final accuracy, as seen by their performance falling below the `Bayes gen` bound and sometimes approaching the `Bayes mem` bound.

* **The γ=0 case** is an extreme of low bias/high variance, where the model initially fits noise (causing the accuracy dip) before learning the true signal with enough data.

* The **Bayes baselines** provide theoretical benchmarks. The fact that models with appropriate regularization (e.g., γ=10^-1, 10^-2) can approach the `Bayes gen` line suggests they are achieving near-optimal generalization for the given noise level. The convergence of all models towards the `Bayes mem` line at very low L suggests that with insufficient data, all models are essentially memorizing.

**In summary, the visualization argues for the necessity of regularization (γ > 0) to achieve robust and data-efficient learning, especially in the presence of noise. The optimal γ is noise-dependent: stronger regularization is beneficial as noise increases.**

</details>

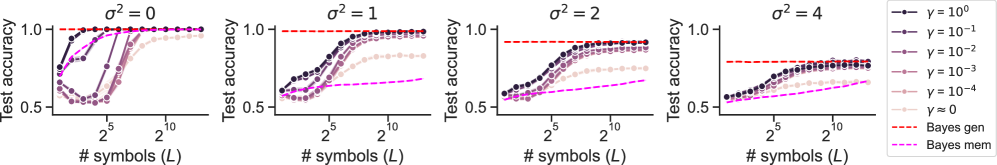

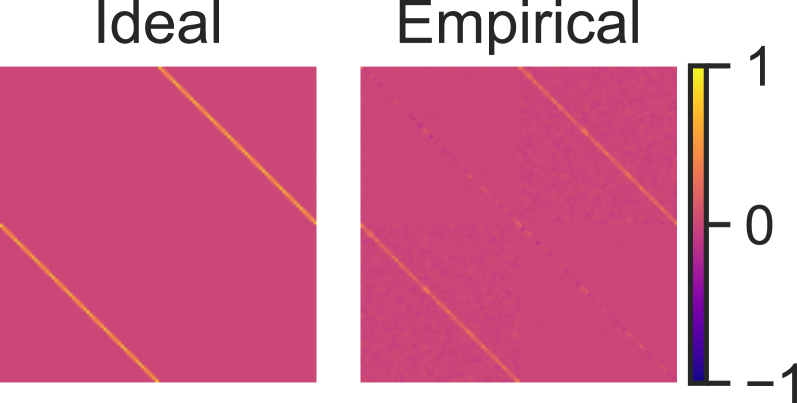

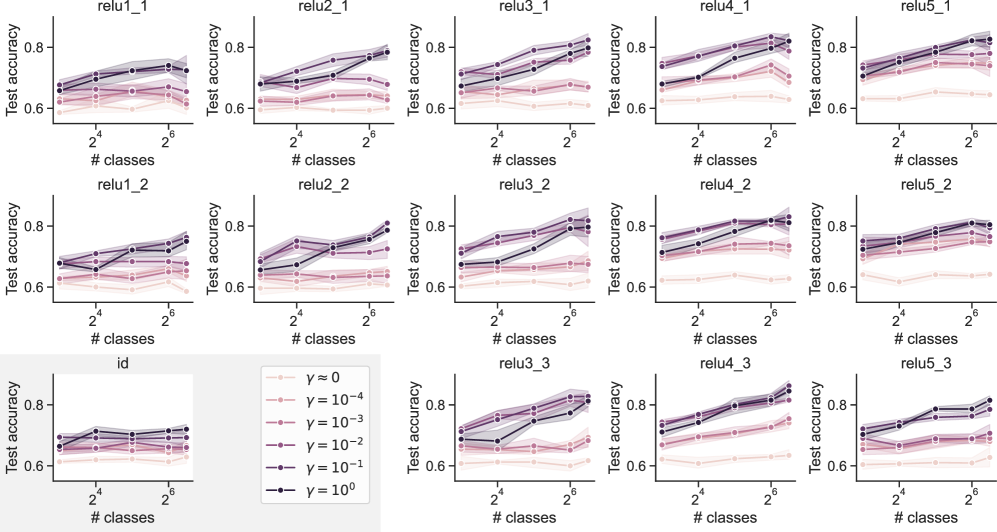

Figure 2: Bayesian simulations. Test accuracy across different numbers of training symbols, for varying richness and noise ( $d=64$ , $m=1024$ ). Bayes optimal accuracy for both generalizing and memorizing priors are plotted with dashed lines. In all cases, rich models attain the Bayes optimal test accuracy under a generalizing prior after sufficiently many training symbols. Shaded error regions are computed across six runs and correspond to empirical 95 percent confidence intervals.

### 3.3 Lazy regime

The lazy learning regime is characterized by vanishingly small change in the model’s hidden representations after training. Smaller values of $\gamma$ lead to lazy learning behavior. The limit $\gamma\rightarrow 0$ corresponds to the Neural Tangent Kernel (NTK) regime, where the network is well-described by a linearization around its initialization (Jacot ., 2018). In our numerics, we approximate this limit by using $\gamma=(1\times 10^{-5})/\sqrt{d}$ .

Because a lazy neural network cannot adapt its representations to an arbitrary pattern, it is impossible for a lazy MLP to learn parallel/antiparallel weights. However, because the statistics of a same example differ from that of a different example, it may still be possible for a lazy MLP to succeed at the task given enough training data For example, for a same input $\mathbf{x}=(\mathbf{z};\mathbf{z})$ and a different input $\mathbf{x}^{\prime}=(\mathbf{z}_{1},\mathbf{z}_{2})$ , the variance of $\mathbf{1}\cdot\mathbf{x}$ is twice that of $\mathbf{1}\cdot\mathbf{x}^{\prime}$ . Leveraging distinct statistics like this may still allow the lazy model to learn this task.. Using standard kernel arguments (Cho Saul, 2009; Jacot ., 2018), we bound the test error of a lazy MLP in Theorem 2. The proof is deferred to Appendix D.

**Theorem 2 (informal)**

*Let $f$ be an infinite-width ReLU MLP. If $f$ is trained on a dataset consisting of $P$ points constructed from $L$ symbols with input dimension $d$ , then the test error of $f$ is upper bounded by $\mathcal{O}\left(\exp\left\{-L/d^{2}\right\}\right)$ .*

This bound suggests that to maintain a consistently low test error (or equivalently, high test accuracy), the number of training symbols $L$ needs to scale quadratically (at worst) with the input dimension: $L\propto d^{2}$ .

We support our theoretical predictions with simulations in Figure 1. Because the model is in a lazy regime, the hidden weights do not move far from initialization, and no clear parallel/antiparallel structure emerges (Figure 1 d). Figure 1 c shows how models require increasingly more training data as richness decreases. Lazier models are also substantially more impacted by changes in input dimension (Figure 1 e and f), and the scaling of training symbols with input dimension is consistent with our theory (Figure 1 e).

Altogether, the lazy model is unable to learn conceptual representations, instead relying on statistical associations that require a large amount of training data to learn and exhibit strong sensitivity to input dimension. In this way, the lazy model exhibits perceptual behavior on the same-different task.

### 3.4 Same-different with noise

Up until now, we defined equality by exact identity: even a minuscule deviation in a single coordinate is enough to break equality and classify an example as different. Reality is far less clean, and real-world objects are rarely equal up to exact identity. As a first step towards this broader setting, we relax our dependence on exact identity and consider a noisy SD task. In the notation of our setup (Section 2), we allow $\sigma^{2}>0$ .

To understand optimal performance under noise, we apply the following Bayesian framework. As a baseline, we consider a prior corresponding to an idealized model which memorizes the training symbols. This memorizing prior assumes every input symbol is distributed uniformly among the training symbols. To contrast this baseline, we consider a gold-standard prior corresponding to a model which generalizes to novel symbols. This generalizing prior assumes every input symbol follows the true underlying distribution. By comparing the test accuracy of the trained models to the posteriors computed in these two settings, we identify which prior more closely reflects the models’ operation. The calculation of these posteriors are recorded in Appendix E.

Results are plotted in Figure 2. In all cases, we find that the rich model approaches Bayes optimal under the generalizing prior. Lazier models tend to plateau at lower test accuracies; they nonetheless tend to exceed the performance of the memorizing prior at higher noise, indicating some level of generalization. Overall, learning richness appears to support convincing generalization to novel training symbols in the noisy SD task.

<details>

<summary>x3.png Details</summary>

### Visual Description

## [Multi-Panel Figure]: Visual Recognition Task Analysis (PSVRT, Pentomino, CIFAR-100)

### Overview

The image is a composite of 8 panels (a–h) analyzing three visual recognition datasets: **PSVRT** (top row), **Pentomino** (middle row), and **CIFAR-100** (bottom row). Each dataset includes a visual example (a, d, g) and two test accuracy plots (b–c, e–f, h) with varying parameters (γ values) and task complexities (x-axes: number of bit-patterns, shapes, classes, or patches).

### Components/Axes

- **Datasets (Rows)**:

- Top: PSVRT (panels a, b, c)

- Middle: Pentomino (panels d, e, f)

- Bottom: CIFAR-100 (panels g, h)

- **Visual Examples (a, d, g)**:

- **a (PSVRT)**: Black/white pixel patterns (bit-patterns) on a gray background (two sets of patterns).

- **d (Pentomino)**: Black pentomino shapes (T, L, cross) on a gray background (two sets of shapes).

- **g (CIFAR-100)**: Color natural images (bee on flower, lamp, tree) on a gray background.

- **Accuracy Plots (b, c, e, f, h)**:

- **Y-axis**: Test accuracy (range: 0.6–1.0).

- **X-axes**:

- b: Number of bit-patterns (2⁶=64 to 2⁹=512).

- c: Number of patches (5–10).

- e: Number of shapes (5–15).

- f: Number of patches (2–10).

- h: Number of classes (2⁴=16 to 2⁶=64).

- **Legend (bottom-right)**: γ values (γ=10⁰, 10⁻¹, 10⁻², 10⁻³, 10⁻⁴, γ≈0) with line styles/colors (dark purple → light pink).

### Detailed Analysis

#### Panel a (PSVRT Visuals)

Two sets of black/white pixel patterns (bit-patterns) on a gray background. Left: Two blocky patterns; right: Two patterns (one checkerboard, one blocky).

#### Panel b (PSVRT: # bit-patterns vs Test Accuracy)

- **X**: Number of bit-patterns (2⁶=64, 2⁷=128, 2⁸=256, 2⁹=512).

- **Y**: Test accuracy (0.6–1.0).

- **Trend**: All lines *increase* with # bit-patterns.

- γ=10⁰ (dark purple): Highest accuracy (~1.0 at 2⁹).

- γ≈0 (lightest pink): Lowest accuracy (~0.75 at 2⁹).

#### Panel c (PSVRT: # patches vs Test Accuracy)

- **X**: Number of patches (5–10).

- **Y**: Test accuracy (0.6–1.0).

- **Trend**: All lines *decrease* with # patches.

- γ=10⁰ (dark purple): Highest accuracy (~1.0 at 5, ~0.95 at 10).

- γ≈0 (lightest pink): Lowest accuracy (~0.75 at 5, ~0.7 at 10).

#### Panel d (Pentomino Visuals)

Two sets of black pentomino shapes (T, L, cross) on a gray background. Left: T and L; right: L and cross.

#### Panel e (Pentomino: # shapes vs Test Accuracy)

- **X**: Number of shapes (5–15).

- **Y**: Test accuracy (0.6–1.0).

- **Trend**: All lines *increase* with # shapes.

- γ=10⁰ (dark purple): Highest accuracy (~0.95 at 15).

- γ≈0 (lightest pink): Lowest accuracy (~0.7 at 15).

#### Panel f (Pentomino: # patches vs Test Accuracy)

- **X**: Number of patches (2–10).

- **Y**: Test accuracy (0.6–1.0).

- **Trend**: All lines *decrease* with # patches.

- γ=10⁰ (dark purple): Highest accuracy (~0.9 at 2, ~0.85 at 10).

- γ≈0 (lightest pink): Lowest accuracy (~0.65 at 2, ~0.6 at 10).

#### Panel g (CIFAR-100 Visuals)

Four color natural images:

- Left: Bee on a yellow flower.

- Middle-left: Bee on a pink flower.

- Middle-right: Lamp on a table.

- Right: Tree in a landscape.

#### Panel h (CIFAR-100: # classes vs Test Accuracy)

- **X**: Number of classes (2⁴=16, 2⁵=32, 2⁶=64).

- **Y**: Test accuracy (0.6–1.0).

- **Trend**: All lines *increase* with # classes.

- γ=10⁰ (dark purple): Highest accuracy (~0.9 at 2⁶).

- γ≈0 (lightest pink): Lowest accuracy (~0.65 at 2⁶).

### Key Observations

1. **γ Impact**: Higher γ (e.g., γ=10⁰) consistently yields higher test accuracy across all datasets. Lower γ (e.g., γ≈0) reduces accuracy.

2. **Task Complexity**:

- For PSVRT (b) and Pentomino (e), increasing the number of bit-patterns/shapes *improves* accuracy.

- For PSVRT (c) and Pentomino (f), increasing the number of patches *decreases* accuracy.

- For CIFAR-100 (h), increasing the number of classes *improves* accuracy.

3. **Visual Diversity**: Each dataset uses distinct input types (pixel blocks, pentomino shapes, natural images), highlighting task-specific challenges.

### Interpretation

This figure explores how a parameter γ (likely a regularization/scaling factor) and task complexity (number of patterns, shapes, classes, or patches) influence test accuracy in visual recognition. Higher γ values improve performance, suggesting γ controls a trade-off (e.g., between model complexity and generalization). The x-axis variations reveal how task complexity (more patterns/shapes/classes) or data granularity (more patches) impacts recognition. The visual examples contextualize the tasks, showing input data diversity. This analysis informs model optimization for visual tasks by clarifying how parameters and task characteristics interact.

</details>

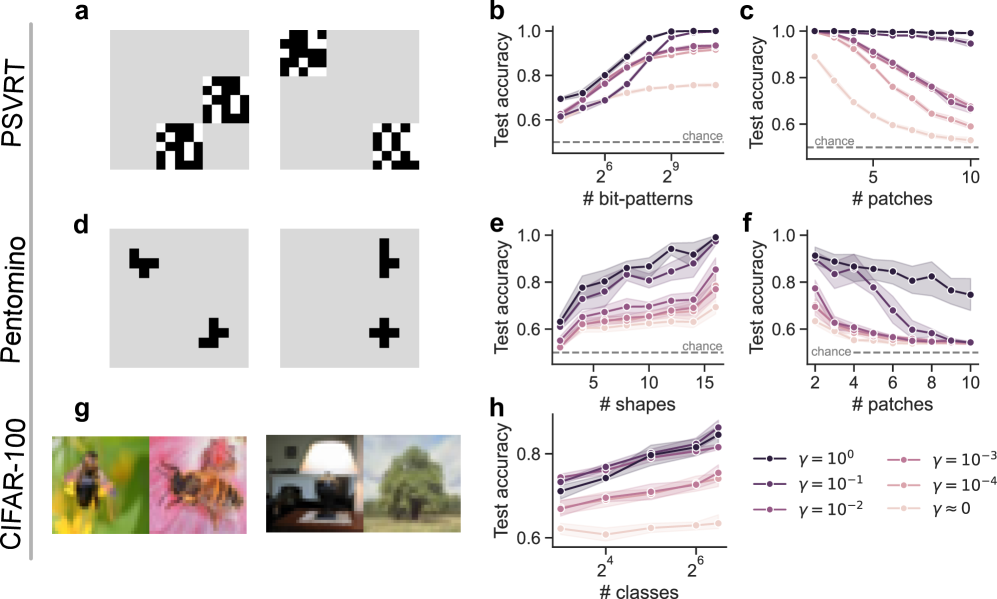

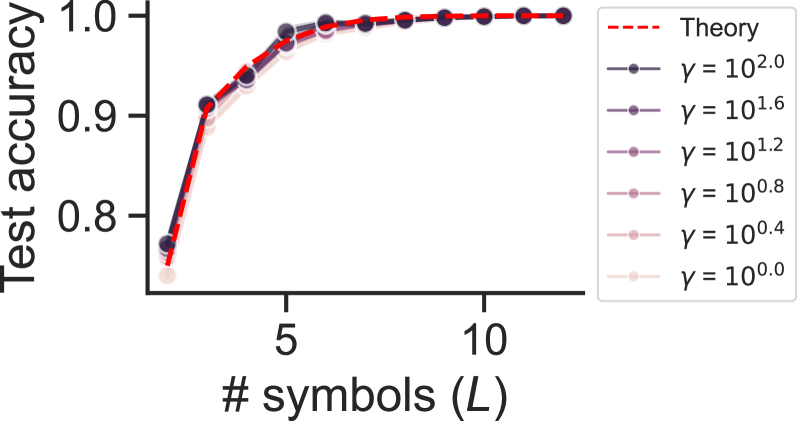

Figure 3: Visual same-different results. (a) PSVRT examples for same (left) and different (right). (b,c) Test accuracy on PSVRT across different numbers of training bit-patterns and image widths. Richer models learn the task with fewer patterns and exhibit less sensitivity to larger sizes. (d) Pentomino examples for same (left) and different (right). (e,f) Test accuracy on Pentomino across training shapes and image widths. As before, richer models learn the task with fewer training shapes and exhibit less sensitivity to larger sizes, though performance across models tends to diminish somewhat with increasing image size. (g) CIFAR-100 examples for same (left) and different (right). (h) Test accuracy on CIFAR-100 same-different across training classes. Richer models tend to perform better with fewer classes, though the richest model in this example performs worse. For this task, very rich models may overfit, necessitating an optimal richness level. (all) Shaded error regions are computed across six runs and correspond to empirical 95 percent confidence intervals.

## 4 Validation in vision tasks

To validate our theoretical findings in a more complex, naturalistic setting, we turn to visual same-different tasks. Specifically, we examine three datasets designed originally to study visual reasoning and computer vision: 1) PSVRT (Kim ., 2018), 2) Pentomino (Gülçehre Bengio, 2016), and 3) CIFAR-100 (Krizhevsky Hinton, 2009). These tasks offer significantly more challenge over the simple SD task we examine before. Rather than reason over symbol embeddings, a model must now reason over complex visual objects. Inputs are now images, and equality is no longer exact identity: inputs can be equal up to translation (in PSVRT), rotation (in Pentomino), or merely share a class label (CIFAR-100). All additional details on model and task configurations are enumerated in Appendix G.

We continue to use the same MLP model as before. Images are flattened before input to the model. Though better performance may be attained using CNNs or Vision Transformers, our ultimate goal is to study learning richness rather than maximize performance. Nonetheless, as we will soon see, an MLP performs astonishingly well on these tasks despite its simplicity — provided it remains in a rich learning regime. To validate our theoretical findings, we should continue to see the three hallmarks of a conceptual solution (conceptual representations, efficiency, and insensitivity to spurious perceptual details), but only in rich MLPs.

### 4.1 PSVRT

The parameterized-SVRT (PSVRT) dataset is a version of the Synthetic Visual Reasoning Test (SVRT), a collection of challenging visual reasoning tasks based on abstract shapes (Kim ., 2018). PSVRT replaces the original shapes with random bit-patterns in order to better control image variability. The task input consists of an image that has two blocks of bit-patterns, placed randomly on a blank background. The model must determine whether the blocks contain the same bit pattern, or different patterns. The training set consists of a fixed number of predetermined bit patterns. The test set consists of novel bit-patterns never encountered during training.

Bit-patterns are patch-aligned: they occur in non-overlapping locations that tile the image. The width of an image may be specified by the number of patches. Figure 3 a illustrates examples from PSVRT that are three patches wide.

Results. Figure 3 b plots a model’s test accuracy on PSVRT as a function of the number of training patterns. As our theory suggests, richer models learn the task more easily and generalize after substantially fewer training patterns. To test our models under perceptual variation, we consider larger image sizes. We keep the same size of bit-patterns, but increase the number of patches to make a bigger input. Figure 3 c indicates that a rich model continues to perform perfectly irrespective of image size, whereas lazier models exhibit a performance decay with larger inputs.

Finally, we identify parallel/antiparallel analogs for PSVRT in the weights of a rich model (Figure A1 a). The presence of these conceptual representations suggests that our theory remains a reasonable description for how a rich MLP may learn a conceptual solution to the PSVRT same-different task.

### 4.2 Pentomino

The Pentomino task uses inputs that are pentomino polygons: shapes consisting of five squares glued by edge (Gülçehre Bengio, 2016). The input consists of an image with two pentominoes, placed arbitrarily on a blank background. The pentominoes may either be the same shape, or different. In contrast with the PSVRT task, sameness in this task implies equality up to rotation. After training on a fixed set of pentomino shapes, the model must generalize to entirely novel shapes. Like with PSVRT, shapes are patch-aligned. Figure 3 d illustrates example inputs from this task that are three patches wide.

Results. Figure 3 e plots a model’s test accuracy on Pentomino as a function of the number of training shapes. Consistent with our theory, richer models learn the task more easily and generalize after substantially fewer training shapes. To test our models under perceptual variation, we consider larger image sizes. Like with PSVRT, we add additional patches to enlarge the input. Figure 3 f indicates that a rich model continues to perform well on larger image sizes, though its performance does start to decay somewhat. Performance decays substantially faster for lazier models.

We again identify parallel/antiparallel analogs for Pentomino in the weights of a rich model (Figure A1 c). The presence of these conceptual representations continues to support our theoretical perspective. Notably, Gülçehre Bengio (2016) introduced this task to motivate curriculum learning, finding that their MLP fails to perform above chance. We found that curriculum learning is unnecessary in the presence of sufficient richness.

### 4.3 CIFAR-100

The CIFAR-100 dataset consists of 60 thousand real-world images, each 32 by 32 pixels (Krizhevsky Hinton, 2009). Images belong to one of 100 different classes. In this task, the input consists of two different unlabeled images that belong either to the same or different classes. After training on images from a fixed set of labels, the model must generalize to entirely novel labels. The sets of train and test labels are disjoint, making this an extremely challenging task. The labels themselves are not provided in any form during training. Example inputs are illustrated in Figure 3 g. We also experiment with providing features from VGG-16 pretrained on ImageNet (Simonyan Zisserman, 2014). We pass CIFAR-100 images to VGG-16, then use intermediate features as inputs to our MLP. The weights of VGG-16 are fixed throughout the whole process. Note also that ImageNet is disjoint from CIFAR-100, so there is limited possibility of contamination in the test images.

Results. Figure 3 h plots a model’s test accuracy on CIFAR-100 images as a function of the number of training classes. We use outputs from VGG-16 block 4, layer 3, which performed the best with our model. As before, richer models tend to perform better with fewer training classes, but with a curious exception: in contrast to the previous two tasks, the richest model does not always perform decisively the best. This is particularly evident using the activations from other intermediate VGG layers, plotted in Figure G 1. For certain layers and number of training classes, the optimal $\gamma$ appears to be somewhat less than 1. This outcome may be in part an artifact of overfitting. Given the complexity of the task and the limited data, richer models are plausibly more susceptible to idiosyncratic features of the training set that generalize poorly, analogous to overfitting effects in classical statistics that degrade the performance of powerful models. In this case, slightly less learning richness may be the optimal setting. Since CIFAR-100 images are fixed to 32 by 32 pixels, we skipped testing variable image size for this task.

As before, we identify parallel/antiparallel analogs for this task in the weights of a rich model (Figure A1 e). The general benefit of richness together with the presence of conceptual representations continues to align with our theoretical perspective. Across our three visual same-different tasks, we identified generally consistent relationships between learning richness, conceptual solutions, and good performance, supporting our theoretical findings.

## 5 Discussion

We studied equality reasoning using a simple same-different task. We showed that learning richness drives the development of either conceptual or perceptual behavior. Rich MLPs develop conceptual representations, learn from few training examples, and remain largely insensitive to perceptual variation. Meanwhile, lazy MLPs require exhaustive training examples and deteriorate substantially with spurious perceptual changes.

Varying learning richness recapitulates E. Wasserman . (2017) ’s continuum between perceptual and conceptual behavior on same-different tasks. Perhaps a pigeon’s competency at equality reasoning may be broadly comparable to a lazy MLP’s, requiring a great deal of training and exhibiting persistent sensitivity to spurious details. Perhaps equality reasoning in human or even language-trained great apes may be comparable to a rich MLP, where learning is faster, less sensitive to spurious details, and presumably involves conceptual abstractions. We suggest that a key parameter underlying these behavioral differences may be learning richness.

Learning richness is a concept imported from machine learning theory, and it is not altogether clear how to measure richness in a living brain. Since richness specifies the degree of change in a neural network’s hidden representations, the most direct analogy in the brain is to look for adaptive representations that seem to encode task-specific variables. Such approaches have implicated richness as an essential property for context-dependent decision making, multitask cognition, generalizing knowledge, among many other phenomena (Flesch ., 2022; Ito Murray, 2023; Johnston Fusi, 2023; Farrell ., 2023). Our theory predicts that greater learning richness relates to faster generalization in equality reasoning, and look forward to possible experimental validation of this principle.

Our work also contributes to the longstanding debate on a neural network’s facility with abstract reasoning. Rich MLPs demonstrate successful generalization to unseen symbols irrespective of input dimension or even high noise variance. Further, the rich MLP’s development of parallel/antiparallel components suggests the formation of abstractions, supporting the account that neural networks may indeed learn to develop and manipulate symbolic representations.

Practically, we demonstrate that learning richness is a vital hyperparameter. Increasing richness generally increases test performance substantially, improves data efficiency, and reduces sensitivity to spurious details. For complex tasks tuned with a large range of $\gamma$ , there may be an optimal level of richness. Indeed, for CIFAR-100, we observed that more richness is not always better, and an optimal level exists. We encourage more widespread application of richness parametrizations like $\mu$ P, and advocate for adding $\gamma$ to the list of tunable hyperparameters that every practitioner must consider when developing neural networks (Atanasov ., 2024).

Acknowledgments.

Special thanks to Alex Atanasov for a serendipitous conversation that inspired much of this project. We also thank Hamza Chaudhry, Ben Ruben, Sab Sainathan, Jacob Zavatone-Veth, and members of the Pehlevan Group for many helpful comments and discussions on our manuscript. WLT is supported by a Kempner Graduate Fellowship. CP is supported by NSF grant DMS-2134157, NSF CAREER Award IIS-2239780, DARPA grant DIAL-FP-038, a Sloan Research Fellowship, and The William F. Milton Fund from Harvard University. This work has been made possible in part by a gift from the Chan Zuckerberg Initiative Foundation to establish the Kempner Institute for the Study of Natural and Artificial Intelligence. The computations in this paper were run on the FASRC cluster supported by the FAS Division of Science Research Computing Group at Harvard University.

## References

- Alhama Zuidema (2019) Alhama2019-review Alhama, R G. Zuidema, W. 20191 08. A review of computational models of basic rule learning: The neural-symbolic debate and beyond A review of computational models of basic rule learning: The neural-symbolic debate and beyond. Psychon Bull Rev2641174–1194.

- Atanasov . (2024) atanasov2024ultrarich Atanasov, A., Meterez, A., Simon, J B. Pehlevan, C. 2024. The Optimization Landscape of SGD Across the Feature Learning Strength The optimization landscape of sgd across the feature learning strength. arXiv preprint arXiv:2410.04642.

- Battaglia . (2018) Battaglia2018-graph Battaglia, P W., Hamrick, J B., Bapst, V., Sanchez-Gonzalez, A., Zambaldi, V., Malinowski, M. Pascanu, R. 201817 10. Relational inductive biases, deep learning, and graph networks Relational inductive biases, deep learning, and graph networks. arXiv.

- Bernstein . (2018) signSGD Bernstein, J., Wang, Y X., Azizzadenesheli, K. Anandkumar, A. 2018. signSGD: Compressed optimisation for non-convex problems signsgd: Compressed optimisation for non-convex problems. International Conference on Machine Learning International conference on machine learning ( 560–569).

- Biehl Schwarze (1995) biehl1995learning_online_gd Biehl, M. Schwarze, H. 1995. Learning by on-line gradient descent Learning by on-line gradient descent. Journal of Physics A: Mathematical and general283643.

- Boix-Adsera . (2023) boix_abbe Boix-Adsera, E., Saremi, O., Abbe, E., Bengio, S., Littwin, E. Susskind, J. 2023. When can transformers reason with abstract symbols? When can transformers reason with abstract symbols? arXiv preprint arXiv:2310.09753.

- Bordelon Pehlevan (2022) bordelon_self_consistent Bordelon, B. Pehlevan, C. 2022. Self-consistent dynamical field theory of kernel evolution in wide neural networks Self-consistent dynamical field theory of kernel evolution in wide neural networks. Advances in Neural Information Processing Systems3532240–32256.

- Bubeck . (2023) Bubeck2023_sparks_of_agi Bubeck, S., Chandrasekaran, V., Eldan, R., Gehrke, J., Horvitz, E., Kamar, E. Zhang, Y. 202313 04. Sparks of Artificial General Intelligence: Early experiments with GPT-4 Sparks of artificial general intelligence: Early experiments with GPT-4. arXiv.

- Carstensen Frank (2021) carstensen_graded_abs Carstensen, A. Frank, M C. 2021. Do graded representations support abstract thought? Do graded representations support abstract thought? Current Opinion in Behavioral Sciences3790–97.

- Chizat Bach (2020) chizat_bach_max_margin Chizat, L. Bach, F. 2020. Implicit bias of gradient descent for wide two-layer neural networks trained with the logistic loss Implicit bias of gradient descent for wide two-layer neural networks trained with the logistic loss. Conference on learning theory Conference on learning theory ( 1305–1338).

- Chizat . (2019) chizat2019lazy_rich Chizat, L., Oyallon, E. Bach, F. 2019. On lazy training in differentiable programming On lazy training in differentiable programming. Advances in neural information processing systems32.

- Cho Saul (2009) cho_saul_kernel Cho, Y. Saul, L. 2009. Kernel methods for deep learning Kernel methods for deep learning. Advances in neural information processing systems22.

- Farrell . (2023) Farrell2023-lazy_rich Farrell, M., Recanatesi, S. Shea-Brown, E. 20231 12. From lazy to rich to exclusive task representations in neural networks and neural codes From lazy to rich to exclusive task representations in neural networks and neural codes. Curr. Opin. Neurobiol.83102780102780.

- Flesch . (2022) Flesch2022-orthogonal Flesch, T., Juechems, K., Dumbalska, T., Saxe, A. Summerfield, C. 20226 04. Orthogonal representations for robust context-dependent task performance in brains and neural networks Orthogonal representations for robust context-dependent task performance in brains and neural networks. Neuron11071258–1270.e11.

- Fleuret . (2011) fleuret2011svrt Fleuret, F., Li, T., Dubout, C., Wampler, E K., Yantis, S. Geman, D. 2011. Comparing machines and humans on a visual categorization test Comparing machines and humans on a visual categorization test. Proceedings of the National Academy of Sciences1084317621–17625.

- Fodor Pylyshyn (1988) fodor_and_pylyshyn Fodor, J A. Pylyshyn, Z W. 1988. Connectionism and cognitive architecture: A critical analysis Connectionism and cognitive architecture: A critical analysis. Cognition281-23–71.

- A. Geiger . (2023) geiger_nonsym Geiger, A., Carstensen, A., Frank, M C. Potts, C. 2023. Relational reasoning and generalization using nonsymbolic neural networks. Relational reasoning and generalization using nonsymbolic neural networks. Psychological Review1302308.

- M. Geiger . (2020) geiger2020disentangling_feature_and_lazy Geiger, M., Spigler, S., Jacot, A. Wyart, M. 2020. Disentangling feature and lazy training in deep neural networks Disentangling feature and lazy training in deep neural networks. Journal of Statistical Mechanics: Theory and Experiment202011113301.

- Giurfa . (2001) Giurfa2001-bee Giurfa, M., Zhang, S., Jenett, A., Menzel, R. Srinivasan, M V. 200119 04. The concepts of ’sameness’ and ’difference’ in an insect The concepts of ’sameness’ and ’difference’ in an insect. Nature4106831930–933.

- Goldt . (2019) goldt2019dynamics Goldt, S., Advani, M., Saxe, A M., Krzakala, F. Zdeborová, L. 2019. Dynamics of stochastic gradient descent for two-layer neural networks in the teacher-student setup Dynamics of stochastic gradient descent for two-layer neural networks in the teacher-student setup. Advances in neural information processing systems32.

- Gülçehre Bengio (2016) gulccehre2016pentomino Gülçehre, Ç. Bengio, Y. 2016. Knowledge matters: Importance of prior information for optimization Knowledge matters: Importance of prior information for optimization. The Journal of Machine Learning Research171226–257.

- Guo . (2025) guo2025deepseek_r1 Guo, D., Yang, D., Zhang, H., Song, J., Zhang, R., Xu, R. others 2025. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948.

- Ito Murray (2023) Ito2023-multitask Ito, T. Murray, J D. 2023 02. Multitask representations in the human cortex transform along a sensory-to-motor hierarchy Multitask representations in the human cortex transform along a sensory-to-motor hierarchy. Nat. Neurosci.262306–315.

- Jacot . (2018) jacot_ntk Jacot, A., Gabriel, F. Hongler, C. 2018. Neural tangent kernel: Convergence and generalization in neural networks Neural tangent kernel: Convergence and generalization in neural networks. Advances in neural information processing systems31.

- James (1905) james1905principles_of_psych James, W. 1905. The Principles of Psychology The principles of psychology. New York,: H. Holt.

- Johnston Fusi (2023) johnston2023abstract_rep Johnston, W J. Fusi, S. 2023. Abstract representations emerge naturally in neural networks trained to perform multiple tasks Abstract representations emerge naturally in neural networks trained to perform multiple tasks. Nature Communications1411040.

- Kim . (2018) Kim2018-not_so_clevr Kim, J., Ricci, M. Serre, T. 201815 06. Not-So-CLEVR: learning same–different relations strains feedforward neural networks Not-so-CLEVR: learning same–different relations strains feedforward neural networks. Interface Focus8420180011.

- Kingma (2014) kingma2014adam Kingma, D P. 2014. Adam: A method for stochastic optimization Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980.

- Krizhevsky Hinton (2009) krizhevsky2009cifar100 Krizhevsky, A. Hinton, G. 2009. Learning multiple layers of features from tiny images Learning multiple layers of features from tiny images. Technical report.

- Mahowald . (2024) Mahowald2024-dissociate Mahowald, K., Ivanova, A A., Blank, I A., Kanwisher, N., Tenenbaum, J B. Fedorenko, E. 20241 06. Dissociating language and thought in large language models Dissociating language and thought in large language models. Trends Cogn. Sci.286517–540.

- G. Marcus (2020) Marcus2020-next_decade Marcus, G. 202019 02. The Next Decade in AI: Four Steps Towards Robust Artificial Intelligence The next decade in AI: Four steps towards robust artificial intelligence. arXiv.

- G F. Marcus (1998) marcus1998rethinking Marcus, G F. 1998. Rethinking eliminative connectionism Rethinking eliminative connectionism. Cognitive psychology373243–282.

- G F. Marcus (2003) Marcus2003-algebraic Marcus, G F. 2003. The algebraic mind: Integrating connectionism and cognitive science The algebraic mind: Integrating connectionism and cognitive science. Cambridge, MABradford Books.

- G F. Marcus . (1999) Marcus1999-rule_learning Marcus, G F., Vijayan, S., Bandi Rao, S. Vishton, P M. 19991 01. Rule learning by seven-month-old infants Rule learning by seven-month-old infants. Science283539877–80.

- Mastrovito . (2024) Mastrovito2024-consciousness Mastrovito, D., Liu, Y H., Kusmierz, L., Shea-Brown, E., Koch, C. Mihalas, S. 202415 05. Transition to chaos separates learning regimes and relates to measure of consciousness in recurrent neural networks Transition to chaos separates learning regimes and relates to measure of consciousness in recurrent neural networks. bioRxivorg2024.05.15.594236.

- McCoy . (2023) McCoy2023-embers McCoy, R T., Yao, S., Friedman, D., Hardy, M. Griffiths, T L. 202324 09. Embers of Autoregression: Understanding Large Language Models Through the Problem They are Trained to Solve Embers of autoregression: Understanding large language models through the problem they are trained to solve. arXiv.

- Mei . (2018) mei2018mean_field Mei, S., Montanari, A. Nguyen, P M. 2018. A mean field view of the landscape of two-layer neural networks A mean field view of the landscape of two-layer neural networks. Proceedings of the National Academy of Sciences11533E7665–E7671.

- Morwani . (2023) morwani_max_margin Morwani, D., Edelman, B L., Oncescu, C A., Zhao, R. Kakade, S. 2023. Feature emergence via margin maximization: case studies in algebraic tasks Feature emergence via margin maximization: case studies in algebraic tasks. arXiv preprint arXiv:2311.07568.

- Obozova . (2015) Obozova2015-parrot Obozova, T., Smirnova, A., Zorina, Z. Wasserman, E. 201518 11. Analogical reasoning in amazons Analogical reasoning in amazons. Anim. Cogn.1861363–1371.

- OpenAI (2023) gpt4 OpenAI. 202315 03. GPT-4 Technical Report GPT-4 technical report. arXiv.

- OpenAI (2024) o1 OpenAI. 2024. Learning to Reason with LLMs. Learning to reason with LLMs. https://openai.com/index/learning-to-reason-with-llms/.

- Penn . (2008) Penn2008-darwin_mistake Penn, D C., Holyoak, K J. Povinelli, D J. 2008 04. Darwin’s mistake: Explaining the discontinuity between human and nonhuman minds Darwin’s mistake: Explaining the discontinuity between human and nonhuman minds. Behav. Brain Sci.312109–130.

- Premack (1983) Premack1983-codes Premack, D. 1983 03. The codes of man and beasts The codes of man and beasts. Behav. Brain Sci.61125–136.

- Rabagliati . (2019) Rabagliati2019-infant_abstract_rule_learning Rabagliati, H., Ferguson, B. Lew-Williams, C. 20191 01. The profile of abstract rule learning in infancy: Meta-analytic and experimental evidence The profile of abstract rule learning in infancy: Meta-analytic and experimental evidence. Dev. Sci.221e12704.

- Raven (2003) raven2003raven_prog_mats Raven, J. 2003. Raven progressive matrices Raven progressive matrices. Handbook of nonverbal assessment Handbook of nonverbal assessment ( 223–237). Springer.