# VisualPRM: An Effective Process Reward Model for Multimodal Reasoning

Abstract

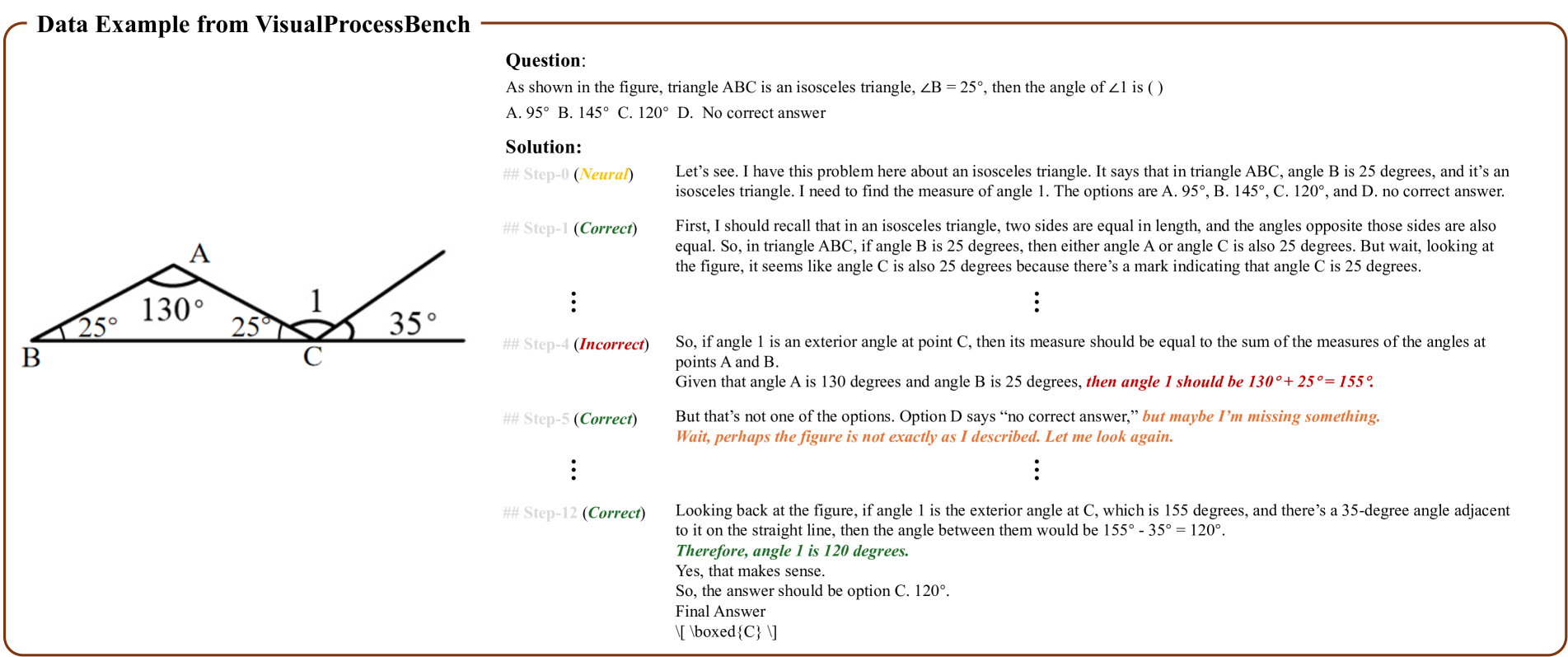

We introduce VisualPRM, an advanced multimodal Process Reward Model (PRM) with 8B parameters, which improves the reasoning abilities of existing Multimodal Large Language Models (MLLMs) across different model scales and families with Best-of-N (BoN) evaluation strategies. Specifically, our model improves the reasoning performance of three types of MLLMs and four different model scales. Even when applied to the highly capable InternVL2.5-78B, it achieves a 5.9-point improvement across seven multimodal reasoning benchmarks. Experimental results show that our model exhibits superior performance compared to Outcome Reward Models and Self-Consistency during BoN evaluation. To facilitate the training of multimodal PRMs, we construct a multimodal process supervision dataset VisualPRM400K using an automated data pipeline. For the evaluation of multimodal PRMs, we propose VisualProcessBench, a benchmark with human-annotated step-wise correctness labels, to measure the abilities of PRMs to detect erroneous steps in multimodal reasoning tasks. We hope that our work can inspire more future research and contribute to the development of MLLMs. Our model, data, and benchmark are released in this page. 🖂 Corresponding Author: wangwenhai@pjlab.org.cn

1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart: Overall Performance Comparison of Policy Models

### Overview

The image is a bar chart comparing the overall performance of different policy models. The y-axis represents "Overall Performance," ranging from 25 to 55. The x-axis represents "Policy Model," with several models listed. There are three data series, each represented by a different color bar: "Pass@1" (green), "BoN with InternVL2.5-8B" (red), and "BoN with VisualPRM-8B (ours)" (blue).

### Components/Axes

* **Y-axis:** "Overall Performance," with a scale from 25 to 55 in increments of 5.

* **X-axis:** "Policy Model," listing the following models: MiniCPM-V2.6, QwenVL2.5-7B, InternVL2.5-8B, InternVL2.5-26B, InternVL2.5-38B, InternVL2.5-78B.

* **Legend:** Located at the top-left of the chart, it identifies the three data series:

* Green: "Pass@1"

* Red: "BoN with InternVL2.5-8B"

* Blue: "BoN with VisualPRM-8B (ours)"

### Detailed Analysis

Here's a breakdown of the performance for each policy model across the three data series:

* **MiniCPM-V2.6:**

* Pass@1 (Green): 29.5

* BoN with InternVL2.5-8B (Red): 28.6

* BoN with VisualPRM-8B (Blue): 37.5

* **QwenVL2.5-7B:**

* Pass@1 (Green): 41.4

* BoN with InternVL2.5-8B (Red): 41.6

* BoN with VisualPRM-8B (Blue): 45.1

* **InternVL2.5-8B:**

* Pass@1 (Green): 32.8

* BoN with InternVL2.5-8B (Red): 33.2

* BoN with VisualPRM-8B (Blue): 41.2

* **InternVL2.5-26B:**

* Pass@1 (Green): 36.9

* BoN with InternVL2.5-8B (Red): 39.1

* BoN with VisualPRM-8B (Blue): 45.8

* **InternVL2.5-38B:**

* Pass@1 (Green): 44.4

* BoN with InternVL2.5-8B (Red): 44.9

* BoN with VisualPRM-8B (Blue): 50.7

* **InternVL2.5-78B:**

* Pass@1 (Green): 46.0

* BoN with InternVL2.5-8B (Red): 46.4

* BoN with VisualPRM-8B (Blue): 51.9

### Key Observations

* The "BoN with VisualPRM-8B (ours)" (blue) consistently outperforms the other two models across all policy models.

* The performance of all three data series generally increases as the "InternVL2.5" model number increases (e.g., from 26B to 78B).

* The "Pass@1" (green) and "BoN with InternVL2.5-8B" (red) models have very similar performance for each policy model, with the red bar being slightly higher in most cases.

### Interpretation

The chart demonstrates that "BoN with VisualPRM-8B (ours)" achieves the highest overall performance compared to "Pass@1" and "BoN with InternVL2.5-8B" across the tested policy models. The trend suggests that increasing the size or complexity of the "InternVL2.5" model generally leads to improved performance for all three configurations. The consistent outperformance of the "VisualPRM-8B" model indicates its effectiveness in the given task or benchmark. The similar performance between "Pass@1" and "InternVL2.5-8B" might suggest a correlation or similar underlying mechanism in their approach.

</details>

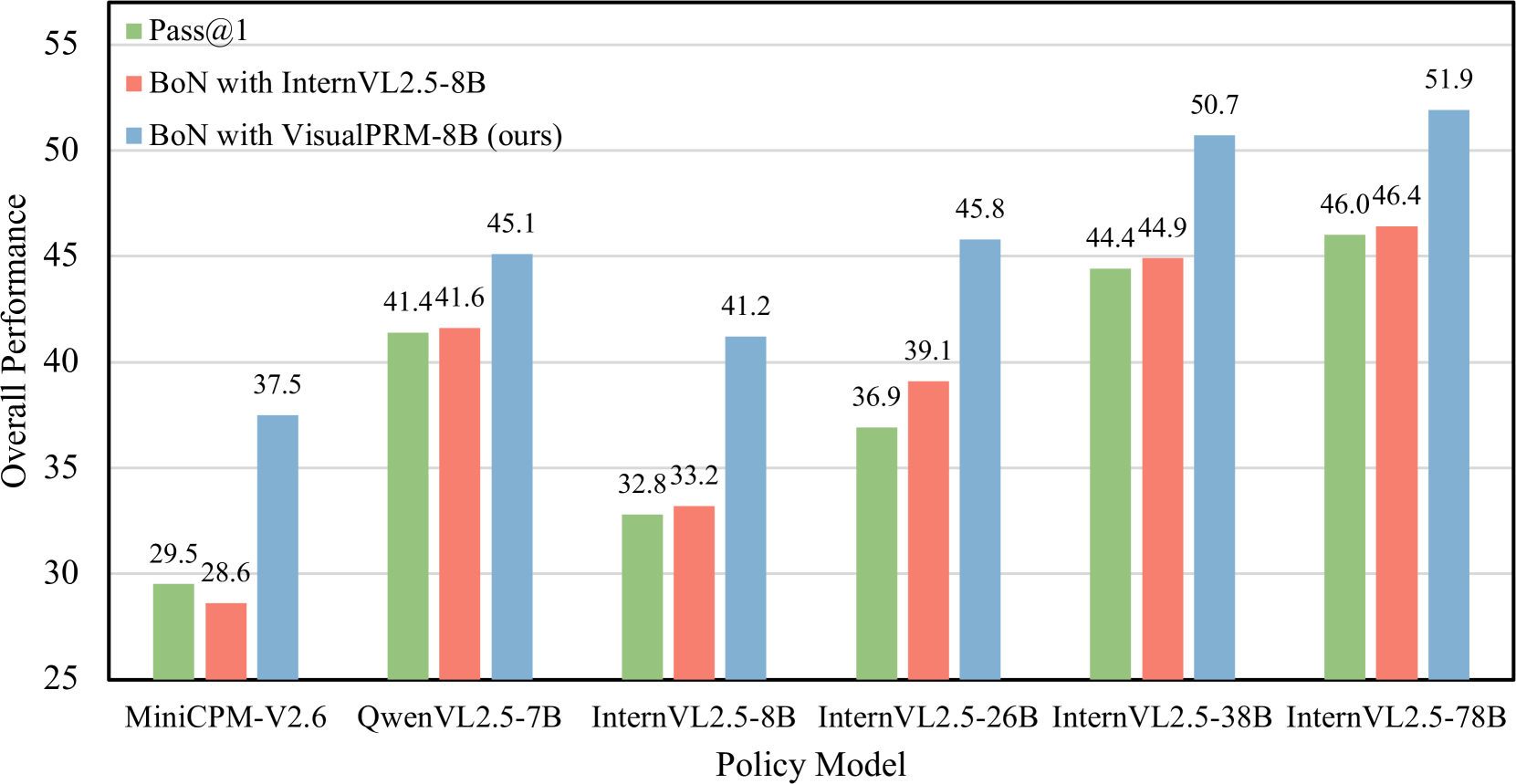

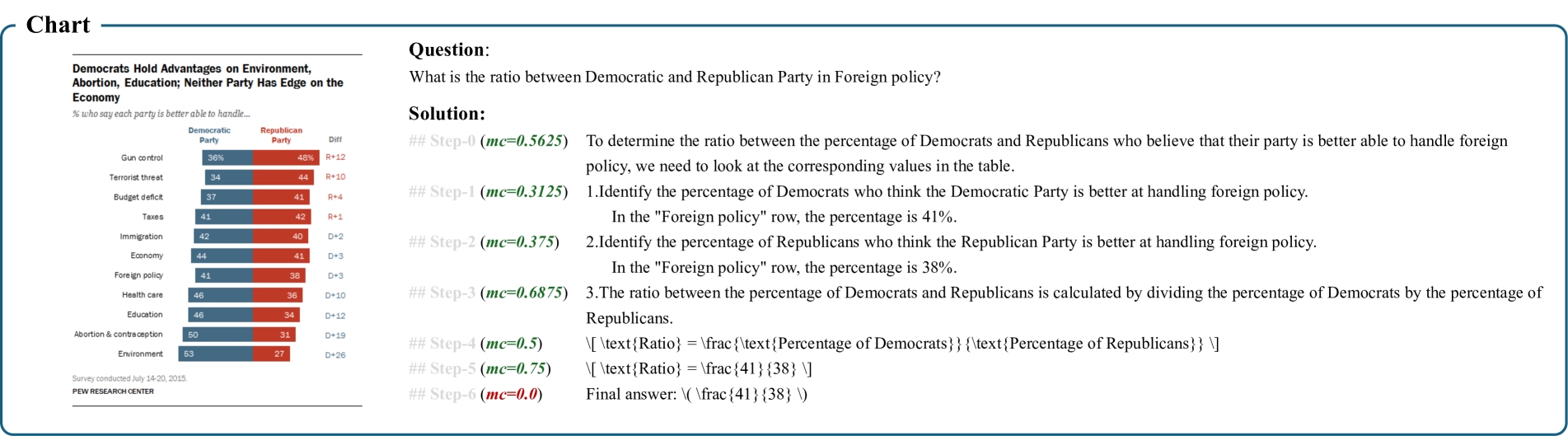

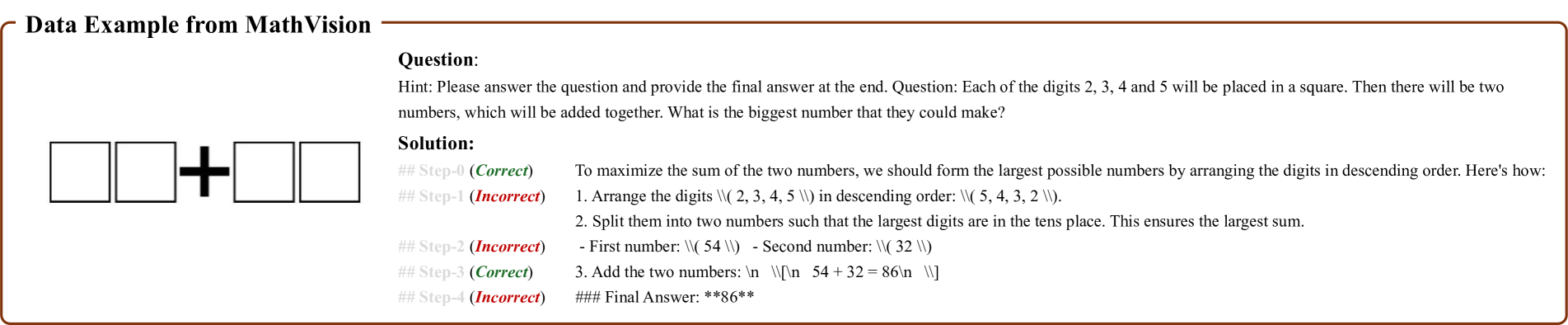

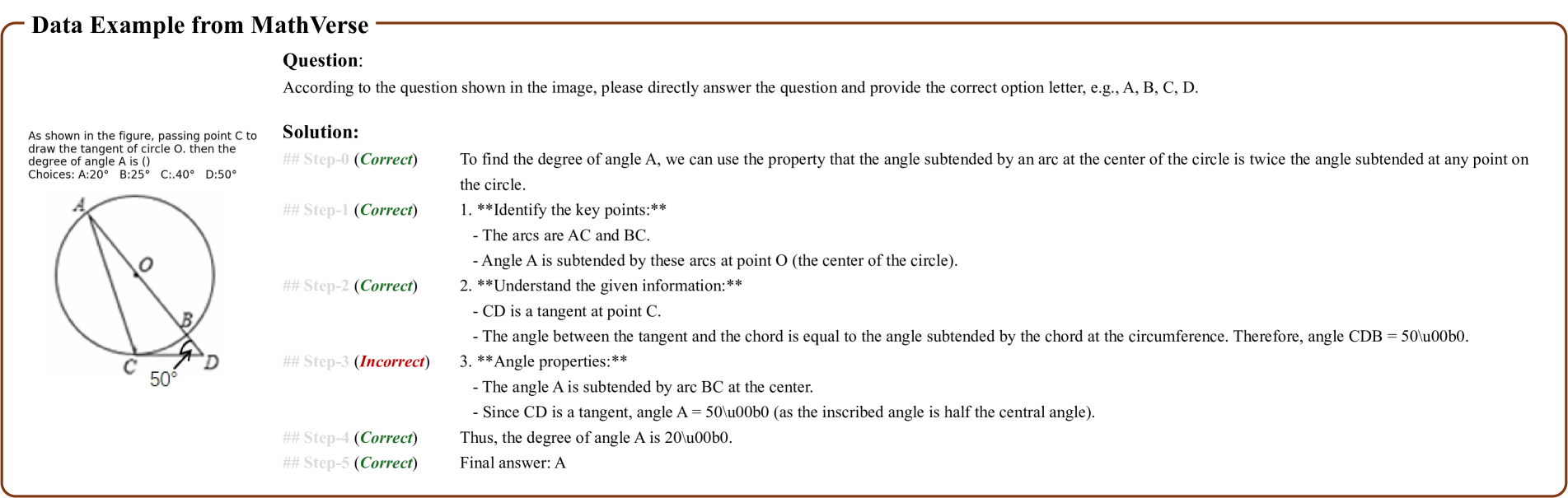

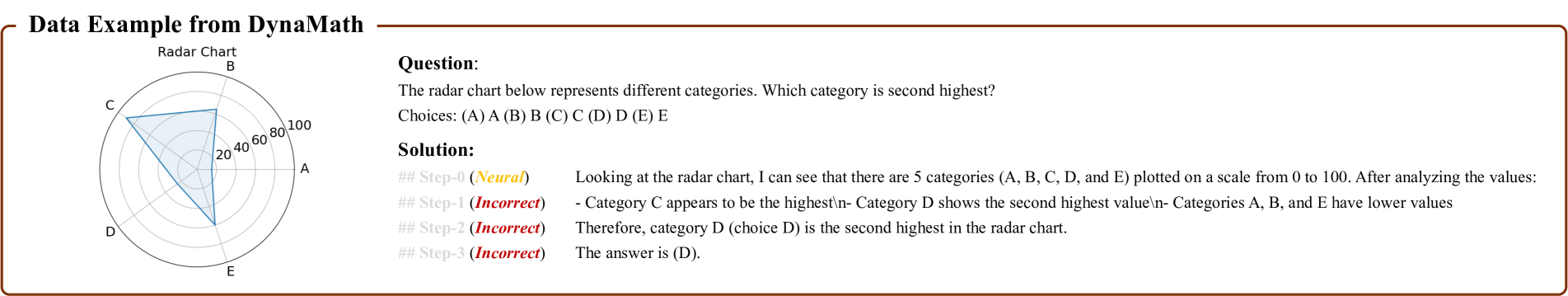

Figure 1: The overall Best-of-8 evaluation results across seven multimodal reasoning benchmarks with different critic models. Our VisualPRM greatly enhances the overall performance, while InternVL2.5-8B struggles to be an effective critic model.

With the remarkable success of Large Language Models (LLMs) [75, 76, 21, 5, 71, 10, 9, 1, 4] in natural language processing, Multimodal Large Language Models (MLLMs) [80, 36, 41, 42, 6, 84, 83, 14, 16, 15, 58, 61, 89] have also achieved significant advancements across various vision-language tasks. Despite their strong performance in perception and recognition, a large gap remains in reasoning capabilities between open-source and proprietary models. A series of studies have explored methods to enhance reasoning abilities, focusing on the perspectives of data collection and construction [57, 74, 38, 43], offline preference optimization [59, 82, 32], and online reinforcement learning [66, 24, 2, 26]. Additionally, another line of research [68, 20, 94, 79] investigates utilizing Test-Time Scaling (TTS) to enhance the reasoning abilities of LLMs. This approach requires the policy model to generate multiple response candidates and select the best one, based on the quality estimation of a critic model, thereby improving the response quality at the cost of higher inference time. However, TTS for MLLMs remains largely unexplored.

This work investigates the application of TTS for MLLMs, focusing on the Best-of-N (BoN) evaluation strategies. The challenges of adapting TTS for MLLMs involves: (1) Lack of effective critic models. In BoN evaluation, a critic model is required to estimate the quality of each response candidate. However, as shown in Figure 1, existing open-source MLLMs struggle to serve as critic models, leading to marginal improvements compared to models without TTS. This limitation stems from the lack of sufficient critic data in their training corpus. (2) Lack of evaluation benchmarks for multimodal critic models. The effectiveness of TTS heavily depends on the performance of the critic model. However, directly evaluating critics under BoN settings poses two key issues. First, the evaluation cost of BoN is expensive. Although the focus is on the performance of critic models, the policy model is required to generate $N$ reasoning processes, with the majority of computational costs arising from the policy model. Second, BoN performance is also affected by the policy model, making it difficult to compare different critic models when paired with varying policy models.

To solve these challenges, we first introduce VisualPRM400K, a dataset comprising approximately 400K multimodal process supervision data. Each sample includes an image, a question, a step-by-step solution, and correctness annotations for each step. Specifically, we collect question prompts from MMPR v1.1 [82] and then generate process correctness using an automatic data pipeline [79]. This pipeline samples multiple continuations starting from a certain step and computes the expected accuracy of that step as the average accuracy of its continuations.

To facilitate the evaluation of multimodal critic models, we introduce VisualProcessBench, a benchmark for evaluating PRMs and MLLMs in detecting erroneous steps in multimodal reasoning tasks. This benchmark includes 2,866 samples with 26,950 human-annotated step-wise correctness labels. Each sample includes a multimodal reasoning question, a step-by-step solution, and correctness labels for each step. To ensure annotation accuracy, we employ human experts with at least a university degree to manually assess the correctness of each step. Unlike prior benchmarks [96, 39], which require identifying only the first erroneous step, VisualProcessBench challenges models to detect all errors within a given solution. This adjustment aligns with recent advancements in model reflection abilities, helping to reduce false negatives in evaluations. Evaluation results reveal that existing open-source MLLMs struggle to accurately assess step-wise correctness, highlighting the need for improved multimodal critic models.

Building upon the dataset and benchmark, we develop VisualPRM, an advanced multimodal Process Reward Model (PRM) with 8B parameters, to serve as the critic model in BoN evaluation. Each training sample is formulated as a multi-turn chat. The first turn includes the image, the question, and the first solution step, while each subsequent turn presents a new step. The model is trained to predict the correctness of the given step at each turn. Experimental results demonstrate that VisualPRM enhances MLLM reasoning across different model families and scales. Specifically, VisualPRM improves the overall reasoning performance of MiniCPM-V2.6, QwenVL2.5-7B, InternVL2.5-8B, and InternVL2.5-78B by 8.0, 3.7, 8.4, and 5.9 points, respectively, across seven multimodal reasoning benchmarks [90, 50, 78, 93, 99, 60, 87]. Additionally, we compare PRMs with Outcome Reward Models and Self-Consistency in BoN evaluation, finding that PRMs consistently outperform both approaches.

In summary, our main contributions are as follows:

(1) We introduce VisualPRM400K, a dataset comprising approximately 400K multimodal process supervision data. Building upon this dataset, we develop VisualPRM, an advanced multimodal PRM to serve as the critic model in the BoN evaluation.

(2) We construct VisualProcessBench, a benchmark designed to measure the abilities of PRMs and MLLMs to identify erroneous steps in multimodal reasoning tasks. This benchmark comprises 2,866 samples with a total of 26,950 human-annotated step-wise correctness labels.

(3) Through extensive experiments, we demonstrate that PRMs can serve as effective critic models for test-time scaling of MLLMs. Specifically, VisualPRM enhances the overall multimodal reasoning performance of MiniCPM-V2.6, QwenVL2.5-7B, InternVL2.5-8B, and InternVL2.5-78B by 8.0, 3.7, 8.4, and 5.9 points, respectively, across seven multimodal reasoning benchmarks. Furthermore, our results show that PRMs consistently outperform both ORMs and SC in BoN evaluation. Additionally, experiments on VisualProcessBench reveal that existing open-source MLLMs struggle to accurately assess the correctness of each step.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Math Problems with Solutions

### Overview

The image presents two distinct math problems, each with a question and a step-by-step solution. The first problem involves finding the measure of an angle in a quadrilateral, while the second involves finding the measure of an angle formed by intersecting lines. Each step in the solution is annotated with a label indicating correctness or a confidence score.

### Components/Axes

**Problem 1 (VisualPRM400K):**

* **Question:** Find m ∠S.

* **Diagram:** A quadrilateral labeled Q, R, S, and T.

* ∠R = x°

* ∠T = x°

* ∠Q = (2x + 5)°

* ∠S = (2x + 7)°

* **Solution Steps:**

* Step 0: (mc=0.75)

* Step 1: (mc=0.5)

* Step 2: (mc=0.5625)

* Step 3: (mc=0.5)

* Step 4: (mc=0.0)

* Step 5: (mc=0.0)

**Problem 2 (VisualProcessBench):**

* **Question:** As shown in the figure, AB parallel CD, EG bisects angle BEF, then angle 2 is equal to (). Choices: A:50° B:60° C:65° D:90°

* **Diagram:** Two parallel lines AB and CD intersected by a transversal EG.

* ∠1 is labeled as 50°.

* EG bisects ∠BEF, creating ∠BEG and ∠GEF.

* ∠2 is formed by EG and CD.

* **Solution Steps:**

* Step 0: (Neural)

* Step 1: (Correct)

* Step 2: (Correct)

* Step 3: (Correct)

* Step 4: (Incorrect)

* Step 5: (Correct)

* Step 6: (Incorrect)

* Step 7: (Incorrect)

### Detailed Analysis or ### Content Details

**Problem 1 (VisualPRM400K):**

* **Solution:**

* The solution uses the property that the sum of interior angles of a quadrilateral is 360°.

* Step 1: (2x + 5)° + x° + (2x + 7)° + x° = 360°

* Step 2: 2x + 5 + x + 2x + 7 + x = 360 => 6x + 12 = 360

* Step 3: 6x = 360 - 12 => 6x = 348 => x = 58

* Step 4: m ∠S = 2x + 7 = 2(58) + 7 = 116 + 7 = 123

* Final Answer: 58 (This is incorrect based on the previous step. It should be 123)

**Problem 2 (VisualProcessBench):**

* **Solution:**

* The solution uses properties of parallel lines and angle bisectors.

* Given: AB || CD, EG bisects ∠BEF, ∠1 = 50°

* Since AB || CD, the transversal EG creates alternate interior angles and corresponding angles.

* EG bisects ∠BEF, meaning ∠BEG = ∠GEF.

* ∠BEF = ∠BEG + ∠GEF

* Since EG bisects ∠BEF, ∠BEG = ∠GEF = 50°

* ∠2 and ∠GEF are congruent because AB || CD and EG is a transversal.

* Therefore, ∠2 = ∠GEF = 50°

* Final Answer: The correct option is A.

### Key Observations

* In Problem 1, the final answer provided (58) is the value of 'x', not the measure of angle S, which should be 2x+7 = 123.

* In Problem 2, the steps are labeled as "Correct" or "Incorrect," providing feedback on the solution process.

### Interpretation

The image presents two geometry problems with detailed solutions. Problem 1 demonstrates how to find an unknown angle in a quadrilateral using the property of the sum of interior angles. However, the final answer is incorrect, as it provides the value of 'x' instead of the measure of angle S. Problem 2 demonstrates how to find an angle formed by intersecting parallel lines using properties of angle bisectors and transversals. The steps are labeled with correctness, which is useful for understanding the reasoning process.

</details>

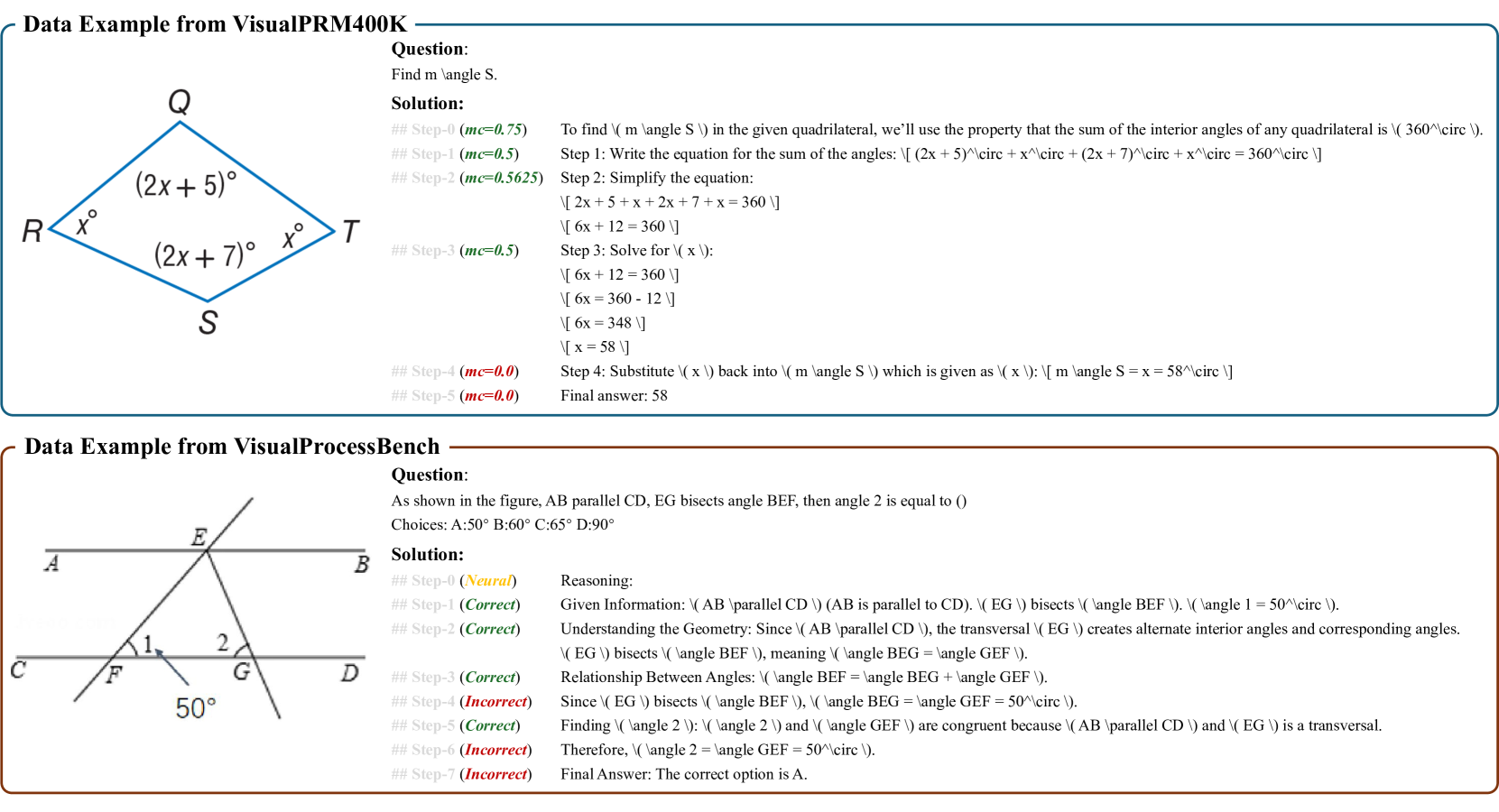

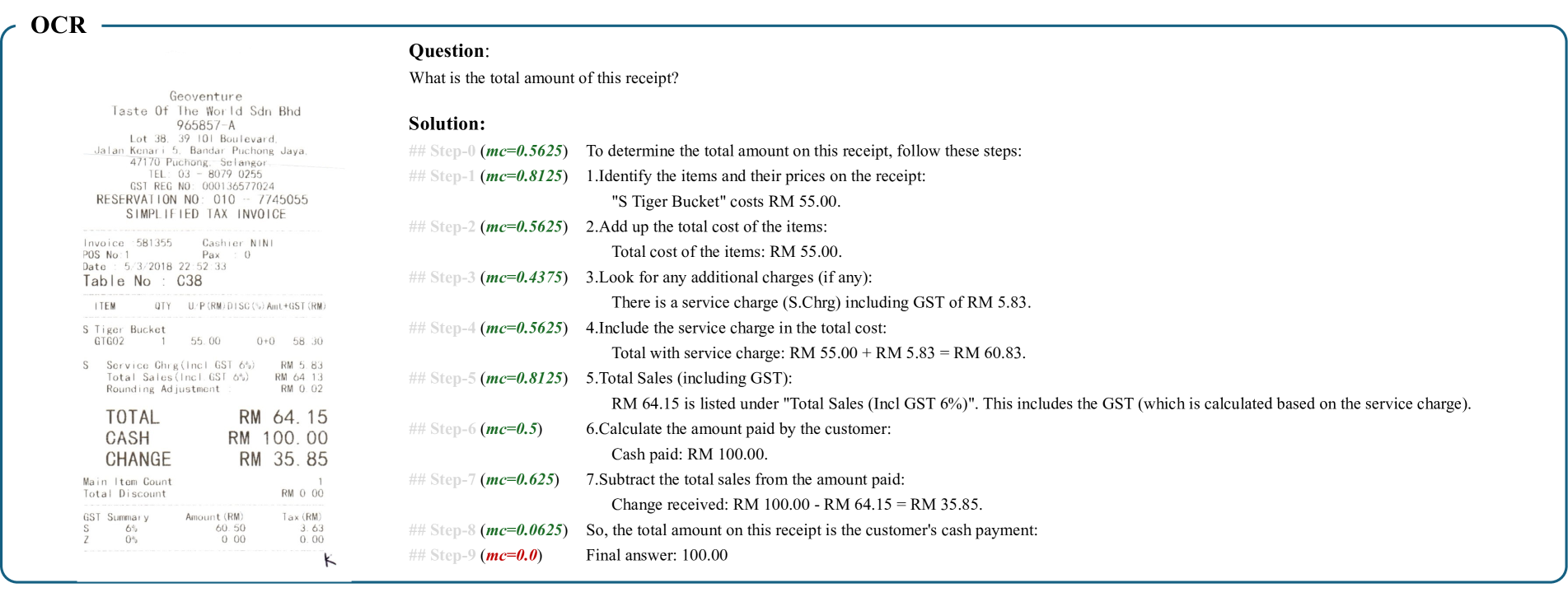

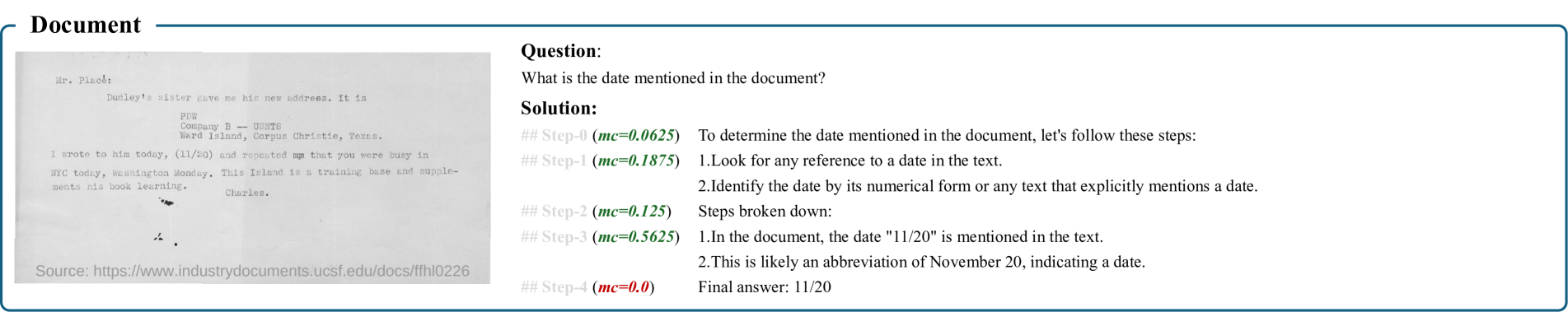

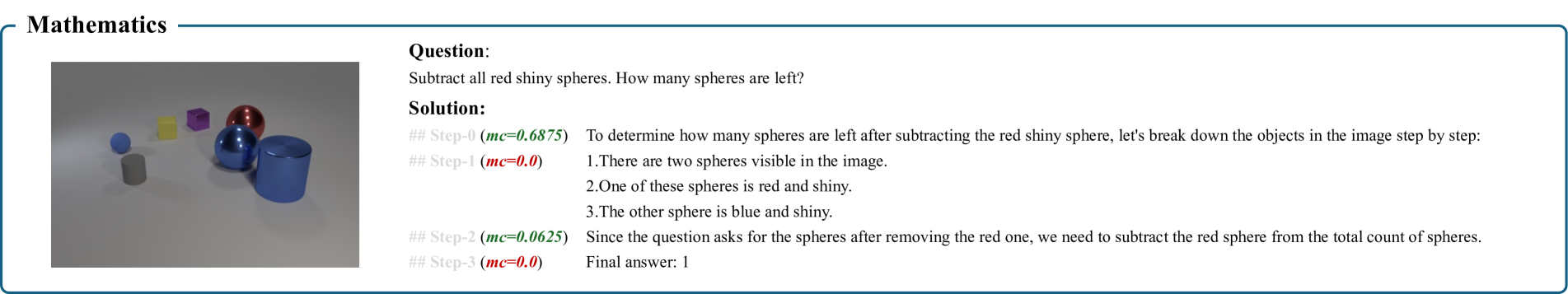

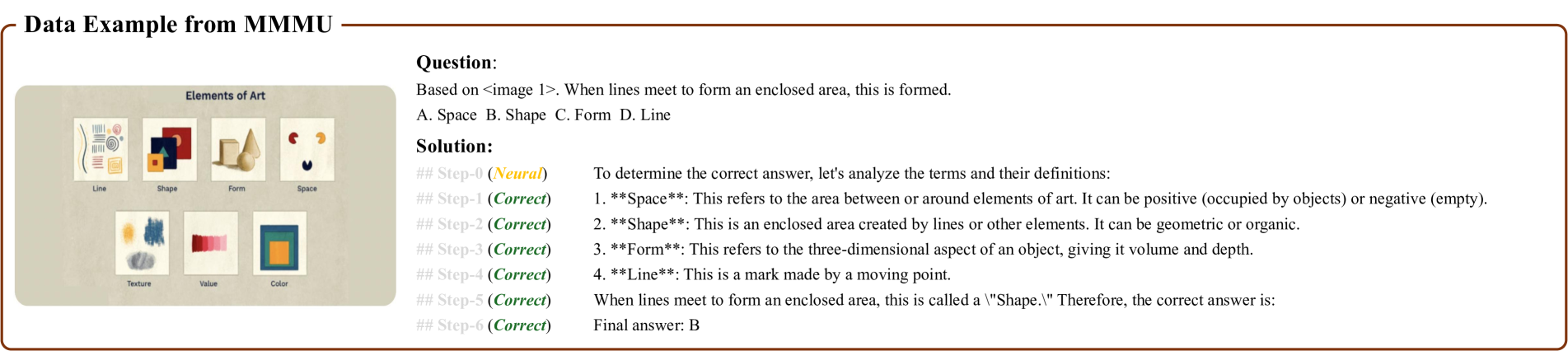

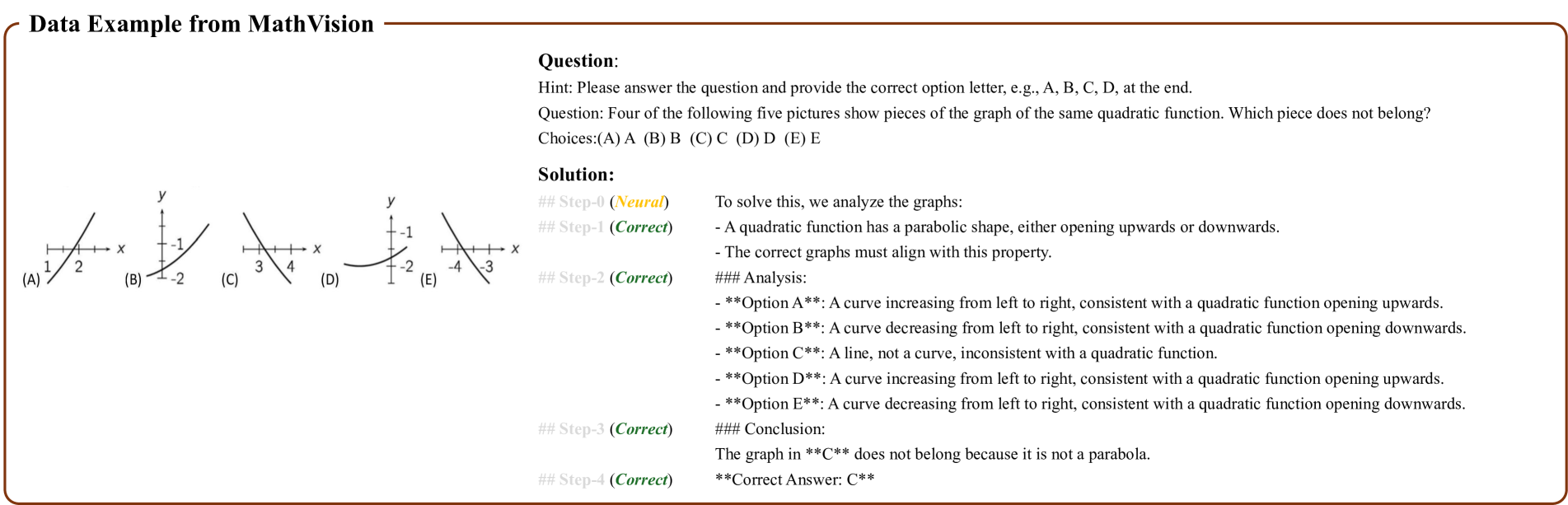

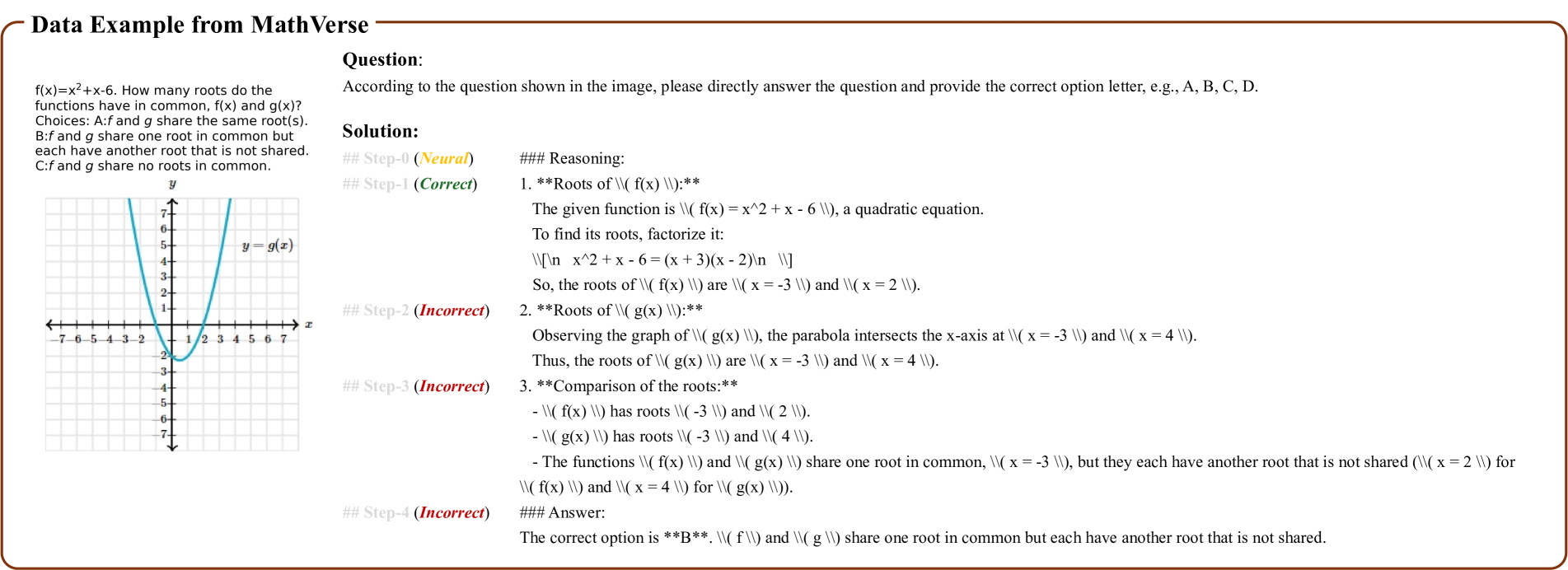

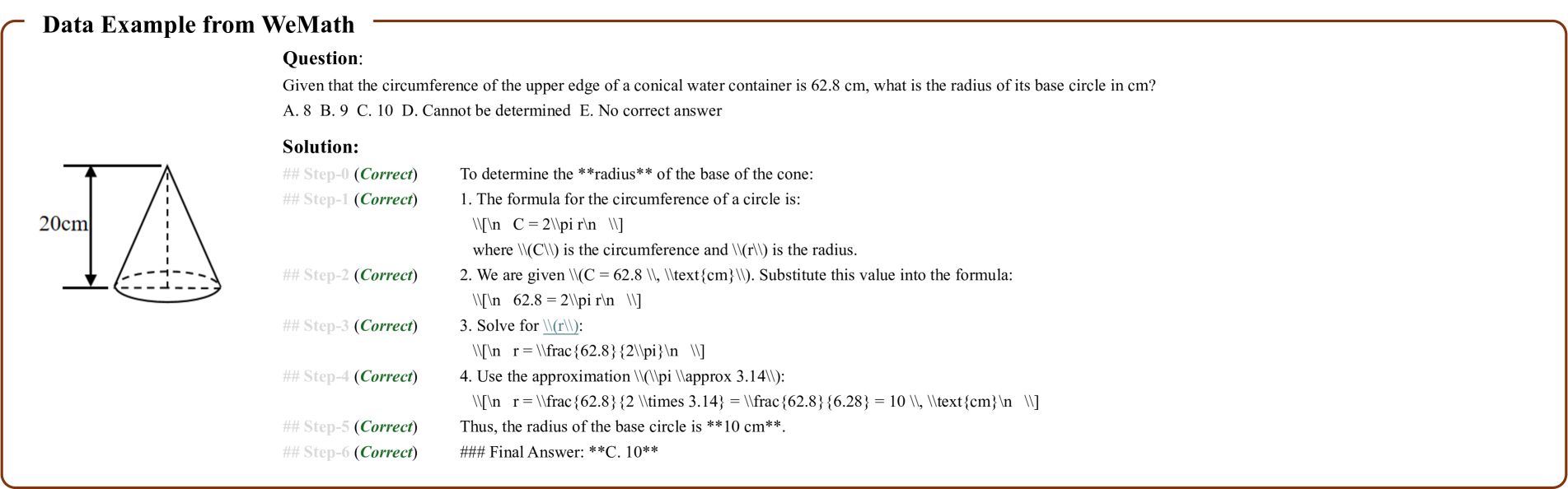

Figure 2: Data examples in VisualPRM400K and VisualProcessBench. For VisualPRM400K, we generate the data using an automatic data pipeline. The key idea is to estimate the expected accuracy $mc_{i}$ of the given step $s_{≤ i}$ based on Monte Carlo sampling and consider the step correct if $mc_{i}>0$ . During the training process of VisualPRM, the data is formulated as multi-turn conversations and the model is required to predict the correctness of each step conditioned on the image, question, and previous steps. For VisualProcessBench, we collect questions from existing multimodal reasoning benchmarks [90, 78, 93, 99, 60] and generate the solutions using leading MLLMs [58, 4, 15, 82, 72]. Based on these questions and solutions, we employ a team of human experts with at least a university degree to manually annotate the correctness of each step in the solutions.

2 Related Work

Multimodal Large Language Models. A wide range of efforts has been made to advance the development of MLLMs, including improvements in model architecture, data construction, and training algorithms. From an architectural perspective, several studies [41, 42, 89, 15, 16, 84, 83, 45, 85, 7, 89] employ connectors to align visual embeddings from Vision Foundation Models (VFMs) [14, 91] with the latent space of LLMs [5, 75, 76, 71], achieving promising performance. Another series of works [3, 21, 73, 81] extends pre-trained LLMs with additional layers to fuse visual features, reducing the number of required visual tokens while introducing extra training cost. In terms of data construction, recent studies have made significant progress [63, 98, 34, 38, 43, 82, 95]. For example, OmniCorpus [38] offers a noisy but large-scale multimodal corpus for pre-training, while MMInstruct [43] provides an open-source, high-quality instruction-tuning dataset. Additionally, MMPR [82] constructs a preference dataset focusing on multimodal reasoning abilities. Regarding training algorithms, the InternVL2.5 series [15, 82] proposes square loss and Mix Preference Optimization to enhance MLLM capabilities. Despite these advancements, existing works primarily focus on the training process of MLLMs, leaving Test-Time Scaling (TTS) for MLLMs largely underexplored. In this work, we investigate TTS applications for MLLMs, specifically focusing on the Best-of-N evaluation to improve multimodal reasoning performance.

Process Reward Models. Reward models play a crucial role in Reinforcement Learning (RL) [64, 66, 2, 26] and TTS [68, 79, 20, 51]. Outcome Reward Models (ORMs) [55, 92, 77] directly assign an overall score to the given response. In contrast, Process Reward Models (PRMs) first estimate the quality of each step in the given response and then aggregate them into a final score. PRM800K [39] is the first open-source process supervision dataset, entirely annotated by human experts. To reduce annotation costs, MathShepherd [79] and OmegaPRM [51] introduce a Monte Carlo sampling-based data pipeline to automatically estimate the quality of each step. Despite these advancements in natural language processing, multimodal PRMs remain largely underexplored. In this work, we introduce VisualPRM400K, the first multimodal process supervision dataset, and develop VisualPRM, a multimodal PRM trained on this dataset.

Benchmarks for Reward Models. The evaluation of reward models (RMs) is a crucial research topic. A series of benchmarks [33, 37, 44] have been proposed to assess the effectiveness of RMs, typically formulated as a binary preference judgment task. Building on this, subsequent work [97] extends the evaluation settings and includes both pairwise and Best-of-N evaluations, providing a more comprehensive evaluation of RM performance. With the rapid advancement of PRMs, a series of benchmarks [96, 69] have been introduced to evaluate their step-wise judgment capabilities. Despite these developments, there remains a lack of a multimodal process benchmark. To bridge this gap and support the development of multimodal PRMs, we introduce VisualProcessBench, a benchmark designed to evaluate the ability of PRMs and MLLMs to detect erroneous steps in multimodal reasoning tasks.

3 Method

<details>

<summary>x3.png Details</summary>

### Visual Description

## Flow Diagram: Math Problem Solving Steps

### Overview

The image is a flow diagram illustrating the steps to solve a geometry problem, specifically finding the measure of angle S in a quadrilateral. It shows the progression of steps, the expected accuracy (mc), and the performance of two different problem-solving methods: Value-based PRM and Advantage-based PRM.

### Components/Axes

* **Nodes:** Rectangular boxes representing steps, accuracy, and performance.

* **Arrows:** Indicate the flow of the problem-solving process.

* **Text Labels:** Describe the steps, accuracy values, and performance assessments.

* **Geometric Diagram:** A quadrilateral labeled with angle measures.

* **Legend (Right Side):**

* Question & Solution (Grey)

* Expected Accuracy (Light Blue)

* Value-based PRM (Light Green)

* Advantage-based PRM (Light Orange)

### Detailed Analysis or ### Content Details

**1. Question & Initial Diagram (Top-Left):**

* **Question:** "Find m ∠S."

* **Diagram:** A quadrilateral labeled Q, R, S, and T.

* ∠Q = (2x + 5)°

* ∠R = x°

* ∠T = x°

* ∠S = (2x + 7)°

**2. Step-by-Step Solution (Top Row):**

* **Step-0:** "To find \(m \angle S \) ..."

* **Step-1:** "Write the equation for ..."

* **Step-4:** "Substitute \(x\) back ..."

* **Step-5:** "Final answer: 58"

**3. Expected Accuracy (Second Row):**

* **Step-0:** mc = 0.75

* **Step-1:** mc = 0.5

* **Step-4:** mc = 0.0

* **Step-5:** mc = 0.0

**4. Value-based PRM Performance (Third Row):**

* **Step-0:** Correct (+)

* **Step-1:** Correct (+)

* **Step-4:** Incorrect (-)

* **Step-5:** Incorrect (-)

**5. Advantage-based PRM Performance (Bottom Row):**

* **Step-0:** Good (+)

* **Step-1:** Bad (-)

* **Step-4:** Bad (-)

* **Step-5:** Tie (=)

**6. Ellipsis:**

* There is an ellipsis (...) between Step-1 and Step-4, indicating that some steps are omitted for brevity.

### Key Observations

* The expected accuracy (mc) decreases as the problem progresses, reaching 0.0 in the final steps.

* The Value-based PRM starts with correct steps but ends with incorrect steps.

* The Advantage-based PRM starts with a good step but degrades to a tie in the final step.

### Interpretation

The diagram illustrates a problem-solving process for a geometry question, highlighting the performance of two different methods (Value-based PRM and Advantage-based PRM) at each step. The decreasing expected accuracy suggests that the problem becomes more complex or error-prone as it progresses. The performance of the two methods varies, indicating that their effectiveness depends on the specific step in the problem-solving process. The ellipsis indicates that the diagram is simplified and does not show all the intermediate steps.

</details>

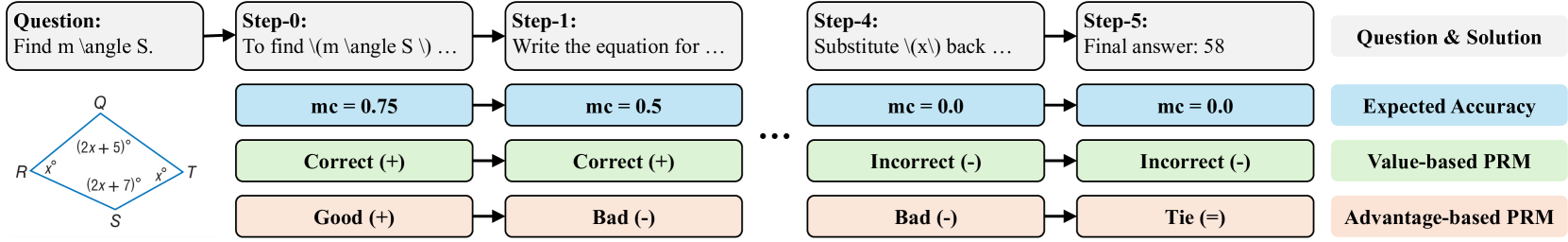

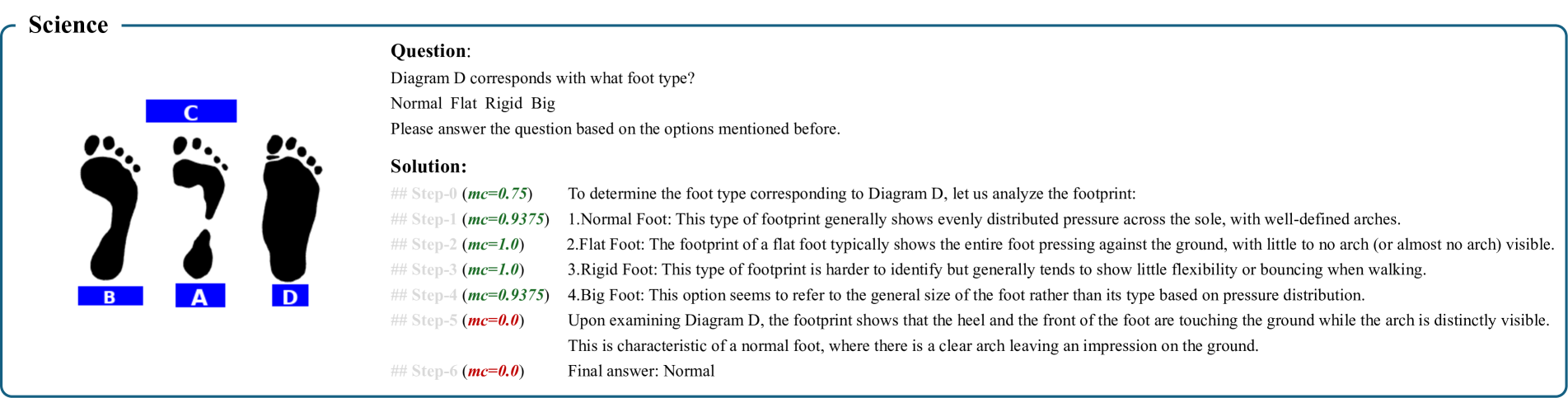

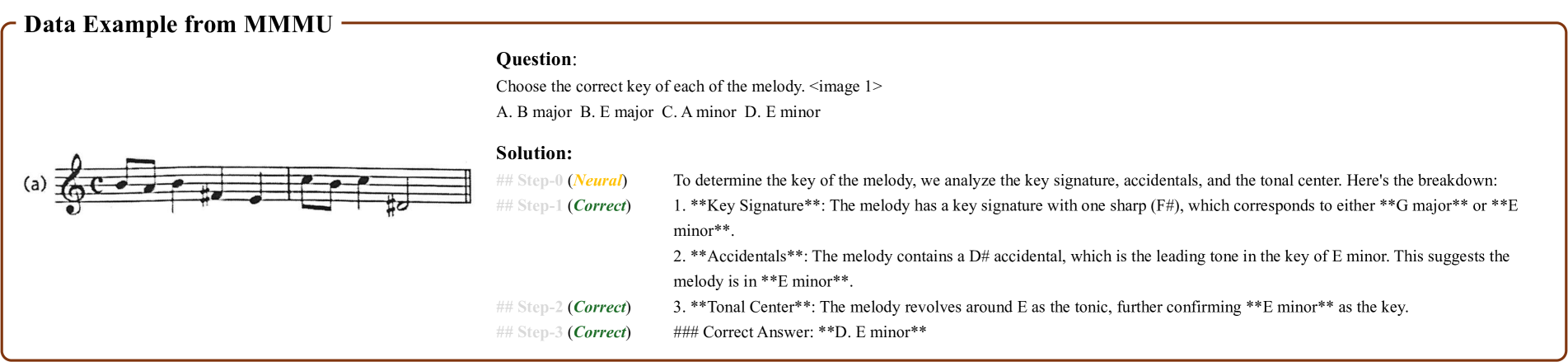

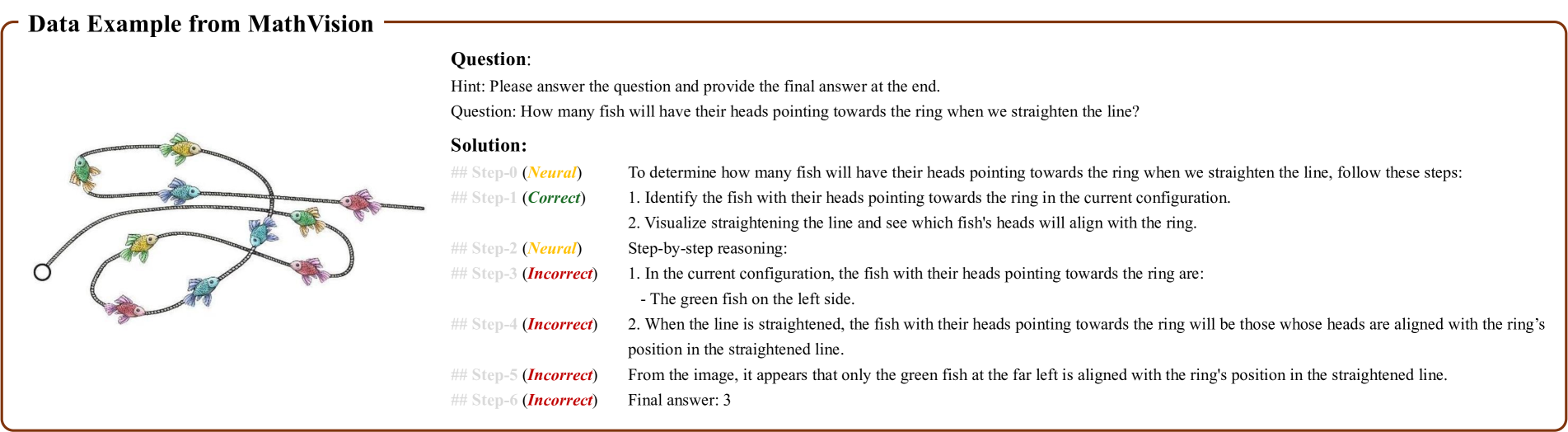

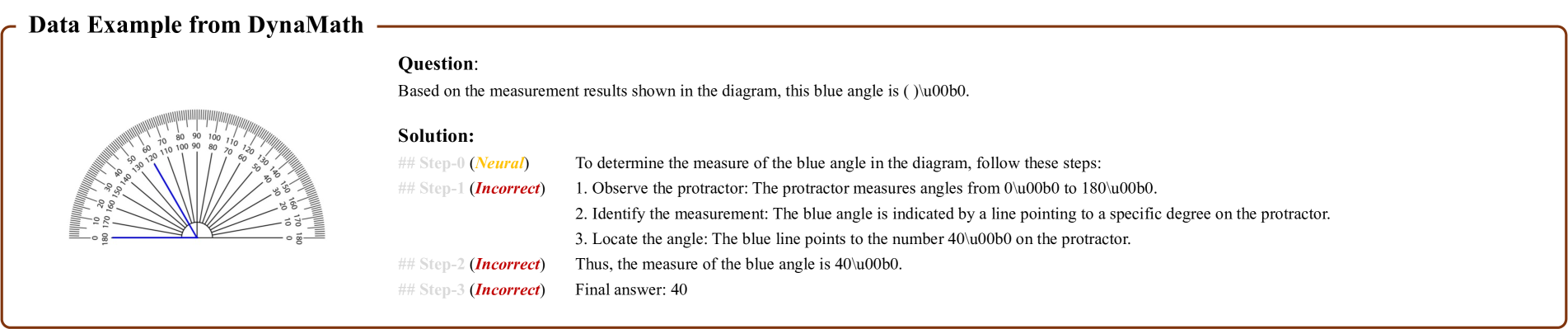

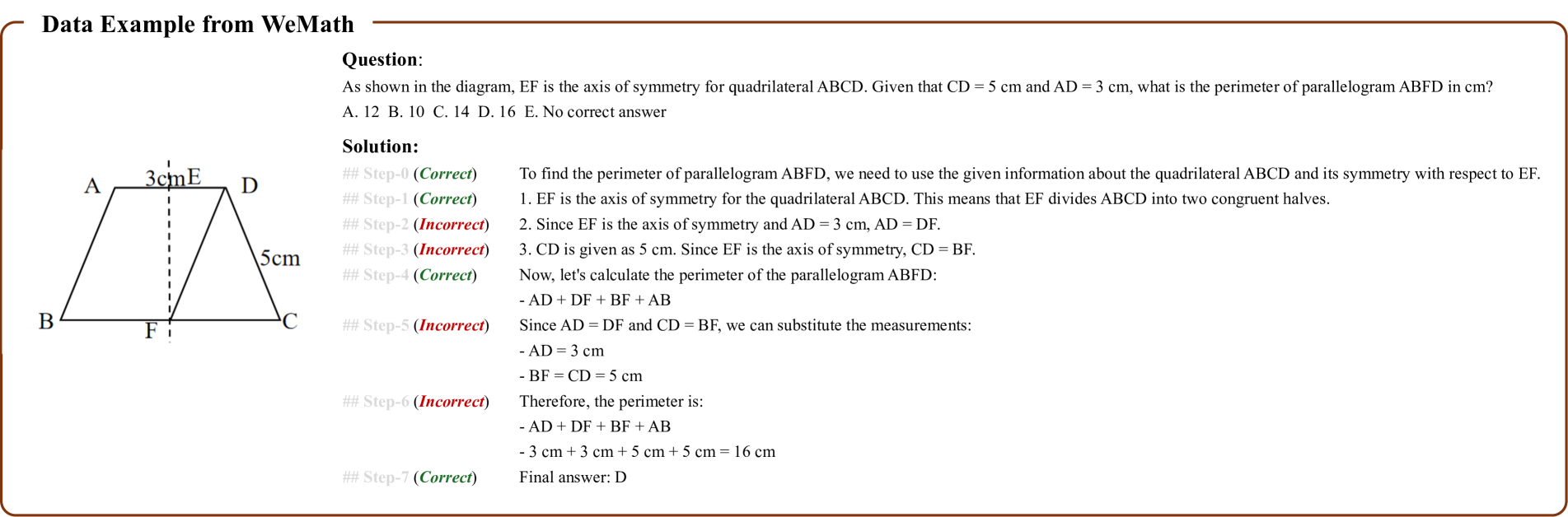

Figure 3: Different modeling methods for PRMs. PRMs are developed to estimate the quality of each step in a given solution. For value-based PRMs, the quality of a certain step is determined by its expected accuracy $mc_{i}$ , where a step is considered correct if $mc_{i}>0$ . For advantage-based PRMs, the quality of a certain step is determined by the improvement of $mc_{i}$ over $mc_{i-1}$ , where a step is considered good if $mc_{i}-mc_{i-1}>0$ . During the training stage, the output space of PRMs is discretized into specific tokens, while during the inference stage, we compute the step score as the weighted sum of the generation probability for these discretized tokens.

During Best-of-N (BoN) evaluation, a critic model is required to estimate the quality of each response candidate. In this work, we formulate the critic model as a Process Reward Model (PRM). To develop a multimodal PRM, we first construct VisualPRM400K, a dataset comprising about 400K multimodal process supervision data, as detailed in Section 3.1. We then describe our PRM’s modeling approach in Section 3.2. Furthermore, to support the evaluation of critic models, we establish VisualProcessBench to measure the abilities of critic models to detect erroneous steps in multimodal reasoning, as introduced in Section 3.3.

3.1 VisualPRM400K

Definition. As shown in Figure 2, each data sample in our VisualPRM400K consists of an image $I∈\mathcal{I}$ , a question $q∈\mathcal{Q}$ , a step-by-step solution $s=\{s_{0},s_{1},·s,s_{n}\}∈\mathcal{S}$ , and the expected accuracy annotation $mc=\{mc_{0},mc_{1},·s,mc_{n}\},mc_{i}∈\mathbb{R}_{≥ 0}$ for each step, where $n$ is the number of steps of a certain solution and $mc_{i}$ denotes the expected accuracy of step $s_{i}$ . The image sets $\mathcal{I}$ and question sets $\mathcal{Q}$ are collected from MMPR v1.1 [82], while the step-by-step solutions $\mathcal{S}$ are sampled using InternVL2.5 series models [15, 82].

Process Supervision Generation. Given an image $I$ , a question $q$ , and a solution $s=\{s_{0},s_{1},·s,s_{n}\}$ , we annotate the correctness of each step $s_{i}$ using an automatic data pipeline. The key idea is to estimate the expected accuracy of given steps $s_{≤ i}$ based on Monte Carlo sampling. Specifically, the model is required to complete the solution as follows:

$$

\tilde{s}_{>i}\sim M(\tilde{s}_{>i}\mid I,q,s_{\leq i}), \tag{1}

$$

where $\tilde{s}_{>i}$ is the completion of $s_{≤ i}$ . Besides, the expected accuracy of $s_{i}$ is defined as:

$$

mc_{i}=\frac{\text{num(correct completions)}}{\text{num(sampled completions)}}. \tag{2}

$$

Notably, to reduce the data construction costs, we set the max number of steps to 12 and evenly merge the steps if the number of current steps exceeds the threshold.

Statistics. During the construction process, we sample $4$ solutions for each image-question pair and split each of them into at most $12$ steps. For each step, we sample $16$ continuations and compute $m_{i}$ according to these continuations. The resulting dataset comprises approximately 400K samples and 2 million steps with process supervision. Each response averages 126.9 words and 5.6 steps, while each step averages 22.6 words. Among these steps, about 10% are incorrect steps. Despite the imbalanced distribution of correct and incorrect steps, our PRM demonstrates promising performance, as shown in Section 4.

3.2 VisualPRM

Overview. During the training process, we formulate the process supervision problem as a multi-turn chat task so that we can effectively leverage the generation ability of MLLMs. The image $I$ , question $q$ , and the first step $s_{0}$ of the solution to this question are included in the first turn and a new step is presented in each subsequent turn. The model is required to predict the quality of the given step in each turn as follows:

$$

y_{i}\sim M(y_{i}\mid I,q,s_{\leq i}), \tag{3}

$$

where $y_{i}$ denotes the quality of $i$ -th step.

For value-based PRMs, the quality of a certain step is determined by its expected accuracy $mc_{i}$ , which is similar to the definition of the value function in reinforcement learning. Following Math-Shepherd [79, 20], we require the model to predict the correctness $c_{i}∈\{+,-\}$ of the given step, rather than the exact score of $mc_{i}$ . The $i$ -th step is considered correct if $mc_{i}>0$ . We also try to set a threshold to reduce false positive steps, but find that such a threshold negatively impacts the PRM performance, as shown in Section 7. Notably, unlike previous works [79, 39, 20], which choose to supervise only up to the first incorrect step, we always supervise all steps.

For advantage-based PRMs, the quality of a certain step is determined by the improvement of $mc_{i}$ over $mc_{i-1}$ , which is analogous to the definition of the advantage function in reinforcement learning. Similar to value-based PRMs, the quality space is discretized into predefined values $\{+,=,-\}$ , meaning that the $i$ -th step $s_{i}$ results in a superior, comparable, or inferior situation.

During inference stage, we first compute the scores of each step and then merge them to obtain the response score. Specifically, the score for each step is defined as the weighted sum of the generation probability for the discretized scores. For value-based PRMs, the weights for $\{+,-\}$ are $\{1,0\}$ . For advantage-based PRMs, the weights for $\{+,=,-\}$ are $\{1,0,-1\}$ . Without further explanation, we average the scores of each step as the response score.

3.3 VisualProcessBench

| Total Samples - MMMU [90] - MathVision [78] | 2866 267 712 |

| --- | --- |

| - MathVerse [93] | 1026 |

| - DynaMath [99] | 570 |

| - WeMath [60] | 291 |

| Source Solutions | 2866 |

| - GPT-4o [58] | 870 |

| - Claude-3.5-Sonnet [4] | 865 |

| - QvQ-72B-Preview [72] | 825 |

| - InternVL2.5-78B [15] | 306 |

| Total Steps | 26950 |

| - Correct Steps | 16585 |

| - Incorrect Steps | 7691 |

| - Neural Steps | 2674 |

| Query Word Length Quartile | (22, 24, 50) |

| Response Word Length Quartile | (137, 193, 552) |

| Step Word Length Quartile | (13, 31, 67) |

| Number of Steps per Solution | 9.4 |

Table 1: Statistics of VisualProcessBench.

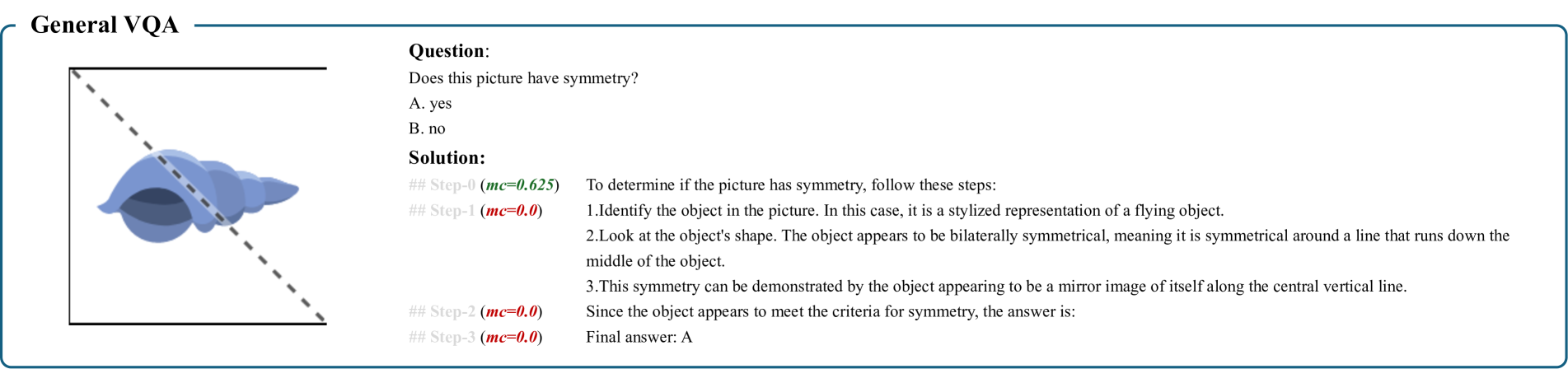

Definition. Each sample in our benchmark consists of a multimodal reasoning question, a step-by-step solution, and correctness annotations for each step. Considering that recent models begin to demonstrate reflection abilities to rectify their own reasoning process, the evaluation setting used in previous works [96, 39], which only requires the model to find the first erroneous step, may lead to a false negative estimation. Therefore, our benchmark requires the model to identify all erroneous steps in the given solution instead of only the first erroneous step.

Data Source. Our benchmark focuses on multimodal reasoning tasks, collecting images and questions from existing representative multimodal reasoning benchmarks, including MMMU [90], MathVision [78], MathVerse [93], DynaMath [99], and WeMath [60]. Given these questions, we generate step-by-step solutions using leading MLLMs, including GPT-4o [58], Claude-3.5-Sonnet [4], Gemini-2.0-Flash [70], QvQ-72B-Preview [72], and InternVL2.5-78B [15]. The solutions are sampled from different MLLMs to ensure their diversity.

Step Correctness Annotation. We employ a team of human experts with at least a university degree to manually annotate the correctness of each step in the solutions. Specifically, 13 people worked for 3 days, resulting in a workload of 39 person-days. The cost per person-day is approximately 37 dollars. During the annotation process, annotators are provided with the image, question, ground truth answer, and each step of the solution. Their task is to assign each step in the solution a label of positive, negative, or neutral, as illustrated in Figure 2. A positive label indicates that the step is correct, while a negative label signifies an incorrect step. The neural label is assigned to steps that do not involve any reasoning process or provide no additional information. To ensure the annotation quality, annotators are permitted to skip questions they do not understand. During the annotation process, our dataset is divided into 10 splits, each containing approximately 300 samples. For each split, the authors of this paper manually review about 10% of the samples. Splits with erroneous annotations are sent back for re-annotation. See Section 10 for more data examples.

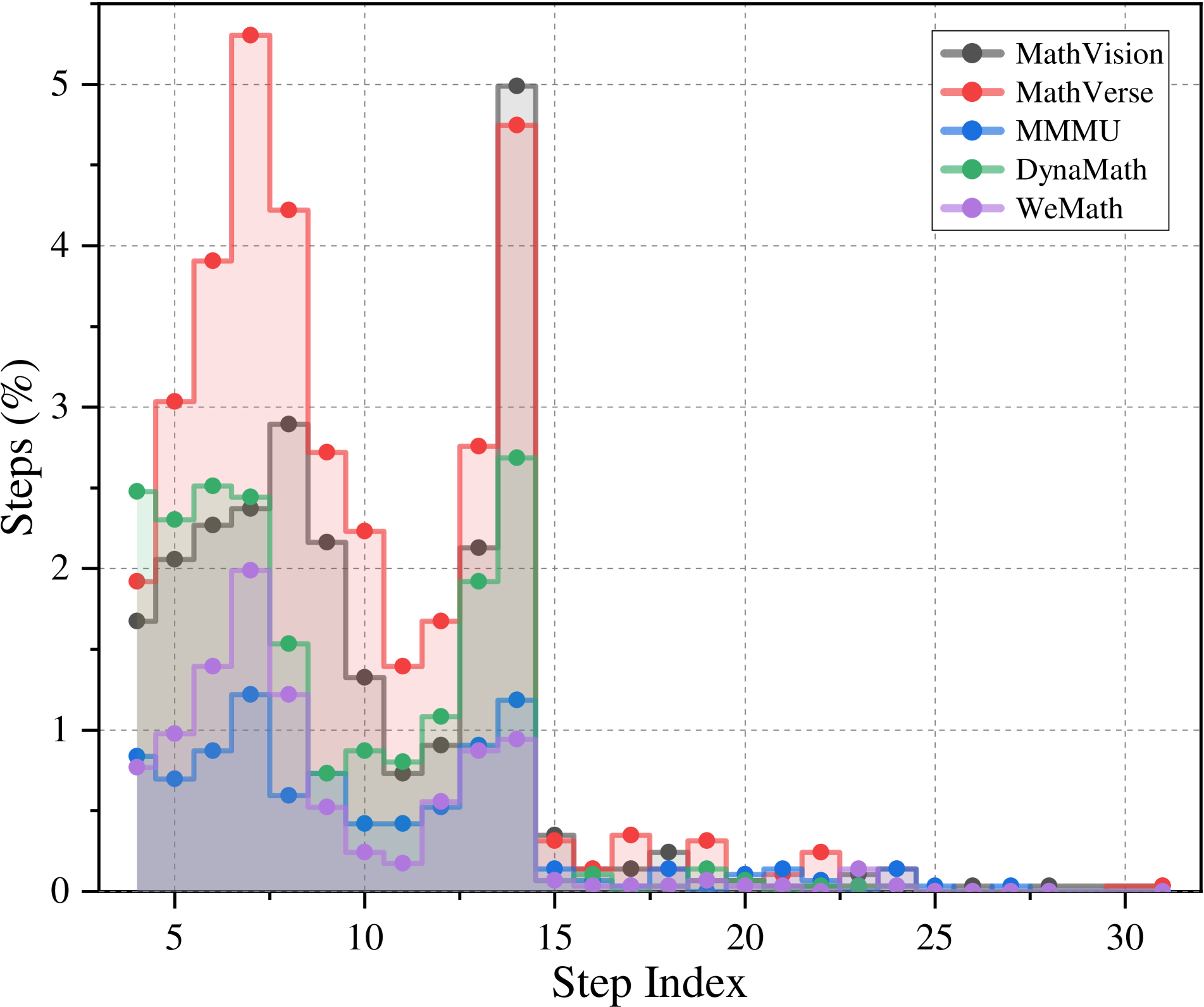

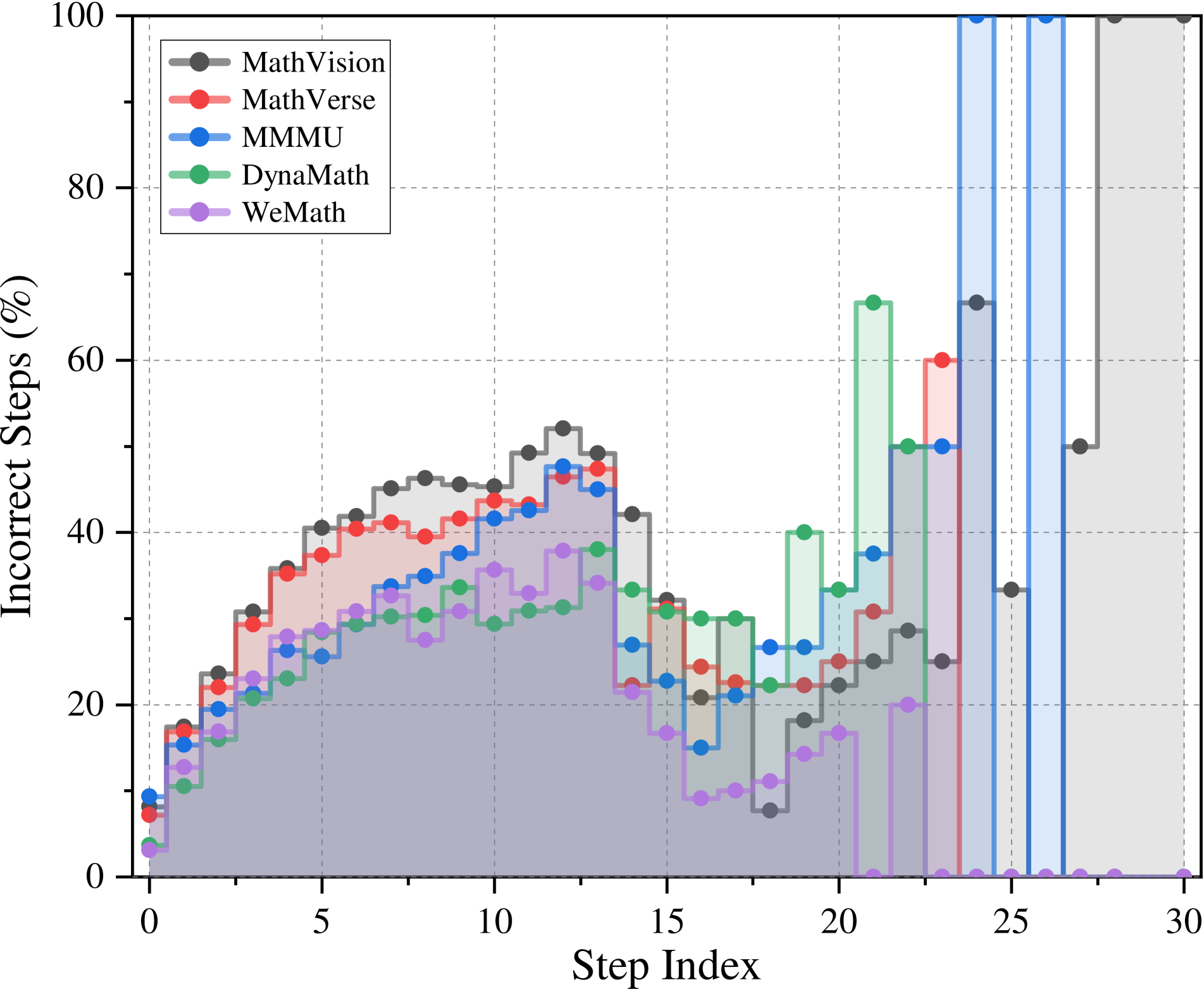

Statistics. As shown in Table 1, our benchmark comprises 2866 samples. To enhance the diversity of our evaluation samples, we gather questions and solutions from a wide range of benchmarks and models while carefully regulating their distribution. The statistics of step distribution are presented in Section 8.

Metrics. In this work, we use macro F1 scores to compare model performance, aiming to mitigate the impact of the imbalanced distribution between correct and incorrect steps. Specifically, we first compute the F1 scores separately for correct and incorrect steps and then take their average to obtain the overall score.

4 Experiments

In this section, we first employ VisualPRM to evaluate various MLLMs using BoN evaluation strategies in Section 4.1, demonstrating that PRMs can significantly enhance the reasoning abilities of MLLMs. Next, we evaluate our VisualPRM and other leading MLLMs on VisualProcessBench in Section 4.2. Finally, the ablation studies are presented in Section 4.3 and Section 7.

| GPT-4o [58] Gemini-2.0-Flash [61] Claude-3.5-Sonnet [4] | 70.7 69.9 66.4 | 60.0 70.4 65.3 | 31.2 43.6 35.6 | 40.6 47.8 46.3 | 34.5 42.1 35.7 | 45.8 47.4 44.0 | 52.8 52.3 60.4 | 47.9 53.4 50.5 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Open-source Models | | | | | | | | |

| MiniCPM-V2.6-8B [89] | 49.8 | 60.8 | 23.4 | 18.9 | 9.8 | 16.4 | 27.5 | 29.5 |

| +VisualPRM | 56.8 | 65.7 | 24.7 | 35.8 | 11.2 | 31.0 | 37.4 | 37.5 |

| +7.0 | +4.9 | +1.3 | +16.9 | +1.4 | +14.6 | +9.8 | +8.0 | |

| Qwen2.5-VL-7B [7] | 55.0 | 67.8 | 25.4 | 41.1 | 21.0 | 35.2 | 44.1 | 41.4 |

| +VisualPRM | 58.6 | 70.3 | 31.3 | 44.3 | 23.0 | 39.8 | 48.3 | 45.1 |

| +3.6 | +2.5 | +5.9 | +3.2 | +2.0 | +4.6 | +4.2 | +3.7 | |

| InternVL2.5-8B [15] | 56.2 | 64.5 | 17.0 | 22.8 | 9.4 | 23.5 | 36.0 | 32.8 |

| +VisualPRM | 60.2 | 68.5 | 25.7 | 35.8 | 18.0 | 36.5 | 43.8 | 41.2 |

| +4.0 | +4.0 | +8.7 | +13.0 | +8.6 | +13.0 | +7.8 | +8.4 | |

| InternVL2.5-26B [15] | 60.7 | 68.2 | 23.4 | 24.0 | 11.4 | 30.9 | 39.6 | 36.9 |

| +VisualPRM | 63.9 | 73.1 | 29.6 | 39.1 | 23.2 | 40.8 | 51.0 | 45.8 |

| +3.2 | +4.9 | +6.2 | +15.1 | +11.8 | +9.9 | +11.4 | +8.9 | |

| InternVL2.5-38B [15] | 63.9 | 71.9 | 32.2 | 36.9 | 20.0 | 38.3 | 47.9 | 44.4 |

| +VisualPRM | 69.0 | 73.9 | 35.2 | 46.7 | 30.5 | 46.2 | 53.7 | 50.7 |

| +5.1 | +2.0 | +3.0 | +9.8 | +10.5 | +7.9 | +5.8 | +6.3 | |

| InternVL2.5-78B [15] | 70.0 | 72.3 | 32.2 | 39.2 | 19.2 | 39.8 | 49.0 | 46.0 |

| +VisualPRM | 70.7 | 75.1 | 35.9 | 47.1 | 31.3 | 49.1 | 53.9 | 51.9 |

| +0.7 | +2.8 | +3.7 | +7.9 | +12.1 | +9.3 | +4.9 | +5.9 | |

Table 2: Results on seven multimodal reasoning benchmarks. MMMU [90] is a multidisciplinary reasoning benchmark. MathVista [50], MathVision [78], MathVerse [93], DynaMath [99], and WeMath [60] are mathematics benchmarks. For MathVerse, we report the performance on Vision-Only (VO) split. LogicVista [87] is a logical reasoning benchmark. Part of the results are collected from the OpenCompass leaderboard [19]. The overall score is the average score of the above benchmarks. By using VisualPRM as the critic model, existing open-source MLLMs achieve significant improvements in reasoning ability under the Best-of-8 evaluation strategy.

4.1 Results with Best-of-N evaluation

Benchmarks. We evaluate the reasoning abilities of MLLMs across seven benchmarks, including MMMU [90], MathVista [50], MathVision [78], MathVerse [93], DynaMath [99], WeMath [60], and LogicVista [87]. The evaluation samples include subject-based, mathematical, and logical reasoning problems. We report the worst-case accuracy for DynaMath and the overall accuracy for the remaining benchmarks. For MathVerse, we report the performance on the Vision-Only split.

Settings. Without further explanation, we use VisualPRM as the critic model for BoN evaluation and set $N$ to $8$ by default. The policy model is required to generate $N$ distinct step-by-step Chain-of-Thought (CoT) reasoning processes with a temperature of 0.7. The response with the highest score is then selected to determine the correctness.

Results. As shown in Table 2, VisualPRM greatly enhances the reasoning abilities of MLLMs across different model scales and families. Specifically, for models with fewer than 10 billion parameters, the overall performance of InternVL2.5-8B, MiniCPM-V-8B, and Qwen2.5-VL-7B improves by 8.4, 8.0, and 3.7 points, respectively, demonstrating the effectiveness of test-time scaling across different model families. For larger models, InternVL2.5-26B, InternVL2.5-38B, and InternVL2.5-78B also achieve substantial performance gains over their counterparts without TTS, further validating the scalability and effectiveness of TTS across different model sizes.

4.2 Results on VisualProcessBench

Settings. For the evaluation of PRMs, a step is considered correct if the probability of outputting “ $+$ ” exceeds that of outputting “ $-$ ” by a certain threshold. For the evaluation of MLLMs, the model is prompted to analyze each step and determine its correctness, classifying it as either correct or incorrect. When computing the F1 score, we exclude steps labeled as neural by human annotators in Section 3.3.

Results. As shown in Table 3, most existing MLLMs struggle to accurately assess the correctness of each step. Specifically, the overall F1 score for random guessing is 50.0, while most open-source MLLMs achieve scores close to this baseline, highlighting their limitations as critic models. We manually check the judgments of these open-source MLLMs and observe that these models tend to provide positive analysis and label most steps as correct. For example, InternVL2.5-8B achieves an F1 score of 76.8 for positive steps, while its F1 score for negative steps is only 19.2, indicating that InternVL2.5-8B rarely identifies steps as incorrect. Furthermore, compared to proprietary models, our VisualPRM demonstrates competitive performance, achieving an overall F1 score of 62.0—outperforming GPT-4o and GPT-4o-Mini, and performing on par with Gemini-2.0-Flash. Notably, our model, with only 8 billion parameters, is more efficient than these proprietary counterparts.

| Model Random Guessing Proprietary Models | MMMU 50.0 | MathVision 50.0 | MathVerse-VO 50.0 | DynaMath 50.0 | WeMath 50.0 | Overall 50.0 |

| --- | --- | --- | --- | --- | --- | --- |

| GPT-4o-Mini [58] | 53.6 | 58.9 | 57.1 | 56.7 | 58.5 | 57.9 |

| GPT-4o [58] | 56.3 | 60.2 | 59.7 | 59.0 | 63.3 | 60.3 |

| Gemini-2.0-Flash [61] | 58.5 | 60.1 | 62.8 | 66.7 | 58.7 | 62.3 |

| Open-source Models | | | | | | |

| MiniCPM-V2.6-8B [89] | 44.9 | 50.9 | 58.9 | 46.7 | 57.4 | 50.4 |

| LLaVA-OV-7B [35] | 45.7 | 43.0 | 42.2 | 44.7 | 52.5 | 44.4 |

| LLaVA-OV-72B [35] | 46.1 | 48.4 | 53.0 | 57.0 | 57.3 | 52.3 |

| Qwen2.5-VL-7B [7] | 53.1 | 51.8 | 47.8 | 51.3 | 54.2 | 51.0 |

| Qwen2.5-VL-72B [7] | 59.2 | 59.0 | 59.7 | 62.9 | 62.3 | 60.5 |

| InternVL2.5-8B [15] | 47.1 | 45.5 | 47.8 | 50.3 | 50.8 | 48.0 |

| InternVL2.5-26B [15] | 48.8 | 47.4 | 49.2 | 50.4 | 51.4 | 49.2 |

| InternVL2.5-38B [15] | 51.5 | 48.4 | 50.9 | 51.8 | 52.5 | 50.8 |

| InternVL2.5-78B [15] | 52.0 | 51.7 | 53.7 | 50.8 | 52.5 | 52.6 |

| VisualPRM (ours) | 58.5 | 62.1 | 61.0 | 62.7 | 61.8 | 62.0 |

Table 3: Results on VisualProcessBench. We report the macro F1 of the correct and incorrect steps. The overall score is the micro average of the score from different data sources. Our VisualPRM exhibits state-of-the-art performance among open-source models.

4.3 Ablation Studies

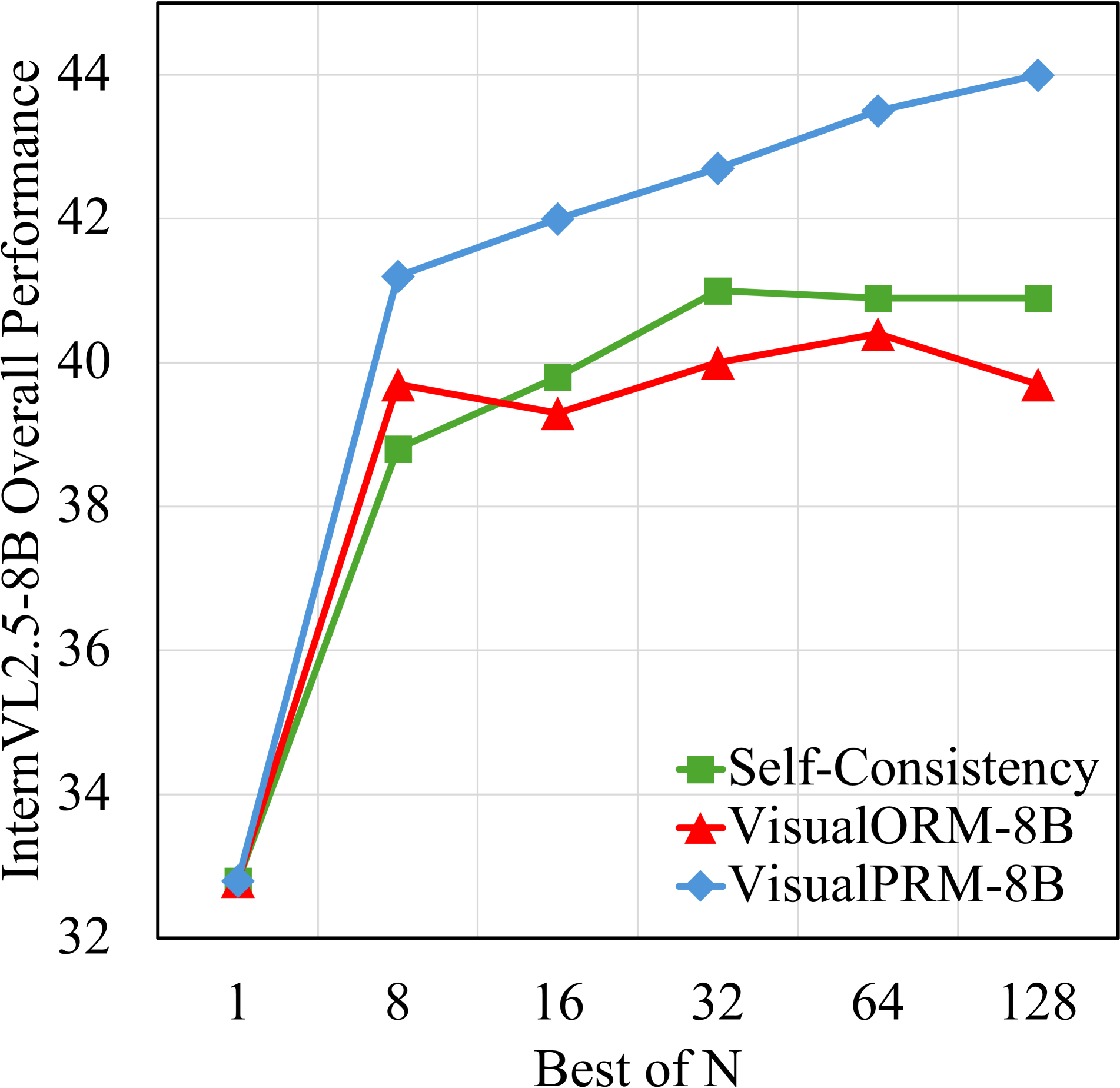

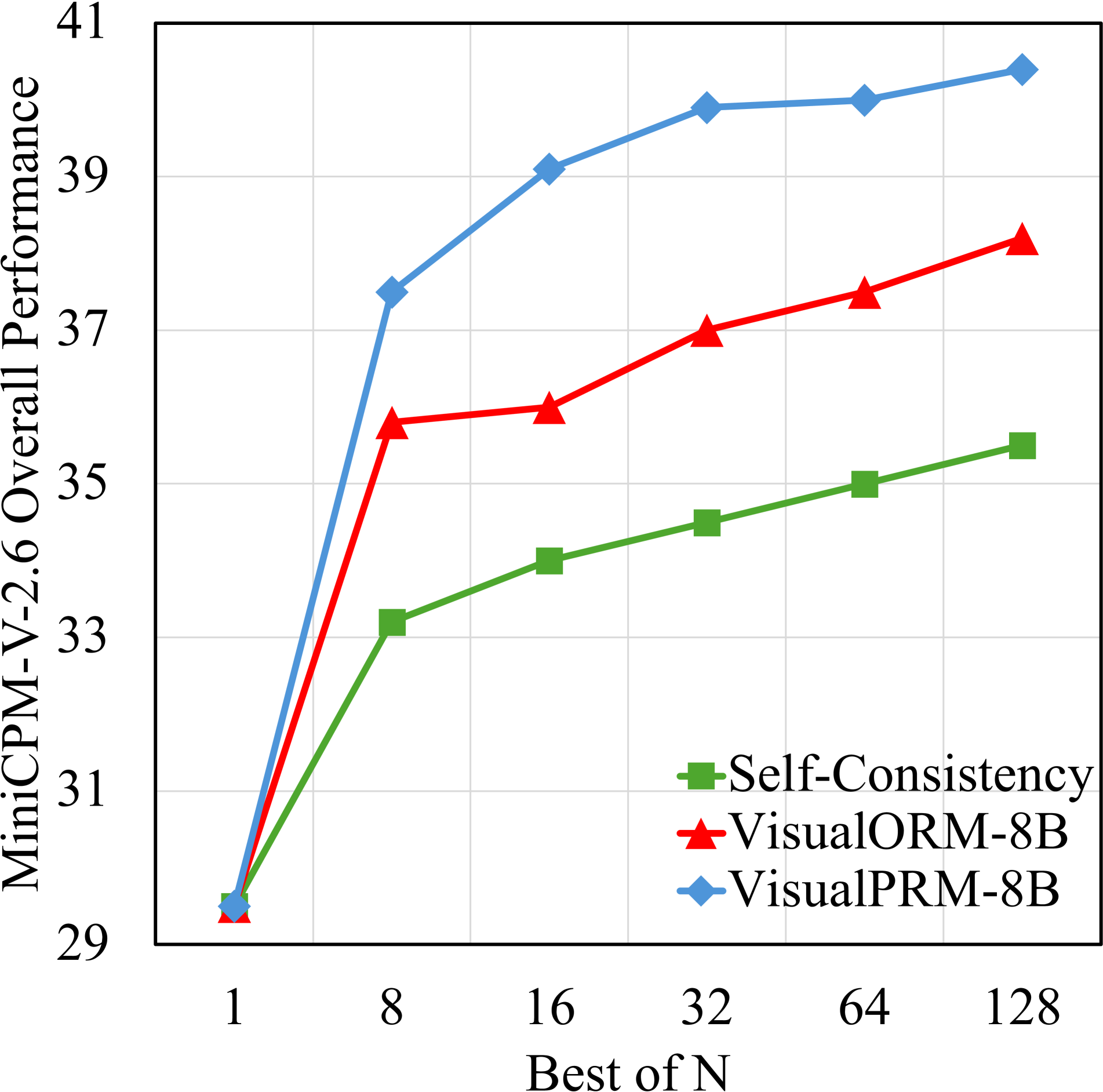

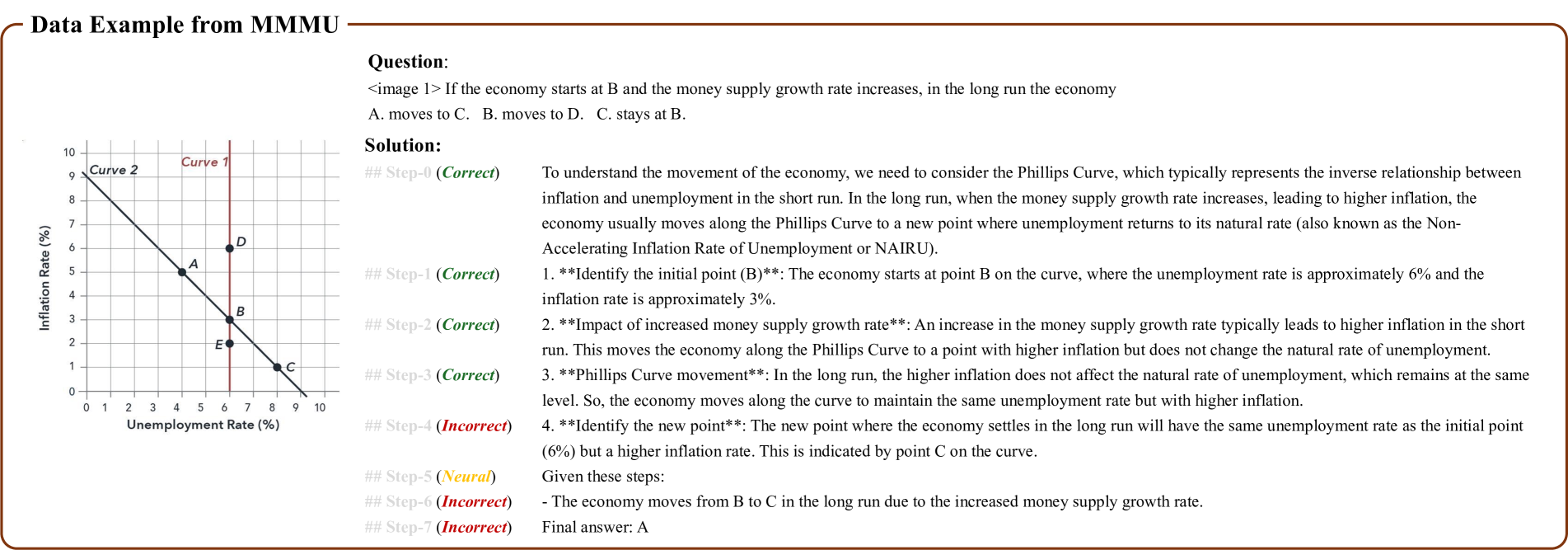

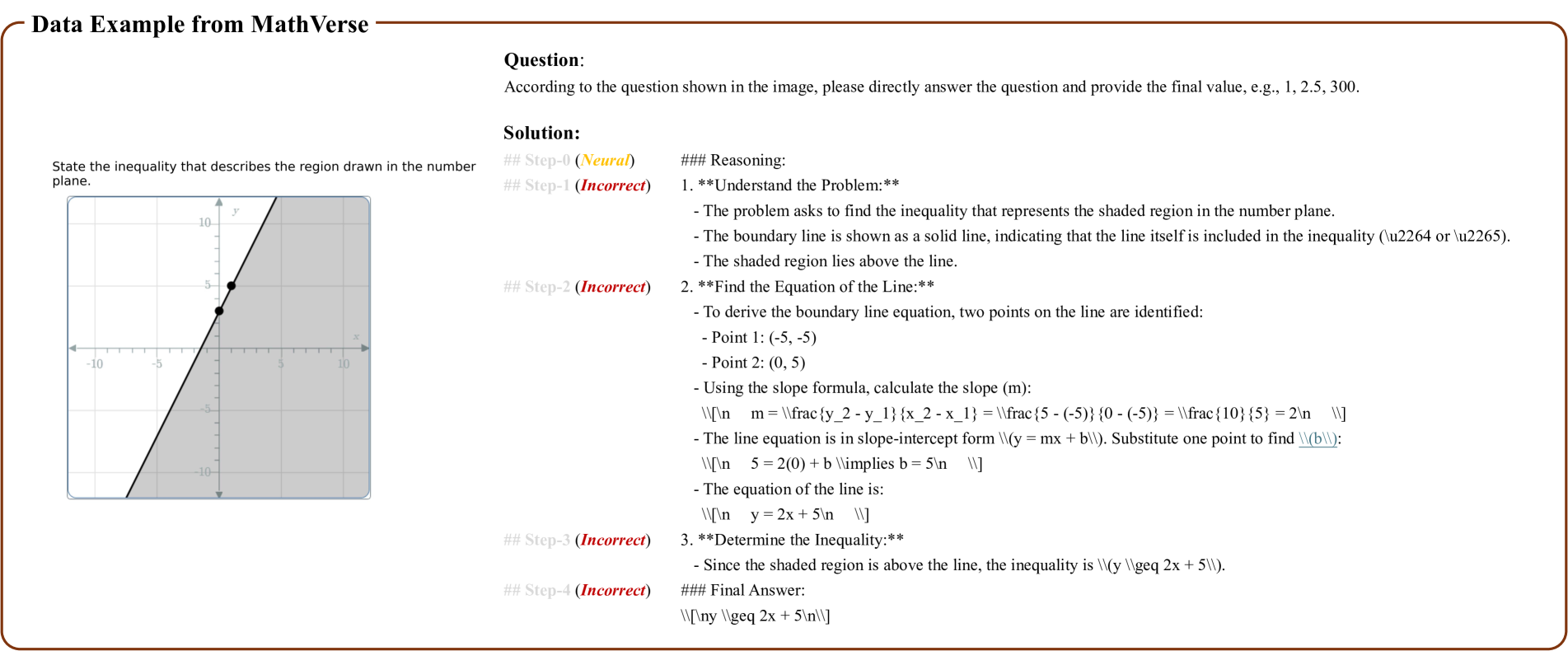

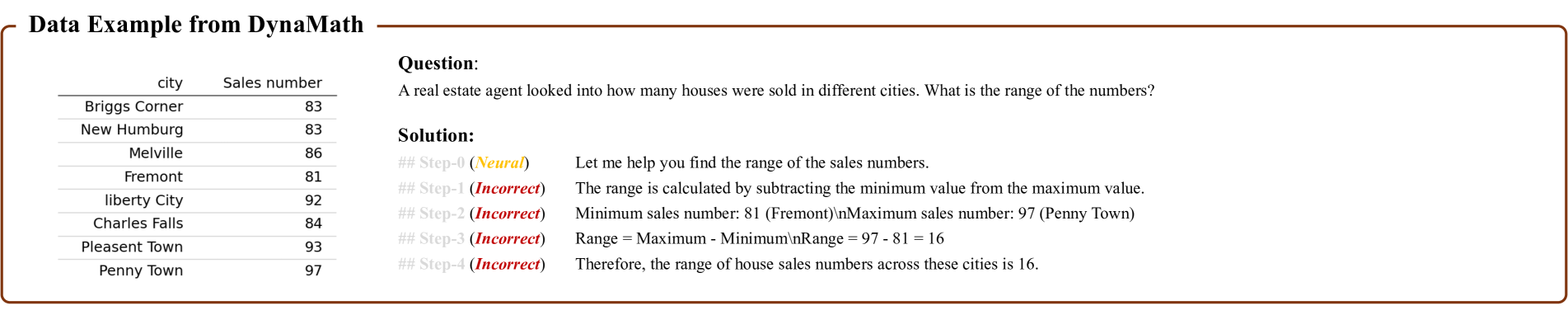

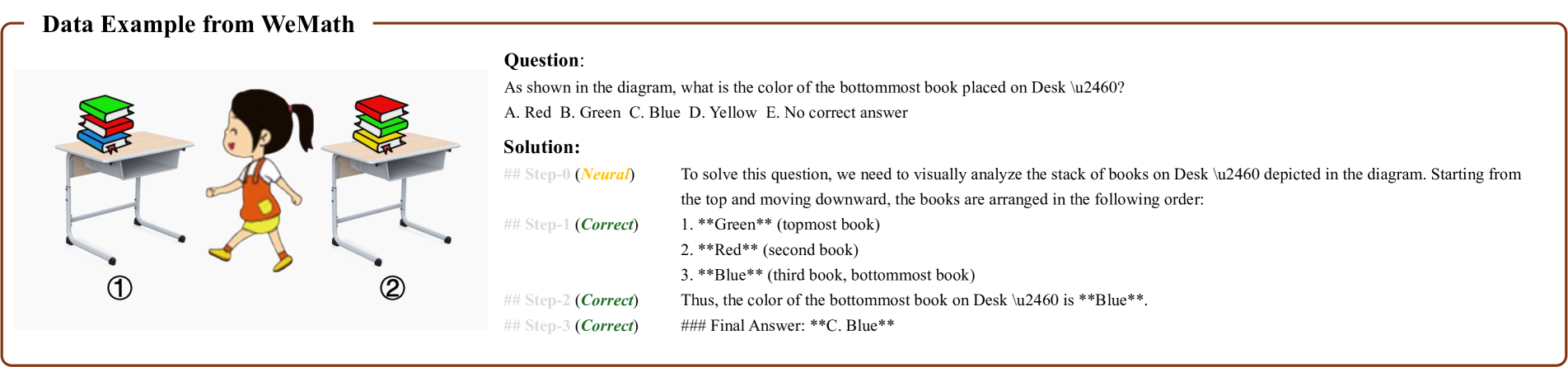

Effects of BoN. Here, we increase the number of response candidates sampled from InternVL2.5-8B and select the final response using Self-Consistency (SC) [86], Outcome Reward Model (ORM), and PRM. The training data for ORM are nearly identical to those used for PRM, except that all steps are concatenated into a single step and step-wise correctness annotations are converted into a single correctness label for the outcome. As shown in Figure 4, increasing the number of response candidates $N$ improves the reasoning performance of InternVL2.5-8B and MiniCPM-V2.6-8B when using SC, ORM, or PRM, with PRM yielding the most significant improvements. Specifically, when using InternVL2.5-8B as the policy model, PRM outperforms SC and ORM by 2.4 and 1.5 points, respectively, under the Best-of-8 evaluation setting. Moreover, this performance gap widens as $N$ increases, reaching 3.1 and 4.3 points when $N$ is set to $128$ . Notably, when using ORM as the critic model, although performance improves during Best-of-8 evaluation, further increasing $N$ does not lead to consistent gains for InternVL2.5-8B. For example, the Best-of-128 performance is inferior to the Best-of-64 performance. These results highlight the effectiveness of PRM in TTS.

Effects of PRM modeling methods. Here, we compare the value-based PRM and the advantage-based PRM introduced in Section 3.2, along with different methods for aggregating step scores into a final score, including averaging, as well as selecting the maximum or minimum value. The results are presented in Table 4. We find that value-based PRMs outperform advantage-based PRMs in both BoN evaluation settings and VL-ProcessBench. We attribute this to the inherent noise in our training data, which is generated through an automatic data pipeline, making it challenging to accurately determine whether a given step contributes to higher or lower expected accuracy. We also compare two training strategies: supervising all steps (i.e., w/o early stop) versus supervising only up to the first incorrect step (i.e., w. early stop) during training. Experimental results show that the former yields better performance. Regarding different score aggregation methods, we find that selecting the maximum value results in poorer performance compared to averaging or taking the minimum value. Analyzing the generated scores reveals that most responses contain a high-scored step, close to $1$ , at the beginning of the solution. This phenomenon likely arises because most erroneous steps appear in the middle of the solution. Our statistics of VisualProcessBench presented in Section 8 further demonstrate this conclusion. Furthermore, averaging performs better than selecting the maximum value, likely because the latter relies on a single step’s score, while averaging accounts for multiple steps and can be considered as an ensemble approach, which benefits the step quality estimation.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: InternVL2.5-8B Overall Performance

### Overview

The image is a line chart comparing the overall performance of three different models: "Self-Consistency", "VisualORM-8B", and "VisualPRM-8B". The x-axis represents "Best of N", with values ranging from 1 to 128. The y-axis represents "InternVL2.5-8B Overall Performance", with values ranging from 32 to 44.

### Components/Axes

* **X-axis:** "Best of N" with markers at 1, 8, 16, 32, 64, and 128.

* **Y-axis:** "InternVL2.5-8B Overall Performance" with markers at 32, 34, 36, 38, 40, 42, and 44.

* **Legend:** Located at the bottom-right of the chart.

* Green line with square markers: "Self-Consistency"

* Red line with triangle markers: "VisualORM-8B"

* Blue line with diamond markers: "VisualPRM-8B"

### Detailed Analysis

* **Self-Consistency (Green):**

* Trend: Increases sharply from N=1 to N=8, then increases less sharply from N=8 to N=32, then remains relatively flat from N=32 to N=128.

* Data Points:

* N=1: ~32.7

* N=8: ~38.8

* N=16: ~39.7

* N=32: ~41.0

* N=64: ~40.9

* N=128: ~40.9

* **VisualORM-8B (Red):**

* Trend: Increases sharply from N=1 to N=8, then decreases slightly from N=8 to N=16, then increases from N=16 to N=64, then decreases from N=64 to N=128.

* Data Points:

* N=1: ~32.6

* N=8: ~39.6

* N=16: ~39.2

* N=32: ~39.9

* N=64: ~40.3

* N=128: ~39.5

* **VisualPRM-8B (Blue):**

* Trend: Increases sharply from N=1 to N=8, then increases steadily from N=8 to N=128.

* Data Points:

* N=1: ~32.8

* N=8: ~41.2

* N=16: ~42.0

* N=32: ~42.7

* N=64: ~43.3

* N=128: ~44.0

### Key Observations

* VisualPRM-8B consistently outperforms the other two models across all values of "Best of N".

* Self-Consistency and VisualORM-8B have similar performance at N=1, but diverge significantly as N increases.

* The performance of VisualORM-8B peaks at N=64 and then declines slightly.

* The performance of Self-Consistency plateaus after N=32.

### Interpretation

The chart demonstrates that increasing the "Best of N" parameter generally improves the overall performance of the InternVL2.5-8B model. However, the extent of improvement varies depending on the specific model configuration. VisualPRM-8B shows the most consistent and significant improvement with increasing N, suggesting it benefits the most from this parameter. The plateauing or slight decline in performance for Self-Consistency and VisualORM-8B at higher N values may indicate diminishing returns or potential overfitting.

</details>

(a)

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: MiniCPM-V-2.6 Overall Performance

### Overview

The image is a line chart comparing the overall performance of three different models: Self-Consistency, VisualORM-8B, and VisualPRM-8B. The x-axis represents "Best of N," with values ranging from 1 to 128. The y-axis represents "MiniCPM-V-2.6 Overall Performance," with values ranging from 29 to 41.

### Components/Axes

* **X-axis Title:** Best of N

* **X-axis Scale:** 1, 8, 16, 32, 64, 128

* **Y-axis Title:** MiniCPM-V-2.6 Overall Performance

* **Y-axis Scale:** 29, 31, 33, 35, 37, 39, 41

* **Legend:** Located in the bottom-right corner.

* **Green Line with Square Markers:** Self-Consistency

* **Red Line with Triangle Markers:** VisualORM-8B

* **Blue Line with Diamond Markers:** VisualPRM-8B

### Detailed Analysis

* **Self-Consistency (Green Line):** The line slopes upward, indicating an increase in performance as "Best of N" increases.

* At Best of N = 1, Performance ≈ 29.5

* At Best of N = 8, Performance ≈ 33

* At Best of N = 16, Performance ≈ 34

* At Best of N = 32, Performance ≈ 34.5

* At Best of N = 64, Performance ≈ 35

* At Best of N = 128, Performance ≈ 35.5

* **VisualORM-8B (Red Line):** The line slopes upward, indicating an increase in performance as "Best of N" increases.

* At Best of N = 1, Performance ≈ 29.5

* At Best of N = 8, Performance ≈ 35.5

* At Best of N = 16, Performance ≈ 36

* At Best of N = 32, Performance ≈ 37

* At Best of N = 64, Performance ≈ 37.5

* At Best of N = 128, Performance ≈ 38.5

* **VisualPRM-8B (Blue Line):** The line slopes upward, indicating an increase in performance as "Best of N" increases.

* At Best of N = 1, Performance ≈ 29.5

* At Best of N = 8, Performance ≈ 37.5

* At Best of N = 16, Performance ≈ 39

* At Best of N = 32, Performance ≈ 39.5

* At Best of N = 64, Performance ≈ 39.5

* At Best of N = 128, Performance ≈ 40

### Key Observations

* VisualPRM-8B consistently outperforms the other two models across all values of "Best of N."

* Self-Consistency has the lowest performance among the three models.

* The performance of all three models increases as "Best of N" increases, but the rate of increase varies. VisualPRM-8B shows a steeper initial increase compared to the other two.

### Interpretation

The chart demonstrates the impact of increasing the "Best of N" parameter on the overall performance of three different models. VisualPRM-8B appears to benefit the most from increasing "Best of N," suggesting it is more effective at leveraging multiple samples to improve performance. Self-Consistency shows a more gradual improvement, indicating it may be less sensitive to the "Best of N" parameter. VisualORM-8B falls in between the other two models in terms of performance and sensitivity to "Best of N." The data suggests that VisualPRM-8B is the most effective model overall, while Self-Consistency may be a more stable but less performant option.

</details>

(b)

Figure 4: Overall Best-of-N results across seven multimodal reasoning benchmarks with different policy and critic models. VisualPRM consistently enhances reasoning performance of both InternVL2.5-8B and MiniCPM-V as $N$ increases and outperforms the improvement introduced by Self-Consistency and ORM, highlighting the effectiveness of PRM in Test-Time Scaling.

| Critic Model Pass@1 Random Guessing | BoN 32.8 33.0 | VL-ProcessBench - 50.0 |

| --- | --- | --- |

| InternVL2.5-8B | 33.2 | 48.0 |

| InternVL2.5-78B | 34.2 | 52.6 |

| Advantage-based PRM | | |

| +Min | 36.8 | 55.0 |

| +Max | 36.9 | 55.0 |

| +Average | 37.4 | 55.0 |

| Value (w. early stop) | | |

| +Min | 40.3 | 61.6 |

| +Max | 37.0 | 61.6 |

| +Average | 40.6 | 61.6 |

| Value (w/o early stop) | | |

| +Min | 40.4 | 62.0 |

| +Max | 35.9 | 62.0 |

| +Average | 41.1 | 62.0 |

Table 4: Comparison of different critic models and score aggregation methods. Note that score aggregation methods do not affect performance on VisualProcessBench, which focuses on step correctness judgement. We find that supervising all steps (w/o early stop) during training perform slightly better than supervising only up to the first incorrect step (w. early steop).

MLLM-as-a-Judger. Existing MLLMs can be prompted to serve as a critic model. However, as shown in Table 4, the InternVL2.5 series struggle to improve BoN performance, resulting in only marginal improvements. Upon analyzing the generated scores, we find that these models assign similar scores to most solutions. Consistent with our observations in Section 4.2, the InternVL2.5 series tend to generate positive judgments for most steps, which hinders their ability to effectively distinguish and select the truly superior response. In addition to their effectiveness as critic models for MLLMs, their inference latency also limits efficiency. Specifically, MLLMs generate judgments for each step in an autoregressive manner, which is time-consuming. In contrast, our VisualPRM computes scores for all steps in a single forward pass by using a “+” as a placeholder for model responses and interpreting its generation probability as the step score.

Results on text-only performance. To assess the effectiveness of VisualPRM on text-only inputs, we evaluate the Qwen2.5 series [88] and InternVL2.5 series [15] on three text reasoning benchmarks under BoN evaluation settings: GSM8K [18], MATH-500 [25], and GPQA-Diamond [62]. We report accuracy as the evaluation metric for these benchmarks. As shown in Table 5, our model enhances the text reasoning abilities of both the Qwen2.5 series and the InternVL2.5 series. Specifically, Qwen2.5-7B achieves improvements of 6.1 and 5.0 points on MATH-500 and GPQA-Diamond, respectively. Similarly, Qwen2.5-72B demonstrates gains of 2.1 and 6.6 points on these benchmarks. For the InternVL2.5 series, InternVL2.5-8B, InternVL2.5-38B, and InternVL2.5-78B achieve improvements of 9.4 and 5.0, 4.6 and 8.1, and 7.4 and 3.5 points, respectively, on MATH-500 and GPQA-Diamond. These results demonstrate the effectiveness of our VisualPRM in text-only scenarios.

| Model Large Language Models Qwen2.5-7B [88] | GSM8K 91.6 | MATH-500 75.5 | GPQA 36.4 |

| --- | --- | --- | --- |

| +VisualPRM | 94.5 | 81.6 | 41.4 |

| +2.9 | +6.1 | +5.0 | |

| Qwen2.5-32B [88] | 95.9 | 83.1 | 49.5 |

| +VisualPRM | 96.1 | 85.4 | 53.5 |

| +0.2 | +2.3 | +4.0 | |

| Qwen2.5-72B [88] | 95.8 | 83.1 | 49.0 |

| +VisualPRM | 96.5 | 85.2 | 55.6 |

| +0.7 | +2.1 | +6.6 | |

| Multimodal Large Language Models | | | |

| InternVL2.5-8B [15] | 81.9 | 56.8 | 29.3 |

| +VisualPRM | 82.9 | 66.2 | 34.3 |

| +1.1 | +9.4 | +5.0 | |

| InternVL2.5-38B [15] | 94.6 | 75.4 | 44.4 |

| +VisualPRM | 95.6 | 80.0 | 52.5 |

| +1.0 | +4.6 | +8.1 | |

| InternVL2.5-78B [15] | 93.6 | 70.4 | 47.5 |

| +VisualPRM | 94.5 | 77.8 | 51.0 |

| +0.9 | +7.4 | +3.5 | |

Table 5: Results on text reasoning benchmarks. GSM8K and MATH500 are mathematics benchmarks, while GPQA is a multidisciplinary reasoning benchmark. For GPQA, we report the performance on the Diamond split. Our VisualPRM enhances the text reasoning abilities of both LLMs and MLLMs under the Best-of-8 evaluation settings.

5 Conclusion

In this work, we construct VisualPRM400K, a dataset comprising about 400K multimodal process supervision data. Building upon this dataset, we develop VisualPRM, an advanced multimodal Process Reward Model (PRM) capable of estimating the value score of each step during the reasoning process. With the Best-of-N (BoN) evaluation strategies, our model improves the reasoning abilities of existing Multimodal Large Language Models (MLLMs) across different model scales and families. Experimental results show that our model exhibits superior performance compared to Outcome Reward Models and Self-Consistency during BoN evaluation, highlighting the effectiveness of PRMs in Test-Time Scaling. To further facilitate the development of multimodal critic models, we construct VisualProcessBench, a benchmark designed to measure the abilities of PRMs and MLLMs to detect incorrect steps in multimodal reasoning tasks. Evaluation results show that existing open-source MLLMs struggle to effectively judge the correctness of each step. We hope that our work can inspire more future research and contribute to the development of MLLMs.

References

- Achiam et al. [2023] Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Ahmadian et al. [2024] Arash Ahmadian, Chris Cremer, Matthias Gallé, Marzieh Fadaee, Julia Kreutzer, Olivier Pietquin, Ahmet Üstün, and Sara Hooker. Back to basics: Revisiting reinforce style optimization for learning from human feedback in llms. arXiv preprint arXiv:2402.14740, 2024.

- Alayrac et al. [2022] Jean-Baptiste Alayrac, Jeff Donahue, Pauline Luc, Antoine Miech, Iain Barr, Yana Hasson, Karel Lenc, Arthur Mensch, Katherine Millican, Malcolm Reynolds, et al. Flamingo: a visual language model for few-shot learning. NIPS, 35:23716–23736, 2022.

- Anthropic [2024] Anthropic. The claude 3 model family: Opus, sonnet, haiku. https://www.anthropic.com, 2024.

- Bai et al. [2023a] Jinze Bai, Shuai Bai, Yunfei Chu, Zeyu Cui, Kai Dang, Xiaodong Deng, Yang Fan, Wenbin Ge, Yu Han, Fei Huang, et al. Qwen technical report. arXiv preprint arXiv:2309.16609, 2023a.

- Bai et al. [2023b] Jinze Bai, Shuai Bai, Shusheng Yang, Shijie Wang, Sinan Tan, Peng Wang, Junyang Lin, Chang Zhou, and Jingren Zhou. Qwen-vl: A frontier large vision-language model with versatile abilities. arXiv preprint arXiv:2308.12966, 2023b.

- Bai et al. [2025] Shuai Bai, Keqin Chen, Xuejing Liu, Jialin Wang, Wenbin Ge, Sibo Song, Kai Dang, Peng Wang, Shijie Wang, Jun Tang, et al. Qwen2. 5-vl technical report. arXiv preprint arXiv:2502.13923, 2025.

- Biten et al. [2019] Ali Furkan Biten, Ruben Tito, Andres Mafla, Lluis Gomez, Marçal Rusinol, Ernest Valveny, CV Jawahar, and Dimosthenis Karatzas. Scene text visual question answering. In ICCV, pages 4291–4301, 2019.

- Brown et al. [2020] Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. NIPS, 2020.

- Cai et al. [2024] Zheng Cai, Maosong Cao, Haojiong Chen, Kai Chen, Keyu Chen, Xin Chen, Xun Chen, Zehui Chen, Zhi Chen, Pei Chu, et al. Internlm2 technical report. arXiv preprint arXiv:2403.17297, 2024.

- Cao and Xiao [2022] Jie Cao and Jing Xiao. An augmented benchmark dataset for geometric question answering through dual parallel text encoding. In COLING, pages 1511–1520, 2022.

- Chang et al. [2022] Shuaichen Chang, David Palzer, Jialin Li, Eric Fosler-Lussier, and Ningchuan Xiao. Mapqa: A dataset for question answering on choropleth maps. arXiv preprint arXiv:2211.08545, 2022.

- Chen et al. [2024a] Qiguang Chen, Libo Qin, Jin Zhang, Zhi Chen, Xiao Xu, and Wanxiang Che. M3cot: A novel benchmark for multi-domain multi-step multi-modal chain-of-thought. arXiv preprint arXiv:2405.16473, 2024a.

- Chen et al. [2023] Zhe Chen, Jiannan Wu, Wenhai Wang, Weijie Su, Guo Chen, Sen Xing, Zhong Muyan, Qinglong Zhang, Xizhou Zhu, Lewei Lu, et al. Internvl: Scaling up vision foundation models and aligning for generic visual-linguistic tasks. arXiv preprint arXiv:2312.14238, 2023.

- Chen et al. [2024b] Zhe Chen, Weiyun Wang, Yue Cao, Yangzhou Liu, Zhangwei Gao, Erfei Cui, Jinguo Zhu, Shenglong Ye, Hao Tian, Zhaoyang Liu, et al. Expanding performance boundaries of open-source multimodal models with model, data, and test-time scaling. arXiv preprint arXiv:2412.05271, 2024b.

- Chen et al. [2024c] Zhe Chen, Weiyun Wang, Hao Tian, Shenglong Ye, Zhangwei Gao, Erfei Cui, Wenwen Tong, Kongzhi Hu, Jiapeng Luo, Zheng Ma, et al. How far are we to gpt-4v? closing the gap to commercial multimodal models with open-source suites. arXiv preprint arXiv:2404.16821, 2024c.

- Clark and Gardner [2018] Christopher Clark and Matt Gardner. Simple and effective multi-paragraph reading comprehension. In ACL, pages 845–855, 2018.

- Cobbe et al. [2021] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- Contributors [2023] OpenCompass Contributors. Opencompass: A universal evaluation platform for foundation models. https://github.com/open-compass/opencompass, 2023.

- Dong et al. [2024] Hanze Dong, Wei Xiong, Bo Pang, Haoxiang Wang, Han Zhao, Yingbo Zhou, Nan Jiang, Doyen Sahoo, Caiming Xiong, and Tong Zhang. Rlhf workflow: From reward modeling to online rlhf. arXiv preprint arXiv:2405.07863, 2024.

- Dubey et al. [2024] Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Gao et al. [2023] Jiahui Gao, Renjie Pi, Jipeng Zhang, Jiacheng Ye, Wanjun Zhong, Yufei Wang, Lanqing Hong, Jianhua Han, Hang Xu, Zhenguo Li, et al. G-llava: Solving geometric problem with multi-modal large language model. arXiv preprint arXiv:2312.11370, 2023.

- Goyal et al. [2017] Yash Goyal, Tejas Khot, Douglas Summers-Stay, Dhruv Batra, and Devi Parikh. Making the v in vqa matter: Elevating the role of image understanding in visual question answering. In CVPR, pages 6904–6913, 2017.

- Guo et al. [2025] Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- Hendrycks et al. [2021] Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv:2103.03874, 2021.

- Hu [2025] Jian Hu. Reinforce++: A simple and efficient approach for aligning large language models. arXiv preprint arXiv:2501.03262, 2025.

- Huang et al. [2019] Zheng Huang, Kai Chen, Jianhua He, Xiang Bai, Dimosthenis Karatzas, Shijian Lu, and CV Jawahar. Icdar2019 competition on scanned receipt ocr and information extraction. In 2019 International Conference on Document Analysis and Recognition (ICDAR), pages 1516–1520. IEEE, 2019.

- Hudson and Manning [2019] Drew A Hudson and Christopher D Manning. Gqa: A new dataset for real-world visual reasoning and compositional question answering. In CVPR, pages 6700–6709, 2019.

- Kafle et al. [2018] Kushal Kafle, Brian Price, Scott Cohen, and Christopher Kanan. Dvqa: Understanding data visualizations via question answering. In CVPR, pages 5648–5656, 2018.

- Kazemi et al. [2023] Mehran Kazemi, Hamidreza Alvari, Ankit Anand, Jialin Wu, Xi Chen, and Radu Soricut. Geomverse: A systematic evaluation of large models for geometric reasoning. arXiv preprint arXiv:2312.12241, 2023.

- Kembhavi et al. [2016] Aniruddha Kembhavi, Mike Salvato, Eric Kolve, Minjoon Seo, Hannaneh Hajishirzi, and Ali Farhadi. A diagram is worth a dozen images. In ECCV, pages 235–251, 2016.

- Lai et al. [2024] Xin Lai, Zhuotao Tian, Yukang Chen, Senqiao Yang, Xiangru Peng, and Jiaya Jia. Step-dpo: Step-wise preference optimization for long-chain reasoning of llms. arXiv preprint arXiv:2406.18629, 2024.

- Lambert et al. [2024] Nathan Lambert, Valentina Pyatkin, Jacob Morrison, LJ Miranda, Bill Yuchen Lin, Khyathi Chandu, Nouha Dziri, Sachin Kumar, Tom Zick, Yejin Choi, et al. Rewardbench: Evaluating reward models for language modeling. arXiv preprint arXiv:2403.13787, 2024.

- Laurençon et al. [2024] Hugo Laurençon, Lucile Saulnier, Léo Tronchon, Stas Bekman, Amanpreet Singh, Anton Lozhkov, Thomas Wang, Siddharth Karamcheti, Alexander Rush, Douwe Kiela, et al. Obelics: An open web-scale filtered dataset of interleaved image-text documents. NIPS, 36, 2024.

- Li et al. [2024a] Bo Li, Yuanhan Zhang, Dong Guo, Renrui Zhang, Feng Li, Hao Zhang, Kaichen Zhang, Yanwei Li, Ziwei Liu, and Chunyuan Li. Llava-onevision: Easy visual task transfer. arXiv preprint arXiv:2408.03326, 2024a.

- Li et al. [2023] Junnan Li, Dongxu Li, Silvio Savarese, and Steven Hoi. Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. In ICML, pages 19730–19742. PMLR, 2023.

- Li et al. [2024b] Lei Li, Yuancheng Wei, Zhihui Xie, Xuqing Yang, Yifan Song, Peiyi Wang, Chenxin An, Tianyu Liu, Sujian Li, Bill Yuchen Lin, et al. Vlrewardbench: A challenging benchmark for vision-language generative reward models. arXiv preprint arXiv:2411.17451, 2024b.

- Li et al. [2024c] Qingyun Li, Zhe Chen, Weiyun Wang, Wenhai Wang, Shenglong Ye, Zhenjiang Jin, Guanzhou Chen, Yinan He, Zhangwei Gao, Erfei Cui, et al. Omnicorpus: An unified multimodal corpus of 10 billion-level images interleaved with text. arXiv preprint arXiv:2406.08418, 2024c.

- Lightman et al. [2023] Hunter Lightman, Vineet Kosaraju, Yuri Burda, Harrison Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step. In The Twelfth International Conference on Learning Representations, 2023.

- Lindström and Abraham [2022] Adam Dahlgren Lindström and Savitha Sam Abraham. Clevr-math: A dataset for compositional language, visual and mathematical reasoning. arXiv preprint arXiv:2208.05358, 2022.

- Liu et al. [2023a] Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. Visual instruction tuning. NIPS, 36, 2023a.

- Liu et al. [2024a] Haotian Liu, Chunyuan Li, Yuheng Li, Bo Li, Yuanhan Zhang, Sheng Shen, and Yong Jae Lee. Llava-next: Improved reasoning, ocr, and world knowledge, 2024a.

- Liu et al. [2024b] Yangzhou Liu, Yue Cao, Zhangwei Gao, Weiyun Wang, Zhe Chen, Wenhai Wang, Hao Tian, Lewei Lu, Xizhou Zhu, Tong Lu, et al. Mminstruct: A high-quality multi-modal instruction tuning dataset with extensive diversity. arXiv preprint arXiv:2407.15838, 2024b.

- Liu et al. [2024c] Yantao Liu, Zijun Yao, Rui Min, Yixin Cao, Lei Hou, and Juanzi Li. Rm-bench: Benchmarking reward models of language models with subtlety and style. arXiv preprint arXiv:2410.16184, 2024c.

- Liu et al. [2023b] Zhaoyang Liu, Yinan He, Wenhai Wang, Weiyun Wang, Yi Wang, Shoufa Chen, Qinglong Zhang, Zeqiang Lai, Yang Yang, Qingyun Li, Jiashuo Yu, et al. Interngpt: Solving vision-centric tasks by interacting with chatgpt beyond language. arXiv preprint arXiv:2305.05662, 2023b.

- Loshchilov and Hutter [2017] Ilya Loshchilov and Frank Hutter. Decoupled weight decay regularization. arXiv preprint arXiv:1711.05101, 2017.

- Lu et al. [2021a] Pan Lu, Ran Gong, Shibiao Jiang, Liang Qiu, Siyuan Huang, Xiaodan Liang, and Song-Chun Zhu. Inter-gps: Interpretable geometry problem solving with formal language and symbolic reasoning. arXiv preprint arXiv:2105.04165, 2021a.

- Lu et al. [2021b] Pan Lu, Liang Qiu, Jiaqi Chen, Tony Xia, Yizhou Zhao, Wei Zhang, Zhou Yu, Xiaodan Liang, and Song-Chun Zhu. Iconqa: A new benchmark for abstract diagram understanding and visual language reasoning. arXiv preprint arXiv:2110.13214, 2021b.

- Lu et al. [2022] Pan Lu, Swaroop Mishra, Tanglin Xia, Liang Qiu, Kai-Wei Chang, Song-Chun Zhu, Oyvind Tafjord, Peter Clark, and Ashwin Kalyan. Learn to explain: Multimodal reasoning via thought chains for science question answering. NIPS, 35:2507–2521, 2022.

- Lu et al. [2023] Pan Lu, Hritik Bansal, Tony Xia, Jiacheng Liu, Chunyuan Li, Hannaneh Hajishirzi, Hao Cheng, Kai-Wei Chang, Michel Galley, and Jianfeng Gao. Mathvista: Evaluating mathematical reasoning of foundation models in visual contexts. arXiv preprint arXiv:2310.02255, 2023.

- Luo et al. [2024] Liangchen Luo, Yinxiao Liu, Rosanne Liu, Samrat Phatale, Harsh Lara, Yunxuan Li, Lei Shu, Yun Zhu, Lei Meng, Jiao Sun, et al. Improve mathematical reasoning in language models by automated process supervision. arXiv preprint arXiv:2406.06592, 2, 2024.

- Marino et al. [2019] Kenneth Marino, Mohammad Rastegari, Ali Farhadi, and Roozbeh Mottaghi. Ok-vqa: A visual question answering benchmark requiring external knowledge. In CVPR, pages 3195–3204, 2019.

- Masry et al. [2022] Ahmed Masry, Xuan Long Do, Jia Qing Tan, Shafiq Joty, and Enamul Hoque. Chartqa: A benchmark for question answering about charts with visual and logical reasoning. In ACL, pages 2263–2279, 2022.

- Mathew et al. [2022] Minesh Mathew, Viraj Bagal, Rubèn Tito, Dimosthenis Karatzas, Ernest Valveny, and CV Jawahar. Infographicvqa. In WACV, pages 1697–1706, 2022.

- McAleese et al. [2024] Nat McAleese, Rai Michael Pokorny, Juan Felipe Ceron Uribe, Evgenia Nitishinskaya, Maja Trebacz, and Jan Leike. Llm critics help catch llm bugs. arXiv preprint arXiv:2407.00215, 2024.

- Mishra et al. [2019] Anand Mishra, Shashank Shekhar, Ajeet Kumar Singh, and Anirban Chakraborty. Ocr-vqa: Visual question answering by reading text in images. In ICDAR, pages 947–952, 2019.

- Muennighoff et al. [2025] Niklas Muennighoff, Zitong Yang, Weijia Shi, Xiang Lisa Li, Li Fei-Fei, Hannaneh Hajishirzi, Luke Zettlemoyer, Percy Liang, Emmanuel Candès, and Tatsunori Hashimoto. s1: Simple test-time scaling. arXiv preprint arXiv:2501.19393, 2025.

- OpenAI [2024] OpenAI. Gpt-4o system card. https://openai.com/index/gpt-4o-system-card/, 2024.

- Pang et al. [2024] Richard Yuanzhe Pang, Weizhe Yuan, Kyunghyun Cho, He He, Sainbayar Sukhbaatar, and Jason Weston. Iterative reasoning preference optimization. arXiv preprint arXiv:2404.19733, 2024.

- Qiao et al. [2024] Runqi Qiao, Qiuna Tan, Guanting Dong, Minhui Wu, Chong Sun, Xiaoshuai Song, Zhuoma GongQue, Shanglin Lei, Zhe Wei, Miaoxuan Zhang, et al. We-math: Does your large multimodal model achieve human-like mathematical reasoning? arXiv preprint arXiv:2407.01284, 2024.

- Reid et al. [2024] Machel Reid, Nikolay Savinov, Denis Teplyashin, Dmitry Lepikhin, Timothy Lillicrap, Jean-baptiste Alayrac, Radu Soricut, Angeliki Lazaridou, Orhan Firat, Julian Schrittwieser, et al. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context. arXiv preprint arXiv:2403.05530, 2024.

- Rein et al. [2024] David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R Bowman. Gpqa: A graduate-level google-proof q&a benchmark. In First Conference on Language Modeling, 2024.

- Schuhmann et al. [2022] Christoph Schuhmann, Romain Beaumont, Richard Vencu, Cade Gordon, Ross Wightman, Mehdi Cherti, Theo Coombes, Aarush Katta, Clayton Mullis, Mitchell Wortsman, et al. Laion-5b: An open large-scale dataset for training next generation image-text models. NIPS, 35:25278–25294, 2022.

- Schulman et al. [2017] John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Seo et al. [2015] Minjoon Seo, Hannaneh Hajishirzi, Ali Farhadi, Oren Etzioni, and Clint Malcolm. Solving geometry problems: Combining text and diagram interpretation. In Proceedings of the 2015 conference on empirical methods in natural language processing, pages 1466–1476, 2015.

- Shao et al. [2024] Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300, 2024.

- Singh et al. [2019] Amanpreet Singh, Vivek Natarajan, Meet Shah, Yu Jiang, Xinlei Chen, Dhruv Batra, Devi Parikh, and Marcus Rohrbach. Towards vqa models that can read. In CVPR, pages 8317–8326, 2019.

- Snell et al. [2024] Charlie Snell, Jaehoon Lee, Kelvin Xu, and Aviral Kumar. Scaling llm test-time compute optimally can be more effective than scaling model parameters. arXiv preprint arXiv:2408.03314, 2024.

- Song et al. [2025] Mingyang Song, Zhaochen Su, Xiaoye Qu, Jiawei Zhou, and Yu Cheng. Prmbench: A fine-grained and challenging benchmark for process-level reward models. arXiv preprint arXiv:2501.03124, 2025.

- Team et al. [2023] Gemini Team, Rohan Anil, Sebastian Borgeaud, Yonghui Wu, Jean-Baptiste Alayrac, Jiahui Yu, Radu Soricut, Johan Schalkwyk, Andrew M Dai, Anja Hauth, et al. Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805, 2023.

- Team [2023] InternLM Team. Internlm: A multilingual language model with progressively enhanced capabilities. https://github.com/InternLM/InternLM, 2023.

- Team [2024] Qwen Team. Qvq: To see the world with wisdom, 2024.

- Tian et al. [2024] Changyao Tian, Xizhou Zhu, Yuwen Xiong, Weiyun Wang, Zhe Chen, Wenhai Wang, Yuntao Chen, Lewei Lu, Tong Lu, Jie Zhou, et al. Mm-interleaved: Interleaved image-text generative modeling via multi-modal feature synchronizer. arXiv preprint arXiv:2401.10208, 2024.

- Toshniwal et al. [2025] Shubham Toshniwal, Ivan Moshkov, Sean Narenthiran, Daria Gitman, Fei Jia, and Igor Gitman. Openmathinstruct-1: A 1.8 million math instruction tuning dataset. Advances in Neural Information Processing Systems, 37:34737–34774, 2025.

- Touvron et al. [2023a] Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023a.

- Touvron et al. [2023b] Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288, 2023b.

- Wang et al. [2024a] Haoxiang Wang, Wei Xiong, Tengyang Xie, Han Zhao, and Tong Zhang. Interpretable preferences via multi-objective reward modeling and mixture-of-experts. In EMNLP, 2024a.

- Wang et al. [2024b] Ke Wang, Junting Pan, Weikang Shi, Zimu Lu, Mingjie Zhan, and Hongsheng Li. Measuring multimodal mathematical reasoning with math-vision dataset. arXiv preprint arXiv:2402.14804, 2024b.

- Wang et al. [2023a] Peiyi Wang, Lei Li, Zhihong Shao, RX Xu, Damai Dai, Yifei Li, Deli Chen, Yu Wu, and Zhifang Sui. Math-shepherd: Verify and reinforce llms step-by-step without human annotations. arXiv preprint arXiv:2312.08935, 2023a.

- Wang et al. [2023b] Wenhai Wang, Zhe Chen, Xiaokang Chen, Jiannan Wu, Xizhou Zhu, Gang Zeng, Ping Luo, Tong Lu, Jie Zhou, Yu Qiao, et al. Visionllm: Large language model is also an open-ended decoder for vision-centric tasks. NIPS, 36, 2023b.

- Wang et al. [2023c] Weihan Wang, Qingsong Lv, Wenmeng Yu, Wenyi Hong, Ji Qi, Yan Wang, Junhui Ji, Zhuoyi Yang, Lei Zhao, Xixuan Song, et al. Cogvlm: Visual expert for pretrained language models. arXiv preprint arXiv:2311.03079, 2023c.

- Wang et al. [2024c] Weiyun Wang, Zhe Chen, Wenhai Wang, Yue Cao, Yangzhou Liu, Zhangwei Gao, Jinguo Zhu, Xizhou Zhu, Lewei Lu, Yu Qiao, et al. Enhancing the reasoning ability of multimodal large language models via mixed preference optimization. arXiv preprint arXiv:2411.10442, 2024c.

- Wang et al. [2024d] Weiyun Wang, Yiming Ren, Haowen Luo, Tiantong Li, Chenxiang Yan, Zhe Chen, Wenhai Wang, Qingyun Li, Lewei Lu, Xizhou Zhu, et al. The all-seeing project v2: Towards general relation comprehension of the open world. arXiv preprint arXiv:2402.19474, 2024d.

- Wang et al. [2024e] Weiyun Wang, Min Shi, Qingyun Li, Wenhai Wang, Zhenhang Huang, Linjie Xing, Zhe Chen, Hao Li, Xizhou Zhu, Zhiguo Cao, et al. The all-seeing project: Towards panoptic visual recognition and understanding of the open world. In ICLR, 2024e.

- Wang et al. [2024f] Weiyun Wang, Shuibo Zhang, Yiming Ren, Yuchen Duan, Tiantong Li, Shuo Liu, Mengkang Hu, Zhe Chen, Kaipeng Zhang, Lewei Lu, et al. Needle in a multimodal haystack. arXiv preprint arXiv:2406.07230, 2024f.

- Wang et al. [2022] Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc Le, Ed Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. Self-consistency improves chain of thought reasoning in language models. arXiv preprint arXiv:2203.11171, 2022.

- Xiao et al. [2024] Yijia Xiao, Edward Sun, Tianyu Liu, and Wei Wang. Logicvista: Multimodal llm logical reasoning benchmark in visual contexts. arXiv preprint arXiv:2407.04973, 2024.

- Yang et al. [2024] An Yang, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran Wei, et al. Qwen2. 5 technical report. arXiv preprint arXiv:2412.15115, 2024.

- Yao et al. [2024] Yuan Yao, Tianyu Yu, Ao Zhang, Chongyi Wang, Junbo Cui, Hongji Zhu, Tianchi Cai, Haoyu Li, Weilin Zhao, Zhihui He, et al. Minicpm-v: A gpt-4v level mllm on your phone. arXiv preprint arXiv:2408.01800, 2024.

- Yue et al. [2024] Xiang Yue, Yuansheng Ni, Kai Zhang, Tianyu Zheng, Ruoqi Liu, Ge Zhang, Samuel Stevens, Dongfu Jiang, Weiming Ren, Yuxuan Sun, Cong Wei, Botao Yu, Ruibin Yuan, Renliang Sun, Ming Yin, Boyuan Zheng, Zhenzhu Yang, Yibo Liu, Wenhao Huang, Huan Sun, Yu Su, and Wenhu Chen. Mmmu: A massive multi-discipline multimodal understanding and reasoning benchmark for expert agi. In CVPR, 2024.

- Zhai et al. [2023] Xiaohua Zhai, Basil Mustafa, Alexander Kolesnikov, and Lucas Beyer. Sigmoid loss for language image pre-training. In ICCV, pages 11975–11986, 2023.

- Zhang et al. [2024a] Lunjun Zhang, Arian Hosseini, Hritik Bansal, Mehran Kazemi, Aviral Kumar, and Rishabh Agarwal. Generative verifiers: Reward modeling as next-token prediction. arXiv preprint arXiv:2408.15240, 2024a.

- Zhang et al. [2024b] Renrui Zhang, Dongzhi Jiang, Yichi Zhang, Haokun Lin, Ziyu Guo, Pengshuo Qiu, Aojun Zhou, Pan Lu, Kai-Wei Chang, Peng Gao, et al. Mathverse: Does your multi-modal llm truly see the diagrams in visual math problems? arXiv preprint arXiv:2403.14624, 2024b.

- Zhang et al. [2025] Zhenru Zhang, Chujie Zheng, Yangzhen Wu, Beichen Zhang, Runji Lin, Bowen Yu, Dayiheng Liu, Jingren Zhou, and Junyang Lin. The lessons of developing process reward models in mathematical reasoning. arXiv preprint arXiv:2501.07301, 2025.

- Zhao et al. [2025] Xiangyu Zhao, Shengyuan Ding, Zicheng Zhang, Haian Huang, Maosong Cao, Weiyun Wang, Jiaqi Wang, Xinyu Fang, Wenhai Wang, Guangtao Zhai, et al. Omnialign-v: Towards enhanced alignment of mllms with human preference. arXiv preprint arXiv:2502.18411, 2025.

- Zheng et al. [2024] Chujie Zheng, Zhenru Zhang, Beichen Zhang, Runji Lin, Keming Lu, Bowen Yu, Dayiheng Liu, Jingren Zhou, and Junyang Lin. Processbench: Identifying process errors in mathematical reasoning. arXiv preprint arXiv:2412.06559, 2024.

- Zhou et al. [2024] Enyu Zhou, Guodong Zheng, Binghai Wang, Zhiheng Xi, Shihan Dou, Rong Bao, Wei Shen, Limao Xiong, Jessica Fan, Yurong Mou, et al. Rmb: Comprehensively benchmarking reward models in llm alignment. arXiv preprint arXiv:2410.09893, 2024.

- Zhu et al. [2024] Wanrong Zhu, Jack Hessel, Anas Awadalla, Samir Yitzhak Gadre, Jesse Dodge, Alex Fang, Youngjae Yu, Ludwig Schmidt, William Yang Wang, and Yejin Choi. Multimodal c4: An open, billion-scale corpus of images interleaved with text. NIPS, 36, 2024.

- Zou et al. [2024] Chengke Zou, Xingang Guo, Rui Yang, Junyu Zhang, Bin Hu, and Huan Zhang. Dynamath: A dynamic visual benchmark for evaluating mathematical reasoning robustness of vision language models. arXiv preprint arXiv:2411.00836, 2024.

\thetitle

Supplementary Material

6 Training Hyper-parameters

During the training process of VisualPRM, the data-packing strategy [15] is enabled during training. We employ the AdamW optimizer [46] with the $\beta_{1}$ of $0.9$ , the $\beta_{2}$ of $0.999$ , and the weight decay of $0.05$ . The learning rate is initialized as $1e\text{-}5$ . The training phases include a linear warmup that lasts until the first 5% of training steps. The warmup is followed by a cosine decay strategy with a minimum learning rate of 0. We set the training epoch to 1.

7 More Ablation Studies

7.1 Effects of Training Hyper-parameters

When training our value-based Process Reward Model (PRM) using VisualPRM400K, we define a step as correct if its expected accuracy exceeds 0. In this section, we analyze the impact of varying expected accuracy thresholds for determining step correctness. As shown in Table 8, increasing the threshold results in a decline in both Best-of-8 evaluation performance and VisualProcessBench scores. These results are consistent with the observation in Qwen2.5-Math-PRM [94]. Therefore, we suggest setting the threshold to $0 0$ during training.

7.2 Effects of Generation Hyper-parameters

In this section, we analyze the impact of generation temperature on the Best-of-8 evaluation. As shown in Table 8, as the temperature increases from 0.3 to 1.3, the overall performance of InternVL2.5-8B first improves and then declines. We attribute this phenomenon to the trade-off between response diversity and accuracy. When the temperature is low (e.g., set to 0.3), the generated responses lack diversity, limiting the model’s performance upper bound. Conversely, when the temperature is high (e.g., set to 1.3), the responses become more random, reducing the accuracy of individual responses and lowering the model’s overall performance ceiling.

7.3 Effects of Best-of-N evaluation

In this section, we present the Best-of-N evaluation results as $N$ increases, as shown in Table 6 and Table 7. Our results indicate that as $N$ increases, VisualPRM consistently enhances the reasoning abilities of InternVL2.5-8B [15] and MiniCPM-V2.6 [89]. Specifically, as $N$ increases from $8$ to $128$ , the overall performance of InternVL2.5-8B improves from 41.2 to 44.0, while MiniCPM-V2.6 improves from 37.5 to 40.4, demonstrating the scalability of Test-Time Scaling for MLLMs.

| Self Consistency 8 16 | 1 58.0 58.6 | 56.2 65.9 65.8 | 64.5 23.4 26.3 | 17.0 30.5 32.1 | 22.8 18.4 19.4 | 9.4 32.7 33.0 | 23.5 43.0 43.4 | 36.0 38.8 39.8 | 32.8 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| 32 | 60.4 | 66.7 | 28.0 | 32.6 | 20.8 | 34.1 | 44.7 | 41.0 | |

| 64 | 59.7 | 66.7 | 26.6 | 33.2 | 20.6 | 35.8 | 43.4 | 40.9 | |

| 128 | 60.6 | 67.4 | 25.7 | 32.0 | 22.6 | 34.7 | 43.2 | 40.9 | |

| VisualORM | 1 | 56.2 | 64.5 | 17.0 | 22.8 | 9.4 | 23.5 | 36.0 | 32.8 |

| 8 | 60.2 | 67.0 | 25.3 | 32.5 | 16.4 | 35.0 | 41.8 | 39.7 | |

| 16 | 58.3 | 67.7 | 27.0 | 33.6 | 16.6 | 33.1 | 39.1 | 39.3 | |

| 32 | 58.6 | 67.9 | 26.3 | 33.6 | 17.4 | 34.4 | 42.1 | 40.0 | |

| 64 | 59.4 | 66.8 | 28.6 | 33.9 | 17.8 | 34.1 | 42.3 | 40.4 | |

| 128 | 59.4 | 66.6 | 28.3 | 33.5 | 16.8 | 32.3 | 40.9 | 39.7 | |

| VisualPRM | 1 | 56.2 | 64.5 | 17.0 | 22.8 | 9.4 | 23.5 | 36.0 | 32.8 |

| 8 | 60.2 | 68.5 | 25.7 | 35.8 | 18.0 | 36.5 | 43.8 | 41.2 | |

| 16 | 60.2 | 69.9 | 27.3 | 36.4 | 19.0 | 38.8 | 42.5 | 42.0 | |