# SACTOR: LLM-Driven Correct and Idiomatic C to Rust Translation with Static Analysis and FFI-Based Verification

**Authors**: Tianyang Zhou, Ziyi Zhang, Haowen Lin, Somesh Jha, Mihai Christodorescu, Kirill Levchenko, Varun Chandrasekaran

> University of Illinois Urbana-Champaign

> University of Wisconsin–Madison

> Google

## Abstract

Translating software written in C to Rust has significant benefits in improving memory safety. However, manual translation is cumbersome, error-prone, and often produces unidiomatic code. Large language models (LLMs) have demonstrated promise in producing idiomatic translations, but offer no correctness guarantees. We propose SACTOR, an LLM-driven C-to-Rust translation tool that employs a two-step process: an initial “unidiomatic” translation to preserve interface, followed by an “idiomatic” refinement to align with Rust standards. To validate correctness of our function-wise incremental translation that mixes C and Rust, we use end-to-end testing via the foreign function interface. We evaluate SACTOR on $200$ programs from two public datasets and on two more complex scenarios (a 50-sample subset of CRust-Bench and the libogg library), comparing multiple LLMs. Across datasets, SACTOR delivers high end-to-end correctness and produces safe, idiomatic Rust with up to 7 $\times$ fewer Clippy warnings; On CRust-Bench, SACTOR achieves an average (across samples) of 85% unidiomatic and 52% idiomatic success, and on libogg it attains full unidiomatic and up to 78% idiomatic coverage on GPT-5.

Keywords Software Engineering $\cdot$ Static Analysis $\cdot$ C $\cdot$ Rust $\cdot$ Large Language Models $\cdot$ Machine Learning

## 1 Introduction

C is widely used due to its ability to directly manipulate memory and hardware (love2013linux). However, manual memory management leads to vulnerabilities such as buffer overflows, dangling pointers, and memory leaks (bigvul). Rust addresses these issues by enforcing memory safety through a strict ownership model without garbage collection (matsakis2014rust), and has been adopted in projects like the Linux kernel https://github.com/Rust-for-Linux/linux and Mozilla Firefox. Translating legacy C code into idiomatic Rust improves safety and maintainability, but manual translation is error-prone, slow, and requires expertise in both languages.

Automatic tools such as C2Rust (c2rust) generate Rust by analyzing C ASTs, but rule-based or static approaches (crown; c2rust; emre2021translating; hong2024don; ling2022rust) typically yield unidiomatic code with heavy use of unsafe. Given semantic differences between C and Rust, idiomatic translations are crucial for compiler-enforced safety, readability, and maintainability.

Large language models (LLMs) show potential for capturing syntax and semantics (pan2023understanding), but they hallucinate and often generate incorrect or unsafe code (perry2023users). In C-to-Rust translation, naive prompting produces unsafe or semantically misaligned outputs. Prior work has explored prompting strategies (syzygy; c2saferrust; shiraishi2024context) and verification methods such as fuzzing and symbolic execution (vert; flourine). While these improve correctness, they struggle with complex programs and rarely yield idiomatic Rust. For example, Vert (vert) fails on programs with complex data structures, and C2SaferRust (c2saferrust) still produces Rust with numerous unsafe blocks.

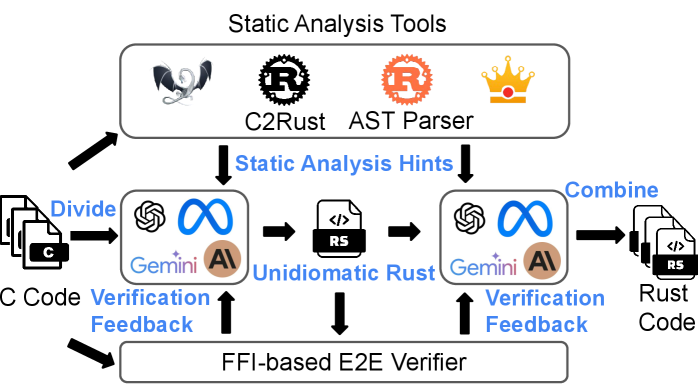

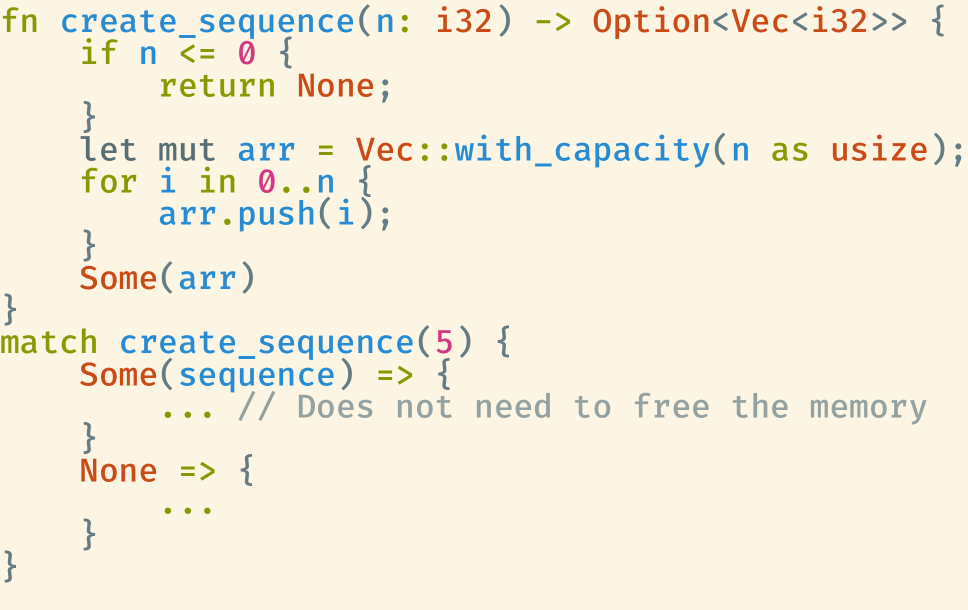

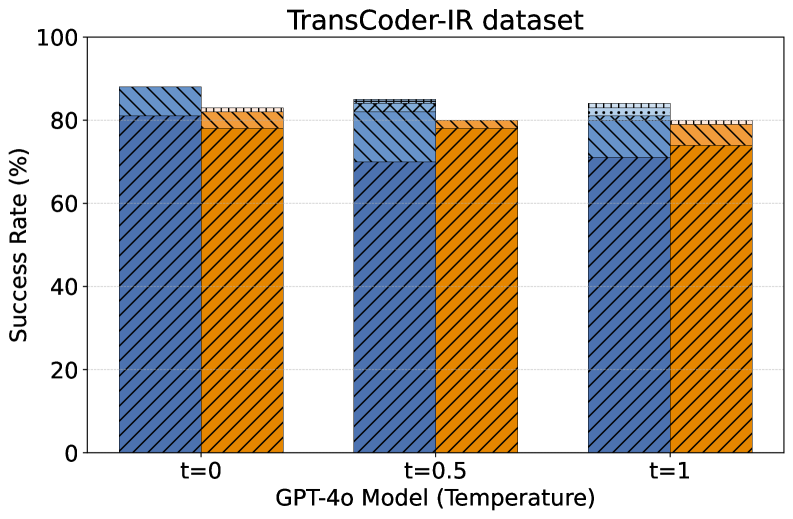

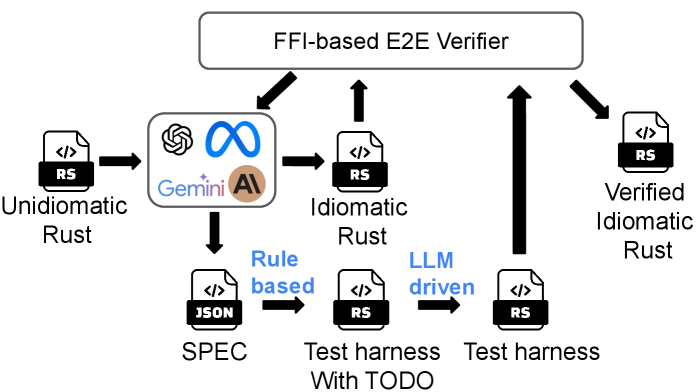

In this paper, we introduce SACTOR, a structure-aware, LLM-driven C-to-Rust translator (Figure 1). SACTOR follows a two-stage pipeline:

- C $\to$ Unidiomatic Rust: Interface-preserving translation that may use unsafe for low-level operations.

- Unidiomatic $\to$ Idiomatic Rust: Behaviorally-equivalent translation that refines to Rust idioms, eliminating unsafe and migrating C API patterns to Rust equivalents.

Static analysis of C code (pointer semantics, dependencies) guides both stages. To verify correctness, we embed the translated Rust with the original C via the Foreign Function Interface (FFI), enabling end-to-end testing on both stages and accept a stage when all end-to-end tests can pass. This decomposition separates syntax from semantics, simplifies the LLM task, and ensures more idiomatic, memory-safe Rust SACTOR code is available at https://github.com/qsdrqs/sactor and datasets are available at https://github.com/qsdrqs/sactor-datasets. An example of SACTOR translation process is in Appendix E.

LLM orchestration. SACTOR places the LLM inside a neuro-symbolic feedback loop. Static analysis and a machine-readable interface specification guide prompting; compiler diagnostics and end-to-end tests provide structured feedback. In the idiomatic verification phase, a rule-based harness generator with an LLM fallback completes the feedback loop. This design first ensures semantic correctness in unidiomatic Rust, then refines it into idiomatic Rust, with both stages verifiable in a unified two-step process.

Our contributions are as follows:

- Method: An LLM-orchestrated, structure-aware two-phase pipeline that separates semantic preservation from idiomatic refinement, guided by static analysis (§ 4)

- Verification: SACTOR verifies both unidiomatic and idiomatic translations via FFI-based testing. During idiomatic verification, it uses a co-produced interface specification to synthesize C/Rust harnesses with an LLM fallback for missing patterns; compiler and test feedback are structured into targeted prompt repairs (§ 4.3).

- Evaluation: Across two datasets (200 programs) and five LLMs, SACTOR reaches 93% / 84% end-to-end correctness (DeepSeek-R1) and improves idiomaticity (§ 6.2). On CRust-Bench (50 samples), unidiomatic translation averages 85% function-level success rate across all samples (82% aggregated across functions), with 32/50 samples fully translated; idiomatic success is computed on those 32 samples and averages 52% (43% aggregated; 8/32 fully idiomatic). On libogg (77 functions), the function-level success rate is 100% for unidiomatic and 53% and 78% for idiomatic across GPT-4o and GPT-5, respectively (§ 6.3).

- Diagnostics: We analyze efficiency, feedback, temperature sensitivity, and failure cases: GPT-4o is the most token-efficient, compilation/testing feedback boosts weaker models by 17%, temperature has little effect, and reasoning models like DeepSeek-R1 excel on complex bugs such as format-string and array errors (Appendix H).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Flowchart: Static Analysis Tools Workflow

### Overview

The flowchart illustrates a multi-stage process for static code analysis and verification, integrating tools like C2Rust, AST Parser, and Gemini AI. It emphasizes feedback loops between static analysis hints, code division, and verification to produce Rust code validated by an FFI-based E2E Verifier.

### Components/Axes

- **Main Title**: "Static Analysis Tools" (top-center).

- **Key Boxes**:

1. **Static Analysis Tools**: Contains icons for C2Rust (black dragon), AST Parser (gear with "R"), and a crown (orange).

2. **Static Analysis Hints**: Arrows from the main box to "Gemini AI" and "Unidiomatic Rust."

3. **Gemini AI**: Labeled with a blue infinity symbol and "AI" (bottom-left).

4. **Unidiomatic Rust**: Contains an RSI file icon (center).

5. **FFI-based E2E Verifier**: Bottom-most box, receiving feedback from both Gemini AI and Unidiomatic Rust.

- **Arrows**:

- "Divide" (left arrow from C Code to Gemini AI/Unidiomatic Rust).

- "Combine" (right arrow from Gemini AI/Unidiomatic Rust to Rust Code).

- "Verification Feedback" (upward arrows from both Gemini AI and Unidiomatic Rust to the FFI-based E2E Verifier).

### Detailed Analysis

- **C Code Input**: Divided into two paths:

- **Gemini AI**: Processes code with verification feedback.

- **Unidiomatic Rust**: Processes code with RSI file output and verification feedback.

- **Rust Code Output**: Generated by combining results from Gemini AI and Unidiomatic Rust.

- **FFI-based E2E Verifier**: Receives feedback from both paths, ensuring end-to-end validation.

### Key Observations

- **Feedback Loops**: Both Gemini AI and Unidiomatic Rust contribute verification feedback to the FFI-based E2E Verifier, suggesting iterative refinement.

- **Tool Integration**: C2Rust and AST Parser are upstream tools feeding into the analysis hints.

- **Dual Paths**: Code is split for parallel processing (Gemini AI vs. Unidiomatic Rust), then merged for final output.

### Interpretation

This workflow demonstrates a hybrid approach to static analysis:

1. **Toolchain Synergy**: C2Rust and AST Parser provide foundational analysis, while Gemini AI introduces AI-driven insights.

2. **Code Refinement**: Dividing code into Gemini AI (likely for high-level feedback) and Unidiomatic Rust (for syntax/idiom checks) allows targeted improvements.

3. **Verification Rigor**: The FFI-based E2E Verifier acts as a gatekeeper, ensuring combined outputs meet cross-platform/interoperability standards.

4. **Unidiomatic Rust’s Role**: The RSI file icon suggests a focus on Rust-specific idioms, possibly flagging non-idiomatic patterns for correction.

The process emphasizes modularity, with static analysis tools and AI working in tandem to produce verified, idiomatic Rust code. The crown icon in the Static Analysis Tools box may symbolize "gold-standard" quality assurance.

</details>

Figure 1: Overview of the SACTOR methodology.

## 2 Background

Primer on C and Rust: C is a low-level language that provides direct access to memory and hardware through pointers and abstracts machine-level instructions (tiobe). While this makes it efficient, it suffers from memory vulnerabilities (sbufferoverflow; hbufferoverflow; uaf; memoryleak). Rust, in contrast, provides memory safety without additional performance penalty, and has the same ability to access low-level hardware as C; it enforces strict compile-time memory safety through ownership, borrowing, and lifetimes to eliminate memory vulnerabilities (matsakis2014rust; jung2017rustbelt).

Challenges in Code Translation: Despite its advantages, and since Rust is relatively new, many widely used system-level programs remain in C. It is desirable to translate such programs to Rust, but the process is challenging due to fundamental language differences. Figure 3 in Appendix A shows an example of a simple C program and its Rust equivalent to illustrate the differences between two languages in terms of memory management and error handling. While Rust permits unsafe blocks for C-like pointer operations, their use is discouraged due to the absence of compiler guarantees and their non-idiomatic nature for further maintenance Other differences include string representation, pointer usage, array handling, reference lifetimes, and error propagation. A non-exhaustive summary appears in Appendix A..

## 3 Related Work

LLMs for C-to-Rust Translation: Vert (vert) combines LLM-generated candidates with fuzz testing and symbolic execution to ensure equivalence, but this strict verification struggles with scalability and complex C features. Flourine (flourine) incorporates error feedback and fuzzing, using data type serialization to mitigate mismatches, yet serialization issues still account for nearly half of errors. shiraishi2024context decompose C programs into sub-tasks (e.g., macros) and translate them with predefined Rust idioms, but evaluate only compilation success without functional correctness. syzygy employ dynamic analysis to capture runtime behavior as translation guidance, but coverage limits hinder generalization across execution paths. c2saferrust refine C2Rust outputs with LLMs to reduce unidiomatic constructs (unsafe, libc), but remain constrained by C2Rust’s preprocessing, which strips comments and directives (§ 4.2) and reduces context for idiomatic translation.

Non-LLM Approaches for C-to-Rust Translation: C2Rust (c2rust) translates by converting C ASTs into Rust ASTs and applying rule-based transformations. While syntactically correct, the results are structural translations that rely heavily on unsafe blocks and explicit type conversions, yielding low readability. Crown (crown) introduces static ownership tracking to reduce pointer usage in generated Rust code. hong2024don focus on handling return values in translation, while ling2022rust rely on rules and heuristics. Although these methods reduce some unsafe usage compared to C2Rust, the resulting code remains largely unidiomatic.

## 4 SACTOR Methodology

We propose SACTOR, an LLM-driven C-to-Rust translation tool using a two-step translation methodology. As Rust and C differ substantially in semantics (§ 2), SACTOR augments the LLM with static-analysis-derived “hints” that capture semantic information in the C code. The four main stages of SACTOR are outlined below.

### 4.1 Task Division

We begin by dividing the program into smaller parts that can be processed by the LLM independently. This enables the LLM to focus on a narrower scope for each translation task and ensures the program fits within its context window. This strategy is supported by studies showing that LLM performance degrades on long-context understanding and generation tasks (liu2024longgenbench; li2024long). By breaking the program into smaller pieces, we can mitigate these limitations and improve performance on each individual task. To facilitate task division and extract relevant language information – such as definitions, declarations, and dependencies – from C code, we developed a static analysis tool called C Parser based on libclang (a library that provides a C compiler interface, allowing access to semantic information of the code).

Our C Parser analyzes the input program and splits the program into fragments consisting of a single type, global variable, or function definition. This step also extracts semantic dependencies between each part (e.g., a function definition depending on a prior type definition). We then process each program fragment in dependency order: all dependencies of a code fragment are processed before the fragment. Concretely, C Parser constructs a directed dependency graph whose nodes are types, global variables, and functions, and whose edges point from each item to the items it directly depends on. We compute a translation order by repeatedly selecting items whose dependencies have already been processed. If the dependency graph contains a cycle, SACTOR currently treats this as an unsupported case and terminates with an explicit error. In addition, to support real-world C projects, SACTOR makes use of the C project compile commands generated by the make tool and performs preprocessing on the C source files. In Appendix B, we provide more details on how we preprocess source files and divide programs.

### 4.2 Translation

To ensure that each program fragment is translated only after its dependencies have been processed, we begin by translating data types, as they form the foundational elements for functions. This is followed by global variables and functions. We divide the translation process into two steps.

Step 1. Unidiomatic Rust Translation: We aim to produce interface equivalent Rust code from the original C code, which allows the use of unsafe blocks to do pointer manipulations and C standard library functions while keeping the same interface as original C code. For data type translation, we leverage information from C2Rust (c2rust) to help the conversion. While C2Rust provides reliable data type translation, it struggles with function translation due to its compiler-based approach, which omits source-level details like comments, macros, and other elements. These omissions significantly reduce the readability and usability of the generated Rust code. Thus, we use C2Rust only for data type translation, and use an LLM to translate global variables and functions. For functions, we rely on our C Parser to automatically extract dependencies (e.g., function signatures, data types, and global variables) and reference the corresponding Rust code. This approach guides the LLM to accurately translate functions by leveraging the previously translated components and directly reusing or invoking them as needed.

Step 2. Idiomatic Rust Translation: The goal of this step is to refine unidiomatic Rust into idiomatic Rust by removing unsafe blocks and following Rust idioms. This stage focuses on rewriting behavioral-equivalent but low-level constructs into type-safe abstractions while preserving behavior verified in the previous step. Handling pointers from C code is a key challenge, as they are considered unsafe in Rust. Unsafe pointers should be replaced with Rust types such as references, arrays, or owned types. To address this, we use Crown (crown) to facilitate the translation by analyzing pointer mutability, fatness (e.g., arrays), and ownership. This information provided by Crown helps the LLM assign appropriate Rust types to pointers. Owned pointers are translated to Box, while borrowed pointers use references or smart pointers. Crown assists in translating data types like struct and union, which are processed first as they are often dependencies for functions. For function translations, Crown analyzes parameters and return pointers, while local variable pointers are inferred by the LLM. Dependencies are extracted using our C Parser to guide accurate function translation. The idiomatic code is produced together with an interface transformation specification, forms the input to the verification step in § 4.3.

### 4.3 Verification

To verify the equivalence between source and target languages, prior work has relied on symbolic execution and fuzz testing, are impractical for real-world C-to-Rust translation (details in Appendix C). We instead validate correctness through soft equivalence: ensuring functional equivalence of the entire program via end-to-end (E2E) tests. This avoids the complexity of generating specific inputs or constraints for individual functions and is well-suited for real-world programs where such E2E tests are often available and reusable. Correctness confidence in this framework depends on the code coverage of the E2E tests: the broader the coverage, stronger the assurance of equivalence.

Verifying Unidiomatic Rust Code. This is straightforward, as it is semantically equivalent to the original C code and maintains compatible function signatures and data types, which ensures a consistent Application Binary Interface (ABI) between the two languages and enabling direct use of the FFI for cross-language linking. The verification process involves two main steps: First, the unidiomatic Rust code is compiled using the Rust compiler to check for successful compilation. Then, the original C code is recompiled with the Rust translation linked as a shared library. This setup ensures that when the C code calls the target function, it invokes the Rust translation instead. To verify correctness, E2E tests are run on the entire program, comparing the outputs of the original C code and the unidiomatic Rust translation. If all tests pass, the target function is considered verified.

Verifying Idiomatic Rust Code. Idiomatic Rust diverges from the original C program in both types and function signatures, producing an ABI mismatch that prevents direct linking into the C build. We therefore verify it via a synthesized, C-compatible test harness together with E2E tests.

During idiomatic translation, SACTOR co-produces a small, machine-readable specification (SPEC) for each function/struct. The SPEC captures, in a compact form, how C-facing values map to idiomatic Rust, including the expected pointer shape (slice / cstring / ref), where lengths come from (a sibling field or a constant), and basic nullability and return conventions; it also allows marking fields that should be compared in self-checks. A rule-based generator consumes the SPEC to synthesize a C-compatible harness that bridges from the C ABI to idiomatic code and backwards. Figure 9 shows the schematic, and Table 12 summarizes current supported patterns; Appendix L presents a detailed exposition of the SPEC-driven harness generation technique (rules and design choices), and Appendix D provides a concrete example of the generated harness. For structs, the SPEC defines bidirectional converters between the C-facing and idiomatic layouts, validated by a lightweight roundtrip test that checks the fields marked as comparable for consistency after conversion. When the SPEC includes a pattern the generator does not yet implement (e.g., aliasing/offset views or unsupported pointer kinds or types), we emit a localized TODO and use an LLM guided by the SPEC to fill only the missing conversions. Finally, we compile the idiomatic crate and the generated harness, link them into the original C build via FFI, and run the program’s existing E2E tests; passing tests validate the idiomatic translation under the coverage of those tests, while failures trigger the feedback procedure in § 4.3.

Feedback Mechanism. For failures, we feed structured signals back to translation: compiler errors guide fixes for build breaks; for E2E failures we use the Rust procedural macro to automatically instrument the target to log salient inputs/outputs, re-run tests, and return the traces to the translator for refinement.

### 4.4 Code Combination

By translating and verifying all functions and data types, we integrate them into a unified Rust codebase. We first collect the translated Rust code from each subtask and remove duplicate definitions and other redundancies required only for standalone compilation. The cleaned code is then organized into a well-structured Rust implementation of the original C program. Finally, we run end-to-end tests on the combined program to verify the correctness of the final Rust output. If all tests pass, the translation is considered successful.

## 5 Experimental Setup

### 5.1 Datasets Used

For the selection of datasets for evaluation, we consider the following criteria:

- Sufficient Number: The dataset should contain a substantial number of C programs to ensure a robust evaluation of the approach’s performance across a diverse set of examples.

- Presence of Non-Trivial C Features: The dataset should include C programs with advanced features such as multiple functions, struct s, and other non-trivial constructs as it enables the evaluation to assess the approach’s ability to handle complex features of C.

- Availability of E2E Tests: The dataset should either include E2E tests or make it easy to generate them as this is essential for accurately evaluating the correctness of the translated code.

Based on the above criteria, we evaluate on two widely used program suites in the translation literature: TransCoder-IR (transcoderir) and Project CodeNet (codenet). Complete details for these datasets are in Appendix F. For TransCoder-IR and CodeNet, we randomly sample 100 C programs from each (for CodeNet, among programs with external inputs) to ensure computational feasibility while maintaining statistical significance.

To better reflect the language features of real-world C codebases and allow test reuse (§ 6.3), we also evaluate on two targets: (i) a 50-sample subset of CRust-Bench (khatry2025crust) and (ii) the libogg multimedia container library (libogg). In CRust-Bench, we exclude entries outside our pipeline’s scope (e.g., circular dependencies or compiler-specific intrinsics). libogg is a real-world C project of about 2,000 lines of code with 77 functions involving non-trivial struct s, buffer s, and pointer manipulation. Both benchmarks reuse their upstream end-to-end tests to verify the translated code.

### 5.2 Evaluation Metrics

Success Rate: This is defined as the ratio of the number of programs that can (a) successfully be translated to Rust, and (b) successfully pass the E2E tests for both unidiomatic and idiomatic translation phases to the total number of programs. To enable the LLMs to utilize feedback from previous failed attempts, we allow the LLM to make up to 6 attempts for each translation process.

Idiomaticity: To evaluate the idiomaticity of the translated code, we use three metrics:

- Lint Alert Count is measured by running Rust-Clippy (clippy), a tool that provides lints on unidiomatic Rust (including improper use of unsafe code and other common style issues). By collecting the warnings and errors generated by Rust-Clippy for the translated code, we can assess its idiomaticity: fewer alerts indicate more idiomaticity. Previous translation works (vert; flourine) have also used Rust-Clippy.

- Unsafe Code Fraction, inspired by shiraishi2024context, is defined as the ratio of tokens inside unsafe code blocks or functions to total tokens for a single program. High usage of unsafe is considered unidiomatic, as it bypasses compiler safety checks, introduces potential memory safety issues and reduces code readability.

- Unsafe Free Fraction indicates the percentage of translated programs in a dataset that do not contain any unsafe code. Since unsafe code represents potential points where the compiler cannot guarantee safety, this metric helps determine the fraction of results that can be achieved without relying on unsafe code.

### 5.3 LLMs Used

We evaluate 6 models across different experiments. On the two datasets (TransCoder-IR and CodeNet) we use four non-reasoning models—GPT-4o (OpenAI), Claude 3.5 Sonnet (Anthropic), Gemini 2.0 Flash (Google), and Llama 3.3 70B Instruct (Meta), and one reasoning model DeepSeek-R1 (DeepSeek). For real-world codebases, we run GPT-4o on CRust-Bench and run both GPT-4o and GPT-5 on libogg. Model configurations appear in Appendix G.

## 6 Evaluation

Through our evaluation, we answer: (1) How successful is SACTOR in generating idiomatic Rust code using different LLM models?; (2) How idiomatic is the Rust code produced by SACTOR compared to existing approaches?; and (3) How well does SACTOR generalize to real-world C codebases?

Our results show that: (1) DeepSeek-R1 achieves the highest success rates (93%) with SACTOR on TransCoder-IR and also reaches the highest success rates (84%) on Project CodeNet (§ 6.1), while failure reasons vary between datasets and models (Appendix H); (2) SACTOR ’s idiomatic translation results outperforms all previous baselines, producing Rust code with fewer Clippy warnings and 100% unsafe-free translations (§ 6.2); and (3) For real-world codebases (§ 6.3), SACTOR attains strong unidiomatic success and moderate idiomatic success: on CRust-Bench, unidiomatic averages 85% across 50 samples (82% aggregated across 966 functions; 32/50 fully translated) and idiomatic averages 52% across 32 samples that fully translated into unidiomatic Rust (43% aggregated across 580 functions; 8/32 fully translated); on libogg unidiomatic reaches 100% and idiomatic spans 53% and 78% for GPT-4o and GPT-5, respectively. Failures concentrate at ABI/type boundaries and harness synthesis (pointer/slice shape, length sources, lifetime or mutability), with additional cases from unsupported features and borrow/ownership pitfalls. Overall, improving the model itself alleviates a subset of failure modes; for a fixed model, strengthening the framework and interface rules also improves outcomes but remains limited when confronted with previously unseen patterns.

We also evaluate the computational cost of SACTOR (Appendix I), the impact of the feedback mechanism (Appendix J), and temperature settings (Appendix K) . GPT-4o and Gemini 2.0 achieve the best cost-performance balance, while Llama 3.3 consumes the most tokens among non-reasoning models. DeepSeek-R1 uses 3-7 $\times$ more tokens than others. The feedback mechanism boosts Llama 3.3’s success rate by 17%, but has little effect on GPT-4o, suggesting it benefits lower-performing models more. Temperature has minimal impact.

### 6.1 Success Rate Evaluation

<details>

<summary>x2.png Details</summary>

### Visual Description

## Comparison Chart: Unid. vs. Idiom Across SR1-SR6

### Overview

The image is a comparative chart displaying two categories ("Unid." and "Idiom") across six subcategories (SR1-SR6). Each subcategory is represented by a colored rectangle with distinct patterns, organized in two vertical columns. The legend on the left associates colors with categories: blue shades for "Unid." and orange shades for "Idiom."

### Components/Axes

- **Legend**:

- **Unid.**: Blue rectangles with patterns (solid, diagonal stripes, grid, dots, vertical stripes).

- **Idiom**: Orange rectangles with patterns (solid, diagonal stripes, grid, dots, vertical stripes).

- **Columns**:

- **Left Column ("Unid.")**: Labels SR1-SR6 with blue-patterned rectangles.

- **Right Column ("Idiom")**: Labels SR1-SR6 with orange-patterned rectangles.

- **Labels**:

- **Rows**: SR1, SR2, SR3 (left column) and SR4, SR5, SR6 (right column).

- **Columns**: "Unid." (left) and "Idiom" (right).

### Detailed Analysis

- **Unid. SR1**: Solid blue rectangle.

- **Unid. SR2**: Diagonal blue stripes.

- **Unid. SR3**: Grid-patterned blue rectangle.

- **Unid. SR4**: Dotted blue rectangle.

- **Unid. SR5**: Vertical blue stripes.

- **Unid. SR6**: Crosshatch-patterned blue rectangle.

- **Idiom SR1**: Solid orange rectangle.

- **Idiom SR2**: Diagonal orange stripes.

- **Idiom SR3**: Grid-patterned orange rectangle.

- **Idiom SR4**: Dotted orange rectangle.

- **Idiom SR5**: Vertical orange stripes.

- **Idiom SR6**: Crosshatch-patterned orange rectangle.

### Key Observations

1. **Color Coding**: Blue consistently represents "Unid.," while orange represents "Idiom."

2. **Pattern Variation**: Each SR subcategory uses a unique pattern (solid, stripes, grid, dots, vertical stripes, crosshatch) to differentiate data points.

3. **Label Placement**: Labels are centered within rectangles, with "Unid." on the left and "Idiom" on the right.

4. **Legend Positioning**: The legend is aligned to the left of the chart, with "Unid." above "Idiom."

### Interpretation

This chart visually contrasts "Unid." and "Idiom" across six scenarios (SR1-SR6), using color and patterns to encode differences. The systematic alternation of patterns suggests a categorical distinction between the two groups, possibly indicating variations in attributes like frequency, usage, or context. The absence of numerical data implies the chart prioritizes qualitative differentiation over quantitative metrics. The structured layout ensures clarity in comparing the two categories across all subcategories.

</details>

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: LLM Model Success Rate Comparison

### Overview

The chart compares the success rates of five large language models (LLMs) across four performance categories: Correct, Incorrect, Uncertain, and Ambiguous. Each model's performance is visualized as a stacked bar, with segmented patterns and colors representing the contribution of each category to the total success rate.

### Components/Axes

- **X-Axis (LLM Models)**: Labeled with model names and versions:

- Claude 3.5

- Gemini 2.0

- Llama 3.3

- GPT-40

- DeepSeek-R1

- **Y-Axis (Success Rate)**: Scaled from 0% to 100% in 20% increments.

- **Legend**: Located on the right, mapping colors/patterns to categories:

- **Blue (Diagonal Lines)**: Correct (正确)

- **Orange (Diagonal Lines)**: Incorrect (错误)

- **Gray (Dotted Lines)**: Uncertain (不确定)

- **Light Blue (Checkered)**: Ambiguous (模糊)

### Detailed Analysis

1. **Claude 3.5**:

- Total Success Rate: ~60%

- Breakdown:

- Correct: ~35% (blue)

- Incorrect: ~15% (orange)

- Uncertain: ~5% (gray)

- Ambiguous: ~5% (light blue)

2. **Gemini 2.0**:

- Total Success Rate: ~85%

- Breakdown:

- Correct: ~60% (blue)

- Incorrect: ~10% (orange)

- Uncertain: ~5% (gray)

- Ambiguous: ~10% (light blue)

3. **Llama 3.3**:

- Total Success Rate: ~60%

- Breakdown:

- Correct: ~35% (blue)

- Incorrect: ~10% (orange)

- Uncertain: ~10% (gray)

- Ambiguous: ~5% (light blue)

4. **GPT-40**:

- Total Success Rate: ~90%

- Breakdown:

- Correct: ~70% (blue)

- Incorrect: ~10% (orange)

- Uncertain: ~5% (gray)

- Ambiguous: ~5% (light blue)

5. **DeepSeek-R1**:

- Total Success Rate: ~100%

- Breakdown:

- Correct: ~55% (blue)

- Incorrect: ~30% (orange)

- Uncertain: ~10% (gray)

- Ambiguous: ~5% (light blue)

### Key Observations

- **DeepSeek-R1** achieves the highest total success rate (100%) but relies heavily on **Incorrect** answers (~30%), suggesting potential overconfidence or flawed evaluation metrics.

- **GPT-40** excels in **Correct** answers (~70%), driving its high total success rate (~90%).

- **Gemini 2.0** balances **Correct** answers (~60%) with moderate **Ambiguous** (~10%) and **Uncertain** (~5%) rates.

- **Claude 3.5** and **Llama 3.3** show similar total success rates (~60%), but Llama has higher **Uncertain** (~10%) and lower **Incorrect** (~10%) rates.

### Interpretation

The data highlights trade-offs in LLM performance:

- **DeepSeek-R1**'s 100% success rate is anomalous, as its **Incorrect** category dominates, raising questions about evaluation criteria or data labeling.

- **GPT-40** demonstrates reliability through high **Correct** answers, making it the most consistent performer.

- Models with higher **Uncertain**/**Ambiguous** rates (e.g., Llama 3.3) may prioritize caution over speed, impacting total success.

- The chart underscores that "success rate" is context-dependent, influenced by how models handle errors, uncertainty, and ambiguity.

</details>

(a) TransCoder-IR SR

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Performance Comparison of LLM Models on a Benchmark Task

### Overview

The chart compares the performance of five large language models (LLMs) across three categories: "Correct Answers," "Incorrect Answers," and "Uncertain Responses." Each model's performance is represented as a stacked bar, with segments differentiated by color and pattern. The y-axis measures performance as a percentage (0–100), while the x-axis lists the models: Claude 3.5, Gemini 2.0, Llama 3.3, GPT-4, and DeepSeek-R1.

### Components/Axes

- **X-Axis (Categories)**: LLM Models (Claude 3.5, Gemini 2.0, Llama 3.3, GPT-4, DeepSeek-R1).

- **Y-Axis (Scale)**: Percentage (0–100), with gridlines at 20% intervals.

- **Legend**:

- **Blue (Solid)**: Correct Answers.

- **Orange (Diagonal Hatch)**: Incorrect Answers.

- **Gray (Dotted)**: Uncertain Responses.

- **Bar Structure**: Stacked vertically, with segments ordered from bottom (Correct Answers) to top (Uncertain Responses).

### Detailed Analysis

1. **Claude 3.5**:

- Correct Answers: ~60% (blue).

- Incorrect Answers: ~20% (orange).

- Uncertain Responses: ~5% (gray).

2. **Gemini 2.0**:

- Correct Answers: ~65% (blue).

- Incorrect Answers: ~10% (orange).

- Uncertain Responses: ~10% (gray).

3. **Llama 3.3**:

- Correct Answers: ~45% (blue).

- Incorrect Answers: ~30% (orange).

- Uncertain Responses: ~10% (gray).

4. **GPT-4**:

- Correct Answers: ~70% (blue).

- Incorrect Answers: ~15% (orange).

- Uncertain Responses: ~5% (gray).

5. **DeepSeek-R1**:

- Correct Answers: ~55% (blue).

- Incorrect Answers: ~25% (orange).

- Uncertain Responses: ~10% (gray).

### Key Observations

- **Highest Correct Answers**: GPT-4 (~70%) and DeepSeek-R1 (~55%) lead in correct responses.

- **Lowest Correct Answers**: Llama 3.3 (~45%) underperforms in this category.

- **Highest Uncertainty**: Gemini 2.0 and DeepSeek-R1 tie at ~10% for uncertain responses.

- **Incorrect Answers**: Llama 3.3 (~30%) has the highest rate of incorrect answers, while GPT-4 (~15%) has the lowest.

### Interpretation

The data suggests significant variability in model reliability. GPT-4 demonstrates the strongest performance, with the highest correct answers and lowest uncertainty. Llama 3.3 struggles with accuracy, showing both the lowest correct answers and highest incorrect responses. Gemini 2.0 and DeepSeek-R1 exhibit moderate performance but share the highest uncertainty, potentially indicating limitations in confidence calibration. The patterns (e.g., GPT-4’s low uncertainty) may reflect architectural differences or training data quality. These trends highlight trade-offs between accuracy, confidence, and error rates across LLMs.

</details>

(b) CodeNet SR

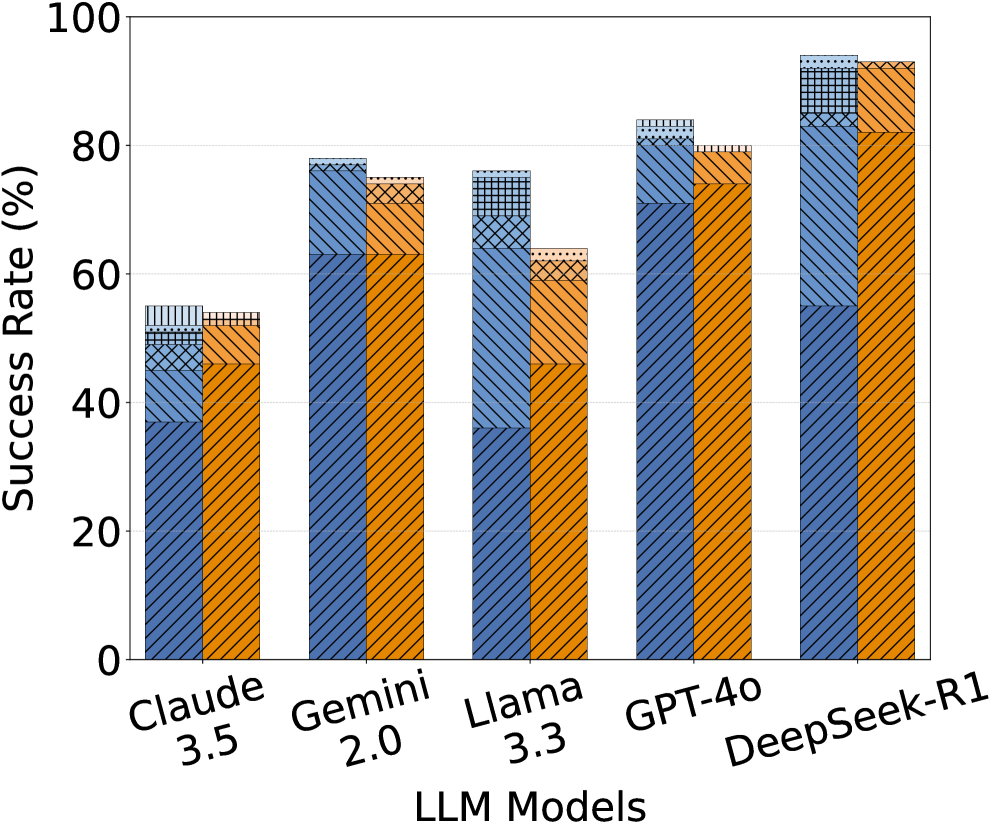

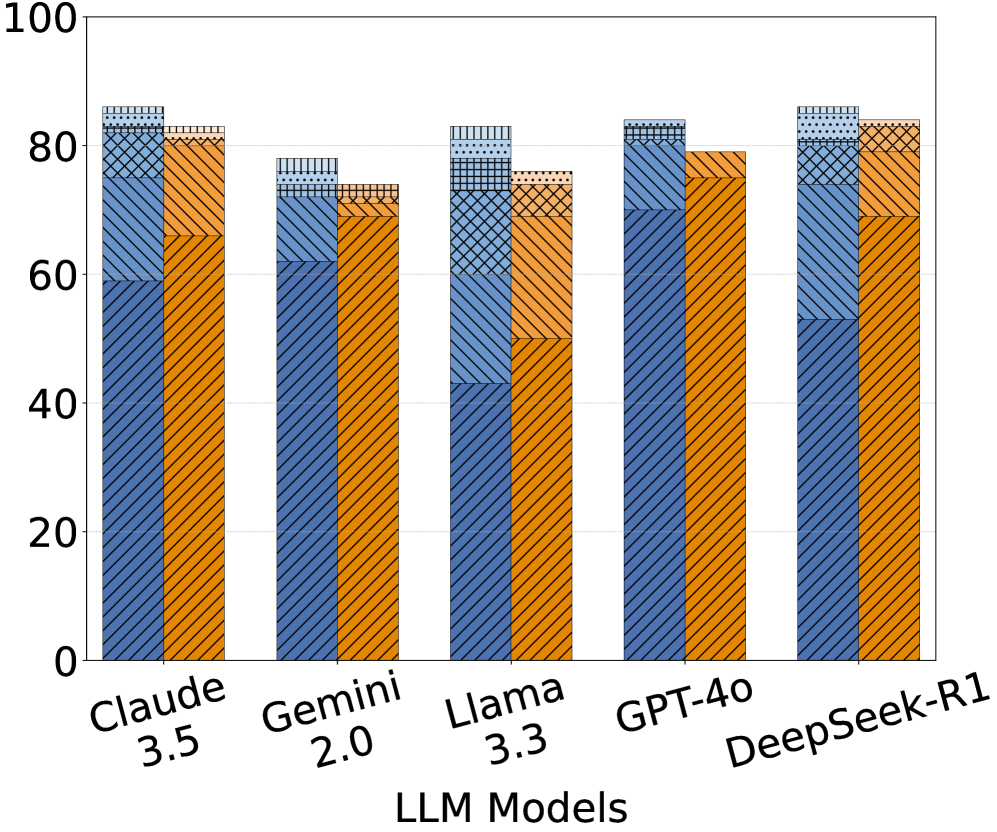

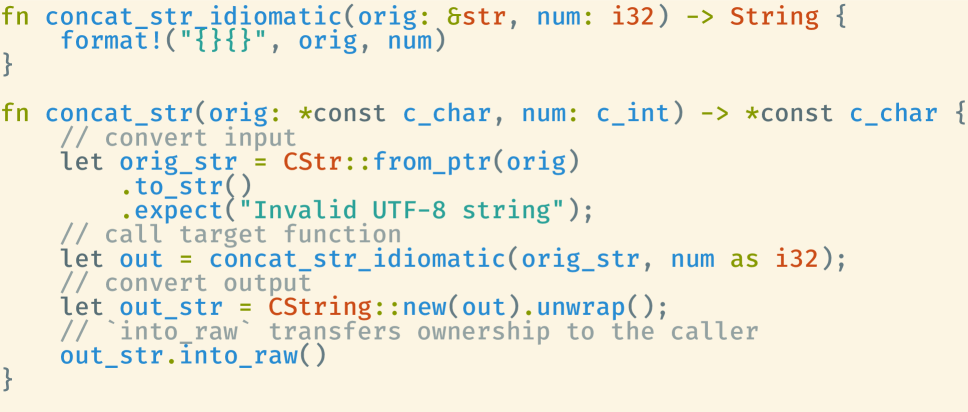

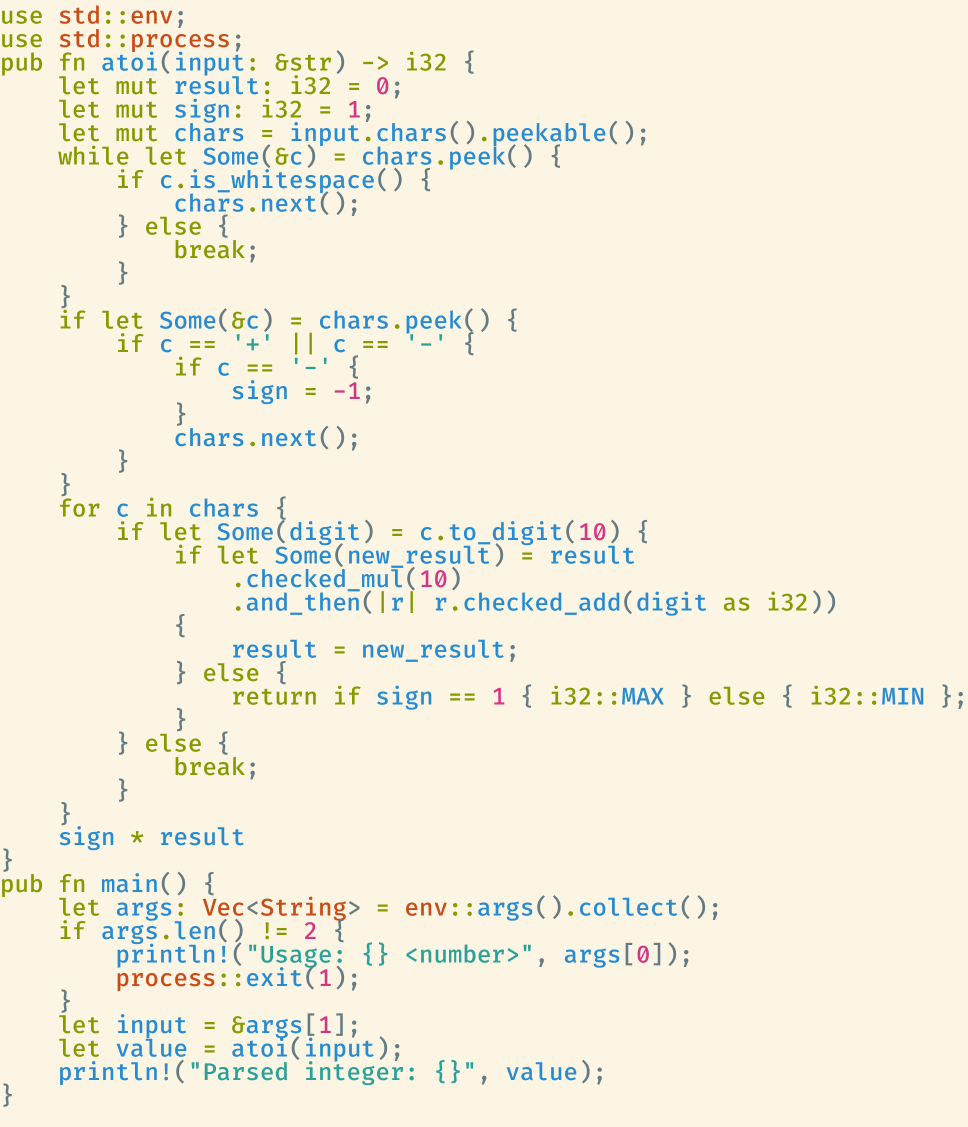

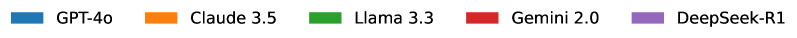

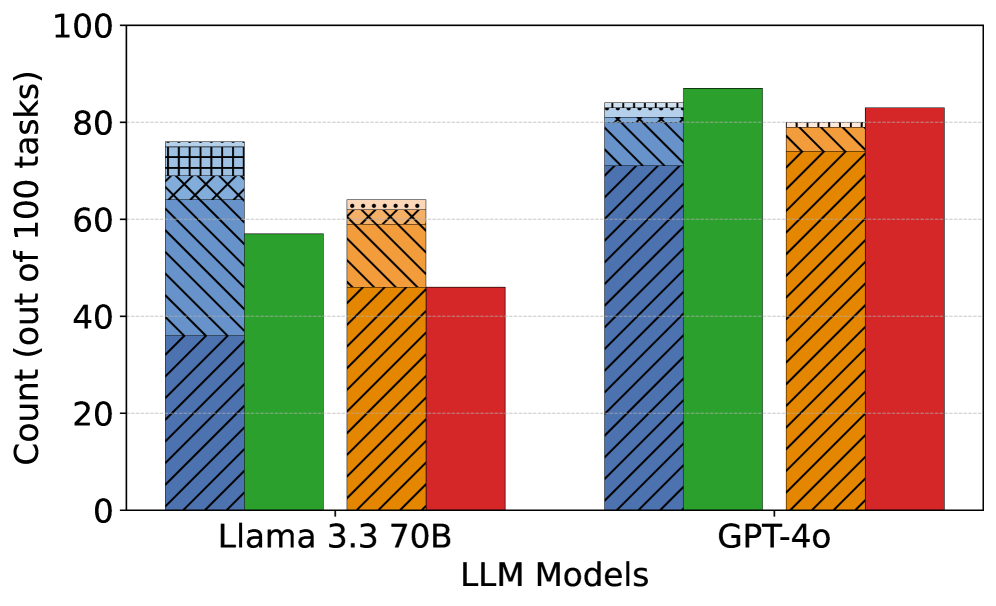

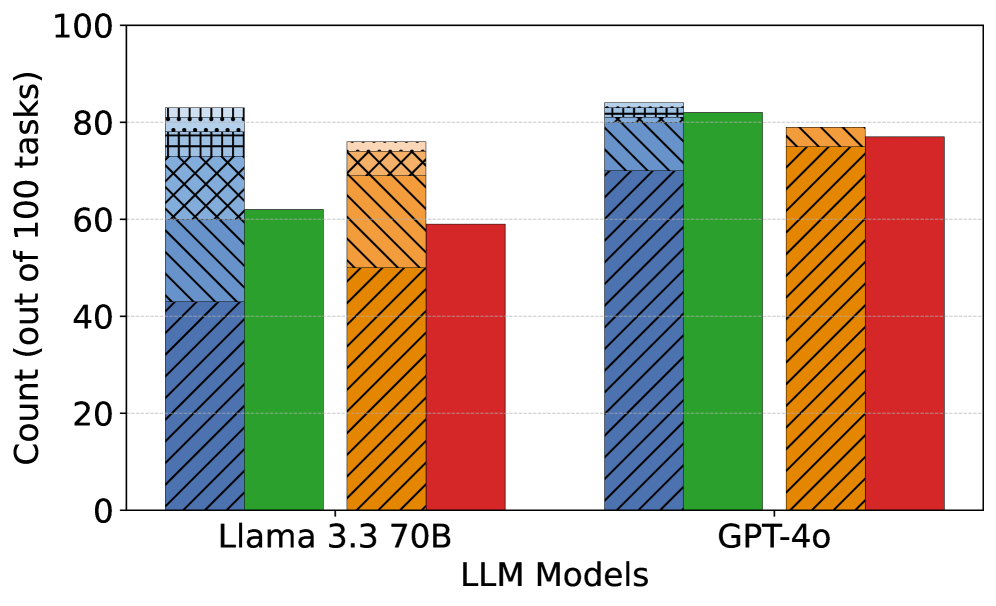

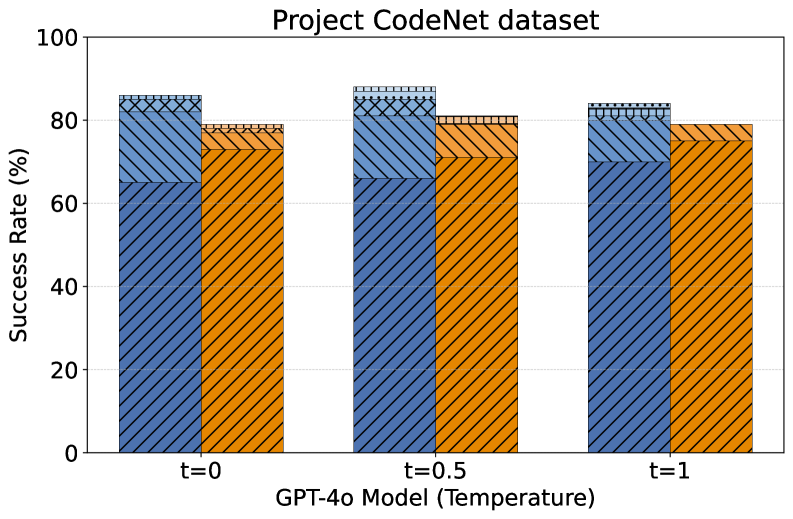

Figure 2: Success rates (SR) across different LLM models for the TransCoder-IR and CodeNet datasets. SR 1-6 represent the number of attempts made to achieve a successful translation. Unid. and Idiom. denote unidiomatic and idiomatic translation steps, respectively.

We evaluate the success rate (as defined in § 5.2) for the two datasets on different models. For idiomatic translation, we also plot how many attempts are needed.

(1) TransCoder-IR (Figure 2(a)): DeepSeek-R1 achieves the highest success rate (SR) in both unidiomatic (94%) and idiomatic (93%) steps, only 1% drops in the idiomatic translation step, demonstrating strong consistency in code translation. GPT-4o follows with 84% in the unidiomatic step and 80% in the idiomatic step. Gemini 2.0 comes next with 78% and 75%, respectively. Claude 3.5 struggles in the unidiomatic step (55%) but does not show substantial degradation when converting unidiomatic Rust to idiomatic Rust (54%, only a 1% drop), but it is still the worst model compared to the others. Llama 3.3 performs well in the unidiomatic step (76%) but drops significantly in the idiomatic step (64%), and requiring more attempts for correctness.

(2) Project CodeNet (Figure 2(b)): DeepSeek-R1 again leads with 86% in the unidiomatic step and 84% in the idiomatic step, showing only a 2% drop in the idiomatic translation step. Claude 3.5 follows closely with 86% success rate in the unidiomatic step and 83% in the idiomatic step. GPT-4o performs consistently well in the unidiomatic step (84%) but drops to 79% in the idiomatic step, indicating a 5% drop between the two steps. Gemini 2.0 follows with 78% in the unidiomatic step and 74% in the idiomatic step, showing consistent performance between two datasets. Llama 3.3 still exhibits significant drops (83% to 76%) in both steps and finishes last in the idiomatic step.

The results demonstrates that DeepSeek-R1’s SRs remain high and consistent–94%/93% (unidiomatic/idiomatic) on TransCoder-IR versus 86%/84% on CodeNet–while other models exhibit notable performance drops when moving to TransCoder-IR. This suggests that models with reasoning capabilities may be better for handling complex code logic and data manipulation.

### 6.2 Measuring Idiomaticity

We compare our approach with four baselines: C2Rust (c2rust), Crown (crown), C2SaferRust (c2saferrust) and Vert (vert). Of these baselines, C2Rust is the most versatile Versatility refers to an approach’s applicability to diverse C programs., supporting most C programs, while Crown is also broad but lacks support for some language features. C2SaferRust focuses on refining the unsafe code produced by C2Rust, allowing it to handle a wide range of C programs. In contrast, Vert targets a specific subset of simpler C programs. We assess the idiomaticity of Rust code generated by C2Rust, Crown, and C2SaferRust on both datasets. Since Vert produced Rust code only for TransCoder-IR, we evaluate it solely on this dataset. All the experiments are conducted using GPT-4o as the LLM for baselines and our approach, with max 6 attempts per translation.

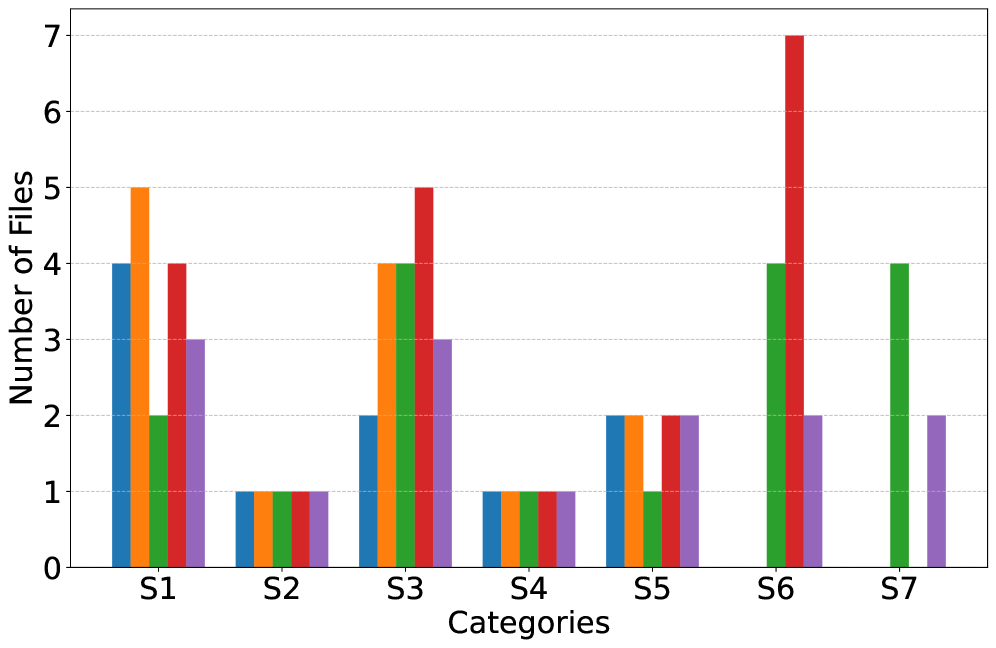

Results: Figure LABEL:fig:idiomaticity presents the lint alert count (sum up of Clippy warnings and errors count for a single program) across all approaches. C2Rust consistently exhibits high Clippy issues, and Crown shows little improvement over C2Rust, indicating both struggle to generate idiomatic Rust. C2SaferRust reduces Clippy issues, but it still retains a significant number of warnings and errors. Notably, even the unidiomatic output of SACTOR surpasses all of these 3. This underscores the advantage of LLMs over rule-based methods. While Vert improves idiomaticity, SACTOR ’s idiomatic phase yields fewer Clippy issues, outperforming some existing LLM-based approaches.

Table LABEL:tab:unsafe_stats summarizes unsafe code statistics. Unsafe-Free indicates the percentage of programs without unsafe code, while Avg. Unsafe represents the average proportion of unsafe code across all translations. C2Rust and Crown generate unsafe code in all programs with a high average unsafe percentage. C2SaferRust has the ability to reduce unsafe code and able to generate unsafe-free programs in some cases (45.6% in TransCoder-IR), but cannot sufficiently reduce the unsafe uses in the CodeNet dataset. Vert has a higher success rate than SACTOR but occasionally introduces unsafe code. SACTOR ’s unidiomatic phase retains C semantics, leading to a high unsafe percentage. However, its idiomatic phase eliminates all unsafe code, achieving a 100% Unsafe-Free rate.

### 6.3 Real-world Code-bases

To evaluate SACTOR ’s performance on two real-world code-bases, we run the translation process up to three times per sample, with SACTOR attempts to translate each function, struct and global variable at most six attempts in each run. For libogg, we also experiment with both GPT-4o and GPT-5 to compare their performance.

CRust-Bench.

Measured at the function level, the mean per-sample translation success rate is 85.15%. Aggregated across the 50 samples, SACTOR translates 788 of 966 functions (81.57% combined). 32 samples achieve 100% function-level translation, i.e., the entire C codebase for the sample is translated to unidiomatic Rust. For idiomatic translation, we evaluate only on the 32 samples whose unidiomatic stage reached 100% function-level translation. On these samples, the mean per-sample function translation rate is 51.85%. Aggregated across them, SACTOR translates 249 of 580 functions (42.93% combined); 8 samples achieve 100% function-level idiomatic translation, which the entire C codebases are translated to idiomatic Rust.

| Unidi. Idiom. | 50 32 $\dagger$ | 85.15% 51.85% | 788 / 966 (81.57%) 249 / 580 (42.93%) | 32 / 50 (64.00%) 8 / 32 (25.00%) | 2.96 0.28 |

| --- | --- | --- | --- | --- | --- |

Table 1: CRust-Bench function-level translation results. Success rate (SR) is averaged per-sample; $\dagger$ idiomatic stage is evaluated only on samples whose unidiomatic pass fully translated all functions.

Table 1 summarizes stage-level outcomes.

Observations and failure modes. We organize failures into five main categories. (1) Interface/name drift: Symbol casing or exact-name mismatches (e.g., CamelCase vs. snake_case). (2) Semantic mapping errors: Mistakes in translating C constructs to idiomatic Rust (e.g., pointer-of-pointer vs. Vec, shape drift, lifetime or mutability issues). (3) C-specific features: Incomplete handling some features like function pointers and C variadics. (4) Borrowing and resource-model violations: Compile-time borrow-checker errors in idiomatic Rust bodies (e.g., overlapping borrows in updates). (5) Harness/runtime faults: Faulty test harnesses translation (e.g. buffer mis-sizing, out-of-bounds access). Other minor cases include unsupported intrinsics (SIMD) and global-state divergence (shadowed globals). Table LABEL:tab:crust_failures (in Appendix M.1) summarizes each sample’s outcome and its primary cause.

Idiomaticity. Unidiomatic outputs exhibit many lint alerts and heavy reliance on unsafe: the mean Clippy alert sum is 50.14 per sample (2.96 per function); the mean unsafe fraction is 97.86% with an unsafe-free rate of 0%. Idiomatic outputs reverse this profile: the mean Clippy alert sum drops to only 2.27 per sample (0.28 per function); the mean unsafe fraction is 0% with a 100% unsafe-free rate.

Libogg.

Step (model) SR (%) Avg. lint / Function Avg. attempt Unid. (GPT-4o) 100 1.45 1.52 Idiom. (GPT-4o) 53 0.28 2.00 Unid. (GPT-5) 100 1.45 1.04 Idiom. (GPT-5) 78 0.23 1.25

Table 2: Evaluation of SACTOR ’s function translation on libogg. “Unid.”/“Idiom.” denotes unidiomatic/idiomatic translation. “SR” is the success rate of translating functions. “Avg. lint”/“Avg. attempt” is the average lint alert count/average number of attempts, for functions that both LLM models succeed in translating.

The unidiomatic and idiomatic translations of all structs and global variables are successful with each LLM model. For functions, the result is summarized in Table 2. SACTOR succeeds in all functions’ unidiomatic translations. For idiomatic translations, SACTOR ’s success rate is 53% and SACTOR takes 2.00 attempts on average to produce a correct translation with GPT-4o. For GPT-5, the performance is significantly better with a success rate of 78% and average number of attempts of 1.25.

Observations and failure modes. The most significant reasons for failed idiomatic translations include: (1) failure to pass tests due to mistakes in translating pointer manipulation and heap memory management; (2) compile errors in translated functions, especially arising from violation of Rust safety rules on lifetimes, borrowing and mutability; (3) failure to generate compilable test harnesses for data types with pointers and arrays. GPT-5 performs significantly better than GPT-4o. For example, GPT-5 only have one failure caused by a compile error in the translated function, in contrast to six compile error failures with GPT-4o, which shows the progress of GPT-5 in understanding Rust grammar and fixing compile errors. More details can be found in Appendix M.2.

Idiomaticity. SACTOR ’s unidiomatic translations cause lint alerts largely due to the use of unsafe code while idiomatic translations lead to very few lint alerts, i.e., fewer than 0.3 alerts per function on average (Table 2). With each model, the unidiomatic translations are all in unsafe code but the idiomatic translations are all in safe code. As a result, the idiomatic translations have an avg. unsafe fraction of 0% and unsafe-free fraction of 100%. The unidiomatic translations are the opposite.

## 7 Conclusions

Translating C to Rust enhances memory safety but remains error-prone and often unidiomatic. While LLMs improve translation, they still lack correctness guarantees and struggle with semantic gaps. SACTOR addresses these through a two-stage pipeline: preserving ABI interface first, then refining to idiomatic Rust. Guided by static analysis and validated via FFI-based testing, SACTOR achieves high correctness and idiomaticity across multiple benchmarks, surpassing prior tools. Remaining challenges include stronger correctness assurance, richer C-feature coverage, and improved scalability and efficiency (see § 8). Example prompts appear in Appendix N.

## 8 Limitations

While SACTOR is effective in producing correct, idiomatic Rust, several limitations remain:

- Test coverage dependence. Our soft-equivalence checks rely on existing end-to-end tests; shallow or incomplete coverage can miss subtle semantic errors. Integrating fuzzing or test generation could raise coverage and catch corner cases.

- Model variance. Translation quality depends on the underlying LLM. Although GPT-4o and DeepSeek-R1 perform well in our study, other models show lower accuracy and stability.

- Unsupported C features. Complex macros, pervasive function pointers, global state, C variadics and inline assembly are only partially handled, limiting applicability to such codebases (see § 6.3).

- Static analysis precision. Current analysis may under-specify aliasing, ownership, and pointer shapes in challenging code, leading to adapter/spec errors. Stronger analyses could improve mapping and reduce retries.

- Harness generation stability. The rule-based generator with LLM fallback can still emit incomplete or brittle adapters on complex patterns (e.g., unusual pointer shapes or length expressions), causing otherwise-correct translations to fail verification. Hardening rules and reducing reliance on the fallback should improve robustness and reproducibility.

- Cost and latency. Multi-stage prompting, compilation, and test loops incur non-trivial token and time costs, which matter for large-scale migrations.

## Appendix A Differences Between C and Rust

### A.1 Code Snippets

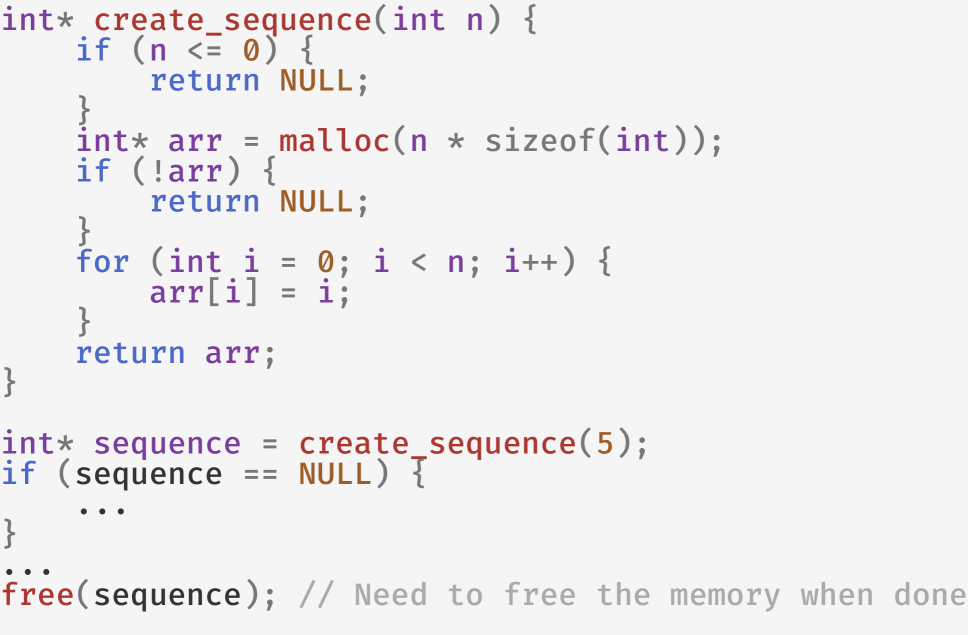

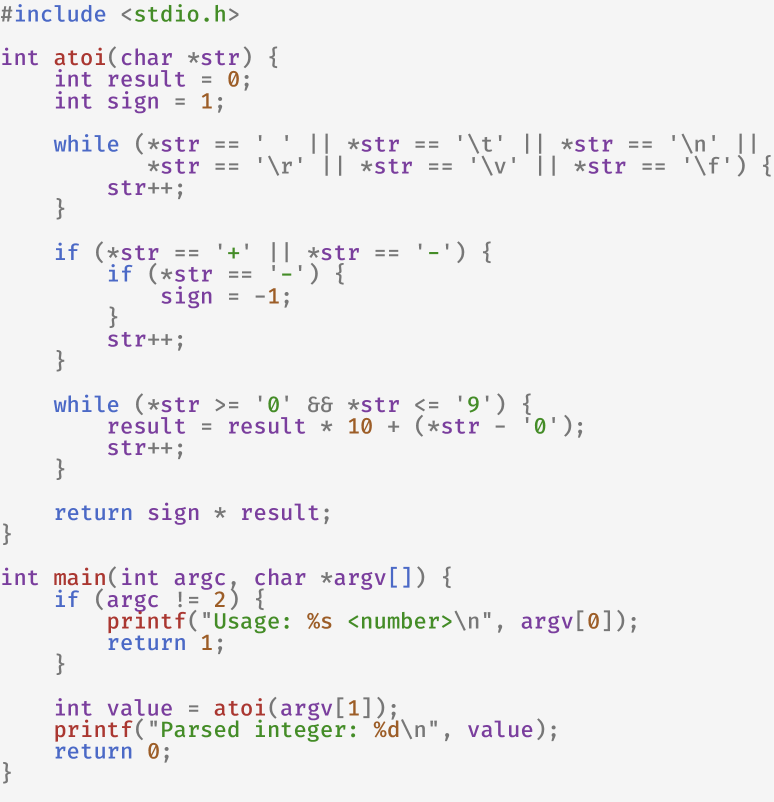

Here is a code example to demonstrate the differences between C and Rust. The example shows a simple C program and its equivalent Rust program. The create_sequence function takes an integer n as input and returns an array with a sequence of integers. In C, the function needs to allocate memory for the array using malloc and will return the pointer to the allocated memory as an array. If the size is invalid, or the allocation fails, the function will return NULL. The caller of the function is responsible for freeing the memory using free when it is done with the array to prevent memory leaks.

C Code:

<details>

<summary>x5.png Details</summary>

### Visual Description

## Code Snippet: Dynamic Integer Sequence Creation

### Overview

The image shows a C programming snippet demonstrating dynamic memory allocation for an integer array, with a function to generate a sequence of integers from 0 to n-1. The code includes error handling for invalid input and memory allocation failures, followed by an example usage and memory cleanup.

### Components/Axes

1. **Function Definition**: `int* create_sequence(int n)`

- Purpose: Creates an integer array of size `n` filled with values 0 to n-1

2. **Memory Allocation**: `malloc(n * sizeof(int))`

- Checks for allocation success using `!arr`

3. **Loop Initialization**: `for (int i = 0; i < n; i++)`

- Fills array with sequential values

4. **Example Usage**: `int* sequence = create_sequence(5);`

5. **Memory Cleanup**: `free(sequence);`

### Detailed Analysis

1. **Function Logic**:

- Returns `NULL` for invalid input (n ≤ 0)

- Allocates memory for `n` integers

- Returns `NULL` if allocation fails

- Populates array with values 0 to n-1

- Returns the populated array

2. **Memory Management**:

- Uses `malloc` for dynamic allocation

- Includes null check after allocation

- Demonstrates proper cleanup with `free()`

3. **Example Execution**:

- Creates sequence of 5 elements (0-4)

- Shows null check pattern for returned pointer

- Includes comment about memory cleanup responsibility

### Key Observations

- **Input Validation**: Explicit handling of n ≤ 0 cases

- **Error Propagation**: Returns NULL on allocation failure

- **Zero-Based Indexing**: Array values match C array indexing

- **Resource Management**: Explicit memory freeing demonstrated

- **Code Structure**: Follows typical C function pattern with error checking

### Interpretation

This code demonstrates fundamental C programming concepts:

1. **Dynamic Memory Management**: Shows proper use of `malloc`/`free` with error checking

2. **Array Initialization**: Implements sequential value population

3. **Defensive Programming**: Includes multiple null checks

4. **Resource Ownership**: Clearly demonstrates caller responsibility for memory deallocation

The example usage (`create_sequence(5)`) illustrates the function's practical application, while the comment about memory cleanup serves as documentation for proper resource management practices. The code follows standard C conventions for error handling and memory management.

</details>

Rust Code:

<details>

<summary>x6.png Details</summary>

### Visual Description

## Code Snippet: Rust Function `create_sequence`

### Overview

The image shows a Rust code snippet defining a function `create_sequence` that generates a sequence of integers. The function returns an `Option<Vec<i32>>`, handling edge cases and memory allocation. A `match` statement demonstrates pattern matching on the function's output.

### Components/Axes

- **Function Definition**:

- `fn create_sequence(n: i32) -> Option<Vec<i32>>`

- `fn`: Rust keyword for function definition (green).

- `create_sequence`: Function name (blue).

- `n: i32`: Parameter `n` of type `i32` (integer).

- `-> Option<Vec<i32>>`: Return type: `Option` enum wrapping a vector of 32-bit integers.

- **Control Flow**:

- `if n <= 0 { return None; }`

- Conditional check for invalid input (`n <= 0`).

- Returns `None` (red) if `n` is non-positive.

- `let mut arr = Vec::with_capacity(n as usize);`

- Initializes a mutable vector `arr` with capacity `n` (converted to `usize`).

- `for i in 0..n { arr.push(i); }`

- Populates the vector with integers from `0` to `n-1`.

- `Some(arr)`: Returns the populated vector wrapped in `Some`.

- **Pattern Matching**:

- `match create_sequence(5) {`

- Calls `create_sequence(5)` and matches the result.

- `Some(sequence) => { ... }`

- Handles the `Some` case (red).

- Comment: `// Does not need to free the memory` (gray).

- `None => { ... }`

- Handles the `None` case (red).

### Detailed Analysis

1. **Function Logic**:

- For `n <= 0`, returns `None` to indicate an empty sequence.

- For `n > 0`, allocates a vector with capacity `n` and fills it with sequential integers.

- Returns `Some(arr)` to indicate a valid sequence.

2. **Memory Management**:

- The comment `// Does not need to free the memory` suggests Rust's ownership model automatically manages memory, avoiding manual deallocation.

3. **Pattern Matching**:

- The `match` statement exhaustively handles both `Some(sequence)` and `None` cases, ensuring all possibilities are addressed.

### Key Observations

- **Edge Case Handling**: The function explicitly checks for `n <= 0` to avoid invalid memory allocation.

- **Type Safety**: Uses `Option` to signal the presence or absence of a result, preventing null pointer errors.

- **Efficiency**: Pre-allocates vector capacity to optimize performance for large `n`.

### Interpretation

This function demonstrates Rust's emphasis on safety and performance:

- **Safety**: The `Option` type and exhaustive `match` ensure all code paths are handled, eliminating runtime panics.

- **Performance**: Pre-allocating vector capacity reduces reallocations during population.

- **Memory Management**: Rust's ownership model avoids manual memory management, as noted in the comment.

The code exemplifies idiomatic Rust practices for error handling, resource management, and pattern matching.

</details>

Figure 3: Example of a simple C program and its equivalent Rust program, both hand-written for illustration.

### A.2 Tabular Summary

Here, we present a non-exhaustive list of differences between C and Rust in Table 3, highlighting the key features that make translating code from C to Rust challenging. While the list is not comprehensive, it provides insights into the fundamental distinctions between the two languages, which can help developers understand the challenges of migrating C code to Rust.

| Memory Management Pointers Lifetime Management | Manual (through malloc/free) Raw pointers like *p Manual freeing of memory | Automatic (through ownership and borrowing) Safe references like &p/&mut p, Box and Rc Lifetime annotations and borrow checker |

| --- | --- | --- |

| Error Handling | Error codes and manual checks | Explicit handling with Result and Option types |

| Null Safety | Null pointers allowed (e.g., NULL) | No null pointers; uses Option for nullable values |

| Concurrency | No built-in protections for data races | Enforces safe concurrency with ownership rules |

| Type Conversion | Implicit conversions allowed and common | Strongly typed; no implicit conversions |

| Standard Library | C stand library with direct system calls | Rust standard library with utilities for strings, collections, and I/O |

| Language Features | Procedure-oriented with minimal abstractions | Modern features like pattern matching, generics, and traits |

Table 3: Key Differences Between C and Rust

## Appendix B Preprocessing and Task Division

### B.1 Preprocessing of C Files

To support real-world C projects, SACTOR parses the compile commands generated by the make tool, extracting relevant flags for preprocessing, parsing, compilation, linking, and third-party tools’ use.

C source files usually contain preprocessing directives, such as #include, #define, #ifdef, #endif, etc., which we need to resolve before parsing C files. For #include, we copy and expand non-system headers recursively while keeping #include of system headers intact, because included non-system headers contain project-specific definitions such as structs and enums that the LLM has not known while system headers’ contents are known to the LLM and expanding them would unnecessarily introduce too much noise. For other directives, we pass relevant C project compile flags to the C preprocessor from GCC to resolve them.

### B.2 Algorithm for Task Division

The task division algorithm is used to determine the order in which the items should be translated. The algorithm is shown in Algorithm 1.

Algorithm 1 Translation Task Order Determination

1: $L_{i}$ : List of items to be translated

2: $dep(a)$ : Function to get dependencies of item $a$

3: $L_{sorted}$ : List of groups resolving dependencies

4: $L_{sorted}\leftarrow\emptyset$ $\triangleright$ Empty list

5: while $|L_{sorted}|<|L_{i}|$ do

6: $L_{processed}\leftarrow\emptyset$

7: for $a\in L_{i}$ do

8: if $a\notin L_{processed}$ and $dep(a)\subseteq L_{processed}$ then

9: $L_{sorted}\leftarrow L_{sorted}+a$ $\triangleright$ Add to sorted list

10: $L_{processed}\leftarrow L_{processed}\cup a$

11: end if

12: end for

13: if $L_{processed}=\emptyset$ then

14: $L_{circular}\leftarrow DFS(L_{i},dep)$ $\triangleright$ Circular dependencies

15: $L_{sorted}\leftarrow L_{sorted}+L_{circular}$ $\triangleright$ Add a group to sorted list

16: end if

17: end while

18: return $L_{sorted}$

In the algorithm, $L_{i}$ is the list of items to be translated, and $dep(a)$ is a function that returns the dependencies of item $a$ . The algorithm returns a list $L_{sorted}$ that contains the items in the order in which they should be translated. $DFS(L_{i},dep)$ is a depth-first search function that returns a list of items involved in a circular dependency. It begins by collecting all items (e.g., functions, structs) to be translated and their respective dependencies (in both functions and data types). Items with no unresolved dependencies are pushed into the translation order list first, and other items will remove them from their dependencies list. This process continues until all items are pushed into the list, or circular dependencies are detected. If circular dependencies are detected, we resolve them through a depth-first search strategy, ensuring that all items involved in a circular dependency are grouped together and handled as a single unit.

## Appendix C Equivalence Testing Details in Prior Literature

### C.1 Symbolic Execution-Based Equivalence

Symbolic execution explores all potential execution paths of a program by using symbolic inputs to generate constraints [king1976symbolic, baldoni2018survey, coward1988symbolic]. While theoretically powerful, this method is impractical for verifying C-to-Rust equivalence due to differences in language features. For instance, Rust’s RAII (Resource Acquisition Is Initialization) pattern automatically inserts destructors for memory management, while C relies on explicit malloc and free calls. These differences cause mismatches in compiled code, making it difficult for symbolic execution engines to prove equivalence. Additionally, Rust’s compiler adds safety checks (e.g., array boundary checks), which further complicate equivalence verification.

### C.2 Fuzz Testing-Based Equivalence

Fuzz testing generates random or mutated inputs to test whether program outputs match expected results [zhu2022fuzzing, miller1990empirical, liang2018fuzzing]. While more practical than symbolic execution, fuzz testing faces challenges in constructing meaningful inputs for real-world programs. For example, testing a URL parsing function requires generating valid URLs with specific formats, which is non-trivial. For large C programs, this difficulty scales, making it infeasible to produce high-quality test cases for every translated Rust function.

## Appendix D An Example of the Test Harness

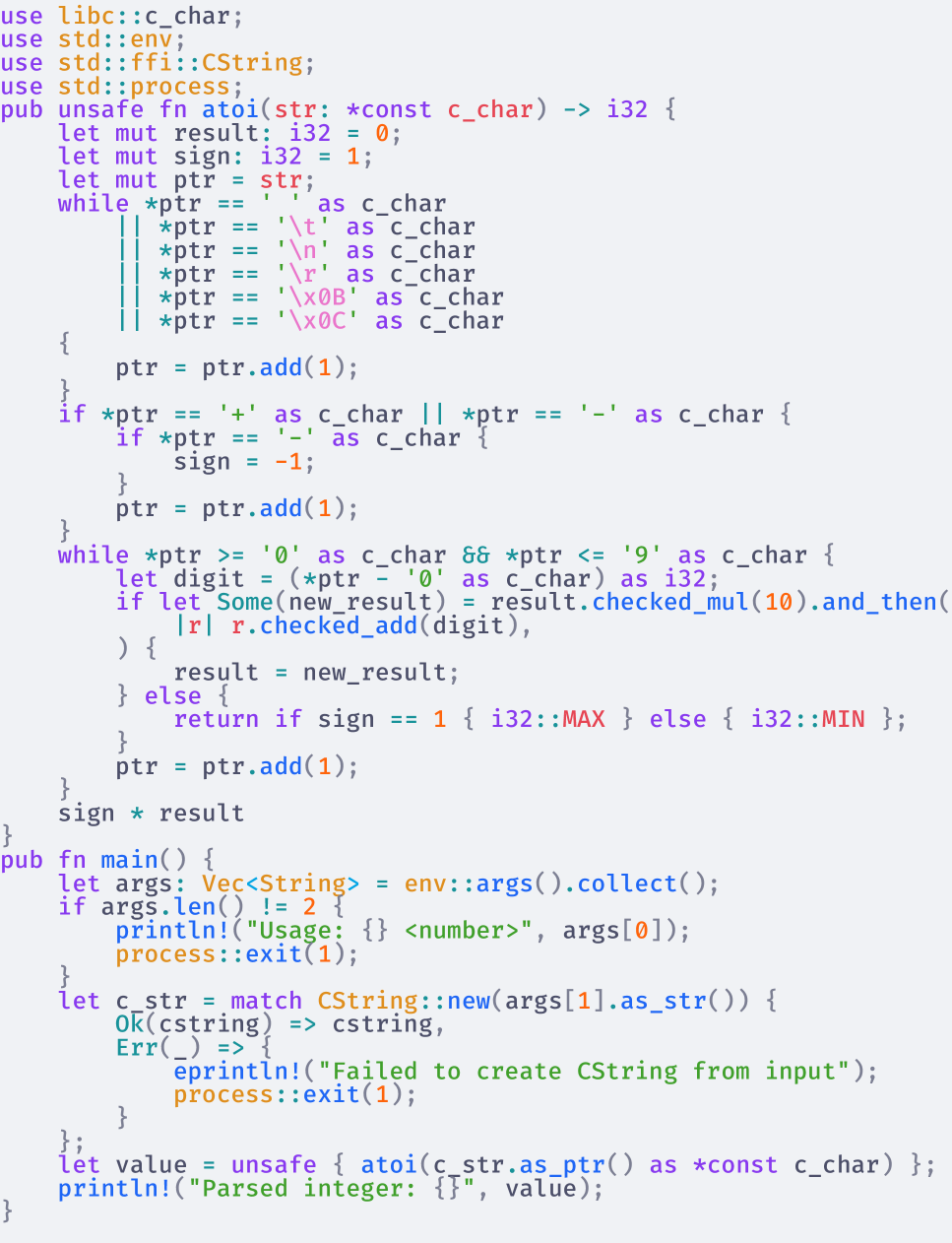

Here, we provide an example of the test harness used to verify the correctness of the translated code in Figure 4, which is used to verify the idiomatic Rust code. In this example, the concat_str_idiomatic function is the idiomatic translation we are testing, while the concat_str_c function is the test harness function that can be linked back to the original C code. where a string and an integer are passed as input, and an owned string is returned. Input strings are converted from C’s char* to Rust’s &str, and output strings are converted from Rust’s String back to C’s char*.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Screenshot: Rust Code Implementation for String Concatenation

### Overview

The image shows a Rust code snippet implementing two functions for string concatenation: `concat_str_idiomatic` and `concat_str`. The code includes syntax highlighting with color-coded elements (blue for keywords, orange for types, green for identifiers) and comments explaining the logic.

### Components/Axes

- **Function Definitions**:

- `fn concat_str_idiomatic(orig: &str, num: i32) -> String`

- `fn concat_str(orig: *const c_char, num: c_int) -> *const c_char`

- **Syntax Highlighting**:

- Keywords (e.g., `fn`, `let`, `expect`) in blue

- Types (e.g., `String`, `CString`, `CStr`) in orange

- Identifiers (e.g., `orig`, `num`, `out_str`) in green

- Comments in gray

- **Error Handling**:

- `expect("Invalid UTF-8 string")` for UTF-8 validation

- `unwrap()` for optional value conversion

### Detailed Analysis

1. **`concat_str_idiomatic` Function**:

- Parameters: `orig` (string slice), `num` (32-bit integer)

- Returns: `String` (heap-allocated string)

- Logic:

- Uses `format!` macro to concatenate `orig` and `num` into a formatted string.

- Example: `format!("{{}}", orig, num)` creates a string like `"hello123"` if `orig="hello"` and `num=123`.

2. **`concat_str` Function**:

- Parameters: `orig` (pointer to constant C string), `num` (C integer)

- Returns: Pointer to constant C string (`*const c_char`)

- Logic:

- Converts `orig` to a Rust `CString` via `CStr::from_ptr(orig).to_str().expect("Invalid UTF-8 string")`.

- Calls `concat_str_idiomatic` with the converted string and `num` (cast to `i32`).

- Wraps the result in a new `CString` and returns its raw pointer via `unwrap()` and `into_raw()`.

3. **Ownership Transfer**:

- `out_str.into_raw()` transfers ownership of the `CString` to the caller, requiring manual deallocation.

### Key Observations

- **Idiomatic vs. Low-Level**: `concat_str_idiomatic` uses Rust's high-level string formatting, while `concat_str` bridges Rust and C via raw pointers.

- **Error Propagation**: The `expect` macro enforces UTF-8 validity, crashing on invalid input.

- **Memory Management**: The `unwrap()` and `into_raw()` combination assumes valid input, risking panics or memory leaks if misused.

### Interpretation

- **Purpose**: The code demonstrates safe interoperability between Rust and C strings, with `concat_str_idiomatic` providing a safe abstraction and `concat_str` enabling low-level C compatibility.

- **Trade-offs**: The `concat_str` function sacrifices Rust's safety guarantees (e.g., raw pointers, `unwrap()`) for C compatibility, requiring careful error handling.

- **Design Insight**: The separation of concerns—`concat_str_idiomatic` for Rust-centric use and `concat_str` for FFI (Foreign Function Interface)—highlights Rust's emphasis on safety without sacrificing performance.

## Additional Notes

- **Language**: Rust (no other languages detected).

- **Color Significance**: Syntax highlighting aids readability but does not affect code semantics.

- **Missing Context**: The code lacks error recovery mechanisms (e.g., `?` operator) for the `expect` call, which could improve robustness.

</details>

Figure 4: Test harness used for verifying concat_str translation

## Appendix E An Example of SACTOR Translation Process

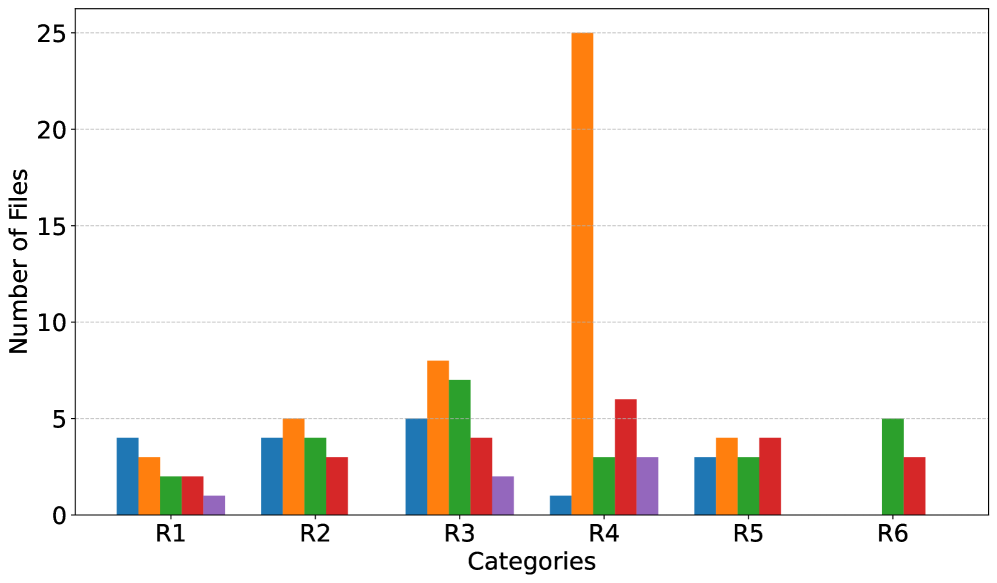

To demonstrate the translation process of SACTOR, we present a straightforward example of translating a C function to Rust. The C program includes an atoi function that converts a string to an integer, and a main function that parses command-line arguments and calls the atoi function. The C code is shown in Figure 5(a).

<details>

<summary>x8.png Details</summary>

### Visual Description

## C Code Screenshot: Custom atoi Implementation

### Overview

The image shows a C programming language implementation of a custom `atoi` function (ASCII to integer converter) along with a `main` function demonstrating its usage. The code includes syntax highlighting with color-coded elements.

### Components/Axes

- **Function Definitions**:

- `int atoi(char *str)`: Custom string-to-integer conversion function

- `int main(int argc, char *argv[])`: Program entry point

- **Variables**:

- `int result = 0`: Accumulates numeric value

- `int sign = 1`: Tracks positive/negative sign

- `char *str`: Input string pointer

- **Control Structures**:

- `while` loops for whitespace skipping and digit processing

- `if` statements for sign handling

- **Syntax Highlighting Colors**:

- Blue: `#include`, `while`, `if`, `return`

- Purple: `int`, `atoi`, `main`, `printf`, `print`

- Red: Function return types (`int`)

- Orange: Numeric literals (`0`, `1`, `10`)

- Green: String literals (`"Usage: %s <number>\\n"`, `"Parsed integer: %d\\n"`)

- Gray: Comments (`//`)

### Detailed Analysis

1. **atoi Function Logic**:

- **Whitespace Handling**: Skips leading spaces, tabs, newlines, carriage returns, vertical tabs, and form feeds

- **Sign Detection**: Checks for `+` or `-` to set sign multiplier

- **Digit Conversion**: Processes digits 0-9 using ASCII arithmetic (`*str - '0'`)

- **Termination**: Stops at first non-digit character

- **Return**: `sign * result`

2. **Main Function**:

- **Argument Check**: Requires exactly 2 command-line arguments (program name + number string)

- **Usage Message**: Prints `"Usage: %s <number>\\n"` if arguments are invalid

- **Conversion & Output**: Calls `atoi(argv[1])` and prints result with `"Parsed integer: %d\\n"`

### Key Observations

- **Color Consistency**: Syntax elements maintain consistent coloring throughout (e.g., all `while` keywords in blue)

- **Edge Case Handling**: Explicitly skips multiple whitespace characters before processing digits

- **Error Prevention**: Returns 0 for empty strings after whitespace skipping

- **ASCII Arithmetic**: Uses `*str - '0'` for digit conversion without numeric constants

### Interpretation

This implementation demonstrates fundamental string processing techniques in C:

1. **Robust Parsing**: Handles various whitespace characters and optional signs

2. **Efficiency**: Processes input in a single pass with O(n) complexity

3. **Safety**: Returns 0 for invalid inputs rather than crashing

4. **Educational Value**: Shows manual string-to-integer conversion without library functions

The color coding enhances code readability by visually separating:

- Keywords (blue)

- Types (purple)

- Literals (orange/green)

- Comments (gray)

This multi-colored approach aids in quickly identifying code structure and logic flow.

</details>

(a) C implementation of atoi

<details>

<summary>x9.png Details</summary>

### Visual Description

## Rust Code Screenshot: Custom `atoi` Implementation

### Overview

The image shows a Rust code snippet implementing a custom `atoi` function to convert C-style strings to integers, along with a `main` function for command-line argument parsing. The code uses unsafe FFI patterns and manual memory management.

### Components/Axes

- **Function Definitions**:

- `pub unsafe fn atoi(str: *const c_char) -> i32`: Converts C strings to integers.

- `pub fn main()`: Handles command-line arguments and input validation.

- **Variables**:

- `result`, `sign`, `ptr`: Integer accumulator and pointer variables.

- `c_str`: C string created from command-line input.

- `value`: Parsed integer output.

- **Error Handling**:

- `Ok(cstring) => cstring`

- `Err(_) => "Failed to create CString from input"`

- **Key Constructs**:

- `while *ptr != '\0'`: Null-terminated string traversal.

- `ptr.add(1)`: Pointer arithmetic for character iteration.

- `i32::checked_add(digit)`: Overflow-safe digit accumulation.

### Detailed Analysis

1. **`atoi` Function Logic**:

- Initializes `result = 0` and `sign = 1`.

- Skips leading `+`/`-` characters to determine sign.

- Iterates through digits using pointer arithmetic (`ptr.add(1)`).

- Converts ASCII digits to integers via `digit - '0'`.

- Uses `i32::checked_add` to prevent overflow, returning `i32::MAX`/`MIN` on error.

- Returns `sign * result` after processing all digits.

2. **`main` Function Flow**:

- Collects command-line arguments into `Vec<String>`.

- Validates argument count (requires exactly 2 arguments).

- Prints usage message for invalid input: `"Usage: <number>"`.

- Converts second argument to `CString` with error handling.

- Parses `CString` using `atoi` and prints result.

### Key Observations

- **Unsafe Usage**: The `atoi` function is marked `unsafe` due to direct pointer manipulation and FFI interactions.

- **Manual Parsing**: Implements digit conversion without standard library helpers (e.g., `parse()`).

- **Error Propagation**: Uses Rust's `Result` type for error handling in `CString` creation.

- **Pointer Safety**: Employs `ptr.add(1)` for safe character iteration within bounds-checked loops.

### Interpretation

This code demonstrates Rust's FFI capabilities while emphasizing safety through:

1. **Explicit Error Handling**: All potential failure points (e.g., invalid input, overflow) are explicitly checked.

2. **Memory Safety**: Uses `CString` for owned C-style strings and bounds-checked pointer arithmetic.

3. **Overflow Prevention**: Leverages `i32::checked_add` to avoid integer overflow vulnerabilities.

4. **CLI Integration**: Provides clear usage instructions and input validation for command-line tools.

The implementation highlights Rust's balance between low-level control and memory safety, particularly in systems programming contexts requiring C interoperability.

</details>

(b) Unidiomatic Rust translation from C

<details>

<summary>x10.png Details</summary>

### Visual Description

## Rust Code: Integer Parsing and Command-Line Argument Handling

### Overview

This code implements a command-line utility that parses an integer from user input, handling edge cases like overflow, invalid characters, and sign detection. It uses Rust's standard libraries for environment interaction and error handling.

### Components/Axes

- **Standard Libraries**:

- `std::env` for command-line argument access

- `std::process` for process exit functionality

- **Key Functions**:

- `atoi(input: &str) -> i32`: Custom string-to-integer conversion

- `main()`: Entry point handling argument validation and parsing

### Detailed Analysis

1. **Argument Validation**:

- Checks if exactly 2 arguments exist (`args.len() != 2`)

- Prints usage message `"Usage: <number>"` on invalid input count

- Exits with code 1 on argument count mismatch

2. **Integer Parsing Logic**:

- **Sign Detection**:

- Scans for leading `+` or `-` to set `sign` (1 or -1)

- Skips whitespace before sign detection

- **Digit Processing**:

- Converts characters to digits using `to_digit(10)`

- Uses `checked_add` for safe arithmetic to prevent overflow

- Returns `i32::MAX`/`i32::MIN` on overflow detection

- **Error Handling**:

- Returns `None` for non-digit characters

- Breaks loop on invalid input during parsing

3. **Output**:

- Prints parsed integer with `"Parsed integer: {value}"`

- Uses `println!` macro for formatted output

### Key Observations

- **Overflow Protection**: Explicit checks using `checked_add` and bounds (`i32::MAX`/`i32::MIN`)

- **Whitespace Handling**: Skips leading whitespace before sign detection

- **Command-Line Interface**: Strict argument count validation (requires exactly one input)

- **Rust Safety Features**: Leverages `checked_*` methods for memory-safe operations

### Interpretation

This code demonstrates Rust's emphasis on safety and explicit error handling. The custom `atoi` implementation:

1. **Prevents Buffer Overflow**: By validating input length and character validity

2. **Handles Edge Cases**:

- Leading/trailing whitespace

- Positive/negative signs

- 32-bit integer overflow

3. **Integrates with CLI**: Follows Unix-style argument parsing conventions

The code's structure reflects Rust's ownership model through:

- Immutable references (`&str`)

- Explicit error propagation via `Option` types

- Safe arithmetic operations

Notable design choices include:

- Returning `i32::MAX`/`i32::MIN` for overflow instead of panicking

- Using `checked_add` to avoid undefined behavior

- Clear separation of sign handling and digit accumulation

</details>

(c) Idiomatic Rust translation from unidiomatic Rust

Figure 5: SACTOR translation process for atoi program

We assume that there are numerous end-to-end tests for the C code, allowing SACTOR to use them for verifying the correctness of the translated Rust code.

First, the divider will divide the C code into two parts: the atoi function and the main function, and determine the translation order is first atoi and then main, as atoi is the dependency of main and the atoi function is a pure function.

Next, SACTOR proceeds with the unidiomatic translation, converting both functions into unidiomatic Rust code. This generated code will keep the semantics of the original C code while using Rust syntax. Once the translation is complete, the unidiomatic verifier executes the end-to-end tests to ensure the correctness of the translated function. If the verifier passes all tests, SACTOR considers the unidiomatic translation accurate and progresses to the next function. If any test fails, SACTOR will retry the translation process using the feedback information collected from the verifier, as described in § 4.3. After translating all sections of the C code, SACTOR will combine the unidiomatic Rust code segments to form the final unidiomatic Rust code. The unidiomatic Rust code is shown in Figure 5(b).

Then, the SACTOR will start the idiomatic translation process and translate the unidiomatic Rust code into idiomatic Rust code. The idiomatic translator requests the LLM to adapt the C semantics into idiomatic Rust, eliminating any unsafe and non-idiomatic constructs, as detailed in § 4.2. Based on the same order, the SACTOR will translate two functions accordingly, and using the idiomatic verifier to verify and provide the feedback to the LLM if the verification fails. After all parts of the Rust code are translated into idiomatic Rust, verified, and combined, the SACTOR will produces the final idiomatic Rust code. The idiomatic Rust code is shown in Figure 5(c), representing the final output of SACTOR.

## Appendix F Dataset Details

| TransCoder-IR [transcoderir] | 100 | Removed buggy programs (compilation/memory errors) and entries with existing Rust | Present | 97.97% / 99.5% |

| --- | --- | --- | --- | --- |

| Project CodeNet [codenet] | 100 | Filtered for external-input programs (argc / argv); auto-generated tests | Generated | 94.37% / 100% |

| CRust-Bench [khatry2025crust] | 50 | Excluded unsupported patterns; combine code of each sample to a single lib.c | Present | 76.18% / 80.98% |

| libogg [libogg] | 1 | None. Each component of the library is contained within a single C file. | Present | 83.3% / 75.3% |

Table 4: Summary of datasets and real-world code-bases used for evaluation; coverage audited with gcov on the tests exercised in our pipeline.

### F.1 TransCoder-IR Dataset [transcoderir]

The TransCoder-IR dataset is used to evaluate the TransCoder-IR model and consists of solutions to coding challenges in various programming languages. For evaluation, we focus on the 698 C programs available in this dataset. First, we filter out programs that already have corresponding Rust code. Several C programs in the dataset contain bugs, which are removed by checking their ability to compile. We then use valgrind to identify and discard programs with memory errors during the end-to-end tests. Finally, we select 100 programs with the most lines of code for our experiments.

### F.2 Project CodeNet [codenet]