# Reasoning Effort and Problem Complexity: A Scaling Analysis in LLMs

**Authors**: Benjamin Estermann, ETH Zürich, &Roger Wattenhofer, ETH Zürich

Abstract

Large Language Models (LLMs) have demonstrated remarkable text generation capabilities, and recent advances in training paradigms have led to breakthroughs in their reasoning performance. In this work, we investigate how the reasoning effort of such models scales with problem complexity. We use the infinitely scalable Tents puzzle, which has a known linear-time solution, to analyze this scaling behavior. Our results show that reasoning effort scales with problem size, but only up to a critical problem complexity. Beyond this threshold, the reasoning effort does not continue to increase, and may even decrease. This observation highlights a critical limitation in the logical coherence of current LLMs as problem complexity increases, and underscores the need for strategies to improve reasoning scalability. Furthermore, our results reveal significant performance differences between current state-of-the-art reasoning models when faced with increasingly complex logical puzzles.

1 Introduction

Large language models (LLMs) have demonstrated remarkable abilities in a wide range of natural language tasks, from text generation to complex problem-solving. Recent advances, particularly with models trained for enhanced reasoning, have pushed the boundaries of what machines can achieve in tasks requiring logical inference and deduction.

<details>

<summary>extracted/6290299/Figures/tents.png Details</summary>

### Visual Description

\n

## Grid Diagram: Distribution of Trees and Triangles

### Overview

The image presents a 6x6 grid containing two types of symbols: green trees and yellow triangles. The grid is annotated with numerical values along both the horizontal and vertical axes. The arrangement appears to represent a distribution or mapping of these symbols across a two-dimensional space.

### Components/Axes

* **Grid:** A 6x6 square grid.

* **Symbols:** Green tree shapes and yellow triangle shapes.

* **Horizontal Axis:** Labeled with numbers 1, 1, 1, 2, 0, 2.

* **Vertical Axis:** Labeled with numbers 1, 1, 2, 0, 0, 3.

* **Grid Cells:** Each cell contains either a tree, a triangle, or is empty.

### Detailed Analysis

The grid can be described as follows, referencing row and column indices (starting from 1):

* **(1,1):** Tree

* **(1,2):** Triangle

* **(1,6):** Tree

* **(2,1):** Tree

* **(2,4):** Triangle

* **(3,3):** Tree

* **(4,2):** Tree

* **(4,5):** Tree

* **(5,1):** Tree

* **(5,3):** Triangle

* **(5,6):** Tree

* **(6,1):** Triangle

* **(6,3):** Triangle

* **(6,6):** Triangle

The number of trees is 8.

The number of triangles is 5.

The number of empty cells is 29.

### Key Observations

* The distribution of trees and triangles is not uniform across the grid.

* The bottom row (row 6) has a higher concentration of triangles.

* The first column (column 1) has a mix of trees and triangles.

* The values on the horizontal and vertical axes do not appear to directly correlate with the placement of the symbols. They seem to be independent labels.

### Interpretation

The image likely represents a spatial distribution of two categories (trees and triangles) within a defined area. The numerical labels on the axes could represent coordinates, categories, or other relevant variables. The arrangement suggests a non-random pattern, potentially indicating a relationship between the location and the type of symbol. Without further context, it's difficult to determine the specific meaning of this distribution. It could represent anything from a simplified map of vegetation and geological features to a visualization of data points in a statistical analysis. The lack of a clear legend or explanation makes a definitive interpretation challenging. The values on the axes are not directly related to the number of trees or triangles in each row or column. They are simply labels.

</details>

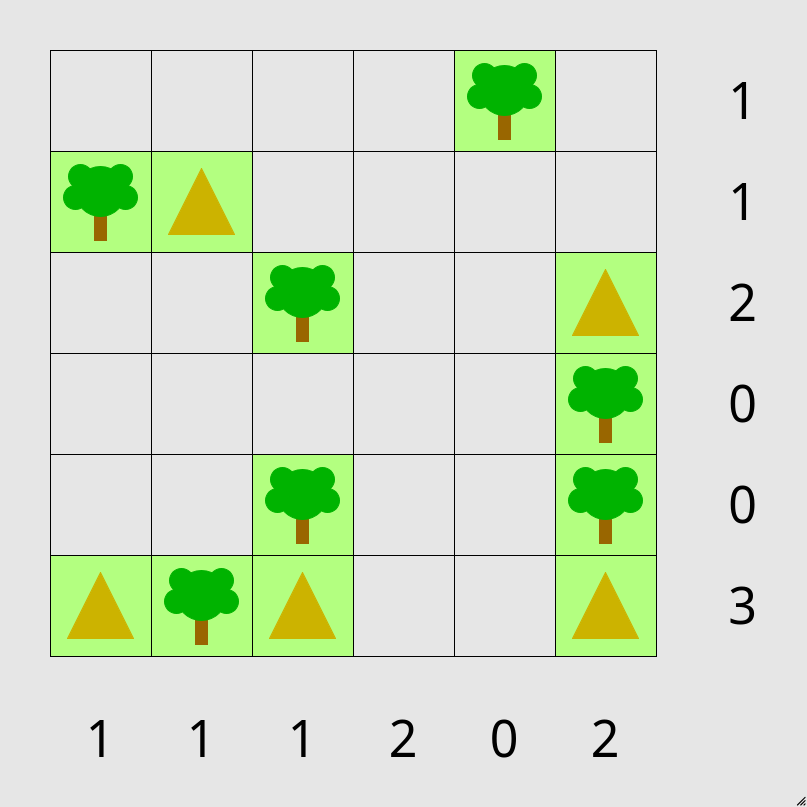

Figure 1: An example instance of a partially solved 6 by 6 tents puzzle. Tents need to be placed next to trees, away from other tents and fulfilling the row and column constraints.

A critical factor in the success of these advanced models is the ability to leverage increased computational resources at test time, allowing them to explore more intricate solution spaces. This capability raises a fundamental question: how does the ”reasoning effort” of these models scale as the complexity of the problem increases?

Understanding this scaling relationship is crucial for several reasons. First, it sheds light on the fundamental nature of reasoning within LLMs, moving beyond simply measuring accuracy on isolated tasks. By examining how the computational demands, reflected in token usage, evolve with problem difficulty, we can gain insights into the efficiency and potential bottlenecks of current LLM architectures. Second, characterizing this scaling behavior is essential for designing more effective and resource-efficient reasoning models in the future.

In this work, we address this question by investigating the scaling of reasoning effort in LLMs using a specific, infinitely scalable logic puzzle: the Tents puzzle The puzzle is available to play in the browser at https://www.chiark.greenend.org.uk/~sgtatham/puzzles/js/tents.html (see Figure 1). This puzzle offers a controlled environment for studying algorithmic reasoning, as its problem size can be systematically increased, and it possesses a known linear-time solution. Our analysis focuses on how the number of tokens used by state-of-the-art reasoning LLMs changes as the puzzle grid size grows. In addition to reasoning effort, we also evaluate the success rate across different puzzle sizes to provide a comprehensive view of their performance.

2 Related Work

The exploration of reasoning abilities in large language models (LLMs) is a rapidly evolving field with significant implications for artificial intelligence. Several benchmarks have been developed to evaluate the reasoning capabilities of LLMs across various domains. These benchmarks provide standardized tasks and evaluation metrics to assess and compare different models. Notable benchmarks include GSM8K (Cobbe et al., 2021), ARC-AGI (Chollet, 2019), GPQA (Rein et al., 2023), MMLU (Hendrycks et al., 2020), SWE-bench (Jimenez et al., 2023) and NPhard-eval (Fan et al., 2023). These benchmarks cover topics from mathematics to commonsense reasoning and coding. More recently, also math competitions such as AIME2024 (of America, 2024) have been used to evaluate the newest models. Estermann et al. (2024) have introduced PUZZLES, a benchmark focusing on algorithmic and logical reasoning for reinforcement learning. While PUZZLES does not focus on LLMs, except for a short ablation in the appendix, we argue that the scalability provided by the underlying puzzles is an ideal testbed for testing extrapolative reasoning capabilities in LLMs.

The reasoning capabilities of traditional LLMs without specific prompting strategies are quite limited (Huang & Chang, 2022). Using prompt techniques such as chain-of-thought (Wei et al., 2022), least-to-most (Zhou et al., 2022) and tree-of-thought (Yao et al., 2023), the reasoning capabilities of traditional LLMs can be greatly improved. Lee et al. (2024) have introduced the Language of Thought Hypothesis, based on the idea that human reasoning is rooted in language. Lee et al. propose to see the reasoning capabilities through three different properties: Logical coherence, compositionality and productivity. In this work we will mostly focus on the aspect of algorithmic reasoning, which falls into logical coherence. Specifically, we analyze the limits of logical coherence.

With the release of OpenAI’s o1 model, it became apparent that new training strategies based on reinforcement learning are able to boost the reasoning performance even further. Since o1, there now exist a number of different models capable of enhanced reasoning (Guo et al., 2025; DeepMind, 2025; Qwen, 2024; OpenAI, 2025). Key to the success of these models is the scaling of test-time compute. Instead of directly producing an answer, or thinking for a few steps using chain-of-thought, the models are now trained to think using several thousands of tokens before coming up with an answer.

3 Methods

3.1 The Tents Puzzle Problem

In this work, we employ the Tents puzzle, a logic problem that is both infinitely scalable and solvable in linear time See a description of the algorithm of the solver as part of the PUZZLES benchmark here: https://github.com/ETH-DISCO/rlp/blob/main/puzzles/tents.c#L206C3-L206C67, making it an ideal testbed for studying algorithmic reasoning in LLMs. The Tents puzzle, popularized by Simon Tatham’s Portable Puzzle Collection (Tatham, ), requires deductive reasoning to solve. The puzzle is played on a rectangular grid, where some cells are pre-filled with trees. The objective is to place tents in the remaining empty cells according to the following rules:

- no two tents are adjacent, even diagonally

- there are exactly as many tents as trees and the number of tents in each row and column matches the numbers around the edge of the grid

- it is possible to match all tents to trees so that each tent is orthogonally adjacent to its own tree (a tree may also be adjacent to other tents).

An example instance of the Tents puzzle is visualized in Figure 1 in the Introduction. The scalability of the puzzle is achieved by varying the grid dimensions, allowing for systematic exploration of problem complexity. Where not otherwise specified, we used the ”easy” difficulty preset available in the Tents puzzle generator, with ”tricky” being evaluated in Section A.2.1. Crucially, the Tents puzzle is designed to test extrapolative reasoning as puzzle instances, especially larger ones, are unlikely to be present in the training data of LLMs. We utilized a text-based interface for the Tents puzzle, extending the code base provided by Estermann et al. (2024) to generate puzzle instances and represent the puzzle state in a format suitable for LLMs.

Models were presented with the same prompt (detailed in Appendix A.1) for all puzzle sizes and models tested. The prompt included the puzzle rules and the initial puzzle state in a textual format. Models were tasked with directly outputting the solved puzzle grid in JSON format. This one-shot approach contrasts with interactive or cursor-based approaches previously used in (Estermann et al., 2024), isolating the reasoning process from potential planning or action selection complexities.

3.2 Evaluation Criteria

Our evaluation focuses on two key metrics: success rate and reasoning effort. Success is assessed as a binary measure: whether the LLM successfully outputs a valid solution to the Tents puzzle instance, adhering to all puzzle rules and constraints. We quantify problem complexity by the problem size, defined as the product of the grid dimensions (rows $×$ columns). To analyze the scaling of reasoning effort, we measure the total number of tokens generated by the LLMs to produce the final answer, including all thinking tokens. We hypothesize a linear scaling relationship between problem size and reasoning effort, and evaluate this hypothesis by fitting a linear model to the observed token counts as a function of problem size. The goodness of fit is quantified using the $R^{2}$ metric, where scores closer to 1 indicate that a larger proportion of the variance in reasoning effort is explained by a linear relationship with problem size. Furthermore, we analyze the percentage of correctly solved puzzles across different problem sizes to assess the performance limits of each model.

3.3 Considered Models

We evaluated the reasoning performance of the following large language models known for their enhanced reasoning capabilities: Gemini 2.0 Flash Thinking (DeepMind, 2025), OpenAI o3-mini (OpenAI, 2025), DeepSeek R1 Guo et al. (2025), and Qwen/QwQ-32B-Preview Qwen (2024).

4 Results

<details>

<summary>x1.png Details</summary>

### Visual Description

## Scatter Plot: Reasoning Tokens vs. Problem Size

### Overview

This image presents a scatter plot comparing the number of reasoning tokens generated by different language models (deepseek/deepseek-r1, o3-mini, and qwen/qawq-32b-preview) as a function of problem size. Each model is represented by a different color and shape, with trend lines indicating the overall relationship between problem size and reasoning tokens. R-squared values are provided for each trend line, indicating the goodness of fit.

### Components/Axes

* **X-axis:** Problem Size (ranging from approximately 15 to 85)

* **Y-axis:** Reasoning Tokens (ranging from approximately 2000 to 22000)

* **Legend:** Located in the top-left corner.

* **deepseek/deepseek-r1:** Represented by blue circles.

* **deepseek/deepseek-r1 fit (R2: 0.667):** Represented by a solid blue line.

* **o3-mini:** Represented by orange squares.

* **o3-mini fit (R2: 0.833):** Represented by a dashed orange line.

* **qwen/qawq-32b-preview:** Represented by green triangles.

* **qwen/qawq-32b-preview fit (R2: 0.087):** Represented by a dashed green line.

* **Gridlines:** Present to aid in visual estimation of data point values.

### Detailed Analysis

**deepseek/deepseek-r1 (Blue Circles & Line):**

The blue line slopes generally upward, indicating a positive correlation between problem size and reasoning tokens.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 6000.

* At Problem Size ≈ 30, Reasoning Tokens ≈ 7000.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 8500.

* At Problem Size ≈ 50, Reasoning Tokens ≈ 9500.

* At Problem Size ≈ 60, Reasoning Tokens ≈ 10500.

* At Problem Size ≈ 70, Reasoning Tokens ≈ 12000.

* At Problem Size ≈ 80, Reasoning Tokens ≈ 14000.

**o3-mini (Orange Squares & Line):**

The orange line also slopes upward, but with a steeper gradient than the blue line.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 3000.

* At Problem Size ≈ 30, Reasoning Tokens ≈ 4500.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 6500.

* At Problem Size ≈ 50, Reasoning Tokens ≈ 8500.

* At Problem Size ≈ 60, Reasoning Tokens ≈ 11000.

* At Problem Size ≈ 70, Reasoning Tokens ≈ 14000.

* At Problem Size ≈ 80, Reasoning Tokens ≈ 18000.

**qwen/qawq-32b-preview (Green Triangles & Line):**

The green line is relatively flat, indicating a weak correlation between problem size and reasoning tokens.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 4000.

* At Problem Size ≈ 30, Reasoning Tokens ≈ 4500.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 5000.

* At Problem Size ≈ 50, Reasoning Tokens ≈ 6000.

* At Problem Size ≈ 60, Reasoning Tokens ≈ 8000.

* At Problem Size ≈ 70, Reasoning Tokens ≈ 11000.

* At Problem Size ≈ 80, Reasoning Tokens ≈ 16000.

### Key Observations

* The o3-mini model exhibits the highest number of reasoning tokens for larger problem sizes.

* The qwen/qawq-32b-preview model shows the least sensitivity to problem size, with a relatively constant number of reasoning tokens.

* The R-squared values indicate that the o3-mini model has the best fit to its trend line (0.833), while the qwen/qawq-32b-preview model has a very poor fit (0.087). This suggests that the relationship between problem size and reasoning tokens is not linear for the qwen model.

* There is significant scatter in the data points for all models, indicating that other factors besides problem size may influence the number of reasoning tokens.

### Interpretation

The data suggests that different language models scale differently with problem size in terms of reasoning token usage. The o3-mini model appears to be the most computationally expensive for larger problems, generating significantly more reasoning tokens than the other models. Conversely, the qwen/qawq-32b-preview model is the most efficient, maintaining a relatively constant level of reasoning token usage regardless of problem size. The R-squared values provide a quantitative measure of how well each model's trend line fits the data, with higher values indicating a stronger relationship between problem size and reasoning tokens. The scatter in the data suggests that the relationship is not perfectly linear and that other factors, such as the specific characteristics of the problem, may also play a role. This information is valuable for understanding the computational costs associated with using these models for different types of tasks and for selecting the most appropriate model for a given application. The low R2 value for qwen suggests that a linear model is not appropriate for describing its behavior, and a different functional form might be needed.

</details>

(a)

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Success Rate vs. Problem Size for Different Models

### Overview

This line chart depicts the success rate of four different models (deepseek/deepseek-r1, o3-mini, gemini-2.0-flash-thinking-exp-01-21, and qwen/qwq-32b-preview) as a function of problem size. The x-axis represents the problem size, and the y-axis represents the success rate in percentage.

### Components/Axes

* **X-axis Title:** "Problem Size" - Scale ranges from approximately 10 to 120.

* **Y-axis Title:** "Success Rate (%)" - Scale ranges from 0 to 100.

* **Legend:** Located in the top-right corner of the chart.

* **deepseek/deepseek-r1:** Solid blue line.

* **o3-mini:** Dashed orange line.

* **gemini-2.0-flash-thinking-exp-01-21:** Dashed green line.

* **qwen/qwq-32b-preview:** Dotted red line.

### Detailed Analysis

* **deepseek/deepseek-r1 (Blue Line):** This line starts at approximately 10% success rate at a problem size of 10, increases to approximately 95% at a problem size of 30, then sharply declines to approximately 0% at a problem size of 60, and remains at 0% for the rest of the range.

* **o3-mini (Orange Dashed Line):** This line begins at approximately 70% success rate at a problem size of 10, remains relatively stable around 95-100% until a problem size of 60, then decreases to approximately 40% at a problem size of 80, and then drops to approximately 0% at a problem size of 100.

* **gemini-2.0-flash-thinking-exp-01-21 (Green Dashed Line):** This line starts at approximately 0% success rate at a problem size of 10, rises to approximately 90% at a problem size of 20, then quickly drops to approximately 0% at a problem size of 40, and remains at 0% for the rest of the range.

* **qwen/qwq-32b-preview (Red Dotted Line):** This line starts at approximately 65% success rate at a problem size of 10, increases to approximately 98% at a problem size of 20, then decreases to approximately 35% at a problem size of 80, and then rises to approximately 65% at a problem size of 80, and then drops to approximately 0% at a problem size of 100.

### Key Observations

* The `deepseek/deepseek-r1` model exhibits a very sharp decline in success rate after a problem size of 30.

* The `o3-mini` model maintains a high success rate for smaller problem sizes (up to 60) but degrades rapidly beyond that.

* The `gemini-2.0-flash-thinking-exp-01-21` model shows a quick rise and fall in success rate, peaking around a problem size of 20.

* The `qwen/qwq-32b-preview` model has the most volatile performance, with significant fluctuations in success rate across different problem sizes.

### Interpretation

The chart demonstrates the scalability of different models in solving problems of varying sizes. The success rate is a metric of how well each model performs on the given task as the complexity (represented by problem size) increases.

* **deepseek/deepseek-r1** appears to be effective only for very small problem sizes. Its rapid decline suggests it struggles with increased complexity.

* **o3-mini** is robust for problems up to size 60, but becomes ineffective beyond that point. This indicates a limitation in its capacity to handle larger problems.

* **gemini-2.0-flash-thinking-exp-01-21** shows a quick initial success, but quickly becomes unreliable as the problem size increases. This could be due to overfitting or a lack of generalization ability.

* **qwen/qwq-32b-preview** exhibits the most complex behavior. Its fluctuating success rate suggests it may be sensitive to specific problem characteristics or have a more nuanced relationship with problem size. The initial high success rate, followed by a dip and then a rise, could indicate a learning curve or adaptation process.

The differences in performance highlight the importance of selecting a model appropriate for the expected problem size. The chart suggests that no single model consistently outperforms the others across all problem sizes. The optimal choice depends on the specific application and the range of problem sizes encountered.

</details>

(b)

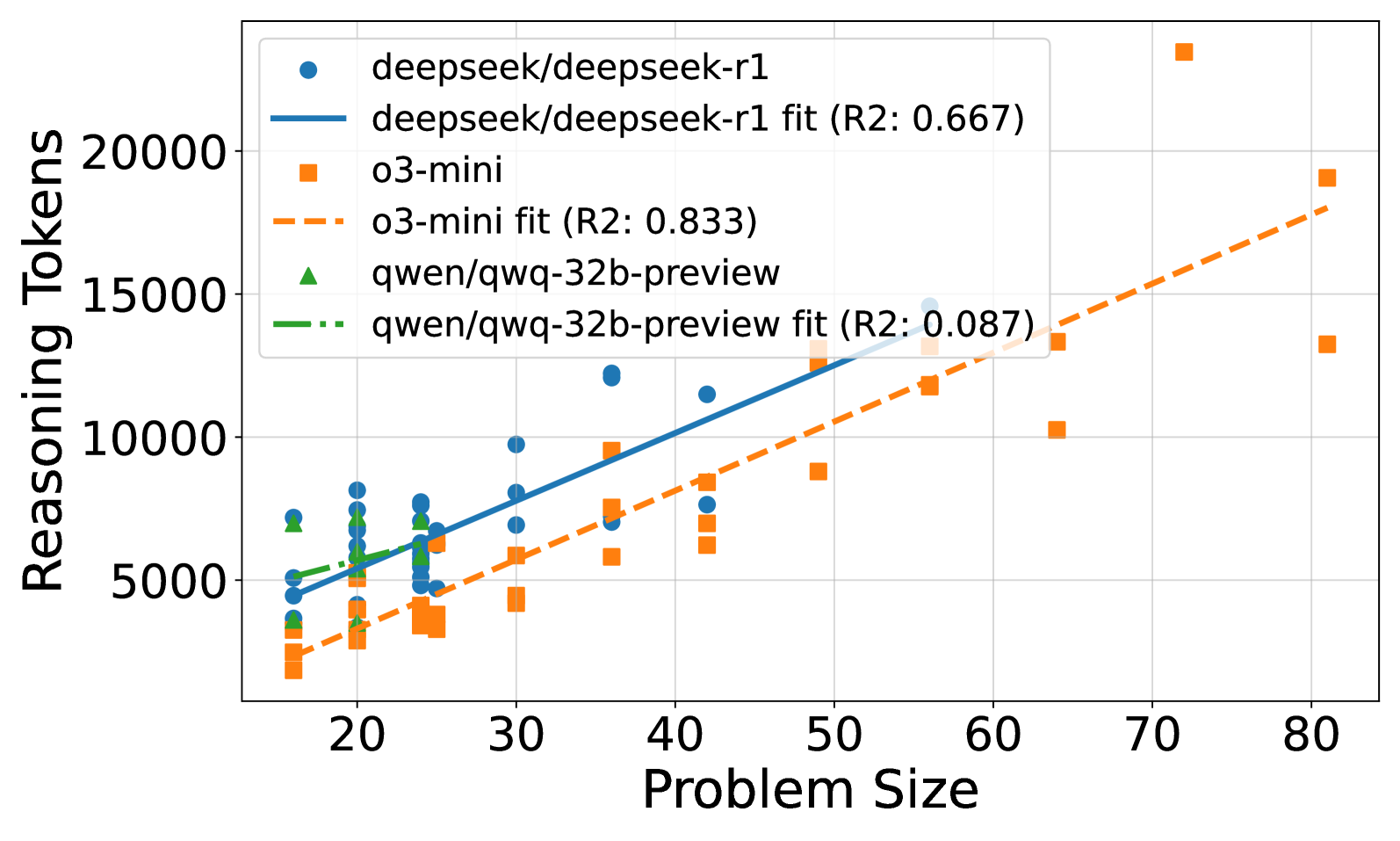

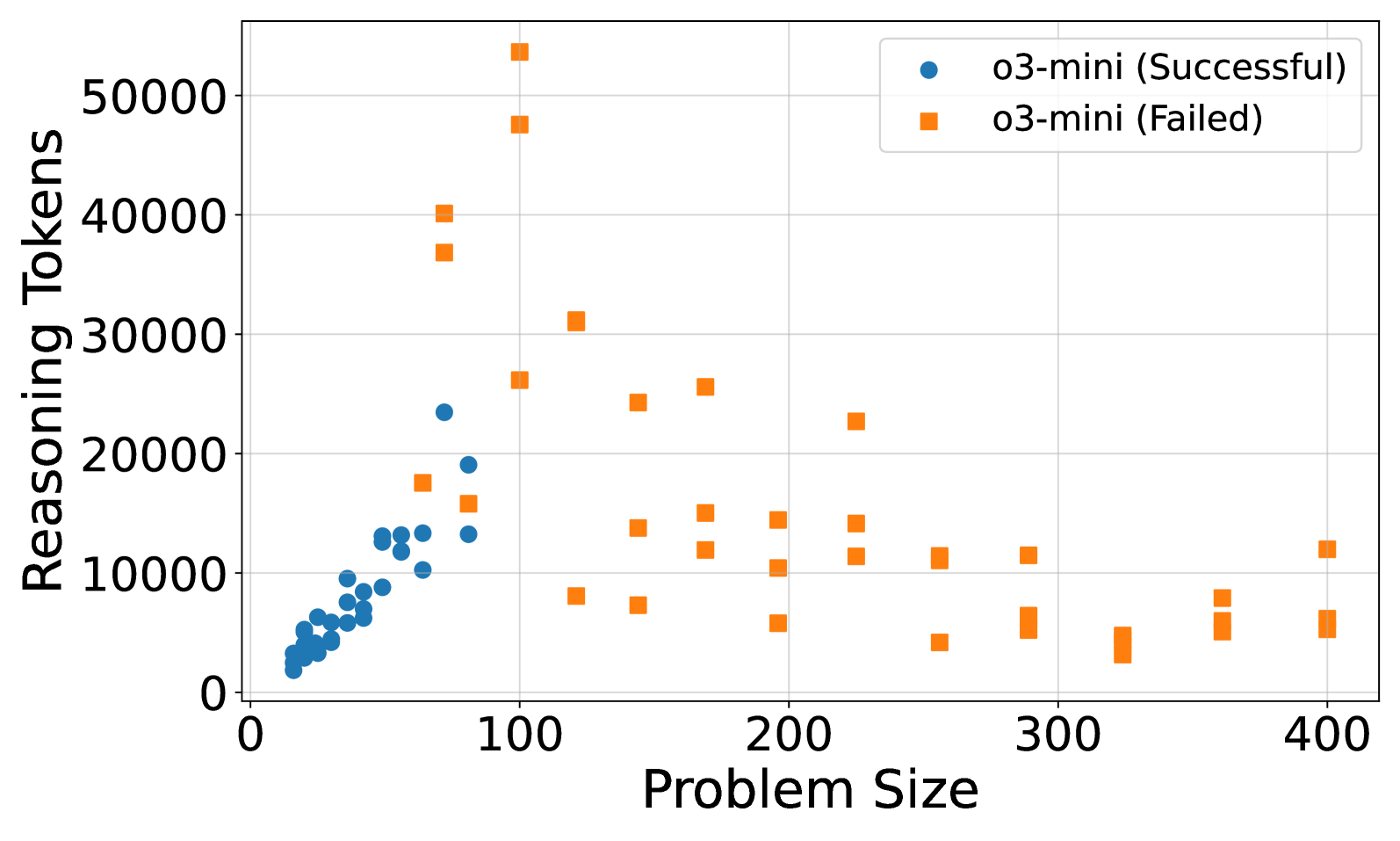

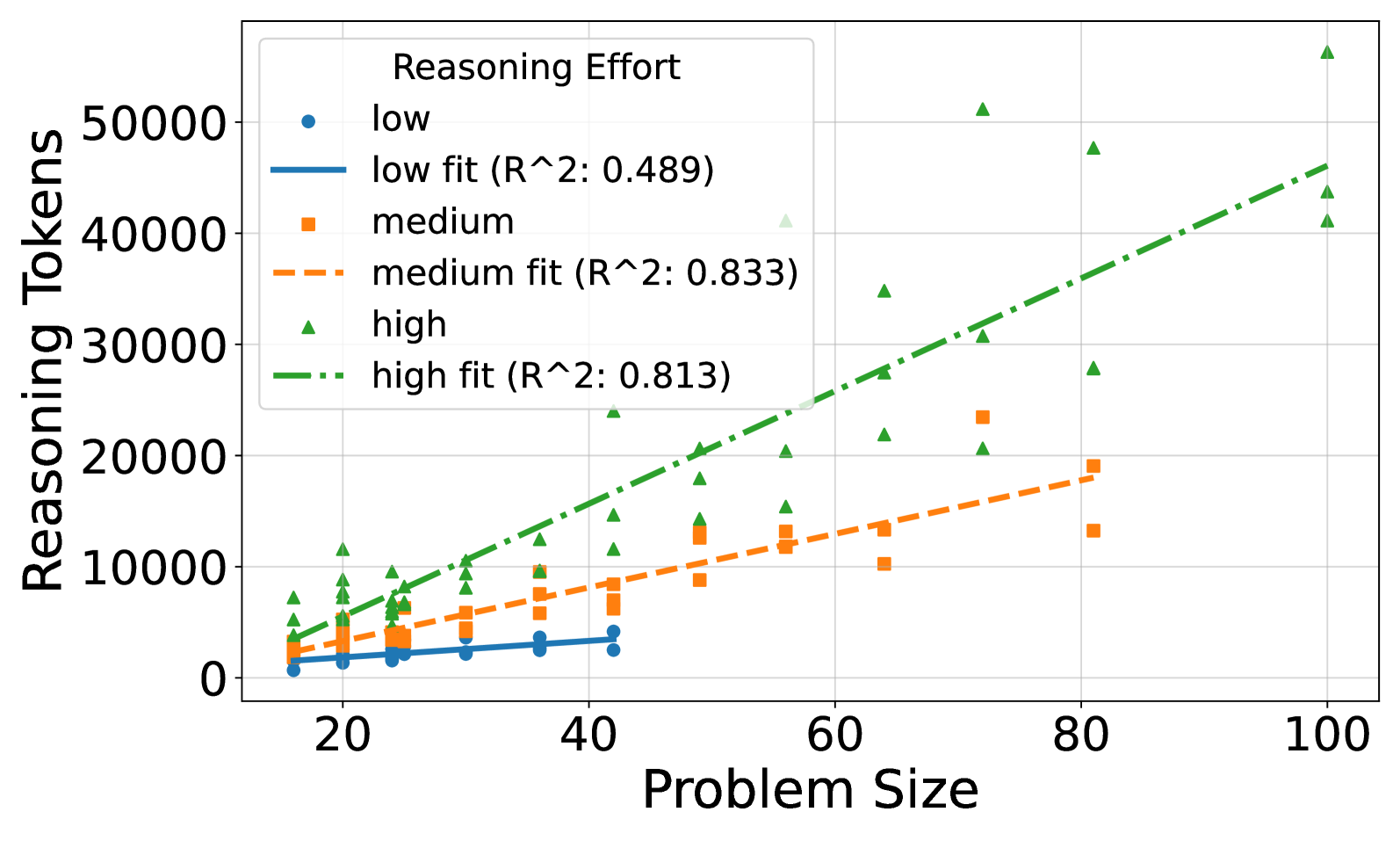

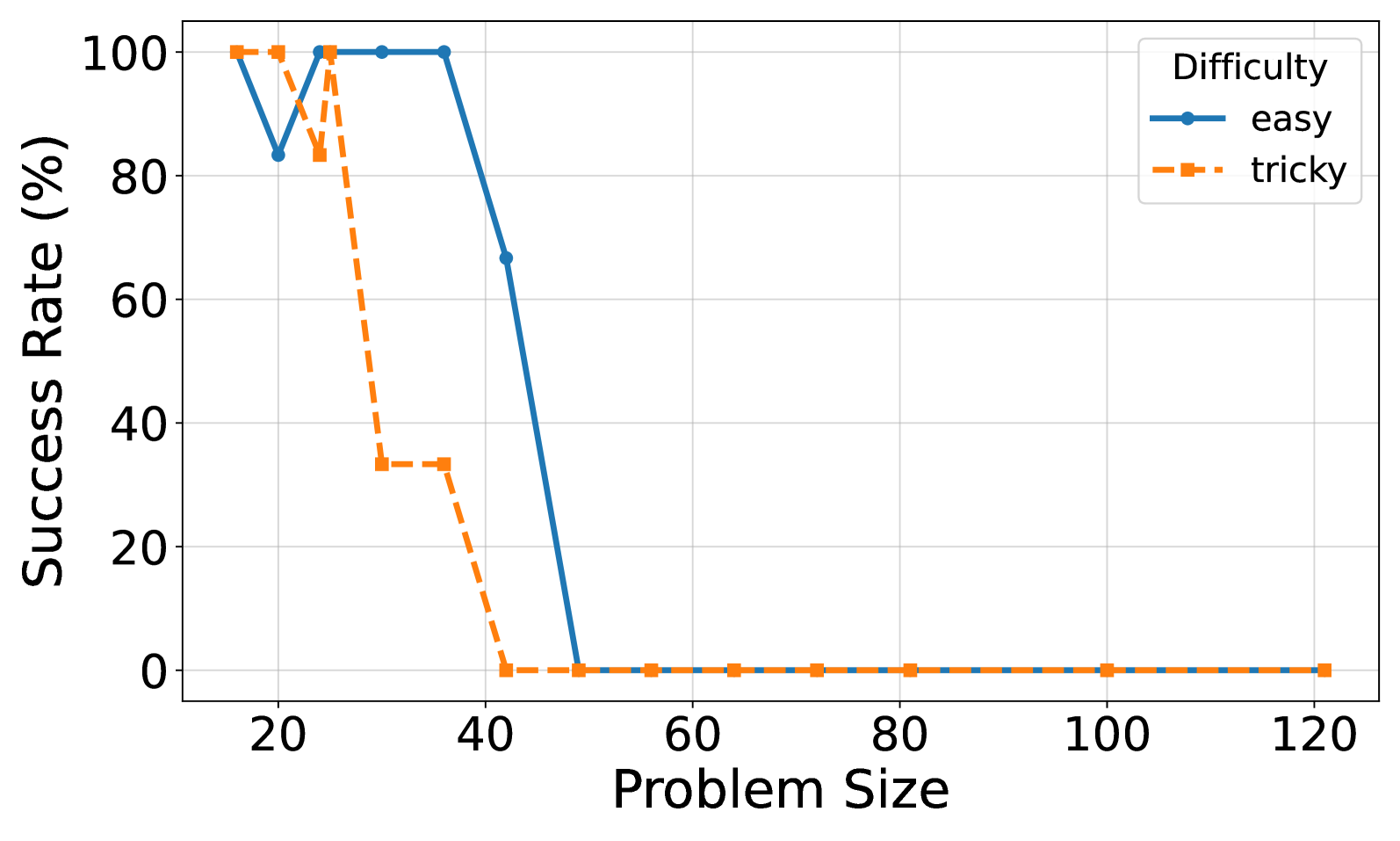

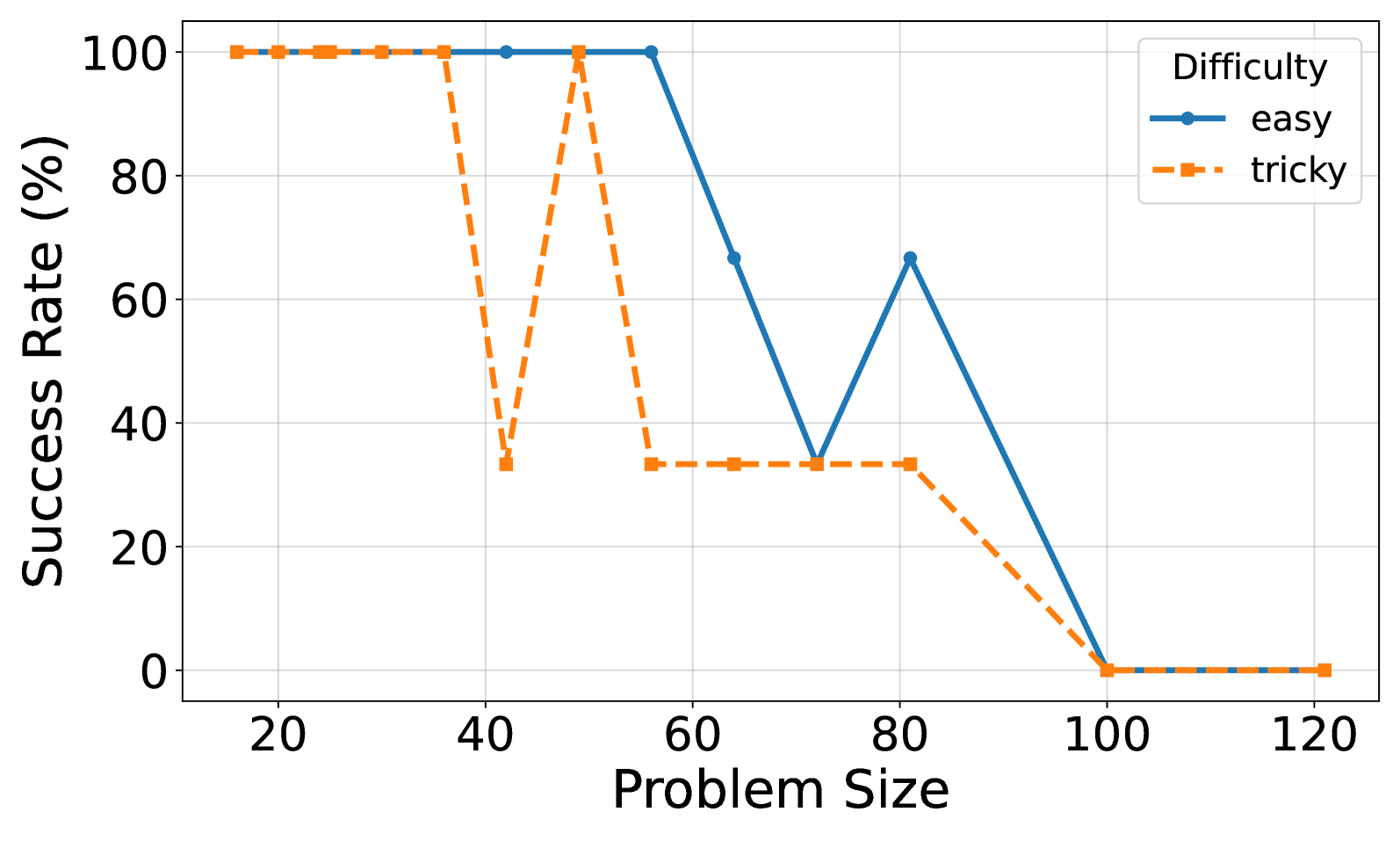

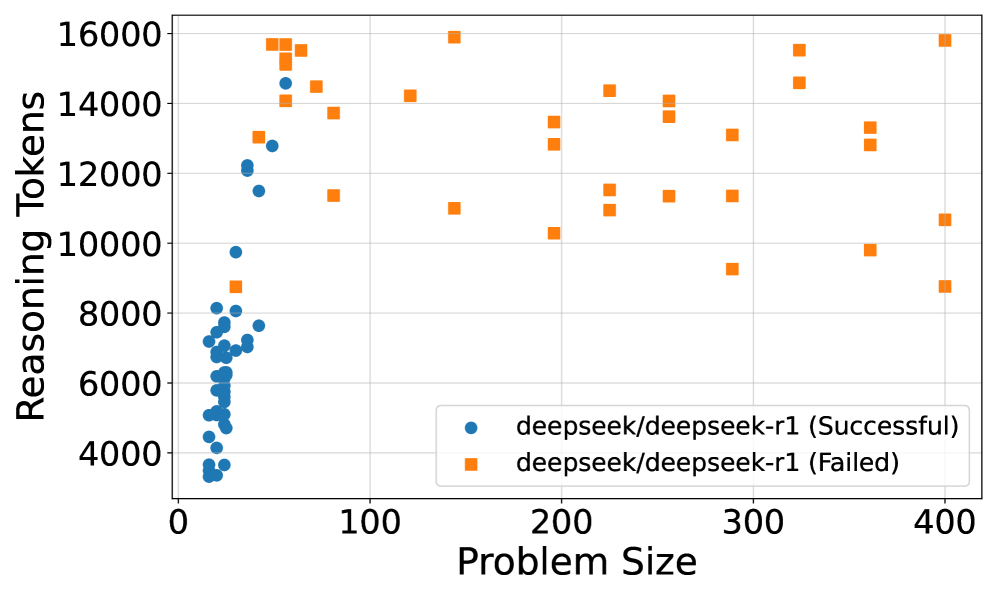

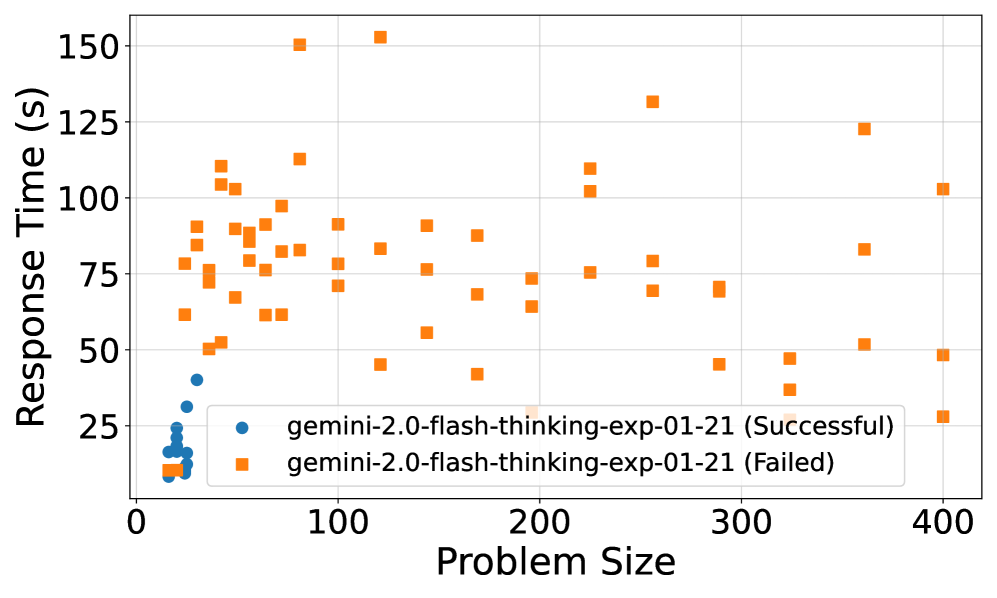

Figure 2: (a) Reasoning effort in number of reasoning tokens versus problem size for DeepSeek R1, o3-mini, and Qwen/QwQ-32B-Preview. Successful attempts only. Linear fits are added for each model. Gemini 2.0 Flash Thinking is excluded due to unknown number of thinking tokens. (b) Solved percentage versus problem size for all models. No model solved problems larger than size 100. o3-mini achieves the highest success rate, followed by DeepSeek R1 and Gemini 2.0 Flash Thinking. Qwen/QwQ-32B-Preview struggles with problem instances larger than size 20.

The relationship between reasoning effort and problem size reveals interesting scaling behaviors across the evaluated models. Figure 2(a) illustrates the scaling of reasoning effort, measured by the number of reasoning tokens, as the problem size increases for successfully solved puzzles. For DeepSeek R1 and o3-mini, we observe a roughly linear increase in reasoning effort with problem size. Notably, the slopes of the linear fits for R1 and o3-mini are very similar, suggesting comparable scaling behavior in reasoning effort for these models, although DeepSeek R1 consistently uses more tokens than o3-mini across problem sizes. Qwen/QwQ-32B-Preview shows a weaker linear correlation, likely due to the limited number of larger puzzles it could solve successfully.

The problem-solving capability of the models, shown in Figure 2(b), reveals performance limits as problem size increases. None of the models solved puzzles with a problem size exceeding 100. o3-mini demonstrates the highest overall solvability, managing to solve the largest problem instances, followed by DeepSeek R1 and Gemini 2.0 Flash Thinking. Qwen/QwQ-32B-Preview’s performance significantly degrades with increasing problem size, struggling to solve instances larger than 25.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Scatter Plot: Reasoning Tokens vs. Problem Size

### Overview

This image presents a scatter plot visualizing the relationship between "Problem Size" and "Reasoning Tokens" for two categories: successful and failed attempts, both labeled as "o3-mini". The plot aims to show how the number of reasoning tokens used changes with the size of the problem, and whether success or failure correlates with token usage.

### Components/Axes

* **X-axis:** "Problem Size" - ranging from approximately 0 to 420.

* **Y-axis:** "Reasoning Tokens" - ranging from 0 to 52000.

* **Legend:** Located in the top-right corner.

* Blue circles: "o3-mini (Successful)"

* Orange squares: "o3-mini (Failed)"

* **Grid:** A light gray grid is present, aiding in the reading of values.

### Detailed Analysis

The plot contains two distinct data series: successful and failed "o3-mini" attempts.

**Successful Attempts (Blue Circles):**

The trend for successful attempts is generally upward, but with significant variation.

* At a Problem Size of approximately 10, Reasoning Tokens are around 2000.

* As Problem Size increases to around 80, Reasoning Tokens increase to approximately 22000.

* Around a Problem Size of 100, Reasoning Tokens reach a peak of around 25000.

* From Problem Size 100 to 400, Reasoning Tokens fluctuate between 5000 and 15000, with a general decreasing trend.

**Failed Attempts (Orange Squares):**

The trend for failed attempts is more scattered and generally shows a decrease in Reasoning Tokens as Problem Size increases.

* At a Problem Size of approximately 10, Reasoning Tokens are around 1000.

* Between Problem Sizes of 50 and 150, Reasoning Tokens vary widely, ranging from approximately 8000 to 50000.

* From Problem Size 200 to 400, Reasoning Tokens generally decrease, fluctuating between 5000 and 10000.

* There is a notable outlier at a Problem Size of approximately 120, with Reasoning Tokens around 52000.

### Key Observations

* **Positive Correlation (Successful):** There's a positive correlation between Problem Size and Reasoning Tokens for successful attempts, up to a certain point (around Problem Size 100). Beyond that, the correlation weakens.

* **Negative Correlation (Failed):** There's a general negative correlation between Problem Size and Reasoning Tokens for failed attempts, though it's less consistent.

* **Outlier:** The failed attempt at a Problem Size of approximately 120 with 52000 Reasoning Tokens is a significant outlier.

* **Token Usage:** Successful attempts generally use fewer tokens than failed attempts for larger problem sizes.

### Interpretation

The data suggests that for smaller problem sizes, successful "o3-mini" attempts require more reasoning tokens. This could indicate that the algorithm needs to explore more possibilities to find a solution when the problem is relatively simple. However, as the problem size increases, the number of tokens needed for success decreases, potentially because the problem becomes more constrained or the algorithm converges more quickly.

The failed attempts show a more erratic pattern, with a high outlier suggesting a case where the algorithm spent a significant amount of resources without finding a solution. The general decrease in token usage for failed attempts with increasing problem size could indicate that the algorithm gives up more quickly on larger problems, or that the search space becomes less navigable.

The difference in token usage between successful and failed attempts, particularly for larger problem sizes, suggests that there's a threshold of reasoning effort beyond which the algorithm is unlikely to succeed. The data could be used to optimize the algorithm's resource allocation, potentially by setting a maximum token limit or by dynamically adjusting the search strategy based on problem size.

</details>

(a)

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Scatter Plot: Reasoning Tokens vs. Problem Size

### Overview

This image presents a scatter plot illustrating the relationship between "Problem Size" (on the x-axis) and "Reasoning Tokens" (on the y-axis) for three different levels of "Reasoning Effort" (low, medium, and high). Each effort level is represented by a different color and a corresponding trendline. The plot aims to demonstrate how the amount of reasoning required (measured in tokens) scales with the complexity of the problem, categorized by the level of effort involved.

### Components/Axes

* **X-axis:** "Problem Size" - Scale ranges from approximately 15 to 105, with markings at 20, 40, 60, 80, and 100.

* **Y-axis:** "Reasoning Tokens" - Scale ranges from 0 to 55,000, with markings at 0, 10,000, 20,000, 30,000, 40,000, and 50,000.

* **Legend:** Located in the top-right corner.

* "low" - Represented by blue circles.

* "medium" - Represented by orange squares.

* "high" - Represented by green triangles.

* **Trendlines:**

* "low fit (R^2: 0.489)" - Solid blue line.

* "medium fit (R^2: 0.833)" - Dashed orange line.

* "high fit (R^2: 0.813)" - Dashed green line.

* **Data Points:** Scatter points representing individual data instances for each reasoning effort level.

### Detailed Analysis

**Low Reasoning Effort (Blue):**

The blue data points are scattered relatively close to the x-axis, with most values between 0 and 5,000 tokens. The trendline is nearly flat, indicating a minimal increase in reasoning tokens with increasing problem size.

* At Problem Size = 20, Reasoning Tokens ≈ 1,000

* At Problem Size = 40, Reasoning Tokens ≈ 2,000

* At Problem Size = 60, Reasoning Tokens ≈ 3,000

* At Problem Size = 80, Reasoning Tokens ≈ 3,000

* At Problem Size = 100, Reasoning Tokens ≈ 4,000

**Medium Reasoning Effort (Orange):**

The orange data points show a more pronounced upward trend than the low effort level. The trendline is steeper, indicating a more significant increase in reasoning tokens with increasing problem size.

* At Problem Size = 20, Reasoning Tokens ≈ 4,000

* At Problem Size = 40, Reasoning Tokens ≈ 7,000

* At Problem Size = 60, Reasoning Tokens ≈ 12,000

* At Problem Size = 80, Reasoning Tokens ≈ 22,000

* At Problem Size = 100, Reasoning Tokens ≈ 28,000

**High Reasoning Effort (Green):**

The green data points exhibit the strongest upward trend, with values ranging from approximately 5,000 to 50,000 tokens. The trendline is the steepest, indicating a substantial increase in reasoning tokens with increasing problem size.

* At Problem Size = 20, Reasoning Tokens ≈ 7,000

* At Problem Size = 40, Reasoning Tokens ≈ 15,000

* At Problem Size = 60, Reasoning Tokens ≈ 25,000

* At Problem Size = 80, Reasoning Tokens ≈ 40,000

* At Problem Size = 100, Reasoning Tokens ≈ 52,000

The R^2 values indicate the goodness of fit for each trendline: 0.489 for low, 0.833 for medium, and 0.813 for high. Higher R^2 values suggest a stronger linear relationship between problem size and reasoning tokens.

### Key Observations

* The relationship between problem size and reasoning tokens is strongly influenced by the reasoning effort level.

* The "low" effort level shows the weakest correlation (lowest R^2 value) and minimal increase in reasoning tokens with problem size.

* The "medium" and "high" effort levels exhibit strong correlations (high R^2 values) and significant increases in reasoning tokens with problem size.

* The "high" effort level consistently requires the most reasoning tokens for any given problem size.

* There is some scatter in the data points around the trendlines, indicating variability in the reasoning token requirements for individual problems within each effort level.

### Interpretation

The data suggests that the amount of reasoning required to solve a problem increases with both the problem's size and the level of reasoning effort applied. The R^2 values indicate that the relationship is more linear and predictable for medium and high reasoning effort levels. The low effort level shows a weaker relationship, possibly because simpler strategies are employed that are less sensitive to problem size.

The increasing trendlines for medium and high effort levels suggest that as problems become more complex, more sophisticated reasoning processes are needed, leading to a greater demand for reasoning tokens. This could be due to the need for more steps, more complex calculations, or more extensive search through possible solutions.

The scatter around the trendlines indicates that there is inherent variability in the reasoning process. Even for problems of the same size and effort level, the exact number of reasoning tokens required can vary depending on the specific problem instance and the approach taken. This variability highlights the complexity of the reasoning process and the challenges in accurately predicting its resource requirements. The data could be used to estimate the computational resources needed for solving problems of different sizes and complexities, depending on the desired level of reasoning effort.

</details>

(b)

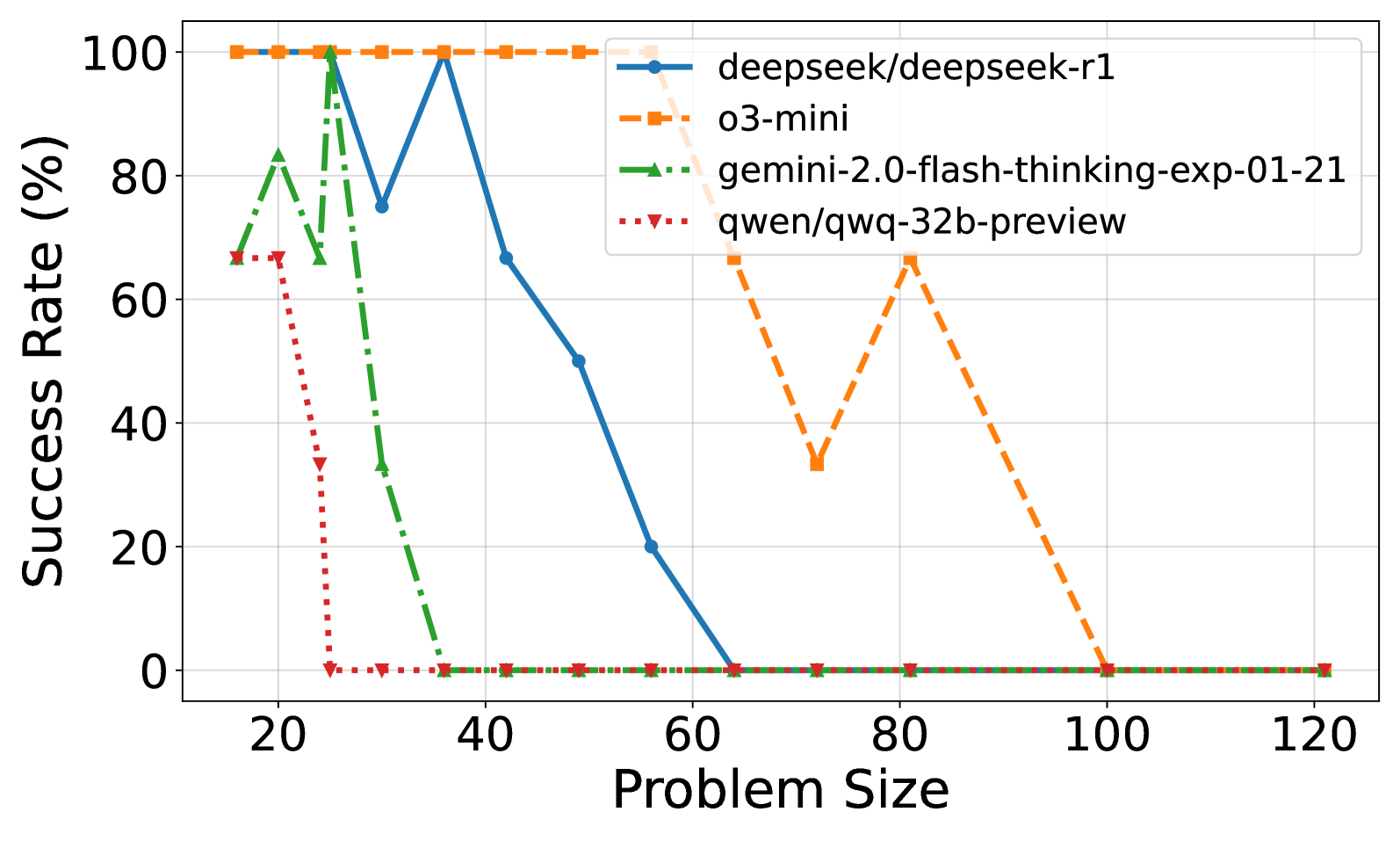

Figure 3: (a) Reasoning effort in number of reasoning tokens versus problem size for o3-mini. A peak in reasoning effort is observed around problem size 100, followed by a decline for larger problem sizes. (b) Reasoning effort in number of reasoning tokens versus problem size for o3-mini, categorized by low, medium, and high reasoning effort strategies. Steeper slopes are observed for higher reasoning effort strategies. High reasoning effort enables solving larger instances but also increases token usage for smaller, already solvable problems.

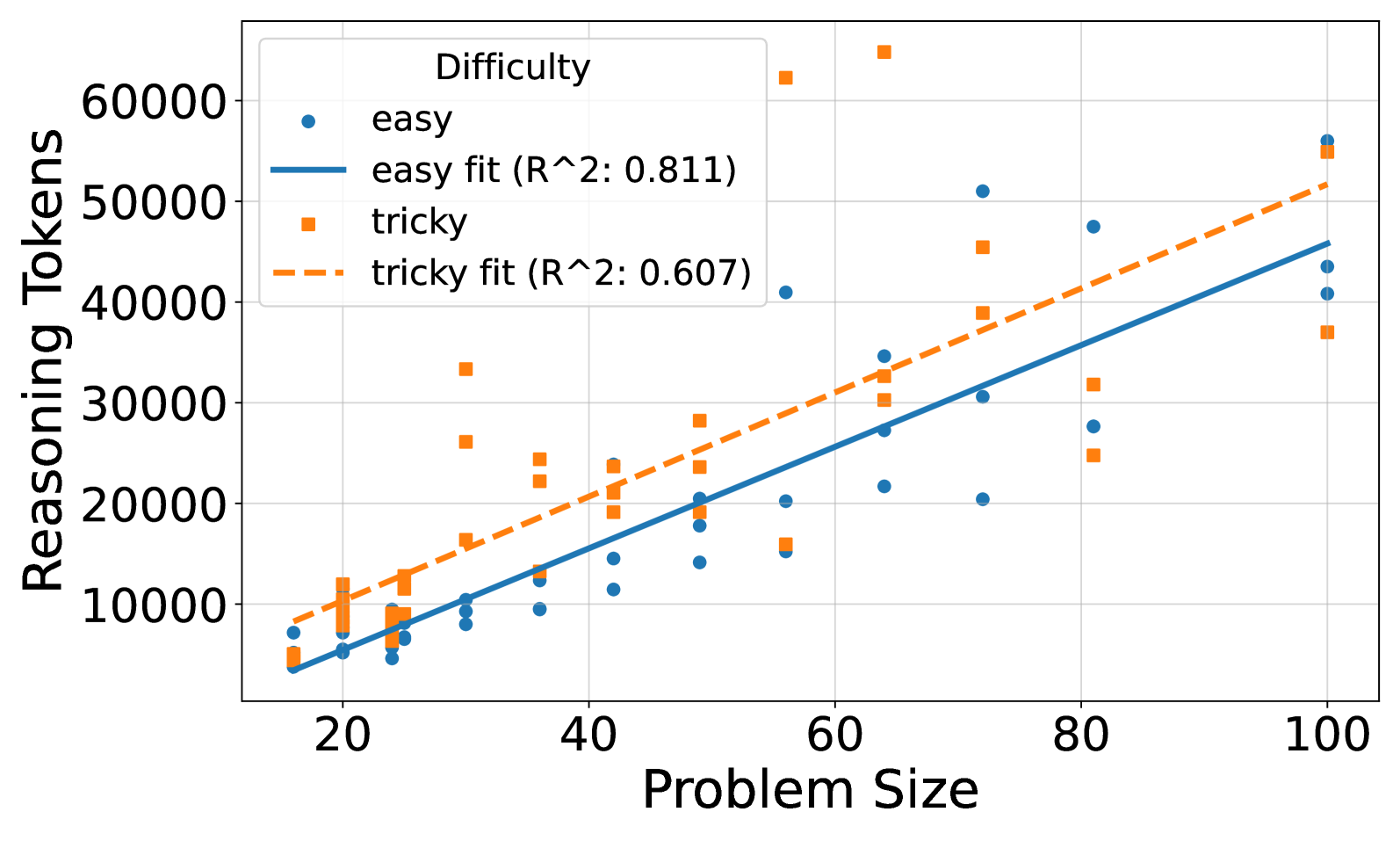

A more detailed analysis of o3-mini’s reasoning effort (Figure 3(a)) reveals a non-monotonic trend. While generally increasing with problem size initially, reasoning effort peaks around a problem size of 100. Beyond this point, the reasoning effort decreases, suggesting a potential ”frustration” effect where increased complexity no longer leads to proportionally increased reasoning in the model. The same behavior could not be observed for other models, see Section A.2.2. It would be interesting to see the effect of recent works trying to optimize reasoning length would have on these results (Luo et al., 2025).

Figure 3(b) further explores o3-mini’s behavior by categorizing reasoning effort into low, medium, and high strategies. The steepness of the scaling slope increases with reasoning effort, indicating that higher effort strategies lead to a more pronounced increase in token usage as problem size grows. While high reasoning effort enables solving larger puzzles (up to 10x10), it also results in a higher token count even for smaller problems that were already solvable with lower effort strategies. This suggests a trade-off where increased reasoning effort can extend the solvable problem range but may also introduce inefficiencies for simpler instances.

5 Conclusion

This study examined how reasoning effort scales in LLMs using the Tents puzzle. We found that reasoning effort generally scales linearly with problem size for solvable instances. Model performance varied, with o3-mini and DeepSeek R1 showing better performance than Qwen/QwQ-32B-Preview and Gemini 2.0 Flash Thinking. These results suggest that while LLMs can adapt reasoning effort to problem complexity, their logical coherence has limits, especially for larger problems. Future work should extend this analysis to a wider variety of puzzles contained in the PUZZLES benchmark to include puzzles with different algorithmic complexity. These insights could lead the way to find strategies to improve reasoning scalability and efficiency, potentially by optimizing reasoning length or refining prompting techniques. Understanding these limitations is crucial for advancing LLMs in complex problem-solving.

References

- Chollet (2019) François Chollet. On the measure of intelligence. arXiv preprint arXiv:1911.01547, 2019.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- DeepMind (2025) DeepMind. Gemini flash thinking. https://deepmind.google/technologies/gemini/flash-thinking/, 2025. Accessed: February 6, 2025.

- Estermann et al. (2024) Benjamin Estermann, Luca A Lanzendörfer, Yannick Niedermayr, and Roger Wattenhofer. Puzzles: A benchmark for neural algorithmic reasoning. In The Thirty-eight Conference on Neural Information Processing Systems Datasets and Benchmarks Track, 2024.

- Fan et al. (2023) Lizhou Fan, Wenyue Hua, Lingyao Li, Haoyang Ling, and Yongfeng Zhang. Nphardeval: Dynamic benchmark on reasoning ability of large language models via complexity classes. arXiv preprint arXiv:2312.14890, 2023.

- Guo et al. (2025) Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- Hendrycks et al. (2020) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300, 2020.

- Huang & Chang (2022) Jie Huang and Kevin Chen-Chuan Chang. Towards reasoning in large language models: A survey. arXiv preprint arXiv:2212.10403, 2022.

- Jimenez et al. (2023) Carlos E Jimenez, John Yang, Alexander Wettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik Narasimhan. Swe-bench: Can language models resolve real-world github issues? arXiv preprint arXiv:2310.06770, 2023.

- Lee et al. (2024) Seungpil Lee, Woochang Sim, Donghyeon Shin, Wongyu Seo, Jiwon Park, Seokki Lee, Sanha Hwang, Sejin Kim, and Sundong Kim. Reasoning abilities of large language models: In-depth analysis on the abstraction and reasoning corpus. ACM Transactions on Intelligent Systems and Technology, 2024.

- Luo et al. (2025) Haotian Luo, Li Shen, Haiying He, Yibo Wang, Shiwei Liu, Wei Li, Naiqiang Tan, Xiaochun Cao, and Dacheng Tao. O1-pruner: Length-harmonizing fine-tuning for o1-like reasoning pruning. arXiv preprint arXiv:2501.12570, 2025.

- of America (2024) Mathematical Association of America. 2024 aime i problems. https://artofproblemsolving.com/wiki/index.php/2024_AIME_I, 2024. Accessed: February 6, 2025.

- OpenAI (2025) OpenAI. Openai o3 mini. https://openai.com/index/openai-o3-mini/, 2025. Accessed: February 6, 2025.

- Qwen (2024) Qwen. Qwq: Reflect deeply on the boundaries of the unknown, November 2024. URL https://qwenlm.github.io/blog/qwq-32b-preview/.

- Rein et al. (2023) David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R Bowman. Gpqa: A graduate-level google-proof q&a benchmark. arXiv preprint arXiv:2311.12022, 2023.

- (16) Simon Tatham. Simon tatham’s portable puzzle collection. https://www.chiark.greenend.org.uk/~sgtatham/puzzles/. Accessed: 2025-02-06.

- Wei et al. (2022) Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35:24824–24837, 2022.

- Yao et al. (2023) Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Thomas L Griffiths, Yuan Cao, and Karthik Narasimhan. Tree of thoughts: Deliberate problem solving with large language models, may 2023. arXiv preprint arXiv:2305.10601, 14, 2023.

- Zhou et al. (2022) Denny Zhou, Nathanael Schärli, Le Hou, Jason Wei, Nathan Scales, Xuezhi Wang, Dale Schuurmans, Claire Cui, Olivier Bousquet, Quoc Le, et al. Least-to-most prompting enables complex reasoning in large language models. arXiv preprint arXiv:2205.10625, 2022.

Appendix A Appendix

A.1 Full Prompt

The full prompt used in the experiments is the following, on the example of a 4x4 puzzle:

⬇

You are a logic puzzle expert. You will be given a logic puzzle to solve. Here is a description of the puzzle:

You have a grid of squares, some of which contain trees. Your aim is to place tents in some of the remaining squares, in such a way that the following conditions are met:

There are exactly as many tents as trees.

The tents and trees can be matched up in such a way that each tent is directly adjacent (horizontally or vertically, but not diagonally) to its own tree. However, a tent may be adjacent to other trees as well as its own.

No two tents are adjacent horizontally, vertically or diagonally.

The number of tents in each row, and in each column, matches the numbers given in the row or column constraints.

Grass indicates that there cannot be a tent in that position.

You receive an array representation of the puzzle state as a grid. Your task is to solve the puzzle by filling out the grid with the correct values. You need to solve the puzzle on your own, you cannot use any external resources or run any code. Once you have solved the puzzle, tell me the final answer without explanation. Return the final answer as a JSON array of arrays.

Here is the current state of the puzzle as a string of the internal state representation:

A 0 represents an empty cell, a 1 represents a tree, a 2 represents a tent, and a 3 represents a grass patch.

Tents puzzle state:

Current grid:

[[0 0 1 0]

[0 1 0 0]

[1 0 0 0]

[0 0 0 0]]

The column constraints are the following:

[1 1 0 1]

The row constraints are the following:

[2 0 0 1]

A.2 Additional Figures

A.2.1 Easy vs. Tricky Puzzles

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Scatter Plot: Reasoning Tokens vs. Problem Size

### Overview

This image presents a scatter plot illustrating the relationship between "Problem Size" and "Reasoning Tokens" for two difficulty levels: "easy" and "tricky". Two linear regression lines are fitted to each dataset, showing the trend for each difficulty. The plot includes a legend identifying the data series and their corresponding R-squared values.

### Components/Axes

* **X-axis:** "Problem Size" ranging from approximately 15 to 42.

* **Y-axis:** "Reasoning Tokens" ranging from approximately 500 to 5000.

* **Legend:** Located in the top-right corner, it identifies:

* "easy" (blue circles) with a solid blue line labeled "easy fit (R^2: 0.468)"

* "tricky" (orange squares) with a dashed orange line labeled "tricky fit (R^2: 0.502)"

* **Grid:** A light gray grid is present to aid in reading values.

### Detailed Analysis

**Easy Data (Blue Circles):**

The "easy" data points are scattered, generally increasing with problem size. The fitted line slopes upward, indicating a positive correlation.

* At Problem Size ≈ 15, Reasoning Tokens ≈ 800.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 1600.

* At Problem Size ≈ 25, Reasoning Tokens ≈ 2200.

* At Problem Size ≈ 30, Reasoning Tokens ≈ 2300.

* At Problem Size ≈ 35, Reasoning Tokens ≈ 2500.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 3900.

**Tricky Data (Orange Squares):**

The "tricky" data points are also scattered, but generally show a stronger upward trend than the "easy" data. The fitted line is steeper, indicating a stronger positive correlation.

* At Problem Size ≈ 16, Reasoning Tokens ≈ 1500.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 2500.

* At Problem Size ≈ 25, Reasoning Tokens ≈ 3500.

* At Problem Size ≈ 30, Reasoning Tokens ≈ 4500.

* At Problem Size ≈ 35, Reasoning Tokens ≈ 4800.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 5000.

### Key Observations

* The "tricky" problems consistently require more reasoning tokens than "easy" problems for the same problem size.

* The R-squared value for the "tricky" fit (0.502) is slightly higher than for the "easy" fit (0.468), suggesting a better linear fit for the "tricky" data.

* Both datasets exhibit considerable scatter, indicating that problem size is not the sole determinant of reasoning tokens.

* There is a noticeable gap between the two lines, especially at larger problem sizes.

### Interpretation

The data suggests that the complexity of a problem (as indicated by "difficulty") significantly impacts the number of reasoning tokens required to solve it. As problem size increases, the number of reasoning tokens also tends to increase, but this relationship is not perfectly linear. The higher R-squared value for the "tricky" data suggests that the relationship between problem size and reasoning tokens is more predictable for complex problems. The scatter in both datasets indicates that other factors, beyond problem size and difficulty, influence the reasoning process. The increasing gap between the two lines as problem size grows suggests that the difference in reasoning effort between "easy" and "tricky" problems becomes more pronounced with larger problem sizes. This could be due to the increased need for more complex strategies or deeper reasoning when tackling larger, more challenging problems.

</details>

(a)

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Line Chart: Success Rate vs. Problem Size for Different Difficulties

### Overview

This line chart depicts the relationship between problem size and success rate for two difficulty levels: "easy" and "tricky". The x-axis represents the problem size, and the y-axis represents the success rate in percentage. The chart shows how the success rate changes as the problem size increases for each difficulty level.

### Components/Axes

* **X-axis Title:** "Problem Size" (ranging from approximately 15 to 125)

* **Y-axis Title:** "Success Rate (%)" (ranging from 0 to 100)

* **Legend Title:** "Difficulty"

* **Legend Labels:**

* "easy" (represented by a solid blue line)

* "tricky" (represented by a dashed orange line)

* **Gridlines:** Present, providing a visual aid for reading values.

### Detailed Analysis

**Easy Difficulty (Blue Solid Line):**

The line starts at approximately 98% success rate at a problem size of 15. It initially decreases slightly to around 85% at a problem size of 20. Then, it rapidly drops to approximately 50% at a problem size of 35, and continues to decline to around 5% at a problem size of 60. From 60 to 120, the line remains relatively flat, hovering around 5% success rate.

* (15, 98)

* (20, 85)

* (35, 50)

* (40, 0)

* (60, 5)

* (120, 5)

**Tricky Difficulty (Orange Dashed Line):**

The line begins at approximately 100% success rate at a problem size of 15. It decreases to around 80% at a problem size of 20. It then drops sharply to approximately 35% at a problem size of 30, and then plummets to 0% at a problem size of 40. From 40 to 120, the line remains consistently at 0% success rate.

* (15, 100)

* (20, 80)

* (30, 35)

* (40, 0)

* (60, 0)

* (120, 0)

### Key Observations

* The "easy" difficulty maintains a significantly higher success rate than the "tricky" difficulty across all problem sizes.

* Both difficulties experience a sharp decline in success rate as the problem size increases.

* For the "tricky" difficulty, the success rate drops to 0% at a problem size of 40, and remains at 0% for larger problem sizes.

* The "easy" difficulty shows a more gradual decline in success rate, but still reaches a very low success rate (around 5%) for larger problem sizes.

### Interpretation

The data suggests that the problem difficulty has a substantial impact on the success rate. While the "easy" problems can be solved with a reasonable success rate for smaller problem sizes, both difficulty levels become increasingly challenging as the problem size grows. The "tricky" problems are particularly sensitive to problem size, with success rate dropping to zero relatively quickly. This could indicate that the algorithm or approach used for the "tricky" problems does not scale well with increasing problem size. The chart highlights the importance of considering problem complexity and scalability when designing algorithms or solving problems. The rapid decline in success rate for both difficulties suggests a potential computational limit or inherent difficulty in solving these types of problems beyond a certain size.

</details>

(b)

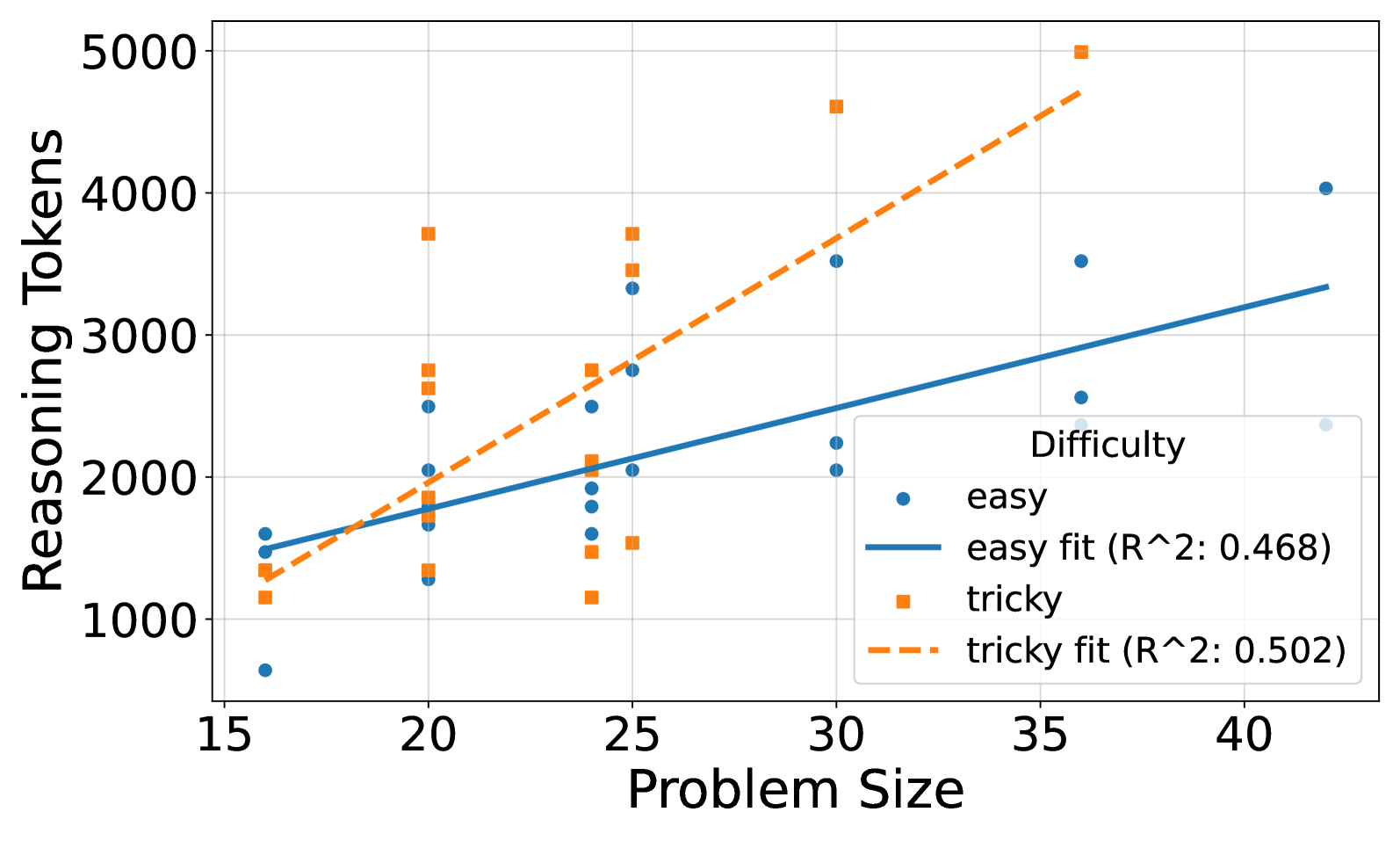

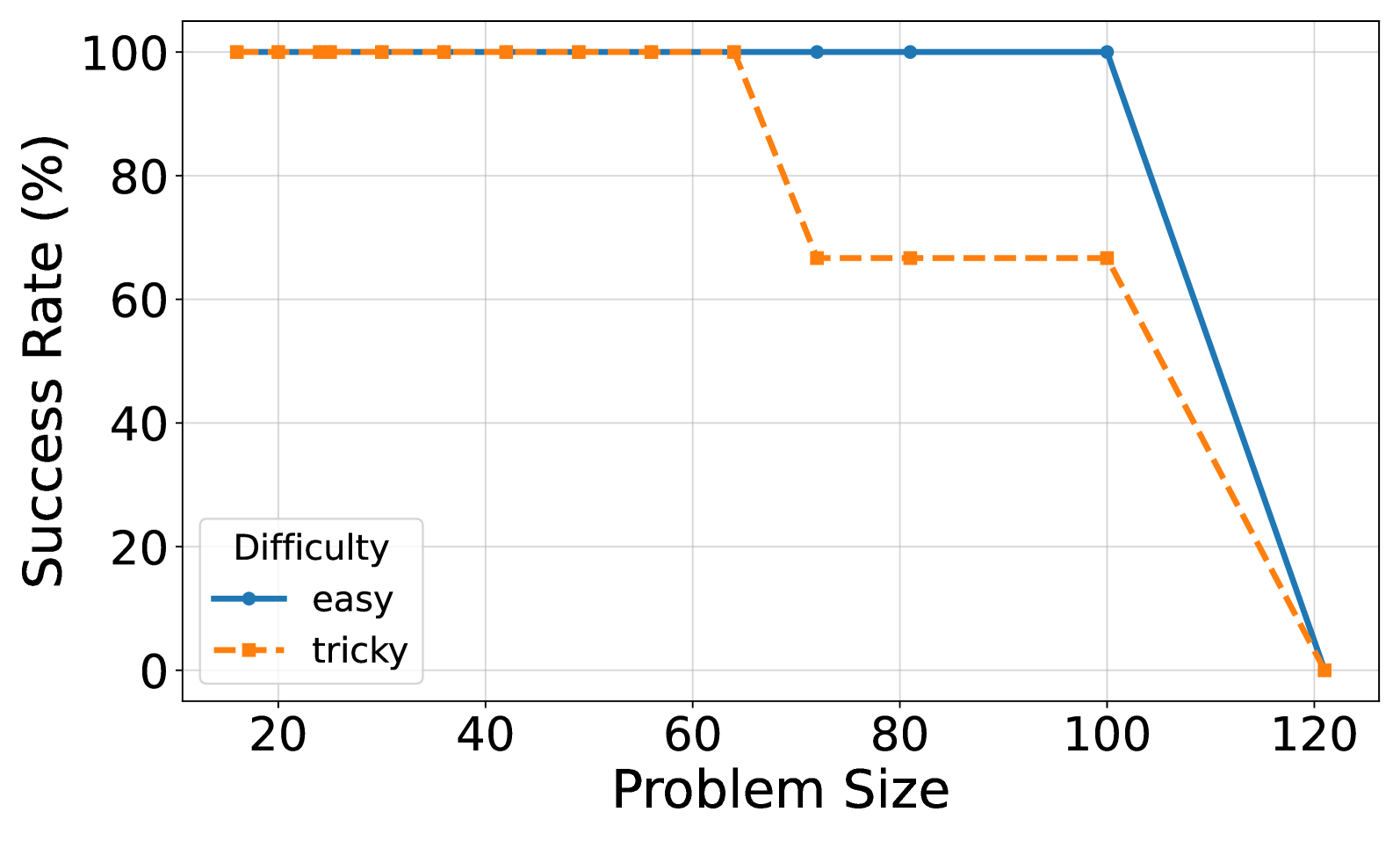

Figure 4: (a) Reasoning effort in number of reasoning tokens versus problem size for o3-mini with reasoning effort low. Successful tries only. Linear fits are added for each model. (b) Solved percentage versus problem size for o3-mini with reasoning effort low.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Scatter Plot: Reasoning Tokens vs. Problem Size by Difficulty

### Overview

This image presents a scatter plot illustrating the relationship between Problem Size (x-axis) and Reasoning Tokens (y-axis) for two levels of Difficulty: "easy" and "tricky". Linear regression fits are overlaid on each data set, with R-squared values provided. The plot aims to demonstrate how the computational effort (Reasoning Tokens) scales with the size of the problem, and whether this scaling differs based on the problem's difficulty.

### Components/Axes

* **X-axis:** Problem Size (ranging approximately from 18 to 82).

* **Y-axis:** Reasoning Tokens (ranging approximately from 2000 to 32000).

* **Legend:** Located in the top-left corner.

* "easy" - Represented by blue circles.

* "easy fit (R^2: 0.829)" - Represented by a solid blue line.

* "tricky" - Represented by orange squares.

* "tricky fit (R^2: 0.903)" - Represented by a dashed orange line.

* **Gridlines:** Present for both axes, aiding in value estimation.

### Detailed Analysis

**Easy Data (Blue):**

The "easy" data points (blue circles) generally show an upward trend, indicating that as Problem Size increases, so do Reasoning Tokens. The data is somewhat scattered, but a linear fit is provided.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 3000.

* At Problem Size ≈ 30, Reasoning Tokens ≈ 4500.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 7000.

* At Problem Size ≈ 50, Reasoning Tokens ≈ 9500.

* At Problem Size ≈ 60, Reasoning Tokens ≈ 11000.

* At Problem Size ≈ 70, Reasoning Tokens ≈ 15000.

* At Problem Size ≈ 80, Reasoning Tokens ≈ 18000.

The linear fit (solid blue line) starts at approximately Reasoning Tokens ≈ 2000 at Problem Size ≈ 18 and ends at approximately Reasoning Tokens ≈ 20000 at Problem Size ≈ 82.

**Tricky Data (Orange):**

The "tricky" data points (orange squares) also exhibit an upward trend, but are generally positioned *above* the "easy" data points, suggesting that "tricky" problems require more Reasoning Tokens for the same Problem Size. The data is also scattered, but the linear fit appears to be a better fit than the "easy" data.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 3500.

* At Problem Size ≈ 30, Reasoning Tokens ≈ 6000.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 9000.

* At Problem Size ≈ 50, Reasoning Tokens ≈ 20000.

* At Problem Size ≈ 60, Reasoning Tokens ≈ 16000.

* At Problem Size ≈ 70, Reasoning Tokens ≈ 31000.

* At Problem Size ≈ 80, Reasoning Tokens ≈ 28000.

The linear fit (dashed orange line) starts at approximately Reasoning Tokens ≈ 2500 at Problem Size ≈ 18 and ends at approximately Reasoning Tokens ≈ 31000 at Problem Size ≈ 82.

### Key Observations

* The R-squared value for the "tricky" fit (0.903) is higher than that for the "easy" fit (0.829), indicating that the linear model explains a larger proportion of the variance in the "tricky" data.

* For a given Problem Size, "tricky" problems consistently require more Reasoning Tokens than "easy" problems.

* The "tricky" data at Problem Size ≈ 50 and 70 appears to be outliers, with significantly higher Reasoning Token values than the surrounding points.

* The "tricky" data at Problem Size ≈ 80 appears to be an outlier, with a significantly lower Reasoning Token value than the surrounding points.

### Interpretation

The data suggests a positive correlation between Problem Size and Reasoning Tokens for both "easy" and "tricky" problems. This indicates that the computational cost of solving these problems increases as the problem becomes larger. The higher Reasoning Token requirements for "tricky" problems suggest that they are more complex and require more computational effort to solve.

The higher R-squared value for the "tricky" data suggests that the relationship between Problem Size and Reasoning Tokens is more linear for "tricky" problems than for "easy" problems. This could be due to the fact that "tricky" problems have a more consistent structure or require a more predictable set of operations to solve.

The outliers in the "tricky" data may represent problems that are particularly difficult or require a different approach to solve. Further investigation would be needed to understand the reasons for these outliers. The data suggests that the scaling of Reasoning Tokens with Problem Size is not perfectly linear, and that there may be other factors that influence the computational cost of solving these problems.

</details>

(a)

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## Line Chart: Success Rate vs. Problem Size for Different Difficulties

### Overview

This line chart depicts the relationship between problem size and success rate for two difficulty levels: "easy" and "tricky". The chart shows how the success rate changes as the problem size increases.

### Components/Axes

* **X-axis:** "Problem Size" - ranging from approximately 20 to 120.

* **Y-axis:** "Success Rate (%)" - ranging from 0 to 100.

* **Legend:** Located in the top-right corner, labeling the two lines:

* "easy" - represented by a solid blue line.

* "tricky" - represented by a dashed orange line.

* **Gridlines:** Present to aid in reading values.

### Detailed Analysis

**Easy Difficulty (Blue Solid Line):**

The blue line representing "easy" difficulty starts at approximately 98% success rate at a problem size of 20. It remains relatively stable until around a problem size of 60, maintaining a success rate near 100%. From 60 to 80, the line slopes downward, decreasing to approximately 65% success rate at a problem size of 80. It then sharply declines from 80 to 100, reaching approximately 2% success rate at a problem size of 100. The line remains near 0% for problem sizes between 100 and 120.

* (20, 98)

* (40, 98)

* (60, 100)

* (80, 65)

* (100, 2)

* (120, 0)

**Tricky Difficulty (Orange Dashed Line):**

The orange dashed line representing "tricky" difficulty starts at approximately 98% success rate at a problem size of 20. It remains stable until around a problem size of 40, where it begins to decline. The line dips to approximately 33% success rate at a problem size of 40. It then rises slightly to around 35% at a problem size of 60, before declining again. From 60 to 80, the line remains relatively stable around 33%. From 80 to 100, the line slopes downward, decreasing to approximately 0% success rate at a problem size of 100. The line remains near 0% for problem sizes between 100 and 120.

* (20, 98)

* (40, 33)

* (60, 35)

* (80, 33)

* (100, 0)

* (120, 0)

### Key Observations

* The "easy" difficulty consistently outperforms the "tricky" difficulty across all problem sizes.

* Both difficulties experience a significant drop in success rate as the problem size increases beyond 60.

* The "easy" difficulty shows a more dramatic decline in success rate for problem sizes between 80 and 100.

* For problem sizes greater than 100, both difficulties have a success rate near 0%.

### Interpretation

The data suggests that the problem's difficulty significantly impacts the success rate, especially as the problem size increases. The "easy" problems remain solvable for larger problem sizes than the "tricky" problems, but both become intractable beyond a certain point (around a problem size of 100). The sharp decline in success rate for both difficulties beyond a certain problem size indicates a potential computational limit or algorithmic inefficiency. The initial high success rates for both difficulties suggest that the algorithms or methods used are effective for smaller problem sizes. The difference in performance between the two difficulties highlights the importance of problem formulation or the complexity of the underlying problem structure. The data could be used to inform the selection of appropriate algorithms or to identify areas for optimization to improve performance on larger, more complex problems.

</details>

(b)

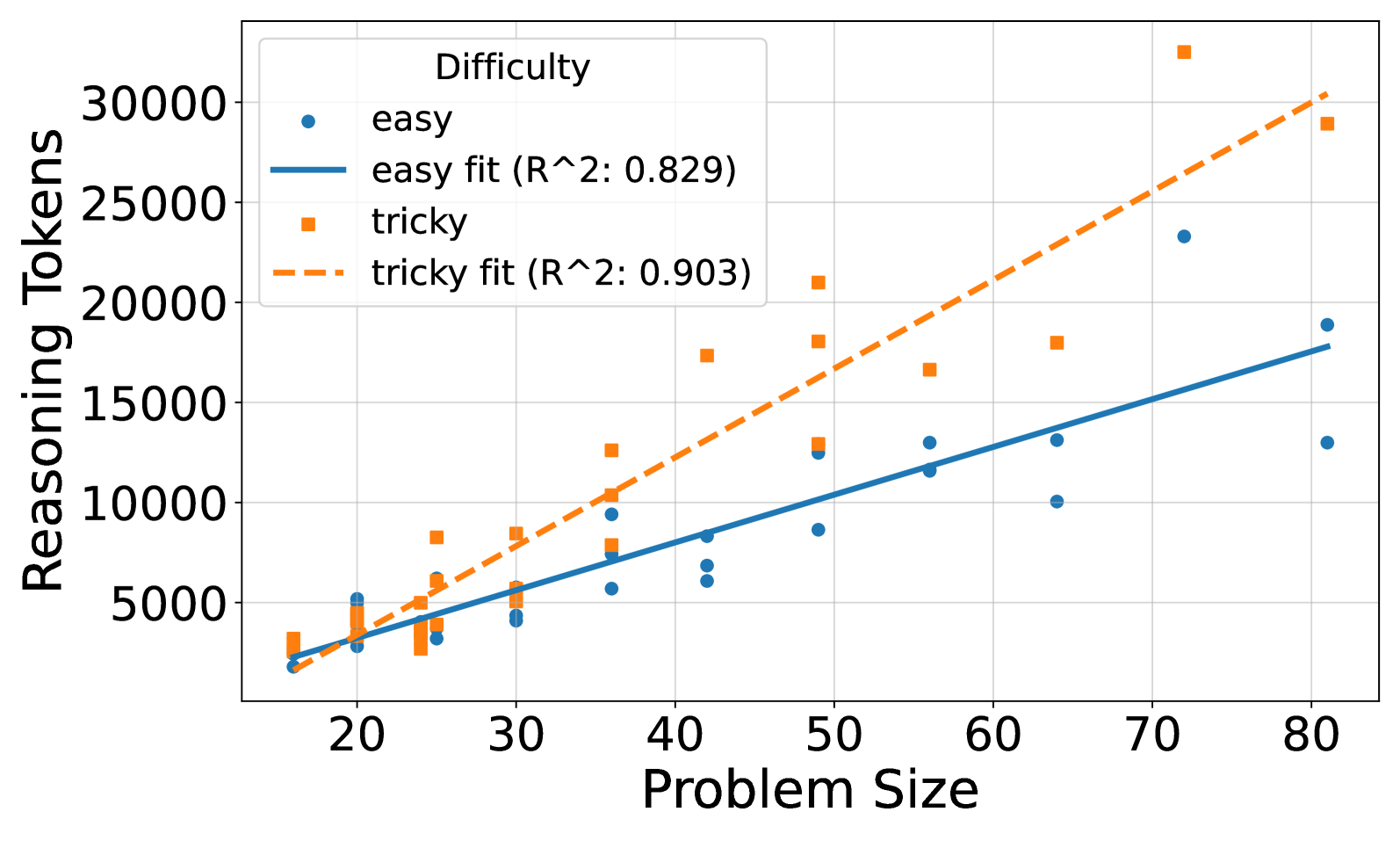

Figure 5: (a) Reasoning effort in number of reasoning tokens versus problem size for o3-mini with reasoning effort medium. Successful tries only. Linear fits are added for each model. (b) Solved percentage versus problem size for o3-mini with reasoning effort medium.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Scatter Plot: Reasoning Tokens vs. Problem Size

### Overview

This image presents a scatter plot illustrating the relationship between "Problem Size" and "Reasoning Tokens" for two levels of "Difficulty": "easy" and "tricky". Linear regression fits are overlaid on each data series, with R-squared values provided. The plot aims to demonstrate how the number of reasoning tokens required scales with problem size, and how this scaling differs between easy and tricky problems.

### Components/Axes

* **X-axis:** "Problem Size" - Scale ranges from approximately 15 to 105, with tick marks at 20, 40, 60, 80, and 100.

* **Y-axis:** "Reasoning Tokens" - Scale ranges from approximately 5000 to 65000, with tick marks at 10000, 20000, 30000, 40000, 50000, and 60000.

* **Legend:** Located in the top-left corner.

* "easy" - Represented by blue circles.

* "easy fit (R^2: 0.811)" - Represented by a solid blue line.

* "tricky" - Represented by orange squares.

* "tricky fit (R^2: 0.607)" - Represented by a dashed orange line.

* **Title:** Not explicitly present, but the plot's content suggests a title relating to reasoning token scaling.

### Detailed Analysis

**Easy Data Series:**

The "easy" data series (blue circles) shows a generally upward trend. The data points are scattered around the blue regression line.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 7000.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 14000.

* At Problem Size ≈ 60, Reasoning Tokens ≈ 24000.

* At Problem Size ≈ 80, Reasoning Tokens ≈ 34000.

* At Problem Size ≈ 100, Reasoning Tokens ≈ 43000.

The "easy fit" line has a positive slope, indicating that as problem size increases, the number of reasoning tokens also increases. The R-squared value of 0.811 suggests a strong linear fit.

**Tricky Data Series:**

The "tricky" data series (orange squares) also exhibits an upward trend, but with more variability than the "easy" data.

* At Problem Size ≈ 20, Reasoning Tokens ≈ 8000.

* At Problem Size ≈ 40, Reasoning Tokens ≈ 20000.

* At Problem Size ≈ 60, Reasoning Tokens ≈ 32000.

* At Problem Size ≈ 80, Reasoning Tokens ≈ 25000.

* At Problem Size ≈ 100, Reasoning Tokens ≈ 50000.

The "tricky fit" line also has a positive slope, but is less steep than the "easy fit" line. The R-squared value of 0.607 indicates a moderate linear fit.

### Key Observations

* The "tricky" problems generally require more reasoning tokens than "easy" problems for the same problem size, especially at larger problem sizes.

* The "easy" data has a tighter distribution around its regression line, indicating a more predictable relationship between problem size and reasoning tokens.

* The "tricky" data is more scattered, suggesting that the relationship between problem size and reasoning tokens is less consistent for these problems.

* There is an outlier in the "tricky" data at Problem Size ≈ 80, where Reasoning Tokens ≈ 25000, which is lower than the trendline would suggest.

* The R-squared value for the "easy" fit is significantly higher than for the "tricky" fit, indicating a stronger linear relationship for the "easy" problems.

### Interpretation

The data suggests that the computational cost of reasoning (as measured by reasoning tokens) increases with problem size. However, the rate of increase, and the consistency of that increase, are affected by the difficulty of the problem. "Easy" problems exhibit a more predictable, linear scaling, while "tricky" problems show greater variability. This could indicate that "tricky" problems require more complex or nuanced reasoning strategies, leading to a wider range of token usage. The higher R-squared value for the "easy" fit suggests that a linear model is a better approximation of the relationship between problem size and reasoning tokens for these problems. The outlier in the "tricky" data might represent a problem that was solved in an unexpectedly efficient way, or a data recording error. The difference in slopes between the two regression lines suggests that the marginal cost of increasing problem size is higher for "tricky" problems than for "easy" problems.

</details>

(a)

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Chart: Success Rate vs. Problem Size for Different Difficulties

### Overview

This line chart depicts the success rate (in percentage) as a function of problem size, comparing two difficulty levels: "easy" and "tricky". The x-axis represents the problem size, and the y-axis represents the success rate.

### Components/Axes

* **X-axis Title:** Problem Size

* **Y-axis Title:** Success Rate (%)

* **Legend:** Located in the bottom-left corner.

* **Difficulty: easy** (Solid Blue Line)

* **Difficulty: tricky** (Dashed Orange Line)

* **X-axis Scale:** Ranges from approximately 20 to 120, with markers at 20, 40, 60, 80, 100, and 120.

* **Y-axis Scale:** Ranges from 0 to 100, with markers at 0, 20, 40, 60, 80, and 100.

* **Gridlines:** Present to aid in reading values.

### Detailed Analysis

**Easy Difficulty (Blue Line):**

The blue line representing "easy" difficulty starts at approximately 98% success rate at a problem size of 20. It remains relatively constant at around 98-100% until a problem size of approximately 100. After 100, the success rate drops sharply, reaching approximately 20% at a problem size of 110 and 0% at a problem size of 120.

**Tricky Difficulty (Orange Dashed Line):**

The orange dashed line representing "tricky" difficulty begins at approximately 98% success rate at a problem size of 20. It remains relatively constant at around 98-100% until a problem size of approximately 80. From 80 to 100, the success rate decreases from approximately 70% to 65%. Between 100 and 120, the success rate declines rapidly, reaching approximately 0% at a problem size of 120.

**Data Points (Approximate):**

| Problem Size | Easy Success Rate (%) | Tricky Success Rate (%) |

|--------------|-----------------------|-------------------------|

| 20 | 98 | 98 |

| 40 | 99 | 99 |

| 60 | 100 | 99 |

| 80 | 100 | 70 |

| 100 | 98 | 65 |

| 110 | 20 | 10 |

| 120 | 0 | 0 |

### Key Observations

* Both difficulty levels exhibit high success rates for small problem sizes (up to approximately 80).

* The "easy" difficulty maintains a significantly higher success rate across most problem sizes.

* The "tricky" difficulty experiences a more substantial decline in success rate as the problem size increases, particularly after a problem size of 80.

* Both difficulties reach 0% success rate at a problem size of 120.

* The inflection point where the "tricky" difficulty begins to decline is around a problem size of 80.

### Interpretation

The data suggests that the "easy" problems are solvable with high accuracy even as the problem size increases, up to a certain point (around 100). Beyond this point, the success rate drops dramatically, indicating a limit to the algorithm's or solver's ability to handle larger instances of the "easy" problem.

The "tricky" problems are more sensitive to problem size. While they also have a high success rate for small problem sizes, their performance degrades much more rapidly as the problem size grows. This suggests that the "tricky" problems may require more computational resources or a different approach to solve effectively.

The fact that both difficulties reach 0% success rate at a problem size of 120 indicates a fundamental limitation in the method being used to solve these problems. This could be due to the problem becoming computationally intractable, or it could be due to limitations in the algorithm's design. The sharp decline in success rate for both difficulties around problem size 100-120 suggests a phase transition or a critical threshold in the problem's complexity.

</details>

(b)

Figure 6: (a) Reasoning effort in number of reasoning tokens versus problem size for o3-mini with reasoning effort high. Successful tries only. Linear fits are added for each model. (b) Solved percentage versus problem size for o3-mini with reasoning effort high.

A.2.2 Reasoning Effort for All Models

<details>

<summary>x11.png Details</summary>

### Visual Description

## Scatter Plot: Reasoning Tokens vs. Problem Size

### Overview

This image presents a scatter plot visualizing the relationship between "Problem Size" and "Reasoning Tokens" for a model named "qwen/qwq-32b-preview". The data points are color-coded to distinguish between successful and failed attempts.

### Components/Axes

* **X-axis:** "Problem Size" - Scale ranges from approximately 0 to 400, with tick marks at intervals of 100.

* **Y-axis:** "Reasoning Tokens" - Scale ranges from approximately 0 to 20000, with tick marks at intervals of 5000.

* **Legend:** Located in the top-right corner.

* Blue circles: "qwen/qwq-32b-preview (Successful)"

* Orange squares: "qwen/qwq-32b-preview (Failed)"

* **Gridlines:** Present to aid in reading values.

### Detailed Analysis

The plot shows two distinct data series: one for successful attempts (blue circles) and one for failed attempts (orange squares).

**Successful Attempts (Blue Circles):**

The successful attempts are concentrated in the lower-left portion of the plot. The trend is relatively flat, with a slight upward slope.

* At Problem Size ≈ 0, Reasoning Tokens range from approximately 2000 to 6000.

* At Problem Size ≈ 50, Reasoning Tokens range from approximately 3000 to 7000.

* At Problem Size ≈ 100, Reasoning Tokens range from approximately 4000 to 6000.

* At Problem Size ≈ 200, Reasoning Tokens range from approximately 4000 to 7000.

* At Problem Size ≈ 300, Reasoning Tokens range from approximately 5000 to 7000.

* At Problem Size ≈ 400, Reasoning Tokens range from approximately 6000 to 8000.

**Failed Attempts (Orange Squares):**

The failed attempts are more dispersed throughout the plot, with a higher concentration in the upper-left and center regions. The trend is more variable.

* At Problem Size ≈ 0, Reasoning Tokens range from approximately 2000 to 12000.

* At Problem Size ≈ 50, Reasoning Tokens range from approximately 5000 to 16000.

* At Problem Size ≈ 100, Reasoning Tokens range from approximately 6000 to 20000.

* At Problem Size ≈ 150, Reasoning Tokens range from approximately 5000 to 13000.

* At Problem Size ≈ 200, Reasoning Tokens range from approximately 5000 to 11000.

* At Problem Size ≈ 300, Reasoning Tokens range from approximately 6000 to 12000.

* At Problem Size ≈ 400, Reasoning Tokens range from approximately 8000 to 15000.

### Key Observations

* Successful attempts generally require fewer reasoning tokens than failed attempts.

* The number of reasoning tokens required for successful attempts appears to increase slightly with problem size, but the increase is relatively small.

* Failed attempts exhibit a wider range of reasoning token usage, suggesting more variability in the model's performance on challenging problems.

* There is a cluster of failed attempts with very high reasoning token counts (above 15000) even for relatively small problem sizes.

### Interpretation

The data suggests that the "qwen/qwq-32b-preview" model is more likely to succeed when the problem size is smaller and the number of reasoning tokens required is lower. The wide range of reasoning token usage for failed attempts indicates that the model sometimes struggles with problems that require more extensive reasoning, even if the problem size is not particularly large. The presence of failed attempts with high reasoning token counts suggests that the model can get "stuck" in a reasoning loop without reaching a solution. This could be due to issues with the model's architecture, training data, or decoding strategy. The relatively flat trend for successful attempts suggests that the model's reasoning efficiency does not significantly degrade as the problem size increases, at least within the observed range. Further investigation could explore the characteristics of the problems that lead to high reasoning token counts and failures, and identify potential strategies for improving the model's performance on these challenging cases.

</details>

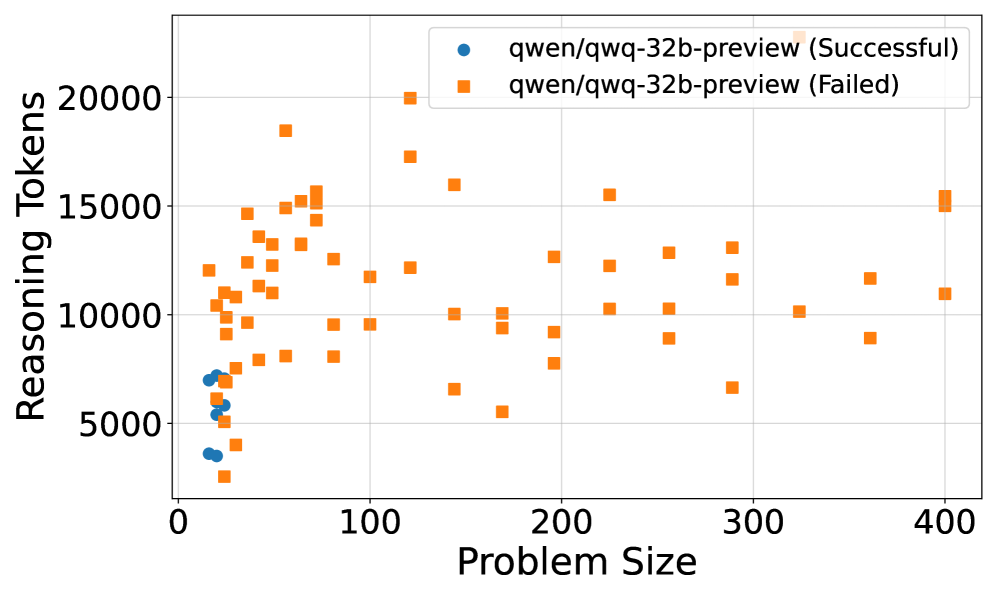

Figure 7: Reasoning effort in tokens for Qwen QwQ.

<details>

<summary>x12.png Details</summary>

### Visual Description

\n

## Scatter Plot: Reasoning Tokens vs. Problem Size for deepseek/deepseek-r1

### Overview

This scatter plot visualizes the relationship between "Problem Size" and "Reasoning Tokens" for the model `deepseek/deepseek-r1`, differentiating between successful and failed attempts. The x-axis represents Problem Size, and the y-axis represents Reasoning Tokens. Two distinct data series are plotted: one for successful runs (blue circles) and one for failed runs (orange squares).

### Components/Axes

* **X-axis:** Problem Size (ranging from approximately 0 to 400)

* **Y-axis:** Reasoning Tokens (ranging from approximately 4000 to 16000)

* **Legend:** Located in the bottom-right corner.

* Blue circles: `deepseek/deepseek-r1 (Successful)`

* Orange squares: `deepseek/deepseek-r1 (Failed)`

* **Gridlines:** Present to aid in reading values.

### Detailed Analysis

**Successful Runs (Blue Circles):**

The blue data series shows a cluster of points concentrated at lower Problem Sizes (0-50). The trend is generally upward, but with significant scatter.

* At Problem Size 0, Reasoning Tokens range from approximately 4500 to 9500.

* At Problem Size 20, Reasoning Tokens range from approximately 6000 to 10000.

* At Problem Size 50, Reasoning Tokens range from approximately 6000 to 12000.

* Beyond Problem Size 100, the successful runs become more sparse, with Reasoning Tokens ranging from approximately 7000 to 14000.

* At Problem Size 400, there is one successful run at approximately 8000 Reasoning Tokens.

**Failed Runs (Orange Squares):**

The orange data series exhibits a wider distribution across the Problem Size range.

* At Problem Size 50, Reasoning Tokens range from approximately 11000 to 15000.

* At Problem Size 100, Reasoning Tokens range from approximately 11000 to 16000.

* At Problem Size 200, Reasoning Tokens range from approximately 11000 to 14000.

* At Problem Size 300, Reasoning Tokens range from approximately 11000 to 15000.

* At Problem Size 400, Reasoning Tokens range from approximately 9000 to 15000.

### Key Observations

* Successful runs tend to occur with lower Reasoning Token counts, especially for smaller Problem Sizes.

* Failed runs consistently require higher Reasoning Token counts across all Problem Sizes.

* There is significant variability in Reasoning Tokens for both successful and failed runs, suggesting other factors influence the outcome.

* The density of successful runs decreases as Problem Size increases, while the density of failed runs remains relatively consistent.

* There is an outlier failed run at Problem Size 400 with a Reasoning Token count of approximately 9000, which is lower than most other failed runs.

### Interpretation

The data suggests a correlation between Problem Size, Reasoning Tokens, and success rate for the `deepseek/deepseek-r1` model. Larger Problem Sizes generally require more Reasoning Tokens, and a higher Reasoning Token count is associated with a higher probability of failure. The model appears to struggle with larger problems, potentially due to limitations in its reasoning capacity or the complexity of the problem space. The scatter suggests that Reasoning Tokens are not the *sole* determinant of success; other factors likely play a role. The outlier at Problem Size 400 indicates that failure isn't always tied to high token usage, and there may be specific problem instances that are particularly challenging even with relatively low reasoning costs. The concentration of successful runs at lower Problem Sizes and Reasoning Token counts suggests an optimal operating range for this model.

</details>

Figure 8: Reasoning effort in tokens for Deepseek R1.

<details>

<summary>x13.png Details</summary>

### Visual Description

\n

## Scatter Plot: Response Time vs. Problem Size for Gemini 2.0 Flash Thinking Experiments

### Overview

This scatter plot visualizes the relationship between Problem Size and Response Time for two sets of experiments: successful and failed runs of "gemini-2.0-flash-thinking-exp-01-21". The plot displays individual data points representing each experiment's outcome, with color-coding used to distinguish between successful and failed attempts.

### Components/Axes

* **X-axis:** Problem Size (ranging from approximately 0 to 400).

* **Y-axis:** Response Time (s) (ranging from approximately 0 to 160 seconds).

* **Legend:** Located in the bottom-left corner.

* Blue circles: "gemini-2.0-flash-thinking-exp-01-21 (Successful)"

* Orange squares: "gemini-2.0-flash-thinking-exp-01-21 (Failed)"

* **Gridlines:** Light gray horizontal and vertical lines provide a visual reference for data point positioning.

### Detailed Analysis

The plot shows a distribution of data points for both successful and failed experiments.

**Successful Runs (Blue Circles):**

The successful runs exhibit a clear trend: as Problem Size increases, Response Time generally increases, but with significant variability.

* At Problem Size ≈ 0, Response Time ranges from approximately 10s to 30s.

* At Problem Size ≈ 50, Response Time ranges from approximately 20s to 60s.

* At Problem Size ≈ 100, Response Time ranges from approximately 30s to 50s.

* At Problem Size ≈ 200, Response Time ranges from approximately 30s to 60s.

* At Problem Size ≈ 300, Response Time ranges from approximately 30s to 50s.

* At Problem Size ≈ 400, Response Time ranges from approximately 20s to 40s.

**Failed Runs (Orange Squares):**

The failed runs also show a general trend of increasing Response Time with increasing Problem Size, but with a wider range of values and a tendency towards longer response times compared to successful runs.

* At Problem Size ≈ 0, Response Time ranges from approximately 10s to 30s.

* At Problem Size ≈ 50, Response Time ranges from approximately 40s to 120s.

* At Problem Size ≈ 100, Response Time ranges from approximately 50s to 150s.

* At Problem Size ≈ 200, Response Time ranges from approximately 60s to 120s.

* At Problem Size ≈ 300, Response Time ranges from approximately 50s to 130s.

* At Problem Size ≈ 400, Response Time ranges from approximately 80s to 120s.

### Key Observations

* **Response Time Distribution:** Failed runs generally have higher response times than successful runs for the same problem size.

* **Variability:** There is significant variability in response times for both successful and failed runs, suggesting other factors influence performance.

* **Outliers:** Several failed runs exhibit exceptionally long response times (e.g., around Problem Size 100, Response Time ≈ 150s).

* **Overlap:** There is overlap in response times between successful and failed runs, particularly at smaller problem sizes.

### Interpretation

The data suggests that as the problem size increases, the response time for the Gemini 2.0 flash thinking experiments also tends to increase. However, the success or failure of the experiment is strongly correlated with the response time; failed runs consistently exhibit longer response times. This could indicate that exceeding a certain response time threshold leads to experiment failure. The variability in response times suggests that factors beyond problem size influence performance, such as the specific characteristics of the problem instance or the system's current load. The outliers in the failed run data may represent particularly challenging problem instances or instances where the system encountered unexpected issues. The overlap in response times between successful and failed runs at smaller problem sizes suggests that the system can handle smaller problems relatively efficiently, but its performance degrades as the problem size increases. Further investigation is needed to understand the underlying causes of the variability and the factors that contribute to experiment failure.

</details>

Figure 9: Reasoning effort quantified by response time for Gemini-2.0-flash-thinking.

A.3 Cost

Total cost of these experiments was around 80 USD in API credits.