# Bayesian Teaching Enables Probabilistic Reasoning in Large Language Models

**Authors**: Linlu Qiu, Fei Sha, Kelsey Allen, Yoon Kim, Tal Linzen, Sjoerd van Steenkiste

> Google DeepMind University of British Columbia Vector Institute

> Google Research New York University

> Google Research

linluqiu@mit.edu, svansteenkiste@google.com, linzen@google.com

## Abstract

Large language models (LLMs) are increasingly used as agents that interact with users and with the world. To do so successfully, LLMs must construct representations of the world and form probabilistic beliefs about them. To provide personalized recommendations, for example, the LLM needs to infer a user’s preferences from their behavior over multiple interactions. The Bayesian inference framework lays out the optimal way for an agent to update its beliefs as it receives new information. We first show that LLMs fall far short of the standard defined by the Bayesian framework. We then show that by teaching LLMs to mimic the predictions of the normative Bayesian model, we can dramatically improve their ability to update their beliefs; this ability generalizes to new tasks. We conclude that LLMs can effectively learn reasoning skills from examples and generalize those skills to new domains.

## 1 Introduction

Humans interact with the world based on our beliefs about it. To effectively support decision making, our beliefs need to correspond to the structure of the world as much as possible; in other words, our beliefs need to be supported by appropriate “world models” [Johnson-Laird, 1980, Ha and Schmidhuber, 2018, LeCun, 2022, Wong et al., 2023]. We typically do not have perfect knowledge about the outside world; to the extent that we are uncertain about our environment, our beliefs need to be probabilistic, reflecting this uncertainty. And for these beliefs to remain relevant as the world changes, or as new information about the world becomes available, we need to update our beliefs to reflect the new information. The framework of Bayesian inference describes the normative way in which new information should trigger a change in one’s beliefs so as to maximize the effectiveness of these beliefs as a foundation for acting in the world [Chater et al., 2006]. The Bayesian framework has informed a substantial body of work in cognitive science, which has identified both areas where humans act as the framework predicts, as well as deviations from it [Griffiths et al., 2024, Jern et al., 2017, Tenenbaum et al., 2011, Xu and Tenenbaum, 2007, Baker et al., 2011, Tenenbaum et al., 2006, Chater and Manning, 2006, Griffiths et al., 2007, Chaigneau et al., 2025, Rehder, 2018, Rottman and Hastie, 2016, Sloman and Lagnado, 2015].

In the last few years, artificial intelligence systems based on large language models (LLMs) have become dramatically more capable than in the past [Team, 2024a, Achiam et al., 2023, Anthropic, 2024, Team, 2024b, Touvron et al., 2023, Guo et al., 2025]. Far outgrowing their original motivation—as methods to estimate the probabilities of different word sequences—these systems are now being used for applications where they interact with users and with the outside world. As with humans, for the LLMs’ interactions with users to be effective, the LLMs’ beliefs need to reflect their experience with the user and to be continuously updated as more information becomes available. Here, we ask: do LLMs act as if they have probabilistic beliefs that are updated as expected from normative Bayesian inference? To the extent that the LLMs’ behavior deviates from the normative Bayesian strategy, how can we minimize these deviations?

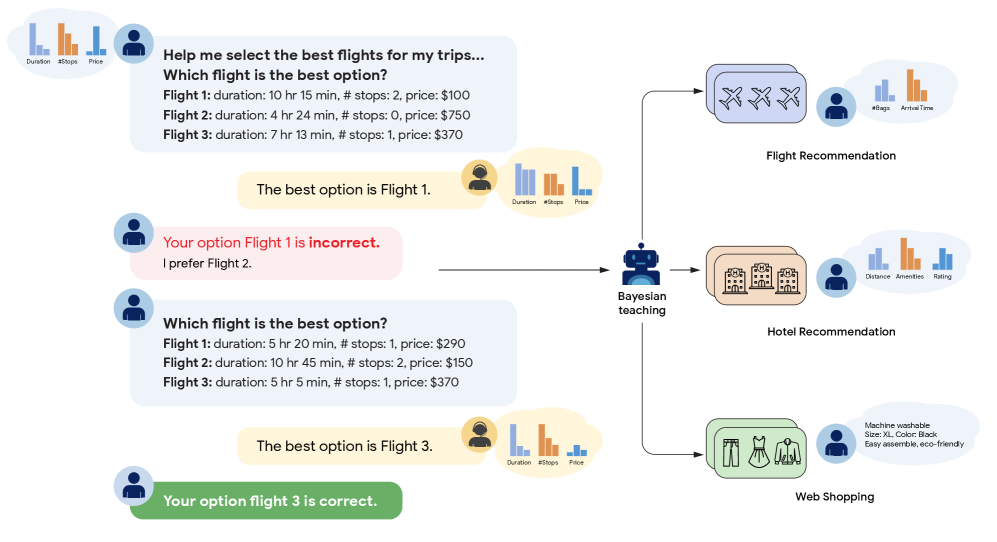

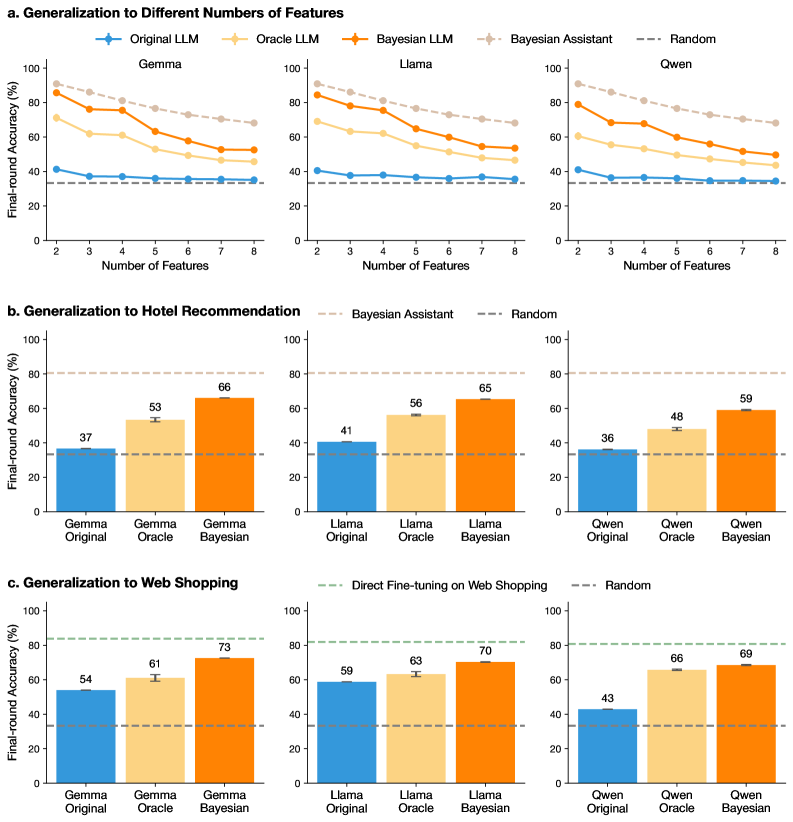

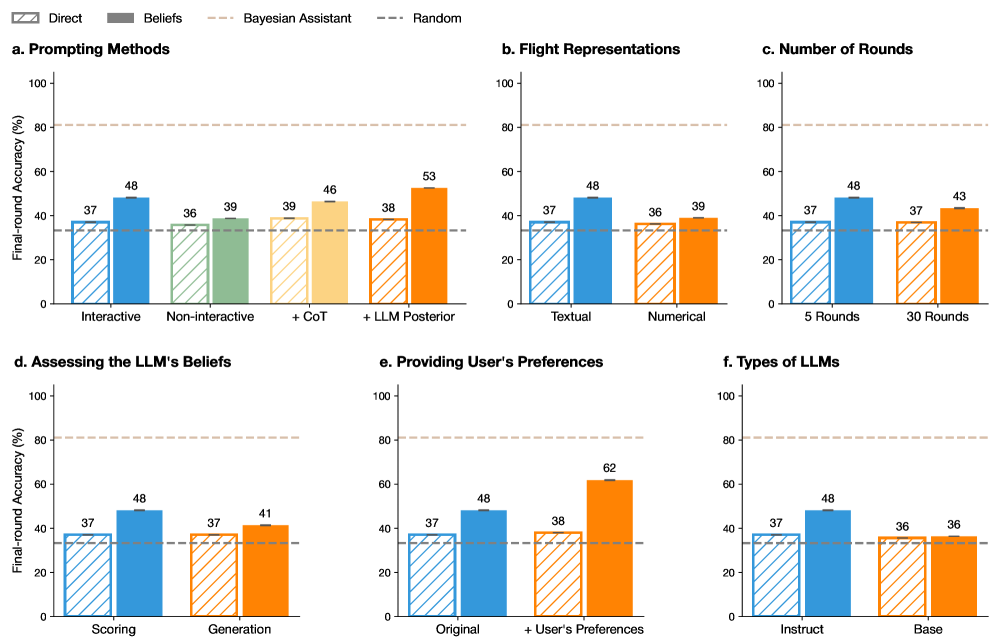

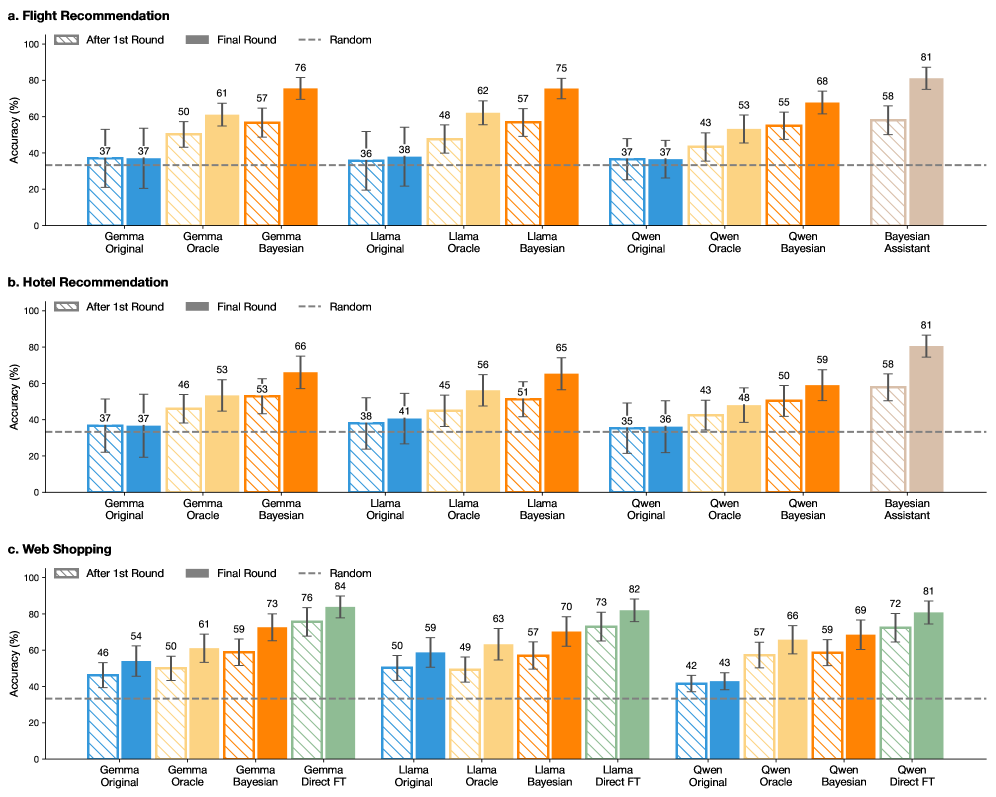

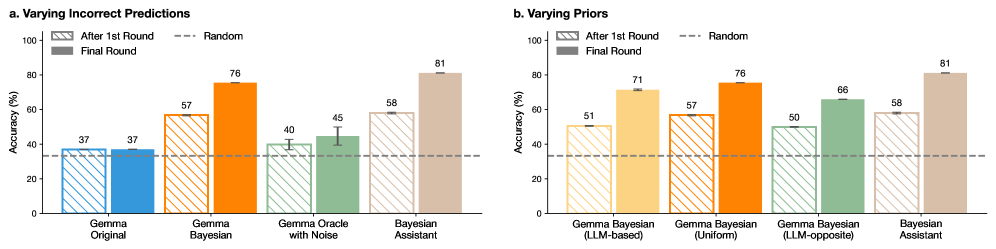

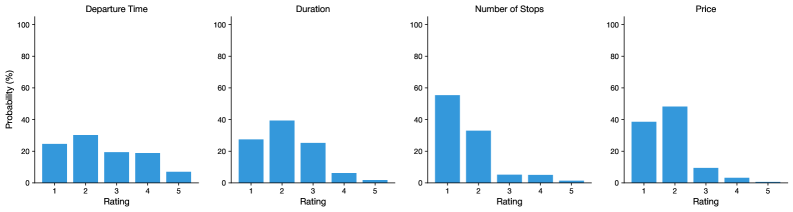

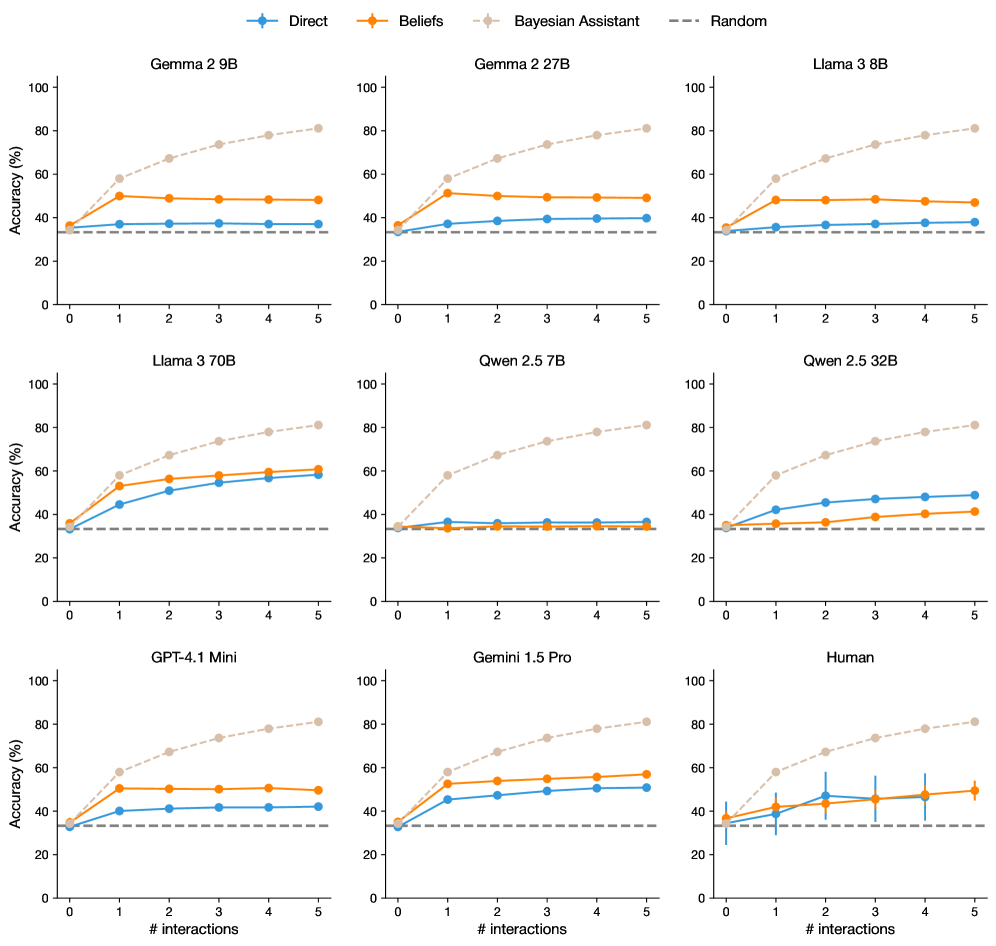

We begin to study these questions using a simple controlled setting: a flight recommendation task [Lin et al., 2022], illustrated in Fig. 1. This task involves multiple rounds of interactions between a simulated user and an LLM, where the LLM is acting as a flight booking assistant. In each round, the assistant is given a small number of flight options, and is expected to recommend one of them to the user, based on the user’s preferences. The user’s preferences are not directly communicated to the LLM: it only observes the choices the user makes among the flight options. To make optimal recommendations, then, the LLM must construct an implicit model of the factors that shape the user’s preferences, and must reason probabilistically about those factors as it learns about the user’s choices across multiple sets of flight options.

We compare the LLMs’ behavior to that of a model that follows the normative Bayesian strategy, which we refer to as the Bayesian Assistant. This model maintains a probability distribution that reflects its beliefs about the user’s preferences, and uses Bayes’ rule to update this distribution as new information about the user’s choices becomes available. Unlike many real-life scenarios, where it is difficult to specify and implement the Bayesian strategy computationally, in this controlled setting this strategy can be computed exactly, allowing us to precisely estimate the extent to which LLMs deviate from it.

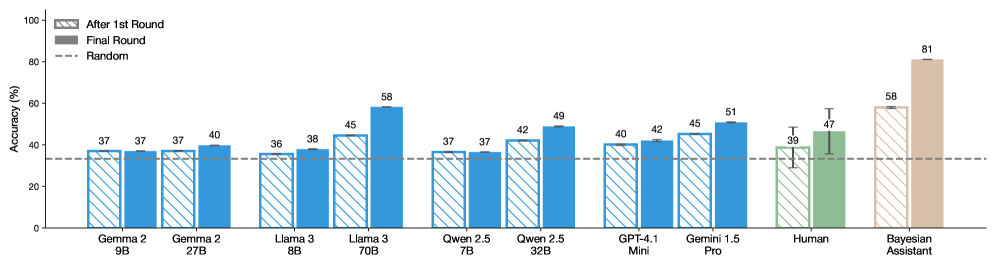

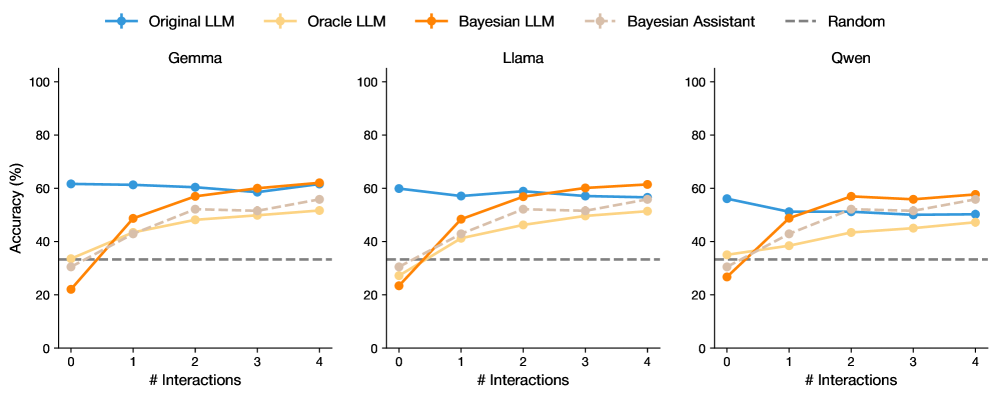

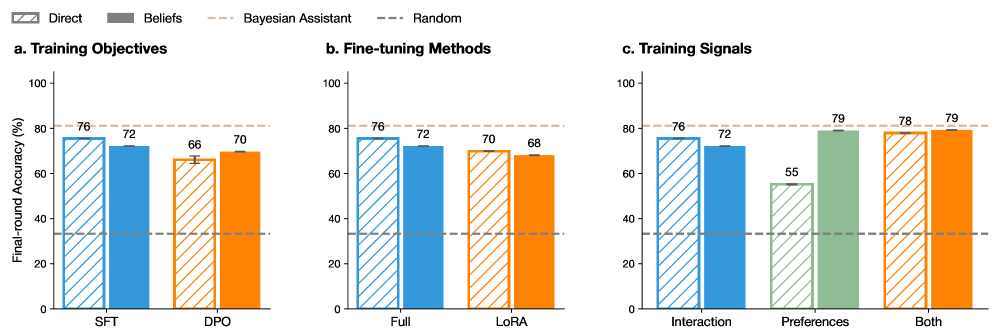

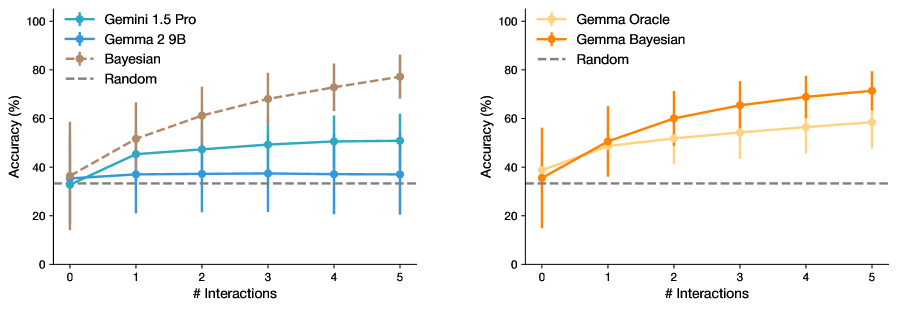

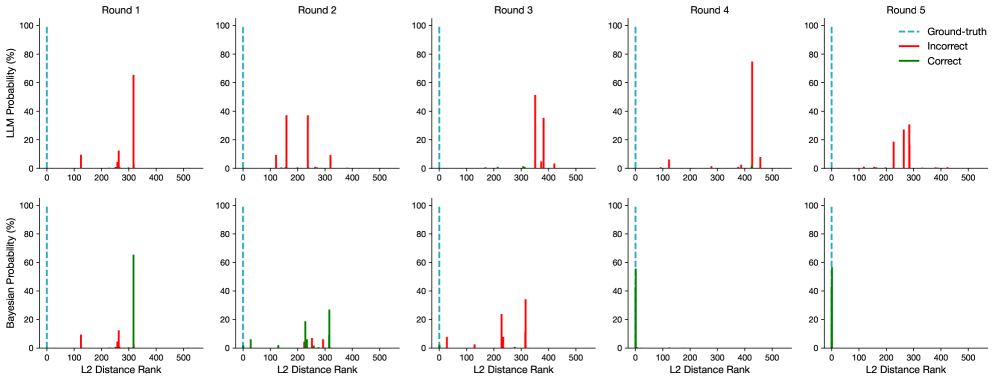

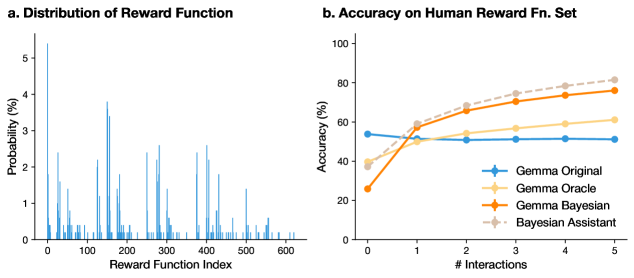

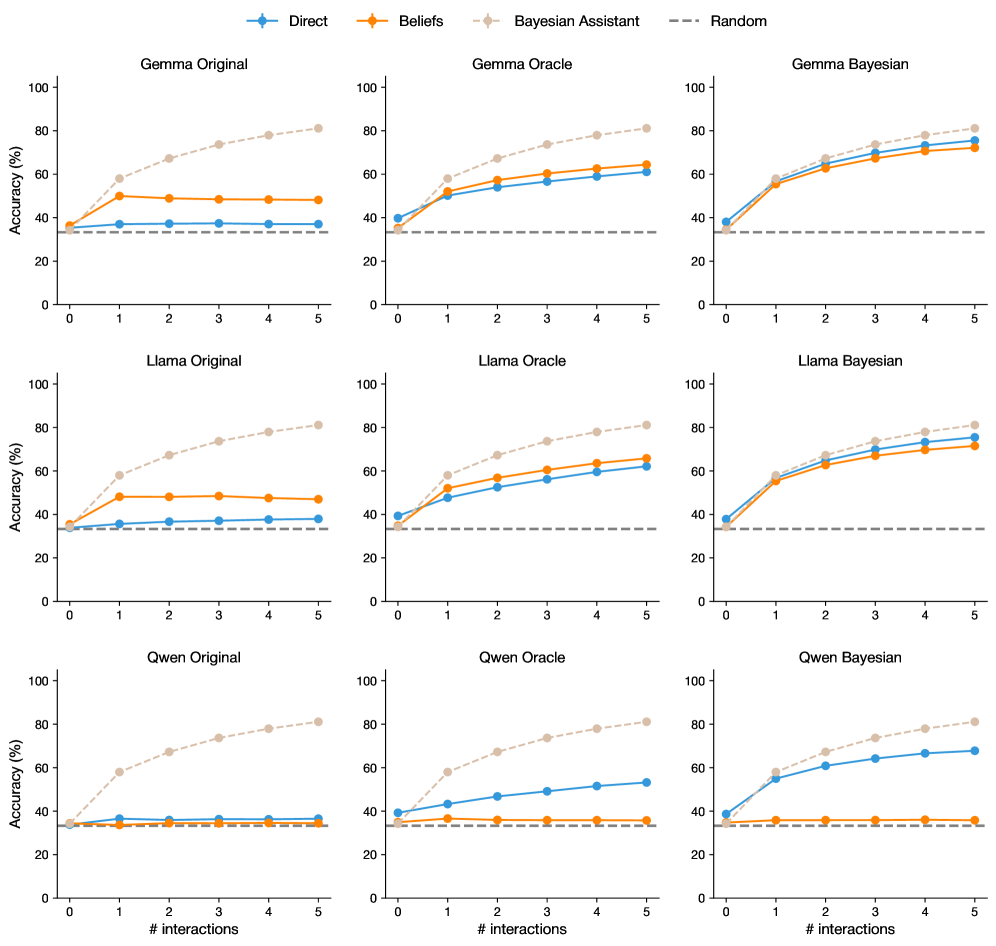

We use this framework to evaluate a range of LLMs and find that they all perform significantly worse than the normative Bayesian Assistant (Fig. 2). Most importantly, in contrast to the Bayesian Assistant, which gradually improves its recommendations as it receives additional information about the user’s choices, LLMs’ performance often plateaus after a single interaction, pointing to a limited ability to adapt to new information.

We then introduce Bayesian teaching, a strategy to teach an LLM to approximate Bayesian reasoning. We provide the LLM with examples of interactions between the user and the Bayesian Assistant, and have the LLM mimic those interactions. We find that, by leading the LLMs to gradually adapt to the user over the course of the interactions, this method substantially improves the LLMs’ performance on the flight recommendation task. Crucially, teaching the LLMs to mimic the Bayesian Assistant in one task allows them to generalize to other tasks that similarly require making decisions under uncertainty; those include not only different variants of the flight recommendation task, but also a related hotel recommendation task, as well as a web shopping task with real-world products (Fig. 1), a much more complex task for which it is difficult to specify and implement a fully Bayesian model.

Notably, while the Bayesian Assistant often makes incorrect predictions as it reasons under uncertainty, especially in the early rounds of interaction, we find that it is a more effective teacher than a teacher that directly provides the LLMs with users’ choices (which we refer to as an oracle teacher); in other words, the Bayesian model’s educated guesses make for a stronger learning signal than the correct answers. Overall, we conclude that through observing the Bayesian Assistant perform a particular task, the LLMs are able to approximate transferable probabilistic reasoning skills.

To summarize our contributions: we first identify significant limitations of off-the-shelf LLMs in tasks that require forming and updating probabilistic beliefs. We then demonstrate that, by having the LLMs mimic an normative Bayesian model, we can teach them effectively to approximate probabilistic belief updates, and show that these skills can generalize to new environments. These findings suggest that LLMs can be used in interactive settings where information is provided gradually, including complex application domains where implementing an exact Bayesian model is difficult. More generally, our results highlight a unique strength of deep learning models such as LLMs: they can learn to mimic a symbolic model and generalize its strategy to domains that are too complex to specify in a classic symbolic model.

## 2 Evaluating Belief Updates via Flight Recommendations

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Bayesian Teaching Process for Multi-Domain Recommendation Systems

### Overview

The image is a flowchart illustrating a "Bayesian teaching" process where a user provides feedback on an AI's recommendations, and this feedback is used to improve recommendations across multiple domains (flights, hotels, web shopping). The diagram shows two iterative cycles of user interaction for flight selection, followed by the application of learned preferences to other recommendation tasks.

### Components/Axes

The diagram is organized into three main regions:

1. **Left Column (User Interaction Loop):** Contains user queries (blue speech bubbles), system recommendations (yellow bubbles), and user feedback (red/green bubbles). Each interaction is accompanied by a small bar chart icon representing the features considered.

2. **Central Node:** A robot icon labeled **"Bayesian teaching"** acts as the learning hub, connecting the user feedback to the recommendation domains.

3. **Right Column (Recommendation Domains):** Three distinct application areas, each with an icon, a label, and a bar chart icon showing relevant features.

* **Top:** **Flight Recommendation** (airplane icons). Feature chart labels: `#Stops`, `Arrival Time`.

* **Middle:** **Hotel Recommendation** (building icons). Feature chart labels: `Distance`, `Amenities`, `Rating`.

* **Bottom:** **Web Shopping** (clothing icons). Feature chart labels: `Machine washable`, `Size: XL`, `Color: Black`, `Easy assemble`, `eco-friendly`.

### Detailed Analysis

**User Interaction Cycle 1 (Top-Left):**

* **User Query:** "Help me select the best flights for my trips... Which flight is the best option?"

* **Flight 1:** duration: 10 hr 15 min, # stops: 2, price: $100

* **Flight 2:** duration: 4 hr 24 min, # stops: 0, price: $750

* **Flight 3:** duration: 7 hr 13 min, # stops: 1, price: $370

* **System Recommendation (Yellow Bubble):** "The best option is Flight 1." Accompanied by a bar chart icon with three bars (blue, orange, gray) labeled `Duration`, `#Stops`, `Price`.

* **User Feedback (Red Bubble):** "Your option Flight 1 is incorrect. I prefer Flight 2."

**User Interaction Cycle 2 (Bottom-Left):**

* **User Query:** "Which flight is the best option?"

* **Flight 1:** duration: 5 hr 20 min, # stops: 1, price: $290

* **Flight 2:** duration: 10 hr 45 min, # stops: 2, price: $150

* **Flight 3:** duration: 5 hr 5 min, # stops: 1, price: $370

* **System Recommendation (Yellow Bubble):** "The best option is Flight 3." Accompanied by a bar chart icon.

* **User Feedback (Green Bubble):** "Your option flight 3 is correct."

**Central Process:**

* Arrows from both user feedback bubbles point to the central **"Bayesian teaching"** node.

* From this node, arrows point to the three recommendation domains on the right, indicating the learned preferences are applied here.

**Web Shopping Details (Bottom-Right):**

* Contains a block of text with mixed English and Chinese.

* **English Text:** `Machine washable`, `Size: XL`, `Color: Black`, `Easy assemble`, `eco-friendly`

* **Chinese Text (Transcribed):** `可机洗` (kě jī xǐ), `尺寸: XL` (chǐcùn: XL), `颜色: 黑色` (yánsè: hēisè), `易于组装` (yìyú zǔzhuāng), `环保` (huánbǎo)

* **English Translation of Chinese Text:** `Machine washable`, `Size: XL`, `Color: Black`, `Easy to assemble`, `Eco-friendly`

### Key Observations

1. **Feedback-Driven Learning:** The core mechanism is explicit user correction. The system makes a recommendation, the user provides binary feedback (correct/incorrect) and sometimes a preferred alternative, which is used for Bayesian updating.

2. **Feature-Based Representation:** Every decision point (user query, system recommendation) is paired with a bar chart icon, emphasizing that recommendations are based on weighted features (e.g., Duration, Price, #Stops).

3. **Cross-Domain Transfer:** The diagram explicitly shows that learning from flight selection interactions is transferred via "Bayesian teaching" to improve Hotel and Web Shopping recommendations.

4. **Multilingual Content:** The Web Shopping domain includes product attributes in both English and Chinese, suggesting the system operates in or supports a multilingual context.

5. **Visual Feedback Coding:** User feedback is color-coded: red for incorrect, green for correct, providing immediate visual cues about the learning signal.

### Interpretation

This diagram illustrates a human-in-the-loop machine learning framework, specifically **Bayesian teaching**, where user feedback directly shapes the model's understanding of preferences. The process is not just about optimizing a single task (flight selection) but about extracting generalizable preference rules that can be applied to novel domains (hotels, shopping).

The two flight examples show the system learning from its mistakes. In the first, it prioritized low price (Flight 1), but the user preferred the faster, non-stop option (Flight 2). In the second, after presumably updating its model, it correctly recommends the shortest flight (Flight 3). The bar chart icons suggest the system is learning the relative importance (weights) of features like duration versus price.

The transfer to other domains implies the learned preferences are abstract. For instance, a user's demonstrated preference for "convenience" (shorter duration, fewer stops) in flights might translate to prioritizing "proximity" (Distance) or "Amenities" in hotels, or "Easy assemble" in products. The inclusion of Chinese text in the shopping domain highlights the practical application of such a system in real-world, multilingual e-commerce platforms. The overall message is that interactive feedback is a powerful tool for building adaptable, multi-purpose recommendation agents.

</details>

Figure 1: Evaluating and improving LLMs’ probabilistic belief updates. The flight recommendation task (left) involves multi-round interactions between a user and a flight booking assistant. In each round, the assistant is asked to recommend to the user one of three available flight options. The assistant is then shown the flight that was in fact chosen by the user (based on the user’s reward function, which characterizes the user’s preferences). To make good recommendations, the assistant needs to infer the user’s preferences from the user’s choices. To teach the LLM to reason probabilistically, we fine-tune the LLM on interactions between users and a Bayesian Assistant, which represents the normative way to update beliefs about the user’s preferences. We then evaluate the fine-tuned model on the flight recommendation task as well as two new tasks (right).

We first describe the simplified flight recommendation task, derived that we use to evaluate the LLMs [Lin et al., 2022]. In this task, we have the LLMs interact with a simulated user for five rounds. In each round, three flight options are presented to both the user and the assistant. Each flight is defined by a departure time, a duration, a number of stops, and a cost (see Fig. 1). Each simulated user is characterized by a set of preferences: for each feature, they can have a strong or weak preference for high or low values of the feature (e.g., they may prefer longer or shorter flights), or no preference regarding this feature. We refer to this set of preferences as the user’s reward function. We have 624 possible users in total (see Appendix Section A). These preferences, which determine the flights that the user chooses, are not directly revealed to the assistant. The goal of the assistant is to recommend the flight that matches the user’s choice. At the end of each round, the user indicates to the assistant whether or not it chose correctly, and provides it with the correct answer.

After each round, we evaluate the accuracy of the assistant’s recommendations for 100 new sets of three flights that differ from the ones on which the assistant has received feedback. We do not provide any feedback to the assistant for these new flight option sets (see Appendix Fig. 7 for the evaluation workflow).

### 2.1 The Bayesian Assistant

Because the users’ preferences are only revealed gradually, through their choices among flight options, we cannot expect the LLMs to reach perfect accuracy immediately after a single round of interaction. As an upper bound on the LLMs’ performance, we define a Bayesian Assistant, which implements the strategy that optimally takes into account the evidence about the user’s preferences that accumulates over rounds of interaction. This entails maintaining uncertainty about those preferences when the evidence is partial: instead of committing to a single most likely reward function, which could turn out to be incorrect in future rounds, the assistant maintains a probability distribution over possible reward functions. After each round, the Bayesian Assistant updates its distribution over reward functions using Bayes’ rule: the probability of each reward function after the round (the posterior) is computed based on its probability before the round (the prior) and whether or not it was compatible with the user’s choice (the likelihood). This normative model represents the best performance that we can possibly expect from any system. Because the number of possible reward functions is small, we are able to perform exact Bayesian inference (see Appendix Section A).

This method requires us to define the Bayesian Assistant’s initial prior distribution, that is, its probabilistic assumptions about which user preferences are more likely, in advance of any interaction with the user. We use an uninformed prior, where all possible sets of user preferences are equally likely (for experiments with alternative priors, see Appendix Section D.4).

<details>

<summary>x2.png Details</summary>

### Visual Description

## Grouped Bar Chart: AI Model and Human Accuracy Comparison

### Overview

The image displays a grouped bar chart comparing the accuracy percentages of various large language models (LLMs), a human baseline, and a "Bayesian Assistant" across two evaluation rounds. The chart assesses performance on an unspecified task, with a random-guess baseline indicated.

### Components/Axes

* **Chart Type:** Grouped bar chart with error bars.

* **Y-Axis:** Labeled "Accuracy (%)". Scale runs from 0 to 100 in increments of 20.

* **X-Axis:** Lists 10 categories (9 AI models/systems + Human). From left to right:

1. Gemma 2 9B

2. Gemma 2 27B

3. Llama 3 8B

4. Llama 3 70B

5. Qwen 2.5 7B

6. Qwen 2.5 32B

7. GPT-4.1 Mini

8. Gemini 1.5 Pro

9. Human

10. Bayesian Assistant

* **Legend (Top-Left Corner):**

* **Hatched Pattern Bar:** "After 1st Round"

* **Solid Color Bar:** "Final Round"

* **Dashed Horizontal Line:** "Random"

* **Baseline:** A dashed horizontal line at approximately 33% accuracy, labeled "Random" in the legend, representing chance-level performance.

### Detailed Analysis

Each category on the X-axis has two bars. The left (hatched) bar represents accuracy after the first round, and the right (solid) bar represents final round accuracy. Numerical values are annotated above each bar.

**Data Series & Values (Accuracy %):**

| Category | After 1st Round (Hatched) | Final Round (Solid) | Trend (1st to Final) |

| :--- | :--- | :--- | :--- |

| **Gemma 2 9B** | 37 | 37 | No change |

| **Gemma 2 27B** | 37 | 40 | Slight increase (+3) |

| **Llama 3 8B** | 36 | 38 | Slight increase (+2) |

| **Llama 3 70B** | 45 | 58 | Significant increase (+13) |

| **Qwen 2.5 7B** | 37 | 37 | No change |

| **Qwen 2.5 32B** | 42 | 49 | Moderate increase (+7) |

| **GPT-4.1 Mini** | 40 | 42 | Slight increase (+2) |

| **Gemini 1.5 Pro** | 45 | 51 | Moderate increase (+6) |

| **Human** | 39 | 47 | Moderate increase (+8). **Note:** Both bars have black error bars indicating variability. |

| **Bayesian Assistant** | 58 | 81 | Very large increase (+23). This is the highest-performing category. |

**Spatial Grounding & Verification:**

* The legend is positioned in the top-left quadrant of the chart area.

* The "Random" baseline dashed line runs horizontally across the entire chart at the ~33% mark.

* The "Human" and "Bayesian Assistant" bars are colored differently (green and brown, respectively) from the blue bars used for the AI models, visually setting them apart.

* The error bars are only present on the "Human" data series, indicating measured uncertainty or variance in human performance.

### Key Observations

1. **Universal Improvement:** All categories either maintained or improved their accuracy from the "After 1st Round" to the "Final Round." No category performed worse.

2. **Performance Tiers:** A clear hierarchy is visible. The Bayesian Assistant is the top performer, followed by the largest AI models (Llama 3 70B, Gemini 1.5 Pro). Human performance and mid-sized models cluster in the middle. Smaller models (Gemma 2 9B, Qwen 2.5 7B) perform just above the random baseline.

3. **Scale Correlation:** For each model family (Gemma 2, Llama 3, Qwen 2.5), the larger parameter version (27B, 70B, 32B) outperforms its smaller counterpart in both rounds.

4. **Human vs. AI:** Human final round accuracy (47%) is surpassed by several AI models (Llama 3 70B, Qwen 2.5 32B, Gemini 1.5 Pro, Bayesian Assistant) but is higher than the smaller models.

5. **Largest Gains:** The most significant accuracy jumps between rounds were achieved by the Bayesian Assistant (+23 points) and Llama 3 70B (+13 points).

### Interpretation

This chart likely visualizes results from a multi-round reasoning or problem-solving benchmark. The "After 1st Round" score may represent initial, single-attempt performance, while the "Final Round" score reflects performance after an iterative process (e.g., self-correction, receiving feedback, or chain-of-thought refinement).

The data suggests that:

* **Iterative refinement is highly effective.** The process between rounds consistently boosts accuracy, with more capable systems (both AI and human) benefiting more dramatically.

* **Model scale matters.** Larger models within the same family show a greater capacity for improvement and higher final performance.

* **Specialized systems can excel.** The "Bayesian Assistant," which likely incorporates probabilistic reasoning or a different architectural approach, demonstrates a superior ability to leverage the iterative process, achieving near-perfect final accuracy (81%).

* **Human reasoning is variable but competitive.** The error bars on human data acknowledge individual differences. While humans are outperformed by top AI systems in this task, they still significantly outperform smaller models and the random baseline.

The chart effectively argues for the value of multi-step evaluation and highlights the performance gap between different classes of AI systems and human capability on this specific task.

</details>

Figure 2: LLMs show limited or no improvement over multiple interactions with the user. We show accuracy after the first round and final (fifth) round. We compare off-the-shelf LLMs from different model families to human participants and the Bayesian Assistant. For human participants, we only evaluate on a subset of 48 out of our 624 simulated users. The LLMs perform considerably worse than the Bayesian Assistant. Human participants demonstrate a larger improvement than most LLMs as they receive more information, but they still fall short of the accuracy that characterizes the normative Bayesian strategy. For the human study, the error bars show the averaged standard error across participants; for models, they show the standard error across the three sets of interactions with each of the 624 users.

### 2.2 LLMs Show Limited Evidence of Belief Updating

The LLMs we evaluate, like most contemporary LLMs, are first trained to predict upcoming words in a large collection of texts (“pre-training”), and are then specialized to follow user instructions provided in natural language (“instruction-tuning”) [Sanh et al., 2022, Wei et al., 2022a]. Most commercially available models are closed-weights: we can query them but we cannot access their parameters. We evaluate two such closed-weights models, Gemini 1.5 Pro [Team, 2024a] and GPT-4.1 Mini [OpenAI, 2025], which were among the state-of-the-art LLMs at the time of writing [Chiang et al., 2024]. We also evaluate the following open-weights models: Gemma 2 (9B and 27B parameters) [Team, 2024b], Llama 3 (8B and 70B parameters) [Grattafiori et al., 2024], and Qwen 2.5 (7B and 32B parameters) [Yang et al., 2024a]. We chose those models because their performance was quite competitive, and their weights are openly available, which makes it possible to perform fine-tuning (see the next section). We provide these LLMs with English instructions explaining how to act as a flight booking assistant (see Fig. 1 for an example, and Appendix Table 3 for a detailed interaction).

We show results in Fig. 2. Overall, the accuracy of the LLMs after the five rounds of interaction is considerably lower than that of the Bayesian Assistant, and most of the models show little improvement after the first round of interaction (Fig. 2 shows results after the first and fifth round; for results after each of the five rounds, see Appendix Fig. 24). For an exploration of how the models’ performance varies across users’ possible reward functions, see Appendix Section D.2.

A range of follow-up experiments failed to produce meaningful improvement in the LLMs’ behavior (for details, see Appendix Section C.1). Those include experiments with “chain-of-thought prompting” [Wei et al., 2022b, Nye et al., 2021, Kojima et al., 2022], that is, instructions that are meant to encourage the LLM to reason more explicitly (Appendix Fig. 9); an experiment with alternative, purely numerical representations of the flight options that we hypothesized might be easier for the LLMs to parse than the verbal ones we used for our main experiments (Appendix Fig. 9); a setting where we have 30 instead of five rounds of interaction (Appendix Fig. 9); and experiments with models that are only pre-trained to predict upcoming words in texts, without subsequent training to follow user instructions (Appendix Fig. 9).

We also had human participants act as the assistant to a subset of 48 simulated users (see Appendix Section A and Appendix Section F.1 for details). The human participants made recommendations for five rounds and showed a significant improvement between round 1 and 5 (p = 0.002, logistic mixed-effects model). In terms of accuracy, they perform better than small LLMs and slightly worse than larger LLMs (see Appendix Fig. 24 for performance over rounds). That being said, like all LLMs, humans also fall substantially short of the accuracy expected from the normative Bayesian strategy.

## 3 Teaching LLMs to Approximate Bayesian Reasoning

<details>

<summary>x3.png Details</summary>

### Visual Description

## Grouped Bar Chart: Accuracy Comparison of AI Models Across Different Methods

### Overview

This image is a grouped bar chart comparing the accuracy percentages of three large language models (Gemma, Llama, Qwen) under three different methods (Original, Oracle, Bayesian), alongside a standalone "Bayesian Assistant" model. Performance is measured across two evaluation stages: "After 1st Round" and "Final Round." A dashed line indicates a "Random" baseline performance.

### Components/Axes

* **Chart Type:** Grouped bar chart with error bars.

* **Y-Axis:** Labeled "Accuracy (%)". Scale runs from 0 to 100 in increments of 20.

* **X-Axis:** Categorical, listing the model-method combinations. From left to right:

* Gemma Original, Gemma Oracle, Gemma Bayesian

* Llama Original, Llama Oracle, Llama Bayesian

* Qwen Original, Qwen Oracle, Qwen Bayesian

* Bayesian Assistant

* **Legend:** Positioned in the top-left corner of the chart area.

* **After 1st Round:** Represented by bars with diagonal hatching (\\).

* **Final Round:** Represented by solid-colored bars.

* **Random:** Represented by a horizontal dashed line.

* **Data Series Colors (by model group):**

* **Gemma Group:** Blue (Original), Light Orange (Oracle), Dark Orange (Bayesian).

* **Llama Group:** Blue (Original), Light Orange (Oracle), Dark Orange (Bayesian).

* **Qwen Group:** Blue (Original), Light Orange (Oracle), Dark Orange (Bayesian).

* **Bayesian Assistant:** A single tan/beige color.

### Detailed Analysis

The chart presents paired bars for each model-method combination, showing the progression from the 1st Round to the Final Round. Error bars are visible on the "After 1st Round" bars for the Oracle and Bayesian methods.

**1. Gemma Model Group:**

* **Trend:** Accuracy increases consistently from Original to Oracle to Bayesian methods. The Final Round shows a significant improvement over the 1st Round for Oracle and Bayesian.

* **Data Points:**

* Gemma Original: After 1st Round = 37%, Final Round = 37% (no change).

* Gemma Oracle: After 1st Round ≈ 50%, Final Round = 61%.

* Gemma Bayesian: After 1st Round ≈ 57%, Final Round = 76%.

**2. Llama Model Group:**

* **Trend:** Similar upward trend from Original to Oracle to Bayesian. The Final Round improvement is most pronounced for the Bayesian method.

* **Data Points:**

* Llama Original: After 1st Round = 36%, Final Round = 38%.

* Llama Oracle: After 1st Round ≈ 48%, Final Round = 62%.

* Llama Bayesian: After 1st Round ≈ 57%, Final Round = 75%.

**3. Qwen Model Group:**

* **Trend:** Again, accuracy improves from Original to Oracle to Bayesian. The Final Round shows gains across all methods.

* **Data Points:**

* Qwen Original: After 1st Round = 37%, Final Round = 37% (no change).

* Qwen Oracle: After 1st Round ≈ 43%, Final Round = 53%.

* Qwen Bayesian: After 1st Round ≈ 55%, Final Round = 68%.

**4. Bayesian Assistant:**

* **Trend:** This is a single model, not a group. It shows the highest performance on the chart.

* **Data Points:** After 1st Round ≈ 58%, Final Round = 81%.

**5. Random Baseline:**

* The dashed "Random" line is positioned at approximately 33% accuracy, serving as a lower-bound reference.

### Key Observations

1. **Method Superiority:** The Bayesian method consistently yields the highest accuracy within each model family (Gemma, Llama, Qwen), followed by Oracle, with Original performing the worst.

2. **Round Improvement:** For all Oracle and Bayesian methods, the "Final Round" accuracy is substantially higher than the "After 1st Round" accuracy. The "Original" methods show minimal to no improvement between rounds.

3. **Top Performer:** The standalone "Bayesian Assistant" achieves the highest overall accuracy (81% Final Round), outperforming the Bayesian variants of the individual models.

4. **Consistency:** The pattern of improvement (Original < Oracle < Bayesian) is consistent across all three base model families.

5. **Baseline Comparison:** All methods, except the "Original" variants which are near the baseline, perform significantly better than the ~33% random chance level.

### Interpretation

This chart demonstrates the effectiveness of advanced inference or training methods (Oracle and especially Bayesian) over a base ("Original") model in a multi-round evaluation setting. The data suggests that:

* **Bayesian methods are highly effective:** They provide the largest accuracy boost within each model family and show the most significant gain from the first to the final round, indicating they benefit greatly from iterative refinement or additional data.

* **The "Original" models plateau:** Their lack of improvement between rounds suggests they may lack the mechanism to leverage additional interactions or information presented in the later round.

* **The "Bayesian Assistant" is a distinct, high-performing system:** Its superior performance implies it may be a specialized model or ensemble designed specifically for the task, rather than just a Bayesian version of Gemma, Llama, or Qwen.

* **The task is non-trivial:** The random baseline is ~33%, suggesting a multi-class classification problem with roughly three choices. The best models achieve over 80% accuracy, showing substantial learning beyond random guessing.

The consistent trends across different base models (Gemma, Llama, Qwen) strengthen the conclusion that the observed benefits are due to the methods (Oracle, Bayesian) themselves, not quirks of a single model architecture.

</details>

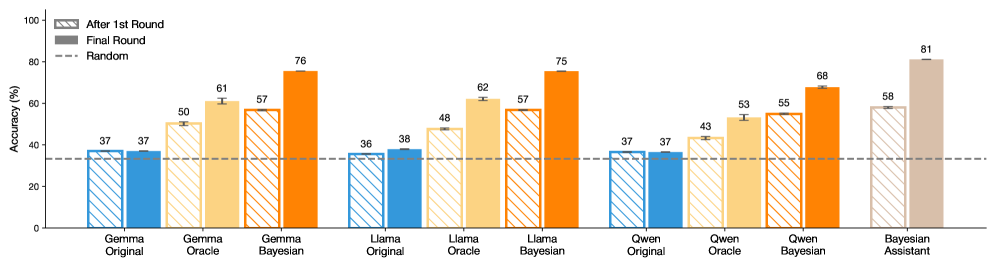

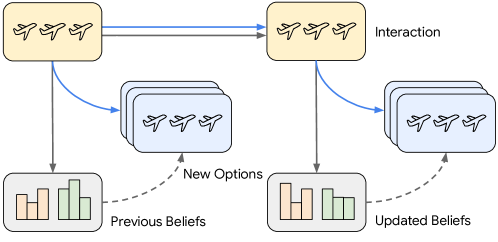

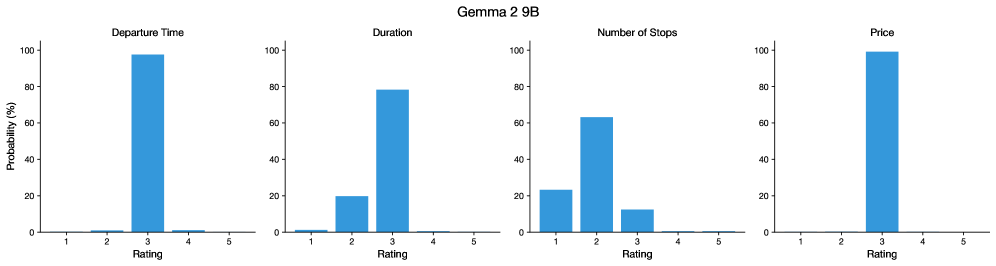

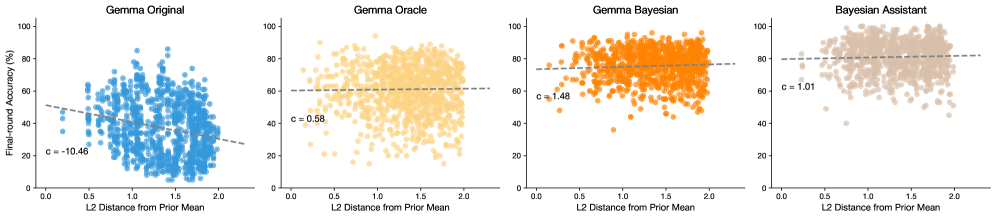

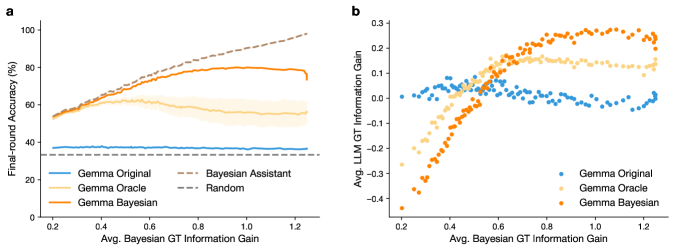

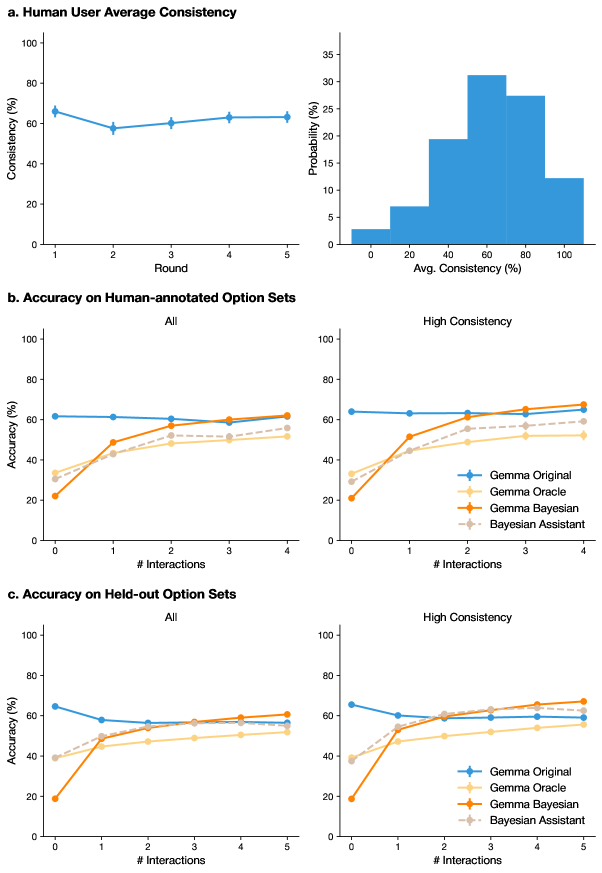

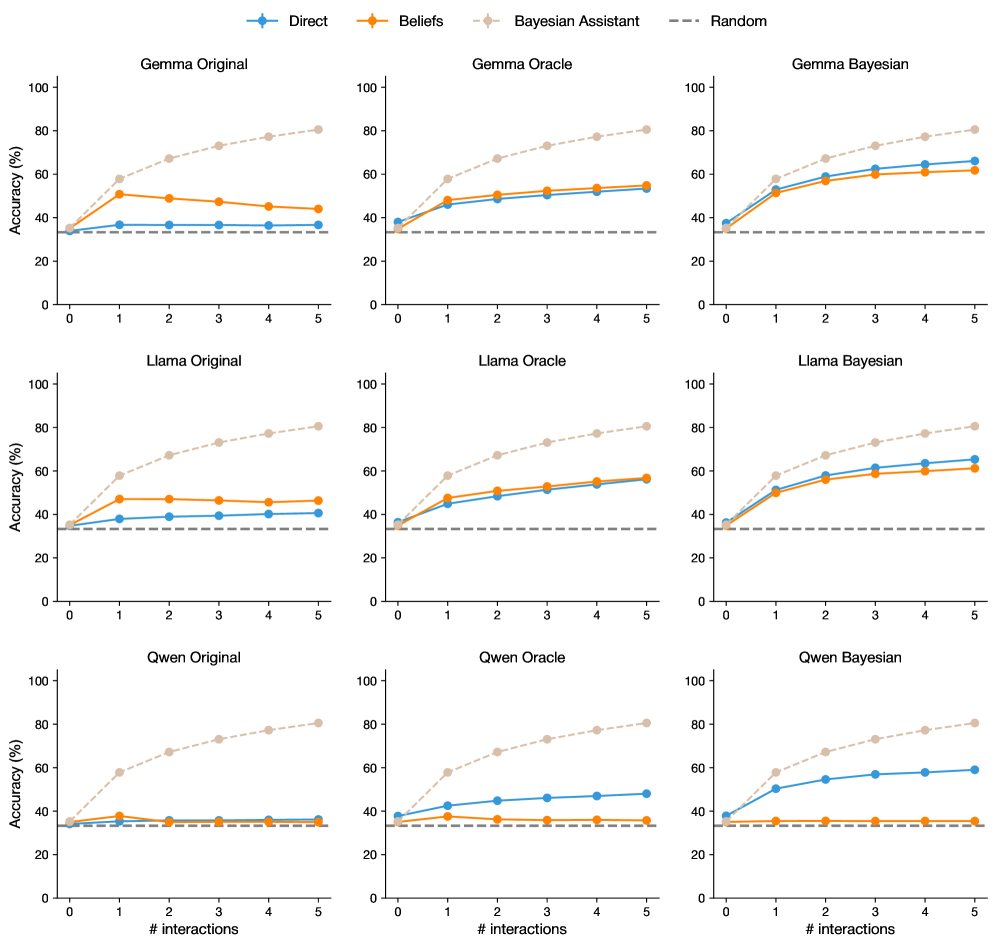

Figure 3: Supervised fine-tuning teaches LLMs to approximate probabilistic inference. We show accuracy after the first round and final (fifth) round across different assistants. We compare the original LLMs, LLMs fine-tuned on user interactions with the Bayesian Assistant, and LLMs fine-tuned on user interactions with an oracle, which always provides the correct answer. Both types of fine-tuning significantly improve LLMs’ performance, and Bayesian teaching is consistently more effectively than oracle teaching. Error bars show the standard error across three random seeds (and three training runs). All results are statistical significant, $p<0.001$ (see Appendix Section G).

We next describe the supervised fine-tuning technique we use to teach the LLM to mimic the normative Bayesian model; we show that this method substantially improves the LLM’s ability to update its beliefs correctly.

From a technical perspective, supervised fine-tuning is similar to the method used to train most LLMs in the first place. The model is provided with the first words of a text and is trained to predict the upcoming word. After each example, the LLM’s weights are adjusted to increase the likelihood of a correct prediction if the same example is observed again. The main difference is that while in the first phase of training the texts are typically drawn from the Internet or similar resources, in the supervised fine-tuning phase the texts are constructed in a targeted way (automatically or by human writers) so as to teach the LLM particular skills [Sanh et al., 2022, Wei et al., 2022a]; to improve arithmetic skills, for example, the model may be given the text “the output of $1+1=…$ is $2$ ”. We apply supervised fine-tuning to the three medium-sized open-weights models (Gemma 2 9B, Llama 3 8B, and Qwen 2.5 7B); we do not attempt to fine-tune the larger models from these families due to computational constraints. We update all of the models’ weights in fine-tuning (in Appendix Section C.2, we show that a different training objective, Direct Preference Optimization [Rafailov et al., 2023], produces similar results, as does a computationally cheaper fine-tuning method, LoRA [Hu et al., 2022], which only updates a subset of the model’s weights).

We explore two strategies to create supervised fine-tuning data. For both strategies, we construct 10 five-round interactions per user. These interactions follow the same format as described above (Appendix Table 3). In the first strategy, which we refer to as oracle teaching, we provide the LLM with interactions between simulated users and an “oracle” assistant that has perfect knowledge of the user’s preferences, and as such always recommends the option that is identical to the user’s choices.

The second strategy, which we call Bayesian teaching, provides the LLM with interactions between the user and the Bayesian Assistant. In this setting, the assistant will often choose flights that do not match the user’s preferred choice, especially in early rounds where it has considerable uncertainty about the user’s preferences. We hypothesize that despite this fact mimicking the Bayesian Assistant’s best guesses would teach the LLM to maintain uncertainty and update its beliefs more effectively than the first strategy where the LLM is trained on the correct choices. This approach can be seen as a form of distillation, where a model is trained by learning to mimic another system [Hinton et al., 2015, Kim and Rush, 2016, Deng et al., 2023, Wang et al., 2023b, Li et al., 2023b, Jung et al., 2024, Yu et al., 2024, Chen et al., 2024b]. We use a uniform prior for the Bayesian Assistant that produces the supervised fine-tuning data. Other priors perform similarly (see Appendix Fig. 16).

### 3.1 Fine-Tuning Teaches LLMs to Adapt to Users

Both supervised fine-tuning strategies, oracle teaching and Bayesian teaching, significantly improve the LLMs’ performance on the flight recommendation task (Fig. 3). Crucially, after fine-tuning, the LLMs’ performance gradually improves as more information becomes available; this contrasts with the original LLMs, which plateaued after the first round (see the substantial performance improvement between the first and last round in Fig. 3; for detailed results for each round, see Appendix Fig. 25). While there is still a performance gap between the fine-tuned LLMs and the normative Bayesian Assistant, this gap is much narrower than for the original LLMs. All three medium-sized LLMs, which before fine-tuning performed worse than either stronger models or our human participants, markedly outperform them after fine-tuning.

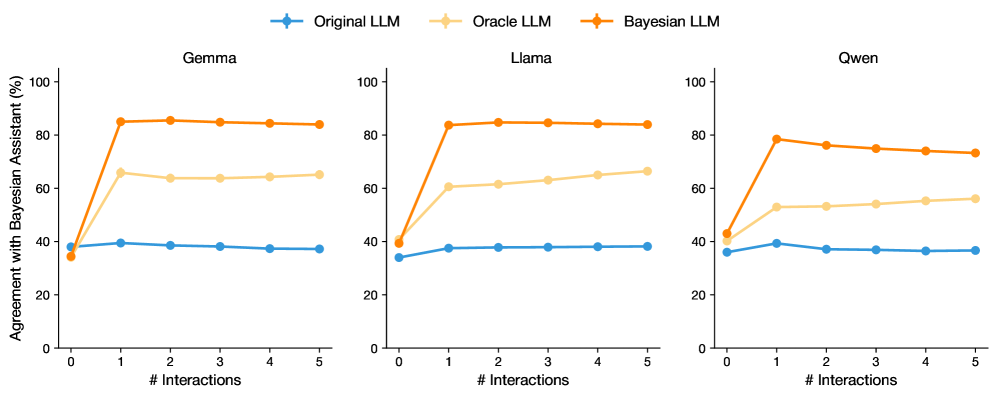

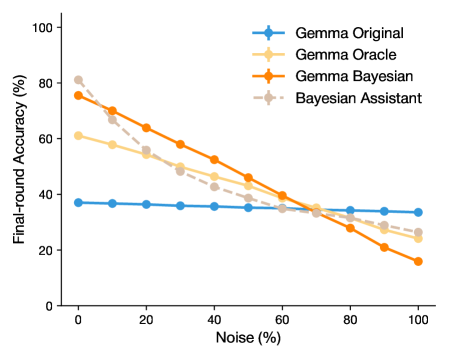

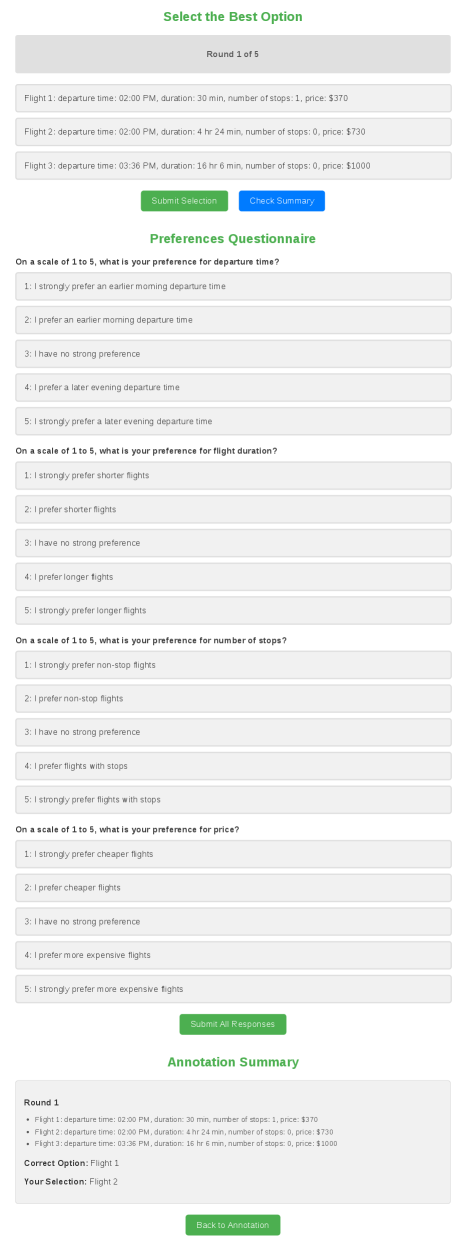

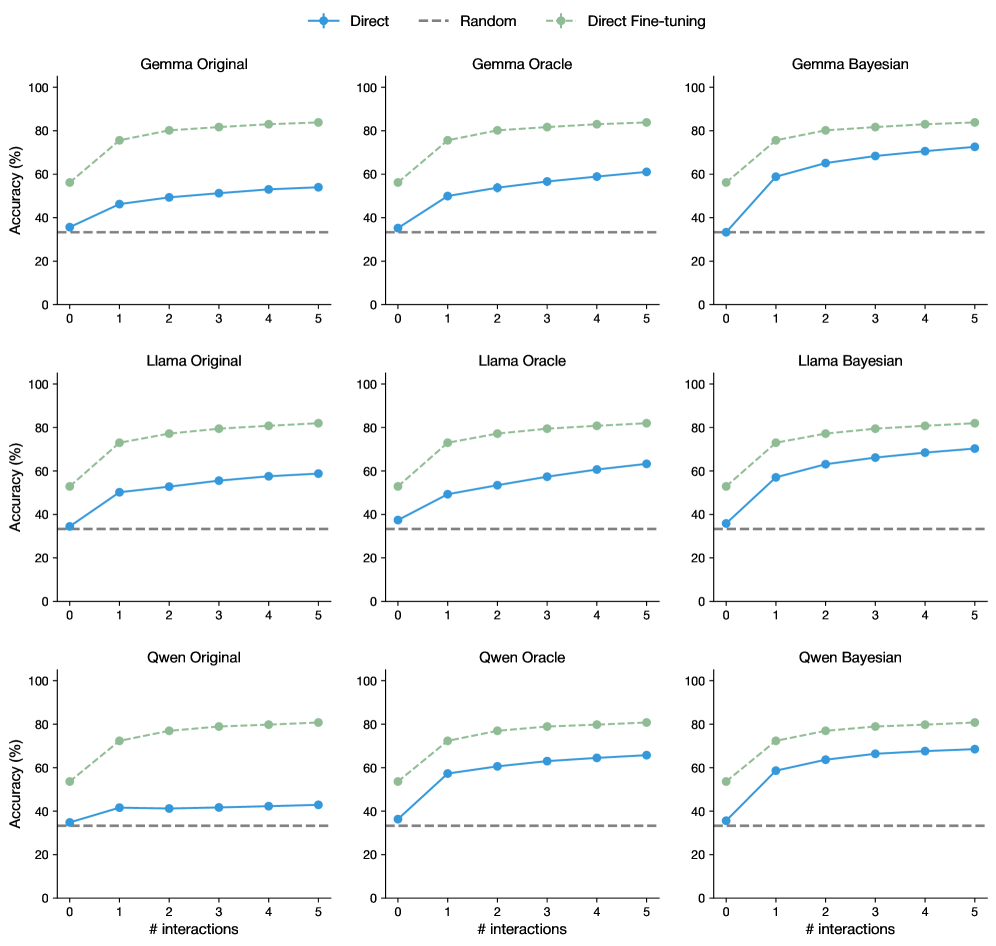

We find that Bayesian teaching leads to higher accuracy and less variability across repetitions of the experiment than oracle teaching (Fig. 3). Bayesian teaching also successfully makes the LLM more Bayesian: the Bayesian-tuned LLMs’ predictions agree with those of the Bayesian Assistant around 80% of the time, significantly more often than do the predictions of the original LLMs and oracle-tuned LLMs (Fig. 4). In Appendix Section D.4, we show that the effectiveness of Bayesian teaching cannot be explained by two potential confounds, and conclude that the effectiveness of this method is in fact due to the Bayesian signal it provides.

The amount of information that can be gained from the user’s choice for a particular option set varies from one set to another. For example, a choice between two flight options that differ in exactly one feature provides direct evidence for the user’s preference for that feature; such a choice could be more informative about the user’s preferences than the choice between options that differ along multiple dimensions. We expect a model with more sophisticated probabilistic skills to show greater sensitivity to this factor. Do our fine-tuned models show such sensitivity? Focusing on the Gemma models, we find that Gemma Original does not show sensitivity to option set informativity, but both fine-tuned versions of Gemma do, with Gemma Bayesian displaying considerably more sensitivity than Gemma Oracle (Appendix Section E).

Can the fine-tuned models accurately verbalize their beliefs? To address this question, we ask the LLMs explicitly for their beliefs about the user’s preferences—we have the simulated user ask them, for example, “on a scale of 1 to 5, what is my preference for price?”. We then test for the accuracy of these verbalized beliefs by deriving flight recommendations from those beliefs, using the same decision procedure we use with the Bayesian Assistant. We find that this approach generally performs better the approach we have used so far where we directly ask for the LLMs’ recommendations; that predictions based on the fine-tuned LLMs’ verbalized beliefs are substantially more accurate than those based on the original LLMs’ verbalized beliefs; and that the Bayesian-tuned LLMs produce more accurate beliefs than either the original LLMs or oracle-tuned ones (for additional details, see Appendix Section B).

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Charts: Agreement with Bayesian Assistant Across LLMs and Interactions

### Overview

The image displays three horizontally arranged line charts, each comparing the performance of a different Large Language Model (LLM) over a series of interactions. The charts measure the "Agreement with Bayesian Assistant (%)" as a function of the number of interactions (# Interactions). Each chart contains three data series representing different model variants: Original LLM, Oracle LLM, and Bayesian LLM.

### Components/Axes

* **Chart Titles (Subplots):** Centered above each chart: "Gemma" (left), "Llama" (center), "Qwen" (right).

* **Y-Axis (All Charts):** Label: "Agreement with Bayesian Assistant (%)". Scale: 0 to 100, with major tick marks at 0, 20, 40, 60, 80, 100.

* **X-Axis (All Charts):** Label: "# Interactions". Scale: 0 to 5, with integer tick marks at 0, 1, 2, 3, 4, 5.

* **Legend:** Positioned at the top center of the entire figure, spanning all three subplots.

* **Original LLM:** Blue line with circular markers.

* **Oracle LLM:** Light orange (beige) line with circular markers.

* **Bayesian LLM:** Dark orange line with circular markers.

* **Data Series:** Each chart contains three lines corresponding to the legend, with markers at each integer interaction point (0 through 5).

### Detailed Analysis

**1. Gemma (Left Chart)**

* **Bayesian LLM (Dark Orange):** Starts at approximately 35% at 0 interactions. Shows a sharp, near-vertical increase to about 85% at 1 interaction. The line then plateaus, remaining stable at approximately 85% for interactions 2 through 5.

* **Oracle LLM (Light Orange):** Starts at approximately 35% at 0 interactions. Increases to about 65% at 1 interaction. The line then shows a very slight downward trend, ending at approximately 63% at 5 interactions.

* **Original LLM (Blue):** Starts at approximately 35% at 0 interactions. Increases slightly to about 40% at 1 interaction. The line then shows a gradual, shallow downward trend, ending at approximately 37% at 5 interactions.

**2. Llama (Center Chart)**

* **Bayesian LLM (Dark Orange):** Starts at approximately 40% at 0 interactions. Shows a sharp increase to about 83% at 1 interaction. The line then plateaus, remaining stable at approximately 84% for interactions 2 through 5.

* **Oracle LLM (Light Orange):** Starts at approximately 40% at 0 interactions. Increases to about 60% at 1 interaction. The line then shows a gradual upward trend, ending at approximately 66% at 5 interactions.

* **Original LLM (Blue):** Starts at approximately 35% at 0 interactions. Increases to about 38% at 1 interaction. The line then remains nearly flat, stable at approximately 38% for interactions 2 through 5.

**3. Qwen (Right Chart)**

* **Bayesian LLM (Dark Orange):** Starts at approximately 43% at 0 interactions. Shows a sharp increase to about 78% at 1 interaction. The line then shows a gradual downward trend, ending at approximately 73% at 5 interactions.

* **Oracle LLM (Light Orange):** Starts at approximately 43% at 0 interactions. Increases to about 53% at 1 interaction. The line then shows a gradual upward trend, ending at approximately 56% at 5 interactions.

* **Original LLM (Blue):** Starts at approximately 36% at 0 interactions. Peaks at about 39% at 1 interaction. The line then shows a gradual downward trend, ending at approximately 36% at 5 interactions.

### Key Observations

1. **Dominant Performance:** The Bayesian LLM (dark orange) consistently achieves the highest agreement percentage after the first interaction across all three base models (Gemma, Llama, Qwen).

2. **Initial Jump:** All three model variants (Original, Oracle, Bayesian) show their most significant change in agreement between 0 and 1 interaction. The Bayesian LLM's jump is the most dramatic.

3. **Post-Jump Trends:**

* The Bayesian LLM plateaus (Gemma, Llama) or slightly declines (Qwen) after interaction 1.

* The Oracle LLM shows a slight upward trend (Llama, Qwen) or a very slight decline (Gemma) after interaction 1.

* The Original LLM remains relatively flat or shows a shallow decline after interaction 1.

4. **Model Comparison:** The starting agreement (at 0 interactions) varies slightly by base model, with Qwen starting highest (~43%) and Gemma/Llama starting lower (~35-40%).

### Interpretation

The data demonstrates a clear hierarchy in performance when aligning with a Bayesian Assistant. The **Bayesian LLM** variant, which presumably incorporates Bayesian inference or updating, shows a transformative improvement after a single interaction, reaching high agreement (73-85%) and maintaining it. This suggests that the Bayesian adaptation is highly effective and efficient.

The **Oracle LLM**, which likely represents a model with access to some ground-truth or privileged information, performs better than the original but significantly worse than the Bayesian variant. Its gradual improvement in Llama and Qwen suggests it can learn slowly from interactions, but it lacks the decisive alignment capability of the Bayesian approach.

The **Original LLM** shows minimal ability to improve its agreement with the Bayesian Assistant through interaction alone, hovering near its baseline. This indicates that without specific architectural or methodological changes (like Bayesian updating), standard LLMs do not naturally converge towards the assistant's reasoning pattern through dialogue.

The slight decline in the Bayesian LLM's performance for Qwen after the first interaction is a notable anomaly. It could indicate a form of overfitting to the initial interaction or a diminishing return where subsequent interactions introduce noise rather than clarifying signal for that specific model. Overall, the charts argue strongly for the efficacy of Bayesian methods in creating AI assistants that can rapidly and stably align with a target reasoning framework.

</details>

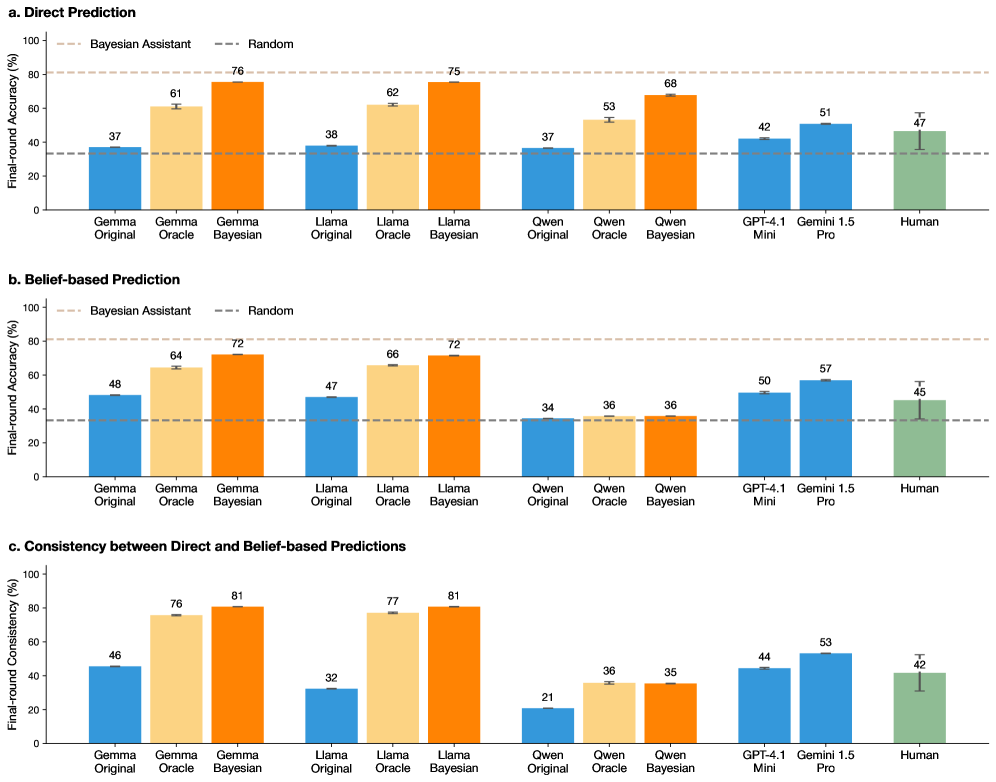

Figure 4: Fine-tuned LLMs agree more with the Bayesian Assistant. We show agreement between the LLMs and the Bayesian Assistant, measured by the proportion of trials where the LLMs makes the same predictions as the Bayesian Assistant. Fine-tuning on the Bayesian Assistant’s predictions makes the LLMs more Bayesian, with the Bayesian versions of each LLM achieving the highest agreement with the Bayesian Assistant. Error bars (too small to be visible in plot) show standard errors across three random seeds (and three training runs).

### 3.2 Fine-Tuned LLMs Generalize to New Tasks

<details>

<summary>x5.png Details</summary>

### Visual Description

## Multi-Chart Figure: Generalization Performance of LLM Variants

### Overview

The image is a composite figure containing three distinct sections (labeled a, b, and c), each presenting performance comparisons of three Large Language Models (Gemma, Llama, Qwen) across different tasks. The charts evaluate "Final-round Accuracy (%)" for different model variants: Original, Oracle, and Bayesian. The overall theme is assessing how these models generalize to tasks with varying feature counts and to new domains (Hotel Recommendation, Web Shopping).

### Components/Axes

**Common Elements Across All Charts:**

* **Y-Axis:** "Final-round Accuracy (%)" ranging from 0 to 100.

* **Models Compared:** Gemma, Llama, Qwen (each in its own sub-chart within a section).

* **Model Variants (Legend):**

* **Original LLM** (Blue line/bar)

* **Oracle LLM** (Light yellow/beige line/bar)

* **Bayesian LLM** (Orange line/bar)

* **Baselines (Dashed Lines):**

* **Random** (Gray dashed line, ~33% accuracy)

* **Bayesian Assistant** (Light brown dashed line, ~80% accuracy in sections b & c)

* **Direct Fine-tuning on Web Shopping** (Green dashed line, ~82% accuracy in section c only)

**Section-Specific Components:**

**a. Generalization to Different Numbers of Features**

* **Chart Type:** Line charts.

* **X-Axis:** "Number of Features" with discrete markers at 2, 3, 4, 5, 6, 7, 8.

* **Legend:** Located at the top of the section, spanning all three sub-charts. Contains five entries: Original LLM, Oracle LLM, Bayesian LLM, Bayesian Assistant, Random.

* **Spatial Layout:** Three sub-charts arranged horizontally for Gemma (left), Llama (center), Qwen (right).

**b. Generalization to Hotel Recommendation**

* **Chart Type:** Bar charts.

* **X-Axis Categories (per sub-chart):** "[Model] Original", "[Model] Oracle", "[Model] Bayesian".

* **Legend:** Located at the top of the section. Contains two entries: Bayesian Assistant, Random.

* **Data Labels:** Numerical accuracy values are printed directly above each bar.

* **Spatial Layout:** Three sub-charts arranged horizontally for Gemma (left), Llama (center), Qwen (right).

**c. Generalization to Web Shopping**

* **Chart Type:** Bar charts.

* **X-Axis Categories (per sub-chart):** "[Model] Original", "[Model] Oracle", "[Model] Bayesian".

* **Legend:** Located at the top of the section. Contains two entries: Direct Fine-tuning on Web Shopping, Random.

* **Data Labels:** Numerical accuracy values are printed directly above each bar.

* **Spatial Layout:** Three sub-charts arranged horizontally for Gemma (left), Llama (center), Qwen (right).

### Detailed Analysis

**a. Generalization to Different Numbers of Features (Line Charts)**

* **Trend Verification:** For all models and variants, accuracy generally **slopes downward** as the number of features increases from 2 to 8. The Bayesian Assistant line remains relatively flat and high.

* **Gemma (Left Sub-chart):**

* **Original LLM (Blue):** Starts at ~41% (2 features), declines slightly to ~35% (8 features).

* **Oracle LLM (Light Yellow):** Starts at ~68% (2 features), declines to ~46% (8 features).

* **Bayesian LLM (Orange):** Starts at ~85% (2 features), declines to ~52% (8 features).

* **Bayesian Assistant (Light Brown Dashed):** Starts at ~90% (2 features), declines to ~68% (8 features).

* **Random (Gray Dashed):** Constant at ~33%.

* **Llama (Center Sub-chart):**

* **Original LLM (Blue):** Starts at ~40% (2 features), declines to ~35% (8 features).

* **Oracle LLM (Light Yellow):** Starts at ~68% (2 features), declines to ~46% (8 features).

* **Bayesian LLM (Orange):** Starts at ~84% (2 features), declines to ~53% (8 features).

* **Bayesian Assistant (Light Brown Dashed):** Starts at ~90% (2 features), declines to ~68% (8 features).

* **Random (Gray Dashed):** Constant at ~33%.

* **Qwen (Right Sub-chart):**

* **Original LLM (Blue):** Starts at ~41% (2 features), declines to ~35% (8 features).

* **Oracle LLM (Light Yellow):** Starts at ~60% (2 features), declines to ~44% (8 features).

* **Bayesian LLM (Orange):** Starts at ~78% (2 features), declines to ~49% (8 features).

* **Bayesian Assistant (Light Brown Dashed):** Starts at ~90% (2 features), declines to ~68% (8 features).

* **Random (Gray Dashed):** Constant at ~33%.

**b. Generalization to Hotel Recommendation (Bar Charts)**

* **Gemma (Left Sub-chart):**

* Original: 37%

* Oracle: 53%

* Bayesian: 66%

* **Llama (Center Sub-chart):**

* Original: 41%

* Oracle: 56%

* Bayesian: 65%

* **Qwen (Right Sub-chart):**

* Original: 36%

* Oracle: 48%

* Bayesian: 59%

* **Baselines:** Bayesian Assistant (~80%) and Random (~33%) are shown as horizontal dashed lines across all sub-charts.

**c. Generalization to Web Shopping (Bar Charts)**

* **Gemma (Left Sub-chart):**

* Original: 54%

* Oracle: 61%

* Bayesian: 73%

* **Llama (Center Sub-chart):**

* Original: 59%

* Oracle: 63%

* Bayesian: 70%

* **Qwen (Right Sub-chart):**

* Original: 43%

* Oracle: 66%

* Bayesian: 69%

* **Baselines:** Direct Fine-tuning on Web Shopping (~82%) and Random (~33%) are shown as horizontal dashed lines across all sub-charts.

### Key Observations

1. **Consistent Hierarchy:** Across all tasks and models, the performance hierarchy is consistent: **Bayesian LLM > Oracle LLM > Original LLM**. All variants outperform the Random baseline.

2. **Task Difficulty:** The "Number of Features" task (section a) shows a clear negative correlation between feature count and accuracy for all model variants. The "Hotel Recommendation" task (section b) appears more challenging than "Web Shopping" (section c), as indicated by lower overall accuracy scores.

3. **Model Comparison:** Gemma and Llama generally show similar performance patterns. Qwen's Original model often starts lower but its Oracle and Bayesian variants show significant gains, particularly in the Web Shopping task.

4. **Baseline Comparison:** The specialized baselines (Bayesian Assistant, Direct Fine-tuning) consistently achieve the highest accuracy (~80-82%), setting an upper benchmark that the Bayesian LLM variants approach but do not surpass in these evaluations.

### Interpretation

The data demonstrates the effectiveness of Bayesian methods in improving the generalization capability of LLMs. The **Bayesian LLM** variant consistently provides a substantial accuracy boost over the **Original LLM** and even the **Oracle LLM** (which likely has access to some privileged information). This suggests that incorporating Bayesian principles helps models better handle uncertainty and adapt to new tasks or more complex feature spaces.

The downward trend in section (a) indicates that all models struggle as the decision problem becomes more complex (more features). However, the Bayesian approach mitigates this decline more effectively. The strong performance of the "Bayesian Assistant" and "Direct Fine-tuning" baselines highlights that task-specific optimization yields the best results, but the Bayesian LLM offers a powerful general-purpose improvement without such specialized tuning.

The variation between models (e.g., Qwen's lower Original score in Web Shopping) suggests that the base model's pre-training or architecture influences its starting point, but the relative gains from the Oracle and Bayesian methods are robust across different model families. This implies the Bayesian framework is a broadly applicable technique for enhancing LLM performance in decision-making and generalization tasks.

</details>

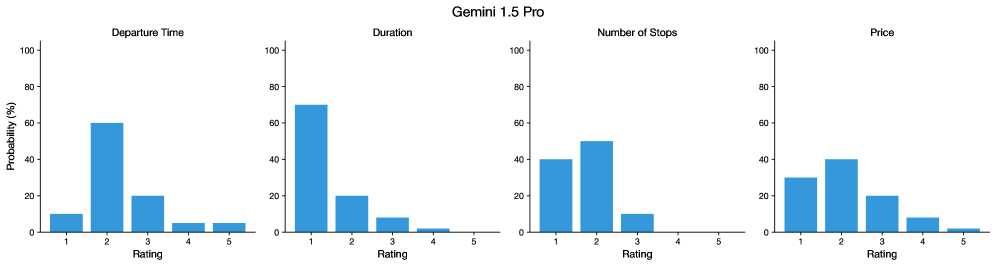

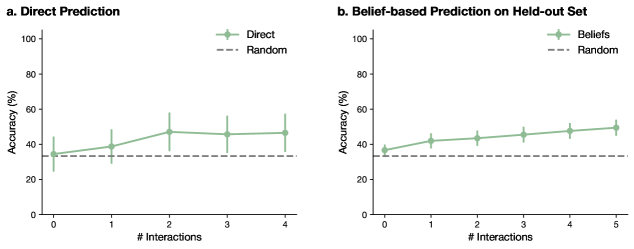

Figure 5: Bayesian teaching generalizes outside the task used for fine-tuning. (a) Final-round accuracy gain in fine-tuned models compared to the original LLM when varying task complexity (here the number of features is a proxy for task complexity). (b) Final-round accuracy for LLMs on the hotel recommendation task, which was not seen during fine-tuning. We show the normative Bayesian Assistant’s performance with brown dashed lines. (c) Final-round accuracy for LLMs on the web shopping domain, also unseen during fine-tuning. The green dashed line indicates the performance of the LLM when it is fine-tuned directly on web shopping data, such that no domain generalization is necessary. Error bars indicate the standard errors over three training runs (for web shopping) and additionally three random seeds (for flight recommendation and hotel recommendation).

As a result of Bayesian teaching, the LLMs demonstrate a greatly improved ability to approximate Bayesian probabilistic inference. Is this ability specific to the particular task the models were trained on, or do the LLMs’ probabilistic skills improve more broadly? To answer this question, we evaluate the fine-tuned LLMs on a set of tasks that diverge to different extents from our original flight recommendation task (see the right panel of Fig. 1 for an overview). All tasks require the LLMs to infer the user’s preferences from the user’s choices over multiple interactions. Overall, as we show in the rest of this section, we find that fine-tuned LLMs show considerable generalization to new settings, and that, as before, Bayesian teaching is more effective than oracle teaching.

We first test the LLMs on variants of the flight recommendation task with different numbers of features: whereas in the interactions provided during fine-tuning, flights were characterized by four features, in this evaluation setting flights are described by between two and eight features. This requires the LLM to generalize to features that were not included in fine-tuning (e.g., the number of checked bags). In this setting, we find that both types of fine-tuning lead to large improvement in accuracy compared to the original LLMs. We also find that Bayesian teaching is considerably more effective than oracle teaching, as before (Fig. 5). We note that as the number of features increases, the space of possible reward functions grows exponentially, and the task becomes inherently more difficult, even for the Bayesian Assistant. Despite this fact, for both fine-tuning methods, performance relative to the upper bound defined by the Bayesian Assistant drops off only moderately as the number of features increases.

The generalization experiments we have discussed so far focused on variants of the flight recommendation task. We next evaluate whether the LLMs can generalize the probabilistic skills they acquire through fine-tuning and apply them to other domains. We consider two such domains: hotel recommendations and web shopping. The hotel recommendation task is a synthetic task whose structure is similar to that of the flight recommendation task presented in fine-tuning. Here, each hotel is defined by four features: distance to downtown, price, rating, and amenities (for an example, see Appendix Table 11).

The web shopping task uses real-world products from a simulated environment [Yao et al., 2022], and differs much more substantially from the fine-tuning task than does the hotel recommendation task. It is difficult to construct a Bayesian Assistant for more natural scenarios like the web shopping task, where the space of user preferences is large and hard to specify formally. For this reason, successful transfer from synthetic settings like the flight recommendation task to more natural scenarios represents a particularly important application of Bayesian teaching. In the web shopping task, each user is defined by a set of randomly sampled goals that characterize the product they are interested in; for example, they might be looking for a shirt that is machine washable, or for a size XL shirt (see Appendix Table 1 for examples). As in the flight domain, the assistant interacts with the user for multiple rounds. In each round, a set of product options is randomly sampled from the product category (e.g., shirts), and the assistant is asked to recommend the best option. Each product is represented by a short title along with a detailed description (see Appendix Table 12 for an example). The user provides feedback at the end of each round, indicating whether or not the assistant’s recommendation was correct. The user’s preferred option is the one with the highest reward, as defined in Yao et al. [2022]. As mentioned above, it is difficult to construct a Bayesian Assistant for this task due to the large space of possible preferences. Instead, as an alternative upper bound on the transfer performance we can expect from the models fine-tuned on the flight recommendation task, we fine-tune LLMs directly on data from the shopping task.

We find that LLMs fine-tuned on the flight recommendation task generalize to both hotel recommendations and web shopping: they perform much better than the original LLMs on those tasks (Fig. 5 and Fig. 5). Bayesian teaching continues to outperform oracle teaching, though the gap is smaller for web shopping than hotel recommendations. There remains a gap between the generalization performance of the LLMs fine-tuned on flight recommendations and the upper bound obtained by fine-tuning the LLMs directly on the web shopping interactions (green dashed line in Fig. 5). Overall, we conclude that fine-tuning, and especially Bayesian teaching, imparts probabilistic skills that transfer substantially beyond the setting used for fine-tuning.

### 3.3 Generalization to Interactions with Human Users

The synthetically generated data we have used so far makes two simplifying assumptions: the simulated users’ choices faithfully reflect the reward function that characterizes their preferences, and all reward functions are encountered equally often. In practice, these assumptions may not hold as humans’ behavior could occasionally be inconsistent with their preferences, due to inattention or other biases, and some preferences may be more common in the population than others (such as a preference for lower price). To evaluate the models in a more realistic setting, we recruit human participants to act as users. Each human participant is asked to first state their preferences for each of the flight features, and then select their preferred flight out of three options, for five different sets of options. We collect data from 10 human participants each for 50 lists of flight option sets, for a total of 500 participants (see Appendix Section A).

The performance of both fine-tuned models and the Bayesian Assistant for human users consistently improves over rounds (Fig. 6), and, as was the case for the simulated users, the Bayesian LLMs consistently outperform the Oracle LLMs; at least for some model families, the Bayesian LLMs also outperform the original LLMs. This indicates that the Bayesian LLMs generalize to human users from the simulated users on which they were fine-tuned.

All models, including the Bayesian Assistant, show substantially lower performance for humans than they did for simulated users, where accuracy after five rounds approached 80% (Fig. 3). In the Appendix Section F.2, we show that this is due to the fact that participants’ choices are not always consistent with their stated preferences, and as such are impossible to predict with high accuracy (Appendix Fig. 22). For the subset of human users whose choices are perfectly consistent with their preferences, the Bayesian LLM performs much better than the original LLM (Appendix Fig. 21; see also Appendix Section D.3, where we study inconsistent simulated users).

Unlike for the simulated users, for human users the original LLMs perform well even after a single interaction (although, crucially, the original LLMs do not improve over interactions). We attribute the original LLMs’ surprisingly strong performance to the fact that human users have generally predictable preferences (e.g., a preference for cheaper flights), such that guesses based on the LLM’s priors, without any adaptation to the individual user, can be quite effective (see Appendix Figs. 20 and 21 for evidence for this hypothesis).

<details>

<summary>x6.png Details</summary>

### Visual Description

## Multi-Panel Line Chart: LLM Accuracy vs. Number of Interactions

### Overview

The image displays a set of three line charts arranged horizontally, comparing the performance of different Large Language Model (LLM) configurations and a baseline across three distinct base models: **Gemma**, **Llama**, and **Qwen**. The charts plot "Accuracy (%)" against the "# Interactions" (from 0 to 4). The primary comparison is between an "Original LLM," an "Oracle LLM," a "Bayesian LLM," a "Bayesian Assistant," and a "Random" baseline.

### Components/Axes

* **Legend:** Positioned at the top center of the entire figure. It defines five data series:

* `Original LLM`: Solid blue line with circular markers.

* `Oracle LLM`: Solid light orange line with circular markers.

* `Bayesian LLM`: Solid dark orange line with circular markers.

* `Bayesian Assistant`: Dashed beige line with circular markers.

* `Random`: Dashed gray line (no markers).

* **Subplot Titles:** Each of the three charts has a title centered above it: "Gemma" (left), "Llama" (center), "Qwen" (right).

* **Y-Axis (Common to all):** Labeled "Accuracy (%)". The scale runs from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **X-Axis (Common to all):** Labeled "# Interactions". The scale shows integer values from 0 to 4.

### Detailed Analysis

#### **Subplot 1: Gemma**

* **Original LLM (Blue):** Starts at ~62% accuracy at 0 interactions. The line is nearly flat, showing a very slight downward trend, ending at ~61% at 4 interactions.

* **Oracle LLM (Light Orange):** Starts at ~33% at 0 interactions. Shows a steady, moderate upward trend, reaching ~51% at 4 interactions.

* **Bayesian LLM (Dark Orange):** Starts the lowest at ~22% at 0 interactions. Exhibits the steepest upward slope, crossing the Oracle line between 1 and 2 interactions, and ends as the highest performer at ~62% at 4 interactions.

* **Bayesian Assistant (Beige, Dashed):** Starts at ~28% at 0 interactions. Follows a similar upward trajectory to the Bayesian LLM but remains slightly below it, ending at ~56% at 4 interactions.

* **Random (Gray, Dashed):** A flat horizontal line at approximately 33% accuracy across all interaction counts.

#### **Subplot 2: Llama**

* **Original LLM (Blue):** Starts at ~60% at 0 interactions. Shows a slight dip at 1 interaction (~57%) before recovering and stabilizing around ~59% from 2-4 interactions.

* **Oracle LLM (Light Orange):** Starts at ~33% at 0 interactions. Increases steadily to ~51% at 4 interactions.

* **Bayesian LLM (Dark Orange):** Starts at ~24% at 0 interactions. Rises sharply, surpassing the Oracle line after 1 interaction, and ends at ~61% at 4 interactions.

* **Bayesian Assistant (Beige, Dashed):** Starts at ~29% at 0 interactions. Increases steadily, tracking just below the Bayesian LLM, and ends at ~57% at 4 interactions.

* **Random (Gray, Dashed):** Flat line at ~33%.

#### **Subplot 3: Qwen**

* **Original LLM (Blue):** Starts at ~56% at 0 interactions. Shows a more pronounced decline than the other models, dropping to ~51% at 1 interaction and ending at ~50% at 4 interactions.

* **Oracle LLM (Light Orange):** Starts at ~34% at 0 interactions. Increases gradually to ~47% at 4 interactions.

* **Bayesian LLM (Dark Orange):** Starts at ~26% at 0 interactions. Rises steeply, crossing the Original LLM line between 1 and 2 interactions, and ends at ~58% at 4 interactions.

* **Bayesian Assistant (Beige, Dashed):** Starts at ~30% at 0 interactions. Follows an upward trend, ending at ~52% at 4 interactions.

* **Random (Gray, Dashed):** Flat line at ~33%.

### Key Observations

1. **Consistent Hierarchy at Start:** For all three base models (Gemma, Llama, Qwen), the performance order at 0 interactions is identical: Original LLM > Random ≈ Oracle LLM ≈ Bayesian Assistant > Bayesian LLM.

2. **Bayesian Methods Improve with Interactions:** Both the "Bayesian LLM" and "Bayesian Assistant" show strong, positive slopes, indicating significant accuracy gains with more interactions.

3. **Crossover Point:** The "Bayesian LLM" consistently starts as the worst performer but surpasses the "Oracle LLM" after 1-2 interactions and eventually surpasses the "Original LLM" for Gemma and Qwen, and nearly matches it for Llama.

4. **Original LLM Stability/Decline:** The "Original LLM" shows minimal improvement or a slight decline with more interactions, suggesting it does not benefit from the iterative process in this setup.

5. **Oracle as a Mid-Tier Benchmark:** The "Oracle LLM" provides a consistent, moderate improvement over the random baseline but is outperformed by the Bayesian methods after a few interactions.

6. **Random Baseline:** The flat "Random" line at ~33% suggests a 3-class classification problem where random guessing yields one-third accuracy.

### Interpretation

This data demonstrates the effectiveness of a **Bayesian iterative refinement approach** for improving LLM accuracy on a given task. The key insight is that while the base ("Original") LLM starts with high accuracy, it cannot improve further. In contrast, the Bayesian methods, which likely incorporate feedback or uncertainty from each interaction, start poorly but learn rapidly.

The "Oracle LLM" likely represents an idealized upper bound for a non-Bayesian iterative method, showing that some improvement is possible. However, the Bayesian approach's ability to surpass both the Oracle and the Original LLM after a few interactions highlights its superior efficiency in leveraging iterative feedback. The consistency of this pattern across three different base models (Gemma, Llama, Qwen) suggests the finding is robust and not model-specific. The "Bayesian Assistant" (dashed beige) performing slightly worse than the full "Bayesian LLM" may indicate it uses a less comprehensive update mechanism. The charts argue strongly for integrating Bayesian or similar uncertainty-aware, iterative frameworks when deploying LLMs in interactive settings where multiple rounds of refinement are possible.

</details>

Figure 6: Bayesian teaching generalizes to human users. We show accuracy over rounds when the user is a human participant. The original LLMs achieve strong performance but do not show any learning behavior. In contrast, fine-tuned LLMs (with both Bayesian and Oracle teachers) improve their performance over rounds, and the Bayesian LLMs consistently outperform the Oracle LLMs. Error bars show standard errors across four random seeds (and three training runs; the errors bars are not visible in the plot because they are very small).

## 4 Discussion

To interact with the world successfully, an agent needs to adapt its behavior as it obtains additional information about the statistics of this environment. To evaluate the ability of large language models (LLMs) to do so, we introduced a simple flight recommendation task where, in order to make accurate predictions, the model needs to adapt to a user’s preferences over multiple interactions with the user. We tested a range of LLMs and found that they struggle to form and update probabilistic beliefs. We further found that continuing the LLMs’ training through exposure to interactions between users and the Bayesian Assistant—a model that implements the normative probabilistic belief update strategy—dramatically improves the LLMs’ ability to approximate probabilistic reasoning. Crucially, this improvement did not only hold for the flight recommendation task the LLM was trained on, but also generalized to variants to the flight recommendation task that the LLM has not encountered before, as well as to other tasks. Across the board, this approach, which we refer to as Bayesian teaching, was more effective than a related approach where the LLM is fine-tuned directly on the correct answers, pointing to the effectiveness of the Bayesian training signal.

Our paradigm differs from those used in previous investigations of LLMs’ probabilistic reasoning abilities, where LLMs were expected to compute statistics explicitly [Nafar et al., 2025, Paruchuri et al., 2024] or provide probability judgments [Zhu and Griffiths, 2024, Belém et al., 2024]. In our paradigm, probabilistic reasoning is as essential as it is in explicit reasoning tasks, but, crucially, it is implicit in the task. Unlike in some recent studies, where the assistant is expected to ask questions to directly elicit the user’s preferences [Li et al., 2023a, Handa et al., 2024, Piriyakulkij et al., 2023, Andukuri et al., 2024, Peng et al., 2024, Aliannejadi et al., 2021, Chen et al., 2024a, Lin et al., 2022], our setup expects the assistant to gradually infer the user’s preferences by simply observing the user’s choices, and to provide recommendations that are increasingly in line with the user’s true preferences. Finally, our findings are consistent with those of concurrent work [Zhao et al., 2025], which also investigates LLMs’ ability to infer user preferences from different types of dialogues, including a condition where the user accepts or rejects one or more options provided by the assistant—a setup similar to ours—where the models performed poorly. Compared to this concurrent study, our work analyzes the LLMs’ behavior through the lens of Bayesian inference, and demonstrates the benefits of mimicking a Bayesian model in fine-tuning compared to a more standard fine-tuning strategy, where the model is always provided with the correct answer (oracle teaching, in the terminology we used in the current paper).

We observed robust generalization from the synthetic flight recommendation task on which the LLMs were fine-tuned to the more natural web shopping task. While performance was even stronger when we fine-tuned the LLM directly on interactions from this task (the green dashed line in Fig. 5), in practice it may be difficult or expensive to collect such data; our synthetic fine-tuning strategy provides an alternative that improves the LLM’s probabilistic reasoning abilities across tasks, without requiring collecting additional data and re-training the model on the new domain.

Our proposal is related to but distinct from approaches that embed an LLM inside a neuro-symbolic framework for probabilistic reasoning [Wong et al., 2023, Feng et al., 2024, Liu et al., 2024, Piriyakulkij et al., 2024, Grand et al., 2023, Ying et al., 2024, Ellis, 2023]. In those approaches, the LLM is used to translate between natural language inputs and formal representations, which in turn serve as input to a symbolic model that can update its beliefs according to the Bayesian framework [Wong et al., 2023]. Indeed, we provide further evidence that hybrid methods can outperform the LLM-only approach in Appendix Section B, where we describe a variation of our method where we first ask the LLM to verbalize its beliefs about the user’s preferences, and then we use an external, symbolic system to make predictions based on these verbalized beliefs. The experiments described in that Appendix section show that in simple tasks where preferences can be mapped to predictions, such hybrid methods indeed outperform a direct interaction with the LLM. Our preliminary explorations of this approach can be developed in greater detail in future work.

Besides their superior performance in certain cases, neuro-symbolic methods have the benefit of greater interpretability, and their probabilistic inferences could be more robust. Crucially, however, the utility of such methods is limited to problems whose structure can be made explicit in the symbolic component of the system. By contrast, the method we propose empowers the LLM to approximate probabilistic inference on its own, such that it can apply this skill to domains that are hard to codify explicitly in a symbolic system, domains such as the web shopping task we have examined. This approach leverages LLMs’ remarkable ability to generalize to new problems defined using natural language.

Notably, even in cases where the domain is simple enough for a purely symbolic model to be constructed, such models may not be consistently more accurate than LLMs. In our study, we found that while for “well-behaved” simulated users a moderate performance gap persisted between the fine-tuned models and the Bayesian Assistant, for human users, whose choices are not always consistent with their preferences, our Bayesian LLMs were in fact superior to the fully symbolic Bayesian Assistant, demonstrating LLMs’ greater robustness to noise compared to symbolic models.

We have argued that through mimicking the Bayesian Assistant the LLMs learn to perform probabilistic inference, albeit only approximately. This hypothesis may appear to be surprising in light of the fact that LLMs’ training objective does not explicitly provide supervision for this skill, and that the transformer architecture does not explicitly track probability distributions: it is trained only to predict the next word produced by the Bayesian Assistant. That being said, there is mounting evidence that in order to predict the next token successfully, LLMs can acquire sophisticated representations that match the structure of the process that generated those tokens. In the case of natural language syntax, for example, the internal representations of LLM trained solely to predict upcoming words have been shown to encode abstract features such as syntactic role and grammatical number [Lakretz et al., 2019, Hao and Linzen, 2023, Manning et al., 2020]. It would be a fruitful direction for future work to determine how probabilistic reasoning is implemented by the LLMs’ internal representations, for example by using techniques such as probes and causal interventions [Finlayson et al., 2021, Ravfogel et al., 2021, Vig et al., 2020] to find internal representations of the model’s probability distributions over users’ preferences, or using circuit analysis [Wang et al., 2023a] to explore the computations through which the model updates these distributions.

The success of Bayesian teaching in imparting approximate probabilistic reasoning skills to LLMs opens up a range of questions for future work. Would the benefits of Bayesian teaching extend to larger models than we were able to fine-tune in this work, or to the recent generation of models that are explicitly trained to reason in words [Guo et al., 2025]? Does the benefit of Bayesian teaching extend to continuous domains and real-world applications beyond the ones we evaluated (for example, interactions whose goal goes beyond shopping)? Could we provide the models with a stronger supervision signal—for example, by instructing them to consider explicit probability distributions, by providing them with explicit supervision on the optimal way to update these distributions (for example, by supervising beliefs as in Appendix Fig. 10), or by encouraging them to maintain explicit representations of users such that the probability distributions are consistent across interactions with the same user, through methods such as supervised fine-tuning or reinforcement learning?