# Challenging the Boundaries of Reasoning: An Olympiad-Level Math Benchmark for Large Language Models

**Authors**: DataCanvas Alaya NeW. BAAI.

Technical Report on Slow Thinking with LLMs: Evaluation Benchmark

Abstract

In recent years, the rapid development of large reasoning models has resulted in the saturation of existing benchmarks for evaluating mathematical reasoning, highlighting the urgent need for more challenging and rigorous evaluation frameworks. To address this gap, we introduce OlymMATH, a novel Olympiad-level mathematical benchmark, designed to rigorously test the complex reasoning capabilities of LLMs. OlymMATH features 200 meticulously curated problems, each manually verified and available in parallel English and Chinese versions. The problems are systematically organized into two distinct difficulty tiers: (1) AIME-level problems (easy) that establish a baseline for mathematical reasoning assessment, and (2) significantly more challenging problems (hard) designed to push the boundaries of current state-of-the-art models. In our benchmark, these problems span four core mathematical fields, each including a verifiable numerical solution to enable objective, rule-based evaluation. Empirical results underscore the significant challenge presented by OlymMATH, with state-of-the-art models including DeepSeek-R1, OpenAI’s o3-mini and Gemini 2.5 Pro Exp demonstrating notably limited accuracy on the hard subset. Furthermore, the benchmark facilitates comprehensive bilingual assessment of mathematical reasoning abilities—a critical dimension that remains largely unaddressed in mainstream mathematical reasoning benchmarks. We release the benchmark, evaluation code, detailed results and a data visualization tool at https://github.com/RUCAIBox/OlymMATH.

1 Introduction

The advent of large language models (LLMs) [1] has marked a significant leap forward in the capabilities of artificial intelligence, showcasing exceptional performance across a broad spectrum of tasks, and in some cases, even rivaling or exceeding human-level proficiency [2, 3]. Among the myriad of capabilities demonstrated by LLMs, mathematical reasoning has surfaced as a particularly pivotal and demanding area of research [4, 5, 6]. In recent years, the evaluation and enhancement of mathematical reasoning abilities have become a central focus in the development of LLMs [7].

Effective assessment of LLM reasoning necessitates reliable and verifiable evaluation benchmarks. Reliability ensures accurately designed problems and solutions, free from ambiguities or errors. Verifiability demands that the evaluation process be easily constructed, replicated, and validated, often relying on easily parsable answer formats. Many benchmarks adopt a single-answer format, like “ The answer is $3 3 3$ ”, to simplify parsing and enhance reproducibility.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart: Model Accuracy on Math Problems

### Overview

This bar chart compares the accuracy of several large language models (LLMs) on four different math problem sets: AIME 24 (30 problems), HMMT 202502 (30 problems), OlyMATH-EN-EASY (100 problems), and OlyMATH-EN-HARD (100 problems). Accuracy is measured as a percentage.

### Components/Axes

* **X-axis:** Represents the math problem sets: AIME 24, HMMT 202502, OlyMATH-EN-EASY, and OlyMATH-EN-HARD.

* **Y-axis:** Represents Accuracy (%), ranging from 0 to 100.

* **Legend (Top-Right):** Identifies the different LLMs being compared, with corresponding colors:

* Gemini 2.5 Pro Exp (Reddish-Brown)

* OpenAI o3-mini (high) (Blue)

* Qwen3-235B-A22B (Yellow)

* Qwen3-30B-A3B (Green)

* DeepSeek-R1 (Teal)

* QwQ-32B (Orange)

* GLM-Z1-AIR (Light-Brown)

### Detailed Analysis

The chart consists of four groups of bars, one for each problem set. Within each group, there is a bar for each LLM.

**AIME 24 (30 Problems):**

* Gemini 2.5 Pro Exp: Approximately 92.0%

* OpenAI o3-mini (high): Approximately 87.3%

* Qwen3-235B-A22B: Approximately 85.7%

* Qwen3-30B-A3B: Approximately 80.4%

**HMMT 202502 (30 Problems):**

* Gemini 2.5 Pro Exp: Approximately 82.5%

* OpenAI o3-mini (high): Approximately 67.5%

* Qwen3-235B-A22B: Approximately 62.5%

* Qwen3-30B-A3B: Approximately 50.8%

* DeepSeek-R1: Approximately 47.5%

**OlyMATH-EN-EASY (100 Problems):**

* Gemini 2.5 Pro Exp: Approximately 92.2%

* OpenAI o3-mini (high): Approximately 91.4%

* Qwen3-235B-A22B: Approximately 90.5%

* Qwen3-30B-A3B: Approximately 87.2%

**OlyMATH-EN-HARD (100 Problems):**

* Gemini 2.5 Pro Exp: Approximately 58.4%

* OpenAI o3-mini (high): Approximately 36.5%

* Qwen3-235B-A22B: Approximately 31.2%

* Qwen3-30B-A3B: Approximately 26.3%

* DeepSeek-R1: Approximately 23.1%

* QwQ-32B: Approximately 19.5%

* GLM-Z1-AIR: Approximately 20.1%

### Key Observations

* Gemini 2.5 Pro Exp consistently achieves the highest accuracy across all problem sets.

* Accuracy generally decreases as the difficulty of the problem set increases (from AIME 24 to OlyMATH-EN-HARD).

* The performance gap between the models is most pronounced on the OlyMATH-EN-HARD dataset.

* OpenAI o3-mini (high) performs well on AIME 24 and OlyMATH-EN-EASY, but its performance drops significantly on the harder datasets.

* GLM-Z1-AIR consistently shows the lowest performance among the models tested.

### Interpretation

The data suggests that Gemini 2.5 Pro Exp is the most capable LLM for solving these math problems, demonstrating a robust ability to handle varying levels of difficulty. The decline in accuracy across all models as the problem sets become harder indicates that even advanced LLMs struggle with complex mathematical reasoning. The significant performance difference on the OlyMATH-EN-HARD dataset highlights the challenges of solving difficult problems and the potential for further improvement in LLM capabilities. The consistent lower performance of GLM-Z1-AIR suggests it may require further development to achieve comparable accuracy to other models. The chart provides a comparative benchmark of LLM performance on different math problem sets, which can be valuable for researchers and developers working on improving LLM capabilities in mathematical reasoning.

</details>

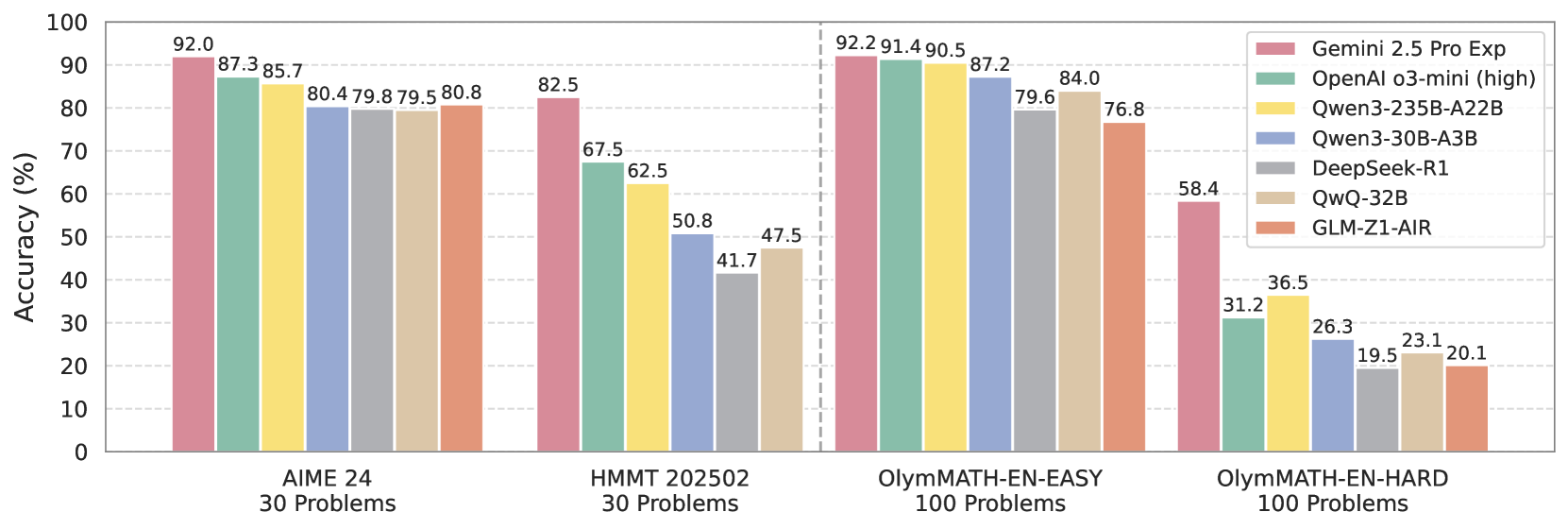

Figure 1: Performance comparisons of mainstream reasoning models between our OlymMATH (English version) and other Olympiad-level mathematical benchmarks. Our OlymMATH dataset provides test results that align with those on existing benchmarks and features a significantly larger number of problems.

Evaluation benchmarks are primarily established to identify LLM limitations, and guiding future improvements. Over recent years, numerous high-quality mathematical benchmarks, such as GSM8K [8] and MATH [9], have been pivotal in advancing LLM reasoning capabilities [10, 11]. However, a significant trend is the saturation of many benchmarks, including those currently in use, due to rapid LLM advancements. For example, GSM8K [8], once a standard for earlier models like Llama 1 and 2, is now largely mastered by state-of-the-art models. Similarly, MATH [9], initially challenging for GPT-4-level models, has also become saturated by today’s leading models. This saturation is further compounded by slow-thinking models like DeepSeek-R1 [4], OpenAI’s o3-mini [12], and Gemini 2.5 Pro Experimental [13]. These models, which promote deliberate step-by-step reasoning, show that enhancing the reasoning process yields substantial performance gains, thereby diminishing the effectiveness of existing benchmarks in differentiating cutting-edge capabilities.

To better evaluate the performance of advanced reasoning models, more rigorous and challenging benchmarks are needed to assess their mathematical reasoning capabilities. The AIME dataset has emerged as a more demanding benchmark by incorporating problems from the American Invitational Mathematics Examination (AIME), which presents a higher level of difficulty. Due to their complexity and rigor, AIME problems continue to challenge state-of-the-art models under standard prompting. Nevertheless, the AIME dataset has three major limitations. First, the limited scale of the current dataset (containing merely 30 problems from AIME 2024) may compromise the statistical reliability and robustness of the evaluation results. Second, as reasoning models rapidly improve—through methods like fine-tuning with long chain-of-thought data [14] or reinforcement learning scaling [4] —the benchmark’s original performance ceiling is being surpassed. For example, models such as Gemini 2.5 Pro Exp now achieve 92% accuracy with single attempt, demonstrating that current top-performing models are approaching the limits of what AIME can effectively measure. Third, the dataset exclusively features English problems, leaving multilingual reasoning capabilities unassessed despite their importance for a comprehensive evaluation.

To overcome these limitations, we present OlymMATH: a rigorously curated, bilingual (English and Chinese) benchmark for Olympiad-level reasoning, comprising 200 problems split into easy (OlymMATH-EASY) and hard (OlymMATH-HARD) levels with parallel bilingual sets (EN & ZH). To prevent data leakage, problems were manually sourced from printed publications and expert-verified. OlymMATH requires precise numerical answers for reliable verification, covers four major mathematical fields, and adheres to the MATH dataset [9] format for compatibility (see Figure 2).

MATH Dataset

Problem: Compute:

$$

1-2+3-4+5-\dots+99-100.

$$

Answer: $-50$ .

Problem: Let $n$ be a positive integer. Simplify the expression

$$

\frac{(2^{4}+\frac{1}{4})(4^{4}+\frac{1}{4})\dotsm[(2n)^{4}+\frac{1}{4}]}{(1^{%

4}+\frac{1}{4})(3^{4}+\frac{1}{4})\dotsm[(2n-1)^{4}+\frac{1}{4}]}.

$$

Answer: $8n^{2}+4n+1$ .

v

OlymMATH-HARD (Ours) Problem-EN: {CJK} UTF8gbsn Find the remainder of $\sum_{k=0}^{1234}\binom{2016× 1234}{2016k}$ modulo $2017^{2}$ (provide the value in the range $[0,2017^{2})$ ). Answer: $1581330$ . Subject: Number Theory. OlymMATH-EASY (Ours) Problem-ZH: {CJK} UTF8gbsn 设 $O$ 为 $\triangle ABC$ 的内心, $AB=3$ , $AC=4$ , $BC=5$ , $\overrightarrow{OP}=x\overrightarrow{OA}+y\overrightarrow{OB}+z\overrightarrow%

{OC}$ , $0≤slant x,y,z≤slant 1$ . 求动点 $P$ 的轨迹所覆盖的平面区域的面积. Answer: $12$ . Subject: {CJK} UTF8gbsn几何.

Figure 2: Examples from the MATH dataset and our OlymMATH dataset.

By leveraging the OlymMATH benchmark, we conduct extensive experiments to evaluate the performance of several state-of-the-art models (see Figure 1). The results underscore our benchmark’s difficulty, with advanced models like DeepSeek-R1 [4], o3-mini [12], and Gemini 2.5 Pro Exp [13] achieving only 19.5%, 31.2%, and 58.4% accuracy, respectively, on OlymMATH-EN-HARD, indicating Olympiad-level math remains a significant challenge necessitating further research. Our multilingual comparison showed a consistent performance gap, with higher accuracy on English problems versus Chinese, highlighting the need for multilingual evaluation. Furthermore, case studies revealed models sometimes use heuristic “guessing” to reach answers without rigorous proofs. This underscores the importance of process-level inspection for accurate LLM capability assessment.

In summary, our contribution are as follows:

$\bullet$ We introduce OlymMATH, a manually curated, Olympiad-level mathematical benchmark. It features parallel English and Chinese versions for objective, bilingual evaluation of LLM mathematical reasoning, with answers efficiently verifiable using sympy -based tools.

$\bullet$ Experiments demonstrate OlymMATH’s reliability (aligned with AIME) and strong discriminative power; even state-of-the-art models achieve only moderate scores, highlighting OlymMATH’s potential to drive LLM reasoning advancements.

$\bullet$ Detailed analyses and case studies reveal key model limitations in complex problem-solving, including performance disparities between English and Chinese problems and instances of heuristic “guessing” rather than rigorous deduction.

$\bullet$ We open-source evaluation results and resources, including sampled long chain-of-thought reasoning trajectories (582,400 entries from 28 models on 400 problems), a data visualization tool, and standard solutions for problems where all LLMs struggled, to facilitate community research and analysis on diverse reasoning patterns and common reasoning issues.

2 Benchmark Construction

In this section, we describe the OlymMATH dataset in detail, including its construction methodology, problem composition, categorical distribution, and evaluation approach. Our dataset is specifically designed to provide a rigorous yet objectively verifiable benchmark for assessing the mathematical reasoning capabilities of LLMs. Additionally, we offer two parallel evaluation sets containing 200 problems each in English and Chinese as supplementary data to facilitate a comparative analysis of performance gaps between the two languages. Table 1 presents a basic comparison of our proposed OlymMATH benchmark and other mathematical reasoning benchmarks.

Table 1: Comparison of existing benchmarks. EN and ZH denote English and Chinese, respectively.

{tblr}

columneven = c, column3 = c, column5 = c, hline1,12 = -0.08em, hline2,10 = -0.05em, Name & # Problems # Field Language Evaluation Difficulty GSM8K [8] 1319 - EN Rule Grade School MATH [9] 5000 6 EN Rule Competition AIME 2024 [15] 30 - EN Rule Olympiad AIME 2025 [16] 30 - EN Rule Olympiad HMMT 202502 [17] 30 - EN Rule Olympiad USAMO 2025 [18] 6 - EN LLM Olympiad Olympiad Bench [19] 8476 3 ZH & EN Rule CEE & Olympiad Omni-MATH [20] 4428 33+ EN LLM Olympiad OlymMATH-EN 200 4 EN Rule Olympiad OlymMATH-ZH 200 4 ZH Rule Olympiad

2.1 Reliability: Contamination and Verification

Contamination

OlymMATH comprises 200 high-quality mathematical problems at the Olympiad level, meticulously curated from printed resources to ensure both quality and originality. These problems were manually gathered from a range of authoritative sources, including specialized magazines, textbooks, and official competition materials. To minimize the risk of data contamination, online repositories and forums were intentionally excluded from the sourcing process. This methodology ensures that the problems are intellectually challenging and representative of advanced mathematical reasoning, while also minimizing prior exposure on publicly accessible digital platforms. Consequently, OlymMATH serves as a reliable benchmark for evaluating the real capabilities of LLMs in solving complex mathematical tasks.

Verification

To enhance dataset reliability, we invited a China Mathematical Olympiad silver medalist and two provincial first-prize winners to verify and revise the problems and solutions. Since the answers to the problems were already provided, the verification difficulty was reduced, making the expertise of reviewers sufficient for this task. Each problem was reviewed by at least two reviewers. Additionally, official solutions for challenging problems are published for community oversight.

2.2 Problem Categories and Distribution

OlymMATH problems span four key high-school Olympiad mathematical fields—algebra, geometry, number theory, and combinatorics—classified by experts (not LLMs) for reliability. Problems are selected for their challenge, suitability for simple-answer verification, and topic diversity (e.g., inequalities, sequences, and more in algebra). Figure-based problems within this set are text-reformulated for LLM compatibility, with non-convertible ones excluded (e.g., Figure 6 in Appendix).

For refined evaluation, problems are categorized by difficulty: easy, designed to challenge standard prompting in mainstream models, and hard, tailored to test advanced reasoning (e.g., slow-thinking modes) in state-of-the-art models. The distribution details are described in Table 2.

Table 2: The distribution of contest problems by category.

{tblr}

cells = c, cell61 = c=2, hline1,7 = -0.08em, hline2,6 = -0.05em, Category & Topic # HARD # EASY # Total Algebra (Alg.) Inequality, Sequence, Trigonometry, etc. 25 25 50 Geometry (Geo.) Solid Geometry, Analytic Geometry, etc. 25 33 58 Number Theory (Num.) Divisibility, Diophantine Equation, etc. 25 13 38 Combinatorics (Com.) Graph Theory, Permutation, etc. 25 29 54 Total 100 100 200

2.3 Format and Verification Methodology

OlymMATH adopts the MATH dataset format (see Figure 2) for seamless integration with existing pipelines and enhancing clarity and processing efficiency. All problems are text-based, including geometry reformulated from diagrams to align with LLM evaluation, as mentioned previously. For consistent, objective assessment, answers are restricted to real numbers and intervals (see Table 3), avoiding ambiguous formats and enabling reliable sympy -based and numerical verification.

Table 3: The included and excluded formats of the final answer.

{tblr}

width = colspec = Q[c,m,wd=0.16] X[l,m] X[l,m], cell12 = halign=c, cell13 = halign=c, cell31 = r=2c, hline1,Z = 0.08em, solid, hline2 = 0.05em, solid, hline3 = 0.05em, solid & Type & Example Type & Example Included Real number: $16^{\circ}$ , $2^{2017}+\arctan 2$ Interval: $[\sqrt{33},+∞)$ , $(4,5\pi]$ Excluded Set Operations: $\{4,5\}\cup\{1,8\}$ Variable: $\sqrt[3]{5}a^{2}$ , $p^{2}-pq$ , $n!+2$ Complex number: $9+4\mathrm{i}$ , $\sqrt{-4}$ Text: East, Alice

To make the evaluation more challenging, OlymMATH includes problems with multiple numerical answers. These problems are modified to require a summary of all potential outcomes (e.g., sums, sums of squares; see Figure 7 in Appendix). This method effectively assesses whether models can consider all possible answers, thereby providing a robust evaluation of their reasoning capabilities.

2.4 Bilingual Extension

Originating from Chinese-language problems, the OlymMATH benchmark includes both original Chinese and translated English versions for comprehensive bilingual evaluation. Our LLM-based translation pipeline first uses Claude Sonnet 3.7 for initial English translations, which are then iteratively refined with GPT-4o. Finally, a crucial human verification stage by two expert annotators ensures mathematical accuracy, rigor, and linguistic fluency. These resulting parallel sets, OlymMATH-EN (English) and OlymMATH-ZH (Chinese) (see Figure 2), facilitate systematic comparison of cross-lingual reasoning, with their union denoted as OlymMATH (full set).

3 Experiments

In this section, we assess the performance of leading reasoning models using the OlymMATH benchmark and then provide a detailed analysis of their capabilities.

3.1 Experimental Setup

Models.

To conduct a thorough evaluation, we assess a range of representative LLMs. For open-source models, we investigated recent work on reasoning models, and evaluated DeepSeek-R1 series [4], STILL-3-Preview [21], DeepScaleR-Preview [22], QwQ [23], Light-R1 series [24], OpenThinker2 series [25], Skywork-OR1 series [26], GLM-Z1-Air [27], AceMath-RL [28], OpenMath-Nemotron series [29], and Qwen3 series [30]. For closed-source models, we include o3-mini (high) [12], Gemini 2.5 Pro Experimental 0325 [13] in our evaluation.

Table 4: Model performance on OlymMATH-EN. Models within each model size group are sorted by release time. The abbreviations “Alg.”, “Geo.”, “Num.”, and “Com.” represent the four categories in OlymMATH. Highest accuracy per model size is bolded. The second highest accuracy per model size is underlined. Models sampled only 8 times are marked in gray to indicate potential instability.

| Model | OlymMATH-EN-HARD | OlymMATH-EN-EASY | | | | | | | | | | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Alg. | Geo. | Num. | Com. | Avg. | Alg. | Geo. | Num. | Com. | Avg. | | | | | | | | | | | |

| P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | |

| Qwen3 (0.6B, Think) | 2.5 | 0.0 | 2.1 | 4.0 | 6.6 | 8.0 | 0.2 | 0.0 | 2.8 | 3.0 | 15.5 | 20.0 | 5.6 | 15.2 | 24.5 | 38.5 | 5.2 | 6.9 | 10.4 | 17.0 |

| DS-R1-Distill (1.5B) | 1.9 | 0.0 | 1.8 | 0.0 | 1.8 | 0.0 | 0.4 | 0.0 | 1.5 | 0.0 | 20.8 | 40.0 | 12.6 | 21.2 | 32.6 | 61.5 | 8.2 | 24.1 | 16.0 | 32.0 |

| STILL-3-Pre. (1.5B) | 3.7 | 0.0 | 4.9 | 4.0 | 5.8 | 8.0 | 0.8 | 0.0 | 3.8 | 3.0 | 22.7 | 36.0 | 14.8 | 30.3 | 37.6 | 69.2 | 10.3 | 17.2 | 18.4 | 33.0 |

| DeepScaleR-Pre. (1.5B) | 3.4 | 4.0 | 4.2 | 8.0 | 8.2 | 4.0 | 0.4 | 0.0 | 4.1 | 4.0 | 19.9 | 16.0 | 18.5 | 21.2 | 44.6 | 46.2 | 18.9 | 31.0 | 22.3 | 26.0 |

| OpenMath-Nemo. (1.5B) | 14.5 | 24.0 | 13.6 | 16.0 | 10.9 | 16.0 | 2.6 | 4.0 | 10.4 | 15.0 | 70.9 | 100.0 | 59.3 | 90.9 | 81.6 | 100.0 | 40.6 | 58.6 | 59.7 | 85.0 |

| Qwen3 (4B, Think) | 18.1 | 20.0 | 14.8 | 12.0 | 19.8 | 28.0 | 3.1 | 4.0 | 13.9 | 16.0 | 76.4 | 92.0 | 79.1 | 97.0 | 85.1 | 84.6 | 57.1 | 72.4 | 72.8 | 87.0 |

| DS-R1-Distill (7B) | 15.6 | 36.0 | 12.6 | 24.0 | 13.1 | 24.0 | 3.1 | 4.0 | 11.1 | 22.0 | 52.8 | 84.0 | 49.6 | 84.8 | 62.5 | 84.6 | 33.9 | 58.6 | 47.5 | 77.0 |

| Light-R1-DS (7B) | 17.1 | 28.0 | 15.2 | 16.0 | 12.8 | 24.0 | 3.6 | 4.0 | 12.2 | 18.0 | 57.1 | 84.0 | 53.6 | 93.9 | 73.7 | 84.6 | 39.5 | 51.7 | 53.0 | 78.0 |

| OpenThinker2 (7B) | 16.0 | 20.0 | 16.8 | 28.0 | 14.0 | 20.0 | 2.8 | 4.0 | 12.4 | 18.0 | 65.3 | 96.0 | 60.5 | 97.0 | 79.1 | 84.6 | 42.3 | 58.6 | 58.9 | 84.0 |

| Skywork-OR1-Pre. (7B) | 14.4 | 20.0 | 12.5 | 12.0 | 11.7 | 24.0 | 1.6 | 0.0 | 10.0 | 14.0 | 61.6 | 88.0 | 55.9 | 78.8 | 74.3 | 92.3 | 36.9 | 48.3 | 54.2 | 74.0 |

| Skywork-OR1-Math (7B) | 17.4 | 20.0 | 17.1 | 20.0 | 13.6 | 28.0 | 0.9 | 0.0 | 12.2 | 17.0 | 67.9 | 92.0 | 67.4 | 93.9 | 76.6 | 92.3 | 47.6 | 62.1 | 63.0 | 84.0 |

| AceMath-RL (7B) | 19.4 | 32.0 | 19.3 | 32.0 | 14.4 | 24.0 | 3.5 | 4.0 | 14.2 | 23.0 | 69.7 | 96.0 | 63.7 | 93.9 | 79.0 | 84.6 | 44.2 | 69.0 | 61.5 | 86.0 |

| OpenMath-Nemo. (7B) | 26.9 | 36.0 | 18.6 | 28.0 | 19.8 | 28.0 | 4.4 | 4.0 | 17.4 | 24.0 | 86.4 | 100.0 | 76.4 | 97.0 | 91.5 | 100.0 | 55.3 | 72.4 | 74.7 | 91.0 |

| DS-R1-Distill (14B) | 16.1 | 16.0 | 17.0 | 16.0 | 18.1 | 32.0 | 2.1 | 4.0 | 13.3 | 17.0 | 69.0 | 96.0 | 65.1 | 97.0 | 79.4 | 92.3 | 44.0 | 65.5 | 61.8 | 87.0 |

| Light-R1-DS (14B) | 21.8 | 24.0 | 22.2 | 28.0 | 17.8 | 36.0 | 2.6 | 4.0 | 16.1 | 23.0 | 72.3 | 88.0 | 73.0 | 100.0 | 84.3 | 92.3 | 47.6 | 65.5 | 66.9 | 86.0 |

| OpenMath-Nemo. (14B) | 28.7 | 40.0 | 22.1 | 32.0 | 21.0 | 32.0 | 3.4 | 4.0 | 18.8 | 27.0 | 87.9 | 100.0 | 78.5 | 93.9 | 95.8 | 100.0 | 59.9 | 86.2 | 77.7 | 94.0 |

| Qwen3 (30B-A3B, Think) | 38.8 | 44.0 | 33.8 | 44.0 | 26.7 | 36.0 | 5.9 | 4.0 | 26.3 | 32.0 | 91.4 | 100.0 | 92.9 | 100.0 | 90.9 | 92.3 | 75.6 | 93.1 | 87.2 | 97.0 |

| DS-R1-Distill (32B) | 22.4 | 32.0 | 21.4 | 24.0 | 20.3 | 40.0 | 3.4 | 4.0 | 16.9 | 25.0 | 73.6 | 100.0 | 71.8 | 97.0 | 84.5 | 92.3 | 49.0 | 69.0 | 67.3 | 89.0 |

| QwQ (32B) | 32.9 | 28.0 | 26.6 | 36.0 | 26.7 | 44.0 | 6.2 | 4.0 | 23.1 | 28.0 | 91.8 | 100.0 | 87.0 | 100.0 | 95.0 | 100.0 | 69.0 | 89.7 | 84.0 | 97.0 |

| Light-R1-DS (32B) | 28.9 | 44.0 | 31.1 | 52.0 | 24.1 | 36.0 | 5.2 | 8.0 | 22.3 | 35.0 | 84.2 | 100.0 | 83.3 | 100.0 | 92.5 | 100.0 | 62.1 | 82.8 | 78.6 | 95.0 |

| OpenThinker2 (32B) | 24.1 | 32.0 | 22.9 | 32.0 | 18.0 | 20.0 | 2.6 | 4.0 | 16.9 | 22.0 | 79.4 | 96.0 | 74.0 | 100.0 | 90.4 | 92.3 | 56.5 | 79.3 | 72.4 | 92.0 |

| Skywork-OR1-Pre. (32B) | 37.2 | 52.0 | 32.3 | 48.0 | 27.0 | 40.0 | 4.2 | 4.0 | 25.2 | 36.0 | 89.3 | 100.0 | 87.3 | 100.0 | 92.4 | 100.0 | 63.9 | 82.8 | 81.7 | 95.0 |

| GLM-Z1-Air (32B) | 35.0 | 44.0 | 21.5 | 32.0 | 19.5 | 24.0 | 4.5 | 4.0 | 20.1 | 26.0 | 86.5 | 100.0 | 79.5 | 90.9 | 90.4 | 100.0 | 59.1 | 75.9 | 76.8 | 90.0 |

| OpenMath-Nemo. (32B) | 22.0 | 36.0 | 21.0 | 28.0 | 20.0 | 24.0 | 3.5 | 4.0 | 16.6 | 23.0 | 75.5 | 100.0 | 60.6 | 90.9 | 89.4 | 100.0 | 42.2 | 69.0 | 62.7 | 88.0 |

| Qwen3 (235B-A22B, Think) | 48.0 | 52.0 | 49.5 | 60.0 | 38.0 | 36.0 | 10.5 | 16.0 | 36.5 | 41.0 | 93.5 | 100.0 | 92.4 | 100.0 | 99.0 | 100.0 | 81.9 | 93.1 | 90.5 | 98.0 |

| DeepSeek R1 | 30.0 | 40.0 | 25.5 | 32.0 | 18.5 | 24.0 | 4.0 | 4.0 | 19.5 | 25.0 | 90.5 | 100.0 | 82.2 | 97.0 | 94.2 | 100.0 | 60.8 | 72.4 | 79.6 | 91.0 |

| OpenAI o3-mini (high) | 29.5 | 32.0 | 29.0 | 44.0 | 49.5 | 60.0 | 17.0 | 20.0 | 31.2 | 39.0 | 93.0 | 92.0 | 89.8 | 100.0 | 97.1 | 100.0 | 89.2 | 96.6 | 91.4 | 97.0 |

| Gemini 2.5 Pro Exp 0325 | 71.5 | 76.0 | 75.5 | 84.0 | 59.0 | 72.0 | 27.5 | 36.0 | 58.4 | 67.0 | 92.0 | 100.0 | 97.0 | 100.0 | 98.1 | 100.0 | 84.5 | 89.7 | 92.2 | 97.0 |

Evaluation Details.

Our evaluation pipeline follows a systematic approach: for each problem, we generate 64 distinct responses from each comparison model, with the exception of certain models (i.e., OpenMath-Nemotron-32B, Qwen3-235B-A22B, GLM-Z1-Air, DeepSeek-R1, o3-mini (high) and Gemini 2.5 Pro Exp), for which, due to resource limitations and the relatively large scale of our dataset, we only conducted 8 samples. For the Pass@1 metric, we compute the mean accuracy across all sampled responses to derive the final accuracy score. For the Cons@64 and Cons@8 metric, we implement majority voting to determine a consensus answer for each problem, subsequently calculating the average accuracy across the entire dataset. For generation hyperparameters, we adhere to established practices from previous research [4, 23], configuring locally-evaluated models with temperature, top_p, min_p, and max_token set to $0.6$ , $0.95$ , $0 0$ , and $32768$ , respectively. For api-evaluated models (i.e., GLM-Z1-Air, DeepSeek-R1, o3-mini (high) and Gemini 2.5 Pro Exp), we expand their max_token limit to the maximum extent possible to unleash their reasoning capabilities better. We have open-sourced all the samples (a dataset of 582,400 math reasoning samples with long chain-of-thought, generated from the 400 problems in OlymMATH across 28 models), an online data visualization tool and standard solutions for problems where all LLMs struggled in our repository, aiming to help the community analyze the problem-solving patterns and characteristics of LLMs (see Section 4 for further information).

3.2 Evaluation Results

In this part, we assess the performance of reasoning models on our benchmark. We present the evaluation results of OlymMATH-EN and OlymMATH-ZH in Table 4 and Table 5, respectively.

Table 5: Model performance on OlymMATH-ZH. Models within each model size group are sorted by release time. The abbreviations “Alg.”, “Geo.”, “Num.”, and “Com.” represent the four categories in OlymMATH. Highest accuracy per model size is bolded. The second highest accuracy per model size is underlined. Models sampled only 8 times are marked in gray to indicate potential instability.

| Model | OlymMATH-ZH-HARD | OlymMATH-ZH-EASY | | | | | | | | | | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Alg. | Geo. | Num. | Com. | Avg. | Alg. | Geo. | Num. | Com. | Avg. | | | | | | | | | | | |

| P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | P@1 | C@k | |

| Qwen3 (0.6B, Think) | 2.6 | 4.0 | 0.8 | 0.0 | 4.4 | 4.0 | 0.0 | 0.0 | 1.9 | 2.0 | 9.9 | 8.0 | 2.8 | 3.0 | 12.0 | 15.4 | 1.3 | 3.4 | 5.4 | 6.0 |

| DS-R1-Distill (1.5B) | 1.8 | 0.0 | 1.3 | 0.0 | 1.1 | 0.0 | 0.0 | 0.0 | 1.0 | 0.0 | 13.7 | 20.0 | 6.3 | 9.1 | 20.9 | 30.8 | 2.6 | 0.0 | 9.0 | 12.0 |

| STILL-3-Pre. (1.5B) | 2.9 | 0.0 | 2.2 | 0.0 | 4.5 | 4.0 | 0.2 | 0.0 | 2.5 | 1.0 | 15.9 | 32.0 | 7.4 | 18.2 | 27.6 | 46.2 | 4.3 | 6.9 | 11.3 | 22.0 |

| DeepScaleR-Pre. (1.5B) | 4.4 | 8.0 | 2.6 | 4.0 | 6.4 | 8.0 | 0.1 | 0.0 | 3.4 | 5.0 | 15.9 | 20.0 | 7.2 | 9.1 | 32.6 | 46.2 | 8.9 | 20.7 | 13.2 | 20.0 |

| OpenMath-Nemo. (1.5B) | 13.9 | 16.0 | 9.8 | 4.0 | 13.3 | 16.0 | 0.8 | 0.0 | 9.5 | 9.0 | 67.9 | 96.0 | 37.6 | 57.6 | 65.3 | 76.9 | 27.6 | 41.4 | 45.9 | 65.0 |

| Qwen3 (4B, Think) | 12.5 | 20.0 | 7.0 | 8.0 | 12.6 | 24.0 | 0.9 | 0.0 | 8.3 | 13.0 | 70.8 | 88.0 | 61.0 | 75.8 | 74.8 | 92.3 | 41.8 | 51.7 | 59.7 | 74.0 |

| DS-R1-Distill (7B) | 6.1 | 8.0 | 7.9 | 12.0 | 6.6 | 8.0 | 0.6 | 0.0 | 5.3 | 7.0 | 38.0 | 64.0 | 30.8 | 51.5 | 49.2 | 61.5 | 18.7 | 27.6 | 31.5 | 49.0 |

| Light-R1-DS (7B) | 7.1 | 4.0 | 9.4 | 12.0 | 7.8 | 12.0 | 1.1 | 0.0 | 6.3 | 7.0 | 42.9 | 76.0 | 42.7 | 72.7 | 56.9 | 61.5 | 22.7 | 31.0 | 38.8 | 60.0 |

| OpenThinker2 (7B) | 7.0 | 0.0 | 7.3 | 8.0 | 7.4 | 8.0 | 1.0 | 0.0 | 5.7 | 4.0 | 48.2 | 80.0 | 44.7 | 72.7 | 57.8 | 76.9 | 22.4 | 37.9 | 40.8 | 65.0 |

| Skywork-OR1-Pre. (7B) | 4.7 | 4.0 | 7.8 | 8.0 | 7.4 | 8.0 | 0.4 | 0.0 | 5.1 | 5.0 | 41.1 | 60.0 | 36.6 | 54.5 | 58.1 | 69.2 | 23.6 | 34.5 | 36.8 | 52.0 |

| Skywork-OR1-Math (7B) | 6.4 | 8.0 | 8.3 | 8.0 | 9.8 | 12.0 | 0.8 | 0.0 | 6.3 | 7.0 | 45.2 | 72.0 | 40.0 | 63.6 | 62.3 | 69.2 | 30.2 | 37.9 | 41.3 | 59.0 |

| AceMath-RL (7B) | 6.4 | 8.0 | 10.7 | 12.0 | 7.8 | 8.0 | 1.4 | 0.0 | 6.6 | 7.0 | 55.1 | 88.0 | 46.6 | 75.8 | 66.9 | 76.9 | 31.0 | 44.8 | 46.9 | 70.0 |

| OpenMath-Nemo. (7B) | 25.0 | 32.0 | 20.8 | 28.0 | 22.3 | 36.0 | 4.8 | 4.0 | 18.2 | 25.0 | 86.8 | 100.0 | 72.7 | 90.9 | 91.8 | 100.0 | 57.9 | 79.3 | 74.4 | 91.0 |

| DS-R1-Distill (14B) | 5.2 | 0.0 | 5.3 | 4.0 | 8.7 | 16.0 | 0.2 | 0.0 | 4.9 | 5.0 | 43.1 | 56.0 | 38.9 | 66.7 | 58.2 | 69.2 | 24.8 | 31.0 | 38.4 | 54.0 |

| Light-R1-DS (14B) | 6.2 | 4.0 | 7.5 | 8.0 | 10.9 | 12.0 | 0.2 | 0.0 | 6.2 | 6.0 | 56.6 | 84.0 | 45.5 | 75.8 | 66.5 | 76.9 | 28.7 | 37.9 | 46.1 | 67.0 |

| OpenMath-Nemo. (14B) | 28.7 | 32.0 | 26.1 | 40.0 | 26.8 | 40.0 | 4.2 | 4.0 | 21.4 | 29.0 | 88.3 | 100.0 | 75.2 | 100.0 | 94.5 | 100.0 | 60.2 | 86.2 | 76.6 | 96.0 |

| Qwen3 (30B-A3B, Think) | 35.6 | 40.0 | 24.1 | 28.0 | 18.1 | 24.0 | 2.7 | 4.0 | 20.1 | 24.0 | 87.8 | 92.0 | 84.7 | 97.0 | 91.3 | 100.0 | 61.9 | 65.5 | 79.7 | 87.0 |

| DS-R1-Distill (32B) | 6.5 | 0.0 | 5.4 | 4.0 | 10.6 | 12.0 | 0.7 | 0.0 | 5.8 | 4.0 | 45.2 | 52.0 | 41.8 | 63.6 | 60.2 | 69.2 | 26.0 | 37.9 | 40.4 | 54.0 |

| QwQ (32B) | 20.9 | 24.0 | 15.9 | 16.0 | 17.6 | 24.0 | 2.0 | 0.0 | 14.1 | 16.0 | 85.4 | 96.0 | 76.6 | 97.0 | 92.9 | 100.0 | 53.8 | 69.0 | 74.3 | 89.0 |

| Light-R1-DS (32B) | 16.8 | 28.0 | 12.0 | 12.0 | 13.4 | 16.0 | 4.4 | 16.0 | 11.6 | 18.0 | 70.1 | 96.0 | 64.1 | 93.9 | 80.4 | 92.3 | 39.8 | 51.7 | 60.7 | 82.0 |

| OpenThinker2 (32B) | 13.6 | 16.0 | 11.1 | 16.0 | 12.7 | 20.0 | 0.9 | 0.0 | 9.6 | 13.0 | 68.0 | 92.0 | 64.3 | 93.9 | 84.6 | 92.3 | 44.8 | 65.5 | 62.2 | 85.0 |

| Skywork-OR1-Pre. (32B) | 19.6 | 20.0 | 16.8 | 20.0 | 18.9 | 24.0 | 3.5 | 4.0 | 14.7 | 17.0 | 79.5 | 96.0 | 72.1 | 93.9 | 88.0 | 100.0 | 45.4 | 58.6 | 68.3 | 85.0 |

| GLM-Z1-Air (32B) | 18.0 | 16.0 | 12.0 | 8.0 | 16.0 | 16.0 | 2.5 | 4.0 | 12.1 | 11.0 | 76.0 | 96.0 | 69.3 | 78.8 | 89.4 | 92.3 | 41.8 | 48.3 | 65.6 | 76.0 |

| OpenMath-Nemo. (32B) | 22.5 | 36.0 | 22.5 | 32.0 | 22.5 | 28.0 | 3.5 | 4.0 | 17.8 | 25.0 | 68.0 | 96.0 | 62.5 | 90.9 | 90.4 | 100.0 | 48.7 | 72.4 | 63.5 | 88.0 |

| Qwen3 (235B-A22B, Think) | 36.5 | 48.0 | 43.5 | 48.0 | 28.5 | 32.0 | 4.0 | 8.0 | 28.1 | 34.0 | 91.0 | 100.0 | 90.2 | 97.0 | 94.2 | 100.0 | 78.4 | 89.7 | 87.5 | 96.0 |

| DeepSeek R1 | 20.0 | 24.0 | 25.0 | 28.0 | 17.0 | 16.0 | 1.5 | 0.0 | 15.9 | 17.0 | 79.5 | 96.0 | 74.6 | 84.8 | 88.5 | 92.3 | 49.6 | 55.2 | 70.4 | 80.0 |

| OpenAI o3-mini (high) | 31.5 | 40.0 | 32.5 | 44.0 | 48.5 | 56.0 | 19.0 | 28.0 | 32.9 | 42.0 | 93.0 | 96.0 | 89.4 | 100.0 | 99.0 | 100.0 | 85.8 | 93.1 | 90.5 | 97.0 |

| Gemini 2.5 Pro Exp 0325 | 65.0 | 76.0 | 78.0 | 80.0 | 53.5 | 56.0 | 25.0 | 40.0 | 55.4 | 63.0 | 90.5 | 96.0 | 93.2 | 93.9 | 100.0 | 100.0 | 84.1 | 86.2 | 90.8 | 93.0 |

First, we observe that all tested models exhibit relatively poor performance, with even OpenAI o3-mini (high) and Gemini 2.5 Pro Exp achieving only 31.2% and 58.4% on OlymMATH-EN-HARD. This underscores the high overall difficulty of our benchmark, which demands stronger reasoning abilities and a deeper understanding of mathematical knowledge to solve the problems effectively. In contrast, the performance of these advanced reasoning models on OlymMATH-EN-EASY is more modest and comparable to that on AIME 2024 and AIME 2025, suggesting that OlymMATH-EN-EASY is well-suited for evaluating the capabilities of less advanced reasoning models.

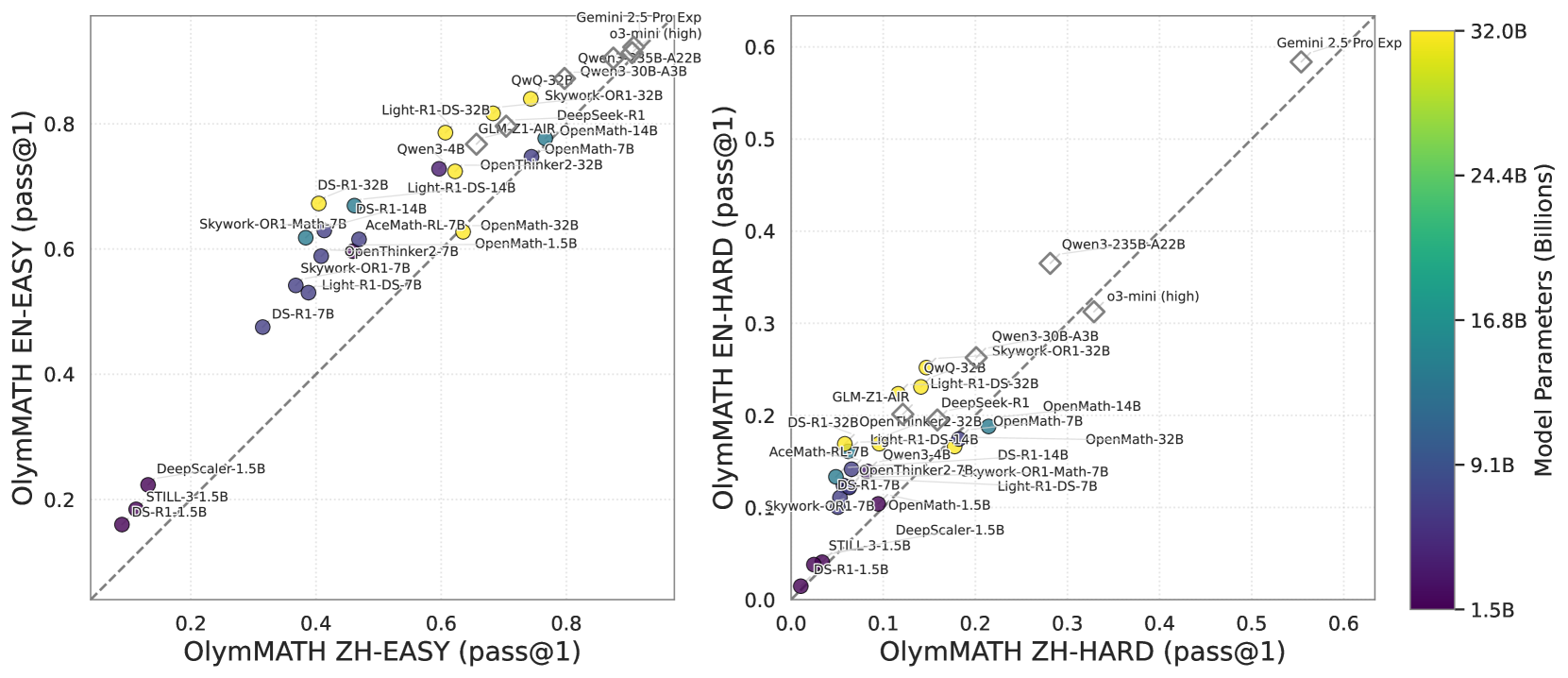

Second, by comparing the performance of LLMs on OlymMATH-EN and OlymMATH-ZH, we find that language can influence the reasoning performance of LLMs to some extent (see Figure 3). Overall, all models tend to achieve higher performance on the English benchmarks. A potential reason for this is that English corpora still dominate existing pre-training datasets, making the English-based task-solving capabilities of LLMs generally more superior compared to other languages. This finding highlights the importance of considering performance across different languages when conducting a comprehensive evaluation of LLMs.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance on Olympiad Math Problems vs. Model Size

### Overview

This image presents two scatter plots comparing the performance of various language models on two Olympiad Math benchmarks (EN-EASY and EN-HARD) against the number of model parameters. A color gradient represents the model size, ranging from 1.5B to 32.0B parameters. Each point represents a specific model, labeled with its name. The plots are positioned side-by-side, with the EN-EASY plot on the left and the EN-HARD plot on the right. A vertical colorbar on the right indicates the mapping between color and model size.

### Components/Axes

* **X-axis (Left Plot):** OlyMath ZH-EASY (pass@1) - Scale ranges from approximately 0.0 to 0.8.

* **Y-axis (Left Plot):** OlyMath EN-EASY (pass@1) - Scale ranges from approximately 0.0 to 0.8.

* **X-axis (Right Plot):** OlyMath ZH-HARD (pass@1) - Scale ranges from approximately 0.0 to 0.7.

* **Y-axis (Right Plot):** OlyMath EN-HARD (pass@1) - Scale ranges from approximately 0.0 to 0.5.

* **Colorbar:** Model Parameters (Billions) - Scale ranges from 1.5B (purple) to 32.0B (yellow).

* **Legend:** No explicit legend, but model names are directly labeled on the scatter plot.

* **Data Points:** Each point represents a model, with color indicating model size.

* **Lines:** Two dashed lines are present on the right plot, representing Gemini 2.5 Pro Exp and Gemini 2.5 Pro.

### Detailed Analysis or Content Details

**Left Plot (EN-EASY):**

* **Trend:** A general upward trend is visible, indicating that larger models tend to perform better on the EN-EASY benchmark. The points are somewhat scattered, suggesting variability in performance for models of similar size.

* **Data Points (Approximate):**

* DS-R1-7B: (0.28, 0.48) - Purple

* DeepScaler-1.5B: (0.24, 0.36) - Purple

* STILL-31.5B: (0.32, 0.44) - Yellow

* DS-R1-1.5B: (0.26, 0.40) - Purple

* Light-R1-DS-7B: (0.34, 0.50) - Green

* Skywork-OR1-Math7B: (0.36, 0.54) - Green

* Light-R1-DS-32B: (0.40, 0.60) - Yellow

* OpenMath-32B: (0.42, 0.62) - Yellow

* OpenThinker2-32B: (0.44, 0.64) - Yellow

* Owen3-4B: (0.46, 0.66) - Orange

* Owen3-30B-A3B: (0.48, 0.68) - Orange

* Owen3-235B-A22B: (0.50, 0.70) - Yellow

* GLM-Z1-AIR-OpenMath-14B: (0.38, 0.56) - Green

* AceMath-RL-7B: (0.30, 0.46) - Green

**Right Plot (EN-HARD):**

* **Trend:** Similar to the left plot, a general upward trend is observed. However, the points are more dispersed, indicating greater variability in performance on the EN-HARD benchmark.

* **Data Points (Approximate):**

* DS-R1-7B: (0.12, 0.18) - Purple

* DeepScaler-1.5B: (0.10, 0.14) - Purple

* STILL-31.5B: (0.18, 0.26) - Yellow

* DS-R1-1.5B: (0.11, 0.16) - Purple

* Light-R1-DS-7B: (0.16, 0.24) - Green

* Skywork-OR1-32B: (0.20, 0.30) - Yellow

* Light-R1-DS-32B: (0.22, 0.32) - Yellow

* OpenMath-32B: (0.24, 0.34) - Yellow

* OpenThinker2-32B: (0.26, 0.36) - Yellow

* Owen3-4B: (0.28, 0.38) - Orange

* Owen3-30B-A3B: (0.30, 0.40) - Orange

* Owen3-235B-A22B: (0.32, 0.42) - Yellow

* GLM-Z1-AIR-OpenMath-14B: (0.18, 0.26) - Green

* AceMath-RL-7B: (0.14, 0.20) - Green

* Gemini 2.5 Pro Exp: (0.5, 0.45) - Light Blue

* Gemini 2.5 Pro: (0.4, 0.35) - Light Blue

### Key Observations

* The Gemini 2.5 Pro models (both Exp and standard) significantly outperform other models on the EN-HARD benchmark, as indicated by their position far above the general trend.

* Model size appears to be a strong predictor of performance on both benchmarks, but the relationship is not perfectly linear.

* There is considerable overlap in performance between models of similar size, suggesting that factors other than model size (e.g., training data, architecture) also play a significant role.

* The EN-HARD benchmark appears to be more challenging than the EN-EASY benchmark, as evidenced by the lower overall performance scores.

### Interpretation

The data suggests a positive correlation between model size and performance on Olympiad Math problems. Larger models generally achieve higher pass rates on both the EN-EASY and EN-HARD benchmarks. However, the Gemini 2.5 Pro models represent a significant outlier, demonstrating substantially higher performance than other models, even those with comparable or larger parameter counts. This suggests that architectural innovations or training methodologies employed by Google have resulted in a model that is significantly more efficient at solving these types of problems.

The scatter plots provide a visual representation of the trade-off between model size and performance. While increasing model size generally leads to improved performance, there are diminishing returns, and other factors can significantly influence the outcome. The variability in performance among models of similar size highlights the importance of considering factors beyond just the number of parameters when evaluating language models. The difference in performance between the EN-EASY and EN-HARD benchmarks indicates that the difficulty of the task also plays a crucial role, with more challenging problems requiring more capable models.

</details>

Figure 3: Pass@1 accuracy on OlymMATH EN (y) vs. ZH (x), the dashed line shows parity. Points above favor English, below favor Chinese. Solid circles (local dense models, colored by size) indicate larger models trend towards higher accuracy. Hollow diamonds are MoE or API evaluated models.

3.3 Benchmark Comparison

<details>

<summary>x3.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance on Math Benchmarks

### Overview

This scatter plot visualizes the performance of various language models on two math benchmarks: AIME24 Accuracy and OlyMath EN Accuracy. The plot also incorporates the release date of each model, represented by color. Four datasets/trends are shown: EN-EASY, EN-EASY Trend, EN-HARD, and EN-HARD Trend.

### Components/Axes

* **X-axis:** AIME24 Accuracy, ranging from approximately 0.1 to 1.0.

* **Y-axis:** OlyMath EN Accuracy (pass@1), ranging from approximately 0.0 to 1.0.

* **Color Scale (Right):** Represents Release Date, ranging from January 2025 (dark purple) to April 2025 (bright green).

* **Legend (Top-Left):**

* **EN-EASY (Blue Circles):** Represents models evaluated on the EN-EASY dataset.

* **EN-EASY Trend (Blue Dashed Line):** Represents the trend line for the EN-EASY dataset.

* **EN-HARD (Red Triangles):** Represents models evaluated on the EN-HARD dataset.

* **EN-HARD Trend (Red Dashed Line):** Represents the trend line for the EN-HARD dataset.

* **Data Points:** Each point represents a specific model, labeled with its name.

### Detailed Analysis

**EN-EASY Dataset (Blue Circles):**

The EN-EASY data points generally show a positive correlation between AIME24 Accuracy and OlyMath EN Accuracy. The data points are scattered, but a clear upward trend is visible.

* Qwen3-235B-A22B: (Approx. 0.95, 0.75) - Released around March 2025 (light green).

* Qwen3-30B-A3B: (Approx. 0.85, 0.65) - Released around February 2025 (medium purple).

* QwQ-32B: (Approx. 0.75, 0.55) - Released around February 2025 (medium purple).

* OpenMath-7B: (Approx. 0.7, 0.4) - Released around January 2025 (dark purple).

* Qwen3-4B: (Approx. 0.6, 0.3) - Released around January 2025 (dark purple).

* OpenMath-14B: (Approx. 0.78, 0.58) - Released around February 2025 (medium purple).

* OpenMath-1.5B: (Approx. 0.5, 0.2) - Released around January 2025 (dark purple).

* Gemini 2.5 Pro Exp: (Approx. 0.98, 0.8) - Released around April 2025 (bright green).

**EN-EASY Trend (Blue Dashed Line):**

The EN-EASY trend line slopes upward, indicating a positive correlation. It starts at approximately (0.2, 0.05) and ends at approximately (0.95, 0.75).

**EN-HARD Dataset (Red Triangles):**

The EN-HARD data points also show a positive correlation, but the relationship appears weaker than for the EN-EASY dataset. The points are more scattered.

* Qwen3-235B-A22B: (Approx. 0.9, 0.1) - Released around March 2025 (light green).

* Qwen3-30B-A3B: (Approx. 0.8, 0.05) - Released around February 2025 (medium purple).

* Skywork-OR1-32B: (Approx. 0.75, 0.08) - Released around February 2025 (medium purple).

* OpenMath-14B: (Approx. 0.7, 0.06) - Released around February 2025 (medium purple).

* OpenMath-7B: (Approx. 0.65, 0.04) - Released around January 2025 (dark purple).

* OpenMath-1.5B: (Approx. 0.4, 0.02) - Released around January 2025 (dark purple).

* o3-mini (high): (Approx. 0.95, 0.15) - Released around March 2025 (light green).

* o3-mini (high): (Approx. 0.85, 0.1) - Released around February 2025 (medium purple).

**EN-HARD Trend (Red Dashed Line):**

The EN-HARD trend line also slopes upward, but is flatter than the EN-EASY trend line. It starts at approximately (0.2, 0.0) and ends at approximately (0.9, 0.1).

### Key Observations

* Models released later (indicated by greener colors) generally exhibit higher performance on both benchmarks.

* The EN-EASY dataset shows a stronger correlation between AIME24 and OlyMath EN accuracy than the EN-HARD dataset.

* Gemini 2.5 Pro Exp appears to be the highest-performing model on both benchmarks.

* There is a noticeable gap in performance between the EN-EASY and EN-HARD datasets, with EN-EASY models consistently outperforming EN-HARD models.

### Interpretation

The data suggests that as models become more advanced (as indicated by later release dates and larger model sizes), their performance on math benchmarks improves. The distinction between the EN-EASY and EN-HARD datasets indicates that the difficulty of the math problems significantly impacts performance. The stronger correlation within the EN-EASY dataset suggests that models are more consistent in their ability to solve easier math problems. The outliers, such as the models with high AIME24 accuracy but relatively low OlyMath EN accuracy, may indicate specialization in certain types of math problems. The color gradient provides a valuable temporal dimension, allowing for the observation of performance improvements over time. The dashed trend lines provide a generalized view of the relationship between the two benchmarks for each dataset, while the individual data points reveal the specific performance of each model. The positioning of the models on the chart allows for a direct comparison of their strengths and weaknesses across the two benchmarks.

</details>

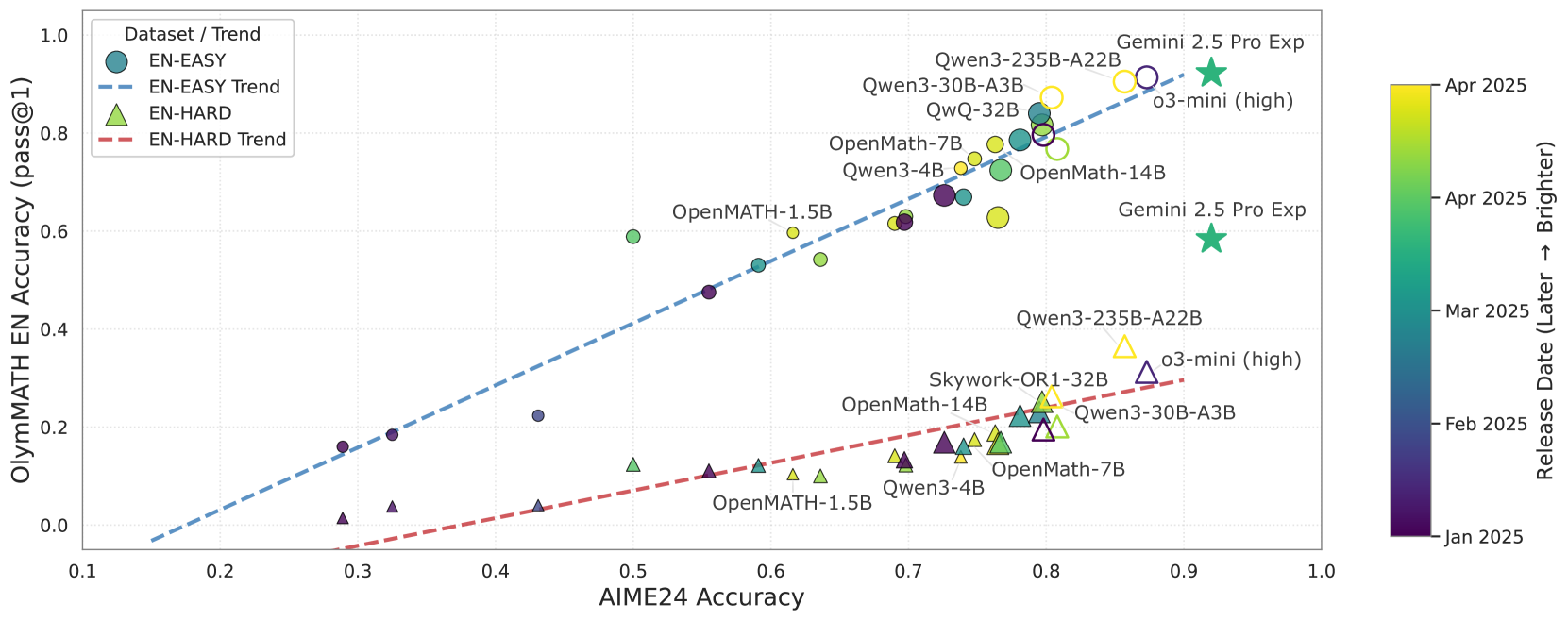

Figure 4: Correlation of Pass@1 performance: OlymMATH-EN vs. AIME24. Dashed lines indicate linear trends per dataset. Solid shapes are local dense models (size = model size, color = release date). Hollow shapes denote MoE or API evaluated models. Stars mark the best overall model.

To comprehensively evaluate OlymMATH against existing benchmarks, we compare state-of-the-art model performance across widely used mathematical benchmarks (see Figure 1). Results are sourced from research reports or the MathArena platform https://matharena.ai/ .

Figure 1 illustrates that OlymMATH is more challenging, yielding lower accuracy compared to saturated benchmarks like MATH-500 (where even DeepSeek-R1-Distill-Qwen-7B exceeds 92% accuracy [4]) or AIME24 (where top LLMs reach 92% with single attempt). Unlike these benchmarks whose high performance limits discriminative power, OlymMATH elicits more varied scores, offering superior differentiation of reasoning capabilities. For example, while Gemini 2.5 Pro Exp and o3-mini (high) achieve similar AIME24 accuracy (92.0% vs. 87.3%), their OlymMATH-EN-HARD performance diverges significantly (58.4% vs. 31.2%).

Figure 4 further demonstrates OlymMATH’s reliability by comparing OlymMATH-EN performance against AIME24. The close clustering of data points around linear trend lines indicates consistent relative model performance across both benchmarks. This strong correlation suggests OlymMATH measures similar underlying mathematical reasoning abilities as the respected AIME24 dataset, validating its use for LLM evaluation (see Figure 8 in Appendix for more information). Despite this alignment, OlymMATH, particularly the HARD subset, remains significantly more challenging than AIME24 for most models, reinforcing its superior ability to differentiate state-of-the-art capabilities.

3.4 Case Study

During our data collection and preliminary experiments, we empirically observed that LLMs sometimes resort to empirical guesses —such as heuristics, symmetry assumptions, or even fabrication—rather than rigorous reasoning. For instance, o3-mini-high merely “guessed” $b=c$ due to symmetry in a geometric optimization problem (see Figure 9 in Appendix). While such intuitive approaches might yield correct answers, they lack logical rigor and this case becomes problematic when employing rule-based or LLM-as-judge methods, as neither can effectively assess the quality of rigorous reasoning, thus potentially leading to an illusory improvement in accuracy via “shortcuts”.

Similar issues were observed in the AIME 2025 and Omni-MATH benchmarks (see Figure 10 and 11 in Appendix), indicating that despite performance gains, LLMs still exhibit deficiencies in deliberative thinking. This underscores the importance of process-level supervision, though its scalability remains a challenge. Currently, we do not accurately measure the proportion of “guesses” in these benchmarks, leaving this as an important direction for future work.

Notably, these guessing strategies often fail on our OlymMATH dataset. For example, a model incorrectly assumed symmetry for a complex optimization problem in OlymMATH, yielding $3081$ instead of the correct $2625$ (see Figure 12 in Appendix). OlymMATH problems, particularly in the HARD subset, are selected and designed so that their reasoning steps are difficult to “hack” through empirical guessing, thus providing a more robust evaluation of genuine reasoning capabilities.

4 Usability and Accessibility

To support research into LLM reasoning, we have open-sourced the OlymMATH-eval dataset at https://hf.co/datasets/RUC-AIBOX/OlymMATH-eval, with 582,400 entries from 28 models, to help compare reasoning capabilities across different models and mathematical domains.

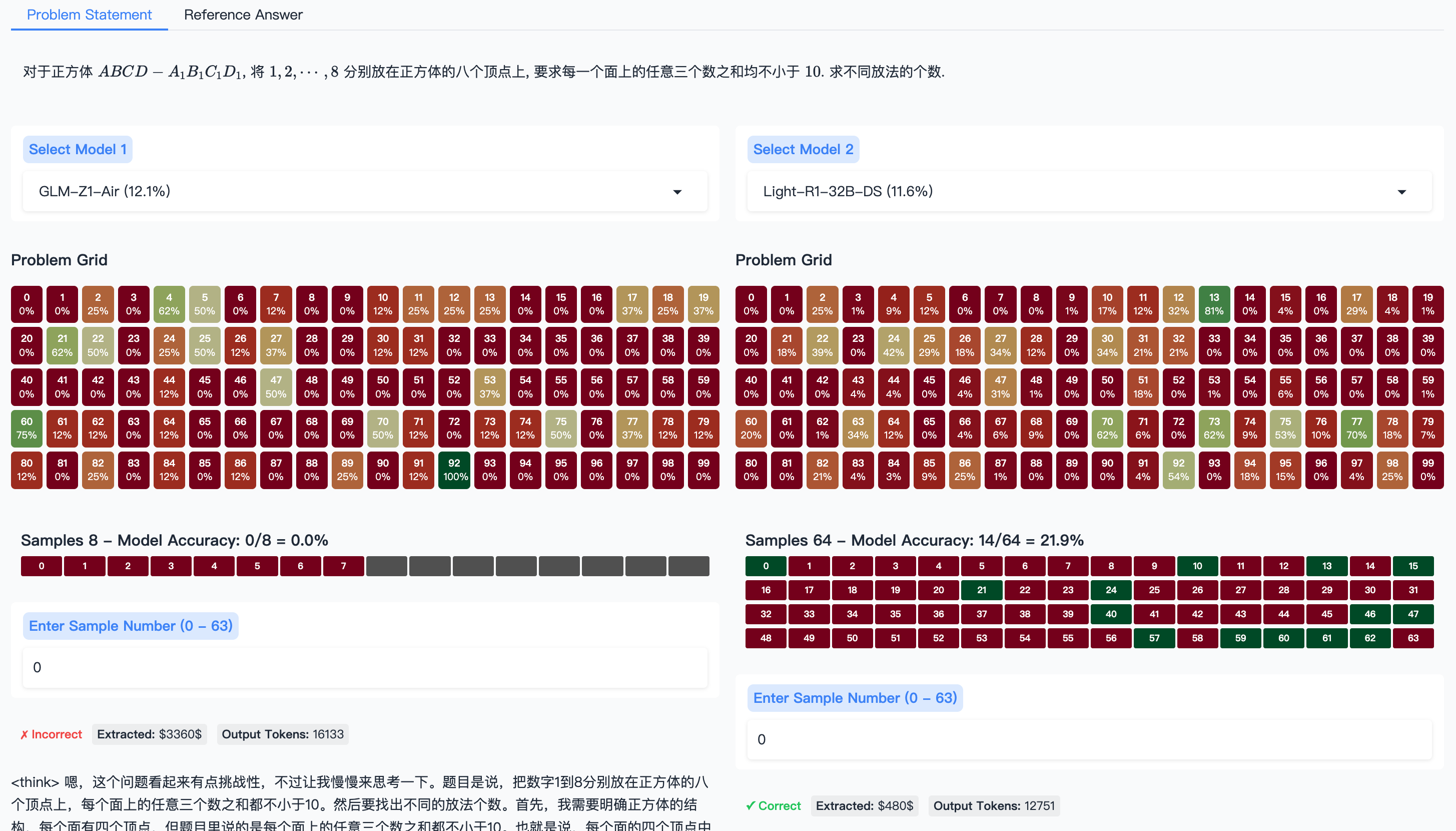

Furthermore, we provide the OlymMATH-demo visualization tool (https://hf.co/spaces/RUC-AIBOX/OlymMATH-demo; see Figure 5) to facilitate in-depth analysis of LLM reasoning. This interactive interface enables: (1) Side-by-side comparison of two selected LLMs on the same L a T e X -rendered problem, with access to reference answers. (2) Color-coded “Problem Grids” for each model, displaying per-problem accuracy for quick identification of challenging areas. (3) Examination of individual model-generated reasoning samples, including correctness, extracted answers, and token counts, crucial for understanding solution processes and identifying flaws. The tool also includes standard solutions for difficult problems and supports local deployment. OlymMATH-demo is thus a valuable asset for dissecting reasoning patterns, diagnosing errors, and guiding LLM development.

<details>

<summary>extracted/6453487/figs/demo.png Details</summary>

### Visual Description

\n

## Heatmap Comparison: Model Performance on Visual Reasoning Task

### Overview

The image presents a comparison of two machine learning models (GLM-ZT-Air and Light-RT-32B-DS) on a visual reasoning task involving a 9x9 grid. The performance is visualized using heatmaps, where each cell represents a digit (0-8) and the color intensity indicates the model's confidence in predicting the correct digit. Accuracy scores for each model are also displayed, along with a bar chart showing the distribution of correct answer numbers. There is text in Chinese at the top of the image.

### Components/Axes

* **Top Section:** Contains the problem statement in Chinese and dropdown menus for selecting models.

* **Model Selection Dropdowns:**

* "Select Model 1" - Currently set to "GLM-ZT-Air (12.1%)"

* "Select Model 2" - Currently set to "Light-RT-32B-DS (11.6%)"

* **Problem Grids (Heatmaps):** Two 9x9 grids, one for each model.

* X-axis: Numbered 0-8 (representing the digits).

* Y-axis: Numbered 0-8 (representing the digits).

* Color Scale: Ranges from white (0% confidence) to dark green (100% confidence).

* **Accuracy Scores:** Displayed below each grid.

* "Samples B - Model Accuracy: 8.0%"

* "Samples B - Model Accuracy: 14/21 = 66.7%"

* "Samples 64 - Model Accuracy: 14/21 = 66.7%"

* **Correct Answer Number Distribution:** A bar chart showing the frequency of each correct answer number (0-63).

* X-axis: Correct Answer Number (0-63)

* Y-axis: Frequency (0-7)

* **Footer:** Contains text in Chinese, including a copyright notice and model information.

### Detailed Analysis or Content Details

**Chinese Text (Top):**

“对于正方形ABCD-A,B(C,D)。将1,2,...,8分别放在正方形的八个顶点上。要求每一个顶上 的任意三个数之和均不小于10. 求不同放置的个数.”

*Translation:* "For the square ABCD - A, B (C, D). Place 1, 2, ..., 8 respectively at the eight vertices of the square. It is required that the sum of any three numbers at each vertex is not less than 10. Find the number of different arrangements."

**Model 1: GLM-ZT-Air (12.1%)**

* **Grid Analysis:** The heatmap shows varying confidence levels across the grid. The highest confidence (darkest green) appears concentrated around cells (20, 21), (21, 22), (40, 41), (41, 42), (60, 61), (61, 62). Many cells have very low confidence (white).

* **Specific Values (Approximate):**

* (0,0): 0%

* (0,1): 25%

* (0,2): 0%

* (0,3): 52%

* (0,4): 62%

* (0,5): 12%

* (0,6): 0%

* (0,7): 37%

* (0,8): 0%

* (20,20): 82%

* (20,21): 82%

* (20,22): 50%

* (20,23): 37%

* (20,24): 0%

* (20,25): 12%

* (20,26): 0%

* (20,27): 0%

* (20,28): 0%

* **Accuracy:** 8.0% and 14/21 = 66.7%

**Model 2: Light-RT-32B-DS (11.6%)**

* **Grid Analysis:** Similar to Model 1, this heatmap also shows a sparse distribution of high confidence. The highest confidence appears around cells (20, 21), (21, 22), (40, 41), (41, 42), (60, 61), (61, 62).

* **Specific Values (Approximate):**

* (0,0): 0%

* (0,1): 25%

* (0,2): 0%

* (0,3): 52%

* (0,4): 62%

* (0,5): 12%

* (0,6): 0%

* (0,7): 37%

* (0,8): 0%

* (20,20): 87%

* (20,21): 87%

* (20,22): 42%

* (20,23): 34%

* (20,24): 12%

* (20,25): 0%

* (20,26): 0%

* (20,27): 0%

* (20,28): 0%

* **Accuracy:** 14/21 = 66.7%

**Correct Answer Number Distribution:**

* The bar chart shows the frequency of each correct answer number. The highest frequency is around answer number 7, with a frequency of approximately 6. The frequencies for other answer numbers are generally lower, ranging from 0 to 3.

**Footer Text (Chinese):**

“版权所有 © 33986 实验班级名称:GLM-ZT-Air, Light-RT-32B-DS. 本文仅用于研究目的,任何商业用途均需获得授权。本实验基于对正方形ABCD-A,B(C,D)的顶点放置问题进行分析,旨在评估模型的视觉推理能力。请谨慎使用实验结果,并注意潜在的风险。”

*Translation:* "Copyright © 33986. Experimental class name: GLM-ZT-Air, Light-RT-32B-DS. This article is for research purposes only, and any commercial use requires authorization. This experiment is based on the analysis of vertex placement problems in square ABCD - A, B (C, D), aiming to evaluate the visual reasoning ability of the model. Please use the experimental results with caution and pay attention to potential risks."

### Key Observations

* Both models exhibit similar performance patterns on the grid, with high confidence concentrated in the same areas.

* The accuracy scores are relatively low for both models (8.0% and 14/21 = 66.7%).

* The distribution of correct answer numbers is uneven, suggesting some answers are easier to predict than others.

* The problem statement describes a combinatorial problem involving placing numbers on the vertices of a square with a specific constraint.

### Interpretation

The image demonstrates a comparison of two models' ability to solve a visual reasoning problem. The heatmaps visualize the models' confidence in predicting the correct digit for each cell in the grid. The low accuracy scores suggest that the task is challenging for both models. The similar performance patterns indicate that both models are struggling with the same aspects of the problem. The uneven distribution of correct answer numbers suggests that the problem has inherent biases or that certain configurations are easier to solve than others. The Chinese text provides the problem definition, indicating a combinatorial reasoning task. The footer emphasizes the research-only nature of the experiment and cautions against commercial use. The models are likely being evaluated on their ability to understand spatial relationships and apply constraints to solve the problem. The fact that the confidence is concentrated in specific areas suggests the models are identifying some patterns, but are not consistently accurate.

</details>

Figure 5: The OlymMATH-demo interface. It is currently being maintained on HuggingFace Spaces.

5 Conclusion

We introduced OlymMATH, a challenging math benchmark for LLMs, uniquely curated from printed materials. It includes 200 problems across four fields, with easy (AIME-level) and hard (more challenging) subsets, in parallel English and Chinese versions. Our experiments with state-of-the-art LLMs, especially in slow-thinking modes, show significant struggles. Analysis highlights language-specific strengths and universal limitations like empirical guessing, identifying weaknesses in LLMs’ multi-step reasoning and logical consistency. Meanwhile, to facilitate community research, we have open-sourced over 580k reasoning data, a visualization tool, and solutions for challenging problems.

As part of our STILL project, OlymMATH affirms our belief in benchmarks’ pivotal role in advancing LLMs’ reasoning capabilities. We advocate for benchmarks to evolve faster than methodologies, guiding the field’s progress. Our planned expansion of OlymMATH embodies this commitment, aiming to further cultivate the development of more robust reasoning models and continue pushing the boundaries of language intelligence.

References

- [1] Wayne Xin Zhao, Kun Zhou, Junyi Li, Tianyi Tang, Xiaolei Wang, Yupeng Hou, Yingqian Min, Beichen Zhang, Junjie Zhang, Zican Dong, Yifan Du, Chen Yang, Yushuo Chen, Zhipeng Chen, Jinhao Jiang, Ruiyang Ren, Yifan Li, Xinyu Tang, Zikang Liu, Peiyu Liu, Jian-Yun Nie, and Ji-Rong Wen. A survey of large language models, 2025.

- [2] An Yang, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran Wei, Huan Lin, Jian Yang, Jianhong Tu, Jianwei Zhang, Jianxin Yang, Jiaxi Yang, Jingren Zhou, Junyang Lin, Kai Dang, Keming Lu, Keqin Bao, Kexin Yang, Le Yu, Mei Li, Mingfeng Xue, Pei Zhang, Qin Zhu, Rui Men, Runji Lin, Tianhao Li, Tingyu Xia, Xingzhang Ren, Xuancheng Ren, Yang Fan, Yang Su, Yichang Zhang, Yu Wan, Yuqiong Liu, Zeyu Cui, Zhenru Zhang, and Zihan Qiu. Qwen2.5 technical report. arXiv preprint arXiv:2412.15115, 2024.

- [3] Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, Anirudh Goyal, Anthony Hartshorn, Aobo Yang, Archi Mitra, Archie Sravankumar, Artem Korenev, Arthur Hinsvark, Arun Rao, Aston Zhang, Aurélien Rodriguez, Austen Gregerson, Ava Spataru, Baptiste Rozière, Bethany Biron, Binh Tang, Bobbie Chern, Charlotte Caucheteux, Chaya Nayak, Chloe Bi, Chris Marra, Chris McConnell, Christian Keller, Christophe Touret, Chunyang Wu, Corinne Wong, Cristian Canton Ferrer, Cyrus Nikolaidis, Damien Allonsius, Daniel Song, Danielle Pintz, Danny Livshits, David Esiobu, Dhruv Choudhary, Dhruv Mahajan, Diego Garcia-Olano, Diego Perino, Dieuwke Hupkes, Egor Lakomkin, Ehab AlBadawy, Elina Lobanova, Emily Dinan, Eric Michael Smith, Filip Radenovic, Frank Zhang, Gabriel Synnaeve, Gabrielle Lee, Georgia Lewis Anderson, Graeme Nail, Grégoire Mialon, Guan Pang, Guillem Cucurell, Hailey Nguyen, Hannah Korevaar, Hu Xu, Hugo Touvron, Iliyan Zarov, Imanol Arrieta Ibarra, Isabel M. Kloumann, Ishan Misra, Ivan Evtimov, Jade Copet, Jaewon Lee, Jan Geffert, Jana Vranes, Jason Park, Jay Mahadeokar, Jeet Shah, Jelmer van der Linde, Jennifer Billock, Jenny Hong, Jenya Lee, Jeremy Fu, Jianfeng Chi, Jianyu Huang, Jiawen Liu, Jie Wang, Jiecao Yu, Joanna Bitton, Joe Spisak, Jongsoo Park, Joseph Rocca, Joshua Johnstun, Joshua Saxe, Junteng Jia, Kalyan Vasuden Alwala, Kartikeya Upasani, Kate Plawiak, Ke Li, Kenneth Heafield, Kevin Stone, and et al. The llama 3 herd of models. CoRR, abs/2407.21783, 2024.

- [4] DeepSeek-AI, Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, Xiaokang Zhang, Xingkai Yu, Yu Wu, Z. F. Wu, Zhibin Gou, Zhihong Shao, Zhuoshu Li, Ziyi Gao, Aixin Liu, Bing Xue, Bingxuan Wang, Bochao Wu, Bei Feng, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chenyu Zhang, Chong Ruan, Damai Dai, Deli Chen, Dongjie Ji, Erhang Li, Fangyun Lin, Fucong Dai, Fuli Luo, Guangbo Hao, Guanting Chen, Guowei Li, H. Zhang, Han Bao, Hanwei Xu, Haocheng Wang, Honghui Ding, Huajian Xin, Huazuo Gao, Hui Qu, Hui Li, Jianzhong Guo, Jiashi Li, Jiawei Wang, Jingchang Chen, Jingyang Yuan, Junjie Qiu, Junlong Li, J. L. Cai, Jiaqi Ni, Jian Liang, Jin Chen, Kai Dong, Kai Hu, Kaige Gao, Kang Guan, Kexin Huang, Kuai Yu, Lean Wang, Lecong Zhang, Liang Zhao, Litong Wang, Liyue Zhang, Lei Xu, Leyi Xia, Mingchuan Zhang, Minghua Zhang, Minghui Tang, Meng Li, Miaojun Wang, Mingming Li, Ning Tian, Panpan Huang, Peng Zhang, Qiancheng Wang, Qinyu Chen, Qiushi Du, Ruiqi Ge, Ruisong Zhang, Ruizhe Pan, Runji Wang, R. J. Chen, R. L. Jin, Ruyi Chen, Shanghao Lu, Shangyan Zhou, Shanhuang Chen, Shengfeng Ye, Shiyu Wang, Shuiping Yu, Shunfeng Zhou, Shuting Pan, and S. S. Li. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. CoRR, abs/2501.12948, 2025.

- [5] OpenAI. Openai o1 system card, 2024.

- [6] Zhipeng Chen, Yingqian Min, Beichen Zhang, Jie Chen, Jinhao Jiang, Daixuan Cheng, Wayne Xin Zhao, Zheng Liu, Xu Miao, Yang Lu, Lei Fang, Zhongyuan Wang, and Ji-Rong Wen. An empirical study on eliciting and improving r1-like reasoning models, 2025.

- [7] An Yang, Beichen Zhang, Binyuan Hui, Bofei Gao, Bowen Yu, Chengpeng Li, Dayiheng Liu, Jianhong Tu, Jingren Zhou, Junyang Lin, Keming Lu, Mingfeng Xue, Runji Lin, Tianyu Liu, Xingzhang Ren, and Zhenru Zhang. Qwen2.5-math technical report: Toward mathematical expert model via self-improvement, 2024.

- [8] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- [9] Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the MATH dataset. In Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks 1, NeurIPS Datasets and Benchmarks 2021, December 2021, virtual, 2021.

- [10] Meng Fang, Xiangpeng Wan, Fei Lu, Fei Xing, and Kai Zou. Mathodyssey: Benchmarking mathematical problem-solving skills in large language models using odyssey math data, 2024.

- [11] Daman Arora, Himanshu Gaurav Singh, and Mausam. Have llms advanced enough? a challenging problem solving benchmark for large language models, 2023.

- [12] OpenAI. Openai o3-mini: Pushing the frontier of cost-effective reasoning, 1 2025.

- [13] Google Deepmind. Gemini 2.5: Our most intelligent ai model, 3 2025.

- [14] Yingqian Min, Zhipeng Chen, Jinhao Jiang, Jie Chen, Jia Deng, Yiwen Hu, Yiru Tang, Jiapeng Wang, Xiaoxue Cheng, Huatong Song, Wayne Xin Zhao, Zheng Liu, Zhongyuan Wang, and Ji-Rong Wen. Imitate, explore, and self-improve: A reproduction report on slow-thinking reasoning systems, 2024.

- [15] Mathematical Association of America. Aime 2024, 2024.

- [16] Mathematical Association of America. Aime 2025, 2025.

- [17] HMMT. Hmmt 202502, 2025.

- [18] Mathematical Association of America. Usamo 2025, 2025.

- [19] Chaoqun He, Renjie Luo, Yuzhuo Bai, Shengding Hu, Zhen Leng Thai, Junhao Shen, Jinyi Hu, Xu Han, Yujie Huang, Yuxiang Zhang, Jie Liu, Lei Qi, Zhiyuan Liu, and Maosong Sun. Olympiadbench: A challenging benchmark for promoting AGI with olympiad-level bilingual multimodal scientific problems. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2024, Bangkok, Thailand, August 11-16, 2024, pages 3828–3850, 2024.

- [20] Bofei Gao, Feifan Song, Zhe Yang, Zefan Cai, Yibo Miao, Qingxiu Dong, Lei Li, Chenghao Ma, Liang Chen, Runxin Xu, et al. Omni-math: A universal olympiad level mathematic benchmark for large language models. arXiv preprint arXiv:2410.07985, 2024.

- [21] RUCAIBox STILL Team. Still-3-1.5b-preview: Enhancing slow thinking abilities of small models through reinforcement learning. 2025.

- [22] Michael Luo, Sijun Tan, Justin Wong, Xiaoxiang Shi, William Y. Tang, Manan Roongta, Colin Cai, Jeffrey Luo, Li Erran Li, Raluca Ada Popa, and Ion Stoica. Deepscaler: Surpassing o1-preview with a 1.5b model by scaling rl, 2025. Notion Blog.

- [23] Qwen Team. Qwq-32b: Embracing the power of reinforcement learning, March 2025.

- [24] Liang Wen, Yunke Cai, Fenrui Xiao, Xin He, Qi An, Zhenyu Duan, Yimin Du, Junchen Liu, Lifu Tang, Xiaowei Lv, Haosheng Zou, Yongchao Deng, Shousheng Jia, and Xiangzheng Zhang. Light-r1: Curriculum sft, dpo and rl for long cot from scratch and beyond, 2025.

- [25] OpenThoughts Team. Open Thoughts. https://open-thoughts.ai, January 2025.

- [26] Jujie He, Jiacai Liu, Chris Yuhao Liu, Rui Yan, Chaojie Wang, Peng Cheng, Xiaoyu Zhang, Fuxiang Zhang, Jiacheng Xu, Wei Shen, Siyuan Li, Liang Zeng, Tianwen Wei, Cheng Cheng, Yang Liu, and Yahui Zhou. Skywork open reasoner series, 2025. Notion Blog.

- [27] Team GLM, Aohan Zeng, Bin Xu, Bowen Wang, Chenhui Zhang, Da Yin, Diego Rojas, Guanyu Feng, Hanlin Zhao, Hanyu Lai, Hao Yu, Hongning Wang, Jiadai Sun, Jiajie Zhang, Jiale Cheng, Jiayi Gui, Jie Tang, Jing Zhang, Juanzi Li, Lei Zhao, Lindong Wu, Lucen Zhong, Mingdao Liu, Minlie Huang, Peng Zhang, Qinkai Zheng, Rui Lu, Shuaiqi Duan, Shudan Zhang, Shulin Cao, Shuxun Yang, Weng Lam Tam, Wenyi Zhao, Xiao Liu, Xiao Xia, Xiaohan Zhang, Xiaotao Gu, Xin Lv, Xinghan Liu, Xinyi Liu, Xinyue Yang, Xixuan Song, Xunkai Zhang, Yifan An, Yifan Xu, Yilin Niu, Yuantao Yang, Yueyan Li, Yushi Bai, Yuxiao Dong, Zehan Qi, Zhaoyu Wang, Zhen Yang, Zhengxiao Du, Zhenyu Hou, and Zihan Wang. Chatglm: A family of large language models from glm-130b to glm-4 all tools, 2024.

- [28] Zihan Liu, Yang Chen, Mohammad Shoeybi, Bryan Catanzaro, and Wei Ping. Acemath: Advancing frontier math reasoning with post-training and reward modeling. arXiv preprint, 2024.

- [29] Ivan Moshkov, Darragh Hanley, Ivan Sorokin, Shubham Toshniwal, Christof Henkel, Benedikt Schifferer, Wei Du, and Igor Gitman. Aimo-2 winning solution: Building state-of-the-art mathematical reasoning models with openmathreasoning dataset, 2025.

- [30] Qwen Team. Qwen3, April 2025.

Appendix A Appendix

This part presents the detailed content of the dataset and the case study examples mentioned before.

| Problem: Given that two vertices of an equilateral triangle are on the parabola $y^{2}=4x$ , and the third vertex is on the directrix of the parabola, and the distance from the center of the triangle to the directrix equals $\frac{1}{9}$ of the perimeter. Find the area of the triangle. Subject: Geometry |

| --- |

Figure 6: A geometry problem described precisely in text from OlymMATH.

| Original problem: If the distances from the eight vertices of a cube to a certain plane are $0 0$ , $1$ , $2$ , $3$ , $4$ , $5$ , $6$ , $7$ respectively, what is the possible edge length of this cube? After transformation: If the distances from the eight vertices of a cube to a certain plane are $0 0$ , $1$ , $2$ , $3$ , $4$ , $5$ , $6$ , $7$ respectively, consider all possible edge lengths of this cube. Assuming the possible edge lengths form a set $S$ , find the sum of squares of all elements in $S$ . |

| --- |

Figure 7: An OlymMATH-HARD example testing model’s identification of all possible answers.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Box Plot: Accuracy Comparison Across Language Models and Difficulty Levels

### Overview

The image presents a series of box plots comparing the accuracy of different language models (AIME24, EN-EASY, EN-HARD, ZH-EASY, ZH-HARD) across varying difficulty levels. The y-axis represents accuracy, ranging from 0.0 to 1.0. The x-axis labels the different language model/difficulty combinations.

### Components/Axes

* **Y-axis:** "Accuracy" (Scale: 0.0 to 1.0, with increments of 0.2)

* **X-axis:** Categories: "AIME24", "EN-EASY", "EN-HARD", "ZH-EASY", "ZH-HARD"

* **Box Plots:** Each box plot represents the distribution of accuracy scores for a specific language model and difficulty level.

* **Outliers:** Individual data points outside the whiskers of the box plots are marked as circles ("o").

### Detailed Analysis

Here's a breakdown of each box plot, including approximate values based on visual inspection:

1. **AIME24 (Purple):**

* Median: Approximately 0.78

* Lower Quartile (Q1): Approximately 0.70

* Upper Quartile (Q3): Approximately 0.85

* Whiskers: Extend to approximately 0.35 (lower) and 0.95 (upper).

* Outlier: One outlier at approximately 0.32.

2. **EN-EASY (Blue):**

* Median: Approximately 0.74

* Lower Quartile (Q1): Approximately 0.62

* Upper Quartile (Q3): Approximately 0.83

* Whiskers: Extend to approximately 0.50 (lower) and 0.95 (upper).

* Outliers: Two outliers at approximately 0.18 and 0.52.

3. **EN-HARD (Red):**

* Median: Approximately 0.12

* Lower Quartile (Q1): Approximately 0.05

* Upper Quartile (Q3): Approximately 0.25

* Whiskers: Extend to approximately 0.00 (lower) and 0.35 (upper).

* Outlier: One outlier at approximately 0.30.

4. **ZH-EASY (Green):**

* Median: Approximately 0.70

* Lower Quartile (Q1): Approximately 0.60

* Upper Quartile (Q3): Approximately 0.80

* Whiskers: Extend to approximately 0.45 (lower) and 0.95 (upper).

5. **ZH-HARD (Orange):**

* Median: Approximately 0.16

* Lower Quartile (Q1): Approximately 0.08

* Upper Quartile (Q3): Approximately 0.25

* Whiskers: Extend to approximately 0.00 (lower) and 0.35 (upper).

* Outlier: One outlier at approximately 0.55.

### Key Observations

* **Difficulty Impact:** Accuracy generally decreases significantly when moving from "EASY" to "HARD" difficulty levels for both English (EN) and Chinese (ZH) models.

* **Model Performance:** AIME24 exhibits the highest median accuracy, followed by EN-EASY and ZH-EASY. EN-HARD and ZH-HARD show substantially lower accuracy.

* **Spread of Data:** The interquartile range (IQR) is relatively narrow for AIME24, suggesting more consistent performance. EN-HARD and ZH-HARD have wider IQRs, indicating greater variability in accuracy.

* **Outliers:** The presence of outliers suggests some data points deviate significantly from the typical performance of each model/difficulty combination.

### Interpretation

The data suggests that the AIME24 model performs best overall, achieving high accuracy across the tested difficulty levels. The English and Chinese "EASY" models demonstrate reasonable accuracy, while the "HARD" versions of both models struggle significantly. This indicates that the difficulty level is a major factor influencing performance. The wider spread of data for the "HARD" models suggests that these tasks are more challenging and lead to more variable results. The outliers may represent particularly difficult or ambiguous cases that the models failed to handle correctly.

The comparison between English and Chinese models reveals that, at the "EASY" level, their performance is comparable. However, the substantial drop in accuracy for both "HARD" versions suggests that the models may have limitations in handling complex linguistic structures or nuanced meanings in both languages. Further investigation could explore the specific types of errors made by each model to identify areas for improvement.

</details>

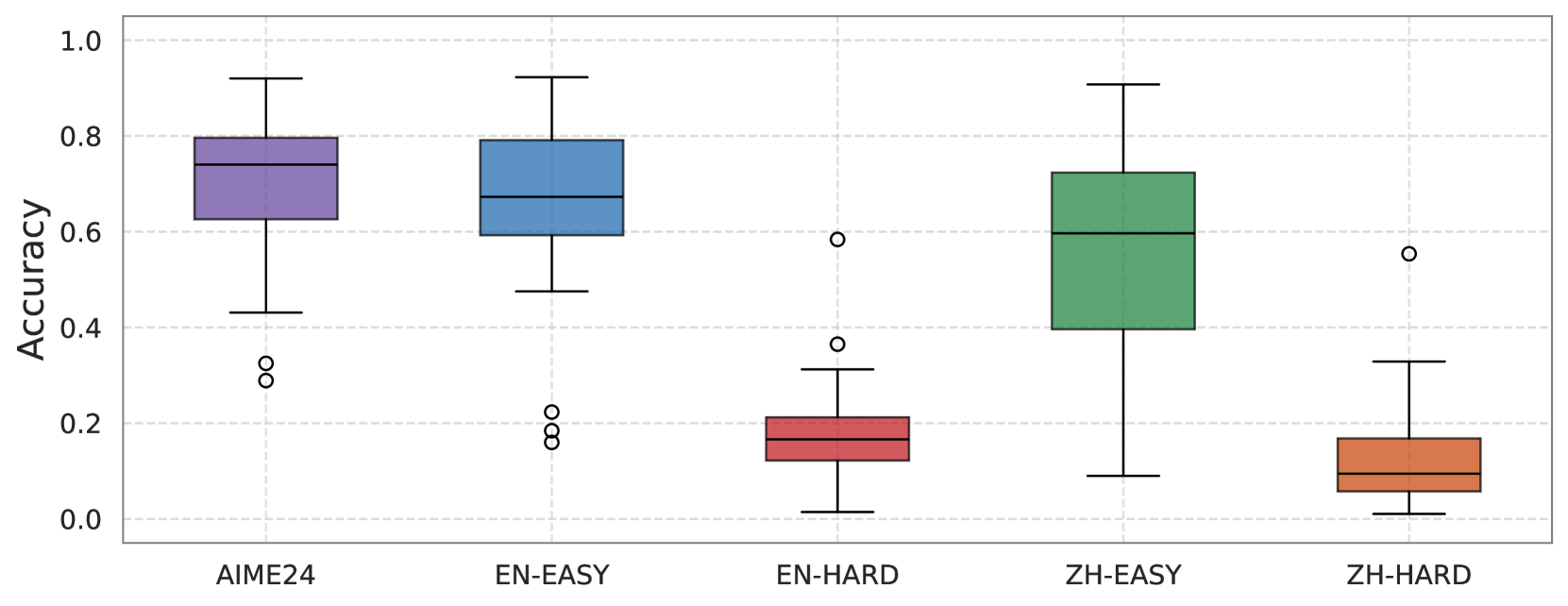

Figure 8: This boxplot shows that our EASY dataset has AIME-level difficulty with a wider distribution, while the HARD dataset is significantly more challenging, demonstrating the discriminative power of our benchmark.

| Problem: In the triangle $\triangle ABC$ , the lengths of the three sides are $a,b,c$ , satisfying the condition $2a^{2}+b^{2}+c^{2}=4$ . Find the maximum value of the area of $\triangle ABC$ . Answer: $\frac{\sqrt{5}}{5}$ . (When $a=\frac{2}{\sqrt{5}}$ , $b=c=\sqrt{\frac{6}{5}}$ ) |

| --- |

| Model Output: We wish to maximize the area of $\triangle ABC$ , subject to $2a^{2}+b^{2}+c^{2}=4.$ Because the condition is symmetric in $b$ and $c$ (but not in $a$ ), it is reasonable to look for an optimum among triangles having $b=c$ . (Later one can check that assuming $b=c$ indeed gives the maximum.) The problem involves maximizing the area of an isosceles triangle with sides $a,b,b$ under the constraint $2a^{2}+b^{2}+c^{2}=4$ . By assuming $b=c$ , we derive a formula for the area in terms of $b$ and find the maximum area by expressing it as a function of $u=b^{2}$ . We then determine the critical points of this quadratic function, identify the maximum area, and finally recover the side lengths to verify that they satisfy the original constraints. The maximum area achieved is $\frac{\sqrt{5}}{5}$ . |

Figure 9: An example during our data collection. o3-mini (high) found the correct answer without rigorously solving the problem. Due to length constraints, we provide only a summary of its original solution steps.

| Col1 |

| --- |

| Problem: Let the sequence of rationals $x_{1},x_{2},...$ be defined such that $x_{1}=\frac{25}{11}$ and $x_{k+1}=\frac{1}{3}\left(x_{k}+\frac{1}{x_{k}}-1\right).$ $x_{2025}$ can be expressed as $\frac{m}{n}$ for relatively prime positive integers $m$ and $n$ . Find the remainder when $m+n$ is divided by $1000$ . Answer: $248$ . |

| Model Output: We will show that if $x_{1}=\frac{25}{11}$ and $x_{k+1}=\frac{1}{3}\Bigl{(}x_{k}+\frac{1}{x_{k}}-1\Bigr{)},$ then after “many” iterations the answer to $x_{2025}=\frac{m}{n}$ (in lowest terms) satisfies $m+n\equiv 248±od{1000}.$ In what follows we briefly describe one way (via a “miraculous” change-of–variables) to “solve” the recurrence; the details need not be reproduced fully here. A good hint is that the recurrence $x_{k+1}=\frac{1}{3}\Bigl{(}x_{k}+\frac{1}{x_{k}}-1\Bigr{)}$ has the curious symmetry $T(x)=\frac{1}{3}\Bigl{(}x+\frac{1}{x}-1\Bigr{)}=T\Bigl{(}\frac{1}{x}\Bigr{)},$ so the expression $x+\frac{1}{x}$ (which is “symmetric” in $x$ and its reciprocal) naturally appears. In fact, one may rewrite the recurrence in the form $3x_{k+1}+1=x_{k}+\frac{1}{x_{k}}.$ A useful idea is then to “complete the square” by writing $\left(\sqrt{x_{k}}-\frac{1}{\sqrt{x_{k}}}\right)^{2}=x_{k}+\frac{1}{x_{k}}-2$ , so that $3x_{k+1}+1=\left(\sqrt{x_{k}}-\frac{1}{\sqrt{x_{k}}}\right)^{2}+2.$ Defining $u_{k}=\sqrt{x_{k}}-\frac{1}{\sqrt{x_{k}}}$ , one obtains the “linear-looking” relation $u_{k}^{2}=3x_{k+1}-1.$ With some additional work it turns out that if we write $x_{k}=\frac{m_{k}}{n_{k}}$ (in lowest terms), then one may prove by induction that $m_{k}+n_{k}=36· 12^{(2^{\,k-1}-1)}.$ (In our case one checks that $m_{1}+n_{1}=25+11=36$ , $m_{2}+n_{2}=432$ , $m_{3}+n_{3}=62208,$ and indeed $36,432,62208$ equal $36· 12^{0},\,36· 12^{1},\,36· 12^{3}$ respectively.) Thus, for $k=2025$ we have $m+n=36· 12^{\left(2^{2024}-1\right)}.$ Even though this number is astronomically large, we are only asked to compute $m+n±od{1000}.$ Using modular reductions, we compute $m+n$ modulo 1000. First, modulo 8, we find $36· 12^{(2^{2024}-1)}\equiv 0±od{8}$ . Modulo 125, Euler’s theorem helps reduce $12^{N}$ to $12^{15}\equiv 118±od{125}$ , giving $36· 12^{N}\equiv 123±od{125}$ . Using the Chinese Remainder Theorem, solving $125k+123\equiv 0±od{8}$ gives $k\equiv 1±od{8}$ , leading to the final answer: $\boxed{248}$ . |

Figure 10: An example from AIME 2025. o3-mini (high) forgot to prove that $(m_{k},n_{k})=1$ and it claimed that $m_{k}+n_{k}=36· 12^{\left(2^{k-1}-1\right)}$ without providing the complete proof (highlighted in red). Instead, the model “guessed” this expression by calculating the first several terms in the sequence one by one. Due to length constraints, we provide only a summary of its unimportant steps.

Figure 11: An example from Omni-MATH. The solution provided by Omni-MATH itself is flawed and problematic. Both the reference solution and o3-mini (high) failed to prove that there are no other possible function $f$ that satisfies the condition (highlighted in red). Due to length constraints, we have omitted its unimportant steps.

Figure 12: An example from OlymMATH-EN-HARD subset. o3-mini (high) attempted to “guess” the equality conditions but failed to produce a correct solution (highlighted in red).