2504.02654v1

Model: gemini-2.0-flash

# SymDQN: Symbolic Knowledge and Reasoning in Neural Network-based Reinforcement Learning

**Authors**:

- Ivo Amador

- Nina Gierasimczuk (Technical University of Denmark)

- ivo.amador@gmail.com, nigi@dtu.dk

## Abstract

We propose a learning architecture that allows symbolic control and guidance in reinforcement learning with deep neural networks. We introduce SymDQN, a novel modular approach that augments the existing Dueling Deep Q-Networks (DuelDQN) architecture with modules based on the neuro-symbolic framework of Logic Tensor Networks (LTNs). The modules guide action policy learning and allow reinforcement learning agents to display behavior consistent with reasoning about the environment. Our experiment is an ablation study performed on the modules. It is conducted in a reinforcement learning environment of a 5x5 grid navigated by an agent that encounters various shapes, each associated with a given reward. The underlying DuelDQN attempts to learn the optimal behavior of the agent in this environment, while the modules facilitate shape recognition and reward prediction. We show that our architecture significantly improves learning, both in terms of performance and the precision of the agent. The modularity of SymDQN allows reflecting on the intricacies and complexities of combining neural and symbolic approaches in reinforcement learning.

## 1 Introduction

Despite its rapidly growing impact on society, Artificial Intelligence technologies are tormented by reliability issues, such as lack of interpretability, propagation of biases, difficulty in generalizing across domains, and susceptibility to adversarial attacks. A possible way towards more interpretable, controlled and guided algorithms leads through the field of neuro-symbolic AI, which explores new ways of integrating symbolic, logic-based knowledge in neural networks (NNs). In particular, the framework of Logic Tensor Networks (LTNs, for short) Serafini and d’Avila Garcez (2016); Badreddine et al. (2022) enhances learning by interpreting first-order logic formulas concretely on data used by NNs algorithms. Such formulas express properties of data and, given a fuzzy semantics, can be integrated into the loss function, thus guiding the learning process.

In this paper, we apply LTNs to a reinforcement learning problem. By integrating LTNs in the training process, our learning agent uses logic to learn the structure of the environment, to predict how different objects in the environment interact with each other, and to guide its actions by performing elementary reasoning about rewards. We investigate how such integration affects learning performance of a robust, established and well-studied framework of Dueling Deep Q-Network (DuelDQN, for short) Wang et al. (2016). The structure of the paper is as follows. In Section 2 we briefly recall Logic Tensor Networks and elements of the underlying Real Logic. In Section 3 we introduce our methodology: the SymDQN architecture and its training process. We follow up with the presentation of the experiment in Section 4. We discuss of the results in Section 5. Section 6 concludes and outlines directions for future work.

#### Related Work

Since its conception, the framework of LTNs has been applied in various domains. In computer vision, LTNs were used to inject prior knowledge about object relationships and properties, improving interpretability and accuracy in object detection Donadello et al. (2017). Their addition to convolutional neural networks improves the robustness on noisy data Manigrasso et al. (2021). They enhance the accuracy in reasoning tasks in open-world and closed-world scenarios Wagner and d’Avila Garcez (2022). In Bianchi and Hitzler (2019), LTNs are leveraged for deductive reasoning tasks. Finally, in learning by reinforcement LTNs were used to integrate prior knowledge into reinforcement learning agents improving both the learning rate and robustness to environmental changes Badreddine and Spranger (2019). The latter work is similar to ours in the choice of tools, but it differs in its methodology. In Badreddine and Spranger (2019), LTN is a separate pre-training module which interacts with DuelDQN only by creating inputs. In contrast, our SymDQN integrates LTN in the training process (making it learn alongside DuelDQN).

Our work uses logic to adjust elements of a reinforcement learning framework. In that, it is related to reward shaping approaches, where the learner is given external symbolic information about the environment, e.g., in the form of linear time logic formulas (also known as restraining bolts) in Giacomo et al. (2019) or of an induced automaton in Furelos-Blanco et al. (2021). In a way, the LTN approach is similar: logical formulas adjust the reinforcement learning process. However, our technique is a more distributed form of reward shaping. First, the formulas of Real Logic are used as guides to obtain a symbolic representation of the environment, then to predict immediate rewards from encountering the objects of the environment. Finally, a logical formula is used to help the learner align the $q$ -values (the agent’s long term policy) with the predicted immediate rewards of symbolically represented objects. In other words, we restrain the reinforcement learner by expecting it to reason about its behavior as it learns, and we investigate the impact of this restriction on learning precision and performance. We will come back to this issue in Section 5.1, after we have detailed all the components.

## 2 Real Logic

Real Logic is the basis of the functioning of LTNs. In this section we provide a rudimentary introduction (for a full exposition consult Badreddine et al. (2022)). Let us start with a simple example.

**Example 1**

*Consider two datasets: a data set of humans (with two features: age and gender), and a dataset of pets (with three features: height, weight and color). Assume that Alice appears in the data set of humans (for instance as a five year old female), and Max and Mittens are listed in a dataset of pets. To be able to talk about Alice, Max and Mittens, we need a logical language that includes constants referring to objects (particular rows of the datasets). Note that such constants can be of different types —in our example humans consists of two, while pets are composed of three features.*

The signature of the language of Real Logic $\mathcal{L}$ contains a set $\mathcal{C}$ of constant symbols, a set $\mathcal{F}$ of function symbols, a set $\mathcal{P}$ of predicate symbols, and a set $\mathcal{X}$ of variable symbols. Let $\mathcal{D}$ be a non-empty set of domain symbols (that represent types). Domain symbols are used by functions $\mathbf{D}$ , $\mathbf{D_{in}}$ , and $\mathbf{D_{out}}$ which for a given element of the signature output its type, in the following way. $\mathbf{D}:\mathcal{X}\cup\mathcal{C}\rightarrow\mathcal{D}$ specifies the types for variables and constants; $\mathbf{D_{in}}:\mathcal{F}\cup\mathcal{P}\rightarrow\mathcal{D}^{\ast}$ specifies the types of the sequence of arguments allowed by function and predicate symbols ( $\mathcal{D}^{\ast}$ stands for the set of all finite sequences of elements from $\mathcal{D}$ ); $\mathbf{D_{out}}:\mathcal{F}\rightarrow\mathcal{D}$ specifies the type of the range of a function symbol.

**Example 2**

*Continuing Example 1, let the language of pet-ownership $\mathcal{L}_{pets}$ have the signature consisting of the set of constants $\mathcal{C}=\{\textsc{Alice},\textsc{Max},\textsc{Mit}\}$ , a set of function symbols $\mathcal{F}=\{\textsc{owner}\}$ , a set of predicate symbols $\mathcal{P}=\{\textsc{isOwner}\}$ , and two variable symbols $\mathcal{X}=\{\textsc{pet},\textsc{person}\}$ . Further, we have two domain symbols, one for the domain of humans and one for pets, $\mathcal{D}=\{H,P\}$ . Then, our domain functions can be defined in the following way. $\mathbf{D}(\textsc{Alice})=H$ (Alice is a constant of type $H$ ), $\mathbf{D}(\textsc{Max})=\mathbf{D}(\textsc{Mit})=P$ (Max and Mittens are of type $P$ ). Further, each dataset will have its own variable: $\mathbf{D}(\textsc{pet})=P$ , $\mathbf{D}(\textsc{person})=H$ . We also need to specify inputs for predicates: $\mathbf{D_{in}}(\textsc{isOwner})=HP$ (isOwner is a predicate taking two arguments, a human and a pet). Finally, for functions, we need both the input and the output types: $\mathbf{D_{in}}(\textsc{owner})=P$ , and $\mathbf{D_{out}}(\textsc{owner})=H$ (owner takes as input a pet and outputs the human who owns it).*

The language of Real Logic corresponds to first-order logic, and so it allows for more complex expressions. The set of terms consists of constants, variables, and function symbols and is defined in the following way: each $t\in X\cup C$ is a term of domain $\mathbf{D}(t)$ ; if $t_{1},\ldots,t_{n}$ are terms, then $t_{1}\ldots t_{n}$ is a term of the domain $\mathbf{D}(t_{1})...\mathbf{D}(t_{n})$ ; if $t$ is a term of the domain $\mathbf{D_{in}}(f)$ , then $f(t)$ is a term of the domain $\mathbf{D_{out}}(f)$ . Finally, the formulae of Real Logic are as follows: $t_{1}=t_{2}$ is an atomic formula for any terms $t_{1}$ and $t_{2}$ with $\mathbf{D}(t_{1})=\mathbf{D}(t_{2})$ ; $P(t)$ is an atomic formula if $\mathbf{D}(t)=\mathbf{D_{in}}(P)$ ; if $\varphi$ and $\psi$ are formulae and $x_{1},\ldots,x_{n}$ are variable symbols, then $\neg\varphi$ , $\varphi\wedge\psi$ , $\varphi\vee\psi$ , $\varphi\rightarrow\psi$ , $\varphi\leftrightarrow\psi$ , $\forall x_{1}\ldots x_{n}\varphi$ and $\exists x_{1}\ldots x_{n}\varphi$ are formulae.

Let us turn to the semantics of Real Logic. Domain symbols allow grounding the logic in numerical, data-driven representations—to be precise, Real Logic is grounded in tensors in the field of real numbers. Tensor grounding is the key concept that allows the interplay of Real Logic with Neural Networks. It refers to the process of mapping high-level symbols to tensor representations, allowing integration of reasoning and differentiable functions. A grounding $\mathcal{G}$ assigns to each domain symbol $D\in\mathcal{D}$ a set of tensors $\mathcal{G}(D)\subseteq\bigcup_{n_{1}\ldots n_{d}\in\mathbb{N}^{\ast}}\mathbb{ R}^{n_{1}\times\ldots\times n_{d}}$ . For every $D_{1}\ldots D_{n}\in\mathcal{D}^{\ast}$ , $\mathcal{G}(D_{1}\ldots D_{n})=\mathcal{G}(D_{1})\times\ldots\times\mathcal{G} (D_{n})$ . Given a language $\mathcal{L}$ , a grounding $\mathcal{G}$ of $\mathcal{L}$ assigns to each constant symbol $c$ , a tensor $\mathcal{G}(c)$ in the domain $\mathcal{G}(\mathbf{D}(c))$ ; to a variable $x$ it assigns a finite sequence of tensors $d_{1}\ldots d_{k}$ , each in $\mathcal{G}(\mathbf{D}(x))$ , representing the instances of $x$ ; to a function symbol $f$ it assigns a function taking tensors from $\mathcal{G}(\mathbf{D_{in}}(f))$ as input, and producing a tensor in $\mathcal{G}(\mathbf{D_{out}}(f))$ as output; to a predicate symbol $P$ it assigns a function taking tensors from $\mathcal{G}(\mathbf{D_{in}}(P))$ as input, and producing a truth-degree in the interval $[0,1]$ as output.

In other words, $\mathcal{G}$ assigns to a variable a concatenation of instances in the domain of the variable. The treatment of free variables in Real Logic is analogous, departing from the usual interpretation of free variables in FOL. Thus, the application of functions and predicates to terms with free variables results in point-wise application of the function or predicate to the string representing all instances of the variable (see p. 5 of Badreddine et al. (2022) for examples). Semantically, logical connectives are fuzzy operators applied recursively to the suitable subformulas: conjunction is a t-norm, disjunction is a t-conorm, and for implication and negation—its fuzzy correspondents. The semantics for formulae with quantifiers ( $\forall$ and $\exists$ ) is given by symmetric and continuous aggregation operators $Agg:\bigcup_{n\in N}[0,1]^{n}\rightarrow[0,1]$ . Intuitively, quantifiers reduce the dimensions associated with the quantified variables.

**Example 3**

*Continuing our running example, we could enrich our signature with predicates $Dog$ and $Cat$ . Then, $Dog(Max)$ might return $0.8$ , while $Dog(Mit)$ might return $0.3$ , indicating that Max is likely a dog, while Mittens is not. In practice, the truth degrees for these atomic formulas could be obtained for example by a Multi-layer Perceptron (MLP), followed by a sigmoid function, taking the object’s features as input and returning a value in $[0,1]$ . For a new function symbol $age$ , $age(Max)$ could be an MLP, taking Max’s features, and outputting a scalar representing Max’s age. An example of a formula could be $Dog(Max)\vee Cat(Max)$ , which could return $0.95$ indicating that Max is almost certainly either a dog or a cat. A formula with a universal quantifier could be used to express that Alice owns all of the cats $\forall pet(Cat(pet)\rightarrow isOwner(Alice,pet))$ .*

Real Logic allows some flexibility in the choice of appropriate fuzzy operators for the semantics of connectives and quantifiers. However, note that not all fuzzy operators are suitable for differentiable learning van Krieken et al. (2022). In Appendix B of Badreddine et al. (2022), the authors discuss some particularly suitable fuzzy operators. In this work, we follow their recommendation and adopt the Product Real Logic semantics (product t-norm for conjunction, standard negation, the Reichenbach implication, p-mean for the existential, and p-mean error for the universal quantifier).

LTNs make use of Real Logic—they learns parameters that maximize the aggregated satisfiability of the formulas in the so-called knowledge base containing formulas of Real Logic. The framework is the basis of the PyTorch implementation of the LTN framework, known as LTNtorch library Carraro (2022). In our experiments we make substantial use of that tool.

## 3 Methodology

### 3.1 The game

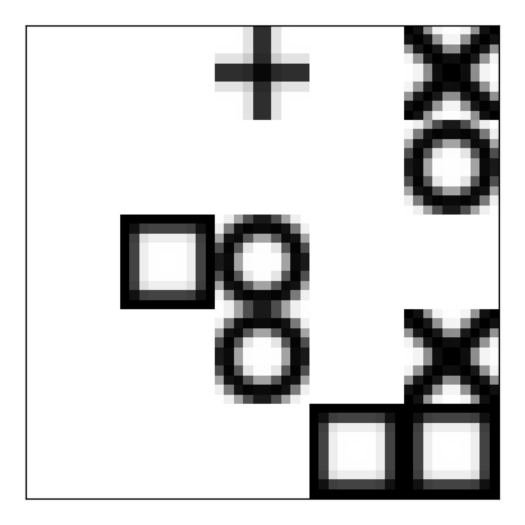

The environment used for the experiments was a custom Gymnasium Towers et al. (2024) environment ShapesGridEnv designed for the experiments in Badreddine and Spranger (2019), see Fig. 1. The game is played on an image showing a 5x5 grid with cells occupied by one agent, represented by the symbol ‘+’, and a number of other objects: circles, squares, and crosses. The agent moves across the board (up, right, down, left) and when it enters a space occupied by an object, it ‘consumes’ that object. Each object shape is associated with a reward. The agent’s goal is to maximize its cumulative reward throughout an episode. An episode terminates when either all shapes with positive reward have been consumed, or when a predefined number of steps has been reached.

<details>

<summary>extracted/6333762/fig-env.png Details</summary>

### Visual Description

## Diagram: Abstract Symbol Arrangement

### Overview

The image presents an arrangement of abstract symbols (plus signs, squares, circles, and "X" shapes) on a white background. The symbols are black and appear to be pixelated, suggesting a low-resolution or stylized representation. There is no clear pattern or relationship between the symbols.

### Components/Axes

* **Symbols:** The image contains the following symbols:

* Plus sign (+)

* Square (□)

* Circle (O)

* "X" shape (X)

* **Arrangement:** The symbols are arranged seemingly randomly within the frame.

* **Background:** The background is plain white.

### Detailed Analysis

* **Top Center:** A plus sign (+) is located near the top center of the image.

* **Top Right:** Two "X" shapes are stacked vertically in the top right corner.

* **Left Center:** A square (□) is positioned to the left of two vertically stacked circles (O).

* **Bottom Right:** Two squares (□) are placed side-by-side in the bottom right corner.

### Key Observations

* The symbols are simple geometric shapes.

* The arrangement appears arbitrary.

* The pixelated style gives the image a retro or digital aesthetic.

### Interpretation

The image lacks a clear narrative or purpose. It could be interpreted as an abstract composition, a random arrangement of symbols, or a stylized representation of a simple game or code. Without additional context, it is difficult to assign a specific meaning to the arrangement. The pixelated style suggests a connection to early computer graphics or digital art.

</details>

Figure 1: ShapesGridEnv environment

We chose this environment because of its simplicity, and because it allows comparing our setting with that of Badreddine and Spranger (2019). The environment is very flexible in its parameters: density (the minimum and maximum amount of shapes initiated, in our case max is $18$ ), rewards (in our case the reward for a cross is +1, for a circle is -1 and for a square is 0), colors (in our case the background is white and objects are black), episode maximum length (for us it is 50). Altering the environment configurations allows investigating the adaptability of the learner in Badreddine and Spranger (2019).

### 3.2 The Model

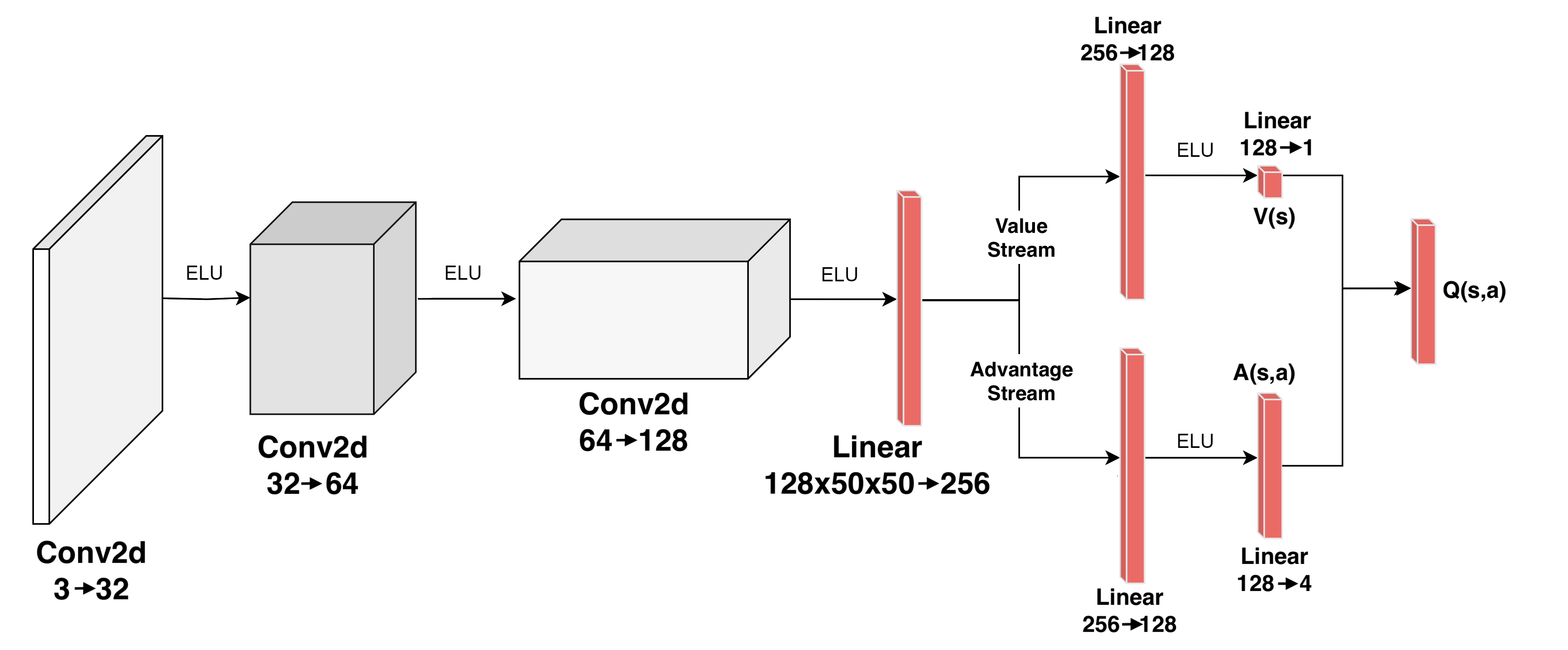

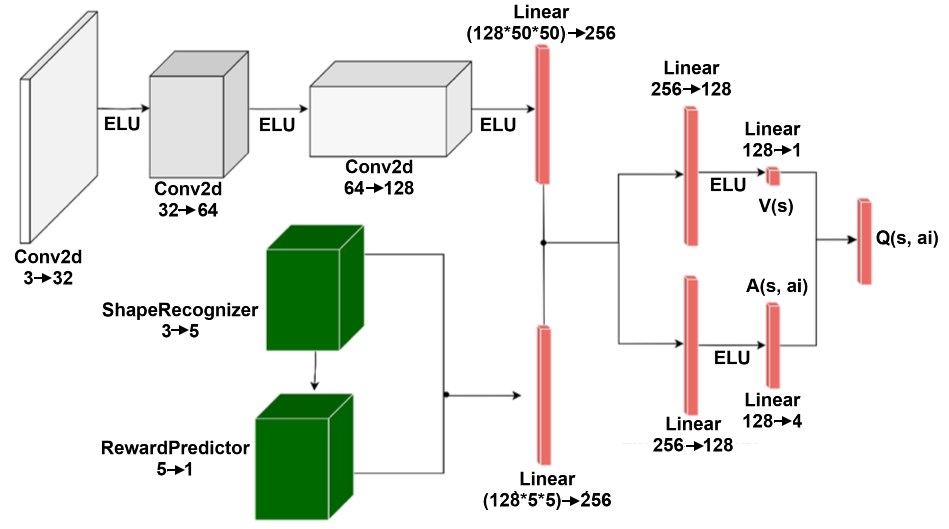

A suitable approach to learning to play such a game could be the existing Dueling Deep Q-Network (DuelDQN) Wang et al. (2015). The architecture is composed of several convolutional layers, which extract relevant features from the raw image input, and then pass them to the two main components, a Value Stream and an Advantage Stream (see Fig. 2). The Value Stream estimates how good it is for the agent to be in the given state, while the Advantage Stream estimates how good it is to perform each action in that given state. The two streams are then combined to calculate the final output.

<details>

<summary>extracted/6333762/dueldqn_parameters.png Details</summary>

### Visual Description

## Diagram: Deep Q-Network Architecture

### Overview

The image is a diagram illustrating the architecture of a Deep Q-Network (DQN). It shows the flow of data through convolutional and linear layers, splitting into value and advantage streams, and ultimately combining to estimate Q-values.

### Components/Axes

* **Layers:** The diagram consists of convolutional (Conv2d) and linear layers.

* **Activation Function:** ELU (Exponential Linear Unit) is used as the activation function between layers.

* **Streams:** The network splits into two streams: Value Stream and Advantage Stream.

* **Inputs/Outputs:** The network takes an input and outputs Q(s,a) values.

### Detailed Analysis

The diagram can be broken down into the following stages:

1. **Input Layer:**

* A rectangular block on the left represents the input.

* Label: Conv2d 3->32

2. **First Convolutional Layer:**

* A cube-shaped block follows the input.

* Label: Conv2d 32->64

* Activation: ELU

3. **Second Convolutional Layer:**

* Another cube-shaped block.

* Label: Conv2d 64->128

* Activation: ELU

4. **Linear Layer (Shared):**

* A red rectangular block.

* Label: Linear 128x50x50->256

* Activation: ELU

5. **Value Stream:**

* Label: Value Stream

* Linear Layer: Red rectangular block labeled Linear 256->128

* Activation: ELU

* Linear Layer: Red rectangular block labeled Linear 128->1

* Output: V(s)

6. **Advantage Stream:**

* Label: Advantage Stream

* Linear Layer: Red rectangular block labeled Linear 256->128

* Linear Layer: Red rectangular block labeled Linear 128->4

* Activation: ELU

* Output: A(s,a)

7. **Output Layer:**

* The Value and Advantage streams are combined.

* Red rectangular block labeled Q(s,a)

### Key Observations

* The network architecture involves a series of convolutional layers followed by a split into value and advantage streams.

* ELU activation functions are used throughout the network.

* The dimensions of the layers change as data flows through the network.

### Interpretation

The diagram illustrates a specific architecture for a Deep Q-Network, likely used in reinforcement learning. The convolutional layers are used for feature extraction from the input, while the value and advantage streams allow for separate estimation of the value of a state and the advantage of taking a particular action in that state. This separation can improve the stability and performance of the learning process. The final combination of the value and advantage streams results in an estimate of the Q-value, which represents the expected cumulative reward for taking a specific action in a given state.

</details>

Figure 2: Network Architecture of DuelDQN, with the convolutional layers in white, and the dense layers in red

The DuelDQN architecture will be our starting point. We will extend it with new symbolic, cognitively-motivated components:

- shape recognition (ShapeRecognizer),

- reward prediction (RewardPredictor),

- action reasoning (ActionReasoner), and

- action filtering (ActionFilter).

In the following, we will discuss each component in more detail.

#### ShapeRecognizer

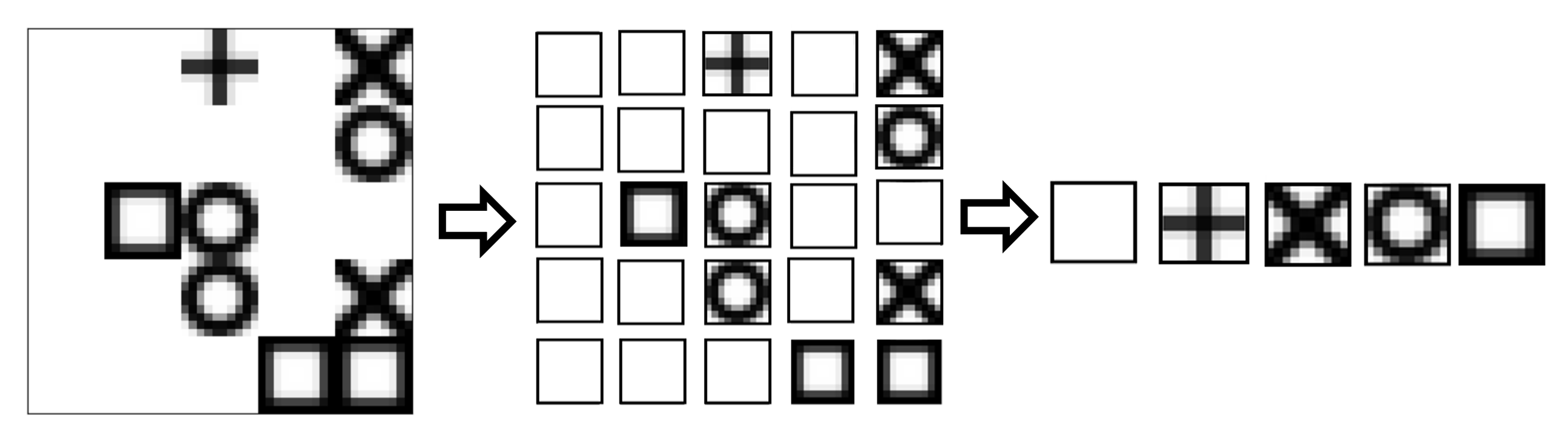

The function of ShapeRecognizer is to estimate the likelihood of a certain observation to be of a given unique kind. ShapeRecognizer is comprised of one pre-processing function, which divides the initial raw image into 25 patches. Each patch is then processed by a Convolutional Neural Network (CNN), which then outputs a 5-dimensional tensor.

The numbers chosen for the number of patches and the output dimension are an instance of soft knowledge injection, as the environment represents a 5x5 grid, and dividing it into 25 patches immediately separates the content of each cell in the grid. As for the output size, 5 is the number of different objects that each patch might contain: empty, agent, circle, cross, square. This allows the agent to perform a multi-label classification on each object type. The theoretical intention of this module is to give the agent a possibility of symbolic understanding of the different entities in the environment, by labeling their types.

Given the simple nature of the ShapesGridEnv, representing the environment is very easy. The state is composed of $25$ positions, with each position being occupied by one of five shapes (empty, agent, circle, square, cross), which results in the state space of size $25^{5}$ . To generate this representation, we start by instantiating five one-hot representations of the classes, which are stored in the LTN Variable $shape\_types$ . Then, for each state that the agent is in, it keeps in memory all the different patches that it has seen and a list of all the patches that are present in the current state.

<details>

<summary>extracted/6333762/unique_patch.png Details</summary>

### Visual Description

## Diagram: Feature Extraction Process

### Overview

The image depicts a feature extraction process. A collection of shapes (square, circle, cross, and X) are transformed into a grid of features, which are then further processed into a final set of features.

### Components/Axes

* **Input:** A collection of shapes including a plus sign (+), an X, circles (O), and squares.

* **Feature Grid:** A 4x4 grid of cells, each potentially containing a feature derived from the input shapes.

* **Output:** A sequence of features.

* **Arrows:** Two arrows indicate the direction of the feature extraction process.

### Detailed Analysis

1. **Input Shapes (Left):**

* A plus sign (+) is located in the top-left corner.

* An X is located in the top-right corner.

* Two circles (O) are stacked vertically below the X.

* A square is located to the left of the circles.

* Two squares are located at the bottom-right.

2. **Feature Grid (Middle):**

* The grid is 4x4, containing 16 cells.

* The following cells contain features:

* Row 1, Column 3: Plus sign (+)

* Row 1, Column 4: X

* Row 2, Column 3: Circle (O)

* Row 3, Column 1: Square

* Row 3, Column 2: Circle (O)

* Row 3, Column 4: X

* Row 4, Column 1: Square

* Row 4, Column 2: Square

3. **Output Features (Right):**

* The output consists of a sequence of 5 features:

* Square

* Plus sign (+)

* X

* Circle (O)

* Square

### Key Observations

* The input shapes are transformed into a grid of features.

* The feature grid is then processed to produce a final sequence of features.

* The order of the output features does not directly correspond to the spatial arrangement of the input shapes.

### Interpretation

The diagram illustrates a simplified feature extraction process. The initial shapes are decomposed into a set of features, which are then organized into a grid. A further processing step selects and orders a subset of these features to form the final output. This process could represent a stage in image recognition or pattern analysis, where raw input is converted into a more abstract representation for further processing. The specific algorithm used to generate the feature grid and select the output features is not specified in the diagram.

</details>

Figure 3: The process of obtaining unique labels

Once the variables have been set up, the ShapeRecognizer module can be used to estimate the likelihood of a grid cell containing a given unique shape. To guide its learning, the aggregated satisfiability of three axioms is maximized. The axioms represent the first instance of actual knowledge injection in the system:

$$

\forall s\ \exists l\ IS(s,l)

$$

$$

\neg\exists s\ l_{1}\ l_{2}\ (IS(s,l_{1})\wedge IS(s,l_{2})\wedge(l_{1}\neq l_

{2}))

$$

$$

\forall s_{1}\ s_{2}\ l\ ((IS(s_{1},l)\wedge(s_{1}\neq s_{2}))\rightarrow\neg

IS

(s_{2},l)

$$

In the above formulas, $s$ stands for a shape, $l$ stands for a label and $IS(x,y)$ stands for $x$ has label $y$ . A1 says that every shape has a label; A2 says that no shape has two different labels; A3 says that different shapes cannot have the same label. At each step of every episode, the aggregated satisfaction (truth) degree of these axioms is calculated, and its inverse, $1-AggSat(A1,A2,A3)$ , is used as a loss to train the agent.

Intuitively, ShapeRecognizer gives the learner a way to distinguish between different shapes. In that, our approach is somewhat similar to the framework of semi-supervised symbol grounding Umili et al. (2023).

#### RewardPredictor

Once the environment is symbolically represented, we will make the agent understand the properties of different objects and their dynamics. The only truly dynamic element in the environment is the agent itself—nothing else moves. The agent can move to a cell that was previously occupied by a different shape, which results in the shape being consumed, and the agent being rewarded with the value of the respective shape. Hence, there are three key pieces of knowledge that the learner must acquire to successfully navigate the environment. It must identify which shape represents the agent, it must understand how each action influences the future position of the agent, and it must associate each shape with its respective reward.

The task of self-recognition can be approached in numerous ways, depending on the information we have about the environment, and on our understanding of its dynamics. In our approach, leveraging the ShapeRecognizer, in each episode we count the occurrences of each shape in the environment and add it to a variable that keeps track of this shape’s count over time. The agent is the only shape that has a constant, and equal to one, number of appearances in the environment. This approach is enough to quickly determine with confidence which shape represents the agent. This step demonstrates a specific advantage of using the neuro-symbolic framework. Our reinforcement learning agent is now equipped with memory of the previous states of the environment (i.e., the count of shapes) which can then be used to make decisions or to further process symbolic knowledge.

Understanding the impact of different actions is crucial for the agent to make informed decisions in the environment. Each action represents a direction (up, right, down, left) and taking the action will lead to one of two outcomes. If the agent is at the edge of the environment and attempts to move against the edge, it will remain in the same cell, otherwise, the agent will move one cell in the given direction. Given the simplicity of this dynamics, a function has been defined that takes as input a position and an action, outputting the resulting position.

Our RewardPredictor is a Multi-layer Perceptron (MLP), which takes as input a ShapeRecognizer ’s prediction and outputs one scalar. The main intention of this module is to train to predict the reward associated with each object shape, using the symbolic representations generated by the ShapeRecognizer paired with high-level reasoning on the training procedure. This module intends to give the agent a way of knowing on a high level the reward associated with any shape, and consequently with any action.

In reinforcement learning environments, agents learn action policies by maximizing their expected rewards. When building an agent that symbolically represents and reasons about the environment, one of the key elements is the agent’s ability to understand how to obtain rewards. Given that the agent has the capability of identifying the shapes in the grid, recognizing its own shape, and calculating the position it will take given an action, it can now determine the shape that will be consumed by that action. By using the RewardPredictor module and passing it this shape, the agent obtains a prediction of the reward associated with that shape. Over time, by calculating the loss between that prediction and the actual reward obtained after taking an action, the module learns to accurately predict the reward associated with every shape.

#### ActionReasoner

Once the agent can predict the expected reward of its own actions, we can then guide its policy learning so that it acts in the way (it expects) will give the highest immediate reward. To achieve this, we specify an axiom to ensure that the $q-$ value outputs of the Q-Network are in alignment with the predicted rewards. To achieve this, the expected reward of all the possible actions is calculated by using the RewardPredictor and the $q-$ values are calculated by calling the SymDQN. Our axiom expresses the following condition: if the reward prediction of action $a_{1}$ is higher than the reward prediction of action $a_{2}$ , then the $q-$ value of $a_{1}$ must also be higher than the $q-$ value of $a_{2}$ . The learning is then guided by the LTN framework with the following formula of Real Logic used in the loss function.

$$

\forall\ \textup{Diag}((r_{1},q_{1}),(r_{2},q_{2}))(r_{1}>r_{2}):(q_{1}>q_{2})

$$

Two standard operators of Real Logic Badreddine et al. (2022) are applied in this axiom: $Diag$ and guarded quantification with the condition $(r_{1}>r_{2})$ . Firstly, the $Diag$ operator restricts the range of the quantifier, which will then not run through all the pairs from $\{r_{1},r_{2},q_{1},q_{2}\}$ , but only the pairs of (reward, $q$ -value) that correspond to the same actions. Specifically, when $r_{1}$ corresponds to the predicted reward of action ‘up’, then $q_{1}$ corresponds to $q$ -value of action ‘up’. Secondly, we use guarded quantification, restricting the range of the quantifier to only those cases in which $(r_{1}>r_{2})$ . If we had used implication, with the antecedent $(r_{1}>r_{2})$ false, the whole condition would evaluate to true. This is problematic when the majority of pairs do not fulfill the antecedent. In such a case the universal quantifier evaluates to true for most of the instances, even if the important ones, with antecedent true, are false. Guarded quantification gives a satisfaction degree that is much closer to the value we are interested in.

#### ActionFilter

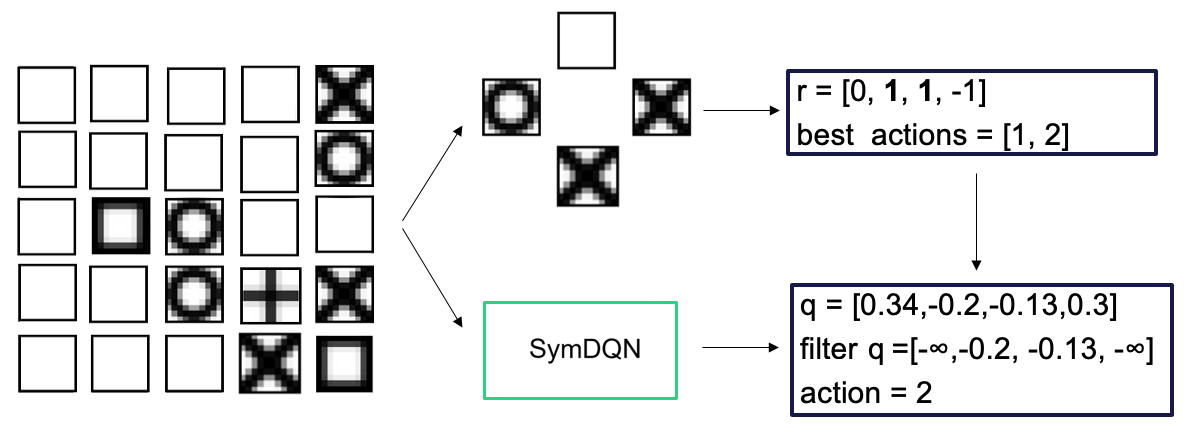

Our learner can now predict the reward for each shape. For each action in a given state, it then knows what shapes could be consumed and what is their corresponding immediate reward. ActionFilter eliminates the actions for which the difference between their reward and the maximum immediately obtainable reward in that state is under a predefined threshold (we set it at 0.5). This allows a balance between the strictness of symbolic selection of immediately best actions and the information about rewards available in the network as a whole. This is represented in Fig. 4.

<details>

<summary>extracted/6333762/fig-action-filter.png Details</summary>

### Visual Description

## Diagram: SymDQN Process

### Overview

The image illustrates a process involving a 4x5 grid of states, a selection of possible actions, and the application of a SymDQN (Symmetric Deep Q-Network) to determine the best action. The diagram shows the flow of information from the initial state representation to the final action selection.

### Components/Axes

* **Left Side:** A 4x5 grid representing the game states. Each cell contains a different pattern, including empty squares, squares with a filled center, circles, crosses, and X-shaped patterns.

* **Center:** A set of five possible actions arranged in a cross shape. The actions are represented by different patterns within squares: empty, circle, and cross.

* **SymDQN Block:** A green rectangle labeled "SymDQN" in the center of the diagram.

* **Top-Right Box:** A blue rectangle containing the reward values and best actions:

* `r = [0, 1, 1, -1]`

* `best actions = [1, 2]`

* **Bottom-Right Box:** A blue rectangle containing the Q-values, filtered Q-values, and the selected action:

* `q = [0.34, -0.2, -0.13, 0.3]`

* `filter q = [-∞, -0.2, -0.13, -∞]`

* `action = 2`

* **Arrows:** Arrows indicate the flow of information from the grid to the actions, from the actions to the SymDQN, and from the SymDQN to the top-right and bottom-right boxes.

### Detailed Analysis

1. **Grid of States:** The 4x5 grid on the left shows various states. The patterns in the grid cells are as follows (reading left to right, top to bottom):

* Row 1: Empty, Empty, Empty, Empty, Empty

* Row 2: Empty, Empty, Empty, Circle, Cross

* Row 3: Empty, Empty, Square, Circle, Empty

* Row 4: Empty, Circle, Plus, Cross, Square

2. **Possible Actions:** The five possible actions are arranged in a cross shape. The top action is an empty square, the left action is a circle, the right action is a cross, and the bottom action is a cross.

3. **SymDQN Processing:** The SymDQN block represents the application of a symmetric deep Q-network to the selected actions.

4. **Reward and Best Actions:** The top-right box shows the reward values `r = [0, 1, 1, -1]` and the best actions `best actions = [1, 2]`.

5. **Q-Values and Action Selection:** The bottom-right box shows the Q-values `q = [0.34, -0.2, -0.13, 0.3]`. The filtered Q-values are `filter q = [-∞, -0.2, -0.13, -∞]`. The selected action is `action = 2`.

### Key Observations

* The SymDQN takes the possible actions and the grid state as input.

* The SymDQN outputs Q-values for each action.

* The Q-values are filtered, likely to remove invalid or impossible actions (represented by -∞).

* The action with the highest filtered Q-value is selected (action = 2).

### Interpretation

The diagram illustrates a reinforcement learning process using a SymDQN. The agent observes a state (represented by the grid), considers possible actions, and uses the SymDQN to estimate the Q-values for each action. The Q-values are then filtered to remove invalid actions, and the action with the highest filtered Q-value is selected. The reward values and best actions provide feedback to the agent, allowing it to learn and improve its policy over time. The use of a SymDQN suggests that the environment has some form of symmetry that can be exploited to improve learning efficiency.

</details>

Figure 4: The process of action filtering

ActionFilter severely restricts action choice. We prevented it from forcing the outcomes by switching it off during the training period. When the agent is actually running in the environment, the ActionFilter is used to optimize decision making. Further strategies on how this dynamic might be implemented in training must be studied, as we want to maintain the asymptotic optimality of DuelDQNs, while enhancing them with reasoning, when relevant.

With ShapeRecognizer, RewardPredictor, ActionReasoner and ActionFilter integrated with the original DuelDQN, the complete architecture of SymDQN can be seen in Fig. 5.

<details>

<summary>extracted/6333762/SymDQN_arch.png Details</summary>

### Visual Description

## Neural Network Diagram: Deep Reinforcement Learning Architecture

### Overview

The image depicts a neural network architecture, likely used in a deep reinforcement learning context. It shows the flow of data through various convolutional (Conv2d) and linear layers, along with non-linear activation functions (ELU). The network appears to process visual input and predict both a value function V(s) and an advantage function A(s, ai), which are then combined to estimate the Q-value Q(s, ai).

### Components/Axes

* **Input:** A visual input (represented by a blank rectangle on the left).

* **Convolutional Layers (Conv2d):**

* Conv2d 3 -> 32

* Conv2d 32 -> 64

* Conv2d 64 -> 128

* **ShapeRecognizer:** 3 -> 5 (Green cube)

* **RewardPredictor:** 5 -> 1 (Green cube)

* **Linear Layers:**

* Linear (128\*50\*50) -> 256 (Red bar)

* Linear (128\*5\*5) -> 256 (Red bar)

* Linear 256 -> 128 (Red bar)

* Linear 128 -> 1 (Red bar)

* Linear 256 -> 128 (Red bar)

* Linear 128 -> 4 (Red bar)

* **Activation Function:** ELU (Exponential Linear Unit)

* **Value Function:** V(s)

* **Advantage Function:** A(s, ai)

* **Q-Value:** Q(s, ai)

### Detailed Analysis

The diagram shows two parallel pathways after the initial convolutional layers. The top pathway consists of three Conv2d layers with ELU activations in between. The bottom pathway consists of a ShapeRecognizer and a RewardPredictor.

The outputs of the two pathways are concatenated and fed into a Linear layer (128\*50\*50 -> 256 and 128\*5\*5 -> 256). This is followed by a Linear layer (256 -> 128). This layer splits into two pathways, one for estimating the value function V(s) and the other for estimating the advantage function A(s, ai).

* **Convolutional Layers:** The input image is processed by three convolutional layers. The first layer transforms the 3-channel input into a 32-channel representation. The second layer transforms the 32-channel representation into a 64-channel representation. The third layer transforms the 64-channel representation into a 128-channel representation.

* **ShapeRecognizer:** The ShapeRecognizer takes a 3-channel input and transforms it into a 5-channel representation.

* **RewardPredictor:** The RewardPredictor takes a 5-channel input and transforms it into a 1-channel representation.

* **Linear Layers:** The Linear layers perform linear transformations on the input data. The first Linear layer transforms the (128\*50\*50)-channel input into a 256-channel representation. The second Linear layer transforms the 256-channel representation into a 128-channel representation. The third Linear layer transforms the 128-channel representation into a 1-channel representation. The fourth Linear layer transforms the 128-channel representation into a 4-channel representation.

* **Value and Advantage Streams:** The 128-channel output is split into two streams. The first stream is fed into a Linear layer (128 -> 1) to estimate the value function V(s). The second stream is fed into a Linear layer (128 -> 4) to estimate the advantage function A(s, ai).

* **Q-Value Estimation:** The value function V(s) and the advantage function A(s, ai) are combined to estimate the Q-value Q(s, ai).

### Key Observations

* The network architecture combines convolutional layers for feature extraction with linear layers for value and advantage estimation.

* The use of ELU activations introduces non-linearity into the network.

* The network predicts both a value function and an advantage function, which are then combined to estimate the Q-value.

### Interpretation

This diagram illustrates a deep reinforcement learning architecture that likely aims to learn an optimal policy for an agent interacting with an environment. The convolutional layers extract relevant features from the visual input, while the ShapeRecognizer and RewardPredictor provide additional information about the environment. The value and advantage functions provide estimates of the expected return for different states and actions, which are then used to estimate the Q-value. The Q-value represents the expected return for taking a specific action in a specific state, and it is used to guide the agent's decision-making process. The architecture suggests a design that leverages both visual information and potentially learned representations of shapes and rewards to improve the agent's learning and performance.

</details>

Figure 5: SymDQN network architecture integrating DuelDQN with ShapeRecognizer and RewardPredictor modules

## 4 The experiment

By comparing the baseline DuelDQN model with our SymDQN model, this study attempts to answer the following questions:

1. Does the SymDQN converge to a stable action policy faster than the baseline DuelDQN?

1. Does the SymDQN outperform the baseline DuelDQN in average reward accumulation?

1. Is SymDQN more precise in its performance than DuelDQN, i.e., is it better at avoiding shapes with negative rewards?

In the experiment, we analyze the impact that each individual modification has on the performance of the SymDQN, comparing them between each other and the baseline DuelDQN. We hence consider five experimental conditions:

DuelDQN: the baseline model-no symbolic components;

SymDQN: DuelDQN enriched with ShapeRecognizer (that uses A1-A3) and with RewardPredictor;

SymDQN(AR): SymDQN with ActionReasoner (that uses the axiom A4);

SymDQN(AF): SymDQN with ActionFilter;

SymDQN(AR,AF): SymDQN enriched with both ActionReasoner and ActionFilter.

Our experiment runs through $250$ epochs, after which the empirically observed rate of learning of all the variations is no longer significant. Each epoch contains $50$ episodes of training, and then the agent’s performance is evaluated as the average score of $50$ new episodes. The score is defined as the ratio of the actual score and the maximum score obtainable in a given episode. The other performance measure we look at is the percentage of the negative-reward objects consumed. The hardware and software specification and the hyperparameters of the experiment can be found in the Appendix A.

## 5 Results

In this section, we will report on the results of our ablation study, which isolates the impact of each component on the agent’s performance. We will first focus on the obtained score comparison among different experimental conditions, and later we will report on the precision of the agent.

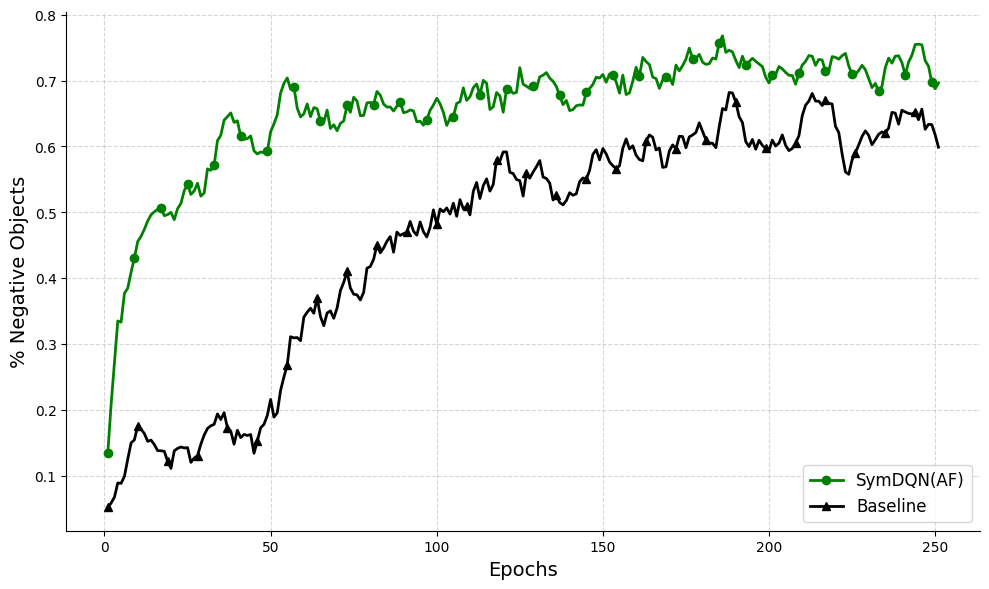

We first compare our best-performing condition, SymDQN(AF) with the baseline learning of the pure non-symbolic DuelDQN, see Fig. 6.

<details>

<summary>extracted/6333762/SymDQNAF-DuelingDQN.png Details</summary>

### Visual Description

## Line Chart: Percentage of Negative Objects vs. Epochs

### Overview

The image is a line chart comparing the performance of two models, "SymDQN(AF)" and "Baseline," over 250 epochs. The y-axis represents the percentage of negative objects, ranging from 0% to 80%. The x-axis represents the number of epochs, ranging from 0 to 250. The chart shows how the percentage of negative objects changes for each model as the training progresses.

### Components/Axes

* **X-axis:** Epochs, ranging from 0 to 250 in increments of 50.

* **Y-axis:** % Negative Objects, ranging from 0 to 0.8 (80%) in increments of 0.1 (10%).

* **Legend:** Located in the bottom-right corner.

* **SymDQN(AF):** Represented by a green line with circle markers.

* **Baseline:** Represented by a black line with triangle markers.

* **Grid:** The chart has a light gray grid in the background.

### Detailed Analysis

* **SymDQN(AF) (Green Line):**

* **Trend:** The green line starts at approximately 14% at epoch 0, rapidly increases to approximately 50% by epoch 25, and then continues to increase, albeit at a slower rate, reaching approximately 65% by epoch 50. It fluctuates between 65% and 75% from epoch 50 to epoch 250.

* **Data Points:**

* Epoch 0: ~14%

* Epoch 25: ~50%

* Epoch 50: ~65%

* Epoch 100: ~65%

* Epoch 150: ~70%

* Epoch 200: ~75%

* Epoch 250: ~70%

* **Baseline (Black Line):**

* **Trend:** The black line starts at approximately 5% at epoch 0, increases to approximately 20% by epoch 50, and then continues to increase, albeit at a slower rate, reaching approximately 50% by epoch 100. It fluctuates between 50% and 70% from epoch 100 to epoch 250.

* **Data Points:**

* Epoch 0: ~5%

* Epoch 25: ~15%

* Epoch 50: ~20%

* Epoch 100: ~50%

* Epoch 150: ~55%

* Epoch 200: ~68%

* Epoch 250: ~60%

### Key Observations

* The SymDQN(AF) model consistently outperforms the Baseline model in terms of the percentage of negative objects.

* Both models show a significant increase in the percentage of negative objects during the initial epochs, with the SymDQN(AF) model showing a more rapid increase.

* After the initial increase, both models exhibit fluctuations in their performance, but the SymDQN(AF) model maintains a higher percentage of negative objects throughout the training process.

### Interpretation

The chart suggests that the SymDQN(AF) model is more effective at identifying negative objects compared to the Baseline model. The rapid initial increase in the percentage of negative objects for both models indicates that they are learning to identify these objects early in the training process. The fluctuations in performance after the initial increase may be due to the models encountering more challenging examples or overfitting to the training data. Overall, the SymDQN(AF) model demonstrates superior performance in this task.

</details>

Figure 6: SymDQN(AF) (in green) vs. DuelDQN (in black): $x$ -axis represents epochs and $y$ -axis represents the ratio of obtained score in the episode and the maximum obtainable score in that episode.

Clearly, the performance of the SymDQN agent equipped with ActionFilter is superior to DuelDQN both in terms of quicker convergence (high initial learning rate) and overall end performance.

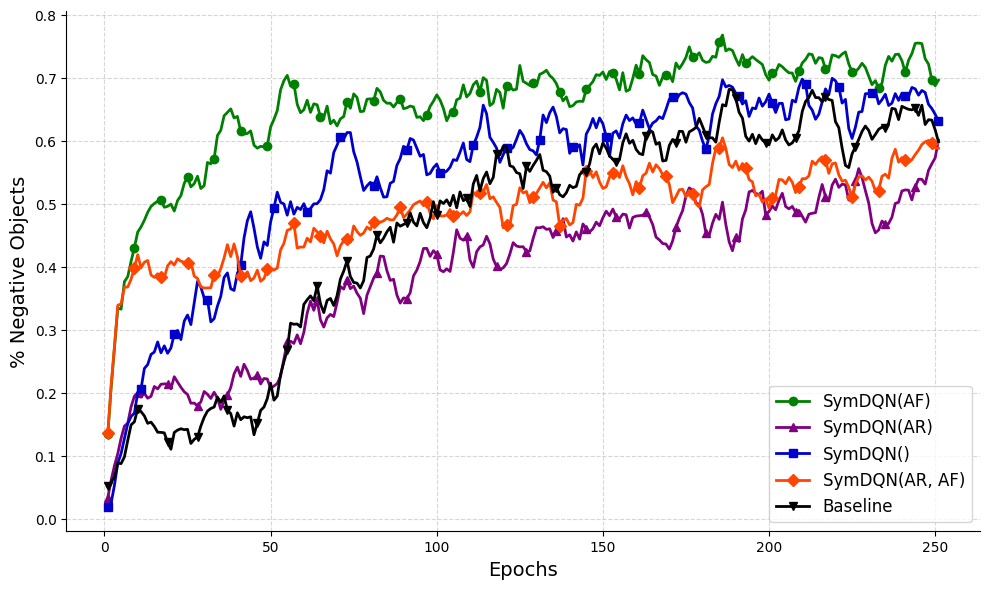

Let us now look at different versions of our SymDQN to better understand what contributes to its performance. In Fig. 7 we show the performance of all four versions of SymDQN.

<details>

<summary>extracted/6333762/SymDQN_performance_new.png Details</summary>

### Visual Description

## Chart: % Negative Objects vs Epochs

### Overview

The image is a line chart comparing the percentage of negative objects over epochs for different SymDQN configurations and a baseline. The x-axis represents epochs, ranging from 0 to 250. The y-axis represents the percentage of negative objects, ranging from 0.0 to 0.8. There are five data series represented by different colored lines: SymDQN(AF) (green), SymDQN(AR) (purple), SymDQN() (blue), SymDQN(AR, AF) (orange), and Baseline (black).

### Components/Axes

* **X-axis:** Epochs, ranging from 0 to 250 in increments of 50.

* **Y-axis:** % Negative Objects, ranging from 0.0 to 0.8 in increments of 0.1.

* **Legend:** Located in the bottom-right corner, identifying each line by color and label:

* Green: SymDQN(AF)

* Purple: SymDQN(AR)

* Blue: SymDQN()

* Orange: SymDQN(AR, AF)

* Black: Baseline

### Detailed Analysis

* **SymDQN(AF) (Green):** The green line representing SymDQN(AF) starts at approximately 0.45 at epoch 0, rapidly increases to approximately 0.65 by epoch 50, and then fluctuates between 0.6 and 0.75 for the remainder of the epochs.

* Epoch 0: ~0.45

* Epoch 50: ~0.65

* Epoch 250: ~0.70

* **SymDQN(AR) (Purple):** The purple line representing SymDQN(AR) starts at approximately 0.15 at epoch 0, gradually increases to approximately 0.4 by epoch 150, and then fluctuates between 0.35 and 0.55 for the remainder of the epochs.

* Epoch 0: ~0.15

* Epoch 50: ~0.20

* Epoch 150: ~0.40

* Epoch 250: ~0.60

* **SymDQN() (Blue):** The blue line representing SymDQN() starts at approximately 0.05 at epoch 0, rapidly increases to approximately 0.5 by epoch 50, and then fluctuates between 0.4 and 0.7 for the remainder of the epochs.

* Epoch 0: ~0.05

* Epoch 50: ~0.50

* Epoch 250: ~0.65

* **SymDQN(AR, AF) (Orange):** The orange line representing SymDQN(AR, AF) starts at approximately 0.15 at epoch 0, rapidly increases to approximately 0.45 by epoch 50, and then fluctuates between 0.35 and 0.6 for the remainder of the epochs.

* Epoch 0: ~0.15

* Epoch 50: ~0.45

* Epoch 250: ~0.60

* **Baseline (Black):** The black line representing the baseline starts at approximately 0.05 at epoch 0, gradually increases to approximately 0.45 by epoch 150, and then fluctuates between 0.4 and 0.65 for the remainder of the epochs.

* Epoch 0: ~0.05

* Epoch 50: ~0.20

* Epoch 150: ~0.45

* Epoch 250: ~0.65

### Key Observations

* SymDQN(AF) consistently has the highest percentage of negative objects throughout the epochs.

* SymDQN(AR) generally has the lowest percentage of negative objects.

* The Baseline and SymDQN() configurations perform similarly, with SymDQN() showing slightly higher values.

* All configurations show an initial increase in the percentage of negative objects, followed by fluctuations.

### Interpretation

The chart suggests that the SymDQN(AF) configuration is the most effective at identifying negative objects, as it consistently maintains the highest percentage. The SymDQN(AR) configuration appears to be the least effective. The other configurations fall in between, with the baseline performing comparably to SymDQN(). The initial increase in negative objects likely reflects the learning phase of the models, while the subsequent fluctuations may indicate ongoing adjustments and potential overfitting. The differences in performance between the configurations highlight the impact of different SymDQN parameters on the model's ability to identify negative objects.

</details>

Figure 7: All versions of SymDQN: SymDQN(AF) (in green), SymDQN(AR,AF) (in red), SymDQN (in blue) and SymDQN(AR) (in purple); the $x$ -axis represents epochs and the $y$ -axis represents the ratio of obtained score in the episode and the maximum obtainable score in that episode. We report in standard deviations in the Appendix.

From this graph, we can conclude that the presence of ActionReasoner, despite giving a slight boost in the initial learning rate, hampers the overall performance of the agent (red and purple graphs). On the other hand, the presence of ActionFilter improves the initial performance (green and red).

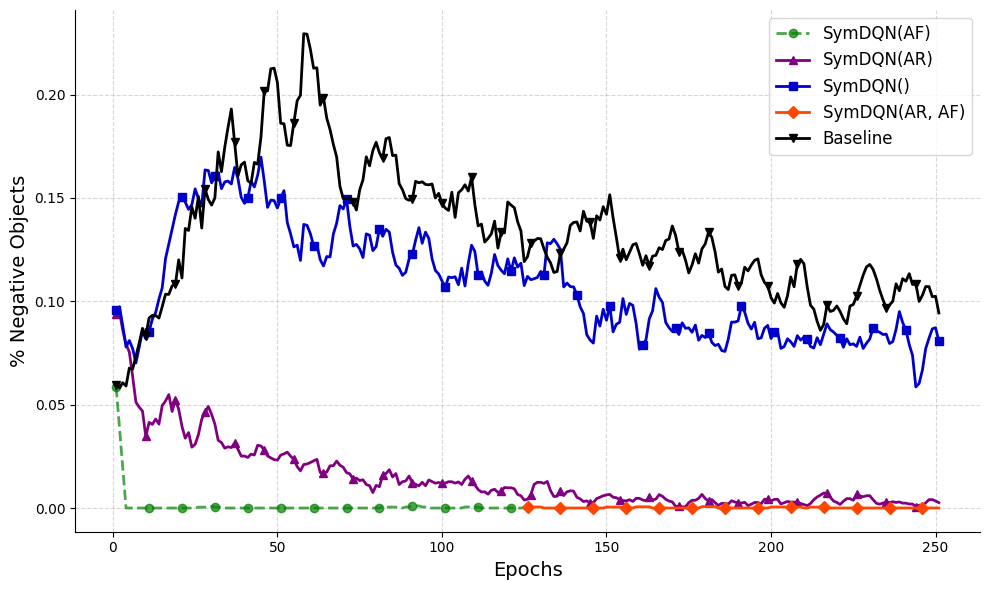

Let us now move to another performance measure: the precision of the agent in avoiding objects of the shape associated with negative rewards. We now compare all five experimental conditions, see Fig. 8.

<details>

<summary>extracted/6333762/precision_new.png Details</summary>

### Visual Description

## Line Chart: % Negative Objects vs. Epochs

### Overview

The image is a line chart comparing the percentage of negative objects detected by different SymDQN models (SymDQN(AF), SymDQN(AR), SymDQN(), SymDQN(AR, AF)) and a Baseline model over 250 epochs. The y-axis represents the percentage of negative objects, ranging from 0.00 to 0.20. The x-axis represents the number of epochs, ranging from 0 to 250.

### Components/Axes

* **X-axis:** Epochs, with markers at 0, 50, 100, 150, 200, and 250.

* **Y-axis:** % Negative Objects, with markers at 0.00, 0.05, 0.10, 0.15, and 0.20.

* **Legend:** Located in the top-right corner, it identifies each line by color and label:

* Green dashed line with circles: SymDQN(AF)

* Purple line with triangles: SymDQN(AR)

* Blue line with squares: SymDQN()

* Orange line with diamonds: SymDQN(AR, AF)

* Black line with inverted triangles: Baseline

### Detailed Analysis

* **SymDQN(AF) (Green, dashed):** This line remains consistently at approximately 0.00% throughout the 250 epochs.

* **SymDQN(AR) (Purple):** Starts at approximately 0.06% at epoch 0, rapidly decreases to approximately 0.01% by epoch 50, and then fluctuates slightly around 0.01% for the remaining epochs.

* **SymDQN() (Blue):** Starts at approximately 0.09% at epoch 0, increases to approximately 0.16% by epoch 50, then gradually decreases to approximately 0.08% by epoch 250.

* **SymDQN(AR, AF) (Orange):** Starts near 0.00% and remains consistently near 0.00% throughout the 250 epochs, with slight fluctuations.

* **Baseline (Black):** Starts at approximately 0.06% at epoch 0, increases sharply to approximately 0.22% by epoch 50, and then gradually decreases to approximately 0.10% by epoch 250, with significant fluctuations throughout.

### Key Observations

* SymDQN(AF) and SymDQN(AR, AF) consistently maintain a very low percentage of negative objects.

* SymDQN(AR) quickly reduces the percentage of negative objects and maintains a low level.

* SymDQN() and Baseline show a similar trend of increasing and then decreasing percentage of negative objects, but SymDQN() generally has a lower percentage than the Baseline.

* The Baseline model exhibits the highest percentage of negative objects and the most significant fluctuations.

### Interpretation

The chart compares the performance of different SymDQN models and a baseline model in terms of the percentage of negative objects detected over 250 epochs. The results suggest that SymDQN(AF) and SymDQN(AR, AF) are the most effective at minimizing the detection of negative objects, as their percentages remain consistently near zero. SymDQN(AR) also performs well, quickly reducing the percentage of negative objects. SymDQN() performs better than the Baseline, indicating that it offers some improvement. The Baseline model shows the highest percentage of negative objects and the most instability, suggesting it is the least effective of the models tested. The initial increase in negative objects for the Baseline and SymDQN() models could indicate an initial learning phase where the models are still adjusting to the data. The subsequent decrease suggests that the models eventually learn to reduce the detection of negative objects, although the Baseline model does so less effectively than SymDQN().

</details>

Figure 8: Agent’s precision in all conditions: SymDQN(AF) (in green), SymDQN(AR,AF) (in red), SymDQN(AR) (in purple); SymDQN (in blue); DuelDQN (in black): the $x$ -axis represents epochs and the $y$ -axis represents the percentage of negative-reward objects consumed by the agent. Note that the green and red lines overlap.

While the presence of ActionReasoner (in purple) allows a significant improvement of precision, it’s the ActionFilter that eradicates negative rewards completely (red and green graphs). The baseline DuelDQN and the pure SymDQN perform similarly, not being able to learn to avoid negative rewards completely.

### 5.1 Interpretation and Discussion

The integration of symbolic knowledge into reinforcement learning, as demonstrated by SymDQN, provides several insights into the potential of neuro-symbolic approaches in AI. The ability of SymDQN to extract and utilize key environmental features drives a significant boost in initial learning rate and overall performance, suggesting that symbolic representations can provide a valuable advantage to neural networks, enabling them to rapidly leverage the features for better decision-making. The ActionFilter provides a dramatic enhancement in early-stage performance, allowing the model to make good decisions as soon as the symbolic representation is available. By leveraging the symbolic representation and understanding of the environment, ActionFilter prunes sub-optimal actions, aligning the agent’s behavior with a symbolic understanding of the environment. The role of ActionReasoner is less clear: while providing a slight boost in initial performance, it hampers the overall learning rate. It seems that by forcing the model output to comply with the logical axiom, it diminishes its ability to capture information that is not described by the logical formulas.

The two components, ActionReasoner and ActionFilter use symbolic information to adjust (the impact of) $q$ -values and could be seen as a form of reward shaping. ActionReasoner uses the axiom (A4) to align $q$ -values with predicted immediate rewards. As we can see in Fig. 7, this ‘reward shaping’ process is detrimental to the overall performance. A possible reason for that can be illustrated in the following example. Let’s assume the agent is separated from a multitude of positive shapes by a thin wall of negative shapes. A long-term perspective of sacrificing some reward by crossing over the wall can be blocked by attaching too much value to the immediate punishment. Note, however, that although ineffective, this ‘reward shaping’ makes the agent more cautious/precise (see Fig. 7). While ActionFilter does not shape the reward function directly (as it is turned off in the training phase), it performs reasoning based on rewards (Fig. 4). In a given state it eliminates the possibility of executing actions for which $q$ -values and immediate rewards differ too drastically.

The advantages of SymDQN come with trade-offs. The computational cost introduced by the additional components is non-trivial, and the logical constraints imposed on the learning might hamper performance in more complex environments. In that, the use of LTNs in reinforcement learning sheds light on the ‘thinking fast and slow’ effects in learning. Firstly, the use of axioms (A1)-(A3) in ShapeRecognizer gives a sharp increase in the initial performance due to the understanding of the environment structure (Fig. 8). Apart from that, adjusting the reward function with ActionReasoner and ActionFilter will increase precision (as normally assumed about the System 2 type of behavior), but it can also hamper the overall performance, like it does in the case of ActionReasoner (see Fig. 7).

## 6 Conclusions and Future Work

This research introduces a novel modular approach to integrating symbolic knowledge with deep reinforcement learning through the implementation of SymDQN. We contribute to the field of Neuro-Symbolic AI in several ways. Firstly, we demonstrate a successful integration of LTNs into reinforcement learning, a promising and under-explored research direction. This integration touches on key challenges: interpretability, alignment, and knowledge injection. Secondly, SymDQN augments reinforcement learning through symbolic environment representation, modeling of object relationships and dynamics, and guiding action based on symbolic knowledge, effectively improving both initial learning rates and the end performance of the reinforcement learning agents. These contributions advance the field of neuro-symbolic AI, bridging the gap between symbolic reasoning and deep learning systems. Our findings demonstrate the potential of integrative approaches in creating more aligned, controllable and interpretable models for safe and reliable AI systems.

### 6.1 Future Work

We see several potential avenues for future research.

Enhancing Environment Representation It is easy to see that the ShapeRecognizer can be adapted to any grid-like environment. It could also be further developed to represent more complex environments symbolically, e.g., by integrating a more advanced object-detection component (e.g., Faster-LTN Manigrasso et al. (2021)). With the addition of precise bounding box detection and multi-class labeling, the component could be extended to also perform hierarchical representations, e.g., recognizing independent objects and their parts or constructing abstract taxonomies.

Automatic Axiom Discovery The investigation of automatic axiom discovery through iterative learning, or meta-learning, is an interesting direction that opens the doors to knowledge extraction from a model (see, e.g., Hasanbeig et al. (2021); Umili et al. (2021); Meli et al. (2024)). Theoretically, given enough time and randomization, Q-learning converges to optimal decision policy in any environment, and so the iterative development and assessment of axioms might allow us to extract knowledge from deep learning systems that outperform human experts.

Broader Evaluation While a version of SymDQN was shown to be advantageous, it was only tested in a single, simple environment. A broader suite of empirical experiments in more complex environments, such as Atari games or Pac-Man, is necessary to understand the generalization capabilities of the findings. These environments provide more complex and diverse challenges, potentially offering deeper insights into the advantages of SymDQN.

## Appendix A Appendix

#### Hardware and software specifications

The experiments, hyper-parameter tuning, and model variation comparisons were performed on a computing center machine (Tesla V100 with either 16 or 32GB). The coding environment used was Python 3.9.5 and Pytorch 2.3.1 with Cuda Toolkit 12.1. To integrate LTNs, the LTNtorch Carraro (2022) library was used, a PyTorch implementation by Tommaso Carraro. For experiment tracking, the ClearMl Platform cle (2023) was used. The code is provided as a separate .zip file.

#### Hyperparameters

The hyper-parameters used for training were: explore steps: 25000, update steps: 1000, initial epsilon: 0.95, end epsilon: 0.05, memory capacity: 1000, batch size: 16, gamma: 0.99, learning rate: 0.0001, maximum gradient norm: 1. Semantics of quantifiers in LTN used $p=8$ .

#### Standard deviations

Performance was assessed through 5 independent experimental runs per configuration. Each run evaluated 50 distinct environments to calculate average scores per epoch; ( $\ast$ ) stands for SymDQN( $\ast$ ), values for epochs 50, 150, 250 (smoothed, rolling window 5). First table: overall performance (rewards, r) and standard deviations (sd). Second table: ratio of negative shapes collected (inverse of precision, p) and sd.

| (AF) (AR) () | 0.65 0.26 0.43 | 0.03 0.03 0.05 | 0.70 0.47 0.65 | 0.01 0.01 0.02 | 0.71 0.53 0.66 | 0.01 0.04 0.02 |

| --- | --- | --- | --- | --- | --- | --- |

| (AR,AF) | 0.40 | 0.02 | 0.53 | 0.02 | 0.59 | 0.02 |

| DuelDQN | 0.24 | 0.06 | 0.57 | 0.02 | 0.64 | 0.01 |

| (AF) (AR) () | 0.00 0.02 0.17 | 0.00 0.00 0.02 | 0.00 0.01 0.12 | 0.00 0.00 0.02 | 0.00 0.00 0.08 | 0.00 0.00 0.00 |

| --- | --- | --- | --- | --- | --- | --- |

| (AR,AF) | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 |

| DDQN | 0.19 | 0.03 | 0.14 | 0.01 | 0.10 | 0.00 |

## References

- Badreddine and Spranger (2019) Samy Badreddine and Michael Spranger. Injecting prior knowledge for transfer learning into reinforcement learning algorithms using logic tensor networks. CoRR, abs/1906.06576, 2019.

- Badreddine et al. (2022) Samy Badreddine, Artur d’Avila Garcez, Luciano Serafini, and Michael Spranger. Logic tensor networks. Artificial Intelligence, 303:103649, 2022.

- Bianchi and Hitzler (2019) Federico Bianchi and Pascal Hitzler. On the capabilities of logic tensor networks for deductive reasoning. In AAAI Spring Symposium Combining Machine Learning with Knowledge Engineering, 2019.

- Carraro (2022) Tommaso Carraro. LTNtorch: PyTorch implementation of Logic Tensor Networks, mar 2022.

- cle (2023) ClearML - your entire mlops stack in one open-source tool, 2023. Software available from http://github.com/allegroai/clearml.

- Donadello et al. (2017) Ivan Donadello, Luciano Serafini, and Artur d’Avila Garcez. Logic tensor networks for semantic image interpretation. In Proceedings of the Twenty-Sixth International Joint Conference on Artificial Intelligence, IJCAI-17, pages 1596–1602, 2017.

- Furelos-Blanco et al. (2021) Daniel Furelos-Blanco, Mark Law, Anders Jonsson, Krysia Broda, and Alessandra Russo. Induction and exploitation of subgoal automata for reinforcement learning. J. Artif. Intell. Res., 70:1031–1116, 2021.

- Giacomo et al. (2019) Giuseppe De Giacomo, Luca Iocchi, Marco Favorito, and Fabio Patrizi. Foundations for restraining bolts: Reinforcement learning with ltlf/ldlf restraining specifications. In J. Benton, Nir Lipovetzky, Eva Onaindia, David E. Smith, and Siddharth Srivastava, editors, Proceedings of the Twenty-Ninth International Conference on Automated Planning and Scheduling, ICAPS 2019, Berkeley, CA, USA, July 11-15, 2019, pages 128–136. AAAI Press, 2019.

- Hasanbeig et al. (2021) Mohammadhosein Hasanbeig, Natasha Yogananda Jeppu, Alessandro Abate, Tom Melham, and Daniel Kroening. Deepsynth: Automata synthesis for automatic task segmentation in deep reinforcement learning. In Thirty-Fifth AAAI Conference on Artificial Intelligence, AAAI 2021, Thirty-Third Conference on Innovative Applications of Artificial Intelligence, IAAI 2021, The Eleventh Symposium on Educational Advances in Artificial Intelligence, EAAI 2021, Virtual Event, February 2-9, 2021, pages 7647–7656. AAAI Press, 2021.

- Manigrasso et al. (2021) Francesco Manigrasso, Filomeno Davide Miro, Lia Morra, and Fabrizio Lamberti. Faster-LTN: A neuro-symbolic, end-to-end object detection architecture. In Igor Farkaš, Paolo Masulli, Sebastian Otte, and Stefan Wermter, editors, Artificial Neural Networks and Machine Learning – ICANN 2021, pages 40–52, Cham, 2021. Springer International Publishing.

- Meli et al. (2024) Daniele Meli, Alberto Castellini, and Alessandro Farinelli. Learning logic specifications for policy guidance in pomdps: an inductive logic programming approach. Journal of Artificial Intelligence Research, 79:725–776, February 2024.

- Serafini and d’Avila Garcez (2016) Luciano Serafini and Artur S. d’Avila Garcez. Logic tensor networks: Deep learning and logical reasoning from data and knowledge. CoRR, abs/1606.04422, 2016.

- Towers et al. (2024) Mark Towers, Jordan K. Terry, Ariel Kwiatkowski, John U. Balis, Gianluca de Cola, Tristan Deleu, Manuel Goulão, Andreas Kallinteris, Arjun KG, Markus Krimmel, Rodrigo Perez-Vicente, Andrea Pierré, Sander Schulhoff, Jun Jet Tai, Andrew Tan Jin Shen, and Omar G. Younis. Gymnasium, June 2024.

- Umili et al. (2021) Elena Umili, Emanuele Antonioni, Francesco Riccio, Roberto Capobianco, Daniele Nardi, Giuseppe De Giacomo, et al. Learning a symbolic planning domain through the interaction with continuous environments. In Workshop on Bridging the Gap Between AI Planning and Reinforcement Learning (PRL), workshop at ICAPS 2021, 2021.

- Umili et al. (2023) Elena Umili, Roberto Capobianco, and Giuseppe De Giacomo. Grounding LTLf Specifications in Image Sequences. In Proceedings of the 20th International Conference on Principles of Knowledge Representation and Reasoning, pages 668–678, 8 2023.

- van Krieken et al. (2022) Emile van Krieken, Erman Acar, and Frank van Harmelen. Analyzing differentiable fuzzy logic operators. Artificial Intelligence, 302:103602, 2022.

- Wagner and d’Avila Garcez (2022) Benedikt Wagner and Artur S. d’Avila Garcez. Neural-symbolic reasoning under open-world and closed-world assumptions. In Proceedings of the AAAI 2022 Spring Symposium on Machine Learning and Knowledge Engineering for Hybrid Intelligence (AAAI-MAKE 2022), 2022.

- Wang et al. (2015) Ziyu Wang, Nando de Freitas, and Marc Lanctot. Dueling network architectures for deep reinforcement learning. CoRR, abs/1511.06581, 2015.

- Wang et al. (2016) Ziyu Wang, Tom Schaul, Matteo Hessel, Hado Van Hasselt, Marc Lanctot, and Nando De Freitas. Dueling network architectures for deep reinforcement learning. In Proceedings of the 33rd International Conference on International Conference on Machine Learning - Volume 48, ICML’16, pages 1995–2003. JMLR.org, 2016.