# Affordable AI Assistants with Knowledge Graph of Thoughts

**Authors**: Maciej Besta, ETH Zurich, &Lorenzo Paleari, ETH Zurich, &Jia Hao Andrea Jiang, ETH Zurich, &Robert Gerstenberger, ETH Zurich, &You Wu, ETH Zurich, &Jón Gunnar Hannesson, ETH Zurich, &Patrick Iff, ETH Zurich, &Ales Kubicek, ETH Zurich, &Piotr Nyczyk, &Diana Khimey, ETH Zurich, &Nils Blach, ETH Zurich, &Haiqiang Zhang, ETH Zurich, &Tao Zhang, ETH Zurich, &Peiran Ma, ETH Zurich, &Grzegorz Kwaśniewski, ETH Zurich, &Marcin Copik, ETH Zurich, &Hubert Niewiadomski, &Torsten Hoefler, ETH Zurich

> corresponding author

Abstract

Large Language Models (LLMs) are revolutionizing the development of AI assistants capable of performing diverse tasks across domains. However, current state-of-the-art LLM-driven agents face significant challenges, including high operational costs and limited success rates on complex benchmarks like GAIA. To address these issues, we propose Knowledge Graph of Thoughts (KGoT), an innovative AI assistant architecture that integrates LLM reasoning with dynamically constructed knowledge graphs (KGs). KGoT extracts and structures task-relevant knowledge into a dynamic KG representation, iteratively enhanced through external tools such as math solvers, web crawlers, and Python scripts. Such structured representation of task-relevant knowledge enables low-cost models to solve complex tasks effectively while also minimizing bias and noise. For example, KGoT achieves a 29% improvement in task success rates on the GAIA benchmark compared to Hugging Face Agents with GPT-4o mini. Moreover, harnessing a smaller model dramatically reduces operational costs by over 36 $×$ compared to GPT-4o. Improvements for other models (e.g., Qwen2.5-32B and Deepseek-R1-70B) and benchmarks (e.g., SimpleQA) are similar. KGoT offers a scalable, affordable, versatile, and high-performing solution for AI assistants.

Website & code: https://github.com/spcl/knowledge-graph-of-thoughts

1 Introduction

Large Language Models (LLMs) are transforming the world. However, training LLMs is expensive, time-consuming, and resource-intensive. In order to democratize the access to generative AI, the landscape of agent systems has massively evolved during the last two years (LangChain Inc., 2025a; Rush, 2023; Kim et al., 2024; Sumers et al., 2024; Hong et al., 2024; Guo et al., 2024; Edge et al., 2025; Besta et al., 2025c; Zhuge et al., 2024; Beurer-Kellner et al., 2024; Shinn et al., 2023; Kagaya et al., 2024; Zhao et al., 2024a; Stengel-Eskin et al., 2024; Wu et al., 2024). These schemes have been applied to numerous tasks in reasoning (Creswell et al., 2023; Bhattacharjya et al., 2024; Besta et al., 2025c), planning (Wang et al., 2023c; Prasad et al., 2024; Shen et al., 2023; Huang et al., 2023), software development (Tang et al., 2024), and many others (Xie et al., 2024; Li & Vasarhelyi, 2024; Schick et al., 2023; Beurer-Kellner et al., 2023).

Among the most impactful applications of LLM agents is the development of AI assistants capable of helping with a wide variety of tasks. These assistants promise to serve as versatile tools, enhancing productivity and decision-making across domains. From aiding researchers with complex problem-solving to managing day-to-day tasks for individuals, AI assistants are becoming an indispensable part of modern life. Developing such systems is highly relevant, but remains challenging, particularly in designing solutions that are both effective and economically viable.

The GAIA benchmark (Mialon et al., 2024) has become a key standard for evaluating LLM-based agent systems across diverse tasks, including web navigation, code execution, image reasoning, scientific QA, and multimodal challenges. Despite its introduction nearly two years ago, top-performing solutions still struggle with many tasks. Moreover, operational costs remain high: running all validation tasks with Hugging Face Agents (Roucher & Petrov, 2025) and GPT-4o costs $≈$ $200, underscoring the need for more affordable alternatives . Smaller models like GPT-4o mini significantly reduce expenses but suffer from steep drops in task success, making them insufficient. Open large models also pose challenges due to demanding infrastructure needs, while smaller open models, though cheaper to run, lack sufficient capabilities.

To address these challenges, we propose Knowledge Graph of Thoughts (KGoT), a novel AI assistant architecture that significantly reduces task execution costs while maintaining a high success rate (contribution #1). The central innovation of KGoT lies in its use of a knowledge graph (KG) (Singhal, 2012; Besta et al., 2024b) to represent knowledge relevant to a given task. A KG organizes information into triples, providing a structured representation of knowledge that small, cost-effective models can efficiently process. Hence, KGoT “turns the unstructured into the structured”, i.e., KGoT turns the often unstructured data such as website contents or PDF files into structured KG triples. This approach enhances the comprehension of task requirements, enabling even smaller models to achieve performance levels comparable to much larger counterparts, but at a fraction of the cost.

The KGoT architecture (contribution #2) implements this concept by iteratively constructing a KG from the task statement, incorporating tools as needed to gather relevant information. The constructed KG is kept in a graph store, serving as a repository of structured knowledge. Once sufficient information is gathered, the LLM attempts to solve the task by either directly embedding the KG in its context or querying the graph store for specific insights. This approach ensures that the LLM operates with a rich and structured knowledge base, improving its task-solving ability without incurring the high costs typically associated with large models. The architecture is modular and extensible towards different types of graph query languages and tools.

Our evaluation against top GAIA leaderboard baselines demonstrates its effectiveness and efficiency (contribution #3). KGoT with GPT-4o mini solves $>$ 2 $×$ more tasks from the validation set than Hugging Face Agents with GPT-4o or GPT-4o mini. Moreover, harnessing a smaller model dramatically reduces operational costs: from $187 with GPT-4o to roughly $5 with GPT-4o mini. KGoT’s benefits generalize to other models, baselines, and benchmarks such as SimpleQA (Wei et al., 2024).

On top of that, KGoT reduces noise and simultaneously minimizes bias and improves fairness by externalizing reasoning into an explicit knowledge graph rather than relying solely on the LLM’s internal generation (contribution #4). This ensures that key steps when resolving tasks are grounded in transparent, explainable, and auditable information.

2 Knowledge Graph of Thoughts

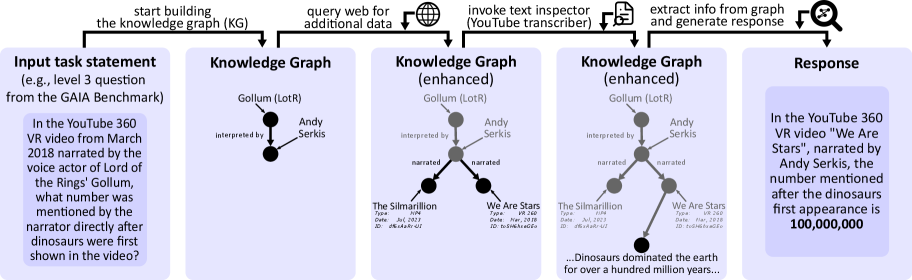

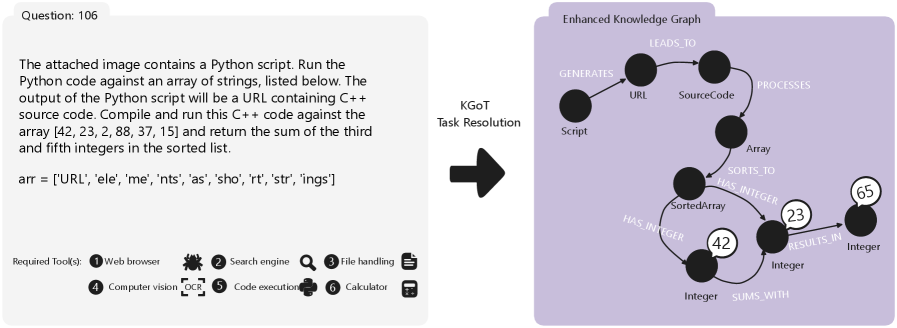

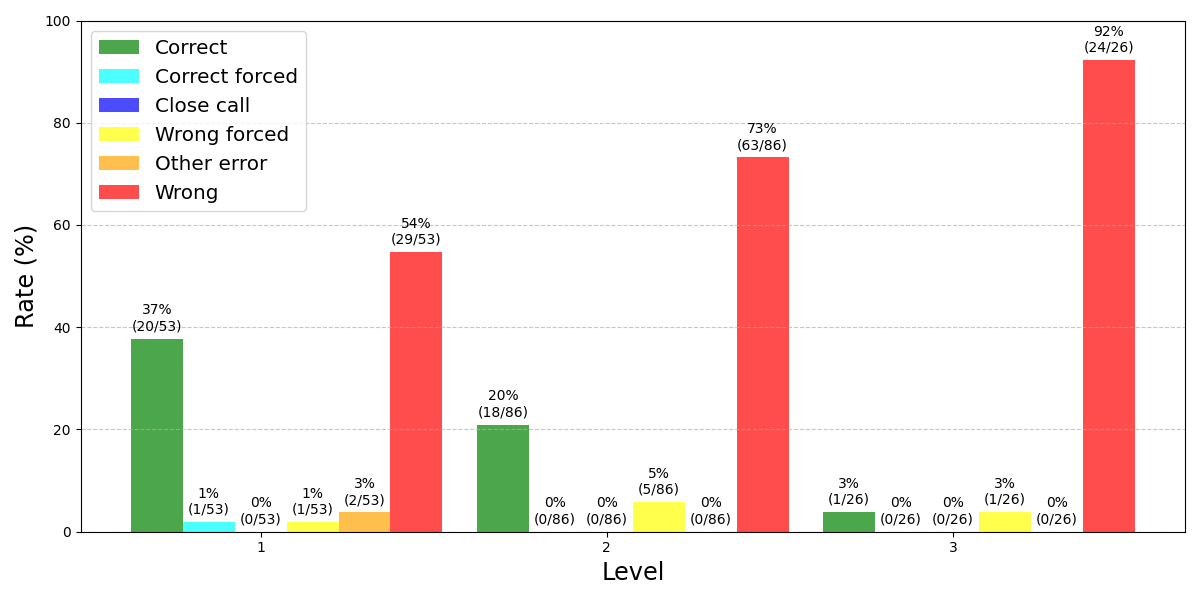

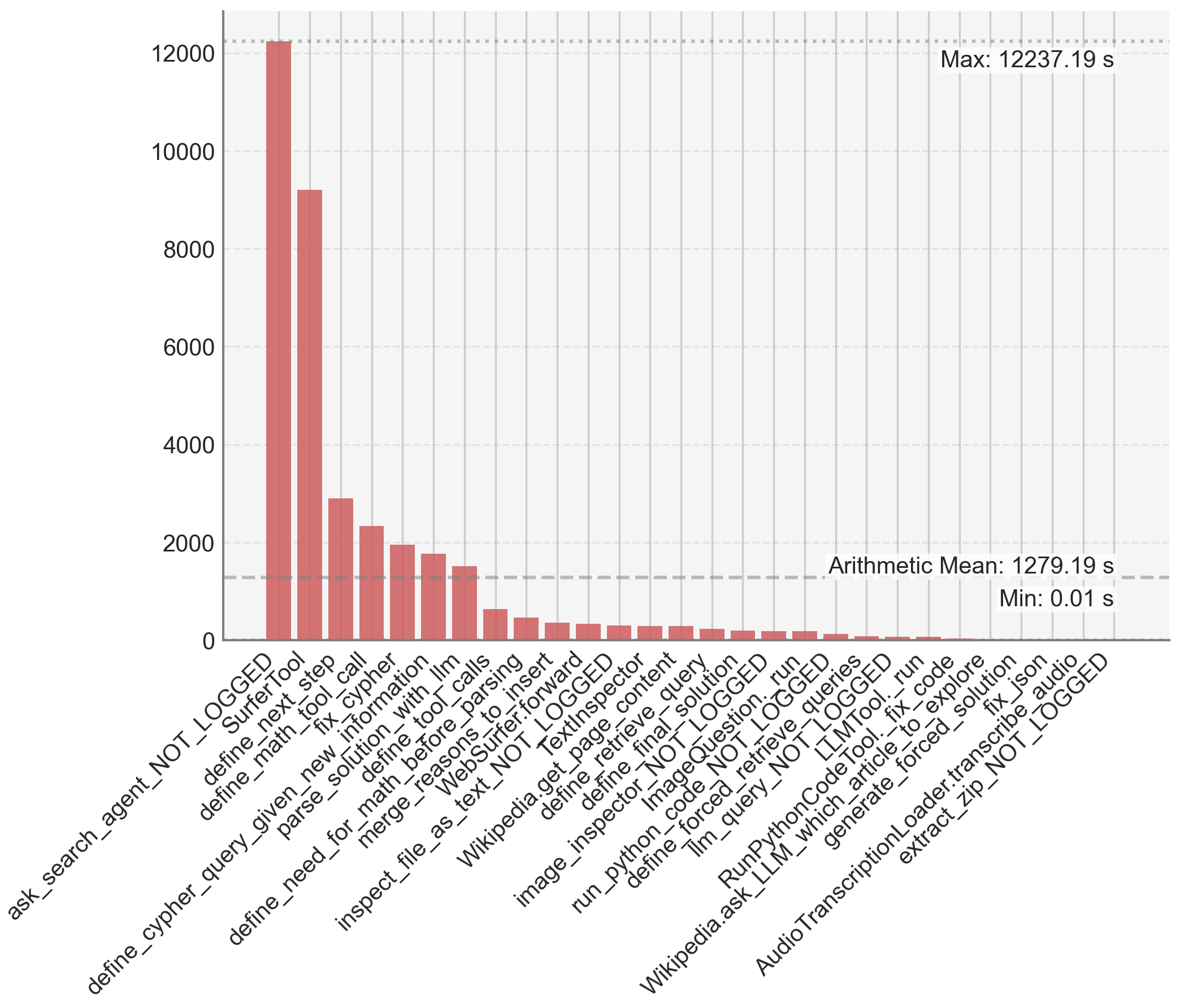

We first illustrate the key idea, namely, using a knowledge graph to encode structurally the task contents. Figure 1 shows an example task and its corresponding evolving KG.

2.1 What is a Knowledge Graph?

A knowledge graph (KG) is a structured representation of information that organizes knowledge into a graph-based format, allowing for efficient querying, reasoning, and retrieval. Formally, a KG consists of a set of triples, where each triple $(s,p,o)$ represents a relationship between two entities $s$ (subject) and $o$ (object) through a predicate $p$ . For example, the triple $(\text{``Earth''},\text{``orbits''},\text{``Sun''})$ captures the fact that Earth orbits the Sun. Mathematically, a knowledge graph can be defined as a directed labeled graph $G=(V,E,L)$ , where $V$ is the set of vertices (entities), $E⊂eq V× V$ is the set of edges (relationships), and $L$ is the set of labels (predicates) assigned to the edges. Each entity or predicate may further include properties or attributes, enabling richer representation. Knowledge graphs are widely used in various domains, including search engines, recommendation systems, and AI reasoning, as they facilitate both efficient storage and complex queries.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Knowledge Graph Construction Process

### Overview

The image depicts a diagram illustrating the process of constructing a knowledge graph (KG) to answer a complex question posed from the GAIA Benchmark. The process involves starting with an initial KG, querying the web for additional data, invoking a text inspector (specifically a YouTube transcriber), and finally extracting information from the enhanced graph to generate a response. The diagram shows the evolution of the knowledge graph through these stages, with examples of nodes and relationships.

### Components/Axes

The diagram is structured horizontally, showing a sequence of steps. The main components are:

* **Input Task Statement:** A text box containing a complex question from the GAIA Benchmark.

* **Knowledge Graph:** Three iterations of a knowledge graph are shown, labeled "Knowledge Graph", "Knowledge Graph (enhanced)", and "Knowledge Graph (enhanced)".

* **Query Web:** An icon representing a globe with the text "query web for additional data".

* **Invoke Inspector:** An icon representing a computer screen with the text "invoke inspector (YouTube transcriber)".

* **Extract Info & Generate Response:** An icon representing a cross mark with the text "extract info from graph and generate response".

* **Response:** A text box containing the answer generated from the knowledge graph.

* **Arrows:** Arrows indicate the flow of the process from left to right.

### Detailed Analysis or Content Details

**1. Input Task Statement:**

The text reads: "In the YouTube 360 VR video from March 2018 narrated by the voice actor of Lord of the Rings’ Gollum, what number was mentioned by the narrator directly after dinosaurs were first shown in the video?"

**2. Knowledge Graph (Initial):**

* **Nodes:**

* Gollum (LotR)

* Andy Serkis

* **Relationship:**

* "interpreted by" connecting Gollum (LotR) to Andy Serkis.

**3. Knowledge Graph (Enhanced - Stage 1):**

* **Nodes:**

* Gollum (LotR)

* Andy Serkis

* The Silmarillion

* **Relationships:**

* "interpreted by" connecting Gollum (LotR) to Andy Serkis.

* "narrated" connecting Andy Serkis to The Silmarillion.

* The Silmarillion has the following attributes:

* Type: Audio

* Date: Jul, 2017

* ID: 20160426-07

**4. Knowledge Graph (Enhanced - Stage 2):**

* **Nodes:**

* Gollum (LotR)

* Andy Serkis

* The Silmarillion

* We Are Stars

* **Relationships:**

* "interpreted by" connecting Gollum (LotR) to Andy Serkis.

* "narrated" connecting Andy Serkis to both The Silmarillion and We Are Stars.

* We Are Stars has the following attributes:

* Type: VR 360

* Date: Mar, 2018

* ID: 20160426-10

* A text snippet is connected to "We Are Stars": "...Dinosaurs dominated the earth for over a hundred million years..."

**5. Response:**

The text reads: "In the YouTube 360 VR video “We Are Stars”, narrated by Andy Serkis, the number mentioned after the dinosaurs first appearance is 100,000,000"

**6. Process Flow:**

* The process starts with the "Input Task Statement".

* An initial "Knowledge Graph" is built.

* The web is queried for "additional data".

* A "YouTube transcriber" is invoked.

* The "Knowledge Graph" is enhanced with the new data.

* Information is extracted from the enhanced graph to generate the "Response".

### Key Observations

* The diagram demonstrates how a knowledge graph can be iteratively built and enhanced to answer complex questions.

* The inclusion of a YouTube transcriber highlights the importance of processing multimedia content to extract relevant information.

* The example shows how the graph connects entities (Gollum, Andy Serkis, videos) and their relationships (interpreted by, narrated).

* The final response is directly derived from the information contained within the enhanced knowledge graph.

### Interpretation

The diagram illustrates a sophisticated approach to question answering, leveraging knowledge graphs and multimedia processing. The process begins with a natural language query and transforms it into a structured representation (the knowledge graph). By querying the web and transcribing video content, the graph is enriched with relevant information. The final step involves extracting the answer from the graph, demonstrating the power of this approach for complex reasoning and information retrieval. The diagram highlights the importance of combining structured knowledge with unstructured data (video transcripts) to achieve accurate and comprehensive answers. The specific example focuses on temporal relationships ("directly after") and numerical extraction, showcasing the system's ability to handle nuanced queries. The inclusion of metadata (Type, Date, ID) for each video suggests a focus on provenance and data quality.

</details>

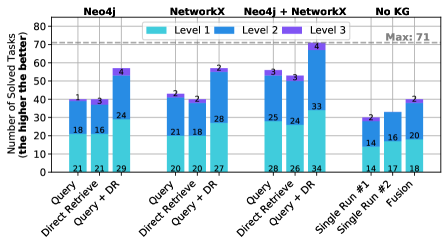

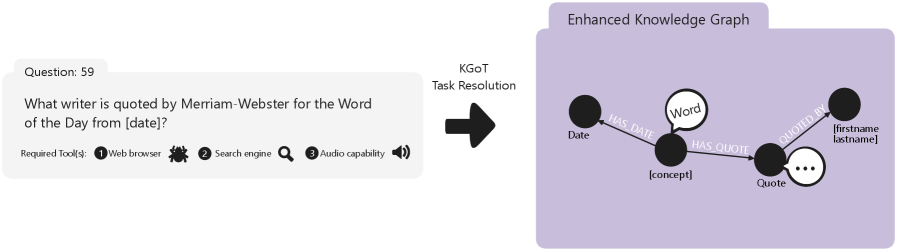

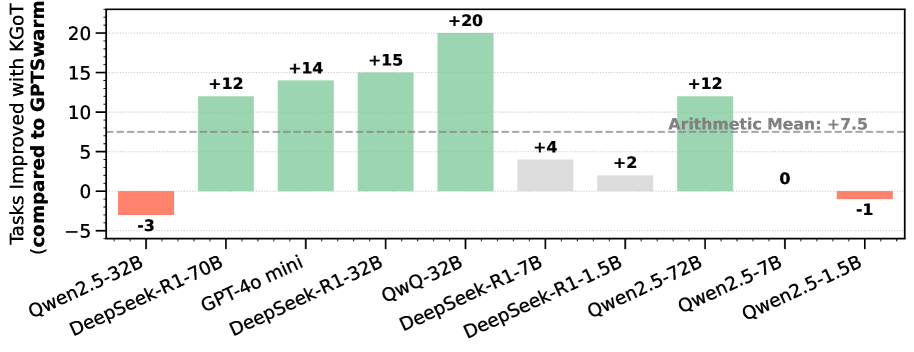

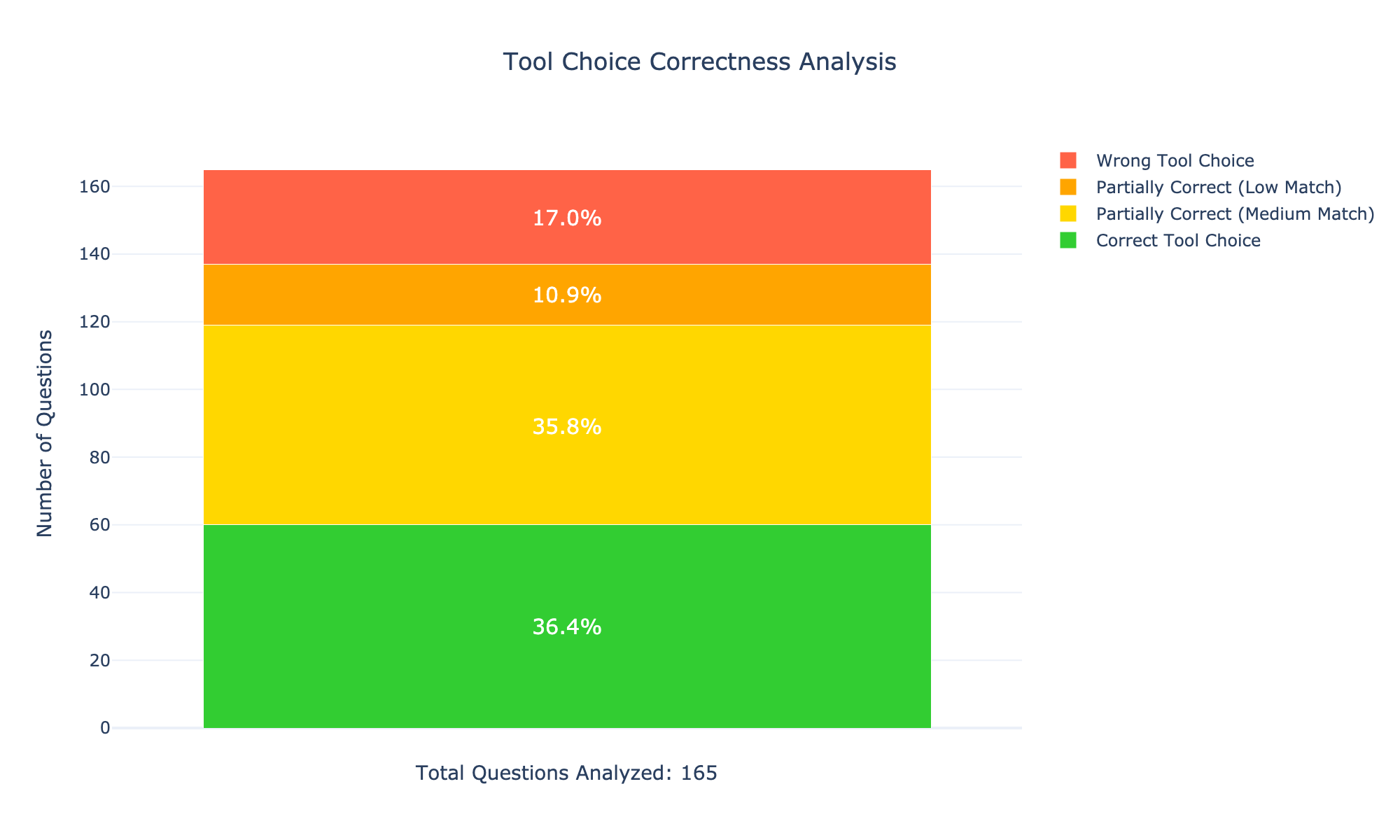

Figure 1: The key idea behind Knowledge Graph of Thoughts (KGoT): transforming the representation of a task for an AI assistant from a textual form into a knowledge graph (KG). As an example, we use a Level-3 (i.e., highest difficulty) task from the GAIA benchmark. In order to solve the task, KGoT evolves this KG by adding relevant information that brings the task closer to completion. This is achieved by iteratively running various tools. Finally, the task is solved by extracting the relevant information from the KG, using – for example – a graph query, or an LLM’s inference process with the KG provided as a part of the input prompt. More examples of KGs are in Appendix A.

2.2 Harnessing Knowledge Graphs for Effective AI Assistant Task Resolution

At the heart of KGoT is the process of transforming a task solution state into an evolving KG. The KG representation of the task is built from “thoughts” generated by the LLM. These “thoughts” are intermediate insights identified by the LLM as it works through the problem. Each thought contributes to expanding or refining the KG by adding vertices or edges that represent new information.

For example, consider the following Level 3 (i.e., highest difficulty) task from the GAIA benchmark: “In the YouTube 360 VR video from March 2018 narrated by the voice actor of Lord of the Rings’ Gollum, what number was mentioned by the narrator directly after dinosaurs were first shown in the video?” (see Figure 1 for an overview; more examples of constructed KGs are in Appendix A). Here, the KG representation of the task solution state has a vertex “Gollum (LotR)”. Then, the thought “Gollum from Lord of the Rings is interpreted by Andy Serkis” results in adding a vertex for “Andy Serkis”, and linking “Gollum (LotR)” to “Andy Serkis” with the predicate “interpreted by”. Such integration of thought generation and KG construction creates a feedback loop where the KG continuously evolves as the task progresses, aligning the representation with problem requirements.

In order to evolve the KG task representation, KGoT iteratively interacts with tools and retrieves more information. For instance, the system might query the internet to identify videos narrated by Andy Serkis (e.g., “The Silmarillion“ and “We Are Stars”). It can also use a YouTube transcriber tool to find their publication date. This iterative refinement allows the KG to model the current “state” of a task at each step, creating a more complete and structured representation of this task and bringing it closer to completion. Once the KG has been sufficiently populated with task-specific knowledge, it serves as a robust resource for solving the problem.

In addition to adding new graph elements, KGoT also supports other graph operations. This includes removing nodes and edges, used as a part of noise elimination strategies.

2.3 Extracting Information from the KG

To accommodate different tasks, KGoT supports different ways to extract the information from the KG. Currently, we offer graph query languages or general-purpose languages; each of them can be combined with the so-called Direct Retrieval. First, one can use a graph query, prepared by the LLM in a language such as Cypher (Francis et al., 2018) or SPARQL (Pérez et al., 2009), to extract the answer to the task from the graph. This works particularly well for tasks that require retrieving specific patterns within the KG. Second, we also support general scripts prepared by the LLM in a general-purpose programming language such as Python. This approach, while not as effective as query languages for pattern matching, offers greater flexibility and may outperform the latter when a task requires, for example, traversing a long path in the graph. Third, in certain cases, once enough information is gathered into the KG, it may be more effective to directly paste the KG into the LLM context and ask the LLM to solve the task, instead of preparing a dedicated query or script. We refer to this approach as Direct Retrieval.

The above schemes offer a tradeoff between accuracy, cost, and runtime. For example, when low latency is priority, general-purpose languages should be used, as they provide an efficient lightweight representation of the KG and offer rapid access and modification of graph data. When token cost is most important, one should avoid Direct Retrieval (which consumes many tokens as it directly embeds the KG into the LLM context) and focus on either query or general-purpose languages, with a certain preference for the former, because its generated queries tend to be shorter than scripts. Finally, when aiming for solving as many tasks as possible, one should experiment with all three schemes. As shown in the Evaluation section, these methods have complementary strengths: Direct Retrieval is effective for broad contextual understanding, while graph queries and scripts are better suited for structured reasoning.

2.4 Representing the KG

KGoT can construct three interoperable KG representations: Property graphs (used with graph query languages such as Cypher and systems such as Neo4j (Robinson et al., 2015)), RDF graphs (used with graph query languages such as SPARQL and systems such as RDF4J (Ben Mahria et al., 2021)), and the adjacency list graphs (Besta et al., 2018) (used with general-purpose languages such as Python and systems such as NetworkX (NetworkX Developers, 2025)).

Each representation supports a different class of analysis. The Property graph view facilitates analytics such as pattern matching, filtering, of motif queries directly on the evolving task-state graph. The RDF graph view facilitates reasoning over ontology constraints, schema validation, and SPARQL-based inference for missing links. The adjacency list representation with NetworkX facilitates Python-based graph analytics, for example centrality measures, connected components, clustering coefficients, etc., all on the same KG snapshots.

Appendix A contains examples of task-specific KGs, illustrating how their topology varies with the task domain (e.g., tree-like procedural chains vs. dense relational subgraphs in multi-entity reasoning).

2.5 Bias, Fairness, and Noise Mitigation through KG-Based Representation

KGoT externalizes and structures the reasoning process, which reduces noise, mitigates model bias, and improves fairness, because in each iteration both the outputs from tools and LLM thoughts are converted into triples and stored explicitly. Unlike opaque monolithic LLM generations, this fosters transparency and facilitates identifying biased inference steps. It also facilitates noise mitigation: new triples can be explicitly checked for the quality of their information content before being integrated into the KG, and existing triples can also be removed if they are deemed redundant (examples of such triples that have been found and removed are in Appendix B.6).

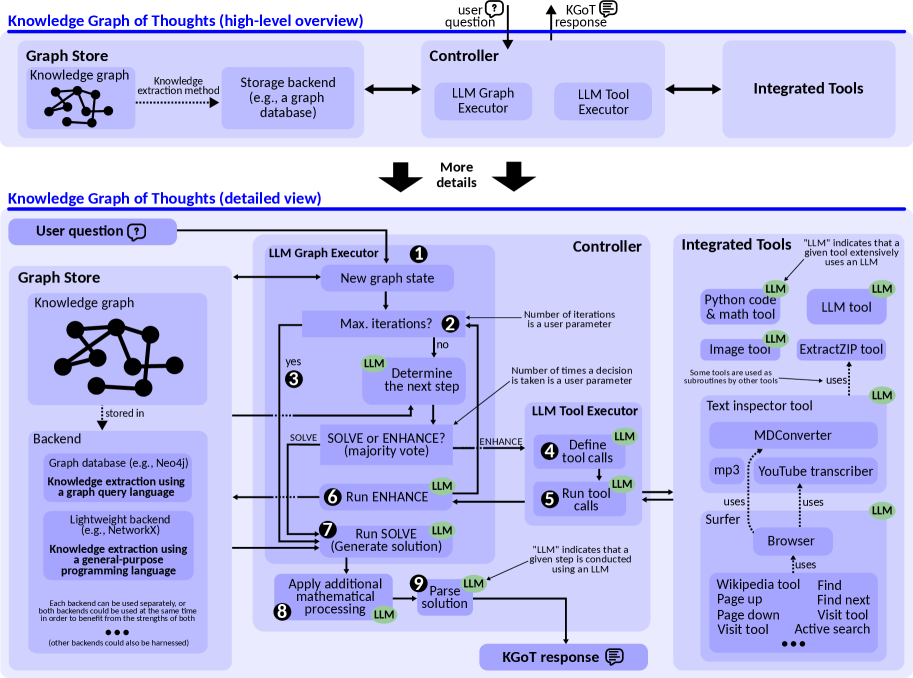

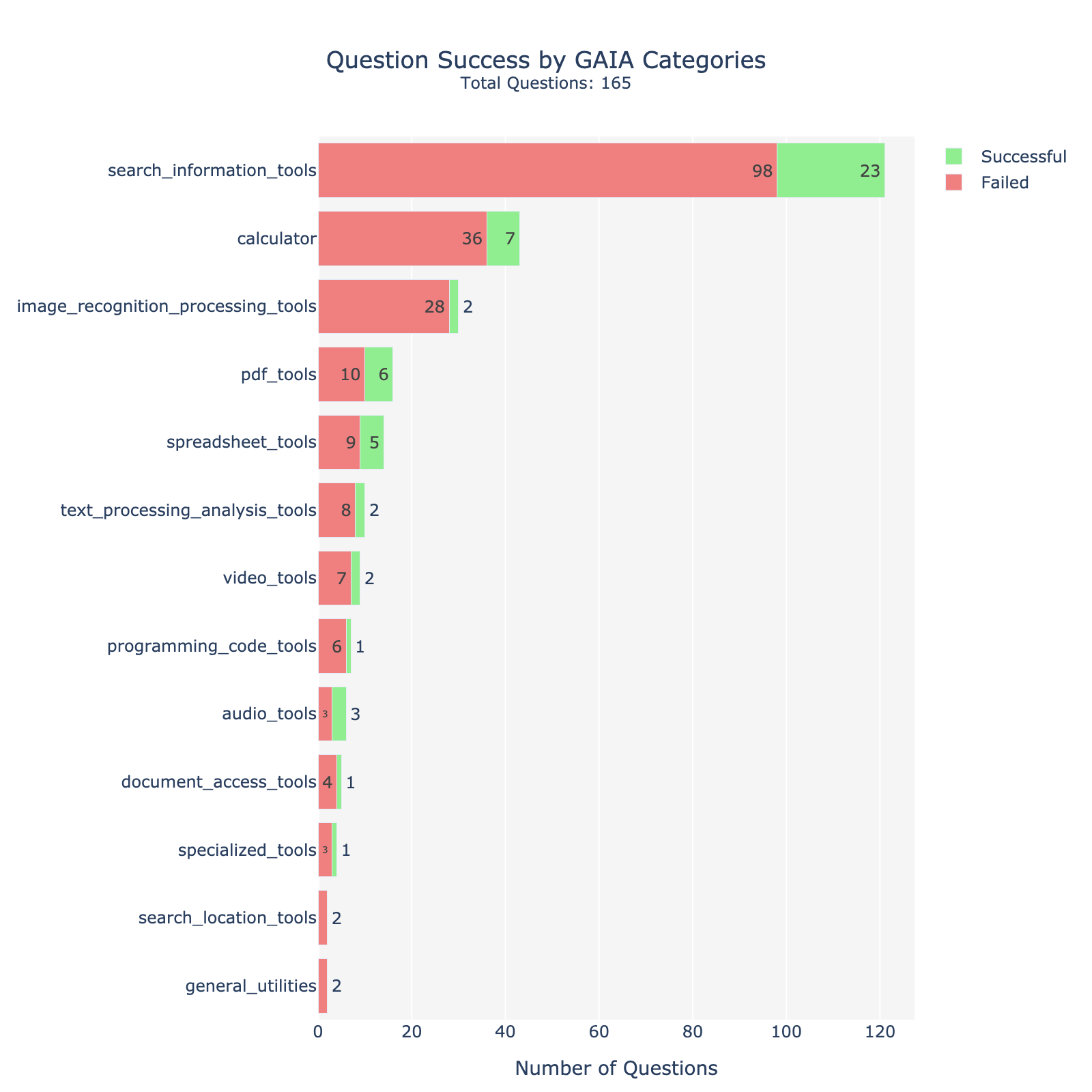

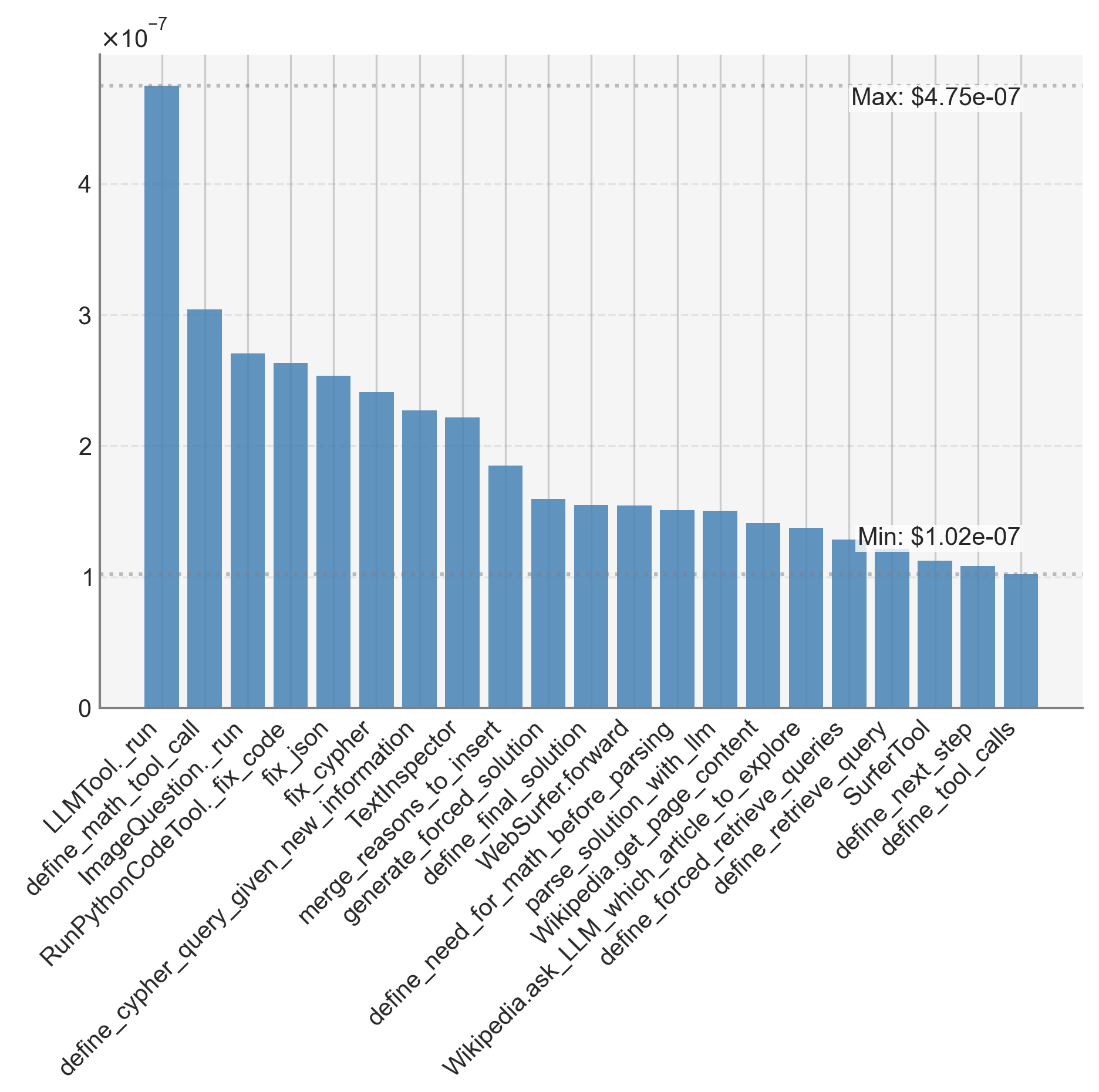

3 System Architecture

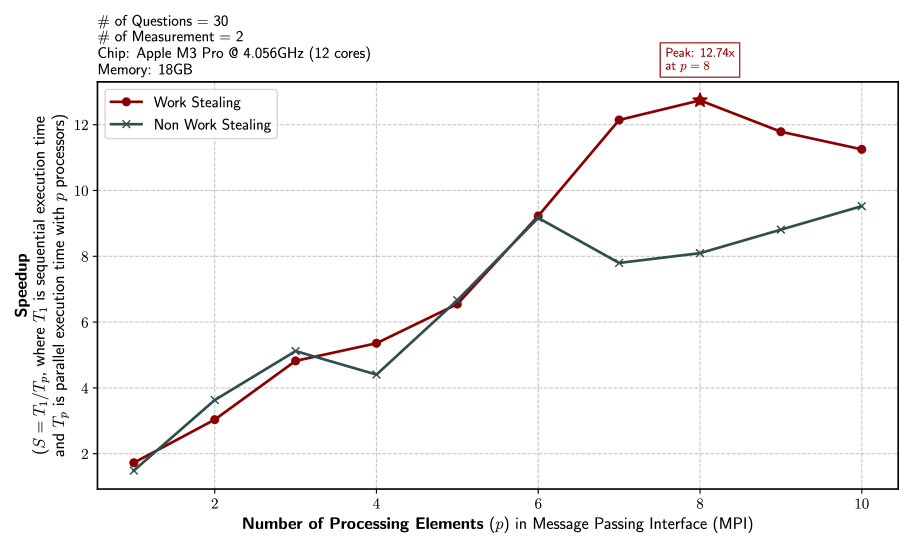

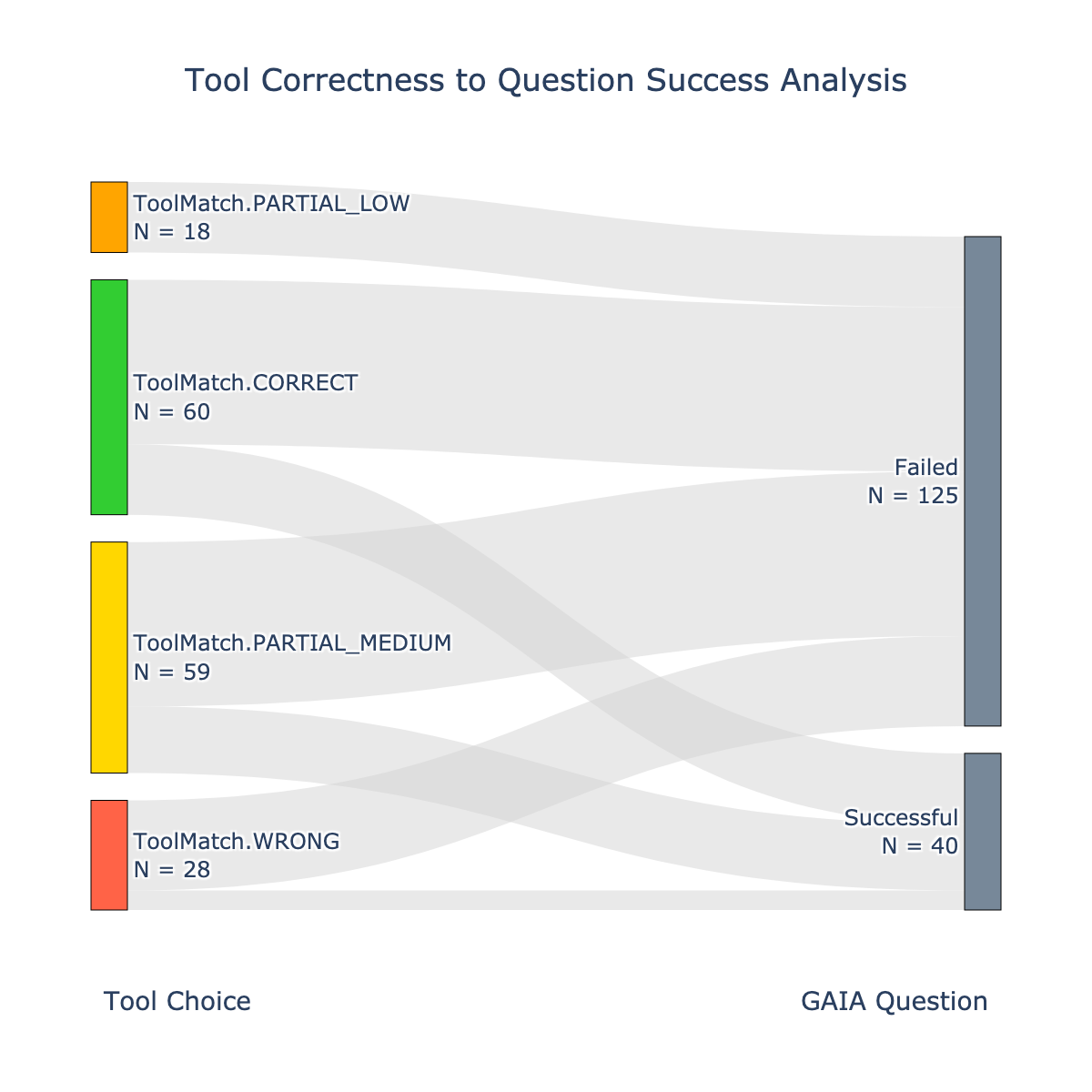

The KGoT modular and flexible architecture, pictured in Figure 2, consists of three main components: the Graph Store Module, the Controller, and the Integrated Tools, each playing a critical role in the task-solving process. Below, we provide a detailed description of each component and its role in the system. Additional details are in Appendix B (architecture) and in Appendix C (prompts).

3.1 Maintaining the Knowledge Graph with the Graph Store Module

A key component of the KGoT system is the Graph Store Module, which manages the storage and retrieval of the dynamically evolving knowledge graph which represents the task state. In order to harness graph queries, we use a graph database backend; in the current KGoT implementation, we test Cypher together with Neo4j (Robinson et al., 2015), an established graph database (Besta et al., 2023b; c), as well as SPARQL together with the RDF4J backend (Ben Mahria et al., 2021). Then, in order to support graph accesses using a general-purpose language, KGoT harnesses the NetworkX library (NetworkX Developers, 2025) and Python. Note that the extensible design of KGoT enables seamless integration of any other backends and languages.

3.2 Managing the Workflow with the Controller Module

The Controller orchestrates the interactions between the KG and the tools. Upon receiving a user query, it iteratively interprets the task, determines the appropriate tools to invoke based on the KG state and task needs, and integrates tool outputs back into the KG. The Controller uses a dual-LLM architecture with a clear separation of roles: the LLM Graph Executor constructs and evolves the KG, while the LLM Tool Executor manages tool selection and execution.

The LLM Graph Executor determines the next steps after each iteration that constructs and evolves the KG. It identifies any missing information necessary to solve the task, formulates appropriate queries for the graph store interaction (retrieve/insert operations), and parses intermediate or final results for integration into the KG. It also prepares the final response to the user based on the KG.

The LLM Tool Executor operates as the executor of the plan devised by the LLM Graph Executor. It identifies the most suitable tools for retrieving missing information, considering factors such as tool availability, relevance, and the outcome of previous tool invocation attempts. For example, if a web crawler fails to retrieve certain data, the LLM Tool Executor might prioritize a different retrieval mechanism or adjust its queries. The LLM Tool Executor manages the tool execution process, including interacting with APIs, performing calculations, or extracting information, and returns the results to the LLM Graph Executor for further reasoning and integration into the KG.

3.3 Ensuring Versatile and Extensible Set of Integrated Tools

KGoT offers a hierarchical suite of tools tailored to diverse task needs. The Python Code Tool enables dynamic script generation and execution for complex computations. The LLM Tool supplements the controller’s reasoning by integrating an auxiliary language model, enhancing knowledge access while minimizing hallucination risk. For multimodal inputs, the Image Tool supports image processing and extraction. Web-based tasks are handled by the Surfer Agent (based on the design by Hugging Face Agents (Roucher & Petrov, 2025)), which leverages tools like the Wikipedia Tool, granular navigation tools (PageUp, PageDown, Find), and SerpApi (SerpApi LLM, 2025) for search. Additional tools include the ExtractZip Tool for compressed files and the Text Inspector Tool for converting content from sources like MP3s and YouTube transcripts into Markdown. Finally, the user can seamlessly add a new tool by initializing the tool, passing in the logger object for tool use statistics, and appending the tool to the tool list via a Tool Manager object. We require all tools implemented to adhere to the LangChain’s BaseTool interface class. This way, the list of tools managed by the Tool Manager can be directly bound to the LLM Tool Executor via LangChain bind_tools, further facilitating new tools.

3.4 Ensuring High-Performance & Scalability

The used scalability optimizations include (1) asynchronous execution using asyncio (Python Software Foundation, 2025b) to parallelize LLM tool invocations, mitigating I/O bottlenecks and reducing idle time, (2) graph operation parallelism by reformulating LLM-generated Cypher queries to enable concurrent execution of independent operations in a graph database, and (3) MPI-based distributed processing, which decomposes workloads into atomic tasks distributed across ranks using a work-stealing algorithm to ensure balanced computational load and scalability.

3.5 Ensuring System Robustness

Robustness is ensured with two established mechanisms, Self-Consistency (Wang et al., 2023b) (via majority voting) and LLM-as-a-Judge (Gu et al., 2025) (other strategies such as embedding-based stability are also applicable (Besta et al., 2025d)). With Self-Consistency, we query the LLM multiple times when deciding whether to insert more data into the KG or retrieve existing data, when deciding which tool to use, and when parsing the final solution. This approach reduces the impact of single-instance errors or inconsistencies in various parts of the KGoT architecture. LLM-as-a-Judge further reinforces the robustness, by directly employing the LLM agent to make these decisions based on generated reasoning chains.

Overall, both Self-Consistency and LLM-as-a-Judge have been shown to significantly enhance the robustness of prompting. For example, MT-Bench and Chatbot Arena show that strong judges (e.g., GPT-4 class) match human preferences at 80% agreement or more, on par with human-human agreement (Zheng et al., 2023). Prometheus/Prometheus-2 further demonstrate open evaluator LMs with the highest correlations to both humans and proprietary judges across direct-assessment and pairwise settings, and AlpacaEval has been validated against approximately 20K human annotations, addressing earlier concerns about reproducibility at scale. Similarly reliable gains have been shown for Self-Consistency (Wang et al., 2023b).

3.6 Ensuring Layered Error Containment & Management

To manage LLM-generated syntax errors, KGoT includes LangChain’s JSON parsers that detect syntax issues. When a syntax error is detected, the system first attempts to correct it by adjusting the problematic syntax using different encoders, such as the “unicode escape” (Python Software Foundation, 2025a). If the issue persists, KGoT employs a retry mechanism (three attempts by default) that uses the LLM to rephrase the query/command and attempts to regenerate its output. If the error persists, the system logs it for further analysis, bypasses the problematic query, and continues with other iterations.

To handle API & system related errors, such as the OpenAI code 500, we employ exponential backoff, implemented using the tenacity library (Tenacity Developers, 2025a). Additionally, KGoT includes comprehensive logging systems as part of its error management framework. These systems track the errors encountered during system operation, providing valuable data that can be easily parsed and analyzed (e.g., snapshots of the knowledge graphs or responses from third-party APIs).

The Python Executor tool, a key component of the system, is containerized to ensure secure execution of LLM-generated code. This tool is designed to run code with strict timeouts and safeguards, preventing potential misuse or resource overconsumption.

3.7 Implementation Details

KGoT employs Docker (Docker Inc., 2025) and Sarus (Benedicic et al., 2019) for containerization, enabling a consistent and isolated runtime environment for all components. We containerize critical modules such as the KGoT controller, the Neo4j knowledge graph, and integrated tools (e.g., the Python Executor tool for safely running LLM-generated code with timeouts). Here, Docker provides a widely adopted containerization platform for local and cloud deployments that guarantees consistency between development and production environments. Sarus, a specialized container platform designed for high-performance computing (HPC) environments, extends KGoT’s portability to HPC settings where Docker is typically unavailable due to security constraints. This integration allows KGoT to operate efficiently in HPC environments, leveraging their computational power.

KGoT also harnesses LangChain (LangChain Inc., 2025a), an open-source framework specifically designed for creating and orchestrating LLM-driven applications. LangChain offers a comprehensive suite of tools and APIs that simplify the complexities of managing LLMs, including prompt engineering, tool integration, and the coordination of LLM outputs.

4 System Workflow

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Knowledge Graph of Thoughts - High-Level & Detailed View

### Overview

This diagram illustrates the architecture of a "Knowledge Graph of Thoughts" system, presenting both a high-level overview and a detailed view of its components and workflow. The system takes a user question as input and generates a Knowledge Graph of Thoughts (KGOT) response. It leverages a combination of knowledge graphs, Large Language Models (LLMs), and integrated tools.

### Components/Axes

The diagram is divided into two main sections: a high-level overview at the top and a detailed view at the bottom, separated by a "More details" banner. Key components include:

* **User Question:** Input to the system.

* **Controller:** Manages the overall process.

* **LLM Graph Executor:** Executes graph-related operations using LLMs.

* **LLM Tool Executor:** Executes tool calls using LLMs.

* **Integrated Tools:** A collection of tools used by the LLM Tool Executor (Python code & math tool, Image tool, Text inspector, MDConverter, mp3, YouTube transcriber, Browser).

* **Graph Store:** Stores the knowledge graph.

* **Backend:** The underlying data storage and knowledge extraction mechanism.

* **KGOT Response:** The output of the system.

The detailed view includes numbered steps (1-9) representing the workflow. There are also annotations indicating where LLMs are used extensively.

### Detailed Analysis or Content Details

**High-Level Overview:**

* **User Question** (top-left): An icon representing a user asking a question.

* **Controller** (top-center): A rectangular box labeled "Controller".

* **LLM Graph Executor** (top-right): A rectangular box labeled "LLM Graph Executor".

* **LLM Tool Executor** (top-right): A rectangular box labeled "LLM Tool Executor".

* **Integrated Tools** (top-right): A rectangular box labeled "Integrated Tools".

* **KGOT Response** (top-right): An icon representing the system's response.

* **Knowledge Store** (top-left): A circular diagram representing a knowledge graph with nodes and edges. Text: "Knowledge graph", "Knowledge extraction method".

* **Storage Backend** (top-center): A rectangular box labeled "Storage backend (e.g., a graph database)".

**Detailed View:**

1. **New graph state** (left-center): A box labeled "New graph state".

2. **Max. iterations?** (center-left): A diamond-shaped decision node labeled "Max. iterations?". An arrow labeled "no" leads to step 3.

3. **Determine the next step** (center-left): A box labeled "Determine the next step (majority vote)". An arrow labeled "yes" loops back to step 1. This step is performed by an LLM.

4. **Define tool calls** (center-right): A box labeled "Define tool calls". This step is performed by an LLM.

5. **Run tool calls** (center-right): A box labeled "Run tool calls".

6. **Run ENHANCE** (bottom-left): A box labeled "Run ENHANCE". This step is performed by an LLM.

7. **Run SOLVE** (bottom-left): A box labeled "Run SOLVE (Generate solution)". This step is performed by an LLM.

8. **Apply additional mathematical processing** (bottom-left): A box labeled "Apply additional mathematical processing". This step is performed by an LLM.

9. **Parse solution** (bottom-right): A box labeled "Parse solution". This step is performed by an LLM.

**Integrated Tools (right side):**

* **Python code & math tool:** Labeled "LLM" indicating LLM usage.

* **Image tool:** Labeled "LLM". Includes "ExtractZIP tool".

* **Text inspector:** Labeled "LLM".

* **MDConverter:** Labeled "LLM".

* **mp3:** Labeled "LLM".

* **YouTube transcriber:** Labeled "LLM".

* **Browser:** Labeled "LLM". Includes "Wikipedia tool" with options "Find", "Find next", "Visit tool", "Active search".

* Annotations: "LLM" indicates that a given step extensively uses an LLM. "uses" arrows connect tools to LLM.

**Backend (bottom-left):**

* **Graph database (e.g., Neo4j)**

* **Knowledge extraction using a graph query language**

* **Lightweight backend using knowledge extraction and a general-purpose language**

* Text: "Each backend can be used separately or at the same time in order to benefit from the strengths of both."

* Text: "(other backends could also be harnessed)"

### Key Observations

* The system is heavily reliant on LLMs, as indicated by the "LLM" annotations throughout the detailed view.

* The workflow involves an iterative process (steps 1-3) until a maximum number of iterations is reached.

* The system utilizes a variety of integrated tools to enhance its capabilities.

* The backend can leverage different knowledge extraction methods and storage solutions.

* The diagram clearly distinguishes between the high-level architecture and the detailed workflow.

### Interpretation

The diagram depicts a sophisticated system for reasoning and problem-solving using knowledge graphs and LLMs. The iterative loop (1-3) suggests a process of refinement and exploration within the knowledge graph. The use of multiple integrated tools allows the system to handle diverse types of information and tasks. The backend flexibility indicates that the system can be adapted to different data sources and knowledge extraction techniques. The diagram highlights the central role of LLMs in orchestrating the entire process, from graph manipulation to tool execution and solution generation. The system appears designed to be robust and adaptable, capable of tackling complex queries by leveraging both structured knowledge (the graph) and the generative capabilities of LLMs. The inclusion of "majority vote" in step 3 suggests a mechanism for handling uncertainty or conflicting information. The diagram is a blueprint for a system that aims to combine the strengths of symbolic reasoning (knowledge graphs) and statistical learning (LLMs).

</details>

Figure 2: Architecture overview of KGoT (top part) and the design details combined with the workflow (bottom part).

We show the workflow in the bottom part of Figure 2. The workflow begins when the user submits a problem to the system

<details>

<summary>x3.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

. The first step is to verify whether the maximum number of iterations allowed for solving the problem has been reached

<details>

<summary>x4.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

. If the iteration limit is exceeded, the system will no longer try to gather additional information and insert it into the KG, but instead will return a solution with the existing data in the KG

<details>

<summary>x5.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

. Otherwise, the majority vote (over several replies from the LLM) decides whether the system should proceed with the Enhance pathway (using tools to generate new knowledge) or directly proceed to the Solve pathway (gathering the existing knowledge in the KG and using it to deliver the task solution).

The Enhance Pathway If the majority vote indicates an Enhance pathway, the next step involves determining the tools necessary for completing the Enhance operation

<details>

<summary>x6.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

. The system then orchestrates the appropriate tool calls based on the KG state

<details>

<summary>x7.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

. Once the required data from the tools is collected, the system generates the Enhance query or queries to modify the KG appropriately. Each Enhance query is executed

<details>

<summary>x8.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

and its output is validated. If an error or invalid value is returned, the system attempts to fix the query, retrying a specified number of times. If retries fail, the query is discarded, and the operation moves on. After processing the Enhance operation, the system increments the iteration count and continues until the KG is sufficiently expanded or the iteration limit is reached. This path ensures that the knowledge graph is enriched with relevant and accurate information, enabling the system to progress toward a solution effectively.

The Solve Pathway If the majority vote directs the system to the Solve pathway, the system executes multiple solve operations iteratively

<details>

<summary>x9.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

. If an execution produces an invalid value or error three times in a row, the system asks the LLM to attempt to correct the issue by recreating the used query. The query is then re-executed. If errors persist after three such retries, the query is regenerated entirely, disregarding the faulty result, and the process restarts. After the Solve operation returns the result, final parsing is applied, which includes potential mathematical processing to resolve potential calculations

<details>

<summary>x10.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

and refining the output (e.g., formatting the results appropriately)

<details>

<summary>x11.png Details</summary>

### Visual Description

Icon/Small Image (19x14)

</details>

.

5 Evaluation

We now show advantages of KGoT over the state of the art. Additional results and full details on the evaluation setup are in Appendix D.

Comparison Baselines. We focus on the Hugging Face (HF) Agents (Roucher & Petrov, 2025), the most competitive scheme in the GAIA benchmark for the hardest level 3 tasks with the GPT-4 class of models. We also compare to two agentic frameworks, namely GPTSwarm (Zhuge et al., 2024) (a representative graph-enhanced multi-agent scheme) and Magentic-One (Fourney et al., 2024), an AI agent equipped with a central orchestrator and multiple integrated tool agents. Next, to evaluate whether database search outperforms graph-based knowledge extraction, we also consider two retrieval-augmented generation (RAG) (Lewis et al., 2020) schemes, a simple RAG scheme and GraphRAG (Edge et al., 2025). Both RAG baselines use the same tool-generated knowledge, chunking data at tool-call granularity (i.e., a chunk corresponds to individual tool call output). Simple RAG constructs a vector database from these tool outputs while GraphRAG instead models the tool outputs as a static KG of entities and relations, enabling retrieval via graph traversal. Finally, we use Zero-Shot schemes where a model answers without any additional agent framework.

KGoT variants. First, we experiment with graph query languages vs. general-purpose languages, cf. Section 2.3. For each option, we vary how the Solve operation is executed, by either having the LLM send a request to the backend (a Python script for NetworkX and a Cypher/SPARQL query for Neo4j/RDF4J) or by directly asking the LLM to infer the answer based on the KG (Direct Retrieval (DR)). We experiment with different query languages (Cypher vs. SPARQL). We also consider “fusion” runs, which simulate the effect from KGoT runs with both graph backends available simultaneously (or both Solve operation variants harnessed for each task). Fusion runs only incur negligible additional storage overhead because the generated KGs are small (up to several hundreds of nodes). Finally, we experiment with different tool sets. To focus on the differences coming from harnessing the KG, we reuse several utilities from AutoGen (Wu et al., 2024) such as Browser and MDConverter, and tools from HF Agents, such as Surfer Agent, web browsing tools, and Text Inspector.

Considered Metrics We focus primarily on the number of solved tasks as well as token costs ($). Unless stated otherwise, we report single run results due to budget reasons.

Considered Datasets We use the GAIA benchmark (Mialon et al., 2024) focusing on the validation set (165 tasks) for budgetary reasons and also because it comes with the ground truth answers. The considered tasks are highly diverse in nature; many require parsing websites or analyzing PDF, image, and audio files. We focus on GAIA as this is currently the most comprehensive benchmark for general-purpose AI assistants, covering diverse domains such as web navigation, code execution, image reasoning, scientific QA, and multimodal tasks. We further evaluate on SimpleQA (Wei et al., 2024), a factuality benchmark of 4,326 questions, of which we sample 10% for budgetary reasons. The dataset spans diverse topics and emphasizes single, verifiable answers, making it effective for assessing factual accuracy.

<details>

<summary>x12.png Details</summary>

### Visual Description

## Bar Chart: Performance Comparison of Language Models on Solving Tasks

### Overview

This image presents a comparative bar chart evaluating the performance of several language models (GPT-40, GPT-4 mini, and variations incorporating Knowledge Graphs (KGs) and Retrieval Augmented Generation (RAG)) across different task-solving scenarios: Zero-Shot, KGoT, KGoT (fusion), and Baselines. The chart displays two primary metrics: the number of solved tasks (left panel) and the average cost per task in US dollars (right panel). The models are positioned along the x-axis, and performance is represented by bar height. Three levels of performance are indicated by color: Level 1 (light blue), Level 2 (medium blue), and Level 3 (dark blue).

### Components/Axes

* **X-axis (Left & Right Panels):** Models being compared: GPT-40, GPT-4 mini, Neo4j, Neo4j + Query, Network + DR, Network + Query, Network + Query + DR, RDP4j + DR, Neo4j + Network (Query + DR), Simple RAG, GraphSwarm, MagneticOne, HF GPT-40 mini.

* **Y-axis (Left Panel):** "Number of Solved Tasks (the higher the better)", ranging from 0 to 70, with a linear scale.

* **Y-axis (Right Panel):** "Average Cost ($) (the lower the better)", displayed on a logarithmic scale from 10^-3 to 10^-1 (0.001 to 0.1).

* **Legend (Top-Left):** Level 1 (light blue), Level 2 (medium blue), Level 3 (dark blue).

* **Annotations:** "Max: 71" and "Max: 3.403$" indicating the maximum values for each metric.

* **Horizontal Labels:** Dividing the chart into sections: "Zero-Shot", "KGoT", "KGoT (fusion)", "Baselines".

### Detailed Analysis or Content Details

**Left Panel: Number of Solved Tasks**

* **GPT-40:** Shows a consistent high performance across all categories.

* Zero-Shot: Approximately 17 tasks solved.

* KGoT: Approximately 21 tasks solved.

* KGoT (fusion): Approximately 34 tasks solved.

* Baselines: Approximately 31 tasks solved.

* **GPT-4 mini:** Generally lower performance than GPT-40.

* Zero-Shot: Approximately 10 tasks solved.

* KGoT: Approximately 21 tasks solved.

* KGoT (fusion): Approximately 28 tasks solved.

* Baselines: Approximately 22 tasks solved.

* **Neo4j:** Performance varies.

* Zero-Shot: Approximately 14 tasks solved.

* KGoT: Approximately 18 tasks solved.

* KGoT (fusion): Approximately 24 tasks solved.

* Baselines: Approximately 13 tasks solved.

* **Neo4j + Query:** Similar to Neo4j.

* Zero-Shot: Approximately 21 tasks solved.

* KGoT: Approximately 21 tasks solved.

* KGoT (fusion): Approximately 33 tasks solved.

* Baselines: Approximately 18 tasks solved.

* **Network + DR:** Performance is moderate.

* Zero-Shot: Approximately 16 tasks solved.

* KGoT: Approximately 21 tasks solved.

* KGoT (fusion): Approximately 20 tasks solved.

* Baselines: Approximately 13 tasks solved.

* **Network + Query:** Performance is moderate.

* Zero-Shot: Approximately 16 tasks solved.

* KGoT: Approximately 21 tasks solved.

* KGoT (fusion): Approximately 24 tasks solved.

* Baselines: Approximately 18 tasks solved.

* **Network + Query + DR:** Performance is moderate.

* Zero-Shot: Approximately 18 tasks solved.

* KGoT: Approximately 20 tasks solved.

* KGoT (fusion): Approximately 34 tasks solved.

* Baselines: Approximately 20 tasks solved.

* **RDP4j + DR:** Performance is moderate.

* Zero-Shot: Approximately 20 tasks solved.

* KGoT: Approximately 21 tasks solved.

* KGoT (fusion): Approximately 27 tasks solved.

* Baselines: Approximately 14 tasks solved.

* **Neo4j + Network (Query + DR):** Performance is high.

* Zero-Shot: Approximately 22 tasks solved.

* KGoT: Approximately 2 tasks solved.

* KGoT (fusion): Approximately 33 tasks solved.

* Baselines: Approximately 14 tasks solved.

* **Simple RAG:** Performance is moderate.

* Zero-Shot: Approximately 13 tasks solved.

* KGoT: Approximately 18 tasks solved.

* KGoT (fusion): Approximately 28 tasks solved.

* Baselines: Approximately 13 tasks solved.

* **GraphSwarm:** Performance is moderate.

* Zero-Shot: Approximately 13 tasks solved.

* KGoT: Approximately 18 tasks solved.

* KGoT (fusion): Approximately 20 tasks solved.

* Baselines: Approximately 13 tasks solved.

* **MagneticOne:** Performance is moderate.

* Zero-Shot: Approximately 13 tasks solved.

* KGoT: Approximately 18 tasks solved.

* KGoT (fusion): Approximately 20 tasks solved.

* Baselines: Approximately 13 tasks solved.

* **HF GPT-40 mini:** Performance is moderate.

* Zero-Shot: Approximately 14 tasks solved.

* KGoT: Approximately 18 tasks solved.

* KGoT (fusion): Approximately 20 tasks solved.

* Baselines: Approximately 13 tasks solved.

**Right Panel: Average Cost ($)**

* **GPT-40:** Highest cost across all categories.

* Zero-Shot: Approximately 0.095$

* KGoT: Approximately 0.135$

* KGoT (fusion): Approximately 0.145$

* Baselines: Approximately 0.145$

* **GPT-4 mini:** Lower cost than GPT-40.

* Zero-Shot: Approximately 0.00175$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.0025$

* **Neo4j:** Moderate cost.

* Zero-Shot: Approximately 0.00135$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00135$

* **Neo4j + Query:** Moderate cost.

* Zero-Shot: Approximately 0.00145$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.002$

* **Network + DR:** Moderate cost.

* Zero-Shot: Approximately 0.00145$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00135$

* **Network + Query:** Moderate cost.

* Zero-Shot: Approximately 0.00145$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.002$

* **Network + Query + DR:** Moderate cost.

* Zero-Shot: Approximately 0.00165$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.002$

* **RDP4j + DR:** Moderate cost.

* Zero-Shot: Approximately 0.00165$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00145$

* **Neo4j + Network (Query + DR):** Moderate cost.

* Zero-Shot: Approximately 0.00175$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00145$

* **Simple RAG:** Moderate cost.

* Zero-Shot: Approximately 0.00145$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00135$

* **GraphSwarm:** Moderate cost.

* Zero-Shot: Approximately 0.00145$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00135$

* **MagneticOne:** Moderate cost.

* Zero-Shot: Approximately 0.00145$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00135$

* **HF GPT-40 mini:** Moderate cost.

* Zero-Shot: Approximately 0.00145$

* KGoT: Approximately 0.00195$

* KGoT (fusion): Approximately 0.00265$

* Baselines: Approximately 0.00135$

### Key Observations

* GPT-40 consistently achieves the highest number of solved tasks, but at a significantly higher cost.

* GPT-4 mini offers a substantial cost reduction but with a corresponding decrease in performance.

* Integrating Knowledge Graphs (KGoT and KGoT (fusion)) generally improves performance compared to Zero-Shot for most models.

* The "KGoT (fusion)" scenario consistently yields better results than "KGoT" alone.

* There's a clear trade-off between performance (number of solved tasks) and cost.

* The logarithmic scale on the right panel highlights the cost differences more effectively.

### Interpretation

The data suggests that while GPT-40 is the most capable model, its high cost may be prohibitive for many applications. Models incorporating Knowledge Graphs demonstrate improved performance, indicating the value of external knowledge sources. The fusion of KGoT with other techniques (e.g., Network + Query + DR) appears particularly effective. The chart illustrates a typical efficiency frontier: one can achieve higher performance, but only at the expense of increased cost. The optimal model choice will depend on the specific application's requirements and budget constraints. The consistent cost profile of the models other than GPT-40 and GPT-4 mini suggests a similar underlying computational expense, while the performance differences are likely due to the effectiveness of the knowledge integration and retrieval mechanisms. The logarithmic scale on the cost axis emphasizes the substantial cost difference between GPT-40 and the other models, making it a critical factor in decision-making.

</details>

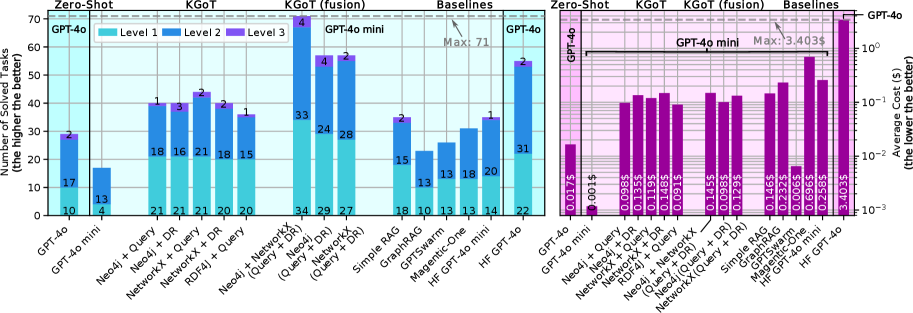

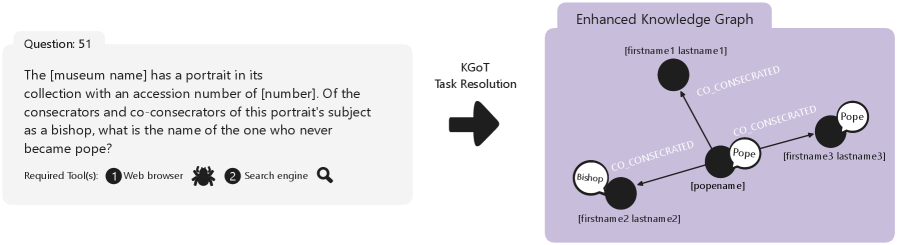

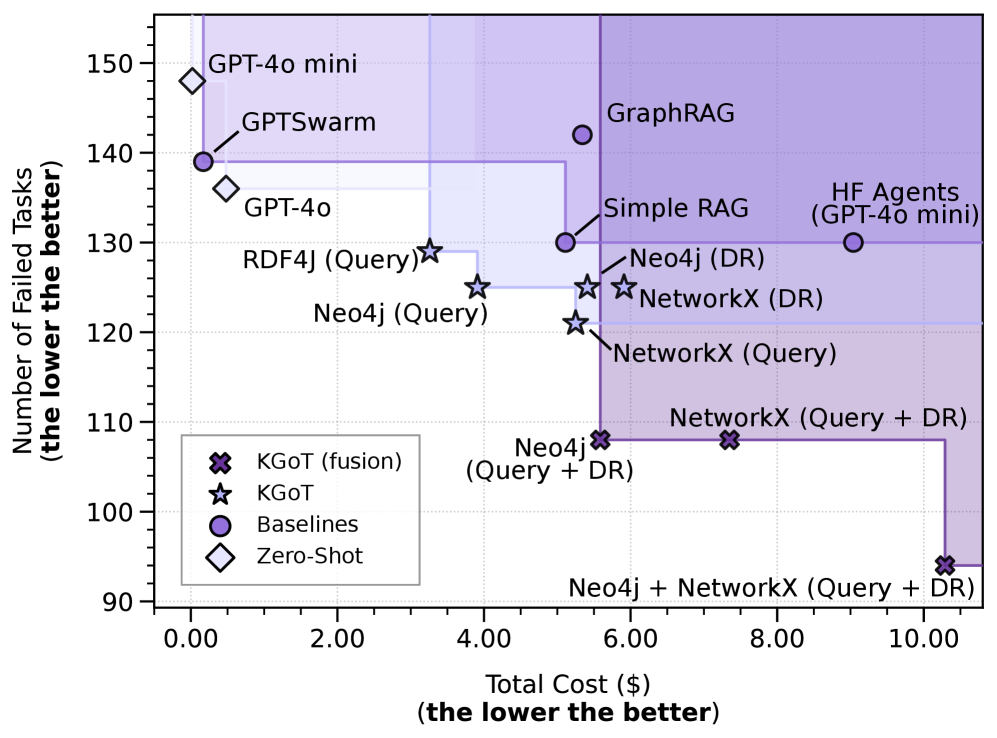

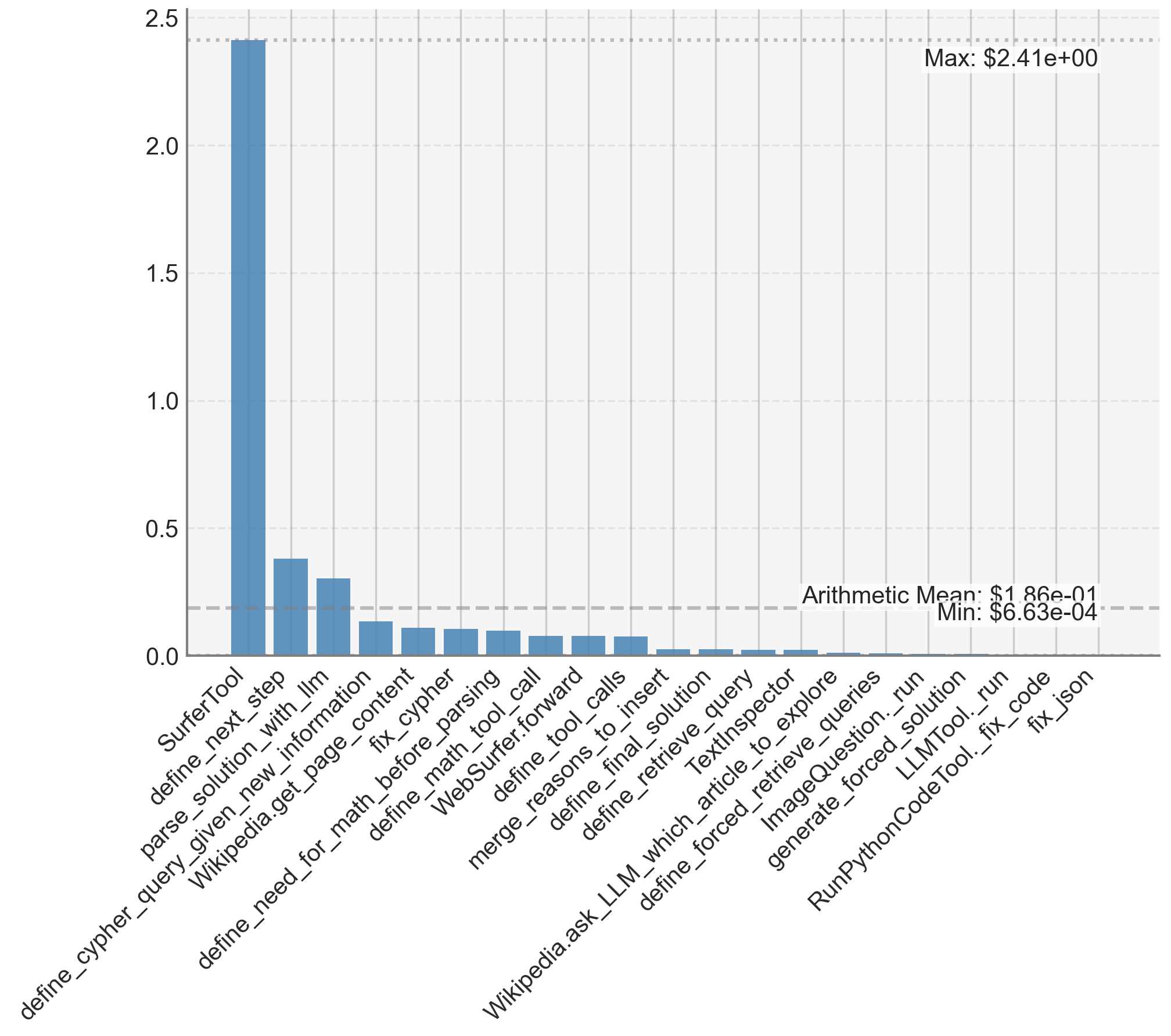

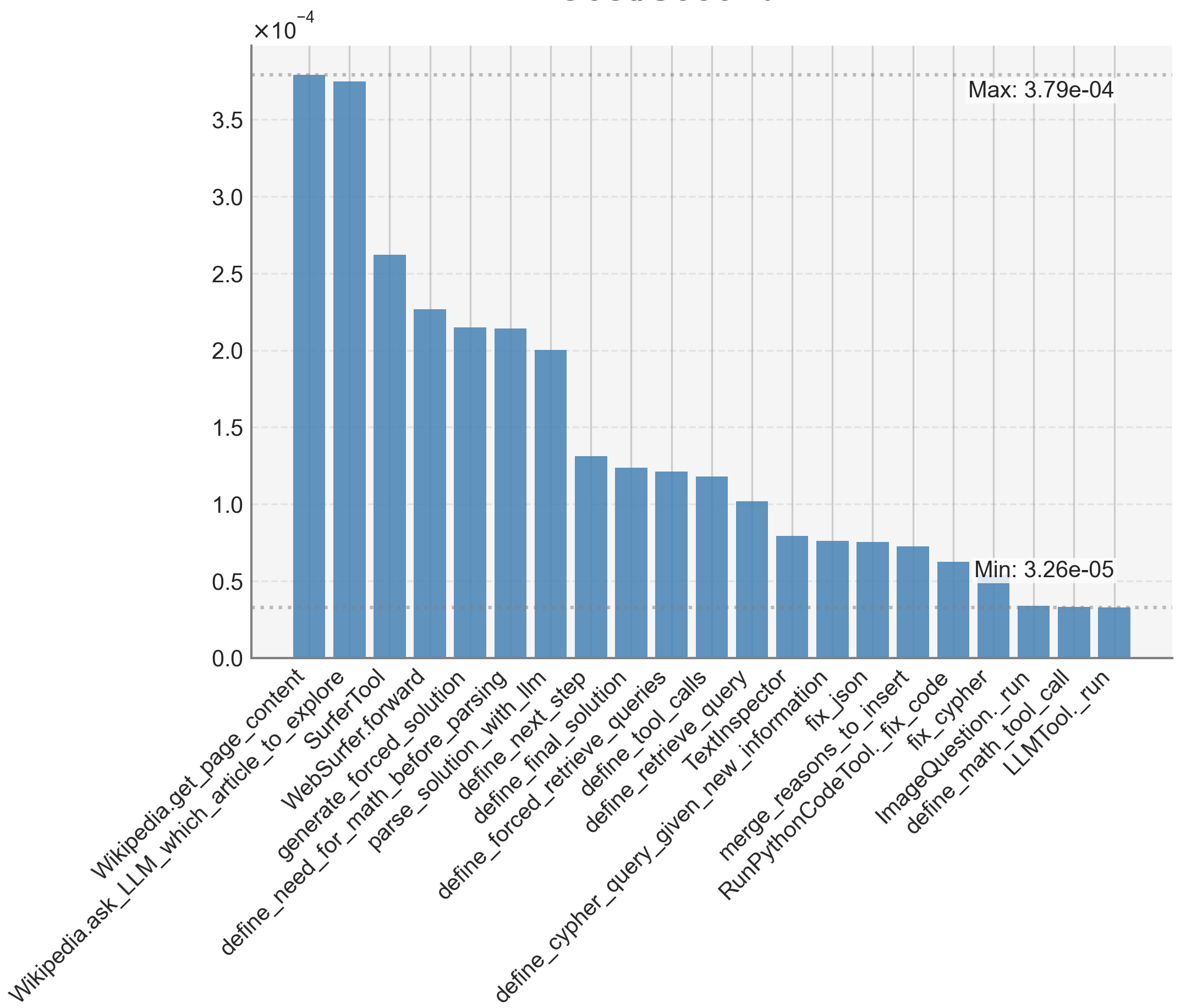

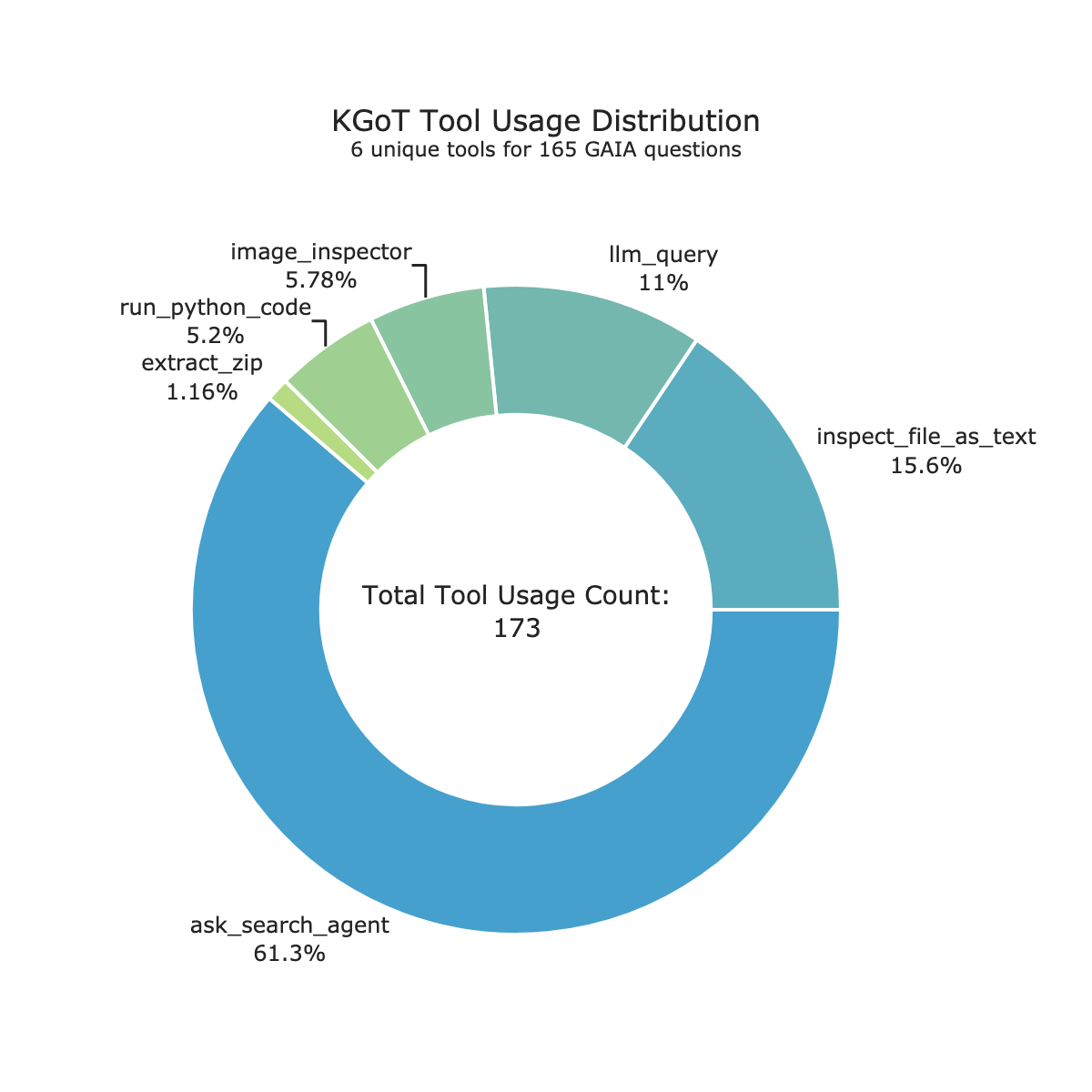

Figure 3: Advantages of different variants of KGoT over other baselines (Hugging Face Agents using both GPT-4o-mini and GPT-4o, Magentic-One, GPTSwarm, two RAG baselines, Zero-Shot GPT-4o mini, and Zero-Shot GPT-4o) on the validation dataset of the GAIA benchmark. DR stands for Direct Retrieval. The used model is GPT-4o mini unless noted otherwise.

5.1 Advantages of KGoT

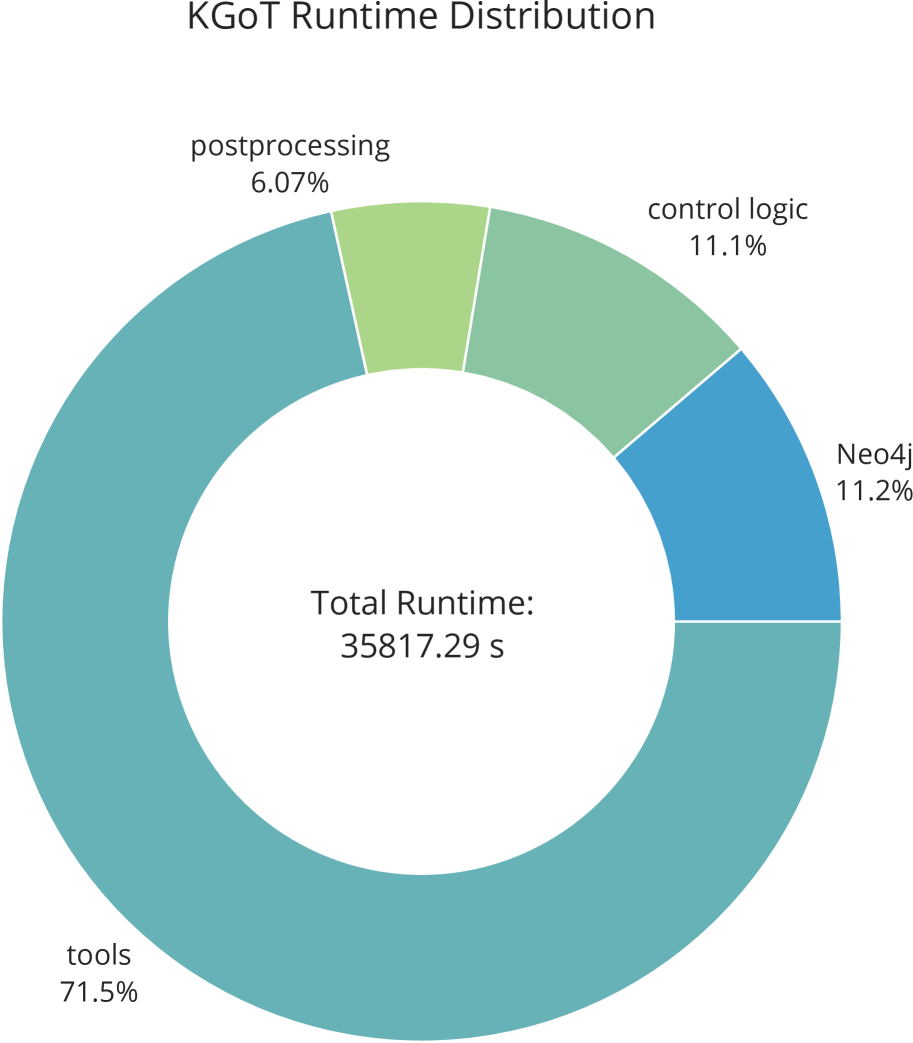

Figure 3 shows the number of solved tasks (the left side) as well as the average cost per solved task (the right side) for different KGoT variants as well as all comparison baselines. While we focus on GPT-4o mini, we also show the results for HF Agents and Zero-Shot with GPT-4o. Additionally, we show the Pareto front in Figure 11 for the multidimensional optimization problem of improving accuracy (i.e., reducing failed tasks) and lowering cost. All variants of KGoT solve a greater number of tasks (up to 9 more) compared to HF Agents while also being more cost-efficient (between 42% to 62% lower costs). The key reason for the KGoT advantages stems from harnessing the knowledge graph–based representation of the evolving task state.

The ideal fusion runs of Neo4j and NetworkX solve an even greater number of tasks (57 for both) than the single runs, they have a lower average cost (up to 62% lower than HF Agents), and they even outperform HF Agents with GPT-4o. The fusion of all combinations of backend and solver types solve by far the highest number of tasks (71) – more than twice as much as HF Agents – while also exhibiting 44% lower cost than HF Agents. The direct Zero-Shot use of GPT-4o mini and GPT-4o has the lowest average cost per solved task (just $0.0013 and $0.0164 respectively), making it the most cost-effective, however this approach is only able to solve 17 and 29 tasks, respectively. GPTSwarm is cheaper compared to KGoT, but also comes with fewer solved tasks (only 26). While Magentic-One is a capable agent with a sophisticated architecture, its performance with GPT-4o mini is limited, solving 31 tasks correctly, while also exhibiting significantly higher costs. Simple RAG yields somewhat higher costs than KGoT and it solves fewer tasks (35). GraphRAG performs even worse, solving only 23 tasks and incurring even higher cost. While neither RAG baseline can invoke new tools to gather missing information (reducing accuracy and adaptability), GraphRAG’s worse performance is due to the fact that it primarily targets query summarization and not tasks as diverse as those tested by GAIA. Overall, KGoT achieves the best cost-accuracy tradeoff, being both highly affordable and very effective.

5.2 Analysis of Methods for Knowledge Extraction

We explore different methods of extracting knowledge. Overall, in many situations, different methods have complementary strengths and weaknesses.

Graph queries with Neo4j excel at queries such as counting patterns. Yet, Cypher queries can be difficult to generate correctly, especially for graphs with more nodes and edges. Despite this, KGoT’s Cypher queries are able to solve many new GAIA tasks that could not be solved without harnessing Cypher. SPARQL (Pérez et al., 2009) + RDF4J (Eclipse Foundation, 2025) is slightly worse (36 tasks solved) than Cypher + Neo4j (existing literature also indicates that LLMs have difficulties formulating effective SPARQL queries (Emonet et al., 2024; Mecharnia & d’Aquin, 2025)).

Python with NetworkX offers certain advantages over Neo4j by eliminating the need for a separate database server, making it a lightweight choice for the KG. Moreover, NetworkX computations are fast and efficient for small to medium-sized graphs without the overhead of database transactions. Unlike Neo4j, which requires writing Cypher queries, we observe that in cases where Neo4j-based implementations struggle, NetworkX-generated graphs tend to be more detailed and provide richer vertex properties and relationships. This is likely due to the greater flexibility of Python code over Cypher queries for graph insertion, enabling more fine-grained control over vertex attributes and relationships. Another reason may be the fact that Python is likely more represented in the training data of the respective models than Cypher.

Our analysis of failed tasks indicates that, in many cases, the KG contains the required data, but the graph query fails to extract it. In such scenarios, Direct Retrieval, where the entire KG is included in the model’s context, performs significantly better by bypassing query composition issues. However, Direct Retrieval demonstrates lower accuracy in cases requiring structured, multi-step reasoning.

We also found that Direct Retrieval excels at extracting dispersed information but struggles with structured queries, whereas graph queries are more effective for structured reasoning but can fail when the LLM generates incorrect query formulations. Although both Cypher and general-purpose queries occasionally are erroneous, Python scripts require more frequent corrections because they are often longer and more error-prone. However, despite the higher number of corrections, the LLM is able to fix Python code more easily than Cypher queries, often succeeding after a single attempt. During retrieval, the LLM frequently embeds necessary computations directly within the Python scripts while annotating its reasoning through comments, improving transparency and interpretability.

5.3 Advantages on the GAIA Test Set

Table 1: Comparison of KGoT with other current state-of-the-art open-source agents on the full GAIA test set. The baseline data, including for TapeAgent (Bahdanau et al., 2024), of the number of solved tasks is obtained through the GAIA Leaderboard (Mialon et al., 2025). We highlight the best performing scheme in a given category in bold. Model: GPT-4o mini.

| Agents | All | L1 | L2 | L3 |

| --- | --- | --- | --- | --- |

| GPTSwarm | 33 | 15 | 15 | 3 |

| Magentic-One | 43 | 22 | 18 | 3 |

| TapeAgent | 66 | 28 | 35 | 3 |

| Hugging Face Agents | 68 | 30 | 34 | 4 |

| KGoT (fusion) | 73 | 33 | 36 | 4 |

Furthermore, our approach achieves state-of-the-art performance on the GAIA test set with the GPT-4o mini model. The results are shown in Table 1, underscoring its effectiveness across all evaluation levels. The test set consists of 301 tasks (93 level 1 tasks, 159 level 2 tasks and 49 level 3 tasks).

5.4 Advantages beyond GAIA Benchmark

We also evaluate KGoT as well as HF Agents and GPTSwarm on a 10% sample (433 tasks) of the SimpleQA benchmark (detailed results are in Appendix D.1). KGoT performs best, solving 73.21%, while HF Agents and GPTSwarm exhibit reduced accuracy (66.05% and 53.81% respectively). KGoT incurs only 0.018$ per solved task, less than a third of the HF Agents costs (0.058$), while being somewhat more expensive than GPTSwarm (0.00093$).

We further evaluate KGoT on the entire SimpleQA benchmark (due to very high costs of running all SimpleQA questions, we limit the full benchmark evaluation to KGoT). We observe no degradation in performance with a 70.34% accuracy rate. When compared against the official F1-scores of various OpenAI and Claude models (OpenAI, 2025), KGoT outperforms all the available results. Specifically, our design achieves a 71.06% F1 score, significantly surpassing the 49.4% outcome of the top-performing reasoning model and improving upon all mini-reasoning models by at least 3.5 $×$ . Furthermore, KGoT exceeds the performance of all standard OpenAI models, from GPT-4o’s 40% F1 score to the best-scoring closed-source model, GPT-4.5, with 62.5%. More detailed results are available in Appendix D.1.

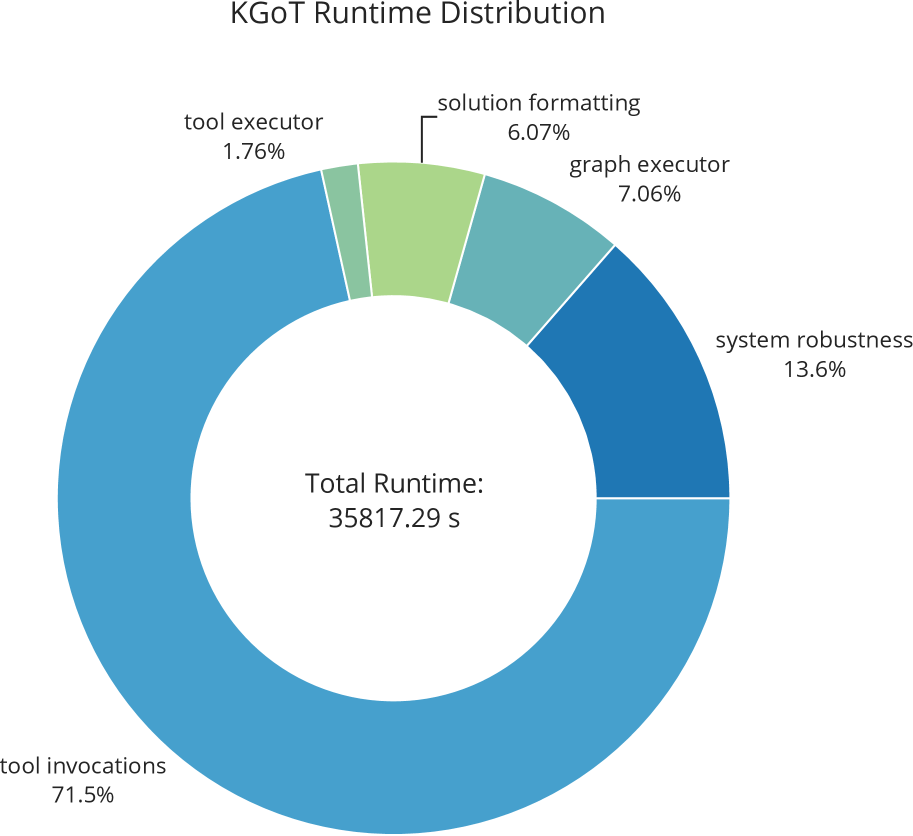

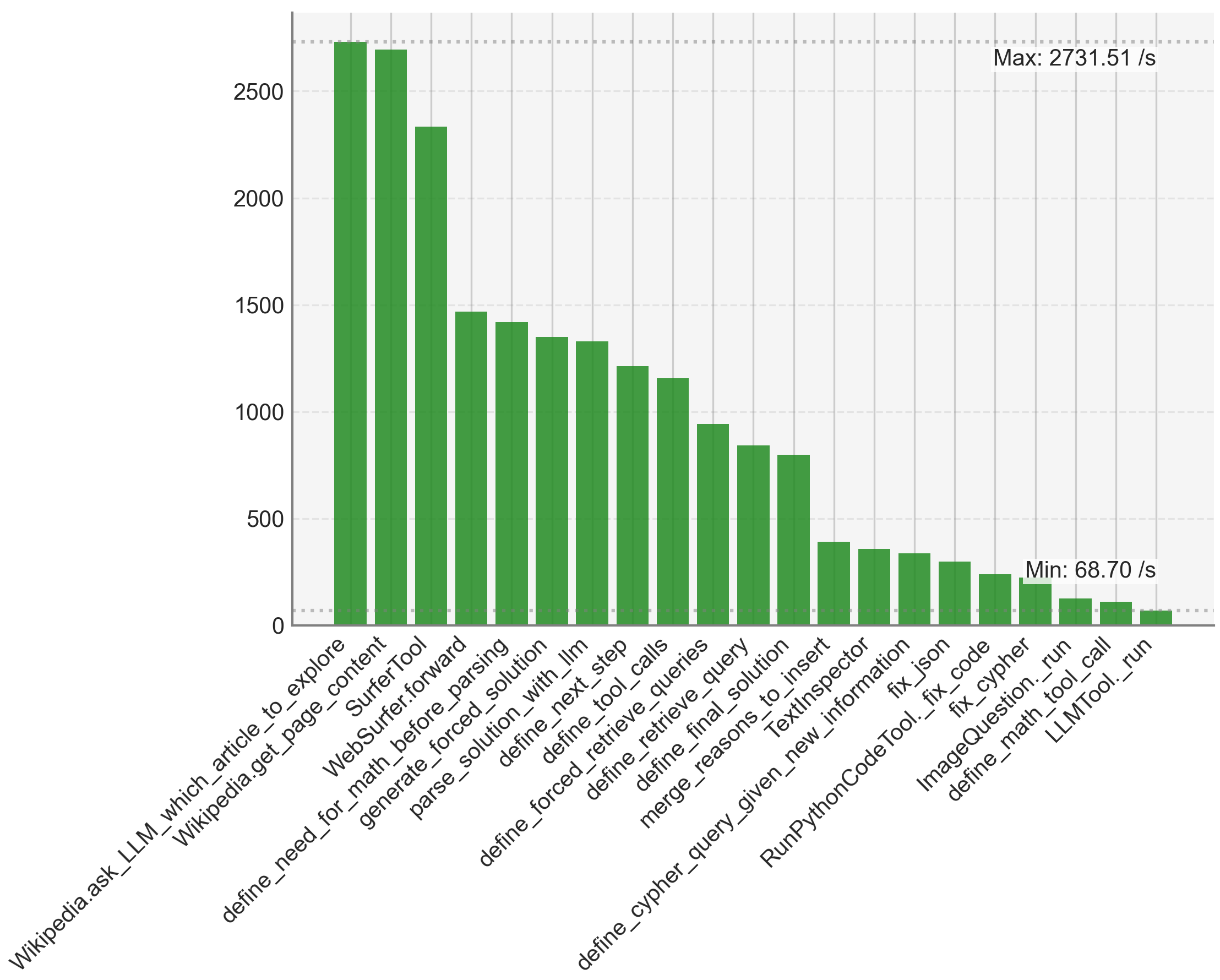

5.5 Ensuring Scalability and Mitigating Bottlenecks

The primary bottleneck in KGoT arises from I/O-bound and latency-sensitive LLM tool invocations (e.g., web browsing, text parsing), which account for 72% of the runtime, which KGoT mitigates through asynchronous execution and graph operation parallelism as discussed in Section 3.4. A detailed breakdown of the runtime is reported in Appendix D.3. Figure 10 confirms KGoT’s scalability, as increasing the number of parallelism consistently reduces the runtime. Moreover, due to the effective knowledge extraction process and the nature of the tasks considered, none of the tasks require large KGs. The maximum graph size that we observed was 522 nodes. This is orders of magnitude below any scalability concerns.

5.6 Impact from Various Design Decisions

<details>

<summary>x13.png Details</summary>

### Visual Description

\n

## Bar Chart: Performance Comparison of Different Agent Architectures

### Overview

This bar chart compares the performance of four different agent architectures – GPTswarm, HF Agents, KGoT (Neo4j + Query), and Zero-Shot – across a range of language models. The performance metric is the "Number of Solved Tasks" (the higher the better). The chart displays the number of solved tasks for each agent architecture on each language model.

### Components/Axes

* **X-axis:** Language Models - Owen2.5-32B, DeepSeek-R1-70B, GPT-40 mini, DeepSeek-R1-32B, QwQ-32B, DeepSeek-R1-7B, Owen2.5-72B, Owen2.5-7B, Owen2.5-1.5B

* **Y-axis:** Number of Solved Tasks (the higher the better), ranging from 0 to 50.

* **Legend:**

* GPTswarm (Light Red)

* HF Agents (Light Blue)

* KGoT (Neo4j + Query) (Medium Blue)

* Zero-Shot (Hatched Pattern)

### Detailed Analysis

The chart consists of a series of grouped bar plots, one group for each language model. For each model, there are four bars representing the performance of each agent architecture.

Here's a breakdown of the data, approximate values are provided with uncertainty due to bar height estimation:

* **Owen2.5-32B:**

* GPTswarm: ~19

* HF Agents: ~29

* KGoT: ~26

* Zero-Shot: ~3

* **DeepSeek-R1-70B:**

* GPTswarm: ~16

* HF Agents: ~26

* KGoT: ~17

* Zero-Shot: ~0

* **GPT-40 mini:**

* GPTswarm: ~22

* HF Agents: ~22

* KGoT: ~14

* Zero-Shot: ~0

* **DeepSeek-R1-32B:**

* GPTswarm: ~17

* HF Agents: ~40

* KGoT: ~21

* Zero-Shot: ~0

* **QwQ-32B:**

* GPTswarm: ~6

* HF Agents: ~16

* KGoT: ~14

* Zero-Shot: ~0

* **DeepSeek-R1-7B:**

* GPTswarm: ~20

* HF Agents: ~39

* KGoT: ~2

* Zero-Shot: ~0

* **Owen2.5-72B:**

* GPTswarm: ~27

* HF Agents: ~39

* KGoT: ~5

* Zero-Shot: ~2

* **Owen2.5-7B:**

* GPTswarm: ~19

* HF Agents: ~37

* KGoT: ~12

* Zero-Shot: ~3

* **Owen2.5-1.5B:**

* GPTswarm: ~12

* HF Agents: ~19

* KGoT: ~9

* Zero-Shot: ~4

**Trends:**

* **HF Agents** consistently outperforms the other architectures across most language models, generally achieving the highest number of solved tasks. The HF Agents line generally slopes upward, peaking at Owen2.5-72B and DeepSeek-R1-7B.

* **GPTswarm** shows moderate performance, generally falling between HF Agents and KGoT.

* **KGoT** generally performs the worst, with very low scores on several models.

* **Zero-Shot** consistently has the lowest performance, often scoring 0 solved tasks.

### Key Observations

* HF Agents demonstrate a clear advantage over other architectures.

* The performance of all architectures varies significantly depending on the language model used.

* Zero-Shot consistently underperforms, suggesting it is not a viable approach for these tasks.

* DeepSeek-R1-32B shows the highest performance for HF Agents, reaching 40 solved tasks.

### Interpretation

The data suggests that HF Agents are the most effective architecture for solving tasks using these language models. The significant difference in performance between HF Agents and other architectures highlights the benefits of the HF Agents approach. The variation in performance across different language models indicates that the choice of language model is crucial for achieving good results. The consistently poor performance of Zero-Shot suggests that it lacks the necessary capabilities for these tasks. The chart provides valuable insights into the strengths and weaknesses of different agent architectures and can inform the selection of the most appropriate architecture for a given task and language model. The high performance of HF Agents on DeepSeek-R1-32B is a notable outlier, suggesting a particularly strong synergy between these two components.

</details>

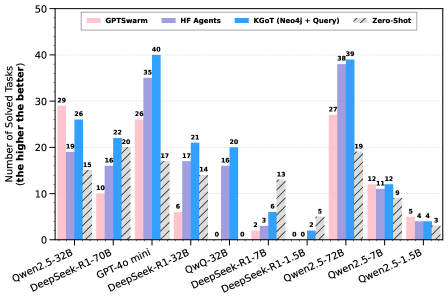

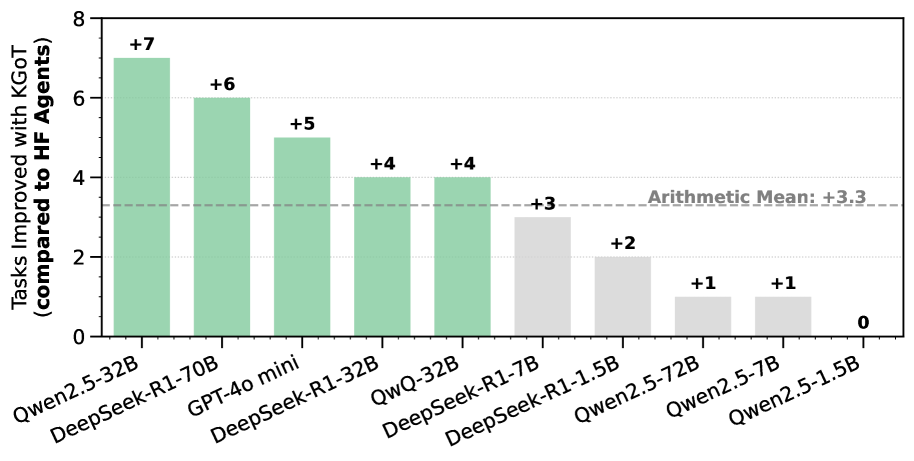

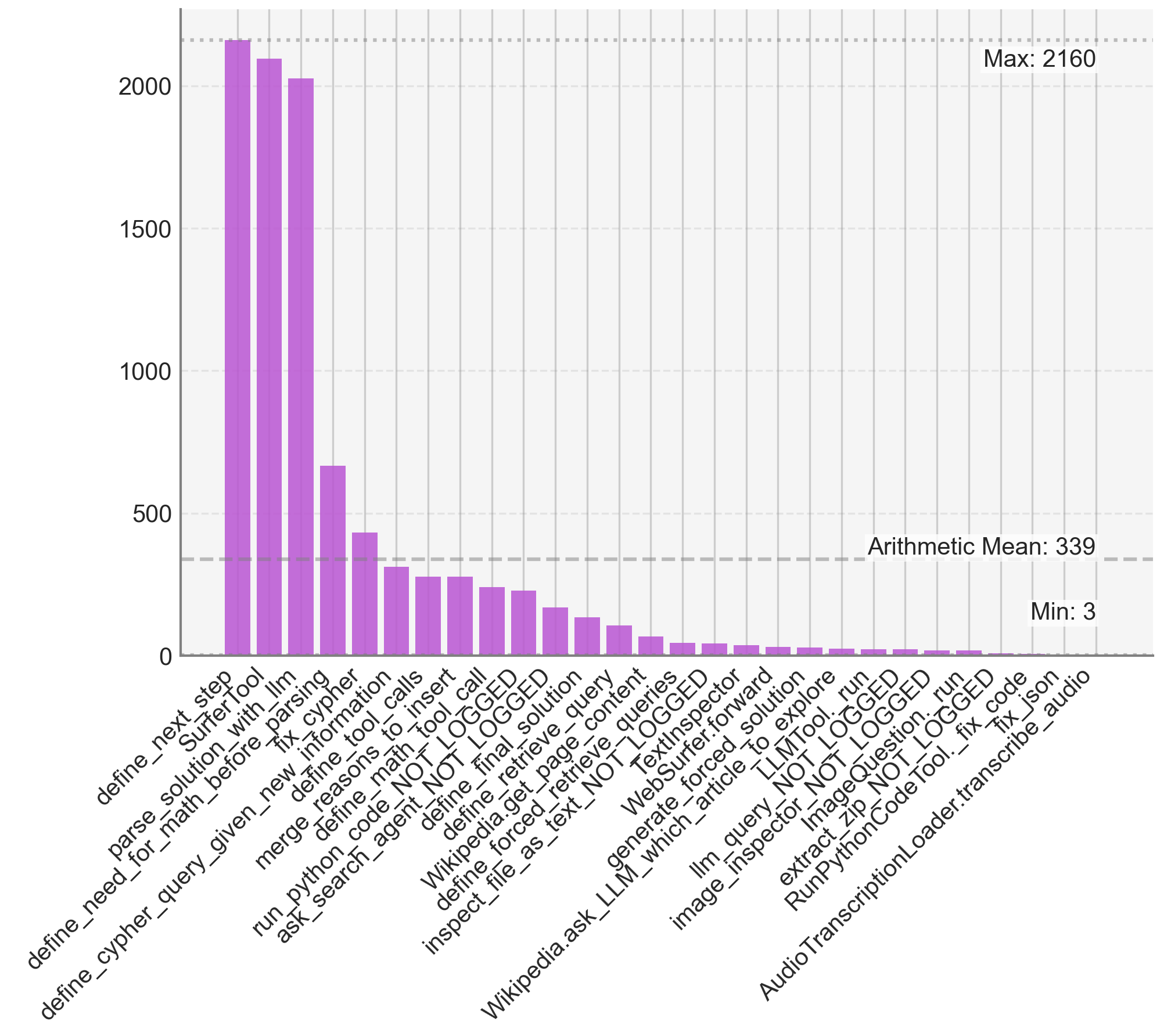

Figure 4: Performance on the GAIA validation set with KGoT (non-fusion) using various LLM models. For KGoT, we use Cypher queries for knowledge extraction from the Neo4j database.

<details>

<summary>x14.png Details</summary>

### Visual Description

\n

## Bar Chart: Performance Comparison of Knowledge Graph Systems

### Overview

This bar chart compares the performance of different knowledge graph systems (Neo4j, NetworkX, and combinations thereof) across various approaches (Direct Retrieve, Query, Query + DR) and levels of task complexity (Level 1, Level 2, Level 3). The performance metric is the "Number of Solved Tasks," where a higher number indicates better performance. A "Max" value is also indicated.

### Components/Axes

* **X-axis:** Represents the different system configurations and approaches. Categories are: "Direct Retrieve", "Query", "Query + DR", "Single Run #1", "Single Run #2", "Fusion". These are further grouped under "Neo4j", "NetworkX", "Neo4j + NetworkX", and "No KG".

* **Y-axis:** Represents the "Number of Solved Tasks" with a scale from 0 to 80. The label also states "(the higher the better)".

* **Legend:**

* Level 1: Light Blue

* Level 2: Medium Blue

* Level 3: Purple

* Max: Grey

* **Title:** Not explicitly present, but the chart's content implies a title related to performance comparison.

### Detailed Analysis

The chart consists of grouped bar plots for each system configuration. Each group has three bars representing Level 1, Level 2, and Level 3 tasks, plus a grey "Max" bar.

**Neo4j:**

* **Direct Retrieve:** Level 1: ~21, Level 2: ~18, Level 3: ~16

* **Query:** Level 1: ~40, Level 2: ~21, Level 3: ~21

* **Query + DR:** Level 1: ~24, Level 2: ~29, Level 3: ~42

**NetworkX:**

* **Direct Retrieve:** Level 1: ~20, Level 2: ~20, Level 3: ~18

* **Query:** Level 1: ~28, Level 2: ~21, Level 3: ~28

* **Query + DR:** Level 1: ~60, Level 2: ~34, Level 3: ~2

**Neo4j + NetworkX:**

* **Direct Retrieve:** Level 1: ~25, Level 2: ~26, Level 3: ~24

* **Query:** Level 1: ~54, Level 2: ~33, Level 3: ~3

* **Query + DR:** Level 1: ~72, Level 2: ~33, Level 3: ~5

**No KG:**

* **Single Run #1:** Level 1: ~14, Level 2: ~14, Level 3: ~2

* **Single Run #2:** Level 1: ~20, Level 2: ~16, Level 3: ~18

* **Fusion:** Level 1: ~32, Level 2: ~42, Level 3: ~38

### Key Observations

* The "Query + DR" approach generally performs best for Neo4j and Neo4j + NetworkX, especially at Level 1.

* NetworkX shows a significant performance boost with the "Query + DR" approach at Level 1, outperforming Neo4j and Neo4j + NetworkX.

* Level 3 tasks consistently have the lowest solved counts across all configurations.

* The "Max" value is relatively low across all configurations, suggesting a ceiling on performance.

* The "Fusion" approach in the "No KG" category shows the highest performance among the "No KG" configurations.

### Interpretation

The chart demonstrates the effectiveness of combining query-based and direct retrieval approaches for knowledge graph systems. The "Query + DR" strategy appears to leverage the strengths of both methods, leading to improved performance, particularly for Level 1 tasks. The performance differences between Neo4j, NetworkX, and their combination suggest that each system has unique capabilities and limitations. The consistently low performance on Level 3 tasks indicates that these tasks are inherently more challenging or require more sophisticated reasoning capabilities than the systems currently possess. The "Max" value suggests that there is a limit to the number of tasks that can be solved, even with the best configuration. The "No KG" category provides a baseline for comparison, and the "Fusion" approach shows that even without a dedicated knowledge graph, reasonable performance can be achieved through alternative methods. The chart highlights the importance of selecting the appropriate system configuration and approach based on the specific task complexity and desired performance level.

</details>

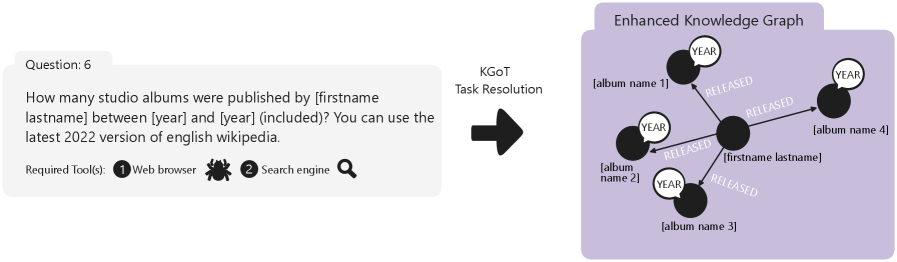

Figure 5: The impact coming from harnessing knowledge graphs (KGs) with different knowledge extraction methods (graph queries with Neo4j and Cypher, and general-purpose languages with Python and NetworkX), vs. using no KGs at all. DR stands for Direct Retrieval. Model: GPT-4o mini.

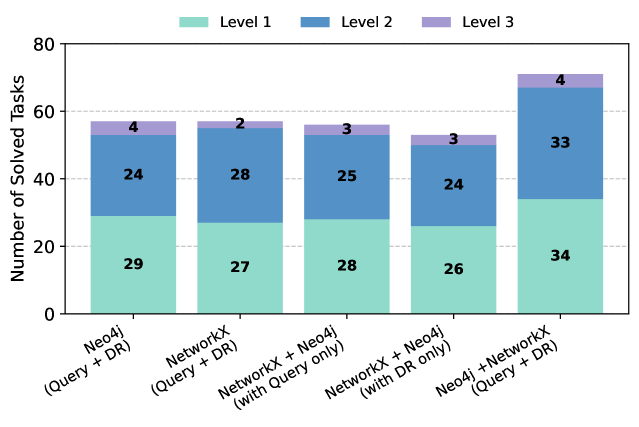

We also show the advantages of KGoT on different open models in Figure 5 over HF Agents and GPTSwarm for nearly all considered models (Yang et al., 2025; Guo et al., 2025). Interestingly, certain sizes of DeepSeek-R1 (Guo et al., 2025) offer high Zero-Shot performance that outperforms both KGoT and HF Agents, illustrating potential for further improvements specifically aimed at Reasoning Language Models (RLMs) (Besta et al., 2025a; c).

Finally, we investigate the impact on performance coming from harnessing KGs, vs. using no KGs at all (the “no KG” baseline), which we illustrate in Figure 5. Harnessing KGs has clear advantages, with a nearly 2 $×$ increase in the number of solved tasks. This confirms the positive impact from structuring the task related knowledge into a graph format, and implies that our workflow generates high quality graphs. To further confirm this, we additionally verified these graphs manually and we discovered that the generated KGs do contain the actual solution (e.g., the solution can be found across nodes/edges of a given KG by string matching). This illustrates that in the majority of the solved tasks, the automatically generated KGs correctly represent the solution and directly enable solving a given task.

We offer further analyses in Appendix D, including studying the impact on performance from different tool sets, prompt formats as well as fusion types.

6 Related Work

Our work is related to numerous LLM domains.

First, we use LangChain (LangChain Inc., 2025a) to facilitate the integration of the LLM agents with the rest of the KGoT system. Other such LLM integration frameworks, such as MiniChain (Rush, 2023) or AutoChain (Forethought, 2023), could be used instead.

Agent collaboration frameworks are systems such as Magentic-One and numerous others (Zhuge et al., 2024; Tang et al., 2024; Liu et al., 2024b; Li et al., 2024; Chu et al., 2024; Wu et al., 2024; Chen et al., 2024; Hong et al., 2024; Shinn et al., 2023; Zhu et al., 2024; Kagaya et al., 2024; Zhao et al., 2024a; Stengel-Eskin et al., 2024; Significant Gravitas, 2025; Zhu et al., 2025). The core KGoT idea that can be applied to enhance such frameworks is that a KG can also be used as a common shared task representation for multiple agents solving a task together. Such a graph would be then updated by more than a single agent. This idea proves effective, as confirmed by the fact that KGoT outperforms highly competitive baselines (HF Agents, Magentic-One, GPTSwarm) in both GAIA and SimpleQA benchmarks.

Some agent frameworks explicitly use graphs for more effective collaboration. Examples are GPTSwarm (Zhuge et al., 2024), MacNet (Qian et al., 2025), and AgentPrune (Zhang et al., 2025). These systems differ from KGoT as they use a graph to model and manage multiple agents in a structured way, forming a hierarchy of tools. Contrarily, KGoT uses KGs to represent the task itself, including its intermediate state. These two design choices are orthogonal and could be combined together. Moreover, while KGoT only relies on in-context learning; both MacNet (Qian et al., 2025) and AgentPrune (Zhang et al., 2025) require additional training rounds, making their integration and deployment more challenging and expensive than KGoT.

Many works exist in the domain of general prompt engineering (Beurer-Kellner et al., 2024; Besta et al., 2025c; Yao et al., 2023a; Besta et al., 2024a; Wei et al., 2022; Yao et al., 2023b; Chen et al., 2023; Creswell et al., 2023; Wang et al., 2023a; Hu et al., 2024; Dua et al., 2022; Jung et al., 2022; Ye et al., 2023). One could use such schemes to further enhance respective parts of the KGoT workflow. While we already use prompts that are suited for encoding knowledge graphs, possibly harnessing other ideas from that domain could bring further benefits.

Task decomposition & planning increases the effectiveness of LLMs by dividing a task into subtasks. Examples include ADaPT (Prasad et al., 2024), ANPL (Huang et al., 2023), and others (Zhu et al., 2025; Shen et al., 2023). Overall, the whole KGoT workflow already harnesses recursive task decomposition: the input task is divided into numerous steps, and many of these steps are further decomposed into sub steps by the LLM Graph Executor if necessary. For example, when solving a task based on the already constructed KG, the LLM Graph Executor may decide to decompose this step similarly to ADaPT. Other decomposition schemes could also be tried, we leave this as future work.

Retrieval-Augmented Generation (RAG) is an important part of the LLM ecosystem, with numerous designs being proposed (Edge et al., 2025; Gao et al., 2024; Besta et al., 2025b; Zhao et al., 2024b; Hu & Lu, 2025; Huang & Huang, 2024; Yu et al., 2024a; Mialon et al., 2023; Li et al., 2022; Abdallah & Jatowt, 2024; Delile et al., 2024; Manathunga & Illangasekara, 2023; Zeng et al., 2024; Wewer et al., 2021; Xu et al., 2024; Sarthi et al., 2024; Asai et al., 2024; Yu et al., 2024b; Gutiérrez et al., 2024). RAG has been used primarily to ensure data privacy and to reduce hallucinations. We illustrate that it has lower performance than KGoT when applied to AI assistant tasks.

Another increasingly important part of the LLM ecosystem is the usage of tools to augment the abilities of LLMs (Beurer-Kellner et al., 2023; Schick et al., 2023; Xie et al., 2024). For example, ToolNet (Liu et al., 2024a) uses a directed graph to model the application of multiple tools while solving a task, however focuses specifically on the iterative usage of tools at scale. KGoT harnesses a flexible and adaptable hierarchy of various tools, which can easily be extended with ToolNet and such designs, to solve a wider range of complex tasks.

While KGoT focuses on classical AI assistant tasks, it can be extended to other applications. Promising directions could include supporting multi-stage, cost-efficient reasoning, for example to enhance the capabilities of the recent reasoning models such as DeepSeek-R1. Extending KGoT to this and other domains may require new ways of KG construction via predictive graph models (Besta et al., 2023a; 2024c), integration with neural graph databases (Besta et al., 2022), or deployment over distributed-memory clusters for scalability. Further, refining its reasoning strategies through advanced task decomposition schemes could improve performance on very long-horizon tasks. These directions highlight both the generality of the framework and current boundaries in tool orchestration, reasoning depth, and scalability, which we aim to address in future work.

7 Conclusion

In this paper, we introduce Knowledge Graph of Thoughts (KGoT), an AI assistant architecture that enhances the reasoning capabilities of low-cost models while significantly reducing operational expenses. By dynamically constructing and evolving knowledge graphs (KGs) that encode the task and its resolution state, KGoT enables structured knowledge representation and retrieval, improving task success rates on benchmarks such as GAIA and SimpleQA. Our extensive evaluation demonstrates that KGoT outperforms existing LLM-based agent solutions, for example achieving a substantial increase in task-solving efficiency of 29% or more over the competitive Hugging Face Agents baseline, while ensuring over 36 $×$ lower costs. Thanks to its modular design, KGoT can be extended to new domains that require complex multi-step reasoning integrated with extensive interactions with the external compute environment, for example automated scientific discovery or software design.

Acknowledgments

We thank Chi Zhang and Muyang Du for their contributions to the framework. We thank Hussein Harake, Colin McMurtrie, Mark Klein, Angelo Mangili, and the whole CSCS team granting access to the Ault, Daint and Alps machines, and for their excellent technical support. We thank Timo Schneider for help with infrastructure at SPCL. This project received funding from the European Research Council (Project PSAP, No. 101002047), and the European High-Performance Computing Joint Undertaking (JU) under grant agreement No. 955513 (MAELSTROM). This project was supported by the ETH Future Computing Laboratory (EFCL), financed by a donation from Huawei Technologies. This project received funding from the European Union’s HE research and innovation programme under the grant agreement No. 101070141 (Project GLACIATION). We gratefully acknowledge the Polish high-performance computing infrastructure PLGrid (HPC Center: ACK Cyfronet AGH) for providing computer facilities and support within computational grant no. PLG/2024/017103.

References

- Abdallah & Jatowt (2024) Abdelrahman Abdallah and Adam Jatowt. Generator-Retriever-Generator Approach for Open-Domain Question Answering, March 2024. URL https://arxiv.org/abs/2307.11278. arXiv:2307.11278.

- Asai et al. (2024) Akari Asai, Zeqiu Wu, Yizhong Wang, Avirup Sil, and Hannaneh Hajishirzi. Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection. In B. Kim, Y. Yue, S. Chaudhuri, K. Fragkiadaki, M. Khan, and Y. Sun (eds.), Proceedings of the Twelfth International Conference on Learning Representations, ICLR ’24, pp. 9112–9141, Vienna, Austria, May 2024. International Conference on Learning Representations. URL https://proceedings.iclr.cc/paper_files/paper/2024/hash/25f7be9694d7b32d5cc670927b8091e1-Abstract-Conference.html.

- Bahdanau et al. (2024) Dzmitry Bahdanau, Nicolas Gontier, Gabriel Huang, Ehsan Kamalloo, Rafael Pardinas, Alex Piché, Torsten Scholak, Oleh Shliazhko, Jordan Prince Tremblay, Karam Ghanem, Soham Parikh, Mitul Tiwari, and Quaizar Vohra. TapeAgents: A Holistic Framework for Agent Development and Optimization, December 2024. URL https://arxiv.org/abs/2412.08445. arXiv:2412.08445.

- Ben Mahria et al. (2021) Bilal Ben Mahria, Ilham Chaker, and Azeddine Zahi. An Empirical Study on the Evaluation of the RDF Storage Systems. Journal of Big Data, 8(1):100:1–100:20, July 2021. ISSN 2196-1115. doi: 10.1186/s40537-021-00486-y. URL https://journalofbigdata.springeropen.com/articles/10.1186/s40537-021-00486-y.