# Enhancing Trust in AI Marketplaces: Evaluating On-Chain Verification of Personalized AI Models using zk-SNARKs

**Authors**: Nishant Jagannath, Christopher Wong, Braden McGrath, MD Farhad Hossain, Asuquo A. Okon, Abbas Jamalipour, Kumudu S. Munasinghe

> School of IT and Systems, University of Canberra, ACT, Australia

> School of Engineering and Technology, University of New South Wales, ACT, Australia

> School of Electrical and Information Engineering, University of Sydney, NSW, Australia

## Abstract

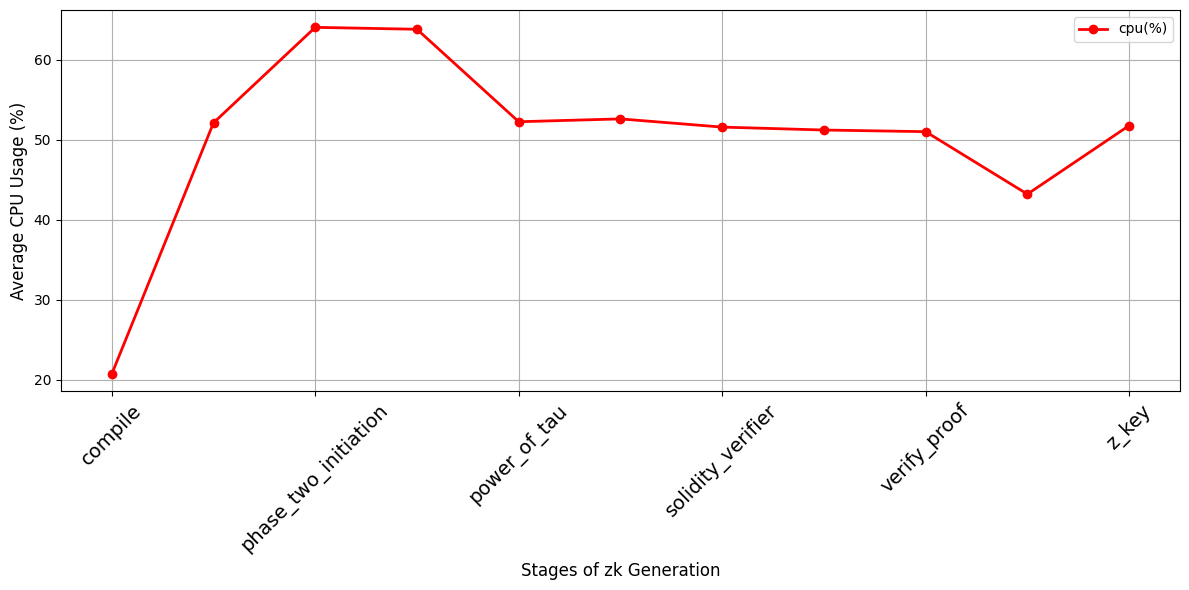

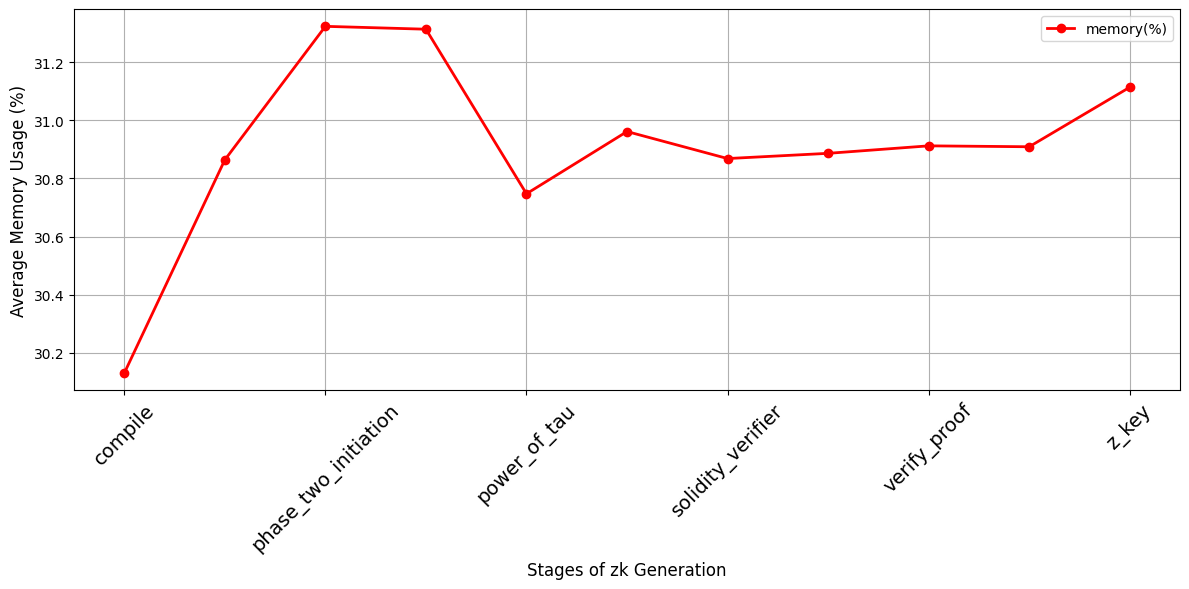

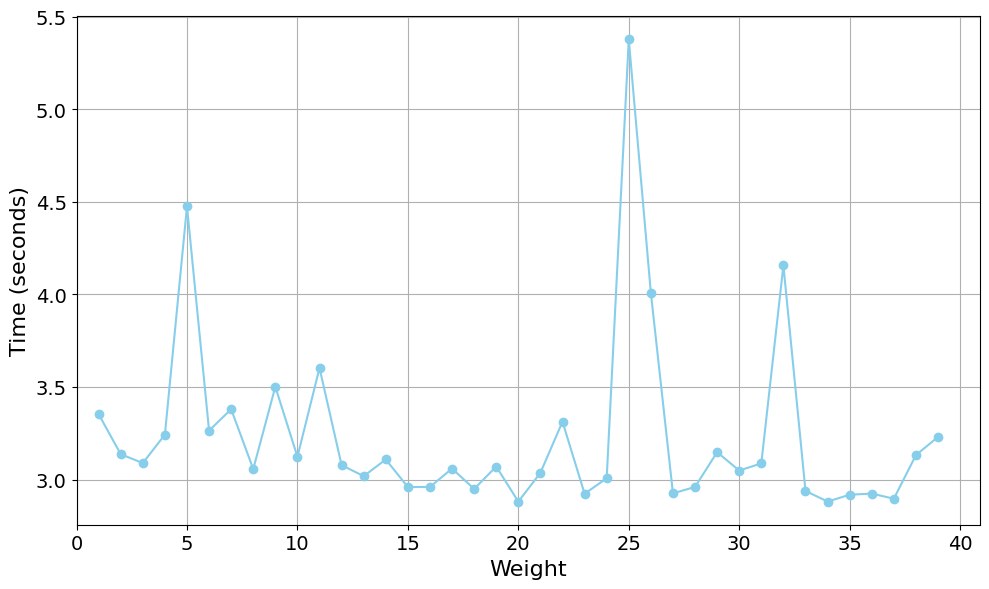

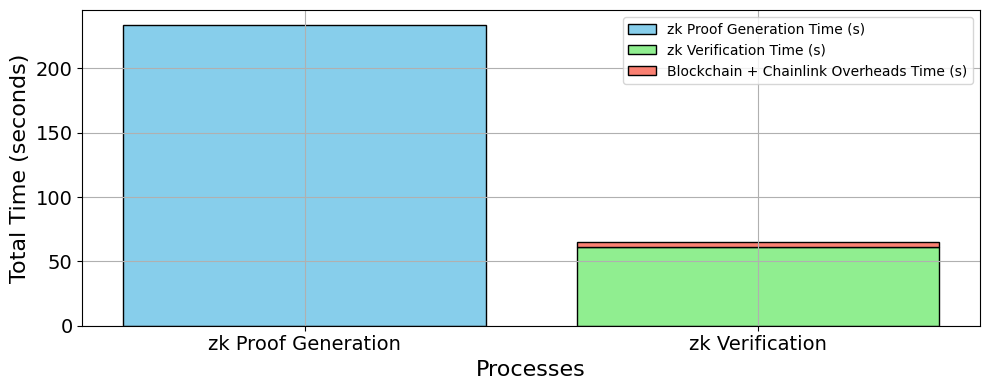

The rapid advancement of artificial intelligence (AI) has brought about sophisticated models capable of various tasks ranging from image recognition to natural language processing. As these models continue to grow in complexity, ensuring their trustworthiness and transparency becomes critical, particularly in decentralized environments where traditional trust mechanisms are absent. This paper addresses the challenge of verifying personalized AI models in such environments, focusing on their integrity and privacy. We propose a novel framework that integrates zero-knowledge succinct non-interactive arguments of knowledge (zk-SNARKs) with Chainlink decentralized oracles to verify AI model performance claims on blockchain platforms. Our key contribution lies in integrating zk-SNARKs with Chainlink oracles to securely fetch and verify external data to enable trustless verification of AI models on a blockchain. Our approach addresses the limitations of using unverified external data for AI verification on the blockchain while preserving sensitive information of AI models and enhancing transparency. We demonstrate our methodology with a linear regression model predicting Bitcoin prices using on-chain data verified on the Sepolia testnet. Our results indicate the framework’s efficacy, with key metrics including proof generation taking an average of 233.63 seconds and verification time of 61.50 seconds. This research paves the way for transparent and trustless verification processes in blockchain-enabled AI ecosystems, addressing key challenges such as model integrity and model privacy protection. The proposed framework, while exemplified with linear regression, is designed for broader applicability across more complex AI models, setting the stage for future advancements in transparent AI verification.

## 1 Introduction

The proliferation of artificial intelligence (AI) has revolutionized the digital landscape, driving a growing demand for personalized, efficient, and reliable AI models. Developing such models, however, is resource-intensive and requires specialized expertise [1]. To bridge this gap, AI marketplaces have emerged as pivotal platforms that facilitate the exchange of personalized AI services. These marketplaces empower developers to monetize their models, providing access to sophisticated AI tools for users who may lack the capacity to develop them independently. A prime example of this trend is the ChatGPT Store [2], which offers diverse AI models tailored to various user needs. By enabling the buying, selling, and sharing of pre-trained AI models, AI marketplaces function much like software app stores but with a focus on AI capabilities rather than applications.

<details>

<summary>extracted/6340811/Strip-1.png Details</summary>

### Visual Description

## Diagram: Three Technical Challenges in Blockchain and AI Systems

### Overview

The image is a horizontal diagram composed of three distinct, side-by-side illustrations. Each illustration pairs a textual statement with a symbolic graphic to represent a specific technical challenge related to trust, integrity, and security in the context of blockchain and personalized AI models. The overall theme is the vulnerability and verification difficulties in decentralized and AI-driven systems.

### Components/Axes

The diagram is divided into three sections, each with a title and an accompanying icon/diagram.

**1. Left Section:**

* **Title Text:** "Blockchain – Lack of Inherent trust"

* **Graphic Components:**

* **Left Side:** Two identical orange-brown neural network icons (each with 3 input nodes, 2 hidden nodes, and 1 output node connected by arrows).

* **Center:** A black, hexagonal blockchain structure composed of interconnected cubes. Inside the central hexagon is a white circle containing various cryptocurrency and blockchain-related symbols (e.g., a Bitcoin '₿' symbol, an Ethereum diamond, a Polkadot logo, a generic chain link).

* **Flow Indicators:** Two curved, dark blue arrows point from the neural network icons towards the central blockchain structure, suggesting data or model inputs being sent to the chain.

**2. Middle Section:**

* **Title Text:** "Personalized AI Model – model’s integrity and confidentiality issues"

* **Graphic Components:**

* A single, larger orange-brown neural network diagram. It has a more complex, interconnected structure with multiple nodes and directional arrows.

* One specific node on the right side of the network is emphasized with a double circle (a circle within a circle), likely representing a personalized or specific output node of concern.

**3. Right Section:**

* **Title Text:** "Detecting changes to the model during inference is challenging."

* **Graphic Components:**

* A light blue line-art icon of a robot's head. The robot has 'X's for eyes and a straight line for a mouth, conveying a non-functional or erroneous state.

* Overlapping the bottom-right of the robot icon is a light blue warning triangle containing an exclamation mark.

### Detailed Analysis

* **Spatial Grounding:** The three challenges are presented in a linear, left-to-right sequence. The titles are positioned directly above their respective graphics. The graphics are centered within their conceptual "columns."

* **Visual Flow & Symbolism:**

* **Left (Blockchain):** The flow arrows indicate a process of feeding external models (neural networks) into a blockchain. The core message, stated in the title, is that the blockchain itself does not inherently solve the trust problem for the data or models being recorded on it.

* **Middle (AI Model):** The isolated, complex neural network with a highlighted node visually represents a "personalized" model. The title specifies the dual issues of **integrity** (has the model been tampered with?) and **confidentiality** (is the model's proprietary structure or data exposed?).

* **Right (Inference):** The "dead" robot icon with a warning sign is a direct metaphor for a model that has been altered or corrupted during its operational phase (inference), making its outputs unreliable. The title explicitly states the difficulty of detecting such changes.

### Key Observations

1. **Consistent Color Coding:** The neural network elements (left and middle) share the same orange-brown color, creating a visual link between the "input models" and the "personalized model." The blockchain is in stark black, and the inference problem is in a distinct light blue.

2. **Progression of Complexity:** The diagrams move from a system interaction (models + blockchain) to a focus on a single model's internal structure, and finally to a symbol of system failure.

3. **Textual Precision:** The titles are concise but technically specific, using terms like "inherent trust," "integrity," "confidentiality," and "inference."

### Interpretation

This diagram outlines a critical security and verification pipeline for AI models operating within or alongside blockchain systems. It presents a logical sequence of problems:

1. **The Foundational Problem:** Even if you use a blockchain to record or coordinate AI models, the chain's immutability doesn't guarantee the *trustworthiness* of the models being put onto it. The "lack of inherent trust" points to the need for external verification mechanisms.

2. **The Core Asset Problem:** The personalized AI model itself is a valuable and vulnerable asset. Its integrity (provenance and lack of tampering) and confidentiality (protection of its architecture and training data) are paramount concerns, especially in decentralized settings where control is distributed.

3. **The Operational Problem:** The most insidious threat may occur during runtime. A model that was verified at deployment could be subtly altered during inference (e.g., via data poisoning or runtime attacks), and detecting these changes in real-time is a significant technical challenge, as symbolized by the malfunctioning robot.

The overarching message is that deploying AI in trust-sensitive environments like blockchain requires solving a multi-layered security challenge: verifying inputs, protecting the model asset, and monitoring its ongoing operation. The diagram serves as a high-level threat model or problem statement for researchers and engineers working on secure, verifiable AI.

</details>

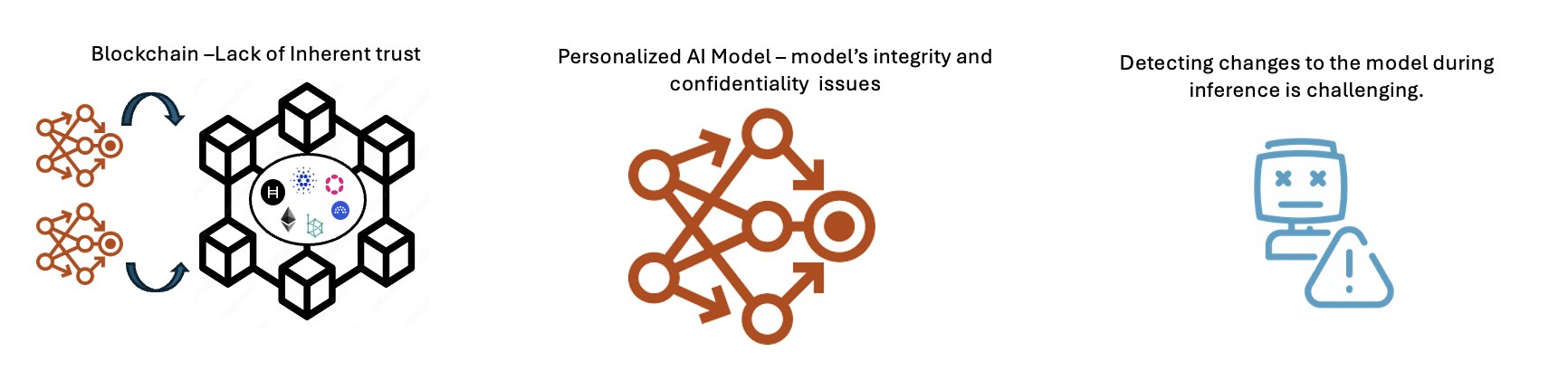

Figure 1: The importance of personalized AI model verification on blockchain.

Despite the promise of AI marketplaces, the dominance of a few global tech giants in AI technology has raised significant concerns regarding transparency, fairness, and equitable access [3]. Model weights are essential for providing experimental reproducibility and fostering innovation. The push towards commercializing AI models has led to a trend of closed-source models, keeping model weights and other details confidential. This confidentiality is due to the significant investments in data acquisition, computational resources, and algorithmic optimization. Even if developers wish to substantiate the performance claims of their models, publishing these weights could result in the misuse of AI models, leading to advanced cyberattacks or the propagation of disinformation [4]. These limitations hinder the examination of model performance and the verification of any claims regarding their effectiveness.

The problem is exacerbated in AI marketplaces operating in decentralized settings, such as blockchain, where there is no inherent trust among users [5]. This lack of transparency makes it difficult to identify performance characteristics, such as performance claims, in production AI models. Ensuring the integrity and reliability of personalized AI models in these marketplaces is crucial, as providers must guarantee model performance, and consumers seek assurance of quality and value. Currently, methods like SingularityNET’s decentralized reputation system rely on community participation to rate AI services [6]. However, this method lacks the rigour necessary for comprehensive validation. These issues as seen in Fig. 1, highlight the need for a decentralized and transparent verification mechanism that fosters trust.

<details>

<summary>extracted/6340811/Strip-2.png Details</summary>

### Visual Description

## Technical Diagram: Secure AI Model Evaluation and Verification via Zero-Knowledge Proofs

### Overview

The image is a two-part technical diagram illustrating a cryptographic workflow for establishing trust in personalized AI models. It details a process for generating a verifiable proof of a model's performance and subsequently verifying its inferences in a decentralized environment using zero-knowledge proofs (specifically zkSNARKs) and blockchain technology.

### Components/Axes

The diagram is divided into two primary horizontal sections, labeled **A** and **B**, each depicting a sequential process flow from left to right.

**Section A: Generate a secure and trusted evaluation proof**

* **Stage 1 (Left):** Labeled "Benchmarked Personalized AI Model". Represented by an orange network graph icon with interconnected nodes and arrows.

* **Stage 2 (Center):** Labeled "Generation of Zero knowledge proofs". This is a composite illustration containing:

* A purple human figure icon labeled "Developer".

* A blue safe/vault icon labeled "zkSNARK".

* A circular arrow flow connecting the developer, a lock icon, and a document icon with a neural network symbol.

* **Stage 3 (Right):** Labeled "Validated Proof shared on the blockchain". Represented by a black blockchain network icon (hexagonal arrangement of cubes) containing a central document icon with a checkmark and various cryptographic symbols (e.g., "H", a diamond, a gear).

**Section B: Verifying model inference on decentralized oracle networks**

* **Stage 1 (Left):** Labeled "Personalized AI models deployed in a decentralized marketplace". Shows two orange AI model network icons feeding into a black blockchain network icon (identical to the one in Section A, Stage 3).

* **Stage 2 (Center):** Labeled "Decentralized oracle network". Represented by a complex, interconnected black network sphere containing small icons (a blue "S", a purple hexagon, a blue gear).

* **Stage 3 (Center-Right):** A dashed box labeled "zk-verification". Contains:

* A chip/processor icon displaying binary code ("10100", "11010").

* An arrow pointing down to a blue document icon with a checkmark.

* Text below: "The result is returned to the blockchain".

* **Stage 4 (Right):** Shows the black blockchain network icon again. To its right, a dashed box contains a question mark icon, an equals sign, and two document icons (one blue with a checkmark, one black with a checkmark). Accompanying text: "The new proof is matched against the validated proof previously shared on the blockchain".

### Detailed Analysis

The diagram outlines a two-phase cryptographic protocol:

**Phase A (Proof Generation):**

1. A personalized AI model is benchmarked.

2. A developer uses a zkSNARK system to generate a zero-knowledge proof. This proof cryptographically attests to the model's performance (e.g., its accuracy on a benchmark) without revealing the model's proprietary weights or the specific test data.

3. This validated proof is published and stored on a blockchain, creating an immutable, timestamped record.

**Phase B (Inference Verification):**

1. The same AI model is deployed for use in a decentralized marketplace.

2. When a user or system requests an inference (a prediction or output) from the model, the request is processed through a decentralized oracle network.

3. The oracle network performs a "zk-verification" step. It generates a new zero-knowledge proof for that specific inference.

4. This new proof is sent back to the blockchain.

5. The blockchain system automatically matches this new inference proof against the original, validated performance proof stored in Phase A. A match confirms that the inference was correctly generated by the authentic, benchmarked model.

### Key Observations

* **Visual Consistency:** The orange network icon consistently represents the AI model. The black blockchain icon is used in both phases, linking the proof storage and verification steps.

* **Flow Direction:** Both processes use clear left-to-right arrows to indicate sequential steps.

* **Cryptographic Core:** The "zkSNARK" safe and the "zk-verification" chip are central visual metaphors for the privacy-preserving and verifiable computation at the heart of the system.

* **Decentralization Elements:** The "decentralized oracle network" is depicted as a complex, peer-to-peer mesh, contrasting with the more structured blockchain icon.

### Interpretation

This diagram presents a solution to the "black box" problem in AI deployment. It proposes a system where:

1. **Trust is Established:** A model's capabilities are cryptographically certified once (Phase A).

2. **Trust is Maintained:** Every subsequent use of that model can be independently verified to ensure it is the genuine, certified model producing the output, and that the output is correct (Phase B).

The system leverages blockchain as a trust anchor for storing the original proof and coordinating verification. Zero-knowledge proofs are the critical enabling technology, allowing verification without exposing sensitive intellectual property (the model) or user data. The "decentralized oracle network" suggests the verification process itself is distributed, avoiding a single point of failure or trust.

**Notable Implication:** This architecture could enable a marketplace for AI models where users can trust the model's advertised performance without having to trust the model provider blindly, and providers can protect their IP. It shifts trust from the provider to mathematics and decentralized networks.

</details>

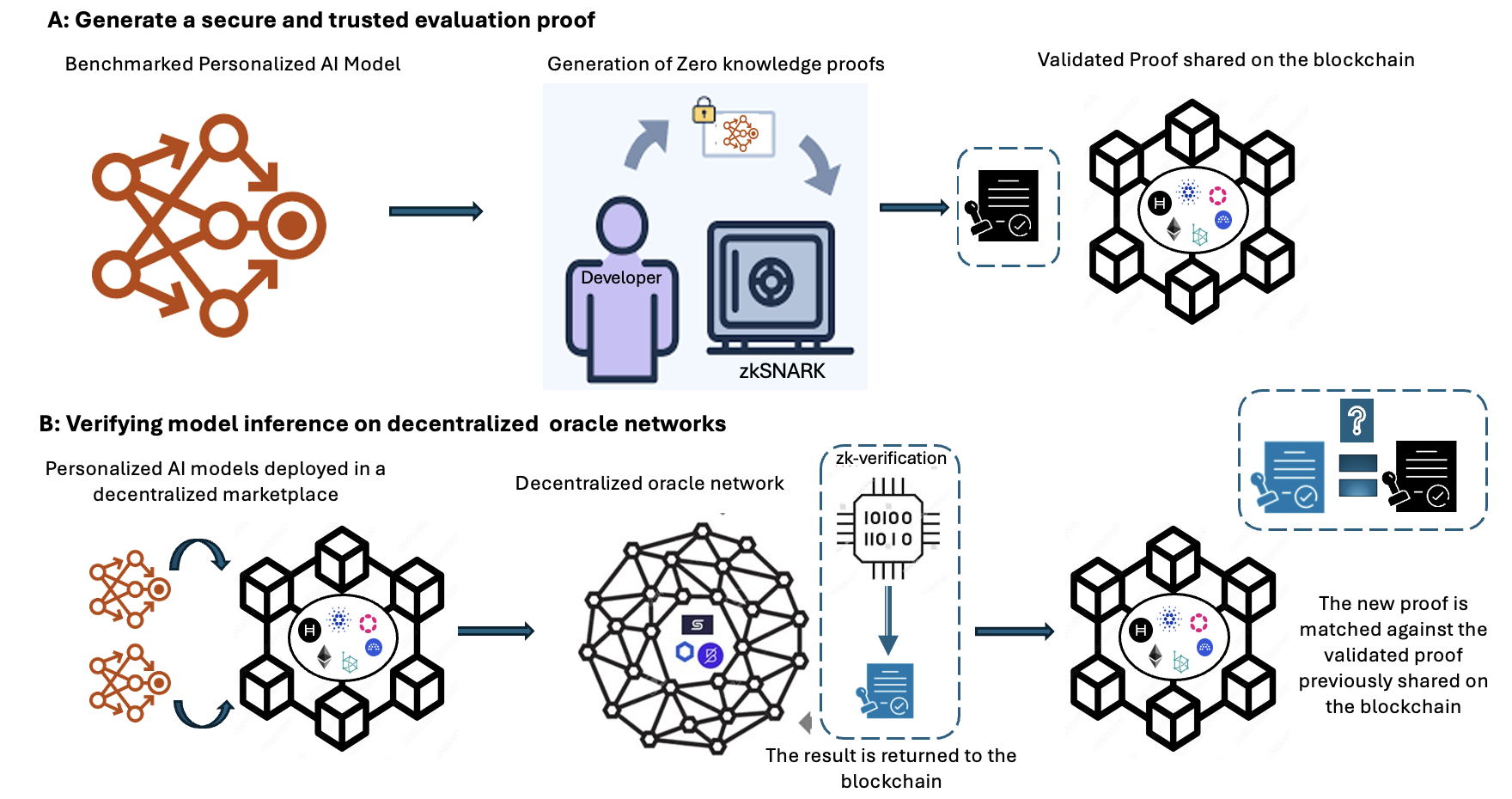

Figure 2: A high-level overview of the system design.

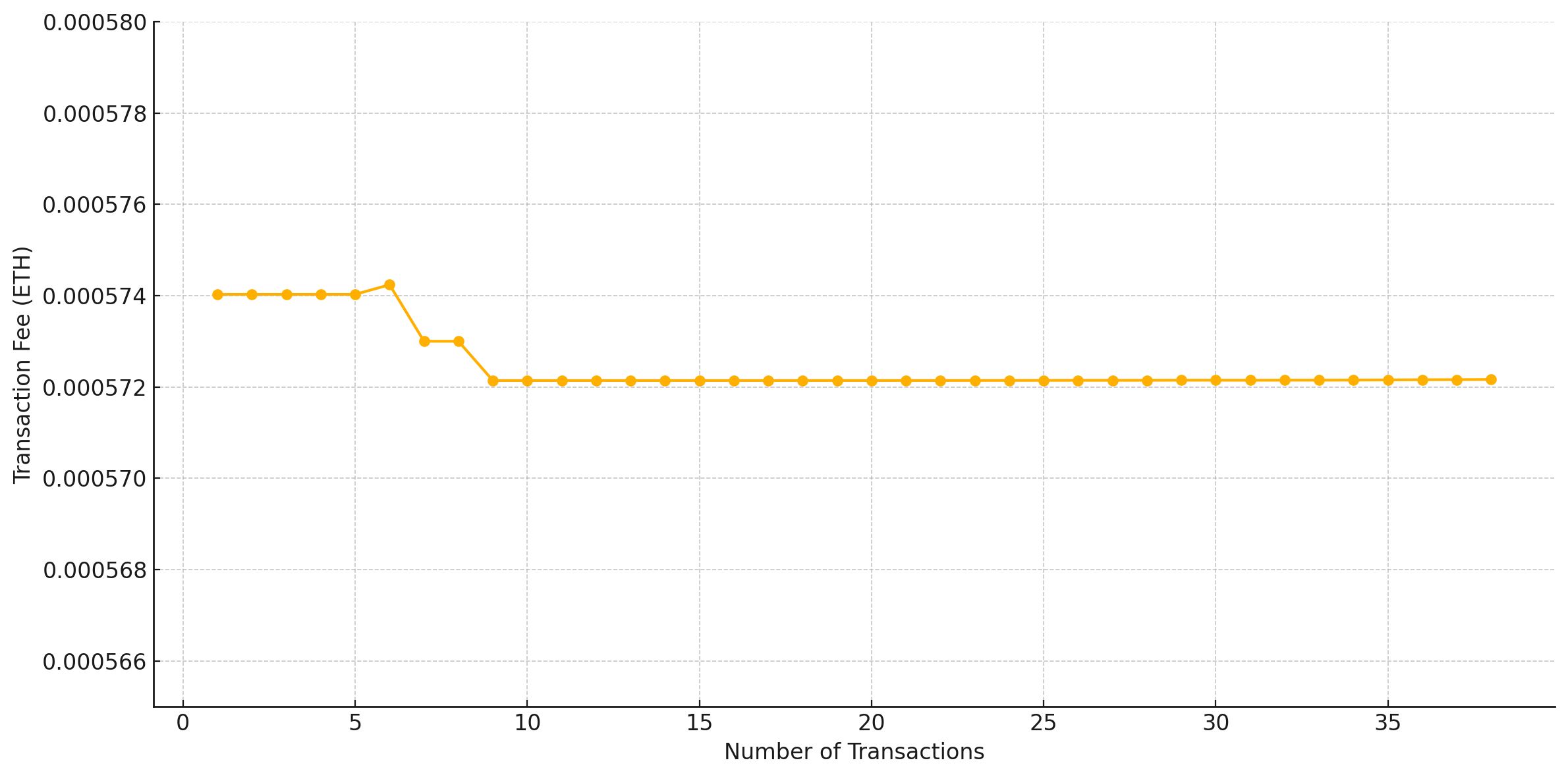

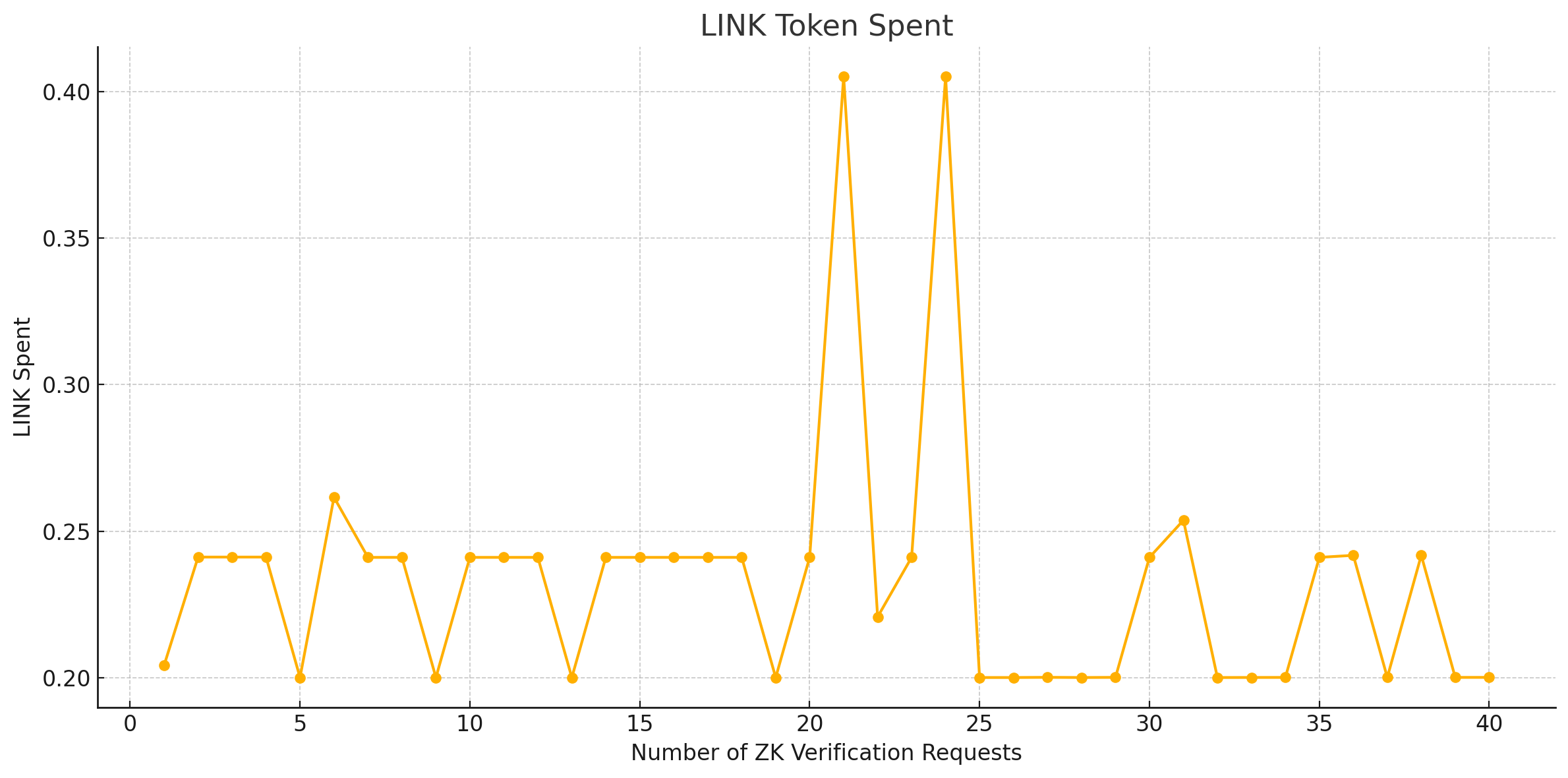

Technologies like Zero-Knowledge Succinct Non-Interactive Arguments of Knowledge (zk-SNARKs) can help address trust and model privacy issues in this context. zk-SNARKs provide powerful cryptographic proofs that verify the correctness of computations without revealing the underlying data [7]. However, using zk-SNARKs to verify AI models’ integrity and performance claims on blockchain-based marketplaces presents several challenges. Firstly, the compactness of zk-SNARK verification proofs is offset by the substantial resources needed for proof verification, potentially causing bottlenecks [8], especially when the blockchain handles multiple transactions and interactions simultaneously. Secondly, the computational intensity of zk-SNARK proofs involves complex mathematical computations that are both time-consuming and costly in terms of blockchain gas fees on platforms like Ethereum [8]. Furthermore, verifying claims of AI models using zk-SNARKs often requires external data inaccessible within the blockchain [9]. These considerations highlight the need for a decentralized approach that leverages off-chain computation for data collection and verification, and on-chain verification to optimize the performance and scalability of blockchain-based AI marketplaces.

Decentralized oracles are critical in bridging blockchain technology with the external world to validate transactions. They present untapped potential for verifying AI models in marketplaces. By bridging the digital and physical realms, oracles can conduct rigorous assessments of AI models’ claims, ensuring they meet high standards before being made available. This paper explores a novel approach as shown in Fig. 2 that integrates zk-SNARKS on Chainlink’s [10] decentralized oracle network with blockchain to verify AI models. This approach could revolutionize the development and distribution of personalized AI services by enhancing trust in blockchain-enabled AI marketplaces. This approach has practical applications across various sectors that require verifiable computation, such as finance, healthcare, education and supply chain management, where accurate AI model predictions are critical and transparency is paramount. By implementing such a solution, we can create a more open, equitable, and reliable AI marketplace, driving the next wave of advancements in AI technology.

### 1.1 Contributions

This paper addresses the challenges of secure and efficient verification of personalized AI models in a blockchain-enabled AI marketplace. We present a comprehensive study using zk-SNARKs and Chainlink oracles. The key contributions of this paper are as follows:

- A novel comprehensive framework that leverages decentralized oracles (Chainlink) to validate unverified data from off-chain data sources for zk-SNARK proof verification, ensuring transparent and trustless verification of AI models on blockchain while preserving model privacy.

- A working implementation that integrates zk-SNARKs with Chainlink oracles, demonstrating their practical use in AI model verification scenarios.

- Analysis of the efficiency and resource consumption of zk-SNARK proof generation and verification to identify key areas for optimization.

- Analysis of the computational costs such as transaction fees and LINK token costs associated with zk-SNARK verification’s, providing insights into the costs involved.

This article is organised as follows. Section II provides an overview of relevant work, emphasising current research on verification of AI models in decentralized settings. Section III covers the system architecture and is divided into four subsections: A, B and C. Subsection A describes the method used to generate a secure and evaluation proof. Subsection B describes the method used to verify model inference while subsection C provides an overview of the proposed framework, D describes the proposed system model. Section IV describes the experimental setup, whereas Section V presents the results and their interpretation. Finally, in Section VI, we summarise our findings and conclusions and outline areas for further research.

## 2 Literature Review

Recent advances in AI models have led to significant progress in various decentralized systems, particularly in the integration of AI with blockchain technology. This development has huge potential for revolutionizing various industries and domains [11]. The benefits of this integration as highlighted by [12] and [13] include improved system performance and a more equitable development of AI. Furthermore, various techniques and applications of decentralized AI, such as decentralized machine learning (ML) frameworks and distributed AI marketplaces are explored in [14].

Traditional trust mechanisms for ensuring the trustworthiness of AI models have been extensively researched, with various approaches proposed. Key issues include transparency and interpretability [15], robustness and fairness [16], uncertainty quantification [17], and causal reasoning [18]. Transparency and interpretability are crucial for building trust in AI models and making the decision-making process understandable to humans. Techniques such as model visualization, saliency maps, Local Interpretable Model-agnostic Explanations (LIME), and SHapley Additive exPlanations (SHAP) support these goals [15]. Robustness and fairness are also vital components of trustworthy AI systems, with techniques like adversarial training and data augmentation enhancing robustness against attacks, while debiasing algorithms and fairness constraints mitigate discriminatory biases [16]. Uncertainty quantification, using methods such as Bayesian neural networks, ensemble methods, and conformal prediction, provides a measure of confidence in AI model predictions, particularly important in critical domains such as healthcare and autonomous systems [17]. Causal reasoning, facilitated by tools such as causal inference, structural causal models, and counterfactual reasoning, is essential for achieving a more interpretable and robust decision-making framework in AI models [18].

Despite these multi-faceted strategies for developing trustworthy centralized AI systems, traditional trust mechanisms often fail to preserve data privacy and confidentiality in decentralized systems, where data is replicated across multiple nodes. In addition, decentralized systems face scalability and performance limitations, making it challenging to handle large-scale applications and high transaction volumes using traditional centralised approaches. Major challenges of traditional trust mechanisms in decentralized environments include the lack of a central authority, identity verification issues, Sybil attacks, scalability and consistency issues, and legal and regulatory uncertainty [19], [20], [21].

The landscape of AI has entered a new era with the advent of blockchain-enabled AI marketplaces. These marketplaces enable individuals and organisations to decentralise AI models’ sharing, trading, and utilisation, in a manner that democratises access to advanced AI technologies [6]. Despite their numerous benefits, decentralized marketplaces present unique challenges for authenticating and verifying AI models. The diversity and volume of AI models exchanged on these platforms render traditional centralised verification and validation processes impractical. Consequently, there is an urgent need for novel approaches to perform these crucial functions efficiently and dependably. The Neuromation platform is an AI marketplace that leverages synthetic data for training models, substantially reducing the time and cost associated with developing AI models. Additionally, they possess a distributed computing platform designed for model training

Chainlink is a pioneering decentralized oracle network that seamlessly connects smart contracts on blockchains with off-chain data and systems [10]. As a secure middleware, it enables blockchain applications to reliably access and leverage real-world information, unlocking a vast array of innovative use cases. At its core, Chainlink employs a decentralized network of independent oracle nodes that retrieve and deliver data to smart contracts, mitigating single points of failure [22]. Through crypto-economic incentives and penalties, it ensures the reliability and correctness of oracles, even against well-resourced adversaries.

Chainlink enhances blockchain scalability and efficiency by enabling secure off-chain computations and data processing, which are then integrated on-chain, facilitating the development of advanced hybrid smart contracts [22]. Through its confidentiality measures and trust minimization achieved via decentralization and cryptographic assurances, Chainlink acts as a secure conduit between blockchains and real-world data, driving the evolution and broader adoption of sophisticated decentralized applications across various sectors [23]. Recent research suggests that the integration of AI and blockchain could be further enhanced with Chainlink [24], which ensures the integrity and transparency of data inputs used in AI models, thereby providing a robust foundation for the ethical and verifiable deployment of AI technologies.

Verification and validation (V&V) are necessary quality assurance procedures for preserving the trust and dependability of AI systems. Verification ensures that the AI model was implemented accurately and behaved as intended per its mathematical description [25]. It is comparable to "building the model properly." Validation, conversely, guarantees that the AI model satisfies the requirements of the context or problem it was designed to solve – it involves "building the right model". Despite their robust capabilities, AI models occasionally generate inaccurate predictions and manifest unintended behaviour.

These risks may be exacerbated in high-stakes domains such as healthcare or finance, where errors may result in severe adverse outcomes, from incorrect medical diagnoses to substantial financial losses. This makes V&V processes essential for the safety and dependability of AI systems, assuring that their decisions are accurate, trustworthy, and dependable [26]. As these models take on increasingly complex duties, their verification and validation become paramount [27]. These procedures are essential for maintaining confidence in AI systems because they help identify and mitigate risks associated with inaccurate predictions or biased outcomes [26].

A key challenge in AI is verifying personalized, closed-source models in a way that safeguards sensitive information, preserves intellectual property, and enhances transparency, as traditional methods often rely on trust or costly re-evaluation. To this end, zero-knowledge proofs have emerged as a powerful tool for privacy-preserving authentication [28]. This cryptographic technique allows one party to prove to another that a given statement is true, without revealing any additional information about the statement. Initially, the zk proofs were designed to be interactive and could not be re-verified multiple times by other validators without creating new interactions. This led to the development of Non-Interactive Zero-knowledge Proofs (NIZKPs) [29], allowing the zero-knowledge proofs to be re-verified by multiple parties.

There are several popular implementations of zero-knowledge proofs, including zk-SNARKs [30], Zero-Knowledge Scalable Transparent Argument of Knowledge (zk-STARKs) [31] and bulletproofs [32]. One of the primary differences between zk-SNARKs, zk-STARKs and bulletproofs is the trusted setup process. An initial trusted setup process is required for zk-SNARKs and it’s not required by zk-STARKs and bulletproofs. zk-STARKs have larger proof sizes, resulting in higher verification costs and storage requirements on the blockchain. Bulletproofs have smaller proof sizes but require interactive verification, which is less practical for decentralized systems. Beyond zero-knowledge proof systems, there exist other cryptographic techniques for verifying computations with privacy guarantees, such as Homomorphic Encryption (HE), Verifiable Computing (VC) [33] and Secure Multiparty Computation (MPC) [34]. While these methods are widely used for general secure computation and data confidentiality, they are not specifically tailored for AI-based tasks.

For this research, we consider zk-SNARKS, despite their reliance on a trusted setup. zk-SNARKs achieves significantly smaller proof sizes compared to zk-STARKs and bulletproofs, resulting in smaller shorter verification times and less gas cost [35]. In the context of personalized AI models, zk-SNARKs can be leveraged to verify the correctness of a model’s predictions without disclosing the underlying model parameters or training data [36]. This is particularly relevant when AI models are deployed in environments handling sensitive user data.

The US Department of Energy implemented a secure neural network verification system using zk-SNARKs for Nuclear Treaty Verification [37]. This proposed system allows to verify the neural network output, input hash and Rivest–Shamir–Adleman (RSA) signature with zk proof, enabling a secure, adaptable way to disclose sensitive data on nuclear materials and facilities. The work by [38] investigates verifiable evaluation attestations using zk-SNARKs, enabling independent validation of model performance claims without exposing the models’ internal weights or outputs. Here the authors employ a "predict, then prove" strategy, where models are converted to a standard format, evaluated on benchmark datasets, and proofs of correct inference are generated. These proofs are aggregated into attestations that can be independently verified.

The authors in [39] presented a practical approach to verify ML model inference for a full-resolution ImageNet model using zk-SNARKs and explore other scenarios such as verifying MLaaS predictions and accuracy. The zk-SNARKs enabled a non-interactive way to verify ML model execution and achieved 79% accuracy. A scheme called zkCNN was proposed to prove the accuracy of a convolution neural network (CNN) model’s predictions using public dataset to others without revealing sensitive information about the model [40].

Based on our literature review, it is evident that technologies like zk-SNARKs can help address trust and AI model privacy issues in this context [37], [38], [39], [40]. However, using zk-SNARKs to verify AI models’ integrity and performance claims on blockchain-based marketplaces presents several challenges. Verifying claims of AI models using zk-SNARKs often requires external data inaccessible within the blockchain [9]. Similar to the work in [40], models can be trained on public datasets and to prove the model accuracy claims, access to high quality public datasets are required. The compactness of zk-SNARK verification proofs is offset by the substantial resources needed for proof verification, potentially causing bottlenecks [8], [41] especially when the blockchain handles multiple transactions and interactions simultaneously. Secondly, the computational intensity of zk-SNARK proofs involves complex mathematical computations that are both time-consuming and costly [42] especially in terms of blockchain gas fees on platforms like Ethereum [8]. These considerations highlight the need for a decentralized approach that leverages off-chain computation for data collection and verification and on-chain zk verification to optimize the performance, scalability and enhancing trust within blockchain-based AI marketplaces.

This paper addresses existing gaps by proposing a novel framework that leverages zk-SNARKs integrated with Chainlink oracles to verify AI model performance claims on blockchain platforms. Our approach allows for the verification of personalized AI models without disclosing sensitive information, preserving intellectual property and enhancing transparency. We demonstrate our approach with a linear regression model predicting Bitcoin prices using on-chain data, verified on the Sepolia testnet.

<details>

<summary>x1.png Details</summary>

### Visual Description

## System Architecture Diagram: Decentralized AI Marketplace with zkSNARK Verification

### Overview

This image is a technical system architecture diagram illustrating a two-part process for creating and verifying AI models in a decentralized marketplace. The diagram is divided into two main sections labeled **A** and **B**, connected by a dashed line representing the flow of a "Validated Proof." The overall system combines on-chain (blockchain) and off-chain (oracle network) components to enable secure, trustless AI model evaluation and inference.

### Components/Axes

The diagram is structured into distinct regions and components, each with specific labels and functions.

**Part A: Generate a secure and trusted evaluation proof** (Top section, light green background)

* **1. On-Chain Data:** Represented by a database icon. This is the starting point.

* **Training Data Processing Pipeline:** A dashed box containing:

* **Data Cleaning** (blue box)

* **Data Normalization** (blue box)

* **Correlation Analysis** (blue box)

* **2. Personalized AI Model:** Represented by a neural network icon.

* **3. Generation of Zero knowledge proofs:** Shows a developer icon, a zkSNARK icon, and a lock icon, indicating the creation of a cryptographic proof.

* **Output:** A document icon labeled "Validated Proof shared on the blockchain" connects Part A to Part B.

**Part B: Verifying model inference on decentralized oracle networks using zkSNARK** (Bottom section, light blue background)

This section is divided into three vertical zones: **Decentralized AI Marketplace** (left), **On-chain** (center), and **Off-chain** (right).

* **Decentralized AI Marketplace (Left Zone):**

* **Sellers:** Represented by purple person icons.

* **5. AI Models:** Three distinct AI chip icons (blue, orange, green) in a dashed box, representing models for sale.

* **Buyers:** Represented by green person icons.

* **Flow:** Sellers provide AI models, which are then available to Buyers.

* **On-chain (Center Zone):**

* **4. Blockchain:** A central hexagonal network diagram containing logos for Hyperledger (H), Ethereum (ETH), and others.

* **13. Blockchain Interaction:** Arrows show data flow to/from the blockchain.

* **14. Proof Matching:** A dashed box showing a question mark icon and two document icons with checkmarks. Text: "The new proof is matched against the validated proof previously shared on the blockchain."

* **Off-chain (Right Zone):**

* **6. Smart Contract:** A document icon that sends a "request" and receives a "result."

* **7. Decentralized Oracle Network:** A large, complex network diagram with interconnected nodes. It contains logos for Chainlink (link icon) and API3 (hexagon icon).

* **8. Computation request:** An arrow pointing from the Oracle Network to a cloud.

* **9. API providers for on-chain and off-chain data:** A database icon.

* **10. API request/response:** Arrows showing communication between the Oracle Network and API providers.

* **11. Result:** A document icon returning from the Oracle Network.

* **12. Result:** A document icon returning to the Smart Contract.

* **Sandboxed Execution (SE):** A cloud icon containing a chip and a document icon, labeled "zk verification." Multiple "SE" clouds are connected to the Oracle Network.

**Numbered Process Steps:** The diagram includes circled numbers (1 through 14) indicating a sequential or logical flow of operations, though the exact sequence is not strictly linear.

### Detailed Analysis

The diagram details a multi-stage workflow:

1. **Proof Generation (Part A):** On-chain data is cleaned, normalized, and analyzed to train a personalized AI model. A developer then uses zkSNARK technology to generate a cryptographic proof of the model's properties or performance. This "Validated Proof" is shared on the blockchain.

2. **Marketplace & Verification (Part B):**

* Sellers list AI models in a decentralized marketplace.

* A buyer's interaction triggers a **Smart Contract (6)** on the blockchain.

* The Smart Contract sends an **Oracle request (7)** to a **Decentralized Oracle Network**.

* The Oracle Network coordinates a **Computation request (8)** to **Sandboxed Execution (SE)** environments for secure, verified processing. It also fetches necessary data via **API requests (9, 10)** to external providers.

* Results are returned through the Oracle Network **(11)** to the Smart Contract **(12)**.

* Crucially, the system performs **Proof Matching (14)**: the new proof generated from the inference is matched against the original "Validated Proof" stored on the blockchain to ensure the model's integrity and correct execution.

### Key Observations

* **Hybrid Architecture:** The system explicitly separates on-chain (blockchain, smart contracts) and off-chain (oracle network, API providers, sandboxed execution) components, highlighting a common pattern in decentralized applications.

* **zkSNARK Integration:** Zero-knowledge proofs are used in two key places: initially to create a trusted evaluation proof (Part A) and later for verification within the sandboxed execution environment (Part B, SE cloud).

* **Trust Model:** The architecture aims to create trust between anonymous sellers and buyers in a marketplace by using cryptographic proofs and decentralized verification, removing the need for a central trusted authority.

* **Complex Flow:** The numbered steps (1-14) suggest a complex, multi-transaction process involving data preparation, model training, proof generation, marketplace listing, request handling, off-chain computation, and on-chain verification.

### Interpretation

This diagram outlines a sophisticated framework for a **trustless AI economy**. It addresses the core problem of verifying that an AI model sold in a decentralized marketplace is both the one advertised and that it performs computations correctly, without requiring the buyer to trust the seller or a central platform.

* **How Elements Relate:** The "Validated Proof" from Part A is the linchpin. It acts as a cryptographic benchmark stored immutably on the blockchain. Part B's entire verification process (steps 11-14) exists to check new model inferences against this benchmark. The Decentralized Oracle Network acts as the secure bridge between the deterministic blockchain and the external world (APIs, computation), while zkSNARKs provide the mathematical guarantees for verification.

* **Purpose:** The system enables **verifiable AI-as-a-service**. A buyer can purchase an inference from a model and receive not just the result, but also a cryptographic proof that the result was generated correctly by the specific, validated model.

* **Notable Anomalies/Considerations:** The diagram is conceptual and does not specify the blockchain platform, the exact zk-SNARK circuit design, or the economic incentives for oracle nodes and sellers. The complexity of the flow (14 steps) implies significant overhead, which would be a practical consideration for implementation. The security of the entire system hinges on the correctness of the initial proof generation (Part A) and the security of the sandboxed execution environments (SE).

</details>

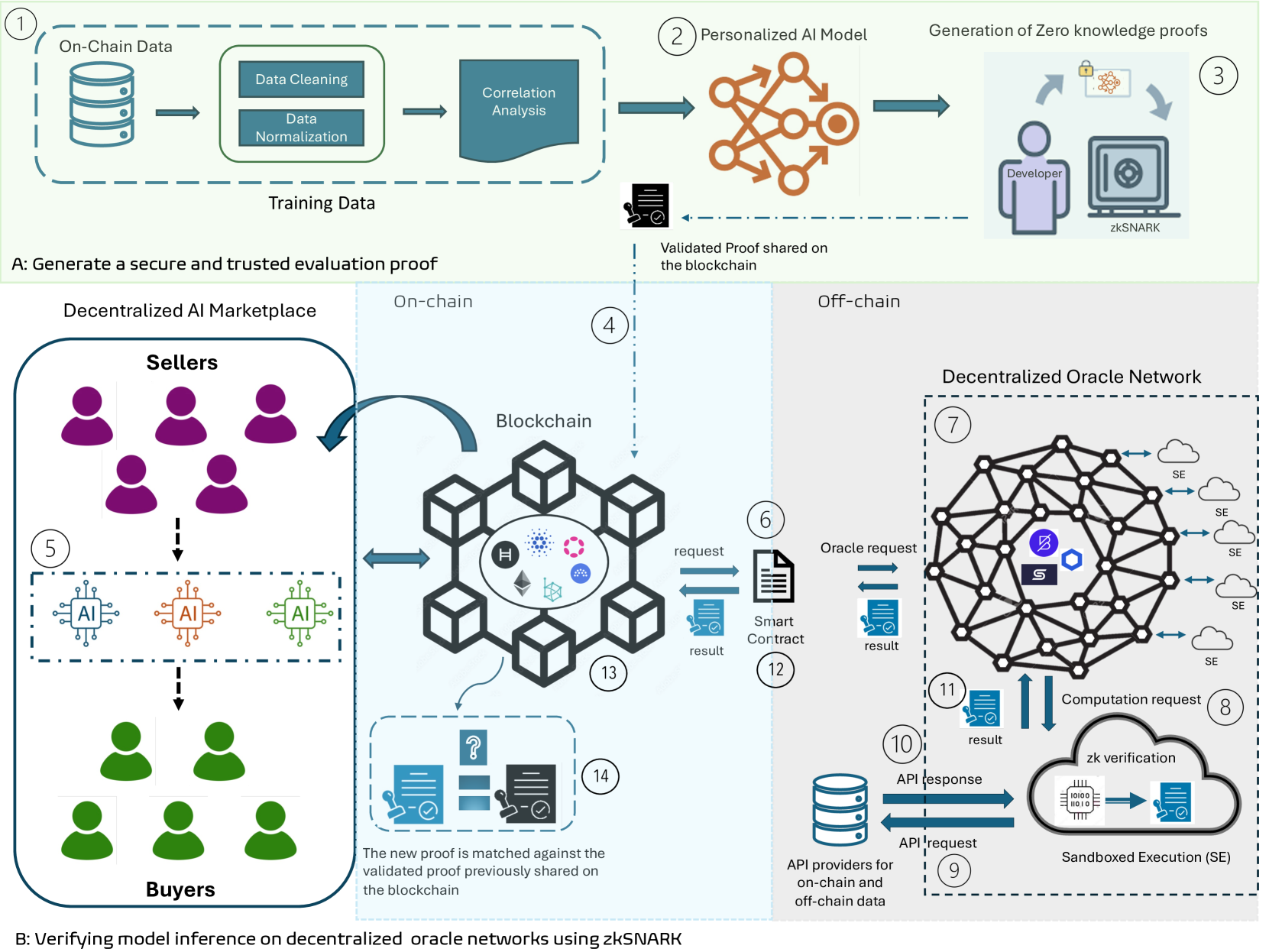

Figure 3: Proposed verification framework.

## 3 Methodology and System Design

This section describes the methodology as shown in Fig. 3 for verifying the performance claims of a personalized AI model without revealing weights and are trained on on-chain and user-specific data to predict Bitcoin prices. The verification process is computed on Chainlink’s decentralised oracle network using zk-SNARKs. We divide the section into two parts and explain these parts with respect to Fig. 3. In Part A, we provide the system overview of our proposed framework. Part B outlines the steps to generate a secure and trusted evaluation proof and Part C describes the verification process for the model inference on a decentralized oracle network using zk-SNARKs.

### 3.1 System Overview

Trust is a major concern for users in the Web3 domain, particularly on the blockchain. Trust issues also extend to the blockchain-enabled AI marketplace, where the credibility of developers’ performance claims for personalized AI models is questioned. The blockchain-enabled AI marketplace combines on-chain and off-chain elements to enhance the verifiability of verifications. The framework represented in Fig. 3 is specifically designed to enable personalized AI model performance verification using zk-SNARKs. The interaction between on-chain smart contracts and off-chain Chainlink oracles is crucial for the functioning of the blockchain-enabled AI marketplace. The interaction guarantees that the data, computation, and proof validation are carried out securely and efficiently. We analyze the on-chain data from external API providers and eliminate inaccurate data points. The data is carefully scaled to uncover and examine the connections between important data points in the model’s output. After the training and testing of the personalized AI model, developers generate zk-SNARK proofs to verify the AI model’s claim without exposing sensitive data such as model weights. These verifiable proofs are shared on the blockchain.

Prior to purchasing the personalized AI model, the buyer demands proof to verify the performance claim of the personalized AI model. The decentralized oracle network is used for verification using the Chainlink Functions, as requested by the blockchain. The Chainlink nodes facilitates the coordination of data acquisition from external API providers for on-chain data and the execution of computations. Each node in the Chainlink carries out sandboxed execution of the provided source code to ensure transparency. The aggregated results are sent to the smart contract using Chainlink’s Off-Chain Reporting (OCR) protocol [43]. The smart contract on Sepolia receives the aggregated result and zk-SNARK proof.

The blockchain verifies the proof using stored verification keys and updates the state of the blockchain based on the verification outcome. Verified proofs are stored on-chain for future reference, ensuring a transparent and tamper-proof record of all computations. This framework provides a practical approach to verifying personalized AI models. Incorporating zk-SNARK ensures the privacy of model weights during verification, enhancing trust and transparency in AI model marketplaces. The integration of zk-SNARKs into Chainlink functions facilitates secure and reliable data fetching and computation, offering a robust AI model verification framework that can be implemented in real-world scenarios.

To summarize the interactions in the proposed verification framework, Fig. 3 represents the framework for verifying personalized AI model performance using zk-SNARKs. In Step 1, The process begins with developers training personalized AI models, followed by data cleaning, normalization, and correlation analysis. In Step 2, developers generate zk-SNARK proofs to verify model performance claims without revealing sensitive data and upload these proofs to the blockchain. In Step 3, buyers initiate verification requests, which the decentralized oracle network processes by fetching data from external APIs and performing zk-SNARK verification in a sandboxed environment. In Step 4, the Chainlink oracles communicates results back to the blockchain smart contract via Chainlink’s Off-Chain Reporting protocol. In Step 5, the blockchain then validates the proofs using stored verification keys and updates the state of the decentralized marketplace, ensuring a transparent and tamper-proof record of all zk-SNARK verifications.

### 3.2 Generate a Secure and Evaluation Proof

#### 3.2.1 Personalized AI model - Introduction to Personalization

Personalized AI models provide customized predictions by utilizing on-chain data and user data. For example, the model can be personalized when predicting Bitcoin prices to consider the user’s unique trading patterns, preferences, and other data points affecting their investment choices.

Data Collection:

Developers acquire on-chain data in two ways. The first method involves collecting and processing raw on-chain data from the public Bitcoin blockchain. The second method uses external application programming interface (API) providers where the on-chain data is already preprocessed and ready to use. We obtained on-chain data from 2016 to 2023 from API providers such as [44], [45]. With the on-chain data collected from these sources, we categorized and analyzed metrics from each category against Bitcoin’s price. We also use user-specific data such as transaction history and wallet activity. The following metrics are obtained from the on-chain data; block size, block height, transaction count, daily active addresses, miners revenue, miner fees, miner to exchanges, total new addresses, transactions rate, transfers count, hash rate, transactions difficulty, transfer rate, wallets address with greater than 1, 10 and 100 coins, exchange deposits, exchange withdrawals and total addresses.

Data Analysis:

The on-chain data closely correlating to the bitcoin price are identified. This step involves using a Pearson and Spearman correlation analysis to understand the linear and non-linear relationship between the on-chain datasets and the bitcoin price. The Pearson correlation can be represented by equation (1)

$$

r=\frac{\sum_{i=1}^{n}(x_{i}-\bar{x})(y_{i}-\bar{y})}{\sqrt{\sum_{i=1}^{n}(x_{

i}-\bar{x})^{2}\sum_{i=1}^{n}(y_{i}-\bar{y})^{2}}} \tag{1}

$$

where:

- $x_{i}$ is the $i$ -th data point of features of on-chain data

- $y_{i}$ is the $i$ -th data point of bitcoin price

- $\bar{x}$ is the mean of the $x$ values

- $\bar{y}$ is the mean of $y$ values

- $n$ is the total number of data points

The Spearman rank correlation coefficient [46] is a nonparametric measurement correlation used to evaluate the monotonic relationship between two variables.

$$

\rho=1-\frac{6\sum{d_{i}}^{2}}{n({n}^{2}-1)} \tag{2}

$$

In (2), the difference between the ranks of the $i$ -th pair of values is represented by $d_{i}$ and $n$ represents the total number of data points.

Pearson correlation coefficients are used to quantify the linear connection between variables. In contrast, Spearman correlation coefficients are only applicable to monotonic connections, in which variables tend to move in the same or opposite direction but not necessarily at the same rate. In a linear relationship, the rate is constant.

We re-scale the data between the range [0,1]. The normalization value is calculated using equation (3).

$$

z=\frac{x_{i}-\min(x)}{\max(x)-\min(x)} \tag{3}

$$

By conducting correlation analysis, we can pinpoint important on-chain metrics that can be incorporated into advanced predictive algorithms. Conversely, we can also identify metrics that could be more relevant and should be considered.

Introduction to zk-SNARKs:

A zk-SNARK allows a prover to convince a verifier that they know a solution to a computational problem without disclosing the solution itself. These proofs are short and fast to verify, and they do not require ongoing interaction between the prover and the verifier after the initial setup. A zk-SNARK system comprises three core algorithms: Generation (Gen), Prover (P) and Verification (V).

Non-Interactive Zero-Knowledge Argument

The arithmetic circuits in zk-SNARKs play a critical role in representing the computational problem that the prover aims to demonstrate to the verifier that it has been solved correctly. In the context of the non-interactive zero-knowledge argument, let $C$ be an arithmetic circuit such that $C:F^{n}\times F^{n^{\prime}}\rightarrow F^{l}$ . Here, $F$ denotes a finite field and $F^{n}$ represents a vector space of dimension $n$ over the finite field $F$ . Similarly, $F^{n^{\prime}}$ and $F^{l}$ indicate vector spaces of dimensions $n^{\prime}$ and $l$ over $F$ , respectively. The NP language $L$ is defined as the set of statements $x$ in $F^{n}$ for which there exists a valid witness $w$ in $F^{n^{\prime}}$ . This is represented by the relation $R$ defined as $R:=\{(x,w)\in F^{n}\times F^{n^{\prime}}\}$ , where $w$ is the witness and $x$ is the statement.

A non-interactive zero-knowledge argument for the relation $R$ consists of the triple of polynomial-time algorithms: Generation (Gen), Prover (P), and Verification (V).

- Generation (Gen): Produces a common reference string (crs) and a private verification state.

$$

(\text{crs})\leftarrow\text{Gen}(1^{n},R)

$$

- Prover (P): Produces a proof $\pi$ for a statement $x$ using a witness $w$ .

$$

\pi\leftarrow\text{P}(\text{crs},x,w)

$$

- Verification (V): Verifies the proof $\pi$ for the statement $x$ .

$$

\text{V}(\text{crs},x,\pi)\rightarrow\{0,1\}

$$

Properties of zk-SNARKs

The following properties [47] must be met by a non-interactive zero-knowledge proof $\pi$ for the relation $R$ :

- Completeness: For a statement $x\in F^{n}$ with a witness $w\in F^{n^{\prime}}$ such that $(x,w)\in R$ , the prover acting honestly always produces a valid proof $\pi$ . This proof should be sufficient to convince an honest verifier. The completeness of the non-interactive zero-knowledge proof can be expressed as follows [48]:

$$

\Pr\left[\begin{array}[]{c}(\text{crs})\leftarrow\text{Gen}(1^{n},R)\\

\pi\leftarrow\text{P}(\text{crs},x,w)\\

\text{V}(\text{crs},x,\pi)=1\text{ if }(x,w)\in R\end{array}\right]=1 \tag{4}

$$

- Soundness: When an adversary attempts to deceive by providing a proof $\pi$ for a false statement $x\notin R$ , the verification algorithm V is designed to have a high probability of rejecting the proof. Any evidence $\pi$ offered by an adversary will be rejected with a high probability due to the soundness requirement, which ensures that $x$ must be in the relation $R$ [28]:

$$

\Pr\left[\begin{array}[]{c}(\text{crs})\leftarrow\text{Gen}(1^{n},R)\\

(x,\pi)\leftarrow\mathcal{A}(\text{crs})\\

\text{V}(\text{crs},x,\pi)=1\text{ and }(x,w)\notin R\end{array}\right]\leq

\text{negl}(n) \tag{5}

$$

Furthermore, suppose there is an extractor $\mathcal{E}$ that can generate the witness $w\leftarrow\mathcal{E}_{\mathcal{A}}(\text{crs})$ based on the output of an adversary $\mathcal{A}$ , which produces a valid argument $(x,\pi)\leftarrow\mathcal{A}(\text{crs})$ :

$$

\Pr\left[\begin{array}[]{c}(\text{crs})\leftarrow\text{Gen}(1^{n},R)\\

(x,\pi)\leftarrow\mathcal{A}(\text{crs})\\

w\leftarrow\mathcal{E}_{\mathcal{A}}(\text{crs})\\

\text{V}(\text{crs},x,\pi)=1\text{ and }(x,w)\notin R\end{array}\right]\leq

\text{negl}(n) \tag{6}

$$

- Zero-Knowledge: This characteristic ensures that the verifier only gains knowledge of the statement’s truth. In zk-SNARKs, Tau refers to the trusted setup parameter generated during the initial phase, creating a secure cryptographic environment. The Powers of Tau (PoT) ceremony generates these parameters, which are necessary for generating and verifying zk-SNARK proofs, ensuring privacy. In the Phase 2, the crs is further refined to support the specific zk-SNARK application, introducing additional complexity as it tailors the parameters to the operations of the AI model being verified. Together, PoT and Phase 2 form the backbone of the trusted setup, ensuring a robust and reliable foundation for zk-SNARK operations. Without knowing the witness $w$ , the proof or argument $\pi$ for a valid assertion $x$ can be simulated using a polynomial-time procedure known as a simulator. Simulator 1 $(S_{1})$ generates a simulated proof based on the crs and the random Tau parameter. This demonstrates that the proof system can function without accessing private data thus maintaining the zero-knowledge property. Simulator 2 $(S_{2})$ simulates the zk-SNARK proof using the input, output pair and a random Tau. This confirms that the system can generate valid proofs without revealing sensitive information, completing the zero-knowledge simulation. The zero-knowledgeness can be expressed as follows [48]:

$$

\Pr\left[\begin{array}[]{c}(\text{crs})\leftarrow\text{Gen}(1^{n},R)\\

(x,w)\leftarrow\mathcal{A}(\text{crs})\\

\pi\leftarrow\text{P}(\text{crs},x,w)\\

\mathcal{A}(\pi)=1\end{array}\right]=\Pr\left[\begin{array}[]{c}(\text{crs},

\tau)\leftarrow S_{1}(1^{n},R)\\

(x,w)\leftarrow\mathcal{A}(\text{crs})\\

\pi\leftarrow S_{2}(\text{crs},x,\tau)\\

\mathcal{A}(\pi)=1\end{array}\right] \tag{7}

$$

#### 3.2.2 Conversion of Linear Regression Model to zk-SNARK Circuit for Validation

We use zk-SNARKs to generate verifiable computations on-chain of the model without revealing its weights. The linear regression model is converted into a zk-SNARK circuit to represent the model’s internal operations. The following steps are used in converting the linear regression model into a zk-SNARK circuit:

Step 1: Model Representation

The developer trains the personalized AI model, specifically a linear regression model that predicts Bitcoin prices based on historical on-chain data. The model takes various features (independent variables) from the on-chain data and user-specific data, such as transaction history and wallet activity of the user, and predicts the price (dependent variable) of Bitcoin. The linear regression model is represented using (8):

$$

y=a_{0}+a_{1}x_{1}+a_{2}x_{2}+\ldots+a_{n}x_{n}+C \tag{8}

$$

where:

- $y$ is the predicted bitcoin price.

- $x_{i}$ are the features of on-chain and user-specific data.

- $a_{i}$ are the coefficients (weights) learned during training.

- $C$ is the intercept.

Step 2: Arithmetic Circuit Construction

The linear regression model equation is converted into an arithmetic circuit to permit proving zk-SNARK based computational statements. Each mathematical operation in the linear regression model is mapped to a multiplication and addition gate in zk-SNARKs. For example, the operation $a_{1}x_{1}$ is handled by multiplication gates and sum $a_{0}$ + $a_{1}x_{1}$ is handled by addition gates. The final output $y$ is computed by using addition gates adding all terms together. This process transforms the linear regression equation into an arithmetic circuit that is compatible with zk-SNARKs.

Step 3: QAP Conversion

The models arithmetic circuit are converted into a QAP, providing a framework for zk-SNARKs to check the correctness of the operations in the arithmetic circuit. A QAP for a function $f$ is defined by three sets of polynomials $\{v_{i}(x)\},\{w_{i}(x)\},\{y_{i}(x)\}$ and a target polynomial $t(x)$ .

For an arithmetic circuit $C$ with $m$ gates:

$$

p(x)=\left(\sum_{i=0}^{m}a_{i}\cdot v_{i}(x)\right)\cdot\left(\sum_{i=0}^{m}a_

{i}\cdot w_{i}(x)\right)-\left(\sum_{i=0}^{m}a_{i}\cdot y_{i}(x)\right) \tag{9}

$$

where $t(x)$ divides $p(x)$ and $a_{i}$ represents the coefficients of the polynomials.

The QAP introduces constraints that must be satisfied to ensure all operations in the arithmetic circuit are represented correctly in zk-SNARK form. The complexity of these QAP constraints increases with larger number of features in the linear regression model. As the complexity of the AI models increases, it will require more number of gates to represent model internal operations, leading to higher computational resources and longer proof generation times.

Step 4: zk-SNARK Proof Generation and Verification

The prover generates a proof $\pi$ demonstrating they know $\{a_{i}\}$ satisfying the Quadratic Arithmetic Program (QAP) equations:

$$

\pi=(A,B,C) \tag{10}

$$

where:

$$

A=\sum_{i=0}^{m}a_{i}\cdot g^{v_{i}(s)},\quad B=\sum_{i=0}^{m}a_{i}\cdot g^{w_

{i}(s)},\quad C=\sum_{i=0}^{m}a_{i}\cdot g^{y_{i}(s)}

$$

The polynomials ${v_{i}(s)},{w_{i}(s)},{y_{i}(s)}$ represent the QAP for the arithmetic circuit. These polynomials are evaluated at a secret value $s$ . The components of the zk-SNARK proof are represented by $A,B,C$ and the generator of a cryptographic group by $g$ , which is used to generate all the elements of the group through its powers. The verifier checks the proof by ensuring:

$$

e(A,B)=e(g,C)\cdot e(g^{t(s)},g) \tag{11}

$$

where $e(A,B)$ represents the bilinear pairing function used for verification and $t(s)$ is the target polynomial evaluated at the secret value $s$ .

### 3.3 Verifying Model Inference on Decentralized Oracle Network Using zk-SNARKs

In this paper, we use the Chainlink Decentralized Oracle Network (DON), hereafter referred to as Chainlink oracles, to perform off-chain computations and relay data to the blockchain. The blockchain component in our framework is represented by the Sepolia testnet, which serves as a proxy for a production blockchain environment. Chainlink Functions enable smart contracts to access a computing infrastructure that is trust-minimized. Smart contracts can access on-chain and off-chain data from APIs and perform personalized computations. By seamlessly integrating these functions with the Sepolia testnet, we can efficiently execute zero-knowledge (zk) verification computations on chainlink’s decentralized oracle network, ensuring that verified results are returned to the blockchain.

Smart contracts utilize the Chainlink nodes to retrieve data from external APIs by sending requests for source code. Every node in the Chainlink carries out the code within a secure and sandboxed execution, efficiently handling the required computations. The zk-SNARK circuits use the obtained data to perform computations without disclosing confidential details. The process yields zk-SNARK proofs that showcase accurate computation using input data. The results are sent to the Sepolia testnet through smart contracts after completing the necessary proofs. These smart contracts validate the proofs and update the state of the blockchain. Once the results have been verified, they can be easily accessed in other smart contracts, ensuring secure and reliable interactions.

## 4 Experimental Setup

The experimental setup used in our study consists of two phases: the proof generation phase and the proof verification phase. The proof generation phase involves an in-depth exploration of the processing environment and configuration details pertinent to a personalized AI model’s zk proof generation process. The proof verification phase delves into the implementation steps associated with deploying zero-knowledge proof on the blockchain and verifying zero-knowledge proofs using Chainlink oracles.

### 4.1 Proof Generation Phase

The proof generation setup uses an NVIDIA Jetson TX2, a cutting-edge device known for its high computational power and energy efficiency. The specifications of NVIDIA are listed in Table 1.

| CPU | 6 ARM Cortex-A57 |

| --- | --- |

| GPU | 256-core NVIDIA Pascal |

| Memory | 8GB LPDDR4 |

Table 1: Nvidia Jetson TX2 specifications

We selected this device due to its suitability for AI applications, which are known to require significant computational resources. Our objective was to develop zk-SNARK circuits designed to generate zero-knowledge proofs. These circuits are specifically tailored for a linear regression model, utilizing characteristics obtained from the on-chain data of Bitcoin as a CSV file. The linear regression model coefficients, including the model weights, were saved in a JSON file. We used Python scripts to automate the process of generating circuit files. These scripts received the JSON data and produced multiple Circom files, each representing a distinct number of weights.

Creating and confirming proofs involves building zk circuits using the Circom programming language, generating witnesses, and then proving and checking the proofs using the Snarkjs library. The automated script managed the complete procedure, encompassing compilation, witness production, contribution to the ceremony, preparation for phase 2, zkey generation, and proof generation and verification. We used the Circom tool to generate a smart contract-based verifier that allows proofs to be verified on the blockchain. Remix was used to deploy the Verifer smart contract on the blockchain. The trusted setup was conducted by a consortium of stakeholders, including model developers, auditors and decentralized oracle providers. This collaborative approach ensures trust in the setup process and mitigates the risk of a single point of failure.

### 4.2 Proof Verification Phase

For this experiment, we chose the Sepolia testnet because it is widely used among developers and one of the few testnets supported by Chainlink. The experimental findings are relevant and applicable to live production settings like the Ethereum main network. We deployed the verifier smart contract on the testnet for zk verification purposes, ensuring the thoroughness of our testing process.

We set up Chainlink Functions to integrate the decentralized oracle network to the Sepolia testnet. We cloned the Chainlink Functions starter kit from the official GitHub repository [49].

This configuration offered the essential resources to interact with the blockchain and Chainlink oracle networks. Subsequently, we modified the Functions request configuration file to explicitly define the source code for API calls and perform computations based on the smart contract request. We established the environment variables using encrypted data for access. This process involved establishing the environment variable file’s password and configuring the environment variable by specifying the key and value. We used four keys to setup the experiment:

- A private key obtained from the MetaMask wallet.

- An Remote Procedure Call (RPC) URL derived from the Alchemy website for the Sepolia testnet.

- An API token for GitHub.

- An API for the blockchain explorer Etherscan

<details>

<summary>extracted/6340811/12.png Details</summary>

### Visual Description

## Screenshot: Blockchain Contract Deployment Log

### Overview

The image is a screenshot of a terminal or command-line interface displaying log output from a blockchain development tool. The text documents the process of deploying and verifying a smart contract named `FunctionsConsumer` on the Ethereum Sepolia test network. The output is presented as a series of timestamped or sequential status messages.

### Components/Axes

The image contains only textual log entries. There are no graphical axes, charts, or legends. The text is monospaced, typical of a terminal console, and appears in a light color (likely white or light gray) against a dark background.

### Detailed Analysis

The following text is transcribed from the image, line by line:

1. `Waiting 2 blocks for transaction 0x7fdca8a958ef7c843a02fbb7fe8bad116b4201579e615d97dbd04b2ef46ec8e2 to be confirmed...`

* **Content:** A status message indicating the system is waiting for blockchain confirmations for a specific transaction.

* **Key Data:** Transaction Hash: `0x7fdca8a958ef7c843a02fbb7fe8bad116b4201579e615d97dbd04b2ef46ec8e2`

2. `Deployed FunctionsConsumer contract to: 0xe953b197CC443e3d8664962C1e1d40abc33701d`

* **Content:** A confirmation that the contract named `FunctionsConsumer` has been deployed.

* **Key Data:**

* Contract Name: `FunctionsConsumer`

* Deployed Contract Address: `0xe953b197CC443e3d8664962C1e1d40abc33701d`

3. `Verifying contract...`

* **Content:** A status message indicating the start of the contract verification process on a block explorer (e.g., Etherscan).

4. `The contract 0xe953b197CC443e3d8664962C1e1d40abc33701d has already been verified`

* **Content:** A status message indicating the verification step was skipped because the contract's source code was already verified on the block explorer.

* **Key Data:** Confirms the contract address from line 2.

5. `Contract verified`

* **Content:** A final confirmation of the verification status.

6. `FunctionsConsumer contract deployed to 0xe953b197CC443e3d8664962C1e1d40abc33701d on ethereumSepolia`

* **Content:** A summary line confirming the successful deployment and network.

* **Key Data:**

* Contract Name: `FunctionsConsumer`

* Deployed Contract Address: `0xe953b197CC443e3d8664962C1e1d40abc33701d` (matches lines 2 & 4)

* Network: `ethereumSepolia` (Ethereum's Sepolia test network)

### Key Observations

* **Successful Deployment:** The log sequence indicates a successful deployment of the `FunctionsConsumer` contract.

* **Pre-verified Contract:** The contract source code was already verified on the block explorer before the deployment script attempted verification, suggesting it may have been deployed previously or the verification was handled in an earlier step.

* **Test Network:** The deployment target is explicitly stated as `ethereumSepolia`, confirming this is a testnet deployment, not on the Ethereum mainnet.

* **Consistent Address:** The contract address `0xe953b197CC443e3d8664962C1e1d40abc33701d` is consistently reported across multiple log lines.

### Interpretation

This log output captures a routine but critical phase in smart contract development: deploying code to a test blockchain and ensuring its source code is publicly verifiable. The process shown is likely automated via a development framework (like Hardhat or Foundry).

The key takeaway is the successful deployment of the `FunctionsConsumer` contract to the Sepolia testnet. The "already verified" status is a positive sign, indicating the contract's source code is publicly available and matches the deployed bytecode on the block explorer. This is essential for transparency and trust, allowing users and other developers to inspect the contract's logic. The use of the Sepolia testnet confirms this is a development or testing activity, allowing for safe experimentation before any potential mainnet deployment. The transaction hash provided in the first line could be used to look up the specific deployment transaction on a Sepolia block explorer for further details like gas used and exact block number.

</details>

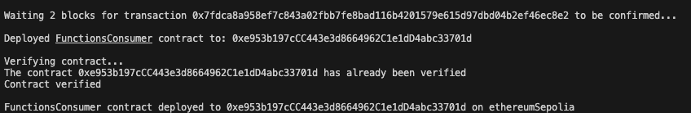

Figure 4: Oracle functions consumer contract deployed to Sepolia.

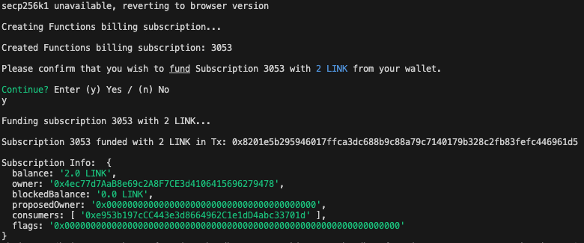

Upon configuring the environment variables, the functions consumer contract was successfully deployed to the Sepolia testnet, as shown in Fig. 4, completing the integration with Chainlink oracles.

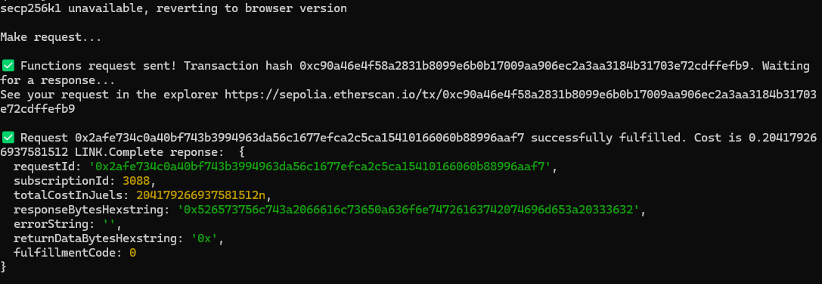

The consumer contract address is used to create and fund the billing subscription for Chainlink Functions, as shown in Fig. 5 using LINK tokens acquired via the Chainlink Faucet.

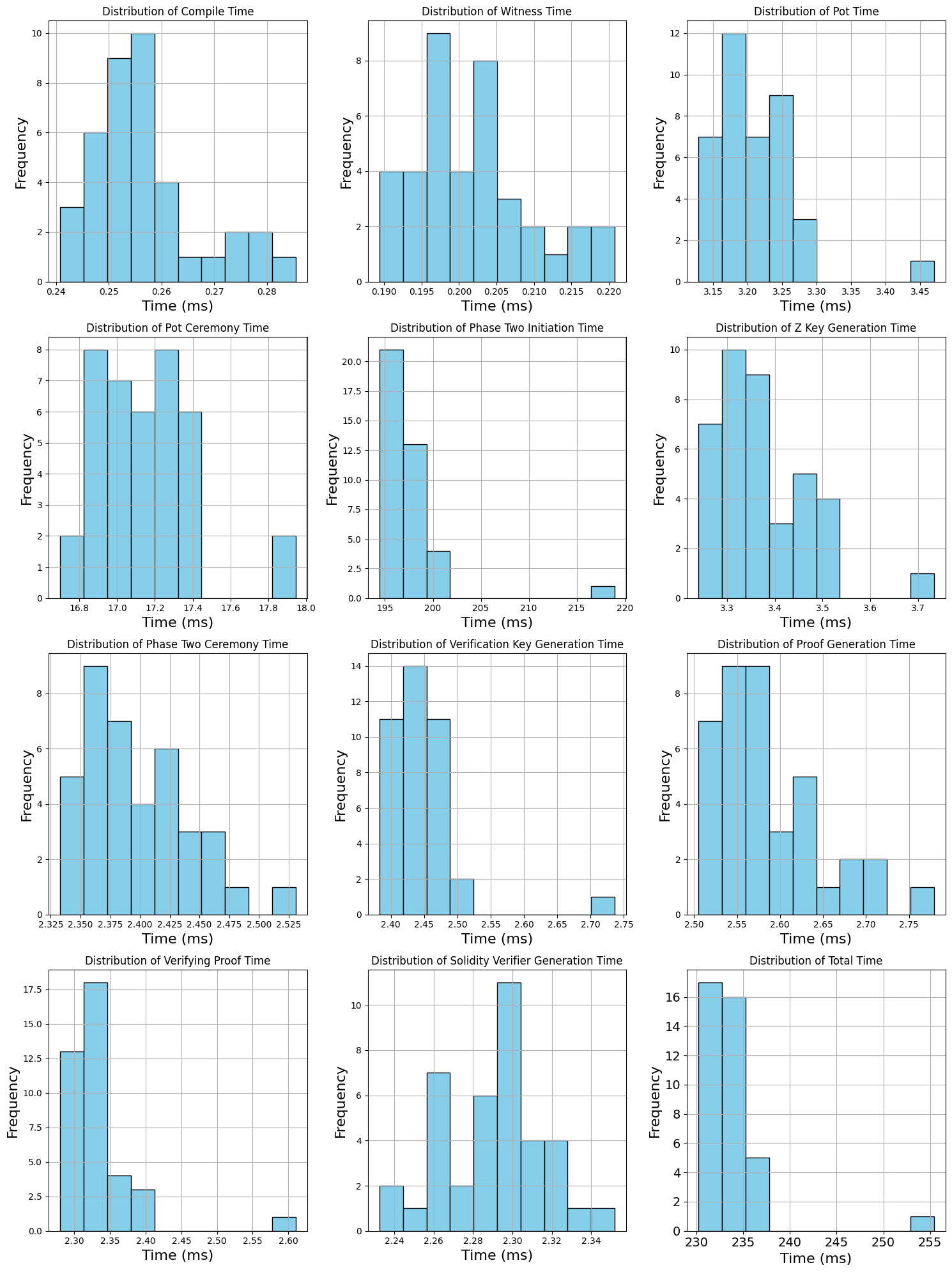

The Chainlink’s smart contract requests the nodes to perform zk computations and return the result. The proof size of our model is 806 bytes and the verification key size is 2922 bytes. The script runs the functions in a sandbox environment, as seen in Fig. 5 before making an on-chain transaction to ensure they are correctly configured and the fulfilment costs are estimated before making the request. As shown in Fig. 6, chain data retrieval was implemented by pushing API queries to external API providers for on-chain data utilizing the Chainlink Functions.

<details>

<summary>extracted/6340811/123.png Details</summary>

### Visual Description

## Terminal Output: Blockchain Subscription Funding Transaction

### Overview

The image is a screenshot of a terminal or command-line interface displaying the process of funding a blockchain-based subscription service using LINK cryptocurrency tokens. The output shows a sequence of system messages, user prompts, and transaction confirmation data.

### Components/Axes

The content is structured as a chronological log of a command-line interaction:

1. **Initial System Message**: A cryptographic library fallback notice.

2. **Subscription Creation**: A billing subscription is initiated.

3. **User Prompt**: A confirmation request to fund the subscription.

4. **User Input**: The user's affirmative response.

5. **Funding Execution**: A message indicating the funding process has started.

6. **Transaction Confirmation**: A success message with a transaction hash.

7. **Subscription Details**: A structured data block (JSON-like) showing the subscription's state post-funding.

### Detailed Analysis

The text is transcribed verbatim below. All text is in English.

**Line-by-Line Transcription:**

1. `secp256k1 unavailable, reverting to browser version`

* *Note: secp256k1 is the elliptic curve used by Bitcoin and Ethereum for digital signatures.*

2. `Creating Functions billing subscription...`

3. `Created Functions billing subscription: 3053`

4. `Please confirm that you wish to fund Subscription 3053 with 2 LINK from your wallet.`

5. `Continue? Enter (y) Yes / (n) No`

6. `y`

* *This is the user's input.*

7. `Funding subscription 3053 with 2 LINK...`

8. `Subscription 3053 funded with 2 LINK in Tx: 0x8201e5b295946017ffca3dc688b9c88a79c7140179b328c2fb83fefc446961d5`

* *Tx: Transaction Hash.*

9. `Subscription Info: {`

10. ` balance: '2.0',`

11. ` usedBalance: '0.27708668692A0F7CE34106415696279478',`

12. ` blockedBalance: '0.0 LINK',`

13. ` proposedOwner: '0x0000000000000000000000000000000000000000',`

14. ` consumers: [ '0x95b019cc11483e3800044962c1e1804abc237810' ],`

15. ` flags: '0x0000000000000000000000000000000000000000000000000000000000000000'`

16. `}`

### Key Observations

* **Transaction Success**: The primary action—funding subscription #3053 with 2 LINK tokens—was successful, as confirmed by the transaction hash.

* **Balance State**: Post-funding, the subscription's `balance` is exactly '2.0' (LINK). The `usedBalance` shows a non-zero value (~0.277 LINK), indicating some funds have already been consumed or allocated, possibly for prior operations or fees.

* **Zero Addresses**: The `proposedOwner` and `flags` fields are set to zero-address values, suggesting these features are not currently configured or active for this subscription.

* **Single Consumer**: The subscription has one authorized `consumer` address listed.

* **Library Fallback**: The initial line indicates a software environment where the native `secp256k1` cryptographic library was not available, causing a fallback to a browser-based implementation.

### Interpretation

This log captures a routine but critical administrative action within a blockchain-based service ecosystem, likely related to Chainlink Functions or a similar decentralized oracle network. The user is allocating financial resources (LINK tokens) to a specific subscription contract (ID: 3053) to pay for future computational services or data requests.

The presence of a `usedBalance` alongside a fresh `balance` suggests this is a top-up transaction for an existing, active subscription rather than the creation of a new one. The single `consumer` address implies a specific smart contract or externally owned account is authorized to spend from this subscription's balance. The zeroed `proposedOwner` and `flags` indicate a simple, default configuration. The transaction hash is the immutable proof of this funding event on the blockchain.

</details>

Figure 5: Funding the subscription

<details>

<summary>extracted/6340811/n123.png Details</summary>

### Visual Description

## Terminal/Console Output: Blockchain Transaction Log

### Overview

The image displays a terminal or console output showing the process and result of a blockchain transaction, likely on the Ethereum Sepolia test network. It details a request being sent, a transaction hash being generated, and the subsequent fulfillment of that request with associated data.

### Components/Axes

The output is structured as a series of text lines, primarily in green and white on a black background. Key components include:

1. **Status Message:** `secp256k1 unavailable, reverting to browser version`

2. **Action Prompt:** `Make request...`

3. **Transaction Submission Log:** Details of a sent function request.

4. **Request Fulfillment Log:** Details of the completed request response.

### Detailed Analysis

The text can be segmented into two main blocks:

**Block 1: Transaction Submission**

* **Line 1:** `Functions request sent! Transaction hash 0xc90a46e4f58a2831b8099e6b0b17009aa906ec2a3aa3184b31703e72cdffefb9. Waiting for a response...`

* **Line 2:** `See your request in the explorer https://sepolia.etherscan.io/tx/0xc90a46e4f58a2831b8099e6b0b17009aa906ec2a3aa3184b31703e72cdffefb9`

**Block 2: Request Fulfillment**

* **Line 1:** `Request 0x2afe734c0a40bf743b3994963da56c1677efca2c5ca15410166060b88996aaf7 LINK.Complete reponse. Cost is 0.2841792696397581512n`

* **Data Object (Key-Value Pairs):**

* `requestId: '0x2afe734c0a40bf743b3994963da56c1677efca2c5ca15410166060b88996aaf7'`

* `subscriptionId: 3088`

* `totalCostInJuels: 2841792696397581512n`

* `responseBytesHexstring: '0x526573756c743a2066616c6c65642062656361757365206973206e6f7420612076616c696420726573706f6e73652e'`

* `errorString: ''`

* `returnDataBytesHexstring: '0x'`

* `fulfillmentCode: 0`

### Key Observations

1. **Successful Transaction:** The process completed successfully, indicated by `LINK.Complete reponse` and a `fulfillmentCode` of `0`.

2. **Cost:** The transaction cost `0.2841792696397581512n` LINK (the native token of the Chainlink network). The `n` suffix denotes a BigInt number.

3. **Identifiers:** A unique `requestId` and a `subscriptionId` (3088) are provided for tracking.

4. **Response Data:** The `responseBytesHexstring` contains a hex-encoded message. Decoding this hex string reveals the text: `Result: failed because is not a valid response.`

5. **No Errors:** The `errorString` is empty, and `returnDataBytesHexstring` is `0x` (empty), suggesting the primary response was carried in the `responseBytesHexstring` field.

### Interpretation

This log captures a complete lifecycle of a decentralized request, likely using the Chainlink Functions service. A user or contract initiated a request (transaction hash provided), which was later fulfilled by the Chainlink network.

The critical piece of information is the decoded response: **"Result: failed because is not a valid response."** This indicates that while the Chainlink node successfully processed and delivered the request (hence the `fulfillmentCode: 0`), the *result of the computation or data fetch* itself was a failure. The external data source or computation returned an invalid or erroneous result.

The cost in LINK and the subscription model (`subscriptionId`) are standard for Chainlink's decentralized services. The initial `secp256k1 unavailable` message suggests the environment may have fallen back to a different cryptographic library, which is a technical note but did not prevent the transaction from proceeding.

**In summary:** The image documents a technically successful blockchain transaction that delivered a failed business logic result. The infrastructure worked, but the requested task could not be completed successfully by the external system.

</details>

Figure 6: Chainlink functions API and computation Output

## 5 Experimental Results and Analysis

<details>

<summary>extracted/6340811/Distribution.png Details</summary>

### Visual Description

\n

## Histograms: Performance Timing Distributions

### Overview

The image displays a 4x3 grid of 12 individual histograms. Each histogram visualizes the frequency distribution of execution times (in milliseconds, ms) for a specific step or component of a computational process, likely related to a cryptographic protocol or zero-knowledge proof system. All plots share the same visual style: light blue bars with black outlines on a white background with gray grid lines.

### Components/Axes

* **Grid Structure:** 12 separate plots arranged in 4 rows and 3 columns.

* **Common Elements per Plot:**

* **Title:** Each plot has a unique title at the top, following the pattern "Distribution of [Process Name] Time".

* **X-axis:** Labeled "Time (ms)" for all plots. The scale and range vary significantly between plots.

* **Y-axis:** Labeled "Frequency" for all plots. The scale varies between plots.

* **Plot Titles (in reading order, left-to-right, top-to-bottom):**

1. Distribution of Compile Time

2. Distribution of Witness Time

3. Distribution of Pot Time

4. Distribution of Pot Ceremony Time

5. Distribution of Phase Two Initiation Time

6. Distribution of Z Key Generation Time

7. Distribution of Phase Two Ceremony Time

8. Distribution of Verification Key Generation Time

9. Distribution of Proof Generation Time

10. Distribution of Verifying Proof Time

11. Distribution of Solidity Verifier Generation Time

12. Distribution of Total Time

### Detailed Analysis

**Row 1:**

1. **Compile Time:** X-axis range ~0.24 to 0.285 ms. Distribution is roughly unimodal, peaking at the bin centered near 0.255 ms with a frequency of 10. The distribution is slightly right-skewed.

2. **Witness Time:** X-axis range ~0.190 to 0.220 ms. Distribution is bimodal, with a primary peak at ~0.197 ms (frequency ~9) and a secondary peak at ~0.203 ms (frequency 8).