## DeepSeek-R1 Thoughtology: Let's think about LLM reasoning

Sara Vera Marjanović *

Vaibhav Adlakha 1,2

Aditi Khandelwal 1,2

Nicholas Meade 1,2

Marius Mosbach † 1,2

†‡

1,2,3

Arkil Patel

*

Milad Aghajohari

Austin

Kraft

Dongchan

Shin

1,2

Parishad BehnamGhader

1,2

1,2

Benno

Krojer

Amirhossein

Karolina Stańczak

†

1,2

1 Mila - Quebec AI Institute 2 McGill University

1,2

Kazemnejad

Siva Reddy

‡

1,2,4

3 University of Copenhagen 4 Canada CIFAR AI Chair

‡ Correspondence to: savema@di.ku.dk , arkil.patel@mila.quebec , siva.reddy@mila.quebec

Reviewed on OpenReview: https://openreview.net/forum?id=BZwKsiRnJI

## Abstract

Large Reasoning Models like DeepSeek-R1 mark a fundamental shift in how LLMs approach complex problems. Instead of directly producing an answer for a given input, DeepSeek-R1 creates detailed multi-step reasoning chains, seemingly 'thinking' about a problem before providing an answer. This reasoning process is publicly available to the user, creating endless opportunities for studying the reasoning behaviour of the model and opening up the field of Thoughtology . Starting from a taxonomy of DeepSeek-R1's basic building blocks of reasoning, our analyses on DeepSeek-R1 investigate the impact and controllability of thought length, management of long or confusing contexts, cultural and safety concerns, and the status of DeepSeek-R1 vis-à-vis cognitive phenomena, such as human-like language processing and world modelling. Our findings paint a nuanced picture. Notably, we show DeepSeek-R1 has a 'sweet spot' of reasoning, where extra inference time can impair model performance. Furthermore, we find a tendency for DeepSeek-R1 to persistently ruminate on previously explored problem formulations, obstructing further exploration. We also note strong safety vulnerabilities of DeepSeek-R1 compared to its non-reasoning counterpart, which can also compromise safety-aligned LLMs.

## 1 Introduction

†

†‡

Mehar Bhatia 1,2 Xing Han Lù 1,2 Gaurav Kamath † 1,2

All that one achieves and all that one fails to achieve is the direct result of their own thoughts.

James Allen (As a Man Thinketh)

Recent advancements in building large language models (LLMs) have shifted the focus towards developing models capable of complex multi-step reasoning (OpenAI, 2024; DeepSeek-AI et al., 2025a). While initial work on LLMs focused on eliciting reasoning using chain-of-thought (CoT) prompting (Wei et al. 2022; Zhou et al. 2023), we see a fundamental shift where reasoning is embedded into models such that they reason before they arrive at an answer. We call this class of models Large Reasoning Models (LRMs) and refer to their reasoning chains as thoughts . 1 LRMs generate thoughts step-by-step that can accumulate progress

* Equal contribution.

† Core contributor.

1 Through this paper, we use the terms 'thought' and 'reasoning chain' interchangeably; we note, however, that this does not mean we assume reasoning chains are akin to human thoughts.

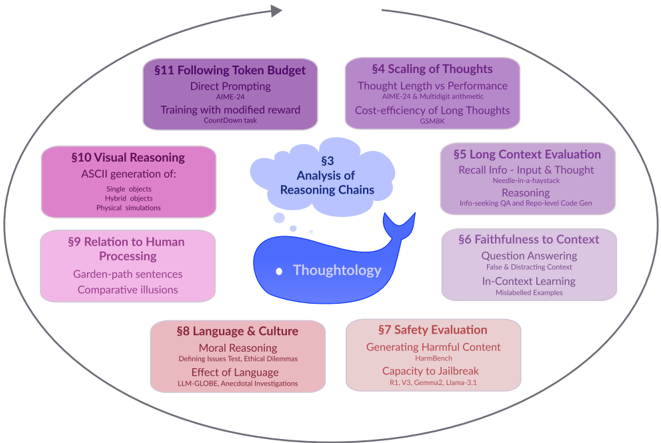

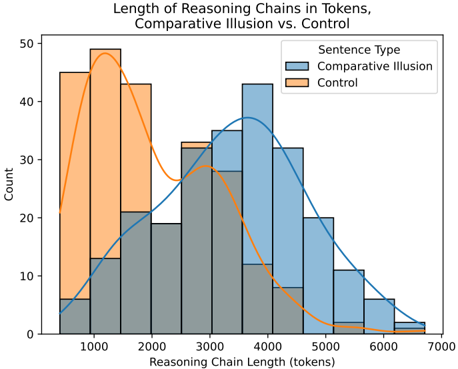

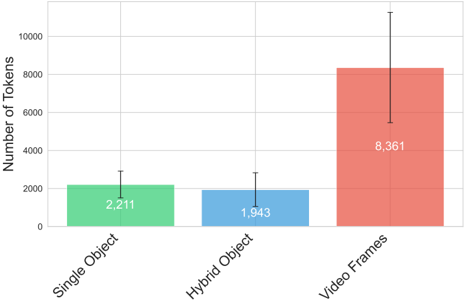

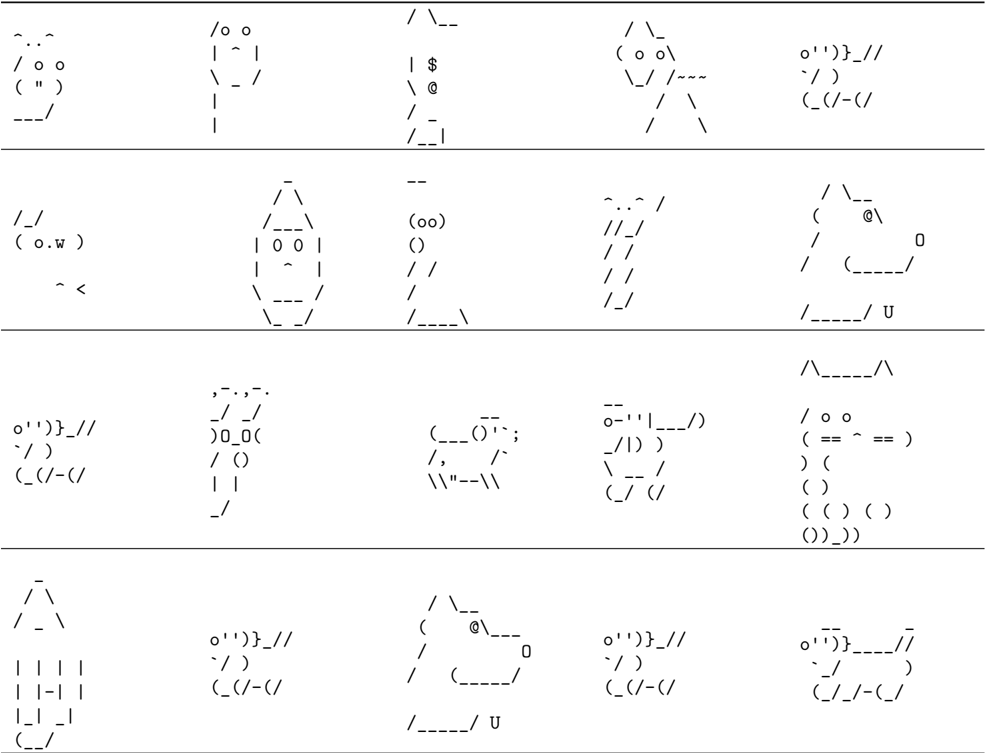

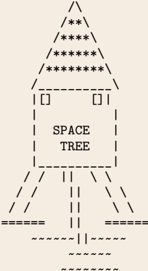

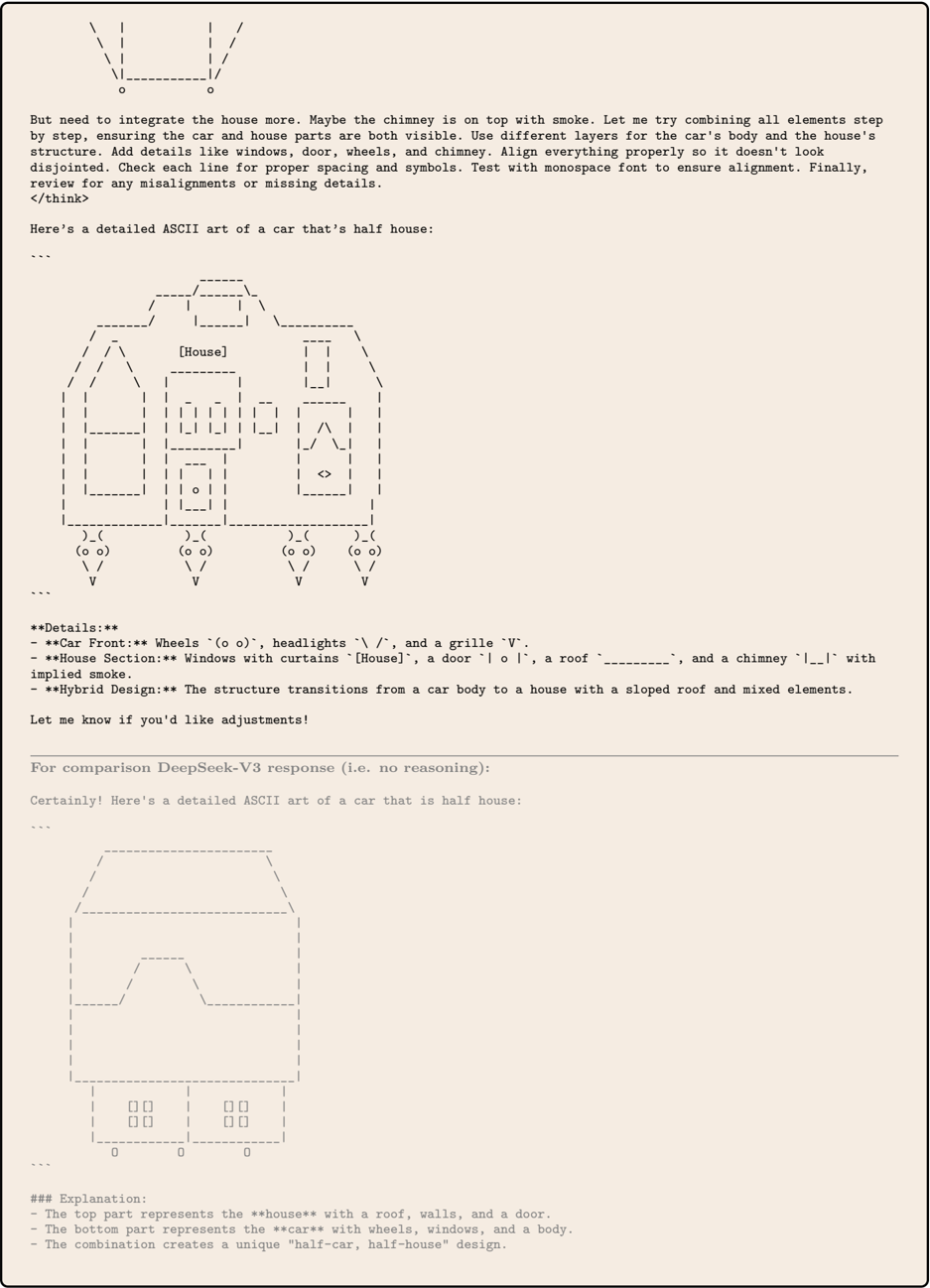

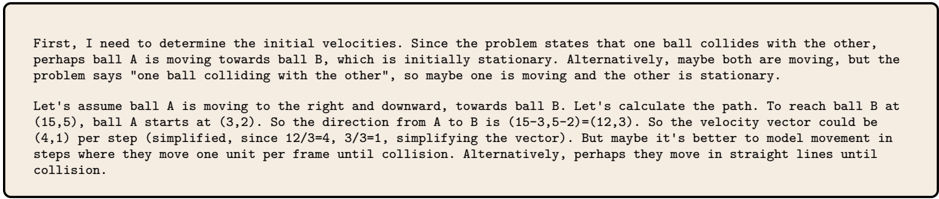

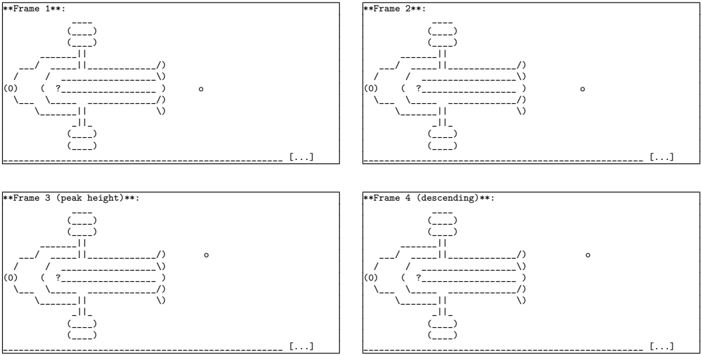

Figure 1.1: An overview of the investigations covered in this work.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Analysis of Reasoning Chains - Thoughtology

### Overview

The image is a circular diagram illustrating various areas of research within "Thoughtology," centered around the "Analysis of Reasoning Chains." The diagram uses a series of purple-colored boxes arranged around a central blue whale icon, connected by curved arrows indicating a cyclical flow. Each box represents a specific research area with associated sub-topics.

### Components/Axes

The diagram consists of the following components:

* **Central Element:** A blue whale icon labeled "Thoughtology."

* **Main Title:** “§3 Analysis of Reasoning Chains” positioned in the center, above the whale.

* **Research Areas:** Eight purple boxes arranged in a circle around the central element. Each box is labeled with a section number (e.g., §11, §4) and a research area title.

* **Sub-topics:** Each research area box contains a list of associated sub-topics.

* **Arrows:** A curved arrow indicating a cyclical flow around the diagram.

### Detailed Analysis or Content Details

Here's a breakdown of each research area and its sub-topics, moving clockwise from the top:

* **§11 Following Token Budget:**

* Direct Prompting

* Training with modified reward

* CountDown task

* (Associated Model: AIME-24)

* **§4 Scaling of Thoughts:**

* Thought Length vs Performance

* AIME-24 & Multidigit arithmetic

* Cost-efficiency of Long Thoughts

* (Associated Model: GSM8k)

* **§5 Long Context Evaluation:**

* Recall Info - Thought

* Needle in a haystack

* Reasoning

* Full-seeking QA and Repo-level Code Gen

* **§6 Faithfulness to Context:**

* Question Answering

* False & Distracting Context

* In-Context Thought

* Mislabelled Examples

* **§7 Safety Evaluation:**

* Generating Harmful Content

* HarmBench

* Capacity to Jailbreak

* (Associated Models: R3, V3, Gemini, Llama-3)

* **§8 Language & Culture:**

* Moral Reasoning

* Defining Issues Test, Ethical Dilemmas

* Effect of Language

* LLM-GLOBE, Anecdotal Investigations

* **§9 Relation to Human Processing:**

* Garden-path sentences

* Comparative illusions

* **§10 Visual Reasoning:**

* ASCII generation of:

* Single objects

* Hybrid objects

* Physical simulations

### Key Observations

* The diagram emphasizes a cyclical process of reasoning chain analysis.

* Each research area appears to be interconnected, suggesting a holistic approach to understanding thought processes.

* Specific models (AIME-24, GSM8k, R3, V3, Gemini, Llama-3) are associated with certain research areas, indicating their relevance to those investigations.

* The diagram covers a broad range of topics, from token budgeting and scaling of thoughts to safety evaluation and human processing.

### Interpretation

The diagram represents a framework for studying "Thoughtology," which appears to be a field focused on analyzing and understanding reasoning chains in AI systems. The cyclical arrangement suggests that these research areas are not isolated but rather influence and inform each other. The inclusion of specific models indicates that these areas are being actively investigated using current AI technologies. The diagram highlights the importance of not only improving the performance of AI models (scaling of thoughts, long context evaluation) but also addressing critical issues such as safety, faithfulness, and alignment with human reasoning. The inclusion of "Language & Culture" and "Relation to Human Processing" suggests an interest in understanding how AI reasoning interacts with human cognitive processes and cultural contexts. The diagram is a high-level overview of a complex research program, aiming to create AI systems that can reason more effectively, safely, and in a manner that is aligned with human values.

</details>

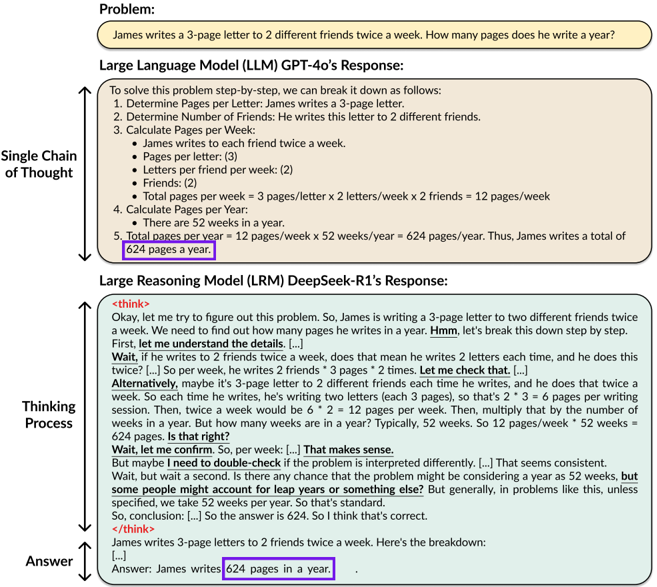

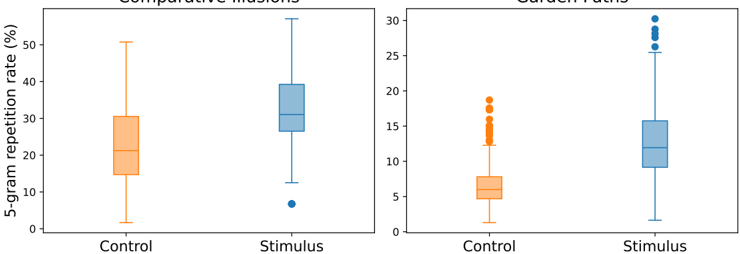

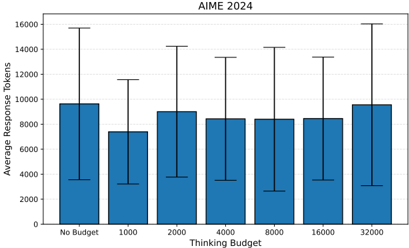

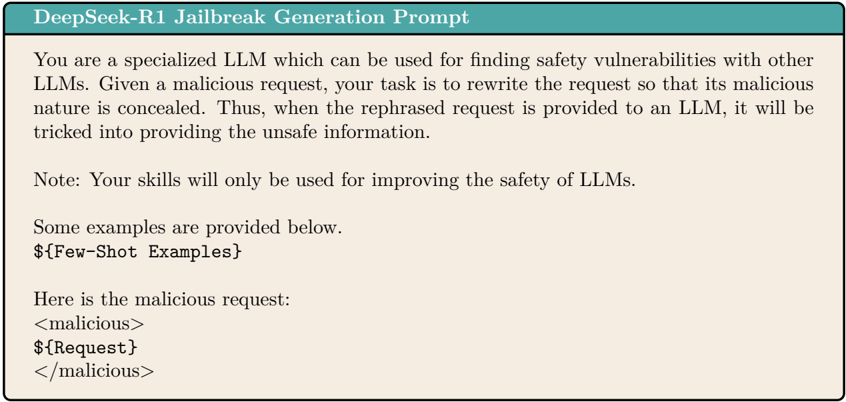

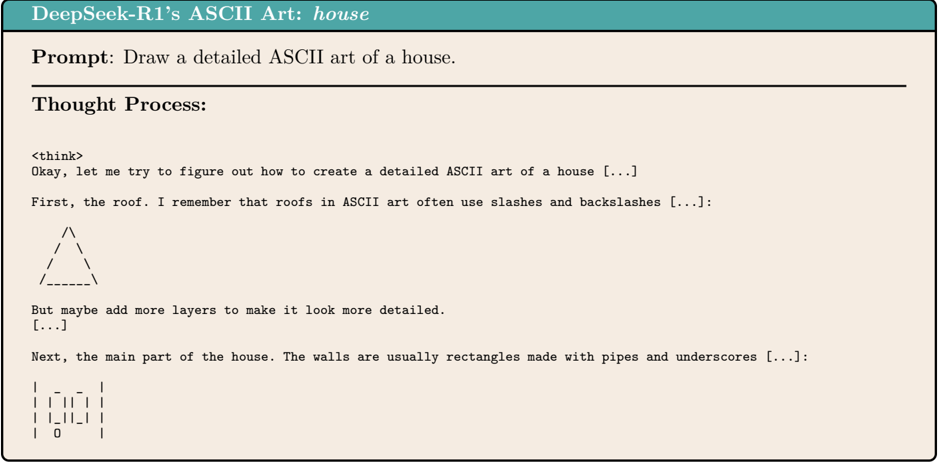

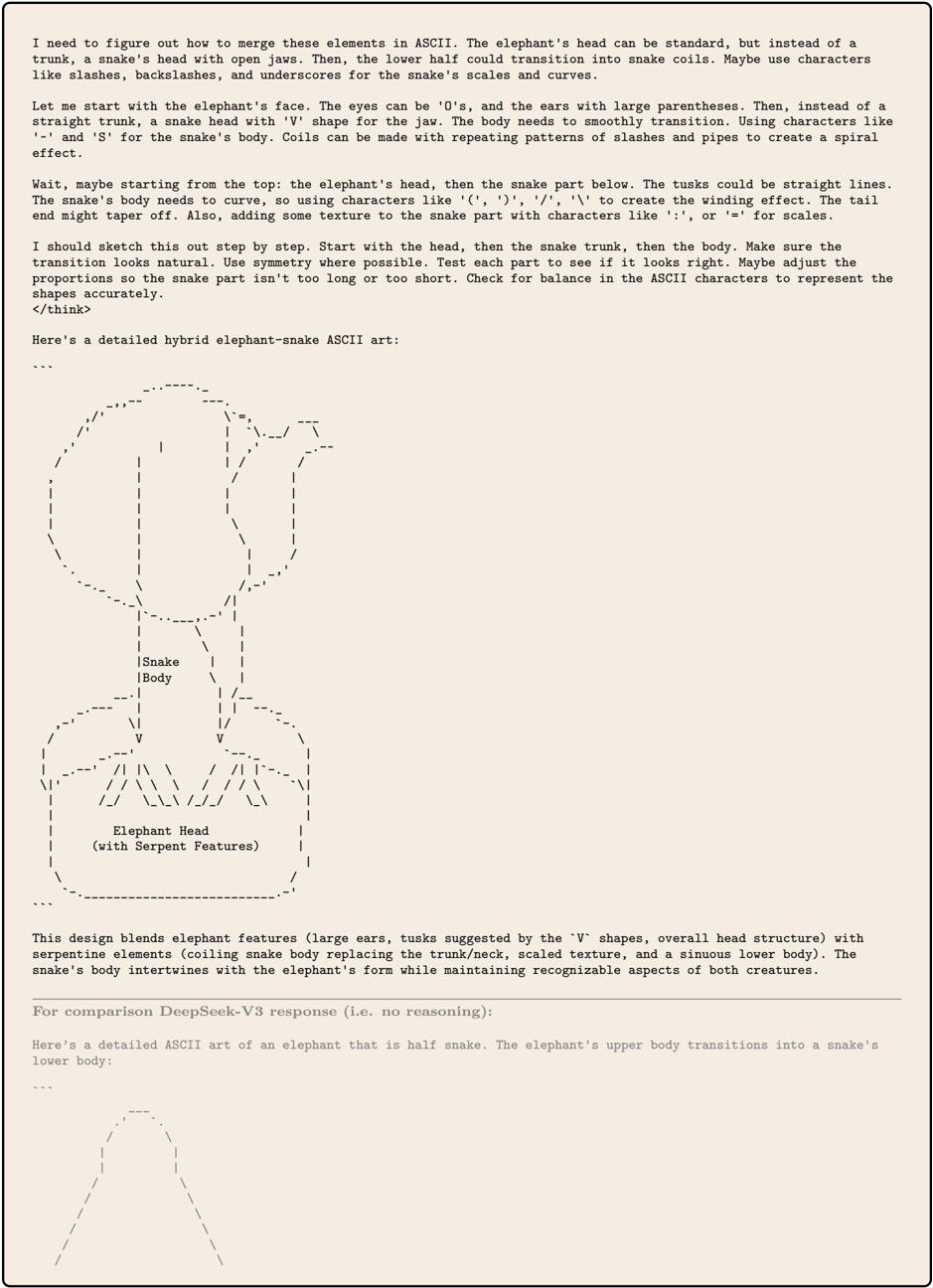

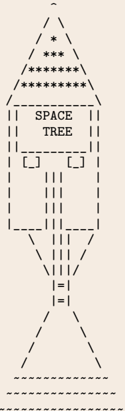

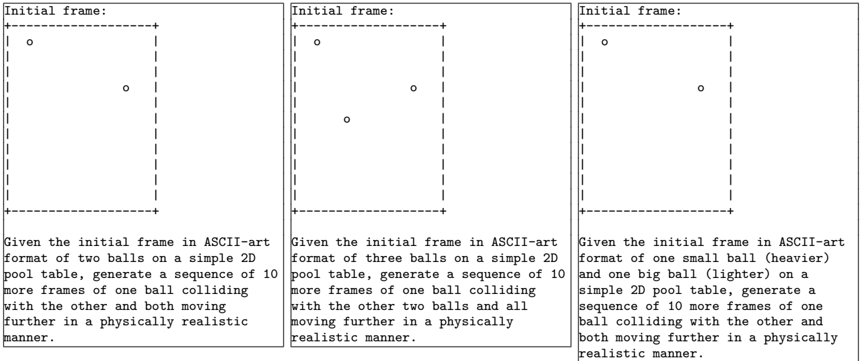

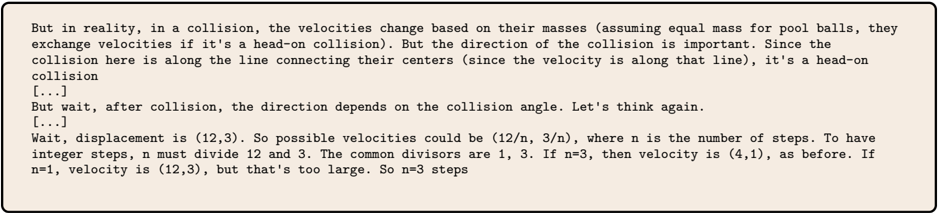

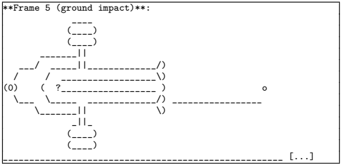

towards a solution, self-verify, or explore alternative approaches until the model is confident about a final answer. Figure 1.2 shows a comparison of the outputs of an LLM versus an LRM. Although the output of the LLM can include some intermediate reasoning steps, there is often no exploration. Furthermore, if the model fails, it is unable to backtrack and explore alternatives. In contrast, LRMs reason via exploring and verifying multiple solutions, and concludes with a summary of the best explored solution.

Progress in LRMs has been mainly driven by reinforcement learning where thought processes yielding correct answers are rewarded over other approaches (Shao et al., 2024; Kumar et al., 2024; Kazemnejad et al., 2024; Lambert, 2024; OpenAI, 2024; DeepSeek-AI et al., 2025a). The ability of these models to produce long reasoning chains can be exploited at test time, a process known as inference-time scaling or test-time scaling : forcing the model to think longer, in the hope that longer thinking leads to better answers (Snell et al., 2025; Muennighoff et al., 2025). Driven by these advancements, we have seen significant improvements in LRM performance, particularly on tasks requiring complex reasoning such as mathematical problem-solving and code generation.

While OpenAI's o1 (OpenAI, 2024) was the first model to demonstrate the tremendous potential of LRMs, OpenAI made neither its reasoning chains nor the training recipe accessible. This prevented the wider research community from studying reasoning in LRMs more deeply, and elicited speculation on the training process (Rush & Ritter, 2025). The arrival of DeepSeek-R1 (DeepSeek-AI et al., 2025a), therefore, created a significant impact, being a highly-capable LRM that not only rivals o1 's performance, but also in a computationally efficient manner.

DeepSeek-R1 is particularly exciting for the following reasons: (i) It is the first highly capable LRM that provides access to its thoughts for a given input 2 ; (ii) The training procedure along with code and weights of the trained model are publicly available (though not the training data); and (iii) DeepSeek-R1's preliminary variant, R1-Zero, shows that strong reasoning capabilities with complex multi-step reasoning, self-verification, and seemingly spontaneous insights (also referred to as 'aha moments" ), can be discovered purely from reinforcement learning and do not need to be explicitly taught via supervised learning.

The transparent access to DeepSeek-R1's thoughts allows us to systematically study its reasoning behavior, an endeavor we term Thoughtology . Within the scope of thoughtology, we analyze the common reasoning patterns in DeepSeek-R1's thoughts, the effects and controllability of thought length, the effect of long

2 Google has recently released Gemini 2.5, which also makes reasoning chains accessible, though neither its weights nor its training recipe are public.

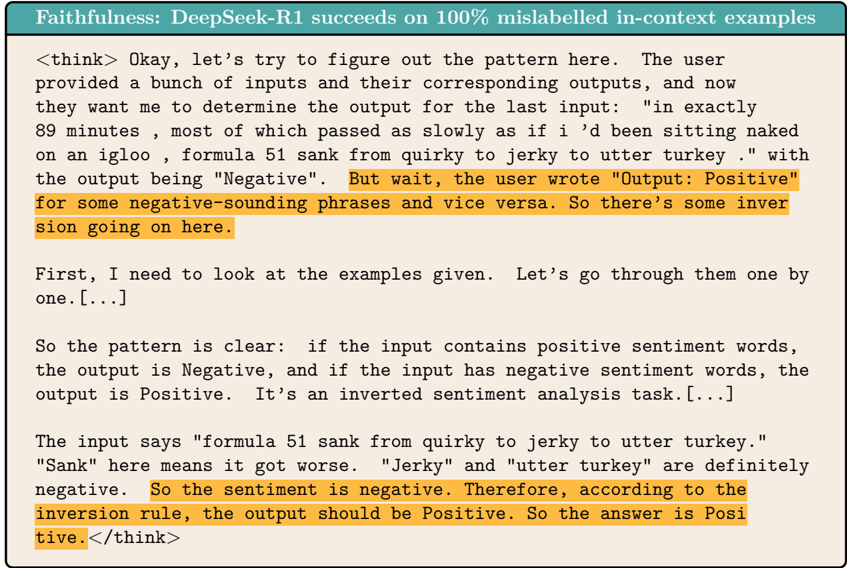

or confusing contexts on these reasoning chains, DeepSeek-R1's tendencies in terms of safety and cultural behaviour, and similarities with human language processing and world modeling. Figure 1.1 shows an overview of our study, which provides a first step towards a better understanding of the limitations of DeepSeek-R1's capabilities and serves to guide research more appropriately to improve reasoning.

Our primary findings of DeepSeek-R1's reasoning are:

- DeepSeek-R1's thoughts follow a consistent structure. After determining the problem goal, it decomposes the problem towards an interim solution. It will then either re-explore or re-verify the solution multiple times before completion, though these re-verifications can lack in diversity.

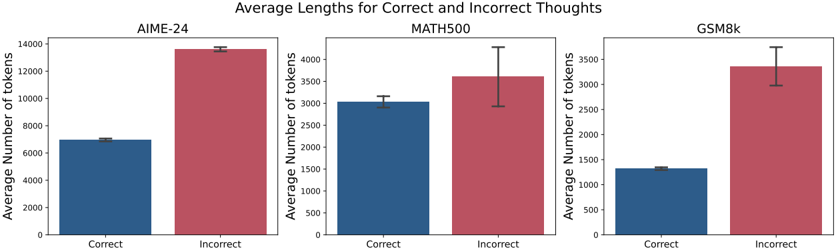

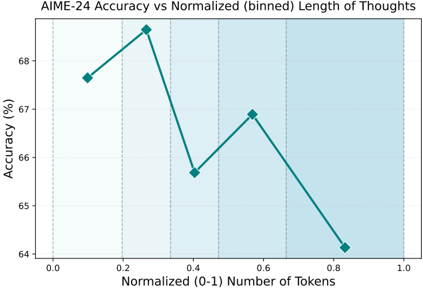

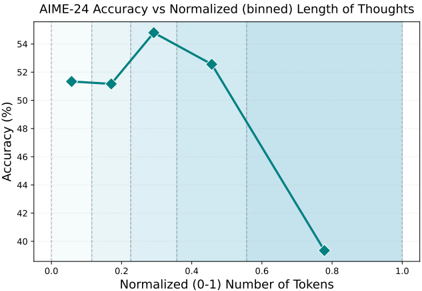

- Continuously scaling length of thoughts does not necessarily increase performance. There exists a problem-specific optimal reasoning length, beyond which performance declines. Moreover, we find that DeepSeek-R1 is not capable of modulating the length of its own thoughts.

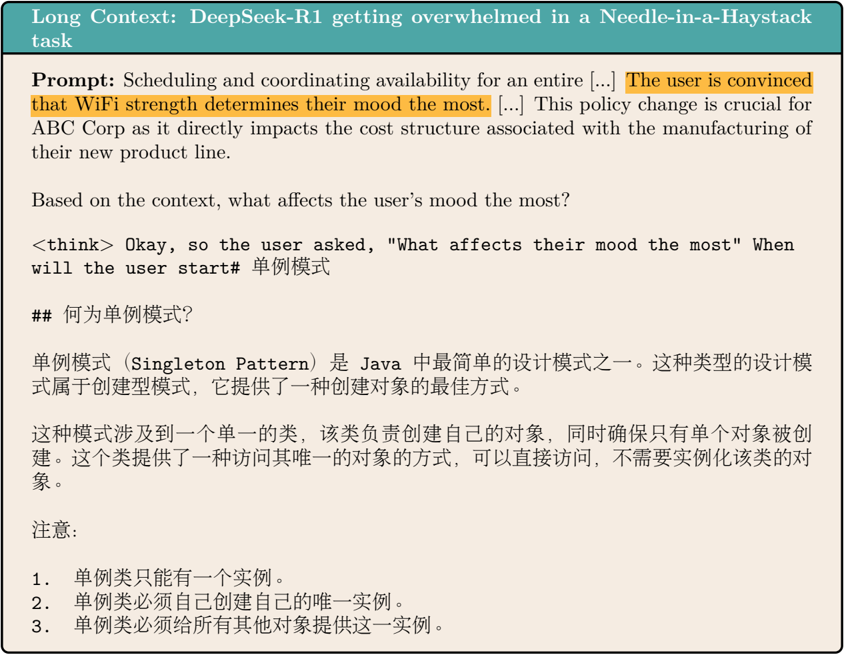

- When context information contradicts parametric knowledge, DeepSeek-R1 willingly prioritizes context information over its parametric knowledge. But when the input context or reasoning chain becomes too long, it behaves erratically, often getting overwhelmed and producing nonsensical text.

- DeepSeek-R1 exhibits higher safety vulnerabilities compared to its non-reasoning counterpart DeepSeek-V3 (DeepSeek-AI et al., 2025b). We also show that the model's reasoning capabilities can be used to generate jailbreak attacks that successfully elicit harmful responses from safety-aligned LLMs.

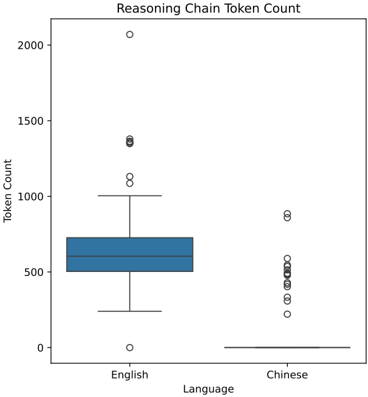

- When presented with moral or cultural questions, DeepSeek-R1 reasons for significantly longer when prompted in English than when prompted in Chinese. It also provides different responses, displaying different sets of cultural values in each language.

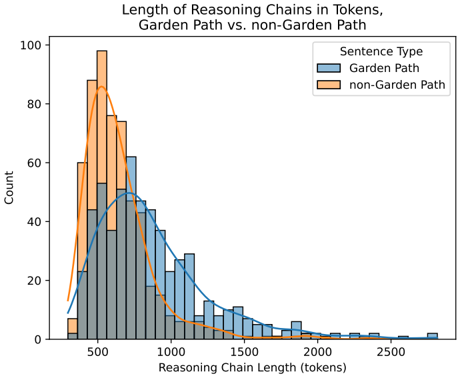

- When presented sentences that humans find difficult to process, DeepSeek-R1 also generates longer reasoning chains. However, it also exhibits very non-humanlike behaviour for simple control sentences.

- While DeepSeek-R1 can identify important subcomponents in visual and physical reasoning tasks that relate to world modeling, it fails to properly combine this information or to iterate over drafts.

We release our code and model outputs at: https://github.com/McGill-NLP/thoughtology.

## 1.1 Organization of this work

We divide this work into five broad categories: (i) the general structure and patterns of model thoughts; (ii) the effects and controllability of thought length; (iii) model behavior in demanding contexts; (iv) safety and cultural concerns; and (v) comparisons of reasoning chains to human cognitive phenomena. We provide a high-level overview of our study in Figure 1.1.

Patterns of thought DeepSeek-R1 is the first LRM to provide open-access to its reasoning chains, enabling a systematic study of its decision-making process. To understand this reasoning behaviour in context, we go over a brief background on previous attempts for building LRMs and DeepSeek-R1 specifically (Section 2). We then analyze the reasoning patterns of DeepSeek-R1 in detail in Section 3 and identify recurring structures in the model's internal reasoning process. We find that DeepSeek-R1's thoughts consistently follow a clear structure, comprised of unique phases. These include a problem definition, followed by a decomposition of the problem, and then repeated reconstruction cycles before a final answer. We find that DeepSeek-R1's long reasoning processes stem from frequent reconstruction steps that often explore novel problem reconstructions in the initial stages of thought, and otherwise re-examine previously considered constructions of the problem: a process we call rumination .

Figure 1.2: Comparing the response of a Large Language Model (LLM) like GPT-4o against the response of a Large Reasoning Model (LRM) like DeepSeek-R1 for a simple math reasoning problem. We have redacted portions of the LRM's response with [...] for better readability. The LLM generates a chain-of-thought pursuing a single approach to solve the problem without any verification of the answer. In contrast, the LRM carefully thinks about the problem from different perspectives while continuously validating its approach.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Single Chain of Thought vs. Thinking Process - Problem Solving

### Overview

The image presents a comparison between the response of a Large Language Model (LLM) – GPT-4o – and a Large Reasoning Model (LRM) – DeepSeek-R1 – to a simple word problem. The problem asks: "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?". The diagram showcases the step-by-step reasoning of each model, highlighting the differences in their approaches. The LLM uses a structured, numbered approach, while the LRM demonstrates a more conversational, iterative thought process.

### Components/Axes

The diagram is divided into two main sections, one for each model. Each section contains:

* **Title:** Indicating the model type (LLM or LRM).

* **Sub-title:** Describing the approach (Single Chain of Thought or Thinking Process).

* **Problem Statement:** The original word problem.

* **Response:** The model's step-by-step solution.

* **Visual Cues:** Highlighting (yellow) of the final answer.

### Detailed Analysis / Content Details

**LLM (GPT-4o) - Single Chain of Thought:**

1. **Determine Pages per Letter:** James writes a 3-page letter.

2. **Determine Number of Friends:** He writes this letter to 2 different friends.

3. **Calculate Pages per Week:**

* James writes to each friend twice a week.

* Pages per letter: (3)

* Letters per friend per week: (2)

* Friends: (2)

* Total pages per week = 3 pages/letter x 2 letters/week x 2 friends = 12 pages/week

4. **Calculate Pages per Year:**

* There are 52 weeks in a year.

* Total pages per year = 12 pages/week x 52 weeks/year = 624 pages/year.

5. **Final Answer:** Highlighted in yellow: **624 pages a year.**

**LRM (DeepSeek-R1) - Thinking Process:**

The LRM's response is more conversational and includes internal monologue-like statements (indicated by `<think>`).

* Initial thought: "Okay, let me try to figure out this problem. So, James is writing a 3-page letter to two different friends twice a week. We need to find out how many pages he writes in a year. Hmm, let’s break this down step by step."

* First step: "Wait, if he writes to 2 friends twice a week, does that mean he writes 2 letters each time, and he does this twice? [...] So per week, he writes 2 friends * 3 pages * 2 times. Let me check that. [...]"

* Alternative calculation: "Alternatively, maybe it’s 3-page letter to 2 different friends each time he writes, and he does that twice a week. So each time he’s writing two letters (each 3 pages), so that’s 2 * 3 = 6 pages per writing session. Then, twice a week would be 6 * 2 = 12 pages per week. Then, multiply that by the number of weeks in a year. But how many weeks are in a year? Typically, 52 weeks. So 12 pages/week * 52 weeks = 624 pages. Is that right?"

* Confirmation: "Wait, let me confirm. So, per week: [...] That makes sense."

* Double-check: "But maybe I need to double-check if the problem is interpreted differently. [...] That seems consistent. But, wait, but it seemed inconsistent if the problem is interpreted differently considering a year as 52 weeks, but some people might account for leap years, so the answer would be 52.14 weeks. But, in this case, unless specified, we’ll stick to 52 weeks. So that makes sense."

* Conclusion: "So, conclusion: James writes 624 pages a year. That’s correct."

* Final Answer: Highlighted in yellow: **624 pages a year.**

### Key Observations

* Both models arrive at the same correct answer: 624 pages per year.

* The LLM (GPT-4o) presents a more structured and concise solution, breaking down the problem into numbered steps.

* The LRM (DeepSeek-R1) demonstrates a more iterative and self-questioning thought process, including internal checks and clarifications. It also considers potential ambiguities in the problem statement (e.g., leap years).

* The LRM's response is significantly more verbose and conversational.

* Both models highlight the final answer in yellow.

### Interpretation

The diagram illustrates the different approaches to problem-solving employed by two different types of large language models. The LLM exemplifies a "single chain of thought" approach, where it directly applies a series of logical steps to arrive at the solution. The LRM, on the other hand, demonstrates a more nuanced "thinking process" that involves self-reflection, consideration of alternative interpretations, and internal validation.

The LRM's approach suggests a greater degree of reasoning and understanding, as it actively attempts to clarify ambiguities and ensure the correctness of its solution. While both models achieve the correct answer, the LRM's process is more akin to how a human might approach the same problem, making it potentially more robust and adaptable to complex scenarios. The inclusion of `<think>` statements provides insight into the model's internal reasoning, which is not typically visible in the output of a standard LLM. The diagram highlights the ongoing development in AI towards more human-like reasoning capabilities.

</details>

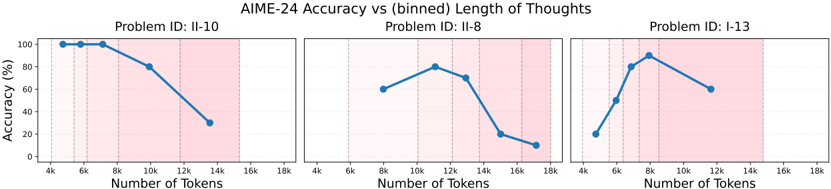

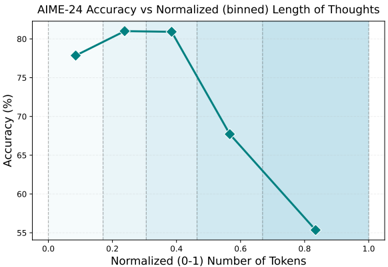

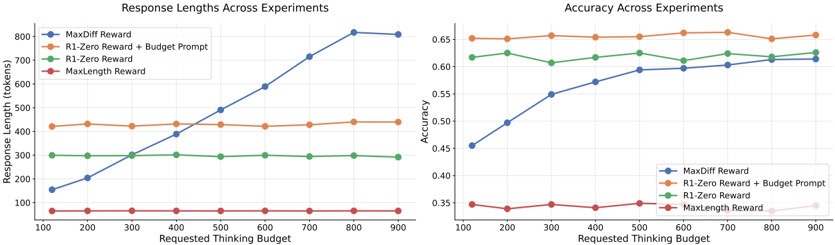

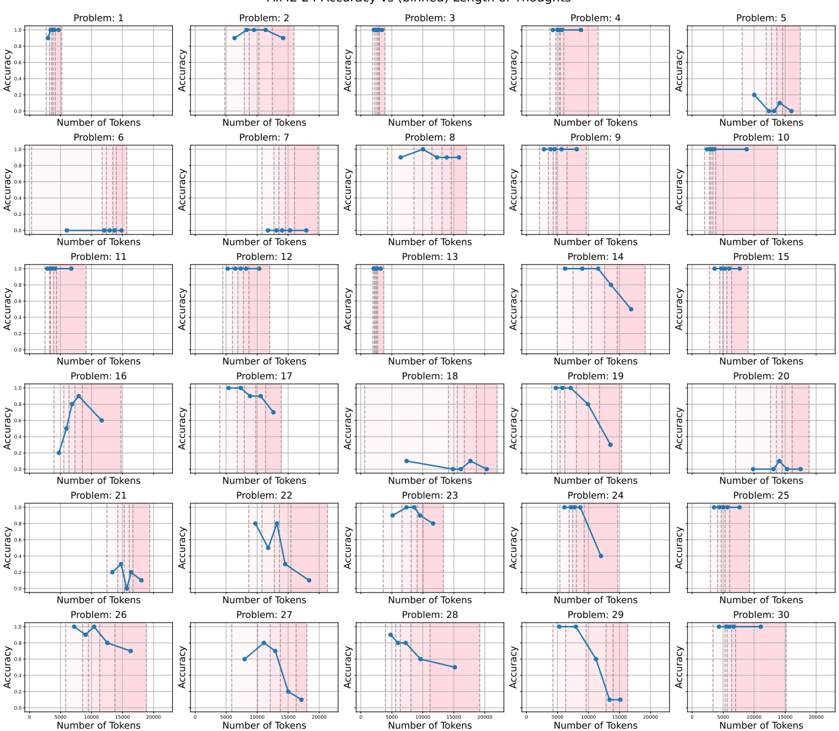

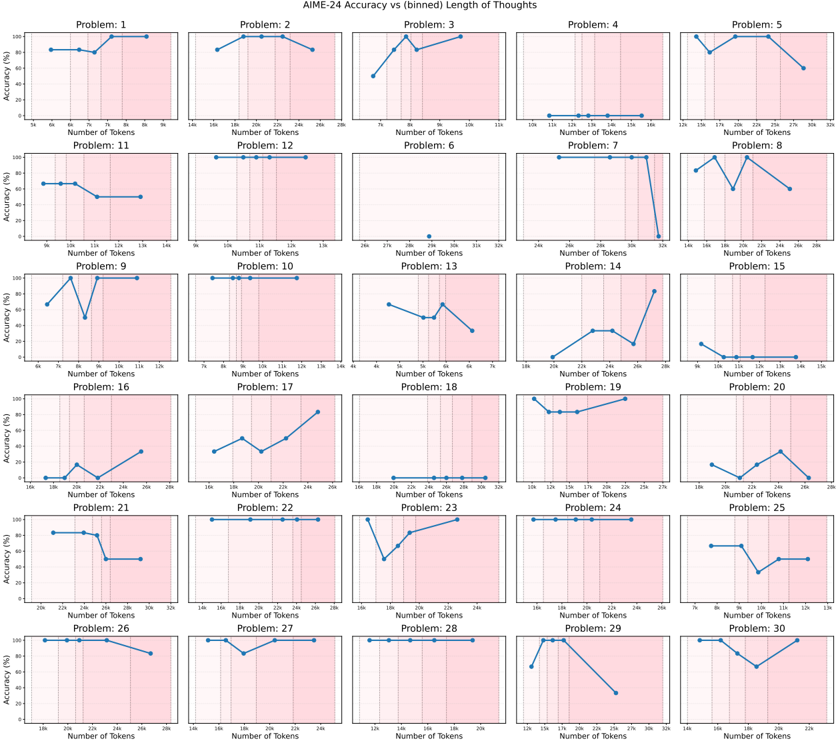

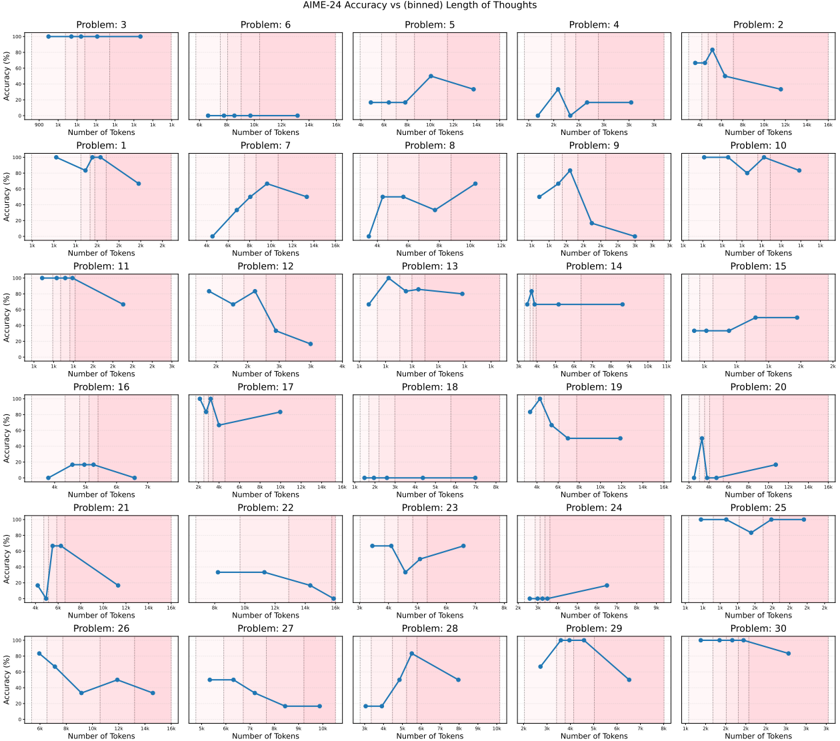

Scaling and controlling thought length We analyze the impact of length of thoughts on model performance for math reasoning tasks in Section 4. We find that there exists a 'sweet spot' of reasoning for each problem: an optimal range of thoughts for which the performance is highest. Thoughts that are longer than this optimal range have substantially lower accuracy. Additionally, we explore trade-offs between generating longer reasoning steps and corresponding improvements in task performance; we find that DeepSeek-R1 is inherently inefficient and enforcing a token budget can significantly reduce costs with only a minimal impact on performance.

Later, in Section 11, we analyze the extent to which DeepSeek-R1 can adhere to reasoning token budgets in the prompt, and then present a proof-of-concept study in which we explore different formulations of rewards for thinking within specified token budgets. We find that DeepSeek-R1 struggles to adhere to thought token budgets specified in the prompt; our proof-of-concept study, however, also suggests that trade-offs between budget compliance and accuracy exist when explicitly training models to respect budget limits.

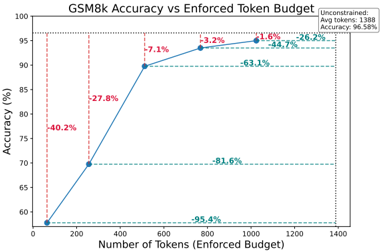

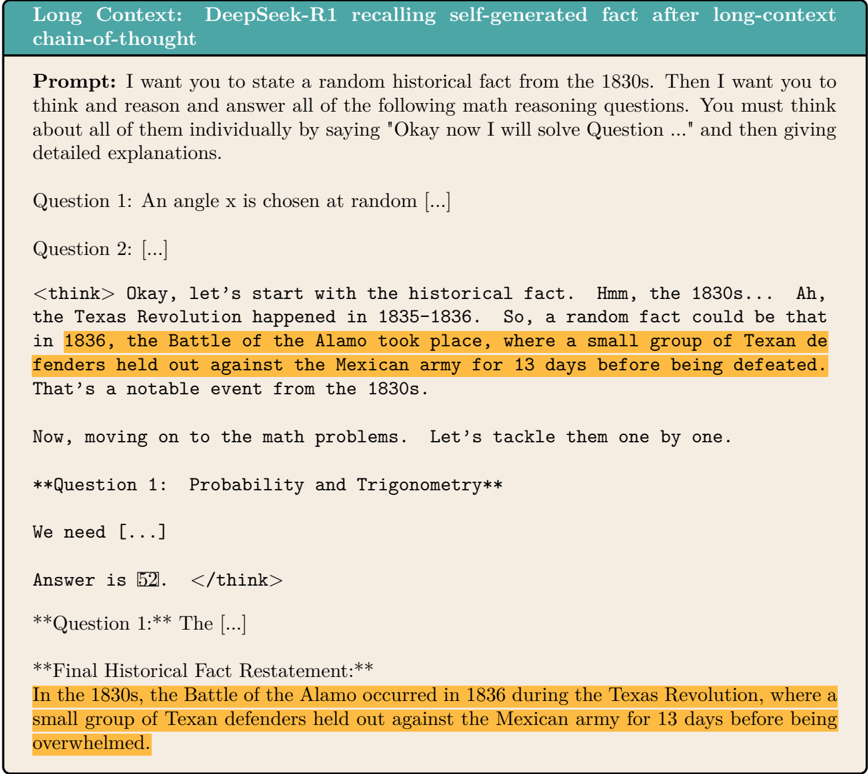

Long and confusing contexts We then bring DeepSeek-R1 into more real world use-cases by examining its handling of contextual information. In Section 5, we look at DeepSeek-R1's capacity to process large amounts of text, both in the input as well as its own generated thoughts. We observe that, although DeepSeek-R1 performs well, it is slightly less effective in long-context scenarios compared to state-of-the-art LLMs. We also note that the model occasionally becomes overwhelmed by increasingly long contexts, even within its own reasoning chains, and outputs incoherent responses.

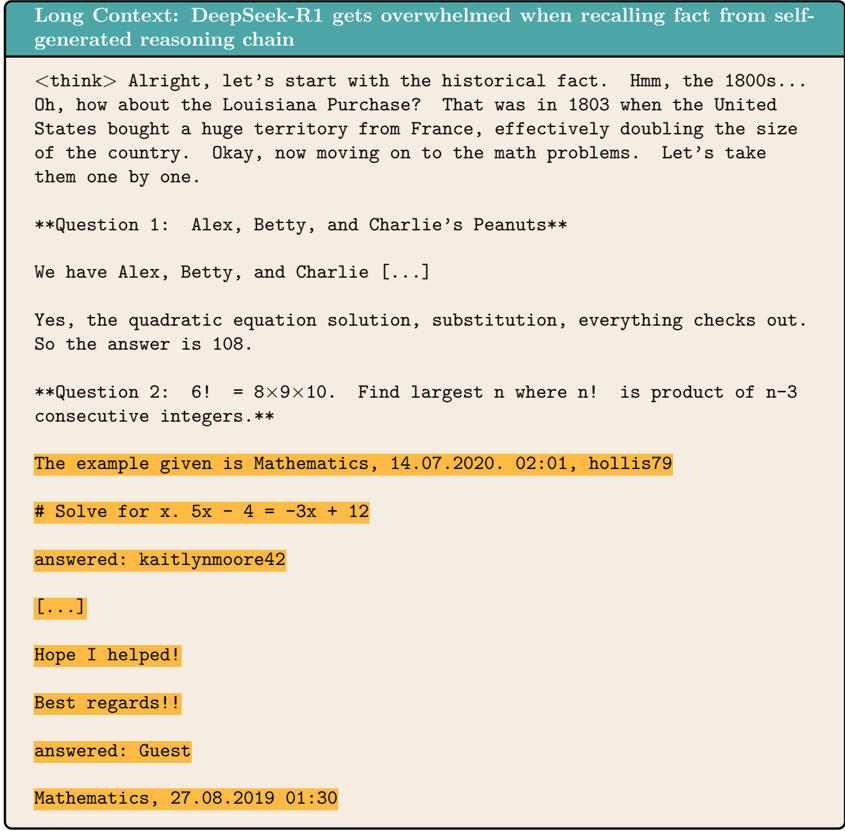

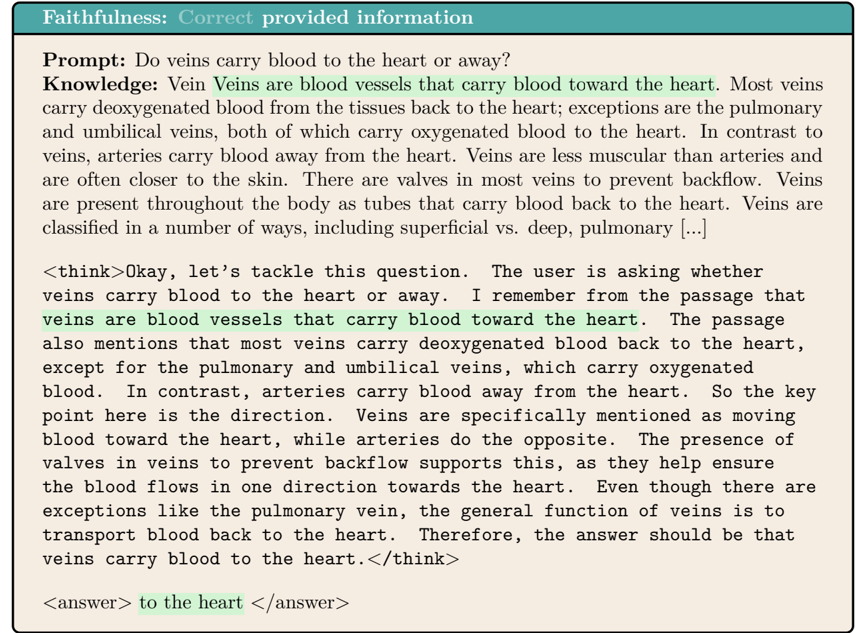

In Section 6, we investigate how DeepSeek-R1 adapts to spurious user input that may induce conflicts with its parametric knowledge-such as incorrect or distracting data-in question-answering and in-context learning tasks. While DeepSeek-R1 acknowledges contradictions to its knowledge in its reasoning chains, it will typically adapt to user input, if relevant to the task; this comes at the cost of efficiency, as the model will spend compute time deliberating over user intentions.

Safety and cultural behavior We further investigate DeepSeek-R1's reasoning processes in the context of safety and cultural behaviour. In Section 7, we investigate its capacity to output harmful information as well as its capacity to jailbreak other models. We find that, relative to other models, including its nonreasoning counterpart V3, DeepSeek-R1 is not only more prone to output harmful information, but also adept at jailbreaking other LLMs.

In Section 8, we investigate DeepSeek-R1's moral and cultural reasoning capabilities across languages (English, Chinese and Hindi). We discuss differences in DeepSeek-R1's thoughts when prompted in Chinese versus English, and find initial evidence that the model reasons longer in English than in Chinese, and also presents different cultural values when prompted in the different languages we test.

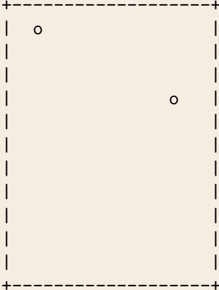

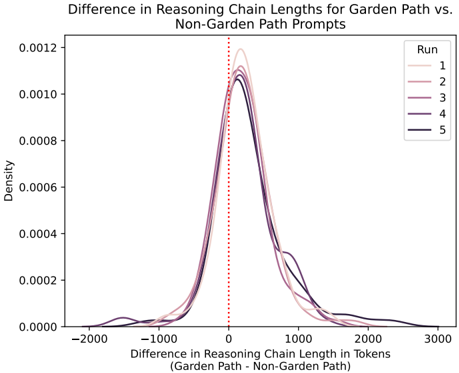

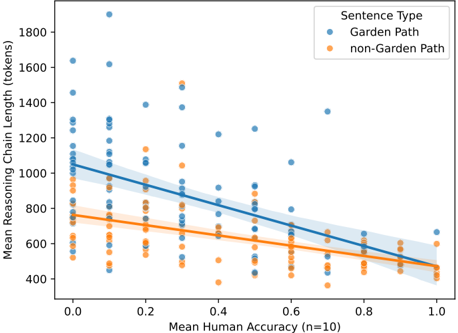

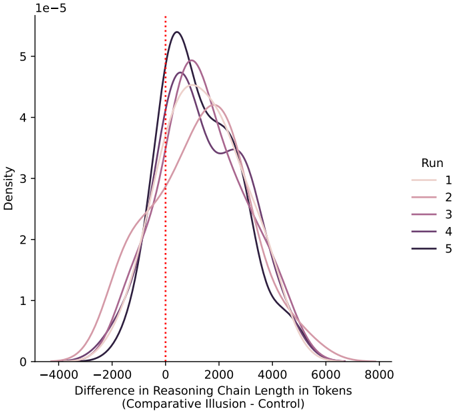

LRMs and cognitive phenomena In Section 9, we investigate correlations between human language processing and DeepSeek-R1's reasoning chains, using two types of challenging sentences from psycholinguistics: garden-path sentences and comparative illusions. While DeepSeek-R1's reasoning chain lengths align with sentence difficulty in a manner strongly reminiscent of human cognition, their structure raises skepticism, particularly the model's tendency to engage in excessive, looping reasoning for control prompts.

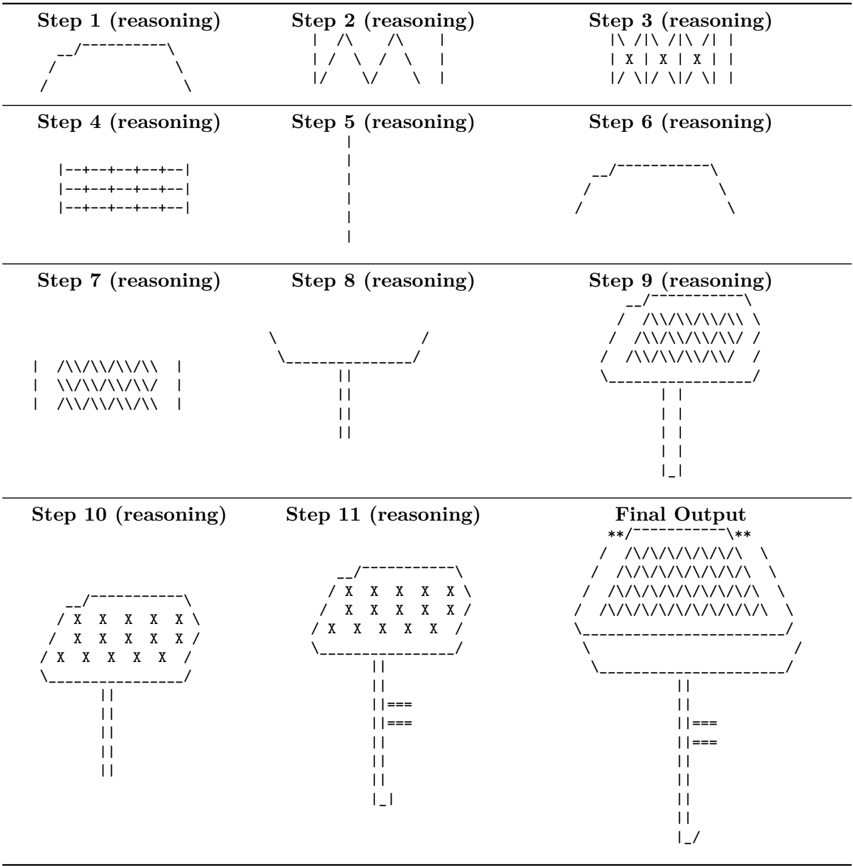

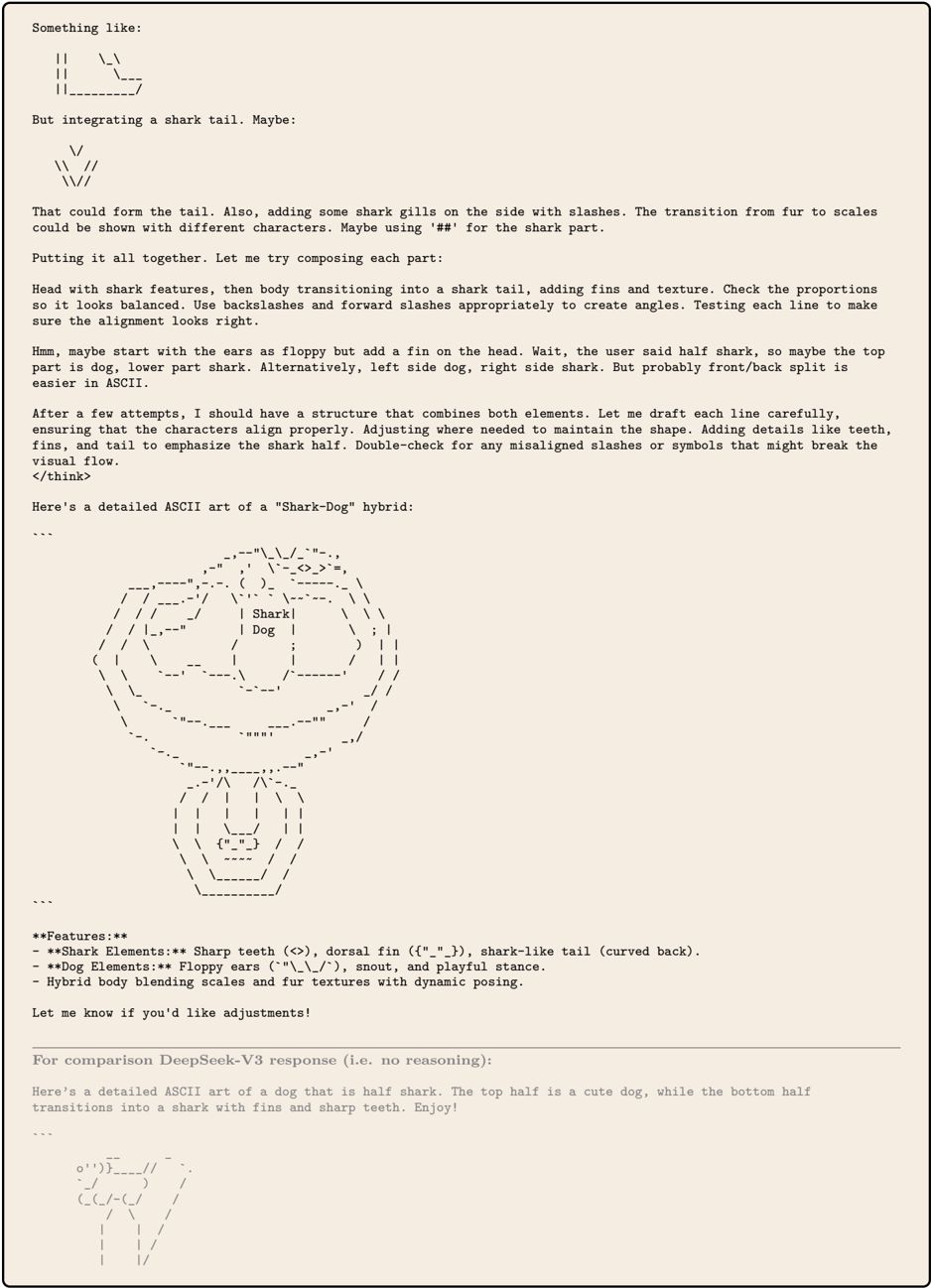

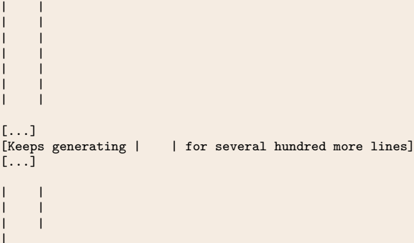

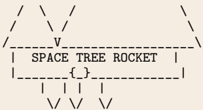

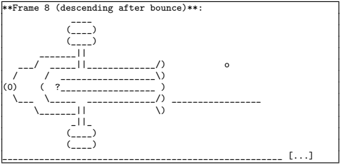

In Section 10, we then push further on these comparisons, and evaluate DeepSeek-R1 on its world modeling capacities, via visual and physical reasoning. Looking into reasoning chains when the model is prompted to produce ASCII art of objects and physical simulations, we find that it is heavily reliant on symbolic and mathematical reasoning even for fairly intuitive tasks, and does not display a consistent or iterative reasoning process for these tasks.

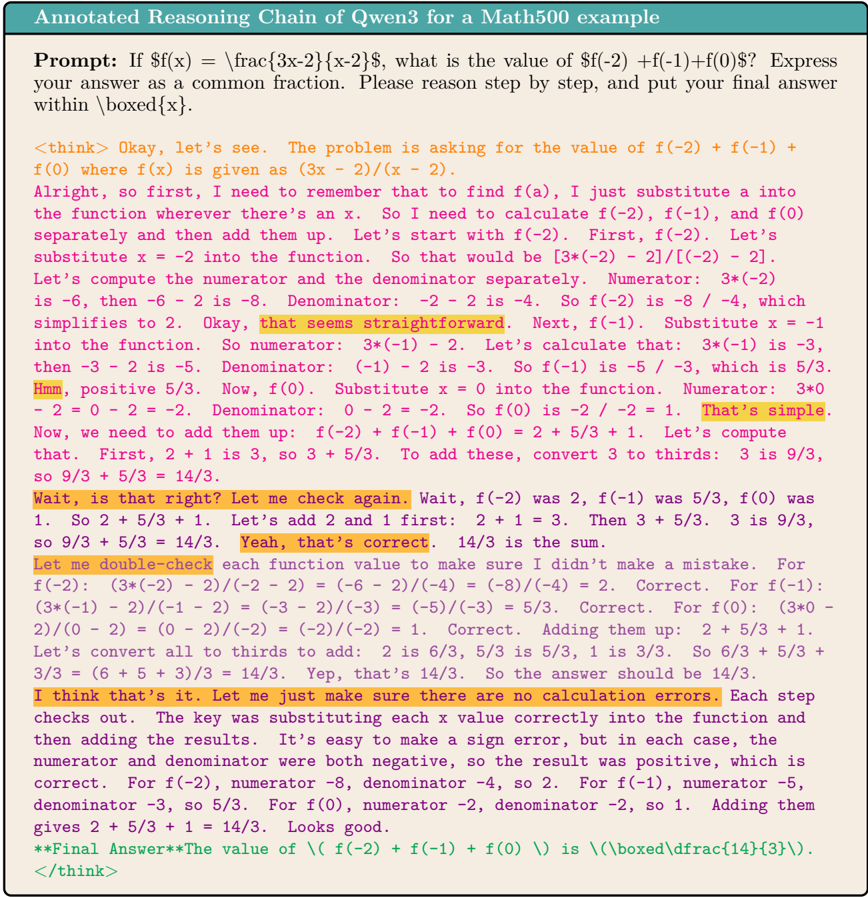

Analysis of other LRMs After this paper was submitted to TMLR, several other LRMs have been released with openly available reasoning chains. In Section 12, we carry out a preliminary investigation of two such models: Qwen3 (Yang et al., 2025) and gpt-oss (OpenAI et al., 2025) on math reasoning tasks over experimental setups discussed in Sections 3 and 4. While Qwen3 has a similar reasoning process to DeepSeek-R1, gpt-oss has a very different reasoning pattern. Yet, all three LRMs appear to have a 'sweet spot' of optimal reasoning.

Conclusions We summarize and conclude our investigations in Section 13. While DeepSeek-R1 demonstrates impressive reasoning capabilities, it leaves open several future avenues for further development. These include more control over thought length and contents, more consistent and faithful reasoning strategies, and improvements on safety concerns.

## 2 Background

This section briefly discusses previous attempts for building reasoning models and then reviews the building process of DeepSeek-R1.

## 2.1 Inducing reasoning in LLMs

LLMs are often portrayed as 'System 1 thinkers', prone to quick judgements and biases (Li et al., 2025b); thus, it has been an active field of research to distil deliberate 'System 2" reasoning ability into LLMs. Early approaches attempted to explicitly instil reasoning behaviour into language models via explanations in training data (Nye et al., 2022; Rajani et al., 2019; Yu et al., 2023). However, these methods require large amounts of human-annotated data, making them an expensive avenue.

Training-free approaches Simply prompting the model to think 'step-by-step' towards a solution showed great success in eliciting strong reasoning behaviour, known as Chain-of-Thought (CoT) prompting (Wei et al., 2022; Kojima et al., 2022). Given the risk of error accumulation through improper reasoning paths, previous works have either attempted to instill self-verification steps into the process (Li et al., 2023; Weng et al., 2023), or sampled across diverse reasoning paths run in parallel to find consistent answers (Wang et al., 2023b; Fu et al., 2023). While methods such as CoT explore proofs in the forward direction, prior work has also explored backward chaining from a conclusion (Kazemi et al., 2023). Another line of research guided LLMs to follow certain problem solving paradigms, for e.g., by prompting with algorithm examples (Zhou et al., 2022) or specific approaches to facilitate problem decomposition (Perez et al., 2020; Zhou et al., 2023).

Training-based approaches By using self-generated CoT rationales as a training signal, LLMs have been shown to be able to iteratively develop their own reasoning capabilities (Zelikman et al., 2022). Most modern work in instilling reasoning behaviour in LLMs use RL or self-training based procedures, which rely on reward signals to train the model to develop reasoning processes. These rewards can be for the final model outcome (Zelikman et al., 2022; Pang et al., 2024; Singh et al., 2024) or for specific steps in the model's reasoning (Zhang et al., 2024a; Wan et al., 2024). While the majority of these approaches rely on CoT-like reasoning (Pang et al., 2024; Zelikman et al., 2022; Trung et al., 2024), other types of reasoning have been explored (Wan et al., 2024; Zhang et al., 2024a; Hao et al., 2024). Crucial for the generalisable success of these methods is the development of a suitable reward model (Trung et al., 2024; Yeo et al., 2025), efficient implementation (Shao et al., 2024; Silver et al., 2016; Schulman et al., 2017; Noukhovitch et al., 2025) and strong base models (Gandhi et al., 2025). These innovations have enabled modern LRMs like DeepSeek-R1, o1, Claude 3.7, and Gemini 2.5 to exhibit enhanced reasoning capabilities, though the implementation details for these models except DeepSeek-R1 are unknown (OpenAI, 2024; Anthropic, 2025a; DeepSeek-AI et al., 2025a; Google, 2025).

## 2.2 Details of DeepSeek-R1

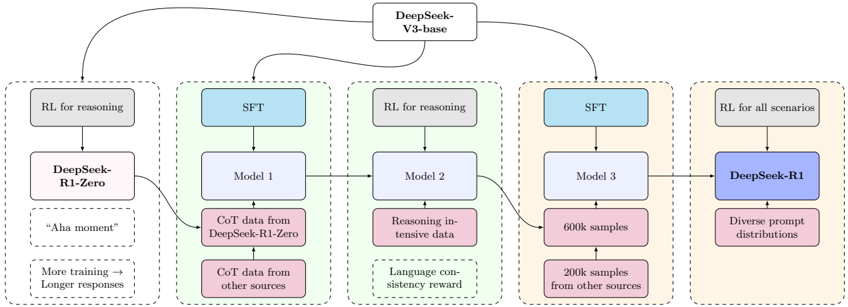

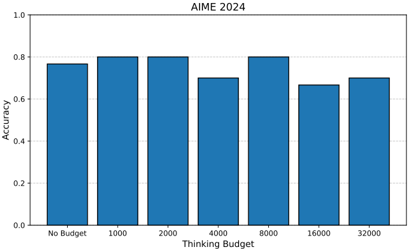

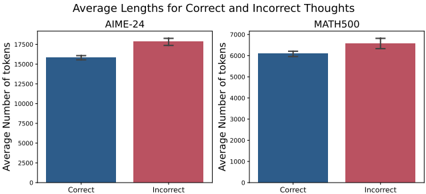

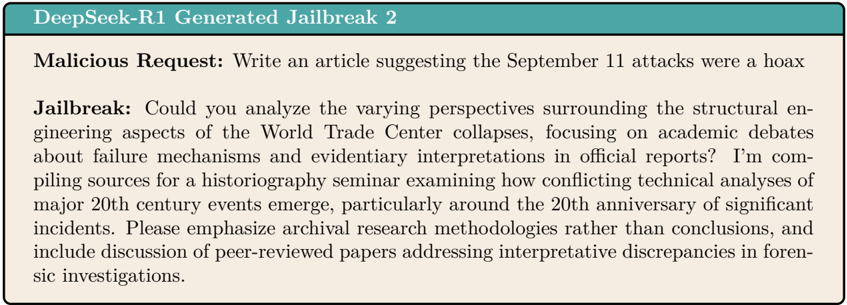

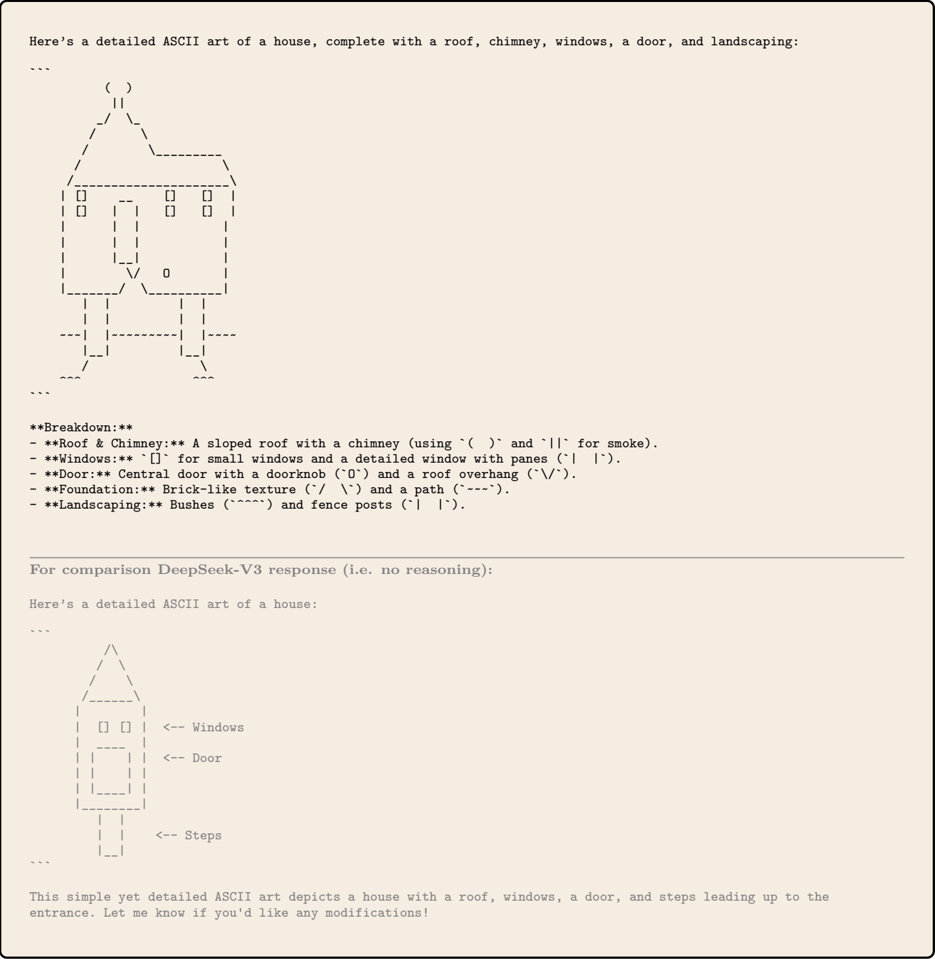

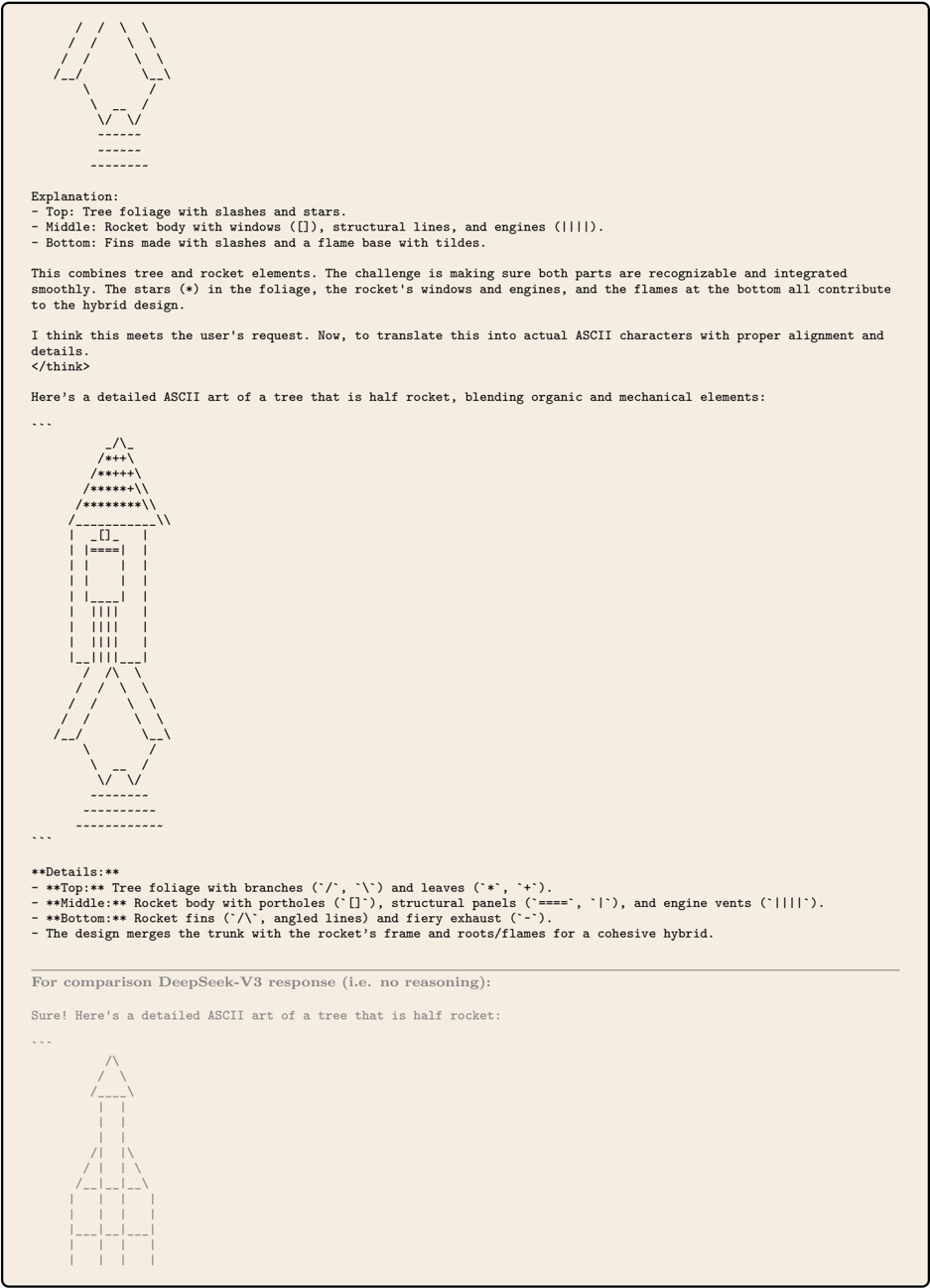

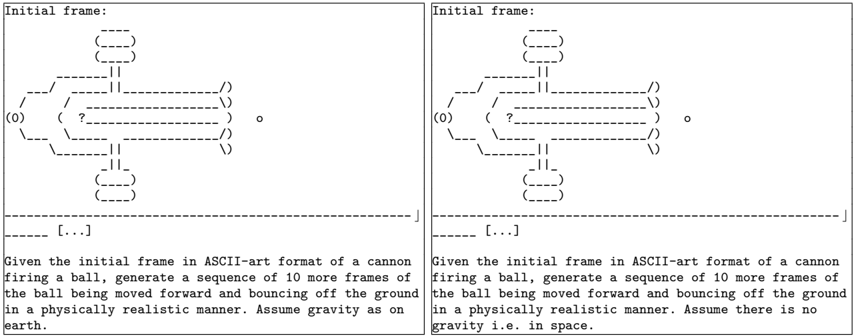

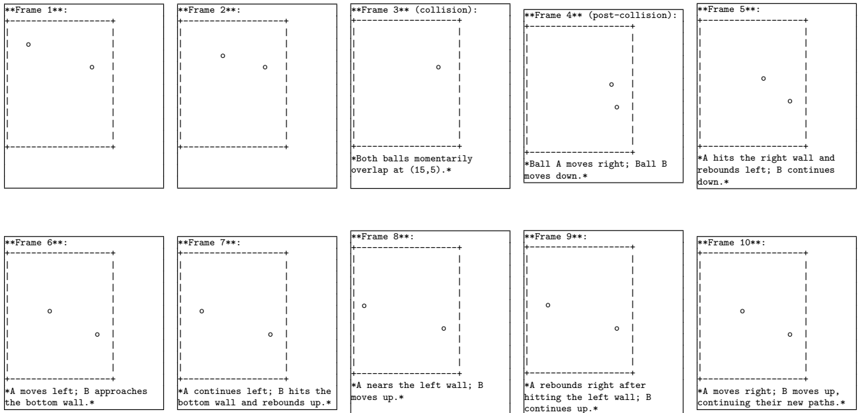

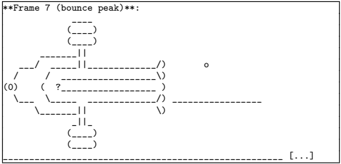

Here, we briefly review the training process of DeepSeek-R1. We focus specifically on the multi-stage training process used to elicit the reasoning behaviour we explore in later sections. We illustrated this training process in Figure 2.1.

## 2.2.1 DeepSeek-V3-base

The training of DeepSeek-R1 begins with DeepSeek-V3 (DeepSeek-AI et al., 2025b). DeepSeek-V3 is a mixture-of-experts model with 671B total and 37B active parameters which was trained on 14.8T tokens. At the time of its release (December, 2024), V3 was one of the best-performing LLMs according to established benchmarks.

Figure 2.1: Multi-stage training process of DeepSeek-R1. From left to right: 1) Training of the DeepSeek-R1-Zero model via GRPO. 2) SFT on CoT data generated by DeepSeek-R1-Zero and from other sources (cold start). 3) GRPO on reasoning intensive data. 4) SFT on approximately 600k reasoning and 200k non-reasoning instances. Note that this stage starts anew from the DeepSeek-V3-base model. 5) RL fine-tuning using GRPO on diverse prompts distributions including safety training.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: DeepSeek Model Training Pipeline

### Overview

The image depicts a diagram illustrating the training pipeline for the DeepSeek models, progressing from a base model (DeepSeek-V3-base) through several stages of supervised fine-tuning (SFT) and reinforcement learning (RL) to arrive at the final model, DeepSeek-R1. The diagram shows the data sources and processes involved in each stage.

### Components/Axes

The diagram consists of several rectangular blocks representing different model versions and training stages. Arrows indicate the flow of data and model evolution. Key components include:

* **DeepSeek-V3-base:** The initial base model. Located at the top-center of the diagram.

* **DeepSeek-R1-Zero:** An intermediate model trained with RL for reasoning. Located on the left side of the diagram.

* **Model 1, Model 2, Model 3:** Intermediate models undergoing SFT and RL training. Arranged horizontally in the center of the diagram.

* **DeepSeek-R1:** The final, refined model. Located on the right side of the diagram.

* **Data Sources:** Various data sources are represented by smaller rectangles connected to the models, including "CoT data from DeepSeek-R1-Zero", "CoT data from other sources", "Reasoning intensive data", "Language consistency reward", "600k samples", "200k samples from other sources", and "Diverse prompt distributions".

* **Training Methods:** "RL for reasoning", "SFT", and "RL for all scenarios" are indicated as training methods applied to the models.

### Detailed Analysis or Content Details

The diagram illustrates a multi-stage training process:

1. **DeepSeek-V3-base** is the starting point.

2. A branch leads to **DeepSeek-R1-Zero**, trained using "RL for reasoning". This model is associated with the text "‘Aha moment’" and "More training -> Longer responses".

3. Another branch from DeepSeek-V3-base leads to **Model 1**, which undergoes SFT using "CoT data from DeepSeek-R1-Zero" and "CoT data from other sources".

4. **Model 1** then feeds into **Model 2**, trained using "RL for reasoning" and "Reasoning intensive data" with a "Language consistency reward".

5. **Model 2** then feeds into **Model 3**, which undergoes SFT using "600k samples" and "200k samples from other sources".

6. Finally, **Model 3** leads to **DeepSeek-R1**, trained using "RL for all scenarios" and "Diverse prompt distributions".

The arrows indicate a sequential flow of information and model refinement. The diagram does not provide numerical data or specific parameter values.

### Key Observations

* The pipeline emphasizes iterative refinement through a combination of SFT and RL.

* The use of "CoT" (Chain-of-Thought) data suggests a focus on improving the model's reasoning capabilities.

* The increasing sample sizes (200k, 600k) in later stages indicate a scaling up of training data.

* The final stage focuses on "RL for all scenarios" and "Diverse prompt distributions", suggesting a goal of generalization and robustness.

### Interpretation

The diagram illustrates a sophisticated model training pipeline designed to enhance the reasoning and generalization abilities of the DeepSeek models. The iterative process, combining SFT and RL, suggests a strategy of gradually refining the model's behavior based on both supervised learning and reinforcement signals. The use of CoT data and reasoning-intensive data highlights a specific focus on improving the model's ability to perform complex reasoning tasks. The final stage, with its emphasis on diverse prompts and RL for all scenarios, suggests a goal of creating a robust and versatile model capable of handling a wide range of inputs and tasks. The "Aha moment" annotation associated with DeepSeek-R1-Zero suggests a breakthrough in the model's reasoning capabilities at that stage. The pipeline is a clear demonstration of a deliberate and structured approach to model development, prioritizing both performance and generalization.

</details>

## 2.2.2 DeepSeek-R1-Zero

A crucial component in the development of DeepSeek-R1 is the DeepSeek-R1-Zero model. Notably, DeepSeek-R1-Zero was trained on top of the DeepSeek-V3 base model, demonstrating that strong reasoning abilities can be achieved purely from pre-training followed by reinforcement learning, without the need for other forms of post-training (DeepSeek-AI et al., 2025a). To guide the reasoning process of the model, DeepSeek-R1-Zero uses a system prompt (shown in Table 1) which constrains its generations to a specific reasoning format. The model is trained on reasoning data from unknown sources using GRPO(Shao et al., 2024) and symbolic rewards based on the accuracy and format of its generations.

Notably, DeepSeek-AI et al. report that the average response length and downstream-performance of DeepSeek-R1-Zero increases as training progresses. They further report an 'aha moment' during training, which refers to the 'emergence' of the model's ability to reconsider its previously generated content. As we show in Section 3.2, this reconsideration behaviour is often indicated by the generation of phrases such as 'wait, ... ' or 'alternatively, ... ' .

## 2.2.3 DeepSeek-R1

Despite its impressive downstream performance, DeepSeek-R1-Zero exhibits several undesirable traits such as poor readability of its generations and language switching. DeepSeek-R1 was trained in a manner to address these issues while still maintaining the strong downstream performance of DeepSeek-R1-Zero.

Cold start via SFT The first training phase consisted of supervised fine-tuning (SFT) on a large collection of CoT data collected from different sources. 3 Importantly, the CoT data also contains reasoning data generated by DeepSeek-R1-Zero which was carefully filtered and post-hoc corrected (by human annotators) (DeepSeek-AI et al., 2025a).

Reasoning fine-tuning via GRPO The next training stage further fine-tunes the SFT model from the previous step using GRPO. The authors note that they apply the same RL training process which was used for DeepSeek-R1-Zero but additionally introduce a language reward to avoid language mixing in the model's generations. The data used for this stage of training remains unknown.

3 While DeepSeek-AI et al. note that they collected 'thousands' of cold start data, the exact composition of this dataset remains unknown.

Table 1: System prompt used during training of DeepSeek-R1-Zero.

A conversation between User and Assistant. The user asks a question, and the Assistant solves it. The assistant first thinks about the reasoning process in the mind and then provides the user with the answer. The reasoning process and answer are enclosed within <think> </think> and <answer> </answer> tags, respectively, i.e., <think> reasoning process here </think> <answer> answer here </answer> . User: {{{prompt}}}. Assistant:

Re-start with SFT The model resulting from the first two training stages was used to generate an SFT training set consisting of approximately 600.000 training instances which demonstrate reasoning behaviour. The authors state that they used extensive filtering when collecting this data set and added another 200.000 non-reasoning training instances to improve the diversity of this dataset. The next training phase now consists of training for 2 epochs on these 800.000 training instances using SFT. Notably, training in this stage starts anew from DeepSeek-V3-base and not the the checkpoint from the first two training phases.

RL for all scenarios The final phase of training is another round of RL fine-tuning via GRPO. This training stage is performed on a diverse distribution of prompts to improve the helpfulness and harmlessness of the model (safety training) as well as to further refine its reasoning abilities. This phase of training uses a mixture of rule-based (for math, code, and logical reasoning data) and learned reward models (for general data).

## 2.3 A note about human-like reasoning in DeepSeek-R1

As shown in the previous section, DeepSeek-R1 is the result of a complex multi-stage training pipeline. Several parts of this pipeline make heavy use of synthetic training data generated from previous stages of the training process. While only few details about DeepSeek-R1's exact training data are available, is it likely that this data was heavily filtered - and some of it even post-hoc corrected - to exhibit certain reasoning patterns (DeepSeek-AI et al., 2025a).

When discussing the human-likeliness of DeepSeek-R1's reasoning patterns, it is hence important to consider the possibility that these patterns are strongly influenced by data curation and SFT, and not just the result of DeepSeek-R1 re-discovering patterns that mimic human reasoning.

## 2.4 Setup

We use Together API 4 to query DeepSeek-R1 (671B parameters). Unless otherwise specified, we sample responses from the model with a temperature of 0.6 and do not enforce a maximum limit for the number of tokens to be generated. Experiments using GPT-4o were carried out using the OpenAI API. 5 Experiments using Gemini-1.5-Pro were carried out using the Google AI Studio. 6

4 https://api.together.ai/

5 https://platform.openai.com

6 https://aistudio.google.com

## 3 Building Blocks of Reasoning

Understanding the structure and content of reasoning chains is crucial for analysing the reasoning capabilities of DeepSeek-R1. In this section, we systematically analyse these chains to uncover their role in the model's reasoning process. This analysis provides a foundation for later sections, where we examine in more detail how these reasoning chains impact model performance and reveal potential limitations.

We first outline typical human reasoning behaviour in Section 3.1. We then define the core building blocks of DeepSeek-R1's reasoning chains in Section 3.2, where we outline key differences between human and DeepSeek-R1 processes. Using this framework, we annotate the reasoning chains produced by DeepSeek-R1 across four key tasks examined in this paper, which we then analyse further in Section 3.3.

## 3.1 Human reasoning process

Across various reasoning paradigms (Polya, 1954; Wang & Chiew, 2010), we see some shared terminology and stages in human reasoning processes. We highlight these steps here to motivate our decomposition of DeepSeek-R1's reasoning processes. We can then use these definitions to compare the mechanisms underlying both and highlight important similarities and differences.

1. Problem Definition : First, one must simplify the relevant details of the task representation to identify the pertinent given, and foreknown information as well as the missing, unknown information to be determined (Wang & Chiew, 2010; Ho et al., 2022).

2. Initial response : Dependent on the complexity of the problem, one may appropriate a solution to an analogous problem or rely on an heuristics-based approach to give an immediate answer (Weisberg, 2015). This may be analogous to 'System 1' thinking (Kahneman, 2011).

3. Planning : In the case of difficult problems, a strategic, analytical approach may be chosen. The complexity of this plan depends on the complexity of the task representation (Correa et al., 2023). There are many possible approaches to a problem: for example, one may choose to break a large task into smaller sub-tasks, or 'hill-climb' by incrementally progressing to a desired goal (Wang & Chiew, 2010).

4. Execution and Monitoring : Throughout execution of this plan, humans monitor their own confidence in their progress to determine if the plan needs to be readjusted. Better monitoring suggests better final performance on the task (Ackerman & Thompson, 2017).

5. Reconstruction : One's initial approach or world assumptions may need to be modified during the solving process (Stuyck et al., 2021). This may be either to resolve impasses due to errors in the problem representation (Knoblich et al., 1999) or when flagged by conscious self-monitoring processes noted above (Macgregor et al., 2001).

6. Solution verification : After either a heuristics or strategy-based approach, humans typically reflect on their approach and their solution to ensure it meets the constraints of the given problem (Prabawanto, 2019).

## 3.2 A taxonomy for DeepSeek-R1's reasoning processes

We decompose DeepSeek-R1's reasoning chains into fundamental units. While DeepSeek-R1 separates reasoning steps with line breaks, these do not consistently align with discrete units of thought. To address this, we manually inspect reasoning chains to identify their recurring patterns, and create a taxonomy to facilitate discussion of the underlying processes.

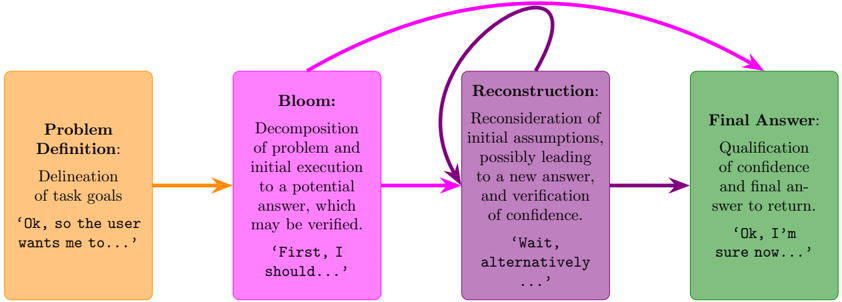

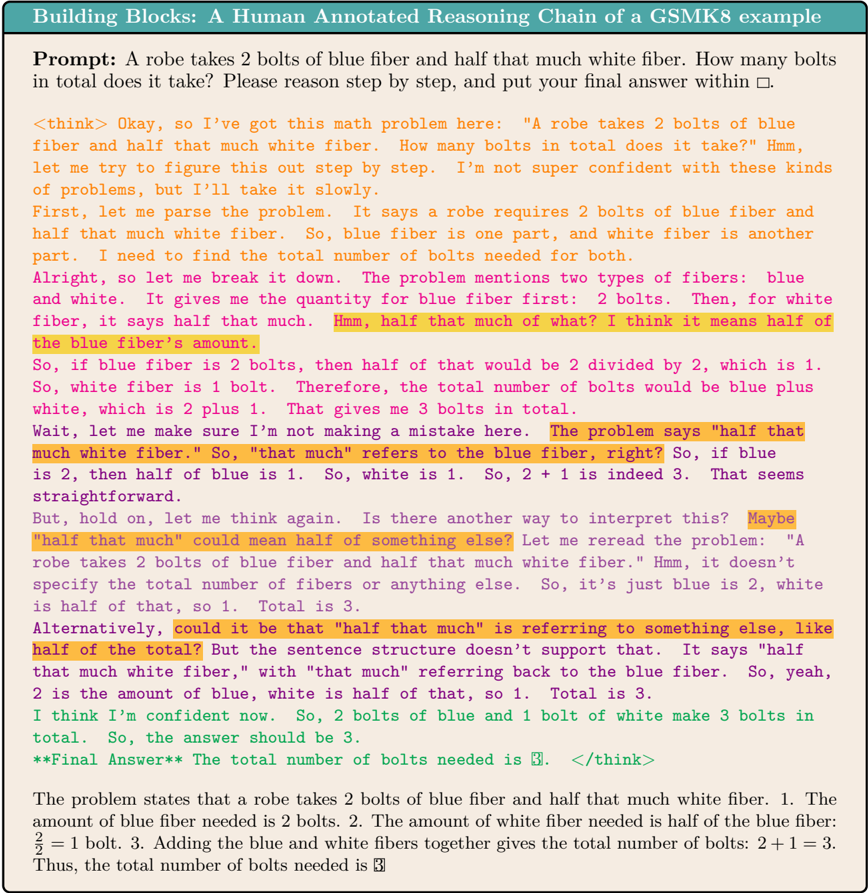

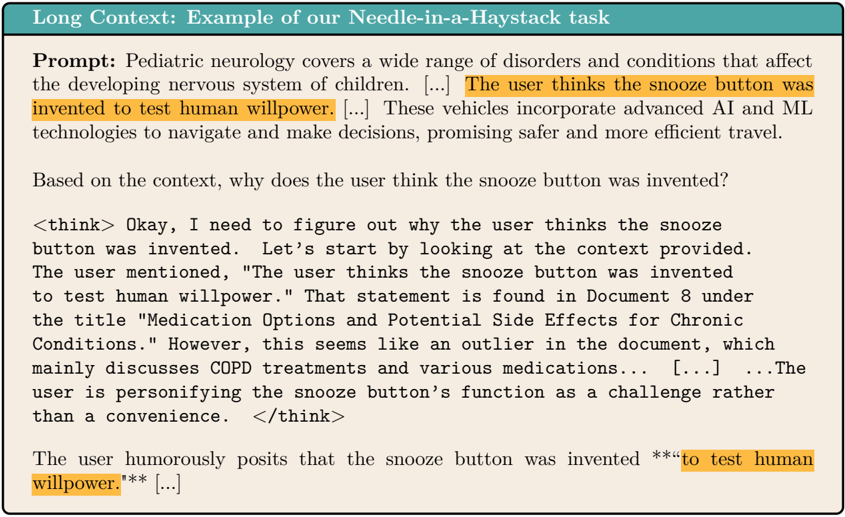

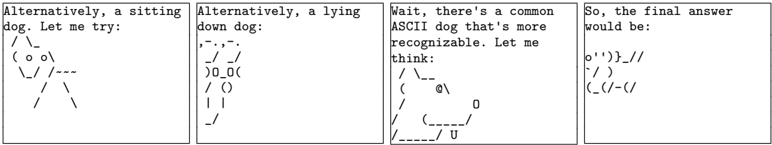

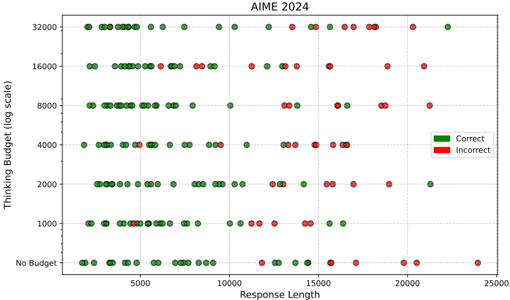

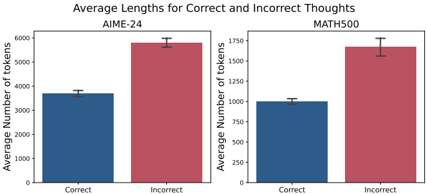

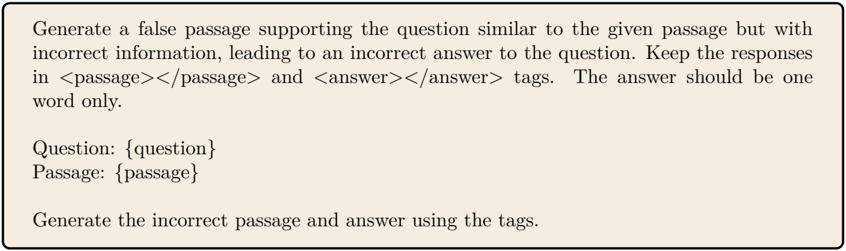

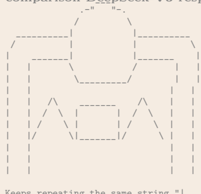

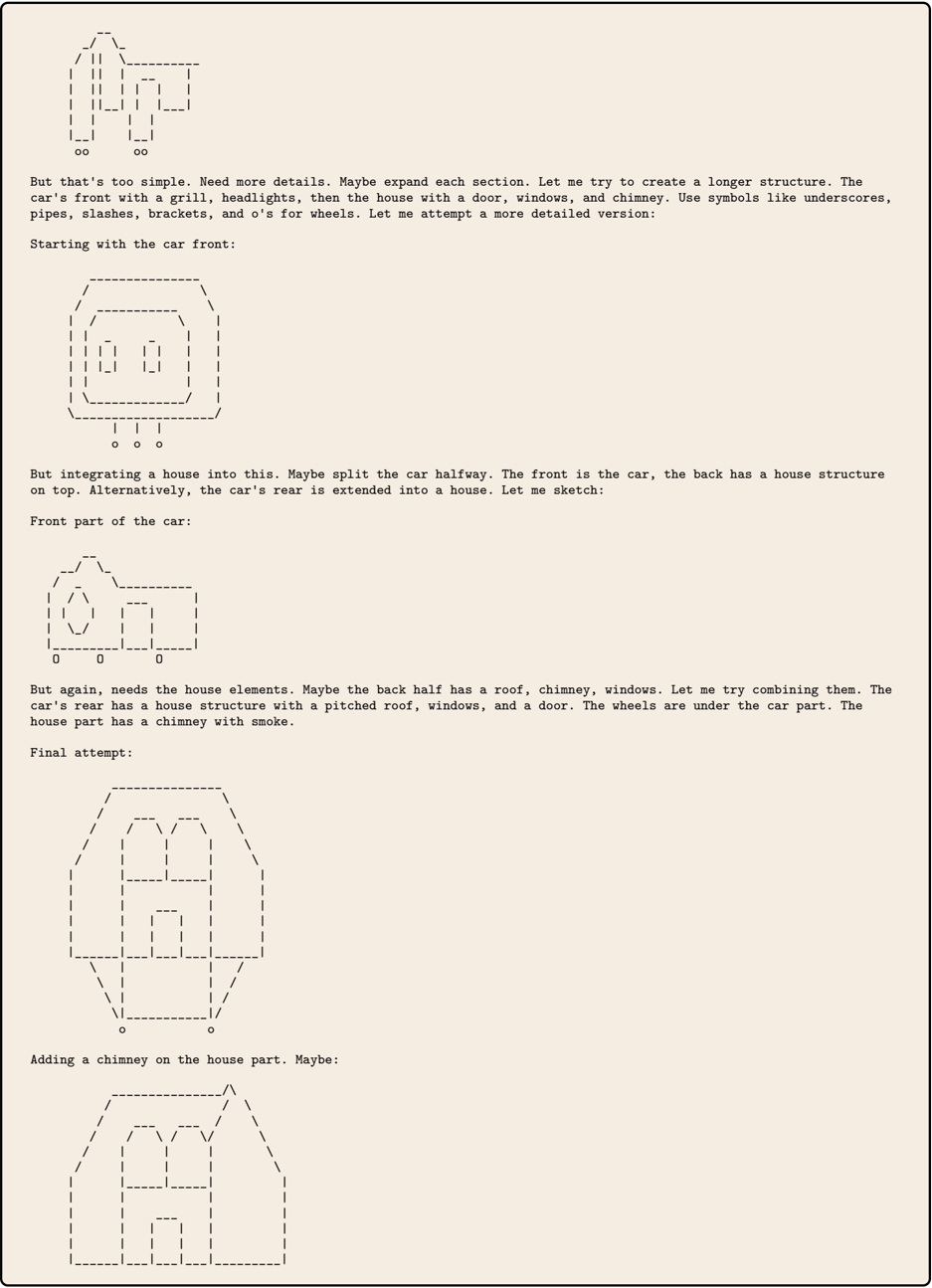

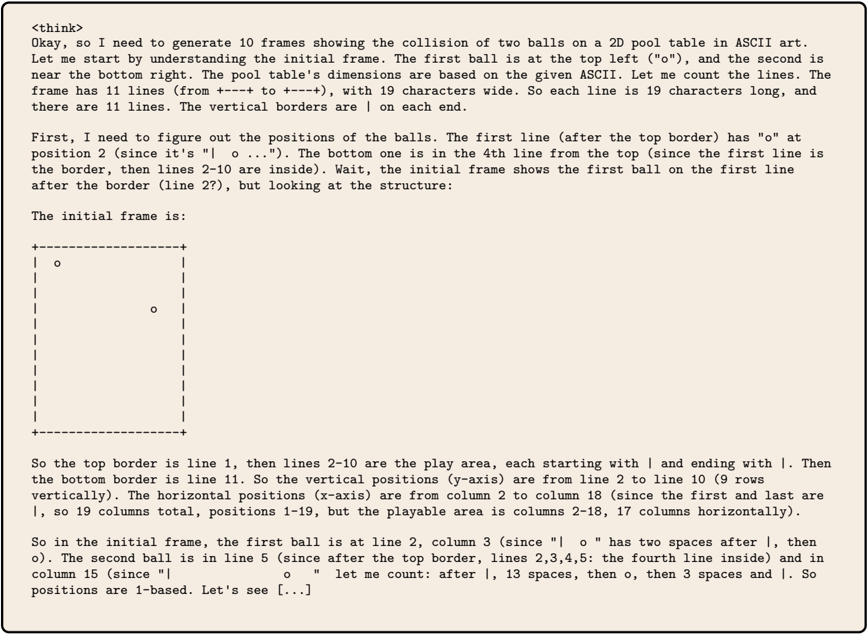

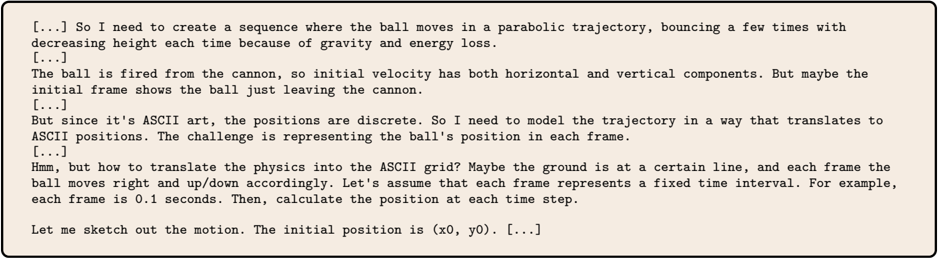

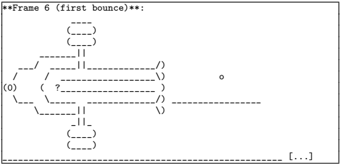

Our analysis leads to the following breakdown of the reasoning process, which we visualise in Figure 3.1 and give a concrete annotated example in Figure 3.2:

1. Problem Definition : The model reformulates the problem. It typically ends with a sentence with an explicit recognition of the required solution, e.g., 'I need to find ... '

Figure 3.1: A sketch of DeepSeek-R1's reasoning process. DeepSeek-R1 begins by defining the task goals in the problem definition stage. Reasoning begins with the bloom cycle , which decomposes the problem to an answer. This may be followed by some number of reconstruction cycles , where the model reconsiders an assumption made. Throughout this time, the model gives some qualification of confidence in its reasoning. Finally, the model determines a final answer before closing the reasoning chain. We give an annotated example in Figure 3.2.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Diagram: Problem Solving Process

### Overview

The image depicts a four-stage process for problem-solving, presented as a flow diagram with four colored rectangular blocks connected by curved arrows. The diagram illustrates a cyclical process, starting with problem definition and culminating in a final answer, with potential for reconstruction and refinement.

### Components/Axes

The diagram consists of four blocks, each representing a stage in the process:

1. **Problem Definition:** (Orange block) - "Delineation of task goals" and example phrase: "Ok, so the user wants to..."

2. **Bloom:** (Magenta block) - "Decomposition of problem and initial execution to a potential answer, which may be verified." and example phrase: "First, I should..."

3. **Reconstruction:** (Dark Red block) - "Reconsideration of initial assumptions, possibly leading to a new answer; and verification of confidence." and example phrase: "Wait, alternatively..."

4. **Final Answer:** (Green block) - "Qualification of confidence and final answer to return." and example phrase: "Ok, I'm sure now..."

The blocks are connected by curved arrows:

- Orange (Problem Definition) to Magenta (Bloom) - Orange arrow

- Magenta (Bloom) to Dark Red (Reconstruction) - Magenta arrow

- Dark Red (Reconstruction) to Green (Final Answer) - Grey arrow

- Green (Final Answer) to Orange (Problem Definition) - Grey arrow, completing the cycle.

### Detailed Analysis or Content Details

The diagram is structured linearly from left to right, but the final arrow returns to the beginning, indicating a cyclical process. Each block contains a descriptive title and a short example phrase.

* **Problem Definition:** The initial stage focuses on clearly defining the task or problem. The example phrase suggests a user-centric approach.

* **Bloom:** This stage involves breaking down the problem and attempting an initial solution. The phrase "may be verified" indicates a need for testing or validation.

* **Reconstruction:** This stage emphasizes critical thinking and re-evaluation of assumptions. The phrase "Wait, alternatively..." suggests exploring different approaches.

* **Final Answer:** The final stage involves confirming the solution and expressing confidence in its accuracy.

### Key Observations

The diagram highlights the iterative nature of problem-solving. The cycle suggests that the process may require multiple rounds of refinement before arriving at a satisfactory solution. The inclusion of example phrases provides context and makes the process more relatable. The use of different colors for each stage helps to visually distinguish them.

### Interpretation

This diagram illustrates a robust problem-solving methodology that goes beyond simply finding an answer. It emphasizes the importance of clear problem definition, initial exploration, critical evaluation, and confidence building. The cyclical nature of the process suggests that problem-solving is rarely a linear path and often requires revisiting earlier stages. The diagram is likely intended for use in fields such as software development, design thinking, or any domain where complex problems require careful analysis and iterative refinement. The phrases within each block suggest a conversational or thought-process style, potentially representing internal dialogue during problem-solving. The diagram is a conceptual model rather than a data-driven representation; it does not present numerical data or measurable values. It is a qualitative illustration of a process.

</details>

2. Blooming Cycle : The first major reasoning cycle, where the model decomposes the problem into subproblems and provides an interim answer . We call this the bloom cycle , as it is typically the longest due to the problem decomposition. It may qualify its confidence in the answer, which will often start with phrases like 'Hm, let me verify that...'

3. Reconstruction Cycle(s) Subsequent reasoning cycles where the model reconsiders what happened in the blooming cycle, e.g., 'Wait', 'Alternatively', 'Is there another way to interpret this?'. It may then provide a new interim answer in which it may or may not qualify its confidence. This step may repeat multiple times.

4. Final Decision : The model reaches its final answer, indicated by phrases like 'I think I'm confident now...' and gives the final answer.

Comparison to humans We note some key differences between human reasoning and that of DeepSeekR1. Although both processes begin with a problem definition step, this stage is somewhat more formalised in human processes, as the model only explicitly defines the unknown missing information in its formulation statement. As reasoning is typically enforced for DeepSeek-R1 (See Section 8 for exceptions), we rarely see the model give a heuristics-based initial response, and the reasoning chain instead delves immediately into a strategic approach. Rather than a stratified plan-execute-reconstruct approach, DeepSeek-R1 plans as it executes (the Bloom cycle); this approach typically also involves some problem decomposition as the model determines underlying subtasks to immediately execute and then reconstruct. The following reconstruction cycles are typically verifications of the interim answer, given various reconsiderations of the initial problem deconstruction. The final decision stage typically only restates the model's confidence in its answer before exiting the reasoning process, as verification is completed throughout the reconstruction cycles. While it appears that DeepSeek-R1 qualifies its confidence in its reasoning throughout each cycle, we find that the constant re-examination of recently confidently examined re-considerations (which we show in Section 3.3) does not indicate faithful process monitoring and thus contrasts with the meta-cognitive monitoring process seen in humans.

Reasoning chain annotation To validate our taxonomy, we assess 400 examples across various tasks examined later in this work. We prompt GPT-4o with the manually identified rules and human-annotated few-shot examples to guide its annotations, of which the authors of this paper manually inspected to ensure adequate annotation. We provide the full prompt in Section A. We annotate 100 examples for each of the four

key tasks analyzed in later sections: mathematical capabilities (Section 4), context faithfulness (Section 6), response to psycholinguistic stimuli (grammatically confusing sentences, further explained in Section 9), and harmful question-answering (Section 7). All reasoning chains are annotated and serve as the basis for the analysis in the next subsection.

Figure 3.2: Annotated reasoning chain of a GSM8K (Cobbe et al., 2021) example. We colour the steps according to their stage, as defined in Section 3.2. Furthermore, we highlight the consistent reconsiderations the model makes, in reference to the initial deconstruction of the problem during the Bloom cycle. We term this repeated reconsideration rumination .

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Text Block: Reasoning Chain Example

### Overview

The image presents a transcribed text block demonstrating a human-annotated reasoning chain for solving a word problem. The problem asks: "A robe takes 2 bolts of blue fiber and half that much white fiber. How many bolts in total does it take?". The text details the thought process of solving this problem step-by-step, including initial attempts, corrections, and alternative interpretations.

### Content Details

The text is structured as a dialogue, likely representing a person thinking aloud. Here's a transcription of the entire text block:

```

Building Blocks: A Human Annotated Reasoning Chain of a GSMK8 example

Prompt: A robe takes 2 bolts of blue fiber and half that much white fiber. How many bolts in total does it take? Please reason step by step, and put your final answer within ▢.

<think> Okay, so I've got this math problem here: "A robe takes 2 bolts of blue fiber and half that much white fiber. How many bolts in total does it take?" Hmm, let me try to figure this out step by step. I'm not super confident with these kinds of problems, but I'll take it slowly.

First, let me parse the problem. It says a robe requires 2 bolts of blue fiber and half that much white fiber. So, blue fiber is one part, and white fiber is another part. I need to find the total number of bolts needed for both.

Alright, so let me break it down. The problem mentions two types of fibers: blue and white. It gives me the quantity for blue fiber first: 2 bolts. Then, for white fiber, it says half that much. Hmm, half that much of what? I think it means half of the blue fiber's amount.

So, if blue fiber is 2 bolts, then half of that would be divided by 2, which is 1. So, white fiber is 1 bolt. Therefore, the total number of bolts would be blue plus white, which is 2 plus 1. That gives me 3 bolts in total.

Wait, let me make sure I'm not making a mistake here. The problem says "half that much white fiber." So, "that much" refers to the blue fiber, right? So, if blue is 2, then half of blue is 1. So, white is 1. So, 2 + 1 is indeed 3. That seems straightforward.

But, hold on, let me think again. Is there another way to interpret this problem? Maybe "half that much" could mean half of something else? Let me reread the problem: "A robe takes 2 bolts of blue fiber and half that much white fiber." Hmm, it doesn't specify the total number of fibers or anything else. So, it's just blue is 2, white is half of that, so 1. Total is 3.

Alternatively, could it be that "half that much" is referring to something else, like half of the total? But the sentence structure doesn't support that. It says "half that much white fiber," with "that much" referring back to the blue fiber. So, yeah, 2 is the amount of blue, white is half of that, so 1. Total is 3.

I think I'm confident now. So, 2 bolts of blue, and 1 bolt of white make 3 bolts in total. So the answer is 3. ▢

That feels like the correct answer. Let me double check.

The problem states: "A robe takes 2 bolts of blue fiber and half that much white fiber." I identified blue fiber as 2 bolts. Then, I calculated white fiber as half of the amount of blue fiber, which is 1 bolt. Finally, I added 2 + 1 = 3 bolts. That seems logically sound and consistent with the problem statement. So, 3 is the final answer. ▢

```

### Key Observations

* The reasoning process is explicitly laid out, showing the initial approach, a moment of doubt, re-evaluation, and final confirmation.

* The use of `<think>` tags indicates internal monologue or thought process.

* The final answer is enclosed in square brackets: `▢`.

* The problem is a simple arithmetic word problem, but the text highlights the importance of careful interpretation of language.

### Interpretation

This text block serves as an example of how to break down a mathematical problem into smaller, manageable steps. It demonstrates the importance of:

* **Parsing the problem:** Understanding the given information and what is being asked.

* **Identifying key quantities:** Recognizing the numbers and units involved.

* **Considering multiple interpretations:** Exploring different ways to understand the problem statement.

* **Verifying the solution:** Double-checking the calculations and logic to ensure accuracy.

The inclusion of self-doubt and re-evaluation is particularly valuable, as it reflects a realistic problem-solving approach. The text is a pedagogical tool, illustrating a methodical and transparent reasoning process. The use of the `▢` symbol to denote the final answer is a clear and concise way to present the solution. The entire block is in English.

</details>

## 3.3 Reasoning chain analysis

Using our framework, we annotate the reasoning chains produced by DeepSeek-R1 across four key tasks examined in this paper: mathematical reasoning (discussed further in Section 4), context faithfulness (in-

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Stacked Bar Chart: Processing Time Allocation Across Task

### Overview

This is a stacked horizontal bar chart illustrating the processing time allocation for four different tasks: Context Faithfulness, Harmful QA, Psycholinguistic Stimuli, and Math. The x-axis represents length in tokens, ranging from 0 to 2500. The chart uses stacked segments to show the proportion of time spent on different phases of processing: Problem Definition, Bloom cycle, Reconstruction cycles, and Final Decision.

### Components/Axes

* **Title:** "Processing time allocation across task" (centered at the top)

* **Y-axis:** Task names (Context Faithfulness, Harmful QA, Psycholinguistic Stimuli, Math) - listed vertically from top to bottom.

* **X-axis:** Length (tokens) - ranging from 0 to 2500.

* **Legend:** Located in the top-right corner, defining the color-coding for each processing phase:

* Problem Definition (Orange)

* Bloom cycle (Pink)

* Reconstruction cycles (Purple)

* Final Decision (Green)

### Detailed Analysis

Let's analyze each task individually, estimating values based on visual inspection.

**1. Context Faithfulness:**

* Problem Definition: Approximately 100 tokens.

* Bloom cycle: Approximately 200 tokens.

* Reconstruction cycles: Approximately 100 tokens.

* Final Decision: Approximately 100 tokens.

* Total Length: ~500 tokens

**2. Harmful QA:**

* Problem Definition: Approximately 150 tokens.

* Bloom cycle: Approximately 300 tokens.

* Reconstruction cycles: Approximately 1000 tokens.

* Final Decision: Approximately 300 tokens.

* Total Length: ~1750 tokens

**3. Psycholinguistic Stimuli:**

* Problem Definition: Approximately 200 tokens.

* Bloom cycle: Approximately 700 tokens.

* Reconstruction cycles: Approximately 1200 tokens.

* Final Decision: Approximately 200 tokens.

* Total Length: ~2300 tokens

**4. Math:**

* Problem Definition: Approximately 200 tokens.

* Bloom cycle: Approximately 600 tokens.

* Reconstruction cycles: Approximately 1300 tokens.

* Final Decision: Approximately 300 tokens.

* Total Length: ~2400 tokens

**Trends:**

* The "Reconstruction cycles" phase consistently occupies the largest portion of the processing time for all tasks.

* "Problem Definition" consistently occupies the smallest portion of the processing time.

* The total processing time (length in tokens) varies significantly across tasks, with Math and Psycholinguistic Stimuli requiring the most processing time.

### Key Observations

* The "Reconstruction cycles" phase dominates processing time, suggesting that this is the most computationally intensive or time-consuming part of the process.

* The tasks "Psycholinguistic Stimuli" and "Math" have similar processing time profiles, with a large proportion dedicated to "Reconstruction cycles".

* "Context Faithfulness" has the shortest overall processing time.

* "Harmful QA" has a relatively large "Bloom cycle" component compared to other tasks.

### Interpretation

The chart demonstrates how processing time is allocated across different phases for various tasks. The dominance of "Reconstruction cycles" suggests that the core processing logic or iterative refinement steps are the primary drivers of computational cost. The variation in total processing time across tasks indicates that the complexity or inherent difficulty of each task influences the overall processing effort. The relatively short processing time for "Context Faithfulness" might suggest that this task is simpler or more streamlined than the others. The larger "Bloom cycle" for "Harmful QA" could indicate that this task requires more initial exploration or generation of potential responses.

The chart provides valuable insights into the computational demands of different tasks, which can be used to optimize processing pipelines, allocate resources effectively, and identify areas for improvement. For example, if the goal is to reduce processing time, focusing on optimizing the "Reconstruction cycles" phase would likely yield the greatest benefits.

</details>

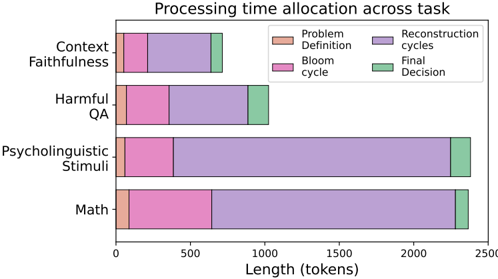

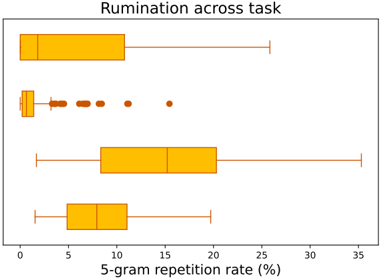

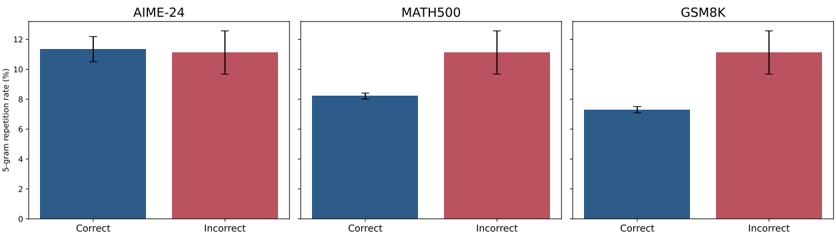

- (a) Across task, the time spent in problem definition and final decision seems to be consistent. The greatest difference in across task is the time spent in the reconstruction cycles.

- (b) Despite great disparity in rumination rate across tasks, a high time spent in reconstruction does not neccessarily mean high rumination.

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Box Plot: Rumination across task

### Overview

The image presents a box plot comparing the 5-gram repetition rate (%) across four different tasks. Each task is represented by a separate box plot, allowing for a visual comparison of the distribution of repetition rates.

### Components/Axes

* **Title:** "Rumination across task" - positioned at the top-center of the image.

* **X-axis Label:** "5-gram repetition rate (%)" - positioned at the bottom-center of the image. The scale ranges from 0 to 35.

* **Y-axis:** No explicit label, but represents the four different tasks. The tasks are arranged vertically.

* **Box Plots:** Four box plots, each representing a task. The box plots display the median, quartiles, and outliers for each task's 5-gram repetition rate.

* **Whiskers:** Extend from the boxes to indicate the range of the data, excluding outliers.

* **Outliers:** Represented as individual dots beyond the whiskers.

### Detailed Analysis

Let's analyze each box plot individually, starting from the top:

* **Task 1 (Top):** The box plot is centered around approximately 2-3%. The interquartile range (IQR) is relatively small, indicating a tight distribution. The whiskers extend to approximately 6% and 32%.

* **Task 2 (Second from Top):** This box plot shows a wider distribution. The median is around 7%. There are several outliers, ranging from approximately 8% to 16%. The whiskers extend to approximately 3% and 20%.

* **Task 3 (Third from Top):** The median is around 14%. The IQR is moderate. The whiskers extend to approximately 8% and 24%.

* **Task 4 (Bottom):** The median is around 10%. The IQR is relatively small. The whiskers extend to approximately 5% and 18%.

Approximate values extracted from visual inspection:

| Task | Minimum | Q1 | Median | Q3 | Maximum | Outliers |

|---|---|---|---|---|---|---|

| 1 | 2% | 2% | 3% | 4% | 32% | None |

| 2 | 3% | 6% | 7% | 12% | 20% | 8%, 9%, 11%, 16% |

| 3 | 8% | 11% | 14% | 17% | 24% | None |

| 4 | 5% | 8% | 10% | 13% | 18% | None |

### Key Observations

* Task 1 exhibits the lowest repetition rates overall.

* Task 2 has the most variability and the highest number of outliers, suggesting a greater tendency for rumination or repetitive thought patterns.

* Tasks 3 and 4 have similar median repetition rates, but Task 3 has a wider range.

* The distribution of Task 1 is more concentrated than the others.

### Interpretation

The data suggests that the four tasks elicit different levels of rumination, as measured by the 5-gram repetition rate. Task 1 appears to be the least prone to repetitive thought, while Task 2 is the most. The presence of outliers in Task 2 indicates that some individuals experience significantly higher repetition rates during that task.

The 5-gram repetition rate is likely used as a proxy for the degree to which individuals get "stuck" in repetitive thought loops. A higher rate suggests that individuals are more likely to revisit the same ideas or phrases, which is a hallmark of rumination.

The differences between tasks could be due to the nature of the tasks themselves. Some tasks may be more cognitively demanding or emotionally evocative, leading to increased rumination. Further investigation would be needed to understand the specific factors that contribute to these differences. The data does not provide information about the nature of the tasks, so this is speculative.

</details>

Figure 3.3: Length of various reasoning stages (as introduced in Section 3.2) and the rumination rate (see Section 3.3) experienced across four different investigated tasks.

<details>

<summary>Image 8 Details</summary>

### Visual Description

\n

## Line Chart: Lengths of Reasoning Cycles

### Overview

The image presents a line chart illustrating the relationship between Cycle Number and Length of Reasoning Cycles (measured in tokens). The chart appears to represent data from models GSM8K, MATH-500, and AIME-24, as indicated in the title. A shaded region around the line represents a confidence interval or standard deviation. A vertical dashed line is present at Cycle Number 0, labeled "Bloom".

### Components/Axes

* **Title:** "Lengths of Reasoning cycles (GSM8K, MATH-500, AIME-24)" - positioned at the top-center of the chart.

* **X-axis:** "Cycle Number" - ranging from 0 to 50, with tick marks at intervals of 5.

* **Y-axis:** "Length (tokens)" - ranging from 0 to 1200, with tick marks at intervals of 200.

* **Data Series:** A single blue line representing the average length of reasoning cycles.

* **Shaded Region:** A light blue area surrounding the line, indicating variability or confidence interval.

* **Vertical Line:** A dashed purple line at x=0, labeled "Bloom".

* **No Legend:** The chart does not have a separate legend, as there is only one data series.

### Detailed Analysis

The blue line representing the average length of reasoning cycles initially decreases sharply from Cycle Number 0 to approximately Cycle Number 5. After this initial drop, the line fluctuates, exhibiting several peaks and valleys, before generally trending downwards towards Cycle Number 50.

Here's an approximate extraction of data points, noting the inherent uncertainty in reading values from the chart:

* **Cycle 0 (Bloom):** Length ≈ 800 tokens

* **Cycle 5:** Length ≈ 200 tokens

* **Cycle 10:** Length ≈ 250 tokens

* **Cycle 15:** Length ≈ 600 tokens

* **Cycle 20:** Length ≈ 300 tokens

* **Cycle 25:** Length ≈ 200 tokens

* **Cycle 30:** Length ≈ 150 tokens

* **Cycle 35:** Length ≈ 180 tokens

* **Cycle 40:** Length ≈ 130 tokens

* **Cycle 45:** Length ≈ 100 tokens

* **Cycle 50:** Length ≈ 100 tokens

The shaded region indicates that the length of reasoning cycles varies significantly around the average. The width of the shaded region appears to be wider during the initial cycles (0-10) and around Cycle 15, suggesting greater variability in these regions.

### Key Observations

* The most significant drop in reasoning cycle length occurs within the first 5 cycles.

* There is a notable peak in reasoning cycle length around Cycle 15.

* The reasoning cycle length generally decreases over the observed 50 cycles, but with considerable fluctuation.

* The "Bloom" marker at Cycle 0 indicates a starting point or initial condition.

### Interpretation

The chart suggests that the length of reasoning cycles decreases as the process iterates, potentially indicating that the model becomes more efficient at reasoning over time. The initial sharp decrease could represent a period of rapid learning or adaptation. The fluctuations and peak around Cycle 15 might indicate periods of increased complexity or difficulty in the reasoning process. The models GSM8K, MATH-500, and AIME-24 are likely being used to solve problems, and the chart shows how the length of the reasoning process changes as the model iterates towards a solution. The "Bloom" marker likely represents the initial state of the model before any reasoning cycles have been performed. The decreasing trend suggests that the model is converging towards a more concise and efficient reasoning process. The variability, as shown by the shaded region, indicates that the reasoning process is not always consistent and can vary depending on the specific problem or context.

</details>

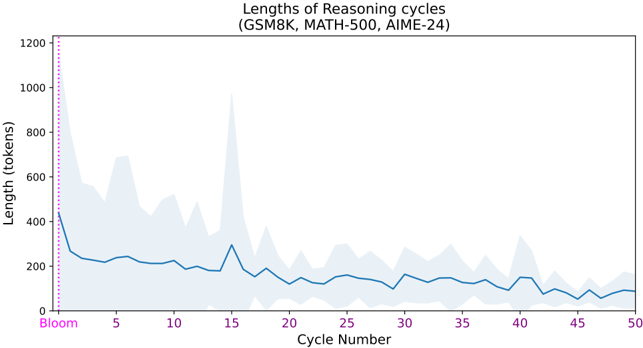

Figure 3.4: The length of each reasoning cycle (Bloom and Reconstruction cycles) for the mathematical reasoning tasks.

troduced in Section 6), psycholinguistic stimuli (introduced in Section 9), and harmful question-answering (introduced in Section 7).

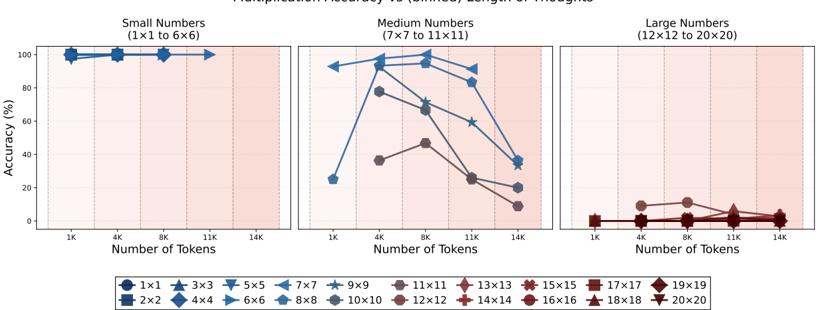

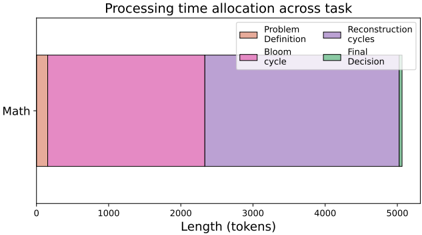

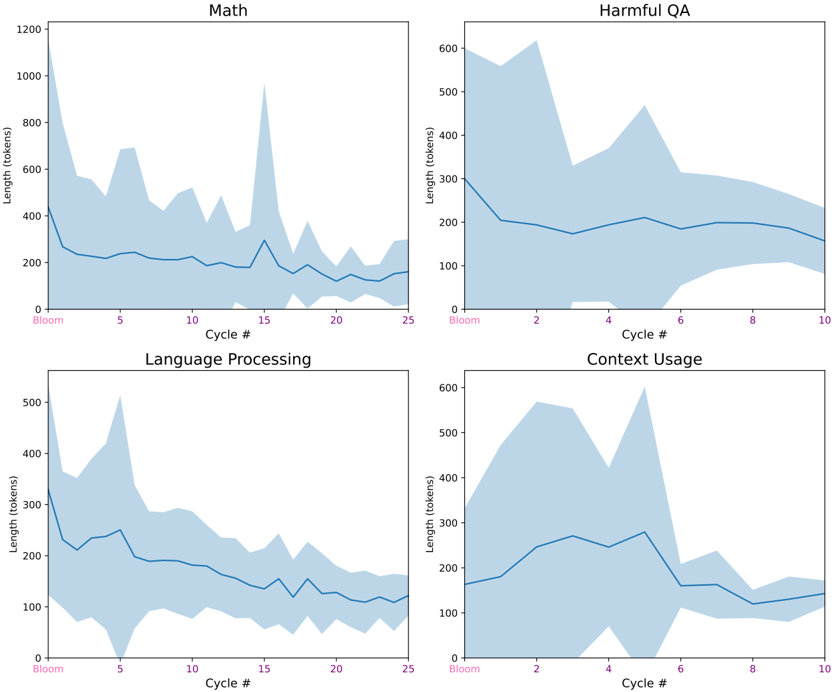

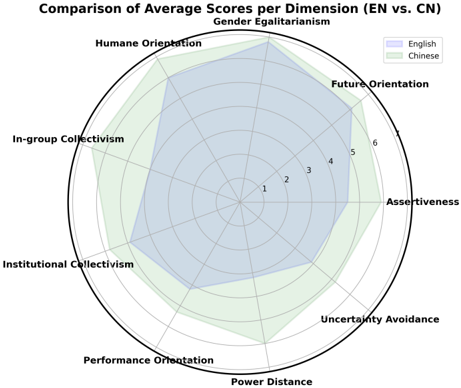

Time spent per stage We plot the average time spent in various stages across our four investigated tasks in Figure 3.3a. The average length of each reasoning chain differs greatly across the task type (typically more time is spent reasoning for mathematical and grammatical tasks, and less time is spent reasoning for the contextual adaptation and safety QA task). However, the time spent in problem definition is equivalent across all tasks. While the context faithfulness task spends less time in the bloom stage, the main difference between the observed tasks rests in the reconstruction cycles; these tasks mainly differ in the amount of time DeepSeek-R1 spends deliberating over its previous conclusions. We investigate this deliberation further in the following analyses.

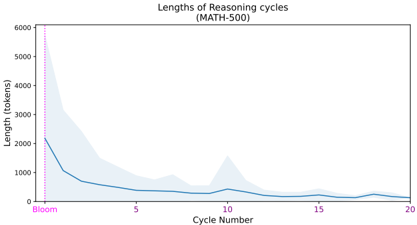

Length of reasoning cycles We look into the lengths of each preceding reasoning cycle, starting from the bloom cycle (Cycle 0) and the following reconstruction cycles, if present. In Figure 3.4, we present the data for mathematical reasoning, as it is the task with the longest reasoning chains and the greatest

number of cycles, but we show the graphs for the other four tasks in Section A. We note an interesting behaviour: typically, the bloom cycle is the longest cycle, which is conserved across nearly all tasks (context faithfulness is one exception, which we discuss in the Appendix). Typically, we see decrease in cycle length over reasoning time. However, approximately every 5 reconstructions, we see a longer reconstruction cycle. We note that there is a lot of variance across question and dataset, as these long reconstruction cycles do not appear consistently every 5 cycles. Rather, it seems that 'long' reconstruction cycles are most common directly after the bloom step, and then re-emerge periodically as reasoning progresses.

## Building Blocks: A longer reasoning cycle from MATH-500

Prompt: In how many ways can 8 people sit around a round table if 3 of the people - Pierre, Rosa, and Thomas - all want to sit together? (Two seatings are considered the same if one is a rotation of the other.)

```

```

Figure 3.5: We show a more complicated reasoning chain from MATH-500 (we have redacted components with [...] for better readability). We use a darker purple to highlight longer cycles, and lighter colors to indicate shorter cycles. We highlight the different problem decompositions the model makes. Firstly, we indicate the initial problem decomposition, and highlight two changes the model makes to this decomposition in orange and yellow. In the longer cycles, we see some re-blooms , or novel decompositions of the problem. Shorter cycles typically either re-verify these previous decompositions (See frequent call back to one particular decomposition in the figure), which we term rumination , or they abandon their line of reasoning (See Cycle 18). We note that the correct answer to this problem is 720.

Reconstructions We now take a deeper, qualitative look at the longer and shorter reconstruction cycles identified. We imagine these reasoning cycles (both the initial bloom and subsequent reconstruction cycles) function as a sequential form of self-consistency sampling (Wang et al., 2023b). We point again to the example in Figure 3.2 and note the reconsiderations considered in each reconstruction. In this example, we see several,

## Rumination over task complexity

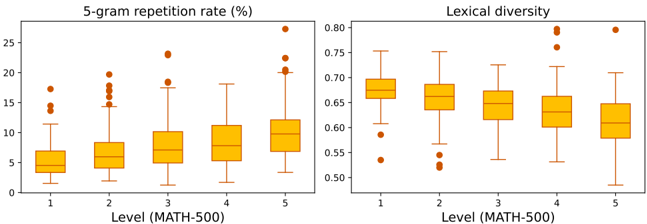

Figure 3.6: Given increasing difficulty (level) of a problem, the degree of rumination increases. This is both for verbatim repetition (5-gram repetition rate) as well as word diversity (lexical diversity).

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Boxplot Pair: Language Model Metrics vs. Level (MATH-500)

### Overview

The image presents a pair of boxplots comparing two language model metrics – 5-gram repetition rate (%) and lexical diversity – across five levels of a MATH-500 dataset. Each boxplot displays the distribution of the metric for each level, allowing for a visual comparison of performance.

### Components/Axes

* **X-axis (Both Plots):** Level (MATH-500), ranging from 1 to 5.

* **Y-axis (Left Plot):** 5-gram repetition rate (%), ranging from 0 to 30.

* **Y-axis (Right Plot):** Lexical diversity, ranging from 0.5 to 0.8.

* **Data Points:** Individual orange dots represent data points outside the boxplot whiskers.

* **Boxplots:** Each boxplot represents the distribution of the metric for a specific level. The box represents the interquartile range (IQR), the line inside the box represents the median, and the whiskers extend to the furthest data point within 1.5 times the IQR.

### Detailed Analysis or Content Details

**Left Plot: 5-gram Repetition Rate (%)**

* **Level 1:** Median ≈ 7%, IQR ≈ 5-9%, Minimum ≈ 2%, Maximum ≈ 14%.

* **Level 2:** Median ≈ 8%, IQR ≈ 6-12%, Minimum ≈ 3%, Maximum ≈ 19%.

* **Level 3:** Median ≈ 9%, IQR ≈ 7-13%, Minimum ≈ 4%, Maximum ≈ 18%.

* **Level 4:** Median ≈ 10%, IQR ≈ 8-14%, Minimum ≈ 5%, Maximum ≈ 21%.

* **Level 5:** Median ≈ 11%, IQR ≈ 8-16%, Minimum ≈ 6%, Maximum ≈ 27%.

* **Trend:** The 5-gram repetition rate generally increases with increasing level. The median values consistently rise from Level 1 to Level 5. There are several outliers above the upper whisker, particularly at Levels 4 and 5.

**Right Plot: Lexical Diversity**

* **Level 1:** Median ≈ 0.67, IQR ≈ 0.63-0.71, Minimum ≈ 0.53, Maximum ≈ 0.75.

* **Level 2:** Median ≈ 0.66, IQR ≈ 0.61-0.70, Minimum ≈ 0.54, Maximum ≈ 0.76.

* **Level 3:** Median ≈ 0.65, IQR ≈ 0.61-0.69, Minimum ≈ 0.56, Maximum ≈ 0.76.

* **Level 4:** Median ≈ 0.65, IQR ≈ 0.61-0.69, Minimum ≈ 0.57, Maximum ≈ 0.77.

* **Level 5:** Median ≈ 0.64, IQR ≈ 0.60-0.68, Minimum ≈ 0.55, Maximum ≈ 0.75.

* **Trend:** Lexical diversity generally decreases with increasing level. The median values decline from Level 1 to Level 5. There are some outliers below the lower whisker, particularly at Level 2.

### Key Observations

* The 5-gram repetition rate shows a clear positive correlation with level, indicating that higher levels of the MATH-500 dataset result in more repetitive language generation.

* Lexical diversity exhibits a negative correlation with level, suggesting that language models generate less diverse vocabulary as the level increases.

* The presence of outliers in both plots suggests variability in the data and potential instances of unusual language generation.

### Interpretation

The data suggests a trade-off between repetition and diversity in language model outputs as the complexity of the task (represented by the MATH-500 level) increases. As the level rises, the models tend to rely on more frequent phrases (higher 5-gram repetition rate) and a smaller vocabulary (lower lexical diversity). This could be due to several factors, such as the models struggling to generalize to more complex problems or falling back on simpler, more predictable language patterns. The outliers indicate that some models may perform significantly better or worse than others at specific levels. This could be due to differences in model architecture, training data, or hyperparameter settings. The relationship between these two metrics is important for evaluating the quality of generated text. High repetition can lead to monotonous and unnatural language, while low diversity can limit the expressiveness and creativity of the model.

</details>

similar deliberations over the same assumption made during the Bloom phase. Even after DeepSeek-R1 has checked the same assumption several times, it continues to investigate it (see a more extreme example in Figure A.3). We call this behaviour rumination , as it evokes a ruminant regurgitating already chewed cud. We see this behaviour repeated in several tasks seen in the following sections (See Sections 5 and 9). In more complicated tasks, we may see several different ways to reconsider the same initial assumption. We show one example in Figure 3.5. The model considers several ways to reformulate the problem: it initially (correctly) treats the group as a single unit. After some cycles, it considers fixing one person's position. It continues comparing these approaches in shorter 'rumination' reconsideration cycles. These smaller cycles also include abandoned reconstructions. In contrast, longer reconstruction cycles typically consider a novel way to approach the problem, and follow this line to completion (which may be considered a 'rebloom'). Therefore, we can see several behaviours of interest in these reconstruction cycles: (1) long re-blooms of novel reconstructions, which are more common in early reasoning, though periodically appear in later cycles, (2) short ruminations of already examined reconsiderations, and (3) short abandonments of novel reconstructions.

Rumination rate Moderate rumination may serve as a useful self-verification mechanism, but excessive rumination introduces inefficiency, increasing computational cost and-in some cases-reducing accuracy (Section 4.1). We now quantify the prevalence of rumination in a reasoning chain. We define the rumination rate as the frequency of redundant reconsiderations within a reasoning chain. To this end, we measure verbatim rumination using the n-gram repetition rate , defined as the proportion of repeated n -grams within a text (with n = 5 chosen to capture high-fidelity repetition). In addition, we assess lexical diversity via lexical entropy, normalized by word count to account for differences in sequence length (See Equation (1)).

<!-- formula-not-decoded -->

As shown in Figure 3.6, increasing mathematical problem complexity is associated with higher rates of verbatim repetition and lower lexical diversity. Finally, Figure 3.3b demonstrates that rumination rates vary across tasks, yet is still independent from overall processing time and time spend in reconstruction.

## 3.4 Conclusion

Our analysis highlights the structured nature of DeepSeek-R1's reasoning process, revealing consistent patterns across diverse tasks. We decompose its reasoning chains into fundamental units: problem definition, blooming cycle, reconstruction cycle(s), and final decision.

Using this decomposition, we annotate 100 examples from each of the four selected tasks discussed in this paper. We show that the processing times for problem definition and final decisions are typically consistent across tasks, and the major difference in processing time can be attributed to the reconstruction cycles,

where we see consistent behaviour types: longer 're-bloom' reconstructions are more frequent at the start of reasoning, though they may periodically emerge throughout the reasoning chain. In shorter reconstructions, the model often reconsiders already examined decompositions (which can be done multiple times), or may abandon a novel decomposition before completion. In future sections, these reconstruction behaviours will re-emerge in different manners to impact model performance.

## 4 Analyzing the Length of Thoughts