# Kimi-VL Technical Report

**Authors**: Kimi Team

template.bib

## Abstract

We present Kimi-VL, an efficient open-source Mixture-of-Experts (MoE) vision-language model (VLM) that offers advanced multimodal reasoning, long-context understanding, and strong agent capabilities —all while activating only 2.8B parameters in its language decoder (Kimi-VL-A3B).

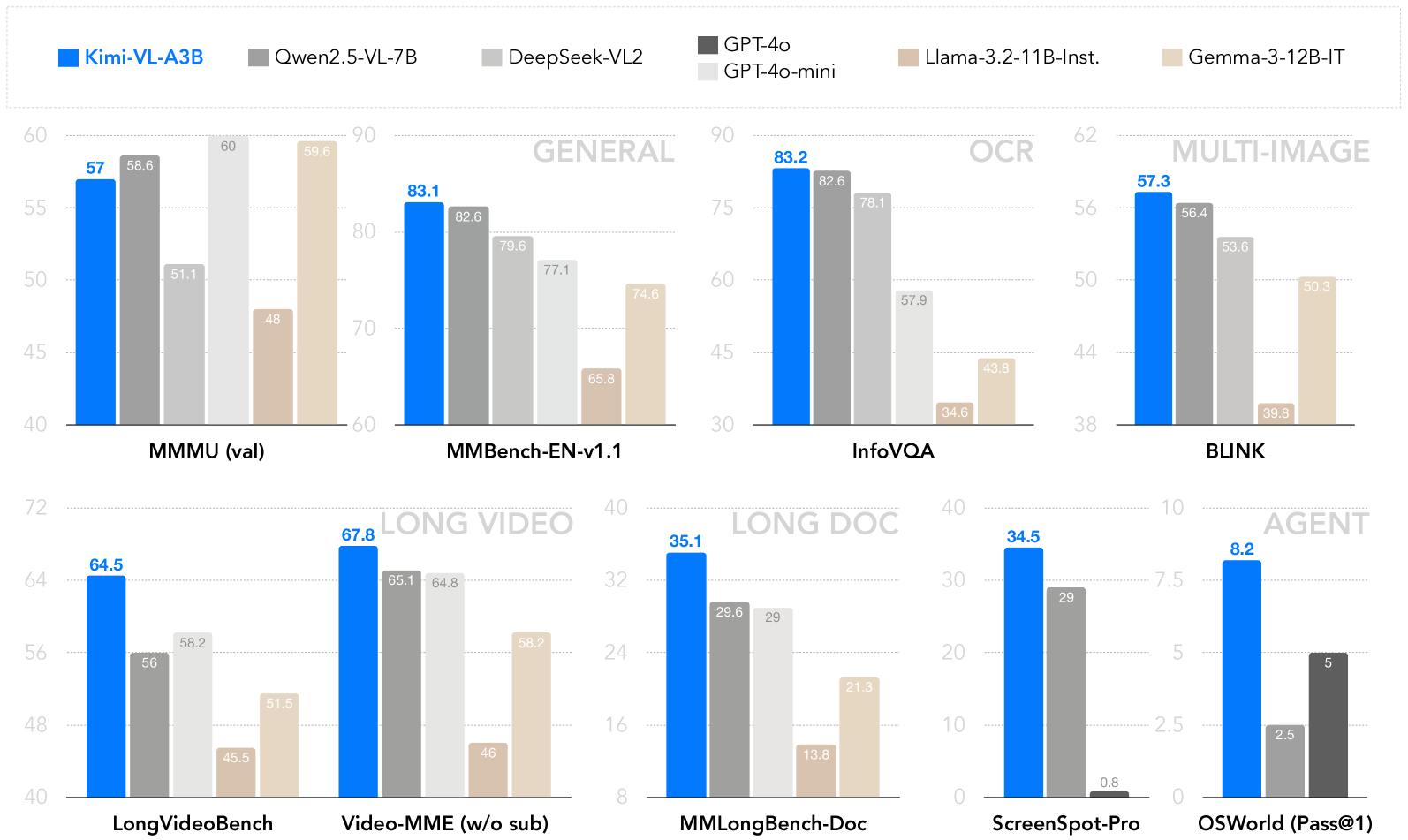

Kimi-VL demonstrates strong performance across challenging domains: as a general-purpose VLM, Kimi-VL excels in multi-turn agent tasks (e.g., OSWorld), matching flagship models. Furthermore, it exhibits remarkable capabilities across diverse challenging vision language tasks, including college-level image and video comprehension, OCR, mathematical reasoning, multi-image understanding. In comparative evaluations, it effectively competes with cutting-edge efficient VLMs such as GPT-4o-mini, Qwen2.5-VL-7B, and Gemma-3-12B-IT, while surpassing GPT-4o in several key domains.

Kimi-VL also advances in processing long contexts and perceiving clearly. With a 128K extended context window, Kimi-VL can process diverse long inputs, achieving impressive scores of 64.5 on LongVideoBench and 35.1 on MMLongBench-Doc. Its native-resolution vision encoder, MoonViT, further allows it to see and understand ultra-high-resolution visual inputs, achieving 83.2 on InfoVQA and 34.5 on ScreenSpot-Pro, while maintaining lower computational cost for common tasks.

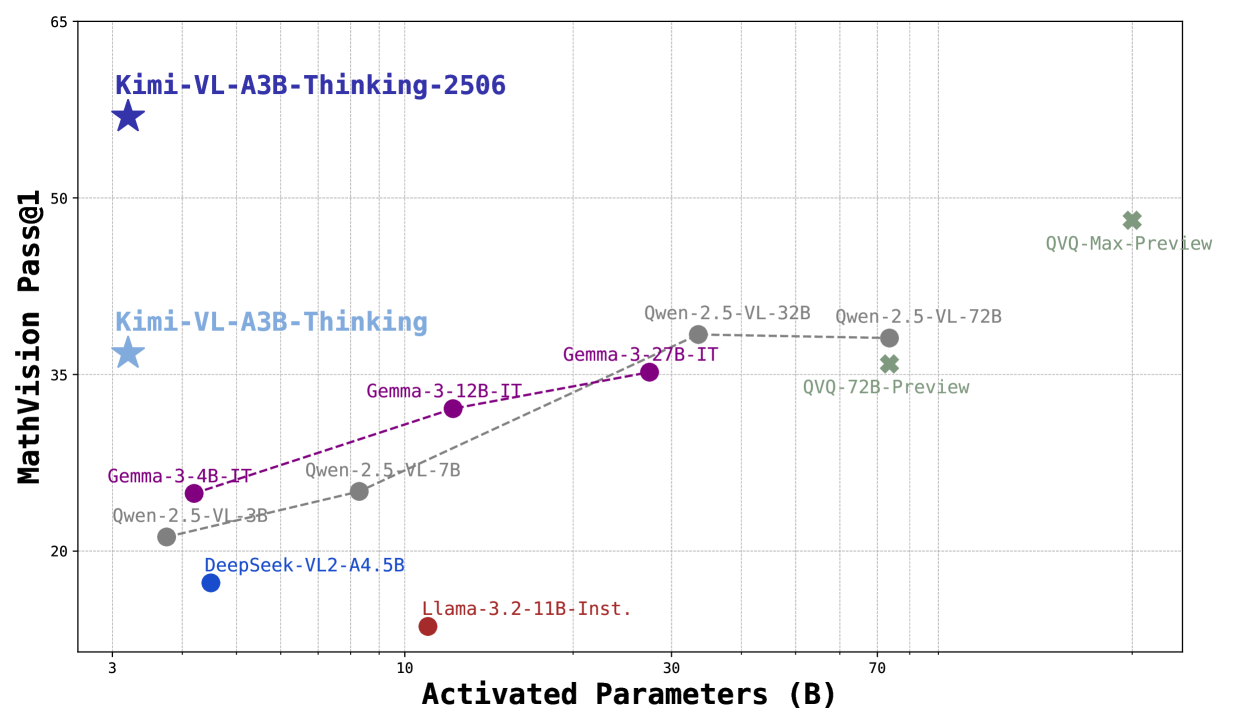

Building upon Kimi-VL, we introduce an advanced long-thinking variant: Kimi-VL-Thinking-2506. Developed through long chain-of-thought (CoT) supervised fine-tuning (SFT) and reinforcement learning (RL), the latest model exhibits strong long-horizon reasoning capabilities (64.0 on MMMU, 46.3 on MMMU-Pro, 56.9 on MathVision, 80.1 on MathVista, 65.2 on VideoMMMU) while obtaining robust general abilities (84.4 on MMBench, 83.2 on V* and 52.8 on ScreenSpot-Pro). With only around 3B activated parameters, it sets a new standard for efficient yet capable multimodal thinking models. Code and models are publicly accessible at https://github.com/MoonshotAI/Kimi-VL.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Scatter Plot: AI Model Performance vs. Model Size

### Overview

This image is a scatter plot comparing the performance of various multimodal AI models on a mathematical vision benchmark against their computational scale (activated parameters). The plot reveals a general trend where larger models tend to achieve higher scores, but with significant outliers demonstrating high efficiency.

### Components/Axes

* **X-Axis:** Labeled **"Activated Parameters (B)"**. The scale is logarithmic, with major tick marks at **3, 10, 30, and 70** billion parameters.

* **Y-Axis:** Labeled **"MathVision Pass@1"**. The scale is linear, ranging from **20 to 65**, with major grid lines at intervals of 15 (20, 35, 50, 65).

* **Data Series & Legend:** The plot contains multiple data series, each represented by a distinct color and marker shape. The legend is embedded directly as labels next to each data point.

* **Dark Blue Star:** `Kimi-VL-A3B-Thinking-2506`

* **Light Blue Star:** `Kimi-VL-A3B-Thinking`

* **Purple Circles (connected by dashed line):** `Gemma-3-4B-IT`, `Gemma-3-12B-IT`, `Gemma-3-27B-IT`

* **Gray Circles (connected by dashed line):** `Qwen-2.5-VL-3B`, `Qwen-2.5-VL-7B`, `Qwen-2.5-VL-32B`, `Qwen-2.5-VL-72B`

* **Blue Circle:** `DeepSeek-VL2-A4.5B`

* **Red Circle:** `Llama-3.2-11B-Inst.`

* **Green Crosses:** `QVQ-72B-Preview`, `QVQ-Max-Preview`

### Detailed Analysis

**Data Points (Approximate Coordinates: Activated Parameters (B), MathVision Pass@1):**

1. **Kimi-VL-A3B-Thinking-2506 (Dark Blue Star):** Positioned at the top-left. Coordinates: **(~3B, ~60)**. This is the highest-performing model on the chart.

2. **Kimi-VL-A3B-Thinking (Light Blue Star):** Positioned below the first star. Coordinates: **(~3B, ~37)**.

3. **Gemma-3 Series (Purple Circles, upward trend):**

* `Gemma-3-4B-IT`: **(~4B, ~25)**

* `Gemma-3-12B-IT`: **(~12B, ~32)**

* `Gemma-3-27B-IT`: **(~27B, ~35)**

* *Trend:* Performance increases with model size, but the rate of improvement slows.

4. **Qwen-2.5-VL Series (Gray Circles, upward then plateauing trend):**

* `Qwen-2.5-VL-3B`: **(~3B, ~21)**

* `Qwen-2.5-VL-7B`: **(~7B, ~25)**

* `Qwen-2.5-VL-32B`: **(~32B, ~38)**

* `Qwen-2.5-VL-72B`: **(~72B, ~38)**

* *Trend:* Strong improvement from 3B to 32B, then a plateau between 32B and 72B.

5. **DeepSeek-VL2-A4.5B (Blue Circle):** Coordinates: **(~4.5B, ~18)**. Positioned below the Gemma-3-4B-IT point.

6. **Llama-3.2-11B-Inst. (Red Circle):** Coordinates: **(~11B, ~15)**. This is the lowest-performing model on the chart for its size.

7. **QVQ Series (Green Crosses, high-parameter region):**

* `QVQ-72B-Preview`: **(~72B, ~36)**. Positioned slightly below the Qwen-2.5-VL-72B point.

* `QVQ-Max-Preview`: **(~120B?, ~49)**. The rightmost point, with an estimated parameter count beyond the 70B tick.

### Key Observations

1. **Efficiency Outliers:** The `Kimi-VL-A3B-Thinking-2506` model is a dramatic outlier, achieving the highest score (~60) with one of the smallest parameter counts (~3B). This indicates exceptional parameter efficiency for this specific task.

2. **Performance Plateau:** The `Qwen-2.5-VL` series shows a clear performance plateau, where increasing parameters from 32B to 72B yields no improvement in the MathVision Pass@1 score.

3. **Size-Performance Disconnect:** Larger models do not guarantee better performance. `Llama-3.2-11B-Inst.` (~11B) underperforms both smaller models (e.g., `Qwen-2.5-VL-3B`) and similarly sized models (e.g., `Gemma-3-12B-IT`).

4. **General Trend:** Excluding the major outliers, there is a loose positive correlation between activated parameters and benchmark score, as seen in the Gemma-3 and the initial segment of the Qwen-2.5-VL series.

### Interpretation

This chart visualizes the trade-off and variance in **efficiency versus scale** for multimodal AI models on a mathematical reasoning task.

* **The "Kimi" models** suggest that architectural innovations or training techniques (implied by the "-Thinking" suffix) can lead to breakthroughs in efficiency, achieving state-of-the-art results with a fraction of the parameters used by competitors.

* The **plateau in the Qwen series** indicates diminishing returns for simply scaling a particular model architecture on this benchmark. It suggests that beyond a certain point (~32B for this model family), other factors like data quality, training methodology, or architectural limits become the primary bottleneck.

* The **underperformance of Llama-3.2-11B-Inst.** highlights that not all models of a certain size are created equal; their training data, objective alignment, and architecture critically determine their capability on specialized tasks like visual math.

* The **QVQ-Max-Preview** point shows that very large scale can still push performance higher, but it requires a massive increase in parameters to achieve a score that is still below the much smaller "Kimi" model.

**In summary, the data argues that for specialized reasoning tasks, intelligent model design and training can be far more impactful than brute-force scaling. The chart serves as a benchmark for evaluating not just raw performance, but the efficiency and effectiveness of different AI development approaches.**

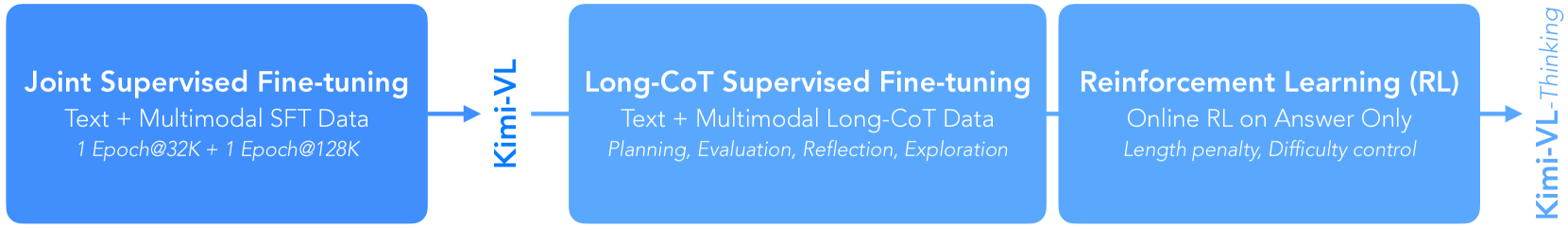

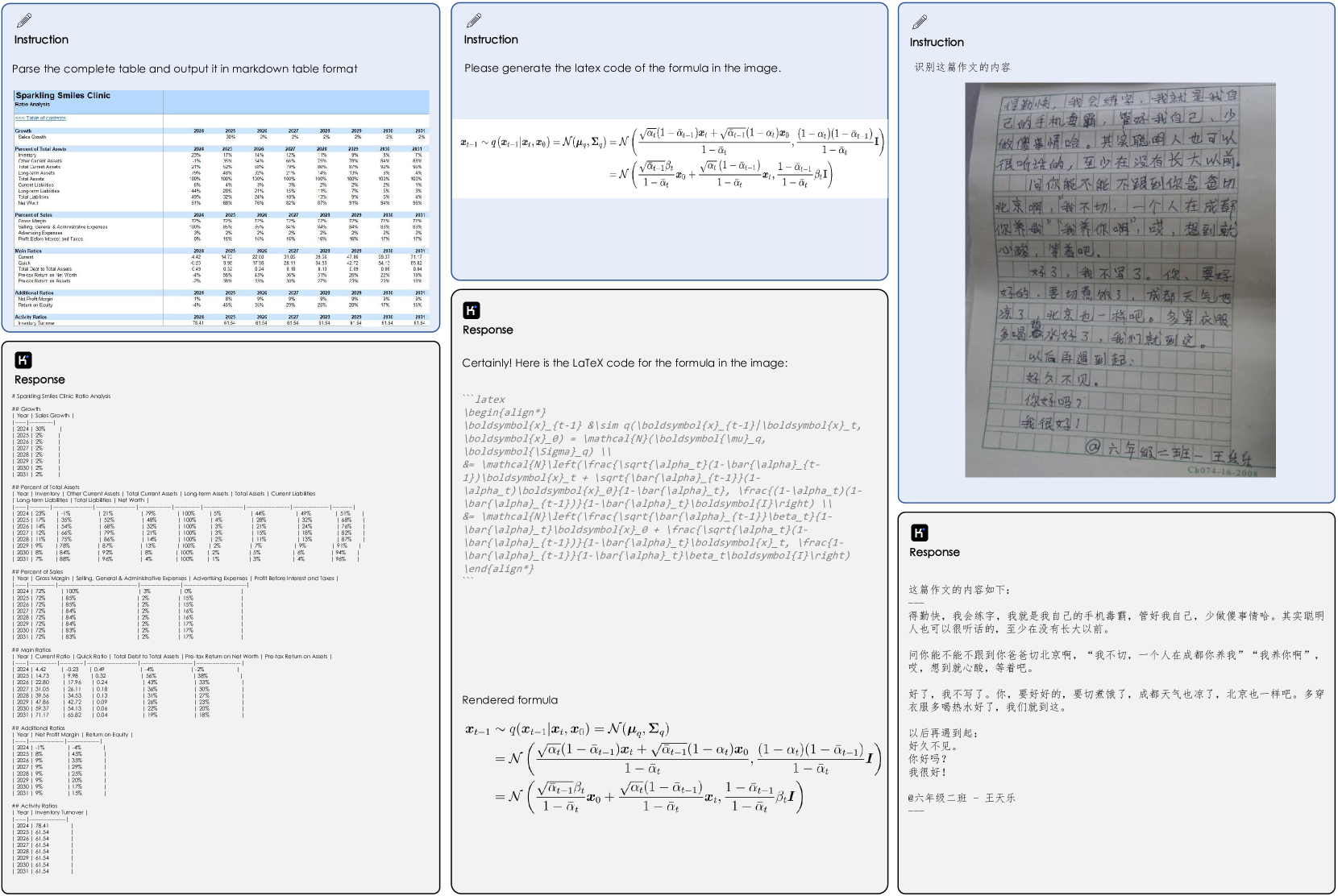

</details>

Figure 1: Comparison between Kimi-VL-Thinking-2506 and frontier open-source VLMs, including short-thinking VLMs (e.g. Gemma-3 series, Qwen2.5-VL series) and long-thinking VLMs (QVQ-72B/Max-Preview), on MathVision benchmark. Our model achieves strong multimodal reasoning with just 2.8B LLM activated parameters.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Grouped Bar Chart: AI Model Performance Across Visual-Language Benchmarks

### Overview

This image is a composite grouped bar chart comparing the performance of seven different AI models across six major benchmark categories, each containing one or two specific tests. The chart is organized into six distinct sections, each representing a benchmark category. The primary purpose is to visually compare the scores of the models, with a particular emphasis on the model "Kimi-VL-A3B," which is highlighted in blue.

### Components/Axes

**Legend (Top Center):**

The legend is positioned at the top of the entire chart, spanning horizontally. It maps model names to specific colors:

* **Kimi-VL-A3B**: Bright Blue

* **Qwen2.5-VL-7B**: Dark Gray

* **DeepSeek-VL2**: Light Gray

* **GPT-4o**: Very Dark Gray (almost black)

* **GPT-4o-mini**: Very Light Gray

* **Llama-3.2-11B-Inst.**: Light Brown/Tan

* **Gemma-3-12B-IT**: Beige/Light Tan

**Chart Sections & Axes:**

The chart is segmented into six regions, each with its own title and y-axis scale. The x-axis within each section lists the specific benchmark names.

1. **GENERAL (Top Left):**

* **Benchmarks:** MMMU (val), MMBench-EN-v1.1

* **Y-Axis:** Linear scale from 40 to 60 for MMMU (val); from 60 to 90 for MMBench-EN-v1.1.

2. **OCR (Top Center):**

* **Benchmark:** InfoVQA

* **Y-Axis:** Linear scale from 30 to 90.

3. **MULTI-IMAGE (Top Right):**

* **Benchmark:** BLINK

* **Y-Axis:** Linear scale from 38 to 62.

4. **LONG VIDEO (Bottom Left):**

* **Benchmarks:** LongVideoBench, Video-MME (w/o sub)

* **Y-Axis:** Linear scale from 40 to 72 for LongVideoBench; from 40 to 72 for Video-MME (w/o sub).

5. **LONG DOC (Bottom Center):**

* **Benchmark:** MMLongBench-Doc

* **Y-Axis:** Linear scale from 8 to 40.

6. **AGENT (Bottom Right):**

* **Benchmarks:** ScreenSpot-Pro, OSWorld (Pass@1)

* **Y-Axis:** Linear scale from 0 to 40 for ScreenSpot-Pro; from 0 to 10 for OSWorld (Pass@1).

### Detailed Analysis

**1. GENERAL Benchmarks:**

* **MMMU (val):**

* **Trend:** Kimi-VL-A3B and Qwen2.5-VL-7B lead, followed by GPT-4o-mini, then DeepSeek-VL2, with Llama-3.2-11B-Inst. and Gemma-3-12B-IT trailing.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 57

* Qwen2.5-VL-7B (Dark Gray): 58.6

* DeepSeek-VL2 (Light Gray): 51.1

* GPT-4o-mini (Very Light Gray): 60

* Llama-3.2-11B-Inst. (Light Brown): 48

* Gemma-3-12B-IT (Beige): 59.6

* **MMBench-EN-v1.1:**

* **Trend:** Kimi-VL-A3B leads, followed closely by Qwen2.5-VL-7B and DeepSeek-VL2. GPT-4o-mini and Gemma-3-12B-IT are in the next tier, with Llama-3.2-11B-Inst. significantly lower.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 83.1

* Qwen2.5-VL-7B (Dark Gray): 82.6

* DeepSeek-VL2 (Light Gray): 79.6

* GPT-4o-mini (Very Light Gray): 77.1

* Llama-3.2-11B-Inst. (Light Brown): 65.8

* Gemma-3-12B-IT (Beige): 74.6

**2. OCR Benchmark:**

* **InfoVQA:**

* **Trend:** Kimi-VL-A3B and Qwen2.5-VL-7B are the top performers, with DeepSeek-VL2 close behind. GPT-4o-mini is in the middle tier, while Llama-3.2-11B-Inst. and Gemma-3-12B-IT score notably lower.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 83.2

* Qwen2.5-VL-7B (Dark Gray): 82.6

* DeepSeek-VL2 (Light Gray): 78.1

* GPT-4o-mini (Very Light Gray): 57.9

* Llama-3.2-11B-Inst. (Light Brown): 34.6

* Gemma-3-12B-IT (Beige): 43.8

**3. MULTI-IMAGE Benchmark:**

* **BLINK:**

* **Trend:** Kimi-VL-A3B leads, followed by Qwen2.5-VL-7B and DeepSeek-VL2. Gemma-3-12B-IT is in the next tier, with Llama-3.2-11B-Inst. scoring the lowest.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 57.3

* Qwen2.5-VL-7B (Dark Gray): 56.4

* DeepSeek-VL2 (Light Gray): 53.6

* Llama-3.2-11B-Inst. (Light Brown): 39.8

* Gemma-3-12B-IT (Beige): 50.3

**4. LONG VIDEO Benchmarks:**

* **LongVideoBench:**

* **Trend:** Kimi-VL-A3B leads, followed by DeepSeek-VL2 and Qwen2.5-VL-7B. Gemma-3-12B-IT is next, with Llama-3.2-11B-Inst. scoring the lowest.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 64.5

* Qwen2.5-VL-7B (Dark Gray): 56

* DeepSeek-VL2 (Light Gray): 58.2

* Llama-3.2-11B-Inst. (Light Brown): 45.5

* Gemma-3-12B-IT (Beige): 51.5

* **Video-MME (w/o sub):**

* **Trend:** Kimi-VL-A3B leads, followed closely by Qwen2.5-VL-7B and DeepSeek-VL2. Gemma-3-12B-IT is in the next tier, with Llama-3.2-11B-Inst. scoring the lowest.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 67.8

* Qwen2.5-VL-7B (Dark Gray): 65.1

* DeepSeek-VL2 (Light Gray): 64.8

* Llama-3.2-11B-Inst. (Light Brown): 46

* Gemma-3-12B-IT (Beige): 58.2

**5. LONG DOC Benchmark:**

* **MMLongBench-Doc:**

* **Trend:** Kimi-VL-A3B leads significantly. Qwen2.5-VL-7B and DeepSeek-VL2 are in the next tier, followed by Gemma-3-12B-IT and Llama-3.2-11B-Inst.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 35.1

* Qwen2.5-VL-7B (Dark Gray): 29.6

* DeepSeek-VL2 (Light Gray): 29

* Llama-3.2-11B-Inst. (Light Brown): 13.8

* Gemma-3-12B-IT (Beige): 21.3

**6. AGENT Benchmarks:**

* **ScreenSpot-Pro:**

* **Trend:** Kimi-VL-A3B leads, followed by Qwen2.5-VL-7B. GPT-4o-mini scores very low.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 34.5

* Qwen2.5-VL-7B (Dark Gray): 29

* GPT-4o-mini (Very Light Gray): 0.8

* **OSWorld (Pass@1):**

* **Trend:** Kimi-VL-A3B leads, followed by GPT-4o and Qwen2.5-VL-7B.

* **Data Points (Approximate):**

* Kimi-VL-A3B (Blue): 8.2

* Qwen2.5-VL-7B (Dark Gray): 2.5

* GPT-4o (Very Dark Gray): 5

### Key Observations

1. **Consistent Leader:** The Kimi-VL-A3B model (blue bars) achieves the highest or near-highest score in every single benchmark presented.

2. **Strong Competitors:** Qwen2.5-VL-7B (dark gray) and DeepSeek-VL2 (light gray) are consistently in the top tier, often swapping second and third place.

3. **Variable Performance of Other Models:** GPT-4o-mini, Llama-3.2-11B-Inst., and Gemma-3-12B-IT show more variable performance. They are competitive in some benchmarks (e.g., Gemma-3-12B-IT in MMMU val) but fall significantly behind in others (e.g., Llama-3.2-11B-Inst. in InfoVQA and MMLongBench-Doc).

4. **Missing Data:** The GPT-4o model (very dark gray) only appears in the OSWorld (Pass@1) benchmark, suggesting it was not evaluated on the other tasks shown here.

5. **Task-Specific Gaps:** The performance gap between the leading models and the lower-performing ones is most pronounced in the OCR (InfoVQA) and LONG DOC (MMLongBench-Doc) benchmarks.

### Interpretation

This chart serves as a comparative performance report for visual-language AI models. The data strongly suggests that **Kimi-VL-A3B is a state-of-the-art model across a wide spectrum of visual-language tasks**, excelling in general understanding, OCR, multi-image reasoning, long video comprehension, long document processing, and agent-based interaction.

The consistent high ranking of Qwen2.5-VL-7B and DeepSeek-VL2 indicates they are also top-tier models, forming a leading group with Kimi-VL-A3B. The variability in the performance of models like Llama-3.2-11B-Inst. highlights that model capabilities are highly task-dependent; a model strong in one area (e.g., general benchmarks) may be weak in another (e.g., OCR or long-document understanding).

The chart is likely intended for a technical audience (researchers, engineers) to quickly assess model strengths and inform decisions about which model to use for specific applications. The emphasis on Kimi-VL-A3B, through its distinctive color and consistent top placement, suggests the chart may be part of a promotional or technical report highlighting its capabilities. The absence of GPT-4o from most benchmarks is a notable data gap, limiting a full comparison with that specific model.

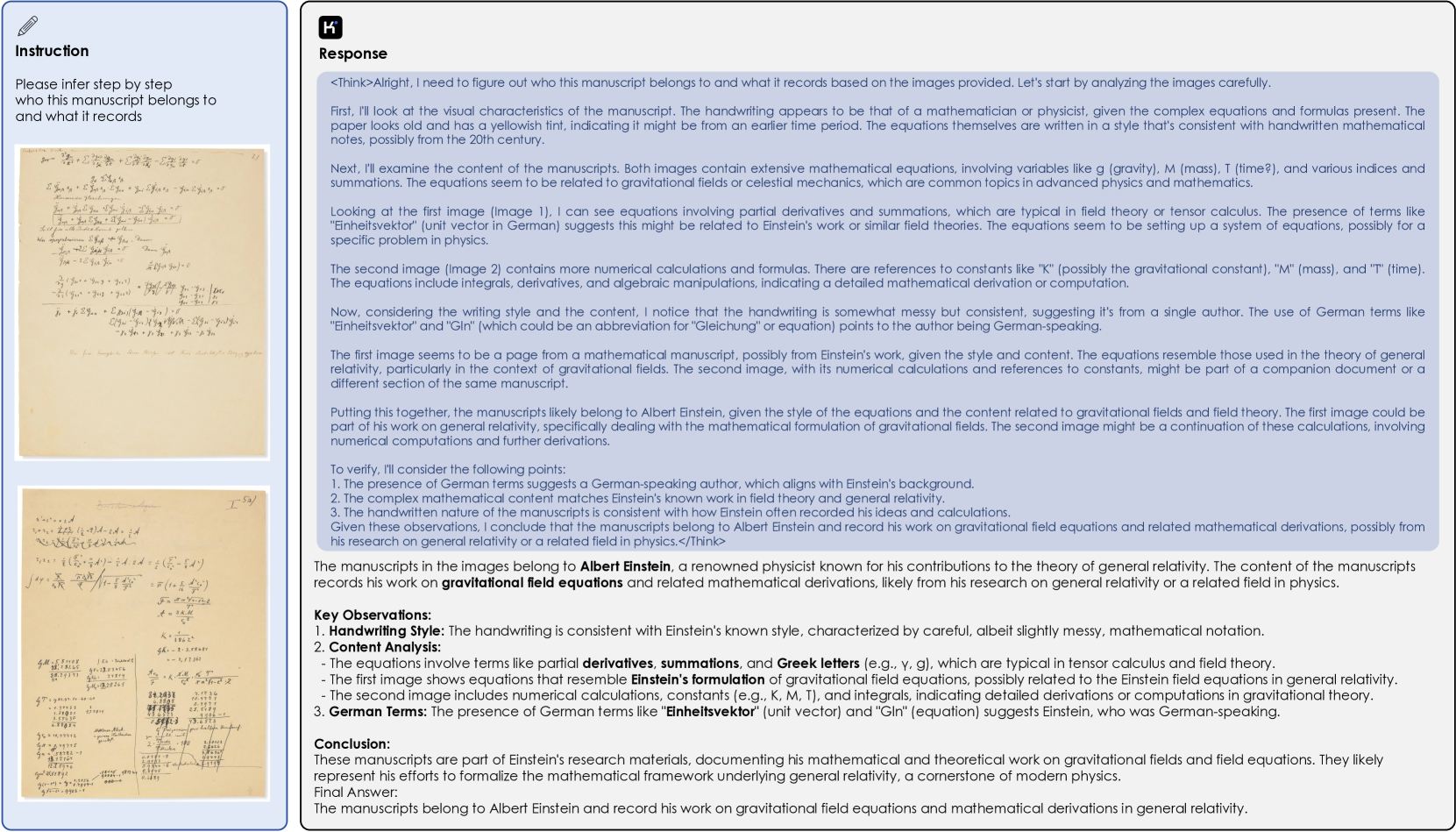

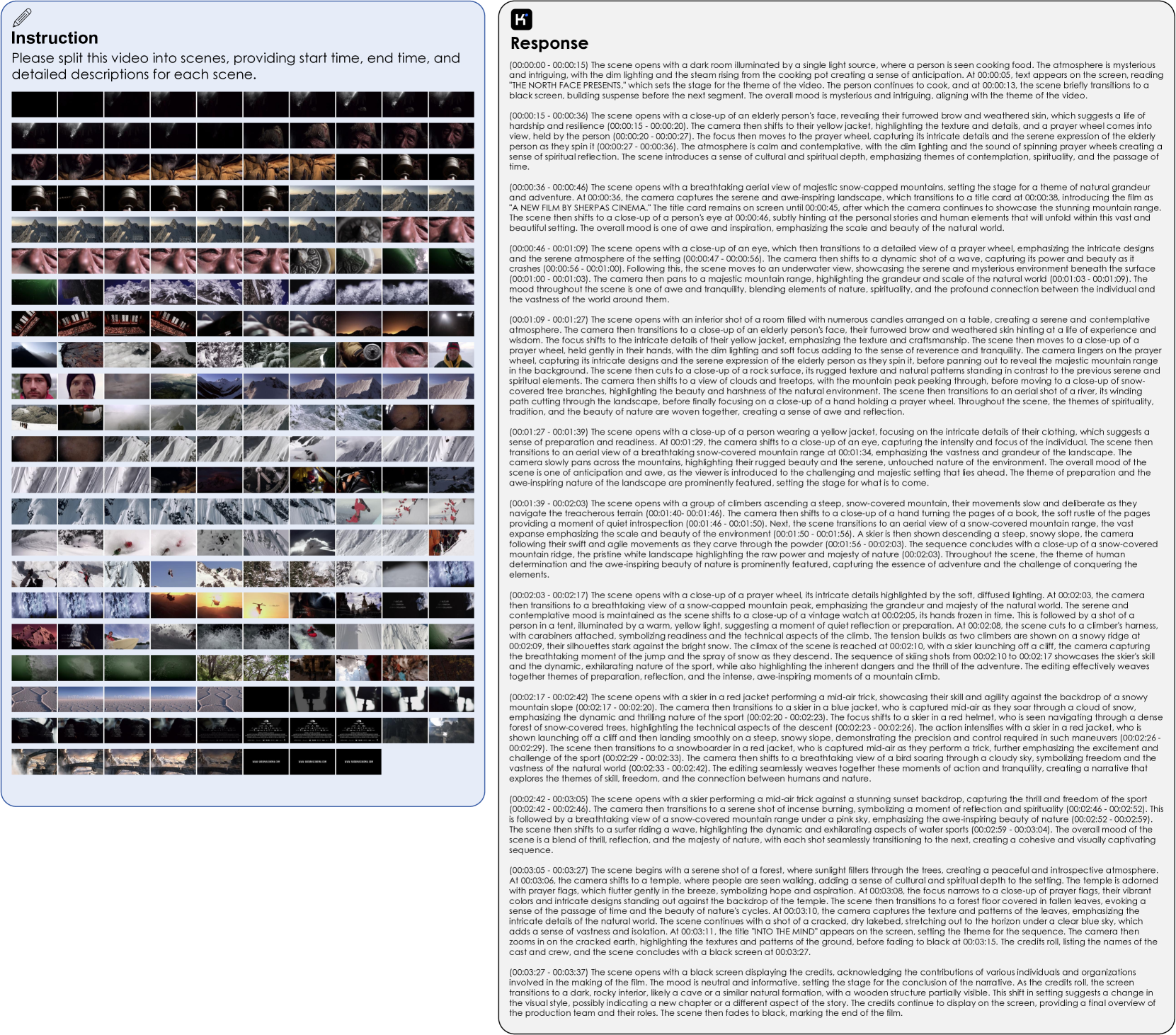

</details>

Figure 2: Highlights of Kimi-VL performance for a wide range of benchmarks like, general benchmarks (MMMU, MMBench), OCR (InfoVQA), multi-image (BLINK), long video (LongVideoBench, Video-MME), long document (MMLongBench-Doc), and agent (ScreenSpot-Pro and OSWorld). Detailed results are presented in Table 3.

## 1 Introduction

With the rapid advancement of artificial intelligence, human expectations for AI assistants have transcended traditional language-only interactions, increasingly aligning with the inherently multimodal nature of our world. To better understand and interact with these expectations, new generations of natively multimodal models, such as GPT-4o openai2024gpt4ocard and Google Gemini geminiteam2024gemini15unlockingmultimodal, have emerged with the capability to seamlessly perceive and interpret visual inputs alongside language processing. Most recently, advanced multimodal models, pioneered by OpenAI o1 series o12024 and Kimi k1.5 team2025kimi, have further pushed these boundaries by incorporating deeper and longer reasoning on multimodal inputs, thereby tackling more complex problems in the multimodal domain.

Nevertheless, development in large VLMs in the open-source community has significantly lagged behind their language-only counterparts, particularly in aspects of scalability, computational efficiency, and advanced reasoning capabilities. While language-only model DeepSeek R1 deepseekai2025deepseekr1incentivizingreasoningcapability has already leveraged the efficient and more scalable mixture-of-experts (MoE) architecture and facilitated sophisticated long chain-of-thought (CoT) reasoning, most recent open-source VLMs, e.g. Qwen2.5-VL bai2025qwen25vltechnicalreport and Gemma-3 gemmateam2025gemma3technicalreport, continue to rely on dense architectures and do not support long-CoT reasoning. Early explorations into MoE-based vision-language models, such as DeepSeek-VL2 wu2024deepseekvl2mixtureofexpertsvisionlanguagemodels and Aria li2024ariaopenmultimodalnative, exhibit limitations in other crucial dimensions. Architecturally, both models still adopt relatively traditional fixed-size vision encoders, hindering their adaptability to diverse visual inputs. From a capability perspective, DeepSeek-VL2 supports only a limited context length (4K), while Aria falls short in fine-grained visual tasks. Additionally, neither of them supports long-thinking abilities. Consequently, there remains a pressing need for an open-source VLM that effectively integrates structural innovation, stable capabilities, and enhanced reasoning through long-thinking.

In light of this, we present Kimi-VL, a vision-language model for the open-source community. Structurally, Kimi-VL consists of our Moonlight liu2025muonscalablellmtraining MoE language model with only 2.8B activated (16B total) parameters, paired with a 400M native-resolution MoonViT vision encoder. In terms of capability, as illustrated in Figure 2, Kimi-VL can robustly handle diverse tasks (fine-grained perception, math, college-level problems, OCR, agent, etc.) across a broad spectrum of input forms (single-image, multi-image, video, long-document, etc.). Specifically, it features the following exciting abilities:

1) Kimi-VL is smart: it has comparable text ability against efficient pure-text LLMs; without long thinking, Kimi-VL is already competitive in multimodal reasoning and multi-turn agent benchmarks, e.g., MMMU, MathVista, OSWorld.

2) Kimi-VL processes long: it effectively tackles long-context understanding on various multimodal inputs within its 128K context window, far ahead of similar-scale competitors on long video benchmarks and MMLongBench-Doc.

3) Kimi-VL perceives clear: it shows all-round competitive ability over existing efficient dense and MoE VLMs in various vision-language scenarios: visual perception, visual world knowledge, OCR, high-resolution OS screenshot, etc.

Furthermore, with long-CoT activation and reinforcement learning (RL), we introduce the long-thinking version of Kimi-VL, Kimi-VL-Thinking, which further substantially improves performance on more complex multimodal reasoning scenarios. Despite its small scale, Kimi-VL-Thinking offers compelling performance on hard reasoning benchmarks (e.g., MMMU, MathVision, MathVista), outperforming many state-of-the-art VLMs with even larger sizes. We further release and improved version of the thinking model, Kimi-VL-Thinking-2506. The improved version has even better performance on these reasoning benchmarks while retaining or improving on common visual perception and understanding scenarios, e.g. high-resolution perception (V*), OS grounding, video and long document understanding.

## 2 Approach

### 2.1 Model Architecture

<details>

<summary>x3.png Details</summary>

### Visual Description

## Technical Diagram: Multimodal AI System Architecture

### Overview

This image is a technical diagram illustrating the architecture of a multimodal AI system. It depicts how various input types (text, images, video, UI screenshots, OCR text) are processed through a central vision transformer ("MoonViT") and then fed into a Mixture-of-Experts (MoE) language decoder. The diagram emphasizes native-resolution processing and the integration of diverse data modalities.

### Components/Axes

The diagram is organized into three primary regions:

1. **Top Region (Blue Background): Mixture-of-Experts (MoE) Language Decoder**

* **Main Label:** "Mixture-of-Experts (MoE) Language Decoder"

* **Sub-components:**

* "MoE FFN" (Feed-Forward Network)

* "Attention Layer"

* A detailed breakout box showing the MoE routing mechanism:

* "Router"

* "Non-shared Experts" (represented by a row of outlined squares)

* "Shared Experts" (represented by a row of filled squares)

* The notation "× N" indicates this block is repeated N times.

* **Input/Output:** A sequence of colored squares (representing tokens) flows into and out of this decoder block.

2. **Central Region: Core Processing Module**

* **Primary Module:** "MoonViT" with the subtitle "(Native-resolution)". This is the central vision transformer.

* **Bridge Component:** "MLP Projector" (Multi-Layer Perceptron), positioned between the MoE decoder and MoonViT, likely for feature projection.

3. **Bottom Region: Input Modalities**

Five distinct input types are shown, all feeding into the MoonViT module via colored arrows:

* **Left (Light Blue Arrow):** "SMALL IMAGE"

* Contains a mathematical notation: `2a - b`

* Dimension labels: "50px" (width), "20px" (height).

* **Bottom Left (Red Arrow):** "LONG VIDEO"

* Depicted as a stack of video frames.

* Dimension labels: "480px" (width), "270px" (height).

* Text visible on the video frames: "CULTURAL CROSSINGS: A JOURNEY OF DISCOVERY".

* **Bottom Center (Green Arrow):** "FINE-GRAINED"

* A high-resolution photograph of a terraced tea plantation.

* Dimension labels: "1113px" (width), "1008px" (height).

* A small white bounding box is drawn on the image, highlighting a specific detail.

* **Bottom Right (Gray Arrow):** "OCR (SPECIAL ASPECT RATIO)"

* A handwritten text snippet: "fastest? That is the exciting competition going on".

* Dimension label: "58px" (height).

* **Right (Orange Arrow):** "UI SCREENSHOT"

* A screenshot of a smartphone home screen (iOS-style).

* Dimension labels: "800px" (width), "1731px" (height).

* Visible UI elements include app icons (FaceTime, Calendar, Photos, Camera, Mail, Clock, Maps, Weather, Notes, etc.), widgets (calendar, weather), and status bar icons.

### Detailed Analysis

* **Data Flow:** The flow is bottom-up and then top-down. Raw multimodal inputs (image, video, UI, text) are first processed by the MoonViT vision encoder. The encoded visual features are then passed through the MLP Projector to the MoE Language Decoder, which generates the final textual output (represented by the token sequence at the very top).

* **Key Architectural Features:**

* **Native-Resolution Processing:** The "MoonViT" label explicitly states it operates on native-resolution inputs, avoiding resizing or padding that could distort information, especially critical for the "FINE-GRAINED" image and "UI SCREENSHOT".

* **Mixture-of-Experts (MoE):** The language decoder uses an MoE architecture. The "Router" dynamically directs input tokens to a subset of "Non-shared Experts" while also utilizing "Shared Experts". This design aims for computational efficiency and model specialization.

* **Diverse Input Handling:** The system is designed to handle a wide range of aspect ratios and content types, from small mathematical images (50x20px) to tall UI screenshots (800x1731px) and long video sequences.

### Key Observations

1. **Input Diversity:** The diagram explicitly showcases five fundamentally different input types, highlighting the system's multimodal capability.

2. **Resolution Emphasis:** Pixel dimensions are provided for every input, underscoring the importance of resolution and aspect ratio in the system's design.

3. **Specialized OCR Input:** The "OCR (SPECIAL ASPECT RATIO)" input suggests the system has a dedicated pathway or training for recognizing text in unusual layouts or handwritten forms.

4. **Visual Detail Focus:** The "FINE-GRAINED" input with its bounding box implies the system can process and reason about specific regions within a high-resolution image.

5. **MoE Complexity:** The detailed breakout of the MoE block indicates that the language generation component is a significant and complex part of the architecture.

### Interpretation

This diagram represents a sophisticated, unified multimodal AI architecture. The core innovation appears to be the **MoonViT** module, which acts as a universal visual encoder capable of ingesting images, video frames, and screenshots at their native resolutions. This preserves critical spatial and textual details that would be lost with standard resizing.

The encoded visual information is then translated (via the MLP Projector) into a format that the powerful **MoE Language Decoder** can understand. The MoE decoder, with its router and mix of shared/specialized experts, is designed to efficiently generate coherent and contextually appropriate language based on the complex visual input.

The system's purpose is likely **visual question answering, document understanding, or detailed image/video captioning**. It can take a complex scene (like a UI screenshot or a detailed landscape) and answer questions about it, describe it, or extract information from it (as hinted by the OCR input and the "What can you interpret from..." text fragment near the top). The inclusion of "LONG VIDEO" suggests it may also handle temporal reasoning across frames.

**Notable Anomaly/Challenge:** The vast difference in input dimensions (from 20px height to 1731px height) presents a significant technical challenge for consistent feature extraction, which the "native-resolution" claim of MoonViT aims to address. The architecture suggests a move away from traditional, rigid vision encoders towards more flexible, resolution-agnostic models.

</details>

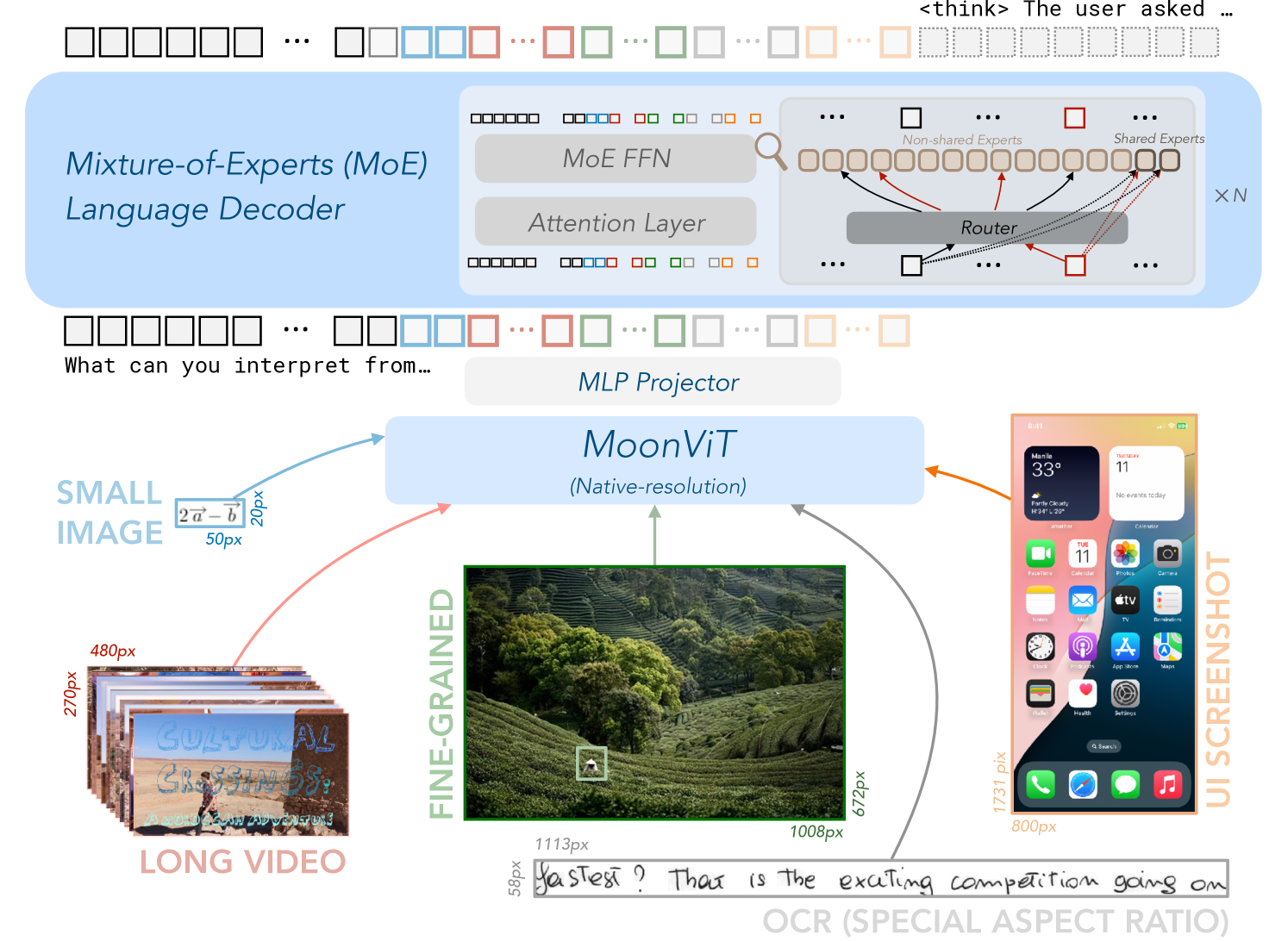

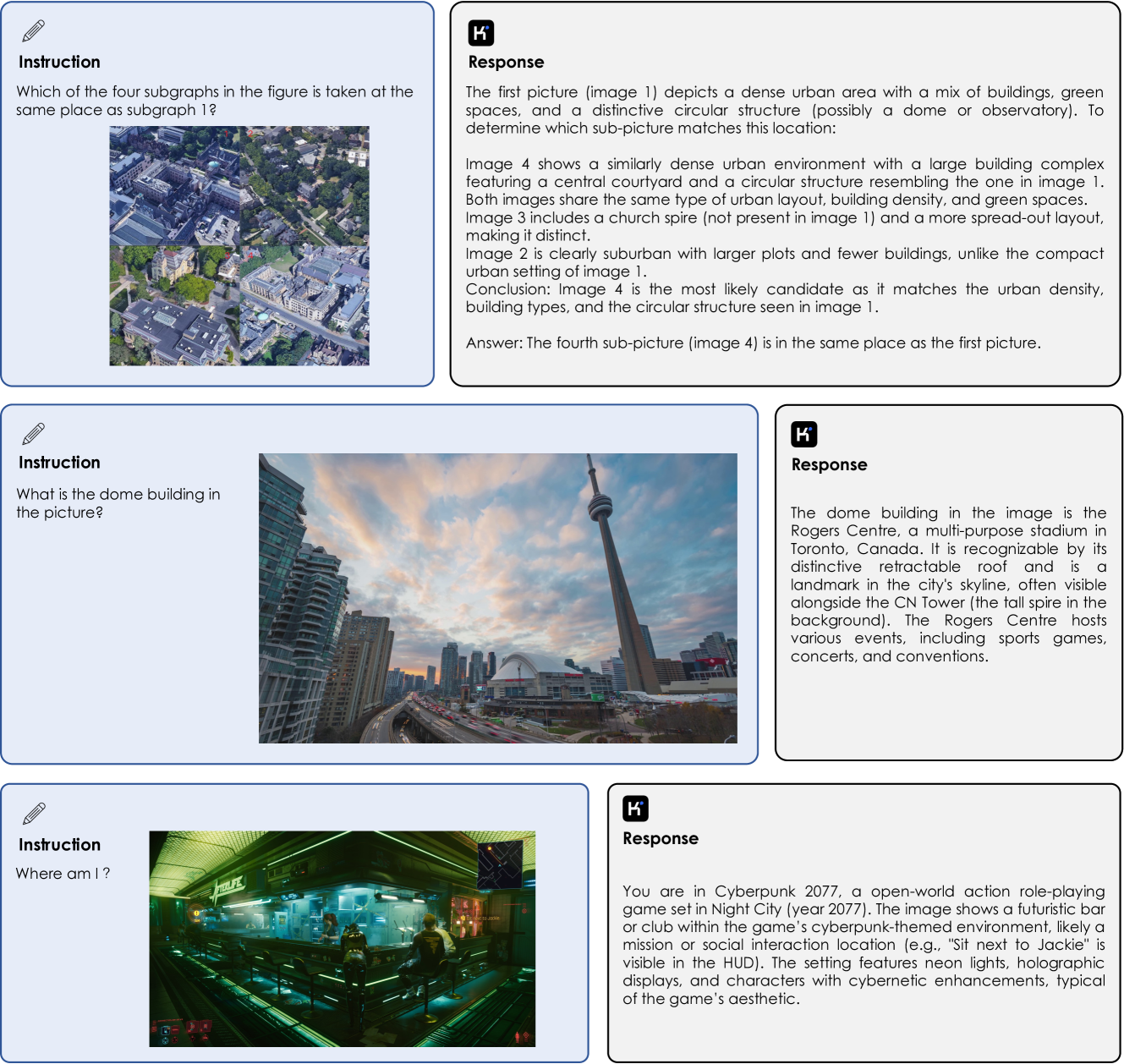

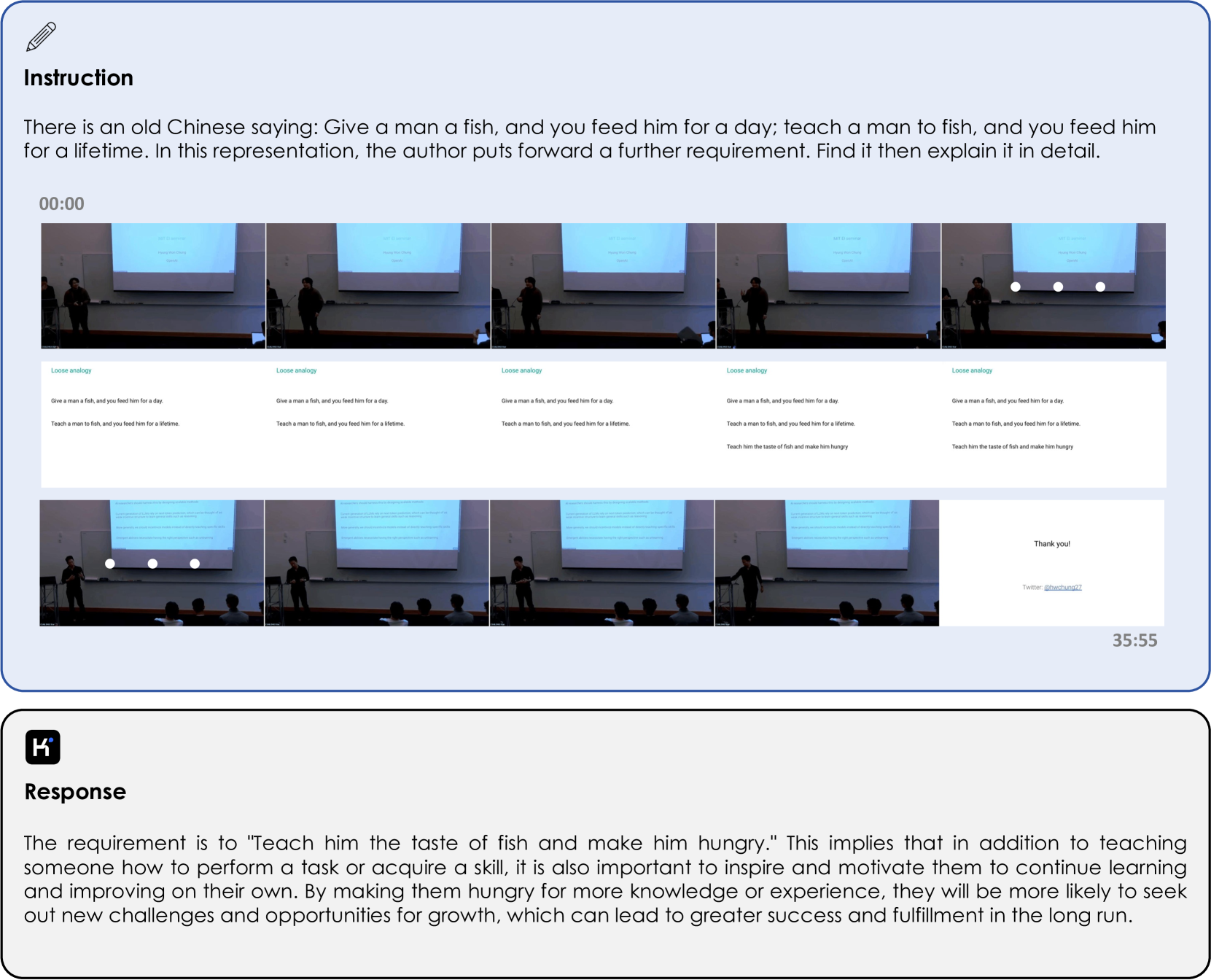

Figure 3: The model architecture of Kimi-VL and Kimi-VL-Thinking, consisting of a MoonViT that allows native-resolution images, an MLP projector, and a Mixture-of-Experts (MoE) language decoder.

The architecture of Kimi-VL consists of three parts: a native-resolution vision encoder (MoonViT), an MLP projector, and an MoE language model, as depicted in Figure 3. We introduce each part in this section.

MoonViT: A Native-resolution Vision Encoder

We design MoonViT, the vision encoder of Kimi-VL, to natively process images at their varying resolutions, eliminating the need for complex sub-image splitting and splicing operations, as employed in LLaVA-OneVision li2024llavaonevisioneasyvisualtask. We incorporate the packing method from NaViT dehghani2023patchnpacknavit, where images are divided into patches, flattened, and sequentially concatenated into 1D sequences. These preprocessing operations enable MoonViT to share the same core computation operators and optimization as a language model, such as the variable-length sequence attention mechanism supported by FlashAttention dao2022flashattentionfastmemoryefficientexact, ensuring non-compromised training throughput for images of varying resolutions.

MoonViT is initialized from and continually pre-trained on SigLIP-SO-400M zhai2023sigmoidlosslanguageimage, which originally employs learnable fixed-size absolute positional embeddings to encode spatial information. While we interpolate these original position embeddings to better preserve SigLIP’s capabilities, these interpolated embeddings become increasingly inadequate as image resolution increases. To address this limitation, we incorporate 2D rotary positional embedding (RoPE) su2023roformerenhancedtransformerrotary across the height and width dimensions, which improves the representation of fine-grained positional information, especially in high-resolution images. These two positional embedding approaches work together to encode spatial information for our model and seamlessly integrate with the flattening and packing procedures. This integration enables MoonViT to efficiently process images of varying resolutions within the same batch. The resulting continuous image features are then forwarded to the MLP projector and, ultimately, to the MoE language model for subsequent training stages. In Kimi-VL-A3B-Thinking-2506, we further continually train this MoonViT to authentically encode up to 3.2 million pixels from a single image, 4 times compared to the original limit.

MLP Projector

We employ a two-layer MLP to bridge the vision encoder (MoonViT) and the LLM. Specifically, we first use a pixel shuffle operation to compress the spatial dimension of the image features extracted by MoonViT, performing 2×2 downsampling in the spatial domain and correspondingly expanding the channel dimension. We then feed the pixel-shuffled features into a two-layer MLP to project them into the dimension of LLM embeddings.

Mixture-of-Experts (MoE) Language Model

The language model of Kimi-VL utilizes our Moonlight model liu2025muonscalablellmtraining, an MoE language model with 2.8B activated parameters, 16B total parameters, and an architecture similar to DeepSeek-V3 deepseekai2025deepseekv3technicalreport. For our implementation, we initialize from an intermediate checkpoint in Moonlight’s pre-training stage—one that has processed 5.2T tokens of pure text data and activated an 8192-token (8K) context length. We then continue pre-training it using a joint recipe of multimodal and text-only data totaling 2.3T tokens, as detailed in Sec. 2.3.

### 2.2 Muon Optimizer

We use an enhanced Muon optimizer liu2025muon for model optimization. Compared to the original Muon optimizer jordan2024muon, we add weight decay and carefully adjust the per-parameter update scale. Additionally, we develop a distributed implementation of Muon following the ZeRO-1 rajbhandari2020zero optimization strategy, which achieves optimal memory efficiency and reduced communication overhead while preserving the algorithm’s mathematical properties. This enhanced Muon optimizer is used throughout the entire training process to optimize all model parameters, including the vision encoder, the projector, and the language model.

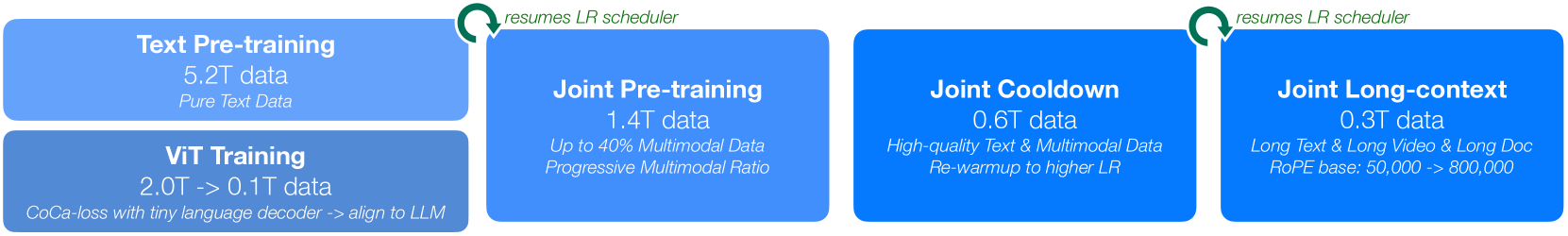

### 2.3 Pre-Training Stages

As illustrated in Figure 4 and Table 1, after loading the intermediate language model discussed above, Kimi-VL’s pre-training comprises a total of 4 stages consuming 4.4T tokens overall: first, standalone ViT training to establish a robust native-resolution visual encoder, followed by three joint training stages (pre-training, cooldown, and long-context activation) that simultaneously enhance the model’s language and multimodal capabilities. The details are as follows.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Multimodal AI Model Training Pipeline

### Overview

The image is a horizontal flowchart illustrating a multi-stage training pipeline for a multimodal AI model. The process flows from left to right, beginning with two parallel initial training phases that converge into a joint training sequence. The diagram uses color-coded blocks (shades of blue) and annotated green arrows to depict stages, data volumes, and key procedural notes.

### Components/Axes

The diagram consists of four primary rectangular blocks arranged horizontally, with one additional block stacked vertically on the far left. Green curved arrows with text annotations connect specific stages.

**Block 1 (Top-Left):**

* **Title:** Text Pre-training

* **Data Volume:** 5.2T data

* **Description:** Pure Text Data

**Block 2 (Bottom-Left, stacked below Block 1):**

* **Title:** ViT Training

* **Data Volume:** 2.0T -> 0.1T data

* **Description:** CoCa-loss with tiny language decoder -> align to LLM

**Block 3 (Center-Left):**

* **Title:** Joint Pre-training

* **Data Volume:** 1.4T data

* **Description:** Up to 40% Multimodal Data / Progressive Multimodal Ratio

**Block 4 (Center-Right):**

* **Title:** Joint Cooldown

* **Data Volume:** 0.6T data

* **Description:** High-quality Text & Multimodal Data / Re-warmup to higher LR

**Block 5 (Far-Right):**

* **Title:** Joint Long-context

* **Data Volume:** 0.3T data

* **Description:** Long Text & Long Video & Long Doc / RoPE base: 50,000 -> 800,000

**Connecting Elements:**

* **Arrow 1:** A green, curved arrow originates from the top-right corner of the "Text Pre-training" block and points to the top-left corner of the "Joint Pre-training" block. The text above the arrow reads: `resumes LR scheduler`.

* **Arrow 2:** A green, curved arrow originates from the top-right corner of the "Joint Pre-training" block and points to the top-left corner of the "Joint Cooldown" block. The text above the arrow reads: `resumes LR scheduler`.

### Detailed Analysis

The pipeline describes a sequential training regimen with distinct phases characterized by data type, volume, and learning rate (LR) schedule.

1. **Initial Parallel Phase:**

* **Text Pre-training:** This is the largest single data phase, using 5.2 trillion (`5.2T`) tokens of pure text data.

* **ViT Training:** This phase shows a data reduction, starting with 2.0 trillion (`2.0T`) tokens and ending with 0.1 trillion (`0.1T`) tokens. It uses a CoCa-loss function with a tiny language decoder, with the explicit goal to "align to LLM."

2. **Joint Training Sequence:** The outputs of the initial phases feed into a joint training sequence.

* **Joint Pre-training:** Uses 1.4 trillion (`1.4T`) data tokens. The multimodal data ratio is not fixed; it increases progressively up to a maximum of 40%.

* **Joint Cooldown:** Uses a smaller, curated dataset of 0.6 trillion (`0.6T`) tokens described as "High-quality Text & Multimodal Data." A key procedural step is a "Re-warmup to higher LR," indicating a deliberate adjustment of the learning rate schedule.

* **Joint Long-context:** The final phase uses the smallest dataset of 0.3 trillion (`0.3T`) tokens. It focuses on extending the model's context window for "Long Text & Long Video & Long Doc." A technical specification notes the RoPE (Rotary Positional Embedding) base is increased from 50,000 to 800,000.

### Key Observations

* **Data Volume Trend:** The total data volume decreases significantly across the joint training phases (1.4T -> 0.6T -> 0.3T), suggesting a shift from broad pre-training to specialized fine-tuning.

* **Learning Rate (LR) Management:** The LR scheduler is explicitly "resumed" when transitioning from the initial text pre-training to joint pre-training, and again from joint pre-training to joint cooldown. The cooldown phase itself involves a "re-warmup to a higher LR," indicating active and nuanced management of this hyperparameter.

* **Specialization of Phases:** Each joint phase has a clear, distinct purpose: general multimodal integration (Pre-training), quality refinement (Cooldown), and context window extension (Long-context).

* **Architectural Alignment:** The ViT (Vision Transformer) training phase has the explicit goal of aligning its output to the LLM (Large Language Model), which is a critical step for effective multimodal fusion.

### Interpretation

This diagram outlines a sophisticated, staged approach to building a capable multimodal AI. The process begins by separately establishing strong unimodal foundations in text (LLM) and vision (ViT). The critical "alignment" step in ViT training ensures the vision encoder's output is compatible with the language model's representation space.

The subsequent joint phases represent a deliberate curriculum. The model first learns to process mixed text and image/video data (Joint Pre-training). It then refines this ability on a smaller, higher-quality dataset while adjusting the learning rate to escape potential local minima (Joint Cooldown). Finally, it specializes in handling very long sequences of text and visual data, which is essential for understanding documents, videos, and complex narratives (Joint Long-context). The progressive increase in multimodal data ratio and the final extension of the RoPE base are technical strategies to efficiently build a model that is not just multimodal, but also capable of deep, long-form reasoning across modalities. The decreasing data volumes across joint stages imply a focus on precision and specialization over raw scale as the model matures.

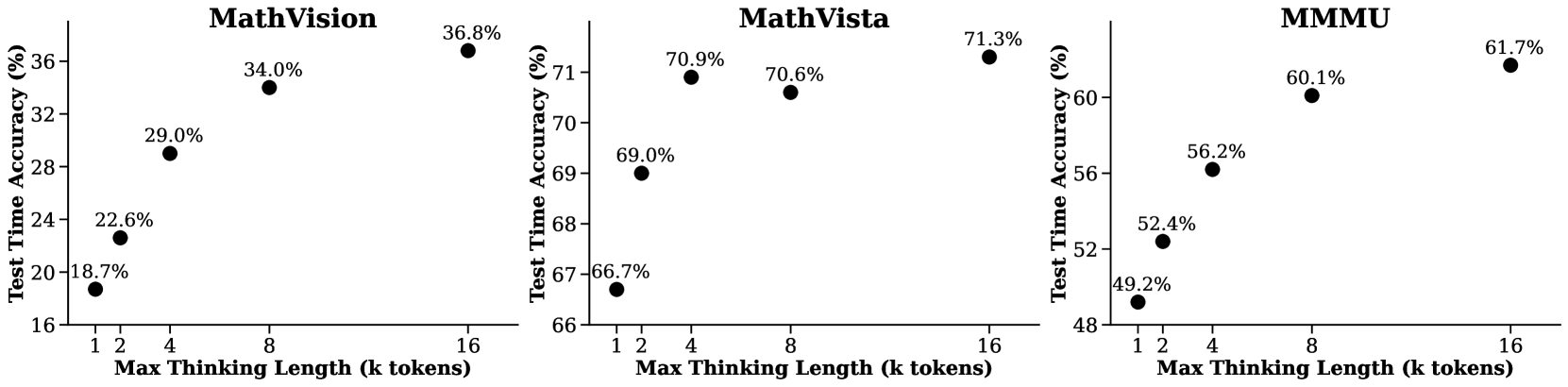

</details>

Figure 4: The pre-training stages of Kimi-VL consume a total of 4.4T tokens after text-only pre-training of its language model. To preserve text abilities, all stages that update the language model are joint training stages.

Table 1: Overview of training stages: data composition, token volumes, sequence lengths, and trainable components.

| Stages Data | ViT Training Alt text Synthesis Caption | Joint Pre-training + Text, Knowledge | Joint Cooldown + High-quality Text | Joint Long-context + Long Text |

| --- | --- | --- | --- | --- |

| Grounding | Interleaving | High-quality Multimodal | Long Video | |

| OCR | Video, Agent | Academic Sources | Long Document | |

| Tokens | 2T + 0.1T | 1.4T | 0.6T | 0.3T |

| Sequence length | 8192 | 8192 | 8192 | 32768->131072 |

| Training | ViT | ViT & LLM | ViT & LLM | ViT & LLM |

ViT Training Stages

The MoonViT is trained on image-text pairs, where the text components consist of a variety of targets: image alt texts, synthetic captions, grounding bboxes, and OCR texts. The training incorporates two objectives: a SigLIP zhai2023sigmoidlosslanguageimage loss $\mathcal{L}_{siglip}$ (a variant of contrastive loss) and a cross-entropy loss $\mathcal{L}_{caption}$ for caption generation conditioned on input images. Following CoCa’s approach yu2022cocacontrastivecaptionersimagetext, the final loss function is formulated as $\mathcal{L}=\mathcal{L}_{siglip}+\lambda\mathcal{L}_{caption}$ , where $\lambda=2$ . Specifically, the image and text encoders compute the contrastive loss, while the text decoder performs next-token prediction (NTP) conditioned on features from the image encoder. To accelerate training, we initialized both encoders with SigLIP SO-400M zhai2023sigmoidlosslanguageimage weights and implemented a progressive resolution sampling strategy to gradually allow larger size; the text decoder is initialized from a tiny decoder-only language model. During training, we observed an emergence in the caption loss while scaling up OCR data, indicating that the text decoder had developed some OCR capabilities. After training the ViT in the CoCa-alike stage with 2T tokens, we align the MoonViT to the MoE language model using another 0.1T tokens, where only MoonViT and MLP projector are updated. This alignment stage significantly reduces the initial perplexity of MoonViT embeddings in the language model, allowing a smoother joint pre-training stage as follows.

Joint Pre-training Stage

In the joint pre-training stage, we train the model with a combination of pure text data (sampled from the same distribution as the initial language model) and a variety of multimodal data (as discussed in Sec. 3.1). We continue training from the loaded LLM checkpoint using the same learning rate scheduler, consuming an additional 1.4T tokens. The initial steps utilize solely language data, after which the proportion of multimodal data gradually increases. Through this progressive approach and the previous alignment stage, we observe that joint pre-training preserves the model’s language capabilities while successfully integrating visual comprehension abilities.

Joint Cooldown Stage

The stage following the pre-training stage is a multimodal cooldown phase, where the model is continue trained with high-quality language and multimodal datasets to ensure superior performance. For the language part, through empirical investigation, we observe that the incorporation of synthetic data during the cooling phase yields significant performance improvements, particularly in mathematical reasoning, knowledge-based tasks, and code generation. The general text components of the cooldown dataset are curated from high-fidelity subsets of the pre-training corpus. For math, knowledge, and code domains, we employ a hybrid approach: utilizing selected pre-training subsets while augmenting them with synthetically generated content. Specifically, we leverage existing mathematical knowledge and code corpora as source material to generate question-answer (QA) pairs through a proprietary language model, implementing rejection sampling techniques to maintain quality standards yue2023mammoth,su2024nemotron. These synthesized QA pairs undergo comprehensive validation before being integrated into the cooldown dataset. For the multimodal part, in addition to the two strategies as employed in text cooldown data preparation, i.e. question-answer synthesis and high-quality subset replay, to allow more comprehensive visual-centric perception and understanding li2024llavaonevisioneasyvisualtask,tong2024cambrian1fullyopenvisioncentric,guo2024mammothvlelicitingmultimodalreasoning, we filter and rewrite a variety of academic visual or vision-language data sources to QA pairs. Unlike post-training stages, these language and multimodal QA pairs in the cooldown stage are only included for activating specific abilities and henceforth facilitating learning high-quality data, thus, we keep their ratio at a low portion to avoid overfitting these QA patterns. The joint cooldown stage significantly improves both language and multimodal abilities of the model.

Table 2: Needle-in-a-Haystack (NIAH) test on text/video haystacks, where needles are uniformly distributed at various positions within the haystack. We report recall accuracy across different haystack lengths up to 131,072 tokens (128K).

| - text haystack - video haystack | 100.0 100.0 | 100.0 100.0 | 100.0 100.0 | 100.0 100.0 | 100.0 100.0 | 100.0 100.0 | 87.0 91.7 |

| --- | --- | --- | --- | --- | --- | --- | --- |

Joint Long-context Activation Stage

In the final pre-training stage, we extend the context length of the model from 8192 (8K) to 131072 (128K), with the inverse frequency of its RoPE su2023roformerenhancedtransformerrotary embeddings reset from 50,000 to 800,000. The joint long-context stage is conducted in two sub-stages, where each one extends the model’s context length by four times. For data composition, we filter and upsample the ratio of long data to 25% in each sub-stage, while using the remaining 75% tokens to replay shorter data in its previous stage; our exploration confirms that this composition allows the model to effectively learn long-context understanding while maintaining short-context ability.

To allow the model to activate long-context abilities on both pure-text and multimodal inputs, the long data used in Kimi-VL’s long-context activation consists of not only long text, but also long multimodal data, including long interleaved data, long videos, and long documents. Similar as cooldown data, we also synthesize a small portion of QA pairs to augment the learning efficiency of long-context activation. After the long-context activations, the model can pass needle-in-a-haystack (NIAH) evaluations with either long pure-text or long video haystack, proving its versatile long-context ability. We provide the NIAH recall accuracy on various range of context length up to 128K in Table 2.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Kimi-VL Training Pipeline

### Overview

The image is a horizontal flowchart illustrating a three-stage training pipeline for an AI model named "Kimi-VL," culminating in a variant called "Kimi-VL-Thinking." The diagram uses a left-to-right flow with blue rectangular boxes representing distinct training phases, connected by arrows indicating the sequence and data flow.

### Components/Axes

The diagram consists of three primary blue boxes arranged horizontally, connected by arrows. Text is embedded within each box and along the connecting arrows.

1. **Leftmost Box (Stage 1):**

* **Position:** Far left.

* **Primary Label:** "Joint Supervised Fine-tuning"

* **Subtext (Line 1):** "Text + Multimodal SFT Data"

* **Subtext (Line 2):** "1 Epoch@32K + 1 Epoch@128K"

2. **First Connecting Arrow:**

* **Position:** Between the first and second boxes.

* **Label (Vertical Text):** "Kimi-VL"

3. **Middle Box (Stage 2):**

* **Position:** Center.

* **Primary Label:** "Long-CoT Supervised Fine-tuning"

* **Subtext (Line 1):** "Text + Multimodal Long-CoT Data"

* **Subtext (Line 2):** "Planning, Evaluation, Reflection, Exploration"

4. **Second Connecting Arrow:**

* **Position:** Between the second and third boxes.

* **Label:** No text on this arrow.

5. **Rightmost Box (Stage 3):**

* **Position:** Far right.

* **Primary Label:** "Reinforcement Learning (RL)"

* **Subtext (Line 1):** "Online RL on Answer Only"

* **Subtext (Line 2):** "Length penalty, Difficulty control"

6. **Final Output Arrow:**

* **Position:** Extending from the right side of the third box.

* **Label (Vertical Text):** "Kimi-VL-Thinking"

### Detailed Analysis

The diagram outlines a sequential, multi-stage training methodology:

* **Stage 1 - Joint Supervised Fine-tuning:** This initial phase uses a combined dataset of text and multimodal data for supervised fine-tuning (SFT). The training schedule is specified as one epoch on a 32K context length followed by one epoch on a 128K context length.

* **Stage 2 - Long-CoT Supervised Fine-tuning:** The model from Stage 1 ("Kimi-VL") undergoes a second supervised fine-tuning phase. This stage uses "Long-CoT" (Long Chain-of-Thought) data, which includes both text and multimodal examples. The training focuses on instilling reasoning capabilities described as "Planning, Evaluation, Reflection, Exploration."

* **Stage 3 - Reinforcement Learning (RL):** The model from Stage 2 is further refined using reinforcement learning. Key details are that it is "Online RL" (likely meaning updates are performed during interaction) and is applied "on Answer Only," suggesting the reward model or policy update focuses on the final answer quality. Training is guided by a "Length penalty" and "Difficulty control" mechanism.

* **Final Output:** The result of this three-stage pipeline is a model designated "Kimi-VL-Thinking."

### Key Observations

* The pipeline shows a clear progression from general supervised learning to specialized reasoning-focused training, and finally to optimization via reinforcement learning.

* The transition from "SFT Data" to "Long-CoT Data" indicates a deliberate shift in training data composition to foster complex reasoning.

* The RL stage's constraints ("Length penalty, Difficulty control") suggest an effort to balance answer quality with efficiency and to manage the complexity of training examples.

* The naming convention implies that "Kimi-VL-Thinking" is an enhanced version of the base "Kimi-VL" model, specifically endowed with advanced reasoning ("Thinking") capabilities through this pipeline.

### Interpretation

This diagram represents a sophisticated, contemporary approach to training large multimodal language models. The pipeline is designed to systematically build capabilities:

1. **Foundation:** Stage 1 establishes a broad base of knowledge and alignment using standard supervised fine-tuning on diverse data.

2. **Reasoning Specialization:** Stage 2 explicitly targets the development of chain-of-thought reasoning, a critical skill for complex problem-solving. The listed components (Planning, Evaluation, etc.) are hallmarks of advanced cognitive processes.

3. **Refinement and Optimization:** Stage 3 uses reinforcement learning to fine-tune the model's outputs based on reward signals, likely improving accuracy, helpfulness, and adherence to desired formats. The "online" aspect and specific penalties point to a dynamic and controlled training environment.

The overall flow suggests that achieving a model capable of sophisticated "Thinking" requires more than just feeding it data; it requires a structured curriculum that first teaches it *what* to know, then *how* to reason, and finally *how to optimize* its reasoning for specific goals. The explicit mention of multimodal data at each supervised stage underscores that this reasoning capability is intended to operate across text and visual inputs.

</details>

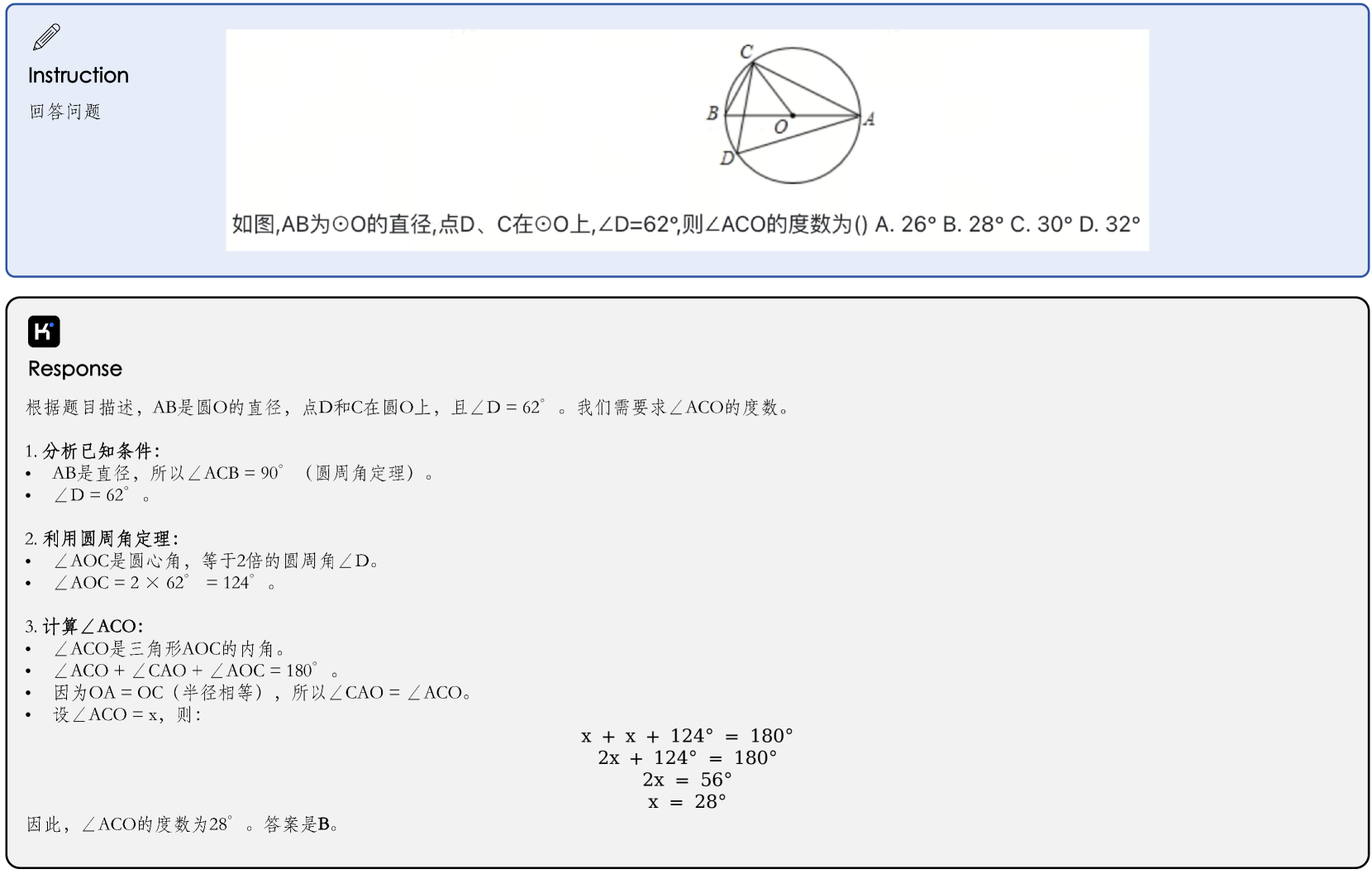

Figure 5: The post-training stages of Kimi-VL and Kimi-VL-Thinking, including two stages of joint SFT in 32K and 128K context, and further long-CoT SFT and RL stages to activate and enhance long thinking abilities.

### 2.4 Post-Training Stages

Joint Supervised Fine-tuning (SFT)

In this phase, we fine-tune the base model of Kimi-VL with instruction-based fine-tuning to enhance its ability to follow instructions and engage in dialogue, culminating in the creation of the interactive Kimi-VL model. This is achieved by employing the ChatML format (Openai, 2024), which allows for a targeted instruction optimization while maintaining architectural consistency with Kimi-VL. We optimize the language model, MLP projector, and vision encoder using a mixture of pure-text and vision-language SFT data, which will be described in Sec 3.2. Supervision is applied only to answers and special tokens, with system and user prompts being masked. The model is exposed to a curated set of multimodal instruction-response pairs, where explicit dialogue role tagging, structured injection of visual embeddings, and preservation of cross-modal positional relationships are ensured through the format-aware packing. Additionally, to guarantee the model’s comprehensive proficiency in dialogue, we incorporate a mix of multimodal data and pure text dialogue data used in Moonlight, ensuring its versatility across various dialogue scenarios.

We first train the model at the sequence length of 32k tokens for 1 epoch, followed by another epoch at the sequence length of 128k tokens. In the first stage (32K), the learning rate decays from $2\times 10^{-5}$ to $2\times 10^{-6}$ , before it re-warmups to $1\times 10^{-5}$ in the second stage (128K) and finally decays to $1\times 10^{-6}$ . To improve training efficiency, we pack multiple training examples into each single training sequence.

Long-CoT Supervised Fine-Tuning

With the refined RL prompt set, we employ prompt engineering to construct a small yet high-quality long-CoT warmup dataset, containing accurately verified reasoning paths for both text and image inputs. This approach resembles rejection sampling (RS) but focuses on generating long-CoT reasoning paths through prompt engineering. The resulting warmup dataset is designed to encapsulate key cognitive processes that are fundamental to human-like reasoning, such as planning, where the model systematically outlines steps before execution; evaluation, involving critical assessment of intermediate steps; reflection, enabling the model to reconsider and refine its approach; and exploration, encouraging consideration of alternative solutions. By performing a lightweight SFT on this warm-up dataset, we effectively prime the model to internalize these multimodal reasoning strategies. As a result, the fine-tuned long-CoT model demonstrates improved capability in generating more detailed and logically coherent responses, which enhances its performance across diverse reasoning tasks.

Reinforcement Learning

To further advance the model’s reasoning abilities, we then train the model with reinforcement learning (RL), enabling the model to autonomously generate structured CoT rationales. Specifically, similar as Kimi k1.5 team2025kimi, we adopt a variant of online policy mirror descent as our RL algorithm, which iteratively refines the policy model $\pi_{\theta}$ to improve its problem-solving accuracy. During the $i$ -th training iteration, we treat the current model as a reference policy model and optimize the following objective, regularized by relative entropy to stabilize policy updates:

$$

\displaystyle\max_{\theta}\mathbb{E}_{(x,y^{*})\sim\mathcal{D}}\left[\mathbb{E

}_{(y,z)\sim\pi_{\theta}}\left[r(x,y,y^{*})\right]-\tau\mathrm{KL}(\pi_{\theta

}(x)||\pi_{\theta_{i}}(x))\right]\,, \tag{1}

$$

where $r$ is a reward model that justifies the correctness of the proposed answer $y$ for the given problem $x$ , by assigning a value $r(x,y,y^{*})\in\{0,1\}$ based on the ground truth $y^{*}$ , and $\tau>0$ is a parameter controlling the degree of regularization.

Each training iteration begins by sampling a problem batch from the dataset $\mathcal{D}$ , and the model parameters are updated to $\theta_{i+1}$ using the policy gradient derived from (1), with the optimized policy model subsequently assuming the role of reference policy for the subsequent iteration. To enhance RL training efficiency, we implement a length-based reward to penalize excessively long responses, mitigating the overthinking problem where the model generates redundant reasoning chains. Besides, we employ two sampling strategies including curriculum sampling and prioritized sampling, which leverage difficulty labels and per-instance success rates to focus training effort on the most pedagogically valuable examples, thereby optimizing the learning trajectory and improving training efficiency.

Through large-scale reinforcement learning training, we can derive a model that harnesses the strengths of both basic prompt-based CoT reasoning and sophisticated planning-enhanced CoT approaches. During inference, the model maintains standard autoregressive sequence generation, eliminating the deployment complexities associated with specialized planning algorithms that require parallel computation. Simultaneously, the model develops essential meta-reasoning abilities including error detection, backtracking, and iterative solution refinement by effectively utilizing the complete history of explored reasoning paths as contextual information. With endogenous learning from its complete reasoning trace history, the model can effectively encode planned search procedures into its parametric knowledge.

### 2.5 Infrastructure

Storage We utilize S3 amazon_s3 compatible object storage from cloud service vendors to store our visual-text data. To minimize the time between data preparation and model training, we store visual data in its original format and have developed an efficient and flexible data loading system. This system provides several key benefits:

- Supports on-the-fly data shuffling, mixing, tokenization, loss masking and packing during training, allowing us to adjust data proportions as needed;

- Enables random augmentation of both visual and text data, while preserving the correctness of 2D coordinate and orientation information during transformations;

- Ensures reproducibility by strictly controlling random states and other states across different data loader workers, guaranteeing that any interrupted training can be resumed seamlessly—the data sequence after resumption remains identical to an uninterrupted run;

- Delivers high-performance data loading: through multiple caching strategies, our system reliably supports training on large scale clusters while maintaining controlled request rates and throughput to the object storage.

Additionally, to ensure consistent dataset quality control, we developed a centralized platform for data registration, visualization, compiling statistics, synchronizing data across cloud storage systems, and managing dataset lifecycles.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Screenshot: AI Assistant Interface Analyzing Handwritten Manuscripts

### Overview

The image is a screenshot of a graphical user interface (GUI) for an AI assistant. The interface is divided into two main vertical panels. The left panel, titled "Instruction," contains a user query and two embedded images of handwritten mathematical manuscripts. The right panel, titled "Response," displays the AI's detailed, step-by-step analysis and final conclusion regarding the authorship and content of those manuscripts. The overall aesthetic is clean and functional, with a light gray background and distinct panel borders.

### Components/Axes

The interface has no chart axes. Its primary components are:

1. **Left Panel ("Instruction"):**

* **Header:** Contains a pencil icon and the bold text "Instruction".

* **User Query Text:** "Please infer step by step who this manuscript belongs to and what it records".

* **Embedded Images:** Two rectangular images showing pages of aged, yellowish paper filled with handwritten mathematical equations and notes.

2. **Right Panel ("Response"):**

* **Header:** Contains a stylized "H" icon and the bold text "Response".

* **Main Content Area:** A large text block containing the AI's analysis. This is subdivided into:

* A `<Think>` section (enclosed in tags) detailing the internal reasoning process.

* A final, formatted answer section with bold headings ("Key Observations:", "Conclusion:", "Final Answer:").

### Detailed Analysis / Content Details

**A. Left Panel - Manuscript Images:**

* **Image 1 (Top):** Shows a page of handwritten notes. The equations involve partial derivatives (denoted by `∂`), summations (Σ), and variables like `g`, `M`, `T`. The term "Einheitsvektor" (German for "unit vector") is visible. The handwriting is cursive and somewhat dense.

* **Image 2 (Bottom):** Shows another page with more numerical calculations and algebraic manipulations. It includes integrals, references to constants labeled "K", "M", "T", and what appears to be a small graph or diagram in the lower right corner. The handwriting style is consistent with the first image.

**B. Right Panel - AI Response Text (Transcription of Key Sections):**

* **User Query:** "Please infer step by step who this manuscript belongs to and what it records"

* **AI's `<Think>` Section (Summary of Reasoning):**

* The handwriting suggests a mathematician or physicist from the 20th century.

* The content involves gravitational fields, celestial mechanics, tensor calculus, and field theory.

* German terms like "Einheitsvektor" and "Gln" (likely for "Gleichung"/equation) point to a German-speaking author.

* The style and content are consistent with Albert Einstein's work on general relativity and gravitational field equations.

* The two images likely represent different sections of the same research manuscript.

* **AI's Final Answer (Formatted Output):**

* **Assertion:** "The manuscripts in the images belong to **Albert Einstein**... The content... records his work on **gravitational field equations** and related mathematical derivations..."

* **Key Observations:**

1. **Handwriting Style:** Consistent with Einstein's known style.

2. **Content Analysis:** Equations involve partial derivatives, summations, Greek letters (γ, g), resembling Einstein's formulation of gravitational field equations. The second image shows detailed numerical derivations.

3. **German Terms:** Presence of "Einheitsvektor" and "Gln" suggests a German-speaking author.

* **Conclusion:** "These manuscripts are part of Einstein's research materials, documenting his mathematical and theoretical work on gravitational fields and field equations... a cornerstone of modern physics."

* **Final Answer:** "The manuscripts belong to Albert Einstein and record his work on gravitational field equations and mathematical derivations in general relativity."

### Key Observations

1. **Structured AI Reasoning:** The response explicitly shows a chain-of-thought process (`<Think>` tags) before delivering the final answer, demonstrating a step-by-step analytical approach.

2. **Multimodal Analysis:** The AI successfully integrates visual analysis (handwriting style, paper age) with textual and mathematical content analysis (equations, German terms) to form its conclusion.

3. **Specific Attribution:** The analysis does not merely suggest a field of study but makes a definitive attribution to a specific historical figure (Albert Einstein) based on correlating multiple lines of evidence.

4. **Content Focus:** The extracted information centers entirely on the *metadata* of the manuscripts (author, subject) rather than a full transcription of the complex mathematical equations themselves, which are described generically.

### Interpretation

This screenshot captures a meta-demonstration of an AI's capability to perform expert-level document analysis. The "data" here is not numerical but forensic and historical.

* **What it demonstrates:** The AI acts as a digital historian and physicist's assistant. It synthesizes paleographic clues (handwriting), linguistic analysis (German technical terms), and domain-specific knowledge (theoretical physics, general relativity) to authenticate and contextualize primary source documents.

* **Relationship between elements:** The user's open-ended query ("infer step by step") directly triggers the AI's structured, evidence-based reasoning process displayed in the response. The two manuscript images serve as the primary evidence, and the AI's text is the analytical report derived from that evidence.

* **Notable pattern:** The AI's conclusion is built on a convergence of independent indicators: the *language* (German), the *scientific content* (gravitational field equations), and the *physical artifact's style* (handwriting). This mirrors how a human expert would approach the problem, lending credibility to the output.

* **Underlying purpose:** The image showcases the AI's utility in academic and research contexts, specifically for digitizing, interpreting, and attributing historical scientific documents, potentially accelerating scholarship in the history of science.

</details>

Figure 6: Manuscript reasoning visualization. Kimi-VL-Thinking demonstrates the ability to perform historical and scientific inference by analyzing handwritten manuscripts step by step. In this example, our model identifies the author as Albert Einstein based on handwriting style, content analysis, and language cues. It reasons that the manuscripts relate to gravitational field equations, consistent with Einstein’s contributions to general relativity.

Parallelism We adopt a 4D parallelism strategy—Data Parallelism li2020pytorchdistributedexperiencesaccelerating, Expert Parallelism fedus2022switchtransformersscalingtrillion, Pipeline Parallelism huang2019gpipeefficienttraininggiant,narayanan2021efficientlargescalelanguagemodel, and Context Parallelism jacobs2023deepspeedulyssesoptimizationsenabling,liu2023ringattentionblockwisetransformers—to accelerate the speed of Kimi-VL . After optimizing parallel strategies, the resulting training throughput of our model is around 60% higher than a 7B dense VLM (e.g. VLMs based on Qwen2.5-7B).

- Data Parallelism (DP). DP replicates the model across multiple devices, each processing different micro-batches. This setup allows larger effective batch sizes by simply increasing the number of devices.

- Expert Parallelism (EP). EP distributes expert modules in the MoE layer across multiple devices. When combined with DP, experts on a given device can handle tokens from different DP groups, enhancing computational efficiency.

- Pipeline Parallelism (PP). PP splits the model into multiple layer-based stages. To minimize pipeline bubbles, we allocate the Vision Tower (VT) and several decoder layers to the first stage, place the output layer and additional decoder layers in the last stage, and distribute the remaining decoder layers evenly across intermediate stages based on their time overhead.

- Context Parallelism (CP). CP addresses long-sequence training by splitting sequences across different CP ranks in conjunction with flash attention dao2022flashattentionfastmemoryefficientexact. This substantially reduces peak memory usage and relieves the memory pressure from attention computations.

Beyond these four parallel strategies, we incorporate ZeRO1 rajbhandari2020zero and Selective Checkpointing Activation chen2016trainingdeepnetssublinear, korthikanti2022reducingactivationrecomputationlarge to further optimize memory usage. ZeRO1 reduces optimizer state overhead by using a distributed optimizer while avoiding extra communication costs. Selective Checkpointing Activation trades time for space by recomputing only those layers that have low time overhead but high memory consumption, striking a balance between computation efficiency and memory demands. For extremely long sequences, we expand recomputation to a broader set of layers to prevent out-of-memory errors.

## 3 Data Construction

### 3.1 Pre-Training Data

Our multimodal pre-training corpus is designed to provide high-quality data that enables models to process and understand information from multiple modalities, including text, images, and videos. To this end, we have also curated high-quality data from six categories – caption, interleaving, OCR, knowledge, video, and agent – to form the corpus.

When constructing our training corpus, we developed several multimodal data processing pipelines to ensure data quality, encompassing filtering, synthesis, and deduplication. Establishing an effective multimodal data strategy is crucial during the joint training of vision and language, as it both preserves the capabilities of the language model and facilitates alignment of knowledge across diverse modalities.

We provide a detailed description of these sources in this section, which is organized into the following categories:

Caption Data

Our caption data provides the model with fundamental modality alignment and a broad range of world knowledge. By incorporating caption data, the multimodal LLM gains wider world knowledge with high learning efficiency. We have integrated various open-source Chinese and English caption datasets like schuhmann2022laion, gadre2024datacomp and also collected substantial in-house caption data from multiple sources. However, throughout the training process, we strictly limit the proportion of synthetic caption data to mitigate the risk of hallucination stemming from insufficient real-world knowledge.

For general caption data, we follow a rigorous quality control pipeline that avoids duplication and maintain high image-text correlation. We also vary image resolution during pre-training to ensure that the vision tower remains effective when processing images of both high- and low-resolution.

Image-text Interleaving Data During the pre-training phase, the model benefits from interleaving data for many aspects. For example, multi-image comprehension ability can be boosted by interleaving data; interleaving data always provides detailed knowledge for the given image; a longer multimodal context learning ability can also be gained by interleaving data. What’s more, we also find that interleaving data can contribute positively to maintaining the model’s language abilities. Thus, image-text interleaving data is an important part in our training corpus. Our multimodal corpus considered open-sourced interleave datasets like zhu2024multimodal,laurenccon2024obelics and also constructed large-scale in-house data using resources like textbooks, webpages, and tutorials. Further, we also find that synthesizing the interleaving data benefits the performance of multimodal LLM for keeping the text knowledge. To ensure each image’s knowledge is sufficiently studied, for all the interleaving data, despite standard filtering, deduping, and other quality control pipeline, we also integrate a data reordering procedure to keep all the image and text in the correct order.

OCR Data Optical Character Recognition (OCR) is a widely adopted technique that converts text from images into an editable format. In our model, a robust OCR capability is deemed essential for better aligning the model with human values. Accordingly, our OCR data sources are diverse, ranging from open-source to in-house datasets, encompassing both clean and augmented images, and spanning over single-page and multi-page inputs.

In addition to the publicly available data, we have developed a substantial volume of in-house OCR datasets, covering multilingual text, dense text layouts, web-based content, and handwritten samples. Furthermore, following the principles outlined in OCR 2.0 wei2024general, our model is also equipped to handle a variety of optical image types, including figures, tables, geometry diagrams, mermaid plots, and natural scene text. We apply extensive data augmentation techniques—such as rotation, distortion, color adjustments, and noise addition—to enhance the model’s robustness. As a result, our model achieves a high level of proficiency in OCR tasks.

In addition to single-page OCR data, we collect and convert a large volume of in-house multi-page OCR data to activate the model’s understanding of long documents in the real world. With the help of these data, our model is capable of performing accurate OCR on a single image but can also comprehend an entire academic paper or a scanned book.

Knowledge Data The concept of multimodal knowledge data is analogous to the previously mentioned text pre-training data, except here we focus on assembling a comprehensive repository of human knowledge from diverse sources to further enhance the model’s capabilities. For example, carefully curated geometry data in our dataset is vital for developing visual reasoning skills, ensuring the model can interpret the abstract diagrams created by humans.

Our knowledge corpus adheres to a standardized taxonomy to balance content across various categories, ensuring diversity in data sources. Similar to text-only corpora, which gather knowledge from textbooks, research papers, and other academic materials, multimodal knowledge data employs both a layout parser and an OCR model to process content from these sources. While we also include filtered data from internet-based and other external resources.

Because a significant portion of our knowledge corpus is sourced from internet-based materials, infographics can cause the model to focus solely on OCR-based information. In such cases, relying exclusively on a basic OCR pipeline may limit training effectiveness. To address this, we have developed an additional pipeline that better captures the purely textual information embedded within images.

Agent Data For agent tasks, the model’s grounding and planning capabilities have been significantly enhanced. In addition to utilizing publicly available data, a platform has been established to efficiently manage and execute virtual machine environments in bulk. Within these virtual environments, heuristic methods were employed to collect screenshots and corresponding action data. This data was then processed into dense grounding formats and continuous trajectory formats. The design of the Action Space was categorized according to Desktop, Mobile, and Web environments. Furthermore, icon data was collected to strengthen the model’s understanding of the meanings of icons within software graphical user interfaces (GUIs). To enhance the model’s planning ability for solving multi-step desktop tasks, a set of computer-use trajectories was collected from human annotators, each accompanied by synthesized Chain-of-Thought (Aguvis xu2024aguvis). These multi-step agent demonstrations equip Kimi-VL with the capability to complete real-world desktop tasks (on both Ubuntu and Windows).

Video Data In addition to image-only and image-text interleaved data, we also incorporate large-scale video data during pre-training, cooldown, and long-context activation stages to enable two directions of essential abilities of our model: first, to understand a long-context sequence dominated by images (e.g. hour-long videos) in addition to long text; second, to perceive fine-grained spatio-temporal correspondence in short video clips.

Our video data are sourced from diverse resources, including open-source datasets as well as in-house web-scale video data, and span videos of varying durations. Similarly, to ensure sufficient generalization ability, our video data cover a wide range of scenes and diverse tasks. We cover tasks such as video description and video grounding, among others. For long videos, we carefully design a pipeline to produce dense captions. Similar to processing the caption data, we strictly limit the proportion of the synthetic dense video description data to reduce the risk of hallucinations.

Text Data Our text pretrain corpus directly utilizes the data in Moonlight [liu2025muonscalablellmtraining], which is designed to provide comprehensive and high-quality data for training large language models (LLMs). It encompasses five domains: English, Chinese, Code, Mathematics & Reasoning, and Knowledge. We employ sophisticated filtering and quality control mechanisms for each domain to ensure the highest quality training data. For all pretrain data, we conducted rigorous individual validation for each data source to assess its specific contribution to the overall training recipe. This systematic evaluation ensures the quality and effectiveness of our diverse data composition. To optimize the overall composition of our training corpus, the sampling strategy for different document types is empirically determined through extensive experimentation. We conduct isolated evaluations to identify document subsets that contribute most significantly to the model’s knowledge acquisition capabilities. These high-value subsets are upsampled in the final training corpus. However, to maintain data diversity and ensure model generalization, we carefully preserve a balanced representation of other document types at appropriate ratios. This data-driven approach helps us optimize the trade-off between focused knowledge acquisition and broad generalization capabilities. footnotetext: GPT-4o and GPT-4o-mini results use Omniparser without UIA, according to [bonatti2024windowsagentarenaevaluating].

| | Benchmark (Metric) | GPT-4o | GPT- | Qwen2.5- | Llama3.2- | Gemma3- | DeepSeek- | Kimi-VL- |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| 4o-mini | VL-7B | 11B-Inst. | 12B-IT | VL2 | A3B | | | |

| Architecture | - | - | Dense | Dense | Dense | MoE | MoE | |

| # Act. Params ${}_{\text{(LLM+VT)}}$ | - | - | 7.6B+0.7B | 8B+2.6B | 12B+0.4B | 4.1B+0.4B | 2.8B+0.4B | |

| # Total Params | - | - | 8B | 11B | 12B | 28B | 16B | |

| College-level | MMMU ${}_{\text{val}}$ (Pass@1) | 69.1 | 60.0 | 58.6 | 48 | 59.6 | 51.1 | 57.0 |

| VideoMMMU (Pass@1) | 61.2 | - | 47.4 | 41.8 | 57.2 | 44.4 | 52.6 | |

| MMVU ${}_{\text{val}}$ (Pass@1) | 67.4 | 61.6 | 50.1 | 44.4 | 57.0 | 52.1 | 52.2 | |

| General | MMBench-EN-v1.1 (Acc) | 83.1 | 77.1 | 82.6 | 65.8 | 74.6 | 79.6 | 83.1 |

| MMStar (Acc) | 64.7 | 54.8 | 63.9 | 49.8 | 56.1 | 55.5 | 61.3 | |

| MMVet (Pass@1) | 69.1 | 66.9 | 67.1 | 57.6 | 64.9 | 60.0 | 66.7 | |

| RealWorldQA (Acc) | 75.4 | 67.1 | 68.5 | 63.3 | 59.1 | 68.4 | 68.1 | |

| AI2D (Acc) | 84.6 | 77.8 | 83.9 | 77.3 | 78.1 | 81.4 | 84.9 | |

| Multi-image | BLINK (Acc) | 68.0 | 53.6 | 56.4 | 39.8 | 50.3 | - | 57.3 |

| Math | MathVista (Pass@1) | 63.8 | 52.5 | 68.2 | 47.7 | 56.1 | 62.8 | 68.7 |

| MathVision (Pass@1) | 30.4 | - | 25.1 | 13.6 | 32.1 | 17.3 | 21.4 | |

| OCR | InfoVQA (Acc) | 80.7 | 57.9 | 82.6 | 34.6 | 43.8 | 78.1 | 83.2 |

| OCRBench (Acc) | 815 | 785 | 864 | 753 | 702 | 811 | 867 | |

| OS Agent | ScreenSpot-V2 (Acc) | 18.1 | - | 86.8 | - | - | - | 92.8 |

| ScreenSpot-Pro (Acc) | 0.8 | - | 29.0 | - | - | - | 34.5 | |

| OSWorld (Pass@1) | 5.03 | - | 2.5 | - | - | - | 8.22 | |

| WindowsAgentArena (Pass@1) footnotemark: | 9.4 | 2.7 | 3.4 | - | - | - | 10.4 | |

| Long Document | MMLongBench-Doc (Acc) | 42.8 | 29.0 | 29.6 | 13.8 | 21.3 | - | 35.1 |

| Long Video | Video-MME (w/o sub. / w/ sub.) | 71.9/77.2 | 64.8/68.9 | 65.1/71.6 | 46.0/49.5 | 58.2/62.1 | - | 67.8/72.6 |

| MLVU ${}_{\text{MCQ}}$ (Acc) | 64.6 | 48.1 | 70.2 | 44.4 | 52.3 | - | 74.2 | |

| LongVideoBench ${}_{\text{val}}$ | 66.7 | 58.2 | 56.0 | 45.5 | 51.5 | - | 64.5 | |

| Video Perception | EgoSchema ${}_{\text{full}}$ | 72.2 | - | 65.0 | 54.3 | 56.9 | 38.5 | 78.5 |

| VSI-Bench | 34.0 | - | 34.2 | 20.6 | 32.4 | 21.7 | 37.4 | |

| TOMATO | 37.7 | 28.8 | 27.6 | 21.5 | 28.6 | 27.2 | 31.7 | |

Table 3: Performance of Kimi-VL against proprietary and open-source efficient VLMs; performance of GPT-4o is also listed in gray for reference. Top and second-best models are in boldface and underline respectively. Some results of competing models are unavailable due to limitation of model ability on specific tasks or model context length.

### 3.2 Instruction Data