## Scaling Laws for Native Multimodal Models

Mustafa Shukor 2

Enrico Fini 1

Victor Guilherme Turrisi da Costa 1

Matthieu Cord 2

Joshua Susskind 1

Alaaeldin El-Nouby 1

1 Apple

2 Sorbonne University

## Abstract

Building general-purpose models that can effectively perceive the world through multimodal signals has been a long-standing goal. Current approaches involve integrating separately pre-trained components, such as connecting vision encoders to LLMs and continuing multimodal training. While such approaches exhibit remarkable sample efficiency, it remains an open question whether such late-fusion architectures are inherently superior. In this work, we revisit the architectural design of native multimodal models (NMMs)-those trained from the ground up on all modalities-and conduct an extensive scaling laws study, spanning 457 trained models with different architectures and training mixtures. Our investigation reveals no inherent advantage to late-fusion architectures over early-fusion ones, which do not rely on image encoders or tokenizers. On the contrary, early-fusion exhibits stronger performance at lower parameter counts, is more efficient to train, and is easier to deploy. Motivated by the strong performance of the early-fusion architectures, we show that incorporating Mixture of Experts (MoEs) allows models to learn modality-specific weights, significantly benefiting performance. 10 18 2 3 Validation Loss

## 1. Introduction

Multimodality provides a rich signal for perceiving and understanding the world. Advances in vision [23, 52, 55, 80] and language models [3, 19, 67] have enabled the development of powerful multimodal models that understand language, images, and audio. A common approach involves grafting separately pre-trained unimodal models, such as connecting a vision encoder to the input layer of an LLM [6, 9, 35, 43, 62, 64, 73, 78].

Although this seems like a convenient approach, it remains an open question whether such late-fusion strategies are inherently optimal for understanding multimodal signals. Moreover, with abundant multimodal data available, initializing from unimodal pre-training is potentially detrimental, as it may introduce biases that prevent the model

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Chart: Validation Loss and N/D vs. FLOPs

### Overview

The image presents two line charts comparing the performance of "Early," "Late," and "MoE" models. The top chart shows Validation Loss as a function of FLOPs (Floating Point Operations Per Second), while the bottom chart shows the ratio N/D as a function of FLOPs. Both charts use a logarithmic scale for the x-axis (FLOPs).

### Components/Axes

**Top Chart:**

* **Y-axis:** "Validation Loss" (linear scale, range approximately 2 to 4)

* **X-axis:** "FLOPs" (logarithmic scale, range 10^18 to 10^24)

* **Legend (top-right):**

* Orange dotted line: "Early: L ∝ C^-0.0492"

* Blue dotted line: "Late: L ∝ C^-0.0494"

* Green dotted line: "MoE: L ∝ C^-0.0474"

**Bottom Chart:**

* **Y-axis:** "N/D" (linear scale, range approximately 0 to 4 * 10^-2)

* **X-axis:** "FLOPs" (logarithmic scale, range 10^18 to 10^24)

* **Legend (top-right):**

* Orange dotted line: "Early: N/D ∝ C^0.053"

* Blue dotted line: "Late: N/D ∝ C^0.076"

* Green dotted line: "MoE: N/D ∝ C^-0.312"

### Detailed Analysis

**Top Chart (Validation Loss vs. FLOPs):**

* **Early (Orange):** The validation loss decreases as FLOPs increase. At 10^18 FLOPs, the validation loss is approximately 3.7. At 10^24 FLOPs, the validation loss is approximately 2.0.

* **Late (Blue):** The validation loss decreases as FLOPs increase. At 10^18 FLOPs, the validation loss is approximately 3.6. At 10^24 FLOPs, the validation loss is approximately 2.0.

* **MoE (Green):** The validation loss decreases as FLOPs increase. At 10^18 FLOPs, the validation loss is approximately 3.5. At 10^24 FLOPs, the validation loss is approximately 1.9.

**Bottom Chart (N/D vs. FLOPs):**

* **Early (Orange):** The N/D ratio increases as FLOPs increase. At 10^18 FLOPs, the N/D ratio is approximately 0.012. At 10^24 FLOPs, the N/D ratio is approximately 0.018.

* **Late (Blue):** The N/D ratio increases as FLOPs increase. At 10^18 FLOPs, the N/D ratio is approximately 0.014. At 10^24 FLOPs, the N/D ratio is approximately 0.028.

* **MoE (Green):** The N/D ratio decreases as FLOPs increase. At 10^18 FLOPs, the N/D ratio is approximately 0.048. At 10^24 FLOPs, the N/D ratio is approximately 0.001.

### Key Observations

* In the top chart, all three models ("Early," "Late," and "MoE") show a decrease in validation loss as FLOPs increase, indicating improved performance with more computation.

* In the bottom chart, the "Early" and "Late" models show an increase in the N/D ratio as FLOPs increase, while the "MoE" model shows a significant decrease in the N/D ratio as FLOPs increase.

* The "MoE" model has the lowest validation loss at higher FLOPs.

### Interpretation

The charts suggest that increasing FLOPs generally leads to a decrease in validation loss for all three models, indicating better model performance. However, the behavior of the N/D ratio differs significantly between the "MoE" model and the "Early" and "Late" models. The decreasing N/D ratio for the "MoE" model as FLOPs increase could indicate a more efficient use of computational resources or a different scaling behavior compared to the other models. The "MoE" model appears to be the most effective in reducing validation loss at higher FLOPs, suggesting it may be a more scalable architecture for this particular task. The relationships L ∝ C^-0.0492, L ∝ C^-0.0494, L ∝ C^-0.0474, N/D ∝ C^0.053, N/D ∝ C^0.076, and N/D ∝ C^-0.312 describe the power-law scaling of Validation Loss (L) and N/D with respect to FLOPs (C) for each model.

</details>

C

-

C

-

C

.

-

.

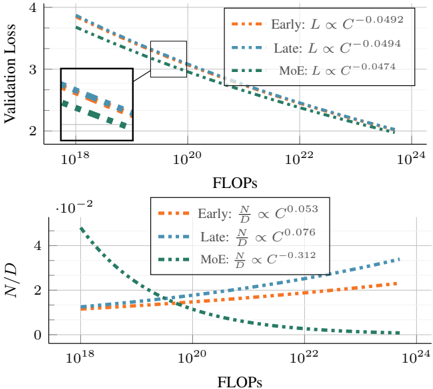

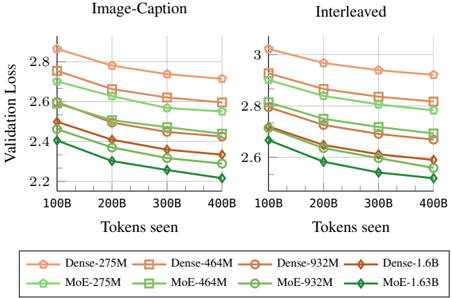

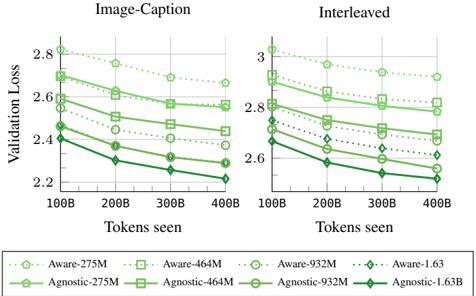

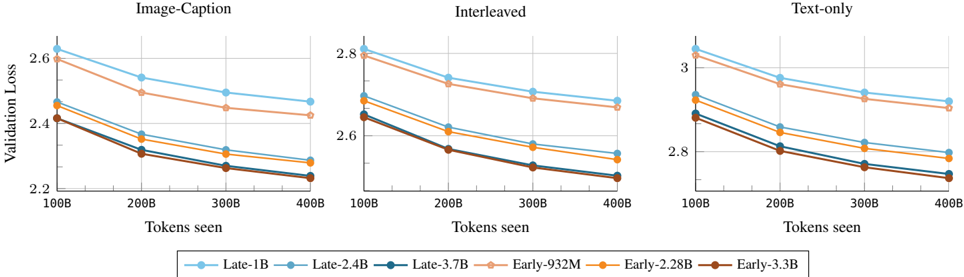

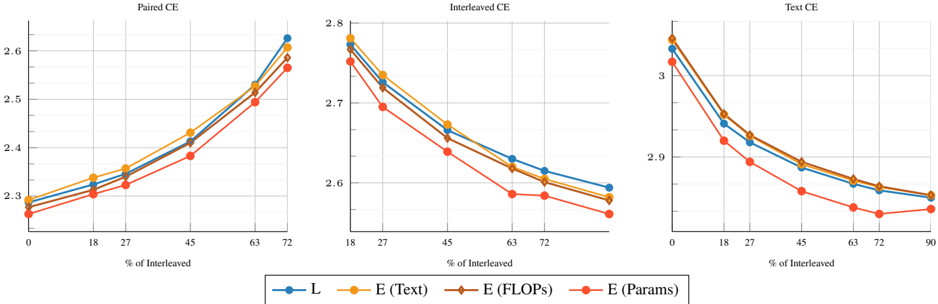

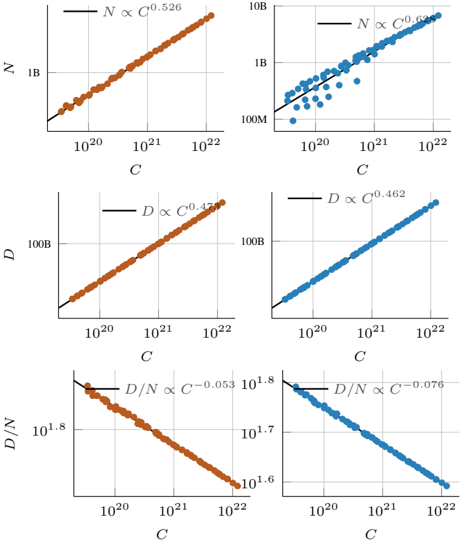

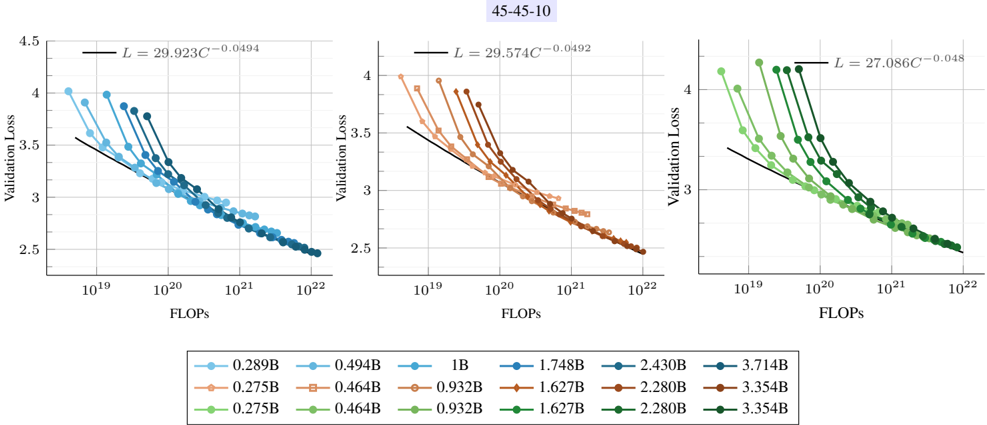

Figure 1. Scaling properties of Native Multimodal Models. Based on the scaling laws study in § 3.1, we observe: (1) early and late fusion models provide similar validation loss L when trained with the same compute budget C (FLOPs); (2) This performance is achieved via a different trade-off between parameters N and number of training tokens D , where early-fusion models requires fewer parameters. (3) Sparse early-fusion models achieve lower loss and require more training tokens for a given FLOP budget.

from fully leveraging cross-modality co-dependancies. An additional challenge is scaling such systems; each component (e.g., vision encoder, LLM) has its own set of hyperparameters, pre-training data mixtues, and scaling properties with respect to the amount of data and compute applied. A more flexible architecture might allow the model to dynamically allocate its capacity across modalities, simplifying scaling efforts.

In this work, we focus on the scaling properties of native multimodal models trained from the ground up on multimodal data. We first investigate whether the commonly adopted late-fusion architectures hold an intrinsic advantage by comparing them to early-fusion models, which process raw multimodal inputs without relying on dedicated vision encoders. We conduct scaling experiments on early and late fusion architectures, deriving scaling laws to pre-

.

dict their performance and compute-optimal configurations. Our findings indicate that late fusion offers no inherent advantage when trained from scratch. Instead, early-fusion models are more efficient and are easier to scale. Furthermore, we observe that native multimodal models follow scaling laws similar to those of LLMs [26], albeit with slight variations in scaling coefficients across modalities and datasets. Our results suggest that model parameters and training tokens should be scaled roughly equally for optimal performance. Moreover, we find that different multimodal training mixtures exhibit similar overall trends, indicating that our findings are likely to generalize to a broader range of settings.

While our findings favor early fusion, multimodal data is inherently heterogeneous, suggesting that some degree of parameter specialization may still offer benefits. To investigate this, we explore leveraging Mixture of Experts (MoEs) [59], a technique that enables the model to dynamically allocate specialized parameters across modalities in a symmetric and parallel manner, in contrast to late-fusion models, which are asymmetric and process data sequentially. Training native multimodal models with MoEs results in significantly improved performance and therefore, faster convergence. Our scaling laws for MoEs suggest that scaling number of training tokens is more important than the number of active parameters. This unbalanced scaling is different from what is observed for dense models, due to the higher number of total parameters for sparse models. In addition, Our analysis reveals that experts tend to specialize in different modalities, with this specialization being particularly prominent in the early and last layers.

## 1.1. Summary of our findings

Our findings can be summarized as follows:

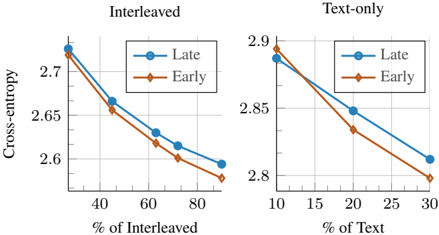

Native Early and Late fusion perform on par: Early fusion models trained from scratch perform on par with their late-fusion counterparts, with a slight advantage to earlyfusion models for low compute budgets (Figure 3). Furthermore, our scaling laws study indicates that the computeoptimal models for early and late fusion perform similarly as the compute budget increases (Figure 1 Top).

NMMs scale similarly to LLMs: The scaling laws of native multimodal models follow similar laws as text-only LLMs with slightly varying scaling exponents depending on the target data type and training mixture (Table 2).

Late-fusion requires more parameters: Computeoptimal late-fusion models require a higher parameters-todata ratio when compared to early-fusion (Figure 1 bottom). Sparsity significantly benefits early-fusion NMMs: Sparse NMMs exhibit significant improvements compared to their dense counterparts at the same inference cost (Figure 10). Furthermore, they implicitly learn modalityspecific weights when trained with sparsity (Figure 12). In

Table 1. Definitions of the expressions used throughout the paper.

| Expression | Definition |

|--------------|-------------------------------------------------------------------------------------------------------------------|

| N | Number of parameters in the multimodal decoder. For MoEs this refers to the active parameters only. |

| D | Total number of multimodal tokens. |

| N v | Number of parameters in the vision-specific encoder. Only exists in late-fusion architectures. |

| D v | Number of vision-only tokens. |

| C | Total number of FLOPs, estimated as C = 6 ND for early-fusion and C = 6( N v D v + ND ) for late-fusion. |

| L | Validation loss measured as the average over interleaved image- text, image-caption, and text-only data mixtures. |

addition, compute-optimal models rely more on scaling the number of training tokens than the number of active parameters as the compute-budget grows (Figure 1 Bottom).

Modality-agnostic routing beats Modality-aware routing for Sparse NMMs: Training sparse mixture of experts with modality-agnostic routing consistently outperforms models with modality-aware routing (Figure 11).

## 2. Preliminaries

## 2.1. Definitions

Native Multimodal Models (NMMs): Models that are trained from scratch on all modalities simultaneously without relying on pre-trained LLMs or vision encoders. Our focus is on the representative image and text modalities, where the model processes both text and images as input and generates text as output.

Early fusion: Enabling multimodal interaction from the beginning, using almost no modality-specific parameters ( e.g ., except a linear layer to patchify images). Using a single transformer model, this approach processes raw multimodal input-tokenized text and continuous image patches-with no image discretization. In this paper, we refer to the main transformer as the decoder.

Late fusion: Delaying the multimodal interaction to deeper layers, typically after separate unimodal components has processed that process each modality independently (e.g., a vision encoder connected to a decoder).

Modality-agnostic routing: In sparse mixture-of-experts, modality-agnostic routing refers to relying on a learned router module that is trained jointly with the model.

Modality-aware routing: Routing based on pre-defined rules such as routing based on the modality type ( e.g ., vision-tokens, token-tokens).

## 2.2. Scaling Laws

We aim to understand the scaling properties of NMMs and how different architectural choices influence trade-offs. To this end, we analyze our models within the scaling laws framework proposed by Hoffmann et al. [26], Kaplan et al. [31]. We compute FLOPs based on the total number of parameters, using the approximation C = 6 ND , as adopted in prior work [2, 26]. However, we modify this estimation to suit our setup: for late-fusion models, FLOPs is computed

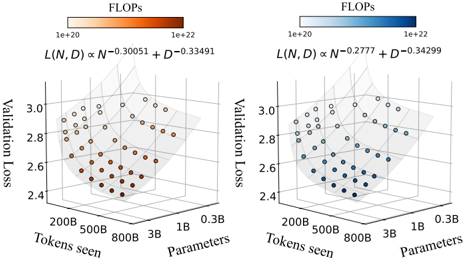

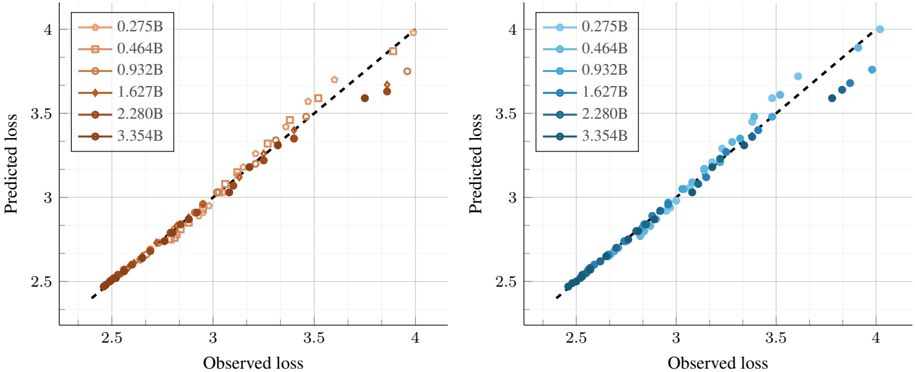

Figure 2. Scaling laws for early-fusion and late-fusion native multimodal models. Each point represents a model (300M to 3B parameters) trained on varying number of tokens (250M to 400B). We report the average cross-entropy loss on the validation sets of interleaved (Obelics), Image-caption (HQITP), and text-only data (DCLM).

<details>

<summary>Image 2 Details</summary>

### Visual Description

## 3D Scatter Plots: Validation Loss vs. Tokens Seen and Parameters

### Overview

The image presents two 3D scatter plots, each visualizing the relationship between validation loss, tokens seen, and the number of parameters. The plots differ in the exponents used in the formula L(N, D) ∝ N^exponent + D^exponent, and the color of the data points represents the FLOPs (Floating Point Operations per second).

### Components/Axes

**Left Plot:**

* **X-axis:** Tokens seen, labeled with values 200B, 500B, 800B, and 3B.

* **Y-axis:** Validation Loss, ranging from 2.4 to 3.0.

* **Z-axis:** Parameters, labeled with values 0.3B, 1B, and 3B.

* **Color Legend (Top-Left):** FLOPs, ranging from 1e+20 (light orange) to 1e+22 (dark orange).

* **Formula:** L(N, D) ∝ N^-0.30051 + D^-0.33491

**Right Plot:**

* **X-axis:** Tokens seen, labeled with values 200B, 500B, 800B, and 3B.

* **Y-axis:** Validation Loss, ranging from 2.4 to 3.0.

* **Z-axis:** Parameters, labeled with values 0.3B, 1B, and 3B.

* **Color Legend (Top-Right):** FLOPs, ranging from 1e+20 (light blue) to 1e+22 (dark blue).

* **Formula:** L(N, D) ∝ N^-0.2777 + D^-0.34299

### Detailed Analysis

**Left Plot (Orange):**

* **Trend:** The validation loss generally decreases as both tokens seen and parameters increase, forming a curved surface.

* **Data Points:**

* At 200B tokens seen and 0.3B parameters, validation loss ranges from approximately 2.8 to 3.0 (light orange, lower FLOPs).

* At 3B tokens seen and 3B parameters, validation loss is approximately 2.4 to 2.6 (dark orange, higher FLOPs).

* The lowest validation loss values (darkest orange) are clustered towards the higher tokens seen and higher parameter values.

**Right Plot (Blue):**

* **Trend:** Similar to the left plot, the validation loss decreases as both tokens seen and parameters increase.

* **Data Points:**

* At 200B tokens seen and 0.3B parameters, validation loss ranges from approximately 2.8 to 3.0 (light blue, lower FLOPs).

* At 3B tokens seen and 3B parameters, validation loss is approximately 2.4 to 2.6 (dark blue, higher FLOPs).

* The lowest validation loss values (darkest blue) are clustered towards the higher tokens seen and higher parameter values.

### Key Observations

* Both plots show a similar trend: increasing tokens seen and parameters leads to lower validation loss.

* The color gradient indicates that higher FLOPs are generally associated with lower validation loss, especially at higher tokens seen and parameter values.

* The exponents in the formulas for L(N, D) are slightly different between the two plots, which may contribute to subtle differences in the shape of the curved surface and the distribution of data points.

### Interpretation

The plots demonstrate the relationship between model size (parameters), training data size (tokens seen), computational cost (FLOPs), and model performance (validation loss). The data suggests that increasing both the size of the model and the amount of training data generally leads to improved performance, as indicated by the lower validation loss. The color gradient further suggests that achieving lower validation loss often requires higher computational cost (FLOPs). The difference in exponents between the two plots could represent different optimization strategies or model architectures, leading to slightly different trade-offs between model size, data size, and computational cost.

</details>

as 6( N v D v + ND ) . We consider a setup where, given a compute budget C , our goal is to predict the model's final performance, as well as determine the optimal number of parameters or number of training tokens. Consistent with prior studies on LLM scaling [26], we assume a power-law relationship between the final model loss and both model size ( N ) and training tokens ( D ):

<!-- formula-not-decoded -->

Here, E represents the lowest achievable loss on the dataset, while A N α captures the effect of increasing the number of parameters, where a larger model leads to lower loss, with the rate of improvement governed by α . Similarly, B D β accounts for the benefits of a higher number of tokens, with β determining the rate of improvement. Additionally, we assume a linear relationship between compute budget (FLOPs) and both N and D ( C ∝ ND ). This further leads to power-law relationships detailed in Appendix C.7.

## 2.3. Experimental setup

Our models are based on the autoregressive transformer architecture [71] with SwiGLU FFNs [58] and QK-Norm [17] following Li et al. [39]. In early-fusion models, image patches are linearly projected to match the text token dimension, while late-fusion follows the CLIP architecture [55]. We adopt causal attention for text tokens and bidirectional attention for image tokens, we found this to work better. Training is conducted on a mixture of public and private multimodal datasets, including DCLM [39], Obelics [34], DFN [21], COYO [11], and a private collection of HighQuality Image-Text Pairs (HQITP). Images are resized to 224×224 resolution with a 14×14 patch size. We use a context length of 1k for the multimodal sequences. For training efficiency, we train our models with bfloat16 , Fully Sharded Data Parallel (FSDP) [82], activation checkpointing, and gradient accumulation. We also use se-

Table 2. Scaling laws for native multimodal models . We report the scaling laws results for early and late fusion models. We fit the scaling laws for different target data types as well as their average loss (A VG).

| L = E + A N α + B D β | N ∝ C a | N ∝ C a | D ∝ C b | D ∝ C b | L ∝ C c | L ∝ C c | D ∝ N d | D ∝ N d |

|--------------------------|---------------|-----------|-----------|-----------|-----------|-----------|-----------|---------------|

| Model | Data | E | α | β | a | b | c | d |

| GPT3 [10] | Text | - | - | - | - | - | -0.048 | |

| Chinchilla [26] | Text | 1.693 | 0.339 | 0.285 | 0.46 | 0.54 | - | |

| NMM(early-fusion) | Text | 2.222 | 0.3084 | 0.3375 | 0.5246 | 0.4774 | -0.0420 | 0.9085 0.9187 |

| | Image-Caption | 1.569 | 0.3111 | 0.3386 | 0.5203 | 0.4785 | -0.0610 | |

| | Interleaved | 1.966 | 0.2971 | 0.338 | 0.5315 | 0.4680 | -0.0459 | 0.8791 |

| | AVG | 1.904 | 0.301 | 0.335 | 0.5262 | 0.473 | -0.0492 | 0.8987 |

| NMM(late-fusion) | AVG | 1.891 | 0.2903 | 0.3383 | 0.6358 | 0.4619 | -0.0494 | 0.6732 |

| Sparse NMM(early-fusion) | AVG | 2.158 | 0.710 | 0.372 | 0.361 | 0.656 | -0.047 | 1.797 |

quence packing for the image captioning dataset to reduce the amount of padded tokens. Similar to previous works [2, 5, 26], we evaluate performance on held-out subsets of interleaved (Obelics), Image-caption (HQITP), and text-only data (DCLM). Further implementation details are provided in Appendix A.

## 3. Scaling native multimodal models

In this section, we present a scaling laws study of native multimodal models, examining various architectural choices § 3.1, exploring different data mixtures § 3.2, analyzing the practical trade-offs between late and early fusion NMMs, and comparing the performance of native pretraining and continual pre-training of NMMs § 3.3.

Setup. We train models ranging from 0.3B to 4B active parameters, scaling the width while keeping the depth constant. For smaller training token budgets, we reduce the warm-up phase to 1K steps while maintaining 5K steps for larger budgets. Following H¨ agele et al. [25], models are trained with a constant learning rate, followed by a cooldown phase using an inverse square root scheduler. The cool-down phase spans 20% of the total steps spent at the constant learning rate. To estimate the scaling coefficients in Eq 1, we apply the L-BFGS algorithm [51] and Huber loss [28] (with δ = 10 -3 ), performing a grid search over initialization ranges.

## 3.1. Scaling laws of NMMs

Scaling laws for early-fusion and late-fusion models. Figure 2 (left) presents the final loss averaged across interleaved, image-caption, and text datasets for early-fusion NMMs. The lowest-loss frontier follows a power law as a function of FLOPs. Fitting the power law yields the expression L ∝ C -0 . 049 , indicating the rate of improvement with increasing compute. When analyzing the scaling laws per data type ( e.g ., image-caption, interleaved, text), we observe that the exponent varies (Table 2). For instance, the model achieves a higher rate of improvement for image-

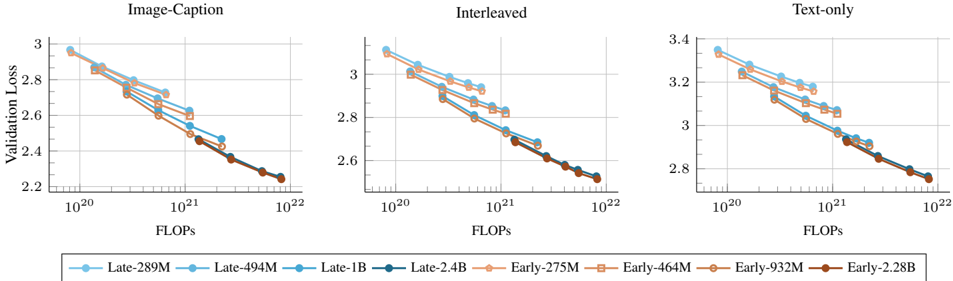

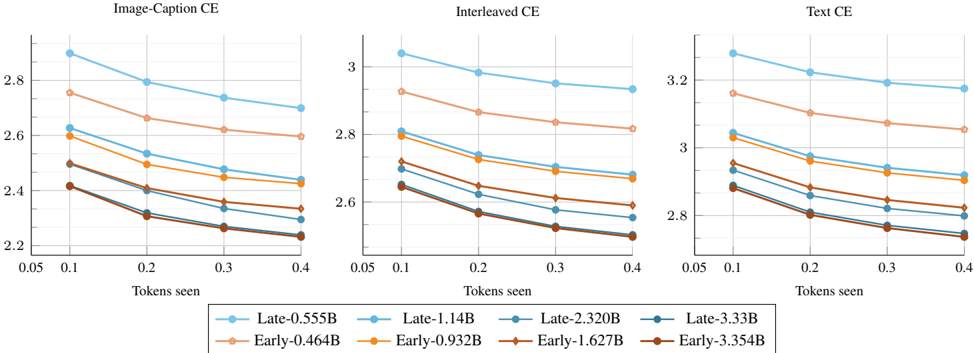

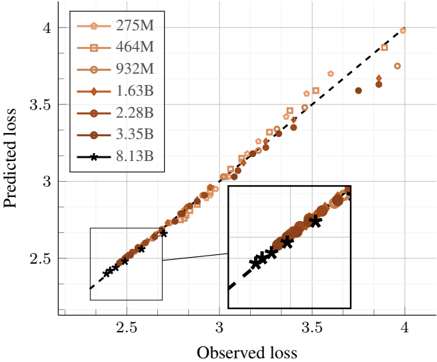

Figure 3. Early vs late fusion: scaling training FLOPs. We compare early and late fusion models when scaling both the number of model parameters and the number of training tokens. Overall, early fusion shows a slight advantage, especially at smaller model sizes, and the gap decreases when scaling the number of parameters N .

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Line Charts: Validation Loss vs. FLOPs for Different Input Types

### Overview

The image presents three line charts comparing the validation loss of different models against the number of floating-point operations (FLOPs) used during training. The charts are titled "Image-Caption", "Interleaved", and "Text-only", representing different input types used for training. Each chart displays multiple lines, each representing a different model size, with the model sizes ranging from 275M to 2.4B parameters. The x-axis represents FLOPs on a logarithmic scale, and the y-axis represents validation loss.

### Components/Axes

* **Titles:**

* Left Chart: "Image-Caption"

* Middle Chart: "Interleaved"

* Right Chart: "Text-only"

* **X-axis (Horizontal):**

* Label: "FLOPs"

* Scale: Logarithmic, with markers at 10^20, 10^21, and 10^22.

* **Y-axis (Vertical):**

* Label: "Validation Loss"

* Scale: Linear.

* Left Chart: Ranges from 2.2 to 3.0, with tick marks at intervals of 0.2.

* Middle Chart: Ranges from 2.6 to 3.4, with tick marks at intervals of 0.2.

* Right Chart: Ranges from 2.8 to 3.4, with tick marks at intervals of 0.2.

* **Legend (Bottom):**

* "Late-289M" (light blue, circle marker)

* "Late-494M" (light blue, square marker)

* "Late-1B" (light blue, no marker)

* "Late-2.4B" (dark blue, circle marker)

* "Early-275M" (light orange, circle marker)

* "Early-464M" (light orange, square marker)

* "Early-932M" (light orange, no marker)

* "Early-2.28B" (brown, circle marker)

### Detailed Analysis

**General Trend:** All lines in all three charts show a downward trend, indicating that validation loss decreases as the number of FLOPs increases. This suggests that the models generally improve with more training.

**Image-Caption Chart:**

* **Late-289M (light blue, circle):** Starts at approximately 2.9 and decreases to around 2.3.

* **Late-494M (light blue, square):** Starts at approximately 2.9 and decreases to around 2.3.

* **Late-1B (light blue, no marker):** Starts at approximately 2.8 and decreases to around 2.3.

* **Late-2.4B (dark blue, circle):** Starts at approximately 2.7 and decreases to around 2.3.

* **Early-275M (light orange, circle):** Starts at approximately 2.9 and decreases to around 2.3.

* **Early-464M (light orange, square):** Starts at approximately 2.8 and decreases to around 2.3.

* **Early-932M (light orange, no marker):** Starts at approximately 2.7 and decreases to around 2.3.

* **Early-2.28B (brown, circle):** Starts at approximately 2.7 and decreases to around 2.2.

**Interleaved Chart:**

* **Late-289M (light blue, circle):** Starts at approximately 3.1 and decreases to around 2.6.

* **Late-494M (light blue, square):** Starts at approximately 3.0 and decreases to around 2.6.

* **Late-1B (light blue, no marker):** Starts at approximately 3.0 and decreases to around 2.6.

* **Late-2.4B (dark blue, circle):** Starts at approximately 2.9 and decreases to around 2.6.

* **Early-275M (light orange, circle):** Starts at approximately 3.1 and decreases to around 2.6.

* **Early-464M (light orange, square):** Starts at approximately 3.0 and decreases to around 2.6.

* **Early-932M (light orange, no marker):** Starts at approximately 2.9 and decreases to around 2.6.

* **Early-2.28B (brown, circle):** Starts at approximately 2.9 and decreases to around 2.5.

**Text-only Chart:**

* **Late-289M (light blue, circle):** Starts at approximately 3.3 and decreases to around 2.9.

* **Late-494M (light blue, square):** Starts at approximately 3.3 and decreases to around 2.9.

* **Late-1B (light blue, no marker):** Starts at approximately 3.2 and decreases to around 2.9.

* **Late-2.4B (dark blue, circle):** Starts at approximately 3.1 and decreases to around 2.9.

* **Early-275M (light orange, circle):** Starts at approximately 3.3 and decreases to around 2.9.

* **Early-464M (light orange, square):** Starts at approximately 3.2 and decreases to around 2.9.

* **Early-932M (light orange, no marker):** Starts at approximately 3.1 and decreases to around 2.9.

* **Early-2.28B (brown, circle):** Starts at approximately 3.0 and decreases to around 2.8.

### Key Observations

* The "Text-only" chart generally shows higher validation loss values compared to the "Image-Caption" and "Interleaved" charts, suggesting that models trained solely on text data perform worse than those trained with image and caption data.

* The "Image-Caption" chart shows the lowest validation loss values, indicating that this input type leads to the best model performance.

* The "Early-2.28B" model (brown line) consistently achieves the lowest validation loss across all three charts, suggesting that larger models trained early in the process perform better.

* The validation loss decreases more rapidly in the beginning and then plateaus as FLOPs increase, indicating diminishing returns from additional training.

### Interpretation

The data suggests that incorporating image information into the training process (as seen in "Image-Caption" and "Interleaved" charts) leads to better model performance compared to using text data alone ("Text-only" chart). The "Image-Caption" input type appears to be the most effective. Furthermore, larger models (like "Early-2.28B") tend to achieve lower validation loss, indicating better generalization. The diminishing returns observed with increasing FLOPs suggest that there is a point beyond which additional training provides minimal improvement in validation loss. The "Early" vs "Late" training regime seems to have a significant impact, with "Early" models generally performing better, especially the largest one.

</details>

caption data ( L ∝ C -0 . 061 ) when compared to interleaved documents ( L ∝ C -0 . 046 ).

To model the loss as a function of the number of training tokens D and model parameters N , we fit the parametric function in Eq 1, obtaining scaling exponents α = 0 . 301 and β = 0 . 335 . These describe the rates of improvement when scaling the number of model parameters and training tokens, respectively. Assuming a linear relationship between compute, N , and D ( i.e ., C ∝ ND ), we derive the law relating model parameters to the compute budget (see Appendix C for details). Specifically, for a given compute budget C , we compute the corresponding model size N at logarithmically spaced D values and determine N opt , the parameter count that minimizes loss. Repeating this across different FLOPs values produces a dataset of ( C, N opt ) , to which we fit a power law predicting the compute-optimal model size as a function of compute: N ∗ ∝ C 0 . 526 .

Similarly, we fit power laws to estimate the computeoptimal training dataset size as a function of compute and model size:

<!-- formula-not-decoded -->

These relationships allow practitioners to determine the optimal model and dataset size given a fixed compute budget. When analyzing by data type, we find that interleaved data benefits more from larger models ( a = 0 . 532 ) compared to image-caption data ( a = 0 . 520 ), whereas the opposite trend holds for training tokens.

We conduct a similar study on late-fusion models in Figure 2 (right) and observe comparable scaling behaviors. In particular, the loss scaling exponent ( c = -0 . 0494 ) is nearly identical to that of early fusion ( c = -0 . 0492 ). This trend is evident in Figure 3, where early fusion outperforms late fusion at smaller model scales, while both architectures converge to similar performance at larger model sizes. We also observe similar trends when varying late-fusion con-

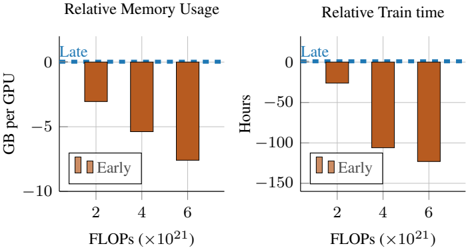

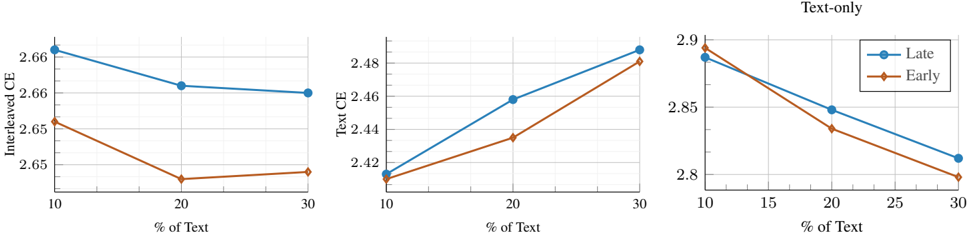

Figure 4. Early vs late: pretraining efficiency. Early-fusion is faster to train and consumes less memory. Models are trained on 16 H100 GPUs for 160k steps (300B tokens).

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Bar Charts: Relative Memory Usage and Relative Train Time

### Overview

The image contains two bar charts comparing "Early" and "Late" training strategies. The left chart shows "Relative Memory Usage" in GB per GPU, while the right chart shows "Relative Train time" in hours. Both charts have FLOPs (x10^21) on the x-axis.

### Components/Axes

**Left Chart: Relative Memory Usage**

* **Title:** Relative Memory Usage

* **Y-axis:** GB per GPU

* Scale: -10 to 0

* Markers: -10, -5, 0

* **X-axis:** FLOPs (x10^21)

* Categories: 2, 4, 6

* **Legend:**

* "Early": Brown bars

* **Horizontal Line:** Dashed blue line at 0, labeled "Late"

**Right Chart: Relative Train time**

* **Title:** Relative Train time

* **Y-axis:** Hours

* Scale: -150 to 0

* Markers: -150, -100, -50, 0

* **X-axis:** FLOPs (x10^21)

* Categories: 2, 4, 6

* **Legend:**

* "Early": Brown bars

* **Horizontal Line:** Dashed blue line at 0, labeled "Late"

### Detailed Analysis

**Left Chart: Relative Memory Usage**

* **Early (Brown Bars):**

* At 2 FLOPs: Approximately -3 GB per GPU

* At 4 FLOPs: Approximately -5.5 GB per GPU

* At 6 FLOPs: Approximately -8 GB per GPU

* Trend: Memory usage decreases (becomes more negative) as FLOPs increase.

**Right Chart: Relative Train time**

* **Early (Brown Bars):**

* At 2 FLOPs: Approximately -30 GB per GPU

* At 4 FLOPs: Approximately -105 GB per GPU

* At 6 FLOPs: Approximately -130 GB per GPU

* Trend: Train time decreases (becomes more negative) as FLOPs increase.

### Key Observations

* In both charts, the "Early" training strategy consistently uses less memory and takes less time than the "Late" strategy (represented by the 0 line).

* The difference between "Early" and "Late" becomes more pronounced as FLOPs increase.

### Interpretation

The charts suggest that the "Early" training strategy is more efficient in terms of both memory usage and training time compared to the "Late" strategy. The efficiency gain of the "Early" strategy increases with higher computational demands (FLOPs). This could be due to the "Early" strategy optimizing resource allocation or convergence speed as the complexity of the training task grows. The data implies that for computationally intensive tasks, adopting the "Early" training strategy could lead to significant resource savings and faster training cycles.

</details>

figurations, such as using a smaller vision encoder with a larger text decoder Appendix B.

Scaling laws of NMMs vs LLMs. Upon comparing the scaling law coefficients of our NMMs to those reported for text-only LLMs ( e.g ., GPT-3, Chinchilla), we find them to be within similar ranges. In particular, for predicting the loss as a function of compute, GPT-3 [10] follows L ∝ C -0 . 048 , while our models follow L ∝ C -0 . 049 , suggesting that the performance of NMMs adheres to similar scaling laws as LLMs. Similarly, our estimates of the α and β parameters in Eq 1 ( α = 0 . 301 , β = 0 . 335 ) closely match those reported by Hoffmann et al. [26] ( α = 0 . 339 , β = 0 . 285 ). Likewise, our computed values of a = 0 . 526 and b = 0 . 473 align closely with a = 0 . 46 and b = 0 . 54 from [26], reinforcing the idea that, for native multimodal models, the number of training tokens and model parameters should be scaled proportionally. However, since the gap between a and b is smaller than in LLMs, this principle holds even more strongly for NMMs. Additionally, as a = 0 . 526 is greater than b = 0 . 473 in our case, the optimal model size for NMMs is larger than that of LLMs,

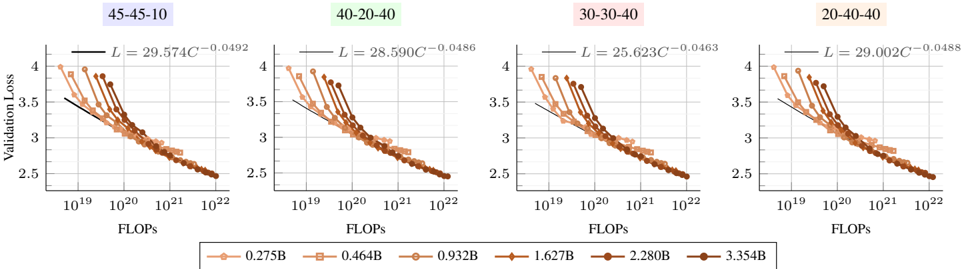

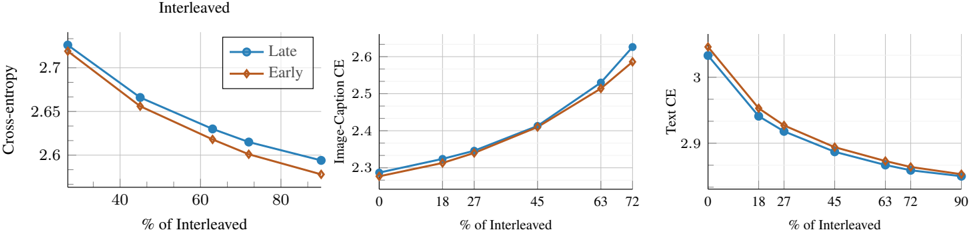

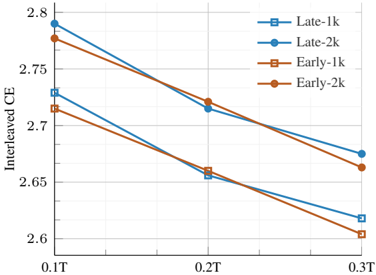

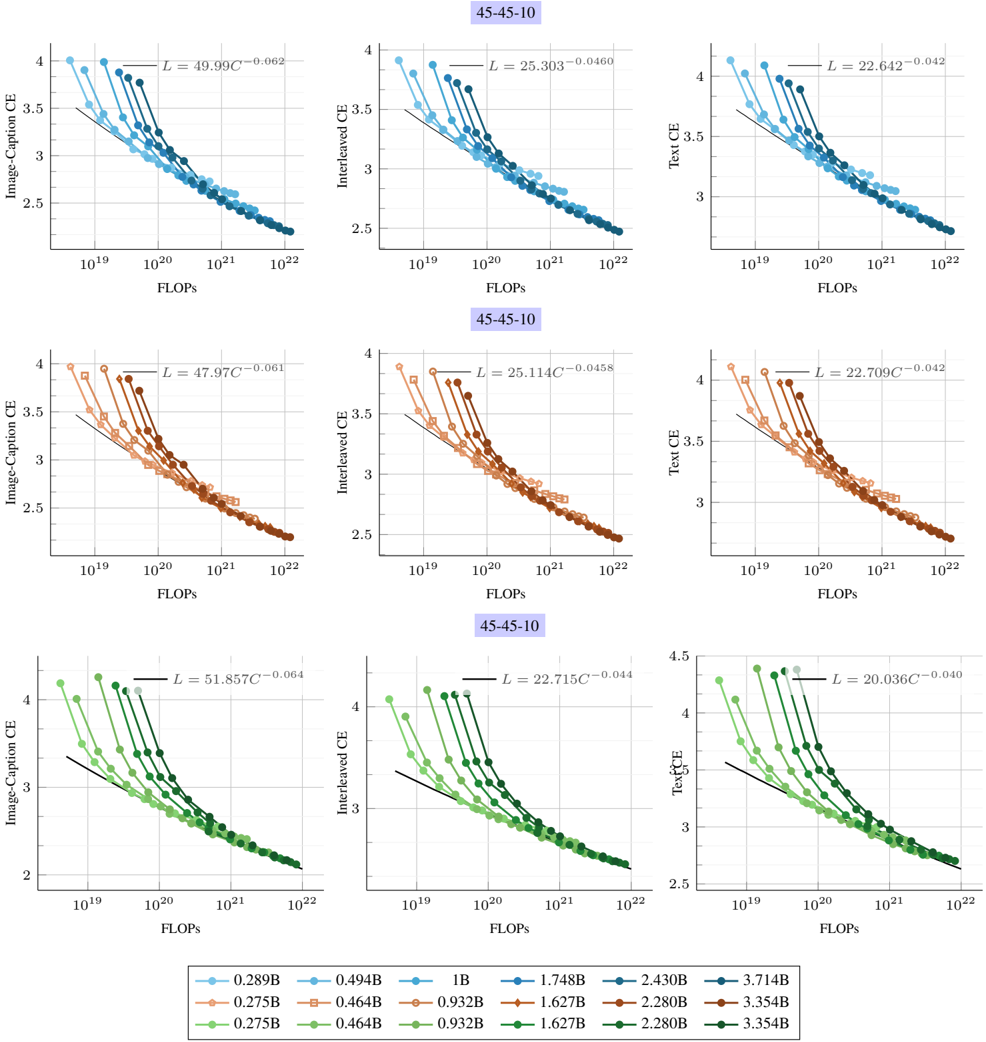

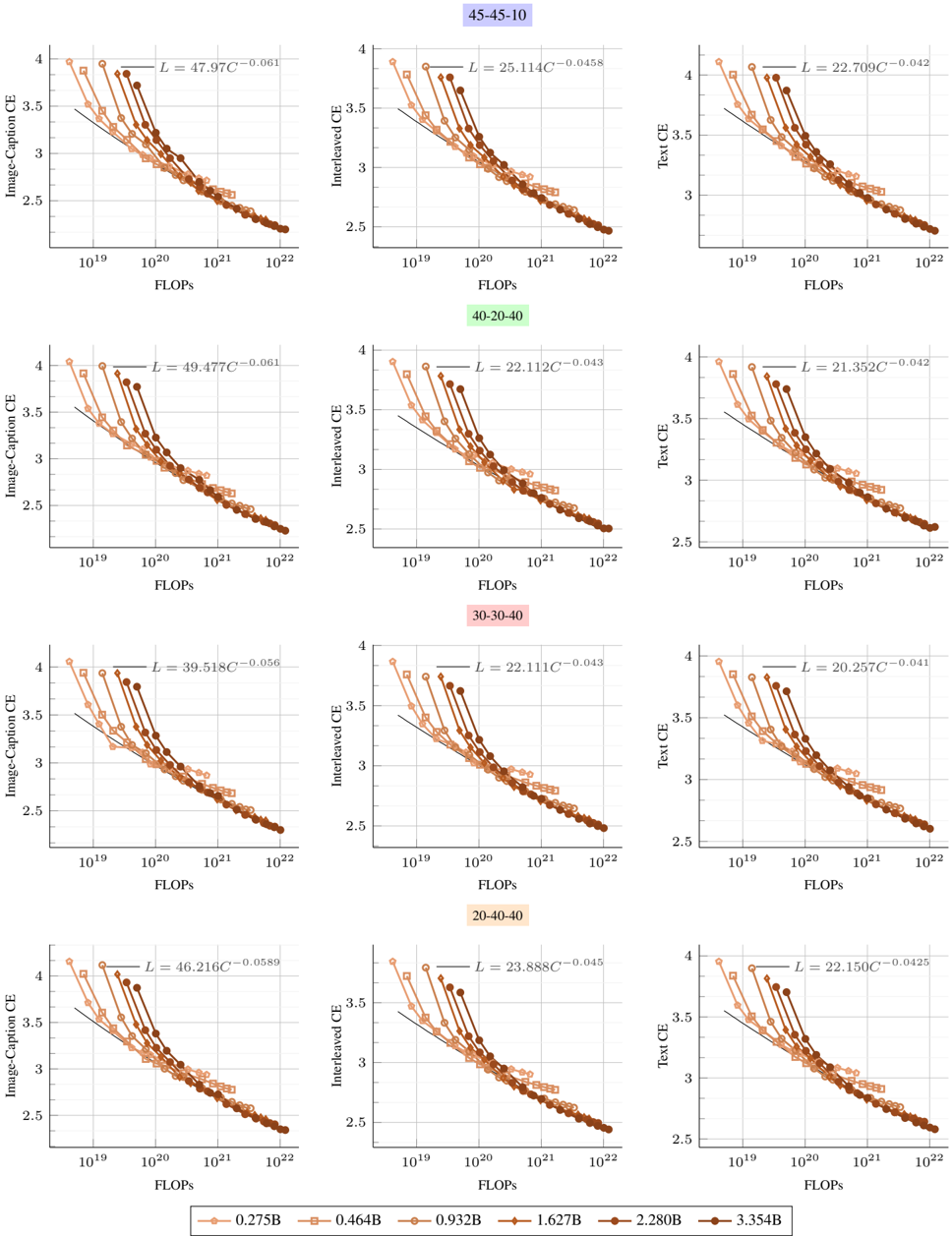

Figure 5. Scaling laws with different training mixtures. Early-fusion models follow similar scaling trends when changing the pretraining mixtures. However, increasing the image captions leads to a higher scaling exponent norm (see Table 3).

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Chart: Validation Loss vs. FLOPs for Different Model Configurations

### Overview

The image presents four line charts comparing validation loss against FLOPs (Floating Point Operations Per Second) for different model configurations. Each chart represents a different configuration, labeled as "45-45-10", "40-20-40", "30-30-40", and "20-40-40". Each chart contains multiple lines, each representing a different model size, ranging from 0.275B to 3.354B parameters. The x-axis (FLOPs) is on a logarithmic scale.

### Components/Axes

* **X-axis:** FLOPs (Floating Point Operations Per Second). Logarithmic scale from approximately 10^19 to 10^22.

* **Y-axis:** Validation Loss. Linear scale from 2.5 to 4.0.

* **Chart Titles (Top):**

* Top-left: "45-45-10" (Blue text)

* Top-middle-left: "40-20-40" (Green text)

* Top-middle-right: "30-30-40" (Pink text)

* Top-right: "20-40-40" (Yellow-Orange text)

* **Legend (Bottom):**

* 0.275B (Light Brown, circle markers)

* 0.464B (Light Brown, square markers)

* 0.932B (Light Brown, diamond markers)

* 1.627B (Brown, triangle markers)

* 2.280B (Dark Brown, circle markers)

* 3.354B (Dark Brown, no marker)

* **Trendline:** Each chart has a black trendline represented by the equation L = aC^-b, where 'L' is the validation loss, 'C' is the FLOPs, and 'a' and 'b' are constants specific to each configuration.

### Detailed Analysis

**Chart 1: 45-45-10**

* Equation: L = 29.574C^-0.0492

* 0.275B: Starts at approximately (10^19, 3.6), decreases to (10^22, 2.6)

* 0.464B: Starts at approximately (10^19, 3.7), decreases to (10^22, 2.7)

* 0.932B: Starts at approximately (10^19, 3.8), decreases to (10^22, 2.7)

* 1.627B: Starts at approximately (10^19, 3.9), decreases to (10^22, 2.8)

* 2.280B: Starts at approximately (10^19, 4.0), decreases to (10^22, 2.8)

* 3.354B: Starts at approximately (10^19, 4.1), decreases to (10^22, 2.9)

**Chart 2: 40-20-40**

* Equation: L = 28.590C^-0.0486

* 0.275B: Starts at approximately (10^19, 3.8), decreases to (10^22, 2.5)

* 0.464B: Starts at approximately (10^19, 3.9), decreases to (10^22, 2.6)

* 0.932B: Starts at approximately (10^19, 4.0), decreases to (10^22, 2.7)

* 1.627B: Starts at approximately (10^19, 4.1), decreases to (10^22, 2.8)

* 2.280B: Starts at approximately (10^19, 4.2), decreases to (10^22, 2.9)

* 3.354B: Starts at approximately (10^19, 4.3), decreases to (10^22, 3.0)

**Chart 3: 30-30-40**

* Equation: L = 25.623C^-0.0463

* 0.275B: Starts at approximately (10^19, 3.7), decreases to (10^22, 2.6)

* 0.464B: Starts at approximately (10^19, 3.8), decreases to (10^22, 2.7)

* 0.932B: Starts at approximately (10^19, 3.9), decreases to (10^22, 2.8)

* 1.627B: Starts at approximately (10^19, 4.0), decreases to (10^22, 2.9)

* 2.280B: Starts at approximately (10^19, 4.1), decreases to (10^22, 3.0)

* 3.354B: Starts at approximately (10^19, 4.2), decreases to (10^22, 3.1)

**Chart 4: 20-40-40**

* Equation: L = 29.002C^-0.0488

* 0.275B: Starts at approximately (10^19, 3.8), decreases to (10^22, 2.6)

* 0.464B: Starts at approximately (10^19, 3.9), decreases to (10^22, 2.7)

* 0.932B: Starts at approximately (10^19, 4.0), decreases to (10^22, 2.8)

* 1.627B: Starts at approximately (10^19, 4.1), decreases to (10^22, 2.9)

* 2.280B: Starts at approximately (10^19, 4.2), decreases to (10^22, 3.0)

* 3.354B: Starts at approximately (10^19, 4.3), decreases to (10^22, 3.1)

### Key Observations

* **General Trend:** For all configurations and model sizes, the validation loss decreases as FLOPs increase. The rate of decrease diminishes as FLOPs increase, indicating diminishing returns.

* **Model Size Impact:** Larger models (higher parameter counts) generally exhibit higher validation loss for a given FLOP count, but also tend to achieve lower final validation loss as FLOPs increase significantly.

* **Configuration Impact:** The "45-45-10" configuration appears to have a slightly lower validation loss compared to the other configurations for similar FLOPs and model sizes.

* **Trendline Fit:** The trendlines provide a reasonable approximation of the overall trend, but they do not perfectly capture the behavior of individual model sizes.

### Interpretation

The charts illustrate the relationship between computational effort (FLOPs) and model performance (validation loss) for different model configurations and sizes. The data suggests that increasing FLOPs generally leads to improved model performance, but the benefit diminishes as FLOPs increase. Larger models tend to have higher initial validation loss but can achieve lower final validation loss with sufficient computational resources. The specific configuration "45-45-10" may be more efficient in terms of validation loss compared to the others. The equations provided above each chart, L = aC^-b, are power law models that describe the relationship between validation loss (L) and FLOPs (C). The exponent 'b' indicates the rate at which validation loss decreases with increasing FLOPs. A smaller absolute value of 'b' indicates a slower rate of decrease.

</details>

Table 3. Scaling laws for different training mixtures . Earlyfusion models. C-I-T refer to image-caption, interleaved and text while the optimal number of training tokens is lower, given a fixed compute budget.

| | C-I-T (%) | I/T ratio | E | α | β | a | b | d | c |

|----|-------------|-------------|-------|-------|-------|-------|-------|-------|---------|

| 1 | 45-45-10 | 1.19 | 1.906 | 0.301 | 0.335 | 0.527 | 0.474 | 0.901 | -0.0492 |

| 2 | 40-20-40 | 0.65 | 1.965 | 0.328 | 0.348 | 0.518 | 0.486 | 0.937 | -0.0486 |

| 3 | 30-30-40 | 0.59 | 1.847 | 0.253 | 0.338 | 0.572 | 0.428 | 0.748 | -0.0463 |

| 4 | 20-40-40 | 0.49 | 1.836 | 0.259 | 0.354 | 0.582 | 0.423 | 0.726 | -0.0488 |

Compute-optimal trade-offs for early vs. late fusion NMMs. While late- and early-fusion models reduce loss at similar rates with increasing FLOPs, we observe distinct trade-offs in their compute-optimal models. Specifically, N opt is larger for late-fusion models, whereas D opt is larger for early-fusion models. This indicates that, given a fixed compute budget, late-fusion models require a higher number of parameters, while early-fusion models benefit more from a higher number of training tokens. This trend is also reflected in the lower N opt D opt ∝ C 0 . 053 for early fusion compared to N opt D opt ∝ C 0 . 076 for late fusion. As shown in Figure 1 (bottom), when scaling FLOPs, the number of parameters of early fusion models becomes significantly lower, which is crucial for reducing inference costs and, consequently, lowering serving costs after deployment.

Early-fusion is more efficient to train. We compare the training efficiency of lateand early-fusion architectures. As shown in Figure 4, early-fusion models consume less memory and train faster under the same compute budget. This advantage becomes even more pronounced as compute increases, highlighting the superior training efficiency of early fusion while maintaining comparable performance to late fusion at scale. Notably, for the same FLOPs, latefusion models have a higher parameter count and higher effective depth ( i.e ., additional vision encoder layers alongside decoder layers) compared to early-fusion models.

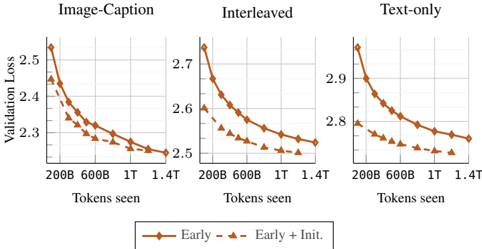

Figure 7. Early vs late fusion: changing the training mixture. Wevary the training mixtures and plot the final training loss. Early fusion models attain a favorable performance when increasing the proportion of interleaved documents and text-only data.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Charts: Cross-Entropy vs. Percentage of Interleaved/Text

### Overview

The image presents two line charts comparing "Late" and "Early" models in terms of cross-entropy. The left chart shows the relationship between cross-entropy and the percentage of interleaved data, while the right chart shows the relationship between cross-entropy and the percentage of text-only data. Both charts display two data series: "Late" and "Early."

### Components/Axes

**Left Chart (Interleaved):**

* **Title:** Interleaved

* **Y-axis:** Cross-entropy

* Scale ranges from approximately 2.58 to 2.78.

* **X-axis:** % of Interleaved

* Scale: 40, 60, 80

* **Legend:** Located in the top-right corner of the chart.

* Blue line with circle markers: Late

* Brown line with diamond markers: Early

**Right Chart (Text-only):**

* **Title:** Text-only

* **Y-axis:** Cross-entropy

* Scale ranges from approximately 2.78 to 2.9.

* **X-axis:** % of Text

* Scale: 10, 20, 30

* **Legend:** Located in the top-right corner of the chart.

* Blue line with circle markers: Late

* Brown line with diamond markers: Early

### Detailed Analysis

**Left Chart (Interleaved):**

* **Late (Blue):** The line slopes downward.

* At 40% Interleaved, Cross-entropy ≈ 2.73

* At 60% Interleaved, Cross-entropy ≈ 2.63

* At 80% Interleaved, Cross-entropy ≈ 2.59

* **Early (Brown):** The line slopes downward.

* At 40% Interleaved, Cross-entropy ≈ 2.66

* At 60% Interleaved, Cross-entropy ≈ 2.62

* At 80% Interleaved, Cross-entropy ≈ 2.57

**Right Chart (Text-only):**

* **Late (Blue):** The line slopes downward.

* At 10% Text, Cross-entropy ≈ 2.88

* At 20% Text, Cross-entropy ≈ 2.85

* At 30% Text, Cross-entropy ≈ 2.80

* **Early (Brown):** The line slopes downward.

* At 10% Text, Cross-entropy ≈ 2.89

* At 20% Text, Cross-entropy ≈ 2.83

* At 30% Text, Cross-entropy ≈ 2.79

### Key Observations

* In both charts, cross-entropy decreases as the percentage of interleaved or text-only data increases.

* The "Early" model generally has a slightly lower cross-entropy than the "Late" model for both interleaved and text-only data.

* The decrease in cross-entropy appears to be more pronounced in the "Text-only" chart compared to the "Interleaved" chart.

### Interpretation

The charts suggest that increasing the percentage of interleaved or text-only data improves the performance of both "Late" and "Early" models, as indicated by the decrease in cross-entropy. The "Early" model seems to perform slightly better than the "Late" model under both conditions. The more significant drop in cross-entropy in the "Text-only" chart might indicate that the models benefit more from increasing the percentage of text-only data compared to interleaved data. This could be due to the nature of the task or the specific characteristics of the models.

</details>

## 3.2. Scaling laws for different data mixtures

We investigate how variations in the training mixture affect the scaling laws of native multimodal models. To this end, we study four different mixtures that reflect common community practices [34, 41, 46, 81], with Image CaptionInterleaved-Text ratios of 45-45-10 (our default setup), 30-30-40 , 40-20-40 , and 20-40-40 . For each mixture, we conduct a separate scaling study by training 76 different models, following our setup in § 3.1. Overall, Figure 5 shows that different mixtures follow similar scaling trends; however, the scaling coefficients vary depending on the mixture (Table 3). Interestingly, increasing the proportion of image-caption data (mixtures 1 and 2) leads to lower a and higher b , whereas increasing the ratio of interleaved and text data (mixtures 3 and 4) have the opposite effect. Notably, image-caption data contains more image tokens than text tokens; therefore, increasing its proportion results in more image tokens, while increasing interleaved and text data increases text token counts. This suggests that, when image tokens are prevalent, training for longer decreases the loss faster than increasing the model size. We also found that for a fixed model size, increasing text-only and interleaved data ratio is in favor of early-fusion Figure 7.

Figure 8. Early native vs initializing from LLMs: initializing from pre-trained models and scaling training tokens. We compare training with and without initializing from DCLM-1B.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Chart: Validation Loss vs. Tokens Seen for Different Training Methods

### Overview

The image presents three line charts comparing the validation loss of a model trained with different data interleaving methods (Image-Caption, Interleaved, and Text-only) against the number of tokens seen during training. Two training strategies are compared: "Early" and "Early + Init."

### Components/Axes

* **X-axis (horizontal):** "Tokens seen" with markers at 200B, 600B, 1T, and 1.4T.

* **Y-axis (vertical):** "Validation Loss" with a scale ranging from approximately 2.3 to 2.5 for "Image-Caption", 2.5 to 2.7 for "Interleaved", and 2.7 to 2.9 for "Text-only".

* **Titles:** "Image-Caption", "Interleaved", and "Text-only" are the titles for the three charts, respectively.

* **Legend (bottom):**

* Solid brown line with diamond markers: "Early"

* Dashed brown line with triangle markers: "Early + Init."

### Detailed Analysis

**1. Image-Caption Chart:**

* **Early (solid brown line, diamond markers):** The validation loss starts at approximately 2.5 and decreases to about 2.5 over the range of tokens seen.

* 200B: ~2.5

* 600B: ~2.35

* 1T: ~2.27

* 1.4T: ~2.25

* **Early + Init. (dashed brown line, triangle markers):** The validation loss starts at approximately 2.45 and decreases to about 2.25 over the range of tokens seen.

* 200B: ~2.45

* 600B: ~2.3

* 1T: ~2.27

* 1.4T: ~2.25

**2. Interleaved Chart:**

* **Early (solid brown line, diamond markers):** The validation loss starts at approximately 2.7 and decreases to about 2.52 over the range of tokens seen.

* 200B: ~2.72

* 600B: ~2.6

* 1T: ~2.55

* 1.4T: ~2.52

* **Early + Init. (dashed brown line, triangle markers):** The validation loss starts at approximately 2.6 and decreases to about 2.5 over the range of tokens seen.

* 200B: ~2.6

* 600B: ~2.53

* 1T: ~2.5

* 1.4T: ~2.5

**3. Text-only Chart:**

* **Early (solid brown line, diamond markers):** The validation loss starts at approximately 2.92 and decreases to about 2.75 over the range of tokens seen.

* 200B: ~2.92

* 600B: ~2.85

* 1T: ~2.78

* 1.4T: ~2.75

* **Early + Init. (dashed brown line, triangle markers):** The validation loss starts at approximately 2.8 and decreases to about 2.7 over the range of tokens seen.

* 200B: ~2.8

* 600B: ~2.75

* 1T: ~2.72

* 1.4T: ~2.7

### Key Observations

* In all three charts, the "Early" training strategy consistently shows a higher validation loss compared to the "Early + Init." strategy across all token counts.

* The validation loss decreases as the number of tokens seen increases for both training strategies in all three charts.

* The "Text-only" chart exhibits the highest validation loss values compared to the "Image-Caption" and "Interleaved" charts.

* The "Image-Caption" chart exhibits the lowest validation loss values compared to the "Interleaved" and "Text-only" charts.

* The rate of decrease in validation loss diminishes as the number of tokens seen increases, suggesting diminishing returns with more training data.

### Interpretation

The data suggests that initializing the model ("Early + Init.") leads to a lower validation loss compared to training from scratch ("Early"). This indicates that pre-training or using a good initialization point can improve model performance.

The different data interleaving methods ("Image-Caption", "Interleaved", "Text-only") impact the validation loss. "Image-Caption" results in the lowest validation loss, suggesting that incorporating image captions during training is beneficial. "Text-only" results in the highest validation loss, implying that relying solely on text data might not be as effective.

The decreasing validation loss with increasing tokens seen demonstrates that the model learns as it is exposed to more data. However, the diminishing rate of decrease suggests that there is a point of diminishing returns, where adding more training data provides less significant improvements in performance.

The relative performance of the different training methods and data interleaving strategies can inform decisions about how to train the model most effectively.

</details>

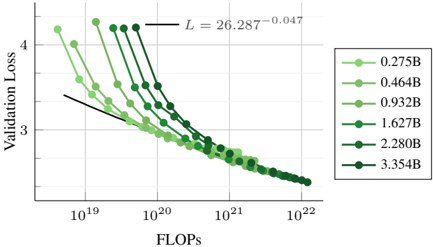

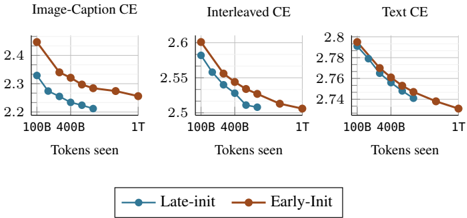

## 3.3. Native multimodal pre-training vs . continual training of LLMs

In this section, we compare training natively from scratch to continual training after initializing from a pre-trained LLM. We initialize the model from DCLM-1B [21] that is trained on more than 2T tokens. Figure 8 shows that native multimodal models can close the gap with initialized models when trained for longer. Specifically, on image captioning data, the model requires fewer than 100B multimodal tokens to reach comparable performance. However, on interleaved and text data, the model may need longer training-up to 1T tokens. Considering the cost of pre-training, these results suggest that training natively could be a more efficient approach for achieving the same performance on multimodal benchmarks.

## 4. Towards multimodal specialization

Previously, we demonstrated that early-fusion models achieve performance on par with late-fusion models under a fixed compute budget. However, multimodal data is inherently heterogeneous, and training a unified model to fit such diverse distributions may be suboptimal. Here, we argue for multimodal specialization within a unified architecture. Ideally, the model should implicitly adapt to each modality, for instance, by learning modality-specific weights or specialized experts. Mixture of Experts is a strong candidate for this approach, having demonstrated effectiveness in LLMs. In this section, we highlight the advantages of sparse earlyfusion models over their dense counterparts.

Setup. Our sparse models are based on the dropless-MoE implementation of Gale et al. [24], which eliminates token dropping during training caused by expert capacity constraints. We employ a topk expert-choice routing mechanism, where each token selects its topk experts among the E available experts. Specifically, we set k = 1 and E = 8 , as we find this configuration to work effectively. Additionally, we incorporate an auxiliary load-balancing loss [59] with a weight of 0.01 to ensure a balanced expert utilization.

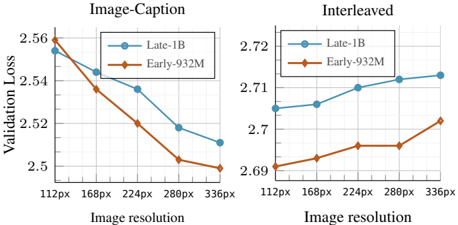

Figure 9. Scaling laws for sparse early-fusion NMMs. We report the final validation loss averaged across interleaved, imagecaptions and text data.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Log-Log Plot: Validation Loss vs. FLOPs for Varying Model Sizes

### Overview

The image is a log-log plot showing the relationship between validation loss and FLOPs (floating point operations per second) for different model sizes. The plot illustrates how validation loss decreases as FLOPs increase, with different lines representing models of varying sizes (0.275B to 3.354B parameters). A power-law fit is also shown on the plot.

### Components/Axes

* **X-axis:** FLOPs (Floating Point Operations Per Second), logarithmic scale from 10^19 to 10^22.

* **Y-axis:** Validation Loss, linear scale from approximately 2.5 to 4.5.

* **Legend:** Located on the right side of the plot, indicating the model size corresponding to each line color. The model sizes are:

* 0.275B (lightest green)

* 0.464B (light green)

* 0.932B (medium green)

* 1.627B (green)

* 2.280B (dark green)

* 3.354B (darkest green)

* **Power-Law Fit:** A black line representing the power-law fit to the data, with the equation L = 26.287 * FLOPs^(-0.047).

### Detailed Analysis

* **0.275B (lightest green):** Starts at approximately (10^19, 4.2) and decreases to approximately (10^22, 2.5).

* **0.464B (light green):** Starts at approximately (10^19, 3.9) and decreases to approximately (10^22, 2.4).

* **0.932B (medium green):** Starts at approximately (10^19, 3.7) and decreases to approximately (10^22, 2.3).

* **1.627B (green):** Starts at approximately (10^19, 3.5) and decreases to approximately (10^22, 2.2).

* **2.280B (dark green):** Starts at approximately (10^19, 3.3) and decreases to approximately (10^22, 2.1).

* **3.354B (darkest green):** Starts at approximately (10^19, 3.2) and decreases to approximately (10^22, 2.0).

All lines show a decreasing trend, indicating that as FLOPs increase, validation loss decreases. The rate of decrease appears to slow down as FLOPs increase, suggesting diminishing returns.

### Key Observations

* **Trend:** All model sizes exhibit a decreasing validation loss as FLOPs increase.

* **Model Size Impact:** Larger models (higher parameter count) generally have lower validation loss for a given number of FLOPs.

* **Power-Law Fit:** The black line represents a power-law fit to the data, suggesting a relationship of the form L = a * FLOPs^b, where 'a' and 'b' are constants. The equation provided is L = 26.287 * FLOPs^(-0.047).

* **Log-Log Scale:** The use of a log-log scale allows for the visualization of a wide range of FLOPs values and highlights the power-law relationship.

### Interpretation

The plot demonstrates the relationship between model size, computational effort (FLOPs), and validation loss. The data suggests that increasing the model size and/or the number of FLOPs used during training leads to a reduction in validation loss, indicating improved model performance. The power-law fit suggests that there is a predictable relationship between FLOPs and validation loss, which can be used to estimate the expected performance of a model given a certain computational budget. The diminishing returns observed at higher FLOPs suggest that there may be a point beyond which increasing FLOPs yields only marginal improvements in validation loss. The different curves for different model sizes indicate that larger models generally achieve lower validation loss for a given number of FLOPs, highlighting the importance of model size in achieving good performance.

</details>

Following Abnar et al. [2], we compute training FLOPs as 6 ND , where N represents the number of active parameters.

## 4.1. Sparse vs dense NMMs when scaling FLOPs

We compare sparse MoE models to their dense counterparts by training models with different numbers of active parameters and varying amounts of training tokens. Figure 10 shows that, under the same inference cost (or number of active parameters), MoEs significantly outperform dense models. Interestingly, this performance gap is more pronounced for smaller model sizes. This suggests that MoEs enable models to handle heterogeneous data more effectively and specialize in different modalities. However, as dense models become sufficiently large, the gap between the two architectures gradually closes.

## 4.2. Scaling laws for sparse early-fusion models

We train different models (ranging from 300M to 3.4B active parameters) on varying amounts of tokens (ranging from 250M to 600B) and report the final loss in Figure 9. We fit a power law to the convex hull of the lowest loss as a function of compute (FLOPs). Interestingly, the exponent ( -0 . 048 ) is close to that of dense NMMs ( -0 . 049 ), indicating that both architectures scale similarly. However, the multiplicative constant is smaller for MoEs ( 27 . 086 ) compared to dense models ( 29 . 574 ), revealing lower loss. Additionally, MoEs require longer training to reach saturation compared to dense models (Appendix C for more details). We also predict the coefficients of Eq 1 by considering N as the number of active parameters. Table 2 shows significantly higher α compared to dense models. Interestingly, b is significantly higher than a , revealing that the training tokens should be scaled at a higher rate than the number of parameters when training sparse NMMs. We also experiment with a scaling law that takes into account the sparsity [2] and reached similar conclusions Appendix C.7.

## 4.3. Modality-aware vs . Modality-agnostic routing

Another alternative to MoEs is modality-aware routing, where multimodal tokens are assigned to experts based on

Figure 10. MoE vs Dense: scaling training FLOPs. We compare MoE and dense early-fusion models when scaling both the amount of training tokens and model sizes. MoEs beat dense models when matching the number of active parameters.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Chart: Validation Loss vs. Tokens Seen

### Overview

The image presents two line charts comparing the validation loss of different model architectures (Dense and MoE) with varying parameter sizes (275M, 464M, 932M, 1.6B) as a function of the number of tokens seen during training. The left chart is labeled "Image-Caption" and the right chart is labeled "Interleaved". Both charts share the same x and y axes.

### Components/Axes

* **Title (Left Chart):** Image-Caption

* **Title (Right Chart):** Interleaved

* **Y-axis Label:** Validation Loss

* Scale: 2.2 to 3.0, with tick marks at 2.2, 2.4, 2.6, 2.8, and 3.0.

* **X-axis Label:** Tokens seen

* Scale: 100B to 400B, with tick marks at 100B, 200B, 300B, and 400B.

* **Legend (Bottom):**

* Dense-275M (light brown, circle marker)

* Dense-464M (light brown, square marker)

* Dense-932M (light brown, no marker)

* Dense-1.6B (brown, diamond marker)

* MoE-275M (light green, circle marker)

* MoE-464M (light green, square marker)

* MoE-932M (light green, no marker)

* MoE-1.63B (dark green, diamond marker)

### Detailed Analysis

**Image-Caption Chart (Left)**

* **Dense-275M (light brown, circle):** Starts at approximately 2.85 at 100B tokens and decreases to approximately 2.72 at 400B tokens.

* **Dense-464M (light brown, square):** Starts at approximately 2.78 at 100B tokens and decreases to approximately 2.70 at 400B tokens.

* **Dense-932M (light brown, no marker):** Starts at approximately 2.65 at 100B tokens and decreases to approximately 2.58 at 400B tokens.

* **Dense-1.6B (brown, diamond):** Starts at approximately 2.52 at 100B tokens and decreases to approximately 2.42 at 400B tokens.

* **MoE-275M (light green, circle):** Starts at approximately 2.72 at 100B tokens and decreases to approximately 2.60 at 400B tokens.

* **MoE-464M (light green, square):** Starts at approximately 2.65 at 100B tokens and decreases to approximately 2.52 at 400B tokens.

* **MoE-932M (light green, no marker):** Starts at approximately 2.50 at 100B tokens and decreases to approximately 2.38 at 400B tokens.

* **MoE-1.63B (dark green, diamond):** Starts at approximately 2.40 at 100B tokens and decreases to approximately 2.20 at 400B tokens.

**Interleaved Chart (Right)**

* **Dense-275M (light brown, circle):** Starts at approximately 3.00 at 100B tokens and decreases to approximately 2.80 at 400B tokens.

* **Dense-464M (light brown, square):** Starts at approximately 2.88 at 100B tokens and decreases to approximately 2.75 at 400B tokens.

* **Dense-932M (light brown, no marker):** Starts at approximately 2.75 at 100B tokens and decreases to approximately 2.65 at 400B tokens.

* **Dense-1.6B (brown, diamond):** Starts at approximately 2.68 at 100B tokens and decreases to approximately 2.55 at 400B tokens.

* **MoE-275M (light green, circle):** Starts at approximately 2.80 at 100B tokens and decreases to approximately 2.70 at 400B tokens.

* **MoE-464M (light green, square):** Starts at approximately 2.72 at 100B tokens and decreases to approximately 2.60 at 400B tokens.

* **MoE-932M (light green, no marker):** Starts at approximately 2.60 at 100B tokens and decreases to approximately 2.48 at 400B tokens.

* **MoE-1.63B (dark green, diamond):** Starts at approximately 2.50 at 100B tokens and decreases to approximately 2.30 at 400B tokens.

### Key Observations

* In both charts, validation loss generally decreases as the number of tokens seen increases.

* For both Dense and MoE architectures, larger models (higher parameter counts) tend to have lower validation loss.

* The MoE-1.63B model consistently exhibits the lowest validation loss across both charts.

* The "Interleaved" chart generally shows higher validation loss values compared to the "Image-Caption" chart for all models.

### Interpretation

The charts suggest that increasing the number of tokens seen during training improves the validation loss for all models. Furthermore, larger models, particularly the MoE-1.63B model, achieve lower validation loss, indicating better performance. The difference in validation loss between the "Image-Caption" and "Interleaved" charts suggests that the training data distribution or task setup in the "Interleaved" scenario is more challenging, leading to higher loss values. The MoE models generally outperform the Dense models, indicating that the Mixture of Experts architecture is more effective for this task.

</details>

their modalities, similar to previous works [7, 75]. We train models with distinct image and text experts in the form of FFNs, where image tokens are processed only by the image FFN and text tokens only by the text FFN. Compared to modality-aware routing, MoEs exhibit significantly better performance on both image-caption and interleaved data as presented in Figure 11.

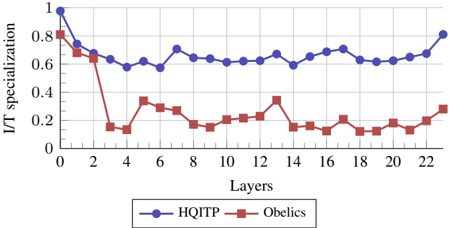

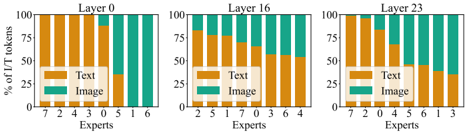

## 4.4. Emergence of expert specialization and sharing

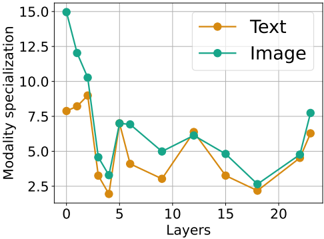

We investigate multimodal specialization in MoE architectures. In Figure 13, we visualize the normalized number of text and image tokens assigned to each expert across layers. To quantify this specialization, we compute a specialization score, defined as the average, across all experts within a layer, of 1 -H ( p ) , where H is the binary entropy of each expert's text/image token distribution. We plot this specialization score in Figure 12. Higher specialization scores indicate a tendency for experts to focus on either text or image tokens, while lower scores indicate a shared behavior. These visualizations provide clear evidence of modalityspecific experts, particularly in the early layers. Furthermore, the specialization score decreases as the number of layers increases, before rising again in the last layers. This suggests that early and final layers exhibit higher modality specialization compared to mid-layers. This behavior is intuitive, as middle layers are expected to hold higherlevel features that may generalize across modalities, and consistent with findings in [61] that shows increasing alignment between modalities across layers. The emergence of both expert specialization and cross-modality sharing in our modality-agnostic MoE, suggests it may be a preferable approach compared to modality-aware sparsity. All data displayed here is from an early-fusion MoE model with 1B active parameters trained for 300B tokens.

Table 4. Supervised finetuning on the LLaVA mixture. All models are native at 1.5B scale and pre-trained on 300B tokens.

| | Accuracy | Accuracy | Accuracy | Accuracy | Accuracy | Accuracy | CIDEr | CIDEr |

|--------------|------------|------------|------------|------------|------------|------------|---------|----------|

| | AVG | VQAv2 | TextVQA | OKVQA | GQA | VizWiz | COCO | TextCaps |

| Late-fusion | 46.8 | 69.4 | 25.8 | 50.1 | 65.8 | 22.8 | 70.7 | 50.9 |

| Early-fusion | 47.6 | 69.3 | 28.1 | 52.1 | 65.4 | 23.2 | 72.0 | 53.8 |

| Early-MoEs | 48.2 | 69.8 | 30.0 | 52.1 | 65.4 | 23.6 | 69.6 | 55.7 |

Figure 11. Modality-aware vs modality agnostic routing for sparse NMMs. We compare modality-agnostic routing with modality-aware routing when scaling both the amount of training tokens and model sizes.

<details>

<summary>Image 10 Details</summary>

### Visual Description

## Line Charts: Validation Loss vs. Tokens Seen

### Overview

The image presents two line charts comparing the validation loss of "Aware" and "Agnostic" models with varying parameter sizes (275M, 464M, 932M, and 1.63B) against the number of tokens seen during training. The left chart is labeled "Image-Caption," and the right chart is labeled "Interleaved." Both charts share the same x and y axes, allowing for a direct comparison of the models' performance under different training conditions.

### Components/Axes

* **Titles:** "Image-Caption" (left chart), "Interleaved" (right chart)

* **Y-axis Label:** "Validation Loss"

* Scale: 2.2 to 2.8 for "Image-Caption", 2.6 to 3.0 for "Interleaved"

* **X-axis Label:** "Tokens seen"

* Scale: 100B, 200B, 300B, 400B

* **Legend:** Located at the bottom of the image.

* Aware-275M (light green, dotted line, circle marker)

* Aware-464M (light green, dotted line, square marker)

* Aware-932M (light green, dotted line, no marker)

* Aware-1.63 (light green, dotted line, diamond marker)

* Agnostic-275M (dark green, solid line, circle marker)

* Agnostic-464M (dark green, solid line, square marker)

* Agnostic-932M (dark green, solid line, no marker)

* Agnostic-1.63B (dark green, solid line, diamond marker)

### Detailed Analysis

#### Image-Caption Chart

* **Aware-275M:** (light green, dotted line, circle marker) Starts at approximately 2.8, decreases to about 2.7 by 400B tokens.

* **Aware-464M:** (light green, dotted line, square marker) Starts at approximately 2.7, decreases to about 2.65 by 400B tokens.

* **Aware-932M:** (light green, dotted line, no marker) Starts at approximately 2.6, decreases to about 2.5 by 400B tokens.

* **Aware-1.63:** (light green, dotted line, diamond marker) Starts at approximately 2.45, decreases to about 2.4 by 400B tokens.

* **Agnostic-275M:** (dark green, solid line, circle marker) Starts at approximately 2.6, decreases to about 2.4 by 400B tokens.

* **Agnostic-464M:** (dark green, solid line, square marker) Starts at approximately 2.5, decreases to about 2.3 by 400B tokens.

* **Agnostic-932M:** (dark green, solid line, no marker) Starts at approximately 2.4, decreases to about 2.25 by 400B tokens.

* **Agnostic-1.63B:** (dark green, solid line, diamond marker) Starts at approximately 2.45, decreases to about 2.2 by 400B tokens.

#### Interleaved Chart

* **Aware-275M:** (light green, dotted line, circle marker) Starts at approximately 3.05, decreases to about 2.9 by 400B tokens.

* **Aware-464M:** (light green, dotted line, square marker) Starts at approximately 2.9, decreases to about 2.8 by 400B tokens.

* **Aware-932M:** (light green, dotted line, no marker) Starts at approximately 2.8, decreases to about 2.7 by 400B tokens.

* **Aware-1.63:** (light green, dotted line, diamond marker) Starts at approximately 2.7, decreases to about 2.6 by 400B tokens.

* **Agnostic-275M:** (dark green, solid line, circle marker) Starts at approximately 2.7, decreases to about 2.5 by 400B tokens.

* **Agnostic-464M:** (dark green, solid line, square marker) Starts at approximately 2.7, decreases to about 2.45 by 400B tokens.

* **Agnostic-932M:** (dark green, solid line, no marker) Starts at approximately 2.6, decreases to about 2.35 by 400B tokens.

* **Agnostic-1.63B:** (dark green, solid line, diamond marker) Starts at approximately 2.65, decreases to about 2.2 by 400B tokens.

### Key Observations

* In both charts, the "Agnostic" models generally exhibit lower validation loss compared to the "Aware" models for a given parameter size.

* Larger parameter sizes (1.63B) tend to result in lower validation loss compared to smaller parameter sizes (275M, 464M, 932M) for both "Aware" and "Agnostic" models.

* The "Interleaved" training method generally results in higher validation loss compared to the "Image-Caption" training method for both "Aware" and "Agnostic" models.

* The validation loss decreases as the number of tokens seen increases for all models and training methods.

### Interpretation

The data suggests that "Agnostic" models are more effective than "Aware" models in terms of validation loss, indicating better generalization performance. Increasing the parameter size of the models also improves performance, as expected. The "Image-Caption" training method appears to be more effective than the "Interleaved" method, possibly due to the nature of the training data or the learning dynamics induced by the different training approaches. The decreasing validation loss with more tokens seen indicates that the models are learning and improving their performance as they are exposed to more data. The "Agnostic-1.63B" model trained with the "Image-Caption" method achieves the lowest validation loss, suggesting it is the most effective configuration among those tested.

</details>

## 5. Evaluation on downstream tasks with SFT

Following previous work on scaling laws, we primarily rely on validation losses. However, we generally find that this evaluation correlates well with performance on downstream tasks. To validate this, we conduct a multimodal instruction tuning stage (SFT) on the LLaVA mixture [43] and report accuracy and CIDEr scores across several VQA and captioning tasks. Table 4 confirms the ranking of different model configurations. Specifically, early fusion outperforms late fusion, and MoEs outperform dense models. However, since the models are relatively small (1.5B scale), trained from scratch, and fine-tuned on a small dataset, the overall scores are lower than the current state of the art. Further implementation details can be found in Appendix A.

## 6. Related work

Large multimodal models. A long-standing research goal has been to develop models capable of perceiving the world through multiple modalities, akin to human sensory experience. Recent progress in vision and language processing has shifted the research focus from smaller, taskspecific models toward large, generalist models that can handle diverse inputs [29, 67]. Crucially, pre-trained vision and language backbones often require surprisingly little adaptation to enable effective cross-modal communication [32, 47, 62, 68, 69]. Simply integrating a vision encoder with either an encoder-decoder architecture [45, 48, 63, 72]

Figure 12. MoE specialization score. Entropy-based image/text specialization score (as described in § 4.4) across layers for two data sources: HQITP and Obelics. HQITP has a more imbalanced image-to-text token distribution, resulting in generally higher specialization. Despite this difference, both data sources exhibit a similar trend: the specialization score decreases in the early layers before increasing again in the final layers.

<details>

<summary>Image 11 Details</summary>

### Visual Description

## Line Chart: I/T Specialization vs. Layers

### Overview

The image is a line chart comparing the I/T specialization of two entities, "HQITP" and "Obelics," across different layers. The x-axis represents the layers, and the y-axis represents the I/T specialization, ranging from 0 to 1.

### Components/Axes

* **X-axis:** "Layers," with numerical markers at 0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, and 22.

* **Y-axis:** "I/T specialization," with numerical markers at 0, 0.2, 0.4, 0.6, 0.8, and 1.

* **Legend:** Located at the bottom of the chart.

* Blue line with circular markers: "HQITP"

* Red line with square markers: "Obelics"

### Detailed Analysis

* **HQITP (Blue line, circular markers):**

* Trend: Generally decreasing from layer 0 to layer 4, then relatively stable with minor fluctuations from layer 4 to layer 22.

* Data Points:

* Layer 0: approximately 0.98

* Layer 2: approximately 0.65

* Layer 4: approximately 0.59

* Layer 6: approximately 0.61

* Layer 8: approximately 0.64

* Layer 10: approximately 0.63

* Layer 12: approximately 0.59

* Layer 14: approximately 0.63

* Layer 16: approximately 0.69

* Layer 18: approximately 0.63

* Layer 20: approximately 0.62

* Layer 22: approximately 0.72

* **Obelics (Red line, square markers):**

* Trend: Decreasing sharply from layer 0 to layer 4, then relatively stable with minor fluctuations from layer 4 to layer 22.

* Data Points:

* Layer 0: approximately 0.83

* Layer 2: approximately 0.68

* Layer 4: approximately 0.13

* Layer 6: approximately 0.32

* Layer 8: approximately 0.20

* Layer 10: approximately 0.15

* Layer 12: approximately 0.34

* Layer 14: approximately 0.18

* Layer 16: approximately 0.13

* Layer 18: approximately 0.18

* Layer 20: approximately 0.14

* Layer 22: approximately 0.28

### Key Observations

* HQITP consistently maintains a higher I/T specialization compared to Obelics across all layers.

* Obelics experiences a significant drop in I/T specialization between layers 2 and 4.

* Both HQITP and Obelics show relatively stable I/T specialization after layer 4, with minor fluctuations.

### Interpretation

The chart suggests that HQITP is more specialized than Obelics across the layers examined. The sharp decline in Obelics' I/T specialization between layers 2 and 4 indicates a significant shift or change in its processing or function within those layers. The relatively stable I/T specialization after layer 4 for both entities suggests that their specialization levels reach a steady state after the initial layers. The data could represent the specialization of different neural network architectures or different processing units within a system.

</details>

or a decoder-only LLM has yielded highly capable multimodal systems [1, 6, 9, 13, 16, 35, 43, 49, 64, 73, 78, 83]. This late-fusion approach, where modalities are processed separately before being combined, is now well-understood, with established best practices for training effective models [34, 41, 46, 81]. In contrast, early-fusion models [8, 18, 66], which combine modalities at an earlier stage, remain relatively unexplored, with only a limited number of publicly released models [8, 18]. Unlike [18, 66], our models utilize only a single linear layer and rely exclusively on a nexttoken prediction loss. Furthermore, we train our models from scratch on all modalities without image tokenization.

Native Multimodal Models. We define native multimodal models as those trained from scratch on all modalities simultaneously [67] rather than adapting LLMs to accommodate additional modalities. Due to the high cost of training such models, they remain relatively underexplored, with most relying on late-fusion architectures [27, 79]. Some multimodal models trained from scratch [4, 66, 76] relax this constraint by utilizing pre-trained image tokenizers such as [20, 70] to convert images into discrete tokens, integrating them into the text vocabulary. This approach enables models to understand and generate text and images, facilitating a more seamless multimodal learning process.