# Knowledge Graph-extended Retrieval Augmented Generation for Question Answering

**Authors**: \fnmJasper\surLinders

> jasper.linders@gmail.com

> jmk.tomczak@gmail.com111Currently working at Chan Zuckerberg Initiative:jtomczak@chanzuckerberg.com[

[1] \fnm Jakub M. \sur Tomczak

1] \orgdiv Department of Mathematics and Computer Science, \orgname Eindhoven University of Technology, \orgaddress \street De Zaale, \city Eindhoven, \postcode 5600 MB, \country the Netherlands

Abstract

Large Language Models (LLMs) and Knowledge Graphs (KGs) offer a promising approach to robust and explainable Question Answering (QA). While LLMs excel at natural language understanding, they suffer from knowledge gaps and hallucinations. KGs provide structured knowledge but lack natural language interaction. Ideally, an AI system should be both robust to missing facts as well as easy to communicate with. This paper proposes such a system that integrates LLMs and KGs without requiring training, ensuring adaptability across different KGs with minimal human effort. The resulting approach can be classified as a specific form of a Retrieval Augmented Generation (RAG) with a KG, thus, it is dubbed Knowledge Graph-extended Retrieval Augmented Generation (KG-RAG). It includes a question decomposition module to enhance multi-hop information retrieval and answer explainability. Using In-Context Learning (ICL) and Chain-of-Thought (CoT) prompting, it generates explicit reasoning chains processed separately to improve truthfulness. Experiments on the MetaQA benchmark show increased accuracy for multi-hop questions, though with a slight trade-off in single-hop performance compared to LLM with KG baselines. These findings demonstrate KG-RAG’s potential to improve transparency in QA by bridging unstructured language understanding with structured knowledge retrieval.

keywords: Knowledge Graphs, Large Language Models, Retrieval-Augmented Generation, Question Answering

1 Introduction

As our world becomes increasingly digital and information is more widely available than ever before, technologies that enable information retrieval and processing have become indispensable in both our personal and professional lives. The advent of Large Language Models (LLMs) has had a great impact, by changing the way many internet users interact with information, through models like ChatGPT https://chatgpt.com/. This has arguably played a large role in sparking an immense interest in solutions that build on artificial intelligence.

The rapid adoption of LLMs has transformed the fields of natural language processing (NLP) and information retrieval (IR). Understanding of natural language, with its long range dependencies and contextual meanings, as well as human-like text generation capabilities, allows these models to be applied to a wide variety of tasks. Additionally, LLMs have proven to be few-shot learners, meaning that they have the ability to perform unseen tasks with only a couple of examples [1]. Unfortunately, the benefits of LLMs come at the cost of characteristic downsides, which are important to consider.

LLMs can hallucinate [2], generating untruthful or incoherent outputs. They also miss knowledge not present during training, leading to knowledge cutoff, and cannot guarantee that certain training data is remembered [3]. Because of their massive size and data requirements, LLMs are expensive to train, deploy, and maintain [4]. Thus, smaller models or those needing only fine-tuning can be more practical for many use cases.

By contrast, Knowledge Graphs (KGs) store information explicitly as entities and relationships, allowing symbolic reasoning and accurate answers [5]. Even if a direct link between entities is missing, inferences can be drawn from their shared associations. KGs may also recall underrepresented knowledge better than LLMs [3]. However, they are costly to build, specialized to a domain, and typically require querying languages rather than natural language [6]. They also do not easily generalize to other domains [5].

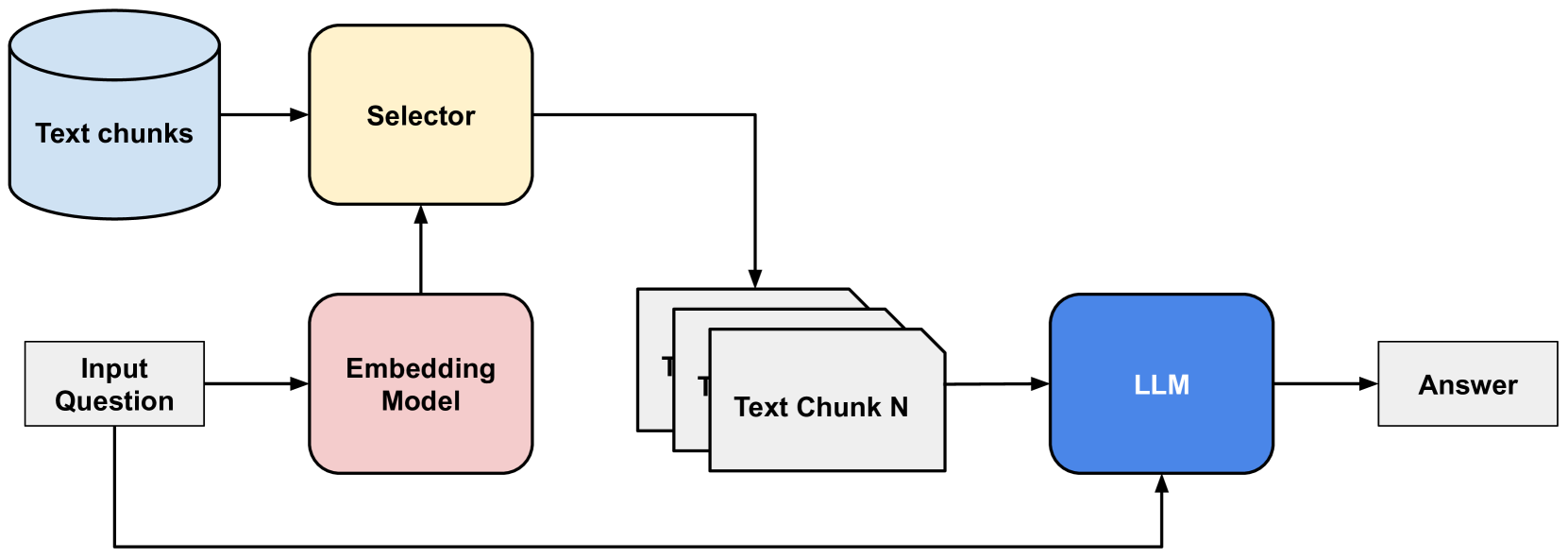

Retrieval-Augmented Generation (RAG) [7] addresses LLMs’ lack of external knowledge by augmenting them with a text document database. Text documents are split into chunks, embedded, and stored in a vector database; the most similar chunks to an input query are retrieved and added to a prompt so the LLM can generate an answer based on this external information [8] (see Figure 1). However, relying on unstructured text can miss comprehensive entity data and even introduce distracting misinformation [8].

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Retrieval Augmented Generation (RAG) Pipeline

### Overview

The image depicts a diagram illustrating a Retrieval Augmented Generation (RAG) pipeline. This pipeline combines information retrieval with a Large Language Model (LLM) to generate answers to input questions. The diagram shows the flow of data from an input question, through embedding, selection of relevant text chunks, and finally to the LLM for answer generation.

### Components/Axes

The diagram consists of the following components:

* **Input Question:** A rectangular box labeled "Input Question".

* **Embedding Model:** A rectangular box labeled "Embedding Model".

* **Selector:** A rectangular box labeled "Selector".

* **Text Chunks:** A cylindrical shape labeled "Text chunks" and a stack of rectangular boxes labeled "Text Chunk 1" through "Text Chunk N".

* **LLM:** A rectangular box labeled "LLM".

* **Answer:** A rectangular box labeled "Answer".

Arrows indicate the flow of data between these components.

### Detailed Analysis or Content Details

The data flow is as follows:

1. An "Input Question" is fed into an "Embedding Model".

2. The "Embedding Model" processes the question and sends its output to the "Selector".

3. The "Selector" also receives input from "Text chunks".

4. The "Selector" identifies and retrieves relevant "Text Chunk(s)" (from "Text Chunk 1" to "Text Chunk N") based on the embedded question.

5. The selected "Text Chunk(s)" and the original "Input Question" are fed into the "LLM".

6. The "LLM" processes this combined information and generates an "Answer".

There are no numerical values or scales present in the diagram. The diagram is purely conceptual, illustrating the process flow.

### Key Observations

The diagram highlights the key stages of a RAG pipeline: embedding, retrieval, and generation. The "Selector" component is central to the process, acting as the bridge between the knowledge base ("Text chunks") and the LLM. The diagram emphasizes that the LLM doesn't operate solely on its pre-trained knowledge but is augmented with retrieved information.

### Interpretation

This diagram illustrates a common architecture for building question-answering systems using LLMs. The RAG approach addresses the limitations of LLMs by providing them with access to external knowledge sources. This allows the LLM to generate more accurate and contextually relevant answers. The "Embedding Model" transforms the question and text chunks into vector representations, enabling semantic similarity search by the "Selector". The "Selector" then identifies the most relevant text chunks to provide to the LLM. This process is crucial for mitigating the problem of LLMs generating incorrect or outdated information (hallucinations). The diagram suggests a modular design, where each component can be independently developed and optimized. The "N" in "Text Chunk N" indicates that the system can handle a variable number of text chunks, suggesting scalability.

</details>

Figure 1: An example of a Retrieval-Augmented Generation (RAG) system, which combines information retrieval and text generation techniques. The red block indicates processing by a text embedding model, whereas the blue block depicts processing by an LLM. The yellow block shows a selector of nearest text chunks in the database.

To overcome these limitations, RAGs can utilize KGs. The resulting system integrates structured data from Knowledge Graphs in a RAG, enabling precise retrieval and complex reasoning. For example, KAPING [9] performs Knowledge Graph Question Answering (KGQA) without requiring any training. When training is needed for KG-enhanced LLMs, issues arise such as limited training data, domain specificity, and the need for frequent retraining as KGs evolve [10, 11]. In short, while RAG enhances LLMs by providing explainable, natural language outputs, incorporating structured Knowledge Graphs may offer improved reasoning and domain adaptability.

In this paper, we propose the Knowledge Graph-extended Retrieval Augmented Generation (KG-RAG) system, which combines the reliability of Retrieval Augmented Generation (RAG) with the high precision of Knowledge Graphs (KGs) and operates without any training or fine-tuning. We focus on the task of Knowledge Graph Question Answering; although this focus is narrow, our findings may have broader implications. For instance, certain insights could be applied to the development of other systems that utilize KG-based information retrieval, such as chatbots. The primary objective of this work is to investigate how LLMs can be enhanced through the integration of KGs. Since the term ”enhance” can encompass various improvements, we define it as follows. First, we aim to enable LLMs to be more readily applied across different domains requiring specialized or proprietary knowledge. Second, we seek to improve answer explainability, thereby assisting end users in validating LLM outputs. Eventually, we aim to answer the following research questions:

1. How can Large Language Models be enhanced with Knowledge Graphs without requiring any training?

1. How can answer explainability be improved with the use of Knowledge Graph-extended Retrieval Augmented Generation systems?

2 Related Work

Knowledge Graphs

Knowledge Graphs (KGs) are structured databases that model real-world entities and their relationships as graphs, which makes them highly amenable to machine processing. They enable efficient querying to retrieve all entities related to a given entity, a task that would be significantly more challenging with unstructured text databases. Complex queries are executed using specialized languages such as SPARQL [12]. As noted in recent research, ”the success of KGs can largely be attributed to their ability to provide factual information about entities with high accuracy” [3]. Typically, the information in KGs is stored as triples, i.e. $(subject,relation,object)$ .

Large Language Models

Large Language Models (LLMs) learn natural language patterns from extensive text data, enabling various NLP tasks such as text generation and sentiment classification. Their emergence was enabled by the Transformer architecture, introduced in Attention Is All You Need [13], which efficiently models sequential data via attention mechanisms. Scaling these models—by increasing compute, dataset size, and parameter count—yields performance improvements following a power law [14], with LLMs typically comprising hundreds of millions to hundreds of billions of parameters.

LLMs generate text in an autoregressive manner. Given a sequence $x_{1:t}$ , the model produces a probability distribution $p(x_{t+1}|x_{1:t})=\mathrm{softmax}(z/T)$ over its vocabulary, where $z$ are the raw logits and $T$ is a temperature parameter that controls randomness. Instead of selecting tokens via simple $\mathrm{argmax}$ , more sophisticated sampling methods are employed (see Section 3.5) to generate coherent and diverse output consistent with the input context [15].

In-Context Learning & Chain-of-Thought

In-Context Learning (ICL) improves LLM performance by providing few-shot examples instead of zero-shot queries. This method boosts task performance through prompt engineering without altering model parameters [16]. It is often combined with Chain-of-Thought (CoT) that can significantly enhance performance without modifying the model’s parameters or incurring the high cost of fine-tuning [17]. A CoT prompt instructs the model to generate intermediate reasoning steps that culminate in the final answer, rather than directly mapping a query to an answer [17]. This approach naturally decomposes complex queries into simpler steps, yielding more interpretable results.

Knowledge Graph Question Answering

Knowledge Graph Question Answering (KGQA) is the task of answering questions using a specific knowledge graph (KG). Benchmarks such as Mintaka [18], WebQuestionsSP [19], and MetaQA [20] provide datasets where each row includes a question, its associated entity/entities, and the answer entity/entities, along with the corresponding KG (provided as a file of triples or accessible via an API). In these benchmarks, the question entity is pre-identified (avoiding the need for entity matching or linking), and performance is evaluated using the binary Hit@1 metric.

KGQA systems are typically classified into three categories [9]:

- Neural Semantic Parsing-Based Methods: These map a question to a KG query (e.g., in SPARQL), reducing the search space between question and answer entities. Although effective [19], they require labor-intensive semantic parse labels.

- Differentiable KG-Based Methods: These employ differentiable representations of the KG (using sparse matrices for subjects, objects, and relations) to perform query execution in the embedding space. They enable end-to-end training on question-answer pairs [21, 22], but necessitate ample training data and may not generalize across different KGs.

- Information Retrieval-Based Methods: These combine KGs with LLMs by retrieving relevant facts—which are then injected into the prompt—to generate answers [9]. Although they leverage off-the-shelf components, they often require fine-tuning on KG-specific datasets [11].

Knowledge Graph-extended Retrieval Augmented Generation

Information retrieval-based KGQA (IR-KGQA) systems differ from neural semantic parsing and differentiable KG methods by delegating part of the reasoning over triples to the LLM. The process is split into retrieving candidate triples and then having the LLM reason over them to formulate an answer, whereas the other methods map directly from the question to the answer entities [21, 23].

KG-RAG is defined as an IR-KGQA system that employs a similarity-based retrieval mechanism using off-the-shelf text embedding models, akin to the original RAG system [7]. In KG-RAG (exemplified by the KAPING system [9]), candidate triples are retrieved up to $N$ hops from the question entity/entities, verbalized, and embedded alongside the question. Their similarity is computed via dot or cosine product, and the Top- $K$ similar triples are passed to an answer generation LLM, which then outputs the answer.

3 Methodology

3.1 Problem Statement

Let $G$ be a knowledge graph, defined as a set of triples of the form $(s,r,o)$ where:

- Each triple $(s,r,o)∈ G⊂eq\mathcal{E}×\mathcal{R}×\mathcal{E}$ represents a fact;

- $s,o∈\mathcal{E}$ are entities from the set of all entities $\mathcal{E}$ ;

- $r∈\mathcal{R}$ is a relation from the set of all relations $\mathcal{R}$ .

We assume that the following objects are given:

- A question $q$ that can be answered using facts from $G$

- The question entity/entities part of that question $e_{q}∈\mathcal{E}$

Moreover, let us introduce the following variables:

- $a$ denotes a natural language answer that can be derived from the facts in $G$ ;

- $c$ is a reasoning chain in natural language, explaining the logical steps from $q$ and $e_{q}$ to $a$

Our objective is to develop a function $f$ that maps given object to both an answer and the reasoning chain, namely:

$$

f:q\times e_{q}\times G\rightarrow(a,c)

$$

where:

- $a$ is a natural language answer that can be derived from the facts in $G$

- $c$ is a reasoning chain in natural language, explaining the logical steps from $q$ and $e_{q}$ to $a$

Additionally, we aim for the following:

- Answer Accuracy: The function $f$ should have high answer accuracy, as evaluated by the Hit@1 metric.

- Answer Explainability: For each answer $a$ generated by the function $f$ , the reasoning chain $c$ must provide a clear logical explanation of how the answer was derived, so that it is more easily verifiable by the user.

- Application Generalizability: The function $f$ must operate without training or finetuning on specific Knowledge Graphs, using only In-Context Learning examples. The Knowledge Graphs must include sufficient amounts of natural language information, as the system relies on natural language-based methods.

The degree to which the function $f$ achieves the objectives is evaluated using both quantitative and qualitative methods, based on experiments with a KGQA benchmark, namely:

- Quantitative evaluation of answer accuracy, based on the Hit@1 metric.

- Qualitative analysis of reasoning chain clarity and logical soundness, as judged by a human evaluator on a sample of results.

3.2 State-of-the-Art

Recent advances in question answering have seen the development of several state-of-the-art methods that leverage a diverse array of Large Language Models alongside innovative baseline strategies. For instance, one method employs multiple scales of models such as T5, T0, OPT, and GPT-3, while experimenting with baselines ranging from no knowledge to generated knowledge on datasets like WebQSP [19] and Mintaka [18]. Another approach expands this exploration by integrating Llama-2, Flan-T5, and ChatGPT, and introducing baselines that utilize triple-form knowledge and alternative KG-to-Text techniques, evaluated on datasets that include WebQSP, MetaQA [20], and even a Chinese benchmark, ZJQA [11]. Additionally, methods centered on ChatGPT are further compared with systems like StructGPT and KB-BINDER across varying complexities of MetaQA and WebQSP. The overview of the SOTA methods is presented in Table 1.

Table 1: Comparison of the question answering LLMs, baselines and benchmark datasets that were used for the different models. The full set of QA LMs is as follows: T0 [24], T5 [25], Flan-T5 [26], OPT [27], GPT-3 [1], ChatGPT, AlexaTM [28], and Llama-2 [29]. The full set of datasets is as follows: WebQuestions [30], WebQSP [19], ComplexWebQuestions [31], MetaQA [20], Mintaka [18], LC-QuAD [32], and ZJQA [11].

| KAPING [9] Retrieve-Rewrite-Answer [11] Keqing [10] | T5 (0.8B, 3B, 11B) T0 (3B, 11B) OPT (2.7B, 6.7B) GPT-3 (6.7B, 175B) Llama-2 (7B, 13B) T5 (0.8B, 3B, 11B) Flan-T5 (80M, 3B, 11B) T0 (3B, 11B) ChatGPT ChatGPT | No knowledge Random knowledge Popular knowledge Generated knowledge No knowledge Triple-form knowledge 2x Alternative KG-to-Text 2x Rival model ChatGPT StructGPT KB-BINDER | WebQSP (w/ 2 KGs) Mintaka WebQSP WebQ MetaQA ZJQA (Chinese) WebQSP MetaQA-1hop MetaQA-2hop MetaQA-3hop |

| --- | --- | --- | --- |

3.2.1 KAPING

KAPING [9] is one of the best IR-KGQA models that requires no training. For example, due to the large number of candidate triples–27 $\%$ of entities in WebQSP [19] have more than 1000 triples–a text embedding-based selection mechanism is employed, typically using cosine similarity [33], instead of appending all triples directly to the prompt. KAPING outperforms many baselines presented in Table 1 in terms of Hit@1, especially those with smaller LLMs, suggesting that external knowledge compensates for the limited parameter space. Notably, using 2-hop triples degrades performance, so only 1-hop triples are selected; when retrieval fails to fetch relevant triples, performance drops below a no-knowledge baseline. An additional finding is that triple-form text outperforms free-form text for retrieval, as converting triples to free-form via a KG-to-Text model often leads to semantic incoherence, and using free-form text in prompts does not improve answer generation.

3.2.2 Retrieve-Rewrite-Answer

Motivated by KAPING’s limitations, the Retrieve-Rewrite-Answer (RRA) architecture was developed for KGQA [11]. Unlike KAPING, which overlooked the impact of triple formatting, RRA introduces a novel triple verbalization module, among other changes. Specifically, question entities are extracted from annotated datasets (with entity matching deferred). The retrieval process consists of three steps: (i) a hop number is predicted via a classification task on the question embedding; (ii) relation paths–sequences of KG relationships–are predicted by sampling and selecting the top- $K$ candidates based on total probability; (iii) selected relation paths are transformed into free-form text using a fine-tuned LLM. This verbalized output, together with the question, is fed to a QA LLM via a prompt template.

For training, the hop number and relation path classifiers, as well as the KG-to-Text LLM, are tuned on each benchmark. Due to the lack of relation path labels and subgraph-text pairs in most benchmarks, the authors employ various data construction techniques, limiting the model’s generalizability across domains and KGs.

As detailed in Table 1, evaluations were carried out using QA LLM, baselines (no knowledge, triple-form knowledge and two standard KG-to-Text models), and benchmark datasets, compared with models from [9] and [22] on WebQ [30] and WebQSP [19] using the Hit@1 metric. The main results show that RRA significantly outperforms rival models, achieving an improvement of 1–8% over triple-form text and 1–5% over the best standard KG-to-Text model. Moreover, RRA is about 100 $×$ more likely to produce a correct answer when the no-knowledge baseline fails, confirming the added value of IR-based KGQA models over vanilla LLMs.

3.2.3 Keqing

Keqing, proposed in [10], is the third SOTA model that is positioned as an alternative to SQL-based retrieval systems. Its key innovation is a question decomposition module that uses a fine-tuned LLM to break a question into sub-questions. These subquestions are matched to predefined templates via cosine similarity, with each template linked to specific KG relation paths. Candidate triples are retrieved based on these relation paths, and sub-questions are answered sequentially–the answer to one sub-question seeds the next. The triples obtained are verbalized and processed through a prompt template by a Quality Assurance LLM, ultimately generating a final answer that reflects the model’s reasoning chain.

In this approach, only the question decomposition LLM is trained using LoRA [34], which adds only a small fraction of trainable weights. However, the construction of sub-question templates and the acquisition of relation path labels are not clearly detailed, which may limit the system’s scalability.

According to Table 1, Keqing outperforms vanilla ChatGPT and two rival models, achieving Hit@1 scores of 98.4% to 99.9% on the MetaQA benchmark and superior performance on the WebQSP benchmark. Its ability to clearly explain its reasoning through sub-question chains further underscores its contribution to answer explainability.

3.2.4 Research Gap

After KAPING was introduced as the first KG-Augmented LLM for KGQA, RRA [11] and Keqing [10] followed, each employing different triple retrieval methods. Although all three use an LLM for question answering, KAPING relies on an untrained similarity-based retriever, while RRA and Keqing develop trainable retrieval modules, improving performance at the cost of significant engineering. Specifically, RRA trains separate modules (hop number classifier, relation path classifier, and KG-to-Text LLM) for each benchmark, requiring two custom training datasets (one for questions with relation path labels and one for triples with free-form text labels). The need for KG-specific techniques limits generalizability and raises concerns about the extra labor required when no Q&A dataset is available. Keqing fine-tunes an LLM for question decomposition to enhance answer interpretability and triple retrieval. This approach also demands a training dataset with sub-question templates and relation path labels, though the methods for constructing these remain unclear. Consequently, it is debatable whether the performance gains justify the additional engineering effort.

In summary, these shortcomings reveal a gap for models that are both as generalizable as KAPING and as explainable as Keqing. KAPING’s training-free design allows minimal human intervention across diverse KGs and domains, even in the absence of benchmark datasets. For this reason, we propose an improvement to the KAPING model by introducing a question decomposition module.

3.3 Our Approach

KAPING, a SOTA method combinining KGs and LLMs, outperforms many zero-shot baselines. However, its retrieval process, a vital process for accurate answer generation, can benefit from reducing irrelevant triple inclusion [9]. Therefore, we build on top of the KAPING model and propose to enhance it by integrating a question decomposition module to improve triple retrieval, answer accuracy, and explainability while maintaining application generalizability.

The proposed question decomposition module decomposes complex, multi-hop questions into simpler sub-questions. This allows the similarity-based retriever to focus on smaller, manageable pieces of information, thereby improving retrieval precision and yielding a more interpretable reasoning chain. Unlike conventional Chain-of-Thought prompting, which may induce hallucinated reasoning [35], decomposing the question forces the LLM to independently resolve each sub-question, ensuring fidelity to the stated reasoning. Our question decomposition module uses manually curated in-context learning examples for the KGQA benchmark, obviating the need for additional training and minimizing human labor. As a result, our approach aligns well with the goals of enhanced generalizability and answer explainability while potentially outperforming KAPING for multi-hop questions. The following section details the overall system architecture and the roles of its individual components.

3.4 System Architecture

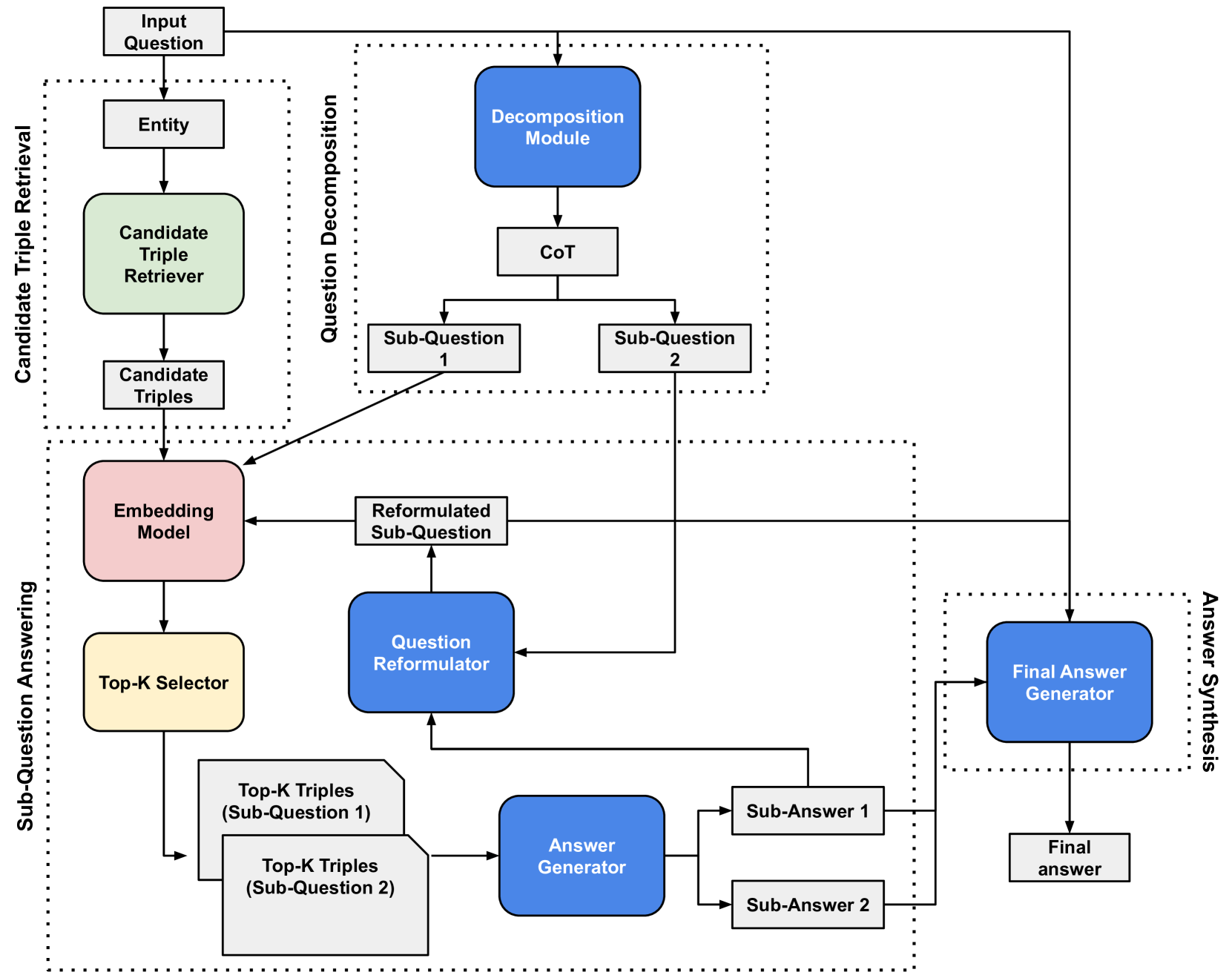

Our system comprises multiple components, each executing a specific role in answering KG-based questions. The overall process involves four primary steps, with the first two being non-sequential:

1. Question Decomposition: The decomposition module splits the question into sub-questions. For simple queries, it avoids unnecessary decomposition.

1. Candidate Triple Retrieval: Given the question entity, the system retrieves all triples up to $N$ hops from the KG. Each triple is verbalized into text for subsequent selection via a sentence embedding model.

1. Sub-Question Answering: This sequential step answers each sub-question using the candidate triples. The process involves embedding the candidate triples to form a vector database, selecting the Top- $K$ similar triples for the sub-question, and reformulating subsequent sub-questions based on prior sub-answers.

1. Answer Synthesis: Finally, the system synthesizes the final answer from the sub-questions and their corresponding answers. The output also includes the chain-of-thought from the decomposition stage, enhancing interpretability.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Question Decomposition and Answering System

### Overview

The image depicts a diagram of a question decomposition and answering system. The system is broken down into four main modules: Candidate Triple Retrieval, Question Decomposition, Sub-Question Answering, and Answer Synthesis. The diagram illustrates the flow of information between these modules, starting with an "Input Question" and ending with a "Final answer". The diagram uses boxes to represent modules and arrows to represent the flow of data.

### Components/Axes

The diagram is divided into four main sections, visually demarcated by dotted-line rectangles and labeled as follows:

1. **Candidate Triple Retrieval:** Located in the top-left corner.

2. **Question Decomposition:** Located in the top-right corner.

3. **Sub-Question Answering:** Located in the bottom-left corner.

4. **Answer Synthesis:** Located in the bottom-right corner.

Key components within these modules include:

* **Input Question:** The starting point of the process.

* **Entity:** A component within Candidate Triple Retrieval.

* **Candidate Triple Retriever:** A component within Candidate Triple Retrieval.

* **Candidate Triples:** The output of the Candidate Triple Retriever.

* **Decomposition Module:** A component within Question Decomposition.

* **CoT:** Chain of Thought, a component within Question Decomposition.

* **Sub-Question 1 & 2:** Outputs of the Decomposition Module.

* **Embedding Model:** A component within Sub-Question Answering.

* **Reformulated Sub-Question:** Output of the Embedding Model.

* **Question Reformulator:** A component within Sub-Question Answering.

* **Top-K Selector:** A component within Sub-Question Answering.

* **Top-K Triples (Sub-Question 1 & 2):** Outputs of the Top-K Selector.

* **Answer Generator:** A component within Sub-Question Answering.

* **Sub-Answer 1 & 2:** Outputs of the Answer Generator.

* **Final Answer Generator:** A component within Answer Synthesis.

* **Final answer:** The final output of the system.

Arrows indicate the flow of information between these components. Dotted arrows represent indirect or feedback loops.

### Detailed Analysis or Content Details

The system operates as follows:

1. An **Input Question** is received.

2. The **Candidate Triple Retriever** extracts **Entity** information and generates **Candidate Triples**.

3. The **Decomposition Module** breaks down the input question using **CoT** (Chain of Thought) into **Sub-Question 1** and **Sub-Question 2**.

4. **Sub-Question 1** and **Sub-Question 2** are passed through an **Embedding Model** and then a **Question Reformulator** to create **Reformulated Sub-Question**s.

5. The **Top-K Selector** retrieves the **Top-K Triples** for each sub-question.

6. The **Answer Generator** uses these triples to generate **Sub-Answer 1** and **Sub-Answer 2**.

7. Finally, the **Final Answer Generator** synthesizes these sub-answers into a **Final answer**.

There are feedback loops:

* From the Decomposition Module back to the Candidate Triple Retrieval.

* From the Sub-Question Answering module back to the Answer Synthesis module.

### Key Observations

The diagram highlights a modular approach to question answering, emphasizing the decomposition of complex questions into simpler sub-questions. The use of "Top-K" suggests a ranking or selection process based on relevance. The inclusion of "CoT" indicates the use of Chain of Thought prompting or reasoning. The system appears to leverage knowledge triples for answering.

### Interpretation

This diagram illustrates a sophisticated question answering system that employs a decomposition strategy to tackle complex queries. The system's architecture suggests a focus on knowledge retrieval and reasoning. The decomposition into sub-questions allows for a more targeted search for relevant information. The use of an embedding model and question reformulation likely aims to improve the accuracy and relevance of the retrieved knowledge. The feedback loops suggest a refinement process, where the system iteratively improves its understanding of the question and its answers. The overall design suggests a system intended to handle questions requiring multi-hop reasoning or access to a knowledge base. The diagram does not provide any quantitative data or performance metrics, but it clearly outlines the system's functional components and their interactions.

</details>

Figure 2: The architecture of the proposed system. An example of a 2-hop question is included, to give an idea of the data structures that are involved in the end-to-end process. The green color indicates processing with the KG; the red block shows the embedding model and the blue modules utilize an LLM.

Figure 2 illustrates the system architecture, highlighting the data structures and interactions between components. The diagram shows how the question reformulation module, which processes all previous sub-answers, enables the sequential resolution of sub-questions until the final answer is generated by the answer synthesis module.

Different components utilize distinct data sources and models. The candidate triple retriever directly accesses the KG, while the similarity-based triple selection leverages an off-the-shelf sentence embedding model trained on question-answer pairs. The remaining modules—the decomposition module, sub-answer generator, question reformulator, and final answer generator—are implemented using a LLM.

3.5 System Components

3.5.1 Question Decomposition

Overview

The question decomposition module splits a complex question into simpler sub-questions while generating an explicit reasoning chain, thereby enhancing both triple retrieval and answer explainability (Section 3.3). Inspired by Chain-of-Thought and In-Context Learning techniques [35], the module uses manually constructed ICL examples from the benchmark (Section 4.1). The prompt is designed to first elicit the reasoning chain (CoT) followed by the sub-questions, aligning with the natural text-based reasoning of LLMs.

Inputs and Outputs

As illustrated in Figure 2, the module takes a natural language question as input and outputs a string containing the reasoning chain and sub-questions. This output is post-processed to extract the CoT and store the sub-questions in a list.

Techniques

The decomposition prompt instructs the LLM to decide if a question requires decomposition. If so, it generates a CoT followed by sub-questions, strictly adhering to a specified format and avoiding irrelevant content. In-context examples–covering three question types from the MetaQA benchmark–guide the LLM, with the stop token “ $<$ END $>$ ” marking completion.

Implementation Details

Here, we use a 4-bit quantized version of Mistral-7B-Instruct-v0.2 [29, 36], originally a 7.24B-parameter model that outperforms Llama 2 and Llama 1 in reasoning, mathematics, and code generation. The quantized model, sized at 4.37 GB https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF, is compatible with consumer-grade hardware (e.g., NVIDIA RTX 3060 12GB https://www.msi.com/Graphics-Card/GeForce-RTX-3060-VENTUS-2X-12G-OC). Fast inference is achieved using the llama.cpp package https://github.com/ggerganov/llama.cpp, and prompts are designed with LM Studio https://lmstudio.ai/.

Inference parameters (see Table 2) include a max tokens limit (256) to prevent runaway generation, a temperature of 0.3 to reduce randomness, and top-k (40) and min-p (0.05) settings to ensure controlled token sampling [37].

Table 2: The inference parameters that were used for the question decomposition LLM.

| Max Tokens Temperature Min-p | 256 0.3 0.05 |

| --- | --- |

| Top-k | 40 |

3.5.2 Candidate Triple Retrieval

Overview

Candidate triple retrieval collects all triples up to $N$ hops from a given question entity in the KG, converting each triple into a text string of the form $(subject,relation,object)$ . Although the worst-case complexity is exponential in the number of hops—approximately $\Theta(d^{N})$ for an undirected KG with average degree $d$ —real-world KGs are sparse, making the average or median complexity more relevant (Section 4.1). The value of $N$ is treated as a hyperparameter.

Inputs and Outputs

This component accepts the question entity/entities as a natural language string and retrieves candidate triples from the KG. The output is a list of lists, where each sub-list corresponds to the candidate triples for each hop up to $N$ . Each triple is stored as a formatted text string, with underscores replaced by spaces (e.g., ”acted_in” becomes ”acted in”).

Techniques

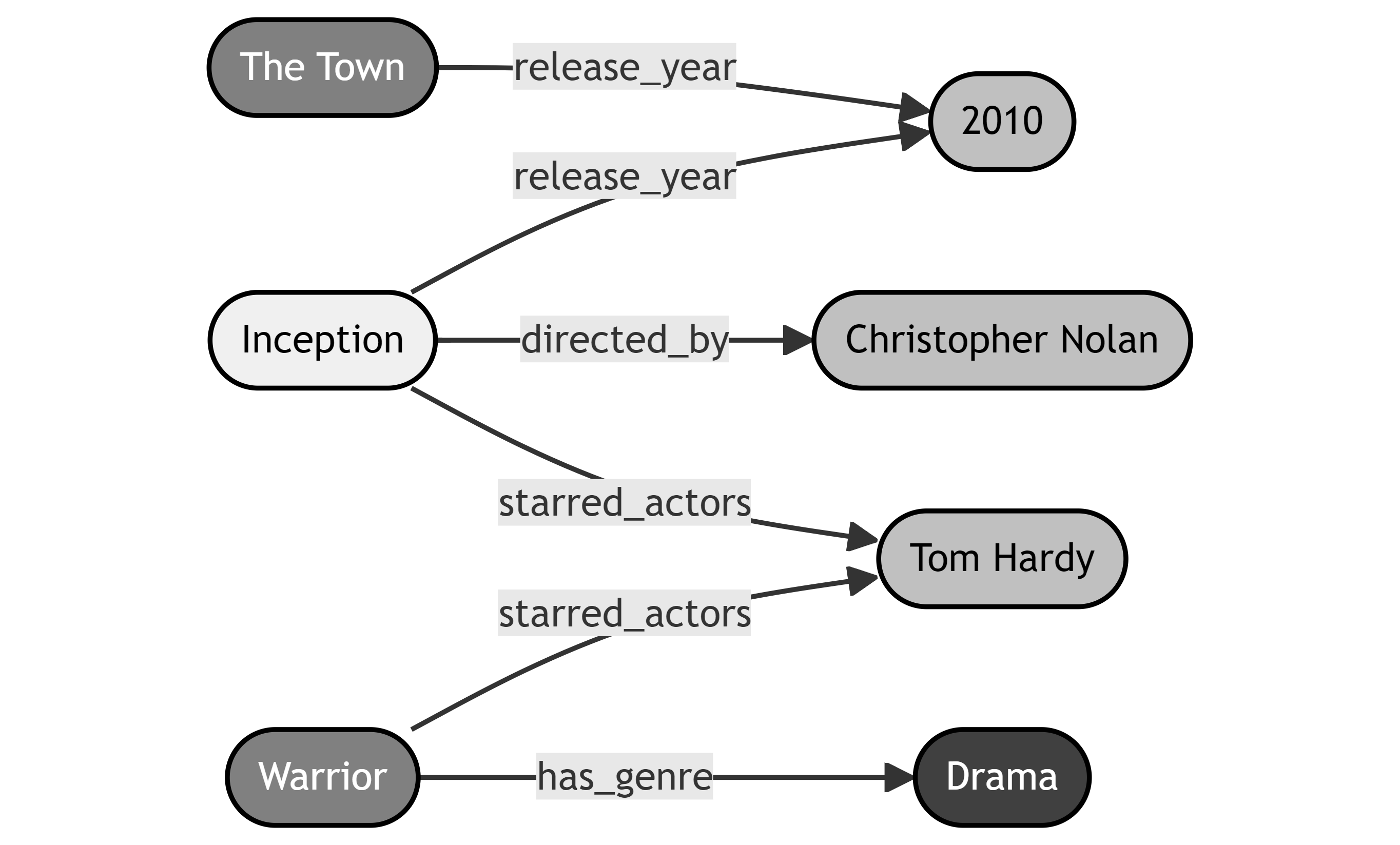

Candidate triple retrieval employs a breadth-first search strategy. In the MetaQA benchmark, which uses a directed KG, retrieval can be unidirectional (considering only outgoing edges) or bidirectional (including both outgoing and incoming edges). For example, as illustrated in Figure 3, unidirectional retrieval from the Inception entity would only yield entities like 2010, Christopher Nolan, and Tom Hardy, whereas bidirectional retrieval expands the search across successive hops. This example underscores the impact of retrieval direction on both the candidate set and computational load.

<details>

<summary>extracted/6354852/Figs/KG_Example.png Details</summary>

### Visual Description

\n

## Diagram: Movie Attribute Graph

### Overview

The image presents a directed graph illustrating relationships between movies and their attributes. Nodes represent movies or attributes, and directed edges indicate the type of relationship between them. The graph appears to be a simplified knowledge representation of movie data.

### Components/Axes

The diagram consists of nodes (rounded rectangles) and directed edges (lines with arrowheads). The nodes contain text labels representing movie titles, attribute names, or attribute values. The edges are labeled with the relationship type.

### Detailed Analysis or Content Details

The diagram shows the following relationships:

1. **The Town** – *release_year* → **2010**

* The movie "The Town" has a release year of 2010.

2. **The Town** – *release_year* → **2010**

* The movie "The Town" has a release year of 2010. (Duplicate entry)

3. **Inception** – *directed_by* → **Christopher Nolan**

* The movie "Inception" was directed by Christopher Nolan.

4. **Inception** – *starred_actors* → **Tom Hardy**

* The movie "Inception" starred Tom Hardy.

5. **Inception** – *starred_actors* → **Tom Hardy**

* The movie "Inception" starred Tom Hardy. (Duplicate entry)

6. **Warrior** – *has_genre* → **Drama**

* The movie "Warrior" has the genre "Drama".

The nodes are positioned vertically, with the relationships flowing generally from left to right. The nodes are white with black text, and the edges are black.

### Key Observations

* There are duplicate relationships for "The Town" release year and "Inception" starred actors. This could indicate redundancy in the data or a deliberate representation of multiple instances.

* The graph is relatively small, representing only a limited subset of movie data.

* The relationships are simple and direct, focusing on single attributes or values.

### Interpretation

The diagram demonstrates a basic knowledge graph structure for representing movie information. It shows how movies can be linked to their attributes (director, actors, genre, release year) through specific relationship types. The duplication of relationships suggests that the data might be representing multiple instances of the same attribute, or it could be an artifact of the data representation. This type of graph could be used for querying movie data, recommending movies based on attributes, or building a more comprehensive knowledge base of movies and their characteristics. The simplicity of the graph suggests it's a conceptual model rather than a detailed database representation.

</details>

Figure 3: A simple subgraph of triples from MetaQA [20]. As indicated by the arrows, this KG is a directed graph, which has implications for candidate triple retrieval. If Inception were the entity we were retrieving for, each darker tint of gray shows the entities that would be reached for a hop deeper.

Implementation Details

The MetaQA benchmark provides the KG as a text file with one triple per row. This file is pre-processed into a compressed KG with indexed entities and relationships to streamline retrieval and minimize memory usage. Each triple is embedded using a sentence embedding model (introduced in Section 3.5.3), forming a dictionary of embeddings that enhances retrieval efficiency by avoiding redundant computations. Retrieval is performed bidirectionally up to 3 hops, i.e., $N∈\{1,2,3\}$ .

3.5.3 Sub-Question Answering

Overview

Once the question is decomposed into sub-questions and candidate triples are retrieved for the given entity/entities, the sub-question answering process begins. Iteratively, the sub-question and candidate triples are embedded using a sentence embedding model, and the top- $K$ similar triples are selected to generate a sub-answer via an LLM. This sub-answer is then used to reformulate subsequent sub-questions if needed (see Figure 2), continuing until all sub-questions are answered.

Inputs and Outputs

Inputs include candidate triples (a list of strings, pre-embedded from the MetaQA KG) and a list of sub-questions. The output comprises two lists of strings: one containing the sub-answers and another with the reformulated sub-questions, both of which contribute to the final answer synthesis.

Techniques

The process employs similarity-based retrieval where both the sub-question and candidate triples are embedded with the same model, and their dot-product similarity is computed. The top- $K$ triples are then passed to a zero-shot LLM answer generator along with the sub-question. Unlike Keqing’s multiple-choice approach [10] (Section 3.2.3), this method allows the LLM to reason over the context. A zero-shot LLM also performs question reformulation.

Implementation Details

The similarity-based triple selection uses the multi-qa-mpnet-base-dot-v1 https://huggingface.co/sentence-transformers/multi-qa-mpnet-base-dot-v1 model from the sentence_transformers https://www.sbert.net/ package, which embeds text into 768-dimensional vectors. Similarity is computed as the dot product between these vectors, and the model is run locally on the GPU. Both the sub-question answering and question reformulation LLMs use parameters from Table 2 with minor adjustments: the sub-question answering LLM employs a repeat_penalty of 1.1 to mitigate repetitive output, while the reformulation module uses ”?” as the stop token to restrict its output to a properly reformulated question.

3.5.4 Answer Synthesis

Overview

The final step synthesizes an answer to the original question using the generated reasoning chain, sub-questions, and sub-answers. This output, which includes the reasoning chain, provides transparency into the system’s decision-making process.

Inputs and Outputs

Inputs comprise the main question, reasoning chain, sub-questions (reformulated if applicable), and sub-answers—all as strings. The output is a single natural language string that integrates both the final answer and the reasoning chain.

Techniques

A custom zero-shot prompt instructs the LLM to formulate the final answer from the provided context. The prompt template merges the main question, sub-questions, and sub-answers, and subsequently incorporates the reasoning chain into the final output. This straightforward zero-shot approach was preferred over ICL due to the simplicity of the final synthesis task compared to the more complex decomposition step.

Implementation Details

The LLM parameters mirror those in Table 2, with the exception of max_tokens, which is increased to 512 to accommodate the typically more complex final answers.

4 Experiments

The goal of our experiments is check whether the usefulness of a KG in question answering and whether our approach, i.e., using an additional question decomposition module, results in a better performance. For this purpose, we use a widely-used Knowledge Graph Question Answering (KGQA) benchmark called MetaQA [20]. In order to verify whether we achieved our objectives, we assess three baselines: a stand-alone LLM, an LLM with an LLM-based question-answering module, and an LLM with a KG (i.e., KAPING). Eventually, the experimental results are presented and discussed.

4.1 Dataset

The MetaQA benchmark, introduced in 2017, addresses the need for KGQA benchmarks featuring multi-hop questions over large-scale KGs, extending the original WikiMovies benchmark with movie-domain questions of varying hop counts [20].

Several factors motivated the selection of MetaQA for this research. First, its questions are categorized by hop count, enabling detailed analysis of multi-hop performance, a key area for improvement via question decomposition. Second, each question includes an entity label, avoiding the complexities of entity linking; many benchmarks, which focus on neural semantic parsing for SPARQL query generation, lack such labels [38]. Third, MetaQA’s simplicity and locally processable KG make it ideal for studies with limited resources, in contrast to highly complex KGs like Wikidata (over 130 GB, 1.57 billion triples, 12,175 relation types https://www.wikidata.org/wiki/Wikidata:Main_Page).

Data

MetaQA consists of three datasets (1-hop, 2-hop, and 3-hop), each split into train, validation, and test sets, and further divided into three components: vanilla, NTM text data, and audio data [20]. This research utilizes only the vanilla data, where the 1-hop dataset contains original WikiMovies questions and the 2-hop and 3-hop datasets are generated using predefined templates. Each dataset row includes a question, its associated entity, and answer entities.

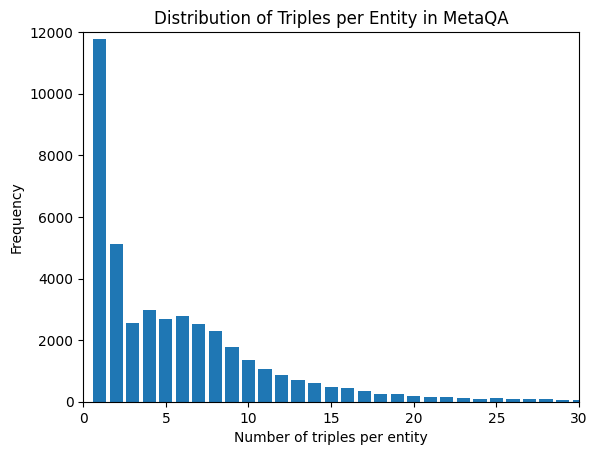

Knowledge Graph

The MetaQA benchmark provides a KG as a text file with each row representing a triple. The KG comprises 43,234 entities and 9 relation types, with movie titles as subjects. Figure 4 illustrates the degree distribution: most entities have few associated triples (median of 4), while the long-tailed distribution includes entities with up to 4431 triples.

<details>

<summary>extracted/6354852/Figs/MetaQA_KG.png Details</summary>

### Visual Description

## Bar Chart: Distribution of Triples per Entity in MetaQA

### Overview

The image presents a bar chart illustrating the distribution of the number of triples per entity within the MetaQA dataset. The chart displays the frequency of entities having a specific number of triples.

### Components/Axes

* **Title:** "Distribution of Triples per Entity in MetaQA" - positioned at the top-center of the chart.

* **X-axis:** "Number of triples per entity" - ranging from 0 to approximately 30, with tick marks at integer values.

* **Y-axis:** "Frequency" - ranging from 0 to 12000, with tick marks at 2000-unit intervals.

* **Data Series:** A single series of blue bars representing the frequency of each number of triples per entity.

### Detailed Analysis

The chart shows a highly skewed distribution. The frequency of entities decreases rapidly as the number of triples per entity increases.

* **0 Triples:** Approximately 11,800 entities have 0 triples.

* **1 Triple:** Approximately 5,300 entities have 1 triple.

* **2 Triples:** Approximately 2,400 entities have 2 triples.

* **3 Triples:** Approximately 2,100 entities have 3 triples.

* **4 Triples:** Approximately 1,800 entities have 4 triples.

* **5 Triples:** Approximately 1,600 entities have 5 triples.

* **6 Triples:** Approximately 1,400 entities have 6 triples.

* **7 Triples:** Approximately 1,200 entities have 7 triples.

* **8 Triples:** Approximately 1,000 entities have 8 triples.

* **9 Triples:** Approximately 800 entities have 9 triples.

* **10 Triples:** Approximately 650 entities have 10 triples.

* **11 Triples:** Approximately 500 entities have 11 triples.

* **12 Triples:** Approximately 400 entities have 12 triples.

* **13 Triples:** Approximately 300 entities have 13 triples.

* **14 Triples:** Approximately 200 entities have 14 triples.

* **15 Triples:** Approximately 150 entities have 15 triples.

* **16 Triples:** Approximately 100 entities have 16 triples.

* **17 Triples:** Approximately 80 entities have 17 triples.

* **18 Triples:** Approximately 60 entities have 18 triples.

* **19 Triples:** Approximately 40 entities have 19 triples.

* **20 Triples:** Approximately 30 entities have 20 triples.

* **21 Triples:** Approximately 20 entities have 21 triples.

* **22 Triples:** Approximately 10 entities have 22 triples.

* **23 Triples:** Approximately 10 entities have 23 triples.

* **24 Triples:** Approximately 5 entities have 24 triples.

* **25 Triples:** Approximately 5 entities have 25 triples.

* **26-30 Triples:** Fewer than 5 entities have 26-30 triples.

The bar heights decrease consistently from 0 to approximately 15 triples, then the decrease becomes more gradual.

### Key Observations

* The distribution is heavily skewed towards entities with a small number of triples (0-5).

* A significant portion of entities (over 11,000) have no triples associated with them.

* The frequency drops off rapidly as the number of triples increases.

* There are very few entities with a large number of triples (above 20).

### Interpretation

The chart suggests that the MetaQA dataset contains a large number of entities that are not well-connected or have limited information associated with them. This could be due to several factors, such as incomplete data, the nature of the entities themselves, or the way the dataset was constructed. The high concentration of entities with zero triples indicates that a substantial portion of the dataset may consist of entities that are placeholders or have not yet been fully populated with data. The rapid decline in frequency as the number of triples increases suggests that the dataset follows a power-law distribution, where a small number of entities have a large number of triples, while the vast majority have very few. This type of distribution is common in many real-world datasets, such as social networks and the web. The data suggests that any analysis relying on the number of triples per entity should account for this skewed distribution and potentially focus on the entities with a higher number of triples to avoid being biased by the large number of entities with few or no triples.

</details>

Figure 4: The distribution of degrees (triples per entity) in the MetaQA KG. (Note that the distribution is long-tailed, so the cut-off at the value of 30 is for the purpose of visualization.)

4.2 Experimental design

In this study, we carry out two experiments:

1. The goal of experiment 1 is to find out how the model parameters impact performance, in order to find a parameter configuration that leads to consistent performance over the different question types. The chosen parameter configuration can then be used to compare the system to baselines in the second experiment.

1. The main goal of the second experiment is to find out how different components of the system impact performance and overall behavior. This is achieved by comparing the performance of the system with specific baselines, which are essentially made up of combinations of system components.

4.2.1 Experiment 1: Model selection

Experiment 1 investigates the effect of model parameters on performance to determine a configuration that yields consistent results across different question types. The parameters under examination are the number of hops $N$ for candidate triple retrieval (tested with values 1, 2, 3) and the number of top triples $K$ selected for each sub-question (tested with values 10, 20, 30), consistent with values reported in the literature (Section 2).

For each MetaQA test dataset, 100 questions are sampled using a fixed seed, and the system is evaluated across all parameter combinations. This process is repeated with 10 different seeds (0–9) to capture performance variability, and all LLM components use the same seed for inference to ensure reproducibility.

Performance is measured using the Hit@1 metric, which checks if the generated answer exactly matches any of the label answer entities (after lowercasing and stripping). For example, if the label is ”Brad Pitt” and the generated answer is ”Pitt is the actor in question,” the response is deemed incorrect. The final score for each dataset sample is the average Hit@1.

4.2.2 Experiment 2: A Comparative Analysis with Baselines

Experiment 2 serves a purpose of assessing how individual system components influence overall performance by comparing the full system to three baselines:

1. LLM: Uses only an LLM with a simple zero-shot prompt to directly answer the question.

1. LLM+QD: Incorporates the question decomposition module to split questions and reformulate sub-questions before answering with the same zero-shot prompt as the LLM baseline.

1. LLM+KG: Functions as the full system without the question decomposition component, which is equivalent to KAPING [9] by employing candidate triple retrieval, top- $K$ triple selection, and the sub-question answering module.

Both the full system and the LLM+KG baseline use the parameter configuration selected in Section 4.3.1. As in Experiment 1, 500 questions are sampled per MetaQA dataset using 8 different seeds (0–7) to ensure consistency. Performance is quantitatively evaluated using the Hit@1 metric to determine the impact of different components, and results are qualitatively analyzed for error insights and to assess accuracy, explainability, and generalizability as outlined in Section 3.1.

4.3 Results and Discussion

4.3.1 Experiment 1: Quantitative analysis

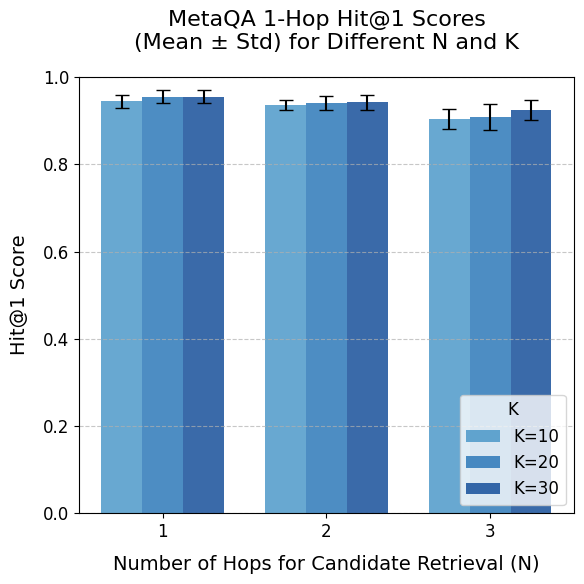

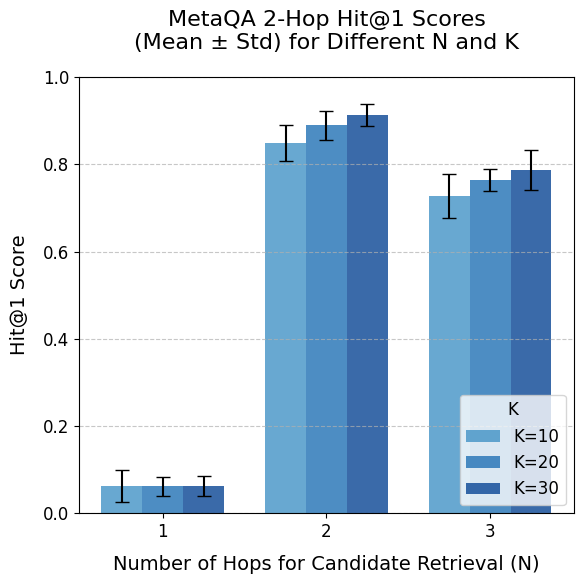

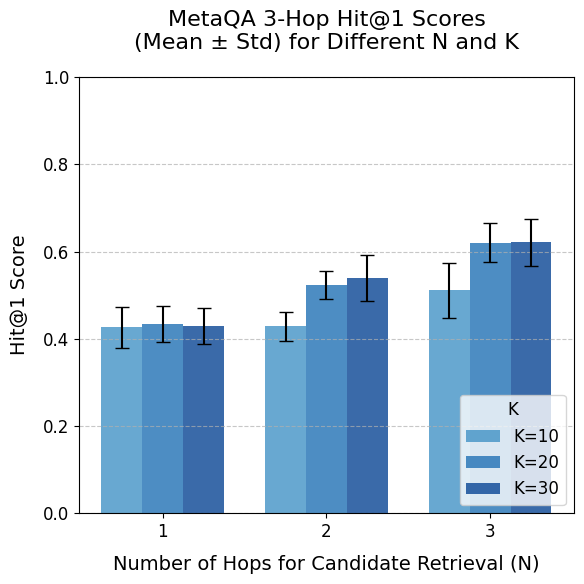

The results of Experiment 1 (Figure 5) indicate high overall performance that decreases with increasing question complexity, with standard deviations remaining low ( $≤ 0.063$ ) across samples.

<details>

<summary>extracted/6354852/Figs/exp1_metaqa_1.png Details</summary>

### Visual Description

\n

## Bar Chart: MetaQA 1-Hop Hit@1 Scores

### Overview

This bar chart displays the Mean ± Standard Deviation of Hit@1 scores for the MetaQA dataset, varying the number of hops (N) for candidate retrieval and the value of K. The chart compares performance across three different values of K (10, 20, and 30) for each hop value (1, 2, and 3).

### Components/Axes

* **Title:** "MetaQA 1-Hop Hit@1 Scores (Mean ± Std) for Different N and K" - positioned at the top-center of the chart.

* **X-axis:** "Number of Hops for Candidate Retrieval (N)" - labeled with values 1, 2, and 3.

* **Y-axis:** "Hit@1 Score" - scaled from 0.0 to 1.0 with increments of 0.2.

* **Legend:** Located in the top-right corner, identifying the different values of K:

* K=10 (Light Blue)

* K=20 (Medium Blue)

* K=30 (Dark Blue)

* **Error Bars:** Represent the standard deviation for each data point.

### Detailed Analysis

The chart consists of three groups of bars, one for each value of N (1, 2, and 3). Within each group, there are three bars representing the Hit@1 scores for K=10, K=20, and K=30.

* **N=1:**

* K=10: The bar reaches approximately 0.92 ± 0.01.

* K=20: The bar reaches approximately 0.94 ± 0.01.

* K=30: The bar reaches approximately 0.93 ± 0.01.

* **N=2:**

* K=10: The bar reaches approximately 0.91 ± 0.01.

* K=20: The bar reaches approximately 0.93 ± 0.01.

* K=30: The bar reaches approximately 0.92 ± 0.01.

* **N=3:**

* K=10: The bar reaches approximately 0.89 ± 0.01.

* K=20: The bar reaches approximately 0.92 ± 0.01.

* K=30: The bar reaches approximately 0.91 ± 0.01.

The error bars are consistently small, indicating low variance in the scores.

### Key Observations

* The Hit@1 scores are generally high across all conditions, consistently above 0.85.

* The highest scores are achieved when N=1 and K=20, with a score of approximately 0.94.

* As the number of hops (N) increases, the Hit@1 scores tend to slightly decrease, although the differences are small.

* The difference in performance between different values of K is minimal.

### Interpretation

The data suggests that the MetaQA model performs well in retrieving the correct answer within one hop (N=1). Increasing the number of hops (N) does not significantly improve performance and may even lead to a slight decrease in Hit@1 scores. The value of K (number of candidates retrieved) has a relatively small impact on performance, with K=20 yielding the highest scores in this dataset. The consistently low standard deviation indicates that the results are reliable and not heavily influenced by random variations. This implies that the model is robust and consistently finds the correct answer when limited to a single hop. The slight decrease in performance with increasing hops suggests that the model may struggle to identify relevant information beyond the immediate context.

</details>

<details>

<summary>extracted/6354852/Figs/exp1_metaqa_2.png Details</summary>

### Visual Description

## Bar Chart: MetaQA 2-Hop Hit@1 Scores

### Overview

This bar chart displays the Mean ± Standard Deviation of Hit@1 scores for the MetaQA 2-Hop dataset, varying the number of hops (N) for candidate retrieval and the value of K (number of candidates). The chart uses bar groupings to compare the performance for different K values at each N value. Error bars are included to represent the standard deviation.

### Components/Axes

* **Title:** "MetaQA 2-Hop Hit@1 Scores (Mean ± Std) for Different N and K" - positioned at the top-center of the chart.

* **X-axis:** "Number of Hops for Candidate Retrieval (N)" - labeled with values 1, 2, and 3.

* **Y-axis:** "Hit@1 Score" - scaled from 0.0 to 1.0 with increments of 0.2. Horizontal gridlines are present at each 0.2 increment.

* **Legend:** Located in the bottom-right corner, identifying the K values:

* K=10 (Light Blue)

* K=20 (Medium Blue)

* K=30 (Dark Blue)

### Detailed Analysis

The chart consists of three groups of bars, one for each value of N (1, 2, and 3). Within each group, there are three bars representing the Hit@1 score for K=10, K=20, and K=30. Each bar is accompanied by an error bar indicating the standard deviation.

* **N=1:**

* K=10: The bar is approximately 0.12 tall, with an error bar extending from roughly 0.08 to 0.16.

* K=20: The bar is approximately 0.13 tall, with an error bar extending from roughly 0.09 to 0.17.

* K=30: The bar is approximately 0.14 tall, with an error bar extending from roughly 0.10 to 0.18.

* **N=2:**

* K=10: The bar is approximately 0.82 tall, with an error bar extending from roughly 0.76 to 0.88.

* K=20: The bar is approximately 0.90 tall, with an error bar extending from roughly 0.85 to 0.95.

* K=30: The bar is approximately 0.92 tall, with an error bar extending from roughly 0.87 to 0.97.

* **N=3:**

* K=10: The bar is approximately 0.73 tall, with an error bar extending from roughly 0.67 to 0.79.

* K=20: The bar is approximately 0.78 tall, with an error bar extending from roughly 0.72 to 0.84.

* K=30: The bar is approximately 0.80 tall, with an error bar extending from roughly 0.74 to 0.86.

### Key Observations

* The Hit@1 score increases significantly when the number of hops (N) increases from 1 to 2.

* The Hit@1 score decreases slightly when the number of hops (N) increases from 2 to 3.

* For each value of N, increasing K (from 10 to 30) generally leads to a higher Hit@1 score, although the difference is more pronounced at N=2.

* The error bars indicate that the standard deviation is relatively small, suggesting that the results are consistent.

### Interpretation

The data suggests that using two hops for candidate retrieval (N=2) yields the best performance in terms of Hit@1 score for the MetaQA 2-Hop dataset. Increasing the number of hops beyond two results in a slight decrease in performance. The positive correlation between K and Hit@1 score indicates that considering more candidates improves the chances of finding the correct answer, but the benefit diminishes as K increases. The relatively small standard deviations suggest that these trends are robust and not due to random chance. The chart demonstrates the importance of selecting an appropriate number of hops and candidate retrieval size for optimal performance in a multi-hop question answering system. The peak performance at N=2 could indicate that two hops are sufficient to retrieve the necessary information for answering the questions in this dataset, while additional hops introduce noise or irrelevant information.

</details>

<details>

<summary>extracted/6354852/Figs/exp1_metaqa_3.png Details</summary>

### Visual Description

## Bar Chart: MetaQA 3-Hop Hit@1 Scores

### Overview

This bar chart displays the Mean ± Standard Deviation of Hit@1 scores for the MetaQA 3-Hop dataset, varying the number of hops (N) for candidate retrieval and the value of K. The chart compares performance across three different K values (10, 20, and 30) for each hop count (1, 2, and 3).

### Components/Axes

* **Title:** "MetaQA 3-Hop Hit@1 Scores (Mean ± Std) for Different N and K" - positioned at the top-center.

* **X-axis:** "Number of Hops for Candidate Retrieval (N)" - with markers at 1, 2, and 3.

* **Y-axis:** "Hit@1 Score" - ranging from 0.0 to 1.0, with gridlines at 0.2, 0.4, 0.6, and 0.8.

* **Legend:** Located in the bottom-right corner, identifying the K values:

* K=10 (Light Blue)

* K=20 (Medium Blue)

* K=30 (Dark Blue)

* **Error Bars:** Represent the standard deviation for each data point.

### Detailed Analysis

The chart consists of three groups of bars, one for each value of N (1, 2, and 3). Within each group, there are three bars representing the Hit@1 score for K=10, K=20, and K=30. The error bars indicate the variability of the scores.

**N = 1:**

* K=10: The bar is approximately at 0.42, with an error bar extending from roughly 0.38 to 0.46.

* K=20: The bar is approximately at 0.44, with an error bar extending from roughly 0.40 to 0.48.

* K=30: The bar is approximately at 0.41, with an error bar extending from roughly 0.37 to 0.45.

**N = 2:**

* K=10: The bar is approximately at 0.46, with an error bar extending from roughly 0.42 to 0.50.

* K=20: The bar is approximately at 0.53, with an error bar extending from roughly 0.49 to 0.57.

* K=30: The bar is approximately at 0.55, with an error bar extending from roughly 0.51 to 0.59.

**N = 3:**

* K=10: The bar is approximately at 0.56, with an error bar extending from roughly 0.52 to 0.60.

* K=20: The bar is approximately at 0.60, with an error bar extending from roughly 0.56 to 0.64.

* K=30: The bar is approximately at 0.62, with an error bar extending from roughly 0.58 to 0.66.

### Key Observations

* The Hit@1 score generally increases as the number of hops (N) increases.

* For each value of N, increasing K (from 10 to 30) generally leads to a higher Hit@1 score.

* The error bars suggest that the variability in scores decreases slightly as N increases.

* The difference in performance between K=20 and K=30 is relatively small, especially at N=3.

### Interpretation

The data suggests that increasing the number of hops for candidate retrieval (N) improves the Hit@1 score in the MetaQA 3-Hop dataset. Furthermore, increasing the value of K (the number of candidates retrieved) also generally improves performance, although the benefit diminishes as K increases. The consistent upward trend with increasing N indicates that exploring more candidate paths is beneficial for this task. The relatively small error bars at N=3 suggest that the performance is more stable with a larger number of hops. This could be due to the model being able to better identify relevant candidates with more hops, or it could be a result of the dataset characteristics. The chart demonstrates a clear relationship between retrieval strategy (N and K) and the quality of the retrieved results (Hit@1 score).

</details>

Figure 5: MetaQA performance results for experiment 1, over 10 samples of 100 questions for each of the three datasets. The bars show the mean Hit@1 for different parameter configurations; the error bars show the standard deviation.

Performance is highest when the parameter $N$ equals the actual number of hops in the questions. As expected, for the 2-hop dataset, $N=1$ yields poor results; however, for the 3-hop dataset, performance with $N<3$ is unexpectedly high due to MetaQA’s question templates–for instance, some 3-hop questions (e.g., ”Who are the directors of the films written by the writer of Blue Collar?”) can be answered with $N=1$ triples. This represents a limitation of the MetaQA benchmark.

When holding the dataset and $N$ constant, increasing $K$ (the number of top triples selected) from 10 to 30 shows minimal effect on the 1-hop dataset, with slight improvements observed for the 2-hop and 3-hop datasets. Given that a higher $K$ is unlikely to reduce performance and is more likely to include the necessary triples, $K=30$ is chosen.

Considering the trade-offs across datasets, a balanced configuration is selected. Since $N=1$ is unacceptable for 2-hop questions and improved performance on 3-hop questions likely requires all candidate triples up to 3 hops, $N=3$ is deemed the best choice despite a minor reduction in 2-hop performance (0.787 $±$ 0.046). Consequently, the optimal parameter configuration for MetaQA is $N=3$ and $K=30$ .

4.3.2 Experiment 2: Quantitative analysis

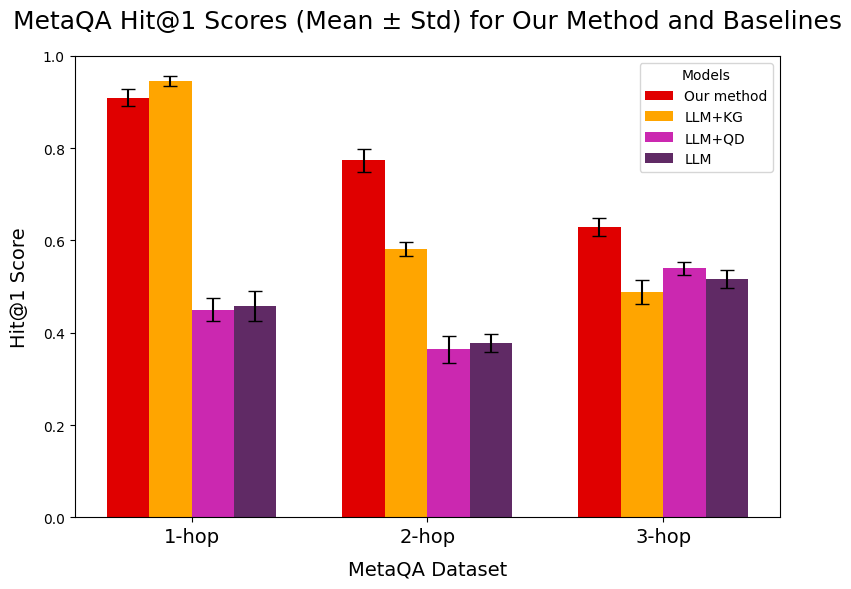

Figure 6 presents the performance results for Experiment 2 across 8 samples of 500 questions per MetaQA dataset. Our system significantly outperforms the baselines on 2-hop and 3-hop questions with minimal variance, while the LLM+KG baseline slightly outperforms on 1-hop questions. This is expected, as question decomposition adds unnecessary overhead for simple queries.

<details>

<summary>extracted/6354852/Figs/results_exp2_MetaQA.png Details</summary>

### Visual Description

## Bar Chart: MetaQA Hit@1 Scores

### Overview

This bar chart presents the Hit@1 scores (Mean ± Std) for a new method and several baseline models on the MetaQA dataset, categorized by the number of hops (1-hop, 2-hop, and 3-hop). Error bars are included for each data point, representing the standard deviation.

### Components/Axes

* **Title:** "MetaQA Hit@1 Scores (Mean ± Std) for Our Method and Baselines" - positioned at the top-center.

* **X-axis:** "MetaQA Dataset" - with markers "1-hop", "2-hop", and "3-hop".

* **Y-axis:** "Hit@1 Score" - ranging from 0.0 to 1.0.

* **Legend:** Located in the top-right corner, listing the models:

* "Our method" (Red)

* "LLM+KG" (Orange)

* "LLM+QD" (Purple)

* "LLM" (Magenta)

### Detailed Analysis

The chart consists of three groups of bars, one for each hop value (1-hop, 2-hop, 3-hop). Each group contains four bars, representing the Hit@1 score for each of the four models. Error bars are present on top of each bar.

**1-hop:**

* **Our method (Red):** Approximately 0.93 ± 0.02. The bar extends to roughly 0.95 and down to 0.91.

* **LLM+KG (Orange):** Approximately 0.95 ± 0.02. The bar extends to roughly 0.97 and down to 0.93.

* **LLM+QD (Purple):** Approximately 0.46 ± 0.03. The bar extends to roughly 0.49 and down to 0.43.

* **LLM (Magenta):** Approximately 0.43 ± 0.03. The bar extends to roughly 0.46 and down to 0.40.

**2-hop:**

* **Our method (Red):** Approximately 0.78 ± 0.04. The bar extends to roughly 0.82 and down to 0.74.

* **LLM+KG (Orange):** Approximately 0.62 ± 0.04. The bar extends to roughly 0.66 and down to 0.58.

* **LLM+QD (Purple):** Approximately 0.39 ± 0.03. The bar extends to roughly 0.42 and down to 0.36.

* **LLM (Magenta):** Approximately 0.36 ± 0.03. The bar extends to roughly 0.39 and down to 0.33.

**3-hop:**

* **Our method (Red):** Approximately 0.65 ± 0.04. The bar extends to roughly 0.69 and down to 0.61.

* **LLM+KG (Orange):** Approximately 0.53 ± 0.04. The bar extends to roughly 0.57 and down to 0.49.

* **LLM+QD (Purple):** Approximately 0.51 ± 0.04. The bar extends to roughly 0.55 and down to 0.47.

* **LLM (Magenta):** Approximately 0.48 ± 0.04. The bar extends to roughly 0.52 and down to 0.44.

### Key Observations

* "Our method" consistently outperforms the baseline models (LLM+KG, LLM+QD, and LLM) across all hop values.

* LLM+KG generally performs better than LLM+QD and LLM.

* The performance of all models decreases as the number of hops increases.

* The error bars indicate that the standard deviation is relatively small, suggesting consistent results.

### Interpretation

The data suggests that the proposed method is effective in improving the Hit@1 score on the MetaQA dataset, particularly when compared to the baseline models. The decrease in performance with increasing hop values indicates that the task becomes more challenging as the reasoning chain lengthens. The relatively small standard deviations suggest that the results are reliable and not heavily influenced by random variations. The consistent outperformance of "Our method" suggests it is more robust to the increased complexity of multi-hop reasoning. The gap between "Our method" and the baselines widens as the hop count increases, indicating that the proposed method's advantage is more pronounced in more complex scenarios.

</details>

Figure 6: MetaQA performance results for experiment 2, over 8 samples of 500 questions for each of the three datasets. The bars show the mean Hit@1, and the error bars show the standard deviation. The results for both the system and the baselines are shown.

Comparing the baselines, the advantage of the KG retrieval module is most pronounced for 1-hop questions, but diminishes for 2-hop questions and disappears for 3-hop questions—likely because complex queries increase the difficulty of retrieving relevant triples. The integration of question decomposition in our system, however, maintains the benefits of KG retrieval for multi-hop questions while also enhancing answer explainability.

In summary, our system achieves improved performance on multi-hop questions with only a minor loss for 1-hop queries compared to the LLM+KG baseline. Although the relative and absolute advantage decreases as the number of hops increases, these quantitative results, combined with a forthcoming qualitative analysis (Section 4.4), support the effectiveness of our approach.

4.4 Qualitative Analysis

This section examines the model outputs to identify recurring behaviors, strengths, and weaknesses, and to suggest directions for future improvements. Given the inherent limitations of a small, quantized LLM, our focus is on common patterns rather than isolated errors.

Table 3: The datasets that were analyzed for the qualitative analysis.

| MetaQA 1-hop | KG-RAG | 1 | 0 | N=3, K=30 |

| --- | --- | --- | --- | --- |

| MetaQA 2-hop | KG-RAG | 1 | 0 | N=3, K=30 |

| MetaQA 3-hop | KG-RAG | 1 | 0 | N=3, K=30 |

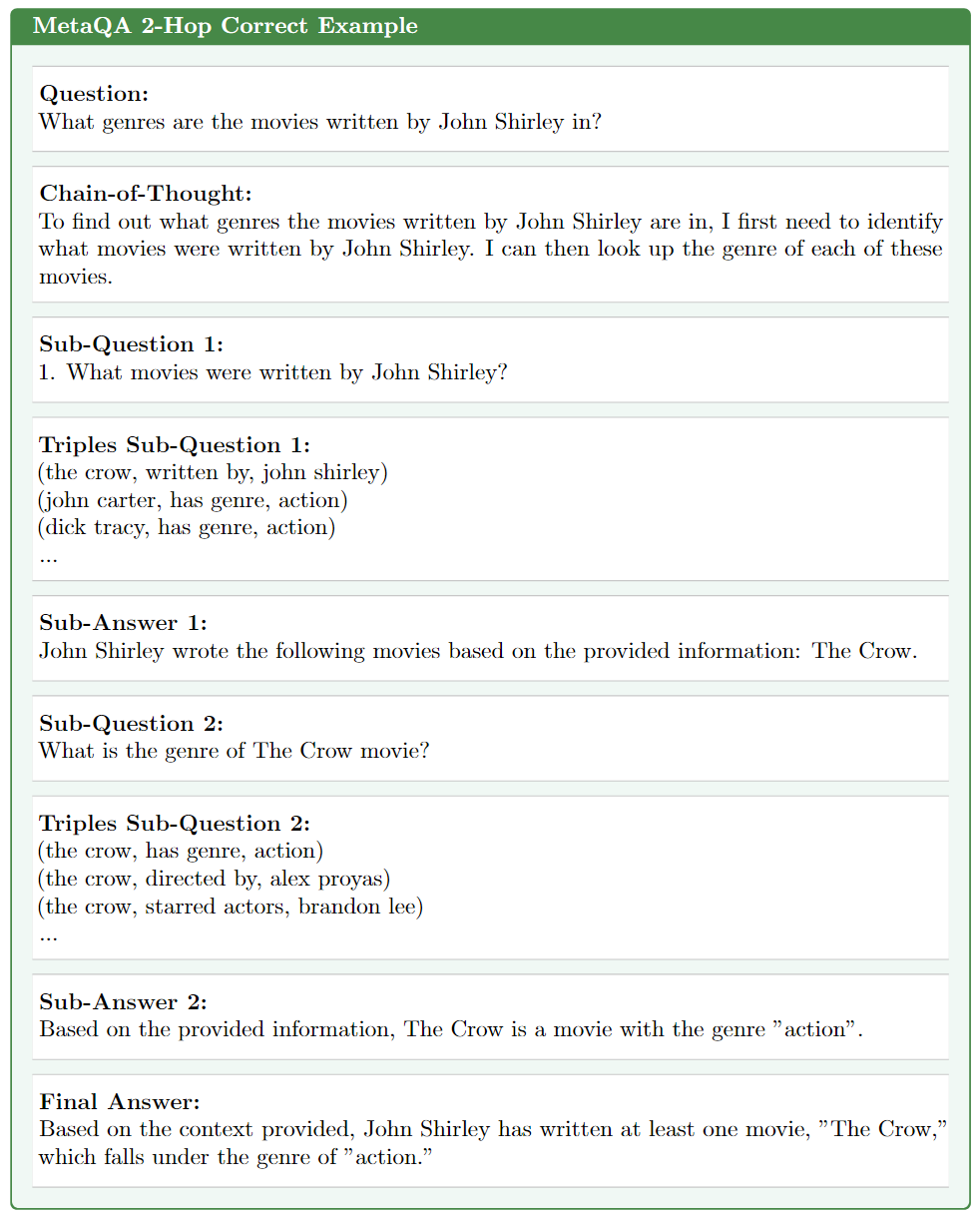

Table 3 lists the generated outputs used in this analysis. First, we present an example output from the MetaQA 2-hop dataset (Figure 7). This example demonstrates how the system correctly decomposes a question into coherent sub-questions, substitutes sub-answers appropriately, and synthesizes a final answer that accurately addresses the main question while maintaining a logical chain-of-thought.

<details>

<summary>extracted/6354852/Figs/output_example.png Details</summary>

### Visual Description

\n

## Document: MetaQA 2-Hop Correct Example

### Overview

The image presents a document demonstrating a "Chain-of-Thought" reasoning process for answering a question using a multi-hop approach. The document outlines a question, a chain of thought to solve it, sub-questions, triples derived from those sub-questions, sub-answers, and a final answer. The document is formatted with a light green background.

### Components/Axes

The document is structured into the following sections:

* **Question:** The initial query.

* **Chain-of-Thought:** The reasoning process.

* **Sub-Question 1:** A breakdown of the initial question into smaller parts.

* **Triples Sub-Question 1:** Knowledge triples extracted to answer Sub-Question 1.

* **Sub-Answer 1:** The answer to Sub-Question 1.

* **Sub-Question 2:** A further breakdown of the problem.

* **Triples Sub-Question 2:** Knowledge triples extracted to answer Sub-Question 2.

* **Sub-Answer 2:** The answer to Sub-Question 2.

* **Final Answer:** The ultimate answer to the original question.

### Content Details

Here's a transcription of the text within the document:

**MetaQA 2-Hop Correct Example**

**Question:**

What genres are the movies written by John Shirley in?

**Chain-of-Thought:**

To find out what genres the movies written by John Shirley are in, I first need to identify what movies were written by John Shirley. I can then look up the genre of each of these movies.

**Sub-Question 1:**

1. What movies were written by John Shirley?

**Triples Sub-Question 1:**

(the crow, written by, john shirley)

(john carter, has genre, action)

(dick tracy, has genre, action)

...

**Sub-Answer 1:**

John Shirley wrote the following movies based on the provided information: The Crow.

**Sub-Question 2:**

What is the genre of The Crow movie?

**Triples Sub-Question 2:**

(the crow, has genre, action)

(the crow, directed by, alex proyas)

(the crow, starred actors, brandon lee)

...

**Sub-Answer 2:**

Based on the provided information, The Crow is a movie with the genre "action".

**Final Answer:**

Based on the context provided, John Shirley has written at least one movie, "The Crow," which falls under the genre of "action."

### Key Observations

* The document demonstrates a step-by-step reasoning process.

* The use of "Triples" suggests a knowledge graph or semantic web approach.

* The "..." indicates that the lists of triples are not exhaustive.

* The example focuses on a single movie ("The Crow") to illustrate the process.

### Interpretation

This document exemplifies a method for answering complex questions by breaking them down into smaller, manageable sub-questions. The "Chain-of-Thought" approach mimics human reasoning, and the use of knowledge triples allows for structured information retrieval. The example demonstrates how a question about movie genres can be answered by first identifying the movies written by a specific author and then determining the genre of each movie. The document highlights the importance of context and the ability to extract relevant information from a knowledge base. The ellipsis suggests that the system can handle more extensive knowledge and a larger number of movies, but the example is simplified for clarity. The document is a demonstration of a question answering system, and the "Correct Example" label suggests it is a successful case study.

</details>

Figure 7: An example of the system’s intermediate outputs, which lead to the final answer. The example was taken from the MetaQA 2-hop sample that was analyzed for the qualitative analysis.

4.4.1 Question Decomposition

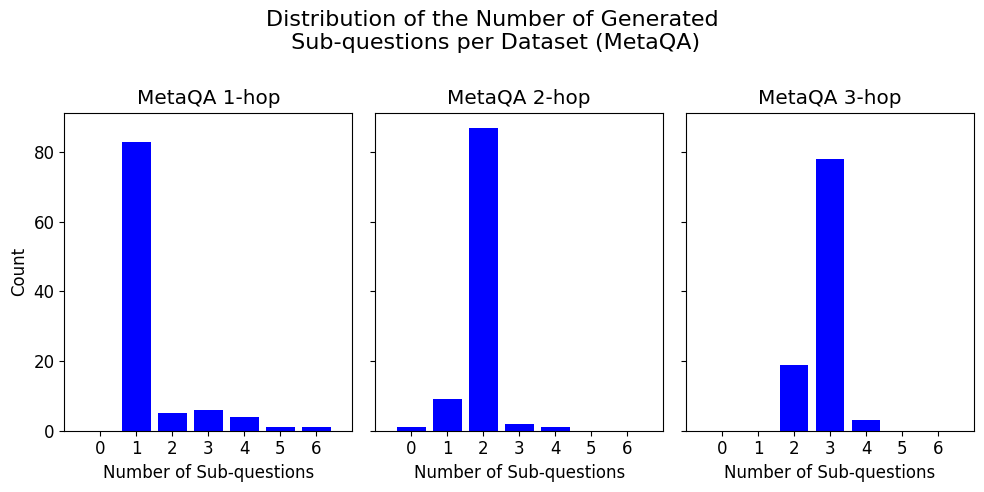

By analyzing the distribution of the number of generated sub-questions per dataset (Figure 8), we observe that the model generally recognizes the appropriate complexity of MetaQA multi-hop questions. For 1-hop questions, the model typically avoids decomposition, though ambiguous queries (e.g. asking for a movie description) sometimes lead to unnecessary sub-questions. For 2-hop and 3-hop questions, the model usually generates the expected number of sub-questions, although there are occasional cases of under-decomposition.

<details>

<summary>extracted/6354852/Figs/subq_distribution.png Details</summary>

### Visual Description

## Bar Chart: Distribution of Generated Sub-questions per Dataset (MetaQA)

### Overview

This image presents three bar charts displaying the distribution of the number of generated sub-questions for the MetaQA dataset, broken down by hop count (1-hop, 2-hop, and 3-hop). The y-axis represents the "Count" of occurrences, while the x-axis represents the "Number of Sub-questions," ranging from 0 to 6.

### Components/Axes

* **Title:** "Distribution of the Number of Generated Sub-questions per Dataset (MetaQA)" - positioned at the top-center of the image.

* **Subtitles:** "MetaQA 1-hop", "MetaQA 2-hop", "MetaQA 3-hop" - positioned above each respective bar chart.

* **X-axis Label:** "Number of Sub-questions" - positioned at the bottom-center of each chart.

* **Y-axis Label:** "Count" - positioned on the left side of each chart.

* **X-axis Markers:** 0, 1, 2, 3, 4, 5, 6.

* **Y-axis Scale:** 0 to 80, with increments of 20.

* **Bar Color:** Blue.

### Detailed Analysis

The image consists of three separate bar charts, each representing a different hop count for the MetaQA dataset.

**1. MetaQA 1-hop:**

* The distribution is heavily skewed towards 0 sub-questions.

* The bar at 0 sub-questions has a height of approximately 82.

* The bar at 1 sub-question has a height of approximately 8.

* The bar at 2 sub-questions has a height of approximately 4.

* The bars at 3, 4, 5, and 6 sub-questions have heights of approximately 2, 1, 1, and 1 respectively.

**2. MetaQA 2-hop:**

* The distribution is concentrated around 0 and 1 sub-questions.

* The bar at 0 sub-questions has a height of approximately 85.

* The bar at 1 sub-question has a height of approximately 10.

* The bar at 2 sub-questions has a height of approximately 3.

* The bars at 3, 4, 5, and 6 sub-questions have heights of approximately 1, 0, 0, and 0 respectively.

**3. MetaQA 3-hop:**

* The distribution is centered around 3 sub-questions.

* The bar at 0 sub-questions has a height of approximately 18.