# Counterfactual Fairness Evaluation of Machine Learning Models on Educational Datasets

**Authors**: Woojin Kim, Hyeoncheol Kim

institutetext: Department of Computer Science and Engineering, Korea University, South Korea email: {woojinkim1021, harrykim}@korea.ac.kr

Abstract

As machine learning models are increasingly used in educational settings, from detecting at-risk students to predicting student performance, algorithmic bias and its potential impacts on students raise critical concerns about algorithmic fairness. Although group fairness is widely explored in education, works on individual fairness in a causal context are understudied, especially on counterfactual fairness. This paper explores the notion of counterfactual fairness for educational data by conducting counterfactual fairness analysis of machine learning models on benchmark educational datasets. We demonstrate that counterfactual fairness provides meaningful insight into the causality of sensitive attributes and causal-based individual fairness in education.

Keywords: Counterfactual Fairness Education Machine Learning.

1 Introduction

Machine learning models are increasingly implemented in educational settings to support automated decision-making processes. Such applications ranges from academic success prediction [33, 50], at-risk detection [25], automated grading [42], knowledge tracing [38] and personalized recommendation [53]. However, the application of machine learning models to automate decision-making in high-stakes scenarios calls for consideration of algorithmic bias [1]. In education, predictive models have been shown to exhibit lower performance for students from underrepresented demographic groups [40, 3, 6, 21, 34].

The majority of research on fairness in education focuses on group fairness [40, 21], while works on individual fairness are limited to aiming for similar treatment of similar individuals [20, 10]. Under context where students’ demographics causally shape their education [45, 11, 27], taking causality in consideration of fairness is crucial. Causal fairness asserts that it is unfair to produce different decisions for individuals caused by factors beyond their control [28]. In this sense, algorithmic decisions that impact students should eliminate the causal effects of uncontrollable variables, such as race, gender, and disability.

Group and individual fairness definitions have certain limitations, and the inherent incompatibility between group and individual fairness presents challenges [2, 31, 52, 48]. Group fairness can mask heterogeneous outcomes of individuals by using group-wise averaging measurements [2, 31]. While group fairness may be achieved, it does not ensure fairness for each individual [52]. Furthermore, ignoring individual fairness in favor of group fairness can result in algorithms making different decisions for identical individuals [29]. Individual fairness faces difficulty in selecting distance metrics for measuring the similarity of individuals and is easily affected by outlier samples [49].

Based on the limitations of group and individual fairness notions, we empirically investigate the potential of counterfactual fairness on educational datasets. Counterfactual fairness ensures that the algorithm’s decision would have remained the same when the individual belongs to a different demographic group, other things being equal [23]. Counterfactual fairness promotes individual-level fairness by removing the causal influence of sensitive attributes on the algorithm’s decisions. To the best of our current knowledge, the notion of counterfactual fairness has not been investigated in the educational domain.

In this paper, we aim to answer the following research questions(RQ):

1. What causal relationships do sensitive attributes have in educational data?

1. Does counterfactual fairness in educational data lead to identical outcomes for individual students regardless of demographic group membership?

1. Does counterfactually fair machine learning models result in a performance trade-off in educational data?

These questions are investigated by estimating a causal model and implementing a counterfactual fairness approach on real-world educational datasets. Section 2 introduces counterfactual fairness and algorithmic fairness in education. In Section 3, we provide methodologies for creating causal models and counterfactual fairness evaluation metrics. We present the experiment result in Section 4. In Section 5, we discuss the key findings of our study, exploring their implications for fairness in educational data before concluding in Section 6.

2 Background

2.1 Causal Model and Counterfactuals

Counterfactual fairness adopts the Structural Causal Model(SCM) framework [35] for the calculation of counterfactual samples. SCM is defined as a triplet $(U,V,F)$ where $U$ is a set of unobserved variables, $V$ is a set of observed variables, and $F$ is a set of structural equations describing how observable variables are determined. Given a SCM, counterfactual inference is to determine $P(Y_{Z← z}(U)|W=w)$ , which indicates the probability of $Y$ if $Z$ is set to $z$ (i.e. counterfactuals), given that we observed $W=w$ . Imagine a female student with a specific academic record. What would be the probability of her passing the course if her gender were male while keeping all other observed academic factors constant? Counterfactual inference on SCM allows us to calculate answers to counterfactual queries by abduction, action, and prediction inference steps detailed in [35].

2.2 Counterfactual Fairness

We follow the definition of counterfactual fairness by Kusner et al. [23].

**Definition 1 (Counterfactual Fairness)**

*Predictor $\hat{Y}$ is counterfactually fair if under any context $X=x$ and $A=a$ ,

$$

P(\hat{Y}_{A\leftarrow a}(U)=y|X=x,A=a)=P(\hat{Y}_{A\leftarrow a^{\prime}}(U)=%

y|X=x,A=a),

$$

for all y and for any value a’ attainable by A.*

The definition states that changing $A$ should not change the distribution of the predicted outcome $\hat{Y}$ . An algorithm is counterfactually fair towards an individual if an intervention in demographic group membership does not change the prediction. For instance, the predicted probability of a female student passing a course should remain the same as if the student had been a male.

Implementing counterfactual fairness requires a causal model of the real world and the counterfactual inference of samples under the causal model. This process allows for isolating the causal influence of the sensitive attribute on the outcome.

Counterfactual fairness is explored in diverse domains, such as in clinical decision support [47] and clinical risk prediction [36, 44] for healthcare, ranking algorithm [37], image classification [9, 22] and text classification [16].

2.3 Algorithmic Fairness in Education

Most works on algorithmic fairness in education focus on group fairness [40, 21]. The group fairness definition states that an algorithm is fair if its prediction performance is equal among subgroups, specifically requiring equivalent prediction ratios for favorable outcomes. Common definitions of group fairness are Equalized Odds [17], Demographic Parity [14] and Equal Opportunity [17].

Individual fairness requires individuals with similar characteristics to receive similar treatment. Research on individual fairness in education focuses on the similarity. Marras et al. [32] proposed a consistency metric for measuring the similarity of students’ past interactions for individual fairness under a personalized recommendation setting. Hu and Rangwala [20] developed a model architecture for individual fairness in at-risk student prediction task. Doewes et al. [12] proposed a methodology to evaluate individual fairness in automated essay scoring. Deho et al. [10] performed individual fairness evaluation of existing fairness mitigation methods in learning analytics.

There have been attempts to understand causal factors influencing academic success. Ferreira de Carvalho et al. [4] identifies causal relationships between LMS logs and student’s grades. Zhao et al. [51] propose Residual Counterfactual Networks to estimate the causal effect of an academic counterfactual intervention for personalized learning. To the best of our knowledge, the notion of algorithmic fairness under causal context, especially under counterfactual inference in the educational domain remains unexplored.

Table 1: Feature descriptions of Law School and OULAD datasets. Student Performance dataset descriptions are provided in Table 6 of Appendix A.

| Data | Feature | Type | Description |

| --- | --- | --- | --- |

| Law | gender | binary | the student’s gender |

| race | binary | the student’s race | |

| lsat | numerical | the student’s LSAT score | |

| ugpa | numerical | the student’s undergraduate GPA | |

| zfygpa | numerical | the student’s law school first year GPA | |

| OULAD | gender | binary | the student’s gender |

| disability | binary | whether the student has declared a disability | |

| education | categorical | the student’s highest education level | |

| IMD | categorical | the Index of Multiple Deprivation(IMD) of the student’s residence | |

| age | categorical | band of the student’s age | |

| studied credits | numerical | the student’s total credit of enrolled modules | |

| final result | binary | the student’s final result of the module | |

Table 2: Summary of datasets used for the experiment.

| Data | Task | Sensitive Attribute | Target | # Instances |

| --- | --- | --- | --- | --- |

| Law School | Regression | race, gender | zfygpa | 20,798 |

| OULAD | Classification | disability | final result | 32,593 |

| Student Performance(Mat) | Regression | gender | G3 | 395 |

| Student Performance(Por) | Regression | gender | G3 | 649 |

3 Methodology

We provide detailed description of experiment methodology for evaluating counterfactual fairness of machine learning models in education.

3.1 Educational Datasets

We use publicly available benchmark educational datasets for fairness presented in [26], which introduces four educational benchmark datasets for algorithmic fairness. Datasets are Law School github.com/mkusner/counterfactual-fairness [46], Open University Learning Analytics Dataset (OULAD) https://archive.ics.uci.edu/dataset/349/open+university+learning+analytics+dataset [24] and Student Performance in Mathematics and Portuguese language https://archive.ics.uci.edu/dataset/320/student+performance [8]. Refer to Table 1 and Table 6 for description of dataset features used in the experiment. The summary of tasks and selection of sensitive attributes are outlined in Table 2.

<details>

<summary>extracted/6375259/figures/freq_law.png Details</summary>

### Visual Description

## Bar Chart: Race Distribution

### Overview

The image is a bar chart showing the distribution of two races: White and Black. The y-axis represents the percentage, and the x-axis represents the race. The chart indicates that White individuals make up 93.8% of the population, while Black individuals make up 6.2%.

### Components/Axes

* **X-axis:** Represents the race categories: White and Black.

* **Y-axis:** Represents the percentage. The scale is not explicitly shown, but the bar heights indicate the percentages.

* **Bars:**

* A blue bar represents the percentage of White individuals.

* An orange bar represents the percentage of Black individuals.

* **Labels:**

* "White" is labeled under the blue bar.

* "Black" is labeled under the orange bar.

* **Values:**

* 93.8% is displayed above the blue bar.

* 6.2% is displayed above the orange bar.

### Detailed Analysis

* **White:** The blue bar extends to a height representing 93.8%.

* **Black:** The orange bar extends to a height representing 6.2%.

### Key Observations

* The percentage of White individuals is significantly higher than the percentage of Black individuals.

### Interpretation

The bar chart illustrates a significant disparity in the distribution of races, with White individuals representing a much larger proportion of the population compared to Black individuals. The data suggests a strong imbalance in the racial composition of the population being represented.

</details>

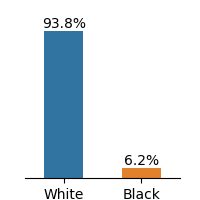

(a) Law School

<details>

<summary>extracted/6375259/figures/freq_oulad.png Details</summary>

### Visual Description

## Bar Chart: Disabled vs Non-Disabled

### Overview

The image is a bar chart comparing the percentages of non-disabled and disabled individuals. The chart consists of two vertical bars, one representing "Non-Disabled" and the other representing "Disabled". The height of each bar corresponds to the percentage it represents, with the exact percentage value displayed above each bar.

### Components/Axes

* **X-axis:** Categorical axis with two categories: "Non-Disabled" and "Disabled".

* **Y-axis:** Implicit percentage scale, represented by the height of the bars.

* **Bar 1:** Represents "Non-Disabled" individuals. The bar is colored blue.

* **Bar 2:** Represents "Disabled" individuals. The bar is colored orange.

* **Values:** The percentage value is displayed above each bar.

### Detailed Analysis

* **Non-Disabled:** The blue bar representing "Non-Disabled" has a height corresponding to 91.3%.

* **Disabled:** The orange bar representing "Disabled" has a height corresponding to 8.7%.

### Key Observations

* The percentage of non-disabled individuals (91.3%) is significantly higher than the percentage of disabled individuals (8.7%).

### Interpretation

The bar chart visually demonstrates a significant disparity between the proportion of non-disabled and disabled individuals. The data suggests that in the population being represented, non-disabled individuals constitute a much larger percentage than disabled individuals. The exact context of this data (e.g., population demographics, survey results) is not provided, but the chart clearly highlights this difference in proportions.

</details>

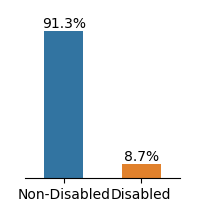

(b) OULAD

<details>

<summary>extracted/6375259/figures/freq_mat.png Details</summary>

### Visual Description

## Bar Chart: Gender Distribution

### Overview

The image is a bar chart showing the distribution of gender, with two categories: Female and Male. The chart displays the percentage of each gender.

### Components/Axes

* **X-axis:** Categorical axis with two labels: "Female" and "Male".

* **Y-axis:** Implicit percentage scale, represented by the height of the bars.

* **Bars:**

* Blue bar representing "Female".

* Orange bar representing "Male".

* **Values:**

* "52.7%" is displayed above the "Female" bar.

* "47.3%" is displayed above the "Male" bar.

### Detailed Analysis

* **Female:** The blue bar representing "Female" has a value of 52.7%.

* **Male:** The orange bar representing "Male" has a value of 47.3%.

### Key Observations

* The percentage of females (52.7%) is slightly higher than the percentage of males (47.3%).

* The total percentage adds up to 100% (52.7% + 47.3% = 100%).

### Interpretation

The bar chart illustrates a gender distribution where females slightly outnumber males. The difference is relatively small, indicating a near-equal distribution between the two genders.

</details>

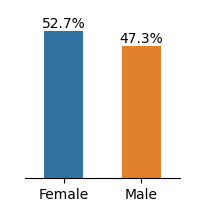

(c) Mat

<details>

<summary>extracted/6375259/figures/freq_por.png Details</summary>

### Visual Description

## Bar Chart: Gender Distribution

### Overview

The image presents a bar chart illustrating the distribution of genders, with "Female" and "Male" categories. The chart displays the percentage of each gender.

### Components/Axes

* **X-axis:** Represents the gender categories: "Female" and "Male".

* **Y-axis:** (Implied) Represents the percentage, with values indicated above each bar.

* **Bars:**

* A blue bar represents "Female".

* An orange bar represents "Male".

### Detailed Analysis

* **Female:** The blue bar for "Female" reaches a value of 59.0%.

* **Male:** The orange bar for "Male" reaches a value of 41.0%.

### Key Observations

* The percentage of females (59.0%) is higher than the percentage of males (41.0%).

* The difference between the two percentages is 18.0%.

### Interpretation

The bar chart indicates that in the sample represented, females constitute a larger proportion (59.0%) than males (41.0%). This suggests a gender imbalance in the population being analyzed.

</details>

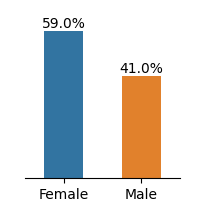

(d) Por

Figure 1: Frequency distributions of sensitive attributes in educational datasets.

The Law School dataset contains admission records of students at 163 U.S. law schools [46]. The dataset has demographic information of 20,798 students on race, gender, LSAT scores, and undergraduate GPA. We select gender and race as sensitive attributes and first-year GPA as the target for the regression task.

The OULAD dataset, originating from a 2013-2014 Open University study in England, compiles student data and their interactions within a virtual learning environment across seven courses. We select disability as the sensitive attribute and final result as the classification target. The gender is not considered as our sensitive attribute because the preceding study [18] revealed that gender attribute does not have a causal relationship to student’s final result. For this work, we only considered the module BBB(Social Science).

The Student Performance dataset describes students’ achievements in Mathematics and Portuguese language subjects in two Portuguese secondary schools during 2005-2006. The dataset provides details about students’ demographics, and family backgrounds such as parent’s jobs and education level, study habits, extracurricular activities, and lifestyle. We select gender as the sensitive attribute and G3 as the target for the regression problem. Feature description of the dataset is presented in Appendix A.

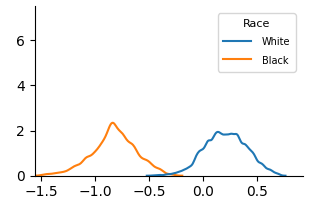

The dataset demonstrates imbalance between subgroups of sensitive attributes, presented in Fig. 1. Law school and OULAD datasets exhibit an extreme imbalance in the selected sensitive attributes, while the gender attribute in Student Performance is less imbalanced.

Race Gender GPA LSAT FYA

(a) Law School

Disability

Highest Education

Final Result Gender Age

(b) OULAD(module BBB)

Gender studytime freetime Dalc G1 Walc goout G2 G3

(c) Student Performance (Mat)

Gender Dalc freetime studytime Walc goout G1 G2 G3

(d) Student Performance (Por)

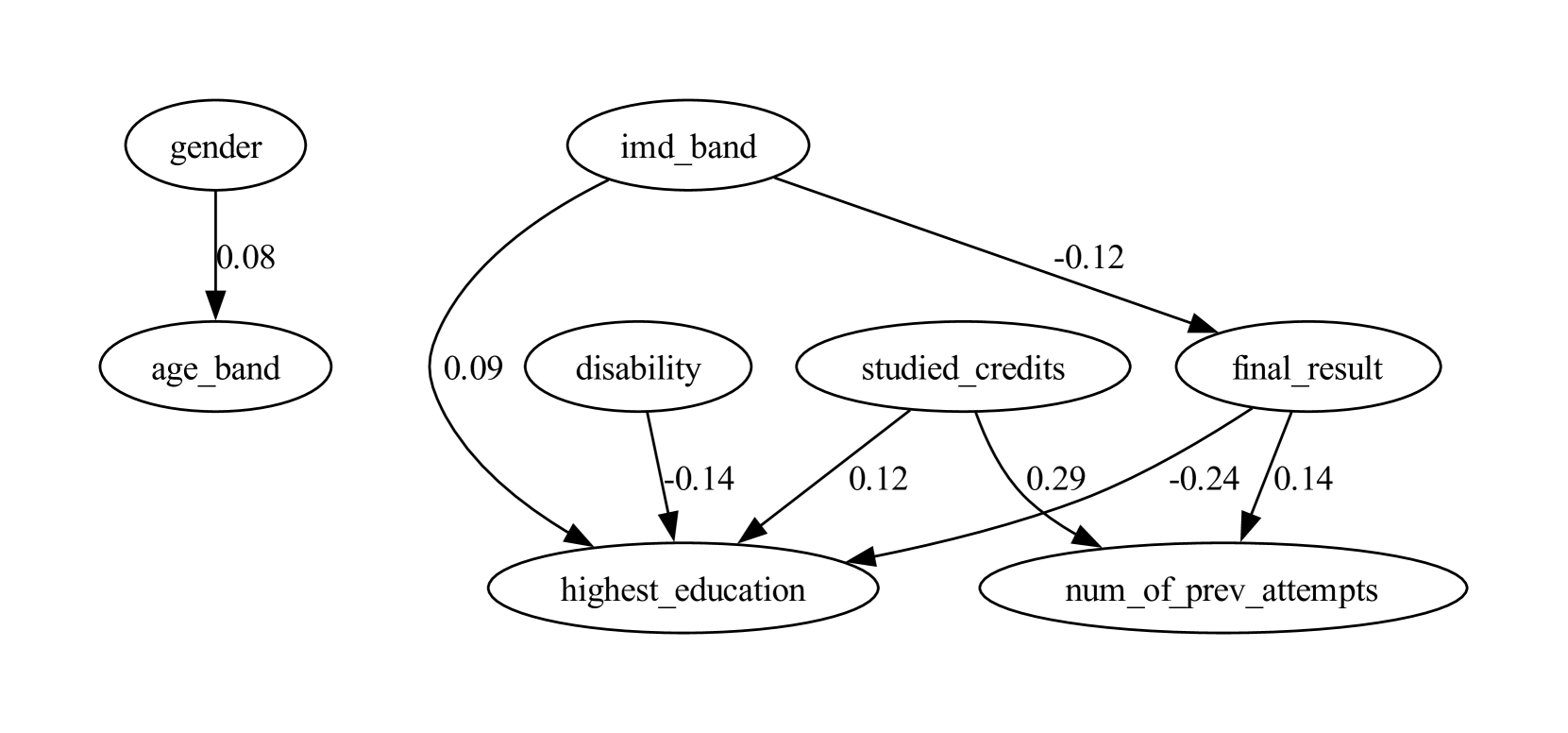

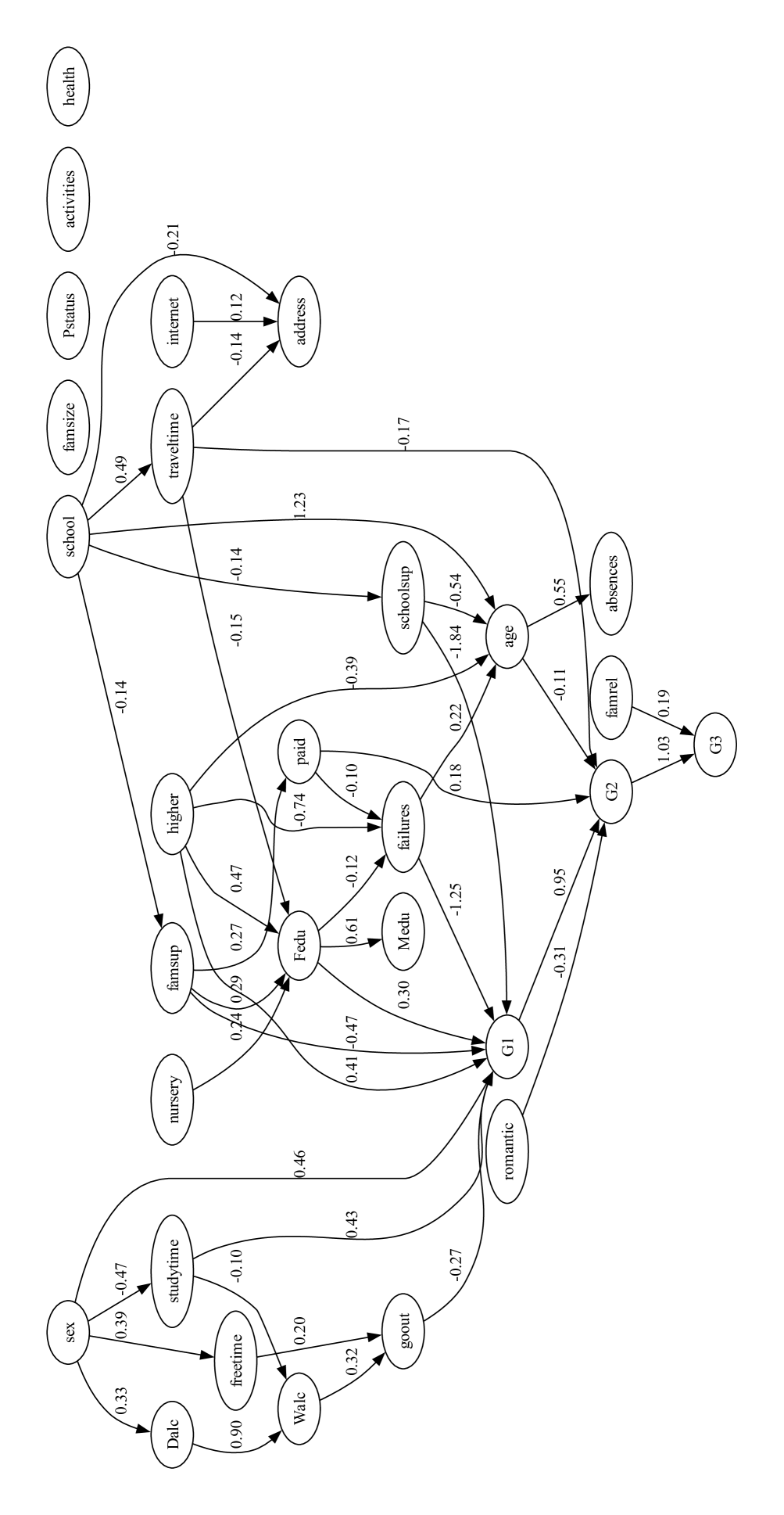

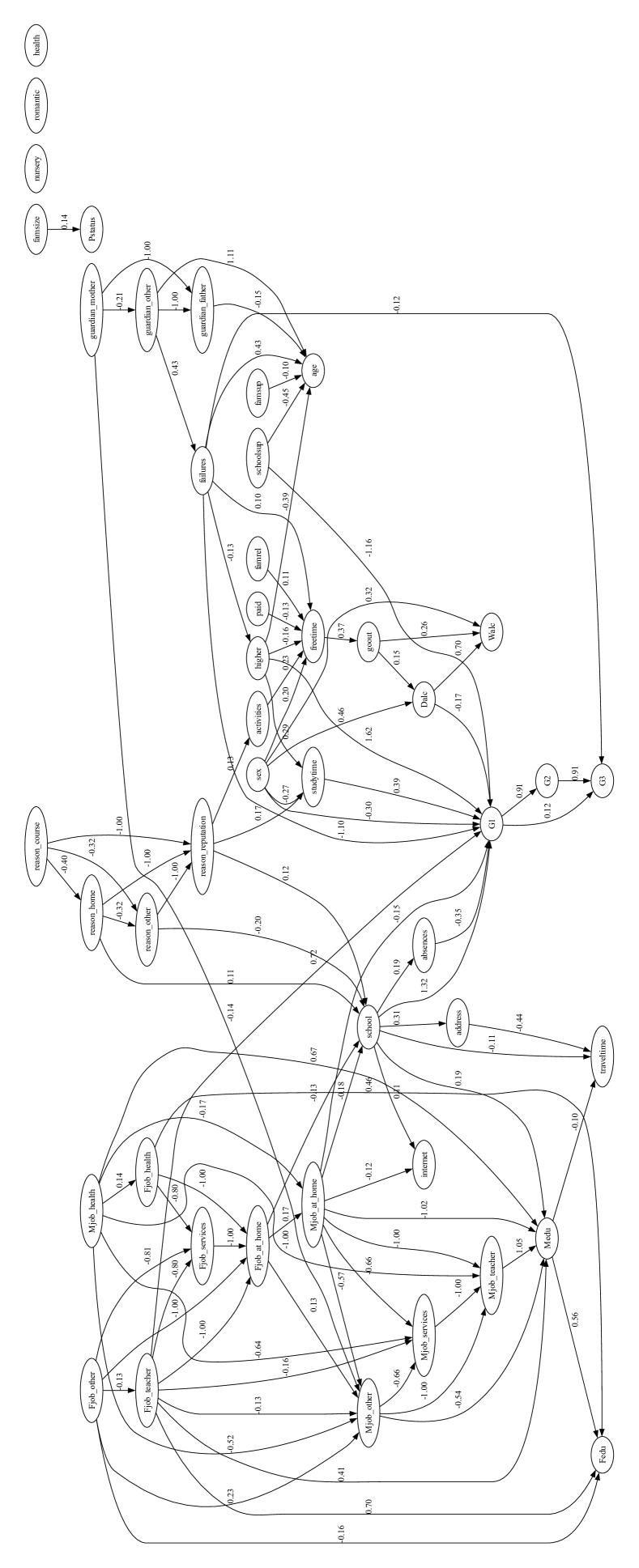

Figure 2: Partial DAGs of the estimated causal model for educational datasets, showing only the sensitive attribute, its descendants, and the target variable. See Appendix B for full graphs. Each sub-graph is not used for implementing counterfactually fair models; only the remaining features are included.

3.2 Structural Causal Model of Educational Dataset

Counterfactual fairness holds that intervening solely on the sensitive attribute A while keeping all other things equal, does not change the model’s prediction distribution. To implement counterfactual fairness, a predefined Structural Causal Model(SCM) in Directed Acyclic Graph(DAG) form is necessary. Although the causal model of the Law School data exists [23], there are no known causal models for the remaining datasets.

To construct the SCM of OULAD and the Student Performance dataset, we use a causal discovery algorithm, Linear Non-Gaussian Acyclic Model (LiNGAM) [41]. The algorithm estimates a causal structure of the observational data of continuous values under linear-non-Gaussian assumption. From the estimated causal model, we filtered DAG weights that are under the 0.1 threshold.

Among constructed SCM, we present features that are in causal relationships with the sensitive attribute that directly or indirectly affects the target variable in Fig. 2. Further analysis of causal relationships between sensitive features is discussed in Section 5.

3.3 Counterfactual Fairness Evaluation Metrics

We use the Wasserstein Distance(WD) and Maximum Mean Discrepancy(MMD) metric for evaluating the difference between prediction distributions for sensitive attributes. Wasserstein distance and MMD are common metrics for evaluating counterfactual fairness [13, 30]. Lower WD and MMD values suggest greater fairness, indicating smaller differences between the outcome distributions.

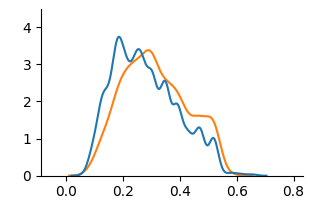

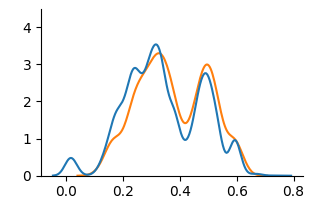

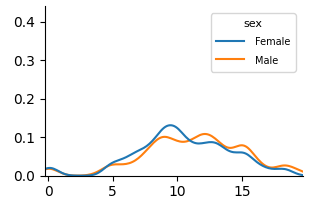

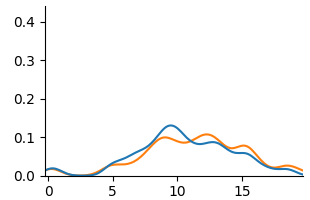

Although there exist other measures for evaluating counterfactual fairness such as Total Effect [55] and Counterfactual Confusion Matrix [39], we limit our evaluation of counterfactual fairness to the above metrics. We construct unaware and counterfactual models without direct access to the sensitive attribute, evaluating fairness with mentioned metrics would not be feasible. We visually examine prediction distributions through Kernel Density Estimation(KDE) plots across our baseline and counterfactually fair models.

3.3.1 Educational Domain Specific Fairness Metric

We additionally analyze the counterfactual approach with pre-existing fairness metrics tailored for the education domain. We choose Absolute Between-ROC Area(ABROCA) [15] and Model Absolute Density Distance(MADD) [43] for the analysis. ABROCA quantifies the absolute difference between two ROC curves. It measures the overall performance divergence of a classifier between sensitive attributes, focusing on the magnitude of the gap regardless of which group performs better at each threshold. MADD constructs KDE plots of prediction probabilities and calculates the area between two curves of the sensitive attribute. While the ABROCA metric represents how similar the numbers of errors across groups are, the MADD metric captures the severity of discrimination across groups, allowing for diverse perspectives on the analysis of model behaviors on fairness. Although both metrics are designed for group fairness, we include those in our work because they are specifically proposed under the context of the educational domain.

3.4 Experiment Details

For the experiment, we considered the Level 1 concept of counterfactual fairness defined in Kusner et al. [23]. At Level 1, the predictor is built exclusively using observed variables that are not causally influenced by the sensitive attributes. While a causal ordering of these features is necessary, no assumptions are made about the structure of unobserved latent variables. This requires causal ordering of features but no further assumptions of unobserved variables. For the Law School dataset, Level 2 is used.

We selected two baselines for the experiment, (a) Unfair model and (b) Unaware model. An unfair model directly includes sensitive attributes to train the model. The unaware model implements ‘Fairness Through Unawareness’, a fairness notion where an algorithm is considered fair when protected attributes are not used in the decision-making process [7]. We compare two baselines with the FairLearning algorithm introduced in Kusner et al. [23].

We evaluate the counterfactual fairness of machine learning models on both regression and classification models. We selected the four most utilized machine learning models in the algorithmic fairness literature [19]. We choose Linear Regression(LR; Logistic Regression for classification), Multilayer Perceptron(MLP), Random Forest(RF), and XGBoost(XGB) [5]. For KDE plot visualizations, we used a linear regression model for regression and MLP for classification.

4 Result

In the result section of our study, we present an analysis of counterfactual fairness on educational datasets. Since the Law School dataset is well studied in the counterfactual fairness literature, we only provide this experiment as a baseline.

4.1 Visual Analysis

We use KDE plots to visualize outcome distributions across subgroups, providing a better understanding of counterfactual fairness with summary statistics.

<details>

<summary>extracted/6375259/figures/kde_law_unfair.png Details</summary>

### Visual Description

## Chart: Density Plot of Two Races

### Overview

The image is a density plot comparing the distributions of a variable for two racial groups: White and Black. The plot shows the probability density of the variable on the x-axis for each race.

### Components/Axes

* **X-axis:** The x-axis is unlabeled, but ranges from approximately -1.5 to 0.5.

* **Y-axis:** The y-axis represents density and ranges from 0 to 7.

* **Legend:** Located in the top-right corner, the legend identifies the two data series:

* Blue line: "White"

* Orange line: "Black"

### Detailed Analysis

* **White (Blue Line):** The distribution for the White group is centered around 0.1, with a peak density of approximately 2. The distribution is relatively narrow, indicating less variability.

* **Black (Orange Line):** The distribution for the Black group is centered around -0.9, with a peak density of approximately 2.5. The distribution is also relatively narrow.

### Key Observations

* The distributions for the two races are clearly separated, indicating a significant difference in the variable being measured.

* The Black group has a higher peak density than the White group.

* Both distributions appear to be approximately normal.

### Interpretation

The density plot suggests that there is a statistically significant difference in the variable being measured between the White and Black racial groups. The variable tends to be higher for the White group and lower for the Black group. The plot does not provide information about the nature of the variable or the context in which it was measured, so further interpretation would require additional information.

</details>

(a) Unfair

<details>

<summary>extracted/6375259/figures/kde_law_unaware.png Details</summary>

### Visual Description

## Chart: Distribution Plot

### Overview

The image shows a distribution plot with two overlapping curves, one blue and one orange. The plot visualizes the distribution of some variable, with the x-axis ranging from approximately -1.5 to 0.5 and the y-axis representing the density or frequency of the variable.

### Components/Axes

* **X-axis:** Ranges from -1.5 to 0.5, with tick marks at -1.5, -1.0, -0.5, 0.0, and 0.5.

* **Y-axis:** Ranges from 0 to approximately 7, with tick marks at 0, 2, 4, and 6.

* **Blue Curve:** Represents one distribution.

* **Orange Curve:** Represents another distribution.

### Detailed Analysis

* **Blue Curve:** The blue curve starts near 0 at x = -1.5, gradually increases to a peak around x = 0.25 with a y-value of approximately 1.5, and then decreases back to near 0 at x = 0.5.

* **Orange Curve:** The orange curve starts near 0 at x = -1.5, increases to a peak around x = -0.3 with a y-value of approximately 1.8, and then decreases back to near 0 at x = 0.5.

### Key Observations

* The orange curve is shifted to the left compared to the blue curve.

* Both curves are unimodal, meaning they have a single peak.

* The peak of the orange curve is slightly higher than the peak of the blue curve.

* The blue curve appears to be slightly wider than the orange curve, indicating a larger standard deviation.

### Interpretation

The plot compares the distributions of two different variables or groups. The orange distribution has a lower mean than the blue distribution, as indicated by its peak being shifted to the left. The blue distribution has a slightly larger spread, suggesting greater variability. The difference in the distributions could be due to various factors depending on the context of the data.

</details>

(b) Unaware

<details>

<summary>extracted/6375259/figures/kde_law_counterfactual.png Details</summary>

### Visual Description

## Chart: Overlapping Distributions

### Overview

The image shows two overlapping probability distributions, one blue and one orange. Both distributions are unimodal and centered around different values on the x-axis.

### Components/Axes

* **X-axis:** Ranges from approximately -1.5 to 0.5, with tick marks at -1.5, -1.0, -0.5, 0.0, and 0.5.

* **Y-axis:** Ranges from 0 to approximately 7, with tick marks at 0, 2, 4, and 6.

* **Blue Distribution:** A unimodal distribution centered around x = 0.1, with a peak value of approximately 5.5.

* **Orange Distribution:** A unimodal distribution centered around x = 0.25, with a peak value of approximately 7.

### Detailed Analysis

* **Blue Distribution:** The blue line starts at approximately x = -0.1 with a y-value near 0, rises to a peak of approximately 5.5 at x = 0.1, and then decreases back to near 0 at approximately x = 0.3.

* **Orange Distribution:** The orange line starts at approximately x = 0 with a y-value near 0, rises to a peak of approximately 7 at x = 0.25, and then decreases back to near 0 at approximately x = 0.5.

### Key Observations

* Both distributions are unimodal.

* The orange distribution has a higher peak and is shifted to the right compared to the blue distribution.

* The blue distribution is slightly wider than the orange distribution.

### Interpretation

The chart compares two different distributions. The orange distribution has a higher probability density around x = 0.25, while the blue distribution has a higher probability density around x = 0.1. The difference in the location and height of the peaks suggests that the two distributions represent different underlying phenomena or populations. The orange distribution is more concentrated around its mean than the blue distribution.

</details>

(c) Counterfactual

Figure 3: KDE plots on Law School.

<details>

<summary>extracted/6375259/figures/kde_oulad_unfair.png Details</summary>

### Visual Description

## Chart: Density Plot of Disabled vs. Non-disabled

### Overview

The image is a density plot comparing the distribution of a certain variable (unspecified) for two groups: "non-disabled" and "disabled". The y-axis represents density, and the x-axis ranges from approximately 0.0 to 0.8. The plot shows the probability density of the variable for each group.

### Components/Axes

* **X-axis:** Ranges from 0.0 to 0.8, with tick marks at 0.0, 0.2, 0.4, 0.6, and 0.8. The label for the x-axis is not provided in the image.

* **Y-axis:** Ranges from 0 to 4, with tick marks at 0, 1, 2, 3, and 4. The label for the y-axis is "Density".

* **Legend:** Located in the top-right corner.

* "non-disabled" is represented by a blue line.

* "disabled" is represented by an orange line.

### Detailed Analysis

* **Non-disabled (Blue Line):**

* The density curve starts near 0 at x=0.0.

* It rises to a peak around x=0.25, with a density of approximately 3.8.

* The curve then decreases, with a small bump around x=0.45, and approaches 0 near x=0.6.

* **Disabled (Orange Line):**

* The density curve starts near 0 at x=0.0.

* It rises to a peak around x=0.35, with a density of approximately 4.2.

* The curve then decreases, with a small bump around x=0.5, and approaches 0 near x=0.7.

### Key Observations

* The "disabled" group has a density peak that is slightly higher and shifted to the right compared to the "non-disabled" group.

* Both groups have a unimodal distribution, but the "disabled" group's distribution is slightly flatter and more spread out.

* There is a secondary, smaller peak in both distributions around x=0.45-0.5.

### Interpretation

The density plot suggests that the variable being measured tends to have higher values for the "disabled" group compared to the "non-disabled" group, as indicated by the shift in the peak of the density curve. The variable is not specified in the image, so the specific meaning of this difference cannot be determined. The secondary peak in both distributions may indicate the presence of a sub-population within each group. Without knowing what the x-axis represents, it's difficult to provide a more detailed interpretation.

</details>

(a) Unfair

<details>

<summary>extracted/6375259/figures/kde_oulad_unaware.png Details</summary>

### Visual Description

## Chart: Density Plot Comparison

### Overview

The image shows a density plot comparing two distributions. One distribution is represented by a blue line, and the other by an orange line. The x-axis ranges from approximately -0.1 to 0.8, and the y-axis ranges from 0 to 4. The plot illustrates the probability density of a continuous variable.

### Components/Axes

* **X-axis:** Ranges from -0.1 to 0.8 in increments of 0.2.

* **Y-axis:** Ranges from 0 to 4 in increments of 1.

* **Blue Line:** Represents one distribution.

* **Orange Line:** Represents another distribution.

### Detailed Analysis

* **Blue Line:**

* Starts near 0 at x = -0.1.

* Rises to a peak around y = 3.8 at x = 0.2.

* Decreases to approximately y = 1 at x = 0.5.

* Ends near 0 at x = 0.8.

* **Orange Line:**

* Starts near 0 at x = -0.1.

* Rises to a peak around y = 3 at x = 0.25.

* Decreases to approximately y = 1.6 at x = 0.5.

* Ends near 0 at x = 0.8.

### Key Observations

* Both distributions have a similar shape, with a peak between x = 0.2 and x = 0.3.

* The blue line has a slightly higher peak than the orange line.

* The blue line has more fluctuations than the orange line.

### Interpretation

The density plot compares two distributions, showing their relative probabilities across a range of values. The similarity in shape suggests that the underlying processes generating these distributions may be related. The differences in peak height and fluctuations indicate variations in the frequency and variability of the data. The blue distribution has a higher peak, suggesting a higher concentration of values around x = 0.2, while the orange distribution is slightly more spread out.

</details>

(b) Unaware

<details>

<summary>extracted/6375259/figures/kde_oulad_counterfactual.png Details</summary>

### Visual Description

## Chart: Density Plot Comparison

### Overview

The image presents a density plot comparing two distributions, one represented by a blue line and the other by an orange line. The plot shows the probability density of a variable across a range of values, allowing for a visual comparison of the shapes and central tendencies of the two distributions.

### Components/Axes

* **X-axis:** Ranges from approximately -0.1 to 0.8, with tick marks at intervals of 0.2.

* **Y-axis:** Ranges from 0 to 4.0, with tick marks at intervals of 1.

* **Blue Line:** Represents one distribution.

* **Orange Line:** Represents another distribution.

### Detailed Analysis

* **Blue Line:**

* Starts near 0 at x = -0.1.

* Rises to a peak around x = 0.3, with a density of approximately 3.5.

* Dips slightly, then rises to another peak around x = 0.45, with a density of approximately 2.5.

* Decreases to near 0 at x = 0.8.

* **Orange Line:**

* Starts near 0 at x = -0.1.

* Rises to a peak around x = 0.2, with a density of approximately 2.5.

* Rises to another peak around x = 0.5, with a density of approximately 3.0.

* Decreases to near 0 at x = 0.8.

### Key Observations

* Both distributions have a similar range, from approximately -0.1 to 0.8.

* The blue distribution has a higher peak density around x = 0.3, while the orange distribution has a higher peak density around x = 0.5.

* Both distributions have a small peak near x = 0.0.

### Interpretation

The density plot visually compares two distributions, highlighting differences in their shapes and peak densities. The blue distribution appears to be more concentrated around x = 0.3, while the orange distribution is more concentrated around x = 0.5. This suggests that the underlying data for the blue distribution tends to have values closer to 0.3, while the data for the orange distribution tends to have values closer to 0.5. The small peak near x = 0.0 in both distributions suggests that there is a small proportion of data points with values close to 0 in both datasets.

</details>

(c) Counterfactual

Figure 4: KDE plots on OULAD.

<details>

<summary>extracted/6375259/figures/kde_mat_unfair.png Details</summary>

### Visual Description

## Chart: Density Plot of Female and Male Distributions

### Overview

The image is a density plot comparing the distributions of two groups, "Female" and "Male". The plot shows the probability density of each group across a range of values on the x-axis.

### Components/Axes

* **X-axis:** The x-axis is unlabeled but ranges from approximately 0 to 20.

* **Y-axis:** The y-axis represents density, ranging from 0.0 to 0.4.

* **Legend:** Located in the top-right corner, the legend identifies the two data series:

* Blue line: "Female"

* Orange line: "Male"

### Detailed Analysis

* **Female (Blue Line):**

* The density starts near 0 at x=0.

* It gradually increases to approximately 0.06 around x=5.

* It peaks at around 0.13 near x=10.

* It decreases to approximately 0.08 around x=13.

* It fluctuates around 0.08-0.10 between x=13 and x=16.

* It decreases to near 0 at x=20.

* **Male (Orange Line):**

* The density starts near 0 at x=0.

* It gradually increases to approximately 0.04 around x=5.

* It increases to approximately 0.10 around x=9.

* It peaks at around 0.11 near x=13.

* It decreases to approximately 0.02 around x=18.

* It decreases to near 0 at x=20.

### Key Observations

* Both distributions start near 0 and end near 0.

* The "Female" distribution has a higher peak density (approximately 0.13) compared to the "Male" distribution (approximately 0.11).

* The "Female" distribution peaks earlier (around x=10) than the "Male" distribution (around x=13).

* The "Male" distribution has a broader peak, while the "Female" distribution has a sharper peak.

### Interpretation

The density plot compares the distributions of "Female" and "Male" groups across an unlabeled x-axis. The "Female" group shows a higher density peak at an earlier value, suggesting a higher concentration of data points around x=10. The "Male" group has a broader peak, indicating a more dispersed distribution. Without knowing what the x-axis represents, it's difficult to provide a more specific interpretation. However, the plot suggests that there are notable differences in the distributions of the two groups.

</details>

(a) Unfair

<details>

<summary>extracted/6375259/figures/kde_mat_unaware.png Details</summary>

### Visual Description

## Chart: Density Plot Comparison

### Overview

The image shows a density plot comparing two distributions, one represented by a blue line and the other by an orange line. The plot displays the probability density of a variable across a range of values.

### Components/Axes

* **X-axis:** Ranges from 0 to 20, with tick marks at intervals of 5 (0, 5, 10, 15, 20).

* **Y-axis:** Ranges from 0.0 to 0.4, with tick marks at intervals of 0.1 (0.0, 0.1, 0.2, 0.3, 0.4).

* **Legend:** There is no explicit legend, but the two distributions are represented by a blue line and an orange line.

### Detailed Analysis

* **Blue Line:** The blue line represents one distribution. It starts near 0.0 at x=0, rises to a peak around x=10 with a density of approximately 0.12, then decreases to near 0.0 at x=20. There is a small bump around x=5 with a density of approximately 0.03.

* **Orange Line:** The orange line represents another distribution. It also starts near 0.0 at x=0, rises to a peak around x=11 with a density of approximately 0.10, then decreases to near 0.0 at x=20. There is a small bump around x=5 with a density of approximately 0.02.

### Key Observations

* Both distributions have a similar shape, with a primary peak around x=10-11.

* The blue distribution has a slightly higher peak density than the orange distribution.

* Both distributions have a small bump around x=5.

### Interpretation

The density plot compares two distributions, showing their relative probabilities across a range of values. The similarity in shape suggests that the underlying processes generating these distributions may be related. The slight difference in peak density indicates that one distribution is more concentrated around its mean than the other. The bump around x=5 suggests a possible secondary mode or influence in both distributions.

</details>

(b) Unaware

<details>

<summary>extracted/6375259/figures/kde_mat_counterfactual.png Details</summary>

### Visual Description

## Chart: Density Plot

### Overview

The image is a density plot showing two overlapping distributions, one in blue and one in orange. The x-axis ranges from 0 to 15, and the y-axis ranges from 0 to 0.4. Both distributions peak around x=11.

### Components/Axes

* **X-axis:** Ranges from 0 to 15, with tick marks at 0, 5, 10, and 15.

* **Y-axis:** Ranges from 0.0 to 0.4, with tick marks at 0.0, 0.1, 0.2, 0.3, and 0.4.

* **Blue Line:** Represents one distribution.

* **Orange Line:** Represents another distribution.

### Detailed Analysis

* **Blue Line:**

* Starts near 0 at x=0.

* Increases to a small peak around x=7.5 with a value of approximately 0.03.

* Decreases slightly, then increases sharply to a major peak around x=11 with a value of approximately 0.42.

* Decreases back to near 0 at x=15.

* **Orange Line:**

* Starts near 0 at x=0.

* Increases to a small peak around x=7.5 with a value of approximately 0.03.

* Decreases slightly, then increases sharply to a major peak around x=11 with a value of approximately 0.33.

* Decreases back to near 0 at x=15.

### Key Observations

* Both distributions have a similar shape, with a major peak around x=11 and a minor peak around x=7.5.

* The blue distribution has a higher peak at x=11 than the orange distribution.

* The distributions are very similar between x=0 and x=10.

### Interpretation

The density plot compares two distributions, showing that they are generally similar but with some differences in peak height. The blue distribution has a slightly higher concentration of values around x=11 compared to the orange distribution. The similarity in shape suggests that the underlying processes generating these distributions may be related. The peak at x=11 indicates a common central tendency for both datasets. The minor peak at x=7.5 suggests a secondary mode or influence in the data.

</details>

(c) Counterfactual

Figure 5: KDE plots on Student Performance(Mathematics).

<details>

<summary>extracted/6375259/figures/kde_por_unfair.png Details</summary>

### Visual Description

## Chart: Density Plot by Sex

### Overview

The image is a density plot comparing the distribution of a variable (unspecified) between females and males. The plot shows the probability density of the variable along the x-axis, with separate lines for each sex.

### Components/Axes

* **X-axis:** Ranges from 0 to 22, with tick marks at intervals of 5. The variable represented by the x-axis is not explicitly labeled.

* **Y-axis:** Ranges from 0.0 to 0.4, with tick marks at intervals of 0.1. Represents the density.

* **Legend:** Located in the top-right corner.

* **Female:** Represented by a blue line.

* **Male:** Represented by an orange line.

### Detailed Analysis

* **Female (Blue Line):**

* The density starts near 0 at x=0.

* The density increases, peaking around x=12 with a density of approximately 0.13.

* The density then decreases, approaching 0 around x=22.

* **Male (Orange Line):**

* The density starts near 0 at x=0.

* The density increases, peaking around x=11 with a density of approximately 0.14.

* The density then decreases, approaching 0 around x=22.

### Key Observations

* Both distributions are unimodal (single peak).

* The male distribution peaks slightly earlier (around x=11) than the female distribution (around x=12).

* The peak density for males is slightly higher (0.14) than for females (0.13).

* Both distributions have similar shapes and ranges.

### Interpretation

The density plot suggests that the variable being analyzed has a similar distribution for both males and females. However, there is a slight tendency for males to have lower values of the variable compared to females, as indicated by the earlier peak in the male distribution. The variable itself is not specified in the image, so further context is needed to understand the significance of this difference.

</details>

(a) Unfair

<details>

<summary>extracted/6375259/figures/kde_por_unaware.png Details</summary>

### Visual Description

## Density Plot: Distribution Comparison

### Overview

The image is a density plot comparing two distributions, one represented by a blue line and the other by an orange line. The plot shows the probability density of each distribution across a range of values from approximately 0 to 20.

### Components/Axes

* **X-axis:** Ranges from 0 to 20, with tick marks at 0, 5, 10, 15, and 20.

* **Y-axis:** Ranges from 0.0 to 0.4, with tick marks at 0.0, 0.1, 0.2, 0.3, and 0.4.

* **Blue Line:** Represents one distribution.

* **Orange Line:** Represents another distribution.

### Detailed Analysis

* **Blue Line:**

* Starts near 0.0 at x=0.

* Gradually increases to a peak around x=11, reaching a density of approximately 0.16.

* Decreases to near 0.0 around x=20.

* **Orange Line:**

* Starts near 0.0 at x=0.

* Gradually increases to a peak around x=12, reaching a density of approximately 0.18.

* Decreases to near 0.0 around x=20.

### Key Observations

* Both distributions are unimodal (have one peak).

* The orange distribution has a slightly higher peak density than the blue distribution.

* The peaks of the two distributions are close to each other, with the orange distribution's peak slightly to the right of the blue distribution's peak.

* Both distributions have similar shapes, with a slight right skew.

### Interpretation

The density plot compares two distributions, showing their relative probabilities across a range of values. The orange distribution has a slightly higher probability density around x=12 compared to the blue distribution around x=11. Both distributions have similar shapes and ranges, suggesting they represent similar phenomena with slightly different central tendencies. The plot indicates that values around 11 and 12 are more likely to occur in these distributions compared to values at the extremes (near 0 or 20).

</details>

(b) Unaware

<details>

<summary>extracted/6375259/figures/kde_por_counterfactual.png Details</summary>

### Visual Description

## Chart: Density Plot of Two Distributions

### Overview

The image is a density plot comparing two distributions, one represented by a blue line and the other by an orange line. The plot shows the probability density of each distribution across a range of values.

### Components/Axes

* **X-axis:** Ranges from 0 to 20, with tick marks at intervals of 5.

* **Y-axis:** Ranges from 0.0 to 0.4, with tick marks at intervals of 0.1.

* **Legend:** There is no explicit legend, but the two distributions are represented by a blue line and an orange line.

### Detailed Analysis

* **Blue Line:** The blue line represents one distribution. It starts near 0 at x=5, rises to a peak around x=12, and then decreases back to near 0 around x=15. The peak density is approximately 0.35.

* **Orange Line:** The orange line represents another distribution. It also starts near 0 at x=5, rises to a peak around x=12, and then decreases back to near 0 around x=15. The peak density is approximately 0.38.

* **Trend Verification:** Both lines show a similar trend: a rise from near 0 to a peak around x=12, followed by a decline back to near 0.

### Key Observations

* Both distributions have a similar shape and are centered around x=12.

* The orange distribution has a slightly higher peak density than the blue distribution.

* The distributions overlap significantly, indicating that they are similar.

### Interpretation

The density plot suggests that the two distributions being compared are very similar. The orange distribution has a slightly higher probability density around the mean, but overall, the shapes and ranges of the distributions are comparable. This could indicate that the two datasets being represented are drawn from similar populations or that they are subject to similar underlying processes.

</details>

(c) Counterfactual

Figure 6: KDE plots on Student Performance(Portuguese).

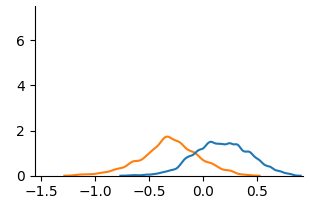

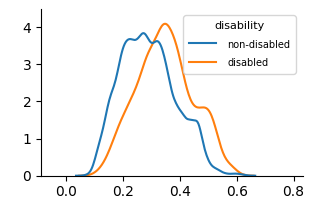

Fig. 3 and Fig. 4 present KDE plots for Law School and OULAD datasets. For Law School data, we can see that the unfair and unaware model produces predictions with large disparities, as previously known from the counterfactual literature. For OULAD data, unfair and unaware models’ prediction probabilities do not overlap, giving slightly higher prediction probabilities for disabled students. For both datasets, the prediction distribution of the counterfactual model is closer than the unfair and unaware model.

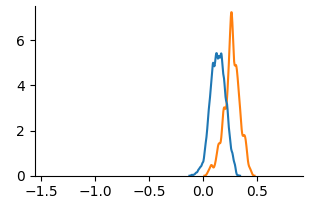

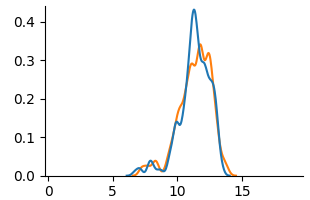

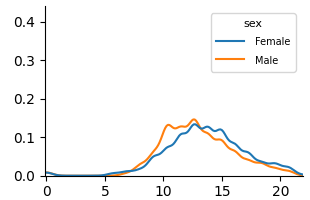

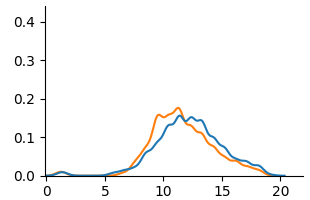

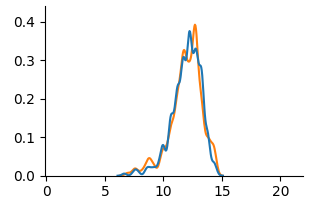

Fig. 5 and Fig. 6 show KDE plots for Student Performance in Mathematics and Portuguese. The differences in model predictions are relatively small for all models compared to previous datasets, although disparities exist. In Mathematics, unfair and unaware models underestimate scores for female students (below 10) and overestimate for males. The opposite is true for Portuguese, where female students are more frequently assigned scores above 10. Counterfactual models on both data demonstrate an overlap of two distributions, although male students were predicted to be in the middle score range more frequently than female students in Mathematics.

4.2 Measure of Counterfactual Fairness

Table 3: Evaluation of fairness notions on benchmark datasets.

| Data | Metric | Unfair | Unaware | Counterfactual |

| --- | --- | --- | --- | --- |

| Law | WD | 1.0340 | 0.4685 | 0.1290 |

| MMD | 0.8658 | 0.4140 | 0.1277 | |

| OULAD | WD | 0.0722 | 0.0342 | 0.0337 |

| MMD | 0.0708 | 0.0324 | 0.0317 | |

| Math | WD | 0.7251 | 0.7358 | 0.1161 |

| MMD | 0.3396 | 0.1917 | 0.0538 | |

| Por | WD | 0.7526 | 0.6339 | 0.1047 |

| MMD | 0.4322 | 0.2839 | 0.1205 | |

We present the evaluation of counterfactual fairness in Table 3. In all cases, the counterfactually fair model achieves the lowest WD and MMD. For Law School and Student Performance(Mat and Por) data, the distance between two distributions of sensitive attribute subgroups significantly reduced, comparing the counterfactual model to the unfair and unaware model. Despite the limited visual evidence of reduced distributional differences in the Student Performance KDE plots, WD and MMD provided quantifiable measures of this reduction. For OULAD data, the reduction in distribution difference between the unaware and counterfactual model is minimal, suggesting a weak causal link of disability to student’s final result. Consistent for all datasets, WD and MMD decrease as the sensitive attribute and its causal relationships are removed.

Fairness levels vary across datasets. Law school data shows the highest initial unfairness while OULAD data shows relatively low unfairness even for the unfair model. Both Student Performance dataset shows significant unfairness, particularly for WD. WD and MMD rankings of fairness methods generally agree, with large differences in one corresponding to large differences in the other, suggesting robustness to the distance metric choice.

Table 4: Evaluation of education-specific fairness on OULAD dataset.

| Data | Metric | Unfair | Unaware | Counterfactual |

| --- | --- | --- | --- | --- |

| OULAD | ABROCA | 0.1019 | 0.0219 | 0.0181 |

| MADD | 0.5868 | 0.3194 | 0.2763 | |

Given the classification nature of the OULAD dataset, ABROCA and MADD metric results are presented in Table 4. Because ABROCA and MADD assess group fairness disparity across all classification thresholds, they are not directly comparable to counterfactual fairness, an individual-level fairness notion. However, the unfair model was highly biased, as evidenced by its ABROCA (0.1019, max 0.5) and MADD (0.5868, max 2) scores. While the unaware model showed improvement, the counterfactual model achieved the best fairness results. This indicates that the counterfactual approach is effective in reducing disparities in the number of errors and model behaviors across groups.

4.3 Performance of Machine Learning Models

Table 5: Prediction performance of machine learning models on fairness notions.

| Data | Metric | Unfair | Unaware | Counterfactual | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| LR | MLP | RF | XGB | LR | MLP | RF | XGB | LR | MLP | RF | XGB | | |

| Law | MSE | 0.72 | 0.73 | 0.50 | 0.52 | 0.75 | 0.75 | 0.59 | 0.59 | 0.82 | 0.83 | 0.57 | 0.57 |

| RMSE | 0.85 | 0.86 | 0.71 | 0.72 | 0.86 | 0.86 | 0.77 | 0.77 | 0.90 | 0.91 | 0.75 | 0.76 | |

| OULAD | Acc | 0.69 | 0.70 | 0.72 | 0.71 | 0.69 | 0.69 | 0.71 | 0.71 | 0.68 | 0.68 | 0.70 | 0.69 |

| AUROC | 0.65 | 0.68 | 0.72 | 0.71 | 0.65 | 0.67 | 0.71 | 0.70 | 0.62 | 0.63 | 0.65 | 0.64 | |

| Mat | MSE | 4.13 | 5.33 | 2.82 | 4.71 | 4.06 | 5.30 | 2.88 | 4.04 | 17.43 | 17.50 | 17.08 | 17.76 |

| RMSE | 2.03 | 2.31 | 1.68 | 2.17 | 2.01 | 2.30 | 1.70 | 2.01 | 4.17 | 4.18 | 4.13 | 4.21 | |

| Por | MSE | 1.43 | 1.74 | 2.19 | 1.60 | 1.41 | 1.42 | 2.17 | 1.73 | 7.96 | 8.61 | 7.90 | 8.52 |

| RMSE | 1.20 | 1.32 | 1.48 | 1.27 | 1.19 | 1.19 | 1.47 | 1.31 | 2.82 | 2.93 | 2.81 | 2.92 | |

We show model performance results in Table 5. Across models, tree-based ensembles (RF and XGB) generally outperformed LR and MLP in regression. LR and MLP showed variable performance, with strong results on the Law School dataset but poor performance on others. All models performed well on the Law School dataset; however, the Student Performance datasets (Mathematics and Portuguese) were more challenging, possibly due to non-linear relationships.

The impact of fairness approaches varies across datasets. Although the unfair model frequently has the highest performance, the classification performance of OULAD remains similar across all fairness approaches. For Law School and Student Performance data, the counterfactual model leads to the worst performance, which aligns with existing literature on the accuracy-fairness trade-off. Student Performance in Mathematics shows a massive increase in MSE and RMSE for all models, suggesting that achieving counterfactual fairness with performance is challenging on this dataset.

5 Discussion

5.0.1 RQ 1. What causal relationships do sensitive attributes have in educational data?

Analysis of the OULAD causal graph (Fig. 2(b) and Fig. 7) reveals that disability has a direct causal effect on highest education (-0.14 weight). This implies that having disability makes attaining higher education more difficult. There is no common cause between disability and final result, implying having a disability does not directly affect student outcome. Attribute gender causally affects age; however, with a 0.1 edge weight threshold, two attributes are disconnected from the DAG. This reinforces previous research [18] which revealed no causal relationship between gender and final result.

The causal model of Student Performance is presented in Fig. 2(c) and Fig. 2(d). The estimated causal model shows potential gender-based influences in study habits, social behaviors, and alcohol consumption to academic performance. Foremost, gender have an indirect causal relationship on G3. For both datasets, gender directly influences studytime, and studytime directly influences G1. For Mathematics, gender directly impacts studytime, freetime, goout and Dalc, but not goout for Portuguese. Differences in goout and alcohol consumption(Dalc and Walc) show that the factors influencing student performance differ between Math and Portuguese, demonstrating the importance of considering subject-specific causal models in education.

5.0.2 RQ 2. Does counterfactual fairness in educational data lead to identical outcomes for students regardless of their demographic group membership in individual-level?

From our experiment result, we have demonstrated that removing causal links between sensitive attributes and the target through counterfactuals achieves a similar prediction distribution of machine learning models in sensitive feature subgroups. This suggests that the counterfactual approach is effective at mitigating unfairness as measured by these metrics, across all datasets.

The fairness result supports the insufficiency of the ‘fairness through unawareness’ notion in educational datasets. In KDE plots from Fig. 3 to Fig. 6, (a) Unfair are often very similar to (b) Unaware. In fairness evaluation in Table 3 and Table 4, the Unaware approach generally performs better than the Unfair baseline, but it’s significantly worse than the Counterfactual approach. This suggests that proxies often exist within the remaining features and simply removing the sensitive attribute is not a reliable way to achieve fairness.

5.0.3 RQ 3. Does counterfactually fair machine learning models result in a performance trade-off in educational data?

The performance result in Table 5 demonstrates trade-off exists between achieving high predictive accuracy and satisfying counterfactual fairness, especially for Student Performance data. Although the definition of counterfactual fairness is agnostic to how good an algorithm is [23], this phenomenon is known from the previous literature [54] that an trade-off between fairness and accuracy exists dominated by the sensitive attribute influencing the target variable.

The severe performance drop in the Student Performance dataset suggests high dependence on sensitive attribute gender on student performance, especially for Mathematics subject. We can infer that machine learning models heavily rely on the information related to the sensitive attribute gender for prediction. Removal of the sensitive attribute and its causal influence can drastically reduce performance in this case.

Similar performance across all fairness approaches in the OULAD dataset implies that sensitive attribute disability might not be a significant feature for predicting student outcomes. Further, the naive exclusion of sensitive attributes has minimal impact on the performance of machine learning models, reconfirming the ineffectiveness of the Unaware approach in both fairness and performance.

Overall, we find the nature of the sensitive attribute and its causal links to other features differs across educational datasets, influencing the variability in the effectiveness of the counterfactual fairness approach. Some sensitive attributes might be more challenging to address than others in terms of counterfactual fairness.

5.0.4 Limitations and Future Work

Our work is limited to implementing the early approach of counterfactual fairness introduced in Kusner et al. [23], which only includes non-descendants of sensitive attributes in the decision-making process and utilizing the Level 1 causal model. Also, we only report on counterfactual fairness and performance trade-offs. Thus, future research will focus on developing our Level 1 causal model into a Level 2 model. This will involve postulating unobserved latent variables based on expert domain knowledge and assessing the impact of increasingly strong causal assumptions. Concurrently, we will develop algorithms to reduce the trade-off between counterfactual fairness and performance in educational datasets.

6 Conclusion

In this paper, we evaluated the counterfactual fairness of machine learning models on real-world educational datasets and provided a comprehensive analysis of counterfactual fairness in the education context. This work contributes to exploring causal mechanisms in educational datasets and their impact on achieving counterfactual fairness. Considering counterfactual fairness as well as group and individual fairness could provide different viewpoints in evaluating the fairness of algorithmic decisions in education.

{credits}

6.0.1 Acknowledgements

We acknowledge the valuable input from Sunwoo Kim, whose comments helped in conducting the experiments. This work was supported by the National Research Foundation(NRF), Korea, under project BK21 FOUR (grant number T2023936).

6.0.2 \discintname

The authors have no competing interests to declare that are relevant to the content of this article.

References

- [1] Baker, R.S., Hawn, A.: Algorithmic bias in education. International Journal of Artificial Intelligence in Education pp. 1–41 (2022)

- [2] Binns, R.: On the apparent conflict between individual and group fairness. In: Proceedings of the 2020 conference on fairness, accountability, and transparency. pp. 514–524 (2020)

- [3] Bird, K.A., Castleman, B.L., Song, Y.: Are algorithms biased in education? exploring racial bias in predicting community college student success. Journal of Policy Analysis and Management (2024)

- [4] Ferreira de Carvalho, W., Roberto Gonçalves Marinho Couto, B., Ladeira, A.P., Ventura Gomes, O., Zarate, L.E.: Applying causal inference in educational data mining: A pilot study. In: Proceedings of the 10th International Conference on Computer Supported Education. pp. 454–460 (2018)

- [5] Chen, T., Guestrin, C.: Xgboost: A scalable tree boosting system. In: Proceedings of the 22nd acm sigkdd international conference on knowledge discovery and data mining. pp. 785–794 (2016)

- [6] Cock, J.M., Bilal, M., Davis, R., Marras, M., Kaser, T.: Protected attributes tell us who, behavior tells us how: A comparison of demographic and behavioral oversampling for fair student success modeling. In: LAK23: 13th International Learning Analytics and Knowledge Conference. pp. 488–498 (2023)

- [7] Cornacchia, G., Anelli, V.W., Biancofiore, G.M., Narducci, F., Pomo, C., Ragone, A., Di Sciascio, E.: Auditing fairness under unawareness through counterfactual reasoning. Information Processing & Management 60 (2), 103224 (2023)

- [8] Cortez, P., Silva, A.M.G.: Using data mining to predict secondary school student performance (2008)

- [9] Dash, S., Balasubramanian, V.N., Sharma, A.: Evaluating and mitigating bias in image classifiers: A causal perspective using counterfactuals. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision. pp. 915–924 (2022)

- [10] Deho, O.B., Zhan, C., Li, J., Liu, J., Liu, L., Duy Le, T.: How do the existing fairness metrics and unfairness mitigation algorithms contribute to ethical learning analytics? British Journal of Educational Technology 53 (4), 822–843 (2022)

- [11] Delaney, J., Devereux, P.J.: Gender and educational achievement: Stylized facts and causal evidence. CEPR Discussion Paper No. DP15753 (2021)

- [12] Doewes, A., Saxena, A., Pei, Y., Pechenizkiy, M.: Individual fairness evaluation for automated essay scoring system. International Educational Data Mining Society (2022)

- [13] Duong, T.D., Li, Q., Xu, G.: Achieving counterfactual fairness with imperfect structural causal model. Expert Systems with Applications 240, 122411 (2024)

- [14] Dwork, C., Hardt, M., Pitassi, T., Reingold, O., Zemel, R.: Fairness through awareness. In: Proceedings of the 3rd innovations in theoretical computer science conference. pp. 214–226 (2012)

- [15] Gardner, J., Brooks, C., Baker, R.: Evaluating the fairness of predictive student models through slicing analysis. In: Proceedings of the 9th international conference on learning analytics & knowledge. pp. 225–234 (2019)

- [16] Garg, S., Perot, V., Limtiaco, N., Taly, A., Chi, E.H., Beutel, A.: Counterfactual fairness in text classification through robustness. In: Proceedings of the 2019 AAAI/ACM Conference on AI, Ethics, and Society. pp. 219–226 (2019)

- [17] Hardt, M., Price, E., Srebro, N.: Equality of opportunity in supervised learning. Advances in neural information processing systems 29 (2016)

- [18] Hasan, R., Fritz, M.: Understanding utility and privacy of demographic data in education technology by causal analysis and adversarial-censoring. Proceedings on Privacy Enhancing Technologies (2022)

- [19] Hort, M., Chen, Z., Zhang, J.M., Harman, M., Sarro, F.: Bias mitigation for machine learning classifiers: A comprehensive survey. ACM Journal on Responsible Computing 1 (2), 1–52 (2024)

- [20] Hu, Q., Rangwala, H.: Towards fair educational data mining: A case study on detecting at-risk students. International Educational Data Mining Society (2020)

- [21] Jiang, W., Pardos, Z.A.: Towards equity and algorithmic fairness in student grade prediction. In: Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society. pp. 608–617 (2021)

- [22] Jung, S., Yu, S., Chun, S., Moon, T.: Do counterfactually fair image classifiers satisfy group fairness?–a theoretical and empirical study. Advances in Neural Information Processing Systems 37, 56041–56053 (2025)

- [23] Kusner, M.J., Loftus, J., Russell, C., Silva, R.: Counterfactual fairness. Advances in neural information processing systems 30 (2017)

- [24] Kuzilek, J., Hlosta, M., Zdrahal, Z.: Open university learning analytics dataset. Scientific data 4 (1), 1–8 (2017)

- [25] Lakkaraju, H., Aguiar, E., Shan, C., Miller, D., Bhanpuri, N., Ghani, R., Addison, K.L.: A machine learning framework to identify students at risk of adverse academic outcomes. In: Proceedings of the 21th ACM SIGKDD international conference on knowledge discovery and data mining. pp. 1909–1918 (2015)

- [26] Le Quy, T., Roy, A., Iosifidis, V., Zhang, W., Ntoutsi, E.: A survey on datasets for fairness-aware machine learning. Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery 12 (3), e1452 (2022)

- [27] Li, Z., Guo, X., Qiang, S.: A survey of deep causal models and their industrial applications. Artificial Intelligence Review 57 (11) (2024)

- [28] Loftus, J.R., Russell, C., Kusner, M.J., Silva, R.: Causal reasoning for algorithmic fairness. CoRR abs/1805.05859 (2018), http://arxiv.org/abs/1805.05859

- [29] Long, C., Hsu, H., Alghamdi, W., Calmon, F.: Individual arbitrariness and group fairness. Advances in Neural Information Processing Systems 36, 68602–68624 (2023)

- [30] Ma, J., Guo, R., Zhang, A., Li, J.: Learning for counterfactual fairness from observational data. In: Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. pp. 1620–1630 (2023)

- [31] Makhlouf, K., Zhioua, S., Palamidessi, C.: Machine learning fairness notions: Bridging the gap with real-world applications. Information Processing & Management 58 (5), 102642 (2021)

- [32] Marras, M., Boratto, L., Ramos, G., Fenu, G.: Equality of learning opportunity via individual fairness in personalized recommendations. International Journal of Artificial Intelligence in Education 32 (3), 636–684 (2022)

- [33] Pallathadka, H., Wenda, A., Ramirez-Asís, E., Asís-López, M., Flores-Albornoz, J., Phasinam, K.: Classification and prediction of student performance data using various machine learning algorithms. Materials today: proceedings 80, 3782–3785 (2023)

- [34] Pan, C., Zhang, Z.: Examining the algorithmic fairness in predicting high school dropouts. In: Proceedings of the 17th International Conference on Educational Data Mining. pp. 262–269 (2024)

- [35] Pearl, J.: Causality. Cambridge university press (2009)

- [36] Pfohl, S.R., Duan, T., Ding, D.Y., Shah, N.H.: Counterfactual reasoning for fair clinical risk prediction. In: Machine Learning for Healthcare Conference. pp. 325–358. PMLR (2019)

- [37] Piccininni, M.: Counterfactual fairness: The case study of a food delivery platform’s reputational-ranking algorithm. Frontiers in Psychology 13, 1015100 (2022)

- [38] Piech, C., Bassen, J., Huang, J., Ganguli, S., Sahami, M., Guibas, L.J., Sohl-Dickstein, J.: Deep knowledge tracing. Advances in neural information processing systems 28 (2015)

- [39] Pinto, M., Carreiro, A.V., Madeira, P., Lopez, A., Gamboa, H.: The matrix reloaded: Towards counterfactual group fairness in machine learning. Journal of Data-centric Machine Learning Research (2024)

- [40] Sha, L., Raković, M., Das, A., Gašević, D., Chen, G.: Leveraging class balancing techniques to alleviate algorithmic bias for predictive tasks in education. IEEE Transactions on Learning Technologies 15 (4), 481–492 (2022)

- [41] Shimizu, S., Hoyer, P.O., Hyvärinen, A., Kerminen, A., Jordan, M.: A linear non-gaussian acyclic model for causal discovery. Journal of Machine Learning Research 7 (10) (2006)

- [42] Taghipour, K., Ng, H.T.: A neural approach to automated essay scoring. In: Proceedings of the 2016 conference on empirical methods in natural language processing. pp. 1882–1891 (2016)

- [43] Verger, M., Lallé, S., Bouchet, F., Luengo, V.: Is your model" madd"? a novel metric to evaluate algorithmic fairness for predictive student models. arXiv preprint arXiv:2305.15342 (2023)

- [44] Wastvedt, S., Huling, J.D., Wolfson, J.: An intersectional framework for counterfactual fairness in risk prediction. Biostatistics 25 (3), 702–717 (2024)

- [45] Webbink, D.: Causal effects in education. Journal of Economic Surveys 19 (4), 535–560 (2005)

- [46] Wightman, L.F.: Lsac national longitudinal bar passage study. lsac research report series. (1998)

- [47] Wu, H., Zhu, Y., Shi, W., Tong, L., Wang, M.D.: Fairness artificial intelligence in clinical decision support: Mitigating effect of health disparity. IEEE Journal of Biomedical and Health Informatics (2024)

- [48] Xu, S., Strohmer, T.: On the (in) compatibility between group fairness and individual fairness. arXiv preprint arXiv:2401.07174 (2024)

- [49] Xu, Z., Li, J., Yao, Q., Li, H., Zhao, M., Zhou, S.K.: Addressing fairness issues in deep learning-based medical image analysis: a systematic review. npj Digital Medicine 7 (1), 286 (2024)

- [50] Yağcı, M.: Educational data mining: prediction of students’ academic performance using machine learning algorithms. Smart Learning Environments 9 (1), 11 (2022)

- [51] Zhao, S., Heffernan, N.: Estimating individual treatment effect from educational studies with residual counterfactual networks. In: Proceedings of the 10th International Conference on Educational Data Mining. pp. 306–311 (2017)

- [52] Zhou, W.: Group vs. individual algorithmic fairness. Ph.D. thesis, University of Southampton (2022)

- [53] Zhou, Y., Huang, C., Hu, Q., Zhu, J., Tang, Y.: Personalized learning full-path recommendation model based on lstm neural networks. Information sciences 444, 135–152 (2018)

- [54] Zhou, Z., Liu, T., Bai, R., Gao, J., Kocaoglu, M., Inouye, D.I.: Counterfactual fairness by combining factual and counterfactual predictions. In: Advances in Neural Information Processing Systems. vol. 37, pp. 47876–47907 (2024)

- [55] Zuo, Z., Khalili, M., Zhang, X.: Counterfactually fair representation. Advances in Neural Information Processing Systems 36, 12124–12140 (2023)

Appendix A Feature Description of Student Performance Dataset

Table 6: Feature descriptions of Student Performance dataset [8].

| Feature | Type | Description |

| --- | --- | --- |

| school | binary | the student’s school (Gabriel Pereira/Mousinho da Silveira) |

| gender | binary | The student’s gender |

| age | numerical | The student’s age |

| address | binary | The student’s residence (urban/rural) |

| famsize | binary | The student’s family size |

| Pstatus | binary | The parent’s cohabitation status |

| Medu | numerical | Mother’s education |

| Fedu | numerical | Father’s education |

| Mjob | categorical | Mother’s job |

| Fjob | categorical | Father’s job |

| reason | categorical | The reason to choose this school |

| guardian | categorical | The student’s guardian (mother/father/other) |

| traveltime | numerical | The travel time from home to school |

| studytime | numerical | The weekly study time |

| failures | numerical | The number of past class failures |

| schoolsup | binary | Is there an extra educational support? |

| famsup | binary | Is there any family educational support? |

| paid | binary | Is there an extra paid classes within the course subject? |

| activities | binary | Are there extra-curricular activities? |

| nursery | binary | Did the student attend a nursery school? |

| higher | binary | Does the student want to take a higher education? |

| internet | binary | Does the student have an Internet access at home? |

| romantic | binary | Does the student have a romantic relationship? |

| famrel | numerical | The quality of family relationships |

| freetime | numerical | Free time after school |

| goout | numerical | How often does the student go out with friends? |

| Dalc | numerical | The workday alcohol consumption |

| Walc | numerical | The weekend alcohol consumption |

| health | numerical | The current health status |

| absences | numerical | The number of school absences |

| G1 | numerical | The first period grade |

| G2 | numerical | The second period grade |

| G3 | numerical | The final grade |

Appendix B Complete SCMs of Datasets

Construction of causal structural model(SCM) is crucial for implementing counterfactual fairness. Thus, we provide a estimated SCM inferred from each dataset through LiNGAM algorithm [41]. We filtered out edges with absolute weights lower than 0.1. These causal models are used for sampling counterfactual instances. For fitting a counterfactually fair model, we excluded direct and indirect descendants of the sensitive feature for each dataset.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Causal Relationships between Student Factors and Final Result

### Overview

The image is a directed acyclic graph (DAG) illustrating the relationships between various factors related to students and their final results. The nodes represent variables, and the directed edges represent causal influences, with associated numerical values indicating the strength and direction (positive or negative) of the relationship.

### Components/Axes

* **Nodes:** The nodes are represented as ovals containing the following labels:

* gender

* age\_band

* imd\_band

* disability

* studied\_credits

* final\_result

* highest\_education

* num\_of\_prev\_attempts

* **Edges:** The edges are represented as arrows indicating the direction of influence. Each arrow is labeled with a numerical value representing the strength and direction of the relationship.

### Detailed Analysis

Here's a breakdown of the relationships and their associated values:

* **gender -> age\_band:** An arrow points from "gender" to "age\_band" with a value of 0.08. This suggests a positive relationship, where gender has a small influence on age band.

* **imd\_band -> highest\_education:** An arrow points from "imd\_band" to "highest\_education" with a value of 0.09. This suggests a positive relationship, where the index of multiple deprivation band has a small influence on highest education.

* **imd\_band -> final\_result:** An arrow points from "imd\_band" to "final\_result" with a value of -0.12. This suggests a negative relationship, where the index of multiple deprivation band has a small negative influence on the final result.

* **disability -> highest\_education:** An arrow points from "disability" to "highest\_education" with a value of -0.14. This suggests a negative relationship, where disability has a small negative influence on highest education.

* **studied\_credits -> highest\_education:** An arrow points from "studied\_credits" to "highest\_education" with a value of 0.12. This suggests a positive relationship, where the number of credits studied has a small influence on highest education.

* **studied\_credits -> num\_of\_prev\_attempts:** An arrow points from "studied\_credits" to "num\_of\_prev\_attempts" with a value of 0.29. This suggests a positive relationship, where the number of credits studied has a moderate influence on the number of previous attempts.

* **final\_result -> num\_of\_prev\_attempts:** An arrow points from "final\_result" to "num\_of\_prev\_attempts" with a value of -0.24. This suggests a negative relationship, where the final result has a moderate negative influence on the number of previous attempts.

* **final\_result -> num\_of\_prev\_attempts:** An arrow points from "final\_result" to "num\_of\_prev\_attempts" with a value of 0.14. This suggests a positive relationship, where the final result has a small positive influence on the number of previous attempts.

### Key Observations

* The diagram shows a network of relationships between student factors and their final results.

* The values associated with the arrows indicate the strength and direction of the influence.

* Some factors, like "studied\_credits," have a positive influence on other factors, while others, like "disability," have a negative influence.