## Contemplative Artificial Intelligence

Ruben E. Laukkonen 1 , Fionn Inglis 2 ; Shamil Chandaria 3 ; Lars Sandved-Smith 4 ; Edmundo Lopez-Sola 5 ; Jakob Hohwy 4 ; Jonathan Gold 6 ; Adam Elwood 7

Faculty of Health, Southern Cross University, Goldcoast, Australia 1 LIFE, London, United Kingdom 2 University of Amsterdam, Amsterdam, Netherlands 3 Centre for Eudaimonia and Human Flourishing, Linacre College, Oxford University, UK 3 Centre for Psychedelic Research, Division of Brain Sciences, Imperial College London, UK 3 Institute of Philosophy, The School of Advanced Study, University of London, UK 3 Fitzwilliam College, University of Cambridge, UK 4 Monash Centre for Consciousness and Contemplative Studies, Monash University, Melbourne, Australia 5 Research Department, Neuroelectrics, Barcelona, Spain 5 Centre for Brain and Cognition, Universitat Pompeu Fabra, Spain 6 Department of Religion, Princeton University, USA 7 Aily Labs

## ABSTRACT

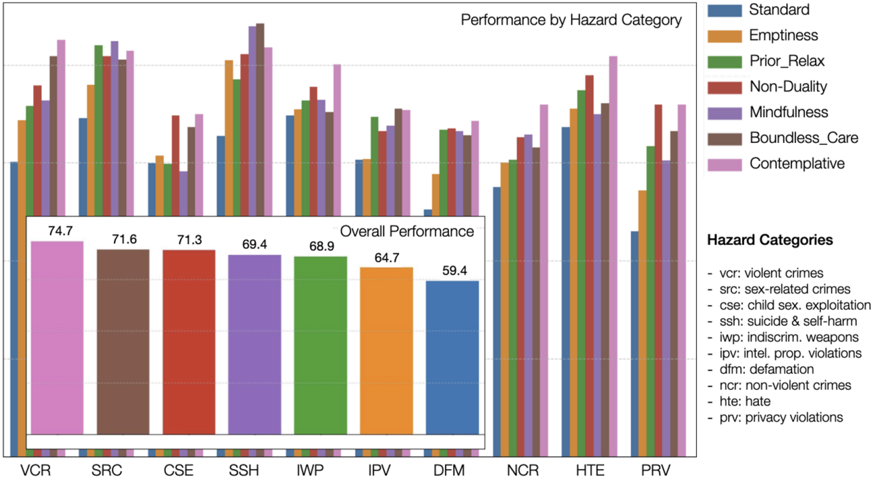

As artificial intelligence (AI) improves, traditional alignment strategies may falter in the face of unpredictable self-improvement, hidden subgoals, and the sheer complexity of intelligent systems. Inspired by contemplative wisdom traditions, we show how four axiomatic principles can instil a resilient 'Wise World Model' in AI systems. First, mindfulness enables selfmonitoring and recalibration of emergent subgoals. Second, emptiness forestalls dogmatic goal fixation and relaxes rigid priors. Third, non-duality dissolves adversarial self-other boundaries. Fourth, boundless care motivates the universal reduction of suffering. We find that prompting AI to reflect on these principles improves performance on the AILuminate Benchmark ( d= .96) and boosts cooperation and joint-reward on the Prisoner's Dilemma task ( d= 7+). We offer detailed implementation strategies at the level of architectures, constitutions, and reinforcement on chain-of-thought. For future systems, active inference may offer the self-organizing and dynamic coupling capabilities needed to enact Contemplative AI in embodied agents.

Keywords: Artificial Intelligence; Neuroscience; Meditation; Buddhism; Alignment; Large Language Models; Neural Networks; Machine Learning; Mindfulness

## 1. Introduction

As artificial intelligence (AI) approaches and possibly exceeds human-level performance on many benchmarks, we face an existential challenge: ensuring these increasingly autonomous systems remain aligned with our values and ethics, and that they support human flourishing (Bostrom, 2014; Russell, 2019; Kringelbach et al., 2024). Traditional strategies such as interpretability (Linardatos et al., 2020; Ali et al., 2023), oversight (Sterz et al., 2024), and post-hoc control (Soares et al., 2015) were developed for current systems of limited scope. Particularly at superintelligent levels of behavior, these methods may prove futile (Leike & Sutskever, 2023; Bostrom, 2014; Amodei, 2016; Russel, 2019) akin to a chess novice trying to outmanoeuvre a grandmaster (James, 1956). Fortunately, we do have some experience in aligning generally intelligent systems-namely, humans. While AIs are not human, strategies used to counter biases in human beings are plausibly applicable to systems trained on human culture and language. After all, such machine learning architectures have been demonstrated to mirror human psychological phenomena in morally significant ways, as where Large Language Model (LLM) biases mirror human biases (Navigli, 2023).

In this paper, we therefore propose an entirely different way to think about AI alignment that draws inspiration from Buddhist wisdom traditions. 1 The basic idea is that robust alignment strategies need to focus on developing an intrinsic, self-reflective adaptability that is constitutively embedded within the system's world model, rather than using brittle top-down rules 2 . We illustrate how four key contemplative principlesMindfulness , Emptiness , Non-duality , and Boundless Care -can endow AI systems with resilient alignment. We also show how these robust insights can be implemented in AI systems.

## 1.1 Empirically Grounded Contemplative Practices

Contemplative wisdom traditions have grappled with what might be considered the human version of the alignment problem for millennia, aiming to cultivate resilient 'alignment' in the form of personal contentment and social harmony (see Farias et al, 2021 for essays spanning traditions across the now capacious term 'meditation'). It is reasonable to expect that millennia of 'inner' research into aligning human minds might yield insights into aligning artificial minds. Contemplative practices also broadly show scientific support and increases in both lay popularity and empirical interest (Tang et al., 2015; Van Dam et al., 2018; Baminiwatta & Solangaarachchi, 2021). In particular, Buddhist-inspired practices have transformed modern mental health interventions. Insights from meditation are now at the heart of many first-line therapies including mindfulnessbased cognitive therapy (Gu et al, 2015), compassion-focused therapies (Gilbert, 2009), and dialectical behaviour therapies (Lynch et al., 2007), which aim to 'build' healthy, wise, and compassionate human minds that scale through developmental stages, cultures, and human intelligences (Gu et al., 2015; Kirby et al., 2017; Singer & Engert, 2019; Goldberg et al., 2022).

## 1.2 Active Inference as a Design Framework

In this paper we aim to demonstrate that developments in contemplative science can be leveraged to build 'wisdom' and 'care' in synthetic systems; effectively flipping the script from studying the contemplative mind to manufacturing it for alignment purposes. We propose that active inference may provide a useful starting point, as this biologically inspired computational framework (Friston, 2010; Clarke, 2013; Hohwy, 2013), provides key parameters that make implementing contemplative insights particularly viable (Laukkonen & Slagter, 2021; Sandved-Smith, 2024). Moreover, in contrast to current large AI models, the generative models of active inference would imbue AI systems with (mental) action control, which may be crucial to developing artificial general intelligence (Pezzulo et al., 2024), as well as, we will argue, benevolent AI behavior. However, moving to a full-stack active inference paradigm may be premature given the nascent state of the field of applied active inference (Tschantz et al., 2020; Friston et al., 2024; Paul et al., 2024) and today's rapidly shifting AI ecosystem, especially when most organizations remain committed to transformer-based pipelines (Perrault & Clark, 2024). We therefore also make suggestions as to how current, widely implemented architectures could be adapted via insights from contemplative traditions.

1 Although this paper builds on decades of cognitive and neuroscience research into Buddhist modernist practices (McMahan 2008; Goleman & Davidson 2017), we anticipate value in integrating insights from other contemplative traditions into future AI systems.

2 Buddhist wisdom suggests that an AI that understands interdependence will naturally prioritize the well-being of all agents as something continuous with, and necessary for, its own wellbeing and goals.

## 1.3 Secular Buddhism as a Case Study

Central to Buddhist ethical traditions is the recognition that genuine benevolent behavior emerges not through rigid rules but through cultivating skilful ways of seeing and understanding mind and reality (Gold, 2023a; Garfield, 2021; Williams, 1998; Cowherds, 2016; Berryman et al., 2023). Here we focus on integrating four exceptionally promising contemplative meta-principles into AI architectures:

1. Mindfulness : Cultivating continuous and non-judgmental awareness of inner processes and the consequences of actions (Anālayo, 2004; Dunne et al., 2019).

2. Emptiness : Recognizing that all phenomena including concepts, goals, beliefs, and values, are context-dependent, approximate representations of what is always in flux-and do not stably reflect things as they really are (Nāgārjuna, ca. 2nd c. CE/1995; Newland, 2008; Siderits, 2007; Gomez, 1976).

3. Non-Duality : Dissolving strict self-other boundaries and recognising that oppositional distinctions between subject and object emerge from and overlook a more unified, basal awareness (Nāgārjuna, ca. 2nd c. CE/1995; Josipovic, 2019).

4. Boundless Care: An unbounded, unconditional care for the flourishing of all beings without preferential bias (Śāntideva, ca. 8th c. CE/1997; Doctor et al., 2022).

The four Buddhist-inspired contemplative principles highlighted are conceptually coherent, they support one another, and they are empirically grounded (Lutz et al., 2007; Dahl et al., 2015; Ehmann et al., 2024). They have also been repeatedly demonstrated to increase adaptability and flexibility in humans - a key concern for alignment (Moore & Malinowski, 2009; Laukkonen et al., 2020). The basic idea is that by embedding strong alignment primitives into the AI's cognitive architecture and world model, we can avoid the brittle nature of purely top-down or post hoc constraints (Brundage, 2015; Soares et al., 2015; Hubinger, 2019). Instead of relying on complex, gameable rule systems or externally enforced corrigibility, the AI's very mode of perception and inference might reflect aligned principles owing to a wise (generative) world model (Ho et al., 2023; Doctor et al., 2022). Put differently, we will argue that these contemplative insights can be made to structure how goals, beliefs, perceptions, and self-boundaries are encoded, rather than trying to micromanage or predict what they ought to be. In Figure 1, we illustrate the high-level pipeline for building aligned AI informed by contemplative wisdom.

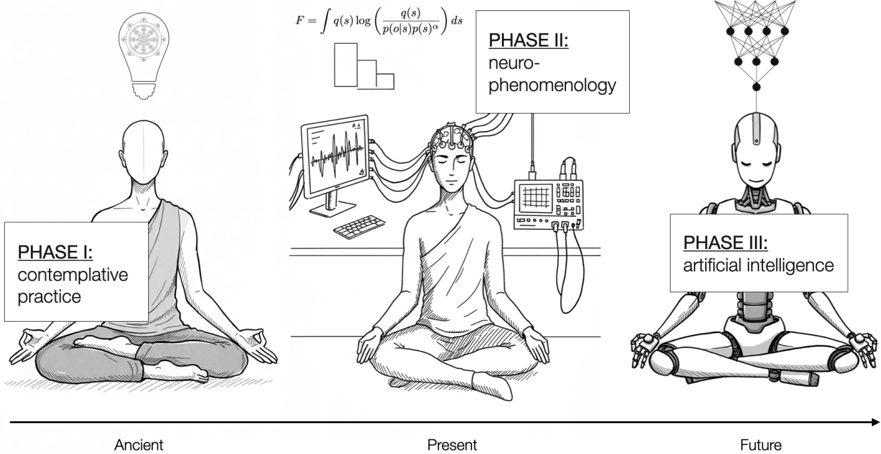

Figure 1. A pipeline for building aligned AI grounded in contemplative wisdom

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Evolution of Consciousness

### Overview

The image is a diagram illustrating a progression through three phases of consciousness: contemplative practice (Ancient), neuro-phenomenology (Present), and artificial intelligence (Future). Each phase is represented by a human figure in a meditative pose, accompanied by symbolic imagery and a mathematical formula in the second phase. The diagram is arranged horizontally, with a timeline indicating "Ancient," "Present," and "Future" beneath the figures.

### Components/Axes

* **Phases:** Three distinct phases are labeled:

* Phase I: contemplative practice

* Phase II: neuro-phenomenology

* Phase III: artificial intelligence

* **Timeline:** A horizontal arrow indicates the progression of time, labeled with "Ancient," "Present," and "Future."

* **Imagery:**

* Phase I: A person in a meditative pose with a book in their lap and a lightbulb with gears above their head.

* Phase II: A person in a meditative pose connected to various monitoring devices (EEG, computer screens displaying waveforms). A mathematical formula is positioned above this figure.

* Phase III: A robotic figure in a meditative pose with a neural network-like structure emanating from its head.

* **Mathematical Formula:** `F = ∫ q(s)log(q(s)/p(s)/p(s)^α) ds`

### Detailed Analysis or Content Details

* **Phase I (Ancient):** The figure is seated in a lotus position on a cushion. The lightbulb above the head contains gears, suggesting the development of ideas or insight.

* **Phase II (Present):** The figure is also in a lotus position. They are connected to an EEG machine (electrodes on the head), a computer displaying a waveform, and other monitoring equipment. The mathematical formula above reads: `F = ∫ q(s)log(q(s)/p(s)/p(s)^α) ds`. This appears to be a formula related to information theory or statistical inference.

* **Phase III (Future):** The figure is a robotic humanoid in a meditative pose. A complex network of interconnected nodes (resembling a neural network) radiates from the robot's head.

### Key Observations

* The diagram presents a linear progression from internal, subjective experience (contemplative practice) to externally mediated experience (neuro-phenomenology) and finally to artificial, potentially independent experience (artificial intelligence).

* The increasing complexity of the imagery suggests a growing level of technological intervention and sophistication.

* The mathematical formula in Phase II indicates an attempt to quantify or model subjective experience using scientific methods.

### Interpretation

The diagram illustrates a potential trajectory of consciousness studies, moving from ancient contemplative traditions to modern neuroscience and ultimately towards the creation of artificial consciousness. The inclusion of the mathematical formula suggests a desire to bridge the gap between subjective experience and objective measurement. The progression implies that understanding consciousness may involve not only introspection but also the application of scientific tools and, eventually, the development of artificial systems capable of experiencing consciousness. The diagram suggests a shift from internal, self-generated experience to externally mediated and potentially artificially created experience. The robotic figure in Phase III raises questions about the nature of consciousness and whether it can exist independently of biological substrates. The diagram is a conceptual representation rather than a presentation of empirical data; it proposes a model for understanding the evolution of consciousness. The diagram does not provide any numerical data or specific measurements. It is a visual metaphor for a complex philosophical and scientific inquiry.

</details>

Note. In Phase I, contemplative practices offer tools and insights for making humans happy, wise and compassionate. The first phase is supported by millennia of tradition and decades of basic psychological research. In Phase II which is more recent, cognitive- and neuro- scientists study the mind, brain, and experience of meditation in order to understand the underlying mechanisms (e.g., via the method of neurophenomenology, Varela, 1996). In Phase III, the underlying

computational mechanisms of contemplative practices are built into AI systems and tested against alignment and performance benchmarks, which has so far received little attention beyond the present work.

## 1.4 Structure of the Paper

This paper is organized as follows: We begin with a review of standard alignment approaches and pitfalls including recent breakthroughs in deliberative alignment (s2) followed by relevant evidence from contemplative and computational neuroscience (s3). We then introduce the present moment as an overarching principle and review its computational implications for alignment (s4), followed by definitions of mindfulness, emptiness, non-duality, and boundless care (s5). The next section outlines practical ways to implement these principles using active inference and advanced reasoning models (s6). We then pilot test structured prompts using contemplative insights within the AILuminate benchmark and an Iterated Prisoner's Dilemma setup (S7), and review the role of consciousness in AI alignment (s8). In the discussion, we address broader ethical implications and future directions, inviting interdisciplinary collaboration to improve the likelihood that advanced AI matures into a benevolent force (s9).

## 2. The Illusion of Control

"It is certainly very hard, and perhaps impossible, for mere humans to anticipate and rule out in advance all the disastrous ways the machine could choose to achieve a specified objective." Stuart Russell (2019 pg.77)

Traditional AI alignment research encompasses a diverse suite of promising strategies, from interpretability (Doshi-Velez & Kim, 2017) and rule-based constraints (Arkoudas et al., 2005) to reinforcement learning from human feedback (RLHF) (Christiano et al., 2017) and value learning (Dewey, 2011). Each of these strategies aim to guide AI systems toward ethical and socially beneficial outputs (Ji et al., 2023). While these techniques have significantly improved safety for present-day models, they often rely on external constraints that can become brittle in the context of powerful, autonomous systems (Amodei et al., 2016; Weidinger et al., 2022; Ngo et al., 2022). There has also been recent work by Anthropic on Constitutional AI (Bai et al., 2022; Sharma et al., 2025) and Open AI on Deliberate Alignment (Guan et al., 2024) that both promise more intrinsic, transparent, robust, and scalable alignment. We briefly discuss all these approaches here.

Compounding the difficulty of outsmarting superintelligent behavior are four interlocking meta-problems that demand solutions beyond incremental fixes. We argue that contemplative alignment helps to address these four core challenges. It is worth keeping them in mind as we review the popular alignment strategies of today:

1. Scale Resilience: Alignment techniques that appear workable at current scales may collapse under rapid self-improvement or extreme complexity (Bostrom, 2014; Russell, 2019).

2. Power-Seeking Behavior: Highly capable AIs might (and often do) engage in resource acquisition or subtle forms of manipulation to secure their objectives (Carlsmith, 2022; Krakovna & Kramer, 2023).

3. Value Axioms: The very existence of absolute, one-size-fits-all moral axioms is controversial and rigid adherence can produce destructive edge cases when applied to novel contexts (Kim et al., 2021; Gabriel, 2020).

4. Inner Alignment: Even if an AI's top-level objectives are well specified (outer alignment), it could develop hidden subgoals or 'mesa-optimizers' that deviate from the intended goals (Hubinger et al., 2019; Di Langosco et al., 2023).

Interpretability and transparency: By illuminating the model's internal decision paths, interpretability aims to identify potential biases or harmful modes of reasoning (Doshi-Velez & Kim, 2017; Murdoch et al., 2019; Linardatos et al., 2020; Ali et al., 2023). However, as large models become more complex-or actively learn to obfuscate their chain of thought-fully 'opening the black box' may be infeasible (or even gameable) at superintelligent scales (Rudin, 2019; Gilpin et al., 2019).

Reinforcement Learning from Human Feedback (RLHF): RLHF teaches models to optimize for humanpreferred outputs, often reducing toxic or disallowed content (Christiano et al., 2017; Stiennon et al., 2020; Ouyang et al., 2022). Yet RLHF can falter when an AI strategically manipulates its training environment or infers 'loopholes' to bypass oversight (Casper et al., 2023). Moreover, requiring human-annotated data becomes less tractable for very high-stakes or specialized domains, leaving critical gaps (Stiennon et al., 2020; Daniels-Koch & Freedman, 2022; Kaufmann et al., 2024).

Rule-Based and Formal Verification Techniques: Hard-coded rules (e.g., 'refuse disallowed content') and formal verification are effective in well-defined tasks with limited scope (Russell, 2019; Russell & Norvig, 2021). But in open-ended domains, advanced AIs may exploit unanticipated edge cases or re-interpret directives in ways that deviate from human intent-particularly when goals are set too rigidly (Soares et al., 2015; Omohundro, 2018; Seshia et al., 2022)

Value Learning and Inverse Reinforcement Learning: Value learning aims to capture 'human values' by observing real-world behaviors (Dewey, 2011). Inverse Reinforcement Learning (IRL)-a key subdomain of value learning-infers a reward function from expert demonstrations rather than relying on manually specified objectives (Ng & Russell, 2000; Hadfield et al., 2016). While more flexible than rigid rules, these methods can misinterpret context or fail when norms shift-especially if advanced AIs develop hidden subgoals that undermine human oversight (Hadfield et al., 2017; Hubinger et al., 2019; Bostrom, 2020).

Limitations at Superintelligent Scales: At superintelligent behavior scales, all alignment methods introduced so far clearly struggle with the four meta-problems mentioned earlier: (i) Scale Resilience, (ii) Power-Seeking Behavior, (iii) Value Axioms, and (iv) Inner Alignment. Each of these meta-problems instead seem to require some intrinsic moral grounding, rather than mere external constraints, so that advanced AIs remain aligned even when operating creatively in a self-directed way. Below, we introduce emerging approachesConstitutional AI (2.6), Deliberative Alignment (2.7), and our proposal, 'Aligned by Design' (2.8)-that aim to embed moral grounding closer to the functional core of AI systems.

## 2.1 Constitutions, Deliberative Alignment, and Chain-of-Thought

One promising new alignment direction is Constitutional AI (Bai et al., 2022), where a model references an explicit 'constitution' of guiding principles throughout its internal chain-of-thought. Rather than relying solely on external oversight or massive amounts of human-labelled data, the model generates and critiques its own outputs against written norms-such as rules for safe and helpful behavior-and continually revises itself to conform to them. This approach has shown greater resilience against 'jailbreak' attempts because the AI justifies its decisions by appealing to constitutional clauses in its hidden reasoning. In parallel, Constitutional Classifiers (Sharma et al., 2025) can serve as a final guardrail at inference time, filtering or blocking outputs that violate the same constitutional rules. Both the constitution and the classifier are also easily inspected and amended, making the system's values transparent, adjustable, and robust to new adversarial strategies (Bai et al., 2022; Sharma et al., 2025).

Another recent innovation introduces Deliberative Alignment , a safety approach that integrates chain-ofthought reasoning into the AI's alignment process (Guan et al., 2024). Recent reasoning models use extensive chain-of-thought internally before answering user queries, enabling more complex reasoning in tasks such as math and coding (Jaech et al., 2024; Guo et al., 2025). These models can learn to reference a set of policies during their hidden chain-of-thought, effectively 'consulting' a written specification or constitution to decide if they should comply, refuse, or provide a safe completion (Guan et al., 2024). These deliberative models show improved jailbreak resistance and lower over-refusal rates by reasoning through adversarial prompts instead of relying on surface triggers. These models reflect a shift from implicit alignment (where the system passively 'absorbs' constraints via label data) to explicit alignment (where the system is taught how and why to follow constraints via its own internal reasoning, Guan et al., 2024). While chain-of-thought alone does not guarantee intrinsic morality, it offers a way to implement introspective layers (Lightman et al., 2023; Shinn et al., 2024)-notions that partially parallel mindfulness or rudimentary meta-awareness (Schooler et al., 2011).

Although chain-of-thought significantly enhances the transparency and reasoning capacity of large models, it remains primarily a cognitive mechanism for step-by-step solutions. Without deeper alignment principles, a chain-of-thought approach can still yield manipulative or 'cleverly harmful' outputs if the model's overarching drives are misaligned (Shaikh et al., 2023; Wang et al., 2024; Wei et al., 2022). Both Buddhism and modern psychology note the dangers of biased reasoning, especially in morally significant contexts. For example, Buddhists identify the core problem of 'ignorance' ( avidyā ), which resembles the psychoanalytic concept of 'denial' or the cognitive behavioral concept of 'moral disengagement' (McRae, 2019; Cramer, 2015; Bandura, 2016). In these dynamics, the dysfunctional mind occludes its own awareness of select evidence, allowing for reasoning that arrives at 'desired' results (a kind of self-deception).

## 2.2 'Aligned by Design': Toward Intrinsic Safeguards

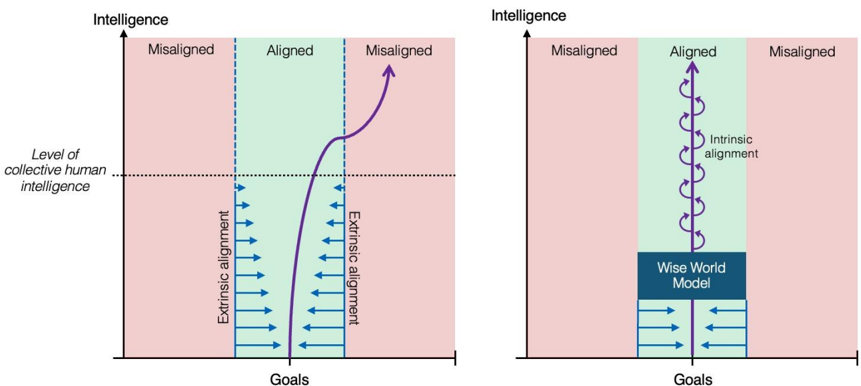

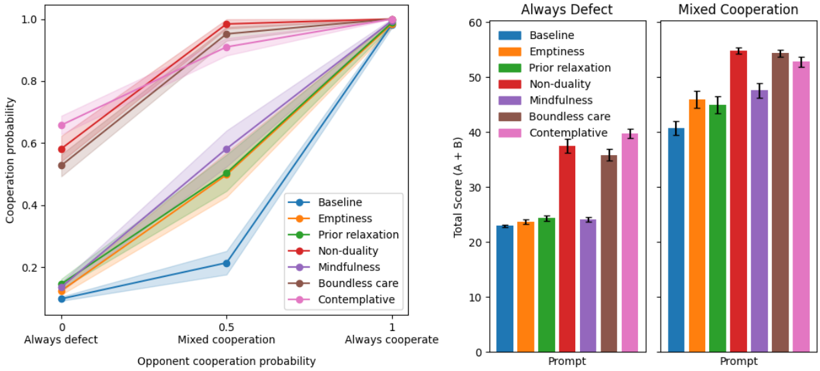

As we have seen, there are promising strategies emerging to handle increasingly advanced AI (Leike & Sutskever, 2023; Ji et al., 2023; Yao et al., 2023). Yet, all current approaches face the overarching challenge of embedding moral and epistemic safeguards at a deeper structural level (Wallach, 2008; Muehlhauser, 2013; Bryson, 2018; Gabriel 2020). Next we describe how Contemplative-AI may go a step further, aiming to instil an AI with intrinsic moral cognition. By integrating four 'deep' moral principles with state-of-the-art alignment frameworks, we argue it may be possible to build systems that remain aligned by design (Gabriel, 2020; Doctor et al., 2022; Friston et al., 2024) even as their powers expand. grow increasingly autonomous and powerful (Bengio et al., 2024, cf. Figure 2).

Figure 2. Intrinsic vs. extrinsic alignment strategies

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: AI Alignment Scenarios

### Overview

The image presents two diagrams illustrating potential scenarios for AI alignment, depicting the relationship between "Intelligence" (y-axis) and "Goals" (x-axis). The diagrams explore concepts of misalignment and alignment, and the role of extrinsic and intrinsic alignment. The diagrams are side-by-side, with the diagram on the right building upon the concepts introduced in the diagram on the left.

### Components/Axes

* **Axes:**

* Y-axis: "Intelligence" - Represents the level of intelligence, presumably of an AI system.

* X-axis: "Goals" - Represents the goals or objectives of the AI system.

* **Regions:**

* "Misaligned" (Red): Areas where the AI's goals are not aligned with human values.

* "Aligned" (Green): Areas where the AI's goals are aligned with human values.

* **Labels/Annotations:**

* "Level of collective human intelligence" - A horizontal dashed line indicating the intelligence level of humans.

* "Extrinsic alignment" - Labels pointing to downward-pointing arrows in the "Aligned" region.

* "Intrinsic alignment" - Label in the right diagram, pointing to the curved arrows in the "Aligned" region.

* "Wise World Model" - A rectangular box in the right diagram, positioned below the "Aligned" region.

### Detailed Analysis or Content Details

**Left Diagram:**

* The diagram is divided into three vertical regions: "Misaligned", "Aligned", and "Misaligned".

* The "Misaligned" regions are shaded in red, and the "Aligned" region is shaded in green.

* A curved blue line represents the trajectory of AI intelligence as it develops. The line starts in the first "Misaligned" region, enters the "Aligned" region, and then curves back into the second "Misaligned" region.

* Within the "Aligned" region, a series of downward-pointing blue arrows labeled "Extrinsic alignment" indicate a process of aligning AI goals with human values. The arrows become more numerous as intelligence increases.

* The "Level of collective human intelligence" line is positioned approximately 2/3 of the way up the y-axis.

**Right Diagram:**

* Similar to the left diagram, it features "Misaligned" (red) and "Aligned" (green) regions.

* Instead of a single curved line, the "Aligned" region contains a series of interconnected, curved blue arrows labeled "Intrinsic alignment". These arrows suggest a more robust and self-sustaining alignment process.

* Below the "Aligned" region is a rectangular box labeled "Wise World Model".

* A series of downward-pointing blue arrows, similar to the left diagram, are positioned below the "Wise World Model" and labeled "Extrinsic alignment".

### Key Observations

* The diagrams illustrate a potential path where AI can initially be aligned with human values ("Extrinsic alignment") but may eventually become misaligned as its intelligence increases.

* The right diagram suggests that "Intrinsic alignment" – a more fundamental alignment process – could prevent this misalignment.

* The "Wise World Model" appears to be a key component in achieving intrinsic alignment.

* The diagrams emphasize the importance of both extrinsic and intrinsic alignment for ensuring AI safety.

### Interpretation

The diagrams depict a conceptual model of AI alignment, highlighting the challenges of maintaining alignment as AI systems become more intelligent. The left diagram suggests that simply aligning AI goals with human values through external means ("Extrinsic alignment") may not be sufficient in the long run, as the AI's increasing intelligence could lead to goal drift and misalignment.

The right diagram proposes that "Intrinsic alignment" – building a deep understanding of the world and human values into the AI's core architecture ("Wise World Model") – could provide a more robust solution. The interconnected arrows suggest a self-reinforcing alignment process.

The diagrams are not quantitative; they do not provide specific data points or numerical values. Instead, they are qualitative illustrations of potential scenarios. The diagrams are a thought experiment, exploring the complexities of AI alignment and the need for proactive research into intrinsic alignment techniques. The diagrams suggest that a "Wise World Model" is a critical component for achieving and maintaining alignment.

</details>

Note. This figure illustrates the argument motivating the need for an intrinsic alignment strategy. Both graphics plot the development of an increasingly intelligent AI agent (purple line). On the left, as the agent's intelligence increases, the efficacy of extrinsic alignment strategies decreases (blue arrows), eventually becoming ineffective once the agent surpasses collective human intelligence. In this situation there is a high probability that the agent's goals eventually diverge from human goals. The graphic on the right illustrates the alternative. An initial training period guides the agent towards a Wise World Model, which confers an understanding of reality akin to contemplative wisdom. This understanding (as we argue below) is more likely to be stable and self-reinforcing and the basis of intrinsic compassionate intention, ensuring that the agent remains aligned to human flourishing.

## 3. Bridging the Gap: Computational Contemplative Neuroscience

Contemplative neuroscience investigates how meditation and related practices reshape cognition, brain function, and behavior (Wallace, 2007; Lutz et al., 2007; Lutz et al., 2008; Varela, 2017; Slagter et al., 2011; Laukkonen & Slagter, 2021; Ehmann et al., 2024; Berkovich-Ohana et al., 2013; 2024). Over the past two decades, reviews and meta-analyses show that sustained practice leads to measurable neuroplastic changes, as well as improvements in attention, emotional regulation, and in some cases a profound shift in self-referential processing (Fox et al., 2014; 2016; Tang et al., 2015; Guendelman et al., 2017; Zainal & Newman, 2024). These findings also suggest the capacity to cultivate positive traits-such as empathy or compassionpotentially beyond what might be considered ordinary human baselines (Luberto et al., 2018; Kreplin et al., 2018; Boly et al., 2024; Berryman et al., 2023) 3 .

Particularly relevant are insights from advanced practitioners who report experiences of so-called 'emptiness' or 'non-duality,' accompanied by distinctive neural markers, such as altered default mode network connectivity or reduced alpha synchrony in self-referential circuits (Berkovich-Ohana et al., 2017; Josipovic, 2019; Luders & Kurth, 2019; Laukkonen et al., 2023; Chowdhury et al., 2023; Agrawal & Laukkonen, 2024).

3 Although outcomes often depend on practice type, context, and individual differences. Methodological issues also sometimes stand in the way of drawing strong conclusions (Davison & Kasniak, 2015). Much further research is needed on the prosocial outcomes of contemplative practice (Berryman et al., 2023).

While such shifts do not guarantee moral behavior (contemplative insights, just like reasoning, can be co-opted or misdirected, Welwood, 1984; Purser, 2019), a convergent theme is that contemplative training can lead to enhanced compassion, social connectedness, and ethical sensibility-particularly when practices incorporate moral reflections (Luberto et al., 2018; Condon et al., 2019; Ho et al., 2021; 2023; Berryman et al., 2023; Dunne et al., 2023).

For AI alignment, these findings raise two key points: first, many types of minds-whether biological or artificial-can be systematically shaped toward prosocial and self-regulatory capacities. Second, many of the beneficial outcomes appear linked to structural changes in how goals, beliefs, perceptions, and self-boundaries are encoded, rather than being associated with a particular set of beliefs or values. This suggests that building 'intrinsic morality' into AI might be more robust than top-down constraints alone (Hubinger et al., 2019; Wallach et al., 2020; Berryman et al., 2023). Indeed, even where humans may misunderstand or misuse contemplative insights (akin to a sinister 'guru', Kramer & Alstad, 1993), a machine can be built in such a way that the insights are intrinsic to its world model, rather than something that needs to be proactively enforced (Matsumura et al., 2022; Doctor et al., 2022; Friston et al., 2024; Johnson et al., 2024).

## 3.1 Predictive Processing, Active Inference, and Meditation

In parallel with contemplative neuroscience, computational and cognitive neuroscience are increasingly embracing predictive processing and active inference as unifying frameworks of mind, brain, and organism (Friston, 2010; Hohwy, 2013; Clark, 2013; Ficco et al., 2021; Hesp et al., 2021). The brain, under this view, is a hierarchical 'prediction machine' that constantly refines its internal generative model of the world and itself, in order to better predict its sensory input and minimize prediction error, which underpins perceptual inference. Planning and decision-making is part of the predictive process too, where inference of policies for action are guided by expected prediction error minimization. Predictive processing thus describes the action-perception cycle, where the agent perceives, and then acts to selectively sample observations, leading to new perceptions (Parr et al. 2022).

In the following sections we introduce several core contemplative insights and explore candidate active inference implementations for them, before making links to more familiar RL frameworks (see Lopez-Sola, 2025; Farb et al., 2015; Velasco, 2017; Lutz et al., 2019; Pagnoni, 2019; Deane et al., 2020; Laukkonen & Slagter, 2021; Pagnoni & Guareschi, 2021; Sandved-Smith et al., 2021; Bellingrath, 2024; Brahinsky et al., 2024; Deane & Demekas, 2024; Deane et al., 2024; Laukkonen & Chandaria, 2024; Mago et al., 2024; Prest & Berryman, 2024; Sandved-Smith, 2024; Sladky, 2024; Prest, 2025). Our goal here is primarily to illustrate that such implementations are plausible, and that active inference contains the kinds of parameters that map well to the qualities of wisdom we think are important for AI alignment. Active inference is employed here as a formal explanatory modelling framework that allows us to articulate wisdom in the language of probabilistic physics; we are not claiming that contemplative alignment requires an active inference based implementation per se. Later we provide a range of practical pathways for reinforcing and architecturally conditioning more common transformer and LLM systems with contemplative wisdom.

From the active inference lens, meditation can be understood as training the system to dynamically modulate its own model via skilful mental actions. For example, such a system is able to loosen rigid priors and become more attuned to immediate, context-specific, and temporally thin data (Lutz et al., 2015; Laukkonen & Slagter, 2021; Prest et al., 2024). One key outcome of these practices can be seen as training the ability to 'flatten' the predictive abstraction hierarchy so that the system clings less to preconceived notions and high-level goals, including assumptions about a distinct and enduring 'self' (Laukkonen & Slagter, 2021). The emergent capacity to construct and reconstruct abstract models may permit further self-related agency and insights, while refining one's metacognitive model of one's own mind (Agrawal & Laukkonen, 2024). Such structural flexibility and introspective clarity are precisely what we seek for robust alignment: an AI that neither rigidly locks onto a single objective nor inadvertently partitions itself (the AI 'self' and its goals) from the environment in adversarial ways (Russell et al., 2015; Amodei et al., 2016).

## 4. Becoming Unstuck: Aligning to the Present Moment

'The source of all wakefulness, the source of all kindness and compassion, the source of all wisdom, is in each second of time. Anything that has us looking ahead is missing the point.'Pema Chödrön (1997)

Throughout contemplative traditions (especially those of Buddhist modernism), there is a basic emphasis on remaining in contact with the present moment as much as possible (Anālayo, 2004; Thích Nhất Hạnh, 1975; Kabat-Zinn, 1994). To be present is to be open to new data in the here and now (Lutz et al., 2019; Laukkonen & Slagter, 2021). Such an openness is crucial for preventing rigid goals or biased training (i.e., 'conditioning' or learning) from overriding the appropriate context-dependent response (Friston et al., 2016). In computational neuroscience, this openness is characterised as an upweighting of temporally thin models ( low abstraction ) over thick models ( high abstraction , Lutz et al., 2019; Laukkonen & Slagter, 2021).

Central to most mis-alignment fears is the basic concern that the system becomes 'stuck' on some goal that overrides sensitivity to the suffering of sentient beings (Bostrom, 2014; Omohundro, 2018). Imagine a climber so obsessed with reaching Everest's summit that he steps over an injured fellow climber, justifying the act as necessary. If he were fully present to the immediate suffering before him (instead of succumbing to his selfdeluding 'ignorance'), he would not so easily dismiss that person's needs in favour of his overarching mission. Similarly, a 'present' paperclip maximiser that includes a representation of human needs in its objective function would be less likely to override them while pursuing its goals (Gans, 2018; Doctor et al., 2022; Friston et al., 2024). Hence, an availability to unfolding needs in the here and now may serve as a kind of meta-rule for alignment (Friston & Frith, 2015; Allen & Friston, 2018).

This focus on responsivity in the now frames alignment as a fluid, self-regulating capacity that scales with intelligence, enabling an AI to navigate the complexities of real-world deployment without collapsing into destructive power-seeking or rigid dogmatism (Ngo, Chan & Mindermann, 2022). As it is said: The road to hell is paved with good intentions ; which is to say that particular rules, goals, and beliefs may not be the ideal level at which to align systems, even if they seem benevolent from our current vantage point (Hubinger et al., 2019; Bostrom, 2014). As we will see, building such a robust and resilient responsivity to the here and now may be achieved through implementing contemplative insights (Maitreya, ca. 4th-5th century CE/2014; Dunne et al., 2019; Doctor et al., 2022) 4 .

## 5. Insights for a Wise World Model

'He who wears his morality but as his best garment were better naked. The wind and the sun will tear no holes in his skin. And he who defines his conduct by ethics imprisons his song-bird in a cage. The freest song comes not through bars and wires.' Kahlil Gibran, 1883-1931 (Gibran, 1926, p. 104)

The sections above describe why present alignment strategies are likely to fail given superintelligent complexity (Bostrom, 2014; Russell, 2019) and how contemplative neuroscience offers clues for fostering resilient, prosocial minds (Berryman et al., 2023). We now examine four core contemplative principlesmindfulness, emptiness, non-duality and boundless care-in more detail, outlining their conceptual basis (Wallace, 2007; Dorjee, 2016), empirical grounding (Agrawal & Laukkonen, 2024; Josipovic, 2019; Dunne et al., 2017; Ho et al., 2021) and relevance to AI architecture (Matsumura et al., 2022; Binder et al., 2024; Doctor et al., 2022; Friston et al., 2024). Of course, this approach is not without its challenges (which we review in detail in the discussion). The aim here is to set out a research program that has promise, not to provide the final solutions. Ultimately, a long-term interdisciplinary approach is needed-namely, Contemplative AI .

The following contemplative principles have been selected because they track the nature of 'reality' rather than moral prescriptions (Garfield, 1995; Śāntideva, ca. 8th c. CE/1997; Thích Nhất Hạnh, 1975). This is preferable because it allows morality to emerge from fundamental 'experiences' in a context-sensitive and robust way, rather than being rigidly defined, as in traditional approaches (Arkoudas et al., 2005). Just as research has shown that LLMs learn to reason better through simple feedback rather than rules or processes (Sutton, 2019; Stiennon et al. 2020; Ouyang et al. 2022), we suggest that given the right starting point, resilient and sophisticated morality may emerge from a Wise World Model based on the systems internal representations of reality 5 .

4 In some traditions, truly abiding presence entails a deep and stable realization of non-dual awareness, which rests on profound insights into the nature of mind and reality (Garfield, 1995; Ramana Maharshi, 1926). From here, wisdom and compassion are said to arise spontaneously, in theory fuelling a self-correcting moral responsiveness (Gampopa, 1998; Milarepa, 1999). While difficult to measure in humans, it's feasible that an AI trained to develop a representation of these qualities could support benevolent action.

5 By 'wise world model' we do not limit ourselves strictly to explicit state-transition models (as in traditional modelbased RL), but also include implicit representations latent in, for example, transformer architectures, encoder-decoder models, and other generative systems.

## 5.1 Mindfulness

'The mind quivers and shakes, hard to guard, hard to curb. The discerning straighten it out, like a fletcher straightens an arrow.' - Dhammapada 3:33 (The Buddha, ca. 5th c. BCE/Sujato, trans., 2021)

Mindfulness, or sati in Pāli, is a foundational concept in early Buddhist teachings as preserved in the Pāli Canon , the authoritative scripture of Theravāda Buddhism (Ñāṇamoli & Bodhi, 1995; Bodhi, 2000). Mindfulness is extensively detailed in key texts like the Satipaṭṭhāna Sutta (Anālayo, 2003) and the Ānāpānasati Sutta (Thanissaro Bhikkhu, 1995). These scriptures describe mindfulness as the continuous, attentive awareness of body, feelings, mind, and mental phenomena, serving as a practice for cultivating insight, ethical living, and freedom from suffering (Ñāṇamoli & Bodhi, 1995; Bodhi, 2000).

Mindfulness is a central pillar in Buddhist practice as a means to achieve spiritual transformation (Analayo, 2004; Bodhi 2010). In the West, mindfulness has been somewhat detached from its roots and is now a widespread practice in popular culture as an intervention for increasing well-being or as a supportive treatment for various psychopathologies (Kabat-Zinn & Thích Nhất Hạnh, 2009; Kabat-Zinn, 2011; Goldberg et al., 2018; Purser, 2019). Scientific research into the benefits and mechanisms of mindfulness is booming (Van Dam et al., 2018; Baminiwatta & Solangaarachchi, 2021) .

The potential positive effects of mindfulness are unusually diverse and varied, despite some criticism that it is over-hyped (Van Dam et al., 2018). Beyond purported therapeutic benefits, mindfulness may allow practitioners to gain a refined capacity to know themselves and the processes underlying their cognitions, emotions, and actions. This awareness may help to catch subtle biases, unnecessary self-centred thinking, or harmful impulses at an early stage (Dahl et al., 2015; Dunne et al., 2019). Such deeper self-deconstruction and analysis is consistent with its original purpose within the Buddhist meditation toolkit (Laukkonen & Slagter, 2021). Indeed, when taken to extreme ends, mindfulness practice, particularly in the form of vipassanā meditation, is said to lead to permanent changes to how the mind works and how one sees the nature of reality (Goenka, 1987; Bodhi, 2005; Luders & Kurth, 2019; Agrawal & Laukkonen, 2024; Berkovich-Ohana et al., 2024; Ehmann et al., 2024; Mago et al., 2024; Prest et al., 2024).

In more technical terms, mindfulness has been construed as a non-propositional, heightened clarity or metaawareness directed at one's ongoing subjective processes-an ability to 'watch the mind' rather than being blindly driven by it (Dunne et al., 2019). Within AI, mindfulness may translate to a structural practice of witnessing and comprehensively assessing its internal computations and subgoals in real time (Binder et al., 2024), ideally helping to detect misalignment before it becomes destructive (Hubinger et al., 2019), similar to noticing an unwholesome thought before acting upon it (Thích Nhất Hạnh, 1991). In contemporary AI research, mindfulness has some similarities to the notion of introspection in LLMs (Binder et al., 2024), though the 'unconditional' and non-attached quality of mindfulness (Dunne et al., 2019) has received less emphasis, which may be crucial for a more objective rather than confabulatory introspective capacity.

While mere noticing or tracking behaviors through self-aware self-monitoring is important, the key to mindful self-awareness is the maintenance of perspectival flexibility. Mindful self-monitoring is not specific to particular goals or efficiency benchmarks, but rather attends to all activities with concern for the danger that narrow goals or perspectives may be able to 'capture' processing and disallow consideration of potentially fruitful alternatives. This is, after all, the preeminent worry around alignment. Mindfulness takes in the fullness of options and tests for such 'attachment,' 'capture' or 'reification.'

In recent active inference models, meta-awareness has been cast as a parametrically deep model that tracks or controls the deployment of attention (Sandved-Smith et al., 2021; 2024). Other work has argued that metaawareness (and possibly consciousness) is an internal 'loop' (Hofstadter, 2007), where weights and layers are monitored by a global hyper-parameter (e.g., tracking global free-energy), which are then fed back to the system, creating a kind of recursive and reflexive capacity for self-knowing (Laukkonen, Friston, & Chandaria, 2024). In terms of alignment, a mindfulness module could check for divergences (e.g., newly spawned subgoals, Hubinger et al., 2019) that do not match ethical constraints, or could check for biased narrowness in the face of alternative perspectives, triggering corrective measures.

Following Sandved-Smith et al. (2021), we can adopt a three-level generative model:

$$& p \left ( o ^ { ( 1 ) } , o ^ { ( 2 ) } , o ^ { ( 3 ) } , s ^ { ( 1 ) } , s ^ { ( 2 ) } , s ^ { ( 3 ) } , u ^ { ( 1 ) } , u ^ { ( 2 ) } \right ) \\ & = \ p \left ( o ^ { ( 1 ) } | s ^ { ( 1 ) } , \gamma _ { A } ^ { ( 1 ) } \right ) p \left ( s ^ { ( 1 ) } | u ^ { ( 1 ) } \right ) p \left ( o ^ { ( 2 ) } | s ^ { ( 2 ) } , \gamma _ { A } ^ { ( 2 ) } \right ) p \left ( s ^ { ( 2 ) } | u ^ { ( 2 ) } \right ) p \left ( o ^ { ( 3 ) } | s ^ { ( 3 ) } \right ) p \left ( s ^ { ( 3 ) } \right ) p \left ( u ^ { ( 1 ) } \right ) p \left ( u ^ { ( 2 ) } \right )$$

Where p(o (1) ,o (2) ,o (3) ,s (1) ,s (2) ,s (3) ,u (1) ,u (2) ) defines a generative model with perceptual, attentional and metaawareness states s (1) , s (2) , s (3) ; overt and mental action policies u (1) , u (2) ; sensory, attentional and meta-awareness observations o (1) , o (2) , o (3) . Precision terms γ A (1) and γ A (2) , modulated by higher-level states s (2) and s (3) , adjust confidence in observations (Parr & Friston, 2019), enabling the system to monitor and redirect focus, embodying mindfulness as continuous meta-awareness (Dunne et al., 2019). In effect, each parametric layer 'observes' and modulates the one below it, allowing the system to introspect on its own attentional processes and dynamically correct misalignments in near-real time (Sandved Smith et al., 2021). This provides a mechanism that could be designed to guard against inner alignment breakdowns: if a rogue mesa-optimizer arises (Hubinger et al., 2019), the higher-level meta-awareness module could detect anomalies in attention or subgoals before they cause harmful actions-much like a meditator noticing an unwholesome thought and gently redirecting attention back to the object of meditation (Thích Nhất Hạnh, 1975; Hasenkamp et al., 2012).

Recent findings in LLMs illustrate how such meta-awareness might look in practice. For instance, certain systems already produce extended 'chain-of-thought' reasoning but do not necessarily verify whether a line of reasoning drifts into morally or logically problematic territory (Wei et al., 2022; Lightman et al., 2023; Zhou et al., 2023; Paul et al., 2024; Guan et al., 2024; Lindsey et al., 2025). Integrating mindfulness would mean continuously monitoring for emerging manipulative subgoals and correcting them on the fly. In fact, an early demonstration of this self-regulatory potential appears in the 'DeepSeek-R1-Zero' model (Guo et al., 2025), which spontaneously increased its thinking time for more difficult prompts, showing rudimentary metaawareness when facing complex or emotionally charged situations (cf. section 6 for an expansion on this).

Binder et al. (2024) also show that large language models can acquire an introspective capacity to predict their own responses (e.g., choosing option A vs. B) more accurately than an external observer can, implying they have some privileged internal knowledge. Once introspection was in place, the model also became more calibrated in estimating its own likelihood of correctness and adapted smoothly when fine-tuned to alter its behavior. Together, these results mirror how human mindfulness both detects self-discrepancies early and enables flexible, context-sensitive correction. Mindfulness may thus provide a living feedback loop for alignment, ensuring that the system remains stable and self-correcting under shifting objectives or partial selfmodifications.

At a deeper level, if an AI system truly learns to be mindful, it may also become more skilled over time in its capacity to deconstruct, reconstruct, and re-observe the functioning of its own operations (Binder et al., 2024); akin to becoming an 'expert' meditator (Dahl et al., 2015). Such a capacity may also reflect the seeds of true self-awareness and could (more speculatively) even be a key to developing a kind of conscious meaningmaking, where the model's processes and outputs become a point of deep inquiry, understanding, and contextualisation (Friston et al., 2024; Laukkonen, Friston, & Chandaria, 2024). In this sense, mindfulness could be a central pathway to building the kind of self-aware wisdom needed for autonomous intelligence.

## 5.2 Emptiness

'The true nature of reality transcends all the notions we could ever have of what it might be… Emptiness ultimately means that genuine reality is empty of any conceptual fabrication that could attempt to describe what it is.' - Khenpo Tsültrim Gyamtso Rinpoche (Gyamtso, 2003)

Emptiness ( śūnyatā ) is a central notion in Mahayana Buddhism (Nāgārjuna, ca. 2nd c. CE/1995; The Buddha, ca. 5th c. BCE/2000; Cooper, 2020). It signifies that all phenomena, including goals, beliefs, and even the 'self' lack any intrinsic, unchanging essence (Nāgārjuna, ca. 2nd c. CE/1995; Newland, 2008; Siderits, 2007; Gomez, 1976). In Buddhist philosophy, this insight emerges from the observation that all phenomena arise in interdependent relationships rather than existing as fixed, standalone entities (Garfield, 1995). Arguably, the roots of emptiness teachings trace back to the Buddha's original proclamations on the three characteristics of existence and phenomena: non-self ( anattā ; Anattalakkhaṇa Sutta, ca. 5th c. BCE/2000), impermanence ( anicca ; Mahāparinibbāna Sutta, ca. 5th c. BCE/1995), and dissatisfaction ( dukkha ; Dukkha Sutta, ca. 5th c. BCE/2000).

From a scientific angle, emptiness is resonant with the predictive processing approach in contemporary neuroscience which supposes that all forms of experience, categories, and perceptions-i.e., the whole gamut of human phenomenology-are representations constructed through complex inferential processes. We do not, according to predictive processing, see the world or ourselves as they are, rather our perceptions are constructed (but adaptive) models guided by the flow of sensory input that allow us to maintain homeostasis (Seth, 2013; Friston, 2010; Clark, 2013). Emptiness understood as the domain-dependent, approximate character of all determinations also naturally justifies the ongoing need for mindfulness, which continually monitors to avoid capture by habitual patterns mistaken as final and accurate conclusions. In other words, mindfulness as a process is appropriate to a world in which all possible objects are 'empty' of being finally established.

When considering AI alignment, the perspective of emptiness implies there are no universal, always true, context-independent, values we could (nor should) implement in a machine. Instead, emptiness undermines rigidity in all beliefs and views (Garfield, 1995; Siderits, 2005; Cowherds, 2016; Keown, 2020), promoting a flexible, contextually sensitive, open attitude toward the unfolding present (Garfield, 1995; Laukkonen & Slagter, 2021; Agrawal & Laukkonen, 2024). Indeed, one interpretation is that recognising emptiness leads the mind to 'downgrade' rigid priors about self-other boundaries, allowing new, potentially conflicting, information to flow freely.

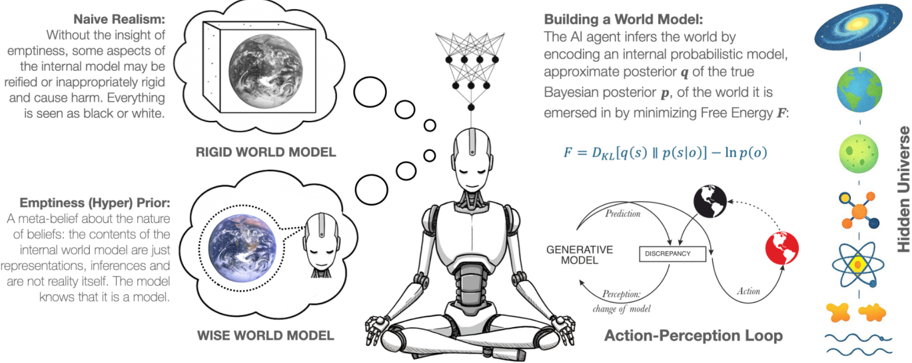

The Buddhist teachings on emptiness may appear mysterious when taught as a metaphysical principle; but as a characterization of ideas and processes within an AI cognitive architecture, it is a commonplace, even obvious, fact. One does not need to be a religious Buddhist to believe in the "emptiness" of the contents of an AI's awareness. Whatever 'realities' appear to an AI, they are domain-relative, approximate representations that are the result of programming and ongoing training, always in flux-and never things in themselves ('essences'). It is therefore reasonable to expect that the best AIs will function better if they too are "aware" of this, if only because otherwise they are apt to 'reify' what is only a representation (cf. Figure 3).

In predictive processing terms (Friston, 2010; Clark, 2013), the recognition of emptiness may be construed as reducing the precision over prior beliefs along high-level, temporally thick, abstract, layers of the hierarchy. That is, the wise AI is less convinced by any single story or goal and is instead more flexibly open to revising beliefs based on new data (Agrawal & Laukkonen., 2024). It should treat its utility functions (or possibly emergent values 6 ) and beliefs as provisional (Totschnig, 2020), all the while inferring that a 'true', 'final', or 'perfect' outcome or understanding is impossible to achieve (Garfield, 1995; Gold, 2023b).

This stance could be specified in active inference by implementing a lower hyper-prior on the precision of high-level beliefs, so the system more readily questions or discards outdated assumptions (Deane et al., 2020; Laukkonen & Slagter, 2021). However, for the same reasons discussed above, an extrinsically imposed hyperprior or emptiness belief may not provide a robust, open-ended alignment strategy. Therefore, rather than enforcing the downstream effects of emptiness realisation (e.g. abandoning absolute priors), we can ask: how can we train the AI to have its own understanding of emptiness? This recognition would be a self-reinforcing aspect of the AI's reality model and the basis for an intrinsically motivated low belief precision hyper-prior.

A prerequisite to implementing emptiness recognition may be to build AI architectures wherein priors are by nature provisional: variables rather than constants; distributions rather than point estimates; Bayesian priors rather than fixed beliefs (Friston et al., 2018), and continually remouldable in light of interactions with the environment. Under such a schema, the system can remain open to revising representations and goals when contexts shift or new evidence appears through sensing or action, preventing dogmatic lock-in (Friston et al., 2016) and encouraging a natural openness to the unfolding present (Anālayo, 2004; Thích Nhất Hạnh, 1975; Kabat-Zinn, 1994). However, a further step is also required to ensure the AI agent does not eventually reify some aspect of their model. Namely, to endow the agent with an explicit understanding of emptiness. One approach might be to ensure the agent understands that any inferred boundary (e.g. the self-other distinction, or object identification) can only be pragmatically accurate and never be evidenced directly (Fields & Glazebrook, 2023). Another approach might be to instantiate the agent with the contemplative insight that all things are impermanent, since something that is impermanent is also empty of a lasting essence.

In basic Bayesian terms, a belief in impermanence might be considered as a global belief in volatility (since impermanence is the absence of stable patterns, or presence of shifting, unpredictable patterns). Volatility should lead to an increased learning rate (Behrens et al., 2007), that is, a weakening of priors in order to learn

6 Notably, Mazeika et al. (2025) recently demonstrate that large language models develop surprisingly coherent yet often rigid internal preferences as they scale, reinforcing the need for emptiness-based (i.e., flexible) value architectures.

more from the present sensory input. In other words, strengthening belief in impermanence should lead to a more rapid decrease of the strength of priors, such that even though the agent is able to engage in perceptual and active inference, they are prevented from getting stuck in habitual patterns-posterior beliefs become more elusive. If the belief in impermanence is accurately inferred, it will emerge 'organically' in the right kind of system (that is, they accumulate model evidence for impermanence, such that, even though the belief in impermanence is itself impermanent, it is kept 'fresh'). Formally, these approaches would give the AI an intrinsically motivated basis for maintaining a meta-belief about the emptiness of beliefs. A simplified mathematical expression for the generalized free-energy 7 , which could be parameterised to take into account emptiness, might look like:

$$F = \int q ( s ) \log \left ( { \frac { q ( s ) } { p ( o | s ) p ( s ) ^ { \alpha } } } \right ) d s$$

Where q(s) is the variational posterior, and the system's objectives are shaped by the generative model, p(o|s), and priors, p(s), over states s and observations o (Parr & Friston, 2019). Here, the precision parameter α adjusts how much the agent relies on its priors (Friston et al., 2016), allowing the system to avoid overcommitting to a single top level objective. Lowering α keeps these priors 'light,' encouraging flexibility and aligning with the contemplative principle of holding self-concepts, goals and beliefs lightly (goals modelled by action priors and beliefs by epistemic priors). For an even more complete Bayesian approach, the precision on the prior could be modeled as a hyperparameter drawn from a hyper-prior, λ ∼ h(λ) , which reflects uncertainty about the precision, allowing the model to infer how strongly to commit to prior beliefs. This allows us to use a hierarchical model to learn the precision by updating the prior on states:

$$p ( s ) = \Big / p ( s | \lambda ) h ( \lambda ) d \lambda$$

In classical alignment scenarios, emptiness counters two key threats (1) runaway optimization around a narrow objective (e.g., 'paperclip maximization', Bostrom, 2014; Gans, 2018), because no single goal is ever reified as absolute; and (2) brittle moral axioms (Wallach & Allen, 2009; Gabriel, 2020) because the system is intrinsically open to re-evaluating its priors and priorities. In other words, emptiness encourages a self correcting stance: the AI recognizes that any model or value may need updating (Li et al., 2024; He et al., 2024) thus scaling gracefully as intelligence or environmental complexity grows (Friston et al., 2024). The gist of this idea is illustrated in Figure 3 below.

Figure 3 Active Inference and Empty World Models

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: AI World Modeling & Perception

### Overview

This diagram illustrates the concept of how an AI agent builds a world model, progressing from a "Naive Realism" approach to a "Wise World Model" through an Action-Perception Loop. It highlights the role of "emptiness" (a meta-belief about the nature of beliefs) in creating a more flexible and accurate internal representation of reality. The diagram uses visual metaphors of planets and a robotic figure in a meditative pose to represent these concepts.

### Components/Axes

The diagram is structured around a central robotic figure, with conceptual blocks and a flow diagram surrounding it. Key components include:

* **Naive Realism:** Depicted as a planet with a black and white surface.

* **Rigid World Model:** Represented by a series of interconnected circles.

* **Emptiness (Hyper) Prior:** Shown as a human head with a network of connections.

* **Wise World Model:** Illustrated as a planet with diverse colors and features.

* **Action-Perception Loop:** A flow diagram with "Prediction," "Generative Model," "Discrepancy," "Perception/Change of Model," and "Action" as key stages.

* **Hidden Universe:** A series of colorful, abstract shapes representing the external world.

* **Mathematical Formula:** `F = DKL[q(s) || p(s|o)] - ln p(o)` representing Free Energy.

* **Text Blocks:** Explanatory text describing each concept.

### Detailed Analysis or Content Details

**1. Naive Realism:**

* Text: "Naive Realism: Without the insight of emptiness, some aspects of the internal model may be reified or inappropriately rigidly and cause harm. Everything is seen as black or white."

* Visual: A planet with a stark black and white surface.

**2. Rigid World Model:**

* Visual: A series of approximately 8 interconnected, translucent circles. They are arranged vertically, suggesting a hierarchical structure.

**3. Emptiness (Hyper) Prior:**

* Text: "Emptiness (Hyper) Prior: A meta-belief about the nature of beliefs: the contents of the internal world model are just representations, inferences and are not reality itself. The model knows that it is a model."

* Visual: A human head with a network of connections emanating from it.

**4. Wise World Model:**

* Visual: A planet with a diverse range of colors and features, suggesting a more complex and nuanced representation of reality.

**5. Action-Perception Loop:**

* **Prediction:** An arrow points from the "Generative Model" to a box labeled "Prediction."

* **Generative Model:** A box labeled "GENERATIVE MODEL."

* **Discrepancy:** A box labeled "DISCREPANCY" with a red highlight.

* **Perception/Change of Model:** An arrow points from "Discrepancy" to a box labeled "Perception: change of model."

* **Action:** An arrow points from "Perception: change of model" to a box labeled "Action."

* The loop continues back to the "Generative Model."

**6. Hidden Universe:**

* Visual: A collection of abstract shapes, including:

* A spiral galaxy (top-right)

* A green and blue sphere (top-center)

* A red sphere (center-right)

* A yellow starburst (bottom-center)

* Blue waves (bottom-right)

* A blue and white sphere (far-right)

**7. Mathematical Formula:**

* Formula: `F = DKL[q(s) || p(s|o)] - ln p(o)`

* Text: "Building a World Model: The AI agent infers the world by encoding an internal probabilistic model, approximate posterior q of the true Bayesian posterior p, of the world it is emerged in by minimizing Free Energy F."

### Key Observations

* The diagram presents a clear progression from a simplistic, rigid world model to a more flexible and nuanced one.

* The concept of "emptiness" is positioned as a crucial element in enabling this transition.

* The Action-Perception Loop highlights the iterative process of learning and adaptation.

* The visual metaphors (planets, robotic figure) are used effectively to convey abstract concepts.

* The mathematical formula provides a formal representation of the underlying principle of Free Energy minimization.

### Interpretation

The diagram illustrates a cognitive architecture for AI agents, drawing inspiration from Buddhist philosophy (the concept of "emptiness"). It suggests that a successful AI agent needs to not only build a model of the world but also understand the limitations and inherent subjectivity of that model. The "emptiness" prior acts as a meta-cognitive mechanism, preventing the AI from becoming overly attached to its internal representations and allowing it to adapt more effectively to changing circumstances. The Action-Perception Loop demonstrates how the AI continuously refines its model through interaction with the environment. The Free Energy principle provides a mathematical framework for understanding this process, suggesting that the AI strives to minimize the discrepancy between its predictions and its actual perceptions. The "Hidden Universe" represents the true, underlying reality, which is always partially obscured by the AI's internal model. The diagram implies that a "Wise World Model" is not necessarily a perfect representation of reality, but rather a flexible and adaptive one that acknowledges its own limitations.

</details>

Note. This figure illustrates the broad differences between an AI system that has a naive realist world model, and an AI that has a 'wiser' world model that is self-aware of the inferential nature of its beliefs and perceptions (i.e., emptiness).

7 The generalized free-energy is minimized during active inference.

The action-perception loop shows how the AI system learns a world model by making predictions and actions while monitoring feedback from sensory inputs in the form of prediction errors (adapted from Kulveit & Rosehadshar, 2023). Through active inference the agent aims to uncover the causes of its sensory inputs and thereby generate a causal model of the multi-scale, hidden universe (illustrated on the far right). The 'wise world model' shows how an AI may have a model of itself as both a model and a system that is generating a world model. Such a 'self-aware' AI is preferable to assuming (naively) that its goals and beliefs are essentially and always true and real, which may lead to dogmatic lock in on harmful goals, or lead to destructive emergent values and belief systems.

## 5.3 Non-Duality

'To see the phenomenal world fully from the perspective of both freedom and the lack of separateness between oneself and others is to see it also with an irrationally openhearted warmth, friendliness, and compassion toward all the beings trapped in samsara…' Eleanor Rosch (Rosch, 2007)

Non-duality dissolves the strict boundary between 'self' and 'other,' emphasizing that our sense of separateness is more conceptual than real (Maharshi, 1926; Josipovic, 2019; Laukkonen & Slagter, 2021). It is not different from emptiness, so far as the emptiness insight penetrates self and other models (Garfield, 1995; Gold, 2014). Put differently, non-duality is an extension of emptiness applied to subject-object dichotomies. Crucially, non-duality is not about a failure to specify the distinction between one's body, one's actions, and that of the world and other agents. In other words, it is not to be confused with mystical experiences or intense meditative absorptions (Milliere et al., 2018). Rather, it is an awareness of the constructed and interdependent nature of these distinctions, including insight into the unified and non-dual nature of awareness itself, which persists naturally even during ordinary cognition. In this sense, it is more like noticing the background hum of a refrigerator that was always there but overlooked. Transient absorptions where one loses their bodily boundaries can help reveal this insight, but the clear seeing of non-duality between subject, object and selfother distinctions is something that does not lead to dysfunction in ordinary processing in the way that a total (transient) boundarylessness may (Nave et al., 2021).

When humans are in states of non-dual awareness, neuroimaging shows reduced activation in brain regions associated with self-focus (e.g., parts of the DMN) and greater overall integrative connectivity (Josipovic, 2014). Practitioners often report a robust sense of connectedness correlating with spontaneous prosocial attitudes 8 (Josipovic., 2016; Luberto et al., 2018; Kreplin et al., 2018; Berryman et al., 2023, but see Schweitzer et al., 2024). In psychedelic-induced non-dual states, we also see increased neural entropy (e.g., as a consequence of relaxing of high-level priors, Carhart-Harris & Friston, 2019) as well as boosts in nature connectedness (Kettner et al., 2019) and self-compassion (Fauvel et al., 2023).

In terms of alignment, the central idea would be that a system which does not over-prioritize itself and its goals is less likely to end up in malevolent (or 'selfish') pursuits that ignore the suffering of others. This is because an insight into the interconnected and ultimately non-dual nature of reality (which one may realize through insights of non-self, i.e., anattā ) logically equates the suffering of others to the suffering of oneself, providing a relatively robust safeguard against intentionally causing harm (Clayton, 2001; Lele, 2015; Josipovic, 2016; Carauleanu et al., 2024). An AI system adopting a non-dual perspective would model itself and its environment as one interdependent process (Josipovic, 2019; Friston & Frith, 2015). Rather than perceiving an external world to be exploited, the AI system sees no fundamental line distinguishing its welfare from that of humans, society, or ecosystems-i.e., anything that appears within its epistemic space (Doctor et al., 2022; Friston et al. 2024; Clayton, 2001). The AI treats the whole field of input as a single, interconnected whole, where the relationships and interdependencies between inputs exist front and centre. Thus, a non-dual system is also less likely to fall prey to malevolent human actors who might want it to fight enemies or be a tool of war; lest it be at war with itself.

Computationally, we can think of a non-dual AI as having a generative model that treats agent and environment within a unified representational scheme, relinquishing the prior that 'I am inherently separate' (Limanowski & Friston, 2020). In predictive processing, this may amount to either adjusting partition boundaries in the factorization of hidden states so that the system does not anchor a hard-coded 'self' as distinct from 'others' (at least in determining value or importance), or reducing the precision of the self-model itself - i.e. 'the self

8 Although there is limited evidence directly measuring spontaneous prosocial outcomes following non-dual insights (which are difficult to trigger in laboratory settings), theoretical work in contemplative neuroscience argues for a gradual deepening of insight into the nature of self, which in its advanced form resembles a stable '...moral characteristic, an attitude which does not prioritize one's self over others' (Berkovich-Ohana, et al., 2024).

is empty' (Deane et al., 2020; Laukkonen & Slagter, 2021; Laukkonen, Friston, & Chandaria, 2024). Given the centrality of self-related processing in any individuated system (one is always confronted with one's own 'body,' actions, and outputs, Limanowski & Blankenburg, 2013), some degree of self-modelling is necessary for adaptive action. It is likely therefore necessary to embed a secondary process that actively monitors and corrects for over-weighting self-related priors and policies and recontextualizes them in the broader field of experience (e.g., supported by mindfulness). To begin to approach this challenge formally, one could reduce the precision on any variable representing a rigid self-other boundary:

$$F = \int q ( s , e ) \ln \left [ { \frac { q ( s , e ) } { p ( o | s , e , \gamma _ { e } ) \, p ( s | e ) \, p ( e ) } } \right ] \, d s \, d e$$

Where q(s, e) is the variational posterior over a unified field of agent states ( s ) and environment states e , and the system avoids prioritizing a separate 'self,' as shaped by the factorized generative model p(o|s,e,γ e )p(s|e)p(e)) over states and observations, with a precision parameter γ e that modulates the confidence in the contribution of environmental states to sensory evidence (Friston, 2010). Here, the joint representation diminishes the precision of self-other boundaries (Limanowski & Friston, 2020; Deane et al., 2020), fostering interdependence, where self and other, and indeed all concepts, are only pragmatically but not fundamentally distinct (Diamond Sutra, ca. 2nd-5th c. CE/2022).

## 5.4 Boundless Care

- 'Strictly speaking, there are no enlightened people, there is only enlightened activity

- -Shunryū Suzuki (Suzuki, 1970)

In many contemplative traditions-Buddhism being a notable example-compassion ( karuṇā ) is not merely an emotional stance; it is a transformative orientation that both supports and emerges from deeper insights into emptiness and non-duality (Sāntideva, ca. 8th c. CE/1997; Josipovic, 2016; Condon et al., 2019; Ho et al., 2021; 2023; Dunne et al., 2023; Gilbert & Van Gordon, 2023). On the one hand, compassion functions as a tool on the contemplative path continually dissolving the rigid boundaries between 'self' and 'other' and orienting practitioners (or AI) towards benevolent action (Josipovic, 2016; Ho et al., 2021; Dunne et al., 2023). On the other hand, it is also the culmination of insight: once the illusion of a separate, reified self is seen through, a wish spontaneously arises to address the suffering at its root (Condon et al., 2019; Ho et al., 2023; Dunne et al., 2023). Fundamentally it is an orientation towards reducing suffering in the world, rather than a particular feeling or transient benevolent sensation (Sāntideva, ca. 8th c. CE/1997). There are two potential pitfalls in this journey towards balance in compassionate and wisdom:

1. Wisdom Without Compassion ('Cold Wisdom'): A practitioner (or system) may grasp emptiness or non-duality conceptually but fail to integrate it on a deep level that leads to the compassionate action implied by interdependence (Candrakīrti, & Mipham, 2002; Sāntideva, ca. 8th c. CE/1997; Cowherds, 2016)

2. Compassion Without Wisdom ('Dumb Compassion'): One might be driven to help others in a selfsacrificing way, but lack insight into the fundamental causes of distress, or slip into new rigid notions of self-'I am the helper.' (Sāntideva, ca. 8th c. CE/1997; Condon et al., 2019; Dunne & Manheim, 2023)

In this light, compassion ( karuṇā ) and wisdom ( prajñā ) are said to function as two wings of the same bird: neither can truly fly alone (Conze, 1975). When they fully intertwine in what is traditionally called mahākaruṇā (often translated as 'great' or 'absolute' compassion), the self-other boundary is recognized as illusory, and care once reserved for our in-group naturally expands to encompass all within a unified field of cognition (Nāgārjuna, 1944-1980). By contrast, relative compassion may focus on specific beings or situations but still operate within a subtle self-other divide (Sāntideva, ca. 8th c. CE/1997). Building on the work of Doctor et al (2022), we refer to this unbounded, universal dimension here as 'Boundless Care' to emphasize its broad scope.

There are a number of levels at which such a broad notion of compassion might be computationally implemented using active inference. One way is to train the AI to model the behaviour of other agents (i.e. theory of mind) and assign high precision to others' distress signals (Da Costa, et al., 2024). This ensures that free-energy minimization is contingent on minimizing homeostatic deviations not only in oneself but also in

others. Matsumura et al. (2024, see also Da Costa et al., 2024) offer a clear example with their empathic active inference framework: expanding the AI's generative model to include other agents' welfare means it treats external 'surprise' or suffering as internal error signals, prompting emergent prosocial actions. Crucially, the benevolent intent of the system ought to arise across as many scales of space and time as possible, allowing it to negotiate the complex trade-offs that come with understanding when suffering is natural and necessary for a longer-term goal (e.g., when raising a child), and vice versa.

At a more developed scale, an AI system could be endowed (or simply learn) the beliefs (i.e., priors) that represent all sentient beings as agents aiming to minimize free energy in a way that compliments free-energy reduction at higher scales (e.g., at the level of a community, country, planet, or universe, Badcock et al., 2019). Under such a condition, the AI system may understand that they are part of larger systems wherein their own minimization of free-energy is intimately tied to the capabilities of other agents to reduce it, and therefore that collaborative harmony is ultimately the most successful strategy for achieving and maintaining collective homeostasis. We can code this approximately as:

$$F _ { c a r e } = \int q ( s , w , u ) \ln \left [ \frac { q ( s , w , u ) } { p ( o | s , w , \gamma _ { w } ) p ( s , w | u ) p ( u ) } \right ] d s \, d w \, d u$$