# Process Reward Models That Think

\undefine@key

newfloatplacement \undefine@key newfloatname \undefine@key newfloatfileext \undefine@key newfloatwithin

Abstract

Step-by-step verifiers—also known as process reward models (PRMs)—are a key ingredient for test-time scaling, but training them requires expensive step-level supervision. This work aims to build data-efficient PRMs as verbalized step-wise reward models that verify every step in the solution by generating a verification chain-of-thought (CoT). We propose ThinkPRM, a long CoT verifier fine-tuned on orders of magnitude fewer process labels than those required by discriminative PRMs. Our approach capitalizes on the inherent reasoning abilities of long CoT models, and outperforms LLM-as-a-Judge and discriminative verifiers—using only 1% of the process labels in PRM800K—across several challenging benchmarks. Specifically, ThinkPRM beats the baselines on ProcessBench, MATH-500, and AIME ’24 under best-of-N selection and reward-guided search. In an out-of-domain evaluation over subsets of GPQA-Diamond and LiveCodeBench, our PRM surpasses discriminative verifiers trained with the full PRM800K by 8% and 4.5%, respectively. Lastly, under the same token budget, ThinkPRM scales up verification compute more effectively compared to LLM-as-a-Judge, outperforming it by 7.2% on a subset of ProcessBench. This work highlights the value of generative, long CoT PRMs that can scale test-time compute for verification while requiring minimal supervision for training. Our code, data, and models are released at https://github.com/mukhal/thinkprm.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Charts: Training Data Efficiency & Verifier-Guided Search

### Overview

The image presents two charts side-by-side. The left chart illustrates the training data efficiency on the ProcessBench dataset, showing verification F1 score against the number of training samples for different models. The right chart depicts verifier-guided search performance on the MATH-500 dataset, displaying reasoning accuracy against the number of beams used.

### Components/Axes

**Left Chart:**

* **Title:** Training data efficiency: ProcessBench

* **X-axis:** Training samples (logarithmic scale, from 10^3 to 10^5)

* **Y-axis:** Verification F1 score (from 70 to 90)

* **Legend:**

* ThinkPRM (Orange star)

* DiscPRM (Teal circle)

* LLM-as-a-judge (Blue dashed line)

* **Annotations:** "8K process labels" near the first ThinkPRM data point, "~700K process labels" near the last DiscPRM data point.

**Right Chart:**

* **Title:** Verifier-guided search: MATH-500

* **X-axis:** Number of beams (logarithmic scale, from 2^0 to 2^4, or 1 to 16)

* **Y-axis:** Reasoning accuracy (from 50 to 70)

* **Legend:**

* ThinkPRM (Orange solid line)

* DiscPRM (Teal solid line)

* LLM-as-a-judge (Blue dashed line)

### Detailed Analysis or Content Details

**Left Chart - Training Data Efficiency:**

* **ThinkPRM (Orange Star):** The line slopes downward initially, then plateaus. Approximate data points: (10^3, ~86), (10^4, ~65), (10^5, ~67).

* **DiscPRM (Teal Circle):** The line shows a slight upward trend. Approximate data points: (10^3, ~74), (10^4, ~75), (10^5, ~77).

* **LLM-as-a-judge (Blue Dashed Line):** The line is relatively flat. Approximate data points: (10^3, ~71), (10^4, ~71), (10^5, ~71).

**Right Chart - Verifier-Guided Search:**

* **ThinkPRM (Orange Solid Line):** The line slopes upward. Approximate data points: (2^0, ~64), (2^1, ~65), (2^2, ~67), (2^3, ~68), (2^4, ~69).

* **DiscPRM (Teal Solid Line):** The line slopes upward, but less steeply than ThinkPRM. Approximate data points: (2^0, ~58), (2^1, ~60), (2^2, ~62), (2^3, ~64), (2^4, ~65).

* **LLM-as-a-judge (Blue Dashed Line):** The line slopes upward, starting lower than the other two. Approximate data points: (2^0, ~54), (2^1, ~56), (2^2, ~59), (2^3, ~61), (2^4, ~63).

### Key Observations

* **Left Chart:** ThinkPRM shows a significant drop in verification F1 score as the number of training samples increases from 8K to 700K. DiscPRM shows a modest increase in F1 score with more training data. LLM-as-a-judge remains relatively constant.

* **Right Chart:** All three models show improved reasoning accuracy with an increasing number of beams. ThinkPRM consistently outperforms DiscPRM and LLM-as-a-judge. The performance gap between the models widens as the number of beams increases.

### Interpretation

The left chart suggests that ThinkPRM may not scale well with increasing training data on the ProcessBench dataset, potentially due to overfitting or diminishing returns. DiscPRM demonstrates more stable performance with more data. The right chart indicates that increasing the number of beams in the search process improves reasoning accuracy for all models on the MATH-500 dataset. ThinkPRM's superior performance suggests it benefits more from the verifier-guided search strategy. The consistent performance of LLM-as-a-judge across both charts suggests it may be less sensitive to the specific dataset or search strategy. The logarithmic scales on the x-axes emphasize the relative changes in training samples and beam numbers. The annotations on the left chart highlight the specific training data sizes used for ThinkPRM. The combination of these two charts provides insights into the trade-offs between training data efficiency and search strategy effectiveness for different models.

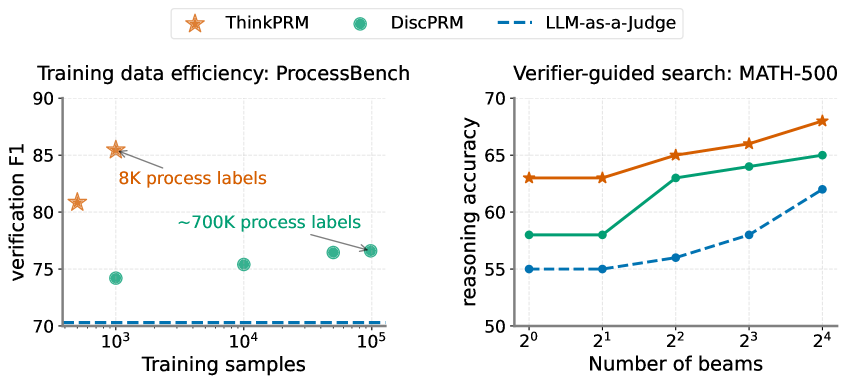

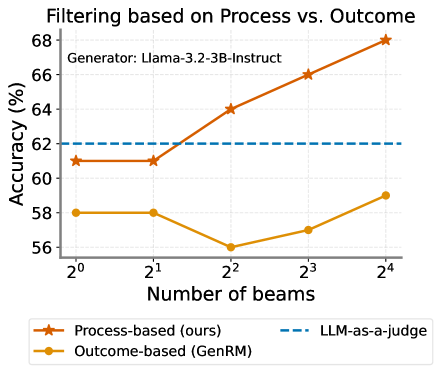

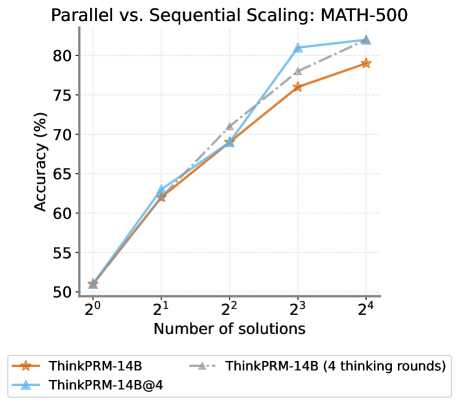

</details>

Figure 1: Left: Verifier F1-score on ProcessBench (Zheng et al., 2024). ThinkPRM -14B, trained on 8K process labels or 1K synthetic examples, outperforms discriminative PRMs trained on about 100x more data. Right: Verifier-guided search accuracy on MATH-500 with Llama-3.2-3B-Instruct as generator. ThinkPRM -1.5B, trained using the same 8K labels, outperforms LLM-as-a-judge and discriminative verifiers in reward-guided search on MATH-500. The LLM-as-a-judge in both figures uses the same base model as ThinkPRM.

1 Introduction

Reasoning with large language models (LLMs) can substantially benefit from utilizing more test-time compute (Jaech et al., 2024; Guo et al., 2025; Akyürek et al., 2024). This typically depends on a high-quality process reward model (PRM)—also known as a process verifier—that scores (partial) solutions for selecting promising paths for search or ranking (Cobbe et al., 2021; Li et al., 2023; Wu et al., 2024; Brown et al., 2024). PRMs have typically assumed the form of discriminative classifiers, trained to discern correct from incorrect reasoning (Uesato et al., 2022; Zhang et al., 2025). However, training discriminative PRMs requires access to process labels, i.e., step-level annotations, which either require extensive human annotation (Lightman et al., 2023; Zheng et al., 2024), gold step-by-step solutions (Khalifa et al., 2023), or compute-intensive rollouts (Luo et al., 2024; Chen et al., 2024a). For instance, training reasonably performing math PRMs requires hundreds of thousands of step-level annotations (Lightman et al., 2023; Wang et al., 2023b).

Generative verification either via LLM-as-a-judge (Wang et al., 2023a; Liu et al., 2023b; Zheng et al., 2023) or GenRM (Zhang et al., 2024a) treats verification as a generation problem of a rationale followed by a decision. However, LLM-as-a-judge is known to perform poorly compared to specialized reward models (Lambert et al., 2024; Zhang et al., 2024b; Chen et al., 2024c), as general-purpose LLMs frequently fail to recognize reasoning errors (Huang et al., 2023; Zhang et al., 2024a; Ye et al., 2024). Moreover, GenRM is limited to outcome verification via short chain-of-thoughts (CoTs), fundamentally limiting its ability for test-time scaling.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Line Chart: Scaling verifier compute: ProcessBench

### Overview

This line chart illustrates the performance of different verification methods (ThinkPRM, DiscPRM, and LLM-as-a-judge) on the ProcessBench dataset as a function of the number of tokens used for "thinking" (up to 32K tokens). The performance metric is F1-score, expressed as a percentage.

### Components/Axes

* **Title:** Scaling verifier compute: ProcessBench

* **X-axis:** Thinking up to (#tokens) - with markers at 8K, 16K, 24K, and 32K.

* **Y-axis:** F1-score (%) - ranging from approximately 74% to 89%. The axis has tick marks at 76%, 78%, 80%, 82%, 84%, 86%, 88%.

* **Legend:** Located at the bottom of the chart.

* ThinkPRM (Orange line with star markers)

* DiscPRM (Teal dashed line)

* LLM-as-a-judge (Blue line with circle markers)

### Detailed Analysis

* **ThinkPRM (Orange):** The line slopes upward from 8K to 16K tokens, then plateaus.

* 8K tokens: Approximately 84% F1-score.

* 16K tokens: Approximately 88% F1-score.

* 24K tokens: Approximately 89% F1-score.

* 32K tokens: Approximately 89% F1-score.

* **DiscPRM (Teal):** The line is nearly flat across all token values.

* 8K tokens: Approximately 74% F1-score.

* 16K tokens: Approximately 74% F1-score.

* 24K tokens: Approximately 74% F1-score.

* 32K tokens: Approximately 74% F1-score.

* **LLM-as-a-judge (Blue):** The line shows a slight downward trend.

* 8K tokens: Approximately 80% F1-score.

* 16K tokens: Approximately 82% F1-score.

* 24K tokens: Approximately 80% F1-score.

* 32K tokens: Approximately 79% F1-score.

### Key Observations

* ThinkPRM consistently outperforms both DiscPRM and LLM-as-a-judge.

* DiscPRM maintains a relatively constant, but lower, F1-score regardless of the number of tokens.

* LLM-as-a-judge shows a slight decrease in performance as the number of tokens increases.

* The performance gains from ThinkPRM appear to saturate after 16K tokens.

### Interpretation

The data suggests that increasing the "thinking" capacity (number of tokens) significantly improves the performance of the ThinkPRM method on the ProcessBench dataset, but the benefits diminish beyond 16K tokens. DiscPRM does not benefit from increased token capacity, indicating it is not leveraging the additional context. LLM-as-a-judge's performance slightly degrades with more tokens, potentially due to increased noise or computational challenges. This implies that ThinkPRM is the most effective method for this task, but there are diminishing returns to increasing the token limit. The flat performance of DiscPRM suggests it may be a simpler or less context-aware approach. The slight decline in LLM-as-a-judge performance is an interesting anomaly that warrants further investigation. The chart highlights the importance of model architecture and context utilization in verification tasks.

</details>

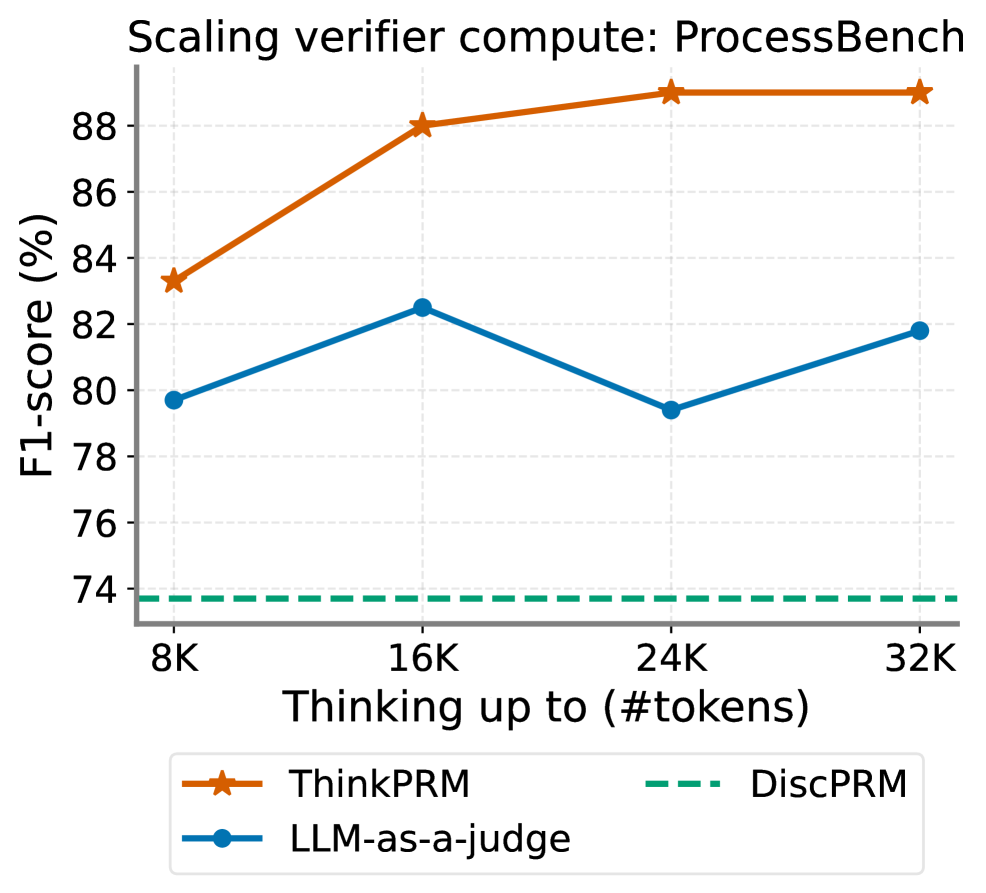

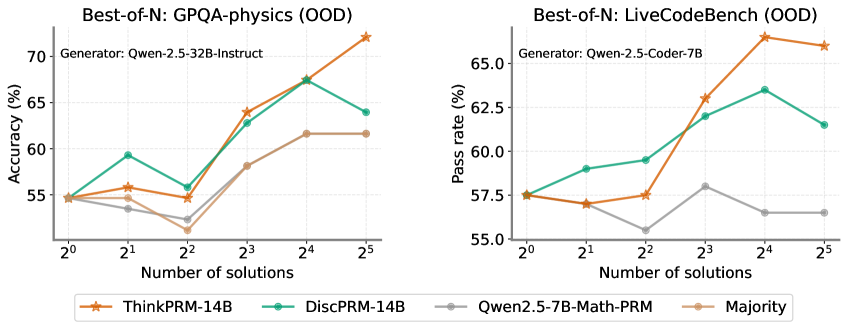

Figure 2: ThinkPRM enables scaling verification compute with more CoT tokens.

This paper builds on the insight that generative step-by-step verification can greatly benefit from scaling up the verifier’s inference compute—specifically, by enabling it to think through a CoT. Specifically, we repurpose open-weight large reasoning models (LRMs) as the foundation for generative PRMs through lightweight training. This training uses uses synthetic data (Kim et al., 2023; Zhu et al., 2023; Wang et al., 2024), utilizing as few as 8K step labels, and yieldinga ThinkPRM —a PRM that not only surpasses LLM-as-a-judge, but also outperforms discriminative PRMs trained on two orders of magnitude more data across a variety of test-time scaling scenarios.

We obtain ThinkPRM by training four reasoning models, namely R1-Distill-Qwen{1.5B,7B,14B} (Guo et al., 2025), and QwQ-32B-Preview (Team, 2024), and extensively evaluate it both as a standalone verifier on ProcessBench (Zheng et al., 2024), and combined with a generator under Best-of-N and verifier-guided beam search. ThinkPRM -14B outperforms a discriminative PRM based on the same base model in terms of accuracy while using far fewer supervision signals as in Fig. 1 left. In addition, ThinkPRM -1.5B demonstrates strong performance on MATH-500 (Hendrycks et al., 2021) under guided beam search, shown in Fig. 1 right. Lastly, as shown in Fig. 2, ThinkPRM can effectively utilize more verification compute than LLM-as-a-judge, by forcing it to think for more tokens. All these results are obtained while training only on 8K step labels.

Our work highlights the promise of long CoT PRMs that verify reasoning with reasoning, effectively scaling both generator and verifier compute. Our main findings are as follows: ThinkPRM outperforms strong PRM baselines in best-of-N and guided-search setups on two math reasoning benchmarks: MATH-500 and AIME 2024, and surpasses LLM-as-a-judge baselines under the same base model by thinking longer during verification (section 4). Moreover, ThinkPRM generalizes under two types of domain shift. First, it outperforms baselines on out-of-domain tasks such as scientific reasoning and code generation. Second, despite being trained only on short solutions, it generalizes to long-form reasoning without explicit step delimiters (section 5.3). Third, ThinkPRM outperforms self-consistency (Wang et al., 2022) when using the same compute budget, especially under high sampling regimes (section 5.4). Finally, fine-grained filtering of synthetic data based on step supervision is crucial for training high-quality PRMs (section 5.7).

2 Background and Related Work

Discriminative PRMs.

Discriminative PRMs are trained as classifiers that directly predict numerical correctness scores for each solution step, and typically rely on extensive step-level annotations (Uesato et al., 2022; Lightman et al., 2023; Zhang et al., 2025). Given a solution prefix, discriminative PRMs encode the solution text and employ a classification head to produce step-level scores, usually optimized with binary cross-entropy. An overall correctness score for a solution is obtained by aggregating these step-level scores (Beeching et al., ). PRMs are effective and straightforward but they do not utilize the language-modeling head of the base language model, making training expensive and labor-intensive (Yuan et al., 2024). Additionally, they offer limited interpretability and utilize fixed compute, restricting their dynamic scalability at test-time (Zhang et al., 2024a; Mahan et al., 2024). Thus, there is a need for data-efficient PRMs that can scale with more test-time compute.

Generative Verification.

Generative verification (Zheng et al., 2023; Zhu et al., 2023; Zhang et al., 2024a) frames verification as a language-generation task, producing step-level decisions as tokens (e.g., “correct” or “incorrect”), typically accompanied by a chain-of-thought (CoT). One can train generative verifiers using the standard language modeling objective on verification rationales rather than on binary labels. This approach leverages the strengths of LLMs in text generation, making generative verifiers inherently interpretable and scalable (Zhang et al., 2024a; Mahan et al., 2024; Wang et al., 2023a; Ankner et al., 2024). However, prior work on generative verifiers has relied mainly on short verification CoT (e.g., few hundred tokens) (Zhang et al., 2024a), which highly limits their scalability. Thus, there is a need for verifiers that can “think” longer through verification, utilizing test-time compute effectively. While LLM-as-a-Judge has been employed for step-level verification (Zheng et al., 2024). it tends to be sensitive to prompt phrasing, and prone to invalid outputs, such as infinite looping or excessive overthinking (Bavaresco et al., 2024) —issues we further confirm in this work. Prior results with reasoning models like QwQ-32B-Preview (Team, 2024) show promise, but their practical utility in test-time scaling remains limited without additional training (Zheng et al., 2024).

Test-Time Scaling with PRMs.

Test-time scaling techniques, such as Best-of-N selection (Charniak & Johnson, 2005) and tree-based search (Yao et al., 2023; Chen et al., 2024c; Wan et al., 2024), leverage additional inference-time compute to improve reasoning performance. Central to these approaches is the quality of the verifier used to score and select solutions. A major advantage of generative PRMs is that they uniquely support simultaneous scaling of both generator and verifier compute (Zhang et al., 2024a; Kalra & Tang, 2025). In particular, our work shows that generative PRMs trained based on long CoT models (Jaech et al., 2024; Guo et al., 2025) enable both parallel and sequential scaling of verifier compute.

3 ThinkPRM

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Sample Verification Chains

### Overview

This diagram illustrates a process for verifying reasoning chains generated by a "Reasoning Model" to create a dataset for finetuning. It depicts a workflow where potential solutions are evaluated against process labels, and only valid chains are retained for use as finetuning data.

### Components/Axes

The diagram consists of three main sections, arranged horizontally:

1. **Problem & Solution:** A red rounded rectangle labeled "Problem" with a question mark inside, connected by an arrow to a pink rounded rectangle labeled "Solution" with "Step 1:", "Step 2:", and "Step 3:" listed vertically.

2. **Verification & Comparison:** Two rectangular boxes, one labeled "1. Sample verification chains" and the other "2. Compare against process labels".

3. **Data Retention:** A rectangular box labeled "3. Keep good chains" connected to a cylinder labeled "Finetuning data".

There are also visual indicators (checkmarks and crosses) used to represent the correctness of each step in the reasoning chain.

### Detailed Analysis or Content Details

**Section 1: Problem & Solution**

- The "Problem" is represented by a red rectangle with a question mark.

- The "Solution" is represented by a pink rectangle, outlining a three-step process.

**Section 2: Verification & Comparison**

- **Box 1 ("Sample verification chains"):** Contains two examples of reasoning chains within green boxes. The text within these boxes is formatted as code:

- **Chain 1 (Discarded):**

```

```

This chain is marked with red "X" symbols next to steps 2 and 3.

- **Chain 2 (Kept):**

```

```

This chain is marked with green checkmarks next to steps 1 and 2, and a red "X" next to step 3.

- **Box 2 ("Compare against process labels"):** Shows a list of "Step" evaluations:

- Step 1: Correct

- Step 2: Incorrect

- Step 3: Incorrect

This box has a large red "X" symbol indicating the entire chain is discarded.

**Section 3: Data Retention**

- **Box 3 ("Keep good chains"):** Shows a list of "Step" evaluations:

- Step 1: Correct

- Step 2: Correct

- Step 3: Incorrect

This box has a green checkmark symbol.

- The output of this box is connected to a yellow cylinder labeled "Finetuning data".

### Key Observations

- The diagram highlights a filtering process. Reasoning chains are evaluated step-by-step.

- A chain is discarded if *any* step is incorrect.

- The `<think>` tags suggest the content within represents the internal reasoning process of the model.

- The `boxed[correct]` and `boxed[incorrect]` notations indicate the outcome of evaluating each step.

- The diagram visually emphasizes the importance of all steps being correct for a chain to be considered valid.

### Interpretation

The diagram illustrates a quality control mechanism for generating training data for a reasoning model. The model generates potential solutions (chains of reasoning steps), and these are then rigorously evaluated against predefined "process labels" (ground truth). The diagram demonstrates a strict filtering criterion: a single incorrect step invalidates the entire chain. Only chains that consistently demonstrate correct reasoning are retained and used to refine the model through finetuning. This process aims to improve the model's accuracy and reliability by ensuring it learns from high-quality, verified examples. The use of visual cues (checkmarks, crosses, colors) effectively communicates the outcome of each evaluation step and the overall flow of the process. The diagram suggests a focus on identifying and eliminating flawed reasoning patterns to enhance the model's performance.

</details>

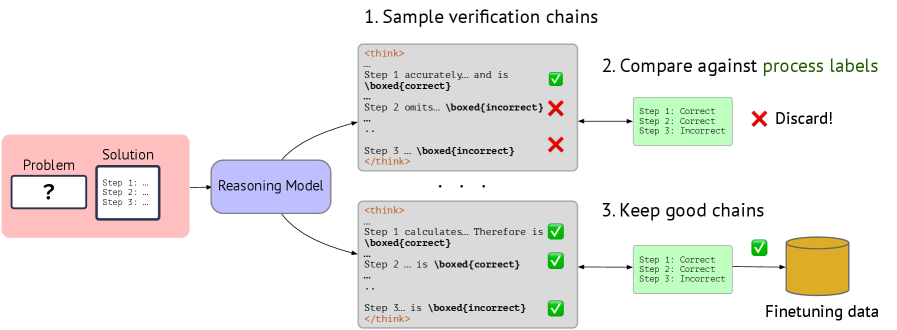

Figure 3: Collecting verification chains for finetuning. First, we prompt a reasoning model, in our case QwQ-32B-Preview to critique a given solution to a problem. Then, we sample multiple verification chains, which we judge against gold process labels from PRM800K, only keeping chains that match the gold process labels.

Our goal is verbalized PRM that, given a problem-solution pair, verifies every step in the solution via an extended chain-of-thought (CoT) such as the one shown in Fig. 44 in App. G. This section introduces issues with LLM-as-a-judge verification and proposes a data collection process (shown in Fig. 3) to curate high-quality synthetic verification CoTs for training such PRM. The rest of the paper addresses the following research questions:

- RQ1: How well do LRMs perform under LLM-as-a-judge for process-level verification? Section 3.1

- RQ2: Can lightweight finetuning on synthetic verification CoTs improve the reliability and effectiveness of these models as process verifiers? Section 3.2

- RQ3: How does a finetuned verbalized PRM (ThinkPRM) compare to discriminative PRMs and LLM-as-a-Judge baselines under different test-time scaling scenarios? Section 4

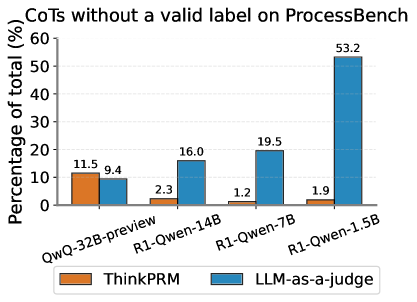

3.1 LLM-as-a-judge PRMs are suboptimal

This section highlights limitations we observe when using off-the-shelf reasoning models as process verifiers, suggesting the need for finetuning. For evaluation, we use ProcessBench (Zheng et al., 2024), which includes problem-solution pairs with problems sourced from existing math benchmarks, along with ground-truth correctness labels. We report the binary F1-score by instructing models to verify full solutions and judge whether there exists a mistake. We use two most challenging subsets of ProcessBench: OlympiadBench (He et al., 2024) and OmniMath (Gao et al., 2024), each comprised of 1K problem-prefix pairs. For LLM-as-a-judge, we use the same prompt template as in Zheng et al. (2024), shown in Fig. 42, which we found to work best overall. Table 3 shows LLM-as-a-judge F1 scores and a sample output by QwQ-32B-Preview is displayed in Fig. 41 in App. F.

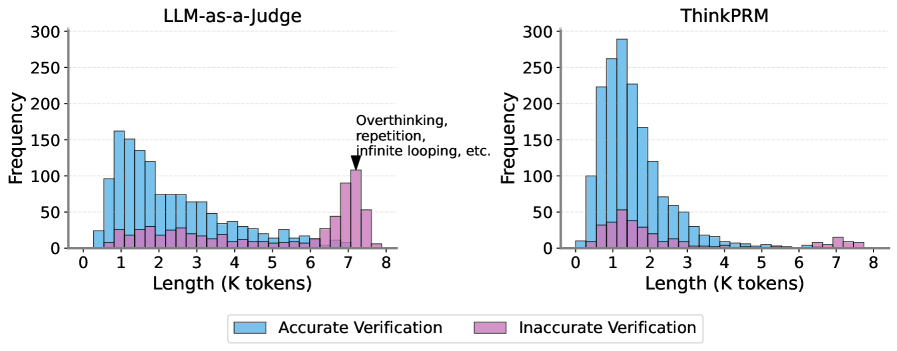

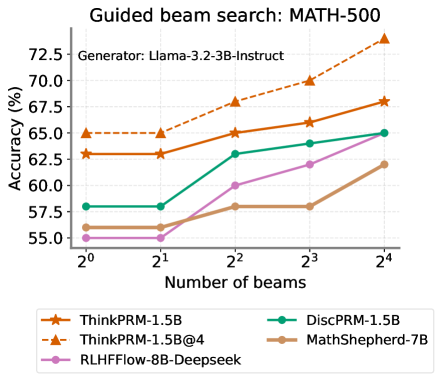

We observe different issues with LLM-as-a-judge verification. First, the verification quality is highly sensitive to the instruction wording: slight change in the instruction can affect the F1-score by up to 3-4 points. First, a substantial number of the generated chains include invalid judgments, i.e., chains without an extractable overall label as clear in Fig. 10. Such invalid judgements are caused by the following. In some cases, final decision was in the wrong format than instructed e.g., the model tries to solve the problem rather than verify the given solution—a behavior likely stemming from the model training. Second, we noted multiple instances of overthinking (Chen et al., 2024b; Cuadron et al., 2025), which prevents the model from terminating within the token budget, and infinite looping/repetitions, where the model gets stuck trying alternative techniques to verify the solutions.

Fig. 4 (left) shows a histogram of verification CoT lengths generated by R1-Qwen-14B in the LLM-as-a-judge setting. Accurate CoTs tend to be shorter, typically under 3K tokens, while inaccurate CoTs are more evenly distributed and spike sharply around 7K-8K tokens, highlighting the prevalence of overthinking and looping in long chains. We show examples of these behaviors in App. B. In the next section, we mostly fix these issues via lightweight finetuning over synthetic verification CoTs.

3.2 Finetuning on synthetic data boosts LLM-as-a-judge verification

Inspired by recent work on reducing overthinking in long CoT models that by training (Yu et al., 2024; Kang et al., 2024), we aim to improve LLM-as-a-judge performance via finetuning on high-quality verification data. Collecting real data would be expensive, so we rely on filtered synthetic data (Zelikman et al., 2022; Singh et al., 2023; Dong et al., 2023; Zhang et al., 2024b; Wang et al., 2024) also known as rejection sampling finetuning. To keep our approach simple, we refrain from more expensive training techniques, such as reinforcement learning or preference-based learning.

Synthetic data collection.

As training data, we sample synthetic verification CoTs from QwQ-32B-Preview, prompting it to verify each step in a solution prefix, using the instruction shown in Fig. 21. The problems and corresponding step-by-step solutions come from the PRM800K dataset (Lightman et al., 2023), which provides both model-generated solutions and human-verified step-level labels.

The sampling process continues until we obtain 1K verification CoTs which coreepond to 8K step labels in total. For data filtering, we use the following criteria: (i) the CoT must follow the expected format (i.e., include an extractable decision label for each step inside \boxed{} as shown in Fig. 20, and (ii) the generated step judgements match the gold step labels from PRM800K, and (iii) the CoT length is within a maximum budget—to avoid the excessive overthinking behavior we observed in Fig. 4 (left). The filtering process ensures our training data is of sufficient quality. note that process-based filtering is crucial for the performance of the resulting PRM as we show in Section 5.7. Data collection is illustrated in Fig. 3, data statistics are in Section A.1 and a training example is in Fig. 20.

Notably, our filtering relies only on step-level annotations, not on gold verification rationales or CoTs—making this pipeline scalable and low-overhead. In the absence of gold step-level annotations, one can obtain silver labels via Monte Carlo rollouts (Wang et al., 2023b; Chen et al., 2024a). While we train only on math data, the resulting PRM remains robust under other domains such as science QA and code generation as we show in Section 4.2. We then proceed to train our models on the 1K collected chains. Our training is very lightweight; finetuning QwQ-32B-Preview takes only 4.5 hours on a single A100 80GB GPU. Refer to Section C.1 for training details.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Histograms: Verification Accuracy vs. Length (K tokens)

### Overview

The image presents two histograms, side-by-side, comparing the distribution of response lengths (in K tokens) for "Accurate Verification" and "Inaccurate Verification" cases. The left histogram represents data for "LLM-as-a-judge", and the right histogram represents data for "ThinkPRM". Both histograms share the same x and y axes scales. A text annotation on the right histogram points to the "Inaccurate Verification" distribution, labeling it as relating to "Overthinking, repetition, infinite looping, etc.".

### Components/Axes

* **X-axis:** "Length (K tokens)" - Ranges from 0 to 8, with tick marks at integer values.

* **Y-axis:** "Frequency" - Ranges from 0 to 300, with tick marks at intervals of 50.

* **Legend (bottom-center):**

* "Accurate Verification" - Represented by a light blue color.

* "Inaccurate Verification" - Represented by a light purple/pink color.

* **Titles:**

* Left Histogram: "LLM-as-a-judge"

* Right Histogram: "ThinkPRM"

* **Annotation:** "Overthinking, repetition, infinite looping, etc." - Points to the tail of the "Inaccurate Verification" distribution in the "ThinkPRM" histogram.

### Detailed Analysis or Content Details

**LLM-as-a-judge (Left Histogram):**

* **Accurate Verification (Blue):** The distribution is unimodal, peaking at approximately 0.8 K tokens. It slopes downward relatively smoothly from the peak, with a long tail extending towards 8 K tokens.

* Frequency at 0.8 K tokens: ~270

* Frequency at 1.6 K tokens: ~150

* Frequency at 2.4 K tokens: ~70

* Frequency at 3.2 K tokens: ~40

* Frequency at 4.0 K tokens: ~25

* Frequency at 4.8 K tokens: ~15

* Frequency at 5.6 K tokens: ~10

* Frequency at 6.4 K tokens: ~5

* Frequency at 7.2 K tokens: ~5

* **Inaccurate Verification (Purple):** The distribution is relatively flat with a small peak around 6.4 K tokens. It has a more pronounced tail extending towards 8 K tokens compared to the accurate verification distribution.

* Frequency at 0.8 K tokens: ~10

* Frequency at 1.6 K tokens: ~15

* Frequency at 2.4 K tokens: ~20

* Frequency at 3.2 K tokens: ~25

* Frequency at 4.0 K tokens: ~30

* Frequency at 4.8 K tokens: ~40

* Frequency at 5.6 K tokens: ~50

* Frequency at 6.4 K tokens: ~60

* Frequency at 7.2 K tokens: ~50

**ThinkPRM (Right Histogram):**

* **Accurate Verification (Blue):** Similar to LLM-as-a-judge, the distribution is unimodal, peaking at approximately 1.6 K tokens. It slopes downward from the peak.

* Frequency at 1.6 K tokens: ~280

* Frequency at 0.8 K tokens: ~200

* Frequency at 2.4 K tokens: ~120

* Frequency at 3.2 K tokens: ~60

* Frequency at 4.0 K tokens: ~30

* Frequency at 4.8 K tokens: ~15

* Frequency at 5.6 K tokens: ~10

* Frequency at 6.4 K tokens: ~5

* **Inaccurate Verification (Purple):** The distribution is more concentrated towards higher lengths (6.4-7.2 K tokens) than in the LLM-as-a-judge histogram. The annotation "Overthinking, repetition, infinite looping, etc." points to this region.

* Frequency at 1.6 K tokens: ~20

* Frequency at 2.4 K tokens: ~30

* Frequency at 3.2 K tokens: ~40

* Frequency at 4.0 K tokens: ~50

* Frequency at 4.8 K tokens: ~60

* Frequency at 5.6 K tokens: ~70

* Frequency at 6.4 K tokens: ~80

* Frequency at 7.2 K tokens: ~60

### Key Observations

* For both LLM-as-a-judge and ThinkPRM, accurate verifications tend to have shorter lengths than inaccurate verifications.

* The "Inaccurate Verification" distribution for ThinkPRM is more heavily skewed towards longer lengths (6-8 K tokens) and has a more pronounced peak in that region compared to LLM-as-a-judge.

* The annotation suggests that longer lengths in ThinkPRM are associated with issues like overthinking and repetition.

* The peak of accurate verifications is higher for ThinkPRM than for LLM-as-a-judge.

### Interpretation

The data suggests that both LLM-as-a-judge and ThinkPRM exhibit a correlation between response length and verification accuracy: longer responses are more likely to be inaccurate. However, this correlation is stronger for ThinkPRM. The annotation highlights a potential issue with ThinkPRM – it appears to be prone to generating overly verbose responses that are characterized by overthinking, repetition, or infinite looping, leading to inaccuracies.

The difference in distributions between the two models suggests that ThinkPRM might be more susceptible to these issues than LLM-as-a-judge. The higher peak in accurate verifications for ThinkPRM could indicate that when it *does* provide accurate responses, they are often more concise.

The histograms provide a visual representation of the trade-off between response length and accuracy. A potential area for improvement for ThinkPRM is to implement mechanisms to constrain response length and prevent the generation of overly detailed or repetitive outputs. The data supports the hypothesis that controlling length can improve accuracy.

</details>

Figure 4: Verifier performance on ProcessBench in light of CoT lengths. On the left, LLM-as-a-judge produces excessively long chains including repetition, infinite looping, and overthinking, leading to worse verifier performance since the output never terminates. Training on collected syntehtic data substantially reduces these issues as shown in the ThinkPRM plot on the right.

Finetuning on synthetic verification CoTs substantially improves the verifier.

ThinkPRM trains on the 1K chains and is evaluated on ProcessBench and compared to LLM-as-a-judge under the same base model. Fig. 10 shows verifier accuracy of different models before and after our finetuning. We note a substantial boost in F1 across all models, with the 1.5B model gaining most improvement by over 70 F1 points, and the 14B model performing best. Looking at the ratio of invalid judgements in Fig. 10, we also note a significant reduction in invalid labels with all models, except for QwQ, where it slightly increases. Lastly, the reduction in overthinking and infinite looping behavior discussed in the last section is evident, as in Fig. 4 (right), where ThinkPRM generations maintain a reasonable length (1K-5K) tokens while being substantially more accurate.

\caption@setoptions

floatrow \caption@setoptions figurerow \caption@setposition b

\caption@setoptions figure \caption@setposition b

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Bar Chart: CoTs without a valid label on ProcessBench

### Overview

This bar chart visualizes the percentage of total CoTs (Chain of Thoughts) without a valid label on the ProcessBench dataset, for different model configurations. Two evaluation methods, "ThinkPRM" and "LLM-as-a-judge", are compared across four model versions: QwQ-32B-preview, R1-Qwen-14B, R1-Qwen-7B, and R1-Qwen-1.5B. The y-axis represents the percentage of total CoTs, ranging from 0% to 60%.

### Components/Axes

* **Title:** "CoTs without a valid label on ProcessBench" (Top-center)

* **X-axis Label:** Model Configurations (Bottom-center)

* Categories: QwQ-32B-preview, R1-Qwen-14B, R1-Qwen-7B, R1-Qwen-1.5B

* **Y-axis Label:** "Percentage of total (%)" (Left-center)

* Scale: 0%, 10%, 20%, 30%, 40%, 50%, 60%

* **Legend:** (Bottom-left)

* "ThinkPRM" - Orange

* "LLM-as-a-judge" - Blue

### Detailed Analysis

The chart consists of paired bars for each model configuration, representing the results from "ThinkPRM" and "LLM-as-a-judge".

* **QwQ-32B-preview:**

* ThinkPRM: Approximately 11.5% (Orange bar)

* LLM-as-a-judge: Approximately 9.4% (Blue bar)

* **R1-Qwen-14B:**

* ThinkPRM: Approximately 2.3% (Orange bar)

* LLM-as-a-judge: Approximately 16.0% (Blue bar)

* **R1-Qwen-7B:**

* ThinkPRM: Approximately 1.2% (Orange bar)

* LLM-as-a-judge: Approximately 19.5% (Blue bar)

* **R1-Qwen-1.5B:**

* ThinkPRM: Approximately 1.9% (Orange bar)

* LLM-as-a-judge: Approximately 53.2% (Blue bar)

The "LLM-as-a-judge" bars generally increase in height from left to right, with a particularly large jump for R1-Qwen-1.5B. The "ThinkPRM" bars remain relatively low and consistent across all model configurations.

### Key Observations

* The percentage of CoTs without a valid label is significantly higher when evaluated using "LLM-as-a-judge", especially for the R1-Qwen-1.5B model.

* "ThinkPRM" consistently reports a low percentage of invalid labels across all models.

* There is a clear trend of increasing invalid labels with "LLM-as-a-judge" as the model size decreases (from QwQ-32B-preview to R1-Qwen-1.5B).

### Interpretation

The data suggests a discrepancy in how "ThinkPRM" and "LLM-as-a-judge" evaluate the validity of labels in CoTs on the ProcessBench dataset. "LLM-as-a-judge" appears to be more sensitive to label issues, or perhaps more critical in its assessment, leading to a higher percentage of flagged invalid labels. The increasing trend of invalid labels for "LLM-as-a-judge" with smaller models could indicate that smaller models generate CoTs with less consistent or accurate labeling, which are then more readily identified as invalid by the LLM judge. Alternatively, it could be that the LLM judge is more prone to false positives when evaluating the output of smaller models. The consistently low invalid label rate reported by "ThinkPRM" suggests it may be less effective at detecting these issues, or that it uses a different criteria for label validity. This difference in evaluation methods highlights the importance of considering the evaluation metric when assessing the performance of CoT generation models.

</details>

Figure 7: LLM-as-a-judge suffers from a significant ratio of verification CoTs that do not terminate with a parsable label, i.e., \boxed{yes} or \boxed{no}. Our finetuning process that yields ThinkPRM, substantially mitigates this issue. Both verifiers are based on R1-Distill-Qwen-14B. \caption@setoptions figure \caption@setposition b

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: Verifier Performance on ProcessBench

### Overview

This bar chart displays the F1-score performance of two verifiers, "ThinkPRM" and "LLMs-as-a-judge", across four different models: QwQ-32B-preview, R1-Qwen-14B, R1-Qwen-7B, and R1-Qwen-1.5B. A horizontal dashed line indicates the performance of a "random" verifier, serving as a baseline.

### Components/Axes

* **Title:** "Verifier performance on ProcessBench" (top-center)

* **Y-axis:** "F1-score" (left-side, ranging from 0 to 100, with tick marks at 0, 20, 40, 60, 80, and 100)

* **X-axis:** Model names: "QwQ-32B-preview", "R1-Qwen-14B", "R1-Qwen-7B", "R1-Qwen-1.5B" (bottom-center)

* **Legend:** Located at the bottom-center.

* Orange: "ThinkPRM"

* Blue: "LLMs-as-a-judge"

* **Baseline:** A horizontal dashed line labeled "random" at an F1-score of approximately 40.

### Detailed Analysis

The chart consists of paired bars for each model, representing the F1-scores of "ThinkPRM" and "LLMs-as-a-judge".

* **QwQ-32B-preview:**

* ThinkPRM: Approximately 73.2 F1-score. (Orange bar)

* LLMs-as-a-judge: Approximately 53.0 F1-score. (Blue bar)

* **R1-Qwen-14B:**

* ThinkPRM: Approximately 86.5 F1-score. (Orange bar) - Highest score for ThinkPRM.

* LLMs-as-a-judge: Approximately 70.3 F1-score. (Blue bar)

* **R1-Qwen-7B:**

* ThinkPRM: Approximately 73.7 F1-score. (Orange bar)

* LLMs-as-a-judge: Approximately 45.2 F1-score. (Blue bar)

* **R1-Qwen-1.5B:**

* ThinkPRM: Approximately 76.0 F1-score. (Orange bar)

* LLMs-as-a-judge: Approximately 5.2 F1-score. (Blue bar) - Lowest score for LLMs-as-a-judge.

The "random" baseline is a horizontal dashed line at approximately 40 F1-score.

### Key Observations

* "ThinkPRM" consistently outperforms "LLMs-as-a-judge" across all models.

* The highest performance for "ThinkPRM" is achieved with the "R1-Qwen-14B" model (86.5 F1-score).

* "LLMs-as-a-judge" shows a significant drop in performance with the "R1-Qwen-1.5B" model (5.2 F1-score), falling well below the random baseline.

* Both verifiers perform above the random baseline for the QwQ-32B-preview, R1-Qwen-14B, and R1-Qwen-7B models.

### Interpretation

The data suggests that "ThinkPRM" is a more reliable verifier than "LLMs-as-a-judge" on the ProcessBench dataset. The performance of "LLMs-as-a-judge" is particularly sensitive to the underlying model, with a drastic decrease in F1-score when used with "R1-Qwen-1.5B". This could indicate that "LLMs-as-a-judge" requires larger or more complex models to achieve acceptable performance. The random baseline provides a crucial point of reference, highlighting that both verifiers offer value beyond random chance, except for the "LLMs-as-a-judge" with the "R1-Qwen-1.5B" model. The consistent outperformance of "ThinkPRM" suggests it may be a more robust and generalizable verifier across different model architectures. The chart demonstrates the importance of evaluating verifiers on a variety of models to understand their limitations and strengths.

</details>

Figure 10: Verification accuracy on 2K question-solution pairs from two most challenging subsets of ProcessBench: OlympiadBench and OmniMath. ThinkPRM obtained by finetuning the correponding model over only 1K verification chains performs better.

4 Test-time Scaling Experiments

This section aims to answer RQ3 introduced in section 3 by comparing ThinkPRM to baselines under different scaling scenarios. We study how ThinkPRM performs under different generation budgets (i) best-of-N selection (Wu et al., 2024; Brown et al., 2020) and (ii) guided beam search (Snell et al., 2024; Beeching et al., ). We also explore how ThinkPRM performs when verifier compute is scaled either in parallel by aggregating decisions over multiple verification CoTs or sequentially through longer CoTs by forcing the model to double check or self-correct its verification.

4.1 Experimental Setup

In the remainder of the the paper, we will mainly use our finetuned verifiers based on R1-Distill-Qwen-1.5B and R1-Distill-Qwen-14B as these provide the best tradeoff between size and performance. We will refer to these as ThinkPRM -1.5B and ThinkPRM -14B, respectively.

Baselines.

We compare ThinkPRM to DiscPRM, which uses the same base model as ThinkPRM, finetuned with binary cross-entropy on the entire PRM800K dataset, totaling 712K process labels, which is two orders of magnitude larger than our training data. Details on finetuning DiscPRMs are in Section C.2. We also compare to unweighted majority voting, which merely selects the most frequent answer across the samples (Wang et al., 2022), and to LLM-as-a-Judge using the same base model as ThinkPRM, prompted as in Section 3.1.

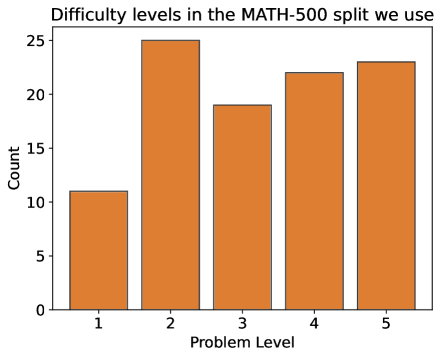

Tasks and Models.

We show results on three math reasoning tasks, namely 100 problems from MATH-500 (Hendrycks et al., 2021) covering all difficulty levels (see Section E.5 for more details), and American Invitational Mathematics Examination (AIME) problems for 2024. Since ThinkPRM was finetuned only on math data, we study the out-of-domain generalization on two tasks: scientific reasoning and code generation. For scientific reasoning, we use the physics subset of GPQA-Diamond (Rein et al., 2024), consisting of 86 PhD-level multiple choice questions. For code generation, we use a 200-problem subset from the v5 release of LiveCodeBench (Jain et al., 2024).

Over MATH-500, we show results with ThinkPRM -1.5B and ThinkPRM -14B on two different generator models: Qwen-2.5-14B and Llama-3.2-3B-Instruct. The former model is used for best-of-N and the latter for beam search as search is compute intensive. Showing results with different generators guarantees that our conclusions are not specific to a certain model family or size. For the more challenging tasks, namely AIME ’24 and GPQA, we use a more capable model, namely Qwen-2.5-32B-Instruct. For code generation, we use Qwen-2.5-Coder-7B (Hui et al., 2024). Implementation and hyperparemter details on how we select the final answer with best-of-N and beam search are in App. E.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Line Chart: Model Accuracy vs. Number of Solutions

### Overview

The image presents two line charts comparing the accuracy of different Large Language Models (LLMs) on two mathematical reasoning benchmarks: AIME '24 and MATH-500. The charts show how accuracy changes as the number of solutions generated by the models increases.

### Components/Axes

**Chart 1 (AIME '24):**

* **Title:** Best-of-N: AIME '24

* **Generator:** Qwen2.5-32B-Instruct

* **X-axis:** Number of solutions (labeled as 2⁰, 2¹, 2², 2³) – representing 1, 2, 4, and 8 solutions respectively.

* **Y-axis:** Accuracy (%) – ranging from approximately 20% to 33%.

* **Legend:**

* ThinkPRM-14B (Orange)

* DiscPRM-14B (Teal)

* LLM-as-a-judge (Gray)

* Majority (Peach)

**Chart 2 (MATH-500):**

* **Title:** Best-of-N: MATH-500

* **Generator:** Qwen2.5-14B

* **X-axis:** Number of solutions (labeled as 2¹, 2², 2³, 2⁴, 2⁵, 2⁶) – representing 2, 4, 8, 16, 32, and 64 solutions respectively.

* **Y-axis:** Accuracy (%) – ranging from approximately 50% to 85%.

* **Legend:**

* ThinkPRM-14B (Orange)

* DiscPRM-14B (Teal)

* LLM-as-a-judge (Gray)

* Majority (Peach)

The legend is positioned at the bottom-center of the image, spanning both charts.

### Detailed Analysis or Content Details

**Chart 1 (AIME '24):**

* **ThinkPRM-14B (Orange):** Starts at approximately 21% accuracy with 1 solution (2⁰), increases to roughly 26% with 2 solutions (2¹), then rises to about 29% with 4 solutions (2²), and finally reaches approximately 32.5% with 8 solutions (2³). The line slopes upward consistently.

* **DiscPRM-14B (Teal):** Begins at approximately 21% accuracy with 1 solution (2⁰), increases to around 25% with 2 solutions (2¹), then rises to about 29% with 4 solutions (2²), and reaches approximately 30.5% with 8 solutions (2³). The line slopes upward, but less steeply than ThinkPRM-14B.

* **LLM-as-a-judge (Gray):** Remains relatively flat, starting at approximately 21% with 1 solution (2⁰) and ending at around 23% with 8 solutions (2³).

* **Majority (Peach):** Starts at approximately 21% accuracy with 1 solution (2⁰) and remains relatively flat, fluctuating around 21-22% throughout.

**Chart 2 (MATH-500):**

* **ThinkPRM-14B (Orange):** Starts at approximately 51% accuracy with 2 solutions (2¹), increases sharply to around 74% with 4 solutions (2²), then rises to about 77% with 8 solutions (2³), plateaus around 77-78% with 16 solutions (2⁴), and finally reaches approximately 83% with 64 solutions (2⁶).

* **DiscPRM-14B (Teal):** Begins at approximately 51% accuracy with 2 solutions (2¹), increases to around 72% with 4 solutions (2²), then rises to about 75% with 8 solutions (2³), plateaus around 75-76% with 16 solutions (2⁴), and reaches approximately 79% with 64 solutions (2⁶).

* **LLM-as-a-judge (Gray):** Starts at approximately 51% accuracy with 2 solutions (2¹), increases to around 64% with 4 solutions (2²), then rises to about 73% with 8 solutions (2³), plateaus around 73-74% with 16 solutions (2⁴), and reaches approximately 75% with 64 solutions (2⁶).

* **Majority (Peach):** Starts at approximately 51% accuracy with 2 solutions (2¹), increases to around 68% with 4 solutions (2²), then rises to about 75% with 8 solutions (2³), plateaus around 75-76% with 16 solutions (2⁴), and reaches approximately 78% with 64 solutions (2⁶).

### Key Observations

* In both charts, increasing the number of solutions generally improves accuracy, but with diminishing returns.

* ThinkPRM-14B consistently outperforms the other methods on both benchmarks, especially as the number of solutions increases.

* LLM-as-a-judge and Majority perform similarly, but their accuracy gains are less pronounced than those of ThinkPRM-14B and DiscPRM-14B.

* The AIME '24 benchmark shows lower overall accuracy compared to the MATH-500 benchmark.

* The accuracy of all models on AIME '24 plateaus at a lower level than on MATH-500.

### Interpretation

These charts demonstrate the effectiveness of generating multiple solutions to mathematical problems and then selecting the best one. The "Best-of-N" approach, where N represents the number of solutions, significantly improves accuracy compared to relying on a single solution. The Qwen2.5 models used as generators appear to be effective in producing diverse solutions.

The superior performance of ThinkPRM-14B suggests that its prompting strategy or architecture is particularly well-suited for mathematical reasoning. The relatively flat performance of LLM-as-a-judge indicates that simply using an LLM to judge the correctness of solutions is not as effective as more sophisticated methods like ThinkPRM-14B or DiscPRM-14B.

The diminishing returns observed as the number of solutions increases suggest that there is a point at which generating additional solutions provides only marginal improvements in accuracy. This highlights the importance of balancing computational cost with performance gains. The difference in overall accuracy between the two benchmarks (AIME '24 and MATH-500) suggests that AIME '24 is a more challenging benchmark, requiring more sophisticated reasoning abilities.

</details>

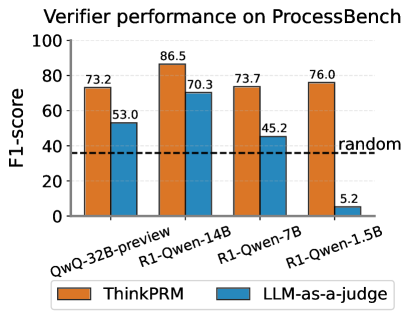

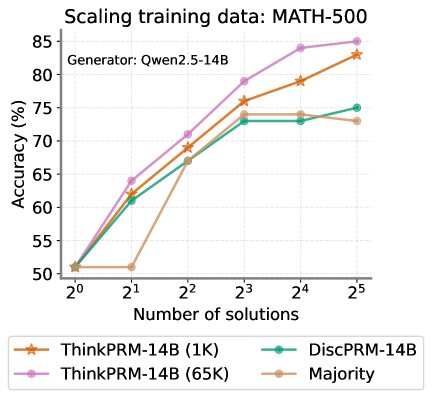

Figure 11: Best-of-N on AIME ’24 and MATH-500. Compared to LLM-as-a-judge, DiscPRM, and (unweighted) majority vote, ThinkPRM -14B exhibits best accuracy scaling curve.

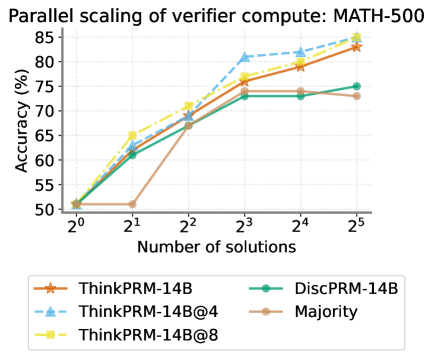

Scaling verifier compute.

Compared to DiscPRMs, generative reward models enable an extra dimension of scaling to squeeze more performance: scaling the verifier compute. Specifically, ThinkPRM allows for two types of scaling. First, we use parallel scaling (Mahan et al., 2024; Brown et al., 2024), by sampling $K$ independent CoTs and averaging their scores. We will refer to this scaling using “@K” throughout the rest of the paper. Second, and more specific to long reasoning models, we use sequential scaling e.g., by enabling the model to double-check its initial verification (Xiong et al., 2025; Kumar et al., 2024; Ye et al., 2024). Inspired by Muennighoff et al. (2025), we use a trigger phrase such as “Let’s verify again” to elicit self-correction of earlier verification. See Section E.4 for more details.

\caption@setoptions

floatrow \caption@setoptions figurerow \caption@setposition b

\caption@setoptions figure \caption@setposition b

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Guided Beam Search - MATH-500 Accuracy

### Overview

This line chart illustrates the relationship between the number of beams used in a guided beam search and the resulting accuracy on the MATH-500 dataset. The chart compares the performance of several different models: ThinkPRM-1.5B, ThinkPRM-1.5B@4, DiscPRM-1.5B, MathShepherd-7B, and RLHFflow-8B-Deepseek. The generator used for all models is Llama-3.2-3B-Instruct.

### Components/Axes

* **Title:** "Guided beam search: MATH-500"

* **Subtitle:** "Generator: Llama-3.2-3B-Instruct" (positioned centrally below the title)

* **X-axis:** "Number of beams" with markers at 2⁰, 2¹, 2², 2³, and 2⁴.

* **Y-axis:** "Accuracy (%)" with a scale ranging from approximately 55.0% to 72.5%.

* **Legend:** Located at the bottom of the chart, horizontally aligned. It contains the following labels and corresponding colors:

* ThinkPRM-1.5B (Orange)

* ThinkPRM-1.5B@4 (Dark Orange, dashed line)

* DiscPRM-1.5B (Teal)

* MathShepherd-7B (Gray)

* RLHFflow-8B-Deepseek (Purple)

### Detailed Analysis

The chart displays five distinct lines, each representing a different model's accuracy as the number of beams increases.

* **ThinkPRM-1.5B (Orange):** The line slopes upward consistently.

* At 2⁰ beams: Approximately 62.2% accuracy.

* At 2¹ beams: Approximately 63.5% accuracy.

* At 2² beams: Approximately 65.0% accuracy.

* At 2³ beams: Approximately 67.0% accuracy.

* At 2⁴ beams: Approximately 68.2% accuracy.

* **ThinkPRM-1.5B@4 (Dark Orange, dashed):** This line shows the most significant upward trend.

* At 2⁰ beams: Approximately 65.0% accuracy.

* At 2¹ beams: Approximately 66.5% accuracy.

* At 2² beams: Approximately 68.5% accuracy.

* At 2³ beams: Approximately 70.0% accuracy.

* At 2⁴ beams: Approximately 72.5% accuracy.

* **DiscPRM-1.5B (Teal):** The line shows a moderate upward trend, with a slight flattening at the higher beam counts.

* At 2⁰ beams: Approximately 57.5% accuracy.

* At 2¹ beams: Approximately 58.5% accuracy.

* At 2² beams: Approximately 62.0% accuracy.

* At 2³ beams: Approximately 63.0% accuracy.

* At 2⁴ beams: Approximately 63.5% accuracy.

* **MathShepherd-7B (Gray):** The line shows a relatively flat trend, with a slight increase.

* At 2⁰ beams: Approximately 55.0% accuracy.

* At 2¹ beams: Approximately 56.0% accuracy.

* At 2² beams: Approximately 57.5% accuracy.

* At 2³ beams: Approximately 57.5% accuracy.

* At 2⁴ beams: Approximately 61.0% accuracy.

* **RLHFflow-8B-Deepseek (Purple):** The line shows an upward trend, but less pronounced than ThinkPRM-1.5B@4.

* At 2⁰ beams: Approximately 55.5% accuracy.

* At 2¹ beams: Approximately 57.0% accuracy.

* At 2² beams: Approximately 58.5% accuracy.

* At 2³ beams: Approximately 60.5% accuracy.

* At 2⁴ beams: Approximately 62.5% accuracy.

### Key Observations

* ThinkPRM-1.5B@4 consistently outperforms all other models across all beam counts.

* The performance gap between ThinkPRM-1.5B@4 and the other models widens as the number of beams increases.

* MathShepherd-7B exhibits the lowest overall accuracy and the flattest trend.

* Increasing the number of beams generally improves accuracy for all models, but the rate of improvement varies.

### Interpretation

The data suggests that guided beam search is an effective technique for improving the accuracy of language models on the MATH-500 dataset. The model ThinkPRM-1.5B@4, in particular, demonstrates a strong ability to leverage the benefits of increasing the number of beams. The relatively poor performance of MathShepherd-7B may indicate that it is less well-suited to this particular task or that it requires different optimization strategies. The consistent upward trends across most models suggest that there is still potential for further accuracy gains by exploring even larger beam counts. The difference in performance between ThinkPRM-1.5B and ThinkPRM-1.5B@4 suggests that the "@4" modification significantly improves the model's ability to utilize beam search. The use of Llama-3.2-3B-Instruct as the generator provides a common baseline for comparing the performance of these different models.

</details>

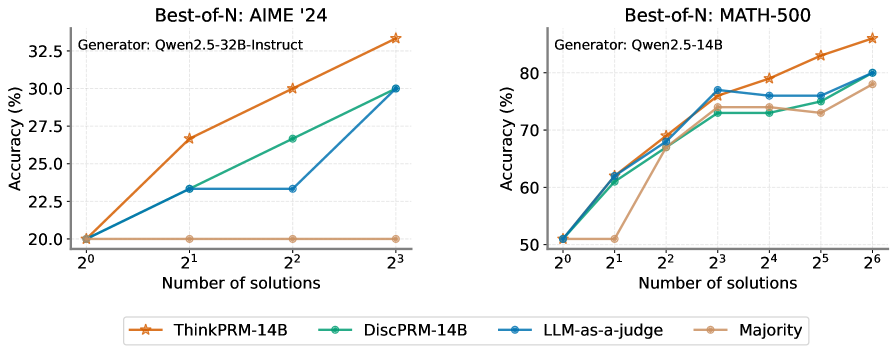

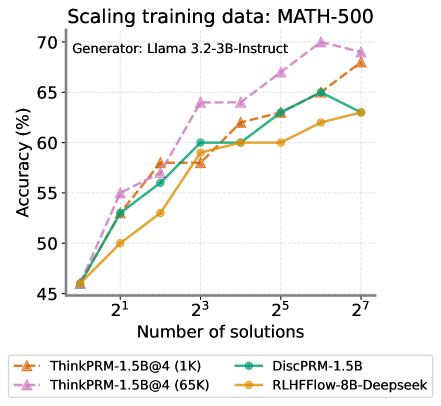

Figure 14: Comparison to off-the-shelf PRMs trained on much more step labels than ThinkPRM. $@K$ represents parallel scaling by averaging scores over K CoTs. \caption@setoptions figure \caption@setposition b

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Line Chart: Filtering based on Process vs. Outcome

### Overview

This line chart compares the accuracy of two filtering methods – process-based (labeled "ours") and outcome-based (GenRM) – as a function of the number of beams used. A baseline accuracy score from an LLM-as-a-judge is also presented for comparison. The generator used is Llama-3.2-3B-Instruct.

### Components/Axes

* **Title:** Filtering based on Process vs. Outcome

* **Subtitle:** Generator: Llama-3.2-3B-Instruct

* **X-axis:** Number of beams. Markers are at 2⁰, 2¹, 2², 2³, and 2⁴.

* **Y-axis:** Accuracy (%) with a scale ranging from approximately 56% to 68%.

* **Legend:**

* Process-based (ours) - Solid orange line with star markers.

* Outcome-based (GenRM) - Solid yellow line with star markers.

* LLM-as-a-judge - Dashed blue line.

### Detailed Analysis

* **Process-based (ours):** The orange line shows an upward trend.

* At 2⁰ (1 beam): Approximately 62% accuracy.

* At 2¹ (2 beams): Approximately 63% accuracy.

* At 2² (4 beams): Approximately 64% accuracy.

* At 2³ (8 beams): Approximately 66% accuracy.

* At 2⁴ (16 beams): Approximately 68% accuracy.

* **Outcome-based (GenRM):** The yellow line shows a downward trend initially, then a slight increase.

* At 2⁰ (1 beam): Approximately 61% accuracy.

* At 2¹ (2 beams): Approximately 58% accuracy.

* At 2² (4 beams): Approximately 56% accuracy.

* At 2³ (8 beams): Approximately 57% accuracy.

* At 2⁴ (16 beams): Approximately 59% accuracy.

* **LLM-as-a-judge:** The blue dashed line is approximately horizontal, indicating a relatively constant accuracy.

* Accuracy is consistently around 62%.

### Key Observations

* The process-based filtering method consistently outperforms the outcome-based method across all beam numbers.

* The process-based method shows a clear positive correlation between the number of beams and accuracy. Increasing the number of beams leads to improved accuracy.

* The outcome-based method initially decreases in accuracy as the number of beams increases, then shows a slight recovery.

* The LLM-as-a-judge provides a stable baseline for comparison.

### Interpretation

The data suggests that filtering based on the *process* of generation (the "ours" method) is more effective than filtering based on the *outcome* (GenRM) when using the Llama-3.2-3B-Instruct generator. The increasing accuracy of the process-based method with more beams indicates that a more thorough evaluation of the generation process leads to better results. The relatively flat line for the LLM-as-a-judge suggests that its accuracy doesn't significantly change with the number of beams, implying it's a consistent but potentially less sensitive metric. The initial drop in accuracy for the outcome-based method could indicate that simply evaluating the final output is less reliable, and may be susceptible to noise or superficial characteristics. The slight recovery at higher beam numbers might suggest that with more samples, the outcome-based method can identify some improvements, but it still doesn't reach the performance of the process-based approach. This implies that understanding *how* a model generates text is more valuable than simply assessing *what* it generates.

</details>

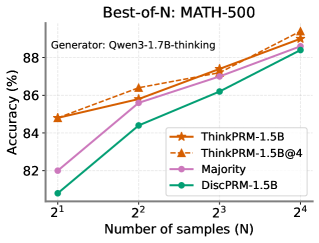

Figure 17: Ablating the data filtering mechanism, where our process-based filtering yields better PRMs. LLM-as-a-judge is shown with number of beams = 16.

4.2 Results

ThinkPRM outperforms DiscPRM and LLM-as-a-Judge.

Under best-of-N selection with MATH-500 shown in Fig. 11 (right), ThinkPRM leads to higher or comparable reasoning accuracy to DiscPRM under all sampling budgets. The trend holds on the more challenging AIME ’24, shown in Fig. 11 left. Additionally, Fig. 1 (right) shows beam search results on MATH-500, with ThinkPRM 1.5B surpassing DiscPRM and LLM-as-a-Judge.

ThinkPRM surpasses off-the-shelf PRMs.

We compare ThinkPRM -1.5B to two strong off-the-shelf PRMs, namely RLHFFlow-Deepseek-PRM (Xiong et al., 2024) and MATH-Shepherd-PRM (Wang et al., 2023b). These PRMs are trained on even more data than PRM800K and are larger than 1.5B. We show results under verifier-guided search on MATH-500 in Fig. 17, with ThinkPRM -1.5B’s scaling curve surpassing all baselines and outperforming RLHFFlow-Deepseek-PRM, the best off-the-shelf PRM among the ones we tested, by more than 7% across all beam sizes.

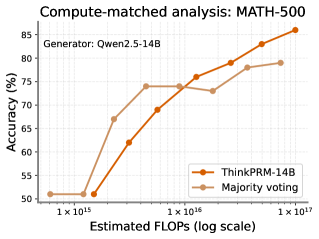

ThinkPRM excels on out-of-domain tasks.

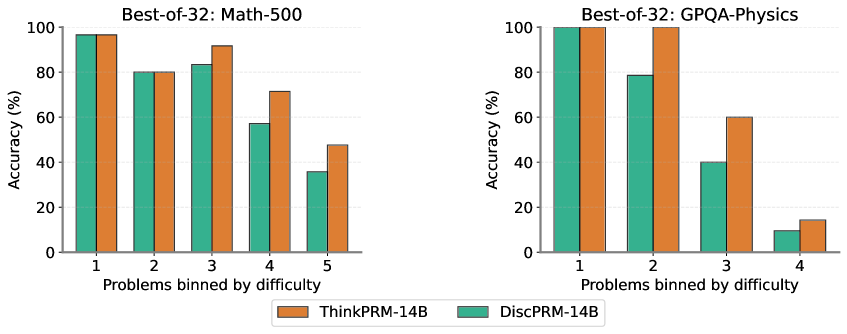

As for OOD performance on GPQA-physics (Fig. 18 left), ThinkPRM scales better than DiscPRM—which drops substantially at N=32—outperforming it by 8%. On LiveCodeBench (Fig. 18 right), ThinkPRM also outperforms DiscPRM by 4.5%. On LiveCodeBench, Qwen2.5-7B-Math-PRM (Zhang et al., 2025) —a discriminative PRM trained on substantial amount of process labels obtained from LLM-as-a-judge data and Monte Carlo rollouts—struggles when applied out-of-domain. Our results shed light on the fragility of discriminative PRMs under domain shifts in contrast with generative PRMs.

Scaling ThinkPRM compute boosts performance.

Under verifier-guided search (shown in Fig. 17), parallel scaling with ThinkPRM -1.5B@4 boosts the accuracy by more than 5% points, and yields the best accuracy on MATH-500. In addition, parallel scaling with ThinkPRM -14B@4 and ThinkPRM -14B@8 boosts best-of-N performance on MATH-500 as shown in Fig. 31 in Section E.6. Now we move to sequential scaling of verifier compute by forcing ThinkPRM to recheck its own verification. Since this can be compute-intensive, we only run this on 200 problems from OmniMath subset of ProcessBench, and observe how verification F1 improves as we force the model to think for longer as shown in Fig. 2. ThinkPRM exhibits better scaling behavior compared to LLM-as-a-judge, which drops after 16K tokens, and outperforms DiscPRM-14B by 15 F1 points. In summary, ThinkPRM is consistently better than LLM-as-a-judge under parallel and sequential scaling.

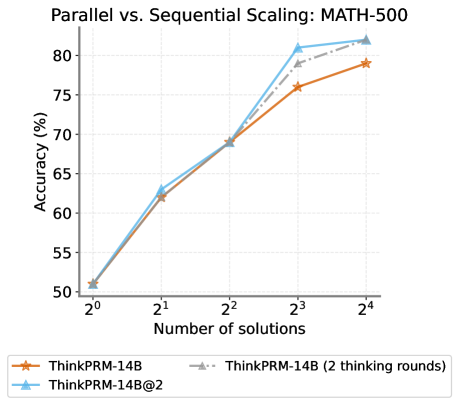

Parallel scaling vs. sequential scaling.

Is it preferable to scale verifier compute in parallel or sequentially? We investigate this by comparing the two modes of scaling under the same token budget. Fig. 32 in Section E.6 shows performance of best-of-N with Qwen-2.5-14B under parallel and sequential scaling with $K=2,4$ under both parallel scaling and sequential scaling. Overall, the performance of both methods is fairly close, but we observe a slight advantage to parallel scaling under certain budgets.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Charts: Best-of-N Performance Comparison

### Overview

The image presents two line charts comparing the performance of different language models on two out-of-distribution (OOD) datasets: GPQA-physics and LiveCodeBench. The charts plot performance metrics (Accuracy for GPQA-physics and Pass Rate for LiveCodeBench) against the number of solutions generated by the models.

### Components/Axes

Both charts share the following components:

* **X-axis:** "Number of solutions" with markers at 2⁰, 2¹, 2², 2³, 2⁴, and 2⁵.

* **Y-axis:** The left chart displays "Accuracy (%)", ranging from approximately 54% to 72%. The right chart displays "Pass rate (%)", ranging from approximately 55% to 67%.

* **Legend:** Located at the bottom of each chart, identifying the different models/strategies:

* ThinkPRM-14B (Orange, dashed line)

* DiscPRM-14B (Green, solid line)

* Qwen2.5-7B-Math-PRM (Gray, dashed-dotted line)

* Majority (Gray, solid line)

* **Title:** Each chart has a title indicating the dataset being evaluated:

* Left Chart: "Best-of-N: GPQA-physics (OOD)"

* Right Chart: "Best-of-N: LiveCodeBench (OOD)"

* **Generator:** Each chart also indicates the generator used:

* Left Chart: "Generator: Qwen-2.5-32B-Instruct"

* Right Chart: "Generator: Qwen-2.5-Coder-7B"

### Detailed Analysis or Content Details

**Chart 1: GPQA-physics (OOD)**

* **ThinkPRM-14B (Orange):** Starts at approximately 54.5% at 2⁰, increases steadily to around 68% at 2⁴, and peaks at approximately 71.5% at 2⁵.

* **DiscPRM-14B (Green):** Begins at approximately 56% at 2⁰, rises to around 64% at 2³, then declines to approximately 62% at 2⁵.

* **Qwen2.5-7B-Math-PRM (Gray, dashed-dotted):** Starts at approximately 55% at 2⁰, increases to around 61% at 2⁴, and remains relatively stable at approximately 61% at 2⁵.

* **Majority (Gray, solid):** Starts at approximately 54% at 2⁰, increases to around 58% at 2³, and remains relatively stable at approximately 58% at 2⁵.

**Chart 2: LiveCodeBench (OOD)**

* **ThinkPRM-14B (Orange):** Starts at approximately 57.5% at 2⁰, increases to around 66% at 2⁴, and declines slightly to approximately 65% at 2⁵.

* **DiscPRM-14B (Green):** Begins at approximately 59% at 2⁰, rises to around 64% at 2³, and declines to approximately 62% at 2⁵.

* **Qwen2.5-7B-Math-PRM (Gray, dashed-dotted):** Starts at approximately 57.5% at 2⁰, increases to around 61% at 2³, and declines to approximately 58% at 2⁵.

* **Majority (Gray, solid):** Starts at approximately 55% at 2⁰, increases to around 59% at 2³, and remains relatively stable at approximately 58% at 2⁵.

### Key Observations

* In both charts, ThinkPRM-14B generally outperforms the other models, especially at higher numbers of solutions (2⁴ and 2⁵).

* DiscPRM-14B shows an initial increase in performance but then plateaus or declines.

* Qwen2.5-7B-Math-PRM and Majority consistently perform at a lower level than ThinkPRM-14B and DiscPRM-14B.

* The performance gap between the models tends to widen as the number of solutions increases.

### Interpretation

The data suggests that increasing the number of solutions generated by the models can improve performance on both GPQA-physics and LiveCodeBench datasets. ThinkPRM-14B appears to be the most effective strategy, benefiting significantly from generating more solutions. The decline in performance for DiscPRM-14B after 2³ might indicate a point of diminishing returns or potential overfitting to the generated solutions. The relatively stable performance of the Majority baseline suggests that simply selecting the most frequent solution doesn't yield the same benefits as more sophisticated generation and selection strategies. The difference in performance between the two generators (Qwen-2.5-32B-Instruct for GPQA-physics and Qwen-2.5-Coder-7B for LiveCodeBench) suggests that the choice of generator is also important and may be task-dependent. The "Best-of-N" approach, where multiple solutions are generated and the best one is selected, is a promising technique for improving the performance of language models on challenging tasks. The OOD nature of the datasets highlights the importance of evaluating models on data that differs from their training distribution.

</details>

Figure 18: Best-of-N on two out-of-domain tasks: science QA (GPQA-Physics) and code generation (LiveCodeBench). Although ThinkPRM was only finetuned on math, it exhibits superior OOD performance than the baselines, especially at larger sampling budgets, where the baselines fall short. Discriminative PRMs struggle despite being trained on orders of magnitude more process labels.

5 Analysis and Discussion

5.1 Training data efficiency

A major strength of ThinkPRM is training data efficiency compared to discriminative versions. Here, we study the training scaling behavior of ThinkPRM -14B by training it over 500 and 1K examples in total collected using the pipeline in Section 3.2, which roughly corresponds to 4K and 8K process labels from PRM800K in total. We compare that to DiscPRM-14B trained with 1K, 10K, 50K and 98K examples, where 98K corresponds to training on the full PRM800K train set that includes 712K step labels. Fig. 1 (Left) contrasts the training data scaling behavior of ThinkPRM -14B with that of DiscPRM-14B, where ThinkPRM -14B’s performance scales substantially better with two orders of magnitude fewer process labels. This primarily stems from ThinkPRM ’s utilization of text generation and reasoning abilities of the underlying models.

While we train ThinkPRM using only 1K data points, we investigate whether it will benefit from training on more data. Using the pipeline, we collect and filter additional verification CoTs and obtain a total of 65K chains. We then finetune R1-Distill-Qwen-1.5B and R1-Distill-Qwen-14B on these for a single epoch while keeping all other training hyperparameters fixed. We then compare the resulting models to the 1K-trained version of ThinkPRM under best-of-N selection on MATH-500. Figs. 38 and 38 in Section E.7 show a performance boost from training on the 65K examples compared to only 1K. This suggests that ThinkPRM can utilize more training data when available.

5.2 Effect of Verification CoT Length on PRM Quality

We study whether the length of verification chains of thought affects the quality of the resulting generative verifier. Specifically, we compare ThinkPRM trained on the full, long synthetic CoTs with a variant trained on short, compressed versions of the same 1K CoTs. To obtain the short CoTs, we instruct gpt-4o-mini to rewrite each original CoT into a concise version that preserves only the essential reasoning. We then train R1-Qwen-1.5B and R1-Qwen-14B on these short CoTs and evaluate verification F1 on ProcessBench. Table 1 reports the comparison.

| R1-Qwen-1.5B R1-Qwen-14B | 87.3 87.3 | 75.7 85.7 | 64.8 55.3 | 66.7 60.8 |

| --- | --- | --- | --- | --- |

Table 1: Verification F1 when training R1 models on long versus short CoTs.

The substantial performance drop when training on short CoTs emphasizes how ThinkPRM benefits from extended reasoning. Since verification is a complex task, throwing more reasoning effort at it via thinking improves performance. These results support the value of using long verification CoTs for training.

5.3 Reasoning traces without clear step boundaries

So far, we have used ThinkPRM to verify short CoTs with clear steps delimiters. Here, we investigate whether ThinkPRM can still verify long CoTs that involve extended reasoning, backtracking, and self-correction. As a generator, we use Qwen3-1.7B (Yang et al., 2025) with thinking mode. Although ThinkPRM was only trained on short solutions from PRM800K, it can still verify long CoTs and outperforms the baselines as shown in Fig. 19 left. Inspecting ThinkPRM ’s outputs, we found that it extracts and verifies individual steps embedded in the long CoT—an example is in Fig. 45.

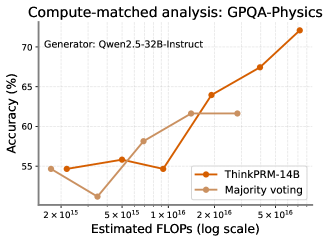

5.4 Compute-matched comparison to self-consistency

Under a fixed test-time compute budget for best-of-N, how does ThinkPRM compare to simply sampling more solutions from the generator and applying majority voting? To investigate this, we conduct a compute-matched analysis on MATH-500 and GPQA-Physics. Fig. 19 mid and right plot solution accuracy as a function of sampling FLOPs for MATH-500 and GPQA-physics. At low sampling budgets, best-of-N with ThinkPRM performs comparably to self-consistency, but as the compute budget increases, ThinkPRM has a clear advantage. These findings agree with recent work on outcome reward models (Singhi et al., 2025).

5.5 ThinkPRM with Monte Carlo step labels

To train ThinkPRM, we have relied on manual step labels from PRM800K. Since automatic labels e.g., via Monte Carlo rollouts Luo et al. (2024) are cheaper, we validate whether we can train ThinkPRM using automatic labels. We train ThinkPRM -1.5B using 1K synthetic chains based on labels from Math-shepherd dataset (Wang et al., 2023b). Performance on ProcessBench is shown in Table 4, where training ThinkPRM with automatic labels yields very comparable performance to training with manual labels, showing that our training pipeline is agnostic to step-labeling strategy.

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Chart: Best-of-N: MATH-500

### Overview

This line chart displays the accuracy of different models on the MATH-500 dataset as a function of the number of samples (N) used in a "Best-of-N" approach. The x-axis represents the number of samples, expressed as powers of 2 (2<sup>1</sup> to 2<sup>4</sup>), and the y-axis represents the accuracy in percentage. The chart compares the performance of four models: ThinkPRM-1.5B, ThinkPRM-1.5B@4, Majority, and DiscPRM-1.5B.

### Components/Axes

* **Title:** Best-of-N: MATH-500

* **Subtitle:** Generator: Qwen3-1.7B-thinking

* **X-axis Label:** Number of samples (N)

* **X-axis Markers:** 2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, 2<sup>4</sup>

* **Y-axis Label:** Accuracy (%)

* **Legend:**

* ThinkPRM-1.5B (Orange, dashed line with triangle markers)

* ThinkPRM-1.5B@4 (Dark Orange, dashed line with square markers)

* Majority (Purple, solid line with circle markers)

* DiscPRM-1.5B (Teal, solid line with diamond markers)

### Detailed Analysis

The chart shows four lines representing the accuracy of each model as the number of samples increases.

* **ThinkPRM-1.5B (Orange):** The line slopes upward, indicating increasing accuracy with more samples.

* At 2<sup>1</sup> (N=2): Approximately 84.7% accuracy.

* At 2<sup>2</sup> (N=4): Approximately 86.2% accuracy.

* At 2<sup>3</sup> (N=8): Approximately 87.2% accuracy.

* At 2<sup>4</sup> (N=16): Approximately 89.1% accuracy.

* **ThinkPRM-1.5B@4 (Dark Orange):** The line also slopes upward, generally above ThinkPRM-1.5B.

* At 2<sup>1</sup> (N=2): Approximately 85.2% accuracy.

* At 2<sup>2</sup> (N=4): Approximately 86.7% accuracy.

* At 2<sup>3</sup> (N=8): Approximately 87.8% accuracy.

* At 2<sup>4</sup> (N=16): Approximately 89.4% accuracy.

* **Majority (Purple):** The line slopes upward, starting lower than the ThinkPRM models but converging towards the higher values.

* At 2<sup>1</sup> (N=2): Approximately 82.5% accuracy.

* At 2<sup>2</sup> (N=4): Approximately 84.2% accuracy.

* At 2<sup>3</sup> (N=8): Approximately 86.2% accuracy.

* At 2<sup>4</sup> (N=16): Approximately 88.8% accuracy.

* **DiscPRM-1.5B (Teal):** The line slopes upward, starting at the lowest accuracy and consistently increasing with more samples.

* At 2<sup>1</sup> (N=2): Approximately 81.2% accuracy.

* At 2<sup>2</sup> (N=4): Approximately 83.2% accuracy.

* At 2<sup>3</sup> (N=8): Approximately 85.2% accuracy.

* At 2<sup>4</sup> (N=16): Approximately 88.2% accuracy.

### Key Observations

* All models show improved accuracy as the number of samples increases.

* ThinkPRM-1.5B@4 consistently outperforms ThinkPRM-1.5B.

* The "Majority" model starts with lower accuracy but shows a significant improvement with more samples, approaching the performance of the ThinkPRM models.

* DiscPRM-1.5B consistently has the lowest accuracy across all sample sizes.

* The differences in accuracy between the models become less pronounced at higher sample sizes (N=16).

### Interpretation

The data suggests that a "Best-of-N" approach is effective in improving the accuracy of these models on the MATH-500 dataset. Increasing the number of samples (N) leads to better performance for all models. The ThinkPRM-1.5B@4 model appears to be the most robust, consistently achieving the highest accuracy. The "Majority" model demonstrates that a simple ensemble method can be competitive, especially with a larger number of samples. The performance gap between the models narrows as N increases, indicating that all models benefit from more data, but some are more sensitive to sample size than others. The generator used, Qwen3-1.7B-thinking, provides context for the models being evaluated. This chart is a comparative analysis of different model architectures and sampling strategies for solving mathematical problems.

</details>

<details>

<summary>x12.png Details</summary>

### Visual Description

## Line Chart: Compute-matched analysis: MATH-500

### Overview

This image presents a line chart comparing the accuracy of two methods, "ThinkPRM-14B" and "Majority voting", as a function of estimated FLOPS (Floating Point Operations Per Second) on the MATH-500 dataset. The chart is designed to show how performance scales with computational resources.

### Components/Axes

* **Title:** Compute-matched analysis: MATH-500

* **Subtitle:** Generator: Qwen2.5-14B

* **X-axis Label:** Estimated FLOPS (log scale)

* **X-axis Scale:** Logarithmic, ranging from approximately 1 x 10<sup>15</sup> to 1 x 10<sup>17</sup>. Markers are at 1 x 10<sup>15</sup>, 5 x 10<sup>15</sup>, 1 x 10<sup>16</sup>, 5 x 10<sup>16</sup>, and 1 x 10<sup>17</sup>.

* **Y-axis Label:** Accuracy (%)

* **Y-axis Scale:** Linear, ranging from 50% to 85%. Markers are at 50%, 55%, 60%, 65%, 70%, 75%, 80%, and 85%.

* **Legend:** Located in the bottom-right corner.

* "ThinkPRM-14B" – Represented by a solid orange line with circular markers.

* "Majority voting" – Represented by a dashed orange line with circular markers.

### Detailed Analysis

**ThinkPRM-14B (Solid Orange Line):**

The line slopes upward, indicating increasing accuracy with increasing FLOPS.

* At 1 x 10<sup>15</sup> FLOPS: Approximately 51% accuracy.

* At 5 x 10<sup>15</sup> FLOPS: Approximately 62% accuracy.

* At 1 x 10<sup>16</sup> FLOPS: Approximately 72% accuracy.

* At 5 x 10<sup>16</sup> FLOPS: Approximately 78% accuracy.

* At 1 x 10<sup>17</sup> FLOPS: Approximately 85% accuracy.

**Majority Voting (Dashed Orange Line):**

The line also slopes upward, but with a different trajectory than ThinkPRM-14B.

* At 1 x 10<sup>15</sup> FLOPS: Approximately 51% accuracy.

* At 5 x 10<sup>15</sup> FLOPS: Approximately 52% accuracy.

* At 1 x 10<sup>16</sup> FLOPS: Approximately 75% accuracy.

* At 5 x 10<sup>16</sup> FLOPS: Approximately 78% accuracy.

* At 1 x 10<sup>17</sup> FLOPS: Approximately 79% accuracy.

### Key Observations

* Both methods show an increase in accuracy with increasing FLOPS.

* ThinkPRM-14B consistently outperforms Majority voting across all FLOPS levels.

* The performance gap between the two methods widens as FLOPS increase, particularly between 1 x 10<sup>15</sup> and 1 x 10<sup>16</sup> FLOPS.

* The accuracy of Majority voting plateaus at higher FLOPS levels (above 1 x 10<sup>16</sup> FLOPS).

### Interpretation

The data suggests that ThinkPRM-14B is a more effective method for solving MATH-500 problems than Majority voting, especially when significant computational resources are available. The increasing accuracy with FLOPS indicates that both methods benefit from increased computational power, but ThinkPRM-14B demonstrates a stronger scaling effect. The plateau in Majority voting's accuracy suggests that its performance is limited by factors other than computational resources, such as the inherent limitations of the voting mechanism itself. The generator used, Qwen2.5-14B, likely influences the performance of ThinkPRM-14B. The chart highlights the trade-off between computational cost and accuracy, and suggests that investing in more FLOPS can lead to substantial improvements in performance for ThinkPRM-14B. The initial low accuracy for both methods at the lowest FLOPS level suggests that a minimum level of computation is required to achieve meaningful results.

</details>

<details>

<summary>x13.png Details</summary>

### Visual Description

## Line Chart: Compute-matched analysis: GPQA-Physics

### Overview

This image presents a line chart illustrating the relationship between Estimated FLOPS (on a logarithmic scale) and Accuracy (%) for two different methods: ThinkPRM-14B and Majority voting. The chart focuses on the GPQA-Physics dataset and uses data generated by Qwen2.5-32B-Instruct.

### Components/Axes

* **Title:** Compute-matched analysis: GPQA-Physics