# Agentic Reasoning and Tool Integration for LLMs via Reinforcement Learning

Abstract

Large language models (LLMs) have achieved remarkable progress in complex reasoning tasks, yet they remain fundamentally limited by their reliance on static internal knowledge and text-only reasoning. Real-world problem solving often demands dynamic, multi-step reasoning, adaptive decision making, and the ability to interact with external tools and environments. In this work, we introduce ARTIST (A gentic R easoning and T ool I ntegration in S elf-improving T ransformers), a unified framework that tightly couples agentic reasoning, reinforcement learning, and tool integration for LLMs. ARTIST enables models to autonomously decide when, how, and which tools to invoke within multi-turn reasoning chains, leveraging outcome-based RL to learn robust strategies for tool use and environment interaction without requiring step-level supervision. Extensive experiments on mathematical reasoning and multi-turn function calling benchmarks show that ARTIST consistently outperforms state-of-the-art baselines, with up to 22% absolute improvement over base models and strong gains on the most challenging tasks. Detailed studies and metric analyses reveal that agentic RL training leads to deeper reasoning, more effective tool use, and higher-quality solutions. Our results establish agentic RL with tool integration as a powerful new frontier for robust, interpretable, and generalizable problem-solving in LLMs.

1 Introduction

Large language models (LLMs) have achieved remarkable advances in complex reasoning tasks (Wei et al., 2023; Kojima et al., 2023), fueled by innovations in model architecture, scale, and training. Among these, reinforcement learning (RL) (Sutton et al., 1998) stands out as a particularly effective method for amplifying LLMs’ reasoning abilities. By leveraging outcome-based reward signals, RL enables models to autonomously refine their reasoning strategies (Shao et al., 2024), resulting in longer, more coherent chains of thought (CoT). Notably, RL-trained LLMs show strong improvements not only during training but also at test time (Zuo et al., 2025), dynamically adapting their reasoning depth and structure to each task’s complexity. This has led to state-of-the-art results on a range of benchmarks, highlighting RL’s promise for scaling LLM reasoning.

However, despite these advances, RL-enhanced LLMs still rely primarily on internal knowledge and language modeling (Wang et al., 2024). This reliance becomes a major limitation for time-sensitive or knowledge-intensive questions, where the model’s static knowledge base may be outdated or incomplete, often resulting in inaccuracies or hallucinations (Hu et al., 2024). Moreover, such models struggle with complex, domain-specific, or open-ended problems that require precise computation, structured manipulation, or specialized tool use. These challenges underscore the inadequacy of purely text-based reasoning and the need for LLMs to access and integrate external information sources during the reasoning process.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Agent-Environment Interaction Loop

### Overview

The image depicts a diagram illustrating an agent-environment interaction loop, likely representing a reinforcement learning or similar system. It shows a sequence of steps involving a task, a policy model, reasoning, tools, an environment, and a reward, culminating in an answer. The diagram uses arrows to indicate the flow of information and actions.

### Components/Axes

The diagram consists of the following components, arranged horizontally:

1. **Task:** Represented by a blue rectangle with a document icon.

2. **Policy Model:** Represented by a yellow rectangle with a robot icon.

3. **Reasoning:** Represented by a light-green rectangle.

4. **Tools:** Represented by a lavender rectangle.

5. **Environment:** Represented by a light-blue rectangle with icons representing observations ("Obs") and actions ("Action").

6. **Answer:** Represented by a grey rectangle.

7. **Reward:** Represented by a pink rectangle with a ribbon icon.

Arrows connect these components, indicating the flow of information and actions. The arrows are black with arrowheads.

### Detailed Analysis or Content Details

The flow of the diagram is as follows:

1. A **Task** is presented.

2. The **Task** is input to a **Policy Model**.

3. The **Policy Model** outputs to **Reasoning**.

4. **Reasoning** outputs to **Tools**.

5. **Tools** interact with the **Environment**, receiving observations ("Obs") and sending actions ("Action").

6. The **Environment** produces an **Answer**.

7. The **Answer** is evaluated by a **Reward** system.

8. The **Reward** is fed back to the **Policy Model**, completing the loop.

There are no numerical values or scales present in the diagram. The diagram is purely conceptual.

### Key Observations

The diagram highlights a closed-loop system where an agent (represented by the Policy Model, Reasoning, and Tools) interacts with an environment to achieve a task and receives feedback in the form of a reward. The inclusion of "Tools" suggests a more complex agent capable of utilizing external resources. The explicit labeling of "Obs" and "Action" within the Environment block emphasizes the input/output relationship between the agent and its surroundings.

### Interpretation

This diagram illustrates a common paradigm in artificial intelligence, particularly in reinforcement learning and embodied AI. The agent learns to perform a task by iteratively interacting with the environment, receiving rewards for successful actions, and adjusting its policy to maximize those rewards. The "Reasoning" component suggests a level of cognitive processing beyond simple stimulus-response behavior. The "Tools" component indicates the agent is not limited to its inherent capabilities but can leverage external resources to improve performance. The loop structure emphasizes the iterative nature of learning and adaptation. The diagram is a high-level representation and does not specify the details of the algorithms or mechanisms used within each component. It is a conceptual model for understanding how an intelligent agent can interact with and learn from its environment.

</details>

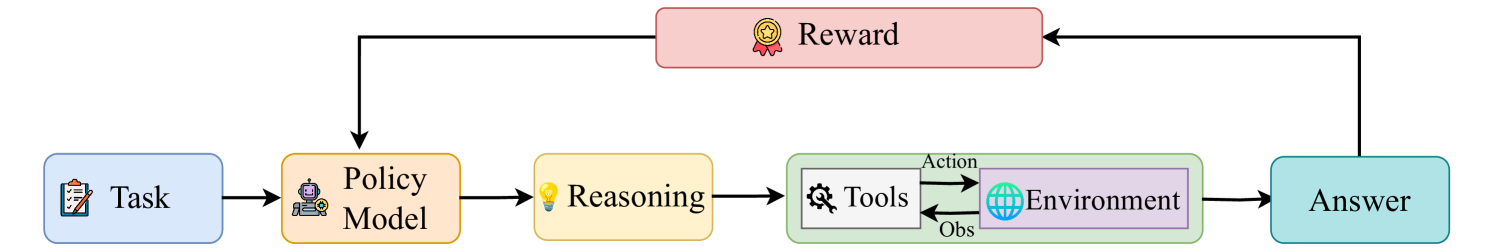

Figure 1: The ARTIST architecture. Agentic reasoning is achieved by interleaving text-based thinking, tool queries, and tool outputs, enabling dynamic coordination of reasoning, tool use, and environment interaction within a unified framework.

Agentic reasoning addresses these limitations by enabling LLMs to dynamically interact with both external resources and environments throughout the reasoning process (Xiong et al., 2025; Patil, 2025). External resources include web search, code execution, API calls, and structured memory, while environments encompass interactive settings such as web browsers (e.g., WebArena (Zhou et al., 2024)) or operating systems (e.g., WindowsAgentArena (Bonatti et al., 2024)). Unlike conventional approaches relying solely on internal inference, agentic reasoning empowers models to orchestrate tool use and adaptively coordinate research, computation, and logical deduction actively engaging with external resources and environments to solve complex, real-world problems (Wu et al., 2025; Rasal and Hauer, 2024).

Many real-world tasks such as math reasoning (Mouselinos et al., 2024), multi-step mathematical derivations, programmatic data analysis (Zhao et al., 2024), or up-to-date fact retrieval demand capabilities that extend beyond the scope of language modeling alone. For example, function-calling frameworks like BFCLv3 (Yan et al., 2024) allow LLMs to invoke specialized functions for file operations, data parsing, or API-based retrieval, while agentic environments such as WebArena empower models to autonomously plan and execute sequences of web interactions to accomplish open-ended objectives. In math problem solving, external libraries like SymPy (Meurer et al., 2017) or NumPy (Harris et al., 2020) empower LLMs to perform symbolic algebra and computation with far greater accuracy and efficiency than text-based reasoning alone.

Despite these advances, current tool-integration strategies for LLMs face major scalability and robustness challenges (Sun et al., 2024). Most approaches rely on hand-crafted prompts or fixed heuristics, which are labor-intensive and do not generalize to complex or unseen scenarios (Luo et al., 2025). While prompting (Gao et al., 2023) and supervised fine-tuning (Gou et al., 2024; Paranjape et al., 2023) can teach tool use, these methods are limited by curated data and struggle to adapt to new tasks or recover from tool failures. As a result, models often misuse tools or revert to brittle behaviors, highlighting the need for scalable, data-efficient, and adaptive tool-use frameworks.

To overcome the limitations of text-only reasoning, we introduce a new paradigm for LLMs: agentic reasoning through tool integration. Our framework, ARTIST (Agentic Reasoning and Tool Integration in Self-Improving Transformers), empowers LLMs to learn optimal strategies for leveraging external tools and interacting with complex environments via reinforcement learning (see Figure 1). Here, “self-improving transformers” refers to models that iteratively generate and learn from their own solutions, progressively tackling harder problems while maintaining the standard transformer architecture. ARTIST interleaves tool queries and tool outputs directly within the reasoning chain, treating tool usage as a first-class operation. In this framework, tool usage is not limited to isolated API calls or computations, but can involve active interaction within external environments—such as navigating a web browser or operating system interface—through sequences of tool invocations. Specifically, the reasoning process alternates between segments of text-based thinking (<think>...</think>), tool queries (e.g., <tool_name>...</tool_name>), and tool outputs (<output>...</output>), allowing the model to seamlessly coordinate reasoning, tool usage and environment interaction.

Motivating Example. Consider a Math Olympiad problem requiring a closed-form expression or complex integral. Traditional RL-trained LLMs (DeepSeek-AI et al., 2025) rely on text-based reasoning, often compounding errors in symbolic manipulation. In contrast, ARTIST enables the model to generate Python code, call a Python interpreter, and use libraries like SymPy (Meurer et al., 2017) for precise computation—seamlessly integrating results into the reasoning chain. This dynamic interplay of language and tool use boosts accuracy and allows iterative self-correction, overcoming the limitations of purely text-based approaches.

This agentic structure enables the model to autonomously decide not only which tools to use, but also when and how to invoke them during multi-turn reasoning—continually adapting its strategy based on context and feedback from both the environment and tool outputs. Tool results inform subsequent reasoning, creating a tightly coupled loop between text-based inference and tool-augmented actions. Notably, ARTIST requires no supervision for intermediate steps or tool calls; instead, we employ reinforcement learning—specifically, the GRPO (Shao et al., 2024) algorithm—to guide the model using only outcome-based rewards. This enables LLMs to develop adaptive, robust, and generalizable tool-use behaviors, overcoming the brittleness and scalability issues of prior methods.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Agentic Reasoning + Tool Integration

### Overview

The image is a diagram illustrating a system for agentic reasoning and tool integration. It depicts a process flow starting with an "Input", passing through a "Policy Model", generating an "Answer", and then calculating "Rewards" and "Advantage". The diagram highlights the iterative nature of reasoning and tool use within the policy model.

### Components/Axes

The diagram consists of the following components:

* **Input:** The starting point of the process.

* **Policy Model:** A rectangular block containing multiple layers of "RTO" (Reasoning, Tool, Output) sequences.

* **Agentic Reasoning + Tool Integration:** A label describing the functionality of the Policy Model.

* **Answer:** The output generated by the Policy Model.

* **Reference Model:** A block that receives the "Answer" and outputs a validation indicator ("A" with a checkmark or cross).

* **Reward Model:** A block that receives the "Answer" and outputs "Rewards" ("r").

* **Group Computation:** A block that receives "Rewards" and outputs "Advantage" ("Adv").

* **Rewards:** The output of the Reward Model.

* **Advantage:** The output of the Group Computation.

The diagram also includes labels within the Policy Model:

* **R:** Reasoning

* **T:** Tool

* **O:** Output

### Detailed Analysis or Content Details

The Policy Model is the most complex component. It consists of three horizontal layers, each containing a sequence of "RTO" blocks.

* **Layer 1:** Contains a longer sequence of "RTO" blocks, extending to "..." indicating continuation.

* **Layer 2:** Contains a shorter sequence of "RTO" blocks.

* **Layer 3:** Contains a sequence of "RTO" blocks, followed by "R", "T", "O".

The "Answer" is generated from the Policy Model and is fed into both the "Reference Model" and the "Reward Model". The Reference Model outputs "A" with either a checkmark (positive validation) or a cross (negative validation). The Reward Model outputs "r", which represents the reward signal. The "Rewards" are then processed by the "Group Computation" block to produce "Advantage", represented by "Adv".

### Key Observations

* The iterative "RTO" structure within the Policy Model suggests a cyclical process of reasoning, tool application, and output generation.

* The parallel processing of the "Answer" through the Reference and Reward Models indicates a dual evaluation of the generated output – both for correctness (Reference Model) and value (Reward Model).

* The "Group Computation" step implies that the "Advantage" is derived from a collective assessment of the "Rewards".

* The "..." notation suggests that the process can continue indefinitely, with the Policy Model refining its reasoning and tool usage over time.

### Interpretation

This diagram illustrates a reinforcement learning framework for agentic reasoning. The agent (represented by the Policy Model) interacts with an environment (implicitly represented by the "Tool" component) to achieve a goal. The agent's actions are evaluated by both a Reference Model (ensuring correctness) and a Reward Model (quantifying progress). The Advantage signal is then used to update the Policy Model, guiding it towards more effective reasoning and tool usage. The iterative "RTO" loop suggests a process of trial and error, where the agent learns from its mistakes and refines its strategy over time. The diagram highlights the importance of both accuracy and reward in shaping the agent's behavior. The use of a Reference Model alongside a Reward Model is a notable feature, suggesting a focus on both correctness and efficiency in the agent's decision-making process. The diagram does not provide specific data or numerical values, but rather a conceptual overview of the system's architecture and functionality.

</details>

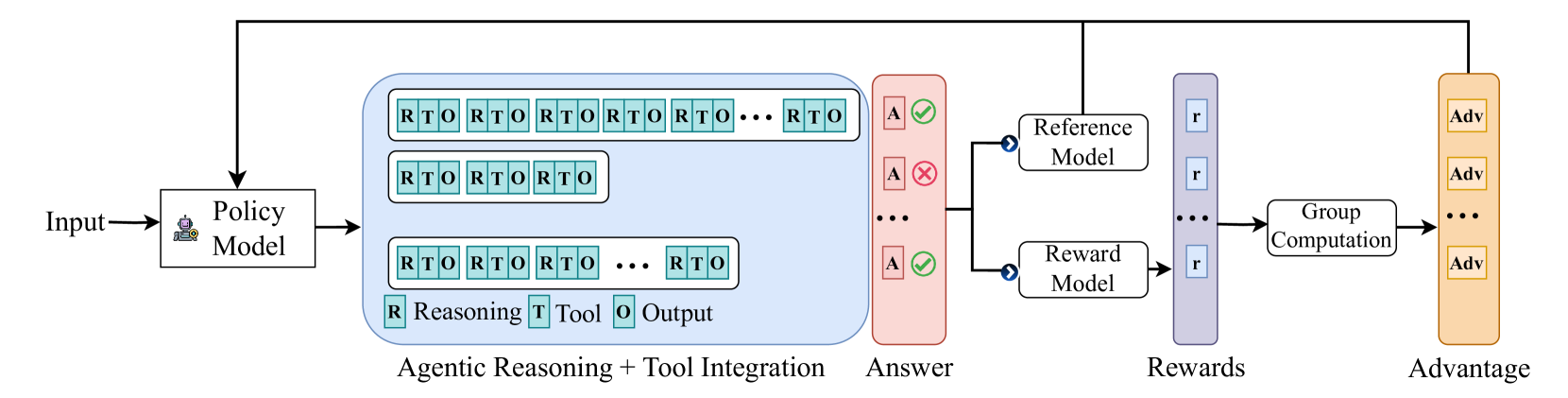

Figure 2: Overview of the ARTIST methodology. The framework illustrates how reasoning rollouts alternate between internal thinking, tool use, and environment interaction, with outcome-based rewards guiding learning. This enables the model to iteratively refine its reasoning and tool-use strategies through reinforcement learning.

To rigorously assess the effectiveness and generality of ARTIST, we conduct extensive experiments in two core domains: complex mathematical problem solving and multi-turn function calling. Our evaluation covers a broad range of challenging benchmarks, including MATH-500 (Hendrycks et al., 2021), AIME (hug, a), AMC (hug, b), and Olympiad Bench (He et al., 2024) for math reasoning, as well as $\tau$ -bench (Yao et al., 2024) and multiple BFCL v3 (Yan et al., 2024) subcategories for multi-turn tool use. We benchmark ARTIST on both 7B and 14B Qwen2.5-Instruct models (Qwen et al., 2025), comparing against a comprehensive suite of baselines—spanning frontier LLMs (e.g., GPT-4o (OpenAI, 2024), DeepSeek-R1 (DeepSeek-AI et al., 2025)), open-source tool-augmented models (e.g., ToRA (Gou et al., 2024), NuminaMath-TIR (Beeching et al., 2024)), prompt-based tool integration, and base models with or without external tools.

ARTIST consistently outperforms all baselines, achieving substantial gains on the most complex tasks. In mathematical reasoning, it delivers up to 22% absolute improvement over base models and surpasses GPT-4o and other leading models on AMC, AIME, and Olympiad. For multi-turn function calling, ARTIST more than doubles the accuracy of base and prompt-based models on $\tau$ -bench and achieves strong gains on the hardest BFCL v3 subsets. Detailed ablations and metric analyses show that agentic RL training in ARTIST leads to deeper reasoning, more effective tool use, and higher-quality solutions.

Across both domains, we observe that ARTIST exhibits emergent agentic behaviors —including adaptive tool selection, iterative self-correction, and context-aware multi-step reasoning —all arising naturally from its unified agentic RL framework. These findings provide compelling evidence that agentic reasoning, when tightly integrated with reinforcement learning and dynamic tool use, marks a paradigm shift in LLM capabilities. As a result, ARTIST not only surpasses prior approaches but also sets a new standard for robust, interpretable, and generalizable problem-solving in real-world scenarios.

Our key contributions are:

- A unified agentic RL framework: We introduce ARTIST, the first framework to tightly couple agentic reasoning, dynamic tool integration, and reinforcement learning for LLMs, enabling adaptive, multi-turn problem solving across diverse domains.

- Generalizable tool use and environment interaction: ARTIST supports seamless integration with arbitrary external tools and environments, allowing LLMs to learn not just which tools to use, but when and how to invoke them within complex reasoning chains.

- Extensive, rigorous evaluation: We provide the most comprehensive evaluation to date, covering both mathematical and multi-turn function calling tasks, a wide range of benchmarks, model scales, and baseline categories, along with detailed ablations and metric analyses.

2 ARTIST Overview

We present ARTIST (Agentic Reasoning and Tool Integration in Self-Improving Transformers), a general and extensible framework that enables large language models (LLMs) to reason with and act upon external tools and environments via reinforcement learning. Unlike prior methods (Schick et al., 2023; Gao et al., 2023) that focus on isolated tool use or narrow domains, ARTIST supports seamless integration with a wide range of tools—including code interpreters, web search engines, and domain-specific APIs—as well as interactive environments such as web browsers and operating systems. This section details the methodology, RL training procedure, prompt templates for structuring reasoning and tool interaction, and our approach to reward modeling.

2.1 Methodology

ARTIST treats tool usage and environment interaction as core components of the model’s reasoning trajectory. The LLM dynamically decides which tools or environments to engage, when to invoke them, and how to incorporate their outputs into multi-step solutions, making it applicable to a broad spectrum of real-world tasks.

Figure 2 illustrates the methodology: for each input, the policy model generates multiple reasoning rollouts, alternating between text-based reasoning (<think>...</think>) and tool interactions. At each step, the model determines which tool to call, when to invoke it, and how to formulate the query based on the current context. The tool interacts with the external environment (e.g., executing code, searching the web, or calling an API) and returns an output, which is incorporated back into the reasoning chain. This iterative process allows the model to explore diverse reasoning and tool-use trajectories. By making tool usage and environment interaction first-class operations, ARTIST enables LLMs to develop flexible, adaptive, and context-aware strategies for complex, multi-step tasks. This agentic approach supports robust self-correction and iterative refinement through continuous interaction with external resources.

2.2 Reinforcement Learning Algorithm

Training agentic LLMs with tool and environment integration requires a reinforcement learning (RL) algorithm that is sample-efficient, stable, and effective with outcome-based rewards. Recent work, such as DeepSeek-R1 (DeepSeek-AI et al., 2025), has shown that Group Relative Policy Optimization (GRPO) (Shao et al., 2024) achieves strong performance in language model RL by leveraging groupwise outcome rewards and removing the need for value function approximation. This reduces training cost and simplifies optimization, making GRPO well-suited for our framework.

Group Relative Policy Optimization.

GRPO extends Proximal Policy Optimization (PPO) (Schulman et al., 2017) by eliminating the critic and instead estimating the baseline from a group of sampled responses. For each question $q$ , a group of responses $\{y_{1},y_{2},...,y_{G}\}$ is sampled from the old policy $\pi_{\text{old}}$ , and the policy model $\pi_{\theta}$ is optimized by maximizing the following objective:

$$

\displaystyle\mathcal{J}_{\text{GRPO}}(\theta)=\mathbb{E}_{x\sim\mathcal{D},\{%

y_{i}\}_{i=1}^{G}\sim\pi_{\text{old}}(\cdot\mid x;\mathcal{R})}\Bigg{[}\frac{1%

}{G}\sum_{i=1}^{G}\frac{1}{\sum_{t=1}^{|y_{i}|}\mathbb{I}(y_{i,t})}\sum_{%

\begin{subarray}{c}t=1\\

(y_{i,t}=1)\end{subarray}}^{|y_{i}|}\min\left(\frac{\pi_{\theta}(y_{i,t}\mid x%

,y_{i,<t};\mathcal{R})}{\pi_{\text{old}}(y_{i,t}\mid x,y_{i,<t};\mathcal{R})}%

\hat{A}_{i,t},\right. \displaystyle\left.\text{clip}\left(\frac{\pi_{\theta}(y_{i,t}\mid x,y_{i,<t};%

\mathcal{R})}{\pi_{\text{old}}(y_{i,t}\mid x,y_{i,<t};\mathcal{R})},1-\epsilon%

,1+\epsilon\right)\hat{A}_{i,t}\right)-\beta\mathbb{D}_{\text{KL}}[\pi_{\theta%

}\|\pi_{\text{ref}}]\Bigg{]} \tag{1}

$$

where $\epsilon$ and $\beta$ are hyperparameters, and $\hat{A}_{i,t}$ represents the advantage, computed based on the relative rewards of outputs within each group.

Adapting GRPO for Agentic Reasoning with Tool Integration.

In ARTIST, rollouts alternate between model-generated reasoning steps and tool outputs, capturing agentic interactions with external tools and environments. Applying token-level loss uniformly can cause the model to imitate deterministic tool outputs, rather than learning effective tool invocation strategies. To prevent this, we employ a loss masking strategy: tokens from tool outputs are masked during loss computation, ensuring gradients are only propagated through model-generated tokens. This focuses optimization on the agent’s reasoning and decision-making, while avoiding spurious updates from deterministic tool responses. The complete training procedure for ARTIST with GRPO is summarized in Algorithm 1.

Algorithm 1 Training ARTIST with Group Relative Policy Optimization (GRPO)

1: Policy model $\pi_{\theta}$ , old policy $\pi_{\text{old}}$ , task dataset $\mathcal{D}$ , group size $G$ , masking function $\mathcal{M}$

2: for each training iteration do

3: for each task $q$ in batch do

4: Sample $G$ rollouts $\{y_{1},...,y_{G}\}$ from $\pi_{\text{old}}$ :

5: for each rollout $y_{i}$ do

6: Initialize reasoning chain

7: while not end of episode do

8: Generate next segment: <think> or <tool_name>

9: if tool is invoked then

10: Interact with environment, obtain <output>

11: Append output to reasoning chain

12: end if

13: end while

14: Compute outcome reward $R(y_{i})$

15: end for

16: Compute groupwise advantages $\hat{A}_{i,t}$ for all $y_{i}$

17: Compute importance weights $r_{i,t}$

18: Apply loss masking $\mathcal{M}$ to exclude tool output tokens

19: Compute GRPO loss $\mathcal{L}_{\text{GRPO}}$ and update $\pi_{\theta}$

20: end for

21: end for

2.3 Rollouts in ARTIST

In ARTIST, rollouts are structured to alternate between internal reasoning and interaction with external tools or environments. Unlike standard RL rollouts that consist solely of model-generated tokens, ARTIST employs an iterative framework where the LLM interleaves text generation with tool and environment queries.

Prompt Template.

ARTIST uses a structured prompt template that organizes outputs into four segments: (1) internal reasoning (<think> … </think>), (2) tool or environment queries (<tool_name> … </tool_name>), (3) tool outputs (<output> … </output>), and (4) the final answer (<answer> … </answer>). Upon issuing a tool query, the model invokes the corresponding tool or environment, appends the output, and continues the reasoning cycle until the answer is produced. The complete prompt template is provided in Appendix A.

Rollout Process.

Each rollout consists of these structured segments, with the policy model deciding at each step whether to reason internally or interact with an external resource. Tool invocations may include code execution, API calls, web search, file operations, or actions in interactive environments like web browsers or operating systems. Outputs from these interactions are incorporated back into the reasoning chain, enabling iterative refinement and adaptive strategy adjustment based on feedback. See Appendix B for illustrative rollout examples in various scenarios.

2.4 Reward Design

A well-designed reward function is essential for effective RL training, as it provides the optimization signal that steers the policy toward desirable behaviors. In GRPO, outcome-based rewards have proven both efficient and effective, supporting robust policy improvement without requiring dense intermediate supervision. However, ARTIST introduces new challenges for reward design: beyond producing correct final answers, the model must also structure its reasoning, tool use, and environment interactions coherently and reliably. To address this, we use a composite reward mechanism that provides fine-grained feedback for each rollout. The reward function in ARTIST consists of three key components:

Answer Reward:

This component assigns a positive reward when the model generates the correct final answer, as identified within the <answer> … </answer> tags. The answer reward directly incentivizes the model to solve the task correctly, ensuring that the ultimate objective of the reasoning process is met.

Format Reward:

To promote structured and interpretable reasoning, we introduce a format reward that encourages adherence to the prescribed prompt template. This reward checks two main criteria: (1) the correct order of execution—reasoning (<think>), tool call (<tool_name>), and tool output (<output>) is maintained throughout the rollout; and (2) the final answer is properly enclosed within <answer> tags. The format reward helps the model learn to organize its outputs in a way that is both consistent and easy to parse, which is essential for reliable tool invocation and downstream evaluation.

Tool Execution Reward:

During each tool interaction, the model’s queries may or may not be well-formed or executable. To encourage robust and effective tool use, we introduce a tool execution reward, defined as the fraction of successful tool calls:

$$

\text{Tool Execution Reward}=\frac{Tool_{success}}{Tool_{total}}

$$

where $Tool_{success}$ and $Tool_{total}$ denote the number of successful and total tool calls, respectively. This reward ensures that the model learns to formulate tool queries that are syntactically correct and executable in the target environment.

3 Case Study

We present two case studies to illustrate the versatility of ARTIST. The first focuses on complex mathematical reasoning, where ARTIST leverages external tools like a Python interpreter for multi-step computation. The second examines multi-turn function calling, demonstrating how ARTIST orchestrates sequential tool use and adapts its reasoning strategy in dynamic, real-world environments.

3.1 Complex Mathematical Reasoning with Agentic Tool Use

While LLMs have demonstrated strong performance on mathematical reasoning tasks using natural language, they often struggle with complex problems that require precise, multi-step calculations—such as multiplying large numbers or evaluating definite integrals. Purely text-based reasoning in these cases can be inefficient and error-prone. To address this, ARTIST augments LLMs with access to an external Python interpreter, enabling the model to offload complex computations and verify intermediate results programmatically.

Prompt Template

During rollouts, the model is prompted to structure its output using special tokens. Internal reasoning is enclosed within <think> … </think> tags, while any code intended for execution is placed within <python> … </python> tags. The code is executed by an external Python interpreter, and the resulting output is returned to the model inside <output> … </output> tags. The final answer is provided within <answer> … </answer> tags. The complete prompt used for guiding the model is listed in Appendix A.

Reward Design

To guide the RL algorithm, we employ three reward components tailored to the mathematical reasoning setting:

- Answer Reward: The model receives a reward of 2 if the final answer exactly matches the ground truth, and 0 otherwise:

$$

R_{\text{answer}}=\begin{cases}2,&\text{if }y_{\text{pred}}=y_{\text{ground}}%

\\

0,&\text{otherwise}\end{cases}

$$

- Format Reward: To encourage structured outputs, we provide both relaxed and strict format rewards:

- Relaxed: For each of the four required tag pairs (<think>, <python>, <output>, <answer>) present in the rollout, a reward of 0.125 is given, up to a maximum of 0.5.

- Strict: An additional reward of 0.5 is awarded if (1) all tags are present, (2) the internal order of opening/closing tags is correct, and (3) the overall structure follows the sequence: <think> $→$ <python> $→$ <output> $→$ <answer>.

- Tool Execution Reward: This reward is proportional to the fraction of successful Python code executions:

$$

R_{\text{tool}}=\frac{\text{Tool}_{\text{success}}}{\text{Tool}_{\text{total}}}

$$

where $\text{Tool}_{\text{success}}$ and $\text{Tool}_{\text{total}}$ denote the number of successful and total tool calls, respectively. The reward ranges from 0, i.e no python code or all python code resulted in compilation error to a maximum of 1, i.e all python code executed successfully.

Example Analysis

Appendix D shows two examples of ARTIST on complex math tasks. Across both examples, ARTIST demonstrates a robust agentic reasoning process that tightly integrates language-based thinking with programmatic tool use. The model systematically decomposes complex math problems into logical steps, alternates between internal reasoning and external computation, and iteratively refines its approach based on intermediate results.

A key strength of ARTIST is the emergence of advanced agentic reasoning capabilities:

- Self-Refinement: The model incrementally adjusts its strategy, such as increasing candidate values or restructuring code, to converge on a correct solution.

- Self-Correction: When encountering errors (e.g., tool execution failures or incorrect intermediate results), the model diagnoses the issue and adapts its subsequent actions accordingly.

- Self-Reflection: At each step, the model evaluates and explains its reasoning, validating results through repeated computation or cross-verification.

These capabilities are not explicitly supervised, but arise naturally from the agentic rollout structure and reward design in ARTIST. As a result, the model is able to solve complex, multi-step mathematical problems with high reliability, leveraging both its language understanding and external tools. This highlights the effectiveness of reinforcement learning with tool integration for enabling flexible, adaptive, and interpretable problem-solving in LLMs. See Appendix D for more details.

3.2 Multi-Turn Function Calling with Agentic Reasoning and Tool Use

Function calling is a core capability in agentic LLM applications, enabling models to answer user queries by invoking external functions to fetch information, perform deterministic operations, or automate workflows. In an agentic setup, access to a suite of functions and their responses forms an interactive environment for LLM agents. This paradigm is increasingly relevant for real-world automation, especially with the adoption of standards like the Model Context Protocol (MCP) Hou et al. (2025) that streamline tool and context integration for LLMs.

In this work, we focus on enhancing LLMs’ ability to perform multi-turn, multi-function tasks—scenarios that require the agent to coordinate multiple function calls, manage intermediate state, and interact with users over extended dialogues. We evaluate ARTIST on BFCL v3 Yan et al. (2024) and $\tau$ Bench Yao et al. (2024), two challenging benchmarks that require long-context reasoning, multiple user interactions, and cascaded function calls (see Experimental setup for more details).

Prompt Template

To maximize performance, we prompt the model to explicitly reason step by step using <reasoning> … </reasoning> tags before issuing function calls within <tool> … </tool> tags. This structure encourages the model to articulate its logic, plan tool use, and adaptively respond to environment feedback. The prompt is provided in Appendix A.

Reward Design

We employ two reward components tailored to the function calling setting. Each is designed to reinforce a critical aspect of agentic reasoning and tool use:

- State Reward: This reward encourages the model to maintain and update the correct state throughout a multi-turn interaction. In the context of function calling, State_match is the number of state variables (e.g., current working directory, selected files, user preferences) that the model correctly tracks or updates, while State_total is the total number of relevant state variables for the task. For example, if the model needs to keep track of which files have been selected and which have been compressed, correctly maintaining both would yield a higher state reward. The reward is scaled by $SR_{\text{max}}$ (set to 0.5), the maximum possible state reward:

$$

R_{\text{state}}=SR_{\text{max}}\times\frac{State_{\text{match}}}{State_{\text%

{total}}}

$$

This reward ensures the agent maintains coherent context and does not lose track of important information across multiple tool calls.

- Function Reward: This reward incentivizes the model to issue the correct sequence of function calls. Here, Functions_matched is the number of function calls that match the expected calls (in terms of both function name and arguments), and Functions_total is the total number of function calls required for the task. For example, if a task requires three specific function calls (listing files, compressing, and emailing), and the model gets two correct, it receives partial credit. $FR_{\text{max}}$ (set to 0.5) is the maximum function reward:

$$

R_{\text{function}}=FR_{\text{max}}\times\frac{Functions_{\text{matched}}}{%

Functions_{\text{total}}}

$$

This reward encourages the agent to plan and execute the correct sequence of actions, mirroring how a human would follow a checklist to complete a task.

- Format Reward: To encourage structured outputs, we provide both relaxed and strict format rewards:

- Relaxed: For the two required tag pairs (<reasoning>, <tool>) present in the rollout, a reward of 0.025 is given, up to a maximum of 0.1.

- Strict: An additional reward of 0.1 is awarded if (1) all tags are present, (2) the internal order of opening/closing tags is correct, and (3) the overall structure follows the sequence: <reasoning> $→$ <\reasoning> $→$ <tool> $→$ <\tool>.

Example Analysis

Appendix E presents four representative examples of ARTIST on multi-turn function calling tasks drawn from the BFCLv3 and $\tau$ -Bench datasets. Across these diverse scenarios—including sequential vehicle control, travel booking and cancellation, item exchange with preference handling, and persistent customer support— ARTIST exhibits a robust agentic reasoning process that tightly integrates step-by-step language-based planning with dynamic tool invocation in interactive environments. The model systematically interprets user requests, sequences and adapts multiple function calls, manages dependencies and state, handles ambiguous or incomplete information, and flexibly recovers from tool errors or workflow constraints.

A key strength of ARTIST is the emergence of advanced agentic reasoning capabilities:

- Self-Refinement: The model incrementally updates its plan in response to evolving requirements, user clarifications, or environment feedback—such as reordering actions, filtering options based on nuanced preferences, or skipping unnecessary steps to efficiently achieve the user’s goal.

- Self-Correction: When faced with tool execution errors, unmet preconditions, or mistaken assumptions (e.g., missing dependencies, unsupported tool actions, or incorrect order/item IDs), the model diagnoses the cause, executes corrective actions, and retries the intended operation without external intervention.

- Self-Reflection: At each stage, the model articulates its reasoning, summarizes the current state, confirms details with the user, and validates outcomes before proceeding, ensuring the overall workflow remains coherent, interpretable, and user-aligned.

See Appendix E for detailed analysis of the examples on multi-turn function calling tasks.

4 Experimental Setup

4.1 Dataset and Evaluation Metrics

We assess ARTIST in two key reasoning domains: complex mathematical problem solving and multi-turn function calling. For each setting, we detail the training and evaluation datasets, along with the metrics used to measure performance.

4.1.1 Complex Mathematical Reasoning

Training Dataset

We curate a training set of 20,000 math word problems, primarily sourced from NuminaMath (LI et al., 2024). The NuminaMath dataset spans a wide range of complexity, from elementary arithmetic and algebra to advanced competition-level problems, ensuring the model is exposed to diverse question types and reasoning depths during training. Each problem is paired with a ground-truth final answer, enabling outcome-based reinforcement learning without requiring intermediate step supervision.

Evaluation Dataset

To assess generalization and robustness, we evaluate on four established math benchmarks:

- MATH-500 (Hendrycks et al., 2021): A diverse set of 500 competition-style math problems.

- AIME (hug, a) and AMC (hug, b): Standardized high school mathematics competition datasets.

- Olympiad Bench (He et al., 2024): A challenging set of olympiad-level problems requiring multi-step reasoning.

Evaluation metrics

We report Pass@1 accuracy: the percentage of problems for which the model’s final answer exactly matches the ground truth. This metric reflects the model’s ability to arrive at a correct solution in a single attempt.

4.1.2 Multi-Turn Function Calling

Training Dataset

For multi-turn function calling, we use a subset of 100 annotated tasks from the base multi-turn category of BFCL v3 (Berkeley Function-Calling Leaderboard) as the training dataset. The remaining 100 annotated tasks are used as validation dataset. Each task requires the agent to issue and coordinate multiple function calls in response to user queries, often involving state tracking and error recovery.

Evaluation Datasets

We evaluate on two major benchmarks:

- BFCL v3 (Yan et al., 2024): This benchmark covers a range of domains (vehicle control, trading bots, travel booking, file systems, and cross-functional APIs) and includes several subcategories:

- Missing parameters: Tasks where the agent must identify and request missing information.

- Missing function: Scenarios where no available function can fulfill the user’s request.

- Long context: Tasks with extended, information-dense user interactions.

- $\tau$ -bench (Yao et al., 2024): A conversational benchmark simulating realistic user-agent dialogues in airline (50 tasks) and retail (115 tasks) domains. The agent must use domain-specific APIs and follow policy guidelines to achieve a predefined goal state in the system database.

Evaluation Metric

We use Pass@1 accuracy, defined as the fraction of tasks for which the agent’s final response is correct and the resulting environment state matches the benchmark’s ground truth.

4.2 Implementation Details

Complex Mathematical Reasoning

We train ARTIST using the Qwen/Qwen2.5-7B-Instruct (Qwen et al., 2025) and Qwen/Qwen2.5-14B-Instruct (Qwen et al., 2025) models. For each training instance, we sample 6 reasoning rollouts per question with a temperature of 1.0 to encourage exploration. Following prior work (Xu et al., 2025), we set a high generation budget of 8,000 tokens to accommodate long-form, multi-step reasoning. During rollouts, the model alternates between text generation and tool invocation, using a Python interpreter as the external tool. The interpreter executes code via Python’s exec() function, and returns structured feedback to the model, including successful outputs, missing print statements, or detailed error messages.

Multi-Turn Function Calling

For multi-turn function calling, we use Qwen/Qwen2.5-7B-Instruct as the base model. Training is performed with GRPO, sampling 8 rollouts per question at a temperature of 1.0. Each rollout consists of multiple tool calls and their outputs, with the number of user turns per task set to 1 to control rollout complexity. The system returns the output of each function call in <tool_result> tags, including explicit failure messages when applicable. The maximum context window is set to 16384 tokens, and the maximum response length per rollout is 2048 tokens. Losses for rollouts which exceed the maximum completion length are masked.

See Appendix C for additional details, including batch size, optimizer settings, learning rate schedule, hardware specifications, and the exact formatting of tool outputs and error handling All code, hyperparameters, and configuration files will be released soon..

4.3 Baselines

To rigorously evaluate the effectiveness of ARTIST, we compare its performance against a comprehensive set of baselines spanning four distinct categories in both complex mathematical reasoning and multi-turn function calling tasks. This diverse selection ensures a fair and thorough assessment of ARTIST ’s capabilities relative to both state-of-the-art and widely used approaches.

- Frontier LLMs (Frontier): Leading proprietary models such as GPT-4o (OpenAI, 2024) and DeepSeek R1 (DeepSeek-AI et al., 2025), representing the current state-of-the-art in large-scale language modeling and serving as strong upper bounds for text-based and reasoning performance.

- Open-Source Tool-Augmented LLMs (Tool-OS): Models such as Numina (Beeching et al., 2024), ToRA (Gou et al., 2024), and PAL (Gao et al., 2023), which are designed to leverage external tools or code execution. These models are directly relevant for comparison with ARTIST ’s tool-augmented approach.

- Base LLMs (Base): Standard open-source models such as Qwen 2.5-7B and Qwen 2.5-14B, evaluated in their vanilla form without tool augmentation. These provide a transparent, reproducible, and widely adopted baseline.

- Base LLMs + External Tools with Prompt Modifications (Base-Prompt+Tools): Base LLMs equipped with access to external tools, but relying on prompt engineering or reasoning token modifications (e.g., explicit tool-use instructions or reasoning tags). This tests the effectiveness of prompt-based tool integration and reasoning.

5 Results

| Method Frontier LLMs GPT-4o | MATH-500 0.630 | AIME 0.080 | AMC 0.430 | Olympiad 0.290 |

| --- | --- | --- | --- | --- |

| Frontier Open-Source LLMs | | | | |

| DeepSeek-R1-Distill-Qwen-7B | 0.858 | 0.211 | 0.675 | 0.395 |

| DeepSeek-R1 | 0.850 | 0.300 | 0.810 | 0.460 |

| Open-Source Tool-Augmented LLMs | | | | |

| NuminaMath-TIR-7B | 0.530 | 0.060 | 0.240 | 0.190 |

| ToRA-7B | 0.410 | 0.000 | 0.070 | 0.130 |

| ToRA-Code-7B | 0.460 | 0.000 | 0.100 | 0.160 |

| Qwen2.5-7B (PAL) | 0.100 | 0.000 | 0.050 | 0.020 |

| Base LLMs | | | | |

| Qwen2.5-7B-Instruct | 0.620 | 0.040 | 0.350 | 0.210 |

| Qwen2.5-14B-Instruct | 0.700 | 0.060 | 0.330 | 0.240 |

| Base LLMs + Tools via Prompt | | | | |

| Qwen2.5-7B-Instruct + Python Tool | 0.629 | 0.122 | 0.349 | 0.366 |

| Qwen2.5-14B-Instruct + Python Tool | 0.671 | 0.100 | 0.410 | 0.371 |

| ARTIST | | | | |

| Qwen2.5-7B-Instruct + ARTIST | 0.676 | 0.156 | 0.470 | 0.379 |

| Qwen2.5-14B-Instruct + ARTIST | 0.726 | 0.122 | 0.550 | 0.420 |

Table 1: Pass@1 accuracy on four mathematical reasoning benchmarks. ARTIST consistently outperforms all baselines, especially on complex tasks.

5.1 Results: Complex Math Reasoning

We conduct a comprehensive evaluation of ARTIST against a diverse set of baselines for complex mathematical reasoning.

5.1.1 Quantitative Results and Comparison

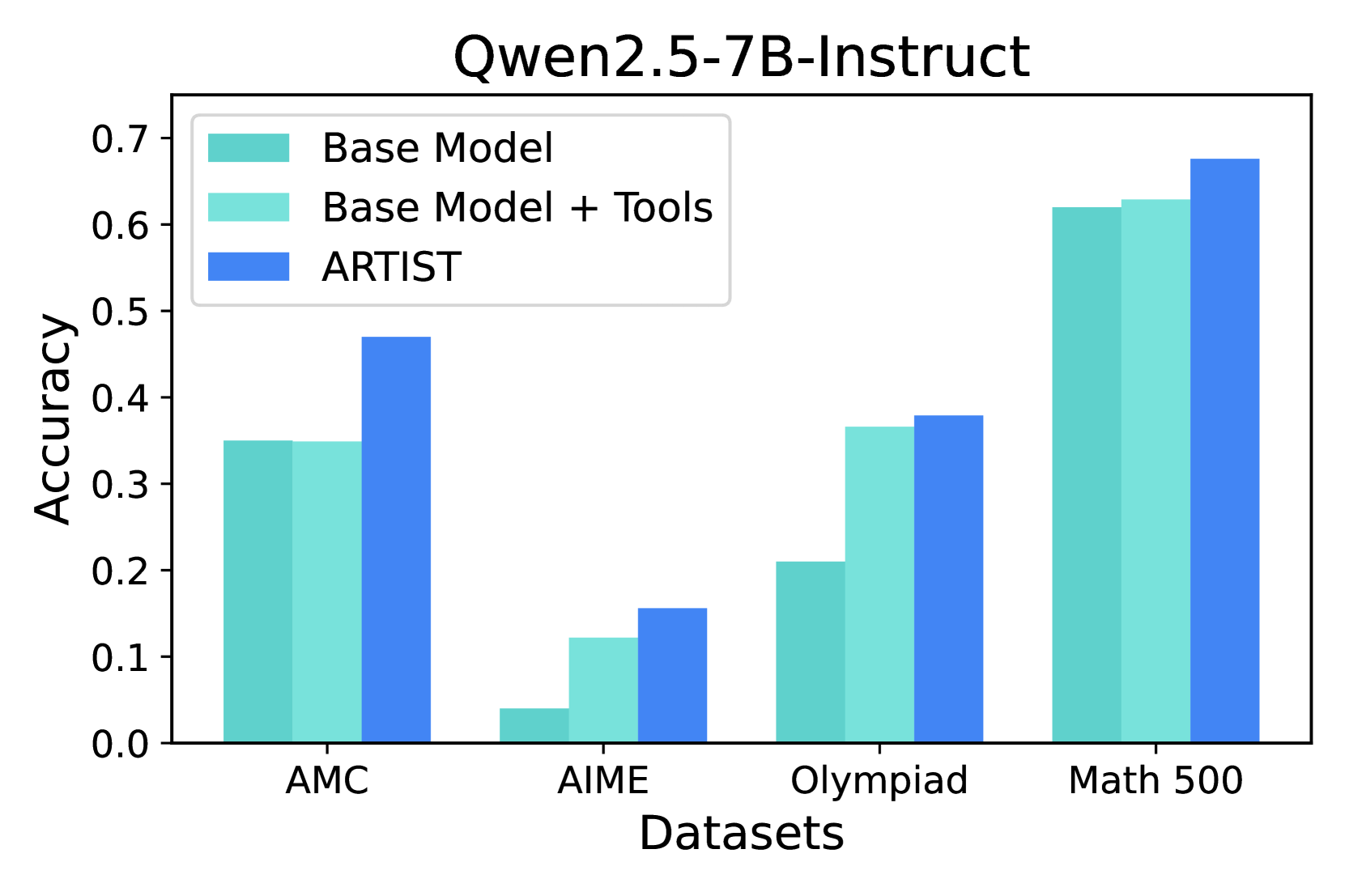

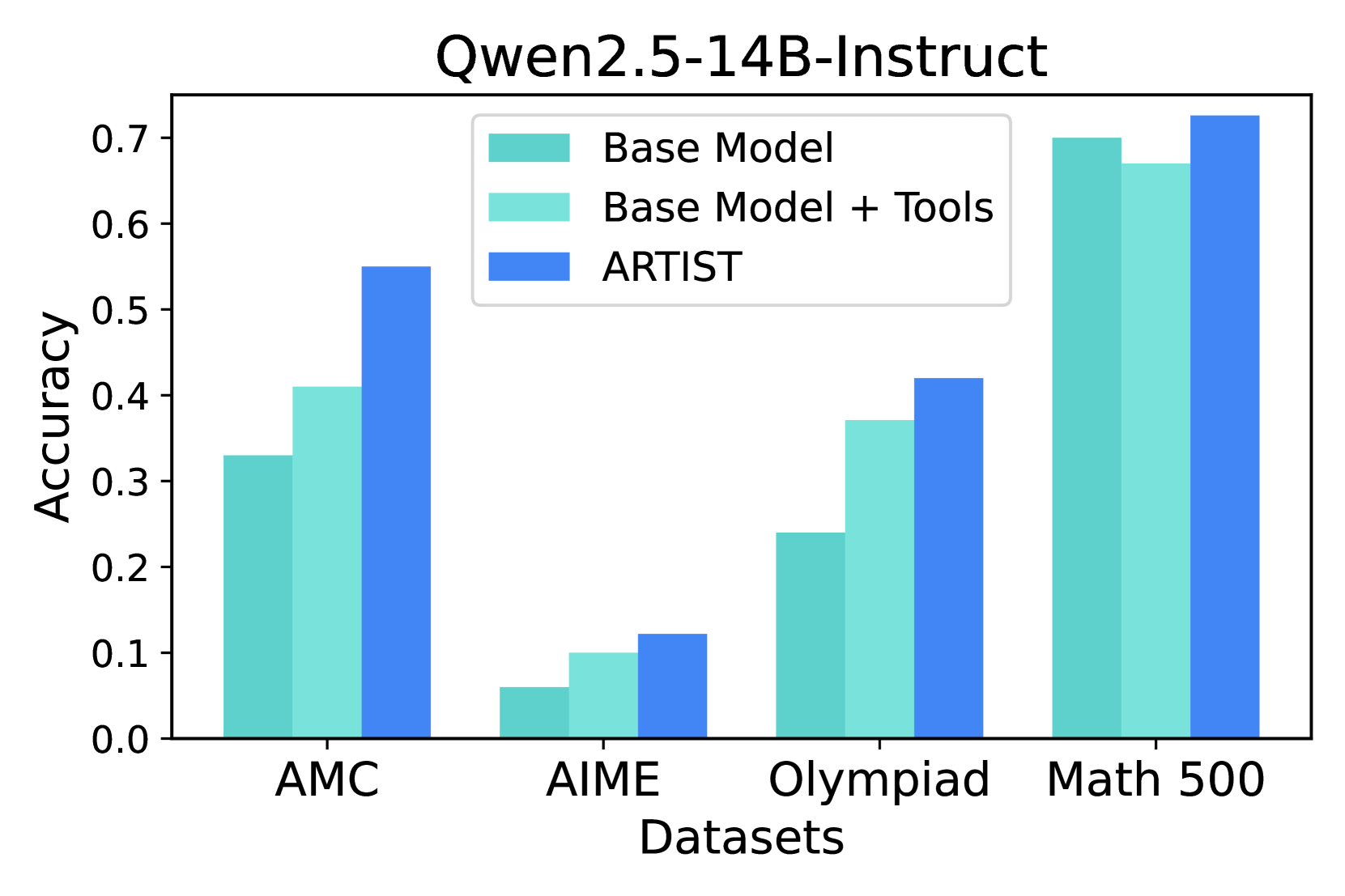

AMC, AIME, and Olympiad: Impact of Complexity

Table 1 reports Pass@1 accuracy across four challenging benchmarks: MATH-500, AIME, AMC, and Olympiad Bench. On the most challenging benchmarks—AMC, AIME, and Olympiad— ARTIST delivers substantial absolute improvements over all baseline categories. For example, on AMC, Qwen2.5-7B- ARTIST achieves 0.47 Pass@1, outperforming the base model (0.35) by +12.0%, the prompt-based tool baseline (0.349) by +12.1%, and the best open-source tool-augmented baseline (NuminaMath-TIR, 0.24) by +23.0%. The gains are even larger for Qwen2.5-14B- ARTIST, which achieves 0.55 on AMC an absolute improvement of +22.0% over the base model and +14.0% over the prompt-based tool baseline.

Similar trends are observed on AIME and Olympiad. On AIME, Qwen2.5-7B- ARTIST improves over the base model by +11.6% (0.156 vs. 0.04), and over the prompt-based tool baseline by +3.4% (0.156 vs. 0.122). On Olympiad, the improvements are +16.9% over the base (0.379 vs. 0.21) and +1.3% over the prompt-based tool baseline (0.379 vs. 0.366). For Qwen2.5-14B- ARTIST, the gains are even more pronounced: +18.0% on Olympiad (0.42 vs. 0.24) and +6.2% on AIME (0.122 vs. 0.06) compared to base model. Similarly, when compared with Base-Prompt+Tools, Qwen2.5-14B- ARTIST we see a boost of 14% on AMC, 5.5% on MATH-500, 5.9% on olympiad and 2.2% on AIME. Compared to open-source tool-augmented LLMs, ARTIST achieves up to +35.9% improvement over PAL on Olympiad and +9.6% over NuminaMath-TIR on AIME.

These results highlight that as the complexity of the reasoning task increases, the advantages of dynamic tool use and agentic reasoning become more significant. ARTIST is able to decompose complex problems, invoke external computation when needed, and iteratively refine its solutions—capabilities that are critical for high-level competition math (see Figure 4).

MATH-500: Internal Knowledge vs. Tool Use

On MATH-500, which contains a broader mix of problem difficulties but is generally less challenging than AMC, AIME, or Olympiad, the absolute improvements of ARTIST over baselines are more modest. For Qwen2.5-7B, ARTIST achieves 0.676, a +5.6% improvement over the base model (0.62) and +4.7% over the prompt-based tool baseline (0.629). For Qwen2.5-14B, the gains are +2.6% over base (0.726 vs. 0.7) and +5.5% over the prompt-based tool baseline (0.726 vs. 0.671). This suggests that for less complex problems, the model’s internal knowledge is often sufficient, and the marginal benefit of agentic tool use is reduced (see Figure 4).

Summary: ARTIST delivers the largest gains on the most complex benchmarks (AMC, AIME, Olympiad), where dynamic tool integration and multi-step agentic reasoning are essential, highlighting that RL-driven tool use is critical for solving advanced mathematical problems.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Bar Chart: Qwen2.5-7B-Instruct Accuracy on Math Datasets

### Overview

This bar chart compares the accuracy of three different models – Base Model, Base Model + Tools, and ARTIST – on four math datasets: AMC, AIME, Olympiad, and Math 500. The accuracy is measured on the y-axis, ranging from 0.0 to 0.7, while the datasets are listed on the x-axis.

### Components/Axes

* **Title:** Qwen2.5-7B-Instruct

* **X-axis Label:** Datasets

* **Y-axis Label:** Accuracy

* **Datasets (X-axis):** AMC, AIME, Olympiad, Math 500

* **Models (Legend):**

* Base Model (Light Blue)

* Base Model + Tools (Turquoise)

* ARTIST (Blue)

### Detailed Analysis

The chart consists of grouped bar plots for each dataset, representing the accuracy of each model.

**AMC Dataset:**

* Base Model: Approximately 0.34

* Base Model + Tools: Approximately 0.48

* ARTIST: Approximately 0.46

**AIME Dataset:**

* Base Model: Approximately 0.08

* Base Model + Tools: Approximately 0.14

* ARTIST: Approximately 0.09

**Olympiad Dataset:**

* Base Model: Approximately 0.21

* Base Model + Tools: Approximately 0.34

* ARTIST: Approximately 0.38

**Math 500 Dataset:**

* Base Model: Approximately 0.61

* Base Model + Tools: Approximately 0.64

* ARTIST: Approximately 0.68

**Trends:**

* For all datasets, ARTIST generally outperforms the Base Model.

* Adding tools to the Base Model consistently improves performance.

* The largest performance difference between models is observed on the Math 500 dataset.

* The Base Model + Tools and ARTIST models show similar performance on the AMC dataset.

### Key Observations

* The ARTIST model achieves the highest accuracy across all datasets.

* The Base Model performs relatively poorly on the AIME and Olympiad datasets.

* The Math 500 dataset shows the highest overall accuracy scores for all models.

* The addition of tools significantly boosts the performance of the Base Model, particularly on the AMC and Olympiad datasets.

### Interpretation

The data suggests that the ARTIST model is the most effective at solving problems from these math datasets, followed by the Base Model with added tools. The Base Model alone exhibits lower accuracy, especially on more challenging datasets like AIME and Olympiad. The consistent improvement observed when tools are added to the Base Model indicates that these tools provide valuable assistance in problem-solving. The higher accuracy scores on the Math 500 dataset may be due to the dataset's characteristics, potentially being less complex or more aligned with the models' training data. The differences in performance across datasets highlight the varying difficulty levels and the models' ability to generalize to different types of math problems. The chart demonstrates the effectiveness of model enhancement through tool integration and the potential for further improvement in AI-driven math problem-solving.

</details>

Figure 3: Qwen2.5-7B-Instruct: Performance on Math datasets.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Bar Chart: Qwen2.5-14B-Instruct Accuracy on Math Datasets

### Overview

This bar chart compares the accuracy of three models – Base Model, Base Model + Tools, and ARTIST – on four different math datasets: AMC, AIME, Olympiad, and Math 500. Accuracy is measured on the y-axis, and the datasets are displayed on the x-axis.

### Components/Axes

* **Title:** Qwen2.5-14B-Instruct (top-center)

* **X-axis Label:** Datasets (bottom-center)

* **Y-axis Label:** Accuracy (left-center)

* **Legend:** Located in the top-left corner.

* Base Model (light teal)

* Base Model + Tools (light blue)

* ARTIST (dark blue)

* **Datasets (X-axis Markers):** AMC, AIME, Olympiad, Math 500.

### Detailed Analysis

The chart consists of four groups of three bars, one for each dataset and model combination.

**AMC Dataset:**

* Base Model: Approximately 0.41 accuracy.

* Base Model + Tools: Approximately 0.53 accuracy.

* ARTIST: Approximately 0.55 accuracy.

*Trend:* All three models show positive accuracy, with ARTIST and Base Model + Tools performing better than the Base Model.

**AIME Dataset:**

* Base Model: Approximately 0.08 accuracy.

* Base Model + Tools: Approximately 0.09 accuracy.

* ARTIST: Approximately 0.11 accuracy.

*Trend:* Accuracy is significantly lower for all models on the AIME dataset compared to the AMC dataset. ARTIST performs best, but the difference between the models is smaller.

**Olympiad Dataset:**

* Base Model: Approximately 0.27 accuracy.

* Base Model + Tools: Approximately 0.29 accuracy.

* ARTIST: Approximately 0.32 accuracy.

*Trend:* Accuracy is higher than AIME but lower than AMC. ARTIST consistently outperforms the other two models.

**Math 500 Dataset:**

* Base Model: Approximately 0.68 accuracy.

* Base Model + Tools: Approximately 0.71 accuracy.

* ARTIST: Approximately 0.73 accuracy.

*Trend:* The highest accuracy scores are observed on the Math 500 dataset. ARTIST again shows the highest performance, followed closely by Base Model + Tools.

### Key Observations

* ARTIST consistently outperforms both the Base Model and the Base Model + Tools across all datasets.

* The Base Model + Tools generally performs better than the Base Model alone.

* Accuracy varies significantly depending on the dataset, with the Math 500 dataset yielding the highest scores and the AIME dataset the lowest.

* The performance gap between the models is most pronounced on the AMC and Math 500 datasets.

### Interpretation

The data suggests that the ARTIST model is the most effective at solving math problems across the tested datasets. The addition of tools to the Base Model provides a moderate improvement in accuracy. The varying performance across datasets indicates that the difficulty and nature of the problems within each dataset influence the models' ability to solve them. The Math 500 dataset, with its higher accuracy scores, may contain problems that are more aligned with the models' training data or capabilities. The AIME dataset, with its lower scores, may present unique challenges. The consistent outperformance of ARTIST suggests that its architecture or training methodology is particularly well-suited for tackling these types of math problems. The data demonstrates a clear hierarchy of performance: ARTIST > Base Model + Tools > Base Model. This could be due to the ARTIST model's ability to leverage more complex reasoning or problem-solving strategies.

</details>

Figure 4: Qwen2.5-14B-Instruct: Performance on Math datasets.

ARTIST vs. Base LLMs + External Tools with Prompt Modifications (Base-Prompt+Tools)

Even though the base model with prompt modifications and tool access (Base-Prompt+Tools) is provided with the ability to call external tools, it consistently underperforms compared to ARTIST. Results indicate that, without explicit agentic training, the model struggles to learn when and how to invoke tools effectively, often failing to integrate tool outputs into the broader reasoning process. In contrast, ARTIST is explicitly trained to coordinate tool use within its reasoning chain, enabling it to leverage external computation in a dynamic and context-aware manner. This highlights the importance of agentic reinforcement learning for teaching LLMs to not only access tools, but to strategically incorporate their capabilities to enhance overall problem-solving performance.

Summary: Prompting base models to use tools yields limited improvements; explicit agentic RL training in ARTIST enables models to learn effective tool use, resulting in up to 12.1% higher accuracy on AMC and consistently superior performance across all tasks.

ARTIST vs. Open-Source Tool-Augmented LLMs (Tool-OS)

Compared to tool-integrated baselines such as ToRA, NuminaMath-TIR, ToRA-Code, and PAL, ARTIST achieves substantial improvements at the same model scale. For Qwen2.5-7B, ARTIST outperforms ToRA-7B by an average of 26.7% (15.25% $→$ 42%), NuminaMath-TIR by 16.5% (25.5% $→$ 42%), ToRA-Code by 24% (18% $→$ 42%), and PAL by 37.7% (4.25% $→$ 42%) across all benchmarks. These gains highlight that RL-driven agentic reasoning enables more effective and adaptive tool use than approaches relying on tool finetuning or inference-only tool integration.

Summary: RL-based agentic training in ARTIST enables LLMs to integrate and reason with tools far more effectively than prior tool-augmented methods, yielding double-digit accuracy improvements across challenging math tasks.

ARTIST vs. Frontier LLMs (Frontier)

ARTIST outperforms GPT-4o across all benchmarks, even at the 7B scale, with absolute gains of 8.9% on Olympiad (37.9% vs. 29%), 7.6% on AIME (15.6% vs. 8%), 4.6% on MATH-500 (67.6% vs. 63%), and 4% on AMC (47% vs. 43%). At 14B, the gap widens further, with improvements of 13% on Olympiad, 12% on AMC, 9.6% on MATH-500, and 4.2% on AIME. While DeepSeek-R1 and its distilled variant perform strongly, they require large teacher models or additional supervised alignment, whereas ARTIST achieves competitive results using only outcome-based RL and tool integration. Incorporating such techniques could further enhance ARTIST, which we leave for future work.

Summary: ARTIST surpasses state-of-the-art frontier models like GPT-4o on all math benchmarks, demonstrating that agentic RL with tool integration can close and even exceed the performance gap with much larger proprietary models.

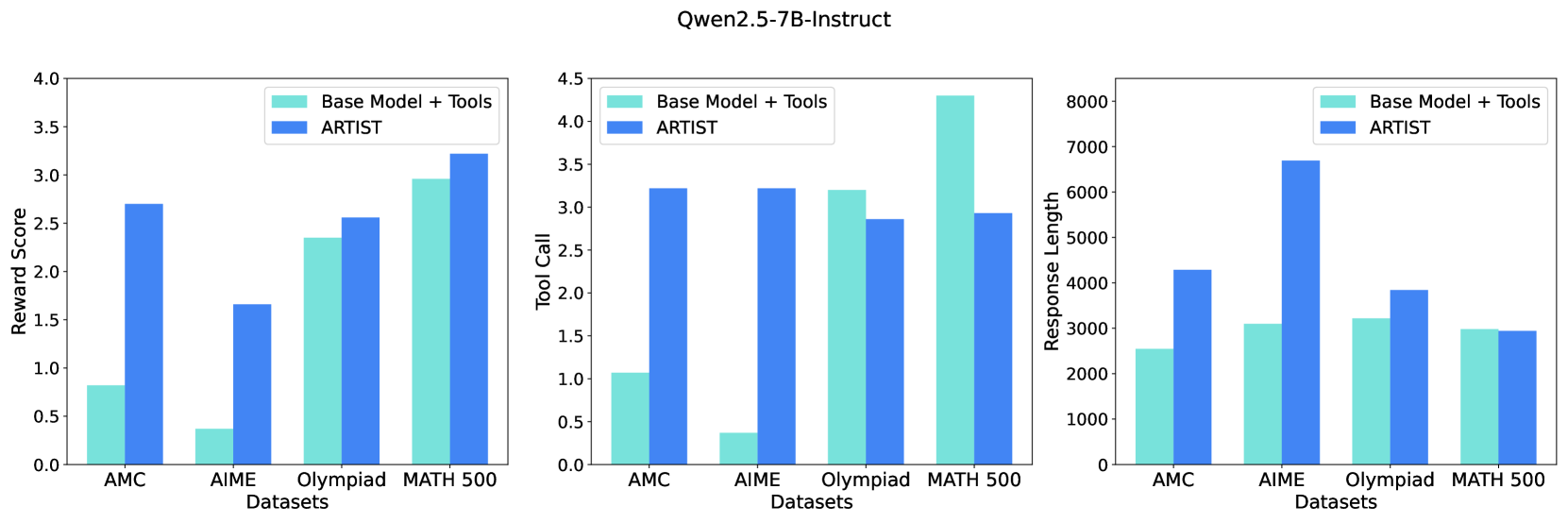

5.1.2 Metrics

We evaluate the effectiveness of ARTIST using three key metrics: (1) Reward Score (solution quality), (2) Number of Tool Calls (external tool utilization), and (3) Response Length (reasoning depth). Figure 5 compares ARTIST with the Base-Prompt+Tools across these dimensions.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Qwen2.5-7B-Instruct Performance Comparison

### Overview

The image presents three bar charts comparing the performance of "Base Model + Tools" and "ARTIST" across four datasets: AMC, AIME, Olympiad, and MATH 500. Each chart visualizes a different metric: Reward Score, Tool Call, and Response Length. The charts are arranged horizontally, side-by-side.

### Components/Axes

Each chart shares the following components:

* **X-axis:** "Datasets" with categories: AMC, AIME, Olympiad, MATH 500.

* **Y-axis:** Varies per chart:

* Chart 1: "Reward Score" (Scale: 0.0 to 4.0)

* Chart 2: "Tool Call" (Scale: 0.0 to 4.5)

* Chart 3: "Response Length" (Scale: 0 to 8000)

* **Legend:** Located at the top-left of each chart, distinguishing between "Base Model + Tools" (light blue) and "ARTIST" (dark blue).

### Detailed Analysis or Content Details

**Chart 1: Reward Score**

* **AMC:** "Base Model + Tools" ≈ 2.6, "ARTIST" ≈ 0.7

* **AIME:** "Base Model + Tools" ≈ 2.1, "ARTIST" ≈ 1.7

* **Olympiad:** "Base Model + Tools" ≈ 2.7, "ARTIST" ≈ 2.3

* **MATH 500:** "Base Model + Tools" ≈ 3.2, "ARTIST" ≈ 3.1

The "Base Model + Tools" consistently achieves higher reward scores than "ARTIST" across all datasets. The difference is most pronounced for the AMC dataset.

**Chart 2: Tool Call**

* **AMC:** "Base Model + Tools" ≈ 3.1, "ARTIST" ≈ 3.0

* **AIME:** "Base Model + Tools" ≈ 3.2, "ARTIST" ≈ 3.1

* **Olympiad:** "Base Model + Tools" ≈ 3.5, "ARTIST" ≈ 3.3

* **MATH 500:** "Base Model + Tools" ≈ 4.0, "ARTIST" ≈ 3.8

"Base Model + Tools" generally exhibits a higher tool call rate than "ARTIST", with the largest difference observed in the MATH 500 dataset.

**Chart 3: Response Length**

* **AMC:** "Base Model + Tools" ≈ 3000, "ARTIST" ≈ 3000

* **AIME:** "Base Model + Tools" ≈ 3000, "ARTIST" ≈ 3000

* **Olympiad:** "Base Model + Tools" ≈ 6500, "ARTIST" ≈ 7000

* **MATH 500:** "Base Model + Tools" ≈ 4000, "ARTIST" ≈ 4000

The response length is similar for both models on AMC, AIME, and MATH 500. However, "ARTIST" generates significantly longer responses for the Olympiad dataset.

### Key Observations

* "Base Model + Tools" consistently outperforms "ARTIST" in Reward Score and Tool Call.

* Response Length is comparable for most datasets, except for Olympiad where "ARTIST" produces longer responses.

* The performance gap between the two models is most significant for the AMC dataset in terms of Reward Score.

### Interpretation

The data suggests that augmenting the base model with tools improves its performance, as measured by Reward Score and Tool Call, across various datasets. The longer response length of "ARTIST" on the Olympiad dataset might indicate a tendency to provide more verbose or detailed answers, potentially at the cost of conciseness or relevance (as reflected in the lower Reward Score). The consistent advantage of "Base Model + Tools" suggests that the tools are effectively utilized to enhance problem-solving capabilities. The relatively small difference in response length for AMC, AIME, and MATH 500 indicates that the tool integration doesn't drastically alter the length of the generated responses for those datasets. The data points to a trade-off between reward/tool usage and response length, with "ARTIST" favoring length and "Base Model + Tools" favoring efficiency and reward.

</details>

Figure 5: Average reward score, Tool call and the response length metric across all math datasets (ARTIST vs. Base-Prompt+Tools).

Reward Score (Solution Quality)

ARTIST dramatically improves solution quality, as measured by the reward score, on both AMC and AIME datasets. For example, on AMC, the average reward score nearly triples from 0.9 (Base-Prompt+Tools) to 2.8 (ARTIST), while on AIME it quadruples from 0.4 to 1.7. On Olympiad, ARTIST improves the reward score from 2.4 to 2.6, and on MATH 500, from 2.9 to 3.3. This reflects ARTIST ’s ability to produce more correct, well-structured, and complete solutions, especially on challenging problems.

Number of Tool Calls (External Tool Utilization)

ARTIST learns to invoke external tools far more frequently and strategically than the baseline, particularly on harder datasets. On AIME, the base model averages only 0.3 tool calls per query, whereas ARTIST averages over 3 tool calls per query. On Olympiad, ARTIST ’s tool usage is slightly lower than the baseline, but remains high and effective for solving complex tasks. Interestingly, on MATH 500, ARTIST reduces tool usage compared to the baseline, suggesting that it selectively avoids unnecessary tool calls when internal reasoning suffices. This adaptive and context-sensitive tool usage is strongly correlated with improved reward scores, demonstrating that dynamic tool invocation based on difficulty of dataset is critical for solving complex problems.

Response Length (Reasoning Depth)

ARTIST generates substantially longer and more detailed responses, with average response length up to twice that of the baseline, especially on AIME. This increase in length indicates that ARTIST engages in more thorough, multi-step reasoning, rather than shortcutting to an answer. On Olympiad, response length increases moderately, reflecting deeper reasoning. However, on MATH 500, the response lengths are roughly comparable between ARTIST and the baseline, indicating that ARTIST maintains concise reasoning when appropriate without sacrificing quality. Overall the richer reasoning traces produced by ARTIST directly contribute to its superior performance on complex mathematical problems.

Summary: These metrics collectively demonstrate that ARTIST not only achieves higher solution quality, but does so by leveraging external tools more effectively and engaging in deeper, more interpretable reasoning.

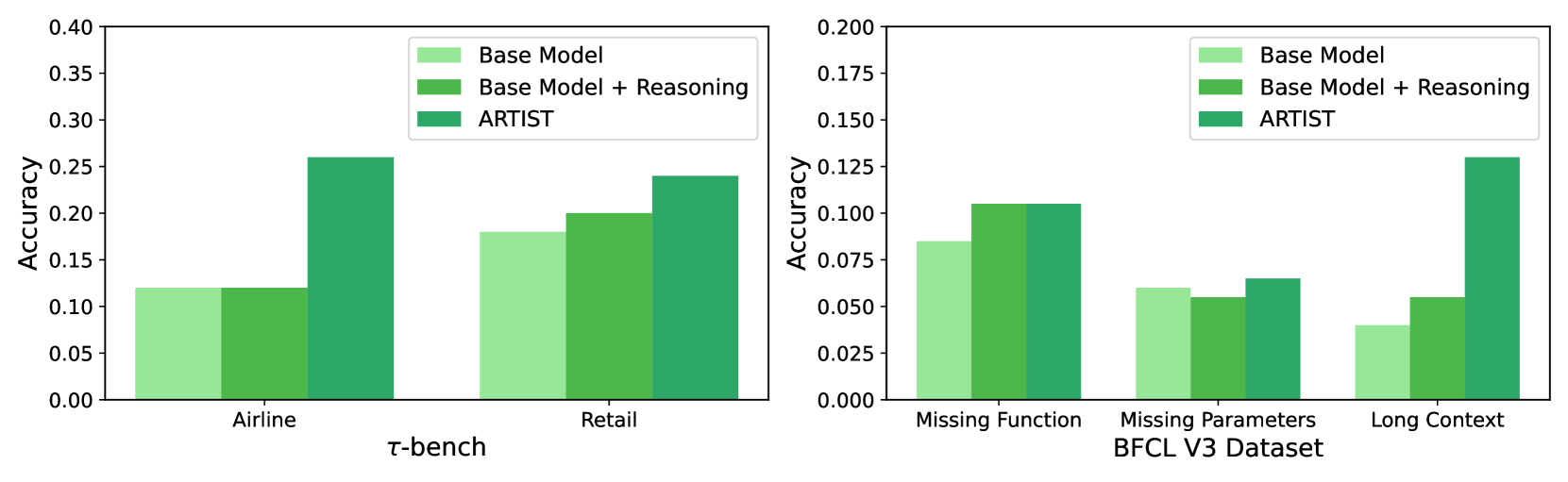

5.2 Results: Multi-Turn Function Calling

We evaluate ARTIST on two challenging multi-turn function calling benchmarks: $\tau$ -bench (Airline and Retail domains) and BFCL v3 (Missing Function, Missing Parameters, and Long Context subsets). These benchmarks test the agent’s ability to reason over extended dialogues, manage state, and coordinate multiple tool calls under realistic and adversarial conditions.

| Benchmark | $\tau$ bench | BFCL v3 | | | |

| --- | --- | --- | --- | --- | --- |

| Method | Airline | Retail | Missing Function | Missing Parameters | Long Context |

| Frontier LLMs | | | | | |

| GPT-4o | 0.460 | 0.604 | 0.410 | 0.355 | 0.545 |

| Frontier Open-Source LLMs | | | | | |

| Llama-3-70B* | 0.148 | 0.144 | 0.130 | 0.105 | 0.095 |

| Deepseek-R1 | – | – | 0.155 | 0.110 | 0.115 |

| Qwen2.5-72B-Instruct | – | – | 0.245 | 0.200 | 0.155 |

| Base LLMs | | | | | |

| Qwen2.5-7B-Instruct | 0.120 | 0.180 | 0.085 | 0.060 | 0.040 |

| Base LLMs + Reasoning via Prompt | | | | | |

| Qwen2.5-7B-Instruct + Reasoning | 0.120 | 0.200 | 0.105 | 0.055 | 0.055 |

| ARTIST | | | | | |

| Qwen2.5-7B-Instruct + ARTIST | 0.260 | 0.240 | 0.105 | 0.065 | 0.130 |

Table 2: Pass@1 accuracy on five multi-turn multi-step function calling benchmarks. ARTIST consistently outperforms baselines, especially on complex tasks.*Llama-3.1-70B was used for BFCLv3 evaluation.

5.2.1 Quantitative Results and Comparison

Table 2 reports Pass@1 accuracy across five challenging benchmarks: $\tau$ -bench (Airline, Retail) and BFCL v3 (Missing Parameters, Missing Function, Long Context).

ARTIST vs. Base LLMs

ARTIST delivers consistent and substantial improvements over base LLMs without explicit agentic reasoning or tool integration. On $\tau$ -bench, Qwen2.5-7B- ARTIST achieves 0.260 (Airline) and 0.240 (Retail), more than doubling the performance of the base model (0.120 and 0.180, respectively). On BFCL v3, ARTIST improves accuracy on Long Context by +9.0% (0.130 vs. 0.040), with smaller but consistent gains on Missing Function (+2.0%, 0.105 vs. 0.085) and Missing Parameters (+0.5%, 0.065 vs. 0.060). These results demonstrate that agentic RL with tool integration enables the model to better manage multi-step workflows, recover from errors, and maintain context over extended interactions.

ARTIST vs. Base LLMs + Reasoning via Prompt (Base-Prompt+Tools)

Compared to prompt-based reasoning, ARTIST shows clear gains, particularly on the hardest tasks. On $\tau$ -bench, ARTIST outperforms the prompt baseline by +14.0% (Airline, 0.260 vs. 0.120) and +4.0% (Retail, 0.240 vs. 0.200). On BFCL v3, the improvement is most pronounced on Long Context (+7.5%, 0.130 vs. 0.055), while performance is comparable on Missing Function and Missing Parameters. This highlights that, as with math, prompt engineering alone is insufficient for robust multi-turn tool use; explicit RL-based agentic training is crucial for learning when and how to invoke tools in complex, evolving scenarios (see Figure 6).

ARTIST vs. Frontier and Open-Source LLMs (FRONT)

While GPT-4o achieves the highest scores on most BFCL v3 subsets (e.g., 0.410 on Missing Function, 0.545 on Long Context), ARTIST narrows the gap and in some cases outperforms much larger open-source models. For example, on BFCL v3 Long Context, ARTIST (0.130) surpasses Meta-Llama-3-70B (Aaron Grattafiori, 2024) (0.095) and Deepseek-R1 (0.115), and matches or exceeds Qwen2.5-72B-Instruct on Missing Function and Parameters. On $\tau$ -bench, ARTIST more than doubles the performance of Meta-Llama-3-70B (Aaron Grattafiori, 2024) (0.260 vs. 0.148 on Airline; 0.240 vs. 0.144 on Retail), despite being a much smaller model. These results demonstrate the scalability and generalization of agentic RL with tool integration.

Summary: ARTIST achieves the largest gains on the most challenging tasks (Long Context, Airline), where multi-turn reasoning, context tracking, and adaptive tool use are critical. Its ability to maintain state, recover from errors, and flexibly invoke external tools leads to substantial outperformance over base and prompt-based baselines, demonstrating that explicit agentic RL training is essential for robust, context-aware tool use and reliable function calling in complex environments.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Bar Charts: Model Accuracy Comparison

### Overview

The image presents two bar charts comparing the accuracy of three models – "Base Model", "Base Model + Reasoning", and "ARTIST" – across different datasets. The first chart focuses on the datasets "Airline", "τ-bench", and "Retail". The second chart focuses on "Missing Function", "Missing Parameters BFCL V3 Dataset", and "Long Context". The y-axis represents accuracy, ranging from 0.00 to 0.40 in the first chart and 0.00 to 0.20 in the second chart.

### Components/Axes

* **X-axis (Chart 1):** Datasets - Airline, τ-bench, Retail

* **X-axis (Chart 2):** Datasets - Missing Function, Missing Parameters BFCL V3 Dataset, Long Context

* **Y-axis (Both Charts):** Accuracy (ranging from 0.00 to 0.40 for Chart 1 and 0.00 to 0.20 for Chart 2)

* **Legend (Both Charts):**

* Light Green: Base Model

* Medium Green: Base Model + Reasoning

* Dark Green: ARTIST

### Detailed Analysis or Content Details

**Chart 1: Airline, τ-bench, Retail**

* **Airline:**

* Base Model: Approximately 0.12

* Base Model + Reasoning: Approximately 0.18

* ARTIST: Approximately 0.26

* **τ-bench:**

* Base Model: Approximately 0.16

* Base Model + Reasoning: Approximately 0.22

* ARTIST: Approximately 0.28

* **Retail:**

* Base Model: Approximately 0.18

* Base Model + Reasoning: Approximately 0.22

* ARTIST: Approximately 0.25

**Chart 2: Missing Function, Missing Parameters BFCL V3 Dataset, Long Context**

* **Missing Function:**

* Base Model: Approximately 0.10

* Base Model + Reasoning: Approximately 0.11

* ARTIST: Approximately 0.13

* **Missing Parameters BFCL V3 Dataset:**

* Base Model: Approximately 0.05

* Base Model + Reasoning: Approximately 0.07

* ARTIST: Approximately 0.10

* **Long Context:**

* Base Model: Approximately 0.04

* Base Model + Reasoning: Approximately 0.08

* ARTIST: Approximately 0.13

### Key Observations

* ARTIST consistently outperforms both the Base Model and the Base Model + Reasoning across all datasets.

* The addition of reasoning to the Base Model consistently improves performance, but not to the level of ARTIST.

* The largest performance difference between the models appears on the "τ-bench" dataset in the first chart, and "Long Context" in the second chart.

* The performance gains from reasoning are more modest on the "Missing Function" dataset.

### Interpretation

The data suggests that the ARTIST model is significantly more effective than the Base Model and the Base Model + Reasoning across a variety of datasets. This indicates that ARTIST possesses capabilities that the other models lack, potentially related to its architecture or training data. The consistent improvement gained by adding reasoning to the Base Model suggests that reasoning is a valuable component for enhancing model performance, but it is not sufficient to match ARTIST's capabilities. The varying degree of improvement across datasets suggests that the effectiveness of reasoning may be dataset-dependent. The datasets themselves represent different challenges – from structured airline data to more complex reasoning tasks like missing function and long context. ARTIST's superior performance on these more challenging datasets highlights its ability to handle complex reasoning and contextual understanding. The data implies that ARTIST is a more robust and versatile model compared to the others.

</details>

Figure 6: Qwen2.5-7B-Instruct: Performance on $\tau$ -bench and BFCL v3 datasets for Multi-turn Function calling.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Line Chart: BFCL V3 Training and Evaluation Reward

### Overview

The image presents two line charts side-by-side, both depicting reward values over training steps. The left chart shows the "Train Reward" and the right chart shows the "Eval Reward". Both charts share the same x-axis, representing "Steps", and have different y-axis scales representing reward values. The title "BFCL V3" is centered above both charts.

### Components/Axes

* **Title:** BFCL V3 (centered at the top)

* **Left Chart:**

* **X-axis Label:** Steps (ranging from approximately 0 to 900)

* **Y-axis Label:** Train Reward (ranging from approximately 0.60 to 0.86)

* **Data Series:** A single blue line representing the training reward.

* **Right Chart:**

* **X-axis Label:** Steps (ranging from approximately 0 to 900)

* **Y-axis Label:** Eval Reward (ranging from approximately 0.58 to 0.72)

* **Data Series:** A single blue line representing the evaluation reward.

### Detailed Analysis

**Left Chart (Train Reward):**

The blue line representing the Train Reward starts at approximately 0.60 at Step 0. It exhibits a steep upward trend until approximately Step 200, reaching a value of around 0.78. From Step 200 to Step 600, the line fluctuates around a mean of approximately 0.81, with oscillations between roughly 0.79 and 0.83. From Step 600 to Step 900, the line shows a slight upward trend, ending at approximately 0.85.

**Right Chart (Eval Reward):**

The blue line representing the Eval Reward starts at approximately 0.72 at Step 0. It decreases to a minimum of around 0.68 at Step 150. From Step 150 to Step 400, the line fluctuates, decreasing to approximately 0.67 at Step 400. From Step 400 to Step 700, the line fluctuates around a mean of approximately 0.68, with oscillations between roughly 0.66 and 0.70. From Step 700 to Step 900, the line shows a clear upward trend, ending at approximately 0.71.

### Key Observations

* The Train Reward consistently remains higher than the Eval Reward throughout the entire training process.

* The Train Reward exhibits a more stable trend after Step 200, while the Eval Reward shows more pronounced fluctuations.

* Both charts show an overall increasing trend in reward values as the number of steps increases, suggesting that the model is learning.

* The Eval Reward shows a dip in performance around Step 150-400, which could indicate a period of instability or overfitting.

### Interpretation

The charts demonstrate the training progress of the BFCL V3 model. The increasing Train Reward indicates that the model is successfully learning to maximize its reward on the training data. The Eval Reward, while lower than the Train Reward (as expected due to generalization), also shows an increasing trend, suggesting that the model is generalizing well to unseen data. The initial dip in Eval Reward could be due to the model initially overfitting to the training data, but it recovers as training continues. The divergence between Train and Eval Reward is a common phenomenon in machine learning, indicating the gap between performance on seen and unseen data. The overall positive trends in both charts suggest that the BFCL V3 model is effectively learning and improving its performance over time.

</details>

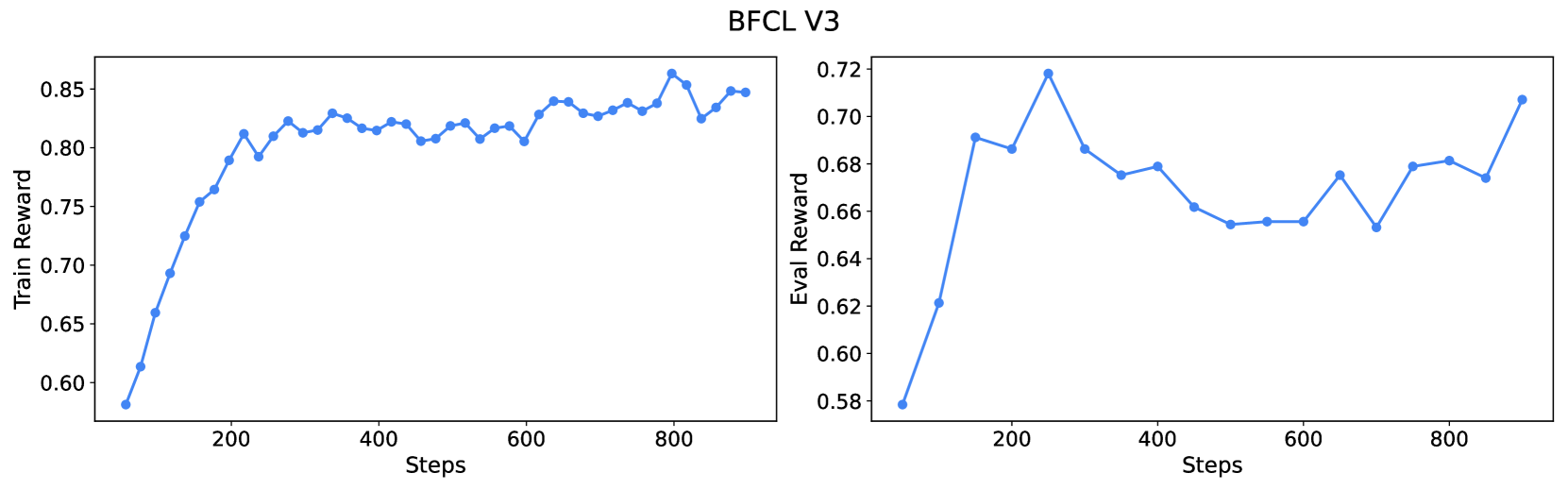

Figure 7: Average reward score at different training steps for BFCL v3.

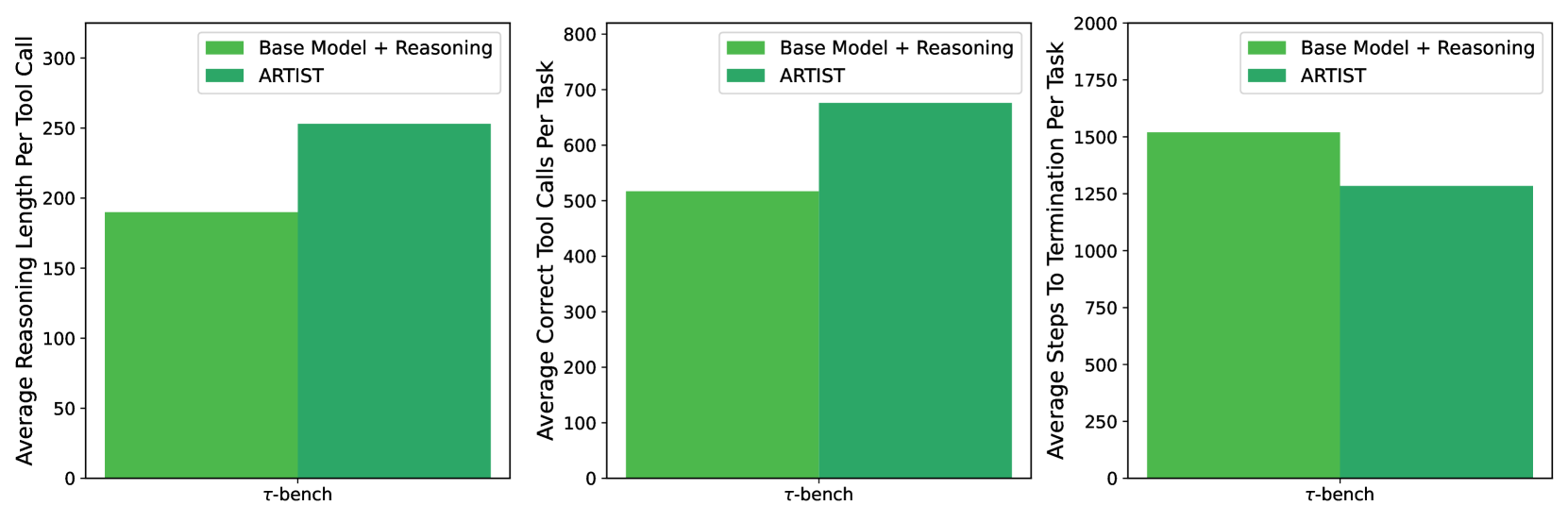

5.2.2 Metrics Analysis for Multi-Turn Function Calling

We evaluate the effectiveness of ARTIST using correctness reward score (state reward + function reward) on BFCL v3 during training, and three key metrics on $\tau$ -bench: (1) reasoning length per tool call, (2) total correct tool calls, and (3) total steps to task completion.

Reward Score (BFCL v3).

Figure 7 shows how reward score improves on both train and eval sets during training. During training on BFCL v3, ARTIST ’s average reward score improves from 0.55 to 0.85 within 900 steps. On evaluation, the reward score rises from 0.58 to 0.72 (a relative gain of 24%), demonstrating robust generalization and effective learning of tool-use strategies.

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## Bar Charts: Performance Comparison of Models

### Overview

The image presents three bar charts comparing the performance of two models, "Base Model + Reasoning" and "ARTIST", on the "τ-bench" benchmark. Each chart measures a different aspect of performance: Average Reasoning Length Per Tool Call, Average Correct Tool Calls Per Task, and Average Steps To Termination Per Task.

### Components/Axes

Each chart shares the following components:

* **X-axis:** Labeled "τ-bench". This appears to represent a single category or benchmark.

* **Y-axis:** Each chart has a different Y-axis label:

* Chart 1: "Average Reasoning Length Per Tool Call" (Scale: 0 to 300, increments of 50)

* Chart 2: "Average Correct Tool Calls Per Task" (Scale: 0 to 800, increments of 100)

* Chart 3: "Average Steps To Termination Per Task" (Scale: 0 to 2000, increments of 250)

* **Legend:** Located in the top-left corner of each chart. It identifies the two data series: