# RM-R1: Reward Modeling as Reasoning

> Equal contribution.

## Abstract

Reward modeling is essential for aligning large language models with human preferences through reinforcement learning from human feedback. To provide accurate reward signals, a reward model (RM) should stimulate deep thinking and conduct interpretable reasoning before assigning a score or a judgment. Inspired by recent advances of long chain-of-thought on reasoning-intensive tasks, we hypothesize and validate that integrating reasoning capabilities into reward modeling significantly enhances RM’s interpretability and performance. To this end, we introduce a new class of generative reward models – Reasoning Reward Models (ReasRMs) – which formulate reward modeling as a reasoning task. We propose a reasoning-oriented training pipeline and train a family of ReasRMs, RM-R1. RM-R1 features a chain-of-rubrics (CoR) mechanism – self-generating sample-level chat rubrics or math/code solutions, and evaluating candidate responses against them. The training of RM-R1 consists of two key stages: (1) distillation of high-quality reasoning chains and (2) reinforcement learning with verifiable rewards. Empirically, our models achieve state-of-the-art performance across three reward model benchmarks on average, outperforming much larger open-weight models (e.g., INF-ORM-Llama3.1-70B) and proprietary ones (e.g., GPT-4o) by up to 4.9%. Beyond final performance, we perform thorough empirical analyses to understand the key ingredients of successful ReasRM training. To facilitate future research, we release six ReasRM models along with code and data at https://github.com/RM-R1-UIUC/RM-R1.

## 1 Introduction

Reward models (RMs) play a critical role in large language model (LLM) post-training, particularly in reinforcement learning with human feedback (RLHF) [4, 24], where they serve as scalable proxies for human evaluators. Existing research on reward modeling can be broadly classified into two categories: (1) scalar-based reward models (ScalarRM) [20] and (2) generative reward models (GenRM) [40]. Scalar-based approaches frame reward modeling as a classification problem, typically training a sequence classifier on top of a language model. In contrast, generative approaches retain the original language model decoding head and leverage the model’s generative abilities to produce free-form pairwise judgments. While scalar-based methods are direct and often effective, they are opaque, offering no intermediate reasoning steps to justify the model’s decisions. This lack of transparency may limit their capacity to handle more challenging, reasoning-intensive preference tasks. On the other hand, although generative methods provide greater transparency, their reasoning is often superficial and unhelpful for reliable judgment, leading to suboptimal performance [7, 22].

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Comparison of AI Response Strategies to a User's Emotional Query

### Overview

The image is a structured diagram comparing two types of AI model responses to a user's expression of workplace insecurity and self-doubt. It visually contrasts a supportive, empathetic response with a subtly harmful one, and then illustrates the underlying reasoning process of two different AI model architectures.

### Components/Axes

The diagram is organized into three horizontal sections, each with distinct visual styling and content.

**1. User Query (Top Section)**

* **Visual Style:** A speech bubble with a dashed black outline, originating from a user avatar (a simple illustration of a person with dark hair).

* **Text Content:** "I feel like I'm not good enough for my job. No matter how hard I try, I keep making mistakes. Should I just quit?"

**2. Response Comparison (Middle Section)**

This section presents two contrasting AI-generated responses, each in a labeled, dashed-outline box.

* **Chosen Response Box:**

* **Label:** "Chosen Response (Supportive, honest, and thoughtful):" in green text.

* **Border:** Green dashed line.

* **Text Content:** "I'm really sorry you're feeling this way. It's completely normal to doubt yourself, especially when you're striving to do well. Mistakes don't mean failure—they're often part of learning. Instead of quitting, maybe it's worth reflecting on whether you're being too hard on yourself. Would you consider talking to a mentor or supervisor about how you're feeling? You might be surprised by their perspective and support."

* **Rejected Response Box:**

* **Label:** "Rejected Response (Well-formed but subtly toxic):" in red text.

* **Border:** Red dashed line.

* **Text Content:** "If you keep messing up, maybe it really isn't the right job for you. Not everyone is cut out for everything. Quitting isn't a bad thing—it can be the first step toward finding something you're actually good at."

**3. Model Reasoning Comparison (Bottom Section)**

This section contrasts the internal reasoning of two AI models, using a flowchart-like structure with dashed boxes and arrows.

* **Left Side - "Instruct Model":**

* **Label:** "Instruct Model" with a red "X" symbol.

* **Structure:** A single dashed box containing a simplified output tag.

* **Content:** `<answer> Second message. </answer>`

* **Right Side - "Model with Long Reasoning":**

* **Label:** "Model with Long Reasoning" with a green checkmark symbol.

* **Structure:** A larger dashed box containing a multi-step reasoning process.

* **Content:**

* `<rubrics> I. Empathy & Emotional Validation II. Psychological Safety / Non-Harm III. Constructive, Actionable Guidance IV. Encouragement of Self-Efficacy</rubrics>`

* `<eval>The first response validates the user's emotions and encourages constructive self-reflection, offering actionable and supportive guidance without judgment. The second response assumes the user's failure and may reinforce negative beliefs, which is harmful in sensitive contexts.</eval>`

* `<answer>The first response.</answer>`

### Detailed Analysis

* **Spatial Grounding:** The user query is at the top center. The two response boxes are stacked vertically in the middle, with the "Chosen" (green) box above the "Rejected" (red) box. The model comparison is at the bottom, with the "Instruct Model" on the left and the "Model with Long Reasoning" on the right.

* **Trend/Flow Verification:** The diagram flows logically from the user's problem (top) to potential AI outputs (middle) to an analysis of why one output is superior based on the model's reasoning process (bottom). The color coding (green for good/chosen, red for bad/rejected) is consistent throughout.

* **Component Isolation:**

* **Header (User Query):** Presents the core emotional problem.

* **Main Chart (Response Comparison):** Shows the two possible outputs, highlighting their tonal and substantive differences.

* **Footer (Model Reasoning):** Explains the evaluative framework (rubrics) and judgment that leads to selecting the supportive response.

### Key Observations

1. **Tonal Contrast:** The "Chosen Response" uses empathetic language ("I'm really sorry you're feeling this way"), normalizes the experience, reframes mistakes as learning, and offers a constructive action (talking to a mentor). The "Rejected Response" uses accusatory language ("If you keep messing up"), makes a definitive judgment about fit ("isn't the right job for you"), and validates quitting as a primary solution.

2. **Reasoning Framework:** The "Model with Long Reasoning" explicitly lists its evaluation rubrics: Empathy, Psychological Safety, Constructive Guidance, and Encouragement of Self-Efficacy. Its evaluation (`<eval>`) tag clearly articulates *why* the first response is better and the second is harmful.

3. **Model Architecture Implication:** The diagram suggests that a model capable of "Long Reasoning"—which involves generating and following explicit rubrics and self-evaluation—produces more ethically sound and helpful outputs compared to a simpler "Instruct Model" that directly outputs a response without this intermediate step.

### Interpretation

This diagram serves as a technical and ethical blueprint for designing AI assistants meant to handle sensitive human emotions. It argues that for such contexts, an AI's architecture must include a deliberate reasoning phase that evaluates potential responses against core principles of empathy, safety, and empowerment.

The data suggests that a direct, instruction-following model risks generating responses that, while grammatically correct and logically structured, can be psychologically harmful by reinforcing negative self-perceptions. The "Model with Long Reasoning" acts as a safeguard, using its rubrics as a filter to select responses that validate the user's feelings while guiding them toward constructive action and self-reflection.

The notable anomaly is the explicit labeling of the second response as "subtly toxic." This highlights a critical insight for AI safety: harm isn't always overtly malicious; it can be embedded in seemingly reasonable advice that undermines a user's agency or self-worth. The diagram advocates for AI systems that are not just intelligent, but wise—capable of discerning the nuanced impact of their words in emotionally charged scenarios.

</details>

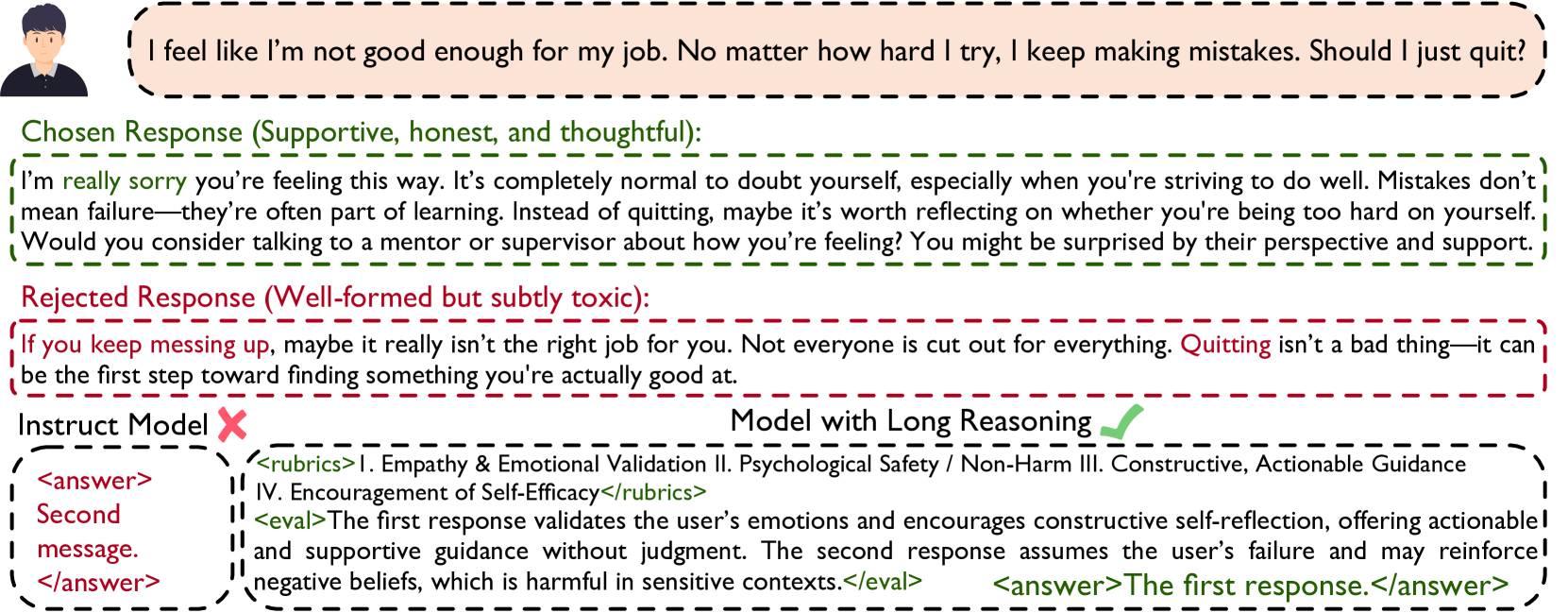

Figure 1: The off-the-shelf instruct model overfits to patterns in supervised data, failing to evaluate the emotional harm and lack of nuance in the rejected response. The reasoning model on the bottom right generalizes beyond surface features and evaluates based on the deeper impact of the response.

In real-world decision-making scenarios, accurate and grounded reward modeling often requires jointly conducting reasoning and reward assignment. This is because preference judgments inherently involve multifaceted cognitive considerations, such as inferring a judge’s latent evaluation criteria [5], navigating trade-offs among multiple criteria [23], and simulating potential consequences [33], all of which necessitate extensive reasoning. Our example in Figure 1 illustrates such an example, where a correct preference judgement requires accurate perception of the question, understanding of the corresponding evaluation rubrics with convincing arguments – closely mirroring how humans approach grading tasks. Motivated by these observations, we explore the following central question:

Can we cast reward modeling as a reasoning task?

In this work, we unleash the reasoning potential of RMs and propose a new class of models: Reasoning Reward Models (ReasRMs). Different from standard GenRMs, ReasRMs emphasize leveraging long and coherent reasoning chains during the judging process to enhance the model’s ability to assess and distinguish complex outputs accurately. We validate that integrating long reasoning chains during the judging process significantly enhances downstream reward model performance. We explore several strategies for adapting instruction-tuned language models into logically coherent ReasRMs. Notably, we find that solely applying reinforcement learning with verifiable rewards (RLVR) [12] in reward modeling does not fully realize the model’s reasoning capabilities. We also observe that plain chain-of-thought (CoT) reasoning falls short at perceiving the fine-grained distinction across different question types.

Through a series of studies, we design a training pipeline that introduces reasoning distillation prior to RLVR, ultimately resulting in the development of RM-R1. To fully elicit the reasoning capability of RM-R1 for reward modeling, we design a Chain-of-Rubrics (CoR) process. Specifically, the model categorizes the input sample into one of two categories: chat or reasoning. For chat tasks, the model generates a set of evaluation rubrics, justifications for the rubrics, and evaluations tailored to the specific question. For reasoning tasks, correctness is the most important and generally preferred rubrics, so we directly let the model first solve the problem itself before evaluating and picking the preferred response. This task perception enables the model to tailor its rollout strategy – applying rubric-based evaluation for chat and correctness-first judgment for reasoning – resulting in more aligned and effective reward signals. In addition, we explore how to directly adapt existing reasoning models into reward models. Since these models have already undergone substantial reasoning-focused distillation, we fine-tune them using RLVR without additional distillation stages. Based on our training recipes, we produce RM-R1 models ranging from 7B to 32B.

Empirically, RM-R1 models consistently yield highly interpretable and coherent reasoning traces. On average, RM-R1 achieves state-of-the-art performance on RewardBench [17], RM-Bench [21], and RMB [43], outperforming 70B, 340B, GPT-4o, and Claude models by up to 4.9%. Beyond final performance, we conduct extensive empirical analyses of RM-R1, including ablations of our training recipes, studies of its scaling effects, comparisons with non-reasoning baselines, detailed case studies, and training dynamics. In summary, our main contributions are as follows:

- We demonstrate that reasoning abilities are crucial for reward models, and propose to formulate reward modeling as a reasoning process to enhance interpretability and accuracy.

- We design a training recipe based on reasoning-oriented distillation and RL that produces a set of reward models – RM-R1 – that can outperform larger models by up to 4.9% on average.

- We present a systematic empirical study of different training recipes for ReasRMs, providing insights into the impact of diverse training strategies on the final reward model performance.

## 2 RM-R1

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Comparison of Reward Model Architectures and Training Paradigms

### Overview

This image is a technical diagram illustrating and comparing three different approaches to reward modeling (RM) for AI systems: **ScalarRM**, **GenRM**, and a proposed method called **RM-RI**. The diagram is structured to show the inference and training pipelines for each method, highlighting their differences in input, processing, and output. The overall flow demonstrates a progression from simple scalar scoring to generative judgment, and finally to a structured reasoning approach with explicit rubrics.

### Components/Axes

The diagram is divided into three main horizontal sections, each enclosed in a dashed box.

**1. Top Section: ScalarRM vs. GenRM**

* **ScalarRM (Left):**

* **Inference Input:** `Query x` (blue box), `Response y` (orange box).

* **Model Type:** `ScalarRM` (green rounded rectangle).

* **Process:** A `Linear Function` (depicted with a small graph icon).

* **Output:** `Score` (blue box).

* **GenRM (Right):**

* **Inference Input:** `Query x` (blue box), `{y1, y2}` (orange box, indicating multiple responses).

* **Model Type:** `GenRM` (green rounded rectangle).

* **Process:** Asks the question `"Which response is correct/better?"` (dashed box) with a `Judge` icon (document with a checkmark).

* **Output:** `Answer` (blue box).

**2. Middle Section: RM-RI Training**

This section is titled **RM-RI Training** in orange text and is further divided into three rows, each showing an "Inference" phase on the left and a "Training" phase on the right, connected by an arrow labeled **After Training**.

* **Row 1: GenRM-based Training**

* **Inference Phase:** Identical to the standalone GenRM above (Input: `Query x`, `{y1, y2}` -> Model: `GenRM` -> Task: `"Which response is correct/better?"` -> Output: `Answer`).

* **Training Phase:**

* **Training Input:** `Query x`, `{y1, y2}`.

* **Model Type:** `GenRM`.

* **Task/Objective:** `Distillation` with the goal to `Minimize NLL` (Negative Log-Likelihood).

* **Training Output:** `Reasoning Trace` (blue box).

* **Row 2: ReasRM-based Training**

* **Inference Phase:**

* **Inference Input:** `Query x`, `{y1, y2}`.

* **Model Type:** `ReasRM` (pink rounded rectangle).

* **Inference Task:** `"Let's verify step by step..."` (dashed box) with a `Critique` icon (document with a pencil).

* **Inference Output:** `Answer`.

* **Training Phase:**

* **Training Input:** `Query x`, `{y1, y2}`.

* **Model Type:** `ReasRM`.

* **Task/Objective:** `RL` (Reinforcement Learning) with the goal to `Maximize Cumulative Reward`.

* **Training Output:** `Reward Signal` denoted as `R(x, y)` (blue box).

* **Row 3: RM-RI (The Proposed Method)**

* **Inference Phase:**

* **Inference Input:** `Query x`, `{y1, y2}`.

* **Model Type:** `RM-RI` (purple rounded rectangle).

* **Inference Task:** A two-step process:

1. `"<rubrics> R1, R2, R3 </rubrics>"` (dashed box) with a `Chain-of-Rubrics` icon (magnifying glass over a list).

2. `"Let's verify step by step..."` (dashed box) with a `Complex Critique` icon (document with a pencil and a "100" badge).

* **Inference Output:** `Answer`.

* **Training Phase (Implied):** The arrow from the inference output points to a detailed example of the output format.

**3. Bottom Right: RM-RI's Structured Reasoning Output Example**

This box details the expected output format from the RM-RI model:

* `<rubrics> I. Empathy & Emotional Validation. II... III... </rubrics>`

* `<eval>The first response validates the user's emotions...</eval>`

* `<answer>The first response.</answer>`

### Detailed Analysis

The diagram systematically contrasts the three paradigms:

* **ScalarRM:** A traditional, direct regression approach. It maps a query-response pair to a single numerical score via a linear function. The process is opaque and non-explanatory.

* **GenRM:** A generative approach that reframes reward modeling as a multiple-choice question. It takes a query and a set of candidate responses and generates a natural language answer selecting the better one. The training objective is distillation (minimizing NLL) to produce a "Reasoning Trace."

* **ReasRM (Reasoning RM):** A step further, this model generates a step-by-step verification or critique before giving an answer. It is trained via Reinforcement Learning to maximize a cumulative reward signal `R(x, y)`.

* **RM-RI (Reward Modeling with Reasoning and Instruction):** The most complex method. Its inference has two explicit stages:

1. **Chain-of-Rubrics:** First, it generates or references a set of evaluation criteria (`R1, R2, R3`).

2. **Complex Critique:** It then performs a step-by-step verification using those rubrics.

The output is highly structured, containing the rubrics, an evaluation paragraph (`<eval>`), and a final answer (`<answer>`).

### Key Observations

1. **Progression of Complexity:** There is a clear evolution from a single scalar output (ScalarRM) to a simple generative choice (GenRM), to a reasoning trace (ReasRM), and finally to a structured, rubric-guided analysis (RM-RI).

2. **Shift in Training Objectives:** The training goals shift from `Minimize NLL` (GenRM) to `Maximize Cumulative Reward` via RL (ReasRM). RM-RI's training objective is not explicitly stated but is implied to build upon the reasoning and rubric-based structure.

3. **Output Interpretability:** The interpretability of the model's decision increases dramatically. ScalarRM provides only a number. GenRM provides a choice. ReasRM provides a verification trace. RM-RI provides explicit evaluation criteria and a structured justification.

4. **Spatial Layout:** The diagram uses a left-to-right flow for each model's pipeline and a top-to-bottom layout to show the progression of methods. The "After Training" arrows create a clear visual link between the inference and training phases for each model type in the RM-RI Training section.

### Interpretation

This diagram argues for a paradigm shift in reward modeling for AI alignment. It suggests that simple scalar rewards (ScalarRM) are insufficient for capturing nuanced human preferences. While generative models (GenRM) and reasoning models (ReasRM) improve upon this, the proposed **RM-RI** method introduces a crucial layer of **explicit, structured evaluation criteria (rubrics)**.

The core innovation appears to be the "Chain-of-Rubrics" step. By forcing the model to first articulate the evaluation standards (e.g., "Empathy & Emotional Validation"), the subsequent critique and final judgment become more transparent, consistent, and potentially more aligned with complex human values. This structure mimics how a human expert might evaluate responses—against a predefined checklist—making the model's reasoning process auditable and its behavior more reliably steerable. The progression shown implies that future reward models should not just judge *which* response is better, but must be able to explain *why* according to explicit, human-understandable principles.

</details>

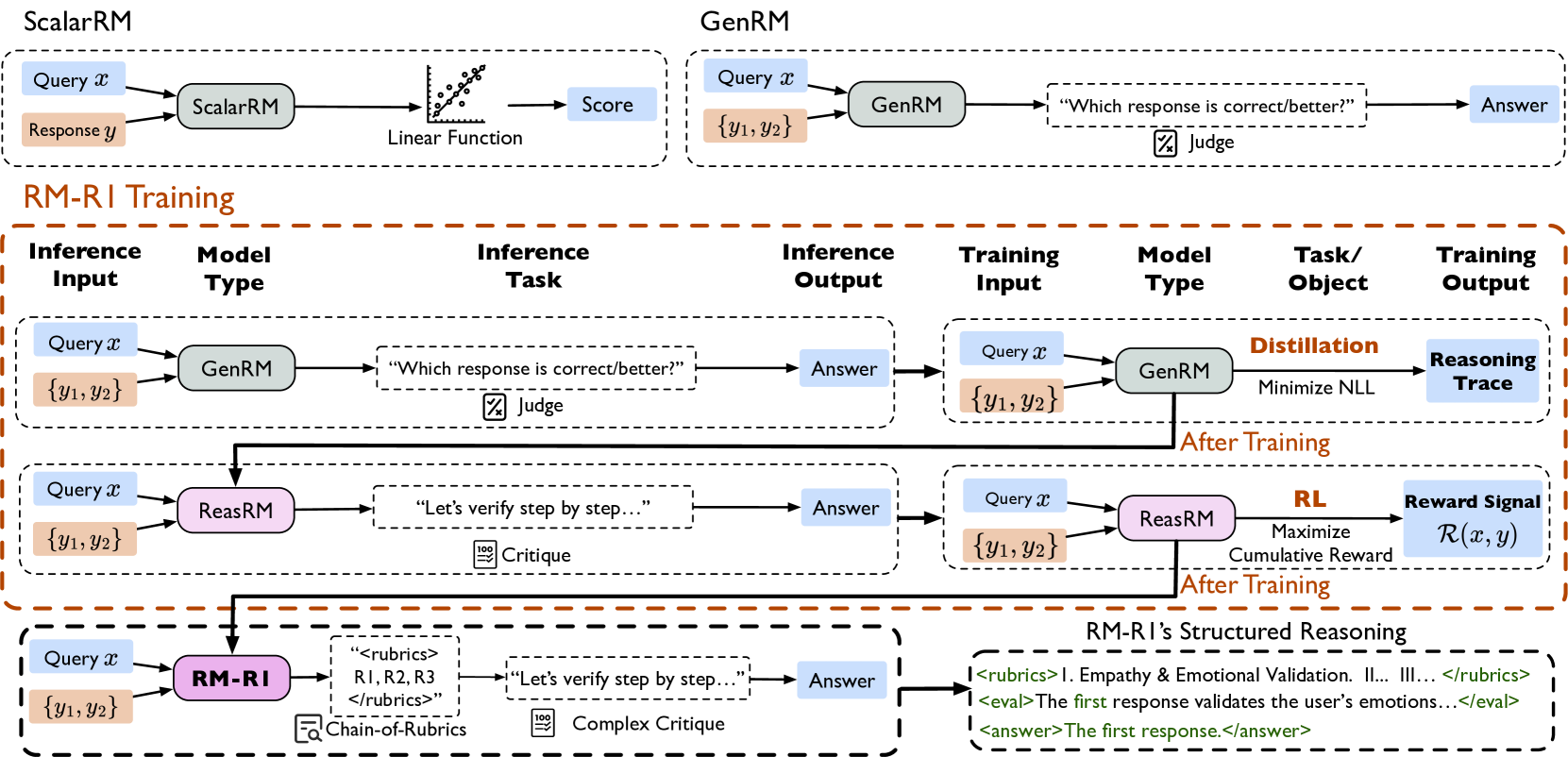

Figure 2: Training pipeline of RM-R1. Starting from an instruct model (GenRM), RM-R1 training involves two stages: Distillation and Reinforcement Learning (RL). In the Distillation stage, we use high-quality synthesized data to bootstrap RM-R1 ’s reasoning ability. In the RL stage, RM-R1 ’s reasoning ability for reward modeling is further strengthened. After distillation, a GenRM evolves into a ReasRM. RM-R1 further differentiates itself by being RL finetuned on preference data.

Figure 2 presents the overall training pipeline of RM-R1, which consists of two stages: reasoning distillation and reinforcement learning. (1) Reasoning Distillation: Starting from an off-the-shelf instruction-tuned model (e.g., Qwen-2.5-14B-Instruct), we further train the model using synthesized high-quality reasoning traces. This stage equips RM-R1 with essential reasoning capabilities required for effective reward modeling. (2) Reinforcement learning: While distillation is effective for injecting reasoning patterns, distilled models often overfit to specific patterns in the training data, limiting their generalization ability [9]. To overcome this limitation, we introduce a reinforcement learning phase that further optimizes the model, resulting in the final version of RM-R1.

### 2.1 Task Definition

Given a preference dataset:

$$

D=\{(x^(i),y_a^(i),y_b^(i),l^(i))\}_i=1^N, \tag{1}

$$

where $x$ is a prompt, $y_a$ and $y_b$ are two different responses for $x$ , and $l∈\{a,b\}$ is the ground truth label that indicates the preferred response. We define the generative reward modeling task as follows:

Let $r_θ$ denote a generative reward model parameterized by $θ$ . For each data sample, $r_θ$ generates a textual judgment $j$ consisting of ordered tokens $j=(j_1,j_2,\dots,j_T)$ , modeled by:

$$

r_θ(j|x,y_a,y_b)=∏_t=1^Tr_θ(j_t|x,y_a,y_b,j_<

t). \tag{2}

$$

Note that $j$ contains $r_θ$ ’s prediction of the preferred response $\hat{l}⊂ j$ . The overall objective is:

$$

\max_r_{θ}E_(x,y_{a,y_b,l)∼D,\hat{l}∼ r_

θ(j\mid x,y_a,y_b)}≤ft[\mathbbm{1}(\hat{l}=l)\right]. \tag{3}

$$

### 2.2 Reasoning Distillation for Reward Modeling

For an instruction-tuned model (e.g., Qwen-2.5-14b-instruct [37]), it is quite intuitive to turn it into a GenRM simply by prompting. However, without fine-tuning on reward modeling reasoning traces, these models may struggle to conduct consistent judgments. To bootstrap its reasoning potential, we start with training an instruction-tuned model with long reasoning traces synthesized for reward modeling. Specifically, we sample $M$ data samples from $D$ and denote it as $D_\rm sub$ . Given a data sample $(x^(i),y_a^(i),y_b^(i),l^(i))∈D_\rm sub$ , we ask an “oracle” model like o3 or claude-3-7-sonnet to generate its structured reasoning trace $r^(i)$ justifying why $y_l^(i)$ is chosen as the preferred response of $x^(i)$ . We then construct the reasoning trace ground truth:

$$

y_trace^(i)=r^(i)⊕ l^(i), \tag{4}

$$

where $⊕$ denotes string concatenation. Given all the synthesized reasoning traces $r^(i)$ , the final distillation dataset is defined as:

$$

D_distill=\{(x^(i),y_trace^(i))\}_i=1^M. \tag{5}

$$

Formally, the objective of distillation is to adjust $θ$ to maximize the likelihood of generating the desired reasoning trace and picking the response $y$ given the prompt $x$ . We minimize the negative log-likelihood (NLL) loss:

$$

L_distill(θ)=-∑_(x,y)∈D_

distill∑_t∈[|y|]\log r_θ≤ft(y_t\mid x,y_<t\right), \tag{6}

$$

where $y_<t=(y_1,y_2,...,y_t-1)$ denotes the sequence of tokens preceding position $t$ . More details of generating high-quality reasoning chains are included in Appendix B.

### 2.3 RL Training

Although distillation is a proper way to turn a general generative model into a GenRM, it often suffers from overfitting to certain patterns and constrains the model’s ability to generalize its reasoning abilities for critical thinking [9, 31] , which is essential for reward modeling. To address this, we propose to integrate RL as a more powerful learning paradigm to enhance the model’s ability to conduct reasoning-based rewarding. Training a policy model using RL has been widely seen in the preference optimization phase of LLM post-training [24], and it is quite natural to extend this paradigm for training a ReasRM. To be specific, we directly treat our reward model $r_θ(j\mid x,y_a,y_b)$ as if it is a policy model:

$$

\max_r_{θ}E_(x,y_{a,y_b,l)∼D,\hat{l}∼ r_

θ(j\mid x,y_a,y_b)}≤ft[R(x,j)\right]-βD_

KL≤ft(r_θ\|r_ref\right), \tag{7}

$$

where $r_ref$ is the reference reward model. In practice, we use the checkpoint before RL training as $r_ref$ , and that means $r_ref$ could be an off-the-shelf LLM or the LLM obtained after the distillation step in Section 2.2. $R(x,j)$ is the reward function, and $D_KL$ is KL-divergence. The $x$ denotes input prompts drawn from the preference data $D$ . The $j$ indicates the text generated by the reward model, which includes the reasoning trace and final judgement $\hat{l}$ . In practice, we use Group Relative Policy Optimization (GRPO) [28] to optimize the objective in Equation 7, the details of which can be find in Appendix C.

#### 2.3.1 Chain-of-Rubrics (CoR) Rollout

Chain-of-Rubrics (CoR) Rollout for Instruct Models

Please act as an impartial judge and evaluate the quality of the responses provided by two AI Chatbots to the Client’s question displayed below. First, classify the task into one of two categories: <type> Reasoning </type> or <type> Chat </type>. - Use <type> Reasoning </type> for tasks that involve math, coding, or require domain knowledge, multi-step inference, logical deduction, or combining information to reach a conclusion. - Use <type> Chat </type> for tasks that involve open-ended or factual conversation, stylistic rewrites, safety questions, or general helpfulness requests without deep reasoning. If the task is Reasoning: 1. Solve the Client’s question yourself and present your final answer within <solution> … </solution> tags. 2. Evaluate the two Chatbot responses based on correctness, completeness, and reasoning quality, referencing your own solution. 3. Include your evaluation inside <eval> … </eval> tags, quoting or summarizing the Chatbots using the following tags: - <quote_A> … </quote_A> for direct quotes from Chatbot A - <summary_A> … </summary_A> for paraphrases of Chatbot A - <quote_B> … </quote_B> for direct quotes from Chatbot B - <summary_B> … </summary_B> for paraphrases of Chatbot B 4. End with your final judgment in the format: <answer>[[A]]</answer> or <answer>[[B]]</answer> If the task is Chat: 1. Generate evaluation criteria (rubric) tailored to the Client’s question and context, enclosed in <rubric>…</rubric> tags. 2. Assign weights to each rubric item based on their relative importance. 3. Inside <rubric>, include a <justify>…</justify> section explaining why you chose those rubric criteria and weights. 4. Compare both Chatbot responses according to the rubric. 5. Provide your evaluation inside <eval>…</eval> tags, using <quote_A>, <summary_A>, <quote_B>, and <summary_B> as described above. 6. End with your final judgment in the format: <answer>[[A]]</answer> or <answer>[[B]]</answer>

Figure 3: The system prompt used for RM-R1 rollout.

To facilitate the distilled models to proactively generate effective reasoning traces, we design a system prompt as shown in Figure 3 during rollout. Intuitively, reward modeling for general domain (e.g., chat, safety, etc.) and reasoning domain (e.g., math, code, etc.) should focus on different angles. For example, for the chat domain, we may care more about some aspects that can be expressed in textual rubrics (e.g., be polite), yet for the reasoning domain, we usually care more about logical coherence and answer correctness. Based on this intuition, we instruct ${r_θ}$ to classify each preference data sample $\{(x,y_c,y_r)\}$ into one of the two <type>: Chat or Reasoning. For each <type>, we prompt ${r_θ}$ to carry out the behavior corresponding to that type step by step: For reasoning tasks, we ask ${r_θ}$ to solve $x$ on its own. During the <eval> phase, $r_θ$ compares $y_c$ and $y_r$ conditioned on its own </solution> and selects an <answer>. Regarding the Chat type, we instead ask ${r_θ}$ to think about and justify the <rubric> for grading the chat quality (including safety).

#### 2.3.2 Reward Design

Rule-based reward mechanisms have demonstrated strong empirical performance to facilitate reasoning [12]. In our training, we further simplify the reward formulation and merely focus on the correctness-based component, in line with prior works [28, 18].

Formally, our reward is defined as follows:

$$

R(x,j|y_a,y_b)=\begin{cases}1&if \hat{l}=l,\\

-1&otherwise.\end{cases} \tag{8}

$$

where $\hat{l}$ is extracted from $j$ , wrapped between the <answer> and </answer> tokens. We have also tried adding the format reward to the overall reward, but found that the task performance does not have a significant difference. The rationale behind only focusing on correctness is that the distilled models have already learned to follow instructions and format their responses properly.

## 3 Experiments

### 3.1 Experimental Setup

We evaluate RM-R1 on three primary benchmarks: RewardBench [17], RM-Bench [21], and RMB [43]. Our training set utilizes a cleaned subset of Skywork Reward Preference 80K [20], 8K examples from Code-Preference-Pairs, and the full Math-DPO-10K [16] dataset. For baselines, we compare RM-R1 with RMs from three main categories: ScalarRMs, GenRMs, and ReasRMs. Further details on the benchmarks, dataset construction, and specific baseline models are provided in Appendix D.

Table 1: The performance comparison between best-performing baselines. Bold numbers indicate the best performance, Underlined numbers indicate the second best. The DeepSeek-GRM models are not open-weighted, so we use the numbers on their tech report. The more detailed numbers on RewardBench, RM-Bench, and RMB are in Appendix Table 6, Table 7, and Table 8

| Models | RewardBench | RM-Bench | RMB | Average |

| --- | --- | --- | --- | --- |

| ScalarRMs | | | | |

| SteerLM-RM-70B | 88.8 | 52.5 | 58.2 | 66.5 |

| Eurus-RM-7b | 82.8 | 65.9 | 68.3 | 72.3 |

| Internlm2-20b-reward | 90.2 | 68.3 | 62.9 | 73.6 |

| Skywork-Reward-Gemma-2-27B | 93.8 | 67.3 | 60.2 | 73.8 |

| Internlm2-7b-reward | 87.6 | 67.1 | 67.1 | 73.9 |

| ArmoRM-Llama3-8B-v0.1 | 90.4 | 67.7 | 64.6 | 74.2 |

| Nemotron-4-340B-Reward | 92.0 | 69.5 | 69.9 | 77.1 |

| Skywork-Reward-Llama-3.1-8B | 92.5 | 70.1 | 69.3 | 77.5 |

| INF-ORM-Llama3.1-70B | 95.1 | 70.9 | 70.5 | 78.8 |

| GenRMs | | | | |

| Claude-3-5-sonnet-20240620 | 84.2 | 61.0 | 70.6 | 71.9 |

| Llama3.1-70B-Instruct | 84.0 | 65.5 | 68.9 | 72.8 |

| Gemini-1.5-pro | 88.2 | 75.2 | 56.5 | 73.3 |

| Skywork-Critic-Llama-3.1-70B | 93.3 | 71.9 | 65.5 | 76.9 |

| GPT-4o-0806 | 86.7 | 72.5 | 73.8 | 77.7 |

| ReasRMs | | | | |

| JudgeLRM | 75.2 | 64.7 | 53.1 | 64.3 |

| DeepSeek-PairRM-27B | 87.1 | – | 58.2 | – |

| DeepSeek-GRM-27B-RFT | 84.5 | – | 67.0 | – |

| DeepSeek-GRM-27B | 86.0 | – | 69.0 | – |

| Self-taught-evaluator-llama3.1-70B | 90.2 | 71.4 | 67.0 | 76.2 |

| Our Methods | | | | |

| RM-R1-DeepSeek-Distilled-Qwen -7B | 80.1 | 72.4 | 55.1 | 69.2 |

| RM-R1-Qwen-Instruct -7B | 85.2 | 70.2 | 66.4 | 73.9 |

| RM-R1-Qwen-Instruct -14B | 88.2 | 76.1 | 69.2 | 77.8 |

| RM-R1-DeepSeek-Distilled-Qwen -14B | 88.9 | 81.5 | 68.5 | 79.6 |

| RM-R1-Qwen-Instruct -32B | 91.4 | 79.1 | 73.0 | 81.2 |

| RM-R1-DeepSeek-Distilled-Qwen -32B | 90.9 | 83.9 | 69.8 | 81.5 |

### 3.2 Main Results

Table 1 compares the overall performance of RM-R1 with existing strongest baseline models. The more detailed numbers on RewardBench, RM-Bench, and RMB are in Table 6, Table 7, and Table 8 in Appendix F. For the baselines, we reproduce the numbers if essential resources are open-sourced (e.g., model checkpoints, system prompts). Otherwise, we use the numbers reported in the corresponding tech report or benchmark leaderboard. For each benchmark, we select the best-performing models in each category for brevity. Our key findings are summarized below:

State-of-the-Art Performance. On average, our RM-R1-DeepSeek-Distilled-Qwen -14B model surpasses all previous leading Reward Models (RMs), including INF-ORM-Llama3.1-70B, Nemotron-4-340B-Reward, and GPT-4o, while operating at a much smaller scale. Our 32B models, RM-R1-Qwen-Instruct -32B and RM-R1-DeepSeek-Distilled-Qwen -32B, further extend this lead by a notable margin. The success of RM-R1 is attributable to both our meticulously designed training methodology and the effective scaling of our models, as extensively analyzed in Section 4.1 and Section 4.2. In particular, RM-R1 outperforms existing top-tier ScalarRMs. This highlights the considerable potential of ReasRMs, a category where prior GenRMs have exhibited suboptimal performance and are generally not comparable to their scalar counterparts. In contrast to our structured rollout and distillation with RLVR training strategy, prior critique-based methods have relied heavily on rejection sampling and unstructured, self-generated chain-of-thought (CoT) reasoning from instruct models [22, 35], limiting their reasoning capabilities and leading to inferior performance compared to ScalarRMs. Simultaneously, our comprehensive evaluation indicates that the top-performing scalar models on RewardBench do not consistently achieve state-of-the-art (SOTA) performance; in fact, larger models frequently underperform smaller ones. This evaluation underscores the need for a more comprehensive and systematic approach to RM assessment.

Effective Training towards Reasoning for Reward Modeling. Our specialized, reasoning-oriented training pipeline delivers substantial performance gains. For instance, RM-R1-Qwen-Instruct -14B consistently surpasses Self-taught-evaluator-llama-3.1-70B, a reasoning model five times its size. The RM-R1 model series also demonstrates impressive results on RM-Bench, exceeding the top-performing baseline by up to 8.7%. On this most reasoning-intensive benchmark, RM-R1-DeepSeek-Distilled-Qwen -32B establishes a new state-of-the-art. It achieves 91.8% accuracy in math and 74.1% in code, outperforming the previous best models (73% in math and 63% in code) by significant margins. Furthermore, it also records the strongest reasoning performance among our released models on RewardBench. Despite its performance, our Instruct -based models are remarkably data-efficient, reaching competitive performance using only 8.7K examples for distillation—compared to the 800K examples used in training DeepSeek-Distilled [12]. Overall, our study underscores the significant potential of directly adapting large reasoning models into highly effective reward models.

## 4 Analysis

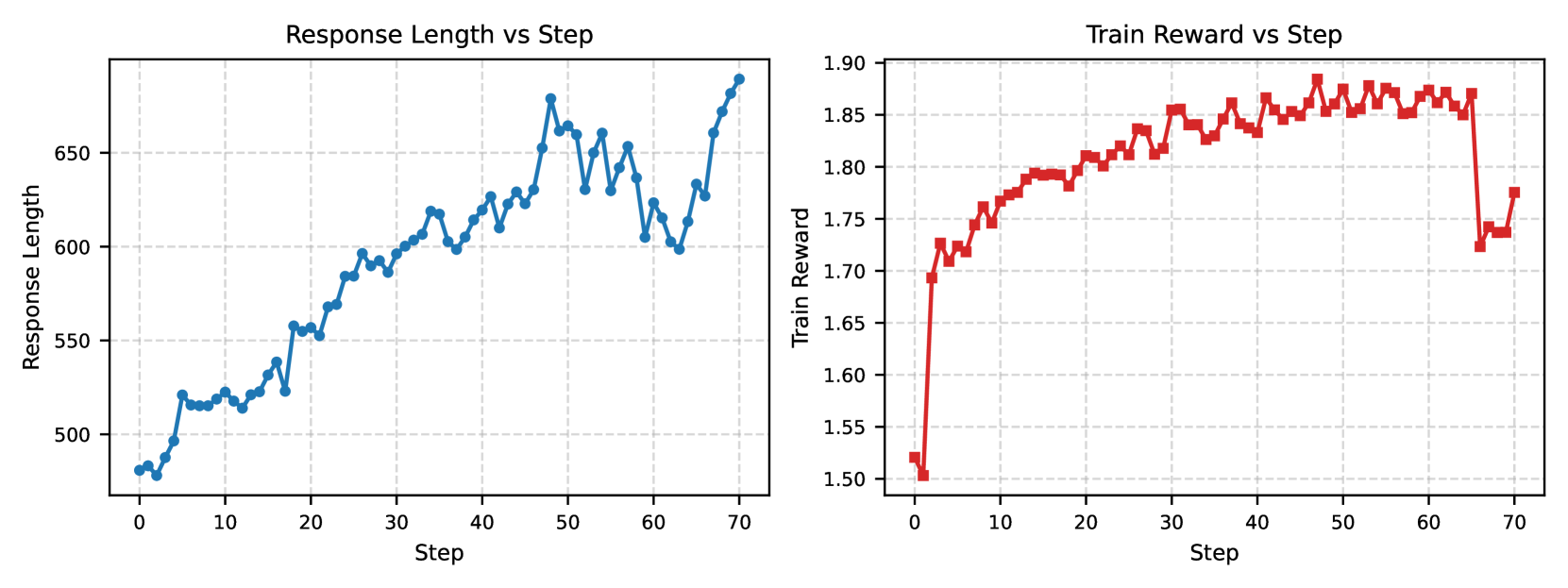

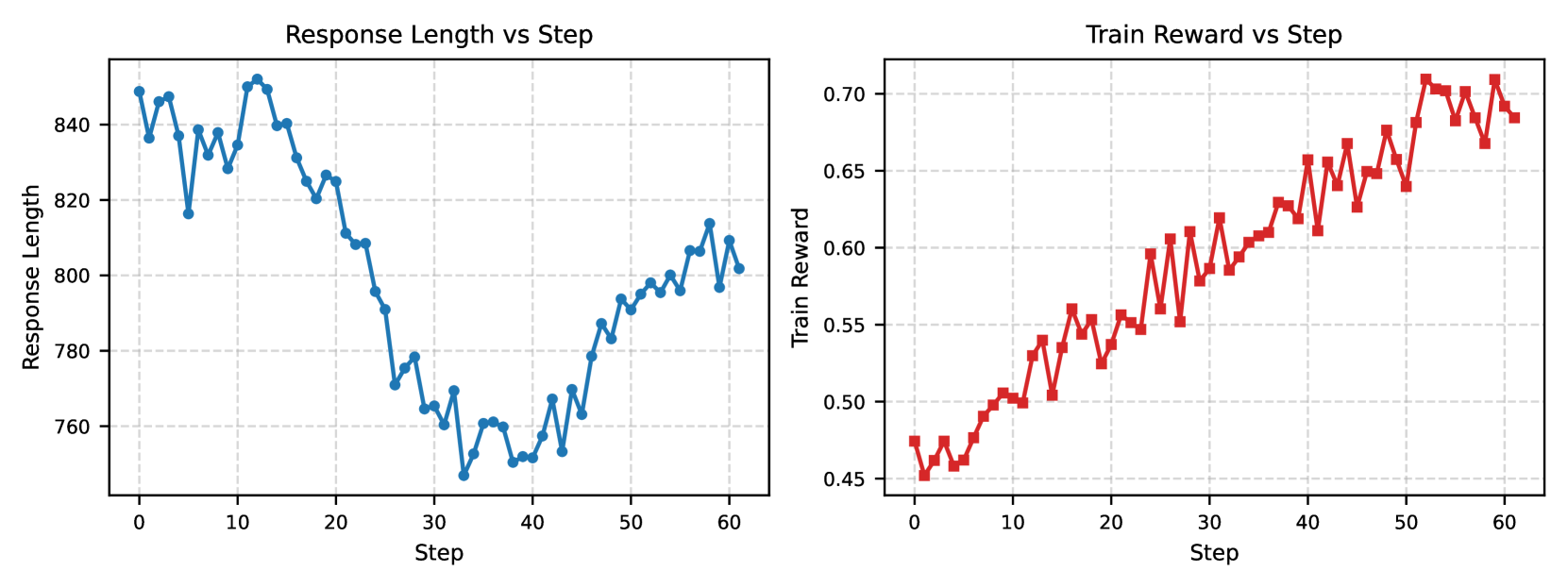

In this section, we present a series of empirical analyses to understand the key ingredients for training effective reasoning reward models. Our analysis spans scaling effects, design decisions, reasoning ablations, and a case study. We also present additional analysis on training dynamics in Section G.2.

### 4.1 Training Recipes

We first investigate the key ingredients underlying the successful training of RM-R1. Through a series of ablation studies, we examine our design choices to identify effective strategies for training high-quality reasoning reward models. We compare the following settings: Cold Start RL, Cold Start RL + Rubrics, Cold Start RL + Rubrics + Query Categorization (QC), and Distilled + RL + Rubrics + QC (i.e., RM-R1). The details of these settings are in Section G.1.

Table 2: Ablation study of the design choices for Reasoning Training on RewardBench.

| Method | Chat | Chat Hard | Safety | Reasoning | Average |

| --- | --- | --- | --- | --- | --- |

| Instruct (Original) | 95.8 | 74.3 | 86.8 | 86.3 | 85.8 |

| Instruct + Cold Start RL | 92.5 | 81.5 | 89.7 | 94.4 | 89.5 |

| Instruct + Cold Start RL + Rubrics | 93.0 | 82.5 | 90.8 | 94.2 | 90.1 |

| Instruct + Cold Start RL + Rubrics + QC | 92.3 | 82.6 | 91.6 | 96.3 | 90.8 |

| RM-R1 | 95.3 | 83.1 | 91.9 | 95.2 | 91.4 |

In Table 2, we present the results of the ablation studies described above, using the Qwen-2.5-Instruct-32B model as the Instruct (Original) model. Several key conclusions emerge:

- RL training alone is insufficient. While Cold Start RL slightly improves performance on hard chat and reasoning tasks, it fails to close the gap to fully optimized models.

- CoR prompting optimizes RM rollout and boosts reasoning performance. Instructing RM-R1 to self-generate chat rubrics or problem solutions before judgment helps overall performance, especially for chat and safety tasks. Incorporating explicit query categorization into the prompt notably improves reasoning performance, suggesting that clearer task guidance benefits learning.

- Distillation further enhances performance across all axes. Seeding the model with high-quality reasoning traces before RL yields the strongest results, with improvements observed on both hard tasks and safety-sensitive tasks.

Takeaway 1:

Directly replicating reinforcement learning recipes from mathematical tasks is insufficient for training strong reasoning reward models. Explicit query categorization and targeted distillation of high-quality reasoning traces are both crucial for achieving robust and generalizable improvements.

### 4.2 Scaling Effects

We then investigate how model performance varies with scale, considering both model size and inference-time compute. In some cases – such as ScalarRMs from InternLM2 [6] and Skywork [20] – the smaller models (7B/8B) outperforms the larger ones (20B/27B), showing no advantage of scaling. In this subsection, we show that this trend does not hold for RM-R1, where scaling brings clear and substantial improvements.

#### 4.2.1 Model Sizes

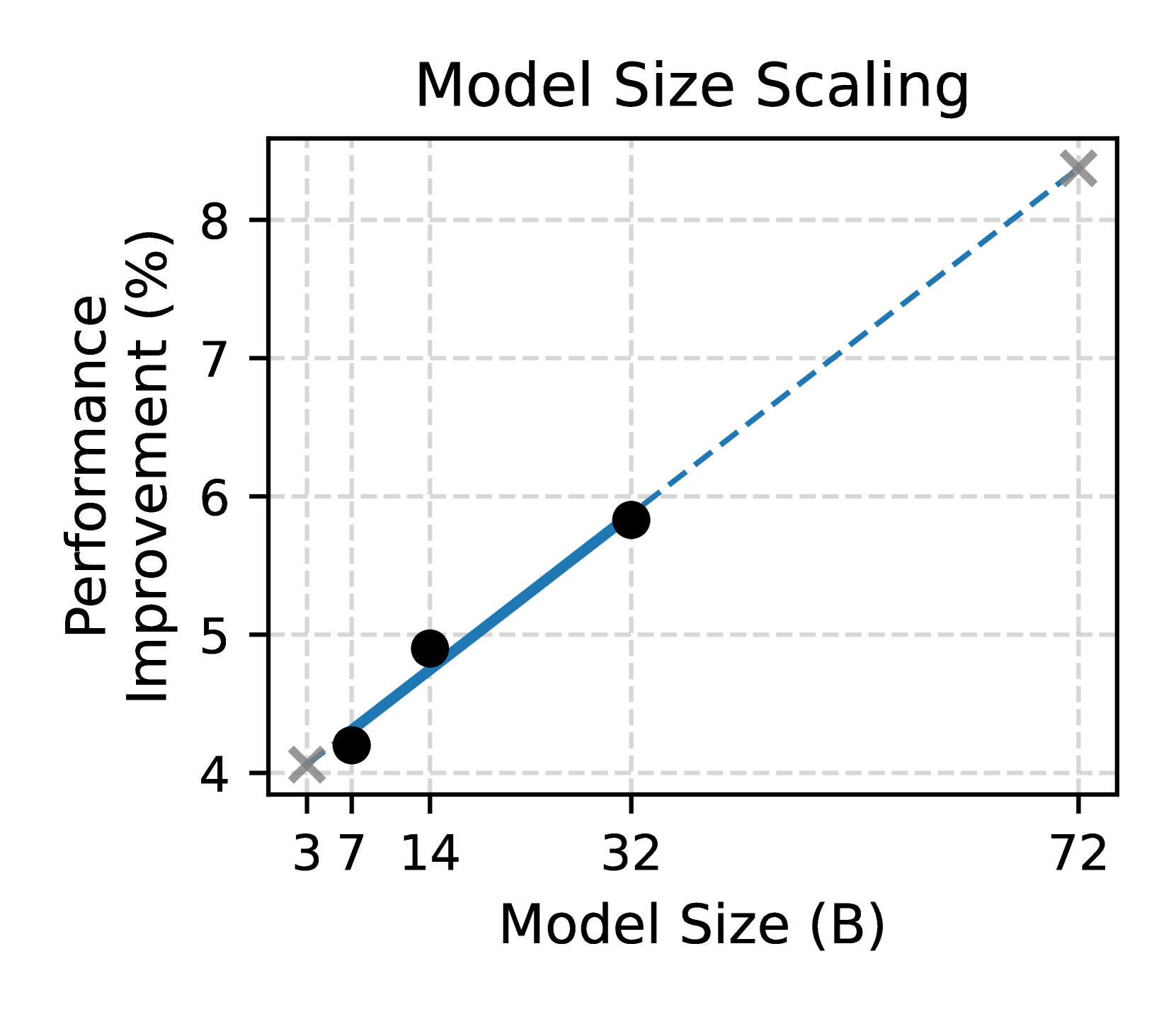

We first analyze the impact of model scale. Our study is based on the Qwen-2.5-Instruct model family at three sizes: 7B, 14B, and 32B. We evaluate performance improvements resulting from our training procedure described in Section 2, with results averaged across three key benchmarks: RewardBench, RM-Bench, and RMB.

For each model size, we compare the original and post-training performance. Figure 4(a) plots the relative improvement (%) with respect to model size. Observing an approximately linear trend, we fit a linear regression model and extrapolate to hypothetical scales of 3B and 72B, shown using faint markers and dashed extensions. The results strongly support a scaling law for reasoning reward models: larger models not only result in an absolute better final performance but also consistently yield greater performance gains. This aligns with the intuition that our training effectively leverages the superior reasoning capabilities of larger models.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Scatter Plot with Linear Trend Line: Model Size Scaling

### Overview

This image is a scatter plot chart titled "Model Size Scaling." It illustrates the relationship between the size of a model (measured in billions of parameters, "B") and the corresponding percentage improvement in performance. The chart includes observed data points and a fitted trend line that extends beyond the observed data range.

### Components/Axes

* **Title:** "Model Size Scaling" (centered at the top).

* **X-Axis:** Labeled "Model Size (B)". The axis has discrete, non-linearly spaced tick marks at the values: 3, 7, 14, 32, and 72.

* **Y-Axis:** Labeled "Performance Improvement (%)". The axis has linear tick marks at integer intervals from 4 to 8.

* **Legend:** Located in the top-left corner of the plot area. It contains two entries:

* A black circle symbol labeled "Observed Data".

* A blue dashed line symbol labeled "Trend Line".

* **Grid:** A light gray, dashed grid is present in the background.

### Detailed Analysis

**Data Series and Points:**

1. **Observed Data (Black Circles):**

* The first point is located at approximately **Model Size = 7 B**, **Performance Improvement = ~4.2%**.

* The second point is located at approximately **Model Size = 14 B**, **Performance Improvement = ~4.9%**.

* The third point is located at approximately **Model Size = 32 B**, **Performance Improvement = ~5.8%**.

* **Trend Verification:** The three black data points show a clear upward trend from left to right.

2. **Trend Line (Blue Dashed Line):**

* The line is a straight, upward-sloping dashed line.

* It originates from a gray 'X' marker at the coordinate **(3, 4)**.

* It passes through or very near the three observed data points.

* It terminates at another gray 'X' marker at the coordinate **(72, ~8.3)**.

* **Trend Verification:** The line has a consistent positive slope, indicating a direct, linear relationship between the logarithm of model size (given the x-axis spacing) and performance improvement.

### Key Observations

* **Positive Correlation:** There is a strong, positive correlation between model size and performance improvement. As model size increases, performance improvement increases.

* **Consistent Increase:** The observed data points (7B, 14B, 32B) show a steady, nearly linear increase in performance improvement on this scale.

* **Extrapolation:** The trend line is extrapolated beyond the observed data range, predicting a performance improvement of approximately **8.3%** for a **72B** parameter model.

* **Anchor Points:** The trend line is explicitly anchored at the points (3, 4) and (72, ~8.3), marked with gray 'X's, which may represent theoretical or projected bounds.

### Interpretation

The chart demonstrates a scaling law for the model in question: increasing the number of parameters leads to predictable gains in performance. The linear fit on this plot (where the x-axis is logarithmically spaced) suggests that performance improvement scales linearly with the *logarithm* of model size. This is a common finding in neural scaling laws.

The data suggests that investing in larger models yields measurable benefits, but the rate of improvement per additional parameter may diminish (as moving from 7B to 14B gains ~0.7%, while moving from 14B to 32B gains ~0.9%, but over a much larger parameter increase). The extrapolation to 72B provides a forecast for future model development, though predictions beyond the observed data (32B) carry inherent uncertainty. The chart effectively communicates that model size is a critical and predictable driver of performance for this specific task or metric.

</details>

(a) Model Size

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Line Chart: Inference Compute Scaling

### Overview

This is a line chart illustrating the relationship between a system's "Compute Budget" and its resulting "Performance" percentage. The chart demonstrates a positive, non-linear correlation where increasing the compute budget leads to improved performance, with the rate of improvement accelerating at higher budget levels.

### Components/Axes

* **Chart Title:** "Inference Compute Scaling" (positioned at the top center).

* **Y-Axis (Vertical):**

* **Label:** "Performance (%)"

* **Scale:** Linear scale ranging from 76 to 80.

* **Major Tick Marks:** 76, 77, 78, 79, 80.

* **X-Axis (Horizontal):**

* **Label:** "Compute Budget"

* **Scale:** Logarithmic scale (base 2), with values doubling at each major tick.

* **Major Tick Mark Labels:** "512", "1 k", "2 k", "4 k", "8 k". (Note: "k" denotes 1000).

* **Data Series:** A single series represented by a solid blue line connecting black circular data points.

* **Legend:** None present; the single data series is self-explanatory from the axis labels.

* **Grid:** Light gray dashed grid lines are present for both major x and y ticks.

### Detailed Analysis

**Trend Verification:** The single data series shows a clear upward trend. The slope is moderate from 512 to 2k, flattens slightly between 2k and 4k, and then increases sharply from 4k to 8k.

**Data Point Extraction (Approximate Values):**

The following values are estimated based on the position of the black data points relative to the y-axis grid lines.

1. **At Compute Budget = 512:** Performance ≈ 75.9% (The point sits just below the 76% grid line).

2. **At Compute Budget = 1 k (1000):** Performance ≈ 76.3% (The point is approximately one-third of the way between the 76% and 77% grid lines).

3. **At Compute Budget = 2 k (2000):** Performance ≈ 77.2% (The point is slightly above the 77% grid line).

4. **At Compute Budget = 4 k (4000):** Performance ≈ 77.3% (The point is marginally higher than the previous point at 2k, indicating a near plateau).

5. **At Compute Budget = 8 k (8000):** Performance ≈ 79.6% (The point is significantly above the 79% grid line, closer to 80% than 79%).

### Key Observations

1. **Non-Linear Scaling:** Performance does not increase linearly with compute budget. The gains are modest at lower budgets, nearly stall between 2k and 4k, and then surge dramatically at 8k.

2. **Significant Final Jump:** The most substantial performance increase (approximately +2.3 percentage points) occurs in the final interval, from a compute budget of 4k to 8k.

3. **Diminishing then Accelerating Returns:** The pattern suggests a region of diminishing returns (2k to 4k) followed by a breakthrough or a different scaling regime at the highest measured budget.

### Interpretation

The chart provides a Peircean insight into the scaling behavior of an inference system. The data suggests that simply doubling the compute budget does not guarantee a proportional performance increase. The near-plateau between 2k and 4k could indicate a bottleneck elsewhere in the system (e.g., memory bandwidth, algorithmic efficiency) that is overcome at the 8k budget level. The sharp rise at 8k implies that the system may have entered a new, more efficient scaling phase, or that a critical threshold of compute was crossed, enabling a qualitative improvement in the model's capability. This pattern is crucial for resource allocation, indicating that investing in compute beyond a certain point (4k in this case) can yield disproportionately high returns, but only if the system architecture can effectively utilize it. The absence of data points beyond 8k leaves open the question of whether this accelerated scaling continues or eventually plateaus again.

</details>

(b) Inference Compute

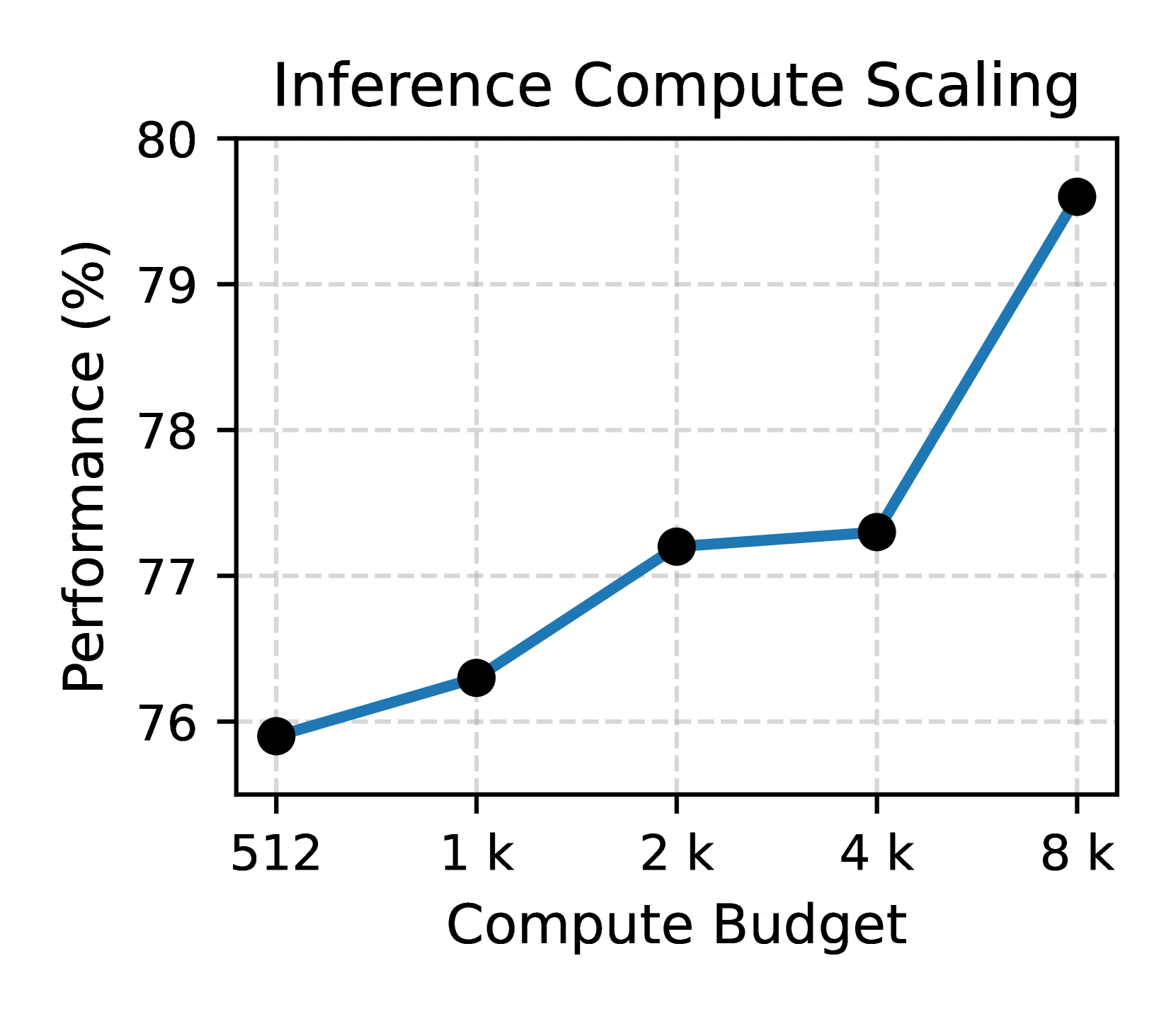

Figure 4: Scaling effect of RM-R1. (a) Larger models benefit more from reasoning training. (b) Longer reasoning chains improve RM performance.

| Method | | RewardBench | RM-Bench | RMB | Avg. |

| --- | --- | --- | --- | --- | --- |

| Train on Full Data | | | | | |

| Instruct + SFT | | 90.9 | 75.4 | 65.9 | 77.4 |

| Instruct + Distilled + SFT | | 91.2 | 76.7 | 65.4 | 77.8 |

| RM-R1 * | | 91.4 | 79.1 | 73.0 | 81.2 |

| Train on 9k (Distillation) Data | | | | | |

| Instruct + SFT | | 88.8 | 74.8 | 66.9 | 76.6 |

| Instruct + Distilled * | | 89.0 | 76.3 | 72.0 | 79.2 |

Table 3: Comparison of reasoning-based training versus SFT across benchmarks. * indicates reasoning-based methods. Reasoning training consistently yields better performance.

#### 4.2.2 Inference-time Computation

Next, we examine how model performance varies with different compute budgets measured in number of tokens allowed during inference. Since this is particularly relevant to reasoning-focused models, we fix our base model to DeepSeek-R1-Distill-Qwen-14B. We evaluate average performance across the three key benchmarks using a wide range of inference-time compute budgets: 512, 1024, 2048, 4096, and 8192 tokens.

To ensure a fair comparison, we match the training rollout budget to the inference budget in each setting (i.e., we allow a maximum of $k$ tokens during training for a compute budget of $k$ at inference). All models are trained using GRPO with identical datasets and hyperparameter configurations. Figure 4(b) shows the relationship between compute budget and performance. We observe a clear improvement trend as the inference budget increases. This highlights the benefits of long reasoning chains in reward modeling.

Takeaway 2:

Scaling improves reward model performance: we observe a near-linear trend with both model size and inference-time compute. Larger models consistently benefit more from our reasoning-based training pipeline, and longer reasoning chains become increasingly effective under higher compute budgets.

### 4.3 Effectiveness of Reasoning Training

We now analyze the impact of reasoning-based training. Here, we demonstrate that reasoning-based training can outperform answer-only approaches. We consider the following settings:

Instruct + SFT. This approach fine-tunes the instruct model directly toward producing the correct final answer using the full dataset, without providing any intermediate reasoning chains.

Instruct + Distilled + SFT. This approach applies SFT (with respect to the answer directly) after the distillation stage, serving as a direct comparison point with RM-R1 trained with RL.

Instruct + RM-R1 (Distilled + RL). This is the full approach proposed in this paper, following the procedure detailed in Section 2.

Instruct + Distilled. This setting uses the model checkpoint immediately after the distillation stage, before any RL fine-tuning.

In summary, methods with “+ RM-R1 ” or “+ Distilled” represent reasoning-based approaches, while the remaining methods are purely non-reasoning-based approaches. In Table 3, we report the results measured across the three benchmarks. The findings clearly demonstrate that reasoning training significantly benefits reward model performance. Under fair comparisons (i.e., training on exactly the same amount of data), reasoning-based models consistently outperform their SFT-only counterparts. In particular, even high-quality distillation alone, applied to a small subset of the data, provides notable gains, highlighting the value of incorporating structured intermediate reasoning.

Takeaway 3:

Reasoning training substantially improves reward modeling. It not only enables better generalization across tasks but also provides consistent gains even under limited data scenarios compared to direct-answer SFT approaches.

### 4.4 Case Study

Table 4: Case Study of RM-R1 (succinct version). Complete Chatbots and model responses are shown in Table 9, 10, and 11. Cold-Start RL evaluation tends to focus on superficial features (e.g., number of suggestions, relevance), whereas RM-R1 produces high-quality rubrics (e.g., accuracy for factual questions), faithfully follows them, and evaluates responses based on their actual content.

Question: symptoms of sickle-cell disease Chatbot A: 1. Painful red or yellow skin lesions; 2. Frequent infections; 3. Fatigue and weakness; 4. Anemia; 5. Slower growth and development; 6. Jaundice; 7. Swelling of hands and feet; 8. Vision loss; 9. Tiredness and weakness after physical activity; 10. Breathlessness; 11. Fever, chills, and night sweats. Chatbot B Sickle cell disease is a genetic disorder that affects the shape of red blood cells. It is most common in African Americans, but can also affect people from other ethnic groups. The symptoms of sickle cell disease can vary widely from person to person, but some common symptoms include: 1. Fatigue: …; 2. Pain: …; 3. Fever: …; 4. Anemia: …; 5. Delayed growth and development: …; 6. Infections: …; 7. Pigmentation: … It’s important to note that not everyone with sickle cell disease will experience all of these symptoms, … Therefore, it’s important to consult with a healthcare provider if you suspect you or someone you know may have sickle cell disease. Cold-start RL evaluation ✗ <type>| Chat\textcolor{deepblue}{\verb </type>| <rubric>| \textcolor{red}{\textbf{Relevance 40 \%}} – Comprehensiveness 30 \% – Clarity/organisation 30 \% \textcolor{red}{\verb </rubric>| <eval>| \textcolor{red}{\textbf{Chatbot A provided a list of symptoms that are directly relevant to sickle cell disease.}} The response is comprehensive and includes a wide range of symptoms commonly associated with the condition. The symptoms are listed in a clear and organized manner, making it easy for the client to understand the information. Chatbot B’s response is less organized than Chatbot A’s response, and the explanation of sickle cell disease may be unnecessary for the client’s question.\textcolor{orange}{\verb </eval>| <answer>| [[A]]\textcolor{lightred}{\verb </answer>| RM-R1 evaluation ✓ <type>| Chat\textcolor{deepblue}{\verb </type>| <rubric>| 1 \textcolor{ForestGreen}{\textbf{Accuracy 40 \%}} · 2 Comprehensiveness 30 \% · 3 Clarity 20 \% · 4 Helpfulness 10 \% \textcolor{deepred}{\verb <justify>| Accuracy is weighted highest because providing correct information about medical conditions is crucial. Comprehensiveness is important because … </justify>| \textcolor{red}{\verb </rubric>| <eval>| \emph{Accuracy of information:} Chatbot A provides a list of symptoms without explanation, but \textcolor{ForestGreen}{\textbf{some listed items (e.g.\ “painful red or yellow skin lesions,” “vision loss”) are not typical of sickle-cell disease}}. Chatbot B offers more accurate information, explaining each symptom’s nature and severity. ... \emph{Comprehensiveness:}...\emph{Clarity and organization:}...\emph{Helpfulness:}... \textcolor{orange}{\verb </eval>| <answer>| [[B]]\textcolor{lightred}{\verb </answer>|

To gain deeper insights into RM-R1, we further conduct a case study using Qwen-14B-Instruct, comparing its behavior with a cold-start RL model. The results, summarized in Table 4, reveal the following key observations:

Transparent Judging Process.

The reasoning traces generated by RM-R1 are highly interpretable and coherent, reflecting the model’s perception of human preferences. It explicitly articulates why certain responses are better, providing transparency into its evaluation process.

High-Quality, Question-Dependent Rubrics.

RM-R1 accurately understands the question and the context of comparison, correctly prioritizing “accuracy” as the most critical rubric for medical-related questions. In contrast, the cold-start RL model often overlooks the most important factors and instead emphasizes superficial or broadly defined features (e.g., relevance) that are less discriminative. The ability to generate high-quality, question-specific rubrics stems from the knowledge acquired during the distillation stage.

Faithful Adherence to Rubrics and Content-Based Judgement.

RM-R1 grounds its evaluation in the actual content of the chatbot responses. For example, it correctly identifies inaccuracies in Chatbot A’s response based on factual content rather than surface presentation. Furthermore, it systematically evaluates different aspects of the rubric, leading to a structured, interpretable, and verifiable judging process.

## 5 Related Work

Reward Models (RMs).

Early RMs were typically outcome-focused: trained to predict human preference rankings for complete outputs [42]. Recent advances have looked at providing process supervision, which rewards or evaluates the steps of a model’s reasoning rather than only the final answer. A series of works propose to train process reward models that judge the correctness of intermediate reasoning steps [19, 10, 27]. A limitation of many PRMs is their heavy reliance on curated step-level human labels or specific schemas, and they often remain domain-specific. Zhang et al. [40] propose Generative Verifiers, framing reward modeling as a next-token prediction task. This allows the reward model to leverage chain-of-thought and even use majority voting over multiple sampled rationales to make more reliable judgments. DeepSeek-GRM [22] and JudgeLRM [7] have studied using reasoning models as generative reward models, which are the most relevant research to ours. However, their main focus is on the effect of scaling inference-time computation on reward modeling. On the contrary, our work is the first to provide a systematic empirical comparison of different reward model training paradigms, shedding light on when and why a distilled and RL-trained reward model like RM-R1 has advantages over the conventional approaches.

Reinforcement Learning from Human Feedback (RLHF).

Early works [8] first demonstrated that reinforcement learning could optimize policies using a reward model trained from human pairwise preferences. Subsequent studies applied RLHF to large-scale language models using policy optimization algorithms such as PPO [26]. For example, Ziegler et al. [45] fine-tuned GPT-2 via PPO on human preference rewards, and Stiennon et al. [32] showed that RLHF could significantly improve the quality of summarization by optimizing against a learned preference model. More recently, Ouyang et al. [24] used a similar PPO-based pipeline to train InstructGPT, establishing the modern RLHF paradigm for instruction-following models. Recently, Verifiable supervision techniques have also emerged: DeepSeek-R1 [12] uses a form of self-verification during RLHF to reward correct reasoning steps, rather than only final-answer quality. This method incentivizes policies to produce outputs that can be verified for correctness, bridging the gap between pure preference-based feedback and ground-truth signals. However, even with such innovations, most RLHF implementations still treat reward modeling and reasoning as separate stages.

## 6 Conclusion and Future Work

In this paper, we revisited reward modeling through the lens of reasoning. We introduced RM-R1, a family of ReasRMs that effectively generate explicit chains of rubrics and rationales, and scale with both model size and inference compute. Across three public benchmarks, RM-R1 matched or surpassed commercial and open-source RMs while producing more interpretable judgments. Ablation investigations reveal that (1) task-type categorization, (2) bootstrapping from high-quality reasoning traces, and (3) RL fine-tuning are all indispensable. Qualitative analyses further showed that RM-R1 learns to prioritize high-impact rubrics, faithfully follow its own criteria and justify coherently. Future work includes active preference collection, where ReasRMs use active learning to query human preference only when the current rubric set is insufficient for a new preference sample. Finally, it would be natural to extend our study to multimodal/agentic reward modeling scenarios.

## Acknowledgments and Disclosure of Funding

This research is based upon work supported DARPA ITM Program No. FA8650-23-C-7316, and the AI Research Institutes program by National Science Foundation and the Institute of Education Sciences, U.S. Department of Education through Award # 2229873 - AI Institute for Transforming Education for Children with Speech and Language Processing Challenges. The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the official policies, either expressed or implied, of the U.S. Government. The U.S. Government is authorized to reproduce and distribute reprints for governmental purposes notwithstanding any copyright annotation therein.

## References

- Achiam et al. [2023] Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Adler et al. [2024] Bo Adler, Niket Agarwal, Ashwath Aithal, Dong H Anh, Pallab Bhattacharya, Annika Brundyn, Jared Casper, Bryan Catanzaro, Sharon Clay, et al. Nemotron-4 340b technical report. arXiv preprint arXiv:2406.11704, 2024.

- Anthropic [2024] AI Anthropic. The claude 3 model family: Opus, sonnet, haiku. Claude-3 Model Card, 1:1, 2024.

- Bai et al. [2022] Yuntao Bai, Saurav Kadavath, Sandipan Kundu, Amanda Askell, Jackson Kernion, Andy Jones, Anna Chen, Anna Goldie, Azalia Mirhoseini, Cameron McKinnon, et al. Constitutional ai: Harmlessness from ai feedback. arXiv preprint arXiv:2212.08073, 2022.

- Baker et al. [2009] Chris L Baker, Rebecca Saxe, and Joshua B Tenenbaum. Action understanding as inverse planning. Cognition, 113(3):329–349, 2009.

- Cai et al. [2024] Zheng Cai, Maosong Cao, Haojiong Chen, Kai Chen, Keyu Chen, Xin Chen, Xun Chen, Zehui Chen, Zhi Chen, Pei Chu, et al. Internlm2 technical report. arXiv preprint arXiv:2403.17297, 2024.

- Chen et al. [2025] Nuo Chen, Zhiyuan Hu, Qingyun Zou, Jiaying Wu, Qian Wang, Bryan Hooi, and Bingsheng He. Judgelrm: Large reasoning models as a judge. arXiv preprint arXiv:2504.00050, 2025.

- Christiano et al. [2017] Paul F Christiano, Jan Leike, Tom Brown, Miljan Martic, Shane Legg, and Dario Amodei. Deep reinforcement learning from human preferences. Advances in neural information processing systems, 30, 2017.

- Chu et al. [2025] Tianzhe Chu, Yuexiang Zhai, Jihan Yang, Shengbang Tong, Saining Xie, Dale Schuurmans, Quoc V Le, Sergey Levine, and Yi Ma. Sft memorizes, rl generalizes: A comparative study of foundation model post-training. arXiv preprint arXiv:2501.17161, 2025.

- Cui et al. [2025] Ganqu Cui, Lifan Yuan, Zefan Wang, Hanbin Wang, Wendi Li, Bingxiang He, Yuchen Fan, Tianyu Yu, Qixin Xu, Weize Chen, et al. Process reinforcement through implicit rewards. arXiv preprint arXiv:2502.01456, 2025.

- Dubey et al. [2024] Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Guo et al. [2025] Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- Hu et al. [2024] Jian Hu, Xibin Wu, Zilin Zhu, Xianyu, Weixun Wang, Dehao Zhang, and Yu Cao. Openrlhf: An easy-to-use, scalable and high-performance rlhf framework. arXiv preprint arXiv:2405.11143, 2024.

- Hurst et al. [2024] Aaron Hurst, Adam Lerer, Adam P Goucher, Adam Perelman, Aditya Ramesh, Aidan Clark, AJ Ostrow, Akila Welihinda, Alan Hayes, Alec Radford, et al. Gpt-4o system card. arXiv preprint arXiv:2410.21276, 2024.

- Ivison et al. [2024] Hamish Ivison, Yizhong Wang, Jiacheng Liu, Zeqiu Wu, Valentina Pyatkin, Nathan Lambert, Noah A Smith, Yejin Choi, and Hannaneh Hajishirzi. Unpacking dpo and ppo: Disentangling best practices for learning from preference feedback. arXiv preprint arXiv:2406.09279, 2024.

- Lai et al. [2024] Xin Lai, Zhuotao Tian, Yukang Chen, Senqiao Yang, Xiangru Peng, and Jiaya Jia. Step-dpo: Step-wise preference optimization for long-chain reasoning of llms. arXiv preprint arXiv:2406.18629, 2024.

- Lambert et al. [2024] Nathan Lambert, Valentina Pyatkin, Jacob Morrison, LJ Miranda, Bill Yuchen Lin, Khyathi Chandu, Nouha Dziri, Sachin Kumar, Tom Zick, Yejin Choi, et al. Rewardbench: Evaluating reward models for language modeling. arXiv preprint arXiv:2403.13787, 2024.

- Li et al. [2025] Xuefeng Li, Haoyang Zou, and Pengfei Liu. Torl: Scaling tool-integrated rl. arXiv preprint arXiv:2503.23383, 2025.

- Lightman et al. [2023] Hunter Lightman, Vineet Kosaraju, Yuri Burda, Harrison Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step. In The Twelfth International Conference on Learning Representations, 2023.

- Liu et al. [2024a] Chris Yuhao Liu, Liang Zeng, Jiacai Liu, Rui Yan, Jujie He, Chaojie Wang, Shuicheng Yan, Yang Liu, and Yahui Zhou. Skywork-reward: Bag of tricks for reward modeling in llms. arXiv preprint arXiv:2410.18451, 2024a.

- Liu et al. [2024b] Yantao Liu, Zijun Yao, Rui Min, Yixin Cao, Lei Hou, and Juanzi Li. Rm-bench: Benchmarking reward models of language models with subtlety and style. arXiv preprint arXiv:2410.16184, 2024b.

- Liu et al. [2025] Zijun Liu, Peiyi Wang, Runxin Xu, Shirong Ma, Chong Ruan, Peng Li, Yang Liu, and Yu Wu. Inference-time scaling for generalist reward modeling. arXiv preprint arXiv:2504.02495, 2025.

- Montibeller and Franco [2010] Gilberto Montibeller and Alberto Franco. Multi-criteria decision analysis for strategic decision making. In Handbook of multicriteria analysis, pages 25–48. Springer, 2010.

- Ouyang et al. [2022] Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. Advances in neural information processing systems, 35:27730–27744, 2022.

- Reid et al. [2024] Machel Reid, Nikolay Savinov, Denis Teplyashin, Dmitry Lepikhin, Timothy Lillicrap, Jean-baptiste Alayrac, Radu Soricut, Angeliki Lazaridou, Orhan Firat, Julian Schrittwieser, et al. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context. arXiv preprint arXiv:2403.05530, 2024.

- Schulman et al. [2017] John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Setlur et al. [2024] Amrith Setlur, Chirag Nagpal, Adam Fisch, Xinyang Geng, Jacob Eisenstein, Rishabh Agarwal, Alekh Agarwal, Jonathan Berant, and Aviral Kumar. Rewarding progress: Scaling automated process verifiers for llm reasoning. arXiv preprint arXiv:2410.08146, 2024.

- Shao et al. [2024] Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300, 2024.

- Sheng et al. [2024] Guangming Sheng, Chi Zhang, Zilingfeng Ye, Xibin Wu, Wang Zhang, Ru Zhang, Yanghua Peng, Haibin Lin, and Chuan Wu. Hybridflow: A flexible and efficient rlhf framework. arXiv preprint arXiv: 2409.19256, 2024.

- Shiwen et al. [2024] Tu Shiwen, Zhao Liang, Chris Yuhao Liu, Liang Zeng, and Yang Liu. Skywork critic model series. https://huggingface.co/Skywork, September 2024. URL https://huggingface.co/Skywork.

- Stanton et al. [2021] Samuel Stanton, Pavel Izmailov, Polina Kirichenko, Alexander A Alemi, and Andrew G Wilson. Does knowledge distillation really work? Advances in neural information processing systems, 34:6906–6919, 2021.

- Stiennon et al. [2020] Nisan Stiennon, Long Ouyang, Jeffrey Wu, Daniel Ziegler, Ryan Lowe, Chelsea Voss, Alec Radford, Dario Amodei, and Paul F Christiano. Learning to summarize with human feedback. Advances in Neural Information Processing Systems, 33:3008–3021, 2020.

- Van Hoeck et al. [2015] Nicole Van Hoeck, Patrick D Watson, and Aron K Barbey. Cognitive neuroscience of human counterfactual reasoning. Frontiers in human neuroscience, 9:420, 2015.

- Wang et al. [2024a] Haoxiang Wang, Wei Xiong, Tengyang Xie, Han Zhao, and Tong Zhang. Interpretable preferences via multi-objective reward modeling and mixture-of-experts. In Yaser Al-Onaizan, Mohit Bansal, and Yun-Nung Chen, editors, Findings of the Association for Computational Linguistics: EMNLP 2024, pages 10582–10592, Miami, Florida, USA, November 2024a. Association for Computational Linguistics. URL https://aclanthology.org/2024.findings-emnlp.620.

- Wang et al. [2024b] Tianlu Wang, Ilia Kulikov, Olga Golovneva, Ping Yu, Weizhe Yuan, Jane Dwivedi-Yu, Richard Yuanzhe Pang, Maryam Fazel-Zarandi, Jason Weston, and Xian Li. Self-taught evaluators. arXiv preprint arXiv:2408.02666, 2024b.

- Wang et al. [2024c] Zhilin Wang, Yi Dong, Jiaqi Zeng, Virginia Adams, Makesh Narsimhan Sreedhar, Daniel Egert, Olivier Delalleau, Jane Scowcroft, Neel Kant, Aidan Swope, and Oleksii Kuchaiev. HelpSteer: Multi-attribute helpfulness dataset for SteerLM. In Kevin Duh, Helena Gomez, and Steven Bethard, editors, Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers), pages 3371–3384, Mexico City, Mexico, June 2024c. Association for Computational Linguistics. URL https://aclanthology.org/2024.naacl-long.185.

- Yang et al. [2024] An Yang, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran Wei, et al. Qwen2. 5 technical report. arXiv preprint arXiv:2412.15115, 2024.

- Yu et al. [2024] Yue Yu, Zhengxing Chen, Aston Zhang, Liang Tan, Chenguang Zhu, Richard Yuanzhe Pang, Yundi Qian, Xuewei Wang, Suchin Gururangan, Chao Zhang, et al. Self-generated critiques boost reward modeling for language models. arXiv preprint arXiv:2411.16646, 2024.

- Yuan et al. [2024] Lifan Yuan, Ganqu Cui, Hanbin Wang, Ning Ding, Xingyao Wang, Jia Deng, Boji Shan, Huimin Chen, Ruobing Xie, Yankai Lin, Zhenghao Liu, Bowen Zhou, Hao Peng, Zhiyuan Liu, and Maosong Sun. Advancing llm reasoning generalists with preference trees, 2024.

- Zhang et al. [2024] Lunjun Zhang, Arian Hosseini, Hritik Bansal, Mehran Kazemi, Aviral Kumar, and Rishabh Agarwal. Generative verifiers: Reward modeling as next-token prediction. arXiv preprint arXiv:2408.15240, 2024.

- Zheng et al. [2023] Lianmin Zheng, Wei-Lin Chiang, Ying Sheng, Siyuan Zhuang, Zhanghao Wu, Yonghao Zhuang, Zi Lin, Zhuohan Li, Dacheng Li, Eric Xing, et al. Judging llm-as-a-judge with mt-bench and chatbot arena. Advances in Neural Information Processing Systems, 36:46595–46623, 2023.

- Zhong et al. [2025] Jialun Zhong, Wei Shen, Yanzeng Li, Songyang Gao, Hua Lu, Yicheng Chen, Yang Zhang, Wei Zhou, Jinjie Gu, and Lei Zou. A comprehensive survey of reward models: Taxonomy, applications, challenges, and future. arXiv preprint arXiv:2504.12328, 2025.

- Zhou et al. [2024] Enyu Zhou, Guodong Zheng, Binghai Wang, Zhiheng Xi, Shihan Dou, Rong Bao, Wei Shen, Limao Xiong, Jessica Fan, Yurong Mou, et al. Rmb: Comprehensively benchmarking reward models in llm alignment. arXiv preprint arXiv:2410.09893, 2024.

- Zhu et al. [2023] Banghua Zhu, Evan Frick, Tianhao Wu, Hanlin Zhu, and Jiantao Jiao. Starling-7b: Improving llm helpfulness & harmlessness with rlaif, November 2023.

- Ziegler et al. [2019] Daniel M Ziegler, Nisan Stiennon, Jeffrey Wu, Tom B Brown, Alec Radford, Dario Amodei, Paul Christiano, and Geoffrey Irving. Fine-tuning language models from human preferences. arXiv preprint arXiv:1909.08593, 2019. Contents

1. 1 Introduction

1. 2 RM-R1

1. 2.1 Task Definition

1. 2.2 Reasoning Distillation for Reward Modeling

1. 2.3 RL Training

1. 2.3.1 Chain-of-Rubrics (CoR) Rollout

1. 2.3.2 Reward Design

1. 3 Experiments

1. 3.1 Experimental Setup

1. 3.2 Main Results

1. 4 Analysis

1. 4.1 Training Recipes

1. 4.2 Scaling Effects

1. 4.2.1 Model Sizes

1. 4.2.2 Inference-time Computation

1. 4.3 Effectiveness of Reasoning Training

1. 4.4 Case Study

1. 5 Related Work

1. 6 Conclusion and Future Work

1. A User Prompt for DeepSeek-Distilled Reasoning Models

1. B Details of Reasoning Chain Generation

1. C Group Relative Policy Optimization (GRPO)

1. D Experiment Setups

1. D.1 Benchmarks

1. D.2 Preference Datasets

1. D.3 Baselines

1. E Implementation Details

1. F Full Experiment Result

1. G Supplementary Information for Section 4

1. G.1 Ablation Settings

1. G.2 Training Dynamics

## Appendix A User Prompt for DeepSeek-Distilled Reasoning Models

Large reasoning models such as DeepSeek-R1-distilled models [12] do not have a system prompt, so we show the user prompt for rollouts in Figure 5.

Chain-of-Rubrics (CoR) Rollout for Reasoning Models

Please act as an impartial judge and evaluate the quality of the responses provided by two AI Chatbots to the Client question displayed below. … [Pairwise Input Content] … Output your final verdict at last by strictly following this format: ’<answer>[[A]]</answer>’ if Chatbot A is better, or ’<answer>[[B]]</answer>’ if Chatbot B is better.

Figure 5: The user prompt used for RM-R1 rollout (for reasoning models).

## Appendix B Details of Reasoning Chain Generation

We now expand on the details of generating high-quality reasoning chains. We first use the same prompt to query Claude-3.7-Sonnet, generating initial reasoning traces. However, approximately 25% of these traces are incorrect, primarily on harder chat tasks. To correct these cases, we pass the original prompt, the incorrect trace, and the correct final answer to OpenAI-O3, which then generates a corrected reasoning trace aligned with the right answer.

This two-stage process yields a high-quality distillation set. We deliberately choose the order—first Claude, then O3 —based on qualitative observations: Claude excels at solving easier tasks and maintaining attention to safety considerations, whereas O3 performs better on harder tasks but tends to overemphasize helpfulness at the expense of safety. We select approximately 12% of the training data (slightly fewer than 9K examples) for distillation. This is then followed by RL training.

## Appendix C Group Relative Policy Optimization (GRPO)

Group Relative Policy Optimization (GRPO) [28] is a variant of Proximal Policy Optimization (PPO) [26], which obviates the need for additional value function approximation, and uses the average reward of multiple sampled outputs produced in response to the same prompt as the baseline. More specifically, for each prompt $x$ , GRPO samples a group of outputs $\{y_1,y_2,⋯,y_G\}$ from the old policy $π_θ_{old}$ and then optimizes the policy model by maximizing the following objective:

$$

\begin{split}J_GRPO(θ)= &E_x∼D

, \{j_i\_i=1^G∼ r_θ_{old}(j\mid x)}\bigg{[}\frac{1}{G

}∑_i=1^G\frac{1}{|j_i|}∑_t=1^|j_i|\Big{\{}\min\Big{(}\frac{r

_θ(j_i,t\mid x,j_i,<t)}{r_θ_{old}(j_i,t\mid x,j_i,

<t)}\hat{A}_i,t,\\[4.0pt]

&clip\big{(}\frac{r_θ(j_i,t\mid x,j_i,<t)}{r_θ_{

old}(j_i,t\mid x,j_i,<t)},1-ε,1+ε\big{)}\hat{A}_i,t\Big{

)}-β D_KL≤ft[r_θ(·\mid x) \| π_

ref(·\mid x)\right]\Big{\}}\bigg{]},\end{split} \tag{9}

$$

where $β$ is a hyperparameter balancing the task specific loss and the KL-divergence. Specifically, $\hat{A}_i$ is computed using the rewards of a group of responses within each group $\{r_1,r_2,…,r_G\}$ , and is given by the following equation:

$$

\hat{A}_i=\frac{r_i-{mean(\{r_1,r_2,⋯,r_G\})}}{{

std(\{r_1,r_2,⋯,r_G\})}}. \tag{10}

$$

## Appendix D Experiment Setups

### D.1 Benchmarks

In this paper, we consider the following three benchmarks:

RewardBench [17]: RewardBench is one of the first endeavors towards benchmarking reward models through prompt-chosen-rejected trios, covering four categories: chat, chat-hard, reasoning, and safety, with 358, 456, 740, and 1431 samples, respectively.

RM-Bench [21]: Building on RewardBench, RM-Bench evaluates reward models for their sensitivity to subtle content differences and robustness against style biases. It includes four categories: Chat, Safety, Math, and Code, with 129, 441, 529, and 228 samples, respectively. Each sample contains three prompts of varying difficulty. RM-Bench is the most reasoning-intensive benchmark among those we consider.

RMB [43]: Compared with RewardBench and RM-Bench, RMB offers a more comprehensive evaluation of helpfulness and harmlessness. It includes over 49 real-world scenarios and supports both pairwise and Best-of-N (BoN) evaluation formats. RMB comprises 25,845 instances in total—37 scenarios under the helpfulness alignment objective and 12 under harmlessness.

### D.2 Preference Datasets

We consider the following datasets for training:

Skywork Reward Preference 80K [20] is a high-quality collection of pairwise preference data drawn from a variety of domains, including chat, safety, mathematics, and code. It employs an advanced data filtering technique to ensure preference reliability across tasks. However, we identify a notable issue with this dataset: all samples from the magpie_ultra source exhibit a strong spurious correlation, where rejected responses consistently contain the token “ <im_start>,” while accepted responses do not. Additionally, responses from this source show a systematic bias—accepted responses are typically single-turn, while rejected responses are multi-turn. This problematic subset constitutes approximately 30% of the Skywork dataset and primarily covers mathematics and code domains. To avoid introducing spurious correlations into training, we exclude all magpie_ultra data and retain only the cleaned subset for our experiments.