# Attestable builds: compiling verifiable binaries on untrusted systems using trusted execution environments

**Authors**: Daniel Hugenroth, Mario Lins, René Mayrhofer, Alastair R. Beresford

> 0000-0003-3413-1722 University of Cambridge Cambridge United Kingdom

> 0000-0003-1713-3347 Johannes Kepler University Linz Austria

> 0000-0003-1566-4646 Johannes Kepler University Linz Austria

> 0000-0003-0818-6535 University of Cambridge Cambridge United Kingdom

## Abstract

In this paper we present attestable builds, a new paradigm to provide strong source-to-binary correspondence in software artifacts. We tackle the challenge of opaque build pipelines that disconnect the trust between source code, which can be understood and audited, and the final binary artifact, which is difficult to inspect. Our system uses modern trusted execution environments (TEEs) and sandboxed build containers to provide strong guarantees that a given artifact was correctly built from a specific source code snapshot. As such it complements existing approaches like reproducible builds which typically require time-intensive modifications to existing build configurations and dependencies, and require independent parties to continuously build and verify artifacts. In comparison, an attestable build requires only minimal changes to an existing project, and offers nearly instantaneous verification of the correspondence between a given binary and the source code and build pipeline used to construct it. We evaluate it by building open-source software libraries—focusing on projects which are important to the trust chain and those which have proven difficult to be built deterministically. Overall, the overhead (42 seconds start-up latency and 14% increase in build duration) is small in comparison to the overall build time. Importantly, our prototype builds even complex projects such as LLVM Clang without requiring any modifications to their source code and build scripts. Finally, we formally model and verify the attestable build design to demonstrate its security against well-resourced adversaries.

copyright: none conference: ; isbn: ;

## 1. Introduction

Executable binaries are digital black boxes. Once compiled, it is hard to reason about their behavior and whether they are trustworthy. On the other hand, source code is easier to inspect. However, few have the ability, resources, and patience to compile all their software from scratch. Therefore, we want to allow recipients to verify that an artifact has been truthfully built from a given source code snapshot. This challenge has been popularized in the now-famous Turing Lecture by Ken Thompson on “Trusting Trust” (Thompson, 1984).

The problem of trusting build artifacts also presents itself in commercial settings where source code is typically not shared. Here as well the source code is the only source-of-truth that is inspectable by the employed engineers and auditors. During code review it is code changes and not binary output that is examined and, likewise, audit reports generally reference repository commits and not the hash of the shipped artifact. Hence, companies are interested in verifiable source-to-binary correspondence in an enterprise setting too. Where this correspondence cannot be verified, defects are difficult to identify—allowing them to spread down the supply chain to many targets.

There have been recent attacks which successfully targeted the build process. During the 2020 SolarWinds hack, attackers compromised the company’s build server to inject additional code into updates for network management system software (Zetter, 2023). As there were no changes to the source code repository, only forensic inspection of the build machines eventually unveiled the malicious change. In the meantime, the software was distributed to many customers in industry and government that relied on it to secure access to their internal networks. The US Cybersecurity & Infrastructure Security Agency (CISA) issued an emergency directive requesting immediate disconnect of all potentially affected products (Cybersecurity & Infrastructure Security Agency, 2021).

In 2024 a complex supply chain attack (CVE-2024-3094) against the XZ Utils package was uncovered that allowed adversaries to compromise vulnerable servers running OpenSSH (Lins et al., 2024). A key aspect that made this attack possible is that, for (open-source) projects utilizing Autoconf, it is a common practice that maintainers manually create certain build assets (e.g., a configure script), add it to a tarball, and then provide it to the packager, who builds the final artifact. In case of XZ, this tarball contained a malicious asset covertly included by the adversary that was not part of the repository. Here both the maintainer and the packager have opportunity to meddle with the final binary artifact.

Reproducible Builds (R-Bs, § 2.2) are the typically proposed solution to address potential discrepancies between source code and compiled binaries. Correctly implemented, R-Bs ensure source-to-binary correspondence by making the build process perfectly deterministic. Thus, they guarantee that the same source code always results in a bit-to-bit identical binary artifact output. This enables independent parties to reproduce binary artifacts, thus verifying that a given source input generated a given output. There are many successful projects that implement R-Bs (Project, 2024; Perry and Project, 2024; Project, 2024).

However, R-Bs come with their own challenges: They require substantial changes to the build process, which is time-intensive and therefore costly—not just as a one-time cost, but also as a continuing maintenance burden. Further, for closed-source software, the downstream consumer cannot check if their supplier has correctly applied R-B principles, since they are typically not given access to the required source code. Additionally, even for source-available software, the build process and compiler are often not available, for example due to intellectual property or licensing concerns. In reality, R-Bs only provide effective security benefits when there are independent builders who are continuously verifying that distributed artifacts are identical to their locally built ones.

We propose Attestable Builds (A-Bs) as a practical and scalable alternative where R-Bs are infeasible or costly to implement—including as a complement to extend R-B guarantees to consumers who cannot verify R-Bs themselves even if the primary build chain has R-B properties. For this we leverage Trusted Execution Environments (TEEs) to ensure that the build process is performed correctly and is verifiable. Unlike previous generations of TEEs (e.g., Intel SGX, Arm TrustZone), modern TEE implementations (e.g., AMD SEV-SNP, Intel TDX, AWS Nitro Enclaves) support full virtual machines with strong protection against interference by the hypervisor and physical attacks. Whereas this technology is typically used to achieve data confidentiality, in this work we leverage its integrity properties.

In our approach, the build process can be performed by an untrusted build service running at an untrusted cloud service provider (CSP), as long as the TEE hardware is trusted. We start by booting an open source machine image embedded inside a modern TEE. The embedded machine downloads the source code repository and commits to a hash of the downloaded files, including build instructions, in a secure manner before executing the build process inside a sandbox. Afterwards, the TEE hardware trust anchor attests to the booted image, the committed hash value, and the built artifact. This attestation certificate is shared alongside the artifact and is recorded in a transparency log. Recipients of the artifact can check the certificate locally and query the transparency log to verify that a given artifact has been built from a particular source code snapshot.

The paradigms of A-B and R-B can be composed to achieve stronger trust models that do not require trusting a single Confidential Computing vendor (see § 3.4). Table 1 highlights the similarities and differences between R-Bs and A-Bs. We believe that A-Bs would have prevented or substantially mitigated the feasibility of the mentioned SolarWind and XZ Utils attacks (see § 7.1).

In this paper, we make the following contributions:

- We present a new paradigm called Attestable Builds (A-Bs) that provides strong source-to-binary correspondence with transparency and accountability.

- We discuss short-comings of alternative approaches and devise a design relying on a sandbox and an integrity-protected observer.

- We implement an open-source prototype to demonstrate the practicality of A-Bs by building real-world software including complex projects like Clang and the Linux Kernel as well as packages that are hard to build reproducibly.

- We evaluate the performance of our system and find that it adds a (mitigable) 42 second start-up cost, which is small compared to typical build durations. It also imposes a performance overhead of around 14% in our default configuration and up-to 68% when using hardened sandboxes.

- We formally verify the system using Tamarin and discuss the underlying trust assumptions required.

Table 1. Comparison of Reproducible Builds (R-Bs) and Attestable Builds (A-Bs).

| Reproducible Builds | Attestable Builds |

| --- | --- |

| + Strong source-to-binary correspondence | |

| - High engineering effort for both initial setup and ongoing build maintenance | + Only small changes to the build environment needed [4pt] + Cloud service compatible |

| - Dependencies and tool chain need to be deterministic | Dependencies and tool chain can be R-B or A-B |

| - Environment might leak into build process undetected | + Enforces hermetic builds |

| + Machine independent | - Requires modern CPU |

| Requires trusting at least one party and their machine | Requires trusting the hardware vendor |

| - Requires open source | + Supports closed source and signed intermediate artifacts |

| + Can be composed to an anytrust setup (§ 3.4) | |

## 2. Background

Attestable builds integrates with modern software engineering and CI/CD patterns (§ 2.1) and provides an alternative to reproducible builds (§ 2.2). For this we leverage Confidential Computing technology (§ 2.3) and verifiable logs (§ 2.4). This section introduces the required background and building blocks.

### 2.1. Modern software engineering & CI/CD

Modern Software Engineering (SWE) involves large teams that requires efficient mediation of their collaboration aspects through software. Many projects rely on source control management (SCM) software like Git (project, 2024a) and Mercurial (community, 2024). The underlying repositories are often hosted by online services, such as GitHub (Inc., 2024c) or Bitbucket (Ltd, 2024). We call these Repository Hosting Providers (RHPs).

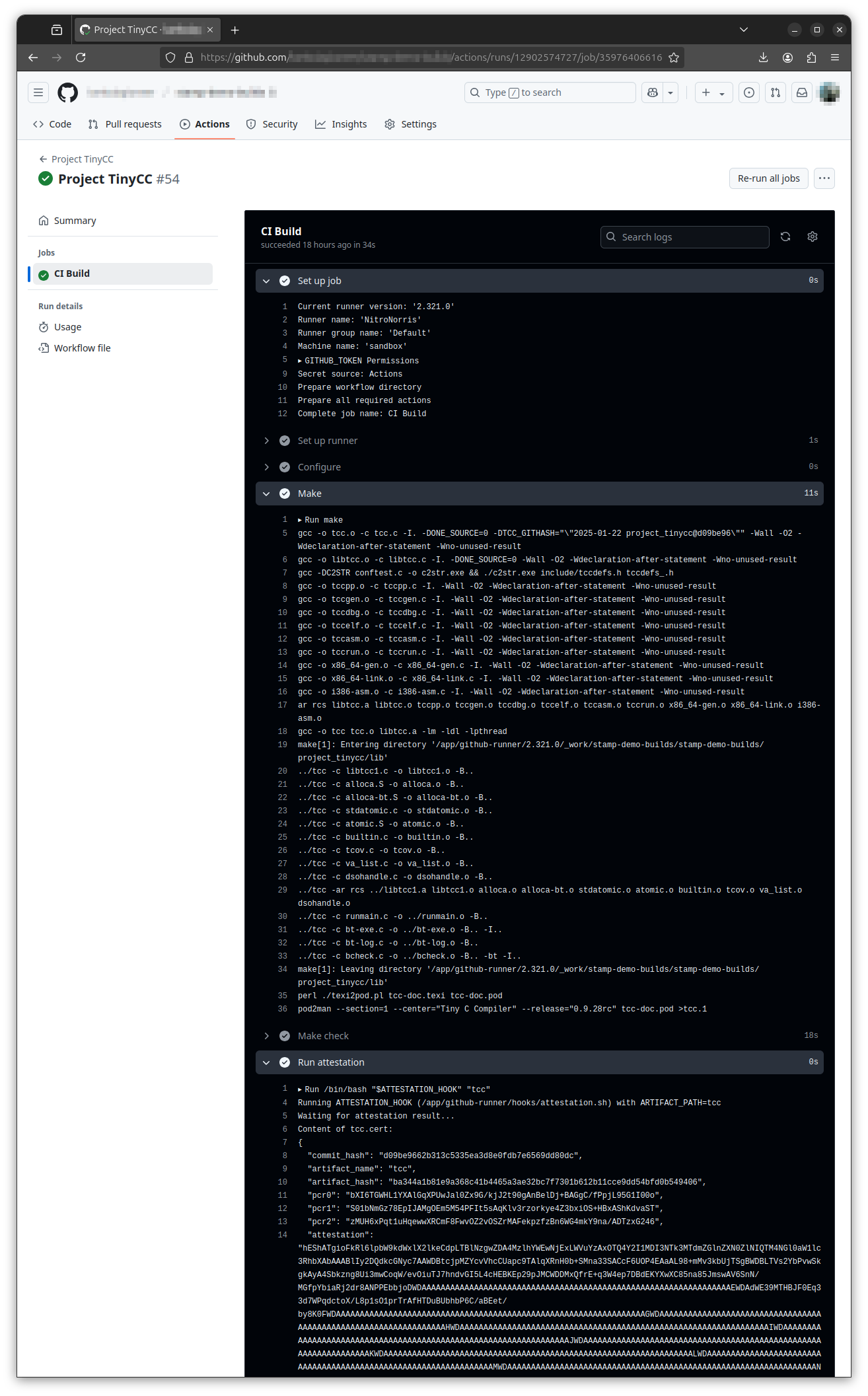

With increasing complexity, Continuous Integration (CI), has become an important component in modern software projects. Every published code change triggers a new execution of the project’s CI pipeline that builds, tests, and verifies the new code snapshot. In addition, some code changes might trigger a (separate) Continuous Deployment (CD) pipeline which after passing all checks distributes binaries automatically and re-deploys them to the production system. Such CI/CD pipelines are described in configuration files within the source code repository and then executed by online services, build service providers (BSPs), such as Jenkins (project, 2024a) or GitHub Actions (Inc., 2024b). The latter is an example where the RHP is also a BSP. Our prototype uses GitHub Actions to demonstrate how A-Bs can integrate into existing infrastructure.

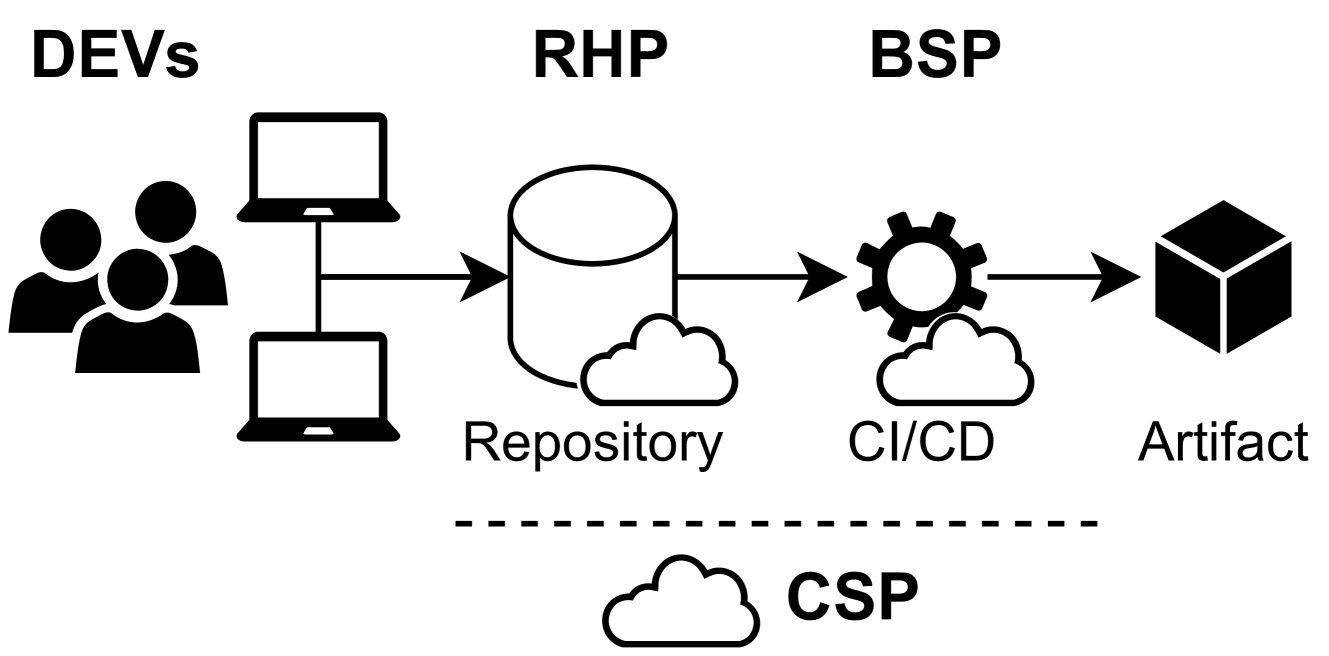

Both RHPs and BSPs often do not manage their own machines, but use cloud infrastructure provided by cloud service providers (CSPs) such as Amazon Web Services (AWS), Microsoft Azure, or Google Cloud Platform (GCP). Although there are self-hosted alternatives, such as GitLab (Inc., 2024d), even those are often deployed via a CSP. We illustrate the involved parties in Figure 1.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Software Development and Deployment Pipeline

### Overview

The image depicts a simplified software development and deployment pipeline. It illustrates the flow of code from developers (DEVs) through a repository (RHP), continuous integration/continuous delivery (CI/CD) system (BSP), and finally to an artifact. A cloud service provider (CSP) is shown below the pipeline, suggesting an underlying infrastructure.

### Components/Axes

The diagram consists of the following components, arranged horizontally from left to right:

* **DEVs:** Represented by three black silhouettes of people, with two laptop icons.

* **RHP:** A cylindrical shape with a cloud-like base, labeled "Repository".

* **BSP:** A gear-shaped icon with a cloud-like base, labeled "CI/CD".

* **Artifact:** A cube-shaped icon, labeled "Artifact".

* **CSP:** A cloud-shaped icon, labeled "CSP", positioned below the pipeline and separated by a dashed line.

Arrows indicate the flow of information/code between these components.

### Detailed Analysis or Content Details

The diagram shows a linear progression:

1. **DEVs** (Developers) create code on their laptops.

2. The code is pushed to the **Repository** (RHP).

3. The **CI/CD** system (BSP) automatically builds, tests, and packages the code.

4. The resulting **Artifact** is produced.

5. The entire process is underpinned by a **CSP** (Cloud Service Provider).

The arrows indicate a unidirectional flow from left to right. There are no numerical values or specific data points present in the diagram.

### Key Observations

The diagram is a high-level representation of a common DevOps pipeline. It emphasizes the automation of the build, test, and deployment process. The CSP is shown as a foundational element, implying that the infrastructure is cloud-based. The dashed line separating the CSP from the pipeline suggests a separation of concerns or a layer of abstraction.

### Interpretation

This diagram illustrates a modern software development workflow. The use of CI/CD and a cloud provider (CSP) indicates a focus on agility, scalability, and automation. The pipeline aims to reduce manual effort and accelerate the delivery of software updates. The diagram doesn't provide specifics about the technologies used, but it conveys the core principles of DevOps. The separation of the CSP suggests that the infrastructure is managed separately from the application code and deployment process. The diagram is a conceptual overview and doesn't contain any quantitative data. It's a visual aid for understanding the flow of code and the roles of different components in a software delivery pipeline.

</details>

Figure 1. Developers (DEVs) commit to a source code repository at a repository hosting provider (RHP). Changes trigger the CI/CD pipeline at a build service provider (BSP) and generate new binary artifacts. RHP and BSP typically run on servers provided by a cloud service provider (CSP).

### 2.2. Reproducible builds (R-Bs)

The use of CI/CD brings many benefits to developers: automated checks ensure that no “broken code” is checked in, builds are easily repeatable since they are fully described in versioned configuration files; and long compile/deploy cycles happen asynchronously. However, they also shift a lot of trust to the RHP, BSP, and CSP. These online services are opaque and any of them can interfere with the build process. Therefore, the conveniently outsourced CI/CD pipeline undermines the trustworthiness of the generated artifacts. This leads to a particularly tricky situation, as its binary output is hard to inspect and understand. Therefore, trust in the process itself is just as important as trust in its input.

R-Bs have been proposed as a solution to ensure source-to-binary correspondence. The underlying approach is to make the build process fully deterministic such that the same source input always yields perfectly identical binary output. In a project with R-Bs malicious build servers can be uncovered by repeating the build process on a different machine. Correctly set up, the builds are replicated by independent parties that then compare their results.

However, introducing R-Bs to a software project is challenging (Fourné et al., 2023; Shi et al., 2021; Butler et al., 2023). For bit-to-bit identical outputs, the build process needs to be fully described in the committed files and all steps need to be fully deterministic. However, sources of non-determinism are plentiful as outputs can be affected by timestamps, archive metadata, unspecified filesystem order, build location paths, and uninitialized memory (Fourné et al., 2023; Shi et al., 2021).

While many sources of non-determinism can be eliminated with effort and tooling, other steps, such as digital signatures used to sign intermediate artifacts in multi-layered images, cannot easily be made deterministic. This is because typical signature algorithms break when random/nonce parameters become predictable and might leak private key material as a result (Katz and Lindell, 2007). For example, consider a build process for a smartphone firmware image that builds a signed boot loader during its process. This inner signature will affect the following build artifacts and is not easily hoisted to a later stage. In other instances, this signing process might happen by an external service or in a hardware security module (HSM) to protect the private key and therefore can never be deterministic.

Critically, for the downstream package to be reproducible, all its dependencies need to be reproducible as well. This also applies for dependencies that are shipped as source code, as R-B is a property of the build system. Facing non-determinism in any of the (transitive) upstream dependencies, a developer either needs to fix the upstream dependency or fork the respective sub-tree. In practice, the verification of having achieved R-B is often done heuristically and newly identified sources of non-determinism can cause a project to loose its status (Fourné et al., 2023). Despite the challenges, there are large real-world projects that have successfully adopted R-Bs. Examples are Debian (Project, 2024), NetBSD (Inc., 2024e), Chromium (Project, 2024), and Tor (Perry and Project, 2024). However, these came at considerable expenses in terms of required upgrades to the build system and on-going maintenance costs (Lamb and Zacchiroli, 2021; Fourné et al., 2023).

The Debian R-B project stands out due to its scale and highlights the challenges of R-Bs, taking twelve years to produce the first fully reproducible Debian image (Gevers, 2023; LWN.net, 2025). A typical challenge is to motivate upstream developers to provide reproducible packages. This even lead to the introduction of a bounty system (Gevers, 2023). The project’s dashboard (et al., 2025) shows that the number of unreproducible packages dropped from 6.1% (Stretch, released 2017) to 2.0% (Bookworm, released 2023). This suggests that the remaining packages are particularly difficult to convert to R-Bs. Therefore, we picked some of these packages for our practical evaluation (§ 4.1).

### 2.3. Confidential Computing

Executing code in a trustworthy manner on untrusted machines is a long standing challenge. Enterprises face this challenge when processing sensitive data in the cloud and financial institutions need to establish trust in installed banking apps. These scenarios require a solution that ensures that the data is not only protected while in-transit or at-rest, but also when in-use. Trusted Execution Environments (TEEs) allow the execution of code inside an enclave, a specially privileged mode such that execution and memory are shielded from the operating system and hypervisor. Typically, the allocated memory is encrypted with a non-extractable key such that it resists even a physical attack with probes used to intercept communication between CPU and RAM (and potentially interfere with). Even the hypervisor can only communicate with the enclaves via dedicated channels, e.g., vsock or shared memory, although the hypervisor maintains the ability to pause or stop code execution inside an enclave.

Earlier technologies such as ARM TrustZone (Pinto and Santos, 2019) and Intel SGX (Costan, 2016) create enclaves on a process level. This requires application developers to rewrite parts of their application using special SDKs so secure functionalities are run inside an enclave. In particular, Intel SGX has proven to be vulnerable to side-channel attacks that allow adversaries to extract secret information from enclaves (Chen et al., 2019; Skarlatos et al., 2019; Murdock et al., 2020; Van Schaik et al., 2020). It also imposes further practical limitations, such as a maximum enclave memory size and performance overhead.

More recent technologies such as Intel TDX (Corporation, 2024) and AMD SEV-SNP (Inc., 2024a) boot entire virtual machines (VMs) in a confidential context. This promises to simplify the development of new use-cases as existing applications and libraries can be used with little to no modification. In addition, VMs can be pinned to specified CPU cores, reducing the risk of timing and cache side-channel attacks. AWS Nitro is a similar technology, built on the proprietary AWS Nitro hypervisor and dedicated hardware. The trust model is slightly weaker as the trusted components sit outside the main processor. We choose AWS Nitro for our prototype due to its accessible tooling, but it can be substituted with equivalent technologies.

It is important for the critical software to verify that it is running inside a secure enclave. Likewise, users and other services interacting with critical software need to verify the software is running securely and is protected from outside interference and inspection. This is typically achieved using remote attestation. On a high-level, the curious client presents a challenge to the software that claims to run inside an enclave. The software then forwards this challenge to the TEE and its backing hardware who signs the challenge and binds it to the enclave’s Platform Configuration Registers (PCRs). The PCRs are digests of hash-chained measurements that cover the boot process and system configuration that claims to have been started inside the TEE (Simpson et al., 2019).

It is typically not possible to run an enclave inside another enclave or to compose these in a hierarchical manner—although new designs are being discussed (Castes et al., 2023). This presents a challenge in our case as we need to run untrusted code, i.e. the build scripts stored in the repository, inside the enclave. We work around this technical limitation by sandboxing those processes inside the TEE.

### 2.4. Verifiable logs

A verifiable log (Eijdenberg et al., 2015) incorporates an append-only data structure which prevents retroactive insertions, modifications, and deletions of its records. In summary, it is based on a binary Merkle tree and provides two cryptographic proofs required for verification. The inclusion proof allows verification of the existence of a particular leaf in the Merkle tree, while the consistency proof secures the append-only property of the tree and can be used to detect whether an attacker has retroactively modified an already logged entry. While such a transparency log is not strictly necessary to verify the attested certificate of an artifact, it adds additional benefits such as ensuring the distribution of revocation notices, e.g., after discovering vulnerabilities or leaked secrets. Artifacts providers can also monitor it to detect when modified versions are shared or their signing key is being used unexpectedly. A central log can also be used to include additional information, such as linking a security audit to a given source code commit (§ 7).

## 3. Attestable Builds (A-Bs)

This section introduces the involved stakeholders, the considered threat model, the design of a typical A-B architecture, and how it can be composed with R-Bs.

### 3.1. Stakeholders

The verifier receives an artifact, e.g., an executable, either directly from a specific BSP or via third-party channels. This could be a user downloading software or a developer receiving a pre-built dependency from a package repository. In general, the verifier does not trust the CI/CD pipeline and therefore wants to verify the authenticity of the respective artifact. The artifact author, e.g., a developer or a company, regularly builds artifacts for their project and distributes them to downstream participants. Thus, the system should integrate with existing version control systems hosted by an RHP. The artifact author also does not trust the CI/CD pipeline, as they do not control the involved hardware. Therefore, they need to detect any unauthorized manipulation. All other stakeholders (RHP, BSP, CSP, HSP, …) are untrusted. We assume there are no restrictions on combining multiple roles on one stakeholder, which is the realistic and more difficult set-up as it makes interference less likely to be detected. For example, a self-hosted Gitlab operator would take over the role as RHP to manage the source code using git, the BSP by providing build workflows, and the CSP by providing the underlying servers that execute the build steps. Only for the transparency log requiress a threshold of honest operators, e.g., in the form of independent witnesses tracking the consistency of the log similar as it is already done in established infrastructure such as Certificate Transparency (Laurie, 2014) and SigStore (Newman et al., 2022).

### 3.2. Threat Model

The main security objective is to provide an attested build process with strong source-to-binary correspondence guarantees. We do not consider confidentiality or availability as security objectives in A-Bs, assuming that the source code is not inherently confidential and that ensuring availability of relevant components in the build pipeline is the responsibility of the infrastructure provider. However, since the TEEs can also provide confidentiality, A-Bs can be adapted accordingly. Our threat model focuses on the build process as illustrated in Figure 1, describing pipelines where an artifact author publishes code to a repository, which is then built and deployed by the BSP.

#### 3.2.1. Assumptions

We make the following assumptions for our threat model: we assume that the enclave itself is trusted including the hardware-backed attestation provided by the TEE. We assume that the transparency log is trustworthy as potential tampering attempts are detectable. We also assume that the transparency log is protected against split-view attacks by having sufficient witnesses in place.

#### 3.2.2. Adversary modeling

The following list defines relevant adversary models, including information about the respective attack surface, in accordance to the scope of our research.

1. Physical adversary Adversary with physical access to hardware, including storage, or the respective infrastructure. We assume that a physical adversary could also be an insider (A3), as our threat model does not distinguish between attacks that require physical access, regardless of whether the attacker is external or internal.

1. On-path adversary (OPA) An on-path adversary has access to the network infrastructure (e.g., via a machine-in-the-middle [MitM] attack) and is capable of modifying code, the attestation data, or the artifact sent within that network.

1. Insider adversary An insider adversary can be a privileged employee working with access to the platform layer such as the hypervisor of the CSP running the VMs or the hosting environment (e.g., docker host) of the BSP. This category of adversary includes malicious service providers. Physical attacks are covered through A1.

#### 3.2.3. Threats

We introduce threats for generic build systems that we considered while designing A-Bs. The following section on architecture explains how A-Bs effectively mitigates these.

1. Compromise the build server: An adversary (A1, A3) might compromise the build server infrastructure by modifying aspects of the build process, including source code, which could result in a malicious build artifact. This threat addresses all kinds of unauthorized modifications during the build process, such as directly manipulating the source code, the respective build scripts (e.g., shell scripts triggering the build), or parts of the build machine itself, like the OS.

1. Cross-tenant threats: Any adversary that uses shared infrastructure might use its privilege to temporarily or permanently compromise the host and thus affect subsequent or parallel builds. It also potentially renders any response from the service untrustworthy. This is particularly important for build processes as they generally allow developers to execute arbitrary code.

1. Implant a backdoor in code or assets: An adversary (A3) might implant a backdoor within the repository through intentionally incorrect code or within files that are committed as binary assets. For this to be successful the adversary might need to successfully execute social engineering attack to become co-maintainer on an open-source repository. An example of this is the compromise of XZ Utils (Lins et al., 2024) which we discuss in Section 7. Unlike T1, implanting a backdoor in this manner does not directly compromise the build process itself, but rather is an orthogonal supply chain concern.

1. Spoofing the repository: An adversary might clone an open-source project, introduce malicious modifications, and attempt to make it appear as the original repository as shown in recent attacks (Harush, 2025). This is similar to typo-squatting of dependencies in package managers (Neupane et al., 2023; Taylor et al., 2020). A common mitigation of such threats is the use of digital signatures for signing the artifact. However, an insider adversary (A3) might be able to exfiltrate such a key.

1. Compromise build assets during transmission: An adversary with network access (A2) might compromise build assets (e.g., source code, dependencies, configuration, …) transferred between the parties involved in the build process by intercepting the network traffic.We consider well-resourced adversaries that might issue valid SSL certificates or compromise the servers of any other party. This threat does also include side-loading potentially malicious libraries from external sources.

1. Compromise the hardware layer: An adversary with physical access (A1) might perform classical physical attacks such as interrupting execution, intercepting access to the RAM, and running arbitrary code on the CPU cores that are not part of a secure enclave. This aligns with the threat model of Confidential Computing technologies although they all vary slightly and they do have known vulnerabilities.

1. Undermine verification results: An adversary (A1, A2, A3) can undermine verification results, e.g., authenticity or integrity checks, by manipulating verification data either directly in the infrastructure or while in transit. Similarly, an adversary (A2) might pursue a split-view attack where some users are given different results for queries against central logs.

### 3.3. Architecture

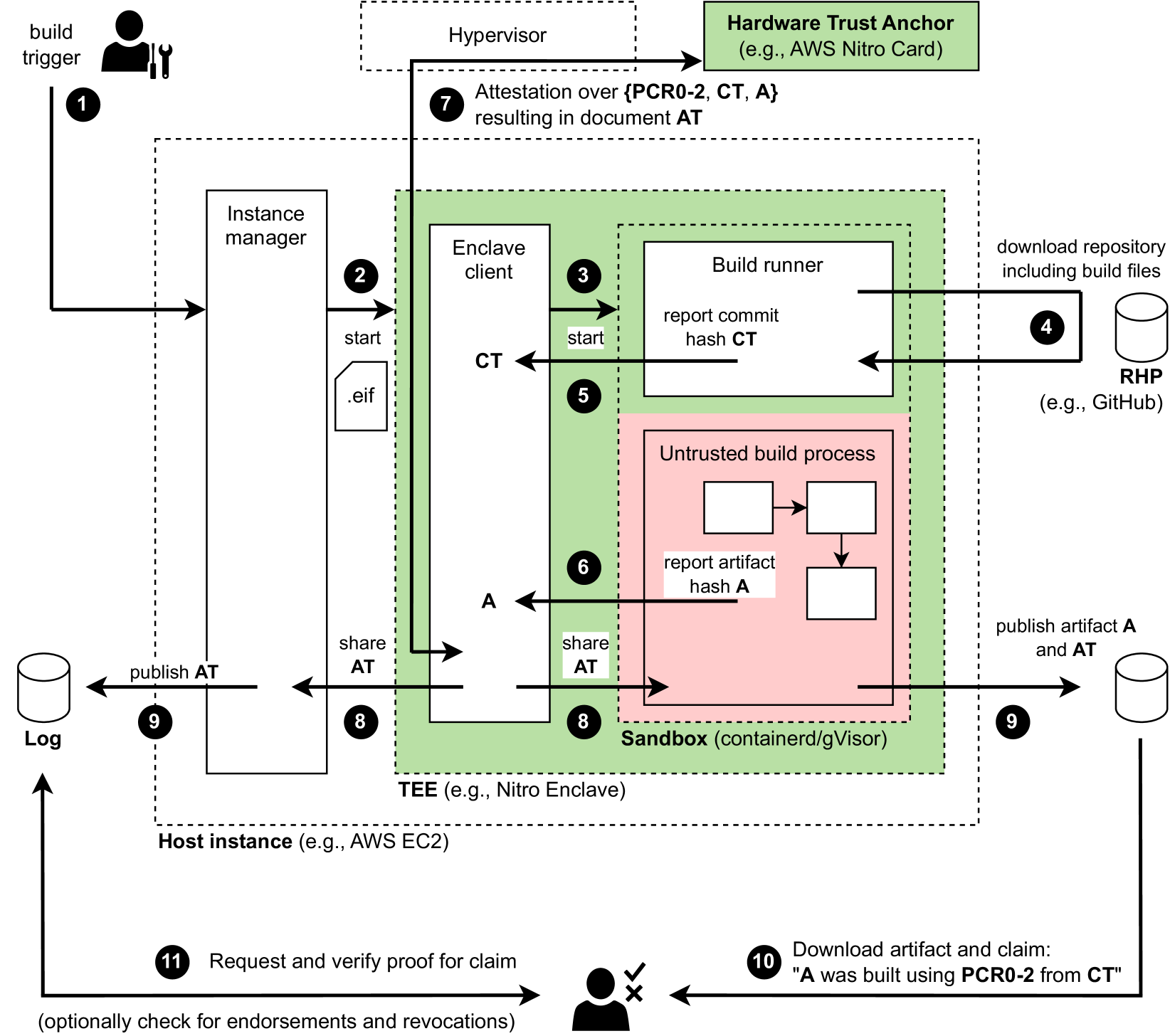

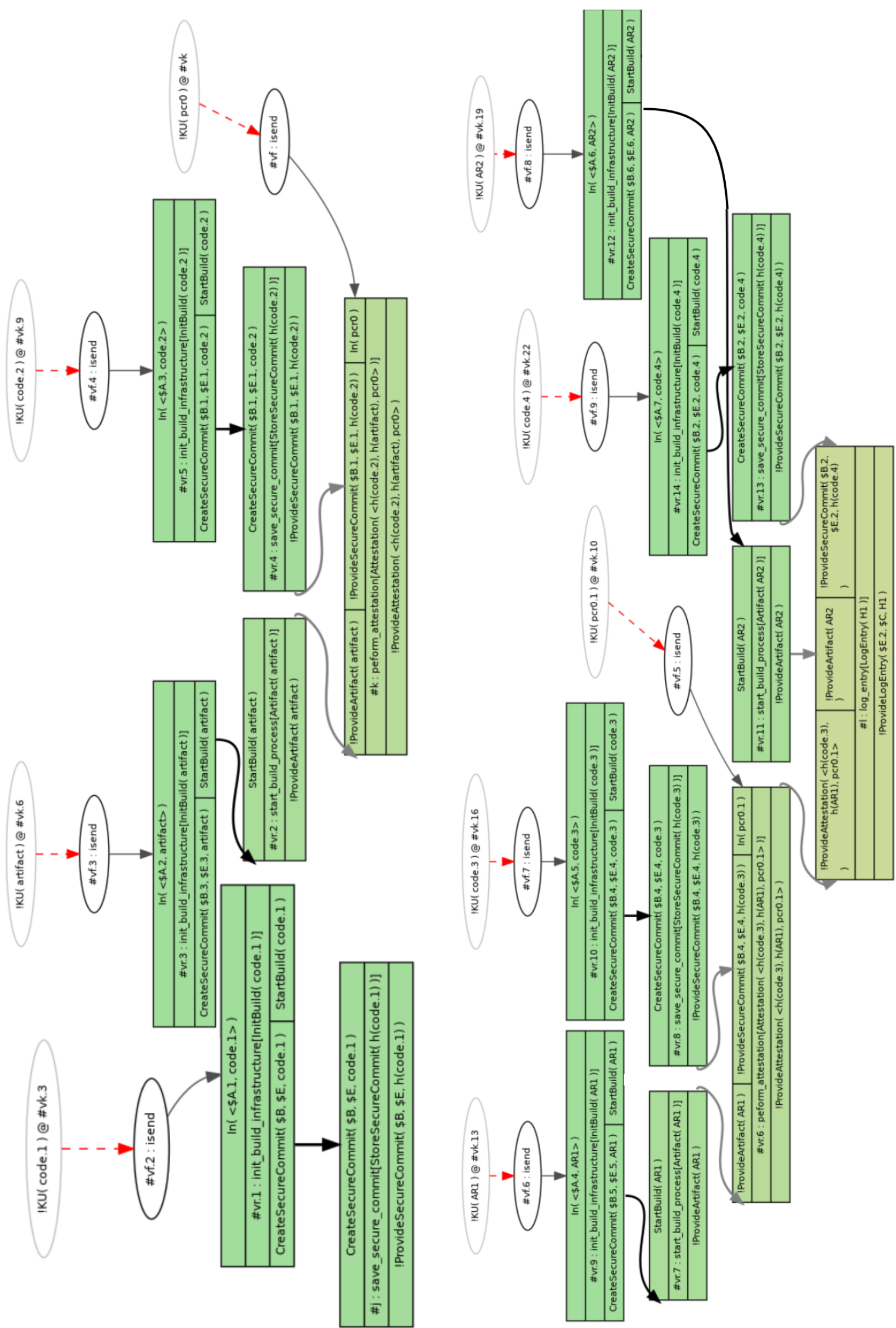

We designed A-Bs with cloud-based CI/CD pipelines in mind. In particular, such a system can be provided by a BSP who rents infrastructure from an untrusted CSP (see Figure 1). Our design is compatible with different Confidential Computing technologies. While our practical implementation (§ 4) uses a particular technology, we describe our architecture and its design challenges in general terms (e.g., TEE, sandbox). Figure 2 provides an architectural overview which is described in more detail in this section.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Secure Build Process Flow

### Overview

This diagram illustrates a secure build process utilizing a Trusted Execution Environment (TEE) to ensure the integrity and provenance of software artifacts. The process begins with a build trigger and culminates in the verification of the built artifact. The diagram highlights the interaction between various components, including a Hardware Trust Anchor, Hypervisor, Instance Manager, Enclave Client, Build Runner, Sandbox, and external repositories.

### Components/Axes

The diagram consists of several key components:

* **Hardware Trust Anchor:** (e.g., AWS Nitro Card) - Provides a root of trust.

* **Hypervisor:** Responsible for attestation.

* **Host Instance:** (e.g., AWS EC2) - The underlying infrastructure.

* **Instance Manager:** Manages instances within the host.

* **Enclave Client:** Initiates and manages the secure build process within the TEE.

* **Build Runner:** Executes the build process.

* **Untrusted Build Process:** The actual build steps.

* **Sandbox:** (containerd/gVisor) - Isolates the build process.

* **RHP (Repository Host Provider):** (e.g., GitHub) - Source code repository.

* **Log:** Stores build-related information.

* **TEE (Trusted Execution Environment):** (e.g., Nitro Enclave) - Provides a secure environment for the build process.

The diagram uses numbered arrows to indicate the flow of control and data. Key data elements include:

* **CT:** Commit Hash

* **A:** Artifact Hash

* **AT:** Attestation Document

### Detailed Analysis / Content Details

1. **Build Trigger:** Initiates the build process.

2. **Instance Manager to Enclave Client:** The Instance Manager starts the Enclave Client, passing an `.eif` file.

3. **Enclave Client to Build Runner:** The Enclave Client starts the Build Runner.

4. **Build Runner to RHP:** The Build Runner downloads the repository, including build files, from the RHP (e.g., GitHub).

5. **Build Runner to Enclave Client:** The Build Runner reports the commit hash (CT) to the Enclave Client.

6. **Untrusted Build Process to Sandbox:** The untrusted build process executes within the Sandbox.

7. **Hypervisor Attestation:** The Hypervisor performs attestation over (PCR0-2, CT, A) resulting in the Attestation Document (AT).

8. **Sandbox to Enclave Client:** The Sandbox shares the Attestation Document (AT) with the Enclave Client.

9. **Publishing AT and Artifact:** The Attestation Document (AT) and the artifact (A) are published to a Log.

10. **Download and Claim Verification:** An external entity downloads the artifact and claims "A was built using PCR0-2 from CT". This is represented with a checkmark and an X, indicating successful and failed verification respectively.

11. **Request and Verify Proof:** A request is made to verify the proof of claim, optionally checking for endorsements and revocations.

The arrows indicate the following flow:

* A solid arrow indicates a direct flow of control or data.

* A dashed arrow indicates an attestation or verification process.

### Key Observations

* The process emphasizes attestation as a critical security measure. The Hypervisor attests to the integrity of the build environment.

* The use of a TEE (Nitro Enclave) provides a secure environment for the build process, isolating it from the potentially compromised Host Instance.

* The diagram highlights the importance of hashing (CT and A) for verifying the integrity of the source code and the resulting artifact.

* The process includes a mechanism for publishing and verifying the Attestation Document (AT), providing transparency and accountability.

* The diagram shows a clear separation between the trusted and untrusted components of the build process.

### Interpretation

This diagram demonstrates a secure software build pipeline designed to mitigate supply chain attacks. By leveraging a Hardware Trust Anchor, TEE, and attestation mechanisms, the process aims to establish a strong chain of trust from the source code to the final artifact. The attestation process ensures that the build environment is in a known and trusted state, and the hashing of the source code and artifact provides a means to verify their integrity. The publishing of the Attestation Document allows external parties to independently verify the provenance of the artifact.

The inclusion of PCR0-2 in the attestation process suggests that the integrity of the boot process and the initial system configuration are also considered part of the trust establishment. The optional check for endorsements and revocations indicates a potential mechanism for managing trust relationships and responding to security vulnerabilities.

The diagram suggests a robust and comprehensive approach to secure software builds, addressing key concerns related to integrity, provenance, and transparency. The use of specific technologies like AWS Nitro Card and Nitro Enclave indicates a cloud-native security model.

</details>

Figure 2. Overview of the protocol steps during build and verification. Dashed borders indicate separate or sandboxed execution environment. Only the TEE and the hardware trust anchor are fully trusted. 1 The build process is triggered manually or as a result of code changes. Either will cause a webhook call to the Instance Manager. 2 The Instance Manager starts an fresh enclave from a publicly known .eif file with the measurements PCR0-2. 3 Once booted, the Enclave Client starts the inner sandbox. 4 The sandbox executes the action runner which fetches the repository snapshot. That snapshot includes both the source code and build instructions. 5 A hash of the snapshot is reported to the Enclave Client for safeguarding. Now the build process is started which is untrusted. 6 Once it finishes, the sandbox reports the hash of the produced artifact. 7 The Enclave Client then requests an attestation document from the Nitro Card covering PCR0-2, the repository snapshot hash, and the artifact hash. 8 The results are shared with both the build process and the outer Instance Manager. 9 The build process can now publish the artifact and certificate. And the Instance Manager publishes the attestation. 10 When a user downloads the artifact, it can contain a certificate specifying how it was build. 11 The user can verify this certificate by checking that it is included in the public transparency log.

The core unit of an A-B system is the host instance which runs control software, the instance manager, and can start our TEE. Each build request is forwarded to an instance manager which then starts a fresh enclave from a public image inside the TEE. These images are available as open-source and therefore have known PCR values that can later be attested to.

The TEE provides both confidentiality and integrity of data-in-use through hardware-backed encryption of memory which protects it from being read or modified—even from adversaries with physical access, the host, and the hypervisor. The enclave will later use remote attestation to verify that it has booted a particular secure image in a secure context. These guarantees mitigate T1 and are essential to the integrity of the final attestation. However, it alone is not sufficient, as otherwise the build process might manipulate its internal state, and thus the input we are later attesting to. Therefore, we designed a protocol with an integrity-protected observer, the Enclave Client, that interacts with a sandbox that is embedded inside the TEE.

Once the enclave has booted, it starts the Enclave Client. As it runs inside the TEE, we can assume that it is integrity-protected. The Enclave Client first establishes a bi-directional communication channel with the Instance Manager outside the TEE via shared memory. Through this channel, the Instance Manager provides short-lived authentication tokens for accessing the repository at the RHP and receives updates about the build process.

The Enclave Client then manages a sandbox inside the enclave. The sandbox ensures that the untrusted build process (which might execute arbitrary build steps and code) cannot modify the important state kept by the Enclave Client. In particular, we need to protect the initial measurement of the received source code files and build instructions. This mitigates T2. The sandbox optionally captures complete, attested, logs of all incoming and outgoing communication of the build execution, which can help audits and investigations.

Once the sandbox has started, the Enclave Client forwards a short-lived authentication token to the build runner inside the sandbox. The build runner uses the token to fetch both the code and build instructions from the RHP. Since the enclave has no direct internet access, all TCP/IP communication is tunneled via shared memory as well. Upon downloading the source code and instructions, the sandbox computes the commit hash CT and reports to the Enclave Client. The commit hash not only covers the content of the code and build instructions, but also the repository metadata. This includes the individual commit messages which can include signatures with the developers private keys (project, 2024b). By checking and verifying these during the build steps, the system also attests to the origin of the source code, i.e. the latest developer implicitly signs-off on the current repository state at this commit. This mitigates T5.

Once the commit hash has been committed to the Enclave Client, the sandbox starts the build process by executing the build instructions from the repository—and from that moment we consider the inner state sandbox untrusted. The sandbox expects the build process to eventually report the path of the artifact that it intends to publish. Once the build process is complete, the sandbox computes the hash A of the artifact and forwards it to the Enclave Client. Note: while the inner state of the sandbox is untrusted, the Enclave Client as an integrity-protected observer has safeguarded the input measurements (CT) from manipulation. A ratcheting mechanism ensures that it will only accept CT once at the beginning from the build runner before any untrusted processes are started inside the sandbox. The hash of the artifact (A) can be received from the untrusted build process as it will be later compared by the user against the received artifact.

The Enclave Client then uses the TEE attestation mechanism to request an attestation document AT over the booted image PCR values (including both the Enclave Client and the sandbox image), the initial input measurement CT, and the artifact hash A. The response AT is then shared with the sandbox, so that the build process can include it with the published artifact, published to the transparency log. Together with proper verification by the client this mitigates T7.

Importantly, the transparency log ensures that revocation notices (e.g., after discovering hardware vulnerabilities) are visible to all users. By requiring up-to-date inclusion proofs for artifacts, the end consumer can efficiently verify that they still considered secure. As such, it lessens the impact of T3 and T6. Furthermore, transparency logs allow the developer to monitor for leaked signing keys. They assure users that observed rotations of signing keys are intentional as they know that developers are being notified about them as well. This mitigates T4.

After completion, the enclave is destroyed. This makes the build process stateless which simplifies debugging and reasoning about its life cycle and helps in mitigating T2, T6. Its stateless nature and the clear control of the ingoing code and build instructions ensures that the build is hermetic, i.e. the build cannot accidentally rely on unintended environmental information. Note that the main build process generally does not require any modifications if it already works with a compatible build runner—it is simply being executed in a sandbox inside an integrity-protected environment. The developer will only need to add a final step to communicate the artifact path and receive the attestation document AD.

### 3.4. Composing A-Bs and R-Bs

We believe that combining our A-Bs and classic R-Bs improves build ergonomics and increases trust. R-Bs can easily consume A-B artifacts and commit to a hash of the artifact similar to lockfiles that are already used by dependency managers such as Rust’s cargo and JavaScript’s NPM. Similarly, A-Bs can consume R-B artifact and even be independent R-B builders themselves. Due to the attested and controlled environment, existing R-B projects might be able to rely on fewer independent builders when A-Bs are used.

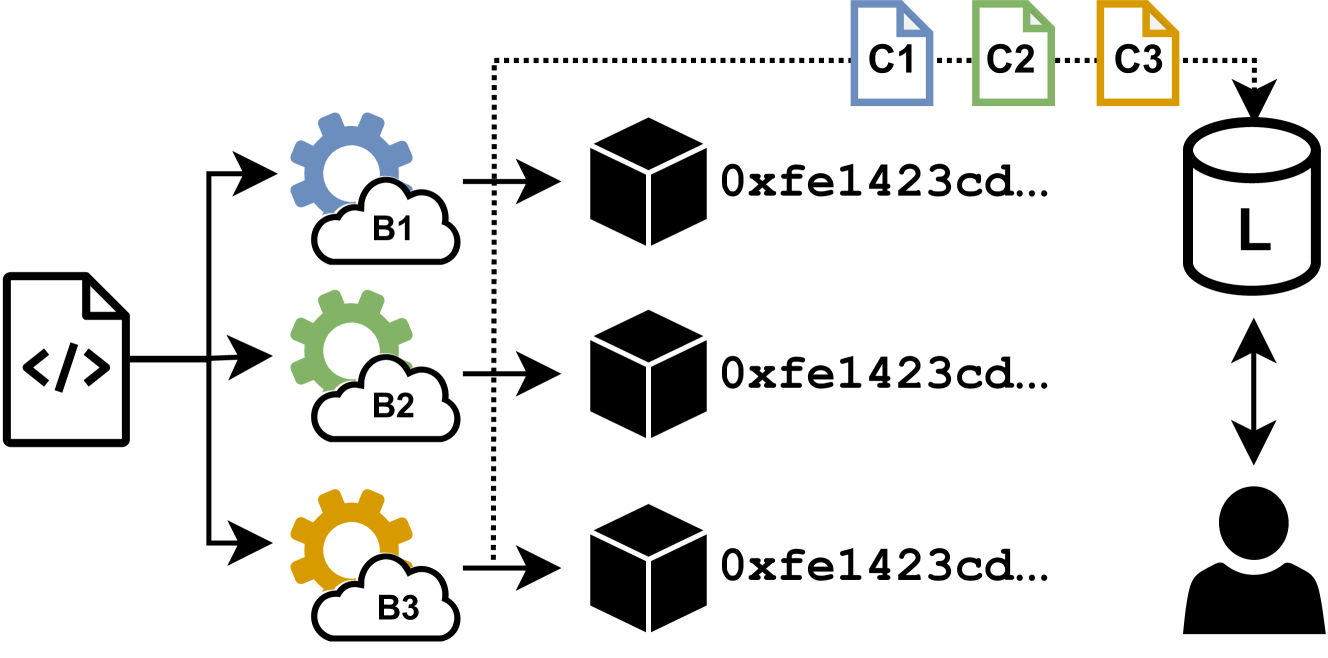

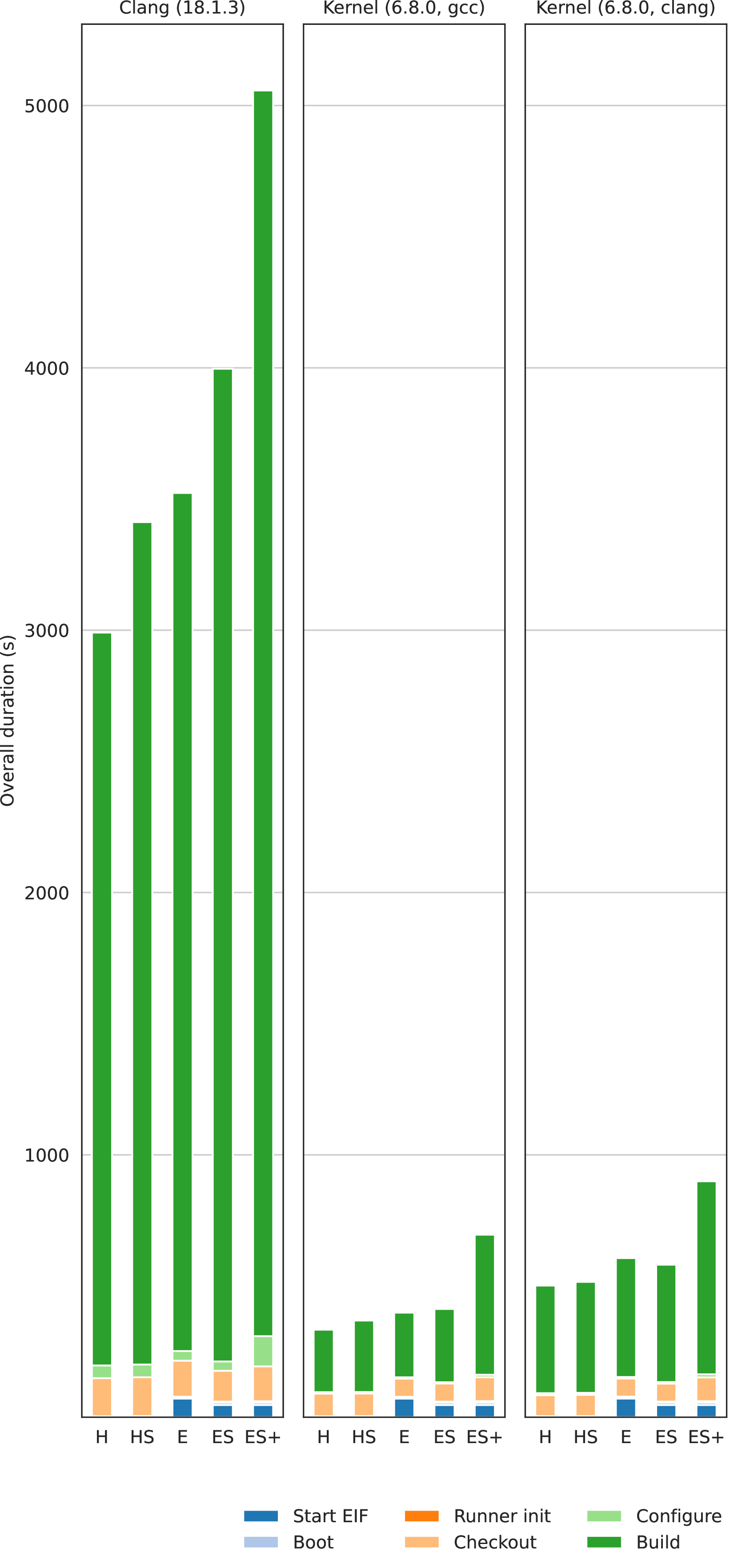

This allows for a setup where the independent builders of an R-B project are distributed across attestable builders running on machines using hardware from different Confidential Computing vendors (see Figure 3). In this setting, the guarantees of the R-B imply an anytrust model that is easily verified. The verifier can use the log to ensure they get a correct build as long as they trust at least one of the Confidential Computing vendors—without having to decide which one. The reader might find it interesting to compare this with how anonymity networks like mix nets and Tor work where traffic is routed through multiple hops and the unlinkability property holds as long as one of them is trusted.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Data Processing and Storage Flow

### Overview

The image depicts a diagram illustrating a data processing and storage workflow. Data originates from a source represented by a file icon, is processed through three distinct stages (B1, B2, B3) involving cloud-like processing units, and ultimately stored in a database (L) accessible by a user. The diagram emphasizes a hashing or identification process, indicated by the hexadecimal strings "0xfe1423cd..." associated with each processed data block.

### Components/Axes

The diagram consists of the following components:

* **Source:** A file icon with `< />` inside, representing the initial data source.

* **Processing Stages:** Three cloud-shaped blocks labeled B1 (light blue), B2 (light green), and B3 (yellow). Each is preceded by a gear icon, suggesting a processing or transformation step.

* **Data Blocks:** Three cube-shaped blocks, each displaying the hexadecimal string "0xfe1423cd...". These represent the processed data.

* **Database:** A cylinder labeled "L", representing the final storage location.

* **User:** A silhouette of a head and shoulders, indicating a user interacting with the database.

* **Files:** Three file icons labeled C1 (blue), C2 (orange), and C3 (yellow) positioned above the database.

* **Arrows:** Black arrows indicating the flow of data between components.

* **Dotted Box:** A dotted rectangle encompassing the data blocks and files, suggesting a boundary or grouping.

### Detailed Analysis or Content Details

The diagram illustrates the following flow:

1. Data originates from the source file.

2. The data is processed by B1, resulting in a data block with the identifier "0xfe1423cd...".

3. The data is processed by B2, resulting in a data block with the identifier "0xfe1423cd...".

4. The data is processed by B3, resulting in a data block with the identifier "0xfe1423cd...".

5. The processed data blocks are stored in the database L.

6. Files C1, C2, and C3 are also associated with the database L.

7. The user interacts with the database L, with arrows indicating both input and output.

The hexadecimal string "0xfe1423cd..." appears to be a hash or unique identifier assigned to each processed data block. The three processing stages (B1, B2, B3) likely perform different transformations or operations on the data. The files C1, C2, and C3 may represent metadata or related files associated with the stored data.

### Key Observations

* All three data blocks have the same hexadecimal identifier ("0xfe1423cd..."). This suggests that despite being processed by different stages (B1, B2, B3), the resulting data is identical or equivalent in terms of its hash value. This could indicate a data validation or consistency check.

* The diagram emphasizes the transformation of data from a source file into a standardized format (represented by the hexadecimal identifier) before storage.

* The dotted box around the data blocks and files suggests a logical grouping or a specific storage area within the database.

### Interpretation

The diagram likely represents a data pipeline where raw data is processed through multiple stages, potentially involving cleaning, transformation, and validation, before being stored in a database. The consistent hash value across all processed data blocks suggests a robust data integrity mechanism. The presence of files C1, C2, and C3 indicates that the database stores not only the processed data but also associated metadata or supporting files. The user interaction with the database implies that the data is accessible and usable for various purposes.

The diagram could be illustrating a system for data archiving, data warehousing, or a secure data storage solution. The use of hashing suggests a focus on data security and integrity. The multiple processing stages (B1, B2, B3) could represent different levels of data processing or different data validation checks. The diagram is a high-level representation and does not provide details about the specific processing algorithms or data formats used.

</details>

Figure 3. Three attestable builders using different hardware vendors (e.g., Intel, AMD, Arm) perform the same R-B resulting in identical artifacts. The user is then hedged against up to two backdoored TEEs (§ 3.4).

The trust of A-Bs depends on the trust of their build image. While the final artifact (or rather its measurement) is attested to and included in the certificate, we rely on the initial image of the machine embedded in the TEE to ensure the correct and secure execution of the build instructions of the source code snapshot. We believe that R-Bs are also important for bootstrapping an A-B system. Even where the base image can be produced using A-Bs, the very first image should be created using R-Bs and bootstrapped from as little code as possible. Projects like Bootstrappable Build (project, 2024b) lay the foundation for this approach. In the long run, these R-Bs can be executed by attestable builders as described above.

## 4. Practical evaluation

We implemented the A-B architecture (§ 3.3) to demonstrate its feasibility and to practically evaluate its performance overhead.

### 4.1. Implementation

Our prototype uses AWS Nitro Enclaves (web services, 2024) as the underlying Confidential Computing technology due the availability of accessible tooling. However, it is also possible to achieve similar guarantees with other technologies. For instance, AMD SEV-SNP might offer security benefits due to a smaller Trusted Computing Base (TCB) and we leave this as an engineering challenge for future work.

AWS Nitro Enclaves are started from EC2 host instances and provide hardware-backed isolation from both the host operation system and the hypervisor through the use of dedicated Nitro Cards. These cards assign each enclave dedicated resources such as main memory and CPU cores that are then no longer accessible to the rest of the system. Enclaves boot a .eif image that can be generated from Docker images. Creation of these images yields PCR0-2 In the AWS Nitro architecture the values PCR0, PCR1, and PCR2 cover the entire .eif image and can be computed during its build process. values that can later be attested to.

Since enclaves do not have direct access to other hardware, such as networking devices, all communication has to be done via vsock sockets that leverage shared memory. These provide bi-directional channels that we use to (a) exchange application layer messages between the instance manager and enclave client and (b) tunnel TCP/IP access for the build runner to the code repository.

We implemented two sandbox variants using the lightweight container runtime containerd and the hardened gVisor (gVisor Authors, 2025) runtime which has a compatible API. Parameters for the sandbox, such as the short-lived authentication tokens for accessing the repository, are passed as environment variables. Internet access is mediated via Linux network namespaces and results are communicated via a shared log file. We pass only limited capabilities to the sandbox and the runtime immediately drops the execution context to an unprivileged user. gVisor provides additional guarantees by intercepting all system calls. Optionally, this setup can be further hardened using SELinux, seccomp-bpf, and similar.

As we want to demonstrate ease-of-adoption, we integrated with GitHub Actions. The Instance Manager exposes a webhook to learn about newly scheduled build workflows and short-lived credentials are acquired using narrowly-scoped personal access tokens (PAT). Inside the sandbox runs an unmodified GitHub Action Runner (v2.232.0) that is provided by GitHub for self-hosted build platforms. As such, developers only need to export a PAT, add our webhook, and perform minor edits in their .yml files (see Appendix B) which include updating the runner name and calling the attestation script.

Most components are written in Rust and we leverage its safety features to minimize the overall attack surfaces and avoid logic errors, e.g., through the use of Typestate Patterns (Biffle, 2024) and similar. Our implementation consists of less than 5 000 lines of open source code and is available at: https://github.com/lambdapioneer/attestable-builds.

### 4.2. Build targets

We demonstrate the feasibility of the A-B approach by building software that appears to be challenging. First, we build five of the still unreproducible Debian packages. We start with a list of all unreproducible packages, choose the ones with the fewest but at least two dependencies (to rule out trivial packages), and then use apt-rdepends -r to identify those with the most reverse dependencies, i.e. which likely have a large impact on the build graph. In addition, we add one with more dependencies. This results in the following five packages: ipxe, hello, gprolog, scheme48, and neovim. Second, we build large software projects including the Linux Kernel (kernel, 6.8.0, default config) and the LLVM Clang (clang, 18.1.3). These show that our A-Bs can accommodate complex builds and these two artifacts are also essential for later bootstrapping the base image itself, as these are the versions used in Ubuntu 24.04. Finally, we augment this set by including tinyCC (a bootstrappable C compiler), libsodium (a popular cryptographic library), xz-utils, and our own verifier client.

For reproducibility, we include copies of the source code and build instructions in a secondary repository with separate branches for each project. The C-based projects follow a classic configure and make approach and the Rust-based projects download dependencies during the configuration step.

### 4.3. Measurements

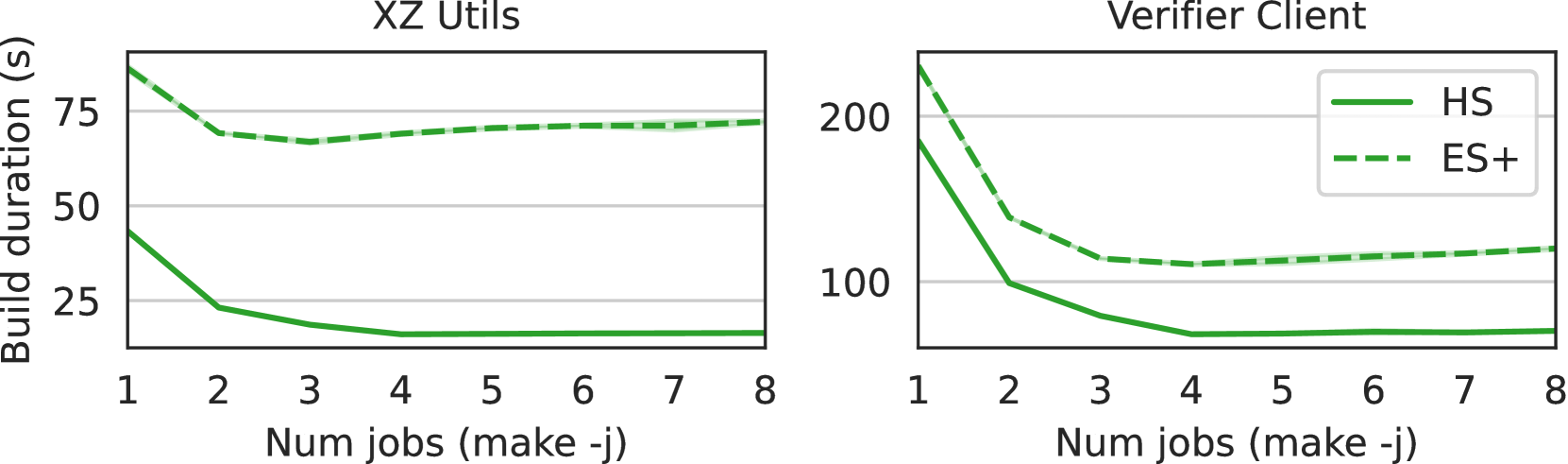

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Bar Chart: Build Duration Comparison Across Projects and Configurations

### Overview

The image presents a bar chart comparing the overall duration (in seconds) of different build stages (Start ELF, Boot, Runner init, Checkout, Configure, Build) across several projects (GProlog, Hello, IPXE, Scheme48, NeoVM, LibSodium, TinyCC, Verifier Client, XZ Utils) and three configurations (HS, ES, ES+). The chart is structured as a series of grouped bar plots, one for each project, with each group representing the three configurations.

### Components/Axes

* **X-axis:** Projects and Configurations. The projects are: GProlog (1.6.0), Hello (2.10), IPXE (1.21.1), Scheme48 (1.9.3), NeoVM (0.11.0), LibSodium (1.0.20), TinyCC (0.9.28), Verifier Client, XZ Utils (5.6.3). Each project has three configurations: HS, ES, and ES+.

* **Y-axis:** Overall duration (in seconds), ranging from 0 to 500.

* **Legend:** Located at the bottom-center of the chart, it defines the color-coding for each build stage:

* Start ELF (Light Blue)

* Boot (Orange)

* Runner init (Light Green)

* Checkout (Blue)

* Configure (Dark Green)

* Build (Dark Blue)

### Detailed Analysis

The chart consists of nine groups of bars, one for each project. Within each group, there are three bars representing the HS, ES, and ES+ configurations.

**GProlog (1.6.0):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**Hello (2.10):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**IPXE (1.21.1):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**Scheme48 (1.9.3):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**NeoVM (0.11.0):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**LibSodium (1.0.20):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**TinyCC (0.9.28):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**Verifier Client:**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

**XZ Utils (5.6.3):**

* HS: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

* ES+: Start ELF ≈ 10s, Boot ≈ 5s, Runner init ≈ 5s, Checkout ≈ 10s, Configure ≈ 10s, Build ≈ 20s.

### Key Observations

The durations for each build stage are remarkably consistent across all projects and configurations. There is minimal variation in the duration of any stage. The "Build" stage consistently takes the longest, followed by "Checkout" and "Configure". The "Boot" and "Runner init" stages are consistently the shortest.

### Interpretation

The data suggests that the build process is highly standardized and predictable across these projects and configurations. The lack of significant variation indicates that the build system is robust and not heavily influenced by project-specific factors. The consistent duration of each stage suggests that the bottlenecks in the build process are well-defined and likely related to the inherent complexity of the build stages themselves (e.g., the amount of code to compile during the "Build" stage). The fact that the ES and ES+ configurations show no significant difference in build times suggests that the changes introduced by these configurations do not substantially impact the build process duration. This could be due to the changes being focused on runtime behavior rather than build-time operations.

</details>

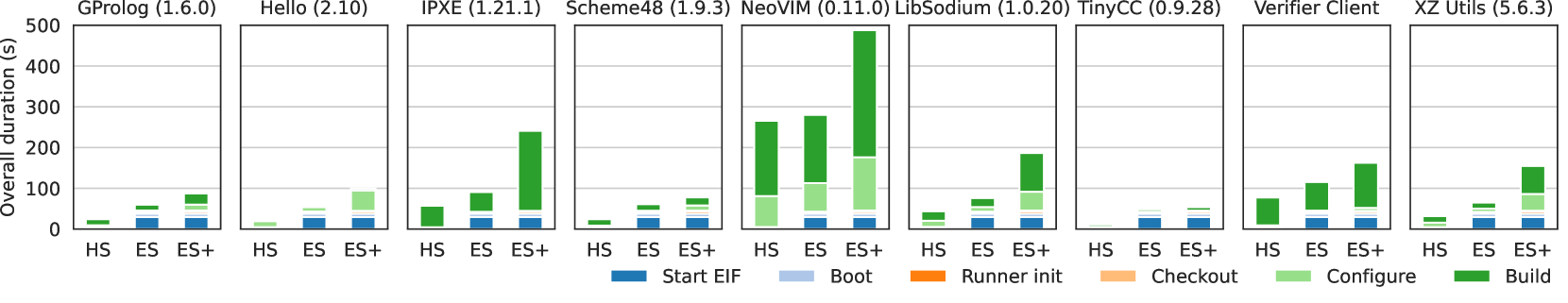

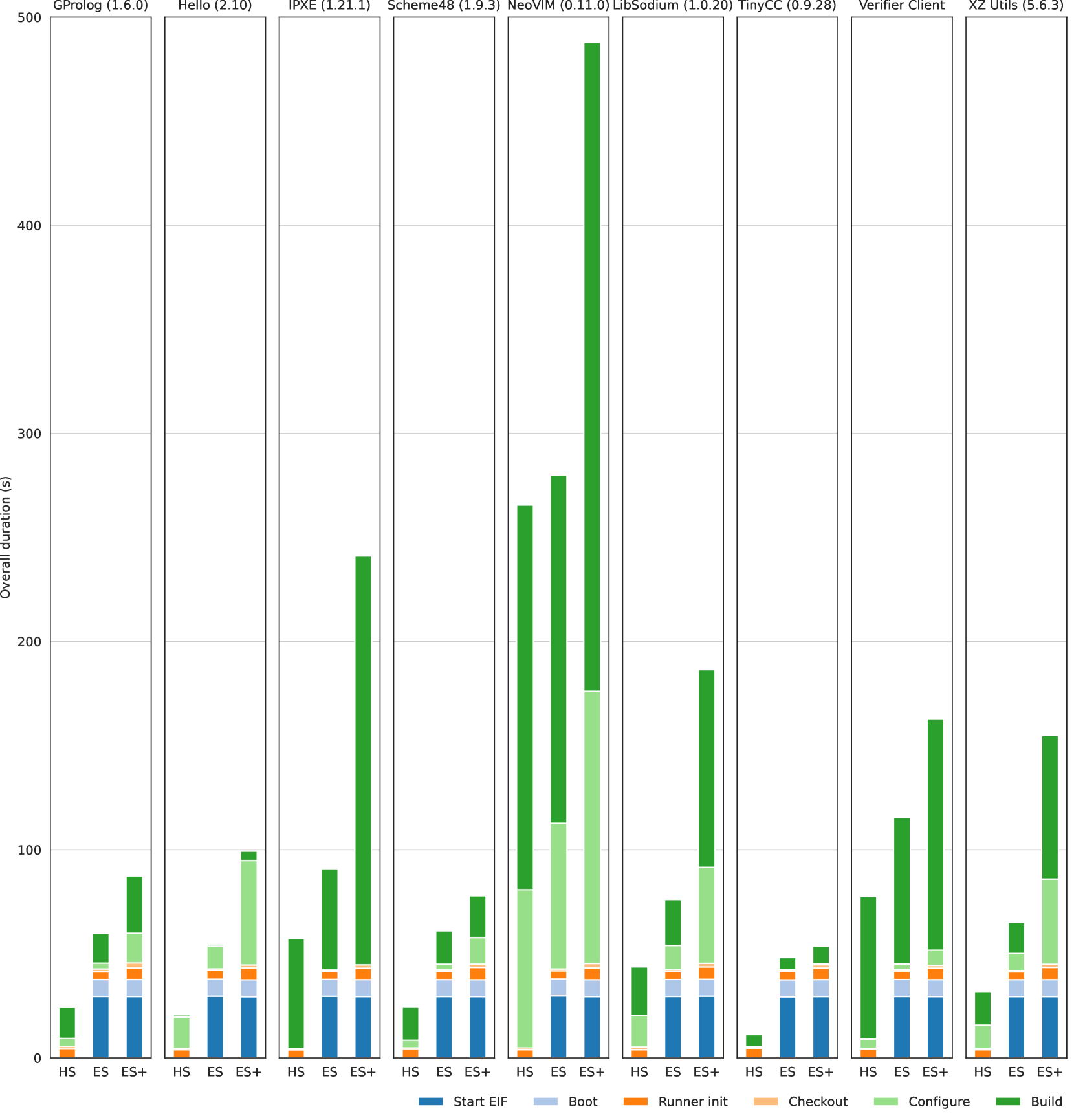

Figure 4. The duration of individual steps for the evaluated projects including the five unreproducible Debian packages and other artifacts. HS represents the baseline with a sandbox running directly on the host, ES (using containerd) and ES+ (using gVisor) are variants of our A-B prototype executing a sandboxed runner within an enclave. See Figure 12 for a larger version.

We build most targets on m5a.2xlarge EC2 instances (8 vCPUs, 32 GiB). However, for kernel and clang we use m5a.8xlarge EC2 instances (32 vCPUs, 128 GiB). To allow fair comparison between executions inside and outside the enclave, we assign half the CPUs and memory to the enclave. At time of writing, the m5a.2xlarge instances cost around $0.34 per hour For comparison: the 4-core Linux runner offered by GitHub costs $0.016 per minute ($0.96 per hour).. We minimize the impact of I/O bottlenecks by increasing the underlying storage limits to 1000 MiB/s and 10 000 operations/s which incurs extra charges.

In order to better understand how the enclave and the sandbox implementations impact performance, we repeat our experiments across three configurations. The host-sandbox (HS) configuration runs the GitHub Runner using containerd on the host and serves as baseline representing a self-hosted build server. We evaluate two A-B compatible configurations: the enclave-sandbox (ES) variant uses the standard containerd runtime and the hardened enclave-sandbox-plus (ES+) variant uses gVisor. For kernel and clang we additionally include H and E configurations without sandboxes.

We are interested in the impact of A-Bs on the duration of typical CI tasks. For this we have instrumented our components to add timestamps to a log file. We extract the following steps: Start EIF allocates the TEE and loads the .eif file into the enclave memory; then the Boot process starts this image inside the TEE; subsequently the Runner init connects to GitHub and performs the source code Checkout; finally, the build file performs first a Configure step and then executes the Build. We run each combination of build target and configuration three times and report the average.

Figure 4 plots these durations for the unreproducible Debian packages and the additional targets that we have picked (§ 4.2). See Appendix D for Table 5 which contains all measurements (also for other configurations). For small builds, the overall duration is dominated by the time required to start and boot the enclave. Together these two steps typically take around 37.6 seconds for our .eif file that weighs 1 473 MiB. These start-up costs can be mitigated by pre-warming enclaves (§ 7).

For small targets we found that the build duration effectively decreases between HS and ES configurations. For instance, the NeoVIM build duration (the green bars in Figure 4) drop from 184.9 s (HS) to 167.3 s (ES, -10% over HS). We believe that the enclave is faster because it entirely in memory and therefore mimicks a RAM-disk mounted build with high I/O performance. Again, gVisor (ES+) has a large impact and can increase the build times significantly, e.g., NeoVIM takes 311.7 s (ES+, +69% over HS).

The costs for initializing the build runner and checking out the source code are typically less than 9 seconds overall. Even though all IP traffic is tunneled via shared memory using vsock, the difference between host-based and enclave-based configurations is small. In fact, for large projects the check-out times sometimes even drops, e.g., clang from 148.0 s (HS) to 117.2 s (ES). We believe that the involved Git operations become I/O bound at this size. However, using gVisor (ES+) imposes a overhead for the checkout of up-to 2 s for small targets and the checkout of the large clang target increases from 117.2 s (ES) to 132.8 s (ES+).

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Line Chart: Build Duration vs. Number of Jobs

### Overview

The image presents two line charts comparing build duration (in seconds) against the number of jobs (using the `make -j` command). The left chart focuses on "XZ Utils", while the right chart focuses on "Verifier Client". Each chart displays two data series, distinguished by line style and color, representing different configurations: "HS" (solid line) and "ES+" (dashed line).

### Components/Axes

* **X-axis (both charts):** "Num jobs (make -j)" with values ranging from 1 to 8.

* **Y-axis (left chart):** "Build duration (s)" with a scale from 0 to 80, incrementing by 20.

* **Y-axis (right chart):** "Build duration (s)" with a scale from 0 to 220, incrementing by 50.

* **Legend (top-right of each chart):**

* Green solid line: "HS"

* Green dashed line: "ES+"

* **Titles:**

* Left chart: "XZ Utils" (top-left)

* Right chart: "Verifier Client" (top-left)

### Detailed Analysis or Content Details

**XZ Utils Chart:**

* **HS (Green Solid Line):** The line slopes downward sharply from x=1 to x=2, decreasing from approximately 70 seconds to 25 seconds. It then plateaus, remaining relatively constant at around 20-25 seconds for x values from 2 to 8.

* x=1: ~70s

* x=2: ~25s

* x=3: ~22s

* x=4: ~22s

* x=5: ~22s

* x=6: ~22s

* x=7: ~22s

* x=8: ~22s

* **ES+ (Green Dashed Line):** The line starts at approximately 65 seconds at x=1 and decreases gradually to around 60 seconds at x=2. It remains relatively stable between 60 and 70 seconds for x values from 2 to 8.

* x=1: ~65s

* x=2: ~62s

* x=3: ~63s

* x=4: ~63s

* x=5: ~63s

* x=6: ~63s

* x=7: ~63s

* x=8: ~63s

**Verifier Client Chart:**

* **HS (Green Solid Line):** The line exhibits a steep downward slope from x=1 to x=3, decreasing from approximately 200 seconds to 20 seconds. It then plateaus, remaining around 20 seconds for x values from 3 to 8.

* x=1: ~200s

* x=2: ~90s

* x=3: ~20s

* x=4: ~20s

* x=5: ~20s

* x=6: ~20s

* x=7: ~20s

* x=8: ~20s

* **ES+ (Green Dashed Line):** The line also shows a steep downward slope from x=1 to x=3, decreasing from approximately 150 seconds to 50 seconds. It then levels off, remaining between 50 and 60 seconds for x values from 3 to 8.

* x=1: ~150s

* x=2: ~80s

* x=3: ~50s

* x=4: ~55s

* x=5: ~55s

* x=6: ~55s

* x=7: ~55s

* x=8: ~55s

### Key Observations

* Both "HS" and "ES+" configurations show decreasing build durations as the number of jobs increases, up to a certain point.

* The "Verifier Client" build durations are significantly longer than those of "XZ Utils" for the same number of jobs, especially at x=1.

* The benefit of increasing the number of jobs diminishes after a certain point (around x=2 or x=3), as the build duration plateaus.

* "HS" consistently outperforms "ES+" in terms of build duration for both "XZ Utils" and "Verifier Client".

### Interpretation

The data suggests that parallelizing the build process using `make -j` can significantly reduce build duration, but there are diminishing returns. The optimal number of jobs appears to be around 2 or 3 for both "XZ Utils" and "Verifier Client". The difference in build durations between the two projects indicates that "Verifier Client" is a more computationally intensive build process. The consistent outperformance of "HS" over "ES+" suggests that the "HS" configuration is more efficient for these builds, potentially due to better resource allocation or optimization. The steep initial decline in build duration likely represents the benefits of utilizing multiple CPU cores, while the plateau indicates that the build process is becoming limited by other factors, such as disk I/O or memory bandwidth. The charts provide valuable insights for optimizing build processes and selecting appropriate configurations for different projects.

</details>

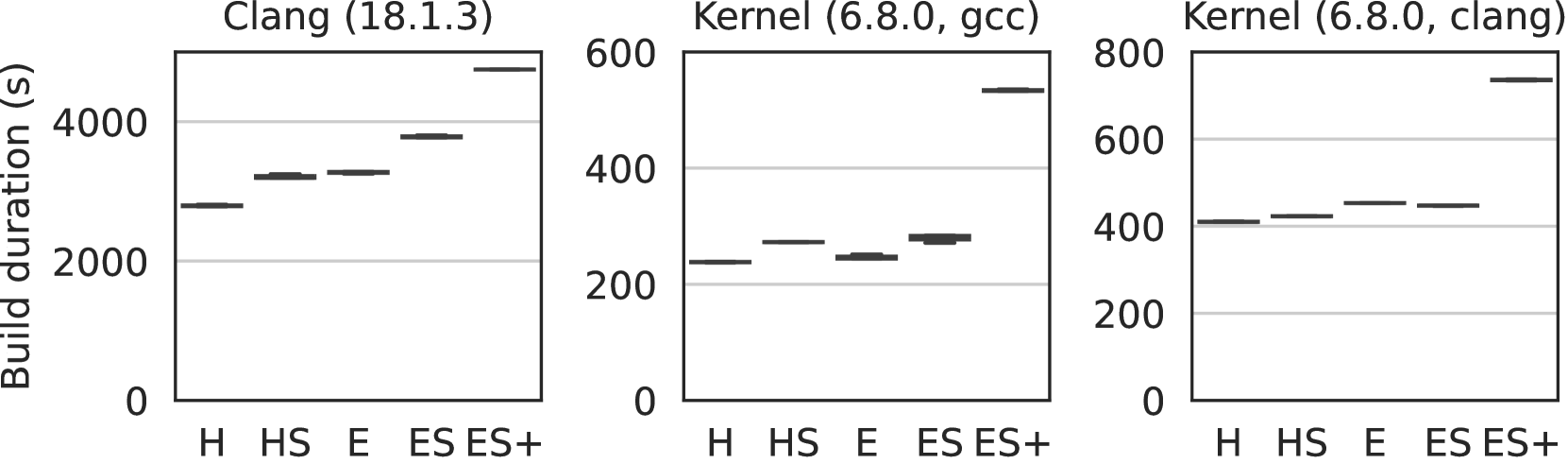

Figure 5. Impact of number of jobs for make -j (left) and cargo build -j (right) with 4 available CPUs.

We found that the impact of gVisor (ES+) can be lessened by using parallelized builds, e.g., passing the -j argument to make. Figure 5 shows that ideal number is close to the number of available CPUs. In our case: 4. And while increasing numbers past this point is fine for host-based executions, it has negative impact for ES+. See Table 6 – 7 in Appendix D for more detailed measurements.

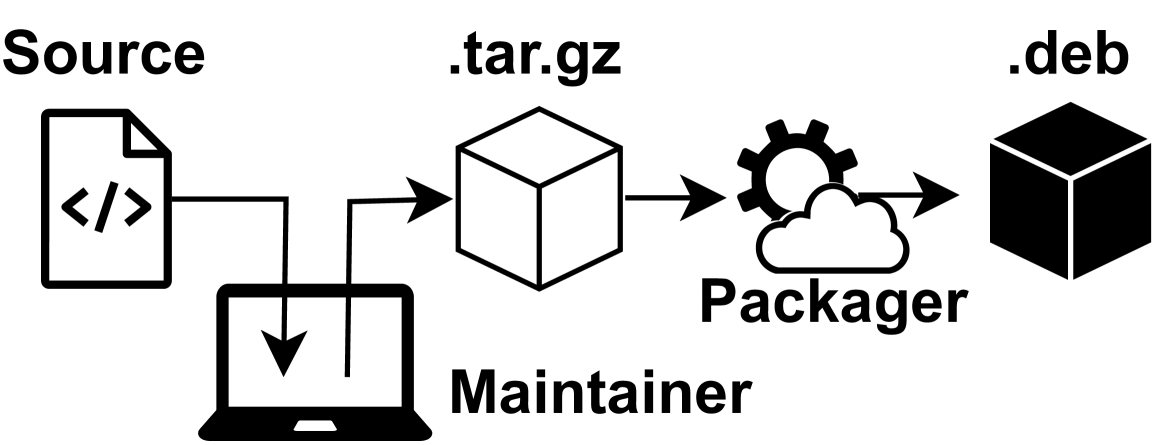

Finally, we build our complex targets clang and kernel on the larger machine where the TEE is assigned 16 vCPUs and 64 GiB. The larger memory allocation for the TEE increases the Start EIF duration from 29.5 s to 46.4 s compared to the smaller instance. Figure 6 shows that there is also a pronounced impact on the build duration. For example, clang ’s build time increased from 54 minutes (HS) to 63 minutes (ES, +18%) or 79 minutes (ES+, +48%).

For our overall overhead numbers we build all nine small targets and the two large targets back-back. With the baseline configuration HS this takes 1h22m. For A-Bs this increases to 1h34m (ES, +14%) and 2h14m (ES+, +62%). These numbers exclude the average start and boot overhead of 42.1s.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Box Plots: Build Duration Comparison

### Overview

The image presents three box plots comparing build durations (in seconds) for different configurations. Each plot represents a different compiler/build system combination. The x-axis represents build configurations (H, HS, E, ES, ES+), and the y-axis represents the build duration in seconds.

### Components/Axes

* **Y-axis Label:** "Build duration (s)" - indicating the vertical axis represents build time in seconds. Scale ranges from 0 to 800 (varying between plots).

* **X-axis Labels:** "H", "HS", "E", "ES", "ES+" - representing different build configurations.

* **Plot Titles:**

* "Clang (18.1.3)"

* "Kernel (6.8.0, gcc)"

* "Kernel (6.8.0, clang)"

* **Box Plot Components:** Each box plot displays the median, quartiles, and potential outliers for build durations. The box represents the interquartile range (IQR), the line inside the box represents the median, and the whiskers extend to the minimum and maximum values within 1.5 times the IQR. Points beyond the whiskers are considered outliers.

### Detailed Analysis

**Plot 1: Clang (18.1.3)**

* **H:** Median build duration is approximately 3200s. IQR ranges from roughly 2800s to 3600s.

* **HS:** Median build duration is approximately 3400s. IQR ranges from roughly 3000s to 3800s.

* **E:** Median build duration is approximately 3700s. IQR ranges from roughly 3400s to 4000s.

* **ES:** Median build duration is approximately 3800s. IQR ranges from roughly 3500s to 4100s.

* **ES+:** Median build duration is approximately 4100s. IQR ranges from roughly 3800s to 4400s.

* Trend: Build duration generally increases as the build configuration progresses from H to ES+.

**Plot 2: Kernel (6.8.0, gcc)**

* **H:** Median build duration is approximately 180s. IQR ranges from roughly 150s to 220s.

* **HS:** Median build duration is approximately 220s. IQR ranges from roughly 180s to 260s.

* **E:** Median build duration is approximately 230s. IQR ranges from roughly 200s to 270s.

* **ES:** Median build duration is approximately 250s. IQR ranges from roughly 220s to 290s.

* **ES+:** Median build duration is approximately 480s. IQR ranges from roughly 400s to 550s.

* Trend: Build duration increases steadily from H to ES, then jumps significantly for ES+.

**Plot 3: Kernel (6.8.0, clang)**

* **H:** Median build duration is approximately 420s. IQR ranges from roughly 380s to 460s.

* **HS:** Median build duration is approximately 440s. IQR ranges from roughly 400s to 480s.

* **E:** Median build duration is approximately 450s. IQR ranges from roughly 410s to 490s.

* **ES:** Median build duration is approximately 460s. IQR ranges from roughly 420s to 500s.

* **ES+:** Median build duration is approximately 750s. IQR ranges from roughly 650s to 800s.

* Trend: Build duration increases steadily from H to ES, then jumps significantly for ES+.

### Key Observations

* Build durations are significantly longer when using Clang (18.1.3) compared to Kernel with either gcc or clang.

* The ES+ configuration consistently results in the longest build times across all compiler/build system combinations.

* The jump in build duration for ES+ is more pronounced for Kernel builds than for Clang builds.

* The spread of build times (as indicated by the IQR) is relatively consistent across configurations for Clang, but varies more for Kernel builds.

### Interpretation

The data suggests that the choice of compiler and build system significantly impacts build duration. Clang appears to be substantially slower than gcc for the Kernel builds. The ES+ configuration introduces a significant overhead, likely due to increased complexity or additional build steps. The consistent increase in build time with more complex configurations (H -> HS -> E -> ES -> ES+) indicates a growing computational cost associated with each added feature or optimization. The larger IQR for Kernel builds suggests greater variability in build times, potentially due to factors like system load or caching effects. The jump in build time for ES+ is a critical observation, indicating a potential bottleneck or area for optimization within the build process. This data could be used to inform decisions about compiler selection, build configuration, and resource allocation to minimize build times.

</details>

Figure 6. The complex targets clang and kernel are additionally built without sandboxes on the host H and enclave E.

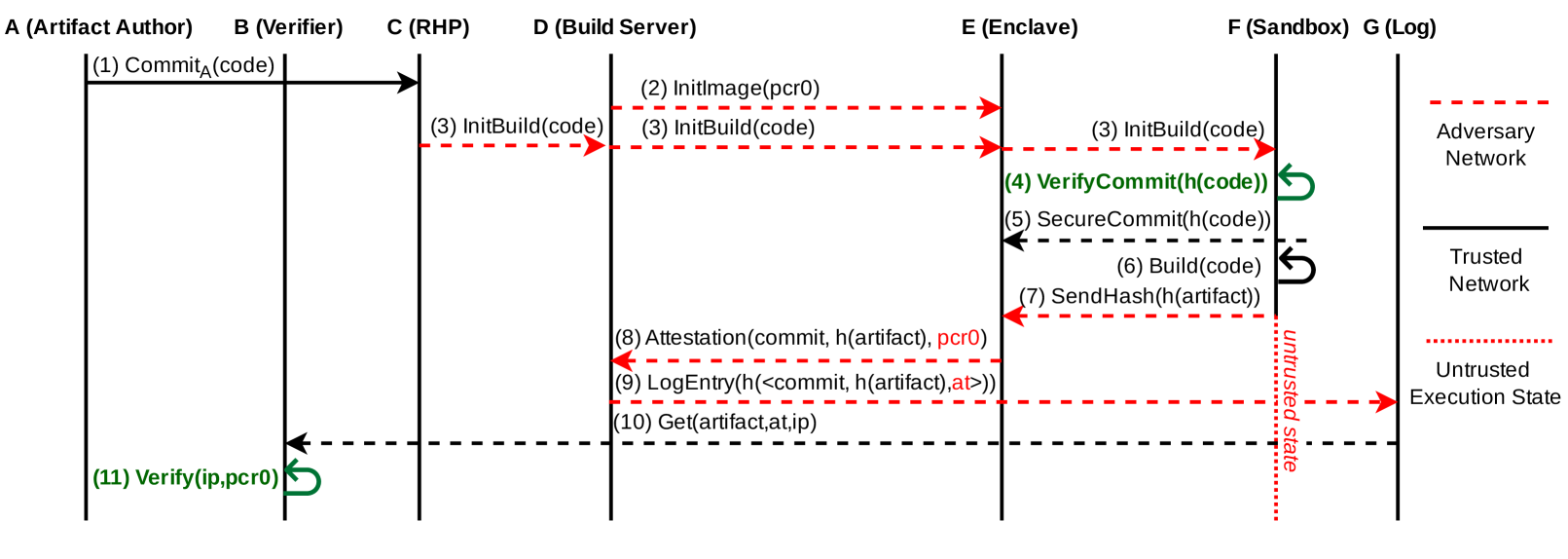

## 5. Formal verification using Tamarin

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Diagram: Secure Build Process Flow

### Overview

This diagram illustrates a secure build process involving multiple parties: an Artifact Author, a Verifier, a Remote Hardware Provider (RHP), a Build Server, an Enclave, a Sandbox (Log), and an Adversary Network. The diagram depicts the flow of messages and data between these parties, highlighting trusted and untrusted network segments and execution states. The process appears to focus on verifying the integrity of code and artifacts during a build process.

### Components/Axes

The diagram consists of seven vertical columns representing the different parties involved:

* **A (Artifact Author)**

* **B (Verifier)**

* **C (RHP)**

* **D (Build Server)**

* **E (Enclave)**

* **F (Sandbox (Log))**

* **G (Adversary Network)**

Horizontal lines with numbered labels represent the sequence of interactions. Three types of lines are used to indicate trust levels:

* Solid lines: Trusted Network

* Dashed lines: Adversary Network

* Dot-dashed lines: Untrusted Execution State

A legend on the right side defines these line types.

### Detailed Analysis or Content Details

The diagram shows the following sequence of events:

1. **(1) Commit<sub>A</sub>(code)**: Artifact Author (A) commits code. A solid arrow indicates this is over a trusted network.

2. **(2) InitImage(pcr0)**: Build Server (D) initializes an image using pcr0. A dashed arrow indicates this is over an adversary network.

3. **(3) InitBuild(code)**: RHP (C) and Build Server (D) initiate the build process with the code. Solid and dashed arrows respectively.

4. **(4) VerifyCommit(h(code))**: Enclave (E) verifies the commit hash of the code. A solid arrow.

5. **(5) SecureCommit(h(code))**: Enclave (E) securely commits the hash of the code. A solid arrow.

6. **(6) Build(code)**: Enclave (E) builds the code. A solid arrow.

7. **(7) SendHash(h(artifact))**: Enclave (E) sends the hash of the artifact. A solid arrow.

8. **(8) Attestation(commit, h(artifact), pcr0)**: RHP (C) provides attestation with the commit, artifact hash, and pcr0. A solid arrow.

9. **(9) LogEntry(h(<commit, h(artifact),at>>))**: Sandbox (F) logs an entry containing the hash of the commit, artifact hash, and attestation. A dot-dashed arrow.

10. **(10) Get(artifact,at,ip)**: Verifier (B) retrieves the artifact, attestation, and IP. A dot-dashed arrow.

11. **(11) Verify(ip,pcr0)**: Verifier (B) verifies the IP and pcr0. A solid arrow.

The diagram also indicates the following execution states:

* **Adversary Network**: Represented by dashed lines.

* **Trusted Network**: Represented by solid lines.

* **Untrusted Execution State**: Represented by dot-dashed lines.

### Key Observations

The diagram highlights a process where code integrity is verified at multiple stages, particularly within the Enclave (E). The use of hashes (h()) and attestation suggests a strong emphasis on preventing tampering and ensuring the authenticity of the build process. The separation of networks and execution states emphasizes the security boundaries. The Verifier (B) is the final point of verification, confirming the integrity of the artifact.

### Interpretation