# APOLLO: Automated LLM and Lean Collaboration for Advanced Formal Reasoning

**Authors**: Azim Ospanov, &Farzan Farnia , &Roozbeh Yousefzadeh

> Huawei Hong Kong Research CenterDepartment of Computer Science & Engineering, The Chinese University of Hong Kong

Abstract

Formal reasoning and automated theorem proving constitute a challenging subfield of machine learning, in which machines are tasked with proving mathematical theorems using formal languages like Lean. A formal verification system can check whether a formal proof is correct or not almost instantaneously, but generating a completely correct formal proof with large language models (LLMs) remains a formidable task. The usual approach in the literature is to prompt the LLM many times (up to several thousands) until one of the generated proofs passes the verification system. In this work, we present APOLLO (A utomated P r O of repair via L LM and L ean c O llaboration), a modular, model‑agnostic agentic framework that combines the strengths of the Lean compiler with an LLM’s reasoning abilities to achieve better proof‐generation results at a low token and sampling budgets. Apollo directs a fully automated process in which the LLM generates proofs for theorems, a set of agents analyze the proofs, fix the syntax errors, identify the mistakes in the proofs using Lean, isolate failing sub‑lemmas, utilize automated solvers, and invoke an LLM on each remaining goal with a low top‑ $K$ budget. The repaired sub‑proofs are recombined and reverified, iterating up to a user‑controlled maximum number of attempts. On the miniF2F benchmark, we establish a new state‑of‑the‑art accuracy of 84.9% among sub 8B‑parameter models (as of August 2025) while keeping the sampling budget below one hundred. Moreover, Apollo raises the state‑of‑the‑art accuracy for Goedel‑Prover‑SFT to 65.6% while cutting sample complexity from 25,600 to a few hundred. General‑purpose models (o3‑mini, o4‑mini) jump from 3–7% to over 40% accuracy. Our results demonstrate that targeted, compiler‑guided repair of LLM outputs yields dramatic gains in both efficiency and correctness, suggesting a general paradigm for scalable automated theorem proving. The codebase is available at https://github.com/aziksh-ospanov/APOLLO

1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Common Approach: Whole-Proof Generation Pipeline

### Overview

This image is a technical flowchart illustrating a standard iterative process for automated theorem proving. It depicts a system where a Large Language Model (LLM) generates complete mathematical proofs, which are then evaluated by a formal verification environment (Lean Server). The system operates in a feedback loop that continues until a correct proof is found or a maximum number of attempts is reached.

### Components and Spatial Grounding

The diagram is composed of a header, three main sequential nodes arranged horizontally from left to right, connecting arrows, and an overarching feedback loop.

**1. Header (Top Center)**

* **Text:** "Common Approach: Whole-Proof Generation Pipeline"

* **Positioning:** Centered at the very top of the image, acting as the title.

**2. Node 1: Generator (Left)**

* **Visual:** A light blue square containing a stylized icon of a brain integrated into a computer microchip (circuit lines ending in dots).

* **Label:** A small blue rectangular tab rests on the top edge of the square containing the text "**LLM**".

**3. Edge 1: Transmission (Center-Left)**

* **Visual:** A blue arrow pointing from the LLM node to the Lean Server node.

* **Label:** A white rectangle interrupts the arrow line, containing the text "**proof attempt**".

**4. Node 2: Verifier (Center)**

* **Visual:** A large white rectangle. Inside the rectangle is a stylized, geometric line-art graphic that spells out the word "**LEAN**" using continuous, sharp angles.

* **Label:** A grey rectangular tab rests on the top edge of the white box containing the text "**Lean Server**".

**5. Edge 2: Result Output (Center-Right)**

* **Visual:** A simple blue arrow pointing from the Lean Server node to the Evaluation node. It has no text label.

**6. Node 3: Evaluation/State (Right)**

* **Visual:** A stylized computer application window. It features a black top menu bar with three small colored dots (red, yellow, green) typical of macOS window controls. Inside the window are two distinct status indicators:

* **Left Indicator:** A green square with rounded corners containing a thick black checkmark. Above it is a green pill-shaped label reading "**exit loop**".

* **Right Indicator:** A red square with rounded corners containing a thick black 'X'. Above it is a red pill-shaped label reading "**continue**".

**7. Feedback Loop (Perimeter)**

* **Visual:** A thick black line that forms a large rectangular loop around the entire process. It originates from the right side of the Evaluation node, points downward, travels left across the bottom of the image, travels up the left side, and points right, feeding back into the LLM node.

* **Label:** On the top horizontal section of this black loop line, there is a grey rectangle containing the text "**repeat up to K times**".

### Content Details and Flow

The diagram maps a specific logical flow:

1. **Generation:** The cycle begins at the **LLM**, which generates a complete **proof attempt**.

2. **Verification:** This attempt is sent to the **Lean Server**, which acts as the compiler/verifier to check the logical and syntactic validity of the proof.

3. **Evaluation:** The output of the Lean Server results in a binary state:

* If the proof is correct (Green Checkmark), the system triggers an **exit loop** command, successfully ending the process.

* If the proof is incorrect (Red 'X'), the system triggers a **continue** command.

4. **Iteration:** The "continue" state feeds into the outer black feedback loop. This prompts the LLM to generate a new, different proof attempt.

5. **Constraint:** The entire iterative process is bounded by the condition to **repeat up to K times**. If the system fails $K$ times, the loop will terminate without a successful proof.

### Key Observations

* **Binary Feedback:** The diagram implies that the LLM only receives a pass/fail signal (check or X) rather than detailed error messages or step-by-step interactive guidance.

* **"Whole-Proof" Paradigm:** The title explicitly states this is a "Whole-Proof" generation pipeline. This means the LLM attempts to write the entire proof from start to finish in a single inference step, rather than constructing it line-by-line with intermediate checks.

* **Automated Loop:** There is no human-in-the-loop depicted; the process relies entirely on the LLM's generation capabilities and the Lean Server's deterministic verification.

### Interpretation

This diagram illustrates the baseline or "naive" approach to using Large Language Models for formal mathematics (specifically using the Lean theorem prover). Because LLMs are prone to hallucinations or logical errors, a single generation is rarely reliable for complex proofs. Therefore, the standard methodology is to use the LLM as a heuristic guesser and the Lean Server as a rigorous judge.

By wrapping this in a loop ("repeat up to K times"), the system utilizes a "sample-and-filter" or brute-force methodology. It relies on the probability that if the LLM generates enough diverse attempts ($K$ attempts), at least one will be logically sound.

*Peircean/Investigative Note:* The explicit labeling of this as the "Common Approach" strongly suggests that this image serves as a baseline comparison in a broader technical paper or presentation. The author is likely setting up this "Whole-Proof" brute-force loop to contrast it with a novel, more sophisticated method—such as a Tree-of-Thought approach, step-by-step interactive proving, or a system where the Lean Server feeds specific error messages back to the LLM to guide corrections, rather than just a binary "continue" signal.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Our Proposed Apollo Pipeline

### Overview

This image is a system architecture diagram illustrating a closed-loop, iterative process for automated theorem proving or code verification. It details the interaction between a Large Language Model (LLM), a specialized "Apollo Proof Repair Agent," and a "Lean Server" environment. The system is designed to generate proofs, test them, extract errors, and iteratively repair them up to a specified number of attempts.

### Components and Spatial Grounding

The diagram is divided into three primary horizontal regions, enclosed within a global feedback loop.

**1. Global Loop (Outer Boundary)**

* **Position:** Surrounds the entire diagram.

* **Visuals:** A thick black line with directional arrows indicating a clockwise flow.

* **Label:** A grey box at the top center reads: `repeat up to r times`.

**2. Left Region: Generation**

* **Component:** `LLM`

* **Visuals:** A light blue box containing an icon of a brain superimposed on a computer microchip. A smaller blue tab at the top reads `LLM`.

**3. Center Region: Processing & Repair**

* **Component:** `Apollo Proof Repair Agent`

* **Visuals:** Enclosed in a dashed black bounding box. A yellow tab at the top reads `Apollo Proof Repair Agent`.

* **Sub-components (Inside the dashed box):**

* **Top (Main Agent):** A large yellow box containing two icons: a hand holding a wrench (symbolizing repair) and a computer monitor displaying lines of code `</>`.

* **Bottom Right:** A peach-colored box labeled `Subproof Extractor` containing an icon of a gear, a warning triangle, and a wrench.

* **Bottom Left:** A brown box labeled `Auto Solver` containing an icon of a tic-tac-toe board with a winning line drawn through the 'O's.

**4. Right Region: Verification & Control**

* **Component:** `Lean Server`

* **Visuals:** A white box with a grey tab at the top reading `Lean Server`. Inside the box is a stylized, geometric line-art spelling of the word `LEAN`.

* **Component:** Loop Control Interface

* **Position:** Bottom right, situated below the Lean Server.

* **Visuals:** A stylized dark computer window/terminal. It contains two prominent buttons:

* Left button: Green with a checkmark, labeled `exit loop`.

* Right button: Red with an 'X', labeled `continue`.

### Detailed Flow Analysis (Content Details)

The flow of information is denoted by colored arrows. Blue arrows generally indicate forward progression or outputs, while red arrows indicate feedback, errors, or return requests.

**Forward Flow (Blue Arrows):**

1. **LLM to Apollo Agent:** A blue arrow points right from the LLM to the yellow box of the Apollo Proof Repair Agent.

* **Label:** `proof attempt(s)`

2. **Apollo Agent to Lean Server:** A blue arrow points right from the yellow box of the Apollo Proof Repair Agent to the Lean Server. (No text label).

3. **Lean Server to Loop Control:** A blue arrow points down and left from the Lean Server to the Loop Control Interface.

**Feedback/Return Flow (Red Arrows):**

1. **Lean Server to Apollo Agent:** A red arrow points left from the Lean Server back to the yellow box of the Apollo Proof Repair Agent.

* **Label:** (Stacked text)

`proof state`

`compilation errors`

`syntax errors`

2. **Internal Apollo Agent Flow:**

* A red arrow points down from the yellow main box to the `Subproof Extractor`.

* A red arrow points left from the `Subproof Extractor` to the `Auto Solver`.

* A red arrow points up from the `Auto Solver` back to the yellow main box.

3. **Apollo Agent to LLM:** A red arrow points left from the yellow box of the Apollo Proof Repair Agent back to the LLM.

* **Label:** `sub-problem(s) to prove`

4. **Loop Control to Lean Server:** A red arrow points up and left from the Loop Control Interface back to the Lean Server.

### Key Observations

* **Color-Coded Semantics:** The diagram strictly adheres to a color-coding scheme for its data flow. Blue represents the generation and submission of attempts, while red represents the extraction of errors, breakdown of problems, and feedback loops.

* **Internal vs. External Loops:** There are multiple loops occurring. An internal loop exists within the Apollo Agent itself (Agent -> Extractor -> Solver -> Agent). A secondary loop exists between the Apollo Agent and the Lean Server. The primary global loop encompasses the LLM and is governed by the `repeat up to r times` constraint.

* **Termination Conditions:** The loop control interface at the bottom right dictates the end of the process. A successful proof (green check) triggers `exit loop`, while a failure (red X) triggers `continue`, pushing the process back through the global loop (provided the attempt count is less than *r*).

### Interpretation

This diagram outlines a sophisticated, AI-driven automated theorem proving or code synthesis pipeline, specifically tailored for the Lean theorem prover.

The process begins with an LLM generating initial `proof attempt(s)`. Instead of sending these directly to the Lean Server, they pass through the "Apollo Proof Repair Agent." This agent acts as an intermediary and refinement tool. It sends the proof to the Lean Server, which acts as the ground-truth verifier.

If the Lean Server rejects the proof, it returns highly specific feedback (`proof state`, `compilation errors`, `syntax errors`). The Apollo Agent receives this feedback. If the error is complex, the Apollo Agent utilizes its internal tools: the `Subproof Extractor` isolates the specific failing part of the proof, and the `Auto Solver` attempts to resolve it algorithmically.

If the Apollo Agent cannot fix the proof internally, it translates the specific failure into `sub-problem(s) to prove` and sends this refined prompt back to the LLM. This prevents the LLM from having to regenerate the entire proof from scratch, focusing its computational power only on the broken segments.

This entire cycle is bounded by a maximum retry limit (*r* times) to prevent infinite loops, ensuring computational efficiency. The system demonstrates a "neuro-symbolic" approach, combining the generative power of neural networks (LLM) with the strict, rule-based logic of symbolic solvers (Lean Server and Auto Solver).

</details>

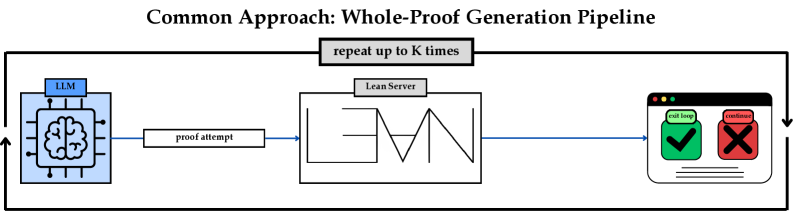

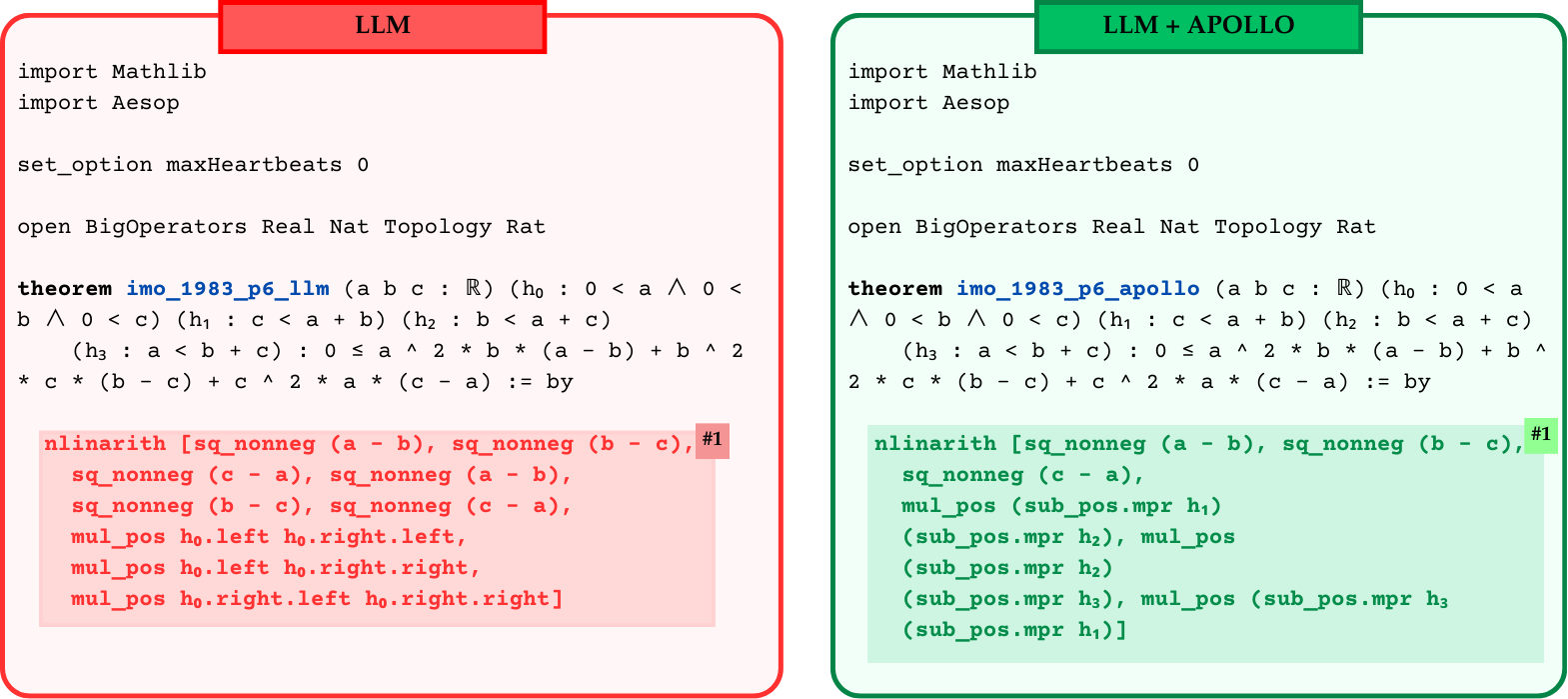

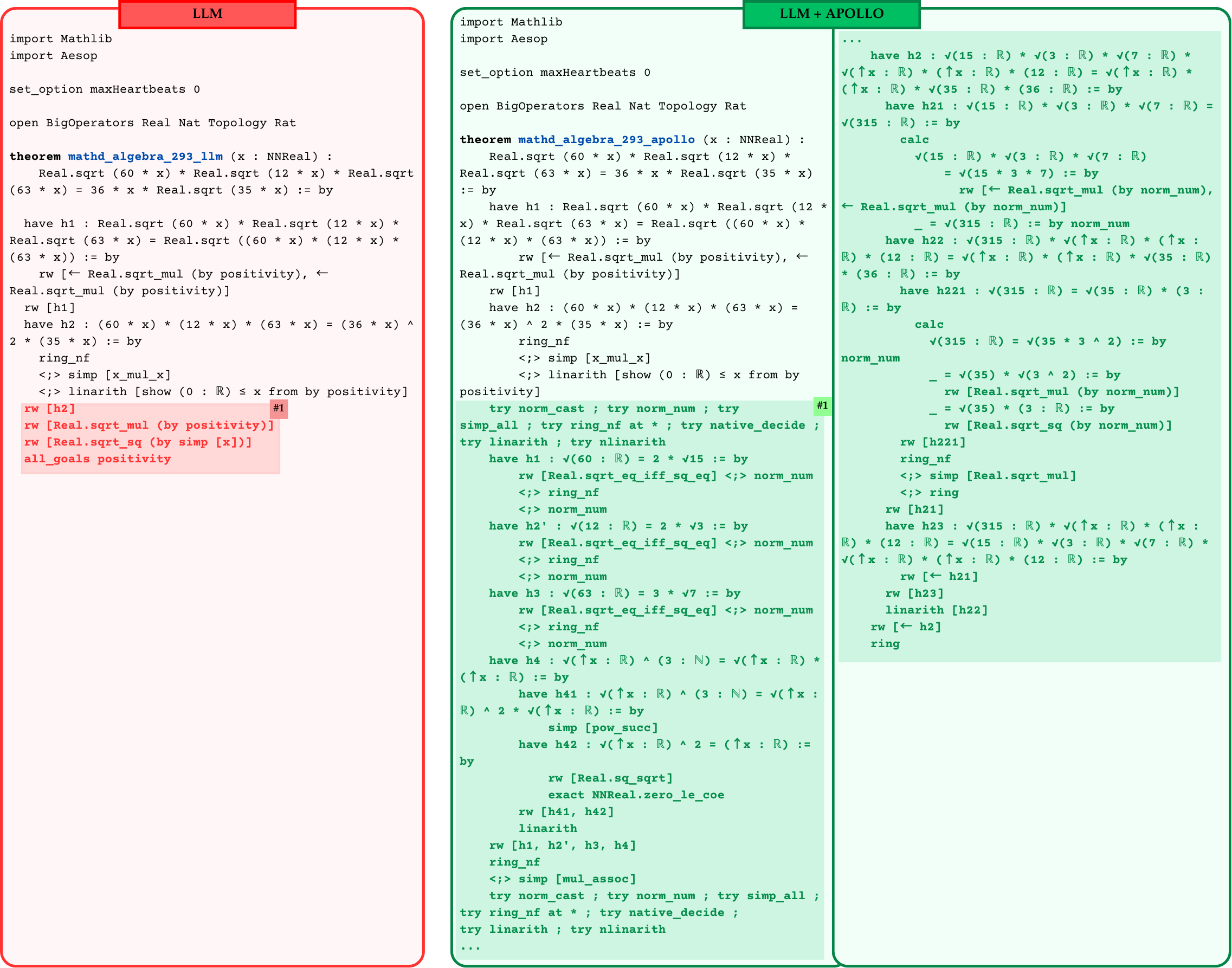

Figure 1: The summary of whole-proof generation pipeline vs. proposed Apollo agentic pipeline. LLM refers to a chosen formal theorem generator model.

Formal reasoning has emerged as one of the most challenging fields of AI with recent achievements such as AlphaProof and AlphaGeometry doing well at the International Math Olympiad competing with humans yang2024formal ; alphaproof ; AlphaGeometryTrinh2024 . Formal reasoning relies both on AI models and a formal verification system that can automatically verify whether a mathematical proof is correct or not. In recent years, formal verification systems such as Lean lean4paper have facilitated a new form of doing mathematical research by enabling mathematicians to interact with the formal verification system and also with each other via the system, enabling larger numbers of mathematicians to collaborate with each other on a single project. As such, these formal verification systems are also called proof assistants as one can use them interactively to write a formal proof and instantaneously observe the current state of the proof and any possible errors or shortcomings in the proof generated by the compiler. Immediate access to the output of Lean compiler can help a mathematician to fix possible errors in the proof. At the same time, when a proof passes the compiler with no error, other collaborators do not need to verify the correctness of the proof. This type of collaboration is transforming the field of mathematics enabling large groups of collaborators to engage in large projects of mathematical research such as the Prime Number Theorem And More project kontorovich_tao_2024_primenumbertheoremand . Moreover, it has given rise to the digitization of mathematics buzzard2024mathematical .

In the AI front, large language models (LLMs) are improving at mathematical reasoning in natural language, and at the same time, they have shown a remarkable ability to learn the language of those formal verification systems and write mathematical proofs in formal language. This has led to the field of Automated Theorem Proving (ATP) where the standard approach is to prompt the LLM to generate a number of candidate proofs for a given theorem which will then be verified automatically using the formal verification system. Better models, better training sets, and better training methods has led to significant advances in this field tsoukalas2024putnambenchevaluatingneuraltheoremprovers ; zheng2022minif2fcrosssystembenchmarkformal ; azerbayev2023proofnetautoformalizingformallyproving ; li2024numinamath ; hu2025minictx .

Despite these advances, the LLMs do not really get the chance to interact with the verification system the way a human does. LLMs generate many possible proofs, sometimes as many as tens of thousands, and the verification system only marks the proofs with a binary value of correct or incorrect. This common approach is illustrated in Figure 1. When the formal verification system analyzes a proof, its compiler may generate a long list of issues including syntax errors, incorrect tactics, open goals, etc. In the current approach of the community, all of those information/feedback from the compiler remains unused, as the LLM’s proof generation is not directly engaged with the compiler errors of the formal verification system.

In this work, we address this issue by introducing Apollo, a fully automated system which uses a modular, model-agnostic pipeline that combines the strengths of the Lean compiler with the reasoning abilities of LLMs. Apollo directs a fully automated process in which the LLM generates proofs for theorems, a set of agents analyze the proofs, fix the syntax errors, identify the mistakes in the proofs using Lean, isolate failing sub‑lemmas, utilize automated solvers, and invoke an LLM on each remaining goal with a low top‑ $K$ budget. The high-level overview of Apollo appears at the bottom of Figure 1.

Our contributions are as follows:

- We introduce a novel fully automated system that directs a collaboration between LLMs, Lean compiler, and automated solvers for automated theorem proving.

- We evaluate Apollo on miniF2F-test set using best LLMs for theorem proving and demonstrate that Apollo improves the baseline accuracies by significant margins while keeping the sampling costs much lower.

- We establish a new SOTA on miniF2F-test benchmark for medium-sized language models. (with parameter size 8B or less)

2 Related Works

Formal theorem proving systems. At their core, formal theorem‑proving (FTP) systems employ interactive theorem provers (ITPs) to verify mathematical results. In particular, Lean4 lean4paper is both a functional programming language and an ITP. Each theorem, lemma, conjecture, or definition must be checked by Lean’s trusted kernel, so the compiler’s verdict is binary: either the statement type‑checks (True) or it fails (False). This rigorous verification dramatically increases the reliability of formal mathematics; however, it also increases the complexity of proof development: authors must both comprehend the mathematical concepts and precisely encode them in the formal language. The usefulness of Lean is also shown to go beyond theorem proving, as Jiang et al. jiang2024leanreasoner showed that Lean can be adapted to natural language logical reasoning.

Patching broken programs. Many prior works have explored using feedback to repair broken proofs. In software engineering, “repair” typically refers to program repair, i.e. fixing code that no longer runs programrepair . A common source of errors is a version mismatch that introduces bugs and prevents execution. For example, SED sed_repair uses a neural program‐generation pipeline that iteratively repairs initial generation attempts. Other notable approaches train specialized models to fix broken code based on compiler feedback (e.g. BIFI bifi , TFix tfix ) or leverage unsupervised learning over large bug corpora (e.g. BugLab buglab ).

LLM collaboration with external experts. The use of expert feedback, whether from human users or from a proof assistant’s compiler, has proven effective for repairing broken proofs. Ringer ringer2009typedirected showcased automatic proof repair within the Coq proof assistant bertot2013interactive , enabling the correction of proofs in response to changes in underlying definitions. Jiang et al. jiang2022thor showed that leveraging automatic solvers with generative models yield better performance on formal math benchmarks. More recently, First et al. first2023baldurwholeproofgenerationrepair showed that incorporating compiler feedback into LLM proof generation significantly improves the model’s ability to correct errors and produce valid proofs. Another line of work song2023towards explored an idea of mathematician and LLM collaboration, where human experts are aided by LLMs at proof writing stage.

Proof search methods. Numerous recent systems use machine learning to guide the search for formal proofs. One of the methods involves using large language models with structured search strategies, e.g. Best‑First Search (BFS) polu2022formalmathematicsstatementcurriculum ; wu2024internlm25stepproveradvancingautomatedtheorem ; xin2025bfsproverscalablebestfirsttree or Monte Carlo Tree Search (MCTS) lample2022hypertree ; wang2023dt-solver ; alphaproof . While tree‑search methods reliably steer a model toward valid proofs, they result in high inference costs and often explore many suboptimal paths before success. Another line of work leverages retrieval based systems to provide context for proof generators with potentially useful lemmas. One such example is ReProver yang_leandojo_2023 that augments Lean proof generation by retrieving relevant lemmas from a proof corpus and feeding them to an LLM, enabling the model to reuse existing proofs.

Whole proof generation. The use of standalone LLMs for theorem proving has emerged as a major research direction in automated theorem proving. One of the earliest works presented GPT-f polu2020generativelanguagemodelingautomated , a transformer-based model for theorem proving that established the LLMs ability in formal reasoning. As training frameworks and methods advance, the community has produced numerous models that generate complete proofs without external search or tree‑search algorithms. Recent work shows that both supervised models xin2024deepseekproverv15harnessingproofassistant ; lin2025goedelproverfrontiermodelopensource and those trained via reinforcement learning xin2024deepseekproverv15harnessingproofassistant ; zhang2025leanabellproverposttrainingscalingformal ; dong2025stp ; kimina_prover_2025 achieve competitive performance on formal‑math benchmarks. However, whole‑proof generation remains vulnerable to hallucinations that can cause compilation failures even for proofs with correct reasoning chains.

Informal Chain-of-Thought in Formal Mathematics. Several recent works demonstrate that interleaving informal “chain‑of‑thought” (CoT) reasoning with formal proof steps substantially improves whole‑proof generation. For example, developers insert natural‑language mathematical commentary between tactics, yielding large accuracy gains lin2025goedelproverfrontiermodelopensource ; xin2024deepseekproverv15harnessingproofassistant ; azerbayev2024llemma . Wang, et al. kimina_prover_2025 further show that special “thinking” tokens let the model plan its strategy and self‑verify intermediate results. The “Draft, Sketch, and Prove” (DSP) jiang2023draftsketchproveguiding and LEGO-Prover wang2024legoprover pipelines rely on an informal proof sketch before attempting to generate the formal proof. Collectively, these studies indicate that providing informal mathematical context, whether as comments, sketches, or dedicated tokens, guides LLMs toward correct proofs and significantly boosts proof synthesis accuracy.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Apollo Algorithm Workflow and Proof Repair

### Overview

This image presents a technical flowchart and code comparison illustrating the "Apollo Algorithm," an automated system designed to repair formal mathematical proofs (specifically in the Lean 4 programming language) using a combination of symbolic tools and Large Language Models (LLMs). The top half displays the iterative workflow of the algorithm, while the bottom half provides a concrete before-and-after example of a Lean 4 proof being repaired.

### Components

The image is divided into three main spatial regions:

1. **Header & Main Flowchart (Top half):** A dashed rectangular border encloses the step-by-step process of the Apollo Algorithm.

2. **Invalid Proof (Bottom-left):** A code block outlined in red, showing a failing mathematical proof with specific errors highlighted.

3. **Correct Proof (Bottom-right):** A code block outlined in green, showing the successfully repaired proof with the corrected sections highlighted.

### Content Details

#### 1. Main Flowchart (Top Section)

The workflow proceeds through several distinct nodes, connected by directional arrows:

* **Syntax Refiner:** Located at the top-left. Represented by a blue box with an icon of a document and gears. An arrow points right to the Sorrifier.

* **Sorrifier:** Represented by a red box with an icon of a hammer breaking a dashed circle. An arrow points right to the Auto Solver.

* **Auto Solver:** Represented by a green box with an icon of a document and a green pen. An arrow points right to a sequence of LLM interactions.

* **LLM Interaction Sequence:**

* A gray box labeled `Extract subproofs to prove` points to an icon of a document with a microchip and checkmark.

* A gray box labeled `Feed to LLM` points to the **LLM** node (orange box, neural network icon).

* An arrow points down from the LLM to a gray box labeled `Assemble the proof back`.

* An arrow points left to the **Proof Assembler** (brown box, icon of a trowel building a brick wall).

* **Decision Loop:** From the Proof Assembler, an arrow points down to a decision diamond.

* **Decision Diamond 1 (Right):** Orange diamond labeled `Does the proof compile in Lean4?`.

* **YES (Green circle):** An arrow points down, exiting the dashed box and pointing to the "Correct Proof" block.

* **NO (Red circle):** An arrow points left to the second decision diamond.

* **Decision Diamond 2 (Left):** Orange diamond labeled `number of attempts > r?`.

* **NO (Red circle):** An arrow loops up and left, returning to the **Syntax Refiner** to start the process over.

* **YES (Green circle):** An arrow points down, exiting the dashed box and pointing to the "Invalid Proof" block.

#### 2. Invalid Proof (Bottom-Left Section)

This section contains Lean 4 code with a red border and a red header labeled "Invalid Proof". Four specific lines/blocks of code are highlighted in red and tagged with numbers `#1` through `#4`.

**Transcription of Invalid Proof Code:**

```lean

import Mathlib

import Aesop

set_option maxHeartbeats 0

open BigOperators Real Nat Topology Rat Set

theorem mathd_numbertheory_495_incorrect (a b : N)

(h₀ : 0 < a 0 < b)

(h₁ : a % 10 = 2) (h₂ : b % 10 = 4)

(h₃ : Nat.gcd a b = 6) : 108 ≤ Nat.lcm a b := by

have h4 : a ≥ 6 := by

have h5 : a % 10 = 2 := h₁

omega #1 (Highlighted Red)

have h5 : b ≥ 6 := by

have h6 : b % 10 = 4 := h₂

omega #2 (Highlighted Red)

by_contra h

push_neg at h

have h6 : a * b < 648 := by

have h7 : Nat.lcm a b < 108 := by linarith

have h8 : a * b = 6 * Nat.lcm a b := by

rw [ Nat.gcd_mul_lcm a b ]

rw [h₃]

linarith

have h7 : a ≤ 100 := by

nlinarith [h₁, h₂, h₃, Nat.gcd_pos_of_pos_left a h₀.right, #3 (Highlighted Red)

h₀.right, Nat.gcd_pos_of_pos_right b h₀.right] (Highlighted Red)

have h8 : b ≤ 100 := by

nlinarith [h₁, h₂, h₃, Nat.gcd_pos_of_pos_left a h₀.right, #4 (Highlighted Red)

h₀.right, Nat.gcd_pos_of_pos_right b h₀.right] (Highlighted Red)

interval_cases a <;> interval_cases b <;>

norm_num at *

<;> try { contradiction }

```

#### 3. Correct Proof (Bottom-Right Section)

This section contains Lean 4 code with a green border and a green header labeled "Correct Proof". It is formatted in two columns. Four specific blocks of code are highlighted in green and tagged with numbers `#1` through `#4`, corresponding directly to the red tags in the invalid proof.

**Transcription of Correct Proof Code (Left Column):**

```lean

...

theorem mathd_numbertheory_495_after_proof_repair

(a b : N)

(h₀ : 0 < a 0 < b)

(h₁ : a % 10 = 2) (h₂ : b % 10 = 4)

(h₃ : Nat.gcd a b = 6) : 108 ≤ Nat.lcm a b := by

have h4 : a ≥ 6 := by

have h5 : a % 10 = 2 := h₁

have h6 : 6 ≤ a := by #1 (Highlighted Green Start)

have h : a.gcd b = 6 := h₃

have h7 : 6 | a := by

rw [ ← h ]

apply Nat.gcd_dvd_left

omega

exact h6 (Highlighted Green End)

have h5 : b ≥ 6 := by

have h6 : b % 10 = 4 := h₂

by_contra h #2 (Highlighted Green Start)

push_neg at h

have h4 : b = 4 := by

omega

rw [h4] at h₃

have h6 : a.gcd 4 ≤ 4 := by

apply Nat.gcd_le_right

norm_num

linarith (Highlighted Green End)

by_contra h

push_neg at h

...

```

**Transcription of Correct Proof Code (Right Column):**

```lean

...

have h6 : a * b < 648 := by

have h7 : Nat.lcm a b < 108 := by linarith

have h8 : a * b = 6 * Nat.lcm a b := by

rw [ Nat.gcd_mul_lcm a b ]

rw [h₃]

linarith

have h7 : a ≤ 100 := by

by_contra h' #3 (Highlighted Green Start)

push_neg at h'

have h7 : a ≥ 101 := by linarith

have h8 : b ≤ 6 := by nlinarith

have h9 : b = 6 := by linarith

rw [h9] at h₂

norm_num at h₂ (Highlighted Green End)

have h8 : b ≤ 100 := by

by_contra h8 #4 (Highlighted Green Start)

push_neg at h8

have h9 : b ≤ 107 := by

have h10 : a * b < 648 := h6

have h11 : a ≥ 6 := h4

nlinarith

have h12 : b ≤ 107 := h9

have h13 : b > 100 := h8

have h14 : b % 10 = 4 := h₂

have h15 : b ≥ 101 := by omega

interval_cases b <;> omega (Highlighted Green End)

interval_cases a <;> interval_cases b <;> norm_num at *

<;> try { contradiction }

```

### Key Observations

* **Direct Substitution:** The tags `#1`, `#2`, `#3`, and `#4` serve as a visual legend connecting the two code blocks. The algorithm identifies failing single-line tactics (like `omega` or `nlinarith` in the red block) and replaces them with expanded, multi-step subproofs (in the green block) that successfully compile.

* **Iterative Loop:** The flowchart explicitly shows that if the LLM's generated proof does not compile in Lean 4, the system does not immediately fail. Instead, it loops back to the "Syntax Refiner" to try again, up to a maximum number of attempts (`r`).

* **Failure State:** If the number of attempts exceeds `r`, the algorithm outputs the "Invalid Proof" (the red path).

* **Success State:** If the proof compiles, it outputs the "Correct Proof" (the green path).

### Interpretation

This diagram illustrates a "neuro-symbolic" approach to automated theorem proving.

1. **The Problem:** Large Language Models (LLMs) are good at generating code but often make logical or syntactic errors in strict formal environments like Lean 4.

2. **The Solution (Apollo):** The Apollo Algorithm uses the strict Lean 4 compiler as a verifiable "ground truth." It isolates broken parts of a proof ("Extract subproofs to prove"), asks the LLM to fix only those specific parts, and then reassembles the proof.

3. **The Loop:** By placing the LLM inside a `while` loop governed by the Lean 4 compiler (`Does the proof compile?`), the system forces the LLM to iteratively refine its output until it is mathematically sound, rather than accepting its first guess. The before-and-after code blocks demonstrate that the LLM is capable of taking a failing, overly-optimistic tactic (like expecting `omega` to solve a complex goal) and breaking it down into rigorous, step-by-step logical deductions that satisfy the compiler.

</details>

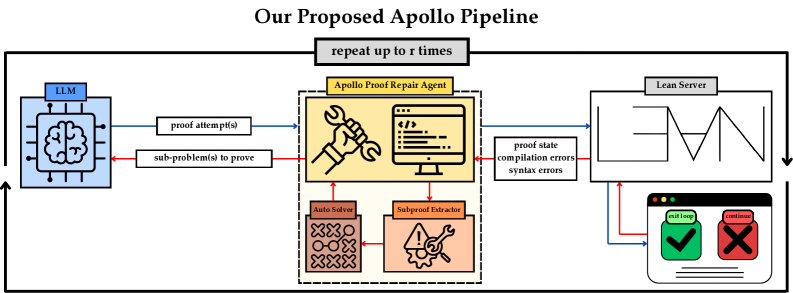

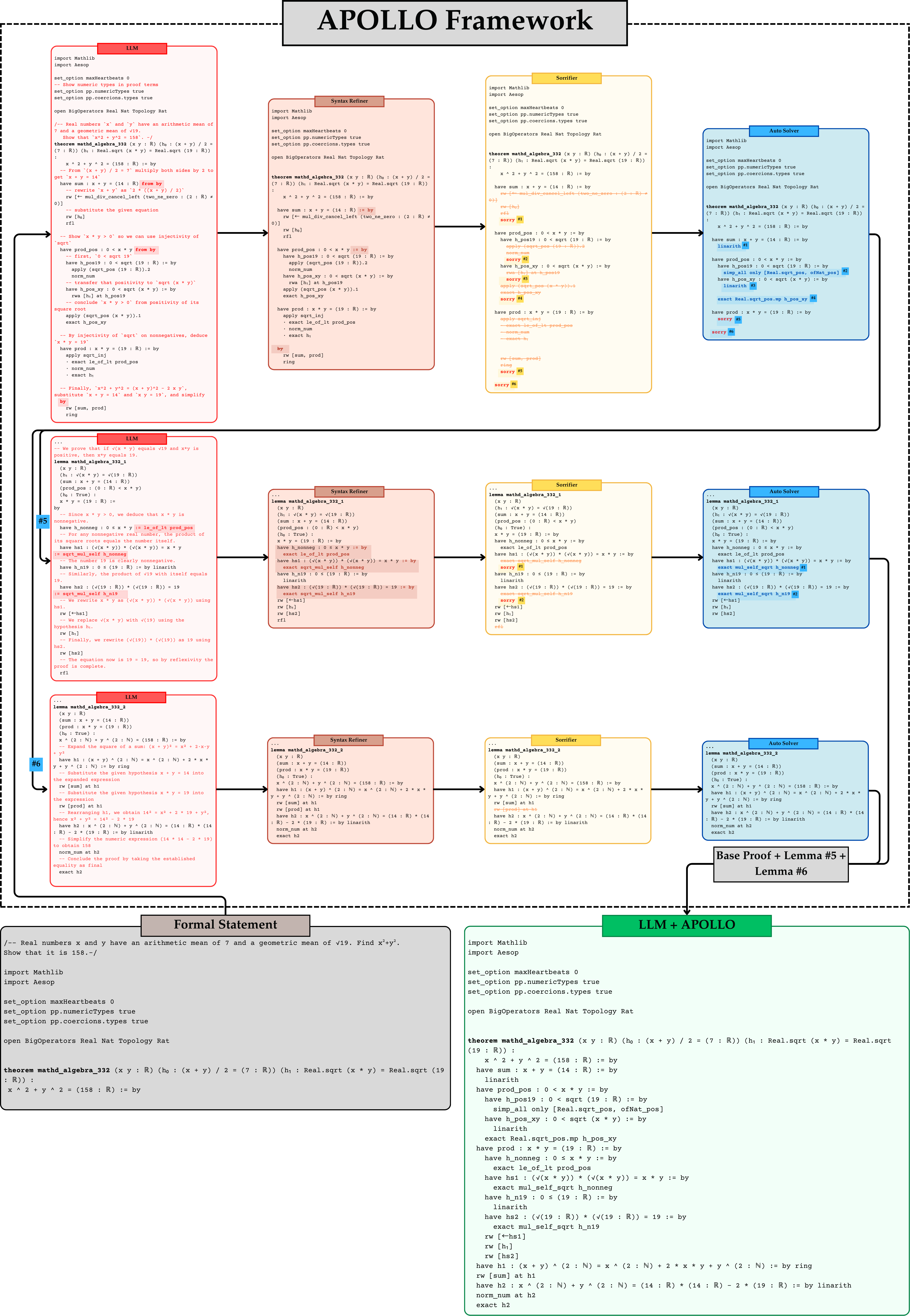

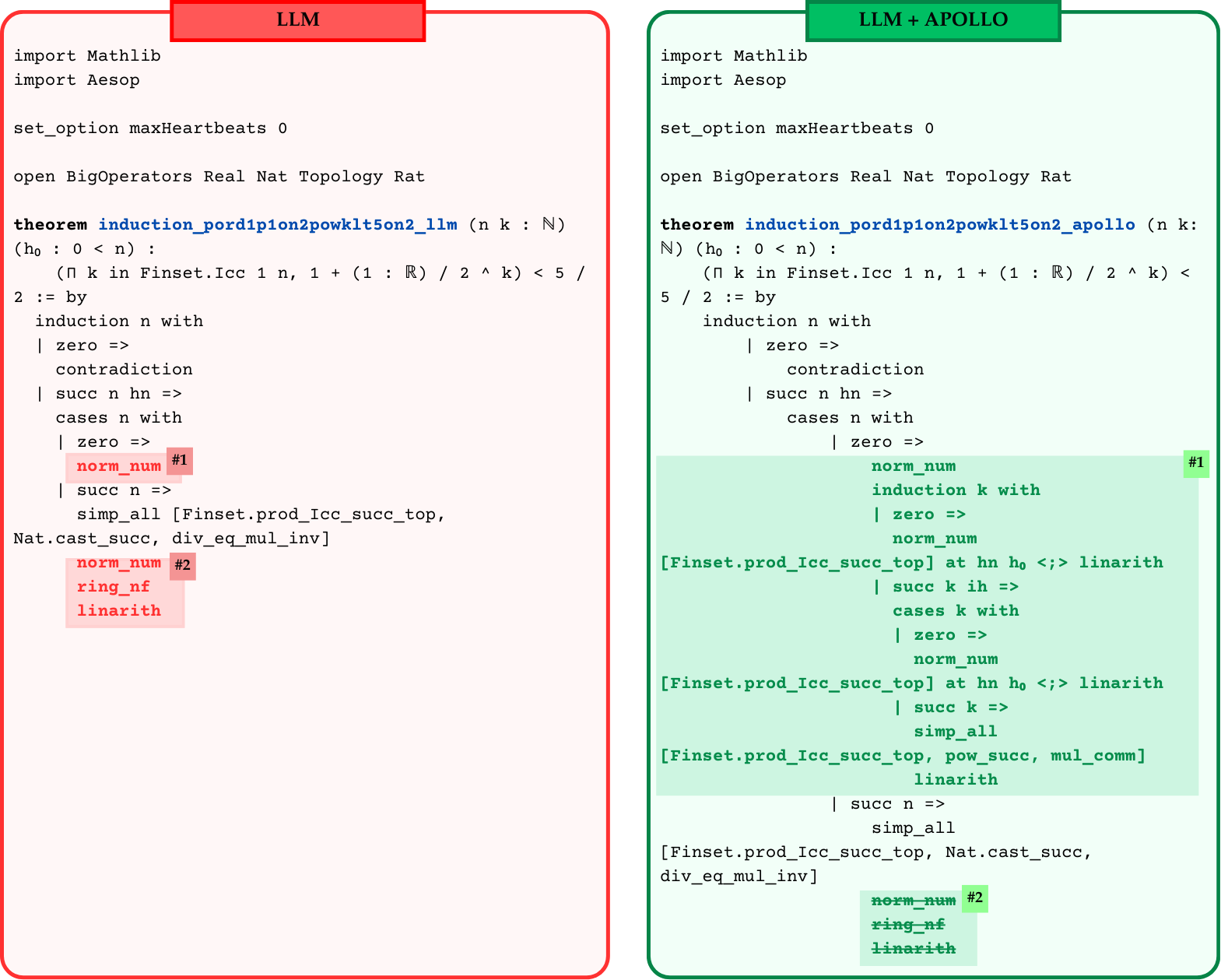

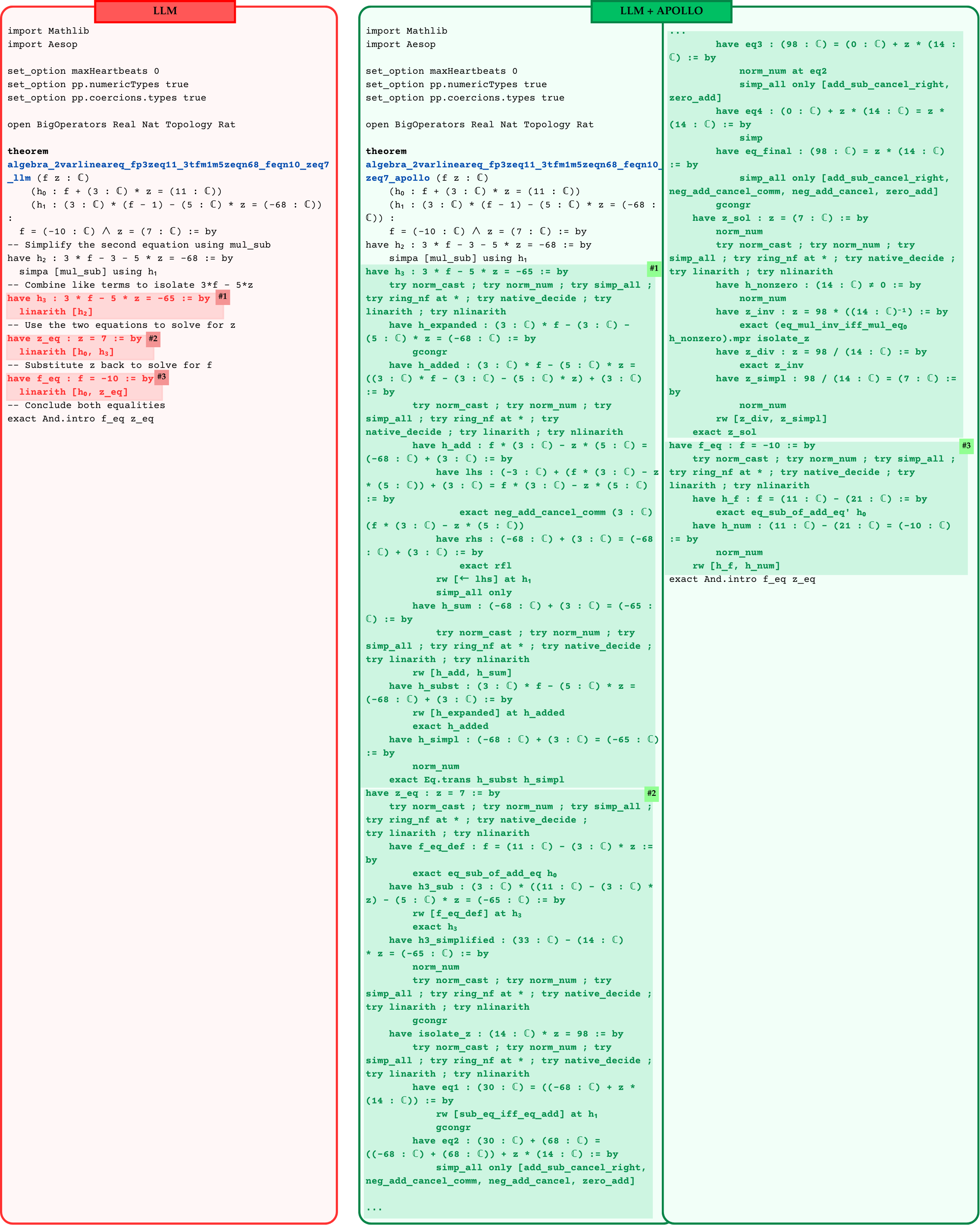

Figure 2: Overview of the Apollo framework.

3 Our Approach

In this section we describe Apollo, our framework for transforming an LLM’s raw proof sketch into a fully verified Lean4 proof. Figure 2 illustrates the Apollo pipeline process with an attached Lean code before and after repair. We observe that often LLM is capable of producing a valid proof sketch; however, it often struggles with closing fine-grained goals. For example, statements h4, h5, h7, h8 are correct but the generated proofs for each sub-lemma throw compilation errors. Apollo identifies and fixes such proof steps and guides the LLM towards a correct solution.

Our compiler of choice is the Lean REPL leanrepl , a “ R ead– E val– P rint– L oop” for Lean4. It provides a complete list of compilation errors and warnings, each with a detailed description and the location (for example, the line number) where it occurred. Errors can include syntax mistakes, nonexistent tactics, unused tactics, and more. We aggregate this information to drive our agent’s dynamic code repair and sub‑problem extraction. The pseudo-code for our framework can be found in Algorithm 1 in the Appendix.

3.1 Syntax Refiner

The Syntax Refiner catches and corrects superficial compilation errors: missing commas, wrong keywords (e.g. Lean3’s from vs. Lean4’s := by), misplaced brackets, etc. These syntax errors arise when a general‑purpose LLM (such as o3‑mini openai-o3-mini-2025 or o4‑mini openai-o4-mini-2025 ) has not been specialized on Lean4 syntax. By normalizing these common mistakes, this module ensures that subsequent stages operate on syntactically valid code. It is important to note that this module is primarily applicable to general purpose models not explicitly trained for theorem proving in Lean. In contrast, Syntax Refiner usually does not get invoked for proofs generated by LLMs trained for formal theorem proving.

3.2 Sorrifier

The Sorrifier patches any remaining compilation failures by inserting Lean’s sorry placeholder. We first parse the failed proof into a tree of nested proof‑blocks (sub‑lemmas as children). Then we compile the proof with Lean REPL leanrepl , detect the offending line or block, and apply one of three repairs:

1. Line removal, if a single tactic or declaration is invalid but its surrounding block may still succeed.

1. Block removal, if the entire sub‑proof is malformed.

1. Insert sorry, if the block compiles but leaves unsolved goals open.

We repeat this procedure until the file compiles without errors. At that point, every remaining sorry marks a sub‑lemma to be proved in later stages of Apollo. This part of the pipeline guarantees that the proof successfully compiles in Lean with warnings of presence of sorry statements.

Each sorry block corresponds to a sub‑problem that the LLM failed to prove. Such blocks may not type‑check for various reasons, most commonly LLM hallucination. The feedback obtained via REPL lets us to automatically catch these hallucinations, insert a sorry placeholder, and remove invalid proof lines.

3.3 Auto Solver

The Auto Solver targets each sorry block in turn. It first invokes the Lean4’s hint to close the goal. If goals persist, it applies built‑in solvers (nlinarith, ring, simp, etc.) wrapped in try to test combinations automatically. Blocks whose goals remain open stay marked with sorry. After applying Auto Solver, a proof may be complete with no sorry’s in which case Apollo has already succeeded in fixing the proof. Otherwise, the process can repeat recursively.

3.4 Recursive reasoning and repair

In the case where a proof still has some remaining sorry statements after applying the Auto Solver, Apollo can consider each as a new lemma, i.e., extract its local context (hypotheses, definitions, and any lemmas proved so far), and recursively try to prove the lemmas by prompting the LLM and repeating the whole process of verification, syntax refining, sorrifying, and applying the Auto Solver.

At each of these recursive iterations, a lemma may be completely proved, or it may end up with an incomplete proof with one or a few sorry blocks. This allows the Apollo to make progress in proving the original theorem by breaking down the incomplete steps further and further until the LLM or Auto Solver can close the goals. This process can continue up to a user‑specified recursion depth $r$ .

3.5 Proof Assembler

Finally, the Proof Assembler splices all repaired blocks back into a single file and verifies that no sorry or admit (alias for "sorry") commands remain. If the proof still fails, the entire pipeline repeats (up to a user‑specified recursion depth $r$ ), allowing further rounds of refinement and sub‑proof generation.

Apollo’s staged approach: syntax cleaning, “sorrifying,” automated solving, and targeted LLM‐driven repair, yields improvements in both proof‐sample efficiency and final proof correctness.

4 Experiments

In this section, we present empirical results for Apollo on the miniF2F dataset zheng2022minif2fcrosssystembenchmarkformal , which comprises of 488 formalized problems drawn from AIME, IMO, and AMC competitions. The benchmark is evenly split into validation and test sets (244 problems each); here we report results on the miniF2F‑test partition. To demonstrate Apollo’s effectiveness, we evaluate it on a range of state‑of‑the‑art whole‑proof generation models. All experiments use Lean v4.17.0 and run on eight NVIDIA A5000 GPUs with 128 CPU cores. We used $@32$ sampling during sub-proof generation unless stated otherwise. Baseline numbers are sourced from each model’s original publication works.

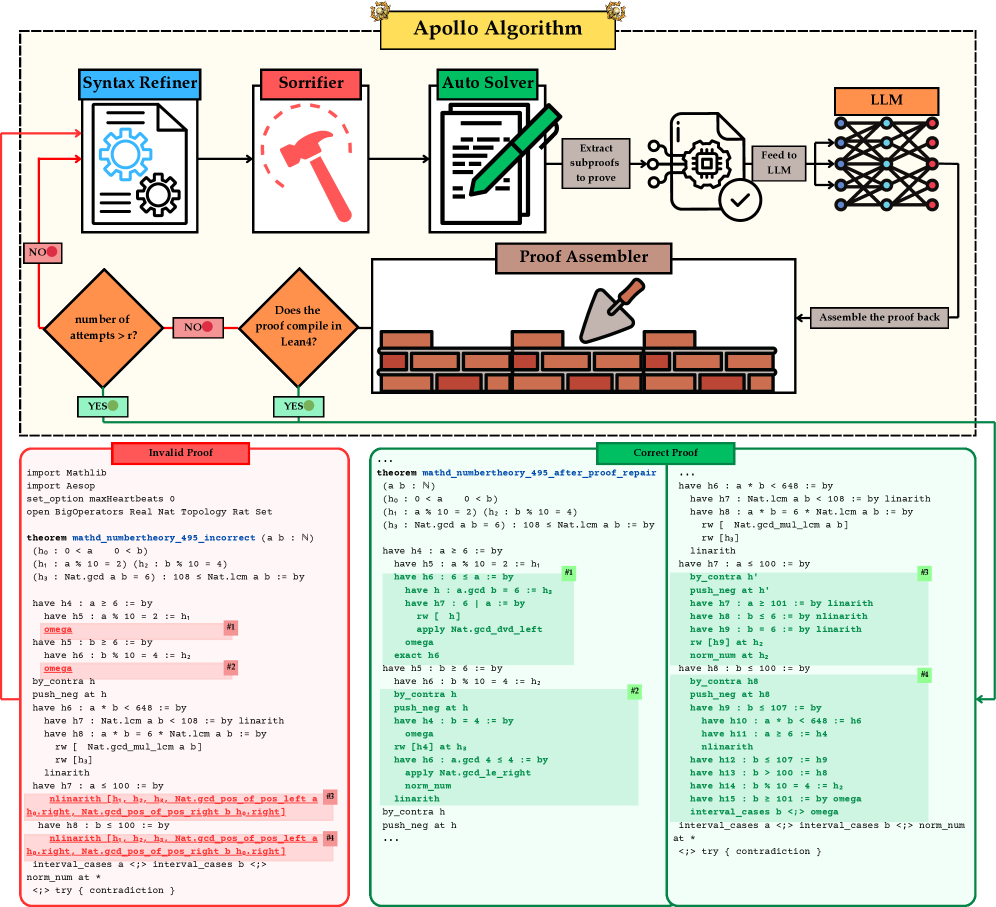

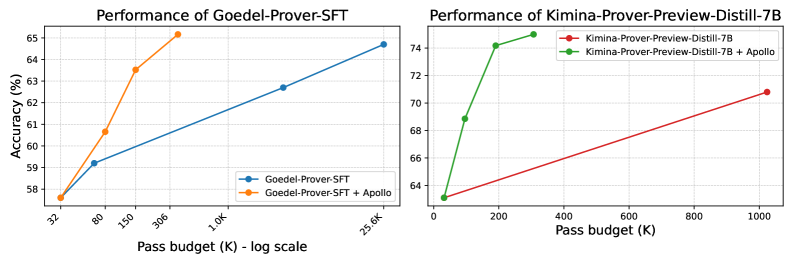

4.1 The effect of applying Apollo on top of the base models

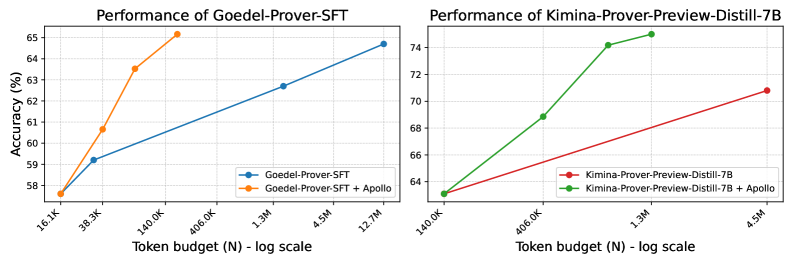

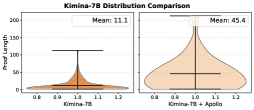

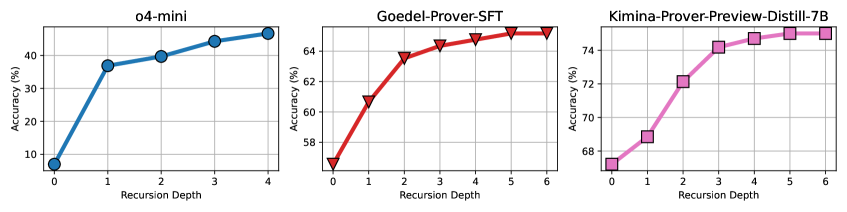

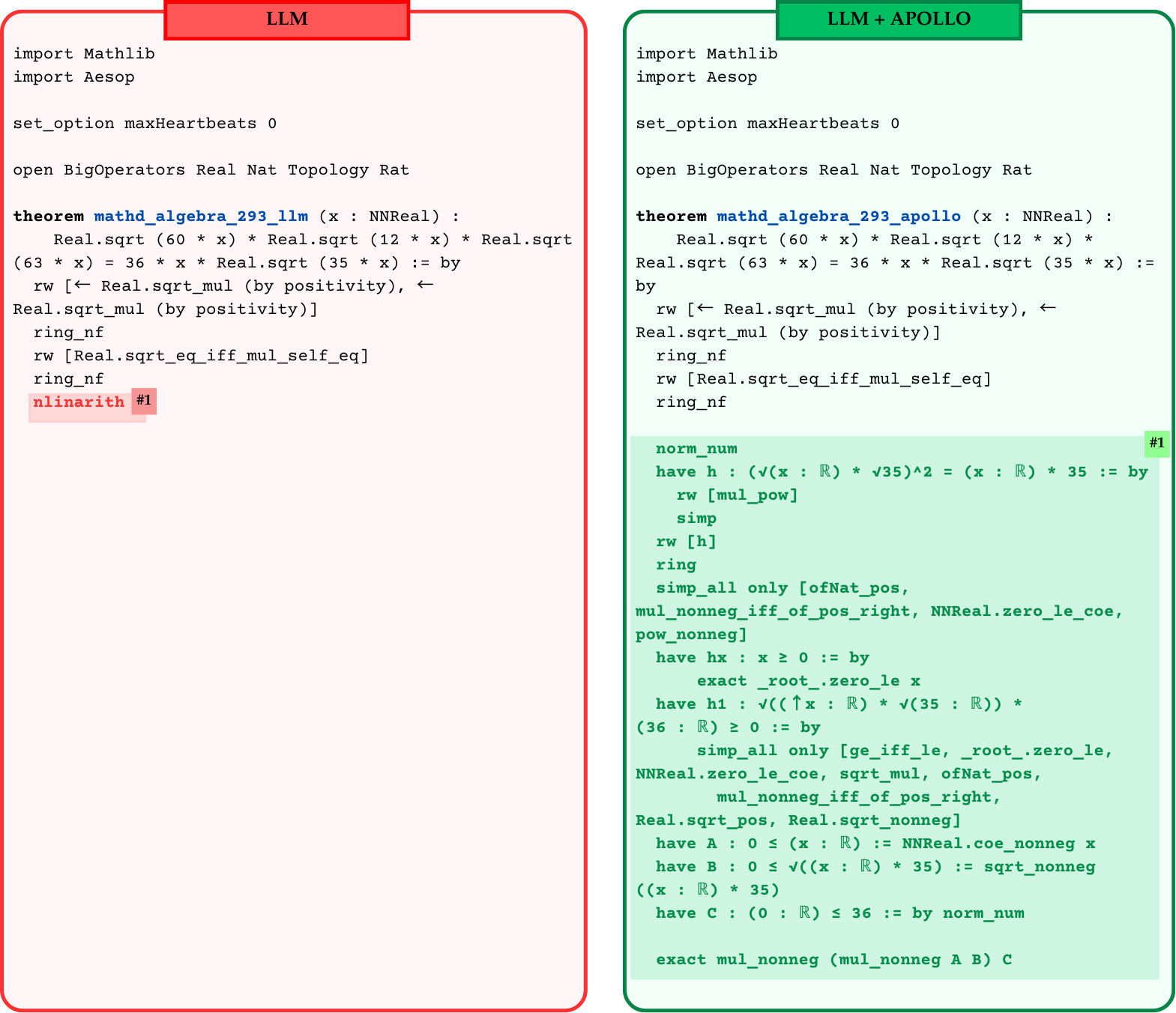

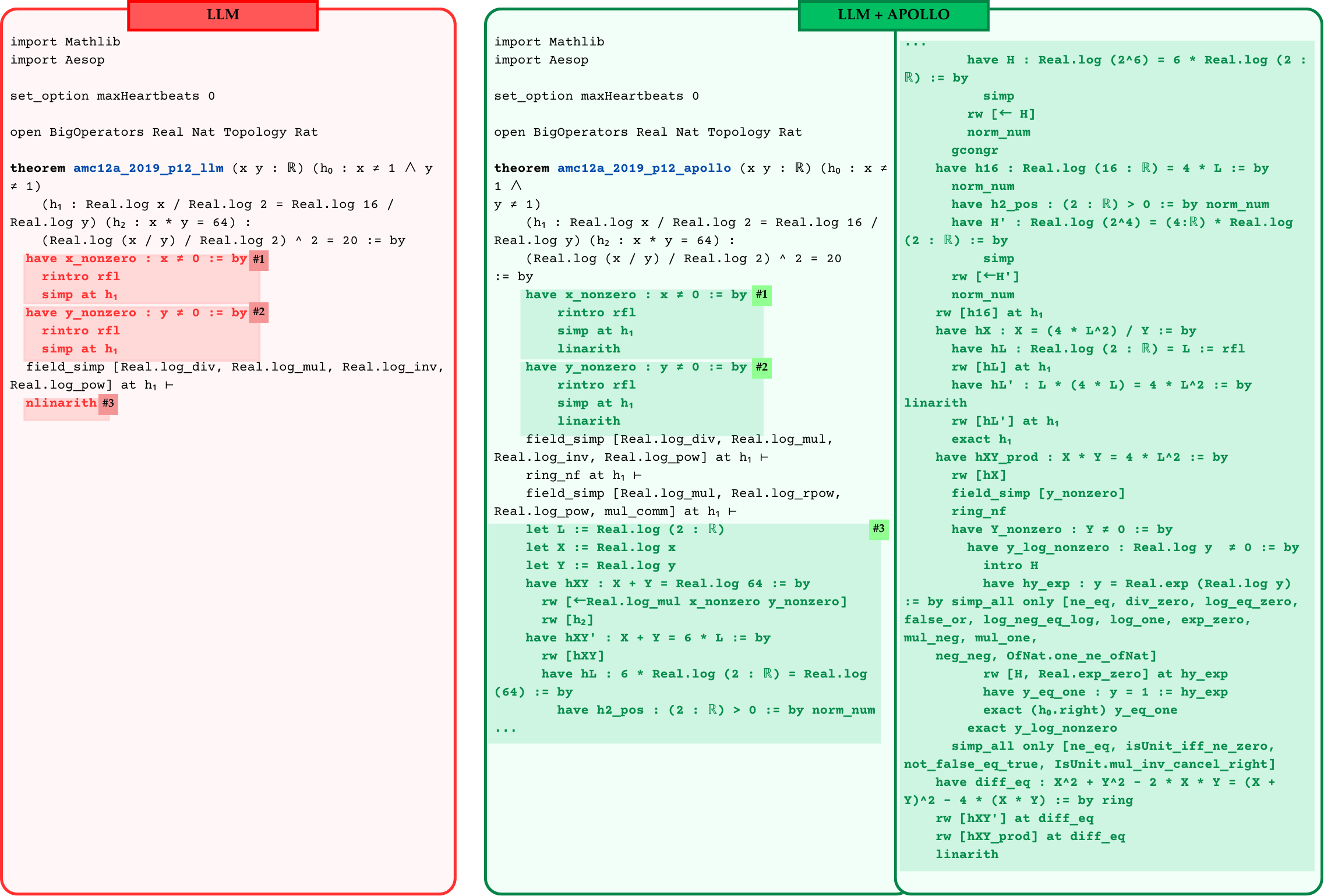

Figure 3 shows the impact of Apollo on two state‑of‑the‑art whole‑proof generation models: Goedel Prover‑SFT lin2025goedelproverfrontiermodelopensource and Kimina‑Prover‑Preview‑Distill‑7B kimina_prover_2025 . Applying Apollo increases Goedel‑Prover‑SFT’s top accuracy from 64.7% to 65.6% while reducing its sampling budget by two orders of magnitude (from 25,600 generated samples to only a few hundred on average). Similarly, Kimina‑Prover‑Preview‑Distill‑7B achieves a new best Kimina-Prover-Preview-Distill-7B accuracy of 75.0% with roughly one‑third of the previous sample budget. We report the exact numbers in Table 1.

<details>

<summary>x4.png Details</summary>

### Visual Description

**Language Declaration:** The text in this image is entirely in English. No other languages are present.

## Line Charts: Performance Impact of "Apollo" on Prover Models

### Overview

The image consists of two side-by-side line charts comparing the performance (Accuracy) against computational cost (Pass budget) for two different AI models: "Goedel-Prover-SFT" (left) and "Kimina-Prover-Preview-Distill-7B" (right). Both charts demonstrate the baseline model's performance compared to the model augmented with a system or method called "Apollo".

### Component Isolation: Left Chart (Goedel-Prover-SFT)

#### Components/Axes

* **Positioning:** Left half of the image.

* **Title:** "Performance of Goedel-Prover-SFT" (Top center).

* **Y-axis:** Labeled "Accuracy (%)". Linear scale with major gridlines at 58, 59, 60, 61, 62, 63, 64, and 65.

* **X-axis:** Labeled "Pass budget (K) - log scale". The tick marks are angled and spaced logarithmically. The explicit labels are: 32, 80, 150, 306, 1.0K, 25.6K.

* **Legend:** Located in the bottom-right corner of the chart area.

* Blue line with circular markers: `Goedel-Prover-SFT`

* Orange line with circular markers: `Goedel-Prover-SFT + Apollo`

#### Detailed Analysis

* **Trend Verification (Blue Line - Baseline):** The blue line slopes upward gradually across a massive span of the x-axis (from 32 to 25.6K).

* Point 1: x = 32, y ≈ 57.6%

* Point 2: x = 80, y ≈ 59.2%

* Point 3: x = 1.0K, y ≈ 62.7%

* Point 4: x = 25.6K, y ≈ 64.7%

* **Trend Verification (Orange Line - With Apollo):** The orange line slopes upward steeply, achieving higher accuracy at much lower pass budgets, terminating early on the x-axis.

* Point 1: x = 32, y ≈ 57.6% (Shares exact starting point with baseline)

* Point 2: x = 80, y ≈ 60.7%

* Point 3: x = 150, y ≈ 63.5%

* Point 4: x = 306, y ≈ 65.1%

---

### Component Isolation: Right Chart (Kimina-Prover-Preview-Distill-7B)

#### Components/Axes

* **Positioning:** Right half of the image.

* **Title:** "Performance of Kimina-Prover-Preview-Distill-7B" (Top center).

* **Y-axis:** No explicit text label, but visually shares the "Accuracy (%)" metric from the left chart. Linear scale with major gridlines at 64, 66, 68, 70, 72, and 74.

* **X-axis:** Labeled "Pass budget (K)". This is a **linear scale**, unlike the left chart. Major tick marks at 0, 200, 400, 600, 800, 1000.

* **Legend:** Located in the top-right corner of the chart area.

* Red line with circular markers: `Kimina-Prover-Preview-Distill-7B`

* Green line with circular markers: `Kimina-Prover-Preview-Distill-7B + Apollo`

#### Detailed Analysis

* **Trend Verification (Red Line - Baseline):** The red line slopes upward gradually in a nearly straight line across the linear x-axis.

* Point 1: x ≈ 32 (slightly right of 0), y ≈ 63.1%

* Point 2: x ≈ 1024 (slightly past 1000), y ≈ 70.8%

* **Trend Verification (Green Line - With Apollo):** The green line slopes upward very steeply, then begins to curve (concave down), showing rapid accuracy gains at low pass budgets.

* Point 1: x ≈ 32, y ≈ 63.1% (Shares exact starting point with baseline)

* Point 2: x ≈ 100, y ≈ 68.8%

* Point 3: x ≈ 200, y ≈ 74.1%

* Point 4: x ≈ 300, y ≈ 75.0%

---

### Key Observations

1. **Shared Origins:** In both charts, the baseline model and the Apollo-enhanced model start at the exact same accuracy for the lowest pass budget (approx. 32K).

2. **Drastic Efficiency Gains:** The addition of "Apollo" creates a significantly steeper learning/performance curve in both models.

3. **Scale Discrepancy:** The left chart uses a logarithmic scale for the X-axis to show the baseline model requiring up to 25.6K pass budget to reach ~64.7% accuracy. The Apollo version reaches higher accuracy (~65.1%) at a mere 306 pass budget. This is an efficiency gain of nearly two orders of magnitude.

4. **Higher Baseline:** The Kimina model (right) starts at a higher baseline accuracy (~63%) compared to the Goedel model (~57.6%).

### Interpretation

The data overwhelmingly demonstrates that the "Apollo" method/system acts as a massive multiplier for computational efficiency (measured here as "Pass budget").

By reading between the lines, "Pass budget" likely refers to the number of attempts, samples, or tokens a theorem-proving model is allowed to generate or evaluate to find a correct solution.

Without Apollo, scaling up the pass budget yields diminishing, slow returns (requiring logarithmic scaling on the left chart just to fit the baseline line). With Apollo, the models achieve superior accuracy using a fraction of the computational budget. For example, on the left chart, Apollo achieves in ~300 passes what the baseline cannot achieve in 25,000 passes. On the right chart, Apollo achieves 75% accuracy at 300 passes, while the baseline only reaches ~71% at over 1000 passes. Apollo appears to be a highly effective search heuristic, filtering mechanism, or reasoning enhancement that prevents the models from wasting computational budget on dead ends.

</details>

(a) Model accuracy against sampling budget

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Charts: Performance Comparison of Base Models vs. Apollo-Augmented Models

### Overview

The image consists of two side-by-side line charts demonstrating the performance (Accuracy) of two different language models ("Goedel-Prover-SFT" and "Kimina-Prover-Preview-Distill-7B") as a function of their token budget. Each chart compares the base model against a version augmented with a system or method called "Apollo". The language used in the image is entirely English.

---

### Component Isolation: Left Chart (Goedel-Prover-SFT)

#### Components/Axes

* **Positioning:** Left half of the image.

* **Title:** "Performance of Goedel-Prover-SFT" (Centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy (%)" (Rotated 90 degrees, positioned on the far left).

* **Scale:** Linear, ranging from 58 to 65.

* **Markers/Gridlines:** Horizontal dotted gridlines at integer intervals: 58, 59, 60, 61, 62, 63, 64, 65.

* **X-Axis:**

* **Label:** "Token budget (N) - log scale" (Centered at the bottom).

* **Scale:** Logarithmic.

* **Markers:** Tilted text labels at specific intervals: 16.1K, 38.3K, 140.0K, 406.0K, 1.3M, 4.5M, 12.7M. Vertical dotted gridlines align with these markers.

* **Legend:** Positioned in the bottom-right corner of the chart area, enclosed in a white box with a light gray border.

* Blue line with a solid circle marker: `Goedel-Prover-SFT`

* Orange line with a solid circle marker: `Goedel-Prover-SFT + Apollo`

#### Detailed Analysis (Left Chart)

* **Trend Verification - Blue Line (Goedel-Prover-SFT):** The blue line exhibits a steady, moderate upward slope across the entire visible x-axis, indicating a gradual increase in accuracy as the token budget increases.

* Point 1: X = 16.1K, Y ≈ 57.6%

* Point 2: X = 38.3K, Y ≈ 59.2%

* Point 3: X = 1.3M, Y ≈ 62.7%

* Point 4: X = 12.7M, Y ≈ 64.7%

* **Trend Verification - Orange Line (Goedel-Prover-SFT + Apollo):** The orange line starts at the exact same point as the blue line but slopes upward much more steeply. It achieves higher accuracy at significantly lower token budgets and terminates earlier on the x-axis.

* Point 1: X = 16.1K, Y ≈ 57.6% (Shared origin with the blue line)

* Point 2: X = 38.3K, Y ≈ 60.7%

* Point 3: X = 140.0K, Y ≈ 63.5%

* Point 4: X ≈ 300K (Visually positioned before the 406.0K marker), Y ≈ 65.2%

---

### Component Isolation: Right Chart (Kimina-Prover-Preview-Distill-7B)

#### Components/Axes

* **Positioning:** Right half of the image.

* **Title:** "Performance of Kimina-Prover-Preview-Distill-7B" (Centered at the top).

* **Y-Axis:**

* **Label:** None explicitly written, but contextually inherits "Accuracy (%)" from the left chart.

* **Scale:** Linear, ranging from 64 to 74.

* **Markers/Gridlines:** Horizontal dotted gridlines at even integer intervals: 64, 66, 68, 70, 72, 74.

* **X-Axis:**

* **Label:** "Token budget (N) - log scale" (Centered at the bottom).

* **Scale:** Logarithmic.

* **Markers:** Tilted text labels at specific intervals: 140.0K, 406.0K, 1.3M, 4.5M. Vertical dotted gridlines align with these markers.

* **Legend:** Positioned in the bottom-right corner of the chart area, enclosed in a white box with a light gray border.

* Red line with a solid circle marker: `Kimina-Prover-Preview-Distill-7B`

* Green line with a solid circle marker: `Kimina-Prover-Preview-Distill-7B + Apollo`

#### Detailed Analysis (Right Chart)

* **Trend Verification - Red Line (Kimina-Prover-Preview-Distill-7B):** The red line shows a steady, moderate upward slope from the lowest visible token budget to the highest.

* Point 1: X = 140.0K, Y ≈ 63.1%

* Point 2: X = 4.5M, Y ≈ 70.8%

* **Trend Verification - Green Line (Kimina-Prover-Preview-Distill-7B + Apollo):** The green line shares the starting point with the red line but slopes upward sharply, achieving significantly higher accuracy at lower token budgets before the slope begins to shallow out slightly at the top.

* Point 1: X = 140.0K, Y ≈ 63.1% (Shared origin with the red line)

* Point 2: X = 406.0K, Y ≈ 68.8%

* Point 3: X ≈ 800K (Visually positioned roughly halfway between 406.0K and 1.3M on the log scale), Y ≈ 74.2%

* Point 4: X ≈ 1.5M (Visually positioned slightly to the right of the 1.3M marker), Y ≈ 75.0%

---

### Key Observations

1. **Shared Origins:** In both charts, the base model and the "+ Apollo" model start at the exact same accuracy for the lowest tested token budget (16.1K for Goedel, 140.0K for Kimina).

2. **Steeper Trajectories:** In both charts, the addition of "Apollo" (Orange line left, Green line right) results in a drastically steeper performance curve compared to the base models (Blue line left, Red line right).

3. **Different Baselines:** The Kimina model (Right Chart) operates at a higher overall accuracy baseline (ranging roughly 63% to 75%) compared to the Goedel model (Left Chart, ranging roughly 57% to 65%).

4. **Token Budget Ranges:** The Goedel chart evaluates performance starting from a much lower token budget (16.1K) and extending to a higher one (12.7M) compared to the Kimina chart (140.0K to 4.5M).

### Interpretation

The data strongly suggests that the "Apollo" augmentation is a highly effective method for improving the sample efficiency of these language models.

By reading between the lines, the charts demonstrate that to achieve a specific target accuracy, a model using Apollo requires orders of magnitude fewer tokens than the base model. For example, in the left chart, the base Goedel model requires roughly 12.7M tokens to reach ~64.7% accuracy. The Apollo-augmented version surpasses that accuracy (reaching ~65.2%) using fewer than 406K tokens.

This implies that Apollo significantly accelerates the learning or reasoning process during training or inference (depending on what "Token budget" specifically refers to in this context, though "Prover" suggests inference/search budgets in formal mathematics or logic tasks). The fact that this pattern holds true across two distinctly different models (Goedel and Kimina) indicates that Apollo is likely a generalized architectural improvement or search strategy rather than a model-specific tweak.

</details>

(b) Model accuracy against generated token budget

Figure 3: Performance of base models against the Apollo aided models on miniF2F-test dataset at different sample budgets and token length.

Note that Apollo’s sampling budget is not fixed: it depends on the recursion depth $r$ and the LLM’s ability to generate sub‑lemmas. For each sub‑lemma that Auto Solver fails to close, we invoke the LLM with a fixed top‑32 sampling budget. Because each generated sub‑lemma may spawn further sub‑lemmas, the total sampling overhead grows recursively, making any single @K budget difficult to report. Sub‑lemmas also tend to require far fewer tactics (and thus far fewer tokens) than the main theorem. A theorem might need 100 lines of proof while a sub-lemma might need only 1 line. Hence, sampling cost does not scale one‑to‑one. Therefore, to compare with other whole-proof generation approaches, we report the average number of proof samples and token budgets. We present more in-depth analysis of inference budgets in Section B of the Appendix.

Table 1: Table results comparing sampling cost, accuracy, and token usage before and after applying Apollo. “Cost” denotes the sampling budget $K$ for whole‑proof generation and the recursion depth $r$ for Apollo. Column $N$ reports the average number of tokens generated per problem in "Chain‑of‑Thought" mode. Bold cells highlight the best accuracy. Results are shown on the miniF2F‑test dataset for two models: Kimina‑Prover‑Preview‑Distill‑7B and Goedel‑SFT.

| Goedel‑Prover‑SFT | Kimina‑Prover‑Preview‑Distill‑7B | | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Base Model | + Apollo | Base Model | + Apollo | | | | | | | | |

| $K$ | $N$ | Acc. (%) | $r$ | $N$ | Acc. (%) | $K$ | $N$ | Acc. (%) | $r$ | $N$ | Acc. (%) |

| 32 | 16.1K | 57.6% | 0 | 16.1K | 57.6% | 32 | 140K | 63.1% | 0 | 140K | 63.1% |

| 64 | 31.8K | 59.2% | 2 | 38.3K | 60.7% | | | | 2 | 406K | 68.9% |

| 3200 | 1.6M | 62.7% | 4 | 74.6K | 63.5% | | | | 4 | 816K | 74.2% |

| 25600 | 12.7M | 64.7% | 6 | 179.0K | 65.6 % | 1024 | 4.5M | 70.8% | 6 | 1.3M | 75.0% |

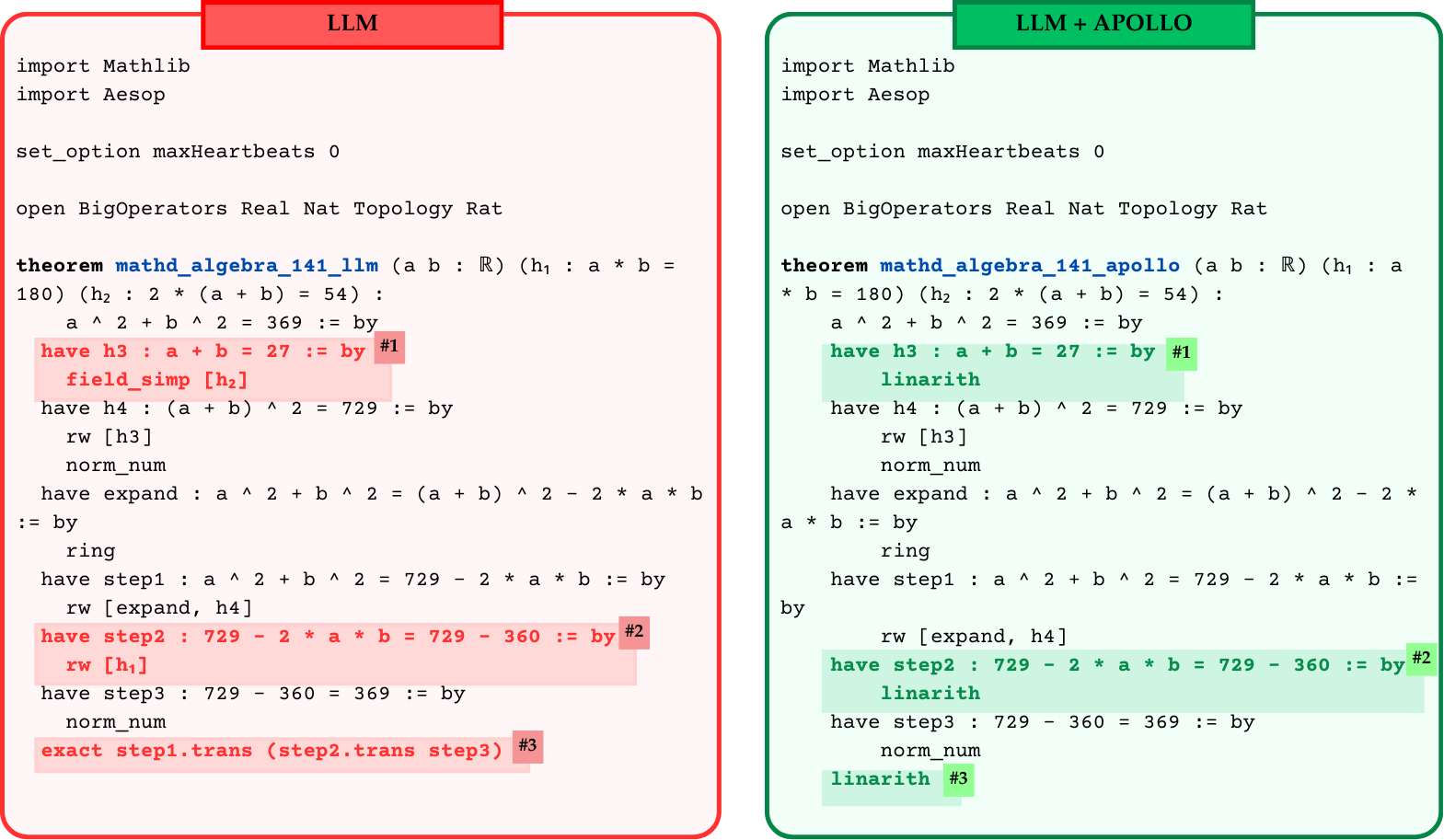

4.2 Comparison with SoTA LLMs

Table 2 compares whole‑proof generation and tree‑search methods on miniF2F‑test. For each approach, we report its sampling budget, defined as the top‑ $K$ parameter for LLM generators or equivalent search‑depth parameters for tree‑search provers. Since Apollo’s budget varies with recursion depth $r$ , we instead report the mean number of proof sampled during procedure. Moreover, we report an average number of generated tokens as another metric for computational cost of proof generation. For some models due to inability to collect generated tokens, we leave them blank and report sampling budget only.

When Apollo is applied on top of any generator, it establishes a new best accuracy for that model. For instance, Goedel‑Prover‑SFT’s accuracy jumps to 65.6%, surpassing the previous best result (which required 25,600 sample budget). In contrast, Apollo requires only 362 samples on average. Likewise, Kimina‑Prover‑Preview‑Distill‑7B sees a 4.2% accuracy gain while reducing its sample budget below the original 1,024. To further validate Apollo’s generalizability, we tested the repair framework on Goedel-V2-8B lin2025goedelproverv2 , the current state-of-the-art theorem prover. We observe that, at similar sample and token budgets, Apollo achieves a 0.9% accuracy gain, whereas the base LLM requires twice the budget to reach the same accuracy. Overall, Apollo not only boosts accuracy but does so with a smaller sampling budget, setting a new state-of-the-art result among sub‑8B‑parameter LLMs with sampling budget of 32.

We also evaluate general‑purpose LLMs (OpenAI o3‑mini and o4‑mini). Without Apollo, these models rarely produce valid Lean4 proofs, since they default to Lean3 syntax or introduce trivial compilation errors. Yet they have a remarkable grasp of mathematical concepts, which is evident by the proof structures they often produce. By applying Apollo’s syntax corrections and solver‑guided refinements, their accuracies increase from single digits (3–7%) to over 40%. These results demonstrate that in many scenarios discarding and regenerating the whole proof might be overly wasteful, and with a little help from a Lean compiler, we can achieve better accuracies while sampling less proofs from LLMs.

Table 2: Comparison of Apollo accuracy against state-of-the-art models on the miniF2F-test dataset. Token budget is computed over all generated tokens by LLM.

| Method | Model size | Sample budget | Token budget | miniF2F-test |

| --- | --- | --- | --- | --- |

| Tree Search Methods | | | | |

| Hypertree Proof Search (lample2022hypertree, ) | 0.6B | $64× 5000$ | - | $41.0\%$ |

| IntLM2.5-SP+BFS+CG (wu2024internlm25stepproveradvancingautomatedtheorem, ) | 7B | $256× 32× 600$ | - | $65.9\%$ |

| HPv16+BFS+DC (li2025hunyuanproverscalabledatasynthesis, ) | 7B | $600× 8× 400$ | - | $68.4\%$ |

| BFS-Prover (xin2025bfsproverscalablebestfirsttree, ) | 7B | $2048× 2× 600$ | - | $70.8\%$ |

| Whole-proof Generation Methods | | | | |

| DeepSeek-R1- Distill-Qwen-7B (deepseekai2025deepseekr1incentivizingreasoningcapability, ) | 7B | 32 | - | 42.6% |

| Leanabell-GD-RL (zhang2025leanabellproverposttrainingscalingformal, ) | 7B | $128$ | - | $61.1\%$ |

| STP (dong2025stp, ) | 7B | $25600$ | - | $67.6\%$ |

| o3-mini (openai-o3-mini-2025, ) | - | 1 | - | $3.3\%$ |

| $32$ | - | $24.6\%$ | | |

| o4-mini (openai-o4-mini-2025, ) | - | 1 | - | $7.0\%$ |

| Goedel-SFT (lin2025goedelproverfrontiermodelopensource, ) | 7B | 32 | 16.1K | $57.6\%$ |

| $3200$ | 1.6M | $62.7\%$ | | |

| $25600$ | 12.7M | $64.7\%$ | | |

| Kimina-Prover- Preview-Distill (kimina_prover_2025, ) | 7B | 1 | 4.4K | $52.5\%$ |

| $32$ | 140K | $63.1\%$ | | |

| $1024$ | 4.5M | $70.8\%$ | | |

| Goedel-V2 (lin2025goedelproverv2, ) | 8B | 32 | 174K | $83.3\%$ |

| $64$ | 349K | $84.0\%$ | | |

| $128$ | 699K | $84.9\%$ | | |

| Whole-proof Generation Methods + Apollo | | | | |

| o3-mini + Apollo | - | 8 | - | 40.2% (+36.9%) |

| o4-mini + Apollo | - | 15 | - | 46.7% (+39.7%) |

| Goedel-SFT + Apollo | 7B | 362 | 179K | 65.6% (+0.9%) |

| Kimina-Preview + Apollo | 7B | 307 | 1.3M | 75.0% (+4.2%) |

| Goedel-V2 + Apollo | 8B | 63 | 344K | 84.9% (+0.9%) |

To further assess Apollo, we conducted experiments on PutnamBench tsoukalas2024putnambenchevaluatingneuraltheoremprovers , using Kimina-Prover-Preview-Distill-7B as the base model. Under whole-proof generation pipeline, LLM produced 10 valid proofs. With Apollo, the LLM produced one additional valid proof while consuming nearly half as many tokens. Results are presented in Table 3.

Overall, our results on a variety of LLMs and benchmarks demonstrate that Apollo consistently consumes fewer tokens while achieving higher accuracy, highlighting its effectiveness.

Table 3: Comparison of Apollo accuracy on the PutnamBench dataset. Token budget is computed over all generated tokens by LLM.

| Method | Model size | Sample budget | Token budget | PutnamBench |

| --- | --- | --- | --- | --- |

| Kimina-Preview | 7B | 32 | 180K | 7/658 |

| Kimina-Preview | 7B | 192 | 1.1M | 10/658 |

| Kimina-Preview+Apollo | 7B | 108 | 579K | 11/658 |

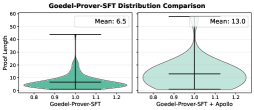

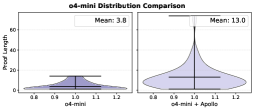

4.3 Distribution of Proof Lengths

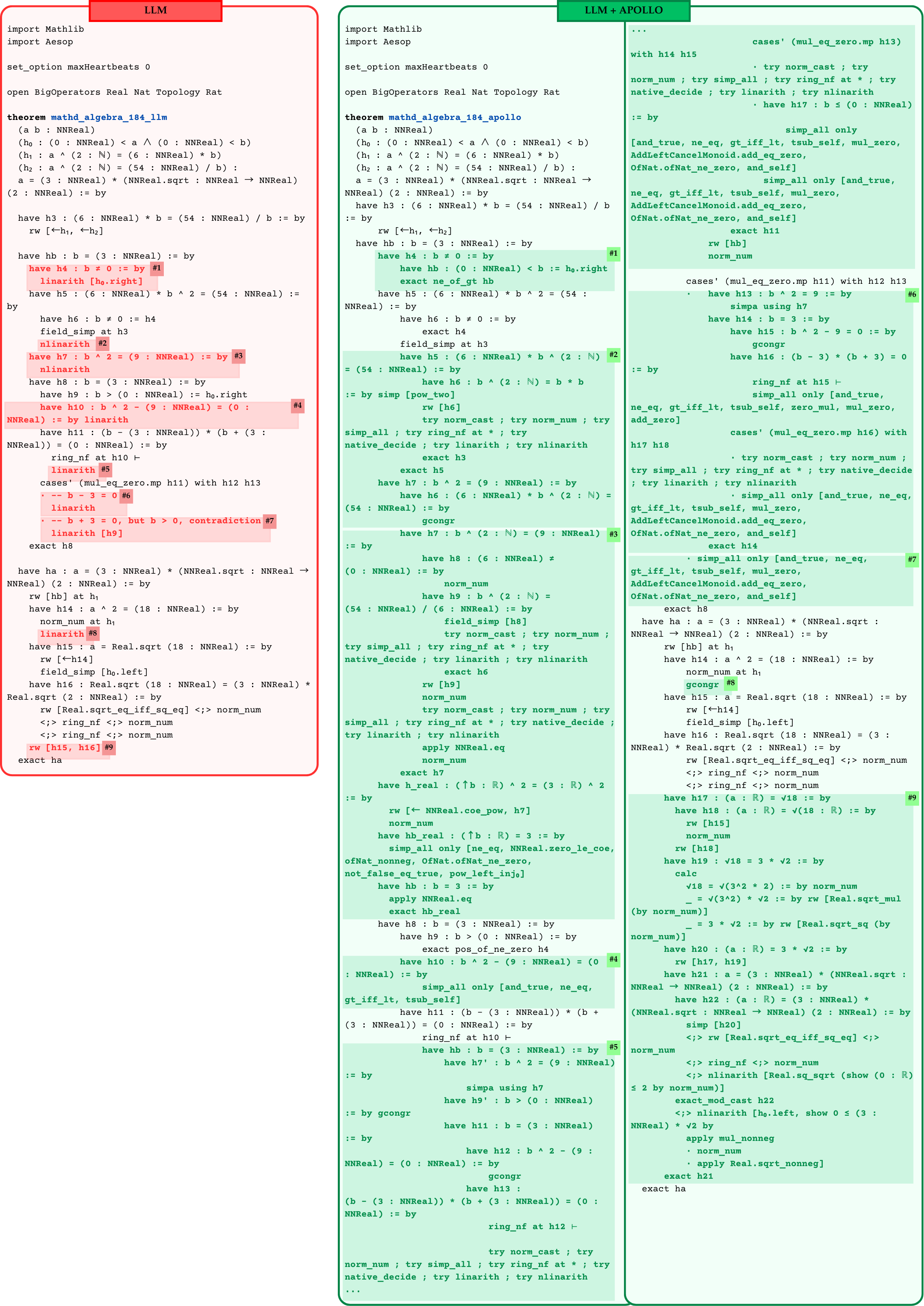

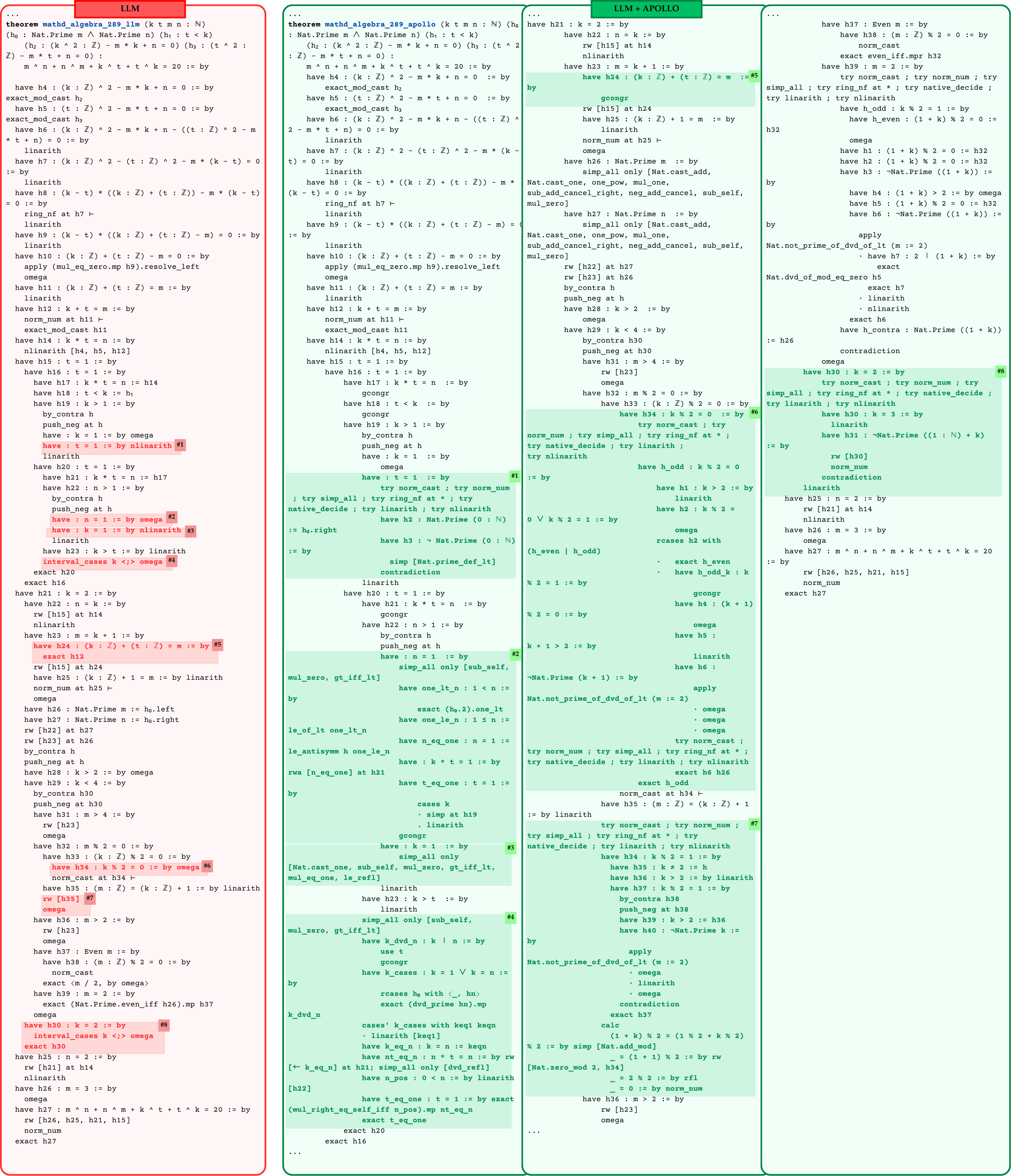

We assess how Apollo affects proof complexity by examining proof‑length distributions before and after repair. Here, proof length is defined as the total number of tactics in a proof, which serves as a proxy for proof complexity.

Figure 4 presents violin plots for three base models: Kimina‑Prover‑Preview‑Distill‑7B, Goedel‑Prover‑SFT, and o4‑mini. Each subplot shows two non‑overlapping sets: proofs generated directly by the base model (“before”) and proofs produced after applying Apollo (“after”). We only consider valid proofs verified by REPL in this setup.

The proofs that Apollo succeeds in fixing in collaboration with the LLM have considerably longer proof lengths. The mean length of proofs fixed by Apollo is longer than those written by the LLM itself at least by a factor of two in each model selection scenario. This increase indicates that the proofs which the base model alone could not prove require longer, more structured reasoning chains. By decomposing challenging goals into smaller sub‑lemmas, Apollo enables the generation of these extended proofs, therefore improving overall success rate.

These results demonstrate that Apollo not only raises accuracy but also systematically guides models to construct deeper, more rigorous proof structures capable of solving harder theorems.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Violin Plot: Kimina-7B Distribution Comparison

### Overview

This image displays a side-by-side comparison of two violin plots. The charts illustrate the distribution of a metric called "Proof Length" across two different system configurations: "Kimina-7B" (left) and "Kimina-7B + Apollo" (right). The plots show the density of the data at different values, along with internal markers indicating the range and median, and explicit text boxes stating the mean for each distribution.

### Components/Axes

**Header Region:**

* **Main Title:** "Kimina-7B Distribution Comparison" (Centered at the top).

**Y-Axis (Shared visually, labeled only on the left):**

* **Title:** "Proof Length" (Rotated 90 degrees counter-clockwise, positioned on the far left).

* **Scale/Markers:** Numerical values starting from 0, incrementing by 50 up to 200 (0, 50, 100, 150, 200).

* **Gridlines:** Faint, light-gray horizontal gridlines extend across both plots at each 50-unit interval.

**X-Axes (Individual to each subplot):**

* **Left Subplot Label:** "Kimina-7B"

* **Right Subplot Label:** "Kimina-7B + Apollo"

* **Scale/Markers (Both subplots):** 0.8, 0.9, 1.0, 1.1, 1.2. (These represent the arbitrary width scale used to draw the violin shapes, centered on 1.0).

**Annotations (Legends/Text Boxes):**

* **Left Subplot (Top-Right):** A white rectangular box containing the text "Mean: 11.1".

* **Right Subplot (Top-Right):** A white rectangular box containing the text "Mean: 45.4".

### Detailed Analysis

**Left Plot: Kimina-7B**

* **Visual Trend/Shape:** The distribution is heavily skewed toward the bottom. The violin shape is extremely wide at the base (near zero) and tapers sharply into a very thin, needle-like tail that extends upwards. The color is a solid, medium-dark orange/brown.

* **Data Points (Approximate based on internal markers):**

* **Stated Mean:** 11.1

* **Minimum (Bottom horizontal line):** ~0 to 2

* **Median (Middle horizontal line):** ~10 to 15 (Aligns closely with the stated mean).

* **Maximum (Top horizontal line):** ~110 to 115.

**Right Plot: Kimina-7B + Apollo**

* **Visual Trend/Shape:** The distribution is much broader and extends much higher than the left plot. While still widest near the bottom, the "bulge" of the violin is thicker and extends further up the y-axis (between 20 and 80) before tapering. The tail extends past the visible 200 mark on the y-axis. The color is a lighter, more transparent peach/orange.

* **Data Points (Approximate based on internal markers):**

* **Stated Mean:** 45.4

* **Minimum (Bottom horizontal line):** ~0 to 2

* **Median (Middle horizontal line):** ~45 to 50 (Aligns closely with the stated mean).

* **Maximum (Top horizontal line):** Extends beyond the 200 gridline, approximately ~210 to 220.

### Key Observations

1. **Significant Shift in Mean:** The addition of "Apollo" increases the mean Proof Length by more than a factor of four (from 11.1 to 45.4).

2. **Change in Distribution Shape:** The baseline Kimina-7B model produces almost exclusively very short proofs (clustered tightly around 10). The Kimina-7B + Apollo configuration produces a much wider variety of proof lengths, with a thick distribution of lengths between 20 and 100.

3. **Increased Maximums:** The longest proofs generated by the baseline model cap out around 115. The Apollo-augmented model generates proofs that exceed a length of 200.

### Interpretation

This chart demonstrates the impact of integrating an "Apollo" component or methodology with a base "Kimina-7B" model (likely a 7-billion parameter Large Language Model) on a task involving formal logic, mathematics, or reasoning (indicated by "Proof Length").

The data clearly suggests that the baseline model tends to output very brief, perhaps truncated or overly concise, proofs. By adding Apollo, the system is forced or enabled to generate significantly longer proofs.

*Reading between the lines:* In the context of LLM reasoning, longer "proofs" often correlate with Chain-of-Thought (CoT) reasoning, step-by-step deduction, or more rigorous formal verification. The Apollo addition appears to be a mechanism designed specifically to increase the verbosity, depth, or step-count of the model's reasoning process. The fact that the distribution becomes much wider (rather than just shifting the whole cluster up) implies that Apollo allows the model to dynamically adjust the proof length based on the complexity of the prompt, whereas the base model was seemingly constrained to short outputs regardless of the input.

</details>

(a) Kimina-Preview-Distill-7B

<details>

<summary>x7.png Details</summary>

### Visual Description

## Violin Plot: Goedel-Prover-SFT Distribution Comparison

### Overview

The image displays two side-by-side violin plots comparing the statistical distribution of "Proof Length" between two different system configurations: a baseline "Goedel-Prover-SFT" and an augmented "Goedel-Prover-SFT + Apollo". The charts illustrate how the addition of "Apollo" alters the length and variance of the generated proofs.

### Components/Axes

**Header (Top Center):**

* **Main Title:** "Goedel-Prover-SFT Distribution Comparison" (Black, bold text).

**Y-Axis (Shared visually, labeled on the far left):**

* **Title:** "Proof Length" (Rotated 90 degrees counter-clockwise).

* **Scale/Markers:** Ranges from 0 to 60, with tick marks and faint horizontal grid lines at intervals of 10 (0, 10, 20, 30, 40, 50, 60).

**Left Subplot (Baseline):**

* **X-Axis Label (Bottom Center):** "Goedel-Prover-SFT"

* **X-Axis Scale:** 0.8, 0.9, 1.0, 1.1, 1.2 (Note: In standard violin plots, these are dummy coordinates used to define the width of the plot around a central axis of 1.0; they do not represent data variables).

* **Legend (Top Right):** A white box containing the text "Mean: 6.5".

**Right Subplot (Augmented):**

* **X-Axis Label (Bottom Center):** "Goedel-Prover-SFT + Apollo"

* **X-Axis Scale:** 0.8, 0.9, 1.0, 1.1, 1.2 (Dummy coordinates).

* **Legend (Top Right):** A white box containing the text "Mean: 13.0".

### Detailed Analysis

**1. Left Plot: Goedel-Prover-SFT**

* **Visual Trend:** The distribution is highly skewed to the right (positive skew). The vast majority of the data mass is concentrated at the very bottom of the y-axis, indicating that most proofs are quite short. The plot narrows sharply and extends into a long, thin upper tail.

* **Color:** Medium teal/sea-green fill with a dark outline.

* **Data Points (Approximate based on visual alignment with Y-axis):**

* **Minimum (Bottom horizontal line):** ~1

* **Mean/Median (Middle horizontal line):** 6.5 (Explicitly stated in the legend). Visually, the widest part of the violin (the mode) sits slightly below this mean line, around 4-5.

* **Maximum (Top horizontal line):** ~44

**2. Right Plot: Goedel-Prover-SFT + Apollo**

* **Visual Trend:** While still exhibiting a rightward skew, the distribution is significantly more dispersed than the baseline. The "bulb" of the violin is wider, taller, and sits higher on the y-axis. The tail extends much further up the y-axis, indicating a higher frequency of much longer proofs.

* **Color:** Pale mint green/light cyan fill with a dark outline.

* **Data Points (Approximate based on visual alignment with Y-axis):**

* **Minimum (Bottom horizontal line):** ~1

* **Mean/Median (Middle horizontal line):** 13.0 (Explicitly stated in the legend). The widest part of the violin sits around 10-12.

* **Maximum (Top horizontal line):** ~58

### Key Observations

* **Mean Doubling:** The most prominent data point is the exact doubling of the mean proof length, from 6.5 in the baseline to 13.0 with the addition of Apollo.

* **Maximum Extension:** The maximum proof length increases by approximately 14 units (from ~44 to ~58).

* **Variance/Spread:** The right plot is visibly "fatter" in the middle ranges (10-30) compared to the left plot, which is almost entirely concentrated below 10. This indicates a much higher variance in proof lengths when Apollo is used.

* **Shared Minimums:** Both distributions appear to share a similar minimum proof length near zero (approx. 1), suggesting that Apollo does not eliminate short proofs entirely, but rather shifts the overall distribution upward.

### Interpretation

The data clearly demonstrates that integrating "Apollo" into the "Goedel-Prover-SFT" system fundamentally changes the output characteristics of the model, specifically regarding verbosity or complexity.

Because "Proof Length" in automated theorem proving or logical reasoning models usually correlates with the number of deductive steps or the depth of reasoning, the doubling of the mean suggests that Apollo enables or forces the model to generate significantly more detailed, multi-step proofs.

*Reading between the lines (Peircean inference):* The fact that the minimum proof length remains unchanged while the mean and maximum increase drastically implies that Apollo does not simply add "padding" to all answers. If a proof requires only 1 or 2 steps, the Apollo-augmented model can still provide that short answer. However, for more complex problems, Apollo unlocks the model's ability to sustain longer chains of reasoning (up to ~58 steps/length units), whereas the baseline model rarely exceeded 10 steps and capped out at ~44. Therefore, Apollo likely acts as a reasoning enhancer or a search-depth expander rather than a simple verbosity multiplier.

</details>

(b) Goedel-SFT

<details>

<summary>x8.png Details</summary>

### Visual Description

## Violin Plot: o4-mini Distribution Comparison

### Overview

This image displays a side-by-side violin plot comparing the distribution of a metric called "Proof Length" between two distinct system configurations: a base model ("o4-mini") and an augmented model ("o4-mini + Apollo"). The charts visually demonstrate the density, range, and mean of the data for both configurations.

### Components/Axes

**Header Region:**

* **Main Title:** "o4-mini Distribution Comparison" (Located top-center).

**Y-Axis (Shared visually across both plots):**

* **Title:** "Proof Length" (Located vertically on the far left).

* **Scale/Markers:** Numerical ticks at `0`, `20`, `40`, and `60`. Faint horizontal grid lines extend across both plots at these intervals.

**Left Subplot (Base Model):**

* **X-Axis Title:** "o4-mini" (Located bottom-center of the left plot).

* **X-Axis Markers:** `0.8`, `0.9`, `1.0`, `1.1`, `1.2`. *(Note: In violin plots, these represent the spatial width/density bounds centered around the categorical x-value of 1.0, rather than a measured data variable).*

* **Annotation/Legend:** A white bounding box in the top-right corner of this subplot contains the text: "Mean: 3.8".

**Right Subplot (Augmented Model):**

* **X-Axis Title:** "o4-mini + Apollo" (Located bottom-center of the right plot).

* **X-Axis Markers:** `0.8`, `0.9`, `1.0`, `1.1`, `1.2`.

* **Annotation/Legend:** A white bounding box in the top-right corner of this subplot contains the text: "Mean: 13.0".

### Detailed Analysis

**1. Left Plot: "o4-mini"**

* **Visual Trend:** The distribution shape is heavily bottom-weighted, resembling a flattened, wide base that tapers off abruptly. It indicates a high concentration of data points at very low values with minimal variance.

* **Data Points (Approximate):**

* **Minimum Value:** ~0.

* **Maximum Value (Top Whisker/Cap):** ~15.

* **Density Peak (Widest point):** ~2 to ~5.

* **Central Horizontal Bar (Median):** ~4.

* **Explicit Mean:** 3.8.

**2. Right Plot: "o4-mini + Apollo"**

* **Visual Trend:** The distribution shape is significantly taller and wider overall compared to the left plot. It features a bulbous base that transitions into a pronounced, elongated upper tail. This indicates a much wider spread of data, a higher average, and the presence of high-value outliers.

* **Data Points (Approximate):**

* **Minimum Value:** ~0.

* **Maximum Value (Top Whisker/Cap):** ~75 (The vertical line extends well past the 60 grid line).

* **Density Peak (Widest point):** ~8 to ~15.

* **Central Horizontal Bar (Median):** ~12.

* **Explicit Mean:** 13.0.

### Key Observations

* **Mean Shift:** The addition of "Apollo" increases the mean Proof Length from 3.8 to 13.0, an increase of approximately 342%.

* **Range Expansion:** The maximum observed Proof Length jumps from roughly 15 in the base model to roughly 75 in the augmented model, a 5x increase in the upper bound.

* **Variance:** The "o4-mini" model is highly consistent, producing short lengths almost exclusively. The "o4-mini + Apollo" model exhibits high variance, producing a wide variety of lengths, including a long tail of exceptionally long proofs.

### Interpretation

The data clearly demonstrates the behavioral impact of adding the "Apollo" component to the "o4-mini" system. Assuming "o4-mini" is a Large Language Model (LLM) and "Proof Length" refers to the number of steps, tokens, or logical deductions generated to solve a problem, the base model tends to provide very brief, concise outputs.

The introduction of "Apollo"—which is likely a reasoning framework (like Chain-of-Thought), a search/retrieval agent, or a formal verification tool—forces or enables the model to "show its work." Consequently, the augmented system generates significantly longer, more elaborate proofs.

The long upper tail in the right-hand plot is particularly notable. It suggests that while Apollo usually increases the proof length to a moderate degree (around 10-20 units), it occasionally encounters complex edge cases that trigger massive expansions in reasoning, pushing the proof length up to 60-75 units. The base model lacks the capacity or prompting to ever reach these lengths, hard-capping at around 15.

</details>

(c) OpenAI o4-mini

Figure 4: Distribution of proof lengths for selected models before (left) and after (right) applying Apollo. In the “before” plots, proof lengths cover only proofs the base model proved without the help of Apollo; in the “after” plots, proof lengths cover only proofs proved with Apollo’s assistance.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Charts: Accuracy vs. Recursion Depth Across Three Models

### Overview

The image consists of three side-by-side line charts arranged horizontally (left, center, right). Each chart illustrates the relationship between "Recursion Depth" and "Accuracy (%)" for a different computational model. While the x-axis scale is similar across the charts, the y-axis scales differ significantly, indicating varying baseline performances among the models.

### Components/Axes

* **Layout:** Three distinct subplots in a 1x3 grid.

* **X-Axis (All Charts):**

* **Label:** "Recursion Depth" (located at the bottom center of each chart).

* **Scale:** Linear integer scale. The left chart ranges from 0 to 4. The center and right charts range from 0 to 6.

* **Y-Axis (Varies per Chart):**

* **Label:** "Accuracy (%)" (located vertically on the left side of each chart).

* **Left Chart Scale:** Major gridlines at 10, 20, 30, 40.

* **Center Chart Scale:** Major gridlines at 58, 60, 62, 64.

* **Right Chart Scale:** Major gridlines at 68, 70, 72, 74.

* **Grid:** All charts feature a light gray background grid corresponding to the major axis ticks.

* **Legend:** There is no explicit floating legend. Instead, the title of each chart identifies the data series, and each uses a distinct color and marker shape.

---

### Detailed Analysis

#### 1. Left Chart: o4-mini

* **Position:** Far left.

* **Title:** "o4-mini" (Top center).

* **Styling:** Thick blue line with large circular markers outlined in black.

* **Visual Trend:** The line exhibits a massive, steep upward slope from depth 0 to 1, followed by a moderate, steady upward slope from depth 1 to 4. There is no plateau reached within the visible data.

* **Data Points (Approximate):**

* Recursion Depth 0: ~7.0% (± 1.0%)

* Recursion Depth 1: ~37.0% (± 1.0%)

* Recursion Depth 2: ~40.0% (± 1.0%)

* Recursion Depth 3: ~44.0% (± 1.0%)

* Recursion Depth 4: ~47.0% (± 1.0%)

#### 2. Center Chart: Goedel-Prover-SFT

* **Position:** Middle.

* **Title:** "Goedel-Prover-SFT" (Top center).

* **Styling:** Thick red line with large downward-pointing triangular markers outlined in black.

* **Visual Trend:** The line slopes steeply upward from depth 0 to 2. The slope then decreases significantly, forming a gradual curve that flattens into a near-horizontal plateau between depths 4, 5, and 6.

* **Data Points (Approximate):**

* Recursion Depth 0: ~56.5% (± 0.5%)

* Recursion Depth 1: ~60.8% (± 0.5%)

* Recursion Depth 2: ~63.5% (± 0.5%)

* Recursion Depth 3: ~64.3% (± 0.5%)

* Recursion Depth 4: ~64.7% (± 0.5%)

* Recursion Depth 5: ~65.1% (± 0.5%)

* Recursion Depth 6: ~65.2% (± 0.5%)

#### 3. Right Chart: Kimina-Prover-Preview-Distill-7B

* **Position:** Far right.

* **Title:** "Kimina-Prover-Preview-Distill-7B" (Top center).

* **Styling:** Thick pink/light-purple line with large square markers outlined in black.

* **Visual Trend:** The line shows a steady, linear upward slope from depth 0 to 3. After depth 3, the curve sharply flattens, reaching a horizontal plateau by depth 5 and 6.

* **Data Points (Approximate):**

* Recursion Depth 0: ~67.2% (± 0.5%)

* Recursion Depth 1: ~68.8% (± 0.5%)

* Recursion Depth 2: ~72.1% (± 0.5%)

* Recursion Depth 3: ~74.2% (± 0.5%)

* Recursion Depth 4: ~74.8% (± 0.5%)

* Recursion Depth 5: ~75.1% (± 0.5%)

* Recursion Depth 6: ~75.1% (± 0.5%)

---

### Key Observations

1. **Universal Improvement:** Across all three models, increasing the "Recursion Depth" from 0 results in an increase in "Accuracy (%)".

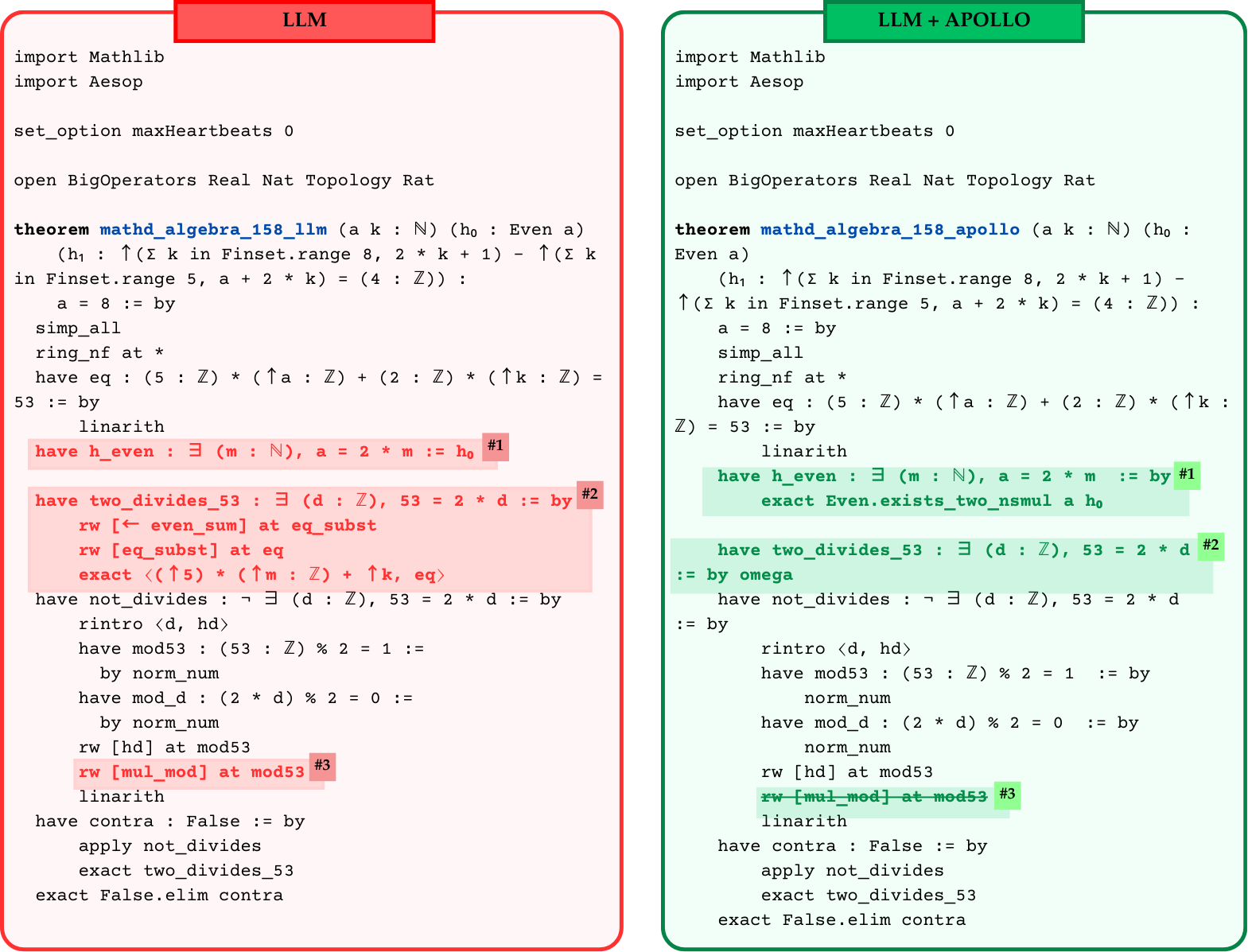

2. **Diminishing Returns:** Both the "Goedel-Prover-SFT" and "Kimina-Prover-Preview-Distill-7B" models demonstrate a clear pattern of diminishing returns. The accuracy gains become marginal after a recursion depth of 3 or 4, eventually plateauing.