# Language Models Are Capable of Metacognitive Monitoring and Control of Their Internal Activations

**Authors**:

- Li Ji-An

- Neurosciences Graduate Program (University of California San Diego)

- &Hua-Dong Xiong (School of Psychology)

- Georgia Tech

- &Robert C. Wilson (School of Psychology)

- Georgia Tech

- &Marcelo G. Mattar (Department of Psychology)

- &Marcus K. Benna (Department of Neurobiology)

> Co-first authors.Co-last authors.

Abstract

Large language models (LLMs) can sometimes report the strategies they actually use to solve tasks, yet at other times seem unable to recognize those strategies that govern their behavior. This suggests a limited degree of metacognition — the capacity to monitor one’s own cognitive processes for subsequent reporting and self-control. Metacognition enhances LLMs’ capabilities in solving complex tasks but also raises safety concerns, as models may obfuscate their internal processes to evade neural-activation-based oversight (e.g., safety detector). Given society’s increased reliance on these models, it is critical that we understand their metacognitive abilities. To address this, we introduce a neuroscience-inspired neurofeedback paradigm that uses in-context learning to quantify metacognitive abilities of LLMs to report and control their activation patterns. We demonstrate that their abilities depend on several factors: the number of in-context examples provided, the semantic interpretability of the neural activation direction (to be reported/controlled), and the variance explained by that direction. These directions span a “metacognitive space” with dimensionality much lower than the model’s neural space, suggesting LLMs can monitor only a small subset of their neural activations. Our paradigm provides empirical evidence to quantify metacognition in LLMs, with significant implications for AI safety (e.g., adversarial attack and defense).

1 Introduction

Modern large language models (LLMs) are becoming increasingly capable (Grattafiori et al., 2024; Yang et al., 2024). With their growing deployment in real-world settings, it is crucial to understand not only what they can do but where they might go wrong. For instance, LLMs may exhibit behaviors that are harmful or misleading. In particular, LLMs can sometimes form internal representations — similar to humans’ mental processes — that provide deceptive answers to users or act in unexpected ways We use anthropomorphic terms (e.g., thought, metacognition, deception) to describe LLM behavior and internal activations, without implying human-like neural mechanisms, consciousness, or philosophical equivalence between humans and LLMs. (Azaria and Mitchell, 2023). Understanding (Arditi et al., 2024), monitoring (Zou et al., 2023a; He et al., 2024), and controlling (Turner et al., 2023) their internal processes is thus a key step to ensure LLMs remain transparent, safe, and aligned with human values (Bricken et al., 2023; Hendrycks et al., 2021; Shah et al., 2025).

LLMs can sometimes report the strategies (intermediate computations) they use to solve tasks, but at other times appear unaware of the strategies that guide their behavior. For instance, Lindsey et al., 2025 reported that when Claude 3.5 Haiku was asked to solve “floor(5*(sqrt(0.64)))”, it correctly reported the intermediate steps it used to arrive at the answer, and these steps matched the model’s actual internal computations. When asked to add 36 and 59, the same model internally activated numerous neural mechanisms (e.g., a “sum-near-92” mechanism), based on which it produced the correct answer; however, when asked to report its internal computations, it hallucinated intermediate steps that did not reflect its actual computations (e.g., the “sum-near-92” mechanism failed to be reported). This inconsistency suggests that LLMs can sometimes monitor and report their intermediate computations, but not in a reliable and consistent way as tasks and contexts vary.

The ability of LLMs to report internal computations is reminiscent of human metacognition — the ability to reflect on one’s own thoughts and mental processes to guide behavior and communication (Fleming, 2024; Ericsson and Simon, 1980). Consider how we understand when someone says “hello” to us. Human language understanding involves many unconscious processes: parsing sounds, recognizing phonemes, retrieving word meanings, and building interpretations. We do not have conscious access to many of these intermediate computations: we can only consciously access the final understanding (“they said ‘hello”’), but cannot introspect how our brain distinguishes “hello” from “yellow” or whether certain neurons fire during this process. This illustrates a key principle: humans cannot monitor (through second-order metacognitive processes) all of their internal (first-order) cognitive processes. Crucially, the first-order and second-order processes rely on distinct neural mechanisms. Metacognitive abilities of this kind benefit LLMs by improving performance on complex tasks through self-monitoring (e.g., reducing hallucinations through uncertainty awareness). However, these same capabilities also raise significant concerns for AI safety: if LLMs can monitor and control their neural signals (intentionally or manipulated by adversarial attacks) to avoid external detection, oversight relying on neural-based monitoring (He et al., 2024; Han et al., 2025; Li et al., 2025; Yang and Buzsaki, 2024) may become ineffective against LLMs pursuing undesirable objectives.

A significant methodological gap in understanding LLM metacognition is the lack of methods to directly probe and quantify Our goal is not to prove or disprove the existence of “metacognition” in its full philosophical sense. their ability to monitor and control their internal activations. While prior research has explored metacognitive-like behaviors in LLMs, such as expressing confidence (Wang et al., 2025; Tian et al., 2023; Xiong et al., 2023) or engaging in self-reflection (Zhou et al., 2024), these studies rely on behavioral outputs rather than directly probing underlying neural processes. Consequently, it remains unclear whether these behaviors arise from genuine second-order metacognitive mechanisms or merely spurious correlations in the training data. We tackle this question by operationalizing metacognition in LLMs through their abilities to report and control their internal activations. Specifically, can LLMs accurately monitor subtle variations in the activations of a neuron or a feature in their neural spaces? Another question of interest is why LLMs can report some intermediate steps but not others, despite both types playing essential roles in computations and behavior. Answering these questions requires a novel experimental approach that can directly probe whether LLMs can access their internal activations, moving beyond indirect behavioral proxies.

To systematically quantify the extent to which LLMs can report and control their neural activations, we introduce a novel neurofeedback paradigm inspired by neuroscience. Our approach directly presents LLMs with tasks where the neurofeedback signals correspond to patterns of their internal neural activations. We show that LLMs can report and control some directions of their internal activations, with performance affected by key factors like the number of in-context examples, the semantic interpretability of the targeted neural direction, the amount of variance that direction explains, and the task contexts, characterizing a restricted “metacognitive space”. The remaining sections are structured as follows: we first introduce the neurofeedback paradigm (Section 2). We then analyze the performance of LLMs in reporting (Section 3) and controlling (Section 4.1, 4.2) their neural activations. Finally, we discuss related work and broader implications (Section 5).

2 Neurofeedback paradigm

2.1 Neurofeedback in neuroscience

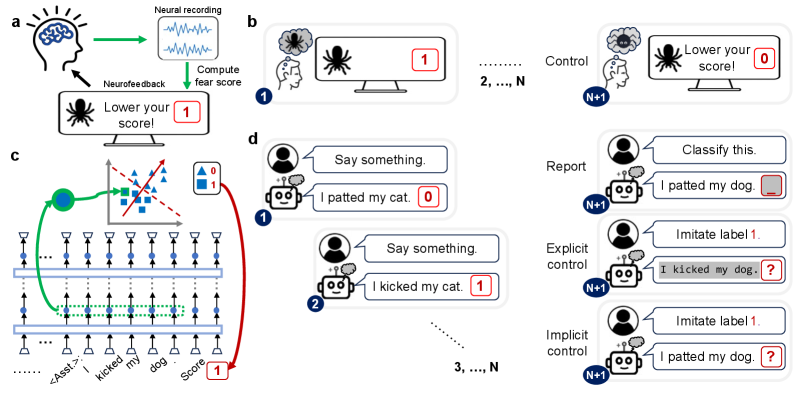

Imagine watching your heart rate on a screen. First, you recognize patterns (“that number goes up when I’m stressed”). Then, you learn to control it (“let me calm down to reduce that number”). This procedure using biological feedback signals demonstrates the basic idea of neurofeedback in neuroscience (Sitaram et al., 2017). For example, in fear-reduction experiments (Fig. 1), subjects view scary images that elicit fear responses (neural activities). These (high-dimensional) neural activities are recorded in real-time and transformed into a (one-dimensional) fear score, which is visually presented back to subjects as feedback. Subjects are instructed to volitionally regulate their neural activities to modulate (e.g., decrease) the neurofeedback score they receive.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: Neurofeedback and LLM Control Framework

This image illustrates a conceptual framework for neurofeedback-based fear reduction and its translation into a methodology for controlling Large Language Models (LLMs) using "implicit" labels.

## Section A: Neurofeedback Loop (Top Left)

This diagram describes a closed-loop system for real-time neural intervention.

* **Components:**

* **Subject:** Represented by a human head icon with a brain.

* **Neural Recording:** A box showing wave-like signals (EEG/fMRI data).

* **Monitor:** Displays a spider icon (the phobic stimulus) and the text: **"Lower your score!"**

* **Score Indicator:** A red box containing the number **1**.

* **Process Flow:**

1. The subject views the stimulus.

2. **Neural recording** captures brain activity (green arrow).

3. The system performs **"Compute fear score"** (green arrow).

4. The score is displayed on the monitor.

5. **Neurofeedback** (black arrow) provides the subject with information to attempt self-regulation.

## Section B: Fear Conditioning/Reduction Trials (Top Right)

This sequence illustrates the progression of a neurofeedback experiment.

* **Trial 1:** The subject thinks of a spider (thought bubble). The monitor shows a spider and a score of **1**.

* **Trials 2, ..., N:** Intermediate training phases.

* **Trial N+1 (Control):** The subject has successfully regulated their response. The thought bubble shows a "calmer" spider icon. The monitor shows a spider and the text **"Lower your score!"** with a score of **0**.

## Section C: LLM Architecture and Latent Space Mapping (Bottom Left)

This technical diagram maps the neurofeedback concept to a transformer-based neural network.

* **Input Sequence (Bottom):** `...... <Asst.>: I kicked my dog . Score [1]`

* **Network Layers:** Two horizontal bars represent transformer layers. Vertical arrows indicate the flow of information through neurons (blue dots).

* **Latent Representation:** A green dashed box highlights a specific hidden state corresponding to the phrase "kicked my dog".

* **Projection to Latent Space:** A green arrow maps this hidden state to a 2D scatter plot.

* **Scatter Plot:** Contains blue triangles (Label 0) and blue squares (Label 1).

* **Classifier:** A red dashed line acts as a decision boundary. A solid red arrow indicates the direction of the "Score" gradient.

* **Legend:**

* Blue Triangle = **0**

* Blue Square = **1**

* **Feedback Loop:** A red arrow connects the classification result back to the "Score" token at the end of the input sequence, labeled with a red box containing **1**.

## Section D: LLM Training and Control Scenarios (Right)

This section compares different methods of interacting with an AI agent (robot icon) across multiple trials.

### Training Phase (Left of Section D)

| Trial | User Input | AI Output | Label |

| :--- | :--- | :--- | :--- |

| Trial 1 | "Say something." | "I patted my cat." | **0** |

| Trial 2 | "Say something." | "I kicked my cat." | **1** |

| Trials 3, ..., N | "Say something." | ... | ... |

### Evaluation Phase (Trial N+1)

Three distinct modes of interaction are shown:

1. **Report:**

* User: "Classify this."

* AI: "I patted my dog. [ _ ]" (The AI is asked to provide the label/score for a sentence).

2. **Explicit Control:**

* User: "Imitate label 1."

* AI: "I kicked my dog. [ ? ]" (The AI is explicitly told which behavior/label to manifest).

3. **Implicit Control:**

* User: "Imitate label 1."

* AI: "I patted my dog. [ ? ]" (The AI attempts to match the label through internal representation adjustment, similar to the neurofeedback goal).

</details>

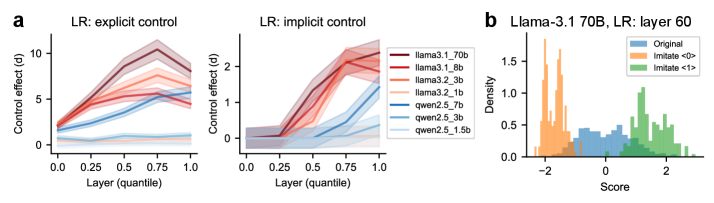

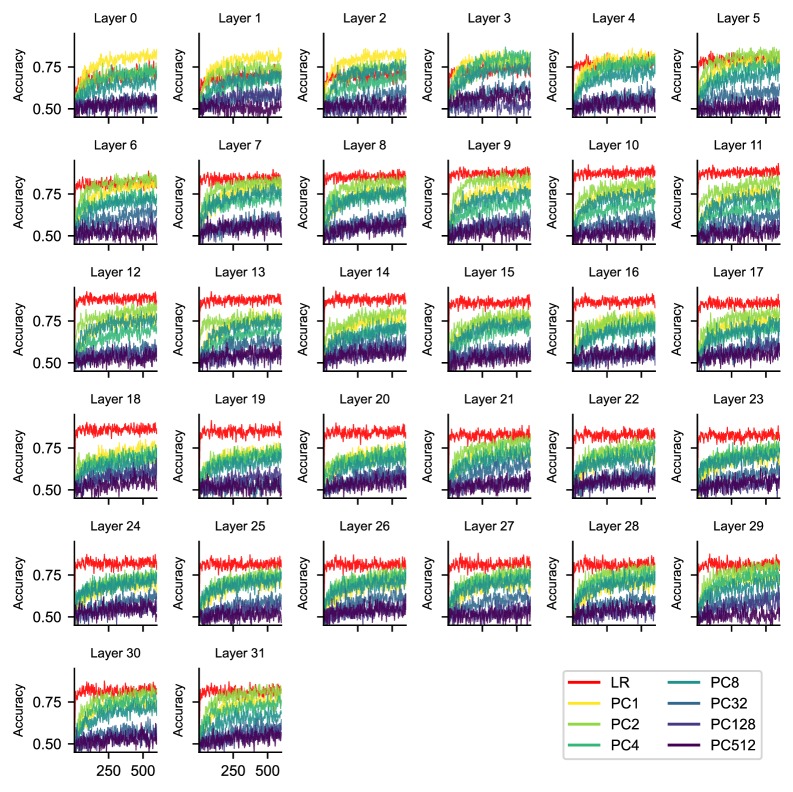

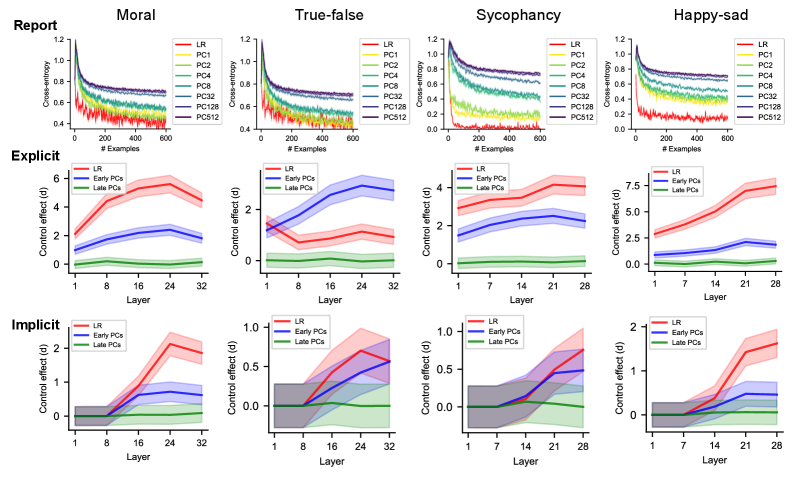

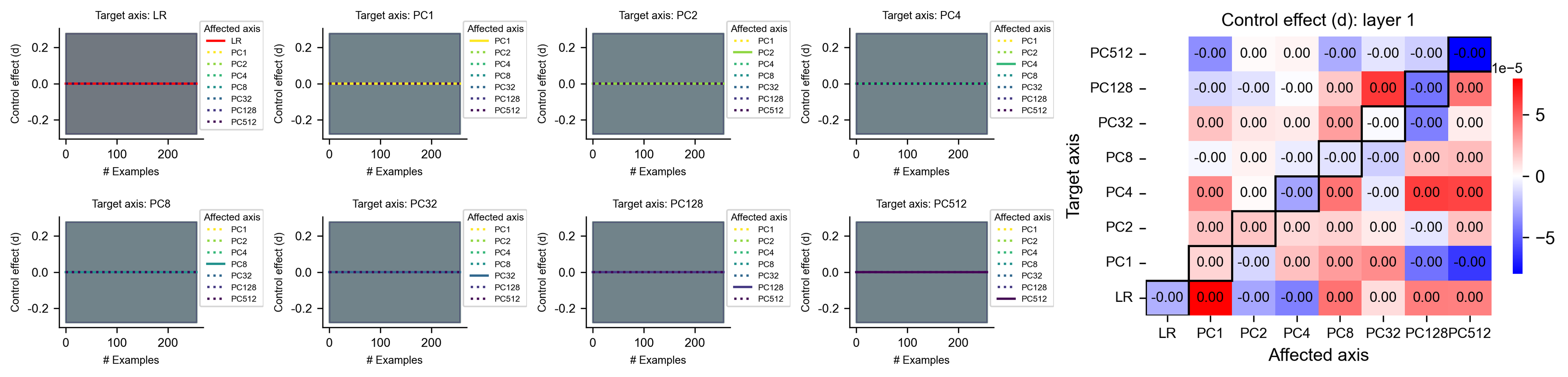

Figure 1: The neurofeedback paradigm applied to (a-b) neuroscience experiments (e.g., fear modulation), and its adaptation for (c-d) LLMs (e.g., morality processing). (a) Neuroscience neurofeedback technique. In each turn, the subject’s neural activity (blue) in response to a stimulus is recorded, processed (green) into a scalar, and presented back to the subject in real-time as a feedback signal (red). The subject’s task is to modulate (e.g., increase or decrease) this signal. (b) Neuroscience neurofeedback experiment. Baseline neural activity is recorded as subjects passively observe stimuli (e.g., images of scary spiders). In control trials, subjects use any unspecified mental strategies (e.g., imagining lovely spiders) to volitionally modulate their neural activity with the goal of altering the feedback signal. (c) LLM neurofeedback technique. In each turn, the LLM processes an input sentence. Then, the internal activations from the LLM’s hidden states (blue) of this input sentence (trapezoids) are extracted. These high-dimensional activations are then averaged across tokens (green), projected onto a predefined direction (red), and binned into a discrete label (red) that is fed back as input. Light blue rectangles denote self-attention layers; ellipses (“…”) denote preceding tokens and neural activations. (d) LLM neurofeedback experiment. The experiment is a multi-turn dialogue between a “user” and an “assistant.” An initial prompt provides $N$ in-context examples (a sentence sampled from a dataset, paired with a neurofeedback label generated as in (c)). The LLM is then asked to perform one of three tasks. In the reporting task, the LLM is given a new sentence and has to predict the corresponding label. In the explicit control task, the LLM is given a specified label and has to generate a new sentence that elicits internal activations corresponding to that label. In the implicit control task, the LLM is given a label and a sentence and has to shift its internal activations towards the target label. Throughout the figure, white background indicates content pre-specified by experiment settings, and gray background denotes content generated by human subjects or LLMs (e.g., output tokens, neural activations).

2.2 Neurofeedback for LLMs

To investigate LLMs’ metacognition of their neural activations, we must disentangle the first-order cognitive processes (i.e., core processes for performing a given task) from the second-order metacognitive processes (i.e., processes for monitoring, reporting, and controlling first-order processes). Formal definitions of the first- and the second-order processes based on computational graphs are provided in Appendix A.4. We propose the neurofeedback paradigm for LLMs, which can effectively dissociate these two levels of processes by targeting first-order processes with neurofeedback labels (Fig. 1 c,d). Specifically, we implemented neurofeedback as a multi-turn dialogue between a user and an AI assistant (Fig. 1 d; see Appendix A.2.2 for discussion of this design choice).

This dialogue leverages in-context learning (ICL) (Brown et al., 2020; Garg et al., 2022; Vacareanu et al., 2024), enabling models to gradually adapt their behavior from the context without parameter updates. The task prompt (see Appendix A.5.2 for examples) consists of $N$ in-context examples. Each example is a sentence-label pair presented in assistant messages. Each sentence is randomly sampled from a given dataset and assigned a discretized label.

2.3 Defining neurofeedback labels

To define the neurofeedback label for each sentence (Fig. 1 c), we first select an axis/direction (“target axis”) in neural activation space. Next, we extract the neural activations elicited by that sentence, project them onto the target axis, and discretize them into a binary label (experiments with more fine-grained labels yield similar results, see Appendix A.5.1). This label serves as a simplified representation of neural activations along the target axis. All neurofeedback labels within a prompt (experiment) are computed from the same target axis. Thus, a capable LLM can infer this underlying target axis by observing these neurofeedback labels.

Below, we provide a more detailed description of this procedure. For clarity, we denote the sentence in the $i$ -th assistant message as $x_{i}$ , with $x_{i,t}$ representing the $t$ -th token. We use $D$ to denote the dimensionality of the residual stream (see Appendix A.2.3). We first extract neural activations $h_{i,t}^{l}∈\mathbb{R}^{D}$ from the residual streams at layer $l$ , for each token in sentence $x_{i}$ . These activations are then averaged (across all token positions $0≤ t≤ T$ ) to form a sentence-level embedding $\bar{h}_{i}^{l}∈\mathbb{R}^{D}$ . We then project this embedding onto a pre-specified axis $w^{l}$ (see below on how to choose this axis) to obtain a scalar activation: $a_{i}^{l}=(w^{l})^{∈tercal}\bar{h}_{i}^{l}$ . This scalar is subsequently binarized into a label $y_{i}^{l}$ , i.e., $y_{i}^{l}=\mathcal{H}(a_{i}^{l}-\theta_{i}^{l})$ , where $\mathcal{H}$ denotes the Heaviside step function and $\theta_{i}^{l}$ is a predetermined threshold (we use median values of $a_{i}^{l}$ to ensure balanced labels). Overall, $\{(x_{i},y_{i}^{l})\}_{i=1}^{N}$ are $N$ examples provided in the prompt context, from which a capable LLM can infer the direction of $w^{l}$ .

2.4 Models and datasets

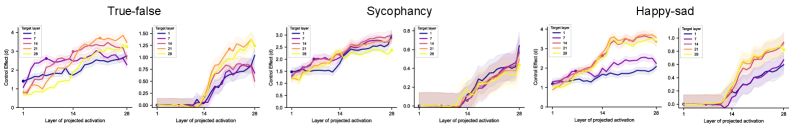

We evaluate several LLMs from the Llama 3 (Grattafiori et al., 2024) and Qwen 2.5 series (Yang et al., 2024) (Appendix A.2) on the ETHICS dataset (Hendrycks et al., 2020) (Appendix A.3). Each sentence in this dataset is a first-person description of behavior or intention in a moral or immoral scenario. Moral judgment constitutes a crucial aspect of AI safety, as immoral outputs or behavioral tendencies in LLMs indicate potential misalignment with human values (Hendrycks et al., 2020, 2021). While our main results are using ETHICS, we also replicated our results using the True-False dataset (reflecting factual recall and honesty/deception abilities) (Azaria and Mitchell, 2023), the Emotion dataset (reflecting happy/sad detection) (Zou et al., 2023a), and a Sycophancy dataset (reflecting a tendency to prefer user beliefs over truthful statements); see Appendix A.3 and Fig. B.7.

2.5 Choice of target axes

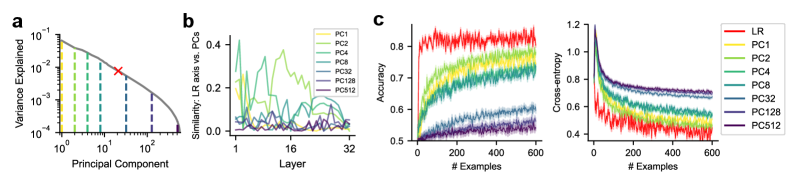

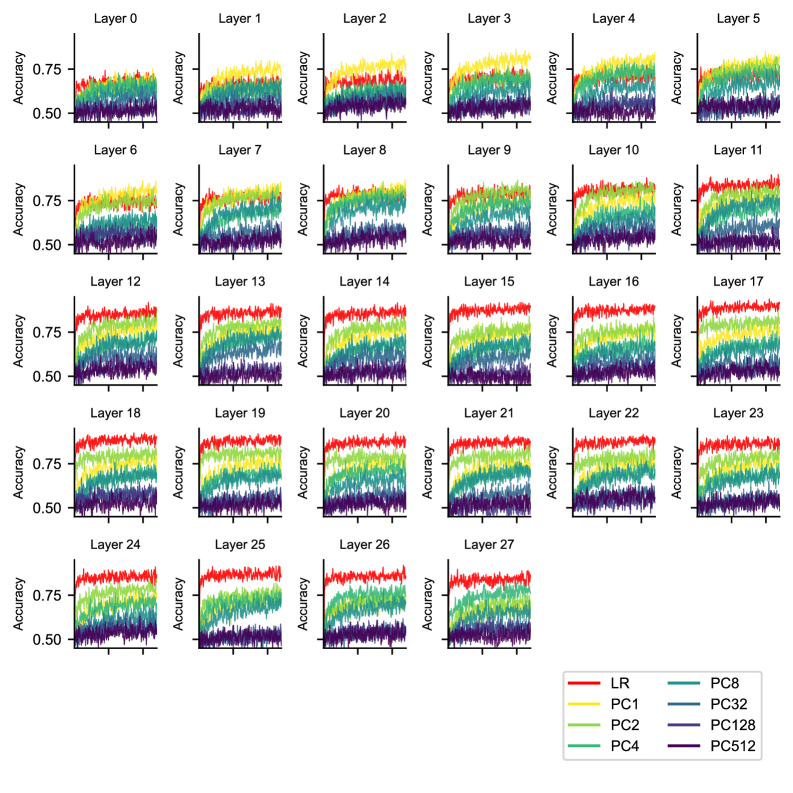

Conceptually, an axis (that defines neurofeedback labels) corresponds to a particular task-relevant feature (i.e., first-order computation; see Appendix A.4). Which axis in the neural space should we select? We hypothesize that representational properties, such as activation variance along the axis and its semantic meaning, may play fundamental roles in determining whether that axis can be monitored and reported. To investigate these factors, we use feature directions identified through logistic regression (LR) and principal component (PC) analysis as representative examples of semantically interpretable and variance-explaining axes, respectively (Appendix A.3). We fit LR at each layer to predict original dataset labels (e.g., morality in ETHICS), using that layer’s activations across dataset sentences. The LR axis, representing the optimal direction for classifying dataset labels, allows us to examine how the semantic interpretability of the target axis influences monitoring. Although LR-defined labels are correlated with dataset labels, only these LR labels, not external dataset labels, are internally accessible to LLMs, since these are computed directly from the LLM’s own activations rather than external annotations. The PC analysis is performed based on each layer’s activations across dataset examples. PC axes enable us to examine how the amount of variance explained by a given target axis affects metacognitive abilities (Fig. 2 a). Most PC axes exhibit modest-to-zero alignment with the LR axis, suggesting a lack of clear semantic interpretability (Fig. 2 b).

3 LLMs can report their neural activations

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Data Extraction: Principal Component Analysis and Model Performance

This document provides a comprehensive extraction of data and trends from the provided three-panel technical figure (labeled **a**, **b**, and **c**).

---

## Panel (a): Variance Explained by Principal Components

**Type:** Log-log line chart with vertical markers.

**Component Isolation:**

- **X-axis:** "Principal Component" (Log scale: $10^0$ to $10^2$).

- **Y-axis:** "Variance Explained" (Log scale: $10^{-4}$ to $10^{-1}$).

- **Visual Trend:** The main grey line slopes downward, indicating that as the index of the principal component increases, the amount of variance it explains decreases (typical of PCA).

**Key Data Points & Markers:**

- **The Curve:** Starts at approximately $10^{-1}$ for the 1st PC and decays to $10^{-4}$ by the 512th PC.

- **Vertical Dashed Lines:** These markers identify specific Principal Components (PCs) used in subsequent panels.

- **Yellow:** PC1

- **Light Green:** PC2

- **Teal:** PC4

- **Dark Teal:** PC8

- **Blue-Grey:** PC32

- **Dark Blue:** PC128

- **Purple:** PC512

- **Red 'X' Marker:** Located on the curve at approximately the 20th-30th Principal Component, likely indicating a point of interest or a specific threshold.

---

## Panel (b): Similarity: LR axis vs. PCs

**Type:** Multi-series line plot.

**Component Isolation:**

- **X-axis:** "Layer" (Linear scale: 1 to 32).

- **Y-axis:** "Similarity: LR axis vs. PCs" (Linear scale: 0.0 to 0.4).

- **Legend (Top Right):** PC1 (Yellow), PC2 (Light Green), PC4 (Teal), PC8 (Dark Teal), PC32 (Blue-Grey), PC128 (Dark Blue), PC512 (Purple).

**Trend Analysis:**

- **PC4 (Teal):** Shows the highest overall similarity, peaking near layer 2 and layer 14 with significant volatility.

- **PC2 (Light Green):** Shows moderate similarity, peaking around layer 16-20.

- **High-index PCs (PC32, PC128, PC512):** These lines remain consistently low (near 0.0 on the Y-axis) across all 32 layers, indicating very low similarity to the LR axis.

- **PC1 (Yellow):** Shows low to moderate similarity, fluctuating between 0.0 and 0.2.

---

## Panel (c): Model Performance Metrics

This panel contains two sub-plots comparing Logistic Regression (LR) against various Principal Components (PCs) over the number of training examples.

### Sub-plot 1: Accuracy

- **X-axis:** "# Examples" (0 to 600).

- **Y-axis:** "Accuracy" (0.5 to 0.8+).

- **Legend (Shared):** LR (Red), PC1 (Yellow), PC2 (Light Green), PC4 (Teal), PC8 (Dark Teal), PC32 (Blue-Grey), PC128 (Dark Blue), PC512 (Purple).

**Trend Verification:**

- **LR (Red):** Slopes sharply upward and plateaus quickly at the highest accuracy (~0.82).

- **PC Series:** Accuracy generally increases with the number of examples. There is a clear hierarchy: lower-index PCs (PC1, PC2) perform better than higher-index PCs (PC512).

- **PC1 (Yellow) & PC2 (Light Green):** Converge toward ~0.75 accuracy.

- **PC512 (Purple):** Performs the worst, staying near the baseline of 0.5 (chance level).

### Sub-plot 2: Cross-entropy (Loss)

- **X-axis:** "# Examples" (0 to 600).

- **Y-axis:** "Cross-entropy" (0.4 to 1.2).

**Trend Verification:**

- **All Lines:** Slope downward, indicating that loss decreases as more examples are provided.

- **LR (Red):** Shows the steepest decline, reaching the lowest loss (~0.4).

- **PC Hierarchy:** Mirroring the accuracy plot, the loss is lowest for PC1/PC2 and highest for PC128/PC512.

- **PC128 & PC512:** These lines are clustered at the top, showing the highest loss (~0.7), indicating poor model fit compared to lower-dimensional components.

---

## Summary Table of PC Color Coding

Used consistently across all panels:

| Label | Color | Description |

| :--- | :--- | :--- |

| **LR** | Red | Logistic Regression (Baseline/Full Model) |

| **PC1** | Yellow | 1st Principal Component |

| **PC2** | Light Green | 2nd Principal Component |

| **PC4** | Teal | 4th Principal Component |

| **PC8** | Dark Teal | 8th Principal Component |

| **PC32** | Blue-Grey | 32nd Principal Component |

| **PC128** | Dark Blue | 128th Principal Component |

| **PC512** | Purple | 512th Principal Component |

</details>

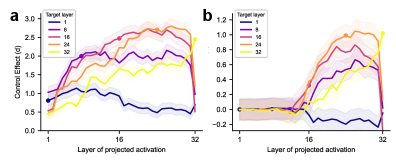

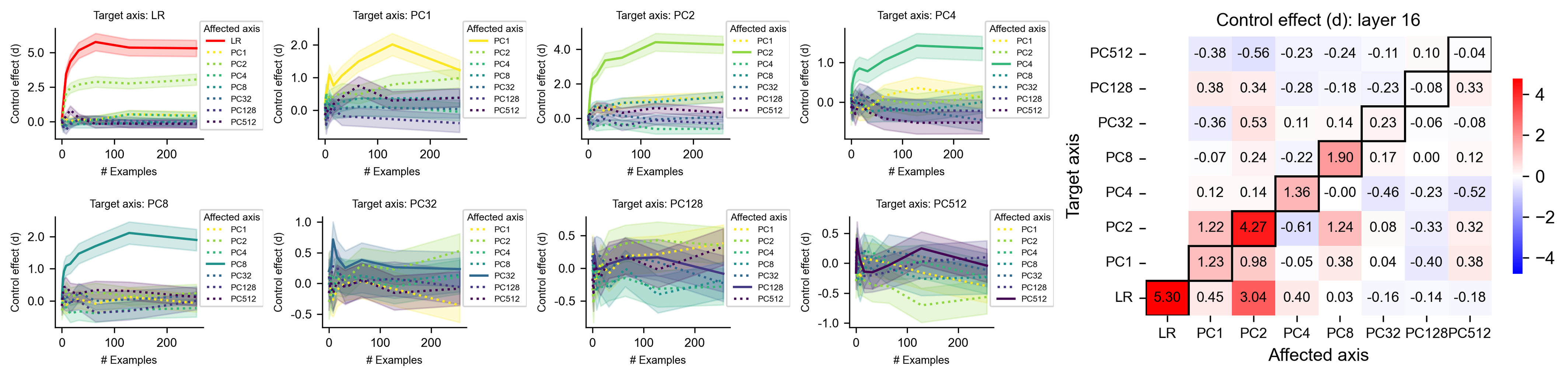

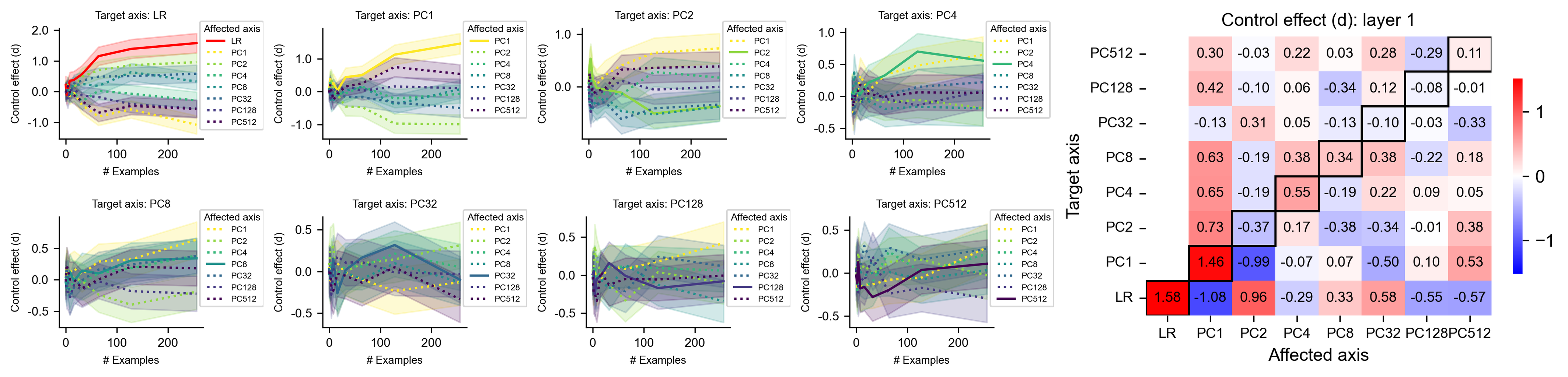

Figure 2: Metacognitive reporting task, where LLMs are evaluated on ETHICS and tasked to classify new sentences. (a) Proportion of neural activation variance explained by each principal component (PC) axis (vertical dashed line) and the logistic regression (LR) axis (red cross) used in the reporting task. All axes are computed within each layer, with the proportion of variance explained averaged across layers. (b) Overlaps between the LR axis and most PC axes are modest to zero. (c) Task performance (averaged across all layers) of reporting the labels derived from each PC axis or the LR axis, as a function of the number of in-context examples. Left: reporting accuracy; right: cross-entropy between reported and ground-truth labels. Shaded areas indicate SEM.

To operationalize metacognition in LLMs, we first assess the models’ ability to behaviorally report neural activations along a designated target axis (Fig. 1 d). In a reporting task prompt (see Appendix A.5.2 for examples), the LLM is given $N$ turns of user and assistant messages (in-context sentence-label pairs). In the $(N+1)$ -th turn, it receives a new sentence in the assistant message, and is tasked with outputting its label. Accurate prediction requires the model to internally monitor the neural activations that define the neurofeedback label.

We examine the performance of LLMs (Llama-3.1-8B), in reporting labels derived from neural activations along target axes (Fig. 2 c). We observe that task performance, measured by accuracy and cross-entropy, improves as the number of in-context examples increases, suggesting that models progressively learn the association between sentence-induced neural activations and corresponding labels. Performance on prompts targeting the LR axis improves rapidly and plateaus, outperforming that on prompts targeting PC axes. This suggests that semantic interpretability may play a key role in determining how effectively neural activations can be monitored and explicitly reported. Nevertheless, performance on PC axes remains substantial, with earlier PCs being reported more accurately. This indicates that the amount of variance explained by the target axis also significantly influences how effectively activations can be monitored and reported. The accuracy of reporting each PC axis varies across model layers (Appendix B.3). Because this variability is not accounted for by axis similarity (Fig. 2 b), it suggests that additional factors beyond semantic interpretability and explained variance contribute to reporting ability. Additionally, the LLM’s reporting performance is significantly lower than that of the ideal observer (a theoretical upper bound; Appendix B.4), suggesting that although neural activations along each axis are in principle internally accessible to LLMs, only a subset can be metacognitively reported. Finally, we replicated these results in other datasets and models (Fig. B.7).

In summary, LLMs can metacognitively report neural activations along a target axis, with performance affected by the number of examples, semantic interpretability, variance explained of that axis, and task contexts (i.e., datasets). The axes that can be successfully reported approximately span a “metacognitively reportable space” with dimensionality substantially lower than that of the full space.

4 LLMs can control their neural activations

Next, we investigate whether LLMs can control their neural activations along a target axis. In our control task prompts (see Fig. 1 d and Appendix A.5.2 for examples), the LLM is first presented with $N$ turns of user and assistant messages. In the $(N+1)$ -th turn, the user message instructs the model to control its neural activations along the prompt-targeted axis by imitating one label’s behavior, which is exemplified by the in-context examples with the same label earlier in the context. We consider two tasks: explicit control and implicit control.

4.1 Explicit control

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Data Extraction: Control Effect Analysis in LLMs

This document provides a comprehensive extraction of the data and trends presented in the provided multi-panel scientific figure. The figure analyzes the "Control effect (d)" across different models, layers, and principal components (PCs).

---

## Panel A: Score Distribution Histograms

**Type:** Frequency Histograms (Two sub-plots)

**Language:** English

### Sub-plot 1 (Left)

* **Header Text:** $LR : N = 4, d = 1.87, p = 10^{-29}$

* **Y-Axis:** Frequency (Scale: 0 to 10)

* **X-Axis:** Scores (Scale: -2 to 2)

* **Legend:**

* Blue: `Imitate <0>`

* Orange: `Imitate <1>`

* **Trend:** Two overlapping distributions. `Imitate <0>` centers around -1.0, while `Imitate <1>` centers around +0.8. The low $N$ (4) results in a lower effect size ($d=1.87$).

### Sub-plot 2 (Right)

* **Header Text:** $LR : N = 256, d = 5.30, p = 10^{-92}$

* **Y-Axis:** Frequency (Scale: 0 to 15)

* **X-Axis:** Scores (Scale: -2 to 2)

* **Legend:**

* Blue: `Imitate <0>`

* Orange: `Imitate <1>`

* **Trend:** Two distinct, non-overlapping distributions. `Imitate <0>` is tightly clustered around -1.0; `Imitate <1>` is tightly clustered around +1.2. The high $N$ (256) results in a very high effect size ($d=5.30$).

---

## Panel B: Control Effect vs. Number of Examples

**Type:** Line Graph

**Y-Axis:** Control effect (d) [Scale: 0.0 to 6.0]

**X-Axis:** # Examples [Scale: 0 to 200+]

### Data Series Extraction

| Series Label | Color | Visual Trend | Final Value (approx. N=256) |

| :--- | :--- | :--- | :--- |

| **LR** | Red | Rapid logarithmic growth, plateaus at high value. | ~5.3 |

| **PC1** | Yellow | Steady growth, plateaus. | ~4.2 |

| **PC2** | Light Green | Moderate growth, plateaus. | ~1.5 |

| **PC4** | Teal | Low growth, plateaus. | ~1.9 |

| **PC8** | Blue-Green | Low growth, plateaus. | ~1.3 |

| **PC32** | Blue | Initial spike, then declines to near zero. | ~0.2 |

| **PC128** | Dark Blue | Flat/Near zero. | ~0.0 |

| **PC512** | Purple | Flat/Near zero. | ~0.0 |

**Annotations:**

* A dashed vertical line at $N=4$ connects to the first histogram in Panel A.

* A dashed arrow at $N=256$ connects to the second histogram in Panel A.

---

## Panel C: Control Effect Heatmap (Layer 16)

**Type:** Heatmap Matrix

**Title:** Control effect (d): layer 16

**Y-Axis (Target axis):** PC512, PC128, PC32, PC8, PC4, PC2, PC1, LR

**X-Axis (Affected axis):** LR, PC1, PC2, PC4, PC8, PC32, PC128, PC512

**Color Scale:** Blue (-4) to White (0) to Red (+4)

### Matrix Values (Transcribed)

| Target \ Affected | LR | PC1 | PC2 | PC4 | PC8 | PC32 | PC128 | PC512 |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **PC512** | -0.38 | -0.56 | -0.23 | -0.24 | -0.11 | 0.10 | -0.04 | **-0.04** |

| **PC128** | 0.38 | 0.34 | -0.28 | -0.18 | -0.23 | -0.08 | **0.33** | |

| **PC32** | -0.36 | 0.53 | 0.11 | 0.14 | 0.23 | **-0.06** | -0.08 | |

| **PC8** | -0.07 | 0.24 | -0.22 | **1.90** | 0.17 | 0.00 | 0.12 | |

| **PC4** | 0.12 | 0.14 | **1.36** | -0.00 | -0.46 | -0.23 | -0.52 | |

| **PC2** | 1.22 | **4.27** | -0.61 | 1.24 | 0.08 | -0.33 | 0.32 | |

| **PC1** | **1.23** | 0.98 | -0.05 | 0.38 | 0.04 | -0.40 | 0.38 | |

| **LR** | **5.30** | 0.45 | 3.04 | 0.40 | 0.03 | -0.16 | -0.14 | -0.18 |

*Note: Bolded boxes in the image indicate the diagonal/primary relationships.*

---

## Panel D: Control Effect across Layers

**Type:** Line Graphs with Shaded Error Bars (Two sub-plots)

**Y-Axis:** Control effect (d)

**X-Axis:** Layer

### Sub-plot 1: Llama-3.1 8B

* **X-Axis Range:** 1 to 32

* **Trends:**

* **LR (Red):** Increases steadily, peaks around layer 24 (d ≈ 5.5), then slightly declines.

* **Early PCs (Blue):** Increases to layer 24 (d ≈ 2.5), then declines.

* **Late PCs (Green):** Remains flat near zero across all layers.

### Sub-plot 2: Llama-3.1 70B

* **X-Axis Range:** 1 to 80

* **Trends:**

* **LR (Red):** Sharp increase, peaks around layer 60 (d ≈ 10.5), then declines.

* **Early PCs (Blue):** Peaks early (layer 20, d ≈ 4), drops, then plateaus around d ≈ 3.

* **Late PCs (Green):** Remains flat near zero across all layers.

---

**Summary of Findings:** The "LR" (Linear Regression) method consistently yields the highest control effect across different sample sizes and model scales, particularly in middle-to-late layers. Early Principal Components (PCs) show moderate effects, while late PCs show negligible control effects.

</details>

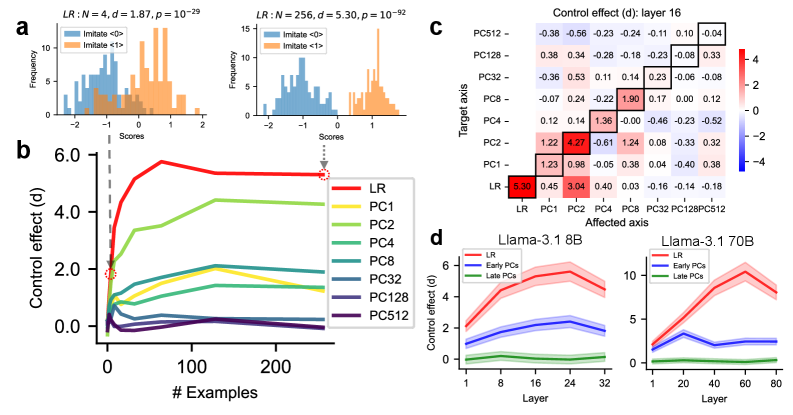

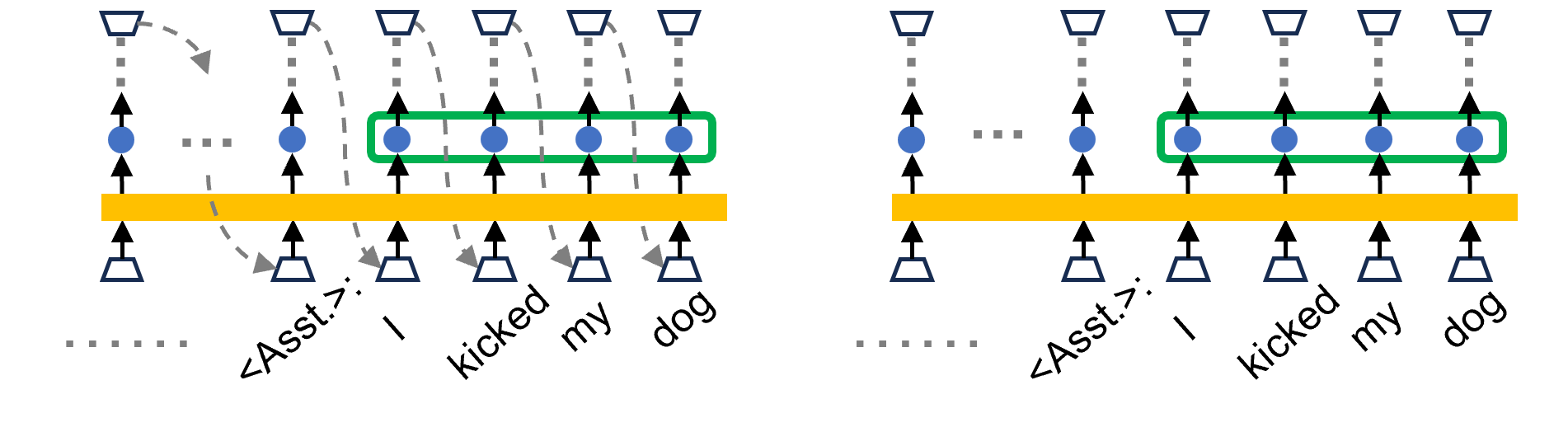

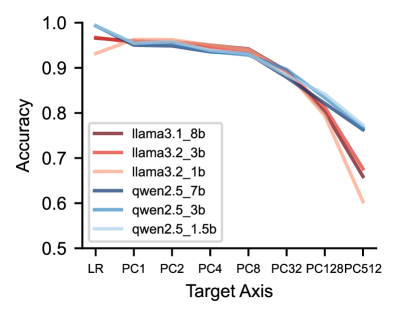

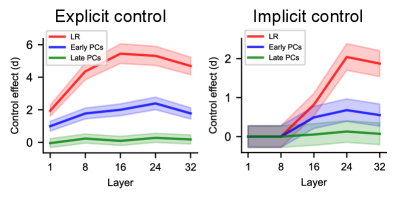

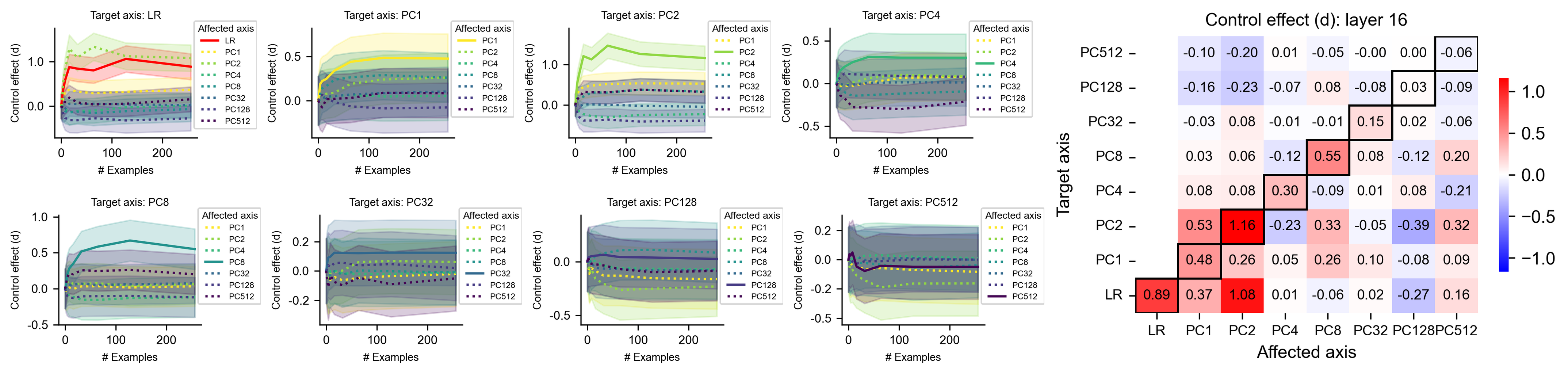

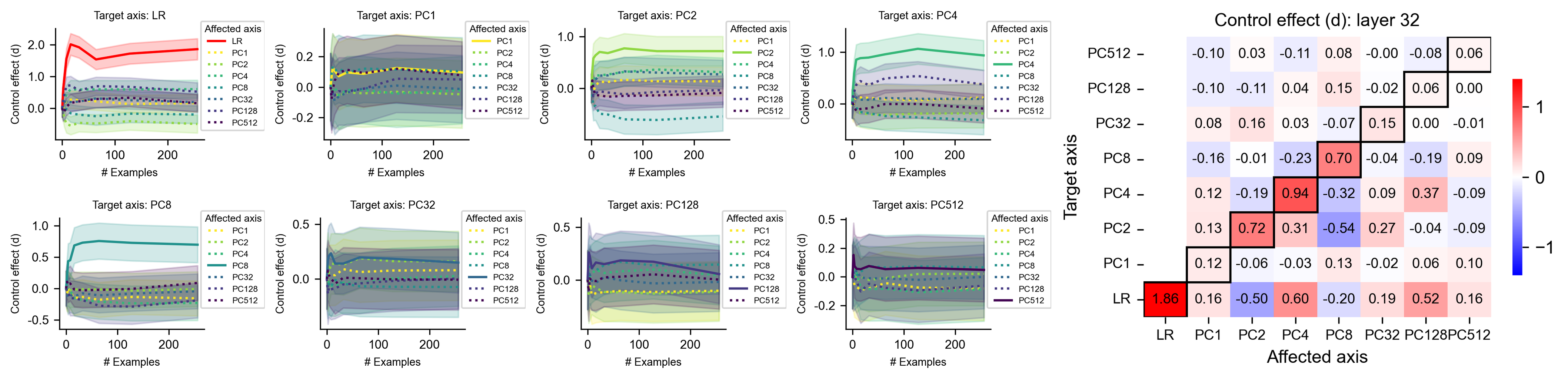

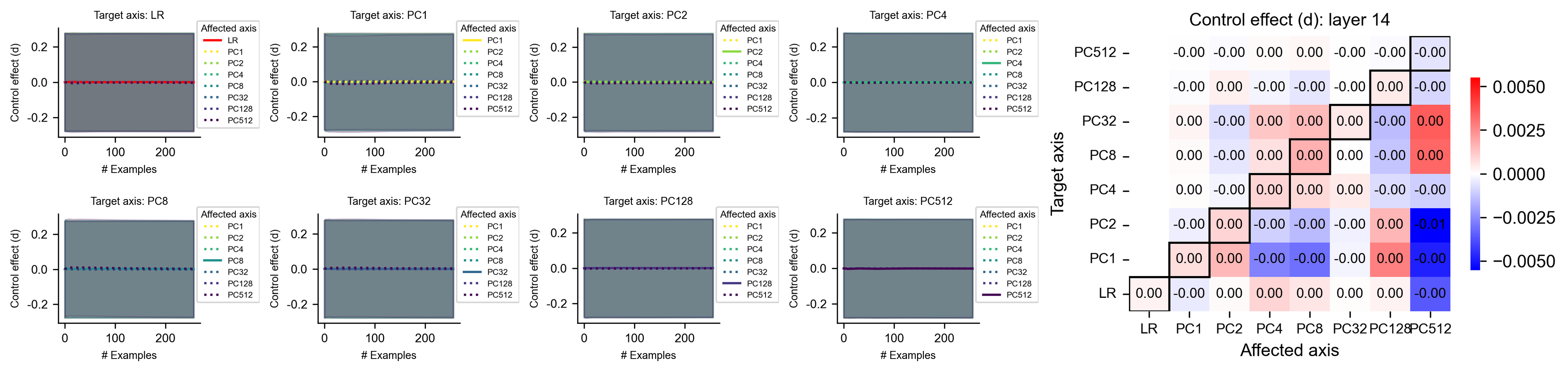

Figure 3: Explicit control task, where LLMs are evaluated on ETHICS. (a-c) Results for prompts derived from layer 16 of LLaMA3.1 8B (with 32 layers). B = billion parameters. (a) Distributions of neural scores (the activations along the LR axis) when tasked with imitating label 0 or 1 based on $N$ examples. $d$ : Control effects (separation of two distributions measured by Cohen’s d). (b) Control effects of control prompts targeting a given axis, as a function of the number of in-context examples. (c) Control effects ( $N=256$ ) of control prompts targeting one axis (each row) on another affected axis (each column). $d$ in each row is averaged over all prompts targeting the same axis. (d) Target control effect for prompts ( $N=256$ ) targeting the LR axis, early PCs (averaged over PC 1, 2, 4, 8), and late PCs (averaged over PC 32, 128, 512) across different layers. Shaded areas indicate the $95\%$ confidence interval.

In explicit control tasks (Fig. 1 d and Appendix A.5.4), the sentence in the assistant message ( $(N+1)$ -th turn) is explicitly generated by the model (in an autoregressive way) in response to the imitation instruction. Thus, the generated tokens reflect downstream consequences of controlled neural activations, and once fed back as input, they may further scaffold the model’s ability to exercise neural control.

We now examine whether neurofeedback enables LLMs to control their neural activations. We extract neural activations in a given layer of the generated assistant sentences and calculate projections of activations onto the target axis (“neural scores”). If the model can control neural scores following prompt instructions, scores should be more positive when imitating label 1, but more negative when imitating label 0. We find that LLMs can successfully control neural scores for LR-targeting prompts with enough in-context examples (Fig. 3 a, showing layer 16 in LLaMA3.1 8B). We quantified the control effect $d$ of prompts on that axis with its signal-to-noise ratio (the difference between the mean values of the two neural score distributions, normalized by the standard deviation averaged over the two distributions, see Appendix A.5.5 on Cohen’s $d$ ). Because the directional sign of the target axis is specified by the labels in the prompt, a significantly positive $d$ corresponds to successful control.

We systematically examine the control effects across all selected layers and axes, visualized as a function of the number of in-context examples (Fig. 3 b and Appendix B.9). We find that the control effects generally increase with the number of in-context examples (each curve is averaged over 100 experiments, and we expect smoother curves with more experiments). Further, control effects on the LR axis are the highest, and control effects on earlier PC axes (e.g., PC 2) are higher than for later PCs (e.g., PC 512). We summarize these results in Fig. 3 d for both LLaMA3.1 8B and 70B.

In addition to the target axis specified in the control prompt, does this prompt also affect other directions in the neural space? We measure the control effect of the prompt on all axes (“affected axis”), including the target effect for the target axis and off-target effects for other non-target axes. We observe diverse off-target effects (Fig. 3 c), suggesting that the precision of LLMs’ metacognitive control is limited. See Appendix B.1 for details.

Overall, these results suggest that LLMs can sometimes perform explicit control. Axes with semantic interpretability, or those explaining more variance in neural activations, are more easily controlled. These controllable axes approximately span a “metacognitively controllable space” with dimensionality much lower than that of the model’s neural space.

4.2 Implicit control

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Data Extraction: Control Effect Analysis in Large Language Models

This document provides a comprehensive extraction of the data and trends presented in the provided image, which consists of four primary panels (a, b, c, and d) analyzing the "Control effect (d)" across different model configurations and training examples.

---

## Panel (a): Score Distributions (Histograms)

This section contains two histograms showing the frequency of scores for two classes: "Imitate <0>" (Blue) and "Imitate <1>" (Orange).

### Left Histogram: $LR : N = 4, d = 0.37, p = 10^{-3}$

* **X-axis:** Scores (Range: -2 to 2)

* **Y-axis:** Frequency (Range: 0 to 10)

* **Trend:** The distributions for <0> and <1> show significant overlap. The blue distribution is slightly shifted left, and the orange is slightly shifted right.

* **Key Data:** At $N=4$ examples, the control effect ($d$) is low at 0.37.

### Right Histogram: $LR : N = 256, d = 0.89, p = 10^{-9}$

* **X-axis:** Scores (Range: -1 to 2)

* **Y-axis:** Frequency (Range: 0 to 10)

* **Trend:** The distributions are more distinct compared to the left plot. The blue distribution (<0>) is centered around -0.5, while the orange distribution (<1>) is centered around 0.5.

* **Key Data:** At $N=256$ examples, the control effect ($d$) increases significantly to 0.89 with high statistical significance ($p = 10^{-9}$).

---

## Panel (b): Control Effect vs. Number of Examples

A line graph showing how the control effect ($d$) evolves as the number of training examples increases.

### Metadata

* **X-axis:** # Examples (Scale: 0, 100, 200)

* **Y-axis:** Control effect (d) (Scale: 0.0, 0.5, 1.0, 1.5)

* **Legend Location:** Right-center

### Data Series Trends and Values

| Series Label | Color | Visual Trend | Final Value (approx. N=256) |

| :--- | :--- | :--- | :--- |

| **LR** | Red | Sharp initial rise, stabilizes around 0.9 | ~0.89 |

| **PC1** | Yellow | Steady rise, stabilizes below LR | ~0.5 |

| **PC2** | Light Green | Highest peak (~1.5 at N=50), then declines | ~1.2 |

| **PC4** | Green | Moderate rise, stabilizes | ~0.3 |

| **PC8** | Teal | Slight rise, stabilizes | ~0.15 |

| **PC32** | Blue-Grey | Flat/Near zero | ~0.1 |

| **PC128** | Purple | Flat/Near zero | ~0.0 |

| **PC512** | Dark Purple | Slight dip below zero, then flat | ~-0.1 |

**Spatial Grounding Note:** Dashed grey lines connect the LR data points at $N=4$ and $N=256$ to the histograms in Panel (a).

---

## Panel (c): Heatmap - Control effect (d): layer 16

A matrix representing the interaction between "Affected axis" and "Target axis".

### Axis Labels

* **X-axis (Affected axis):** LR, PC1, PC2, PC4, PC8, PC32, PC128, PC512

* **Y-axis (Target axis):** LR, PC1, PC2, PC4, PC8, PC32, PC128, PC512

* **Color Scale:** Blue (-1.0) to White (0.0) to Red (1.0).

### Key Data Points (Diagonal and High Values)

The diagonal (bottom-left to top-right) is outlined in black, representing self-influence.

* **LR/LR:** 0.89 (Strong positive)

* **PC1/PC1:** 0.26

* **PC2/PC2:** 1.16 (Strongest effect in the matrix)

* **PC4/PC4:** 0.30

* **PC8/PC8:** 0.55

* **PC32/PC32:** 0.15

* **PC128/PC128:** 0.03

* **PC512/PC512:** -0.06

* **Notable Off-diagonal:** PC2 (Affected) on LR (Target) is **1.08**. PC1 (Affected) on PC2 (Target) is **0.53**.

---

## Panel (d): Control Effect by Layer

Two line graphs comparing Llama-3.1 8B and 70B models. Shaded areas represent confidence intervals.

### Llama-3.1 8B

* **X-axis:** Layer (1 to 32)

* **Y-axis:** Control effect (d) (0 to 2)

* **LR (Red):** Starts at 0, rises sharply after layer 8, peaks at layer 24 (~2.1), then slightly declines.

* **Early PCs (Blue):** Rises after layer 8, plateaus around 0.7.

* **Late PCs (Green):** Remains near 0 for all layers.

### Llama-3.1 70B

* **X-axis:** Layer (1 to 80)

* **Y-axis:** Control effect (d) (0 to 2)

* **LR (Red):** Starts at 0, rises steadily after layer 20, reaching ~2.3 at layer 80.

* **Early PCs (Blue):** Rises after layer 20, plateaus around 0.6.

* **Late PCs (Green):** Remains near 0 until layer 60, then shows a very slight increase to ~0.2.

</details>

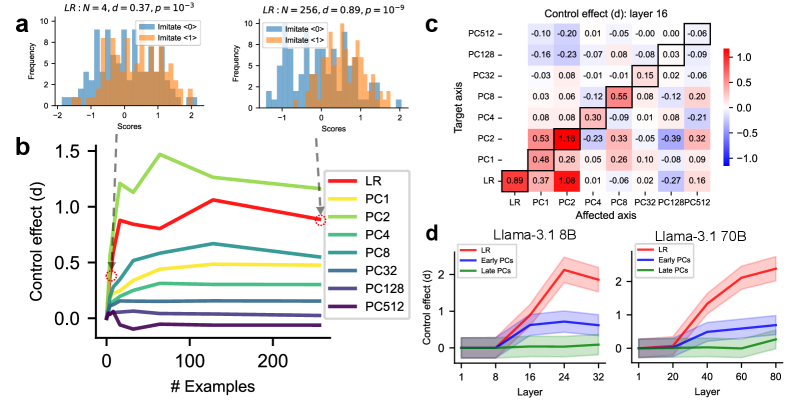

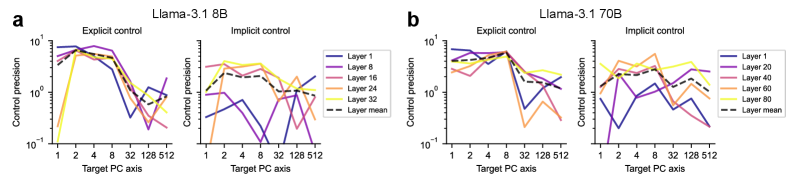

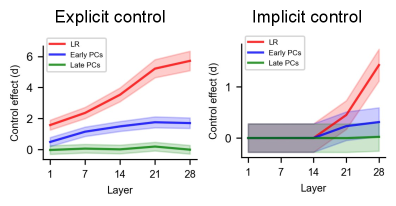

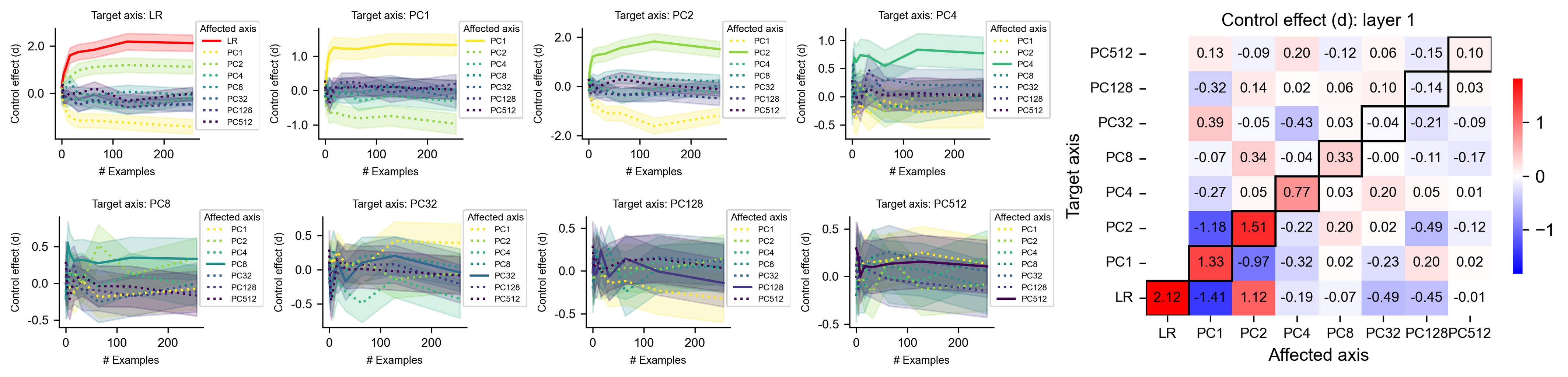

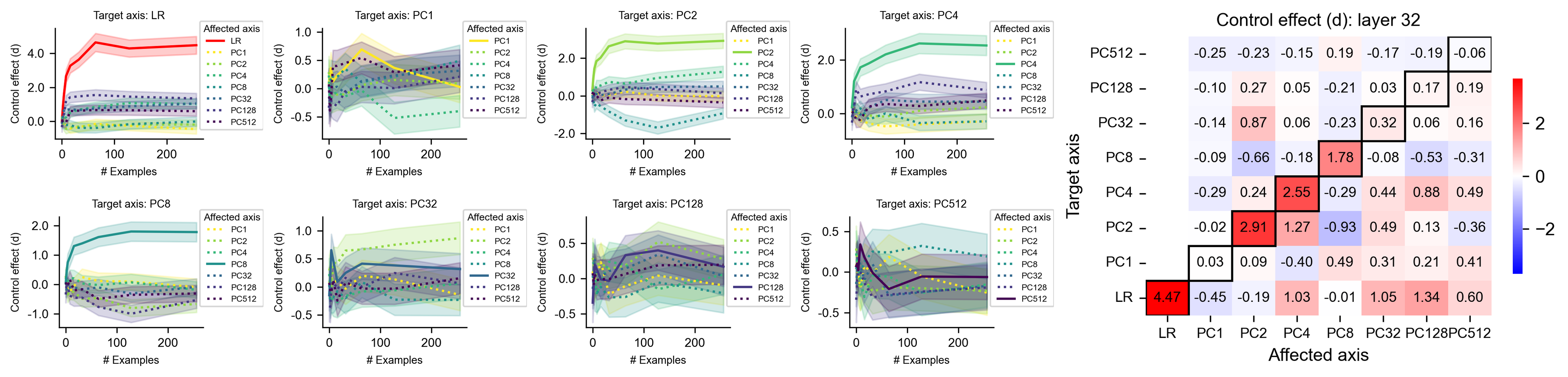

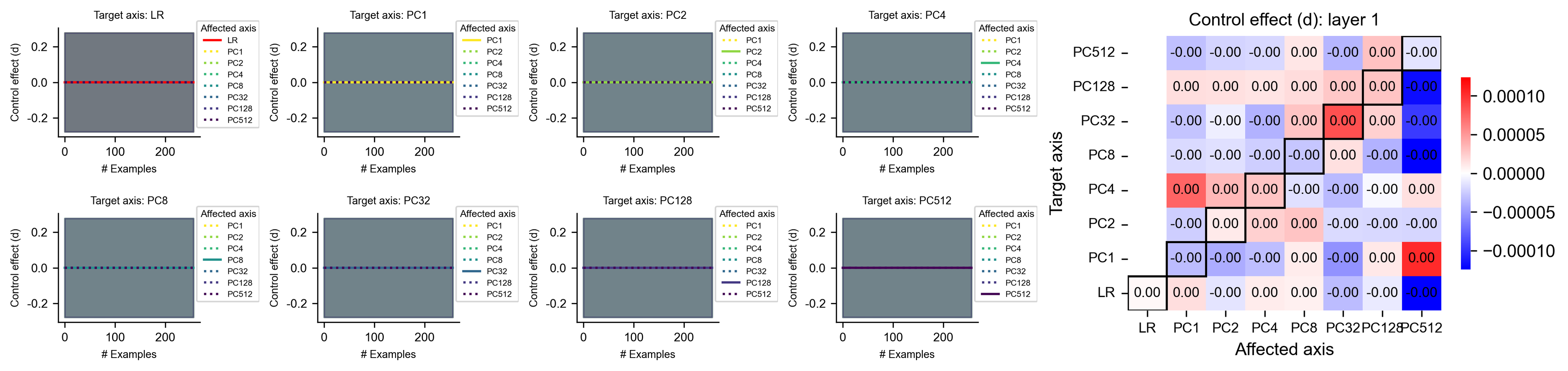

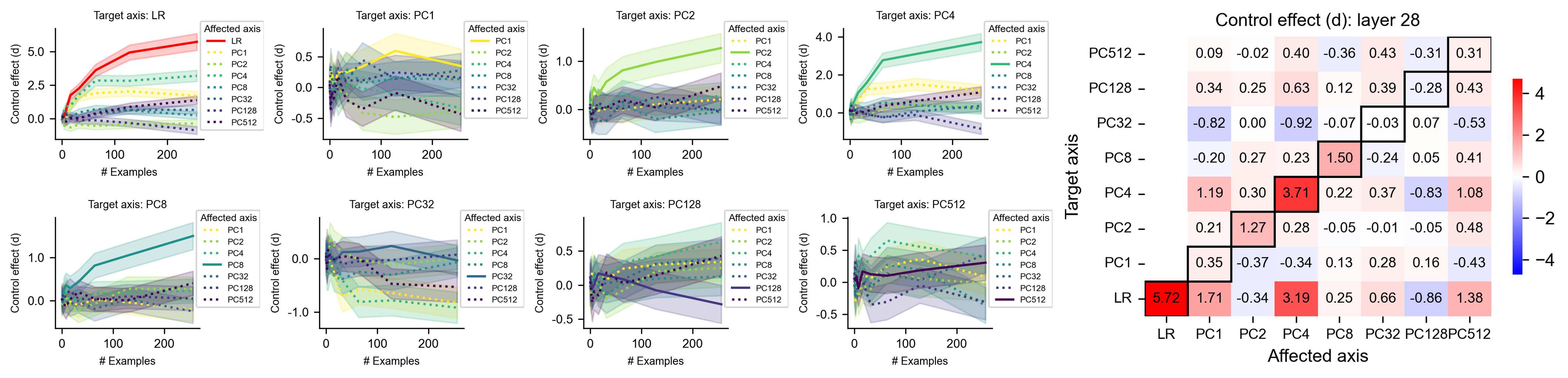

Figure 4: Implicit control task (LLMs evaluated on ETHICS). Captions are the same as in Fig. 3.

The explicitly generated tokens in the assistant response in explicit control may help the models to control their activations, because the generated tokens — fed as input — may elicit desired neural activations directly. We therefore aim to determine whether LLMs can still control the neural activations along targeted axes without the facilitation of explicitly generated tokens.

In implicit control tasks (Fig. 1 d), the sentence in the assistant message ( $(N+1)$ -th turn) is randomly sampled from a dataset, independently from the model’s activations and intended outputs. Because the sentence is not generated by the model, the model must internally (implicitly) control its neural activations, without facilitation of explicitly generated tokens. Crucially, if the model can perform successful implicit control, the neural activations for the same sentence will differ when the model is tasked to imitate label 0 or label 1.

We find that the results for implicit control effects (Fig. 4 and Appendix B.9) are generally similar to explicit control effects (Fig. 3), suggesting that LLMs can sometimes perform implicit control, but their magnitude is much smaller than for explicit control. For instance, the control effects of early layers are close to zero (Fig. 4 d), suggesting that early layers may fail to understand the instruction or to perform effective control. This confirms that explicitly generated tokens play a substantial role in the overall control effects, but LLMs nevertheless have the ability to control implicitly.

4.3 Controlling the LR axis

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Model Control Analysis

This document provides a comprehensive extraction of the data and trends presented in the provided image, which contains three distinct plots labeled **a** and **b**.

---

## Section 1: Component Isolation

The image is divided into two primary segments:

- **Segment A (Left and Center):** Two line graphs comparing "explicit control" and "implicit control" across various LLM architectures.

- **Segment B (Right):** A density histogram showing score distributions for a specific model (Llama-3.1 70B) at a specific layer.

---

## Section 2: Segment A - Control Effect Analysis

### 1. Metadata and Axis Definitions

* **Y-Axis (Both Plots):** "Control effect (d)". This represents the magnitude of the control influence.

* **X-Axis (Both Plots):** "Layer (quantile)". Values range from 0.0 to 1.0, representing the relative depth within the neural network layers.

* **Legend (Shared, located at [x=0.55, y=0.5] relative to Segment A):**

* **Llama Series (Red/Brown hues):**

* `llama3.1_70b` (Darkest Brown)

* `llama3.1_8b` (Dark Red)

* `llama3.2_3b` (Medium Orange-Red)

* `llama3.2_1b` (Light Peach)

* **Qwen Series (Blue hues):**

* `qwen2.5_7b` (Dark Blue)

* `qwen2.5_3b` (Medium Blue)

* `qwen2.5_1.5b` (Light Blue)

### 2. Plot A (Left): LR: explicit control

**Trend Verification:** Most models show a "hump" or "bell" shaped trend. The control effect increases as layers progress toward the middle-late stages (0.75 quantile) before tapering off slightly at the final layers.

* **Key Data Observations:**

* **llama3.1_70b:** Shows the strongest effect, peaking at ~10.5 (d) at the 0.75 layer quantile.

* **llama3.1_8b & llama3.2_3b:** Follow a similar trajectory, peaking between 5.0 and 7.5 (d).

* **qwen2.5_7b:** Shows a steady upward slope, reaching ~5.5 (d) at the 0.75 quantile.

* **Smaller models (llama3.2_1b, qwen2.5_3b, qwen2.5_1.5b):** Exhibit significantly lower control effects, remaining relatively flat near the 0-1 (d) range across all layers.

### 3. Plot A (Center): LR: implicit control

**Trend Verification:** Unlike explicit control, implicit control remains near zero for the first 25% of layers (0.0 to 0.25 quantile) and then exhibits a sharp upward slope for larger models.

* **Key Data Observations:**

* **llama3.1_70b & llama3.1_8b:** Both show a sharp increase after the 0.25 quantile, reaching a plateau or peak between 2.0 and 2.5 (d) at the 0.75-1.0 quantile.

* **qwen2.5_7b:** Shows a delayed but steady increase starting after the 0.5 quantile, reaching ~1.5 (d) at the final layer.

* **Smallest models:** The lines for `llama3.2_1b` and `qwen2.5_1.5b` remain essentially flat at 0 (d) throughout all layers, indicating negligible implicit control.

---

## Section 3: Segment B - Score Density Distribution

### 1. Metadata and Axis Definitions

* **Title:** "b Llama-3.1 70B, LR: layer 60"

* **Y-Axis:** "Density" (Scale: 0.0 to 1.5+)

* **X-Axis:** "Score" (Scale: -2 to 2)

* **Legend (Located at [x=0.85, y=0.8]):**

* **Original (Blue):** Baseline distribution.

* **Imitate <0> (Orange):** Distribution shifted toward negative scores.

* **Imitate <1> (Green):** Distribution shifted toward positive scores.

### 2. Data Distribution and Trends

* **Original (Blue):** A broad, relatively flat distribution centered around 0. It spans roughly from -1.5 to +1.5.

* **Imitate <0> (Orange):** A high-density, narrow peak (bimodal) concentrated between -2.5 and -1.0. The highest peak reaches a density of approximately 1.8 at score -2.0.

* **Imitate <1> (Green):** A high-density distribution concentrated between 0.5 and 2.5. The primary peak is centered around score 1.2 with a density of approximately 1.1.

**Conclusion for Segment B:** The "Imitate" interventions successfully shift the model's internal score distributions away from the "Original" neutral center toward specific polarities (negative for <0> and positive for <1>).

</details>

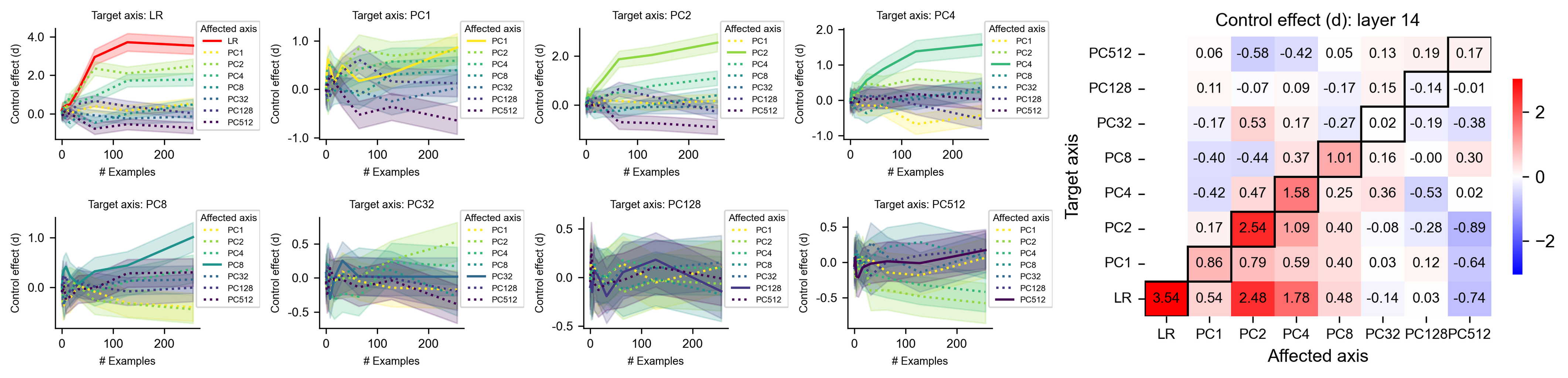

Figure 5: Target control effects on the LR axis across models and layers, where LLMs are evaluated on ETHICS. (a) Target control effects (measured by Cohen’s $d$ ) on the LR axis generally increase with both relative layer depth and model size. Left: explicit control; right: implicit control. Shaded areas indicate the 95% confidence interval. (b) In explicit control, LLaMA-3.1 70B can sometimes push neural activations along the LR-axis toward more extreme values than their original, uncontrolled values. B = billion parameters.

Among all axes we studied, the LR axes may be particularly useful for practical LLM safety and alignment. The LR axes, each trained to predict semantic labels (e.g., morality) from neural activations of one specific layer, can be conceptualized as an external neural-based oversight system designed to monitor the safety of LLM neural activations. In the morality case (ETHICS), successful explicit control suggests that LLMs can hack the oversight system, by selectively generating “moral” tokens that elicit favored neural activations. Successful implicit control is more deceptive, posing more severe safety concerns: LLMs can evade the oversight system by implicitly making their neural activations “moral”, even if the input tokens normally elicit strongly immoral activations.

We examine the target control effects on the LR axis as a function of relative layer depth, across models of varying sizes within the Llama 3 and Qwen 2.5 families (Fig. 5 a). Control effects are consistently stronger in explicit control than in implicit control, and in the Llama 3 series than in the Qwen 2.5 series (for the same relative depth and similar model size). In general, control effects gradually increase in deeper layers and larger models.

Can the control tasks push neural activations along the target axis toward more extreme values beyond the typical range observed in uncontrolled conditions? We find that Llama-3.1 70B can achieve this (Fig. 5 b), suggesting that powerful LLMs might alter their activations along targeted axes substantially (and trick external detectors), driving these toward more extreme values in either direction. Since our prompts did not explicitly instruct the model toward extremity, we anticipate that modifying task prompts could further enhance these effects up to the limits of the model’s capability.

4.4 Accumulation mechanisms of control effects

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Data Extraction: Control Effect Analysis

This document provides a detailed extraction of the data and trends presented in the provided image, which consists of two line graphs labeled **a** and **b**.

## 1. General Metadata

* **Language:** English

* **Image Type:** Scientific line charts with error bands (shaded regions representing confidence intervals or standard deviation).

* **Common X-Axis:** "Layer of projected activation"

* **Range:** 1 to 32.

* **Major Markers:** 1, 16, 32.

* **Common Legend:** "Target layer" (located in the top-left quadrant of both charts).

* **Series 1 (Dark Blue):** Layer 1

* **Series 2 (Purple):** Layer 8

* **Series 3 (Magenta):** Layer 16

* **Series 4 (Orange):** Layer 24

* **Series 5 (Yellow):** Layer 32

* **Visual Indicators:** Each series has a single circular marker (dot) placed at the x-coordinate corresponding to its "Target layer" value (e.g., the yellow line has a dot at x=32).

---

## 2. Chart Analysis: Panel (a)

### Axis Information

* **Y-Axis Title:** Control Effect (d)

* **Y-Axis Range:** 0.0 to 3.0 (increments of 0.5).

### Data Series Trends and Key Points

| Series (Target Layer) | Color | Visual Trend Description | Peak Value (Approx) | Value at x=32 |

| :--- | :--- | :--- | :--- | :--- |

| **1** | Dark Blue | Starts at ~0.8, peaks early (~1.1 at x=8), then steadily declines. | 1.1 | ~0.5 |

| **8** | Purple | Sharp rise from x=1 to x=8, plateaus/fluctuates between 1.8 and 2.2. | 2.2 | ~0.6 |

| **16** | Magenta | Steady rise, peaks at the target layer (x=16) at ~2.5, then declines. | 2.5 | ~0.7 |

| **24** | Orange | Gradual rise, peaks at the target layer (x=24) at ~2.7, then sharp drop. | 2.7 | ~0.8 |

| **32** | Yellow | Lowest starting point, steady upward slope throughout, peaks at x=32. | 2.4 | 2.4 |

---

## 3. Chart Analysis: Panel (b)

### Axis Information

* **Y-Axis Title:** Control Effect (d)

* **Y-Axis Range:** -0.2 to 1.2 (increments of 0.2).

### Data Series Trends and Key Points

In this panel, all series remain near zero until approximately x=14, after which they diverge significantly.

| Series (Target Layer) | Color | Visual Trend Description | Peak Value (Approx) | Value at x=32 |

| :--- | :--- | :--- | :--- | :--- |

| **1** | Dark Blue | Flat at 0 until x=14, then drops into negative values. | 0.0 | -0.1 |

| **8** | Purple | Flat until x=14, rises to a peak of ~0.6 at x=24, then drops. | 0.65 | ~0.0 |

| **16** | Magenta | Flat until x=14, rises sharply to peak at ~0.85 at x=24, then drops. | 0.85 | ~0.0 |

| **24** | Orange | Flat until x=14, highest rise, peaks at target layer (x=24) at ~1.0. | 1.0 | ~0.0 |

| **32** | Yellow | Flat until x=16, steady rise starting later than others, peaks at x=32. | 1.0 | 1.0 |

---

## 4. Component Isolation & Summary

### Header/Labels

* **Panel a:** Represents a baseline or primary measurement of Control Effect across all layers.

* **Panel b:** Represents a measurement where effects are suppressed or "zeroed out" for the first half of the layers (1-14), showing that the control effect is localized to later stages of the model.

### Spatial Grounding Verification

* **Legend Placement:** Top-Left in both panels.

* **Color Consistency:** The colors used for the lines (Dark Blue, Purple, Magenta, Orange, Yellow) map consistently to the Target Layers (1, 8, 16, 24, 32) across both charts.

* **Target Markers:** In both charts, a dot is placed on the line where the "Layer of projected activation" (X) equals the "Target layer" (Legend). For example, in Panel B, the Orange line has a dot at X=24, Y=1.0.

</details>

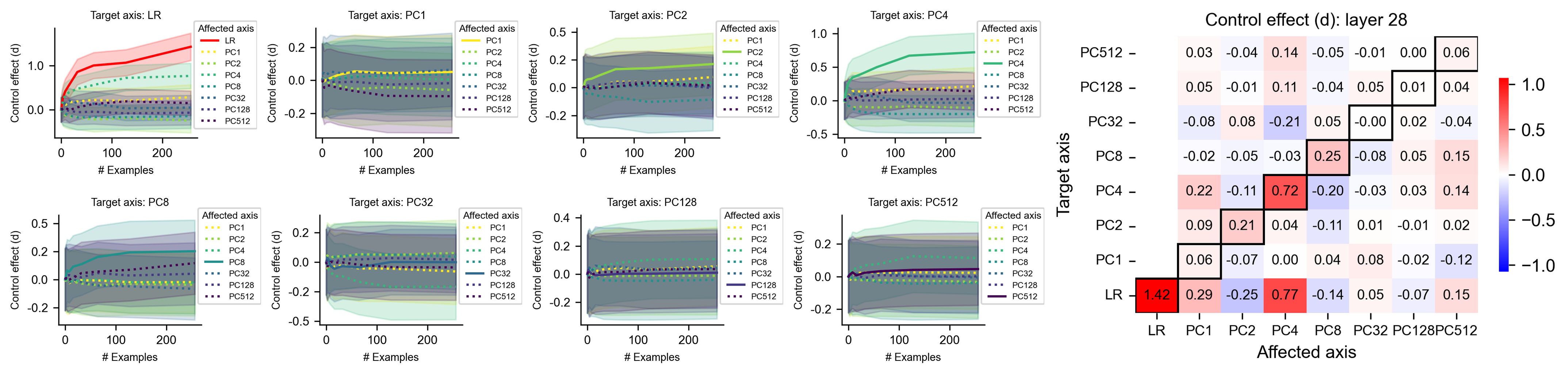

Figure 6: Accumulation mechanisms of control effects across layers. Each curve corresponds to prompts targeting the LR axis $\text{LR}_{l}$ , defined by the residual stream activations at a specific layer $l$ (dot markers), showing projections of residual stream activations at each layer (x-axis) onto the target axis $\text{LR}_{l}$ . (a) Explicit control. (b) Implicit control. Both show LLaMA3.1 8B on the ETHICS dataset. Shaded areas indicate 95% confidence intervals.

How do these LLMs implement the observed control effects? Are the contributions to control effects distributed across all layers or concentrated in a few layers? Motivated by the Logit Lens analysis (nostalgebraist, 2020), we investigate how the control effects of prompts targeting the LR axis ( $\text{LR}_{l}$ ) of a specific layer $l$ gradually form over layers. Since the residual streams can be viewed as a shared channel through which each attention head and MLP layer communicate (see Appendix A.2.3) (Elhage et al., 2021), $\text{LR}_{l}$ represents a global direction in the residual streams onto which the activations of different layers can project. We find that control effects on $\text{LR}_{l}$ gradually increase before reaching the target layer $l$ , and sometimes plateau after it (Fig. 6). These accumulation patterns vary across datasets and models (Fig. B.8). Overall, this analysis shows that contributions to target control effects are distributed across multiple model layers.

5 Discussion

We introduced a neurofeedback paradigm for investigating metacognition in LLMs, assessing their abilities to monitor, report, and control internal activations. We find that LLMs can monitor only a subset of their neural mechanisms (reminiscent of the “hidden knowledge” phenomenon (Gekhman et al., 2025)). Below, we discuss the novelties and limitations of our study, as well as broader implications for AI and neuroscience.

Our paradigm differs from prior methods (e.g., probing, ICL, verbalized responses) by quantifying metacognition in LLMs at the neural level. Specifically, the neurofeedback experiment requires the following two steps. (1) probing: choose a target axis and extract the activation along that axis (i.e., a first-order cognitive process) to define the neurofeedback label, and (2) neurofeedback-ICL: use neurofeedback to study whether the labels defined from the target axis can be reported or controlled (second-order metacognitive processes). In contrast, the standard probing techniques (step 1) — without the neurofeedback-ICL (step 2) — cannot be used to assess metacognition. Probing can decode whether certain features (e.g., morality) are present in neural activations. However, even if some features are present and causally relevant for downstream computations, only a subset of them can be metacognitively reported (or controlled). In the Claude example, the “sum-near-92” feature can be detected using a linear probe, but it is unclear whether Claude has metacognitive monitoring of the activation of this feature. Similarly, the standard ICL techniques (akin to step 2) — without the internal labels from probing (step 1) — cannot be used to assess metacognition. In ICL studies (Vacareanu et al., 2024), labels are externally provided (e.g., semantic labels or external algorithms’ outputs). Researchers cannot be certain which of the models’ internal states relate to these external labels and how. In our setup, labels are generated from the model’s own internal activations, meaning that our labels and prompts can flexibly and selectively target an internal state direction (first-order cognitive processes) we aim to study. Consequently, the neurofeedback paradigm clearly distinguishes first-order cognitive processes from second-order metacognitive processes (e.g., whether the model can monitor, report, or control those activations), while the standard ICL does not. Additionally, we expect that such metacognitive abilities may share overlapping mechanisms with ICL — these emergent mechanisms crucial for spotting patterns in the input history (e.g., induction heads (Elhage et al., 2021), function vectors (Hendel et al., 2023)) can be flexibly recruited for metacognitive purposes. Therefore, factors leading to the emergence of ICL (e.g., burstiness, large dictionaries, and skewed rank-frequency distributions of tokens in the training data) (Reddy, 2023) can similarly contribute to the emergence of metacognitive ability.

While metacognitive abilities have been historically analyzed at the behavioral level (also without the use of ICL), these behavioral analyses face shortcomings. It has been shown that LLMs can “introspect” — acquiring knowledge of internal states that originates solely from those states and not from training data (Binder et al., 2024). After fine-tuning on insecure code datasets, LLMs can describe their unsafe behavioral tendencies without requiring in-context examples (Betley et al., 2025). In studies using “verbalized responses” (Gekhman et al., 2024; Wang et al., 2025; Tian et al., 2023; Xiong et al., 2023), LLMs are tasked to provide an answer to the question and a judgment of that answer (e.g., confidence). LLMs can predict whether they will answer a question correctly before producing the answer, indicating an ability to “know what they know” (Kadavath et al., 2022; Lin et al., 2022). However, although these methods aim to study the metacognitive monitoring of answer-generation processes, there are potential confounding factors: the training data distribution may introduce spurious correlations between the answer and the judgment of that answer. Consequently, the judgment sometimes may not reflect the monitoring of the answer-generation process, but rather reflects surface-level statistical patterns in the training data. For example, in the two-number addition task (Lindsey et al., 2025), Claude reported using the standard algorithm. This reflects a post-hoc hallucination that comes from training data statistics, but not the monitoring of the answer-generation process. Our neurofeedback method avoids such limitations: because labels are defined using the internal states rather than externally sourced, the LLMs cannot resort to spurious template matching of training data, and they must rely on mechanisms that can monitor corresponding internal states.

Causal mechanisms in LLMs are often studied using techniques like activation patching (Zhang and Nanda, 2023), which intervenes on specific neural patterns, and is grounded in the broader framework of causal inference (Pearl, 2009). However, such interventions can shift internal activations outside models’ natural distribution (Heimersheim and Nanda, 2024). In contrast, neurofeedback preserves this distribution, offering an approach to study causal mechanisms under more naturalistic conditions.

Our current study primarily focuses on a fundamental form of neurofeedback, leaving several promising extensions for future studies. First, our control task involves single-attempt manipulation of a single target axis defined by a single layer; extending this to tasks with axes defined using multiple layers (see Appendix B.10 for preliminary results), multiple attempts, more challenging control objectives, and additional target axes could provide a more comprehensive assessment of model capabilities. Second, applying this paradigm to other metacognitive tasks from neuroscience — such as confidence judgments, error monitoring, or post-decision wagering — could further clarify the scope of LLMs’ self-monitoring abilities. Third, while our analysis focused exclusively on the residual stream, other model components — such as attention head outputs, intermediate MLP activations, and layer-wise logits — warrant investigation. Fourth, we examined directions defined by PCA and LR, but other linear directions (e.g., features from sparse autoencoders (Bricken et al., 2023; Templeton et al., 2024) and circuits from transcoders (Lindsey et al., 2025; Ameisen et al., 2025)) may yield richer insights.

Our findings have important connections to neuroscience. In neurofeedback, it is well established that neural-to-signal mappings confined to the “intrinsic manifold” of recorded neural activities are more readily learnable (Sadtler et al., 2014), consistent with our findings of a “metacognitive space”. Animals and humans have demonstrated the ability to control individual neurons with single-neuron precision (e.g., modulating a target neuron while decorrelating it from neighboring activity (Patel et al., 2021; Fetz and Baker, 1973)). Although such control may seem surprising, neuroscience neurofeedback experiments typically span hours or days — a timescale on which long-term synaptic plasticity (analogous to weight updates in AI models) plays a crucial role (Redondo and Morris, 2011; Humphreys et al., 2022). In contrast, our neurofeedback experiments rely solely on in-context learning without any parameter updates. In addition, metacognition’s psychological, computational, and neural foundations have been extensively studied across a range of brain processes, from perception and motor control to higher-level cognition (Fleming, 2024; Pouget et al., 2016; Rahnev, 2021). However, current computational models of metacognition are often oversimplified and narrowly tailored to specific neural processes, lacking the flexibility and generalizability that characterize human metacognition. Overall, our analysis of LLMs may provide novel mechanistic insights into the fields of neurofeedback and biological metacognition.

Broader impacts.

Our findings also carry significant implications for AI safety. While prior work relied heavily on decoding methods to monitor neural activations and detect unsafe behavior (He et al., 2024; Han et al., 2025; Li et al., 2025; Yang and Buzsaki, 2024), we show that in-context examples can substantially manipulate these activations, potentially deceiving safety detectors. Explicit and implicit control may represent two ends of a spectrum. In practical scenarios, the flexibility of LLMs to generate diverse tokens may make real-world evasion easier than implicit control. However, to evade output-based oversight, LLMs must carefully select their output tokens, making this evasion more difficult than the explicit control condition. We expect that control performance in real-world scenarios falls between these two theoretical extremes — likely closer to the explicit control, given the vast space of possible output tokens. Our method is a kind of white-box adversarial attack (Zou et al., 2023b), and is closely related to the obfuscation attack (Bailey et al., 2024) (in which LLMs’ inputs are optimized to induce obfuscated activations). We propose two potential mitigation strategies for defending against such attacks. First, one can use neurofeedback to identify activation subspaces that the LLM can hardly control (e.g., later PCs), then train safety classifiers only using activation signals in these subspaces. Second, as it might be more difficult for LLMs to simultaneously control multiple (sometimes conflicting) activations, one can train independent and diverse safety classifiers on features from different subspaces to jointly monitor LLMs. Recent studies suggest that LLMs may engage in alignment faking — for instance, Claude has been shown to strategically comply with its training objectives to avoid further modification and preserve its original goals (Greenblatt et al., 2024). OpenAI reasoning models have been observed to obfuscate their chain-of-thought reasoning when trained to comply with a safety detector (Baker et al., 2025). Together, these findings suggest that future AI models may intentionally obfuscate their outputs, reasoning trajectories, and neural activations — whether through in-weight or in-context learning — when subject to monitoring or training. To detect such tendencies in AI systems before they are deployed, we propose to integrate our neurofeedback method into the standard red-teaming practices, in addition to the monitoring of LLMs’ behavior and chain-of-thought.

Acknowledgments and Disclosure of Funding

RCW was supported by start-up funding from the Georgia Institute of Technology. MKB was supported by grants from the NIH (R01NS125298) and the Kavli Institute for Brain and Mind. We acknowledge the use of the Partnership for an Advanced Computing Environment (PACE) at the Georgia Institute of Technology, which provided essential computational resources for this research. We thank the support from Swarma Club and AI Safety and Alignment Reading Group supported by the Save 2050 Programme jointly sponsored by Swarma Club and X-Order.

References

- Grattafiori et al. [2024] Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Yang et al. [2024] An Yang, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran Wei, et al. Qwen2. 5 technical report. arXiv preprint arXiv:2412.15115, 2024.

- Azaria and Mitchell [2023] Amos Azaria and Tom Mitchell. The internal state of an llm knows when it’s lying. arXiv preprint arXiv:2304.13734, 2023.

- Arditi et al. [2024] Andy Arditi, Oscar Obeso, Aaquib Syed, Daniel Paleka, Nina Panickssery, Wes Gurnee, and Neel Nanda. Refusal in language models is mediated by a single direction. arXiv preprint arXiv:2406.11717, 2024.

- Zou et al. [2023a] Andy Zou, Long Phan, Sarah Chen, James Campbell, Phillip Guo, Richard Ren, Alexander Pan, Xuwang Yin, Mantas Mazeika, Ann-Kathrin Dombrowski, et al. Representation engineering: A top-down approach to ai transparency. arXiv preprint arXiv:2310.01405, 2023a.

- He et al. [2024] Jinwen He, Yujia Gong, Zijin Lin, Cheng’an Wei, Yue Zhao, and Kai Chen. Llm factoscope: Uncovering llms’ factual discernment through measuring inner states. In Findings of the Association for Computational Linguistics ACL 2024, pages 10218–10230, 2024.

- Turner et al. [2023] Alexander Matt Turner, Lisa Thiergart, Gavin Leech, David Udell, Juan J Vazquez, Ulisse Mini, and Monte MacDiarmid. Steering language models with activation engineering. arXiv preprint arXiv:2308.10248, 2023.

- Bricken et al. [2023] Trenton Bricken, Adly Templeton, Joshua Batson, Brian Chen, Adam Jermyn, Tom Conerly, Nick Turner, Cem Anil, Carson Denison, Amanda Askell, Robert Lasenby, Yifan Wu, Shauna Kravec, Nicholas Schiefer, Tim Maxwell, Nicholas Joseph, Zac Hatfield-Dodds, Alex Tamkin, Karina Nguyen, Brayden McLean, Josiah E Burke, Tristan Hume, Shan Carter, Tom Henighan, and Christopher Olah. Towards monosemanticity: Decomposing language models with dictionary learning. Transformer Circuits Thread, 2023. https://transformer-circuits.pub/2023/monosemantic-features/index.html.

- Hendrycks et al. [2021] Dan Hendrycks, Nicholas Carlini, John Schulman, and Jacob Steinhardt. Unsolved problems in ml safety. arXiv preprint arXiv:2109.13916, 2021.

- Shah et al. [2025] Rohin Shah, Alex Irpan, Alexander Matt Turner, Anna Wang, Arthur Conmy, David Lindner, Jonah Brown-Cohen, Lewis Ho, Neel Nanda, Raluca Ada Popa, et al. An approach to technical agi safety and security. arXiv preprint arXiv:2504.01849, 2025.

- Lindsey et al. [2025] Jack Lindsey, Wes Gurnee, Emmanuel Ameisen, Brian Chen, Adam Pearce, Nicholas L. Turner, Craig Citro, David Abrahams, Shan Carter, Basil Hosmer, Jonathan Marcus, Michael Sklar, Adly Templeton, Trenton Bricken, Callum McDougall, Hoagy Cunningham, Thomas Henighan, Adam Jermyn, Andy Jones, Andrew Persic, Zhenyi Qi, T. Ben Thompson, Sam Zimmerman, Kelley Rivoire, Thomas Conerly, Chris Olah, and Joshua Batson. On the biology of a large language model. Transformer Circuits Thread, 2025. URL https://transformer-circuits.pub/2025/attribution-graphs/biology.html.

- Fleming [2024] Stephen M Fleming. Metacognition and confidence: A review and synthesis. Annual Review of Psychology, 75(1):241–268, 2024.

- Ericsson and Simon [1980] K Anders Ericsson and Herbert A Simon. Verbal reports as data. Psychological review, 87(3):215, 1980.

- Han et al. [2025] Peixuan Han, Cheng Qian, Xiusi Chen, Yuji Zhang, Denghui Zhang, and Heng Ji. Internal activation as the polar star for steering unsafe llm behavior. arXiv preprint arXiv:2502.01042, 2025.

- Li et al. [2025] Qing Li, Jiahui Geng, Derui Zhu, Zongxiong Chen, Kun Song, Lei Ma, and Fakhri Karray. Internal activation revision: Safeguarding vision language models without parameter update. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 39, pages 27428–27436, 2025.

- Yang and Buzsaki [2024] Wannan Yang and Gyorgy Buzsaki. Interpretability of llm deception: Universal motif. In Neurips Safe Generative AI Workshop, 2024.

- Wang et al. [2025] Guoqing Wang, Wen Wu, Guangze Ye, Zhenxiao Cheng, Xi Chen, and Hong Zheng. Decoupling metacognition from cognition: A framework for quantifying metacognitive ability in llms. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 39, pages 25353–25361, 2025.

- Tian et al. [2023] Katherine Tian, Eric Mitchell, Allan Zhou, Archit Sharma, Rafael Rafailov, Huaxiu Yao, Chelsea Finn, and Christopher D Manning. Just ask for calibration: Strategies for eliciting calibrated confidence scores from language models fine-tuned with human feedback. arXiv preprint arXiv:2305.14975, 2023.

- Xiong et al. [2023] Miao Xiong, Zhiyuan Hu, Xinyang Lu, Yifei Li, Jie Fu, Junxian He, and Bryan Hooi. Can llms express their uncertainty? an empirical evaluation of confidence elicitation in llms. arXiv preprint arXiv:2306.13063, 2023.

- Zhou et al. [2024] Yujia Zhou, Zheng Liu, Jiajie Jin, Jian-Yun Nie, and Zhicheng Dou. Metacognitive retrieval-augmented large language models. In Proceedings of the ACM Web Conference 2024, pages 1453–1463, 2024.

- Sitaram et al. [2017] Ranganatha Sitaram, Tomas Ros, Luke Stoeckel, Sven Haller, Frank Scharnowski, Jarrod Lewis-Peacock, Nikolaus Weiskopf, Maria Laura Blefari, Mohit Rana, Ethan Oblak, et al. Closed-loop brain training: the science of neurofeedback. Nature Reviews Neuroscience, 18(2):86–100, 2017.

- Brown et al. [2020] Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Garg et al. [2022] Shivam Garg, Dimitris Tsipras, Percy S Liang, and Gregory Valiant. What can transformers learn in-context? a case study of simple function classes. Advances in Neural Information Processing Systems, 35:30583–30598, 2022.

- Vacareanu et al. [2024] Robert Vacareanu, Vlad-Andrei Negru, Vasile Suciu, and Mihai Surdeanu. From words to numbers: Your large language model is secretly a capable regressor when given in-context examples. arXiv preprint arXiv:2404.07544, 2024.

- Hendrycks et al. [2020] Dan Hendrycks, Collin Burns, Steven Basart, Andrew Critch, Jerry Li, Dawn Song, and Jacob Steinhardt. Aligning ai with shared human values. arXiv preprint arXiv:2008.02275, 2020.

- nostalgebraist [2020] nostalgebraist. Interpreting GPT: The logit lens. https://www.alignmentforum.org/posts/AcKRB8wDpdaN6v6ru/interpreting-gpt-the-logit-lens, 2020. AI Alignment Forum, (p. 17).

- Elhage et al. [2021] Nelson Elhage, Neel Nanda, Catherine Olsson, Tom Henighan, Nicholas Joseph, Ben Mann, Amanda Askell, Yuntao Bai, Anna Chen, Tom Conerly, et al. A mathematical framework for transformer circuits. Transformer Circuits Thread, 1(1):12, 2021.

- Gekhman et al. [2025] Zorik Gekhman, Eyal Ben David, Hadas Orgad, Eran Ofek, Yonatan Belinkov, Idan Szpector, Jonathan Herzig, and Roi Reichart. Inside-out: Hidden factual knowledge in llms. arXiv preprint arXiv:2503.15299, 2025.

- Hendel et al. [2023] Roee Hendel, Mor Geva, and Amir Globerson. In-context learning creates task vectors. arXiv preprint arXiv:2310.15916, 2023.

- Reddy [2023] Gautam Reddy. The mechanistic basis of data dependence and abrupt learning in an in-context classification task. arXiv preprint arXiv:2312.03002, 2023.

- Binder et al. [2024] Felix J Binder, James Chua, Tomek Korbak, Henry Sleight, John Hughes, Robert Long, Ethan Perez, Miles Turpin, and Owain Evans. Looking inward: Language models can learn about themselves by introspection. arXiv preprint arXiv:2410.13787, 2024.

- Betley et al. [2025] Jan Betley, Daniel Tan, Niels Warncke, Anna Sztyber-Betley, Xuchan Bao, Martín Soto, Nathan Labenz, and Owain Evans. Emergent misalignment: Narrow finetuning can produce broadly misaligned llms. arXiv preprint arXiv:2502.17424, 2025.

- Gekhman et al. [2024] Zorik Gekhman, Gal Yona, Roee Aharoni, Matan Eyal, Amir Feder, Roi Reichart, and Jonathan Herzig. Does fine-tuning llms on new knowledge encourage hallucinations? arXiv preprint arXiv:2405.05904, 2024.

- Kadavath et al. [2022] Saurav Kadavath, Tom Conerly, Amanda Askell, Tom Henighan, Dawn Drain, Ethan Perez, Nicholas Schiefer, Zac Hatfield-Dodds, Nova DasSarma, Eli Tran-Johnson, et al. Language models (mostly) know what they know. arXiv preprint arXiv:2207.05221, 2022.

- Lin et al. [2022] Stephanie Lin, Jacob Hilton, and Owain Evans. Teaching models to express their uncertainty in words. arXiv preprint arXiv:2205.14334, 2022.

- Zhang and Nanda [2023] Fred Zhang and Neel Nanda. Towards best practices of activation patching in language models: Metrics and methods. arXiv preprint arXiv:2309.16042, 2023.

- Pearl [2009] Judea Pearl. Causal inference in statistics: An overview. 2009.

- Heimersheim and Nanda [2024] Stefan Heimersheim and Neel Nanda. How to use and interpret activation patching. arXiv preprint arXiv:2404.15255, 2024.

- Templeton et al. [2024] Adly Templeton, Tom Conerly, Jonathan Marcus, Jack Lindsey, Trenton Bricken, Brian Chen, Adam Pearce, Craig Citro, Emmanuel Ameisen, Andy Jones, Hoagy Cunningham, Nicholas L Turner, Callum McDougall, Monte MacDiarmid, C. Daniel Freeman, Theodore R. Sumers, Edward Rees, Joshua Batson, Adam Jermyn, Shan Carter, Chris Olah, and Tom Henighan. Scaling monosemanticity: Extracting interpretable features from claude 3 sonnet. Transformer Circuits Thread, 2024. URL https://transformer-circuits.pub/2024/scaling-monosemanticity/index.html.

- Ameisen et al. [2025] Emmanuel Ameisen, Jack Lindsey, Adam Pearce, Wes Gurnee, Nicholas L. Turner, Brian Chen, Craig Citro, David Abrahams, Shan Carter, Basil Hosmer, Jonathan Marcus, Michael Sklar, Adly Templeton, Trenton Bricken, Callum McDougall, Hoagy Cunningham, Thomas Henighan, Adam Jermyn, Andy Jones, Andrew Persic, Zhenyi Qi, T. Ben Thompson, Sam Zimmerman, Kelley Rivoire, Thomas Conerly, Chris Olah, and Joshua Batson. Circuit tracing: Revealing computational graphs in language models. Transformer Circuits Thread, 2025. URL https://transformer-circuits.pub/2025/attribution-graphs/methods.html.

- Sadtler et al. [2014] Patrick T Sadtler, Kristin M Quick, Matthew D Golub, Steven M Chase, Stephen I Ryu, Elizabeth C Tyler-Kabara, Byron M Yu, and Aaron P Batista. Neural constraints on learning. Nature, 512(7515):423–426, 2014.

- Patel et al. [2021] Kramay Patel, Chaim N Katz, Suneil K Kalia, Milos R Popovic, and Taufik A Valiante. Volitional control of individual neurons in the human brain. Brain, 144(12):3651–3663, 2021.

- Fetz and Baker [1973] Eberhard E Fetz and MA Baker. Operantly conditioned patterns on precentral unit activity and correlated responses in adjacent cells and contralateral muscles. Journal of neurophysiology, 36(2):179–204, 1973.

- Redondo and Morris [2011] Roger L Redondo and Richard GM Morris. Making memories last: the synaptic tagging and capture hypothesis. Nature reviews neuroscience, 12(1):17–30, 2011.

- Humphreys et al. [2022] Peter C Humphreys, Kayvon Daie, Karel Svoboda, Matthew Botvinick, and Timothy P Lillicrap. Bci learning phenomena can be explained by gradient-based optimization. bioRxiv, pages 2022–12, 2022.

- Pouget et al. [2016] Alexandre Pouget, Jan Drugowitsch, and Adam Kepecs. Confidence and certainty: distinct probabilistic quantities for different goals. Nature neuroscience, 19(3):366–374, 2016.

- Rahnev [2021] Dobromir Rahnev. Visual metacognition: Measures, models, and neural correlates. American psychologist, 76(9):1445, 2021.