# Hypothesis on the Functional Advantages of the Selection-Broadcast Cycle Structure: Global Workspace Theory and Dealing with a Real-Time World

**Authors**: Junya Nakanishi, Jun Baba, Yuichiro Yoshikawa, Hiroko Kamide, and Hiroshi Ishiguro

> *This work was not supported by any organization1Junya Nakanishi, Yuichiro Yoshikawa, and Hiroshi Ishiguro are with the Graduate School of Engineering Science, The University of Osaka, Osaka 560-0043, Japannakanishi.junya@irl.sys.es.osaka-u.ac.jp2Jun Baba is with AI Lab, CyberAgent Inc., Tokyo 150-0042, Japan3Hiroko Kamide is with Faculty/School of Law, Kyoto University, Kyoto 606-8501, Japan

Abstract

This paper discusses the functional advantages of the Selection-Broadcast Cycle structure proposed by Global Workspace Theory (GWT), inspired by human consciousness, particularly focusing on its applicability to artificial intelligence and robotics in dynamic, real-time scenarios. While previous studies often examined the Selection and Broadcast processes independently, this research emphasizes their combined cyclic structure and the resulting benefits for real-time cognitive systems. Specifically, the paper identifies three primary benefits: Dynamic Thinking Adaptation, Experience-Based Adaptation, and Immediate Real-Time Adaptation. This work highlights GWT’s potential as a cognitive architecture suitable for sophisticated decision-making and adaptive performance in unsupervised, dynamic environments. It suggests new directions for the development and implementation of robust, general-purpose AI and robotics systems capable of managing complex, real-world tasks.

I INTRODUCTION

In recent years, a major research theme in the fields of artificial intelligence (AI), robotics, and cognitive science has been how to implement the advanced intelligence and flexible problem-solving abilities of humans and animals into systems [1, 2]. With the technical advances in machine learning (most notably deep learning) and the heightened performance of hardware in robotics, there has been growing interest in “multimodal” and “parallel” architectures that carry out tasks while simultaneously leveraging multiple cognitive functions [3, 4]. However, even if several specialized modules (e.g., vision, language, logical reasoning, motor control) each have excellent capabilities, there are still many aspects of information exchange and control methods that have not been fully organized due to the simultaneous parallel operation of multiple modules [5].

Against this background, the Global Workspace Theory (GWT), which was devised by imitating human consciousness, is attracting attention. GWT positions “consciousness” from the perspective of information processing structure and proposes a framework in which information that has been competed for and integrated among numerous parallel specialized modules is temporarily brought “into consciousness” and then shared system-wide [6]. Since it was first proposed by the psychologist Bernard Baars, GWT has been linked to many empirical findings in neuroscience and cognitive science [7, 8]. More recently, its advantages as an information processing architecture have begun to attract attention in AI research as well. Previous GWT research suggests that the “Selection” process, which integrates information among multiple parallel specialized modules, and the “Broadcast” process, which disseminates the selected information throughout the system, are expected to be effective as a wide range of functions, including creative thinking, transfer learning, top-down control, and attention allocation [8, 9, 10]. However, in many of these discussions, “Selection” and “Broadcast” are treated separately, and the effectiveness of these two processes being executed in parallel and intermittently are not fully addressed.

In this paper, we call the process of exchanging information through “Selection” and “Broadcast” the “Selection-Broadcast Cycle”, and focus on it. In the Selection-Broadcast Cycle, we are considering information processing that has a time dimension, that is, information processing that is not a single information processing, but rather a series of multiple information processes, such as responding to an environment that changes over time or taking time to search for an answer. These information processing methods with a time dimension are important research topics in robotics, where real-time processing is required, and in artificial intelligence systems that handle complex tasks that require long-term learning and adaptation [11, 12]. For instance, for continuous tasks that span a period of time, a robot will inevitably need to change its approach during interactions with humans. Moreover, sensor data are updated moment by moment, and task goals or external conditions may change depending on the situation. Therefore, there is a need for a real-time processing framework that can dynamically decide “when and which module to call upon” in an online setting and swiftly reflect the results in the next step.

Accordingly, this paper focuses on the Selection-Broadcast Cycle structure proposed in GWT and discusses the functional advantages its dynamic, cyclic structure offers from the perspective of applying it to the design of real AI and robotic systems. Specifically, we highlight: Dynamic Thinking Adaptation:

a capacity to dynamically rearrange module execution order, thereby enabling flexible adaptation to unexpected task changes or evolving goals Experience-Based Adaptation:

an acceleration of consciousness processing by exploiting past experiences stored in memory modules, facilitating faster predictions and decision-making Immediate Real-Time Adaptation:

a quick intervention route to consciousness processing allows for immediate response to real-time changes

Our aim is to theoretically clarify “why such a structure is useful for real-time intelligent systems.” By doing so, we hope to offer fresh insights into the design philosophy and implementation guidelines of cognitive architectures based on GWT and contribute to the development of robust, general-purpose AI and robotic systems capable of adapting to complex tasks and unknown environments.

II LITERATURE REVIEW

II-A Overview of GWT

<details>

<summary>x1.png Details</summary>

### Visual Description

## System Diagram: Module Interaction

### Overview

The image presents a system diagram illustrating the interaction between different modules, a sensor, and memory, all potentially managed by a selector. The diagram uses boxes to represent components and arrows to indicate the flow of information or control.

### Components/Axes

* **Components:**

* Sensor

* Memory

* Module 1

* Module 2

* Selector (partially visible)

* **Flow Indicators:** Arrows indicate the direction of data or control flow.

* **Containers:** Dashed lines enclose Module 1 and Module 2, and the Selector.

### Detailed Analysis or ### Content Details

* **Sensor:** A box labeled "Sensor" at the bottom-left. An arrow points upwards from the Sensor to Module 1.

* **Memory:** A box labeled "Memory" at the bottom-right. An arrow points upwards from the Memory to Module 2.

* **Module 1:** A rounded rectangle labeled "Module 1". It receives input from the Sensor and sends output to Module 2. It also receives input from Module 2.

* **Module 2:** A rounded rectangle labeled "Module 2". It receives input from Memory and Module 1. It also sends input to Module 1.

* **Selector:** Located at the top of the diagram, only partially visible. The text "Selector" is visible. It sends a signal to both Module 1 and Module 2.

* **Connections:**

* A purple arrow goes from the Sensor to Module 1.

* A purple arrow goes from the Memory to Module 2.

* A light green arrow goes from the Selector to Module 1.

* A light green arrow goes from the Selector to Module 2.

* A purple arrow goes from Module 1 to Module 2.

* A purple arrow goes from Module 2 to Module 1.

### Key Observations

* The Sensor and Memory provide input to Module 1 and Module 2, respectively.

* Module 1 and Module 2 have a bi-directional connection, suggesting they exchange information.

* The Selector seems to control or influence both Module 1 and Module 2.

### Interpretation

The diagram illustrates a system where a Sensor and Memory feed data into two interacting modules, Module 1 and Module 2. The Selector component likely manages or controls the operation of these modules. The bi-directional connection between Module 1 and Module 2 suggests a feedback loop or collaborative processing. The system appears to be designed for processing data from external sources (Sensor, Memory) under the control of a Selector, with internal communication between the modules.

</details>

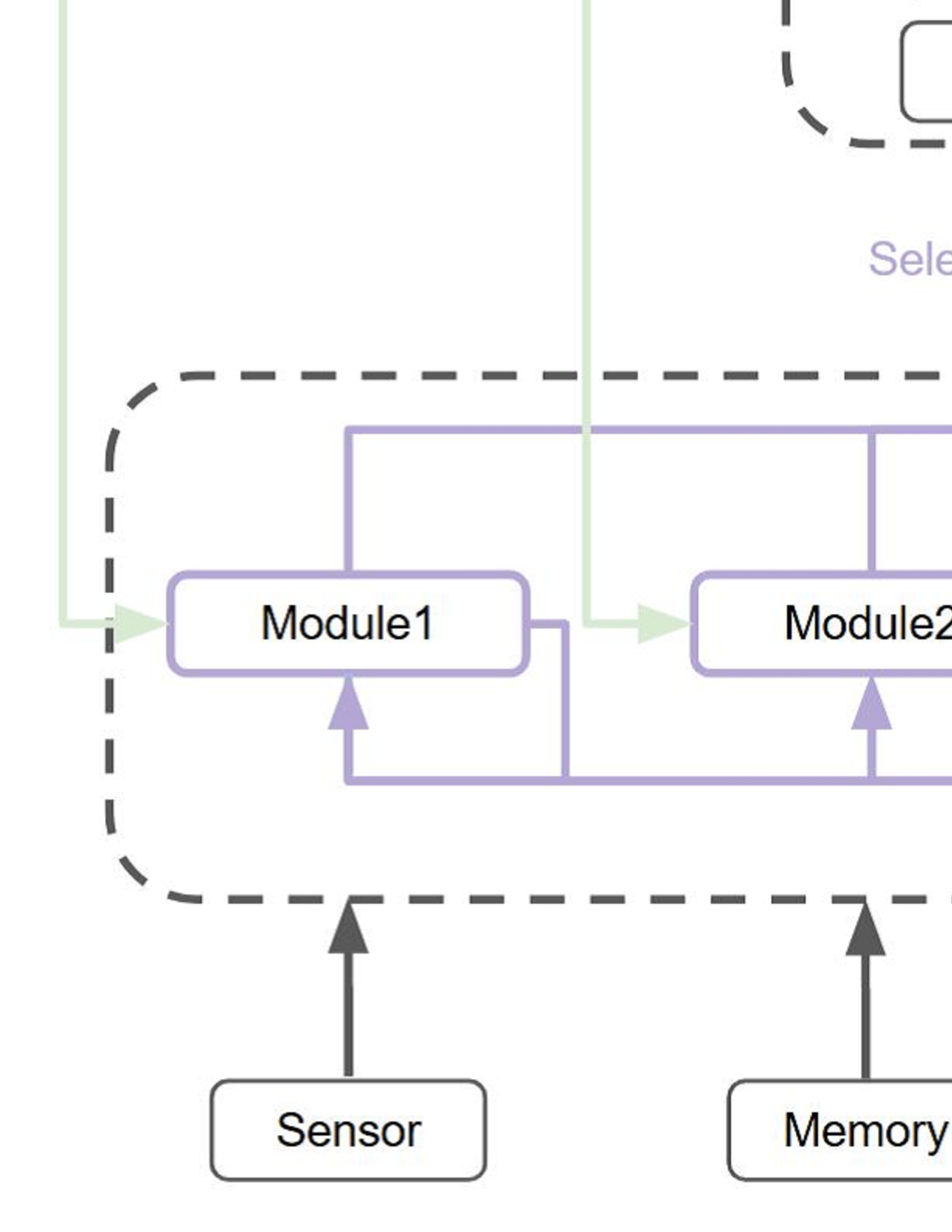

Figure 1: Architecture of the Global Workspace Theory

The Global Workspace Theory (GWT) is a cognitive science theory of information processing in consciousness, proposed by the psychologist Bernard Baars [6]. The essence of GWT is a framework in which information is competed and integrated among many specialized modules (e.g., vision, hearing, memory, language) that operate in parallel, and the information that eventually wins is then shared among all modules (Figure 1). The winning information is temporarily retained in a conscious form within a memory area called the “global workspace”. Only a limited amount of information can win at a time, and other competing information is considered to be processed unconsciously in the background. In this way, GWT is positioned as a framework to explain the interaction between a serial, limited-capacity conscious process and parallel, large-capacity unconscious processes. This model is supported by numerous experimental findings [7, 8]. For example, in brain imaging studies (e.g., fMRI, PET, EEG), stimuli processed under consciousness involve extensive regions of the brain, including the frontal and parietal lobes, exhibiting recurrent signaling, whereas stimuli not reaching conscious awareness (i.e., unconscious processing) remain confined to local, transient activity [13, 14]. This is consistent with the mechanism proposed by GWT that once some piece of information wins, it is broadcast globally to the entire system.

On the other hand, GWT mainly deals with “What information processing structures do we use?”, so it does not provide a direct answer to the question of “Why did we arrive at this kind of information processing structure?”. From the biological and evolutionary perspective, we can address this question by considering how such a structure might have provided adaptive advantages in terms of survival and reproduction [9]. In previous research, the focus has often been placed on the part of GWT’s information processing structure related to competing and integrating information among multiple specialized modules operating in parallel (Selection process) and on the part that shares the selected information with the entire system (Broadcast process), and the advantages and benefits of these have been discussed.

II-B Functional Advantages of Selection

In this paper, the process of selecting information from among the information processed in parallel by multiple specialized modules and then integrating them in a global workspace is called the “Selection” process.

II-B 1 Diverse Perspectives

By comparing and examining the outputs of multiple specialized modules, it is thought that it will be possible to generate a wider variety of solutions and ideas for a given task [15, 16]. For instance, if both a visual module and a language module are operating simultaneously, approaches that capture a problem from a pictorial/imaginative viewpoint can be compared with those that capture it from a linguistic/logical viewpoint. This concept is akin to the notion of “ensemble learning” [17] : by combining multiple models or modules with different specializations, they can complement the diverse aspects that a single model alone would not capture, thereby producing higher predictive accuracy and robustness overall.

Furthermore, the mechanism that integrates multiple parallel modules enables unexpected combinations of knowledge and skills from each module, which is thought to lead to creative thinking [10, 16]. For example, imagine a module responsible for visual thinking, inspired by metaphorical expressions provided by a language processing module, giving rise to a new diagram or prototype, which is then validated by a logical reasoning module. Alternatively, a module specializing in reinforcement learning might combine with a sensorimotor module’s proposed action strategy, leading to previously unanticipated solutions or task-execution procedures. The process of generating these incidental or divergent ideas and then evaluating, narrowing down, and integrating them is considered by many to be at the core of creative thinking [18].

II-B 2 Transfer Learning

When faced with a new task, utilizing the skills already acquired in the specialized modules reduces the need to learn from scratch, and as a result, it is thought that the efficiency and speed of learning will improve [10, 16]. For instance, if there are modules that excel in visual recognition, language processing, or logical reasoning and each is independently trained, then when facing a new domain or a different task, it becomes possible to adapt quickly by making use of the knowledge and representations already accumulated in these modules. This is analogous to “transfer learning” [19] in machine learning. In fact, when adapting a deep neural network learned in one domain (source domain) to another domain (target domain), reusing the lower-level feature extraction parts shortens the early training phase while still delivering high performance.

II-C Functional Advantages of Broadcast

In this paper, the process of sharing selected information with all specialized modules is called the “Broadcast” process.

II-C 1 Shared Attention

It is thought that broadcasting allows each specialized module to concentrate its resources on information that is deemed to be extremely important according to the current goals and environmental conditions, thereby improving the efficiency and accuracy of task execution [16, 20]. For example, consider a robot endowed with multiple sensory modules for vision, hearing, and touch, which is tasked with detecting, identifying, and accurately grasping an object. First, the visual module, operating unconsciously, generates multiple candidates, performing tasks such as location estimation and object classification in parallel. Meanwhile, the hearing module tries to gather hints from environmental sounds or voice commands that could modify actions. The tactile module prepares feedback control for the stage at which the robot actually grasps the object. After the information generated by each module is integrated by the Selection process, if the decision “to combine accurate location estimation from the visual module with minor corrective commands from auditory instructions” wins, that information is shared with all modules via the Broadcast function. As a result, the robot can carry out the plan “move the arm toward the coordinates estimated visually, corrected by auditory information” in coordination across all modules.

This mechanism seems to be highly relevant to the “Transformer architecture” [21]. Transformers, which demonstrate extremely high performance in various tasks such as natural language processing and image recognition, have a core mechanism known as “self-attention”. In self-attention, the inputs (or feature vectors) compute their mutual relevance, enabling the network as a whole to incorporate necessary contextual information. This mechanism is akin to GWT’s claim of handling diverse information while spotlighting important items and sharing them throughout the system. Though the transformer was not initially designed with the goal of mimicking consciousness, the fact that it achieves such high performance in language processing, image recognition, and more by way of sharing of important information hints at the fundamental usefulness of a strategy that shares the most crucial elements globally in an intelligent system.

II-C 2 Predictive Coding

Among the specialized modules, there are those that receive data from sensors (e.g., visual, auditory, tactile). If they receive predictions or metacognition as broadcast information, it may enhance the performance of the module’s output [10, 16]. For example, when the visual module is only processing lower-level features such as raw pixel data and edge information, it will only output tentative recognition results based on local statistics and pattern recognition. However, when higher-level context and objectives such as “this scene is outdoors and there is a high possibility that there are multiple people in the picture” and “the task is to judge the facial expressions of specific people” are broadcast from the global workspace, the visual module will re-evaluate its output while referring to these predictions and hypotheses. As a result, corrections such as prioritizing the extraction of resolution and regions of interest that are appropriate for the task, or more carefully searching for clues to separate people and backgrounds, can be expected to improve recognition performance and reduce false positives. This aligns closely with the concept of “predictive coding” [22] often discussed in neuroscience and cognitive science. Predictive coding posits that the brain or cognitive system is constantly sending top-down predictions from higher (i.e., more advanced) modules to lower (i.e., more basic) modules, while the lower-level modules calculate and return the discrepancy (prediction error) between the actual sensory input and the prediction. If the discrepancy is large, it implies that something different from the predictions is likely in the scene, and this error is returned upstream so that the higher-level module can update or generate new predictions. If the discrepancy is small, it implies that the prediction and actual data largely match, thus increasing the likelihood that it is really as observed. Through repeated mutual interplay between top-down predictions and bottom-up prediction errors, the entire perception and cognition system dynamically adapts to the environment.

III HYPOTHESIS

In this paper, in addition to the structural advantages from each of the traditional GWT perspectives (Selection and Broadcast), we newly focus on the advantage of a cycle structure in which information processing occurs through Selection and Broadcast (Selection-Broadcast Cycle). Within this cycle structure, we discuss the dynamic, stepwise information processing in which Selection and Broadcast intertwine in parallel and intermittently.

III-A Dynamic Thinking Adaptation

The Selection-Broadcast Cycle possesses a structure that can realize any order of serial processing steps of specialized modules. The serial processing referred to here means processing that is carried out step by step (e.g., a chain of thought [23], inductive and deductive reasoning [24]). In contrast to parallel processing, in which multiple modules operate simultaneously, serial processing involves processing being carried out in order, with the information generated or selected by one module being passed on as input to the next module. In serial processing, the final answer is derived from the inferences and logical development that take place in the intermediate processing. This process of deriving conclusions in steps allows reliable problem solving and decision making with a small number of inferences and logical knowledge for various complex tasks. For example, by simply memorizing the results of addition and multiplication of 0 to 9 and the methodology of longhand arithmetic, you can calculate any addition or multiplication of integers (e.g., 11×2=10×2+1×2). In this way, by breaking down complex tasks into simpler sub-tasks (i.e., tasks that can be processed using limited memory or simple rules) and dealing with them in stages, it is possible to deal with a wide range of different tasks using relatively little memory capacity.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Broadcast and Selection Process

### Overview

The image is a diagram illustrating a process involving broadcast and selection mechanisms. It shows two modules, M1 and M2, which are connected to a gateway (GW) through a selection process. The gateway then broadcasts back to both M1 and M2.

### Components/Axes

* **Nodes:**

* GW (Gateway): Represented by an oval shape.

* M1: Represented by a rounded rectangle.

* M2: Represented by a rounded rectangle.

* **Connections:**

* Selection: A purple line connecting M1 and M2 to GW.

* Broadcast: A light green line connecting GW to M1 and M2.

* **Labels:**

* "Broadcast" (light green, positioned above the GW node).

* "Selection" (purple, positioned between M1/M2 and GW).

### Detailed Analysis

* **Flow:**

1. M1 and M2 are connected to GW via a "Selection" process (purple line).

2. GW broadcasts back to M1 and M2 (light green line).

* **Node Shapes:**

* GW is represented as an oval.

* M1 and M2 are represented as rounded rectangles.

* **Line Colors:**

* Selection is represented by a purple line.

* Broadcast is represented by a light green line.

### Key Observations

* The diagram illustrates a two-way communication process.

* The "Selection" process seems to aggregate data from M1 and M2 to GW.

* The "Broadcast" process distributes data from GW to M1 and M2.

### Interpretation

The diagram likely represents a system where data from modules M1 and M2 is selected and aggregated at a gateway (GW). The gateway then broadcasts information back to the modules. This could represent a sensor network, a distributed computing system, or any system where data is collected, processed, and redistributed. The "Selection" process could involve filtering, prioritization, or any other mechanism for choosing which data to send to the gateway. The "Broadcast" process could involve sending updates, commands, or other information to the modules.

</details>

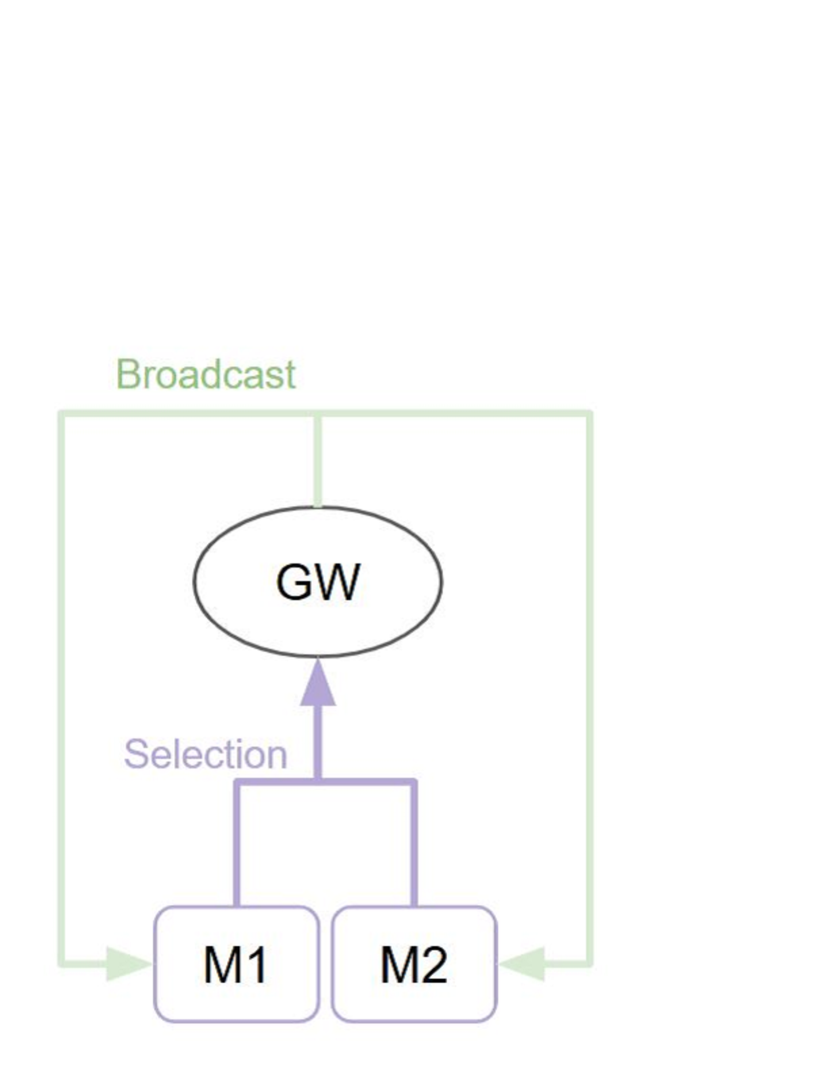

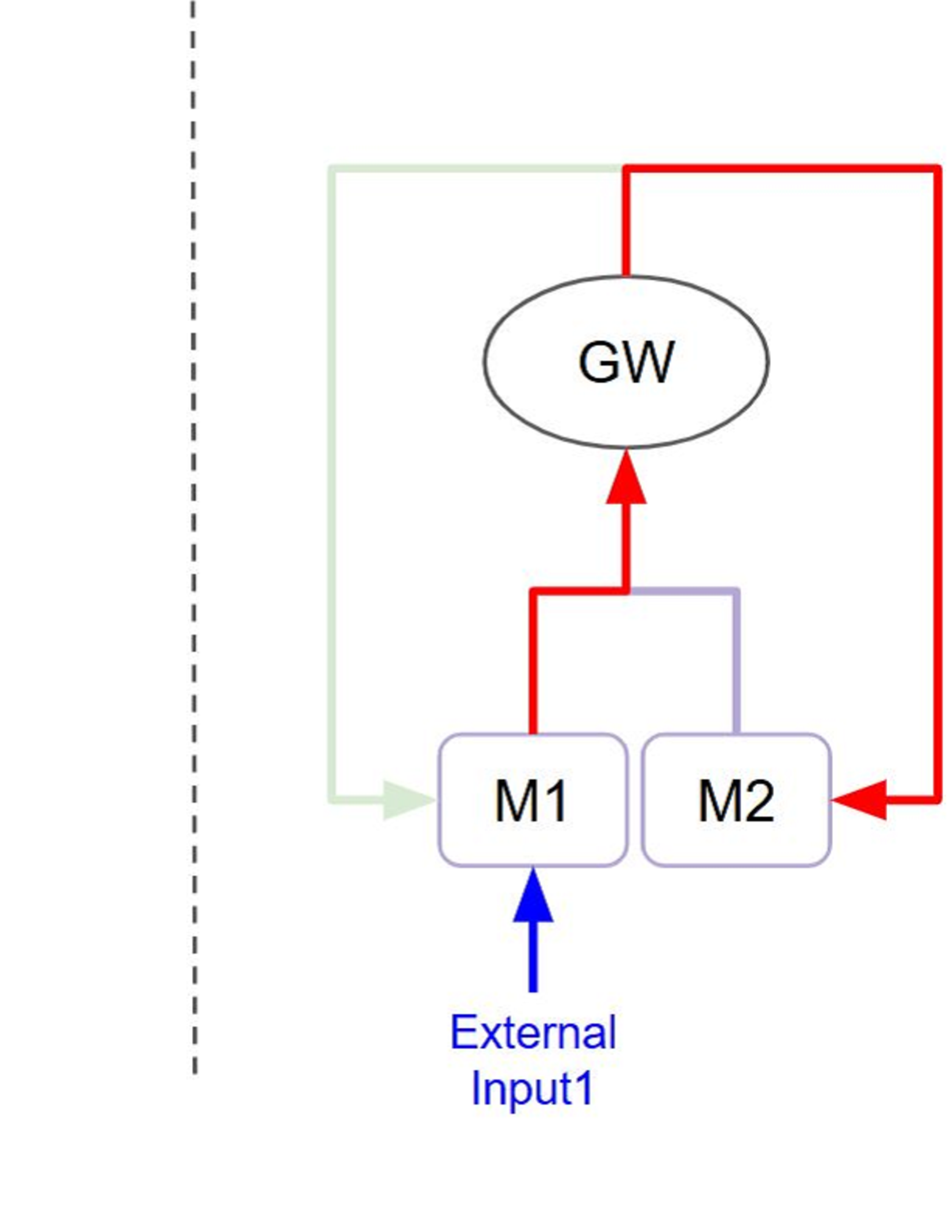

Figure 2: Example of GWT-based structure with two modules

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: System Feedback Loop

### Overview

The image is a diagram illustrating a system with feedback loops. It includes components labeled "GW," "M1," and "M2," connected by arrows indicating the direction of flow or influence. The diagram shows a cyclical relationship between these components.

### Components/Axes

* **Nodes:**

* GW: A central oval node labeled "GW."

* M1: A rounded rectangle labeled "M1."

* M2: A rounded rectangle labeled "M2."

* **Connections:**

* A red arrow originates from M1 and points towards GW.

* A light purple line connects M1 and M2.

* A red arrow originates from M2 and points towards GW.

* A red arrow originates from GW and loops back to M2.

* A light green arrow originates from GW and loops back to M1.

* **Other:**

* A dashed vertical line is present on the left side of the image.

### Detailed Analysis or ### Content Details

* The flow starts from M1 and M2, both feeding into GW.

* GW then influences both M1 and M2 through separate feedback loops.

* The connection between M1 and M2 is represented by a light purple line.

* The feedback loop from GW to M2 is red, while the feedback loop from GW to M1 is light green.

### Key Observations

* The diagram depicts a closed-loop system where GW receives input from M1 and M2 and, in turn, influences them.

* The use of different colored arrows (red and light green) suggests different types of influence or feedback mechanisms.

* The dashed line on the left side of the image does not appear to be connected to the diagram.

### Interpretation

The diagram represents a system where "GW" acts as a central processing unit or gateway, receiving input from "M1" and "M2." The feedback loops from "GW" to "M1" and "M2" indicate a regulatory or control mechanism, where the output of "GW" affects the subsequent behavior of "M1" and "M2." The different colors of the feedback loops might signify different types of feedback, such as positive (red) and negative (light green) feedback. The light purple line connecting M1 and M2 suggests a direct relationship between the two. The dashed line on the left side of the image is likely an artifact or an incomplete element and does not seem to play a role in the system's functionality.

</details>

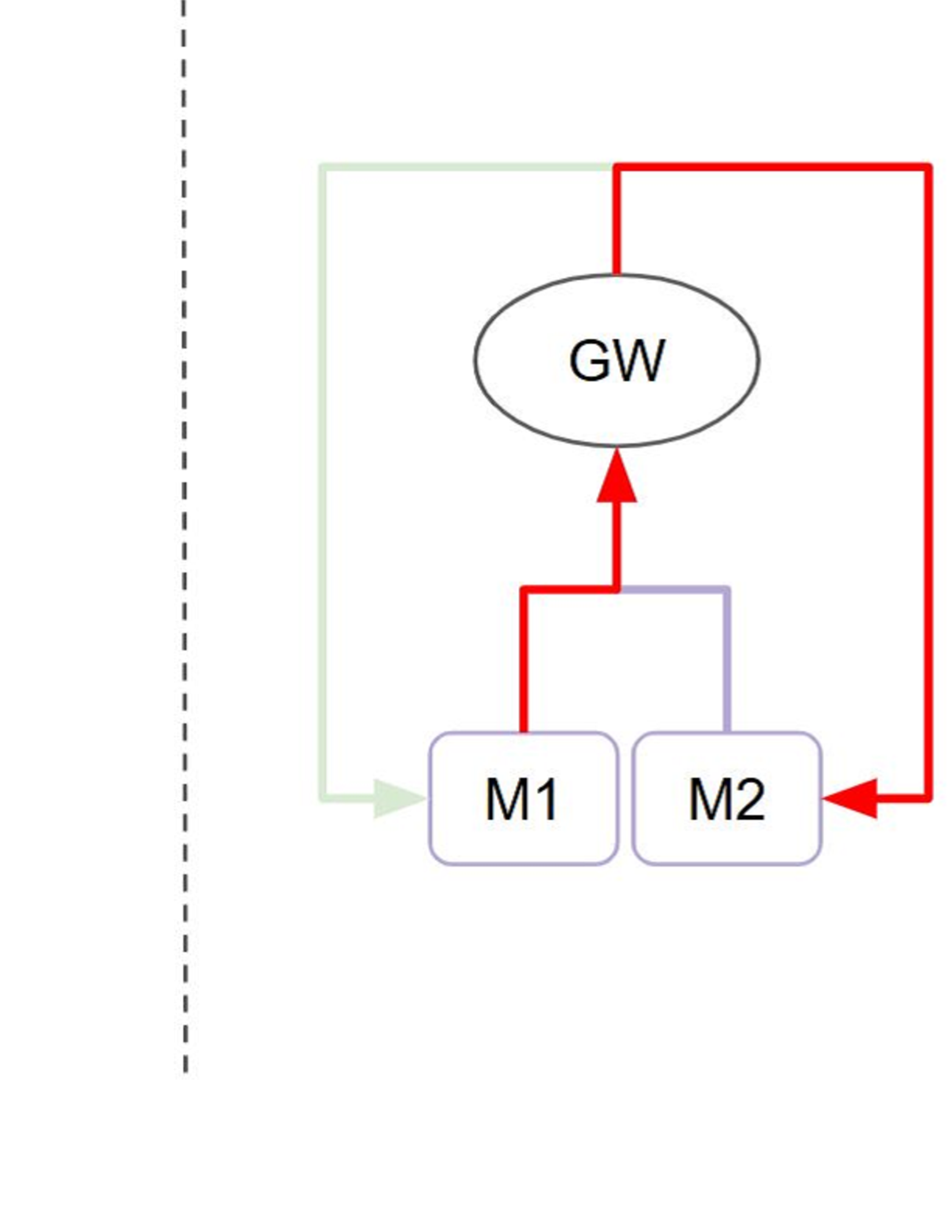

Figure 3: Flow of pipeline and GWT process in the GWT-based example

The Selection-Broadcast Cycle process has a space where such intermediate inferences and logical developments can be freely performed. Figure 2 shows an example of a simple Selection-Broadcast Cycle structure with two modules (M1, M2). The upper part of Figure 3 shows an example of the execution procedure of modules, and the lower part shows the processing flow that executes that execution procedure in the Selection-Broadcast Cycle. As you can see, the Selection-Broadcast Cycle process can execute any execution procedure using the modules by switching the selection well. In order to implement such a vast serial processing space for intermediate inferences and logical development as a pipeline, it is thought that a large tree structure made up of a large number of modules is necessary. The Selection-Broadcast Cycle process is thought to be a structure made up of a minimum number of modules using looped information processing.

Furthermore, this function enables flexible and dynamic processing, allowing you to try out all kinds of thought processes and change your thought processes in response to changes in the situation. This is a great advantage when dealing with situations that are difficult to handle with a fixed pipeline process, such as when the processing procedure is unclear or the goal is changed partway through. For example, consider the case where a robot explores a room based on information from multiple sensors (vision, touch, audio input, etc.). At the start of the search, the main objective was to search for and move along the shortest route, and the processing was set up to call the object detection module and the route planning module in order. However, during the search, there were many collisions with people in the room along the route. In this case, the Selection-Broadcast Cycle makes it possible to share the problem with the whole system, devise a solution, and make changes to the processing, for example, by calling a human detection module while planning a route. Also, if a voice instruction is received and the content of the instruction changes, it is possible to call a voice recognition module to share the analysis results with the whole system, and then reconfigure the execution order of the visual module and route planning module in response to the results. Thanks to this variable serial processing, the order in which the necessary specialized modules are called can be flexibly rearranged in response to changes in the situation or new goals, making it possible to accomplish tasks that would be difficult with fixed pipeline processing.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Accelerated Thinking

### Overview

The image presents a diagram illustrating a process labeled "Accelerated Thinking." It consists of two separate flow diagrams, each depicting a sequence of steps involving components labeled "M1," "M2," and "M3." The second flow diagram is associated with "Consciousness (Cycle1)."

### Components/Axes

* **Title:** Accelerated Thinking

* **Nodes:**

* M1: Represented by a rounded rectangle with a light purple outline.

* M2: Represented by a rounded rectangle with a light purple outline.

* M3: Represented by a rounded rectangle with an orange outline.

* **Arrows:** Gray arrows indicate the direction of flow between the nodes.

* **Cycle Label:** Consciousness (Cycle1) is positioned above the second flow diagram.

### Detailed Analysis

* **Top Flow Diagram:**

* Starts with a node labeled "M1."

* An arrow points from "M1" to "M2."

* **Bottom Flow Diagram:**

* Starts with a node labeled "M1."

* An arrow points from "M1" to "M3."

* A small red line extends from the right side of "M3."

### Key Observations

* The diagram shows two distinct processes, one leading from M1 to M2, and another from M1 to M3.

* The second process is explicitly linked to "Consciousness (Cycle1)."

* The node "M3" in the second process has a distinct orange outline, potentially indicating a different state or significance compared to "M2."

### Interpretation

The diagram appears to represent two possible pathways or outcomes within the "Accelerated Thinking" process. The first pathway, M1 -> M2, might represent a standard or default cognitive flow. The second pathway, M1 -> M3, associated with "Consciousness (Cycle1)," could represent a modified or enhanced cognitive flow, possibly involving conscious awareness or a specific cyclical process. The orange outline of "M3" may signify a state of heightened activity or a different type of cognitive processing. The red line extending from M3 is unclear, but could indicate a further step or consequence within this conscious cycle.

</details>

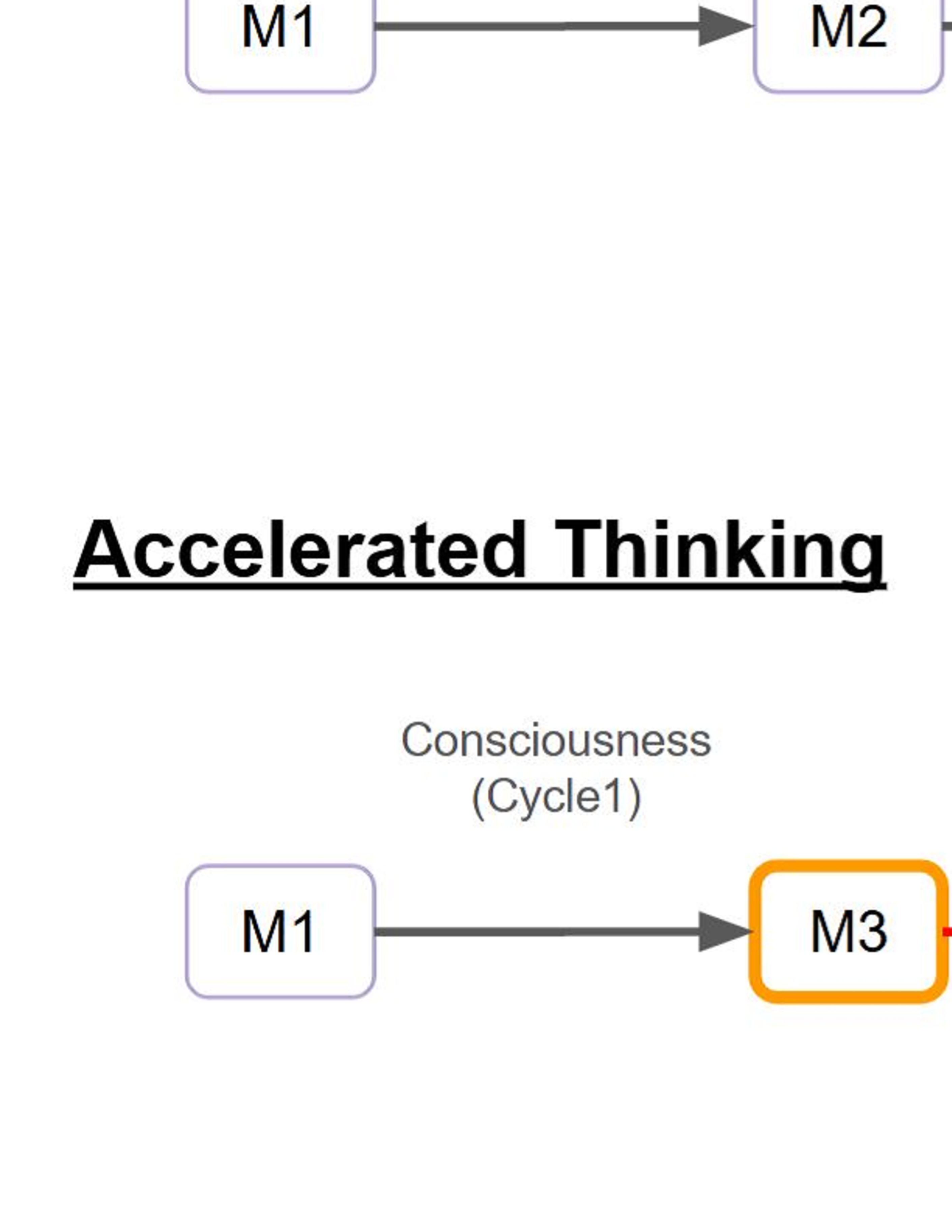

Figure 4: Flow of accelerated thinking in the GWT-based example

This function can also be said to mean that it is possible to exchange information between any of the modules. VanRullen and Kanai [10] point out that the global workspace functions as a “hub” between specialized modules, and that cycle-consistency learning [25] can be carried out by exchanging information between the same specialized modules. Cycle-consistency learning is a learning method that imposes constraints on the model to maintain consistency when converting data back and forth. These constraints ensure that once converted data can be restored to its original state by reversing the conversion, and prevent the loss of content or meaning during the conversion process. A major advantage is that it can learn domain mapping even without training data. In this way, the outputs of each specialized module are continuously cross-checked by repeating the Selection-Broadcast Cycle, and the entire system has the potential to detect potential inconsistencies, correct errors, and gradually build more reliable processing results.

III-B Experience-Based Adaptation

As noted, in GWT, the information that is sequentially raised in the Global Workspace (Consciousness) through the Selection-Broadcast Cycle is shared with all specialized modules in a stepwise manner. Here, we focus on the point that the serial processing carried out in consciousness enters each specialized module in chronological order. It is thought that there are specialized modules that record such chronological consciousness and store it as experience memory [26]. We can further suppose that such experience memory can be recalled if a similar situation arises. If so, it would become possible to speed up or predict the course of serial processing.

Figure 4 shows an example of a simple Selection-Broadcast Cycle structure with two modules (M1, M2) and one experience memory module (M3). Consciousness(Cycle1), Consciousness(Cycle2), and Consciousness(Cycle3) are each listed in the global workspace in chronological order. Since these consciousnesses are broadcast in chronological order, they of course flow into the experience memory module as well. The experience memory module retains them as experiences. Then, when Consciousness(Cycle1) is broadcast again, the experience memory module can output Consciousness(Cycle2) and Consciousness(Cycle3) as recalled memories. This means that it is possible to reach the output of Consciousness(Cycle3) in two cycles, whereas it would have taken three cycles to reach it in the past. As described above, it is thought that the Selection-Broadcast Cycle will enable faster serial processing and prediction. This is similar to the concept of “chunking” [27] in cognitive science, and if learned schemas and procedures are stored as a kind of “chunk”, then when faced with a similar task next time, that chunk can be called up all at once to quickly progress with the processing.

This mechanism not only increases processing speed, but also promotes inference and anticipation of actions. In other words, while referring to past thought processes, it is possible to make predictions such as “there is a possibility that new information will be lacking at this stage” or “it would be better to activate the sensorimotor module before the logical inference module in the next step”, and it is possible to adjust the order of module calls and resource allocation in advance based on these predictions. As a result, each step in the variable serial processing is no longer a simple “trial and error” process, but rather a planned and efficient process that makes full use of past accumulated knowledge. The meta-cognitive decisions made during this process, such as “which module should be activated at what time” and “when should top-down information be updated”, are also optimized through the use of overall information sharing and memory via the Selection-Broadcast Cycle. In this way, by having a system in place that can record and utilize a record of serial processing, it is hoped that the cognitive architecture based on GWT will not only speed up, but also acquire advanced problem-solving capabilities that incorporate reasoning and prediction with an eye on the next move.

There have been several implementations of agent systems that apply experience memory as knowledge (e.g., reasoning and prediction) [28, 29]. For instance, Franklin and colleagues [30] have demonstrated a framework called LIDA (Learning Intelligent Distribution Agent), which builds on GWT to incorporate conscious content into various cognitive modules, including an episodic memory module. In LIDA-based implementations, information that reaches consciousness is not only broadcast to specialized modules but is also chronologically recorded in an episodic (or experience) memory. When a similar situation occurs, the system recalls the sequence of recorded conscious events and applies them as learned knowledge.

<details>

<summary>x5.png Details</summary>

### Visual Description

## System Diagram: Simple Feedback Loop

### Overview

The image is a system diagram illustrating a simple feedback loop involving components labeled M1, M2, and GW. An external input feeds into M1, which then influences GW. GW, in turn, influences both M1 and M2, creating a feedback mechanism.

### Components/Axes

* **Nodes:**

* M1: A rounded rectangle labeled "M1".

* M2: A rounded rectangle labeled "M2".

* GW: An ellipse labeled "GW".

* **Input:**

* "External Input1": A blue upward-pointing arrow feeding into M1.

* **Connections/Arrows:**

* Blue arrow: Represents the external input to M1.

* Red arrows: Represent connections from M1 to GW, from M2 to GW, and from GW to M2.

* Light green arrow: Represents a connection from GW to M1.

* **Dashed Line:** A vertical dashed line on the left side of the diagram, possibly indicating a system boundary.

### Detailed Analysis or ### Content Details

1. **External Input:** The blue arrow labeled "External Input1" points directly into the M1 node.

2. **M1 to GW:** A red arrow originates from M1 and points towards GW.

3. **M2 to GW:** A light purple arrow originates from M2 and points towards GW.

4. **GW to M2:** A red arrow originates from GW and points towards M2.

5. **GW to M1:** A light green arrow originates from GW and points towards M1.

6. **Dashed Line:** A vertical dashed line is present on the left side of the diagram.

### Key Observations

* The diagram depicts a closed-loop system with feedback.

* M1 receives an external input and is influenced by GW.

* M2 is influenced by GW and also influences GW.

* The colors of the arrows indicate different types of connections or signals.

### Interpretation

The diagram represents a control system where "External Input1" affects module M1. M1 and M2 both influence "GW" (likely a global weight or some central processing unit). "GW" then feeds back into both M1 and M2, creating a feedback loop. The red arrows likely represent excitatory connections, while the light green arrow from GW to M1 might represent an inhibitory connection. The light purple arrow from M2 to GW could represent a modulatory signal. The dashed line on the left suggests that the system is part of a larger, undefined system. This type of diagram is commonly used to model biological or engineering control systems.

</details>

Figure 5: Flow of real-time intervention in the GWT-based example

III-C Immediate Real-Time Adaptation

The selective broadcast cycle allows real-time intervention in the results of intermediate processing by external input. Figure 5 shows a simple scenario in which external intervention occurs within a Selection-Broadcast Cycle process with two modules (M1, M2). As shown, external inputs can influence the global workspace’s serial processing at any point. For example, if a specialized module detects new, highly significant information, this information can immediately enter the global workspace through the Selection process, which then disseminates it to all other modules via Broadcast. This quick route ensures that unnecessary waiting times and message passing are greatly reduced, and real-time system responsiveness is greatly improved.

In practical robotics scenarios, such flexible intervention mechanisms have notable advantages. For instance, imagine a robot performing an assembly task using multiple sensory modules (visual, tactile, auditory). Suppose the robot’s tactile sensor suddenly detects an unexpected slip or instability in its grip. With the Selection-Broadcast Cycle, this critical information is rapidly promoted into the global workspace, interrupting the ongoing processing sequence. Consequently, other modules (e.g., motor control, vision processing, or reinforcement learning) immediately receive this alert and can swiftly initiate corrective actions. This immediate broadcast enables the system to promptly reconsider and revise its gripping strategy from both top-down (strategic re-planning) and bottom-up (sensor-driven adjustments) perspectives, substantially improving safety, precision, and robustness in real-time.

IV DISCUSSION

Traditional discussions of GWT’s intelligence have predominantly emphasized the process on static, supervised settings, which rely heavily on pre-labeled data sets, explicit instructions, and predefined tasks (e.g., ensemble learning, transfer learning, self-attention, predictive coding). In such scenarios, intelligence manifests primarily as a system’s ability to accurately replicate patterns and knowledge derived from historical, structured data. However, the real-world application of artificial intelligence increasingly demands a shift toward dynamic, unsupervised settings, where tasks, environments, and goals continuously evolve, often without explicit guidance or labeled examples.

In dynamic, unsupervised scenarios, intelligent systems face fundamentally different challenges. Rather than relying on historical labels or fixed benchmarks, these systems must autonomously discover meaningful patterns, adapt swiftly to changing contexts, and continuously learn from ongoing experiences. In this paper, we discussed the strengths of GWT in such real-time processing by focusing on Selection-Broadcast Cycle. We explained that this Selection-Broadcast Cycle realizes flexible processing, is capable of being accelerated, and is a mechanism that can respond immediately to real-time changes. Thus, by highlighting the advantages of the Selection-Broadcast Cycle, this paper extends traditional conceptions of GWT intelligence into the realm of dynamic, unsupervised learning, opening new pathways toward the development of more robust, adaptive, and autonomous artificial intelligence systems capable of thriving in complex real-time environments. Future research could further explore practical implementations and empirical evaluations to validate these theoretical insights and expand the applicability of GWT-based architectures in diverse, real-world scenarios.

Furthermore, although GWT seems well-suited for thriving in the real-time world, one potential way to enhance its adaptability further could involve multiple consciousness (GWT) processes operating in parallel. This parallelization could facilitate the simultaneous exploration of diverse solutions, enhance adaptability by rapidly responding to varied and unpredictable changes, and effectively distribute cognitive load, thereby potentially surpassing the limitations inherent to a single, centralized consciousness structure. Such a mechanism might represent the collective intelligence observed in groups of humans, suggesting that human societies themselves could represent natural exemplars of parallel consciousness networks capable of robust, adaptive decision-making in complex and dynamic environments. For example, Taniguchi [31] is researching the dynamics of such group intelligence and language development.

V LIMITATIONS AND FUTURE WORK

While the proposed Selection-Broadcast Cycle structure inspired by the Global Workspace Theory (GWT) provides a compelling theoretical framework for adaptive, real-time cognitive architectures, several critical limitations need to be acknowledged and addressed in future work.

One significant limitation of this study is the absence of empirical validation. The advantages of the Selection-Broadcast Cycle, such as dynamic thinking, experience-based acceleration, and immediate real-time responsiveness, remain largely theoretical. Currently, the paper does not present experimental results, simulations, or quantitative analyses to substantiate these claims. Therefore, readers must accept the described benefits without direct evidence of improved adaptability or efficiency compared to other existing methods. To strengthen future iterations of this research, practical implementations such as comparative simulations or robot-based experiments demonstrating fewer task failures or quicker adaptation would be essential.

In particular, the overall effectiveness of the system depends greatly on the quality of the Selection process. There remain important open questions regarding whether such effective and sophisticated selection mechanisms can actually be put to practical use. In static environments, there is an example of Selection process that improves the overall performance of the system by weighting based mainly on internal indicators such as happiness and past experience [32]. In order to put GWT-based architectures to practical use in real-world applications, it is extremely important to achieve robust and adaptive Selection processes, so future research will need to address this implementation issue in dynamic environments as well.

VI CONCLUSIONS

In this paper, we explored the potential of the Global Workspace Theory (GWT) and, in particular, the Selection-Broadcast Cycle, as an information processing architecture suitable for dynamic, unsupervised real-time environments. Traditional approaches to artificial intelligence often rely heavily on structured, labeled data, where intelligence primarily involves replicating known patterns. However, real-world applications require systems that can continuously adapt and respond to evolving tasks, environments, and goals. In this context, we highlighted the Selection-Broadcast Cycle’s strengths: its flexibility to rearrange module execution order dynamically, its capability for acceleration through experience-driven predictions, and its responsiveness to immediate real-time inputs.

Our hypothesis suggests that a cognitive architecture based on GWT and, specifically, the Selection-Broadcast Cycle, provides a robust framework for dynamic decision-making and rapid adaptation in complex environments. The ability to rearrange processing sequences dynamically, accelerate learning through experience-based memory, and intervene swiftly in response to changing conditions positions GWT-based architectures to effectively handle the challenges posed by real-time intelligence.

A critical unresolved question remains the practical feasibility of implementing robust and adaptive Selection mechanisms in real-world systems. Future research must address this challenge, potentially through integrating machine learning techniques and advanced evaluative frameworks, to further validate and extend the applicability of GWT-based architectures. By tackling these challenges, we can move closer to developing truly autonomous, flexible artificial intelligence systems capable of thriving in the complexities and uncertainties of the real-time world.

References

- [1] D. Hassabis, D. Kumaran, C. Summerfield, and M. Botvinick, “Neuroscience-inspired artificial intelligence,” Neuron, vol. 95, no. 2, pp. 245–258, 2017.

- [2] M. K. Ho and T. L. Griffiths, “Cognitive science as a source of forward and inverse models of human decisions for robotics and control,” Annual Review of Control, Robotics, and Autonomous Systems, vol. 5, no. 1, pp. 33–53, 2022.

- [3] I. Kotseruba and J. K. Tsotsos, “40 years of cognitive architectures: core cognitive abilities and practical applications,” Artificial Intelligence Review, vol. 53, no. 1, pp. 17–94, 2020.

- [4] A. Ajay, S. Han, Y. Du, S. Li, A. Gupta, T. Jaakkola, J. Tenenbaum, L. Kaelbling, A. Srivastava, and P. Agrawal, “Compositional foundation models for hierarchical planning,” Advances in Neural Information Processing Systems, vol. 36, pp. 22 304–22 325, 2023.

- [5] B. Liu, X. Li, J. Zhang, J. Wang, T. He, S. Hong, H. Liu, S. Zhang, K. Song, K. Zhu, et al., “Advances and challenges in foundation agents: From brain-inspired intelligence to evolutionary, collaborative, and safe systems,” arXiv preprint arXiv:2504.01990, 2025.

- [6] B. J. Baars, “Global workspace theory of consciousness: toward a cognitive neuroscience of human experience,” Progress in brain research, vol. 150, pp. 45–53, 2005.

- [7] S. Dehaene and L. Naccache, “Towards a cognitive neuroscience of consciousness: basic evidence and a workspace framework,” Cognition, vol. 79, no. 1-2, pp. 1–37, 2001.

- [8] G. A. Mashour, P. Roelfsema, J.-P. Changeux, and S. Dehaene, “Conscious processing and the global neuronal workspace hypothesis,” Neuron, vol. 105, no. 5, pp. 776–798, 2020.

- [9] A. Juliani, K. Arulkumaran, S. Sasai, and R. Kanai, “On the link between conscious function and general intelligence in humans and machines,” Transactions on Machine Learning Research, 2022, survey Certification.

- [10] R. VanRullen and R. Kanai, “Deep learning and the global workspace theory,” Trends in Neurosciences, vol. 44, no. 9, pp. 692–704, 2021.

- [11] T. Lesort, V. Lomonaco, A. Stoian, D. Maltoni, D. Filliat, and N. Díaz-Rodríguez, “Continual learning for robotics: Definition, framework, learning strategies, opportunities and challenges,” Information Fusion, vol. 58, pp. 52–68, 2020.

- [12] K. Shaheen, M. A. Hanif, O. Hasan, and M. Shafique, “Continual learning for real-world autonomous systems: Algorithms, challenges and frameworks,” Journal of Intelligent & Robotic Systems, vol. 105, no. 1, p. 9, 2022.

- [13] S. Dehaene, L. Naccache, L. Cohen, D. L. Bihan, J.-F. Mangin, J.-B. Poline, and D. Rivière, “Cerebral mechanisms of word masking and unconscious repetition priming,” Nature neuroscience, vol. 4, no. 7, pp. 752–758, 2001.

- [14] R. Gaillard, S. Dehaene, C. Adam, S. Clémenceau, D. Hasboun, M. Baulac, L. Cohen, and L. Naccache, “Converging intracranial markers of conscious access,” PLoS biology, vol. 7, no. 3, p. e1000061, 2009.

- [15] I. Ito, T. Ito, J. Suzuki, and K. Inui, “Investigating the effectiveness of multiple expert models collaboration,” in Findings of the Association for Computational Linguistics: EMNLP 2023, 2023, pp. 14 393–14 404.

- [16] G. A. Wiggins, “Crossing the threshold paradox: Modelling creative cognition in the global workspace,” in International Conference on Computational Creativity, 2012, pp. 180–187.

- [17] R. Polikar, “Ensemble learning,” Ensemble machine learning: Methods and applications, pp. 1–34, 2012.

- [18] G. K. Stojanov and B. Indurkhya, “Creativity and cognitive development: The role of perceptual similarity and analogy.” in AAAI Spring symposium: Creativity and (Early) cognitive development, 2013.

- [19] C. Tan, F. Sun, T. Kong, W. Zhang, C. Yang, and C. Liu, “A survey on deep transfer learning,” in Artificial Neural Networks and Machine Learning–ICANN 2018: 27th International Conference on Artificial Neural Networks, Rhodes, Greece, October 4-7, 2018, Proceedings, Part III 27. Springer, 2018, pp. 270–279.

- [20] R. F. J. Dossa, K. Arulkumaran, A. Juliani, S. Sasai, and R. Kanai, “Design and evaluation of a global workspace agent embodied in a realistic multimodal environment,” Frontiers in Computational Neuroscience, vol. 18, p. 1352685, 2024.

- [21] A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin, “Attention is all you need,” Advances in neural information processing systems, vol. 30, pp. 6000–6010, 2017.

- [22] K. Friston and S. Kiebel, “Predictive coding under the free-energy principle,” Philosophical transactions of the Royal Society B: Biological sciences, vol. 364, no. 1521, pp. 1211–1221, 2009.

- [23] J. Wei, X. Wang, D. Schuurmans, M. Bosma, F. Xia, E. Chi, Q. V. Le, D. Zhou, et al., “Chain-of-thought prompting elicits reasoning in large language models,” Advances in neural information processing systems, vol. 35, pp. 24 824–24 837, 2022.

- [24] T. Shanahan, “Deductive and inductive arguments,” The Internet Encyclopedia of Philosophy, ISSN 2161-0002, https://iep.utm.edu/, accessed on 2025-03-05.

- [25] J.-Y. Zhu, T. Park, P. Isola, and A. A. Efros, “Unpaired image-to-image translation using cycle-consistent adversarial networks,” in Proceedings of the IEEE international conference on computer vision, 2017, pp. 2223–2232.

- [26] S. Franklin, B. J. Baars, U. Ramamurthy, and M. Ventura, “The role of consciousness in memory,” Brains, Minds and Media, vol. 2005, no. 1, 2005.

- [27] F. Gobet, P. C. Lane, S. Croker, P. C. Cheng, G. Jones, I. Oliver, and J. M. Pine, “Chunking mechanisms in human learning,” Trends in cognitive sciences, vol. 5, no. 6, pp. 236–243, 2001.

- [28] J. Laird, K. Kinkade, S. Mohan, and J. Xu, “Cognitive robotics using the soar cognitive architecture,” AAAI Workshop - Technical Report, pp. 46–54, 01 2012.

- [29] L. Martin, J. H. Rosales, K. Jaime, and F. Ramos, “Affective episodic memory system for virtual creatures: The first step of emotion-oriented memory,” Computational Intelligence and Neuroscience, vol. 2021, no. 1, p. 7954140, 2021.

- [30] S. Franklin, T. Madl, S. D’mello, and J. Snaider, “Lida: A systems-level architecture for cognition, emotion, and learning,” IEEE Transactions on Autonomous Mental Development, vol. 6, no. 1, pp. 19–41, 2013.

- [31] T. Taniguchi, “Collective predictive coding hypothesis: Symbol emergence as decentralized bayesian inference,” Frontiers in Robotics and AI, vol. 11, p. 1353870, 2024.

- [32] E. C. Garrido-Mercháin, M. Molina, and F. M. Mendoza-Soto, “A global workspace model implementation and its relations with philosophy of mind,” Journal of Artificial Intelligence and Consciousness, vol. 9, no. 01, pp. 1–28, 2022.