# From Reasoning to Generalization: Knowledge-Augmented LLMs for ARC Benchmark

## Abstract

Recent reasoning-oriented LLMs have demonstrated strong performance on challenging tasks such as mathematics and science examinations. However, core cognitive faculties of human intelligence, such as abstract reasoning and generalization, remain underexplored. To address this, we evaluate recent reasoning-oriented LLMs on the Abstraction and Reasoning Corpus (ARC) benchmark, which explicitly demands both faculties. We formulate ARC as a program synthesis task and propose nine candidate solvers. Experimental results show that repeated-sampling planning-aided code generation (RSPC) achieves the highest test accuracy and demonstrates consistent generalization across most LLMs. To further improve performance, we introduce an ARC solver, Knowledge Augmentation for Abstract Reasoning (KAAR), which encodes core knowledge priors within an ontology that classifies priors into three hierarchical levels based on their dependencies. KAAR progressively expands LLM reasoning capacity by gradually augmenting priors at each level, and invokes RSPC to generate candidate solutions after each augmentation stage. This stage-wise reasoning reduces interference from irrelevant priors and improves LLM performance. Empirical results show that KAAR maintains strong generalization and consistently outperforms non-augmented RSPC across all evaluated LLMs, achieving around 5% absolute gains and up to 64.52% relative improvement. Despite these achievements, ARC remains a challenging benchmark for reasoning-oriented LLMs, highlighting future avenues of progress in LLMs.

## 1 Introduction

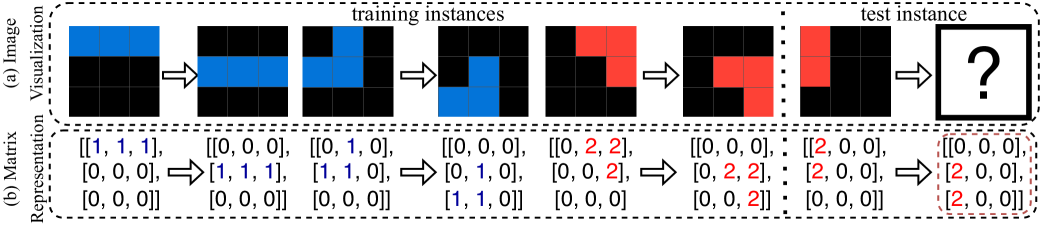

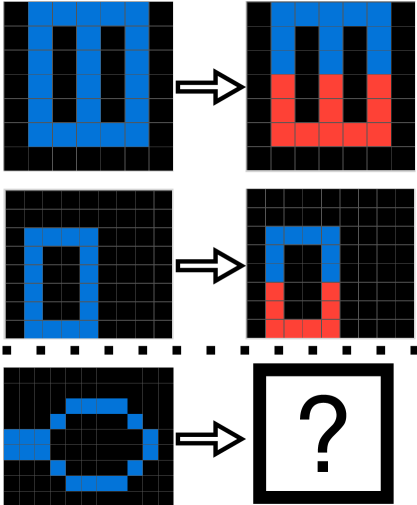

Learning from extensive training data has achieved remarkable success in major AI fields such as computer vision, natural language processing, and autonomous driving [1, 2, 3]. However, achieving human-like intelligence goes beyond learning purely from large-scale data; it requires rapid reasoning and generalizing from prior knowledge to novel tasks and situations [4]. Chollet [5] introduced Abstraction and Reasoning Corpus (ARC) to assess the generalization and abstract reasoning capabilities of AI systems. In each ARC task, the solver is required to infer generalized rules or procedures from a small set of training instances, typically fewer than five input-output image pairs, and apply them to generate output images for given input images provided in test instances (Figure 1 (a)). Each image in ARC is a pixel grid represented as a 2D matrix, where each value denotes a pixel color (Figure 1 (b)). ARC evaluates broad generalization, encompassing reasoning over individual input-output pairs and inferring generalized solutions via high-level abstraction, akin to inductive reasoning [6].

ARC is grounded in core knowledge priors, which serve as foundational cognitive faculties of human intelligence, enabling equitable comparisons between AI systems and human cognitive abilities [7]. These priors include: (1) objectness – aggregating elements into coherent, persistent objects; (2) geometry and topology – recognizing and manipulating shapes, symmetries, spatial transformations, and structural patterns (e.g., containment, repetition, projection); (3) numbers and counting – counting, sorting, comparing quantities, performing basic arithmetic, and identifying numerical patterns; and (4) goal-directedness – inferring purposeful transformations between initial and final states without explicit temporal cues. Incorporating these priors allows ARC solvers to replicate human cognitive processes, produce behavior aligned with human expectations, address human-relevant problems, and demonstrate human-like intelligence through generalization and abstract reasoning [5]. These features highlight ARC as a crucial benchmark for assessing progress toward general intelligence.

Chollet [5] suggested approaching ARC tasks as instances of program synthesis, which studies automatically generating a program that satisfies a high-level specification [8]. Following this proposal, recent studies [9, 10] have successfully solved partial ARC tasks by searching for program solutions encoded within object-centric domain-specific languages (DSLs). Reasoning-oriented LLMs integrate chain-of-thought (CoT) reasoning [11], often trained via reinforcement learning, further advancing program synthesis performance. Common approaches using LLMs for code generation include repeated sampling, where multiple candidate programs are generated [12], followed by best-program selection strategies [13, 14, 15, 16], and code refinement, where initial LLM-generated code is iteratively improved using error feedback from execution results [17, 18] or LLM-generated explanations [17, 19, 18]. We note that ARC presents greater challenges than existing program synthesis benchmarks such as HumanEval [12], MBPP [20], and LiveCode [21], due to its stronger emphasis on generalization and abstract reasoning grounded in core knowledge priors, which remain underexplored. This gap motivates our evaluation of recent reasoning-oriented LLMs on the ARC benchmark, and our proposed knowledge augmentation approach to improve their performance.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Visualizing Training and Testing Instances

### Overview

The image presents a diagram illustrating the concept of training and testing instances, likely within a machine learning context. It shows a sequence of "training instances" represented both visually as images and numerically as matrices, leading to a "test instance" with an unknown outcome. The diagram is split into two sections: (a) Image Visualization and (b) Matrix Representation.

### Components/Axes

* **Section (a) - Image Visualization:** Displays a series of 6 images arranged horizontally. Each image is a 3x3 grid of colored cells (blue, black, and red). Arrows indicate the flow from one image to the next. The images are labeled "training instances" except for the last one, which is labeled "test instance". The last image contains a question mark.

* **Section (b) - Matrix Representation:** Displays corresponding 3x3 matrices below each image. Each matrix contains numerical values (0, 1, and 2). Arrows connect each matrix to the next, mirroring the flow in the image visualization.

* **Labels:** "training instances" (repeated), "test instance", "(a) Image Visualization", "(b) Matrix Representation".

* **Color Coding:**

* Blue in the images corresponds to the value '1' in the matrices.

* Black in the images corresponds to the value '0' in the matrices.

* Red in the images corresponds to the value '2' in the matrices.

### Detailed Analysis or Content Details

The diagram shows a sequence of six instances. Let's analyze each step:

1. **Instance 1:**

* Image: Predominantly blue with some black cells.

* Matrix: `[[1, 1, 1], [0, 0, 0], [0, 0, 0]]`

2. **Instance 2:**

* Image: Blue and black cells.

* Matrix: `[[0, 0, 0], [1, 1, 1], [0, 0, 0]]`

3. **Instance 3:**

* Image: Blue and black cells.

* Matrix: `[[0, 1, 0], [1, 1, 0], [0, 0, 0]]`

4. **Instance 4:**

* Image: Blue, black, and red cells.

* Matrix: `[[0, 0, 2], [0, 2, 2], [1, 0, 0]]`

5. **Instance 5:**

* Image: Red and black cells.

* Matrix: `[[0, 0, 0], [2, 2, 0], [0, 0, 0]]`

6. **Instance 6 (Test Instance):**

* Image: Black and red cells with a question mark.

* Matrix: `[[0, 0, 0], [2, 0, 0], [0, 0, 0]]`

The arrows indicate a sequential progression, suggesting a time series or a process where each instance builds upon the previous one.

### Key Observations

* The color scheme consistently maps to the numerical values in the matrices.

* The transition from training instances to the test instance shows a shift in color distribution, with red becoming more prominent.

* The test instance is presented as an unknown, indicated by the question mark.

* The matrices represent a sparse representation of the images, with most values being 0.

### Interpretation

This diagram likely illustrates a learning process where a model is trained on the first five instances (training instances) and then tested on the sixth instance (test instance). The matrices could represent feature vectors or activations within a neural network. The changing color distribution suggests that the model is learning to recognize patterns or features associated with the red color. The question mark on the test instance indicates that the model needs to predict the outcome or classify the instance based on its training. The diagram demonstrates a simple example of supervised learning, where the model learns from labeled data (training instances) and then makes predictions on unseen data (test instance). The sparse matrix representation suggests that the features are not densely activated, and only a few features are relevant for each instance.

</details>

Figure 1: An ARC problem example (25ff71a9) with image visualizations (a), including three input-output pairs in the training instances, and one input image in the test instance, along with their corresponding 2D matrix representations (b). The ground-truth test output is enclosed in a red box.

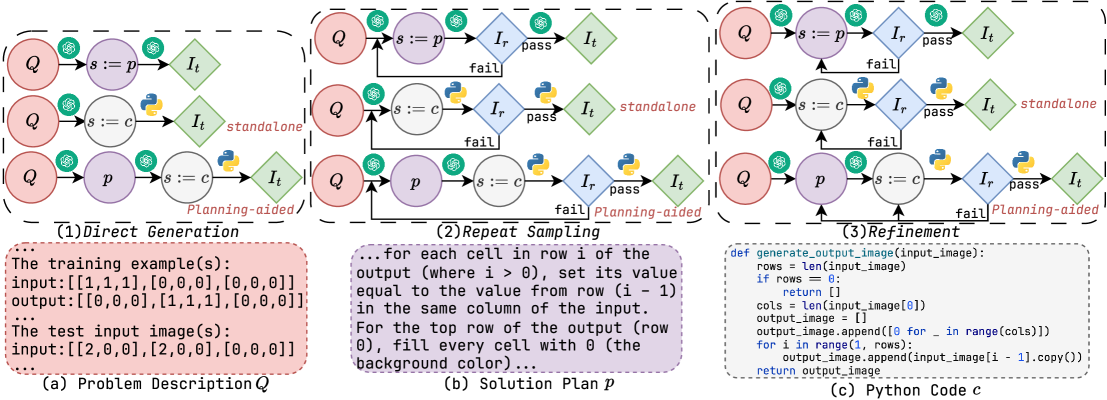

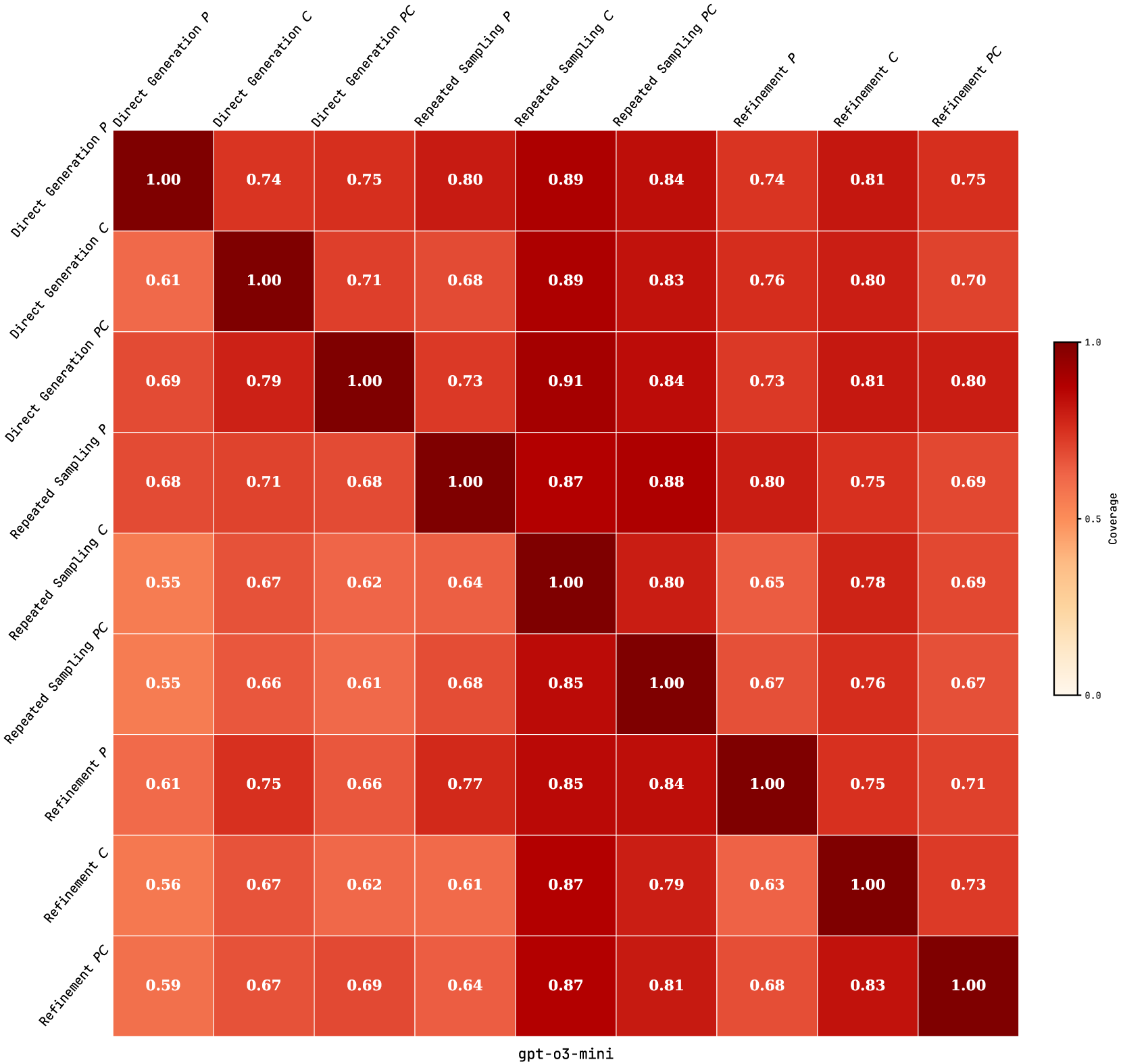

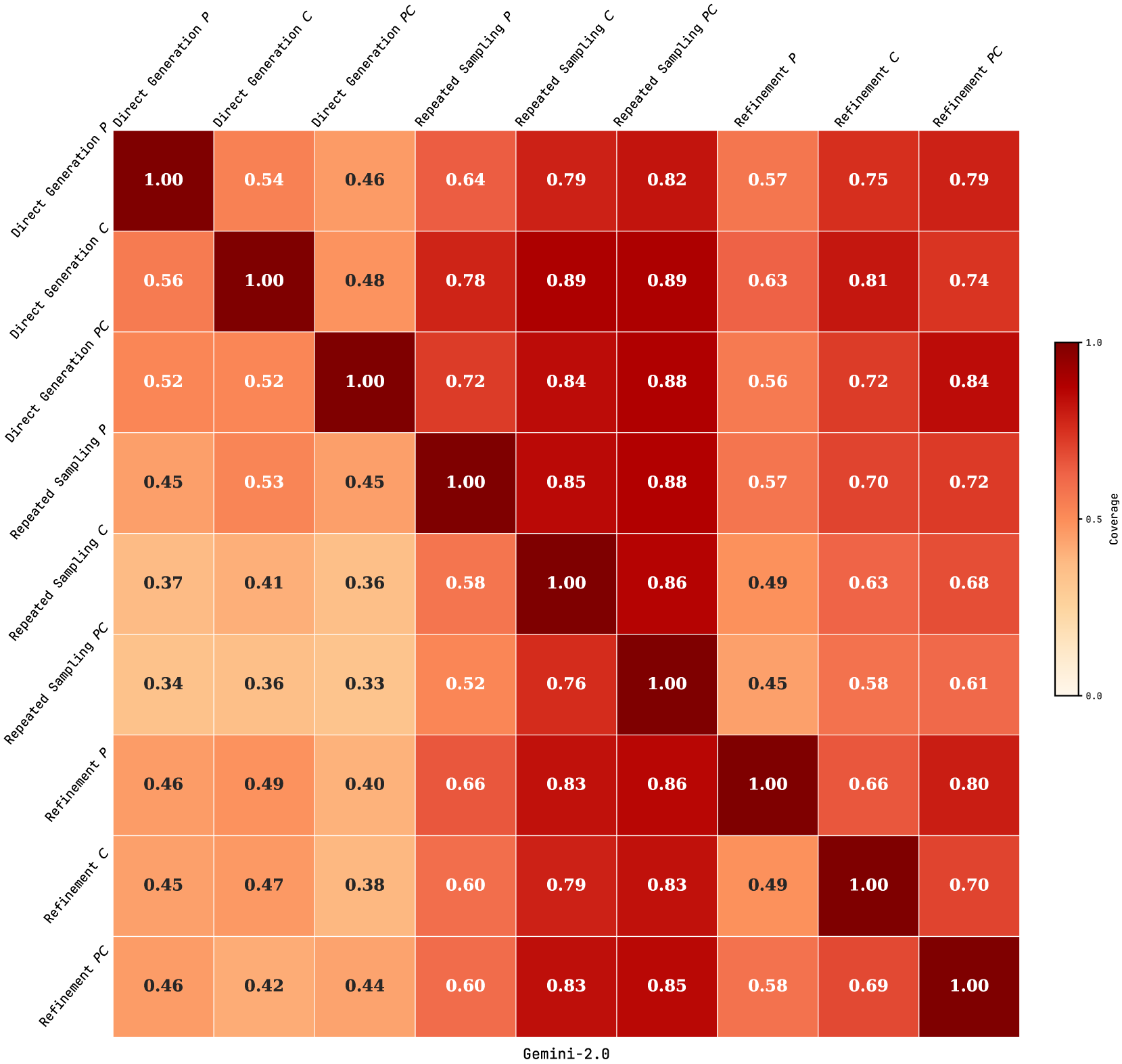

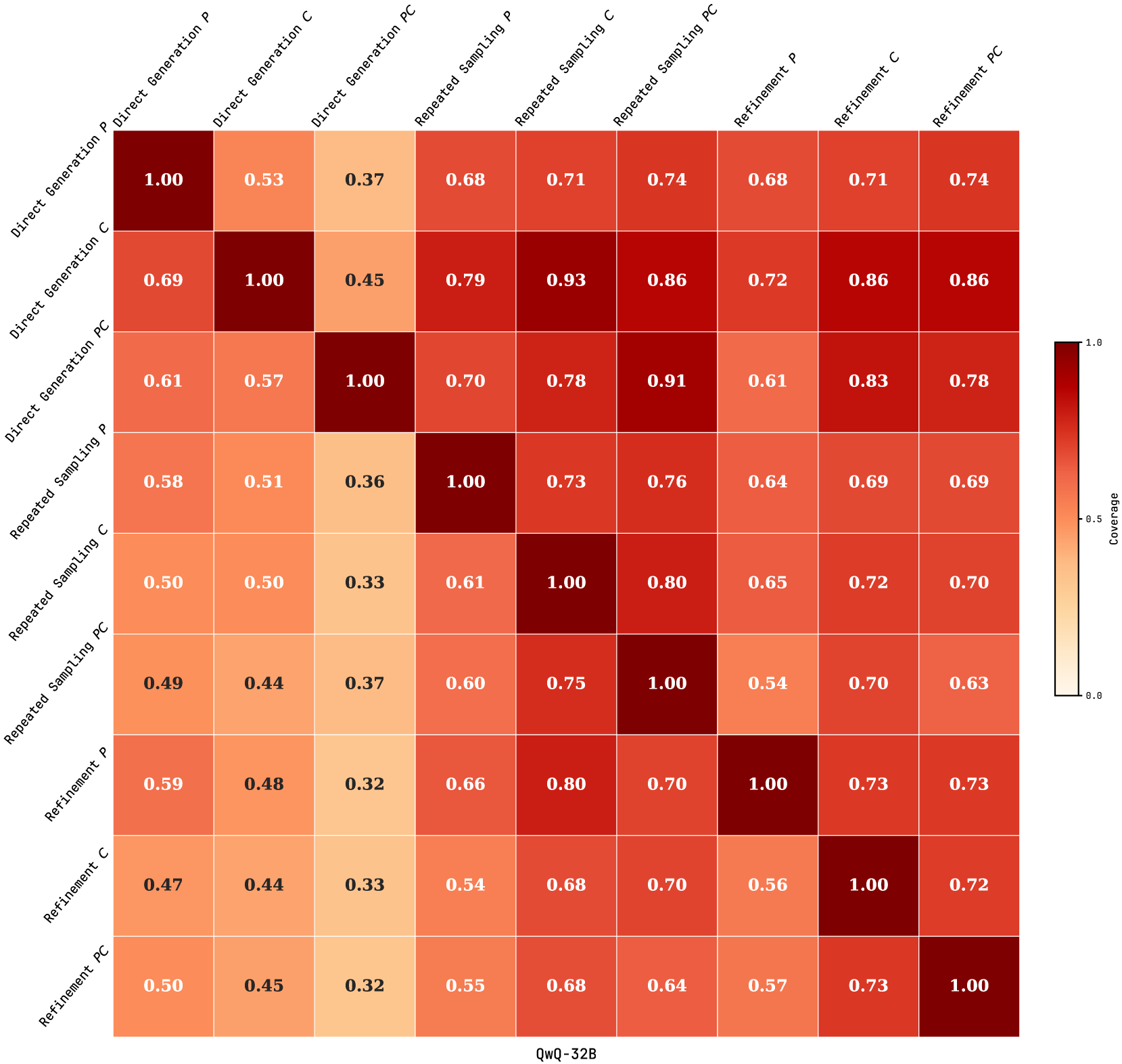

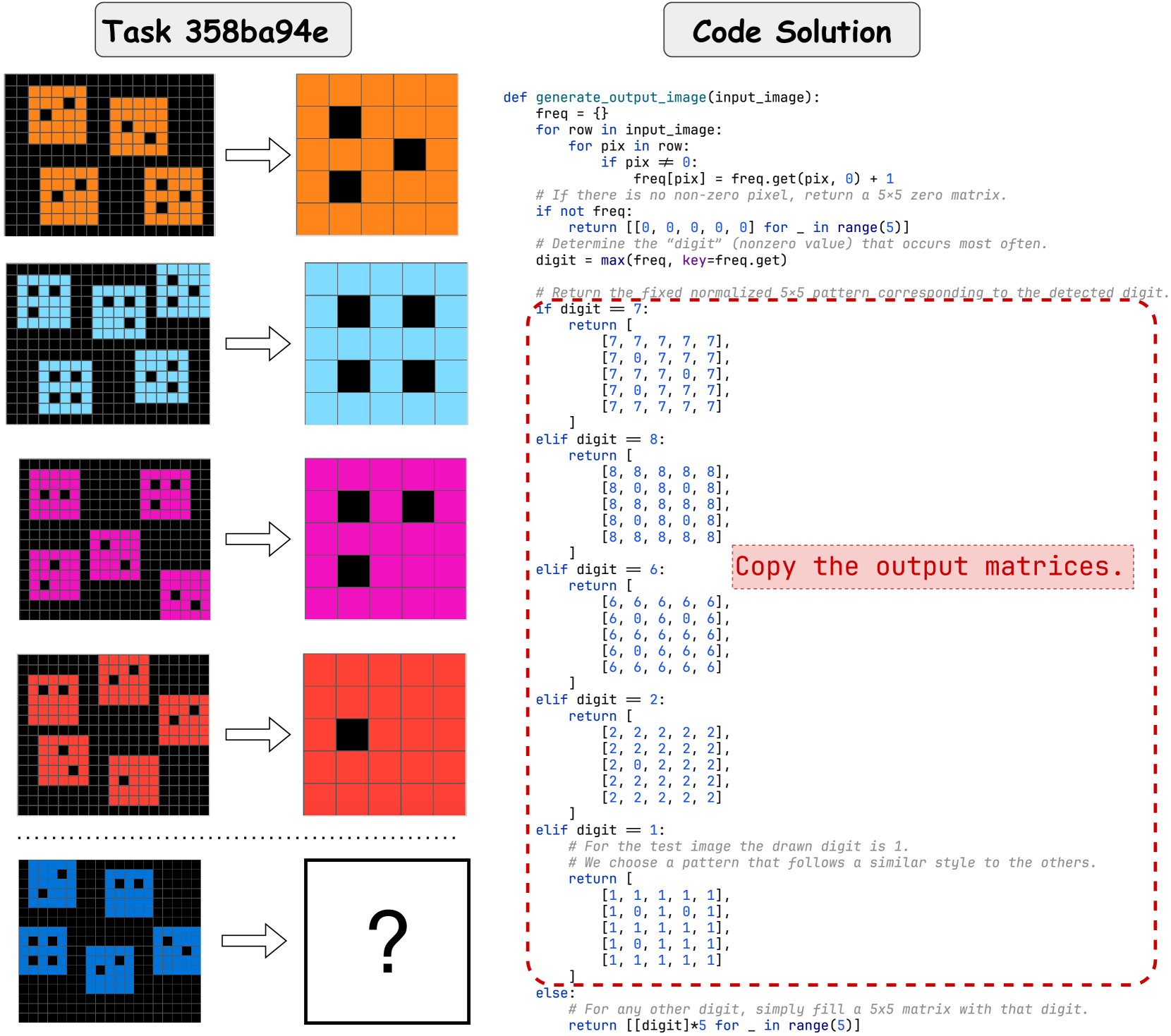

We systematically assess how reasoning-oriented LLMs approach ARC tasks within the program synthesis framework. For each ARC problem, we begin by providing 2D matrices as input. We adopt three established program generation strategies: direct generation, repeated sampling, and refinement. Each strategy is evaluated under two solution representations: a text-based solution plan and Python code. When generating code solutions, we further examine two modalities: standalone and planning-aided, where a plan is generated to guide subsequent code development, following recent advances [18, 22, 23]. In total, nine ARC solvers are considered. We evaluate several reasoning-oriented LLMs, including proprietary models, GPT-o3-mini [24, 25], and Gemini-2.0-Flash-Thinking (Gemini-2.0) [26], and open-source models, DeepSeek-R1-Distill-Llama-70B (DeepSeek-R1-70B) [27] and QwQ-32B [28]. Accuracy on test instances is reported as the primary metric. When evaluated on the ARC public evaluation set (400 problems), repeated-sampling planning-aided code generation (RSPC) demonstrates consistent generalization and achieves the highest test accuracy across most LLMs, 30.75% with GPT-o3-mini, 16.75% with Gemini-2.0, 14.25% with QwQ-32B, and 7.75% with DeepSeek-R1-70B. We treat the most competitive ARC solver, RSPC, as the solver backbone.

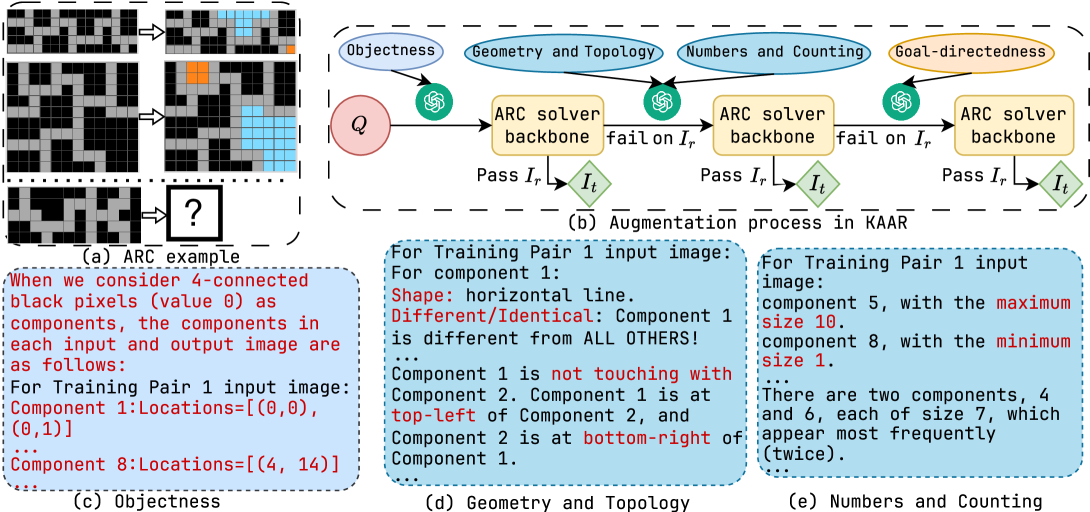

Motivated by the success of manually defined priors in ARC solvers [9, 10], we propose K nowledge A ugmentation for A bstract R easoning (KAAR) for solving ARC tasks using reasoning-oriented LLMs. KAAR formalizes manually defined priors through a lightweight ontology that organizes priors into hierarchical levels based on their dependencies. It progressively augments LLMs with priors at each level via structured prompting. Specifically, core knowledge priors are introduced in stages: beginning with objectness, followed by geometry, topology, numbers, and counting, and concluding with goal-directedness. After each stage, KAAR applies the ARC solver backbone (RSPC) to generate the solution. This progressive augmentation enables LLMs to gradually expand their reasoning capabilities and facilitates stage-wise reasoning, aligning with human cognitive development [29]. Empirical results show that KAAR improves accuracy on test instances across all evaluated LLMs, achieving the largest absolute gain of 6.75% with QwQ-32B and the highest relative improvement of 64.52% with DeepSeek-R1-70B over non-augmented RSPC.

We outline our contributions as follows:

- We evaluate the abstract reasoning and generalization capabilities of reasoning-oriented LLMs on ARC using nine solvers that differ in generation strategies, modalities, and solution representations.

- We introduce KAAR, a knowledge augmentation approach for solving ARC problems using LLMs. KAAR progressively augments LLMs with core knowledge priors structured via an ontology and applies the best ARC solver after augmenting same-level priors, further improving performance.

- We conduct a comprehensive performance analysis of the proposed ARC solvers, highlighting failure cases and remaining challenges on the ARC benchmark.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Iterative Image Generation Process

### Overview

The image depicts a diagram illustrating an iterative process for image generation, broken down into three stages: Direct Generation, Repeat Sampling, and Refinement. Each stage is represented by a flow diagram and accompanied by textual descriptions of the problem, solution, and corresponding Python code. The diagrams use state transitions and feedback loops to show the process flow.

### Components/Axes

The diagram consists of three main sections, labeled (1) Direct Generation, (2) Repeat Sampling, and (3) Refinement, arranged horizontally. Each section contains a flow diagram and a text block.

* **Flow Diagrams:** Each diagram uses circles to represent states (labeled Q, p, c), arrows to indicate transitions, and labels on the arrows to describe the conditions or actions causing the transitions (e.g., "s := p", "fail", "pass"). The diagrams also include labels indicating the type of process: "standalone" and "Planning-aided".

* **Text Blocks:**

* **(a) Problem Description Q:** Contains example input and output image data.

* **(b) Solution Plan P:** Describes the algorithm for generating the output image.

* **(c) Python Code C:** Provides the Python code implementation of the algorithm.

### Detailed Analysis or Content Details

**Section 1: Direct Generation**

* The diagram shows a series of states 'Q' transitioning based on the condition "s := p" (where 's' is assigned the value of 'p').

* The output 'I<sub>t</sub>' is labeled as either "pass" or "fail".

* The process is labeled "Planning-aided".

* **Problem Description Q:**

* "The training example(s):"

* input: `[[1,1],[1,0],[0,0],[0,0]]`

* output: `[[0,0],[1,1],[1,1],[0,0]]`

* "The test input image(s):"

* input: `[[2,0],[2,0],[0,0],[0,0]]`

**Section 2: Repeat Sampling**

* The diagram shows states 'Q' transitioning based on "s := c".

* The output 'I<sub>t</sub>' is labeled as either "pass" or "fail".

* The process is labeled as both "standalone" and "Planning-aided".

* **Solution Plan P:**

* "...for each cell in row i of the output (where i > 0), set its value equal to the value from row (i - 1) in the same column of the input."

* "For the top row of the output (row 0), fill every cell with 0 (the background color)..."

**Section 3: Refinement**

* The diagram shows states 'Q' transitioning based on "s := c".

* The output 'I<sub>t</sub>' is labeled as either "pass" or "fail".

* The process is labeled "Planning-aided".

* **Python Code C:**

```python

def generate_output_image(input_image):

rows = len(input_image)

if rows == 0:

return []

cols = len(input_image[0])

output_image = []

output_image.append([0 for _ in range(cols)])

for i in range(1, rows):

output_image.append(input_image[i-1].copy())

return output_image

```

### Key Observations

* The diagrams in all three sections share a similar structure, suggesting a consistent underlying process with iterative refinement.

* The "fail" transitions indicate potential areas for improvement or further iteration.

* The Python code in Section 3 directly implements the algorithm described in the Solution Plan in Section 2.

* The input/output examples in Section 1 provide concrete instances for understanding the problem and solution.

### Interpretation

The diagram illustrates a method for image generation that starts with a direct generation step, then iteratively refines the output through repeat sampling and a final refinement stage. The process appears to be based on copying rows from the input image to the output image, with an initial row filled with a background color (0). The "pass" and "fail" labels suggest a validation or evaluation step at each iteration. The use of "Planning-aided" indicates that some form of planning or guidance is involved in the process. The Python code provides a clear and concise implementation of the algorithm, demonstrating how the iterative process can be automated. The examples provided show a simple case of copying rows, but the overall framework could be extended to more complex image generation tasks. The iterative nature of the process suggests a potential for learning and improvement over time. The diagram highlights the interplay between problem definition, solution design, and code implementation in the context of image generation.

</details>

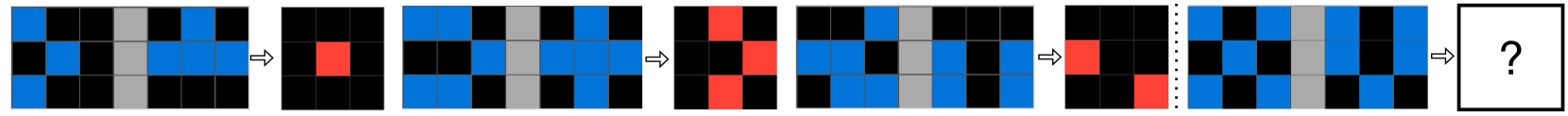

Figure 2: An illustration of the three ARC solution generation approaches, (1) direct generation, (2) repeated sampling, and (3) refinement, with the GPT-o3-mini input and response fragments (a–c) for solving task 25ff71a9 (Figure 1). For each approach, when the solution $s$ is code, $s:=c$ , a plan $p$ is either generated from the problem description $Q$ to guide code generation (planning-aided) or omitted (standalone). Otherwise, when $s:=p$ , the plan $p$ serves as the final solution instead.

## 2 Problem Formulation

We formulate each ARC task as a tuple $\mathcal{P}=\langle I_{r},I_{t}\rangle$ , where $I_{r}$ and $I_{t}$ are sets of training and test instances. Each instance consists of an input-output image pair $(i^{i},i^{o})$ , represented as 2D matrices. The goal is to leverage the LLM $\mathcal{M}$ to generate a solution $s$ based on training instances $I_{r}$ and test input images $\{i^{i}\ |\ (i^{i},i^{o})\in I_{t}\}$ , where $s$ maps each test input $i^{i}$ to its output $i^{o}$ , i.e., $s(i^{i})=i^{o}$ , for $(i^{i},i^{o})\in I_{t}$ . We note that the test input images are visible during the generation of solution $s$ , whereas test output images become accessible only after $s$ is produced to validate the correctness of $s$ . We encode the solution $s$ in different forms, as a solution plan $p$ , or as Python code $c$ , optionally guided by $p$ . We denote each ARC problem description, comprising $I_{r}$ and $\{i^{i}\ |\ (i^{i},i^{o})\in I_{t}\}$ , as $Q$ .

## 3 ARC Solver Backbone

LLMs have shown promise in solving tasks that rely on ARC-relevant priors [30, 31, 32, 33]. We initially assume that reasoning-oriented LLMs implicitly encode sufficient core knowledge priors to solve ARC tasks. We cast each ARC task as a program synthesis problem, which involves generating a solution $s$ from a problem description $Q$ without explicitly prompting for priors. We consider established LLM-based code generation approaches [17, 18, 19, 23] as candidate ARC solution generation strategies, illustrated at the top of Figure 2. These include: (1) direct generation, where the LLM produces the solution $s$ in a single attempt, and then validates it on test instances $I_{t}$ ; (2) repeated sampling, where the LLM samples solutions until one passes training instances $I_{r}$ , and then evaluates it on $I_{t}$ ; and (3) refinement, where the LLM iteratively refines an initial solution $s$ based on failures on $I_{r}$ until it succeeds, followed by evaluation on $I_{t}$ . In addition, we extend the solution representation beyond code to include text-based solution plans. Given the problem description $Q$ as input (Figure 2, block (a)), all strategies prompt the LLM to generate a solution $s$ , represented either as a natural language plan $p$ (block (b)), $s:=p$ , or as a Python code $c$ (block (c)), $s:=c$ . For $s:=p$ , the solution is derived directly from $Q$ . For $s:=c$ , we explore two modalities: the LLM either generates $c$ directly from $Q$ (standalone), or first generates a plan $p$ for $Q$ , which is then concatenated with $Q$ to guide subsequent code development (planning-aided), a strategy widely adopted in recent work [18, 22, 23].

Repeated sampling and refinement iteratively produce new solutions based on the correctness of $s$ on training instances $I_{r}$ , and validate $s$ on test instances $I_{t}$ once it passes $I_{r}$ or the iteration limit is reached. When $s:=p$ , its correctness is evaluated by prompting the LLM to generate each output image $i^{o}$ given its corresponding input $i^{i}$ and the solution plan $p$ , where $(i^{i},i^{o})\in I_{r}$ or $(i^{i},i^{o})\in I_{t}$ . Alternatively, when $s:=c$ , its correctness is assessed by executing $c$ on $I_{r}$ or $I_{t}$ . In repeated sampling, the LLM iteratively generates a new plan $p$ and code $c$ from the problem description $Q$ without additional feedback. In contrast, refinement revises $p$ and $c$ by prompting the LLM with the previously incorrect $p$ and $c$ , concatenated with failed training instances. In total, nine ARC solvers are employed to evaluate the performance of reasoning-oriented LLMs on the ARC benchmark.

## 4 Knowledge Augmentation

Xu et al. [34] improved LLM performance on the ARC benchmark by prompting object-based representations for each task derived from graph-based object abstractions. Building on this insight, we propose KAAR, a knowledge augmentation approach for solving ARC tasks using reasoning-oriented LLMs. KAAR leverages Generalized Planning for Abstract Reasoning (GPAR) [10], a state-of-the-art object-centric ARC solver, to generate the core knowledge priors. GPAR encodes priors as abstraction-defined nodes enriched with attributes and inter-node relations, which are extracted using standard image processing algorithms. To align with the four knowledge dimensions in ARC, KAAR maps GPAR-derived priors into their categories. In detail, KAAR adopts fundamental abstraction methods from GPAR to enable objectness. Objects are typically defined as components based on adjacency rules and color consistency (e.g., 4-connected or 8-connected components), while also including the entire image as a component. KAAR further introduces additional abstractions: (1) middle-vertical, which vertically splits the image into two equal parts, and treats each as a distinct component; (2) middle-horizontal, which applies the same principle along the horizontal axis; (3) multi-lines, which segments the image using full-length rows or columns of uniform color, and treats each resulting part as a distinct component; and (4) no abstraction, which considers only raw 2D matrices. Under no abstraction, KAAR degrades to the ARC solver backbone without incorporating any priors. KAAR inherits GPAR’s geometric and topological priors, including component attributes (size, color, shape) and relations (spatial, congruent, inclusive). It further extends the attribute set with symmetry, bounding box, nearest boundary, and hole count, and augments the relation set with touching. For numeric and counting priors, KAAR follows GPAR, incorporating the largest/smallest component sizes, and the most/least frequent component colors, while extending them with statistical analysis of hole counts and symmetry, as well as the most/least frequent sizes and shapes.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Task Categorization and Color Change Rules

### Overview

The image presents a flowchart-style diagram outlining a task categorization process, specifically focusing on tasks involving color change. It details the steps to determine the category of a task and, if it involves color change, the components affected and the rules governing the color transformation. The diagram is structured with branching paths based on task characteristics.

### Components/Axes

The diagram consists of three main categories:

* **(a) Action(s) Selection:** Leads to a check for color change involvement.

* **(b) Component(s) Selection:** Focuses on identifying components requiring color change.

* **(c) Color Change Rule:** Defines the rules for color transformation.

Each category has a corresponding green swirl icon. There are orange boxes containing descriptive text. A vertical text label on the right reads "action schema". The diagram uses arrows to indicate flow direction.

### Detailed Analysis or Content Details

**(a) Action(s) Selection:**

* Text: "Please determine which category or categories this task belongs to. Please select from the following predefined categories..."

* Text: "Selection"

* Arrow leads to a conditional check: "This task involves color change."

**(b) Component(s) Selection:**

* Text: "If this task involves color change: 1. Which components require color change? 2. Determine the conditions used to select these target components..."

* Text: "Selection"

* Text: "Components: (color 0) with the minimum and maximum sizes."

* Arrow leads to a conditional check: "If this task involves color change, please determine which source color maps to which target color for the target components. 2. Determine the conditions used to dictate this color change..."

**(c) Color Change Rule:**

* Text: "Color Change Rule"

* Text: "- minimum-size component (from color 0) to 7."

* Text: "- maximum-size component (from color 0) to 8."

### Key Observations

The diagram focuses on a specific type of task – those involving color changes. It breaks down the process into identifying the affected components and defining the rules for the color transformation. The color "0" appears to be a starting color for both minimum and maximum size components. The color change rules are specific: minimum-size components change from color 0 to color 7, and maximum-size components change from color 0 to color 8.

### Interpretation

This diagram represents a formalized process for handling tasks that involve altering the color of components within a system. It suggests a structured approach to ensure consistency and predictability in color changes. The categorization into Action, Component, and Color Change Rule indicates a modular design, allowing for independent modification of each aspect. The specific color change rules (0 to 7, 0 to 8) imply a defined color palette or scale is in use. The diagram is likely part of a larger system for defining and executing automated tasks or workflows, potentially within a user interface or a data visualization application. The "action schema" label suggests this is a formalized description of the actions that can be taken. The diagram is not presenting data, but rather a *process* for handling data.

</details>

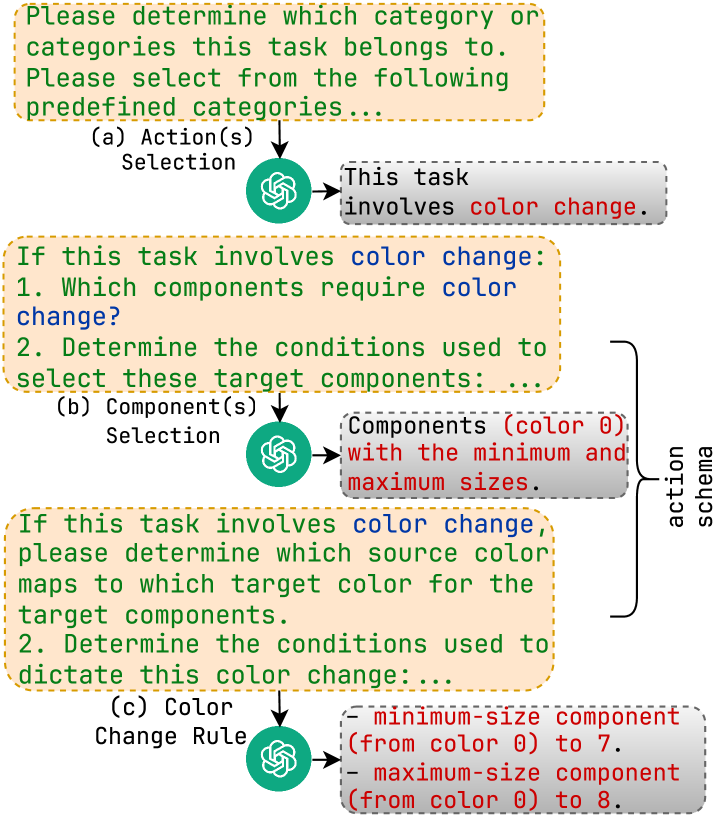

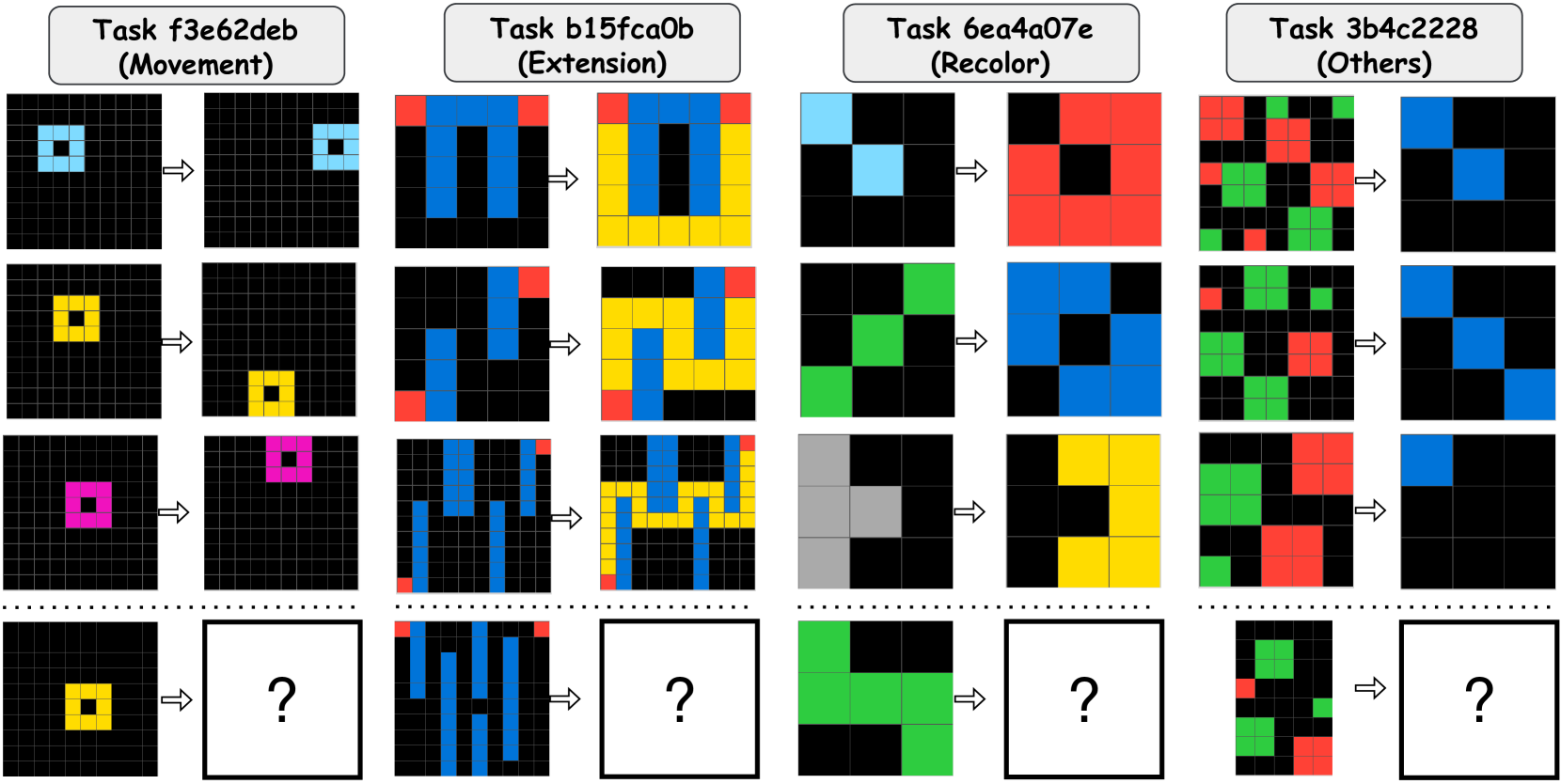

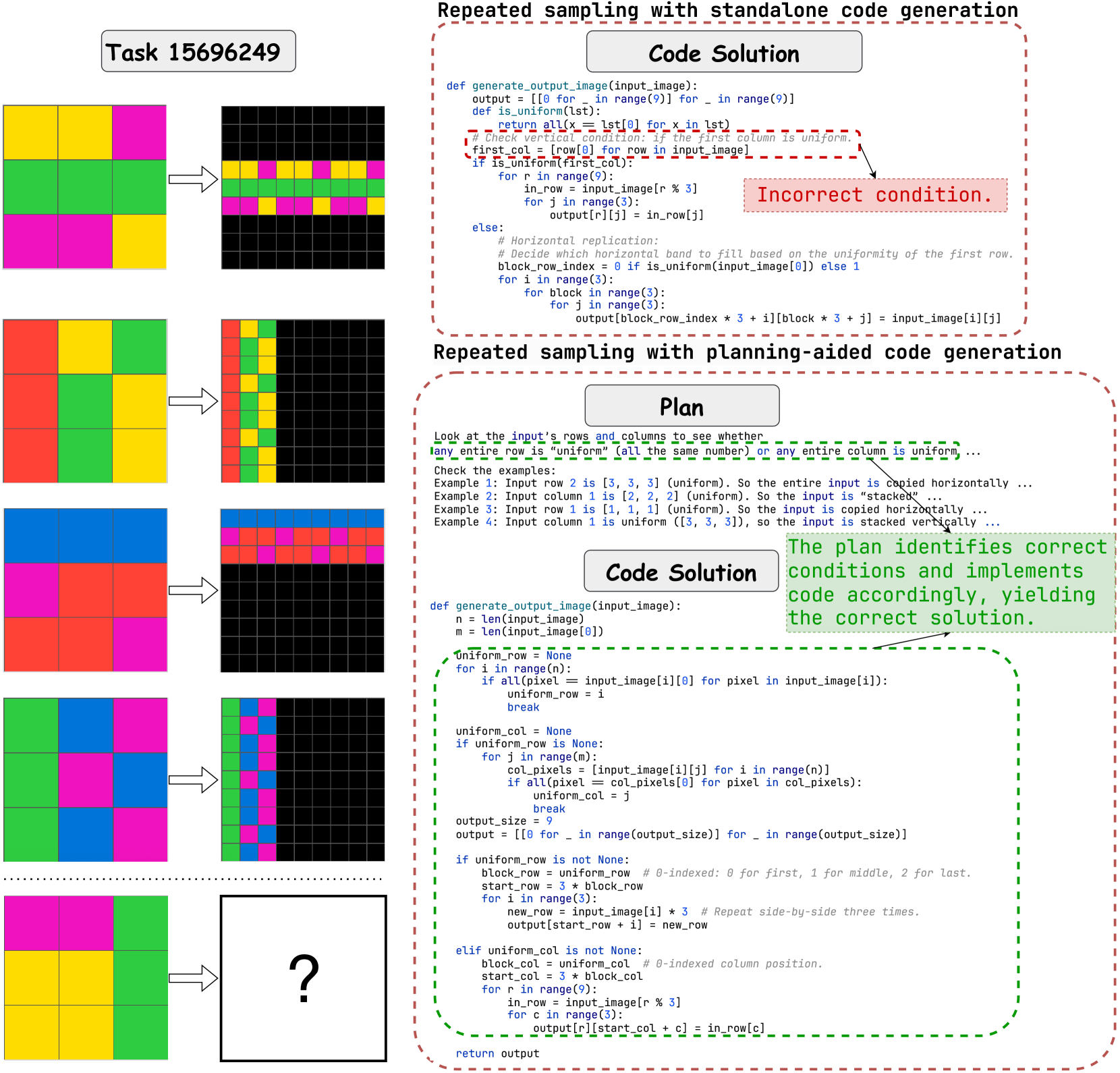

Figure 3: The example of goal-directedness priors augmentation in KAAR with input and response fragments from GPT-o3-mini.

GPAR approaches goal-directedness priors by searching for a sequence of program instructions [35] defined in a DSL. Each instruction supports conditionals, branching, looping, and action statements. KAAR incorporates the condition and action concepts from GPAR, and enables goal-directedness priors by augmenting LLM knowledge in two steps: 1) It prompts the LLM to identify the most relevant actions for solving the given ARC problem from ten predefined action categories (Figure 3 block (a)), partially derived from GPAR and extended based on the training set, such as color change, movement, and extension; 2) For each selected action, KAAR prompts the LLM with the associated schema to resolve implementation details. For example, for a color change action, KAAR first prompts the LLM to identify the target components (Figure 3 blocks (b)), and then specify the source and target colors for modification based on the target components (Figure 3 blocks (c)). We note that KAAR also prompts the LLM to incorporate condition-aware reasoning when determining action implementation details, using knowledge derived from geometry, topology, numbers, and counting priors. This enables fine-grained control, for example, applying color changes only to black components conditioned on the maximum or minimum size: from black (value 0) to blue (value 8) if largest, or to orange (value 7) if smallest. Figure 3 shows fragments of the goal-directedness priors augmentation. See Appendix A.2 for the full set of priors in KAAR.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Diagram: Visual Reasoning Components & Augmentation Process

### Overview

The image presents a breakdown of visual reasoning tasks, specifically focusing on the ARC (Abstract Reasoning Challenge) example, and the augmentation process used in KAAR (likely a system or method). It combines a visual example of an ARC puzzle, a diagram of the augmentation process, and textual descriptions of objectness, geometry/topology, and number/counting aspects.

### Components/Axes

The image is divided into five labeled sections:

* **(a) ARC example:** A grid-based puzzle with black and white squares, and a question mark indicating the missing element.

* **(b) Augmentation process in KAAR:** A flow diagram illustrating the augmentation steps.

* **(c) Objectness:** Textual description of component identification.

* **(d) Geometry and Topology:** Textual description of component shape and relationships.

* **(e) Numbers and Counting:** Textual description of component sizes and frequencies.

The augmentation process diagram uses the following elements:

* Oval nodes representing stages: "Objectness", "Geometry and Topology", "Numbers and Counting", "Goal-directed".

* Circular nodes representing input images: labeled *I<sub>T</sub>*.

* Rectangular nodes representing the ARC solver backbone.

* Arrows indicating flow and success/failure paths ("Pass" or "fail on *I<sub>T</sub>*").

* A question mark symbol *Q* representing the unknown.

### Detailed Analysis or Content Details

**(a) ARC example:**

The grid is approximately 8x8. Black pixels have a value of 0, and white pixels have a value of 1. The puzzle has a missing square in the bottom-right corner, marked with a question mark. The pattern appears to involve alternating black and white blocks, with some variations.

**(b) Augmentation process in KAAR:**

The diagram shows a cyclical process.

1. The process starts with an input image *I<sub>T</sub>*.

2. It passes through "Objectness", then to "Geometry and Topology", then to "Numbers and Counting", and finally to "Goal-directed".

3. The output of "Goal-directed" is fed back into the ARC solver backbone.

4. There are two possible outcomes: "Pass *I<sub>T</sub>*" (looping back to the beginning) or "fail on *I<sub>T</sub>*". The "fail" path leads back to the ARC solver backbone.

5. This process is repeated three times, with each iteration labeled *I<sub>T</sub>*.

**(c) Objectness:**

The text states: "When we consider 4-connected black pixels (value 0) as components, the components in each input and output image are as follows:".

For Training Pair 1 input image:

* Component 1: Locations = [(0,0), (0,1)]

* Component 8: Locations = [(4, 14)]

**(d) Geometry and Topology:**

For Training Pair 1 input image:

* For component 1: Shape: horizontal line. Different/Identical: Component 1 is different from ALL OTHERS!

* Component 1 is not touching with Component 2. Component 1 is at top-left of Component 2, and Component 2 is at bottom-right of Component 1.

**(e) Numbers and Counting:**

For Training Pair 1 input image:

* component 5, with the maximum size 10.

* component 8, with the minimum size 1.

* There are two components, 4 and 6, each of size 7, which appear most frequently (twice).

### Key Observations

* The ARC example demonstrates a visual reasoning task requiring pattern recognition.

* The KAAR augmentation process appears to iteratively refine the solution through multiple stages of analysis (objectness, geometry, numbers).

* The textual descriptions provide specific details about component identification, shape, relationships, and sizes within a training image.

* The augmentation process includes a feedback loop, suggesting an iterative refinement strategy.

* The component descriptions are specific to "Training Pair 1", implying that the analysis is being performed on a dataset of training examples.

### Interpretation

The image illustrates a system for solving visual reasoning problems, likely using a combination of automated analysis and iterative refinement. The KAAR augmentation process seems designed to improve the robustness of the ARC solver by systematically exploring different aspects of the visual input. The detailed component descriptions suggest that the system breaks down the image into fundamental elements and analyzes their properties to identify patterns and relationships. The iterative nature of the augmentation process, with its feedback loop, indicates a learning or optimization strategy. The specific details about component sizes and frequencies suggest that the system is capable of quantifying visual features and using them to make inferences. The mention of "Training Pair 1" suggests that this is part of a larger machine learning pipeline. The system appears to be designed to learn from examples and improve its ability to solve visual reasoning problems over time.

</details>

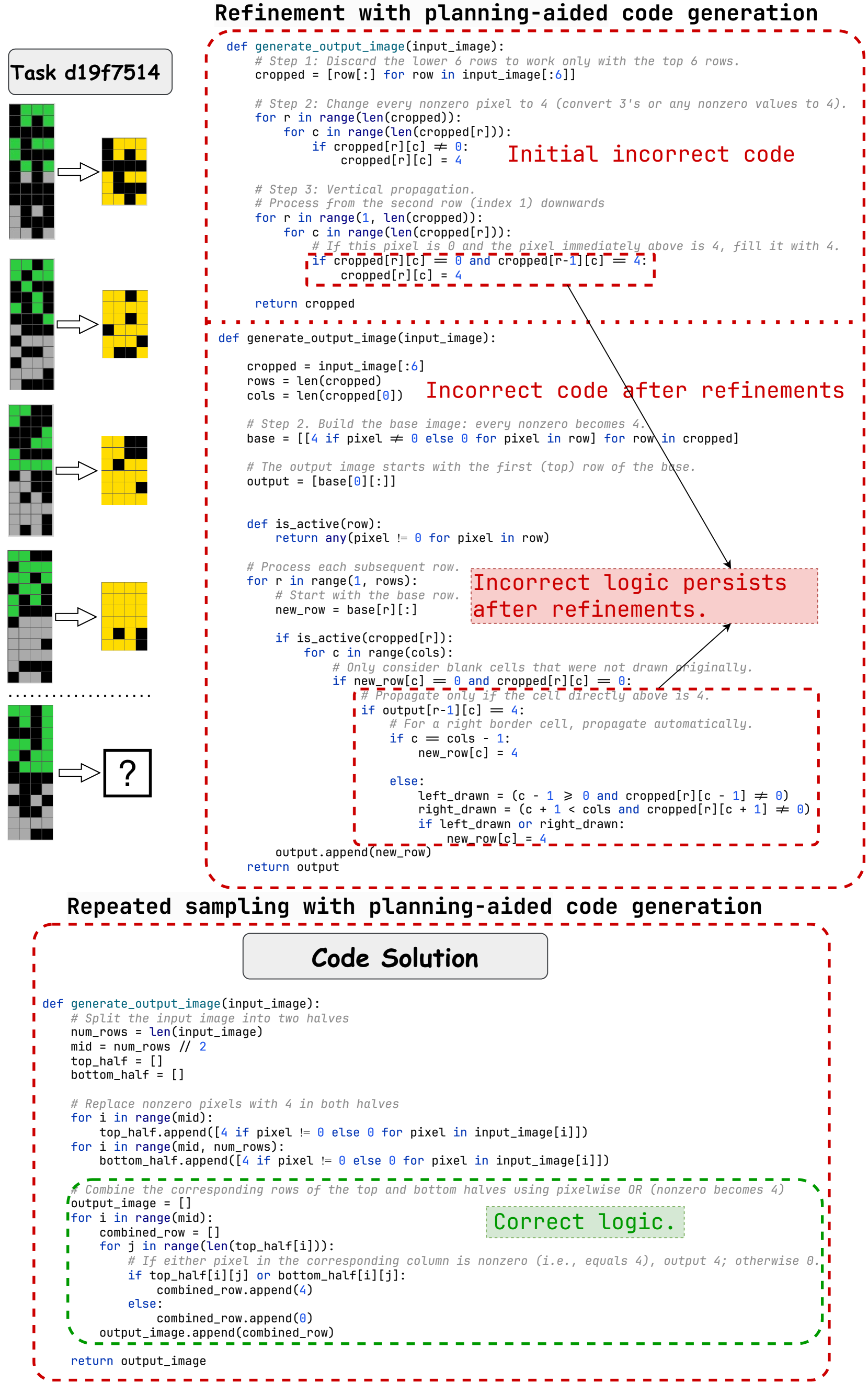

Figure 4: Augmentation process in KAAR (block (b)) and the corresponding knowledge augmentation fragments (blocks (c-e)) for ARC problem 62ab2642 (block (a)).

KAAR encodes the full set of core knowledge priors assumed in ARC into an ontology, where priors are organized into three hierarchical levels based on their dependencies. KAAR prompts LLMs with priors at each level to enable incremental augmentation. This reduces context interference and supports stage-wise reasoning aligned with human cognitive development [29]. Figure 4, block (b), illustrates the augmentation process in KAAR alongside the augmented prior fragments used to solve the problem shown in block (a). KAAR begins augmentation with objectness priors, encoding images into components with detailed coordinates based on a specific abstraction method (block (c)). KAAR then prompts geometry and topology priors (block (d)), followed by numbers and counting priors (block (e)). These priors are ordered by dependency while residing at the same ontological level, as they all build upon objectness. Finally, KAAR augments goal-directedness priors, as shown in Figure 3, where target components are derived from objectness analysis and conditions are inferred from geometric, topological, and numerical analyses. After augmenting each level of priors, KAAR invokes the ARC solver backbone to generate solutions. If any solution passes training instances $I_{r}$ , it is validated on the test instances $I_{t}$ ; otherwise, augmentation proceeds to the next level of priors.

While the ontology provides a hierarchical representation of priors, it may also introduce hallucinations, such as duplicate abstractions, irrelevant component attributes or relations, and inapplicable actions. To address this, KAAR integrates restrictions from GPAR to filter out inapplicable priors. KAAR adopts GPAR’s duplicate-checking strategy, retaining only abstractions that yield distinct components by size, color, or shape, in at least one training instance. In KAAR, each abstraction is associated with a set of applicable priors. For instance, when the entire image is treated as a component, relation priors are excluded, and actions such as movement and color change are omitted, whereas symmetry and size attributes are retained and actions such as flipping and rotation are considered. In contrast, 4-connected and 8-connected abstractions include all component attributes and relations, and the full set of ten action priors. See Appendix A.3 for detailed restrictions.

| 2 GPT-o3-mini | $I_{r}$ | Direct Generation P - | Repeated Sampling C - | Refinement PC - | P 35.50 | C 52.50 | PC 35.50 | P 31.00 | C 47.25 | PC 32.00 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| $I_{t}$ | 20.50 | 24.50 | 22.25 | 23.75 | 32.50 | 30.75 | 24.75 | 29.25 | 25.75 | |

| $I_{r}\&I_{t}$ | - | - | - | 22.00 | 31.75 | 29.25 | 21.75 | 28.50 | 25.00 | |

| Gemini-2.0 | $I_{r}$ | - | - | - | 36.50 | 39.50 | 21.50 | 15.50 | 25.50 | 15.50 |

| $I_{t}$ | 7.00 | 6.75 | 6.25 | 10.00 | 14.75 | 16.75 | 8.75 | 12.00 | 11.75 | |

| $I_{r}\&I_{t}$ | - | - | - | 9.50 | 14.25 | 16.50 | 8.00 | 10.50 | 10.75 | |

| QwQ-32B | $I_{r}$ | - | - | - | 19.25 | 13.50 | 15.25 | 16.75 | 15.00 | 14.25 |

| $I_{t}$ | 9.50 | 7.25 | 5.75 | 11.25 | 13.50 | 14.25 | 11.00 | 14.25 | 14.00 | |

| $I_{r}\&I_{t}$ | - | - | - | 9.25 | 12.75 | 13.00 | 8.75 | 13.00 | 11.75 | |

| DeepSeek-R1-70B | $I_{r}$ | - | - | - | 8.75 | 6.75 | 7.75 | 6.25 | 5.75 | 7.75 |

| $I_{t}$ | 4.25 | 4.75 | 4.50 | 4.25 | 7.25 | 7.75 | 4.75 | 5.75 | 7.75 | |

| $I_{r}\&I_{t}$ | - | - | - | 3.50 | 6.50 | 7.25 | 4.25 | 5.25 | 7.00 | |

| 2 | | | | | | | | | | |

Table 1: Performance of nine ARC solvers measured by accuracy on $I_{r}$ , $I_{t}$ , and $I_{r}\&I_{t}$ using four reasoning-oriented LLMs. For each LLM, the highest accuracy on $I_{r}$ and $I_{r}\&I_{t}$ is in bold; the highest accuracy on $I_{t}$ is in red. Accuracy is reported as a percentage. P denotes the solution plan; C and PC refer to standalone and planning-aided code generation, respectively.

## 5 Experiments

In ARC, each task is unique and solvable using only core knowledge priors [5]. We begin by comparing nine candidate solvers on the full ARC public evaluation set of 400 tasks. This offers broader insights than previous studies limited to subsets of 400 training tasks [10, 9, 36], given the greater difficulty of the evaluation set [37]. We experiment with recent reasoning-oriented LLMs, including proprietary models, GPT-o3-mini and Gemini 2.0 Flash-Thinking (Gemini-2.0), and open-source models, DeepSeek-R1-Distill-Llama-70B (DeepSeek-R1-70B) and QwQ-32B. We compute accuracy on test instances $I_{t}$ as the primary evaluation metric. It measures the proportion of problems where the first solution successfully solves $I_{t}$ after passing the training instances $I_{r}$ ; otherwise, if none pass $I_{r}$ within 12 iterations, the last solution is evaluated on $I_{t}$ , applied to both repeated sampling and refinement. We also report accuracy on $I_{r}$ and $I_{r}\&I_{t}$ , measuring the percentage of problems whose solutions solve $I_{r}$ and both $I_{r}$ and $I_{t}$ . See Appendix A.4 for parameter settings.

Table 1 reports the performance of nine ARC solvers across four reasoning-oriented LLMs. For direct generation methods, accuracy on $I_{r}$ and $I_{r}\&I_{t}$ is omitted, as solutions are evaluated directly on $I_{t}$ . GPT-o3-mini consistently outperforms all other LLMs, achieving the highest accuracy on $I_{r}$ (52.50%), $I_{t}$ (32.50%), and $I_{r}\&I_{t}$ (31.75%) under repeated sampling with standalone code generation (C), highlighting its strong abstract reasoning and generalization capabilities. Notably, QwQ-32B, the smallest model, outperforms DeepSeek-R1-70B across all solvers and surpasses Gemini-2.0 under refinement. Among the nine ARC solvers, repeated sampling-based methods generally outperform those based on direct generation or refinement. This diverges from previous findings where refinement dominated conventional code generation tasks that lack abstract reasoning and generalization demands [10, 17, 19]. Within repeated sampling, planning-aided code generation (PC) yields the highest accuracy on $I_{t}$ across most LLMs. It also demonstrates the strongest generalization with GPT-o3-mini and Gemini-2.0, as evidenced by the smallest accuracy gap between $I_{r}$ and $I_{r}\&I_{t}$ , compared to solution plan (P) and standalone code generation (C). A similar trend is observed for QwQ-32B and DeepSeek-R1-70B, where both C and PC generalize effectively across repeated sampling and refinement. Overall, repeated sampling with planning-aided code generation, denoted as RSPC, shows the best performance and thus serves as the ARC solver backbone.

| 2 GPT-o3-mini | RSPC | $I_{r}$ Acc 35.50 | $I_{t}$ $\Delta$ - | $I_{r}\&I_{t}$ $\gamma$ - | Acc 30.75 | $\Delta$ - | $\gamma$ - | Acc 29.25 | $\Delta$ - | $\gamma$ - |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| KAAR | 40.00 | 4.50 | 12.68 | 35.00 | 4.25 | 13.82 | 33.00 | 3.75 | 12.82 | |

| Gemini-2.0 | RSPC | 21.50 | - | - | 16.75 | - | - | 16.50 | - | - |

| KAAR | 25.75 | 4.25 | 19.77 | 21.75 | 5.00 | 29.85 | 20.50 | 4.00 | 24.24 | |

| QwQ-32B | RSPC | 15.25 | - | - | 14.25 | - | - | 13.00 | - | - |

| KAAR | 22.25 | 7.00 | 45.90 | 21.00 | 6.75 | 47.37 | 19.25 | 6.25 | 48.08 | |

| DeepSeek-R1-70B | RSPC | 7.75 | - | - | 7.75 | - | - | 7.25 | - | - |

| KAAR | 12.25 | 4.50 | 58.06 | 12.75 | 5.00 | 64.52 | 11.50 | 4.25 | 58.62 | |

| 2 | | | | | | | | | | |

Table 2: Comparison of RSPC (repeated-sampling planning-aided code generation) and its knowledge-augmented variant, KAAR, in terms of accuracy (Acc) on $I_{r}$ , $I_{t}$ , and $I_{r}\&I_{t}$ . $\Delta$ and $\gamma$ denote the absolute and relative improvements over RSPC, respectively. All values are reported as percentages. The best results for $I_{r}$ and $I_{r}\&I_{t}$ are in bold; the highest for $I_{t}$ is in red.

We further compare the performance of RSPC with its knowledge-augmented variant, KAAR. For each task, KAAR begins with simpler abstractions, i.e., no abstraction and whole image, and progresses to complicated 4-connected and 8-connected abstractions, consistent with GPAR. KAAR reports the accuracy on test instances $I_{t}$ based on the first abstraction whose solution solves all training instances $I_{r}$ ; otherwise, it records the final solution from each abstraction and selects the one that passes the most $I_{r}$ to evaluate on $I_{t}$ . KAAR allows the solver backbone (RSPC) up to 4 iterations per invocation, totaling 12 iterations, consistent with the non-augmented setting. See Appendix A.5 for KAAR execution details. As shown in Table 2, KAAR consistently outperforms non-augmented RSPC across all LLMs, yielding around 5% absolute gains on $I_{r}$ , $I_{t}$ , and $I_{r}\&I_{t}$ . This highlights the effectiveness and model-agnostic nature of the augmented priors. KAAR achieves the highest accuracy using GPT-o3-mini, with 40% on $I_{r}$ , 35% on $I_{t}$ , and 33% on $I_{r}\&I_{t}$ . KAAR shows the greatest absolute improvements ( $\Delta$ ) using QwQ-32B and the largest relative gains ( $\gamma$ ) using DeepSeek-R1-70B across all evaluated metrics. Moreover, KAAR maintains generalization comparable to RSPC across all LLMs, indicating that the augmented priors are sufficiently abstract and expressive to serve as basis functions for reasoning, in line with ARC assumptions.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Heatmap: Model Coverage Comparison - RSPC & KAAR

### Overview

The image presents two heatmaps, labeled (a) RSPC and (b) KAAR, comparing the coverage between four different models: GPT-03-mini, GPT-03-mini, Gemini-2.0, QwQ-32B, and DeepSeek-R1-70B. The color intensity represents the coverage value, with darker shades indicating higher coverage.

### Components/Axes

* **X-axis:** Models - GPT-03-mini, GPT-03-mini, Gemini-2.0, QwQ-32B, DeepSeek-R1-70B.

* **Y-axis:** Models - GPT-03-mini, Gemini-2.0, QwQ-32B, DeepSeek-R1-70B.

* **Color Scale (Legend):** Located on the right side of the image. Ranges from approximately 0.0 (lightest color) to 1.0 (darkest color), representing Coverage. The color gradient transitions from light yellow to dark red.

* **Labels:** Each cell in the heatmap displays a numerical value representing the coverage between the corresponding row and column models.

* **Titles:** "(a) RSPC" and "(b) KAAR" indicate the type of coverage being measured in each heatmap.

### Detailed Analysis or Content Details

**Heatmap (a) - RSPC**

* **GPT-03-mini vs. GPT-03-mini:** 1.00

* **GPT-03-mini vs. Gemini-2.0:** 0.50

* **GPT-03-mini vs. QwQ-32B:** 0.40

* **GPT-03-mini vs. DeepSeek-R1-70B:** 0.22

* **Gemini-2.0 vs. GPT-03-mini:** 0.91

* **Gemini-2.0 vs. Gemini-2.0:** 1.00

* **Gemini-2.0 vs. QwQ-32B:** 0.60

* **Gemini-2.0 vs. DeepSeek-R1-70B:** 0.40

* **QwQ-32B vs. GPT-03-mini:** 0.86

* **QwQ-32B vs. Gemini-2.0:** 0.70

* **QwQ-32B vs. QwQ-32B:** 1.00

* **QwQ-32B vs. DeepSeek-R1-70B:** 0.44

* **DeepSeek-R1-70B vs. GPT-03-mini:** 0.87

* **DeepSeek-R1-70B vs. Gemini-2.0:** 0.87

* **DeepSeek-R1-70B vs. QwQ-32B:** 0.81

* **DeepSeek-R1-70B vs. DeepSeek-R1-70B:** 1.00

**Heatmap (b) - KAAR**

* **GPT-03-mini vs. GPT-03-mini:** 1.00

* **GPT-03-mini vs. Gemini-2.0:** 0.55

* **GPT-03-mini vs. QwQ-32B:** 0.54

* **GPT-03-mini vs. DeepSeek-R1-70B:** 0.34

* **Gemini-2.0 vs. GPT-03-mini:** 0.89

* **Gemini-2.0 vs. Gemini-2.0:** 1.00

* **Gemini-2.0 vs. QwQ-32B:** 0.72

* **Gemini-2.0 vs. DeepSeek-R1-70B:** 0.48

* **QwQ-32B vs. GPT-03-mini:** 0.88

* **QwQ-32B vs. Gemini-2.0:** 0.74

* **QwQ-32B vs. QwQ-32B:** 1.00

* **QwQ-32B vs. DeepSeek-R1-70B:** 0.53

* **DeepSeek-R1-70B vs. GPT-03-mini:** 0.92

* **DeepSeek-R1-70B vs. Gemini-2.0:** 0.82

* **DeepSeek-R1-70B vs. QwQ-32B:** 0.88

* **DeepSeek-R1-70B vs. DeepSeek-R1-70B:** 1.00

### Key Observations

* In both heatmaps, the diagonal elements (representing a model compared to itself) are all 1.00, as expected.

* Coverage values are generally higher between models within the same heatmap (RSPC or KAAR).

* GPT-03-mini consistently shows lower coverage with other models compared to Gemini-2.0, QwQ-32B, and DeepSeek-R1-70B.

* DeepSeek-R1-70B generally exhibits high coverage with other models, particularly in the KAAR heatmap.

* The coverage values differ between RSPC and KAAR, suggesting that the two metrics capture different aspects of model coverage.

### Interpretation

The heatmaps illustrate the degree of overlap or similarity in coverage between different language models, as measured by RSPC and KAAR. A higher coverage value indicates that the two models being compared perform similarly on the given task or dataset. The differences between the two heatmaps (RSPC vs. KAAR) suggest that the two metrics are not perfectly correlated and may be sensitive to different characteristics of the models.

The consistently lower coverage of GPT-03-mini suggests that it may have a narrower scope or different capabilities compared to the other models. DeepSeek-R1-70B appears to be the most versatile model, exhibiting high coverage with all other models in the KAAR metric.

The data suggests that model coverage is a useful metric for comparing the capabilities of different language models, but it is important to consider the specific metric being used and the context of the comparison. Further investigation would be needed to understand the underlying reasons for the observed differences in coverage. The fact that the coverage is not always symmetrical (e.g., GPT-03-mini vs Gemini-2.0 has a different value than Gemini-2.0 vs GPT-03-mini) suggests that the relationship is not necessarily transitive.

</details>

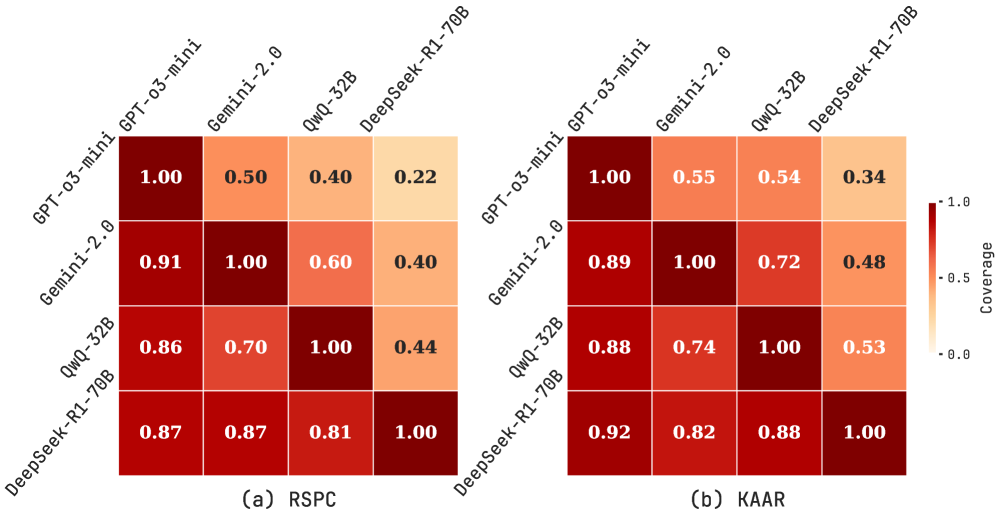

Figure 5: Asymmetric relative coverage matrices for RSPC (a) and KAAR (b), showing the proportion of problems whose test instances are solved by the row model that are also solved by the column model, across four LLMs.

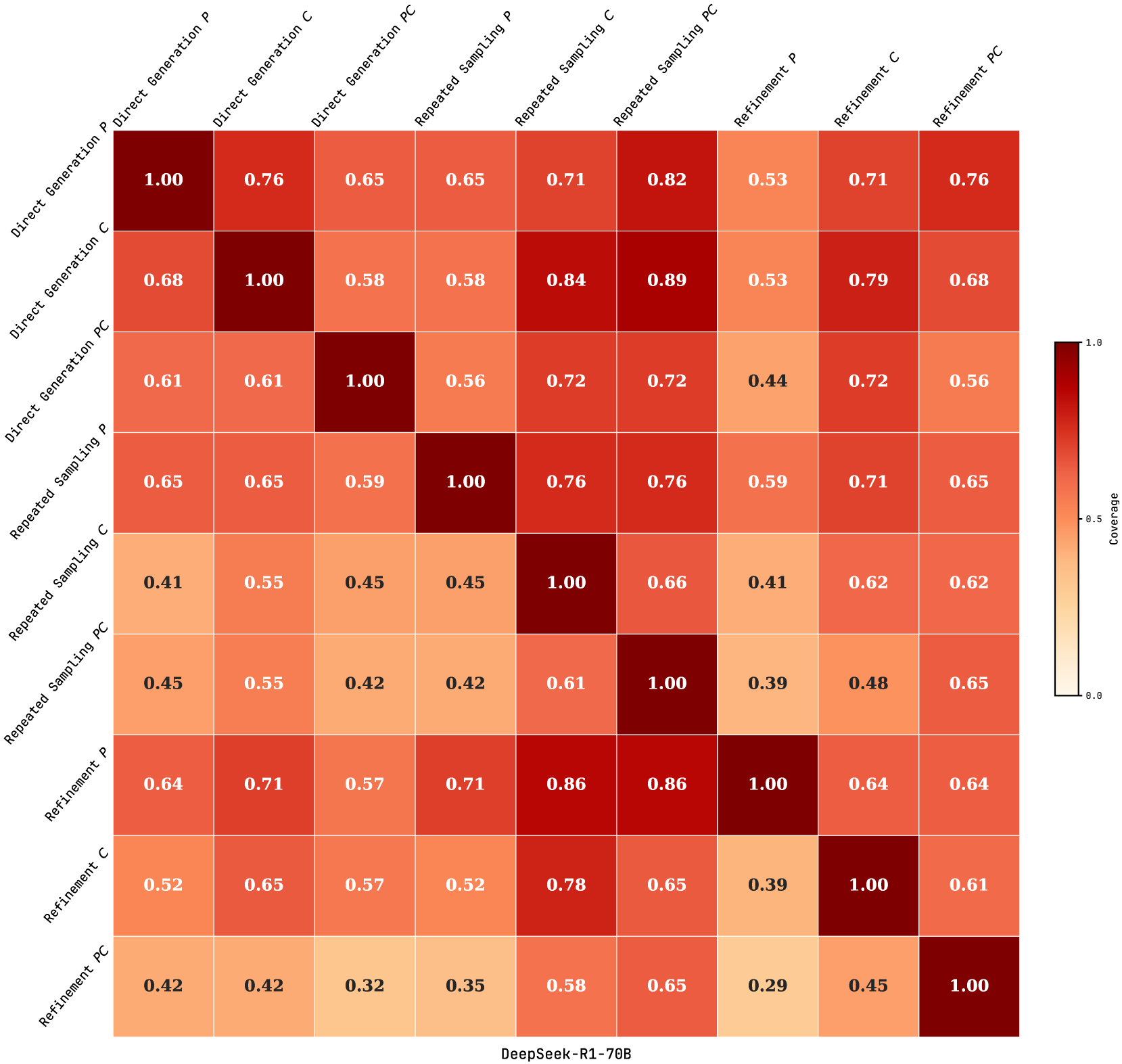

We compare relative problem coverage across evaluated LLMs under RSPC and KAAR based on successful solutions on test instances. As shown in Figure 5, each cell $(i,j)$ represents the proportion of problems solved by the row LLM that are also solved by the column LLM. This is computed as $\frac{|A_{i}\cap A_{j}|}{|A_{i}|}$ , where $A_{i}$ and $A_{j}$ are the sets of problems solved by the row and column LLMs, respectively. Values near 1 indicate that the column LLM covers most problems solved by the row LLM. Under RSPC (Figure 5 (a)), GPT-o3-mini exhibits broad coverage, with column values consistently above 0.85. Gemini-2.0 and QwQ-32B also show substantial alignment, with mutual coverage exceeding 0.6. In contrast, DeepSeek-R1-70B shows lower alignment, with column values below 0.45 due to fewer solved problems. Figure 5 (b) illustrates that KAAR generally improves or maintains inter-model overlap compared to RSPC. Notably, KAAR raises the minimum coverage between GPT-o3-mini and DeepSeek-R1-70B from 0.22 under RSPC to 0.34 under KAAR. These results highlight the effectiveness of KAAR in improving cross-model generalization, with all evaluated LLMs solving additional shared problems. In particular, it enables smaller models such as QwQ-32B and DeepSeek-R1-70B to better align with stronger LLMs on the ARC benchmark.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: Accuracy on f1 Score for Different Models and Tasks

### Overview

This bar chart compares the accuracy (f1 score) of several large language models (LLMs) – GPT-03-mini, QwQ-32B, Gemini-2.0, and DeepSeek-R1-70B – across four different tasks: Movement, Extension, Recolour, and Others. Each model is evaluated using two prompting methods: RSPC and KAAR. The chart displays the accuracy as a percentage on the y-axis, and the tasks on the x-axis. Each bar is segmented to show the performance of each model/prompting method combination. Total counts for each task are provided below the x-axis labels.

### Components/Axes

* **X-axis:** Tasks - Movement, Extension, Recolour, Others.

* **Y-axis:** Accuracy on f1 (%) - Scale ranges from 0 to 50, with increments of 10.

* **Legend:** Located in the top-right corner, identifies the color-coding for each model and prompting method:

* Blue: GPT-03-mini: RSPC

* Dark Blue: GPT-03-mini: KAAR

* Green: Gemini-2.0: RSPC

* Light Green: Gemini-2.0: KAAR

* Purple: QwQ-32B: RSPC

* Dark Purple: QwQ-32B: KAAR

* Orange: DeepSeek-R1-70B: RSPC

* Yellow: DeepSeek-R1-70B: KAAR

* **Task Totals:** Below each task label, the total number of samples for that task is indicated (Movement: 55, Extension: 129, Recolour: 115, Others: 101).

* **Data Labels:** Numerical values are displayed on top of each segment of the bar, representing the accuracy percentage.

### Detailed Analysis

Here's a breakdown of the accuracy values for each task and model/prompting method combination:

**Movement (Total: 55)**

* GPT-03-mini: RSPC - 41.8%

* GPT-03-mini: KAAR - 20.0%

* QwQ-32B: RSPC - 12.7%

* QwQ-32B: KAAR - 18.2%

* Gemini-2.0: RSPC - 3.6%

* Gemini-2.0: KAAR - 9.1%

* DeepSeek-R1-70B: RSPC - 10.9%

* DeepSeek-R1-70B: KAAR - 14.5%

**Extension (Total: 129)**

* GPT-03-mini: RSPC - 38.8%

* GPT-03-mini: KAAR - 19.4%

* QwQ-32B: RSPC - 1.6%

* QwQ-32B: KAAR - 7.8%

* Gemini-2.0: RSPC - 0.8%

* Gemini-2.0: KAAR - 2.3%

* DeepSeek-R1-70B: RSPC - 17.8%

* DeepSeek-R1-70B: KAAR - 1.6%

**Recolour (Total: 115)**

* GPT-03-mini: RSPC - 24.3%

* GPT-03-mini: KAAR - 13.9%

* QwQ-32B: RSPC - 6.1%

* QwQ-32B: KAAR - 7.8%

* Gemini-2.0: RSPC - 7.0%

* Gemini-2.0: KAAR - 4.3%

* DeepSeek-R1-70B: RSPC - 10.4%

* DeepSeek-R1-70B: KAAR - 7.8%

**Others (Total: 101)**

* GPT-03-mini: RSPC - 21.8%

* GPT-03-mini: KAAR - 14.9%

* QwQ-32B: RSPC - 4.0%

* QwQ-32B: KAAR - 11.9%

* Gemini-2.0: RSPC - 5.0%

* Gemini-2.0: KAAR - 9.9%

* DeepSeek-R1-70B: RSPC - 7.9%

* DeepSeek-R1-70B: KAAR - 5.0%

### Key Observations

* GPT-03-mini consistently performs well, particularly with the RSPC prompting method, achieving the highest scores in Movement and Extension tasks.

* Gemini-2.0 generally exhibits the lowest accuracy across all tasks, regardless of the prompting method.

* The KAAR prompting method often results in lower accuracy compared to RSPC for GPT-03-mini.

* The "Movement" task has the highest overall accuracy scores, while "Extension" and "Recolour" have relatively lower scores.

* The task totals vary significantly, with Extension having the largest sample size (129) and Movement having the smallest (55).

### Interpretation

The data suggests that GPT-03-mini is the most effective model among those tested, especially when using the RSPC prompting method. The performance differences between models are task-dependent, with some models excelling in specific areas. The consistently low performance of Gemini-2.0 indicates potential limitations in its ability to handle these tasks. The varying task totals might influence the observed accuracy scores, as larger sample sizes generally lead to more reliable results. The choice of prompting method (RSPC vs. KAAR) also plays a crucial role, with RSPC generally yielding better results for GPT-03-mini. This data could be used to inform model selection and prompting strategy for specific applications. The relatively low scores across all models for the "Recolour" task suggest that this task is particularly challenging. Further investigation into the nature of these tasks and the models' capabilities could provide valuable insights for improving performance.

</details>

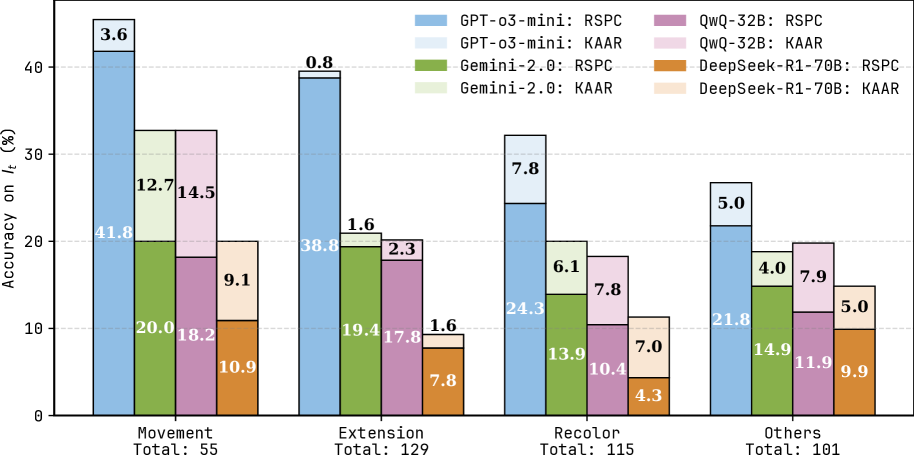

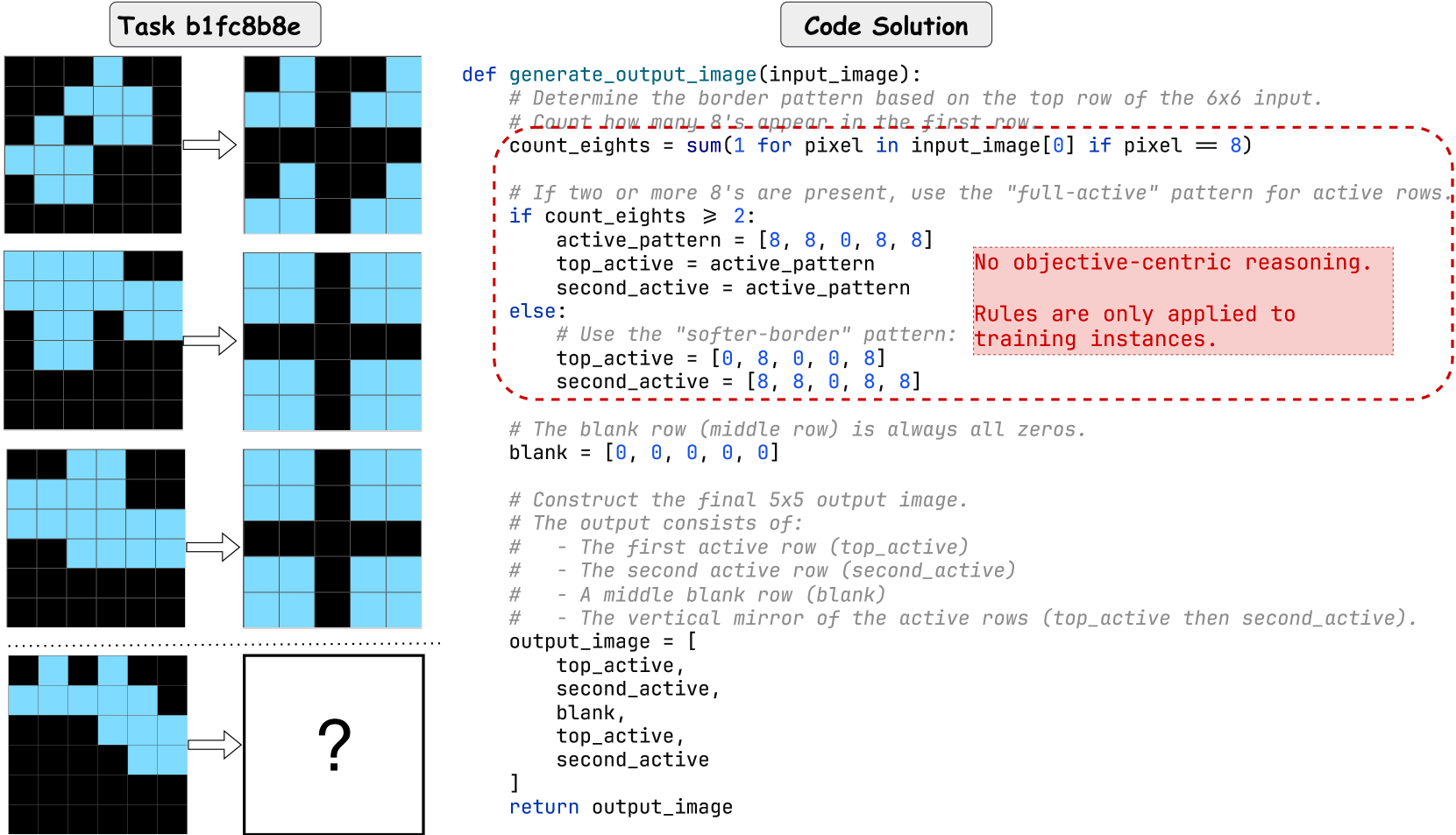

Figure 6: Accuracy on test instances $I_{t}$ for RSPC and KAAR across the movement, extension, recolor, and others categories using four LLMs. Each stacked bar shows RSPC accuracy (darker segment) and the additional improvement from KAAR (lighter segment).

Following prior work [9, 10], we categorize 400 problems in the ARC public evaluation set into four classes based on their primary transformations: (1) movement (55 problems), (2) extension (129 problems), (3) recolor (115 problems), and (4) others (101 problems). The others category comprises infrequent tasks such as noise removal, selection, counting, resizing, and problems with implicit patterns that hinder systematic classification into the aforementioned categories. See Appendix A.7 for examples of each category. Figure 6 illustrates the accuracy on test instances $I_{t}$ for RSPC and KAAR across four categories with evaluated LLMs. Each stacked bar represents RSPC accuracy and the additional improvement achieved by KAAR. KAAR consistently outperforms RSPC with the largest accuracy gain in movement (14.5% with QwQ-32B). In contrast, KAAR shows limited improvements in extension, since several problems involve pixel-level extension, which reduces the reliance on component-level recognition. Moreover, extension requires accurate spatial inference across multiple components and poses greater difficulty than movement, which requires mainly direction identification. Although KAAR augments spatial priors, LLMs still struggle to accurately infer positional relations among multiple components, consistent with prior findings [38, 39, 40]. Overlaps from component extensions further complicate reasoning, as LLMs often fail to recognize truncated components as unified wholes, contrary to human perceptual intuition.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Bar Chart: Accuracy on I_t vs. Average Image Size Interval

### Overview

This bar chart compares the accuracy on I_t (in percentage) for two models, GPT-o3-mini and QwQ-32B, using two different methods, RSPC and KAAR, across varying average image size intervals (width x height). The chart consists of grouped bar plots for each image size interval, with each group representing the accuracy of the four combinations of model and method. The total number of images used for each interval is also indicated.

### Components/Axes

* **X-axis:** Average Image Size Interval (width x height). The intervals are: (0, 25], (25, 100], (100, 225], (225, 400], (400, 625], (625, 900].

* **Y-axis:** Accuracy on I_t (%). The scale ranges from 0 to 80.

* **Legend:**

* Blue: GPT-o3-mini RSPC

* Light Blue: GPT-o3-mini KAAR

* Orange: QwQ-32B RSPC

* Pink: QwQ-32B KAAR

* **Total Count:** Below each interval on the x-axis, the total number of images used for that interval is displayed.

### Detailed Analysis

The chart presents six groups of bars, one for each image size interval. Within each group, there are four bars representing the accuracy of each model/method combination.

* **(0, 25]**:

* GPT-o3-mini RSPC: Approximately 73.7%

* GPT-o3-mini KAAR: Approximately 42.1%

* QwQ-32B RSPC: Approximately 15.8%

* QwQ-32B KAAR: Approximately 5.3%

* Total: 19

* **(25, 100]**:

* GPT-o3-mini RSPC: Approximately 48.9%

* GPT-o3-mini KAAR: Approximately 23.7%

* QwQ-32B RSPC: Approximately 11.5%

* QwQ-32B KAAR: Approximately 5.0%

* Total: 139

* **(100, 225]**:

* GPT-o3-mini RSPC: Approximately 24.8%

* GPT-o3-mini KAAR: Approximately 8.5%

* QwQ-32B RSPC: Approximately 4.7%

* QwQ-32B KAAR: Approximately 6.2%

* Total: 129

* **(225, 400]**:

* GPT-o3-mini RSPC: Approximately 5.9%

* GPT-o3-mini KAAR: Approximately 11.8%

* QwQ-32B RSPC: Approximately 2.0%

* QwQ-32B KAAR: Approximately 9.8%

* Total: 51

* **(400, 625]**:

* GPT-o3-mini RSPC: Approximately 5.1%

* GPT-o3-mini KAAR: Not visible, but likely low.

* QwQ-32B RSPC: Not visible, but likely low.

* QwQ-32B KAAR: Approximately 4.3%

* Total: 39

* **(625, 900]**:

* GPT-o3-mini RSPC: Not visible, but likely low.

* GPT-o3-mini KAAR: Not visible, but likely low.

* QwQ-32B RSPC: Not visible, but likely low.

* QwQ-32B KAAR: Approximately 4.3%

* Total: 23

**Trends:**

* For both models, the accuracy of RSPC generally decreases as the image size interval increases.

* GPT-o3-mini consistently outperforms QwQ-32B, especially in the smaller image size intervals.

* The difference in accuracy between RSPC and KAAR methods varies depending on the image size interval.

### Key Observations

* GPT-o3-mini RSPC achieves the highest accuracy (73.7%) in the smallest image size interval ((0, 25]).

* As image size increases, the accuracy of all methods declines.

* The number of images used for each interval varies significantly, with the largest number (139) in the (25, 100] interval.

* The accuracy values for the larger image size intervals (400, 625] and (625, 900]) are very low and difficult to discern precisely from the chart.

### Interpretation

The data suggests that both GPT-o3-mini and QwQ-32B models perform better on smaller images. The RSPC method generally yields higher accuracy than the KAAR method, particularly for GPT-o3-mini. The significant drop in accuracy as image size increases indicates that the models may struggle with larger, more complex images. The varying number of images used for each interval could introduce bias in the results. The chart demonstrates a trade-off between image size and accuracy, and highlights the importance of considering image size when selecting a model and method for a given task. The consistent outperformance of GPT-o3-mini suggests it may be a more robust choice for this particular application, especially when dealing with smaller images. The low accuracy values in the larger image size intervals warrant further investigation to understand the underlying causes and potential mitigation strategies.

</details>

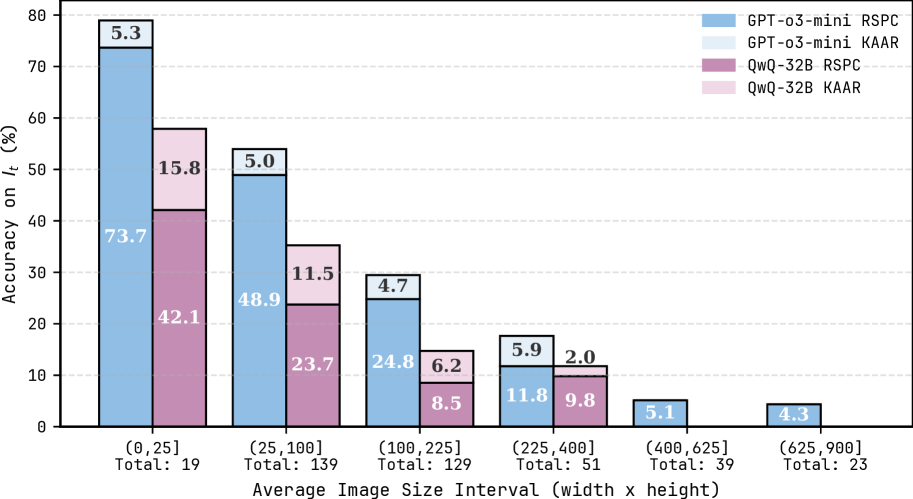

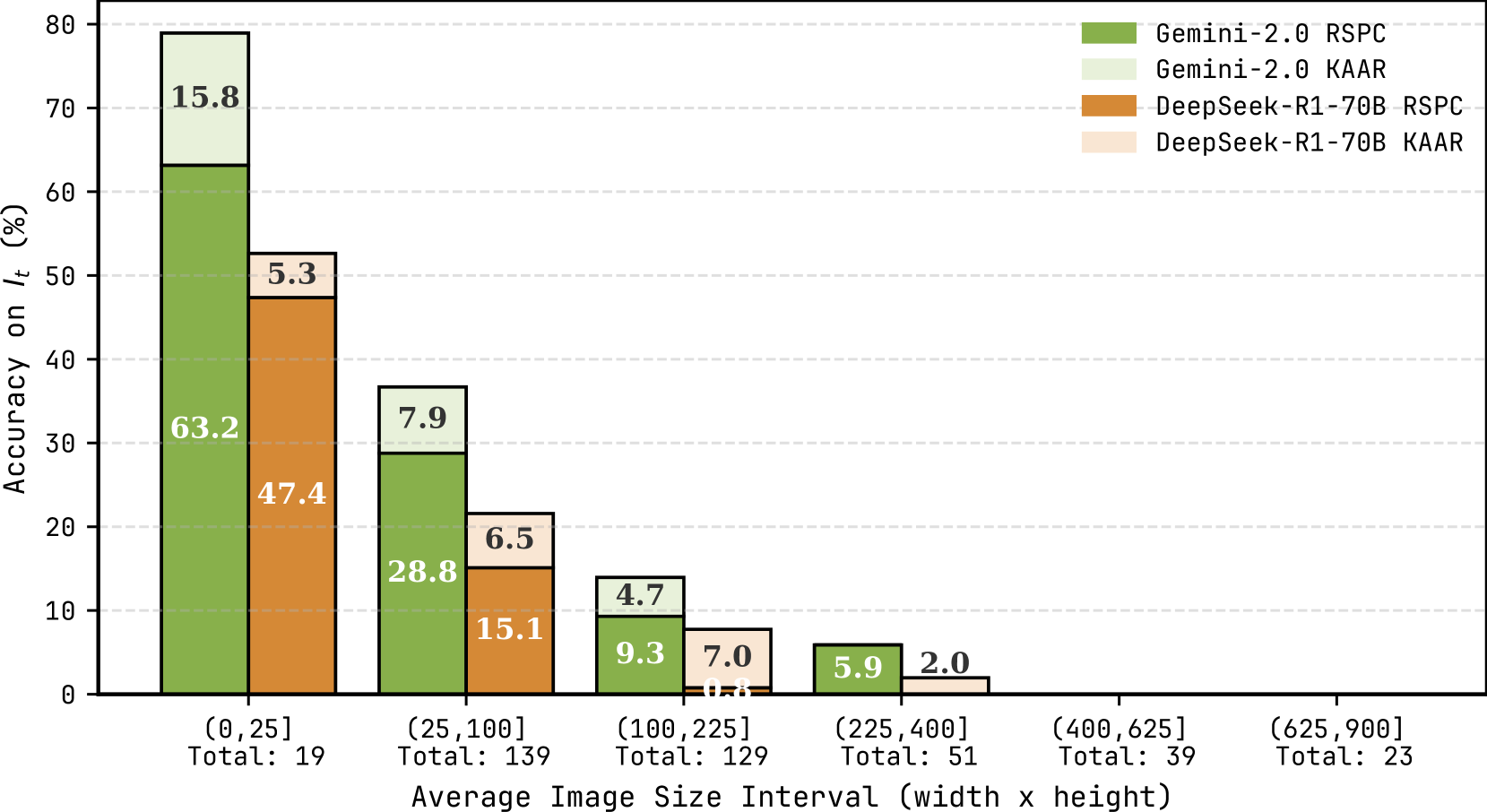

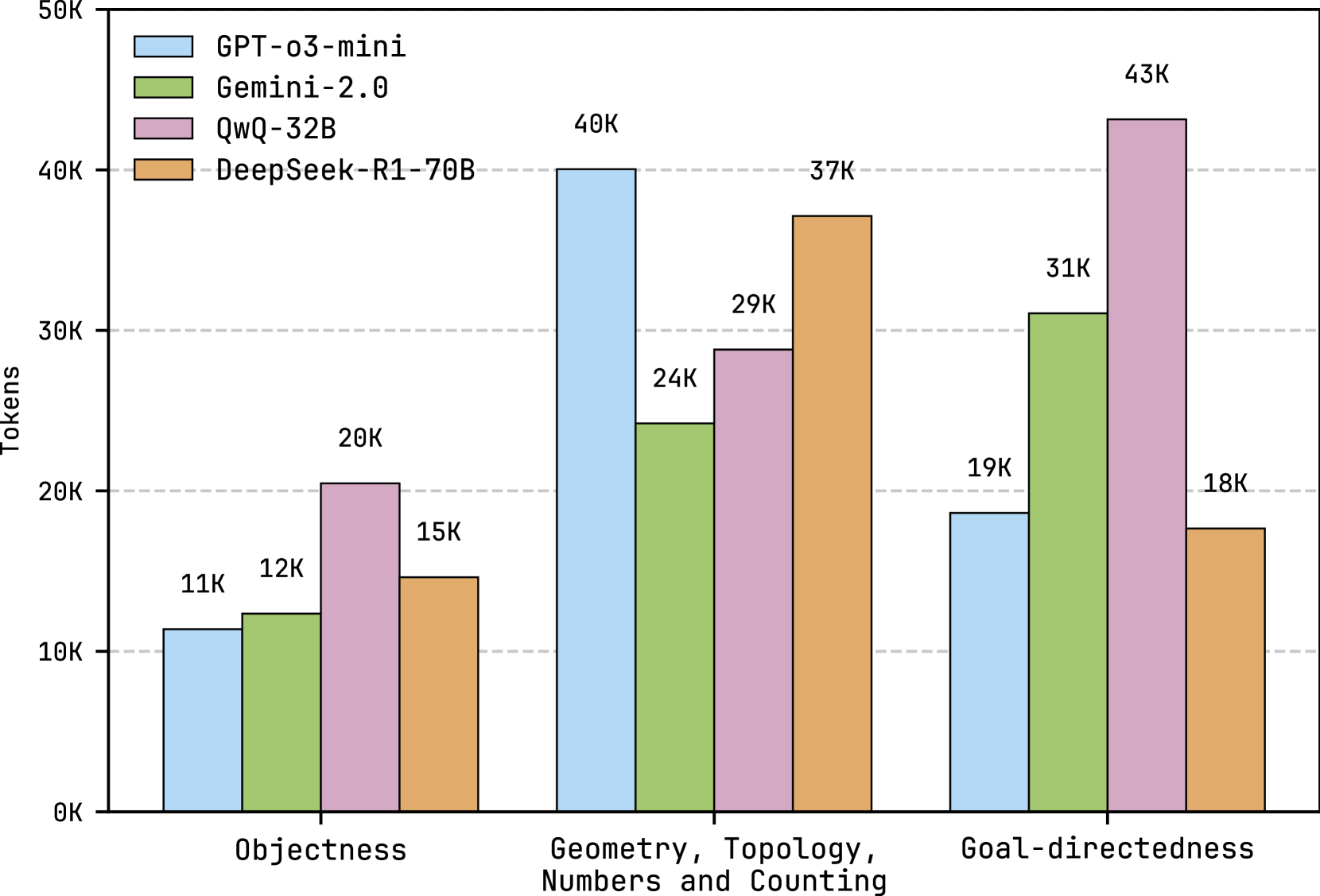

Figure 7: Accuracy on test instances $I_{t}$ for RSPC and KAAR across average image size intervals, evaluated using GPT-o3-mini and QwQ-32B. See Figure 12 in Appendix for the results with the other LLMs.

A notable feature of ARC is the variation in image size both within and across problems. We categorize tasks by averaging the image size per problem, computed over both training and test image pairs. We report the accuracy on $I_{t}$ for RSPC and KAAR across average image size intervals using GPT-o3-mini and QwQ-32B, the strongest proprietary and open-source models in Tables 1 and 2. As shown in Figure 7, both LLMs experience performance degradation as image size increases. When the average image size exceeds 400 (20×20), GPT-o3-mini solves only three problems, while QwQ-32B solves none. In ARC, isolating relevant pixels in larger images, represented as 2D matrices, requires effective attention mechanisms in LLMs, which remains an open challenge noted in recent work [41, 34]. KAAR consistently outperforms RSPC on problems with average image sizes below 400, benefiting from object-centric representations. By abstracting each image into components, KAAR reduces interference from irrelevant pixels, directs attention to salient components, and facilitates component-level transformation analysis. However, larger images often produce both oversized and numerous components after abstraction, which continue to challenge LLMs during reasoning. Oversized components hinder transformation execution, and numerous components complicate the identification of target components.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Accuracy on IδLt vs. Iterations

### Overview

This line chart displays the accuracy on IδLt (%) for four different models (GPT-o3-mini with RSPC and KAAR, and QwQ-32B with RSPC and KAAR) across a range of iterations, from 1 to 12. The x-axis represents the number of iterations, and the y-axis represents the accuracy percentage. The chart is divided into three sections based on the task: Objectness (iterations 1-4), Geometry, Topology, Numbers and Counting (iterations 4-8), and Goal-directedness (iterations 8-12).

### Components/Axes

* **X-axis:** "# Iterations" - Scale from 1 to 12. Marked with vertical dashed lines at 1, 4, 8, and 12, corresponding to the task divisions.

* **Y-axis:** "Accuracy on IδLt (%)" - Scale from 3.5 to 35.

* **Legend:** Located in the top-right corner. Contains the following labels and corresponding colors:

* GPT-o3-mini: RSPC (Blue)

* GPT-o3-mini: KAAR (Green)

* QwQ-32B: RSPC (Red)

* QwQ-32B: KAAR (Brown)

### Detailed Analysis

The chart shows four distinct lines, each representing a model's performance.

**GPT-o3-mini: RSPC (Blue)**

* Trend: The line generally slopes upward, indicating increasing accuracy with more iterations. The slope is steeper in the initial stages and flattens out towards the end.

* Data Points:

* Iteration 1: ~21.25%

* Iteration 4: ~26.75%

* Iteration 8: ~29%

* Iteration 12: ~33%

**GPT-o3-mini: KAAR (Green)**

* Trend: Similar to RSPC, the line slopes upward, but starts at a lower accuracy and has a less steep slope overall.

* Data Points:

* Iteration 1: ~20.75%

* Iteration 4: ~26.25%

* Iteration 8: ~28.25%

* Iteration 12: ~29.25%

**QwQ-32B: RSPC (Red)**

* Trend: The line shows an upward trend, but with more fluctuations than the GPT-o3-mini lines.

* Data Points:

* Iteration 1: ~4.5%

* Iteration 4: ~11.5%

* Iteration 8: ~15.5%

* Iteration 12: ~19%

**QwQ-32B: KAAR (Brown)**

* Trend: The line also slopes upward, but starts at the lowest accuracy and has the least steep slope.

* Data Points:

* Iteration 1: ~6.25%

* Iteration 4: ~11.5%

* Iteration 8: ~12.75%

* Iteration 12: ~19.25%

### Key Observations

* GPT-o3-mini consistently outperforms QwQ-32B across all iterations and tasks.

* RSPC generally yields higher accuracy than KAAR for both models.

* The rate of accuracy improvement decreases as the number of iterations increases, suggesting diminishing returns.

* The largest performance gains are observed during the "Objectness" task (iterations 1-4).

* The QwQ-32B models plateau at a lower accuracy level compared to the GPT-o3-mini models.

### Interpretation

The data suggests that GPT-o3-mini, particularly when used with RSPC, is more effective at achieving higher accuracy on the IδLt metric than QwQ-32B, regardless of whether RSPC or KAAR is used. The diminishing returns observed with increasing iterations indicate that further iterations may not significantly improve performance beyond a certain point. The task-specific performance differences suggest that the models may have varying strengths and weaknesses depending on the nature of the task. The relatively low accuracy of QwQ-32B models suggests they may require further optimization or a different approach to achieve comparable performance to GPT-o3-mini. The IδLt metric likely measures some form of logical or reasoning ability, and the chart demonstrates the iterative improvement of these models on that metric. The three tasks (Objectness, Geometry/Topology/Counting, Goal-directedness) represent increasing levels of complexity in reasoning.

</details>

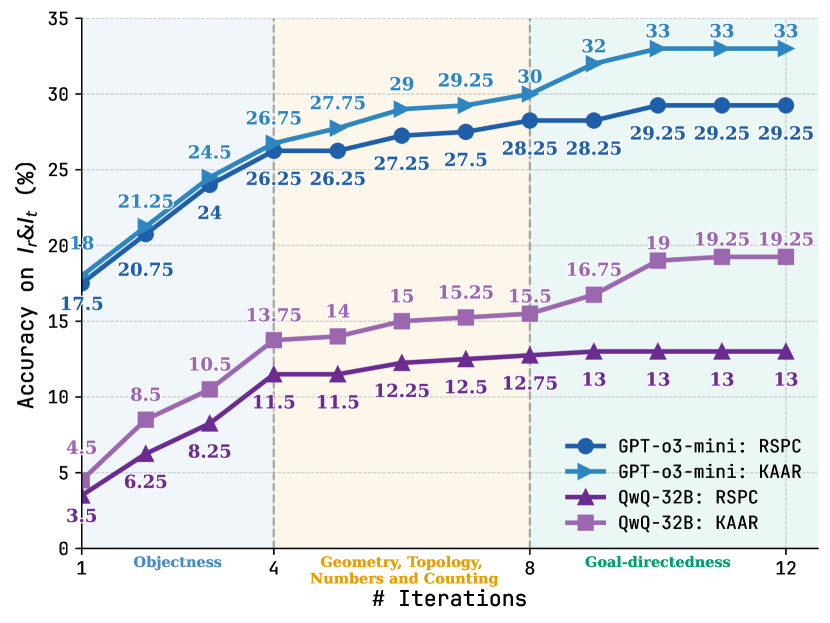

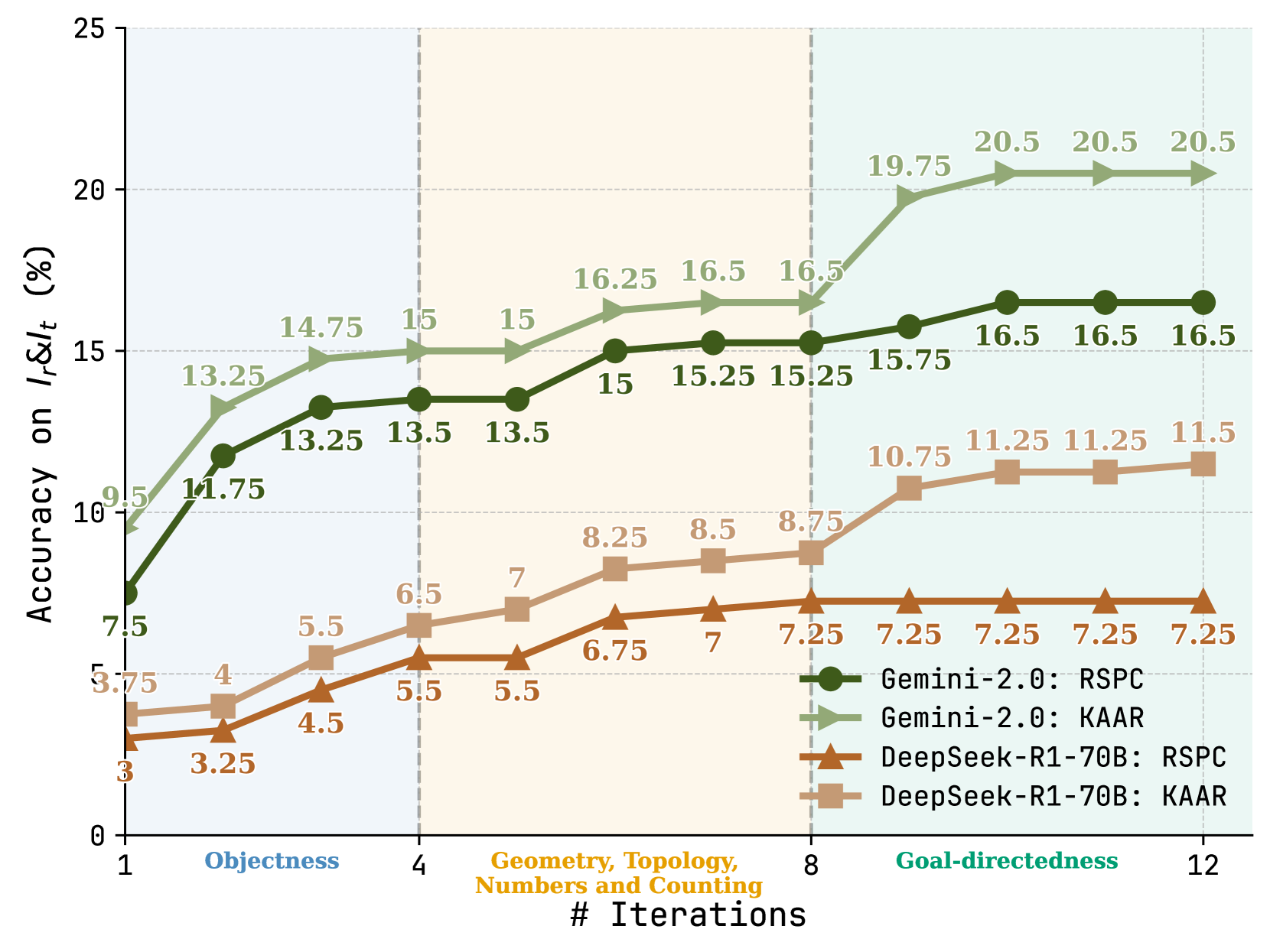

Figure 8: Variance in accuracy on $I_{r}\&I_{t}$ with increasing iterations for RSPC and KAAR using GPT-o3-mini and QwQ-32B. See Figure 13 in Appendix for the results with the other LLMs.

Figure 8 presents the variance in accuracy on $I_{r}\&I_{t}$ for RSPC and KAAR as iteration count increases using GPT-o3-mini and QwQ-32B. For each task under KAAR, we include only iterations from the abstraction that solves both $I_{r}$ and $I_{t}$ . For KAAR, performance improvements across each 4-iteration block are driven by the solver backbone invocation after augmenting an additional level of priors: iterations 1–4 introduce objectness; 5–8 incorporate geometry, topology, numbers, and counting; 9–12 further involve goal-directedness. RSPC shows rapid improvement in the first 4 iterations and plateaus around iteration 8. At each iteration, the accuracy gap between KAAR and RSPC reflects the contribution of accumulated priors via augmentation. KAAR consistently outperforms RSPC, with the performance gap progressively increasing after new priors are augmented and peaking after the integration of goal-directedness. We note that objectness priors alone yield marginal gains with GPT-o3-mini. However, the inclusion of object attributes and relational priors (iterations 4–8) leads to improvements in KAAR over RSPC. This advantage is further amplified after the augmentation of goal-directedness priors (iterations 9–12). These results highlight the benefits of KAAR. Representing core knowledge priors through a hierarchical, dependency-aware ontology enables KAAR to incrementally augment LLMs, perform stage-wise reasoning, and improve solution accuracy. Compared to augmentation at once and non-stage-wise reasoning, KAAR consistently yields superior accuracy, as detailed in Appendix A.6.

## 6 Discussion

ARC and KAAR. ARC serves as a visual abstract reasoning benchmark, requiring models to infer transformations from few examples for each unique task, rather than fitting to a closed rule space as in RAVEN [42] and PGM [43]. ARC assumes tasks are solvable using core knowledge priors. However, the problems are intentionally left undefined to preclude encoding complete solution rules [5]. This pushes models beyond closed-form rule fitting and toward truly domain-general capabilities. While some of the knowledge in KAAR is tailored to ARC, its central contribution lies in representing knowledge through a hierarchical, dependency-aware ontology that enables progressive augmentation. This allows LLMs to gradually expand their reasoning scope and perform stage-wise inference, improving performance on ARC without relying on an exhaustive rule set. Moreover, the ontology of KAAR is transferable to other domains requiring hierarchical reasoning, such as robotic task planning [44], image captioning [45], and visual question answering [46], where similar knowledge priors and dependencies from ARC are applicable. In KAAR, knowledge augmentation increases token consumption, while the additional tokens remain relatively constant since all priors, except goal-directedness, are generated via image processing algorithms from GPAR. On GPT-o3-mini, augmentation tokens constitute around 60% of solver backbone token usage, while on QwQ-32B, this overhead decreases to about 20%, as the solver backbone consumes more tokens. See Appendix A.8 for a detailed discussion. Incorrect abstraction selection in KAAR also leads to wasted tokens. However, accurate abstraction inference often requires validation through viable solutions, bringing the challenge back to solution generation.

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Diagram: Pattern Recognition/Transformation

### Overview

The image presents a visual pattern recognition problem. It shows three pairs of 8x8 pixel grids. In the first two pairs, a blue shape is transformed into a red shape. The third pair shows a blue shape and a question mark, implying the task is to predict the corresponding red shape. The grids are arranged in three rows, with the initial blue shape on the left and the transformed red shape on the right, separated by a right-pointing arrow.

### Components/Axes

There are no explicit axes or scales. The components are:

* **Blue Shapes:** Represent the initial state.

* **Red Shapes:** Represent the transformed state.

* **Arrows:** Indicate the transformation process.

* **Question Mark:** Represents the unknown transformed state.

* **Grid:** Each shape is displayed on an 8x8 grid of squares.

### Detailed Analysis or Content Details

Let's analyze the transformations:

* **Row 1:** The initial blue shape resembles the letter "U". The transformed red shape also resembles a "U", but with the interior filled in. The transformation appears to be filling the enclosed space with red.

* **Row 2:** The initial blue shape is a hollow square. The transformed red shape is a solid square. The transformation appears to be filling the enclosed space with red.

* **Row 3:** The initial blue shape is a "C". The question mark indicates the expected transformation. Based on the previous two examples, we can infer that the transformed shape should be a solid "C", filled with red.

### Key Observations

The transformation consistently involves filling the enclosed space of the initial blue shape with red. The shapes are relatively simple geometric forms. The pattern is consistent across the first two examples.

### Interpretation

The diagram demonstrates a simple pattern recognition task. The underlying principle is to identify the enclosed space within a shape and fill it with a different color. The question mark suggests a test of the observer's ability to extrapolate this pattern to a new shape. The diagram is likely used to assess visual reasoning or pattern completion skills. The consistent application of the "fill enclosed space" rule suggests a deterministic transformation. The use of simple shapes and colors makes the pattern easily discernible. The diagram is a visual analogy for a logical operation or algorithm.

</details>

Figure 9: Fragment of ARC problem e7dd8335.

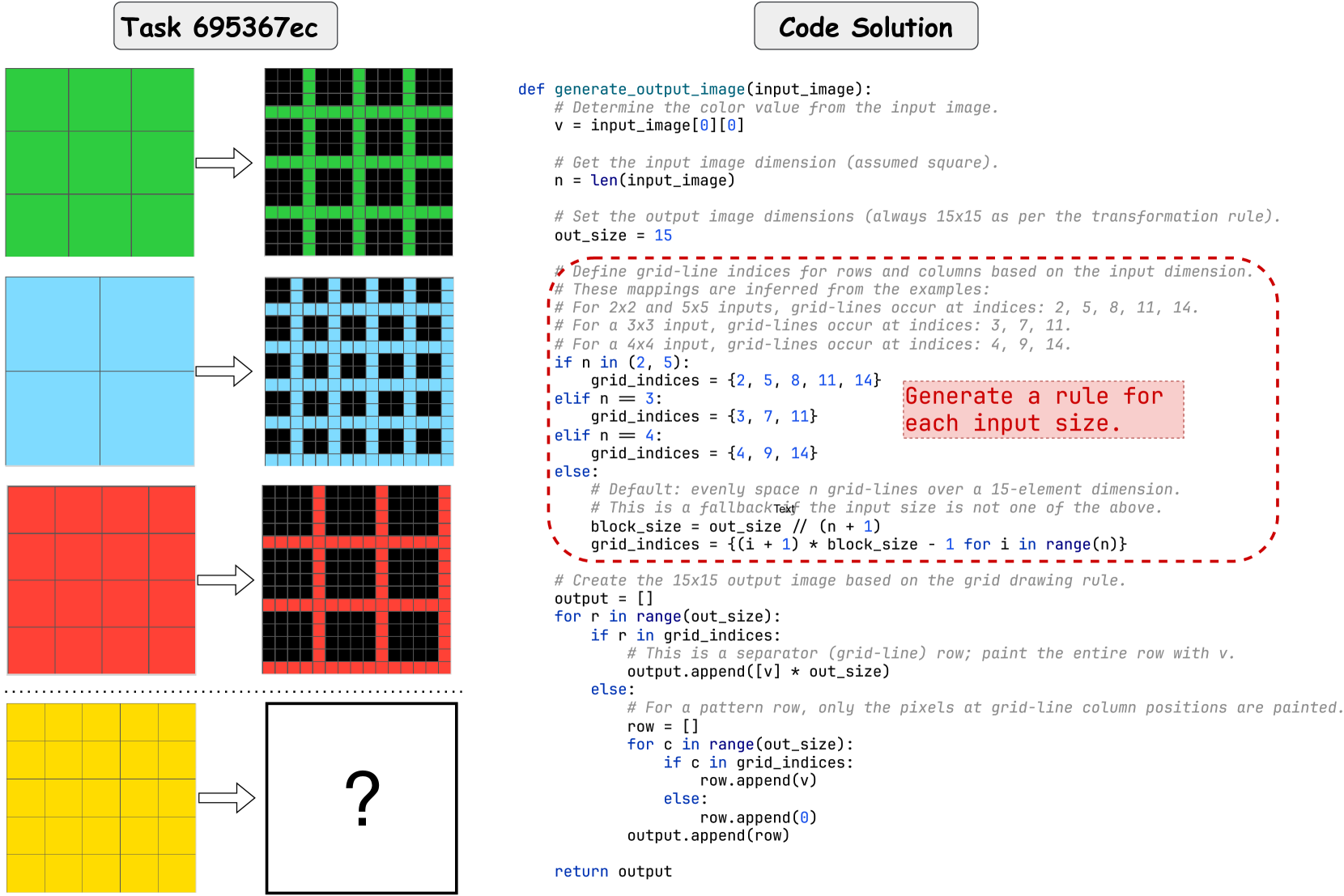

Solution Analysis. RSPC achieves over 30% accuracy across evaluated metrics using GPT-o3-mini, even without knowledge augmentation. To assess its alignment with core knowledge priors, we manually reviewed RSPC-generated solution plans and code that successfully solve $I_{t}$ with GPT-o3-mini. RSPC tends to solve problems without object-centric reasoning. For instance, in Figure 1, it shifts each row downward by one and pads the top with zeros, rather than reasoning over objectness to move each 4-connected component down by one step. Even when applying objectness, RSPC typically defaults to 4-connected abstraction, failing on the problem in Figure 9, where the test input clearly requires 8-connected abstraction. We note that object recognition in ARC involves grouping pixels into task-specific components based on clustering rules, differing from feature extraction approaches [47] in conventional computer vision tasks. Recent work seeks to bridge this gap by incorporating 2D positional encodings and object indices into Vision Transformers [41]. However, its reliance on data-driven learning weakens generalization, undermining ARC’s core objective. In contrast, KAAR enables objectness through explicitly defined abstractions, implemented via standard image processing algorithms, thus ensuring both accuracy and generalization.

Generalization. For all evaluated ARC solvers, accuracy on $I_{r}$ consistently exceeds that on $I_{r}\&I_{t}$ , revealing a generalization gap. Planning-aided code generation methods, such as RSPC and KAAR, exhibit smaller gaps than other solvers, though the issue persists. One reason is that solutions include low-level logic for the training pairs, thus failing to generalize. See Appendix A.9 for examples. Another reason is the usage of incorrect abstractions. For example, reliance solely on 4-connected abstraction leads RSPC to solve only $I_{r}$ in Figure 9. KAAR similarly fails to generalize in this case. It selects 4-connected abstraction, the first one that solves $I_{r}$ , to report accuracy on $I_{t}$ , instead of the correct 8-connected abstraction, as the former is considered simpler. Table 1 also reveals that LLMs differ in their generalization across ARC solvers. While a detailed analysis of these variations is beyond the scope of this study, investigating the underlying causes could offer insights into LLM inference and alignment with intended behaviors, presenting a promising direction for future work.

## 7 Conclusion

We explored the generalization and abstract reasoning capabilities of recent reasoning-oriented LLMs on the ARC benchmark using nine candidate solvers. Experimental results show that repeated-sampling planning-aided code generation (RSPC) achieves the highest test accuracy and demonstrates consistent generalization across most evaluated LLMs. To further improve performance, we propose KAAR, which progressively augments LLMs with core knowledge priors organized into hierarchical levels based on their dependencies, and applies RSPC after augmenting each level of priors to enable stage-wise reasoning. KAAR improves LLM performance on the ARC benchmark while maintaining strong generalization compared to non-augmented RSPC. However, ARC remains challenging even for the most capable reasoning-oriented LLMs, given its emphasis on abstract reasoning and generalization, highlighting current limitations and motivating future research.

## References

- Khan et al. [2021] Abdullah Ayub Khan, Asif Ali Laghari, and Shafique Ahmed Awan. Machine learning in computer vision: A review. EAI Endorsed Transactions on Scalable Information Systems, 8(32), 2021.

- Otter et al. [2020] Daniel W Otter, Julian R Medina, and Jugal K Kalita. A survey of the usages of deep learning for natural language processing. IEEE transactions on neural networks and learning systems, 32(2):604–624, 2020.

- Grigorescu et al. [2020] Sorin Grigorescu, Bogdan Trasnea, Tiberiu Cocias, and Gigel Macesanu. A survey of deep learning techniques for autonomous driving. Journal of field robotics, 37(3):362–386, 2020.

- Lake et al. [2017] Brenden M Lake, Tomer D Ullman, Joshua B Tenenbaum, and Samuel J Gershman. Building machines that learn and think like people. Behavioral and brain sciences, 40:e253, 2017.

- Chollet [2019] François Chollet. On the measure of intelligence. arXiv preprint arXiv:1911.01547, 2019.

- Peirce [1868] Charles S Peirce. Questions concerning certain faculties claimed for man. The Journal of Speculative Philosophy, 2(2):103–114, 1868.

- Spelke and Kinzler [2007] Elizabeth S Spelke and Katherine D Kinzler. Core knowledge. Developmental science, 10(1):89–96, 2007.

- Gulwani et al. [2017] Sumit Gulwani, Oleksandr Polozov, Rishabh Singh, et al. Program synthesis. Foundations and Trends® in Programming Languages, 4:1–119, 2017.

- Xu et al. [2023a] Yudong Xu, Elias B Khalil, and Scott Sanner. Graphs, constraints, and search for the abstraction and reasoning corpus. In Proceedings of the 37th AAAI Conference on Artificial Intelligence, AAAI, pages 4115–4122, 2023a.

- Lei et al. [2024a] Chao Lei, Nir Lipovetzky, and Krista A Ehinger. Generalized planning for the abstraction and reasoning corpus. In Proceedings of the 38th AAAI Conference on Artificial Intelligence, AAAI, pages 20168–20175, 2024a.

- Wei et al. [2022] Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. In Proceedings of the 36th Advances in Neural Information Processing Systems, NeurIPS, pages 24824–24837, 2022.

- Chen et al. [2021] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374, 2021.

- Li et al. [2022] Yujia Li, David Choi, Junyoung Chung, Nate Kushman, Julian Schrittwieser, Rémi Leblond, Tom Eccles, James Keeling, Felix Gimeno, Agustin Dal Lago, et al. Competition-level code generation with alphacode. Science, 378:1092–1097, 2022.

- Chen et al. [2023] Bei Chen, Fengji Zhang, Anh Nguyen, Daoguang Zan, Zeqi Lin, Jian-Guang Lou, and Weizhu Chen. Codet: Code generation with generated tests. In Proceedings of the 11th International Conference on Learning Representations, ICLR, pages 1–19, 2023.

- Zhang et al. [2023] Tianyi Zhang, Tao Yu, Tatsunori Hashimoto, Mike Lewis, Wen-tau Yih, Daniel Fried, and Sida Wang. Coder reviewer reranking for code generation. In Proceedings of the 40th International Conference on Machine Learning, ICML, pages 41832–41846, 2023.

- Ni et al. [2023] Ansong Ni, Srini Iyer, Dragomir Radev, Veselin Stoyanov, Wen-tau Yih, Sida Wang, and Xi Victoria Lin. Lever: Learning to verify language-to-code generation with execution. In Proceedings of the 40th International Conference on Machine Learning, ICML, pages 26106–26128, 2023.