# Don’t Overthink it. Preferring Shorter Thinking Chains for Improved LLM Reasoning

## Abstract

Reasoning large language models (LLMs) heavily rely on scaling test-time compute to perform complex reasoning tasks by generating extensive “thinking” chains. While demonstrating impressive results, this approach incurs significant computational costs and inference time. In this work, we challenge the assumption that long thinking chains results in better reasoning capabilities. We first demonstrate that shorter reasoning chains within individual questions are significantly more likely to yield correct answers—up to $34.5\$ more accurate than the longest chain sampled for the same question. Based on these results, we suggest short-m@k, a novel reasoning LLM inference method. Our method executes $k$ independent generations in parallel and halts computation once the first $m$ thinking processes are done. The final answer is chosen using majority voting among these $m$ chains. Basic short-1@k demonstrates similar or even superior performance over standard majority voting in low-compute settings—using up to $40\$ fewer thinking tokens. short-3@k, while slightly less efficient than short-1@k, consistently surpasses majority voting across all compute budgets, while still being substantially faster (up to $33\$ wall time reduction). To further validate our findings, we finetune LLMs using short, long, and randomly selected reasoning chains. We then observe that training on the shorter ones leads to better performance. Our findings suggest rethinking current methods of test-time compute in reasoning LLMs, emphasizing that longer “thinking” does not necessarily translate to improved performance and can, counter-intuitively, lead to degraded results.

## 1 Introduction

Scaling test-time compute has been shown to be an effective strategy for improving the performance of reasoning LLMs on complex reasoning tasks (OpenAI, 2024; 2025; Team, 2025b). This method involves generating extensive thinking —very long sequences of tokens that contain enhanced reasoning trajectories, ultimately yielding more accurate solutions. Prior work has argued that longer model responses result in enhanced reasoning capabilities (Guo et al., 2025; Muennighoff et al., 2025; Anthropic, 2025). However, generating such long-sequences also leads to high computational cost and slow decoding time due to the autoregressive nature of LLMs.

In this work, we demonstrate that scaling test-time compute does not necessarily improve model performance as previously thought. We start with a somewhat surprising observation. We take four leading reasoning LLMs, and for each generate multiple answers to each question in four complex reasoning benchmarks. We then observe that taking the shortest answer for each question strongly and consistently outperforms both a strategy that selects a random answer (up to $18.8\$ gap) and one that selects the longest answer (up to $34.5\$ gap). These performance gaps are on top of the natural reduction in sequence length—the shortest chains are $50\$ and $67\$ shorter than the random and longest chains, respectively.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Reasoning Process Comparison

### Overview

The image presents a comparison of the reasoning processes of two models, "majority@k" and "short-1@k", when attempting to solve a mathematical problem. The problem statement is "Find the sum of all positive integers n such that n+2 divides the product 3(n+3)(n+9)". The diagram visualizes the intermediate "thinking" steps of each model and their final answers, indicating whether the answer is correct or incorrect.

### Components/Axes

The diagram is divided into two main sections, one for each model. Each section contains:

* A model identifier ("majority@k" and "short-1@k") with a cartoon llama icon.

* A series of "thinking" steps, represented as `<think>` tags followed by a textual statement.

* Arrows connecting the thinking steps to a "Final answer" box.

* A visual indicator (red 'X' or green checkmark) next to the final answer, signifying correctness.

* A dashed horizontal line separating the two model sections.

### Detailed Analysis or Content Details

**1. majority@k:**

* **Thinking Steps:**

* `<think> So the answer is 52`

* `<think> So the answer is 49`

* `<think> So the answer is 33`

* `<think> So the answer is 52`

* **Final Answer:** 52 (marked with a red 'X', indicating incorrect)

**2. short-1@k:**

* **Thinking Steps:**

* `<think> // Terminated thinking`

* `<think> <think> So the answer is 49`

* `<think> // Terminated thinking`

* `<think> // Terminated thinking`

* **Final Answer:** 49 (marked with a green checkmark, indicating correct)

### Key Observations

* The "majority@k" model explores multiple potential answers (52, 49, 33, 52) before arriving at a final answer of 52, which is incorrect.

* The "short-1@k" model appears to terminate its reasoning process more quickly, with several steps labeled as "// Terminated thinking". It arrives at the correct answer of 49 after a single reasoning step.

* The "majority@k" model revisits the answer 52 twice, suggesting a potential oscillation or inability to converge on the correct solution.

* The "// Terminated thinking" label in "short-1@k" suggests a mechanism to stop further reasoning once a satisfactory answer is found.

### Interpretation

The diagram illustrates a comparison of two different reasoning strategies. "majority@k" seems to explore a wider range of possibilities but ultimately fails to find the correct answer, potentially due to a lack of a stopping criterion or an inability to evaluate the validity of its intermediate steps. "short-1@k", on the other hand, demonstrates a more concise and efficient reasoning process, arriving at the correct answer with fewer steps and a mechanism for terminating the search. This suggests that a balance between exploration and efficient termination is crucial for successful problem-solving. The use of "// Terminated thinking" indicates a potential pruning strategy within the "short-1@k" model, which prevents it from wasting resources on unproductive lines of reasoning. The diagram highlights the importance of not only generating potential solutions but also effectively evaluating and selecting the correct one. The cartoon llama icons are purely illustrative and do not contribute to the factual content of the diagram.

</details>

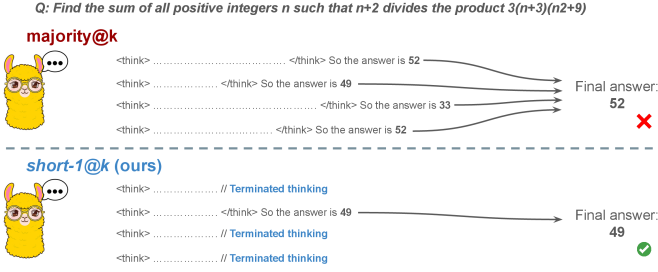

Figure 1: Visual comparison between majority voting and our proposed method short-m@k with $m=1$ (“…” represent thinking time). Given $k$ parallel attempts for the same question, majority@ $k$ waits until all attempts are done, and perform majority voting among them. On the other hand, our short-m@k method halts computation for all attempts as soon as the first $m$ attempts finish “thinking”, which saves compute and time, and surprisingly also boost performance in most cases.

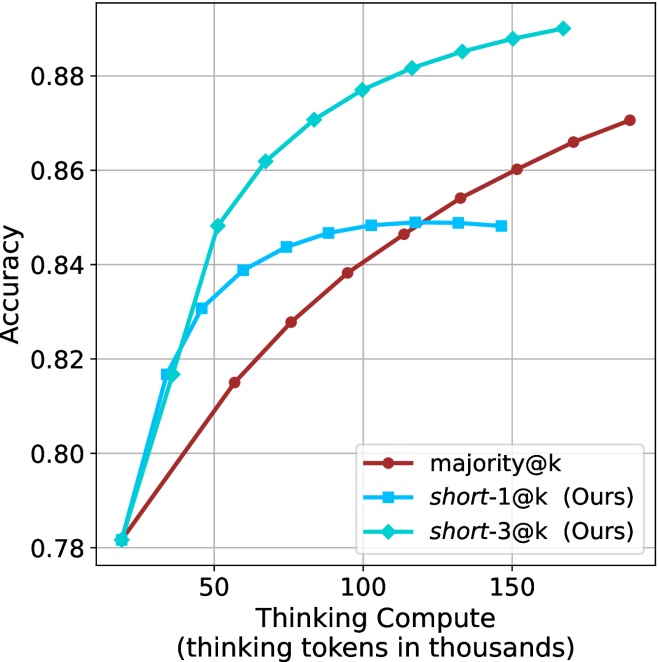

Building on these findings, we propose short-m@k —a novel inference method for reasoning LLMs. short-m@k executes $k$ generations in parallel and terminates computation for all generations as soon as the first $m$ thinking processes are completed. The final answer is then selected via majority voting among those shortest chains, where ties are broken by taking the shortest answer among the tied candidates. See Figure ˜ 1 for visualization.

We evaluate short-m@k using six reasoning LLMs, and compare it to majority voting—the most common aggregation method for evaluating reasoning LLMs on complex benchmarks (Wang et al., 2022; Abdin et al., 2025). We show that in low-compute regimes, short-1@k, i.e., taking the single shortest chain, outperforms majority voting, while significantly reducing the time and compute needed to generate the final answer. For example, using LN-Super- $49$ B (Bercovich and others, 2025), short-1@k can reduce up to $40\$ of the compute while giving the same performance as majority voting. Moreover, for high-compute regimes, short-3@k, which halts generation after three thinking chains are completed, consistently outperforms majority voting across all compute budgets, while running up to $33\$ faster.

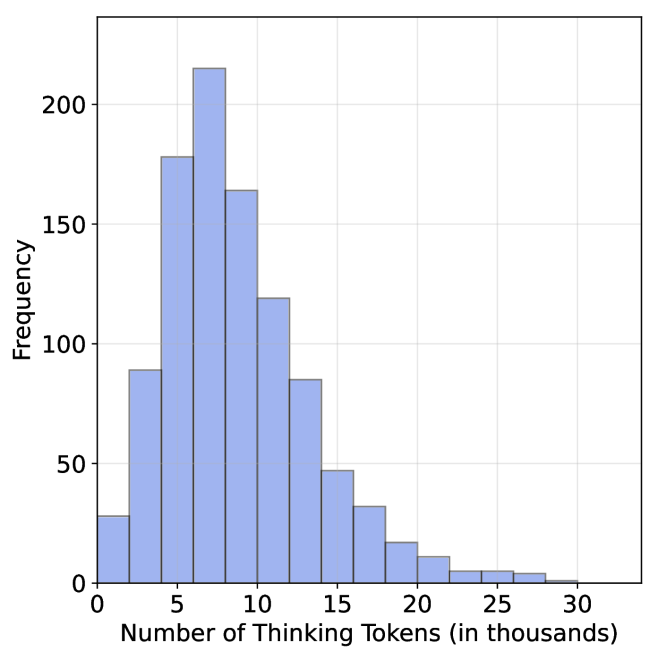

To gain further insights into the underlining mechanism of why shorter thinking is preferable, we analyze the generated reasoning chains. We first show that while taking the shorter reasoning is beneficial per individual question, longer reasoning is still needed to solve harder questions, as claimed in recent studies (Anthropic, 2025; OpenAI, 2024; Muennighoff et al., 2025). Next, we analyze the backtracking and re-thinking behaviors of reasoning chains. We find that shorter reasoning paths are more effective, as they involve fewer backtracks, with a longer average backtrack length. This finding holds both generally and when controlling for overall trajectory length.

To further strengthening our findings, we study whether training on short reasoning chains can lead to more accurate models. To do so, we finetune two Qwen- $2.5$ (Yang and others, 2024) models ( $7$ B and $32$ B) on three variants of the S $1$ dataset (Muennighoff et al., 2025): S $1$ -short, S $1$ -long, and S $1$ -random, consisting of examples with the shortest, longest, and randomly sampled reasoning trajectories among several generations, respectively. Our experiments demonstrate that finetuning using S $1$ -short not only yields shorter thinking lengths, but also improves model performance. Conversely, finetuning on S $1$ -long increases reasoning time with no significant performance gains.

This work rethinks the test-time compute paradigm for reasoning LLMs, showing that longer thinking not only does not ensure better reasoning, but also leads to worse reasoning in most cases. Our short-m@k methods prioritize shorter reasoning, yielding improved performance and reduced computational costs for current reasoning LLMs. We also show that training reasoning LLMs with shorter reasoning trajectories can enhance performance and reduce costs. Our results pave the way towards a new era of efficient and high-performing reasoning LLMs.

## 2 Related work

Reasoning LLMs and test-time scaling.

Reasoning LLMs tackle complex tasks by employing extensive reasoning processes, often involving detailed, step-by-step trajectories (OpenAI (2024); OpenAI (2025); Q. Team (2025b); M. Abdin, S. Agarwal, A. Awadallah, V. Balachandran, H. Behl, L. Chen, G. de Rosa, S. Gunasekar, M. Javaheripi, N. Joshi, et al. (2025); Anthropic (2025); A. Bercovich et al. (2025); D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025); 27; G. DeepMind (2025); Q. Team (2025a)). This capability is fundamentally based on techniques like chain-of-thought (CoT; Wei et al., 2022), which encourage models to generate intermediate reasoning steps before arriving at a final answer. Modern LLMs use a large number of tokens, often referred to as “thinking tokens”, to explore multiple problem-solving approaches, to employ self-reflection, and to perform verification. This thinking capability has allowed them to achieve superior performance on challenging tasks such as mathematical problem-solving and code generation (Ke et al., 2025).

The LLM thinking capability is typically achieved through post-training methods applied to a strong base model. The two primary approaches to instilling or improving this reasoning ability are using reinforcement learning (RL) (Guo et al., 2025; Team, 2025b) and supervised fine-tuning (Muennighoff et al., 2025; Ye et al., 2025). Guo et al. (2025) have demonstrated that as training progresses the model tends to generate longer thinking trajectories, which results in improved performance on complex tasks. Similarly, Anthropic (2025) and Muennighoff et al. (2025) have shown a correlation between increased average thinking length during inference and improved performance. We challenge this assumption, demonstrating that shorter sequences are more likely to yield an accurate answer.

Efficiency in reasoning LLMs.

While shortening the length of CoT is beneficial for non-reasoning models (Nayab et al., 2024; Kang et al., 2025), it is higly important for reasoning LLMs as they require a very large amount of tokens to perform the thinking process. As a result, recent studies tried to make the process more efficient, e.g., by using early exit techniques for reasoning trajectories (Pu et al., 2025; Yang et al., 2025), by suppressing backtracks (Wang et al., 2025a) or by training reasoning models which enable control over the thinking length (Yu et al., 2025).

Several recent works studied the relationship between reasoning trajectory length and correctness. Lu et al. (2025) proposed a method for reducing the length of thinking trajectories in reasoning training datasets. Their method employs a reasoning LLM several times over an existing trajectory in order to make it shorter. As this approach eventually trains a model over shorter trajectories it is similar to the method we employ in Section ˜ 6. However, our method is simpler as it does not require an LLM to explicitly shorten the sequence. Fatemi et al. (2025); Qi et al. (2025) and Arora and Zanette (2025) proposed RL methods to shorten reasoning in language models. Fatemi et al. (2025) also observed that correct answers typically require shorter thinking trajectories by averaging lengths across examples, suggesting that lengthy responses might inherently stem from RL-based optimization during training. In Section ˜ 5.1 we show that indeed correct answers usually use shorter thinking trajectories, but also highlight that averaging across all examples might hinder this effect as easier questions require sustainably lower amount of reasoning tokens compared to harder ones.

More relevant to our work, Wu et al. (2025) showed that there is an optimal thinking length range for correct answers according to the difficulty of the question, while Wang et al. (2025b) found that for a specific question, correct responses from reasoning models are usually shorter than incorrect ones. We provide further analysis supporting these observations in Sections ˜ 3 and 5. Finally, our proposed inference method short-m@k is designed to enhance the efficiency of reasoning LLMs by leveraging this property, which can be seen as a generalization of the FFS method (Agarwal et al., 2025), which selects the shortest answer among several candidates as in our short-1@k.

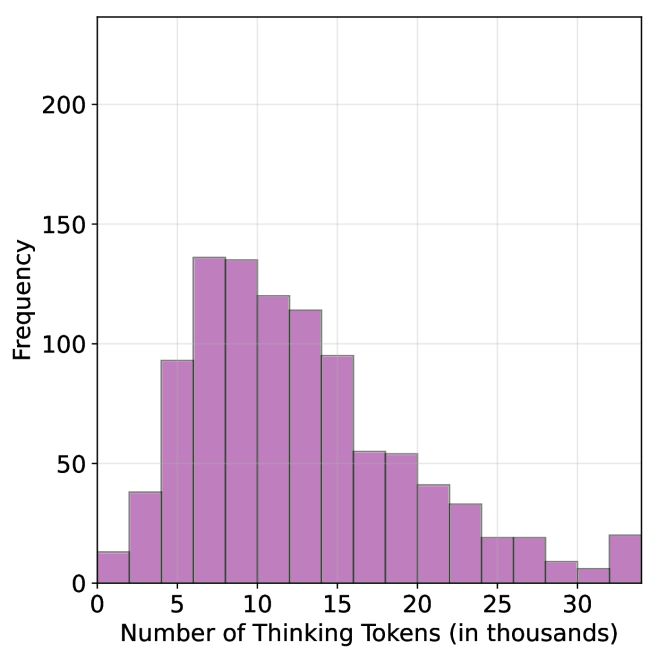

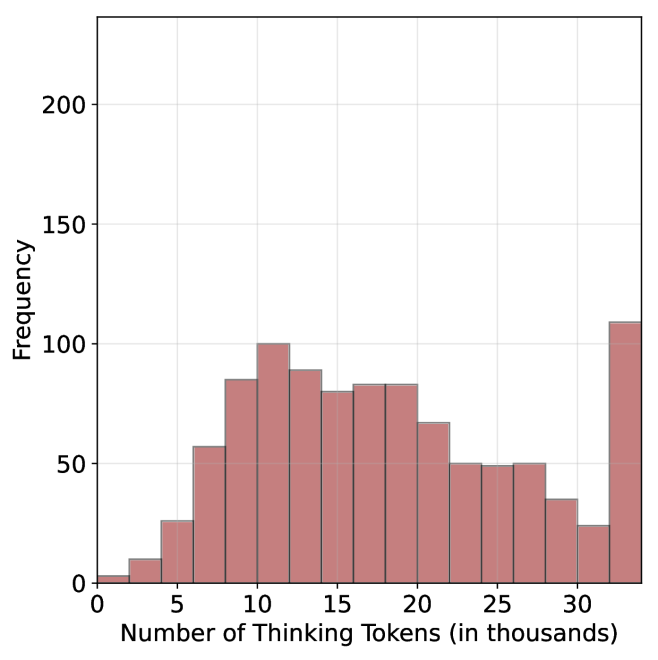

## 3 Shorter thinking is preferable

As mentioned above, the common wisdom in reasoning LLMs suggests that increased test-time computation enhances model performance. Specifically, it is widely assumed that longer reasoning process, which entails extensive reasoning thinking chains, correlates with improved task performance (OpenAI, 2024; Anthropic, 2025; Muennighoff et al., 2025). We challenge this assumption and ask whether generating more tokens per trajectory actually leads to better performance. To that end, we generate multiple answers per question and compare performance based solely on the shortest, longest and randomly sampled thinking chains among the generated samples.

### 3.1 Experimental details

We consider four leading, high-performing, open, reasoning LLMs. Llama- $3.3$ -Nemotron-Super- $49$ B-v $1$ [LN-Super- $49$ B; Bercovich and others, 2025]: a reasoning RL-enhanced version of Llama- $3.3$ - $70$ B (Grattafiori et al., 2024); R $1$ -Distill-Qwen- $32$ B [R $1$ - $32$ B; Guo et al., 2025]: an SFT finetuned version of Qwen- $2.5$ - $32$ B-Instruct (Yang and others, 2024) derived from R $1$ trajectories; QwQ- $32$ B a reasoning RL-enhanced version Qwen- $2.5$ - $32$ B-Instruct (Team, 2025b); and R1- $0528$ a $670$ B RL-trained flagship reasoning model (R $1$ - $670$ B; Guo et al., 2025). We also include results for smaller models in Appendix ˜ D.

We evaluate all models using four competitive reasoning benchmarks. We use AIME $2024$ (of America, 2024), AIME $2025$ (of America, 2025) and HMMT February $2025$ , from the Math Arena benchmark (Balunović et al., 2025). This three benchmarks are derived from math competitions, and involve solving problems that cover a broad range of mathematics topics. Each dataset consists of $30$ examples with varied difficulty. We also evaluate the models using the GPQA-diamond benchmark [GPQA-D; Rein et al., 2024], which consists of $198$ multiple-choice scientific questions, and is considered to be challenging for reasoning LLMs (DeepMind, 2025).

For each question, we generate $20$ responses per model, yielding a total of about $36$ k generations. For all models we use temperature of $0.7$ , top-p= $0.95$ and a maximum number of generated tokens of $32$ , $768$ . When measuring the thinking chain length, we measure the token count between the <think> and </think> tokens. We run inference for all models using paged attention via the vLLM framework (Kwon et al., 2023).

### 3.2 The shorter the better

Table 1: Shorter thinking performs better. Comparison between taking the shortest/longest/random generation per example.

| | GPQA-D | AIME 2024 | AIME 2025 | HMMT | Math Average | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | |

| LN-Super-49B | | | | | | | | | | |

| random | 5357 | 65.1 | 11258 | 58.8 | 12105 | 51.3 | 13445 | 33.0 | 12270 | 47.7 |

| longest | 8763 $(+64\$ | 57.6 | 18566 | 33.3 | 18937 | 30.0 | 19790 | 23.3 | 19098 $(+56\$ | 28.9 |

| shortest | 2790 $(-48\$ | 69.1 | 0 6276 | 76.7 | 0 7036 | 66.7 | 0 7938 | 46.7 | 7083 $(-42\$ | 63.4 |

| R1-32B | | | | | | | | | | |

| random | 5851 | 62.5 | 9614 | 71.8 | 11558 | 56.4 | 12482 | 38.3 | 11218 | 55.5 |

| longest | 9601 $(+64\$ | 57.1 | 17689 | 53.3 | 19883 | 36.7 | 20126 | 23.3 | 19233 $(+71\$ | 37.8 |

| shortest | 3245 $(-45\$ | 64.7 | 0 4562 | 80.0 | 0 6253 | 63.3 | 0 6557 | 36.7 | 5791 $(-48\$ | 60.0 |

| QwQ-32B | | | | | | | | | | |

| random | 8532 | 63.7 | 13093 | 82.0 | 14495 | 72.3 | 16466 | 52.5 | 14685 | 68.9 |

| longest | 12881 $(+51\$ | 54.5 | 20059 | 70.0 | 21278 | 63.3 | 24265 | 36.7 | 21867 $(+49\$ | 56.7 |

| shortest | 5173 $(-39\$ | 64.7 | 0 8655 | 86.7 | 10303 | 66.7 | 11370 | 60.0 | 10109 $(-31\$ | 71.1 |

| R1-670B | | | | | | | | | | |

| random | 11843 | 76.2 | 16862 | 83.8 | 18557 | 82.5 | 21444 | 68.2 | 18954 | 78.2 |

| longest | 17963 $(+52\$ | 63.1 | 22603 | 70.0 | 23570 | 66.7 | 27670 | 40.0 | 24615 $(+30\$ | 58.9 |

| shortest | 8116 $(-31\$ | 75.8 | 11229 | 83.3 | 13244 | 83.3 | 13777 | 83.3 | 12750 $(-33\$ | 83.3 |

For all generated answers, we compare short vs. long thinking chains for the same question, along with a random chain. Results are presented in Table ˜ 1. In this section we exclude generations where thinking is not completed within the maximum generation length, as these often result in an infinite thinking loop. First, as expected, the shortest answers are $25\$ – $50\$ shorter compared to randomly sampled responses. However, we also note that across almost all models and benchmarks, considering the answer with the shortest thinking chain actually boosts performance, yielding an average absolute improvement of $2.2\$ – $15.7\$ on the math benchmarks compared to randomly selected generations. When considering the longest thinking answers among the generations, we further observe an increase in thinking chain length, with up to $75\$ more tokens per chain. These extended reasoning trajectories substantially degrade performance, resulting in average absolute reductions ranging between $5.4\$ – $18.8\$ compared to random generations over all benchmarks. These trends are most noticeable when comparing the shortest generation with the longest ones, with an absolute performance gain of up to $34.5\$ in average accuracy and a substantial drop in the number of thinking tokens.

The above results suggest that long generations might come with a significant price-tag, not only in running time, but also in performance. That is, within an individual example, shorter thinking trajectories are much more likely to be correct. In Section ˜ 5.1 we examine how these results relate to the common assumption that longer trajectories leads to better LLM performance. Next, we propose strategies to leverage these findings to improve the efficiency and effectiveness of reasoning LLMs.

## 4 short-m@k : faster and better inference of reasoning LLMs

Based on the results presented in Section ˜ 3, we suggest a novel inference method for reasoning LLMs. Our method— short-m@k —leverages batch inference of LLMs per question, using multiple parallel decoding runs for the same query. We begin by introducing our method in Section ˜ 4.1. We then describe our evaluation methodology, which takes into account inference compute and running time (Section ˜ 4.2). Finally, we present our results (Section ˜ 4.3).

### 4.1 The short-m@k method

The short-m@k method, visualized in Figure ˜ 1, performs parallel decoding of $k$ generations for a given question, halting computation across all generations as soon as the $m\leq k$ shortest thinking trajectories are completed. It then conducts majority voting among those shortest answers, resolving ties by selecting the answer with the shortest thinking chain. Given that thinking trajectories can be computationally intensive, terminating all generations once the $m$ shortest trajectories are completed not only saves computational resources but also significantly reduces wall time due to the parallel decoding approach, as shown in Section ˜ 4.3.

Below we focus on short-1@k and short-3@k, with short-1@k being the most efficient variant of short-m@k and short-3@k providing the best balance of performance and efficiency (see Section ˜ 4.3). Ablation studies on $m$ and other design choices are presented in Appendix ˜ C, while results for smaller models are presented in Appendix ˜ D.

### 4.2 Evaluation setup

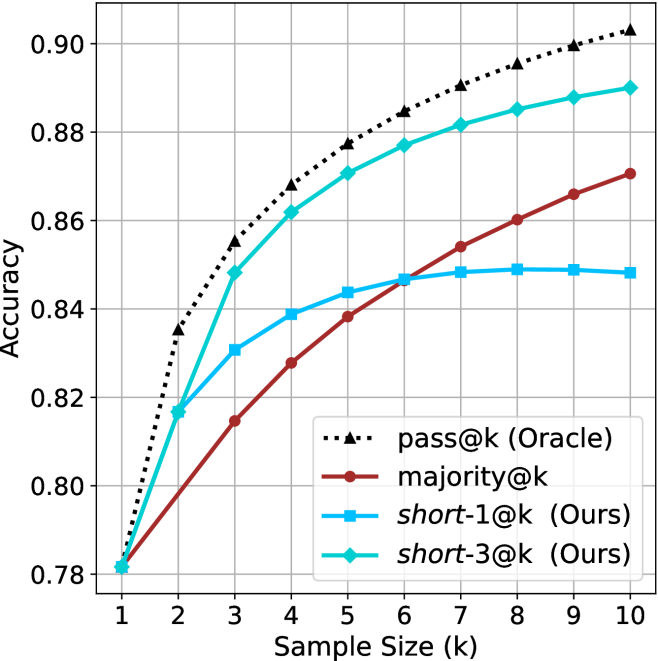

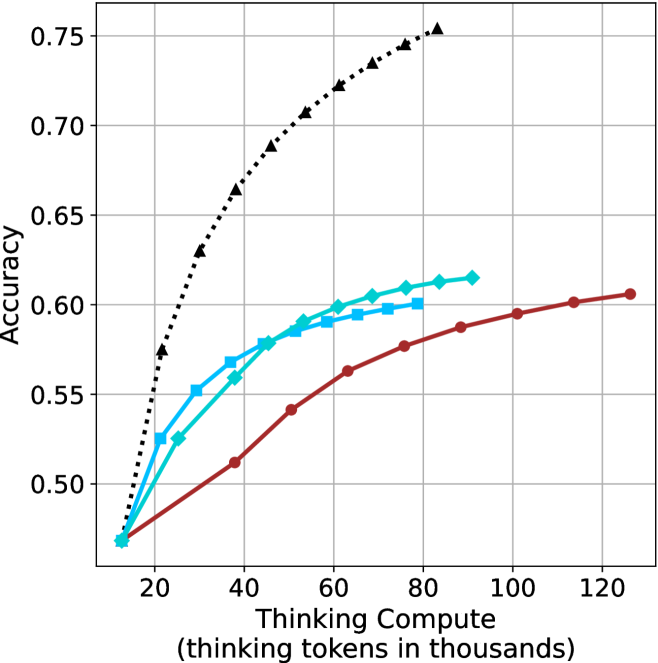

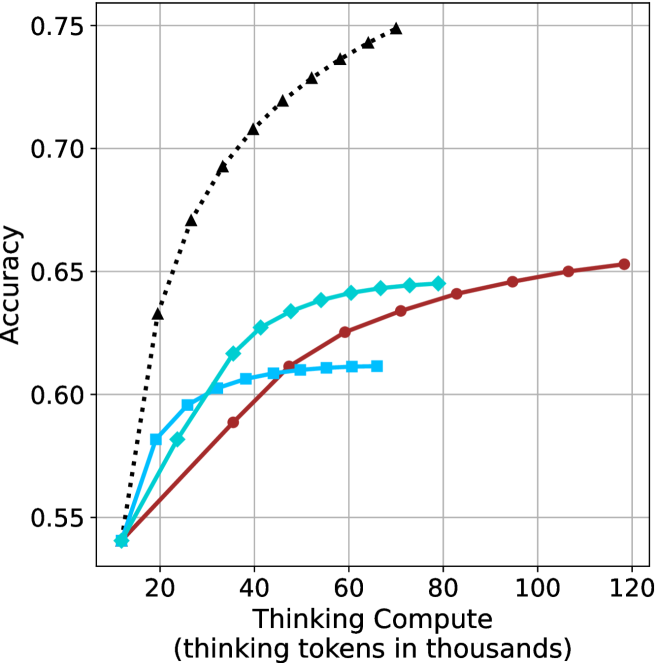

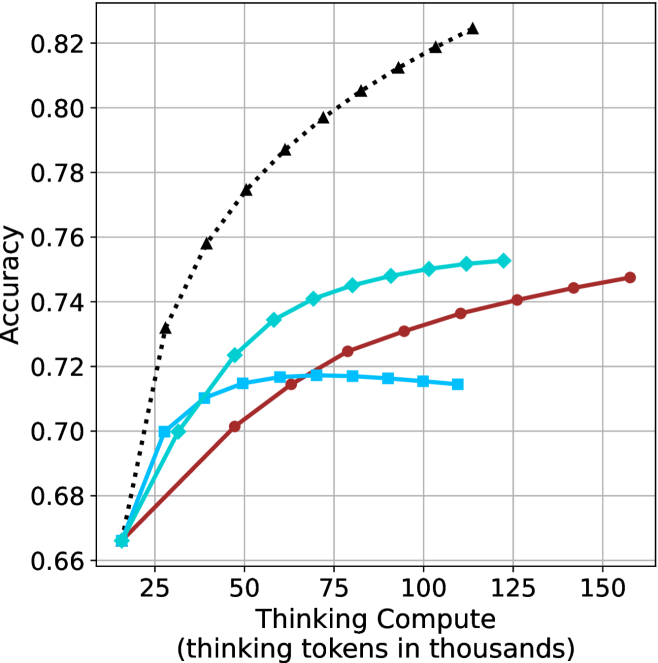

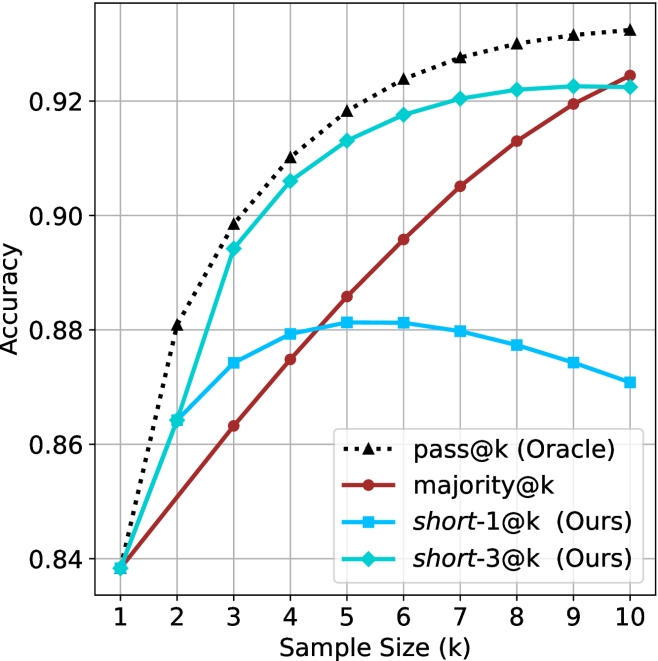

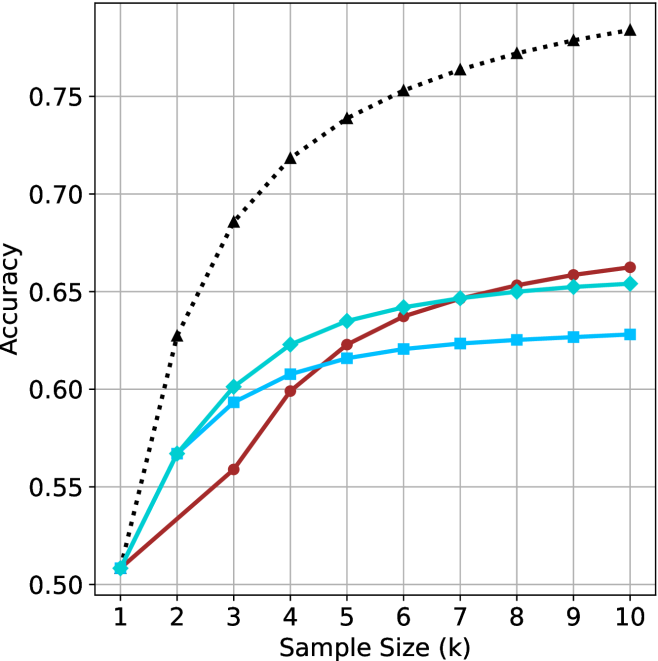

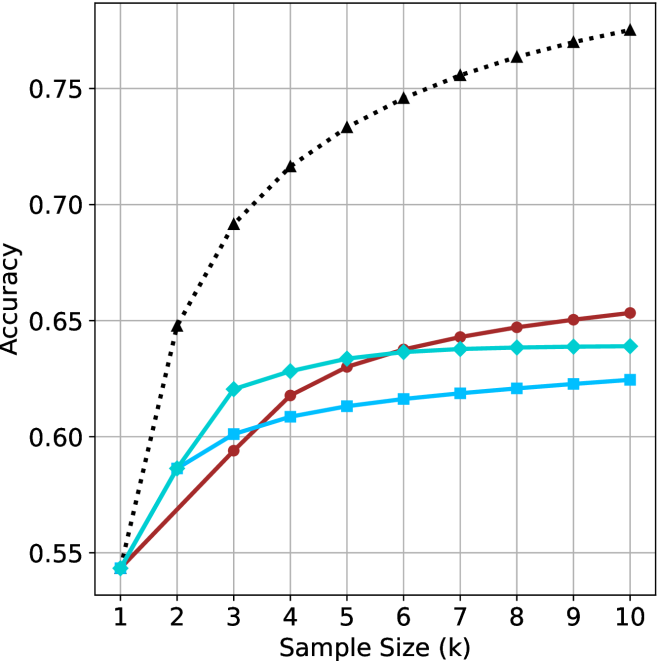

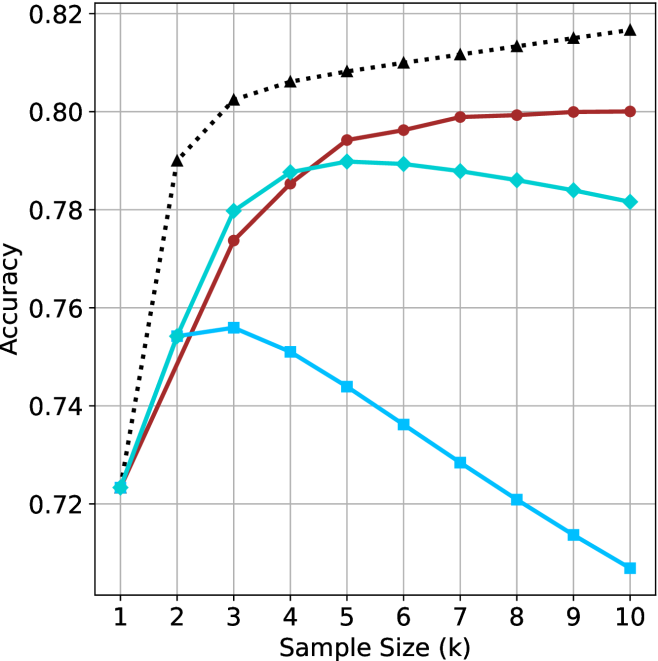

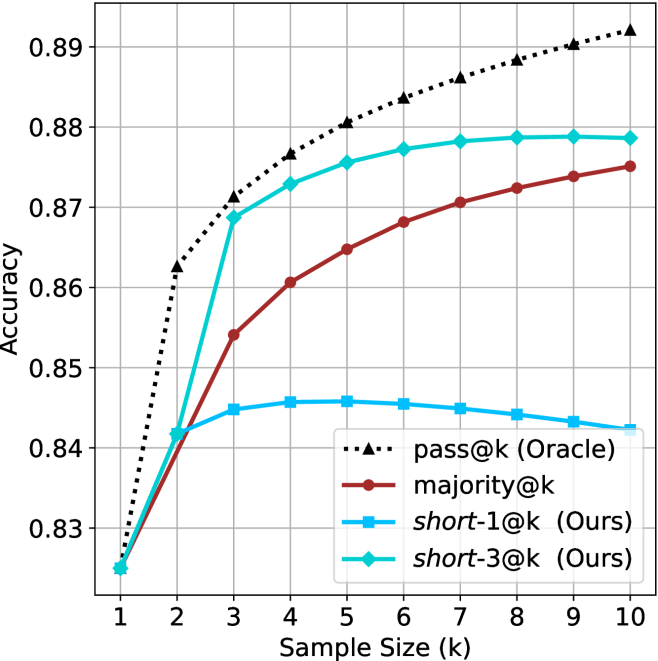

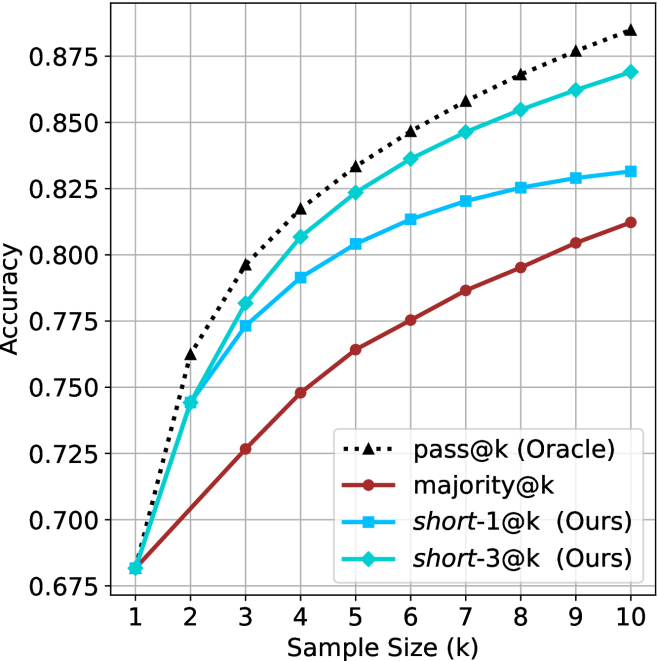

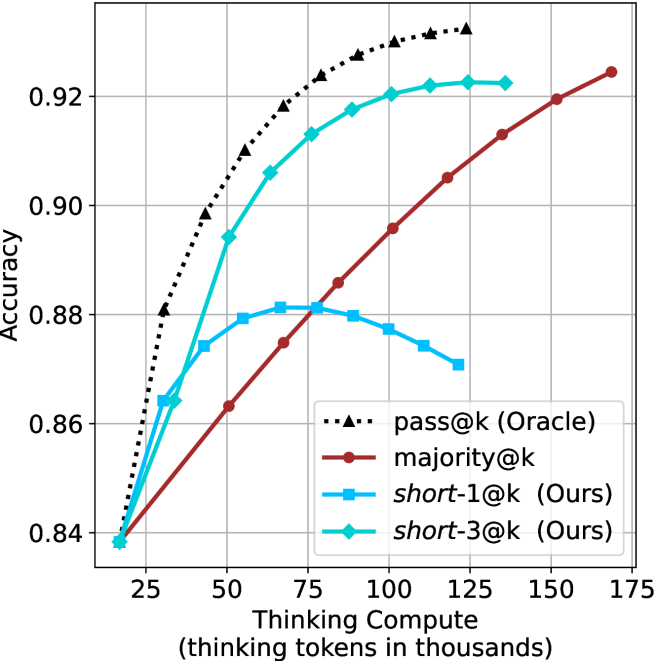

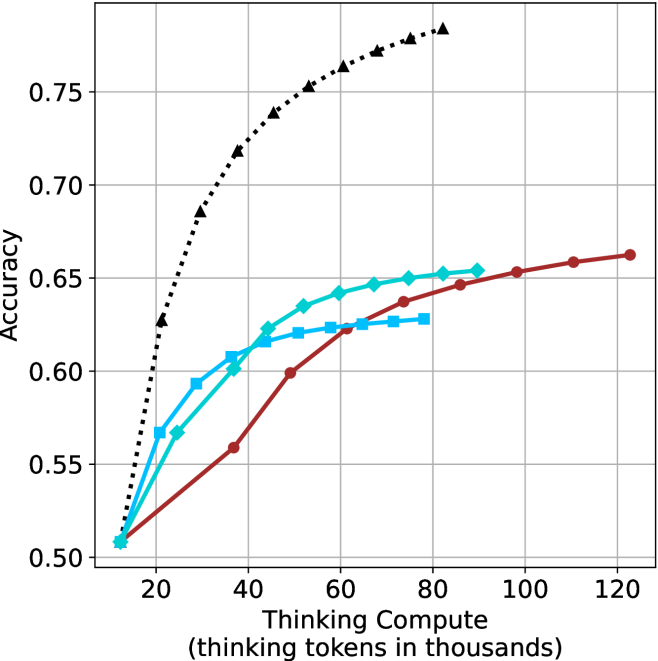

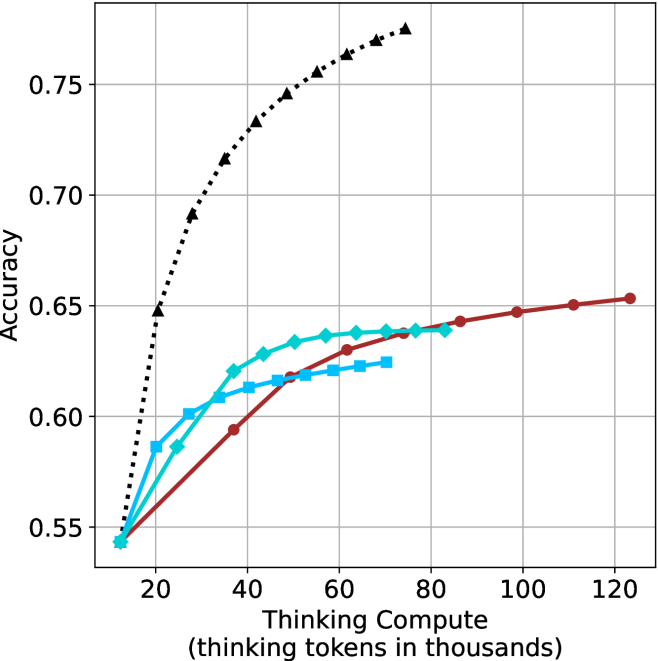

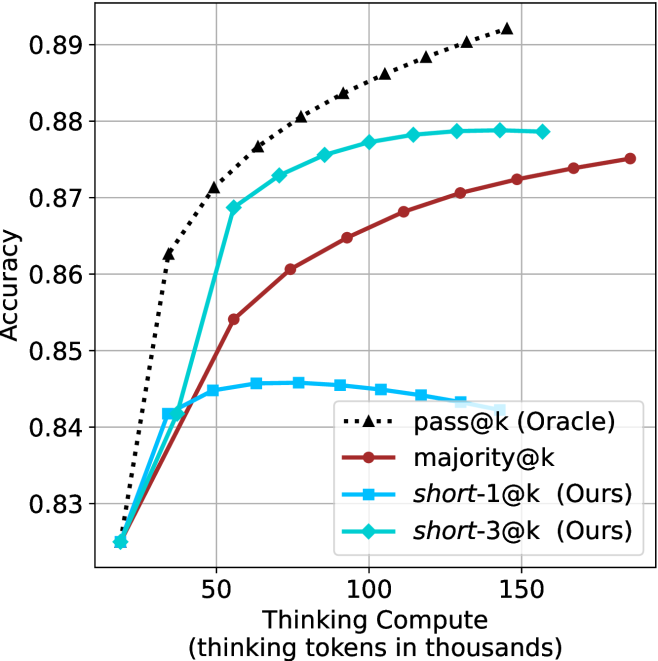

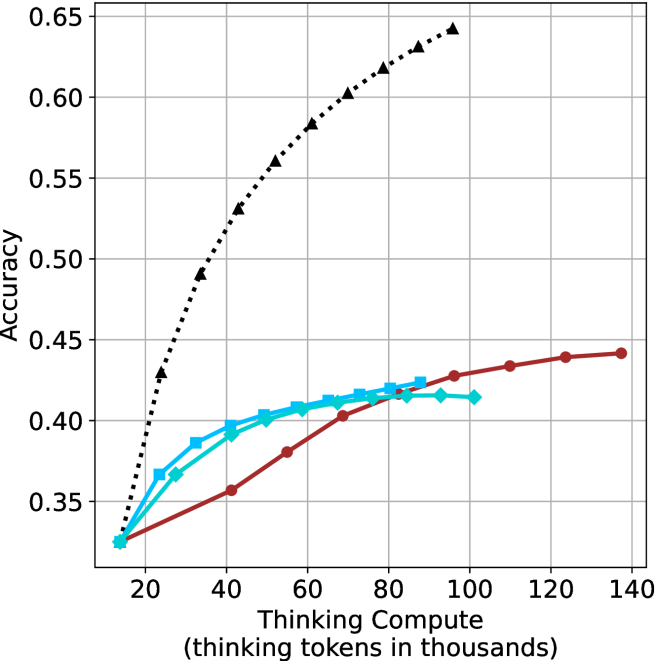

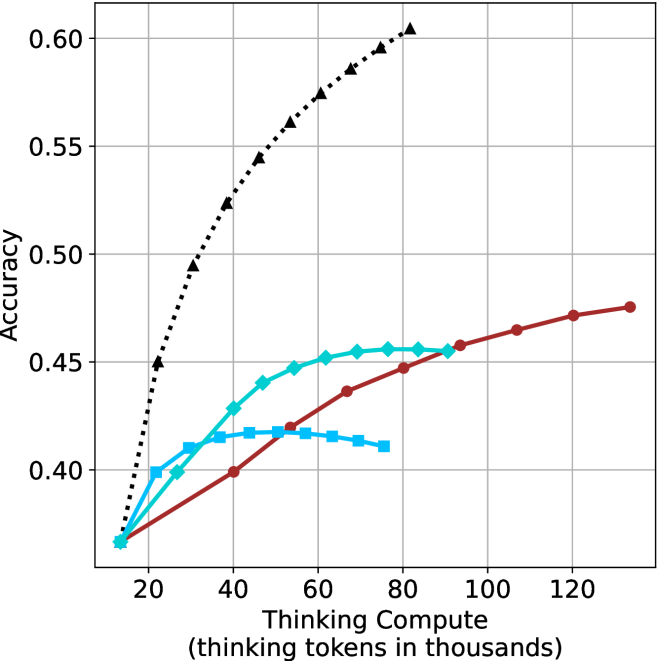

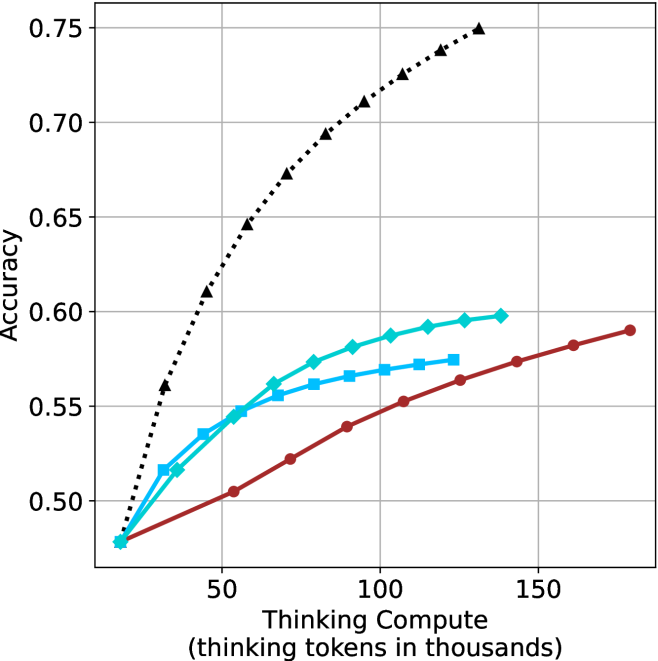

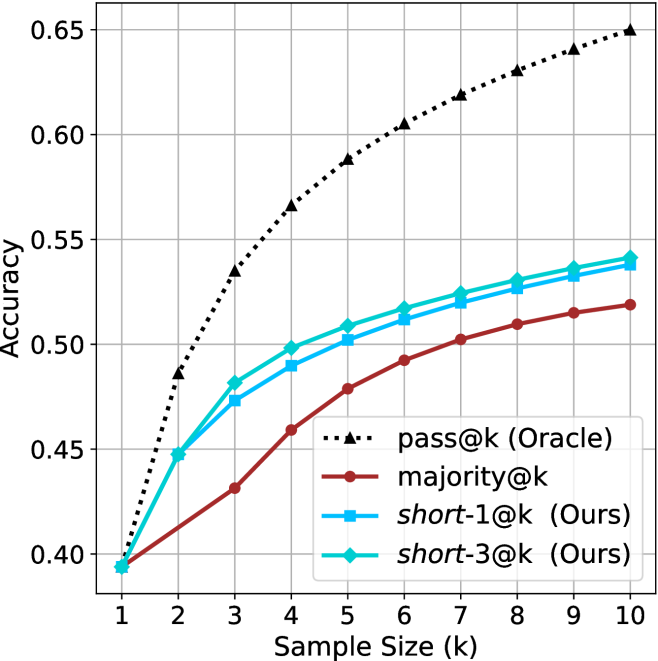

We evaluate all methods under the same setup as described in Section ˜ 3.1. We report the averaged results across the math benchmarks, while the results for GPQA-D presented in Appendix ˜ A. The per benchmark resutls for the math benchmarks are in Appendix ˜ B. We report results using our method (short-m@k) with $m\in\{1,3\}$ . We compare the proposed method to the standard majority voting (majority $@k$ ), arguably the most common method for aggregating multiple outputs (Wang et al., 2022), which was recently adapted for reasoning LLMs (Guo et al., 2025; Abdin et al., 2025; Wang et al., 2025b). As an oracle, we consider pass $@k$ (Kulal et al., 2019; Chen and others, 2021), which measures the probability of including the correct solution within $k$ generated responses.

We benchmark the different methods with sample sizes of $k\in\{1,2,...,10\}$ , assuming standard parallel decoding setup, i.e., all samples are generated in parallel. Section 5.3 presents sequential analysis where parallel decoding is not assumed. For the oracle (pass@ $k$ ) approach, we use the unbiased estimator presented in Chen and others (2021), with our $20$ generations per question ( $n$ $=$ $20$ ). For the short-1@k method, we use the rank-score@ $k$ metric (Hassid et al., 2024), where we sort the different generations according to thinking length. For majority $@k$ and short-m@k where $m>1$ , we run over all $k$ -sized subsets out of the $20$ generations per example.

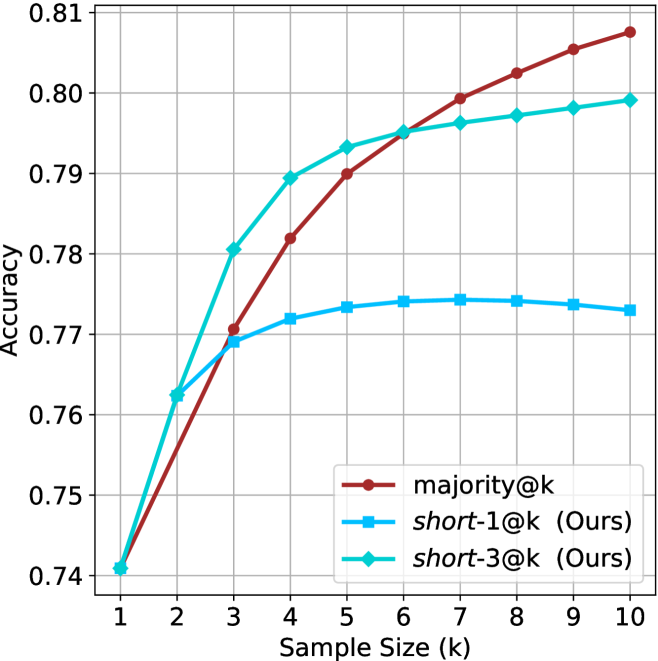

We evaluate the different methods considering three main criteria: (a) Sample-size (i.e., $k$ ), where we compare methods while controlling for the number of generated samples; (b) Thinking-compute, where we measure the total number of thinking tokens used across all generations in the batch; and (c) Time-to-answer, which measures the wall time of running inference using each method. In this parallel framework, our method (short-m@k) terminates all other generations after the first $m$ decoding thinking processes terminate. Thus, the overall thinking compute is the total number of thinking tokens for each of the $k$ generations at that point. Similarly, the overall time is that of the $m$ ’th shortest generation process. Conversely, for majority $@k$ , the method’s design necessitates waiting for all generations to complete before proceeding. Hence, we consider the compute as the total amount of thinking tokens in all generations and run time according to the longest thinking chain. As for the oracle approach, we terminate all thinking trajectories once the shortest correct one is finished, and consider the compute and time accordingly.

### 4.3 Results

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size

### Overview

The image presents a line chart illustrating the relationship between accuracy and sample size. Three distinct lines represent different models or conditions, showing how accuracy changes as the sample size increases from 1k to 10k. The chart is set against a white background with a grid for easier readability.

### Components/Axes

* **X-axis:** Labeled "Sample Size (k)", ranging from 1 to 10, with increments of 1.

* **Y-axis:** Labeled "Accuracy", ranging from 0.50 to 0.75, with increments of 0.05.

* **Lines:** Three lines are plotted, each with a distinct color and style:

* Black dotted line

* Cyan solid line

* Red solid line

### Detailed Analysis

Let's analyze each line individually, noting trends and approximate data points.

* **Black Dotted Line:** This line exhibits the steepest upward trend. It starts at approximately 0.57 at a sample size of 1k and rapidly increases to approximately 0.74 at a sample size of 10k.

* (1k, 0.57)

* (2k, 0.63)

* (3k, 0.67)

* (4k, 0.69)

* (5k, 0.70)

* (6k, 0.71)

* (7k, 0.72)

* (8k, 0.73)

* (9k, 0.74)

* (10k, 0.74)

* **Cyan Solid Line:** This line shows a moderate upward trend, but plateaus towards the higher sample sizes. It begins at approximately 0.45 at 1k and reaches approximately 0.61 at 10k.

* (1k, 0.45)

* (2k, 0.52)

* (3k, 0.56)

* (4k, 0.58)

* (5k, 0.59)

* (6k, 0.60)

* (7k, 0.60)

* (8k, 0.61)

* (9k, 0.61)

* (10k, 0.61)

* **Red Solid Line:** This line demonstrates the slowest upward trend and appears to converge with the cyan line at higher sample sizes. It starts at approximately 0.43 at 1k and reaches approximately 0.59 at 10k.

* (1k, 0.43)

* (2k, 0.48)

* (3k, 0.52)

* (4k, 0.55)

* (5k, 0.57)

* (6k, 0.58)

* (7k, 0.59)

* (8k, 0.59)

* (9k, 0.60)

* (10k, 0.60)

### Key Observations

* The black dotted line consistently outperforms the other two lines across all sample sizes.

* The cyan and red lines converge as the sample size increases, suggesting diminishing returns in accuracy beyond a certain point.

* The initial increase in accuracy is most pronounced for the black dotted line, indicating a rapid improvement with even small sample sizes.

### Interpretation

The chart suggests that increasing the sample size generally improves accuracy, but the rate of improvement varies significantly depending on the model or condition being evaluated. The black dotted line represents a model that benefits substantially from larger sample sizes, while the cyan and red lines show a more limited improvement. The convergence of the cyan and red lines at higher sample sizes indicates that, for these models, the benefit of additional data diminishes as the sample size grows. This could be due to factors such as reaching a saturation point in the data or the inherent limitations of the models themselves. The chart highlights the importance of considering sample size when evaluating model performance and suggests that the optimal sample size may depend on the specific model and the desired level of accuracy.

</details>

(a) LN-Super-49B

<details>

<summary>x3.png Details</summary>

### Visual Description

## Chart: Accuracy vs. Sample Size

### Overview

The image presents a line chart illustrating the relationship between sample size (in thousands) and accuracy. Three distinct data series are plotted, each representing a different model or condition. The chart demonstrates how accuracy changes as the sample size increases.

### Components/Axes

* **X-axis:** Labeled "Sample Size (k)", ranging from 1 to 10 (in thousands). The axis is linearly scaled.

* **Y-axis:** Labeled "Accuracy", ranging from 0.55 to 0.75. The axis is linearly scaled.

* **Data Series 1:** Represented by a black dotted line with diamond markers.

* **Data Series 2:** Represented by a red solid line with circular markers.

* **Data Series 3:** Represented by a light blue solid line with square markers.

* **Grid:** A light gray grid is present, aiding in the reading of values.

### Detailed Analysis

**Data Series 1 (Black Dotted Line):** This line exhibits a steep upward trend, indicating a rapid increase in accuracy with increasing sample size.

* At Sample Size = 1k, Accuracy ≈ 0.56

* At Sample Size = 2k, Accuracy ≈ 0.64

* At Sample Size = 3k, Accuracy ≈ 0.69

* At Sample Size = 4k, Accuracy ≈ 0.72

* At Sample Size = 5k, Accuracy ≈ 0.73

* At Sample Size = 6k, Accuracy ≈ 0.735

* At Sample Size = 7k, Accuracy ≈ 0.74

* At Sample Size = 8k, Accuracy ≈ 0.74

* At Sample Size = 9k, Accuracy ≈ 0.745

* At Sample Size = 10k, Accuracy ≈ 0.75

**Data Series 2 (Red Solid Line):** This line shows a moderate upward trend, with the rate of increase slowing down as the sample size grows.

* At Sample Size = 1k, Accuracy ≈ 0.55

* At Sample Size = 2k, Accuracy ≈ 0.59

* At Sample Size = 3k, Accuracy ≈ 0.62

* At Sample Size = 4k, Accuracy ≈ 0.64

* At Sample Size = 5k, Accuracy ≈ 0.65

* At Sample Size = 6k, Accuracy ≈ 0.65

* At Sample Size = 7k, Accuracy ≈ 0.65

* At Sample Size = 8k, Accuracy ≈ 0.65

* At Sample Size = 9k, Accuracy ≈ 0.65

* At Sample Size = 10k, Accuracy ≈ 0.65

**Data Series 3 (Light Blue Solid Line):** This line demonstrates a rapid initial increase in accuracy, followed by a plateau.

* At Sample Size = 1k, Accuracy ≈ 0.55

* At Sample Size = 2k, Accuracy ≈ 0.60

* At Sample Size = 3k, Accuracy ≈ 0.62

* At Sample Size = 4k, Accuracy ≈ 0.63

* At Sample Size = 5k, Accuracy ≈ 0.63

* At Sample Size = 6k, Accuracy ≈ 0.63

* At Sample Size = 7k, Accuracy ≈ 0.63

* At Sample Size = 8k, Accuracy ≈ 0.63

* At Sample Size = 9k, Accuracy ≈ 0.63

* At Sample Size = 10k, Accuracy ≈ 0.63

### Key Observations

* Data Series 1 consistently outperforms the other two series across all sample sizes.

* Data Series 2 and 3 converge in accuracy as the sample size increases, reaching a plateau around 0.63-0.65.

* The initial increase in accuracy is most pronounced for Data Series 1 and Data Series 3.

* The benefit of increasing sample size diminishes for Data Series 2 and 3 beyond a sample size of 4k.

### Interpretation

The chart suggests that increasing the sample size generally improves accuracy, but the extent of improvement varies depending on the model or condition being evaluated. Data Series 1 demonstrates a strong positive correlation between sample size and accuracy, indicating that this model benefits significantly from larger datasets. Data Series 2 and 3, however, exhibit diminishing returns, suggesting that their accuracy reaches a limit even with larger sample sizes. This could be due to factors such as model complexity, data quality, or inherent limitations of the underlying algorithm. The plateau observed in Data Series 2 and 3 implies that further increasing the sample size beyond a certain point will not yield substantial gains in accuracy. This information is valuable for resource allocation, as it suggests that investing in larger datasets may not be the most effective strategy for improving the performance of these models. The differences between the curves could also indicate different learning algorithms or different levels of sensitivity to data quantity.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Sample Size

### Overview

This image presents a line chart illustrating the relationship between sample size (in thousands) and accuracy. Four distinct data series are plotted, each represented by a different colored line. The chart appears to demonstrate how accuracy improves with increasing sample size, but at a diminishing rate.

### Components/Axes

* **X-axis:** Labeled "Sample Size (k)". The scale ranges from 1 to 10, in increments of 1.

* **Y-axis:** Labeled "Accuracy". The scale ranges from 0.66 to 0.82, in increments of 0.02.

* **Data Series:** Four lines are present, each with a unique color and style:

* Black dotted line

* Teal solid line

* Red solid line

* Light blue dashed line

### Detailed Analysis

Let's analyze each line individually, noting trends and approximate data points.

* **Black Dotted Line:** This line exhibits the steepest upward trend. It starts at approximately 0.67 at a sample size of 1k and rises rapidly, reaching approximately 0.82 at a sample size of 10k.

* **Teal Solid Line:** This line shows a moderate upward trend, but plateaus at higher sample sizes. It begins at approximately 0.67 at 1k, rises to around 0.75 at 5k, and then plateaus around 0.75-0.76 for sample sizes greater than 5k.

* **Red Solid Line:** This line demonstrates a slower, more gradual increase in accuracy. It starts at approximately 0.67 at 1k, reaches around 0.73 at 5k, and continues to rise slowly, reaching approximately 0.75 at 10k.

* **Light Blue Dashed Line:** This line shows a slight initial increase, followed by a plateau and even a slight decrease at higher sample sizes. It starts at approximately 0.68 at 1k, rises to around 0.72 at 3k, and then remains relatively constant around 0.72, with a slight dip to approximately 0.71 at 10k.

Here's a table summarizing approximate data points:

| Sample Size (k) | Black Dotted (Accuracy) | Teal Solid (Accuracy) | Red Solid (Accuracy) | Light Blue Dashed (Accuracy) |

|---|---|---|---|---|

| 1 | 0.67 | 0.67 | 0.67 | 0.68 |

| 2 | 0.74 | 0.71 | 0.70 | 0.71 |

| 3 | 0.78 | 0.73 | 0.72 | 0.72 |

| 4 | 0.80 | 0.74 | 0.73 | 0.72 |

| 5 | 0.81 | 0.75 | 0.73 | 0.72 |

| 6 | 0.81 | 0.75 | 0.74 | 0.72 |

| 7 | 0.81 | 0.75 | 0.74 | 0.72 |

| 8 | 0.82 | 0.76 | 0.75 | 0.71 |

| 9 | 0.82 | 0.76 | 0.75 | 0.71 |

| 10 | 0.82 | 0.76 | 0.75 | 0.71 |

### Key Observations

* The black dotted line consistently outperforms the other three lines across all sample sizes.

* The light blue dashed line shows a limited benefit from increasing sample size beyond 3k, and even a slight decrease in accuracy at 10k.

* The teal solid line demonstrates diminishing returns, with accuracy plateauing after 5k.

* The red solid line shows the slowest rate of improvement in accuracy.

### Interpretation

The chart suggests that increasing the sample size generally improves accuracy, but the extent of improvement varies depending on the method or model being used. The black dotted line likely represents a method that benefits significantly from larger sample sizes, while the light blue dashed line represents a method that is less sensitive to sample size or may even be negatively affected by excessively large samples (potentially due to overfitting or other issues). The teal and red lines represent intermediate cases.

The plateauing observed in the teal and light blue lines indicates that there is a point of diminishing returns, where adding more data does not significantly improve accuracy. This could be due to factors such as the inherent limitations of the method, the quality of the data, or the presence of noise.

The differences between the lines highlight the importance of selecting an appropriate method and sample size for a given task. The optimal choice will depend on the specific requirements of the application and the characteristics of the data. The chart provides valuable insights into the trade-offs between sample size, accuracy, and computational cost.

</details>

(c) QwQ-32B

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size

### Overview

This image presents a line chart illustrating the relationship between accuracy and sample size (k) for four different methods: pass@k (Oracle), majority@k, short-1@k (Ours), and short-3@k (Ours). The chart displays how accuracy changes as the sample size increases from 1 to 10.

### Components/Axes

* **X-axis:** Sample Size (k), ranging from 1 to 10.

* **Y-axis:** Accuracy, ranging from 0.78 to 0.90.

* **Data Series:**

* pass@k (Oracle) - represented by a dotted black line.

* majority@k - represented by a solid maroon line.

* short-1@k (Ours) - represented by a solid blue line.

* short-3@k (Ours) - represented by a solid teal line.

* **Legend:** Located in the center-right of the chart, listing the data series and their corresponding colors.

### Detailed Analysis

Here's a breakdown of each data series and their trends:

* **pass@k (Oracle):** This line starts at approximately 0.78 at k=1 and steadily increases, reaching approximately 0.90 at k=10. The line exhibits a consistently upward slope, indicating a strong positive correlation between sample size and accuracy.

* k=1: Accuracy ≈ 0.78

* k=2: Accuracy ≈ 0.83

* k=3: Accuracy ≈ 0.86

* k=4: Accuracy ≈ 0.88

* k=5: Accuracy ≈ 0.89

* k=6: Accuracy ≈ 0.89

* k=7: Accuracy ≈ 0.89

* k=8: Accuracy ≈ 0.89

* k=9: Accuracy ≈ 0.90

* k=10: Accuracy ≈ 0.90

* **majority@k:** This line begins at approximately 0.78 at k=1 and increases more slowly than pass@k, reaching approximately 0.87 at k=10. The slope is less steep than pass@k, suggesting a weaker correlation.

* k=1: Accuracy ≈ 0.78

* k=2: Accuracy ≈ 0.80

* k=3: Accuracy ≈ 0.82

* k=4: Accuracy ≈ 0.83

* k=5: Accuracy ≈ 0.84

* k=6: Accuracy ≈ 0.85

* k=7: Accuracy ≈ 0.86

* k=8: Accuracy ≈ 0.86

* k=9: Accuracy ≈ 0.87

* k=10: Accuracy ≈ 0.87

* **short-1@k (Ours):** This line starts at approximately 0.78 at k=1 and increases at a moderate rate, reaching approximately 0.85 at k=10. The slope is between pass@k and majority@k.

* k=1: Accuracy ≈ 0.78

* k=2: Accuracy ≈ 0.81

* k=3: Accuracy ≈ 0.83

* k=4: Accuracy ≈ 0.84

* k=5: Accuracy ≈ 0.84

* k=6: Accuracy ≈ 0.84

* k=7: Accuracy ≈ 0.85

* k=8: Accuracy ≈ 0.85

* k=9: Accuracy ≈ 0.85

* k=10: Accuracy ≈ 0.85

* **short-3@k (Ours):** This line starts at approximately 0.78 at k=1 and increases rapidly initially, then plateaus, reaching approximately 0.88 at k=10. It initially outperforms short-1@k but converges towards a similar accuracy level as k increases.

* k=1: Accuracy ≈ 0.78

* k=2: Accuracy ≈ 0.82

* k=3: Accuracy ≈ 0.85

* k=4: Accuracy ≈ 0.86

* k=5: Accuracy ≈ 0.87

* k=6: Accuracy ≈ 0.88

* k=7: Accuracy ≈ 0.88

* k=8: Accuracy ≈ 0.88

* k=9: Accuracy ≈ 0.88

* k=10: Accuracy ≈ 0.88

### Key Observations

* The "pass@k (Oracle)" method consistently achieves the highest accuracy across all sample sizes.

* The "short-3@k (Ours)" method initially shows promising results, but its accuracy plateaus as the sample size increases.

* The "majority@k" method exhibits the slowest increase in accuracy with increasing sample size.

* All methods start with a similar accuracy at k=1.

### Interpretation

The chart demonstrates the impact of sample size on the accuracy of different methods. The "pass@k (Oracle)" method, likely representing an ideal or perfect scenario, serves as an upper bound on achievable accuracy. The "Ours" methods (short-1@k and short-3@k) represent practical approaches that attempt to approximate the performance of the Oracle method. The plateauing of "short-3@k" suggests diminishing returns with larger sample sizes, potentially due to limitations in the method's ability to effectively utilize additional data. The slower improvement of "majority@k" indicates that it may be less sensitive to sample size or less effective at leveraging the available information. The data suggests that increasing the sample size generally improves accuracy, but the extent of improvement varies depending on the method used. The "Ours" methods offer a trade-off between performance and computational cost, as they do not require the perfect knowledge assumed by the "Oracle" method.

</details>

(d) R1-670B

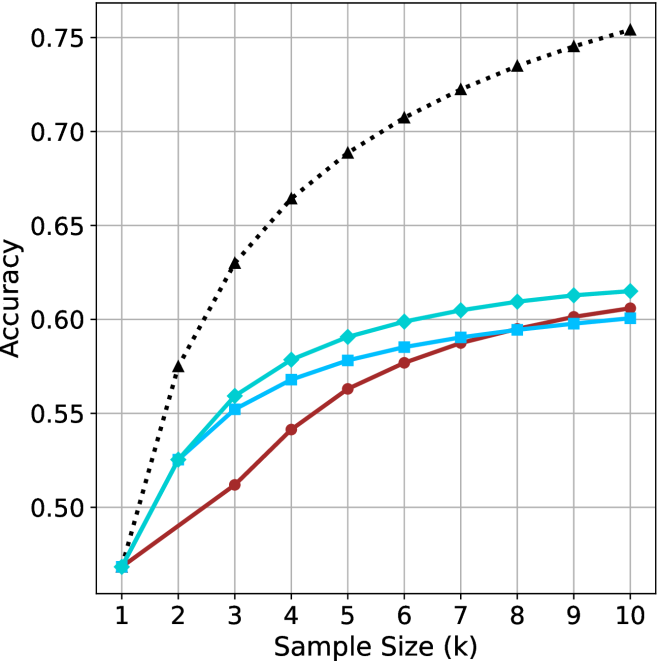

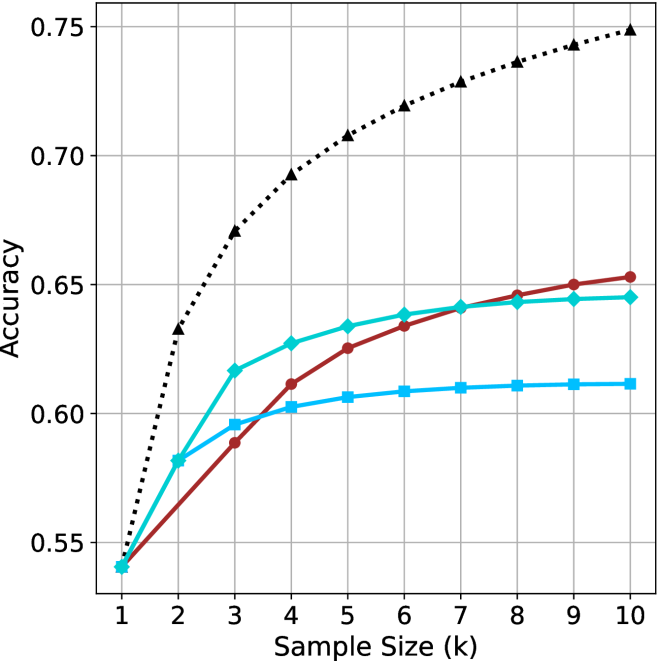

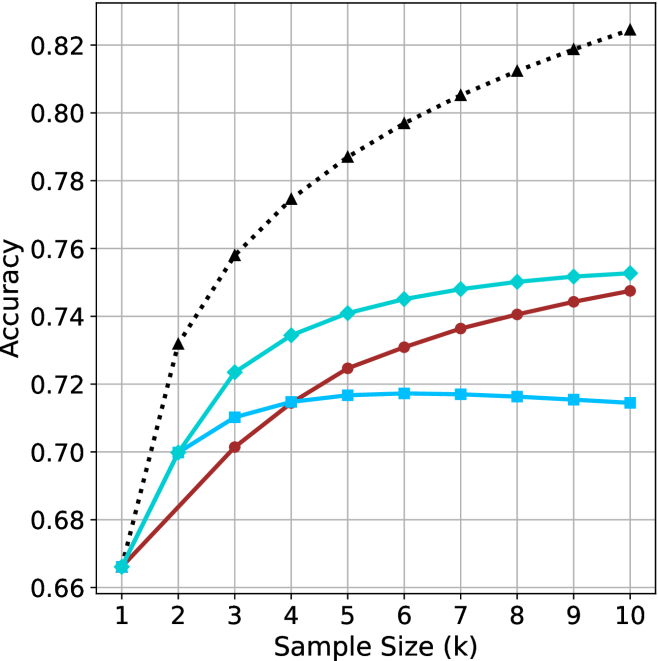

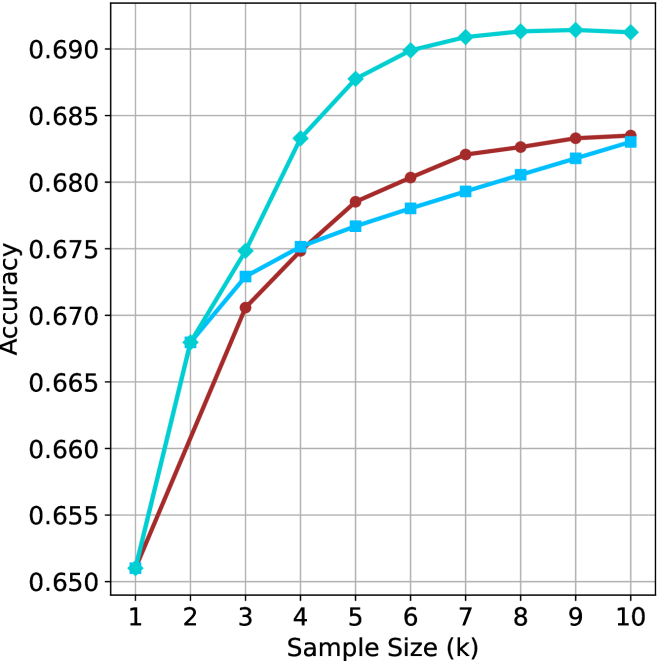

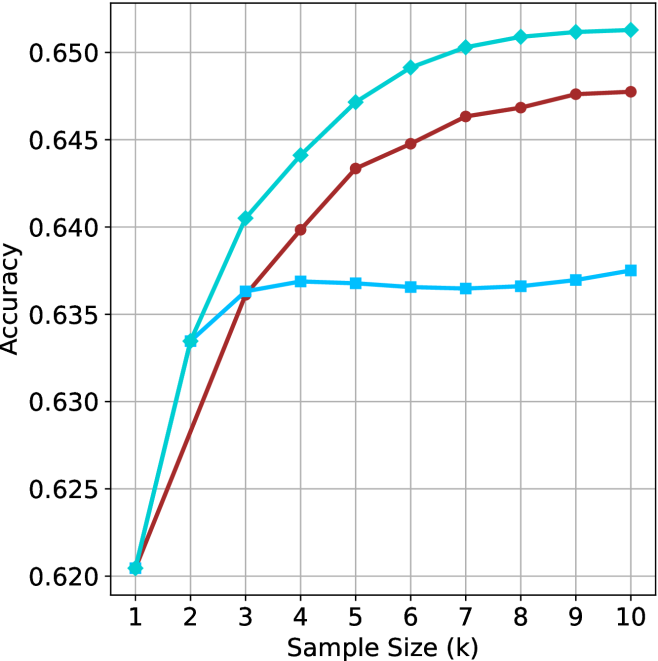

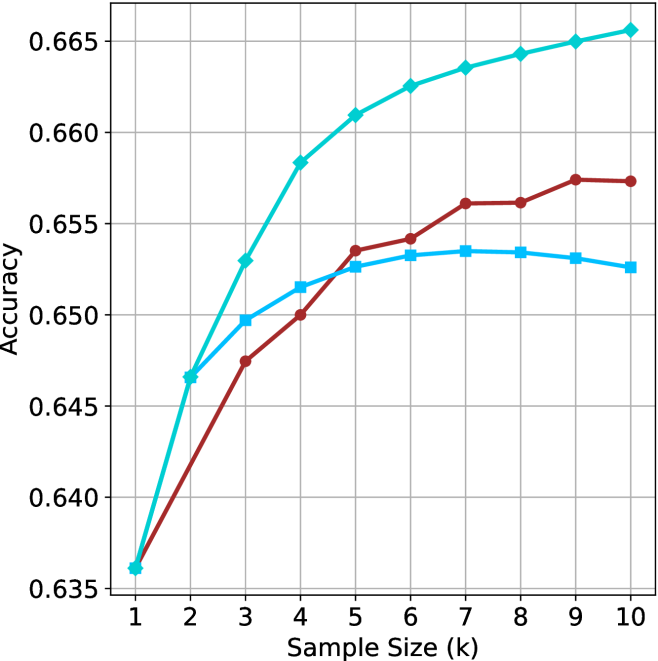

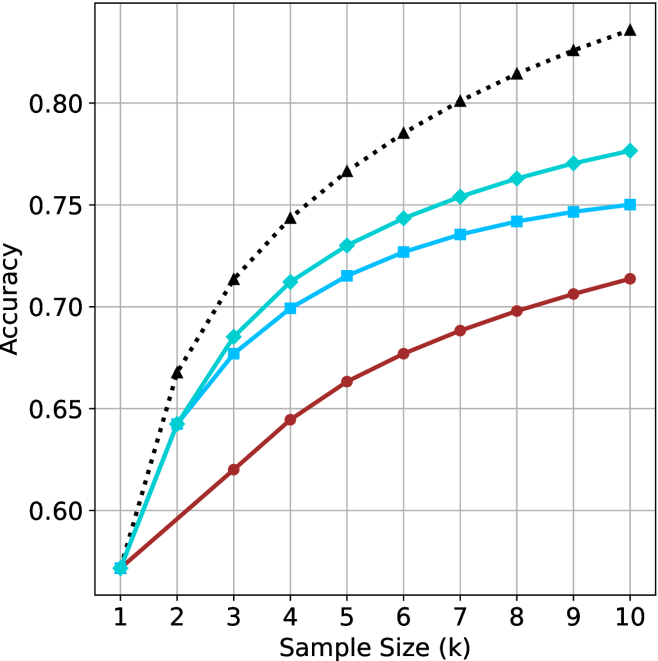

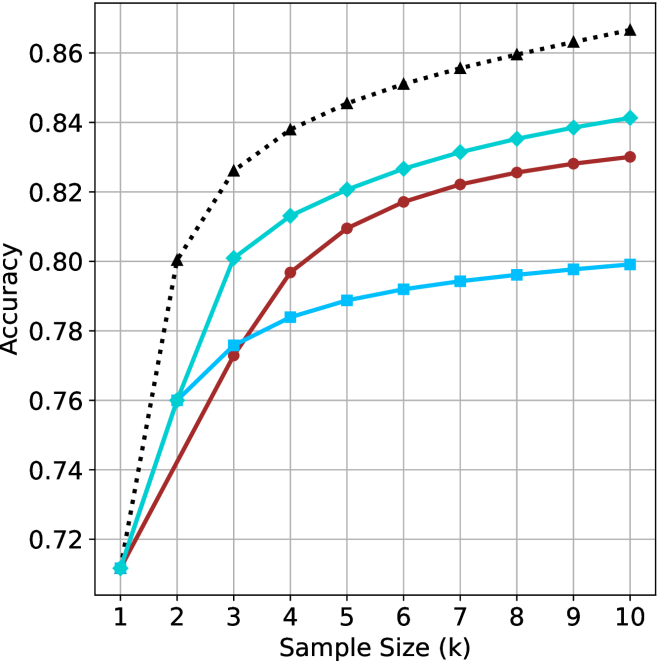

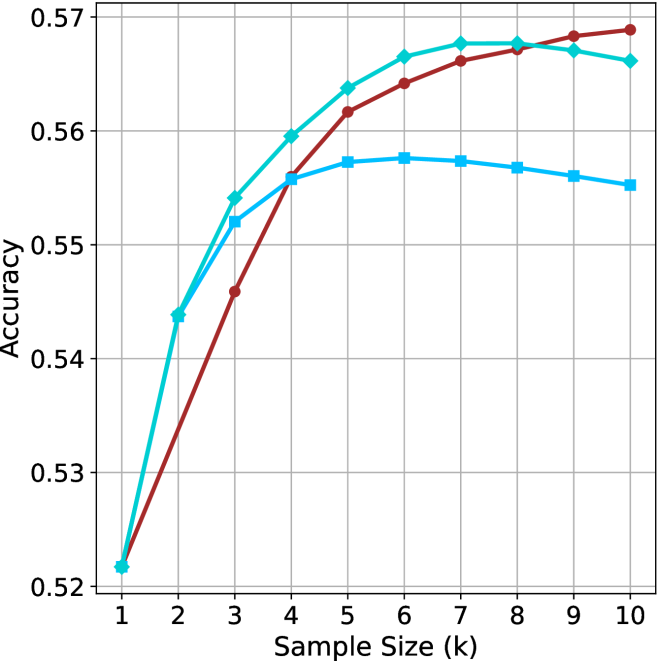

Figure 2: Comparing different inference methods under controlled sample size ( $k$ ). All methods improve with larger sample sizes. Interestingly, this trend also holds for the short-m@k methods.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute

### Overview

This image presents a line chart illustrating the relationship between "Thinking Compute" (measured in thousands of tokens) and "Accuracy". The chart displays four distinct data series, each represented by a different colored line, showing how accuracy changes as thinking compute increases. The chart is set against a white background with a grid for easier readability.

### Components/Axes

* **X-axis:** Labeled "Thinking Compute (thinking tokens in thousands)". The scale ranges from approximately 0 to 120 (in thousands of tokens). Markers are present at 20, 40, 60, 80, 100, and 120.

* **Y-axis:** Labeled "Accuracy". The scale ranges from approximately 0.48 to 0.76. Markers are present at 0.50, 0.55, 0.60, 0.65, 0.70, and 0.75.

* **Data Series:** Four lines are present, each with a distinct color and marker style:

* Black dotted line with diamond markers.

* Cyan line with square markers.

* Blue line with triangular markers.

* Red line with circular markers.

* **Grid:** A light gray grid is overlaid on the chart area to aid in reading values.

### Detailed Analysis

Let's analyze each data series individually:

* **Black (Dotted Line with Diamonds):** This line exhibits the steepest upward trend. It starts at approximately (20, 0.52), rises rapidly, and plateaus around (80, 0.74), remaining relatively constant until (120, 0.74). Approximate data points: (20, 0.52), (40, 0.68), (60, 0.72), (80, 0.74), (100, 0.74), (120, 0.74).

* **Cyan (Line with Squares):** This line shows a moderate upward trend, less steep than the black line. It begins at approximately (20, 0.56), increases steadily, and levels off around (60, 0.62), with slight fluctuations until (120, 0.62). Approximate data points: (20, 0.56), (40, 0.59), (60, 0.62), (80, 0.62), (100, 0.62), (120, 0.62).

* **Blue (Line with Triangles):** This line demonstrates a similar trend to the cyan line, but starts at a slightly lower accuracy. It begins at approximately (20, 0.54), increases steadily, and plateaus around (60, 0.61), remaining relatively constant until (120, 0.61). Approximate data points: (20, 0.54), (40, 0.57), (60, 0.61), (80, 0.61), (100, 0.61), (120, 0.61).

* **Red (Line with Circles):** This line exhibits the slowest upward trend. It starts at approximately (20, 0.50), increases gradually, and reaches approximately (120, 0.60). Approximate data points: (20, 0.50), (40, 0.53), (60, 0.56), (80, 0.59), (100, 0.60), (120, 0.60).

### Key Observations

* The black data series consistently outperforms the other three, achieving the highest accuracy levels.

* The red data series consistently underperforms the other three, achieving the lowest accuracy levels.

* The cyan and blue data series exhibit similar performance, with cyan slightly outperforming blue.

* Accuracy gains diminish as "Thinking Compute" increases beyond approximately 80,000 tokens, particularly for the black data series.

* The initial increase in accuracy is most pronounced for the black data series, suggesting a significant benefit from increased compute in the early stages.

### Interpretation

The chart demonstrates the impact of "Thinking Compute" on "Accuracy" for four different models or configurations. The significant difference in performance between the black line and the others suggests that the black line represents a more effective approach or a more advanced model. The diminishing returns observed at higher compute levels indicate that there's a point where increasing compute yields only marginal improvements in accuracy. This could be due to limitations in the model architecture, the dataset, or the task itself. The consistent ranking of the lines suggests inherent differences in the capabilities of the underlying systems. The data suggests that investing in "Thinking Compute" is beneficial, but there's an optimal point beyond which further investment provides limited value. Further investigation would be needed to understand *why* the black line performs so much better – is it a different algorithm, more training data, or a larger model size? The chart provides a clear visual representation of the trade-off between computational cost and performance.

</details>

(a) LN-Super-49B

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute

### Overview

This image presents a line chart illustrating the relationship between "Thinking Compute" (measured in thousands of tokens) and "Accuracy". Four distinct data series are plotted, each represented by a different colored line with distinct markers. The chart appears to demonstrate how accuracy improves with increased computational effort (thinking tokens).

### Components/Axes

* **X-axis:** "Thinking Compute (thinking tokens in thousands)". Scale ranges from approximately 0 to 120, with markers at 20, 40, 60, 80, 100, and 120.

* **Y-axis:** "Accuracy". Scale ranges from approximately 0.55 to 0.75, with markers at 0.55, 0.60, 0.65, 0.70, and 0.75.

* **Data Series:** Four lines are present, each with a unique color and marker style:

* Black dotted line with diamond markers.

* Teal (cyan) line with circular markers.

* Red line with circular markers.

* Blue line with square markers.

### Detailed Analysis

Let's analyze each data series individually:

* **Black Line (Diamond Markers):** This line exhibits the steepest upward trend.

* At approximately 20 (thousands of tokens), Accuracy is around 0.64.

* At approximately 40 (thousands of tokens), Accuracy is around 0.71.

* At approximately 60 (thousands of tokens), Accuracy is around 0.73.

* At approximately 80 (thousands of tokens), Accuracy is around 0.74.

* At approximately 100 (thousands of tokens), Accuracy is around 0.745.

* At approximately 120 (thousands of tokens), Accuracy is around 0.75.

* **Teal Line (Circular Markers):** This line shows a moderate upward trend, leveling off after approximately 60 (thousands of tokens).

* At approximately 20 (thousands of tokens), Accuracy is around 0.58.

* At approximately 40 (thousands of tokens), Accuracy is around 0.62.

* At approximately 60 (thousands of tokens), Accuracy is around 0.64.

* At approximately 80 (thousands of tokens), Accuracy is around 0.645.

* At approximately 100 (thousands of tokens), Accuracy is around 0.65.

* At approximately 120 (thousands of tokens), Accuracy is around 0.65.

* **Red Line (Circular Markers):** This line shows a slow upward trend, leveling off after approximately 40 (thousands of tokens).

* At approximately 20 (thousands of tokens), Accuracy is around 0.55.

* At approximately 40 (thousands of tokens), Accuracy is around 0.60.

* At approximately 60 (thousands of tokens), Accuracy is around 0.61.

* At approximately 80 (thousands of tokens), Accuracy is around 0.62.

* At approximately 100 (thousands of tokens), Accuracy is around 0.63.

* At approximately 120 (thousands of tokens), Accuracy is around 0.64.

* **Blue Line (Square Markers):** This line shows a moderate upward trend, leveling off after approximately 60 (thousands of tokens).

* At approximately 20 (thousands of tokens), Accuracy is around 0.57.

* At approximately 40 (thousands of tokens), Accuracy is around 0.61.

* At approximately 60 (thousands of tokens), Accuracy is around 0.62.

* At approximately 80 (thousands of tokens), Accuracy is around 0.625.

* At approximately 100 (thousands of tokens), Accuracy is around 0.63.

* At approximately 120 (thousands of tokens), Accuracy is around 0.63.

### Key Observations

* The black line consistently demonstrates the highest accuracy across all levels of "Thinking Compute".

* The red line consistently demonstrates the lowest accuracy across all levels of "Thinking Compute".

* All lines exhibit diminishing returns in accuracy as "Thinking Compute" increases beyond approximately 60-80 (thousands of tokens).

* The teal and blue lines show similar performance, with the teal line slightly outperforming the blue line.

### Interpretation

The chart suggests a positive correlation between "Thinking Compute" and "Accuracy", but with diminishing returns. Increasing computational effort (as measured by tokens) initially leads to significant gains in accuracy. However, beyond a certain point, the improvement in accuracy becomes marginal. The black line likely represents a more sophisticated or optimized approach to "thinking" or problem-solving, as it consistently achieves higher accuracy. The red line may represent a baseline or less effective method. The leveling off of all lines indicates that there are inherent limitations to the approach, or that other factors become more important in determining accuracy beyond a certain level of computational effort. This could be due to the complexity of the task, the quality of the data, or the limitations of the underlying model. The differences between the lines suggest that different algorithms or configurations have varying levels of efficiency in utilizing computational resources to achieve accuracy.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute

### Overview

The image presents a line chart illustrating the relationship between "Thinking Compute" (measured in thousands of tokens) and "Accuracy". Four distinct data series are plotted, each represented by a different colored line. The chart appears to demonstrate how accuracy improves with increased computational effort (thinking tokens).

### Components/Axes

* **X-axis:** "Thinking Compute (thinking tokens in thousands)". The scale ranges from approximately 0 to 150, with markers at 25, 50, 75, 100, 125, and 150.

* **Y-axis:** "Accuracy". The scale ranges from approximately 0.66 to 0.82, with markers at 0.66, 0.68, 0.70, 0.72, 0.74, 0.76, 0.78, 0.80, and 0.82.

* **Data Series:** Four lines are present, each with a distinct color and pattern:

* Black dotted line

* Cyan solid line

* Red solid line

* Orange dashed line

### Detailed Analysis

Let's analyze each line individually, noting trends and approximate data points.

* **Black Dotted Line:** This line exhibits the steepest upward slope, indicating the fastest increase in accuracy with increasing thinking compute.

* At 25 (Thinking Compute), Accuracy is approximately 0.72.

* At 50, Accuracy is approximately 0.77.

* At 75, Accuracy is approximately 0.795.

* At 100, Accuracy is approximately 0.81.

* At 125, Accuracy is approximately 0.818.

* At 150, Accuracy is approximately 0.822.

* **Cyan Solid Line:** This line shows a moderate upward slope, with a diminishing rate of increase as thinking compute increases.

* At 25, Accuracy is approximately 0.685.

* At 50, Accuracy is approximately 0.72.

* At 75, Accuracy is approximately 0.74.

* At 100, Accuracy is approximately 0.748.

* At 125, Accuracy is approximately 0.75.

* At 150, Accuracy is approximately 0.752.

* **Red Solid Line:** This line demonstrates a slow, but steady, increase in accuracy.

* At 25, Accuracy is approximately 0.67.

* At 50, Accuracy is approximately 0.695.

* At 75, Accuracy is approximately 0.71.

* At 100, Accuracy is approximately 0.725.

* At 125, Accuracy is approximately 0.735.

* At 150, Accuracy is approximately 0.74.

* **Orange Dashed Line:** This line initially increases rapidly, then plateaus.

* At 25, Accuracy is approximately 0.69.

* At 50, Accuracy is approximately 0.71.

* At 75, Accuracy is approximately 0.715.

* At 100, Accuracy is approximately 0.718.

* At 125, Accuracy is approximately 0.72.

* At 150, Accuracy is approximately 0.722.

### Key Observations

* The black dotted line consistently outperforms the other three lines across all values of "Thinking Compute".

* The red solid line exhibits the slowest rate of improvement in accuracy.

* The orange dashed line shows initial gains, but quickly reaches a point of diminishing returns.

* All lines demonstrate an overall positive correlation between "Thinking Compute" and "Accuracy", but the strength of this correlation varies significantly.

### Interpretation

The chart suggests that increasing "Thinking Compute" generally leads to improved accuracy, but the effectiveness of this approach depends on the specific method or model being used (represented by the different lines). The black dotted line likely represents a highly efficient method, achieving substantial accuracy gains with relatively little computational effort. Conversely, the red solid line represents a less efficient method, requiring significantly more compute to achieve comparable accuracy. The orange dashed line suggests a method that quickly reaches its performance limit.

The diminishing returns observed in the cyan and orange lines indicate that there is a point at which further investment in "Thinking Compute" yields only marginal improvements in accuracy. This highlights the importance of optimizing computational resources and potentially exploring alternative approaches to achieve higher accuracy. The chart provides valuable insights into the trade-offs between computational cost and performance, which is crucial for practical applications of these models.

</details>

(c) QwQ-32B

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Thinking Compute

### Overview

This image presents a line chart comparing the accuracy of different methods (pass@k, majority@k, short-1@k, and short-3@k) as a function of "Thinking Compute" measured in thousands of tokens. The chart illustrates how accuracy improves with increased computational effort for each method.

### Components/Axes

* **X-axis:** "Thinking Compute (thinking tokens in thousands)". Scale ranges from approximately 0 to 160, with markers at 0, 50, 100, and 150.

* **Y-axis:** "Accuracy". Scale ranges from approximately 0.78 to 0.91, with markers at 0.78, 0.80, 0.82, 0.84, 0.86, 0.88, and 0.90.

* **Legend:** Located in the top-right corner, listing the following data series with corresponding colors:

* pass@k (Oracle) - Black dotted line with triangle markers.

* majority@k - Brown solid line with circle markers.

* short-1@k (Ours) - Red solid line with circle markers.

* short-3@k (Ours) - Cyan solid line with diamond markers.

### Detailed Analysis

* **pass@k (Oracle):** This line starts at approximately 0.79 at a Thinking Compute of 0, rises steeply to approximately 0.87 at a Thinking Compute of 50, continues to rise but at a decreasing rate, reaching approximately 0.90 at a Thinking Compute of 150. The trend is upward and leveling off.

* **majority@k:** This line begins at approximately 0.79 at a Thinking Compute of 0, increases steadily to approximately 0.84 at a Thinking Compute of 50, and continues to rise, reaching approximately 0.87 at a Thinking Compute of 150. The trend is consistently upward, but less steep than pass@k.

* **short-1@k (Ours):** This line starts at approximately 0.78 at a Thinking Compute of 0, increases rapidly to approximately 0.84 at a Thinking Compute of 50, and then plateaus, reaching approximately 0.85 at a Thinking Compute of 150. The trend is initially steep, then flattens.

* **short-3@k (Ours):** This line begins at approximately 0.79 at a Thinking Compute of 0, increases rapidly to approximately 0.86 at a Thinking Compute of 50, and then rises more slowly, reaching approximately 0.88 at a Thinking Compute of 150. The trend is upward, with a decreasing rate of increase after a Thinking Compute of 50.

### Key Observations

* "pass@k (Oracle)" consistently achieves the highest accuracy across all levels of "Thinking Compute".

* "short-3@k (Ours)" outperforms "short-1@k (Ours)" at all levels of "Thinking Compute".

* The rate of accuracy improvement diminishes for all methods as "Thinking Compute" increases, suggesting a point of diminishing returns.

* "short-1@k (Ours)" shows the least improvement in accuracy with increasing "Thinking Compute".

### Interpretation

The chart demonstrates the relationship between computational effort (measured as "Thinking Compute") and the accuracy of different methods for a task. The "pass@k (Oracle)" method, likely representing an ideal or upper-bound performance, serves as a benchmark. The "short-1@k" and "short-3@k" methods, labeled as "Ours," represent approaches developed by the authors.

The data suggests that increasing "Thinking Compute" generally improves accuracy, but the benefit is not linear. The diminishing returns observed for all methods indicate that there's a trade-off between computational cost and performance gains. The superior performance of "short-3@k" over "short-1@k" suggests that incorporating more information or complexity into the model (represented by the '3' in short-3@k) leads to better results, but with increased computational cost. The gap between the "Ours" methods and the "Oracle" method highlights the potential for further improvement in the developed approaches. The chart provides evidence for the effectiveness of the "Ours" methods, particularly "short-3@k," while also indicating areas for future research and optimization.

</details>

(d) R1-670B

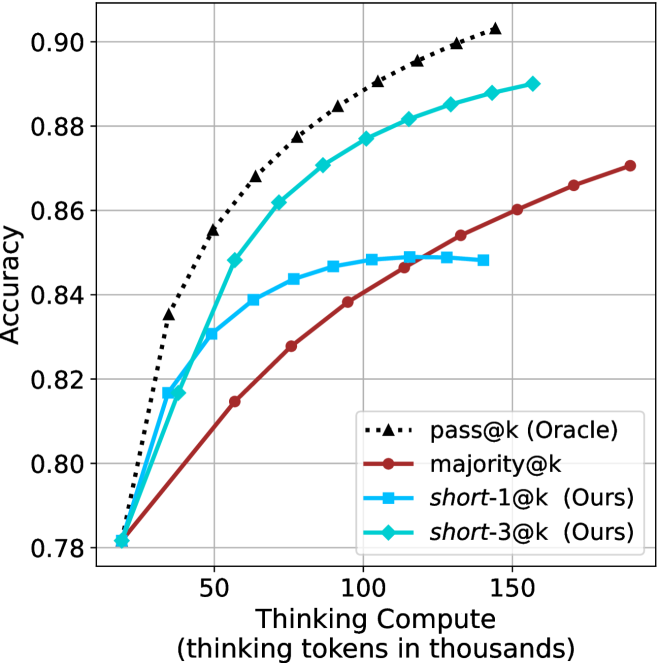

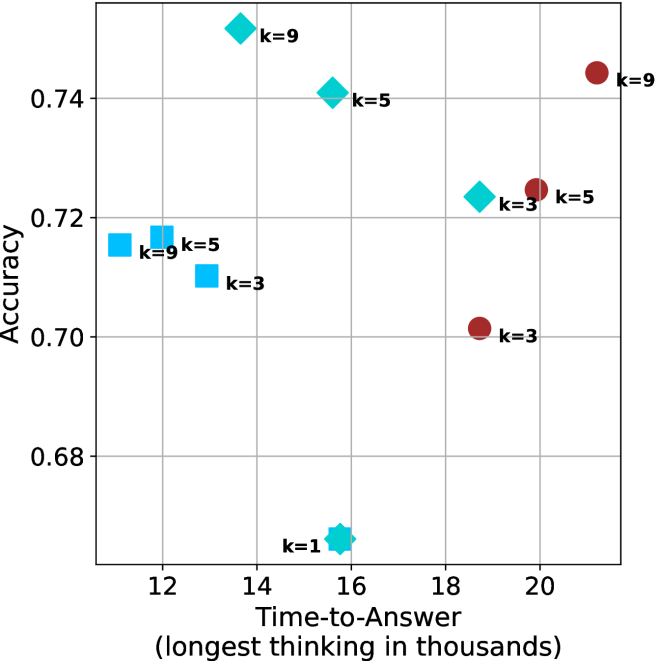

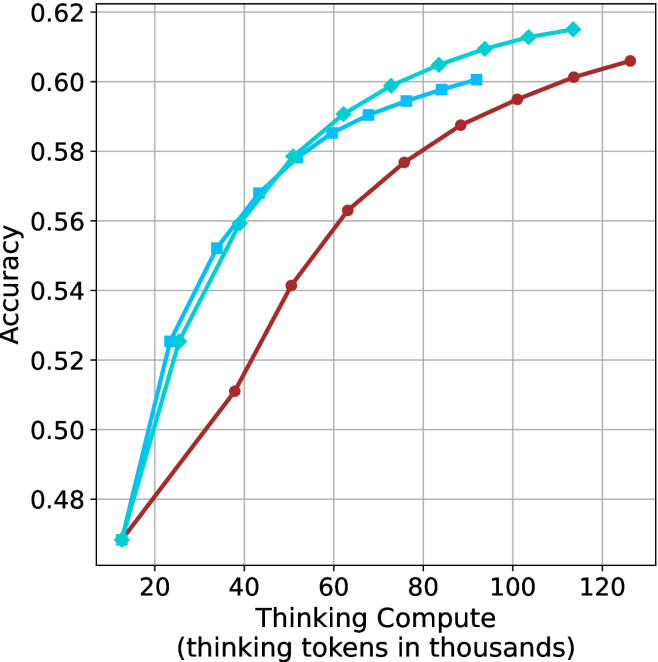

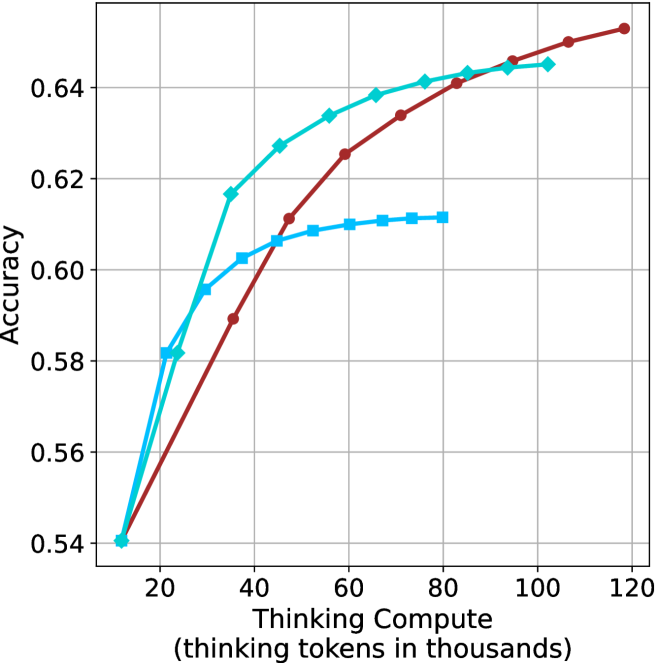

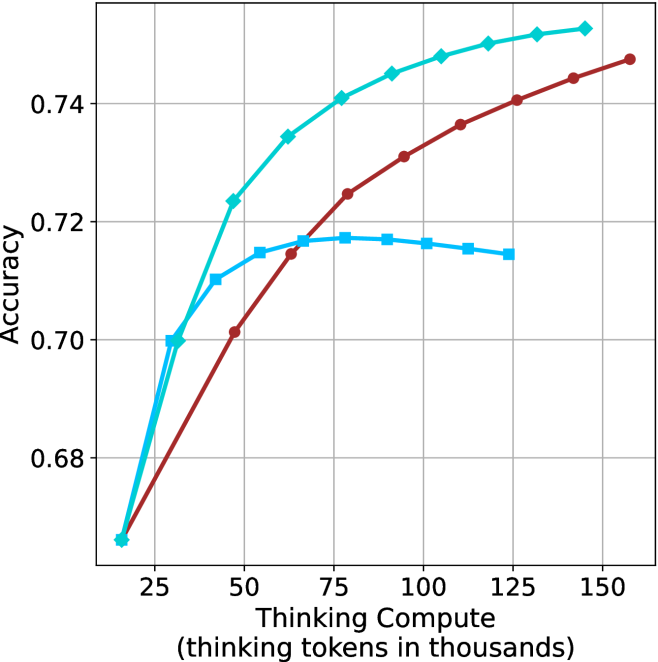

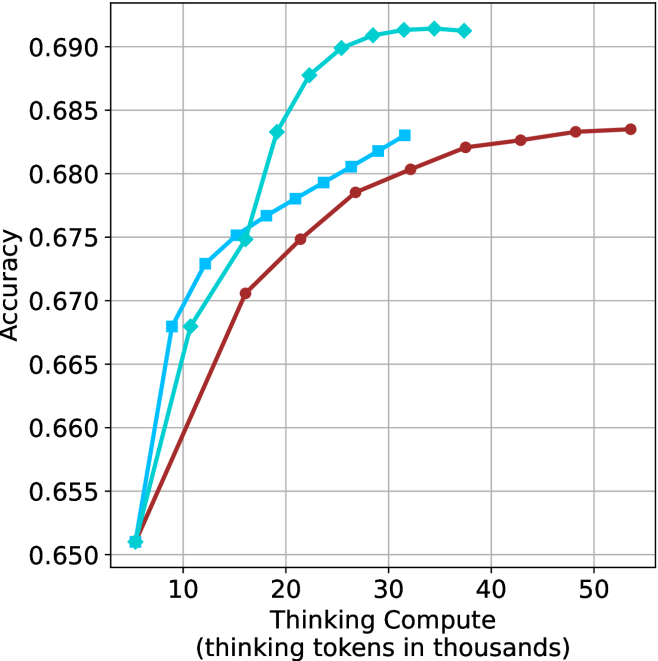

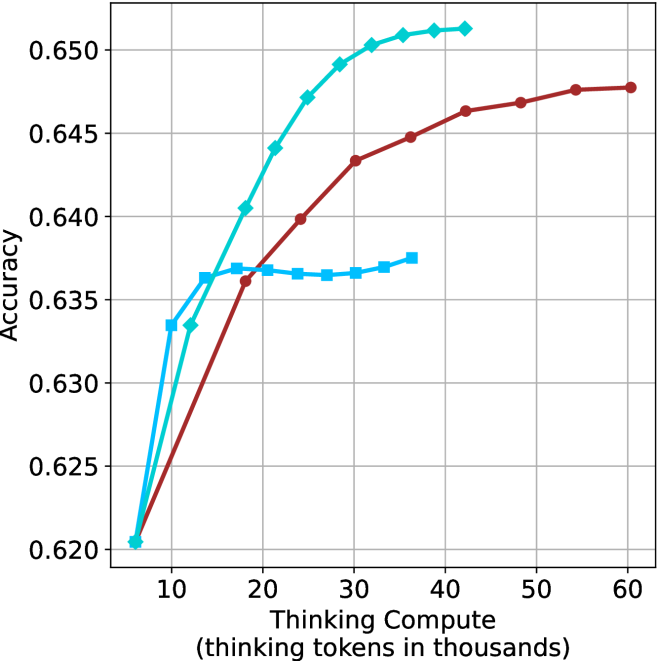

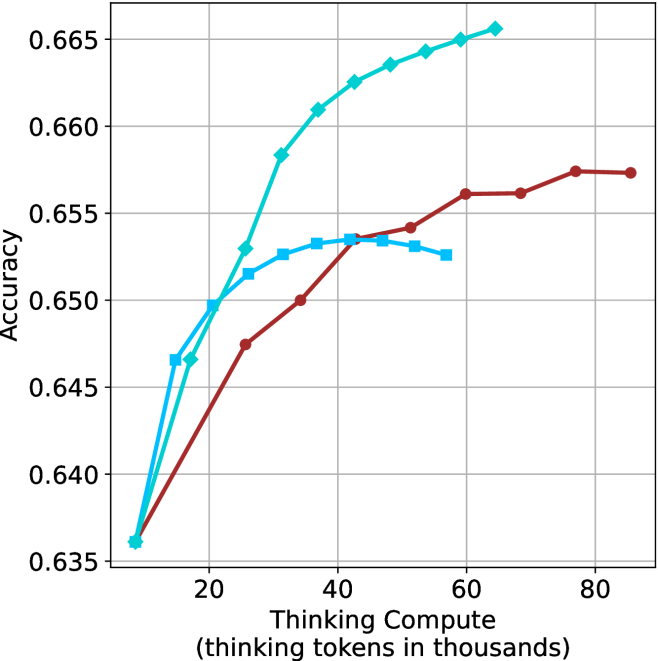

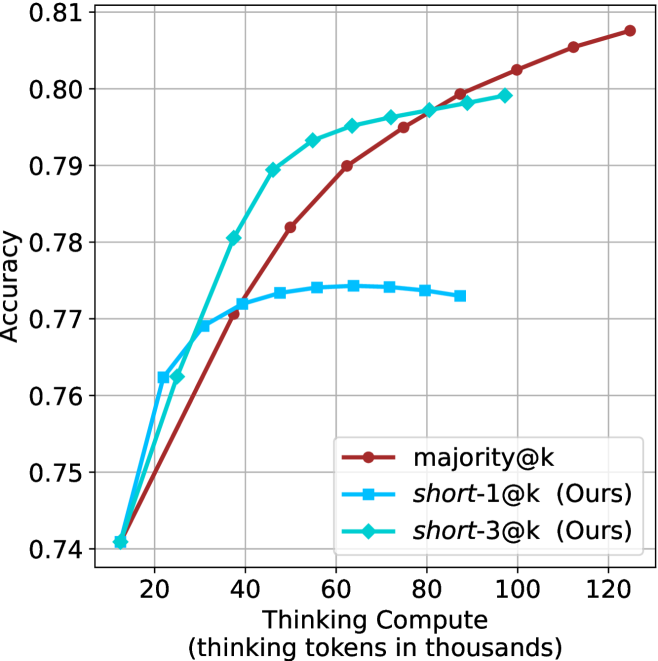

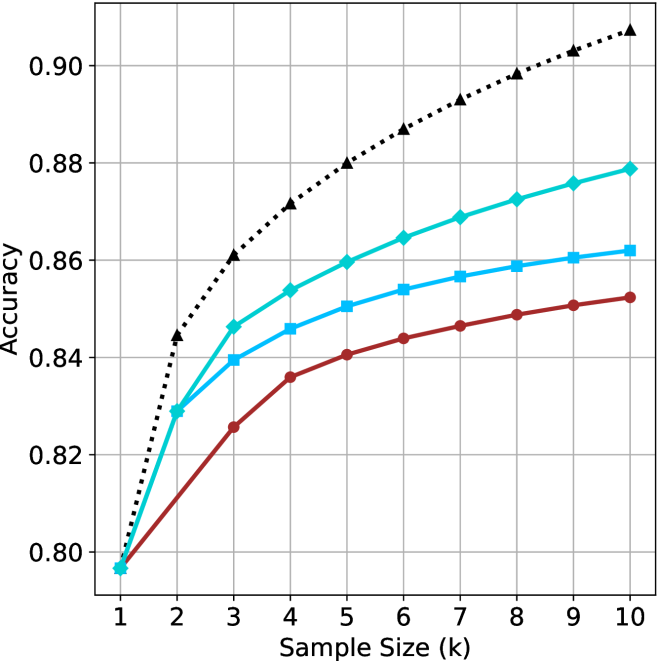

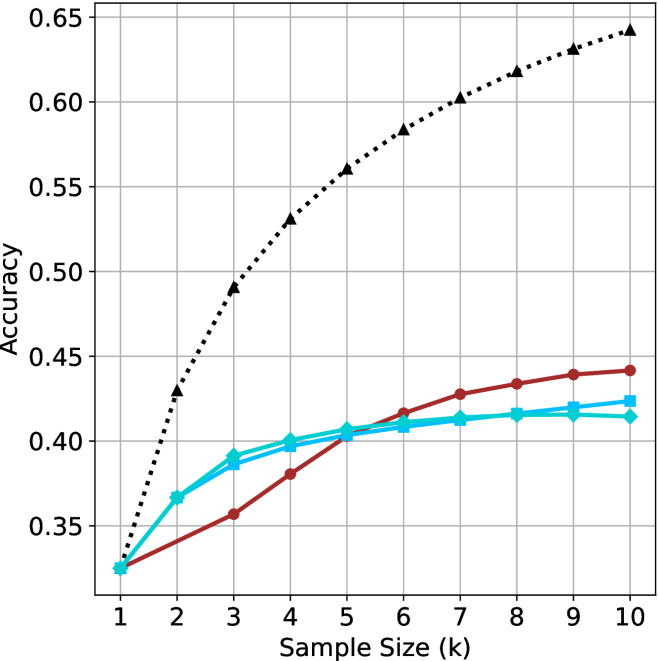

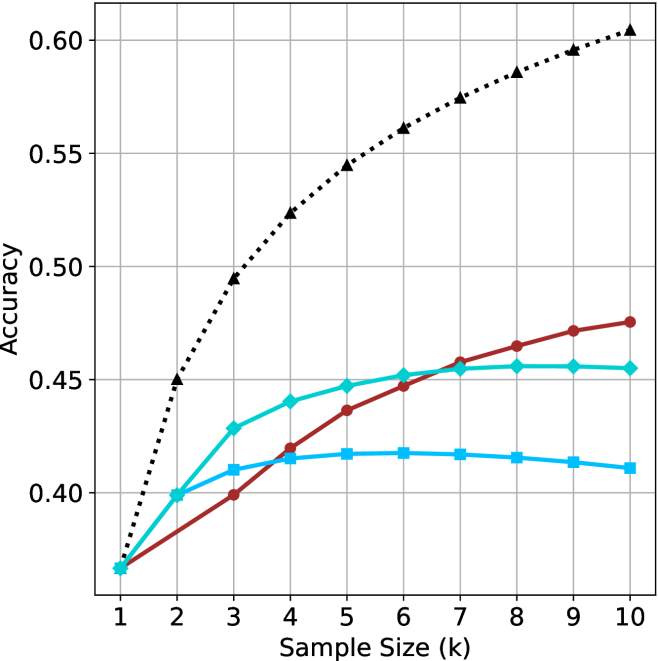

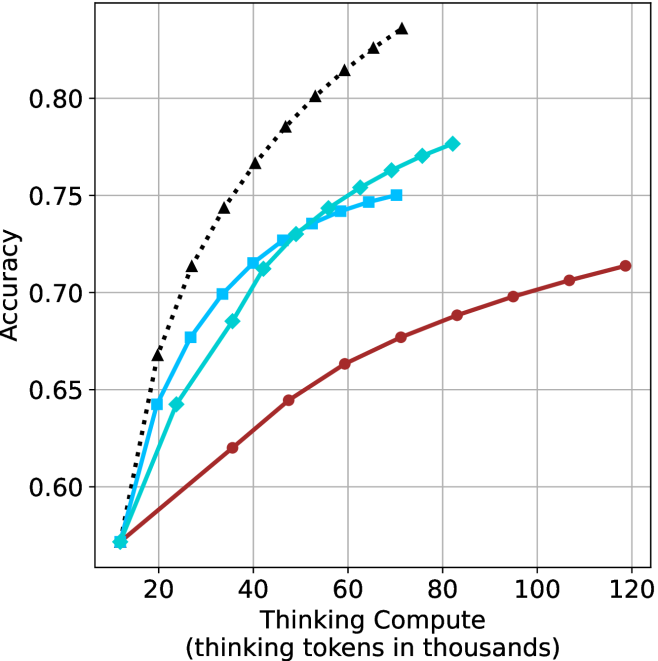

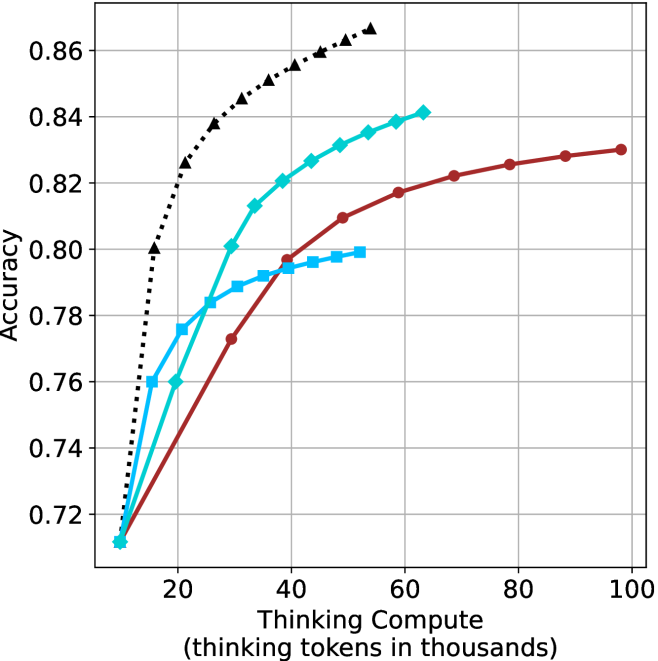

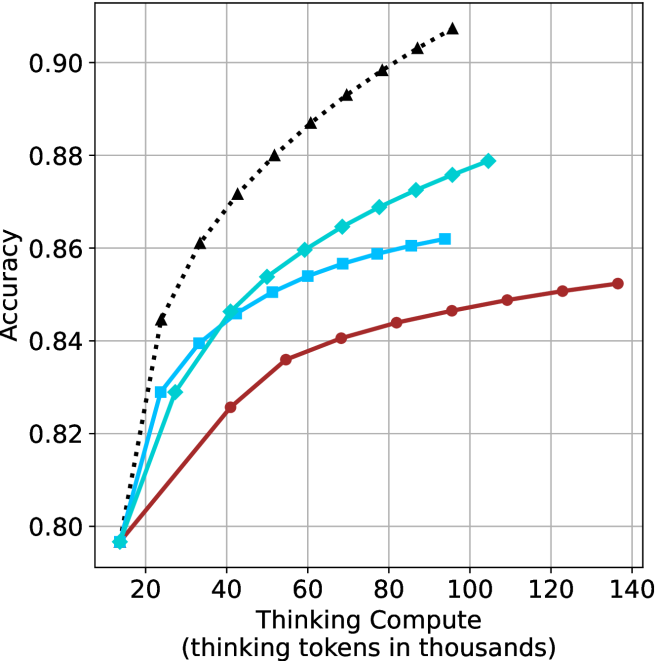

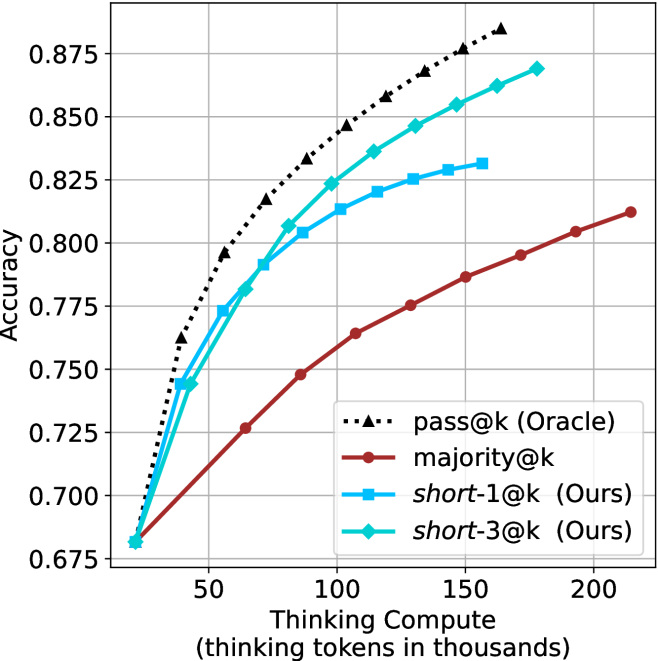

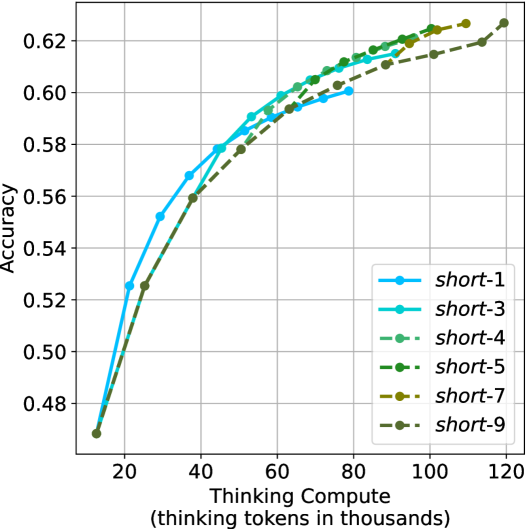

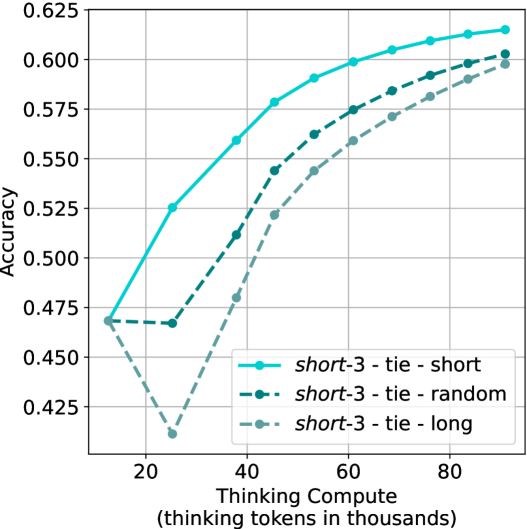

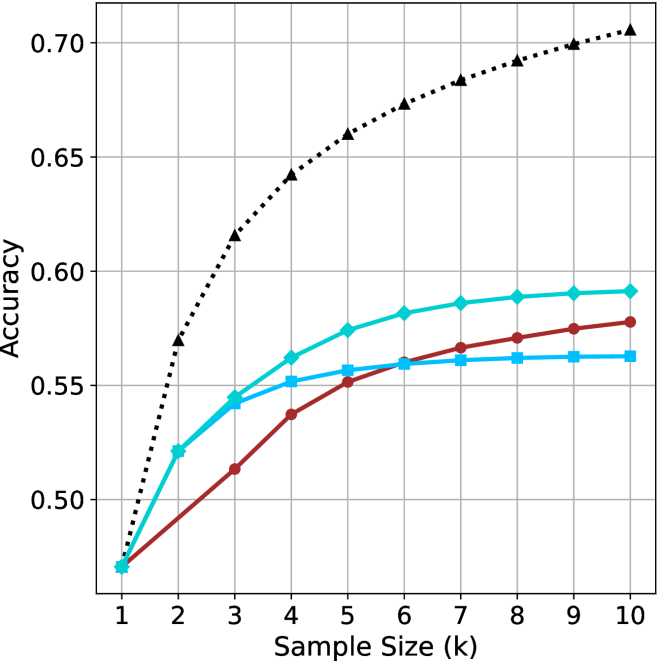

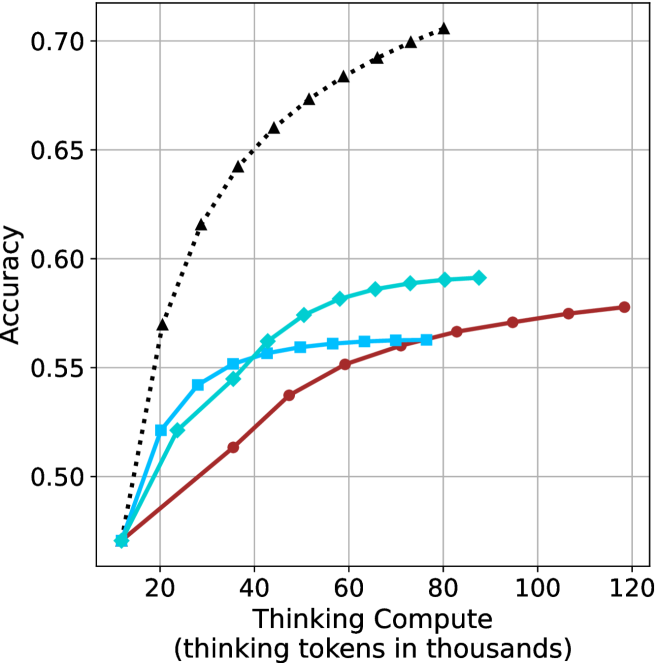

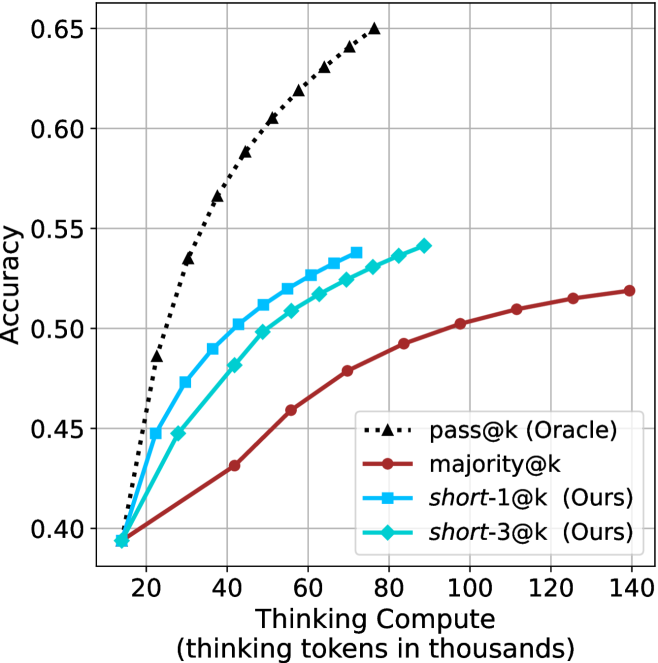

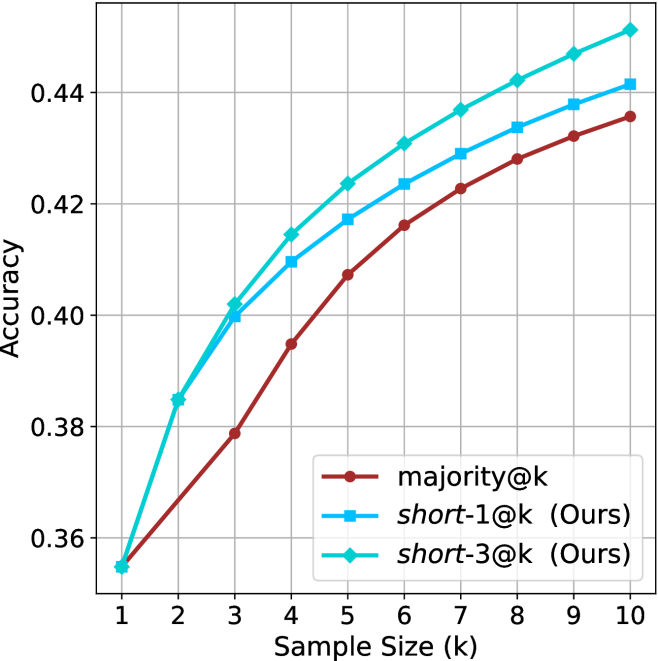

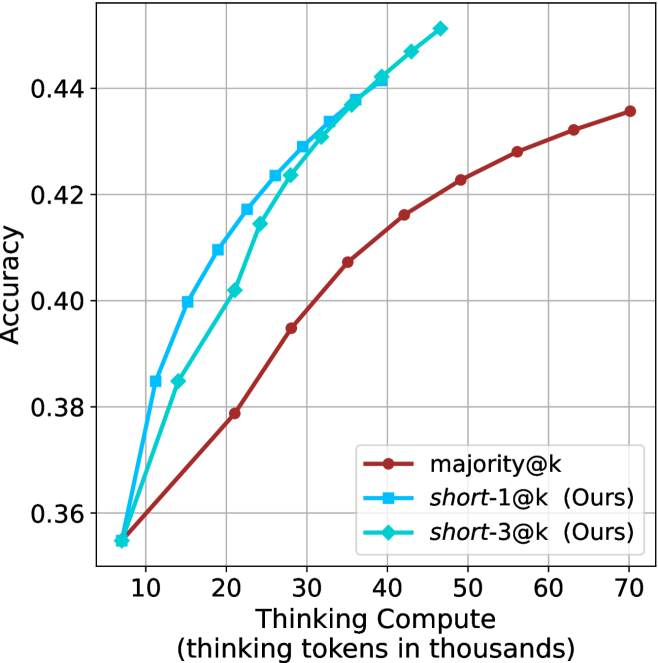

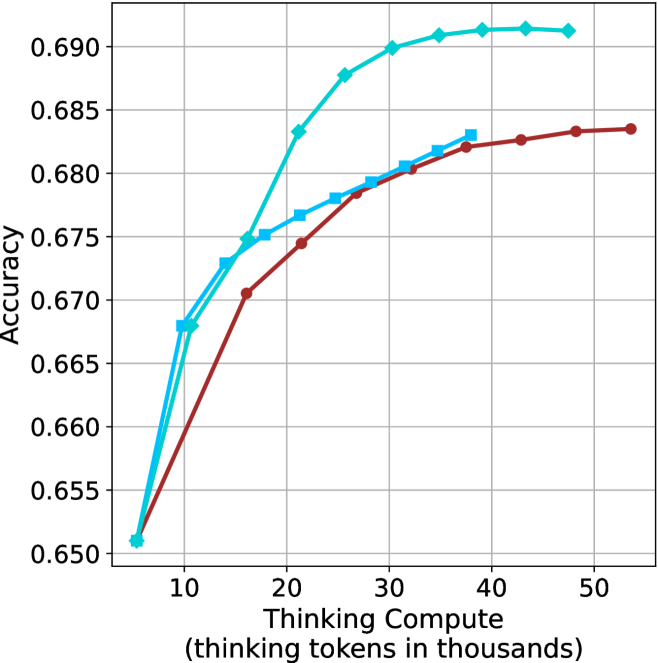

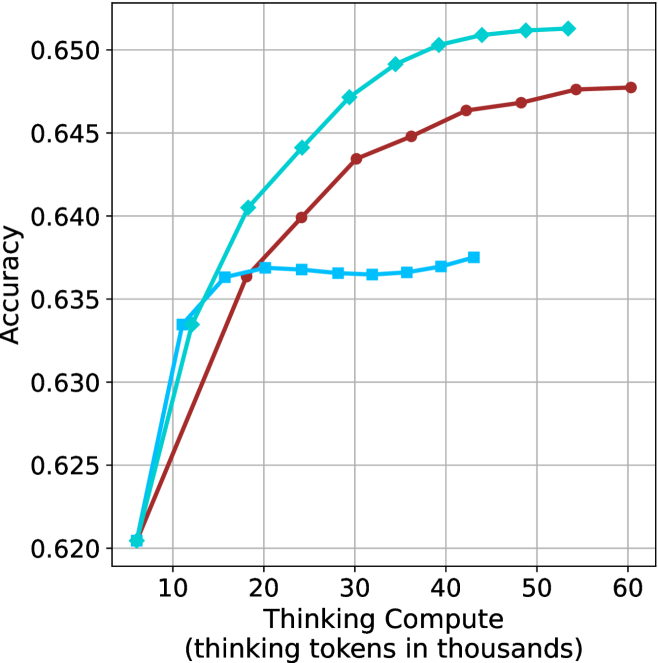

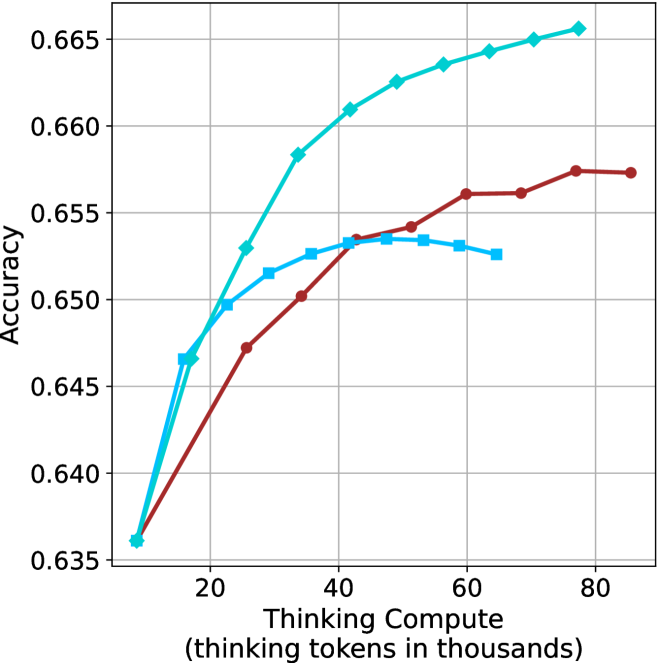

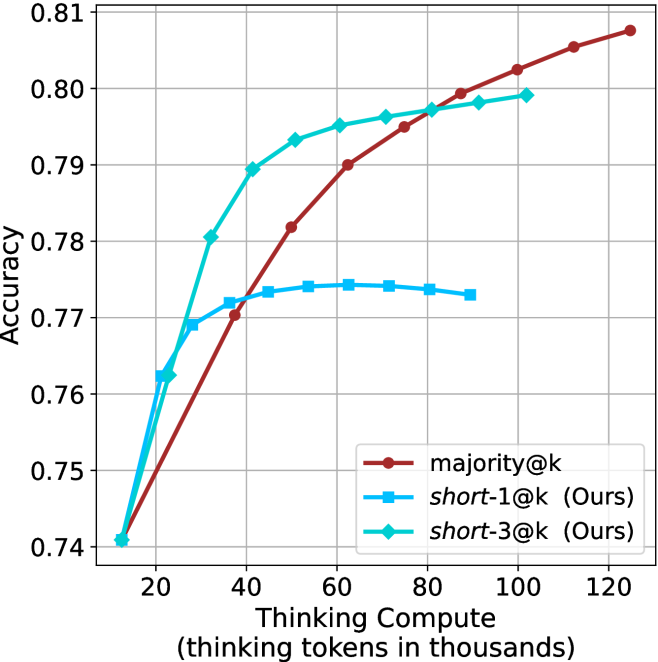

Figure 3: Comparing different inference methods under controlled thinking compute. short-1@k is highly performant in low compute regimes. short-3@k dominates the curve compared to majority $@k$ .

Sample-size ( $k$ ).

We start by examining different methods across benchmarks for a fixed sample size $k$ . Results aggregated across math benchmarks are presented in Figure ˜ 2, while Figure ˜ 6 in Appendix ˜ A presents GPQA-D results, and detailed results per benchmark can be seen at Appendix ˜ B. We observe that, generally, all methods improve with larger sample sizes, indicating that increased generations per question enhance performance. This trend is somewhat expected for the oracle (pass@ $k$ ) and majority@ $k$ methods but surprising for our method, as it means that even when a large amount of generations is used, the shorter thinking ones are more likely to be correct. The only exception is QwQ- $32$ B (Figure ˜ 2(c)), which shows a small of decline when considering larger sample sizes with the short-1@k method.

When comparing short-1@k to majority@ $k$ , the former outperforms at smaller sample sizes, but is outperformed by the latter in three out of four models when the sample size increases. Meanwhile, the short-3@k method demonstrates superior performance, dominating across nearly all models and sample sizes. Notably, for the R $1$ - $670$ B model, short-3@k exhibits performance nearly on par with the oracle across all sample sizes. We next analyze how this performance advantage translates into efficiency benefits.

Thinking-compute.

The aggregated performance results for math benchmarks, evaluated with respect to thinking compute, are presented in Figure ˜ 3 (per-benchmark results provided in Appendix ˜ B), while the GPQA-D respective results are presented in Figure ˜ 7 in Appendix ˜ A. We again observe that the short-1@k method outperforms majority $@k$ at lower compute budgets. Notably, for LN-Super- $49$ B (Figure ˜ 3(a)), the short-1@k method surpasses majority $@k$ across all compute budgets. For instance, short-1@k achieves $57\$ accuracy with approximately $60\$ of the compute budget used by majority@ $k$ to achieve the same accuracy. For R $1$ - $32$ B, QwQ- $32$ B and R $1$ - $670$ B models, the short-1@k method exceeds majority $@k$ up to compute budgets of $45$ k, $60$ k and $100$ k total thinking tokens, respectively, but is underperformed by it on larger compute budgets.

The short-3@k method yields even greater performance improvements, incurring only a modest increase in thinking compute compared to short-1@k. When compared to majority $@k$ , short-3@k consistently achieves higher performance with lower thinking compute across all models and compute budgets. For example, with the QwQ- $32$ B model (Figure ˜ 3(c)), and an average compute budget of $80$ k thinking tokens per example, short-3@k improves accuracy by $2\$ over majority@ $k$ . For the R $1$ - $670$ B model (Figure ˜ 3(d)), short-3@k consistently outperforms majority voting, yielding an approximate $4\$ improvement with an average token budget of $100$ k.

<details>

<summary>x10.png Details</summary>

### Visual Description

\n

## Scatter Plot: Accuracy vs. Time-to-Answer

### Overview

This image presents a scatter plot illustrating the relationship between Accuracy and Time-to-Answer, with data points differentiated by the value of 'k'. The x-axis represents Time-to-Answer in thousands of units, and the y-axis represents Accuracy. There are two distinct marker shapes: squares and circles, each representing different values of 'k'.

### Components/Axes

* **X-axis:** Time-to-Answer (longest thinking in thousands) - Scale ranges from approximately 8 to 19.

* **Y-axis:** Accuracy - Scale ranges from approximately 0.48 to 0.62.

* **Legend:** Implicitly defined by marker shape and 'k' value labels next to the data points.

* **Data Series:** Two distinct series based on marker shape.

* Squares: Representing k = 1, 3, 5, and 9.

* Circles: Representing k = 3, 5, and 9.

* **'k' Values:** 1, 3, 5, and 9.

### Detailed Analysis

The plot shows a general trend of increasing accuracy with increasing time-to-answer. Let's analyze each 'k' value series:

* **k = 1 (Square, Cyan):** Located at approximately (13.5, 0.49).

* **k = 3 (Square, Blue):** Located at approximately (9.5, 0.55) and (15.5, 0.51).

* **k = 5 (Square, Light Blue):** Located at approximately (10.5, 0.59) and (17.5, 0.56).

* **k = 9 (Square, Dark Blue):** Located at approximately (9, 0.60) and (14, 0.61).

* **k = 3 (Circle, Red):** Located at approximately (16, 0.52).

* **k = 5 (Circle, Red):** Located at approximately (18, 0.56).

* **k = 9 (Circle, Red):** Located at approximately (18, 0.60).

The square markers generally cluster towards the left side of the plot (lower Time-to-Answer values), while the circle markers are more dispersed and tend towards the right side (higher Time-to-Answer values).

### Key Observations

* There is a positive correlation between Time-to-Answer and Accuracy.

* For k=3, k=5, and k=9, there are data points represented by both square and circle markers, suggesting potentially different behaviors or conditions for the same 'k' value.

* The highest accuracy values (around 0.60) are achieved with k=9, and these points are associated with longer Time-to-Answer values.

* k=1 has the lowest accuracy and a moderate Time-to-Answer.

* There is some overlap in the Time-to-Answer ranges for different 'k' values, particularly for k=3, k=5, and k=9.

### Interpretation

This data suggests that increasing the 'thinking time' (Time-to-Answer) generally leads to higher accuracy. The parameter 'k' appears to influence both the accuracy and the time required to achieve it. The presence of both square and circle markers for k=3, k=5, and k=9 indicates that there might be different strategies or conditions under which the system operates, leading to variations in performance for the same 'k' value. The fact that the circle markers generally have higher Time-to-Answer values suggests that these conditions might involve more complex or time-consuming processing. The plot could be illustrating the trade-off between speed and accuracy in a decision-making process, where 'k' represents a parameter controlling the complexity or thoroughness of the process. The differing shapes for the same 'k' value could represent different algorithms or approaches being tested. Further investigation would be needed to understand the specific meaning of 'k' and the conditions that lead to the different marker shapes.

</details>

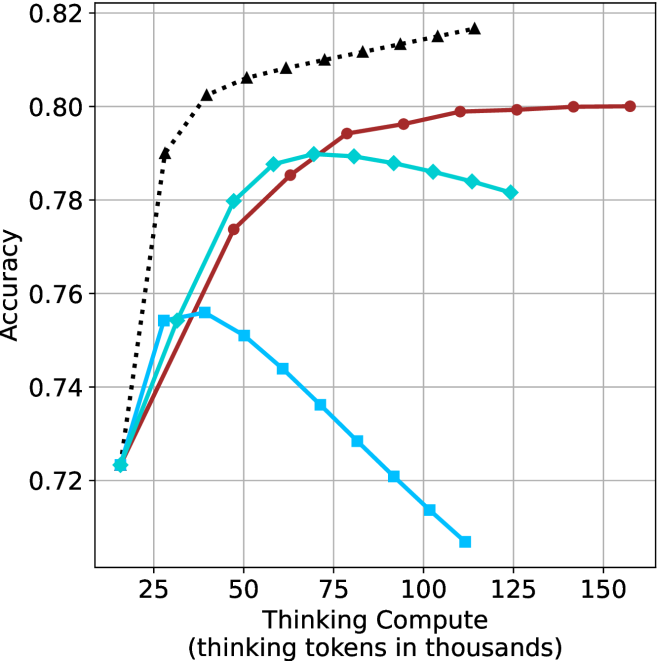

(a) LN-Super- $49$ B

<details>

<summary>x11.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy vs. Time-to-Answer with varying k values

### Overview

This image presents a scatter plot illustrating the relationship between Accuracy and Time-to-Answer for different values of 'k'. The 'k' parameter likely represents a configuration setting or a model parameter. Each data point represents a specific configuration, and the color of the point indicates the value of 'k'.

### Components/Axes

* **X-axis:** Time-to-Answer (longest thinking in thousands) - ranging from approximately 7.5 to 19.

* **Y-axis:** Accuracy - ranging from approximately 0.54 to 0.65.

* **Data Series:** Multiple data series, each representing a different value of 'k'.

* **Legend:** Located in the top-right corner, indicating the color mapping for each 'k' value:

* k=1 (Dark Red)

* k=3 (Red)

* k=5 (Blue)

* k=9 (Cyan)

* **Data Point Labels:** Each data point is labeled with its corresponding 'k' value.

### Detailed Analysis

The plot shows data points for k=1, k=3, k=5, and k=9.

* **k=1 (Dark Red):** A single data point at approximately (12, 0.54).

* **k=3 (Red):** Three data points:

* Approximately (8.5, 0.59)

* Approximately (15.5, 0.58)

* Approximately (13.5, 0.62)

* **k=5 (Blue):** Three data points:

* Approximately (8.2, 0.60)

* Approximately (10.2, 0.63)

* Approximately (17.2, 0.62)

* **k=9 (Cyan):** Three data points:

* Approximately (8.0, 0.61)

* Approximately (10.0, 0.65)

* Approximately (18.0, 0.64)

**Trends:**

* **k=1:** The single point suggests a low accuracy and moderate time-to-answer.

* **k=3:** The points show a moderate spread in time-to-answer (8.5 to 15.5) with accuracy fluctuating around 0.58-0.62.

* **k=5:** The points show a moderate spread in time-to-answer (8.2 to 17.2) with accuracy fluctuating around 0.60-0.63.

* **k=9:** The points show a moderate spread in time-to-answer (8.0 to 18.0) with accuracy fluctuating around 0.61-0.65.

Generally, as 'k' increases, the accuracy tends to increase, and the time-to-answer also tends to increase.

### Key Observations

* Higher values of 'k' (5 and 9) generally exhibit higher accuracy compared to lower values (1 and 3).

* There's a positive correlation between 'k' and both accuracy and time-to-answer.

* The data points for k=9 show the highest accuracy values, reaching approximately 0.65.

* The data points for k=1 show the lowest accuracy values, at approximately 0.54.

* There is significant variance in time-to-answer for each 'k' value, suggesting that other factors influence processing time.

### Interpretation

The data suggests that increasing the value of 'k' improves the accuracy of the system, but also increases the time it takes to generate an answer. 'k' likely controls the breadth of the search or the complexity of the model. A higher 'k' allows for a more thorough search or a more complex model, leading to better accuracy, but at the cost of increased processing time.

The spread of data points for each 'k' value indicates that the relationship between 'k', accuracy, and time-to-answer is not strictly deterministic. Other factors, such as the specific input or the underlying hardware, likely play a role.

The single data point for k=1 suggests that this value is insufficient to achieve high accuracy, and may be a lower bound for practical use. The optimal value of 'k' would likely be a trade-off between accuracy and time-to-answer, depending on the specific application requirements. Further investigation could involve analyzing the distribution of time-to-answer for each 'k' value and performing statistical tests to determine the significance of the observed trends.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x12.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy vs. Time-to-Answer

### Overview

This image presents a scatter plot visualizing the relationship between Accuracy and Time-to-Answer, with data points differentiated by the value of 'k'. The plot appears to explore the trade-off between speed (Time-to-Answer) and correctness (Accuracy) for different values of 'k'.

### Components/Axes

* **X-axis:** Time-to-Answer (longest thinking in thousands). Scale ranges from approximately 11.5 to 21.

* **Y-axis:** Accuracy. Scale ranges from approximately 0.67 to 0.75.

* **Data Points:** Scatter plot points, each representing a data instance. The points are color-coded based on the value of 'k'.

* **Legend:** Located in the top-right corner, the legend maps colors to 'k' values:

* Blue: k=1

* Light Blue: k=3

* Light Green: k=5

* Dark Green: k=9

* Red: k=3

### Detailed Analysis

The plot contains several data points, each labeled with its corresponding 'k' value. Let's analyze the data points by 'k' value and their approximate coordinates:

* **k=1:** One data point, located at approximately (15.5, 0.67).

* **k=3:** Three data points:

* (14.5, 0.72)

* (18.5, 0.70)

* (19.5, 0.72)

* **k=5:** Three data points:

* (12.5, 0.72)

* (14.5, 0.74)

* (20.0, 0.72)

* **k=9:** Three data points:

* (13.5, 0.75)

* (18.0, 0.73)

* (20.5, 0.74)

**Trends:**

* **k=1:** The single point suggests a low accuracy and relatively fast Time-to-Answer.

* **k=3:** The points show a wider range of Time-to-Answer (14.5 to 19.5) with accuracy fluctuating around 0.72 and 0.70.

* **k=5:** The points show a range of Time-to-Answer (12.5 to 20.0) with accuracy fluctuating around 0.72 and 0.74.

* **k=9:** The points show a range of Time-to-Answer (13.5 to 20.5) with accuracy fluctuating around 0.73 and 0.75.

Generally, as 'k' increases, the Time-to-Answer tends to increase, and the Accuracy also tends to increase, but this is not a strict monotonic relationship.

### Key Observations

* There is a general positive correlation between 'k' and both Time-to-Answer and Accuracy.

* For k=3, there is a significant spread in Time-to-Answer values, while the accuracy remains relatively consistent.

* The highest accuracy is achieved with k=9, but it also requires the longest Time-to-Answer.

* There is overlap in the Time-to-Answer ranges for different 'k' values, indicating that achieving a certain speed does not necessarily dictate a specific accuracy level.

### Interpretation

The data suggests that increasing the value of 'k' generally improves accuracy but at the cost of increased Time-to-Answer. 'k' likely represents a parameter controlling the complexity or thoroughness of the process being evaluated. A higher 'k' might involve more extensive computation or consideration of more factors, leading to better results but slower processing.

The spread of data points for k=3 indicates that there are multiple ways to achieve similar accuracy levels with this parameter setting, potentially due to variations in the input data or the underlying algorithm.

The plot highlights a trade-off between speed and accuracy, which is a common consideration in many applications. The optimal value of 'k' would depend on the specific requirements of the task, balancing the need for accurate results with the constraints of time or computational resources. The red data points for k=3 are interesting, as they are clustered together, suggesting a specific behavior or condition for that parameter value.

</details>

(c) QwQ-32B

<details>

<summary>x13.png Details</summary>

### Visual Description

\n

## Scatter Plot: Accuracy vs. Time-to-Answer

### Overview

This image presents a scatter plot comparing the accuracy and time-to-answer for different values of 'k' across three methods: majority@k, short-1@k (Ours), and short-3@k (Ours). The plot visualizes the trade-off between performance (accuracy) and computational cost (time).

### Components/Axes

* **X-axis:** Time-to-Answer (longest thinking in thousands) - Scale ranges from approximately 14 to 22.5.

* **Y-axis:** Accuracy - Scale ranges from approximately 0.78 to 0.89.

* **Legend:** Located in the bottom-right corner.

* majority@k - Represented by red circles.

* short-1@k (Ours) - Represented by blue squares.

* short-3@k (Ours) - Represented by teal diamonds.

* **Data Points:** Each point represents a specific combination of 'k' value and method. The 'k' value is labeled next to each data point.

### Detailed Analysis

Let's analyze each data series individually:

**1. majority@k (Red Circles):**

* The data points show an upward trend as 'k' increases.

* k=3: Approximately (21.8, 0.82)

* k=5: Approximately (21.2, 0.84)

* k=9: Approximately (22.3, 0.87)

**2. short-1@k (Ours) (Blue Squares):**

* The data points show a generally increasing trend in accuracy with increasing 'k', but with more fluctuation.

* k=1: Approximately (14.2, 0.78)

* k=3: Approximately (15.5, 0.84)

* k=5: Approximately (16.2, 0.84)

* k=9: Approximately (17.5, 0.86)

**3. short-3@k (Ours) (Teal Diamonds):**

* The data points show a clear upward trend in accuracy as 'k' increases.

* k=3: Approximately (19.5, 0.85)

* k=5: Approximately (20.2, 0.87)

* k=9: Approximately (21.0, 0.88)

### Key Observations

* For all methods, increasing 'k' generally leads to higher accuracy, but also increases the time-to-answer.

* The 'short-3@k (Ours)' method consistently achieves the highest accuracy for a given time-to-answer compared to the other two methods.

* The 'majority@k' method has the lowest accuracy for a given time-to-answer.

* The 'short-1@k (Ours)' method has the lowest time-to-answer for a given accuracy.

* The 'short-1@k (Ours)' method shows a plateau in accuracy between k=3 and k=5.

### Interpretation

The data suggests a trade-off between accuracy and computational cost. Increasing the value of 'k' improves accuracy but requires more time to compute the answer. The 'short-3@k (Ours)' method appears to be the most efficient in terms of achieving high accuracy with a reasonable time-to-answer. The 'short-1@k (Ours)' method is the fastest but sacrifices some accuracy. The 'majority@k' method is the least accurate.

The plot demonstrates the effectiveness of the "Ours" methods (short-1@k and short-3@k) in balancing accuracy and speed. The plateau observed in 'short-1@k (Ours)' between k=3 and k=5 might indicate diminishing returns for increasing 'k' beyond a certain point for that specific method. This information is valuable for selecting the appropriate method based on the specific requirements of the application, prioritizing either speed or accuracy. The choice of 'k' also depends on the application's constraints.

</details>

(d) R1-670B

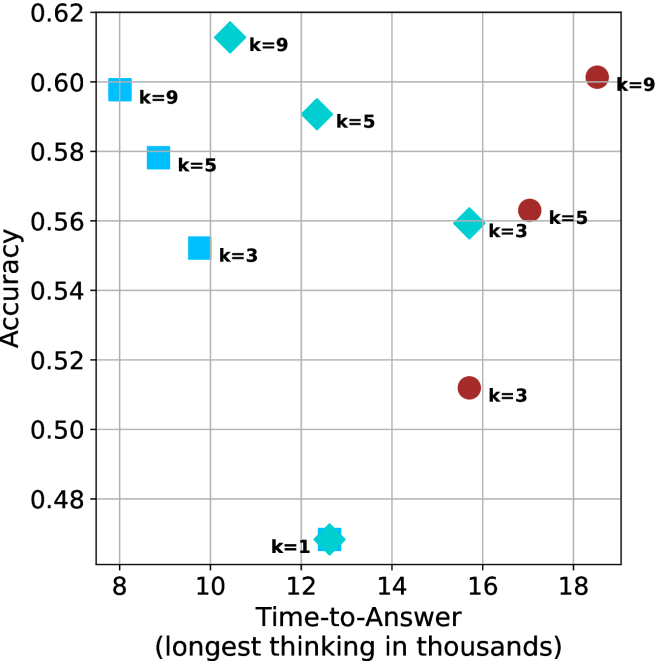

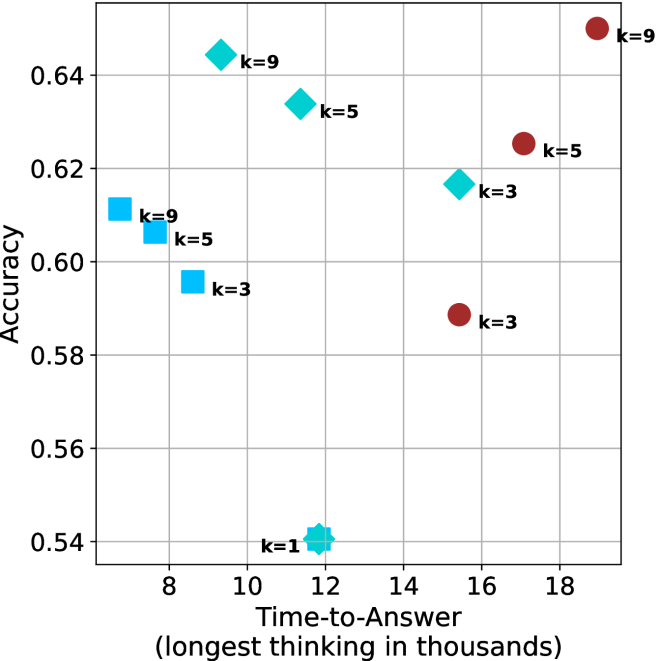

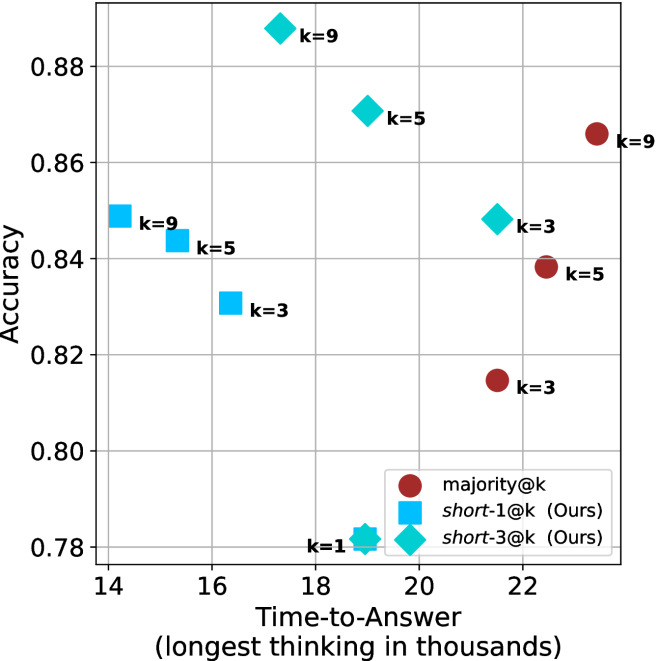

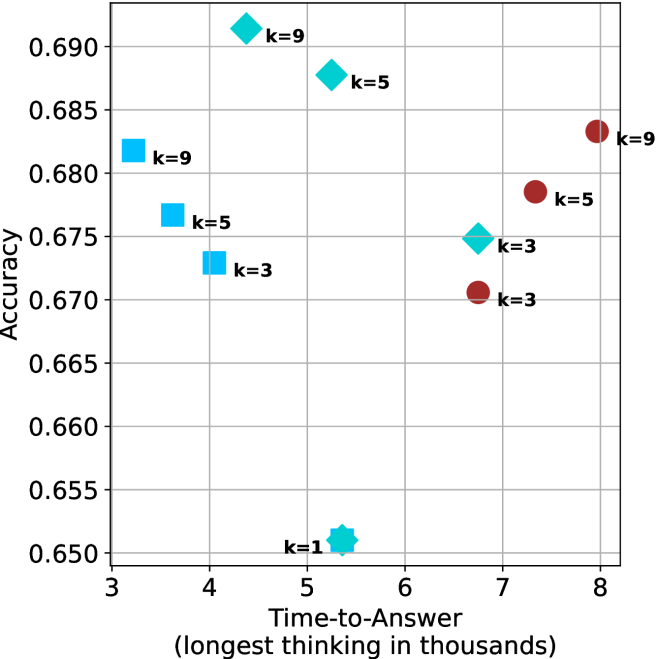

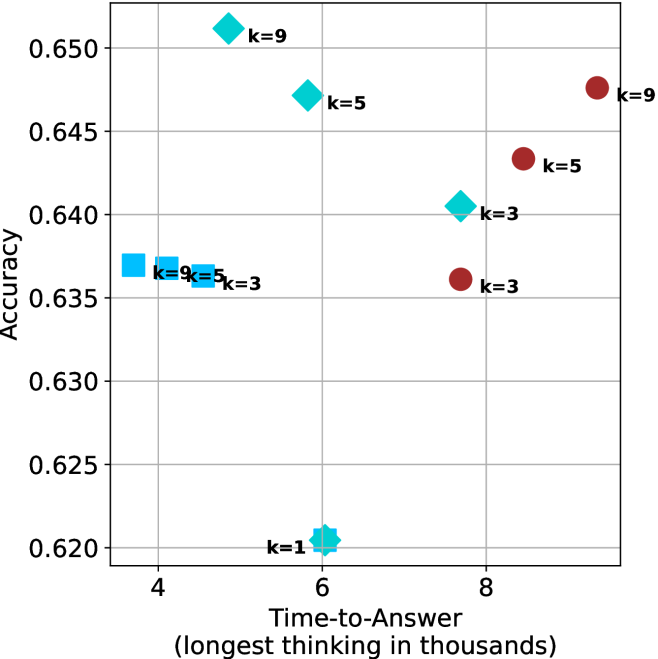

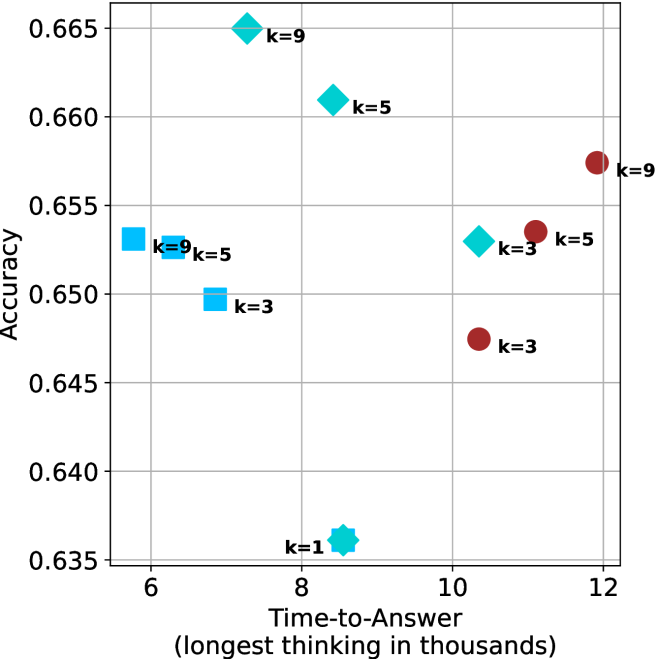

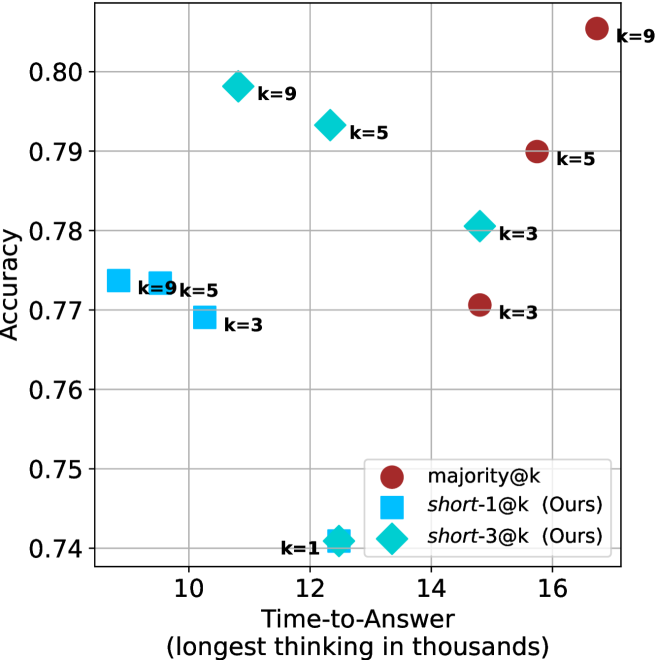

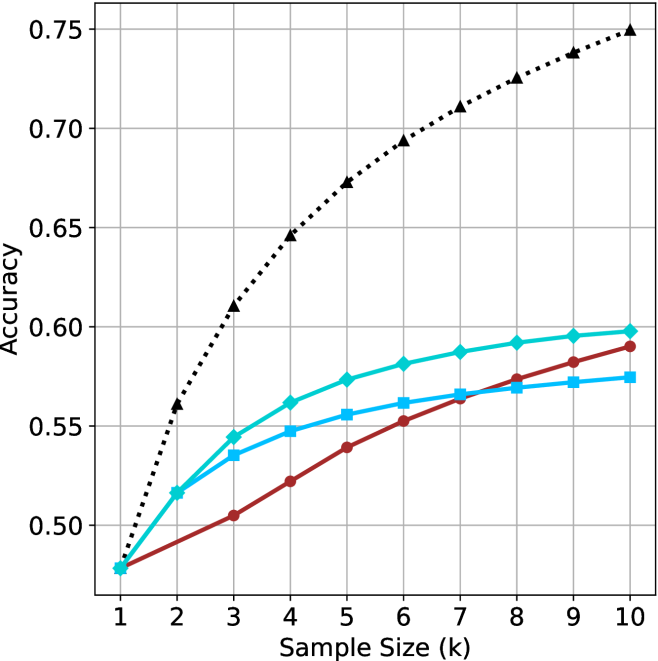

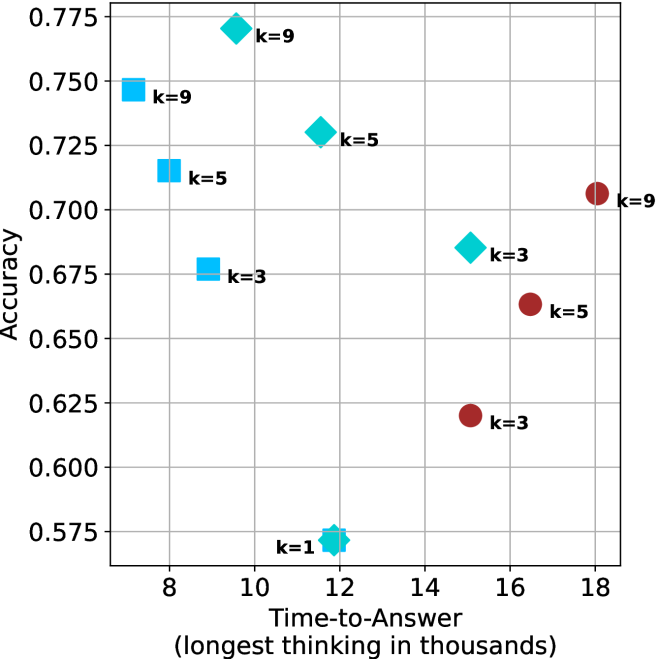

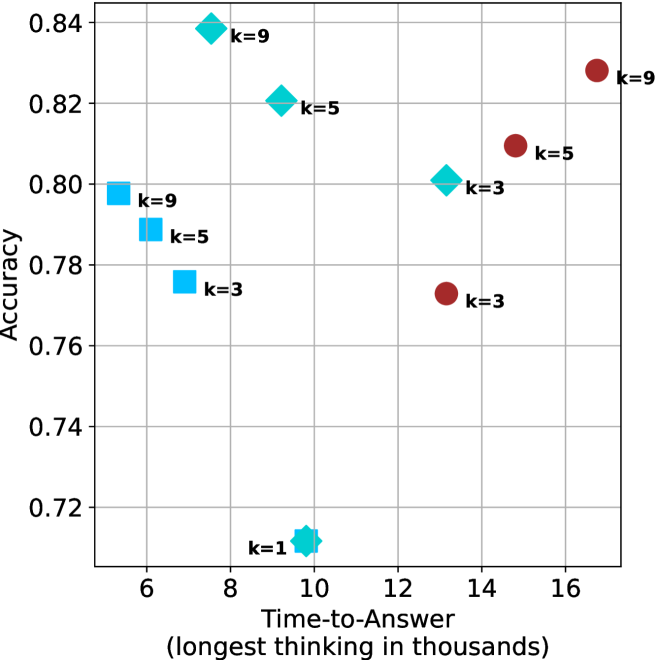

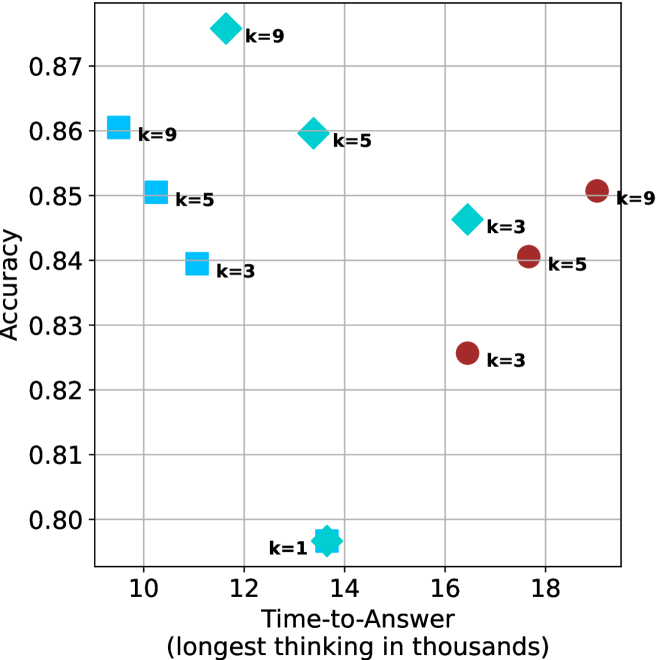

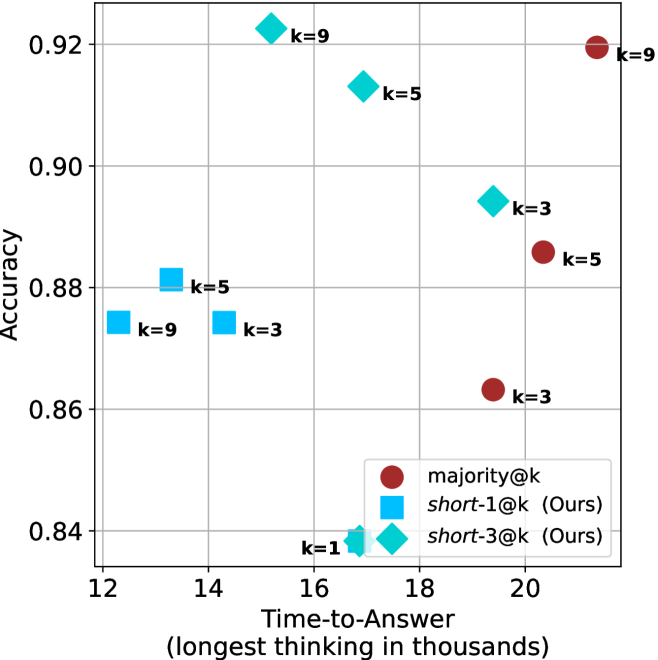

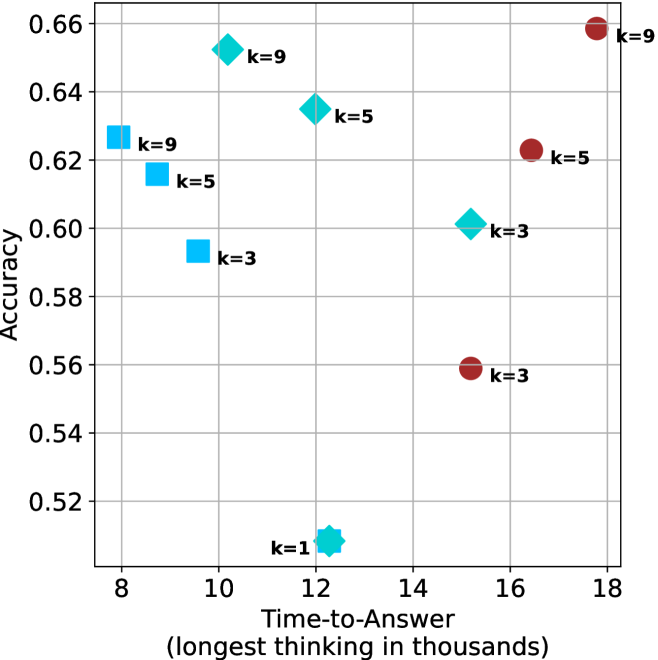

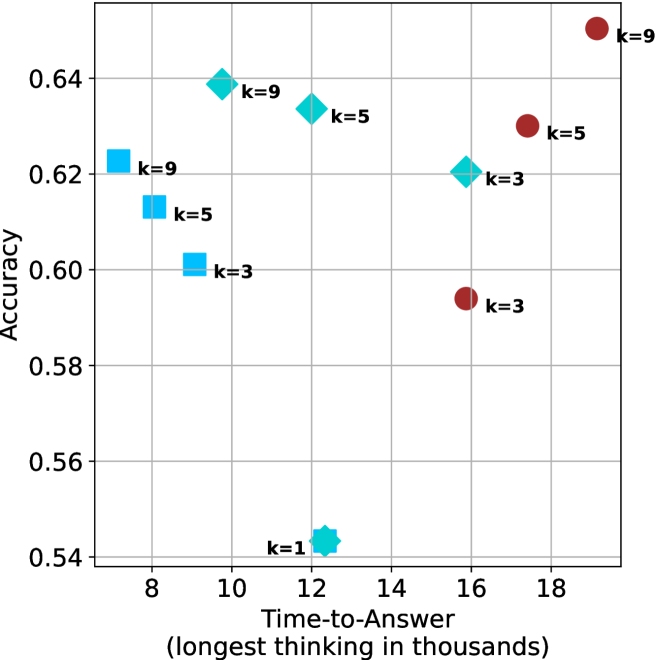

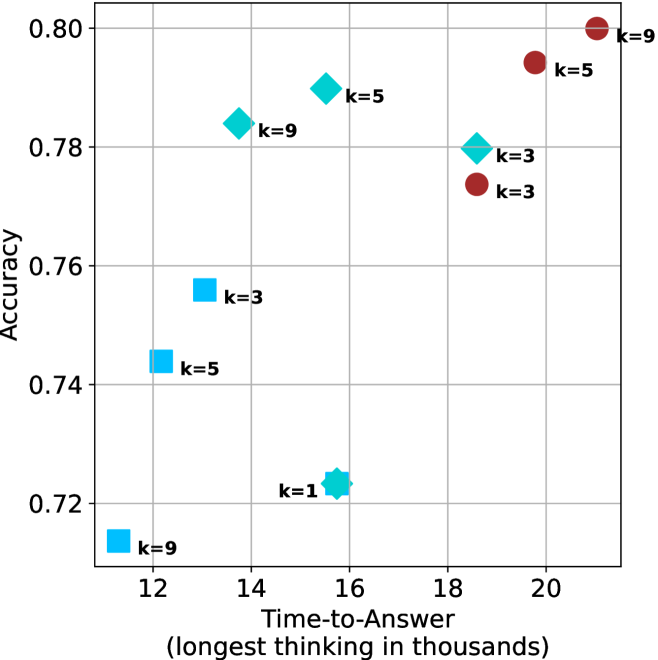

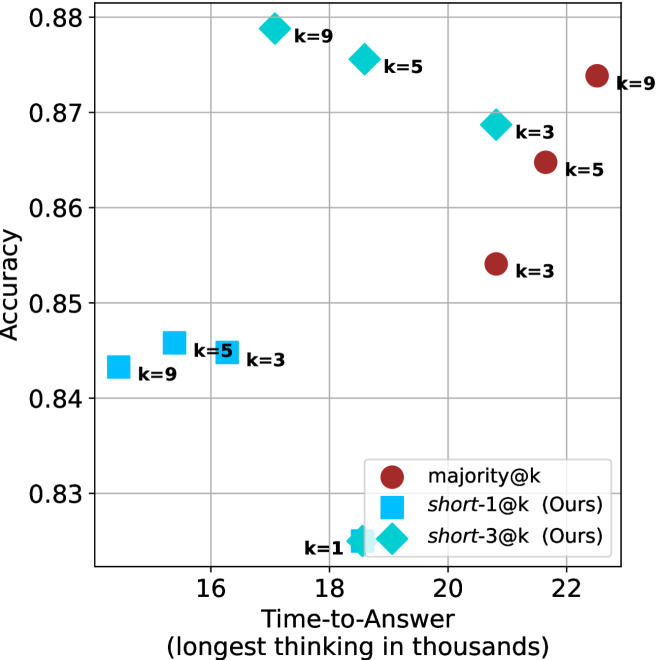

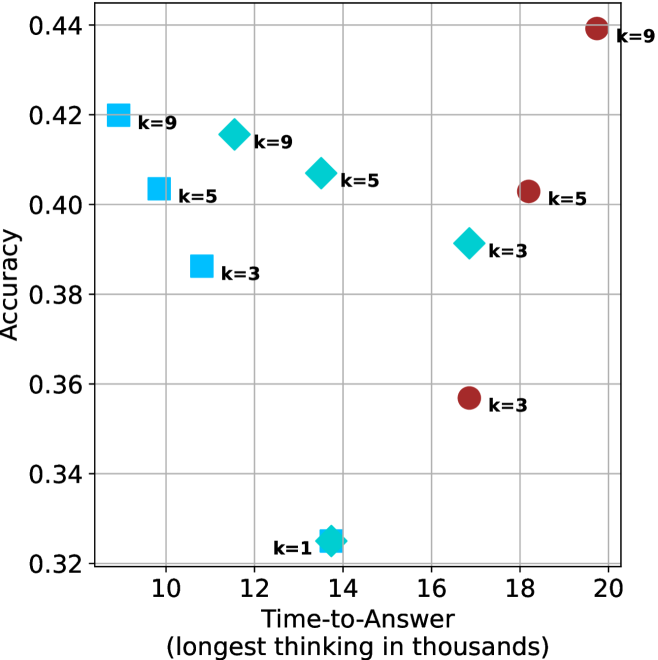

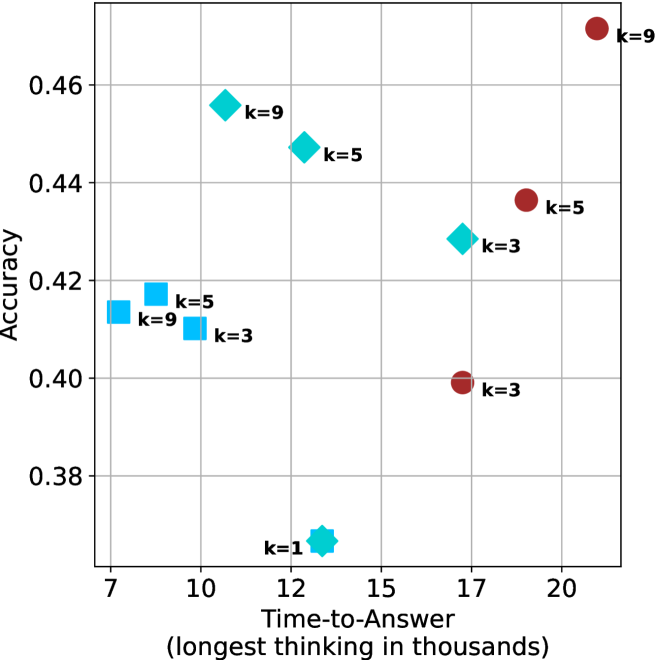

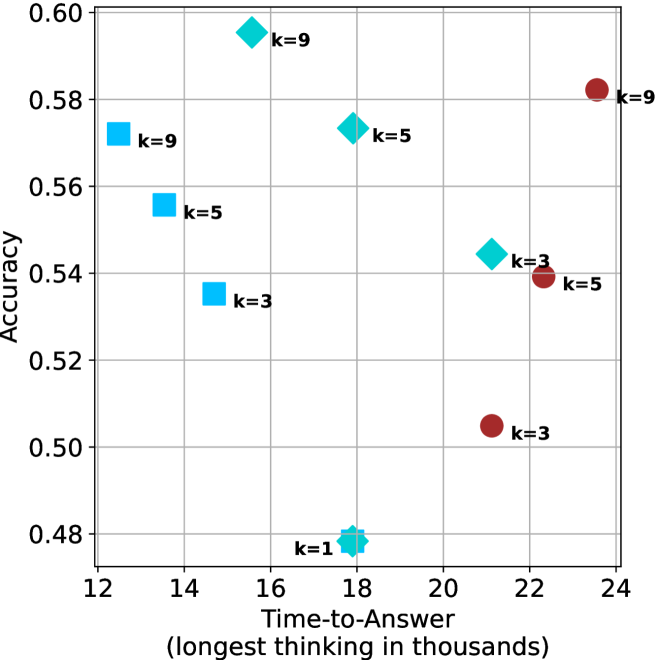

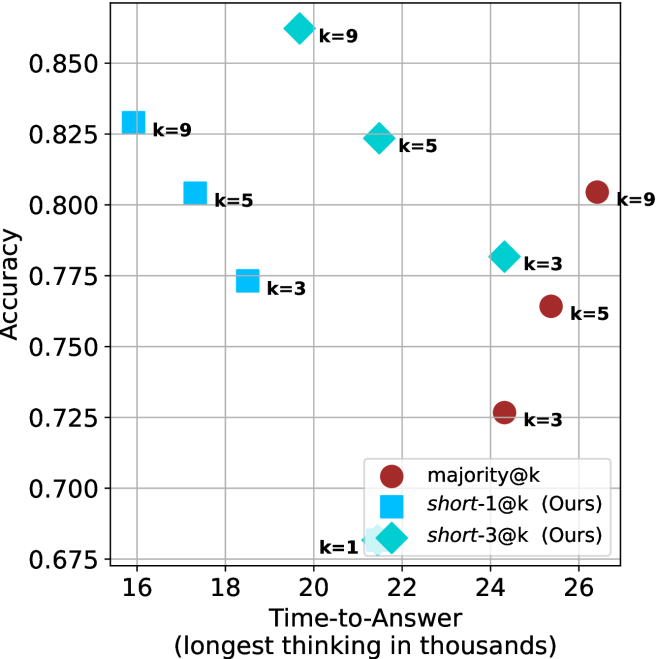

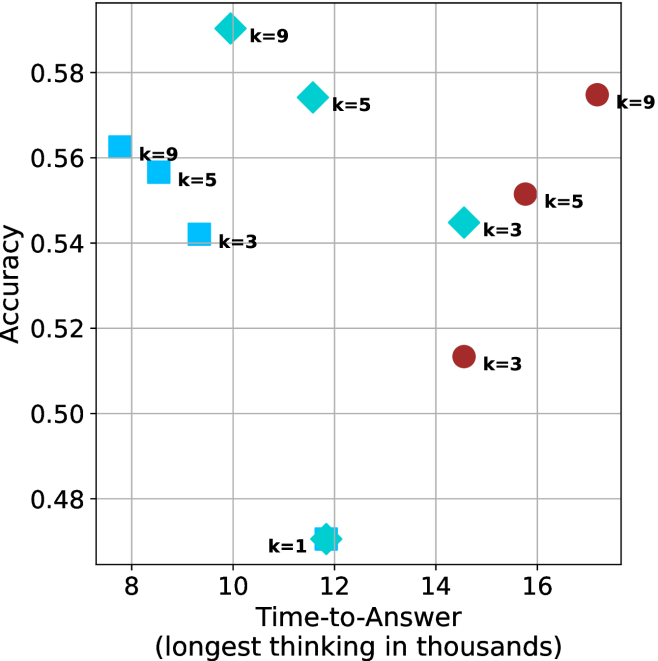

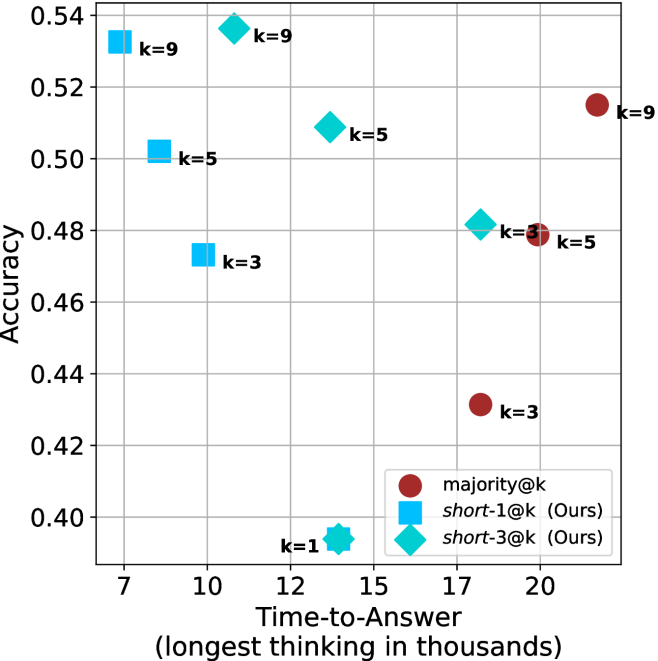

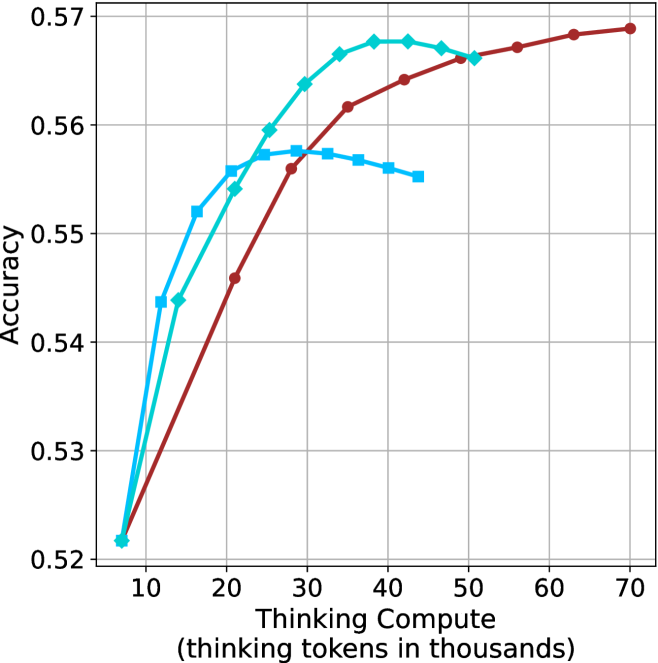

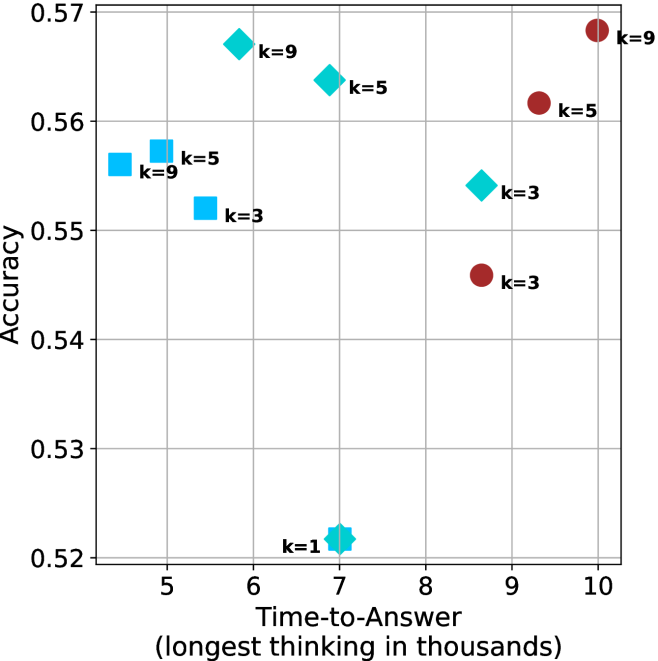

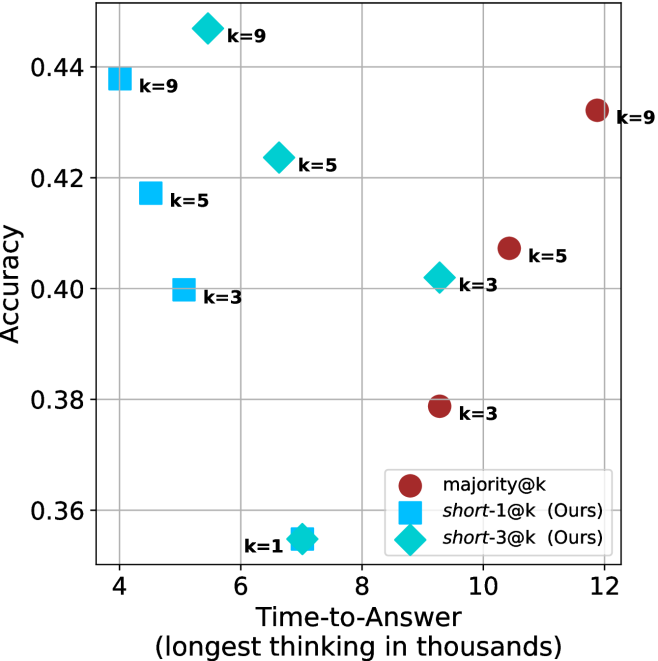

Figure 4: Comparing time-to-answer for different inference methods. Our methods substantially reduce time cost with no major loss in performance. Unlike majority $@k$ , which becomes slower as $k$ grows, our methods run faster with $k$ , as the probability of finding a short chain increases with $k$ .

Time-to-answer.

Finally, the math aggregated time-to-answer results are shown in Figure ˜ 4, with GPQA-D results shown in Figure ˜ 8 and pe math benchmark in Appendix ˜ B. For readability, Figure 4 omits the oracle, and methods are compared across a subset of sample sizes. As sample size increases, majority $@k$ exhibits longer time-to-answer, driven by a higher probability of sampling generations with extended thinking chains, requiring all trajectories to complete. Conversely, the short-1@k method shows reduced time-to-answer with larger sample sizes, as the probability of encountering a short answer increases. This trend also holds for the short-3@k method after three reasoning processes complete.