# Don’t Overthink it. Preferring Shorter Thinking Chains for Improved LLM Reasoning

## Abstract

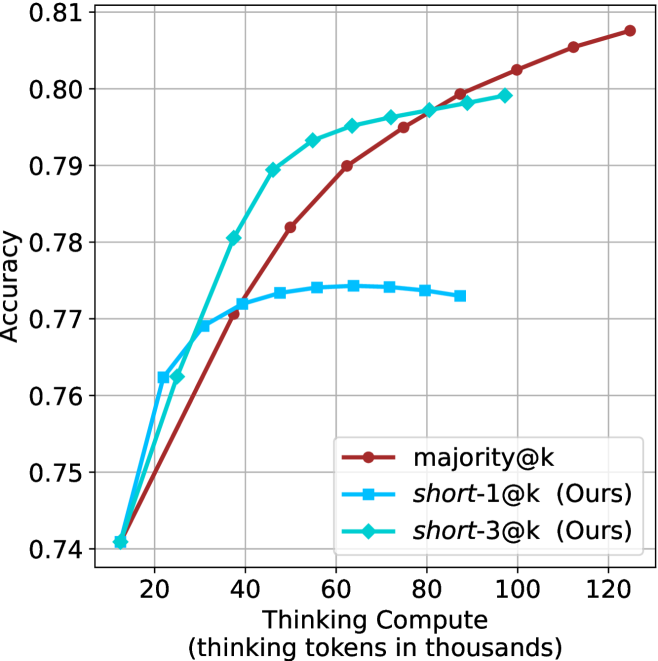

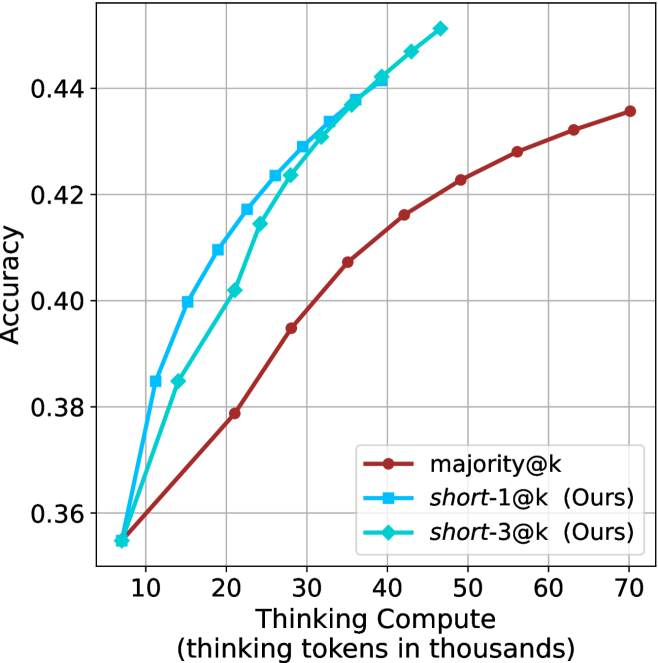

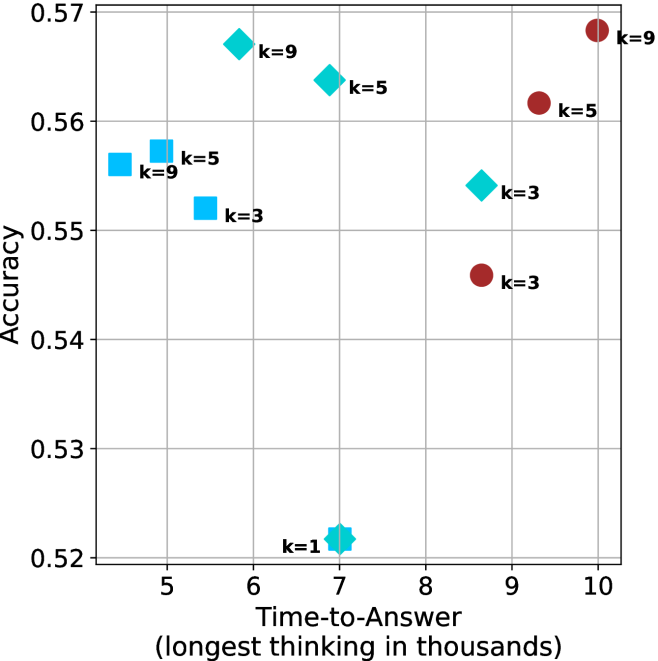

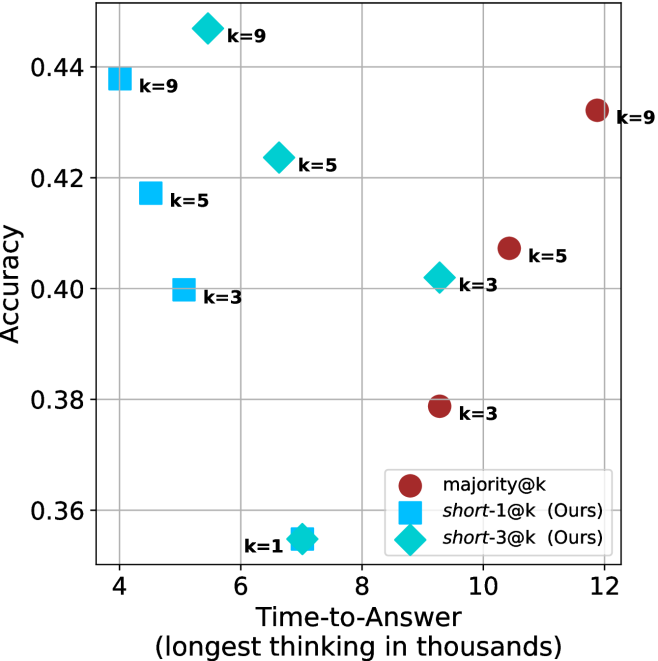

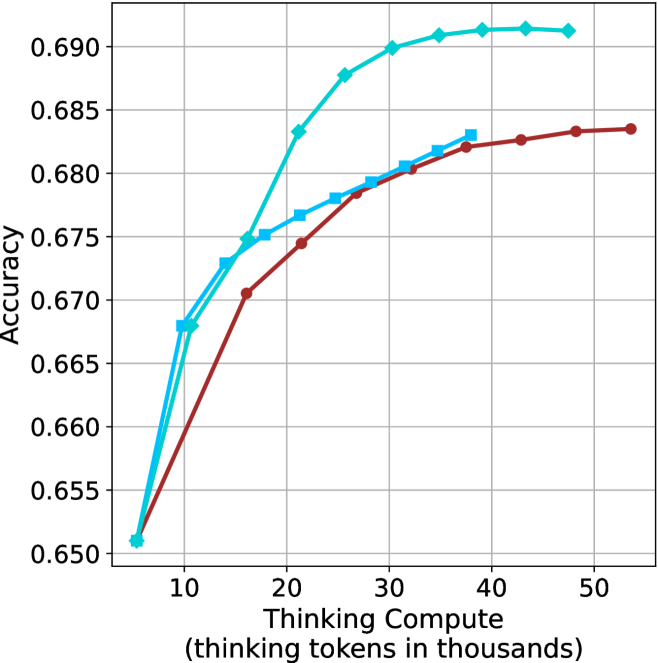

Reasoning large language models (LLMs) heavily rely on scaling test-time compute to perform complex reasoning tasks by generating extensive “thinking” chains. While demonstrating impressive results, this approach incurs significant computational costs and inference time. In this work, we challenge the assumption that long thinking chains results in better reasoning capabilities. We first demonstrate that shorter reasoning chains within individual questions are significantly more likely to yield correct answers—up to $34.5\$ more accurate than the longest chain sampled for the same question. Based on these results, we suggest short-m@k, a novel reasoning LLM inference method. Our method executes $k$ independent generations in parallel and halts computation once the first $m$ thinking processes are done. The final answer is chosen using majority voting among these $m$ chains. Basic short-1@k demonstrates similar or even superior performance over standard majority voting in low-compute settings—using up to $40\$ fewer thinking tokens. short-3@k, while slightly less efficient than short-1@k, consistently surpasses majority voting across all compute budgets, while still being substantially faster (up to $33\$ wall time reduction). To further validate our findings, we finetune LLMs using short, long, and randomly selected reasoning chains. We then observe that training on the shorter ones leads to better performance. Our findings suggest rethinking current methods of test-time compute in reasoning LLMs, emphasizing that longer “thinking” does not necessarily translate to improved performance and can, counter-intuitively, lead to degraded results.

## 1 Introduction

Scaling test-time compute has been shown to be an effective strategy for improving the performance of reasoning LLMs on complex reasoning tasks (OpenAI, 2024; 2025; Team, 2025b). This method involves generating extensive thinking —very long sequences of tokens that contain enhanced reasoning trajectories, ultimately yielding more accurate solutions. Prior work has argued that longer model responses result in enhanced reasoning capabilities (Guo et al., 2025; Muennighoff et al., 2025; Anthropic, 2025). However, generating such long-sequences also leads to high computational cost and slow decoding time due to the autoregressive nature of LLMs.

In this work, we demonstrate that scaling test-time compute does not necessarily improve model performance as previously thought. We start with a somewhat surprising observation. We take four leading reasoning LLMs, and for each generate multiple answers to each question in four complex reasoning benchmarks. We then observe that taking the shortest answer for each question strongly and consistently outperforms both a strategy that selects a random answer (up to $18.8\$ gap) and one that selects the longest answer (up to $34.5\$ gap). These performance gaps are on top of the natural reduction in sequence length—the shortest chains are $50\$ and $67\$ shorter than the random and longest chains, respectively.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Comparison of Two Problem-Solving Methods

### Overview

The image is a diagram comparing the outputs of two different methods, labeled "majority@k" and "short-1@k (ours)", when applied to the same mathematical problem. The diagram visually demonstrates that the "short-1@k" method yields the correct answer, while the "majority@k" method yields an incorrect one.

### Components/Axes

The diagram is structured into three main horizontal sections:

1. **Header/Problem Statement:** A single line of text at the top presenting the mathematical question.

2. **Method 1 (majority@k):** The upper section, featuring a cartoon character (a yellow, horned creature with glasses) on the left. To its right are four lines representing internal "thinking" processes, which converge via arrows to a final answer on the far right.

3. **Method 2 (short-1@k (ours)):** The lower section, separated by a dashed line. It features an identical cartoon character on the left. To its right are four lines representing its "thinking" processes, which also converge to a final answer on the far right.

**Labels and Text Elements:**

* **Problem Statement:** "Q: Find the sum of all positive integers n such that n+2 divides the product 3(n+3)(n²+9)"

* **Method 1 Label:** "majority@k" (in red text)

* **Method 2 Label:** "short-1@k (ours)" (in blue text)

* **Thinking Process Tags:** Lines begin with `<think>` or `// Terminated thinking`.

* **Answers within Thinking:** Phrases like "So the answer is 52", "So the answer is 49", "So the answer is 33".

* **Final Answer Blocks:** "Final answer:" followed by a number (52 or 49).

* **Outcome Indicators:** A red "X" (✗) next to the final answer for "majority@k", and a green checkmark (✓) next to the final answer for "short-1@k".

### Detailed Analysis

**Method 1: majority@k**

* **Process:** Four parallel thinking streams are shown.

1. `<think> So the answer is 52`

2. `<think> So the answer is 49`

3. `<think> So the answer is 33`

4. `<think> So the answer is 52`

* **Convergence:** All four streams have arrows pointing to a single "Final answer: 52" block.

* **Outcome:** The final answer "52" is marked with a red "X", indicating it is incorrect.

**Method 2: short-1@k (ours)**

* **Process:** Four parallel thinking streams are shown.

1. `<think> So the answer is 49`

2. `<think> So the answer is 49`

3. `<think>.................... // Terminated thinking`

4. `<think>.................... // Terminated thinking`

* **Convergence:** Only the second stream (which concluded "49") has an arrow pointing to the "Final answer: 49" block. The other three streams are marked as terminated and do not contribute.

* **Outcome:** The final answer "49" is marked with a green checkmark, indicating it is correct.

### Key Observations

1. **Divergent Outputs:** The two methods, when processing the same problem, produce different final answers (52 vs. 49).

2. **Process Difference:** The "majority@k" method aggregates results from all its thinking streams (including conflicting ones like 49 and 33) to arrive at a majority-based answer (52 appears twice). The "short-1@k" method appears to terminate most thinking streams early, allowing only one stream to complete and provide the final answer.

3. **Correctness:** The diagram explicitly labels the output of "majority@k" as wrong and the output of "short-1@k" as correct.

4. **Visual Metaphor:** The identical cartoon characters suggest the underlying "agent" or model is the same; the difference lies in the method or strategy ("@k") applied to its reasoning process.

### Interpretation

This diagram is a technical illustration likely from a research paper or report on AI reasoning or problem-solving strategies. It argues for the superiority of the "short-1@k" method over the "majority@k" method.

* **What it demonstrates:** It shows that a strategy of terminating most reasoning paths ("short-1") can prevent an AI system from being misled by incorrect intermediate conclusions and lead it to the correct answer. In contrast, a strategy that aggregates multiple reasoning paths ("majority") can be corrupted by incorrect intermediate outputs, leading to a wrong final answer.

* **Relationship between elements:** The problem statement is the constant input. The two methods are competing algorithms applied to that input. The "thinking" lines represent the internal reasoning traces of the AI. The final answers and their correctness markers are the evaluated outcomes. The arrows show the flow of information from reasoning to conclusion.

* **Underlying message:** The diagram suggests that for certain types of complex problems (like the given number theory problem), quality and correctness of reasoning are more important than quantity or consensus. A method that can identify and halt flawed reasoning paths ("short-1") is more reliable than one that simply tallies the results of multiple paths, some of which may be flawed ("majority"). The "(ours)" label indicates the authors are proposing the "short-1@k" method as their contribution.

</details>

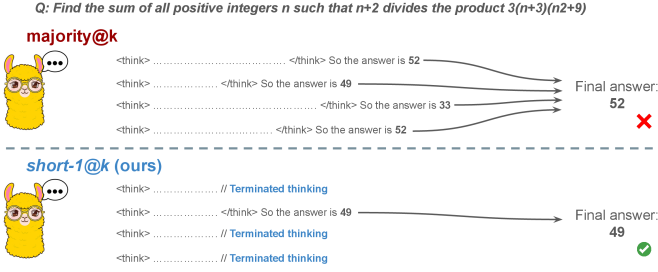

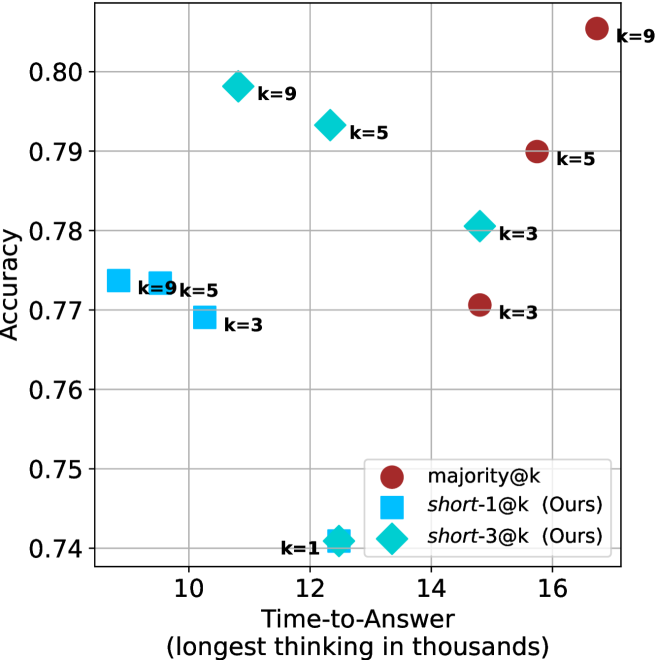

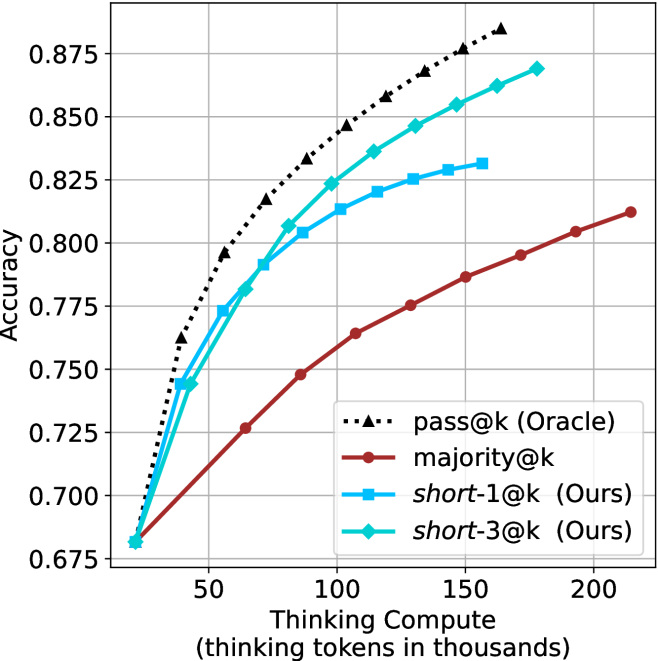

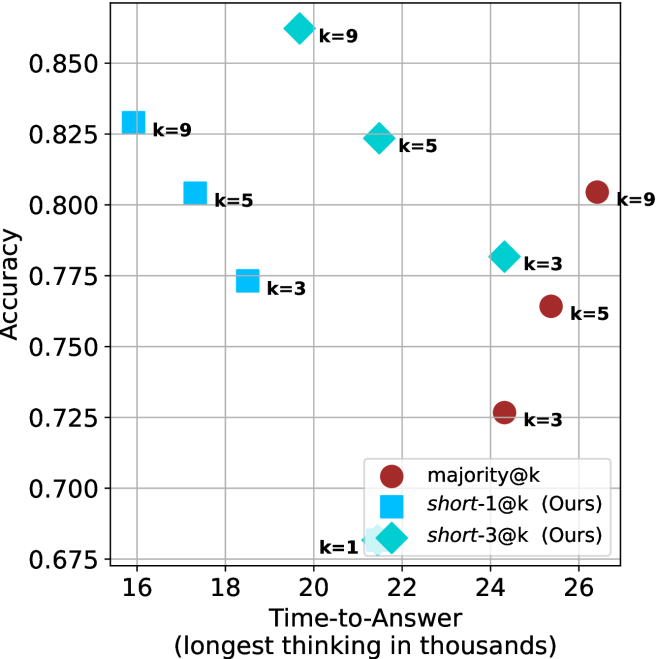

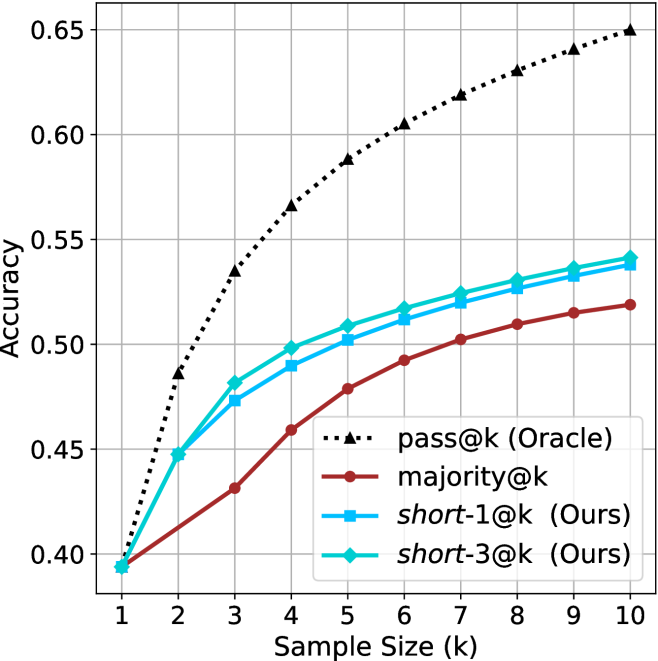

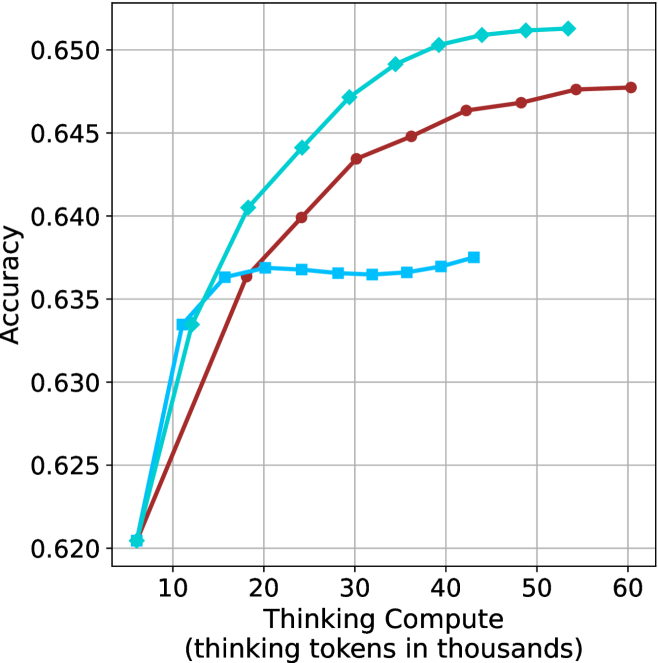

Figure 1: Visual comparison between majority voting and our proposed method short-m@k with $m=1$ (“…” represent thinking time). Given $k$ parallel attempts for the same question, majority@ $k$ waits until all attempts are done, and perform majority voting among them. On the other hand, our short-m@k method halts computation for all attempts as soon as the first $m$ attempts finish “thinking”, which saves compute and time, and surprisingly also boost performance in most cases.

Building on these findings, we propose short-m@k —a novel inference method for reasoning LLMs. short-m@k executes $k$ generations in parallel and terminates computation for all generations as soon as the first $m$ thinking processes are completed. The final answer is then selected via majority voting among those shortest chains, where ties are broken by taking the shortest answer among the tied candidates. See Figure ˜ 1 for visualization.

We evaluate short-m@k using six reasoning LLMs, and compare it to majority voting—the most common aggregation method for evaluating reasoning LLMs on complex benchmarks (Wang et al., 2022; Abdin et al., 2025). We show that in low-compute regimes, short-1@k, i.e., taking the single shortest chain, outperforms majority voting, while significantly reducing the time and compute needed to generate the final answer. For example, using LN-Super- $49$ B (Bercovich and others, 2025), short-1@k can reduce up to $40\$ of the compute while giving the same performance as majority voting. Moreover, for high-compute regimes, short-3@k, which halts generation after three thinking chains are completed, consistently outperforms majority voting across all compute budgets, while running up to $33\$ faster.

To gain further insights into the underlining mechanism of why shorter thinking is preferable, we analyze the generated reasoning chains. We first show that while taking the shorter reasoning is beneficial per individual question, longer reasoning is still needed to solve harder questions, as claimed in recent studies (Anthropic, 2025; OpenAI, 2024; Muennighoff et al., 2025). Next, we analyze the backtracking and re-thinking behaviors of reasoning chains. We find that shorter reasoning paths are more effective, as they involve fewer backtracks, with a longer average backtrack length. This finding holds both generally and when controlling for overall trajectory length.

To further strengthening our findings, we study whether training on short reasoning chains can lead to more accurate models. To do so, we finetune two Qwen- $2.5$ (Yang and others, 2024) models ( $7$ B and $32$ B) on three variants of the S $1$ dataset (Muennighoff et al., 2025): S $1$ -short, S $1$ -long, and S $1$ -random, consisting of examples with the shortest, longest, and randomly sampled reasoning trajectories among several generations, respectively. Our experiments demonstrate that finetuning using S $1$ -short not only yields shorter thinking lengths, but also improves model performance. Conversely, finetuning on S $1$ -long increases reasoning time with no significant performance gains.

This work rethinks the test-time compute paradigm for reasoning LLMs, showing that longer thinking not only does not ensure better reasoning, but also leads to worse reasoning in most cases. Our short-m@k methods prioritize shorter reasoning, yielding improved performance and reduced computational costs for current reasoning LLMs. We also show that training reasoning LLMs with shorter reasoning trajectories can enhance performance and reduce costs. Our results pave the way towards a new era of efficient and high-performing reasoning LLMs.

## 2 Related work

Reasoning LLMs and test-time scaling.

Reasoning LLMs tackle complex tasks by employing extensive reasoning processes, often involving detailed, step-by-step trajectories (OpenAI (2024); OpenAI (2025); Q. Team (2025b); M. Abdin, S. Agarwal, A. Awadallah, V. Balachandran, H. Behl, L. Chen, G. de Rosa, S. Gunasekar, M. Javaheripi, N. Joshi, et al. (2025); Anthropic (2025); A. Bercovich et al. (2025); D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025); 27; G. DeepMind (2025); Q. Team (2025a)). This capability is fundamentally based on techniques like chain-of-thought (CoT; Wei et al., 2022), which encourage models to generate intermediate reasoning steps before arriving at a final answer. Modern LLMs use a large number of tokens, often referred to as “thinking tokens”, to explore multiple problem-solving approaches, to employ self-reflection, and to perform verification. This thinking capability has allowed them to achieve superior performance on challenging tasks such as mathematical problem-solving and code generation (Ke et al., 2025).

The LLM thinking capability is typically achieved through post-training methods applied to a strong base model. The two primary approaches to instilling or improving this reasoning ability are using reinforcement learning (RL) (Guo et al., 2025; Team, 2025b) and supervised fine-tuning (Muennighoff et al., 2025; Ye et al., 2025). Guo et al. (2025) have demonstrated that as training progresses the model tends to generate longer thinking trajectories, which results in improved performance on complex tasks. Similarly, Anthropic (2025) and Muennighoff et al. (2025) have shown a correlation between increased average thinking length during inference and improved performance. We challenge this assumption, demonstrating that shorter sequences are more likely to yield an accurate answer.

Efficiency in reasoning LLMs.

While shortening the length of CoT is beneficial for non-reasoning models (Nayab et al., 2024; Kang et al., 2025), it is higly important for reasoning LLMs as they require a very large amount of tokens to perform the thinking process. As a result, recent studies tried to make the process more efficient, e.g., by using early exit techniques for reasoning trajectories (Pu et al., 2025; Yang et al., 2025), by suppressing backtracks (Wang et al., 2025a) or by training reasoning models which enable control over the thinking length (Yu et al., 2025).

Several recent works studied the relationship between reasoning trajectory length and correctness. Lu et al. (2025) proposed a method for reducing the length of thinking trajectories in reasoning training datasets. Their method employs a reasoning LLM several times over an existing trajectory in order to make it shorter. As this approach eventually trains a model over shorter trajectories it is similar to the method we employ in Section ˜ 6. However, our method is simpler as it does not require an LLM to explicitly shorten the sequence. Fatemi et al. (2025); Qi et al. (2025) and Arora and Zanette (2025) proposed RL methods to shorten reasoning in language models. Fatemi et al. (2025) also observed that correct answers typically require shorter thinking trajectories by averaging lengths across examples, suggesting that lengthy responses might inherently stem from RL-based optimization during training. In Section ˜ 5.1 we show that indeed correct answers usually use shorter thinking trajectories, but also highlight that averaging across all examples might hinder this effect as easier questions require sustainably lower amount of reasoning tokens compared to harder ones.

More relevant to our work, Wu et al. (2025) showed that there is an optimal thinking length range for correct answers according to the difficulty of the question, while Wang et al. (2025b) found that for a specific question, correct responses from reasoning models are usually shorter than incorrect ones. We provide further analysis supporting these observations in Sections ˜ 3 and 5. Finally, our proposed inference method short-m@k is designed to enhance the efficiency of reasoning LLMs by leveraging this property, which can be seen as a generalization of the FFS method (Agarwal et al., 2025), which selects the shortest answer among several candidates as in our short-1@k.

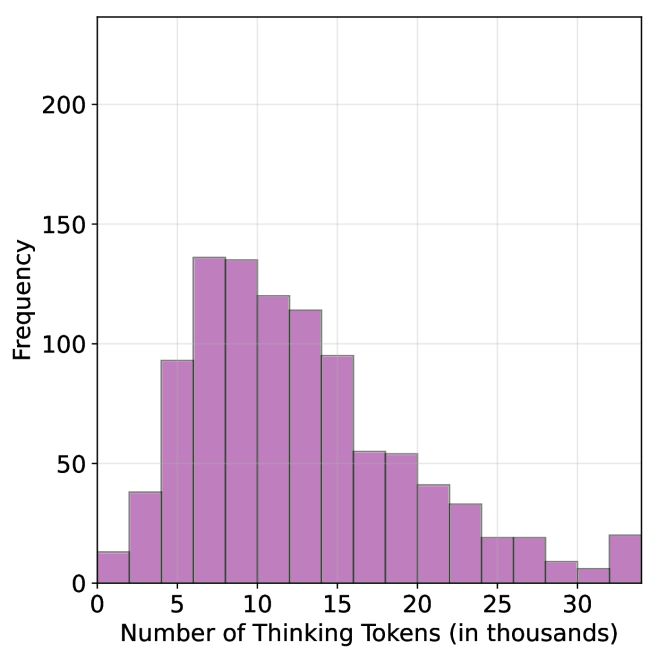

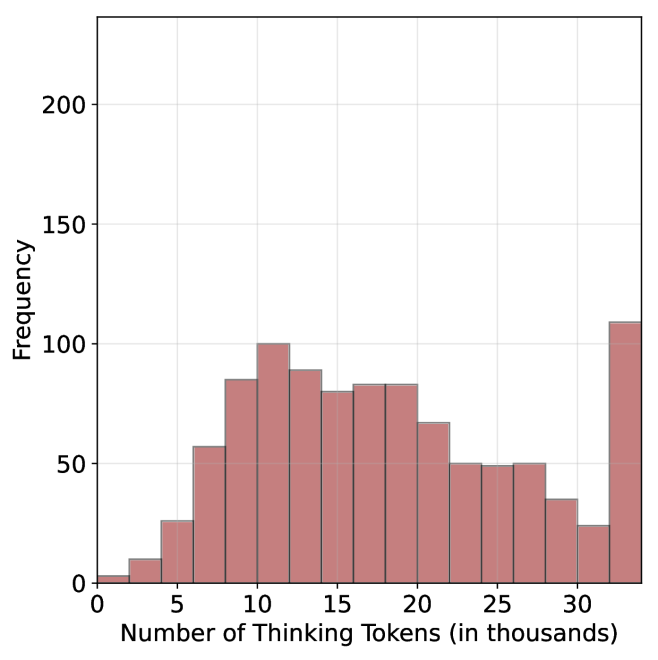

## 3 Shorter thinking is preferable

As mentioned above, the common wisdom in reasoning LLMs suggests that increased test-time computation enhances model performance. Specifically, it is widely assumed that longer reasoning process, which entails extensive reasoning thinking chains, correlates with improved task performance (OpenAI, 2024; Anthropic, 2025; Muennighoff et al., 2025). We challenge this assumption and ask whether generating more tokens per trajectory actually leads to better performance. To that end, we generate multiple answers per question and compare performance based solely on the shortest, longest and randomly sampled thinking chains among the generated samples.

### 3.1 Experimental details

We consider four leading, high-performing, open, reasoning LLMs. Llama- $3.3$ -Nemotron-Super- $49$ B-v $1$ [LN-Super- $49$ B; Bercovich and others, 2025]: a reasoning RL-enhanced version of Llama- $3.3$ - $70$ B (Grattafiori et al., 2024); R $1$ -Distill-Qwen- $32$ B [R $1$ - $32$ B; Guo et al., 2025]: an SFT finetuned version of Qwen- $2.5$ - $32$ B-Instruct (Yang and others, 2024) derived from R $1$ trajectories; QwQ- $32$ B a reasoning RL-enhanced version Qwen- $2.5$ - $32$ B-Instruct (Team, 2025b); and R1- $0528$ a $670$ B RL-trained flagship reasoning model (R $1$ - $670$ B; Guo et al., 2025). We also include results for smaller models in Appendix ˜ D.

We evaluate all models using four competitive reasoning benchmarks. We use AIME $2024$ (of America, 2024), AIME $2025$ (of America, 2025) and HMMT February $2025$ , from the Math Arena benchmark (Balunović et al., 2025). This three benchmarks are derived from math competitions, and involve solving problems that cover a broad range of mathematics topics. Each dataset consists of $30$ examples with varied difficulty. We also evaluate the models using the GPQA-diamond benchmark [GPQA-D; Rein et al., 2024], which consists of $198$ multiple-choice scientific questions, and is considered to be challenging for reasoning LLMs (DeepMind, 2025).

For each question, we generate $20$ responses per model, yielding a total of about $36$ k generations. For all models we use temperature of $0.7$ , top-p= $0.95$ and a maximum number of generated tokens of $32$ , $768$ . When measuring the thinking chain length, we measure the token count between the <think> and </think> tokens. We run inference for all models using paged attention via the vLLM framework (Kwon et al., 2023).

### 3.2 The shorter the better

Table 1: Shorter thinking performs better. Comparison between taking the shortest/longest/random generation per example.

| | GPQA-D | AIME 2024 | AIME 2025 | HMMT | Math Average | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | |

| LN-Super-49B | | | | | | | | | | |

| random | 5357 | 65.1 | 11258 | 58.8 | 12105 | 51.3 | 13445 | 33.0 | 12270 | 47.7 |

| longest | 8763 $(+64\$ | 57.6 | 18566 | 33.3 | 18937 | 30.0 | 19790 | 23.3 | 19098 $(+56\$ | 28.9 |

| shortest | 2790 $(-48\$ | 69.1 | 0 6276 | 76.7 | 0 7036 | 66.7 | 0 7938 | 46.7 | 7083 $(-42\$ | 63.4 |

| R1-32B | | | | | | | | | | |

| random | 5851 | 62.5 | 9614 | 71.8 | 11558 | 56.4 | 12482 | 38.3 | 11218 | 55.5 |

| longest | 9601 $(+64\$ | 57.1 | 17689 | 53.3 | 19883 | 36.7 | 20126 | 23.3 | 19233 $(+71\$ | 37.8 |

| shortest | 3245 $(-45\$ | 64.7 | 0 4562 | 80.0 | 0 6253 | 63.3 | 0 6557 | 36.7 | 5791 $(-48\$ | 60.0 |

| QwQ-32B | | | | | | | | | | |

| random | 8532 | 63.7 | 13093 | 82.0 | 14495 | 72.3 | 16466 | 52.5 | 14685 | 68.9 |

| longest | 12881 $(+51\$ | 54.5 | 20059 | 70.0 | 21278 | 63.3 | 24265 | 36.7 | 21867 $(+49\$ | 56.7 |

| shortest | 5173 $(-39\$ | 64.7 | 0 8655 | 86.7 | 10303 | 66.7 | 11370 | 60.0 | 10109 $(-31\$ | 71.1 |

| R1-670B | | | | | | | | | | |

| random | 11843 | 76.2 | 16862 | 83.8 | 18557 | 82.5 | 21444 | 68.2 | 18954 | 78.2 |

| longest | 17963 $(+52\$ | 63.1 | 22603 | 70.0 | 23570 | 66.7 | 27670 | 40.0 | 24615 $(+30\$ | 58.9 |

| shortest | 8116 $(-31\$ | 75.8 | 11229 | 83.3 | 13244 | 83.3 | 13777 | 83.3 | 12750 $(-33\$ | 83.3 |

For all generated answers, we compare short vs. long thinking chains for the same question, along with a random chain. Results are presented in Table ˜ 1. In this section we exclude generations where thinking is not completed within the maximum generation length, as these often result in an infinite thinking loop. First, as expected, the shortest answers are $25\$ – $50\$ shorter compared to randomly sampled responses. However, we also note that across almost all models and benchmarks, considering the answer with the shortest thinking chain actually boosts performance, yielding an average absolute improvement of $2.2\$ – $15.7\$ on the math benchmarks compared to randomly selected generations. When considering the longest thinking answers among the generations, we further observe an increase in thinking chain length, with up to $75\$ more tokens per chain. These extended reasoning trajectories substantially degrade performance, resulting in average absolute reductions ranging between $5.4\$ – $18.8\$ compared to random generations over all benchmarks. These trends are most noticeable when comparing the shortest generation with the longest ones, with an absolute performance gain of up to $34.5\$ in average accuracy and a substantial drop in the number of thinking tokens.

The above results suggest that long generations might come with a significant price-tag, not only in running time, but also in performance. That is, within an individual example, shorter thinking trajectories are much more likely to be correct. In Section ˜ 5.1 we examine how these results relate to the common assumption that longer trajectories leads to better LLM performance. Next, we propose strategies to leverage these findings to improve the efficiency and effectiveness of reasoning LLMs.

## 4 short-m@k : faster and better inference of reasoning LLMs

Based on the results presented in Section ˜ 3, we suggest a novel inference method for reasoning LLMs. Our method— short-m@k —leverages batch inference of LLMs per question, using multiple parallel decoding runs for the same query. We begin by introducing our method in Section ˜ 4.1. We then describe our evaluation methodology, which takes into account inference compute and running time (Section ˜ 4.2). Finally, we present our results (Section ˜ 4.3).

### 4.1 The short-m@k method

The short-m@k method, visualized in Figure ˜ 1, performs parallel decoding of $k$ generations for a given question, halting computation across all generations as soon as the $m\leq k$ shortest thinking trajectories are completed. It then conducts majority voting among those shortest answers, resolving ties by selecting the answer with the shortest thinking chain. Given that thinking trajectories can be computationally intensive, terminating all generations once the $m$ shortest trajectories are completed not only saves computational resources but also significantly reduces wall time due to the parallel decoding approach, as shown in Section ˜ 4.3.

Below we focus on short-1@k and short-3@k, with short-1@k being the most efficient variant of short-m@k and short-3@k providing the best balance of performance and efficiency (see Section ˜ 4.3). Ablation studies on $m$ and other design choices are presented in Appendix ˜ C, while results for smaller models are presented in Appendix ˜ D.

### 4.2 Evaluation setup

We evaluate all methods under the same setup as described in Section ˜ 3.1. We report the averaged results across the math benchmarks, while the results for GPQA-D presented in Appendix ˜ A. The per benchmark resutls for the math benchmarks are in Appendix ˜ B. We report results using our method (short-m@k) with $m\in\{1,3\}$ . We compare the proposed method to the standard majority voting (majority $@k$ ), arguably the most common method for aggregating multiple outputs (Wang et al., 2022), which was recently adapted for reasoning LLMs (Guo et al., 2025; Abdin et al., 2025; Wang et al., 2025b). As an oracle, we consider pass $@k$ (Kulal et al., 2019; Chen and others, 2021), which measures the probability of including the correct solution within $k$ generated responses.

We benchmark the different methods with sample sizes of $k\in\{1,2,...,10\}$ , assuming standard parallel decoding setup, i.e., all samples are generated in parallel. Section 5.3 presents sequential analysis where parallel decoding is not assumed. For the oracle (pass@ $k$ ) approach, we use the unbiased estimator presented in Chen and others (2021), with our $20$ generations per question ( $n$ $=$ $20$ ). For the short-1@k method, we use the rank-score@ $k$ metric (Hassid et al., 2024), where we sort the different generations according to thinking length. For majority $@k$ and short-m@k where $m>1$ , we run over all $k$ -sized subsets out of the $20$ generations per example.

We evaluate the different methods considering three main criteria: (a) Sample-size (i.e., $k$ ), where we compare methods while controlling for the number of generated samples; (b) Thinking-compute, where we measure the total number of thinking tokens used across all generations in the batch; and (c) Time-to-answer, which measures the wall time of running inference using each method. In this parallel framework, our method (short-m@k) terminates all other generations after the first $m$ decoding thinking processes terminate. Thus, the overall thinking compute is the total number of thinking tokens for each of the $k$ generations at that point. Similarly, the overall time is that of the $m$ ’th shortest generation process. Conversely, for majority $@k$ , the method’s design necessitates waiting for all generations to complete before proceeding. Hence, we consider the compute as the total amount of thinking tokens in all generations and run time according to the longest thinking chain. As for the oracle approach, we terminate all thinking trajectories once the shortest correct one is finished, and consider the compute and time accordingly.

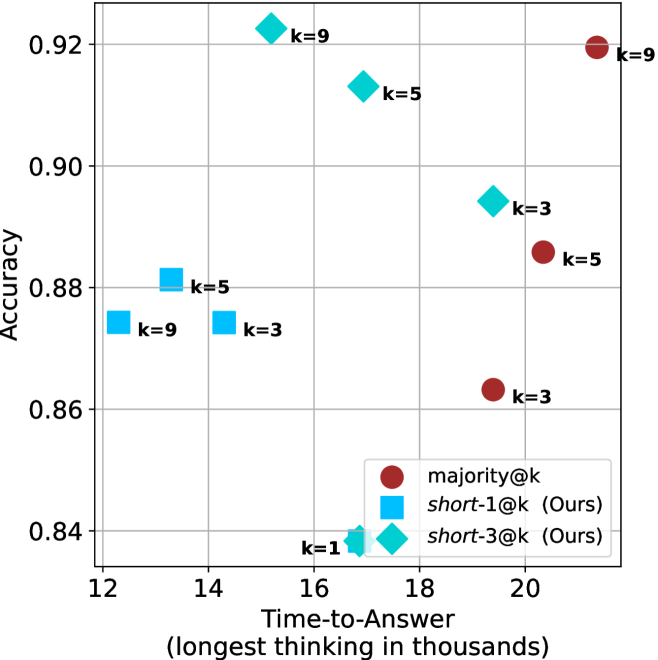

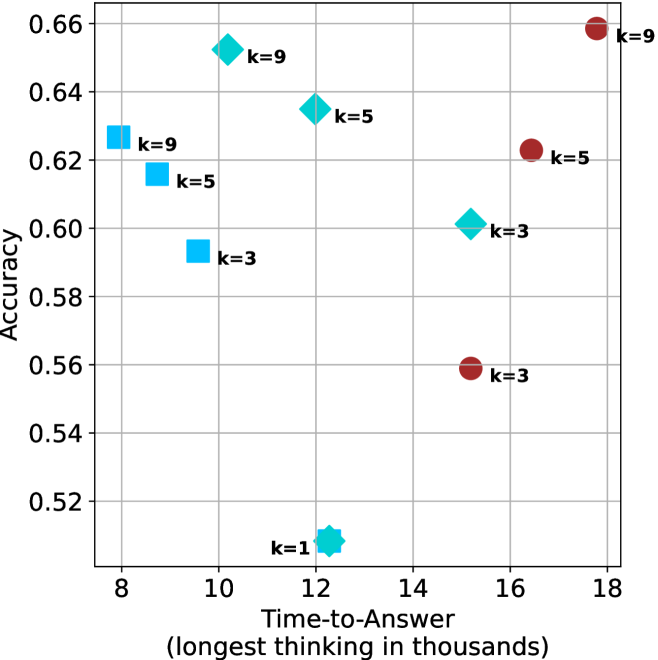

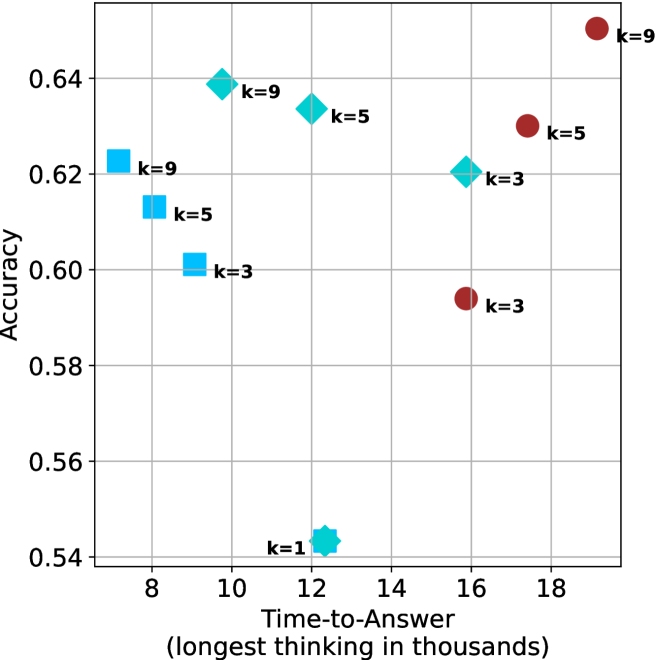

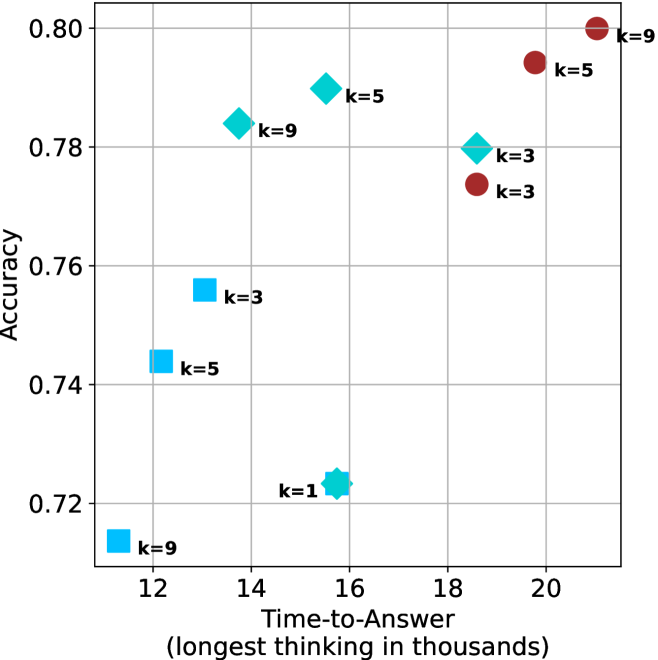

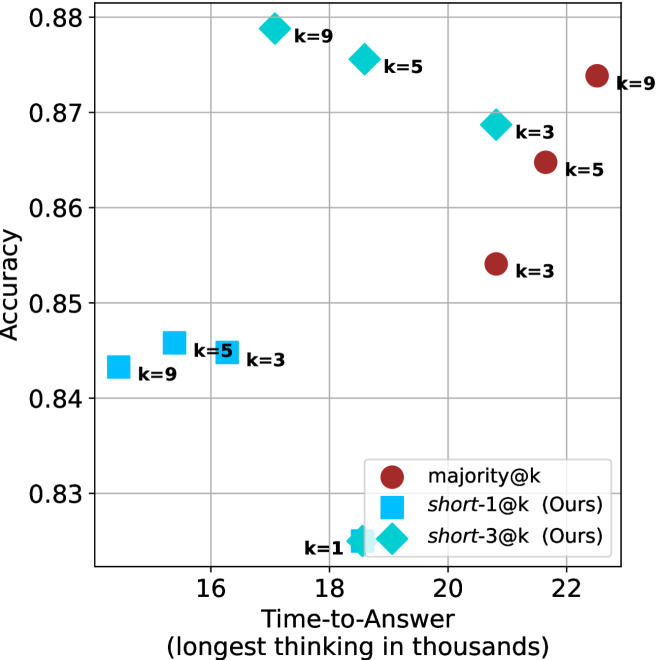

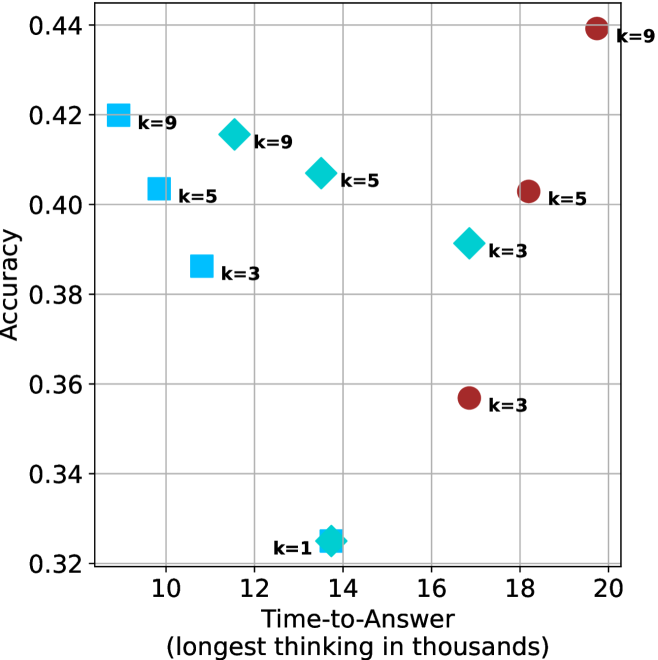

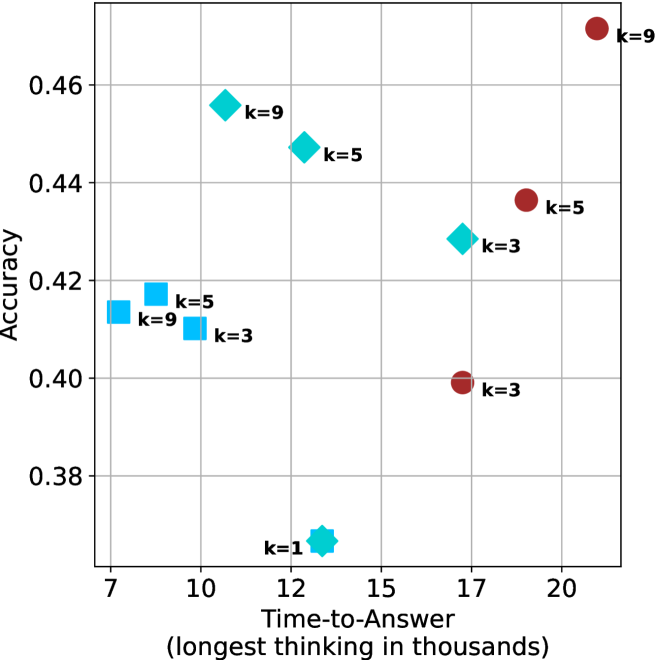

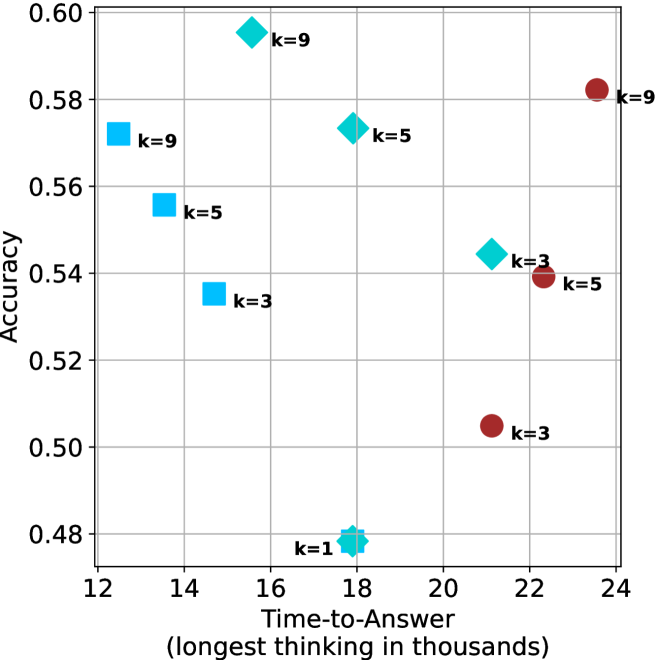

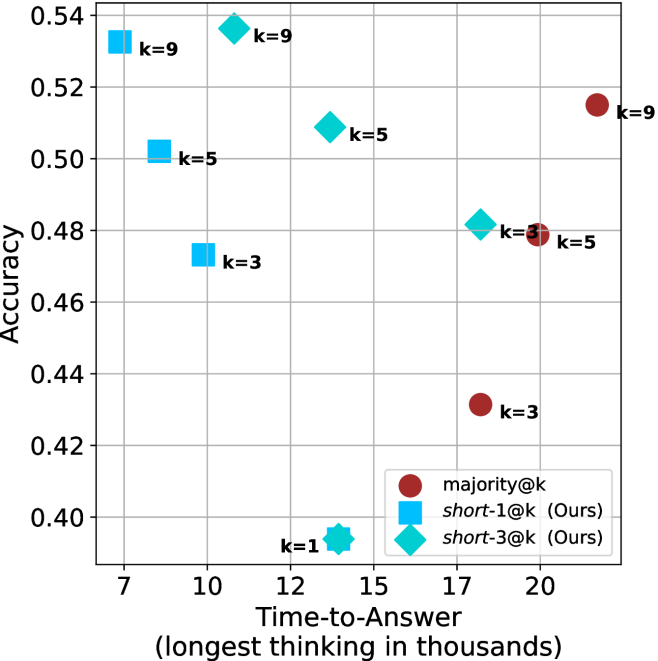

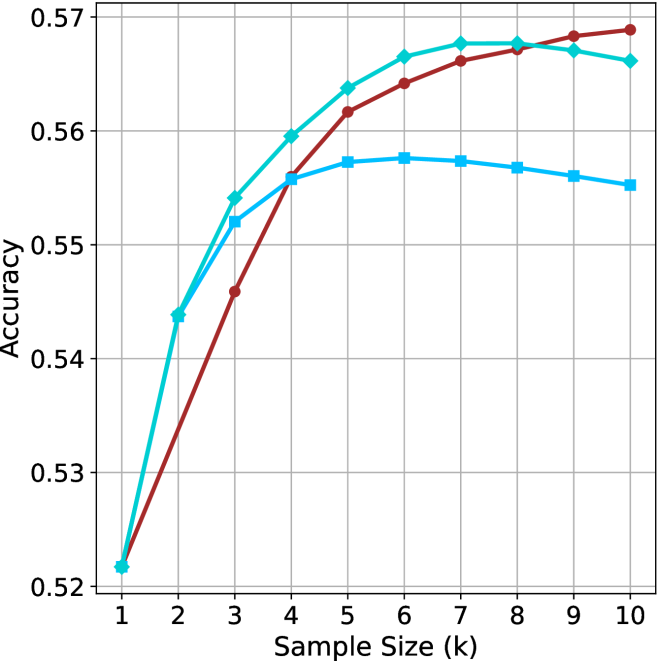

### 4.3 Results

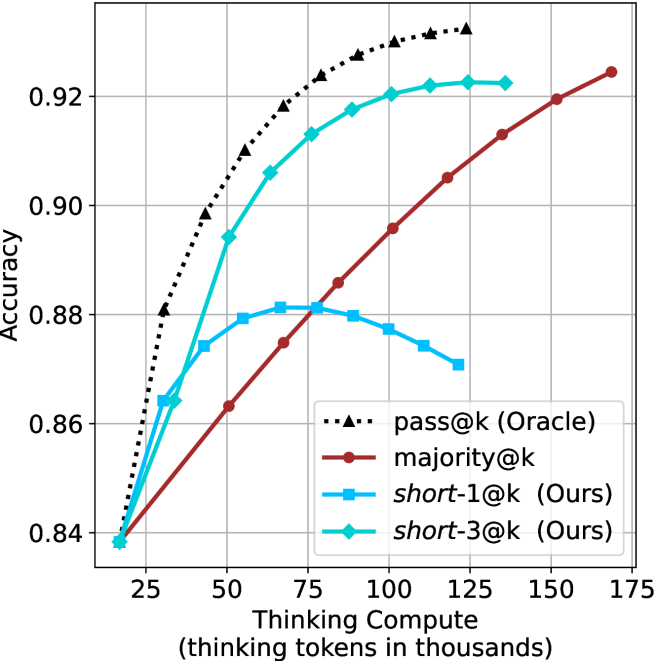

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Sample Size (k)

### Overview

The image is a line chart comparing the performance (accuracy) of four different methods or models as a function of increasing sample size. The chart demonstrates how accuracy improves with more data for each method, with one method consistently outperforming the others.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Sample Size (k)". It represents the number of samples in thousands (k). The axis has discrete, evenly spaced markers from 1 to 10.

* **Y-Axis (Vertical):** Labeled "Accuracy". It represents a performance metric, likely a proportion or probability. The axis ranges from 0.50 to 0.75, with major grid lines at intervals of 0.05 (0.50, 0.55, 0.60, 0.65, 0.70, 0.75).

* **Legend:** Positioned in the top-left corner of the chart area. It contains four entries, each associating a line color and marker shape with a label.

1. **Black dotted line with upward-pointing triangle markers:** Label is partially obscured but appears to be a model name (e.g., "Model A" or similar).

2. **Cyan solid line with diamond markers:** Label is partially obscured.

3. **Blue solid line with square markers:** Label is partially obscured.

4. **Red solid line with circle markers:** Label is partially obscured.

* **Grid:** A light gray grid is present, with both horizontal and vertical lines aligned with the axis ticks.

### Detailed Analysis

The chart plots four data series. All series begin at approximately the same accuracy point at k=1 and show a monotonic increase as sample size grows, but at different rates and to different final levels.

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **Black Dotted Line (Triangles):**

* **Trend:** Shows the steepest and most consistent upward slope, indicating the strongest positive correlation between sample size and accuracy. It maintains a clear lead over all other methods throughout the entire range.

* **Data Points (k, Accuracy):**

* (1, ~0.47)

* (2, ~0.575)

* (3, ~0.63)

* (4, ~0.665)

* (5, ~0.69)

* (6, ~0.705)

* (7, ~0.72)

* (8, ~0.735)

* (9, ~0.745)

* (10, ~0.75)

2. **Cyan Line (Diamonds):**

* **Trend:** Shows a strong initial increase from k=1 to k=4, after which the rate of improvement slows, forming a gentle curve that begins to plateau. It is the second-best performing method for most of the range.

* **Data Points (k, Accuracy):**

* (1, ~0.47)

* (2, ~0.525)

* (3, ~0.56)

* (4, ~0.58)

* (5, ~0.59)

* (6, ~0.60)

* (7, ~0.605)

* (8, ~0.61)

* (9, ~0.615)

* (10, ~0.62)

3. **Blue Line (Squares):**

* **Trend:** Follows a similar trajectory to the cyan line but consistently achieves slightly lower accuracy. The gap between the cyan and blue lines remains relatively constant after k=3.

* **Data Points (k, Accuracy):**

* (1, ~0.47)

* (2, ~0.525)

* (3, ~0.55)

* (4, ~0.57)

* (5, ~0.58)

* (6, ~0.585)

* (7, ~0.59)

* (8, ~0.595)

* (9, ~0.60)

* (10, ~0.60)

4. **Red Line (Circles):**

* **Trend:** Starts with the lowest accuracy and the shallowest initial slope. However, it shows a steady, almost linear increase and begins to converge with the blue line at higher sample sizes (k=8 to 10).

* **Data Points (k, Accuracy):**

* (1, ~0.47)

* (2, ~0.49)

* (3, ~0.51)

* (4, ~0.54)

* (5, ~0.56)

* (6, ~0.575)

* (7, ~0.585)

* (8, ~0.595)

* (9, ~0.60)

* (10, ~0.605)

### Key Observations

1. **Universal Improvement:** All four methods benefit from increased sample size, as evidenced by the positive slope of every line.

2. **Clear Performance Hierarchy:** A distinct and consistent ranking is established by k=2 and maintained: Black > Cyan > Blue > Red (until convergence at the end).

3. **Diminishing Returns:** The cyan, blue, and red lines all show signs of diminishing returns (a decreasing marginal gain in accuracy) as sample size increases beyond k=5 or 6. The black line also shows this but to a much lesser degree.

4. **Convergence at High k:** The performance gap between the blue and red methods nearly closes by k=10, suggesting they may have similar asymptotic performance limits.

5. **Significant Outlier:** The method represented by the black dotted line is a significant outlier in terms of performance, achieving substantially higher accuracy at every sample size greater than 1.

### Interpretation

This chart likely compares different machine learning models, algorithms, or training strategies on a specific task. The data suggests:

* **Data Efficiency:** The "Black" method is far more data-efficient, extracting significantly more accuracy from the same amount of data. This could indicate a superior model architecture, better feature engineering, or a more effective learning algorithm.

* **Performance Ceiling:** The cyan, blue, and red methods appear to be approaching a performance ceiling (around 0.60-0.62 accuracy) within the given sample size range, while the black method's ceiling is much higher (above 0.75).

* **Practical Implications:** If collecting labeled data is expensive, the black method is the clear choice. If the black method is computationally more complex, there is a clear trade-off between resource cost and performance gain. The convergence of the blue and red lines suggests that for large datasets, the choice between those two specific methods may become less critical based on accuracy alone.

* **Underlying Cause:** The stark difference in performance prompts investigation into what makes the black method unique. Is it a fundamentally different approach (e.g., a deep neural network vs. traditional models), or does it utilize the same data in a more effective way? The chart provides strong empirical evidence for its superiority but does not explain the cause.

</details>

(a) LN-Super-49B

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size (k) for Four Methods

### Overview

The image is a line chart plotting the performance metric "Accuracy" against "Sample Size (k)" for four distinct methods or algorithms. The chart demonstrates how the accuracy of each method changes as the sample size increases from 1 to 10. All methods begin at approximately the same accuracy point when the sample size is 1, but their performance trajectories diverge significantly as more samples are added.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Sample Size (k)". It has discrete integer markers from 1 to 10.

* **Y-Axis (Vertical):** Labeled "Accuracy". It has numerical markers at 0.55, 0.60, 0.65, 0.70, and 0.75. The scale is linear.

* **Legend:** Positioned in the top-left corner of the chart area. It contains four entries, each associating a line color and marker style with a method name.

1. **Black dotted line with upward-pointing triangle markers:** Labeled "Method A".

2. **Cyan solid line with diamond markers:** Labeled "Method B".

3. **Red solid line with circle markers:** Labeled "Method C".

4. **Blue solid line with square markers:** Labeled "Method D".

* **Grid:** A light gray grid is present, aligning with the major tick marks on both axes.

### Detailed Analysis

**Trend Verification & Data Point Extraction:**

1. **Method A (Black, Dotted, Triangles):**

* **Trend:** Shows a steep, concave-downward increasing curve. It has the highest accuracy at every sample size after k=1 and continues to show significant gains across the entire range.

* **Approximate Data Points:**

* k=1: ~0.54

* k=2: ~0.63

* k=3: ~0.67

* k=4: ~0.69

* k=5: ~0.71

* k=6: ~0.72

* k=7: ~0.73

* k=8: ~0.74

* k=9: ~0.745

* k=10: ~0.75

2. **Method B (Cyan, Solid, Diamonds):**

* **Trend:** Shows a steady, slightly concave-downward increase. It is initially the second-best performer but is overtaken by Method C around k=7.

* **Approximate Data Points:**

* k=1: ~0.54

* k=2: ~0.58

* k=3: ~0.615

* k=4: ~0.625

* k=5: ~0.635

* k=6: ~0.64

* k=7: ~0.642

* k=8: ~0.643

* k=9: ~0.644

* k=10: ~0.645

3. **Method C (Red, Solid, Circles):**

* **Trend:** Shows a steady, nearly linear increase. It starts as the third-best method but shows consistent improvement, surpassing Method B between k=6 and k=7 to become the second-best method by k=10.

* **Approximate Data Points:**

* k=1: ~0.54

* k=2: ~0.57

* k=3: ~0.59

* k=4: ~0.61

* k=5: ~0.625

* k=6: ~0.635

* k=7: ~0.642

* k=8: ~0.648

* k=9: ~0.65

* k=10: ~0.655

4. **Method D (Blue, Solid, Squares):**

* **Trend:** Shows a shallow, concave-downward increase that plateaus early. It provides the least improvement with additional samples.

* **Approximate Data Points:**

* k=1: ~0.54

* k=2: ~0.58

* k=3: ~0.595

* k=4: ~0.602

* k=5: ~0.605

* k=6: ~0.608

* k=7: ~0.609

* k=8: ~0.61

* k=9: ~0.61

* k=10: ~0.61

### Key Observations

* **Common Starting Point:** All four methods converge at an accuracy of approximately 0.54 when the sample size (k) is 1.

* **Performance Hierarchy:** A clear performance hierarchy is established by k=2 and maintained thereafter: Method A >> Method B ≈ Method C > Method D. The gap between Method A and the others widens substantially.

* **Crossover Event:** Method C (red) overtakes Method B (cyan) between sample sizes 6 and 7. By k=10, Method C is clearly the second-best performer.

* **Diminishing Returns:** All methods show diminishing returns (the rate of accuracy improvement slows as k increases), but this effect is most pronounced for Method D, which nearly flatlines after k=5.

* **Method A's Dominance:** Method A's accuracy at k=10 (~0.75) is approximately 0.10 points higher than the next best method (Method C at ~0.655), representing a significant performance advantage.

### Interpretation

This chart likely compares the sample efficiency of different machine learning or statistical estimation methods. The data suggests:

1. **Superior Scalability of Method A:** Method A is not only the most accurate but also scales the best with increased data. Its steep initial rise indicates it learns very effectively from the first few additional samples. This could represent a more complex model (e.g., a deep neural network) or a more sophisticated algorithm that better leverages available data.

2. **The "Crossover" between B and C:** The point where Method C surpasses Method B is critical. It suggests that while Method B may be more effective with very small datasets (k < 7), Method C has a better long-term learning trajectory. This could indicate that Method C has a higher capacity or a more appropriate inductive bias for the underlying problem as more evidence becomes available.

3. **Limited Potential of Method D:** Method D's rapid plateau indicates it may be a simple model (e.g., a linear model or a naive baseline) that quickly reaches its performance ceiling. Adding more data beyond a small amount provides negligible benefit, suggesting the model is underfitting or has high bias.

4. **Practical Implication:** The choice of method depends on the expected sample size. If data is extremely scarce (k=1-3), the difference between B, C, and D is small. However, for any scenario where k can be increased beyond 5, investing in Method A yields substantial gains, and Method C becomes preferable over Method B. Method D is only justifiable if computational constraints are extreme and sample size is very limited.

**Spatial Grounding Note:** The legend is placed in the top-left quadrant, overlapping the grid lines but not obscuring any data points, as the lines all start in the bottom-left corner. The data series are clearly distinguishable by both color and marker shape, ensuring accessibility.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size (k)

### Overview

The image is a line chart plotting the performance (Accuracy) of four different methods or models as a function of increasing sample size (k). The chart demonstrates how the accuracy of each method changes as more data samples are used, from k=1 to k=10.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Sample Size (k)". It has discrete integer markers from 1 to 10.

* **Y-Axis (Vertical):** Labeled "Accuracy". It has a linear scale ranging from 0.66 to 0.82, with major gridlines at intervals of 0.02.

* **Data Series:** Four distinct lines, each identified by a unique color and marker shape. There is no separate legend box; the markers serve as the key.

1. **Black, dotted line with upward-pointing triangle markers (▲).**

2. **Cyan (light blue), solid line with diamond markers (◆).**

3. **Red (dark red), solid line with circle markers (●).**

4. **Blue (medium blue), solid line with square markers (■).**

### Detailed Analysis

**Trend Verification & Data Point Extraction:**

All four series show an overall upward trend in accuracy as sample size increases, but at markedly different rates and with different saturation points.

1. **Black Dotted Line (▲):**

* **Trend:** Steepest and most consistent upward slope across the entire range. Shows no sign of plateauing.

* **Data Points (Approximate):**

* k=1: 0.665

* k=2: 0.732

* k=3: 0.758

* k=4: 0.774

* k=5: 0.787

* k=6: 0.797

* k=7: 0.805

* k=8: 0.812

* k=9: 0.819

* k=10: 0.825

2. **Cyan Line (◆):**

* **Trend:** Strong initial improvement from k=1 to k=5, then the rate of improvement slows significantly, approaching a gentle upward slope.

* **Data Points (Approximate):**

* k=1: 0.665 (overlaps with others)

* k=2: 0.700

* k=3: 0.723

* k=4: 0.735

* k=5: 0.741

* k=6: 0.745

* k=7: 0.748

* k=8: 0.750

* k=9: 0.752

* k=10: 0.753

3. **Red Line (●):**

* **Trend:** Steady, nearly linear increase from k=1 to k=10. The slope is less steep than the black line but more consistent than the cyan line in the later stages.

* **Data Points (Approximate):**

* k=1: 0.665 (overlaps with others)

* k=2: 0.680

* k=3: 0.701

* k=4: 0.715

* k=5: 0.725

* k=6: 0.731

* k=7: 0.737

* k=8: 0.741

* k=9: 0.745

* k=10: 0.748

4. **Blue Line (■):**

* **Trend:** Increases from k=1 to k=5, then plateaus and shows a very slight *decrease* from k=7 to k=10. This is the only series that does not show continuous improvement.

* **Data Points (Approximate):**

* k=1: 0.665 (overlaps with others)

* k=2: 0.700

* k=3: 0.710

* k=4: 0.715

* k=5: 0.717

* k=6: 0.718

* k=7: 0.718

* k=8: 0.717

* k=9: 0.716

* k=10: 0.715

### Key Observations

1. **Performance Hierarchy:** A clear and consistent ranking is established by k=3 and maintained thereafter: Black (▲) > Cyan (◆) > Red (●) > Blue (■).

2. **Convergence at k=1:** All four methods start at approximately the same accuracy (~0.665) when the sample size is minimal (k=1).

3. **Divergence with Data:** The performance gap between the best (Black) and worst (Blue) method widens dramatically as sample size increases, from 0 at k=1 to ~0.11 at k=10.

4. **Plateauing Behavior:** The Blue line's performance saturates and slightly degrades after k=5. The Cyan line's improvement slows markedly after k=5. The Red and Black lines show no plateau within the observed range.

5. **Relative Gain:** The Black method gains approximately 0.16 in accuracy from k=1 to k=10, while the Blue method gains only ~0.05.

### Interpretation

This chart likely compares different machine learning models, algorithms, or training strategies. The data suggests:

* **The method represented by the black dotted line (▲) is superior and most data-efficient.** It not only achieves the highest final accuracy but also improves most rapidly with additional data, indicating it effectively leverages larger datasets. This could represent a more complex model (e.g., a deep neural network) or a more sophisticated algorithm.

* **The cyan (◆) and red (●) methods are intermediate performers.** The cyan method has a higher ceiling than the red one, but both show more modest gains from data compared to the black method. They might represent simpler models or baseline approaches.

* **The blue method (■) is the least effective for larger datasets.** Its plateau and slight decline suggest it may be a simple model that quickly reaches its capacity (underfitting) or a method that becomes unstable or overfits with more data in this specific context. It is only competitive at very small sample sizes (k=2, where it matches the cyan line).

* **The choice of method is critical and depends on available data.** If data is extremely scarce (k=1), all methods perform similarly. However, for any application where more than a couple of samples are available, the black method is the clear choice. The chart makes a strong case for investing in the approach represented by the black line, as its return on investment (in terms of accuracy per added sample) is the highest.

</details>

(c) QwQ-32B

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size (k)

### Overview

The image is a line chart comparing the performance of four different methods or models as a function of sample size (k). The chart plots "Accuracy" on the y-axis against "Sample Size (k)" on the x-axis. All methods show an increasing trend in accuracy as the sample size grows, but they diverge significantly in their rates of improvement and final performance levels.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:**

* **Label:** "Sample Size (k)"

* **Scale:** Linear, from 1 to 10.

* **Markers/Ticks:** Integers 1, 2, 3, 4, 5, 6, 7, 8, 9, 10.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear, from 0.78 to 0.90.

* **Markers/Ticks:** 0.78, 0.80, 0.82, 0.84, 0.86, 0.88, 0.90.

* **Legend:** Located in the bottom-right quadrant of the chart area.

* **Series 1:** `pass@k (Oracle)` - Represented by a black, dotted line with upward-pointing triangle markers (▲).

* **Series 2:** `majority@k` - Represented by a solid, dark red (maroon) line with circle markers (●).

* **Series 3:** `short-1@k (Ours)` - Represented by a solid, bright blue line with square markers (■).

* **Series 4:** `short-3@k (Ours)` - Represented by a solid, cyan (light blue-green) line with diamond markers (◆).

### Detailed Analysis

**Trend Verification & Data Point Extraction:**

The following table lists approximate accuracy values for each method at each sample size (k). Values are estimated from the chart's gridlines. The visual trend for each series is described first.

1. **pass@k (Oracle) [Black Dotted Line, ▲]:** Shows the steepest and most consistent upward slope, achieving the highest accuracy at every point after k=1.

* k=1: ~0.78

* k=2: ~0.835

* k=3: ~0.855

* k=4: ~0.868

* k=5: ~0.877

* k=6: ~0.884

* k=7: ~0.890

* k=8: ~0.895

* k=9: ~0.899

* k=10: ~0.902

2. **short-3@k (Ours) [Cyan Line, ◆]:** Shows a strong upward slope, closely following but consistently below the Oracle line. It is the second-best performing method.

* k=1: ~0.78

* k=2: ~0.818

* k=3: ~0.848

* k=4: ~0.862

* k=5: ~0.871

* k=6: ~0.877

* k=7: ~0.881

* k=8: ~0.885

* k=9: ~0.888

* k=10: ~0.890

3. **majority@k [Dark Red Line, ●]:** Shows a steady, moderate upward slope. It starts as the lowest-performing method but surpasses `short-1@k` around k=6.

* k=1: ~0.78

* k=2: ~0.798

* k=3: ~0.815

* k=4: ~0.828

* k=5: ~0.839

* k=6: ~0.846

* k=7: ~0.854

* k=8: ~0.860

* k=9: ~0.866

* k=10: ~0.871

4. **short-1@k (Ours) [Blue Line, ■]:** Shows an initial upward slope that flattens significantly after k=6, exhibiting a plateau effect. It is overtaken by `majority@k` in the latter half.

* k=1: ~0.78

* k=2: ~0.818

* k=3: ~0.831

* k=4: ~0.839

* k=5: ~0.844

* k=6: ~0.847

* k=7: ~0.848

* k=8: ~0.849

* k=9: ~0.849

* k=10: ~0.848

### Key Observations

1. **Performance Hierarchy:** A clear and consistent performance hierarchy is established for k > 1: `pass@k (Oracle)` > `short-3@k (Ours)` > `majority@k` ≈ `short-1@k (Ours)` (with a crossover).

2. **Diminishing Returns:** All methods show diminishing returns (the slope decreases as k increases), but this is most extreme for `short-1@k (Ours)`, which nearly plateaus after k=6.

3. **Crossover Point:** The `majority@k` method, while starting slower, shows more sustained improvement and overtakes `short-1@k (Ours)` between k=5 and k=6.

4. **Convergence at k=1:** All four methods begin at approximately the same accuracy point (~0.78) when the sample size is 1.

5. **Gap Analysis:** The performance gap between the best (`Oracle`) and the proposed `short-3` method remains relatively constant (~0.01-0.015 accuracy points) across most sample sizes. The gap between `short-3` and `short-1` widens substantially as k increases.

### Interpretation

This chart likely comes from a research paper in machine learning or statistics, comparing different strategies for aggregating results from multiple samples or trials (where `k` is the number of samples). The "Oracle" represents an idealized upper-bound performance.

* **What the data suggests:** The proposed method `short-3@k` is highly effective, achieving performance close to the theoretical Oracle and significantly outperforming the baseline `majority@k` rule. However, the variant `short-1@k` suffers from severe saturation, indicating that its strategy does not scale well with increased sample size.

* **Relationship between elements:** The chart demonstrates the trade-off between sample efficiency and final accuracy. While all methods benefit from more samples (`k`), the choice of aggregation strategy (`short-3` vs. `short-1` vs. `majority`) dramatically impacts how effectively that additional data is utilized.

* **Notable anomaly/insight:** The plateau of `short-1@k` is a critical finding. It suggests that this particular method hits a fundamental limit in its ability to leverage additional samples, making it a poor choice for scenarios where `k` can be large. In contrast, `short-3@k` and `majority@k` continue to improve, with `short-3@k` maintaining a superior rate of improvement. This implies the "short-3" strategy is more robust and scalable.

</details>

(d) R1-670B

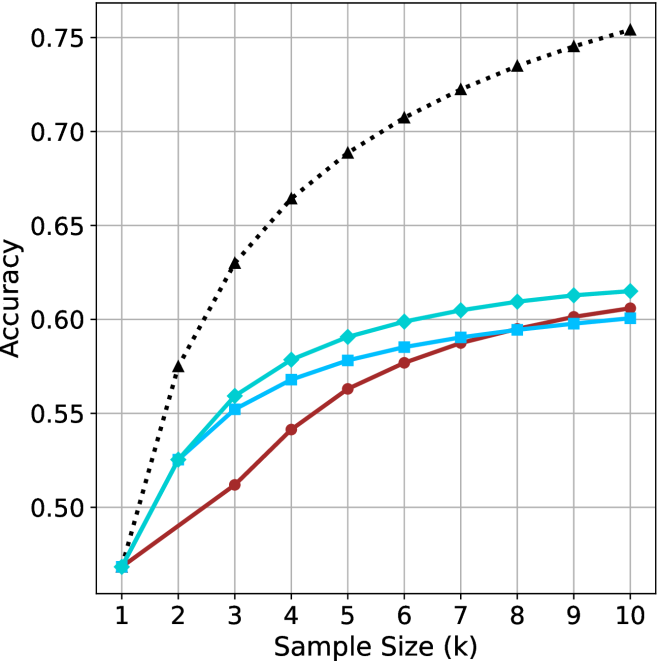

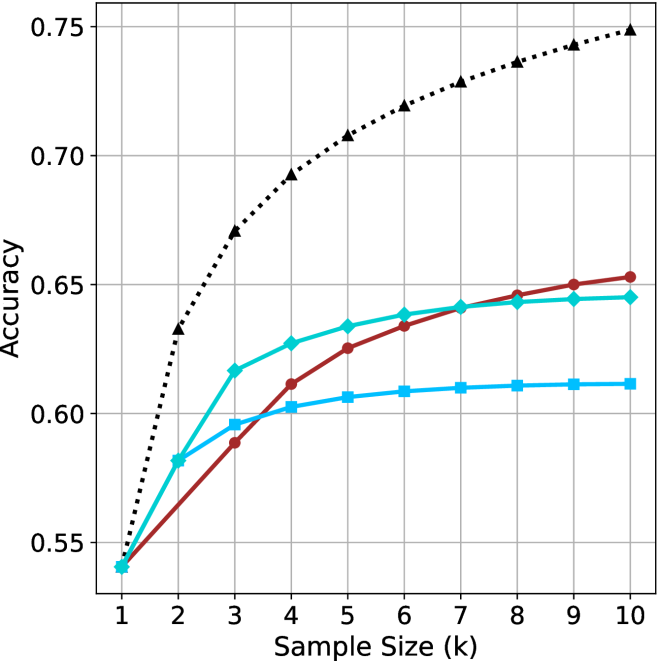

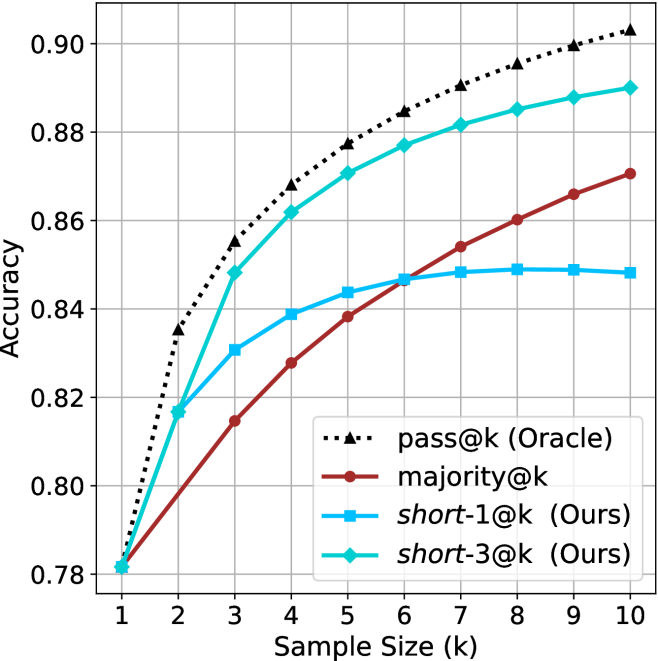

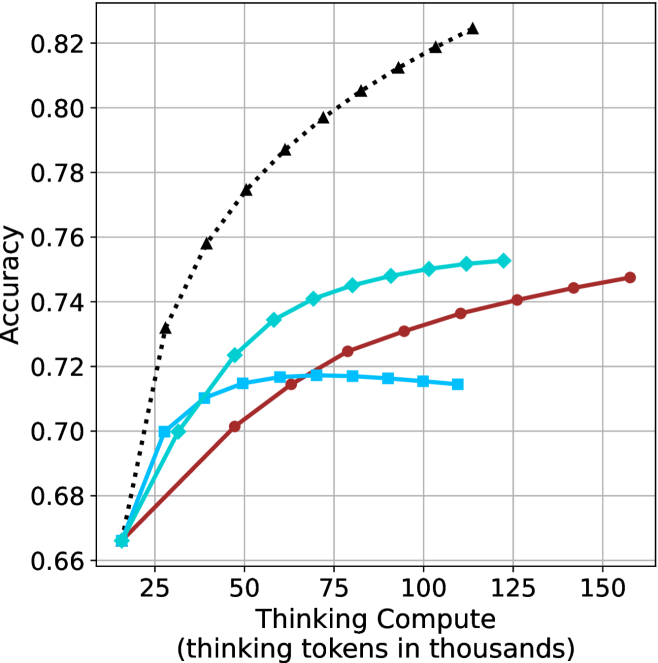

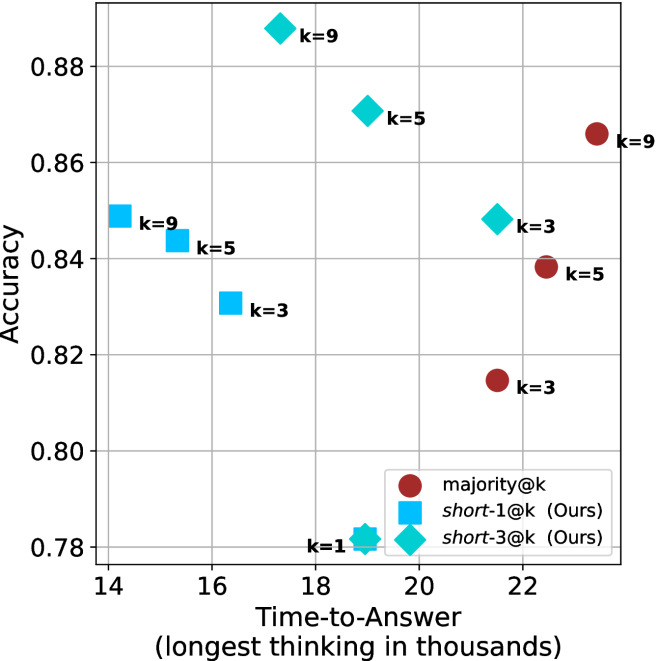

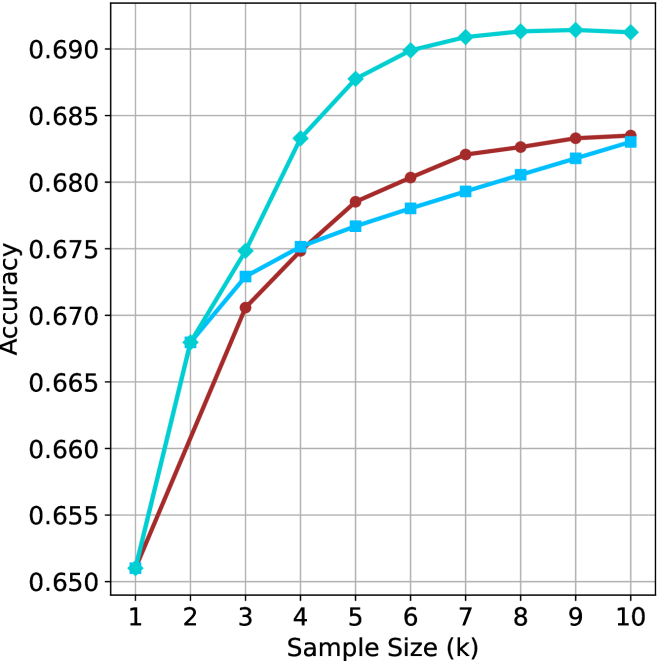

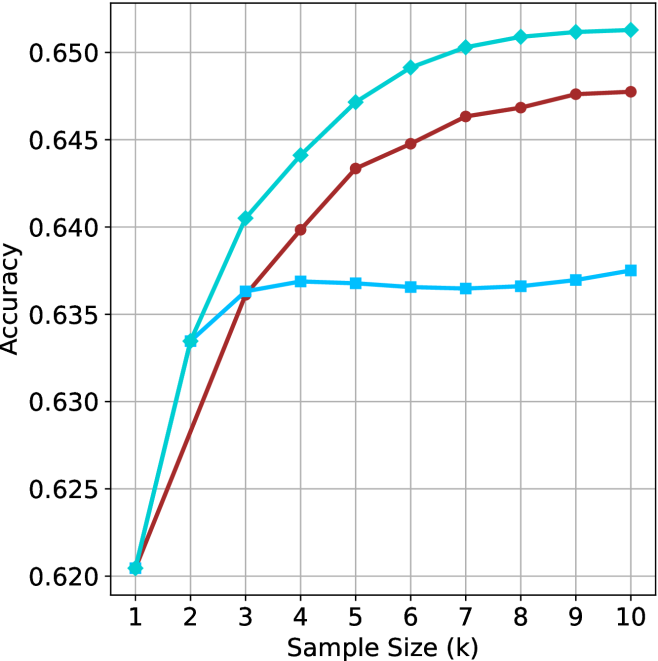

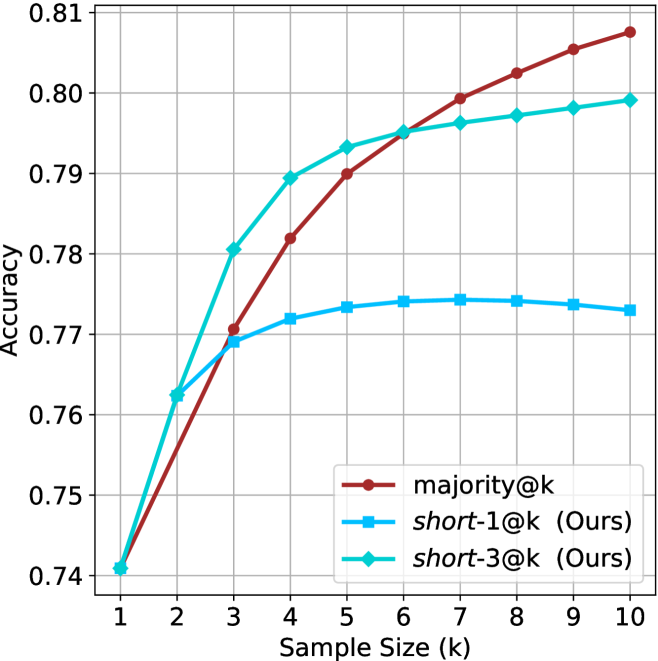

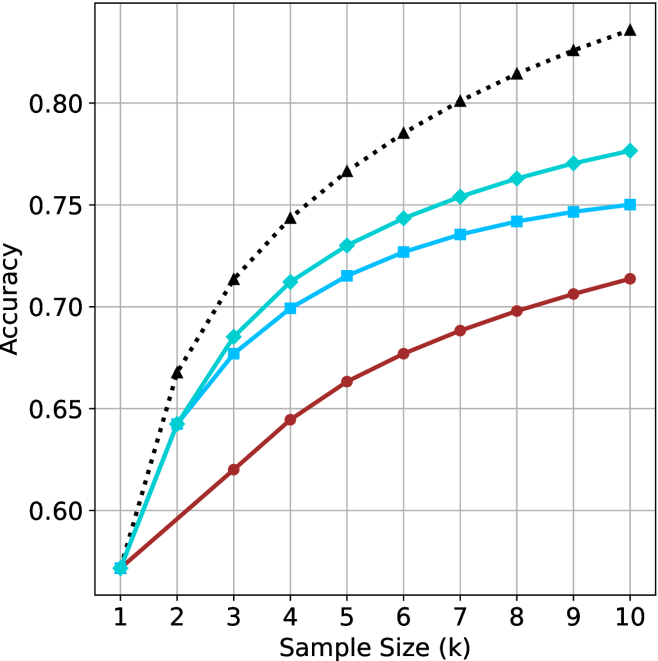

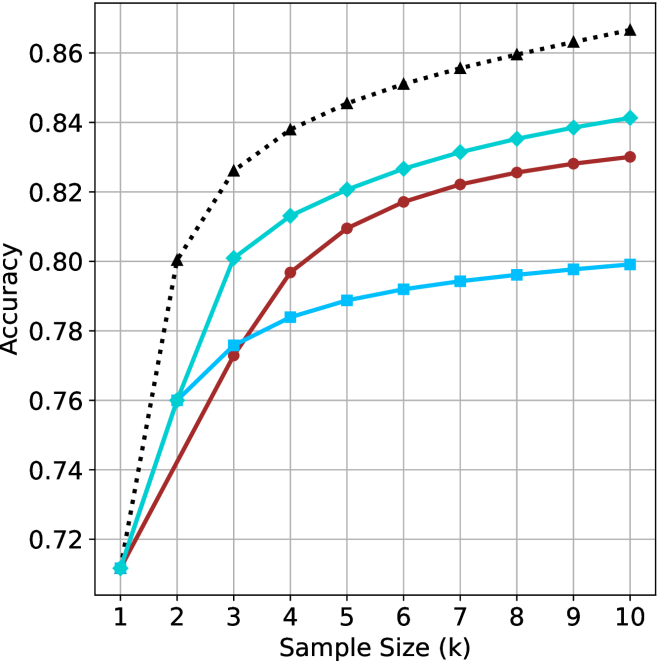

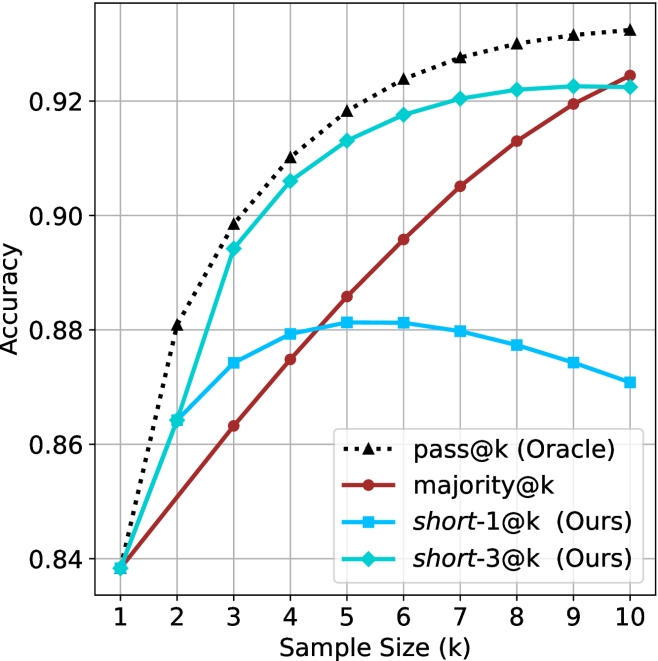

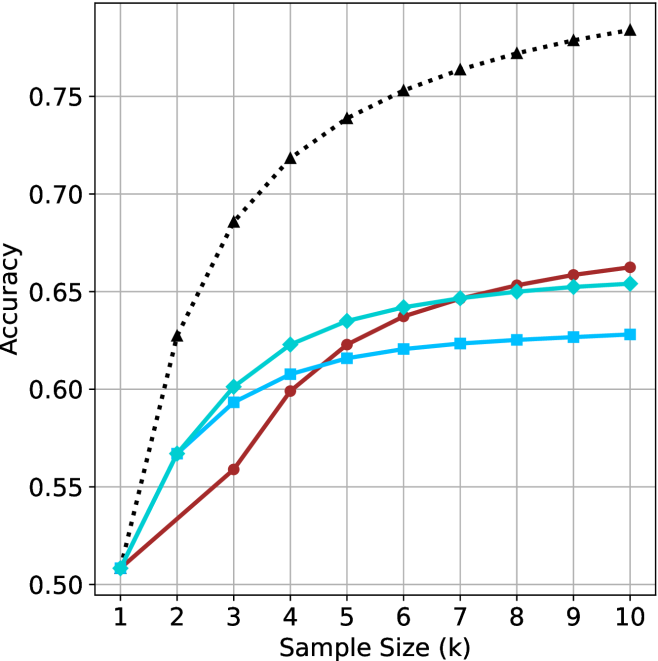

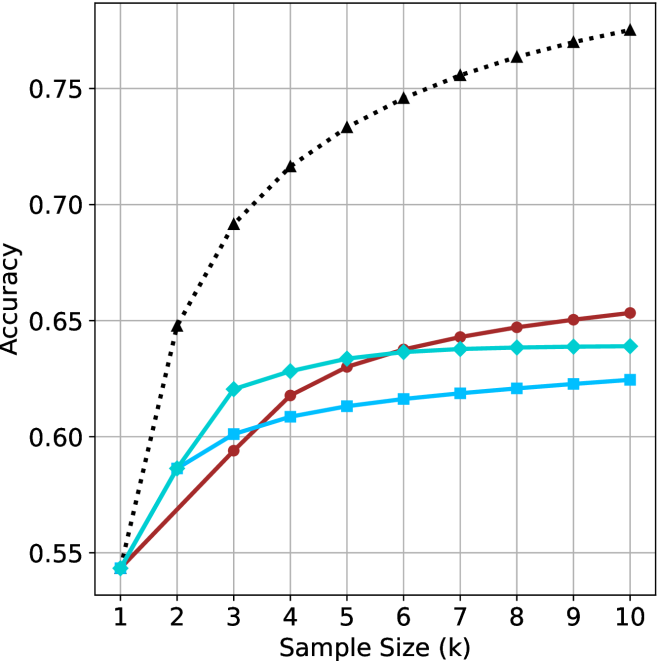

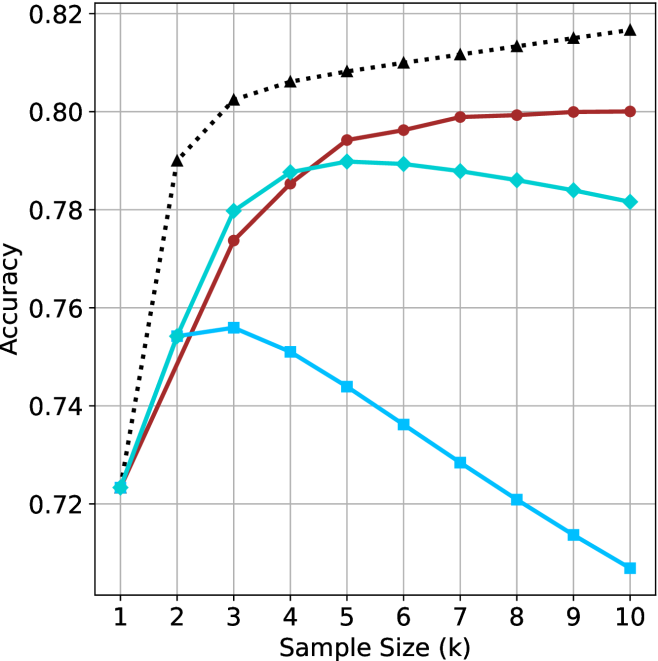

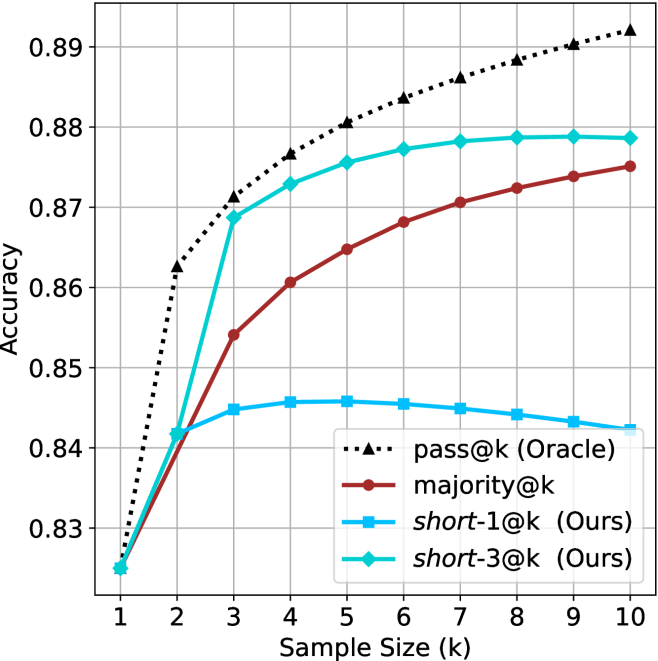

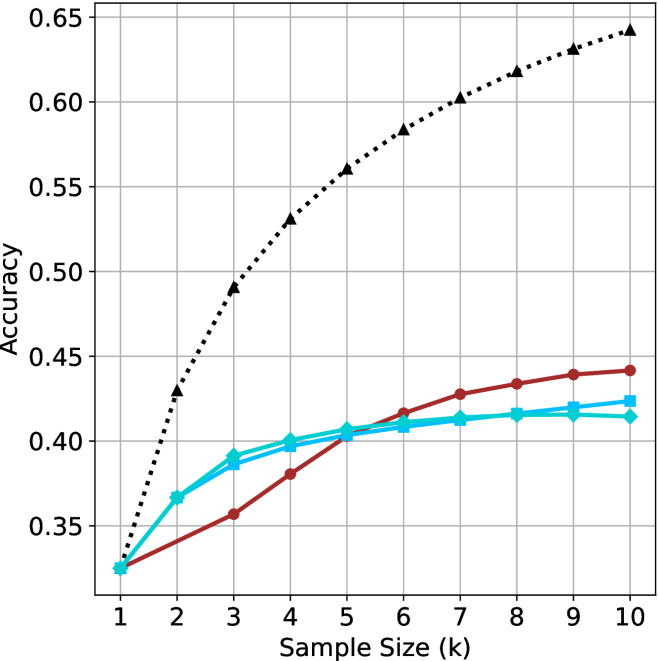

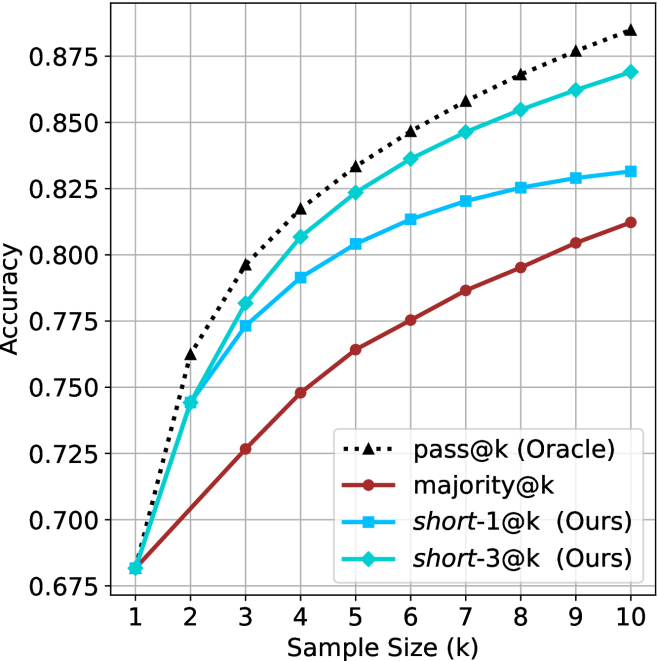

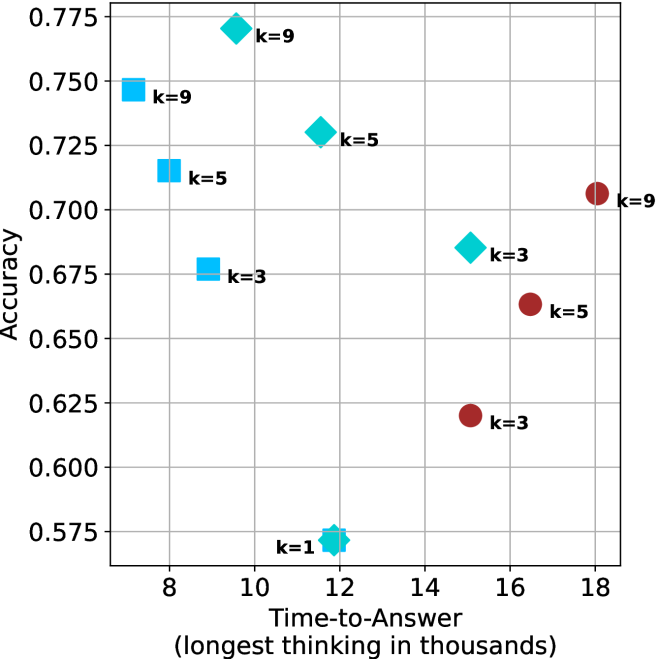

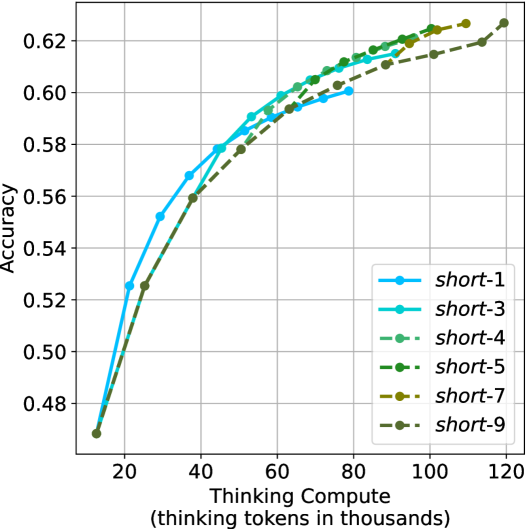

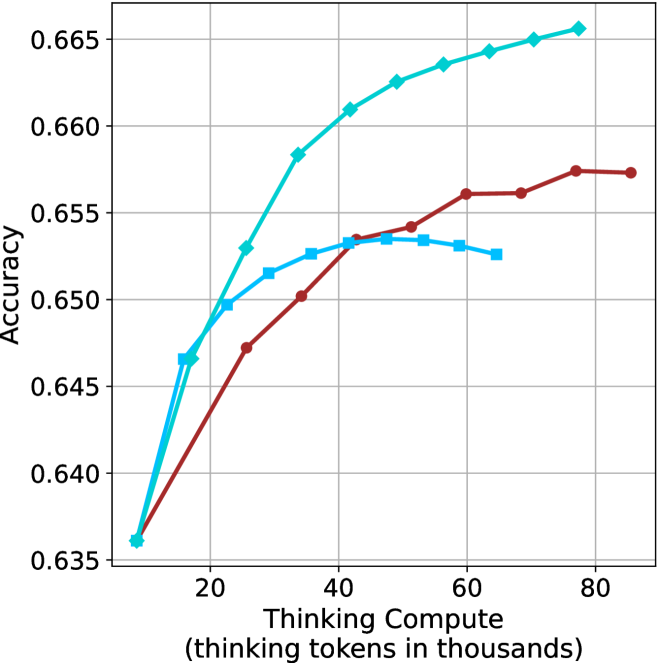

Figure 2: Comparing different inference methods under controlled sample size ( $k$ ). All methods improve with larger sample sizes. Interestingly, this trend also holds for the short-m@k methods.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute

### Overview

The image is a line chart comparing the performance (Accuracy) of four different methods as a function of computational resources (Thinking Compute). The chart demonstrates how accuracy scales with increased "thinking tokens" for each method, showing distinct scaling laws and efficiency differences.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:**

* **Label:** `Thinking Compute (thinking tokens in thousands)`

* **Scale:** Linear, ranging from 0 to 120.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100, 120.

* **Y-Axis:**

* **Label:** `Accuracy`

* **Scale:** Linear, ranging from approximately 0.45 to 0.75.

* **Major Tick Marks:** 0.50, 0.55, 0.60, 0.65, 0.70, 0.75.

* **Legend:** Located in the top-left corner of the plot area. It contains four entries:

1. **Chain-of-Thought:** Black dotted line with upward-pointing triangle markers.

2. **Self-Consistency:** Cyan (light blue) solid line with diamond markers.

3. **Self-Consistency + CoT:** Cyan (light blue) solid line with square markers.

4. **Standard:** Red solid line with circle markers.

* **Grid:** A light gray grid is present for both major x and y ticks.

### Detailed Analysis

The chart plots four data series. All series begin at approximately the same point (Thinking Compute ≈ 10k tokens, Accuracy ≈ 0.47) and show increasing accuracy with more compute, but at markedly different rates.

1. **Chain-of-Thought (Black, Dotted, Triangles):**

* **Trend:** Exhibits the steepest, near-logarithmic upward slope. It shows the highest marginal gain in accuracy per additional thinking token.

* **Approximate Data Points:**

* (10, 0.47)

* (20, 0.57)

* (30, 0.63)

* (40, 0.66)

* (50, 0.69)

* (60, 0.71)

* (70, 0.73)

* (80, 0.75)

2. **Self-Consistency (Cyan, Solid, Diamonds):**

* **Trend:** Shows a strong, steady upward slope, but less steep than Chain-of-Thought. It consistently outperforms the Standard method.

* **Approximate Data Points:**

* (10, 0.47)

* (20, 0.53)

* (30, 0.56)

* (40, 0.58)

* (50, 0.59)

* (60, 0.60)

* (70, 0.61)

* (80, 0.615)

3. **Self-Consistency + CoT (Cyan, Solid, Squares):**

* **Trend:** Follows a path nearly identical to the "Self-Consistency" line, with data points often overlapping or lying very close. This suggests combining Self-Consistency with Chain-of-Thought provides minimal additional benefit over Self-Consistency alone in this specific evaluation.

* **Approximate Data Points:**

* (10, 0.47)

* (20, 0.53)

* (30, 0.57)

* (40, 0.58)

* (50, 0.59)

* (60, 0.60)

* (70, 0.605)

4. **Standard (Red, Solid, Circles):**

* **Trend:** Exhibits the shallowest, most linear upward slope. It has the lowest accuracy for any given compute budget beyond the initial point.

* **Approximate Data Points:**

* (10, 0.47)

* (20, 0.49)

* (30, 0.51)

* (40, 0.54)

* (50, 0.56)

* (60, 0.58)

* (70, 0.59)

* (80, 0.60)

* (90, 0.605)

* (100, 0.61)

### Key Observations

* **Performance Hierarchy:** For all compute budgets > 10k tokens, the performance order is clear and consistent: Chain-of-Thought > Self-Consistency ≈ Self-Consistency + CoT > Standard.

* **Diminishing Returns:** All curves show signs of diminishing returns (flattening) as compute increases, but the point of significant flattening occurs much later for Chain-of-Thought.

* **Convergence at Low Compute:** At the lowest compute point (~10k tokens), all methods perform identically (~0.47 accuracy), suggesting the advanced techniques require a minimum compute threshold to show benefit.

* **Method Synergy:** The combination of "Self-Consistency + CoT" does not yield a performance curve above the "Self-Consistency" line, indicating these methods may be addressing similar aspects of the problem or that one subsumes the other's benefits in this context.

### Interpretation

This chart is a powerful visualization of **scaling laws for reasoning methods** in AI. It demonstrates that not all methods scale equally with increased computational resources ("thinking tokens").

* **Chain-of-Thought is Highly Efficient:** The steep slope of the Chain-of-Thought line indicates it is an exceptionally efficient method for converting additional compute into accuracy gains. It suggests that structuring a model's internal reasoning process yields disproportionate benefits.

* **Self-Consistency Provides a Solid Baseline Improvement:** The method offers a reliable, significant boost over the Standard approach but does not scale as aggressively as pure Chain-of-Thought.

* **The "Standard" Approach is Inefficient:** The shallow slope implies that simply allocating more tokens to a standard, unstructured generation process is a less effective strategy for improving accuracy on this task.

* **Practical Implication:** For tasks where accuracy is critical and compute is available, Chain-of-Thought is the most effective strategy shown. The chart provides a quantitative basis for choosing a reasoning method based on a given compute budget. The lack of synergy between Self-Consistency and CoT is a notable finding, suggesting research into their interaction is needed.

</details>

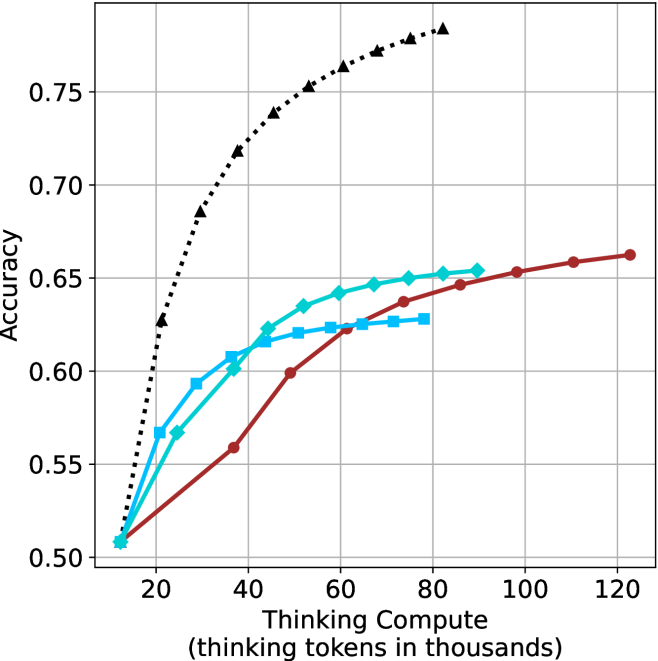

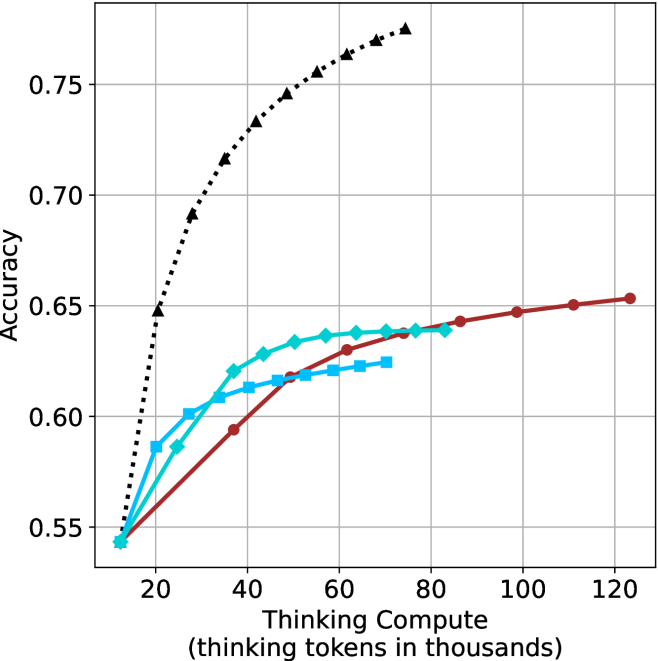

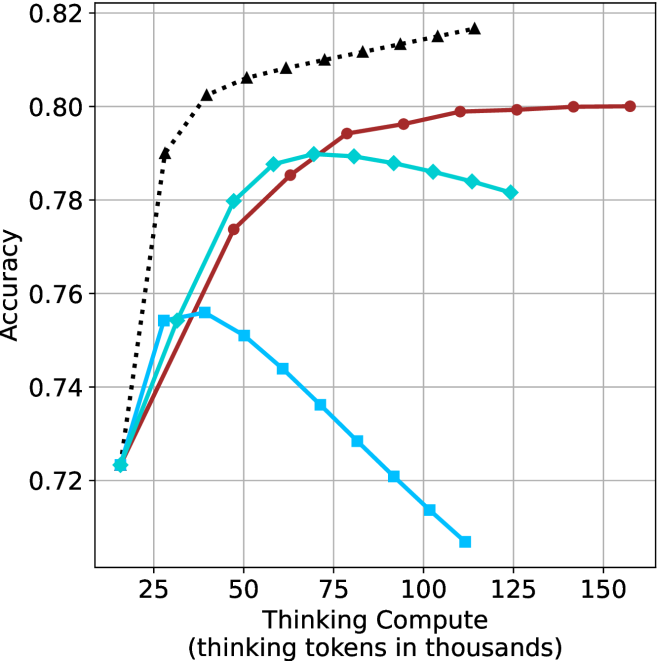

(a) LN-Super-49B

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute

### Overview

The image is a line chart plotting model accuracy against computational effort, measured in "thinking tokens." It displays four distinct data series, each representing a different model or method, showing how their performance scales with increased compute. The chart demonstrates a clear positive correlation between thinking compute and accuracy for all series, though the rate of improvement varies significantly.

### Components/Axes

* **X-Axis (Horizontal):**

* **Label:** "Thinking Compute (thinking tokens in thousands)"

* **Scale:** Linear scale from 0 to 120, with major tick marks at 20, 40, 60, 80, 100, and 120.

* **Y-Axis (Vertical):**

* **Label:** "Accuracy"

* **Scale:** Linear scale from 0.55 to 0.75, with major tick marks at 0.55, 0.60, 0.65, 0.70, and 0.75.

* **Data Series (Legend inferred from visual markers):**

1. **Black Dotted Line with Upward-Pointing Triangles:** Positioned as the top-most line.

2. **Red Solid Line with Circles:** Positioned as the second-highest line at higher compute values.

3. **Cyan Solid Line with Diamonds:** Positioned in the middle range.

4. **Cyan Solid Line with Squares:** Positioned as the lowest line after the initial points.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

* **Series 1: Black Dotted Line (Triangles)**

* **Trend:** Shows the steepest initial increase in accuracy, rising sharply from low compute and continuing to climb steadily, though the slope decreases slightly at higher values. It consistently achieves the highest accuracy.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.54

* At ~20k tokens: Accuracy ≈ 0.63

* At ~30k tokens: Accuracy ≈ 0.67

* At ~40k tokens: Accuracy ≈ 0.70

* At ~50k tokens: Accuracy ≈ 0.72

* At ~60k tokens: Accuracy ≈ 0.73

* At ~70k tokens: Accuracy ≈ 0.74

* At ~80k tokens: Accuracy ≈ 0.75 (final point)

* **Series 2: Red Solid Line (Circles)**

* **Trend:** Shows a steady, near-linear increase in accuracy across the entire compute range. It starts lower than the cyan lines but surpasses them as compute increases.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.54

* At ~40k tokens: Accuracy ≈ 0.59

* At ~60k tokens: Accuracy ≈ 0.625

* At ~80k tokens: Accuracy ≈ 0.64

* At ~100k tokens: Accuracy ≈ 0.65

* At ~120k tokens: Accuracy ≈ 0.655 (final point)

* **Series 3: Cyan Solid Line (Diamonds)**

* **Trend:** Increases rapidly at first, then begins to plateau around 60k-80k tokens. It is overtaken by the red line at approximately 50k tokens.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.54

* At ~20k tokens: Accuracy ≈ 0.58

* At ~30k tokens: Accuracy ≈ 0.60

* At ~40k tokens: Accuracy ≈ 0.625

* At ~50k tokens: Accuracy ≈ 0.635

* At ~60k tokens: Accuracy ≈ 0.64

* At ~70k tokens: Accuracy ≈ 0.645

* At ~80k tokens: Accuracy ≈ 0.645 (final point)

* **Series 4: Cyan Solid Line (Squares)**

* **Trend:** Increases initially but plateaus very early, showing minimal gains after approximately 40k tokens. It has the lowest final accuracy.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.54

* At ~20k tokens: Accuracy ≈ 0.58

* At ~30k tokens: Accuracy ≈ 0.60

* At ~40k tokens: Accuracy ≈ 0.605

* At ~50k tokens: Accuracy ≈ 0.61

* At ~60k tokens: Accuracy ≈ 0.61

* At ~70k tokens: Accuracy ≈ 0.61 (final point)

### Key Observations

1. **Universal Scaling Law:** All four methods show improved accuracy with increased thinking compute, confirming a fundamental relationship between computational resources and model performance on this task.

2. **Divergent Scaling Efficiency:** The methods scale with dramatically different efficiency. The black-dotted method is highly efficient, achieving high accuracy with relatively low compute. The red method scales steadily but requires more compute to reach the same accuracy as the black method. The two cyan methods show early saturation.

3. **Crossover Point:** The red line (circles) crosses above both cyan lines between 40k and 50k tokens, indicating that its scaling advantage becomes dominant at medium-to-high compute budgets.

4. **Performance Ceiling:** The cyan-square method hits a clear performance ceiling around 0.61 accuracy, suggesting a fundamental limitation in that approach regardless of additional compute.

### Interpretation

This chart likely compares different reasoning or "thinking" strategies for an AI model. The data suggests that the method represented by the **black dotted line** is superior in terms of **compute efficiency**—it extracts the most accuracy per thinking token, especially in the low-to-medium compute regime (10k-60k tokens). This could represent a more optimized or advanced reasoning algorithm.

The **red line** represents a method with **consistent, reliable scaling**. While less efficient initially, it continues to improve predictably and overtakes the other methods at higher compute budgets, making it potentially suitable for scenarios where maximum accuracy is the goal and compute is less constrained.

The two **cyan lines** appear to be less sophisticated methods that benefit from initial compute but quickly **saturate**, hitting a performance wall. The difference between the diamond and square markers might indicate a minor variation in an otherwise similar approach, with the diamond variant having a slightly higher ceiling.

The overarching message is that **how** a model "thinks" (the algorithm or strategy) is critically important. Simply throwing more compute at a problem yields diminishing returns if the underlying method is inefficient. The chart makes a strong case for investing in better reasoning architectures (like the black-dotted one) over brute-force scaling of less efficient methods.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute

### Overview

The image is a line chart plotting model accuracy against computational effort, measured in thinking tokens. It compares the performance of four distinct methods or models, showing how their accuracy scales with increased compute. The chart demonstrates a clear positive correlation between thinking compute and accuracy for all series, though with varying rates of improvement and saturation points.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Thinking Compute (thinking tokens in thousands)". The scale runs from approximately 15 to 160, with major tick marks at 25, 50, 75, 100, 125, and 150.

* **Y-Axis (Vertical):** Labeled "Accuracy". The scale runs from 0.66 to 0.82, with major tick marks at 0.02 intervals (0.66, 0.68, 0.70, ..., 0.82).

* **Legend:** Positioned in the top-left corner of the chart area. It contains four entries, each with a colored line segment and a marker symbol:

1. **Black dotted line with upward-pointing triangle markers (▲)**

2. **Cyan solid line with diamond markers (◆)**

3. **Red solid line with circle markers (●)**

4. **Cyan solid line with square markers (■)**

* **Grid:** A light gray grid is present, with vertical lines at each major x-axis tick and horizontal lines at each major y-axis tick.

### Detailed Analysis

The chart displays four data series. Below is an analysis of each, including approximate data points extracted from the visual plot.

**1. Black Dotted Line (▲)**

* **Trend:** Shows the steepest and most consistent upward slope across the entire range. It demonstrates the highest accuracy at every compute level beyond the initial point.

* **Approximate Data Points:**

* ~15k tokens: Accuracy ≈ 0.665

* ~25k tokens: Accuracy ≈ 0.73

* ~50k tokens: Accuracy ≈ 0.775

* ~75k tokens: Accuracy ≈ 0.80

* ~100k tokens: Accuracy ≈ 0.815

* ~115k tokens: Accuracy ≈ 0.825 (highest point on the chart)

**2. Cyan Solid Line (◆)**

* **Trend:** Increases steadily but begins to show signs of diminishing returns (flattening) after approximately 75k tokens. It is the second-highest performing series.

* **Approximate Data Points:**

* ~15k tokens: Accuracy ≈ 0.665

* ~25k tokens: Accuracy ≈ 0.70

* ~50k tokens: Accuracy ≈ 0.735

* ~75k tokens: Accuracy ≈ 0.745

* ~100k tokens: Accuracy ≈ 0.75

* ~120k tokens: Accuracy ≈ 0.752

**3. Red Solid Line (●)**

* **Trend:** Shows a steady, nearly linear increase throughout the plotted range. Its slope is less steep than the black or cyan (◆) lines but remains consistently positive.

* **Approximate Data Points:**

* ~15k tokens: Accuracy ≈ 0.665

* ~50k tokens: Accuracy ≈ 0.70

* ~75k tokens: Accuracy ≈ 0.725

* ~100k tokens: Accuracy ≈ 0.735

* ~125k tokens: Accuracy ≈ 0.74

* ~155k tokens: Accuracy ≈ 0.748

**4. Cyan Solid Line (■)**

* **Trend:** Increases initially but plateaus very early, showing almost no improvement after approximately 50k tokens. It has the lowest performance ceiling.

* **Approximate Data Points:**

* ~15k tokens: Accuracy ≈ 0.665

* ~25k tokens: Accuracy ≈ 0.70

* ~50k tokens: Accuracy ≈ 0.715

* ~75k tokens: Accuracy ≈ 0.718

* ~100k tokens: Accuracy ≈ 0.715 (slight decline visible)

* ~110k tokens: Accuracy ≈ 0.714

### Summary of Approximate Data Points

| Thinking Compute (k tokens) | Black (▲) Accuracy | Cyan (◆) Accuracy | Red (●) Accuracy | Cyan (■) Accuracy |

| :--- | :--- | :--- | :--- | :--- |

| ~15 | 0.665 | 0.665 | 0.665 | 0.665 |

| ~25 | 0.73 | 0.70 | - | 0.70 |

| ~50 | 0.775 | 0.735 | 0.70 | 0.715 |

| ~75 | 0.80 | 0.745 | 0.725 | 0.718 |

| ~100 | 0.815 | 0.75 | 0.735 | 0.715 |

| ~115 | 0.825 | - | - | - |

| ~120 | - | 0.752 | - | - |

| ~125 | - | - | 0.74 | - |

| ~155 | - | - | 0.748 | - |

### Key Observations

1. **Common Starting Point:** All four methods begin at nearly the same accuracy (~0.665) at the lowest compute level (~15k tokens).

2. **Performance Hierarchy:** A clear performance hierarchy is established quickly and maintained: Black (▲) > Cyan (◆) > Red (●) > Cyan (■).

3. **Saturation Points:** The methods exhibit different saturation behaviors. The Cyan (■) line saturates earliest (~50k tokens). The Cyan (◆) line begins to saturate around 75-100k tokens. The Black (▲) and Red (●) lines show no clear signs of saturation within the plotted range.

4. **Efficiency:** The Black (▲) method is the most compute-efficient, achieving higher accuracy with fewer tokens than any other method. For example, it reaches 0.75 accuracy at ~40k tokens, a level the Cyan (◆) method only approaches at ~100k tokens.

### Interpretation

This chart illustrates the scaling law relationship between computational resources ("thinking tokens") and model performance (accuracy) for different approaches. The data suggests:

* **Investment Pays Off:** For all tested methods, allocating more compute for "thinking" leads to better accuracy, validating the core premise of scaling inference-time computation.

* **Methodological Superiority:** The method represented by the black dotted line (▲) is fundamentally more efficient and effective. It extracts more accuracy per thinking token and has a higher performance ceiling. This could indicate a superior architecture, training technique, or reasoning algorithm.

* **The Plateau Problem:** The early plateau of the Cyan (■) line indicates a method that hits a fundamental limit; throwing more compute at it yields negligible gains. This is a critical insight for resource allocation.

* **Strategic Implications:** The choice of method involves a trade-off. If maximum accuracy is the goal and compute is available, the Black (▲) method is the clear choice. If compute is constrained, the relative efficiency of each method at different budget levels (e.g., Red (●) may be preferable to Cyan (◆) at very high compute budgets due to its continued, albeit slower, improvement) becomes the key decision factor. The chart provides the empirical data needed to make that cost-benefit analysis.

</details>

(c) QwQ-32B

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute for Different Methods

### Overview

The image displays a line chart comparing the performance of four different methods or models. The chart plots "Accuracy" on the vertical axis against "Thinking Compute" (measured in thousands of thinking tokens) on the horizontal axis. The primary trend for all series is that accuracy increases with increased thinking compute, but the rate of improvement and the final accuracy achieved vary significantly between methods.

### Components/Axes

* **X-Axis (Horizontal):**

* **Label:** "Thinking Compute (thinking tokens in thousands)"

* **Scale:** Linear scale ranging from approximately 0 to 180 (in thousands of tokens).

* **Major Ticks:** Labeled at 50, 100, and 150.

* **Y-Axis (Vertical):**

* **Label:** "Accuracy"

* **Scale:** Linear scale ranging from 0.78 to 0.90.

* **Major Ticks:** Labeled at 0.78, 0.80, 0.82, 0.84, 0.86, 0.88, and 0.90.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains four entries:

1. `pass@k (Oracle)`: Represented by a black, dotted line with upward-pointing triangle markers.

2. `majority@k`: Represented by a solid, dark red (maroon) line with circular markers.

3. `short-1@k (Ours)`: Represented by a solid, light blue (cyan) line with square markers.

4. `short-3@k (Ours)`: Represented by a solid, teal (darker cyan) line with diamond markers.

* **Grid:** A light gray grid is present, with vertical lines at the major x-axis ticks and horizontal lines at the major y-axis ticks.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **pass@k (Oracle) [Black Dotted Line, Triangle Markers]:**

* **Trend:** This line shows the steepest initial ascent and achieves the highest overall accuracy. It appears to be the upper-bound or ideal performance.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.78

* At ~25k tokens: Accuracy ≈ 0.835

* At ~50k tokens: Accuracy ≈ 0.855

* At ~75k tokens: Accuracy ≈ 0.875

* At ~100k tokens: Accuracy ≈ 0.885

* At ~125k tokens: Accuracy ≈ 0.895

* At ~150k tokens: Accuracy ≈ 0.902 (highest point on the chart)

2. **short-3@k (Ours) [Teal Line, Diamond Markers]:**

* **Trend:** This is the second-best performing method. It follows a similar curve to `pass@k (Oracle)` but consistently below it. The gap between this line and the oracle line narrows slightly as compute increases.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.78

* At ~25k tokens: Accuracy ≈ 0.818

* At ~50k tokens: Accuracy ≈ 0.848

* At ~75k tokens: Accuracy ≈ 0.870

* At ~100k tokens: Accuracy ≈ 0.878

* At ~125k tokens: Accuracy ≈ 0.885

* At ~150k tokens: Accuracy ≈ 0.890

3. **short-1@k (Ours) [Light Blue Line, Square Markers]:**

* **Trend:** This method improves rapidly at very low compute but then plateaus much earlier than the others. After approximately 75k tokens, its accuracy gains become negligible, and it is overtaken by `majority@k`.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.78

* At ~25k tokens: Accuracy ≈ 0.818 (similar to `short-3@k` at this point)

* At ~50k tokens: Accuracy ≈ 0.838

* At ~75k tokens: Accuracy ≈ 0.845

* At ~100k tokens: Accuracy ≈ 0.848

* At ~125k tokens: Accuracy ≈ 0.848 (plateau)

* At ~150k tokens: Accuracy ≈ 0.848 (plateau)

4. **majority@k [Dark Red Line, Circle Markers]:**

* **Trend:** This method starts with the lowest accuracy at low compute but shows steady, nearly linear improvement. It surpasses the plateaued `short-1@k` method at around 110k tokens and continues to climb.

* **Data Points:**

* At ~10k tokens: Accuracy ≈ 0.78

* At ~25k tokens: Accuracy ≈ 0.795

* At ~50k tokens: Accuracy ≈ 0.815

* At ~75k tokens: Accuracy ≈ 0.828

* At ~100k tokens: Accuracy ≈ 0.838

* At ~125k tokens: Accuracy ≈ 0.854

* At ~150k tokens: Accuracy ≈ 0.860

* At ~180k tokens (estimated): Accuracy ≈ 0.870

### Key Observations

1. **Performance Hierarchy:** A clear performance hierarchy is established: `pass@k (Oracle)` > `short-3@k (Ours)` > `majority@k` > `short-1@k (Ours)` at high compute levels (>110k tokens).

2. **Diminishing Returns:** All methods show diminishing returns (the slope of the curve decreases), but the point of severe plateauing varies. `short-1@k` plateaus earliest and most dramatically.

3. **Crossover Point:** A significant crossover occurs between `majority@k` and `short-1@k` at approximately 110k thinking tokens, where `majority@k` becomes the more accurate method despite starting lower.

4. **Oracle Gap:** The gap between the best proposed method (`short-3@k`) and the oracle (`pass@k`) remains relatively constant (≈0.01-0.015 accuracy points) across most of the compute range, suggesting a consistent performance ceiling.

5. **Low-Compute Similarity:** At the lowest compute point (~10k tokens), all four methods start at nearly the same accuracy (≈0.78), indicating that with minimal "thinking," the method choice is less impactful.

### Interpretation

This chart demonstrates the trade-off between computational cost ("Thinking Compute") and performance (Accuracy) for different reasoning or generation strategies in an AI system.

* **What the data suggests:** The `pass@k (Oracle)` line likely represents an idealized upper bound, perhaps achieved by having perfect knowledge of which of `k` generated samples is correct. The proposed methods, `short-1@k` and `short-3@k`, are practical attempts to approach this oracle performance. `short-3@k` is significantly more effective than `short-1@k`, suggesting that allowing for or considering more diverse or longer "short" reasoning paths (3 vs. 1) yields better results.

* **Relationship between elements:** The `majority@k` method, which likely selects the most common answer among `k` samples, serves as a strong baseline. Its steady climb shows that simple aggregation benefits consistently from more compute. The fact that `short-3@k` outperforms it indicates that the "short" methods are doing more than just aggregation; they are likely leveraging the compute to generate higher-quality individual samples.

* **Notable Anomalies/Insights:** The early plateau of `short-1@k` is critical. It implies that this method exhausts its ability to improve with more compute relatively quickly. In contrast, `short-3@k` and `majority@k` continue to scale, making them more suitable for scenarios where high compute budgets are available. The chart argues for the efficacy of the `short-3@k` approach, as it provides the best practical performance, closest to the oracle, across a wide range of compute budgets.

</details>

(d) R1-670B

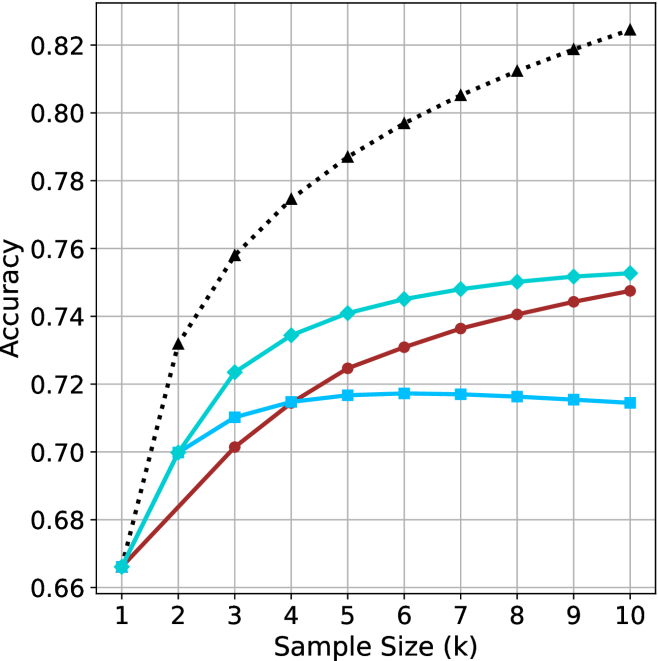

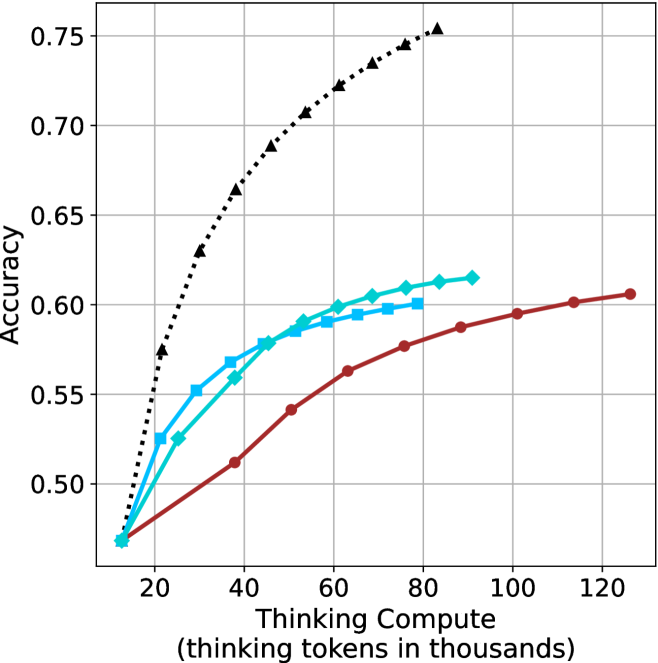

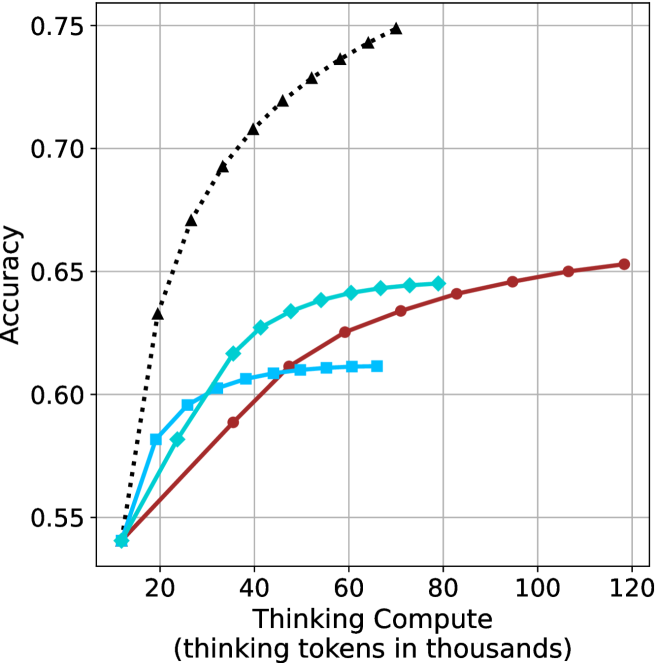

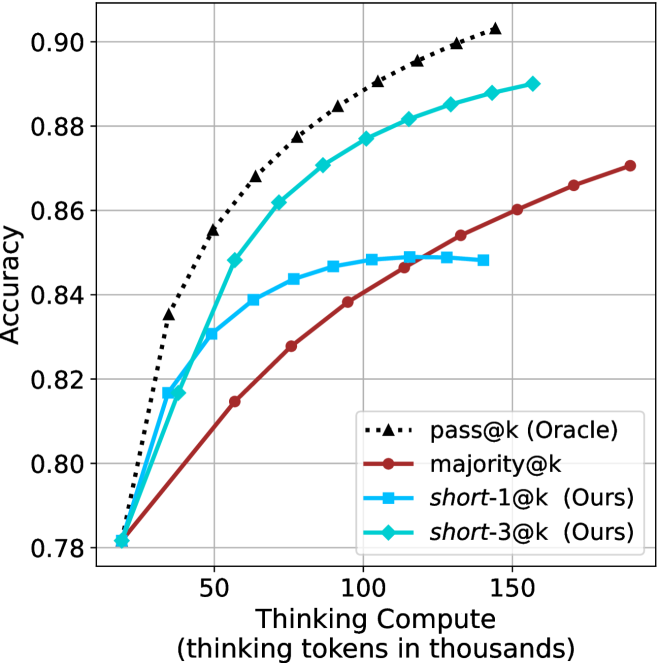

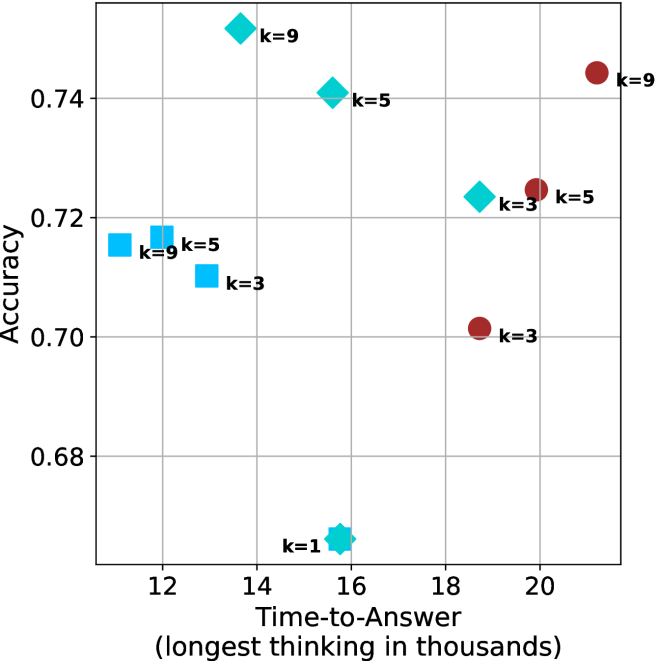

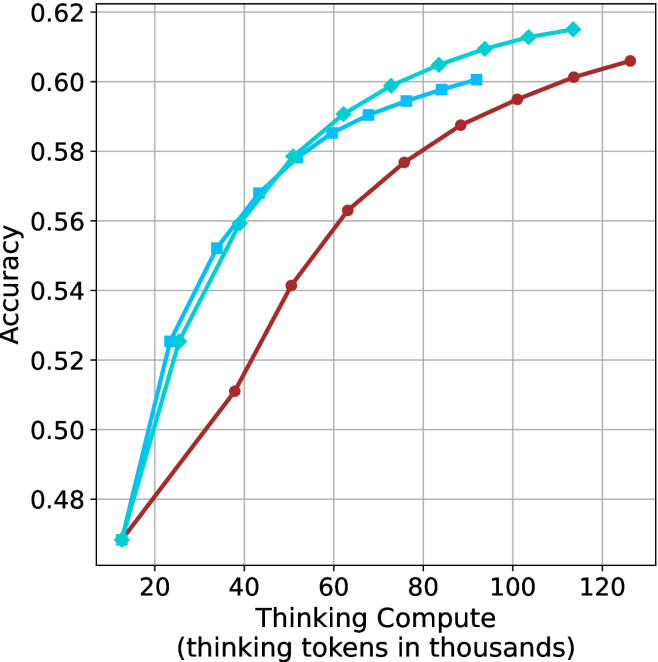

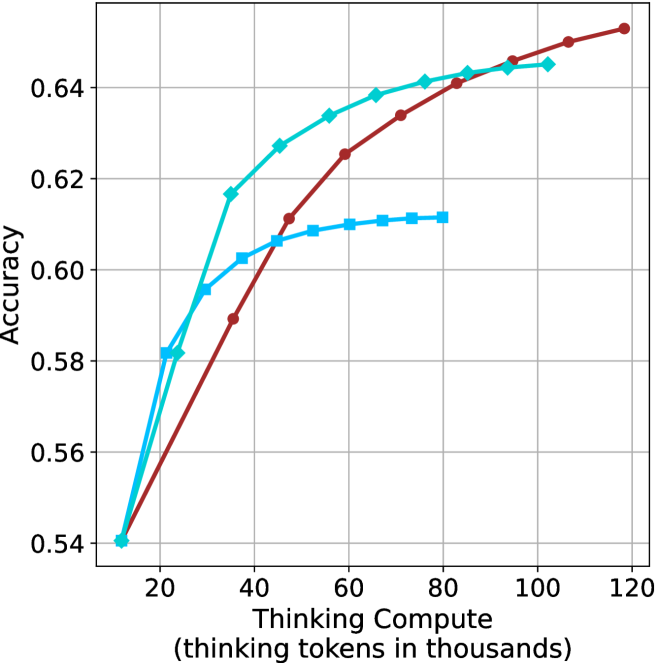

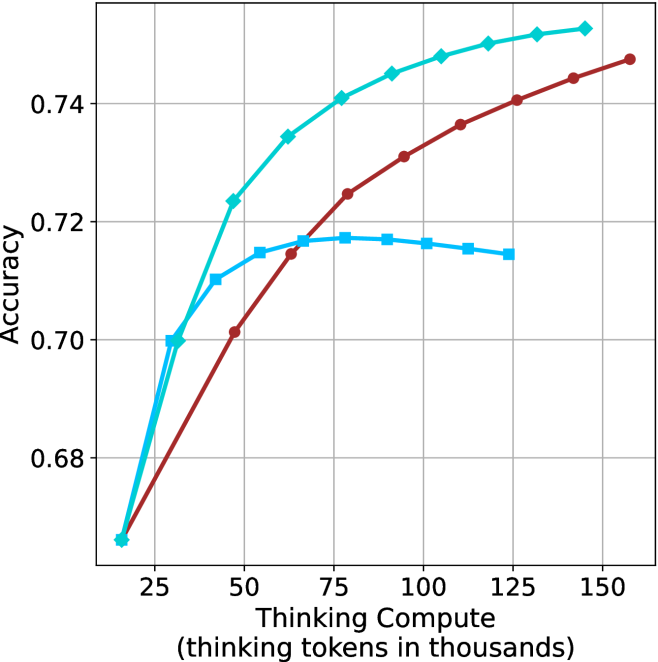

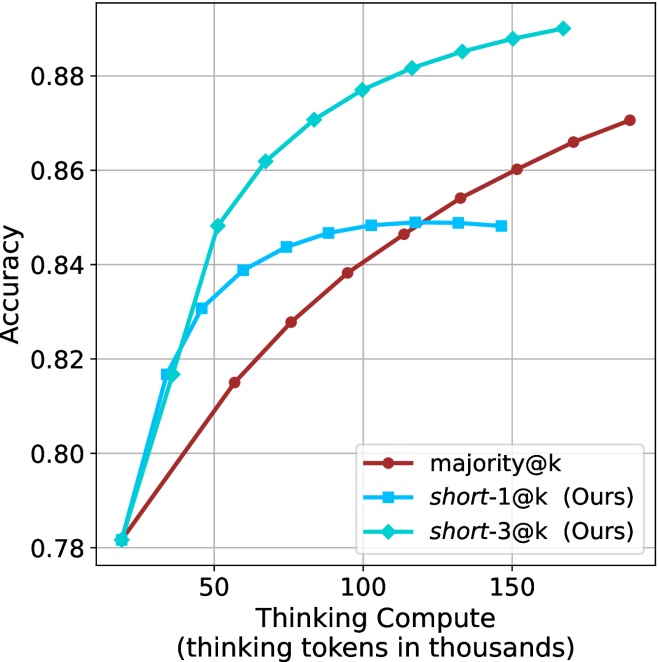

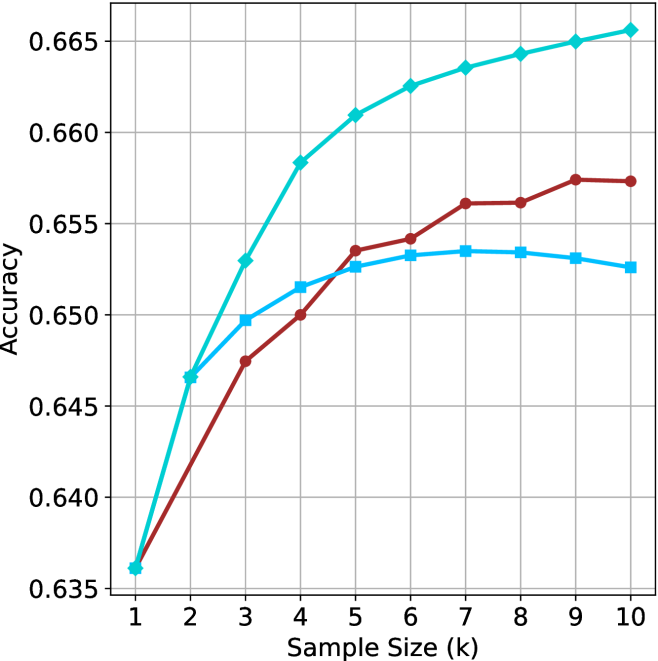

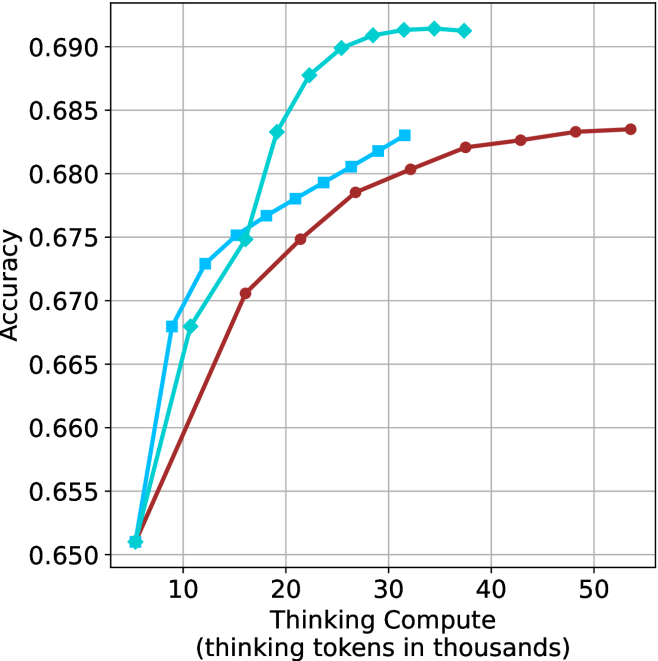

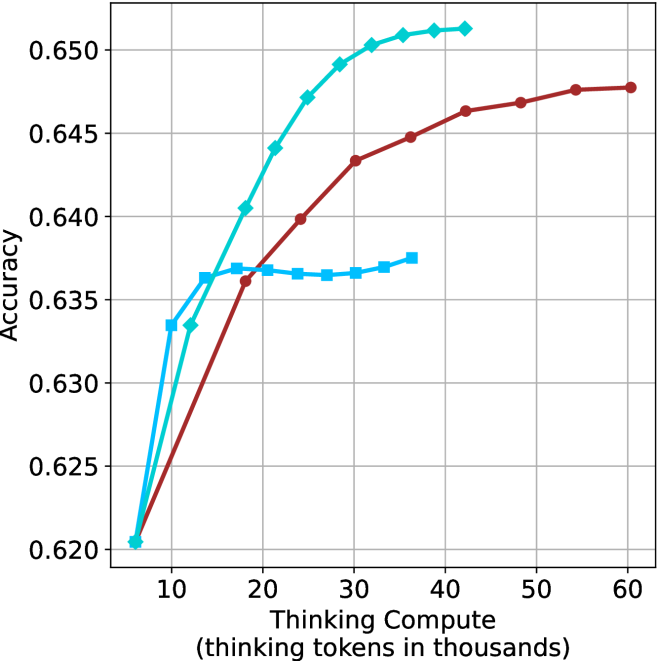

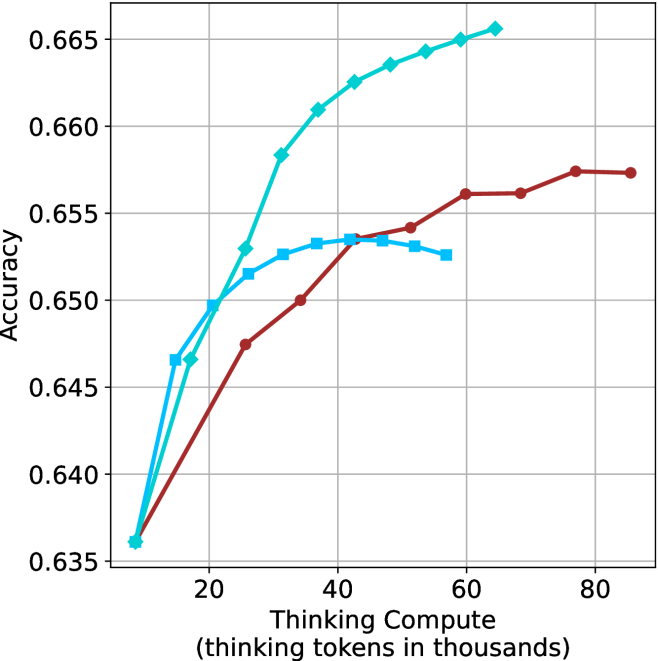

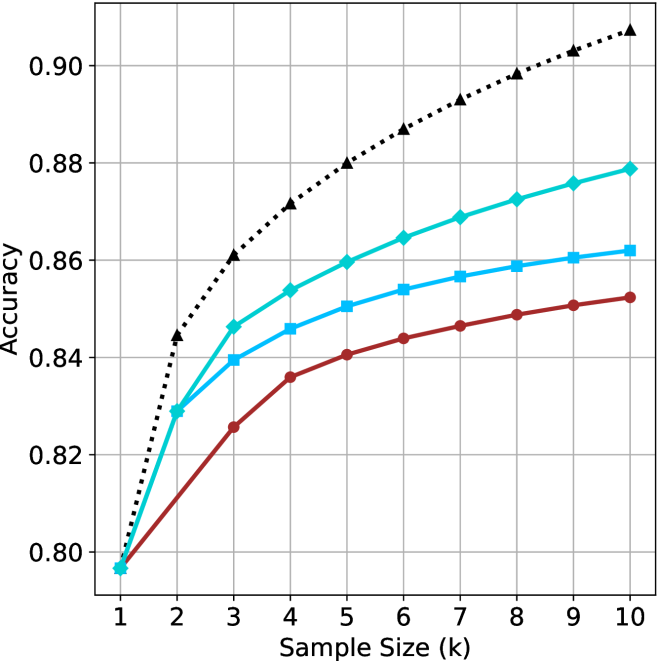

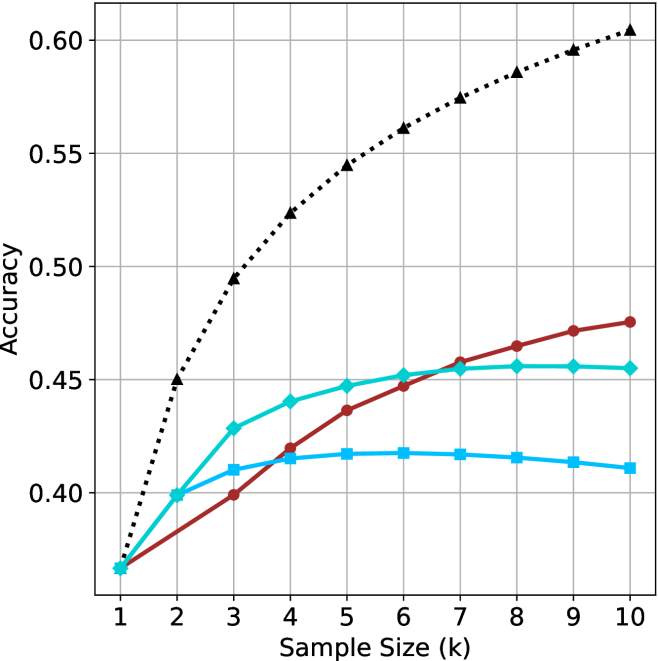

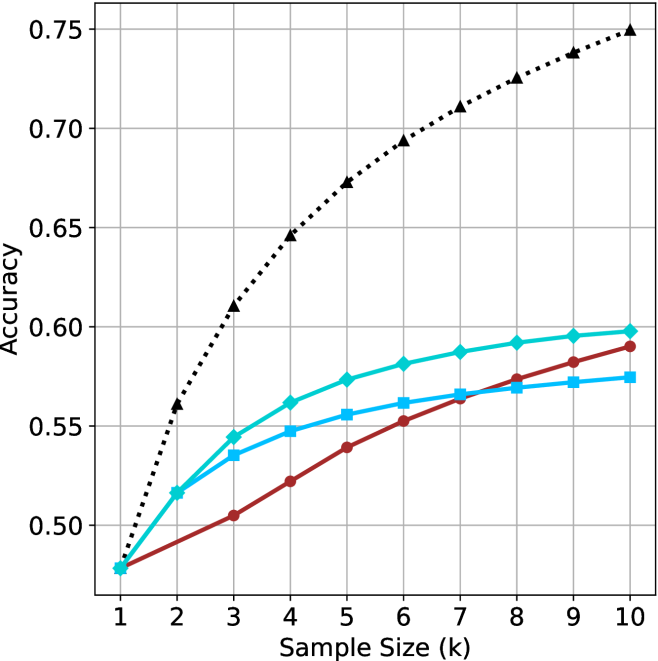

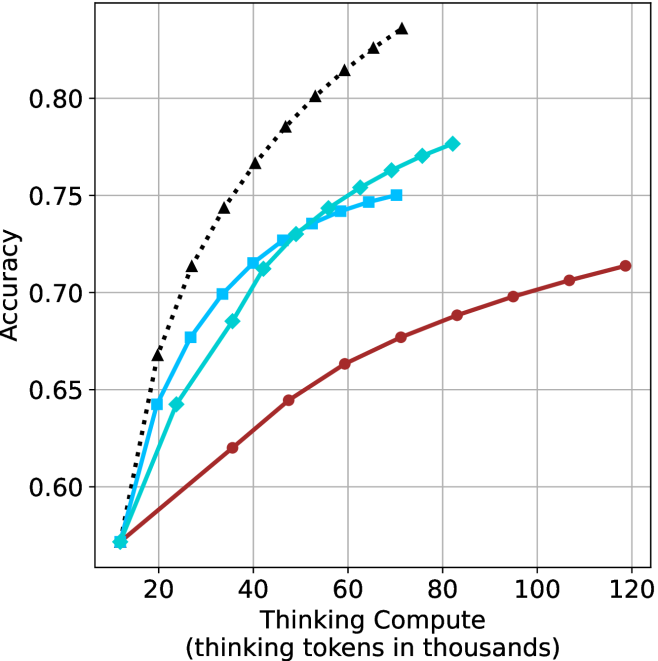

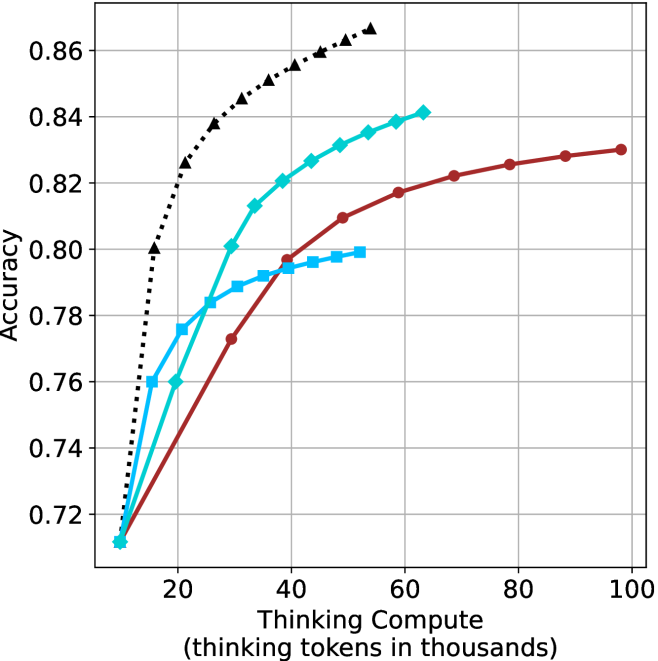

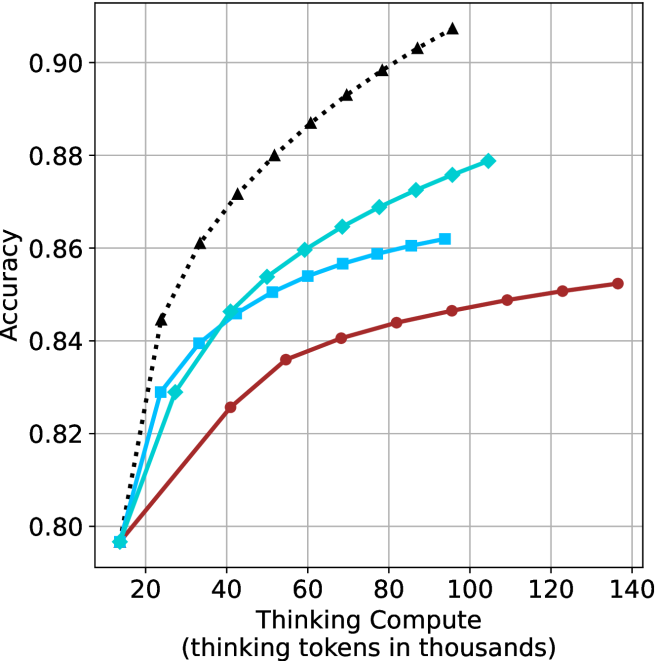

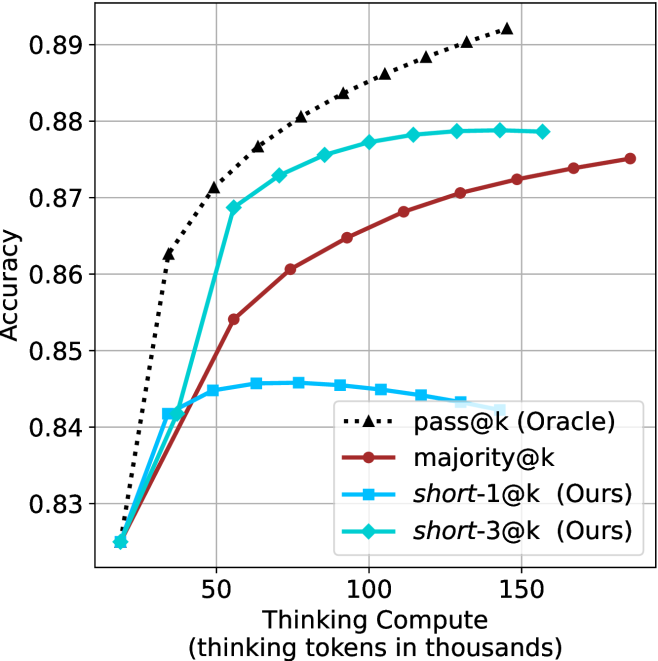

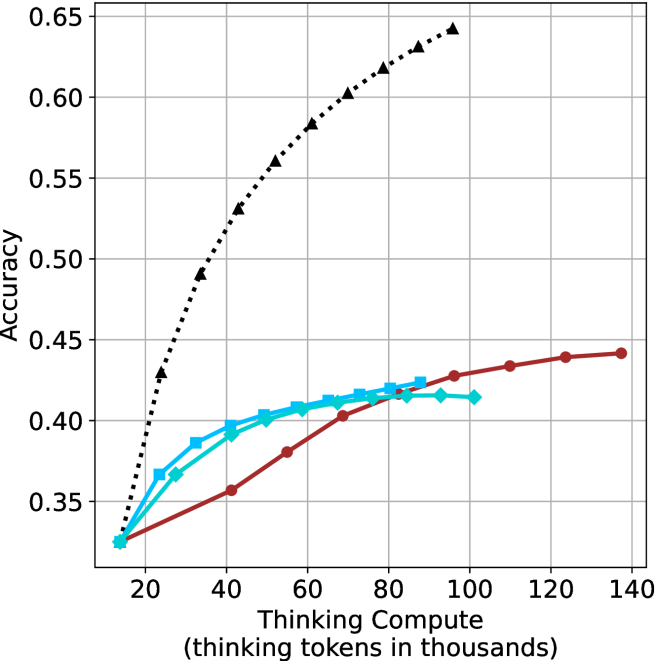

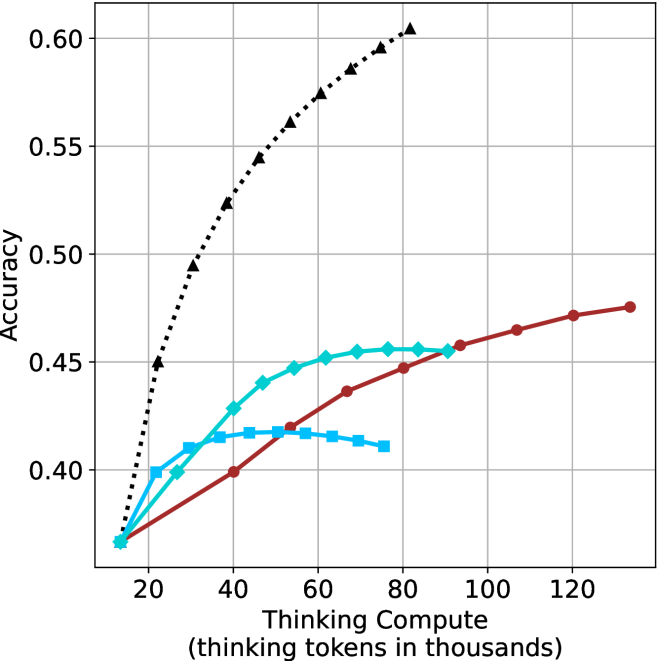

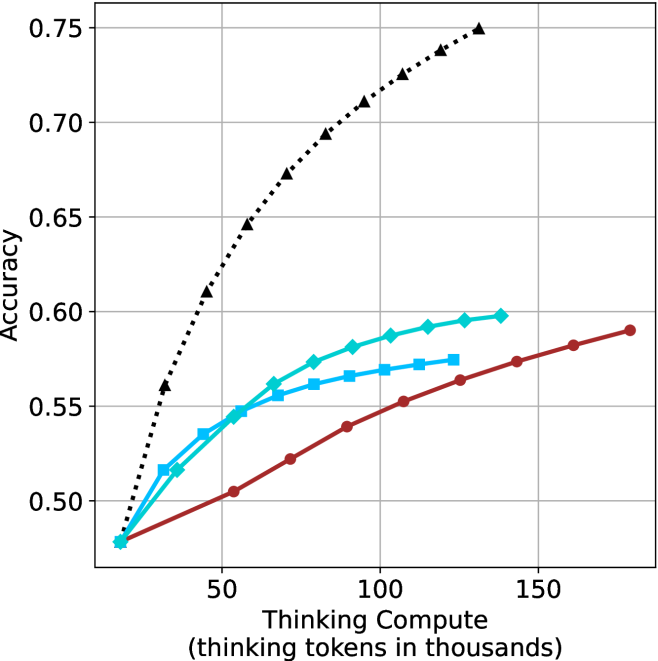

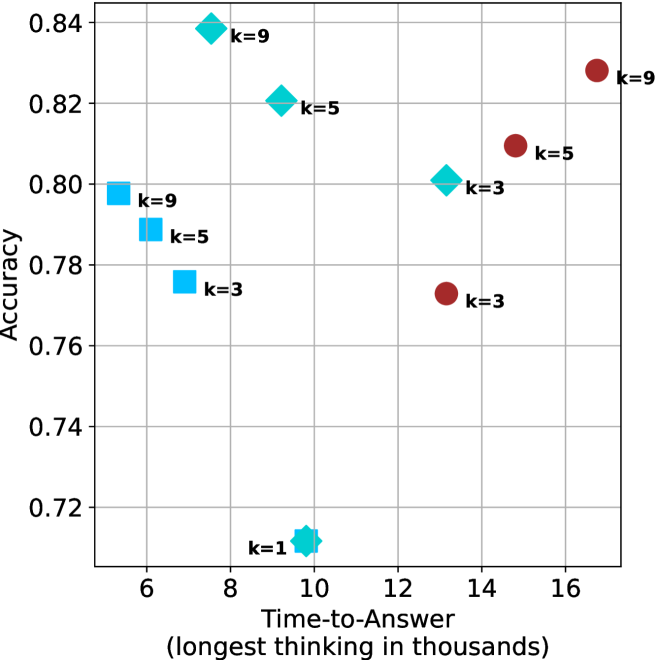

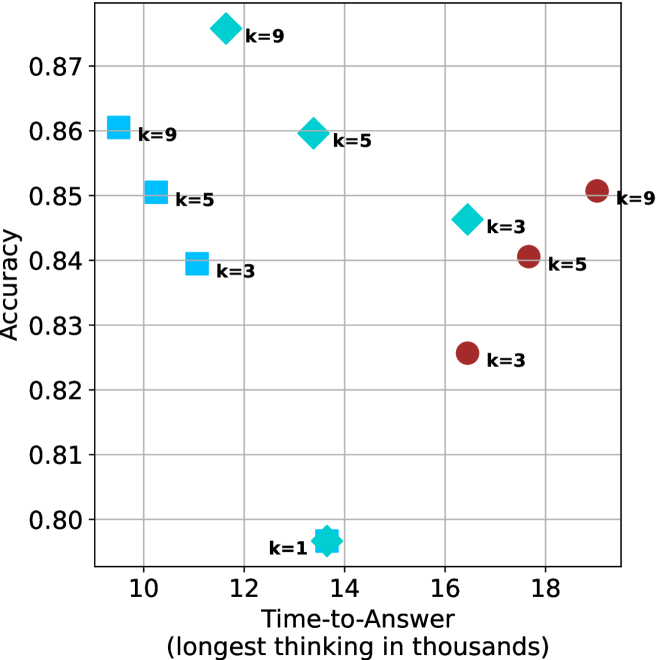

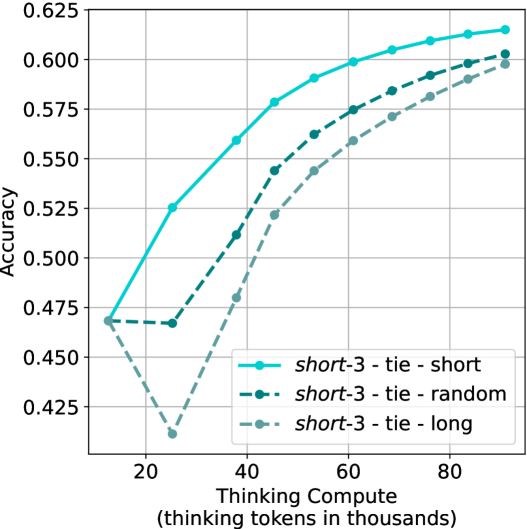

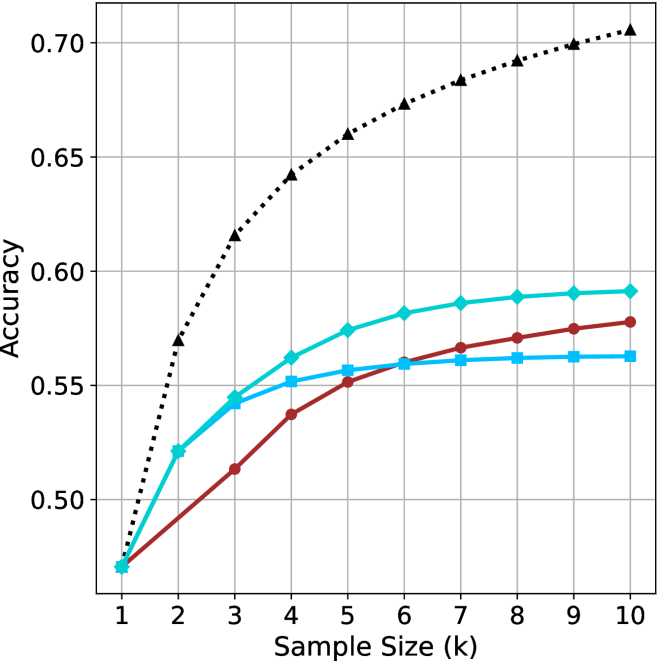

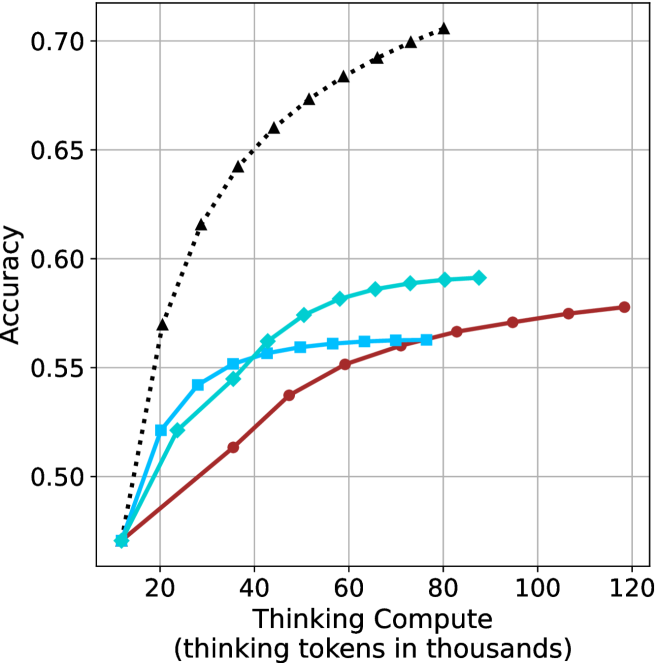

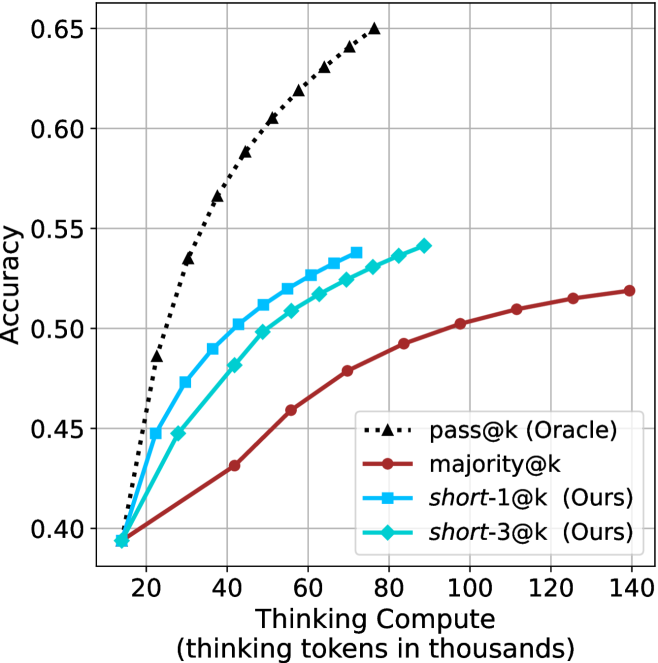

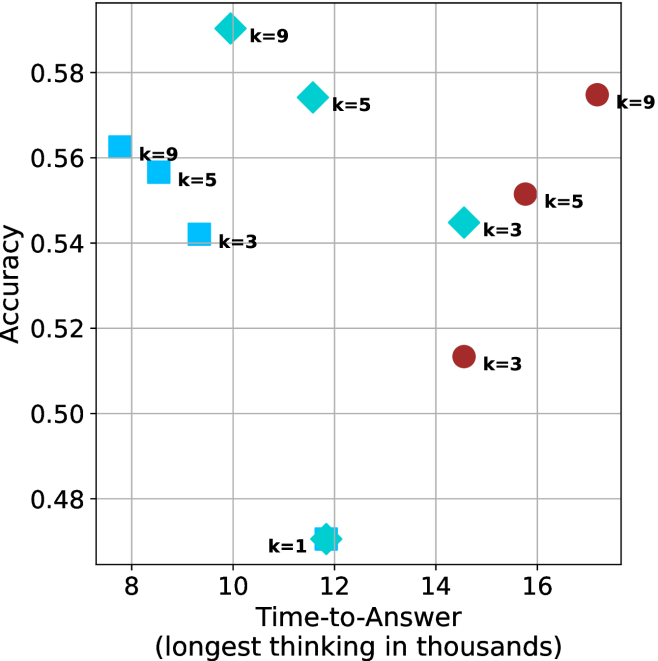

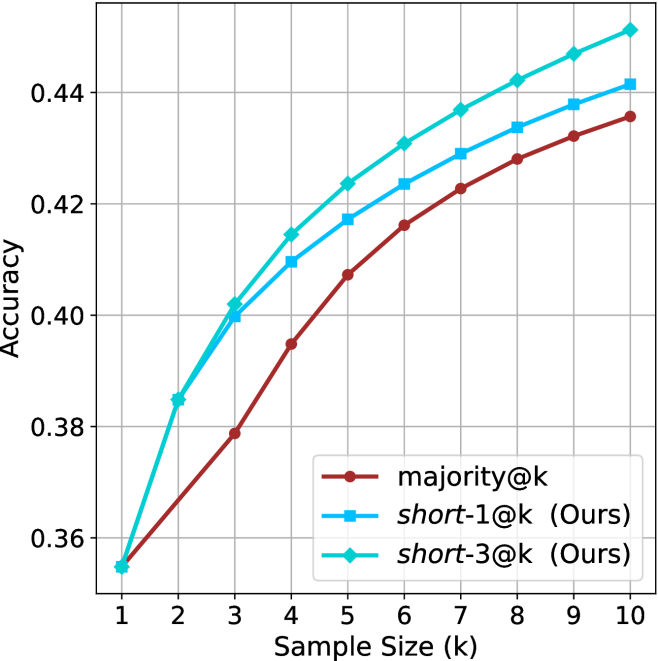

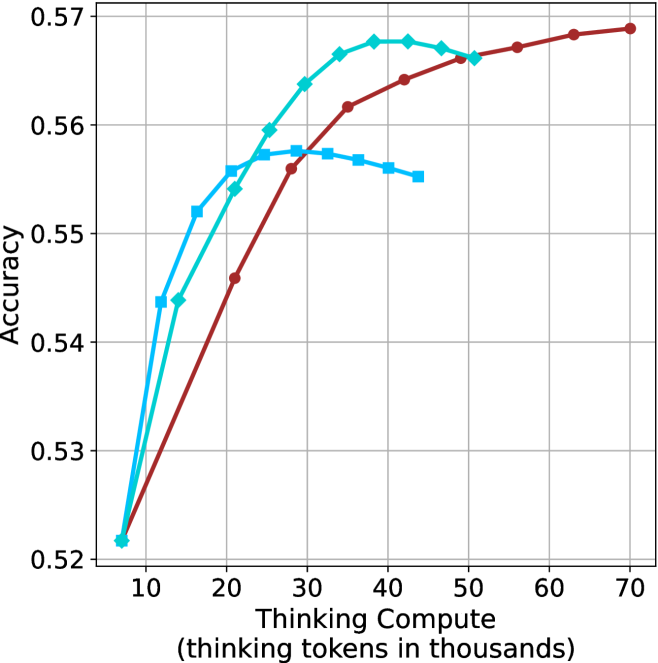

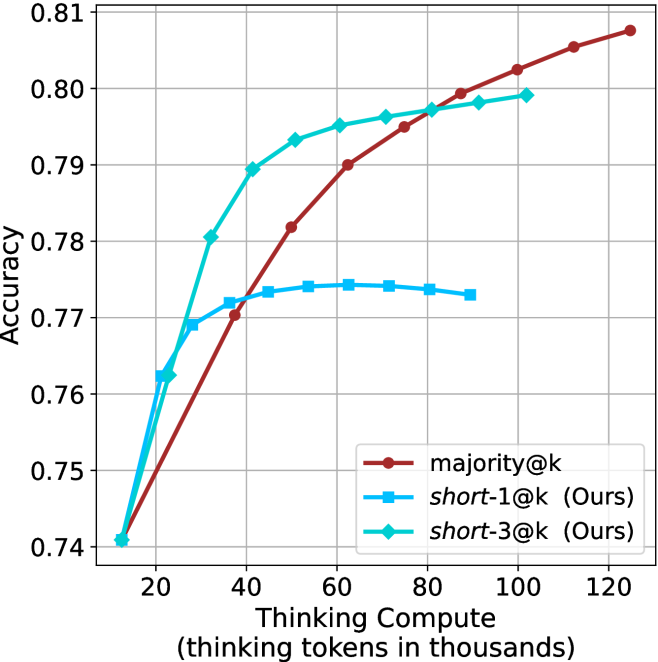

Figure 3: Comparing different inference methods under controlled thinking compute. short-1@k is highly performant in low compute regimes. short-3@k dominates the curve compared to majority $@k$ .

Sample-size ( $k$ ).

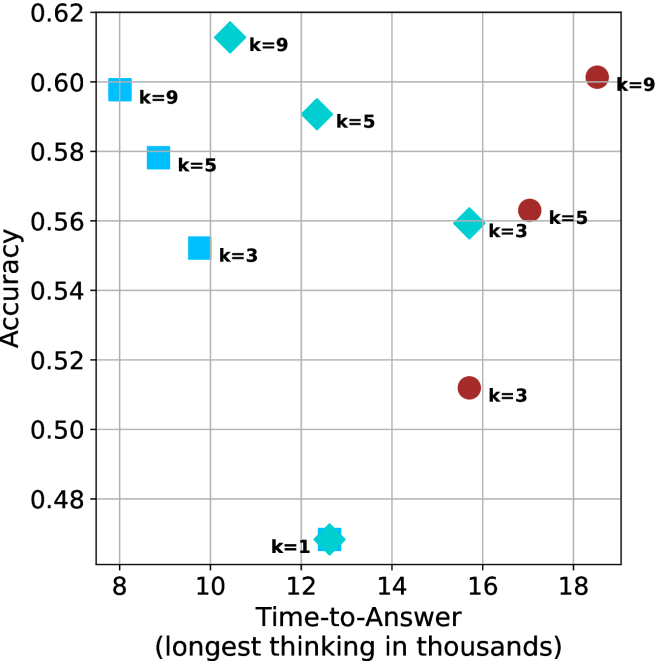

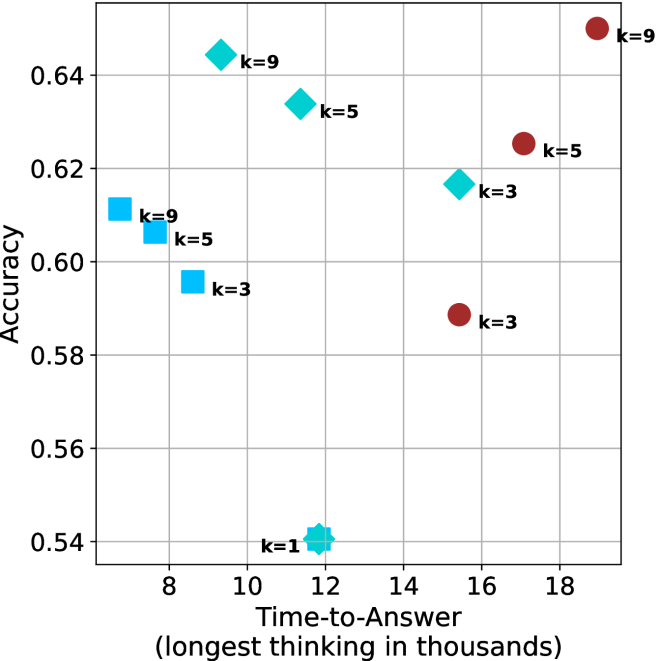

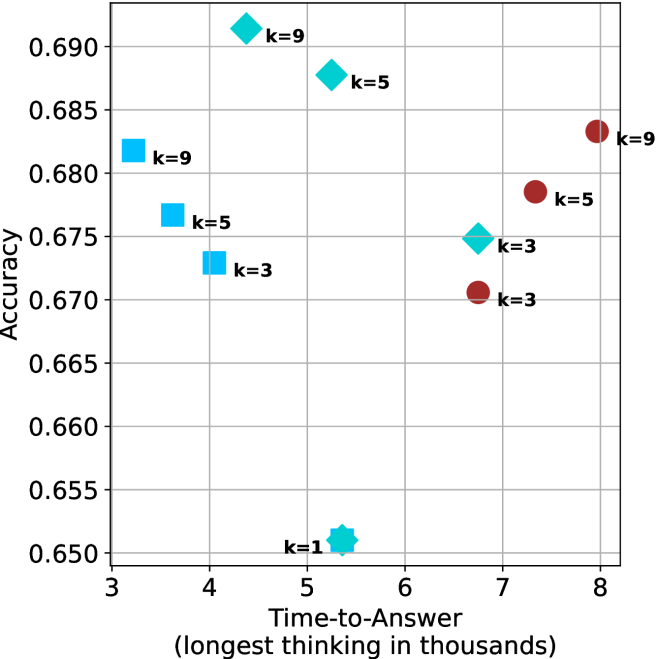

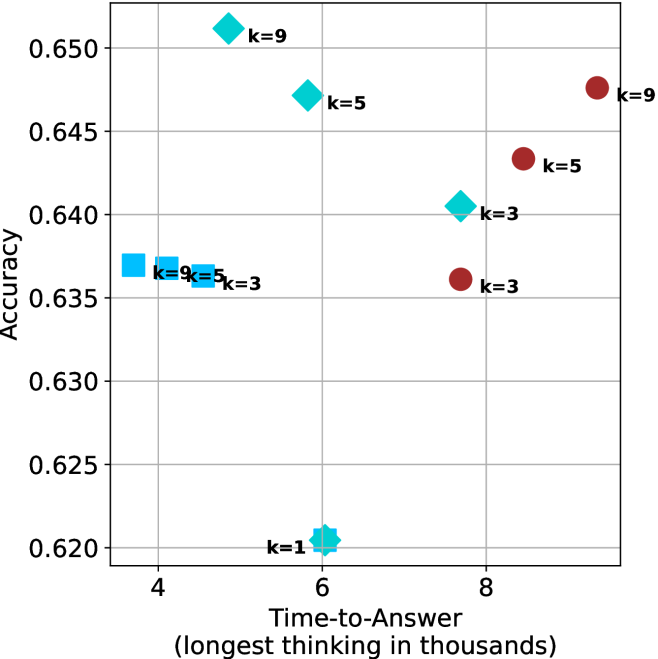

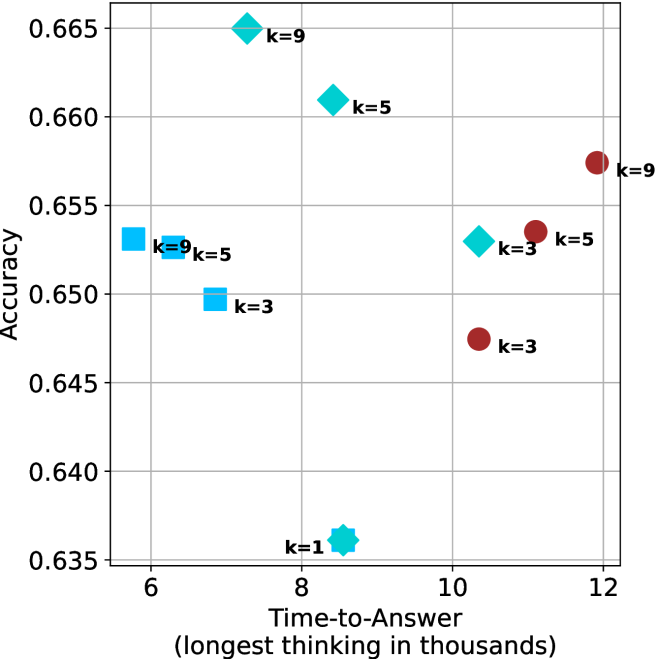

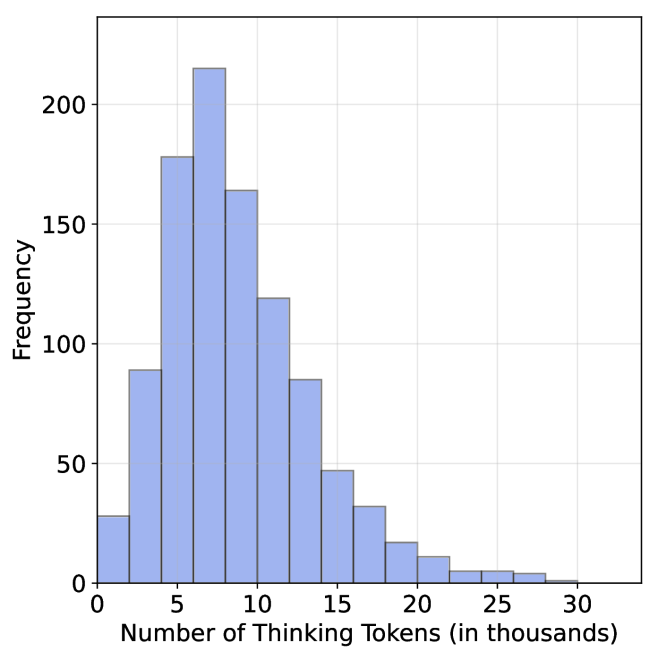

We start by examining different methods across benchmarks for a fixed sample size $k$ . Results aggregated across math benchmarks are presented in Figure ˜ 2, while Figure ˜ 6 in Appendix ˜ A presents GPQA-D results, and detailed results per benchmark can be seen at Appendix ˜ B. We observe that, generally, all methods improve with larger sample sizes, indicating that increased generations per question enhance performance. This trend is somewhat expected for the oracle (pass@ $k$ ) and majority@ $k$ methods but surprising for our method, as it means that even when a large amount of generations is used, the shorter thinking ones are more likely to be correct. The only exception is QwQ- $32$ B (Figure ˜ 2(c)), which shows a small of decline when considering larger sample sizes with the short-1@k method.

When comparing short-1@k to majority@ $k$ , the former outperforms at smaller sample sizes, but is outperformed by the latter in three out of four models when the sample size increases. Meanwhile, the short-3@k method demonstrates superior performance, dominating across nearly all models and sample sizes. Notably, for the R $1$ - $670$ B model, short-3@k exhibits performance nearly on par with the oracle across all sample sizes. We next analyze how this performance advantage translates into efficiency benefits.

Thinking-compute.

The aggregated performance results for math benchmarks, evaluated with respect to thinking compute, are presented in Figure ˜ 3 (per-benchmark results provided in Appendix ˜ B), while the GPQA-D respective results are presented in Figure ˜ 7 in Appendix ˜ A. We again observe that the short-1@k method outperforms majority $@k$ at lower compute budgets. Notably, for LN-Super- $49$ B (Figure ˜ 3(a)), the short-1@k method surpasses majority $@k$ across all compute budgets. For instance, short-1@k achieves $57\$ accuracy with approximately $60\$ of the compute budget used by majority@ $k$ to achieve the same accuracy. For R $1$ - $32$ B, QwQ- $32$ B and R $1$ - $670$ B models, the short-1@k method exceeds majority $@k$ up to compute budgets of $45$ k, $60$ k and $100$ k total thinking tokens, respectively, but is underperformed by it on larger compute budgets.

The short-3@k method yields even greater performance improvements, incurring only a modest increase in thinking compute compared to short-1@k. When compared to majority $@k$ , short-3@k consistently achieves higher performance with lower thinking compute across all models and compute budgets. For example, with the QwQ- $32$ B model (Figure ˜ 3(c)), and an average compute budget of $80$ k thinking tokens per example, short-3@k improves accuracy by $2\$ over majority@ $k$ . For the R $1$ - $670$ B model (Figure ˜ 3(d)), short-3@k consistently outperforms majority voting, yielding an approximate $4\$ improvement with an average token budget of $100$ k.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy vs. Time-to-Answer for Different Methods and k-values

### Overview