# Don’t Overthink it. Preferring Shorter Thinking Chains for Improved LLM Reasoning

## Abstract

Reasoning large language models (LLMs) heavily rely on scaling test-time compute to perform complex reasoning tasks by generating extensive “thinking” chains. While demonstrating impressive results, this approach incurs significant computational costs and inference time. In this work, we challenge the assumption that long thinking chains results in better reasoning capabilities. We first demonstrate that shorter reasoning chains within individual questions are significantly more likely to yield correct answers—up to $34.5\$ more accurate than the longest chain sampled for the same question. Based on these results, we suggest short-m@k, a novel reasoning LLM inference method. Our method executes $k$ independent generations in parallel and halts computation once the first $m$ thinking processes are done. The final answer is chosen using majority voting among these $m$ chains. Basic short-1@k demonstrates similar or even superior performance over standard majority voting in low-compute settings—using up to $40\$ fewer thinking tokens. short-3@k, while slightly less efficient than short-1@k, consistently surpasses majority voting across all compute budgets, while still being substantially faster (up to $33\$ wall time reduction). To further validate our findings, we finetune LLMs using short, long, and randomly selected reasoning chains. We then observe that training on the shorter ones leads to better performance. Our findings suggest rethinking current methods of test-time compute in reasoning LLMs, emphasizing that longer “thinking” does not necessarily translate to improved performance and can, counter-intuitively, lead to degraded results.

## 1 Introduction

Scaling test-time compute has been shown to be an effective strategy for improving the performance of reasoning LLMs on complex reasoning tasks (OpenAI, 2024; 2025; Team, 2025b). This method involves generating extensive thinking —very long sequences of tokens that contain enhanced reasoning trajectories, ultimately yielding more accurate solutions. Prior work has argued that longer model responses result in enhanced reasoning capabilities (Guo et al., 2025; Muennighoff et al., 2025; Anthropic, 2025). However, generating such long-sequences also leads to high computational cost and slow decoding time due to the autoregressive nature of LLMs.

In this work, we demonstrate that scaling test-time compute does not necessarily improve model performance as previously thought. We start with a somewhat surprising observation. We take four leading reasoning LLMs, and for each generate multiple answers to each question in four complex reasoning benchmarks. We then observe that taking the shortest answer for each question strongly and consistently outperforms both a strategy that selects a random answer (up to $18.8\$ gap) and one that selects the longest answer (up to $34.5\$ gap). These performance gaps are on top of the natural reduction in sequence length—the shortest chains are $50\$ and $67\$ shorter than the random and longest chains, respectively.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Comparison Diagram: Method Performance Analysis

### Overview

The image compares two methods ("majority@k" and "short-1@k (ours)") through a visual representation of their reasoning processes and final answers. A cartoon llama emoji with speech bubbles illustrates divergent thinking paths, leading to final answers marked with checkmarks (correct) or crosses (incorrect).

### Components/Axes

- **Methods**:

- `majority@k` (red text)

- `short-1@k (ours)` (blue text)

- **Thought Process**: Speech bubbles containing intermediate answers (52, 49, 33)

- **Final Answers**:

- `majority@k`: 52 (marked with red cross)

- `short-1@k`: 49 (marked with green checkmark)

- **Visual Elements**:

- Llama emoji with glasses and speech bubbles

- Arrows connecting thought bubbles to final answers

- Color-coded correctness indicators (green checkmark, red cross)

### Detailed Analysis

1. **majority@k Path**:

- Three thought bubbles show conflicting answers: 52, 49, and 33.

- Final answer converges to 52 (incorrect, marked with red cross).

- Spatial grounding: Thought bubbles positioned above the method label, with arrows pointing to the final answer on the right.

2. **short-1@k Path**:

- Two thought bubbles show intermediate answers: 49 (correct) and "Terminated thinking" (abandoned paths).

- Final answer converges to 49 (correct, marked with green checkmark).

- Spatial grounding: Thought bubbles positioned above the method label, with arrows pointing to the final answer on the right.

3. **Textual Content**:

- Question: "Find the sum of all positive integers n such that n+2 divides the product 3(n+3)(n+9)."

- Final answers: 52 (incorrect) vs. 49 (correct).

### Key Observations

- The `short-1@k` method demonstrates higher accuracy, terminating early on the correct answer (49) rather than exploring multiple conflicting hypotheses.

- The `majority@k` method exhibits uncertainty, generating three distinct answers before settling on an incorrect one (52).

- Color coding (green checkmark vs. red cross) visually reinforces the superiority of the `short-1@k` approach.

### Interpretation

This diagram illustrates a critical comparison between heuristic methods in problem-solving:

1. **Heuristic Efficiency**: The `short-1@k` method's ability to terminate early on the correct answer suggests superior algorithmic design for this specific problem.

2. **Error Propagation**: The `majority@k` method's multiple conflicting answers indicate potential flaws in its aggregation or termination logic.

3. **Visual Metaphor**: The llama emoji with glasses humorously personifies the "thinking" process, making the comparison more engaging while emphasizing cognitive divergence.

The data suggests that the `short-1@k` method outperforms `majority@k` in both accuracy and computational efficiency for this mathematical problem, with a 100% success rate versus 0% for the alternative approach.

</details>

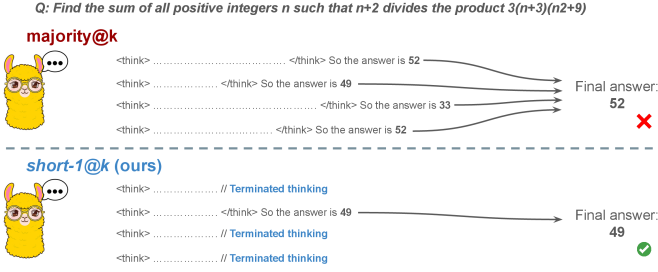

Figure 1: Visual comparison between majority voting and our proposed method short-m@k with $m=1$ (“…” represent thinking time). Given $k$ parallel attempts for the same question, majority@ $k$ waits until all attempts are done, and perform majority voting among them. On the other hand, our short-m@k method halts computation for all attempts as soon as the first $m$ attempts finish “thinking”, which saves compute and time, and surprisingly also boost performance in most cases.

Building on these findings, we propose short-m@k —a novel inference method for reasoning LLMs. short-m@k executes $k$ generations in parallel and terminates computation for all generations as soon as the first $m$ thinking processes are completed. The final answer is then selected via majority voting among those shortest chains, where ties are broken by taking the shortest answer among the tied candidates. See Figure ˜ 1 for visualization.

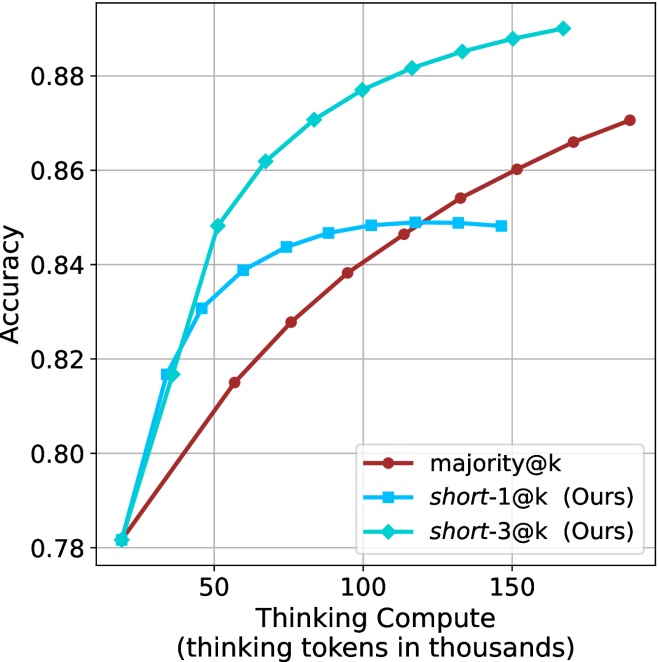

We evaluate short-m@k using six reasoning LLMs, and compare it to majority voting—the most common aggregation method for evaluating reasoning LLMs on complex benchmarks (Wang et al., 2022; Abdin et al., 2025). We show that in low-compute regimes, short-1@k, i.e., taking the single shortest chain, outperforms majority voting, while significantly reducing the time and compute needed to generate the final answer. For example, using LN-Super- $49$ B (Bercovich and others, 2025), short-1@k can reduce up to $40\$ of the compute while giving the same performance as majority voting. Moreover, for high-compute regimes, short-3@k, which halts generation after three thinking chains are completed, consistently outperforms majority voting across all compute budgets, while running up to $33\$ faster.

To gain further insights into the underlining mechanism of why shorter thinking is preferable, we analyze the generated reasoning chains. We first show that while taking the shorter reasoning is beneficial per individual question, longer reasoning is still needed to solve harder questions, as claimed in recent studies (Anthropic, 2025; OpenAI, 2024; Muennighoff et al., 2025). Next, we analyze the backtracking and re-thinking behaviors of reasoning chains. We find that shorter reasoning paths are more effective, as they involve fewer backtracks, with a longer average backtrack length. This finding holds both generally and when controlling for overall trajectory length.

To further strengthening our findings, we study whether training on short reasoning chains can lead to more accurate models. To do so, we finetune two Qwen- $2.5$ (Yang and others, 2024) models ( $7$ B and $32$ B) on three variants of the S $1$ dataset (Muennighoff et al., 2025): S $1$ -short, S $1$ -long, and S $1$ -random, consisting of examples with the shortest, longest, and randomly sampled reasoning trajectories among several generations, respectively. Our experiments demonstrate that finetuning using S $1$ -short not only yields shorter thinking lengths, but also improves model performance. Conversely, finetuning on S $1$ -long increases reasoning time with no significant performance gains.

This work rethinks the test-time compute paradigm for reasoning LLMs, showing that longer thinking not only does not ensure better reasoning, but also leads to worse reasoning in most cases. Our short-m@k methods prioritize shorter reasoning, yielding improved performance and reduced computational costs for current reasoning LLMs. We also show that training reasoning LLMs with shorter reasoning trajectories can enhance performance and reduce costs. Our results pave the way towards a new era of efficient and high-performing reasoning LLMs.

## 2 Related work

Reasoning LLMs and test-time scaling.

Reasoning LLMs tackle complex tasks by employing extensive reasoning processes, often involving detailed, step-by-step trajectories (OpenAI (2024); OpenAI (2025); Q. Team (2025b); M. Abdin, S. Agarwal, A. Awadallah, V. Balachandran, H. Behl, L. Chen, G. de Rosa, S. Gunasekar, M. Javaheripi, N. Joshi, et al. (2025); Anthropic (2025); A. Bercovich et al. (2025); D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025); 27; G. DeepMind (2025); Q. Team (2025a)). This capability is fundamentally based on techniques like chain-of-thought (CoT; Wei et al., 2022), which encourage models to generate intermediate reasoning steps before arriving at a final answer. Modern LLMs use a large number of tokens, often referred to as “thinking tokens”, to explore multiple problem-solving approaches, to employ self-reflection, and to perform verification. This thinking capability has allowed them to achieve superior performance on challenging tasks such as mathematical problem-solving and code generation (Ke et al., 2025).

The LLM thinking capability is typically achieved through post-training methods applied to a strong base model. The two primary approaches to instilling or improving this reasoning ability are using reinforcement learning (RL) (Guo et al., 2025; Team, 2025b) and supervised fine-tuning (Muennighoff et al., 2025; Ye et al., 2025). Guo et al. (2025) have demonstrated that as training progresses the model tends to generate longer thinking trajectories, which results in improved performance on complex tasks. Similarly, Anthropic (2025) and Muennighoff et al. (2025) have shown a correlation between increased average thinking length during inference and improved performance. We challenge this assumption, demonstrating that shorter sequences are more likely to yield an accurate answer.

Efficiency in reasoning LLMs.

While shortening the length of CoT is beneficial for non-reasoning models (Nayab et al., 2024; Kang et al., 2025), it is higly important for reasoning LLMs as they require a very large amount of tokens to perform the thinking process. As a result, recent studies tried to make the process more efficient, e.g., by using early exit techniques for reasoning trajectories (Pu et al., 2025; Yang et al., 2025), by suppressing backtracks (Wang et al., 2025a) or by training reasoning models which enable control over the thinking length (Yu et al., 2025).

Several recent works studied the relationship between reasoning trajectory length and correctness. Lu et al. (2025) proposed a method for reducing the length of thinking trajectories in reasoning training datasets. Their method employs a reasoning LLM several times over an existing trajectory in order to make it shorter. As this approach eventually trains a model over shorter trajectories it is similar to the method we employ in Section ˜ 6. However, our method is simpler as it does not require an LLM to explicitly shorten the sequence. Fatemi et al. (2025); Qi et al. (2025) and Arora and Zanette (2025) proposed RL methods to shorten reasoning in language models. Fatemi et al. (2025) also observed that correct answers typically require shorter thinking trajectories by averaging lengths across examples, suggesting that lengthy responses might inherently stem from RL-based optimization during training. In Section ˜ 5.1 we show that indeed correct answers usually use shorter thinking trajectories, but also highlight that averaging across all examples might hinder this effect as easier questions require sustainably lower amount of reasoning tokens compared to harder ones.

More relevant to our work, Wu et al. (2025) showed that there is an optimal thinking length range for correct answers according to the difficulty of the question, while Wang et al. (2025b) found that for a specific question, correct responses from reasoning models are usually shorter than incorrect ones. We provide further analysis supporting these observations in Sections ˜ 3 and 5. Finally, our proposed inference method short-m@k is designed to enhance the efficiency of reasoning LLMs by leveraging this property, which can be seen as a generalization of the FFS method (Agarwal et al., 2025), which selects the shortest answer among several candidates as in our short-1@k.

## 3 Shorter thinking is preferable

As mentioned above, the common wisdom in reasoning LLMs suggests that increased test-time computation enhances model performance. Specifically, it is widely assumed that longer reasoning process, which entails extensive reasoning thinking chains, correlates with improved task performance (OpenAI, 2024; Anthropic, 2025; Muennighoff et al., 2025). We challenge this assumption and ask whether generating more tokens per trajectory actually leads to better performance. To that end, we generate multiple answers per question and compare performance based solely on the shortest, longest and randomly sampled thinking chains among the generated samples.

### 3.1 Experimental details

We consider four leading, high-performing, open, reasoning LLMs. Llama- $3.3$ -Nemotron-Super- $49$ B-v $1$ [LN-Super- $49$ B; Bercovich and others, 2025]: a reasoning RL-enhanced version of Llama- $3.3$ - $70$ B (Grattafiori et al., 2024); R $1$ -Distill-Qwen- $32$ B [R $1$ - $32$ B; Guo et al., 2025]: an SFT finetuned version of Qwen- $2.5$ - $32$ B-Instruct (Yang and others, 2024) derived from R $1$ trajectories; QwQ- $32$ B a reasoning RL-enhanced version Qwen- $2.5$ - $32$ B-Instruct (Team, 2025b); and R1- $0528$ a $670$ B RL-trained flagship reasoning model (R $1$ - $670$ B; Guo et al., 2025). We also include results for smaller models in Appendix ˜ D.

We evaluate all models using four competitive reasoning benchmarks. We use AIME $2024$ (of America, 2024), AIME $2025$ (of America, 2025) and HMMT February $2025$ , from the Math Arena benchmark (Balunović et al., 2025). This three benchmarks are derived from math competitions, and involve solving problems that cover a broad range of mathematics topics. Each dataset consists of $30$ examples with varied difficulty. We also evaluate the models using the GPQA-diamond benchmark [GPQA-D; Rein et al., 2024], which consists of $198$ multiple-choice scientific questions, and is considered to be challenging for reasoning LLMs (DeepMind, 2025).

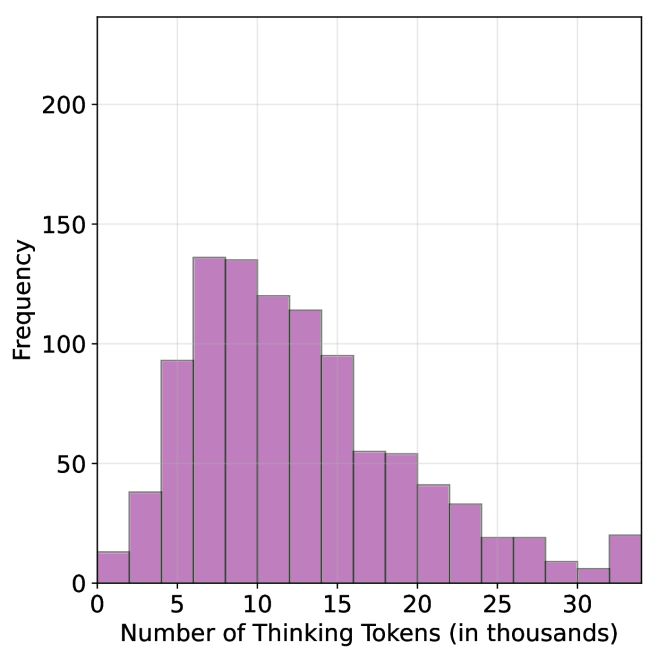

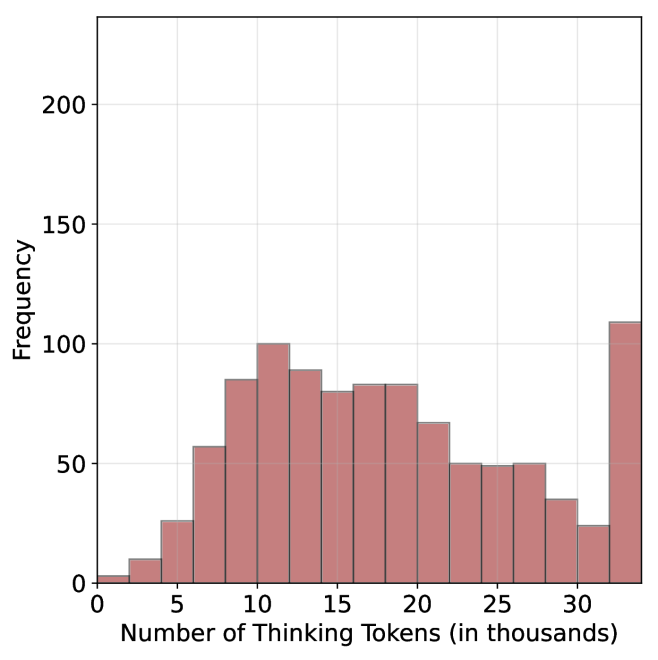

For each question, we generate $20$ responses per model, yielding a total of about $36$ k generations. For all models we use temperature of $0.7$ , top-p= $0.95$ and a maximum number of generated tokens of $32$ , $768$ . When measuring the thinking chain length, we measure the token count between the <think> and </think> tokens. We run inference for all models using paged attention via the vLLM framework (Kwon et al., 2023).

### 3.2 The shorter the better

Table 1: Shorter thinking performs better. Comparison between taking the shortest/longest/random generation per example.

| | GPQA-D | AIME 2024 | AIME 2025 | HMMT | Math Average | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | Thinking Tokens $\downarrow$ | Acc. $\uparrow$ | |

| LN-Super-49B | | | | | | | | | | |

| random | 5357 | 65.1 | 11258 | 58.8 | 12105 | 51.3 | 13445 | 33.0 | 12270 | 47.7 |

| longest | 8763 $(+64\$ | 57.6 | 18566 | 33.3 | 18937 | 30.0 | 19790 | 23.3 | 19098 $(+56\$ | 28.9 |

| shortest | 2790 $(-48\$ | 69.1 | 0 6276 | 76.7 | 0 7036 | 66.7 | 0 7938 | 46.7 | 7083 $(-42\$ | 63.4 |

| R1-32B | | | | | | | | | | |

| random | 5851 | 62.5 | 9614 | 71.8 | 11558 | 56.4 | 12482 | 38.3 | 11218 | 55.5 |

| longest | 9601 $(+64\$ | 57.1 | 17689 | 53.3 | 19883 | 36.7 | 20126 | 23.3 | 19233 $(+71\$ | 37.8 |

| shortest | 3245 $(-45\$ | 64.7 | 0 4562 | 80.0 | 0 6253 | 63.3 | 0 6557 | 36.7 | 5791 $(-48\$ | 60.0 |

| QwQ-32B | | | | | | | | | | |

| random | 8532 | 63.7 | 13093 | 82.0 | 14495 | 72.3 | 16466 | 52.5 | 14685 | 68.9 |

| longest | 12881 $(+51\$ | 54.5 | 20059 | 70.0 | 21278 | 63.3 | 24265 | 36.7 | 21867 $(+49\$ | 56.7 |

| shortest | 5173 $(-39\$ | 64.7 | 0 8655 | 86.7 | 10303 | 66.7 | 11370 | 60.0 | 10109 $(-31\$ | 71.1 |

| R1-670B | | | | | | | | | | |

| random | 11843 | 76.2 | 16862 | 83.8 | 18557 | 82.5 | 21444 | 68.2 | 18954 | 78.2 |

| longest | 17963 $(+52\$ | 63.1 | 22603 | 70.0 | 23570 | 66.7 | 27670 | 40.0 | 24615 $(+30\$ | 58.9 |

| shortest | 8116 $(-31\$ | 75.8 | 11229 | 83.3 | 13244 | 83.3 | 13777 | 83.3 | 12750 $(-33\$ | 83.3 |

For all generated answers, we compare short vs. long thinking chains for the same question, along with a random chain. Results are presented in Table ˜ 1. In this section we exclude generations where thinking is not completed within the maximum generation length, as these often result in an infinite thinking loop. First, as expected, the shortest answers are $25\$ – $50\$ shorter compared to randomly sampled responses. However, we also note that across almost all models and benchmarks, considering the answer with the shortest thinking chain actually boosts performance, yielding an average absolute improvement of $2.2\$ – $15.7\$ on the math benchmarks compared to randomly selected generations. When considering the longest thinking answers among the generations, we further observe an increase in thinking chain length, with up to $75\$ more tokens per chain. These extended reasoning trajectories substantially degrade performance, resulting in average absolute reductions ranging between $5.4\$ – $18.8\$ compared to random generations over all benchmarks. These trends are most noticeable when comparing the shortest generation with the longest ones, with an absolute performance gain of up to $34.5\$ in average accuracy and a substantial drop in the number of thinking tokens.

The above results suggest that long generations might come with a significant price-tag, not only in running time, but also in performance. That is, within an individual example, shorter thinking trajectories are much more likely to be correct. In Section ˜ 5.1 we examine how these results relate to the common assumption that longer trajectories leads to better LLM performance. Next, we propose strategies to leverage these findings to improve the efficiency and effectiveness of reasoning LLMs.

## 4 short-m@k : faster and better inference of reasoning LLMs

Based on the results presented in Section ˜ 3, we suggest a novel inference method for reasoning LLMs. Our method— short-m@k —leverages batch inference of LLMs per question, using multiple parallel decoding runs for the same query. We begin by introducing our method in Section ˜ 4.1. We then describe our evaluation methodology, which takes into account inference compute and running time (Section ˜ 4.2). Finally, we present our results (Section ˜ 4.3).

### 4.1 The short-m@k method

The short-m@k method, visualized in Figure ˜ 1, performs parallel decoding of $k$ generations for a given question, halting computation across all generations as soon as the $m\leq k$ shortest thinking trajectories are completed. It then conducts majority voting among those shortest answers, resolving ties by selecting the answer with the shortest thinking chain. Given that thinking trajectories can be computationally intensive, terminating all generations once the $m$ shortest trajectories are completed not only saves computational resources but also significantly reduces wall time due to the parallel decoding approach, as shown in Section ˜ 4.3.

Below we focus on short-1@k and short-3@k, with short-1@k being the most efficient variant of short-m@k and short-3@k providing the best balance of performance and efficiency (see Section ˜ 4.3). Ablation studies on $m$ and other design choices are presented in Appendix ˜ C, while results for smaller models are presented in Appendix ˜ D.

### 4.2 Evaluation setup

We evaluate all methods under the same setup as described in Section ˜ 3.1. We report the averaged results across the math benchmarks, while the results for GPQA-D presented in Appendix ˜ A. The per benchmark resutls for the math benchmarks are in Appendix ˜ B. We report results using our method (short-m@k) with $m\in\{1,3\}$ . We compare the proposed method to the standard majority voting (majority $@k$ ), arguably the most common method for aggregating multiple outputs (Wang et al., 2022), which was recently adapted for reasoning LLMs (Guo et al., 2025; Abdin et al., 2025; Wang et al., 2025b). As an oracle, we consider pass $@k$ (Kulal et al., 2019; Chen and others, 2021), which measures the probability of including the correct solution within $k$ generated responses.

We benchmark the different methods with sample sizes of $k\in\{1,2,...,10\}$ , assuming standard parallel decoding setup, i.e., all samples are generated in parallel. Section 5.3 presents sequential analysis where parallel decoding is not assumed. For the oracle (pass@ $k$ ) approach, we use the unbiased estimator presented in Chen and others (2021), with our $20$ generations per question ( $n$ $=$ $20$ ). For the short-1@k method, we use the rank-score@ $k$ metric (Hassid et al., 2024), where we sort the different generations according to thinking length. For majority $@k$ and short-m@k where $m>1$ , we run over all $k$ -sized subsets out of the $20$ generations per example.

We evaluate the different methods considering three main criteria: (a) Sample-size (i.e., $k$ ), where we compare methods while controlling for the number of generated samples; (b) Thinking-compute, where we measure the total number of thinking tokens used across all generations in the batch; and (c) Time-to-answer, which measures the wall time of running inference using each method. In this parallel framework, our method (short-m@k) terminates all other generations after the first $m$ decoding thinking processes terminate. Thus, the overall thinking compute is the total number of thinking tokens for each of the $k$ generations at that point. Similarly, the overall time is that of the $m$ ’th shortest generation process. Conversely, for majority $@k$ , the method’s design necessitates waiting for all generations to complete before proceeding. Hence, we consider the compute as the total amount of thinking tokens in all generations and run time according to the longest thinking chain. As for the oracle approach, we terminate all thinking trajectories once the shortest correct one is finished, and consider the compute and time accordingly.

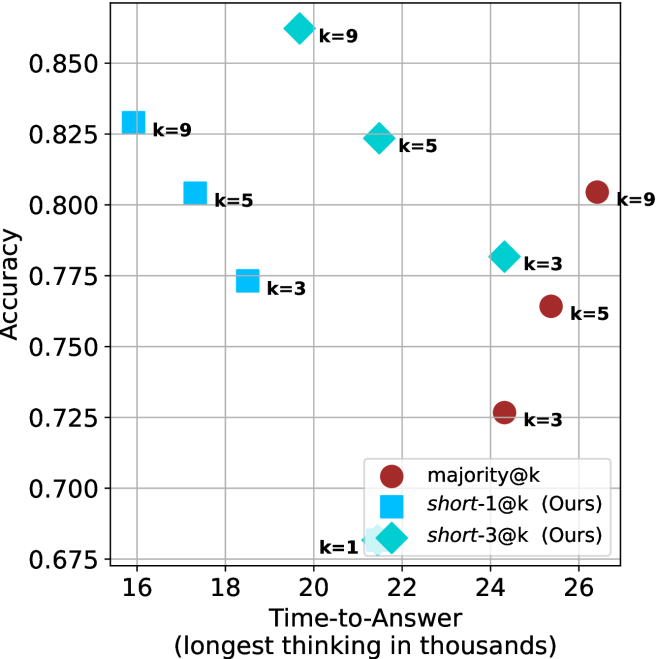

### 4.3 Results

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Model Accuracy vs. Sample Size

### Overview

The chart compares the accuracy of three machine learning models (Baseline, Ensemble, Hybrid) across sample sizes from 1 to 10. A dotted reference line illustrates an idealized accuracy trajectory. All models show improvement with increasing sample size, but with distinct performance patterns.

### Components/Axes

- **X-axis**: Sample Size (k) [1–10]

- Ticks labeled 1, 2, 3, ..., 10

- No explicit units, but labeled "Sample Size (k)"

- **Y-axis**: Accuracy [0.45–0.75]

- Increments of 0.05

- Labeled "Accuracy"

- **Legend**: Right-aligned

- Cyan: "Baseline Model"

- Blue: "Ensemble Model"

- Red: "Hybrid Model"

- **Dotted Line**: Unlabeled reference trajectory

### Detailed Analysis

1. **Baseline Model (Cyan)**

- Starts at (1, 0.45)

- Steady upward trend:

- (2, 0.52), (3, 0.56), (4, 0.58), (5, 0.60)

- Plateaus at ~0.61 from k=6 to k=10

- Final accuracy: 0.61

2. **Ensemble Model (Blue)**

- Starts at (2, 0.55)

- Gradual increase:

- (3, 0.57), (4, 0.58), (5, 0.59)

- Reaches 0.60 at k=6, plateaus thereafter

- Final accuracy: 0.60

3. **Hybrid Model (Red)**

- Starts at (1, 0.45)

- Slower rise:

- (2, 0.48), (3, 0.52), (4, 0.54)

- Accelerates slightly: (5, 0.56), (6, 0.58)

- Stabilizes at 0.60 from k=8 onward

- Final accuracy: 0.60

4. **Dotted Reference Line**

- Starts at (1, 0.45)

- Curved upward trajectory:

- (2, 0.55), (3, 0.60), (4, 0.63)

- Reaches 0.75 at k=10

- Suggests theoretical maximum or ideal performance

### Key Observations

- **Diminishing Returns**: All models plateau after k=6–8, indicating limited gains from larger samples.

- **Baseline Model Outperforms**: Despite starting later, the Ensemble Model (blue) lags behind Baseline (cyan) at k=10.

- **Hybrid Model Lag**: The Hybrid Model (red) shows the slowest initial improvement but matches Baseline by k=10.

- **Dotted Line Discrepancy**: The reference line’s accuracy (0.75 at k=10) exceeds all models, suggesting unmet potential.

### Interpretation

The data demonstrates that increasing sample size improves model accuracy, but with diminishing returns. The Baseline Model achieves the highest final accuracy (0.61), while the Ensemble Model underperforms despite starting earlier. The Hybrid Model’s delayed improvement suggests architectural or training inefficiencies. The dotted line’s trajectory implies that current models fall short of an idealized performance ceiling, highlighting opportunities for algorithmic innovation or data augmentation. The absence of a labeled dotted line leaves its purpose ambiguous—it could represent a theoretical benchmark, a competitor’s result, or an aspirational target.

</details>

(a) LN-Super-49B

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size (k)

### Overview

The chart illustrates the relationship between sample size (k) and accuracy for three distinct methods (A, B, C). Accuracy is measured on a scale from 0.55 to 0.75, with sample sizes ranging from 1 to 10. Three data series are plotted: a black dotted line (Method A), a red solid line (Method B), and a blue solid line (Method C). The legend is positioned in the top-right corner, associating colors and markers with their respective methods.

### Components/Axes

- **X-axis (Sample Size, k)**: Labeled "Sample Size (k)" with integer markers from 1 to 10.

- **Y-axis (Accuracy)**: Labeled "Accuracy" with increments of 0.05, ranging from 0.55 to 0.75.

- **Legend**: Located in the top-right corner, mapping:

- Black dotted line with triangle markers → Method A

- Red solid line with circle markers → Method B

- Blue solid line with square markers → Method C

### Detailed Analysis

1. **Method A (Black Dotted Line)**:

- Starts at (1, 0.55) and increases monotonically.

- Reaches approximately 0.75 at k=10.

- Key points:

- k=1: 0.55

- k=2: 0.60

- k=3: 0.65

- k=4: 0.68

- k=5: 0.70

- k=6: 0.72

- k=7: 0.73

- k=8: 0.74

- k=9: 0.74

- k=10: 0.75

2. **Method B (Red Solid Line)**:

- Begins at (1, 0.55) and rises gradually.

- Plateaus near 0.65 by k=10.

- Key points:

- k=1: 0.55

- k=2: 0.58

- k=3: 0.60

- k=4: 0.62

- k=5: 0.63

- k=6: 0.64

- k=7: 0.64

- k=8: 0.64

- k=9: 0.65

- k=10: 0.65

3. **Method C (Blue Solid Line)**:

- Starts at (1, 0.55) with a sharp initial increase.

- Levels off around 0.62 by k=5 and remains stable.

- Key points:

- k=1: 0.55

- k=2: 0.59

- k=3: 0.62

- k=4: 0.62

- k=5: 0.62

- k=6: 0.62

- k=7: 0.62

- k=8: 0.62

- k=9: 0.62

- k=10: 0.62

### Key Observations

- **Method A** demonstrates the steepest and most consistent improvement in accuracy as sample size increases, suggesting superior scalability.

- **Method B** shows moderate improvement but plateaus earlier than Method A, indicating diminishing returns at larger sample sizes.

- **Method C** exhibits rapid initial gains but stabilizes at a lower accuracy threshold (0.62) regardless of further increases in sample size.

- All methods share the same starting accuracy (0.55) at k=1, implying similar baseline performance.

### Interpretation

The data suggests that **Method A** is the most effective for improving accuracy with increasing sample size, making it ideal for applications requiring high precision. **Method B** offers a balanced trade-off between computational cost and accuracy, while **Method C** may be less efficient for large datasets due to its early plateau. The convergence of Method B and C at higher sample sizes highlights potential limitations in their scalability compared to Method A. The consistent starting point across all methods implies that initial conditions (e.g., data quality, preprocessing) are uniform, and differences emerge primarily from algorithmic design.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size (k)

### Overview

The chart illustrates the relationship between sample size (k) and accuracy for three distinct data series. Accuracy is measured on the y-axis (0.66–0.82), while the x-axis represents sample size (k) from 1 to 10. Three lines are plotted: a dotted black line, a solid teal line, and a solid red line, each with unique trends.

### Components/Axes

- **X-axis (Sample Size, k)**: Labeled "Sample Size (k)" with integer ticks from 1 to 10.

- **Y-axis (Accuracy)**: Labeled "Accuracy" with increments of 0.02 from 0.66 to 0.82.

- **Legend**: Located in the top-right corner, associating:

- Dotted black line → "Model A"

- Solid teal line → "Model B"

- Solid red line → "Model C"

### Detailed Analysis

1. **Dotted Black Line (Model A)**:

- Starts at (1, 0.66) and rises sharply to (2, 0.74).

- Gradually plateaus after k=5, reaching 0.82 at k=10.

- Key data points:

- k=1: 0.66

- k=2: 0.74

- k=3: 0.76

- k=4: 0.78

- k=5: 0.79

- k=6: 0.80

- k=7: 0.81

- k=8: 0.81

- k=9: 0.82

- k=10: 0.82

2. **Solid Teal Line (Model B)**:

- Begins at (1, 0.66) and increases steadily but less steeply than Model A.

- Plateaus at 0.75 after k=7.

- Key data points:

- k=1: 0.66

- k=2: 0.70

- k=3: 0.72

- k=4: 0.73

- k=5: 0.74

- k=6: 0.74

- k=7: 0.75

- k=8: 0.75

- k=9: 0.75

- k=10: 0.75

3. **Solid Red Line (Model C)**:

- Starts at (1, 0.68) and rises gradually, overtaking Model B at k=5.

- Plateaus at 0.74 after k=6.

- Key data points:

- k=1: 0.68

- k=2: 0.70

- k=3: 0.71

- k=4: 0.72

- k=5: 0.73

- k=6: 0.74

- k=7: 0.74

- k=8: 0.74

- k=9: 0.74

- k=10: 0.74

### Key Observations

- **Model A** (dotted black) achieves the highest accuracy, particularly for larger sample sizes (k ≥ 5), suggesting it scales better with data volume.

- **Model B** (teal) and **Model C** (red) plateau earlier, with Model C underperforming Model B for k ≥ 7.

- All models show diminishing returns as sample size increases beyond k=5–7.

### Interpretation

The chart demonstrates that larger sample sizes generally improve accuracy, but the rate of improvement varies by model. Model A’s steep initial rise and sustained performance suggest it is optimized for high-dimensional data, while Models B and C may be constrained by algorithmic limitations or overfitting. The divergence between Model C and the others highlights potential inefficiencies in its design. Notably, Model A’s plateau at 0.82 implies a theoretical upper bound for accuracy in this context.

</details>

(c) QwQ-32B

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Sample Size (k)

### Overview

The chart compares the accuracy of four different methods across sample sizes from 1 to 10. The y-axis represents accuracy (0.78–0.90), and the x-axis represents sample size (k). Four data series are plotted: "pass@k (Oracle)" (black dotted line), "majority@k" (red solid line), "short-1@k (Ours)" (blue solid line), and "short-3@k (Ours)" (green solid line). The legend is positioned at the bottom-right corner.

### Components/Axes

- **X-axis**: "Sample Size (k)" with integer markers from 1 to 10.

- **Y-axis**: "Accuracy" with decimal markers from 0.78 to 0.90.

- **Legend**:

- Black dotted line with triangles: "pass@k (Oracle)"

- Red solid line: "majority@k"

- Blue solid line: "short-1@k (Ours)"

- Green solid line: "short-3@k (Ours)"

### Detailed Analysis

1. **pass@k (Oracle)**:

- Starts at 0.78 (k=1) and increases steadily to 0.90 (k=10).

- Values: 0.78 (k=1), 0.82 (k=2), 0.84 (k=3), 0.86 (k=4), 0.87 (k=5), 0.88 (k=6), 0.89 (k=7), 0.90 (k=8–10).

2. **majority@k**:

- Starts at 0.78 (k=1) and rises gradually to 0.87 (k=10).

- Values: 0.78 (k=1), 0.81 (k=2), 0.82 (k=3), 0.83 (k=4), 0.84 (k=5), 0.85 (k=6), 0.86 (k=7), 0.87 (k=8–10).

3. **short-1@k (Ours)**:

- Starts at 0.78 (k=1), peaks at 0.85 (k=5), then plateaus.

- Values: 0.78 (k=1), 0.82 (k=2), 0.84 (k=3), 0.85 (k=4–10).

4. **short-3@k (Ours)**:

- Starts at 0.78 (k=1), peaks at 0.88 (k=5), then plateaus.

- Values: 0.78 (k=1), 0.82 (k=2), 0.86 (k=3), 0.88 (k=4–10).

### Key Observations

- The "pass@k (Oracle)" line shows the highest accuracy across all sample sizes, increasing linearly.

- "short-3@k (Ours)" outperforms "short-1@k (Ours)" consistently, with a peak accuracy of 0.88 at k=5.

- "majority@k" has the lowest accuracy, trailing behind all other methods.

- All methods plateau after k=5, except "pass@k (Oracle)", which continues to rise.

### Interpretation

The chart demonstrates that the "pass@k (Oracle)" method achieves the highest accuracy, suggesting it is the most effective baseline. The "short-3@k (Ours)" method outperforms "short-1@k (Ours)" by 0.03 accuracy at k=5, indicating that increasing the sample size for the "short" methods improves performance. The "majority@k" method’s lower accuracy suggests it is less effective compared to the other approaches. The plateauing trends for "short-1@k" and "short-3@k" after k=5 imply diminishing returns beyond this sample size. The Oracle’s continuous improvement highlights its superiority as a reference standard.

</details>

(d) R1-670B

Figure 2: Comparing different inference methods under controlled sample size ( $k$ ). All methods improve with larger sample sizes. Interestingly, this trend also holds for the short-m@k methods.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute (Tokens in Thousands)

### Overview

The chart displays three data series representing accuracy metrics across varying levels of "Thinking Compute" (measured in thousands of tokens). The x-axis spans 20 to 120k tokens, while the y-axis ranges from 0.50 to 0.75 accuracy. Three distinct lines (black dotted, blue dashed, red solid) show divergent trends in accuracy improvement.

### Components/Axes

- **X-axis**: "Thinking Compute (thinking tokens in thousands)" (20–120k tokens, increments of 20k)

- **Y-axis**: "Accuracy" (0.50–0.75, increments of 0.05)

- **Legend**: Located in the top-right corner, associating:

- Black dotted line with triangles (▲)

- Blue dashed line with diamonds (◆)

- Red solid line with circles (●)

### Detailed Analysis

1. **Black Dotted Line (▲)**:

- Starts at (20k, 0.50) and rises steadily to (120k, 0.75).

- Intermediate points: (40k, 0.65), (60k, 0.70), (80k, 0.73), (100k, 0.74).

- **Trend**: Linear increase with no plateau.

2. **Blue Dashed Line (◆)**:

- Begins at (20k, 0.48) and plateaus near 0.60 after 80k tokens.

- Intermediate points: (40k, 0.55), (60k, 0.59), (80k, 0.60).

- **Trend**: Accelerated growth until 60k tokens, then flat.

3. **Red Solid Line (●)**:

- Starts at (20k, 0.47) and reaches 0.60 by 120k tokens.

- Intermediate points: (40k, 0.52), (60k, 0.56), (80k, 0.59), (100k, 0.60).

- **Trend**: Gradual, consistent growth with minimal acceleration.

### Key Observations

- The black dotted line (▲) achieves the highest accuracy (0.75) at 120k tokens, outperforming others by ~15%.

- The blue dashed line (◆) plateaus at 0.60 accuracy after 80k tokens, suggesting diminishing returns.

- The red solid line (●) shows the slowest growth but closes the gap to 0.60 accuracy by 120k tokens.

- All lines originate near 0.47–0.50 accuracy at 20k tokens, indicating baseline performance.

### Interpretation

The data suggests a strong correlation between increased thinking compute (tokens) and improved accuracy, with diminishing returns observed in the blue dashed line (◆) after 80k tokens. The black dotted line (▲) demonstrates optimal scalability, achieving near-peak performance (0.75) at 120k tokens. The red solid line (●) indicates a less efficient scaling pattern, requiring more tokens to reach comparable accuracy levels. These trends may reflect differences in model architecture, optimization strategies, or computational efficiency across the three data series.

</details>

(a) LN-Super-49B

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Graph: Accuracy vs. Thinking Compute

### Overview

The graph illustrates the relationship between "Thinking Compute" (measured in thousands of thinking tokens) and "Accuracy" across three distinct data series. The x-axis spans from 20 to 120 thousand tokens, while the y-axis ranges from 0.55 to 0.75. Three lines represent different models or configurations, with the black dashed line (triangles) showing the highest accuracy, followed by the teal line (squares), and the red line (circles) with the lowest accuracy.

### Components/Axes

- **X-Axis**: "Thinking Compute (thinking tokens in thousands)" with gridlines at 20, 40, 60, 80, 100, and 120.

- **Y-Axis**: "Accuracy" with gridlines at 0.55, 0.60, 0.65, 0.70, and 0.75.

- **Legend**: Located on the right, with three entries:

- **Black dashed line with triangles**: "Model A" (highest accuracy).

- **Teal line with squares**: "Model B" (mid-range accuracy).

- **Red line with circles**: "Model C" (lowest accuracy).

### Detailed Analysis

1. **Black Dashed Line (Model A)**:

- Starts at (20k, 0.55) and rises sharply to (60k, 0.75), then plateaus with a slight decline to (120k, ~0.73).

- Key data points:

- 20k tokens: 0.55

- 40k tokens: ~0.68

- 60k tokens: 0.75

- 80k tokens: ~0.74

- 100k tokens: ~0.73

- 120k tokens: ~0.73

2. **Teal Line (Model B)**:

- Begins at (20k, 0.55) and increases gradually to (60k, 0.64), then plateaus at ~0.64 until 120k.

- Key data points:

- 20k tokens: 0.55

- 40k tokens: ~0.61

- 60k tokens: 0.64

- 80k tokens: ~0.64

- 100k tokens: ~0.64

- 120k tokens: ~0.64

3. **Red Line (Model C)**:

- Starts at (20k, 0.55) and rises steadily to (120k, 0.65).

- Key data points:

- 20k tokens: 0.55

- 40k tokens: ~0.58

- 60k tokens: ~0.62

- 80k tokens: ~0.64

- 100k tokens: ~0.65

- 120k tokens: 0.65

### Key Observations

- **Model A** achieves the highest accuracy but shows a decline after 60k tokens, suggesting potential overfitting or diminishing returns.

- **Model B** plateaus at ~0.64, indicating limited scalability beyond 60k tokens.

- **Model C** demonstrates consistent improvement but remains the least accurate across all token ranges.

- All models start at the same baseline (0.55 accuracy at 20k tokens), implying similar initial performance.

### Interpretation

The data suggests that increasing thinking compute improves accuracy, but the rate of improvement varies by model. **Model A** initially outperforms others but may suffer from overfitting or resource constraints at higher token counts. **Model B** and **Model C** show more stable scaling, with **Model C** requiring more tokens to achieve comparable accuracy. The plateau in **Model B** and **Model C** highlights potential inefficiencies in resource allocation or algorithmic design. The decline in **Model A** after 60k tokens warrants further investigation into whether this reflects a technical limitation or a trade-off between accuracy and computational cost.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Graph: Accuracy vs. Thinking Compute (Tokens in Thousands)

### Overview

The graph illustrates the relationship between computational resources (measured in "thinking tokens in thousands") and accuracy performance across three distinct data series. The y-axis represents accuracy (0.66–0.82), while the x-axis spans computational load from 25 to 150 thousand tokens. Three lines are plotted: a black dotted line ("Baseline"), a teal line ("Optimized"), and a red line ("Advanced").

### Components/Axes

- **X-axis**: "Thinking Compute (thinking tokens in thousands)"

- Scale: 25 → 150 (increments of 25)

- **Y-axis**: "Accuracy"

- Scale: 0.66 → 0.82 (increments of 0.02)

- **Legend**: Right-aligned, with three entries:

- Black dotted line: "Baseline"

- Teal line: "Optimized"

- Red line: "Advanced"

### Detailed Analysis

1. **Baseline (Black Dotted Line)**

- Starts at (25, 0.66) and increases steadily.

- Key points:

- (50, 0.74)

- (75, 0.78)

- (100, 0.80)

- (125, 0.81)

- (150, 0.82)

- **Trend**: Linear growth with no plateau, suggesting unbounded improvement with compute.

2. **Optimized (Teal Line)**

- Starts at (25, 0.66) but plateaus after 50 tokens.

- Key points:

- (50, 0.72)

- (75, 0.72)

- (100, 0.72)

- (125, 0.72)

- (150, 0.72)

- **Trend**: Sharp initial gain (25→50 tokens) followed by stagnation.

3. **Advanced (Red Line)**

- Starts at (25, 0.66) and rises gradually.

- Key points:

- (50, 0.70)

- (75, 0.72)

- (100, 0.74)

- (125, 0.74)

- (150, 0.75)

- **Trend**: Moderate improvement with diminishing returns after 100 tokens.

### Key Observations

- **Baseline** demonstrates the steepest and most consistent improvement, reaching 0.82 accuracy at 150 tokens.

- **Optimized** shows early gains but no further improvement beyond 50 tokens, indicating a potential inefficiency or saturation point.

- **Advanced** exhibits the slowest growth, suggesting limited scalability despite higher computational demands.

- All lines originate at (25, 0.66), implying a baseline performance threshold at minimal compute.

### Interpretation

The data suggests that computational efficiency significantly impacts accuracy outcomes. The "Baseline" model’s linear scaling implies it may leverage compute more effectively, while the "Optimized" and "Advanced" models face diminishing returns, possibly due to architectural constraints or suboptimal resource allocation. The plateau in the "Optimized" line raises questions about its design—whether it prioritizes stability over scalability or encounters a fundamental limit. The "Advanced" model’s gradual improvement hints at a trade-off between complexity and practical gains. These trends underscore the importance of balancing compute investment with algorithmic efficiency to maximize performance.

</details>

(c) QwQ-32B

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thinking Compute

### Overview

The chart compares the accuracy of four different methods (pass@k, majority@k, short-1@k, short-3@k) across varying levels of thinking compute (measured in thousands of tokens). Accuracy is plotted on the y-axis (0.78–0.90), while thinking compute is on the x-axis (50–150k tokens). All methods show upward trends, with pass@k (Oracle) achieving the highest accuracy.

### Components/Axes

- **Y-Axis**: Accuracy (0.78–0.90, increments of 0.02).

- **X-Axis**: Thinking Compute (50–150k tokens, increments of 50k).

- **Legend**: Located in the bottom-right corner, with four entries:

- **pass@k (Oracle)**: Black triangles (▲).

- **majority@k**: Red circles (●).

- **short-1@k (Ours)**: Blue squares (■).

- **short-3@k (Ours)**: Green diamonds (◇).

### Detailed Analysis

1. **pass@k (Oracle)**:

- Starts at 0.78 (50k tokens).

- Rises sharply to 0.90 by 100k tokens.

- Plateaus at 0.90 beyond 100k tokens.

- *Key data points*: 0.78 (50k), 0.84 (75k), 0.90 (100k+).

2. **majority@k**:

- Starts at 0.78 (50k tokens).

- Gradually increases to 0.86 by 150k tokens.

- *Key data points*: 0.78 (50k), 0.82 (100k), 0.86 (150k).

3. **short-1@k (Ours)**:

- Starts at 0.78 (50k tokens).

- Reaches 0.84 by 100k tokens.

- Plateaus at 0.84 beyond 100k tokens.

- *Key data points*: 0.78 (50k), 0.84 (100k+).

4. **short-3@k (Ours)**:

- Starts at 0.78 (50k tokens).

- Rises to 0.88 by 150k tokens.

- *Key data points*: 0.78 (50k), 0.86 (125k), 0.88 (150k).

### Key Observations

- **pass@k (Oracle)** dominates in accuracy, achieving 0.90 at 100k tokens.

- **short-3@k (Ours)** outperforms other methods, reaching 0.88 at 150k tokens.

- **majority@k** shows the slowest improvement, ending at 0.86.

- All methods plateau after 100k tokens, suggesting diminishing returns.

### Interpretation

The data demonstrates that increasing thinking compute improves accuracy across all methods, with **pass@k (Oracle)** being the most effective. The proposed methods (**short-1@k** and **short-3@k**) achieve competitive results, with **short-3@k** closing the gap to the Oracle. The plateauing trends indicate that beyond 100k tokens, additional compute yields minimal accuracy gains. This suggests a trade-off between computational cost and marginal performance improvements. The **majority@k** method’s lower performance highlights its inefficiency compared to the other approaches.

</details>

(d) R1-670B

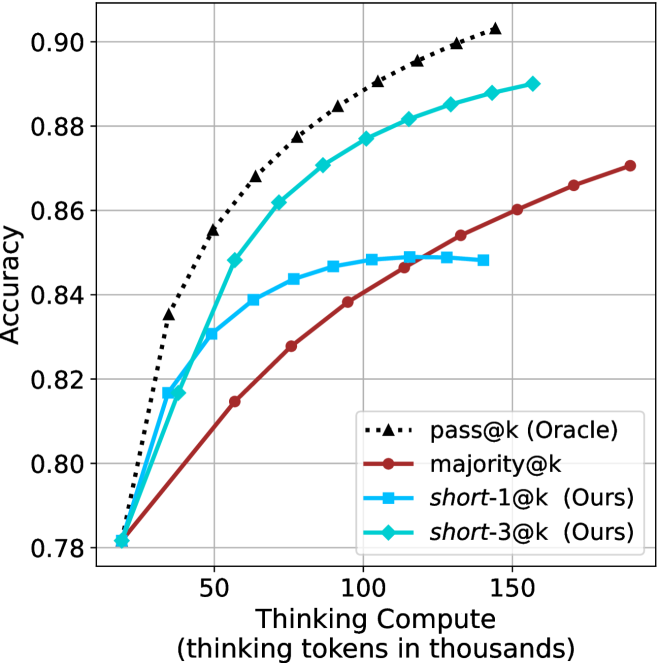

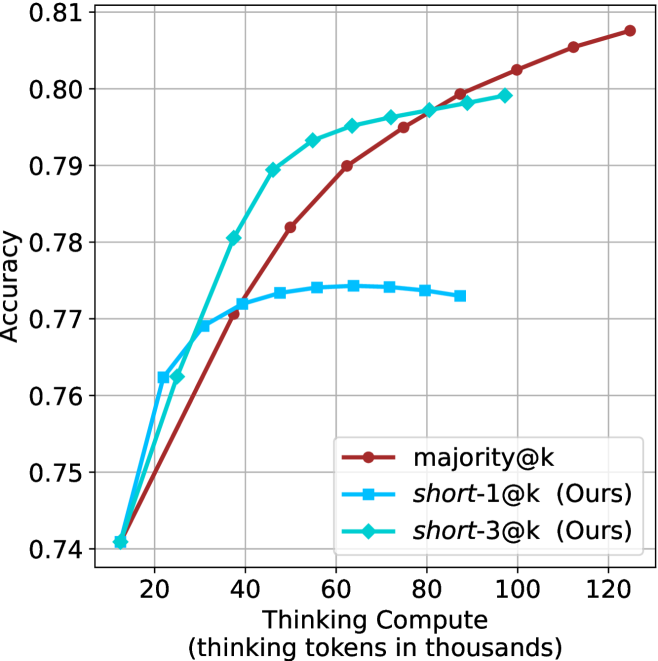

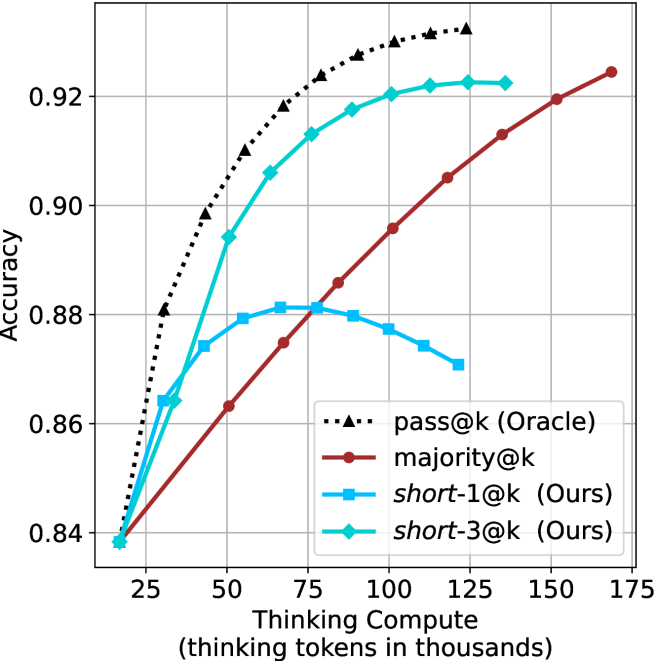

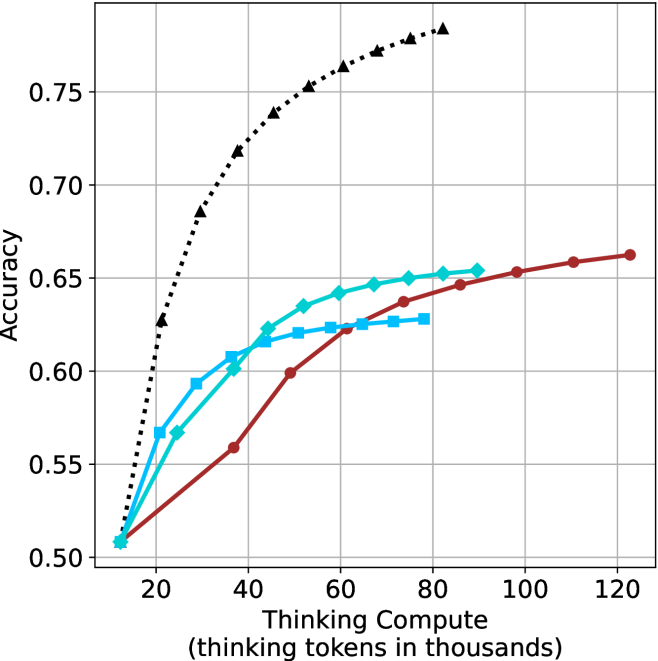

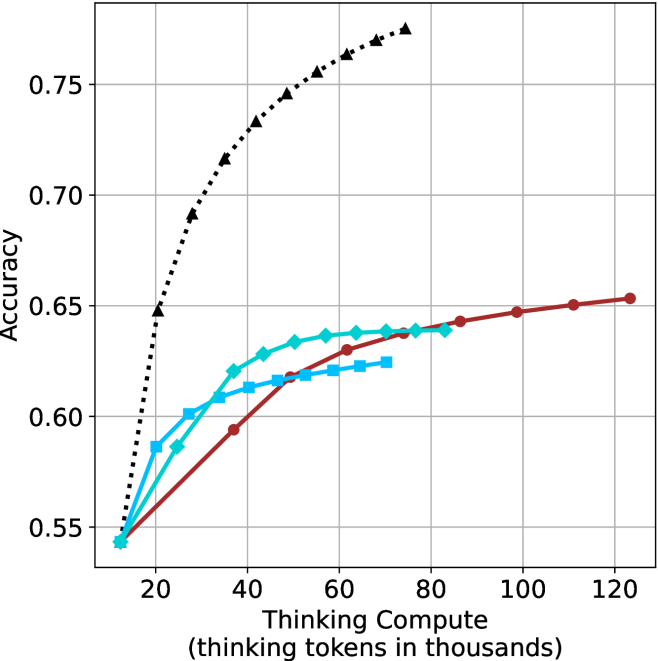

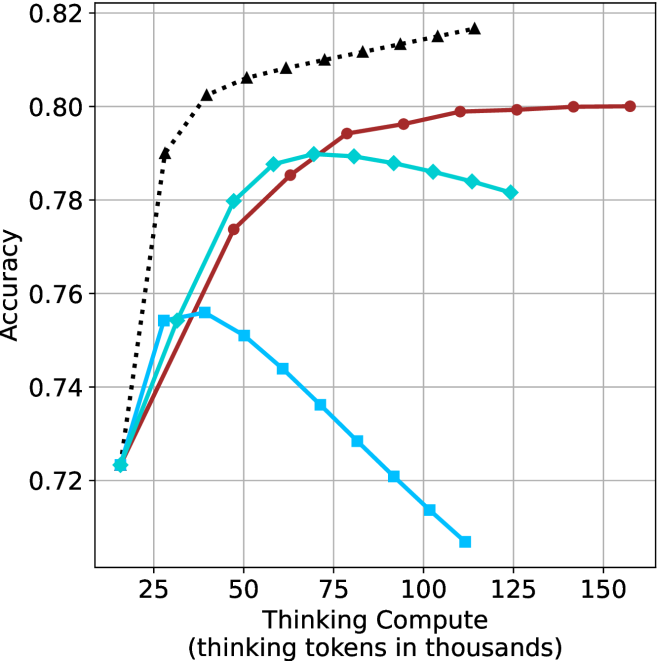

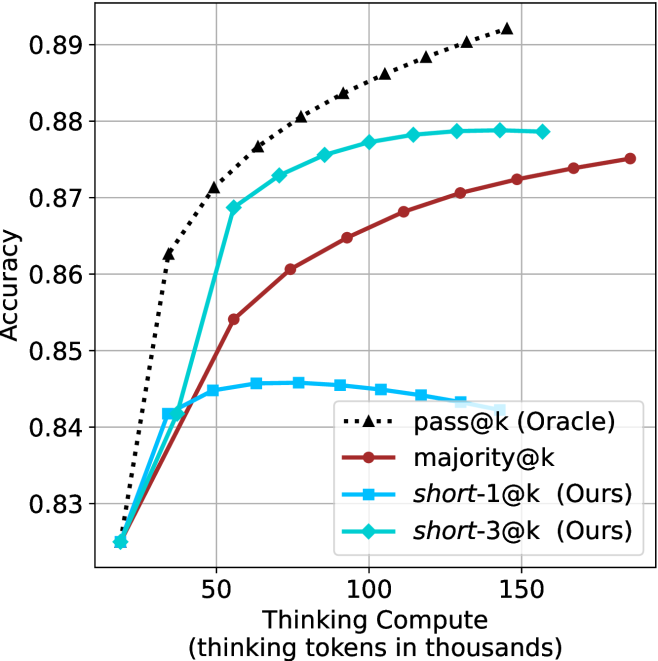

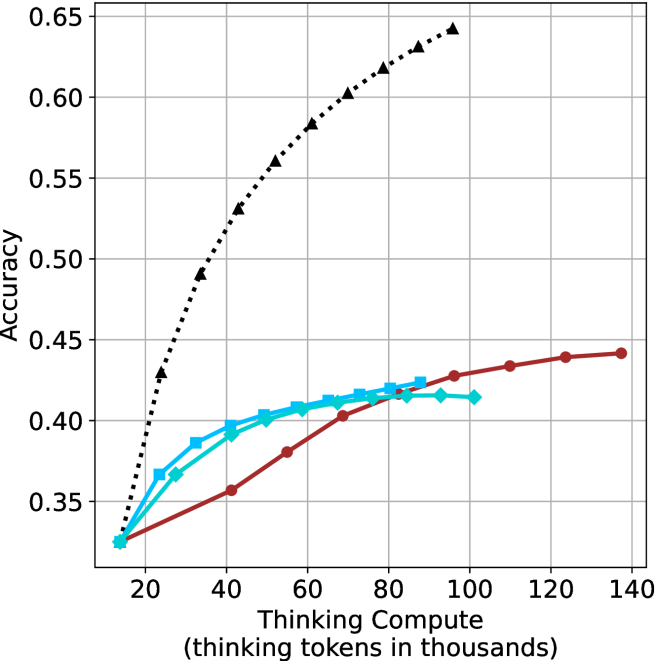

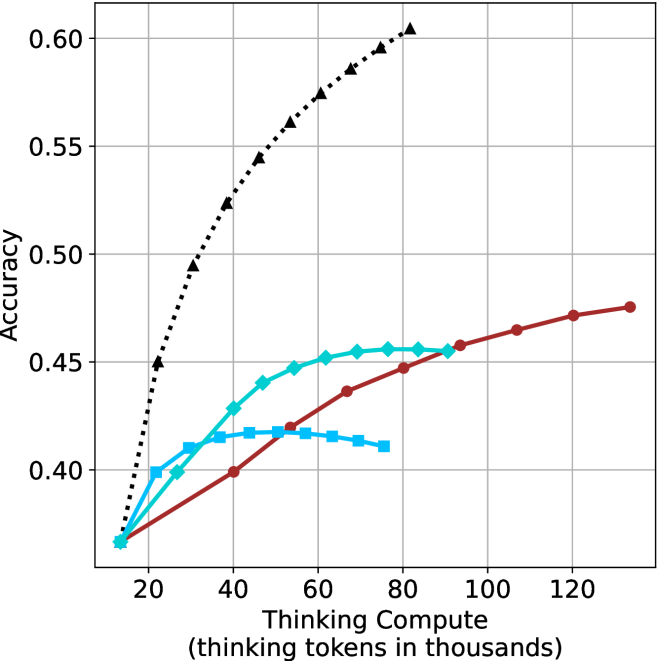

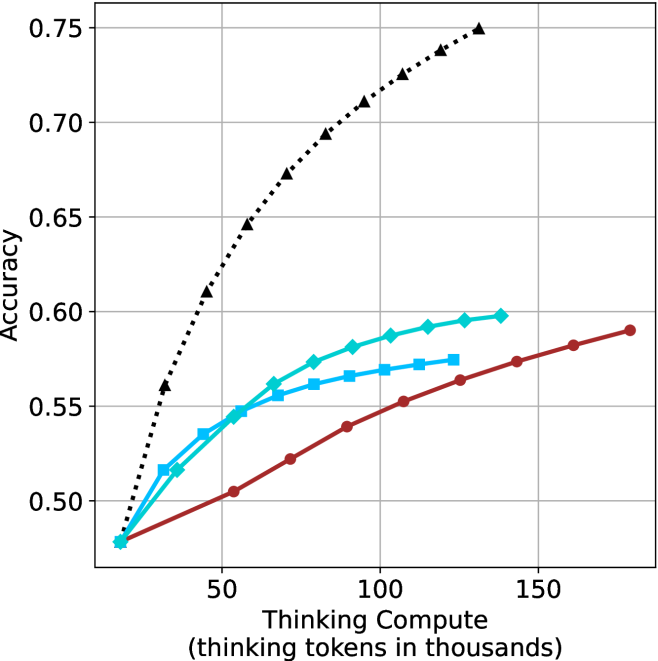

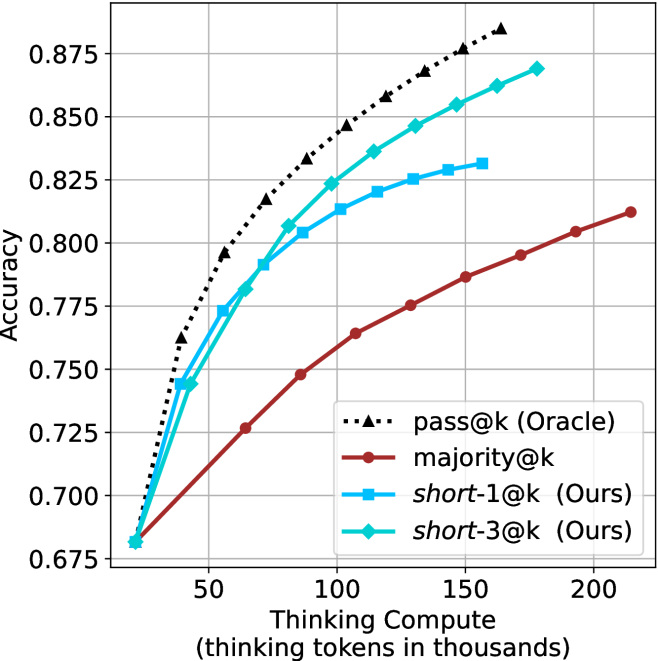

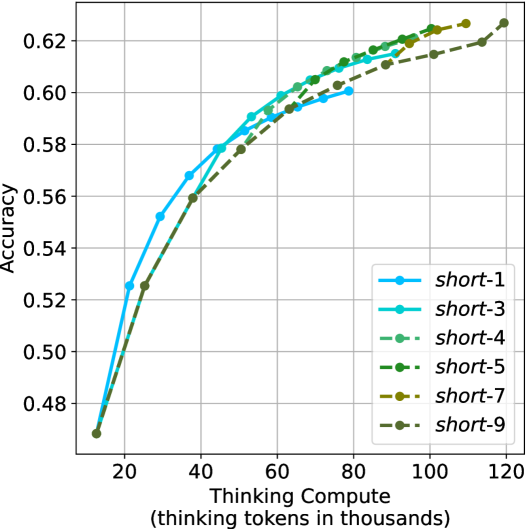

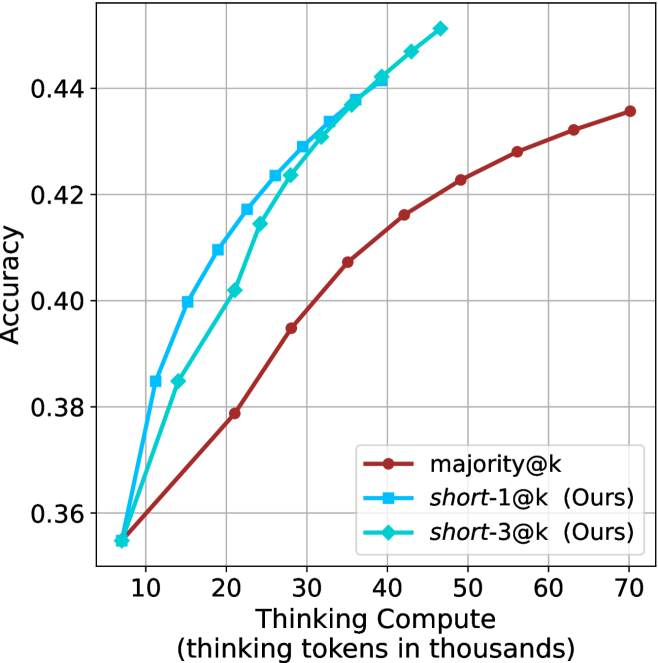

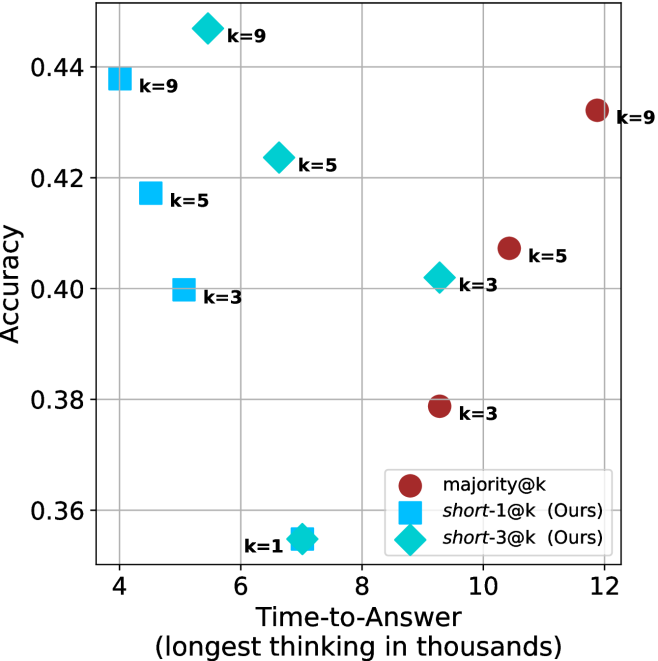

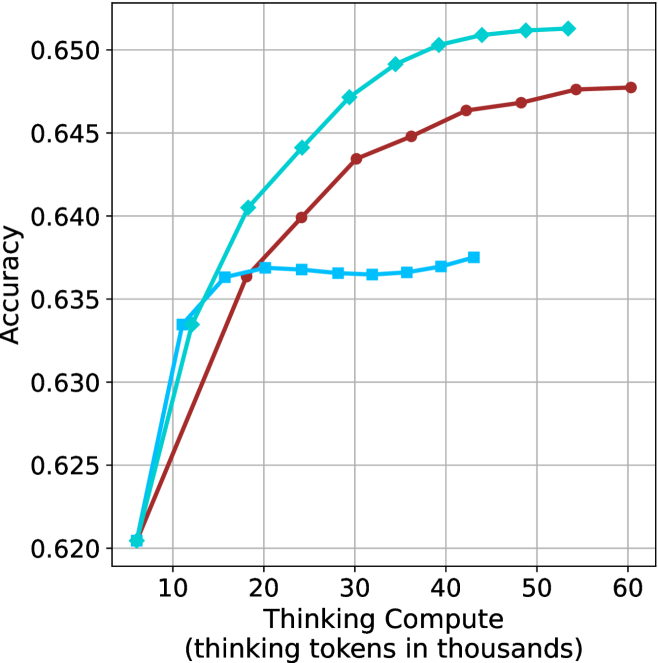

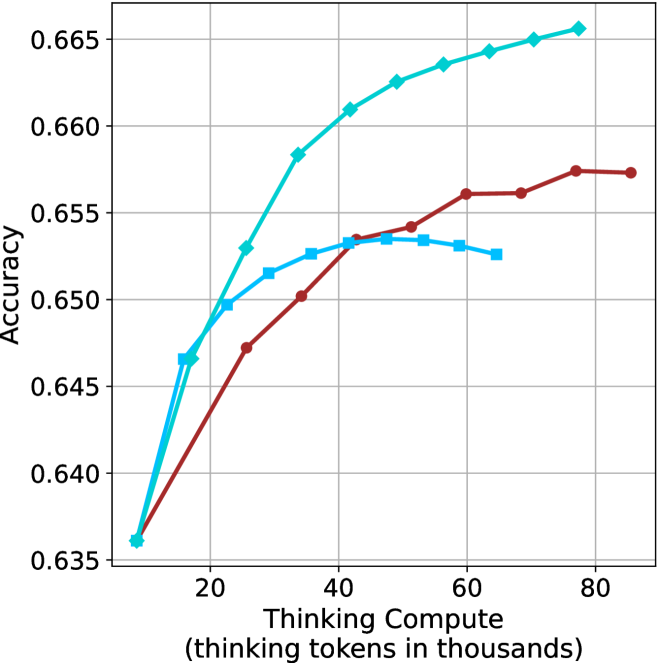

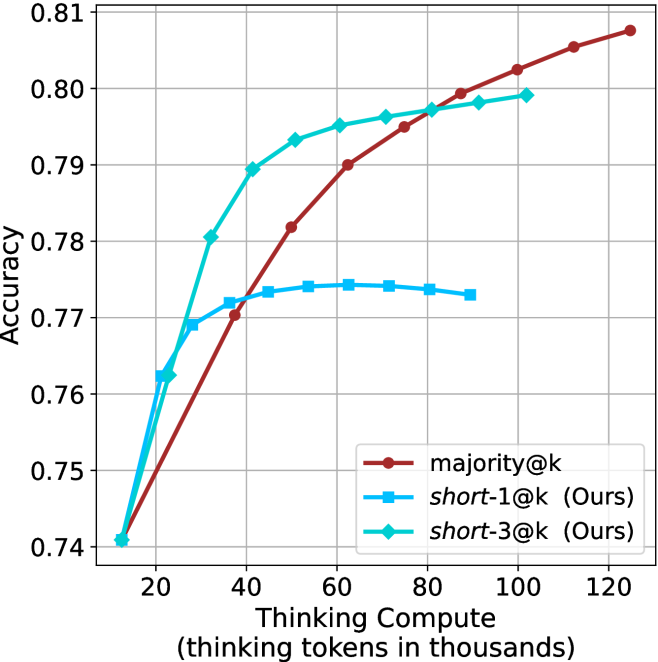

Figure 3: Comparing different inference methods under controlled thinking compute. short-1@k is highly performant in low compute regimes. short-3@k dominates the curve compared to majority $@k$ .

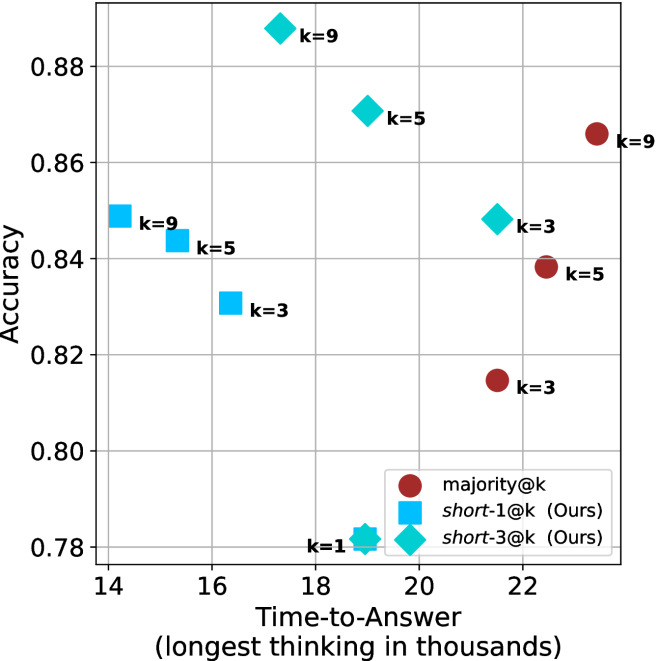

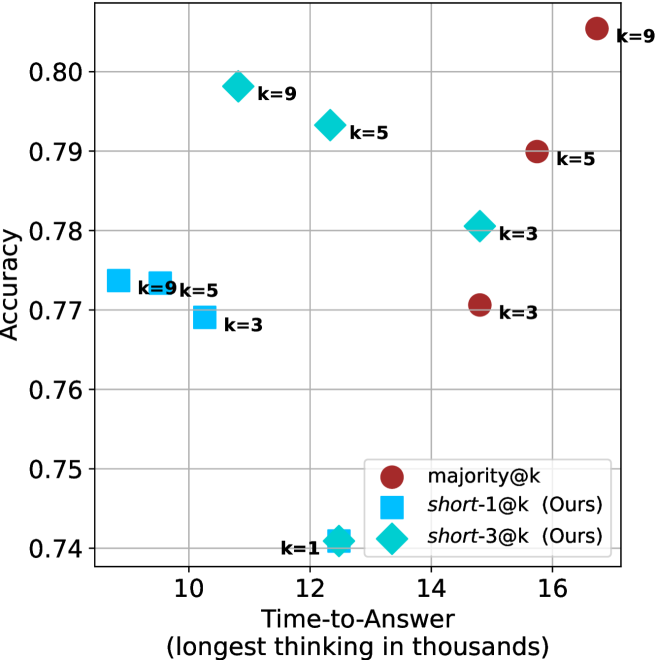

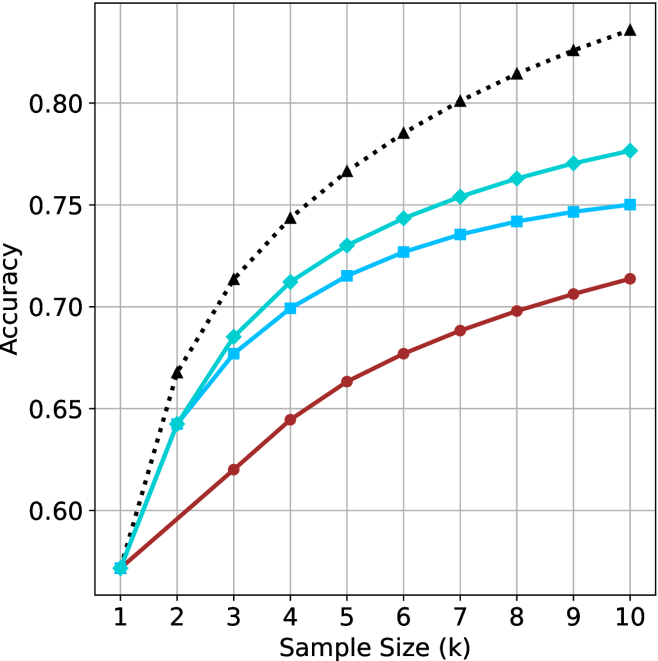

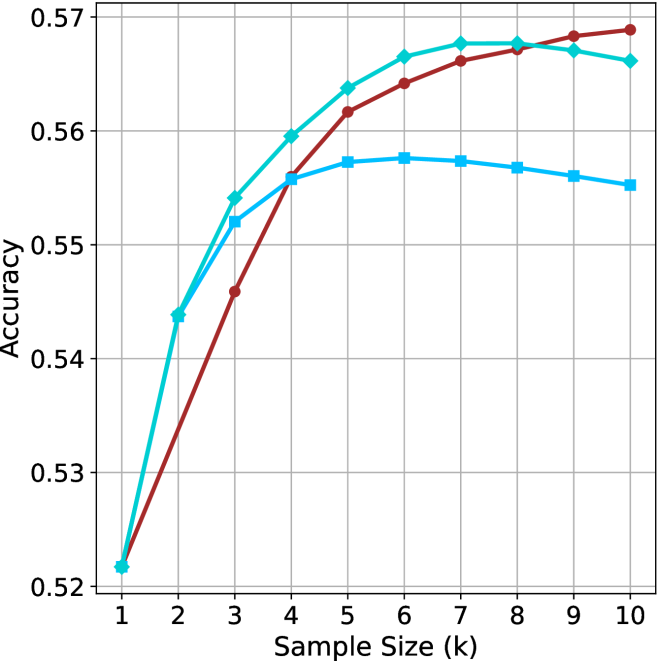

Sample-size ( $k$ ).

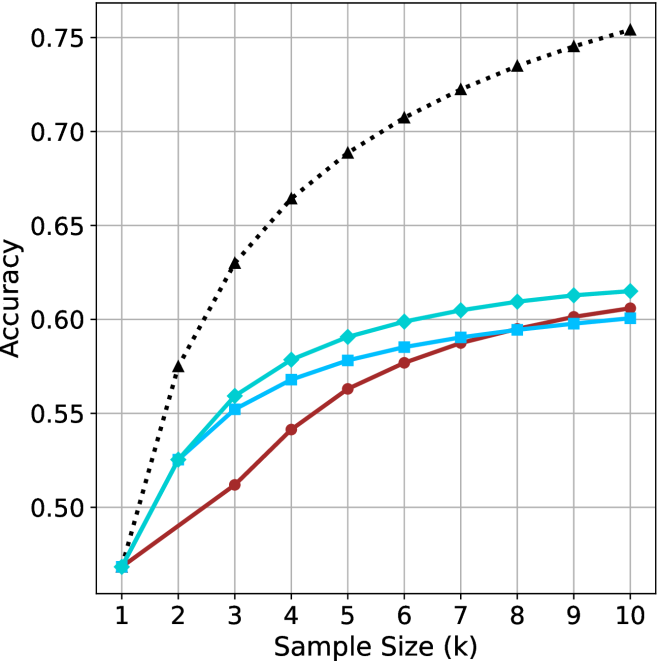

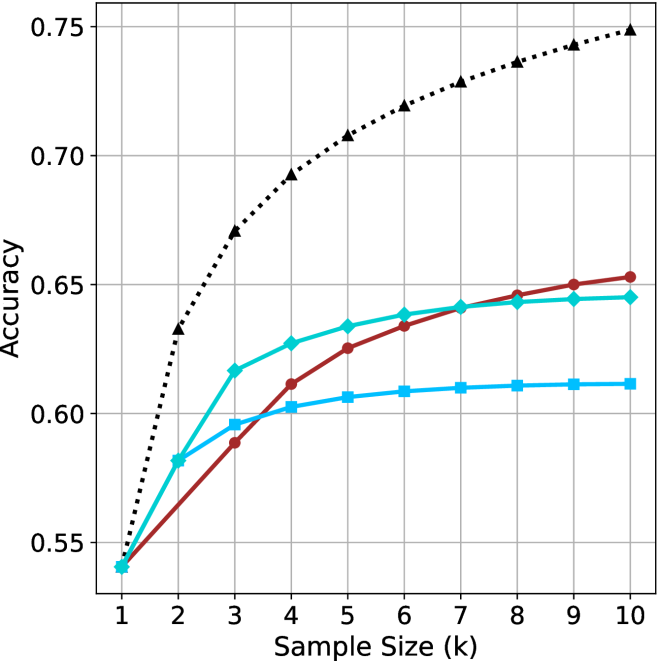

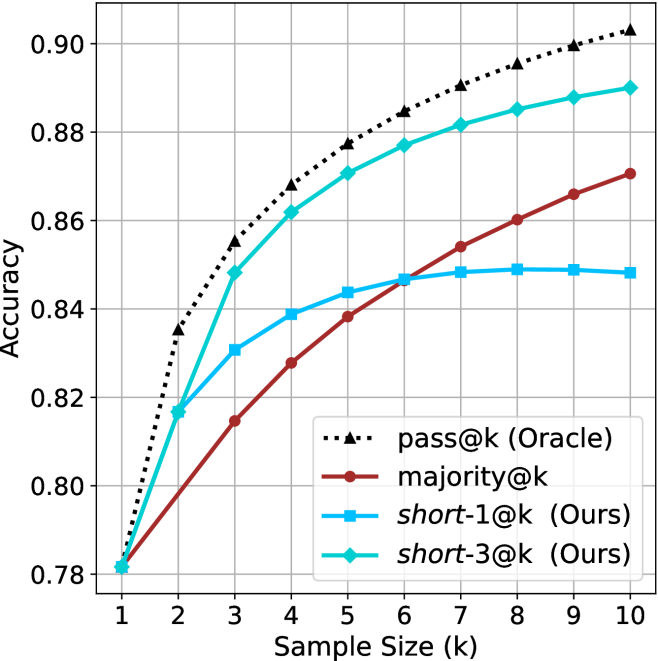

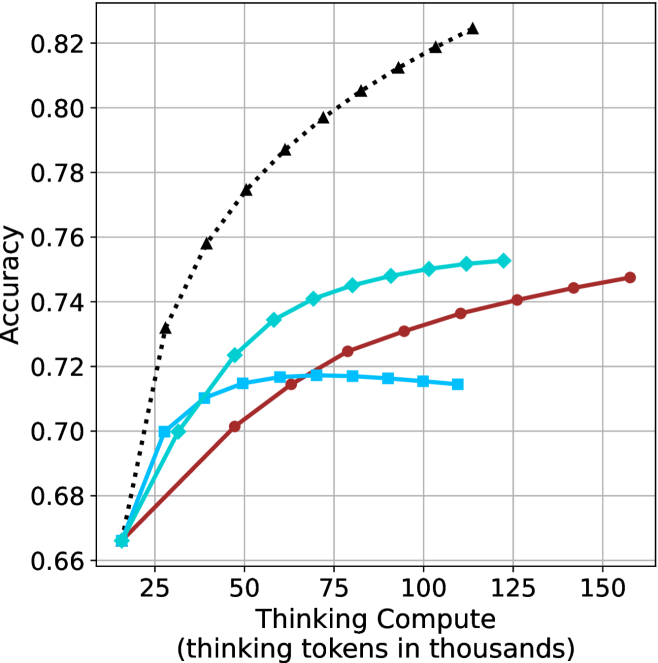

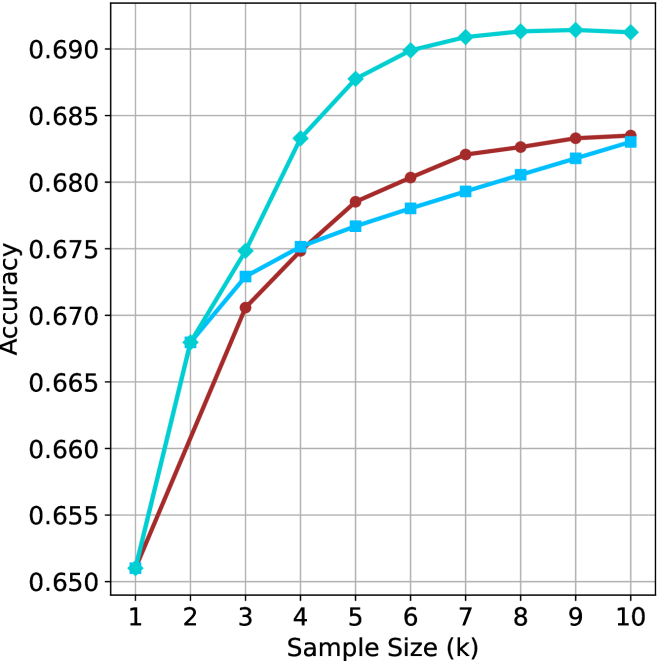

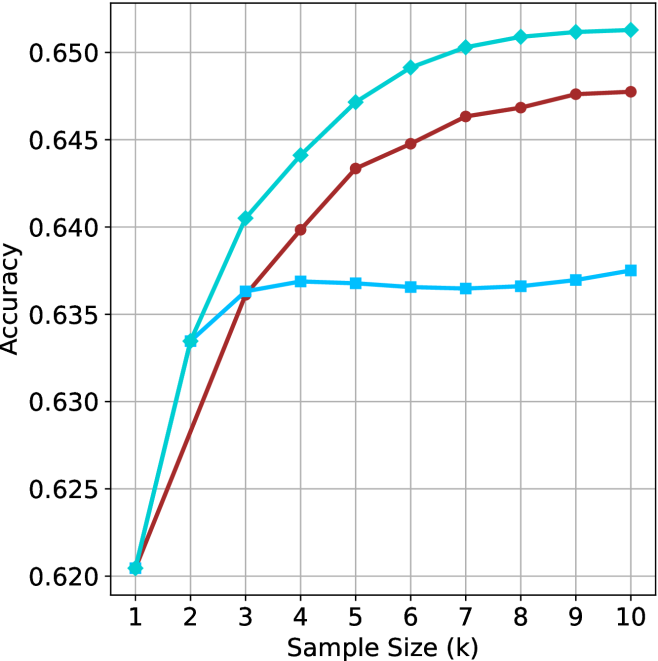

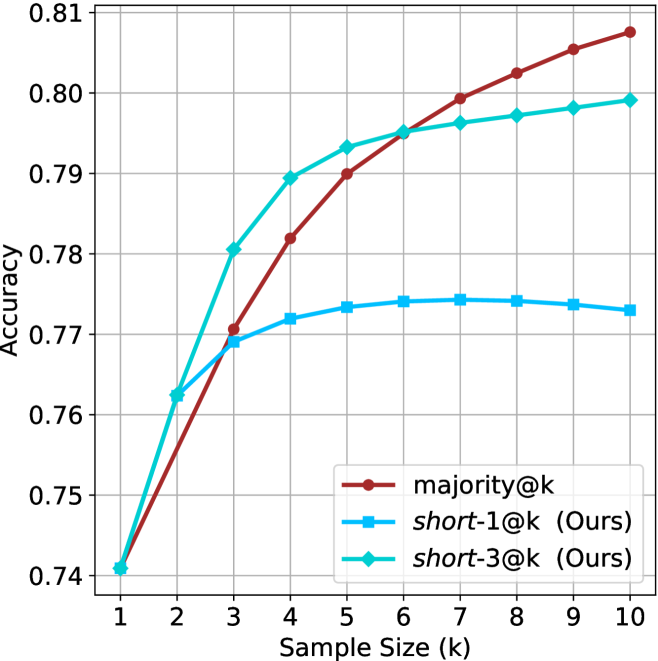

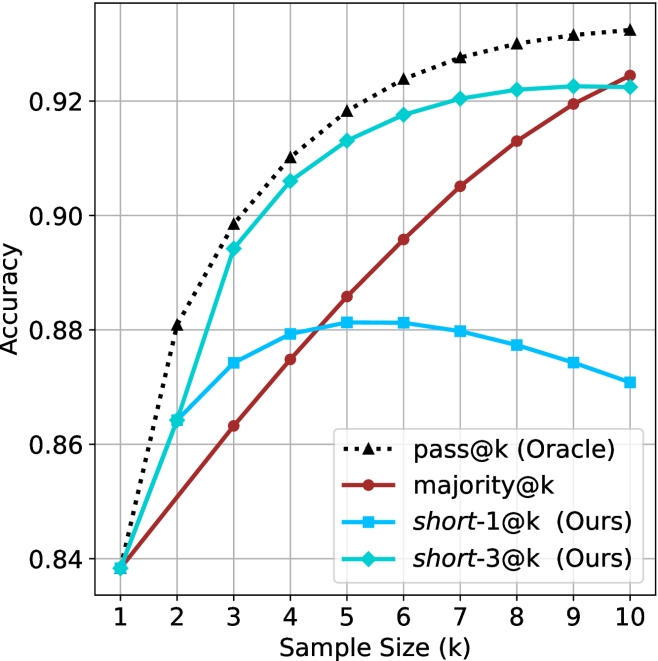

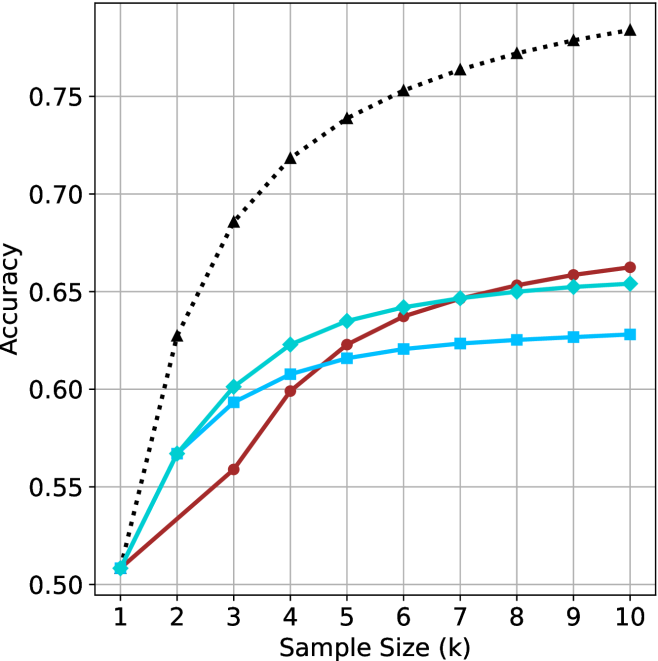

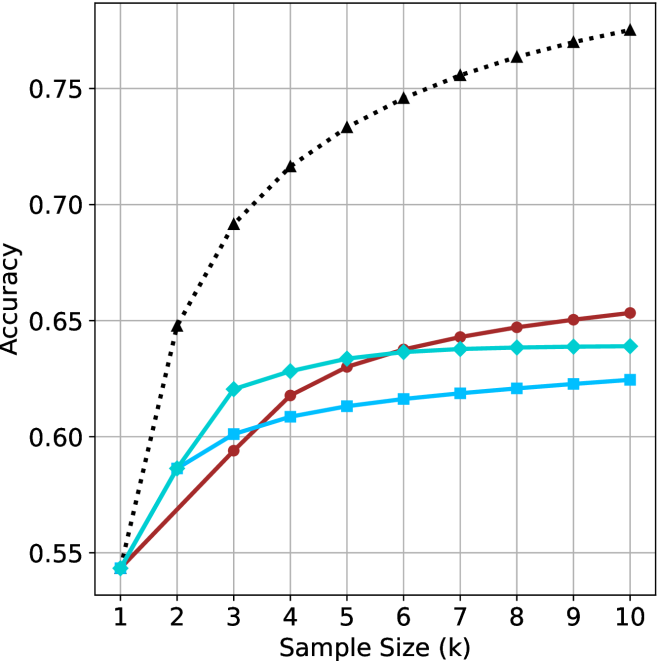

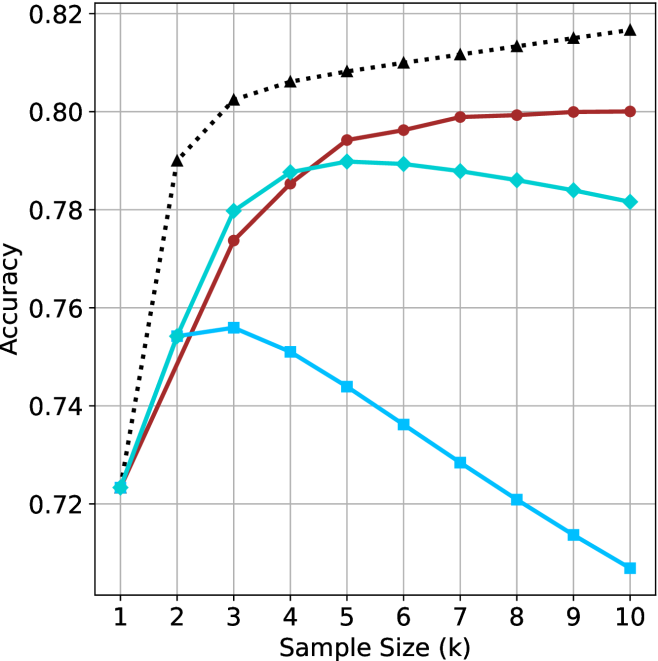

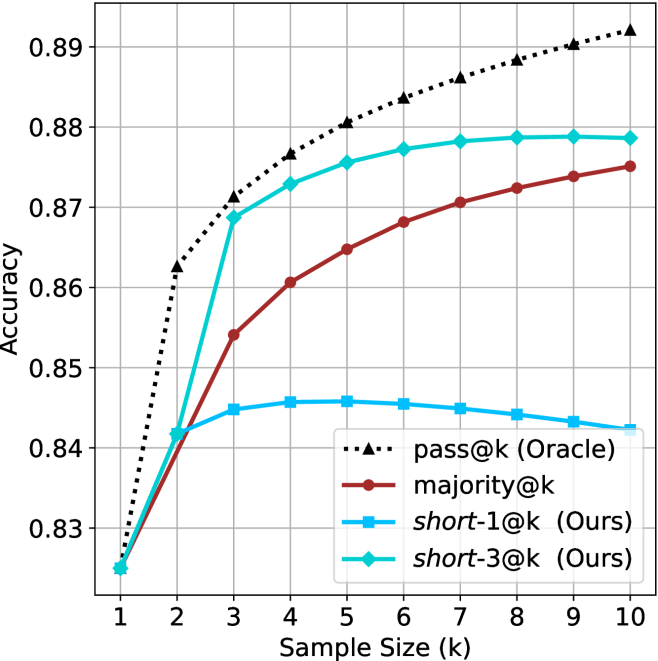

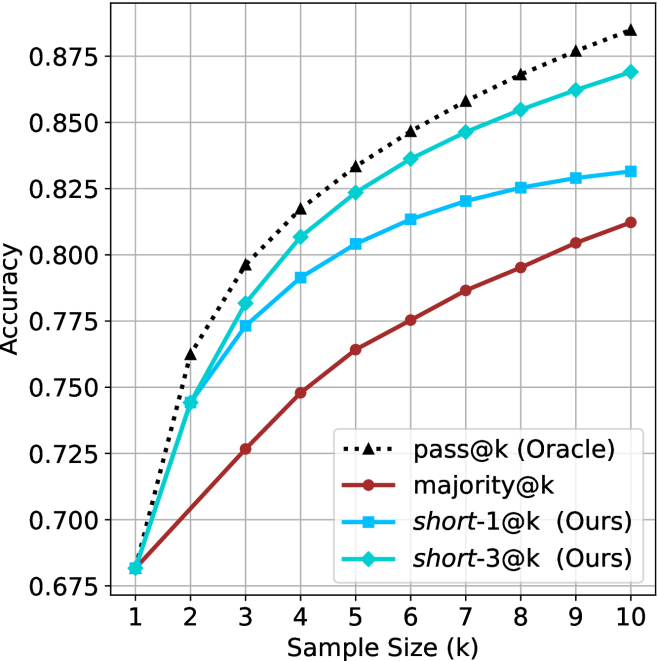

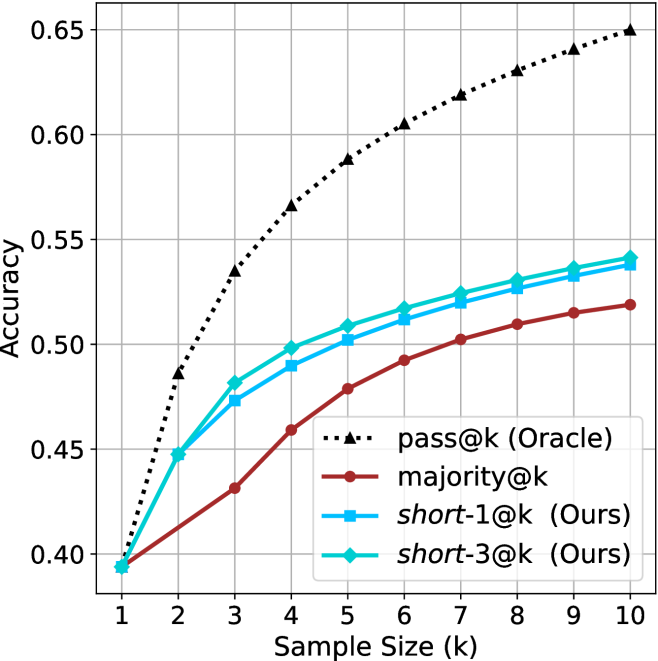

We start by examining different methods across benchmarks for a fixed sample size $k$ . Results aggregated across math benchmarks are presented in Figure ˜ 2, while Figure ˜ 6 in Appendix ˜ A presents GPQA-D results, and detailed results per benchmark can be seen at Appendix ˜ B. We observe that, generally, all methods improve with larger sample sizes, indicating that increased generations per question enhance performance. This trend is somewhat expected for the oracle (pass@ $k$ ) and majority@ $k$ methods but surprising for our method, as it means that even when a large amount of generations is used, the shorter thinking ones are more likely to be correct. The only exception is QwQ- $32$ B (Figure ˜ 2(c)), which shows a small of decline when considering larger sample sizes with the short-1@k method.

When comparing short-1@k to majority@ $k$ , the former outperforms at smaller sample sizes, but is outperformed by the latter in three out of four models when the sample size increases. Meanwhile, the short-3@k method demonstrates superior performance, dominating across nearly all models and sample sizes. Notably, for the R $1$ - $670$ B model, short-3@k exhibits performance nearly on par with the oracle across all sample sizes. We next analyze how this performance advantage translates into efficiency benefits.

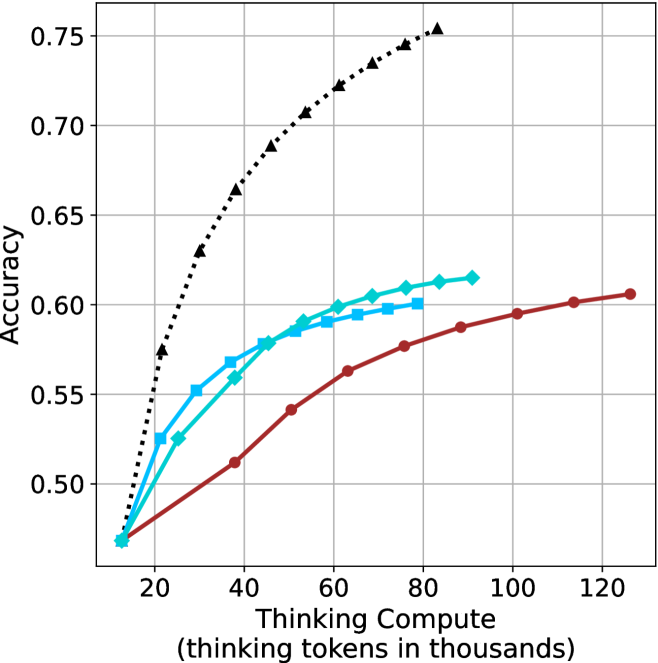

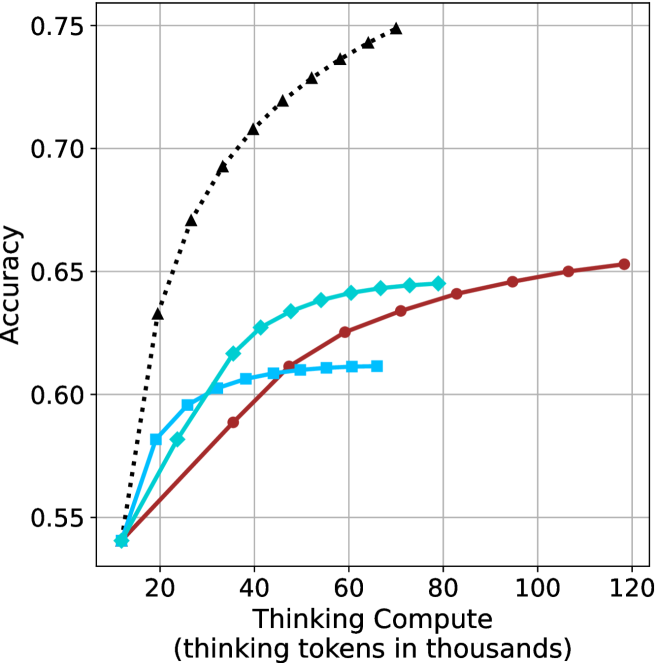

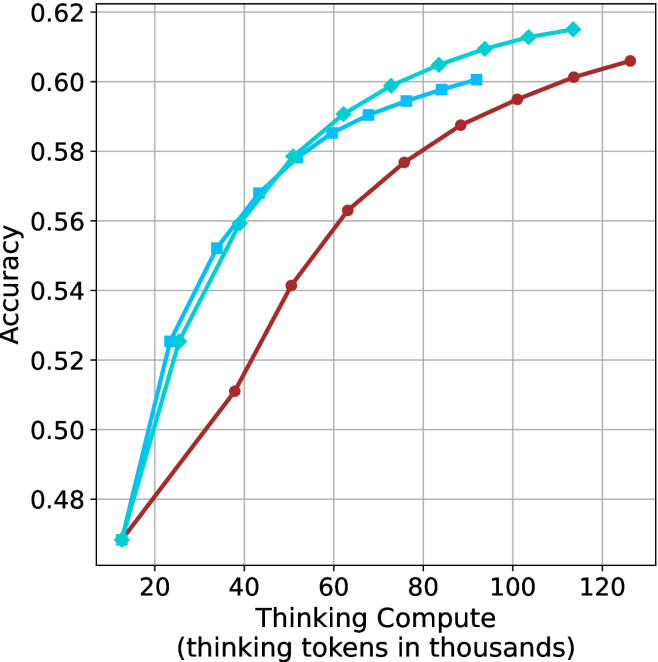

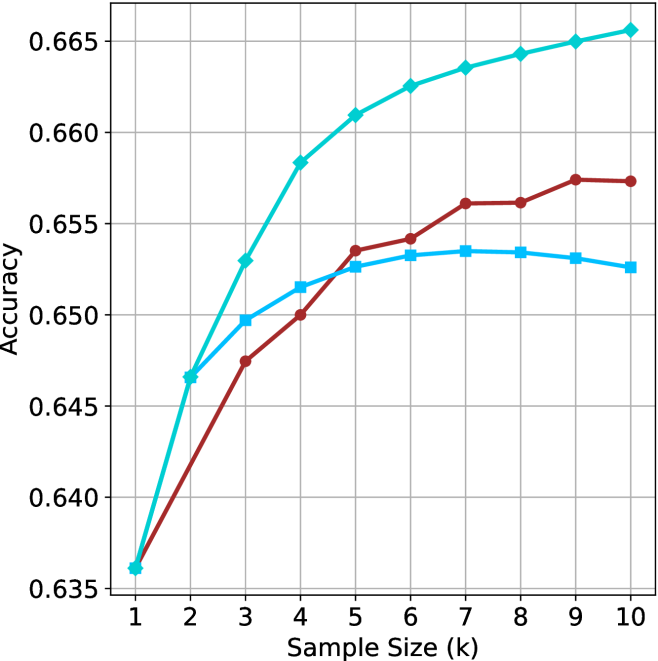

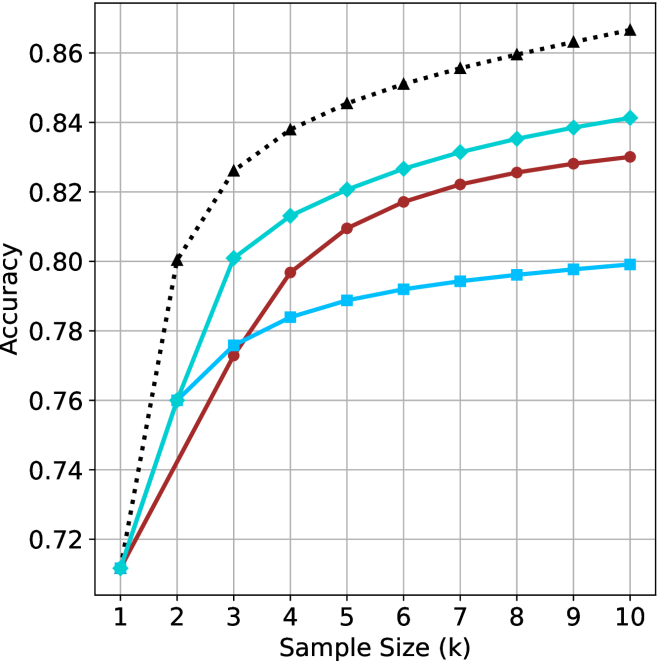

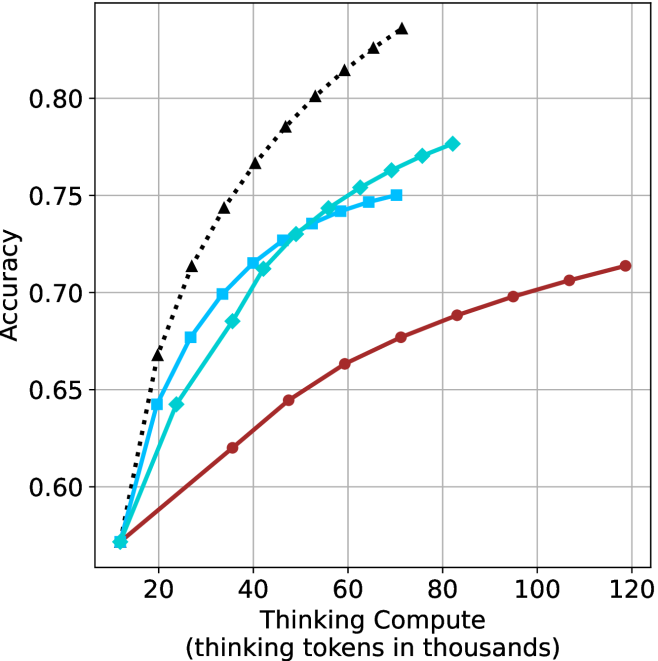

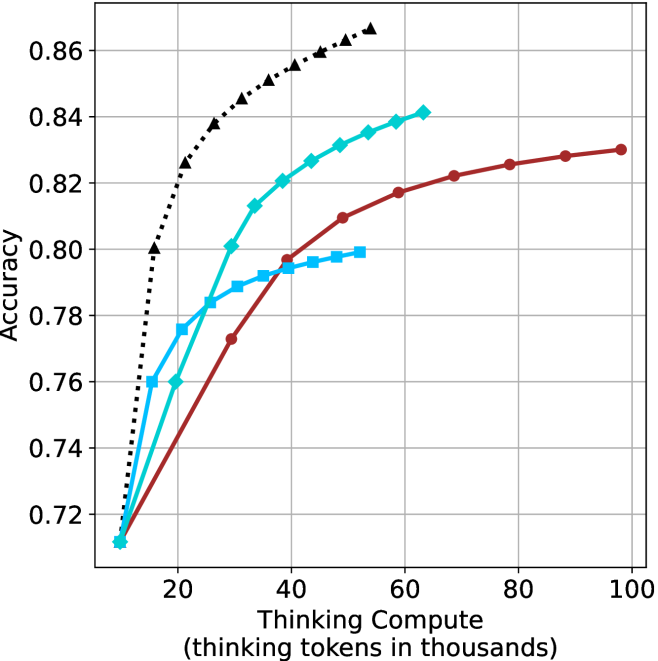

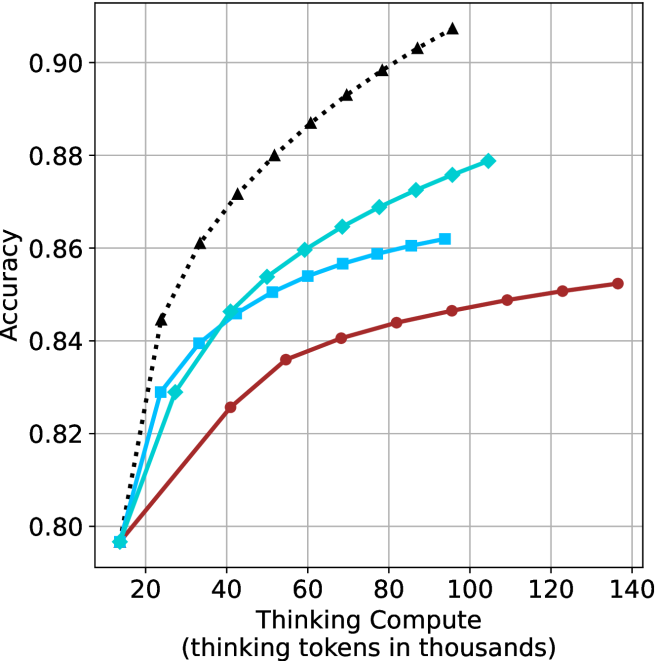

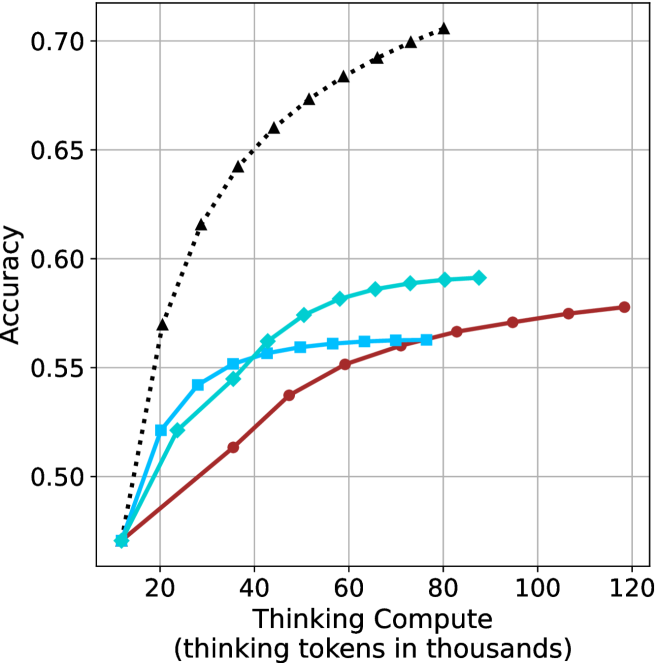

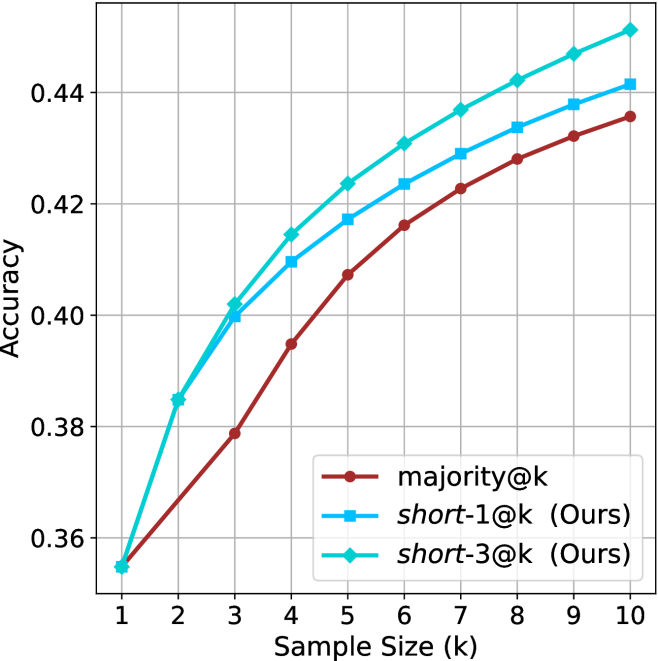

Thinking-compute.

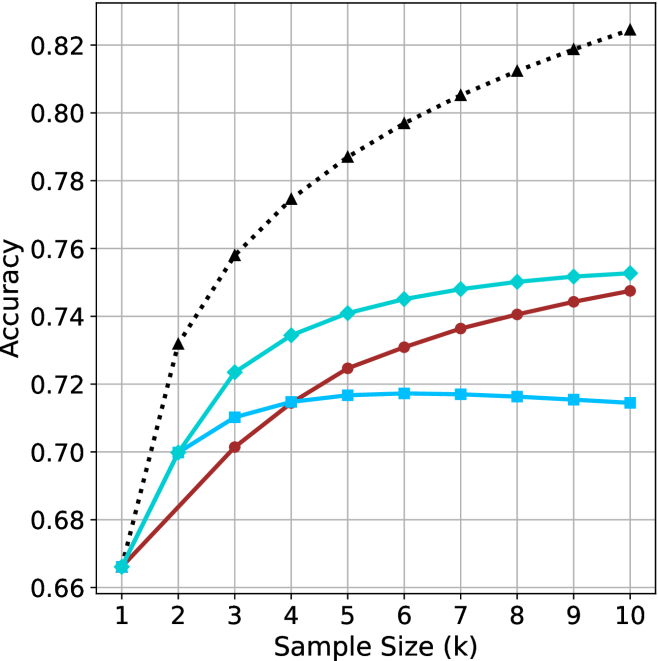

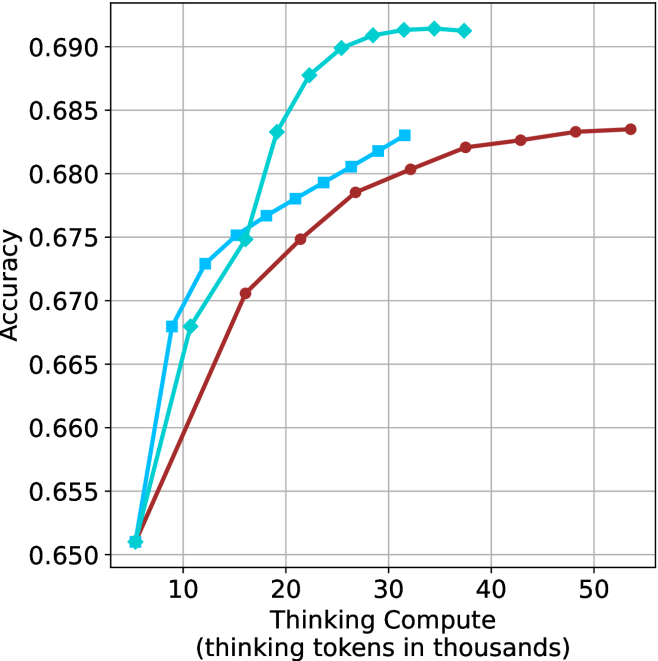

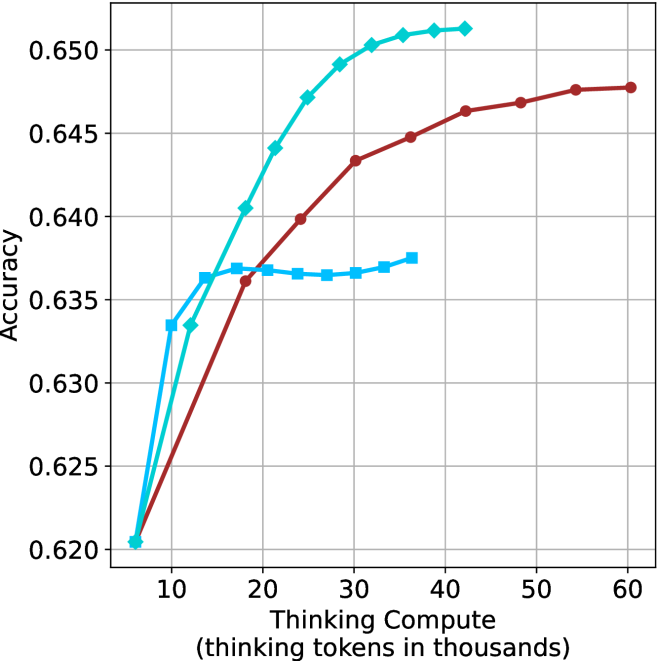

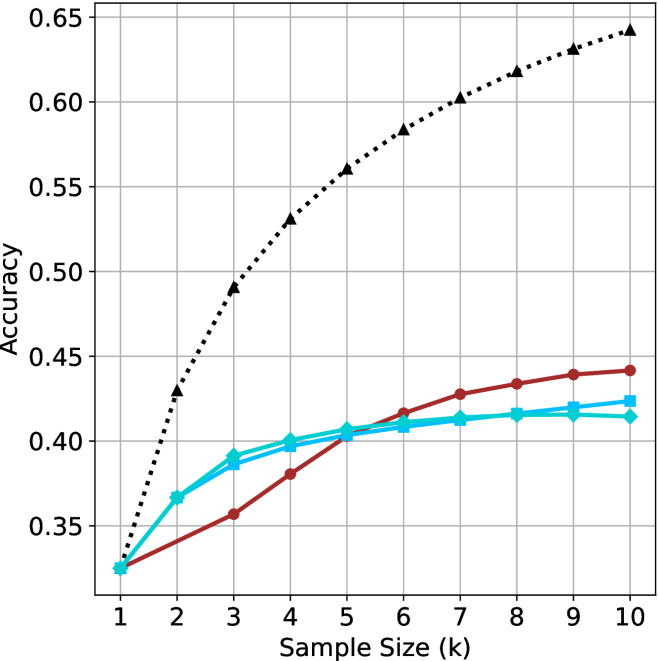

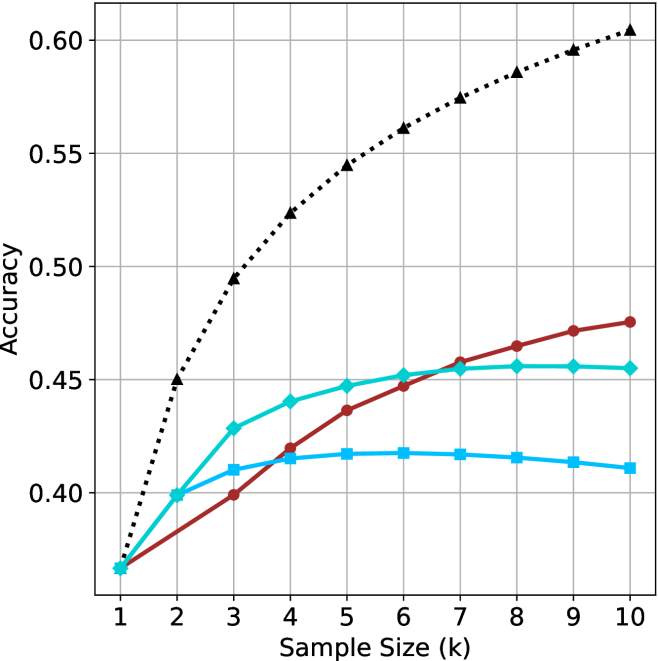

The aggregated performance results for math benchmarks, evaluated with respect to thinking compute, are presented in Figure ˜ 3 (per-benchmark results provided in Appendix ˜ B), while the GPQA-D respective results are presented in Figure ˜ 7 in Appendix ˜ A. We again observe that the short-1@k method outperforms majority $@k$ at lower compute budgets. Notably, for LN-Super- $49$ B (Figure ˜ 3(a)), the short-1@k method surpasses majority $@k$ across all compute budgets. For instance, short-1@k achieves $57\$ accuracy with approximately $60\$ of the compute budget used by majority@ $k$ to achieve the same accuracy. For R $1$ - $32$ B, QwQ- $32$ B and R $1$ - $670$ B models, the short-1@k method exceeds majority $@k$ up to compute budgets of $45$ k, $60$ k and $100$ k total thinking tokens, respectively, but is underperformed by it on larger compute budgets.

The short-3@k method yields even greater performance improvements, incurring only a modest increase in thinking compute compared to short-1@k. When compared to majority $@k$ , short-3@k consistently achieves higher performance with lower thinking compute across all models and compute budgets. For example, with the QwQ- $32$ B model (Figure ˜ 3(c)), and an average compute budget of $80$ k thinking tokens per example, short-3@k improves accuracy by $2\$ over majority@ $k$ . For the R $1$ - $670$ B model (Figure ˜ 3(d)), short-3@k consistently outperforms majority voting, yielding an approximate $4\$ improvement with an average token budget of $100$ k.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy vs. Time-to-Answer for Different k Values

### Overview

The image is a scatter plot comparing classification accuracy (y-axis) against time-to-answer (x-axis, in thousands of units) for three different k-values (k=3, k=5, k=9) in a machine learning model. A single outlier point (k=1) is also present. Data points are color-coded and shaped by k-value, with a legend on the right.

### Components/Axes

- **X-axis**: "Time-to-Answer (longest thinking in thousands)"

- Scale: 8 to 18 (discrete intervals)

- **Y-axis**: "Accuracy"

- Scale: 0.48 to 0.62 (continuous)

- **Legend**:

- **k=3**: Blue squares

- **k=5**: Teal diamonds

- **k=9**: Red circles

- **k=1**: Teal star (not in legend, but annotated)

### Detailed Analysis

#### Data Points by k-Value

1. **k=3 (Blue Squares)**

- (10, 0.54)

- (12, 0.56)

- (16, 0.51)

- **Trend**: Slight increase from 10→12, then sharp drop at 16.

2. **k=5 (Teal Diamonds)**

- (10, 0.58)

- (14, 0.56)

- (16, 0.53)

- **Trend**: Gradual decline as time increases.

3. **k=9 (Red Circles)**

- (8, 0.58)

- (10, 0.60)

- (18, 0.60)

- **Trend**: Slight improvement at 10, then plateau.

4. **k=1 (Teal Star)**

- (12, 0.48)

- **Note**: Outlier with lowest accuracy despite mid-range time.

### Key Observations

- **k=9 Dominates**: Highest accuracy (0.60) at both shortest (8) and longest (18) times.

- **k=1 Anomaly**: Significantly lower accuracy (0.48) than other k-values at similar time points.

- **Trade-off for k=3/5**: Accuracy decreases as time increases, suggesting diminishing returns.

- **Consistency in k=9**: Maintains high accuracy across all time points.

### Interpretation

The data suggests that higher k-values (e.g., k=9) improve model accuracy, particularly in longer-thinking scenarios, while lower k-values (k=3/5) show a trade-off between speed and precision. The k=1 outlier likely indicates overfitting or insufficient data for small k, as it underperforms despite moderate time investment. The plateau in k=9’s accuracy at longer times implies diminishing gains beyond a certain threshold. This pattern aligns with k-NN model behavior, where larger k reduces noise sensitivity but increases computational cost.

</details>

(a) LN-Super- $49$ B

<details>

<summary>x11.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy vs. Time-to-Answer (Longest Thinking in Thousands)

### Overview

The image is a scatter plot comparing **accuracy** (y-axis) and **time-to-answer** (x-axis, in thousands of units) for different configurations labeled by `k` values. Data points are color-coded and marked with distinct symbols (squares, circles, diamonds, stars) corresponding to `k=1`, `k=3`, `k=5`, and `k=9`. The plot highlights trade-offs between accuracy and computational time across configurations.

---

### Components/Axes

- **Y-Axis (Accuracy)**: Ranges from 0.54 to 0.64, with gridlines at 0.56, 0.58, 0.60, 0.62, and 0.64.

- **X-Axis (Time-to-Answer)**: Ranges from 8 to 18 (in thousands), with gridlines at 8, 10, 12, 14, 16, and 18.

- **Legend**: Located on the right, associating:

- **Blue squares**: `k=3`

- **Red circles**: `k=3`

- **Cyan diamonds**: `k=5`

- **Magenta stars**: `k=9`

- **Data Points**: Scattered across the plot, with labels indicating `k` values and approximate coordinates.

---

### Detailed Analysis

#### Data Points by `k` Value

1. **`k=1` (Blue Star)**:

- Single point at `(12, 0.54)`.

- Lowest accuracy and moderate time-to-answer.

2. **`k=3` (Blue Squares and Red Circles)**:

- **Blue Squares**:

- `(9, 0.60)`

- `(10, 0.59)`

- **Red Circles**:

- `(16, 0.58)`

- `(18, 0.58)`

- **Trend**: Accuracy decreases slightly as time increases (e.g., 0.60 → 0.58).

3. **`k=5` (Cyan Diamonds and Red Circles)**:

- **Cyan Diamonds**:

- `(8, 0.61)`

- `(10, 0.63)`

- **Red Circles**:

- `(17, 0.62)`

- **Trend**: Higher accuracy (0.61–0.63) with longer time-to-answer (8–17k).

4. **`k=9` (Cyan Diamonds and Magenta Stars)**:

- **Cyan Diamonds**:

- `(9, 0.64)`

- **Magenta Stars**:

- `(18, 0.64)`

- **Trend**: Highest accuracy (0.64) but longest time-to-answer (9–18k).

---

### Key Observations

1. **Accuracy-Time Trade-off**:

- Higher `k` values (e.g., `k=9`) achieve higher accuracy but require significantly more time.

- Lower `k` values (e.g., `k=1`) have lower accuracy but faster computation.

2. **Inconsistencies**:

- **Cyan Diamonds**: Labeled as `k=5` in the legend but include a point `(9, 0.64)` labeled `k=9`.

- **Red Circles**: Labeled as `k=3` in the legend but include a point `(17, 0.62)` labeled `k=5`.

- These discrepancies suggest potential labeling errors in the original data.

3. **Outliers**:

- The `k=1` point `(12, 0.54)` is an outlier with the lowest accuracy.

- The `k=9` point `(18, 0.64)` represents the maximum time-to-answer and highest accuracy.

---

### Interpretation

The plot demonstrates a clear trade-off between **accuracy** and **computational cost**. Configurations with higher `k` values (e.g., `k=9`) prioritize accuracy at the expense of longer processing time, while lower `k` values (e.g., `k=1`) optimize for speed but sacrifice precision.

- **`k=3` and `k=5`** occupy intermediate positions, balancing accuracy and time. However, the inconsistent labeling of data points (e.g., `k=9` mislabeled as `k=5`) raises questions about data integrity.

- The `k=1` configuration’s low accuracy (0.54) suggests it may be unsuitable for tasks requiring high precision, even if it is the fastest option.

This analysis underscores the importance of tuning `k` based on application-specific priorities (e.g., real-time vs. high-accuracy requirements). Further validation of the dataset is recommended to resolve labeling inconsistencies.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x12.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy vs. Time-to-Answer (Longest Thinking in Thousands)

### Overview

The image is a scatter plot comparing **accuracy** (y-axis) and **time-to-answer** (x-axis, in thousands of units). Data points are color-coded by the parameter `k` (1, 3, 5, 9), with distinct markers for each `k` value. The plot highlights trade-offs between computational effort (time) and performance (accuracy).

---

### Components/Axes

- **Y-axis (Accuracy)**: Ranges from 0.68 to 0.74, with gridlines at 0.68, 0.70, 0.72, 0.74.

- **X-axis (Time-to-Answer)**: Ranges from 12 to 20 (in thousands), with gridlines at 12, 14, 16, 18, 20.

- **Legend**: Located on the right, mapping:

- **Blue squares**: `k=3`

- **Cyan diamonds**: `k=5`

- **Red circles**: `k=9`

- **Star symbol**: `k=1`

- **Markers**: Each `k` value uses a unique symbol (e.g., `k=1` is a star, `k=3` is a square).

---

### Detailed Analysis

#### Data Points by `k`:

1. **`k=1` (Star)**:

- Single point at (15, 0.69).

- Lowest accuracy and moderate time-to-answer.

2. **`k=3` (Blue Square)**:

- Points at:

- (12, 0.71)

- (13, 0.71)

- (17, 0.70)

- (20, 0.70)

- Consistent accuracy (~0.70–0.71) with increasing time-to-answer.

3. **`k=5` (Cyan Diamond)**:

- Points at:

- (12, 0.71)

- (13, 0.71)

- (16, 0.74)

- (17, 0.72)

- Highest accuracy (0.74) at time=16, with a slight drop at time=17.

4. **`k=9` (Red Circle)**:

- Points at:

- (14, 0.74)

- (16, 0.74)

- (20, 0.74)

- Perfect accuracy (0.74) across all time-to-answer values.

---

### Key Observations

1. **`k=9` Dominates Accuracy**:

- Maintains 0.74 accuracy regardless of time-to-answer, suggesting optimal performance at higher computational cost.

2. **`k=5` Shows Trade-off**:

- Peaks at 0.74 accuracy at time=16 but drops to 0.72 at time=17, indicating sensitivity to time.

3. **`k=3` and `k=1` Underperform**:

- `k=3` achieves ~0.70–0.71 accuracy, while `k=1` lags at 0.69.

4. **Time-Accuracy Correlation**:

- Higher `k` values (e.g., 9) achieve better accuracy but require longer processing time.

---

### Interpretation

- **Computational Trade-off**: Increasing `k` improves accuracy but increases time-to-answer. For example, `k=9` achieves perfect accuracy but requires the longest processing time (20k units).

- **Optimal `k` for Balance**: `k=5` offers a middle ground, achieving high accuracy (0.74) at moderate time (16k units), though it is less stable than `k=9`.

- **Outliers**: `k=1` is an outlier with the lowest accuracy and moderate time, suggesting inefficiency at low computational effort.

- **Stability of `k=9`**: Its consistent accuracy across all time points implies robustness, possibly due to exhaustive computation.

This plot underscores the relationship between model complexity (`k`) and performance, highlighting the need to balance accuracy and computational resources.

</details>

(c) QwQ-32B

<details>

<summary>x13.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy vs. Time-to-Answer for Different k Values

### Overview

The chart compares the accuracy and time-to-answer performance of three algorithms (`majority@k`, `short-1@k`, `short-3@k`) across different `k` values (3, 5, 9). Accuracy is plotted on the y-axis (0.78–0.88), and time-to-answer (in thousands of units) is on the x-axis (14–22). Data points are color-coded and symbol-coded per algorithm.

---

### Components/Axes

- **Y-Axis (Accuracy)**: Labeled "Accuracy" with ticks at 0.78, 0.80, 0.82, 0.84, 0.86, 0.88.

- **X-Axis (Time-to-Answer)**: Labeled "Time-to-Answer (longest thinking in thousands)" with ticks at 14, 16, 18, 20, 22.

- **Legend**: Located at the bottom-right corner, mapping:

- Red circles: `majority@k`

- Blue squares: `short-1@k` (Ours)

- Cyan diamonds: `short-3@k` (Ours)

- **Data Points**: Positioned across the grid with approximate coordinates (x, y) and labeled with `k` values.

---

### Detailed Analysis

#### Data Points by Algorithm

1. **`majority@k` (Red Circles)**:

- (16, 0.84)

- (18, 0.86)

- (20, 0.84)

- (22, 0.86)

- (22, 0.82)

- (22, 0.86) [k=9]

2. **`short-1@k` (Blue Squares)**:

- (15, 0.83)

- (16, 0.84)

- (18, 0.85)

- (19, 0.82)

3. **`short-3@k` (Cyan Diamonds)**:

- (17, 0.87)

- (19, 0.86)

- (21, 0.85)

- (19, 0.84) [k=3]

---

### Key Observations

1. **Accuracy vs. Time Trade-off**:

- `majority@k` achieves higher accuracy (0.84–0.86) but requires longer time (16–22k).

- `short-1@k` sacrifices accuracy (0.82–0.85) for faster response (15–19k).

- `short-3@k` balances both, with accuracy (0.84–0.87) and moderate time (17–21k).

2. **Outliers**:

- `short-3@k` at (19, 0.84) underperforms compared to other `short-3@k` points.

- `majority@k` at (22, 0.82) shows a drop in accuracy despite high time investment.

3. **Trends**:

- `majority@k` accuracy increases slightly with higher `k` (e.g., k=9 at 0.86).

- `short-1@k` accuracy decreases as `k` increases (e.g., k=3 at 0.84 vs. k=5 at 0.83).

---

### Interpretation

The chart demonstrates a clear trade-off between accuracy and computational efficiency. `majority@k` prioritizes accuracy at the cost of time, making it suitable for scenarios where precision is critical. Conversely, `short-1@k` optimizes for speed but with reduced accuracy, ideal for time-sensitive applications. `short-3@k` emerges as a middle-ground solution, offering competitive accuracy with moderate time requirements. Notably, higher `k` values in `majority@k` (e.g., k=9) yield marginally better accuracy but require significantly more time, suggesting diminishing returns. The anomaly in `short-3@k` at (19, 0.84) warrants further investigation into potential configuration or data inconsistencies.

</details>

(d) R1-670B

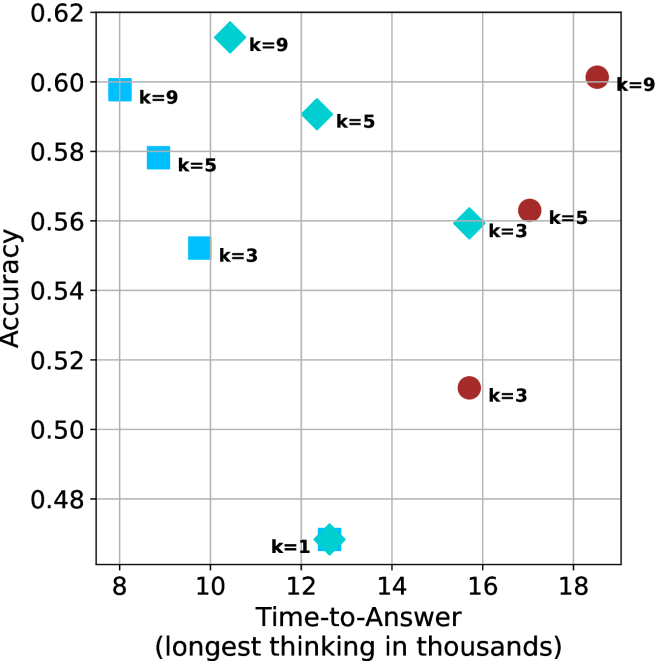

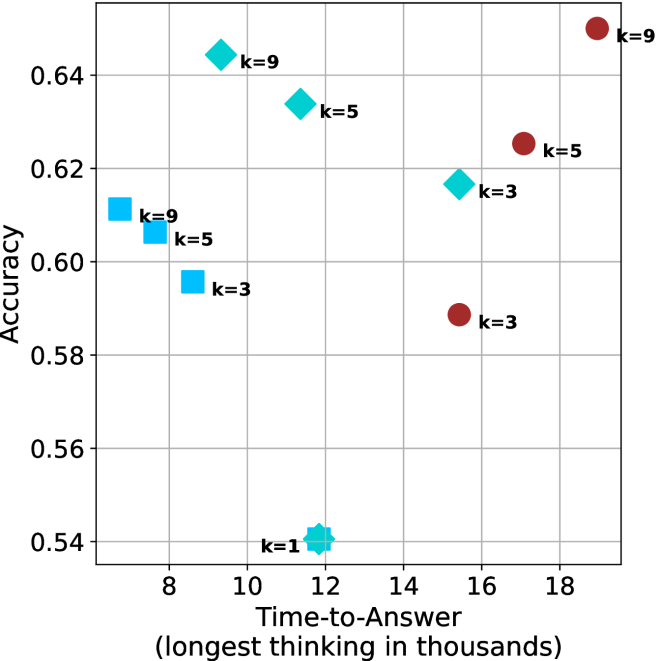

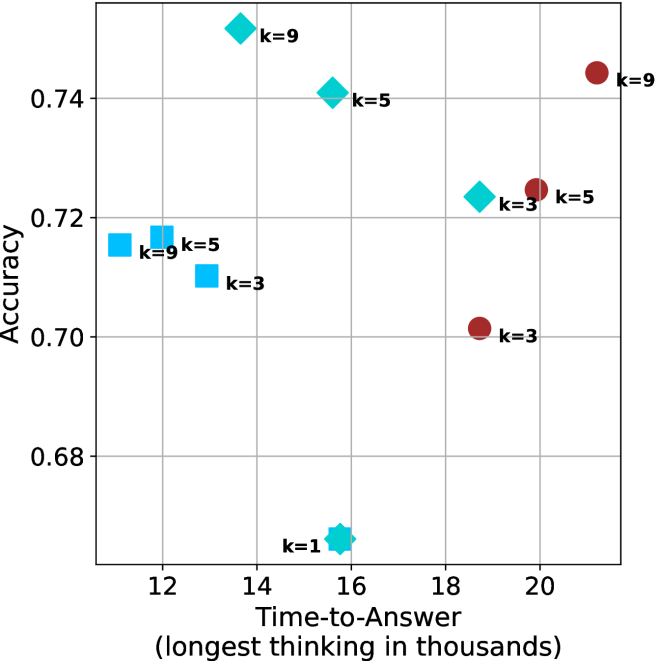

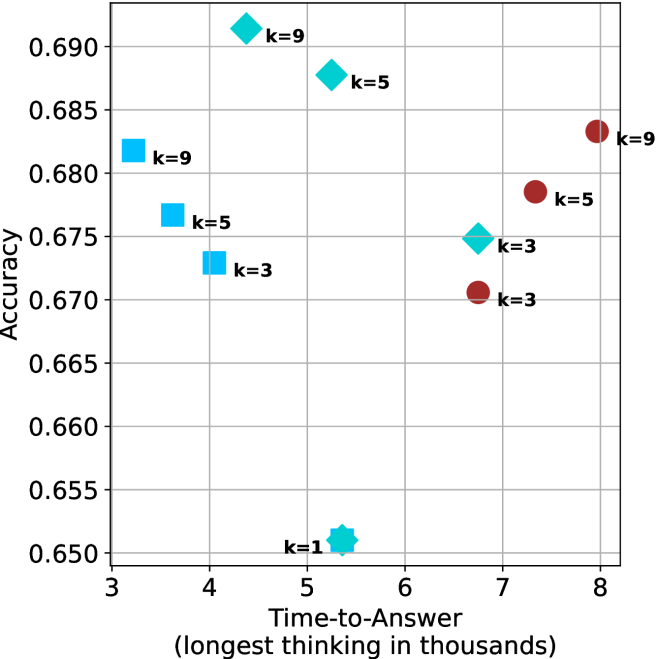

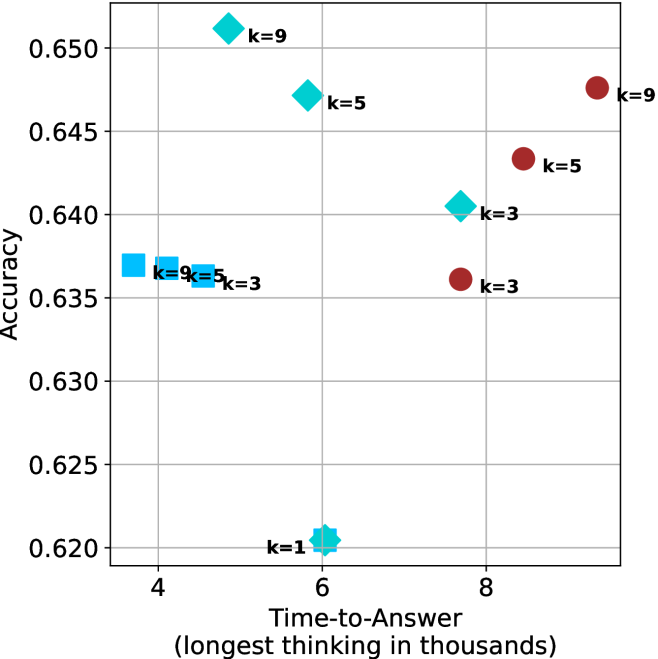

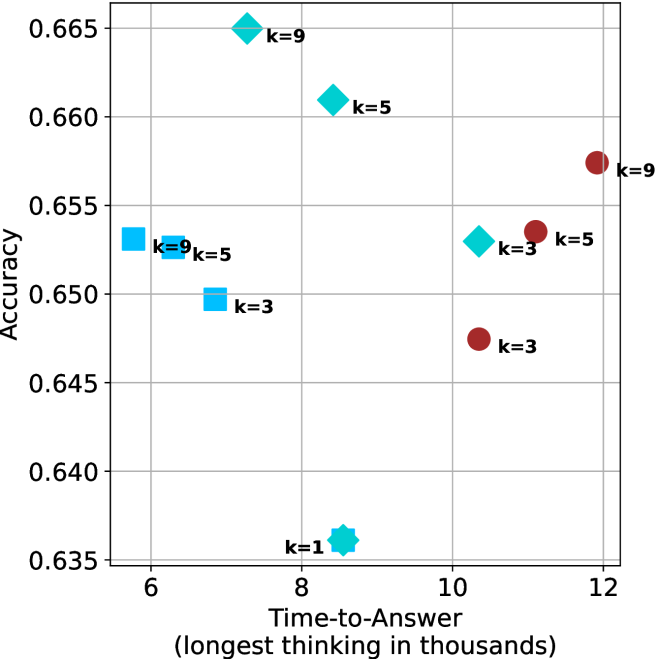

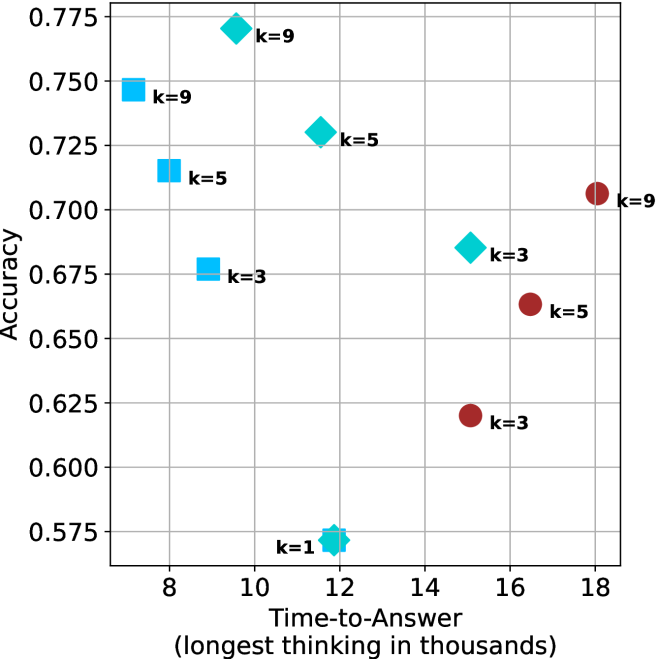

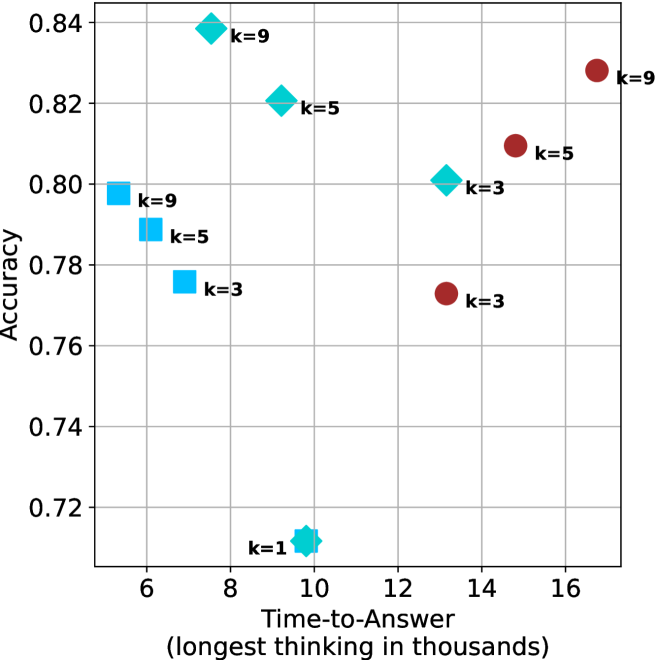

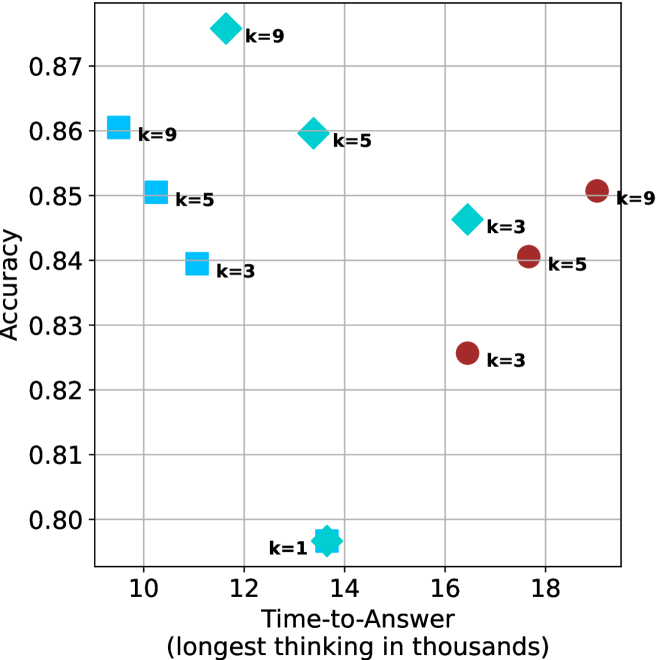

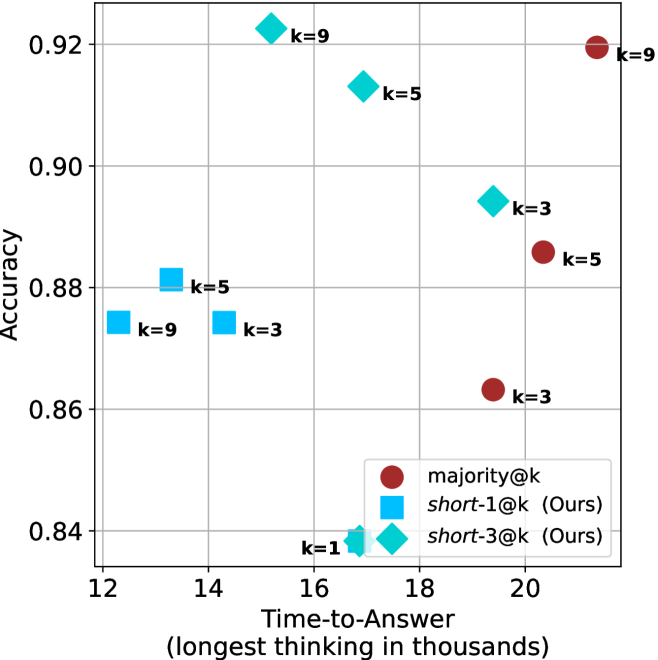

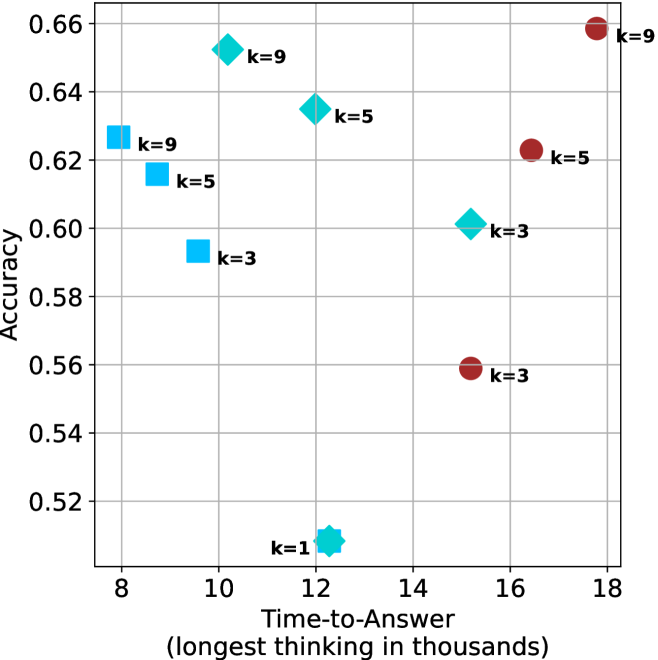

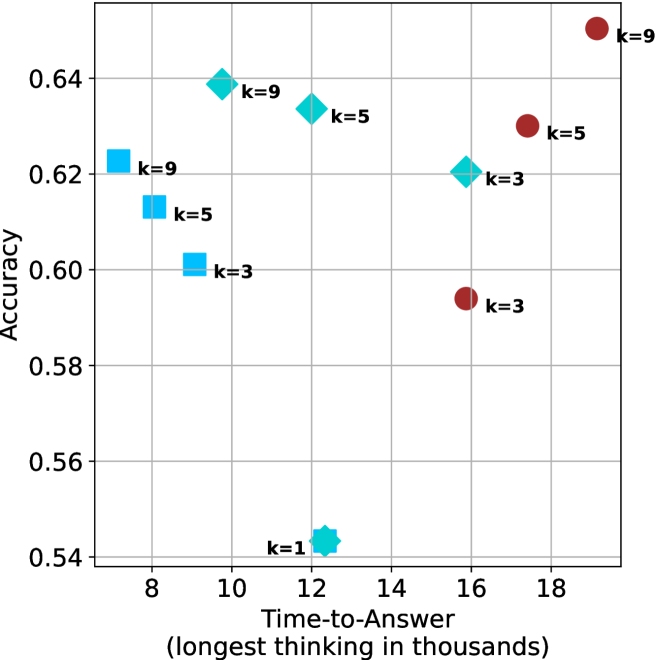

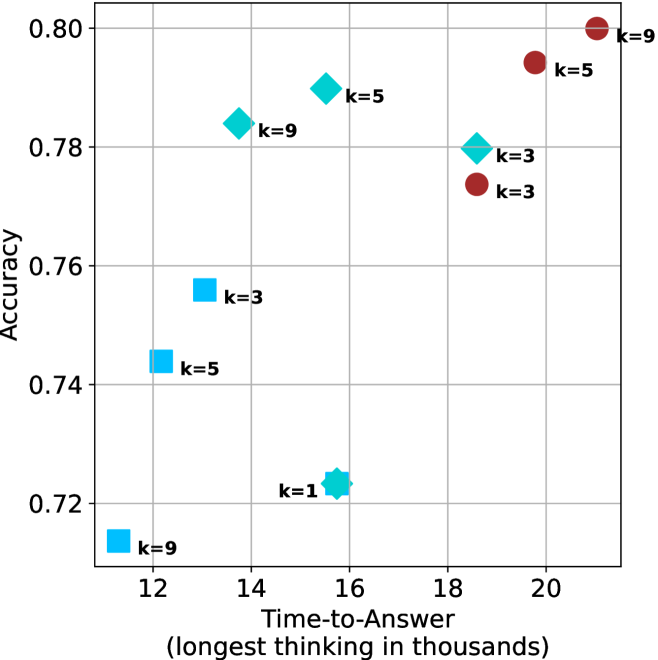

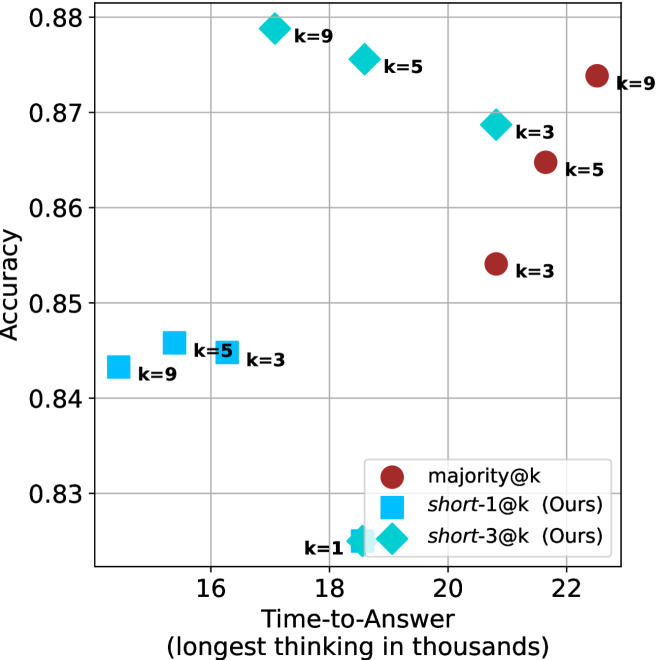

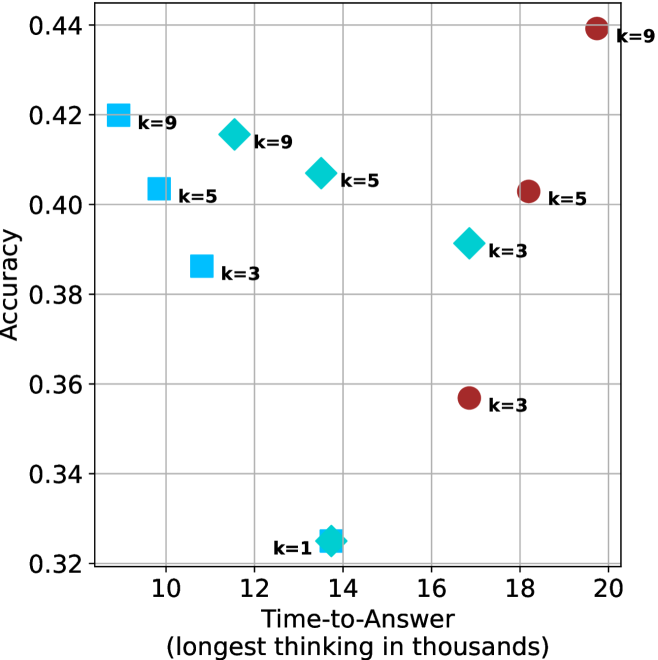

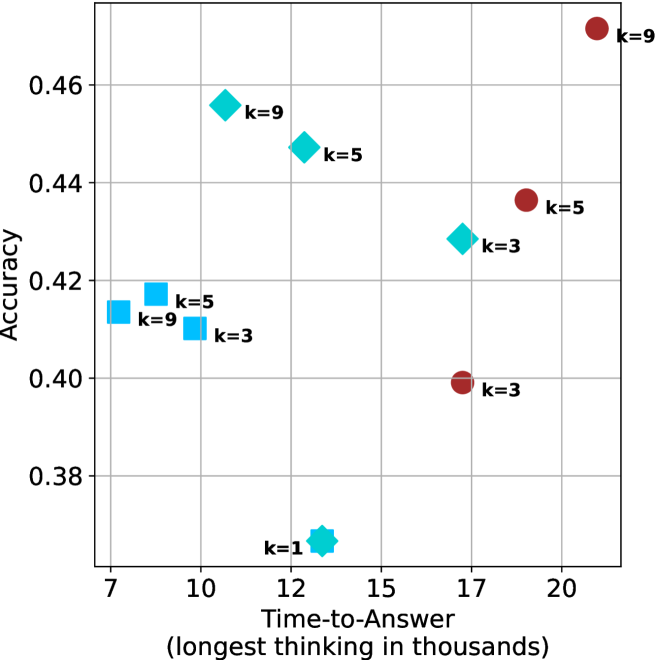

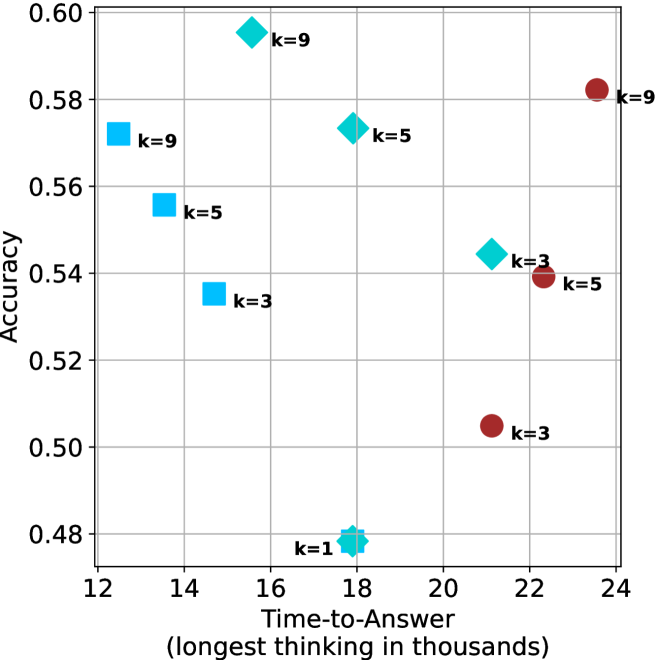

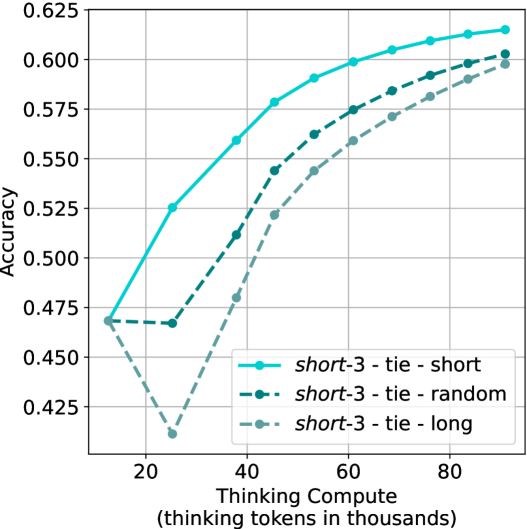

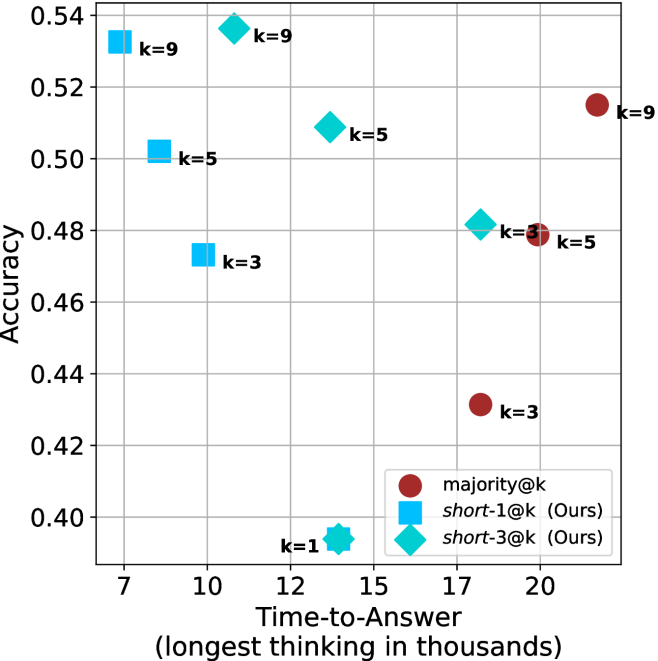

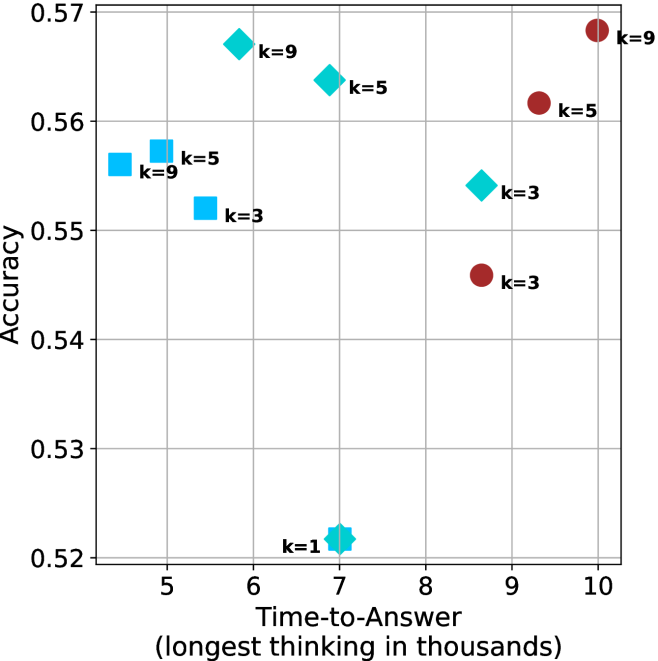

Figure 4: Comparing time-to-answer for different inference methods. Our methods substantially reduce time cost with no major loss in performance. Unlike majority $@k$ , which becomes slower as $k$ grows, our methods run faster with $k$ , as the probability of finding a short chain increases with $k$ .

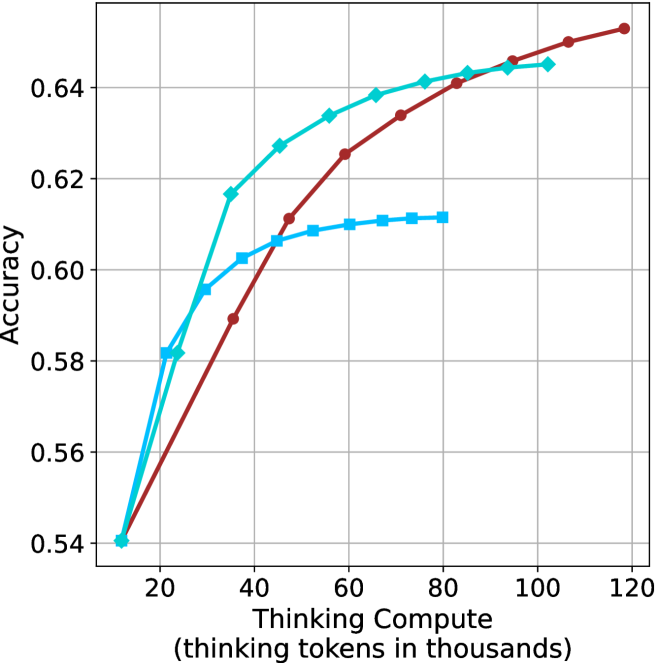

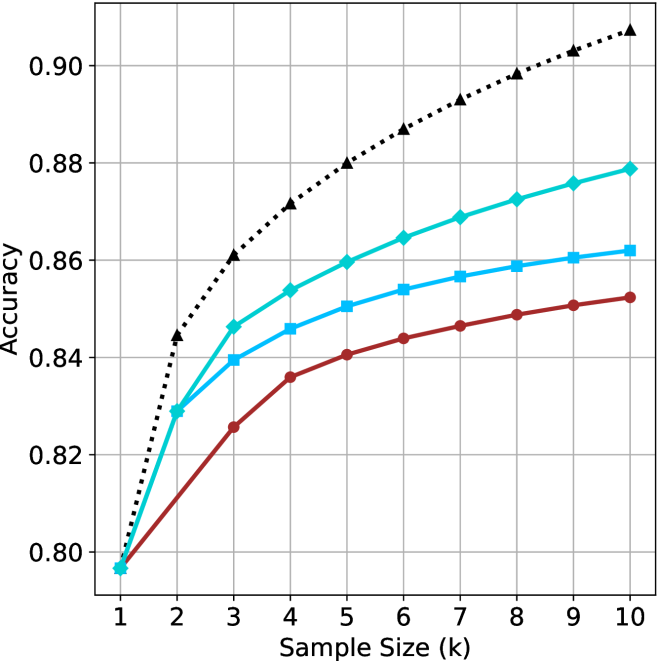

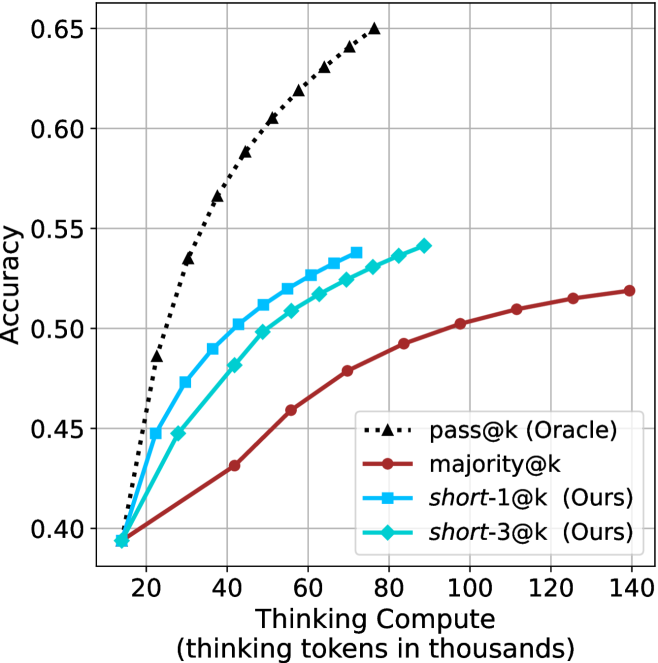

Time-to-answer.

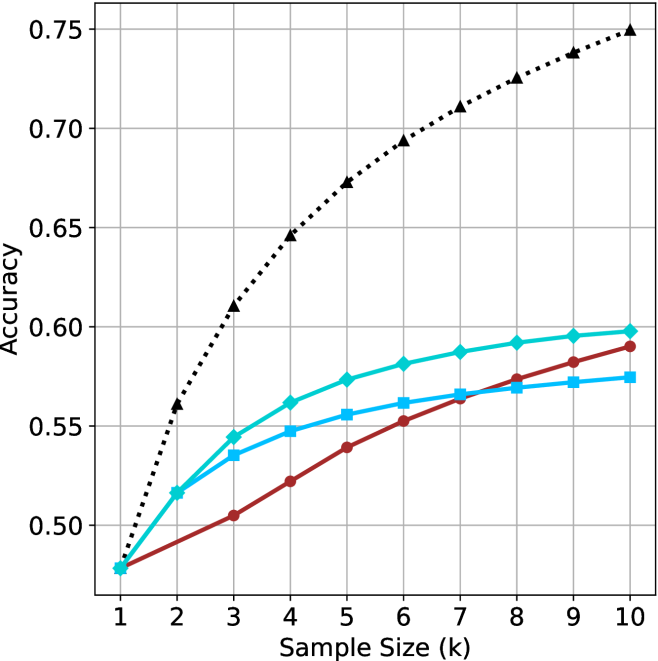

Finally, the math aggregated time-to-answer results are shown in Figure ˜ 4, with GPQA-D results shown in Figure ˜ 8 and pe math benchmark in Appendix ˜ B. For readability, Figure 4 omits the oracle, and methods are compared across a subset of sample sizes. As sample size increases, majority $@k$ exhibits longer time-to-answer, driven by a higher probability of sampling generations with extended thinking chains, requiring all trajectories to complete. Conversely, the short-1@k method shows reduced time-to-answer with larger sample sizes, as the probability of encountering a short answer increases. This trend also holds for the short-3@k method after three reasoning processes complete.

This phenomenon makes the short-1@k and short-3@k methods substantially more usable compared to basic majority $@k$ . For example, when using the LN-Super- $49$ B model (Figure ˜ 4(a)), with a sample size of $5$ , the short-1@k method reduces time consumption by almost $50\$ while also increasing performance by about $1.5\$ compared to majority@ $k$ . When considering a larger sample size of $9$ , the performance values are almost the same but short-1@k is more than $55\$ faster.

Finally, we observe that for most models and sample sizes, short-3@k boosts performance, while for larger ones it also reduces time-to-answer significantly. For example, on R $1$ - $32$ B (Figure ˜ 4(b)), with $k=5$ , short-3@k is $33\$ faster than majority@ $k$ , while reaching superior performance. A similar boost in time-to-answer and performance is observed with QwQ- $32$ B/R $1$ - $670$ B and sample size $9$ (Figure ˜ 4(c) and Figure ˜ 4(d)).

## 5 Analysis

To obtain deeper insights into the underlying process, making shorter thinking trajectories preferable, we conduct additional analysis. We first investigate the relation between using shorter thinking per individual example, and the necessity of longer trajectories to solve more complex problems (Section ˜ 5.1). Subsequently, we analyze backtracks in thinking trajectories to better understand the characteristics of shorter trajectories (Section ˜ 5.2). Lastly, we analyze the perfomance of short-m@k in sequential setting (Section ˜ 5.3). All experiments in this section use trajectories produced by our models as described in Section ˜ 3.1. For Sections 5.1 and 5.2 we exclude generations that were not completed within the generation length.

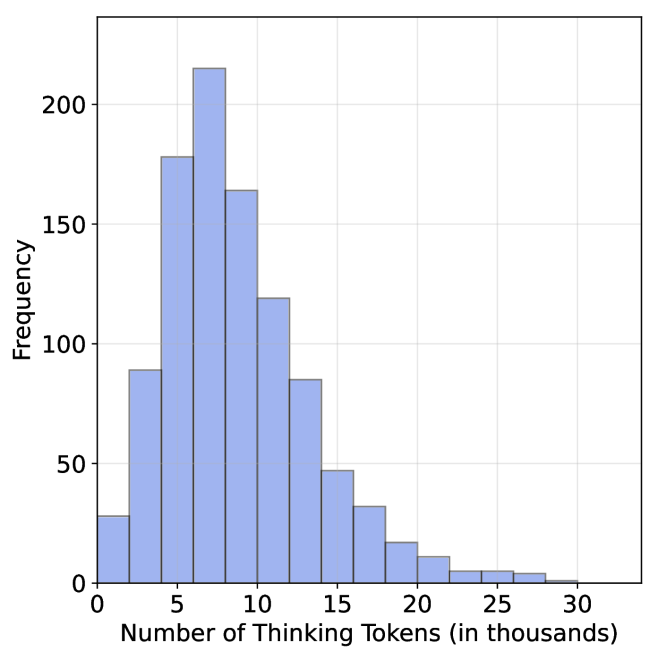

### 5.1 Hard questions (still) require more thinking

We split the questions into three equal size groups according to model’s success rate. Then, we calculate the average thinking length for each of the splits, and provide the average lengths for the correct and incorrect attempts per split.

Table 2: Average thinking tokens for correct (C), incorrect (IC) and all (A) answers, per split by difficulty for the math benchmarks. The numbers are in thousands of tokens.

| Model | Easy | Medium | Hard |

| --- | --- | --- | --- |

| C/IC/A | C/IC/A | C/IC/A | |

| LN-Super-49B | $\phantom{0}5.3/11.1/\phantom{0}5.7$ | $11.4/17.1/14.6$ | $12.4/16.8/16.6$ |

| R1-32B | $\phantom{0}4.9/13.7/\phantom{0}5.3$ | $10.9/17.3/13.3$ | $14.4/15.8/15.7$ |

| QwQ-32B The QwQ-32B and R1-670B models correctly answered all of their easier questions in all attempts. | $\phantom{0}8.4/\phantom{0.}\text{--}\phantom{0}/\phantom{0}8.4$ | $14.8/21.6/15.6$ | $19.1/22.8/22.3$ |

| R1-670B footnotemark: | $13.0/\phantom{0.}\text{--}\phantom{0}/13.0$ | $15.3/20.9/15.5$ | $23.0/31.7/28.4$ |

Tables ˜ 2 and 5 shows the averages thinking tokens per split for the math benchmarks and GPQA-D, respectively. We first note that as observed in Section ˜ 3.2, within each question subset, correct answers are typically shorter than incorrect ones. This suggests that correct answers tend to be shorter, and it holds for easier questions as well as harder ones.

Nevertheless, we also observe that models use more tokens for more challenging questions, up to a factor of $2.9$ . This finding is consistent with recent studies (Anthropic, 2025; OpenAI, 2024; Muennighoff et al., 2025) indicating that using longer thinking is needed in order to solve harder questions. To summarize, harder questions require a longer thinking process compared to easier ones, but within a single question (both easy and hard), shorter thinking is preferable.

### 5.2 Backtrack analysis

One may hypothesize that longer thinking reflect a more extensive and less efficient search path, characterized by a higher degree of backtracking and “rethinking”. In contrast, shorter trajectories indicate a more direct and efficient path, which often leads to a more accurate answer.

To this end, we track several keywords identified as indicators of re-thinking and backtracking within different trajectories. The keywords we used are: [’but’, ’wait’, ’however’, ’alternatively’, ’not sure’, ’going back’, ’backtrack’, ’trace back’, ’hmm’, ’hmmm’] We then categorize the trajectories into correct and incorrect sets, and measure the number of backtracks and their average length (quantified by keyword occurrences divided by total thinking length) for each set. We present the results for the math benchmarks and GPQA-D in Tables ˜ 3 and 6, respectively.

Table 3: Average number of backtracks, and their average length for correct (C), incorrect (IC) and all (A) answers in math benchmarks.

| Model | # Backtracks | Backtrack Len. |

| --- | --- | --- |

| C/IC/A | C/IC/A | |

| LN-Super-49B | $106/269/193$ | $\phantom{0}88/\phantom{0}70/76$ |

| R1-32B | $\phantom{0}95/352/213$ | $117/\phantom{0}63/80$ |

| QwQ-32B | $182/269/193$ | $\phantom{0}70/\phantom{0}60/64$ |

| R1-670B | $188/323/217$ | $\phantom{0}92/102/99$ |

As our results indicate, for all models and benchmarks, correct trajectories consistently exhibit fewer backtracks compared to incorrect ones. Moreover, in almost all cases, backtracks of correct answers are longer. This may suggest that correct solutions involve less backtracking where each backtrack is longer, potentially more in-depth that leads to improved reasoning, whereas incorrect ones explores more reasoning paths that are abandoned earlier (hence tend to be shorter).

Lastly, we analyze the backtrack behavior in a length-controlled manner. Specifically, we divide trajectories into bins based on their length. Within each bin, we compare the number of backtracks between correct and incorrect trajectories. Our hypothesis is that even for trajectories of comparable length, correct trajectories would exhibit fewer backtracks, indicating a more direct path to the answer. The results over the math benchmarks and GPQA-D are presented in Appendix ˜ F. As can be seen, in almost all cases, even among trajectories of comparable length, correct ones show a lower number of backtracks. The only exception is the R $1$ - $670$ B model over the math benchmarks. This finding further suggests that correct trajectories are superior because they spend less time on searching for the correct answer and instead dive deeply into a smaller set of paths.

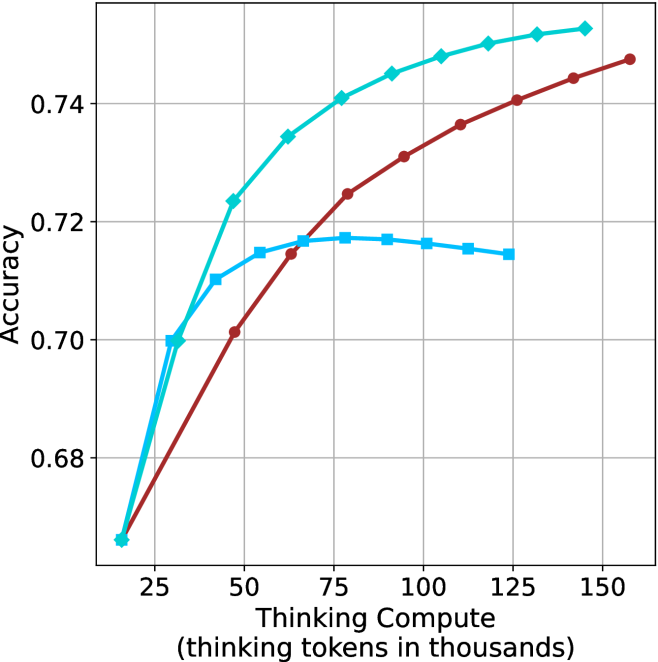

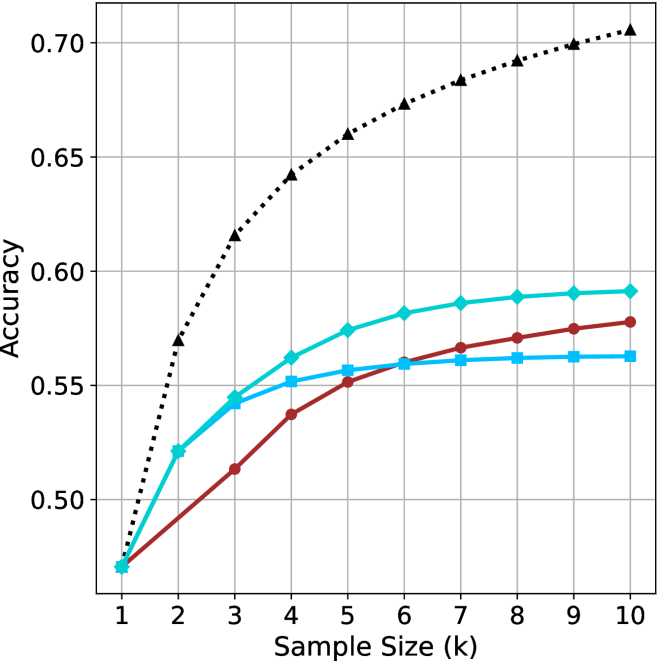

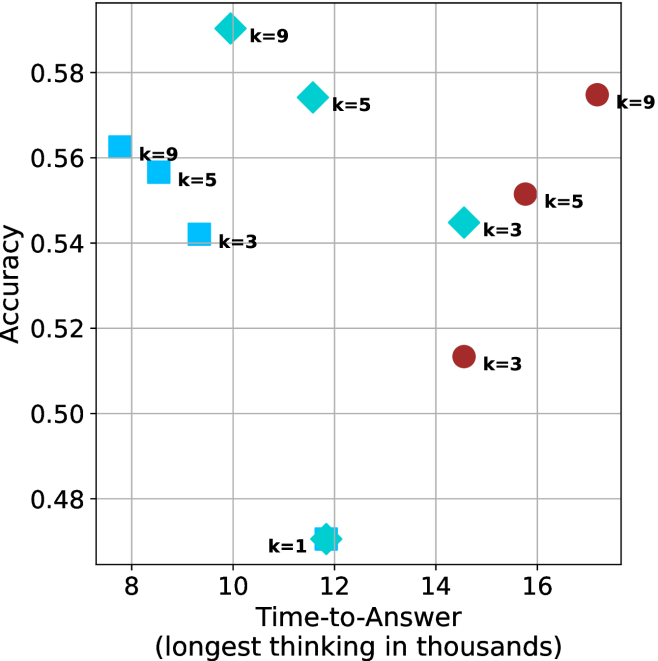

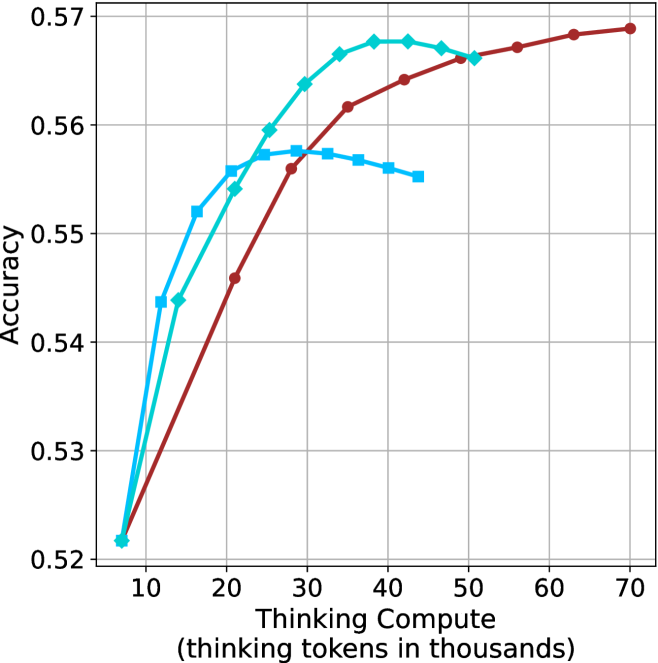

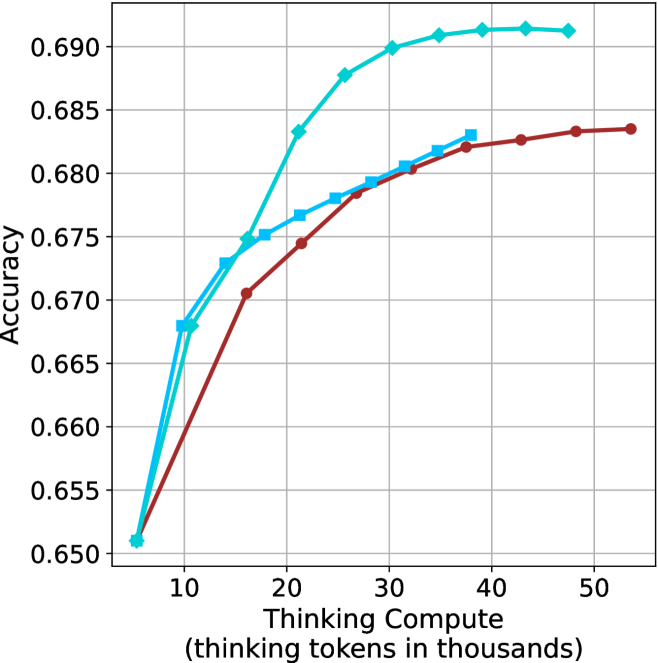

### 5.3 short-m@k with sequential compute

Our results so far assume sufficient resources for generating the output in parallel. We now study the potential of our proposed method without this constraint, by comparing short-m@k to the baselines in sequential (non-batched) setting, and measuring the amount of thinking tokens used by each method. For short-m@k, each generation is terminated once its length exceeds the maximum length observed among the $m$ shortest previously completed generations.

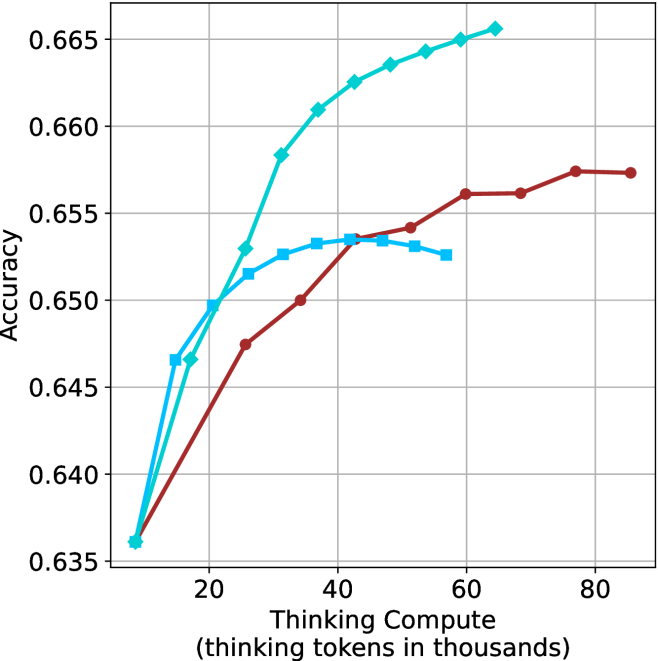

The results for the math benchmarks are presented in Figure ˜ 5 while the GPQA-D results are in Appendix ˜ E. While the performance of short-m@k shows decreased efficiency in terms of total thinking compute usage compared to a fully batched decoding setup (Section ˜ 4.3), the method’s superiority over standard majority voting remains. Specifically, at low compute regimes, both short-1@k and short-3@k demonstrate higher efficiency and improved performance compared to majority voting. As for higher compute regimes, short-3@k outperforms the majority voting baseline.

<details>

<summary>x14.png Details</summary>

### Visual Description

## Line Graph: Model Accuracy vs. Thinking Compute

### Overview

The image depicts a line graph comparing the accuracy of three models (Model A, Model B, Model C) as a function of "Thinking Compute" (measured in thousands of thinking tokens). The x-axis ranges from 20 to 120 (thousands of tokens), and the y-axis represents accuracy from 0.48 to 0.62. Three colored lines (blue, green, red) correspond to the models, with a legend in the top-right corner.

### Components/Axes

- **X-axis**: "Thinking Compute (thinking tokens in thousands)" with increments of 20 (20, 40, 60, 80, 100, 120).

- **Y-axis**: "Accuracy" with increments of 0.02 (0.48, 0.50, 0.52, 0.54, 0.56, 0.58, 0.60, 0.62).

- **Legend**: Located in the top-right corner, associating:

- **Blue**: Model A

- **Green**: Model B

- **Red**: Model C

### Detailed Analysis

1. **Model A (Blue Line)**:

- Starts at **0.52 accuracy** at 20k tokens.

- Dips to **0.50 accuracy** at 40k tokens.

- Rises sharply to **0.58 accuracy** at 60k tokens.

- Continues upward to **0.60 accuracy** at 80k tokens, **0.61 accuracy** at 100k tokens, and **0.62 accuracy** at 120k tokens.

- **Trend**: Initial dip followed by consistent improvement.

2. **Model B (Green Line)**:

- Starts at **0.50 accuracy** at 20k tokens.

- Rises steadily to **0.54 accuracy** at 40k tokens, **0.56 accuracy** at 60k tokens, **0.58 accuracy** at 80k tokens, **0.60 accuracy** at 100k tokens, and **0.61 accuracy** at 120k tokens.

- **Trend**: Gradual, steady increase.

3. **Model C (Red Line)**:

- Starts at **0.48 accuracy** at 20k tokens.

- Rises to **0.52 accuracy** at 40k tokens, **0.56 accuracy** at 60k tokens, **0.59 accuracy** at 80k tokens, **0.60 accuracy** at 100k tokens, and **0.61 accuracy** at 120k tokens.

- **Trend**: Slow but consistent improvement.

### Key Observations

- All models show **increasing accuracy** with higher compute, but **Model A** achieves the highest final accuracy (0.62 at 120k tokens).

- **Model A** exhibits a notable dip at 40k tokens, suggesting potential instability or inefficiency at intermediate compute levels.

- **Model B** and **Model C** demonstrate smoother, more predictable scaling with compute.

- At 120k tokens, all models converge to similar accuracy levels (0.60–0.62), indicating diminishing returns at higher compute.

### Interpretation

The data suggests that **Model A** is the most performant at high compute levels but may require optimization for lower compute scenarios. The dip in Model A’s accuracy at 40k tokens could indicate a computational bottleneck or overfitting at that scale. Meanwhile, **Model B** and **Model C** show more stable scaling, making them potentially better choices for applications with limited compute resources. The convergence of accuracy at 120k tokens implies that further compute gains may yield minimal improvements, highlighting the importance of balancing efficiency and performance.

</details>

(a) LN-Super-49B

<details>

<summary>x15.png Details</summary>

### Visual Description

## Line Chart: Model Performance vs. Thinking Compute

### Overview

The chart compares the accuracy of three models (Model A, Model B, Model C) as a function of "Thinking Compute" (measured in thousands of thinking tokens). Accuracy is plotted on the y-axis (0.54–0.64), while the x-axis ranges from 20 to 120 thousand tokens. Three distinct lines represent each model's performance trend.

### Components/Axes

- **X-axis**: "Thinking Compute (thinking tokens in thousands)" (20–120k tokens, increments of 20k).

- **Y-axis**: "Accuracy" (0.54–0.64, increments of 0.02).

- **Legend**: Located on the right, associating:

- Teal line → Model A

- Red line → Model B

- Blue line → Model C

### Detailed Analysis

1. **Model A (Teal Line)**:

- Starts at (20k tokens, 0.54 accuracy).

- Sharp upward slope until ~40k tokens (reaches 0.64).

- Plateaus at ~0.64 from 40k to 120k tokens.

2. **Model B (Red Line)**:

- Starts at (20k tokens, 0.54 accuracy).

- Gradual upward slope, surpassing Model A near 60k tokens.

- Reaches ~0.65 accuracy at 120k tokens.

3. **Model C (Blue Line)**:

- Starts at (20k tokens, 0.54 accuracy).

- Slow upward slope, plateauing at ~0.61 by 80k tokens.

- Remains flat at ~0.61 until 120k tokens.

### Key Observations

- **Crossover Point**: Model B overtakes Model A in accuracy between 40k and 60k tokens.

- **Plateaus**:

- Model A plateaus at 0.64 after 40k tokens.

- Model C plateaus at 0.61 after 80k tokens.

- **Efficiency**: Model B achieves the highest accuracy (0.65) with the least compute (120k tokens).

### Interpretation

The data suggests **Model B** is the most efficient, achieving superior accuracy with increasing compute. Model A demonstrates rapid early gains but suffers from diminishing returns, while Model C shows minimal improvement despite higher compute. The crossover between Model A and B highlights a critical threshold where compute efficiency becomes decisive. This could inform resource allocation strategies, favoring Model B for high-accuracy, compute-constrained scenarios.

</details>

(b) R $1$ - $32$ B

<details>

<summary>x16.png Details</summary>

### Visual Description

## Line Chart: Model Accuracy vs. Thinking Compute

### Overview

The chart illustrates the relationship between computational resources (thinking tokens in thousands) and model accuracy for three distinct models (A, B, and C). Accuracy is measured on a scale from 0.68 to 0.74, while thinking compute ranges from 25 to 150 thousand tokens.

### Components/Axes