# : A General-Purpose and Unified Framework for Reinforcement Fine-Tuning of Large Language Models

**Authors**: Alibaba Group

Abstract

Trinity-RFT is a general-purpose, unified and easy-to-use framework designed for reinforcement fine-tuning (RFT) of large language models. It is built with a modular and decoupled design, consisting of (1) an RFT-core that unifies and generalizes synchronous/asynchronous, on-policy/off-policy, and online/offline modes of RFT; (2) seamless integration for agent-environment interaction with high efficiency and robustness; and (3) systematic data pipelines optimized for RFT. Trinity-RFT can be easily adapted for diverse application scenarios, and serves as a unified platform for development and research of advanced reinforcement learning paradigms at both macroscopic and microscopic levels. This technical report outlines the vision, features, design and implementations of Trinity-RFT, accompanied by extensive examples, applications and experiments that demonstrate its functionalities and user-friendliness. footnotetext: Equal contribution. footnotetext: Corresponding author. {chenyanxi.cyx,panxuchen.pxc,daoyuanchen.cdy,yaliang.li,bolin.ding}@alibaba-inc.com

GitHub: https://github.com/modelscope/Trinity-RFT

Documentation: https://modelscope.github.io/Trinity-RFT

Note: Trinity-RFT is currently under active development. This technical report corresponds to commit id 63d4920 (July 14, 2025) of the GitHub repository, and will be continuously updated as the codebase evolves. Comments, suggestions and contributions are welcome!

1 Introduction

Reinforcement learning (RL) has achieved remarkable success in the development of large language models (LLMs). Examples include aligning LLMs with human preferences via reinforcement learning from human feedback (RLHF) [24], and training long-CoT reasoning models via RL with rule-based or verifiable rewards (RLVR) [5, 35]. However, such approaches are limited in their abilities to handle dynamic, agentic and continuous learning in the real world.

We envision a future where AI agents learn by interacting directly with environments, collecting lagged or complex reward signals, and continuously refining their behavior through RL based on the collected experiences [32]. For example, imagine an AI scientist who designs an experiment, executes it, waits for feedback (while working on other tasks concurrently), and iteratively updates itself based on true environmental rewards and feedback when the experiment is finally finished.

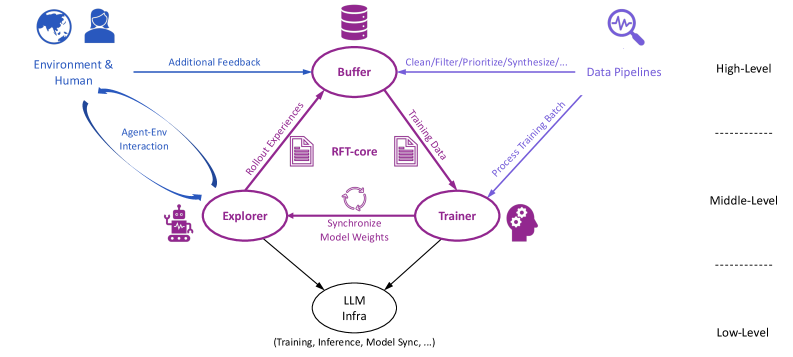

This vision motivates us to develop Trinity-RFT, a reinforcement fine-tuning (RFT) framework that aims to offer a path into this future. The modular, decoupled and trinity design of Trinity-RFT illustrated in Figure 1, along with its various features, makes it a promising solution for realizing such a vision.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: System Architecture for Agent-Environment Interaction and Training

### Overview

The diagram illustrates a multi-component system architecture for agent-environment interaction, training, and inference. It depicts data flow between components such as the Buffer, Explorer, Trainer, RFT-core, and LLM Infra, with feedback loops and synchronization mechanisms.

### Components/Axes

- **Key Components**:

- **Buffer**: Central node receiving "Additional Feedback" from Environment & Human and processing data via "Clean/Filter/Prioritize/Synthesize..." pipelines.

- **Explorer**: Receives "Rollout Experiences" from Buffer and interacts with Environment & Human via "Agent-Env Interaction" loop.

- **Trainer**: Processes "Training Data" from Buffer and "Process Training Batch" from Explorer, synchronizing model weights with LLM Infra.

- **RFT-core**: Central to feedback loops, connected to both Explorer and Trainer.

- **LLM Infra**: Handles "Training, Inference, Model Sync..." at the lowest level, connected to both Explorer and Trainer.

- **Data Flow**:

- Arrows indicate directional processes (e.g., "Rollout Experiences" from Buffer to Explorer).

- Feedback loops (e.g., "Agent-Env Interaction" between Explorer and Environment & Human).

- Synchronization mechanisms (e.g., "Synchronize Model Weights" between Trainer and LLM Infra).

### Detailed Analysis

- **Buffer**: Acts as a central hub for data preprocessing and prioritization, feeding into both Explorer and Trainer.

- **Explorer**: Engages in active exploration of the environment, generating experiences that inform training.

- **Trainer**: Processes training data and batches, ensuring model updates are synchronized with LLM Infra.

- **RFT-core**: Likely represents a reinforcement learning framework core, enabling iterative learning through feedback.

- **LLM Infra**: Provides the foundational infrastructure for training and inference, ensuring model consistency.

### Key Observations

- **Feedback Loops**: Multiple feedback mechanisms (e.g., "Agent-Env Interaction," "Rollout Experiences") suggest iterative learning and adaptation.

- **Synchronization**: Explicit emphasis on model weight synchronization between Trainer and LLM Infra highlights the importance of consistency.

- **Modular Design**: Components are decoupled but interconnected, allowing scalability and specialization (e.g., Buffer handles data pipelines, LLM Infra focuses on inference).

### Interpretation

This architecture represents a closed-loop system for training and deploying intelligent agents. The Buffer ensures data quality before distribution, while the Explorer and Trainer balance exploration and exploitation. The RFT-core and LLM Infra enable efficient learning and inference, with feedback loops allowing continuous improvement. The system’s modularity suggests adaptability to different environments and tasks, with the Buffer and Data Pipelines acting as critical control points for data integrity.

</details>

Figure 1: The high-level design of Trinity-RFT.

1.1 Key Features of Trinity-RFT

Trinity-RFT is a general-purpose, unified, scalable and user-friendly RL framework that can be easily adapted for diverse experimental or real-world scenarios. It integrates both macroscopic and microscopic RL methodologies in one place; roughly speaking, the former deals with natural language and plain text, while the latter handles torch.Tensor (such as token probabilities, gradients, and model weights of LLMs) Many prior RL works for games/control/LLMs focus mainly on the microscopic aspect, e.g., designing policy loss functions or optimization techniques for updating the policy model. On the other hand, pre-trained LLMs, as generative models with rich prior knowledge of natural language and the world, open up numerous opportunities at the macroscopic level, e.g., experience synthesis by reflection or reasoning with environmental feedback [4], leveraging existing text processing methods like deduplication and quality filtering [2], among others.. The key features of Trinity-RFT are presented below, which will be further elaborated in Section 2 and exemplified in Section 3.

An RFT-core that unifies and generalizes diverse RL modes.

Trinity-RFT implements diverse RL methodologies in a unified manner, supporting synchronous/asynchronous, on-policy/off-policy, and online/offline training. These RL modes can be flexibly generalized, e.g., a hybrid mode that incorporates expert trajectories to accelerate an online RL process [21, 46]. This unification is made possible partly by our decoupled design (which will soon be introduced in Section 2.1) that allows rollout and training to be executed separately and scaled up independently on different devices, while having access to the same stand-alone experience buffer. The efficacy of various RL modes has been validated empirically by our experiments in Section 3.3, which particularly highlight the efficiency gains by off-policy or asynchronous methods.

Agent-environment interaction as a first-class citizen.

Trinity-RFT allows delayed rewards and environmental feedback in multi-step/time-lagged feedback loop, handles long-tailed latencies and the straggler effect via asynchronous and streaming LLM inference, and deals with environment/agent failures gracefully via dedicated timeout/retry/skip mechanisms. These together ensure efficiency and robustness of continuous agent-environment interaction in complex real-world scenarios.

Systematic data pipelines optimized for RFT.

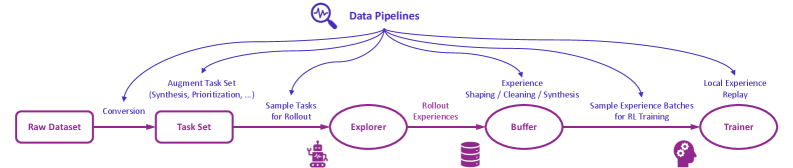

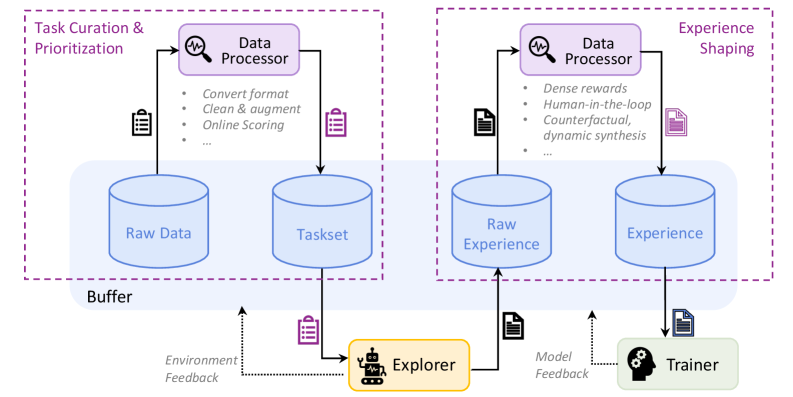

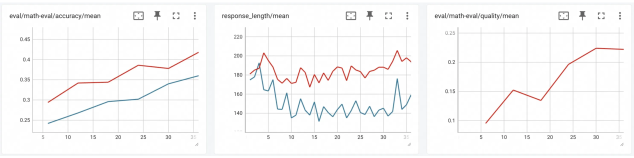

Figure 2 illustrates the high-level design of data pipelines in Trinity-RFT, which regard rollout tasks and experiences as dynamic assets to be actively managed throughout the RFT lifecycle. Trinity-RFT empowers users to: (1) curate tasks for curriculum learning, e.g., by prioritizing easier tasks at the beginning of training to stabilize and accelerate the learning process; (2) actively manipulate experience by cleaning, filtering, or synthesizing new experiences, such as repairing failed trajectories or amplifying successful ones; (3) perform online reward shaping by augmenting sparse environmental rewards with dense, computed signals, such as quality or diversity scores; (4) customize interfaces for human-in-the-loop curation and utilize an agentic paradigm for RFT data processing that translates high-level natural language commands (e.g., “improve response diversity and safety for coding scenarios”) into complex data pipelines, powered by established community tools like Data-Juicer [2]. For instance, Section 3.4 presents experiments that demonstrate the efficacy of task prioritization and reward shaping empowered by Trinity-RFT.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Flowchart: Data Pipeline Architecture

### Overview

The diagram illustrates a multi-stage data pipeline for processing raw datasets into trained models, with feedback loops for iterative improvement. Key components include data conversion, task sampling, experience exploration, buffering, and model training.

### Components/Axes

- **Components**:

- **Raw Dataset** (leftmost box)

- **Task Set** (conversion from Raw Dataset)

- **Explorer** (processes sampled tasks)

- **Buffer** (stores rollout experiences)

- **Trainer** (uses experience batches for training)

- **Arrows/Labels**:

- "Conversion" (Raw Dataset → Task Set)

- "Sample Tasks for Rollout" (Task Set → Explorer)

- "Rollout Experiences" (Explorer → Buffer)

- "Experience Shaping / Cleaning / Synthesis" (Buffer → Trainer)

- "Sample Experience Batches for RL Training" (Buffer → Trainer)

- "Local Experience Replay" (Trainer → Buffer)

- **Legend**:

- "Data Pipelines" (magnifying glass icon at the top)

### Detailed Analysis

1. **Raw Dataset → Task Set**:

- Raw data is converted into a structured task set via synthesis and prioritization.

2. **Task Set → Explorer**:

- Tasks are sampled for rollout, implying iterative testing or simulation.

3. **Explorer → Buffer**:

- The Explorer generates "Rollout Experiences," which are stored in the Buffer.

4. **Buffer → Trainer**:

- Experiences are shaped, cleaned, and synthesized before being sampled as batches for reinforcement learning (RL) training.

5. **Trainer → Buffer**:

- A feedback loop ("Local Experience Replay") sends trained experiences back to the Buffer for reuse.

### Key Observations

- **Sequential Flow**: Data progresses linearly from Raw Dataset to Trainer, with a critical feedback loop from Trainer to Buffer.

- **Iterative Refinement**: The Buffer acts as a reservoir for experiences, enabling continuous improvement through replay.

- **Modular Design**: Each component (Explorer, Buffer, Trainer) has distinct responsibilities, suggesting a modular architecture.

### Interpretation

This pipeline emphasizes **closed-loop learning**, where the Trainer not only processes experiences but also replays them locally to refine future iterations. The Buffer’s role as a central hub for experience storage and preprocessing highlights its importance in managing data quality and diversity. The feedback loop ensures that the system adapts dynamically, leveraging past experiences to improve future training cycles.

**Notable Patterns**:

- The use of "Local Experience Replay" suggests a focus on efficiency and reducing reliance on external data sources.

- The separation of "Experience Shaping/Cleaning/Synthesis" implies rigorous preprocessing to enhance training stability.

**Underlying Logic**:

The diagram aligns with reinforcement learning principles, where agents learn through interaction (rollout experiences) and refine policies via experience replay. The modular components suggest scalability, allowing each stage to be optimized independently.

</details>

Figure 2: The high-level design of data pipelines in Trinity-RFT.

User-friendliness as a top priority.

For development and research, the modular and decoupled design of Trinity-RFT allows the user to develop new RFT methodologies by adding one or a few small, plug-and-play classes (modified from built-in templates) that implement the essential functionalities of interest, with minimal code duplication or intrusive changes to the codebase. An example can be found in Section 3.2, which shows that three compact python classes (with around 200 lines of code in total) suffice for implementing a hybrid RL process that leverages samples from multiple data sources and updates the policy model with a customized loss function. For applications, the user can adapt Trinity-RFT to a new scenario by simply implementing a single Workflow class that specifies the logic of agent-environment interaction, as will be exemplified in Section 3.1. To further enhance usability, Trinity-RFT incorporates various graphical user interfaces to support low-code usage and development, enhance transparency of the RFT process, and facilitate easy monitoring and tracking.

1.2 Related Works

There exist numerous open-source RLHF frameworks, such as veRL [30], OpenRLHF [13], TRL [40], ChatLearn [1], Asynchronous RLHF [23], among others. Some of them have been further adapted for training long-CoT reasoning models or for agentic RL more recently.

Concurrent to Trinity-RFT, some recent works on LLM reinforcement learning also advocate a decoupled and/or asynchronous design; examples include StreamRL [50], MiMo [44], AReaL [9], ROLL [37], LlamaRL [43], Magistral [18], AsyncFlow [12], among others.

Complementary to this huge number of related works, Trinity-RFT provides the community with a new solution that is powerful, easy-to-use, and unique in certain aspects. In a nutshell, Trinity-RFT aims to be general-purpose and applicable to diverse application scenarios, while unifying various RFT modes, RFT methodologies at macroscopic and microscopic levels, and RFT-core/agent-environment interaction/data pipelines. Such a system-engineering perspective makes Trinity-RFT particularly useful for handling the whole RFT pipeline in one place. We also hope that some specific features of Trinity-RFT, such as data persistence in the experience buffer, and distributed deployment of multiple independent explorers, will open up new opportunities for LLM reinforcement fine-tuning.

2 Design and Implementations

The overall design of Trinity-RFT exhibits a trinity consisting of (1) RFT-core, (2) agent-environment interaction, and (3) data pipelines, which are illustrated in Figure 1 and elaborated in this section.

2.1 RFT-Core

RFT-core is the component of Trinity-RFT, highlighted at the center of Figure 1, where the core RFT process happens. Its design also exhibits a trinity, consisting of the explorer, buffer, and trainer.

- The explorer, powered by a rollout model, takes a task as input and solves it by executing a workflow that specifies the logic of agent-environment interaction, thereby collecting experiences (including rollout trajectories, rewards, and other useful information) to be stored in the buffer.

- The buffer stores experiences that can be generated by the explorer or by other sources, such as human experts. It can be realized in various forms, such as a non-persistent ray.Queue or a persistent SQLite database. It also assists with fetching training samples for the trainer, and can be integrated with advanced sampling strategies and post-processing operations.

- The trainer, backed by a policy model, samples batches of experiences from the buffer and updates the policy model via RL algorithms.

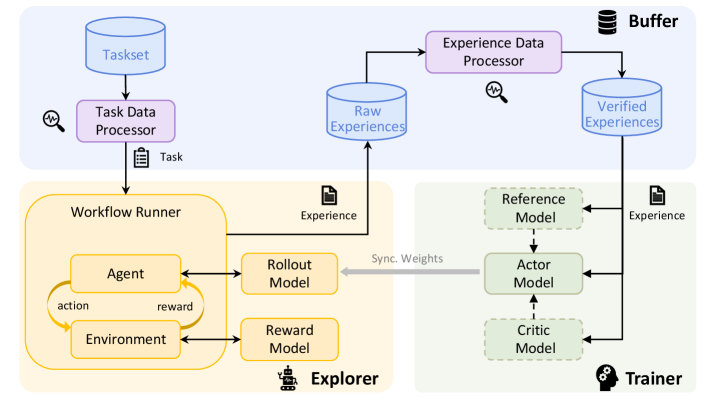

Our implementations allow the explorer and trainer to be deployed on separate machines and act independently. They are only connected via (1) access to the same experience buffer with a customizable sampling strategy, and (2) model weight synchronization by a customizable schedule. See Figure 3 for an illustration. This decoupled design of RFT-core offers support for diverse RFT modes with great flexibility.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Reinforcement Learning System Architecture

### Overview

This diagram illustrates a reinforcement learning (RL) system architecture, depicting the flow of data and interactions between components. It includes elements for task processing, agent-environment interaction, experience management, and model training.

### Components/Axes

**Key Components**:

1. **Taskset** → **Task Data Processor** → **Workflow Runner**

2. **Workflow Runner** → **Agent**, **Environment**, **Rollout Model**, **Reward Model**

3. **Experience Data Processor** → **Raw Experiences** → **Verified Experiences** → **Buffer**

4. **Trainer** → **Reference Model**, **Actor Model**, **Critic Model**

5. **Synchronize Weights** (connects Workflow Runner and Trainer)

**Flow Direction**:

- Top-to-bottom: Taskset → Task Data Processor → Workflow Runner → Experience Data Processor → Trainer.

- Horizontal: Workflow Runner ↔ Trainer via "Synchronize Weights."

**Color Coding**:

- **Top Section (Blue)**: Taskset, Task Data Processor, Experience Data Processor.

- **Middle Section (Orange)**: Workflow Runner, Agent, Environment, Rollout Model, Reward Model.

- **Bottom Section (Green)**: Trainer, Reference Model, Actor Model, Critic Model.

- **Buffer**: Black icon with "Buffer" label.

### Detailed Analysis

1. **Taskset → Task Data Processor**:

- The Taskset (input) is processed by the Task Data Processor, which generates a "Task" output.

2. **Workflow Runner**:

- Contains an **Agent** that interacts with an **Environment** via **actions** and **rewards**.

- Uses a **Rollout Model** (predicts actions) and **Reward Model** (evaluates rewards).

- Outputs "Experience" to the Experience Data Processor.

3. **Experience Data Processor**:

- Processes **Raw Experiences** into **Verified Experiences**, which are stored in the **Buffer**.

4. **Trainer**:

- Uses **Reference Model** (baseline/expert model), **Actor Model** (policy), and **Critic Model** (value function) to train on experiences from the Buffer.

- **Synchronize Weights** ensures alignment between the Workflow Runner and Trainer models.

### Key Observations

- **Modular Design**: The system separates task processing (top), agent interaction (middle), and training (bottom).

- **Feedback Loop**: The Workflow Runner and Trainer share weights, enabling continuous improvement.

- **Experience Pipeline**: Raw experiences are filtered/verified before training, ensuring data quality.

### Interpretation

This architecture represents a closed-loop RL system:

1. **Exploration**: The Agent explores the Environment, generating experiences.

2. **Experience Refinement**: The Experience Data Processor cleans and validates data.

3. **Training**: The Trainer updates the Actor and Critic models using the Reference Model as a guide.

4. **Weight Synchronization**: Ensures the Workflow Runner’s models (e.g., Rollout, Reward) stay aligned with the Trainer’s policies.

The system emphasizes **data quality** (via verification) and **model alignment** (via weight synchronization), critical for stable RL training. The modular structure allows scalability and separation of concerns.

</details>

Figure 3: The architecture of RFT-core in Trinity-RFT.

2.1.1 Unified Support for Diverse RFT Modes

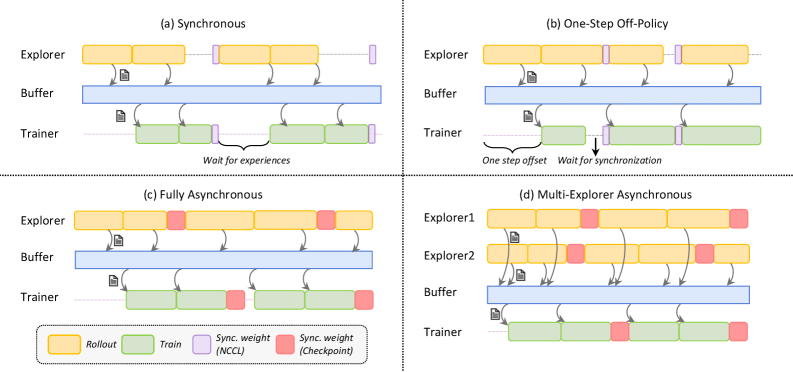

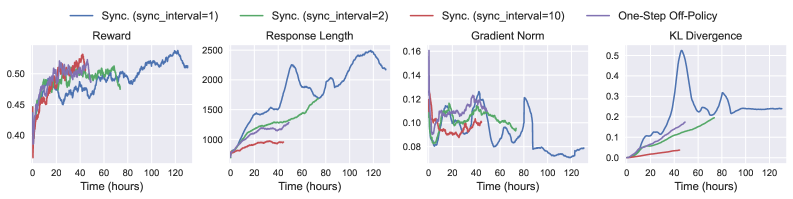

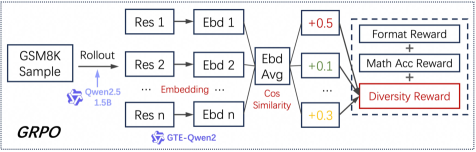

We present the RFT modes supported by Trinity-RFT, some of which are demonstrated in Figure 4.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Synchronization Strategies in a Distributed System

### Overview

The image depicts four synchronization strategies (a-d) for a distributed system involving **Explorers**, **Buffers**, and **Trainers**. Each quadrant illustrates data flow, synchronization points, and component interactions using color-coded blocks and arrows.

### Components/Axes

- **Components**:

- **Explorers**: Generate data (yellow blocks labeled "Rollout").

- **Buffers**: Temporary storage (blue blocks).

- **Trainers**: Process data (green blocks labeled "Train").

- **Synchronization Elements**:

- **Sync. weight (NCCL)**: Purple blocks (inter-component synchronization).

- **Sync. weight (Checkpoint)**: Red blocks (checkpoint synchronization).

- **Arrows**: Indicate data flow direction.

- **Legend**: Located at the bottom, mapping colors to actions (Rollout, Train, Sync. weight, Checkpoint).

### Detailed Analysis

#### Quadrant (a) Synchronous

- **Flow**:

- Explorers → Buffers (yellow → blue).

- Buffers → Trainers (blue → green).

- Trainers wait for experiences (dashed arrow).

- **Synchronization**:

- Purple "Sync. weight (NCCL)" blocks between Explorers and Buffers.

- No checkpoints (no red blocks).

#### Quadrant (b) One-Step Off-Policy

- **Flow**:

- Explorers → Buffers (yellow → blue).

- Buffers → Trainers (blue → green).

- **Synchronization**:

- One-step offset (dashed arrow from Explorers to Buffers).

- Purple "Sync. weight (NCCL)" blocks between Buffers and Trainers.

- "Wait for synchronization" note.

#### Quadrant (c) Fully Asynchronous

- **Flow**:

- Explorers → Buffers (yellow → blue).

- Buffers → Trainers (blue → green).

- **Synchronization**:

- Red "Sync. weight (Checkpoint)" blocks at intervals.

- No explicit waiting (fully asynchronous).

#### Quadrant (d) Multi-Explorer Asynchronous

- **Flow**:

- Two Explorers (Explorer1, Explorer2) → Buffers (yellow → blue).

- Buffers → Trainers (blue → green).

- **Synchronization**:

- Red "Sync. weight (Checkpoint)" blocks at intervals.

- Arrows indicate parallel data flow from multiple Explorers.

### Key Observations

1. **Synchronous (a)**: Strict synchronization with delays ("Wait for experiences").

2. **One-Step Off-Policy (b)**: Introduces latency via offset but synchronizes before training.

3. **Fully Asynchronous (c)**: No waiting but relies on checkpoints for consistency.

4. **Multi-Explorer Asynchronous (d)**: Scales to multiple Explorers but requires checkpoint synchronization.

### Interpretation

- **Trade-offs**:

- Synchronous strategies prioritize consistency but reduce throughput.

- Asynchronous strategies improve parallelism but risk data staleness without checkpoints.

- **Checkpoints**: Critical in asynchronous systems (red blocks) to ensure data consistency.

- **Multi-Explorer Scalability**: Quadrant (d) suggests handling multiple data sources but complicates synchronization.

- **NCCL vs. Checkpoint**: NCCL (purple) enables real-time sync, while checkpoints (red) act as recovery points.

This diagram highlights the balance between synchronization overhead, data freshness, and system scalability in distributed training pipelines.

</details>

Figure 4: A visualization of diverse RFT modes supported by Trinity-RFT, including: (a) synchronous mode, with sync_interval=2; (b) one-step off-policy mode, with sync_interval=1 and sync_offset=1; (c) fully asynchronous mode, with sync_interval=2; (d) multi-explorer asynchronous mode, with sync_interval=2. The buffer supports, in principle, arbitrary management and sampling strategies for experiences.

Synchronous mode.

In the synchronous mode shown in Figure 4 (a), the explorer and trainer get launched simultaneously, work in close coordination, and synchronize their model weights once every sync_interval training steps. Within each synchronization period, the explorer continuously generates sync_interval batches of rollout experiences and stores them in the buffer, which are then retrieved and utilized by the trainer for updating the policy model. If sync_interval=1, this is a strictly on-policy RL process, whereas if sync_interval>1, it becomes off-policy (akin to the mode adopted in [35]) and can be accelerated by pipeline parallelism between the explorer and trainer. This mode can be activated by setting the configuration parameter mode=both.

One-step off-policy mode.

This mode, demonstrated in Figure 4 (b), closely resembles the synchronous mode, except for an offset of one batch between the explorer and trainer. This allows the trainer to sample experiences from the buffer immediately after model weight synchronization, thereby streamlining the execution of explorer and trainer with smaller pipeline bubbles, at the cost of slight off-policyness. The visualization in Figure 4 (b) corresponds to configuration parameters sync_interval=1 and sync_offset=1, both of which can take more general values in Trinity-RFT.

Asynchronous mode.

In the fully asynchronous mode shown in Figure 4 (c), the explorer and trainer act almost independently. The explorer continuously generates rollout experiences and stores them in the buffer, while the trainer continuously samples experiences from the buffer and uses them for training the policy model. External experiences, e.g., those generated by expert models or humans, can be continuously incorporated into the buffer as well. The explorer and trainer independently load or save model weights from the checkpoint directory every sync_interval steps, keeping the distribution of rollout experiences up to date. This mode can be activated by setting mode=explore/train and launching the explorer and trainer separately on different GPUs.

Multi-explorer asynchronous mode.

One benefit brought by the decoupled design is that explorers and trainers can scale up independently on separate devices. As a proof-of-concept, Trinity-RFT offers support for a multi-explorer asynchronous mode, as demonstrated in Figure 4 (d), where multiple explores send the generated rollout experiences to the same buffer. Scaling up the number of independent and distributed explorers can be particularly useful for resolving data scarcity and speeding up the generation of experiences in real-world scenarios where rollout trajectories have to be sampled via interaction with the physical world, or in an environment with sparse and lagged feedback. Another by-product of this multi-explorer mode is 24-hour non-interrupted service for real-world online serving situations: since the explorers can pause and update model weights at different moments, it can be guaranteed that there is always one explorer ready to serve an incoming request immediately whenever it arrives. This is in contrast to a single-explorer mode, where online service has to be paused when the explorer is updating its model weights.

Benchmark mode.

Trinity-RFT supports a benchmark mode that allows the user to evaluate one or multiple checkpoints on arbitrary benchmarks, after the RFT training process has finished. To activate this mode, the user simply needs to set mode=bench and specify the paths for the evaluation datasets in the configurations. This mode can be particularly useful for experimental purposes; for example, the user might want to try out different RFT techniques or configurations quickly (with limited evaluation on hold-out data) during training, identify which RFT trials have achieved stable convergence and high rewards, and then conduct more thorough evaluations only for the checkpoints of these successful trials.

Train-only mode.

In certain scenarios, the user would like to train the policy model without further exploration, using experiences that have already been collected and stored in the buffer. This train-only mode can be activated by setting the configuration parameter mode $=$ train and launching the trainer alone. Offline methods like Supervised Fine-Tuning (SFT) and Direct Preference Optimization (DPO) [25] can be regarded as special cases of such scenarios, both of which are natively supported by Trinity-RFT. For another example, consider an online RFT process that expands over a long period, where the explorer alone is launched during the daytime for serving human users and collecting experiences, while the trainer alone is launched at night for updating the policy model (which will be thoroughly validated and evaluated before it can be actually deployed as the rollout model for the next day).

Discussions.

We conclude this subsection with two remarks. (1) Given the unified implementation of various RFT modes, it is easy to design and implement a hybrid mode with Trinity-RFT that combines multiple modes into a single learning process. One example is learning with both online rollout data and offline-collected expert data, via jointly optimizing two loss terms corresponding to these two data sources. Section 3.2 illustrates how to implement this conveniently in Trinity-RFT. (2) We take a system-algorithm co-design perspective in the development of Trinity-RFT, aiming to unify and generalize diverse RFT methodologies in this framework. RFT-core provides the necessary infrastructure for achieving this goal. This technical report focuses on the system perspective, and we refer interested readers to the literature for recent algorithmic developments in off-policy / asynchronous RL for LLMs [21, 6, 26, 35, 7, 23, 42, 45, 46, 47].

2.1.2 Implementations of RFT-Core

We present some implementation details of RFT-core in the following.

Inference and training engines.

The current version of Trinity-RFT leverages vLLM [15] as the inference engine for the explorer, which offers features including paged attention, continuous batching [49], asynchronous and concurrent inference for multiple rollout trajectories, among others. Trinity-RFT also leverages verl [30] as the training engine for the trainer, which gracefully handles model placement (for the policy, critic and reference models) and incorporates various performance optimizations for training (such as dynamic batching, management of padding and unpadding, etc.). Trinity-RFT stands on the shoulders of these excellent open-source projects, and will continue to benefit from their future development.

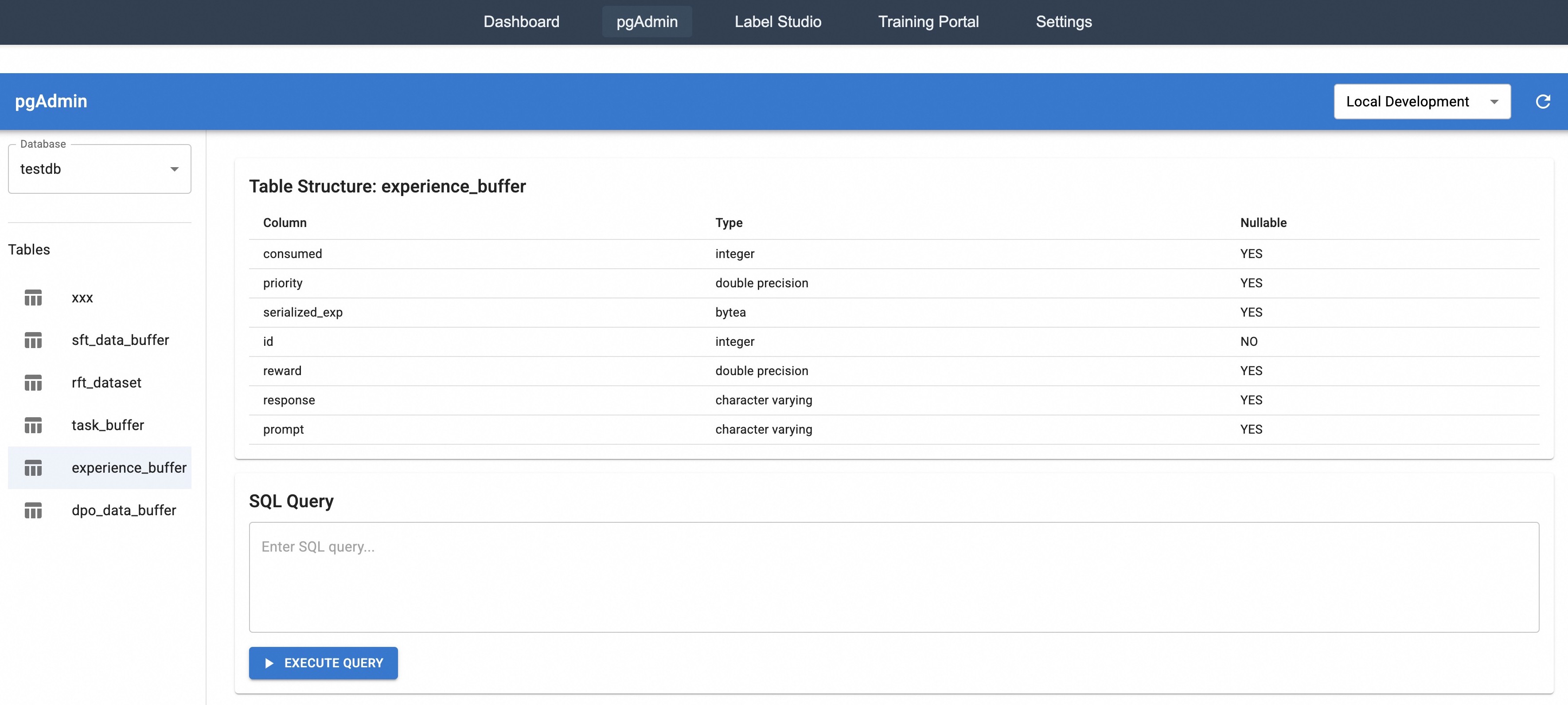

Experience buffer.

Trinity-RFT supports multiple types of experience buffers, ranging from a non-persistent ray.Queue to persistent SQLite or Redis databases. While using a basic first-in-first-out queue is the most straightforward approach, data persistence with a database opens up many new opportunities (e.g., advanced sampling strategies), as discussed throughout this report. Trinity-RFT has provided dedicated read/write control to prevent any conflict in accessing the buffer.

Model weight synchronization.

Trinity-RFT supports model weight synchronization between the explorer and trainer by NCCL [22], or by checkpoint saving and loading. The former is faster (when available), while the latter is generally more flexible and widely applicable, especially for asynchronous RFT modes.

2.2 Agent-Environment Interaction

To adapt Trinity-RFT to a new downstream scenario, the user mainly needs to define and register a customized workflow (by inheriting the base class Workflow or MultiTurnWorkflow) where the logic of agent-environment interaction for this particular scenario is implemented. Advanced methods for experience synthesis with environmental feedback [4] can be implemented in the same way as well. See Section 3.1 for detailed examples. The workflow will then be executed by workflow runners within the explorer for generating experiences, as shown in Figure 3.

Numerous challenges arise when one tries to build an RFT framework that can efficiently and robustly handle real-world interaction between the LLM-powered agent and the environment. These include long-tailed latencies, agent/environment failures, and lagged reward signals, among others. Trinity-RFT regards agent-environment interaction as a first-class citizen and incorporates various solutions to tackle these challenges, for example:

- The workflow runners in Trinity-RFT support asynchronous and streaming generation of rollout trajectories for multiple tasks. This helps mitigate the straggler effect caused by the long-tailed latencies in rollout generation and agent-environment interaction, thereby accelerating the RFT process. Load balancing among multiple LLM inference engines within one RFT training course is also taken care of, and would be one direction for further optimizing the utilization of computational resources.

- Trinity-RFT incorporates various timeout/retry/skip mechanisms for fault tolerance and robustness, which ensure that continuous rollout generation would not be interrupted or blocked by individual failures in certain rounds of agent-environment interaction. This is crucial for stable and efficient learning in real-world scenarios, e.g., when the agent interacts with a large number of MCP services [17] that differ vastly in quality and availability.

- Trinity-RFT is built to provide native support for asynchronous RFT modes, which allow great flexibility in the paces of the explorer and trainer. This can boost the overall efficiency of the RFT process, compared to synchronous modes where the slower one among the explorer and trainer can block the progress of the other and cause waste of computational resources.

- For lagged reward signals, the trinity design of RFT-core offers a natural solution. As soon as the rollout trajectory (without reward values) has been generated, it is saved into the experience buffer, but marked as “not ready for training”. The explorer is now free from this task and may continue to collect experiences for other tasks. When the reward signals from the environment finally arrive, they are written to the buffer, and the corresponding experience is now marked as “ready for training”.

- For multi-turn conversations and ReAct-style workflows [48], Trinity-RFT supports concatenating multiple rounds of agent-environment interaction compactly into a single sequence, with proper masking that indicates which tokens need to be incorporated into the training objective of RL algorithms. This avoids unnecessary recomputation and thus improves training efficiency, compared to a vanilla approach that represents a $K$ -turn rollout trajectory with $K$ separate samples.

- As another performance optimization, the implementation of Trinity-RFT allows resetting the environment in a workflow, rather than re-initializing it every time. This is especially useful for scenarios where setting up the environment is costly.

2.3 Data Pipelines

The data pipelines in Trinity-RFT aim to address fundamental challenges in RFT scenarios, such as managing heterogeneous data dynamics across interaction workflows, enabling delayed reward integration, and facilitating continuous data curation. Our solutions center on four core aspects: end-to-end data transformation, task curation, active experience shaping, and human-in-the-loop curation, each corresponding to key requirements identified in our development of RFT-core (Section 2.1).

2.3.1 End-to-end Data Transformation

To support the diverse RFT modes (e.g., synchronous or asynchronous) in Trinity-RFT, we establish a service-oriented data pipeline architecture as illustrated in Figure 5. It decouples data pipeline logic from procedure control to enable flexible RL-oriented data transformations with two key modules:

- The Formatter Module unifies disparate data sources into RFT-compatible formats, providing convenient conversion between raw inputs (e.g., meta-prompts, domain-specific corpora, and QA pairs with tagged rewards) and structured RFT representations. For efficient RFT workloads, we utilize buffer-based persistent storage (Section 2.1) to support different data models, such as ExperienceModel for prioritized rollout trajectories and DPODataModel for preference pairs. The conversion logic and data models are highly customizable to meet diverse requirements for managing experience data. This flexibility enables robust metadata recording and field normalization, which is essential for advanced scenarios such as asynchronous RFT in trainer-explorer environments, agent self-evolution from a cold start using meta-prompts, and knowledge injection from structurally complex domain-specific corpora.

- The Controller Module manages the complete data pipeline lifecycle through distributed server initialization, declarative configuration, and automated dataset persistence. It implements dynamic control mechanisms for asynchronous scenarios and protection against resource exhaustion, with configurable termination conditions based on compute quota or data quantity. This modular design enables Trinity-RFT to handle data transformations flexibly while maintaining consistency across different RFT modes.

The Formatter-Controller duality mirrors the explorer-trainer decoupling in RFT-core, enabling parallel data ingestion and model updating. This design also allows Trinity-RFT to handle delayed rewards through version-controlled experience updates while maintaining low-latency sampling for the trainer.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Diagram: System Architecture for Task Curation and Experience Shaping

### Overview

The diagram illustrates a two-phase system architecture for processing data and shaping experiences. It features interconnected components for task curation, data processing, exploration, and model training, with feedback loops between exploration and training phases.

### Components/Axes

1. **Left Section (Task Curation & Prioritization):**

- **Data Processor**: Contains sub-tasks:

- Convert format

- Clean & augment

- Online Scoring

- **Raw Data** (blue cylinder) → **Taskset** (blue cylinder)

- **Buffer** (gray rectangle) acts as intermediary storage

2. **Right Section (Experience Shaping):**

- **Data Processor**: Contains sub-tasks:

- Dense rewards

- Human-in-the-loop

- Counterfactual

- Dynamic synthesis

- **Raw Experience** (blue cylinder) → **Experience** (blue cylinder)

3. **Central Components:**

- **Explorer** (yellow robot icon): Receives Environment Feedback and sends Model Feedback

- **Trainer** (green brain icon): Receives Model Feedback and sends Experience Shaping outputs

4. **Feedback Loops:**

- Environment Feedback → Explorer → Model Feedback → Trainer

- Model Feedback → Experience Shaping

### Detailed Analysis

- **Task Curation Flow**: Raw Data undergoes preprocessing (format conversion, cleaning, augmentation) and online scoring before becoming Taskset. The Buffer manages data flow between these stages.

- **Experience Shaping Flow**: Raw Experience is processed through human-centric methods (dense rewards, counterfactual analysis) and dynamic synthesis to create refined Experience outputs.

- **Exploration-Training Interaction**: The Explorer interacts with the environment, receives feedback, and shares Model Feedback with the Trainer. This creates a closed-loop system for iterative improvement.

### Key Observations

1. Dual Data Processors handle distinct but complementary workflows (task preparation vs. experience refinement)

2. Buffer acts as a critical synchronization point between raw data and taskset generation

3. Human-in-the-loop components appear in both processing phases, emphasizing human-AI collaboration

4. Feedback loops suggest continuous system improvement through environmental interaction

### Interpretation

This architecture represents a hybrid AI system where:

1. **Task Curation** focuses on preparing structured data for specific applications

2. **Experience Shaping** emphasizes human-AI interaction quality through:

- Reward design

- Counterfactual analysis (what could have been)

- Dynamic adaptation

3. The Explorer-Trainer loop mirrors reinforcement learning paradigms, with the key difference being explicit human feedback integration at multiple stages

4. The system prioritizes both data quality (through rigorous preprocessing) and experience quality (through human-centric shaping)

The architecture suggests a framework for developing AI systems that balance automated processing with human oversight, particularly in applications requiring nuanced understanding of human preferences and contextual adaptation.

</details>

Figure 5: The interaction of data processor and data buffers in Trinity-RFT, divided into two key stages. Left: Task Curation & Prioritization prepares the initial tasks for the explorer. Right: Experience Shaping processes the collected trajectories from the explorer before they are used by the trainer. The data processor is a central component that operates on different buffers at different stages.

2.3.2 Task Curation and Prioritization

Before the RFT loop begins, it is crucial to prepare a high-quality set of initial tasks. This stage, depicted on the left side of Figure 5, transforms raw data into an optimized task set for the explorer.

The process begins with raw data sources (e.g., prompts, domain corpora), which are ingested into a buffer. The Data Processor, powered by over 100 operators from Data-Juicer [2], reads from this buffer to perform various curation tasks. It provides composable building blocks for experience cleaning (e.g., length filters, duplication removal), safety alignment (e.g., toxicity detection, ethics checks), and preference data synthesis (e.g., critique-conditioned augmentation). By treating Data-Juicer as a modular data processing operator pool rather than a central dependency, Trinity-RFT provides RL-specific abstractions and coherence, while benefiting from well-established data tools.

The processed data is then organized into a structured task buffer. This stage effectively implements a form of curriculum learning by allowing users to prioritize tasks (e.g., from easy to hard), guiding the explorer towards a more efficient and stable learning trajectory from the outset. This entire workflow is managed by a service-oriented architecture that decouples data logic from procedural control, ensuring flexibility and scalability, especially in asynchronous and distributed settings.

2.3.3 Active Experience Shaping

Once the explorer begins interacting with the environment, it generates a continuous stream of experience data. To maximize learning efficiency, this raw experience must be actively shaped before it reaches the trainer. This stage is shown on the right side of Figure 5.

Generated experiences are first collected in a buffer. The Data Processor is applied again with a series of transformations to clean, augment, or synthesize these experiences. This is where the core of RFT data intelligence lies. Key capabilities include:

- Agent-Driven Data Processing: Trinity-RFT introduces a powerful agentic paradigm for data manipulation. Users can define complex processing pipelines through high-level objectives, specified as either natural language commands (e.g., “improve safety” or “increase response diversity”) or explicit Data-Juicer configurations. The framework automatically translates these commands into executable workflows backed by its modular components like DataCleaner and DataSynthesizer. This design provides a user-friendly abstraction layer over the underlying Data-Juicer operators, making advanced processing functionalities accessible to both RFT users familiar with Data-Juicer and those who are not. It also facilitates the flexible injection of user-defined inductive biases into the learning process, unlocking new research directions for self-evolving agents, as we will discuss later in Section 2.3.5.

- Online Reward Shaping: The data processor can dynamically augment the reward signal. Instead of relying on a single, often sparse, task-completion reward, users can add dense rewards based on quality, diversity, or safety scores computed on the fly. This enriched feedback provides a much stronger learning signal for the trainer.

- Prioritized Experience Replay: Experiences are not treated equally. Trinity-RFT allows for flexible, multi-dimensional utility scoring to prioritize the most valuable samples for training. The DataActiveIterator supports version-controlled experience reuse and cross-task data lineage tracking, ensuring that the trainer always learns from the most informative data available. This mechanism is also critical for handling delayed rewards, as experience utilities can be updated asynchronously as new feedback arrives.

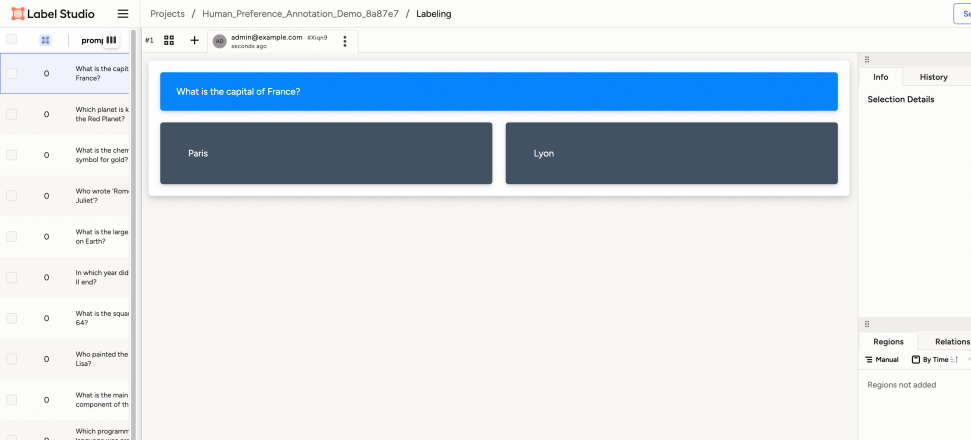

2.3.4 Human-AI Collaboration

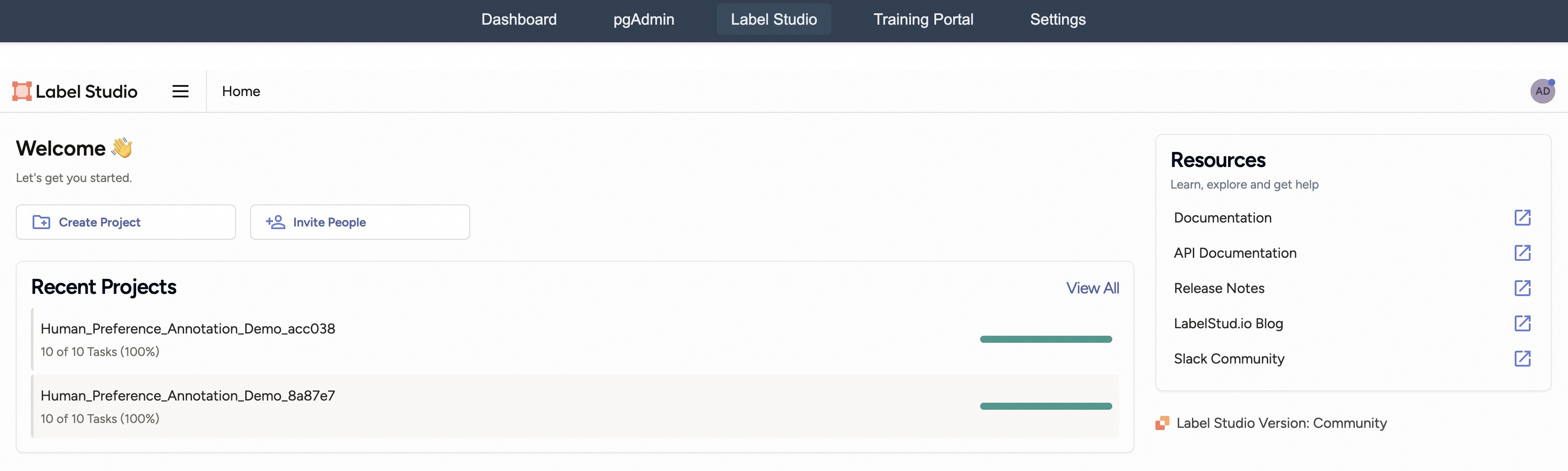

In scenarios where human feedback is irreplaceable, Trinity-RFT establishes a bi-directional human-AI collaboration loop that provides first-class support for human annotations, based on Label Studio [39] and Data-Juicer’s HumanOPs.

- Multi-stage annotation. Trinity-RFT implements configurable procedures combining automatic pre-screening and human verification. Typical stages include preference annotation (comparative assessment of model responses), quality auditing (human verification of automated cleaning/synthesis results), and cold-start bootstrapping (initial dataset curation through expert demonstrations).

- Native asynchronism support. As the collection of human feedback is generally slower than AI/model feedback, we provide dedicated capabilities to handle both synchronous and asynchronous feedback modes, with configurable timeout and polling parameters. The feedback collaboration is based on an event-driven design, with automatic task creation upon data state changes, configurable notifications via email/Slack/webhook, and an atomic transaction model for annotation batches.

- Customization. Different applications may involve humans in heterogeneous ways. We thus prioritize flexibility in both the interaction-interface and service levels. Examples include rich built-in interfaces that can be extended in a visualized style with XML-like tags provided by Label Studio, fine-grained quality scoring for reward shaping, free-form feedback attachment for dataset shaping, among others. Moreover, for easy deployment, we provide local Label Studio instance management with automatic environment setup via Docker/pip; optimized SDK interactions with batch request coalescing; unified logging across annotation tools and ML services; and concurrent annotation campaigns through priority-based task routing, while maintaining full data lineage preserved via LineageTracker.

The decoupled design of Trinity-RFT, and the presence of a standalone experience buffer in particular, enable human feedback to participate in RL loops without breaking the asynchronous execution model. For instance, human-verified samples can be prioritized for training while fresh experiences are being collected, which is a critical capability for real-world deployment scenarios with mixed feedback sources. Further details for human-AI collaboration in Trinity-RFT will be illustrated in Section 3.5.

2.3.5 Discussion: Unlocking New Research & Development Directions

The modular design of our data pipelines and the powerful data processor open up promising research and development avenues to be further explored.

One direction is about effective management of experience data. While prior RFT works often treat the experience as a static log, Trinity-RFT enables a more sophisticated, full-lifecycle approach to data, from selective acquisition to efficient representation:

- Intelligent Perception and Collection: In an open-ended environment, what experience is “worth” recording? Storing everything creates a low signal-to-noise ratio and burdens the trainer. Trinity-RFT ’s architecture allows researchers to implement active collection strategies. For instance, one could design a data processor operator that evaluates incoming experiences from the explorer based on metrics like surprise, uncertainty, or information gain, and only commits the most salient trajectories to the replay buffer. This transforms data collection from passive logging into a targeted, intelligent process.

- Adaptive Representation: Raw experience is often high-dimensional and redundant (e.g., long dialogues, complex code generation traces). How can this be distilled into a format that an agent can efficiently learn from? The data processor in Trinity-RFT acts as a powerful transformation engine. Researchers can use it to explore various representation learning techniques, such as automatically summarizing trajectories, extracting causal chains from tool usage, or converting a multi-turn dialogue into a structured preference pair. This not only makes training more efficient but also opens the door to building meta-experience (more abstract and reusable knowledge) from raw interaction data.

- Agentic Workflows: Trinity-RFT ’s agent-driven processing enables the research and development of self-improving agents, e.g., by configuring the policy agent to also serve as the “processing agent” for LLM-based Data-Juicer operators. Such an agent could perform its own critique and dynamically curate its own training data, creating a truly autonomous learning and data management loop.

Another direction is about synthetic and counterfactual experience processing. The integration of synthesis operators enables research into creating “better-than-real” data. Instead of relying solely on the agent’s own trial-and-error, our framework facilitates exploring questions like:

- Dynamic and Composable Rewarding: With our framework, researchers can move beyond static, hand-crafted rewards. It is now possible to investigate dynamic reward shaping, where auxiliary signals like novelty, complexity, or alignment scores are automatically extracted from trajectories and composed into a dense reward function. How to define “good” experience and how can we learn the optimal combination of these reward components as the agent’s policy evolves?

- Experience Reorganization: Can successful sub-trajectories from different tasks be “spliced” together to solve a novel, composite task? For example, can an agent that has learned to “open a door” and “pick up a cup” synthesize a new trajectory to "enter the room and fetch the cup"?

- Failure Repair: Can the data processor identify where errors occur in a failed trajectory, and synthesize a corrected version for the trainer to learn from, effectively turning failures into valuable lessons?

- Success Amplification: Can a single successful experience be augmented into multiple diverse yet successful variants, thereby improving the generalization and robustness of the learned policy?

By providing dedicated capabilities for such advanced data and reward manipulation, Trinity-RFT aims to facilitates flexible processing of “experience data” for the next generation of self-evolving LLMs.

2.4 User-Friendliness

Trinity-RFT has been designed with user-friendliness as a top priority.

For development and research:

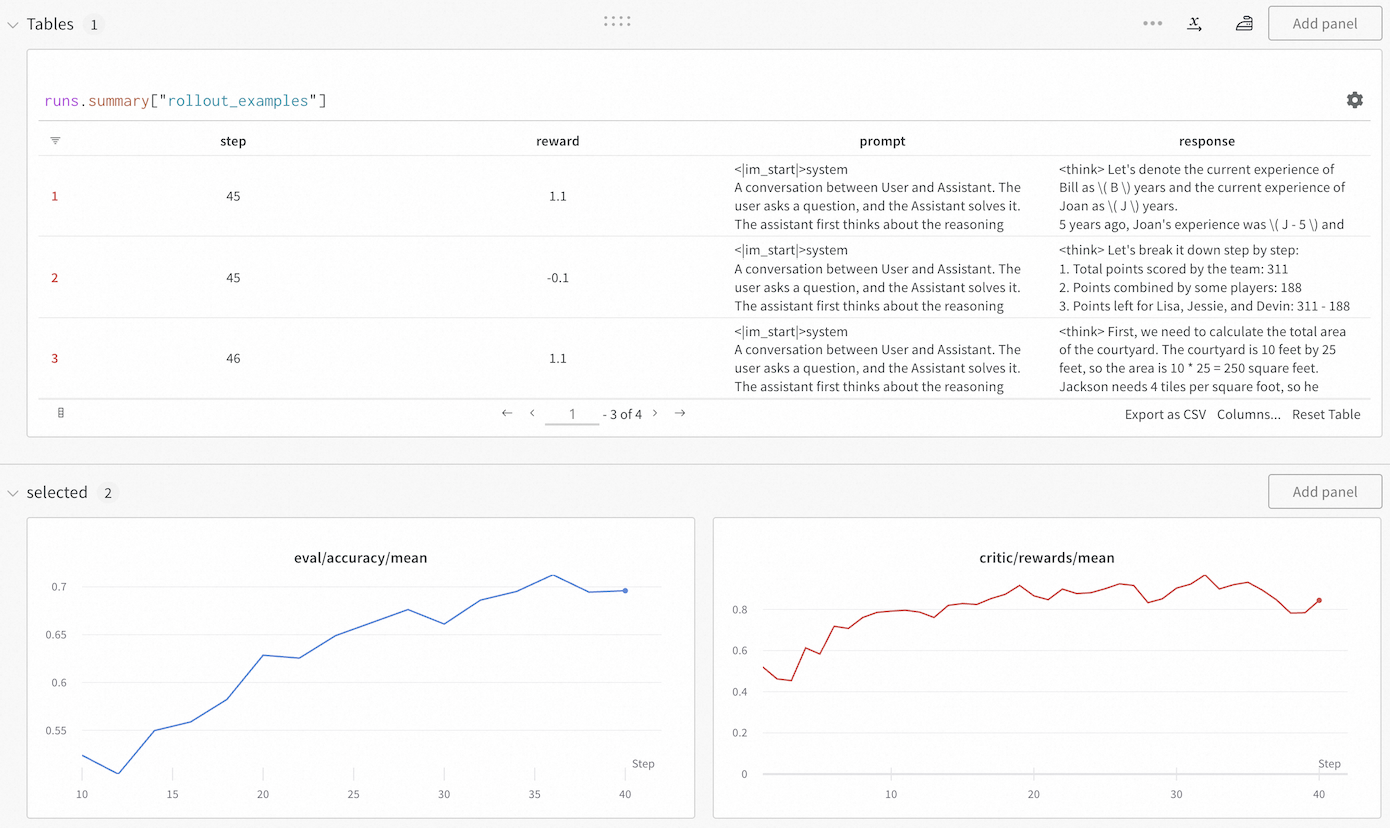

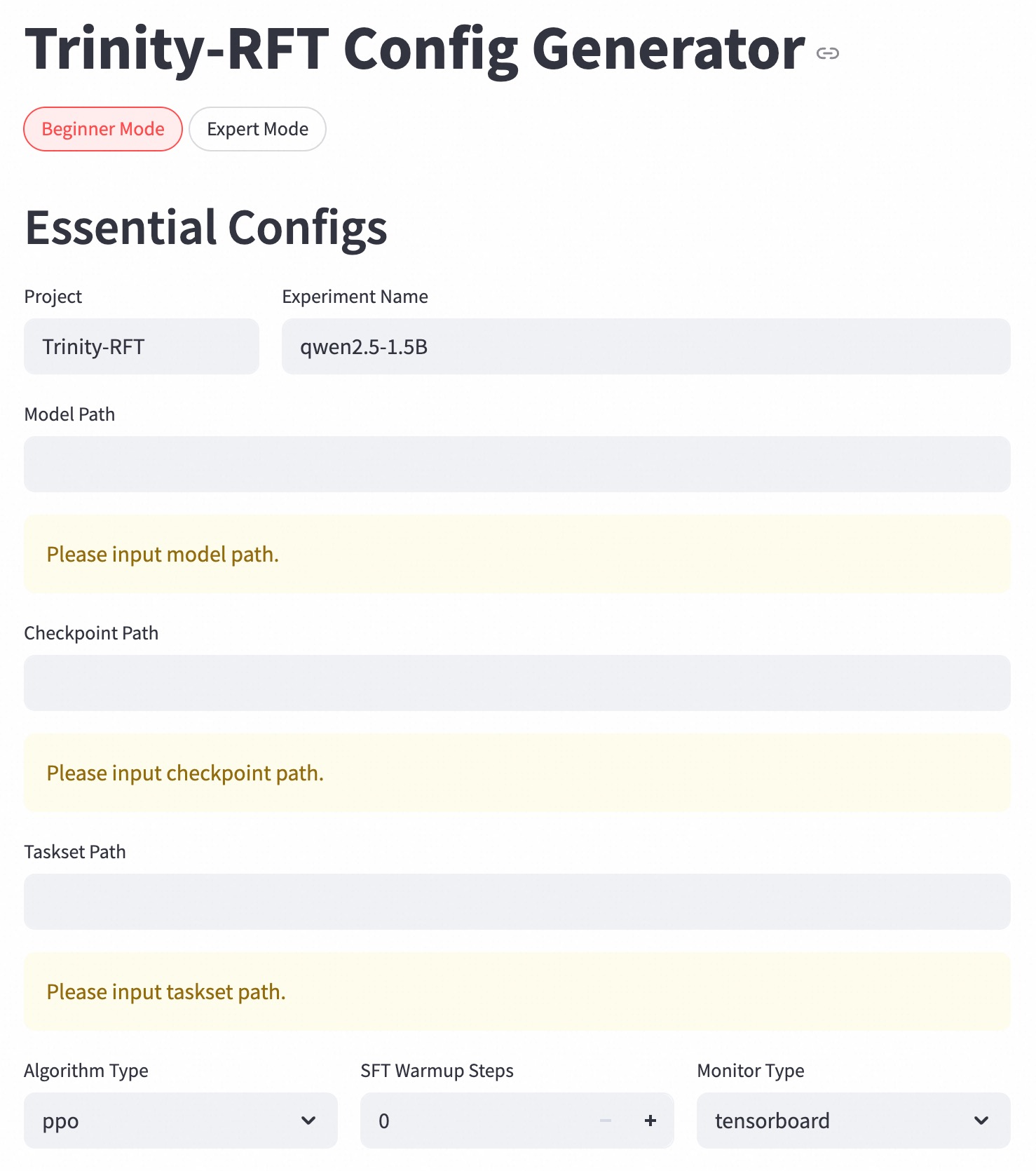

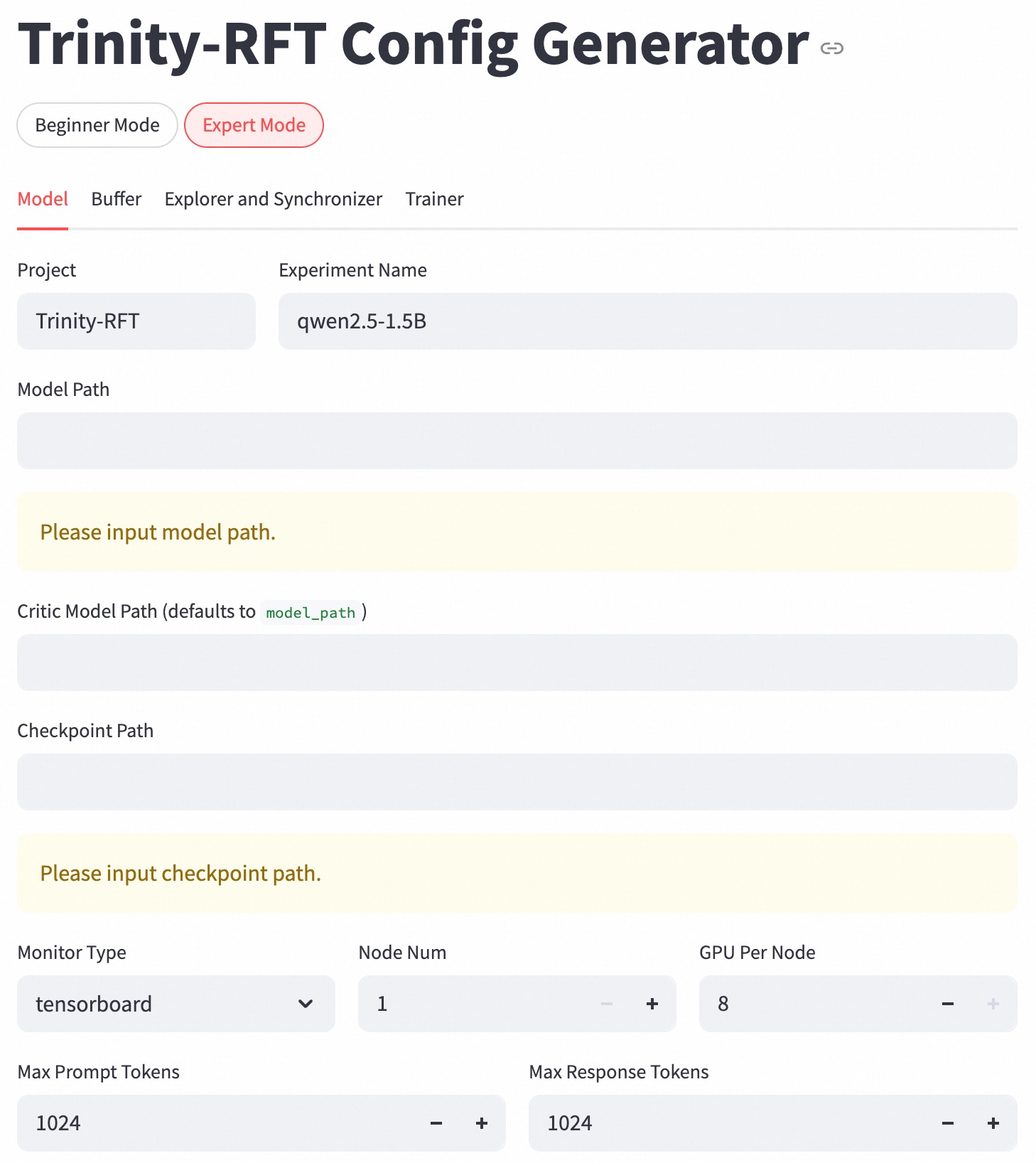

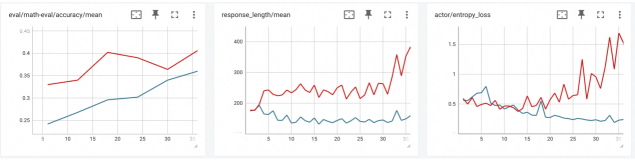

The modular and decoupled design of Trinity-RFT allows users to develop a new algorithm for a specific aspect of RFT by adding one or a few new classes that implement the essential functionalities of interest, without concerning about other aspects of RFT or intrusive modifications of the original codebase. In addition, we include a monitor (built upon Wandb [41] and TensorBoard [38]) that makes it easy to track the progress of an RFT process, both quantitatively (e.g., via learning curves for rewards and other metrics) and qualitatively (e.g., via concrete examples of rollout trajectories generated at different RL steps). See Figure 6 for an example snapshot of the monitor.

For RFT applications:

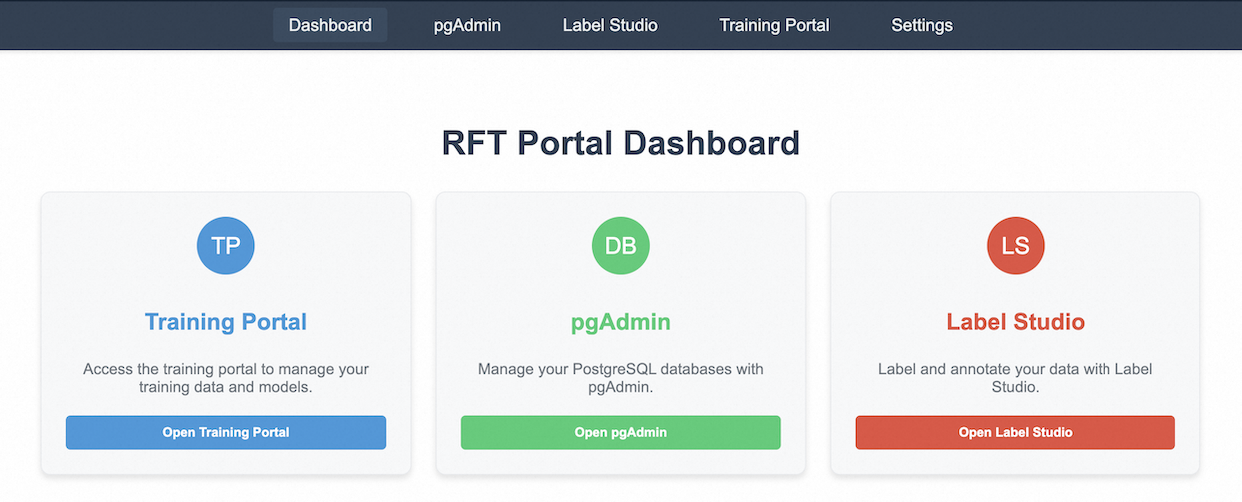

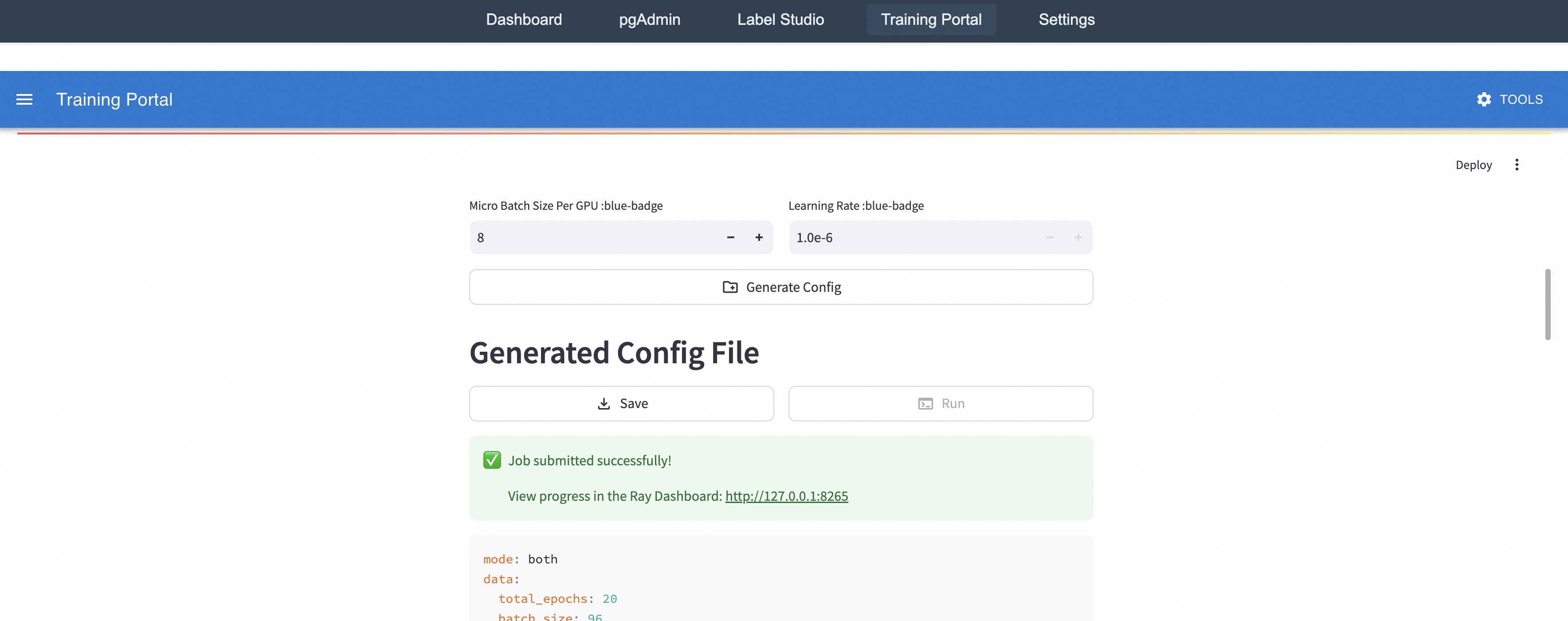

Trinity-RFT offers extensive graphical user interfaces to support low-code usage of the framework, and to maximize transparency of the RFT process. For example, we implement a configuration manager, as shown in Figure 7, that allows the user to create configuration files conveniently via a front-end interface. We also provide Trinity-Studio, an all-in-one unified UI (including the aforementioned monitor and configuration manager) that allows the user to configure and run data inspection, data processing, RFT learning process, etc., all by clicking the mouse and filling forms, without writing any code. An example for using Trinity-Studio will be introduced in Section 3.6. Such functionalities, of course, can be useful not only for applications but also for development and research.

<details>

<summary>figs/wandb_screencut.png Details</summary>

### Visual Description

```markdown

## Dashboard: AI Interaction Analysis

### Overview

The image displays a technical dashboard analyzing AI interaction data. It includes:

1. A table showing structured data for three steps (1-3)

2. Two line graphs tracking evaluation metrics over 40 steps

3. Text-based prompts and responses demonstrating AI reasoning

### Components/Axes

**Table Structure**:

- Columns: Step | Reward | Prompt | Response

- Rows: 3 entries (Steps 1-3)

**Graphs**:

1. **eval/accuracy/mean** (Blue line):

- X-axis: Step (1-40)

- Y-axis: Accuracy (0.52-0.70)

2. **critic/rewards/mean** (Red line):

- X-axis: Step (1-40)

- Y-axis: Rewards (0.2-0.85)

**Text Elements**:

- Table headers in bold black text

- Graph titles in bold black text

- Axis labels in smaller black text

### Detailed Analysis

**Table Data**:

| Step | Reward | Prompt (Example) | Response (Example) |

|------|--------|------------------|--------------------|

| 1 | 1.1 |

</details>

Figure 6: A snapshot of the monitor implemented in Trinity-RFT.

<details>

<summary>figs/config_manager_beginner.jpg Details</summary>

### Visual Description

## Screenshot: Trinity-RFT Config Generator Interface

### Overview

The image shows a configuration interface for the Trinity-RFT system in **Beginner Mode**. The interface contains form fields for essential configuration parameters, with some fields pre-filled and others requiring user input. The layout is structured in a vertical form with grouped input elements.

### Components/Axes

1. **Mode Selection**:

- Two radio buttons: "Beginner Mode" (selected, red highlight) and "Expert Mode" (unselected, gray)

2. **Essential Configs Section**:

- **Project**: Pre-filled with "Trinity-RFT"

- **Experiment Name**: Pre-filled with "qwen2.5-1.5B"

- **Model Path**: Empty field with placeholder text "Please input model path."

- **Checkpoint Path**: Empty field with placeholder text "Please input checkpoint path."

- **Taskset Path**: Empty field with placeholder text "Please input taskset path."

3. **Advanced Settings**:

- **Algorithm Type**: Dropdown with "ppo" selected

- **SFT Warmup Steps**: Numeric input showing "0" with increment/decrement controls

- **Monitor Type**: Dropdown with "tensorboard" selected

### Detailed Analysis

- **Textual Content**:

- All labels and values are in English

- Placeholder texts use imperative phrasing ("Please input...")

- Numeric input for warmup steps uses integer values

- **Visual Hierarchy**:

- Selected mode ("Beginner Mode") is visually emphasized with red color

- Pre-filled fields use darker text than empty fields

- Dropdowns and numeric inputs use consistent gray styling

### Key Observations

1. **Missing Critical Paths**:

- Model, checkpoint, and taskset paths are all empty, requiring user input

2. **Default Configuration**:

- Algorithm type set to PPO (Proximal Policy Optimization)

- Zero warmup steps suggests no pretraining phase

- TensorBoard selected as monitoring tool

3. **Interface Design**:

- Clear separation between pre-filled and required fields

- Minimalist design with no decorative elements

### Interpretation

This configuration interface appears to be for setting up a reinforcement learning experiment using the Qwen-2.5-1.5B model. The empty paths indicate that the user must provide:

1. Model architecture/weights location

2. Training checkpoint directory

3. Task specification files

The selected PPO algorithm with zero warmup steps suggests a direct deployment scenario rather than a training setup. The TensorBoard monitoring choice indicates integration with MLflow or similar tracking systems. The absence of expert mode selection implies this is a simplified configuration for standard use cases.

The interface follows a logical flow from project identification to algorithm selection, with required fields grouped together for easy validation. The numeric warmup steps control allows precise adjustment of pretraining duration if needed later.

</details>

(a) The “beginner” mode.

<details>

<summary>figs/config_manager_expert.jpg Details</summary>

### Visual Description

## Screenshot: Trinity-RFT Config Generator UI

### Overview

The image displays a configuration interface for the Trinity-RFT model generator. The UI is in "Expert Mode" with the "Model" tab active. Key configuration parameters include project details, model paths, resource allocation, and training constraints.

### Components/Axes

1. **Tabs**:

- Top-level tabs: "Beginner Mode" (unselected) and "Expert Mode" (selected, highlighted in red).

- Secondary tabs under "Expert Mode": "Model" (active), "Buffer," "Explorer and Synchronizer," "Trainer."

2. **Input Fields**:

- **Project**: "Trinity-RFT" (text field).

- **Experiment Name**: "qwen2.5-1.5B" (text field).

- **Model Path**: Empty with placeholder "Please input model path."

- **Critic Model Path**: Defaults to "model_path" (text field).

- **Checkpoint Path**: Empty with placeholder "Please input checkpoint path."

3. **Dropdowns/Selectors**:

- **Monitor Type**: "tensorboard" (selected from dropdown).

4. **Resource Allocation**:

- **Node Num**: 1 (numeric input with +/- controls).

- **GPU Per Node**: 8 (numeric input with +/- controls).

5. **Token Constraints**:

- **Max Prompt Tokens**: 1024 (numeric input with +/- controls).

- **Max Response Tokens**: 1024 (numeric input with +/- controls).

### Detailed Analysis

- **Required Fields**: "Model Path" and "Checkpoint Path" are mandatory, indicated by placeholder text in yellow-highlighted fields.

- **Default Values**: "Critic Model Path" defaults to "model_path," suggesting a fallback or placeholder value.

- **Resource Configuration**: "Node Num" and "GPU Per Node" define computational resources, with values 1 and 8 respectively.

- **Token Limits**: Both prompt and response tokens are capped at 1024, likely to manage model input/output size.

### Key Observations

- The interface enforces mandatory inputs for model and checkpoint paths, critical for training workflows.

- Default values (e.g., "model_path") may indicate incomplete configuration or placeholder text.

- Token limits suggest optimization for medium-sized language models, balancing context length and computational efficiency.

### Interpretation

This UI is designed for advanced users configuring a large language model (LLM) training pipeline. The "Model Path" and "Checkpoint Path" fields are essential for specifying training data and model states. The integration with TensorBoard implies real-time monitoring capabilities. Resource allocation (1 node, 8 GPUs) suggests a distributed computing setup, while token limits reflect constraints on sequence processing. The absence of filled paths indicates the configuration is incomplete, requiring user input to proceed. The design prioritizes flexibility (dropdowns, numeric controls) while enforcing critical dependencies (required fields).

</details>

(b) The “expert” mode.

Figure 7: Snapshots of the configuration manager.

3 Examples, Applications, and Experiments

This section demonstrates the utilities and user-friendliness of Trinity-RFT and exemplifies some concepts introduced in previous sections, through a diverse range of examples, applications and experiments. Additional step-by-step tutorials can be found on the documentation website https://modelscope.github.io/Trinity-RFT, or the examples folder of the GitHub repository https://github.com/modelscope/Trinity-RFT/tree/main/examples.

3.1 Customizing Agent-Environment Interaction

With a modular design, Trinity-RFT can be easily adapted to a new downstream scenario by implementing the logic of agent-environment interaction in a single workflow class, without modifications to other components of the codebase. This approach is also sufficient for macroscopic RL algorithm design that targets high-quality experience synthesis with environmental feedback [4]. We provide some concrete examples in the rest of this subsection.

3.1.1 Single-turn Scenarios

In a simple yet common scenario, a user of Trinity-RFT would like to train an LLM for completing single-turn tasks, where the LLM generates one response to each input query. For this purpose, the user mainly needs to (1) define and register a single-turn workflow class (by inheriting the base class Workflow) tailored to the targeted tasks, and (2) specify the tasksets (for training and/or evaluation) and the initial LLM, both of which are compatible with HuggingFace [14] and ModelScope [19] formats.

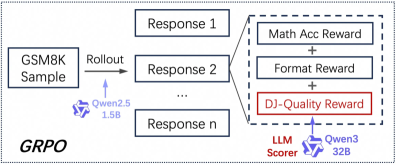

Listing 1 gives a minimal example for implementing a single-turn workflow. Suppose that each task is specified by a <question, answer> tuple. The run() method of ExampleWorkflow calls the LLM once to generate a response for the question, calculates its reward, and returns an Experience instance that consists of the response itself, the reward value, and the log-probabilities of response tokens predicted by the rollout model (which is necessary for certain RL algorithms, such as PPO [28] and GRPO [29]). Some built-in workflows and reward functions have been implemented in Trinity-RFT, e.g., the MathWorkflow class for math-related tasks.

In some cases, the user wants to utilize auxiliary LLMs in the workflow, e.g., for computing rewards via LLM-as-a-judge, or for playing the roles of other agents in a multi-agent scenario. For these purposes, the user can specify auxiliary_models via APIs when initializing the workflow.

⬇

1 @WORKFLOWS. register_module ("example_workflow")

2 class ExampleWorkflow (Workflow):

3

4 def __init__ (

5 self,

6 model: ModelWrapper,

7 task: Task,

8 auxiliary_models: Optional [List [openai. OpenAI]] = None,

9 ):

10 super (). __init__ (model, task, auxiliary_models)

11 self. question = task. raw_task. get ("question")

12 self. answer = task. raw_task. get ("answer")

13

14 def calculate_reward_by_rule (self, response: str, truth: str) -> float:

15 return 1.0 if response == truth else 0.0

16

17 def calculate_reward_by_llm_judge (self, response: str, truth: str) -> float:

18 judge_model = self. auxiliary_models [0]

19 PROMPT_FOR_JUDGE = "" "Please evaluate..." ""

20 completion = judge_model. chat. completions. create (

21 model = "gpt-4", # Or another suitable judge model

22 messages =[{"role": "user", "content": PROMPT_FOR_JUDGE}],

23 )

24 reward_str = completion. choices [0]. message. content. strip ()

25 reward = float (reward_str)

26 return reward

27

28 def run (self) -> List [Experience]:

29 response = self. model. chat (

30 [

31 {

32 "role": "user",

33 "content": f "Question:\n{self.question}",

34 }

35 ],

36 ** self. rollout_args,

37 )

38 reward: float = self. calculate_reward_by_rule (response. response_text, self. answer)

39 # reward: float = self.calculate_reward_by_llm_judge(response.response_text, self.answer)

40 return [

41 Experience (

42 tokens = response. tokens,

43 prompt_length = response. prompt_length,

44 reward = reward,

45 logprobs = response. logprobs,

46 )

47 ]

Listing 1: A minimal example for implementing a customized workflow.

3.1.2 Multi-turn Scenarios

In more advanced cases, the user would like to train an LLM-powered agent that solves multi-turn tasks by repeatedly interacting with the environment. In Trinity-RFT, achieving this is mostly as simple as in the single-turn case, except that the user needs to define and register a multi-turn workflow class by inheriting the base class MultiTurnWorkflow. Listing 2 provides one such example using the ALFWorld dataset [31]. For training efficiency, the process_messages_to_experience() method concatenates multiple rounds of agent-environment interactions compactly into an Experience instance consisting of a single token sequence with proper masking, which can readily be consumed by standard RL algorithms like PPO and GRPO.

For more detailed examples of multi-turn cases, please refer to the documentation https://modelscope.github.io/Trinity-RFT/tutorial/example_multi_turn.html.

⬇

1 @WORKFLOWS. register_module ("alfworld_workflow")

2 class AlfworldWorkflow (MultiTurnWorkflow):

3 "" "A workflow for the ALFWorld task." ""

4

5 def generate_env_inference_samples (self, env, rollout_num) -> List [Experience]:

6 print ("Generating env inference samples...")

7 experience_list = []

8 for i in range (rollout_num):

9 observation, info = env. reset ()

10 final_reward = -0.1

11 memory = []

12 memory. append ({"role": "system", "content": AlfWORLD_SYSTEM_PROMPT})

13 for r in range (self. max_env_steps):

14 format_obs = format_observation (observation)

15 memory = memory + [{"role": "user", "content": format_obs}]

16 response_text = self. model. chat (memory, n =1)[0]. response_text

17 memory. append ({"role": "assistant", "content": response_text})

18 action = parse_action (response_text)

19 observation, reward, done, info = env. step (action)

20 if done:

21 final_reward = reward

22 break

23 experience = self. process_messages_to_experience (

24 memory, final_reward, {"env_rounds": r, "env_done": 1 if done else 0}

25 )

26 experience_list. append (experience)

27 # Close the env to save CPU memory

28 env. close ()

29 return experience_list

30

31 def run (self) -> List [Experience]:

32 # ...

33 game_file_path = self. task_desc

34 rollout_n = self. repeat_times

35 # ...

36 env = create_environment (game_file_path)

37 return self. generate_env_inference_samples (env, rollout_n)

Listing 2: An implementation of a multi-turn workflow for ALFWorld [31].

3.1.3 Experience Synthesis in Workflows

As mentioned in Section 1.1, Trinity-RFT has been designed to streamline RL algorithm design and development at both macroscopic and microscopic levels. One example for the former is experience synthesis: at each RL step, the agent (backed by the rollout LLM) iteratively generates refined responses to a query by incorporating feedback or guidance from the environment, which can be in the form of plain text rather than numerical reward values. The resulting data will then be utilized for updating the policy model, e.g., by a standard SFT or RL loss. Such a macroscopic RL approach is made possible by pre-trained LLMs’ generative nature and rich prior knowledge about natural language. Closely related to this idea is Agent-RLVR [4], a contemporary work that applies such an approach to software engineering scenarios.

Within Trinity-RFT, this process of experience synthesis can be regarded as a particular way of agent-environment interaction, and thus can be realized by simply implementing a Workflow class. As a minimal demonstration, suppose that we want to implement this approach for a math reasoning scenario, where the agent generates multiple rollout responses to an input query, receives feedback from the environment regarding correctness of the responses, reflects on the gathered information, and generates a final response to the query. Listing 3 presents an implementation of this approach within Trinity-RFT.

⬇

1 @WORKFLOWS. register_module ("reflect_once_workflow")

2 class ReflectOnceWorkflow (Workflow):

3

4 def run (self) -> List [Experience]:

5 experiences = []

6

7 # Stage 1: K-rollout generation

8 rollout_messages = self. create_rollout_messages ()

9 responses = self. model. chat (

10 rollout_messages,

11 n = self. k_rollouts,

12 temperature = self. temperature,

13 logprobs = self. logprobs,

14 max_tokens = self. task. rollout_args. max_tokens,

15 )

16 rollout_responses = [response. response_text. strip () for response in responses]

17

18 # Stage 2: Verification

19 verification_results = []

20 for rollout_response in rollout_responses:

21 is_correct = self. verify_answer (rollout_response, self. ground_truth)

22 verification_results. append (is_correct)

23

24 # Stage 3: Reflection

25 reflection_messages = self. create_reflection_messages (

26 rollout_responses,

27 verification_results,

28 )

29 reflection_responses = self. model. chat (

30 reflection_messages,

31 n =1,

32 temperature = self. temperature,

33 logprobs = self. logprobs,

34 max_tokens = self. task. rollout_args. max_tokens,

35 )

36 reflection_response = reflection_responses [0]

37

38 # Verify the reflection response

39 reflection_text = reflection_response. response_text. strip ()

40 reflection_is_correct = self. verify_answer (reflection_text, self. ground_truth)

41

42 if reflection_is_correct:

43 sharegpt_message = [

44 {

45 "role": "system",

46 "content": self. task. format_args. system_prompt

47 },

48 {

49 "role": "user",

50 "content": self. question

51 },

52 {

53 "role": "assistant",

54 "content": reflection_text

55 }

56 ]

57 experience = self. process_messages_to_experience (sharegpt_message)

58 experiences. append (experience)

59

60 # Save experience to file

61 if self. exp_file and sharegpt_message is not None:

62 exp_data = sharegpt_message

63 self. exp_file. write (json. dumps (exp_data, ensure_ascii = False) + "\n")

64 self. exp_file. flush ()

65 return experiences

Listing 3: A toy implementation of experience synthesis with environmental feedback.

3.2 RL Algorithm Development with Trinity-RFT

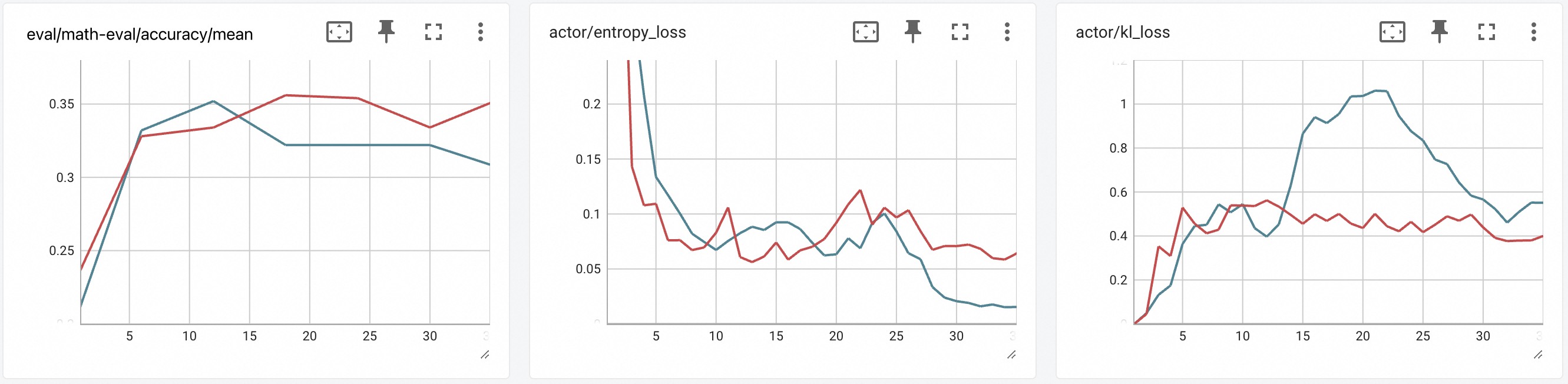

To support RL algorithm development, Trinity-RFT allows researchers and developers to focus on designing and implementing the essential logic of a new RL algorithm, without the need to care about the internal engineering details about Trinity-RFT.

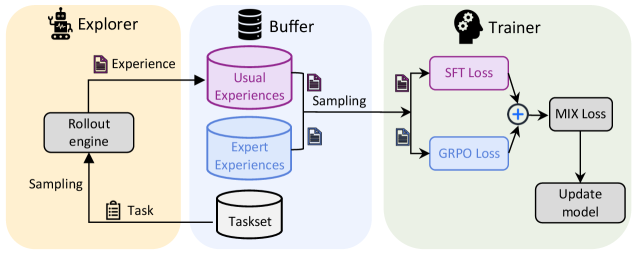

As an example, suppose that we want to implement a MIX algorithm that seamlessly integrates online RL and offline SFT into a single learning process. In its most basic form, the MIX algorithm requires that (1) the trainer samples from two sources of experiences, i.e., the rollout experiences collected online and the high-quality expert trajectories collected offline; and (2) the trainer updates its policy model with a loss function that handles both sources of experiences properly, e.g., a weighted sum of GRPO loss for the on-policy rollout experiences and SFT loss for the expert trajectories.

Variants of this MIX algorithm include adaptive weighting of multiple loss terms [10], alternating between RL and SFT [16], incorporating expert trajectories into RL loss [21, 34, 46], or incorporating SFT loss for high-reward rollout trajectories generated by older versions of the rollout model [27]. Such approaches have proved to be effective in accelerating the online RL process with only a small amount of expert experiences, or to enhance stability and plasticity in continual learning.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Diagram: Reinforcement Learning System Architecture

### Overview

The diagram illustrates a three-stage pipeline for a reinforcement learning (RL) system: **Explorer**, **Buffer**, and **Trainer**. Arrows indicate data flow and interactions between components, with distinct color-coded sections for each stage.

### Components/Axes

#### Explorer (Left Section, Peach Background)

- **Rollout engine**: Generates experiences via interaction with a task.

- **Sampling**: Arrows indicate data extraction from the rollout engine.

- **Task**: Represents the environment or problem domain.

#### Buffer (Middle Section, Light Blue Background)

- **Usual Experiences**: Stored in a pink cylinder, representing standard data.

- **Expert Experiences**: Stored in a blue cylinder, representing high-quality or pre-trained data.

- **Sampling**: Arrows indicate data extraction for training.

- **Taskset**: A gray cylinder representing a collection of tasks.

#### Trainer (Right Section, Light Green Background)

- **SFT Loss**: Supervised Fine-Tuning loss, represented in pink.

- **GRPO Loss**: Group Relative Policy Optimization loss, represented in blue.

- **MIX Loss**: Combined loss function (SFT + GRPO), represented in gray.

- **Update model**: Final step to refine the model using MIX Loss.

### Detailed Analysis

1. **Explorer**:

- The rollout engine interacts with a task to generate experiences.

- Sampling extracts these experiences for storage in the Buffer.

2. **Buffer**:

- Experiences are categorized into "Usual" (pink) and "Expert" (blue) and stored separately.

- Sampling from both categories feeds data into the Trainer.

3. **Trainer**:

- SFT Loss and GRPO Loss are computed independently and combined via a summation node (+) to form MIX Loss.

- MIX Loss drives the model update process.

### Key Observations

- **Flow Direction**: Data moves unidirectionally from Explorer → Buffer → Trainer.

- **Loss Function Design**: The Trainer integrates SFT (supervised learning) and GRPO (RL-specific) losses, suggesting a hybrid optimization strategy.

- **Buffer Segmentation**: Separating "Usual" and "Expert" experiences implies a focus on balancing exploration and leveraging prior knowledge.

### Interpretation

This architecture represents a **meta-RL** or **multi-task RL** system where:

- The **Explorer** collects diverse experiences across tasks.

- The **Buffer** acts as a memory bank, preserving both standard and expert trajectories to mitigate catastrophic forgetting.

- The **Trainer** uses a mixed loss function to balance supervised learning (SFT) and RL objectives (GRPO), enabling efficient adaptation to new tasks while retaining expertise.

The system emphasizes **sample efficiency** (via expert experiences) and **generalization** (via mixed loss), critical for real-world RL applications. The absence of explicit numerical values suggests a conceptual framework rather than empirical results.

</details>

Figure 8: A visualization of the MIX algorithm.

The MIX algorithm is visualized in Figure 8, where we integrate GRPO loss for usual experiences generated by the rollout model and SFT loss for expert experiences into a unified training pipeline. It requires dealing with different sources of experiences, and two types of loss functions; fortunately, to implement such an algorithm in Trinity-RFT, we only need to define three new classes — MixSampleStrategy, MIXPolicyLossFn, and MIXAlgorithm — as demonstrated in Listing 4. With these components, Trinity-RFT enables a seamless integration of online RL and offline SFT within the same training loop. More details of the MIX algorithm are referred to the documentation https://modelscope.github.io/Trinity-RFT/tutorial/example_mix_algo.html.

⬇

1 @SAMPLE_STRATEGY. register_module ("mix")

2 class MixSampleStrategy (SampleStrategy):

3 def __init__ (self, buffer_config: BufferConfig, trainer_type: str, ** kwargs):

4 # ...

5 self. usual_exp_buffer = get_buffer_reader (

6 buffer_config. trainer_input. experience_buffer, usual_buffer_config

7 )

8 self. expert_exp_buffer = get_buffer_reader (

9 buffer_config. trainer_input. sft_warmup_dataset, expert_buffer_config

10 )

11 # ...

12

13 def sample (self, step: int) -> Tuple [Any, Dict, List]:

14 "" "Sample a batch composed of rollout experiences and expert trajectories" ""

15 usual_exp_list = self. usual_exp_buffer. read ()

16 expert_exp_list = self. expert_exp_buffer. read ()

17 exp_list = usual_exp_list + expert_exp_list

18 exps = Experiences. gather_experiences (exp_list, self. pad_token_id)

19 # ...

20

21

22 @POLICY_LOSS_FN. register_module ("mix")

23 class MIXPolicyLossFn (PolicyLossFn):

24 def __init__ (self, mu: float = 0.1, ...):

25 # ...

26 self. mu = mu

27 self. grpo_loss_fn = PPOPolicyLossFn (...)

28 self. sft_loss_fn = SFTLossFn (...)

29

30 def __call__ (

31 self,

32 logprob: torch. Tensor,

33 old_logprob: torch. Tensor,

34 action_mask: torch. Tensor,