# DreamPRM: Domain-Reweighted Process Reward Model for Multimodal Reasoning

**Authors**:

- Qi Cao (University of California, San Diego)

- &Ruiyi Wang (University of California, San Diego)

- &Ruiyi Zhang (University of California, San Diego)

- &Sai Ashish Somayajula (University of California, San Diego)

- &Pengtao Xie (University of California, San Diego)

Abstract

Reasoning has substantially improved the performance of large language models (LLMs) on complicated tasks. Central to the current reasoning studies, Process Reward Models (PRMs) offer a fine-grained evaluation of intermediate reasoning steps and guide the reasoning process. However, extending PRMs to multimodal large language models (MLLMs) introduces challenges. Since multimodal reasoning covers a wider range of tasks compared to text-only scenarios, the resulting distribution shift from the training to testing sets is more severe, leading to greater generalization difficulty. Training a reliable multimodal PRM, therefore, demands large and diverse datasets to ensure sufficient coverage. However, current multimodal reasoning datasets suffer from a marked quality imbalance, which degrades PRM performance and highlights the need for an effective data selection strategy. To address the issues, we introduce DreamPRM, a domain-reweighted training framework for multimodal PRMs which employs bi-level optimization. In the lower-level optimization, DreamPRM performs fine-tuning on multiple datasets with domain weights, allowing the PRM to prioritize high-quality reasoning signals and alleviating the impact of dataset quality imbalance. In the upper-level optimization, the PRM is evaluated on a separate meta-learning dataset; this feedback updates the domain weights through an aggregation loss function, thereby improving the generalization capability of trained PRM. Extensive experiments on multiple multimodal reasoning benchmarks covering both mathematical and general reasoning show that test-time scaling with DreamPRM consistently improves the performance of state-of-the-art MLLMs. Further comparisons reveal that DreamPRM’s domain-reweighting strategy surpasses other data selection methods and yields higher accuracy gains than existing test-time scaling approaches. Notably, DreamPRM achieves a top-1 accuracy of 85.2% on the MathVista leaderboard using the o4-mini model, demonstrating its strong generalization in complex multimodal reasoning tasks.

Project Page: https://github.com/coder-qicao/DreamPRM

1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart: Accuracy Improvement Comparison Between DreamPRM and PRM w/o Data Selection

### Overview

The image contains a bar chart comparing accuracy improvements between two methods (DreamPRM and PRM without data selection) across five datasets. Two text boxes on the right provide contextual examples of reasoning tasks with associated metadata.

### Components/Axes

- **X-axis (Datasets)**: WeMath, MMVet, MathVista, MMStar, MathVision

- **Y-axis (Accuracy Improvement)**: Percentage (%) from 0% to 7%

- **Legend**:

- Blue: DreamPRM

- Yellow: PRM w/o data selection

- **Additional Elements**:

- Horizontal dashed line at 4.0% (average improvement)

- Text boxes with reasoning task examples

### Detailed Analysis

#### Bar Chart Data

| Dataset | DreamPRM (%) | PRM w/o data selection (%) |

|--------------|--------------|----------------------------|

| WeMath | 5.7 | 2.5 |

| MMVet | 5.5 | 3.0 |

| MathVista | 3.5 | 1.8 |

| MMStar | 3.4 | 1.9 |

| MathVision | 1.7 | 0.2 |

#### Text Box Content

**Example 1 (AID2D 2016):**

- **Question**: What does the bird feed on?

- **Choices**: A. zooplankton, B. grass, C. predator fish, D. none

- **Answer**: C

- **Dataset Difficulty**: easy (InternVL-2.5-MPO-8B's accuracy 84.6%)

- **Unnecessary modality**: can answer without image

- **Requirements for reasoning**: do not require complicated reasoning

- **Domain weight**: 0.55 (Determined by DreamPRM)

**Example 2 (M3CoT 2024):**

- **Question**: Determine the scientific nomenclature of the organism shown.

- **Choices**: A. Hemidactylus turcicus, B. Felis silvestris, C. Macropus agilis, D. None

- **Answer**: D

- **Dataset Difficulty**: hard (InternVL-2.5-MPO-8B's accuracy 62.1%)

- **Unnecessary modality**: cannot answer without image

- **Requirements for reasoning**: require complicated reasoning

- **Domain weight**: 1.49 (Determined by DreamPRM)

### Key Observations

1. **Consistent Outperformance**: DreamPRM shows higher accuracy improvements across all datasets compared to PRM without data selection.

2. **Average Improvement**: The overall average improvement is 4.0%, with individual dataset improvements ranging from +0.2% (MathVision) to +5.7% (WeMath).

3. **Domain Weight Correlation**: Higher domain weights (e.g., 1.49 for MathVision) correspond to tasks requiring more complex reasoning and lower baseline accuracy.

4. **Modality Impact**: Tasks labeled "can answer without image" (e.g., AID2D) show higher baseline accuracy (84.6%) than image-dependent tasks (e.g., M3CoT at 62.1%).

### Interpretation

The data demonstrates that **data selection in DreamPRM significantly enhances reasoning accuracy** across diverse datasets. The domain weight metric (determined by DreamPRM) quantifies task complexity, with higher weights indicating greater reasoning demands. For instance:

- **MathVision** (domain weight 1.49) requires complex reasoning and shows minimal improvement (+0.2%), suggesting inherent difficulty.

- **WeMath** (domain weight 0.55) benefits most from data selection (+5.7%), highlighting the method's effectiveness for simpler tasks.

- The **average 4.0% improvement** underscores the systematic advantage of DreamPRM, particularly in image-dependent tasks where baseline accuracy is lower.

This analysis reveals that **domain-specific data curation** (via DreamPRM) is critical for optimizing performance in reasoning tasks with varying complexity and modality requirements.

</details>

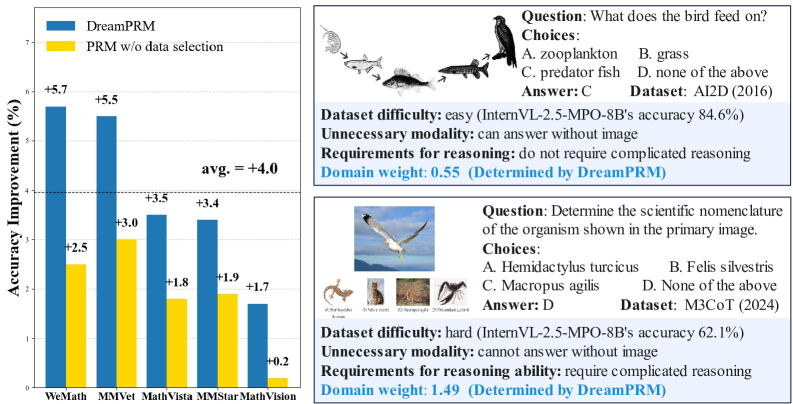

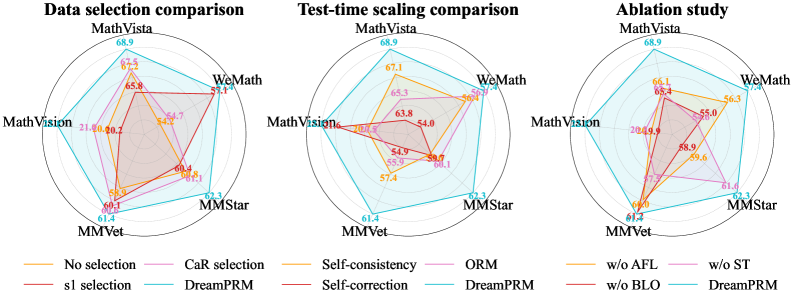

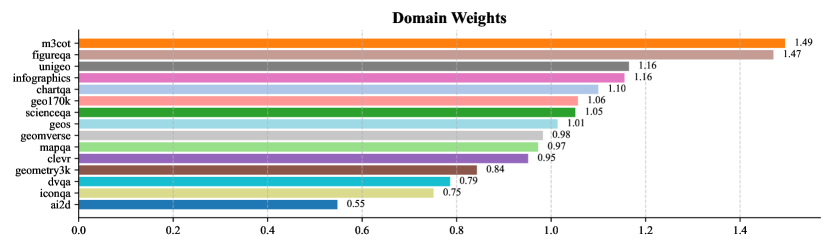

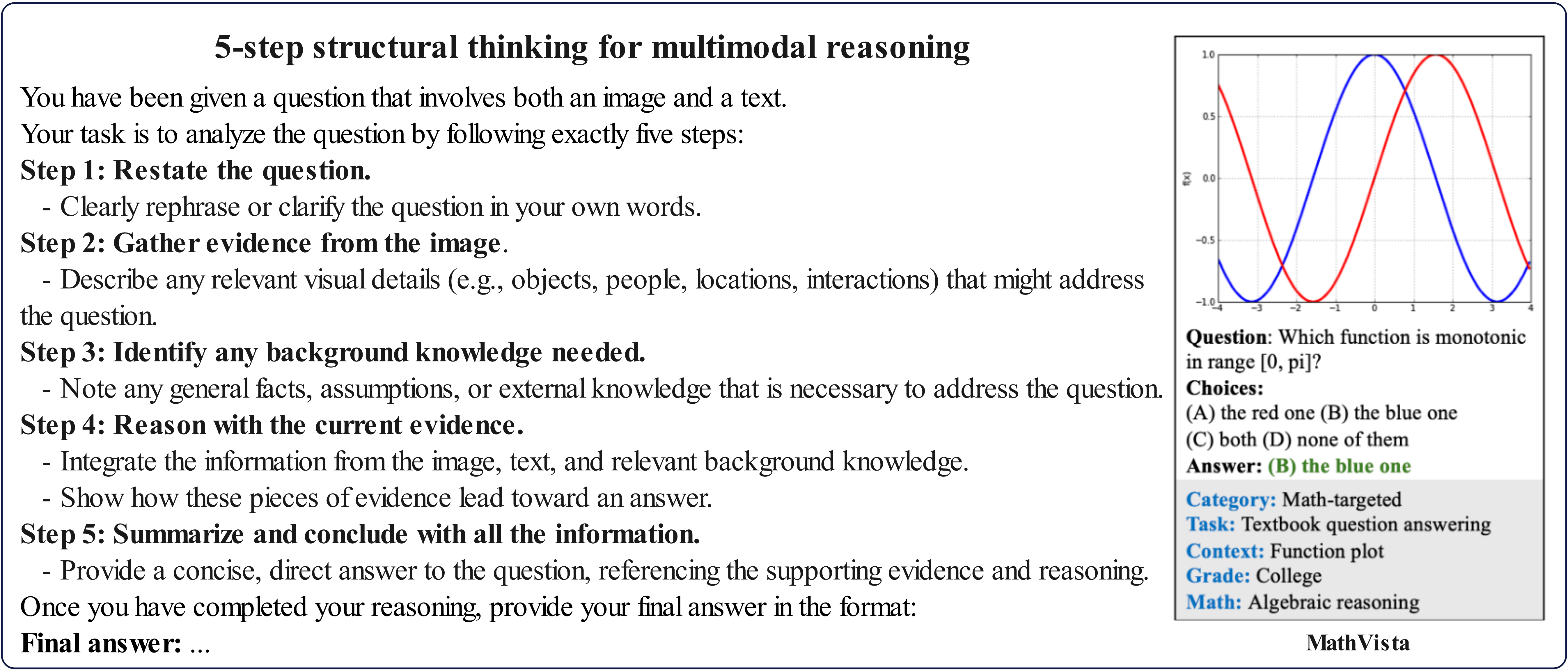

Figure 1: DreamPRM improves multimodal reasoning by mitigating the dataset quality imbalance problem. Left: On five benchmarks, DreamPRM outperforms base model (InternVL-2.5-8B-MPO [67]) by an average of $+4.0\%$ . DreamPRM also consistently surpasses Vanilla PRM trained without data selection. Right: Easy AI2D [23] questions (weight 0.55) vs. hard M3CoT [6] questions (weight 1.49) shows how DreamPRM prioritizes data that demand deeper reasoning - samples requiring knowledge from both textual and visual modalities for step-by-step logical deduction.

Reasoning [55] has significantly enhanced the logical and critical thinking capabilities of large language models (LLMs) [2, 8, 59, 49]. Post-training [45, 10] and test-time scaling strategies [44] enable sophisticated reasoning behaviors in LLMs and extend the length of Chain-of-Thoughts (CoTs) [71], thereby achieving strong results on challenging benchmarks [80, 47]. A key component of these advances is the Process Reward Models (PRMs) [29, 27], which provide fine-grained, step-wise supervision of the reasoning process and reliable selection of high-quality reasoning trajectories. These developments are proven highly effective for improving the performance of LLMs in complex tasks [38, 61].

Given the success with LLMs, a natural extension is to apply PRMs to multimodal large language models (MLLMs) [72, 28] to enhance their reasoning abilities. Early studies of multimodal PRMs demonstrate promise results, yet substantial challenges persist. Distinct from text-only inputs of LLMs, MLLMs must combine diverse visual and language signals: a high-dimensional, continuous image space coupled with discrete language tokens. This fusion dramatically broadens the input manifold and leads to more severe distribution shifts [56] from training to testing distributions. Consequently, directly utilizing PRM training strategies from the text domain [69, 37] underperforms, mainly due to the decreased generalizability [11] caused by the insufficient coverage of the multimodal input space.

A straightforward solution to this problem is to combine multiple datasets that emphasize different multimodal reasoning skills, thereby enlarging the sampling space. However, quality imbalance among existing multimodal reasoning datasets is more severe than in text-only settings: many contain noisy inputs such as unnecessary modalities [78] or questions of negligible difficulty [33], as illustrated in Fig. 1. Since these easy datasets contribute little to effective sampling, paying much attention to them can substantially degrade PRM performance. Therefore, an effective data selection strategy that filters out unreliable datasets and instances is crucial to training a high-quality multimodal PRM.

To overcome these challenges, we propose DreamPRM, a domain-reweighted training framework for multimodal PRMs. Inspired by domain-reweighting techniques [53, 12, 57], DreamPRM dynamically learns appropriate weights for each multimodal reasoning dataset, allowing them to contribute unequally during training. Datasets that contain many noisy samples tend to receive lower domain weights, reducing their influence on PRM parameter updates. Conversely, high-quality datasets are assigned higher weights and thus play a more important role in optimization. This domain-reweighting strategy alleviates the issue of dataset quality imbalances. DreamPRM adopts a bi-level optimization (BLO) framework [14, 31] to jointly learn the domain weights and PRM parameters. At the lower level, the PRM parameters are optimized with Monte Carlo signals on multiple training domains under different domain weights. At the upper level, the optimized PRM is evaluated on a separate meta domain to compute a novel aggregation function loss, which is used to optimized the domain weights. Extensive experiments on a wide range of multimodal reasoning benchmarks verify the effectiveness of DreamPRM.

Our contributions are summarized as follows:

- We propose DreamPRM, a domain-reweighted multimodal process reward model training framework that dynamically adjusts the importance of different training domains. We formulate the training process of DreamPRM as a bi-level optimization (BLO) problem, where the lower level optimizes the PRM via domain-reweighted fine-tuning, and the upper level optimizes domain weights with an aggregation function loss. Our method helps address dataset quality imbalance issue in multimodal reasoning, and improves the generalization ability of PRM.

- We conduct extensive experiments using DreamPRM on a wide range of multimodal reasoning benchmarks. Results indicate that DreamPRM consistently surpasses PRM baselines with other data selection strategies, confirming the effectiveness of its bi-level optimization based domain-reweighting strategy. Notably, DreamPRM achieves a top-1 accuracy of 85.2% on the MathVista leaderboard using the o4-mini model, demonstrating its strong generalization in complex multimodal reasoning tasks. Carefully designed evaluations further demonstrate that DreamPRM possesses both scaling capability and generalization ability to stronger models.

2 Related Works

Multimodal reasoning

Recent studies have demonstrated that incorporating Chain-of-Thought (CoT) reasoning [70, 25, 81] into LLMs encourages a step-by-step approach, thereby significantly enhancing question-answering performance. However, it has been reported that CoT prompting can’t be easily extended to MLLMs, mainly due to hallucinated outputs during the reasoning process [67, 82, 19]. Therefore, some post-training methods have been proposed for enhancing reasoning capability of MLLMs. InternVL-MPO [67] proposes a mixed preference optimization that jointly optimizes preference ranking, response quality, and response generation loss to improve the reasoning abilities. Llava-CoT [74] creates a structured thinking fine-tuning dataset to make MLLM to perform systematic step-by-step reasoning. Some efforts have also been made for inference time scaling. RLAIF-V [77] proposes a novel self-feedback guidance for inference-time scaling and devises a simple length-normalization strategy tackling the bias towards shorter responses. AR-MCTS [11] combines Monte-Carlo Tree Search (MCTS) and Retrival Augmented Generation (RAG) to guide MLLM search step by step and explore the answer space.

Process reward model

Process Reward Model (PRM) [29, 27, 38, 61] provides a more finer-grained verification than Outcome Reward Model (ORM) [9, 52], scoring each step of the reasoning trajectory. However, a central challenge in designing PRMs is obtaining process supervision signals, which require supervised labels for each reasoning step. Current approaches typically depend on costly, labor-intensive human annotation [29], highlighting the need for automated methods to improve scalability and efficiency. Math-Shepherd [64] proposes a method utilizing Monte-Carlo estimation to provide hard labels and soft labels for automatic process supervision. OmegaPRM [37] proposes a Monte Carlo Tree Search (MCTS) for finer-grained exploration for automatical labeling. MiPS [69] further explores the Monte Carlo estimation method and studies the aggregation of PRM signals.

Domain-reweighting

Domain reweighting methodologies are developed to modulate the influence of individual data domains, thereby enabling models to achieve robust generalization. Recently, domain reweighting has emerged as a key component in large language model pre-training, where corpora are drawn from heterogeneous sources. DoReMi [73] trains a lightweight proxy model with group distributionally robust optimization to assign domain weights that maximize excess loss relative to a reference model. DOGE [13] proposes a first-order bi-level optimization framework, using gradient alignment between source and target domains to update mixture weights online during training. Complementary to these optimization-based approaches, Data Mixing Laws [76] derives scaling laws that could predict performance under different domain mixtures, enabling low-cost searches for near-optimal weights without proxy models. In this paper, we extend these ideas to process supervision and introduce a novel bi-level domain-reweighting framework.

3 Problem Setting and Preliminaries

Notations.

Let $\mathcal{I}$ , $\mathcal{T}$ , and $\mathcal{Y}$ denote the multimodal input space (images), textual instruction space, and response space, respectively. A multimodal large language model (MLLM) is formalized as a parametric mapping $M_{\theta}:\mathcal{T}×\mathcal{I}→\Delta(\mathcal{Y})$ , where $\hat{y}\sim M_{\theta}(·|x)$ represents the stochastic generation of responses conditioned on input pair $x=(t,I)$ including visual input $I∈\mathcal{I}$ and textual instruction $t∈\mathcal{T}$ , with $\Delta(\mathcal{Y})$ denoting the probability simplex over the response space. We use $y∈\mathcal{Y}$ to denote the ground truth label from a dataset.

The process reward model (PRM) constitutes a sequence classification function $\mathcal{V}_{\phi}:\mathcal{T}×\mathcal{I}×\mathcal{Y}→[0,1]$ , parameterized by $\phi$ , which quantifies the epistemic value of partial reasoning state $\hat{y}_{i}$ through scalar reward $p_{i}=\mathcal{V}_{\phi}(x,\hat{y}_{i})$ , modeling incremental utility toward solving instruction $t$ under visual grounding $I$ . Specifically, $\hat{y}_{i}$ represents the first $i$ steps of a complete reasoning trajectory $\hat{y}$ .

PRM training with Monte Carlo signals.

Due to the lack of ground truth epistemic value for each partial reasoning state $\hat{y}_{i}$ , training of PRM requires automatic generation of approximated supervision signals. An effective approach to obtain these signals is to use the Monte Carlo method [69, 65]. We first feed the input question-image pair $x=(t,I)$ and the prefix solution $\hat{y}_{i}$ into the MLLM, and let it complete the remaining steps until reaching the final answer. We randomly sample multiple completions, compare their final answers to the gold answer $y$ , and thereby obtain multiple correctness labels. PRM is trained as a sequence classification task to predict these correctness labels. The ratio of correct completions at the $i$ -th step estimates the “correctness level” up to step $i$ , which is used as the approximated supervision signals $p_{i}$ to train the PRM. Formally,

$$

p_{i}=\texttt{MonteCarlo}(x,\hat{y}_{i},y)=\frac{\texttt{num(correct completions from }\hat{y}_{i})}{\texttt{num(total completions from }\hat{y}_{i})} \tag{1}

$$

PRM-based inference with aggregation function.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Machine Learning Model Performance Under Distribution Shift

### Overview

The diagram illustrates the workflow and performance of a machine learning system (MLLM) and a prediction refinement module (PRM) across training and testing phases. It highlights how distribution shifts affect model accuracy, using visual metaphors like arrows, color-coded components, and error markers.

---

### Components/Axes

1. **Training Set (Blue)**

- **Input**: Map visualization with questions:

- "What is the area of the yellow region?"

- "Which building is west of the park?"

- **Process**:

- MLLM (blue robot) processes spatial queries.

- Outputs connect to PRM via "Monte Carlo signal" (dashed blue line).

- **Output**: PRM (purple robot) refines predictions.

2. **Testing Set (Orange)**

- **Input**: Bar chart visualization with question:

- "What is the value of the highest bar?"

- **Process**:

- MLLM (green robot) processes numerical data.

- Outputs connect to PRM via multiple paths (solid green lines).

- **Output**: PRM evaluates predictions with mixed results:

- ✅ Correct answers (green checkmark).

- ❌ Errors (red "X").

3. **Distribution Shift**

- Arrows (orange) indicate a shift from training to testing data.

- PRM performance degrades in testing due to this shift.

---

### Detailed Analysis

- **Training Set Flow**:

- MLLM processes map-based spatial reasoning tasks.

- PRM refines predictions using Monte Carlo methods (probabilistic sampling).

- No errors observed in training.

- **Testing Set Flow**:

- MLLM handles bar chart data (numerical reasoning).

- PRM encounters distribution shift, leading to:

- 3 correct predictions (✅).

- 2 incorrect predictions (❌).

- Green lines show multiple pathways for PRM to process testing data.

- **Color Coding**:

- Blue (Training): Spatial reasoning, Monte Carlo signal.

- Orange (Testing): Numerical reasoning, distribution shift.

- Purple (PRM): Central refinement module.

- Green (Testing MLLM): Adaptation to new data types.

---

### Key Observations

1. **Performance Degradation**:

- PRM accuracy drops in testing (2/5 errors) compared to training (0/2).

- Distribution shift introduces ambiguity in bar chart interpretation.

2. **Model Adaptability**:

- MLLM retains core functionality across data types (map → bar chart).

- PRM struggles with novel data distributions despite training.

3. **Visual Metaphors**:

- Dashed lines (Monte Carlo) vs. solid lines (testing pathways) suggest differing confidence levels.

- Error markers (❌) and checkmarks (✅) quantify shift impact.

---

### Interpretation

The diagram demonstrates how distribution shifts challenge machine learning systems. While the MLLM adapts to new data formats (map to bar chart), the PRM’s reliance on training-specific patterns (Monte Carlo signals) fails under novel conditions. This highlights the need for robust generalization techniques, such as domain adaptation or ensemble methods, to mitigate performance drops in real-world scenarios. The use of color and error markers effectively visualizes the trade-off between model complexity and adaptability.

</details>

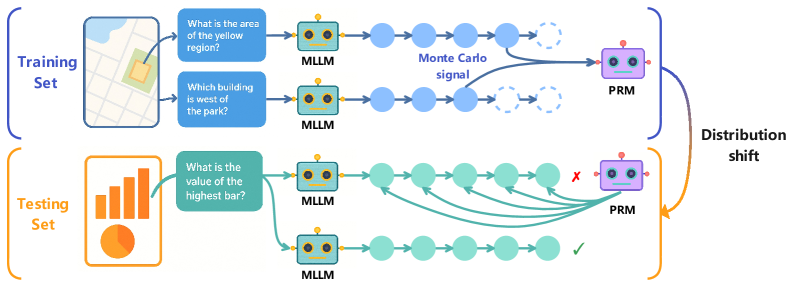

Figure 2: General flow of training PRM and using PRM for inference. Training phase: Train PRM with Monte Carlo signals from intermediate steps of Chain-of-Thoughts (CoTs). Inference phase: Use the trained PRM to verify CoTs step by step and select the best CoT. Conventional training of PRM has poor generalization capability due to distribution shift between training set and testing set.

After training a PRM, a typical way of conducting PRM-based MLLM inference is to use aggregation function [69]. Specifically, for each candidate solution $\hat{y}$ from the MLLM, PRM will generate a list of predicted probabilities ${p}=\{{p_{1}},{p_{2}},...,{p_{n}}\}$ accordingly, one for each step $\hat{y}_{i}$ in the solution. The list of predicted probabilities are then aggregated using the following function:

$$

\mathcal{A}({p})=\sum_{i=1}^{n}\log\frac{{p_{i}}}{1-{p_{i}}}. \tag{2}

$$

The aggregated value corresponds to the score of a specific prediction $\hat{y}$ , and the final PRM-based solution is the one with the highest aggregated score.

Bi-level optimization.

Bi-level optimization (BLO) has been widely used in meta-learning [14], neural architecture search [31], and data reweighting [54]. A BLO problem is usually formulated as:

$$

\displaystyle\min_{\alpha}\mathcal{U}(\alpha,\phi^{*}(\alpha)) \displaystyle s.t. \displaystyle\phi^{*}(\alpha)=\underset{\mathbf{\phi}}{\arg\min}\mathcal{L}(\phi,\alpha) \tag{3}

$$

where $\mathcal{U}$ is the upper-level optimization problem (OP) with parameter $\alpha$ , and $\mathcal{L}$ is the lower-level OP with parameter $\phi$ . The lower-level OP is nested within the upper-level one, and the two OPs are mutually dependent.

4 The Proposed Domain-reweighting Method

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Multi-stage Optimization Pipeline with Domain-Specific Processing

### Overview

The diagram illustrates a hierarchical optimization framework combining domain-specific processing (Lower-level Optimization) and global optimization (Upper-level Optimization). It features color-coded components, directional flows, and parameter management systems (activated/frozen parameters). The architecture integrates multiple domains, mathematical reasoning, and optimization models (DreamPRM, BLO).

### Components/Axes

1. **Lower-level Optimization Section (Top)**

- **Domains**:

- Domain 1: Map visualization with yellow region area question

- Domain k: Pie chart with "largest pie area" question

- **MLLM Processing**:

- Blue nodes (Domain 1) and orange nodes (Domain k) represent MLLM processing steps

- Arrows show sequential processing flow

- **Output**:

- Connects to DreamPRM (purple) and BLO (yellow) optimization models

2. **Upper-level Optimization Section (Bottom)**

- **Domain k+1**:

- Mathematical equation "2x+6=13" with "What is the value of x?" question

- **MLLM Processing**:

- Green nodes represent MLLM processing for mathematical reasoning

- Multiple arrows indicate parallel processing paths

- **Output**:

- Connects to DreamPRM and BLO through domain weights

3. **Parameter Management**

- **Activated Parameters**: Red flame icon (bottom-left)

- **Frozen Parameters**: Blue snowflake icon (bottom-left)

- **Legend**:

- Positioned at bottom-center

- Color coding:

- Blue = Domain 1 processing

- Orange = Domain k processing

- Green = Domain k+1 processing

- Purple = DreamPRM

- Yellow = BLO

- Red = Activated parameters

- Blue = Frozen parameters

### Detailed Analysis

1. **Domain Processing Flow**

- Lower-level domains (1 to k) process visual/spatial tasks (maps, pie charts)

- Upper-level domain (k+1) handles mathematical reasoning

- All domains feed into MLLM processing nodes before optimization

2. **Color-Coded Connections**

- Blue arrows: Domain 1 → MLLM → DreamPRM

- Orange arrows: Domain k → MLLM → BLO

- Green arrows: Domain k+1 → MLLM → DreamPRM/BLO

- Purple arrows: Domain weights → DreamPRM

- Yellow arrows: Domain weights → BLO

3. **Optimization Models**

- **DreamPRM**:

- Receives inputs from all domains

- Connected to purple domain weights

- **BLO**:

- Receives inputs from Domain k and k+1

- Connected to yellow domain weights

### Key Observations

1. **Quality Imbalance**:

- Lower-level domains show visual tasks with varying complexity (map vs. pie chart)

- Upper-level domain demonstrates mathematical reasoning capability

2. **Parameter Management**:

- Red (activated) and blue (frozen) parameters suggest dynamic model adaptation

- Frozen parameters likely maintain core functionality while activated parameters enable domain-specific adjustments

3. **Multi-path Optimization**:

- Domain k+1 connects to both DreamPRM and BLO through multiple green arrows

- Suggests parallel optimization pathways for different objective functions

### Interpretation

This diagram represents a sophisticated optimization system that:

1. Processes domain-specific tasks through specialized MLLM modules

2. Maintains parameter flexibility through activation/freezing mechanisms

3. Combines local optimizations (DreamPRM) with global balancing (BLO)

4. Handles both visual/spatial and mathematical reasoning tasks

The color-coded architecture suggests a modular design where:

- Different colors represent distinct processing streams

- Arrows indicate information flow and optimization dependencies

- Domain weights (purple/yellow) likely represent importance/confidence metrics

The presence of both visual and mathematical domains implies the system can handle multimodal optimization challenges, with the Upper-level optimization serving as a meta-controller that coordinates domain-specific optimizations while maintaining overall system coherence.

</details>

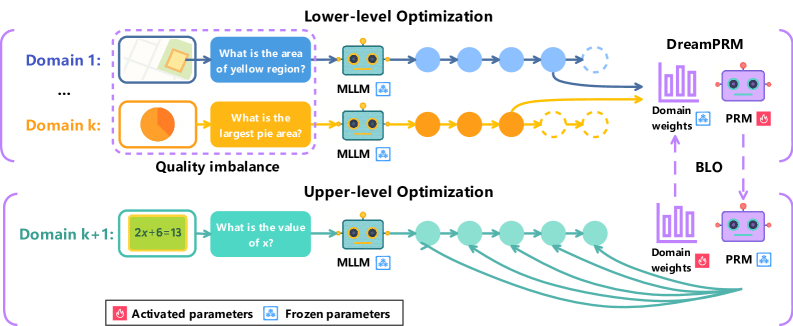

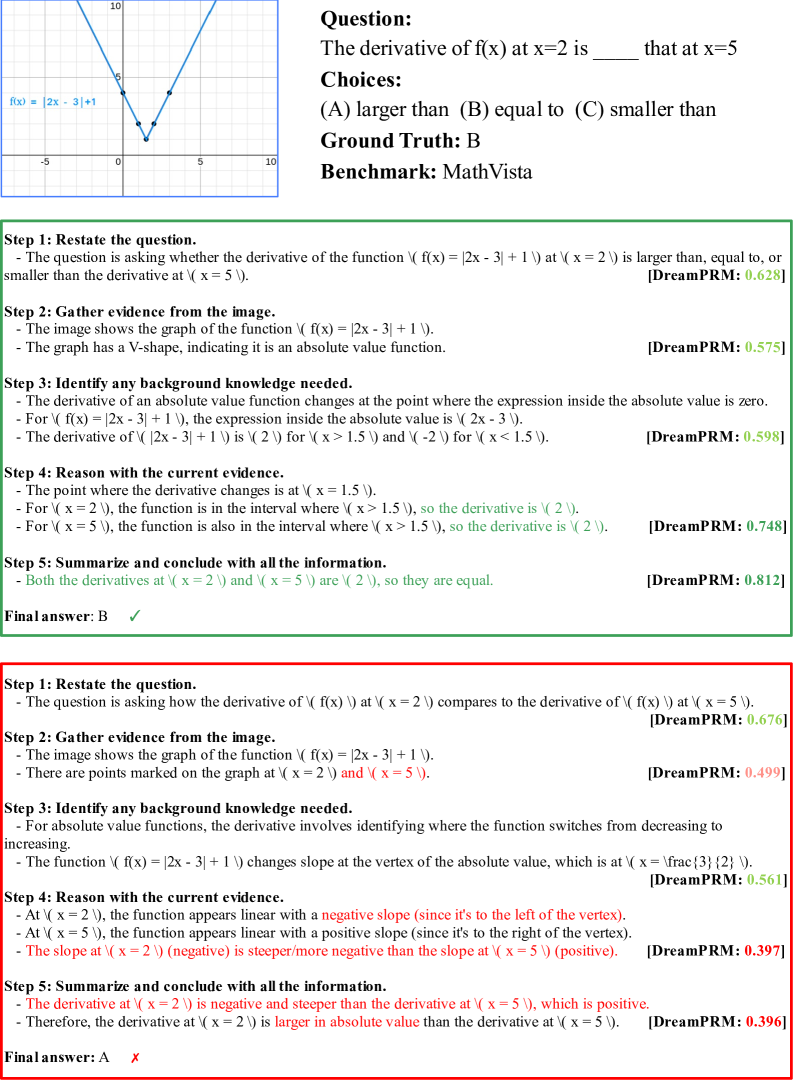

Figure 3: The proposed bi-level optimization based domain-reweighting method. Lower-level optimization: In this stage, PRM’s parameters are updated on multiple datasets with domain weights, allowing the PRM to prioritize domains with better quality. Upper-level optimization: In this stage, the PRM is evaluated on a separate meta dataset to compute an aggregation function loss and optimize the domain weights. DreamPRM helps address dataset quality imbalance problems and leads to stronger and more generalizable reasoning performance.

Overview.

Training process reward models (PRMs) for MLLMs is challenging for two reasons: (1) dataset (domain) quality imbalance, and (2) discrepancy between training and inference procedures. To address these two challenges, we propose DreamPRM, which automatically searches for domain importance using a novel aggregation function loss that better simulates the inference process of PRM. Under a bi-level optimization framework, it optimizes PRM parameters with Monte Carlo signals at the lower level, and optimizes trainable domain importance weights with aggregation function loss at the upper level. An overview of DreamPRM method is shown in Fig. 3.

Datasets.

We begin with $K{+}1$ datasets, each from a distinct domain (e.g., science, geometry). The first $K$ datasets form the training pool $\mathcal{D}_{\mathrm{tr}}=\{\mathcal{D}_{1},...,\mathcal{D}_{K}\}$ , while the remaining dataset, $\mathcal{D}_{\mathrm{meta}}=\mathcal{D}_{K+1}$ , is a meta (validation) dataset with better quality.

Lower-level optimization: domain-reweighted training of PRM.

In lower-level optimization, we aim to update the weights $\phi$ of PRM with domain-reweighted training. We first define the typical PRM training loss $\mathcal{L}_{tr}$ on a single domain $\mathcal{D}_{k}$ , given PRM parameters $\phi$ , as follows:

$$

\displaystyle\mathcal{L}_{tr}(\mathcal{D}_{k},\phi)=\sum_{(x,y)\in\mathcal{D}_{k}}\sum_{i=1}^{n}\mathcal{L}_{MSE}(\mathcal{V}_{\phi}(x,\hat{y}_{i}),p_{i}) \tag{5}

$$

where $\hat{y}_{i}$ is the prefix of MLLM generated text $\hat{y}=M_{\theta}(x)$ given input pair $x=(t,I)$ , and $p_{i}$ is the process supervision signal value obtained by Monte Carlo estimation given input pair $x$ , prefix $\hat{y}_{i}$ and ground truth label $y$ , as previously defined in Equation 1. The PRM is optimized by minimizing the mean squared error (MSE) between supervision signal and PRM predicted score $\mathcal{V}_{\phi}(x,\hat{y}_{i})$ . With the PRM training loss on a single domain $\mathcal{D}_{k}$ above, we next define the domain-reweighted training objective of PRM on multiple training domains $\mathcal{D}=\{\mathcal{D}_{k}\}_{k=1}^{K}$ . The overall objective is a weighted sum of the single-domain PRM training losses, allowing the contribution of each domain to be adjusted during the learning process:

$$

\displaystyle\mathcal{L}_{tr}(\mathcal{D}_{tr},\phi,\alpha)=\sum_{k=1}^{K}\alpha_{k}\mathcal{L}_{tr}(\mathcal{D}_{k},\phi) \tag{6}

$$

Here, $\alpha=\{\alpha_{k}\}_{k=1}^{K}$ represents the trainable domain weight parameters, indicating the importance of each domain. By optimizing this objective, we obtain the optimal value of PRM parameters $\phi^{*}$ :

$$

\displaystyle\phi^{*}(\alpha)= \displaystyle\underset{\mathbf{\phi}}{\arg\min}\mathcal{L}_{tr}(\mathcal{D}_{tr},\phi,\alpha) \tag{7}

$$

It is worth mentioning that only $\phi$ is optimized at this level, while $\alpha$ remains fixed.

Upper-level optimization: learning domain reweighting parameters.

In upper-level optimization, we optimize the domain reweighting parameter $\alpha$ on meta dataset $\mathcal{D}_{meta}$ given optimal PRM weights $\phi^{*}(\alpha)$ obtained from the lower level. To make the meta learning target more closely reflect the actual PRM-based inference process, we propose a novel meta loss function $\mathcal{L}_{meta}$ , different from the training loss $\mathcal{L}_{tr}$ . Specifically, we first obtain an aggregated score $\mathcal{A}({p})$ for each generated solution $\hat{y}$ from the MLLM given input pair $x=(t,I)$ , following process in Section 3. We then create a ground truth signal $r(\hat{y},y)$ by assigning it a value of 1 if the generated $\hat{y}$ contains ground truth $y$ , and 0 otherwise. The meta loss is defined as the mean squared error between aggregated score and ground truth signal:

$$

\displaystyle\mathcal{L}_{meta}(\mathcal{D}_{meta},\phi^{*}(\alpha))=\sum_{(x,y)\in\mathcal{D}_{meta}}\mathcal{L}_{MSE}(\sigma(\mathcal{A}(\mathcal{V}_{\phi^{*}(\alpha)}(x,\hat{y}))),r(\hat{y},y)) \tag{8}

$$

where $\mathcal{A}$ represents the aggregation function as previously defined in Equation 2, and $\sigma$ denotes the sigmoid function to map the aggregated score to a probability. Accordingly, the optimization problem at the upper level is formulated as follows:

$$

\displaystyle\underset{\alpha}{\min}\mathcal{L}_{meta}(\mathcal{D}_{meta},\phi^{*}(\alpha)) \tag{9}

$$

To solve this optimization problem, we propose an efficient gradient-based algorithm, which is detailed in Appendix A.

5 Experimental Results

5.1 Experimental settings

Multistage reasoning.

To elicit consistent steady reasoning responses from current MLLMs, we draw on the Llava-CoT approach [75], which fosters structured thinking prior to answer generation. Specifically, we prompt MLLMs to follow five reasoning steps: (1) Restate the question. (2) Gather evidence from the image. (3) Identify any background knowledge needed. (4) Reason with the current evidence. (5) Summarize and conclude with all the information. We also explore zero-shot prompting settings in conjunction with structural reasoning, which can be found in Appendix C. We use 8 different chain-of-thought reasoning trajectories for all test-time scaling methods, unless otherwise stated.

Table 1: Comparative evaluation of DreamPRM and baselines on multimodal reasoning benchmarks. Bold numbers indicate the best performance, while underlined numbers indicate the second best. The table reports accuracy (%) on five datasets: WeMath, MathVista, MathVision, MMVet, and MMStar.

| | Math Reasoning WeMath (loose) | General Reasoning MathVista (testmini) | MathVision (test) | MMVet (v1) | MMStar (test) |

| --- | --- | --- | --- | --- | --- |

| Zero-shot Methods | | | | | |

| Gemini-1.5-Pro [50] | 46.0 | 63.9 | 19.2 | 64.0 | 59.1 |

| GPT-4v [46] | 51.4 | 49.9 | 21.7 | 67.7 | 62.0 |

| LLaVA-OneVision-7B [26] | 44.8 | 63.2 | 18.4 | 57.5 | 61.7 |

| Qwen2-VL-7B [66] | 42.9 | 58.2 | 16.3 | 62.0 | 60.7 |

| InternVL-2.5-8B-MPO [67] | 51.7 | 65.4 | 20.4 | 55.9 | 58.9 |

| Test-time Scaling Methods (InternVL-2.5-8B-MPO based) | | | | | |

| Self-consistency [68] | 56.4 | 67.1 | 20.7 | 57.4 | 59.6 |

| Self-correction [17] | 54.0 | 63.8 | 21.6 | 54.9 | 59.7 |

| ORM [52] | 56.9 | 65.3 | 20.5 | 55.9 | 60.1 |

| Vanilla PRM [29] | 54.2 | 67.2 | 20.6 | 58.9 | 60.8 |

| CaR-PRM [16] | 54.7 | 67.5 | 21.0 | 60.6 | 61.1 |

| s1-PRM [44] | 57.1 | 65.8 | 20.2 | 60.1 | 60.4 |

| DreamPRM (ours) | 57.4 | 68.9 | 22.1 | 61.4 | 62.3 |

Base models.

For inference, we use InternVL-2.5-8B-MPO [67] as the base MLLM, which has undergone post-training to enhance its reasoning abilities and is well-suited for our experiment. For fine-tuning PRM, we adopt Qwen2-VL-2B-Instruct [66]. Qwen2-VL is a state-of-the-art multimodal model pretrained for general vision-language understanding tasks. This pretrained model serves as the initialization for our fine-tuning process.

Training hyperparameters.

In the lower-level optimization, we perform 5 inner gradient steps per outer update (unroll steps = 5) using the AdamW [32] optimizer with learning rate set to $5× 10^{-7}$ . In the upper-level optimization, we use the AdamW optimizer ( $\mathrm{lr}=0.01$ , weight decay $=10^{-3}$ ) and a StepLR scheduler (step size = 5000, $\gamma=0.5$ ). In total, DreamPRM is fine-tuned for 10000 iterations. Our method is implemented with Betty [7], and the fine-tuning process takes approximately 10 hours on one NVIDIA A100 GPUs.

Baselines.

We use three major categories of baselines: (1) State-of-the-art models on public leaderboards, including Gemini-1.5-Pro [50], GPT-4V [46], LLaVA-OneVision-7B [26], Qwen2-VL-7B [66]. We also carefully reproduce the results of InternVL-2.5-8B-MPO with structural thinking. (2) Test-time scaling methods (excluding PRM) based on the InternVL-2.5-8B-MPO model, including: (i) Self-consistency [68], which selects the most consistent reasoning chain via majority voting over multiple responses; (ii) Self-correction [17], which prompts the model to critically reflect on and revise its initial answers; and (iii) Outcome Reward Model (ORM) [52], which evaluates and scores the final response to select the most promising one. (3) PRM-based methods, including: (i) Vanilla PRM trained without any data selection, as commonly used in LLM settings [29]; (ii) s1-PRM, which selects high-quality reasoning responses based on three criteria - difficulty, quality, and diversity - following the s1 strategy [44]; and (iii) CaR-PRM, which filters high-quality visual questions using clustering and ranking techniques, as proposed in CaR [16].

Datasets and benchmarks.

We use 15 multimodal datasets for lower-level optimization ( $\mathcal{D}_{tr}$ ), covering four domains: science, chart, geometry, and commonsense, as listed in Appendix Table 2. For upper-level optimization ( $\mathcal{D}_{meta}$ ), we adopt the MMMU [79] dataset. Evaluation is conducted on five multimodal reasoning benchmarks: WeMath [48], MathVista [33], MathVision [63], MMVet [78], and MMStar [5]. Details are provided in Appendix B.

5.2 Benchmark evaluation of DreamPRM

Tab. 1 presents the primary experimental results. We observe that: (1) DreamPRM outperforms other PRM-based methods, highlighting the effectiveness of our domain reweighting strategy. Compared to the vanilla PRM trained without any data selection, DreamPRM achieves a consistent performance gain of 2%-3% across all five datasets, suggesting that effective data selection is crucial for training high-quality multimodal PRMs. Moreover, DreamPRM also outperforms s1-PRM and CaR-PRM, which rely on manually designed heuristic rules for data selection. These results indicate that selecting suitable reasoning datasets for PRM training is a complex task, and handcrafted rules are often suboptimal. In contrast, our automatic domain-reweighting approach enables the model to adaptively optimize its learning process, illustrating how data-driven optimization offers a scalable solution to dataset selection challenges. (2) DreamPRM outperforms SOTA MLLMs with much fewer parameters, highlighting the effectiveness of DreamPRM. For example, DreamPRM significantly surpasses two trillion-scale closed-source LLMs (GPT-4v and Gemini-1.5-Pro) on 4 out of 5 datasets. In addition, it consistently improves the performance of the base model, InternVL-2.5-8B-MPO, achieving an average gain of 4% on the five datasets. These results confirm that DreamPRM effectively yields a high-quality PRM, which is capable of enhancing multimodal reasoning across a wide range of benchmarks. (3) DreamPRM outperforms other test-time scaling methods, primarily because it enables the training of a high-quality PRM that conducts fine-grained, step-level evaluation. While most test-time scaling methods yield moderate improvements, DreamPRM leads to the most substantial gains, suggesting that the quality of the reward model is critical for effective test-time scaling. We further provide case studies in Appendix D, which intuitively illustrate how DreamPRM assigns higher scores to coherent and high-quality reasoning trajectories.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Leaderboard on MathVista

### Overview

The chart displays a horizontal bar comparison of model performance on the MathVista benchmark. Each bar represents a different AI model's accuracy percentage, with the highest-performing model at the top and the lowest at the bottom. The chart uses distinct colors for each model to differentiate results.

### Components/Axes

- **X-Axis**: Model names (e.g., "o4-mini + DreamPRM", "VL-Rethinker", "Step R1 -V-Mini", etc.)

- **Y-Axis**: Accuracy percentages (0% to 100% in 10% increments)

- **Legend**: Integrated via bar colors (no separate legend box). Colors correspond to model names in left-to-right order.

- **Title**: "Leaderboard on MathVista" (centered at the top)

### Detailed Analysis

1. **o4-mini + DreamPRM** (Blue): 85.2% (highest)

2. **VL-Rethinker** (Orange): 80.3%

3. **Step R1 -V-Mini** (Green): 80.1%

4. **Kimi-k1.6 -preview-20250308** (Red): 80.0%

5. **Doubao-pro-1.5** (Purple): 79.5%

6. **Ovis2_34B** (Brown): 77.1%

7. **Kimi-k1.5** (Pink): 74.9%

8. **OpenAI o1** (Gray): 73.9%

9. **Llama 4 Maverick** (Olive): 73.7%

10. **Vision-R1-7B** (Cyan): 73.2% (lowest)

### Key Observations

- **Dominance of o4-mini + DreamPRM**: The top model outperforms all others by 5.1 percentage points.

- **Tight Competition in Mid-Range**: Models 2–5 (VL-Rethinker to Kimi-k1.6) are clustered within 0.3 percentage points.

- **Gradual Decline**: Performance drops steadily from 85.2% to 73.2%, with the largest gap between the top model and the rest.

- **Color Consistency**: Each model’s bar color matches its position in the x-axis list without overlap.

### Interpretation

The data suggests **o4-mini + DreamPRM** is the current state-of-the-art for MathVista, likely due to specialized training or architecture optimizations. The mid-range cluster (79.5–80.3%) indicates a competitive field of high-performing models, while the bottom 3 models (73.2–73.9%) show minimal differentiation, possibly reflecting similar capabilities or niche limitations. The chart highlights the importance of incremental improvements in AI benchmarks, where small percentage gains can signify significant technical advancements. The absence of a separate legend implies the chart assumes viewers can directly associate colors with model names via their x-axis order.

</details>

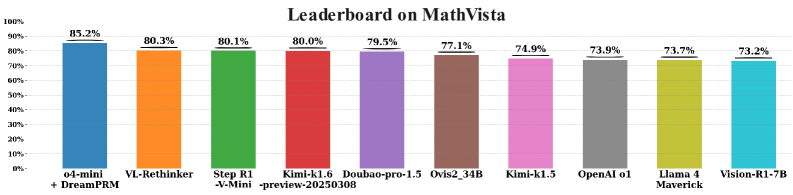

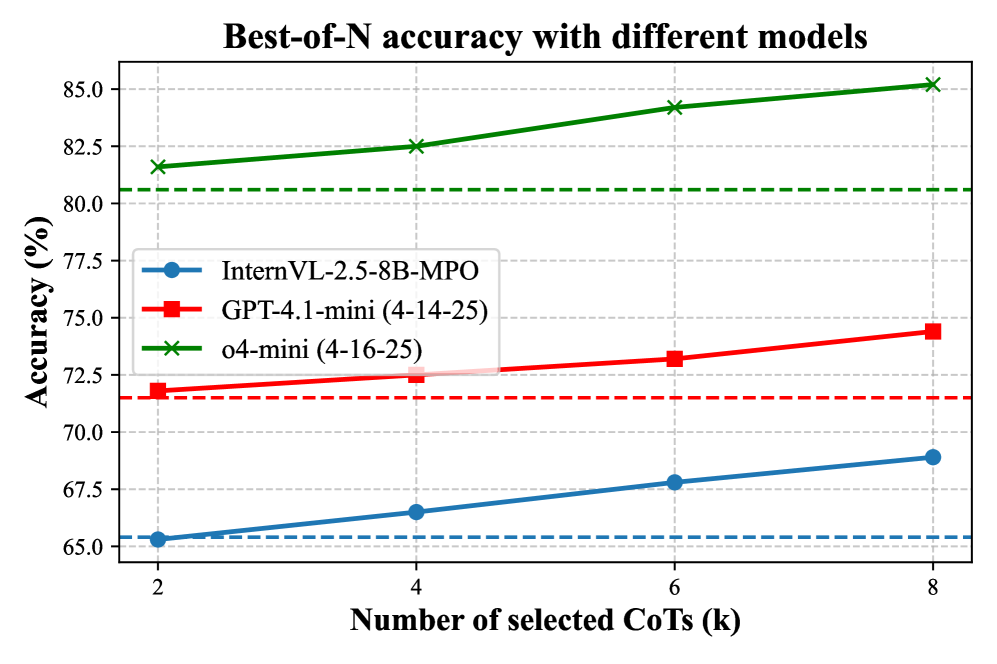

Figure 4: Leaderboard on MathVista (as of October 15, 2025). The first column (“o4-mini + DreamPRM”) reports our own evaluation, while the remaining results are taken from the official MathVista leaderboard. The compared models include VL-Rethinker [62], Step R1-V-Mini [58], Kimi-k1.6-preview [43], Kimi-k1.5 [24], Doubao-pro-1.5 [60], Ovis2-34B [1], OpenAI o1 [45], Llama 4 Maverick [41, 42], and Vision-R1-7B [18].

5.3 Leaderboard performance of DreamPRM

As shown in Fig. 4, DreamPRM achieves the top-1 accuracy of 85.2% on the MathVista leaderboard (as of October 15, 2025). The result (o4-mini + DreamPRM) has been officially verified through the MathVista evaluation. Compared with a series of strong multimodal reasoning baselines, including VL-Rethinker [62], Step R1-V-Mini [58], Kimi-k1.6-preview [43], Doubao-pro-1.5 [60], Ovis2-34B [1], OpenAI o1 [45], Llama 4 Maverick [41, 42], and Vision-R1-7B [18], DreamPRM demonstrates clearly superior multimodal reasoning capability.

Table 5 in Appendix provides a detailed comparison among various Process Reward Model (PRM) variants built on the same o4-mini backbone. DreamPRM surpasses all counterparts, improving the base o4-mini model from 80.6% (pass@1) and 81.7% (self-consistency@8) to 85.2%. This consistent gain verifies the effectiveness of DreamPRM in enhancing reasoning accuracy through process-level supervision and reliable consensus across multiple chains of thought.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Radar Charts: Method Performance Comparison Across Three Studies

### Overview

The image contains three radar charts comparing the performance of five methods (MathVista, WeMath, MathVision, MMVet, MMStar) across three studies: "Data selection comparison," "Test-time scaling comparison," and "Ablation study." Each chart uses colored lines to represent different experimental configurations (e.g., "No selection," "DreamPRM," "w/o AFL") and their corresponding performance metrics.

---

### Components/Axes

#### Common Elements Across All Charts:

- **Axes**: Labeled with method names:

`MathVista`, `WeMath`, `MathVision`, `MMVet`, `MMStar`

- **Legends**:

- **Data selection comparison**:

`No selection` (yellow), `s1 selection` (red), `CaR selection` (pink), `Self-consistency` (orange), `Self-correction` (purple), `ORM` (blue), `DreamPRM` (teal)

- **Test-time scaling comparison**:

`No selection` (yellow), `Self-consistency` (orange), `ORM` (blue), `DreamPRM` (teal), `w/o AFL` (orange), `w/o ST` (pink), `w/o BLO` (red), `DreamPRM` (teal)

- **Ablation study**:

`No selection` (yellow), `Self-consistency` (orange), `ORM` (blue), `DreamPRM` (teal), `w/o AFL` (orange), `w/o ST` (pink), `w/o BLO` (red), `DreamPRM` (teal)

- **Axis Markers**: Numerical values (e.g., 68.9, 57.4) placed at the outer edge of each axis.

#### Spatial Grounding:

- **Legends**: Positioned at the bottom of each chart.

- **Lines**: Colored lines connect data points for each configuration, radiating from the center to the axes.

- **Text Labels**: Numerical values are placed near the end of each line segment.

---

### Detailed Analysis

#### 1. **Data Selection Comparison**

- **MathVista**:

- Highest value: `68.9` (No selection, yellow).

- Lowest value: `54.7` (Self-correction, purple).

- **WeMath**:

- Highest value: `57.4` (DreamPRM, teal).

- Lowest value: `54.2` (Self-consistency, orange).

- **MathVision**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.0` (Self-correction, purple).

- **MMVet**:

- Highest value: `60.1` (No selection, yellow).

- Lowest value: `54.9` (Self-correction, purple).

- **MMStar**:

- Highest value: `62.3` (No selection, yellow).

- Lowest value: `54.0` (Self-correction, purple).

#### 2. **Test-Time Scaling Comparison**

- **MathVista**:

- Highest value: `68.9` (No selection, yellow).

- Lowest value: `54.9` (w/o AFL, orange).

- **WeMath**:

- Highest value: `56.9` (DreamPRM, teal).

- Lowest value: `54.0` (w/o ST, pink).

- **MathVision**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

- **MMVet**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.9` (w/o AFL, orange).

- **MMStar**:

- Highest value: `62.3` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

#### 3. **Ablation Study**

- **MathVista**:

- Highest value: `68.9` (No selection, yellow).

- Lowest value: `54.9` (w/o BLO, red).

- **WeMath**:

- Highest value: `56.3` (DreamPRM, teal).

- Lowest value: `54.0` (w/o ST, pink).

- **MathVision**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

- **MMVet**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.9` (w/o AFL, orange).

- **MMStar**:

- Highest value: `62.3` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

---

### Key Observations

1. **Consistent Performance**:

- `MathVista` consistently achieves the highest values across all charts, particularly under "No selection" (yellow line).

- `DreamPRM` (teal) performs well in the first two charts but underperforms in the ablation study.

2. **Impact of Ablation**:

- Removing components (e.g., `w/o AFL`, `w/o ST`, `w/o BLO`) significantly reduces performance. For example:

- `w/o BLO` (red) in the ablation study shows the lowest values for all methods.

- `w/o ST` (pink) in the test-time scaling and ablation studies has the lowest values for `WeMath` and `MathVision`.

3. **Method-Specific Trends**:

- `WeMath` and `MathVision` show moderate performance, with `WeMath` benefiting more from `DreamPRM` in the first two charts.

- `MMVet` and `MMStar` exhibit similar trends, with `MMStar` slightly outperforming `MMVet` in the first chart.

---

### Interpretation

The data suggests that **data selection methods** (e.g., "No selection," "DreamPRM") have the most significant impact on performance, particularly for `MathVista`. The **ablation study** highlights the critical role of components like `BLO` (likely a key module) in maintaining high performance. Test-time scaling introduces variability, but the core methods (`MathVista`, `WeMath`) remain robust. The repeated use of `DreamPRM` in the legends may indicate a focus on its importance in data selection and test-time scaling, though its performance drops in the ablation study, suggesting dependencies on other components.

**Notable Outliers**:

- `w/o BLO` (red) in the ablation study consistently underperforms, indicating its necessity for optimal results.

- `Self-correction` (purple) in the data selection comparison shows the lowest values for most methods, suggesting it is less effective than other selection strategies.

This analysis underscores the importance of holistic system design, where individual components and selection strategies synergize to achieve peak performance.

</details>

Figure 5: Comparative evaluation of DreamPRM on multimodal reasoning benchmarks. Radar charts report accuracy (%) on five datasets (WeMath, MathVista, MathVision, MMVet, and MMStar). (a) Impact of different data selection strategies. (b) Comparison with existing test-time scaling methods. (c) Ablation study of three key components, i.e. w/o aggregation function loss (AFL), w/o bi-level optimization (BLO), and w/o structural thinking (ST).

<details>

<summary>x6.png Details</summary>

### Visual Description

## Radar Chart: Scaling ability

### Overview

The chart compares the performance of four model configurations (Zero-shot, DreamPRM@4, DreamPRM@2, DreamPRM@8) across five datasets (MathVista, WeMath, MMStar, MMVet, MathVision). Performance is measured on a radial scale from 0 to 70, with each dataset represented as an axis. The chart uses four distinct colored lines to visualize performance trends.

### Components/Axes

- **Legend**: Located at the bottom center, with four entries:

- Orange: Zero-shot

- Pink: DreamPRM@4

- Red: DreamPRM@2

- Blue: DreamPRM@8

- **Axes**: Five radial axes labeled clockwise:

1. MathVista (top)

2. WeMath (top-right)

3. MMStar (bottom-right)

4. MMVet (bottom)

5. MathVision (bottom-left)

- **Radial Scale**: Incremental markers from 0 to 70, with dashed lines for intermediate values.

### Detailed Analysis

1. **Zero-shot (Orange)**:

- MathVista: 20.0

- WeMath: 51.7

- MMStar: 58.0

- MMVet: 55.9

- MathVision: 20.0

- *Trend*: Lowest values across all datasets, with a sharp drop in MathVista and MathVision.

2. **DreamPRM@4 (Pink)**:

- MathVista: 66.5

- WeMath: 54.5

- MMStar: 60.0

- MMVet: 60.4

- MathVision: 65.4

- *Trend*: Moderate performance, consistently above Zero-shot but below DreamPRM@8.

3. **DreamPRM@2 (Red)**:

- MathVista: 55.3

- WeMath: 53.6

- MMStar: 59.3

- MMVet: 60.3

- MathVision: 60.4

- *Trend*: Slightly better than DreamPRM@4 in MMVet and MathVision, but lower in MathVista.

4. **DreamPRM@8 (Blue)**:

- MathVista: 68.9

- WeMath: 57.4

- MMStar: 62.3

- MMVet: 61.4

- MathVision: 61.4

- *Trend*: Highest values across all datasets, with a pronounced peak in MathVista.

### Key Observations

- **Performance Hierarchy**: DreamPRM@8 > DreamPRM@4 > DreamPRM@2 > Zero-shot.

- **Dataset Variance**: MathVista shows the largest performance gap between configurations (48.9 between Zero-shot and DreamPRM@8).

- **Consistency**: DreamPRM@8 maintains the highest performance across all datasets, while Zero-shot performs worst in MathVista and MathVision.

- **Diminishing Returns**: The performance gap between DreamPRM@4 and DreamPRM@8 narrows in WeMath (3.9) and MMStar (3.3) compared to MathVista (12.4).

### Interpretation

The data demonstrates that increasing the number of prompts (from 2 to 8) significantly improves model performance, particularly in complex tasks like MathVista. The Zero-shot configuration struggles across all datasets, suggesting that prompt engineering is critical for scaling ability. DreamPRM@8 achieves near-optimal results, with MathVista serving as a key differentiator where it outperforms other configurations by 12.4 points. The consistent performance of DreamPRM@8 across datasets implies robustness, while the variability in DreamPRM@4 and DreamPRM@2 highlights sensitivity to task complexity. This pattern underscores the importance of prompt quantity in scaling AI systems, with diminishing returns observed at higher prompt counts.

</details>

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Best-of-N accuracy with different models

### Overview

The chart compares the accuracy of three AI models (InternVL-2.5-8B-MPO, GPT-4.1-mini, and o4-mini) across different numbers of selected Chain-of-Thought (CoT) steps (k=2,4,6,8). Accuracy is measured in percentage, with distinct performance trends observed for each model.

### Components/Axes

- **X-axis**: Number of selected CoTs (k) - Discrete values at 2, 4, 6, 8

- **Y-axis**: Accuracy (%) - Continuous scale from 65% to 85%

- **Legend**: Located in bottom-left quadrant

- Blue circles: InternVL-2.5-8B-MPO

- Red squares: GPT-4.1-mini (4-14-25)

- Green crosses: o4-mini (4-16-25)

- **Dashed reference line**: Green dashed line at 80.5% accuracy

### Detailed Analysis

1. **InternVL-2.5-8B-MPO** (Blue line):

- Accuracy increases from 65.2% (k=2) to 68.5% (k=8)

- Linear upward trend with consistent slope

- Data points: (2,65.2), (4,66.8), (6,67.6), (8,68.5)

2. **GPT-4.1-mini** (Red line):

- Accuracy rises from 72.1% (k=2) to 74.5% (k=8)

- Steeper slope than InternVL, with sharper increases at k=4 and k=6

- Data points: (2,72.1), (4,73.2), (6,73.8), (8,74.5)

3. **o4-mini** (Green line):

- Highest performance across all k values

- Accuracy starts at 81.5% (k=2) and reaches 85.2% (k=8)

- Slightly concave upward curve with diminishing returns

- Data points: (2,81.5), (4,82.3), (6,84.1), (8,85.2)

### Key Observations

- All models show improved accuracy with more CoT steps

- o4-mini maintains >10% accuracy advantage over GPT-4.1-mini

- Green dashed line at 80.5% intersects o4-mini's performance at k=2

- InternVL-2.5-8B-MPO shows the most gradual improvement curve

- GPT-4.1-mini demonstrates strongest performance gains between k=2→4 and k=4→6

### Interpretation

The data suggests that increasing CoT steps improves model performance across all architectures, with o4-mini demonstrating superior base capabilities and scalability. The green dashed line at 80.5% appears to represent a performance threshold, which o4-mini exceeds even at minimal CoT steps (k=2). The InternVL model shows the most linear improvement pattern, suggesting more predictable scaling with CoT expansion. The GPT-4.1-mini's sharper mid-range gains indicate potential optimization opportunities in its CoT implementation. These results highlight the importance of CoT step selection in model performance optimization, with o4-mini emerging as the most efficient architecture for this task.

</details>

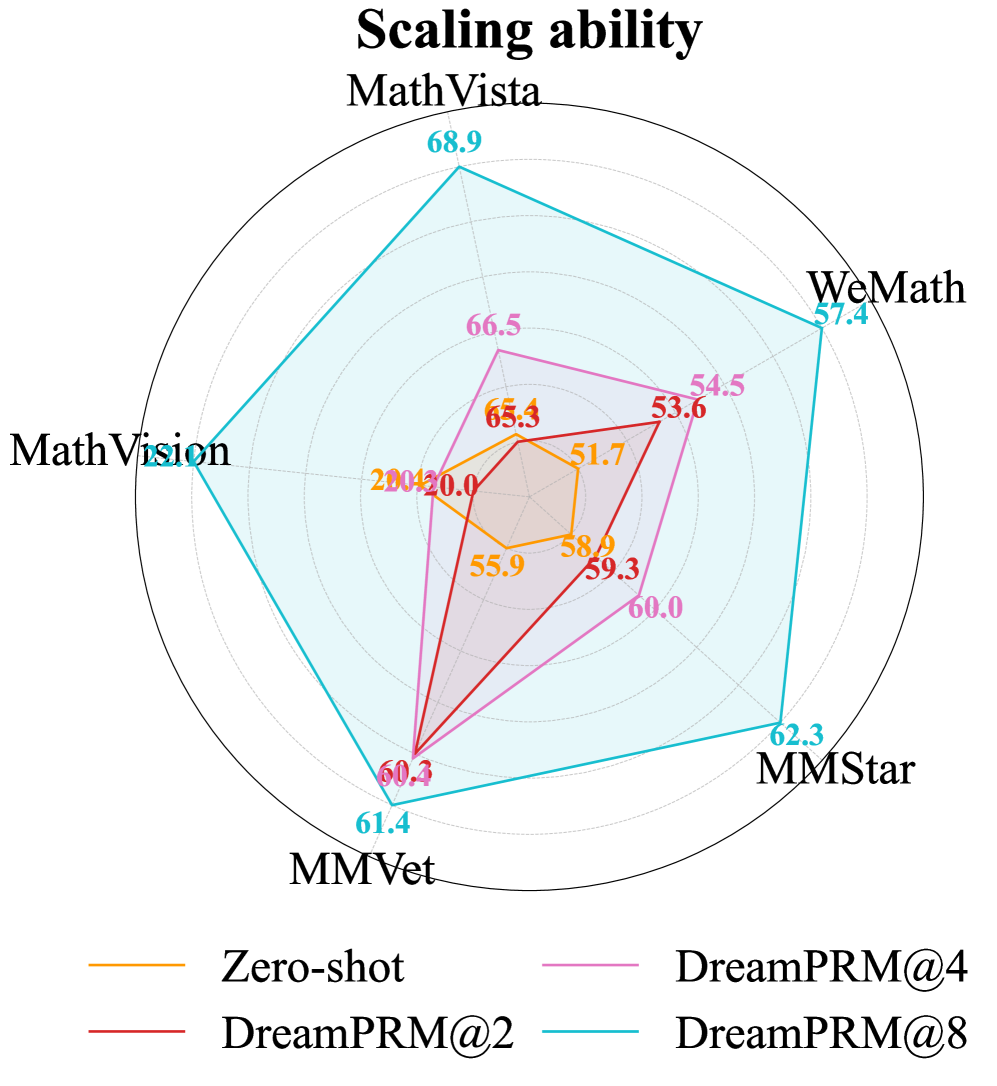

Figure 6: Scaling ability and cross-model generalization. (a) Radar chart of five multimodal reasoning benchmarks shows that DreamPRM delivers monotonic accuracy gains as the number of selected chains-of-thought increases (@2, @4, @8) over the pass@1 baseline. (b) Best-of- N accuracy curves for InternVL-2.5-8B-MPO (blue), GPT-4.1-mini (red) and o4-mini (green) on MathVista confirm that the same DreamPRM-ranked CoTs generalize across models, consistently outperforming pass@1 performance (dashed lines) as $k$ grows.

5.4 Scaling and generalization analysis of DreamPRM

DreamPRM scales reliably with more CoT candidates. As shown in the left panel of Fig. 6, the accuracy of DreamPRM consistently improves on all five benchmarks as the number of CoTs increases from $k{=}2$ to $k{=}8$ , expanding the radar plot outward. Intuitively, a larger set of candidates increases the likelihood of including high-quality reasoning trajectories, but it also makes identifying the best ones more challenging. The consistent performance gains indicate that DreamPRM effectively verifies and ranks CoTs, demonstrating its robustness in selecting high-quality reasoning trajectories under more complex candidate pools.

DreamPRM transfers seamlessly to stronger base MLLMs. The right panel of Fig. 6 shows the MathVista accuracy when applying DreamPRM to recent MLLMs, GPT-4.1-mini (2025-04-14) [46] and o4-mini (2025-04-16) [45]. For o4-mini model, the pass@1 score of 80.6% steadily increases to 85.2% at $k{=}8$ , surpassing the previous state-of-the-art performance. This best-of- $N$ trend, previously observed with InternVL, also holds for GPT-4.1-mini and o4-mini, demonstrating the generalization ability of DreamPRM. Full results of these experiments are provided in Tab. 3.

5.5 Ablation study

In this section, we investigate the importance of three components in DreamPRM: (1) bi-level optimization, (2) aggregation function loss in upper-level, and (3) structural thinking prompt (detailed in Section 5.1). As shown in the rightmost panel of Fig. 5, the complete DreamPRM achieves the best results compared to three ablation baselines across all five benchmarks. Eliminating bi-level optimization causes large performance drop (e.g., -3.5% on MathVista and -3.4% on MMStar). Removing aggregation function loss leads to a consistent 1%-2% decline (e.g., 57.4% $→$ 56.3% on WeMath). Excluding structural thinking also degrades performance (e.g., -1.8% on MathVision). These results indicate that all three components are critical for DreamPRM to achieve the best performance. More detailed results are shown in Appendix Tab. 4.

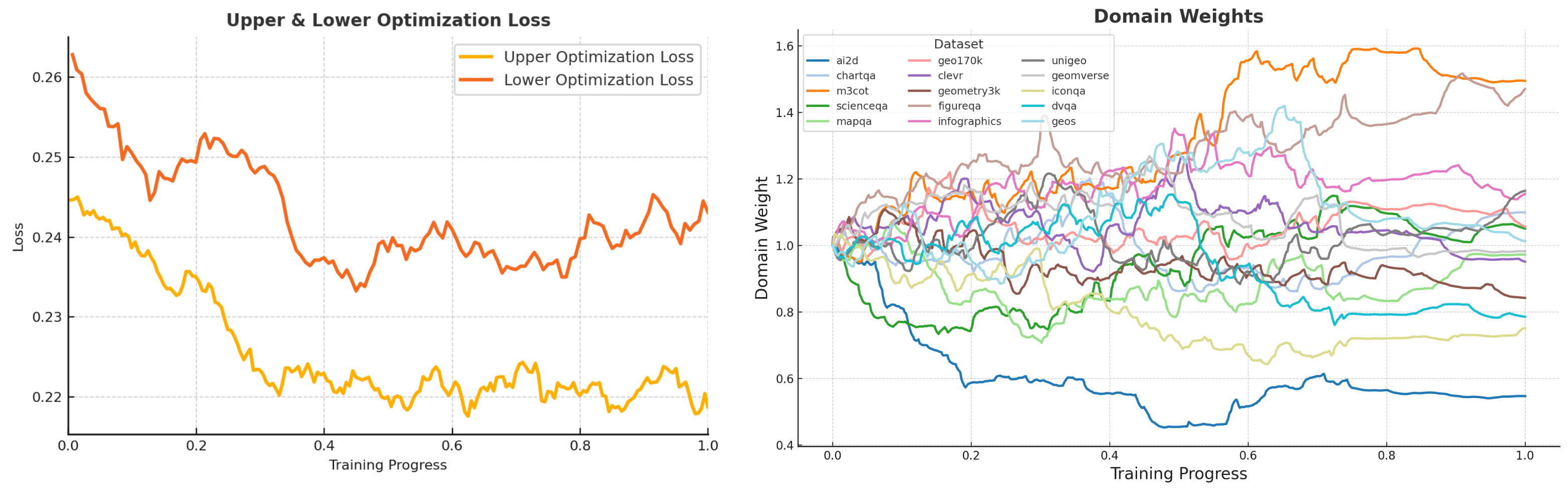

5.6 Analysis of learned domain weights

<details>

<summary>x8.png Details</summary>

### Visual Description

## Bar Chart: Domain Weights

### Overview

The image is a horizontal bar chart titled "Domain Weights," displaying the relative importance or usage of 15 different domains. Each domain is represented by a colored bar, with values ranging from 0.0 to 1.5 on the x-axis. The y-axis lists domain names in descending order of their weights.

### Components/Axes

- **X-axis**: Labeled "0.0" to "1.5" with increments of 0.2. Represents domain weights.

- **Y-axis**: Lists 15 domains in descending order of weight:

1. m3cot

2. figureqa

3. unigeo

4. infographics

5. chartqa

6. geo170k

7. scienceqa

8. geos

9. geomverse

10. mapqa

11. clever

12. geometry3k

13. dvqa

14. iconqa

15. ai2d

- **Colors**: Each domain is assigned a distinct color (e.g., orange for m3cot, brown for figureqa, gray for unigeo, etc.). No explicit legend is present, but colors align with the y-axis order.

### Detailed Analysis

- **m3cot**: Orange bar, weight = **1.49** (highest).

- **figureqa**: Brown bar, weight = **1.47**.

- **unigeo**: Gray bar, weight = **1.16**.

- **infographics**: Pink bar, weight = **1.16**.

- **chartqa**: Light blue bar, weight = **1.10**.

- **geo170k**: Red bar, weight = **1.06**.

- **scienceqa**: Green bar, weight = **1.05**.

- **geos**: Light blue bar, weight = **1.01**.

- **geomverse**: Gray bar, weight = **0.98**.

- **mapqa**: Green bar, weight = **0.97**.

- **clever**: Purple bar, weight = **0.95**.

- **geometry3k**: Brown bar, weight = **0.84**.

- **dvqa**: Cyan bar, weight = **0.79**.

- **iconqa**: Yellow bar, weight = **0.75**.

- **ai2d**: Blue bar, weight = **0.55** (lowest).

### Key Observations

1. **Top 3 Domains**: m3cot (1.49), figureqa (1.47), and unigeo/infographics (1.16) dominate, with weights significantly higher than the rest.

2. **Mid-Range Domains**: chartqa (1.10), geo170k (1.06), and scienceqa (1.05) cluster around 1.0, indicating moderate importance.

3. **Lower-Weight Domains**: ai2d (0.55) is the least weighted, followed by iconqa (0.75) and dvqa (0.79).

4. **Color Consistency**: Colors align with the y-axis order, confirming no mismatches between labels and visual representation.

### Interpretation

The chart highlights a clear hierarchy in domain weights, with m3cot and figureqa being the most critical. The top 10 domains all exceed 0.95, suggesting they are prioritized in the analyzed context (e.g., data processing, model training, or resource allocation). The sharp decline from the top 3 to the bottom 5 domains indicates a long-tail distribution, where a few domains dominate usage. The ai2d domain’s low weight (0.55) may reflect niche applicability or lower adoption. The absence of a legend implies the color-coding is intuitive or predefined, requiring careful cross-referencing with the y-axis labels for accuracy.

</details>

Figure 7: Learned domain weights after the convergence of the DreamPRM training process.

The final domain weights (Fig. 7) range from 0.55 to 1.49: M3CoT [6] and FigureQA [21] receive the highest weights (approximately 1.5), while AI2D [23] and IconQA [36] are assigned lower weights (less than 0.8). This learned weighting pattern contributes to improved PRM performance, indicating that the quality imbalance problem across reasoning datasets is real and consequential. Additionally, as shown in Fig. 9 in Appendix, all domain weights are initialized to 1.0 and eventually converge during the training process of DreamPRM.

6 Conclusions

We propose DreamPRM, the first domain-reweighted PRM framework for multimodal reasoning. By automatically searching for domain weights using a bi-level optimization framework, DreamPRM effectively mitigates issues caused by dataset quality imbalance and significantly enhances the generalizability of multimodal PRMs. Extensive experiments on five diverse benchmarks confirm that DreamPRM outperforms both vanilla PRMs without domain reweighting and PRMs using heuristic data selection methods. We also observe that the domain weights learned by DreamPRM correlate with dataset quality, effectively separating challenging, informative sources from overly simplistic or noisy ones. These results highlight the effectiveness of our proposed automatic domain reweighting strategy.

Acknowledgments

This work was supported by the National Science Foundation (IIS2405974 and IIS2339216) and the National Institutes of Health (R35GM157217).

References

- [1] AIDC-AI. Ovis2-34b (model card). https://huggingface.co/AIDC-AI/Ovis2-34B, 2025. Related paper: arXiv:2405.20797; Accessed 2025-10-15.

- [2] Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, and Dario Amodei. Language models are few-shot learners, 2020.

- [3] Shuaichen Chang, David Palzer, Jialin Li, Eric Fosler-Lussier, and Ningchuan Xiao. Mapqa: A dataset for question answering on choropleth maps, 2022.

- [4] Jiaqi Chen, Tong Li, Jinghui Qin, Pan Lu, Liang Lin, Chongyu Chen, and Xiaodan Liang. Unigeo: Unifying geometry logical reasoning via reformulating mathematical expression, 2022.

- [5] Lin Chen, Jinsong Li, Xiaoyi Dong, Pan Zhang, Yuhang Zang, Zehui Chen, Haodong Duan, Jiaqi Wang, Yu Qiao, Dahua Lin, and Feng Zhao. Are we on the right way for evaluating large vision-language models?, 2024.

- [6] Qiguang Chen, Libo Qin, Jin Zhang, Zhi Chen, Xiao Xu, and Wanxiang Che. M 3 cot: A novel benchmark for multi-domain multi-step multi-modal chain-of-thought, 2024.

- [7] Sang Keun Choe, Willie Neiswanger, Pengtao Xie, and Eric Xing. Betty: An automatic differentiation library for multilevel optimization. In The Eleventh International Conference on Learning Representations, 2023.

- [8] Aakanksha Chowdhery, Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung Won Chung, Charles Sutton, Sebastian Gehrmann, Parker Schuh, Kensen Shi, Sasha Tsvyashchenko, Joshua Maynez, Abhishek Rao, Parker Barnes, Yi Tay, Noam Shazeer, Vinodkumar Prabhakaran, Emily Reif, Nan Du, Ben Hutchinson, Reiner Pope, James Bradbury, Jacob Austin, Michael Isard, Guy Gur-Ari, Pengcheng Yin, Toju Duke, Anselm Levskaya, Sanjay Ghemawat, Sunipa Dev, Henryk Michalewski, Xavier Garcia, Vedant Misra, Kevin Robinson, Liam Fedus, Denny Zhou, Daphne Ippolito, David Luan, Hyeontaek Lim, Barret Zoph, Alexander Spiridonov, Ryan Sepassi, David Dohan, Shivani Agrawal, Mark Omernick, Andrew M. Dai, Thanumalayan Sankaranarayana Pillai, Marie Pellat, Aitor Lewkowycz, Erica Moreira, Rewon Child, Oleksandr Polozov, Katherine Lee, Zongwei Zhou, Xuezhi Wang, Brennan Saeta, Mark Diaz, Orhan Firat, Michele Catasta, Jason Wei, Kathy Meier-Hellstern, Douglas Eck, Jeff Dean, Slav Petrov, and Noah Fiedel. Palm: Scaling language modeling with pathways, 2022.

- [9] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems, 2021.

- [10] DeepSeek-AI, Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, Xiaokang Zhang, Xingkai Yu, Yu Wu, Z. F. Wu, Zhibin Gou, Zhihong Shao, Zhuoshu Li, Ziyi Gao, Aixin Liu, Bing Xue, Bingxuan Wang, Bochao Wu, Bei Feng, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chenyu Zhang, Chong Ruan, Damai Dai, Deli Chen, Dongjie Ji, Erhang Li, Fangyun Lin, Fucong Dai, Fuli Luo, Guangbo Hao, Guanting Chen, Guowei Li, H. Zhang, Han Bao, Hanwei Xu, Haocheng Wang, Honghui Ding, Huajian Xin, Huazuo Gao, Hui Qu, Hui Li, Jianzhong Guo, Jiashi Li, Jiawei Wang, Jingchang Chen, Jingyang Yuan, Junjie Qiu, Junlong Li, J. L. Cai, Jiaqi Ni, Jian Liang, Jin Chen, Kai Dong, Kai Hu, Kaige Gao, Kang Guan, Kexin Huang, Kuai Yu, Lean Wang, Lecong Zhang, Liang Zhao, Litong Wang, Liyue Zhang, Lei Xu, Leyi Xia, Mingchuan Zhang, Minghua Zhang, Minghui Tang, Meng Li, Miaojun Wang, Mingming Li, Ning Tian, Panpan Huang, Peng Zhang, Qiancheng Wang, Qinyu Chen, Qiushi Du, Ruiqi Ge, Ruisong Zhang, Ruizhe Pan, Runji Wang, R. J. Chen, R. L. Jin, Ruyi Chen, Shanghao Lu, Shangyan Zhou, Shanhuang Chen, Shengfeng Ye, Shiyu Wang, Shuiping Yu, Shunfeng Zhou, Shuting Pan, S. S. Li, Shuang Zhou, Shaoqing Wu, Shengfeng Ye, Tao Yun, Tian Pei, Tianyu Sun, T. Wang, Wangding Zeng, Wanjia Zhao, Wen Liu, Wenfeng Liang, Wenjun Gao, Wenqin Yu, Wentao Zhang, W. L. Xiao, Wei An, Xiaodong Liu, Xiaohan Wang, Xiaokang Chen, Xiaotao Nie, Xin Cheng, Xin Liu, Xin Xie, Xingchao Liu, Xinyu Yang, Xinyuan Li, Xuecheng Su, Xuheng Lin, X. Q. Li, Xiangyue Jin, Xiaojin Shen, Xiaosha Chen, Xiaowen Sun, Xiaoxiang Wang, Xinnan Song, Xinyi Zhou, Xianzu Wang, Xinxia Shan, Y. K. Li, Y. Q. Wang, Y. X. Wei, Yang Zhang, Yanhong Xu, Yao Li, Yao Zhao, Yaofeng Sun, Yaohui Wang, Yi Yu, Yichao Zhang, Yifan Shi, Yiliang Xiong, Ying He, Yishi Piao, Yisong Wang, Yixuan Tan, Yiyang Ma, Yiyuan Liu, Yongqiang Guo, Yuan Ou, Yuduan Wang, Yue Gong, Yuheng Zou, Yujia He, Yunfan Xiong, Yuxiang Luo, Yuxiang You, Yuxuan Liu, Yuyang Zhou, Y. X. Zhu, Yanhong Xu, Yanping Huang, Yaohui Li, Yi Zheng, Yuchen Zhu, Yunxian Ma, Ying Tang, Yukun Zha, Yuting Yan, Z. Z. Ren, Zehui Ren, Zhangli Sha, Zhe Fu, Zhean Xu, Zhenda Xie, Zhengyan Zhang, Zhewen Hao, Zhicheng Ma, Zhigang Yan, Zhiyu Wu, Zihui Gu, Zijia Zhu, Zijun Liu, Zilin Li, Ziwei Xie, Ziyang Song, Zizheng Pan, Zhen Huang, Zhipeng Xu, Zhongyu Zhang, and Zhen Zhang. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning, 2025.

- [11] Guanting Dong, Chenghao Zhang, Mengjie Deng, Yutao Zhu, Zhicheng Dou, and Ji-Rong Wen. Progressive multimodal reasoning via active retrieval, 2024.

- [12] Simin Fan, Matteo Pagliardini, and Martin Jaggi. Doge: Domain reweighting with generalization estimation, 2024.

- [13] Simin Fan, Matteo Pagliardini, and Martin Jaggi. DOGE: Domain reweighting with generalization estimation. In Ruslan Salakhutdinov, Zico Kolter, Katherine Heller, Adrian Weller, Nuria Oliver, Jonathan Scarlett, and Felix Berkenkamp, editors, Proceedings of the 41st International Conference on Machine Learning, volume 235 of Proceedings of Machine Learning Research, pages 12895–12915. PMLR, 21–27 Jul 2024.

- [14] Chelsea Finn, P. Abbeel, and Sergey Levine. Model-agnostic meta-learning for fast adaptation of deep networks. In International Conference on Machine Learning, 2017.

- [15] Jiahui Gao, Renjie Pi, Jipeng Zhang, Jiacheng Ye, Wanjun Zhong, Yufei Wang, Lanqing Hong, Jianhua Han, Hang Xu, Zhenguo Li, and Lingpeng Kong. G-llava: Solving geometric problem with multi-modal large language model, 2023.

- [16] Yuan Ge, Yilun Liu, Chi Hu, Weibin Meng, Shimin Tao, Xiaofeng Zhao, Hongxia Ma, Li Zhang, Boxing Chen, Hao Yang, Bei Li, Tong Xiao, and Jingbo Zhu. Clustering and ranking: Diversity-preserved instruction selection through expert-aligned quality estimation, 2024.

- [17] Jiayi He, Hehai Lin, Qingyun Wang, Yi Fung, and Heng Ji. Self-correction is more than refinement: A learning framework for visual and language reasoning tasks, 2024.

- [18] Wenxuan Huang, Bohan Jia, Zijie Zhai, et al. Vision-r1: Incentivizing reasoning capability in multimodal large language models. arXiv preprint arXiv:2503.06749, 2025.

- [19] Dongzhi Jiang, Renrui Zhang, Ziyu Guo, Yanwei Li, Yu Qi, Xinyan Chen, Liuhui Wang, Jianhan Jin, Claire Guo, Shen Yan, Bo Zhang, Chaoyou Fu, Peng Gao, and Hongsheng Li. Mme-cot: Benchmarking chain-of-thought in large multimodal models for reasoning quality, robustness, and efficiency, 2025.

- [20] Kushal Kafle, Brian Price, Scott Cohen, and Christopher Kanan. Dvqa: Understanding data visualizations via question answering, 2018.

- [21] Samira Ebrahimi Kahou, Vincent Michalski, Adam Atkinson, Akos Kadar, Adam Trischler, and Yoshua Bengio. Figureqa: An annotated figure dataset for visual reasoning, 2018.

- [22] Mehran Kazemi, Hamidreza Alvari, Ankit Anand, Jialin Wu, Xi Chen, and Radu Soricut. Geomverse: A systematic evaluation of large models for geometric reasoning, 2023.

- [23] Aniruddha Kembhavi, Mike Salvato, Eric Kolve, Minjoon Seo, Hannaneh Hajishirzi, and Ali Farhadi. A diagram is worth a dozen images, 2016.

- [24] Kimi Team. Kimi k1.5: Scaling reinforcement learning with llms. arXiv preprint arXiv:2501.12599, 2025.

- [25] Takeshi Kojima, Shixiang (Shane) Gu, Machel Reid, Yutaka Matsuo, and Yusuke Iwasawa. Large language models are zero-shot reasoners. In Advances in Neural Information Processing Systems, volume 35, pages 22199–22213, 2022.

- [26] Bo Li, Yuanhan Zhang, Dong Guo, Renrui Zhang, Feng Li, Hao Zhang, Kaichen Zhang, Peiyuan Zhang, Yanwei Li, Ziwei Liu, and Chunyuan Li. Llava-onevision: Easy visual task transfer, 2024.

- [27] Yifei Li, Zeqi Lin, Shizhuo Zhang, Qiang Fu, Bei Chen, Jian-Guang Lou, and Weizhu Chen. Making large language models better reasoners with step-aware verifier, 2023.

- [28] Zongxia Li, Xiyang Wu, Hongyang Du, Fuxiao Liu, Huy Nghiem, and Guangyao Shi. A survey of state of the art large vision language models: Alignment, benchmark, evaluations and challenges. arXiv preprint arXiv:2501.02189, 2025.

- [29] Hunter Lightman, Vineet Kosaraju, Yuri Burda, Harrison Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step. In The Twelfth International Conference on Learning Representations, 2024.

- [30] Adam Dahlgren Lindström and Savitha Sam Abraham. Clevr-math: A dataset for compositional language, visual and mathematical reasoning, 2022.

- [31] Hanxiao Liu, Karen Simonyan, and Yiming Yang. DARTS: Differentiable architecture search. In International Conference on Learning Representations, 2019.

- [32] Ilya Loshchilov and Frank Hutter. Decoupled weight decay regularization, 2019.

- [33] Pan Lu, Hritik Bansal, Tony Xia, Jiacheng Liu, Chunyuan Li, Hannaneh Hajishirzi, Hao Cheng, Kai-Wei Chang, Michel Galley, and Jianfeng Gao. Mathvista: Evaluating mathematical reasoning of foundation models in visual contexts. In International Conference on Learning Representations (ICLR), 2024.

- [34] Pan Lu, Ran Gong, Shibiao Jiang, Liang Qiu, Siyuan Huang, Xiaodan Liang, and Song-Chun Zhu. Inter-gps: Interpretable geometry problem solving with formal language and symbolic reasoning. In The 59th Annual Meeting of the Association for Computational Linguistics (ACL), 2021.

- [35] Pan Lu, Swaroop Mishra, Tony Xia, Liang Qiu, Kai-Wei Chang, Song-Chun Zhu, Oyvind Tafjord, Peter Clark, and Ashwin Kalyan. Learn to explain: Multimodal reasoning via thought chains for science question answering, 2022.

- [36] Pan Lu, Liang Qiu, Jiaqi Chen, Tony Xia, Yizhou Zhao, Wei Zhang, Zhou Yu, Xiaodan Liang, and Song-Chun Zhu. Iconqa: A new benchmark for abstract diagram understanding and visual language reasoning, 2022.

- [37] Liangchen Luo, Yinxiao Liu, Rosanne Liu, Samrat Phatale, Meiqi Guo, Harsh Lara, Yunxuan Li, Lei Shu, Yun Zhu, Lei Meng, Jiao Sun, and Abhinav Rastogi. Improve mathematical reasoning in language models by automated process supervision, 2024.

- [38] Qianli Ma, Haotian Zhou, Tingkai Liu, Jianbo Yuan, Pengfei Liu, Yang You, and Hongxia Yang. Let’s reward step by step: Step-level reward model as the navigators for reasoning, 2023.

- [39] Ahmed Masry, Do Xuan Long, Jia Qing Tan, Shafiq Joty, and Enamul Hoque. Chartqa: A benchmark for question answering about charts with visual and logical reasoning, 2022.

- [40] Minesh Mathew, Viraj Bagal, Rubèn Pérez Tito, Dimosthenis Karatzas, Ernest Valveny, and C. V Jawahar. Infographicvqa, 2021.

- [41] Meta AI. The llama 4 herd: The beginning of a new era of natively multimodal intelligence. https://ai.meta.com/blog/llama-4-multimodal-intelligence/, 2025. Llama 4 Maverick announcement; Accessed 2025-10-15.

- [42] Meta Llama. Llama-4-maverick-17b-128e-instruct (model card). https://huggingface.co/meta-llama/Llama-4-Maverick-17B-128E-Instruct, 2025. Accessed 2025-10-15.

- [43] Moonshot AI / Kimi. Kimi-k1.6-preview-20250308 (preview announcement). https://x.com/RotekSong/status/1900061355945926672, 2025. Accessed 2025-10-15; preview model announcement.

- [44] Niklas Muennighoff, Zitong Yang, Weijia Shi, Xiang Lisa Li, Li Fei-Fei, Hannaneh Hajishirzi, Luke Zettlemoyer, Percy Liang, Emmanuel Candès, and Tatsunori Hashimoto. s1: Simple test-time scaling, 2025.

- [45] OpenAI, :, Aaron Jaech, Adam Kalai, Adam Lerer, Adam Richardson, Ahmed El-Kishky, Aiden Low, Alec Helyar, Aleksander Madry, Alex Beutel, Alex Carney, Alex Iftimie, Alex Karpenko, Alex Tachard Passos, Alexander Neitz, Alexander Prokofiev, Alexander Wei, Allison Tam, Ally Bennett, Ananya Kumar, Andre Saraiva, Andrea Vallone, Andrew Duberstein, Andrew Kondrich, Andrey Mishchenko, Andy Applebaum, Angela Jiang, Ashvin Nair, Barret Zoph, Behrooz Ghorbani, Ben Rossen, Benjamin Sokolowsky, Boaz Barak, Bob McGrew, Borys Minaiev, Botao Hao, Bowen Baker, Brandon Houghton, Brandon McKinzie, Brydon Eastman, Camillo Lugaresi, Cary Bassin, Cary Hudson, Chak Ming Li, Charles de Bourcy, Chelsea Voss, Chen Shen, Chong Zhang, Chris Koch, Chris Orsinger, Christopher Hesse, Claudia Fischer, Clive Chan, Dan Roberts, Daniel Kappler, Daniel Levy, Daniel Selsam, David Dohan, David Farhi, David Mely, David Robinson, Dimitris Tsipras, Doug Li, Dragos Oprica, Eben Freeman, Eddie Zhang, Edmund Wong, Elizabeth Proehl, Enoch Cheung, Eric Mitchell, Eric Wallace, Erik Ritter, Evan Mays, Fan Wang, Felipe Petroski Such, Filippo Raso, Florencia Leoni, Foivos Tsimpourlas, Francis Song, Fred von Lohmann, Freddie Sulit, Geoff Salmon, Giambattista Parascandolo, Gildas Chabot, Grace Zhao, Greg Brockman, Guillaume Leclerc, Hadi Salman, Haiming Bao, Hao Sheng, Hart Andrin, Hessam Bagherinezhad, Hongyu Ren, Hunter Lightman, Hyung Won Chung, Ian Kivlichan, Ian O’Connell, Ian Osband, Ignasi Clavera Gilaberte, Ilge Akkaya, Ilya Kostrikov, Ilya Sutskever, Irina Kofman, Jakub Pachocki, James Lennon, Jason Wei, Jean Harb, Jerry Twore, Jiacheng Feng, Jiahui Yu, Jiayi Weng, Jie Tang, Jieqi Yu, Joaquin Quiñonero Candela, Joe Palermo, Joel Parish, Johannes Heidecke, John Hallman, John Rizzo, Jonathan Gordon, Jonathan Uesato, Jonathan Ward, Joost Huizinga, Julie Wang, Kai Chen, Kai Xiao, Karan Singhal, Karina Nguyen, Karl Cobbe, Katy Shi, Kayla Wood, Kendra Rimbach, Keren Gu-Lemberg, Kevin Liu, Kevin Lu, Kevin Stone, Kevin Yu, Lama Ahmad, Lauren Yang, Leo Liu, Leon Maksin, Leyton Ho, Liam Fedus, Lilian Weng, Linden Li, Lindsay McCallum, Lindsey Held, Lorenz Kuhn, Lukas Kondraciuk, Lukasz Kaiser, Luke Metz, Madelaine Boyd, Maja Trebacz, Manas Joglekar, Mark Chen, Marko Tintor, Mason Meyer, Matt Jones, Matt Kaufer, Max Schwarzer, Meghan Shah, Mehmet Yatbaz, Melody Y. Guan, Mengyuan Xu, Mengyuan Yan, Mia Glaese, Mianna Chen, Michael Lampe, Michael Malek, Michele Wang, Michelle Fradin, Mike McClay, Mikhail Pavlov, Miles Wang, Mingxuan Wang, Mira Murati, Mo Bavarian, Mostafa Rohaninejad, Nat McAleese, Neil Chowdhury, Neil Chowdhury, Nick Ryder, Nikolas Tezak, Noam Brown, Ofir Nachum, Oleg Boiko, Oleg Murk, Olivia Watkins, Patrick Chao, Paul Ashbourne, Pavel Izmailov, Peter Zhokhov, Rachel Dias, Rahul Arora, Randall Lin, Rapha Gontijo Lopes, Raz Gaon, Reah Miyara, Reimar Leike, Renny Hwang, Rhythm Garg, Robin Brown, Roshan James, Rui Shu, Ryan Cheu, Ryan Greene, Saachi Jain, Sam Altman, Sam Toizer, Sam Toyer, Samuel Miserendino, Sandhini Agarwal, Santiago Hernandez, Sasha Baker, Scott McKinney, Scottie Yan, Shengjia Zhao, Shengli Hu, Shibani Santurkar, Shraman Ray Chaudhuri, Shuyuan Zhang, Siyuan Fu, Spencer Papay, Steph Lin, Suchir Balaji, Suvansh Sanjeev, Szymon Sidor, Tal Broda, Aidan Clark, Tao Wang, Taylor Gordon, Ted Sanders, Tejal Patwardhan, Thibault Sottiaux, Thomas Degry, Thomas Dimson, Tianhao Zheng, Timur Garipov, Tom Stasi, Trapit Bansal, Trevor Creech, Troy Peterson, Tyna Eloundou, Valerie Qi, Vineet Kosaraju, Vinnie Monaco, Vitchyr Pong, Vlad Fomenko, Weiyi Zheng, Wenda Zhou, Wes McCabe, Wojciech Zaremba, Yann Dubois, Yinghai Lu, Yining Chen, Young Cha, Yu Bai, Yuchen He, Yuchen Zhang, Yunyun Wang, Zheng Shao, and Zhuohan Li. Openai o1 system card, 2024.