# Reasoning in Neurosymbolic AI

**Authors**: Son Tran, Edjard Mota, Artur d’Avila Garcez

> School of Information Technology, Deakin University, Victoria, 3125, Melbourne, Australia

> Instituto de Computação, Universidade Federal do Amazonas, 69067-005, Manaus, Brazil

Abstract

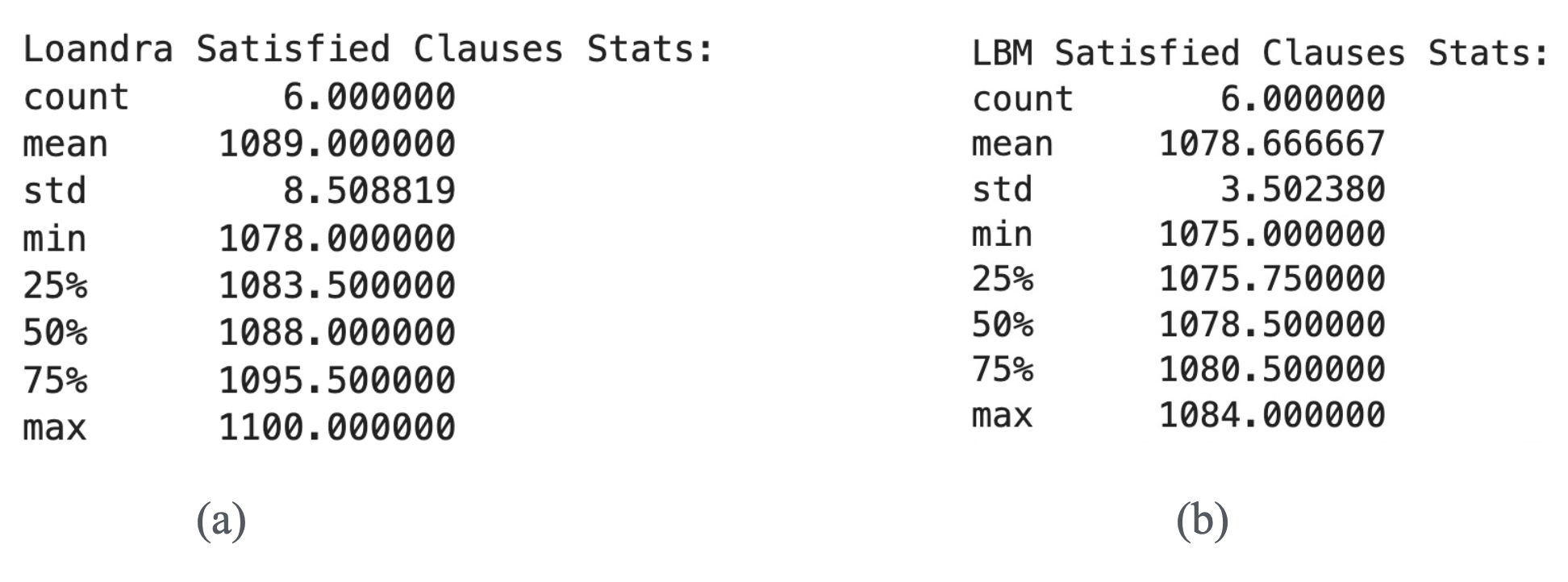

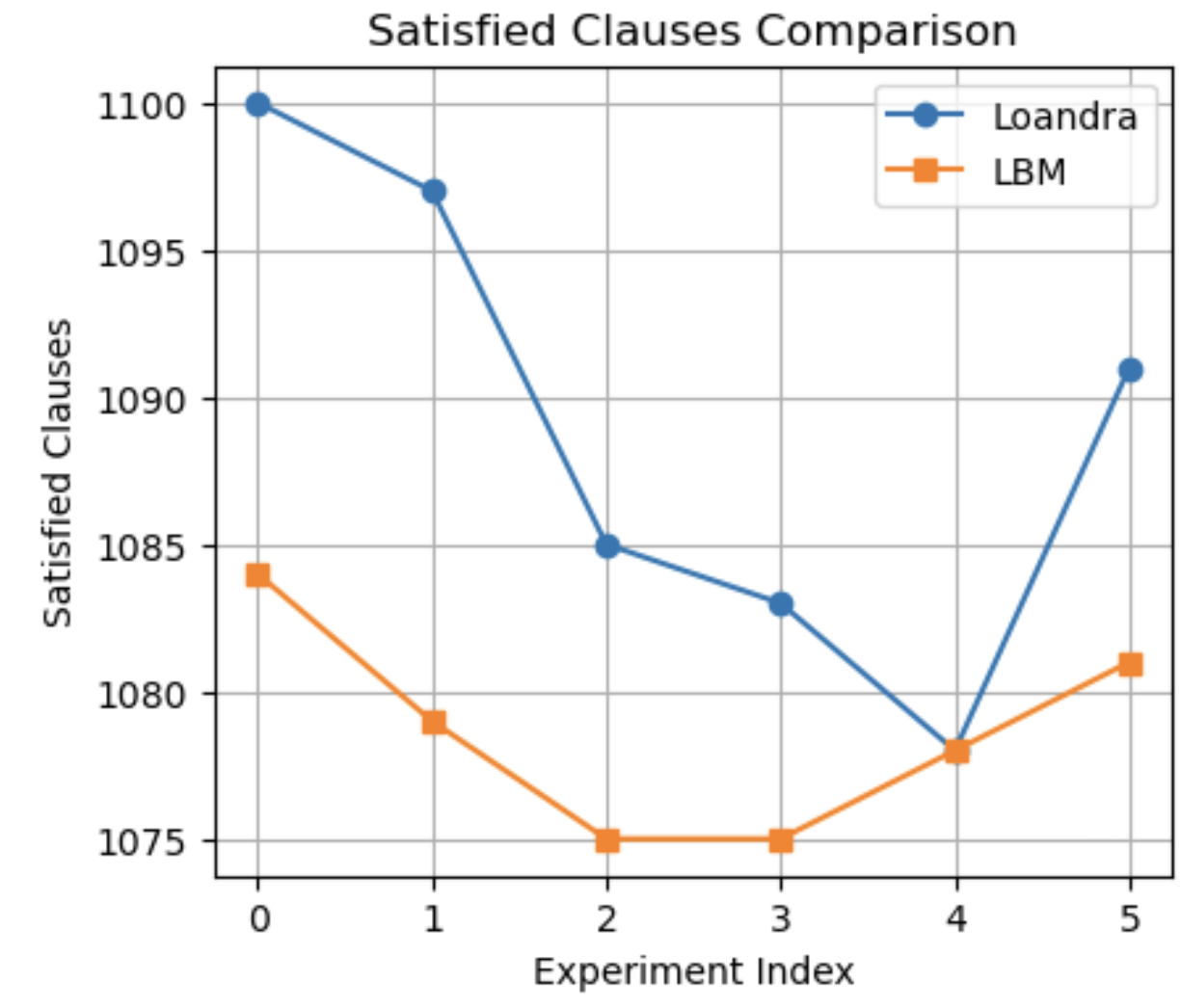

Knowledge representation and reasoning in neural networks have been a long-standing endeavor which has attracted much attention recently. The principled integration of reasoning and learning in neural networks is a main objective of the area of neurosymbolic Artificial Intelligence (AI). In this chapter, a simple energy-based neurosymbolic AI system is described that can represent and reason formally about any propositional logic formula. This creates a powerful combination of learning from data and knowledge and logical reasoning. We start by positioning neurosymbolic AI in the context of the current AI landscape that is unsurprisingly dominated by Large Language Models (LLMs). We identify important challenges of data efficiency, fairness and safety of LLMs that might be addressed by neurosymbolic reasoning systems with formal reasoning capabilities. We then discuss the representation of logic by the specific energy-based system, including illustrative examples and empirical evaluation of the correspondence between logical reasoning and energy minimization using Restricted Boltzmann Machines (RBM). The system, called Logical Boltzmann Machine (LBM), can find all satisfying assignments of a class of logical formulae by searching through a very small percentage of the possible truth-value assignments. Learning from data and knowledge in LBM is also evaluated empirically and compared with a purely-symbolic, a purely-neural and a state-of-the-art neurosymbolic system, achieving better learning performance in five out of seven data sets. Results reported in this chapter in an accessible way are expected to reignite the research on the use of neural networks as massively-parallel models for logical reasoning and to promote the principled integration of reasoning and learning in deep networks. LBM is also evaluated in the role of an interpretable neural module that can be added on top of complex neural networks such as convolutional networks and encoder-decoder networks to implement any given set of logical constraints e.g. fairness or safety requirements. LBM is further evaluated when deployed in the solution of the connectionist Boolean satisfiability (SAT) problem, maximum satisfiability (MaxSAT) and approximate optimization problems when certain logical rules may be given a higher priority or a penalty according to a confidence value. We conclude the chapter with a discussion of the importance of positioning neurosymbolic AI within a broader framework of formal reasoning and accountability in AI, discussing the challenges for neurosynbolic AI to tackle the various known problems of reliability of deep learning. We close with an opinion on the risks of AI and future opportunities for neurosymbolic AI. Keywords: Neurosymbolic AI, Restricted Boltzmann Machines, Logical Reasoning, SAT solving, MaxSAT, Energy-based Learning, Constrained Optimization, Modular Deep Learning.

1 What is Reasoning in Neural Networks?

Increasing attention has been devoted in recent years to knowledge representation and reasoning in neural networks. The principled integration of reasoning and learning in neural networks is a main objective of the field of neurosymbolic Artificial Intelligence (AI) [9, 34]. In neurosymbolic AI, typically, an algorithm is provided that translates some form of symbolic knowledge representation into the architecture and initial set of parameters of a neural network. Ideally, a theorem then shows that the neural network can be used as a massively-parallel model of computation capable of reasoning about such knowledge. Finally, when trained with data and knowledge, the network is expected to produce better performance, either a higher accuracy or faster learning than when trained from data alone. Symbolic knowledge may be provided to a neural network in the form of general rules which are known to be true in a given domain, or rules which are expected to be true across domains when performing transfer and continual learning. When rules are not available to start with, they can be extracted from a trained network. When rules are contradicted by data, they can be revised as part of the learning process. This has been shown to offer a flexible framework whereby knowledge and data, neural networks and symbolic descriptions are combined, leading to a better understanding of complex network models with the interplay between learning and reasoning.

This chapter includes a general discussion of how neurosymbolic AI can contribute to the goals of reasoning in neural networks and a specific illustration of a neurosymbolic system for reasoning in propositional logic with restricted Boltzmann machines (RBMs) [44]. We will describe a neurosymbolic system, called Logical Boltzmann Machines (LBM), capable of (i) representing any propositional logic formula into a restricted Boltzmann machine, (ii) reasoning efficiently from such formula, and (iii) learning from such knowledge representation and data. LBM comes with an algorithm to translate any set of propositional logical formulae into a Boltzmann machine and a proof of equivalence between the logical formulae and the energy-based connectionist model; in other words, a proof of soundness of the translation algorithm from logical formulae to neural networks. Specifically, the network is shown to assign minimum energy to the assignments of truth-values that satisfy the formulae. This provides a new way of performing reasoning in symmetrical neural networks by employing the network to search for the models of a logical theory, that is, to search for the assignments of truth-values that map the logical formulae to $true$ . We use the term model to refer to logical models and to neural network models. When the intended meaning is not clear from the context, we shall use the term logical model. If the number of variable is small, inference can be carried out analytically by sorting the free-energy of all possible truth-value assignments. Otherwise, Gibbs sampling is applied in the search for logical models. We start, however, with a general discussion of reasoning in current AI including large language models.

1.1 Reasoning in Large Language Models

Since the release of GPT4 by OpenAI in March 2023, a fierce debate developed around the risks of AI, Big Tech companies released various proprietary and open-source competitors to ChatGPT, and the European Union passed the regulatory AI Act in record time. Leading figures disagreed on what should be done about the risks of AI. Some claimed that Big Tech is best placed to take care of safety, others argued in favor of open source, and others still argued for regulation of AI and social media. As society contemplates the impact of AI on everyday life, the secrecy surrounding AI technology fueled fears of existential risk and even claims of an upcoming AI bubble burst. Large Language Models (LLMs) such as ChatGPT, Gemini, Claude, Mistral and DeepSeek are a great engineering achievement, are impressive at text summarization and language translation, may improve productivity of those who are knowledgeable enough to spot the LLM’s mistakes, but have great potential to deceive those who aren’t.

There are various technical and non-technical reasons why LLMs and current AI may not be deployed in practice: lack of trust or fairness, reliability issues and public safety as in the case of self-driving cars that use the same technology as LLMs. Fixing reliability issues case-by-case with Reinforcement Learning has proved to be too costly. A common risk mitigation strategy has been to adopt a human-in-the-loop approach: making sure that a human is ultimately responsible for decision making. However, in the age of Agentic AI, where at least some decisions are made by the machine, simply apportioning blame or liability to a human does not address the problem. It is necessary to empower the user of AI, the data scientist and the domain expert to be able to interpret, question and if necessary intervene in the AI system. Neural networks that are accompanied by symbolic descriptions and sound reasoning capabilities will be an important tool in this process of empowering users of AI.

Consider LLMs’ ability to produce code. If GPT4 was allowed to work, not as a stand-alone computer program, but in a loop whereby the code can be executed and data collected from execution to improve the code automatically, one can see how such self-improving LLM with autonomy may pose a serious risk to current computer systems. Recent experiments, however, indicated that the opposite, self-impairing, may also happen in practice, producing a degradation in performance. We will argue that the emerging field of neurosymbolic AI can address such failures and that there must be a better way, other than very costly post-hoc model alignment, of achieving AI that can offer certain logical guarantees to network training.

LLMs have been considered to be general purpose because they will provide an answer to any question. They do that by doing only one thing: predicting the probability of the next word (token) in a sentence. Having made a choice of the next word, LLMs will apply the same calculations recursively to build larger sentences. They are called auto-regressive machine learning models because they perform regression on the discrete tokens to learn such probabilities, and apply recursively the learned function $f$ to choose the word that comes at time t+1 given the words that are available at time t, that is, $x_{t+1}=f(x_{t})$ . Artificial General Intelligence (AGI), however, is best measured by the ability to adapt to novelty. It will require effective learning from fewer data, the ability to reason reliably about the knowledge that has been learned, the extraction of compact descriptions from trained networks and the consolidation of knowledge learned from multiple tasks, using analogy to enable extrapolation to new situations at an adequate level of abstraction. It has been almost two years since GPT4 was released. The competition has caught up. Reliable data seem to have been exhausted. Performance increments obtained with increase in scale have not produced AGI. It is fair to say that the “scale is all you need” claim has not been confirmed. Notwithstanding, domain-specific AI systems that can exhibit intelligence at the level of humans or higher already exist. These systems exhibit intelligence in specialized tasks: targeted medical diagnoses, protein folding, various closed-world two-player strategy games.

When LLMs make stuff up such as non-existing citations, they are said to hallucinate. AGI will require systems that never hallucinate (that is, reason reliably), that can form long term plans and act on those plans to achieve a goal, and that can handle exceptions as they materialize, addressing shifts in data distribution not case-by-case, but requiring far less data labeling. This is very different from current LLMs that seem to have difficulty handling exceptions. For this reason, hallucinations are not going away and the cost of post-hoc model alignment has spiraled in the last two years.

As a case in point, take the o1 LLM system released by OpenAI in September 2024; o1 was claimed to “think before it answers” and to be capable of “truly general reasoning”. Widely seen as a re-branding of the much anticipated GPT5, which was promised to be at AGI level, the little that we know about o1 is that it improved on reasoning and code generation benchmarks, and yet it can be stubbornly poor at simple tasks such as multiplication, formal reasoning, planning or the formidable ARC AGI challenge (see https://arcprize.org/). Let’s assume that OpenAI’s o1 system is best described as “GPT-Go”, a pre-trained transformer to which a tree search is incorporated in the style of Google DeepMind’s earlier Alpha-Go system. The tree search uses “Chain of Thought” (CoT) prompting: generation of synthetic data using the transformer neural network itself in a chain that breaks down a prompt into sub-prompts (sub-problems to be solved in stages). o1’s “thinking” time is presumably needed to build the tree for the CoT. And it’s this breaking of the problem into sub-problems that is expected to improve performance on reasoning tasks since this is how reasoning tasks are solved.

Leaving aside the practical question of how long users will be happy to wait for an answer, the main issue with o1 and successors is a lack of reliability of the synthetic data generation and combinatorial nature of CoT: CoT may solve one reasoning task well today only to fail at an analogous reasoning task tomorrow due to simple naming variations [31]. With synthetic data generation from GPT-like auto-regressive models having been shown to impair model performance, the quality of the data decreases and the model continues to hallucinate [42].

What we are seeing in practice is that eliminating hallucinations is very difficult. And there is another concern: regurgitation. The New York Times (NYT) lawsuit against OpenAI argues that ChatGPT can basically reproduce (regurgitate) copyrighted NYT texts with minimal prompting. Whether regurgitation can be fixed remains to be seen. Efforts in this direction have been focused on a simple technique called RAG (Retrieval Augmented Generation) that fetches facts from external sources. What is clear is that further research is needed to make sense of how LLMs generalize to new situations, to find out whether performance depends on task familiarity or true generalization. In the meantime, there will be many relevant but domain-specific applications of LLMs in areas where the system has been deemed to have been controlled reasonably well or where controlling it isn’t crucial.

In neurosymbolic AI, instead of adjusting the input to fix a misbehaving LLM as done with CoT, the idea is to control the architecture or the loss function of the system. Neurosymbolic AI integrates learning and reasoning to make model development parsimonious by following this recipe: (1) extract symbolic descriptions as learning progresses, (2) reason formally about what has been learned, (3) compress the neural network as knowledge is instilled back into the network. Reasoning in neurosymbolic AI follows the tradition of knowledge representation in AI. It requires the definition of a semantics for deep learning and it measures the capabilities of neural networks w.r.t. formally-defined, sound and approximate reasoning, providing a much needed measure of the accumulation of errors in the AI system.

1.2 AI from a Neurosymbolic Perspective

It is paradoxical that computers have been invented to provide fast calculations and sound reasoning, and yet the latest AI may fail at calculations as simple as multiplication (even though a typical artificial neural network will rely on millions of correct multiplications as part of its internal computations). The first wave of AI in the 1980s was knowledge-based, well-founded and inefficient if compared with deep learning. The second wave from the 2010s was data-driven, distributed and efficient but unsound if compared with knowledge-bases. It is clear that neural networks are here to stay, but the problems with deep learning have been stubbornly difficult to fix using neural networks alone. Next, we discuss how solving these problems will require the use of symbolic AI alongside neural networks. The third wave of AI, we argue, will be neurosymbolic [15].

In order to understand the achievements and limitations of AI, it is helpful to consider the AGI debate https://www.youtube.com/watch?v=JGiLz_Jx9uI. with its focus on what is missing from current AI systems, i.e. the technological innovation that may bring about better AI or AGI. Simply put, such innovation may be described as the ability to apply knowledge learned from a task by a neural network to a novel task without requiring too much data.

As AI experts John Hopfield and Geoff Hinton are awarded the 2024 Nobel Prize for Physics, and AI expert Demis Hassabis is awarded the 2024 Nobel Prize for Chemistry (with David Baker and John Jumper), one can say that the era of computation as the language of science has began. Hassabis led the team at Google DeepMind that created AlphaFold, an AI model capable of predicting with high accuracy the 3D structure of proteins given their amino acid sequence. AlphaFold is arguably the greatest achievement of AI to date, even though it is squarely an application specific (or narrow) AI by comparison with LLMs. From particle physics to drug discovery, energy efficiency and novel materials, AI is being adopted as the process by which scientific research is carried out. However, as noted above, the lack of a description or explanation capable of conveying a deeper sense of understanding of the solution being offered by AI is something that is very unsatisfactory. Computer scientists in a great feat of engineering will solve to a high degree of accuracy very challenging problems in science without necessarily improving their own understanding of the solutions provided by very large neural networks trained on vast amounts of data that are not humanly possible to inspect.

The risks of current AI together with this unsatisfactory lack of explainability confirm the need for neurosymbolic AI as an alternative approach. As mentioned, neurosymbolic AI uses the technology of knowledge extraction to interpret, ask what-if questions and if necessary intervene in the AI system, controlling learning in ways that can offer correctness or fairness guarantees and, with this process, producing a more compact, data efficient system. We start to see a shift towards such explainable neurosymbolic AI systems being deployed as part of a risk-based approach. As argued in [36], effective regulation goes hand in hand with accountability in AI, the definition of a risk mitigation strategy and the use of technology itself such as explainable AI technology [33] to mitigate risks. We shall return to this discussion at the end of the paper.

For more than 20 years, a small group of researchers have been advocating for neurosymbolic AI. Already around the turn of the 21st century, the importance of artificial neural networks as an efficient computational model for learning was clear to that group. But the value of symbol manipulation and abstract reasoning offered by symbolic logic was also obvious to them. Many before them have contributed to neurosymbolic AI. In fact, it could be argued that neurosymbolic AI starts together with connectionism itself, with the aptly titled 1943 paper by McCulloch and Pitts, A Logical Calculus of the Ideas Immanent in Nervous Activity, and with John Von Neumann’s 1952 Lectures on Probabilistic Logics and the Synthesis of Reliable Organisms from Unreliable Components, indicating that the gap between distributed vector representations (embeddings) and localist symbolic representations in logic was not as big as some might imagine. Even Alan Turing’s 1948 Intelligent Machinery introduced a type of neural network called a B-type machine. All of this, of course, before the term Artificial Intelligence was coined ahead of the now famous Dartmouth Workshop in 1956. Since then the field has separated into two: symbolic AI and connectionist AI (or neural networks). This has slowed progress as the two research communities went their separate ways with different conferences, journals and associations. Following the temporary success of symbolic AI in the 1980’s and the success of deep learning since 2015 with its now obvious limitations, the time is right for revisiting the approaches of the founding fathers of computer science and developing neurosymbolic AI that is fit for the 21st century. As a step in this direction, in what follows, we illustrate how a single bi-directional network layer in the form of a restricted Boltzmann machine can implement the full semantics of propositional logic, formally defined.

2 Background: Logic and Restricted Boltzmann Machines

Differently from general-purpose Large Language Models, domain specific Artificial Intelligence, such as the protein folding AlphaFold system, aims to develop systems for specific purposes, enabling human abilities to handle tasks that might otherwise take many years to solve. This goal of domain specific AI is analogous to the invention of the Archimedean lever, which enhanced physical strength capabilities and has enabled humanity to make leaps in construction, mobility and physical labor. AI can be a mental lever that enhances our ability to deal with problems requiring mental activity in volume or intensity that is difficult to accomplish in feasible time or with precision. Modeling such abstract human mental activity is a highly complex task and we shall focus on representing two well-studied aspects: learning and reasoning.

A key step in this endeavor is to choose an appropriate language to represent the problem at hand. In the context of this paper, such a choice will be deemed to be suitable if it allows the development of efficient algorithms to perform learning from data and reasoning about what was learned or if it allows one to identify patterns of solutions that will lead to adequate decisions. Traditional AI has separated the study of reasoning and learning with a focus on either knowledge elicitation by hand for the purpose of sound reasoning or statistical learning from large amounts of data. In neurosymbolic AI this artificial separation is removed. The neurosymbolic cycle seeks to enable AI systems to learn a little and reason a little in integrated fashion. Learning takes place in the usual way within a neural network but reasoning has to be formalized, whether taking place inside or outside the network. Instead of simply measuring reasoning capabilities of the networks using benchmarks, neurosymbolic AI networks seek to offer reasoning guarantees of correctness. It is crucial to pay attention to the many years of research in knowledge representation and reasoning within Computer Science logic. While learning may benefit from the use of natural language and other available multimodal data, sound reasoning requires a formal language. A choice of language adequate to the problem influences the system’s ability to find a solution.

Formal logic, particularly Propositional Logic, is the most straightforward language for representing propositions about the problem domain. Propositional logic is the simplest formal language for representation, a branch of mathematics and logic that deals with simple declarative statements, called propositions, which can be true or false. As we shall see, in the context of neurosymbolic systems, statements are not purely true or false, but are associated with confidence values, probability intervals or degrees of truth denoting the intrinsic uncertainly of AI problems. It is therefore incorrect to assume that the use of logic is incompatible with uncertainty reasoning or limited to crisp, true or false statements. In its most general form, logic includes fuzzy and many-valued logics and various other forms of non-classical reasoning. We start however with propositional logic.

Think of propositions as the fundamental building blocks for reasoning. For instance, “it is raining” is a proposition because its truth can be determined by examining the current weather conditions. We typically use symbols such as $P$ , $Q$ , or $R$ to represent these propositions. Any symbol, including indices, can be used as long as it is clear that they represent a specific proposition. To combine or modify these propositions, we use logical connectives or operators: AND ( $\land$ ), OR ( $\lor$ ), NOT ( $\lnot$ ), IMPLICATION ( $→$ ), and BI-CONDITIONAL ( $\leftrightarrow$ ). For example, if $P$ represents “it is raining” and $Q$ represents “I have an umbrella,” then $P\land Q$ means “it is raining AND I have an umbrella”. The operators allow us to compose complex relationships among ideas in a precise way.

A syntactically correct expression in logic is said to be a Well-Formed Formula (WFF). A WFF in propositional logic is constructed according to the following rules:

1. Any atomic proposition (e.g, $P$ , $Q$ , $R$ ) is a WFF.

1. If $A$ is a WFF then $\lnot A$ (the negation of $A$ ) is also a WFF.

1. If $A$ and $B$ are WFFs then $(A\land B)$ , $(A\lor B)$ , $(A→ B)$ , and $(A\leftrightarrow B)$ are also WFFs.

1. Nothing else is a WFF.

For example, the expression $(P\land Q)→ R$ is a WFF because it follows these rules: $P$ , $Q$ , and $R$ are atomic propositions, $(P\land Q)$ is a valid combination using the AND operator, and the entire expression forms a valid implication. On the other hand, expressions like $P\land\lor Q$ are not WFFs because they violate the rules.

Propositional logic is also known as Boolean Logic, named after George Boole, a pioneer in the formalization of logical reasoning. Interestingly, George Boole is the great-great-grandfather of Geoffrey Hinton, a leading figure in the field of neural networks. Boole proposed his Laws of Thought using a simplified notation where $1$ and $0 0$ denote true and false, respectively. This binary representation aligns naturally with the semantic interpretation of neural networks and fits seamlessly into the reasoning method to be presented in this paper.

By adhering to the rules of WFFs, we ensure that our logical expressions are unambiguous and well-structured (compositional), providing a solid foundation for further exploration of propositional logic and its applications. In the remainder of this paper, unless otherwise specified, we shall use WFF to refer specifically to a subset of WFFs consisting only of formulas constructed using combinations of negation ( $\lnot$ ), conjunction ( $\land$ ), and disjunction ( $\lor$ ). If other logical connectives, such as implication ( $→$ ) or bi-conditional ( $\leftrightarrow$ ), are included, we will explicitly clarify this deviation from the specific subset, noting that in Classical Logic $A\leftrightarrow B$ is equavelent to $(A→ B)\land(B→ A)$ and that $A→ B$ is equivalent to $\neg A\lor B$ .

2.1 Illustrating Logical Reasoning with the Sudoku Puzzle

Sudoku is more than just a number puzzle (see Figure 1); it is a gateway to understanding the power of logical thinking. This globally beloved puzzle challenges us to impose order on apparent chaos, using nothing but numbers and logic. At its core, Sudoku is about solving constraints, ensuring that every row, column, and sub-grid (or block) adheres to a simple strict rule (containing one and only one of the elements of a given set). The same principle of constraint satisfaction is a cornerstone of Artificial Intelligence and computational problem-solving. By learning how to express Sudoku’s rules logically, we unlock the secrets of this captivating game and the tools to tackle more complex problem solving. Let’s explore how propositional logic can elegantly capture the rules of Sudoku as a way to illustrate structured reasoning.

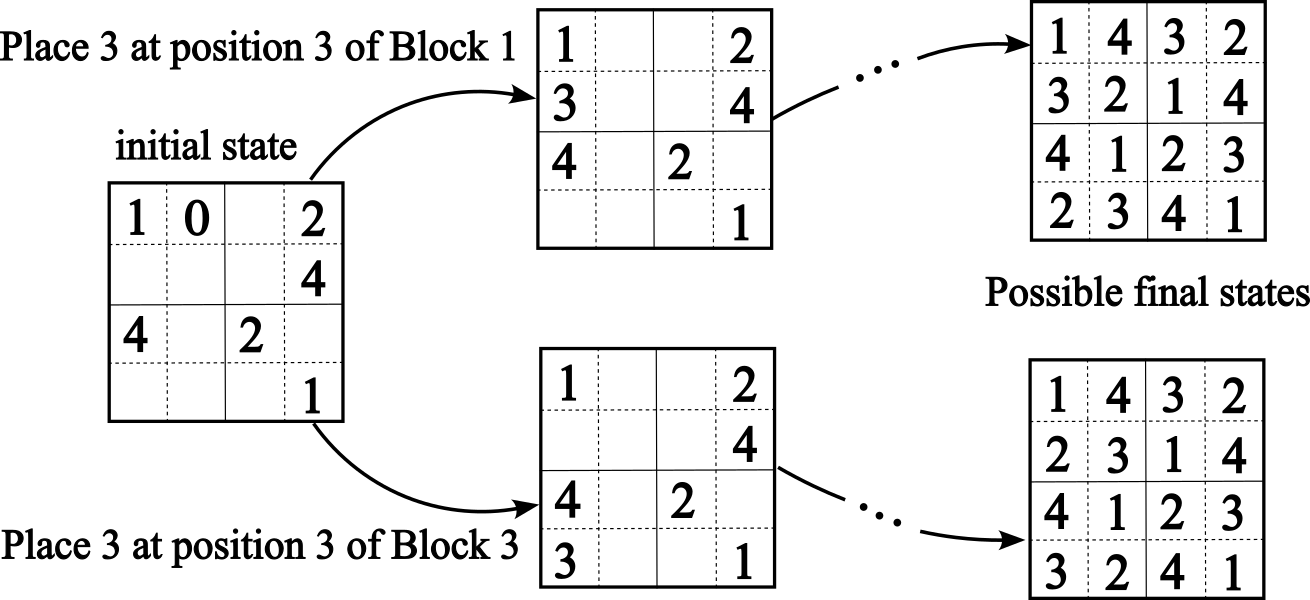

For simplicity, we consider a smaller version of Sudoku, using a $4× 4$ grid instead of the standard $9× 9$ . This simplified puzzle divides the board into four $2× 2$ blocks or sub-grids, each containing four positions (or cells). Blocks are counted from left to right and top to bottom: block 1 is on top of block 3, and block 2 is on top of block 4. Positions within each block are also counted from left to right and top to bottom. Each cell in the grid must contain a number from 1 to 4, with no repetition allowed in any row, column, or $2× 2$ block. In the real Sudoku puzzle, each block is $3× 3$ and the set of possible elements is {1,2,…,9} with the board having 9 blocks in total. Figure 1 depicts an example of an initial setting for a Sudoku $4× 4$ board, followed by two possible transitions placing number 3 in two possible cells satisfying the constraints. Two possible final states are also shown, each derived from the above two states if every movement satisfies the constraints of the puzzle.

<details>

<summary>extracted/6466920/figs/Sudoku-4x4-sol03.png Details</summary>

### Visual Description

## Diagram: Puzzle State Transitions

### Overview

The diagram illustrates the possible state transitions of a 2x2 grid puzzle with numbered tiles (1, 2, 3, 4) and an empty space (0). It shows two possible moves from an initial state and their resulting configurations.

### Components/Axes

- **Initial State**: A 2x2 grid with the following configuration:

```

1 0

4 2

```

(Note: The fourth cell is empty, represented by "0".)

- **Move 1**: "Place 3 at position 3 of Block 1" (top-left 2x2 quadrant).

- **Move 2**: "Place 3 at position 3 of Block 3" (bottom-right 2x2 quadrant).

- **Possible Final States**: Two sets of 2x2 grids resulting from the moves, each with 4 permutations of numbers 1, 2, 3, 4.

### Detailed Analysis

#### Initial State Grid

```

1 0

4 2

```

- **Key Details**: The empty space (0) is at position (1,2) in a 2x2 grid (row-major order).

#### Move 1: Place 3 at Position 3 of Block 1

- **Action**: Insert "3" into the third position of the top-left 2x2 block (positions 1, 2, 3, 4 in row-major order).

- **Resulting Grids**:

1.

```

1 4

3 2

```

2.

```

3 4

1 2

```

3.

```

1 3

4 2

```

4.

```

4 3

1 2

```

#### Move 2: Place 3 at Position 3 of Block 3

- **Action**: Insert "3" into the third position of the bottom-right 2x2 block (positions 3, 4, 7, 8 in row-major order).

- **Resulting Grids**:

1.

```

1 2

4 3

```

2.

```

4 2

1 3

```

3.

```

1 3

4 2

```

4.

```

4 1

3 2

```

### Key Observations

1. **Permutation Variability**: Each move generates 4 distinct permutations of the numbers 1, 2, 3, 4, with no repeated configurations.

2. **Symmetry**: The final states from both moves overlap partially (e.g., "1 3 / 4 2" appears in both Move 1 and Move 2).

3. **Empty Space Propagation**: The initial empty space (0) is replaced by "3" in both moves, but the placement of "3" affects adjacent tiles differently depending on the block targeted.

### Interpretation

The diagram demonstrates how localized changes (placing "3" in specific blocks) propagate through the puzzle, altering the global configuration. The overlapping final states suggest that certain permutations are reachable via multiple paths, while others are unique to a specific move. This could model scenarios in combinatorial optimization, game theory, or state-space exploration, where discrete actions lead to branching outcomes. The absence of repeated states in the final configurations implies deterministic transitions, though the diagram does not explicitly confirm whether all permutations are achievable through these two moves alone.

</details>

Figure 1: An initial Sudoku board and two branches generated by placing a 3 at position 3 of blocks 1 and 3, respectively, and corresponding final states satisfying the constraints of the game.

Solving Sudoku involves reasoning about these constraints, making it a good example for introducing logical notation. To model the problem using propositional logic, one can systematically represent the constraints in terms of propositional variables encoding the relationships between numbers, positions, rows, columns and blocks. The rules dictate that every row, column and block must include the numbers 1 to 4 exactly once. By encoding the problem in this way, one can use symbolic logical reasoning to systematically explore possible solutions while respecting all constraints. The rules are encoded as follows:

Logical Variables:

Let the proposition $B_{i,j,k}$ denote that the block $i$ at position $j$ (that is, the cell $(i,j)$ ) contains the number $k$ . Formally, $B_{i,j,k}$ is true if and only if $k∈\{1,2,3,4\}$ is in position $j$ of block $i$ , $1≤ i≤ 4$ , $1≤ j≤ 4$ . Logical Constraints:

The constraints ensure that the numbers are placed correctly according to the rules of Sudoku. These constraints can be grouped into four categories:

1. Each cell must contain a number (cell $(i,j)$ contains a 1 or a 2 or a 3 or a 4): $B_{i,j,1}\lor B_{i,j,2}\lor B_{i,j,3}\lor B_{i,j,4}$ . When needed, we shall write:

$$

\bigvee_{k=1}^{4}B_{i,j,k}\quad\text{as shorthand notation for }B_{i,j,1}\lor B%

_{i,j,2}\lor B_{i,j,3}\lor B_{i,j,4}.

$$

There cannot be two or more numbers on the same cell Notice that $\neg(A\wedge B)$ implies $\neg(A\wedge B\wedge C)$ .:

$$

\neg(B_{i,j,k_{1}}\wedge B_{i,j,k_{2}}),\quad\text{for all }k_{1}\neq k_{2}.

$$

The above two rules can be written compactly as:

$$

\left(\bigvee_{k=1}^{4}B_{i,j,k}\right)\wedge\left(\bigwedge_{k_{1}<k_{2}}\neg%

(B_{i,j,k_{1}}\wedge B_{i,j,k_{2}})\right),

$$

where $\bigwedge_{i}x_{i}$ is shorthand for $x_{1}\wedge x_{2}\wedge...$ and $k_{1}<k_{2}$ is used to avoid repetition. Notice that $A\wedge B$ is logically equivalent to $B\wedge A$ .

1. Each number appears exactly once per row. For each row across the entire board and each number $k$ , exactly one position in that row must contain $k$ . This is expressed as:

$$

\bigvee_{j=1}^{4}B_{i,j,k}\quad\text{}

$$

along with the constraint that there cannot be two or more occurrences of the same number on the same row:

$$

\neg(B_{i,j_{1},k}\wedge B_{i,j_{2},k}),\quad\text{for all }j_{1}\neq j_{2}.

$$

In compact form:

$$

\left(\bigvee_{j=1}^{4}B_{i,j,k}\right)\wedge\left(\bigwedge_{j_{1}<j_{2}}\neg%

(B_{i,j_{1},k}\wedge B_{i,j_{2},k})\right).

$$

1. Each number appears exactly once per column. In compact form (as above):

$$

\left(\bigvee_{i=1}^{4}B_{i,j,k}\right)\wedge\left(\bigwedge_{i_{1}<i_{2}}\neg%

(B_{i_{1},j,k}\wedge B_{i_{2},j,k})\right).

$$

1. Each number appears exactly once per block. For each $2× 2$ block and each number $k$ , exactly one position within the block must contain $k$ . For example, for the top-left block:

$$

\bigvee_{(i,j)\in\{(1,1),(1,2),(2,1),(2,2)\}}B_{i,j,k},

$$

along with the constraint:

$$

\neg(B_{i_{1},j_{1},k}\wedge B_{i_{2},j_{2},k}),\text{for all distinct pairs }%

(i_{1},j_{1})\neq(i_{2},j_{2}).

$$

In compact form:

$$

\left(\bigvee_{(i,j)\in\text{block}}B_{i,j,k}\right)\wedge\left(\bigwedge_{(i_%

{1},j_{1})<(i_{2},j_{2})}\neg(B_{i_{1},j_{1},k}\wedge B_{i_{2},j_{2},k})\right).

$$

The complete set of constraints for the $4× 4$ Sudoku puzzle is the conjunction of all the above conditions over all cells, rows, columns and blocks. This logical formula guarantees that every number appears exactly once in each row, column, and block, satisfying the rules of Sudoku. It also provides a systematic framework for reasoning about the puzzle.

**Example 1**

*For block 1, position 1, we have: - $B_{1,1,1}\lor B_{1,1,2}\lor B_{1,1,3}\lor B_{1,1,4}$

- $\neg B_{1,1,1}\lor\neg B_{1,1,2}$

- $\neg B_{1,1,1}\lor\neg B_{1,1,3}$

- $\neg B_{1,1,1}\lor\neg B_{1,1,4}$

- $\neg B_{1,1,2}\lor\neg B_{1,1,3}$

- $\neg B_{1,1,2}\lor\neg B_{1,1,4}$

- $\neg B_{1,1,3}\lor\neg B_{1,1,4}$*

Some observations about this representation:

- This notation provides a framework whereby each possible combination of $B$ with indices is assigned to True or False.

- Each rule above is called a clause (a disjunction of logic literals) and the complete set of clauses would be significantly larger to cover all rows, columns and blocks.

- This representation can be used as input to a satisfiability (SAT) solver to find solutions to the Sudoku puzzle, that is, assignments of truth-values True or False to each literal that will provably satisfy the puzzle’s constraints.

This Boolean logic representation allows us to express the Sudoku problem as a set of constraints that must be satisfied simultaneously. By finding a truth assignment to the variables that satisfy all the clauses, we determine a valid solution to the Sudoku puzzle.

2.2 Sudoku with Strategies of Sampling

1. Reasoning Strategy based on Unused Numbers:

To control which number to pick based on the bank of numbers not yet placed on the board, let us illustrate how additional constraints may be introduced that ensure unused numbers are considered first. A strategy such as this could be learned from observation of game plays as well as specified by hand.

For each empty cell $(i,j)$ , define $U(i,j)$ as the set of numbers $k$ such that $k$ is not already used in the corresponding row, column or block of cell $(i,j)$ .

The constraint ensuring the selection of an unused number $k$ can be expressed as:

$$

\bigvee_{k\in U(i,j)}B_{i,j,k}

$$

where $U(i,j)$ is defined as:

$$

U(i,j)=\{k\mid k\notin\{B_{i,j^{\prime},k^{\prime}}\mid j^{\prime}\neq j\}%

\land k\notin\{B_{i^{\prime},j,k^{\prime}}\mid i^{\prime}\neq i\}

$$

$$

\land k\notin\{B_{i^{\prime},j^{\prime},k^{\prime}}\mid(i^{\prime},j^{\prime})%

\in\text{block}(i,j)\}\}.

$$

Here, $\text{block}(i,j)$ denotes the set of positions in the same block as $(i,j)$ .

1. Priority Constraint for Unused Numbers:

To prioritize the use of unused numbers, we can add a preference rule that assigns higher priority to considering numbers from $U(i,j)$ ahead of other possibilities.

Formally, let $P(i,j,k)$ represent the priority of placing number $k$ in cell $(i,j)$ . The priority can be defined as:

$$

P(i,j,k)=\begin{cases}1&\text{if }k\in U(i,j)\\

0&\text{otherwise}\end{cases}

$$

The constraint ensuring that the highest priority is given to unused numbers can be expressed as:

$$

\bigvee_{k\in U(i,j)}(P(i,j,k)\wedge B_{i,j,k})

$$

The complete set of logical constraints for the 4x4 Sudoku puzzle now includes the original Sudoku constraints along with additional reasoning strategies that prioritize the use of unused numbers. These constraints ensure that every number appears exactly once in each row, column, and block while also guiding the generation of solutions (that is, the assignment of truth-values to the literals) by leveraging the bank of unused numbers. By incorporating these, the Sudoku solving process becomes systematic and more efficient as it should reduce the likelihood of the process getting stuck and having to backtrack when searching for a solution, or analogously in the case of a neural network getting stuck in local minima.

2.3 Restricted Boltzmann Machines

An RBM [44] is a two-layer neural network with bidirectional (symmetric) connections, which is characterised by a function called the energy of the RBM:

$$

{\it E}(\mathbf{x},\mathbf{h})=-\sum_{i,j}w_{ij}x_{i}h_{j}-\sum_{i}a_{i}x_{i}-%

\sum_{j}b_{j}h_{j} \tag{1}

$$

where $a_{i}$ and $b_{j}$ are the biases of input unit $x_{i}$ and hidden unit $h_{j}$ , respectively, and $w_{ij}$ is the connection weight between $x_{i}$ and $h_{j}$ . This RBM represents a joint probability distribution $p(\mathbf{x},\mathbf{h})=\frac{1}{Z}e^{-\frac{1}{\tau}{\it E}(\mathbf{x},%

\mathbf{h})}$ where $Z=\sum_{\mathbf{x}\mathbf{h}}e^{-\frac{1}{\tau}{\it E}(\mathbf{x},\mathbf{h})}$ is the partition function and parameter $\tau$ is called the temperature of the RBM, $\mathbf{x}=\{x_{i}\}$ is the set of visible units and $\mathbf{h}=\{h_{j}\}$ is the set of hidden units of the RBM.

Training RBMs normally makes use of the Contrastive Divergence learning algorithm [19], whereby each input vector from the training set is propagated to the hidden layer of the network and back to the input a number of times ( $n$ ) using a probabilistic selection rule to decide at each time whether or not a neuron should be activated (with activation value in $\{0,1\}$ ). The weight assigned to the connection between input neuron $x_{i}$ and hidden neuron $h_{j}$ is adjusted according to a simple update rule based on the difference between the value of $x_{i}h_{j}$ at time $1$ and time $n$ . More precisely, $\Delta W_{ij}=\eta((x_{i}h_{j})_{1}-(x_{i}h_{j})_{n})$ , where $\eta$ is a learning rate (a small positive real number).

3 Symbolic Reasoning with Energy-based Neural Networks

The content of this section is based on [52].

Over the years, many neurosymbolic approaches have used a form of knowledge representation based on if-then rules [49, 13, 50, 12, 56, 29, 51], written $B← A$ (make $B$ $True$ if $A$ is $True$ ) to distinguish from classical implication ( $A→ B$ ). Under the convention that $1$ represents $True$ and $0 0$ represents $False$ , given $B← A$ and input $1$ to neuron $A$ , a neurosymbolic system would infer that neuron $B$ should have activation value approximately $1$ . Given input $0 0$ to neuron $A$ , it would infer that $B$ should have activation approximately $0 0$ .

Logical Boltzmann Machines (LBM) allow for a richer representation than if-then rules by using full propositional logic. Next, we review LBM’s immediate related work, define a mapping from any logical formulae to LBMs, and describe how reasoning takes place by sampling and energy minimization. We also evaluate scalability of reasoning in LBM and learning by combining knowledge and data, evaluating results on benchmarks in comparison with a symbolic, another neurosymbolic and a neural network-based approach.

3.1 Related Work

One of the earliest work on the integration of neural networks and symbolic knowledge is known as KBANN (Knowledge-based Artificial Neural Network [49]), which encodes if-then rules into a hierarchical multilayer perceptron. In another early approach [8], a single-hidden layer recurrent neural network is proposed to support logic programming rules. An extension of that approach to work with first-order logic programs, called Connectionist Inductive Logic Programming (CILP++) [13], uses the concept of propositionalisation from Inductive Logic Programming (ILP), whereby first-order variables can be treated as propositional atoms in the neural network. Also based on first-order logic programs, [12] propose a differentiable ILP approach that can be implemented by neural networks, and [6] maps stochastic logic programs into a differentiable function also trainable by neural networks. These are all supervised learning approaches.

Early work in neurosymbolic AI has also shown a correspondence between propositional logic and symmetrical neural networks [38], in particular Hopfield networks, which nevertheless did not scale well with the number of variables. Among unsupervised learning approaches, Penalty Logic [37] was the first work to integrate nonmonotonic logic in the form of weighted if-then rules into symmetrical neural networks. However, Penalty Logic required the use of higher-order Hopfield networks, which can be difficult to construct Building such higher-order networks requires transforming the energy function into quadratic form by adding hidden variables not present in the original logic formulae. and inefficient to train with the learning algorithm for Boltzmann machines. More recently, several attempts have been made to extract and encode symbolic knowledge into RBMs trained with the more efficient Contrastive Divergence learning algorithm [35, 50]. Such approaches explored the structural similarity between symmetric networks and logical rules with bi-conditional implication but do not have a proof of soundness. By contrast, and similarly to Penalty Logic, LBM is provably equivalent to the logic formulae encoded in the RBM. Differently from Penalty Logic, LBM does not require the use of higher-order networks.

Alongside the above approaches, which translate symbolic representations into neural networks (normally if-then rules translated into a feedforward or recurrent network), there are hybrid approaches that combine neural networks and symbolic AI systems as communicating modules of a neurosymbolic system. These include DeepProbLog [29] and Logic Tensor Networks (LTN) [41]. DeepProbLog adds a neural network module to probabilistic logic programming such that an atom of the logic program can be represented by a network module. LTN and various approaches derived from it use real-valued logic to constrain the loss function of the neural network given statements in firt-order logic. Both DeepProbLog and LTNs use backpropagation, differently from the approach adopted here which uses Contrastive Divergence.

Finally, approaches focused on reasoning include SAT solving using neural networks. In [17, 7], the maximum satisfiability problem is mapped onto Boltzmann machines and higher-order Boltzmann machines, which are used to solve the combinatorial optimization task in parallel, similarly to [38]. In [53], the SAT problem is redefined as a soft (differentiable) task and solved approximately by deep networks with the objective of integrating logical reasoning and learning, as in the case of the approaches discussed earlier. This soft version of the SAT problem is therefore different from the satisfiability problem. A preliminary evaluation of our approach in comparison with symbolic SAT solvers shows that our approach allows the use of up to approximately 100 variables. This is well below the capability of symbolic SAT solvers. A way of improving the performance of neural SAT solvers may well be to consider approximate solutions as done by soft SAT solvers, including neuroSAT [40]. Although still not beating SAT solvers, neuroSAT showed promise at addressing out-of-distribution learning after training on random SAT problems.

In our experiments on learning, the focus is on benchmark neurosymbolic AI tasks with available data and knowledge, obtained from [13]. We therefore compare LBM with a state-of-the-art ILP symbolic system ALEPH [46], standard RBMs as a purely-neural approach closest to LBM, and with CILP++ as a neurosymbolic system. It is worth noting, however, that CILP++ is a neurosymbolic system for supervised learning while LBMs use unsupervised learning, and it is worth investigating approaches for semi-supervised learning and other combinations of such systems. Further comparisons and evaluations on both reasoning and learning are underway.

3.2 Knowledge Representation in RBMs

Before we present LBM, let’s contrast the simple $B← A$ example used earlier with classical logic. Given $A→ B$ as knowledge In classical logic, $A→ B$ is equivalent to $\neg A\vee B$ , i.e. True if $A$ is False regardless of the truth-value of $B$ ., if neuron $A$ is assigned input value $1$ in the corresponding neurosymbolic network, we expect the network to converge to a stable state where neuron $B$ has value approximately $1$ , similarly to the example seen earlier. This is because the truth-value of WFF $A→ B$ is True given an assignment of truth-values True to its constituent literals $A$ and $B$ . Now, $A→ B$ is False when $A$ is True and $B$ is False. If neuron $B$ is assigned input $0 0$ , we expect the network to converge to a stable state where $A$ is approximately $0 0$ ( $A→ B$ is True when $A$ is False and $B$ is False). What if $A$ is assigned input $0 0$ (or $B$ is assigned input $1$ )? In these cases, $A→ B$ is satisfied if $B$ is either $1$ or $0 0$ (or if $A$ is either $1$ or $0 0$ ). Differently from $B← A$ , the network will converge to one of the two options that satisfy the formulae.

From this point forward, unless stated otherwise, we will treat assignments of truth-values to logical literals and binary input vectors denoting the activation states of neurons indistinguishably.

**Definition 1**

*Let $s_{\varphi}(\mathbf{x})∈\{0,1\}$ denote the truth-value of a WFF $\varphi$ given an assignment of truth-values $\mathbf{x}$ to the literals of $\varphi$ , where truth-value $True$ is mapped to 1 and truth-value $False$ is mapped to 0. Let ${\it E}(\mathbf{x},\mathbf{h})$ denote the energy function of an energy-based neural network $\mathcal{N}$ with visible units $\mathbf{x}$ and hidden units $\mathbf{h}$ . $\varphi$ is said to be equivalent to $\mathcal{N}$ if and only if for any assignment of values to $\mathbf{x}$ there exists a function $\psi$ such that $s_{\varphi}(\mathbf{x})=\psi({\it E}(\mathbf{x},\mathbf{h}))$ .*

Definition 1 is similar to that of Penalty Logic [37], where all assignments of truth-values satisfying a WFF $\varphi$ are mapped to global minima of the energy function of network $\mathcal{N}$ . In our case, by construction, assignments that do not satisfy the WFF will, in addition, be mapped to maxima of the energy function. To see how this is the case, it will be useful to define strict and full DNFs, as follows.

**Definition 2**

*A strict DNF (SDNF) is a DNF with at most one conjunctive clause (a conjunction of literals) that maps to $True$ for any choice of assignment of truth-values $\mathbf{x}$ . A full DNF is a DNF where each propositional variable (a positive or negative literal) must appear at least once in every conjunctive clause (sometimes called a canonical DNF).*

For example, to turn DNF $A\vee B$ into an equivalent full DNF, one needs to map it to $(A\wedge\neg B)\vee(\neg A\wedge B)\vee(A\wedge B)$ , according to the truth-table for $A\vee B$ . For any given assignment of truth-values to $A$ and $B$ , at most one of the above three conjunctive clauses will be $True$ , by definition of the truth-table. Not every SDNF is also a full DNF though, e.g. $(a\wedge b)\vee\neg b$ is a SDNF that is not a full DNF.

**Lemma 1**

*Let $\mathcal{S}_{T_{j}}$ denote the set of indices of the positive literals $\mathrm{x}_{t}$ in a conjunctive clause $j$ . Let $\mathcal{S}_{K_{j}}$ denote the set of indices of the negative literals $\mathrm{x}_{k}$ in $j$ . Any SDNF $\varphi\equiv\bigvee_{j}(\bigwedge_{t}\mathrm{x}_{t}\wedge\bigwedge_{k}\neg%

\mathrm{x}_{k})$ can be mapped onto an energy function: $$

{\it E}(\mathbf{x})=-\sum_{j}(\prod_{t\in\mathcal{S}_{T_{j}}}x_{t}\prod_{k\in%

\mathcal{S}_{K_{j}}}(1-x_{k})).

$$*

Proof: Each conjunctive clause $\bigwedge_{t}\mathrm{x}_{t}\wedge\bigwedge_{k}\neg\mathrm{x}_{k}$ in $\varphi$ corresponds to the product $\prod_{t}x_{t}\prod_{k}(1-x_{k})$ which maps to $1$ if and only if $x_{t}$ is $True$ ( $x_{t}=1$ ) and $x_{k}$ is $False$ ( $x_{k}=0$ ) for all $t∈\mathcal{S}_{T_{j}}$ and $k∈\mathcal{S}_{K_{j}}$ . Since $\varphi$ is SDNF, $\varphi$ is $True$ if and only if one conjunctive clause is $True$ and $\sum_{j}(\prod_{t∈\mathcal{S}_{T_{j}}}x_{t}\prod_{k∈\mathcal{S}_{K_{j}}}(1%

-x_{k}))=1$ . Hence, the neural network with energy function ${\it E}$ is such that $s_{\varphi}(\mathbf{x})=-{\it E}(\mathbf{x})$ . ∎

**Theorem 1**

*Any SDNF $\varphi\equiv\bigvee_{j}(\bigwedge_{t}\mathrm{x}_{t}\wedge\bigwedge_{k}\neg%

\mathrm{x}_{k})$ can be mapped onto an RBM with energy function:

$$

{\it E}(\mathbf{x},\mathbf{h})=-\sum_{j}h_{j}(\sum_{t\in\mathcal{S}_{T_{j}}}x_%

{t}-\sum_{k\in\mathcal{S}_{K_{j}}}x_{k}-|\mathcal{S}_{T_{j}}|+\epsilon), \tag{2}

$$

such that $s_{\varphi}(\mathbf{x})=-{\it E}(\mathbf{x})$ , where $0<\epsilon<1$ and $|\mathcal{S}_{T_{j}}|$ is the number of positive literals in conjunctive clause $j$ of $\varphi$ .*

Proof: Lemma 1 states that any SDNF $\varphi$ can be mapped onto energy function ${\it E}=-\sum_{j}(\prod_{t∈\mathcal{S}_{T_{j}}}x_{t}\prod_{k∈\mathcal{S}_{%

K_{j}}}(1-x_{k}))$ . For each expression $\tilde{e}_{j}(\mathbf{x})=-\prod_{t∈\mathcal{S}_{T_{j}}}x_{t}\prod_{k∈%

\mathcal{S}_{K_{j}}}(1-x_{k})$ , we define an energy expression associated with hidden unit $h_{j}$ as $e_{j}(\mathbf{x},h_{j})=-h_{j}(\sum_{t∈\mathcal{S}_{T_{j}}}x_{t}-\sum_{k∈%

\mathcal{S}_{K_{j}}}x_{k}-|\mathcal{S}_{T_{j}}|+\epsilon)$ . The term $e_{j}(\mathbf{x},h_{j})$ is minimized with value $-\epsilon$ when $h_{j}=1$ , written $min_{h_{j}}(e_{j}(\mathbf{x},h_{j}))=-\epsilon$ . This is because $-(\sum_{t∈\mathcal{S}_{T_{j}}}x_{t}-\sum_{k∈\mathcal{S}_{K_{j}}}x_{k}-|%

\mathcal{S}_{T_{j}}|+\epsilon)=-\epsilon$ if and only if $x_{t}=1$ and $x_{k}=0$ for all $t∈\mathcal{S}_{T_{j}}$ and $k∈\mathcal{S}_{K_{j}}$ . Otherwise, $-(\sum_{t}x_{t∈\mathcal{S}_{T_{j}}}-\sum_{k∈\mathcal{S}_{K_{j}}}x_{k}-|%

\mathcal{S}_{T_{j}}|+\epsilon)>0$ and $min_{h_{j}}(e_{j}(\mathbf{x},h_{j}))=0$ with $h_{j}=0$ . By repeating this process for each $\tilde{e}_{j}(\mathbf{x})$ we obtain that the energy function ${\it E}(\mathbf{x},\mathbf{h})=-\sum_{j}h_{j}(\sum_{t∈\mathcal{S}_{T_{j}}}x_%

{t}-\sum_{k∈\mathcal{S}_{K_{j}}}x_{k}-|\mathcal{S}_{T_{j}}|+\epsilon)$ is such that $s_{\varphi}(\mathbf{x})=-\frac{1}{\epsilon}min_{\mathbf{h}}{\it E}(\mathbf{x},%

\mathbf{h})$ . ∎

It is well-known that any WFF $\varphi$ can be converted into DNF. Then, if $\varphi$ is not SDNF, by definition there is more than one conjunctive clause in $\varphi$ that map to $True$ when $\varphi$ is satisfied. This group of conjunctive clauses can always be converted into a full DNF according to its truth-table. By definition, any such full DNF is also a SDNF. Therefore, any WFF can be converted into SDNF. From Theorem 1, it follows that any WFF can be represented by the energy function of an RBM. The conversion of WFFs into full DNF can be computationally expensive. Sometimes, the logic is provided already in canonical DNF form or in Conjunctive Normal Form (CNF), i.e. conjunctions of disjunctions. We will see later that any WFF expressed in CNF can be converted into an RBM’s energy function efficiently without the need to convert into SDNF first. This covers the most common forms of propositional knowledge representation. Next, we describe a method for converting logical formulae into SDNF, which we use in the empirical evaluations that will follow. Consider a clause $\gamma$ such that:

$$

\gamma\equiv\bigvee_{t\in\mathcal{S}_{T}}\neg\mathrm{x}_{t}\vee\bigvee_{k\in%

\mathcal{S}_{K}}\mathrm{x}_{k} \tag{3}

$$

where $\mathcal{S}_{T}$ now denotes the set of indices of the negative literals, and $\mathcal{S}_{K}$ denotes the set of indices of the positive literals in the clause (dually to the conjunctive clause case). Clause $\gamma$ can be rearranged into $\gamma\equiv\gamma^{\prime}\vee\mathrm{x}^{\prime}$ , where $\gamma^{\prime}$ is obtained by removing $\mathrm{x}^{\prime}$ from $\gamma$ ( $\mathrm{x}^{\prime}$ can be either $\neg\mathrm{x}_{t}$ or $\mathrm{x}_{k}$ for any $t∈\mathcal{S}_{T}$ and $k∈\mathcal{S}_{K}$ ). We have:

$$

\gamma\equiv(\neg\gamma^{\prime}\wedge\mathrm{x}^{\prime})\vee\gamma^{\prime} \tag{4}

$$

because $(\neg\gamma^{\prime}\wedge\mathrm{x}^{\prime})\vee\gamma^{\prime}\equiv(\gamma%

^{\prime}\vee\neg\gamma^{\prime})\wedge(\gamma^{\prime}\vee\mathrm{x}^{\prime}%

)\equiv True\wedge(\gamma^{\prime}\vee\mathrm{x}^{\prime})$ . By De Morgan’s law ( $\neg(\mathrm{a}\vee\mathrm{b})\equiv\neg\mathrm{a}\wedge\neg\mathrm{b}$ ), we can always convert $\neg\gamma^{\prime}$ (and therefore $\neg\gamma^{\prime}\wedge\mathrm{x}^{\prime}$ ) into a conjunctive clause.

By applying (4) repeatedly, each time we eliminate a variable out of the clause by moving it into a new conjunctive clause. Given an assignment of truth-values, either the clause $\gamma^{\prime}$ will be True or the conjunctive clause ( $\neg\gamma^{\prime}\wedge\mathrm{x}^{\prime}$ ) will be True, e.g. $a\vee b\equiv a\vee(\neg a\wedge b)$ . Therefore, the SDNF for clause $\gamma$ in Eq. (3) is:

$$

\bigvee_{p\in\mathcal{S}_{T}\cup\mathcal{S}_{K}}(\bigwedge_{t\in\mathcal{S}_{T%

}\backslash p}\mathrm{x}_{t}\wedge\bigwedge_{k\in\mathcal{S}_{K}\backslash p}%

\neg\mathrm{x}_{k}\wedge\mathrm{x}^{\prime}_{p}) \tag{5}

$$

where $\mathcal{S}\backslash p$ denotes a set $\mathcal{S}$ from which element $p$ has been removed. If $p∈\mathcal{S}_{T}$ then $\mathrm{x}^{\prime}_{p}\equiv\neg\mathrm{x}_{p}$ . Otherwise, $\mathrm{x}^{\prime}_{p}\equiv\mathrm{x}_{p}$ . As an example of the translation into SDNF, consider the translation of an if-then statement (logical implication) below.

**Example 2**

*Translation of if-then rules into SDNF. Consider the formula $\gamma\equiv(x_{1}\wedge x_{2}\wedge\neg x_{3})→ y$ . Using our notation: $$

\gamma\equiv(\bigwedge_{t\in\{1,2\}}\mathrm{x}_{t}\wedge\bigwedge_{k\in\{3\}}%

\neg\mathrm{x}_{k})\rightarrow\mathrm{y} \tag{6}

$$ Converting to DNF: $$

(\mathrm{y}\wedge\bigwedge_{t\in\{1,2\}}\mathrm{x}_{t}\wedge\bigwedge_{k\in\{3%

\}}\neg\mathrm{x}_{k})\vee\bigvee_{t\in\{1,2\}}\neg\mathrm{x}_{t}\vee\bigvee_{%

k\in\{3\}}\mathrm{x}_{k} \tag{7}

$$ Applying the variable elimination method to the clause $\neg\mathrm{x}_{1}\vee\neg\mathrm{x}_{2}\vee\mathrm{x}_{3}$ , we obtain the SDNF for $\gamma$ : $$

\displaystyle(\mathrm{y}\wedge\bigwedge_{t\in\mathcal{S}_{T}}\mathrm{x}_{t}%

\bigwedge_{k\in\mathcal{S}_{K}}\neg\mathrm{x}_{k})\vee(\neg\mathrm{x}_{1})\vee%

(\mathrm{x}_{1}\wedge\neg\mathrm{x}_{2})\vee(\mathrm{x}_{1}\wedge\mathrm{x}_{2%

}\wedge\mathrm{x}_{3}) \tag{8}

$$*

3.3 Reasoning in RBMs

We have seen how propositional logic formula can be mapped onto the energy functions of RBMs. In this section, we discuss the deployment of such RBMs for logical reasoning.

3.3.1 Reasoning as Sampling

There is a direct relationship between inference in RBMs and logical satisfiability, as follows.

**Lemma 2**

*Let $\mathcal{N}$ be an RBM with energy function $E$ . Let $\varphi$ be a WFF such that $s_{\varphi}(\mathbf{x})=-{\it E}(\mathbf{x})$ . Let $\mathcal{A}$ be a set of indices of variables in $\varphi$ that have been assigned to either True or False. We use $\mathbf{x}_{\mathcal{A}}$ to denote the set $\{x_{\alpha}|\alpha∈\mathcal{A}\}$ ). Let $\mathcal{B}$ be a set of indices of variables that have not been assigned a truth-value in $\varphi$ . We use $\mathbf{x}_{\mathcal{B}}$ to denote $\{x_{\beta}|\beta∈\mathcal{B}\}$ ). Performing Gibbs sampling on $\mathcal{N}$ given $\mathbf{x}_{\mathcal{A}}$ is equivalent to searching for an assignment of truth-values for $\mathbf{x}_{\mathcal{B}}$ that satisfies $\varphi$ .*

Proof: Theorem 1 has shown that the assignments of truth-values to $\varphi$ are partially ordered according to the RBM’s energy function such that the models of $\varphi$ (mapping $\varphi$ to 1) correspond to minima of the energy function. We say that the satisfiability of $\varphi$ is inversely proportional to the RBM’s rank function. When the satisfiability of $\varphi$ is maximum ( $s_{\varphi}(\mathbf{x})=1$ ) ranking the output of $-{\it E}(\mathbf{x})$ produces the highest rank. A value of $\mathbf{x}_{\mathcal{B}}$ that minimises the energy function also maximises satisfiability: $s_{\varphi}(\mathbf{x}_{\mathcal{B}},\mathbf{x}_{\mathcal{A}})\propto-min_{%

\mathbf{h}}{\it E}(\mathbf{x}_{\mathcal{B}},\mathbf{x}_{\mathcal{A}},\mathbf{h})$ because:

$$

\displaystyle\mathbf{x}_{\mathcal{B}}^{*}=\operatorname*{arg\,min}_{\mathbf{x}%

_{\mathcal{B}_{\mathbf{h}}}}{\it E}(\mathbf{x}_{\mathcal{B}},\mathbf{x}_{%

\mathcal{A}},\mathbf{h})=\operatorname*{arg\,max}_{\mathbf{x}_{\mathcal{B}}}(s%

_{\varphi}(\mathbf{x}_{\mathcal{B}},\mathbf{x}_{\mathcal{A}})) \tag{9}

$$

We can consider an iterative process to search for truth-values $\mathbf{x}_{\mathcal{B}}^{*}$ by minimising an RBM’s energy function. This can be done using gradient descent or contrastive divergence with Gibbs sampling. The goal is to update the values of $\mathbf{h}$ and then $\mathbf{x}_{\mathcal{B}}$ in parallel until convergence to minimise ${\it E}(\mathbf{x}_{\mathcal{B}},\mathbf{x}_{\mathcal{A}},\mathbf{h})$ while keeping the other variables ( $\mathbf{x}_{\mathcal{A}}$ ) fixed. The gradients amount to:

$$

\displaystyle\frac{\partial-{\it E}(\mathbf{x}_{\mathcal{B}},\mathbf{x}_{%

\mathcal{A}},\mathbf{h})}{\partial h_{j}} \displaystyle=\sum_{i\in\mathcal{A}\cup\mathcal{B}}x_{i}w_{ij}+\theta_{j} \displaystyle\frac{\partial-{\it E}(\mathbf{x}_{\mathcal{B}},\mathbf{x}_{%

\mathcal{A}},\mathbf{h})}{\partial x_{\beta}} \displaystyle=\sum_{j}h_{j}w_{\beta j}+theta_{\beta} \tag{10}

$$

In the case of Gibbs sampling, given the assigned variables $\mathbf{x}_{\mathcal{A}}$ , the process starts with a random initialization of $\mathbf{x}_{\mathcal{B}}$ and proceeds to infer values for the hidden units $h_{j}$ and then the unassigned variables $x_{\beta}$ in the visible layer of the RBM, using the conditional distributions $h_{j}\sim p(h_{j}|\mathbf{x})$ and $x_{\beta}\sim p(x_{\beta}|\mathbf{h})$ , respectively, where $\mathbf{x}=\{\mathbf{x}_{\mathcal{A}},\mathbf{x}_{\mathcal{B}}\}$ and:

$$

\displaystyle p(h_{j}|\mathbf{x}) \displaystyle=\frac{1}{1+e^{-\frac{1}{\tau}\sum_{i}x_{i}w_{ij}+\theta_{j}}}=%

\frac{1}{1+e^{-\frac{1}{\tau}\frac{\partial-{\it E}(\mathbf{x}_{\mathcal{B}},%

\mathbf{x}_{\mathcal{A}},\mathbf{h})}{\partial h_{j}}}} \displaystyle p(x_{\beta}|\mathbf{h}) \displaystyle=\frac{1}{1+e^{-\frac{1}{\tau}\sum_{j}h_{j}w_{\beta j}+\theta_{%

\beta}}}=\frac{1}{1+e^{-\frac{1}{\tau}\frac{\partial-{\it E}(\mathbf{x}_{%

\mathcal{B}},\mathbf{x}_{\mathcal{A}},\mathbf{h})}{\partial x_{\beta}}}} \tag{11}

$$

It can be seen from Eq.(11) that the distributions are monotonic functions of the negative energy’s gradient over $\mathbf{h}$ and $\mathbf{x}_{\mathcal{B}}$ . Therefore, performing Gibbs sampling on them can be seen as moving towards a local minimum that is equivalent to an assignment of truth-values that satisfies $\varphi$ . Each step of Gibbs sampling, calculating $\mathbf{h}$ and then $\mathbf{x}$ to reduce the energy, should intuitively generate an assignment of truth-values that gets closer to satisfying the formula $\varphi$ . ∎

3.3.2 Reasoning as Lowering Free Energy

When the number of unassigned variables is not large, it should be possible to calculate the above probabilities directly. In this case, one can infer the assignments of $\mathbf{x}_{\mathcal{B}}$ using the conditional distribution:

$$

P(\mathbf{x}_{\mathcal{B}}|\mathbf{x}_{\mathcal{A}})=\frac{e^{-\mathcal{F}_{%

\mathcal{B}}(\mathbf{x}_{\mathcal{A}},\mathbf{x}_{\mathcal{B}})}}{\sum_{%

\mathbf{x}^{\prime}_{\mathcal{B}}}e^{\mathcal{F}_{\mathcal{B}}}(\mathbf{x}_{%

\mathcal{A}},\mathbf{x}^{\prime}_{\mathcal{B}})} \tag{12}

$$

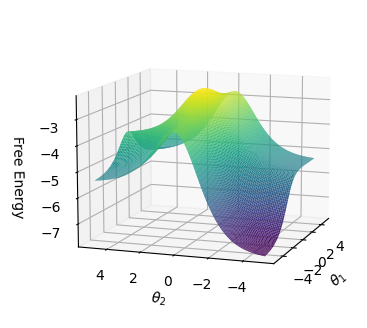

where $\mathcal{F}_{\mathcal{B}}=-\sum_{j}(-\log(1+e^{(c\sum_{i∈\mathcal{A}\cup%

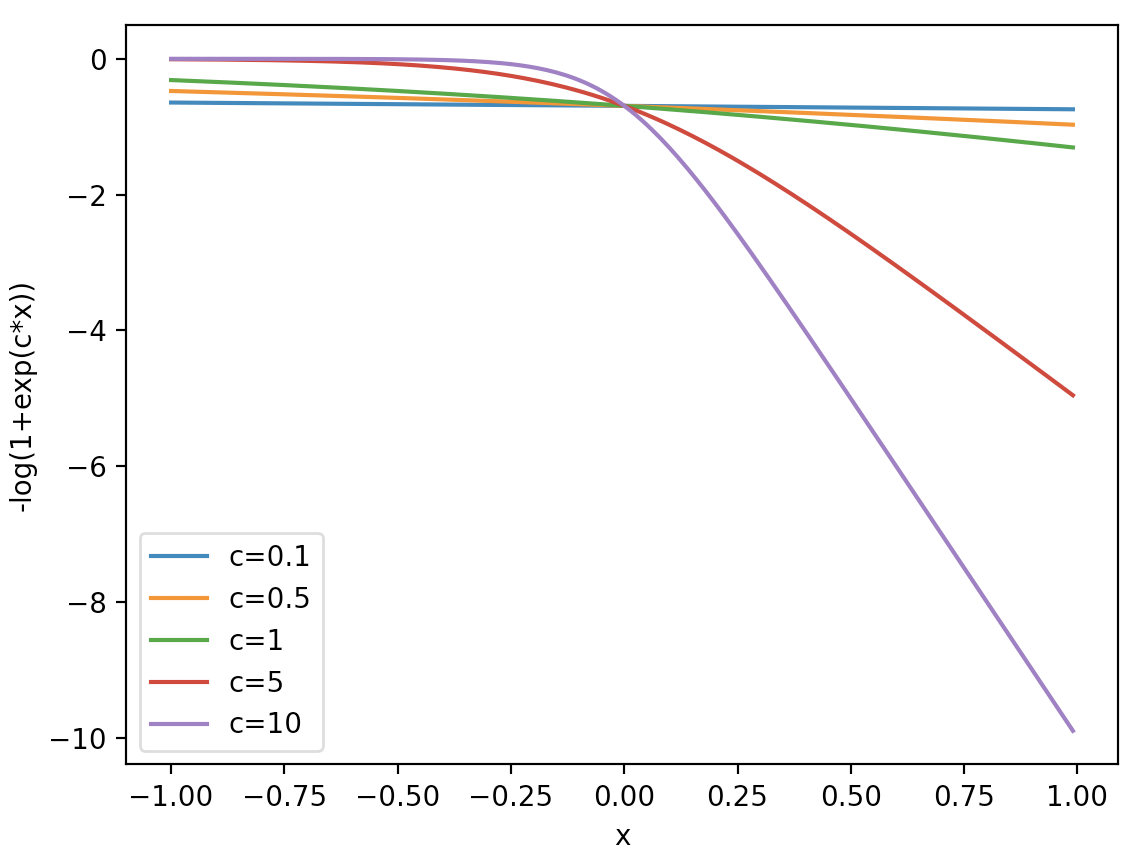

\mathcal{B}}w_{ij}x_{i}+\theta_{j})}))$ is known as the free energy; $\mathbf{x}^{\prime}_{\mathcal{B}}$ denotes all the combinations of truth-value assignments to the literals in $\mathbf{x}_{\mathcal{B}}$ , and $c$ is a non-negative real number that we call a confidence value. The free energy term $-\log(1+e^{(c\sum_{i∈\mathcal{A}\cup\mathcal{B}}w_{ij}x_{i}+\theta_{j})})$ is a negative softplus function scaled by $c$ as shown in Figure 2. It returns a negative output for a positive input and a close-to-zero output for a negative input.

<details>

<summary>extracted/6466920/figs/confidence_smoothing.png Details</summary>

### Visual Description

## Line Graph: -log(1+exp(c*x)) vs x for varying c values

### Overview

The graph displays five curves representing the function -log(1+exp(c*x)) for different constant values of c (0.1, 0.5, 1, 5, 10). The x-axis ranges from -1.00 to 1.00, while the y-axis spans from -10 to 0. All curves originate near y=0 at x=-1.00 and exhibit varying degrees of decline as x increases toward 1.00.

### Components/Axes

- **X-axis**: Labeled "x" with tick marks at -1.00, -0.75, -0.50, -0.25, 0.00, 0.25, 0.50, 0.75, 1.00.

- **Y-axis**: Labeled "-log(1+exp(c*x))" with tick marks at -10, -8, -6, -4, -2, 0.

- **Legend**: Located at the bottom-left corner, mapping colors to c values:

- Blue: c=0.1

- Orange: c=0.5

- Green: c=1

- Red: c=5

- Purple: c=10

### Detailed Analysis

1. **c=0.1 (Blue)**: Nearly flat line with minimal decline. Starts near y=0 at x=-1.00 and decreases slightly to ~y=-0.5 at x=1.00.

2. **c=0.5 (Orange)**: Slightly steeper than blue. Begins near y=0 and drops to ~y=-1.5 at x=1.00.

3. **c=1 (Green)**: Moderate decline. Starts near y=0 and reaches ~y=-3 at x=1.00.

4. **c=5 (Red)**: Sharp decline. Starts near y=0 and plunges to ~y=-7 at x=1.00.

5. **c=10 (Purple)**: Steepest slope. Begins near y=0 and falls sharply to ~y=-10 at x=1.00.

All curves intersect near x=0, where their y-values converge to approximately -0.5 to -1.0. The rate of decline increases exponentially with higher c values.

### Key Observations

- **Symmetry**: All curves are symmetric about x=0, with identical behavior for positive and negative x values.

- **Convergence**: At x=0, all curves intersect at y≈-0.5 to -1.0, regardless of c value.

- **Sensitivity**: Higher c values (5, 10) produce steeper slopes, indicating greater sensitivity to x changes.

- **Asymptotic Behavior**: Curves approach horizontal asymptotes as x→±∞ (not shown), with y-values stabilizing near -c for large |x|.

### Interpretation

The graph demonstrates how the parameter c controls the steepness of the function's response to x. Higher c values amplify the function's sensitivity, causing rapid declines for positive x and sharp rises for negative x (though only x≥-1.00 is shown). The intersection at x=0 suggests a critical point where the function's behavior transitions symmetrically. This could model phenomena like activation functions in neural networks or dose-response relationships in pharmacology, where c represents a scaling factor for input sensitivity.

</details>

Figure 2: Free energy term $-\log(1+e^{cx})$ for different confidence values $c$ .

Each free energy term is associated with a conjunctive clause in the SDNF through the weighted sum $\sum_{i∈\mathcal{A}\cup\mathcal{B}}w_{ij}x_{i}+\theta_{j}$ . Therefore, if a truth-value assignment of $\mathbf{x}_{\mathcal{B}}$ does not satisfy the formula $\varphi$ , all energy terms will be close to zero. When $\varphi$ is satisfied, one free energy term will be $-\log(1+e^{c\epsilon})$ , for a choice of $0<\epsilon<1$ from Theorem 1. Thus, the more likely that a truth assignment is to satisfying the formula, the lower the free energy. Formally:

$$

s_{\varphi}(\mathbf{x})=-\frac{1}{c\epsilon}\text{min}_{\mathbf{h}}E(\mathbf{x%

},\mathbf{h})=\lim_{c\rightarrow\infty}-\frac{1}{c\epsilon}\mathcal{F}(\mathbf%

{x}) \tag{13}

$$

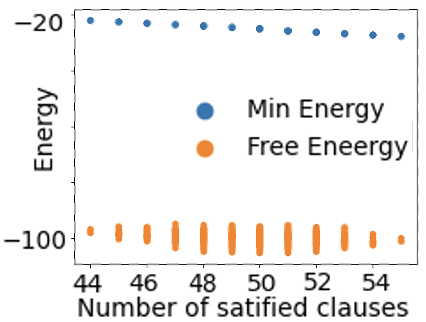

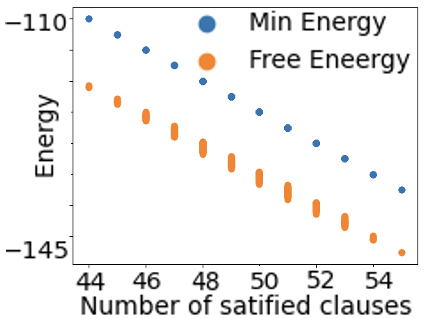

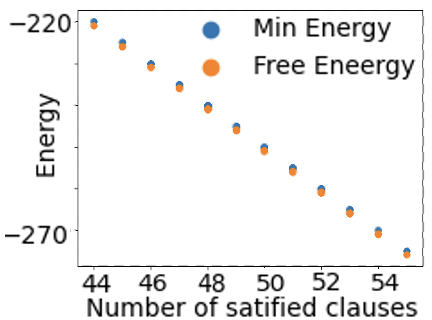

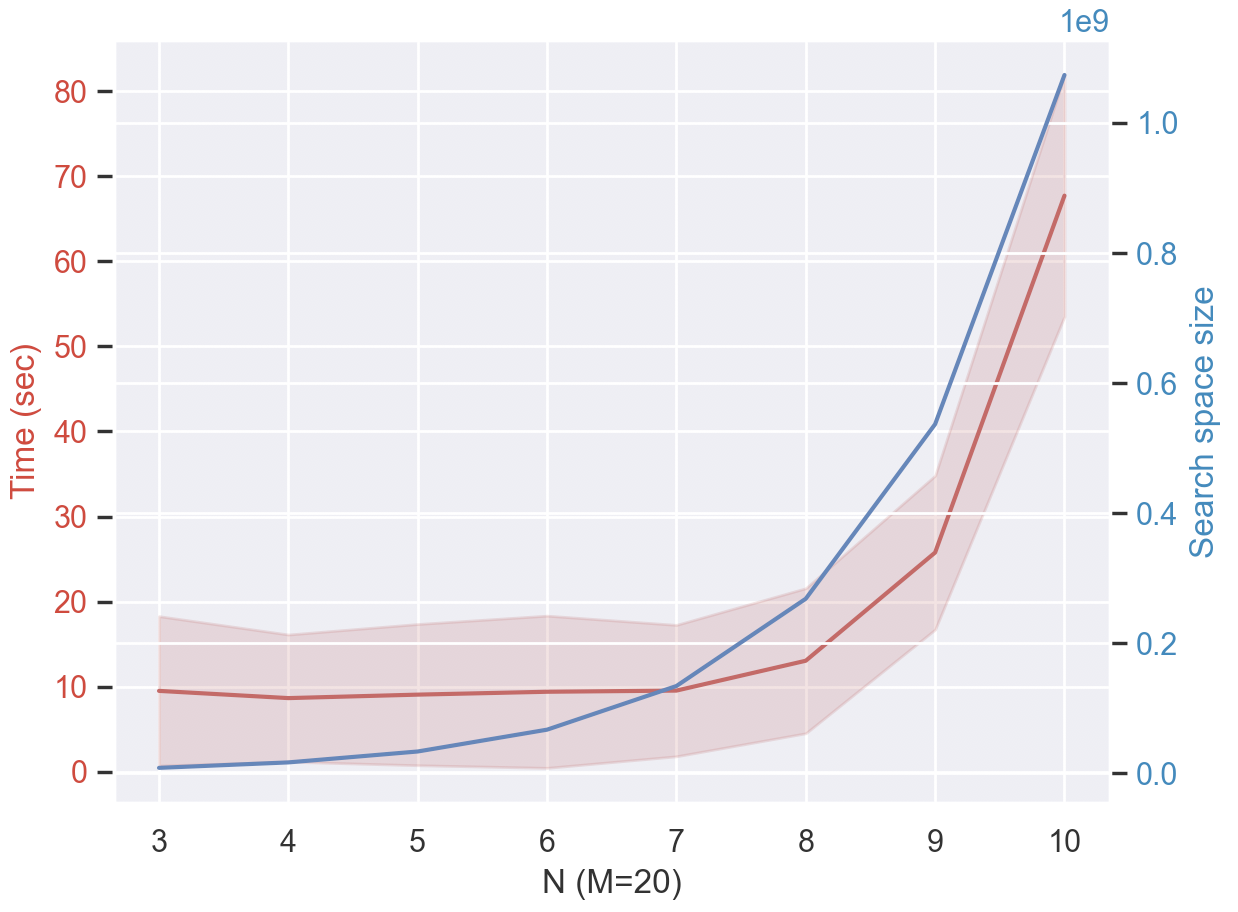

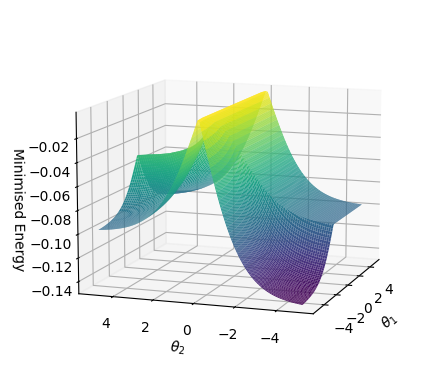

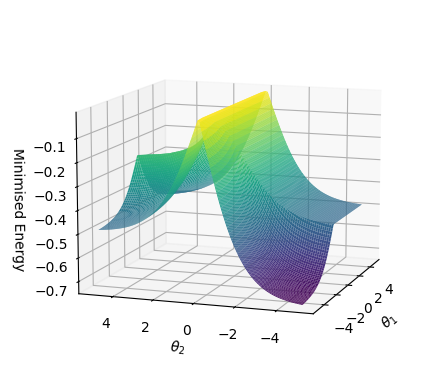

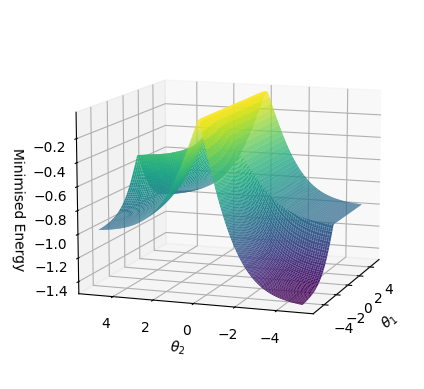

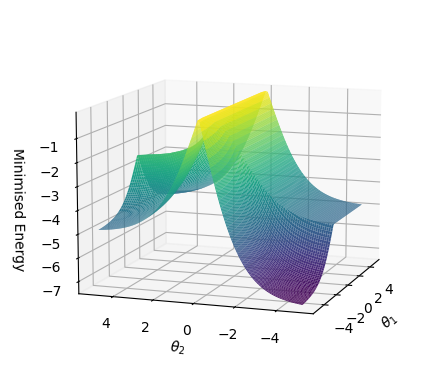

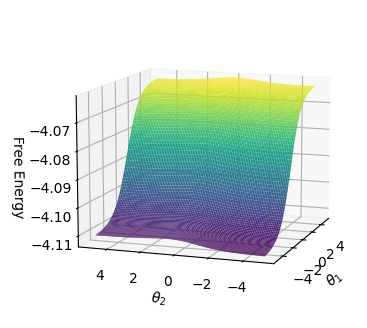

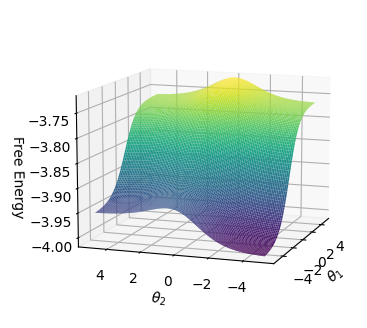

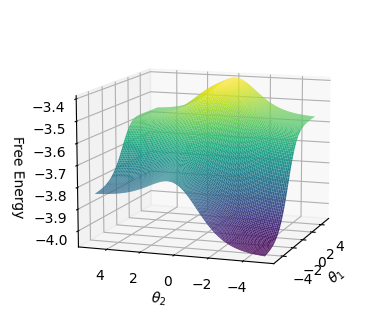

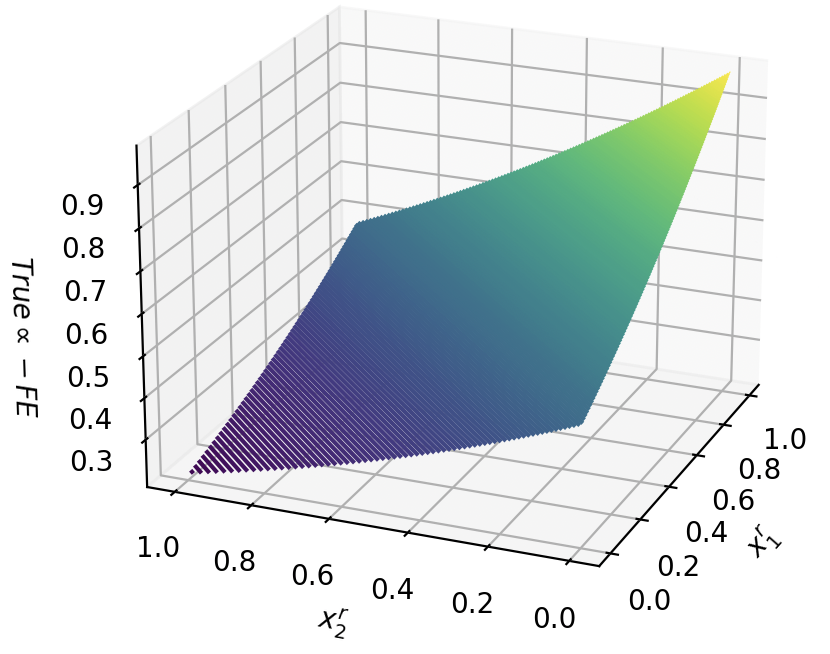

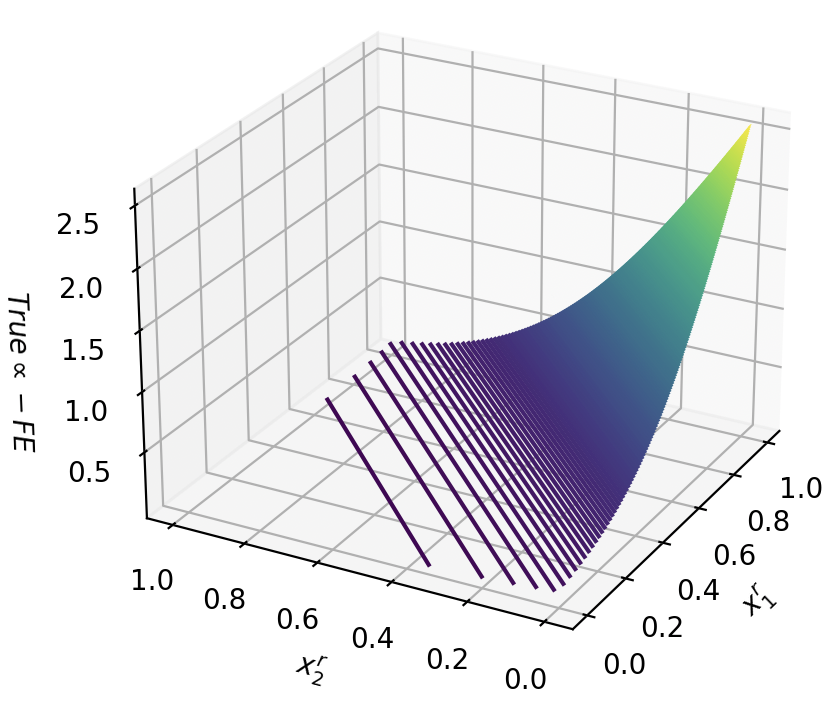

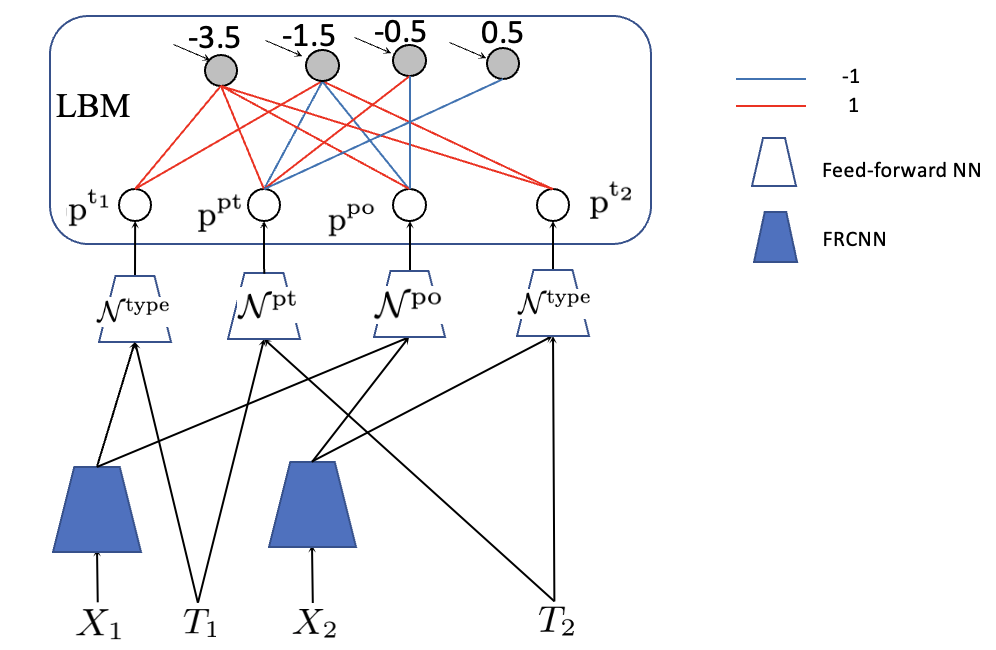

Figure 3 shows the average values of the energy function and free energy for CNFs with 55 clauses as the number of satisfied clauses increases. The CNF is satisfied if and only if all 55 clauses are satisfied. As can be seen, the relationships are linear. Minimum energy and free energy values converge with an increasing value of $c$ .

<details>

<summary>extracted/6466920/figs/energies_versus_sat_clauses_c1.png Details</summary>

### Visual Description

## Scatter Plot: Energy vs. Number of Satisfied Clauses

### Overview

The image is a scatter plot comparing two energy metrics—**Min Energy** (blue dots) and **Free Energy** (orange dots)—across a range of **number of satisfied clauses** (x-axis). The y-axis represents **Energy** values, with both metrics showing distinct trends.

### Components/Axes

- **X-axis**: "Number of satisfied clauses" with ticks at 44, 46, 48, 50, 52, 54.

- **Y-axis**: "Energy" with values ranging from -100 to -20.

- **Legend**:

- **Blue dots**: "Min Energy"

- **Orange dots**: "Free Energy"

- **Spatial Placement**:

- Legend is positioned in the **top-right** corner.

- Blue dots (Min Energy) are clustered near the **top** of the y-axis (around -20).

- Orange dots (Free Energy) are clustered near the **bottom** of the y-axis (around -100).

### Detailed Analysis

- **Min Energy (Blue Dots)**:

- All data points are **approximately -20** with minimal variation.

- Slight downward trend as the number of satisfied clauses increases (e.g., from -20 at 44 to -20 at 54).

- No significant outliers; points are tightly grouped.

- **Free Energy (Orange Dots)**:

- All data points are **approximately -100** with minor fluctuations.

- Slight upward trend (less negative) as the number of satisfied clauses increases (e.g., from -100 at 44 to -100 at 54).

- Some variability in the orange dots (e.g., slight dips around 48–50), but no extreme outliers.

### Key Observations

1. **Min Energy** remains nearly constant across all values of satisfied clauses, hovering around **-20**.

2. **Free Energy** is consistently **lower** (more negative) than Min Energy, averaging around **-100**.

3. Both metrics show **minimal variation** with the number of satisfied clauses, suggesting stability in the system.

4. The **legend** clearly distinguishes the two metrics, with no ambiguity in color coding.

### Interpretation

- The **Min Energy** and **Free Energy** metrics represent different energy states, with Free Energy being significantly lower (more negative) than Min Energy. This could imply that Free Energy is a more favorable or optimized state in the context of the system being analyzed.

- The **stability** of both metrics across the range of satisfied clauses suggests that the system’s energy is relatively insensitive to changes in the number of satisfied clauses.

- The **slight downward trend** in Min Energy and **slight upward trend** in Free Energy may indicate minor adjustments in energy as the system approaches higher clause satisfaction, but these changes are negligible.

- The **lack of outliers** in both datasets suggests a consistent and predictable relationship between the variables.

This plot likely reflects a thermodynamic or optimization scenario where energy states are evaluated against clause satisfaction, with Free Energy serving as a critical metric for system efficiency or stability.

</details>

(a) (c=1)

<details>

<summary>extracted/6466920/figs/energies_versus_sat_clauses_c5.png Details</summary>

### Visual Description

## Scatter Plot: Energy vs. Number of Satisfied Clauses

### Overview

The image is a scatter plot comparing two energy metrics—Min Energy and Free Energy—across varying numbers of satisfied clauses. The plot uses distinct markers (blue circles for Min Energy, orange ovals for Free Energy) to differentiate the datasets. Both metrics decline as the number of satisfied clauses increases, with notable convergence at higher clause counts.

### Components/Axes

- **X-axis**: "Number of satisfied clauses" (integer values from 44 to 54, in increments of 2).

- **Y-axis**: "Energy" (continuous scale from -145 to -110, with negative values).

- **Legend**:

- Blue circles: Min Energy

- Orange ovals: Free Energy

- **Data Points**:

- Blue circles are consistently positioned above orange ovals across all x-axis values.

- Orange ovals exhibit vertical error bars (approximate uncertainty: ±2–3 units on the y-axis).

### Detailed Analysis

- **Min Energy (Blue Circles)**:

- Starts at approximately **-110** when clauses = 44.

- Declines linearly to **-145** at clauses = 54.

- Slope: ~-2.5 units per clause (calculated from total drop of 35 over 10 clauses).

- **Free Energy (Orange Ovals)**:

- Starts at **-145** when clauses = 44.

- Remains relatively flat until clauses = 52, then drops sharply to **-145** at clauses = 54.

- Slope: ~-0.5 units per clause (minimal change until final clause increment).

### Key Observations

1. **Convergence at High Clause Counts**: Both metrics reach **-145** at clauses = 54, suggesting a critical threshold where Min Energy and Free Energy align.

2. **Divergence at Low Clause Counts**: Min Energy is significantly higher (less negative) than Free Energy for clauses ≤ 48.

3. **Error in Free Energy**: Orange ovals show vertical uncertainty, implying variability in Free Energy measurements at lower clause counts.

### Interpretation

The plot reveals a phase-like relationship between energy metrics and clause satisfaction. Min Energy decreases predictably with increasing clauses, while Free Energy remains stable until a critical point (clauses ≥ 52), after which it drops sharply. This convergence at clauses = 54 may indicate a phase transition or criticality in the system, where Min Energy and Free Energy equilibrate. The stability of Free Energy at lower clause counts suggests robustness to minor clause satisfaction changes, whereas Min Energy’s steep decline highlights sensitivity to clause fulfillment. The error bars on Free Energy imply measurement uncertainty, particularly at lower clause counts, which could affect interpretations of its stability.

</details>

(b) (c=5)

<details>

<summary>extracted/6466920/figs/energies_versus_sat_clauses_c10.png Details</summary>

### Visual Description

## Scatter Plot: Energy vs. Number of Satisfied Clauses

### Overview

The image is a scatter plot comparing two energy metrics ("Min Energy" and "Free Energy") across a range of satisfied clauses (44–54). Both metrics show a downward trend in energy as the number of satisfied clauses increases. Data points are color-coded (blue for Min Energy, orange for Free Energy) and closely aligned, with Min Energy consistently slightly higher than Free Energy.

### Components/Axes

- **X-axis**: "Number of satisfied clauses" (integer values: 44, 46, 48, 50, 52, 54).

- **Y-axis**: "Energy" (continuous scale from -270 to -220).

- **Legend**: Located in the top-right corner, with blue representing "Min Energy" and orange representing "Free Energy."

- **Data Points**: Blue dots (Min Energy) and orange dots (Free Energy) plotted at each x-axis value.

### Detailed Analysis

- **Min Energy (Blue)**:

- At 44 clauses: ~-220

- At 46 clauses: ~-221

- At 48 clauses: ~-223

- At 50 clauses: ~-225

- At 52 clauses: ~-227

- At 54 clauses: ~-229

- **Free Energy (Orange)**:

- At 44 clauses: ~-221

- At 46 clauses: ~-222

- At 48 clauses: ~-224

- At 50 clauses: ~-226

- At 52 clauses: ~-228

- At 54 clauses: ~-230

Both series exhibit a linear downward trend, with Min Energy consistently ~1 unit higher than Free Energy at each clause count. The energy difference narrows slightly as clauses increase (e.g., from ~1 unit at 44 clauses to ~1 unit at 54 clauses).

### Key Observations

1. **Consistent Trend**: Both metrics decrease monotonically as clauses increase.

2. **Energy Gap**: Min Energy remains marginally higher than Free Energy across all clause counts.

3. **Data Precision**: Points are tightly clustered, suggesting minimal variability in measurements.

### Interpretation

The plot demonstrates that satisfying more clauses correlates with lower energy states for both Min Energy and Free Energy. The near-identical trajectories imply that the system’s energy landscape is relatively stable, with Min Energy serving as a slight upper bound for Free Energy. The small energy gap (~1 unit) suggests that the two metrics are closely related, possibly reflecting different theoretical or computational approaches to energy calculation. The linear relationship indicates a predictable trade-off between clause satisfaction and energy minimization, which could inform optimization strategies in systems like SAT solvers or thermodynamic models.

</details>

(c) (c=10)

Figure 3: Linear correlation between satisfiability of a CNF and minimization of the free energy function for various confidence values $c$ . Source: [52].

3.4 Logical Boltzmann Machines

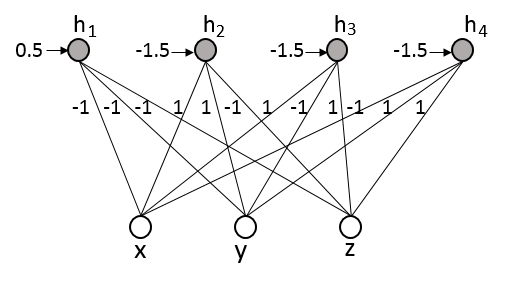

We are now in position to present a translation algorithm to build an RBM from logical formulae. The energy function of the RBM will be derived based on Theorem 1 given a formula in SDNF. The weights and biases of the RBM will be obtained from the energy function $E(\mathrm{x},\mathrm{h})=-(\sum_{i}\theta_{i}\mathrm{x}_{i}+\sum_{j}\theta_{j}%

\mathrm{h}_{j}+\sum_{ij}\mathrm{x}_{i}W_{ij}\mathrm{h}_{j})$ , where $\theta_{i}$ are the biases of the visible units, $\theta_{j}$ are the biases of the hidden units, and $W_{ij}$ is the symmetric weight between a visible and a hidden unit. For each conjunctive clause in the formula of the form $\bigwedge_{t∈\mathcal{S}_{T}}\mathrm{x}_{t}\wedge\bigwedge_{k∈\mathcal{S}_%

{K}}\ \neg\mathrm{x}_{k}$ , we create an energy term $-h_{j}(\sum_{t∈\mathcal{S}_{T}}x_{t}-\sum_{k∈\mathcal{\ S}_{K}}x_{k}-|%

\mathcal{S}_{T}|+\epsilon)$ . The disjunctions in the SDNF are implemented in the RBM simply by creating a hidden neuron $h_{j}$ for each disjunct in the SDNF.

Learning in LBM uses learning from data $\mathcal{D}$ combined with knowledge provided by the logical formulae. Learning with data and knowledge is expected to improve accuracy or training time. If the logical formula is empty, the weights and biases are initialized randomly and one has a standard RBM. Learning in this case is an approximation of parameters $\Theta$ over a set of preferred models $\mathcal{D}=\{\mathbf{x}^{(n)}|n=1,..,N\}$ of an unknown formula $\varphi^{*}$ . Consider the case where the data set $\mathcal{D}$ is complete, i.e. it contains all preferred models of an unknown $\varphi^{*}$ . We will show that learning an RBM to represent the SDNF of $\varphi^{*}$ is possible. Consider the gradient of the negative log-likelihood ( $-\ell$ ) of an RBM:

$$

\frac{\partial{-}\ell}{\partial\Theta}=\mathbf{E}[\frac{\partial{\it E}(%

\mathbf{x},\mathbf{h})}{\partial\Theta}]_{\mathbf{h}|\mathbf{x}\in\mathcal{D}}%

-\mathbf{E}[\frac{\partial{\it E}(\mathbf{x},\mathbf{h})}{\partial\Theta}]_{%

\mathbf{h},\mathbf{x}} \tag{14}

$$

where $\mathbf{E}$ denotes the expected value. This function is not convex. Therefore, the RBM may not always converge to $\varphi^{*}$ . Consider now the case where $\mathcal{D}$ is incomplete. At a local minimum, we have that $\frac{∂\text{-}\ell}{∂ w_{ij}}=-\frac{1}{N}\sum_{\mathbf{x}∈%

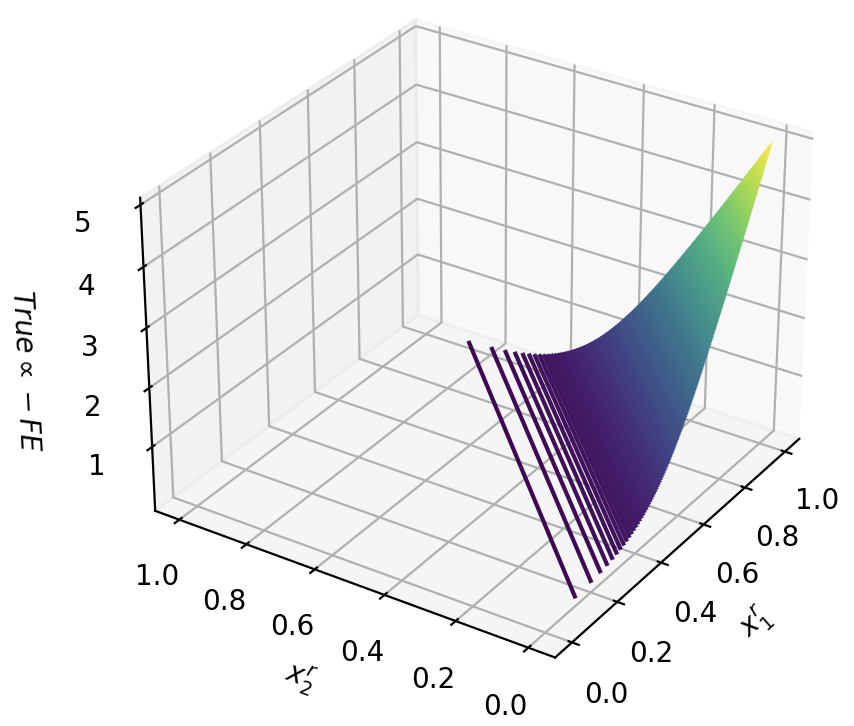

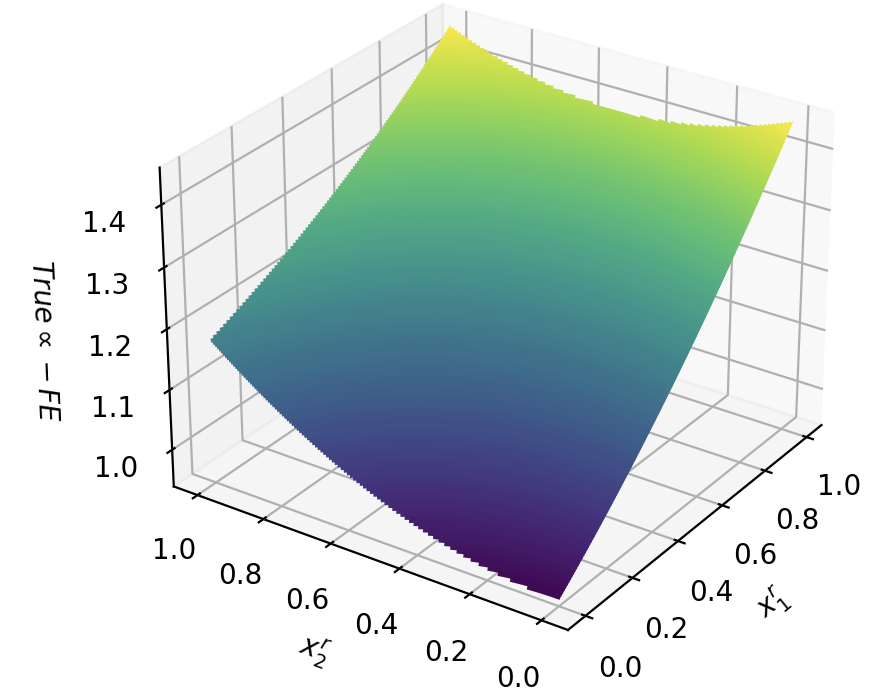

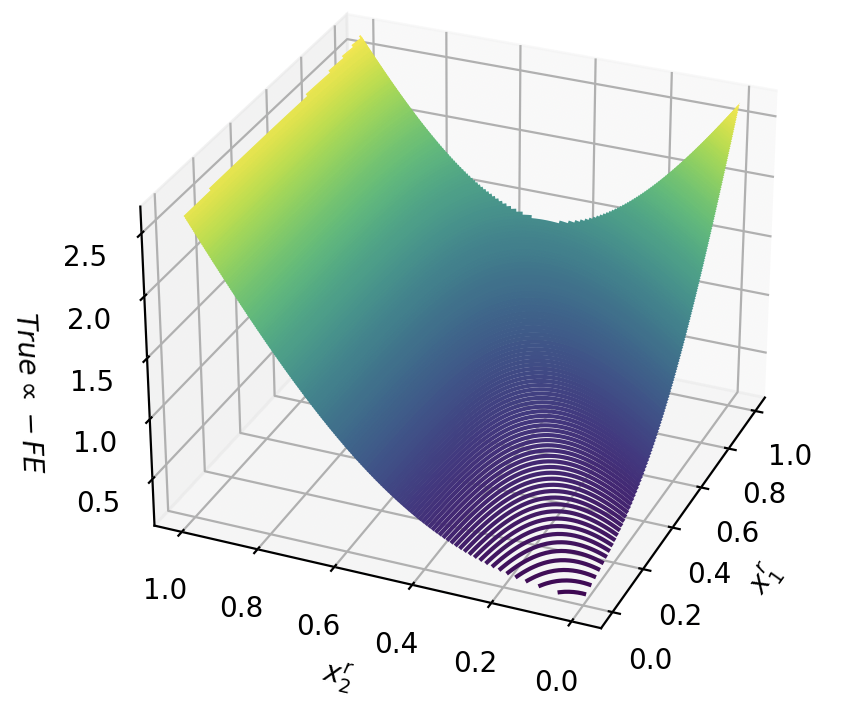

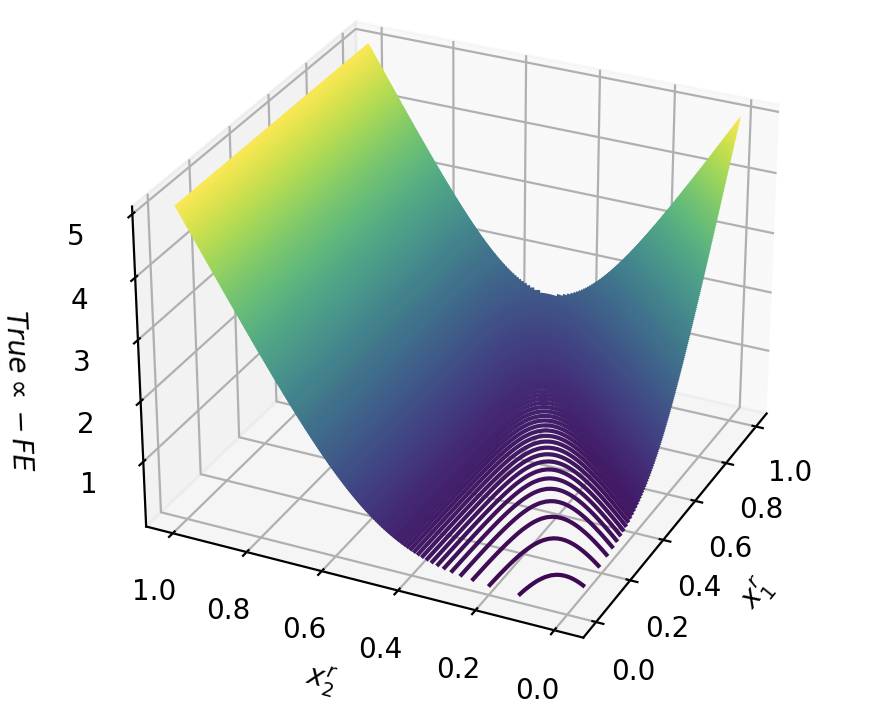

\mathcal{D}}x_{i}p(h_{j}|\mathbf{x})+\sum_{\mathbf{x}}x_{i}p(h_{j}|\mathbf{x})%