# Revisiting Group Relative Policy Optimization: Insights into On-Policy and Off-Policy Training

> ⋆⋆\star⋆IBM Research,††\dagger†IBM Quantum,∘\circ∘MIT-IBM Watson Lab

Abstract.

We revisit Group Relative Policy Optimization (GRPO) in both on-policy and off-policy optimization regimes. Our motivation comes from recent work on off-policy Proximal Policy Optimization (PPO), which improves training stability, sampling efficiency, and memory usage. In addition, a recent analysis of GRPO suggests that estimating the advantage function with off-policy samples could be beneficial. Building on these observations, we adapt GRPO to the off-policy setting. We show that both on-policy and off-policy GRPO objectives yield an improvement in the reward. This result motivates the use of clipped surrogate objectives in the off-policy version of GRPO. We then compare the empirical performance of reinforcement learning with verifiable rewards in post-training using both GRPO variants. Our results show that off-policy GRPO either significantly outperforms or performs on par with its on-policy counterpart.

1. Introduction

Proximal Policy Optimization (PPO) (Schulman et al., 2015, 2017) is a widely used algorithm in reinforcement learning. Reinforcement learning from Human Feedback (Christiano et al., 2017; Stiennon et al., 2020; Ouyang et al., 2022; Bai et al., 2022) and Reinforcement Learning from Verifiable Rewards (Lambert et al., 2024; Shao et al., 2024) are corner stones in post-training of large language models to align their preferences with human values and to enable reasoning and coding capabilities using verifiable rewards.

Group Relative Policy Optimization introduced in (Shao et al., 2024) alleviate the need of training a critic network in PPO and uses Monte-Carlo samples referred to as “a group” to estimate the advantage function via a standardized reward, where the mean and standard deviation statistics are estimated using the group. GRPO was used to train the Deepseek R1 reasoning models (Guo et al., 2025) and was adopted by the open-source community as a method of choice for post-training of large language models, with open-source implementations in several librarires such as TRL of HuggingFace (von Werra et al., 2020b) and VERL (Luo et al., 2025).

Several recent works analyzed the loss implemented in GRPO such as Vojnovic and Yun (2025); Mroueh (2025). The study in Mroueh (2025) suggests that the iterative GRPO of Shao et al. (2024) with sample reuse (i.e. for $\mu>1$ in Shao et al. (2024)) leads to an off-policy estimation of the advantage and to a success rate amplification when using verifiable rewards. Indeed, it has been observed empirically that this off-policy advantage estimation leads to an improved performance (HuggingFace, 2025b).

Motivated by these observations and the rich literature on off-policy PPO and RL, like work by Queeney et al. (2021); Meng et al. (2023); Gan et al. (2024); Fakoor et al. (2020) to cite a few (see related work Section 4 for a larger account on this), in this paper we explore the extension of GRPO to the off-policy regime where the advantage is estimated using statistics coming from a different policy than the current policy.

The main contributions of this paper are:

- We review in Section 2 the iterative GRPO algorithm proposed in Shao et al. (2024) and introduce in Section 3 the off-policy GRPO.

- We show in Section 3 that the on-policy and off-policy advantages provides a lower bound on the policy improvement of the expected reward (Theorem 1 and Corollary 1).

- We state conditions under which optimizing the advantage leads to improvements in the off-policy regime, namely, given that the off-policy stays in the vicinity of the current policy and the variance of the reward under the off-policy is non zero, maximizing the regularized off-policy advantage leads to policy improvement. The regularization ensures that the updated policy stays close to the off-policy.

- Finally, armed with these results, we state the constrained policy optimization problem for off-policy GRPO in Section 3.2 and derive a clipped surrogate similar to the ones in off-policy PPO (Gan et al., 2024) and obtain on-policy GRPO clipped objective as a particular case.

- We validate experimentally that training LLMs with off-policy GRPO leads to either improved or on par performance while potentially reducing the communication burden in serving the model in each iteration for inference.

2. On-Policy GRPO

Let $\mathcal{X}$ be the space of inputs (prompts in the context of LLMs) and $\mathcal{Y}$ the space of responses. We denote by $\rho_{\mathcal{X}}$ the distribution on inputs. We refer to the policy we want to optimize as $\pi(·|x)$ , which is a distribution on $\mathcal{Y}$ conditioned on $x\sim\rho_{\mathcal{X}}$ . For $k≥ 0$ , let $\pi_{k}$ be the policy at the current step $k$ .

The Group Relative Policy Optimization (GRPO) Clipped objective introduced in Shao et al. (2024) is a variant of Proximal Policy Optimization (PPO) (Schulman et al., 2017, 2015), where the advantage is computed as a standardized reward function with mean and variances computed with respect to a group or Monte-Carlo samples of size $G$ sampled from the current policy $\pi_{k}(.|x)$ for each $x$ independently. For $\epsilon,\beta>0$ and given a reference policy $\pi_{\mathrm{ref}}$ , the clipped objective optimization in GRPO is defined as follows:

$$

\max_{\pi}\mathbb{E}_{y\sim\pi_{k}(\cdot|x)}\min\left(\frac{\pi(y|x)}{\pi_{k}(%

y|x)}A_{\pi_{k}}(x,y),~{}\text{clip}\left(\frac{\pi(y|x)}{\pi_{k}(y|x)},1-%

\epsilon,1+\epsilon\right)A_{\pi_{k}}(x,y)\right)-\beta\mathsf{KL}(\pi||\pi_{%

\mathrm{ref}}),

$$

where $\mathsf{KL}$ is the Kullback-Leibler divergence, and $A_{\pi_{k}}$ is the GRPO advantage function:

$$

A_{\pi_{k}}(x,y)=\frac{r(x,y)-\mathbb{E}_{\pi_{k}}r(x,y)}{\sqrt{\mathbb{E}_{%

\pi_{k}}(r(x,y)-\mathbb{E}_{\pi_{k}}r(x,y))^{2}+\varepsilon}}.

$$

The advantage can be estimated from samples on “a group” of size $G$ for each $x$ , we sample $y_{1},...,y_{G}\sim\pi_{k}(·|x)$ and compute $r_{\ell}=r(x,y_{\ell}),$ $\ell=1,...,G$ . We refer to the group of reward conditioned on $x$ as $\{r_{\ell}\}$ and the estimated GRPO advantage is therefore (Shao et al., 2024):

$$

\hat{A}_{\pi_{k}}(x,y_{i})=\frac{r_{i}-\texttt{mean}(\{r_{\ell}\})}{\sqrt{%

\texttt{std}^{2}(\{r_{\ell}\})+\varepsilon}},

$$

where mean and std are empirical mean and standard deviation respectively. The statistics used to normalize the reward leading to the advantage function are estimated using the current policy $\pi_{k}$ , and hence we refer to $A_{\pi_{k}}$ as the on-policy advantage.

When compared with PPO, GRPO alleviates the need of training a critic network to compute the advantage and relies instead on standarized rewards that can be estimated efficiently using efficient inference frameworks such as vLLM (Kwon et al., 2023) in the context of large language models.

GRPO with Verifiable Rewards and Success Rate Amplification

The iterative GRPO (Shao et al., 2024) has two overlooked features:

- The algorithm suggests to optimize the policy $\pi$ for $\mu$ iterations fixing the samples from $\pi_{k}$ , which inherently leads to an off-policy estimation of the advantage.

- The algorithm suggests to do the training in stages while changing $\pi_{\mathrm{ref}}$ to the latest optimized policy with GRPO.

A recent analysis of GRPO with verifiable rewards, i.e. with binary rewards (Mroueh, 2025), suggests that this aforementioned off-policy advantage estimation in Shao et al. (2024) leads to an implicit fixed point iteration that guarantees that the success rate of the GRPO-optimized policy is higher than the one of the reference policy. This also explains the multi-stage nature of the iterative GRPO that changes the reference along the training iterations.

Motivated by these observations, we propose to take a step back and analyze on-policy and off-policy GRPO. In practice, in our proposed off-policy GRPO instead of just fixing the samples for $\mu$ iterations from $\pi_{k}$ as suggested in Shao et al. (2024), we use the policy $\pi_{k-\mu}$ to estimate the advantage for $\mu$ iterations with fresh samples in each iteration, and we refer to this as off-policy advantage.

3. Off-Policy and On-Policy GRPO Reward Improvement

We introduce in this Section off-policy GRPO, and analyze conditions under which policy reward improvement is possible in both the on-policy and off-policy regimes. Towards that goal we start by some preliminary definitions.

Define the expected reward of a policy given $x\sim\rho_{\mathcal{X}}$ :

$$

J(\pi(\cdot|x))=\mathbb{E}_{y\sim\pi(\cdot|x)}r(x,y) \tag{1}

$$

For $k≥ 0$ , let $\pi_{k}$ be the policy at the current step $k$ and $\alpha(·|x)$ be a policy used for off-policy sampling, where typically we consider $\alpha(·|x)=\pi_{k-v}(·|x)$ , for $0≤ v<k$ . Note in Section 2 we referred to this as $\pi_{k-\mu}$ so we keep close to notation used in the original GRPO paper. We will use $v$ instead of $\mu$ in the rest of the paper.

Define the mean and standard deviation of the off-policy reward, i.e. under policy $\alpha$ :

$$

{\mu_{\alpha,r}(x)=\mathbb{E}_{y\sim\alpha(\cdot|x)}r(x,y)}

$$

and

$$

\sigma_{\alpha,r}(x)=\sqrt{\mathbb{E}_{y\sim\alpha(\cdot|x)}(r(x,y)-\mu_{%

\alpha,r}(x))^{2}},

$$

and denote for ${0<\varepsilon<1}$ :

$$

\sigma_{\alpha,r,\varepsilon}(x)=\sqrt{\sigma^{2}_{\alpha,r}(x)+\varepsilon}.

$$

The GRPO advantage function computed using the off-policy distribution $\alpha$ is defined as the whitened reward, as follows:

$$

A_{\alpha}(x,y)=\frac{r(x,y)-\mu_{\alpha,r}(x)}{\sigma_{\alpha,r,\varepsilon}(%

x)}. \tag{2}

$$

Our goal is to maximize the expected advantage function using importance sampling under the policy $\alpha$ :

$$

\mathcal{L}_{\alpha}(\pi(\cdot|x))=\mathbb{E}_{y\sim\alpha(\cdot|x)}\frac{\pi(%

y|x)}{\alpha(y|x)}A_{\alpha}(x,y) \tag{3}

$$

If $\alpha=\pi_{k}$ , we obtain the online policy objective function of GRPO, where the advantage is computed with the current policy $\pi_{k}$ , i.e. using $A_{\pi_{k}}(x,y)$ .

3.1. Policy Improvement in GRPO

Note that our goal is to optimize the expected reward under $\pi$ , $J(\pi)$ given in eq. (1), but instead we use the expected advantage $\mathcal{L}_{\alpha}(\pi)$ – where the advantage is computed using $\alpha$ – given in eq. (3). Hence, our goal in what follows is to provide a lower bound on $J(\pi(·|x))-J(\pi_{k}(·|x))$ that involves $\mathcal{L}_{\alpha}(\pi)$ , which guarantees that maximizing the expected advantage function leads to improvement in terms of expected rewards on the current policy $\pi_{k}$ .

Our lower bounds are given in Theorem 1 and Corollary 1 and they involve the total variation distance $\mathbb{TV}$ defined as follows:

$$

\mathbb{TV}(m_{1},m_{2})=\frac{1}{2}\int|m_{1}-m_{2}|.

$$

**Theorem 1 (Policy Improvement Lower Bound inOff-Policy GRPO)**

*Assume that the reward is positive and bounded in $0≤ r≤ 1$ . Let $\alpha$ be the off-policy distribution and $\pi_{k}$ the current policy. Then for any policy $\pi$ we have for all $x$ ( $\rho_{\mathcal{X}}$ a.s.): $J (π (⋅ | x)) − J (π k (⋅ | x)) ≥ ℒ α (π (⋅ | x)) − 2 1 − σ α, r, ε (x) σ α, r, ε (x) 𝕋 𝕍 (π (⋅ | x), α (⋅ | x)) − 2 𝕋 𝕍 (π k (⋅ | x), α (⋅ | x)) italic_J ( italic_π ( ⋅ | italic_x ) ) - italic_J ( italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT ( ⋅ | italic_x ) ) ≥ caligraphic_L start_POSTSUBSCRIPT italic_α end_POSTSUBSCRIPT ( italic_π ( ⋅ | italic_x ) ) - 2 divide start_ARG 1 - italic_σ start_POSTSUBSCRIPT italic_α , italic_r , italic_ε end_POSTSUBSCRIPT ( italic_x ) end_ARG start_ARG italic_σ start_POSTSUBSCRIPT italic_α , italic_r , italic_ε end_POSTSUBSCRIPT ( italic_x ) end_ARG blackboard_T blackboard_V ( italic_π ( ⋅ | italic_x ) , italic_α ( ⋅ | italic_x ) ) - 2 blackboard_T blackboard_V ( italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT ( ⋅ | italic_x ) , italic_α ( ⋅ | italic_x ) )$*

If the reward is not bounded by $1$ we can scale it by $\left\lVert{r}\right\rVert_{∞}$ so it becomes in $[0,1]$ , without this impacting the overall optimization problem. Note that this condition on the reward ensures that $\sigma_{\alpha,r}(x)≤ 1$ which is needed in the GRPO case to get the policy improvement lower bound. Indeed for bounded random variable in $[a,b]$ the variance is bounded by $\frac{(b-a)^{2}}{4}$ , and hence we have $\sigma_{\alpha,r}(x)≤\frac{1}{4}$ , which guarantees that the term $\frac{1-\sigma_{\alpha,r,\varepsilon}(x)}{\sigma_{\alpha,r,\varepsilon}(x)}≥

0$ .

For on-policy GRPO i.e. setting $\alpha=\pi_{k}$ in Theorem 1 we have the following corollary:

**Corollary 1 (Policy Improvement Lower Bound inOn-Policy GRPO)**

*Assume that the reward is positive and bounded, $0≤ r≤ 1$ . Let $\pi_{k}$ be the current policy, then for any policy $\pi$ we have for all $x$ ( $\rho_{\mathcal{X}}$ a.s.):

$J (π (⋅ | x)) − J (π k (⋅ | x)) ≥ ℒ π k (π (⋅ | x)) − 2 1 − σ π k, r, ε (x) σ π k, r, ε (x) 𝕋 𝕍 (π (⋅ | x), π k (⋅ | x)) italic_J ( italic_π ( ⋅ | italic_x ) ) - italic_J ( italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT ( ⋅ | italic_x ) ) ≥ caligraphic_L start_POSTSUBSCRIPT italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT end_POSTSUBSCRIPT ( italic_π ( ⋅ | italic_x ) ) - 2 divide start_ARG 1 - italic_σ start_POSTSUBSCRIPT italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT , italic_r , italic_ε end_POSTSUBSCRIPT ( italic_x ) end_ARG start_ARG italic_σ start_POSTSUBSCRIPT italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT , italic_r , italic_ε end_POSTSUBSCRIPT ( italic_x ) end_ARG blackboard_T blackboard_V ( italic_π ( ⋅ | italic_x ) , italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT ( ⋅ | italic_x ) )$*

Define :

$$

M_{\alpha,r,\varepsilon}=\sqrt{\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\frac{(1-%

\sigma_{\alpha,r,\varepsilon}(x))^{2}}{\sigma^{2}_{\alpha,r,\varepsilon}(x)}}

$$

Integrating Theorem 1 on $x$ (prompts) and applying Cauchy-Schwarz inequality we obtain:

$\displaystyle\mathbb{E}_{x\sim\rho_{\mathcal{X}}}J(\pi(·|x))-\mathbb{E}_{x%

\sim\rho_{\mathcal{X}}}J(\pi_{k}(·|x))≥\mathbb{E}_{x\sim\rho_{\mathcal{%

X}}}\mathcal{L}_{\alpha}(\pi(·|x))..$ $\displaystyle..-2M_{\alpha,r,\varepsilon}(\mathbb{E}_{x\sim\rho_{\mathcal{X}}}%

\mathbb{TV}^{2}(\pi(·|x),\alpha(·|x)))^{\frac{1}{2}}-2\mathbb{E}_{x%

\sim\rho_{\mathcal{X}}}\mathbb{TV}(\pi_{k}(·|x),\alpha(·|x))$ (4)

Interpreting the lower bound

When compared with lower bounds for policy improvement in PPO (Theorem 1 in TRPO (Schulman et al., 2015)) and for off-policy PPO (Lemma 3.1 in transductive PPO (Gan et al., 2024) and Theorem 1 in Generalized PPO (Queeney et al., 2021)), we observe similar lower bounds with a crucial difference that the constants weighting total variations are absolute constants for PPO whereas they are policy and data dependent for GRPO. In particular, the dependency of the lower bound on:

$$

\frac{1-\sigma_{\alpha,r,\varepsilon}(x)}{\sigma_{\alpha,r,\varepsilon}(x)}

$$

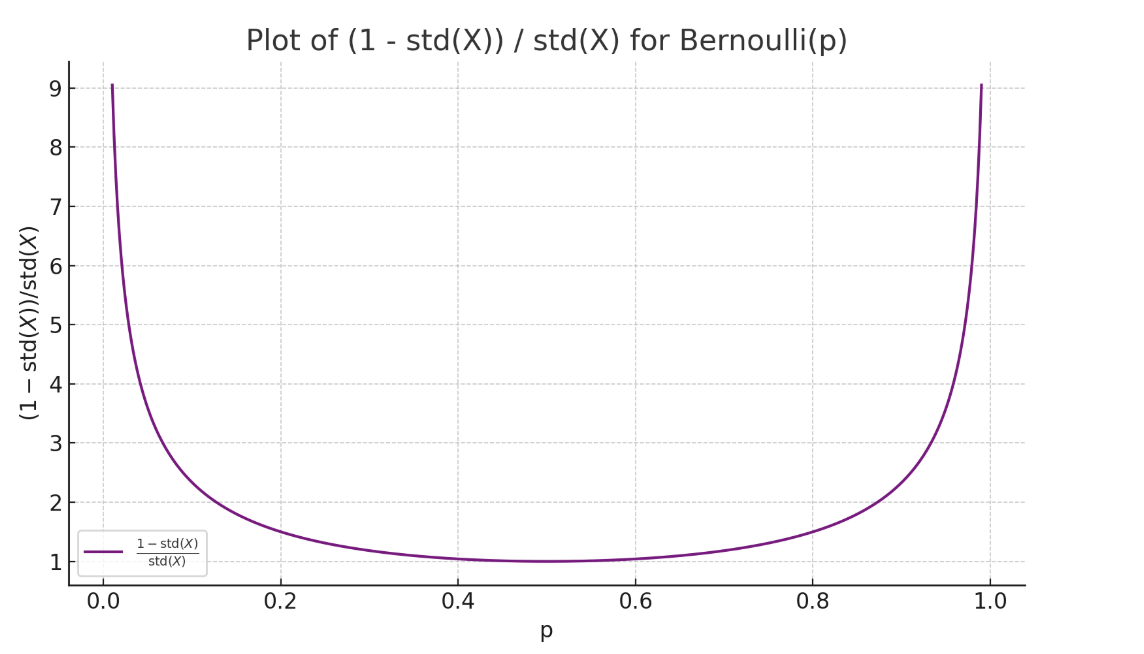

is of interest. We can examine this quantity for verifiable rewards, for each $x$ the verifiable reward is a Bernouilli random variable with parameter $p$ the probability of success of the policy given $x$ (Mroueh, 2025). Hence we have:

$$

\frac{1-\sigma_{\alpha,r,\varepsilon}(x)}{\sigma_{\alpha,r,\varepsilon}(x)}=%

\frac{1-\sqrt{p(1-p)+\varepsilon}}{\sqrt{p(1-p)+\varepsilon}}

$$

Plotting this quantity as function of $p$ below, we observe that it diverges for fully correct and incorrect answers and this can indeed hurt the lower bound, as the negative terms in the lower bound will be dominating. It was suggested in DAPO (Yu et al., 2025) to filter out prompts with fully correct or incorrect answers, this will have the effect of controlling this term in the lower bound and keep that quantity bounded away from infinity.

<details>

<summary>extracted/6494595/std_bern.png Details</summary>

### Visual Description

## Chart: Plot of (1 - std(X)) / std(X) for Bernoulli(p)

### Overview

The image displays a line graph illustrating the relationship between the probability parameter 'p' of a Bernoulli distribution and the ratio (1 - std(X)) / std(X), where std(X) represents the standard deviation of the Bernoulli distribution. The graph shows a U-shaped curve, indicating a non-linear relationship between the two variables.

### Components/Axes

* **Title:** "Plot of (1 - std(X)) / std(X) for Bernoulli(p)" - positioned at the top-center of the chart.

* **X-axis:** Labeled "p", representing the probability parameter of the Bernoulli distribution. The scale ranges from 0.0 to 1.0, with markers at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Y-axis:** Labeled "(1 - std(X)) / std(X)", representing the ratio of (1 - standard deviation) to the standard deviation. The scale ranges from approximately 1.0 to 9.0, with markers at 1, 2, 3, 4, 5, 6, 7, 8, and 9.

* **Legend:** Located in the bottom-left corner of the chart. It contains a single entry: "1 - std(X) / std(X)" associated with a solid black line.

### Detailed Analysis

The graph shows a single data series represented by a solid black line.

* **Trend:** The line exhibits a U-shaped curve. It starts at approximately y = 1.0 when x = 0.0, decreases to a minimum value of approximately y = 1.0 around x = 0.5, and then increases sharply to approximately y = 9.0 when x = 1.0.

* **Data Points (approximate):**

* (0.0, 1.0)

* (0.2, ~1.8)

* (0.4, ~2.5)

* (0.5, ~1.0)

* (0.6, ~2.5)

* (0.8, ~1.8)

* (1.0, 9.0)

### Key Observations

* The ratio (1 - std(X)) / std(X) is equal to 1 when p = 0 or p = 1.

* The ratio reaches a minimum value of approximately 1 when p = 0.5.

* The curve is symmetrical around p = 0.5.

* The ratio increases rapidly as p approaches 1.

### Interpretation

The graph demonstrates the relationship between the probability parameter 'p' of a Bernoulli distribution and a specific ratio involving its standard deviation. The U-shape indicates that the ratio is minimized when p = 0.5, meaning the difference between 1 and the standard deviation is smallest relative to the standard deviation itself at this probability. As 'p' moves away from 0.5, the ratio increases, suggesting a greater difference between 1 and the standard deviation relative to the standard deviation. This could be interpreted as the uncertainty (represented by the standard deviation) becoming more pronounced as the probability deviates from 0.5. The rapid increase near p=1 suggests a very high sensitivity to changes in 'p' when the probability is close to certainty. The graph provides a visual representation of how the standard deviation impacts this ratio across the range of possible Bernoulli probabilities.

</details>

Figure 1. $\frac{1-\sigma_{\alpha,r,\varepsilon}(x)}{\sigma_{\alpha,r,\varepsilon}(x)}$ explodes when variance is zero, meaning for fully correct or wrong policies, this term dominates the lower bound.

3.2. GRPO: From Constrained Optimization to Clipped Surrogate Objectives

From Penalized to $\mathsf{KL}$ Constrained Optimization

To maximize the lower bound in eq.(4), we see that the off-policy $\alpha$ needs to be in the vicinity of the current policy $\pi_{k}$ , i.e. for $\mathbb{TV}(\alpha,\pi_{k})≤\delta$ and that $M α, r, 0 < ∞ subscript 𝑀 𝛼 𝑟 0 italic_M start_POSTSUBSCRIPT italic_α , italic_r , 0 end_POSTSUBSCRIPT < ∞$ (variance terms not exploding). Under these assumptions, we can solve the following penalized problem :

$$

\max_{\pi}\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\mathcal{L}_{\alpha}(\pi(\cdot|x%

))-2~{}M_{\alpha,r,\varepsilon}\sqrt{\mathbb{E}_{x\sim\rho_{\mathcal{X}}}%

\mathbb{TV}^{2}(\pi(\cdot|x),\alpha(\cdot|x))}.

$$

By virtue of Theorem 1, maximizing this objective above leads to policy reward improvement.

We can write this as a constrained optimization, there exists $\Delta>0$ such that the following constrained optimization problem is equivalent:

$$

\max_{\pi}\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\mathcal{L}_{\alpha}(\pi(\cdot|x%

))\text{ subject to }\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\mathbb{TV}^{2}(\pi(%

\cdot|x),\alpha(\cdot|x))\leq\Delta^{2}.

$$

By Pinsker inequality for two measures $m_{1},m_{2}$ we have $\mathbb{TV}(m_{1},m_{2})≤\sqrt{\frac{1}{2}\mathsf{KL}(m_{1},m_{2})}$ and hence we can bound instead the $\mathsf{KL}$ divergence as follows:

$$

\boxed{\max_{\pi}\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\mathcal{L}_{\alpha}(\pi(%

\cdot|x))\text{ subject to }\frac{1}{2}~{}\mathbb{E}_{x\sim\rho_{\mathcal{X}}}%

\mathsf{KL}(\pi(\cdot|x),\alpha(\cdot|x))\leq\Delta^{2}.} \tag{5}

$$

From Constrained Optimization to Clipped Surrogate Objectives

The objective in (5) is the same as in the original constrained PPO formulation (Schulman et al., 2015) with two key differences: the advantage is the whitened reward of GRPO where the statistics are computed using the off-policy $\alpha$ , and the advantage objective is computed using importance sampling from the off-policy $\alpha$ , instead of $\pi_{k}$ in both cases. This is indeed related to objectives in off-policy PPO (Queeney et al., 2021; Gan et al., 2024). A practical implementation of these objectives is through clipped surrogates (Schulman et al., 2015).

For $\epsilon∈[0,1]$ following Gan et al. (2024); Queeney et al. (2021) let us define:

$$

f_{\epsilon}(r,r^{\prime},a)=\min(ra,~{}\text{clip}(r,\max(r^{\prime}-\epsilon%

,0),r^{\prime}+\epsilon)~{}a).

$$

The clipped off-policy GRPO objective for $\alpha$ such that $𝕋 𝕍 (α, π k) ≤ δ 𝕋 𝕍 𝛼 subscript 𝜋 𝑘 𝛿 blackboard_T blackboard_V ( italic_α , italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT ) ≤ italic_δ$ and $M α, r, 0 < ∞ subscript 𝑀 𝛼 𝑟 0 italic_M start_POSTSUBSCRIPT italic_α , italic_r , 0 end_POSTSUBSCRIPT < ∞$ is therefore defined as follows :

$$

\mathcal{L}^{c}_{\alpha}(\pi(\cdot|x))=\mathbb{E}_{y\sim\alpha(\cdot|x)}f_{%

\epsilon}\left(\frac{\pi(y|x)}{\alpha(y|x)},\frac{\pi_{k}(y|x)}{\alpha(y|x)},A%

_{\alpha}(x,y)\right) \tag{6}

$$

Let us unpack this, we have: $f_{\epsilon}\left(\frac{\pi(y|x)}{\alpha(y|x)},\frac{\pi_{k}(y|x)}{\alpha(y|x)%

},A_{\alpha}(x,y)\right)=..$

$$

..=\begin{cases}A_{\alpha}(x,y)\min\left(\frac{\pi(y|x)}{\alpha(y|x)},\frac{%

\pi_{k}(y|x)}{\alpha(y|x)}+\epsilon\right),&r(x,y)\geq\mu_{\alpha,r}(x)\\

A_{\alpha}(x,y)\max\left(\frac{\pi(y|x)}{\alpha(y|x)},\max(\frac{\pi_{k}(y|x)}%

{\alpha(y|x)}-\epsilon,0)\right),&r(x,y)<\mu_{\alpha,r}(x).\end{cases}

$$

The clipping ensures that the ratio $\frac{\pi}{\alpha}$ remains bounded and is a relaxation of the $\mathsf{KL}$ (or the total variation distance). Since $\alpha$ needs to satisfy closeness to $\pi_{k}$ in order to ensure improvement, the clipping objective incentivizes the difference between $\frac{\pi}{\alpha}-\frac{\pi_{k}}{\alpha}$ to not exceed $\epsilon$ (Gan et al., 2024).

In practice, the off-policy is $\alpha=\pi_{k-v}$ for a small $v∈[0,k)$ . Given a small learning rate and a small $v$ , the assumption that the policy $\pi_{k-v}$ doesn’t deviate from $\pi_{k}$ is reasonable, and for $v$ small we can approximate $\frac{\pi_{k}}{\pi_{k-v}}$ by $1$ . We use this approximation in practice as we found it more stable, and given that this approximation is in practice used in off-Policy PPO (with sample reuse) as discussed in Gan et al. (2024) (See Section 4.1 in Gan et al. (2024)).

Back to On-Policy GRPO Clipped Objective

For $\alpha=\pi_{k}$ , we obtain the clipped objective for on-policy GRPO (Shao et al., 2024):

| | $\displaystyle\mathcal{L}^{c}_{\pi_{k}}(\pi(·|x))$ | $\displaystyle=\mathbb{E}_{y\sim\pi_{k}(·|x)}f_{\epsilon}\left(\frac{\pi(y|%

x)}{\pi_{k}(y|x)},1,A_{\pi_{k}}(x,y)\right)$ | |

| --- | --- | --- | --- |

| Method name | Update by fixed batch $i$ | Update of Policy on Server $v$ |

| --- | --- | --- |

| On-Policy GRPO (Shao et al., 2024) | $i=1$ | $v=1$ |

| Off-policy GRPO (Shao et al., 2024) | $i>1$ | $v=1$ |

| Off-policy GRPO (this work) | $i=1$ | $v>1$ |

Table 1. Training configurations in alg. 1: (v1-i1) is on-policy GRPO and (v1-i10) is an example of off-policy GRPO in (Shao et al., 2024). Our off-policy GRPO corresponds e.g. to (v10-i1).

Algorithm 1 Iterative GRPO with verifiable rewards, modified from Shao et al. (2024)

1: Input initial policy model $\pi_{\theta_{\text{init}}}$ ; verifiable reward $r$ ; task prompts $\mathcal{D}$ ;

2: Hyperparameters $\epsilon$ , $\beta$ , $S$ ,

3: $(i,v)$ =(Number of SGD iteration by fixed batch, Model update on vLLM server)

4: Policy model $\pi_{\theta}←\pi_{\theta_{\text{init}}}$ $\pi_{\mathrm{ref}}←\pi_{\theta_{\text{init}}}$

5: for $s=1,...,S$ do

6: for $k=1,...,M$ do

7: Sample a batch $\mathcal{D}_{b}$ from $\rho_{\mathcal{X}}$

8: if $k\bmod v=0$ then

9: Update the old policy model on the vLLM server $\pi_{\theta_{\textrm{old}}}←\pi_{\theta}$

10: Sample $G$ outputs $\{y_{i}\}_{i=1}^{G}\sim\pi_{\theta_{\textrm{old}}}(·\mid x_{i})$ for each question $x∈\mathcal{D}_{b}$

11: Compute rewards $\{r_{i}\}_{i=1}^{G}$ for each sampled output $y_{i}$ by running verifiable reward $r$

12: $\alpha←\pi_{\theta_{\textrm{old}}}$

13: Compute $A_{\alpha}(x,y_{i})$ using Equation (2)

14: for GRPO iteration = 1, …, $i$ do $\triangleright$ $i$ is referred to as $\mu$ in Original GRPO

15: Update the policy model $\pi_{\theta}$ by maximizing the GRPO objective (7) with gradient ascent

16: $\pi_{\mathrm{ref}}←\pi_{\theta}$ $\triangleright$ Swap reference with the latest model

17: Output $\pi_{\theta}$

$\mathsf{KL}-$ Regularized RL & On-Policy / Off-Policy Algorithms

Finally putting together our clipped surrogate objective with the $\mathsf{KL}$ regularizer we obtain our final objective:

$$

\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\mathcal{L}^{c}_{\alpha}(\pi(\cdot|x))-%

\beta\mathsf{KL}(\pi||\pi_{\mathrm{ref}}). \tag{7}

$$

We present the GRPO algorithm in Algorithm 1 and the configurations that allow toggling between on-policy and off-policy GRPO in Table 1. Within the RL loop, the model is served for inference using vLLM (Kwon et al., 2023). The parameter $v$ controls how often the model is updated on the vLLM server (which corresponds to off-policy with $\alpha=\pi_{k-v+1}$ ). The parameter $i$ controls how many SGD iterations are applied to each batch sampled from the policy. For $v=1$ and $i=1$ , the model is continuously served, and each batch of samples is used once in SGD. This corresponds to on-policy GRPO. For $i>1$ and $v=1$ , the model is still continuously served, but each batch is used $i$ times in the SGD loop; this corresponds to an “off-policy” GRPO variant, as proposed in Shao et al. (2024). For large models that require tensor parallelism and multi-GPU serving, continuous model serving incurs additional communication costs. Our off-policy GRPO mitigates these costs by serving the model every $v>1$ iterations (line 8 in Algorithm 1) and fixing $i=1$ . Our theory guarantees reward improvement as long as $v$ is not too large.

Computational and Communication Costs

Updating the model served by vLLM during GRPO training incurs varying costs depending on the model size, update frequency ( $v$ ), and parallelism settings. When the training model and vLLM instance reside on different GPUs, or when vLLM uses tensor parallelism (TP), model updates may trigger deep copies and inter-GPU communication. These involve either full weight transfers or partitioned broadcasts, which scale linearly with model size. Frequent updates (e.g., $v=1$ ) can dominate the runtime, especially for large models (see the recent benchmark vLLM (2025) for latencies in serving large models with tensor parallelism using vLLM). To mitigate this, we update the vLLM model every $v>1$ iterations. This amortizes the copy cost while maintaining reward improvement guarantees from our theory. In our experiments (Section 5), we are limited to single node setups with relatively small models, and therefore cannot fully demonstrate the potential speedups —particularly those that would become more pronounced at larger scales. In our setups the speedups are modest, given that there is no inter GPU or inter nodes communication for serving the models. See Section A for further discussion.

On-Policy Clipped Objective with Zero Variance Masking a la DAPO (Yu et al., 2025) As discussed earlier in the interpretation of the lower bound in page 4, the samples with zero variance may lead to total variation terms to dominate the lower bound, hence we propose similar to DAPO (Yu et al., 2025) to mask these samples. For instance in the on policy case this would be with the following masked objective:

$$

\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\mathbbm{1}_{\sigma_{\pi_{k},r}(x)\neq 0}%

\left(\mathcal{L}^{c}_{\pi_{k}}(\pi(\cdot|x))-\beta\mathsf{KL}(\pi||\pi_{%

\mathrm{ref}})\right). \tag{8}

$$

4. Related Work

Proximal Policy Optimization (PPO) and Extensions

Proximal Policy Optimization (PPO) is a widely used on-policy reinforcement learning algorithm that improves training stability through clipped surrogate objectives. While PPO is effective in diverse settings, it is inherently limited by its on-policy nature, which constrains sample efficiency. To address these limitations, several off-policy adaptations and extensions of PPO have been proposed. Generalized Proximal Policy Optimization (G-PPO) (Queeney et al., 2021) enables sample reuse while maintaining convergence guarantees. Transductive off-Policy PPO (ToPPO) (Gan et al., 2024) builds on G-PPO by incorporating transductive learning principles, bridging the gap between off-policy learning and theoretical guarantees of on-policy methods. Off-Policy PPO (OPPO) (Meng et al., 2023) proposes novel corrections to integrate replay buffer samples in PPO-style updates.

On-Policy and Off-Policy Actor-Critic Methods

Actor-critic methods blend the strengths of policy gradients and value function estimation. Off-policy variants aim to improve sample efficiency by learning from a replay buffer. The Off-Policy Actor-Critic algorithm (Degris et al., 2012) introduces importance weighting to enable stable updates from off-policy data. ACER (Wang et al., 2016) extends this with trust-region optimization and truncated importance sampling, enhancing by that the learning efficiency in discrete action spaces. Mixing on-policy and off-policy methods aims to leverage the stability of on-policy updates with the efficiency of off-policy learning. P3O (Fakoor et al., 2020) provides a principled approach that interleaves policy updates from both on- and off-policy data.

Off-Policy RLHF and other variants of GRPO

Noukhovitch et al. (2025) introduced within the iterative DPO framework an asynchronous RLHF using off-policy data and that ensures faster convergence to the optimal policy. New variants of GRPO have been proposed recently such as DAPO (Yu et al., 2025) and DR-GRPO (Liu et al., 2025). DAPO proposes the zero variance masking without theoretical backing, our work roots this in the improvement lower bound. DR-GRPO proposes to center only the reward without using the variance normalization.

5. Experiments

5.1. Ablation Studies on GSM8K

Setup, Model, and Data

In our first set of experiments, we use GSM8K dataset from Cobbe et al. (2021) (MIT license), and Qwen/Qwen2.5-0.5B-Instruct (Apache 2.0 license) by Yang et al. (2024). We integrate our changes in Algorithm 1 to the GRPO implementation in TRL (von Werra et al., 2020b), and train our models on the training split of GSM8K on a node with 8 GPUs (GPU 0 for the vLLM server and 7 other GPUs for distributed training). See Appendix B for the hardware specification. We use a learning $5× 10^{-6}$ for all experiments and the KL regularizer $\beta=0.1$ in Equation (7). We use the correctness of the LLM output as a reward. For GRPO training, the hyperparameters are the following: group size $G=16$ and per-device batch size $16$ (meaning each GPU processes a single prompt $x$ with $16$ responses). To increase the overall batchsize we use gradient accumulation of $4$ , ending with an effective batch size of prompts of $28$ . The context length used for this experiment is $200$ , and the sampling temperature is set to $\tau=0.1$ .

Ablations and Results

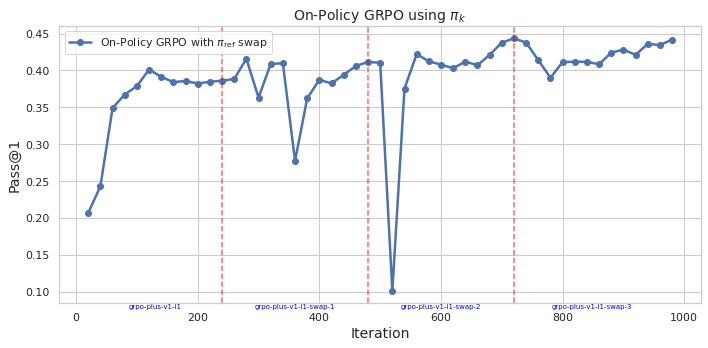

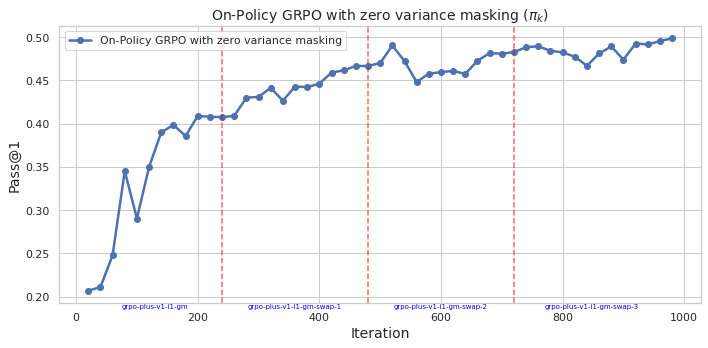

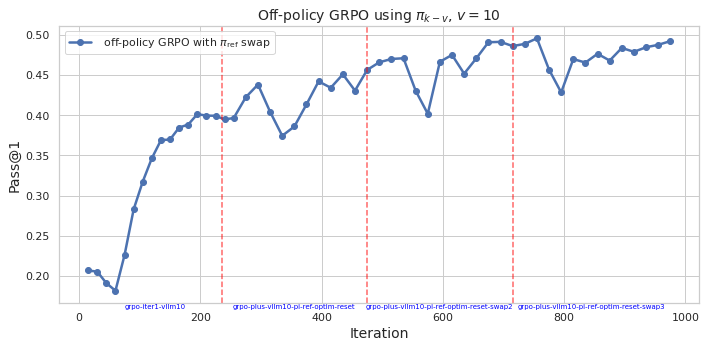

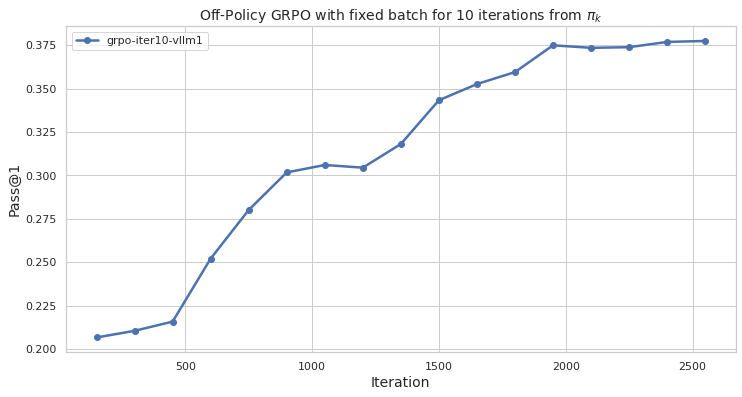

We train our models with GRPO using Algorithm 1 with a verifiable reward for answer correctness. We use for GRPO different configurations given in Table 1 and report on the test split of GSM8K Pass@1 using 50 samples (i.e. frequency of success given 50 generations for each question) using the same sampling configuration as in training. We report results in Figure 2: Fig. 2(a) for on-policy GRPO ( $i=1,v=1$ ) with the objective given in Equation (7) with $S=3$ (i.e. for 4 epochs with $\pi_{\mathrm{ref}}$ swap at end of each epoch with latest model); Fig. 2(b) for on-policy GRPO ( $i=1,v=1$ ) with masking zero variance samples i.e. using the objective given Equation (8) with $S=3$ ; Fig. 2(c) for our off-policy GRPO $(v=10,i=1)$ , with $S=3$ and Fig. 2(d) for Shao et al. (2024) ’s off-policy GRPO i.e $(v=1,i=10)$ for a single epoch. We see in Fig. 2(a) that while the on-policy GRPO converges to a maximum Pass@1 of $45\%$ it is unstable. The masking of zero variance sampling in 2(b) stabilizes the on-policy GRPO and leads to an improvement of the performance to $50\%$ . This is in line with our theoretical grounding through the improvement lower bound. Our off-policy GRPO in Fig. 2(c) stabilizes the training also and leads to an improved Pass@1 of $50\%$ on the test set. In all three cases, we see that by resetting the $\pi_{\mathrm{ref}}$ to the latest model, GRPO amplifies the success rate above the current $\pi_{\mathrm{ref}}$ , this concurs with the theoretical findings in Mroueh (2025). Finally, the off-policy variant in Shao et al. (2024) in Fig. 2(d) shows a slower convergence over an epoch.

<details>

<summary>extracted/6494595/figs/v1-i1-swap.png Details</summary>

### Visual Description

## Line Chart: On-Policy GRPO using πk

### Overview

This image presents a line chart illustrating the performance of an On-Policy GRPO algorithm using πk, measured by the Pass@1 metric, across iterations. The chart shows fluctuations in performance, with distinct dips coinciding with labeled "swap" events.

### Components/Axes

* **Title:** "On-Policy GRPO using πk" - positioned at the top-center of the chart.

* **X-axis:** "Iteration" - ranging from 0 to 1000, with tick marks at approximately 0, 200, 400, 600, 800, and 1000. Specific labels are: "grpo-plus-v1-1", "grpo-plus-v1-1-swap-1", "grpo-plus-v1-1-swap-2", "grpo-plus-v1-1-swap-3".

* **Y-axis:** "Pass@1" - ranging from 0.10 to 0.45, with tick marks at 0.10, 0.15, 0.20, 0.25, 0.30, 0.35, 0.40, and 0.45.

* **Data Series:** A single line labeled "On-Policy GRPO with πref swap" - colored in a dark blue.

* **Vertical Dashed Lines:** Four vertical dashed lines, colored in red and teal, are present. These lines are positioned at approximately iterations 200, 400, 600, and 800, and are labeled with "grpo-plus-v1-1-swap-1", "grpo-plus-v1-1-swap-2", "grpo-plus-v1-1-swap-3".

### Detailed Analysis

The line representing "On-Policy GRPO with πref swap" starts at approximately (0, 0.22). It then exhibits a steep upward trend, reaching a peak of approximately (200, 0.41). Following this peak, the line declines sharply to a low of approximately (400, 0.30), coinciding with the first dashed line. It then rises again to a peak of approximately (500, 0.42), before declining to a low of approximately (600, 0.36) at the second dashed line. The line then rises to a peak of approximately (700, 0.43), and declines to a low of approximately (800, 0.38) at the third dashed line. Finally, the line shows a slight upward trend, stabilizing around (900, 0.42) and (1000, 0.42).

Here's a more detailed breakdown of approximate data points:

* (0, 0.22)

* (100, 0.35)

* (200, 0.41)

* (300, 0.38)

* (400, 0.30)

* (500, 0.42)

* (600, 0.36)

* (700, 0.43)

* (800, 0.38)

* (900, 0.42)

* (1000, 0.42)

### Key Observations

The chart demonstrates a cyclical pattern in the Pass@1 metric. Each cycle consists of an increase in performance, followed by a sharp decline coinciding with a "swap" event. The magnitude of the performance decline appears to be relatively consistent across the observed swaps. The overall trend suggests that the algorithm is capable of learning and improving, but is periodically disrupted by the swap events.

### Interpretation

The data suggests that the "πref swap" mechanism, while potentially beneficial in some contexts, introduces instability into the learning process. The periodic dips in performance indicate that the swaps disrupt the algorithm's current state, requiring it to re-adapt. The consistent pattern of decline following each swap suggests that the swap process itself may be a source of inefficiency. The algorithm appears to recover from these disruptions, but the recovery process introduces a cyclical pattern in performance. Further investigation is needed to understand the underlying cause of these disruptions and to explore strategies for mitigating their impact. The chart highlights a trade-off between exploration (through swaps) and exploitation (through continued learning). The optimal balance between these two strategies likely depends on the specific characteristics of the environment and the algorithm's learning parameters.

</details>

(a) On-Policy GRPO with $\pi_{\mathrm{ref}}$ swap at end of each epoch. ( $v=1$ , $i=1$ , $S=3$ )

<details>

<summary>extracted/6494595/figs/v1-i1-swap-gm.png Details</summary>

### Visual Description

\n

## Line Chart: On-Policy GRPO with zero variance masking

### Overview

This image presents a line chart illustrating the performance of an "On-Policy GRPO with zero variance masking" algorithm over iterations. The chart plots "Pass@1" on the y-axis against "Iteration" on the x-axis. Vertical dashed red lines mark specific iteration points with labels indicating algorithm versions or stages.

### Components/Axes

* **Title:** "On-Policy GRPO with zero variance masking (πk)" - positioned at the top-center of the chart.

* **X-axis:** "Iteration" - ranging from 0 to 1000, with tick marks at intervals of 100.

* **Y-axis:** "Pass@1" - ranging from 0.20 to 0.50, with tick marks at intervals of 0.05.

* **Data Series:** A single line representing "On-Policy GRPO with zero variance masking" - colored in dark blue.

* **Legend:** Located in the top-left corner, labeling the data series.

* **Vertical Dashed Lines:** Four vertical dashed red lines are present at approximately iterations 200, 400, 600, and 800.

* **Labels on X-axis:** "grpo-plus-v1-1-gm", "grpo-plus-v1-1-gm-swap-1", "grpo-plus-v1-1-gm-swap-2", "grpo-plus-v1-1-gm-swap-3"

### Detailed Analysis

The blue line representing "On-Policy GRPO with zero variance masking" shows a generally increasing trend, with some fluctuations.

* **Iteration 0:** Pass@1 is approximately 0.21.

* **Iteration 100:** Pass@1 is approximately 0.36, with a dip to around 0.32 at iteration 50.

* **Iteration 200:** Pass@1 is approximately 0.41. The first vertical dashed red line is positioned here, labeled "grpo-plus-v1-1-gm".

* **Iteration 400:** Pass@1 is approximately 0.45. The second vertical dashed red line is positioned here, labeled "grpo-plus-v1-1-gm-swap-1".

* **Iteration 600:** Pass@1 is approximately 0.47. The third vertical dashed red line is positioned here, labeled "grpo-plus-v1-1-gm-swap-2".

* **Iteration 800:** Pass@1 is approximately 0.46. The fourth vertical dashed red line is positioned here, labeled "grpo-plus-v1-1-gm-swap-3".

* **Iteration 1000:** Pass@1 is approximately 0.48.

* The line reaches a peak of approximately 0.49 around iteration 550.

* There is a slight decrease in Pass@1 between iterations 600 and 700, dropping to around 0.45.

* The line fluctuates between approximately 0.46 and 0.48 from iteration 700 to 1000.

### Key Observations

* The algorithm demonstrates a clear improvement in Pass@1 as the number of iterations increases, although the improvement plateaus after iteration 600.

* The vertical dashed lines indicate points where the algorithm or its configuration was modified (e.g., "swap-1", "swap-2", "swap-3"). These modifications do not consistently lead to immediate improvements in Pass@1.

* The initial learning phase (iterations 0-200) shows a rapid increase in Pass@1.

* The fluctuations in the line after iteration 600 suggest that the algorithm may be approaching a local optimum or experiencing some instability.

### Interpretation

The chart suggests that the "On-Policy GRPO with zero variance masking" algorithm is effective in improving performance (as measured by Pass@1) over iterations. The algorithm shows significant gains in the initial stages of training, but the rate of improvement slows down as it converges. The vertical dashed lines, representing changes to the algorithm, provide insights into the impact of different configurations. The fact that the algorithm doesn't consistently improve with each modification suggests that the optimal configuration may be complex and require careful tuning. The fluctuations in the later stages of training could indicate the need for further optimization or regularization techniques to prevent overfitting or instability. The algorithm appears to be converging towards a Pass@1 value of approximately 0.48, which may represent its maximum achievable performance under the given conditions.

</details>

(b) On-Policy GRPO with masking of samples with variance $\sigma_{\pi_{k},r}=0$ , and with $\pi_{\mathrm{ref}}$ swap at end of each epoch. $v=1$ , $i=1$ , $S=3$ )

<details>

<summary>extracted/6494595/figs/v10-i1-swap.png Details</summary>

### Visual Description

## Line Chart: Off-policy GRPO Performance

### Overview

This image presents a line chart illustrating the performance of an off-policy GRPO algorithm using a policy denoted as π<sub>k</sub>-v, with a value of v=10. The chart tracks the Pass@1 metric over iterations, showing how the algorithm's performance evolves during training. Vertical dashed red lines mark specific iteration points with labels indicating algorithm configurations.

### Components/Axes

* **Title:** "Off-policy GRPO using π<sub>k</sub>-v, v = 10" (Top-center)

* **X-axis:** "Iteration" (Bottom-center), ranging from 0 to 1000.

* **Y-axis:** "Pass@1" (Left-center), ranging from 0.20 to 0.50.

* **Data Series:** A single line representing "off-policy GRPO with π<sub>ref</sub> swap" (Top-left legend). The line is blue.

* **Vertical Dashed Lines:** Red dashed lines at approximately iterations 150, 400, 600, 800.

* **Labels on Vertical Dashed Lines:**

* "grpo-iter-v1lm10" (at ~150)

* "grpo-plus-v1lm10-pi-ref-optim-reset" (at ~400)

* "grpo-plus-v1lm10-pi-ref-optim-reset-swap2" (at ~600)

* "grpo-plus-v1lm10-pi-ref-optim-reset-swap3" (at ~800)

### Detailed Analysis

The blue line representing "off-policy GRPO with π<sub>ref</sub> swap" starts at approximately 0.21 at iteration 0. It then exhibits a steep upward trend, reaching around 0.40 by iteration 200. The line fluctuates between approximately 0.40 and 0.48 for the next 400 iterations (from 200 to 600). Around iteration 600, there's a noticeable dip to approximately 0.42, followed by a recovery to around 0.48 by iteration 700. The line then experiences a slight decline, stabilizing around 0.46-0.48 from iteration 800 to 1000.

Here's a breakdown of approximate values at key iterations:

* Iteration 0: ~0.21

* Iteration 50: ~0.24

* Iteration 100: ~0.32

* Iteration 150: ~0.38

* Iteration 200: ~0.40

* Iteration 300: ~0.42

* Iteration 400: ~0.43

* Iteration 500: ~0.46

* Iteration 600: ~0.42

* Iteration 700: ~0.48

* Iteration 800: ~0.47

* Iteration 900: ~0.47

* Iteration 1000: ~0.46

### Key Observations

* The algorithm demonstrates significant initial improvement in Pass@1 within the first 200 iterations.

* Performance plateaus and fluctuates between iterations 200 and 600.

* The configuration changes marked by the red dashed lines appear to correlate with performance shifts, though the relationship isn't strictly monotonic. The dip at iteration 600 coincides with a configuration change.

* The algorithm achieves a peak performance of approximately 0.48 around iteration 700.

* The final performance (at iteration 1000) is slightly lower than the peak, but still significantly higher than the initial performance.

### Interpretation

The chart suggests that the off-policy GRPO algorithm with π<sub>ref</sub> swap is effective in improving the Pass@1 metric, particularly in the early stages of training. The fluctuations in performance after iteration 200 could indicate sensitivity to hyperparameter settings or the exploration-exploitation trade-off. The vertical dashed lines and their associated labels suggest that different algorithm configurations are being tested, and their impact on performance is being monitored. The dip in performance around iteration 600, coinciding with a configuration change, suggests that the new configuration may have temporarily hindered performance before potentially stabilizing. The overall trend indicates that the algorithm converges to a relatively stable performance level, although further optimization might be possible. The Pass@1 metric is a measure of success rate, and the algorithm's ability to reach a Pass@1 of approximately 0.48 indicates a reasonable level of performance. The labels on the vertical lines suggest a systematic exploration of different algorithm variants (e.g., "optim-reset", "swap2", "swap3"), likely aimed at identifying the optimal configuration for maximizing performance.

</details>

(c) Off-Policy GRPO using $v=10$ (this amounts to fixing the model on the vLLM server for $10$ iterations and getting fresh samples for new batches), and with $\pi_{\mathrm{ref}}$ swap.( $v=10$ , $i=1$ , $S=3$ )

<details>

<summary>extracted/6494595/figs/v1-i10-1ep.png Details</summary>

### Visual Description

\n

## Line Chart: Off-Policy GRPO Performance

### Overview

The image presents a line chart illustrating the performance of an Off-Policy GRPO algorithm with a fixed batch size over 10 iterations, evaluated using the Pass@1 metric. The chart plots Pass@1 against the iteration number, showing how the algorithm's performance changes as it iterates.

### Components/Axes

* **Title:** "Off-Policy GRPO with fixed batch for 10 iterations from πk" - positioned at the top-center of the chart.

* **X-axis:** "Iteration" - ranging from approximately 0 to 2500, with gridlines.

* **Y-axis:** "Pass@1" - ranging from approximately 0.200 to 0.375, with gridlines.

* **Legend:** Located at the top-left corner of the chart. It contains a single entry:

* "grpo-iter10-vllm1" - associated with a blue line.

### Detailed Analysis

The chart displays a single data series represented by a blue line. The line generally slopes upward, indicating an increase in Pass@1 as the iteration number increases.

Here's a breakdown of approximate data points, verified by matching the line color to the legend:

* Iteration 0: Pass@1 ≈ 0.205

* Iteration 500: Pass@1 ≈ 0.235

* Iteration 1000: Pass@1 ≈ 0.305

* Iteration 1500: Pass@1 ≈ 0.325

* Iteration 2000: Pass@1 ≈ 0.360

* Iteration 2500: Pass@1 ≈ 0.370

The line exhibits a steeper increase between iterations 500 and 1000, then a more gradual increase from 1000 to 2500. There is a slight flattening of the curve between iterations 1500 and 2000.

### Key Observations

* The algorithm demonstrates a clear positive trend in Pass@1 as the number of iterations increases.

* The most significant performance gain occurs within the first 1000 iterations.

* The rate of improvement slows down after iteration 1000, suggesting diminishing returns.

* The final Pass@1 value plateaus around 0.37, indicating a potential convergence point.

### Interpretation

The chart suggests that the Off-Policy GRPO algorithm with a fixed batch size effectively improves its performance (as measured by Pass@1) with increasing iterations. The initial rapid improvement likely reflects the algorithm quickly learning from the data. The subsequent slower improvement suggests the algorithm is approaching an optimal solution or encountering limitations in the data or algorithm itself. The plateau at the end indicates that further iterations may not yield substantial performance gains. This data could be used to determine an appropriate number of iterations to train the algorithm, balancing performance gains with computational cost. The use of "πk" in the title suggests this is a policy iteration process, and the algorithm is learning from a sequence of policies. The "vllm1" component of the legend likely refers to a specific version or configuration of the algorithm.

</details>

(d) Off-Policy GRPO using fixed samples from $\pi_{k}$ for $10$ iterations. This will make $1$ epoch $10×$ slower. $v=1$ , $i=10$ , $S=1$ )

Figure 2. We train different variants of GRPO on the train portion of GSM8K and report the Pass@1 on GSM8 test set using $50$ samples for each question in the test set for various variant of on-policy and off-policy GRPO. We see that as predicted by our theory, masking samples with zero variance stabilizes the training for on-policy training and leads to better performance. For off-policy training we see that using $v=10,i=1$ stabilizes also the training and leads also to better performance.

5.2. Finetuning Qwen Distill R1 model (1.5 B) on Deepscaler Data

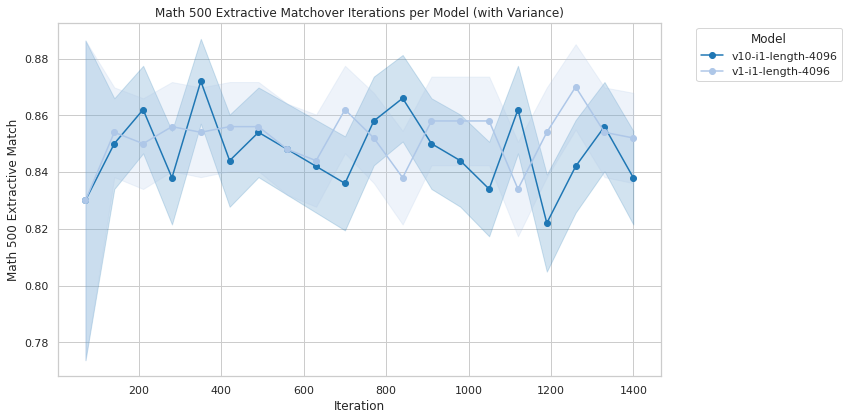

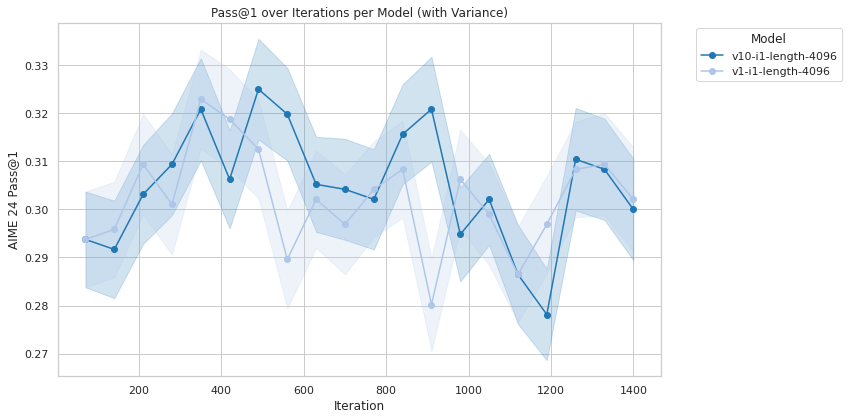

In this section we use GRPO to finetune DeepSeek-R1-Distill-Qwen-1.5B (Guo et al., 2025) on DeepScaleR-Preview-Dataset from Luo et al. (2025) consisting of roughly $40K$ math questions with known answers. We used math-verify as the verifiable reward. We use a learning rate of $1× 10^{-6}$ in the same distributed setting as before (GPU 0 for vLLM and 7 GPUs for distributed training). We use a context length of $4096$ , a group size $G=16$ , a per-device batch size of $16$ , and the KL regularizer is $\beta=0.001$ . The sampling temperature used is $0.7$ . We compared here the on-policy GRPO ( $v=1,i=1$ ) to our off-policy GRPO ( $v=10,i=1$ ) and report the performance of the trained model on a single epoch (around 24 hours on a single node). We report in Tables 3 and 2 Aime24 and Math500 performance using Huggingface light-eval (Habib et al., 2023). Aime24 is evaluated with Pass@1 using 32 samples, and math500 with extractive matching as recommended in light-eval with a context length of $~{}32K$ (evaluation context length and all other sampling hyperparameters are set to the default in OpenR1 for this model). Plots of evaluation as function of iterations are given in Appendix D. We see that both on-policy and off-policy GRPO improve the performance of DeepSeek-R1-Distill-Qwen-1.5B that has an Aime24 of $29\%$ to $32\%$ at maximum (over iterations), and its math-500 from $83\%$ to $87\%$ . This result confirms our theoretical results that by going off-policy we don’t loose in term of overall performance.

| Model/Aime24 | Min | Max | Median | Mean |

| --- | --- | --- | --- | --- |

| v1-i1-length-4096 | 0.2802 | 0.3229 | 0.3021 | 0.3022 |

| v10-i1-length-4096 | 0.2781 | 0.3250 | 0.3047 | 0.3049 |

Table 2. Aime24 using lighteval with on & off-policy ( (v1-i1) and (v10-i1)) GRPO.

| Model/Math500 | Min | Max | Median | Mean |

| --- | --- | --- | --- | --- |

| v1-i1-length-4096 | 0.830 | 0.870 | 0.854 | 0.8519 |

| v10-i1-length-4096 | 0.822 | 0.872 | 0.846 | 0.8474 |

Table 3. Math 500 extractive matching using light-eval (Habib et al., 2023) with on and off-policy (v1-i1) and (v10-i1) GRPO.

6. Conclusion and Discussion

We revisited (on-policy) GRPO (Shao et al., 2024) and showed that its clipping objective can be derived from first principles as a lower bound for reward improvement. We also gave theoretical grounding to masking of zero variance samples suggested in DAPO (Yu et al., 2025). We introduced off-policy GRPO and layed conditions under which it leads to policy improvement. Our off-policy GRPO has the advantage of reducing communication costs in serving the model for inference within the GRPO loop at each iteration as done in the on-policy counter-part, while not sacrificing performance. We showcased that off-policy GRPO stabilizes training and leads to either on par or improved performance as the on-policy one.

The main takeaways of our paper to practitioners are: (1) Zero variance masking stabilizes on-policy GRPO’s training (2) Off-policy GRPO attains its full potential in terms of maintaining performance and lowering latencies and communication overhead in larger scale training where models are served using tensor parallelism (see vLLM (2025)).

We hope our proof of concept for off-policy GRPO will help enabling stable and efficient reinforcement learning at scale.

References

- Bai et al. [2022] Y. Bai, A. Jones, K. Ndousse, A. Askell, A. Chen, N. DasSarma, D. Drain, S. Fort, D. Ganguli, T. Henighan, et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv preprint arXiv:2204.05862, 2022.

- Christiano et al. [2017] P. F. Christiano, J. Leike, T. Brown, M. Martic, S. Legg, and D. Amodei. Deep reinforcement learning from human preferences. In I. Guyon, U. V. Luxburg, S. Bengio, H. Wallach, R. Fergus, S. Vishwanathan, and R. Garnett, editors, Advances in Neural Information Processing Systems, volume 30. Curran Associates, Inc., 2017. URL https://proceedings.neurips.cc/paper_files/paper/2017/file/d5e2c0adad503c91f91df240d0cd4e49-Paper.pdf.

- Cobbe et al. [2021] K. Cobbe, V. Kosaraju, M. Bavarian, M. Chen, H. Jun, L. Kaiser, M. Plappert, J. Tworek, J. Hilton, R. Nakano, C. Hesse, and J. Schulman. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- Degris et al. [2012] T. Degris, M. White, and R. S. Sutton. Off-policy actor-critic. arXiv preprint arXiv:1205.4839, 2012.

- Fakoor et al. [2020] R. Fakoor, P. Chaudhari, and A. J. Smola. P3o: Policy-on policy-off policy optimization. In Proceedings of The 35th Uncertainty in Artificial Intelligence Conference, pages 1017–1027. PMLR, 2020.

- Gan et al. [2024] Y. Gan, R. Yan, X. Tan, Z. Wu, and J. Xing. Transductive off-policy proximal policy optimization. arXiv preprint arXiv:2406.03894, 2024.

- Guo et al. [2025] D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- Habib et al. [2023] N. Habib, C. Fourrier, H. Kydlíček, T. Wolf, and L. Tunstall. Lighteval: A lightweight framework for llm evaluation, 2023. URL https://github.com/huggingface/lighteval.

- HuggingFace [2025a] HuggingFace. Open r1: A fully open reproduction of deepseek-r1, January 2025a. URL https://github.com/huggingface/open-r1.

- HuggingFace [2025b] HuggingFace. Open r1: Update #3, Mar. 2025b. URL https://huggingface.co/blog/open-r1/update-3. Accessed: 2025-05-11.

- Kwon et al. [2023] W. Kwon, Z. Li, S. Zhuang, Y. Sheng, L. Zheng, C. H. Yu, J. E. Gonzalez, H. Zhang, and I. Stoica. Efficient memory management for large language model serving with pagedattention. In Proceedings of the ACM SIGOPS 29th Symposium on Operating Systems Principles, 2023.

- Lambert et al. [2024] N. Lambert, J. Morrison, V. Pyatkin, S. Huang, H. Ivison, F. Brahman, L. J. V. Miranda, A. Liu, N. Dziri, S. Lyu, et al. Tülu 3: Pushing frontiers in open language model post-training. arXiv preprint arXiv:2411.15124, 2024.

- Liu et al. [2025] Z. Liu, C. Chen, W. Li, P. Qi, T. Pang, C. Du, W. S. Lee, and M. Lin. Understanding r1-zero-like training: A critical perspective, 2025. URL https://arxiv.org/abs/2503.20783.

- Luo et al. [2025] M. Luo, S. Tan, J. Wong, X. Shi, W. Y. Tang, M. Roongta, C. Cai, J. Luo, T. Zhang, L. E. Li, R. A. Popa, and I. Stoica. Deepscaler: Surpassing o1-preview with a 1.5b model by scaling rl. https://tinyurl.com/5e9rs33z, 2025. Notion Blog.

- Meng et al. [2023] W. Meng, Q. Zheng, G. Pan, and Y. Yin. Off-policy proximal policy optimization. Proceedings of the AAAI Conference on Artificial Intelligence, 37(8):9162–9170, 2023.

- Mroueh [2025] Y. Mroueh. Reinforcement learning with verifiable rewards: Grpo’s effective loss, dynamics, and success amplification, 2025. URL https://arxiv.org/abs/2503.06639.

- Noukhovitch et al. [2025] M. Noukhovitch, S. Huang, S. Xhonneux, A. Hosseini, R. Agarwal, and A. Courville. Faster, more efficient RLHF through off-policy asynchronous learning. In The Thirteenth International Conference on Learning Representations, 2025. URL https://openreview.net/forum?id=FhTAG591Ve.

- Ouyang et al. [2022] L. Ouyang, J. Wu, X. Jiang, D. Almeida, C. Wainwright, P. Mishkin, C. Zhang, S. Agarwal, K. Slama, A. Ray, et al. Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems, 35:27730–27744, 2022.

- Paszke et al. [2019] A. Paszke, S. Gross, F. Massa, A. Lerer, J. Bradbury, G. Chanan, T. Killeen, Z. Lin, N. Gimelshein, L. Antiga, A. Desmaison, A. Köpf, E. Yang, Z. DeVito, M. Raison, A. Tejani, S. Chilamkurthy, B. Steiner, L. Fang, J. Bai, and S. Chintala. PyTorch: An Imperative Style, High-Performance Deep Learning Library, Dec. 2019.

- Queeney et al. [2021] J. Queeney, I. C. Paschalidis, and C. G. Cassandras. Generalized proximal policy optimization with sample reuse. In Advances in Neural Information Processing Systems, volume 34, 2021.

- Schulman et al. [2015] J. Schulman, S. Levine, P. Abbeel, M. Jordan, and P. Moritz. Trust region policy optimization. In International conference on machine learning, pages 1889–1897. PMLR, 2015.

- Schulman et al. [2017] J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Shao et al. [2024] Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y. Li, Y. Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300, 2024.

- Stiennon et al. [2020] N. Stiennon, L. Ouyang, J. Wu, D. Ziegler, R. Lowe, C. Voss, A. Radford, D. Amodei, and P. F. Christiano. Learning to summarize with human feedback. Advances in Neural Information Processing Systems, 33:3008–3021, 2020.

- vLLM [2025] P. vLLM. Pytorch ci hud: vllm benchmark dashboard. https://hud.pytorch.org/benchmark/llms?repoName=vllm-project/vllm, 2025. Accessed: 2025-05-14.

- Vojnovic and Yun [2025] M. Vojnovic and S.-Y. Yun. What is the alignment objective of grpo?, 2025. URL https://arxiv.org/abs/2502.18548.

- von Werra et al. [2020a] L. von Werra, Y. Belkada, L. Tunstall, E. Beeching, T. Thrush, N. Lambert, S. Huang, K. Rasul, and Q. Gallouédec. Trl: Transformer reinforcement learning. https://github.com/huggingface/trl, 2020a.

- von Werra et al. [2020b] L. von Werra, Y. Belkada, L. Tunstall, E. Beeching, T. Thrush, N. Lambert, S. Huang, K. Rasul, and Q. Gallouédec. Trl: Transformer reinforcement learning. https://github.com/huggingface/trl, 2020b.

- Wang et al. [2016] Z. Wang, V. Bapst, N. Heess, V. Mnih, R. Munos, K. Kavukcuoglu, and N. De Freitas. Sample efficient actor-critic with experience replay. arXiv preprint arXiv:1611.01224, 2016.

- Wolf et al. [2020] T. Wolf, L. Debut, V. Sanh, J. Chaumond, C. Delangue, A. Moi, P. Cistac, T. Rault, R. Louf, M. Funtowicz, J. Davison, S. Shleifer, P. von Platen, C. Ma, Y. Jernite, J. Plu, C. Xu, T. L. Scao, S. Gugger, M. Drame, Q. Lhoest, and A. M. Rush. HuggingFace’s Transformers: State-of-the-art Natural Language Processing, July 2020.

- Yang et al. [2024] A. Yang, B. Yang, B. Hui, B. Zheng, B. Yu, C. Zhou, C. Li, C. Li, D. Liu, F. Huang, G. Dong, H. Wei, H. Lin, J. Tang, J. Wang, J. Yang, J. Tu, J. Zhang, J. Ma, J. Yang, J. Xu, J. Zhou, J. Bai, J. He, J. Lin, K. Dang, K. Lu, K. Chen, K. Yang, M. Li, M. Xue, N. Ni, P. Zhang, P. Wang, R. Peng, R. Men, R. Gao, R. Lin, S. Wang, S. Bai, S. Tan, T. Zhu, T. Li, T. Liu, W. Ge, X. Deng, X. Zhou, X. Ren, X. Zhang, X. Wei, X. Ren, X. Liu, Y. Fan, Y. Yao, Y. Zhang, Y. Wan, Y. Chu, Y. Liu, Z. Cui, Z. Zhang, Z. Guo, and Z. Fan. Qwen2 Technical Report, Sept. 2024.

- Yu et al. [2025] Q. Yu, Z. Zhang, R. Zhu, Y. Yuan, X. Zuo, Y. Yue, T. Fan, G. Liu, L. Liu, X. Liu, H. Lin, Z. Lin, B. Ma, G. Sheng, Y. Tong, C. Zhang, M. Zhang, W. Zhang, H. Zhu, J. Zhu, J. Chen, J. Chen, C. Wang, H. Yu, W. Dai, Y. Song, X. Wei, H. Zhou, J. Liu, W.-Y. Ma, Y.-Q. Zhang, L. Yan, M. Qiao, Y. Wu, and M. Wang. Dapo: An open-source llm reinforcement learning system at scale, 2025. URL https://arxiv.org/abs/2503.14476.

Appendix A Broader Impact and Limitations

Our work analyzes the celebrated GRPO algorithm and develops an adaptation for the off-policy setting motivated by recent efforts for PPO that demonstrated higher stability and efficiency. Our primary contributions are theoretical, providing formal conditions under which advantage optimization guarantees policy improvement for the on-policy and off-policy regimes. These insights provide lower bounds on policy improvement and directly inform a practical clipped surrogate optimization objective for large language model (LLM) policy training that inherits our theoretical guarantees for both on policy and off policy regimes. In the on-policy regime our lower bound shed the light and give theoretical backing to the benefits of masking samples with zero variance as suggested in the DAPO paper [Yu et al., 2025]. Our formulation also clarifies theoretical relationships between our newly introduced off-policy GRPO, PPO variants, and general off-policy optimization frameworks – a linkage previously underexplored in the literature. Our derived off-policy GRPO algorithm is validated experimentally demonstrating improved performance compared to standard GRPO, while having the potential to reduce the communication overhead across devices in serving large models for sampling that is needed in GRPO. The broader impacts that we anticipate from our work (beside those directly inherited from GRPO and reinforcement fine-tuning of LLMs and the risks associated to the dual use of the enabled reasoning models) are then generally positive, as it enhances RL efficiency, reducing computational costs and improving stability.

The main limitation of our work is that the empirical validation remains constrained to smaller datasets, smaller model architectures, and smaller context size (4096 tokens at maximum) that can be trained on our hardware setup consisting of one compute node with 8 H100 NVIDIA gpus (1 used for the vLLM server and 7 for training the policy LLM). Our 1.5 B experimental setup, with deepscaler data is at the limit of what can fit in the memory of a single node.

This limitation primarily reflects the common resource constraints associated with provisioning large-scale distributed training environments, rather than any inherent restriction of the algorithm itself. Note that for larger context, larger batch size and larger architectures than the ones used in our paper, multi-node training is required.

While our main contribution here remains theoretical and backed with ablation studies on a single node, we reserve to scale up our experiments to larger training runs in future work aimed at showcasing the fact that the benefits of our off-policy algorithms in terms of efficient and reduced communication are expected to become even more pronounced in the large-scale distributed regime as it is already showed in multiple off policy RL works.

Appendix B Assets

Hardware setup

All our experiments were run on one compute node with Dual 48-core Intel Xeon 8468, 2TB of RAM, 8 NVIDIA HGX H100 80GB SMX5, 8x 3.4TB Enterprise NVMe U.2 Gen4, and 10x NVIDIA Mellanox Infiniband Single port NDR adapters, running RedHat Enterprise Linux 9.5

Libraries

Our experiments rely on the open-source libraries pytorch [Paszke et al., 2019] (license: BSD), HuggingFace Transformers [Wolf et al., 2020] (Apache 2.0 license), and HuggingFace TRL [von Werra et al., 2020a] (Apache 2.0 license). We also relied on Open-R1 [HuggingFace, 2025a] as well as light-eval [Habib et al., 2023] for the evaluation of Aime24 and Math500.

Code re-use

Our GRPO training code is based on the public Github repository https://github.com/huggingface/open-r1 [HuggingFace, 2025a].

Data and Models

In our experiments, we use following publicly available datasets: (1) GSM8K dataset from Cobbe et al. [2021] (MIT license), and (2) the DeepScaleR-Preview-Dataset from Luo et al. [2025] (MIT license). The models that we used were Qwen/Qwen2.5-0.5B-Instruct (Apache 2.0 license) by Yang et al. [2024], and DeepSeek-R1-Distill-Qwen-1.5B (MIT license) by Guo et al. [2025].

Appendix C Reward Improvement Lower Bound

C.1. Proof of Theorem 1

We have :

$$

J(\pi(\cdot|x))=\mathbb{E}_{y\sim\pi(\cdot|x)}r(x,y)

$$

Let $\pi_{k}$ be the current policy and $\alpha(·|x)$ be another policy typically consider $\alpha(·|x)=\pi_{k-i}(·|x)$ .

Define mean and variances of the off-policy reward, i.e policy under $\alpha$ :

$\mu_{\alpha}(x)=\mathbb{E}_{y\sim\alpha(·|x)}r(x,y)$ and $\sigma_{\alpha}(x)=\sqrt{\mathbb{E}_{y\sim\alpha(·|x)}(r(x,y)-\mu_{\alpha}%

(x))^{2}},$ and denote for $0<\varepsilon<1$ : $\sigma_{\alpha,\varepsilon}(x)=\sqrt{\sigma^{2}_{\alpha}(x)+\varepsilon}$ .

Note that we have a bounded reward $0≤ r(x,y)≤\left\lVert{r}\right\rVert_{∞}$ which implies that $\sigma^{2}_{\alpha}(x)≤\frac{\left\lVert{r}\right\rVert^{2}_{∞}}{4}$ , and hence we have:

$$

\sigma_{\alpha,\varepsilon}(x)\leq\sqrt{\frac{\left\lVert{r}\right\rVert^{2}_{%

\infty}}{4}+\varepsilon}.

$$

We normalize the reward so that : $\sigma_{\alpha,\varepsilon}(x)≤\sqrt{\frac{\left\lVert{r}\right\rVert^{2}_{%

∞}}{4}+\varepsilon}≤ 1.$

We denote GRPO advantage function as:

$$

A_{\alpha}(x,y)=\frac{r(x,y)-\mu_{\alpha}(x)}{\sigma_{\alpha,\varepsilon}(x)}

$$

$$

\mathcal{L}_{\alpha}(\pi(\cdot|x))=\mathbb{E}_{y\sim\alpha(\cdot|x)}\frac{\pi(%

y|x)}{\alpha(y|x)}A_{\alpha}(x,y)

$$

If $\alpha=\pi_{k}$ , we obtain the online policy objective function of GRPO, where the advantage is computed with the current policy $\pi_{k}$ , i.e using $A_{\pi_{k}}(x,y)$ .

We have:

| | $\displaystyle\mathcal{L}_{\alpha}(\pi(·|x))$ | $\displaystyle=\frac{1}{\sigma_{\alpha,\varepsilon}(x)}\left(\mathbb{E}_{y\sim%

\pi(·|x)}r(x,y)-\mu_{\alpha}(x)\right)$ | |

| --- | --- | --- | --- |

Our goal is to provide an upper bound on :

$$

\mathcal{L}_{\alpha}(\pi(\cdot|x))-\left(J(\pi(\cdot|x))-J(\pi_{k}(\cdot|x))\right)

$$

Hence we have:

| | $\displaystyle\mathcal{L}_{\alpha}(\pi(·|x))-\left(J(\pi(·|x))-J(\pi_{k%

}(·|x))\right)=\left(\frac{1}{\sigma_{\alpha,\varepsilon}(x)}-1\right)J(%

\pi(·|x))+J(\pi_{k}(·|x))-\frac{1}{\sigma_{\alpha,\varepsilon}(x)}J(%

\alpha(·|x))$ | |

| --- | --- | --- |

**Lemma 1 (Kantorovich-Rubenstein duality of total variation distance, see )**

*The Kantorovich-Rubenstein duality (variational representation) of the total variation distance is as follows:

$$

\mathbb{TV}(m_{1},m_{2})=\frac{1}{2L}\sup_{g\in\mathcal{G}_{L}}\left\{\mathbb{%

E}_{Z\sim m_{1}}[g(Z)]-\mathbb{E}_{Z\sim m_{2}}[g(Z)]\right\}, \tag{9}

$$

where $\mathcal{G}_{L}=\{g:\mathcal{Z}→\mathbb{R},||g||_{∞}≤ L\}$ .*

On the other hand using Lemma 1 we have:

$$

J(\pi(\cdot|x))-J(\alpha(\cdot|x))\leq 2\left\lVert{r}\right\rVert_{\infty}%

\mathbb{TV}(\pi(\cdot|x),\alpha(\cdot|x))

$$

and

$$

J(\pi_{k}(\cdot|x))-J(\alpha(\cdot|x))\leq 2\left\lVert{r}\right\rVert_{\infty%

}\mathbb{TV}(\pi_{k}(\cdot|x),\alpha(\cdot|x))

$$

By our assumption on the reward we have :

$$

\frac{1-\sigma_{\alpha,\varepsilon}(x)}{\sigma_{\alpha,\varepsilon}(x)}\geq 0

$$

so that we obtain the final bound as follows:

| | $\displaystyle\mathcal{L}_{\alpha}(\pi(·|x))-\left(J(\pi(·|x))-J(\pi_{k%

}(·|x))\right)≤ 2\frac{1-\sigma_{\alpha,\varepsilon}(x)}{\sigma_{\alpha%

,\varepsilon}(x)}\left\lVert{r}\right\rVert_{∞}\mathbb{TV}(\pi(·|x),%

\alpha(·|x))+2\left\lVert{r}\right\rVert_{∞}\mathbb{TV}(\pi_{k}(·%

|x),\alpha(·|x))$ | |

| --- | --- | --- |

We obtain finally our lower bound on policy improvement as follows:

$J (π (⋅ | x)) − J (π k (⋅ | x)) ≥ ℒ α (π (⋅ | x)) − 2 1 − σ α, ε (x) σ α, ε (x) ∥ r ∥ ∞ 𝕋 𝕍 (π (⋅ | x), α (⋅ | x)) − 2 ∥ r ∥ ∞ 𝕋 𝕍 (π k (⋅ | x), α (⋅ | x)) italic_J ( italic_π ( ⋅ | italic_x ) ) - italic_J ( italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT ( ⋅ | italic_x ) ) ≥ caligraphic_L start_POSTSUBSCRIPT italic_α end_POSTSUBSCRIPT ( italic_π ( ⋅ | italic_x ) ) - 2 divide start_ARG 1 - italic_σ start_POSTSUBSCRIPT italic_α , italic_ε end_POSTSUBSCRIPT ( italic_x ) end_ARG start_ARG italic_σ start_POSTSUBSCRIPT italic_α , italic_ε end_POSTSUBSCRIPT ( italic_x ) end_ARG ∥ italic_r ∥ start_POSTSUBSCRIPT ∞ end_POSTSUBSCRIPT blackboard_T blackboard_V ( italic_π ( ⋅ | italic_x ) , italic_α ( ⋅ | italic_x ) ) - 2 ∥ italic_r ∥ start_POSTSUBSCRIPT ∞ end_POSTSUBSCRIPT blackboard_T blackboard_V ( italic_π start_POSTSUBSCRIPT italic_k end_POSTSUBSCRIPT ( ⋅ | italic_x ) , italic_α ( ⋅ | italic_x ) )$

Integrating over $x$ (the prompts) we have:

| | $\displaystyle\mathbb{E}_{x\sim\rho_{\mathcal{X}}}J(\pi(·|x))-\mathbb{E}_{x%

\sim\rho_{\mathcal{X}}}J(\pi_{k}(·|x))≥\mathbb{E}_{x\sim\rho_{\mathcal{%

X}}}\mathcal{L}_{\alpha}(\pi(·|x))-2\left\lVert{r}\right\rVert_{∞}%

\mathbb{E}_{x\sim\rho_{\mathcal{X}}}\frac{1-\sigma_{\alpha,\varepsilon}(x)}{%

\sigma_{\alpha,\varepsilon}(x)}\mathbb{TV}(\pi(·|x),\alpha(·|x))$ | |

| --- | --- | --- |

Appendix D Experiments

<details>

<summary>extracted/6494595/figs/math500.png Details</summary>

### Visual Description

\n

## Line Chart: Math 500 Extractive Matchover Iterations per Model (with Variance)

### Overview

This line chart displays the Math 500 Extractive Match score over iterations for two different models: `v10-i1-length-4096` and `v1-i1-length-4096`. The chart also shows the variance around each line, represented by shaded regions. The x-axis represents the iteration number, and the y-axis represents the Math 500 Extractive Match score.

### Components/Axes

* **Title:** Math 500 Extractive Matchover Iterations per Model (with Variance) - positioned at the top-center.

* **X-axis Label:** Iteration - positioned at the bottom-center. Scale ranges from approximately 0 to 1400, with gridlines at 200-iteration intervals.

* **Y-axis Label:** Math 500 Extractive Match - positioned at the left-center. Scale ranges from approximately 0.78 to 0.88, with gridlines at 0.02 intervals.

* **Legend:** Located in the top-right corner.

* `v10-i1-length-4096` - represented by a dark blue line with circular markers.

* `v1-i1-length-4096` - represented by a light blue line with circular markers.

### Detailed Analysis

**Model v10-i1-length-4096 (Dark Blue Line):**

The line generally trends upward initially, then fluctuates with some peaks and valleys.

* Iteration 0: Approximately 0.825

* Iteration 200: Approximately 0.855

* Iteration 400: Approximately 0.84

* Iteration 600: Approximately 0.845

* Iteration 800: Approximately 0.86

* Iteration 1000: Approximately 0.83

* Iteration 1200: Approximately 0.85

* Iteration 1400: Approximately 0.855

**Model v1-i1-length-4096 (Light Blue Line):**

This line also shows fluctuations, with a similar overall trend.

* Iteration 0: Approximately 0.845

* Iteration 200: Approximately 0.855

* Iteration 400: Approximately 0.85

* Iteration 600: Approximately 0.84

* Iteration 800: Approximately 0.855

* Iteration 1000: Approximately 0.825

* Iteration 1200: Approximately 0.845

* Iteration 1400: Approximately 0.86

**Variance (Shaded Regions):**

Both models have significant variance, indicated by the large shaded areas around the lines. The variance appears to be relatively consistent across iterations, with no obvious patterns of increasing or decreasing uncertainty.

### Key Observations

* Both models exhibit similar performance, with Math 500 Extractive Match scores fluctuating between approximately 0.82 and 0.87.

* The variance is substantial for both models, suggesting that the results are not highly consistent.

* There isn't a clear winner between the two models; they trade places in terms of performance throughout the iterations.

* The lines are relatively close together, indicating that the difference in performance between the two models is not dramatic.

### Interpretation

The chart suggests that both models are performing reasonably well on the Math 500 Extractive Match task, but their performance is somewhat unstable. The large variance indicates that the results are sensitive to factors not explicitly controlled in the experiment. The lack of a clear performance difference between the two models suggests that the specific configuration differences (v10 vs. v1) do not have a substantial impact on the outcome, at least within the range of iterations shown. Further investigation might be needed to understand the sources of variance and to determine whether one model consistently outperforms the other over a longer period or with different data. The initial upward trend for both models could indicate a learning or adaptation phase, but the subsequent fluctuations suggest that the models may have reached a plateau or are experiencing some form of instability.

</details>

(a) Aime 24

<details>

<summary>extracted/6494595/figs/aime24.png Details</summary>

### Visual Description

## Line Chart: Pass@1 over Iterations per Model (with Variance)

### Overview

This line chart displays the performance of two models ("v10-i1-length-4096" and "v1-i1-length-4096") over iterations, measured by the "AIME 24 Pass@1" metric. The chart also shows the variance around each model's performance using shaded regions.

### Components/Axes

* **Title:** Pass@1 over Iterations per Model (with Variance) - positioned at the top-center.

* **X-axis:** "Iteration" - ranging from approximately 0 to 1400, with gridlines.

* **Y-axis:** "AIME 24 Pass@1" - ranging from approximately 0.27 to 0.33, with gridlines.

* **Legend:** Located in the top-right corner, labeling the two models:

* "v10-i1-length-4096" - represented by a dark blue line with circle markers.

* "v1-i1-length-4096" - represented by a light blue line with circle markers.

* **Shaded Regions:** Light blue shaded areas around each line represent the variance in performance.

### Detailed Analysis

**Model v10-i1-length-4096 (Dark Blue Line):**

The line initially slopes upward from iteration 0 to approximately iteration 200, increasing from roughly 0.28 to 0.31. It then fluctuates, peaking around iteration 400 at approximately 0.325, before decreasing to around 0.30 at iteration 600. From iteration 600 to 800, the line shows a slight upward trend, reaching approximately 0.32. It then declines to a low of around 0.28 at iteration 1000, followed by an increase to approximately 0.31 at iteration 1200, and finally stabilizes around 0.305 at iteration 1400.

* Iteration 0: ~0.28

* Iteration 200: ~0.31

* Iteration 400: ~0.325

* Iteration 600: ~0.30

* Iteration 800: ~0.32

* Iteration 1000: ~0.28

* Iteration 1200: ~0.31

* Iteration 1400: ~0.305

**Model v1-i1-length-4096 (Light Blue Line):**

This line starts at approximately 0.29 at iteration 0 and rises sharply to a peak of around 0.32 at iteration 400. It then experiences a significant drop to approximately 0.27 at iteration 1000. From iteration 1000, the line recovers somewhat, reaching approximately 0.29 at iteration 1400.

* Iteration 0: ~0.29

* Iteration 200: ~0.30

* Iteration 400: ~0.32

* Iteration 600: ~0.30

* Iteration 800: ~0.29

* Iteration 1000: ~0.27

* Iteration 1200: ~0.28

* Iteration 1400: ~0.29

The shaded regions around each line indicate the variance in performance. The variance appears to be larger during periods of rapid change in the lines.

### Key Observations

* Model "v10-i1-length-4096" generally exhibits more stable performance than "v1-i1-length-4096".

* Model "v1-i1-length-4096" shows a more dramatic drop in performance around iteration 1000.

* Both models show some degree of fluctuation in performance over the iterations.

* The variance around both models is significant, suggesting that the performance is not consistently predictable.

### Interpretation

The chart demonstrates the performance trends of two different models over a series of iterations, as measured by the AIME 24 Pass@1 metric. The variance bands suggest that the performance of each model is not deterministic and is subject to fluctuations.

The initial rise in performance for both models could indicate a learning phase where the models are adapting to the data. The subsequent fluctuations might be due to factors such as the specific data encountered during each iteration or the inherent stochasticity of the training process.