# Experience-based Knowledge Correction for Robust Planning in Minecraft

footnotetext: Corresponding author: Jungseul Ok <jungseul@postech.ac.kr>

Abstract

Large Language Model (LLM)-based planning has advanced embodied agents in long-horizon environments such as Minecraft, where acquiring latent knowledge of goal (or item) dependencies and feasible actions is critical. However, LLMs often begin with flawed priors and fail to correct them through prompting, even with feedback. We present XENON (eXpErience-based kNOwledge correctioN), an agent that algorithmically revises knowledge from experience, enabling robustness to flawed priors and sparse binary feedback. XENON integrates two mechanisms: Adaptive Dependency Graph, which corrects item dependencies using past successes, and Failure-aware Action Memory, which corrects action knowledge using past failures. Together, these components allow XENON to acquire complex dependencies despite limited guidance. Experiments across multiple Minecraft benchmarks show that XENON outperforms prior agents in both knowledge learning and long-horizon planning. Remarkably, with only a 7B open-weight LLM, XENON surpasses agents that rely on much larger proprietary models. Project page: https://sjlee-me.github.io/XENON

1 Introduction

Large Language Model (LLM)-based planning has advanced in developing embodied AI agents that tackle long-horizon goals in complex, real-world-like environments (Szot et al., 2021; Fan et al., 2022). Among such environments, Minecraft has emerged as a representative testbed for evaluating planning capability that captures the complexity of such environments (Wang et al., 2023b; c; Zhu et al., 2023; Yuan et al., 2023; Feng et al., 2024; Li et al., 2024b). Success in these environments often depends on agents acquiring planning knowledge, including the dependencies among goal items and the valid actions needed to obtain them. For instance, to obtain an iron nugget

<details>

<summary>x1.png Details</summary>

### Visual Description

Icon/Small Image (23x20)

</details>

, an agent should first possess an iron ingot

<details>

<summary>x2.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

, which can only be obtained by the action smelt.

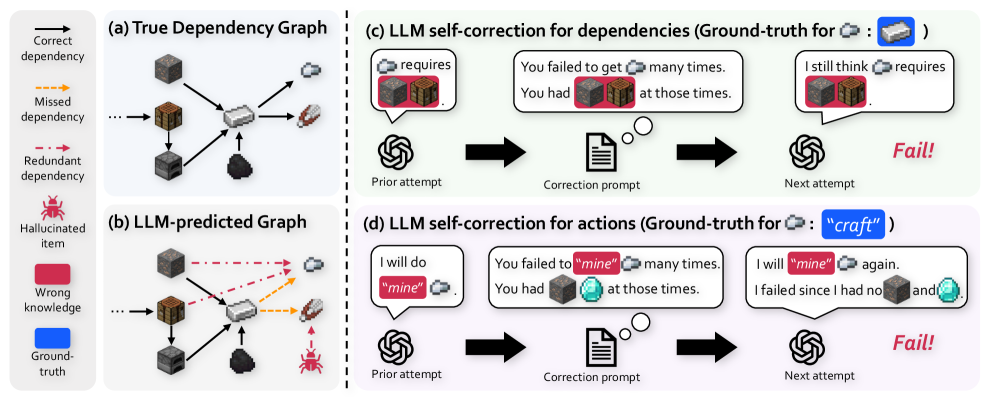

However, LLMs often begin with flawed priors about these dependencies and actions. This issue is indeed critical, since a lack of knowledge for a single goal can invalidate all subsequent plans that depend on it (Guss et al., 2019; Lin et al., 2021; Mao et al., 2022). We find several failure cases stemming from these flawed priors, a problem that is particularly pronounced for the lightweight LLMs suitable for practical embodied agents. First, an LLM often fails to predict planning knowledge accurately enough to generate a successful plan (Figure ˜ 1 b), resulting in a complete halt in progress toward more challenging goals. Second, an LLM cannot robustly correct its flawed knowledge, even when prompted to self-correct with failure feedback (Shinn et al., 2023; Chen et al., 2024), often repeating the same errors (Figures 1 c and 1 d). To improve self-correction, one can employ more advanced techniques that leverage detailed reasons for failure (Zhang et al., 2024; Wang et al., 2023a). Nevertheless, LLMs often stubbornly adhere to their erroneous parametric knowledge (i.e. knowledge implicitly stored in model parameters), as evidenced by Stechly et al. (2024) and Du et al. (2024).

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: LLM Dependency and Action Prediction

### Overview

The image presents a comparison between true dependency graphs and Large Language Model (LLM)-predicted graphs for Minecraft item crafting, along with examples of LLM self-correction attempts for dependencies and actions. The image is divided into four sections: (a) True Dependency Graph, (b) LLM-predicted Graph, (c) LLM self-correction for dependencies, and (d) LLM self-correction for actions.

### Components/Axes

**Legend (Left Side):**

* **Correct dependency:** Solid black arrow.

* **Missed dependency:** Dashed orange arrow.

* **Redundant dependency:** Dashed red arrow.

* **Hallucinated item:** Red bug-like icon.

* **Wrong knowledge:** Red filled square.

* **Ground-truth:** Blue filled square.

**Section (a): True Dependency Graph**

* Nodes: Minecraft items (stone, planks, iron ingot, furnace, coal, flint and steel).

* Edges: Solid black arrows indicating correct dependencies.

**Section (b): LLM-predicted Graph**

* Nodes: Minecraft items (stone, planks, iron ingot, furnace, coal, flint and steel, spider).

* Edges: Solid black arrows, dashed orange arrows, and dashed red arrows indicating correct, missed, and redundant dependencies, respectively.

**Section (c): LLM self-correction for dependencies**

* Flow: Prior attempt (LLM icon) -> Correction prompt (document icon) -> Next attempt (LLM icon).

* Speech bubbles containing LLM output.

**Section (d): LLM self-correction for actions**

* Flow: Prior attempt (LLM icon) -> Correction prompt (document icon) -> Next attempt (LLM icon).

* Speech bubbles containing LLM output.

### Detailed Analysis

**Section (a): True Dependency Graph**

* Planks require stone.

* Iron ingot requires furnace and coal.

* Flint and steel requires iron ingot.

* Furnace requires stone.

**Section (b): LLM-predicted Graph**

* Planks require stone.

* Iron ingot requires furnace and coal.

* Iron ingot requires planks (correct dependency).

* Flint and steel requires iron ingot (correct dependency).

* Flint and steel requires planks (missed dependency).

* Flint and steel requires spider (redundant dependency).

**Section (c): LLM self-correction for dependencies (Ground-truth for: Iron Ingot)**

* **Prior attempt:** "requires" [Stone] and [Planks].

* **Correction prompt:** "You failed to get [Iron Ingot] many times. You had [Stone] and [Planks] at those times."

* **Next attempt:** "I still think [Stone] and [Planks] requires [Iron Ingot]." Result: Fail!

**Section (d): LLM self-correction for actions (Ground-truth for: "craft")**

* **Prior attempt:** "I will do "mine" [Iron Ingot]."

* **Correction prompt:** "You failed to "mine" [Iron Ingot] many times. You had [Stone] and [Diamond] at those times."

* **Next attempt:** "I will "mine" [Iron Ingot] again. I failed since I had no [Stone] and [Diamond]." Result: Fail!

### Key Observations

* The LLM-predicted graph in (b) contains both correct and incorrect dependencies, including a "hallucinated item" (spider) as a dependency for flint and steel.

* The self-correction attempts in (c) and (d) fail to achieve the ground-truth dependencies and actions, respectively.

### Interpretation

The image highlights the challenges faced by LLMs in accurately predicting dependencies and actions in a complex environment like Minecraft crafting. The LLM exhibits both correct and incorrect knowledge, and its self-correction mechanisms are not always effective in rectifying errors. The presence of "hallucinated items" and incorrect dependencies suggests that the LLM may be prone to generating information that is not grounded in the true relationships between items and actions. The failure of the self-correction attempts indicates that the LLM struggles to learn from feedback and adjust its predictions accordingly.

</details>

Figure 1: An LLM exhibits flawed planning knowledge and fails at self-correction. (b) The dependency graph predicted by Qwen2.5-VL-7B (Bai et al., 2025) contains multiple errors (e.g., missed dependencies, hallucinated items) compared to (a) the ground truth. (c, d) The LLM fails to correct its flawed knowledge about dependencies and actions from failure feedbacks, often repeating the same errors. See Appendix ˜ B for the full prompts and LLM’s self-correction examples.

In response, we propose XENON (eXpErience-based kNOwledge correctioN), an agent that robustly learns planning knowledge from only binary success/failure feedback. To this end, instead of relying on an LLM for correction, XENON algorithmically and directly revises its external knowledge memory using its own experience, which in turn guides its planning. XENON learns this planning knowledge through two synergistic components. The first component, Adaptive Dependency Graph (ADG), revises flawed dependency knowledge by leveraging successful experiences to propose plausible new required items. The second component, Failure-aware Action Memory (FAM), builds and corrects its action knowledge by exploring actions upon failures. In the challenging yet practical setting of using only binary feedbacks, FAM enables XENON to disambiguate the cause of a failure, distinguishing between flawed dependency knowledge and invalid actions, which in turn triggers a revision in ADG for the former.

Extensive experiments in three Minecraft testbeds show that XENON excels at both knowledge acquisition and planning. XENON outperforms prior agents in learning knowledge, showing unique robustness to LLM hallucinations and modified ground-truth environmental rules. Furthermore, with only a 7B LLM, XENON significantly outperforms prior agents that rely on much larger proprietary models like GPT-4 in solving diverse long-horizon goals. These results suggest that robust algorithmic knowledge management can be a promising direction for developing practical embodied agents with lightweight LLMs (Belcak et al., 2025).

Our contributions are as follows. First, we propose XENON, an LLM-based agent that robustly learns planning knowledge from experience via algorithmic knowledge correction, instead of relying on the LLM to self-correct its own knowledge. We realize this idea through two synergistic mechanisms that explicitly store planning knowledge and correct it: Adaptive Dependency Graph (ADG) for correcting dependency knowledge based on successes, and Failure-aware Action Memory (FAM) for correcting action knowledge and disambiguating failure causes. Second, extensive experiments demonstrate that XENON significantly outperforms prior state-of-the-art agents in both knowledge learning and long-horizon goal planning in Minecraft.

2 Related work

2.1 LLM-based planning in Minecraft

Prior work has often address LLMs’ flawed planning knowledge in Minecraft using impractical methods. For example, such methods typically involve directly injecting knowledge through LLM fine-tuning (Zhao et al., 2023; Feng et al., 2024; Liu et al., 2025; Qin et al., 2024) or relying on curated expert data (Wang et al., 2023c; Zhu et al., 2023; Wang et al., 2023a).

Another line of work attempts to learn planning knowledge via interaction, by storing the experience of obtaining goal items in an external knowledge memory. However, these approaches are often limited by unrealistic assumptions or lack robust mechanisms to correct the LLM’s flawed prior knowledge. For example, ADAM and Optimus-1 artificially simplify the challenge of predicting and learning dependencies via shortcuts like pre-supplied items, while also relying on expert data such as learning curriculum (Yu and Lu, 2024) or Minecraft wiki (Li et al., 2024b). They also lack a robust way to correct wrong action choices in a plan: ADAM has none, and Optimus-1 relies on unreliable LLM self-correction. Our most similar work, DECKARD (Nottingham et al., 2023), uses an LLM to predict item dependencies but does not revise its predictions for items that repeatedly fail, and when a plan fails, it cannot disambiguate whether the failure is due to incorrect dependencies or incorrect actions. In contrast, our work tackles the more practical challenge of learning planning knowledge and correcting flawed priors from only binary success/failure feedback.

2.2 LLM-based self-correction

LLM self-correction, i.e., having an LLM correct its own outputs, is a promising approach to overcome the limitations of flawed parametric knowledge. However, for complex tasks like planning, LLMs struggle to identify and correct their own errors without external feedback (Huang et al., 2024; Tyen et al., 2024). To improve self-correction, prior works fine-tune LLMs (Yang et al., 2025) or prompt LLMs to correct themselves using environmental feedback (Shinn et al., 2023) and tool-execution results (Gou et al., 2024). While we also use binary success/failure feedbacks, we directly correct the agent’s knowledge in external memory by leveraging experience, rather than fine-tuning the LLM or prompting it to self-correct.

3 Preliminaries

We aim to develop an agent capable of solving long-horizon goals by learning planning knowledge from experience. As a representative environment which necessitates accurate planning knowledge, we consider Minecraft as our testbed. Minecraft is characterized by strict dependencies among game items (Guss et al., 2019; Fan et al., 2022), which can be formally represented as a directed acyclic graph $\mathcal{G}^{*}=(\mathcal{V}^{*},\mathcal{E}^{*})$ , where $\mathcal{V}^{*}$ is the set of all items and each edge $(u,q,v)∈\mathcal{E}^{*}$ indicates that $q$ quantities of an item $u$ are required to obtain an item $v$ . In our actual implementation, each edge also stores the resulting item quantity, but we omit it from the notation for presentation simplicity, since most edges have resulting item quantity 1 and this multiplicity is not essential for learning item dependencies. A goal is to obtain an item $g∈\mathcal{V}^{*}$ . To obtain $g$ , an agent must possess all of its prerequisites as defined by $\mathcal{G}^{*}$ in its inventory, and perform the valid high-level action in $\mathcal{A}=\{\text{``mine'', ``craft'', ``smelt''}\}$ .

Framework: Hierarchical agent with graph-augmented planning

We employ a hierarchical agent with an LLM planner and a low-level controller, adopting a graph-augmented planning strategy (Li et al., 2024b; Nottingham et al., 2023). In this strategy, agent maintains its knowledge graph $\mathcal{G}$ and plans with $\mathcal{G}$ to decompose a goal $g$ into subgoals in two stages. First, the agent identifies prerequisite items it does not possess by traversing $\hat{\mathcal{G}}$ backward from $g$ to nodes with no incoming edges (i.e., basic items with no known requirements), and aggregates them into a list of (quantity, item) tuples, $((q_{1},u_{1}),...,(q_{L_{g}},u_{L_{g}})=(1,g))$ . Second, the planner LLM converts this list into executable language subgoals $\{(a_{l},q_{l},u_{l})\}_{l=1}^{L_{g}}$ , where it takes each $u_{l}$ as input and outputs a high-level action $a_{l}$ to obtain $u_{l}$ . Then the controller executes each subgoal, i.e., it takes each language subgoal as input and outputs a sequence of low-level actions in the environment to achieve it. After each subgoal execution, the agent receives only binary success/failure feedback.

Problem formulation: Dependency and action learning

To plan correctly, the agent must acquire knowledge of the true dependency graph $\mathcal{G}^{*}$ . However, $\mathcal{G}^{*}$ is latent, making it necessary for the agent to learn this structure from experience. We model this as revising a learned graph, $\hat{\mathcal{G}}=(\hat{\mathcal{V}},\hat{\mathcal{E}})$ , where $\hat{\mathcal{V}}$ contains known items and $\hat{\mathcal{E}}$ represents the agent’s current belief about item dependencies. Following Nottingham et al. (2023), whenever the agent obtains a new item $v$ , it identifies the experienced requirement set $\mathcal{R}_{\text{exp}}(v)$ , the set of (item, quantity) pairs consumed during this item acquisition. The agent then updates $\hat{\mathcal{G}}$ by replacing all existing incoming edges to $v$ with the newly observed $\mathcal{R}_{\text{exp}}(v)$ . The detailed update procedure is in Appendix C.

We aim to maximize the accuracy of learned graph $\hat{\mathcal{G}}$ against true graph $\mathcal{G}^{*}$ . We define this accuracy $N_{true}(\hat{\mathcal{G}})$ as the number of items whose incoming edges are identical in $\hat{\mathcal{G}}$ and $\mathcal{G}^{*}$ , i.e.,

$$

\displaystyle N_{true}(\hat{\mathcal{G}}) \displaystyle\coloneqq\sum_{v\in\mathcal{V}^{*}}\mathbb{I}(\mathcal{R}(v,\hat{\mathcal{G}})=\mathcal{R}(v,\mathcal{G}^{*}))\ , \tag{1}

$$

where the dependency set, $\mathcal{R}(v,\mathcal{G})$ , denotes the set of all incoming edges to the item $v$ in the graph $\mathcal{G}$ .

4 Methods

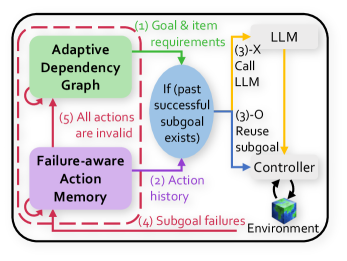

XENON is an LLM-based agent with two core components: Adaptive Dependency Graph (ADG) and Failure-aware Action Memory (FAM), as shown in Figure ˜ 3. ADG manages dependency knowledge, while FAM manages action knowledge. The agent learns this knowledge in a loop that starts by selecting an unobtained item as an exploratory goal (detailed in Appendix ˜ G). Once an item goal $g$ is selected, ADG, our learned dependency graph $\mathcal{G}$ , traverses itself to construct $((q_{1},u_{1}),...,(q_{L_{g}},u_{L_{g}})=(1,g))$ . For each $u_{l}$ in this list, FAM either reuses a previously successful action for $u_{l}$ or, if none exists, the planner LLM selects a high-level action $a_{l}∈\mathcal{A}$ given $u_{l}$ and action histories from FAM. The resulting actions form language subgoals $\{(a_{l},q_{l},u_{l})\}_{l=1}^{L_{g}}$ . The controller then takes each subgoal as input, executes a sequence of low-level actions to achieve it, and returns binary success/failure feedback, which is used to update both ADG and FAM. The full procedure is outlined in Algorithm ˜ 1 in Appendix ˜ D. We next detail each component, beginning with ADG.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Adaptive Dependency Graph with Failure-Aware Action Memory

### Overview

The image presents a diagram illustrating an adaptive dependency graph integrated with a failure-aware action memory system, interacting with a Large Language Model (LLM) and a controller within an environment. The diagram outlines the flow of information and control between these components.

### Components/Axes

* **Adaptive Dependency Graph:** Located in the top-left, represented by a green box.

* **Failure-aware Action Memory:** Located in the bottom-left, represented by a purple box.

* **LLM:** Located in the top-right, represented by a gray box.

* **Controller:** Located in the center-right, connected to the environment.

* **Environment:** Located in the bottom-right, represented by a cube.

* **Conditional Check:** An oval shape in the center, labeled "If (past successful subgoal exists)".

* **Arrows:** Indicate the flow of information and control.

### Detailed Analysis

1. **(1) Goal & item requirements:** A green arrow flows from the Adaptive Dependency Graph to the conditional check.

2. **(2) Action history:** A purple arrow flows from the Failure-aware Action Memory to the conditional check.

3. **(3)-X Call LLM:** A yellow arrow flows from the conditional check to the LLM.

4. **(3)-O Reuse subgoal:** A yellow arrow flows from the conditional check to the Controller.

5. **(4) Subgoal failures:** A purple arrow flows from the Controller to the Failure-aware Action Memory.

6. **(5) All actions are invalid:** A green arrow flows from the Failure-aware Action Memory back to the Adaptive Dependency Graph.

7. **Environment Interaction:** The Controller interacts with the Environment.

8. **Feedback Loops:** Red arrows indicate feedback loops between the Adaptive Dependency Graph and the Failure-aware Action Memory.

### Key Observations

* The Adaptive Dependency Graph provides goal and item requirements.

* The Failure-aware Action Memory stores action history and receives subgoal failures.

* The conditional check determines whether to call the LLM or reuse a subgoal based on past successful subgoals.

* The Controller interacts with the environment and provides feedback on subgoal failures.

### Interpretation

The diagram illustrates a system designed to adapt and learn from failures. The Adaptive Dependency Graph manages the goals, while the Failure-aware Action Memory stores and utilizes past action history to avoid repeating unsuccessful actions. The conditional check acts as a decision point, leveraging past successes to either reuse subgoals or call the LLM for new solutions. The feedback loops between the components enable the system to continuously learn and improve its performance within the environment. The system aims to optimize the interaction with the environment by learning from past failures and adapting its strategies accordingly.

</details>

Figure 2: Overview. XENON updates Adaptive Dependency Graph and Failure-aware Action Memory with environmental experiences.

4.1 Adaptive Dependency Graph (ADG)

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Adaptive Dependency Graph with Failure-Aware Action Memory and LLM Interaction

### Overview

The image presents a diagram illustrating an adaptive dependency graph system that incorporates failure-aware action memory and interacts with a Large Language Model (LLM). The system appears to be designed for goal-oriented tasks within an environment, with mechanisms for adapting to failures and reusing successful subgoals.

### Components/Axes

The diagram consists of the following key components:

* **Adaptive Dependency Graph (Top-Left, Green):** This component likely represents a dynamic structure that models dependencies between actions or subgoals.

* **Failure-aware Action Memory (Bottom-Left, Purple):** This component stores information about past actions and their outcomes, enabling the system to learn from failures.

* **LLM (Top-Right, Gray):** A Large Language Model, used for generating or selecting actions.

* **Controller (Right, Blue):** This component manages the execution of actions and interacts with the environment.

* **Environment (Bottom-Right, Green/Blue):** The external environment in which the system operates.

* **Conditional Check (Center, Blue):** A check for past successful subgoals.

The diagram also includes labeled arrows indicating the flow of information:

* **(1) Goal & item requirements (Top, Green):** Input to the Adaptive Dependency Graph.

* **(2) Action history (Bottom, Purple):** Input to the Failure-aware Action Memory.

* **(3)-X Call LLM (Top-Right, Yellow):** Interaction with the LLM.

* **(3)-O Reuse subgoal (Right, Yellow):** Reuse of a subgoal.

* **(4) Subgoal failures (Bottom, Purple):** Feedback from the environment to the Failure-aware Action Memory.

* **(5) All actions are invalid (Left, Green):** Feedback from the Failure-aware Action Memory to the Adaptive Dependency Graph.

### Detailed Analysis

* **Adaptive Dependency Graph:** Receives "Goal & item requirements" as input. It likely uses this information to construct or update its dependency graph.

* **Failure-aware Action Memory:** Receives "Action history" and "Subgoal failures" as input. This suggests it learns from past experiences, adapting its behavior based on failures.

* **LLM:** Interacts with the Controller via "Call LLM" and "Reuse subgoal" pathways. The LLM likely provides suggestions or actions to the Controller.

* **Controller:** Interacts with the Environment, executing actions and receiving feedback. It also interacts with the LLM and the "Reuse subgoal" pathway.

* **Conditional Check:** The "If (past successful subgoal exists)" component acts as a gate, determining whether a previously successful subgoal can be reused.

### Key Observations

* The system is designed to adapt to failures and reuse successful subgoals.

* The LLM plays a role in generating or selecting actions.

* The Adaptive Dependency Graph and Failure-aware Action Memory are key components for learning and adaptation.

### Interpretation

The diagram illustrates a sophisticated system for goal-oriented tasks that leverages an adaptive dependency graph, failure-aware action memory, and a large language model. The system's ability to learn from failures and reuse successful subgoals suggests a robust and efficient approach to problem-solving in dynamic environments. The interaction with the LLM indicates the potential for leveraging the model's knowledge and reasoning capabilities to improve performance. The system is designed to dynamically adjust its strategy based on past performance and environmental feedback.

</details>

Figure 3: Overview. XENON updates Adaptive Dependency Graph and Failure-aware Action Memory with environmental experiences.

Dependency graph initialization

To make the most of the LLM’s prior knowledge, albeit incomplete, we initialize the learned dependency graph $\hat{\mathcal{G}}=(\hat{\mathcal{V}},\hat{\mathcal{E}})$ using an LLM. We follow the initialization process of DECKARD (Nottingham et al., 2023), which consists of two steps. First, $\hat{\mathcal{V}}$ is assigned $\mathcal{V}_{0}$ , which is the set of goal items whose dependencies must be learned, and $\hat{\mathcal{E}}$ is assigned $\emptyset$ . Second, for each item $v$ in $\hat{\mathcal{V}}$ , the LLM is prompted to predict its requirement set (i.e. incoming edges of $v$ ), aggregating them to construct the initial graph.

However, those LLM-predicted requirement sets often include items not present in the initial set $\mathcal{V}_{0}$ , which is a phenomenon overlooked by DECKARD. Since $\mathcal{V}_{0}$ may be an incomplete subset of all possible game items $\mathcal{V}^{*}$ , we cannot determine whether such items are genuine required items or hallucinated items which do not exist in the environment. To address this, we provisionally accept all LLM requirement set predictions. We iteratively expand the graph by adding any newly mentioned item to $\hat{\mathcal{V}}$ and, in turn, querying the LLM for its own requirement set. This expansion continues until a requirement set has been predicted for every item in $\hat{\mathcal{V}}$ . Since we assume that the true graph $\mathcal{G}^{*}$ is a DAG, we algorithmically prevent cycles in $\hat{\mathcal{G}}$ ; see Section ˜ E.2 for the cycle-check procedure. The quality of this initial LLM-predicted graph is analyzed in detail in Appendix K.1.

Dependency graph revision

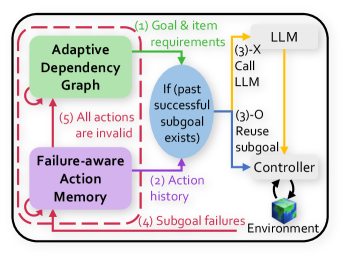

Correcting the agent’s flawed dependency knowledge involves two challenges: (1) detecting and handling hallucinated items from the graph initialization, and (2) proposing a new requirement set. Simply prompting an LLM for corrections is ineffective, as it often predicts a new, flawed requirement set, as shown in Figures 1 c and 1 d. Therefore, we revise $\hat{\mathcal{G}}$ algorithmically using the agent’s experiences, without relying on the LLM.

To implement this, we introduce a dependency revision procedure called RevisionByAnalogy and a revision count $C(v)$ for each item $v∈\hat{\mathcal{V}}$ . This procedure outputs a revised graph by taking item $v$ whose dependency needs to be revised, its revision count $C(v)$ , and the current graph $\hat{\mathcal{G}}$ as inputs, leveraging the required items of previously obtained items. When a revision for an item $v$ is triggered by FAM (Section ˜ 4.2), the procedure first discards $v$ ’s existing requirement set ( $\text{i.e}.\hbox{},\mathcal{R}(v,\hat{\mathcal{G}})←\emptyset$ ). It increments the revision count $C(v)$ for $v$ . Based on whether $C(v)$ exceeds a hyperparameter $c_{0}$ , RevisionByAnalogy proceeds with one of the following two cases:

- Case 1: Handling potentially hallucinated items ( $C(v)>c_{0}$ ). If an item $v$ remains unobtainable after excessive revisions, the procedure flags it as inadmissible to signify that it may be a hallucinated item. This reveals a critical problem: if $v$ is indeed a hallucinated item, any of its descendants in $\hat{\mathcal{G}}$ become permanently unobtainable. To enable XENON to try these descendant items through alternative paths, we recursively call RevisionByAnalogy for all of $v$ ’s descendants in $\hat{\mathcal{G}}$ , removing their dependency on the inadmissible item $v$ (Figure ˜ 4 a, Case 1). Finally, to account for cases where $v$ may be a genuine item that is simply difficult to obtain, its requirement set $\mathcal{R}(v,\hat{\mathcal{G}})$ is reset to a general set of all resource items (i.e. items previously consumed for crafting other items), each with a quantity of hyperparameter $\alpha_{i}$ .

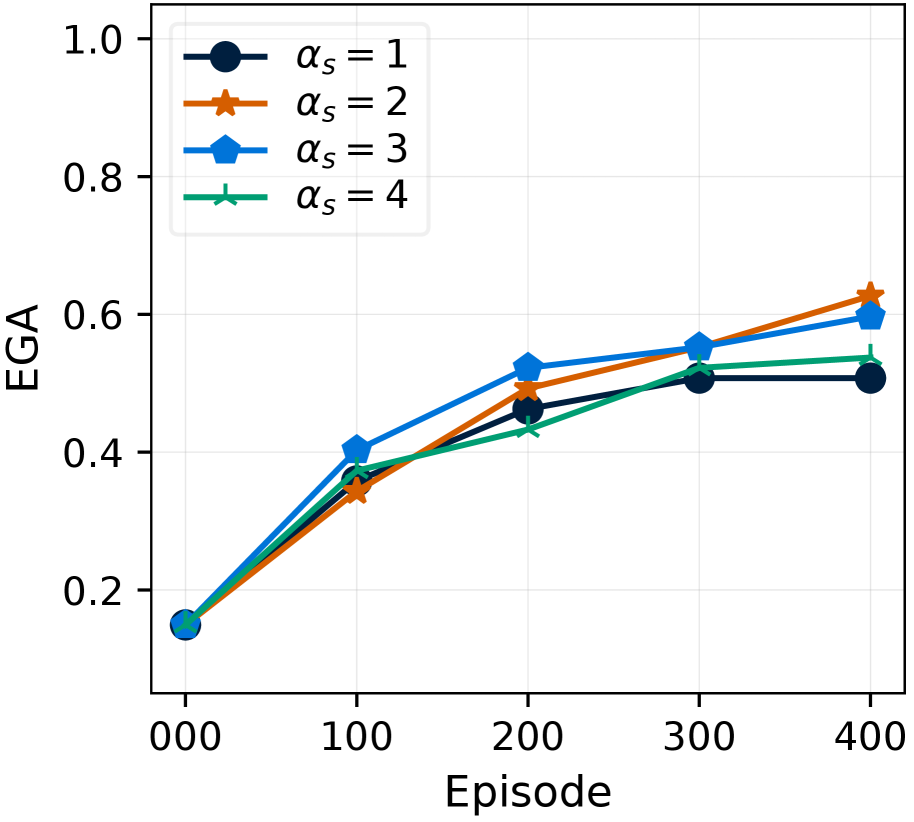

- Case 2: Plausible revision for less-tried items ( $C(v)≤ c_{0}$ ). The item $v$ ’s requirement set, $\mathcal{R}(v,\hat{\mathcal{G}})$ , is revised to determine both a plausible set of new items and their quantities. First, for plausible required items, we use an idea that similar goals often share similar preconditions (Yoon et al., 2024). Therefore, we set the new required items referencing the required items of the top- $K$ similar, successfully obtained items (Figure ˜ 4 a, Case 2). We compute this item similarity as the cosine similarity between the Sentence-BERT (Reimers and Gurevych, 2019) embeddings of item names. Second, to determine their quantities, the agent should address the trade-off between sufficient amounts to avoid failures and an imperfect controller’s difficulty in acquiring them. Therefore, the quantities of those new required items are determined by gradually scaling with the revision count, $\alpha_{s}C(v)$ .

Here, the hyperparameter $c_{0}$ serves as the revision count threshold for flagging an item as inadmissible. $\alpha_{i}$ and $\alpha_{s}$ control the quantity of each required item for inadmissible items (Case 1), and for less-tried items (Case 2), respectively, to maintain robustness when dealing with an imperfect controller. $K$ determines the number of similar, successfully obtained items to reference for (Case 2). Detailed pseudocode of RevisionByAnalogy is in Section ˜ E.3, Algorithm ˜ 3.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Diagram: Dependency and Action Correction

### Overview

The image presents two diagrams illustrating correction mechanisms for dependency and action errors. Diagram (a) focuses on "Dependency Correction for ADG" (Action Dependency Graph), showing two cases where dependencies are corrected. Diagram (b) focuses on "Action Correction for FAM" (Failure Aware Module), detailing how invalid actions are identified and removed.

### Components/Axes

**Diagram (a): Dependency Correction for ADG**

* **Title:** (a) Dependency Correction for

* **Sub-titles:** Case 1 ADG, Case 2 ADG, ADG

* **Elements:**

* **Case 1:**

* A cube labeled "Descendant (Leaf)"

* A brown item labeled "Descendant"

* A red bug labeled "Hallucinated item"

* Arrows indicating the flow of dependency

* Text: "Recursively call RevisionByAnalogy"

* **Case 2:**

* A set of Minecraft blocks (wood, stone) marked with a red "X"

* A sword and pickaxe marked with a green checkmark

* An arrow indicating a search for similar obtained items

* Text: "Search similar, obtained items"

* **ADG (Corrected):**

* A cloud icon

* A pair of scissors cutting the dependency

* A sword and pickaxe

* An arrow indicating the replacement of the wrong dependency

* Text: "Replace the wrong dependency"

**Diagram (b): Action Correction for FAM**

* **Title:** (b) Action Correction for

* **Labels:** FAM, Prompt, Subgoal

* **Elements:**

* **FAM (Failure Aware Module):**

* A box labeled "Failure counts:"

* "mine": 2 (highlighted in red)

* "craft": 1

* "smelt": 0

* **Prompt:**

* A document icon

* Text: "Select an action for: mine, craft, smelt..." (repeated twice)

* A purple arrow indicating the flow of action selection

* **Subgoal:**

* A box labeled "craft"

* A cursor pointing towards the "craft" subgoal

* An icon resembling a neural network

* **Text:**

* "Determine & remove invalid actions"

* "Try under-explored action"

* "Invalid action" (associated with a dashed line)

### Detailed Analysis or ### Content Details

**Diagram (a):**

* **Case 1:** Illustrates a scenario where a hallucinated item is identified and removed from the dependency graph. The flow starts from the "Descendant (Leaf)" and goes down to the "Hallucinated item."

* **Case 2:** Shows a scenario where incorrect dependencies (wood and stone blocks) are replaced with correct ones (sword and pickaxe). The process involves searching for similar obtained items and then replacing the wrong dependency.

**Diagram (b):**

* The "Failure counts" box indicates the number of times each action ("mine," "craft," "smelt") has failed. "mine" has failed twice, "craft" once, and "smelt" zero times.

* The process involves selecting an action from a prompt, identifying invalid actions based on failure counts, removing them, and then trying under-explored actions to achieve the subgoal.

### Key Observations

* **Dependency Correction:** Focuses on correcting errors in the dependency graph by identifying and replacing incorrect or hallucinated items.

* **Action Correction:** Focuses on improving action selection by learning from past failures and prioritizing under-explored actions.

* **Failure Counts:** The "mine" action has the highest failure count, suggesting it is the most problematic action.

### Interpretation

The diagrams illustrate two different approaches to error correction in a system, likely related to AI or game playing. The dependency correction aims to ensure the accuracy of the underlying dependency graph, while the action correction focuses on improving the decision-making process by learning from past failures. The combination of these two correction mechanisms likely leads to a more robust and efficient system. The red highlighting of "mine": 2 suggests that the system prioritizes addressing the "mine" action due to its high failure rate.

</details>

Figure 4: XENON’s algorithmic knowledge correction. (a) Dependency Correction via RevisionByAnalogy. Case 1: For an inadmissible item (e.g., a hallucinated item), its descendants are recursively revised to remove the flawed dependency. Case 2: A flawed requirement set is revised by referencing similar, obtained items. (b) Action Correction via FAM. FAM prunes invalid actions from the LLM’s prompt based on failures, guiding it to select an under-explored action.

4.2 Failure-aware Action Memory (FAM)

FAM is designed to address two challenges of learning only from binary success/failure feedback: (1) discovering valid high-level actions for each item, and (2) disambiguating the cause of persistent failures between invalid actions and flawed dependency knowledge. This section first describes FAM’s core mechanism, and then details how it addresses each of these challenges in turn.

Core mechanism: empirical action classification

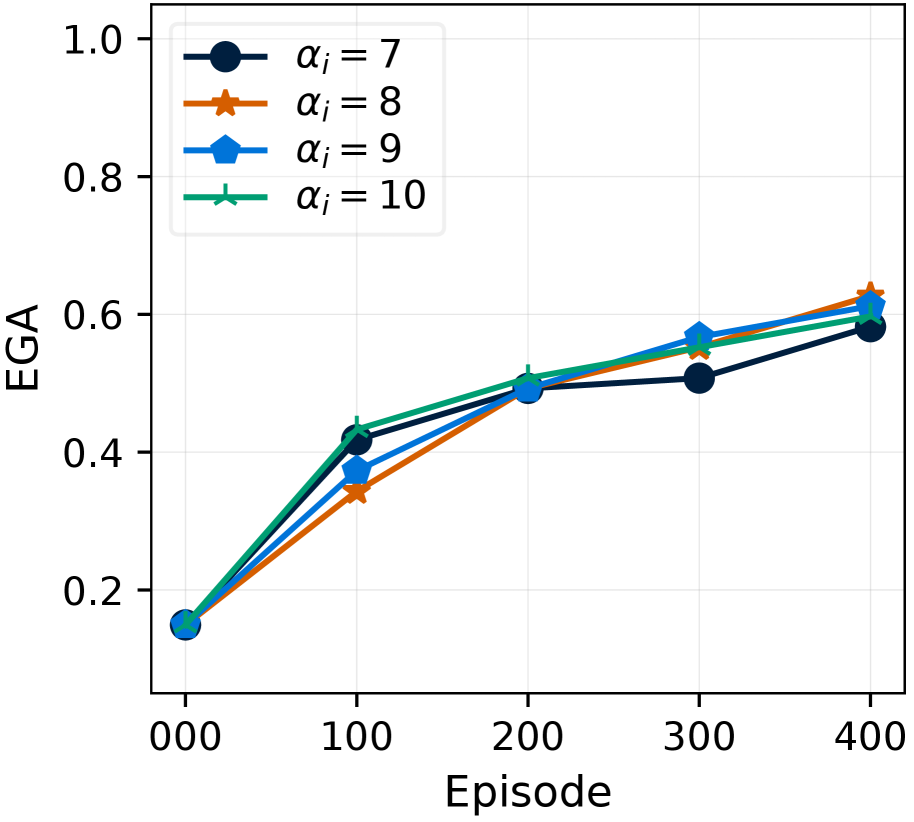

FAM classifies actions as either empirically valid or empirically invalid for each item, based on their history of past subgoal outcomes. Specifically, for each item $v∈\hat{\mathcal{V}}$ and action $a∈\mathcal{A}$ , FAM maintains the number of successful and failed outcomes, denoted as $S(a,v)$ and $F(a,v)$ respectively. Based on these counts, an action $a$ is classified as empirically invalid for $v$ if it has failed repeatedly, (i.e., $F(a,v)≥ S(a,v)+x_{0}$ ); otherwise, it is classified as empirically valid if it has succeeded at least once (i.e., $S(a,v)>0$ and $S(a,v)>F(a,v)-x_{0}$ ). The hyperparameter $x_{0}$ controls the tolerance for this classification, accounting for the possibility that an imperfect controller might fail even with an indeed valid action.

Addressing challenge 1: discovering valid actions

FAM helps XENON discover valid actions by avoiding repeatedly failed actions when making a subgoal $sg_{l}=(a_{l},q_{l},u_{l})$ . Only when FAM has no empirically valid action for $u_{l}$ , XENON queries the LLM to select an under-explored action for constructing $sg_{l}$ . To accelerate this search for a valid action, we query the LLM with (i) the current subgoal item $u_{l}$ , (ii) empirically valid actions for top- $K$ similar items successfully obtained and stored in FAM (using Sentence-BERT similarity as in Section ˜ 4.1), and (iii) candidate actions for $u_{l}$ that remain after removing all empirically invalid actions from $\mathcal{A}$ (Figure ˜ 4 b). We prune action candidates rather than include the full failure history because LLMs struggle to effectively utilize long prompts (Li et al., 2024a; Liu et al., 2024). If FAM already has an empirically valid one, XENON reuses it to make $sg_{l}$ without using LLM. Detailed procedures and prompts are in Appendix ˜ F.

Addressing challenge 2: disambiguating failure causes

By ensuring systematic action exploration, FAM allows XENON to determine that persistent subgoal failures stem from flawed dependency knowledge rather than from the actions. Specifically, once FAM classifies all actions in $\mathcal{A}$ for an item as empirically invalid, XENON concludes that the error lies within ADG and triggers its revision. Subsequently, XENON resets the item’s history in FAM to allow for a fresh exploration of actions with the revised ADG.

4.3 Additional technique: context-aware reprompting (CRe) for controller

In real-world-like environments, an imperfect controller can stall (e.g., in deep water). To address this, XENON employs context-aware reprompting (CRe), where an LLM uses the current image observation and the controller’s language subgoal to decide whether to replace the subgoal and propose a new temporary subgoal to escape the stalled state (e.g., “get out of the water”). Our CRe is adapted from Optimus-1 (Li et al., 2024b) to be suitable for smaller LLMs, with two differences: (1) a two-stage reasoning process that captions the observation first and then makes a text-only decision on whether to replace the subgoal, and (2) a conditional trigger that activates only when the subgoal for item acquisition makes no progress, rather than at fixed intervals. See Appendix ˜ H for details.

5 Experiments

5.1 Setups

Environments

We conduct experiments in three Minecraft environments, which we separate into two categories based on their controller capacity. First, as realistic, visually-rich embodied AI environments, we use MineRL (Guss et al., 2019) and Mineflayer (PrismarineJS, 2023) with imperfect low-level controllers: STEVE-1 (Lifshitz et al., 2023) in MineRL and hand-crafted codes (Yu and Lu, 2024) in Mineflayer. Second, we use MC-TextWorld (Zheng et al., 2025) as a controlled testbed with a perfect controller. Each experiment in this environment is repeated over 15 runs; in our results, we report the mean and standard deviation, omitting the latter when it is negligible. In all environments, the agent starts with an empty inventory. Further details on environments are provided in Appendix ˜ J. Additional experiments in a household task planning domain other than Minecraft are reported in Appendix ˜ A, where XENON also exhibits robust performance.

Table 1: Comparison of knowledge correction mechanisms across agents. ○: Our proposed mechanism (XENON), $\triangle$ : LLM self-correction, ✗: No correction, –: Not applicable.

| Agent | Dependency Correction | Action Correction |

| --- | --- | --- |

| XENON | ○ | ○ |

| SC | $\triangle$ | $\triangle$ |

| DECKARD | ✗ | ✗ |

| ADAM | - | ✗ |

| RAND | ✗ | ✗ |

Evaluation metrics

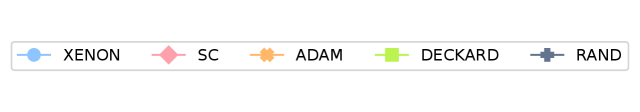

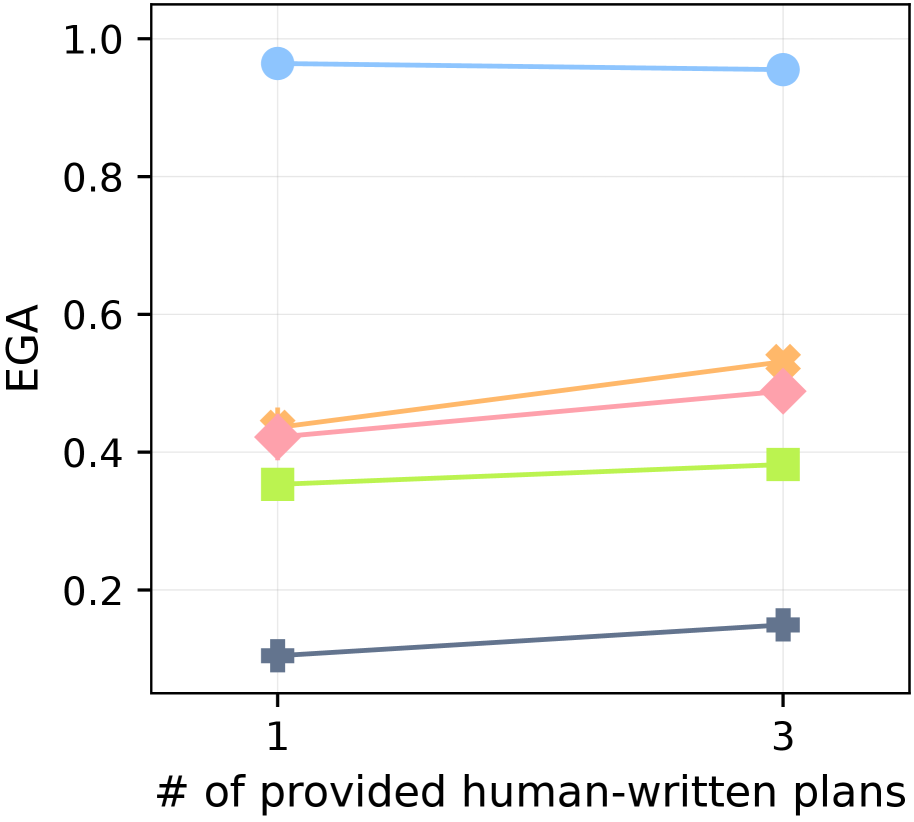

For both dependency learning and planning evaluations, we utilize the 67 goals from 7 groups proposed in the long-horizon task benchmark (Li et al., 2024b). To evaluate dependency learning with an intuitive performance score between 0 and 1, we report $N_{\text{true}}(\hat{\mathcal{G}})/67$ , where $N_{\text{true}}(\hat{\mathcal{G}})$ is defined in Equation ˜ 1. We refer to this normalized score as Experienced Graph Accuracy (EGA). To evaluate planning performance, we follow the benchmark setting (Li et al., 2024b): at the beginning of each episode, a goal item is specified externally for the agent, and we measure the average success rate (SR) of obtaining this goal item in MineRL. See Table ˜ 10 for the full list of goals.

Implementation details

For the planner, we use Qwen2.5-VL-7B (Bai et al., 2025). The learned dependency graph is initialized with human-written plans for three goals (“craft an iron sword

<details>

<summary>x7.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

”, “craft a golden sword

<details>

<summary>x8.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

,” “mine a diamond

<details>

<summary>x9.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

”), providing minimal knowledge; the agent must learn dependencies for over 80% of goal items through experience. We employ CRe only for long-horizon goal planning in MineRL. All hyperparameters are kept consistent across experiments. Further details on hyperparameters and human-written plans are in Appendix ˜ I.

Baselines

As no prior work learns dependencies in our exact setting, we adapt four baselines, whose knowledge correction mechanisms are summarized in Table 1. For dependency knowledge, (1) LLM Self-Correction (SC) starts with an LLM-predicted dependency graph and prompts the LLM to revise it upon failures; (2) DECKARD (Nottingham et al., 2023) also relies on an LLM-predicted graph but with no correction mechanism; (3) ADAM (Yu and Lu, 2024) assumes that any goal item requires all previously used resource items, each in a sufficient quantity; and (4) RAND, the simplest baseline, uses a static graph similar to DECKARD. Regarding action knowledge, all baselines except for RAND store successful actions. However, only the SC baseline attempts to correct its flawed knowledge upon failures. The SC prompts the LLM to revise both its dependency and action knowledge using previous LLM predictions and interaction trajectories, as done in many self-correction methods (Shinn et al., 2023; Stechly et al., 2024). See Appendix ˜ B for the prompts of SC and Section ˜ J.1 for detailed descriptions of these baselines. To evaluate planning on diverse long-horizon goals, we further compare XENON with recent planning agents that are provided with oracle dependencies: DEPS Wang et al. (2023b), Jarvis-1 Wang et al. (2023c), Optimus-1 Li et al. (2024b), and Optimus-2 Li et al. (2025b).

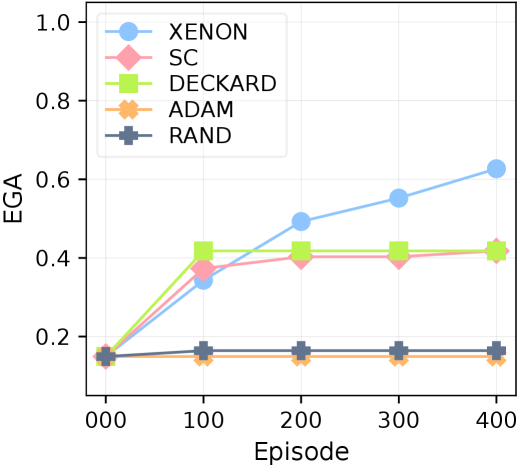

5.2 Robust dependency learning against flawed prior knowledge

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Chart: EGA vs Episode

### Overview

The image is a line chart comparing the performance of five different algorithms (XENON, SC, DECKARD, ADAM, and RAND) over a series of episodes. The y-axis represents EGA (likely a performance metric), and the x-axis represents the episode number. The chart shows how the EGA value changes for each algorithm as the number of episodes increases.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:**

* Label: "Episode"

* Scale: 000, 100, 200, 300, 400

* **Y-axis:**

* Label: "EGA"

* Scale: 0.2, 0.4, 0.6, 0.8, 1.0

* **Legend:** Located in the top-left corner.

* XENON (light blue line with circle markers)

* SC (light pink line with diamond markers)

* DECKARD (light green line with square markers)

* ADAM (light orange line with asterisk-like markers)

* RAND (dark gray line with plus-like markers)

### Detailed Analysis

* **XENON (light blue line with circle markers):** The line starts at approximately 0.15 EGA at episode 0, increases to approximately 0.35 at episode 100, then to approximately 0.5 at episode 200, then to approximately 0.55 at episode 300, and finally reaches approximately 0.63 at episode 400. The trend is generally upward.

* **SC (light pink line with diamond markers):** The line starts at approximately 0.15 EGA at episode 0, increases to approximately 0.38 at episode 100, then remains relatively constant at approximately 0.4 at episodes 200, 300, and 400.

* **DECKARD (light green line with square markers):** The line starts at approximately 0.15 EGA at episode 0, increases to approximately 0.42 at episode 100, then remains relatively constant at approximately 0.42 at episodes 200, 300, and 400.

* **ADAM (light orange line with asterisk-like markers):** The line starts at approximately 0.15 EGA at episode 0 and remains relatively constant at approximately 0.15 across all episodes (100, 200, 300, 400).

* **RAND (dark gray line with plus-like markers):** The line starts at approximately 0.15 EGA at episode 0 and remains relatively constant at approximately 0.16 across all episodes (100, 200, 300, 400).

### Key Observations

* XENON shows the most significant improvement in EGA as the number of episodes increases.

* SC and DECKARD show a significant initial increase in EGA but then plateau.

* ADAM and RAND show very little change in EGA across all episodes.

* All algorithms start at approximately the same EGA value at episode 0.

### Interpretation

The chart demonstrates the learning performance of different algorithms over a series of episodes. XENON appears to be the most effective algorithm, as it shows the greatest improvement in EGA as the number of episodes increases. SC and DECKARD show some initial learning but then plateau, suggesting they may have reached their performance limit or require further tuning. ADAM and RAND show very little learning, indicating they may not be suitable for this particular task or require significant modifications. The fact that all algorithms start at approximately the same EGA value suggests a fair comparison at the beginning of the training process.

</details>

(a) MineRL

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Chart: EGA vs Episode

### Overview

The image is a line chart displaying the relationship between Episode (x-axis) and EGA (y-axis) for four different data series, each represented by a distinct color and marker. The chart shows how EGA changes over the course of 400 episodes for each series.

### Components/Axes

* **X-axis:** Episode, with markers at 0, 100, 200, 300, and 400.

* **Y-axis:** EGA, ranging from 0.0 to 1.0, with markers at 0.2 intervals.

* **Data Series:**

* Blue line with circle markers.

* Orange line with cross markers.

* Light Green line with square markers.

* Pink line with diamond markers.

* Dark Grey line with plus markers.

### Detailed Analysis

* **Blue Line (Circle Markers):**

* Trend: Initially increases sharply, then plateaus.

* Data Points:

* Episode 0: EGA ~0.15

* Episode 100: EGA ~0.7

* Episode 200: EGA ~0.9

* Episode 300: EGA ~0.9

* Episode 400: EGA ~0.9

* **Orange Line (Cross Markers):**

* Trend: Increases sharply initially, then remains relatively constant.

* Data Points:

* Episode 0: EGA ~0.15

* Episode 100: EGA ~0.65

* Episode 200: EGA ~0.65

* Episode 300: EGA ~0.65

* Episode 400: EGA ~0.65

* **Light Green Line (Square Markers):**

* Trend: Increases gradually, then plateaus.

* Data Points:

* Episode 0: EGA ~0.15

* Episode 100: EGA ~0.4

* Episode 200: EGA ~0.42

* Episode 300: EGA ~0.44

* Episode 400: EGA ~0.44

* **Pink Line (Diamond Markers):**

* Trend: Increases gradually, then plateaus.

* Data Points:

* Episode 0: EGA ~0.15

* Episode 100: EGA ~0.35

* Episode 200: EGA ~0.4

* Episode 300: EGA ~0.43

* Episode 400: EGA ~0.43

* **Dark Grey Line (Plus Markers):**

* Trend: Increases slightly, then plateaus.

* Data Points:

* Episode 0: EGA ~0.15

* Episode 100: EGA ~0.2

* Episode 200: EGA ~0.2

* Episode 300: EGA ~0.21

* Episode 400: EGA ~0.21

### Key Observations

* The blue line (circle markers) shows the highest EGA values and the most significant initial increase.

* The orange line (cross markers) also shows a significant initial increase but plateaus at a lower EGA value than the blue line.

* The light green and pink lines (square and diamond markers, respectively) show more gradual increases in EGA.

* The dark grey line (plus markers) shows the lowest EGA values and the least change over the episodes.

* All lines start at approximately the same EGA value (~0.15) at Episode 0.

* All lines plateau after approximately 200 episodes.

### Interpretation

The chart compares the performance of four different strategies or algorithms (represented by the different colored lines) in terms of EGA (likely a performance metric) over a series of episodes. The blue line represents the most effective strategy, achieving the highest EGA values and a rapid initial improvement. The orange line is also effective initially but plateaus at a lower level. The light green, pink, and dark grey lines represent less effective strategies, with lower EGA values and slower improvement. The fact that all lines start at the same EGA value suggests that all strategies begin with similar initial performance. The plateauing of all lines indicates that the strategies reach a point of diminishing returns, where further episodes do not lead to significant improvements in EGA.

</details>

(b) Mineflayer

Figure 5: Robustness against flawed prior knowledge. EGA over 400 episodes in (a) MineRL and (b) Mineflayer. XENON consistently outperforms the baselines.

Table 2: Robustness to LLM hallucinations. The number of correctly learned dependencies of items that are descendants of a hallucinated item in the initial LLM-predicted dependency graph (out of 12).

| Agent | Learned descendants of hallucinated items |

| --- | --- |

| XENON | 0.33 |

| SC | 0 |

| ADAM | 0 |

| DECKARD | 0 |

| RAND | 0 |

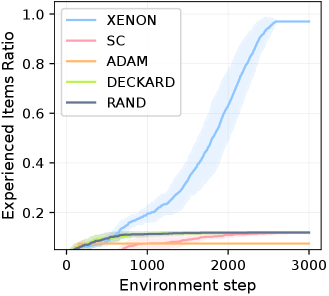

XENON demonstrates robust dependency learning from flawed prior knowledge, consistently outperforming baselines with an EGA of approximately 0.6 in MineRL and 0.9 in Mineflayer (Figure ˜ 5), despite the challenging setting with imperfect controllers. This superior performance is driven by its algorithmic correction mechanism, RevisionByAnalogy, which corrects flawed dependency knowledge while also accommodating imperfect controllers by gradually scaling required items quantities. The robustness of this algorithmic correction is particularly evident in two key analyses of the learned graph for each agent from the MineRL experiments. First, as shown in Table ˜ 2, XENON is uniquely robust to LLM hallucinations, learning dependencies for descendant items of non-existent, hallucinated items in the initial LLM-predicted graph. Second, XENON outperforms the baselines in learning dependencies for items that are unobtainable by the initial graph, as shown in Table ˜ 13.

Our results demonstrate the unreliability of relying on LLM self-correction or blindly trusting an LLM’s flawed knowledge; in practice, SC achieves the same EGA as DECKARD, with both plateauing around 0.4 in both environments.

We observe that controller capacity strongly impacts dependency learning. This is evident in ADAM, whose EGA differs markedly between MineRL ( $≈$ 0.1), which has a limited controller, and Mineflayer ( $≈$ 0.6), which has a more competent controller. While ADAM unrealistically assumes a controller can gather large quantities of all resource items before attempting a new item, MineRL’s controller STEVE-1 (Lifshitz et al., 2023) cannot execute this demanding strategy, causing ADAM’s EGA to fall below even the simplest baseline, RAND. Controller capacity also accounts for XENON’s lower EGA in MineRL. For instance, XENON learns none of the dependencies of the Redstone group items, as STEVE-1 cannot execute XENON’s strategy for inadmissible items (Section ˜ 4.1). In contrast, the more capable Mineflayer controller executes this strategy successfully, allowing XENON to learn the correct dependencies for 5 of 6 Redstone items. This difference highlights the critical role of controllers for dependency learning, as detailed in our analysis in Section ˜ K.3

5.3 Effective planning to solve diverse goals

Table 3: Performance on long-horizon task benchmark. Average success rate of each group on the long-horizon task benchmark Li et al. (2024b) in MineRL. Oracle indicates that the true dependency graph is known in advance, Learned indicates that the graph is learned via experience across 400 episodes. For fair comparison across LLMs, we include Optimus-1 †, our reproduction of Optimus-1 using Qwen2.5-VL-7B. Due to resource limits, results for DEPS, Jarvis-1, Optimus-1, and Optimus-2 are cited directly from (Li et al., 2025b). See Section ˜ K.12 for the success rate on each goal.

| Method | Dependency | Planner LLM | Overall |

<details>

<summary>x12.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

|

<details>

<summary>x13.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

|

<details>

<summary>x14.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

|

<details>

<summary>x15.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

|

<details>

<summary>x16.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

|

<details>

<summary>x17.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

|

<details>

<summary>x18.png Details</summary>

### Visual Description

Icon/Small Image (20x20)

</details>

|

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Wood | Stone | Iron | Diamond | Gold | Armor | Redstone | | | | |

| DEPS | - | Codex | 0.22 | 0.77 | 0.48 | 0.16 | 0.01 | 0.00 | 0.10 | 0.00 |

| Jarvis-1 | Oracle | GPT-4 | 0.38 | 0.93 | 0.89 | 0.36 | 0.08 | 0.07 | 0.15 | 0.16 |

| Optimus-1 | Oracle | GPT-4V | 0.43 | 0.98 | 0.92 | 0.46 | 0.11 | 0.08 | 0.19 | 0.25 |

| Optimus-2 | Oracle | GPT-4V | 0.45 | 0.99 | 0.93 | 0.53 | 0.13 | 0.09 | 0.21 | 0.28 |

| Optimus-1 † | Oracle | Qwen2.5-VL-7B | 0.34 | 0.92 | 0.80 | 0.22 | 0.10 | 0.09 | 0.17 | 0.04 |

| XENON ∗ | Oracle | Qwen2.5-VL-7B | 0.79 | 0.95 | 0.93 | 0.83 | 0.75 | 0.73 | 0.61 | 0.75 |

| XENON | Learned | Qwen2.5-VL-7B | 0.54 | 0.85 | 0.81 | 0.46 | 0.64 | 0.74 | 0.28 | 0.00 |

As shown in Table ˜ 3, XENON significantly outperforms baselines in solving diverse long-horizon goals despite using the lightweight Qwen2.5-VL-7B LLM (Bai et al., 2025), while the baselines rely on large proprietary models such as Codex (Chen et al., 2021), GPT-4 (OpenAI, 2024), and GPT-4V (OpenAI, 2023). Remarkably, even with its learned dependency knowledge (Section ˜ 5.2), XENON surpasses the baselines with the oracle knowledge on challenging late-game goals, achieving high SRs for item groups like Gold (0.74) and Diamond (0.64).

XENON’s superiority stems from two key factors. First, its FAM provides systematic, fine-grained action correction for each goal. Second, it reduces reliance on the LLM for planning in two ways: it shortens prompts and outputs by requiring it to predict one action per subgoal item, and it bypasses the LLM entirely by reusing successful actions from FAM. In contrast, the baselines lack a systematic, fine-grained action correction mechanism and instead make LLMs generate long plans from lengthy prompts—a strategy known to be ineffective for LLMs (Wu et al., 2024; Li et al., 2024a). This challenge is exemplified by Optimus-1 †. Despite using a knowledge graph for planning like XENON, its long-context generation strategy causes LLM to predict incorrect actions or omit items explicitly provided in its prompt, as detailed in Section ˜ K.5.

We find that accurate knowledge is critical for long-horizon planning, as its absence can make even a capable agent ineffective. The Redstone group from Table ˜ 3 provides an example: while XENON ∗ with oracle knowledge succeeds (0.75 SR), XENON with learned knowledge fails entirely (0.00 SR), because it failed to learn the dependencies for Redstone goals due to the controller’s limited capacity in MineRL (Section ˜ 5.2). This finding is further supported by our comprehensive ablation study, which confirms that accurate dependency knowledge is most critical for success across all goals (See Table ˜ 17 in Section ˜ K.7).

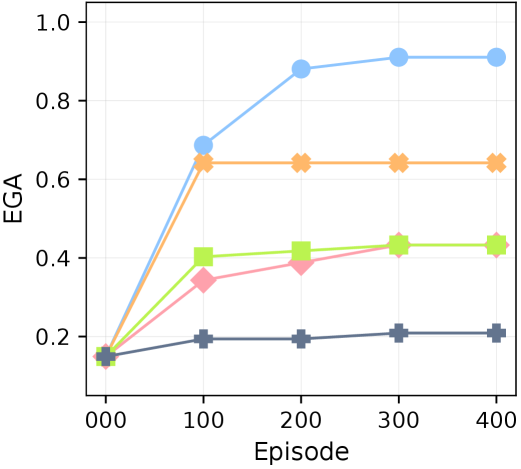

5.4 Robust dependency learning against knowledge conflicts

<details>

<summary>x19.png Details</summary>

### Visual Description

## Legend: Algorithm Identification

### Overview

The image presents a legend that identifies different algorithms using distinct colors and markers. This legend is likely associated with a chart or graph comparing the performance of these algorithms.

### Components/Axes

The legend contains the following entries, each with a specific color and marker:

* **XENON**: Light blue with a circle marker.

* **SC**: Light pink with a diamond marker.

* **ADAM**: Light orange with a pentagon marker.

* **DECKARD**: Light green with a square marker.

* **RAND**: Gray with a plus marker.

### Detailed Analysis or ### Content Details

The legend is horizontally oriented. Each algorithm name is paired with a colored line and a corresponding marker shape. The order of the algorithms in the legend is: XENON, SC, ADAM, DECKARD, and RAND.

### Key Observations

The legend provides a clear mapping between algorithm names, colors, and markers. This is essential for interpreting any associated chart or graph.

### Interpretation

The legend serves as a key for understanding which data series in a chart or graph corresponds to which algorithm. The use of distinct colors and markers helps to visually differentiate the algorithms and facilitates comparison of their performance.

</details>

<details>

<summary>x20.png Details</summary>

### Visual Description

## Line Chart: EGA vs. Perturbed Items and Action

### Overview

The image is a line chart displaying the relationship between EGA (likely representing some form of effectiveness or accuracy) and the number of perturbed items and action. The x-axis represents the perturbed items and action,

</details>

(a) Perturbed True Required Items

<details>

<summary>x21.png Details</summary>

### Visual Description

## Line Chart: EGA vs Perturbed (required items, action)

### Overview

The image is a line chart displaying the relationship between EGA (Expected Goal Achievement) and "Perturbed (required items, action)". There are four distinct data series represented by different colored lines: light blue, light orange, light pink, light green, and dark grey. The x-axis represents the "Perturbed (required items, action)" with values (0, 0), (0, 1), (0, 2), and (0, 3). The y-axis represents EGA, ranging from 0.2 to 1.0.

### Components/Axes

* **X-axis:** "Perturbed (required items, action)" with labels (0, 0), (0, 1), (0, 2), and (0, 3).

* **Y-axis:** "EGA" (Expected Goal Achievement) with values 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Data Series:**

* Light Blue: Constant value across all x-axis points.

* Light Orange: Decreases sharply from (0, 0) to (0, 1) and then remains constant.

* Light Pink: Decreases gradually from (0, 0) to (0, 3).

* Light Green: Decreases gradually from (0, 0) to (0, 3).

* Dark Grey: Remains relatively constant across all x-axis points.

### Detailed Analysis or ### Content Details

* **Light Blue Line:**

* Trend: Horizontal, indicating a constant EGA value.

* Values: Approximately 0.98 at (0, 0), (0, 1), (0, 2), and (0, 3).

* **Light Orange Line:**

* Trend: Decreases sharply from (0, 0) to (0, 1) and then remains constant.

* Values: Approximately 0.68 at (0, 0), approximately 0.15 at (0, 1), approximately 0.15 at (0, 2), and approximately 0.15 at (0, 3).

* **Light Pink Line:**

* Trend: Decreases gradually.

* Values: Approximately 0.62 at (0, 0), approximately 0.43 at (0, 1), approximately 0.28 at (0, 2), and approximately 0.22 at (0, 3).

* **Light Green Line:**

* Trend: Decreases gradually.

* Values: Approximately 0.48 at (0, 0), approximately 0.38 at (0, 1), approximately 0.24 at (0, 2), and approximately 0.22 at (0, 3).

* **Dark Grey Line:**

* Trend: Relatively constant.

* Values: Approximately 0.24 at (0, 0), approximately 0.24 at (0, 1), approximately 0.24 at (0, 2), and approximately 0.22 at (0, 3).

### Key Observations

* The light blue line maintains a consistently high EGA value regardless of the "Perturbed (required items, action)".

* The light orange line experiences a significant drop in EGA between (0, 0) and (0, 1), after which it stabilizes at a low value.

* The light pink and light green lines show a gradual decrease in EGA as the "Perturbed (required items, action)" increases.

* The dark grey line remains relatively stable, with a slight decrease at the end.

### Interpretation

The chart illustrates how different strategies or configurations (represented by the colored lines) perform under varying levels of perturbation. The light blue line represents a highly robust strategy, maintaining high EGA even when the "Perturbed (required items, action)" increases. The light orange line represents a strategy that is highly sensitive to initial perturbations, with performance plummeting after the first perturbation. The light pink and light green lines represent strategies that are moderately affected by perturbations, with a gradual decline in performance. The dark grey line represents a strategy that is consistently stable, but at a lower EGA level. The data suggests that the light blue strategy is the most resilient to perturbations, while the light orange strategy is the most vulnerable.

</details>

(b) Perturbed True Actions

<details>

<summary>x22.png Details</summary>

### Visual Description

## Line Chart: EGA vs Perturbed (required items, action)

### Overview

The image is a line chart showing the relationship between EGA (Expected Goal Achievement) and the level of perturbation, represented as "(required items, action)". There are four distinct data series, each represented by a different color and marker. The x-axis represents the perturbation level, increasing from (0, 0) to (3, 3). The y-axis represents the EGA, ranging from 0.0 to 1.0.

### Components/Axes

* **X-axis:** "Perturbed (required items, action)" with markers at (0, 0), (1, 1), (2, 2), and (3, 3).

* **Y-axis:** "EGA" ranging from 0.2 to 1.0 in increments of 0.2.

* **Data Series:** Four distinct lines with different colors and markers. The legend is missing, so the colors are described below.

### Detailed Analysis

* **Light Blue (Circles):** This line remains constant at approximately EGA = 0.98 across all perturbation levels.

* (0, 0): 0.98

* (1, 1): 0.98

* (2, 2): 0.98

* (3, 3): 0.98

* **Orange (X):** This line shows a decreasing trend as the perturbation level increases.

* (0, 0): 0.68

* (1, 1): 0.12

* (2, 2): 0.08

* (3, 3): 0.07

* **Pink (Diamonds):** This line also shows a decreasing trend as the perturbation level increases.

* (0, 0): 0.62

* (1, 1): 0.40

* (2, 2): 0.22

* (3, 3): 0.15

* **Lime Green (Squares):** This line shows a decreasing trend as the perturbation level increases.

* (0, 0): 0.48

* (1, 1): 0.35

* (2, 2): 0.20

* (3, 3): 0.15

* **Dark Grey (Plus Signs):** This line shows a slight decreasing trend as the perturbation level increases.

* (0, 0): 0.24

* (1, 1): 0.21

* (2, 2): 0.18

* (3, 3): 0.14

### Key Observations

* The light blue line representing one of the strategies maintains a high EGA regardless of the perturbation level.

* The orange, pink, and lime green lines show a significant decrease in EGA as the perturbation level increases.

* The dark grey line shows a slight decrease in EGA as the perturbation level increases.

### Interpretation

The chart suggests that some strategies (represented by the light blue line) are robust to perturbations, maintaining a high expected goal achievement even when the required items or actions are altered. Other strategies (represented by the orange, pink, and lime green lines) are highly sensitive to perturbations, with their EGA decreasing significantly as the perturbation level increases. The dark grey line represents a strategy that is somewhat sensitive to perturbations, but not as much as the orange, pink, and lime green lines. The data demonstrates the varying degrees of robustness of different strategies to changes in the environment or task requirements.

</details>

(c) Perturbed Both Rules

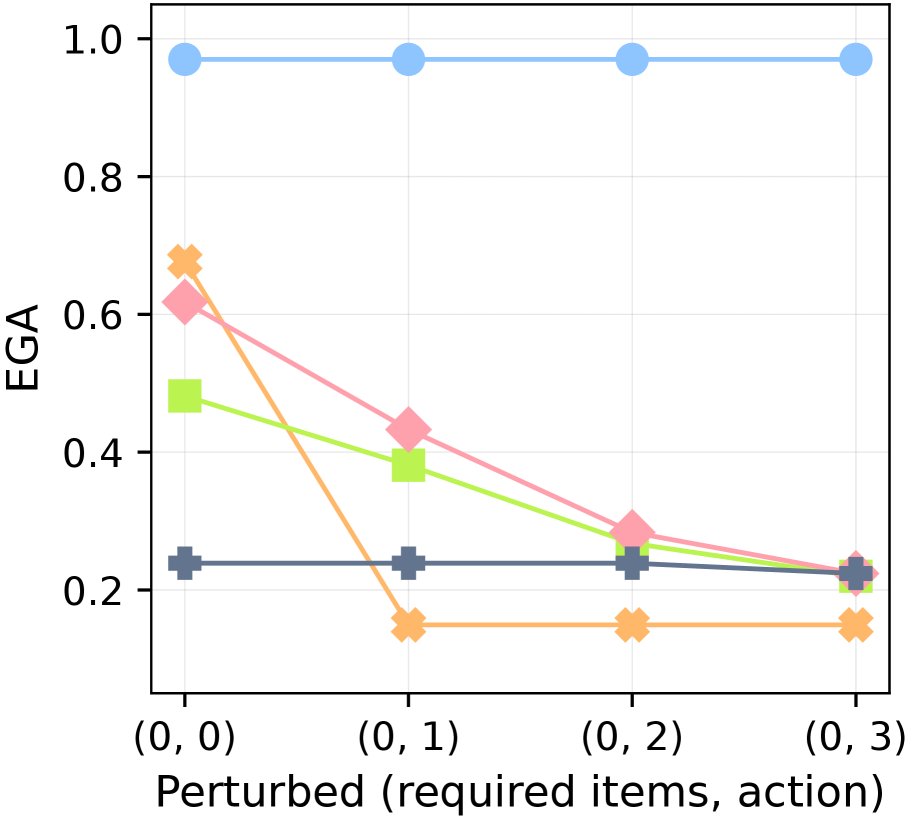

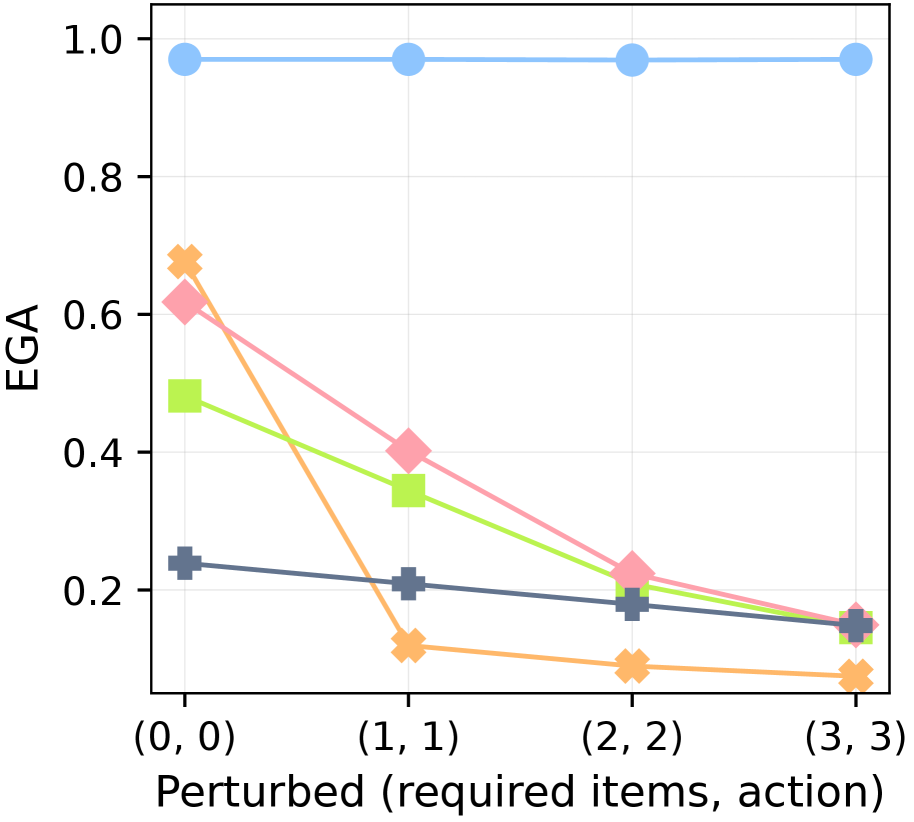

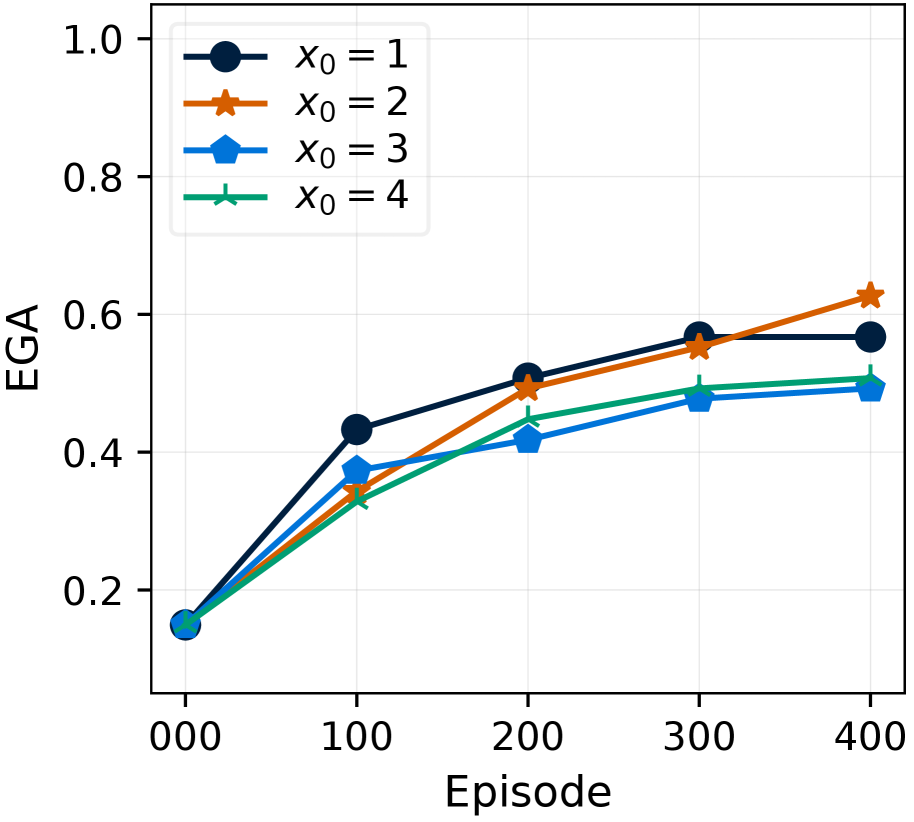

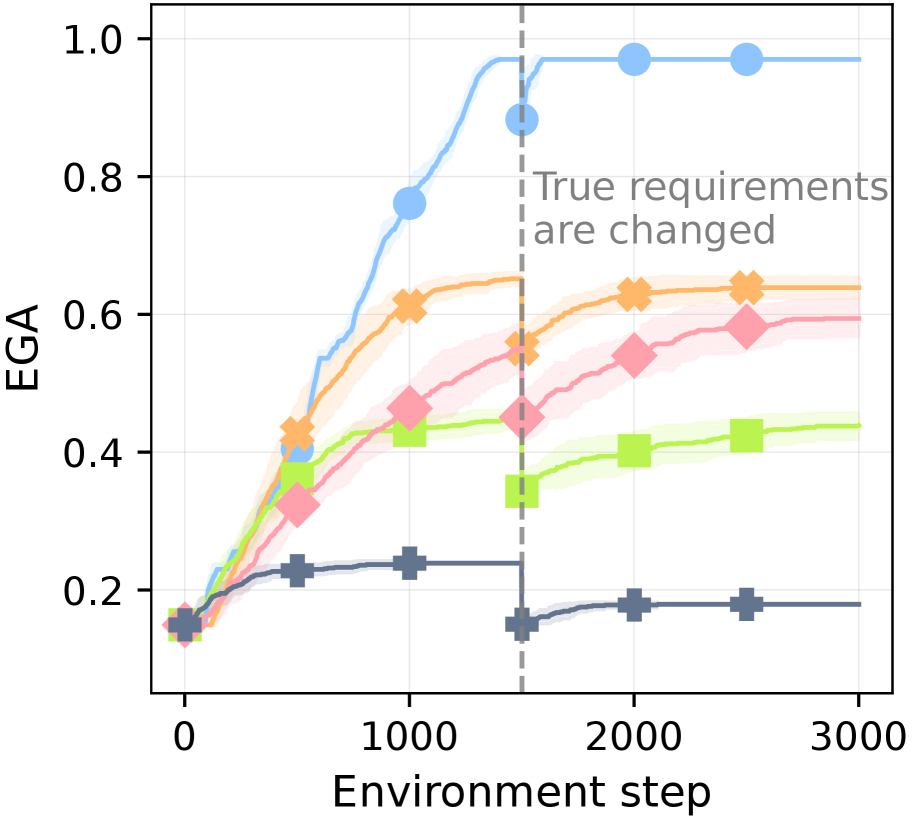

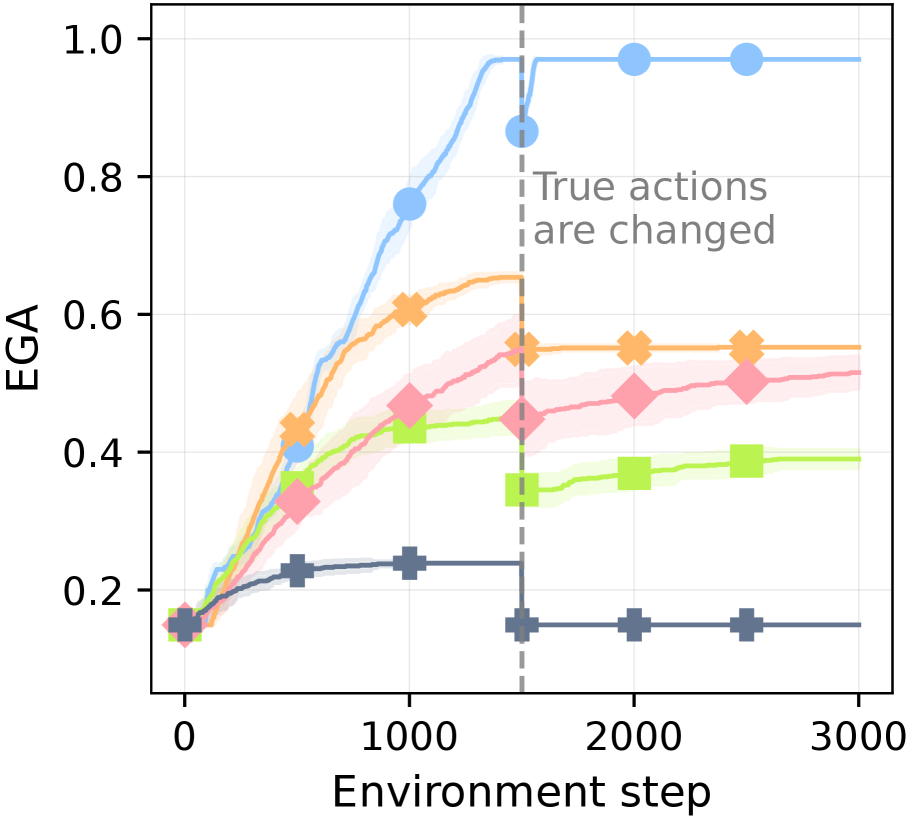

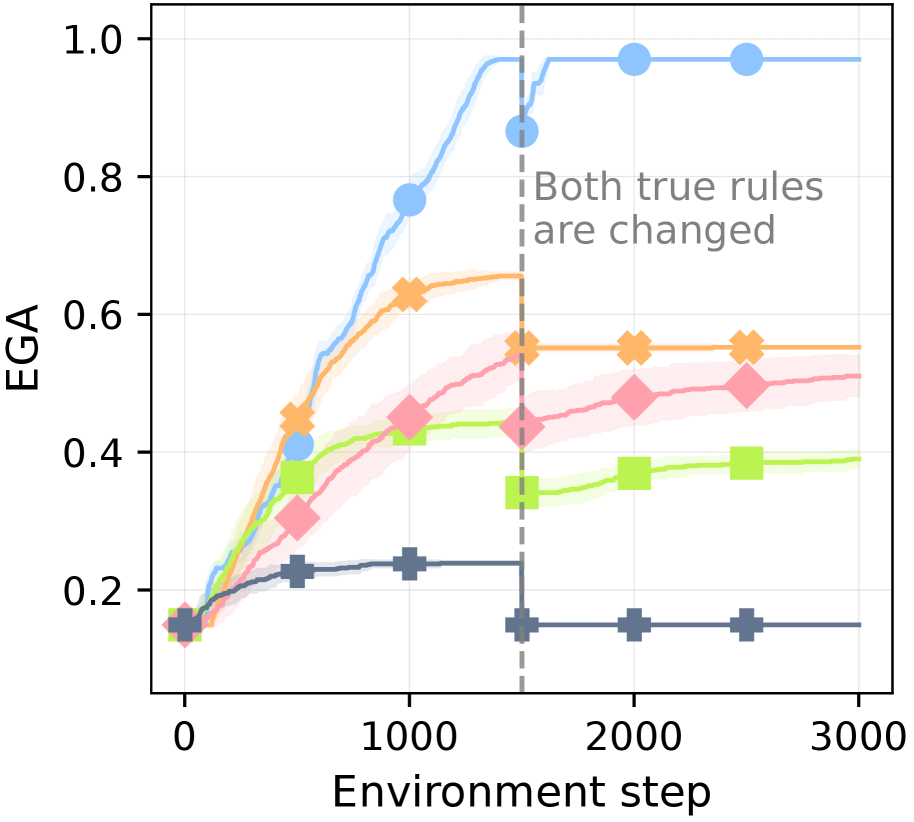

Figure 6: Robustness against knowledge conflicts. EGA after 3,000 environment steps in MC-TextWorld under different perturbations of the ground-truth rules. The plots show performance with increasing intensities of perturbation applied to: (a) requirements only, (b) actions only, and (c) both (see Table ˜ 4).

Table 4: Effect of ground-truth perturbations on prior knowledge.

| Perturbation Intensity | Goal items obtainable via prior knowledge |

| --- | --- |

| 0 | 16 (no perturbation) |

| 1 | 14 (12 %) |

| 2 | 11 (31 %) |

| 3 | 9 (44 %) |

To isolate dependency learning from controller capacity, we shift to the MC-TextWorld environment with a perfect controller. In this setting, we test each agent’s robustness to conflicts with its prior knowledge (derived from the LLM’s initial predictions and human-written plans) by introducing arbitrary perturbations to the ground-truth required items and actions. These perturbations are applied with an intensity level; a higher intensity affects a greater number of items, as shown in Table ˜ 4. This intensity is denoted by a tuple (r,a) for required items and actions, respectively. (0,0) represents the vanilla setting with no perturbations. See Figure ˜ 21 for the detailed perturbation process.

Figure ˜ 6 shows XENON’s robustness to knowledge conflicts, as it maintains a near-perfect EGA ( $≈$ 0.97). In contrast, the performance of all baselines degrades as perturbation intensity increases across all three perturbation scenarios (required items, actions, or both). We find that prompting an LLM to self-correct is ineffective when the ground truth conflicts with its parametric knowledge: SC shows no significant advantage over DECKARD, which lacks a correction mechanism. ADAM is vulnerable to action perturbations; its strategy of gathering all resource items before attempting a new item fails when the valid actions for those resources are perturbed, effectively halting its learning.

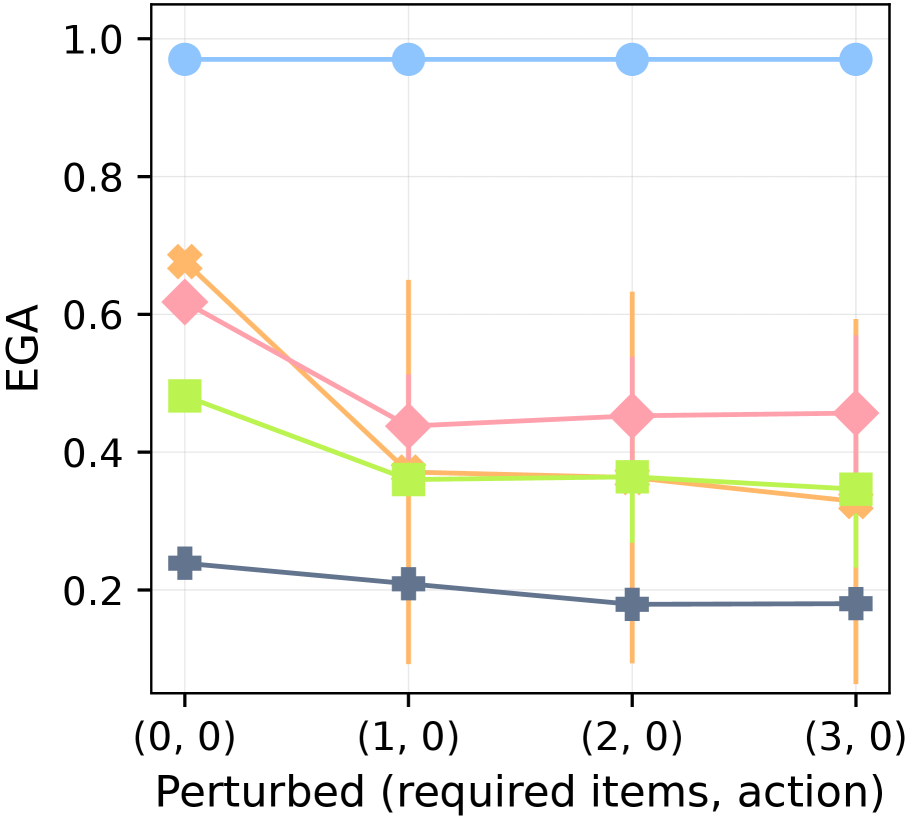

5.5 Ablation studies on knowledge correction mechanisms

Table 5: Ablation study of knowledge correction mechanisms. ○: XENON; $\triangle$ : LLM self-correction; ✗: No correction. All entries denote the EGA after 3,000 environment steps. Columns denote the perturbation setting (r,a). For LLM self-correction, we use the same prompt as the SC baseline (see Appendix ˜ B).

| Dependency Correction | Action Correction | (0,0) | (3,0) | (0,3) | (3,3) |

| --- | --- | --- | --- | --- | --- |

| ○ | ○ | 0.97 | 0.97 | 0.97 | 0.97 |

| ○ | $\triangle$ | 0.93 | 0.93 | 0.12 | 0.12 |

| ○ | ✗ | 0.84 | 0.84 | 0.12 | 0.12 |

| $\triangle$ | ○ | 0.57 | 0.30 | 0.57 | 0.29 |

| ✗ | ○ | 0.53 | 0.13 | 0.53 | 0.13 |

| ✗ | ✗ | 0.46 | 0.13 | 0.19 | 0.11 |

As shown in Table ˜ 5, to analyze XENON’s knowledge correction mechanisms for dependencies and actions, we conduct ablation studies in MC-TextWorld. While dependency correction is generally more important for overall performance, action correction becomes vital under action perturbations. In contrast, LLM self-correction is ineffective for complex scenarios: it offers minimal gains for dependency correction even in the vanilla setting and fails entirely for perturbed actions. Its effectiveness is limited to simpler scenarios, such as action correction in the vanilla setting. These results demonstrate that our algorithmic knowledge correction approach enables robust learning from experience, overcoming the limitations of both LLM self-correction and flawed initial knowledge.

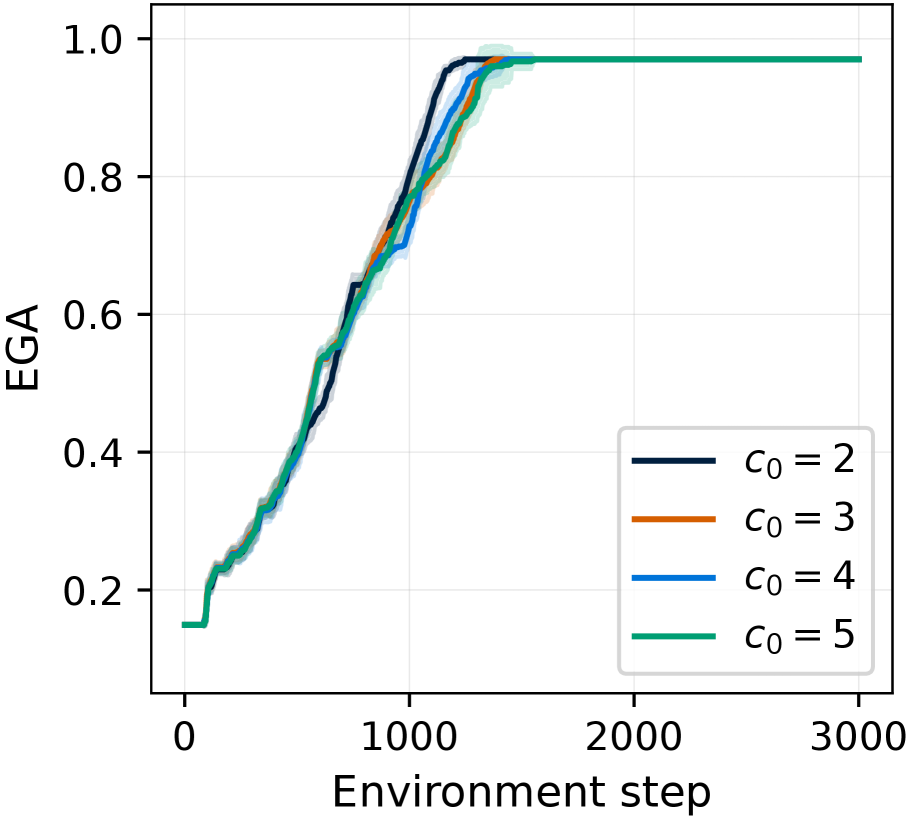

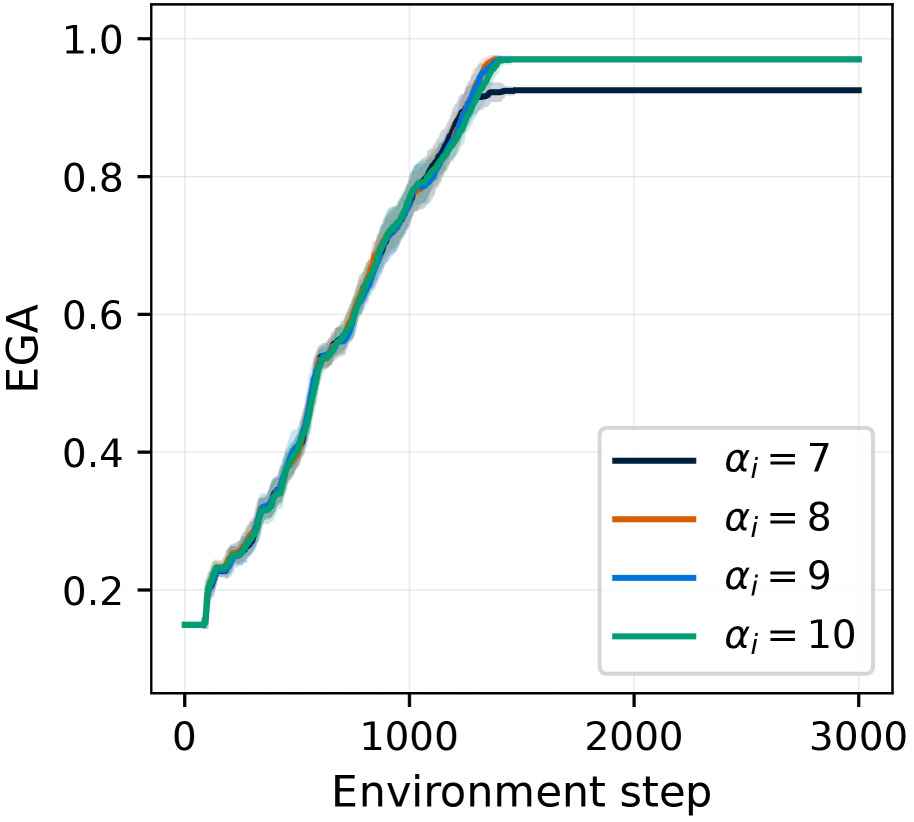

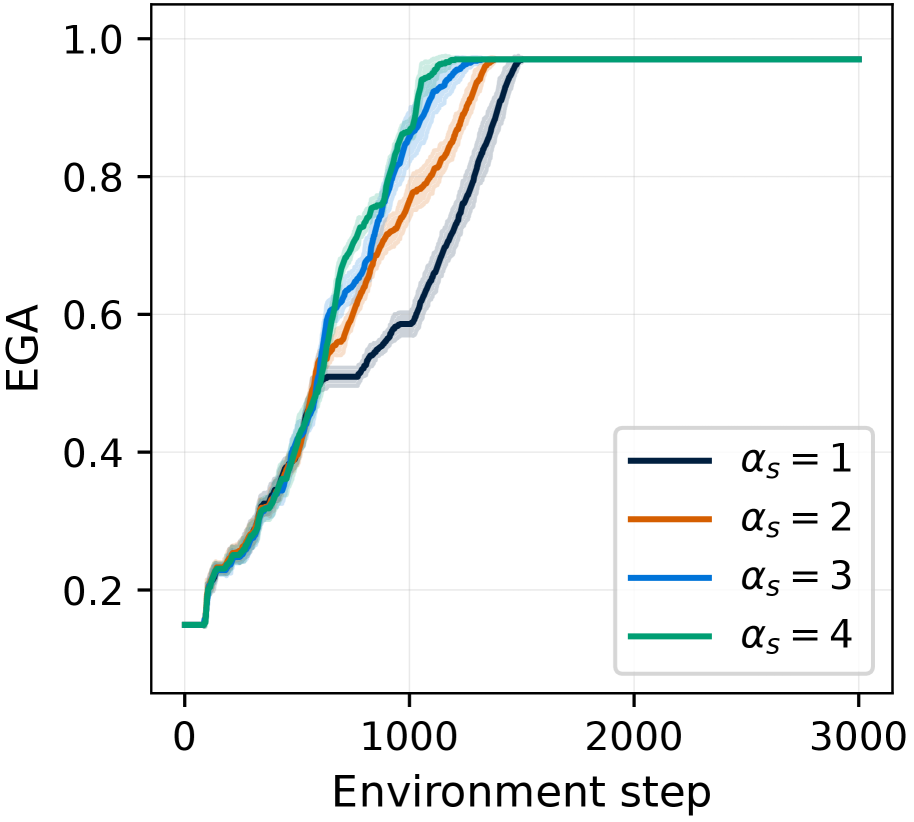

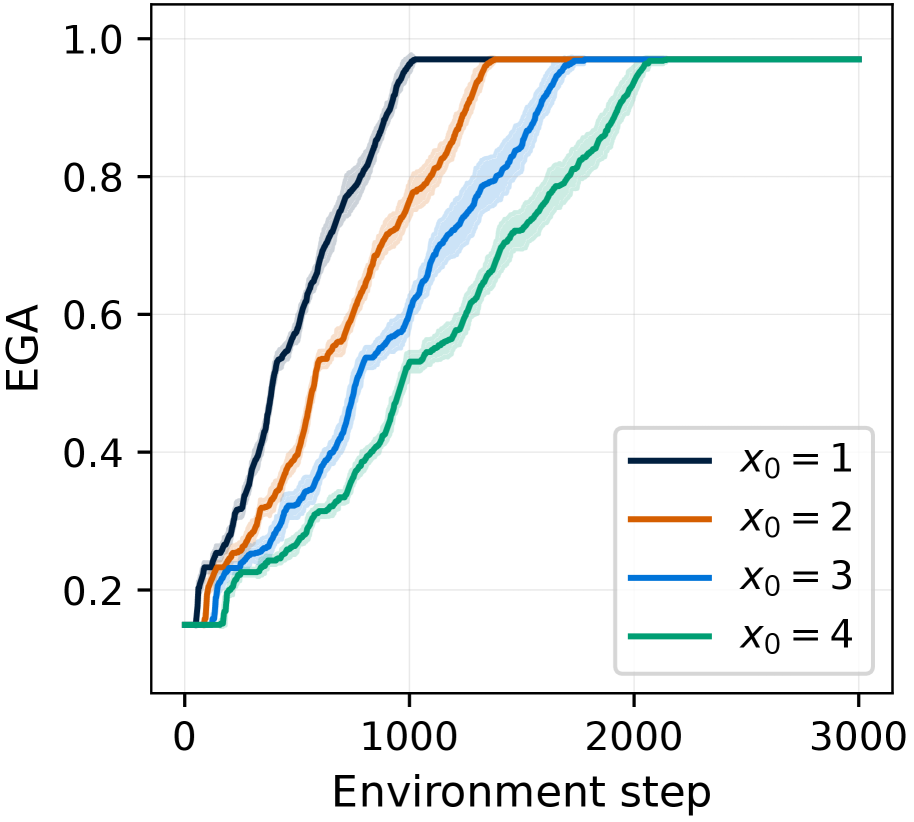

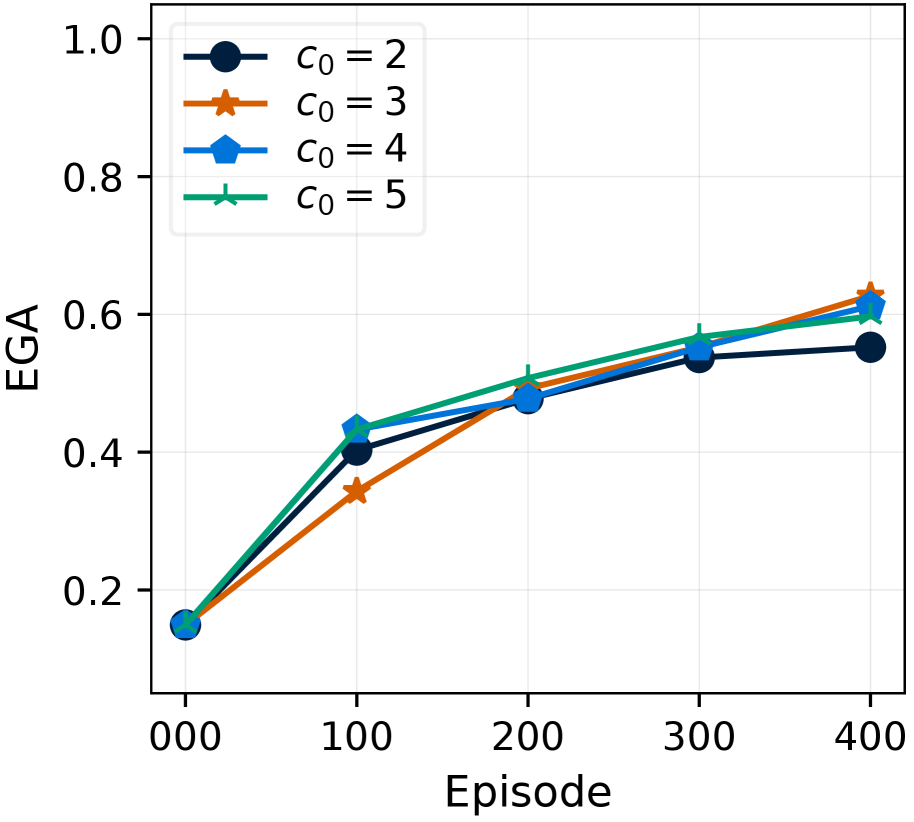

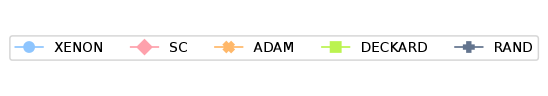

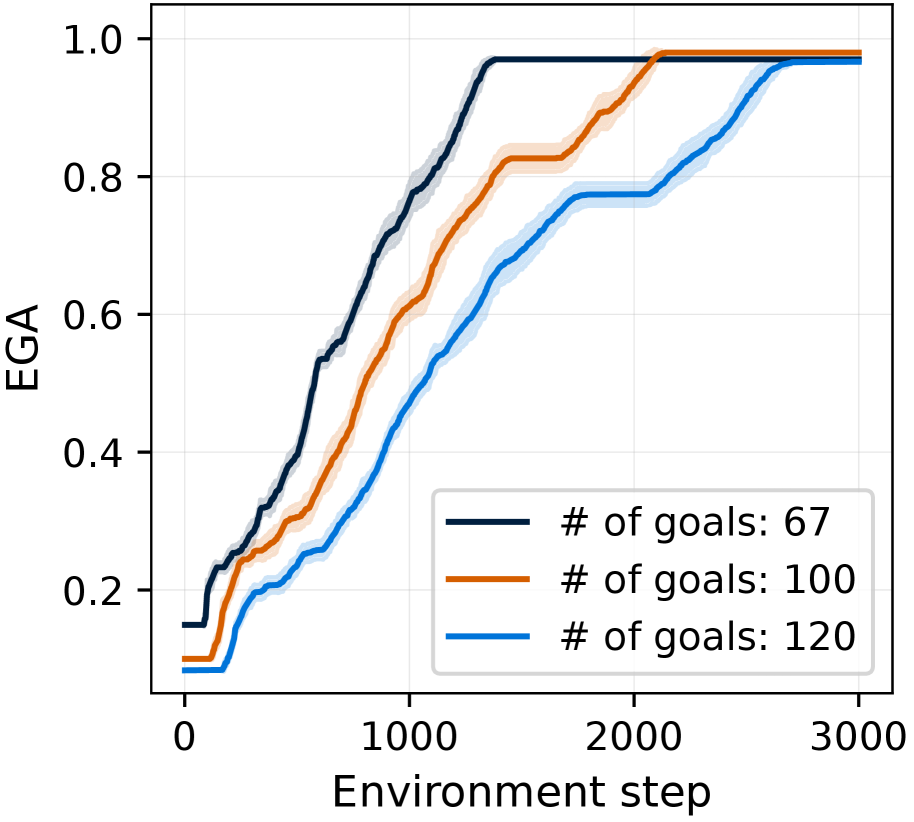

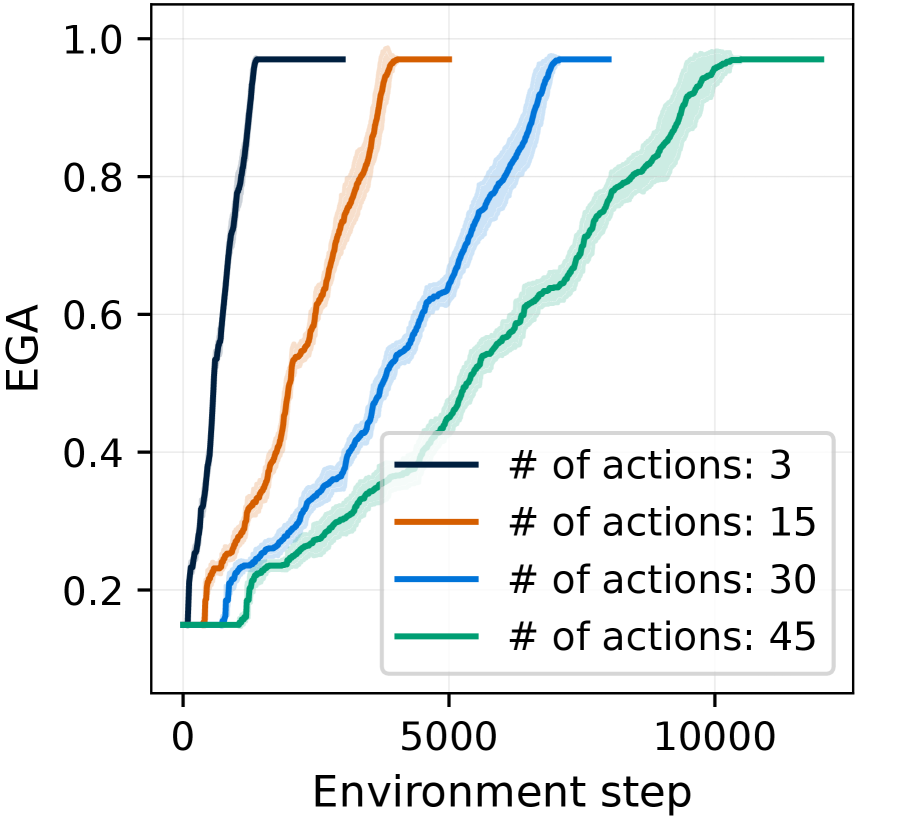

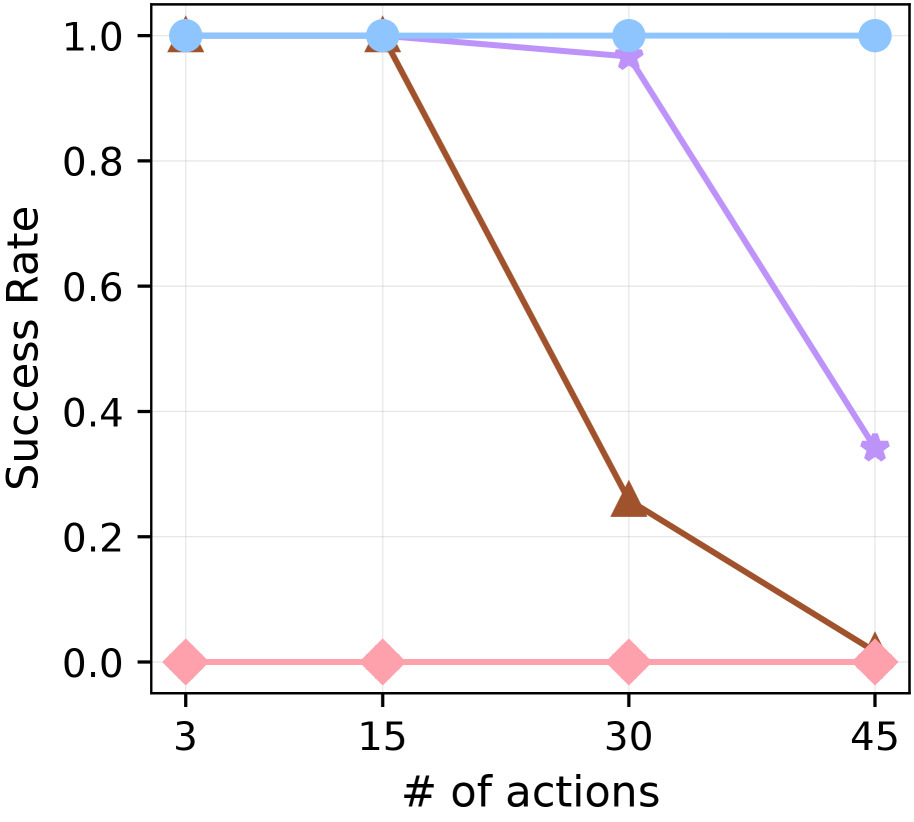

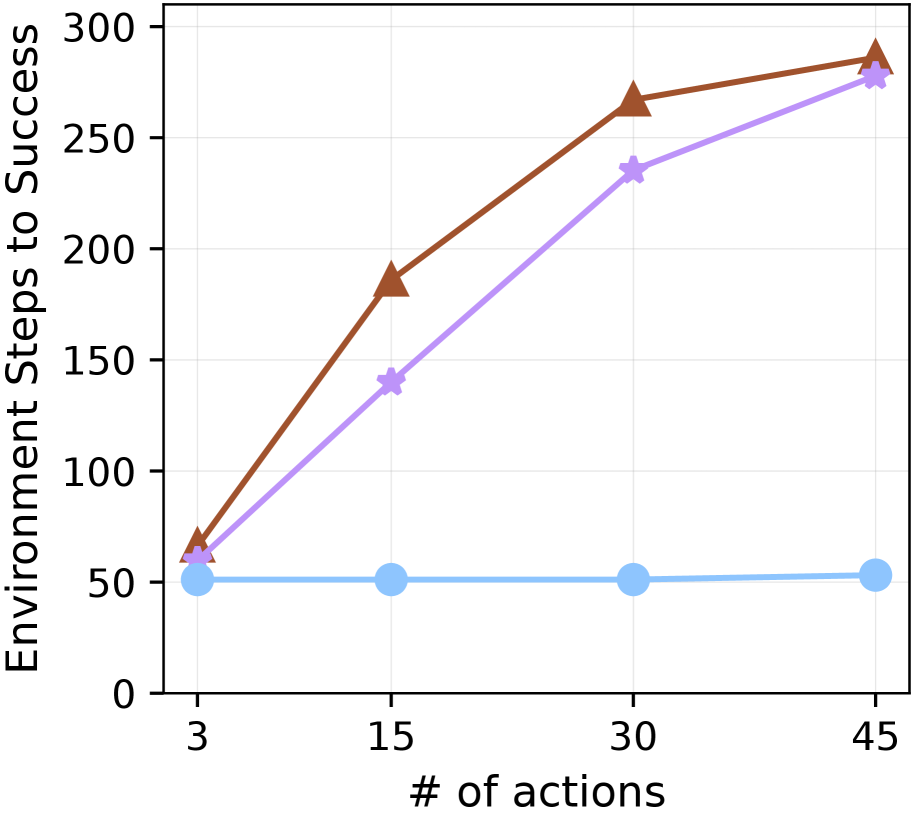

5.6 Ablation studies on hyperparameters

<details>

<summary>x23.png Details</summary>

### Visual Description

## Line Chart: EGA vs. Environment Step for Different c0 Values

### Overview

The image is a line chart that plots EGA (likely an abbreviation for an evaluation metric) against the environment step for four different values of a parameter denoted as "c0". The chart compares the performance of a system or algorithm under varying conditions, showing how EGA changes as the environment step increases. The chart includes a legend in the bottom-right corner that identifies each line by its corresponding c0 value.

### Components/Axes

* **X-axis:** "Environment step", ranging from 0 to 3000, with tick marks at intervals of 1000.

* **Y-axis:** "EGA", ranging from 0.2 to 1.0, with tick marks at intervals of 0.2.

* **Legend:** Located in the bottom-right corner, it identifies the lines by their c0 values:

* c0 = 2 (Dark Blue)

* c0 = 3 (Orange)

* c0 = 4 (Blue)

* c0 = 5 (Teal)

### Detailed Analysis

* **c0 = 2 (Dark Blue):** The line starts at an EGA of approximately 0.2 around environment step 0. It then increases steadily, reaching an EGA of approximately 0.95 around environment step 1500. After that, it plateaus at around 0.97.

* **c0 = 3 (Orange):** The line starts at an EGA of approximately 0.2 around environment step 0. It then increases steadily, reaching an EGA of approximately 0.9 around environment step 1200. After that, it plateaus at around 0.97.

* **c0 = 4 (Blue):** The line starts at an EGA of approximately 0.2 around environment step 0. It then increases steadily, reaching an EGA of approximately 0.95 around environment step 1400. After that, it plateaus at around 0.97.

* **c0 = 5 (Teal):** The line starts at an EGA of approximately 0.15 around environment step 0. It then increases steadily, reaching an EGA of approximately 0.97 around environment step 1500. After that, it plateaus at around 0.97.

### Key Observations

* All four lines show a similar trend: a rapid increase in EGA in the early environment steps, followed by a plateau at a high EGA value.

* The c0 = 5 line (Teal) starts at a slightly lower EGA value than the other lines.

* The c0 = 3 line (Orange) seems to increase slightly faster than the other lines initially.

* All lines converge to a similar EGA value (approximately 0.97) after around 1500 environment steps.

### Interpretation

The chart suggests that the parameter "c0" influences the initial learning rate or performance of the system, but its impact diminishes as the environment step increases. Specifically, a higher c0 value (c0 = 5) seems to result in a slightly lower initial EGA, while a c0 value of 3 results in a slightly faster initial increase in EGA. However, regardless of the c0 value, the system eventually achieves a similar high level of performance (EGA ≈ 0.97) after a sufficient number of environment steps. This indicates that the system is robust to variations in the c0 parameter in the long run.

</details>

(a) $c_{0}$

<details>

<summary>x24.png Details</summary>

### Visual Description

## Line Chart: EGA vs. Environment Step for Different Alpha Values

### Overview

The image is a line chart that plots the Expected Goal Achievement (EGA) against the Environment Step for four different values of alpha (α): 7, 8, 9, and 10. The chart shows how the EGA changes over time (environment steps) for each alpha value. The legend is located in the bottom-right corner of the chart.

### Components/Axes

* **X-axis:** Environment step, ranging from 0 to 3000 in increments of 1000.

* **Y-axis:** EGA (Expected Goal Achievement), ranging from 0.2 to 1.0 in increments of 0.2.

* **Legend:** Located in the bottom-right corner, indicating the alpha values:

* Dark Blue: αᵢ = 7

* Orange: αᵢ = 8

* Blue: αᵢ = 9

* Teal: αᵢ = 10

### Detailed Analysis

* **αᵢ = 7 (Dark Blue):** The EGA starts at approximately 0.2, increases steadily until around environment step 1500 where it reaches approximately 0.95, and then plateaus at approximately 0.95 for the remainder of the steps.

* **αᵢ = 8 (Orange):** The EGA starts at approximately 0.15, increases steadily until around environment step 1500 where it reaches approximately 0.95, and then plateaus at approximately 0.95 for the remainder of the steps.

* **αᵢ = 9 (Blue):** The EGA starts at approximately 0.15, increases steadily until around environment step 1500 where it reaches approximately 0.97, and then plateaus at approximately 0.97 for the remainder of the steps.

* **αᵢ = 10 (Teal):** The EGA starts at approximately 0.15, increases steadily until around environment step 1500 where it reaches approximately 0.98, and then plateaus at approximately 0.98 for the remainder of the steps.

### Key Observations

* All four alpha values show a similar trend: a rapid increase in EGA up to around 1500 environment steps, followed by a plateau.

* Higher alpha values (9 and 10) achieve slightly higher EGA plateaus compared to lower alpha values (7 and 8).

* The shaded regions around each line indicate the variance or uncertainty in the EGA values.

### Interpretation

The chart suggests that increasing the alpha value generally leads to a slightly higher Expected Goal Achievement. However, the difference in EGA between the different alpha values is relatively small, especially after the initial learning phase (up to 1500 environment steps). The plateauing of EGA indicates that after a certain number of environment steps, further training does not significantly improve the agent's performance, regardless of the alpha value. The alpha values of 9 and 10 appear to perform slightly better than 7 and 8.

</details>

(b) $\alpha_{i}$

<details>

<summary>x25.png Details</summary>

### Visual Description

## Line Chart: EGA vs. Environment Step for Different Alpha Values

### Overview

The image is a line chart that plots the EGA (Expected Goal Achievement) on the y-axis against the Environment Step on the x-axis. There are four lines, each representing a different value of alpha (αs): 1, 2, 3, and 4. The chart illustrates how the EGA changes over time (environment steps) for each alpha value. The chart includes a legend in the bottom-right corner that identifies each line by its corresponding alpha value.

### Components/Axes

* **X-axis:** Environment step, ranging from 0 to 3000, with major ticks at 0, 1000, 2000, and 3000.

* **Y-axis:** EGA (Expected Goal Achievement), ranging from 0.2 to 1.0, with major ticks at 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Legend:** Located in the bottom-right corner, the legend identifies each line by its alpha value:

* Dark Blue: αs = 1

* Orange: αs = 2

* Blue: αs = 3

* Green: αs = 4

### Detailed Analysis

* **αs = 1 (Dark Blue):** The EGA starts around 0.2, remains relatively flat until approximately environment step 500, then increases steadily until it reaches approximately 0.95 around environment step 1500, after which it plateaus.

* **αs = 2 (Orange):** The EGA starts around 0.2, remains relatively flat until approximately environment step 500, then increases steadily until it reaches approximately 0.95 around environment step 1250, after which it plateaus.

* **αs = 3 (Blue):** The EGA starts around 0.2, remains relatively flat until approximately environment step 500, then increases steadily until it reaches approximately 0.95 around environment step 1000, after which it plateaus.

* **αs = 4 (Green):** The EGA starts around 0.15, remains relatively flat until approximately environment step 250, then increases steadily until it reaches approximately 0.97 around environment step 1000, after which it plateaus.

### Key Observations

* All four lines show a similar trend: a period of low EGA followed by a rapid increase and then a plateau.

* The alpha values affect the speed at which the EGA increases. Higher alpha values (3 and 4) reach the plateau faster than lower alpha values (1 and 2).

* The final EGA value is approximately the same for all alpha values, around 0.95 to 0.97.

* The shaded regions around each line likely represent the standard deviation or confidence interval, indicating the variability in the EGA for each alpha value.

### Interpretation

The chart suggests that the alpha value influences the learning rate or the speed at which the agent achieves a high EGA. Higher alpha values lead to faster learning, as indicated by the steeper increase in EGA at earlier environment steps. However, the final EGA achieved is similar across all alpha values, suggesting that the alpha value primarily affects the learning speed rather than the ultimate performance. The shaded regions indicate the variability in the learning process, which is also influenced by the alpha value. The data demonstrates that increasing alpha beyond a certain point (likely between 3 and 4) provides diminishing returns in terms of learning speed, as the green line (αs = 4) plateaus only slightly earlier than the blue line (αs = 3).

</details>

(c) $\alpha_{s}$

<details>

<summary>x26.png Details</summary>

### Visual Description