# 1 Introduction

Statistical mechanics of extensive-width Bayesian neural networks near interpolation

Jean Barbier * 1 Francesco Camilli * 1 Minh-Toan Nguyen * 1 Mauro Pastore * 1 Rudy Skerk * 2 footnotetext: * Equal contribution 1 The Abdus Salam International Centre for Theoretical Physics (ICTP), Strada Costiera 11, 34151 Trieste, Italy 2 International School for Advanced Studies (SISSA), Via Bonomea 265, 34136 Trieste, Italy.

Abstract

For three decades statistical mechanics has been providing a framework to analyse neural networks. However, the theoretically tractable models, e.g., perceptrons, random features models and kernel machines, or multi-index models and committee machines with few neurons, remained simple compared to those used in applications. In this paper we help reducing the gap between practical networks and their theoretical understanding through a statistical physics analysis of the supervised learning of a two-layer fully connected network with generic weight distribution and activation function, whose hidden layer is large but remains proportional to the inputs dimension. This makes it more realistic than infinitely wide networks where no feature learning occurs, but also more expressive than narrow ones or with fixed inner weights. We focus on the Bayes-optimal learning in the teacher-student scenario, i.e., with a dataset generated by another network with the same architecture. We operate around interpolation, where the number of trainable parameters and of data are comparable and feature learning emerges. Our analysis uncovers a rich phenomenology with various learning transitions as the number of data increases. In particular, the more strongly the features (i.e., hidden neurons of the target) contribute to the observed responses, the less data is needed to learn them. Moreover, when the data is scarce, the model only learns non-linear combinations of the teacher weights, rather than “specialising” by aligning its weights with the teacher’s. Specialisation occurs only when enough data becomes available, but it can be hard to find for practical training algorithms, possibly due to statistical-to-computational gaps.

Understanding the expressive power and generalisation capabilities of neural networks is not only a stimulating intellectual activity, producing surprising results that seem to defy established common sense in statistics and optimisation (Bartlett et al., 2021), but has important practical implications in cost-benefit planning whenever a model is deployed. E.g., from a fruitful research line that spanned three decades, we now know that deep fully connected Bayesian neural networks with $O(1)$ readout weights and $L_{2}$ regularisation behave as kernel machines (the so-called Neural Network Gaussian processes, NNGPs) in the heavily overparametrised, infinite-width regime (Neal, 1996; Williams, 1996; Lee et al., 2018; Matthews et al., 2018; Hanin, 2023), and so suffer from these models’ limitations. Indeed, kernel machines infer the decision rule by first embedding the data in a fixed a priori feature space, the renowned kernel trick, then operating linear regression/classification over the features. In this respect, they do not learn features (in the sense of statistics relevant for the decision rule) from the data, so they need larger and larger feature spaces and training sets to fit their higher order statistics (Yoon & Oh, 1998; Dietrich et al., 1999; Gerace et al., 2021; Bordelon et al., 2020; Canatar et al., 2021; Xiao et al., 2023).

Many efforts have been devoted to studying Bayesian neural networks beyond this regime. In the so-called proportional regime, when the width is large and proportional to the training set size, recent studies showed how a limited amount of feature learning makes the network equivalent to optimally regularised kernels (Li & Sompolinsky, 2021; Pacelli et al., 2023; Camilli et al., 2023; Cui et al., 2023; Baglioni et al., 2024; Camilli et al., 2025). This could be a consequence of the fully connected architecture, as, e.g., convolutional neural networks learn more informative features (Naveh & Ringel, 2021; Seroussi et al., 2023; Aiudi et al., 2025; Bassetti et al., 2024). Another scenario is the mean-field scaling, i.e., when the readout weights are small: in this case too a Bayesian network can learn features in the proportional regime (Rubin et al., 2024a; van Meegen & Sompolinsky, 2024).

Here instead we analyse a fully connected two-layer Bayesian network trained end-to-end near the interpolation threshold, when the sample size $n$ is scaling like the number of trainable parameters: for input dimension $d$ and width $k$ , both large and proportional, $n=\Theta(d^{2})=\Theta(kd)$ , a regime where non-trivial feature learning can happen. We consider i.i.d. Gaussian input vectors with labels generated by a teacher network with matching architecture, in order to study the Bayes-optimal learning of this neural network target function. Our results thus provide a benchmark for the performance of any model trained on the same dataset.

2 Setting and main results

2.1 Teacher-student setting

We consider supervised learning with a shallow neural network in the classical teacher-student setup (Gardner & Derrida, 1989). The data-generating model, i.e., the teacher (or target function), is thus a two-layer neural network itself, with readout weights ${\mathbf{v}}^{0}∈\mathbb{R}^{k}$ and internal weights ${\mathbf{W}}^{0}∈\mathbb{R}^{k× d}$ , drawn entrywise i.i.d. from $P_{v}^{0}$ and $P^{0}_{W}$ , respectively; we assume $P^{0}_{W}$ to be centred while $P^{0}_{v}$ has mean $\bar{v}$ , and both priors have unit second moment. We denote the whole set of parameters of the target as ${\bm{\theta}}^{0}=({\mathbf{v}}^{0},{\mathbf{W}}^{0})$ . The inputs are i.i.d. standard Gaussian vectors ${\mathbf{x}}_{\mu}∈\mathbb{R}^{d}$ for $\mu≤ n$ . The responses/labels $y_{\mu}$ are drawn from a kernel $P^{0}_{\rm out}$ :

$$

\textstyle y_{\mu}\sim P^{0}_{\rm out}(\,\cdot\mid\lambda^{0}_{\mu}),\quad%

\lambda^{0}_{\mu}:=\frac{1}{\sqrt{k}}{{\mathbf{v}}^{0\intercal}}\sigma(\frac{1%

}{\sqrt{d}}{{\mathbf{W}}^{0}{\mathbf{x}}_{\mu}}). \tag{1}

$$

The kernel can be stochastic or model a deterministic rule if $P^{0}_{\rm out}(y\mid\lambda)=\delta(y-\mathsf{f}^{0}(\lambda))$ for some outer non-linearity $\mathsf{f}^{0}$ . The activation function $\sigma$ is applied entrywise to vectors and is required to admit an expansion in Hermite polynomials with Hermite coefficients $(\mu_{\ell})_{\ell≥ 0}$ , see App. A: $\sigma(x)=\sum_{\ell≥ 0}\frac{\mu_{\ell}}{\ell!}{\rm He}_{\ell}(x)$ . We assume it has vanishing 0th Hermite coefficient, i.e., that it is centred $\mathbb{E}_{z\sim\mathcal{N}(0,1)}\sigma(z)=0$ ; in App. D.5 we relax this assumption. The input/output pairs $\mathcal{D}=\{({\mathbf{x}}_{\mu},y_{\mu})\}_{\mu≤ n}$ form the training set for a student network with matching architecture.

Notice that the readouts ${\mathbf{v}}^{0}$ are only $k$ unknowns in the target compared to the $kd=\Theta(k^{2})$ inner weights ${\mathbf{W}}^{0}$ . Therefore, they can be equivalently considered quenched, i.e., either given and thus fixed in the student network defined below, or unknown and thus learnable, without changing the leading order of the information-theoretic quantities we aim for. E.g., in terms of mutual information per parameter $\frac{1}{kd+k}I(({\mathbf{W}}^{0},{\mathbf{v}}^{0});\mathcal{D})=\frac{1}{kd}I%

({\mathbf{W}}^{0};\mathcal{D}\mid{\mathbf{v}}^{0})+o_{d}(1)$ . Without loss of generality, we thus consider ${\mathbf{v}}^{0}$ quenched and denote it ${\mathbf{v}}$ from now on. This equivalence holds at leading order and at equilibrium only, but not at the dynamical level, the study of which is left for future work.

The Bayesian student learns via the posterior distribution of the weights ${\mathbf{W}}$ given the training data (and ${\mathbf{v}}$ ), defined by

| | $\textstyle dP({\mathbf{W}}\mid\mathcal{D}):=\mathcal{Z}(\mathcal{D})^{-1}dP_{W%

}({\mathbf{W}})\prod_{\mu≤ n}P_{\rm out}\big{(}y_{\mu}\mid\lambda_{\mu}({%

\mathbf{W}})\big{)}$ | |

| --- | --- | --- |

with post-activation $\lambda_{\mu}({\mathbf{W}}):=\frac{1}{\sqrt{k}}{\mathbf{v}}^{∈tercal}\sigma(%

\frac{1}{\sqrt{d}}{{\mathbf{W}}{\mathbf{x}}_{\mu}})$ , the posterior normalisation constant $\mathcal{Z}(\mathcal{D})$ called the partition function, and $P_{W}$ is the prior assumed by the student. From now on, we focus on the Bayes-optimal case $P_{W}=P_{W}^{0}$ and $P_{\rm out}=P_{\rm out}^{0}$ , but the approach can be extended to account for a mismatch.

We aim at evaluating the expected generalisation error of the student. Let $({\mathbf{x}}_{\rm test},y_{\rm test}\sim P_{\rm out}(\,·\mid\lambda^{0}_{%

\rm test}))$ be a fresh sample (not present in $\mathcal{D}$ ) drawn using the teacher, where $\lambda_{\rm test}^{0}$ is defined as in (1) with ${\mathbf{x}}_{\mu}$ replaced by ${\mathbf{x}}_{\rm test}$ (and similarly for $\lambda_{\rm test}({\mathbf{W}})$ ). Given any prediction function $\mathsf{f}$ , the Bayes estimator for the test response reads $\hat{y}^{\mathsf{f}}({\mathbf{x}}_{\rm test},{\mathcal{D}}):=\langle\mathsf{f}%

(\lambda_{\rm test}({\mathbf{W}}))\rangle$ , where the expectation $\langle\,·\,\rangle:=\mathbb{E}[\,·\mid\mathcal{D}]$ is w.r.t. the posterior $dP({\mathbf{W}}\mid\mathcal{D})$ . Then, for a performance measure $\mathcal{C}:\mathbb{R}×\mathbb{R}\mapsto\mathbb{R}_{≥ 0}$ the Bayes generalisation error is

$$

\displaystyle\varepsilon^{\mathcal{C},\mathsf{f}}:=\mathbb{E}_{{\bm{\theta}}^{%

0},{\mathcal{D}},{\mathbf{x}}_{\rm test},y_{\rm test}}\mathcal{C}\big{(}y_{\rm

test%

},\big{\langle}\mathsf{f}(\lambda_{\rm test}({\mathbf{W}}))\big{\rangle}\big{)}. \tag{2}

$$

An important case is the square loss $\mathcal{C}(y,\hat{y})=(y-\hat{y})^{2}$ with the choice $\mathsf{f}(\lambda)=∈t dy\,y\,P_{\rm out}(y\mid\lambda)=:\mathbb{E}[y\mid\lambda]$ . The Bayes-optimal mean-square generalisation error follows:

$$

\displaystyle\varepsilon^{\rm opt} \displaystyle:=\mathbb{E}_{{\bm{\theta}}^{0},{\mathcal{D}},{\mathbf{x}}_{\rm

test%

},y_{\rm test}}\big{(}y_{\rm test}-\big{\langle}\mathbb{E}[y\mid\lambda_{\rm

test%

}({\mathbf{W}})]\big{\rangle}\big{)}^{2}. \tag{3}

$$

Our main example will be the case of linear readout with Gaussian label noise: $P_{\rm out}(y\mid\lambda)=\exp(-\frac{1}{2\Delta}(y-\lambda)^{2})/\sqrt{2\pi\Delta}$ . In this case, the generalisation error $\varepsilon^{\rm opt}$ takes a simpler form for numerical evaluation than (3), thanks to the concentration of “overlaps” entering it, see App. C.

We study the challenging extensive-width regime with quadratically many samples, i.e., a large size limit

$$

\displaystyle d,k,n\to+\infty\quad\text{with}\quad k/d\to\gamma,\quad n/d^{2}%

\to\alpha. \tag{4}

$$

We denote this joint $d,k,n$ limit with these rates by “ ${\lim}$ ”.

In order to access $\varepsilon^{\mathcal{C},\mathsf{f}},\varepsilon^{\rm opt}$ and other relevant quantities, one can tackle the computation of the average log-partition function, or free entropy in statistical physics language:

$$

\textstyle f_{n}:=\frac{1}{n}\mathbb{E}_{{\bm{\theta}}^{0},\mathcal{D}}\ln%

\mathcal{Z}(\mathcal{D}). \tag{5}

$$

The mutual information between teacher weights and the data is related to the free entropy $f_{n}$ , see App. F. E.g., in the case of linear readout with Gaussian label noise we have $\lim\frac{1}{kd}I({\mathbf{W}}^{0};\mathcal{D}\mid{\mathbf{v}})=-\frac{\alpha}%

{\gamma}\lim f_{n}-\frac{\alpha}{2\gamma}\ln(2\pi e\Delta)$ . Considering the mutual information per parameter allows us to interpret $\alpha$ as a sort of signal-to-noise ratio, so that the mutual information defined in this way increases with it.

Notations: Bold is for vectors and matrices; $d$ is the input dimension, $k$ the width of the hidden layer, $n$ the size of the training set $\mathcal{D}$ , with asymptotic ratios given by (4); ${\mathbf{A}}^{\circ\ell}$ is the Hadamard power of a matrix; for a vector ${\mathbf{v}}$ , $({\mathbf{v}})$ is the diagonal matrix ${\rm diag}({\mathbf{v}})$ ; $(\mu_{\ell})$ are the Hermite coefficients of the activation function $\sigma(x)=\sum_{\ell≥ 0}\frac{\mu_{\ell}}{\ell!}{\rm He}_{\ell}(x)$ ; the norm $\|\,·\,\|$ for vectors and matrices is the Frobenius norm.

2.2 Main results

The aforementioned setting is related to the recent paper Maillard et al. (2024a), with two major differences: said work considers Gaussian distributed weights and quadratic activation. These hypotheses allow numerous simplifications in the analysis, exploited in a series of works Du & Lee (2018); Soltanolkotabi et al. (2019); Venturi et al. (2019); Sarao Mannelli et al. (2020); Gamarnik et al. (2024); Martin et al. (2024); Arjevani et al. (2025). Thanks to this, Maillard et al. (2024a) maps the learning task onto a generalised linear model (GLM) where the goal is to infer a Wishart matrix from linear observations, which is analysable using known results on the GLM Barbier et al. (2019) and matrix denoising Barbier & Macris (2022); Maillard et al. (2022); Pourkamali et al. (2024); Semerjian (2024).

Our main contribution is a statistical mechanics framework for characterising the prediction performance of shallow Bayesian neural networks, able to handle arbitrary activation functions and different distributions of i.i.d. weights, both ingredients playing an important role for the phenomenology.

The theory we derive draws a rich picture with various learning transitions when tuning the sample rate $\alpha≈ n/d^{2}$ . For low $\alpha$ , feature learning occurs because the student tunes its weights to match non-linear combinations of the teacher’s, rather than aligning to those weights themselves. This phase is universal in the (centred, with unit variance) law of the i.i.d. teacher inner weights: our numerics obtained both with binary and Gaussian inner weights match well the theory, which does not depend on this prior here. When increasing $\alpha$ , strong feature learning emerges through specialisation phase transitions, where the student aligns some of its weights with the actual teacher’s ones. In particular, when the readouts ${\mathbf{v}}$ in the target function have a non-trivial distribution, a whole sequence of specialisation transitions occurs as $\alpha$ grows, for the following intuitive reason. Different features in the data are related to the weights of the teacher neurons, $({\mathbf{W}}^{0}_{j}∈\mathbb{R}^{d})_{j≤ k}$ . The strength with which the responses $(y_{\mu})$ depend on the feature ${\mathbf{W}}_{j}^{0}$ is tuned by the corresponding readout through $|v_{j}|$ , which plays the role of a feature-dependent “signal-to-noise ratio”. Therefore, features/hidden neurons $j∈[k]$ corresponding to the largest readout amplitude $\max\{|v_{j}|\}$ are learnt first by the student when increasing $\alpha$ (in the sense that the teacher-student overlap ${\mathbf{W}}^{∈tercal}_{j}{\mathbf{W}}^{0}_{j}/d>o_{d}(1)$ ), then features with the second largest amplitude are, and so on. If the readouts are continuous, an infinite sequence of specialisation transitions emerges in the limit (4). On the contrary, if the readouts are homogeneous (i.e. take a unique value), then a single transition occurs where almost all neurons of the student specialise jointly (possibly up to a vanishing fraction). We predict specialisation transitions to occur for binary inner weights and generic activation, or for Gaussian ones and more-than-quadratic activation. We provide a theoretical description of these learning transitions and identify the order parameters (sufficient statistics) needed to deduce the generalisation error through scalar equations.

The picture that emerges is connected to recent findings in the context of extensive-rank matrix denoising Barbier et al. (2025). In that model, a recovery transition was also identified, separating a universal phase (i.e., independent of the signal prior), from a factorisation phase akin to specialisation in the present context. We believe that this picture and the one found in the present paper are not just similar, but a manifestation of the same fundamental mechanism inherent to the extensive-rank of the matrices involved. Indeed, matrix denoising and neural networks share features with both matrix models Kazakov (2000); Brézin et al. (2016); Anninos & Mühlmann (2020) and planted mean-field spin glasses Nishimori (2001); Zdeborová & and (2016). This mixed nature requires blending techniques from both fields to tackle them. Consequently, the approach developed in Sec. 4 based on the replica method Mezard et al. (1986) is non-standard, as it crucially relies on the Harish Chandra–Itzykson–Zuber (HCIZ), or “spherical”, integral used in matrix models Itzykson & Zuber (1980); Matytsin (1994); Guionnet & Zeitouni (2002). Mixing spherical integration and the replica method has been previously attempted in Schmidt (2018); Barbier & Macris (2022) for matrix denoising, both papers yielding promising but quantitatively inaccurate or non-computable results. Another attempt to exploit a mean-field technique for matrix denoising (in that case a high-temperature expansion) is Maillard et al. (2022), which suffers from similar limitations. The more quantitative answer from Barbier et al. (2025) was made possible precisely thanks to the understanding that the problem behaves more as a matrix model or as a planted mean-field spin glass depending on the phase in which it lives. The two phases could then be treated separately and then joined using an appropriate criterion to locate the transition.

It would be desirable to derive a unified theory able to describe the whole phase diagram based on a single formalism. This is what the present paper provides through a principled combination of spherical integration and the replica method, yielding predictive formulas that are easy to evaluate. It is important to notice that the presence of the HCIZ integral, which is a high-dimensional matrix integral, in the replica formula presented in Result 2.1 suggests that effective one-body problems are not enough to capture alone the physics of the problem, as it is usually the case in standard mean-field inference and spin glass models. Indeed, the appearance of effective one-body problems to describe complex statistical models is usually related to the asymptotic decoupling of the finite marginals of the variables in the problem at hand in terms of products of the single-variable marginals. Therefore, we do not expect a standard cavity (or leave-one-out) approach based on single-variable extraction to be exact, while it is usually showed that the replica and cavity approaches are equivalent in mean-field models Mezard et al. (1986). This may explain why the approximate message-passing algorithms proposed in Parker et al. (2014); Krzakala et al. (2013); Kabashima et al. (2016) are, as stated by the authors, not properly converging nor able to match their corresponding theoretical predictions based on the cavity method. Algorithms for extensive-rank systems should therefore combine ingredients from matrix denoising and standard message-passing, reflecting their hybrid mean-field/matrix model nature.

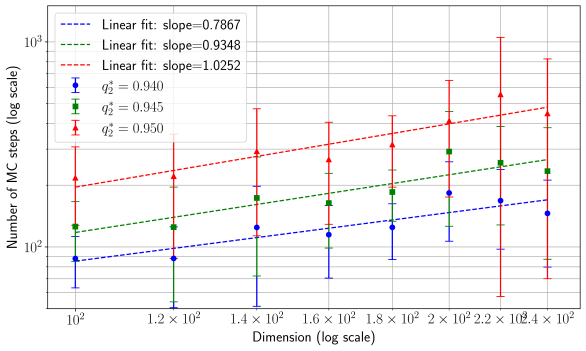

In order to face this, we adapt the GAMP-RIE (generalised approximate message-passing with rotational invariant estimator) introduced in Maillard et al. (2024a) for the special case of quadratic activation, to accommodate a generic activation function $\sigma$ . By construction, the resulting algorithm described in App. H cannot find the specialisation solution, i.e., a solution where at least $\Theta(k)$ neurons align with the teacher’s. Nevertheless, it matches the performance associated with the so-called universal solution/branch of our theory for all $\alpha$ , which describes a solution with overlap ${\mathbf{W}}^{∈tercal}_{j}{\mathbf{W}}^{0}_{j}/d>o_{d}(1)$ for at most $o(k)$ neurons. As a side investigation, we show empirically that the specialisation solution is potentially hard to reach with popular algorithms for some target functions: the algorithms we tested either fail to find it and instead get stuck in a sub-optimal glassy phase (Metropolis-Hastings sampling for the case of binary inner weights), or may find it but in a training time increasing exponentially with $d$ (ADAM Kingma & Ba (2017) and Hamiltonian Monte Carlo (HMC) for the case of Gaussian weights). It would thus be interesting to settle whether GAMP-RIE has the best prediction performance achievable by a polynomial-time learner when $n=\Theta(d^{2})$ for such targets. For specific choices of the distribution of the readout weights, the evidence of hardness is not conclusive and requires further investigation.

Replica free entropy

Our first result is a tractable approximation for the free entropy. To state it, let us introduce two functions $\mathcal{Q}_{W}(\mathsf{v}),\hat{\mathcal{Q}}_{W}(\mathsf{v})∈[0,1]$ for $\mathsf{v}∈{\rm Supp}(P_{v})$ , which are non-decreasing in $|\mathsf{v}|$ . Let (see (43) in appendix for a more explicit expression of $g$ )

$$

\textstyle g(x):=\sum_{\ell\geq 3}x^{\ell}{\mu_{\ell}^{2}}/{\ell!}, \textstyle q_{K}(x,\mathcal{Q}_{W}):=\mu_{1}^{2}+{\mu_{2}^{2}}\,x/2+\mathbb{E}%

_{v\sim P_{v}}[v^{2}g(\mathcal{Q}_{W}(v))], \textstyle r_{K}:=\mu_{1}^{2}+{\mu_{2}^{2}}(1+\gamma\bar{v}^{2})/2+g(1), \tag{1}

$$

and the auxiliary potentials

| | $\textstyle\psi_{P_{W}}(x):=\mathbb{E}_{w^{0},\xi}\ln\mathbb{E}_{w}\exp(-\frac{%

1}{2}xw^{2}+xw^{0}w+\sqrt{x}\xi w),$ | |

| --- | --- | --- |

where $w^{0},w\sim P_{W}$ and $\xi,u_{0},u\sim{\mathcal{N}}(0,1)$ all independent. Moreover, $\mu_{{\mathbf{Y}}(x)}$ is the limiting (in $d→∞$ ) spectral density of data ${\mathbf{Y}}(x)=\sqrt{x/(kd)}\,{\mathbf{S}}^{0}+{\mathbf{Z}}$ in the denoising problem of the matrix ${\mathbf{S}}^{0}:={\mathbf{W}}^{0∈tercal}({\mathbf{v}}){\mathbf{W}}^{0}∈%

\mathbb{R}^{d× d}$ , with ${\mathbf{Z}}$ a standard GOE matrix (a symmetric matrix whose upper triangular part has i.i.d. entries from $\mathcal{N}(0,(1+\delta_{ij})/d)$ ). Denote the minimum mean-square error associated with this denoising problem as ${\rm mmse}_{S}(x)=\lim_{d→∞}d^{-2}\mathbb{E}\|{\mathbf{S}}^{0}-\mathbb{%

E}[{\mathbf{S}}^{0}\mid{\mathbf{Y}}(x)]\|^{2}$ (whose explicit definition is given in App. D.3) and its functional inverse by ${\rm mmse}_{S}^{-1}$ (which exists by monotonicity).

**Result 2.1 (Replica symmetric free entropy)**

*Let the functional $\tau(\mathcal{Q}_{W}):={\rm mmse}_{S}^{-1}(1-\mathbb{E}_{v\sim P_{v}}[v^{2}%

\mathcal{Q}_{W}(v)^{2}])$ . Given $(\alpha,\gamma)$ , the replica symmetric (RS) free entropy approximating ${\lim}\,f_{n}$ in the scaling limit (4) is ${\rm extr}\,f_{\rm RS}^{\alpha,\gamma}$ with RS potential $f^{\alpha,\gamma}_{\rm RS}=f^{\alpha,\gamma}_{\rm RS}(q_{2},\hat{q}_{2},%

\mathcal{Q}_{W},\hat{\mathcal{Q}}_{W})$ given by

$$

\textstyle f^{\alpha,\gamma}_{\rm RS} \textstyle:=\psi_{P_{\text{out}}}(q_{K}(q_{2},\mathcal{Q}_{W});r_{K})+\frac{1}%

{4\alpha}(1+\gamma\bar{v}^{2}-q_{2})\hat{q}_{2} \textstyle\qquad+\frac{\gamma}{\alpha}\mathbb{E}_{v\sim P_{v}}\big{[}\psi_{P_{%

W}}(\hat{\mathcal{Q}}_{W}(v))-\frac{1}{2}\mathcal{Q}_{W}(v)\hat{\mathcal{Q}}_{%

W}(v)\big{]} \textstyle\qquad+\frac{1}{\alpha}\big{[}\iota(\tau(\mathcal{Q}_{W}))-\iota(%

\hat{q}_{2}+\tau(\mathcal{Q}_{W}))\big{]}. \tag{6}

$$

The extremisation operation in ${\rm extr}\,f^{\alpha,\gamma}_{\rm RS}$ selects a solution $(q_{2}^{*},\hat{q}_{2}^{*},\mathcal{Q}_{W}^{*},\hat{\mathcal{Q}}_{W}^{*})$ of the saddle point equations, obtained from $∇ f^{\alpha,\gamma}_{\rm RS}=\mathbf{0}$ , which maximises the RS potential.*

The extremisation of $f_{\rm RS}^{\alpha,\gamma}$ yields the system (76) in the appendix, solved numerically in a standard way (see provided code).

The order parameters $q_{2}^{*}$ and $\mathcal{Q}_{W}^{*}$ have a precise physical meaning that will be clear from the discussion in Sec. 4. In particular, $q_{2}^{*}$ is measuring the alignment of the student’s combination of weights ${\mathbf{W}}^{∈tercal}({\mathbf{v}}){\mathbf{W}}/\sqrt{k}$ with the corresponding teacher’s ${\mathbf{W}}^{0∈tercal}({\mathbf{v}}){\mathbf{W}}^{0}/\sqrt{k}$ , which is non trivial with $n=\Theta(d^{2})$ data even when the student is not able to reconstruct ${\mathbf{W}}^{0}$ itself (i.e., to specialise). On the other hand, $\mathcal{Q}_{W}^{*}(\mathsf{v})$ measures the overlap between weights $\{{\mathbf{W}}_{i}^{0/·}\mid v_{i}=\mathsf{v}\}$ (a different treatment for weights connected to different $\mathsf{v}$ ’s is needed because, as discussed earlier, the student will learn first –with less data– weights connected to larger readouts). A non-trivial $\mathcal{Q}_{W}^{*}(\mathsf{v})≠ 0$ signals that the student learns something about ${\mathbf{W}}^{0}$ . Thus, the specialisation transitions are naturally defined, based on the extremiser of $f_{\rm RS}^{\alpha,\gamma}$ in the result above, as $\alpha_{\rm sp,\mathsf{v}}(\gamma):=\sup\,\{\alpha\mid\mathcal{Q}^{*}_{W}(%

\mathsf{v})=0\}$ . For non-homogeneous readouts, we call the specialisation transition $\alpha_{\rm sp}(\gamma):=\min_{\mathsf{v}}\alpha_{\rm sp,\mathsf{v}}(\gamma)$ . In this article, we report cases where the inner weights are discrete or Gaussian distributed. For activations different than a pure quadratic, $\sigma(x)≠ x^{2}$ , we predict the transition to occur in both cases (see Fig. 1 and 2). Then, $\alpha<\alpha_{\rm sp}$ corresponds to the universal phase, where the free entropy is independent of the choice of the prior over the inner weights. Instead, $\alpha>\alpha_{\rm sp}$ is the specialisation phase where the prior $P_{W}$ matters, and the student aligns a finite fraction of its weights $({\mathbf{W}}_{j})_{j≤ k}$ with those of the teacher, which lowers the generalisation error.

Let us comment on why the special case $\sigma(x)=x^{2}$ with $P_{W}=\mathcal{N}(0,1)$ could be treated exactly with known techniques (spherical integration) in Maillard et al. (2024a); Xu et al. (2025). With $\sigma(x)=x^{2}$ the responses $(y_{\mu})$ depend on ${\mathbf{W}}^{0∈tercal}({\mathbf{v}}){\mathbf{W}}^{0}$ only. If ${\mathbf{v}}$ has finite fractions of equal entries, a large invariance group prevents learning ${\mathbf{W}}^{0}$ and thus specialisation. Take as example ${\mathbf{v}}=(1,...,1,-1,...,-1)$ with the first half filled with ones. Then, the responses are indistinguishable from those obtained using a modified matrix ${\mathbf{W}}^{0∈tercal}{\mathbf{U}}^{∈tercal}({\mathbf{v}}){\mathbf{U}}{%

\mathbf{W}}^{0}$ where ${\mathbf{U}}=(({\mathbf{U}}_{1},\mathbf{0}_{d/2})^{∈tercal},(\mathbf{0}_{d/2%

},{\mathbf{U}}_{2})^{∈tercal})$ is block diagonal with $d/2× d/2$ orthogonal ${\mathbf{U}}_{1},{\mathbf{U}}_{2}$ and zeros on off-diagonal blocks. The Gaussian prior $P_{W}$ is rotationally invariant and, thus, does not break any invariance, so ${\mathbf{U}}_{1},{\mathbf{U}}_{2}$ are arbitrary. The resulting invariance group has an $\Theta(d^{2})$ entropy (the logarithm of its volume), which is comparable to the leading order of the free entropy. Therefore, it cannot be broken using infinitesimal perturbations (or “side information”) and, consequently, prevents specialisation. This reasoning can be extended to $P_{v}$ with a continuous support, as long as we can discretise it with a finite (possibly large) number of bins, take the limit (4) first, and then take the continuum limit of the binning afterwards. However, the picture changes if the prior breaks rotational invariance; e.g., with Rademacher $P_{W}$ , only signed permutation invariances survive, a symmetry with negligible entropy $o(d^{2})$ which, consequently, does not change the limiting thermodynamic (information-theoretic) quantities. The large rotational invariance group is the reason why $\sigma(x)=x^{2}$ with $P_{W}=\mathcal{N}(0,1)$ can be treated using the HCIZ integral alone. Even when $P_{W}=\mathcal{N}(0,1)$ , the presence of any other term in the series expansion of $\sigma$ breaks invariances with large entropy: specialisation can then occur, thus requiring our theory. We mention that our theory seems inexact When solving the extremisation of (6) for $\sigma(x)=x^{2}$ with $P_{W}=\mathcal{N}(0,1)$ , we noticed that the difference between the RS free entropy of the correct universal solution, $\mathcal{Q}_{W}(\mathsf{v})=0$ , and the maximiser, predicting $\mathcal{Q}_{W}(\mathsf{v})>0$ , does not exceed $≈ 1\%$ : the RS potential is very flat as a function of $\mathcal{Q}_{W}$ . We thus cannot discard that the true maximiser of the potential is at $\mathcal{Q}_{W}(\mathsf{v})=0$ , and that we observe otherwise due to numerical errors. Indeed, evaluating the spherical integrals $\iota(\,·\,)$ in $f^{\alpha,\gamma}_{\rm RS}$ is challenging, in particular when $\gamma$ is small. Actually, for $\gamma\gtrsim 1$ we do get that $\mathcal{Q}_{W}(\mathsf{v})=0$ is always the maximiser for $\sigma(x)=x^{2}$ with $P_{W}=\mathcal{N}(0,1)$ . for $\sigma(x)=x^{2}$ with $P_{W}=\mathcal{N}(0,1)$ if applied naively, as it predicts ${\mathcal{Q}}_{W}(\mathsf{v})>0$ and therefore does not recover the rigorous result of Xu et al. (2025) (yet, it predicts a free entropy less than $1\%$ away from the truth). Nevertheless, the solution of Maillard et al. (2024a); Xu et al. (2025) is recovered from our equations by enforcing a vanishing overlap $\mathcal{Q}_{W}(\mathsf{v})=0$ , i.e., via its universal branch.

Bayes generalisation error

Another main result is an approximate formula for the generalisation error. Let $({\mathbf{W}}^{a})_{a≥ 1}$ be i.i.d. samples from the posterior $dP(\,·\mid\mathcal{D})$ and ${\mathbf{W}}^{0}$ the teacher’s weights. Assuming that the joint law of $(\lambda_{\rm test}({\mathbf{W}}^{a},{\mathbf{x}}_{\rm test}))_{a≥ 0}=:(%

\lambda^{a})_{a≥ 0}$ for a common test input ${\mathbf{x}}_{\rm test}∉\mathcal{D}$ is a centred Gaussian, our framework predicts its covariance. Our approximation for the Bayes error follows.

**Result 2.2 (Bayes generalisation error)**

*Let $q_{K}^{*}=q_{K}(q_{2}^{*},\mathcal{Q}_{W}^{*})$ where $(q_{2}^{*},\hat{q}_{2}^{*},\mathcal{Q}_{W}^{*},\hat{\mathcal{Q}}_{W}^{*})$ is an extremiser of $f_{\rm RS}^{\alpha,\gamma}$ as in Result 2.1. Assuming joint Gaussianity of the post-activations $(\lambda^{a})_{a≥ 0}$ , in the scaling limit (4) their mean is zero and their covariance is approximated by $\mathbb{E}\lambda^{a}\lambda^{b}=q_{K}^{*}+(r_{K}-q_{K}^{*})\delta_{ab}=:(%

\mathbf{\Gamma})_{ab}$ , see App. C. Assume $\mathcal{C}$ has the series expansion $\mathcal{C}(y,\hat{y})=\sum_{i≥ 0}c_{i}(y)\hat{y}^{i}$ . The Bayes error $\smash{\lim\,\varepsilon^{\mathcal{C},\mathsf{f}}}$ is approximated by

| | $\textstyle\mathbb{E}_{(\lambda^{a})\sim\mathcal{N}(\mathbf{0},\mathbf{\Gamma})%

}\mathbb{E}_{y_{\rm test}\sim P_{\rm out}(\,·\mid\lambda^{0})}\sum_{i≥ 0%

}c_{i}(y_{\rm test}(\lambda^{0}))\prod_{a=1}^{i}\mathsf{f}(\lambda^{a}).$ | |

| --- | --- | --- |

Letting $\mathbb{E}[\,·\mid\lambda]=∈t dy\,(\,·\,)\,P_{\rm out}(y\mid\lambda)$ , the Bayes-optimal mean-square generalisation error $\smash{\lim\,\varepsilon^{\rm opt}}$ is approximated by

$$

\textstyle\mathbb{E}_{\lambda^{0},\lambda^{1}}\big{(}\mathbb{E}[y^{2}\mid%

\lambda^{0}]-\mathbb{E}[y\mid\lambda^{0}]\mathbb{E}[y\mid\lambda^{1}]\big{)}. \tag{7}

$$*

This result assumed that $\mu_{0}=0$ ; see App. D.5 if this is not the case. Results 2.1 and 2.2 provide an effective theory for the generalisation capabilities of Bayesian shallow networks with generic activation. We call these “results” because, despite their excellent match with numerics, we do not expect these formulas to be exact: their derivation is based on an unconventional mix of spin glass techniques and spherical integrals, and require approximations in order to deal with the fact that the degrees of freedom to integrate are large matrices of extensive rank. This is in contrast with simpler (vector) models (perceptrons, multi-index models, etc) where replica formulas are routinely proved correct, see e.g. Barbier & Macris (2019); Barbier et al. (2019); Aubin et al. (2018).

|

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Optimal Epsilon vs. Alpha

### Overview

The image is a line chart displaying the relationship between "alpha" (α) on the x-axis and "epsilon optimal" (εopt) on the y-axis. There are two distinct data series plotted, one in blue and one in red, each with different markers and line styles. Error bars are present on some data points.

### Components/Axes

* **X-axis:** Labeled "α" (alpha), ranging from 0 to 7, with tick marks at each integer value.

* **Y-axis:** Labeled "εopt" (epsilon optimal), ranging from 0.02 to 0.10. Tick marks are present at 0.02, 0.04, 0.06, 0.08, 0.025, 0.050, 0.075, and 0.100.

* **Data Series 1 (Blue):** A solid blue line with circular markers. A dashed blue line with 'x' markers is also present, closely following the solid line.

* **Data Series 2 (Red):** A solid red line with circular markers. A dashed red line with triangle markers is also present, closely following the solid line.

* **Error Bars:** Vertical error bars are present on some data points for both series.

### Detailed Analysis

* **Blue Series (Solid Line, Circles):**

* Trend: Decreases rapidly from α = 0 to α = 2, then plateaus and remains relatively constant.

* Data Points:

* α = 0: εopt ≈ 0.08

* α = 1: εopt ≈ 0.04

* α = 2: εopt ≈ 0.025

* α = 7: εopt ≈ 0.018

* **Blue Series (Dashed Line, Xs):**

* Trend: Similar to the solid blue line, decreasing rapidly and then plateauing.

* Data Points: Follows the solid blue line closely, with values very similar to the solid line.

* **Red Series (Solid Line, Circles):**

* Trend: Starts relatively constant at α = 0, then decreases rapidly around α = 1.5, and plateaus at a low value.

* Data Points:

* α = 0: εopt ≈ 0.102

* α = 1: εopt ≈ 0.100

* α = 2: εopt ≈ 0.04

* α = 3: εopt ≈ 0.02

* α = 7: εopt ≈ 0.01

* **Red Series (Dashed Line, Triangles):**

* Trend: Remains relatively constant around εopt = 0.10.

* Data Points:

* α = 0 to α = 7: εopt ≈ 0.10

* **Error Bars:**

* Present on some data points, indicating the uncertainty in the measurements. The error bars appear to be larger for the red series at higher alpha values (α = 6 and α = 7).

### Key Observations

* The blue series (both solid and dashed) shows a rapid decrease in εopt as α increases from 0 to 2, after which it stabilizes.

* The red series (solid line) shows a sharp drop in εopt around α = 1.5, while the dashed red line remains constant.

* The error bars suggest that the uncertainty in the measurements increases for the red series at higher values of α.

### Interpretation

The chart illustrates how the optimal epsilon value (εopt) changes with respect to alpha (α) for two different scenarios represented by the blue and red series. The blue series indicates a system where the optimal epsilon quickly decreases as alpha increases, suggesting that a smaller epsilon is needed for optimal performance at higher alpha values. The red series, particularly the solid line, shows a critical point around α = 1.5, where the optimal epsilon drops significantly. This could indicate a phase transition or a change in the system's behavior. The dashed red line represents a scenario where the optimal epsilon remains constant regardless of the alpha value. The increasing error bars for the red series at higher alpha values suggest that the system becomes less stable or more sensitive to variations at these points.

</details>

|

<details>

<summary>x2.png Details</summary>

### Visual Description

## Chart: ReLU vs Tanh

### Overview

The image is a chart comparing the performance of ReLU and Tanh activation functions. The x-axis represents a variable denoted as "α", ranging from 0 to 7. Two data series are plotted: ReLU (blue) and Tanh (red). Both series have a solid line representing one type of data and a dashed line representing another. Error bars are present on some data points.

### Components/Axes

* **X-axis:** α, ranging from 0 to 7.

* **Y-axis:** (Implicit) Performance metric, not explicitly labeled.

* **Legend (Top-Right):**

* ReLU (blue)

* Tanh (red)

### Detailed Analysis

* **ReLU (Blue):**

* **Solid Line:** Starts at approximately 1.2 at α=0, decreases rapidly to approximately 0.4 at α=2, and then gradually decreases to approximately 0.25 at α=7. The data points are marked with circles.

* **Dashed Line:** Starts at approximately 1.1 at α=0, decreases rapidly to approximately 0.5 at α=2, and then gradually decreases to approximately 0.3 at α=7. The data points are marked with asterisks.

* **Tanh (Red):**

* **Solid Line:** Starts at approximately 0.8 at α=0, decreases rapidly to approximately 0.1 at α=2, and then gradually decreases to approximately 0.02 at α=7. The data points are marked with circles.

* **Dashed Line:** Starts at approximately 0.8 at α=0, remains relatively constant at approximately 0.75 until α=3, and then has some error bars at α=4. The data points are marked with asterisks.

### Key Observations

* The solid lines for both ReLU and Tanh show a decreasing trend as α increases.

* The dashed line for ReLU is below the solid line.

* The dashed line for Tanh is above the solid line.

* The Tanh solid line decreases more rapidly than the ReLU solid line.

* The Tanh dashed line remains relatively constant.

### Interpretation

The chart compares the performance of ReLU and Tanh activation functions under different conditions, represented by the solid and dashed lines. The x-axis variable "α" likely represents a parameter or condition being varied. The solid lines suggest that both ReLU and Tanh performance decreases as "α" increases, but Tanh decreases more rapidly. The dashed lines represent a different scenario, where ReLU performance is slightly worse than the solid line scenario, while Tanh performance remains relatively constant. The error bars indicate the variability or uncertainty in the data points. The data suggests that the choice between ReLU and Tanh depends on the specific conditions represented by "α" and the solid/dashed lines.

</details>

|

<details>

<summary>x3.png Details</summary>

### Visual Description

## Chart: Algorithm Performance vs. Alpha

### Overview

The image is a chart comparing the performance of four algorithms (ADAM, informative HMC, uninformative HMC, and GAMP-RIE) across varying values of a parameter denoted as alpha (α). The chart displays the performance of each algorithm as a function of alpha, with error bars indicating the uncertainty in the measurements.

### Components/Axes

* **X-axis:** α (alpha), ranging from 0 to 7, with tick marks at every integer value.

* **Y-axis:** The Y-axis is not explicitly labeled, but it represents a performance metric. The values on the Y-axis are not explicitly marked, but the data ranges approximately from 0 to 1.

* **Legend (Top-Right):**

* `* ADAM`: Represented by blue asterisks connected by a solid blue line.

* `o informative HMC`: Represented by blue circles connected by a solid blue line.

* `o uninformative HMC`: Represented by red circles connected by a solid red line.

* `△ GAMP-RIE`: Represented by red triangles connected by a dashed red line.

### Detailed Analysis

* **ADAM (Blue Asterisks, Solid Line):** The performance of ADAM starts high and decreases rapidly as alpha increases from 0 to 1. It then continues to decrease more gradually as alpha increases further, approaching a relatively stable value.

* α = 0: Performance ≈ 0.9

* α = 1: Performance ≈ 0.4

* α = 7: Performance ≈ 0.2

* **Informative HMC (Blue Circles, Solid Line):** The performance of informative HMC mirrors that of ADAM, starting high and decreasing rapidly as alpha increases from 0 to 1. It then continues to decrease more gradually as alpha increases further, approaching a relatively stable value.

* α = 0: Performance ≈ 0.85

* α = 1: Performance ≈ 0.35

* α = 7: Performance ≈ 0.15

* **Uninformative HMC (Red Circles, Solid Line):** The performance of uninformative HMC starts high and decreases rapidly as alpha increases from 0 to 1. It then continues to decrease more gradually as alpha increases further, approaching a relatively stable value.

* α = 0: Performance ≈ 0.7

* α = 1: Performance ≈ 0.15

* α = 7: Performance ≈ 0.02

* **GAMP-RIE (Red Triangles, Dashed Line):** The performance of GAMP-RIE remains relatively constant across all values of alpha.

* α = 0: Performance ≈ 0.7

* α = 7: Performance ≈ 0.7

### Key Observations

* ADAM and informative HMC exhibit similar performance trends, with a rapid decrease in performance as alpha increases from 0 to 1, followed by a more gradual decrease.

* Uninformative HMC shows a similar trend to ADAM and informative HMC, but with a more pronounced initial drop in performance.

* GAMP-RIE maintains a relatively constant performance level across all values of alpha.

* The error bars suggest that the uncertainty in the performance measurements is relatively small for most data points.

### Interpretation

The chart suggests that the performance of ADAM, informative HMC, and uninformative HMC is sensitive to the value of alpha, with higher values of alpha generally leading to lower performance. In contrast, the performance of GAMP-RIE appears to be relatively insensitive to alpha. This could indicate that GAMP-RIE is more robust to changes in this parameter or that it is optimized for a different range of alpha values. The rapid initial drop in performance for the HMC methods suggests that there may be a critical value of alpha beyond which their performance degrades significantly. The error bars provide an indication of the reliability of the performance measurements, with smaller error bars indicating greater confidence in the results.

</details>

|

| --- | --- | --- |

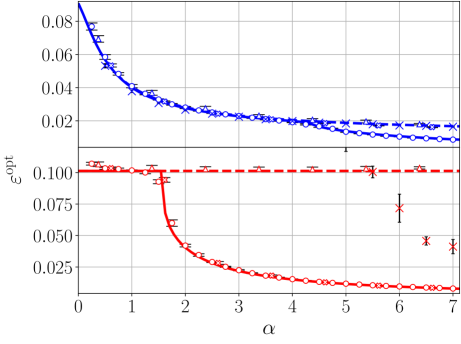

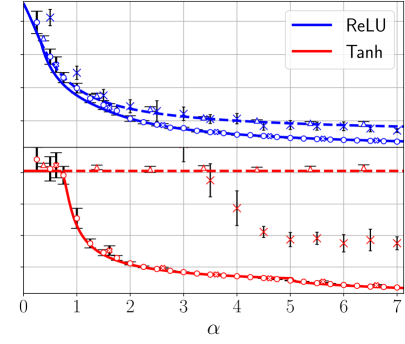

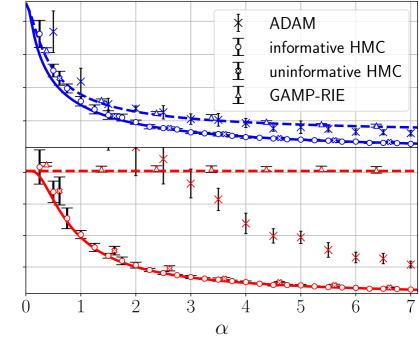

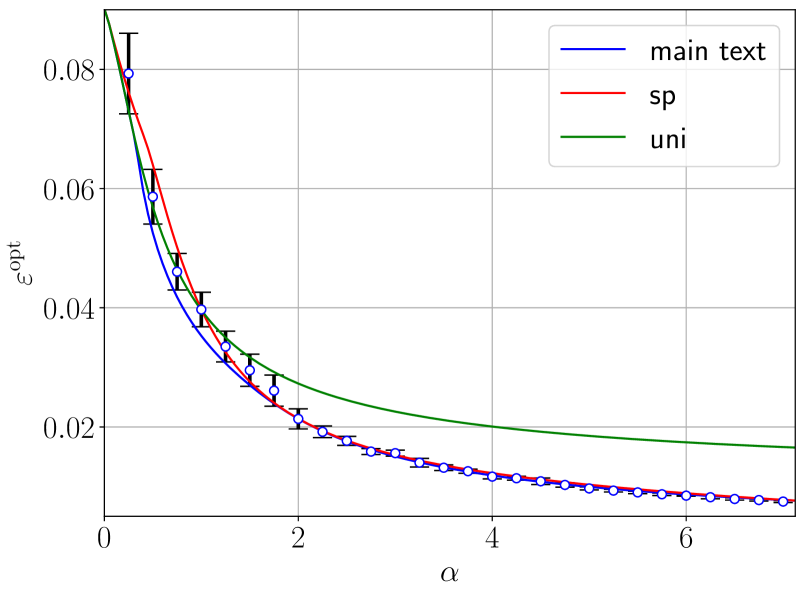

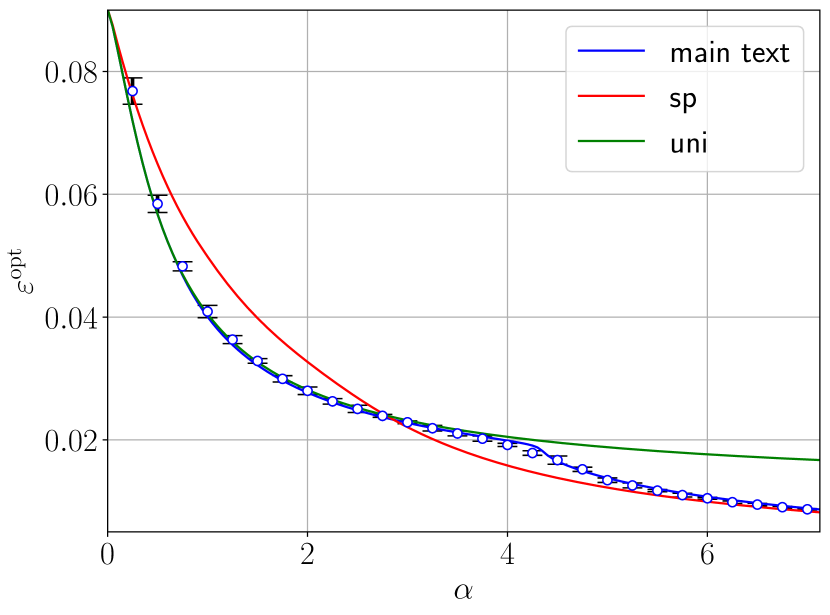

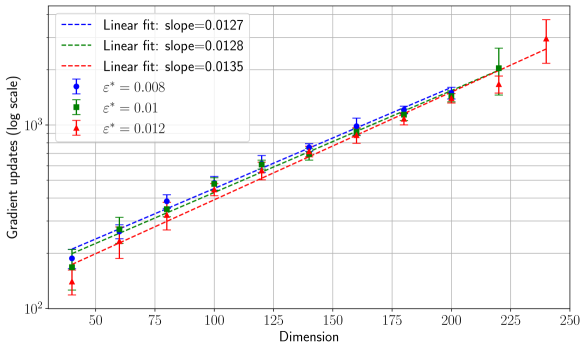

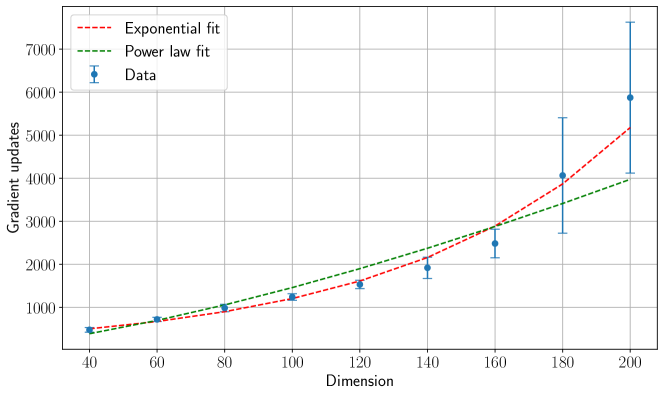

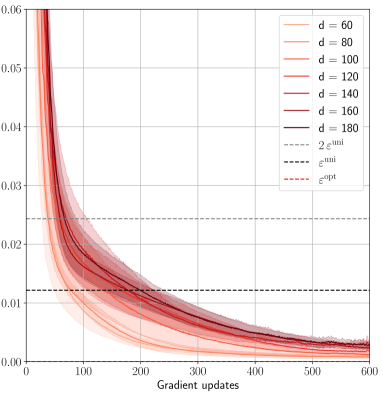

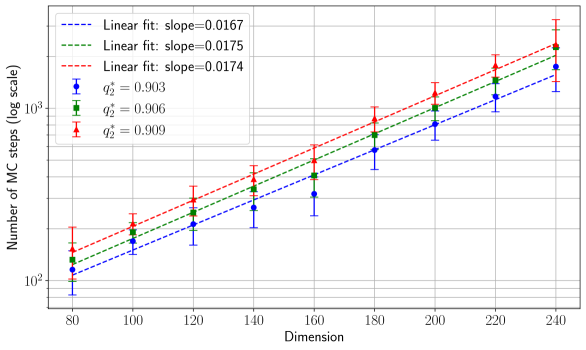

Figure 1: Theoretical prediction (solid curves) of the Bayes-optimal mean-square generalisation error for Gaussian inner weights with ReLU(x) activation (blue curves) and Tanh(2x) activation (red curves), $d=150,\gamma=0.5$ , with linear readout with Gaussian label noise of variance $\Delta=0.1$ and different $P_{v}$ laws. The dashed lines are the theoretical predictions associated with the universal solution, obtained by plugging ${\mathcal{Q}}_{W}(\mathsf{v})=0\ ∀\ \mathsf{v}$ in (6) and extremising w.r.t. $(q_{2},\hat{q}_{2})$ (the curve coincides with the optimal one before the transition $\alpha_{\rm sp}(\gamma)$ ). The numerical points are obtained with Hamiltonian Monte Carlo (HMC) with informative initialisation on the target (empty circles), uninformative, random, initialisation (empty crosses), and ADAM (thin crosses). Triangles are the error of GAMP-RIE (Maillard et al., 2024a) extended to generic activation, obtained by plugging estimator (109) in (3) in appendix. Each point has been averaged over 10 instances of the teacher and training set. Error bars are the standard deviation over instances. The generalisation error for a given training set is evaluated as $\frac{1}{2}\mathbb{E}_{{\mathbf{x}}_{\rm test}\sim\mathcal{N}(0,I_{d})}(%

\lambda_{\rm test}({\mathbf{W}})-\lambda_{\rm test}^{0})^{2}$ , using a single sample ${\mathbf{W}}$ from the posterior for HMC. For ADAM, with batch size fixed to $n/5$ and initial learning rate $0.05$ , the error corresponds to the lowest one reached during training, i.e., we use early stopping based on the minimum test loss over all gradient updates. Its generalisation error is then evaluated at this point and divided by two (for comparison with the theory). The average over ${\mathbf{x}}_{\rm test}$ is computed empirically from $10^{5}$ i.i.d. test samples. We exploit that, for typical posterior samples, the Gibbs error $\varepsilon^{\rm Gibbs}$ defined in (39) in App. C is linked to the Bayes-optimal error as $(\varepsilon^{\rm Gibbs}-\Delta)/2=\varepsilon^{\rm opt}-\Delta$ , see (40) in appendix. To use this formula, we are assuming the concentration of the Gibbs error w.r.t. the posterior distribution, in order to evaluate it from a single sample per instance. Left: Homogeneous readouts $P_{v}=\delta_{1}$ . Centre: 4-points readouts $P_{v}=\frac{1}{4}(\delta_{-3/\sqrt{5}}+\delta_{-1/\sqrt{5}}+\delta_{1/\sqrt{5}%

}+\delta_{3/\sqrt{5}})$ . Right: Gaussian readouts $P_{v}=\mathcal{N}(0,1)$ .

3 Theoretical predictions and numerical experiments

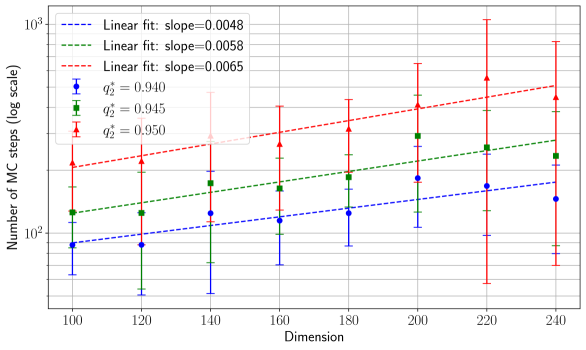

Let us compare our theoretical predictions with simulations. In Fig. 1 and 2, we report the theoretical curves from Result 2.2, focusing on the optimal mean-square generalisation error for networks with different $\sigma$ , with linear readout with Gaussian noise variance $\Delta$ . The Gibbs error divided by $2$ is used to compute the optimal error, see Remark C.2 in App. C for a justification. In what follows, the error attained by ADAM is also divided by two, only for the purpose of comparison.

Figure 1 focuses on networks with Gaussian inner weights, various readout laws, for $\sigma(x)={\rm ReLU}(x)$ and ${\rm Tanh}(2x)$ . Informative (i.e., on the teacher) and uninformative (random) initialisations are used when sampling the posterior by HMC. We also run ADAM, always selecting its best performance over all epochs, and implemented an extension of the GAMP-RIE of Maillard et al. (2024a) for generic activation (see App. H). It can be shown analytically that GAMP-RIE’s generalisation error asymptotically (in $d$ ) matches the prediction of the universal branch of our theory (i.e., associated with $\mathcal{Q}_{W}(\mathsf{v})=0\ ∀\ \mathsf{v}$ ).

For ReLU activation and homogeneous readouts (left panel), informed HMC follows the specialisation branch (the solution of the saddle point equations with $\mathcal{Q}_{W}(\mathsf{v})≠ 0$ for at least one $\mathsf{v}$ ), while with uninformative initialisation it sticks to the universal branch, thus suggesting algorithmic hardness. We shall be back to this matter in the following. We note that the error attained by ADAM (divided by 2), is close to the performance associated with the universal branch, which suggests that ADAM is an effective Gibbs estimator for this $\sigma$ . For Tanh and homogeneous readouts, both the uninformative and informative points lie on the specialisation branch, while ADAM attains an error greater than twice the posterior sample’s generalisation error.

For non-homogeneous readouts (centre and right panels) the points associated with the informative initialisation lie consistently on the specialisation branch, for both ${\rm ReLU}$ and Tanh, while the uninformatively initialised samples have a slightly worse performance for Tanh. Non-homogeneous readouts improves the ADAM performance: for Gaussian readouts and high sampling ratio its half-generalisation error is consistently below the error associated with the universal branch of the theory.

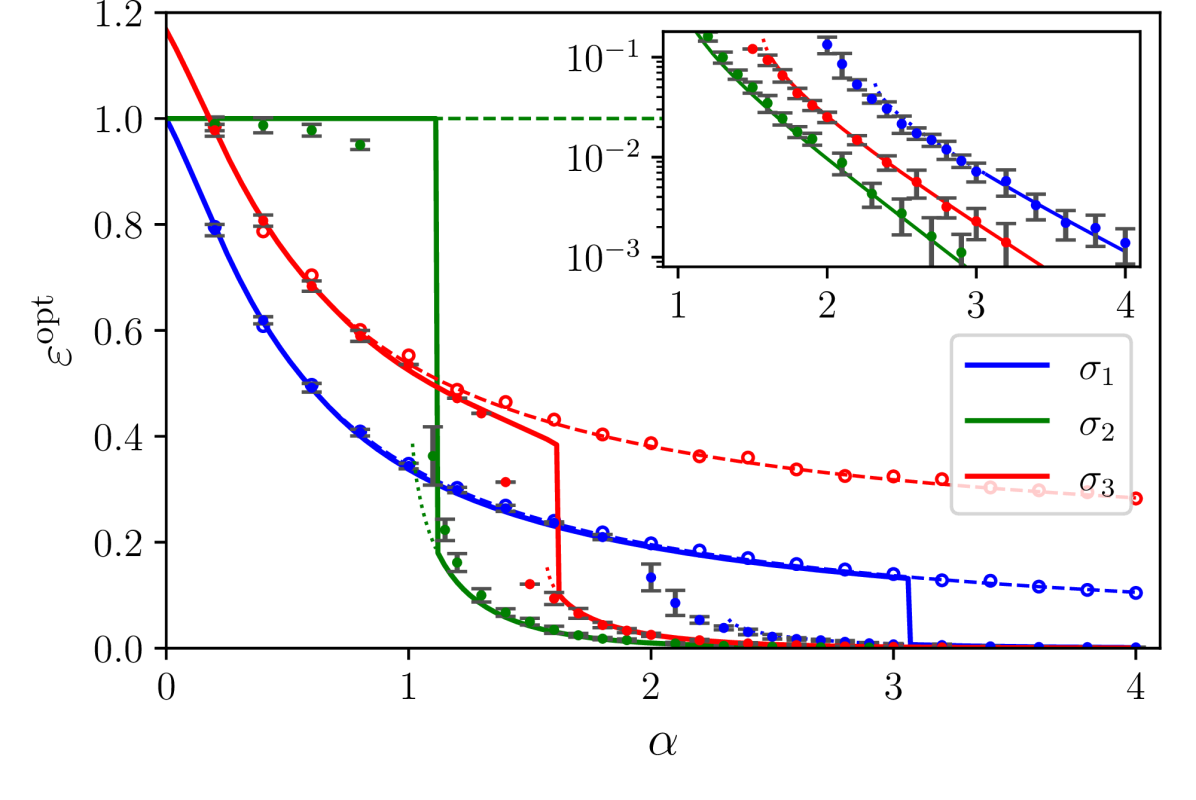

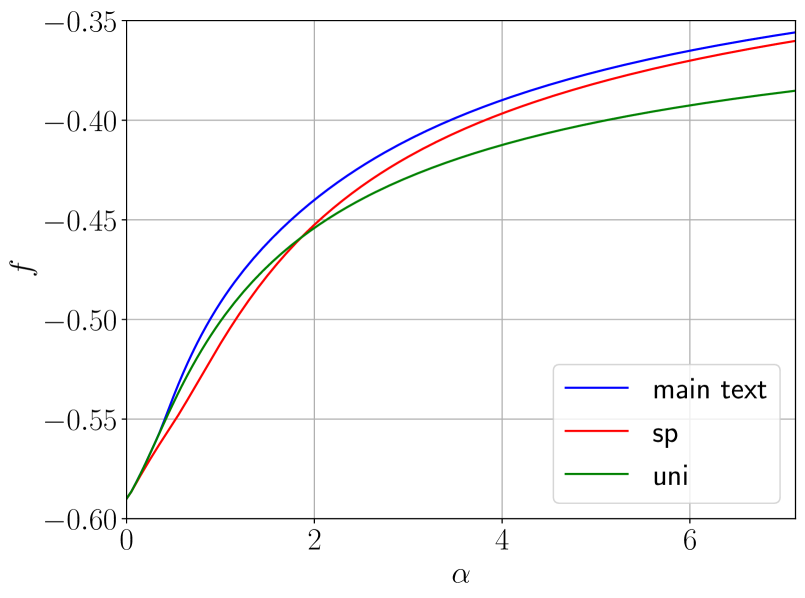

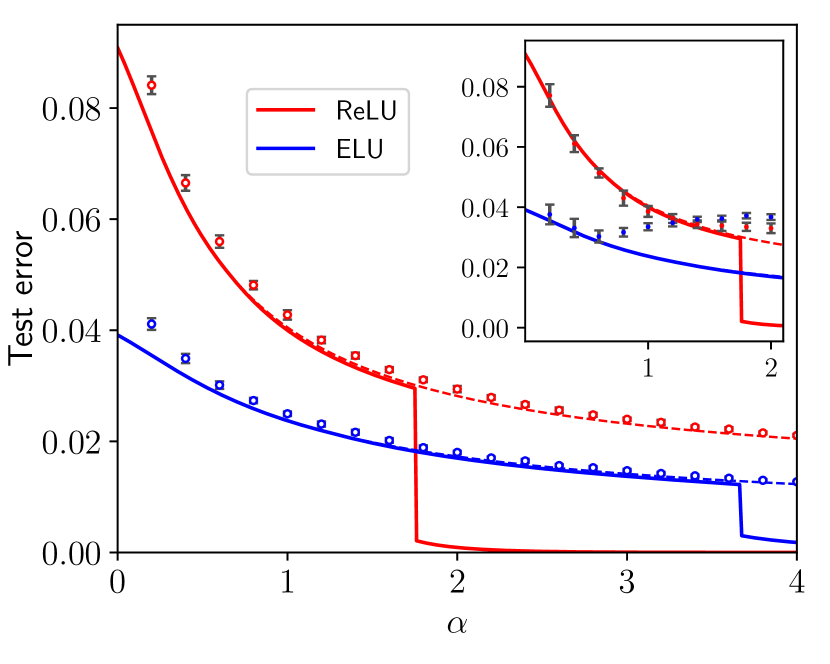

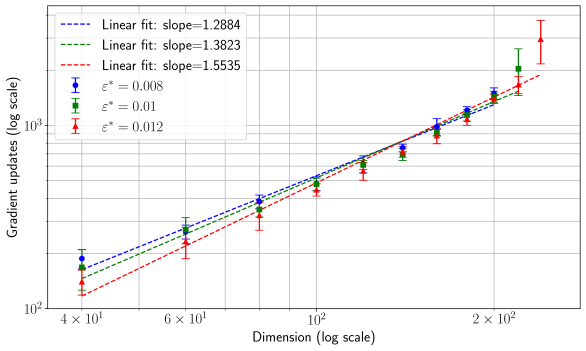

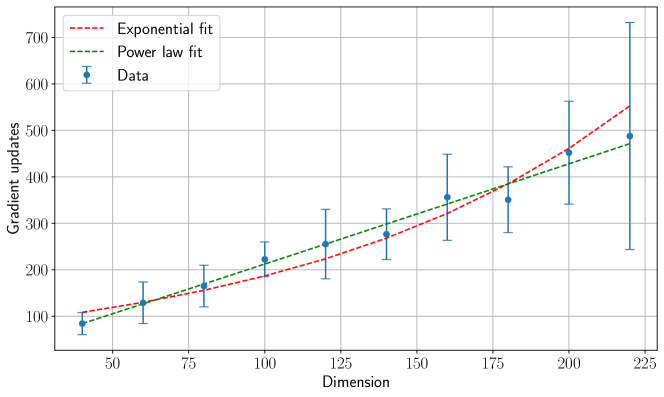

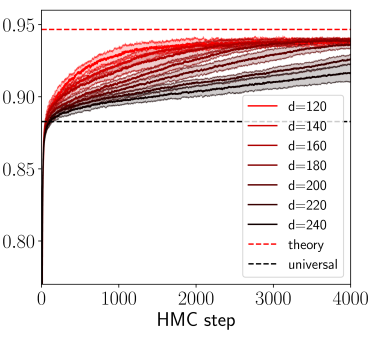

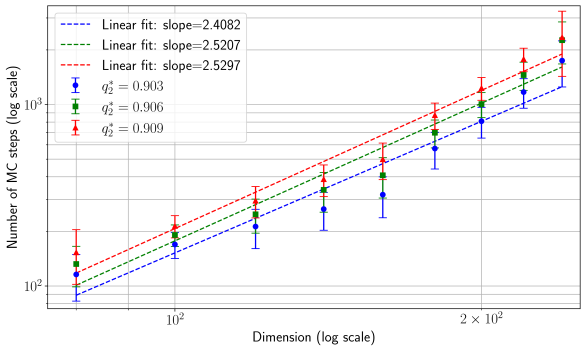

Figure 2 concerns networks with Rademacher weights and homogeneous readout. The numerical points are of two kinds: the dots, obtained from Metropolis–Hastings sampling of the weight posterior, and the circles, obtained from the GAMP-RIE (App. H). We report analogous simulations for ${\rm ReLU}$ and ${\rm ELU}$ activations in Figure 7, App. H. The remarkable agreement between theoretical curves and experimental points in both phases supports the assumptions used in Sec. 4.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Epsilon Opt vs. Alpha for Different Sigma Values

### Overview

The image is a line chart displaying the relationship between epsilon opt (εopt) and alpha (α) for three different sigma values (σ1, σ2, σ3). The chart includes an inset plot showing the same data on a log scale for the y-axis. Data points are marked with circles and error bars.

### Components/Axes

* **Main Chart:**

* X-axis: α (alpha), ranging from 0 to 4.

* Y-axis: εopt (epsilon opt), ranging from 0 to 1.2.

* Legend (bottom-right):

* Blue line: σ1

* Green line: σ2

* Red line: σ3

* **Inset Chart (top-right):**

* X-axis: α (alpha), ranging from approximately 1 to 4.

* Y-axis: Logarithmic scale, ranging from 10^-3 to 10^-1.

* Data series are the same as the main chart.

### Detailed Analysis

* **σ1 (Blue Line):**

* Trend: Decreases from approximately 1.0 at α = 0 to approximately 0.15 at α = 3, then drops to 0.0 at α = 3.1.

* Data Points:

* α = 0, εopt ≈ 1.0

* α = 1, εopt ≈ 0.4

* α = 2, εopt ≈ 0.15

* α = 3, εopt ≈ 0.15

* α > 3.1, εopt = 0

* **σ2 (Green Line):**

* Trend: Remains constant at approximately 1.0 from α = 0 to α = 1.1, then drops to 0.0.

* Data Points:

* α < 1.1, εopt ≈ 1.0

* α > 1.1, εopt = 0

* **σ3 (Red Line):**

* Trend: Decreases from approximately 1.2 at α = 0 to approximately 0.45 at α = 1.7, then drops to 0.3 at α = 1.8, and remains relatively constant.

* Data Points:

* α = 0, εopt ≈ 1.2

* α = 1, εopt ≈ 0.75

* α = 2, εopt ≈ 0.3

* α = 3, εopt ≈ 0.3

* α = 4, εopt ≈ 0.3

### Key Observations

* σ2 exhibits a step function behavior, dropping sharply to zero at α ≈ 1.1.

* σ1 decreases gradually before dropping to zero at α ≈ 3.1.

* σ3 decreases gradually and then plateaus at approximately 0.3.

* The inset plot confirms the exponential decay of the data series.

### Interpretation

The chart illustrates how epsilon opt (εopt) changes with alpha (α) for different values of sigma (σ). The different sigma values seem to represent different thresholds or sensitivities. σ2 has the lowest threshold, dropping to zero at a low α value, while σ1 and σ3 have higher thresholds and exhibit more gradual decreases. The inset plot highlights the exponential decay behavior of the data, suggesting a relationship where epsilon opt decreases exponentially with increasing alpha. The error bars on the data points indicate the uncertainty in the measurements.

</details>

Figure 2: Theoretical prediction (solid curves) of the Bayes-optimal mean-square generalisation error for binary inner weights and polynomial activations: $\sigma_{1}={\rm He}_{2}/\sqrt{2}$ , $\sigma_{2}={\rm He}_{3}/\sqrt{6}$ , $\sigma_{3}={\rm He}_{2}/\sqrt{2}+{\rm He}_{3}/6$ , with $\gamma=0.5$ , $d=150$ , linear readout with Gaussian label noise with $\Delta=1.25$ , and homogeneous readouts ${\mathbf{v}}=\mathbf{1}$ . Dots are optimal errors computed via Gibbs errors (see Fig. 1) by running a Metropolis-Hastings MCMC initialised near the teacher. Circles are the error of GAMP-RIE (Maillard et al., 2024a) extended to generic activation, see App. H. Points are averaged over 16 data instances. Error bars for MCMC are the standard deviation over instances (omitted for GAMP-RIE, but of the same order). Dashed and dotted lines denote, respectively, the universal (i.e. the $\mathcal{Q}_{W}(\mathsf{v})=0\ ∀\ \mathsf{v}$ solution of the saddle point equations) and the specialisation branches where they are metastable (i.e., a local maximiser of the RS potential but not the global one).

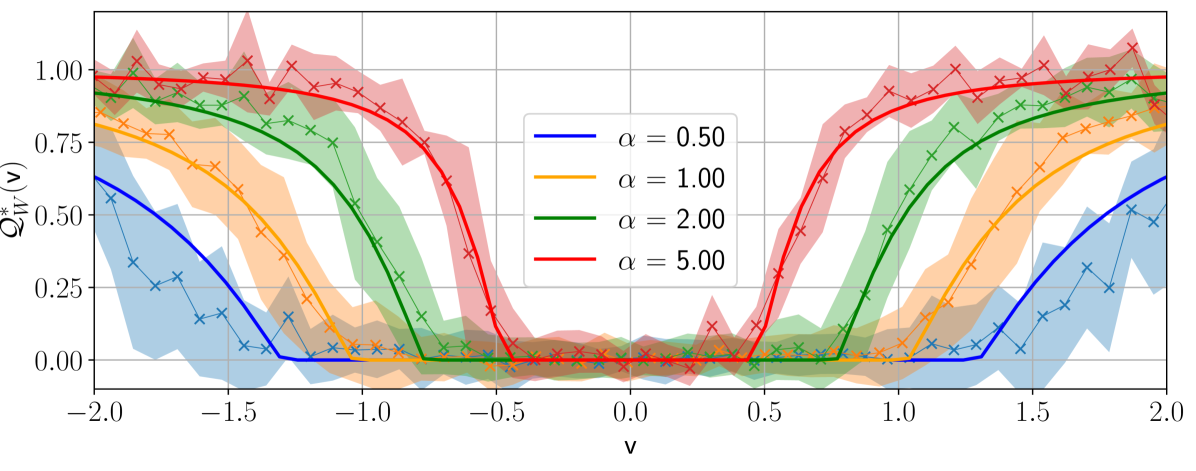

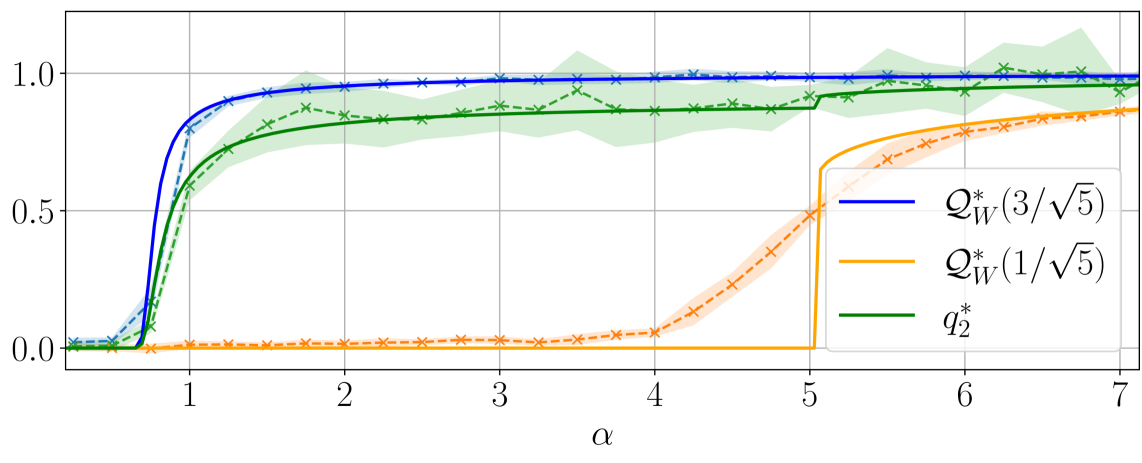

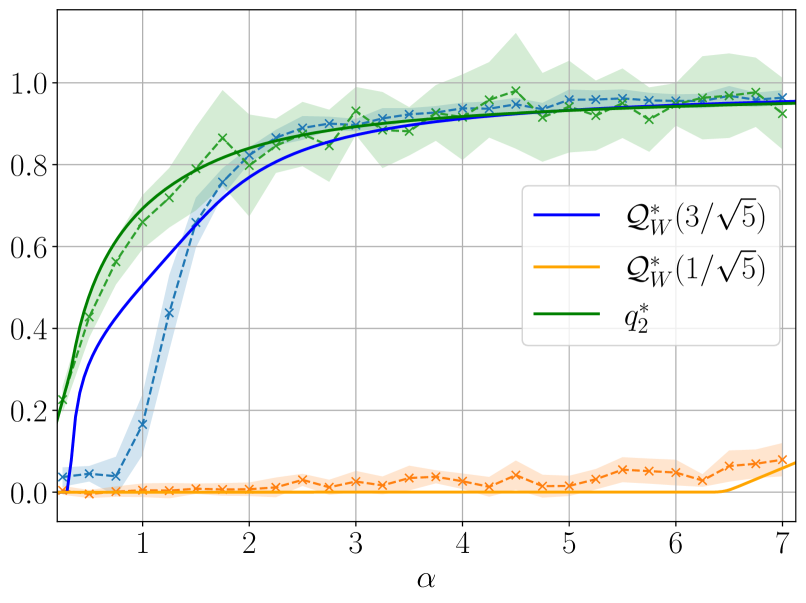

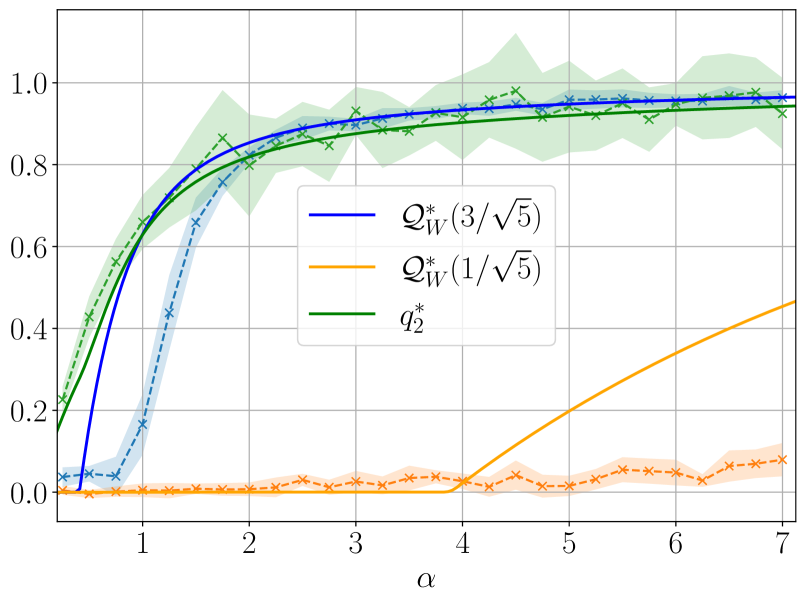

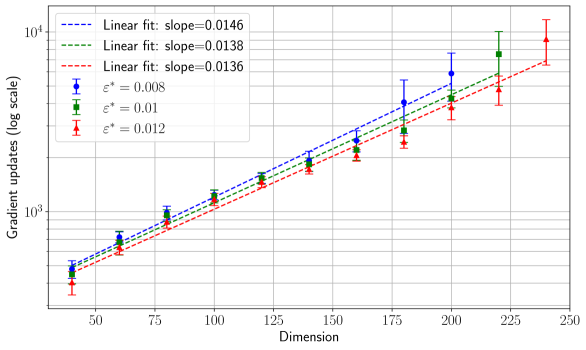

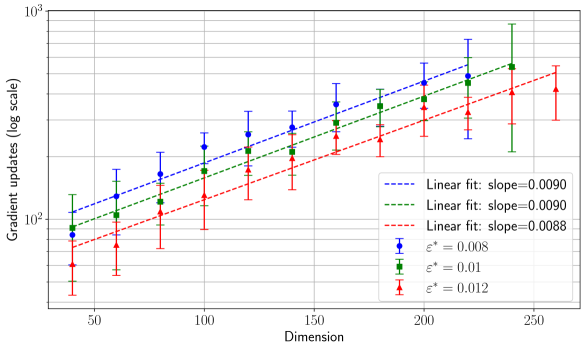

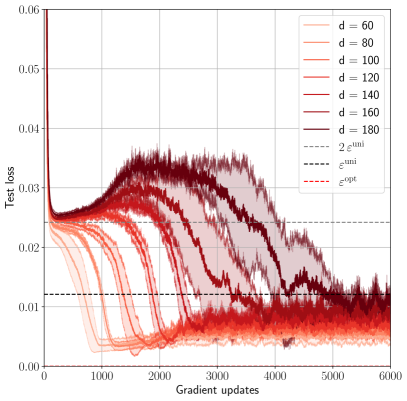

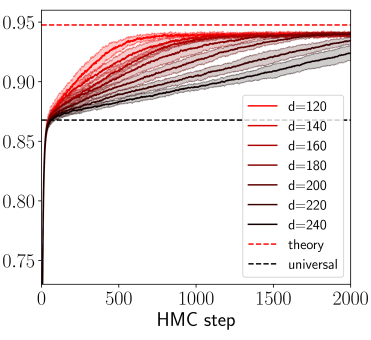

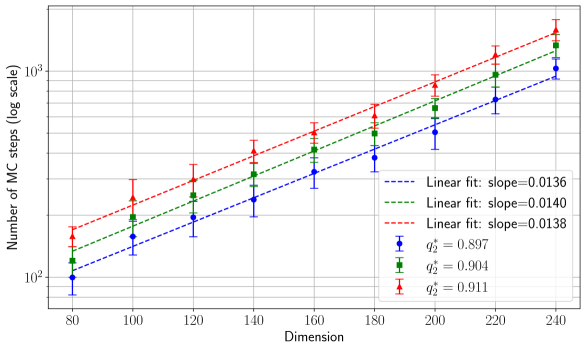

Figure 3 illustrates the learning mechanism for models with Gaussian weights and non-homogeneous readouts, revealing a sequence of phase transitions as $\alpha$ increases. Top panel shows the overlap function $\mathcal{Q}_{W}(\mathsf{v})$ in the case of Gaussian readouts for four different sample rates $\alpha$ . In the bottom panel the readout assumes four different values with equal probabilities; the figure shows the evolution of the two relevant overlaps associated with the symmetric readout values $± 3/\sqrt{5}$ and $± 1/\sqrt{5}$ . As $\alpha$ increases, the student weights start aligning with the teacher weights associated with the highest readout amplitude, marking the first phase transition. As these alignments strengthen when $\alpha$ further increases, the second transition occurs when the weights corresponding to the next largest readout amplitude are learnt, and so on. In this way, continuous readouts produce an infinite sequence of learning transitions, as supported by the upper part of Figure 3.

Even when dominating the posterior measure, we observe in simulations that the specialisation solution can be algorithmically hard to reach. With a discrete distribution of readouts (such as $P_{v}=\delta_{1}$ or Rademacher), simulations for binary inner weights exhibit it only when sampling with informative initialisation (i.e., the MCMC runs to sample ${\mathbf{W}}$ are initialised in the vicinity of ${\mathbf{W}}^{0}$ ). Moreover, even in cases where algorithms (such as ADAM or HMC for Gaussian inner weights) are able to find the specialisation solution, they manage to do so only after a training time increasing exponentially with $d$ , and for relatively small values of the label noise $\Delta$ , see discussion in App. I. For the case of the continuous distribution of readouts $P_{v}={\mathcal{N}}(0,1)$ , our numerical results are inconclusive on hardness, and deserve numerical investigation at a larger scale.

The universal phase is superseded at $\alpha_{\rm sp}$ by a specialisation phase, where the student’s inner weights start aligning with the teacher ones. This transition occurs for both binary and Gaussian priors over the inner weights, and it is different in nature w.r.t. the perfect recovery threshold identified in Maillard et al. (2024a), which is the point where the student with Gaussian weights learns perfectly ${\mathbf{W}}^{0∈tercal}({\mathbf{v}}){\mathbf{W}}^{0}$ (but not ${\mathbf{W}}^{0}$ ) and thus attains perfect generalisation in the case of purely quadratic activation and noiseless labels. For large $\alpha$ , the student somehow realises that the higher order terms of the activation’s Hermite decomposition are not label noise, but are informative on the decision rule. The two identified phases are akin to those recently described in Barbier et al. (2025) for matrix denoising. The model we consider is also a matrix model in ${\mathbf{W}}$ , with the amount of data scaling as the number of matrix elements. When data are scarce, the student cannot break the numerous symmetries of the problem, resulting in an “effective rotational invariance” at the source of the prior universality, with posterior samples having a vanishing overlap with ${\mathbf{W}}^{0}$ . On the other hand, when data are sufficiently abundant, $\alpha>\alpha_{\rm sp}$ , there is a “synchronisation” of the student’s samples with the teacher.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Q*_W(v) vs. v for different alpha values

### Overview

The image is a line chart displaying the relationship between Q*_W(v) and v for different values of alpha (α). The chart includes four data series, each representing a different alpha value (0.50, 1.00, 2.00, and 5.00). Each data series is plotted with a line and 'x' markers, along with a shaded region indicating uncertainty. The x-axis represents 'v', ranging from -2.0 to 2.0, and the y-axis represents Q*_W(v), ranging from 0.00 to 1.00.

### Components/Axes

* **X-axis:**

* Label: v

* Scale: -2.0 to 2.0, with tick marks at -2.0, -1.5, -1.0, -0.5, 0.0, 0.5, 1.0, 1.5, and 2.0.

* **Y-axis:**

* Label: Q*_W(v)

* Scale: 0.00 to 1.00, with tick marks at 0.00, 0.25, 0.50, 0.75, and 1.00.

* **Legend:** Located in the top-right quadrant of the chart.

* Blue line: α = 0.50

* Orange line: α = 1.00

* Green line: α = 2.00

* Red line: α = 5.00

### Detailed Analysis

* **α = 0.50 (Blue):**

* Trend: Starts at approximately 0.55 at v = -2.0, decreases to approximately 0.0 at v = -0.5, remains near 0.0 until v = 0.5, then increases to approximately 0.55 at v = 2.0.

* Data Points:

* v = -2.0, Q*_W(v) ≈ 0.55

* v = -0.5, Q*_W(v) ≈ 0.0

* v = 0.5, Q*_W(v) ≈ 0.0

* v = 2.0, Q*_W(v) ≈ 0.55

* **α = 1.00 (Orange):**

* Trend: Starts at approximately 0.9 at v = -2.0, decreases to approximately 0.0 at v = -0.5, remains near 0.0 until v = 0.5, then increases to approximately 0.9 at v = 2.0.

* Data Points:

* v = -2.0, Q*_W(v) ≈ 0.9

* v = -0.5, Q*_W(v) ≈ 0.0

* v = 0.5, Q*_W(v) ≈ 0.0

* v = 2.0, Q*_W(v) ≈ 0.9

* **α = 2.00 (Green):**

* Trend: Starts at approximately 0.95 at v = -2.0, decreases to approximately 0.0 at v = -0.5, remains near 0.0 until v = 0.5, then increases to approximately 0.95 at v = 2.0.

* Data Points:

* v = -2.0, Q*_W(v) ≈ 0.95

* v = -0.5, Q*_W(v) ≈ 0.0

* v = 0.5, Q*_W(v) ≈ 0.0

* v = 2.0, Q*_W(v) ≈ 0.95

* **α = 5.00 (Red):**

* Trend: Starts at approximately 1.0 at v = -2.0, decreases to approximately 0.0 at v = -0.5, remains near 0.0 until v = 0.5, then increases to approximately 1.0 at v = 2.0.

* Data Points:

* v = -2.0, Q*_W(v) ≈ 1.0

* v = -0.5, Q*_W(v) ≈ 0.0

* v = 0.5, Q*_W(v) ≈ 0.0

* v = 2.0, Q*_W(v) ≈ 1.0

### Key Observations

* All data series exhibit a similar U-shaped trend, with Q*_W(v) decreasing from a high value at v = -2.0 to approximately 0.0 at v = -0.5, remaining low until v = 0.5, and then increasing back to a high value at v = 2.0.

* As alpha increases, the value of Q*_W(v) at v = -2.0 and v = 2.0 increases, approaching 1.0.

* The shaded regions around each line indicate the uncertainty in the data, which appears to be larger in the regions where Q*_W(v) is changing rapidly.

### Interpretation

The chart illustrates how the function Q*_W(v) changes with respect to 'v' for different values of the parameter alpha. The U-shaped trend suggests that Q*_W(v) is minimized around v = 0 and maximized at the extreme values of v (i.e., -2.0 and 2.0). The parameter alpha appears to control the magnitude of Q*_W(v) at these extreme values, with larger alpha values resulting in Q*_W(v) approaching 1.0. This could represent a system where 'v' is an input, Q*_W(v) is an output, and alpha is a control parameter that influences the system's response to 'v'. The uncertainty regions suggest that the relationship between Q*_W(v) and 'v' is less well-defined in the regions where Q*_W(v) is changing rapidly, possibly due to noise or other factors.

</details>

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Q*_w vs Alpha

### Overview

The image is a line chart comparing three different data series, Q*_w(3/√5), Q*_w(1/√5), and q*_2, as a function of alpha (α). The chart shows how these values change as alpha increases from approximately 0 to 7. The plot includes shaded regions around the dashed lines, indicating uncertainty or variance in the data.

### Components/Axes

* **X-axis (Horizontal):**

* Label: α (alpha)

* Scale: 0 to 7, with tick marks at every integer value.

* **Y-axis (Vertical):**

* Scale: 0.0 to 1.0, with tick marks at 0.0, 0.5, and 1.0.

* **Legend (Right Side):**

* Blue line: Q*_w(3/√5)

* Orange line: Q*_w(1/√5)

* Green line: q*_2*

### Detailed Analysis

* **Q*_w(3/√5) (Blue):**

* Solid blue line with 'x' markers.

* Trend: Starts near 0 at α=0, rapidly increases to approximately 0.9 around α=1, and then plateaus around 1.0 for α > 1.

* Data Points:

* α = 0: Q*_w(3/√5) ≈ 0

* α = 1: Q*_w(3/√5) ≈ 0.9

* α = 7: Q*_w(3/√5) ≈ 1.0

* **Q*_w(1/√5) (Orange):**

* Dashed orange line with '+' markers. Shaded region around the line indicates uncertainty.

* Trend: Remains near 0 until α=5, then rapidly increases to approximately 0.85.

* Data Points:

* α = 0: Q*_w(1/√5) ≈ 0

* α = 5: Q*_w(1/√5) ≈ 0

* α = 6: Q*_w(1/√5) ≈ 0.7

* α = 7: Q*_w(1/√5) ≈ 0.85

* **q*_2* (Green):**

* Solid green line with 'x' markers. Shaded region around the line indicates uncertainty.

* Trend: Starts near 0 at α=0, rapidly increases to approximately 0.7 around α=1, and then plateaus around 0.9 for α > 1. There is a slight dip around α=5.

* Data Points:

* α = 0: q*_2* ≈ 0

* α = 1: q*_2* ≈ 0.7

* α = 5: q*_2* ≈ 0.9

* α = 7: q*_2* ≈ 0.95

### Key Observations

* Q*_w(3/√5) and q*_2* exhibit a similar trend, rapidly increasing around α=1 and then plateauing.

* Q*_w(1/√5) remains near zero until α=5, after which it rapidly increases.

* The shaded regions around the dashed lines indicate the variability or uncertainty in the data.

### Interpretation

The chart illustrates how the values of Q*_w(3/√5), Q*_w(1/√5), and q*_2* change with respect to α. The data suggests that Q*_w(3/√5) and q*_2* are strongly influenced by α around α=1, while Q*_w(1/√5) is significantly affected only after α reaches 5. This could indicate different thresholds or critical points for these parameters in the underlying system being modeled. The shaded regions highlight the uncertainty associated with the dashed lines, suggesting that the solid lines are more stable or have less variability.

</details>

Figure 3: Top: Theoretical prediction (solid curves) of the overlap function $\mathcal{Q}_{W}(\mathsf{v})$ for different sampling ratios $\alpha$ for Gaussian inner weights, ReLU(x) activation, $d=150,\gamma=0.5$ , linear readout with $\Delta=0.1$ and $P_{v}=\mathcal{N}(0,1)$ . The shaded curves were obtained from HMC initialised informatively. Using a single sample ${\mathbf{W}}^{a}$ from the posterior, $\mathcal{Q}_{W}(\mathsf{v})$ has been evaluated numerically by dividing the interval $[-2,2]$ into 50 bins and by computing the value of the overlap associated with each bin. Each point has been averaged over 50 instances of the training set, and shaded regions around them correspond to one standard deviation. Bottom: Theoretical prediction (solid curves) of the overlaps as function of the sampling ratio $\alpha$ for Gaussian inner weights, Tanh(2x) activation, $d=150,\gamma=0.5$ , linear readout with $\Delta=0.1$ and $P_{v}=\frac{1}{4}(\delta_{-3/\sqrt{5}}+\delta_{-1/\sqrt{5}}+\delta_{1/\sqrt{5}%

}+\delta_{3/\sqrt{5}})$ . The shaded curves were obtained from informed HMC. Each point has been averaged over 10 instances of the training set, with one standard deviation depicted.

The phenomenology observed depends on the activation function selected. In particular, by expanding $\sigma$ in the Hermite basis we realise that the way the first three terms enter information theoretical quantities is completely described by order 0, 1 and 2 tensors later defined in (12), that give rise to combinations of the inner and readout weights. In the regime of quadratically many data, order 0 and 1 tensors are recovered exactly by the student because of the overwhelming abundance of data compared to their dimension. The challenge is thus to learn the second order tensor. On the contrary, we claim that learning any higher order tensors can only happen when the student aligns its weights with ${\mathbf{W}}^{0}$ : before this “synchronisation”, they play the role of an effective noise. This is the mechanism behind the specialisation transition. For odd activation ( ${\rm Tanh}$ in Figure 1, $\sigma_{3}$ in Figure 2), where $\mu_{2}=0$ , the aforementioned order-2 tensor does not contribute any more to the learning. Indeed, we observe numerically that the generalisation error sticks to a constant value for $\alpha<\alpha_{\rm sp}$ , whereas at the phase transition it suddenly drops. This is because the learning of the order-2 tensor is skipped entirely, and the only chance to perform better is to learn all the other higher-order tensors through specialisation.

By extrapolating universality results to generic activations, we are able to use the GAMP-RIE of Maillard et al. (2024a), publicly available at Maillard et al. (2024b), to obtain a polynomial-time predictor for test data. Its generalisation error follows our universal theoretical curve even in the $\alpha$ regime where MCMC sampling experiences a computationally hard phase with worse performance (for binary weights), and in particular after $\alpha_{\rm sp}$ (see Fig. 2, circles). Extending this algorithm, initially proposed for quadratic activation, to a generic one is possible thanks to the identification of an effective GLM onto which the learning problem can be mapped (while the mapping is exact when $\sigma(x)=x^{2}$ as exploited by Maillard et al. (2024a)), see App. H. The key observation is that our effective GLM representation holds not only from a theoretical perspective when describing the universal phase, but also algorithmically.

Finally, we emphasise that our theory is consistent with Cui et al. (2023), which considers the simpler strongly over-parametrised regime $n=\Theta(d)$ rather than the interpolation one $n=\Theta(d^{2})$ : our generalisation curves at $\alpha→ 0$ match theirs at $\alpha_{1}:=n/d→∞$ , which is when the student learns perfectly the combinations ${\mathbf{v}}^{0∈tercal}{\mathbf{W}}^{0}/\sqrt{k}$ (but nothing more).

4 Accessing the free entropy and generalisation error: replica method and spherical integration combined

The goal is to compute the asymptotic free entropy by the replica method Mezard et al. (1986), a powerful heuristic from spin glasses also used in machine learning Engel & Van den Broeck (2001), combined with the HCIZ integral. Our derivation is based on a Gaussian ansatz on the replicated post-activations of the hidden layer, which generalises Conjecture 3.1 of Cui et al. (2023), now proved in Camilli et al. (2025), where it is specialised to the case of linearly many data ( $n=\Theta(d)$ ). To obtain this generalisation, we will write the kernel arising from the covariance of the aforementioned post-activations as an infinite series of scalar order parameters derived from the expansion of the activation function in the Hermite basis, following an approach recently devised in Aguirre-López et al. (2025) in the context of the random features model (see also Hu et al. (2024) and Ghorbani et al. (2021)). Another key ingredient of our analysis will be a generalisation of an ansatz used in the replica method by Sakata & Kabashima (2013) for dictionary learning.

4.1 Replicated system and order parameters

The starting point in the replica method to tackle the data average is the replica trick:

| | $\textstyle{\lim}\,\frac{1}{n}\mathbb{E}\ln{\mathcal{Z}}(\mathcal{D})={\lim}{%

\lim\limits_{\,\,s→ 0^{+}}}\!\frac{1}{ns}\ln\mathbb{E}\mathcal{Z}^{s}=\lim%

\limits_{\,\,s→ 0^{+}}\!{\lim}\,\frac{1}{ns}\ln\mathbb{E}\mathcal{Z}^{s}$ | |

| --- | --- | --- |

assuming the limits commute. Recall ${\mathbf{W}}^{0}$ are the teacher weights. Consider first $s∈\mathbb{N}^{+}$ . Let the “replicas” of the post-activation $\{\lambda^{a}({\mathbf{W}}^{a}):=\frac{1}{\sqrt{k}}{{\mathbf{v}}^{∈tercal}}%

\sigma(\frac{1}{\sqrt{d}}{{\mathbf{W}}^{a}{\mathbf{x}}})\}_{a=0,...,s}$ . We then directly obtain

| | $\textstyle\mathbb{E}\mathcal{Z}^{s}=\mathbb{E}_{{\mathbf{v}}}∈t\prod\limits_%

{a}\limits^{0,s}dP_{W}({\mathbf{W}}^{a})\big{[}\mathbb{E}_{\mathbf{x}}∈t dy%

\prod\limits_{a}\limits^{0,s}P_{\rm out}(y\mid\lambda^{a}({\mathbf{W}}^{a}))%

\big{]}^{n}.$ | |

| --- | --- | --- |

The key is to identify the law of the replicas $\{\lambda^{a}\}_{a=0,...,s}$ , which are dependent random variables due to the common random Gaussian input ${\mathbf{x}}$ , conditionally on $({\mathbf{W}}^{a})$ . Our key hypothesis is that $\{\lambda^{a}\}$ is jointly Gaussian, an ansatz we cannot prove but that we validate a posteriori thanks to the excellent match between our theory and the empirical generalisation curves, see Sec. 2.2. Similar Gaussian assumptions have been the crux of a whole line of recent works on the analysis of neural networks, and are now known under the name of “Gaussian equivalence” (Goldt et al., 2020; Hastie et al., 2022; Mei & Montanari, 2022; Goldt et al., 2022; Hu & Lu, 2023). This can also sometimes be heuristically justified based on Breuer–Major Theorems (Nourdin et al., 2011; Pacelli et al., 2023).

Given two replica indices $a,b∈\{0,...,s\}$ we define the neuron-neuron overlap matrix $\Omega^{ab}_{ij}:={{\mathbf{W}}_{i}^{a∈tercal}{\mathbf{W}}^{b}_{j}}/d$ with $i,j∈[k]$ . Recalling the Hermite expansion of $\sigma$ , by using Mehler’s formula, see App. A, the post-activations covariance $K^{ab}:=\mathbb{E}\lambda^{a}\lambda^{b}$ reads

$$

\textstyle K^{ab} \textstyle=\sum_{\ell\geq 1}^{\infty}\frac{\mu^{2}_{\ell}}{\ell!}Q_{\ell}^{ab}%

\ \ \text{with}\ \ Q_{\ell}^{ab}:=\frac{1}{k}\sum_{i,j\leq k}v_{i}v_{j}(\Omega%

^{ab}_{ij})^{\ell}. \tag{8}

$$

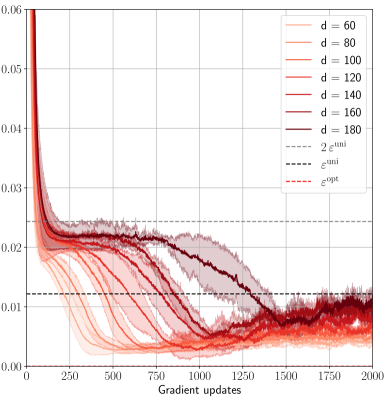

This covariance ${\mathbf{K}}$ is complicated but, as we argue hereby, simplifications occur as $d→∞$ . In particular, the first two overlaps $Q_{1}^{ab},Q_{2}^{ab}$ are special. We claim that higher-order overlaps $(Q_{\ell}^{ab})_{\ell≥ 3}$ can be simplified as functions of simpler order parameters.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Overlaps vs. HMC Steps

### Overview

The image is a line chart showing the relationship between "Overlaps" and "HMC steps". There are five data series, labeled Q1 through Q5, each represented by a different colored line. An inset plot shows the behavior of "εopt" over HMC steps.

### Components/Axes

* **X-axis:** "HMC steps", ranging from 0 to 125000 in increments of 25000.

* **Y-axis:** "Overlaps", ranging from 0.0 to 1.0 in increments of 0.2.

* **Legend:** Located on the right side of the main plot, identifying the lines as Q1 (blue), Q2 (orange), Q3 (green), Q4 (red), and Q5 (purple).

* **Inset Plot X-axis:** HMC steps, ranging from 0 to 100000.

* **Inset Plot Y-axis:** Values ranging from 0.000 to 0.025.

* **Inset Plot Legend:** "εopt" (black).

* **Horizontal Dashed Lines:** There are four horizontal dashed lines corresponding to the approximate steady-state values of Q1, Q2, Q5, and εopt.

### Detailed Analysis

* **Q1 (Blue):** Starts at approximately 1.0 and remains relatively constant around 0.99.

* Horizontal dashed line at y = 1.0

* **Q2 (Orange):** Starts at approximately 0.75 and decreases rapidly before stabilizing around 0.62.

* Horizontal dashed line at y = 0.62

* **Q3 (Green):** Starts at approximately 0.75 and decreases rapidly before stabilizing around 0.03.

* **Q4 (Red):** Starts at approximately 0.75 and decreases rapidly before stabilizing around 0.04.

* **Q5 (Purple):** Starts at approximately 0.75 and decreases rapidly before stabilizing around 0.01.

* Horizontal dashed line at y = 0.0

* **εopt (Black - Inset Plot):** Starts at 0.0 and increases rapidly to approximately 0.026, then fluctuates around that value.

* Horizontal dashed line at y = 0.026

### Key Observations

* Q1 maintains a high overlap value throughout the HMC steps.

* Q2, Q3, Q4, and Q5 all exhibit a rapid decrease in overlap early in the HMC steps, followed by stabilization at lower values.

* εopt rapidly converges to a stable value.

### Interpretation

The chart illustrates the convergence behavior of different "overlap" metrics (Q1-Q5) during a Hamiltonian Monte Carlo (HMC) simulation. Q1 appears to represent a highly stable or conserved quantity, while Q2-Q5 converge to lower overlap values, suggesting a change or adaptation in the system being modeled. The inset plot shows that the parameter εopt quickly reaches an optimal value, which may be related to the convergence of the other overlap metrics. The horizontal dashed lines indicate the approximate steady-state values for each series.

</details>

<details>

<summary>x8.png Details</summary>

### Visual Description

## Chart: Overlaps vs. HMC Steps

### Overview

The image is a chart showing the relationship between "Overlaps" and "HMC steps". There are five primary data series plotted, each represented by a different color. Additionally, there is an inset plot showing the behavior of "ε_opt" over HMC steps.

### Components/Axes

* **Main Chart:**

* X-axis: "HMC steps" ranging from 0 to 125000 in increments of 25000.

* Y-axis: "Overlaps" ranging from 0.5 to 1.0 in increments of 0.1.

* Legend: There is no explicit legend, but the data series are distinguished by color.

* Blue: A nearly constant line at approximately 1.0 overlap.

* Orange: A fluctuating line around 0.88 overlap.

* Green: A fluctuating line around 0.67 overlap.

* Red: A fluctuating line around 0.60 overlap.

* Purple: A fluctuating line around 0.55 overlap.

* Horizontal dashed lines indicate approximate average overlap values for each series.

* Blue: 1.0

* Orange: 0.9

* Green: 0.63

* Red: 0.57

* Purple: 0.52

* **Inset Chart:**

* X-axis: "HMC steps" ranging from 0 to 100000 in increments of 50000.

* Y-axis: Values ranging from 0.00 to 0.01 in increments of 0.01.

* Data Series: Black line representing "ε_opt".

* Horizontal dashed line indicates the approximate average value for "ε_opt" at 0.008.

### Detailed Analysis

* **Blue Series:** Starts at 1.0 and remains nearly constant around 1.0 throughout the entire range of HMC steps.

* **Orange Series:** Starts at approximately 0.9 and fluctuates around 0.88.

* **Green Series:** Starts at approximately 0.8 and decreases rapidly before fluctuating around 0.67.

* **Red Series:** Starts at approximately 0.7 and decreases rapidly before fluctuating around 0.60.

* **Purple Series:** Starts at approximately 0.8 and decreases rapidly before fluctuating around 0.55.

* **Inset Chart (ε_opt):** The black line starts at 0 and increases rapidly to approximately 0.008, then fluctuates slightly around this value.

### Key Observations

* The blue series exhibits the highest overlap and remains stable.

* The green, red, and purple series show a significant initial drop in overlap before stabilizing at lower values.

* The inset chart shows that "ε_opt" quickly converges to a stable value.

### Interpretation

The chart illustrates how different configurations or parameters (represented by the colored lines) affect the "Overlaps" during HMC steps. The blue series represents a highly stable configuration with consistently high overlap. The other series (orange, green, red, and purple) represent configurations that initially have high overlap but degrade over the initial HMC steps before stabilizing at lower overlap values. The inset chart suggests that the parameter "ε_opt" quickly converges to an optimal value, which might be related to the stabilization observed in the main chart's data series. The dashed lines likely represent target or expected overlap values for each configuration.

</details>

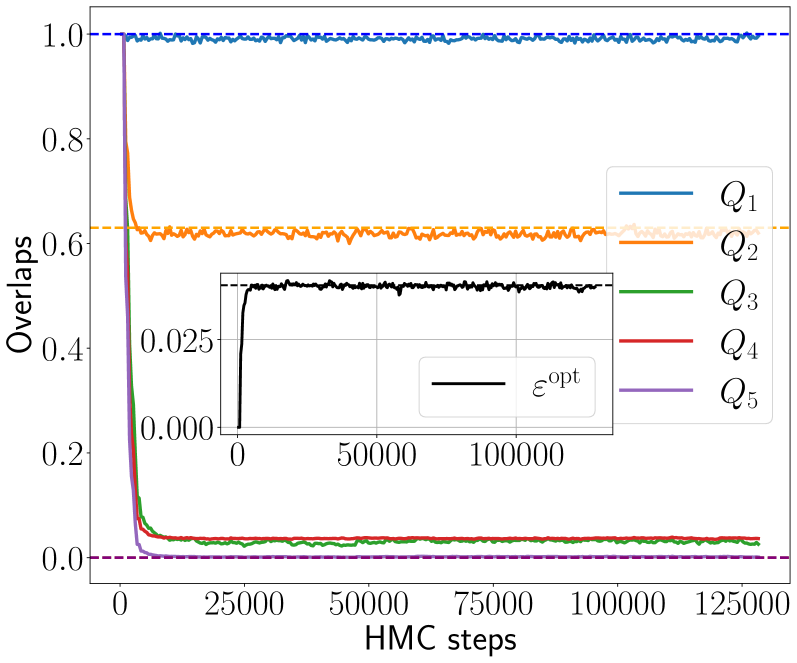

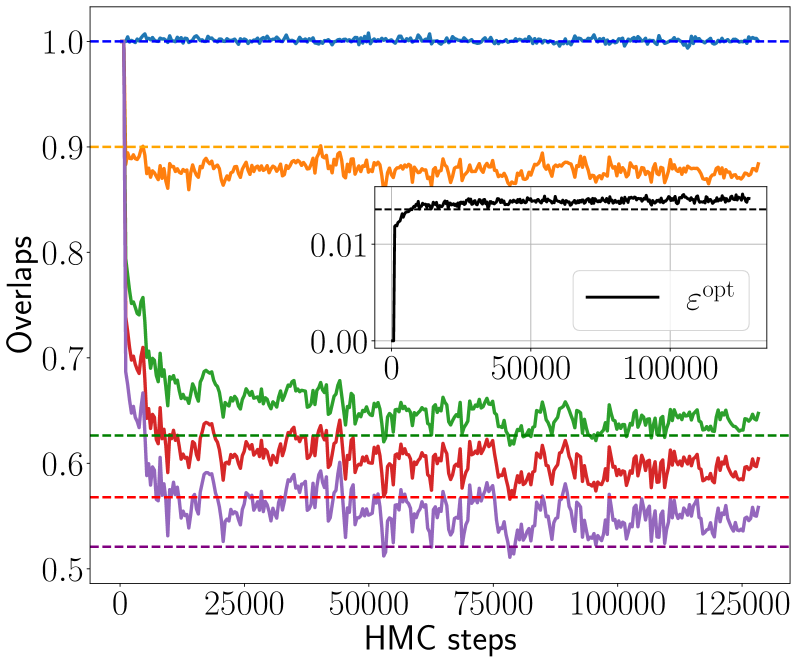

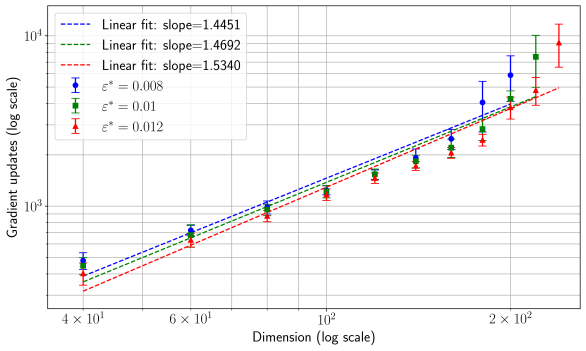

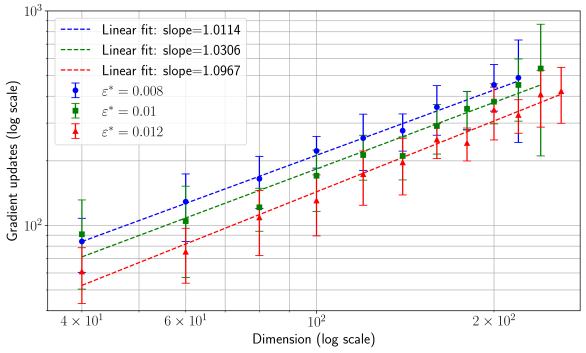

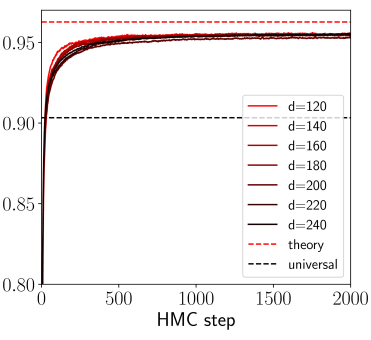

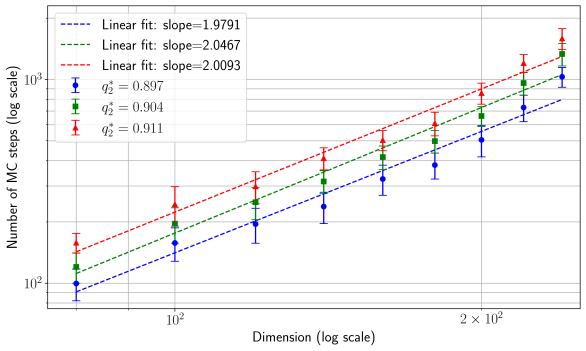

Figure 4: Hamiltonian Monte Carlo dynamics of the overlaps $Q_{\ell}=Q_{\ell}^{01}$ between student and teacher weights for $\ell∈[5]$ , with activation function ReLU(x), $d=200$ , $\gamma=0.5$ , linear readout with $\Delta=0.1$ and two choices of sample rates and readouts: $\alpha=1.0$ with $P_{v}=\delta_{1}$ (Left) and $\alpha=3.0$ with $P_{v}=\mathcal{N}(0,1)$ (Right). The teacher weights ${\mathbf{W}}^{0}$ are Gaussian. The dynamics is initialised informatively, i.e., on ${\mathbf{W}}^{0}$ . The overlap $Q_{1}$ always fluctuates around 1. Left: The overlaps $Q_{\ell}$ for $\ell≥ 3$ at equilibrium converge to 0, while $Q_{2}$ is well estimated by the theory (orange dashed line). Right: At higher sample rate $\alpha$ , also the $Q_{\ell}$ for $\ell≥ 3$ are non zero and agree with their theoretical prediction (dashed lines). Insets show the mean-square generalisation error and the theoretical prediction.

4.2 Simplifying the order parameters

In this section we show how to drastically reduce the number of order parameters to track. Assume at the moment that the readout prior $P_{v}$ has discrete support $\mathsf{V}=\{\mathsf{v}\}$ ; this can be relaxed by binning a continuous support, as mentioned in Sec. 2.2. The overlaps in (8) can be written as

$$

\textstyle Q_{\ell}^{ab}=\frac{1}{k}\sum_{\mathsf{v},\mathsf{v}^{\prime}\in%

\mathsf{V}}\mathsf{v}\,\mathsf{v}^{\prime}\sum_{\{i,j\leq k\mid v_{i}=\mathsf{%

v},v_{j}=\mathsf{v}^{\prime}\}}(\Omega_{ij}^{ab})^{\ell}. \tag{9}

$$

In the following, for $\ell≥ 3$ we discard the terms $\mathsf{v}≠\mathsf{v}^{\prime}$ in the above sum, assuming they are suppressed w.r.t. the diagonal ones. In other words, a neuron ${\mathbf{W}}^{a}_{i}$ of a student (replica) with a readout value $v_{i}=\mathsf{v}$ is assumed to possibly align only with neurons of the teacher (or, by Bayes-optimality, of another replica) with the same readout. Moreover, in the resulting sum over the neurons indices $\{i,j\mid v_{i}=v_{j}=\mathsf{v}\}$ , we assume that, for each $i$ , a single index $j=\pi_{i}$ , with $\pi$ a permutation, contributes at leading order. The model is symmetric under permutations of hidden neurons. We thus take $\pi$ to be the identity without loss of generality.

We now assume that for Hadamard powers $\ell≥ 3$ , the off-diagonal of the overlap $({\bm{\Omega}}^{ab})^{\circ\ell}$ , obtained from typical weight matrices sampled from the posterior, is sufficiently small to consider it diagonal in any quadratic form. Moreover, by exchangeability among neurons with the same readout value, we further assume that all diagonal elements $\{\Omega_{ii}^{ab}\mid i∈\mathcal{I}_{\mathsf{v}}\}$ concentrate onto the constant $\mathcal{Q}_{W}^{ab}(\mathsf{v})$ , where $\mathcal{I}_{\mathsf{v}}:=\{i≤ k\mid v_{i}=\mathsf{v}\}$ :

$$

\textstyle(\Omega_{ij}^{ab})^{\ell}=(\frac{1}{d}{\mathbf{W}}_{i}^{a\intercal}{%

\mathbf{W}}^{b}_{j})^{\ell}\approx\delta_{ij}\mathcal{Q}_{W}^{ab}(\mathsf{v})^%

{\ell} \tag{10}

$$