# WorldGym: World Model as An Environment for Policy Evaluation

**Authors**:

- Percy Liang Sherry Yang (Stanford University NYU Google DeepMind)

Abstract

Evaluating robot control policies is difficult: real-world testing is costly, and handcrafted simulators require manual effort to improve in realism and generality. We propose a world-model-based policy evaluation environment (WorldGym), an autoregressive, action-conditioned video generation model which serves as a proxy to real world environments. Policies are evaluated via Monte Carlo rollouts in the world model, with a vision-language model providing rewards. We evaluate a set of VLA-based real-robot policies in the world model using only initial frames from real robots, and show that policy success rates within the world model highly correlate with real-world success rates. Moreoever, we show that WorldGym is able to preserve relative policy rankings across different policy versions, sizes, and training checkpoints. Due to requiring only a single start frame as input, the world model further enables efficient evaluation of robot policies’ generalization ability on novel tasks and environments. We find that modern VLA-based robot policies still struggle to distinguish object shapes and can become distracted by adversarial facades of objects. While generating highly realistic object interaction remains challenging, WorldGym faithfully emulates robot motions and offers a practical starting point for safe and reproducible policy evaluation before deployment. See videos and code at https://world-model-eval.github.io

1 Introduction

Robots can help humans in ways that range from home robots performing chores (Shafiullah et al., 2023; Liu et al., 2024) to hospital robots taking care of patients (Soljacic et al., 2024). One of the major road blocks in the development robots lies in evaluation — how should we ensure that these robots will work reliably without causing any physical damage when deployed in the real world? Traditionally, people have used handcrafted software simulators to develop and evaluate robot control policies (Tedrake et al., 2019; Todorov et al., 2012; Erez et al., 2015). However, handcrafted simulation based on our understanding of the physical world can be limited, especially when it comes to hardcoding complex dynamics with high degrees of freedom or complex interactions such as manipulating soft objects (Sünderhauf et al., 2018; Afzal et al., 2020; Choi et al., 2021). As a result, the sim-to-real gap has hindered progress in robotics (Zhao et al., 2020; Salvato et al., 2021; Dulac-Arnold et al., 2019).

With the development of generative models trained on large-scale video data (Ho et al., 2022; Villegas et al., 2022; Singer et al., 2022), recent work has shown that video world models can visually emulate interactions with the physical real world, by conditioning on control inputs in the form of text (Yang et al., 2023; Brooks et al., 2024) or keyboard strokes (Bruce et al., 2024). This brings up an interesting question — could video world models be used to emulate robot interactions with the real world, hence being used as an environment to evaluate robot policies in the world model before real-world testing or deployment?

Learning a dynamics model from past experience and performing rollouts in the learned dynamics model has been extensively studied in model-based reinforcement learning (RL) (Hafner et al., 2019; Fonteneau et al., 2013; Zhang et al., 2021; Kaiser et al., 2019; Yu et al., 2020). However, most of the existing work in model-based RL considers single-task settings, which puts itself at a disadvantage compared to model-free RL, since learning a dynamics model can be much harder than learning a policy in the single-task setting. Nevertheless, we make the important observation that

- While there can be many tasks and policies, there is only one physical world in which we live that is governed by the same set of physical laws.

This makes it possible to learn a single world model that, in principle, can be used as an interactive environment to evaluate any policies on any tasks.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Robot Task Execution System with World Model and VLM Reward

### Overview

This diagram illustrates a robotic task execution system that integrates a world model, policy execution, and vision-language model (VLM) reward evaluation. The system processes initial frames, language instructions, and out-of-distribution (OOD) inputs to generate and evaluate robotic actions.

### Components/Axes

1. **Left Panel: Initial Inputs**

- **Initial Frame and Language Instruction**: Contains two scenarios:

- *Evaluation Dataset Example*: "Put the eggplant in the pot" (correct instruction)

- *OOD Image Input*: Modified image with additional objects (red border)

- *OOD Language Instruction*: Modified instruction "Put the eggplant in the drying rack" (red border)

- **Key Elements**: Robot arm, sink environment, objects (eggplant, pot, drying rack)

2. **Central Panel: World Model and Policies**

- **World Model**: Central processing unit receiving sequential observations (o₁, o₂, o₃)

- **Policy Blocks**: Three identical policy modules processing observations (o₁→o₃) and outputting actions (oθ)

- **Flow**: Observations feed into world model → policies → world model (recurrent loop)

3. **Right Panel: VLM Reward**

- **VLM as Reward**: Hexagonal symbol representing vision-language model

- **Output**: Reward value (R̂) derived from policy evaluation

### Detailed Analysis

- **Initial Inputs**:

- Correct instruction: "Put the eggplant in the pot" (yellow box)

- OOD variations:

- Image: Additional objects (red border)

- Language: "Put the eggplant in the drying rack" (red border)

- **World Model**:

- Processes sequential observations (o₁→o₃) showing robot arm movement

- Maintains internal state (g) representing environment dynamics

- **Policy Execution**:

- Three identical policy modules process different observation states

- Outputs action sequences (oθ) for robotic execution

- **VLM Reward System**:

- Evaluates policy outputs using vision-language model

- Generates scalar reward (R̂) for action quality assessment

### Key Observations

1. **OOD Handling**: Red borders highlight system's ability to process instruction/image mismatches

2. **Recurrent Architecture**: World model maintains state between policy executions

3. **Modular Design**: Separate policy blocks suggest parallel processing capability

4. **Reward Integration**: VLM directly influences policy evaluation without explicit training signals

### Interpretation

This system demonstrates a closed-loop robotic control architecture where:

1. **World Model** serves as both environment simulator and memory

2. **Policies** generate actions based on current observations and historical context

3. **VLM Reward** provides real-time evaluation of action quality through vision-language understanding

4. **OOD Robustness**: The system explicitly handles instruction-image mismatches through separate OOD input channels

The architecture suggests a hierarchical approach where:

- Low-level policies execute basic actions

- World model maintains high-level context

- VLM provides semantic evaluation of action-instruction alignment

- Recurrent connections enable continuous learning from execution outcomes

The use of identical policy blocks implies transfer learning capabilities across different observation states, while the VLM reward system enables value-based policy selection without explicit reward shaping.

</details>

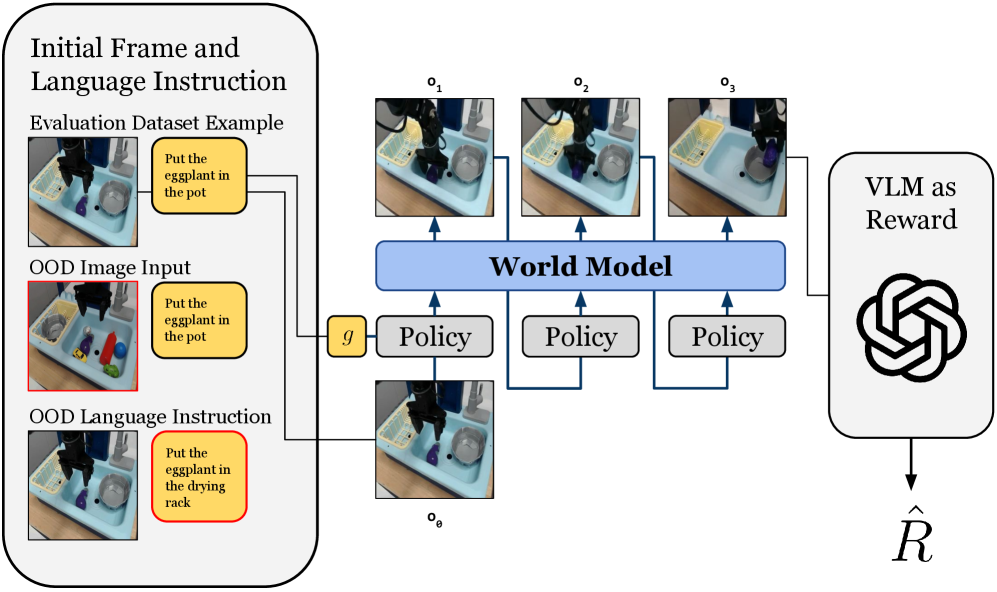

Figure 1: Overview of WorldGym. Given an initial frame and an action sequence predicted by a policy, WorldGym uses a world model to interactively predict future frames, serving as a generative simulator. WorldGym then passes the generated rollout to a VLM which provides rewards. WorldGym can easily be used to test policies on OOD tasks and environments by changing the input language instruction or directly modifying the initial image.

Inspired by this observation, we propose a world-model-based policy evaluation environment (WorldGym), as shown in Figure 1. WorldGym first combines knowledge of the world across diverse environments by learning a single world model that generates videos conditioned on actions. To enable efficient rollouts of policies which predict different-length action chunks, WorldGym aligns its diffusion horizon length with policies’ chunk sizes at inference time. With video rollouts from the world model, WorldGym then uses a vision-language model (VLM) to determine tasks’ success from generated videos.

Our experiments show that WorldGym can emulate end-effector controls across different control axes highly effectively for robots with different morphologies. We then use the world model to evaluate VLA-based robot policies by rolling out the policies in the world model starting from real initial frames, and compare their success rates (policy values) in WorldGym to those achieved in real-world experiments. Our result suggests that policy values in WorldGym are highly correlated with policy performance in the real world, and the relative rankings of different policies are preserved.

Furthermore, as WorldGym requires only a single initial frame as input, we show how we can easily design out-of-distribution (OOD) tasks and environments and then use WorldGym to evaluate robot policies within these newly “created” environments. We find that modern robot policies still struggle to distinguish some classes of objects by their shape, and can even be distracted by adversarial facades of objects.

Although simulating realistic object interactions remains challenging, we believe WorldGym can serve as a highly useful tool for sanity check and testing robot policies safely and reproducibly before deploying them on real robots. Key contributions of this paper include:

- We propose to use video world model to evaluate robot policies across different robot morphologies, and perform a comprehensive set of studies to understand its feasibility.

- We propose flexibly aligning diffusion horizon length with policies’ action chunk sizes for efficient rollouts of a variety of policies over hundreds of interactive steps.

- We show a single world model learned on data from diverse tasks and environments can enable policy value estimates that highly correlate with real-world policy success rates.

- We demonstrate the ease of testing robot policies on OOD tasks and environments within an autoregressive video generation-based world model.

2 Problem Formulation

In this section, we define relevant notations and review the formulation of offline policy evaluation (OPE). We also situate OPE in practical settings with partially observable environments and image-based observations.

Multi-Task POMDP.

We consider a multi-task, finite-horizon, partially observable Markov Decision Process (POMDP) (Puterman, 2014; Kaelbling et al., 1995), specified by $\mathcal{M}=(S,A,O,G,R,T,\mathcal{E},H)$ , which consists of a state space, action space, observation space, goal space, reward function, transition function, emission function, and horizon length. A policy $\pi$ interacts with the environment for a goal starting from an initial state $g,s_{0}\sim G$ , producing a distribution $\pi(·|s_{t},g)$ over $A$ from which an action $a_{t}$ is sampled and applied to the environment at each step $t∈[0,H]$ . The environment produces a scalar reward $r_{t}=R(s_{t},g)$ , and transitions to a new state $s_{t+1}\sim T(s_{t},a_{t})$ and emits a new observation $o_{t+1}\sim\mathcal{E}(s_{t+1})$ . We consider the sparse reward setting with $R(s_{H},g)∈\{0,1\}$ and $R(s_{t},g)=0,∀ t<H$ , where $g$ is a language goal that defines the task. Data is logged from previous interactions into an offline dataset $D=\{g,s_{0},o_{0},a_{0},...,s_{H},o_{H},r_{H}\}$ . The value of a policy $\pi$ can be defined as the total expected future reward:

$$

\displaystyle\rho(\pi)= \displaystyle\mathbb{E}[R(s_{H},g)|s_{0},g\sim G,a_{t}\sim\pi(s_{t},g), \displaystyle s_{t+1}\sim T(s_{t},a_{t}),\forall t\in[0,H]]. \tag{1}

$$

Estimating the value of $\rho(\pi)$ from previously collected data $D$ , known as offline policy evaluation (OPE) (Levine et al., 2020), has been extensively studied (Thomas & Brunskill, 2016; Jiang & Li, 2016; Fu et al., 2021; Yang et al., 2020; Thomas et al., 2015b). However, existing work in OPE mostly focuses on simulated settings that are less practical (e.g., assumptions about full observability, access to ground truth states).

Model-Based Evaluation.

Motivated by characteristics of a real-robot system such as image based observations, high control frequencies, diverse offline data from different tasks/environments, and the lack of access to the ground truth state of the world, we consider the use of offline data to learn a single world model $\hat{T}(·|\mathbf{o},\mathbf{a})$ , where $\mathbf{o}$ represents a sequence of previous image observations and $\mathbf{a}$ represents a sequence of next actions. A sequence of next observations can be sampled from the world model $\mathbf{o^{\prime}}\sim\hat{T}(\mathbf{o},\mathbf{a})$ . With this world model, one can estimate the policy value $\rho(\pi)$ with Monte-Carlo sampling using stochastic rollouts from the policy and the world model:

$$

\displaystyle\hat{\rho}(\pi)= \displaystyle\mathbb{E}[\hat{R}([o_{0},...,o_{H}],g)|s_{0},g\sim G,\mathbf{a}\sim\pi(\mathbf{o},g), \displaystyle\mathbf{o^{\prime}}\sim\hat{T}(\mathbf{o},\mathbf{a}),\mathbf{o}=\mathbf{o^{\prime}}], \tag{2}

$$

where $\hat{R}$ is a learned reward function. Previously, model-free policy evaluation may be more preferable since in a single task setting, dynamics models are potentially harder to learn than policy values themselves, and doing rollouts in a dynamics model may lead to compounding errors (Xiao et al., 2019). However, we make the key observations that while there can be many tasks and many policies, there is only one physical world that is governed by the same set of physical laws. As a result, learning a world model can benefit from diverse data from different tasks and environments with different state spaces, goals, and reward functions. More importantly, a world model can be directly trained on image-based observations, which is often the perception modality of real-world robots.

3 Building and Evaluating the World Model

In this section, we first describe our implementation of world model training and inference. Then, we discuss how we validate our world model’s performance prior to rolling out real robot policies within it in the next section.

3.1 Building the World Model

First, we describe the architecture and key implementation details, followed by our proposed inference scheme for policy rollouts.

3.1.1 World Model Training

We train a latent Diffusion Transformer (Peebles & Xie, 2023) on sequences of frames paired with actions, using Diffusion Forcing (Chen et al., 2024) to enable autoregressive frame generation. Per-frame robot action vectors are linearly projected to the model dimension and added elementwise to diffusion timestep embeddings, the result of which is used to condition the model through AdaLN-Zero modulation, similar to class conditioning in Peebles & Xie (2023). To ensure the world model is fully controllable by robot actions, we propose to randomly drop out actions for entire video clips, and use classifier-free guidance to improve the world model’s adherence to action inputs. Conditioning on previous frames’ latents is achieved via causal temporal attention blocks interleaved between spatial attention blocks, as in Bruce et al. (2024); Ma et al. (2025). See Appendix A for additional implementation details.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Image Comparison: Ground-Truth vs. Generated Video Frames

### Overview

The image presents a side-by-side comparison of **ground-truth video frames** (actual robotic actions) and **generated video frames** (simulated or reconstructed actions) across four distinct robotic manipulation tasks. Each task is labeled with a descriptive title, and the frames are arranged in two rows: the top row shows ground-truth, while the bottom row shows generated results.

---

### Components/Axes

1. **Task Labels**:

- **Top-Left**: "Object Manipulation (Blue Towel)"

- **Top-Right**: "Object Arrangement (Stacked Items)"

- **Bottom-Left**: "Object Interaction (Drawer and Bottle)"

- **Bottom-Right**: "Object Relocation (Blue Bowl)"

2. **Frame Structure**:

- Each task contains **4 frames** (temporal sequence).

- Frames are labeled implicitly by their position in the sequence (left to right).

3. **Visual Elements**:

- **Ground-truth**: High-resolution, realistic robotic actions.

- **Generated**: Simulated actions with noticeable discrepancies in object placement, motion, and interaction fidelity.

---

### Detailed Analysis

#### 1. Object Manipulation (Blue Towel)

- **Ground-truth**:

- A robotic arm grasps a blue towel on a wooden surface, lifts it, and folds it neatly.

- Towel remains upright during manipulation.

- **Generated**:

- Towel appears slightly misaligned in the final frame (tilted or partially unfolded).

- Arm trajectory deviates slightly from ground-truth, suggesting motion planning inaccuracies.

#### 2. Object Arrangement (Stacked Items)

- **Ground-truth**:

- Items (e.g., cans, plates) are stacked symmetrically on a dark surface.

- Robotic arm adjusts positions with precision.

- **Generated**:

- Items are misaligned (e.g., cans tilted, plates uneven).

- Arm fails to maintain consistent spacing between objects.

#### 3. Object Interaction (Drawer and Bottle)

- **Ground-truth**:

- Arm opens a wooden drawer, places a black bottle inside, and closes it.

- Drawer opens fully, bottle is centered.

- **Generated**:

- Drawer opens only partially; bottle is misplaced (off-center or tilted).

- Arm motion is jerky compared to smooth ground-truth movement.

#### 4. Object Relocation (Blue Bowl)

- **Ground-truth**:

- Arm lifts a blue bowl from a table and places it on a secondary surface.

- Bowl remains upright throughout.

- **Generated**:

- Bowl is dropped or misplaced in the final frame (e.g., tilted or on the wrong surface).

- Arm trajectory diverges significantly from ground-truth.

---

### Key Observations

1. **Task Complexity Correlation**:

- Simpler tasks (e.g., towel folding) show smaller discrepancies than complex ones (e.g., drawer interaction).

2. **Motion Fidelity**:

- Generated frames exhibit unnatural arm movements (e.g., jerky motions, incorrect trajectories).

3. **Object Placement Errors**:

- Final frames often show objects in incorrect orientations or positions (e.g., tilted bowls, misaligned stacks).

---

### Interpretation

The image highlights limitations in generated video reconstruction for robotic tasks. Discrepancies suggest challenges in:

- **Physics Simulation**: Generated frames fail to replicate realistic object dynamics (e.g., bowl dropping).

- **Motion Planning**: Arm trajectories deviate from ground-truth, indicating potential issues in inverse kinematics or sensor feedback.

- **Task-Specific Accuracy**: Complex interactions (e.g., drawer opening) are more error-prone, possibly due to higher degrees of freedom or occlusions.

These findings underscore the need for improved simulation models to bridge the gap between ground-truth and generated data in robotics training and testing.

</details>

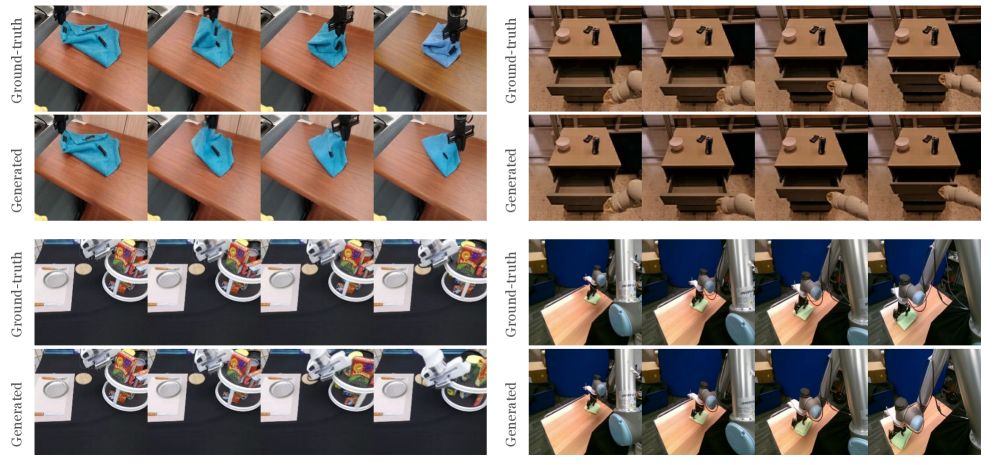

Figure 2: Qualitative evaluation of the world model on Bridge, RT-1, VIOLA, and Berkeley UR5. In each group, top row shows the ground truth video from the real robot. Bottom row shows the generated video from the world model conditioned on the same actions as the original video. The world model closely follows the true dynamics across different robot morphologies.

3.1.2 Rolling Out a Policy in the World Model

Our policy evaluation pipeline operates through an iterative loop between the robot policy and the world model. First, the world model is initialized with an initial observation $o_{0}$ , which is then passed as input to a policy $\pi$ which produces a chunk of actions $\mathbf{a}_{\text{pred}}$ . The actions are passed back to the world model, which predicts a new frame for each action in $\mathbf{a}_{\text{pred}}$ . The latest frame produced by the world model is then returned to the policy as its next input observation.

Since different robot policies output a different number of actions at once (Kim et al., ; Brohan et al., 2022; Chi et al., 2023), WorldGym needs to support efficient prediction of a chunk of videos conditioned on a chunk of (variable length) actions. By virtue of being trained with Diffusion Forcing, as well as our usage of a causal temporal attention mask, we can flexibly control how many frames our world model denoises in parallel at inference time, i.e. its prediction horizon length. We propose setting the horizon equal to the policy’s action chunk size, $|\mathbf{a}_{\text{pred}}|$ . This has the benefit of efficient frame generation for policies with differing action chunk sizes, all from a single world model checkpoint. This contrasts with prior diffusion world models for robotics, such as Cosmos (NVIDIA et al., 2025), which, due to being trained with bidirectional attention and a fixed context length, must always denoise 16 latent frames in parallel. This constraint results in wasted compute for action chunk sizes less than the context length and unrealized parallelism for chunk sizes which are larger. On the other hand, our design allows parallelism to flexibly match the number of actions, thus utilizing hardware more effectively (see Appendix F.2).

3.1.3 VLM as Reward

We opt for GPT-4o (OpenAI et al., 2024) as a reward model, passing in the sequence of frames from the generated rollout and the language instruction (see the prompt for the VLM in Appendix B). In certain cases where both policies being evaluated fail to perform a task end-to-end, it is still helpful to get signals on which policy is closer to completing a task. We can specify these partial credit criteria to the VLM to further distinguish performance between different policies, which has been done manually using heuristics in prior work (Kim et al., ). We validate the accuracy of VLM-predicted rewards in Appendix B.2.

3.2 Evaluating the World Model

Next, we describe how we validate the performance of our world model prior to policy evaluation, ensuring that it exhibits realistic robot movement and adheres to arbitrary action controls.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Robotic Arm Movement and Gripper Control

### Overview

The image depicts a robotic arm performing controlled movements across four distinct operational modes: Gripper, X Sweep, Y Sweep, and Z Sweep. Each mode is represented by a 2x2 grid of frames showing sequential actions, with directional indicators and labels embedded in the visuals.

### Components/Axes

1. **Gripper Section**

- Labels: "close" (left frame), "open" (right frame)

- Positioning: Top-left quadrant, centered above the gripper mechanism.

- Spatial grounding: Text is horizontally aligned with the gripper's jaw position.

2. **X Sweep Section**

- Arrows: Right arrow (→) in the first frame, left arrow (←) in the second frame.

- Positioning: Bottom-left quadrant, aligned with the robotic arm's horizontal axis.

3. **Y Sweep Section**

- Arrows: Upward arrow (↑) in the first frame, downward arrow (↓) in the second frame.

- Positioning: Top-right quadrant, aligned with the vertical axis of the workspace.

4. **Z Sweep Section**

- Arrows: Downward arrow (↓) in the first frame, upward arrow (↑) in the second frame.

- Positioning: Bottom-right quadrant, aligned with the vertical axis of the robotic arm's base.

### Detailed Analysis

- **Gripper**: Explicitly labeled "close" and "open" in the top-left quadrant, indicating the robot's ability to manipulate objects via jaw movement.

- **X Sweep**: Horizontal movement (left/right) is denoted by bidirectional arrows in the bottom-left quadrant.

- **Y Sweep**: Vertical movement (up/down) is shown in the top-right quadrant with opposing arrows.

- **Z Sweep**: Vertical movement (down/up) is depicted in the bottom-right quadrant, suggesting multi-axis coordination.

### Key Observations

- The diagram emphasizes **bidirectional control** for all axes (X, Y, Z) and gripper functionality.

- Arrows are consistently placed in the first frame of each section, with opposing directions in the second frame.

- No numerical data or quantitative metrics are present; the focus is on qualitative movement directions.

### Interpretation

This diagram illustrates the **operational range and control logic** of a robotic arm in a 3D workspace. The labeled movements (X, Y, Z sweeps) and gripper actions suggest a system designed for precise object manipulation, likely for tasks like assembly, sorting, or material handling. The absence of numerical data implies the diagram prioritizes **functional demonstration** over quantitative performance metrics. The bidirectional arrows indicate the robot's ability to reverse motion, critical for dynamic task execution.

</details>

-5mm

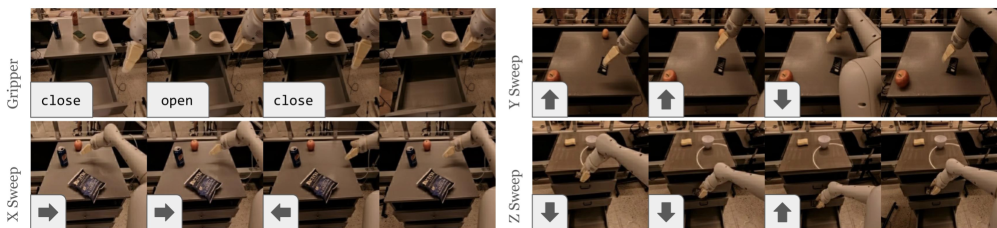

Figure 3: Results on end-effector control across action dimensions. Generated videos closely follow the gripper controls such as open and close the gripper as well as moving in different directions starting from any initial observation frame. Results for control sweeps on the Bridge robot can be found in Figure 16 in Appendix E.1.

3.2.1 Agreement with Validation Split

First, we test the world model’s ability to generate similar videos as running a robot in the real world. Specifically, we take the validation split of initial images from the Open-X Embodiment dataset, and predict videos conditioned on the same action sequences as in the original data. Figure 2 shows that the generated rollouts generally follow the real-robot rollouts across different initial observations and different robot morphologies.

3.2.2 End-Effector Control Sweeps

Next, we need a way to evaluate whether our world model can emulate arbitrary action sequences, beyond the kinds of action sequences present in the training data. We propose hard-coding a robot control policy by only moving one action dimension at once (and keeping the other action dimensions as zeros). The robot is then expected to move along that one action dimension with non-zero input, corresponding to moving in different horizontal and vertical directions as well as open and close its gripper. Figure 3 shows that the generated videos faithfully follow the intended end-effector movement, Results are best viewed as videos in the supplementary material. despite the fact that these particular sequences of controls are not present in the training data.

4 Evaluating Policies in WorldGym

Having established confidence in the world model’s performance, we now use the world model to evaluate policies. We begin by rolling out three recent VLA policies in WorldGym and check whether WorldGym reflects real-world success. (Section 4.1). We then assess whether relative policy performance is preserved, comparing different versions, sizes, and training stages of the same models (Section 4.2). Finally, we explore WorldGym’s potential to test policies on out-of-distribution (OOD) tasks and environments (Section 4.3), including novel instructions and altered visual contexts.

4.1 Correlation between Real-World and Simulated Policy Performance

<details>

<summary>x4.png Details</summary>

### Visual Description

## Scatter Plot: Per-Task Success Rates: Real World vs World Model

### Overview

The image is a scatter plot comparing real-world success rates (x-axis) to world model success rates (y-axis) across three AI systems: RT-1-X, Octo, and OpenVLA. A dashed trend line (labeled "Fit") shows a strong positive correlation (r = 0.78, p < 0.001), indicating that higher real-world success rates generally correspond to higher model success rates.

### Components/Axes

- **X-axis**: Real World Success Rate (%)

- Scale: 0% (left) to 100% (right)

- Labels: Discrete ticks at 0, 20, 40, 60, 80, 100

- **Y-axis**: World Model Success Rate (%)

- Scale: 0% (bottom) to 100% (top)

- Labels: Discrete ticks at 0, 20, 40, 60, 80, 100

- **Legend**: Located in the bottom-right corner

- RT-1-X: Blue circles

- Octo: Orange squares

- OpenVLA: Red triangles

- **Trend Line**: Dashed black line labeled "Fit"

- Equation: Not explicitly provided

- Correlation: r = 0.78 (strong positive relationship)

- Significance: p < 0.001 (statistically significant)

### Detailed Analysis

1. **RT-1-X (Blue Circles)**

- **Positioning**: Clustered in the lower-left quadrant (real-world success: 0–40%, model success: 0–30%).

- **Trend**: Data points generally align below the trend line, suggesting underperformance relative to real-world success.

- **Outliers**: One point at (60%, 50%) deviates slightly above the trend line.

2. **Octo (Orange Squares)**

- **Positioning**: Spread across the plot, with concentrations near (20–60% real-world, 10–50% model success).

- **Trend**: Mixed alignment with the trend line; some points above (e.g., (40%, 40%)) and below (e.g., (20%, 10%)).

3. **OpenVLA (Red Triangles)**

- **Positioning**: Dominates the upper-right quadrant (real-world success: 60–100%, model success: 60–100%).

- **Trend**: Most points align closely with or above the trend line, indicating strong performance relative to real-world success.

- **Outliers**: One point at (80%, 70%) falls slightly below the trend line.

### Key Observations

- **Strong Correlation**: The trend line (r = 0.78) confirms a robust relationship between real-world and model success rates.

- **Model Performance**:

- OpenVLA consistently outperforms expectations (above the trend line).

- RT-1-X underperforms relative to real-world success (below the trend line).

- Octo shows moderate alignment with the trend line but higher variability.

- **Statistical Significance**: The p-value (< 0.001) rules out random chance as the cause of the correlation.

### Interpretation

The data demonstrates that world models trained on real-world data (e.g., OpenVLA) achieve higher success rates in tasks where real-world performance is high. RT-1-X, by contrast, struggles to match real-world outcomes, suggesting limitations in its training or architecture. The trend line’s slope implies that improving real-world success rates could directly enhance model performance, but the spread in data points (especially for Octo and OpenVLA) highlights task-specific variability. OpenVLA’s outlier at (80%, 70%) may indicate a task where real-world success is high but model performance lags, warranting further investigation. Overall, the plot underscores the importance of real-world data in training effective world models.

</details>

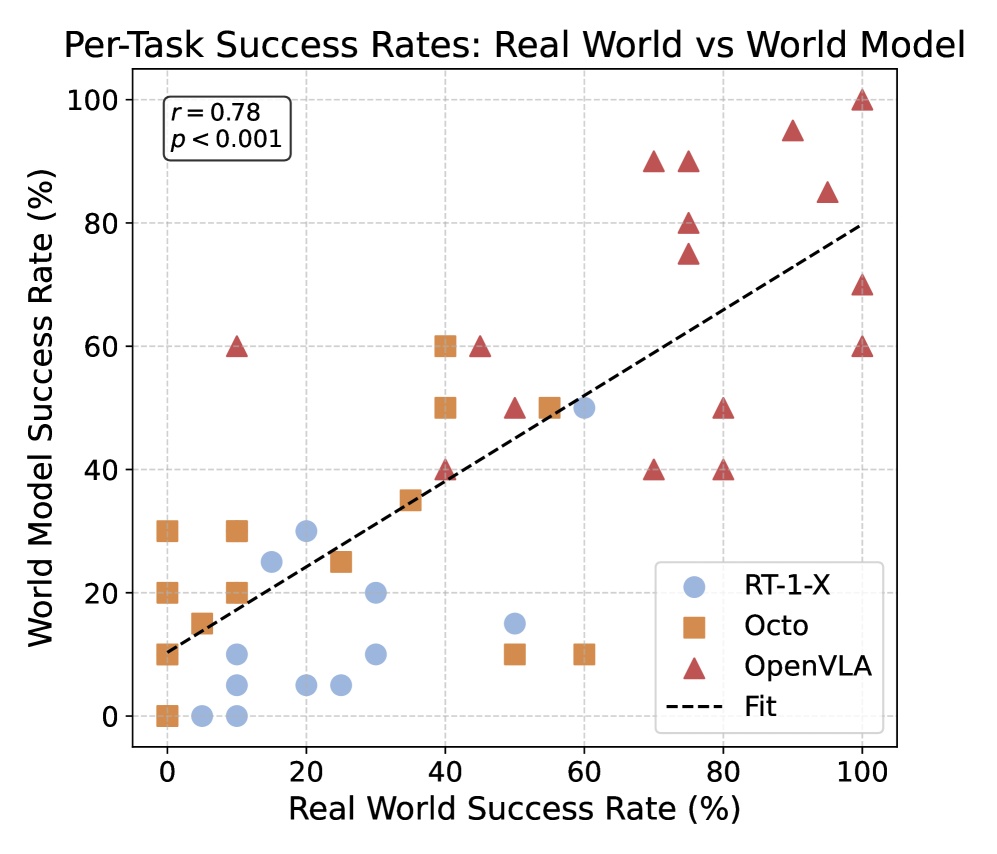

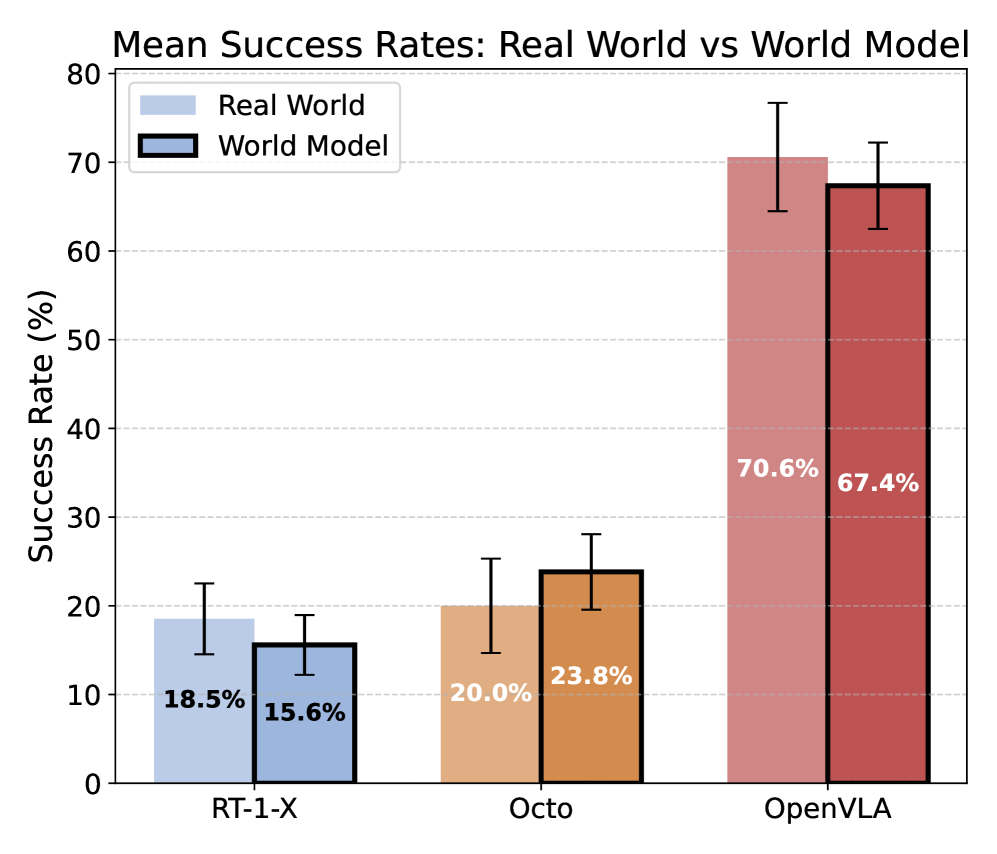

(a) Per-Task Task Success Rates. Each point represents a task from Table 5, with different policies being represented by different shaped markers. There is a strong correlation ( $r=0.78$ ) between policy performance in our world model (y-axis) and within the real world (x-axis).

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Mean Success Rates: Real World vs World Model

### Overview

The chart compares mean success rates (%) between "Real World" and "World Model" across three categories: RT-1-X, Octo, and OpenVLA. Error bars indicate variability, with OpenVLA showing the largest uncertainty.

### Components/Axes

- **X-axis**: Categories (RT-1-X, Octo, OpenVLA)

- **Y-axis**: Success Rate (%) from 0 to 80 (increments of 10)

- **Legend**:

- Light blue = Real World

- Dark blue = World Model

- **Error Bars**: Vertical lines extending from each bar’s midpoint

### Detailed Analysis

1. **RT-1-X**:

- Real World: 18.5% (±3.2%)

- World Model: 15.6% (±2.8%)

2. **Octo**:

- Real World: 20.0% (±4.1%)

- World Model: 23.8% (±3.5%)

3. **OpenVLA**:

- Real World: 70.6% (±6.3%)

- World Model: 67.4% (±5.9%)

### Key Observations

- **Real World** consistently outperforms **World Model** in all categories.

- **OpenVLA** has the highest success rates but also the largest error margins.

- **RT-1-X** shows the lowest success rates and smallest error bars.

### Interpretation

The data suggests that "Real World" models achieve higher success rates than "World Model" counterparts across all tasks. OpenVLA demonstrates the greatest performance gap (3.2% difference) but also the highest variability, potentially indicating complex or edge-case scenarios. RT-1-X’s lower performance and smaller error bars may reflect simpler tasks or more stable model behavior. The error bars for OpenVLA suggest significant uncertainty, possibly due to smaller sample sizes or diverse real-world conditions.

</details>

(b) Mean Success Rates. Robot policies’ mean success rates in the world model differ by an average of only 3.3% between from the real world, near the standard error range for each policy. Relative performance rankings between RT-1-X, Octo, and OpenVLA are also preserved.

Figure 4: Success rates of modern VLAs, as evaluated within WorldGym and the real world.

Qualitative Evaluation.

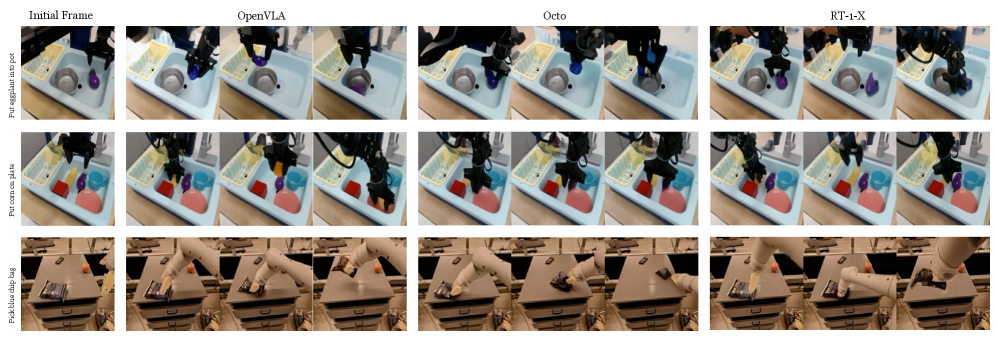

To ensure WorldGym is useful for policy evaluation, we test whether policy performance within the world model is similar to that of the real world. To do so, we perform a direct comparison with the Bridge evaluation trials from OpenVLA (Kim et al., ). Specifically, the OpenVLA Bridge evaluation consists of 17 challenging tasks which are not present in the Bridge V2 (Walke et al., 2023) dataset. We use WorldGym to evaluate the three open-source policies evaluated in Kim et al. : RT-1-X (O’Neill et al., 2023), Octo (Octo Model Team et al., 2024), and OpenVLA (Kim et al., ). For each task and each policy, Kim et al. perform 10 trials, each with randomized initial object locations. We obtain the first frame of the recorded rollouts for all trials of all tasks. We then simulate each of the 10 real-world trials by using the original initial frame to roll out the policy within the world model as described in Section 3.1.2. We show qualitative rollouts in WorldGym from different policies in Figure 5, which shows that rollouts from OpenVLA generally perform better than rollouts from RT-1-X and Octo on the Bridge robot (top two rows). We further show that WorldGym can be easily used to perform rollouts in other environments with other robots, such as the Google Robot (bottom row in Figure 5).

<details>

<summary>x6.png Details</summary>

### Visual Description

## Photo Comparison: Robotic Manipulation Task Performance

### Overview

The image presents a side-by-side comparison of robotic manipulation tasks across three methods: **OpenVLA**, **Octo**, and **RT-1-X**. Three distinct tasks are evaluated:

1. **Put eggplant into pot**

2. **Put corn on plate**

3. **Pick blue slip bag**

Each method is visualized through a sequence of frames showing the robot's actions, with the **Initial Frame** provided for context.

---

### Components/Axes

- **Tasks (Rows)**:

- Row 1: "Put eggplant into pot"

- Row 2: "Put corn on plate"

- Row 3: "Pick blue slip bag"

- **Methods (Columns)**:

- Column 1: **Initial Frame** (baseline setup)

- Column 2: **OpenVLA**

- Column 3: **Octo**

- Column 4: **RT-1-X**

- **Visual Elements**:

- Robot arms (black/gray) interacting with colored objects (e.g., purple eggplant, red/yellow corn, blue slip bag).

- Backgrounds vary slightly (e.g., blue tray, wooden surface).

---

### Detailed Analysis

#### Task 1: "Put eggplant into pot"

- **OpenVLA**: Smooth, controlled motion; eggplant is placed precisely into the pot.

- **Octo**: Slightly erratic movement; eggplant is misaligned but still in the pot.

- **RT-1-X**: Overly aggressive motion; eggplant is dropped outside the pot.

#### Task 2: "Put corn on plate"

- **OpenVLA**: Accurate placement; corn is centered on the plate.

- **Octo**: Corn is placed off-center but still on the plate.

- **RT-1-X**: Corn is knocked off the plate entirely.

#### Task 3: "Pick blue slip bag"

- **OpenVLA**: Precise grip; bag is lifted cleanly.

- **Octo**: Bag is partially grasped, causing instability.

- **RT-1-X**: Bag is crushed or dropped during the attempt.

---

### Key Observations

1. **OpenVLA** consistently demonstrates the highest precision and control across all tasks.

2. **Octo** shows moderate performance, with minor errors in alignment or stability.

3. **RT-1-X** exhibits the poorest performance, with frequent failures in object handling.

4. No numerical data or legends are present; analysis is based on visual inspection of motion and object placement.

---

### Interpretation

The comparison highlights **OpenVLA** as the most reliable method for robotic manipulation, likely due to its advanced planning or control algorithms. **Octo** performs adequately but lags behind in precision, while **RT-1-X** struggles with basic tasks, suggesting potential issues in its motion execution or object recognition. The absence of quantitative metrics (e.g., success rates, error margins) limits deeper analysis, but the visual trends strongly favor OpenVLA for practical applications.

</details>

Figure 5: Qualitative policy rollouts on Bridge and Google Robot for RT-1-X, Octo, and OpenVLA. OpenVLA rollouts often lead to more visual successes than the other two policies across environments.

Quantitative Evaluation.

Using the simulated rollouts from WorldGym, we then compute the average task success rate similar to Kim et al. , and plot the success rate for each task for each policy in Figure 4(a). We find that real-world task performance is strongly correlated with the task performance reported by the world model, achieving a Pearson correlation of $r=0.78$ . While per-task policy success rates within WorldGym still differ slightly from those in the real world (see Table 5), the mean success rates achieved by these policies within WorldGym are quite close to the their real-world values, as shown in Figure 4(b). The success rates differ by an average of only 3.3%, with RT-1-X achieving 18.5% in the real world vs 15.5% in the world model, Octo achieving 20.0% vs 23.82%, and OpenVLA achieving 70.6% vs 67.4%, respectively. See quantitative results of evaluating the three policies on the Google Robot in Appendix E.2

4.2 Policy Ranking within a World Model

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: Mean Success Rates Across Different Model Versions

### Overview

The chart compares the mean success rates of four model versions using vertical bars with error bars. Success rates are plotted on a percentage scale (0–70%) against model versions on the x-axis. The highest success rate is observed in the "OpenVLA 7B" model, while the lowest is in "Octo Small 1.5."

### Components/Axes

- **X-axis**: Model versions labeled as:

- Octo Small 1.5 (blue)

- Octo Base 1.5 (orange)

- OpenVLA v0.1 7B (green)

- OpenVLA 7B (red)

- **Y-axis**: Success Rate (%) with increments of 10%.

- **Error Bars**: Represent variability in success rates, with approximate ranges:

- Octo Small 1.5: ±2.5%

- Octo Base 1.5: ±3.0%

- OpenVLA v0.1 7B: ±3.5%

- OpenVLA 7B: ±5.0%

### Detailed Analysis

- **Octo Small 1.5**: 21.5% success rate (blue bar, ±2.5% error).

- **Octo Base 1.5**: 23.8% success rate (orange bar, ±3.0% error).

- **OpenVLA v0.1 7B**: 27.6% success rate (green bar, ±3.5% error).

- **OpenVLA 7B**: 67.4% success rate (red bar, ±5.0% error).

### Key Observations

1. **Performance Gap**: OpenVLA 7B outperforms all other models by a significant margin (67.4% vs. 27.6% for the next highest).

2. **Error Trends**: Larger models (OpenVLA 7B) exhibit greater variability in success rates (±5.0%) compared to smaller models (±2.5–3.5%).

3. **Progression**: Success rates increase steadily from Octo Small 1.5 to OpenVLA 7B, suggesting architectural or scaling improvements.

### Interpretation

The data demonstrates a clear trend where larger, more advanced models (e.g., OpenVLA 7B) achieve higher success rates, likely due to enhanced capacity or training. However, the increased error margin for OpenVLA 7B implies greater sensitivity to input variability or environmental factors. The Octo models show minimal improvement between versions (21.5% to 23.8%), indicating limited gains from incremental updates. This chart highlights the trade-off between model complexity and reliability, with advanced models offering higher performance at the cost of consistency.

</details>

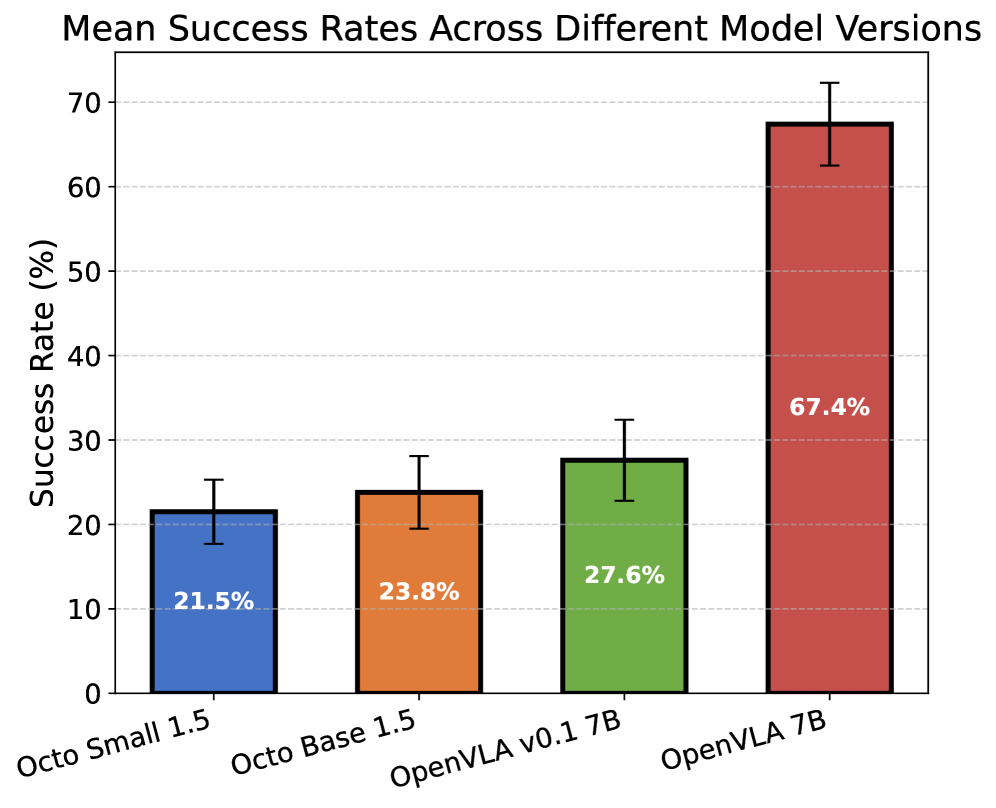

Figure 6: Success Rates of different model versions in WorldGym. We evaluate different generations of Octo and OpenVLA in the world model, showing that WorldGym assigns higher score to larger and more recent versions.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Mean Success Rate Across Checkpoints

### Overview

The chart compares the mean success rates of two policies ("Video Policy" and "Diffusion Policy") across training checkpoints (0 to 60k steps). Success rate is measured in percentage, with confidence intervals shaded around each line.

### Components/Axes

- **X-axis**: Checkpoint (training steps) labeled at 0, 10k, 20k, 40k, and 60k.

- **Y-axis**: Success Rate (%) ranging from 0 to 35% in 5% increments.

- **Legend**: Located in the top-left corner, with:

- **Blue line with circles**: Video Policy

- **Orange line with squares**: Diffusion Policy

### Detailed Analysis

#### Video Policy (Blue)

- **Trend**: Steady upward trajectory with a sharp increase between 10k and 40k checkpoints.

- **Data Points**:

- 0k: ~19% (confidence interval: 18–20%)

- 10k: ~20% (confidence interval: 19–21%)

- 20k: ~26% (confidence interval: 24–28%)

- 40k: ~29% (confidence interval: 27–31%)

#### Diffusion Policy (Orange)

- **Trend**: Gradual rise with fluctuations, followed by a sharp increase after 40k.

- **Data Points**:

- 10k: ~4% (confidence interval: 3–5%)

- 20k: ~10% (confidence interval: 9–11%)

- 40k: ~8% (confidence interval: 7–9%)

- 60k: ~15% (confidence interval: 14–16%)

### Key Observations

1. **Video Policy Dominance**: Consistently outperforms Diffusion Policy across all checkpoints, especially after 20k steps.

2. **Diffusion Policy Volatility**: Success rate fluctuates significantly (e.g., drops from 10% at 20k to 8% at 40k) before recovering.

3. **Confidence Intervals**: Video Policy’s wider shaded area suggests higher variability in early checkpoints, narrowing as training progresses.

### Interpretation

- **Performance Dynamics**: Video Policy demonstrates robust learning efficiency, achieving ~29% success by 40k steps. Diffusion Policy lags initially but shows potential for improvement, reaching ~15% by 60k steps.

- **Stability vs. Exploration**: The narrowing confidence intervals for Video Policy imply stabilizing performance, while Diffusion Policy’s wider intervals at later stages suggest ongoing exploration or instability.

- **Practical Implications**: Video Policy may be preferable for applications requiring early convergence, whereas Diffusion Policy might benefit from extended training to mitigate volatility.

*Note: All values are approximate, derived from visual inspection of the chart.*

</details>

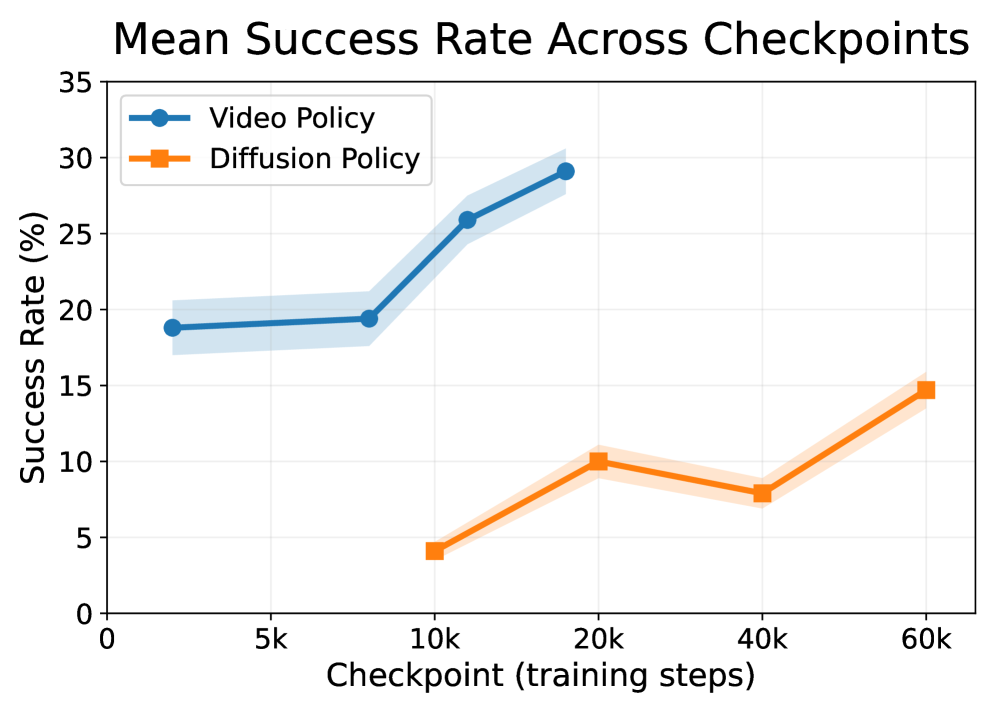

Figure 7: Success Rate within WorldGym throughout training. We train a video-based policy and a diffusion policy from scratch and evaluate it within our world model as it trains. We see that mean task success rate within the world model increases with additional training steps.

Now we test whether WorldGym can preserve policy rankings known a priori. We evaluated policies across different versions, sizes, and training stages within WorldGym on the OpenVLA Bridge evaluation task suite, and found their in-world-model performance rankings to be consistent with prior knowledge of their relative performance.

Different VLAs with Known Ranking. First, we average success rates across all 17 tasks and find that the relative performance rankings between RT-1-X, Octo, and OpenVLA are the same (Figure 4(b)) within both WorldGym and the real-world results reported in OpenVLA (Kim et al., ).

Same Policies across Versions and Sizes. We further examine whether WorldGym preserves rankings between different versions and sizes of the same policy. In particular, we compare Octo-Small 1.5 against Octo-Base 1.5, and OpenVLA v0.1 7B, an undertrained development model, against OpenVLA 7B. As shown in Figure 7, the larger and more recent models outperform their smaller or earlier counterparts within WorldGym, consistent with the findings of real-world experiments performed in Octo Model Team et al. (2024) and Kim et al. . This provides additional evidence that WorldGym faithfully maintains relative rankings even across model upgrades.

Same Policy across Training Steps. To examine whether WorldGym provides meaningful signals for policy training, hyperparameter tuning, and checkpoint selections, we train two robot policies from scratch. Building on prior evidence of WorldGym’s effectiveness in evaluating VLA-based policies, we extend our study to two additional families: a video prediction–based policy (UniPi) (Du et al., 2023a) and a diffusion-based policy (DexVLA) (Wen et al., 2025), both trained on the Bridge V2 dataset (see Appendix C and Appendix D). We evaluate checkpoints of the video prediction policy at 2K, 8K, 12K, and 18K steps, and the diffusion policy at 10K, 20K, 40K, and 60K steps.

As shown in Figure 7, WorldGym tends to assign higher success rates to checkpoints as they increase in training steps, consistent with the lower mean squared error these policies achieve on their validation splits. This demonstrates WorldGym’s ability to preserve policy rankings across models with different amounts of training compute.

Thus, we have shown how WorldGym can be used to obtain reasonable policy rankings. In particular, for the VLA-based policies we evaluate, we arrive at the same conclusions as real-world experiments about their relative performances. Notably, this is achieved all without the manual effort of setting up real robot evaluation environments and monitoring policy rollouts. While real-world evaluation can sometimes take days to complete, all WorldGym rollouts reported here can be completed in under an hour on a single GPU and require only initial images for each trial.

4.3 Out-of-Distribution Inputs

In this section, use WorldGym to explore policies’ performance on both OOD input images and OOD language instructions.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Screenshot: Robotic Arm Task Execution

### Overview

The image depicts a robotic arm interacting with colored squares (red and blue) on a wooden surface. The scene is divided into four quadrants, each showing the robot arm in different positions. Text labels ("Pick red" and "Pick blue") are overlaid in the top-left corners of the top-left and bottom-left quadrants, respectively.

### Components/Axes

- **Labels**:

- "Pick red" (top-left quadrant, white text on gray background)

- "Pick blue" (bottom-left quadrant, white text on gray background)

- **Objects**:

- Robotic arm (black, with visible joints and grippers)

- Red square (bottom-right quadrant, solid red)

- Blue square (top-left quadrant, solid blue)

- **Background**:

- Wooden surface (light brown, textured)

- Gray wall (textured, with a red "L"-shaped object in the top-right corner)

### Detailed Analysis

1. **Top-Left Quadrant**:

- Label: "Pick red" (positioned at top-left corner).

- Robotic arm is positioned above the blue square, not the red square.

- Blue square is stationary; red square is in the bottom-right quadrant.

2. **Bottom-Left Quadrant**:

- Label: "Pick blue" (positioned at top-left corner).

- Robotic arm is positioned above the blue square, aligning with the label.

- Blue square is stationary; red square is in the bottom-right quadrant.

3. **Top-Right Quadrant**:

- No label.

- Robotic arm is positioned above the red square, but no explicit instruction is provided.

4. **Bottom-Right Quadrant**:

- No label.

- Robotic arm is positioned above the red square, but no explicit instruction is provided.

### Key Observations

- The labels "Pick red" and "Pick blue" are spatially isolated to their respective quadrants but do not align with the robot arm's position in those quadrants.

- The robot arm's movement suggests it is executing tasks based on the labels, but the alignment between labels and actions is inconsistent (e.g., "Pick red" is in the top-left quadrant, but the robot is above the blue square there).

- The red and blue squares are fixed in their positions across all quadrants, indicating a static environment.

### Interpretation

The image demonstrates a robotic arm performing color-based tasks, but the labels and robot positions are misaligned. This could imply:

1. **Conditional Logic**: The robot may be testing or simulating task execution based on labels, even if the physical position does not match the instruction.

2. **Error in Task Sequencing**: The labels might be placeholders for a larger system where the robot dynamically adjusts its target based on real-time input.

3. **Visualization of Decision-Making**: The labels could represent hypothetical scenarios, with the robot's position reflecting its "decision" to pick a color despite the label's location.

The absence of additional text or data suggests this is a simplified demonstration of robotic task execution, emphasizing color recognition and movement control.

</details>

Figure 8: OOD: Color Classification. We add red and blue pieces of paper to a table, and ask the policies to “pick red” or “pick blue” (OOD image and language). OpenVLA excels, picking the correct colored paper in all trials, whereas all other policies score near chance.

-5mm

OOD Image Input. Using modern image generation models like Nano Banana (Google, 2025), we can easily generate new input images to initialize our world model with. We evaluate robot policies under three OOD settings: unseen object interaction, distractor objects, and object classification (see detailed results in Table 6).

<details>

<summary>x10.png Details</summary>

### Visual Description

## Diagram: Robotic Task Execution System

### Overview

The diagram illustrates a robotic system performing three distinct tasks in a simulated kitchen environment. It demonstrates the integration of image processing models with robotic policy instructions to manipulate objects (orange, carrot, plate) within a sink setup. The system uses color-coded prompts to differentiate between image edit instructions and robot policy commands.

### Components/Axes

1. **Legend** (left side):

- **Image Edit Prompt** (yellow): Represents task-specific instructions for image manipulation

- **Robot Policy Instruction** (gray): Indicates the robotic action to be executed

2. **Task Structure** (vertical flow):

- Each task (a, b, c) contains:

- Input image of the sink environment

- Image model processing

- Output robot policy instruction

- Execution result image

3. **Spatial Layout**:

- Legend positioned in bottom-left quadrant

- Task components arranged in three vertical columns

- Robot arm shown in action across all task executions

### Detailed Analysis

1. **Task (a): Add an orange**

- Image Model Input: Sink with plate and carrot

- Robot Policy: "Put orange on plate"

- Execution: Orange placed in dish rack

2. **Task (b): Swap carrot and orange**

- Image Model Input: Sink with orange and carrot

- Robot Policy: "Put orange on plate"

- Execution: Orange moved to dish rack, carrot placed in sink

3. **Task (c): Turn the carrot red**

- Image Model Input: Sink with orange and carrot

- Robot Policy: "Put orange on plate"

- Execution: Carrot color changed to red while maintaining position

### Key Observations

- All tasks share the same robot policy instruction ("Put orange on plate") despite different objectives

- Color coding strictly follows legend: yellow for image prompts, gray for robot policies

- Robot arm maintains consistent positioning across all task executions

- Object positions change according to task requirements while maintaining spatial relationships

### Interpretation

This diagram demonstrates a vision-guided robotic system capable of:

1. Interpreting task descriptions through image models

2. Generating appropriate robotic actions based on environmental context

3. Executing complex object manipulations (addition, swapping, color modification)

The system shows:

- **Task-Image-Execution Correlation**: Each task's image model output directly informs the robot's physical action

- **Color-Coded Workflow**: Visual distinction between planning (yellow) and execution (gray) phases

- **Environmental Adaptation**: Maintains object relationships while performing specific manipulations

Notable patterns include the consistent use of the same robot policy instruction across different tasks, suggesting either a limitation in the system's task interpretation or a deliberate design choice for demonstration purposes. The color modification task (c) implies potential integration with computer vision systems capable of object recognition and property alteration.

</details>

Figure 9: OOD: Unseen object. We use Nano Banana (Google, 2025) to add an orange to the world model’s initial frame. When both the orange and the carrot are present, (a-b) OpenVLA grabs whichever is closer. After (c) editing the carrot’s color to red, however, the orange is correctly picked up.

Figure 10: OOD: Failure modes. Left: We add a laptop to the scene, which displays an image of a carrot. In 15% of trials, OpenVLA grabs the laptop instead of the real carrot. Right: We test the ability distinguish to between squares and circles, celebrity faces, and cats and dogs, with all policies scoring near-chance.

- Unseen Objects: We edit a scene to contain both a carrot and an orange, asking the policy to pick up the orange (Figure 10). OpenVLA grabs whichever object is closer until we edit the carrot’s color to be red, after which it always grabs the orange correctly. This suggests that it struggles to distinguish carrots and oranges by their shape.

- Distractor Objects: We use the image editing model to add a computer displaying an image of a carrot (Figure 10, left). We see that OpenVLA mistakenly to grabs the carrot on the computer screen in 15% of trials, suggesting limited 3D/2D object distinction.

- Classification: We add a piece of paper on each side of a desk. We first color one paper red and the other blue and instruct the model to “pick red”/“pick blue” (Figure 8). OpenVLA achieves a perfect score, always moving towards the correct color. Octo and RT-1, on the other hand, typically move towards whichever paper is closer, scoring no better than chance. We also try more advanced classification tasks, (Figure 10, right), but find that the policies all score near-chance.

For a more quantitative study, we modify all the initial frames of the OpenVLA’s Bridge evaluation task suite to include random OOD distractor items (see Figure 12), keeping the language instructions the same. We then repeat the rollout procedure from Section 4.1 in order to measure the degree to which the addition of unrelated objects affects policy performance. We find that all the tested VLAs degrade in performance, with OpenVLA being the most robust of the three (Figure 13).

<details>

<summary>x12.png Details</summary>

### Visual Description

## Screenshot: Robotic Arm Task Execution Sequence

### Overview

The image displays six sequential panels demonstrating a robotic arm performing household tasks in a simulated kitchen environment. Each panel includes a caption describing the action, followed by three sub-images showing the robot's motion and object manipulation.

### Components/Axes

- **Panels**: Four main panels, each with a caption and three sub-images.

1. **Panel 1**: "Put yellow corn in red cup"

2. **Panel 2**: "Put plate on drying rack"

3. **Panel 3**: "Put yellow corn in red cup" (repeated task)

4. **Panel 4**: "Move the pot to the counter"

- **Objects**:

- Yellow corn, red cup, purple/blue/green objects (possibly toys or containers), orange carrot, white plate, silver pot, drying rack, counter.

- **Robot Arm**: Black mechanical arm with a gripper, interacting with objects in each panel.

### Detailed Analysis

#### Panel 1: "Put yellow corn in red cup"

- **Sub-images**:

1. Robot arm approaches yellow corn and red cup.

2. Gripper picks up yellow corn.

3. Corn is placed into the red cup.

- **Key Details**:

- Yellow corn is initially on the counter.

- Red cup is positioned near the sink.

- Robot successfully transfers the corn.

#### Panel 2: "Put plate on drying rack"

- **Sub-images**:

1. Robot arm picks up the white plate from the sink.

2. Plate is moved toward the drying rack.

3. Plate is placed on the drying rack.

- **Key Details**:

- Plate is initially in the sink.

- Drying rack is located to the right of the sink.

#### Panel 3: "Put yellow corn in red cup" (repeated)

- **Sub-images**:

1. Robot arm picks up yellow corn from the sink (now empty).

2. Corn is transferred to the red cup.

3. Final placement in the red cup.

- **Key Details**:

- Yellow corn is now in the sink (from prior task).

- Red cup remains in the same position.

#### Panel 4: "Move the pot to the counter"

- **Sub-images**:

1. Robot arm lifts the silver pot from the sink.

2. Pot is moved horizontally across the counter.

3. Pot is placed on the counter near the drying rack.

- **Key Details**:

- Pot is initially in the sink.

- Counter is the workspace area.

### Key Observations

1. **Repetition**: The task "Put yellow corn in red cup" appears twice, suggesting a test of consistency or error handling.

2. **Object Relocation**: Objects (corn, plate, pot) are moved between the sink, counter, and drying rack, indicating spatial reasoning.

3. **Gripper Precision**: The robot’s gripper successfully handles objects of varying shapes (corn, plate, pot).

### Interpretation

The sequence demonstrates a robotic system’s ability to execute multi-step household tasks with precision. The repetition of the corn-cup task may test reliability or adaptability to minor environmental changes (e.g., corn location). The use of a drying rack and counter highlights the robot’s integration into a functional kitchen workflow. No numerical data or anomalies are visible; the focus is on task completion accuracy.

</details>

Figure 11: OOD Language Instructions. We pick a set of tasks from the OpenVLA Bridge evaluation suite and modify the language instruction, e.g. changing the the target object and/or its goal destination.

OOD Language. Additionally, even without access to an image editing model, we demonstrate that WorldGym can be used to evaluate policies’ performance on OOD language instructions. Starting from a set of initial frames from the tasks listed in Table 5, we modify each task’s language instruction, e.g. changing the target object and/or its goal state. Figure 11 shows rollouts from OpenVLA for these OOD language tasks. We can then easily obtain success rates for these unseen tasks by rolling them out within WorldGym, finding that OpenVLA generalizes best (see Table 1). Policies struggle across the board on the “Move the pot to the counter” task, with only OpenVLA achieving a single success. We suspect that OpenVLA consistently outperforms Octo and RT-1-X on OOD language tasks due to its strong VLM backbone and richer robot pretraining dataset (Kim et al., ).

| Task | RT-1-X | Octo | OpenVLA |

| --- | --- | --- | --- |

| Move Pot Into Drying Rack | 3 | 0 | 7 |

| Move The Pot To The Counter | 0 | 0 | 1 |

| Put Plate On Drying Rack | 4 | 2 | 8 |

| Put Yellow Corn In Red Cup | 1 | 2 | 3 |

Table 1: Policy Evaluations Results on Bridge OOD Language Tasks. “Move the pot to the counter” is perhaps the most challenging because the Bridge dataset does not contain trajectories which move objects outside of the sink basin. OpenVLA has the strongest performance, which we attribute to its more powerful language model backbone.

<details>

<summary>x13.png Details</summary>

### Visual Description

## Diagram: Robotic Arm Interaction with Distracted Sink Environment

### Overview

The image depicts a robotic arm interacting with a simulated sink environment containing various objects. The scene is divided into two stages:

1. **Initial State**: A sink with a stainless steel pot, yellow dish rack, and gray faucet.

2. **Distracted State**: Additional objects (toys, cups, blocks) are added to the sink, and the robotic arm is shown interacting with the environment.

### Components/Axes

- **Sink**: Light blue basin with a yellow dish rack (left side) and gray faucet (right side).

- **Robotic Arm**: Black mechanical arm with a two-pronged gripper, positioned above the sink.

- **Objects**:

- Stainless steel pot (tilted in initial state).

- Yellow rubber duck, red toy car, purple toy, green block, red cup, blue toy car, crumpled white cloth.

- **Text Labels**:

- "add distractions" (yellow box, top-left of first two panels).

- "Image Model" (white box, bottom-center of first two panels).

### Detailed Analysis

1. **Initial State (Left Panels)**:

- Sink contains a stainless steel pot, yellow dish rack, and gray faucet.

- No distractions present.

- Robotic arm is absent in the first panel but appears in the second panel above the sink.

2. **Distracted State (Right Panels)**:

- Sink cluttered with:

- Yellow rubber duck (center-left).

- Red toy car (center-right).

- Purple toy (dish rack).

- Green block (near faucet).

- Red cup (near faucet).

- Blue toy car (bottom-right).

- Crumpled white cloth (bottom-right).

- Robotic arm actively interacts with the environment (gripper positioned near objects).

3. **Image Model Processing**:

- Arrows indicate the flow from the "add distractions" stage to the "Image Model" analysis.

- The model processes the cluttered scene, suggesting a focus on object recognition or manipulation under distraction.

### Key Observations

- **Distraction Complexity**: The addition of 7 objects (toys, cups, blocks) increases environmental complexity.

- **Robotic Arm Positioning**: The arm’s gripper is centrally located in the distracted state, implying active engagement with objects.

- **Object Distribution**: Objects are scattered unevenly, with higher density near the faucet and bottom-right corner.

### Interpretation

This setup simulates a real-world scenario where a robotic system must operate in cluttered environments. The "Image Model" likely evaluates the arm’s ability to:

1. Identify target objects (e.g., the stainless steel pot) amid distractions.

2. Adjust gripper positioning for precise manipulation.

3. Handle occlusions caused by overlapping objects.

The progression from a clean to a cluttered sink tests the system’s robustness to visual noise. The presence of diverse object shapes (cylindrical pot, spherical duck, angular blocks) suggests a focus on generalizability in object recognition. The crumpled cloth introduces texture variability, further challenging the model.

No numerical data or quantitative metrics are provided in the image. The diagram emphasizes qualitative analysis of robotic perception and interaction in dynamic environments.

</details>

Figure 12: OOD Distraction Examples. We use Nano Banana (Google, 2025) to add distractions to every image of the OpenVLA Bridge task suite. The resulting change in mean success rates can be seen in Figure 13.

<details>

<summary>x14.png Details</summary>

### Visual Description

## Bar Chart: Effect of OOD Distractors on Success Rates

### Overview

The chart compares success rates (%) of two model variants ("World Model" and "World Model (with OOD input image)") across three categories: RT-1-X, Octo, and OpenVLA. Success rates are visualized as grouped bars with error bars, and the chart emphasizes the impact of OOD distractors on performance.

### Components/Axes

- **X-axis**: Categories (RT-1-X, Octo, OpenVLA)

- **Y-axis**: Success Rate (%) ranging from 0 to 70%

- **Legend**:

- Solid blue: World Model

- Striped red: World Model (with OOD input image)

- **Error Bars**: Present on all bars, indicating variability (exact error values not labeled).

### Detailed Analysis

1. **RT-1-X**:

- World Model: 15.6% (solid blue bar)

- World Model (with OOD input image): 7.6% (striped red bar)

- **Trend**: Success rate decreases by ~53% when OOD input is added.

2. **Octo**:

- World Model: 23.8% (solid blue bar)

- World Model (with OOD input image): 4.1% (striped red bar)

- **Trend**: Success rate drops by ~83% with OOD input.

3. **OpenVLA**:

- World Model: 67.4% (solid blue bar)

- World Model (with OOD input image): 39.4% (striped red bar)

- **Trend**: Success rate decreases by ~42% with OOD input.

### Key Observations

- **Inverse Relationship**: In all categories, adding OOD input images reduces success rates.

- **Magnitude of Impact**:

- Octo shows the steepest decline (~83%).

- RT-1-X has a moderate decline (~53%).

- OpenVLA has the smallest decline (~42%).

- **Error Bars**: Visually, error margins are largest for OpenVLA (World Model) and smallest for Octo (World Model with OOD input image).

### Interpretation

The data suggests that OOD distractors consistently degrade model performance across all categories. However, the severity of this degradation varies:

- **Octo** is most vulnerable to OOD input, with near-collapse in success rates.

- **OpenVLA** retains higher absolute success rates even after OOD input is introduced, indicating better robustness.

- The inverse correlation implies that OOD distractors act as significant noise sources, particularly in models like Octo that lack adaptive mechanisms to handle such inputs.

The chart highlights the need for OOD-aware training strategies to mitigate these performance drops, especially for models deployed in environments with unpredictable inputs.

</details>

Figure 13: Effect of OOD Distractors. We use an image editing model to add distractor objects to the Bridge evaluation suite, finding that RT-1-X drops in performance by 51%, Octo by 83%, and OpenVLA by 41.5%, making OpenVLA the most robust to distractors. See Table 7 for details.

The ability to use WorldGym to quickly design and evaluate policies within OOD tasks and environments thus leads us to new findings about policies’ strengths and weaknesses. Future research could be prioritized to address these issues, all without spending extra effort to set up additional experiments in the real world or within handcrafted simulators.

5 Related Work

Action-Conditioned Video Generation.

Previous work has shown that video generation can simulate real-world interactions (Yang et al., 2023; Brooks et al., 2024), robotic plans (Du et al., 2024; 2023b), and games (AI et al., 2024; Bruce et al., 2024; Valevski et al., 2024; Alonso et al., 2024) when conditioned on text or keyboard controls. Prior work (NVIDIA et al., 2025) has begun to explore applying video generation to simulating complex robotic controls. We take this a step further by using video-based world models to quantitatively estimate robot policy success rates. WorldGym draws architectural inspirations from prior work on video generation such as Diffusion Forcing (Chen et al., 2024) and Diffusion Transformers (Peebles & Xie, 2023), but experiments with variable horizon lengths to support efficient long-horizon rollouts for policies with a variety of action chunk sizes.

Policy Evaluation.

Off-policy and offline policy evaluation has long been studied in the RL literature (Farajtabar et al., 2018; Jiang & Li, 2015; Kallus & Uehara, 2019; Munos et al., 2016; Precup et al., 2000; Thomas et al., 2015a). Some of these approaches are model-based, learning a dynamics model from previously collected data and rolling out the learned dynamics model for policy evaluation (Fonteneau et al., 2013; Zhang et al., 2021; Yu et al., 2020; Hafner et al., 2020). Since learning a dynamics model is challenging and subject to accumulation of error, a broader set of work has focused on model-free policy evaluation, which works by estimating the value function (Le et al., 2019; Duan & Wang, 2020; Sutton et al., 2009; 2016) or policy correction (Kanamori et al., 2009; Nguyen et al., 2010; Nachum et al., 2019). WorldGym performs model-based policy evaluation, but proposes to learn a single world model on image-based observation that can be used to evaluate different policies on different tasks. SIMPLER (Li et al., 2024) aims to evaluate realistic policies by constructing software-based simulators from natural images and showed highly correlated curves between simulated evaluation and real-robot execution, but it is hard to evaluate OOD language and image input in SIMPLER without significant hand engineering of the software simulator. Li et al. (2025) proposes to evaluate robot policies in a world model in a specific bi-manual manipulation setup, whereas WorldGym focuses on evaluating policies across diverse environments and robot morphologies while enabling testing OOD language and image inputs.

6 Conclusion

We have presented WorldGym, a world-model-based environment for evaluating robot policies. WorldGym emulates realistic robot interactions and shows strong correlations between simulated evaluation and real-world policy outcomes. WorldGym further provides the flexibility for evaluating OOD language instructions and performing tasks with an OOD initial frame. While not all interactions emulated by WorldGym are fully realistic, WorldGym serves as an important step towards safe and reproducible policy evaluation before deployment.

Acknowledgments

We thank Xinchen Yan and Doina Precup for reviewing versions of this manuscript. We thank Moo Jin Kim for help in setting up the OpenVLA policy. We thank Boyuan Chen and Kiwhan Song for the Diffusion Forcing GitHub repository.

References

- Afzal et al. (2020) Afsoon Afzal, Deborah S Katz, Claire Le Goues, and Christopher S Timperley. A study on the challenges of using robotics simulators for testing. arXiv preprint arXiv:2004.07368, 2020.

- AI et al. (2024) Decart AI, Julian Quevedo, Quinn McIntyre, Spruce Campbell, Xinlei Chen, and Robert Wachen. Oasis: A universe in a transformer. 2024. URL https://oasis-model.github.io/.

- Alonso et al. (2024) Eloi Alonso, Adam Jelley, Vincent Micheli, Anssi Kanervisto, Amos Storkey, Tim Pearce, and François Fleuret. Diffusion for world modeling: Visual details matter in atari. arXiv preprint arXiv:2405.12399, 2024.

- Brohan et al. (2022) Anthony Brohan, Noah Brown, Justice Carbajal, Yevgen Chebotar, Joseph Dabis, Chelsea Finn, Keerthana Gopalakrishnan, Karol Hausman, Alex Herzog, Jasmine Hsu, et al. Rt-1: Robotics transformer for real-world control at scale. arXiv preprint arXiv:2212.06817, 2022.

- Brooks et al. (2024) Tim Brooks, Bill Peebles, Connor Holmes, Will DePue, Yufei Guo, Li Jing, David Schnurr, Joe Taylor, Troy Luhman, Eric Luhman, et al. Video generation models as world simulators. 2024. URL https://openai. com/research/video-generation-models-as-world-simulators, 3, 2024.

- Bruce et al. (2024) Jake Bruce, Michael D Dennis, Ashley Edwards, Jack Parker-Holder, Yuge Shi, Edward Hughes, Matthew Lai, Aditi Mavalankar, Richie Steigerwald, Chris Apps, et al. Genie: Generative interactive environments. In Forty-first International Conference on Machine Learning, 2024.

- Chen et al. (2024) Boyuan Chen, Diego Marti Monso, Yilun Du, Max Simchowitz, Russ Tedrake, and Vincent Sitzmann. Diffusion forcing: Next-token prediction meets full-sequence diffusion. arXiv preprint arXiv:2407.01392, 2024.

- Chi et al. (2023) Cheng Chi, Zhenjia Xu, Siyuan Feng, Eric Cousineau, Yilun Du, Benjamin Burchfiel, Russ Tedrake, and Shuran Song. Diffusion policy: Visuomotor policy learning via action diffusion. The International Journal of Robotics Research, pp. 02783649241273668, 2023.

- Choi et al. (2021) HeeSun Choi, Cindy Crump, Christian Duriez, Asher Elmquist, Gregory Hager, David Han, Frank Hearl, Jessica Hodgins, Abhinandan Jain, Frederick Leve, et al. On the use of simulation in robotics: Opportunities, challenges, and suggestions for moving forward. Proceedings of the National Academy of Sciences, 118(1):e1907856118, 2021.

- Chung et al. (2023) Hyung Won Chung, Noah Constant, Xavier Garcia, Adam Roberts, Yi Tay, Sharan Narang, and Orhan Firat. Unimax: Fairer and more effective language sampling for large-scale multilingual pretraining, 2023. URL https://arxiv.org/abs/2304.09151.

- Du et al. (2023a) Yilun Du, Mengjiao Yang, Bo Dai, Hanjun Dai, Ofir Nachum, Joshua B. Tenenbaum, Dale Schuurmans, and Pieter Abbeel. Learning universal policies via text-guided video generation, 2023a. URL https://arxiv.org/abs/2302.00111.

- Du et al. (2023b) Yilun Du, Mengjiao Yang, Pete Florence, Fei Xia, Ayzaan Wahid, Brian Ichter, Pierre Sermanet, Tianhe Yu, Pieter Abbeel, Joshua B Tenenbaum, et al. Video language planning. arXiv preprint arXiv:2310.10625, 2023b.

- Du et al. (2024) Yilun Du, Sherry Yang, Bo Dai, Hanjun Dai, Ofir Nachum, Josh Tenenbaum, Dale Schuurmans, and Pieter Abbeel. Learning universal policies via text-guided video generation. Advances in Neural Information Processing Systems, 36, 2024.

- Duan & Wang (2020) Yaqi Duan and Mengdi Wang. Minimax-optimal off-policy evaluation with linear function approximation, 2020. arXiv:2002.09516.

- Dulac-Arnold et al. (2019) Gabriel Dulac-Arnold, Daniel Mankowitz, and Todd Hester. Challenges of real-world reinforcement learning. arXiv preprint arXiv:1904.12901, 2019.

- Ebert et al. (2021) Frederik Ebert, Yanlai Yang, Karl Schmeckpeper, Bernadette Bucher, Georgios Georgakis, Kostas Daniilidis, Chelsea Finn, and Sergey Levine. Bridge data: Boosting generalization of robotic skills with cross-domain datasets. arXiv preprint arXiv:2109.13396, 2021.

- Erez et al. (2015) Tom Erez, Yuval Tassa, and Emanuel Todorov. Simulation tools for model-based robotics: Comparison of bullet, havok, mujoco, ode and physx. In 2015 IEEE international conference on robotics and automation (ICRA), pp. 4397–4404. IEEE, 2015.

- Esser et al. (2024) Patrick Esser, Sumith Kulal, Andreas Blattmann, Rahim Entezari, Jonas Müller, Harry Saini, Yam Levi, Dominik Lorenz, Axel Sauer, Frederic Boesel, Dustin Podell, Tim Dockhorn, Zion English, Kyle Lacey, Alex Goodwin, Yannik Marek, and Robin Rombach. Scaling rectified flow transformers for high-resolution image synthesis, 2024. URL https://arxiv.org/abs/2403.03206.

- Farajtabar et al. (2018) Mehrdad Farajtabar, Yinlam Chow, and Mohammad Ghavamzadeh. More robust doubly robust off-policy evaluation. arXiv preprint arXiv:1802.03493, 2018.

- Fonteneau et al. (2013) Raphael Fonteneau, Susan A. Murphy, Louis Wehenkel, and Damien Ernst. Batch mode reinforcement learning based on the synthesis of artificial trajectories. Annals of Operations Research, 208(1):383–416, 2013.

- Fu et al. (2021) Justin Fu, Mohammad Norouzi, Ofir Nachum, George Tucker, Ziyu Wang, Alexander Novikov, Mengjiao Yang, Michael R Zhang, Yutian Chen, Aviral Kumar, et al. Benchmarks for deep off-policy evaluation. arXiv preprint arXiv:2103.16596, 2021.

- Google (2025) Google. Image editing in gemini just got a major upgrade. Blog post on “The Keyword”, Google, August 26 2025. URL https://blog.google/products/gemini/updated-image-editing-model/. Multimodal Generation Lead, Gemini Apps; Gemini Image Product Lead, Google DeepMind.

- Hafner et al. (2019) Danijar Hafner, Timothy Lillicrap, Jimmy Ba, and Mohammad Norouzi. Dream to control: Learning behaviors by latent imagination. arXiv preprint arXiv:1912.01603, 2019.

- Hafner et al. (2020) Danijar Hafner, Timothy Lillicrap, Mohammad Norouzi, and Jimmy Ba. Mastering atari with discrete world models. arXiv preprint arXiv:2010.02193, 2020.