# Bridging Neural ODE and ResNet: A Formal Error Bound for Safety Verification

**Authors**: Abdelrahman Sayed Sayed, Pierre-Jean Meyer, Mohamed Ghazel

institutetext: Univ Gustave Eiffel, COSYS-ESTAS, F-59657 Villeneuve d’Ascq, France email: {abdelrahman.ibrahim,pierre-jean.meyer,mohamed.ghazel}@univ-eiffel.fr

## Abstract

A neural ordinary differential equation (neural ODE) is a machine learning model that is commonly described as a continuous-depth generalization of a residual network (ResNet) with a single residual block, or conversely, the ResNet can be seen as the Euler discretization of the neural ODE. These two models are therefore strongly related in a way that the behaviors of either model are considered to be an approximation of the behaviors of the other. In this work, we establish a more formal relationship between these two models by bounding the approximation error between two such related models. The obtained error bound then allows us to use one of the models as a verification proxy for the other, without running the verification tools twice: if the reachable output set expanded by the error bound satisfies a safety property on one of the models, this safety property is then guaranteed to be also satisfied on the other model. This feature is fully reversible, and the initial safety verification can be run indifferently on either of the two models. This novel approach is illustrated on a numerical example of a fixed-point attractor system modeled as a neural ODE.

## 1 Introduction

Neural ordinary differential equations (neural ODE) are gaining prominence in continuous-time modeling, offering distinct advantages over traditional neural networks, such as memory efficiency, continuous-time modeling, adaptive computation balancing speed and accuracy [5, 14, 23]. This surge in interest stems from recent advancements in differential programming, which have enhanced the ability to model complex dynamics with greater flexibility and precision [24].

Neural ODE can be viewed as a continuous-depth generalization of residual networks (ResNet) [10], and conversely a ResNet represents an Euler discretization of the continuous transformations modeled by a neural ODE [9, 18]. Unlike ResNet, neural ODE enable smooth and robust representations through continuous dynamics, leading to improved modeling of time-evolving systems [5, 9]. By interpreting ResNet as discretized neural ODE, we can leverage advanced ODE solvers to enhance computational efficiency and reduce the number of required parameters [5]. Furthermore, the continuous formulation of neural ODE supports flexible handling of varying input resolutions and scales, making them adaptable to diverse data modalities. This perspective also facilitates theoretical analysis using tools from differential equations, providing insights into network stability and convergence [14].

Despite the growing interest in neural ODE for continuous-time modeling, formal analysis techniques for these models remain underdeveloped [17]. Current verification methods for neural ODE are still maturing, with existing reachability approaches primarily focusing on stochastic methods [7, 8]. Other works include the NNVODE tool [17] which is an extension of the Neural Network Verification (NNV) framework [28, 16] that investigates reachability for a general class of neural ODE. Additionally, another line of verification based on topological properties was introduced in [15] through a set-boundary method for safety verification of neural ODE and invertible residual networks (i-ResNet) [3].

The similarity between the neural ODE and ResNet models enables bidirectional safety verification, where the properties verified for one model can be used to deduce safety guarantees for the other one. This motivates our work, which investigates how verification results from one model can serve as a proxy for the other, addressing practical scenarios where only one model or compatible verification tools are available. The main contributions of this work are as follows:

- We derive a rigorous bound on the approximation error between the neural ODE and ResNet models for a given input set.

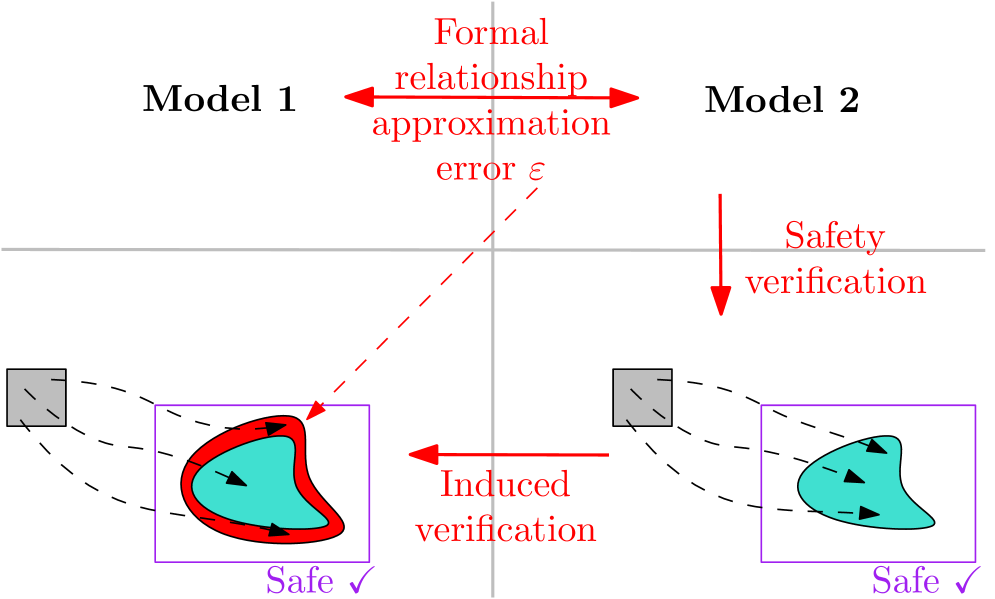

- We use the derived error bound in conjunction with the reachable set of one model as a proxy to verify safety properties of the other model, without applying any verification tools to the other model as illustrated in Figure 1.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Model Safety Verification via Formal Approximation

### Overview

This is a conceptual technical diagram illustrating a method for verifying the safety of two computational models (Model 1 and Model 2) through a formal relationship and induced verification. The diagram uses geometric shapes, arrows, and labels to depict the flow of verification and the relationship between the models' safe operating regions.

### Components/Axes

The diagram is divided into two primary horizontal sections by a thin gray line.

**Top Section:**

* **Left Label:** "Model 1" (black text).

* **Right Label:** "Model 2" (black text).

* **Central Element:** A red, double-headed horizontal arrow connecting the space between the two model labels.

* **Text Associated with Central Arrow:** "Formal relationship approximation error ε" (red text, stacked vertically). This text is positioned above and below the arrow's center.

**Bottom Section:**

This section contains two mirrored subsystems, one for each model.

* **Left Subsystem (Model 1):**

* A small gray square (top-left of this subsystem).

* Three dashed black lines emanate from the right side of the square, curving downward and rightward.

* These lines terminate at a purple-bordered rectangle.

* Inside the purple rectangle is an irregular, teal-colored shape with a thick red border. Inside this shape are three small black arrows pointing in a clockwise, circular flow.

* Below the purple rectangle is the label "Safe ✓" in purple text.

* A red dashed line originates from the central "Formal relationship" arrow above and points diagonally down to the top-left corner of the purple rectangle.

* **Right Subsystem (Model 2):**

* A small gray square (top-left of this subsystem).

* Three dashed black lines emanate from the right side of the square, curving downward and rightward.

* These lines terminate at a purple-bordered rectangle.

* Inside the purple rectangle is an irregular, teal-colored shape (without a red border). Inside this shape are three small black arrows pointing in a clockwise, circular flow.

* Below the purple rectangle is the label "Safe ✓" in purple text.

* A solid red arrow originates from above (near the "Safety verification" label) and points vertically down to the top of this purple rectangle.

**Connecting Elements Between Subsystems:**

* A solid red arrow labeled "Induced verification" (red text) points horizontally from the right subsystem (Model 2's box) to the left subsystem (Model 1's box).

* A label "Safety verification" (red text) is positioned above the right subsystem, associated with the vertical red arrow pointing down to Model 2's box.

### Detailed Analysis

The diagram presents a logical flow for safety verification:

1. **Direct Safety Verification:** Model 2 undergoes a direct "Safety verification" process, indicated by the vertical red arrow. The result is a verified safe region (the teal shape inside the purple box labeled "Safe ✓").

2. **Formal Relationship:** Model 1 and Model 2 are connected by a "Formal relationship" which has an associated "approximation error ε". This relationship is the core link between the two models.

3. **Induced Verification:** The safety verification performed on Model 2 is transferred or "induced" to Model 1 via the formal relationship. This is shown by the horizontal "Induced verification" arrow.

4. **Resulting Safe Region for Model 1:** The induced verification results in a safe region for Model 1. This region is visually distinct: it is the same teal shape as Model 2's, but it is now enclosed by a thick red border. The red border and the incoming red dashed line from the "Formal relationship" suggest that this safe region is *derived* or *approximated* from Model 2's verified region, subject to the approximation error ε.

5. **Internal Dynamics:** The black arrows inside both teal shapes indicate a dynamic process or flow within the safe operating region of each model.

### Key Observations

* **Visual Distinction of Safe Regions:** The safe region for Model 1 has a red border, while Model 2's does not. This is the primary visual cue differentiating a directly verified region from an indirectly (induced) verified one.

* **Direction of Induction:** The "Induced verification" arrow points from Model 2 to Model 1, indicating the direction of the safety property transfer.

* **Role of Error (ε):** The approximation error ε is explicitly tied to the formal relationship, implying that the induced safety guarantee for Model 1 is not perfect but is bounded or characterized by this error.

* **Symmetry and Asymmetry:** The layout of the two model subsystems is symmetrical, but the verification process is asymmetrical (direct vs. induced), highlighted by the different arrow types (solid vertical vs. solid horizontal) and the red border.

### Interpretation

This diagram illustrates a **proof-by-approximation** or **transfer learning** approach to formal safety verification. The core idea is that if you have a complex or hard-to-verify system (Model 1), you can relate it to a simpler or already-verified system (Model 2). By formally characterizing the relationship and its error (ε), you can "induce" a safety guarantee for the original system.

* **What it demonstrates:** It shows a methodology to reduce verification effort. Instead of verifying Model 1 directly, you verify Model 2 and then use the formal relationship to argue that Model 1 is also safe, within the known error margin ε.

* **Relationship between elements:** The "Formal relationship" is the bridge. "Safety verification" is the foundational act performed on the reference model (Model 2). "Induced verification" is the consequential act that provides a safety certificate for the target model (Model 1).

* **Notable implication:** The red border around Model 1's safe region signifies that its safety is *conditional* or *derived*. It is not an intrinsic, directly proven property but one inherited through a potentially lossy approximation. The diagram effectively communicates the trade-off: reduced verification cost for Model 1 comes with a safety guarantee that is approximate, as quantified by ε.

</details>

Figure 1: Illustration of the proposed framework to verify Model $1$ based on the outcome of the verification of Model $2$ and a bound $ε$ on the maximal error between the models.

#### Related work.

Although the similarity between the ResNet and neural ODE models is well established [5, 14], to the best of our knowledge, very few works have tried connecting these models through some more formal relationships. These include various theoretical perspectives, such as quantifying the deviation between the hidden state trajectory of a ResNet and its corresponding neural ODE, focusing on approximation error [26], while [20] derives generalization bounds for neural ODE and ResNet using a Lipschitz-based argument, emphasizing the impact of successive weight matrix differences on generalization capability. On the other hand, [21] investigates implicit regularization effects in deep ResNet and its impact on training outcomes. While these studies focus on theoretical analyses of approximation error, generalization, and regularization to understand model behavior and performance, our work leverages this relationship for formal safety verification. We propose a verification proxy approach that uses the reachable set of one model to verify the safety properties of the other, incorporating an error bound to ensure conservative over-approximations, which enables practical verification of nonlinear systems.

Abstraction-based verification (i.e., verifying properties of one model by working on an abstraction of its behaviors into a simpler model) has been a popular topic in the past decades outside of the AI field [27]. Within the field of AI verification, its primary application has been on abstracting specific model components rather than the whole model itself, as in approaches based on convex relaxation of nonlinear ReLU activation functions [13, 11]. On the other hand, full-model abstraction has been mostly unexplored for AI verification, except on the topic of neural network model reduction, where the verification of a neural network is achieved at a lower computational cost on a reduced network with less neurons, see e.g. [4] for unidirectional relationships, or [29] for bidirectional ones through the use of approximate bisimulation relations. Although the overall principle of the proposed approach in our paper is similar (abstracting a model by one that over-approximates the set of all its behaviors), the main difference with the above works between two discrete neural networks is that our paper considers the formal relationships between a continuous neural ODE model and a discrete ResNet one.

#### Organization of the paper.

The remainder of the paper is structured as follows. First, we formulate the safety verification problem of interest and provide some preliminaries in Section 2. In Section 3, we describe our proposed approach to bound the approximation error between the ResNet and neural ODE models, and use this error bound to verify the safety of one model based on the reachability analysis of the other. Following this, we provide numerical illustrations of our error bounding and verification proxy results (in both directions: from ResNet to neural ODE, and from neural ODE to ResNet) on an academic example in Section 4. Finally, we summarize the main findings of the paper and discuss potential future work in Section 5.

## 2 Preliminaries

### 2.1 Neural ODE and ResNet models

We consider the following neural ODE:

$$

\dot{x}(t)=\frac{dx(t)}{dt}=f(x(t)), \tag{1}

$$

with state $x∈ℝ^n$ , initial state $x(0)=u$ , and vector field $f:ℝ^n→ℝ^n$ defined as a finite sequence of classical neural network layers (such as fully connected layers, convolutional layers, activation functions, batch normalization). The state trajectories of (1) are defined based on the solution $Φ:ℝ×ℝ^n→ℝ^n$ of the corresponding initial value problem:

$$

x(t)=Φ(t,x(0))=Φ(t,u). \tag{0}

$$

In [5], such a neural ODE is described as a continuous-depth generalization of a residual neural network constituted of a single residual block. Conversely, this ResNet can be seen as the Euler discretization of the neural ODE (1):

$$

y=u+f(u), \tag{2}

$$

where $u∈ℝ^n$ is the input, $y∈ℝ^n$ is the output, and the residual function $f:ℝ^n→ℝ^n$ is identical to the vector field of the neural ODE (1).

Since the approach proposed in this paper relies on the Taylor expansion of the trajectories of (1) up to the second order, we assume here for simplicity that the neural network described by the vector field $f$ is continuously differentiable.

**Remark 1**

*The case where $f$ contains piecewise-affine activation functions such as ReLU can theoretically be handled as well, since our approach only really requires their derivatives to be bounded (but not necessarily continuous). But for the sake of clarity of presentation (to avoid the case decompositions of each ReLU activation), this case is kept out of the scope of the present paper.*

### 2.2 Problem definition

As mentioned above and in [5], both the neural ODE and ResNet models describe a very similar behavior, and either model could be seen as an approximation of the other. Our goal in this paper is to provide a formal comparison of these models in the context of safety verification, by evaluating the approximation error between them. For such comparison to be meaningful, we consider the outputs $y$ of the ResNet (2) on one side, and the outputs $Φ(1,u)$ of the neural ODE (1) at continuous depth $t=1$ on the other side, since other values $t≠ 1$ of this continuous depth have no elements of comparison in the discrete architecture of the ResNet.

Given an initial set $X_in⊆ℝ^n$ for the neural ODE (or equivalently referred to as input set for the ResNet), we first define the sets of reachable outputs for either model:

$$

R_neural ODE(X_in)=\{y∈ℝ^n\mid y=Φ(1,u), u∈X_in\},

$$

$$

R_ResNet(X_in)=\{y∈ℝ^n\mid y=u+f(u), u∈X_in\}.

$$

Since we usually cannot compute these output reachable sets exactly, we will often rely on computing an over-approximation denoted as $Ω(X_in)$ such that $R(X_in)⊆Ω(X_in)$ .

Our first objective is to bound the approximation error between the two models, as formalized below.

**Problem 1 (Error Bounding)**

*Given an input set $X_in⊆ℝ^n$ , we want to over-approximate the set $R_ε(X_in)$ of errors between the ResNet (2) and neural ODE (1) models, defined as:

$$

R_ε(X_in)=≤ft\{Φ(1,u)-(u+f(u))~|~u∈X_in\right\}.

$$*

Our second problem of interest is to use one of our models as a verification proxy for the other. In other words, we want to combine this error bound with the reachable set of one model to verify the satisfaction of a safety property on the other model, without having to compute the reachable output set of this second model.

**Problem 2 (Verification Proxy)**

*Given an input-output safety property defined by an input set $X_in⊆ℝ^n$ and a safe output set $X_s⊆ℝ^n$ , the verification problem consists in checking whether the reachable output set of a model is fully contained in the targeted safe set: $R(X_in)⊆X_s$ . In this paper, we want to verify this safety property on one model by relying only on the error set $R_ε(X_in)$ from Problem 1 and the reachability analysis of the other model.*

## 3 Proposed approach

As mentioned in Section 2.2, the ResNet model in (2) can be seen as the Euler discretization of the neural ODE (1) evaluated at continuous depth $t=1$ :

$$

x(1)=Φ(1,u)≈ u+f(u)=y. \tag{1}

$$

Our initial goal, related to Problem 1, is to evaluate this approximation error for a given set of inputs $u∈X_in$ . This is done below through the use of a Taylor expansion and its Lagrange-remainder form, combined later with some tools dedicated for reachability analysis.

### 3.1 Lagrange remainder

The Taylor expansion of the state trajectory $x(t)$ of the neural ODE (1) at $t=0$ is given by the infinite sum:

$$

x(t)=x(0)+t\frac{dx(0)}{dt}+\frac{t^2}{2!}\frac{d^2x(0)}{dt^2}+\frac{t^3}{3!}\frac{d^3x(0)}{dt^3}+\dots \tag{0}

$$

The Lagrange remainder theorem offers the possibility to truncate (4) without approximation error, hence preserving the above equality. We only state below the result in the case of a truncation at the Taylor order $2$ corresponding to the case of interest in our work.

**Proposition 1 (Lagrange remainder[25])**

*There exists $t^*∈[0,t]$ such that

$$

x(t)=x(0)+t\frac{dx(0)}{dt}+\frac{t^2}{2!}\frac{d^2x(t^*)}{dt^2} \tag{0}

$$*

Notice that in (5), the second order derivative $\frac{d^2x}{dt^2}$ is evaluated at $t^*∈[0,t]$ instead of $t$ as in the Taylor series (4). Although the truncation in Proposition 1 provides a much more manageable expression than the infinite sum in (4), the main difficulty is that this result only states the existence of a $t^*∈[0,t]$ satisfying the equality in (5), but its actual value is unknown.

### 3.2 Error function

To compare the continuous state $x(t)$ with the discrete output of the ResNet, the state of the neural ODE (1) should be evaluated at depth $t=1$ .

The first term of the right-hand side in (5) is the known initial condition of the neural ODE (1): $x(0)=u$ .

The second term is provided by the definition of the vector field of the neural ODE (1), and thus reduces to:

$$

t\frac{dx(0)}{dt}=1· f(x(0))=f(u). \tag{0}

$$

The second derivative appearing in the third term of (5) can be computed using the chain rule as follows:

| | $\displaystyle\frac{d^2x(t)}{dt^2}$ | $\displaystyle=\frac{df(x(t))}{dt}$ | |

| --- | --- | --- | --- |

In our context of Section 2, the function $f$ is assumed not to be explicitly dependent on the depth $t$ due to its definition as a single residual block with classical layers. Therefore, the partial derivative $\frac{∂ f(x(t))}{∂ t}$ is equal to $0$ , and the third term of (5) thus reduces to:

$$

\frac{t^2}{2!}\frac{d^2x(t^*)}{dt^2}=\frac{1}{2}f^\prime(x(t^*))f(x(t^*)).

$$

We can thus re-write (5) as an equation defining the output of the neural ODE based on the output of the ResNet (for the same initial state/input $u$ ) and an error term:

$$

Φ(1,u)=(u+f(u))+ε(u), \tag{6}

$$

where the approximation error between our models for this particular input $u$ is expressed by the Lagrange remainder of Taylor order 2:

$$

ε(u)=\frac{1}{2}f^\prime(x(t^*))f(x(t^*)), \tag{7}

$$

with $x(t^*)=Φ(t^*,u)$ for a fixed but unknown $t^*∈[0,1]$ .

Equation (6) can also be modified to rather express the outputs of the ResNet based on those of the neural ODE:

$$

u+f(u)=Φ(1,u)-ε(u). \tag{8}

$$

The error function $ε:ℝ^n→ℝ^n$ appearing positively in (6) and negatively in (8) is defined in (7) only for a specific input $u$ . However, in the context of our Problem 1, we are interested in analyzing the approximation error between both models over an input set $X_in⊆ℝ^n$ . In addition, since the specific value of $t^*$ is unknown, we need to bound (7) for any possible value of $t^*∈[0,1]$ . Therefore in the next sections, we focus on converting the equalities (6)-(8) to set inclusions over all $u∈X_in$ and $t^*∈[0,1]$ .

### 3.3 Bounding the error set

The reachable error set $R_ε(X_in)$ introduced in Problem 1, can be redefined based on the error function (7) as follows:

$$

\displaystyleR_ε(X_in) \displaystyle=≤ft\{Φ(1,u)-(u+f(u))~|~u∈X_in\right\} \displaystyle=≤ft\{≤ft.\frac{1}{2}f^\prime(Φ(t^*,u))f(Φ(t^*,u))~\right|~t^*∈[0,1],~u∈X_in\right\}. \tag{9}

$$

To solve Problem 1, our objective is thus to compute an over-approximation $Ω_ε(X_in)$ bounding the error set: $R_ε(X_in)⊆Ω_ε(X_in)$ .

The first step (corresponding to line 1 in Algorithm 1) is to compute the reachable tube of all possible states that can be reached by the neural ODE (1) over the whole range $t∈[0,1]$ and for any initial state $x(0)=u∈X_in$ . This reachable tube can be defined similarly to $R_neural ODE(X_in)$ in Section 2.2 but for all possible depth $t∈[0,1]$ instead of only the final one:

$$

R^tube_neural ODE(X_in)=\{Φ(t,u)∈ℝ^n\mid t∈[0,1],~u∈X_in\}.

$$

Since in most cases this set cannot be computed exactly, we instead use off-the-shelf reachability analysis toolboxes to compute an over-approximating set $Ω^tube_neural ODE(X_in)$ such that $R^tube_neural ODE(X_in)⊆Ω^tube_neural ODE(X_in)$ .

The error set can then be re-written based on the above reachable tube definition, by replacing $Φ(t^*,u)$ (with $t^*∈[0,1]$ and $u∈X_in$ ) in (9) by $x∈R^tube_neural ODE(X_in)$ .

$$

\displaystyleR_ε(X_in) \displaystyle=≤ft\{≤ft.\frac{1}{2}f^\prime(x)f(x)~\right|~x∈R^tube_neural ODE(X_in)\right\} \displaystyle⊆≤ft\{≤ft.\frac{1}{2}f^\prime(x)f(x)~\right|~x∈Ω^tube_neural ODE(X_in)\right\}. \tag{10}

$$

The next step, in line 2 of Algorithm 1, is to over-approximate this error set $R_ε(X_in)$ . One possible approach to achieve this is to define the static function $ε=\frac{1}{2}f^\prime(x)f(x)$ and apply to it some set-propagation techniques (such as interval arithmetic [12], Taylor models [19], or affine arithmetic [6]) to bound the set of output errors $ε$ corresponding to any state $x∈Ω^tube_neural ODE(X_in)$ in the reachable tube over-approximation. An alternative approach, which provided a tighter error bounding set in the particular case of the numerical example presented in Section 4, is to define the discrete-time nonlinear system $x^+=\frac{1}{2}f^\prime(x)f(x)$ , and then use existing reachability analysis toolboxes to over-approximate the reachable set of this system after one time step, which corresponds to bounding the image of the error function. Note that in this case, it is important that this final reachable set is computed as a single step, and not decomposed into a sequence of smaller intermediate steps whose iterative updates of the internal state would have no mathematical meaning for the static (stateless) error function.

As a consequence of the equalities and set inclusions in (9)-(10) and the fact that the reachability methods to be used in the first two steps of Algorithm 1 described above guarantee that the obtained sets are over-approximations of the output or reachable sets of interest, we have thus reached a solution to Problem 1.

**Theorem 3.1**

*The set $Ω_ε(X_in)$ obtained after applying this second step described above solves Problem 1:

$$

R_ε(X_in)=≤ft\{Φ(1,u)-(u+f(u))~|~u∈X_in\right\}⊆Ω_ε(X_in).

$$*

Note that the error bound in Theorem 3.1 is defined as a set in the state space of the neural ODE. This differs from the approach in [26], where the error bound is defined as a positive scalar.

A second and more important difference with this work is the tightness of the obtained error bounds. Indeed, if we adapt the results from [26] to the context of our framework described in Section 2, their error bound is expressed as:

$$

ε≤\frac{e^L-1}{L}≤ft\|\frac{1}{2}f^\prime(x)f(x)\right\|_∞,~∀ x∈R^tube_neural ODE(X_in),

$$

where $L$ is a Lipschitz constant of the neural ODE vector field. The term $≤ft\|\frac{1}{2}f^\prime(x)f(x)\right\|_∞$ can be obtained by first over-approximating the error set by $Ω_ε(X_in)$ in the same way we did, but the infinity norm forces to expand this set to make it symmetrical around $0$ , and then keeping only the maximum value among its components (thus corresponding to a second expansion of this set into an hypercube whose width along all dimensions is the largest width of the previous set). In addition, for any system with non-zero Lipschitz constant, the factor $\frac{e^L-1}{L}$ is always greater than $1$ , which increases this error bound even more.

In summary, this scalar error bound is doubly more conservative than our proposed set-based error bound. The comparison of both approaches is illustrated in the numerical example of Section 4.

### 3.4 Verification proxy

To address Problem 2, we leverage the similar behavior between the neural ODE and ResNet models to verify safety properties on one model using the reachable set of the other, combined with the error bound from Theorem 3.1. Specifically, we want to verify whether the reachable output set of a model is contained in the safe set $X_s$ , i.e., $R(X_in)⊆X_s$ .

We first focus on the case of Algorithm 1 to verify the safety property on the neural ODE, based on the reachability analysis of the ResNet. This first verification proxy relies on the set-based version of (6) using the Minkowski sum:

$$

R_neural ODE(X_in)⊆Ω_ResNet(X_in)+Ω_ε(X_in), \tag{11}

$$

stating that the reachable output set of the neural ODE is contained in the output set over-approximation of the ResNet $Ω_ResNet(X_in)$ , expanded by the bounding set of the error $Ω_ε(X_in)$ obtained after applying the first two lines of Algorithm 1 as described in Section 3.3.

Therefore, this verification procedure is achieved as in Algorithm 1, by first using existing set-propagation or reachability analysis tools to compute an over-approximation ${\color[rgb]{0,0,1}Ω_ResNet(X_in)}$ of the ResNet output set (line 3). Then in line 4, an over-approximation of the neural ODE output set can be deduced from (11) by taking the Minkowski sum of ${\color[rgb]{0,0,1}Ω_ResNet(X_in)}$ and our error bound ${\color[rgb]{1,0,0}Ω_ε(X_in)}$ . If $Ω_neural ODE(X_in)$ is contained in the safe set ${\color[rgb]{0,0,0}X_s}$ , then the neural ODE satisfies the safety property, otherwise the result is inconclusive (line 5-9).

Algorithm 1 Safety Verification Framework for neural ODE based on ResNet

Input: a neural ODE, an input set $X_in$ and a safe set $X_s$ . Output: Safe or Unknown.

1: compute an over-approximation of the reachable tube of the neural ODE $Ω^tube_neural ODE(X_in)$ ;

2: compute the over-approximation of the error set ${\color[rgb]{1,0,0}Ω_ε(X_in)}$ , $∀ x∈Ω^tube_neural ODE(X_in)$ ;

3: compute the over-approximation of the ResNet output ${\color[rgb]{0,0,1}Ω_ResNet(X_in)}$ ;

4: deduce an over-approximation of the neural ODE output $Ω_neural ODE(X_in)={\color[rgb]{1,0,0}Ω_ResNet(X_in)+Ω_ε(X_in)}$ ;

5: if $Ω_neural ODE(X_in)⊆{\color[rgb]{0,0,0}X_s}$ then

6: return Safe

7: else

8: return Unknown

9: end if

Reversing the roles, the case of verifying the ResNet based on the reachability analysis of the neural ODE is described in Algorithm 2. This case is very similar to the previous one, so we focus here on the main differences with Algorithm 1. The first difference is that in (8), the term representing the approximation error between the models appears with a negative sign. Therefore, when converting this equation into a set inclusion similarly to (11), we need to be careful to add the negation of the error set (and not to do a set difference, which is not the correct set operation in our case). We thus introduce the negative error set

$$

Ω_-ε(X_in)=\{-ε\midε∈Ω_ε(X_in)\},

$$

in order to convert (8) into its set-based notation as follows:

$$

R_ResNet(X_in)⊆Ω_neural ODE(X_in)+Ω_-ε(X_in). \tag{12}

$$

The second difference is that in line 3 of Algorithm 2, we compute an over-approximation of the reachable set of the neural ODE, using any classical tools for reachability analysis of continuous-time nonlinear systems, and add it to the negative error set to obtain an over-approximation of the ResNet output set. This final set can then similarly be used to verify the satisfaction of the safety property on the ResNet model.

Algorithm 2 Safety Verification Framework for ResNet based on neural ODE

Input: a ResNet, an input set $X_in$ and a safe set $X_s$ . Output: Safe or Unknown.

1: compute an over-approximation of the reachable tube of the neural ODE $Ω^tube_neural ODE(X_in)$ ;

2: compute the over-approximation of the negative error set ${\color[rgb]{1,0,0}Ω_-ε(X_in)}$ , $∀ x∈Ω^tube_neural ODE(X_in)$ ;

3: compute the over-approximation of the neural ODE output $Ω_neural ODE(X_in)$ ;

4: deduce an over-approximation of the ResNet output ${\color[rgb]{0,0,1}Ω_ResNet(X_in)}={\color[rgb]{1,0,0}Ω_neural ODE(X_in)+Ω_-ε(X_in)}$ ;

5: if ${\color[rgb]{0,0,1}Ω_ResNet(X_in)}⊆{\color[rgb]{0,0,0}X_s}$ then

6: return Safe

7: else

8: return Unknown

9: end if

**Theorem 3.2 (Soundness)**

*For the case that either Algorithm 1 or 2 returns Safe, the safety property in the sense of Problem 2 holds true [15].*

The soundness of the verification framework is guaranteed because both algorithms rely on over-approximations of the true reachable sets. Specifically, (11) ensures that $R_neural ODE(X_in)⊆Ω_neural ODE(X_in)$ , and (12) ensures ${\color[rgb]{0,0,1}R_ResNet(X_in)}⊆{\color[rgb]{0,0,1}Ω_ResNet(X_in)}$ . These inclusions hold due to the conservative nature of the considered reachability analysis and error bound computations in Section 3.3 (Theorem 3.1).

## 4 Numerical illustration

In this section, a commonly used neural ODE academic example [16, 17] is used to demonstrate the verification proxy between the two models, which is the Fixed-Point Attractor (FPA) [22] that consists of one nonlinear neural ODE.

Experiment Setting: All the experiments Code available in the following repository: https://github.com/ab-sayed/Formal-Error-Bound-for-Safety-Verification-of-neural-ODE herein are run on MATLAB 2024b with Continuous Reachability Analyzer (CORA) version 2024.4.0 with an Intel (R) Core (TM) i5-1145G7 CPU@2.60 GHz and 32 GB of RAM.

### 4.1 System description

The FPA system is a nonlinear dynamical system with dynamics that converge to a fixed point (an equilibrium state) under certain conditions [2], and the fixed-point aspect makes it a useful model for studying convergence and stability, which are important in safety-critical applications where the system must not diverge or enter unsafe states. As in the proposed benchmark in [22], we consider here the following $5$ -dimensional neural ODE approximating the FPA dynamics:

$$

\dot{x}=f(x)=τ x+Wtanh(x),

$$

where $x∈ℝ^5$ is the state vector, $τ=-10^-6$ is a time constant for the neurons, $W∈ℝ^5× 5$ is a composite weight matrix defined as $W=\begin{pmatrix}0_2× 2&A\ 0_3× 2&BA\end{pmatrix}$ with $A=\begin{pmatrix}-1.20327&-0.07202&-0.93635\ 1.18810&-1.50015&0.93519\end{pmatrix}$ and $B=\begin{pmatrix}1.21464&-0.10502\ 0.12023&0.19387\ -1.36695&0.12201\end{pmatrix}$ , and $tanh(x)$ is the hyperbolic tangent activation function applied element-wise to the state vector $x$ .

We choose our safety property defined by the input set $X_in≈[0.45,0.55]×[0.72,0.88]×[0.47,0.58]×[0.19,0.24]×[-0.64,-0.53]$ (its exact numerical values are provided in the code linked below) and the safe set $X_s=[0.2,0.6]×[0.3,0.85]⊂ℝ^2$ , that only focuses on the projection of the state onto its first two dimensions, i.e., using an output function $h(x)=(x_1,x_2)$ . In the case of the neural ODE, we thus want to verify that for all initial state $x(0)∈X_in$ , we have $h(x(1))∈X_s$ .

### 4.2 Computing the error bound

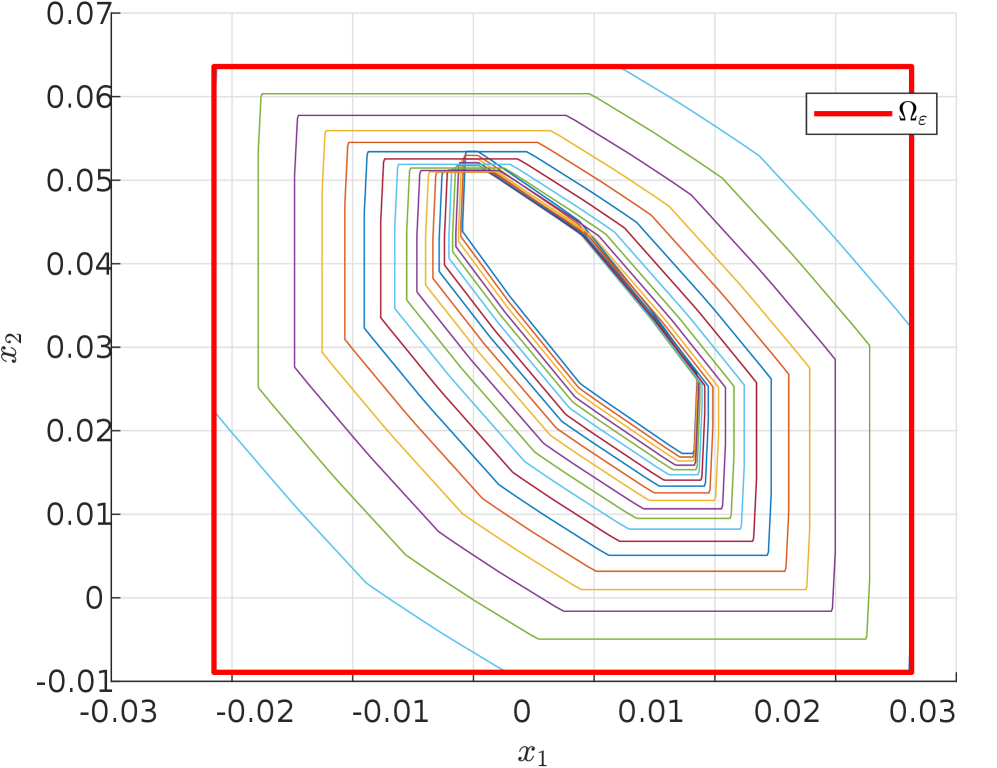

Using CORA [1], we compute the error bound $Ω_ε(X_in)$ from Theorem 3.1 as follows. First, we over-approximate the reachable tube of the neural ODE $R^tube_neural ODE$ over the time interval $[0,1]$ as a sequence of zonotopes, where each zonotope corresponds to an intermediate time range. For each zonotope in the reachable tube, we bound the image of the error function (7) by applying a discrete-time reachability analysis method at $t=1$ . This results in a new zonotope that over-approximates the error set starting from that particular reachable tube zonotope. The total error set is thus guaranteed to be contained in the union of these error zonotopes across all time steps. To simplify its use in the safety verification experiments in Section 4.3, we compute the interval hull of this union, yielding a hyperrectangle that over-approximates ${\color[rgb]{1,0,0}Ω_ε(X_in)}$ illustrated in Figure 2 in red, and showing 20 error zonotopes in different colors, corresponding to the error bound of each intermediate time range used in the reachable tube.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Contour Plot / Phase Portrait: Nested Polygons within a Bounding Rectangle

### Overview

The image is a 2D technical plot displaying a series of nested, closed polygons (likely representing contour lines or level sets) contained within a prominent red rectangular boundary. The plot is set against a light gray grid on a white background. The visualization appears to represent a mathematical or engineering concept, such as a stability region, invariant set, or phase portrait of a dynamical system.

### Components/Axes

* **Plot Type:** 2D contour plot or phase portrait.

* **Axes:**

* **Horizontal Axis (x1):** Labeled `x1`. The scale runs from approximately -0.03 to 0.03. Major tick marks are present at intervals of 0.01 (e.g., -0.03, -0.02, -0.01, 0, 0.01, 0.02, 0.03).

* **Vertical Axis (x2):** Labeled `x2`. The scale runs from approximately -0.01 to 0.07. Major tick marks are present at intervals of 0.01 (e.g., -0.01, 0, 0.01, 0.02, 0.03, 0.04, 0.05, 0.06, 0.07).

* **Legend:** Located in the top-right corner of the plot area. It contains a single entry: a thick red line segment labeled with the mathematical symbol **Ωε** (Omega subscript epsilon).

* **Primary Boundary:** A thick, solid red rectangle, corresponding to the legend entry **Ωε**. Its approximate corners are at coordinates (-0.022, -0.009) and (0.029, 0.064).

* **Data Series (Nested Polygons):** A set of approximately 20-25 concentric, closed polygons. Each polygon is drawn with a thin line of a distinct color. The colors include (from outermost to innermost, approximate sequence): light blue, green, purple, yellow, orange, red, dark blue, and others, repeating in a pattern. The polygons are not perfect circles or ellipses but have a distinct, slightly irregular, multi-faceted shape, suggesting they are defined by a piecewise-linear or polyhedral function.

### Detailed Analysis

* **Spatial Arrangement:** The polygons are nested concentrically. The outermost polygon is the largest, and each subsequent polygon is contained within the previous one, with the innermost polygon being the smallest. They are all centered roughly around the point (0.005, 0.035).

* **Shape & Trend:** All polygons share a similar geometric characteristic: they are elongated along a diagonal axis running from the top-left to the bottom-right of the plot. The shapes are not symmetric about the x1 or x2 axes. The trend is a clear convergence towards a central point or region.

* **Color-Legend Cross-Reference:** The red color is exclusively used for the outer bounding rectangle labeled **Ωε**. None of the inner nested polygons are red. The inner polygons use a palette of other colors (blues, greens, purples, yellows, oranges) which are not individually labeled in a legend.

* **Data Point Approximation (Polygon Vertices):** While exact vertices are not labeled, the polygons' corners can be approximated relative to the grid. For example, the outermost light blue polygon has a vertex near (0.025, 0.030) and another near (-0.020, 0.022). The innermost polygons are tightly clustered around the central point.

### Key Observations

1. **Concentric Nesting:** The most striking feature is the perfect nesting of the polygons, indicating a hierarchical or iterative relationship between them.

2. **Diagonal Orientation:** The consistent diagonal elongation of all shapes suggests an underlying dynamic or constraint that is not aligned with the primary x1 or x2 axes.

3. **Bounding Set:** The red rectangle **Ωε** acts as a strict outer boundary. All other data (the polygons) are fully contained within it.

4. **Lack of Individual Labels:** The inner polygons are not individually identified. Their meaning is derived from their collective pattern and relationship to the bounding set **Ωε**.

### Interpretation

This plot is highly characteristic of visualizations used in **control theory, optimization, or dynamical systems analysis**.

* **What it likely represents:** The nested polygons are almost certainly **level sets** (contours) of a scalar function, such as a **Lyapunov function** or a **cost function**. The function's value decreases as one moves from the outer polygons toward the center. The central point (approx. 0.005, 0.035) is likely an **equilibrium point** or an **optimal solution**.

* **Role of Ωε:** The red rectangle **Ωε** defines a **region of interest, a safe operating set, or an invariant set**. The fact that all level sets are contained within it suggests that trajectories starting inside **Ωε** will remain within it and converge toward the central equilibrium. The subscript ε (epsilon) often denotes a small, positive parameter, implying this set might be defined by a tolerance or a bound.

* **Underlying System:** The diagonal, non-axis-aligned shape of the contours indicates that the system's dynamics or the function's Hessian have coupled terms between the states `x1` and `x2`. The system is not decoupled.

* **Purpose:** The graph demonstrates **stability** or **convergence**. It visually proves that from any point within the bounding set **Ωε**, the system state will evolve (following the gradient of the function) toward the central equilibrium, as shown by the descending sequence of level sets. It is a graphical certificate of performance for a controller or an optimization algorithm.

</details>

Figure 2: Illustration of the error over-approximation

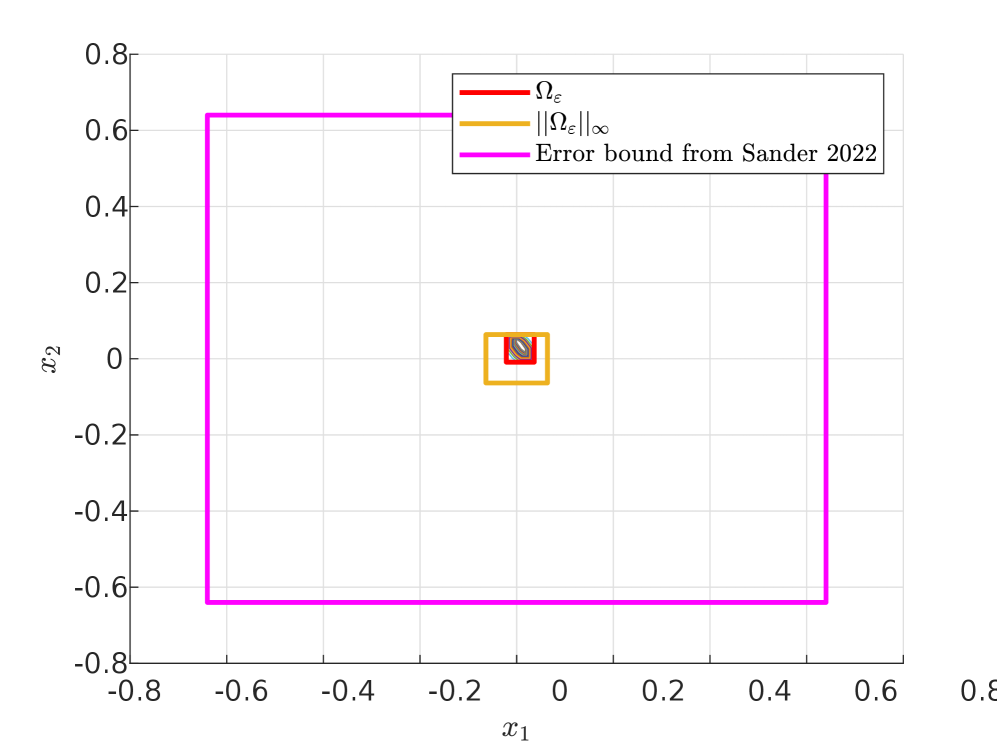

To contextualize our proposed error bound, we compare it with the error bound proposed in [26]. For that, we first compute the infinity norm of our error set $\|{\color[rgb]{1,0,0}Ω_ε(X_in)}\|_∞=0.064$ , which corresponds to a positive and scalar bound on the error, thus implying that its set representation in the state space (represented in yellow in Figure 3) is necessarily symmetrical around $0$ and with the width that is identical on all dimensions (since the infinity norm takes the largest width across all dimensions). The set-based error bound ( ${\color[rgb]{1,0,0}Ω_ε(X_in)}$ represented in red) obtained from our method is thus always contained in this infinity norm.

Next, we compute the Lipschitz constant for the vector field of the neural ODE $L=\|τ+W\|_∞=3.62$ , and then we obtain the error bound in [26] as $\frac{(e^L-1)}{L}\|Ω_ε(X_in)\|_∞=0.64$ . This final error bound, represented in magenta in Figure 3, is 10 times wider (on each dimension) than the infinity norm of our error set in yellow, and about $16$ millions times larger (in volume over the $5$ -dimensional state space) than our error set ${\color[rgb]{1,0,0}Ω_ε(X_in)}$ in red. The improved tightness of our proposed approach is therefore very significant.

<details>

<summary>x3.png Details</summary>

### Visual Description

## 2D Plot: Nested Rectangular Regions and Error Bounds

### Overview

The image is a 2D Cartesian plot displaying three nested, axis-aligned rectangular regions centered at the origin (0,0). The plot visually compares the size of a specific set (Ω_ε) and its infinity norm bound (||Ω_ε||_∞) against a larger, previously established error bound from a 2022 paper by Sander.

### Components/Axes

* **Axes:**

* Horizontal Axis (x1): Linear scale ranging from -0.8 to 0.8, with major tick marks at intervals of 0.2.

* Vertical Axis (x2): Linear scale ranging from -0.8 to 0.8, with major tick marks at intervals of 0.2.

* **Legend:** Located in the top-right quadrant of the plot area. It contains three entries:

1. **Red line:** Labeled `Ω_ε`

2. **Orange line:** Labeled `||Ω_ε||_∞`

3. **Magenta line:** Labeled `Error bound from Sander 2022`

* **Grid:** A light gray grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

The plot contains three concentric rectangles, all centered at (0,0). Their boundaries are defined as follows:

1. **Innermost Rectangle (Red - `Ω_ε`):**

* **Color:** Red.

* **Position:** Centered at the origin.

* **Approximate Boundaries:** x1 ≈ [-0.05, 0.05], x2 ≈ [-0.05, 0.05].

* **Visual Trend:** This is the smallest region, representing the core set of interest.

2. **Middle Rectangle (Orange - `||Ω_ε||_∞`):**

* **Color:** Orange.

* **Position:** Centered at the origin, enclosing the red rectangle.

* **Approximate Boundaries:** x1 ≈ [-0.1, 0.1], x2 ≈ [-0.1, 0.1].

* **Visual Trend:** This rectangle is larger than the red one, representing a bound (likely the infinity norm) on the set Ω_ε.

3. **Outermost Rectangle (Magenta - `Error bound from Sander 2022`):**

* **Color:** Magenta.

* **Position:** Centered at the origin, enclosing both the red and orange rectangles.

* **Approximate Boundaries:** x1 ≈ [-0.65, 0.5], x2 ≈ [-0.65, 0.65].

* **Visual Trend:** This is the largest region by a significant margin. It represents a previously published error bound.

### Key Observations

* **Nested Containment:** There is a clear, strict nesting: `Ω_ε` (red) ⊂ `||Ω_ε||_∞` (orange) ⊂ `Error bound from Sander 2022` (magenta).

* **Scale Discrepancy:** The magenta error bound from Sander (2022) is substantially larger than the bounds represented by the red and orange rectangles. Its half-width (~0.6) is approximately 6 times larger than that of the orange rectangle (~0.1) and 12 times larger than that of the red rectangle (~0.05).

* **Symmetry:** All three rectangles are symmetric about both the x1 and x2 axes.

### Interpretation

This chart is a visual comparison of error or set bounds in a 2D parameter space (x1, x2). It demonstrates that the new bounds represented by `Ω_ε` and its norm `||Ω_ε||_∞` are significantly tighter (smaller) than the previously established bound from Sander (2022). The primary message is one of improvement: the new analysis or method yields a much more precise characterization of the region of interest (the red set) and its immediate bound (the orange set) compared to the prior work. The large magenta rectangle serves as a benchmark, highlighting the magnitude of the improvement. The chart effectively communicates that the new results are not just marginally better, but are orders of magnitude more precise in defining the relevant region in this 2D space.

</details>

Figure 3: Comparison of the error bounds obtained from our approach in red and the one from [26] in magenta

### 4.3 Experiments on safety verification

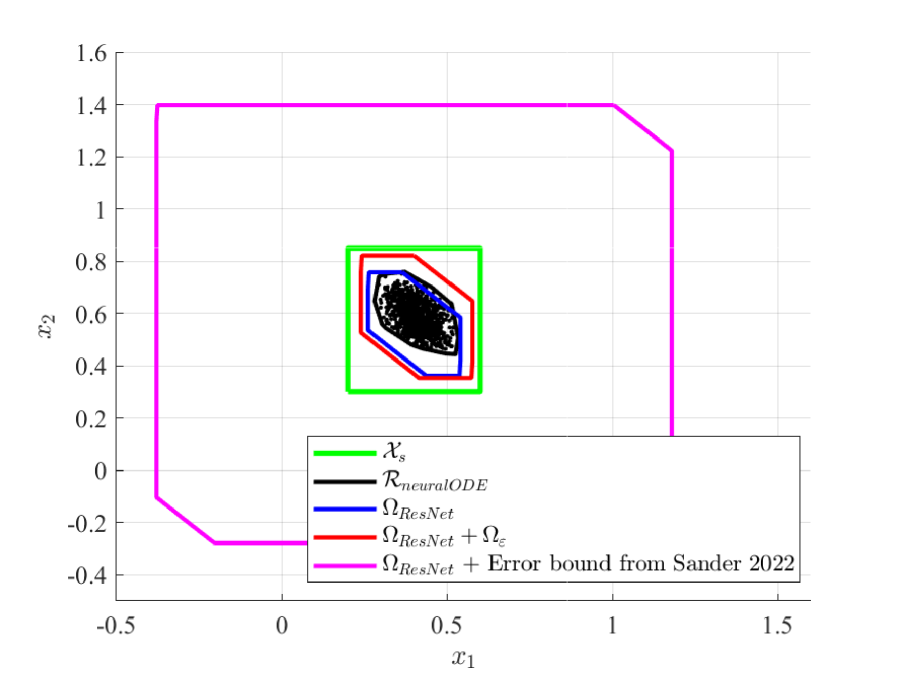

Using the error bound computed in Section 4.2, we can verify safety properties for the neural ODE output set based on the ResNet output set and the error bound set (i.e., ${\color[rgb]{1,0,0}Ω_ResNet(X_in)+Ω_ε(X_in)}$ ), or vice versa for the ResNet output set based on the neural ODE output set and the negative error bound set (i.e., ${\color[rgb]{1,0,0}Ω_neural ODE(X_in)+Ω_-ε(X_in)}$ ).

In Figure 4, we compute the over-approximation of the ResNet output set ${\color[rgb]{0,0,1}Ω_ResNet}$ using simple bound propagation through the ResNet function with CORA. By adding the error bound ${\color[rgb]{1,0,0}Ω_ε}$ , we obtain a zonotope (shown in red) that is guaranteed to contain $R_neural ODE(X_in)$ . The figure also includes black points representing neural ODE outputs for random initial conditions in $X_in$ , with their convex hull (black set) approximating the true reachable set $R_neural ODE(X_in)$ . Since the safe set ${\color[rgb]{0,0,0}X_s}$ contains the over-approximation ${\color[rgb]{1,0,0}Ω_ResNet(X_in)+Ω_ε(X_in)}$ , we guarantee that the neural ODE true reachable set is safe, as:

$$

{\color[rgb]{0,0,0}X_s}⊇{\color[rgb]{1,0,0}Ω_ResNet(X_in)+Ω_ε(X_in)}⊇R_neural ODE.

$$

From Figure 4, we can see that the ResNet and neural ODE reachable sets are very similar due to the ResNet role as a discretization of the neural ODE, but they are not identical. Indeed, some neural ODE outputs (black points) lie outside ${\color[rgb]{0,0,1}Ω_ResNet}$ , highlighting the necessity of the error bound ${\color[rgb]{1,0,0}Ω_ε(X_in)}$ to ensure that the over-approximation captures all possible neural ODE outputs.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## 2D Plot: Comparison of Set Bounds and Data Distribution

### Overview

The image is a 2D scatter plot with overlaid geometric shapes representing different sets or bounds. It appears to compare the coverage or containment of a data distribution (represented by a scatter of points) against various theoretical or computed regions. The plot is set against a white background with a light gray grid.

### Components/Axes

* **Axes:**

* **Horizontal Axis (x1):** Labeled `x1`. Scale ranges from approximately -0.5 to 1.5, with major tick marks at -0.5, 0, 0.5, 1, and 1.5.

* **Vertical Axis (x2):** Labeled `x2`. Scale ranges from approximately -0.4 to 1.6, with major tick marks at -0.4, -0.2, 0, 0.2, 0.4, 0.6, 0.8, 1, 1.2, 1.4, and 1.6.

* **Legend:** Located at the bottom-center of the plot area. It contains five entries, each with a colored line sample and a label:

1. **Green Line:** `X_s`

2. **Black Line:** `R_{neuralODE}`

3. **Blue Line:** `Ω_{ResNet}`

4. **Red Line:** `Ω_{ResNet} + Ω_ε`

5. **Magenta Line:** `Ω_{ResNet} + Error bound from Sander 2022`

* **Data Series & Shapes:**

* **Scatter Points:** A dense cluster of black points, with a smaller number of blue points interspersed, concentrated in the central region of the plot.

* **Geometric Shapes (from outermost to innermost):**

1. **Magenta Polygon:** A large, irregular hexagon forming the outermost boundary.

2. **Green Rectangle:** A smaller, axis-aligned rectangle nested inside the magenta shape.

3. **Red Polygon:** An irregular pentagon/hexagon nested inside the green rectangle.

4. **Blue Polygon:** A smaller irregular shape nested inside the red polygon.

5. **Black Polygon:** The innermost irregular shape, closely surrounding the main cluster of scatter points.

### Detailed Analysis

* **Spatial Layout & Containment:** The shapes exhibit a clear nested hierarchy. The magenta polygon is the largest and contains all other elements. The green rectangle is fully contained within the magenta polygon. The red, blue, and black polygons are successively nested within the green rectangle, with the black polygon being the innermost bound.

* **Approximate Coordinates of Shape Vertices (Estimated from Grid):**

* **Magenta Polygon (`Ω_{ResNet} + Error bound...`):** Vertices are approximately at (-0.4, -0.3), (-0.2, -0.3), (-0.4, 1.4), (1.0, 1.4), (1.2, 1.2), and (1.2, 0.2).

* **Green Rectangle (`X_s`):** Appears to be an axis-aligned rectangle with corners approximately at (0.2, 0.3) and (0.6, 0.8).

* **Red Polygon (`Ω_{ResNet} + Ω_ε`):** Vertices are approximately at (0.25, 0.35), (0.25, 0.75), (0.35, 0.8), (0.55, 0.7), (0.55, 0.4), and (0.45, 0.35).

* **Blue Polygon (`Ω_{ResNet}`):** Vertices are approximately at (0.3, 0.4), (0.3, 0.7), (0.4, 0.75), (0.5, 0.65), (0.5, 0.45), and (0.4, 0.4).

* **Black Polygon (`R_{neuralODE}`):** Vertices are approximately at (0.35, 0.45), (0.35, 0.65), (0.45, 0.7), (0.5, 0.6), (0.5, 0.5), and (0.45, 0.45).

* **Scatter Point Distribution:** The black points form a dense, roughly elliptical cluster centered near (0.4, 0.6). The blue points are scattered within and slightly around this main cluster, with a few appearing near the boundary of the blue polygon.

### Key Observations

1. **Containment Hierarchy:** There is a strict visual containment: Magenta ⊃ Green ⊃ Red ⊃ Blue ⊃ Black ≈ Data Cluster.

2. **Tightness of Bounds:** The black polygon (`R_{neuralODE}`) appears to be the tightest bound around the primary data cluster. The blue polygon (`Ω_{ResNet}`) is slightly larger, the red polygon (`Ω_{ResNet} + Ω_ε`) larger still, and the green rectangle (`X_s`) is a much looser, axis-aligned bound. The magenta polygon is a very loose, outer bound.

3. **Legend-Color Correspondence:** The colors in the legend match the shapes in the plot exactly. The magenta line corresponds to the outermost polygon, the green line to the rectangle, and so on, down to the black line for the innermost polygon.

4. **Data vs. Bounds:** The majority of the black scatter points are contained within the innermost black polygon. The blue scatter points are also mostly within the black and blue polygons, though a few are near or slightly outside the blue boundary.

### Interpretation

This plot likely visualizes and compares different methods for computing **invariant sets, reachable sets, or error bounds** for a dynamical system or a neural network model (suggested by terms like `ResNet` and `neuralODE`).

* **`X_s` (Green):** Represents a simple, possibly predefined, safe set or state space region (an axis-aligned box).

* **`Ω_{ResNet}` (Blue):** Represents a set computed or guaranteed by a Residual Network (ResNet) based method. It is tighter than `X_s`.

* **`Ω_{ResNet} + Ω_ε` (Red):** Likely represents the ResNet set augmented with an additional error term (`Ω_ε`), resulting in a slightly larger, more conservative bound.

* **`R_{neuralODE}` (Black):** Represents a set computed by a Neural Ordinary Differential Equation (Neural ODE) method. It provides the tightest fit to the empirical data distribution (the scatter points), suggesting it may be a more accurate model of the system's true behavior or reachable states.

* **`Ω_{ResNet} + Error bound...` (Magenta):** Represents a very conservative, possibly theoretical, error bound from a cited work (Sander 2022) applied to the ResNet set. Its large size indicates it is a loose, worst-case guarantee.

**The core message** is a comparison of **set computation techniques**. The Neural ODE method (`R_{neuralODE}`) produces a set that most closely matches the observed data distribution, while the ResNet-based methods (`Ω_{ResNet}`) produce larger, more conservative sets. The plot demonstrates the trade-off between the tightness of a computed bound and its conservatism, with the Neural ODE approach appearing superior in this specific case for approximating the data's support. The magenta bound serves as a reference for a known, highly conservative theoretical limit.

</details>

Figure 4: Verification of neural ODE based on ResNet

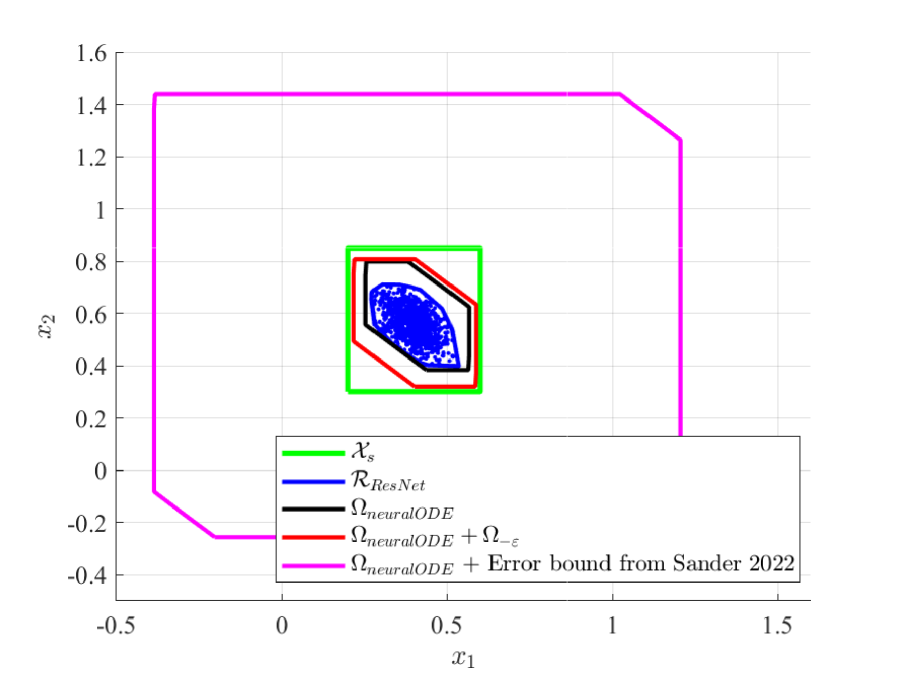

Conversely, in Figure 5, we compute the over-approximation of the neural ODE reachable set ${\color[rgb]{0,0,0}Ω_neural ODE(X_in)}$ . By adding the negative error bound ${\color[rgb]{1,0,0}Ω_-ε}$ , we obtain a zonotope (shown in red) that encapsulates ${\color[rgb]{0,0,1}R_ResNet(X_in)}$ . Similarly, the figure includes blue points representing ResNet outputs for random inputs in $X_in$ , with their convex hull (blue set) approximating the true reachable set ${\color[rgb]{0,0,1}R_ResNet(X_in)}$ . Since the safe set ${\color[rgb]{0,0,0}X_s}$ is a super set that contains the over-approximation ${\color[rgb]{1,0,0}Ω_neural ODE(X_in)+Ω_-ε(X_in)}$ , we guarantee that the ResNet true reachable set is safe, as:

$$

{\color[rgb]{0,0,0}X_s}⊇{\color[rgb]{1,0,0}Ω_neural ODE(X_in)+Ω_-ε(X_in)}⊇{\color[rgb]{0,0,1}R_ResNet}.

$$

<details>

<summary>x5.png Details</summary>

### Visual Description

## State-Space Diagram: Comparison of Invariant and Reachable Sets

### Overview

The image is a 2D state-space plot (phase portrait) comparing different computed sets for a dynamical system. It visualizes the relationship between a reachable set from a ResNet model (`R_ResNet`) and several invariant set approximations (`Ω_neuralODE`) with different error bounds. The plot demonstrates how conservative various bounding methods are relative to each other and to the computed reachable set.

### Components/Axes

* **Chart Type:** 2D State-Space / Phase Portrait Diagram.

* **Axes:**

* **X-axis:** Labeled `x₁`. Scale ranges from approximately -0.5 to 1.5, with major ticks at -0.5, 0, 0.5, 1, 1.5.

* **Y-axis:** Labeled `x₂`. Scale ranges from approximately -0.4 to 1.6, with major ticks at -0.4, -0.2, 0, 0.2, 0.4, 0.6, 0.8, 1, 1.2, 1.4, 1.6.

* **Legend:** Positioned at the bottom-center of the plot area. It contains five entries, each with a colored line sample and a corresponding label:

1. **Green Line:** `Xₛ`

2. **Blue Line:** `R_ResNet`

3. **Black Line:** `Ω_neuralODE`

4. **Red Line:** `Ω_neuralODE + Ω₋ₑ`

5. **Magenta Line:** `Ω_neuralODE + Error bound from Sander 2022`

### Detailed Analysis

The diagram contains five distinct geometric regions, nested within each other. Their spatial relationships and approximate coordinates are as follows:

1. **Magenta Polygon (`Ω_neuralODE + Error bound from Sander 2022`):**

* **Trend/Shape:** This is the outermost, largest polygon. It is an irregular hexagon.

* **Approximate Vertices (x₁, x₂):** (-0.4, -0.1), (-0.2, -0.25), (1.2, -0.25), (1.2, 1.25), (1.0, 1.45), (-0.4, 1.45). It encloses all other shapes.

2. **Green Rectangle (`Xₛ`):**

* **Trend/Shape:** A simple axis-aligned rectangle nested inside the magenta polygon.

* **Approximate Bounds:** x₁ from ~0.2 to ~0.6; x₂ from ~0.3 to ~0.8.

3. **Red Polygon (`Ω_neuralODE + Ω₋ₑ`):**

* **Trend/Shape:** An irregular hexagon nested inside the green rectangle. It is smaller than the green rectangle but larger than the black polygon.

* **Approximate Vertices (x₁, x₂):** (0.25, 0.3), (0.25, 0.6), (0.35, 0.7), (0.55, 0.6), (0.55, 0.3), (0.35, 0.2). It appears to be a tighter bound than the green rectangle.

4. **Black Polygon (`Ω_neuralODE`):**

* **Trend/Shape:** An irregular hexagon nested inside the red polygon. It is the smallest of the outlined invariant sets.

* **Approximate Vertices (x₁, x₂):** (0.28, 0.35), (0.28, 0.55), (0.38, 0.65), (0.52, 0.55), (0.52, 0.35), (0.38, 0.25). This represents the core invariant set computed by the neural ODE method.

5. **Blue Region (`R_ResNet`):**

* **Trend/Shape:** A dense, irregular cloud of points (or a filled polygon) located entirely within the black polygon. It is not a simple geometric shape but appears as a scattered set.

* **Approximate Bounds:** Centered roughly around (0.4, 0.5). Its extent is fully contained within the black polygon's vertices.

### Key Observations

* **Nested Hierarchy:** There is a clear containment hierarchy: `R_ResNet` ⊂ `Ω_neuralODE` ⊂ (`Ω_neuralODE + Ω₋ₑ`) ⊂ `Xₛ` ⊂ (`Ω_neuralODE + Error bound from Sander 2022`).

* **Conservatism Gradient:** The sets become progressively larger (more conservative) as we move from the black (`Ω_neuralODE`) to the red, then green, and finally the magenta set. The magenta set from "Sander 2022" is significantly larger than the others.

* **Model Comparison:** The blue reachable set (`R_ResNet`) is fully contained within the black invariant set (`Ω_neuralODE`), suggesting the neural ODE's computed invariant set successfully bounds the behavior of the ResNet model for the analyzed scenario.

* **Geometric Nature:** All bounding sets (`Xₛ`, `Ω_neuralODE`, and their augmented versions) are represented as polytopes (polygons in 2D), which is common for computational tractability in control theory and verification.

### Interpretation

This diagram is a technical comparison of methods for computing **invariant sets**—regions in a system's state space where, if the system starts inside, it will remain inside for all future time. Such sets are crucial for safety verification and stability analysis of dynamical systems, especially those modeled by neural networks (like ResNets or Neural ODEs).

* **What it demonstrates:** The plot visually argues that the `Ω_neuralODE` method (black) provides a tight, non-conservative invariant set that accurately contains the actual reachable set of the ResNet model (`R_ResNet`, blue). The other sets (`Xₛ`, red, magenta) represent alternative or older bounding techniques.

* **Relationships:** The large magenta polygon shows that the prior error bound from "Sander 2022" is highly conservative, over-approximating the system's behavior by a large margin. The green rectangle (`Xₛ`) might represent a simple, possibly hand-crafted, safe set. The red set shows the effect of adding an additional error term (`Ω₋ₑ`) to the core neural ODE set, resulting in a slightly larger but still relatively tight bound.

* **Significance:** The key takeaway is the efficacy of the `Ω_neuralODE` approach. By producing a tight invariant set (black) that contains the true reachable set (blue), it enables more precise safety guarantees and less conservative control design compared to the much larger bound from prior work (magenta). The nesting visually validates the method's accuracy and superiority in reducing conservatism.

</details>

Figure 5: Verification of ResNet based on neural ODE

We can also remark that the magenta sets obtained by adding the error bound proposed in [26] to the ResNet and neural ODE reachable sets in Figures 4 and 5, extends significantly beyond the green safe set, preventing us from successfully guaranteeing the safety of the models.

## 5 Conclusion

In this paper, we propose a set-based method to bound the error between a neural ODE model and its ResNet approximation. This approach is based on reachability analysis tools applied to the Lagrange remainder in the Taylor expansion of the neural ODE trajectories, and is shown both theoretically and numerically to provide significantly tighter over-approximation of this approximation error than previous results in [26]. As the second contribution of this paper, the obtained bounding set of the approximation error between the two models is used to verify a safety property on either of the two models by applying reachability or verification tools only on the other model. This approach is fully reversible and either model can be used as the verification proxy for the other. These contributions and their improvement with respect to [26] have been illustrated on a numerical example of a fixed-point attractor system modeled as a neural ODE.

In future works, we plan to explore additional sources of complexity for these approaches, such as handling non-smooth activation functions (e.g. ReLU), and the case where the neural ODE vector field is explicitly dependent on the depth variable $t$ , thus corresponding to ResNet with multiple residual blocks. Additionally, we aim to study the versatility of this verification proxy approach by applying it to other complex nonlinear dynamical systems or neural network architectures.

## Acknowledgement

This project has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie COFUND grant agreement no. 101034248.

## References

- [1] Althoff, M.: An introduction to cora 2015. In: Proc. of the workshop on applied verification for continuous and hybrid systems. pp. 120–151 (2015)

- [2] Beer, R.D.: On the dynamics of small continuous-time recurrent neural networks. Adaptive Behavior 3 (4), 469–509 (1995)

- [3] Behrmann, J., Grathwohl, W., Chen, R.T., Duvenaud, D., Jacobsen, J.H.: Invertible residual networks. In: International conference on machine learning. pp. 573–582. PMLR (2019)

- [4] Boudardara, F., Boussif, A., Meyer, P.J., Ghazel, M.: Innabstract: An inn-based abstraction method for large-scale neural network verification. IEEE Transactions on Neural Networks and Learning Systems (2023)

- [5] Chen, R.T., Rubanova, Y., Bettencourt, J., Duvenaud, D.K.: Neural ordinary differential equations. Advances in neural information processing systems 31 (2018)

- [6] De Figueiredo, L.H., Stolfi, J.: Affine arithmetic: concepts and applications. Numerical algorithms 37, 147–158 (2004)

- [7] Gruenbacher, S., Hasani, R., Lechner, M., Cyranka, J., Smolka, S.A., Grosu, R.: On the verification of neural odes with stochastic guarantees. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 35, pp. 11525–11535 (2021)

- [8] Gruenbacher, S.A., Lechner, M., Hasani, R., Rus, D., Henzinger, T.A., Smolka, S.A., Grosu, R.: Gotube: Scalable statistical verification of continuous-depth models. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 36, pp. 6755–6764 (2022)

- [9] Haber, E., Ruthotto, L.: Stable architectures for deep neural networks. Inverse problems 34 (1), 014004 (2017)

- [10] He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp. 770–778 (2016)

- [11] Huang, X., Kwiatkowska, M., Wang, S., Wu, M.: Safety verification of deep neural networks. In: Computer Aided Verification (CAV). Springer (2017)

- [12] Jaulin, L., Kieffer, M., Didrit, O., Walter, E., Jaulin, L., Kieffer, M., Didrit, O., Walter, É.: Interval analysis. Springer (2001)

- [13] Katz, G., Barrett, C., Dill, D.L., Julian, K., Kochenderfer, M.J.: Reluplex: An efficient smt solver for verifying deep neural networks. In: Computer Aided Verification (CAV). Springer (2017)

- [14] Kidger, P.: On neural differential equations. Ph.D. thesis, University of Oxford (2021)

- [15] Liang, Z., Ren, D., Liu, W., Wang, J., Yang, W., Xue, B.: Safety verification for neural networks based on set-boundary analysis. In: International Symposium on Theoretical Aspects of Software Engineering. pp. 248–267. Springer (2023)

- [16] Lopez, D.M., Choi, S.W., Tran, H.D., Johnson, T.T.: Nnv 2.0: the neural network verification tool. In: International Conference on Computer Aided Verification. pp. 397–412. Springer (2023), https://doi.org/10.1007/978-3-031-37703-7_19

- [17] Lopez, D.M., Musau, P., Hamilton, N., Johnson, T.T.: Reachability analysis of a general class of neural ordinary differential equations (2022), https://doi.org/10.1007/978-3-031-15839-1_15

- [18] Lu, Y., Zhong, A., Li, Q., Dong, B.: Beyond finite layer neural networks: Bridging deep architectures and numerical differential equations. In: International conference on machine learning. pp. 3276–3285. PMLR (2018)

- [19] Makino, K., Berz, M.: Taylor models and other validated functional inclusion methods. International Journal of Pure and Applied Mathematics 6, 239–316 (2003)

- [20] Marion, P.: Generalization bounds for neural ordinary differential equations and deep residual networks. Advances in Neural Information Processing Systems 36, 48918–48938 (2023)

- [21] Marion, P., Wu, Y.H., Sander, M.E., Biau, G.: Implicit regularization of deep residual networks towards neural odes (2024), https://arxiv.org/abs/2309.01213

- [22] Musau, P., Johnson, T.: Continuous-time recurrent neural networks (ctrnns)(benchmark proposal). In: 5th Applied Verification for Continuous and Hybrid Systems Workshop (ARCH), Oxford, UK (2018), https://doi.org/10.29007/6czp

- [23] Oh, Y., Kam, S., Lee, J., Lim, D.Y., Kim, S., Bui, A.: Comprehensive review of neural differential equations for time series analysis (2025), https://arxiv.org/abs/2502.09885

- [24] Rackauckas, C., Ma, Y., Martensen, J., Warner, C., Zubov, K., Supekar, R., Skinner, D., Ramadhan, A., Edelman, A.: Universal differential equations for scientific machine learning (2021), https://arxiv.org/abs/2001.04385

- [25] Rudin, W.: Principles of Mathematical Analysis. McGraw-Hill, New York, 3rd edn. (1976)

- [26] Sander, M., Ablin, P., Peyré, G.: Do residual neural networks discretize neural ordinary differential equations? Advances in Neural Information Processing Systems 35, 36520–36532 (2022)

- [27] Tabuada, P.: Verification and control of hybrid systems: a symbolic approach. Springer Science & Business Media (2009)

- [28] Tran, H.D., Yang, X., Manzanas Lopez, D., Musau, P., Nguyen, L.V., Xiang, W., Bak, S., Johnson, T.T.: Nnv: the neural network verification tool for deep neural networks and learning-enabled cyber-physical systems. In: International conference on computer aided verification. pp. 3–17. Springer (2020)

- [29] Xiang, W., Shao, Z.: Approximate bisimulation relations for neural networks and application to assured neural network compression. In: 2022 American Control Conference (ACC). pp. 3248–3253. IEEE (2022)