# KPIRoot+: An Efficient Integrated Framework for Anomaly Detection and Root Cause Analysis in Large-Scale Cloud Systems

**Authors**: Wenwei Gu, Renyi Zhong, Guangba Yu111Guangba Yu is the corresponding author., Xinying Sun, Jinyang Liu, Yintong Huo, Zhuangbin Chen, Jianping Zhang, Jiazhen Gu, Yongqiang Yang, Michael R. Lyu

> wwgu21@cse.cuhk.edu.hk

> ryzhong22@cse.cuhk.edu.hk

> guangbayu@cuhk.edu.hk

> sunxinying1@huawei.com

> jyliu@cse.cuhk.edu.hk

> ythuo@smu.edu.sg

> chenzhb36@mail.sysu.edu.cn

> jpzhang@cse.cuhk.edu.hk

> jiazhengu@cuhk.edu.hk

> yangyongqiang@huawei.com

> lyu@cse.cuhk.edu.hk[[[[

1] \orgname The Chinese University of Hong Kong, \country Hong Kong SAR

2] \orgname Sun Yat-sen University, \country China

3] \orgname Singapore Management University, \country Singapore

4] \orgname Huawei Cloud Computing Technology Co., Ltd, \country China

Abstract

To ensure the reliability of cloud systems, their runtime status reflecting the service quality is periodically monitored with monitoring metrics, i.e., KPIs (key performance indicators). When performance issues happen, root cause localization pinpoints the specific KPIs that are responsible for the degradation of overall service quality, facilitating prompt problem diagnosis and resolution. To this end, existing methods generally locate root-cause KPIs by identifying the KPIs that exhibit a similar anomalous trend to the overall service performance. While straightforward, solely relying on the similarity calculation may be ineffective when dealing with cloud systems with complicated interdependent services. Recent deep learning-based methods offer improved performance by modeling these intricate dependencies. However, their high computational demand often hinders their ability to meet the efficiency requirements of industrial applications. Furthermore, their lack of interpretability further restricts their practicality. To overcome these limitations, an effective and efficient root cause localization method, KPIRoot, is proposed. It integrates both advantages of similarity analysis and causality analysis, where similarity measures the trend alignment of KPI and causality measures the sequential order of variation of KPI. Furthermore, it leverages symbolic aggregate approximation to produce a more compact representation for each KPI, enhancing the overall analysis efficiency of the approach. However, during the deployment of KPIRoot in cloud systems of a large-scale cloud system vendor, Cloud $\mathcal{H}$ . We identified two additional drawbacks of KPIRoot: 1. The threshold-based anomaly detection method is insufficient for capturing all types of performance anomalies; 2. The SAX representation cannot capture intricate variation trends, which causes suboptimal root cause localization results. We propose KPIRoot+ to address the above drawbacks. The experimental results show that KPIRoot+ outperforms eight state-of-the-art baselines by 2.9% $\sim$ 35.7%, while time cost is reduced by 34.7%. Moreover, we share our experience of deploying KPIRoot in the production environment of a large-scale cloud provider Cloud $\mathcal{H}$ Due to the company policy, we anonymize the name as Cloud $\mathcal{H}$ ..

keywords: Root Cause Localization, Cloud System Reliability, Cloud Monitoring Metrics, Cloud Service Systems

1 Introduction

Large-scale cloud systems have become the backbone of modern computing infrastructure, offering unprecedented scalability and flexibility. Cloud platforms such as Microsoft Azure, Amazon Web Services, and Google Cloud Platform provide cost-effective services to users worldwide on a $7× 24$ basis [21, 49]. However, the inherent complexity and scale of these systems inevitably lead to performance issues, including slow application response times, network latency spikes, and resource contention [20, 16]. These issues can result in violations of Service Level Agreements (SLAs), causing user dissatisfaction and financial losses for both service providers and consumers [24]. Consequently, the prompt identification and resolution of performance issues have become critical concerns for cloud vendors and users alike [23]. Addressing these challenges is essential for maintaining the reliability and efficiency of cloud services in an increasingly digital world.

Cloud vendors usually collect real-time key performance indicators (KPIs) to monitor the health status of their services [39]. Anomaly detection is conducted over these KPIs to identify performance issues based on this KPI data [55, 57, 14]. For example, if the resource utilization rate is continuously high, it may indicate an imminent service overload and performance degradation. However, due to the scale of cloud systems, it is infeasible to analyze the KPI of each instance (e.g., VM and container) individually. Since a cloud service typically consists of many instances, a common way is to monitor specific KPIs that can reflect the overall performance of the service, e.g., latency, error count, and traffic, which we refer to as alarm KPIs. Automated performance issue detection can thus be realized through configuring alerting rules or performing anomaly detection algorithms on such alarm KPIs. These underlying KPIs of individual instances or VMs within a cloud service may not be directly analyzed due to the scale of cloud systems. However, their collective behavior significantly influences the alarm KPIs.

When a performance issue is detected (i.e., the alarm KPI is abnormal), it is crucial to identify the root cause [37] (e.g., which underlying instances cause the abnormal performance of the service). However, pinpointing the root cause is a non-trivial task since the monitored alarm KPI is highly aggregated and often derived [46], i.e., the correlation between the underlying KPIs and the alarm KPI is complicated and hard to understand. Even experienced software reliability engineers (SREs) can struggle to pinpoint the specific KPIs that contribute to the root cause. Such a manual approach is like finding a needle in a haystack, which is tedious and time-consuming. Hence, the automated root cause localization method is an urgent requirement for prompt performance issue resolution.

In particular, a practical root cause localization approach for KPIs from cloud systems should meet the efficiency and interpretability requirements [50]. Specifically, due to the huge volume of underlying KPIs and the tight time-to-resolve pressure, the approach needs to be able to process large amounts of data (e.g., thousands of KPIs) efficiently (e.g., in seconds). Furthermore, the approach should produce interpretable results to help engineers take effective remedy actions, which is essential in the maintenance of cloud systems. Existing root cause localization methods typically adopt statistics or deep learning models. Statistic-based methods adopt Kendall, Spearman, and Pearson correlation to compute the linear relationships between KPIs and find the root cause [48]. However, these methods have high computational costs to calculate the correlation for every KPI pair and also suffer from low accuracy in handling complicated KPIs from cloud systems [47]. Some recent studies [46] adopt deep learning models (e.g., graph neural networks) to model the KPI relationships for root cause localization. However, such methods suffer from high computation costs and lack interpretability [51, 52].

To address the above limitations, a root cause localization framework, KPIRoot [11], is proposed to identify the root cause underlying KPIs when an anomaly in the monitored alarm KPI is detected in cloud systems. To meet the efficiency requirement, KPIRoot first adopts the Symbolic Aggregate Approximation (SAX) representation to downsample the time series data of KPIs and facilitate extracting the anomaly segments. By filtering out the normal KPI data, KPIRoot can focus on anomaly patterns instead of the whole time series, which optimizes efficiency. Then, KPIRoot conducts both the similarity and causality analysis to localize the root cause KPIs. Specifically, underlying KPIs with a high similarity of anomaly patterns to the alarm KPI are more likely to trigger the alert and be the root causes. On the other hand, causality analysis is used to validate the cause and effect in the temporal dimension, i.e., the anomaly pattern of root cause KPIs should happen before that of the alarm KPI. Finally, KPIRoot combines the similarity and causality analysis results to produce a correlation score for each underlying KPI. The higher the score, the more likely the KPI is the root cause. The time complexity of KPIRoot is $\mathcal{O}(\sqrt{n})$ ( $n$ is the length of the KPIs), which allows it to process thousands of KPIs in seconds, thus facilitating the resolution of real-time performance issues.

However, we identified several drawbacks of KPIRoot. Firstly, the threshold-based anomaly detection method employed by KPIRoot, while effective in identifying trend anomalies, struggles to detect seasonal and point anomalies. This limitation is particularly highlighted in performance issues reflected in KPIs, where seasonal fluctuations and sudden spikes or drops are common and critical to accurate anomaly detection. Secondly, although the SAX representation utilized in KPIRoot enhances the efficiency of root cause localization by downsampling the KPIs, it may not effectively capture intricate variation trends. This limitation arises from its reliance on segment averages, which can obscure variation trend details in the data essential for accurate root cause localization.

This paper extends our preliminary work, which appears as a research paper of ISSRE 2024 [11]. In particular, we extend our preliminary work in the following directions:

- We propose KPIRoot+, an extended version of the KPIRoot framework introduced in our preliminary work [11]. There are two major differences in KPIRoot+ compared to KPIRoot. Firstly, anomaly detection is positioned as a critical precursor to root cause localization. We reveal that the original KPIRoot framework struggles to detect all types of anomalies, which are pivotal for accurate root cause localization in some cases. To address this deficiency, we have implemented a time series decomposition-based method. By supplementing the original approach based on trend variation with time series decomposition and a U-Net autoencoder, KPIRoot+ significantly improves the accuracy of anomaly detection, thus improving the subsequent root cause analysis phase. Secondly, the original Symbolic Aggregate Approximation (SAX) representation employed in KPIRoot falls short of effectively capturing intricate trends and variations due to its dependence on segment averages. This can obscure critical behavioral patterns. To overcome these limitations, KPIRoot+ incorporates an Improved SAX representation (ISAX) that further incorporates trend variation indicators. Our experiments show that KPIRoot+ performs better in terms of root cause localization accuracy but requires a similar execution time when compared with KPIRoot.

- We conduct a comprehensive evaluation of anomaly detection performance across different models, an aspect that was overlooked in KPIRoot.

- We strengthen our experimental part by including NDCG in our evaluation metrics, specifically NDCG@5 and NDCG@10. This metric measures how easily engineers can find the culprit VMs, which is crucial in our scenarios as the most relevant root causes are prioritized for investigation.

- We conduct a sensitivity analysis on the parameters used in KPIRoot+. The results demonstrate that our approach maintains robustness within a reasonable interval of parameter values.

- During the deployment of KPIRoot in our Cloud $\mathcal{H}$ , we identified several failure cases that highlighted its limitations. We share our industrial experiences and insights on how KPIRoot+ addresses these issues.

To evaluate the effectiveness of our proposed KPIRoot+, we conducted extensive experiments based on large-scale real-world KPI data from a large cloud vendor. The experimental results demonstrate that KPIRoot+ can pinpoint root cause KPIs more accurately compared with seven baselines with an F1-score of 0.882 and Hit Rate@10 of 0.946. On the other hand, KPIRoot+ largely reduces the computation cost with an execution time of around 8 seconds, which facilitates engineers diagnosing root causes in real time. In particular, we have successfully deployed our approach in the cloud service system of Cloud $\mathcal{H}$ since Aug 2023 and successfully localized the true root cause of ten performance issues of emergence level with 100 accuracies without affecting the customer. We also share industrial experience in practice.

We summarize the main contributions of this work, which form a super-set of those in our preliminary study, as follows:

- We introduce KPIRoot+, an effective and efficient method to localize the underlying KPIs that cause the anomaly, which is an improved version of KPIRoot. KPIRoot+ adopts the Improved SAX representation for downsampling and combines both the similarity and causality of anomaly patterns of KPIs to identify the root cause. Such designs meet the practical requirements of efficiency and interpretability, making KPIRoot feasible to deploy in large-scale cloud systems. We further strengthen the anomaly detection part to make it effective for different anomaly types.

- Extensive experiments on three industrial datasets collected from Cloud $\mathcal{H}$ ’s large-scale cloud system demonstrate the effectiveness of KPIRoot+, i.e., 0.882 F1-score and 0.946 Hit@10 rate. The average execution time of KPIRoot+ is around 8 seconds, significantly outperforming seven state-of-the-art baselines.

- We have successfully deployed KPIRoot+ into the troubleshooting system of a large-scale cloud service system of Cloud $\mathcal{H}$ since Nov 2022. It has successfully analyzed ten emerging performance issues with 100 accuracies, and none of the issues affected the customer. The success stories of our deployment confirm the applicability and effectiveness of our method.

2 Background and Motivation

In this section, we present a comprehensive overview of KPI-based root cause analysis in cloud service systems and demonstrate its practical application through a case study of root cause localization in Cloud $\mathcal{H}$ , a large-scale production cloud environment.

<details>

<summary>extracted/6514164/figures/framework.png Details</summary>

### Visual Description

# Technical Document Extraction: Cloud System Self-Healing Workflow

This document provides a detailed technical extraction of the provided architectural diagram, which outlines a four-stage pipeline for cloud service monitoring and automated remediation.

## 1. High-Level Overview

The image depicts a linear, four-stage workflow (from left to right) encapsulated in dashed rounded rectangles. The process flows from infrastructure hosting to data collection, analytical correlation, and finally to automated self-healing actions.

---

## 2. Component Segmentation and Analysis

### Stage 1: Cloud Service System (Infrastructure)

* **Header Label:** Cloud Service System

* **Components:**

* **Host Cluster:** Represented by a cloud icon containing a server rack symbol.

* **Physical Server:** A central server icon connected via dotted lines to multiple Virtual Machines.

* **VMs:** Four individual square icons representing Virtual Machines (VMs) at the base of the hierarchy.

* **Function:** This stage represents the source environment where services are hosted and where raw data originates.

### Stage 2: Cloud Monitoring Backend (Data Collection)

* **Header Label:** Cloud Monitoring Backend

* **Textual Labels:**

* "Periodically Collecting"

* "Monitored KPIs"

* **Visual Components:**

* An icon of a monitor displaying a heartbeat/pulse line.

* A downward-pointing arrow indicating the flow from the monitor to the data.

* **Data Visualization:** Two line charts representing time-series data.

* **Top Chart:** Shows a highly volatile trend with multiple sharp peaks and troughs.

* **Bottom Chart:** Shows a relatively stable baseline with one significant spike toward the end of the timeline.

* **Function:** This stage involves the continuous gathering of Key Performance Indicators (KPIs) from the infrastructure.

### Stage 3: KPI Correlation Analysis (Intelligence)

* **Header Label:** KPI Correlation Analysis

* **Textual Labels:**

* "KPI Selection"

* "VM Candidates"

* **Visual Components:**

* **Network Graph Icon:** A series of nodes and edges representing the correlation between different data points.

* A downward-pointing arrow leading to a cloud icon.

* **Warning Icon:** A cloud icon with a superimposed black triangle containing an exclamation mark (!), signifying the identification of a fault or an anomaly.

* **Function:** This stage processes the collected KPIs to identify specific Virtual Machines that are candidates for remediation based on correlated failure patterns.

### Stage 4: Cloud System Self Healing (Remediation)

* **Header Label:** Cloud System Self Healing

* **Textual Labels:**

* "Mitigation Strategies"

* "VM Migration, Current Limiting..."

* **Visual Components:**

* **Self-Healing Icon:** A heart symbol enclosed in circular "refresh" arrows, indicating a continuous recovery loop.

* **Action Flow:** Three dotted arrows radiate downward from the self-healing icon to specific mitigation actions:

1. **Cloud Download/Sync Icon:** Likely representing data backup or state synchronization.

2. **Server/VM Toggle Icon:** Representing VM Migration or resource adjustment.

3. **Cloud Power/Shutdown Icon:** Representing service restarts or current limiting.

* **Function:** The final stage executes automated strategies to resolve the issues identified in the previous steps.

---

## 3. Workflow Summary

The system operates in a unidirectional flow:

1. **Generate:** The **Cloud Service System** runs the workload.

2. **Observe:** The **Cloud Monitoring Backend** collects periodic KPI data.

3. **Analyze:** **KPI Correlation Analysis** filters data and identifies problematic VM candidates.

4. **Act:** **Cloud System Self Healing** applies mitigation strategies like migration or current limiting to restore system health.

</details>

Figure 1: The Overall Pipeline of Root Cause Localization in Cloud $\mathcal{H}$

2.1 KPI-based Root Cause Localization in Cloud Systems

Ensuring performance and reliability in cloud systems is of great importance. Performance anomalies like hardware malfunctions, network overloads, and security violations can significantly influence the performance of cloud systems and violate SLA [34]. Consequently, the need for run-time status and performance monitoring of cloud systems is in demand. Key Performance Indicators (KPIs) serve as informative tools that monitor the overall status of various components of cloud systems [7], providing helpful insights that aid in the identification of potential anomalies [36], and even proactively predicting these performance issues before they escalate into catastrophic failures [40]. Some common KPIs in cloud systems include CPU usage, memory usage, network bandwidth, latency, error rates, and service QPS (queries per second).

The cloud service system has become increasingly huge in scale and produces larger volumes of monitoring data. The highly interconnected nature of cloud systems causes problems, such as performance failures, which can spread from one component to another component. Consequently, the failure diagnosis, root cause localization, and performance debugging in large cloud systems are more complex than before [42, 29]. In real-world applications, monitoring a large number of KPIs is computationally intensive. Thus, a more practical way is to monitor the aggregated KPI and configure alerts.

Specifically, in large-scale cloud service clusters, large amounts of virtual machines (VMs) operate concurrently to provide tenants with various services. A special KPI is the “ alarm KPI ” that triggers alerts when a performance issue like an overload of CPU usage in the entire cluster happens. In large-scale cloud systems, service may consist of large amounts of VMs working together to respond to cloud users’ demands [44]. Given the scale of these systems, individual monitoring of each VM becomes infeasible. Instead, software reliability engineers often utilize alarm KPIs as a more effective approach to oversee the overall performance of the service. When the alarm KPI indicates abnormal activity, it becomes crucial to identify which VMs are the root causes. The root cause refers to the specific VMs that trigger the anomaly within the alarm KPI. For instance, if the alarm KPI is triggered due to a fairly high CPU usage, the root cause could be the particular VMs that directly cause the resource overload. Such a setup allows for the proactive identification of performance issues. In addition to the alarm KPI, other KPIs monitor the bytes per second (bps) and packets per second (pps) of each VM in the cluster [17]. These KPIs offer valuable insights into the data traffic of each user, serving as indicators of their workload.

The overall pipeline of root cause localization using monitoring KPI in Cloud $\mathcal{H}$ is shown in Fig. 1. Cloud service providers typically have many data centers spread across different regions. Each region consists of multiple isolated locations known as availability zones to ensure low latency and high availability [15]. Users can create their VMs in any region that best fits their needs. Then, the behavior of both the host CPU cluster and the VMs is continuously monitored and recorded through KPIs, including CPU usage, memory usage, and netflow throughput. Next, KPI correlation analysis is conducted to understand the dependencies between each VM and the host cluster. Based on the KPI correlation analysis, mitigation strategies such as VM migration or throttling are enacted to alleviate the system overload. In our paper, we focus on the third and most significant part, namely root cause analysis, and propose KPIRoot+.

2.2 A Motivating Example

In a cloud system, there exist intrinsic correlations between the KPIs of individual VMs and the alarm KPI [10], which is a crucial part of RCA. Take the CPU usage in cloud systems as an example, the correlation is based on the fundamental principle of resource allocation within a cloud system that each VM is allocated a portion of the cluster’s resources like CPU [8]. When a VM’s workload increases, it consumes more CPU resources, thereby affecting the overall CPU usage. However, the relationship between the KPIs of individual VMs and the overall CPU usage of the cluster is complex and non-linear [41]. This complexity is due to the sophisticated architecture of modern cloud systems and the principles of resource allocation they employ. In other words, these mechanisms ensure that the resource usage of one VM does not significantly impact others, thereby preventing a single VM from monopolizing the CPU [58]. Thus, the bulge of the workload KPI of a single VM does not necessarily lead to alarm KPI trigger alerts.

To effectively identify the root cause of performance anomaly, we capture the correlations between the VM KPIs and the alarm KPI that depicts the contribution of VMs to the detected performance anomaly. This correlation often manifests in a similar waveform between the VM’s KPIs and the alarm KPI. For example, a sudden surge in a VM’s data traffic would likely lead to an increased demand for CPU resources, which would be reflected as a spike in the KPI of the cluster’s CPU usage [3]. The KPI correlation analysis approach aiming to mine the inherent correlations in KPI data can be leveraged to pinpoint the root causes of system alerts. In our case, similarity and causality analysis are adopted. Firstly, similarity analysis allows us to identify which VMs are behaving similarly to the overall system’s performance, as reflected by the alarm KPI. Therefore, similarity analysis can help narrow down the potential root causes of the anomaly. Secondly, causality analysis is critical as it allows us to determine which changes in VM KPIs occurred before the anomaly, thus providing clues as to which VMs might have triggered the anomaly.

<details>

<summary>extracted/6514164/figures/example.png Details</summary>

### Visual Description

# Technical Data Extraction: Cluster Performance and Network Traffic Analysis

## 1. Document Overview

This image is a multi-paneled time-series line chart used for root cause analysis in a computing cluster environment. It correlates a high-level alarm (Cluster CPU Usage) with the network traffic of four specific Virtual Machines (VM1 through VM4) over a 15-hour period.

## 2. Component Isolation

### A. Header/Y-Axis Labels (Left Side)

The chart is divided into five vertically stacked sub-plots, each with a specific label:

1. **Cluster CPU Usage (Alarm KPI)**: The primary metric being monitored.

2. **Network Traffic of VM1 (Root Cause)**: Identified as the source of the anomaly.

3. **Network Traffic of VM2**: Comparative metric.

4. **Network Traffic of VM3**: Comparative metric.

5. **Network Traffic of VM4**: Comparative metric.

### B. X-Axis (Footer)

The horizontal axis represents time on the date **08-26**. The markers are:

* `08-26 07:00`

* `08-26 10:00`

* `08-26 13:00`

* `08-26 16:00`

* `08-26 19:00`

* `08-26 22:00`

## 3. Data Series Analysis and Trend Verification

### Series 1: Cluster CPU Usage (Alarm KPI)

* **Color**: Dark Red / Maroon.

* **Visual Trend**: Highly erratic with frequent, sharp vertical spikes reaching a consistent maximum ceiling. There are approximately 7-8 major "burst" periods of high activity.

* **Correlation**: The spikes in this series align precisely with the activity peaks in the VM1 series.

### Series 2: Network Traffic of VM1 (Root Cause)

* **Color**: Pink / Magenta.

* **Visual Trend**: Characterized by distinct "plateau" blocks of high traffic.

* **Key Data Points**:

* First peak: Just before 07:00.

* Second peak: Between 07:00 and 10:00.

* Major sustained peak: Around 10:30.

* Major sustained peak: Around 13:30.

* Smaller peaks: Between 16:00 and 19:00.

* Final peak: Just before 22:00.

* **Observation**: This series is the only one that mirrors the timing of the "Cluster CPU Usage" spikes perfectly, justifying the "(Root Cause)" label.

### Series 3: Network Traffic of VM2

* **Color**: Blue.

* **Visual Trend**: Flat/low activity for the first half of the day, followed by high-frequency oscillations starting around 16:00, ending with a high-level sustained plateau after 21:00.

* **Observation**: Does not correlate with the initial CPU spikes seen in the first panel.

### Series 4: Network Traffic of VM3

* **Color**: Blue.

* **Visual Trend**: Binary/Step function behavior. The traffic is either at a baseline or at a fixed high plateau with perfectly vertical transitions.

* **Key Data Points**: High traffic blocks occur at ~08:30, ~12:00, ~14:30, ~18:30, and a final sustained jump at ~21:30.

* **Observation**: These regular intervals suggest a scheduled task or batch process, but they do not align with the erratic CPU spikes.

### Series 5: Network Traffic of VM4

* **Color**: Blue.

* **Visual Trend**: High initial activity before 07:00, followed by low activity with minor bumps until 19:00. After 19:00, it shows intense, high-amplitude noise/volatility.

* **Observation**: The late-day volatility does not match the specific timing of the CPU alarm peaks.

## 4. Summary of Findings

The visualization demonstrates a direct temporal correlation between **Cluster CPU Usage** and **Network Traffic of VM1**. Whenever VM1 experiences a surge in network traffic (Pink line), the Cluster CPU Usage (Red line) hits its peak alarm threshold. The traffic patterns of VM2, VM3, and VM4 show significant activity at different times, but their patterns do not synchronize with the Cluster CPU Alarm KPI, effectively ruling them out as the primary root cause during the observed spikes.

</details>

Figure 2: An Industrial Case in Cloud $\mathcal{H}$

An industrial case in a real-world cloud system cluster of Cloud $\mathcal{H}$ is shown in Fig. 2. There is an alarm KPI monitoring the overall CPU usage of the cluster, and several VM KPIs monitor the network traffic of individual VMs. For the purpose of the discussion, we will focus on four of the VM KPIs. We can observe that the waveforms of VM2 and VM4 have weak alignments with the fluctuations in the alarm KPI, indicating a lower correlation and, thus, are unlikely to be significant contributors to CPU overload. The KPI of VM1 and VM3 exhibit a high degree of similarity to the alarm KPI, indicating they are potential root causes for the anomaly. However, to ascertain the true root cause of the CPU overload, time series causality, i.e., chronological order of events, should also be taken into consideration. As confirmed by the SREs, it is VM1, not VM3, which is the true root cause of the CPU overload. This is because the spike in VM1’s KPI precedes the CPU overload anomaly, while the spike in VM3’s KPI happens slightly after the anomaly, indicating that it is an outcome, not a cause of the anomaly. Indeed, in a cloud system, a VM’s increase in resource consumption usually precedes the CPU overload due to temporal causality, which is why we take temporal causality into consideration in our method.

2.3 Different Types of Performance Anomalies

<details>

<summary>extracted/6514164/figures/type.png Details</summary>

### Visual Description

# Technical Document Extraction: Time Series Anomaly Types

This document contains a detailed extraction of data and visual information from a set of three time-series charts illustrating different types of anomalies.

## General Layout and Structure

The image consists of three vertically stacked line charts. Each chart shares a common X-axis (time/index) ranging from **0 to approximately 1450** and a Y-axis (normalized value) ranging from **0.0 to 1.0**.

* **Language:** English

* **Data Series Color:** Black solid line

* **Anomaly Indicator:** Light red/pink shaded vertical regions

---

## 1. Trend Shift Anomaly (Top Chart)

### Component Isolation

* **Header:** Title "Trend Shift Anomaly"

* **Y-Axis:** Markers at [0.0, 0.2, 0.4, 0.6, 0.8, 1.0]

* **X-Axis:** Markers at [0, 200, 400, 600, 800, 1000, 1200, 1400]

### Trend Analysis

The data begins with a downward trend from 0.9 to 0.4. It then enters a sustained low-level period (the anomaly). Following the anomaly, the data exhibits a steady upward trend with high-frequency noise, eventually reaching a peak near 1.0 around index 1350.

### Anomaly Data

* **Type:** Level/Trend Shift.

* **Spatial Grounding:** A single large red shaded block.

* **X-Range:** Approximately **index 230 to index 510**.

* **Behavior:** During this window, the mean value of the series drops significantly to a range between 0.0 and 0.3, representing a "trough" anomaly compared to the surrounding global trend.

---

## 2. Seasonal Pattern Variation Anomaly (Middle Chart)

### Component Isolation

* **Header:** Title "Seasonal Pattern Variation Anomaly"

* **Y-Axis:** Markers at [0.0, 0.2, 0.4, 0.6, 0.8, 1.0]

* **X-Axis:** Markers at [0, 200, 400, 600, 800, 1000, 1200, 1400]

### Trend Analysis

The series consists of intermittent "bursts" or pulses of activity separated by periods of zero value (flatline). The normal pattern appears to be a complex pulse reaching heights of 0.8 to 1.0.

### Anomaly Data

* **Type:** Pattern Variation / Missing Cycle.

* **Anomaly Region 1:**

* **X-Range:** Approximately **index 20 to index 220**.

* **Behavior:** The pulses in this region are significantly lower in amplitude (peaking around 0.4) and have a different frequency/shape compared to the standard pulses seen at index 400, 650, and 850.

* **Anomaly Region 2:**

* **X-Range:** Approximately **index 1060 to index 1090**.

* **Behavior:** A very narrow shaded region where a pulse is truncated or fails to reach the expected height of the surrounding seasonal peaks.

---

## 3. Residual Outlier Anomaly (Bottom Chart)

### Component Isolation

* **Header:** Title "Residual Outlier Anomaly"

* **Y-Axis:** Markers at [0.0, 0.2, 0.4, 0.6, 0.8, 1.0]

* **X-Axis:** Markers at [0, 200, 400, 600, 800, 1000, 1200, 1400]

### Trend Analysis

The series represents a stochastic or "noisy" signal centered roughly around a mean of 0.3. It fluctuates rapidly between 0.1 and 0.6 throughout the entire duration.

### Anomaly Data

* **Type:** Point Outlier / Spike.

* **Spatial Grounding:** A very thin vertical red line.

* **X-Range:** Approximately **index 570 to index 580**.

* **Behavior:** A single, sharp vertical spike where the value reaches **1.0**. This is a distinct deviation from the local variance, as the surrounding data points do not exceed 0.7 in that specific neighborhood.

---

## Summary Table of Extracted Data

| Chart Title | Anomaly Type | Primary X-Range | Visual Characteristic |

| :--- | :--- | :--- | :--- |

| **Trend Shift Anomaly** | Level Shift | 230 - 510 | Sustained drop in mean value. |

| **Seasonal Pattern Variation** | Shape/Frequency | 20 - 220; 1060 - 1090 | Low amplitude pulses; irregular timing. |

| **Residual Outlier Anomaly** | Point Outlier | 570 - 580 | Single instantaneous spike to 1.0. |

</details>

Figure 3: Different Anomaly Types in Cloud $\mathcal{H}$

Our previous work KPIRoot [11] predominantly focuses on detecting trend anomalies using a threshold-based method. While effective for identifying gradual or sustained shifts in performance metrics, this approach may not adequately capture the breadth of anomalies that can occur in Cloud $\mathcal{H}$ . Specifically, seasonal and residual anomalies, which manifest as periodic deviations or abrupt, unexpected changes, respectively, might not be sufficiently detected by a threshold method alone.

In Figure 3, we observe three distinct types of performance anomalies across different monitoring metrics within Cloud $\mathcal{H}$ . The first anomaly is a trend anomaly characterized by a sudden downward shift in throughput on a network interface card (NIC). This abrupt change can be indicative of packet loss, which might occur due to network congestion, hardware malfunctions, or configuration errors. The second case illustrates seasonal anomalies in NIC throughput, with unexpected deviations occurring in the area marked with red spans. The anomaly could suggest issues like batch jobs running at non-standard times or misconfigured scheduling that leads to throughput drop. The third example presents a residual anomaly in the average throughput on another NIC. Such short-duration spikes are neither part of a long-term trend nor follow a seasonal pattern, hinting at sporadic issues such as brief network outages, hardware failures, or security incidents like DDoS attacks. All these three types of performance anomalies can have severe impacts on service performance and reliability.

3 METHODOLOGY

In this section, we present KPIRoot+, an automated approach for root cause localization with monitoring KPIs in cloud systems. We first formulate the problem we target. Then, we provide an overview of the proposed method. Next, we elaborate on each part of our method, i.e., time series decomposition-based anomaly segment detection, similarity analysis, and causality analysis. We finally analyze the complexity of our proposed algorithm.

3.1 Problem Formulation

<details>

<summary>extracted/6514164/figures/overview.png Details</summary>

### Visual Description

# Technical Document Extraction: KPIRoot+ Workflow Architecture

This document provides a comprehensive technical breakdown of the provided architectural diagram for **KPIRoot+**, a system designed for anomaly detection and correlation analysis in virtualized environments.

---

## 1. High-Level Process Overview

The diagram illustrates a four-stage pipeline that transforms raw monitoring data into correlation scores to identify root causes of anomalies.

* **Input:** Raw monitoring Key Performance Indicators (KPIs) from hosts and Virtual Machines (VMs).

* **Processing:** Decomposition-based anomaly detection followed by parallel Similarity and Causality analyses.

* **Output:** Correlation scores for specific VMs.

---

## 2. Component Segmentation and Flow

### Region 1: Input of KPIRoot+ (Raw monitoring KPI)

This section represents the data collection layer.

* **Components:**

* **Host Server Icon:** Connected via dashed lines to two sets of VM icons.

* **KPI Data Series:**

* **Blue Line Chart:** Labeled "$KPI_{host}$" (KPI from host).

* **Green Line Chart:** Labeled "$KPI_{VM1}$" (KPI from VM1).

* **Orange Line Chart:** Labeled "$KPI_{VM2}$" (KPI from VM2).

* **Flow:** All raw KPI data is aggregated and passed to the next stage via a rightward-pointing arrow.

### Region 2: Decomposition based Anomaly Detection

This stage focuses on signal processing and identifying deviations.

* **Process:** The input signal undergoes "**Decomposition**".

* **Sub-components:** The signal is broken down into three distinct mathematical components:

1. **Trend**

2. **Seasonal**

3. **Residual**

* **Detection:** An upward red arrow points to the word "**Anomaly**" in red text, accompanied by a magnifying glass icon containing a warning symbol. This indicates that anomalies are detected within the decomposed components (likely the Residual).

### Region 3: Parallel Analysis (Similarity & Causality)

The output of the anomaly detection stage splits into two concurrent analytical paths.

#### A. Similarity Analysis

* **Method:** **Jaccard similarity**.

* **Logic:** The host KPI (blue) is compared against VM KPIs.

* **Path 1 (Green Arrow):** $Jaccard(KPI_{host}, KPI_{VM1})$ comparing the blue host signal to the green VM1 signal.

* **Path 2 (Orange Arrow):** $Jaccard(KPI_{host}, KPI_{VM2})$ comparing the blue host signal to the orange VM2 signal.

#### B. Causality Analysis

* **Method:** **Granger causality**.

* **Logic:** Determines the directional influence between VM KPIs and the host KPI.

* **Path 1 (Green Arrow):** $F(KPI_{VM1} \rightarrow KPI_{host})$ - Testing if VM1 causes the host anomaly.

* **Path 2 (Orange Arrow):** $F(KPI_{VM2} \rightarrow KPI_{host})$ - Testing if VM2 causes the host anomaly.

### Region 4: Output of KPIRoot+ (Correlation Score)

The final stage aggregates the results from the Similarity and Causality analyses.

* **Structure:** A vertical container receiving four inputs (two green, two orange).

* **Results:**

* **Green Circle:** Labeled "$KPI_{VM1}$". This represents the final correlation/root-cause score for Virtual Machine 1.

* **Orange Circle:** Labeled "$KPI_{VM2}$". This represents the final correlation/root-cause score for Virtual Machine 2.

---

## 3. Data and Label Transcription

| Category | Label / Variable | Description |

| :--- | :--- | :--- |

| **Header 1** | Input of KPIRoot+ | Entry point of the system. |

| **Header 2** | Decomposition based Anomaly Detection | Primary processing stage. |

| **Header 3** | Similarity Analysis | Statistical comparison stage. |

| **Header 4** | Causality Analysis | Directional influence stage. |

| **Header 5** | Output of KPIRoot+ | Final result stage. |

| **KPI Source** | $KPI_{host}$ | Blue signal; reference point for the host. |

| **KPI Source** | $KPI_{VM1}$ | Green signal; data from the first VM. |

| **KPI Source** | $KPI_{VM2}$ | Orange signal; data from the second VM. |

| **Math Function** | $Jaccard(x, y)$ | Used for similarity measurement. |

| **Math Function** | $F(x \rightarrow y)$ | Used for Granger causality measurement. |

---

## 4. Visual Trend and Logic Verification

* **Signal Consistency:** The color coding is strictly maintained throughout the diagram. **Blue** always represents the Host, **Green** always represents VM1, and **Orange** always represents VM2.

* **Trend Check:** The line charts for $KPI_{host}$, $KPI_{VM1}$, and $KPI_{VM2}$ all show high-frequency fluctuations (noise/activity), which justifies the need for "Decomposition" to extract the "Trend" and "Seasonal" patterns from the "Residual" noise where anomalies typically reside.

* **Spatial Logic:** The diagram flows linearly from left to right, with a logical fork in the center to show that Similarity and Causality are independent metrics used to calculate the final Correlation Score.

</details>

Figure 4: The Overview of Our Proposed Method KPIRoot+

The goal of our work is to identify the root causes of performance anomalies, including but not limited to CPU overload in large-scale cloud systems based on the alarm KPI and observed individual KPIs. The root causes are the VMs that influence the system service quality. By throttling the throughput of these VMs, we can alleviate the system-level anomaly and restore service quality. Given the alarm KPI that monitors the status of the host cluster $X_{host}∈{R^{n}}$ and the monitored KPIs of VMs, e.g., the netflow of them $X_{i}∈{R^{n},i∈\{1,2,...,m\}}$ , where $N$ denotes the number of observations collected at an equal interval and $m$ is the number of monitored VMs. To determine the true root cause of the detected anomaly, a correlation score $c_{i}∈[0,1]$ that represents the contribution of a VM KPI to the anomaly is calculated. Then, the root causes can be obtained by ranking the correlation score, and KPIs with the top $K$ scores are deemed as root causes.

3.2 Overview

The overview of KPIRoot+ is shown in Fig. 4, which consists of three key components, namely, time series decomposition-based anomaly segment detection, similarity analysis, and causality analysis. Given the raw monitoring KPI, to make the RCA more efficient and meet the real-time requirement of industrial deployment, we propose to adopt SAX representation to downsample the raw KPI. Then, KPIRoot detects the potential anomaly segments, including different anomaly types in the downsampled alarm KPI of the host cluster (Section. 2). In this step, an anomaly score that describes the probability of KPI being anomalous will be computed, an anomaly segment will automatically extracted around the spike. Then, KPIRoot conducts a similarity analysis to compute the similarity between VM KPIs and the alarm KPI during the anomaly period (Section. 3.4). This analysis provides insights into how each VM influences the host cluster by measuring the alignment of the KPI trends. A causality analysis is then conducted (Section. 3.5) to identify the cause-and-effect between the VM KPIs and the alarm KPI. In our case, we utilize Granger causality. The results from the similarity and causality analyses are then combined to compute a correlation score for each KPI.

3.3 Time Series Decomposition Based Anomaly Segment Detection

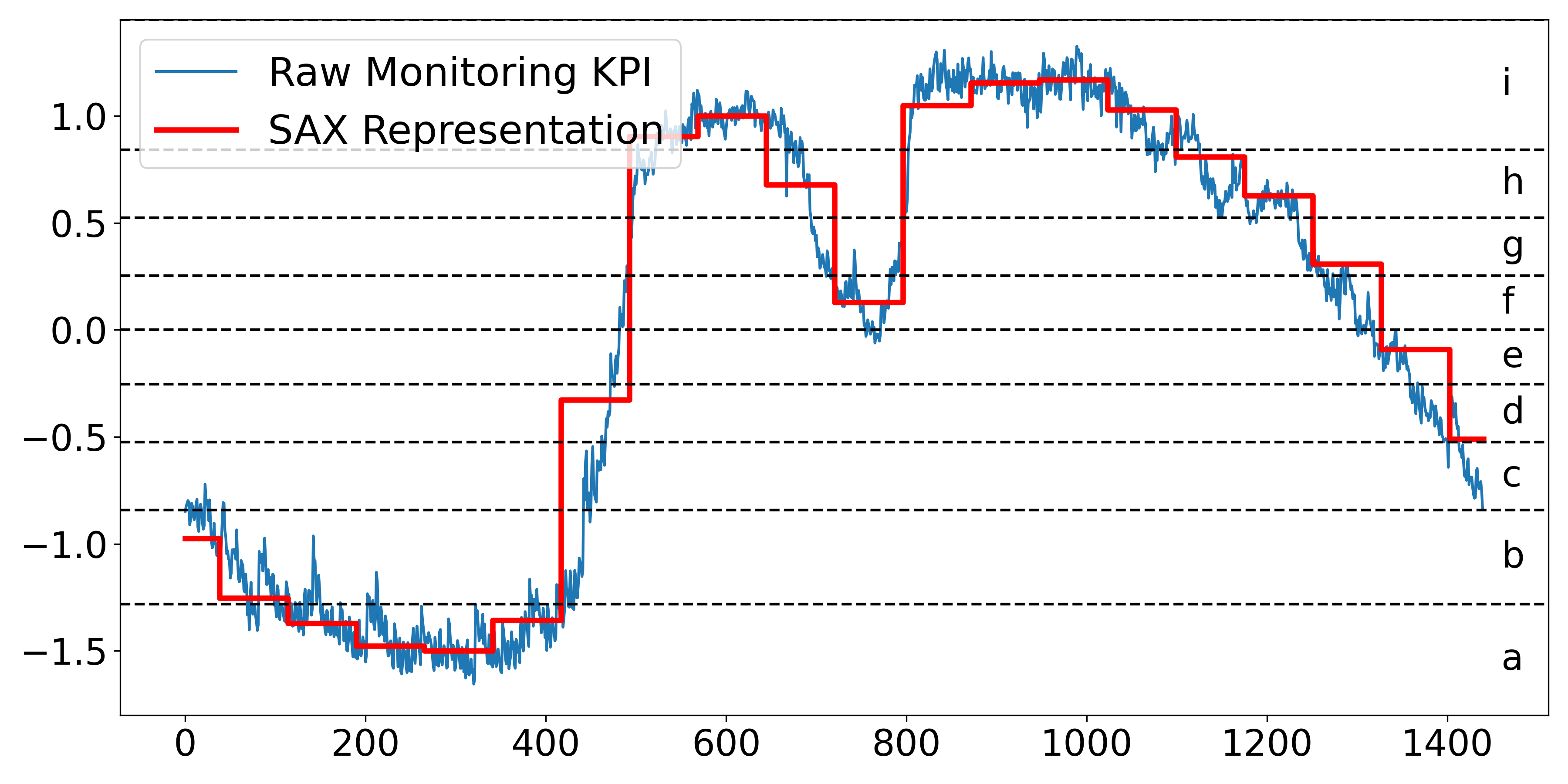

To make KPIRoot+ efficient and meet the industrial requirement of real-time identification, KPIRoot [11] propose to adopt Symbolic Aggregate Approximation (SAX) [18]. SAX has several advantages in KPI analysis: First, SAX allows for a significant reduction in the dimension of the raw KPI, which can make subsequent similarity computation more efficient [28]. Second, SAX can effectively filter out the noise and highlight the significant patterns in the KPIs by aggregating several consecutive data points into a single ”symbol” [33]. Specifically, the raw KPI $x$ of length $n$ will be represented as a $w$ -dimensional vector $P=\{p_{1},p_{2},...,p_{w}\}$ , where the $j^{th}$ element can be calculated as follows:

<details>

<summary>extracted/6514164/figures/sax.png Details</summary>

### Visual Description

# Technical Document Extraction: Symbolic Aggregate Approximation (SAX) Visualization

## 1. Component Isolation

* **Header/Legend:** Located at the top-left [x: 85, y: 75]. Contains the series identifiers.

* **Main Chart Area:** Occupies the central region. Features a fluctuating time-series line, a stepped approximation line, and horizontal threshold markers.

* **Axes:**

* **X-axis:** Horizontal bottom, representing time or sequence index.

* **Y-axis:** Vertical left, representing normalized KPI values.

* **Right-side Labels:** Vertical right, representing the symbolic alphabet mapping.

---

## 2. Legend and Labels

* **Legend [Top-Left]:**

* **Blue Thin Line:** "Raw Monitoring KPI"

* **Red Thick Stepped Line:** "SAX Representation"

* **Y-Axis Markers (Left):** -1.5, -1.0, -0.5, 0.0, 0.5, 1.0

* **X-Axis Markers (Bottom):** 0, 200, 400, 600, 800, 1000, 1200, 1400

* **Symbolic Alphabet (Right):**

* The space between horizontal dashed lines is labeled with lowercase letters **a** through **i** (bottom to top).

* **i**: > ~0.85

* **h**: ~0.55 to ~0.85

* **g**: ~0.25 to ~0.55

* **f**: ~0.0 to ~0.25

* **e**: ~-0.25 to ~0.0

* **d**: ~-0.5 to ~-0.25

* **c**: ~-0.85 to ~-0.5

* **b**: ~-1.3 to ~-0.85

* **a**: < ~-1.3

---

## 3. Data Series Analysis

### Series 1: Raw Monitoring KPI (Blue Line)

* **Trend:** This is a high-frequency, noisy time-series signal.

* **Visual Flow:**

1. Starts at approx -0.9, trends downward with volatility to a trough of approx -1.6 around index 300.

2. Sharp upward climb from index 400 to 550, reaching a peak of approx 1.1.

3. Brief dip to 0.0 at index 750.

4. Second peak reaching approx 1.3 between index 800 and 1000.

5. Gradual, volatile descent from index 1000 to the end of the chart, finishing near -0.8.

### Series 2: SAX Representation (Red Stepped Line)

* **Trend:** This is a Piecewise Aggregate Approximation (PAA) converted into discrete symbols. It follows the mean of the blue line within specific time windows.

* **Visual Flow:**

* **Index 0-400:** Stays in the lower regions, stepping through levels corresponding to symbols **c, b,** and **a**.

* **Index 400-500:** A sharp vertical jump from level **b** to level **h**.

* **Index 550-700:** Plateaus at level **i**, then drops to level **h**.

* **Index 700-800:** Drops to level **f**.

* **Index 800-1050:** Sustained plateau at level **i**.

* **Index 1050-1450:** Sequential downward steps through levels **h, g, f, e,** and finally **d**.

---

## 4. Data Table Reconstruction (Approximated)

The following table represents the SAX transformation logic visible in the chart, mapping the Red Line's position to the symbolic alphabet.

| Time Window (Approx Index) | SAX Level (Red Line Value) | Symbolic Label |

| :--- | :--- | :--- |

| 0 - 50 | ~ -1.0 | c |

| 50 - 120 | ~ -1.25 | b |

| 120 - 180 | ~ -1.35 | a |

| 180 - 350 | ~ -1.5 | a |

| 350 - 420 | ~ -1.35 | a |

| 420 - 480 | ~ -0.3 | d |

| 480 - 550 | ~ 0.9 | i |

| 550 - 650 | ~ 1.0 | i |

| 650 - 720 | ~ 0.7 | h |

| 720 - 800 | ~ 0.15 | f |

| 800 - 1050 | ~ 1.1 | i |

| 1050 - 1120 | ~ 1.0 | i |

| 1120 - 1180 | ~ 0.8 | h |

| 1180 - 1250 | ~ 0.6 | h |

| 1250 - 1320 | ~ 0.3 | g |

| 1320 - 1400 | ~ -0.1 | e |

| 1400 - 1450 | ~ -0.5 | d |

---

## 5. Technical Summary

The image demonstrates the **Symbolic Aggregate Approximation (SAX)** algorithm applied to a noisy KPI signal. The process involves:

1. **Normalization:** The Y-axis indicates the signal is likely Z-normalized.

2. **PAA (Piecewise Aggregate Approximation):** The signal is divided into equal-sized time windows, and the mean of each window is calculated (the horizontal segments of the red line).

3. **Discretization:** The PAA values are mapped to discrete symbols (**a-i**) based on predefined breakpoints (the horizontal dashed lines). This transforms a continuous time-series into a string of characters for efficient pattern matching and storage.

</details>

Figure 5: An Illustration of SAX Representation

$$

\displaystyle p_{i}=\frac{w}{n}\sum_{j=\frac{n}{w}(i-1)+1}^{\frac{n}{w}i}{x_{j}} \tag{1}

$$

In other words, to reduce the dimension of KPI from $n$ to $w$ , the KPI is divided into $w$ equal-sized subsequences. The mean value of the subsequence is calculated, and a vector of these values becomes the Piecewise Aggregate Approximation (PAA) representation [12]. Indeed, PAA representation is intuitive and simple yet shows an approximate performance compared with more sophisticated dimension reduction representations like Fourier transforms and wavelet transforms [18]. Before converting it to the PAA, we normalize each KPI to have a mean of zero and a standard deviation of one. However, SAX representation can obscure significant variation trends due to its reliance on segment averages, potentially leading to inaccurate representations.

In the industrial scenario, a fixed threshold method (e.g., CPU usage higher than 80%) is commonly used to detect system resource usage anomalies. However, fixed thresholds can be limiting as they do not adapt to changes in the system’s behavior over time. Typically, an anomaly refers to a state where the system’s resources, such as CPU, memory, or network bandwidth, are being utilized at their maximum capacity and will cause performance issues for the system. However, in a dynamic cloud system, the threshold at which an anomaly occurs can shift. Specifically, during periods of low demand, a sudden spike in resource usage might be considered an anomaly. However, during peak demand periods, the system might be designed to handle much higher resource usage. Thus, the same usage level would not be considered an anomaly. Furthermore, the individual preferences of engineers make the setting of universally acceptable static thresholds complex. What might be a suitable threshold for one engineer could be too high or too low for another, leading to potential issues being overlooked or an excessive number of false alarms [56]. KPIRoot assumes that by detecting an uprush in workload, the early warning of potential system anomaly can be identified, and root cause localization will be enabled. A score that describes the variation trend of a KPI is computed as follows:

$$

\displaystyle r_{i}=\frac{\sum_{k=i}^{i+l-1}p_{k}}{\sum_{j=i-l}^{i-1}p_{j}} \tag{2}

$$

where $l$ denotes the historical lags taken into consideration. If the value $r_{i}$ is greater than a large threshold $\gamma$ , it suggests that the usage of resources as indicated by the KPI starts to undergo a spike, and we denote the start point of overload as $t_{s}$ . Once the KPI value drops below the value of $t_{s}$ , it signifies that the overload ends; the endpoint of the overload is denoted as $t_{e}$ . In other words, $x_{t_{e}}<x_{t_{s}}$ and $x_{t_{e}-1}>x_{t_{s}}$ .

However, KPIRoot [11] primarily targets trend anomalies through threshold-based techniques, which may fall short in identifying performance anomalies in large-scale cloud systems. The complexity and scale can lead to multiple overlapping types of performance anomalies, including level shifts, periodic variations, and sudden spikes or dips. We extend our previous work by proposing to utilize time series decomposition to better differentiate and detect these diverse anomaly types. This method distinctly identifies and addresses performance anomalies, which can often be obscured in a unified analysis.

We assume the metric time series can be decomposed as the sum of three different components, namely, trend, seasonality, and remainder components:

$$

\displaystyle X_{host}^{t}=\tau_{host}^{t}+s_{host}^{t}+r_{host}^{t},t=1,2,...,n \tag{3}

$$

where $X_{host}^{t}$ denotes the original host cluster KPI at time $t$ , $\tau_{host}^{t}$ denotes the trend, $s_{host}^{t}$ denotes the periodic component and $r_{host}^{t}$ is the residual component.

In this paper, we propose to use the Seasonal and Trend decomposition using the Loess (STL) algorithm, which is a robust and versatile method for decomposing time series data [30]. It uses a sequence of Loess (locally estimated scatter plot smoothing) regressions. The flexibility of STL in handling various seasonal patterns and the ability to adjust its parameters makes it particularly suitable for complex and non-linear metrics in large-scale cloud systems.

After obtaining the decomposition into seasonal, trend, and remainder components, we perform anomaly detection on each component separately to identify distinct types of anomalies. To encode the complex patterns of the time series, it is necessary to consider both the local and global information, i.e., multi-scale features. We adopt an auto-encoder network architecture with skip connections, as known as the U-Net structure [32]. It is trained on multiple sliding window segments of the monitoring metrics. Although the autoencoder approach may incur some additional computational cost, it remains affordable, considering there is typically only one alarm KPI against thousands of VM KPIs.

3.4 Similarity Analysis

<details>

<summary>extracted/6514164/figures/isax.png Details</summary>

### Visual Description

# Technical Data Extraction: Time-Series SAX Representation

## 1. Document Overview

This image is a technical line chart illustrating the transformation of a high-frequency time-series signal into a Symbolic Aggregate Approximation (SAX) representation. It displays three distinct data series plotted against time, with a secondary symbolic categorization on the right-hand side.

## 2. Component Isolation

### A. Header / Legend

* **Location:** Top-left corner [x: 100, y: 50] to [x: 450, y: 280].

* **Legend Items:**

* **Raw Monitoring KPI:** Represented by a thin blue line.

* **Variation Trend:** Represented by a thick green line.

* **SAX Representation:** Represented by a thick red "step" line.

### B. Main Chart Area (Axes and Markers)

* **Y-Axis (Vertical):**

* **Label:** "Normalized Value"

* **Scale:** Ranges from -1.5 to 1.0 (with data extending slightly above 1.0).

* **Major Tick Marks:** -1.5, -1.0, -0.5, 0.0, 0.5, 1.0.

* **X-Axis (Horizontal):**

* **Label:** "Time"

* **Scale:** Ranges from 0 to 1400.

* **Major Tick Marks:** 0, 200, 400, 600, 800, 1000, 1200, 1400.

* **Symbolic Alphabet (Right Margin):**

* Nine horizontal dashed lines divide the Y-axis into discrete bins.

* Each bin is labeled with a lowercase letter from **a** to **i** (bottom to top).

## 3. Data Series Analysis and Trends

### Series 1: Raw Monitoring KPI (Thin Blue Line)

* **Trend:** High-frequency, noisy signal. It starts at approx. -0.8, dips to a minimum near -1.6 around Time 300, rises sharply between Time 400 and 600 to a peak above 1.0, and then gradually declines back toward -0.8 by Time 1400.

* **Characteristics:** Contains significant jitter/noise throughout the duration.

### Series 2: Variation Trend (Thick Green Line)

* **Trend:** A piecewise linear approximation of the blue line. It smooths the noise to show the underlying directional movement.

* **Logic Check:** It follows the "valleys" and "peaks" of the blue line but ignores the high-frequency oscillations.

### Series 3: SAX Representation (Thick Red Step Line)

* **Trend:** A horizontal "step" function. It discretizes the signal into fixed-width time segments (approximately 70-80 time units wide).

* **Logic Check:** The height of each red horizontal segment corresponds to the average value of the signal within that time window, mapped to the symbolic bins (a-i).

## 4. Symbolic Mapping (SAX Bins)

The chart uses horizontal dashed lines to define the following symbolic regions:

| Symbol | Approximate Normalized Value Range |

| :--- | :--- |

| **i** | > 0.85 |

| **h** | 0.55 to 0.85 |

| **g** | 0.25 to 0.55 |

| **f** | 0.0 to 0.25 |

| **e** | -0.25 to 0.0 |

| **d** | -0.5 to -0.25 |

| **c** | -0.85 to -0.5 |

| **b** | -1.25 to -0.85 |

| **a** | < -1.25 |

## 5. Sequence Extraction (SAX String)

Based on the red step line's vertical position relative to the lettered bins, the approximate symbolic sequence represented is:

`b -> b -> a -> a -> a -> a -> b -> d -> h -> i -> i -> g -> c -> f -> i -> i -> i -> h -> g -> g -> e -> d -> c`

*(Note: Each step represents a discrete time interval of roughly 75 units).*

</details>

Figure 6: An Illustration of Improved SAX Representation

Motivated by [47], we propose to compute the similarity of the alarm KPI and VM KPIs to measure the degree of the root cause. The intuition behind this is that if a VM is responsible for triggering an overload, its KPI should exhibit a significant similarity with the host cluster’s KPI, especially during periods of overload. If a VM is indeed the root cause of an overload, it is expected that its resource usage pattern would reflect the pattern of the host resource usage.

Although there exist some approaches that can be used to calculate the similarity of monitoring KPIs, such as AID [47], HALO [53], and CMMD [46], however, in real-time cloud computing systems, timely root cause localization is paramount. Traditional algorithms such as Dynamic Time Warping (DTW) might not be suitable for such scenarios due to their high time complexity, which can be prohibitive for processing large volumes of data in a real-time manner.

KPIRoot transforms the KPIs into symbolic sequences and then computes the similarity between these sequences using the Jaccard similarity coefficient. A discretization technique that produces symbols with equal probability is used to obtain the discrete representation with symbols. As proved by [18], the normalized KPIs have nearly Gaussian distributions. It’s easy to pick equal-sized areas under the Gaussian distribution curve using lookup tables for the cut line coordinates, slicing the under-the-Gaussian-curve area. Suppose there $\alpha$ symbols in the SAX representation, then the breakpoints refer to a sort of numbers $\beta=\{\beta_{1},\beta_{2},...,\beta_{\alpha}\}$ such that the area under normalized Gaussian distribution curve between $\beta_{i}$ to $\beta_{i+1}$ is equal to $\frac{1}{\alpha}$ . The PAA representation element in Section. 2 between $\beta_{i}$ to $\beta_{i+1}$ will be assigned with the $i^{th}$ symbol shown as follows:

$$

\displaystyle s_{i}=alphabet_{l},\quad if\ {\beta_{l}}\leq{p_{i}}\leq\beta_{l+1} \tag{4}

$$

where, $alphabet_{i}$ denotes the $i^{th}$ symbol and $s_{i}$ denotes the $i^{th}$ element of the SAX representation $S$ . An example of SAX representation of a monitoring KPI with $w=20,\alpha=9$ is shown in Fig. 5.

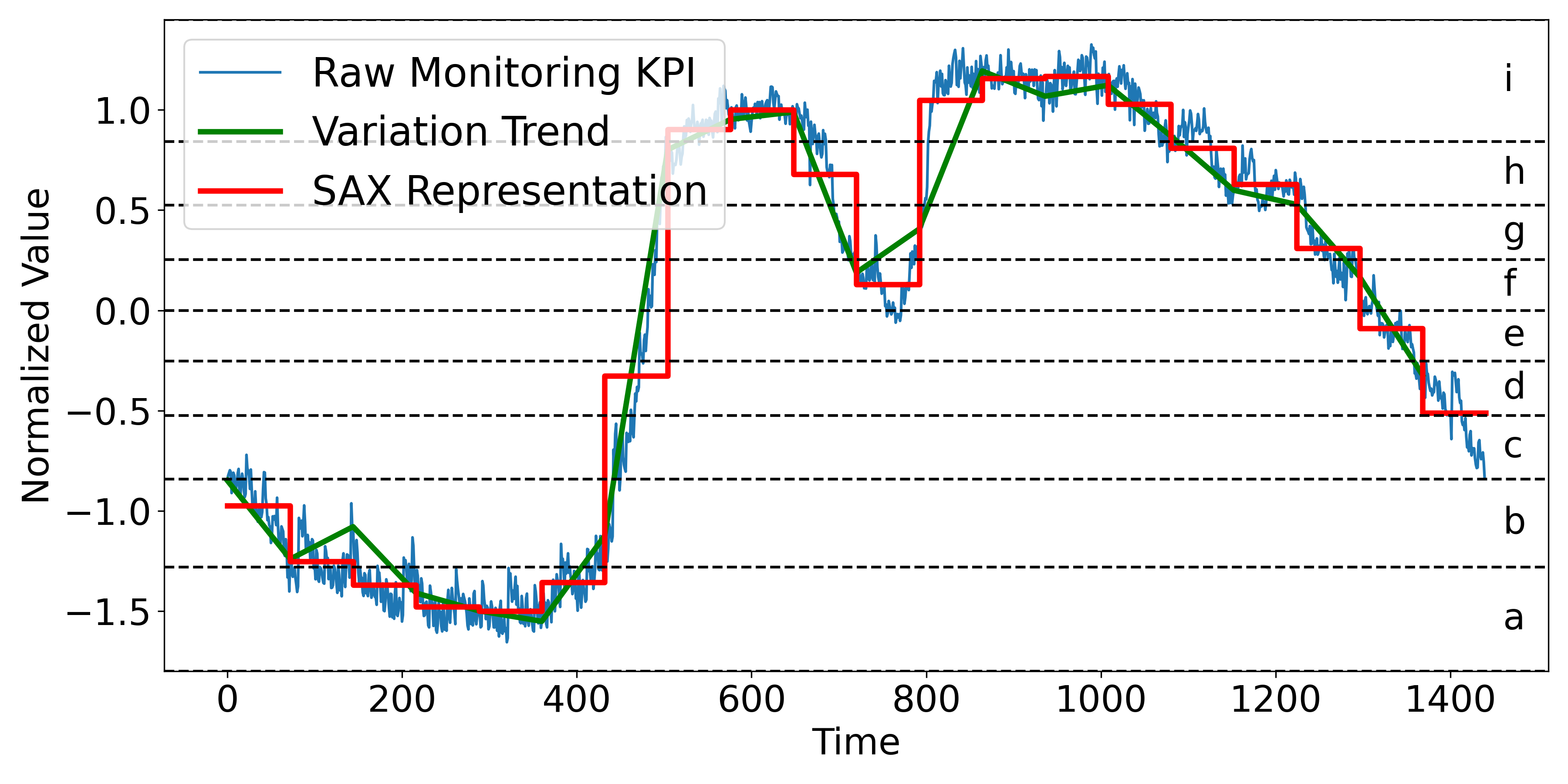

However, the traditional Symbolic Aggregate Approximation (SAX) method, while effective for dimensionality reduction and pattern recognition in time series data, has limitations due to its reliance on segment averages. This approach can obscure significant trends and variations, leading to misleading representations. For instance, two segments with different behaviors—such as an increasing CPU usage in a VM with relatively low average usage and a decreasing usage in another VM with a higher average, might be mapped to the same symbol if their averages are similar. This could overlook critical issues like potential CPU overloads. To address this, an Improved SAX representation (ISAX) is proposed (shown in Figure 6), which incorporates the variation trend indicators. To maintain the efficiency of the approach, only trend information is considered, ensuring that the enhanced representation remains computationally feasible while providing more critical insights into the KPI’s dynamic behavior. The trend information, represented by the sign of the slope within the dimensionality reduction window, is calculated as follows:

$$

\displaystyle\phi_{i}=sgn(x_{\frac{n}{w}\cdot{i}}-x_{\frac{n}{w}\cdot(i-1)+1}) \tag{5}

$$

where $x_{\frac{n}{w}·{i}}$ and $x_{\frac{n}{w}·(i-1)+1}$ are the start and end point of the $i^{th}$ metric segment in PAA representation. The Improved SAX in KPIRoot+ will further differentiate between two metric segments that originally map to the same symbol under traditional SAX due to similar averages. By incorporating the variation trend, Improved SAX assigns different symbols to segments that have the same average but different trends, such as an increasing versus a decreasing sequence. Different from the original SAX representation, improved SAX will assign the PAA representation element between $\beta_{i}$ to $\beta_{i+1}$ a symbol as follows:

$$

\displaystyle s_{i}=alphabet_{2{\alpha}-{\phi_{i}}\cdot{l}},\quad if\ {\beta_{%

l}}\leq{p_{i}}\leq\beta_{l+1} \tag{6}

$$

We adopt the Jaccard similarity coefficient rather than other similarity measures because of its advantages when dealing with symbolic sequences like the SAX representation [13]. Moreover, Jaccard similarity is easy to compute and can effectively capture the similarity between two symbolic sequences regardless of their lengths. This makes it very suitable for our case, where the lengths of the symbolic sequences could vary. Then, the Jaccard similarity can be computed as follows:

$$

\displaystyle Jaccard(S_{host},S_{i})=\frac{\lvert{S_{host}\cap{S_{i}}}\rvert}%

{\lvert{S_{host}\cup{S_{i}}}\rvert} \tag{7}

$$

where $S_{host}$ is the SAX representation of the host cluster’s KPI and $S_{i}$ is the SAX representation of individual VM KPI $X_{i}$ .

3.5 Causality Analysis

The Improved Symbolic Aggregate Approximation method is effective in reducing the dimension of raw KPI while preventing trend information loss; however, the computation of Improved SAX representation-based similarity does not provide any insights into the causality between VM KPIs and alarm KPIs. As mentioned by [27], the ability of Granger causality analysis to analyze the correlation between KPIs can be a key factor for improving the accuracy of the root cause localization. By using Granger Causality in conjunction with SAX representation, we can not only analyze large quantities of time series data effectively but also gain insights into the potential causality between different KPIs. That is why we take Granger Causality [35] as a supplement.

Granger Causality is a statistical hypothesis test used to determine if one KPI is useful in forecasting another KPI [2]. For instance, if a VM KPI undergoes an uprush and causes the alarm KPI to trigger alerts, i.e., the change in the VM KPI precedes the changes in the alarm KPI, then Granger causality exists from the alarm KPI to the VM KPI. It should be noted that Granger Causality is unidirectional, which means that if VM KPI Granger causes alarm KPI, it does not imply that alarm KPI Granger causes VM KPI. In our case, we are interested in understanding how VM KPIs influence the alarm KPI of the host cluster, so we focus on the Granger causality from the VM KPIs to the alarm KPI. Specifically, assuming that the two KPIs can be well described by Gaussian autoregressive processes, the autoregression (AR) of alarm KPI without and with information from VM KPI can be written as follows:

$$

\displaystyle p_{alarm}^{t}=\hat{a_{0}}+\sum_{j=1}^{q}{\hat{a_{j}}}p_{alarm}^{%

t-j}+\hat{\varepsilon_{t}} \tag{8}

$$

$$

\displaystyle p_{alarm}^{t}=a_{0}+\sum_{j=1}^{q}{a_{j}}p_{alarm}^{t-j}+\sum_{j%

=1}^{q}{b_{j}}p_{i}^{t-j}+\varepsilon_{t} \tag{9}

$$

where the first equation uses the past values of the PAA representation of host KPI $X^{host}$ while the second includes the past values of the PAA representation of both $X^{host}$ and $X^{vm}$ . Furthermore, $\hat{a_{j}}$ is the autoregression coefficients for $X^{host}$ , while $a_{j}$ and $b_{j}$ are the autoregression coefficients for $X^{host}$ with the contribution of both $X^{host}$ and $X^{vm}$ ’s historical values. Both $\hat{\varepsilon_{t}}$ and $\varepsilon_{t}$ are residual terms assumed to be Gaussian, and $q$ is model order, which represents the amount of past information that will be included in the prediction of the future sample. Then, we conduct the F-statistic test:

$$

\displaystyle F_{vm\rightarrow{host}}=\frac{\sum_{t=t_{s}+q}^{t_{e}}({\hat{%

\varepsilon}_{t}^{2}}-{\varepsilon_{t}^{2}})/q}{\sum_{t=t_{s}+q}^{t_{e}}{%

\varepsilon_{t}^{2}}/(t_{e}-t_{s}-2q-1)} \tag{10}

$$

where ${\hat{\varepsilon}_{t}^{2}}$ and $\varepsilon_{t}^{2}$ represent the mean square error (MSE) of the AR model of host KPI without and with information from VM KPI. $t_{s}$ and $t_{e}$ are the start point and end point of the detected overload. The F-statistic test follows an F-distribution with $q$ and $t_{e}-t_{s}-2p-1$ degrees of freedom under the null hypothesis that the VM KPI does not Granger-cause the host KPI. The calculated F-statistic can be a good indicator of the VM KPI Granger-causality to the host KPI.

After both the similarity and causality analyses are performed, KPIRoot combines these two scores to create a more comprehensive correlation score for each VM KPI. Specifically, the correlation score is a weighted sum of similarity score and causality score:

$$

\displaystyle c_{i}=\lambda\times{Jaccard(S_{host},S_{i})}+(1-\lambda)\times{F%

_{vm\rightarrow{host}}} \tag{11}

$$

where $c_{i}$ is the correlation score between the $i^{th}$ VM KPI and the alarm KPI. The balance weight $\lambda$ is a hyper-parameter. In our experiments, this parameter is set to be 0.9.

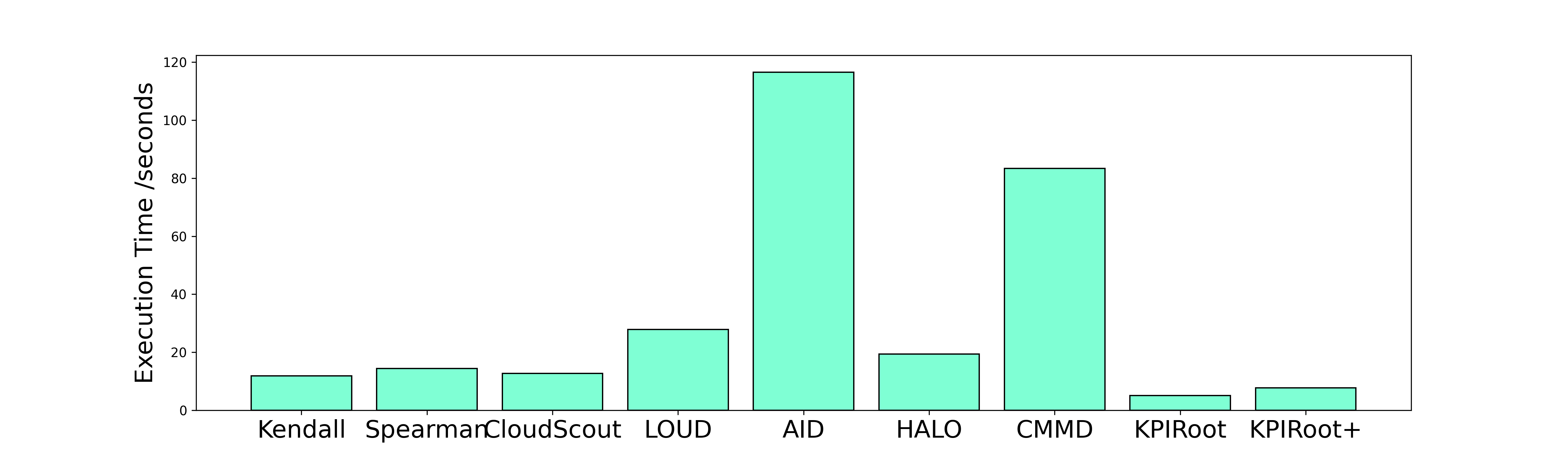

3.6 Complexity Analysis

The proposed method KPIRoot+ is summarized in Algorithm. 1. The computation of our method mainly lies in the similarity and causality analysis. In industrial practice, $w≈{\sqrt{n}}$ , which means the lengths of SAX representation of KPIs are roughly $\sqrt{n}$ . So, the time complexity of obtaining SAX representation is $\mathcal{O}(\sqrt{n})$ . On one hand, the time complexity of Jaccard similarity is directly proportional to the KPI length, so the complexity of similarity analysis is $\mathcal{O}(\sqrt{n})$ . On the other hand, the complexity of Granger causality mainly depends on the autoregression of $P_{host}$ , which is $\mathcal{O}(\sqrt{n}×{q^{3}})$ , where $q$ is the time lag of Granger causality (usually very small). Thus, the complexity of KPIRoot is $\mathcal{O}(\sqrt{n}×({q^{3}}+2))$ . As a comparison, the time efficiency of methods like AID (based on DTW) is $\mathcal{O}(n^{2})$ , let alone more complex deep learning-based methods like CMMD. Therefore, KPIRoot+ is a more suitable method for industrial applications that demand real-time root cause localization.

Algorithm 1 KPI Root Cause Localization+

0: The alarm KPI of the host $X_{alarm}$ ; The KPIs of VMs $X_{i},i∈\{1,2,...,m\}$ ;

0: The correlation scores of VM KPIs that correlate to the anomaly of alarm KPI $c_{i}$

1: for $i=1$ ; $i≤ w$ ; $i++$ do

2: $p^{i}_{alarm}=\frac{w}{n}\sum_{j=\frac{n}{w}(i-1)+1}^{\frac{n}{w}i}{x_{alarm}^%

{j}}$

3: $\phi_{alarm}^{i}=sgn(x_{alarm}^{\frac{n}{w}·{i}}-x_{alarm}^{\frac{n}{w}%

·(i-1)+1})$

4: end for

5: // Anomaly Segment Detection

6: $X_{alarm}^{t}=\tau_{host}^{t}+s_{host}^{t}+r_{host}^{t}$

7: $i_{anomaly}=AE(\tau_{host}){\cup}AE(s_{host}){\cup}AE(r_{host})$

8: $p_{alarm}=p_{alarm}[i_{anomaly}]$

9: $s^{i}_{alarm}=\{alphabet_{2{\alpha}-{\phi_{i}}·{l}},\quad if\ {\beta_{l}}%

≤{p^{i}_{alarm}}≤\beta_{l+1}\}$

10: for $i=1$ ; $i≤ m$ ; $i++$ do

11: // Similarity Analysis

12: for $k=1$ ; $k<m$ ; $k++$ do

13: $p_{i}^{k}=\frac{w}{n}\sum_{j=\frac{n}{w}(k-1)+1}^{\frac{n}{w}k}{x_{i}^{k}}$

14: $p_{i}=p_{i}[i_{anomaly}]$

15: $\phi_{i}^{k}=sgn(x_{i}^{\frac{n}{w}·{k}}-x_{i}^{\frac{n}{w}·(k-1)+1})$

16: $s_{i}^{k}=\{alphabet_{2{\alpha}-{\phi_{i}}·{l}},\,s.t.\ {\beta_{l}}≤{p_%

{i}^{k}}≤\beta_{l+1}$ }

17: end for

18: $Jaccard(S_{host},S_{i})=\frac{\lvert{S_{host}\cap{S_{i}}}\rvert}{\lvert{S_{%

host}\cup{S_{i}}}\rvert}$

19: // Causality Analysis

20: for $t=t_{s}+q$ ; $t<t_{e}$ ; $t++$ do

21: $p_{alarm}^{t}=\hat{a_{0}}+\sum_{j=1}^{q}{\hat{a_{j}}}p_{alarm}^{t-j}+\hat{%

\varepsilon_{t}}$

22: $p_{alarm}^{t}=a_{0}+\sum_{j=1}^{q}{a_{j}}p_{alarm}^{t-j}+\sum_{j=1}^{q}{b_{j}}%

p_{i}^{t-j}+\varepsilon_{t}$

23: end for

24: $F_{vm→{host}}=\frac{\sum_{t=t_{s}+q}^{t_{e}}({\hat{\varepsilon}_{t}^%

{2}}-{\varepsilon_{t}^{2}})/q}{\sum_{t=t_{s}+q}^{t_{e}}{\varepsilon_{t}^{2}}/(%

t_{e}-t_{s}-2q-1)}$

25: $c_{i}=\lambda×{Jaccard(S_{host},S_{i})}+(1-\lambda)×{F_{vm%

→{host}}}$

26: end for

27: return $c_{i}$

4 EVALUATION

To fully evaluate the effectiveness of our proposed approach, KPIRoot+, we use three real-world monitoring KPI datasets from the cloud service systems of Cloud $\mathcal{H}$ . Particularly, we aim to answer the following research questions (RQs):

- RQ1: How effective is KPIRoot+ in performance issue detection compared with baselines?

- RQ2: How effective is KPIRoot+ compared with KPI root cause localization baselines?

- RQ3: How effective is each component of KPIRoot+ in root cause localization?

- RQ4: How efficient is KPIRoot+ in localizing root cause KPIs compared to baselines?

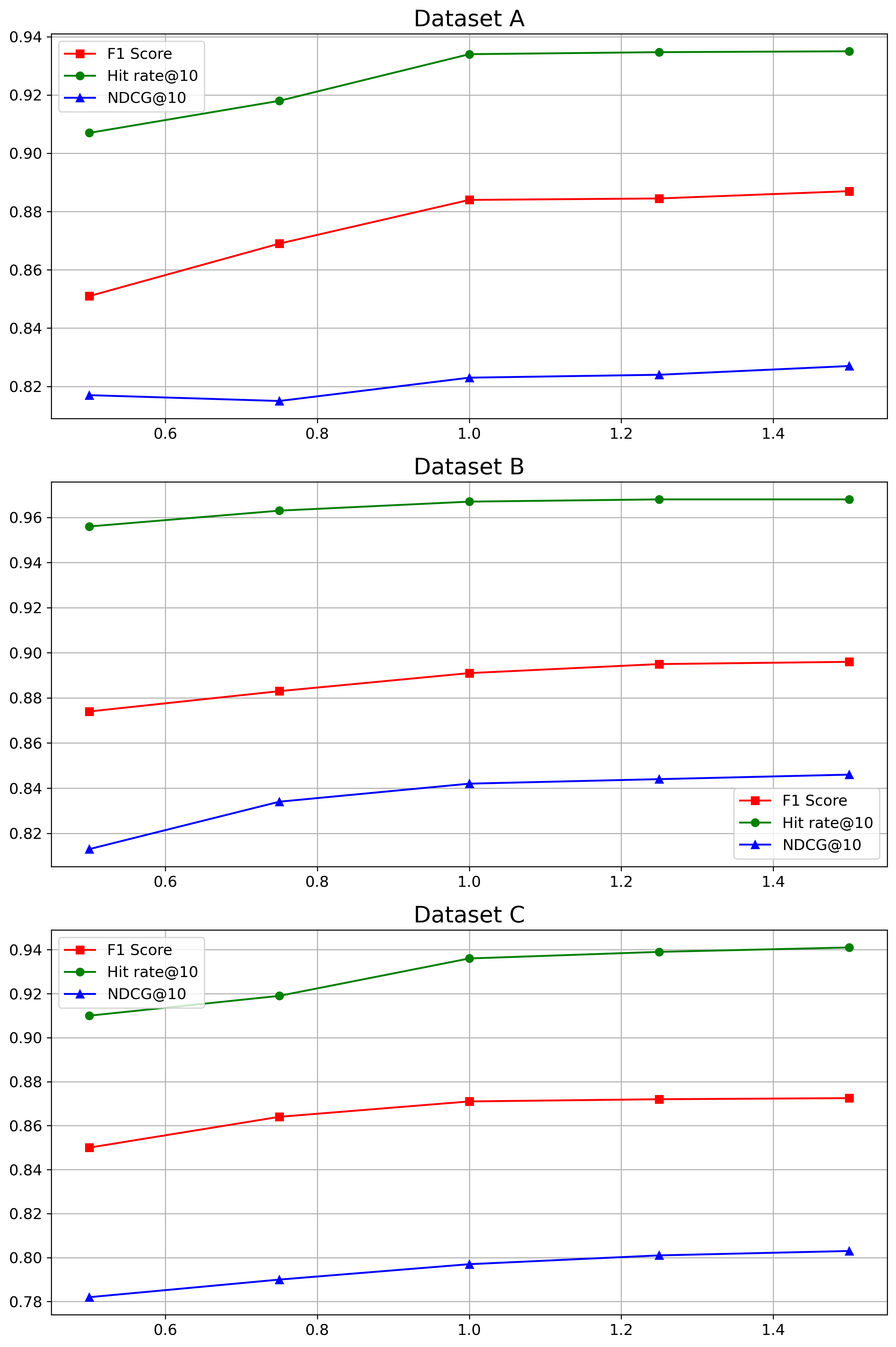

- RQ5: How sensitive is KPIRoot+ to each hyperparameter?

Table 1: Statistics of Industrial Dataset

| Industrial | Dataset A | Dataset B | Dataset C |

| --- | --- | --- | --- |

| Host Clusters | 16 | 6 | 7 |

| VM Number | 120 $\sim$ 803 | 21 $\sim$ 26 | 41 $\sim$ 57 |

| KPI Length | 5,928,480 | 17,040 | 37,200 |

| Root Causes | 4 $\sim$ 36 | 3 $\sim$ 8 | 2 $\sim$ 15 |

4.1 Experiment Setting

4.1.1 Datasets

To confirm the practical significance of KPIRoot, we collect three datasets from large-scale online services in three Available Zones (AZs) of Cloud $\mathcal{H}$ . The statistics of three industrial datasets are shown in Table 1. Various VM KPIs and alarm KPIs monitor the status of the service. The VM KPIs typically measure the healthy status of each VM, including resource usage metrics like CPU, memory, I/O, and bandwidth usage. The alarm KPI monitors the runtime status at the host cluster level, which is usually positively correlated to the VM KPIs.

4.1.2 Evaluation Metrics

In the following experiments, the F1-score is utilized to evaluate the performance of root cause localization results. We employ Precision: $PC=\frac{TP}{TP+FP}$ , Recall: $RC=\frac{TP}{TP+FN}$ , F1 score: $F1=2·\frac{PC·{RC}}{PC+RC}$ . To be specific, $TP$ is the number of correctly localized VM KPIs; $FP$ is the number of incorrectly predicted VM KPIs; $FN$ is the number of root cause VM KPIs that failed to be predicted by the model. F1 score is the harmonic mean of the precision and recall. In real-world applications, since the number of root cause KPIs is unknown, software engineers will first investigate top $k$ recommended results by root cause localization methods. Hit Rate@ $k$ is a widely used metric to measure whether the correct root causes (in our case, the root cause VM KPIs) are within the recommended top $k$ results. We adopt Hit Rate@ $5$ and Hit Rate@ $10$ as evaluation metrics in our experiments.

Additionally, we propose to use the Normalized Discounted Cumulative Gain (NDCG) in our evaluation metrics, specifically NDCG@ $10$ . NDCG is more beneficial because it considers the rank position of each result, applying a discounting factor to lower-ranked positions, which measures how easily engineers can find the culprit VMs. This is crucial in our scenarios as the most relevant root causes are more prioritized for investigation. NDCG@ $1$ is left out because it is the same as Hit Rate@ $1$ in our scenario. NDCG@ $k$ measures to what extent the root cause appears higher up in the ranked candidate list. Thus, the higher the above measurements, the better.

4.2 Experimental Results

Table 2: Experimental Results of Different Anomaly Detection Methods

| Methods | Dataset A | Dataset B | Dataset C | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Pre | Rec | F1 | Pre | Rec | F1 | Pre | Rec | F1 | |

| 3 $\sigma$ | 0.709 | 0.762 | 0.738 | 0.765 | 0.694 | 0.730 | 0.797 | 0.685 | 0.747 |

| LOF | 0.681 | 0.587 | 0.753 | 0.619 | 0.591 | 0.737 | 0.681 | 0.598 | 0.715 |

| IF | 0.699 | 0.612 | 0.788 | 0.673 | 0.607 | 0.772 | 0.715 | 0.612 | 0.706 |

| Autoencoder | 0.791 | 0.770 | 0.782 | 0.776 | 0.810 | 0.794 | 0.859 | 0.793 | 0.823 |

| LSTM | 0.836 | 0.752 | 0.805 | 0.826 | 0.865 | 0.824 | 0.829 | 0.863 | 0.839 |

| KPIRoot | 0.787 | 0.712 | 0.755 | 0.782 | 0.717 | 0.744 | 0.803 | 0.709 | 0.759 |

| KPIRoot+ | 0.914 | 0.943 | 0.928 | 0.924 | 0.907 | 0.913 | 0.942 | 0.863 | 0.894 |

4.2.1 RQ1 The effectiveness of KPIRoot+ in performance issue detection

To answer this research question, we compare the performance of KPIRoot+ with five widely used performance anomaly detection methods in cloud systems, 3 $\sigma$ [50], LOF (Local Outlier Factor) [4], IF (Isolation Forest) [22], Autoencoder [45], LSTM [54] and KPIRoot [11]. The results are shown in Table 2, where the best Precision, Recall and F1 scores are all marked with boldface. We can see that the average Precision, Recall and F1 scores of KPIRoot+ outperform all baseline methods in three datasets, including our previous method, KPIRoot. Each of the baseline methods has its strengths depending on the specific type of anomaly. Methods like $3\sigma$ , LOF, and IF are particularly effective at detecting residual anomalies because they are point-wise anomaly detection, which identifies deviations from normal behavior at specific data points. This makes them suitable for catching sudden or isolated anomalies but less effective for detecting anomalies that persist over time. On the other hand, Autoencoder and LSTM models are designed to capture deviations from historical patterns by embedding the sliding window of metrics and fitting local patterns. These methods are effective at identifying seasonal anomalies, where recurring deviations from the periodic patterns happen. KPIRoot, in contrast, computes the variation between the current observation window and previous observation windows, which makes it particularly adept at identifying trend anomalies. This method is well-suited for detecting gradual changes or level shifts in performance metrics over time.

Despite the capabilities of these individual approaches, they often yield suboptimal results when all anomaly types are mixed together, as they cannot differentiate between them effectively. This is where KPIRoot+ demonstrates its superiority by utilizing a time series decomposition-based method. By decomposing time series data into its constituent components, KPIRoot+ is able to better isolate and identify trends, seasonal patterns, and residual anomalies, leading to higher accuracy and more comprehensive anomaly detection. Furthermore, the effective identification of performance anomalies is crucial not only for immediate anomaly detection but also for facilitating the subsequent root cause localization.

4.2.2 RQ2 The effectiveness of KPIRoot+

To answer this research question, we compare the performance of KPIRoot+ with several other methods, including three statistical correlation measurements: Kendall correlation, Spearman correlation, and CloudScout [48]. Additionally, we consider AID [47], which uses DTW distance, LOUD [26], a graph centrality-based method, HALO [53], which employs conditional entropy, CMMD [46], a graph neural network-based method, and our previously proposed method, KPIRoot [11]. Table 3 presents the results, highlighting the best F1 scores, Hit@5, Hit@10, and NDGG@10 in bold. We observe that KPIRoot+ consistently outperforms all baseline methods across three datasets in terms of average F1 scores, Hit@5, Hit@10, and NDGG@10. In particular, the improvement achieved by KPIRoot+ is more pronounced in Dataset B and Dataset C compared to Dataset A. This is because these datasets focus on KPIs, such as request rates, related to the load balancer, which manages the distribution of network traffic across physical machines. As a result, anomalies in VM request rates tend to precede anomalies in host clusters, providing an early indicator for potential issues. It is important to note that, as shown in Table 3, the number of root causes often exceeds 5. Consequently, not all root causes can be captured within the top 5 predictions. Despite this, achieving Hit@5 scores exceeding 70% is significant, as it indicates that our method accurately identifies a substantial portion of root causes within just the top 5 predictions. Additionally, the high F1 score and Hit@10 demonstrate the method’s effectiveness for industrial applications.