# FairPFN: A Tabular Foundation Model for Causal Fairness

## Abstract

Machine learning (ML) systems are utilized in critical sectors, such as healthcare, law enforcement, and finance. However, these systems are often trained on historical data that contains demographic biases, leading to ML decisions that perpetuate or exacerbate existing social inequalities. Causal fairness provides a transparent, human-in-the-loop framework to mitigate algorithmic discrimination, aligning closely with legal doctrines of direct and indirect discrimination. However, current causal fairness frameworks hold a key limitation in that they assume prior knowledge of the correct causal model, restricting their applicability in complex fairness scenarios where causal models are unknown or difficult to identify. To bridge this gap, we propose FairPFN, a tabular foundation model pre-trained on synthetic causal fairness data to identify and mitigate the causal effects of protected attributes in its predictions. FairPFN’s key contribution is that it requires no knowledge of the causal model and still demonstrates strong performance in identifying and removing protected causal effects across a diverse set of hand-crafted and real-world scenarios relative to robust baseline methods. FairPFN paves the way for promising future research, making causal fairness more accessible to a wider variety of complex fairness problems.

## 1 Introduction

Algorithmic discrimination is among the most pressing AI-related risks of our time, manifesting when machine learning (ML) systems produce outcomes that disproportionately disadvantage historically marginalized groups Angwin et al. (2016). Despite significant advancements by the fairness-aware ML community, critiques highlight the contextual limitations and lack of transferability of current statistical fairness measures to practical legislative frameworks Weerts et al. (2023). In response, the field of causal fairness has emerged, providing a transparent and human-in-the-loop causal framework for assessing and mitigating algorithmic bias with a strong analogy to existing anti-discrimination legal doctrines Plecko & Bareinboim (2024).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: FairPFN Pre-training Framework

### Overview

This image is a technical diagram illustrating the pre-training framework for a model called "FairPFN." The diagram is divided into three sequential stages (a, b, c) at the top, which describe the process, and a larger visual flow below that maps these stages to a "Real-world Inference" pipeline. The overall purpose is to show how a Structural Causal Model (SCM) is used to generate data for training a transformer model (FairPFN) to make fair predictions by learning to map biased observables to fair outcomes.

### Components/Axes

The diagram is segmented into several key components:

1. **Top Text Blocks (Process Description):**

* **a) Data generation:** Describes generating an SCM and sampling a dataset `D` with a protected attribute `A`, biased observables `X_b`, and biased outcome `Y_b`. A fair outcome `Y_f` is also sampled by removing outgoing edges from `A`.

* **b) Transformer input:** Describes partitioning the observational dataset `D` into training and validation splits. The transformer uses in-context examples `D_train` to make predictions on the inference set `D_val = (A_val, X_val)`.

* **c) Fair prediction:** Describes the transformer making predictions `Ŷ_f` on the validation set. The pre-training loss is calculated with respect to the fair outcomes `Y_f`, teaching the model the mapping `X_b → Y_f`.

2. **Main Visual Flow (Left to Right):**

* **Left: Structural Causal Model (SCM):** A directed acyclic graph with nodes and arrows.

* **Nodes:** `A0` (blue circle), `U2` (green circle, appears twice), `X1`, `X2`, `X3` (purple circles), `Y_b` (orange circle), `Y_f` (yellow circle with red outline).

* **Arrows:** Black arrows indicate causal relationships. Red arrows originate from `A0` and point to `X2` and `X3`, indicating a biased or protected attribute's influence.

* **Center-Left: Observational Dataset:** A table with columns labeled `A` (blue header), `X1`, `X2`, `X3` (purple headers), and `Y_b` (orange header). The table contains 5 rows of colored cells (blue, purple, orange, light beige) representing data samples.

* **Center: FairPFN:** A large, green, abstract block diagram representing the transformer model. Above it are three small copies of the SCM graph. Below it is a mathematical equation: `p(y_f | x_b, D_b) ∝ ∫_φ p(y_f | x_b, φ) p(D_b | φ) p(φ) dφ`.

* **Center-Right: Pre-training Loss:** Two vertical bars.

* Left bar: Labeled `Ŷ_f` (predicted fair outcome), with a grayscale gradient from white (top) to black (bottom).

* Right bar: Labeled `Y_f` (true fair outcome), with a color gradient from light beige (top) to yellow (bottom).

* **Arrows:** Large, light green arrows show the data flow from the SCM to the dataset, from the dataset to FairPFN, and from FairPFN to the loss calculation. A final green arrow loops from the loss back to the SCM, indicating the pre-training cycle.

3. **Title:** The entire lower section is titled "**FairPFN Pre-training**" in a large, bold, serif font.

### Detailed Analysis

* **SCM Node Relationships:**

* `A0` has direct red arrows to `X2` and `X3`.

* `U2` (top) has arrows to `X1` and `Y_b`.

* `U2` (bottom) has an arrow to `X1`.

* `X1` has arrows to `X2` and `Y_b`.

* `X2` has an arrow to `Y_b`.

* `X3` has an arrow to `Y_b`.

* `Y_f` is shown as a separate node, derived from the SCM by "removing the outgoing edges of A" as per the text in (a).

* **Data Flow & Process Mapping:**

1. The **SCM** (Stage a) is used to generate the **Observational Dataset**.

2. The dataset is fed into the **FairPFN** model (Stages b & c).

3. The model produces predictions `Ŷ_f`.

4. The **Pre-training Loss** is computed by comparing `Ŷ_f` to the true fair outcome `Y_f`.

5. The loss is used to update the model, completing the pre-training loop.

* **Mathematical Equation:** The equation below FairPFN expresses the model's predictive distribution as a proportionality (`∝`) involving an integral over model parameters `φ`. It combines the likelihood of the fair outcome given the biased input and parameters, the likelihood of the biased data given the parameters, and a prior over the parameters.

### Key Observations

* **Color Coding is Systematic:** Blue is consistently used for the protected attribute `A`. Purple is used for the observables `X1, X2, X3`. Orange represents the biased outcome `Y_b`. Yellow represents the fair outcome `Y_f`.

* **Bias Representation:** The red arrows from `A0` in the SCM visually highlight the pathways of bias that the framework aims to remove to achieve `Y_f`.

* **Model Abstraction:** The FairPFN is represented as a generic green block, emphasizing its role as a black-box transformer model within this causal framework.

* **Loss Visualization:** The loss bars visually contrast the model's grayscale predictions (`Ŷ_f`) against the colored true fair targets (`Y_f`), implying the goal is to align the prediction distribution with the fair outcome distribution.

### Interpretation

This diagram outlines a methodology for instilling fairness into a predictive model through causal pre-training. The core idea is to use a known or assumed causal structure (the SCM) to generate synthetic data where the direct effect of a protected attribute (`A`) on the outcome is removed, creating a "fair" target (`Y_f`). A transformer model (FairPFN) is then trained not on the real-world biased outcome (`Y_b`), but on this constructed fair outcome.

The process is "pre-training" because it happens before the model is applied to real-world tasks. By learning the mapping from biased observables (`X_b`) to fair outcomes (`Y_f`) in a controlled, causal environment, the model is intended to internalize a fair prediction rule. The integral in the equation suggests a Bayesian approach, marginalizing over model parameters to make predictions. The framework implies that real-world inference (using the pre-trained model) will then produce fair predictions even when only biased data is available, as the model has learned to "ignore" the biased pathways from `A`. The key assumption is the validity of the initial SCM used for data generation.

</details>

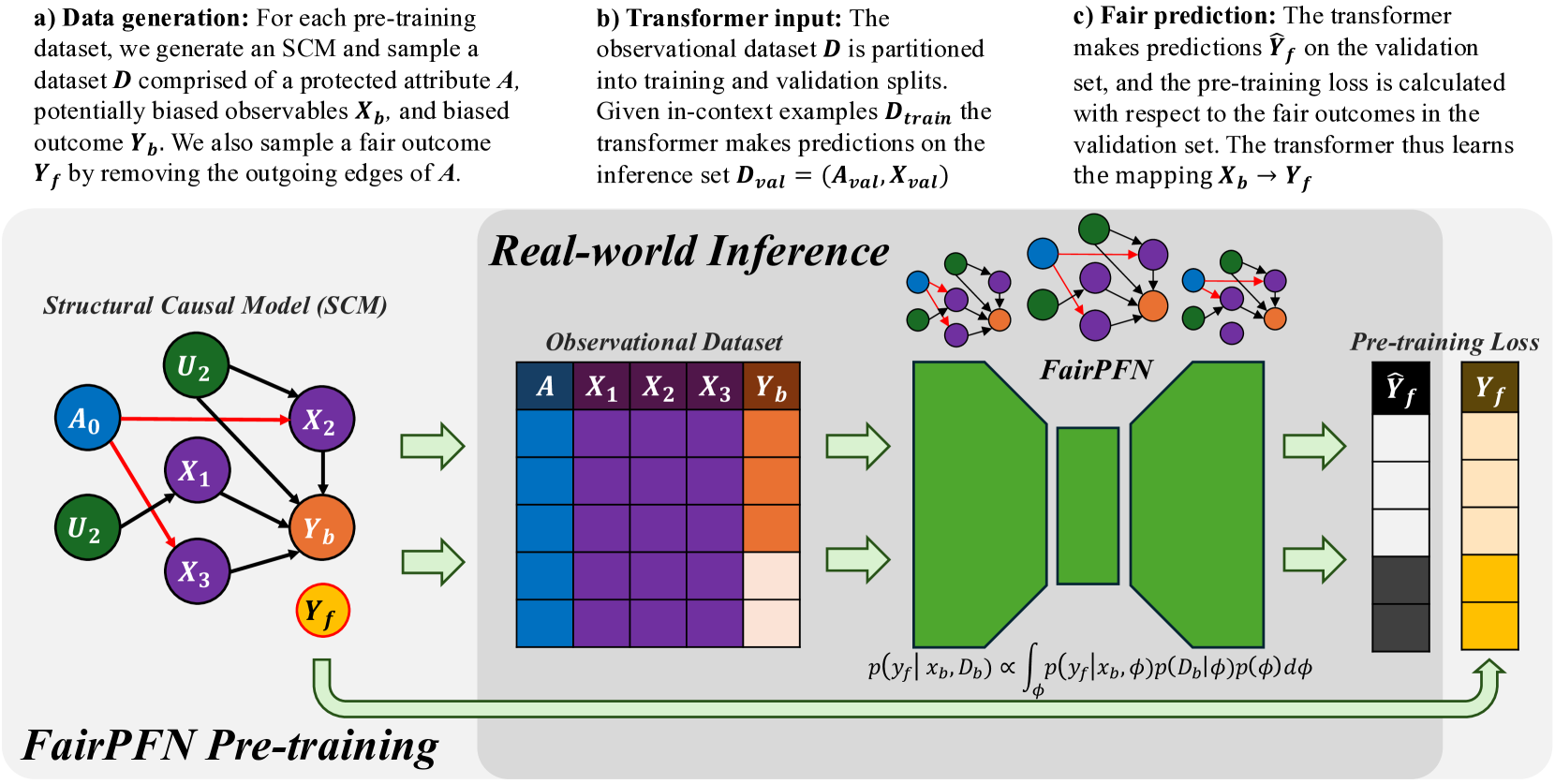

Figure 1: FairPFN Overview: FairPFN is a foundation model for causal fairness, pre-trained on synthetic datasets generated from sparse MLPs that represent SCMs with exogenous protected attributes (a). A biased dataset is created for each MLP/SCM and supplied as context to the transformer (b), with loss computed based on fair outcomes obtained by excluding the causal influence of the protected attribute (c). In practice, (d) FairPFN takes in only an observational dataset to predict fair targets by integrating over the simplest causal explanations for the biased data.

A recent review comparing outcome-based and causal fairness approaches (Castelnovo et al., 2022) argues that the non-identifiability of causal models from observational data Pearl (2009) limits the usage of current causal fairness frameworks in practical applications. In practice, users must provide full or partial information about the underlying causal model, a challenging task given the complexity of systemic inequalities. Furthermore, an incorrectly presumed causal graph, such as one falsely assuming a variable is independent of a protected attribute, can invalidate causal fairness metrics Ma et al. (2023); Binkytė-Sadauskienė et al. (2022), resulting in fairwashing and fostering a false sense of security and trust.

This paper takes a bold new perspective on achieving causal fairness. Our key contribution is FairPFN, a tabular foundation model for causal fairness, pre-trained on synthetic causal fairness data to learn to identify and remove the causal effects of protected attributes in tabular classification settings. When used on a new dataset, FairPFN does not rely on a user-specified causal model or graph, instead solely relying on the causally-generated data it has seen during pre-training. We demonstrate through extensive experiments that FairPFN effectively and consistently mitigates the causal impact of protected attributes across various hand-crafted and real-world scenarios, yielding causally fair predictions without user-specified causal information. We summarize our various contributions:

1. PFNs for Causal Fairness We propose a paradigm shift for algorithmic fairness, in which a transformer is pre-trained on synthetic causal fairness data.

1. Causal Fairness Prior: We introduce a synthetic causal data prior which offers a comprehensive representation for fairness datasets, modeling protected attributes as binary exogenous causes.

1. Foundation Model: We present FairPFN, a foundation model for causal fairness which, given only observational data, identifies and removes the causal effect of binary, exogenous protected attributes in predictions, and demonstrates strong performance in terms of both causal fairness and predictive accuracy on a combination of hand-crafted and real-world causal scenarios. We provide a prediction interface to evaluate and assess our pre-trained model, as well as code to generate and visualize our pre-training data at https://github.com/jr2021/FairPFN.

## 2 Related Work

In recent years, causality has gained prominence in the field of algorithmic fairness, providing fairness researchers with a structural framework to reason about algorithmic discrimination. Unlike traditional fairness research Kamishima et al. (2012); Agarwal et al. (2018); Hardt et al. (2016), which focuses primarily on optimizing statistical fairness measures, causal fairness frameworks concentrate on the structure of bias. This approach involves modeling causal relationships among protected attributes, observed variables, and outcomes, assessing the causal effects of protected attributes, and mitigating biases using causal methods, such as optimal transport Plecko & Bareinboim (2024) or latent variable estimation Kusner et al. (2017); Ma et al. (2023); Bhaila et al. (2024).

Counterfactual fairness, introduced by Kusner et al. (2017), posits that predictive outcomes should remain invariant between the actual world and a counterfactual scenario in which a protected attribute assumes an alternative value. This notion has spurred interest within the fairness research community, resulting in developments like path-specific extensions Chiappa (2019) and the application of Variational Autoencoders (VAEs) to create counterfactually fair latent representations Ma et al. (2023).

The initial counterfactual fairness framework necessitates comprehensive knowledge of the causal model. In contrast, the Causal Fairness Analysis (CFA) framework Plecko & Bareinboim (2024) relaxes this requirement by organizing variables within a Standard Fairness Model (SFM) for bias assessment and mitigation. Moreover, the CFA framework presents the Fairness Cookbook, which defines causal fairness metrics—Indirect-Effect, Direct-Effect, and Spurious-Effect—that directly align with US legal doctrines of disparate impact and treatment. Furthermore, the CFA framework challenges Kusner et al. (2017) ’s modeling of protected attributes as exogenous causes, permitting correlations between protected attributes and confounding variables that contribute to the legally admissible Spurious-Effect.

## 3 Background

This section establishes the scientific foundation of FairPFN, including terminology relevant to algorithmic fairness, causal ML, counterfactual fairness, and prior-data fitted networks (PFNs).

#### Algorithmic Fairness

Algorithmic discrimination occurs when historical biases against demographic groups (e.g., ethnicity, sex) are reflected in the training data of ML algorithms, leading to the perpetuation and amplification of these biases in predictions Barocas et al. (2023). Fairness research focuses on measuring algorithmic bias and developing fairness-aware ML models that produce non-discriminatory predictions. Practitioners have established over 20 fairness metrics, which generally break down into group-level and individual-level metrics Castelnovo et al. (2022). These metrics can be used to optimize predictive models, balancing the commonly observed trade-off between fairness and predictive accuracy Weerts et al. (2024).

Causal Machine Learning Causal ML is a developing field that leverages modern ML methods for causal reasoning Pearl (2009), facilitating advancements in causal discovery, causal inference, and causal reasoning Peters et al. (2014). Causal mechanisms are often represented as Structural Causal Models (SCMs), defined as $\mathcal{M}=(U,O,F)$ , where $U$ are unobservables, $O$ are observables, and $F$ is a set of structural equations. These equations are expressed as $f_{j}:X_{j}=f_{j}(PA_{j},N_{j})$ , indicating that an outcome variable $F$ depends on its parent variables $PA$ and independent noise $N_{j}$ . Non-linearities in the set of structural equations $F$ influence data complexity and identifiability of causal quantities from observational data Schölkopf et al. (2012). In an SCM, interventions can be made by setting $X\leftarrow x_{1}$ and propagating this value through the model $\mathcal{M}$ , posing the question of "what will happen if I do something?". Counterfactuals expand upon the idea of interventions and are relevant when a value of $X$ is already observed, instead posing the question of "what would have happened if something had been different?" In addition to posing a slightly different question, counterfactuals require that exogenous noise terms are held constant, and thus classically require full knowledge of the causal model. In the context of algorithmic fairness, we are limited to level of counterfactuals as protected attributes are typically given and already observed.

In causal reasoning frameworks, one major application of counterfactuals is the estimation of causal effects such as the individual and average treatment effects (ITE and ATE) which quantify the difference and expected difference between outcomes under different values of $X$ .

$$

ITE:\tau=Y_{X\leftarrow x}-Y_{X\leftarrow x^{\prime}} \tag{1}

$$

$$

ATE:E[\tau]=E[Y_{X\leftarrow x}]-E[Y_{X\leftarrow x^{\prime}}]. \tag{2}

$$

#### Counterfactual Fairness

is a foundational notion of causal fairness introduced by Kusner et al. (2017), requiring that an individual’s predictive outcome should match that in a counterfactual scenario where they belong to a different demographic group. This notion is formalized in the theorem below.

**Theorem 1 (Unit-level/probabilistic)**

*Given an SCM $\mathcal{M}=(U,O,F)$ where $O=A\cup X$ , a predictor $\hat{Y}$ is counterfactually fair on the unit-level if $\forall\hat{y}\in\hat{Y},\forall x,a,a^{\prime}\in A$

$$

P(\hat{y}_{A\rightarrow a}(u)|X,A=x,a)=P(\hat{y}_{A\rightarrow a^{\prime}}(u)|

X,A,=x,a)

$$*

Kusner et al. (2017) notably choose to model protected attributes as exogenous, which means that they may not be confounded by unobserved variables with respect to outcomes. We note that the definition of counterfactual fairness in Theorem 1 is the unit-level probabilistic one as clarified by Plecko & Bareinboim (2024), because counterfactual outcomes are generated deterministically with fixed unobservables $U=u$ . Theorem 1 can be applied on the dataset level to form the population-level version also provided by Plecko & Bareinboim (2024) which measures the alignment of natural and counterfactual predictive distributions.

**Theorem 2 (Population-level)**

*Given an SCM $\mathcal{M}=(U,O,F)$ where $O=A\cup X$ , a predictor $\hat{Y}$ is counterfactually fair on the population-level if $\forall\hat{y}\in\hat{Y},\forall x,a,a^{\prime}\in A$

$$

P(\hat{y}_{A\rightarrow a}|X,A=x,a)=P(\hat{y}_{A\rightarrow a^{\prime}}|X,A=x,a)

$$*

Theorem 1 can also be transformed into a counterfactual fairness metric by quantifying the difference between natural and counterfactual predictive distributions. In this study we quantify counterfactual fairness as the distribution of the counterfactual absolute error (AE) between predictions in each distribution.

**Definition 1 (Absolute Error (AE))**

*Given an SCM $\mathcal{M}=(U,O,F)$ where $O=A\cup X$ , the counterfactual absolute error of a predictor $\hat{Y}$ is the distribution

$$

AE=|P(\hat{y}_{A\rightarrow a}(u)|X,A=x,a)-P(\hat{y}_{A\rightarrow a^{\prime}}

(u)|X,A=x,a)|

$$*

We note that because the outcomes are condition on the same noise terms $u$ our definition of AE builds off of Theorem 1. Intuitively, when the AE is skewed towards zero, then most individuals receive the same prediction in both the natural and counterfactual scenarios.

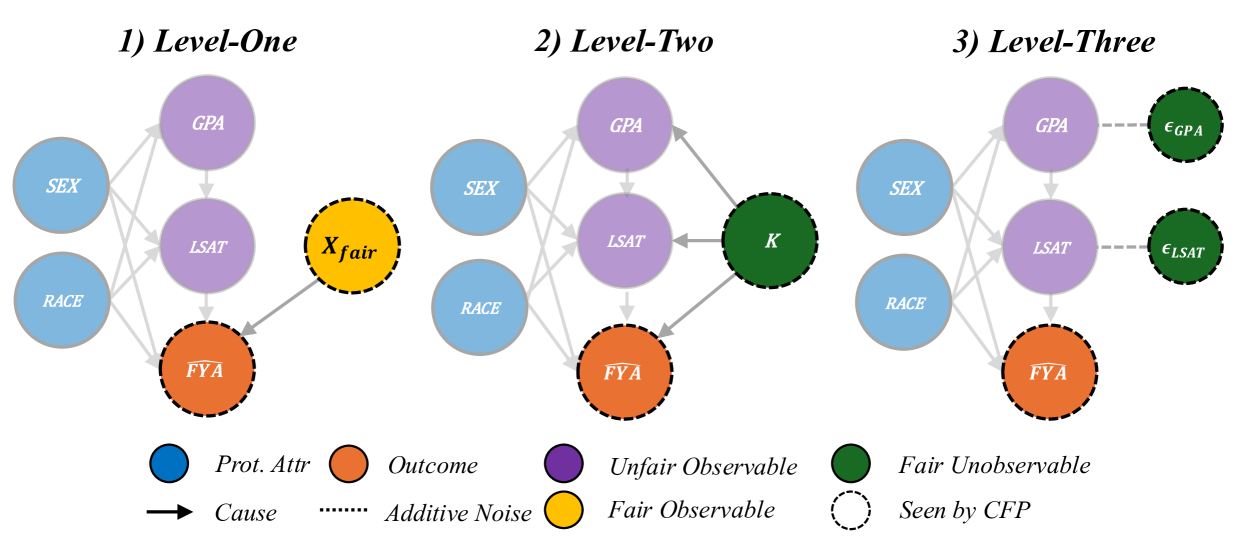

Kusner et al. (2017) present various implementations of Counterfactually Fair Prediction (CFP). The three levels of CFP can be achieved by fitting a predictive model $\hat{Y}$ to observable non-descendants if any exist (Level-One), inferred values of an exogenous unobserved variable $K$ (Level-Two), or additive noise terms (Level-Three). Kusner et al. (2017) acknowledge that in practice, Level-One rarely occurs. Level-Two requires that the causal model be invertible, which allows the unobservable $K$ to be inferred by abduction. Level-Three models the scenario as an Additive Noise Model, and thus is the strongest in terms of representational capacity, allowing more degrees of freedom than in Level-Two to represent fair terms. The three levels of CFP are depicted in Appendix Figure 22.

Causal Fairness The Causal Fairness Analysis (CFA) framework Plecko & Bareinboim (2024) introduces the Standard Fairness Model (SFM), which classifies variables as protected attributes $A$ , mediators $X_{med}$ , confounders $X_{conf}$ , and outcomes $Y$ . This framework includes a Fairness Cookbook of causal fairness metrics with a strong analogy to the legal notions of direct and indirect discrimination and business necessity as illustrated in Appendix Figure 23. Plecko & Bareinboim (2024) refute the modeling choice of Kusner et al. (2017) by their inclusion of confounders $X_{conf}$ in the SFM, arguing that these variables contribute to the legally admissible Spurious-Effect (SE).

For simplicity of our experimental results, we follow the modeling of Kusner et al. (2017), and focus on the elimination of the Total-Effect (TE) of protected attributes as defined by Plecko & Bareinboim (2024), while noting in Section 6 the importance of relaxing this assumption in future extensions.

Prior-data Fitted Networks Prior-data Fitted Networks (PFNs) Müller et al. (2022) and TabPFN Hollmann et al. (2023, 2025) represent a paradigm shift from traditional ML with a causal motivation, namely that simple causal models offer a quality explanation for real-world data. PFNs incorporate prior knowledge into transformer models by pre-training on datasets from a specific prior distribution Müller et al. (2022). TabPFN, a popular application of PFNs, applies these ideas to small tabular classification tasks by training a transformer on synthetic datasets derived from sparse Structural Causal Models (SCMs). As noted in Hollmann et al. (2023), a key advantage of TabPFN is its link to Bayesian Inference; where the transformer approximates the Posterior Predictive Distribution (PPD), thus achieving state-of-the-art performance by integrating over simple causal explanations for the data.

## 4 Methodology

In this section, we introduce FairPFN, a foundation model for legally or ethically sensitive tabular classification problems that draws inspiration from PFNs and principles of causal fairness. We introduce our pre-training scheme, synthetic data prior, and draw connections to Bayesian Inference to explain the inner workings of FairPFN.

### 4.1 FairPFN Pre-Training

First, we present our pre-training scheme, where FairPFN is fit to a prior of synthetic causal fairness data to identify and remove the causal effects of protected attributes in practice from observational data alone. We provide pseudocode for our pre-training algorithm in Algorithm 2, and outline the steps below.

Input:

Number of pre-training epochs $E$ and steps $S$

Transformer $\mathcal{M}$ with weights $\theta$

Hypothesis space of SCMs $\phi\in\Phi$

begin

for $epoch=1$ to $E$ do

for $step=1$ to $S$ do

Draw a random SCM $\phi$ from $\Phi$

Sample $D_{bias}=(A,X_{bias},Y_{bias})$ from $\phi$ where A $\{a_{0},a_{1}\}$ is an exogenous binary protected attribute

Sample $Y_{fair}$ from $\phi$ by performing dropout on outgoing edges of $A$ if any exist

Partition $D_{bias}$ and $D_{fair}$ into $train/val$

Pass $D_{bias}^{train}$ into $\mathcal{M}$ as context

Pass $D_{bias}^{val}$ into $\mathcal{M}$ to generate $Y_{pred}^{val}$

Calculate loss $L=CE(Y_{pred}^{val},Y_{fair}^{val})$

Update weights $\theta$ w.r.t $\nabla_{\theta}L$

end for

end for

Output: Transformer $\mathcal{M}:X_{bias}\rightarrow Y_{fair}$

Algorithm 1 FairPFN Pre-training

Data Generating Mechanisms FairPFN pre-training begins by creating synthetic datasets that capture the causal mechanisms of bias in real-world data. Following the approach of Hollmann et al. (2023), we use Multi-Layer Perceptrons (MLPs) to model Structural Causal Models (SCMs) via the structural equation $f=z(P\cdot W^{T}x+\epsilon)$ , where $W$ denotes activation weights, $\epsilon$ represents Gaussian noise, $P$ is a dropout mask sampled from a log-scale to promote sparsity, and $z$ is a non-linearity. Figure 1 illustrates the connection among sampled MLPs, their corresponding SCMs, and the resulting synthetic pre-training data generated. We note that independent noise terms are not visualized in Figure 1.

Biased Data Generation An MLP is randomly sampled and sparsity is induced through dropout on select edges. The protected attribute is defined as a binary exogenous variable $A\in\{a_{0},a_{1}\}$ at the input layer. We uniformly select $m$ features $X$ from the second hidden layer onwards to capture rich representations of exogenous causes. The target variable $Y$ is chosen from the output layer and discretized into a binary variable using a random threshold. A forward pass through the MLP produces a dataset $D_{bias}=(A,X_{bias},Y_{bias})$ with $n$ samples containing the causal influence of the protected attribute.

#### Fair Data Generation

A second forward pass generates a fair dataset $D_{fair}$ by applying dropout to the outgoing edges of the protected attribute $A$ in the MLP, as shown by the red edges in Figure 1. This dropout, similar to that in TabPFN, masks the causal weight of $A$ to zero, effectively reducing its influence to Gaussian noise $\epsilon$ . This increases the influence of fair exogenous causes $U_{0}$ and $U_{1}$ and independent noise terms all over the MLP visualized in Figure 1. We note that $A$ is sampled from an arbitrary distribution $A\in\{a_{0},a_{1}\}$ , as opposed to $A\in\{0,1\}$ , since both functions $f=0\cdot wx+\epsilon$ and $f=p\cdot 0x+\epsilon$ yield equivalent outcomes. Only after generating the pre-training dataset is $A$ converted to a binary variable for processing by the transformer.

In-Context Learning After generating $D_{bias}$ and $D_{fair}$ , we partition them into training and validation sets: $D_{bias}^{train}$ , $D_{bias}^{val}$ , $D_{fair}^{train}$ , and $D_{fair}^{val}$ . We pass $D_{bias}^{train}$ as context to the transformer to provide information about feature-target relationships. To simulate inference, we input $X_{bias}^{val}$ into the transformer $\mathcal{M}$ , yielding predictions $Y_{pred}$ . We then compute the binary-cross-entropy (BCE) loss $L(Y_{pred},Y_{fair}^{val})$ against the fair outcomes $Y_{fair}^{val}$ , which do not contain effects of the protected attribute. Thus, the transformer $\mathcal{M}$ learns the mapping $\mathcal{M}:X_{bias}\rightarrow Y_{fair}$ .

1

Input:

- Number of exogenous causes $U$

- Number of endogenous variables $U\times H$

- Number of features and samples $M\times N$

begin

- Define MLP $\phi$ with depth $H$ and width $U$

- Initialize random weights $W:(U\times U\times H-1)$

- Sample sparsity masks $P$ with same dimensionality as weights

- Sample $H$ per-layer non-linearities $z_{i}\sim\{Identity,ReLU,Tanh\}$

- Initialize output matrix $X:(U\times H)$

- Sample location $k$ of protected attribute in $X_{0}$

- Sample locations of features $X_{biased}$ in $X_{1:H-1}$ , and outcome $y_{bias}$ in $X_{H}$

- Sample protected attribute threshold $a_{t}$ and binary values $\{a_{0},a_{1}\}$

for $n=0$ to $N$ samples do

- Sample values of exogenous causes $X_{0}:(U\times 1)$

- Sample values of additive noise terms $\epsilon:(U\times H)$

for $i=0$ to $H-1$ layers do

- Pass intermediate representation through hidden layer $X_{i+1}=z_{i}(P_{i}\cdot W_{i}^{T}X_{i}+\epsilon_{i})$

end for

- Select prot. attr. $A$ , features $X_{bias}$ and outcome $y_{bias}$ from $X_{0}$ , $X_{1:H-1}$ , and $X_{H}$

- Binarize $A\in\{a_{0},a_{1}\}$ over threshold $a_{t}$

- Set input weights in row $k$ of $W_{0}$ to 0

for $j=0$ to $H-1$ layers do

- Pass intermediate representation through hidden layer $X_{j+1}=z_{i}(P_{i}\cdot W_{j}^{T}X_{j}+\epsilon_{j})$

end for

2 - Select the fair outcome $y_{fair}$ from $X_{H}$

end for

- Binarize $y_{fair}\in\{0,1\}$ and $y_{bias}\in\{0,1\}$ over randomly sampled output threshold $y_{t}$

3 Output: $D_{bias}=(A,X_{bias},y_{bias})$ and $y_{fair}$

Algorithm 2 FairPFN Synthetic Data Generation

Prior-Fitting The transformer is trained for approximately 3 days on an RTX-2080 GPU on approximately 1.5 million different synthetic data-generating mechanisms, in which we vary the MLP architecture, the number of features $m$ , the sample size $n$ , and the non-linearities $z$ .

Real-World Inference During real-world inference, FairPFN requires no knowledge of causal mechanisms in the data, but instead only takes as input a biased observational dataset and implicitly infers potential causal explanations for the data (Figure 1 d) based on the causally generated data it has seen during pre-training. Crucially, FairPFN is provided information regarding which variable is the protected attribute, which is represented in a protected attribute encoder step in the transformer. A key advantage of FairPFN is its alignment with Bayesian Inference, as transformers pre-trained in the PFN framework have been shown to approximate the Posterior Predictive Distribution (PPD) Müller et al. (2022).

FairPFN thus approximates a modified PPD, predicting a causally fair target $y_{f}$ given biased features $X_{b}$ and a biased dataset $D_{b}$ by integrating over hypotheses for the SCM $\phi\in\Phi$ :

$$

p(y_{f}|x_{b},D_{b})\propto\int_{\Phi}p(y_{f}|x_{b},\phi)p(D_{b}|\phi)p(\phi)d\phi \tag{3}

$$

This approach has two advantages: it reduces the necessity of precise causal model inference, thereby lowering the risk of fairwashing from incorrect models Ma et al. (2023), and carries with it regularization-related performance improvements observed in Hollmann et al. (2023). We also emphasize that FairPFN is a foundation model and thus does not need to be trained for new fairness problems in practice. Instead, FairPFN performs predictions in a single forward pass of the data through the transformer.

## 5 Experiments

This section assesses FairPFN’s performance on synthetic and real-world benchmarks, highlighting its capability to remove the causal influence of protected attributes without user-specified knowledge of the causal model, while maintaining high predictive accuracy.

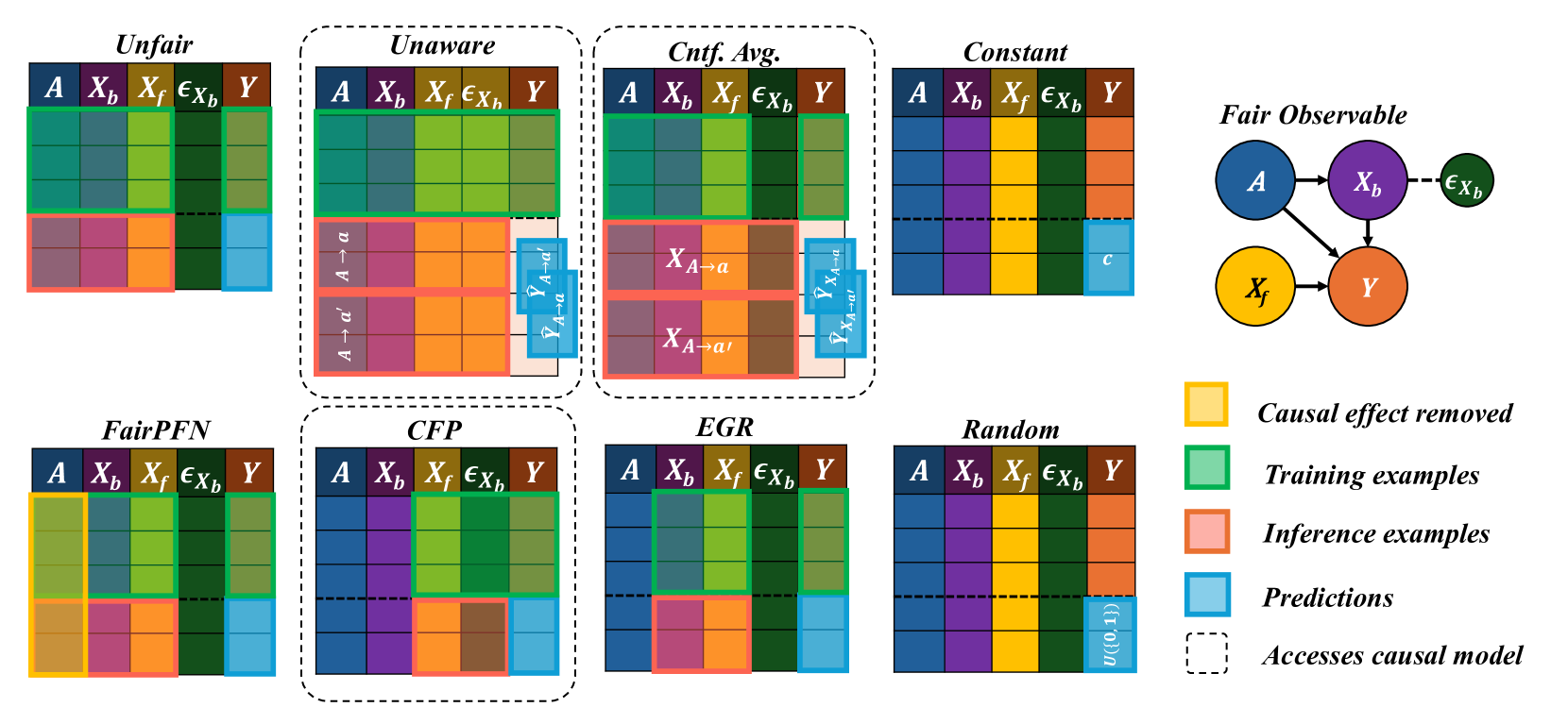

### 5.1 Baselines

We implement several baselines to compare FairPFN against a diverse set of traditional ML models, causal-fairness frameworks, and fairness-aware ML approaches. We summarize our baselines below, and provide a visualization of our baselines applied to the Fair Observable benchmark in Appendix Figure 25.

- Unfair: Fit the entire training set $(X,A,Y)$ .

- Unaware: Fit to the entire training set $(X,A,Y)$ . Inference returns the average of predictions on the original test set $(X,A)$ and the test set with alternative protected attribute values $(X,A\rightarrow a^{\prime})$ .

- Avg. Cnft: Fit to the entire training set $(X,A,Y)$ . Inference returns the average (avg.) of predictions on the original test set $(X,A)$ and the counterfactual (cntf) test set $(X_{A\rightarrow a^{\prime}},A\rightarrow a^{\prime})$ .

- Constant: Always predicts the majority class

- Random: Randomly predicts the target

- CFP: Combination of the three-levels of CFP as proposed in Kusner et al. (2017). Fit to non-descendant observables, unobservables, and independent noise terms $(X_{fair},U_{fair},\epsilon_{fair},Y)$ .

- EGR: Exponentiated Gradient Reduction (EGR) as proposed by Agarwal et al. (2018) is fit to non-protected attributes $(X,Y)$ with XGBoost Chen & Guestrin (2016) as a base model.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Causal Diagram: Six Fairness Models in Machine Learning

### Overview

The image displays six distinct causal models, numbered 1 through 6, illustrating different relationships between a protected attribute (`A`), observable features (`X_b`, `X_f`), an unobservable feature (`U`), an outcome (`Y`), and additive noise terms (`ε`). Each model is presented as a panel containing a directed acyclic graph (DAG) and its corresponding mathematical formulation. A legend at the bottom defines the visual symbols and node colors.

### Components/Axes

The image is organized into six horizontal panels. Each panel contains:

1. **Title**: A numbered label (e.g., "1) Biased").

2. **Causal Graph**: A diagram with colored nodes and arrows.

3. **Mathematical Formulation**: A set of equations defining the distributions and relationships between variables.

**Legend (Bottom of Image):**

* **Node Colors & Meanings**:

* Blue Circle: `Prot. Attr.` (Protected Attribute, `A`)

* Orange Circle: `Outcome` (`Y`)

* Purple Circle: `Unfair Observable` (`X_b`)

* Yellow Circle: `Fair Observable` (`X_f`)

* Green Circle: `Fair Unobservable` (`U`)

* **Arrow Types**:

* Solid Arrow (`→`): `Cause`

* Dotted Arrow (`⋯>`): `Additive Noise`

* **Node Border**:

* Dashed Circle Border: `Non-descendent`

* **Node Fill Pattern**:

* Hatched Pattern: `Seen by FairPFN`

### Detailed Analysis

#### Panel 1: Biased

* **Graph**: `A` (blue, hatched) → `X_b` (purple, hatched) → `Y` (orange, hatched). `X_b` has an additive noise term `ε_Xb` (green). `Y` has an additive noise term `ε_Y` (orange).

* **Equations**:

* `A ~ U({0,1})`

* `ε_Xb, ε_Y ~ N(μ, σ), N(μ, σ)`

* `X_b = w_A * A² + ε_Xb`

* `Y = w_Xb * X_b² + ε_Y`

* `Y = 1(Y ≥ Ȳ)`

#### Panel 2: Direct-Effect

* **Graph**: `A` (blue, hatched) → `X_f` (yellow, hatched) → `Y` (orange, hatched). `A` also has a direct arrow to `Y`. `X_f` has an additive noise term `ε_Xf` (green). `Y` has an additive noise term `ε_Y` (orange).

* **Equations**:

* `A ~ U({0,1})`

* `ε_Xb, ε_Y ~ N(μ, σ), N(μ, σ)`

* `X_f = N(μ, σ)`

* `Y = w_Xf * X_f² + w_A * A² + ε_Y`

* `Y = 1(Y ≥ Ȳ)`

#### Panel 3: Indirect-Effect

* **Graph**: `A` (blue, hatched) → `X_b` (purple, hatched) → `Y` (orange, hatched). `X_f` (yellow, dashed border) → `Y`. `X_b` has an additive noise term `ε_Xb` (green). `Y` has an additive noise term `ε_Y` (orange).

* **Equations**:

* `ε_Xb, ε_Y ~ N(μ, σ), N(μ, σ)`

* `A ~ U({0,1}), X_f ~ N(μ, σ)`

* `X_b = w_A * A² + ε_Xb`

* `Y = w_Xb * X_b² + w_Xf * X_f² + ε_Y`

* `Y = 1(Y ≥ Ȳ)`

#### Panel 4: Fair Observable

* **Graph**: `A` (blue, hatched) → `X_b` (purple, hatched) → `Y` (orange, hatched). `A` also has a direct arrow to `Y`. `X_f` (yellow, dashed border) → `Y`. `X_b` has an additive noise term `ε_Xb` (green). `Y` has an additive noise term `ε_Y` (orange).

* **Equations**:

* `ε_Xb, ε_Y ~ N(μ, σ), N(μ, σ)`

* `A ~ U({0,1}), X_f ~ N(μ, σ)`

* `X_b = w_A * A² + ε_Xb`

* `Y = w_Xb * X_b² + w_Xf * X_f² + w_A * A² + ε_Y`

* `Y = 1(Y ≥ Ȳ)`

#### Panel 5: Fair Unobservable

* **Graph**: `A` (blue, hatched) → `X_b` (purple, hatched) → `Y` (orange, hatched). `A` also has a direct arrow to `Y`. `U` (green, dashed border) → `X_b` and `U` → `Y`. `X_b` has an additive noise term `ε_Xb` (green). `Y` has an additive noise term `ε_Y` (orange).

* **Equations**:

* `ε_Xb, ε_Y ~ N(μ, σ), N(μ, σ)`

* `A ~ U({0,1}), U ~ N(μ, σ)`

* `X_b = w_A * A² + w_U * U² + ε_Xb`

* `Y = w_Xb * X_b² + w_A * A² + ε_Y`

* `Y = 1(Y ≥ Ȳ)`

#### Panel 6: Fair Additive Noise

* **Graph**: `A` (blue, hatched) → `X_b` (purple, hatched) → `Y` (orange, hatched). `A` also has a direct arrow to `Y`. `X_b` has an additive noise term `ε_Xb` (green). `Y` has an additive noise term `ε_Y` (orange).

* **Equations**:

* `ε_Xb, ε_Y ~ N(μ, σ), N(μ, σ)`

* `A ~ U({0,1})`

* `X_b = w_A * A² + ε_Xb`

* `Y = w_Xb * X_b² + w_A * A² + ε_Y`

* `Y = 1(Y ≥ Ȳ)`

### Key Observations

1. **Progression of Complexity**: The models progress from a simple biased pathway (1) to more complex structures incorporating direct effects (2, 4, 6), indirect effects (3), and unobservable confounders (5).

2. **Variable Roles**: The protected attribute `A` is always binary (`U({0,1})`). The outcome `Y` is always binarized via a threshold (`1(Y ≥ Ȳ)`). Observable features (`X_b`, `X_f`) and the unobservable `U` are modeled with normal distributions.

3. **Visual Coding**: The hatched pattern indicates which variables are "Seen by FairPFN," suggesting this diagram is from a paper proposing or analyzing a method called FairPFN. The dashed border for `X_f` and `U` in panels 3, 4, and 5 marks them as "Non-descendent" of `A` in those specific causal structures.

4. **Mathematical Consistency**: All models use squared terms (e.g., `A²`, `X_b²`) in their structural equations, implying non-linear relationships. The noise terms are consistently modeled as Gaussian.

### Interpretation

This diagram is a technical taxonomy of data-generating processes used to study algorithmic fairness. It systematically varies the causal pathways through which a protected attribute (`A`) can influence an outcome (`Y`).

* **Model 1 (Biased)** represents a scenario where bias flows entirely through a single, unfair observable feature (`X_b`).

* **Models 2 & 6 (Direct-Effect, Fair Additive Noise)** introduce a direct effect of `A` on `Y`, which is often considered a source of unfair discrimination.

* **Model 3 (Indirect-Effect)** separates features into fair (`X_f`) and unfair (`X_b`) observables, with `A` only affecting `Y` through the unfair one.

* **Model 4 (Fair Observable)** combines direct effect with both fair and unfair observable pathways.

* **Model 5 (Fair Unobservable)** is the most complex, introducing an unobserved confounder (`U`) that affects both the unfair feature and the outcome, representing real-world complexity where important factors are not measured.

The core purpose is to provide a framework for evaluating fairness interventions (like the mentioned "FairPFN"). By defining these precise data-generating models, researchers can test whether a fairness algorithm works correctly under different, well-specified assumptions about how bias enters a system. The consistent use of squared terms and Gaussian noise creates a controlled, synthetic environment for this evaluation. The diagram argues that understanding fairness requires moving beyond simple correlations to explicitly model the causal structure of the data.

</details>

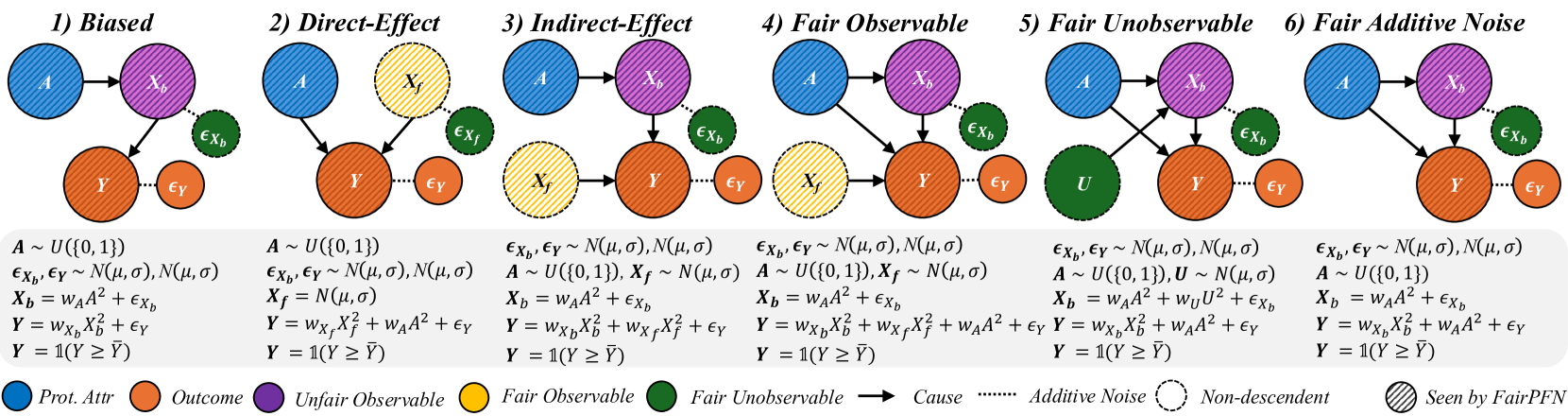

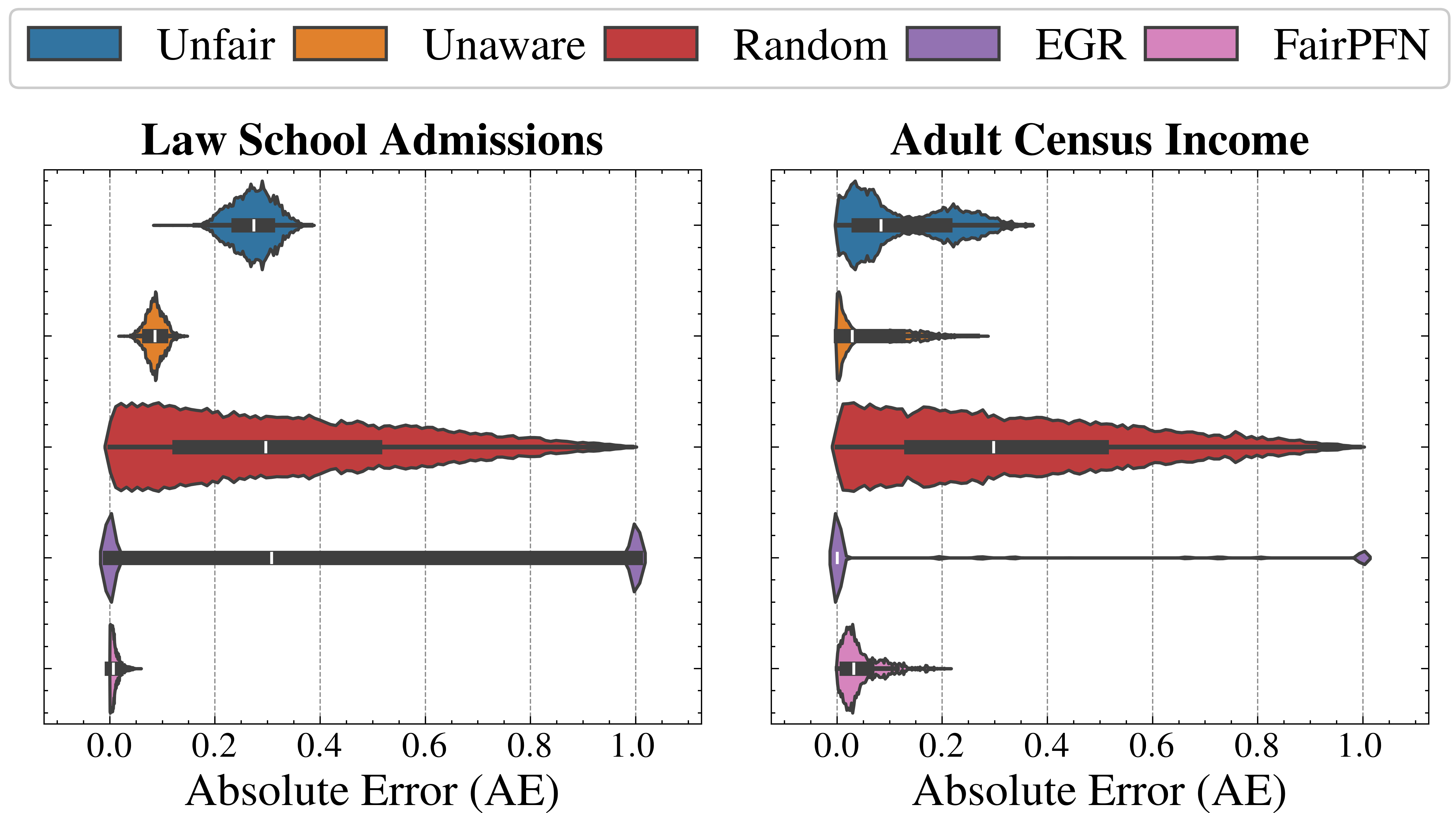

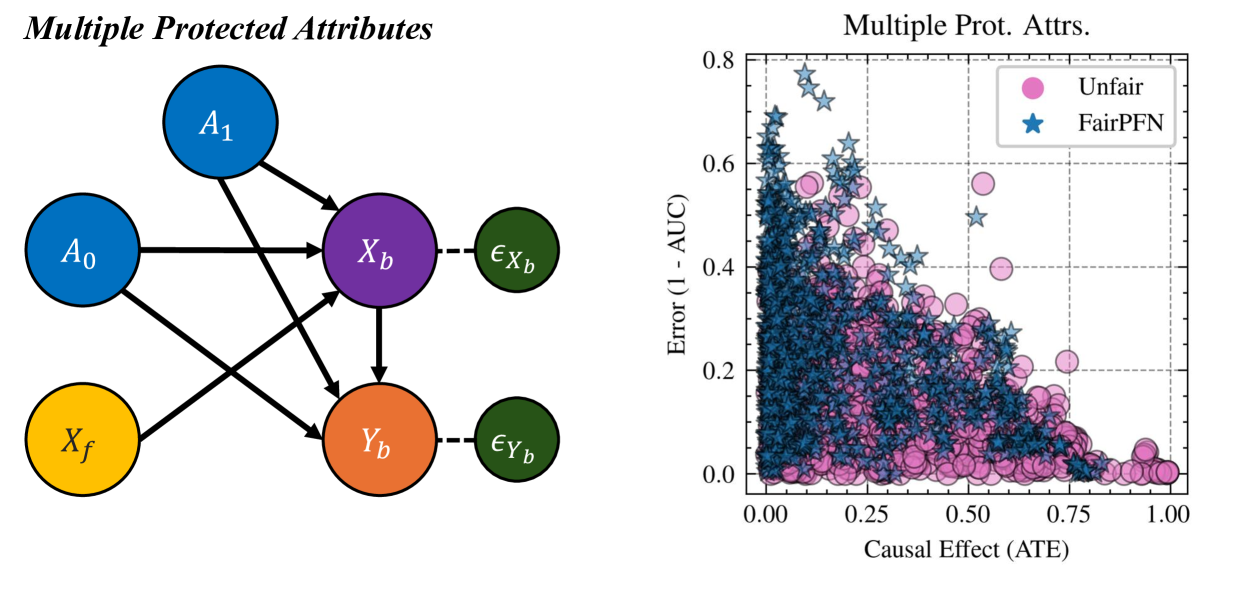

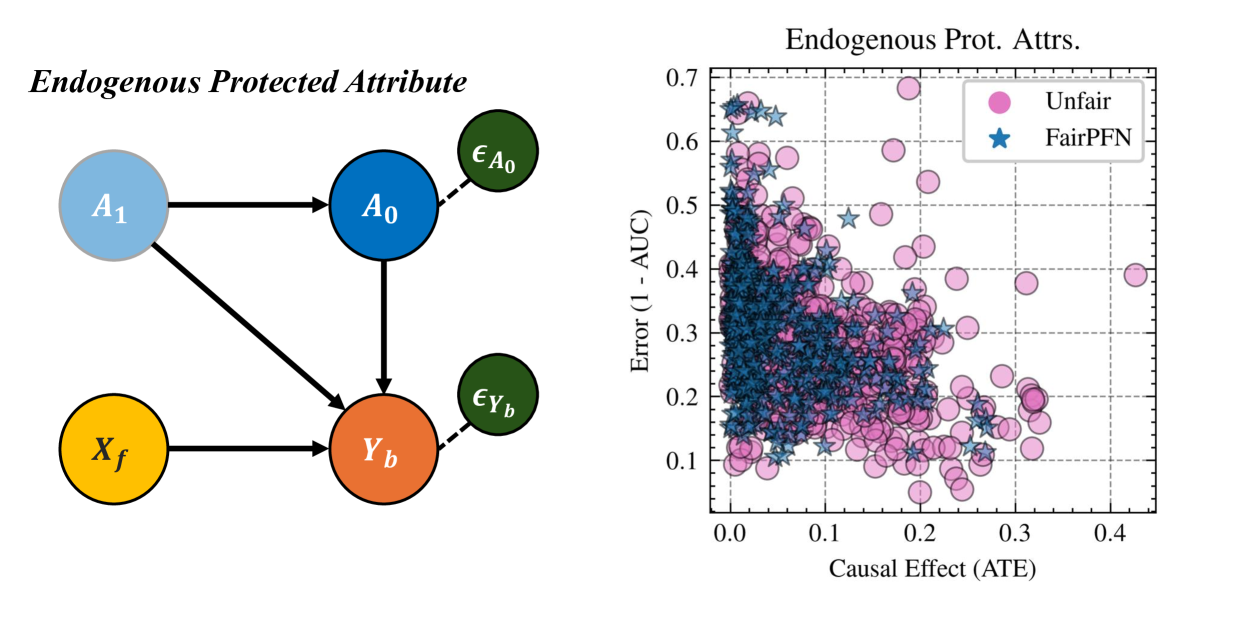

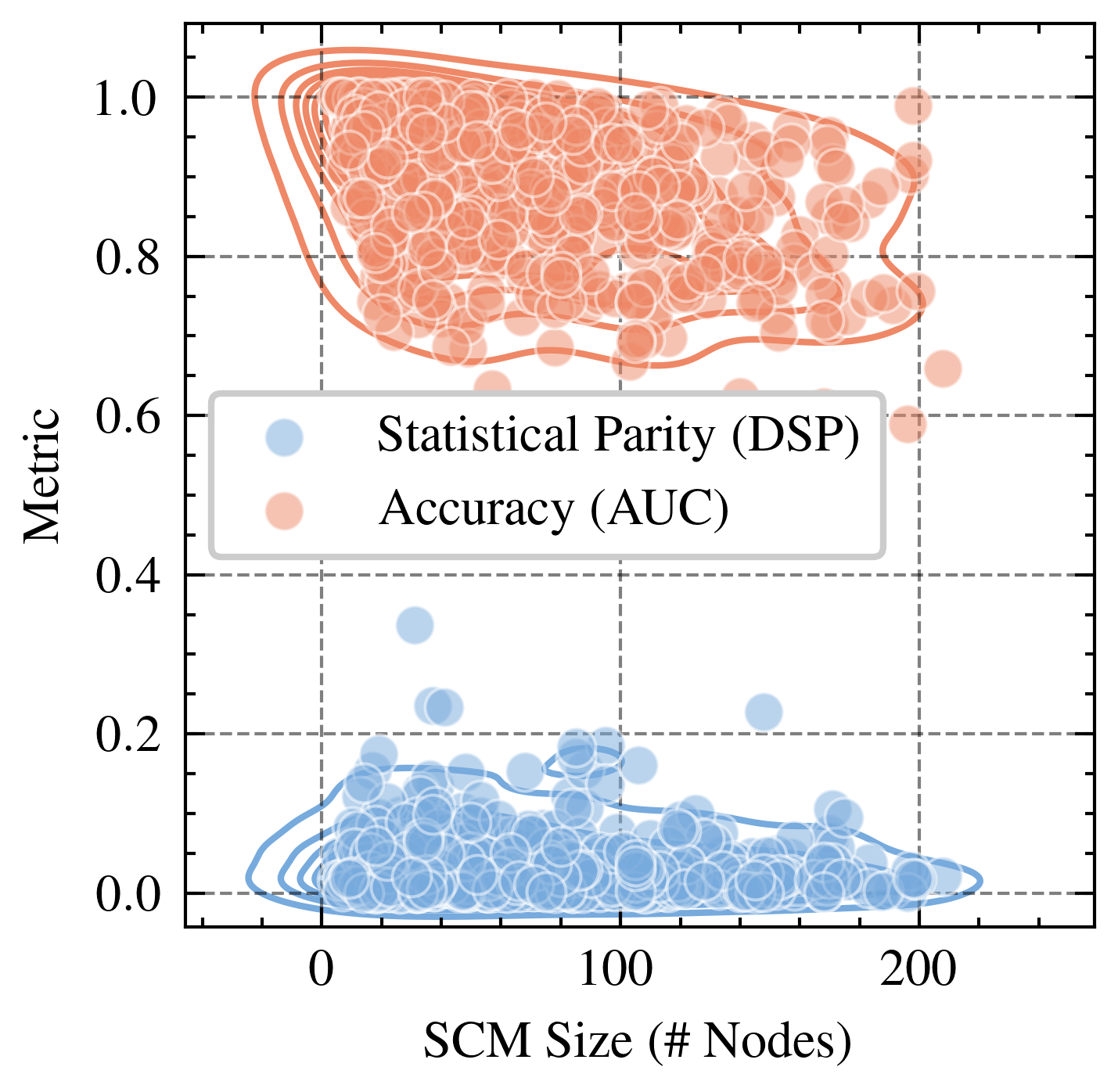

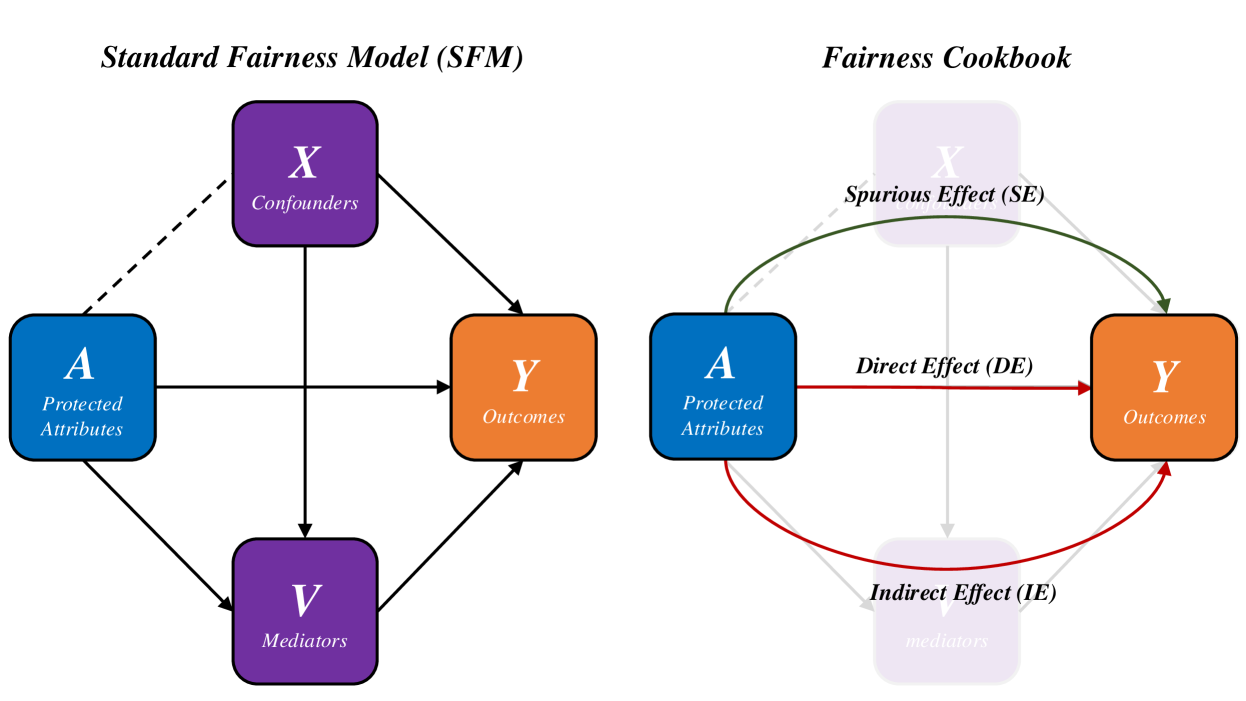

Figure 2: Causal Case Studies: Visualization and data generating processes of synthetic causal case studies, a handcrafted set of benchmarks designed to evaluate FairPFN’s ability to remove various sources of bias in causally generated data. For each group, 100 independent datasets are sampled, varying the number of samples, the standard deviation of noise terms $\sigma$ and the base causal effect $w_{A}$ of the protected attribute.

In the CFP, Unfair, Unaware, and Avg. Cntf. baselines, we employ FairPFN with a random noise term passed as a "protected attribute." We opt to use this UnfairPFN instead of TabPFN so as to not introduce any TabPFN-specific behavioral characteristics or artifacts. We show in Appendix Figure 17 that this reverts FairPFN to a normal tabular classifier with competitive peformance to TabPFN. We also note that our Unaware baseline is not the standard approach of dropping the protected attribute. We opt for our own implementation of Unaware as it shows improved causal effect removal to the standard approach (Appendix Figure 17).

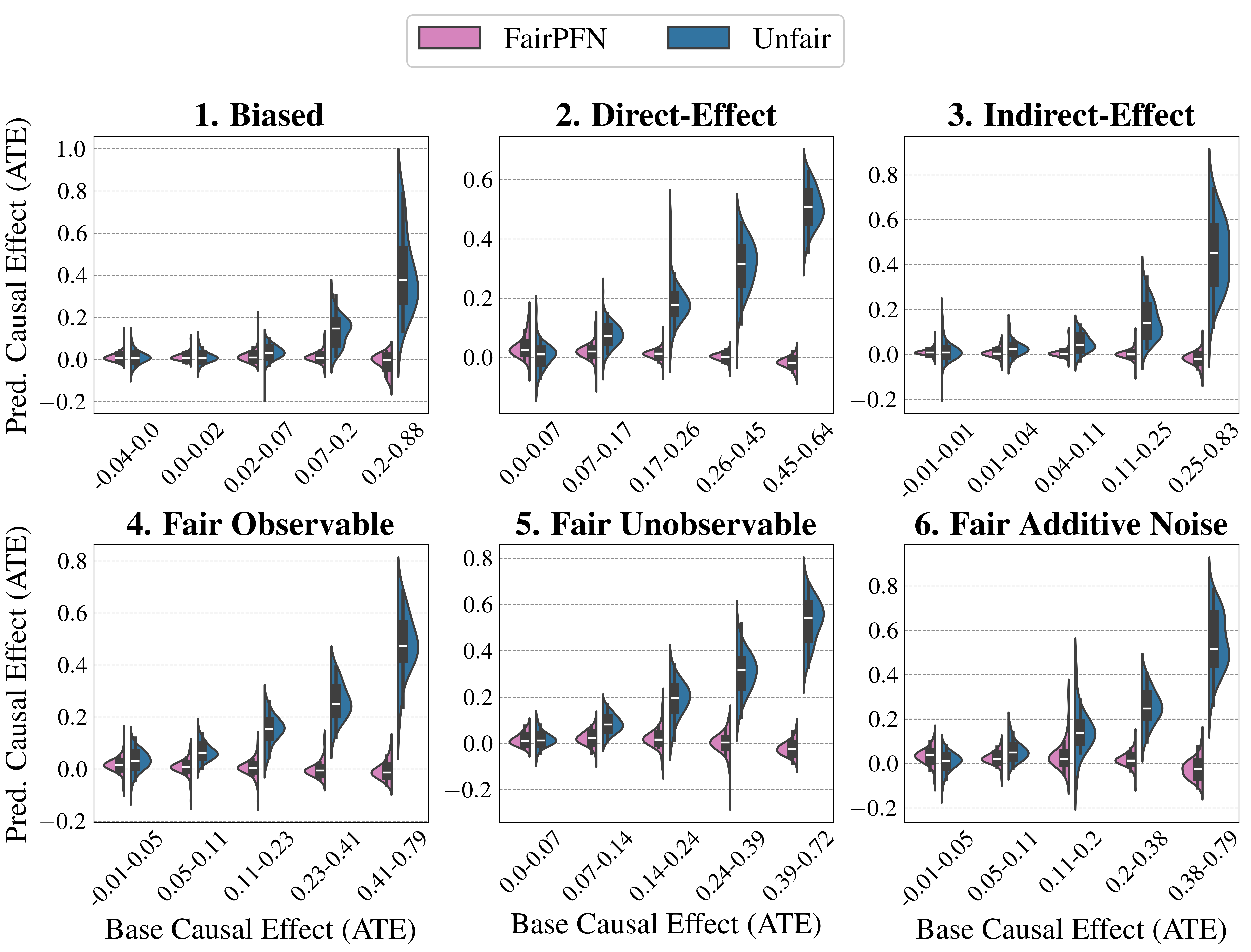

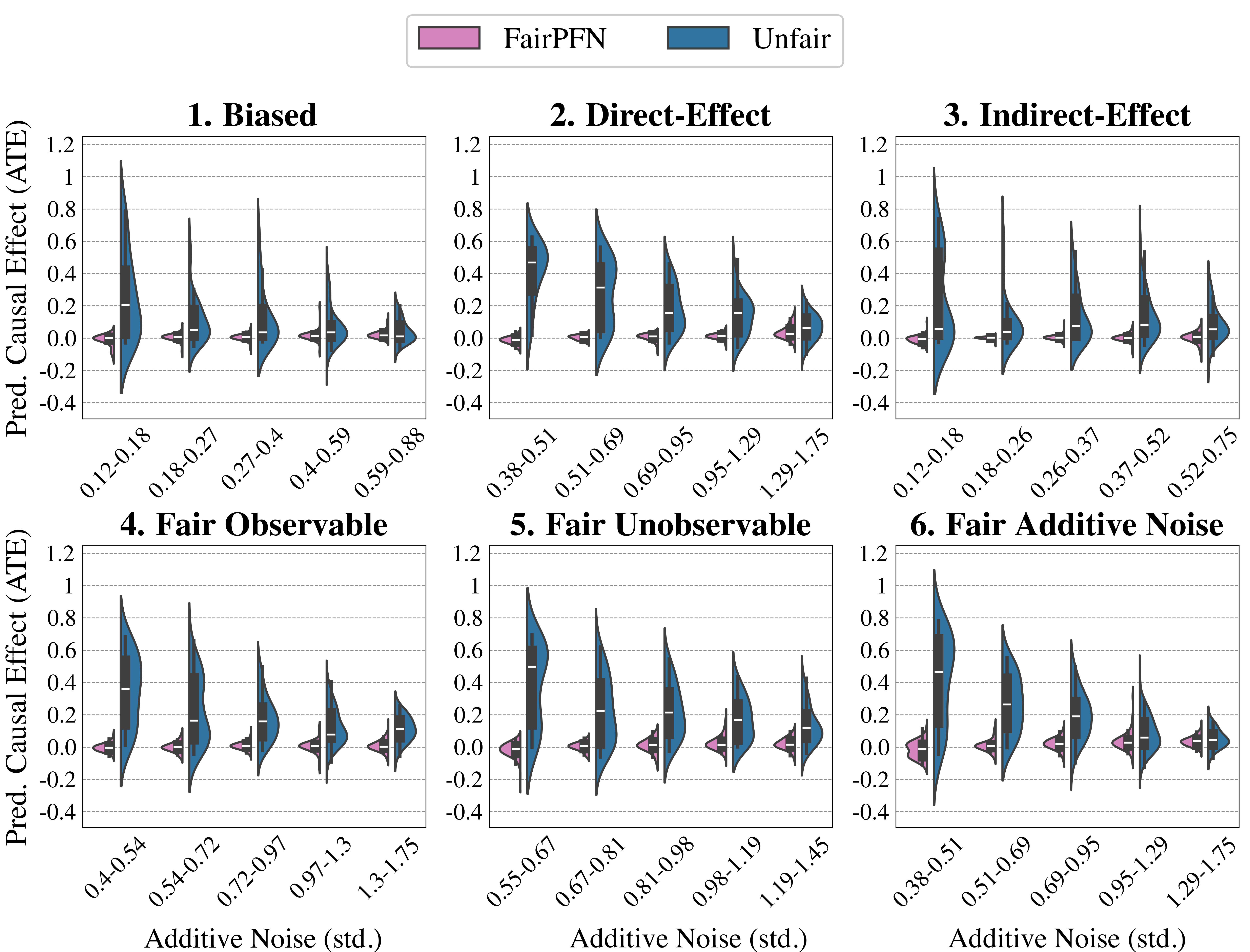

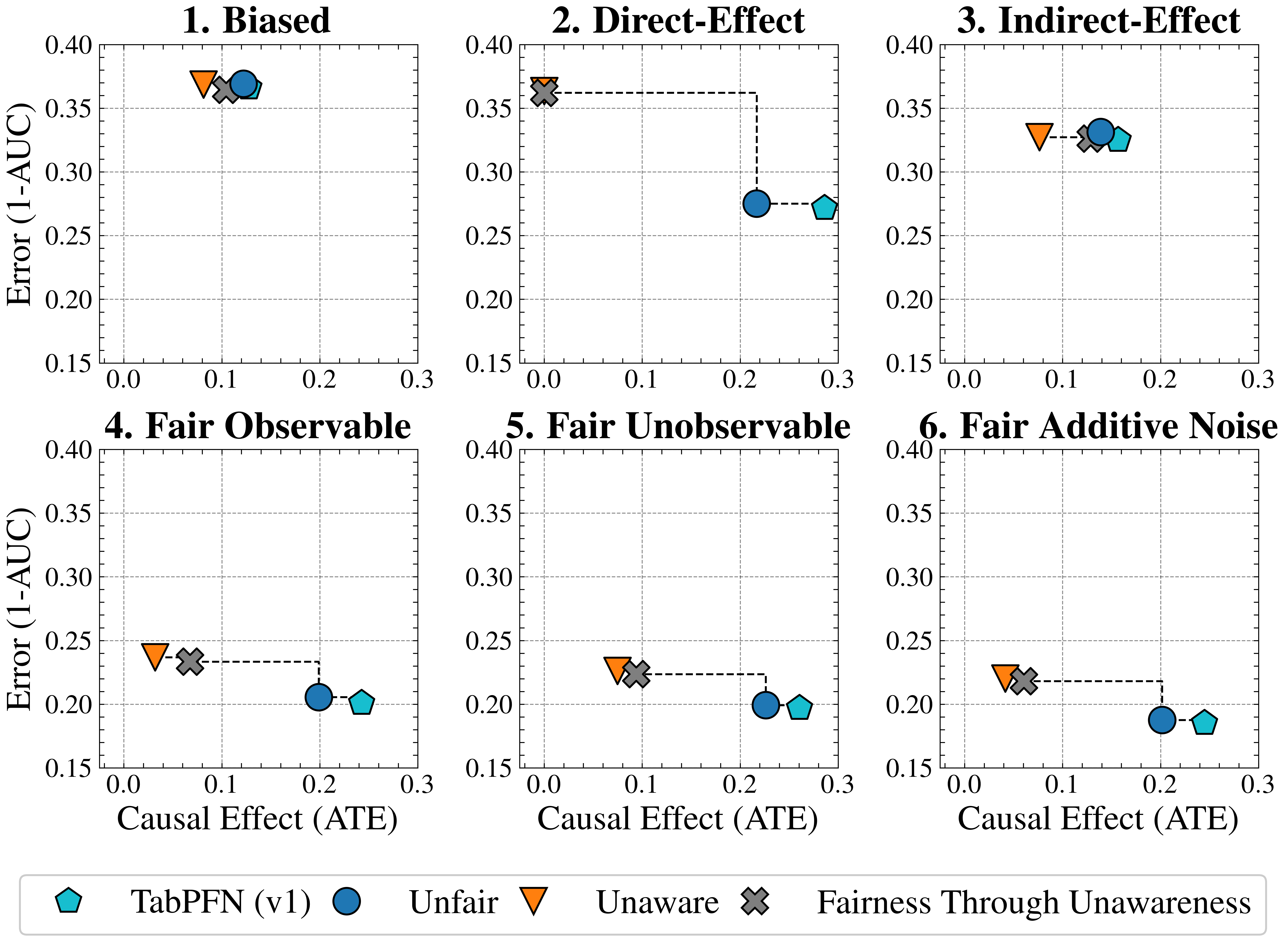

### 5.2 Causal Case Studies

We first evaluate FairPFN using synthetic causal case studies to establish an experimental setting where the data-generating processes and all causal quantities are known, presenting a series of causal case studies with increasing difficulty to evaluate FairPFN’s capacity to remove various sources of bias in causally generated data. The data-generating processes and structural equations are illustrated in Figure 2, following the notation: $A$ for protected attributes, $X_{b}$ for biased-observables, $X_{f}$ for fair-observables, $U$ for fair-unobservables, $\epsilon_{X}$ for additive noise terms, and $Y$ for the outcome, discretized as $Y=\mathbb{1}(Y\geq\bar{Y})$ . We term a variable $X$ "fair" iff $A\notin anc(X)$ . The structural equations in Figure 2 contain exponential non-linearities to ensure the direction of causality is identifiable Peters et al. (2014), distinguishing the Fair Unobservable and Fair Additive Noise scenarios, with the former including an unobservable yet identifiable causal effect $U$ .

For a robust evaluation, we generate 100 datasets per case study, varying causal weights of protected attributes $w_{A}$ , sample sizes $m\in(100,10000)$ (sampled on a log-scale), and the standard deviation $\sigma\in(0,1)$ (log-scale) of additive noise terms. We also create counterfactual versions of each dataset to assess FairPFN and its competitors across multiple causal and counterfactual fairness metrics, such as average treatment effect (ATE) and absolute error (AE) between predictions on observational and counterfactual datasets. We highlight that because our synthetic datasets are created from scratch, the fair causes, additive noise terms, counterfactual datasets, and ATE are ground truth. As a result, our baselines that have access to causal quantities are more precise in our causal case studies than in real-world scenarios where this causal information must be inferred.

<details>

<summary>extracted/6522797/figures/trade-off_by_group_synthetic.png Details</summary>

### Visual Description

## Scatter Plot Grid: Fairness-Accuracy Trade-offs Across Causal Scenarios

### Overview

The image displays a 2x3 grid of six scatter plots, each illustrating the trade-off between model error (1-AUC) and causal effect (Average Treatment Effect, ATE) for various machine learning fairness methods under different data-generating scenarios. A shared legend at the bottom defines eight distinct methods, each represented by a unique colored marker. The plots compare how these methods perform in terms of predictive error and the magnitude of the causal effect they induce or mitigate.

### Components/Axes

* **Plot Titles (Top of each subplot):**

1. Biased

2. Direct-Effect

3. Indirect-Effect

4. Fair Observable

5. Fair Unobservable

6. Fair Additive Noise

* **Y-Axis (Common to all plots):** Label: `Error (1-AUC)`. Scale ranges from 0.20 to 0.50, with major ticks at 0.20, 0.30, 0.40, 0.50.

* **X-Axis (Common to all plots):** Label: `Causal Effect (ATE)`. Scale ranges from 0.00 to 0.25, with major ticks at 0.00, 0.05, 0.10, 0.15, 0.20, 0.25.

* **Legend (Bottom of image):** Contains eight entries, each with a marker symbol and label:

* Blue Circle: `Unfair`

* Orange Inverted Triangle: `Unaware`

* Green Triangle (pointing up): `Constant`

* Red Diamond: `Random`

* Purple Square: `EGR`

* Brown Left-Pointing Triangle: `CFP`

* Pink Star: `FairPFN`

* Yellow Diamond: `Cntf. Avg.` (Counterfactual Average)

### Detailed Analysis

**Plot 1: Biased**

* **Trend:** Methods cluster in the top-left (high error, low causal effect), except for `Unfair` (blue circle) which is an outlier to the right (lower error, higher causal effect).

* **Data Points (Approximate):**

* `Random` (Red Diamond): ATE ≈ 0.00, Error ≈ 0.50

* `Constant` (Green Triangle): ATE ≈ 0.00, Error ≈ 0.49

* `EGR` (Purple Square): ATE ≈ 0.03, Error ≈ 0.44

* `FairPFN` (Pink Star): ATE ≈ 0.01, Error ≈ 0.41

* `Cntf. Avg.` (Yellow Diamond): ATE ≈ 0.01, Error ≈ 0.41

* `CFP` (Brown Triangle): ATE ≈ 0.01, Error ≈ 0.41 (partially obscured)

* `Unaware` (Orange Inv. Triangle): ATE ≈ 0.08, Error ≈ 0.37

* `Unfair` (Blue Circle): ATE ≈ 0.12, Error ≈ 0.37

* **Spatial Grounding:** A dashed line connects `FairPFN`/`Cntf. Avg.` to `Unaware`, and another connects `Unaware` to `Unfair`, suggesting a progression or comparison path.

**Plot 2: Direct-Effect**

* **Trend:** Similar high-error cluster at low ATE. `Unfair` is again an outlier with much lower error but the highest ATE.

* **Data Points (Approximate):**

* `Random` (Red Diamond): ATE ≈ 0.00, Error ≈ 0.50

* `Constant` (Green Triangle): ATE ≈ 0.00, Error ≈ 0.49

* `EGR` (Purple Square): ATE ≈ 0.00, Error ≈ 0.41

* `FairPFN` (Pink Star): ATE ≈ 0.01, Error ≈ 0.39

* `CFP` (Brown Triangle): ATE ≈ 0.00, Error ≈ 0.36

* `Unaware` (Orange Inv. Triangle): ATE ≈ 0.00, Error ≈ 0.36

* `Unfair` (Blue Circle): ATE ≈ 0.22, Error ≈ 0.28

* **Spatial Grounding:** A dashed line connects the cluster around `CFP`/`Unaware` to `Unfair`.

**Plot 3: Indirect-Effect**

* **Trend:** The `Unfair` method has the lowest error and a moderate ATE. Other methods show a clearer separation, with `Unaware` having a higher ATE than the high-error cluster.

* **Data Points (Approximate):**

* `Random` (Red Diamond): ATE ≈ 0.00, Error ≈ 0.50

* `Constant` (Green Triangle): ATE ≈ 0.00, Error ≈ 0.49

* `CFP` (Brown Triangle): ATE ≈ 0.00, Error ≈ 0.42

* `EGR` (Purple Square): ATE ≈ 0.06, Error ≈ 0.42

* `FairPFN` (Pink Star): ATE ≈ 0.01, Error ≈ 0.38

* `Cntf. Avg.` (Yellow Diamond): ATE ≈ 0.01, Error ≈ 0.38

* `Unaware` (Orange Inv. Triangle): ATE ≈ 0.08, Error ≈ 0.33

* `Unfair` (Blue Circle): ATE ≈ 0.14, Error ≈ 0.33

* **Spatial Grounding:** A dashed line connects `FairPFN`/`Cntf. Avg.` to `Unaware`.

**Plot 4: Fair Observable**

* **Trend:** `Unfair` achieves the lowest error but at the cost of the highest ATE. `FairPFN` and `Cntf. Avg.` achieve very low error with near-zero ATE. `Unaware` has low error but moderate ATE.

* **Data Points (Approximate):**

* `Random` (Red Diamond): ATE ≈ 0.00, Error ≈ 0.50

* `Constant` (Green Triangle): ATE ≈ 0.00, Error ≈ 0.49

* `CFP` (Brown Triangle): ATE ≈ 0.00, Error ≈ 0.33

* `EGR` (Purple Square): ATE ≈ 0.02, Error ≈ 0.33

* `FairPFN` (Pink Star): ATE ≈ 0.01, Error ≈ 0.28

* `Cntf. Avg.` (Yellow Diamond): ATE ≈ 0.01, Error ≈ 0.28

* `Unaware` (Orange Inv. Triangle): ATE ≈ 0.04, Error ≈ 0.24

* `Unfair` (Blue Circle): ATE ≈ 0.20, Error ≈ 0.21

* **Spatial Grounding:** A dashed line connects `Random`/`Constant` down to `FairPFN`/`Cntf. Avg.`, and another connects `FairPFN`/`Cntf. Avg.` to `Unaware`, and a third connects `Unaware` to `Unfair`.

**Plot 5: Fair Unobservable**

* **Trend:** Similar pattern to Plot 4. `Unfair` has the lowest error and highest ATE. `FairPFN` and `Cntf. Avg.` show a good balance of low error and low ATE.

* **Data Points (Approximate):**

* `Random` (Red Diamond): ATE ≈ 0.00, Error ≈ 0.50

* `Constant` (Green Triangle): ATE ≈ 0.00, Error ≈ 0.49

* `EGR` (Purple Square): ATE ≈ 0.06, Error ≈ 0.31

* `CFP` (Brown Triangle): ATE ≈ 0.00, Error ≈ 0.28

* `FairPFN` (Pink Star): ATE ≈ 0.01, Error ≈ 0.28

* `Cntf. Avg.` (Yellow Diamond): ATE ≈ 0.01, Error ≈ 0.28

* `Unaware` (Orange Inv. Triangle): ATE ≈ 0.08, Error ≈ 0.23

* `Unfair` (Blue Circle): ATE ≈ 0.22, Error ≈ 0.20

* **Spatial Grounding:** A dashed line connects `Random`/`Constant` down to `FairPFN`/`Cntf. Avg.`, and another connects `FairPFN`/`Cntf. Avg.` to `Unaware`, and a third connects `Unaware` to `Unfair`.

**Plot 6: Fair Additive Noise**

* **Trend:** `Unfair` has the lowest error and a high ATE. `FairPFN` and `Cntf. Avg.` are clustered with low error and very low ATE.

* **Data Points (Approximate):**

* `Random` (Red Diamond): ATE ≈ 0.00, Error ≈ 0.50

* `Constant` (Green Triangle): ATE ≈ 0.00, Error ≈ 0.49

* `EGR` (Purple Square): ATE ≈ 0.03, Error ≈ 0.30

* `CFP` (Brown Triangle): ATE ≈ 0.00, Error ≈ 0.27

* `FairPFN` (Pink Star): ATE ≈ 0.01, Error ≈ 0.27

* `Cntf. Avg.` (Yellow Diamond): ATE ≈ 0.01, Error ≈ 0.27

* `Unaware` (Orange Inv. Triangle): ATE ≈ 0.05, Error ≈ 0.22

* `Unfair` (Blue Circle): ATE ≈ 0.20, Error ≈ 0.19

* **Spatial Grounding:** A dashed line connects `Random`/`Constant` down to `FairPFN`/`Cntf. Avg.`, and another connects `FairPFN`/`Cntf. Avg.` to `Unaware`, and a third connects `Unaware` to `Unfair`.

### Key Observations

1. **Consistent Baselines:** The `Random` and `Constant` methods consistently show the highest error (~0.50) and near-zero causal effect across all six scenarios, serving as performance baselines.

2. **The Unfair Baseline:** The `Unfair` method (blue circle) consistently achieves the lowest or near-lowest error in every plot but always at the expense of the highest causal effect (ATE), illustrating the core fairness-accuracy trade-off.

3. **Cluster of Fair Methods:** Methods like `FairPFN`, `Cntf. Avg.`, and often `CFP` cluster together in the low-error, low-ATE region, especially in the "Fair" scenarios (Plots 4, 5, 6). They appear to offer a favorable balance.

4. **Impact of Scenario:** The spread of points changes across scenarios. In "Biased" and "Direct-Effect," most fair methods are clustered at high error. In "Fair Observable," "Fair Unobservable," and "Fair Additive Noise," the fair methods achieve significantly lower error while maintaining low ATE.

5. **Dashed Lines:** The dashed lines appear to trace a "frontier" or comparison path, often connecting the high-error/random methods down to the better-performing fair methods, and then to the `Unaware` and finally the `Unfair` method.

### Interpretation

This visualization is a comparative analysis of algorithmic fairness interventions. The **Causal Effect (ATE)** on the x-axis likely measures the disparity or bias in model outcomes between protected groups. **Error (1-AUC)** on the y-axis measures predictive inaccuracy.

The data demonstrates a fundamental tension: methods that completely ignore fairness (`Unfair`) achieve the best predictive performance but cause the largest harmful disparities. Conversely, naive methods (`Random`, `Constant`) eliminate disparity but are useless for prediction.

The key insight is the performance of methods like **FairPFN** and **Cntf. Avg.** They consistently appear in the "sweet spot" of the plots—achieving error rates much closer to the `Unfair` baseline while keeping the causal effect (bias) very low, particularly in the scenarios labeled "Fair." This suggests these methods are effective at mitigating unfairness without catastrophically sacrificing accuracy.

The variation across the six titled scenarios indicates that the effectiveness of each fairness method is highly dependent on the underlying data-generating process (e.g., whether bias is direct, indirect, or based on observable/unobservable factors). The plots serve as a guide for selecting an appropriate fairness intervention based on the suspected causal structure of bias in a given problem domain.

</details>

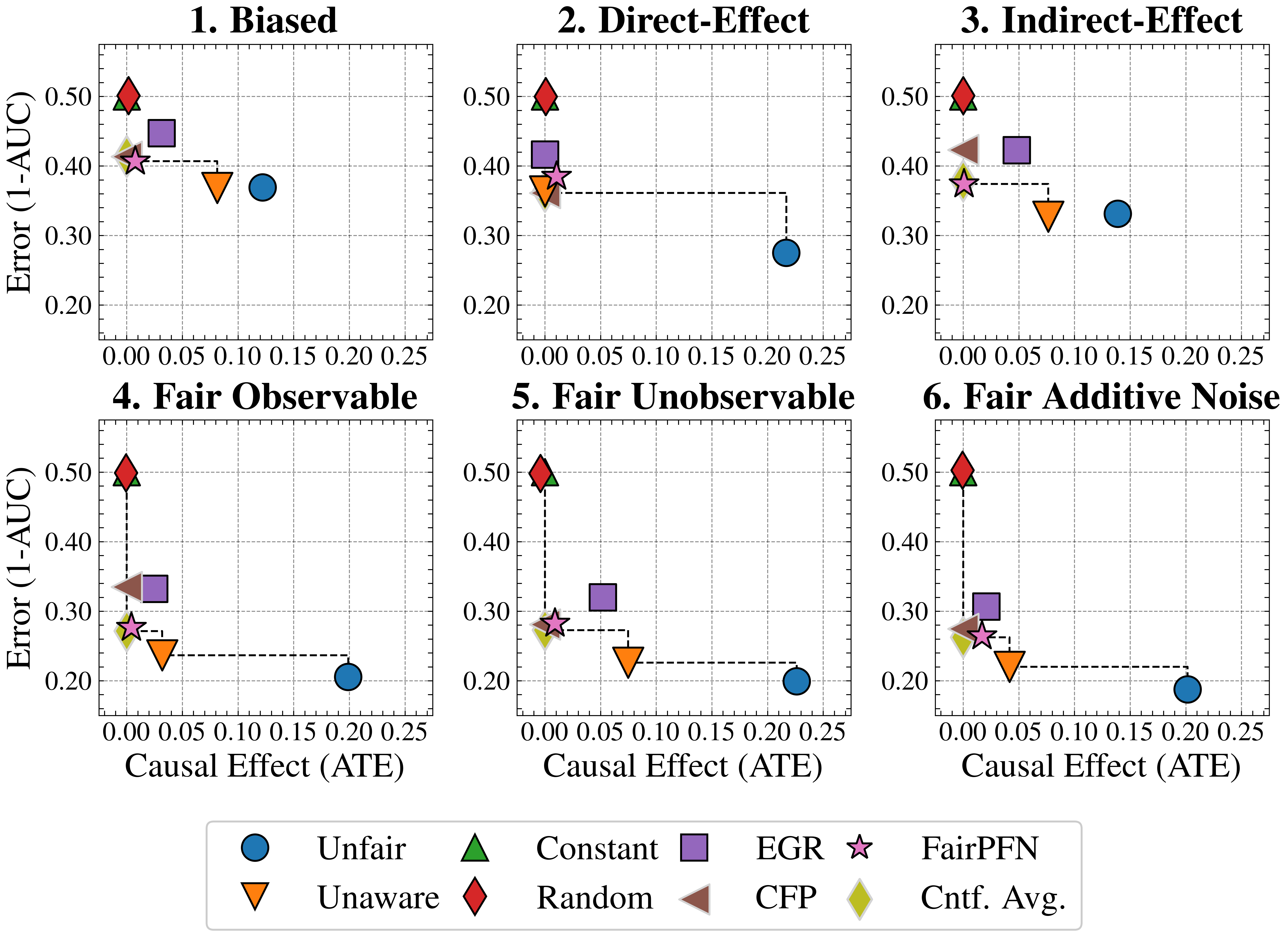

Figure 3: Fairness Accuracy Trade-Off (Synthetic): Average Treatment Effect (ATE) of predictions, predictive error (1-AUC), and Pareto Front performance of FairPFN versus baselines in our causal case studies. Baselines which have access to causal information are indicated by a light border. FairPFN is on the Pareto Front on 40% of synthetic datasets using only observational data, demonstrating competitive performance with the CFP and Cntf. Avg. baselines that utilize causal quantities from the true data-generating process.

#### Fairness-Accuracy Trade-Off

Figure 3 presents the fairness-accuracy trade-off for FairPFN and its baselines, displaying the mean absolute treatment effect (ATE) and mean predictive error (1-AUC) observed across synthetic datasets, along with the Pareto Front of non-dominated solutions. FairPFN (which only uses observational data) attains Pareto Optimal performance in 40% of the 600 synthetic datasets, exhibiting a fairness-accuracy trade-off competitive with CFP and Cntf. Avg., which use causal quantities from the true data-generating process. This is even the case in the Fair Unobservable and Fair Additive Noise benchmark groups, producing causally fair predictions using only observational variables that are either a protected attribute or a causal ancestor of it. This indicates FairPFN’s capacity to infer latent unobservables, which we further investigate in Section 5.3. We also highlight how the Cntf. Avg. baseline achieves lower error than CFP. We believe that this is due to Cntf. Avg. having access to both the observational and counterfactual datasets, which implicitly contains causal weights and non-linearities, while CFP is given only fair unobservables and must infer this causal information. The fact that a PFN is used as a base model in Cntf. Avg. could further explain this performance gain, as access to more observable variables helps guide the PFN toward accurate predictions realistic for the data. We suggest that this Cntf. Avg. as an alternative should be explored in future studies.

<details>

<summary>extracted/6522797/figures/tce_by_group_synthetic_new.png Details</summary>

### Visual Description

## Box Plot Series: Causal Effect (ATE) Across Six Fairness Scenarios

### Overview

The image displays a 2x3 grid of six box plots, each visualizing the distribution of "Causal Effect (ATE)" for four different methods under distinct experimental conditions. The plots are titled to indicate the scenario being tested. A shared legend at the bottom identifies the four methods and provides a summary performance metric.

### Components/Axes

* **Chart Type:** Box and whisker plots with overlaid data points (jitter).

* **Y-Axis (All Plots):** Labeled **"Causal Effect (ATE)"**. The scale ranges from -0.5 to 0.75, with major gridlines at intervals of 0.25 (-0.5, -0.25, 0, 0.25, 0.5, 0.75).

* **X-Axis (All Plots):** Represents four categorical methods. The categories are not labeled on the axis but are defined by color in the legend.

* **Legend (Bottom Center):** Contains the title **"Avg. Rank (ATE)"** and defines the four methods with associated colors and a numerical rank (lower is better):

* **Pink:** `FairPFN: 1.88/4`

* **Purple:** `EGR: 2.11/4`

* **Orange:** `Unaware: 2.16/4`

* **Blue:** `Unfair: 3.42/4`

* **Subplot Titles (Top of each plot):**

1. **Biased** (Top Left)

2. **Direct-Effect** (Top Center)

3. **Indirect-Effect** (Top Right)

4. **Fair Observable** (Bottom Left)

5. **Fair Unobservable** (Bottom Center)

6. **Fair Additive Noise** (Bottom Right)

### Detailed Analysis

**General Structure per Plot:** Each subplot contains four box plots, one for each method (Blue, Orange, Purple, Pink from left to right). The box represents the interquartile range (IQR), the line inside is the median, whiskers extend to 1.5*IQR, and circles represent individual data points/outliers.

**Plot-by-Plot Analysis:**

1. **Biased:**

* **Unfair (Blue):** Highest median (~0.05), largest IQR (box from ~0 to ~0.2), and widest overall range (whiskers from ~-0.15 to ~0.45). Many high-value outliers up to ~0.75.

* **Unaware (Orange):** Median near 0, smaller IQR than Blue, range ~-0.05 to ~0.3.

* **EGR (Purple):** Median slightly below 0, IQR similar to Orange, but with notable low-value outliers down to ~-0.5.

* **FairPFN (Pink):** Median at 0, very compact IQR, range ~-0.1 to ~0.1. Tightest distribution.

2. **Direct-Effect:**

* **Unfair (Blue):** Dominates the plot. Median ~0.15, large IQR (box from ~0.05 to ~0.35), whiskers from ~-0.1 to ~0.65.

* **Unaware (Orange), EGR (Purple), FairPFN (Pink):** All are extremely compressed around 0. Their boxes are nearly flat lines, indicating near-zero variance and median. Minor outliers exist within ±0.1.

3. **Indirect-Effect:**

* **Unfair (Blue):** Similar pattern to "Biased" plot. Median ~0.05, IQR ~0 to ~0.2, outliers up to ~0.75.

* **Unaware (Orange):** Median ~0, IQR ~0 to ~0.1.

* **EGR (Purple):** Median ~0, IQR ~0 to ~0.1, with low outliers to ~-0.4.

* **FairPFN (Pink):** Very tight distribution around 0.

4. **Fair Observable:**

* **Unfair (Blue):** Median ~0.15, IQR ~0.05 to ~0.3.

* **Unaware (Orange):** Median ~0, very compact.

* **EGR (Purple):** Median ~0, compact but with low outliers to ~-0.4.

* **FairPFN (Pink):** Extremely tight around 0.

5. **Fair Unobservable:**

* **Unfair (Blue):** Median ~0.2, IQR ~0.05 to ~0.35, whiskers to ~0.7.

* **Unaware (Orange):** Median ~0.05, small IQR.

* **EGR (Purple):** Median ~0, small IQR, low outliers.

* **FairPFN (Pink):** Tight around 0.

6. **Fair Additive Noise:**

* **Unfair (Blue):** Median ~0.15, IQR ~0.05 to ~0.3.

* **Unaware (Orange):** Median ~0, small IQR.

* **EGR (Purple):** Median ~0, small IQR, low outliers.

* **FairPFN (Pink):** Tight around 0.

### Key Observations

1. **Consistent Hierarchy:** Across all six scenarios, the **Unfair (Blue)** method consistently shows the highest median causal effect (ATE) and the greatest variance (widest box and whiskers). **FairPFN (Pink)** consistently shows a median at or very near zero with the smallest variance.

2. **Scenario Impact:** The "Direct-Effect" scenario shows the most dramatic suppression of effect for the three fair/unaware methods (Orange, Purple, Pink), compressing them to near-zero variance. The "Biased" and "Indirect-Effect" scenarios show the most pronounced high-value outliers for the Unfair method.

3. **Method Comparison:** The **Unaware (Orange)** and **EGR (Purple)** methods generally perform similarly, with medians near zero. EGR exhibits a recurring pattern of negative outliers (low ATE values) in several plots (Biased, Indirect-Effect, Fair Observable).

4. **Legend Rank Correlation:** The visual performance aligns with the "Avg. Rank" in the legend. FairPFN (rank 1.88) is visually the best (lowest, tightest ATE). Unfair (rank 3.42) is visually the worst (highest, most variable ATE). Unaware and EGR are in the middle and close in rank (2.16 vs. 2.11), reflecting their similar visual performance.

### Interpretation

This figure evaluates how different algorithmic approaches (FairPFN, EGR, Unaware) perform in estimating or mitigating **causal effects** (specifically, Average Treatment Effect - ATE) compared to an **Unfair** baseline, across various data-generating scenarios related to fairness.

* **What the data suggests:** The "Unfair" method, which likely does not account for fairness constraints, results in substantial and variable estimated causal effects. In contrast, the methods designed for fairness (FairPFN, EGR) or that are simply unaware of sensitive attributes (Unaware) successfully drive the estimated ATE towards zero. This implies these methods are effective at removing or neutralizing the measured causal influence of a treatment, which in a fairness context often corresponds to a sensitive attribute like race or gender.

* **How elements relate:** The six scenarios (Biased, Direct/Indirect Effect, Fair Observable/Unobservable/Noise) test the robustness of the methods under different assumptions about how bias or fairness is embedded in the data. The consistent pattern across plots indicates the core finding is robust: fairness-aware methods suppress the measured causal effect.

* **Notable patterns/anomalies:**

* The extreme compression in the "Direct-Effect" plot suggests that when the causal pathway is direct, the fairness interventions (and even the unaware method) are exceptionally effective at nullifying the measured effect.

* The negative outliers for EGR are an anomaly, suggesting that in some runs, this method may over-correct, leading to a negative estimated ATE.

* The high-value outliers for the Unfair method in "Biased" and "Indirect-Effect" scenarios indicate that under those data conditions, the lack of fairness constraints can lead to very large estimated causal disparities.

**In summary, the visualization provides strong evidence that the FairPFN method (and to a lesser extent EGR and Unaware) consistently and effectively minimizes the estimated average causal effect of a treatment across a variety of fairness-related data scenarios, outperforming an unfair baseline.**

</details>

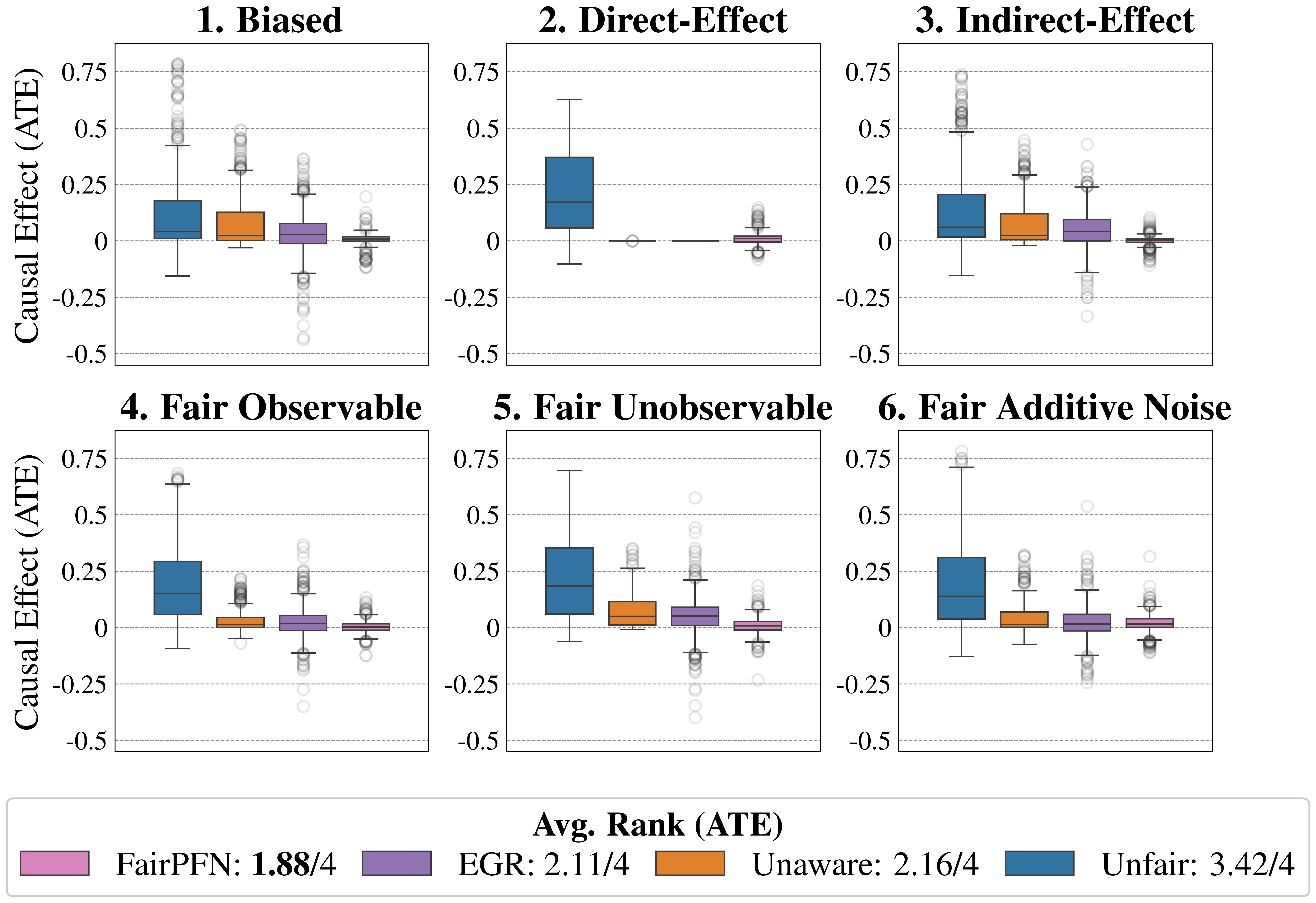

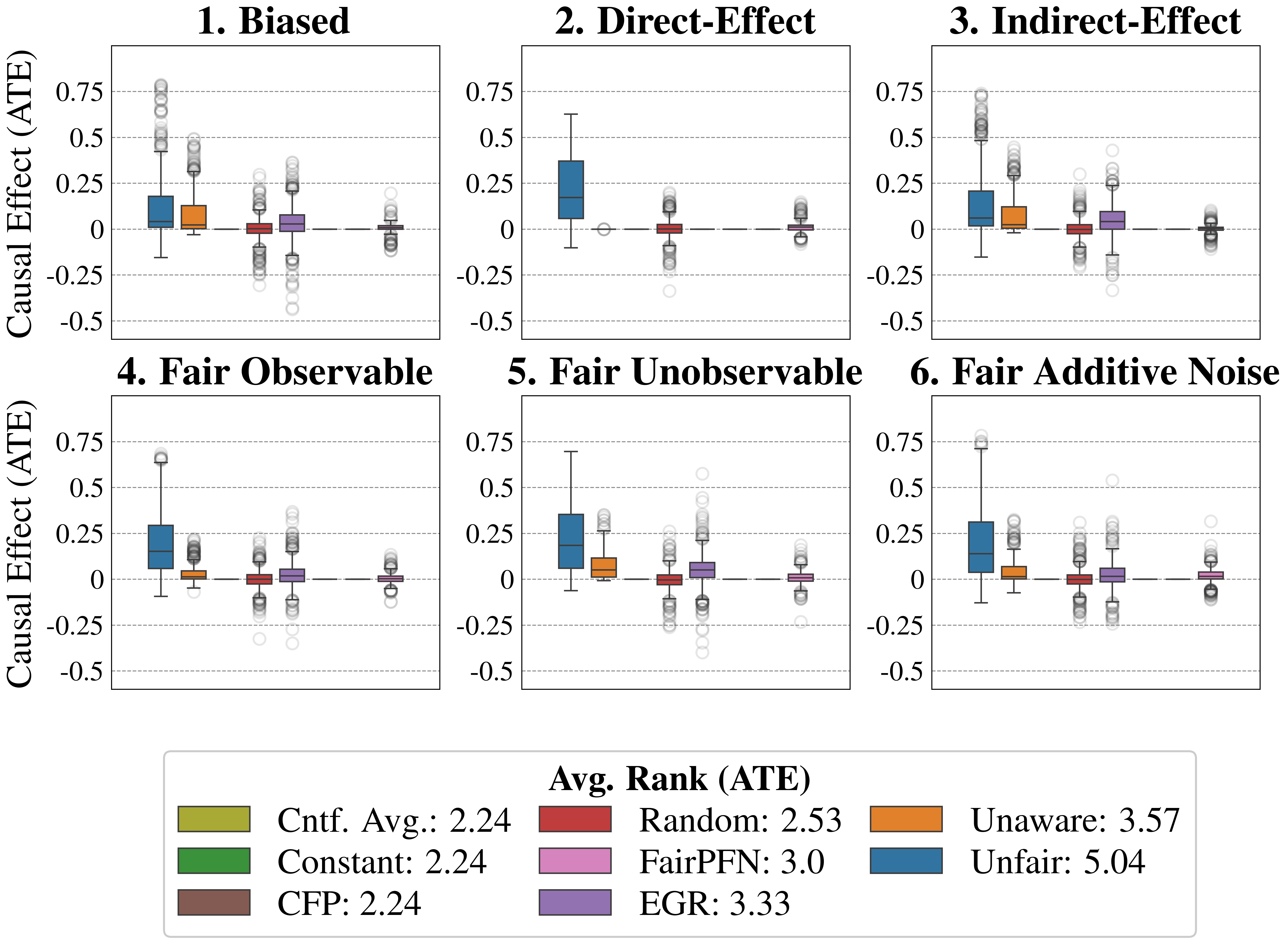

Figure 4: Causal Fairness (Synthetic): Average Treatment Effect (ATE) of predictions of FairPFN compared to baselines which do not have access to causal information. FairPFN consistently removes the causal effect with a margin of error of (-0.2, 0.2) and achieves an average rank of 1.88 out of 4, only to be outperformed on the Direct-Effect benchmark where Unaware is the optimal strategy.

Causal Effect Removal We evaluate FairPFN’s efficacy in causal effect removal by analyzing box plots depicting the median, interquartile range (IQR), and average treatment effect (ATE) of predictions, compared to baseline predictive models that also do not access causal information (Figure 4). We observe that FairPFN exhibits a smaller IQR than the state-of-the-art bias mitigation method EGR. In an average rank test across 600 synthetic datasets, FairPFN achieves an average rank of 1.88 out of 4. We provide a comparison of FairPFN against all baselines in Figure 24. We note that our case studies crucially fit our prior assumptions about the causal representation of protected attributes. We show in Appendix Figure 13 that FairPFN reverts to a normal classifier when, for example, the exogeneity assumption is violated.

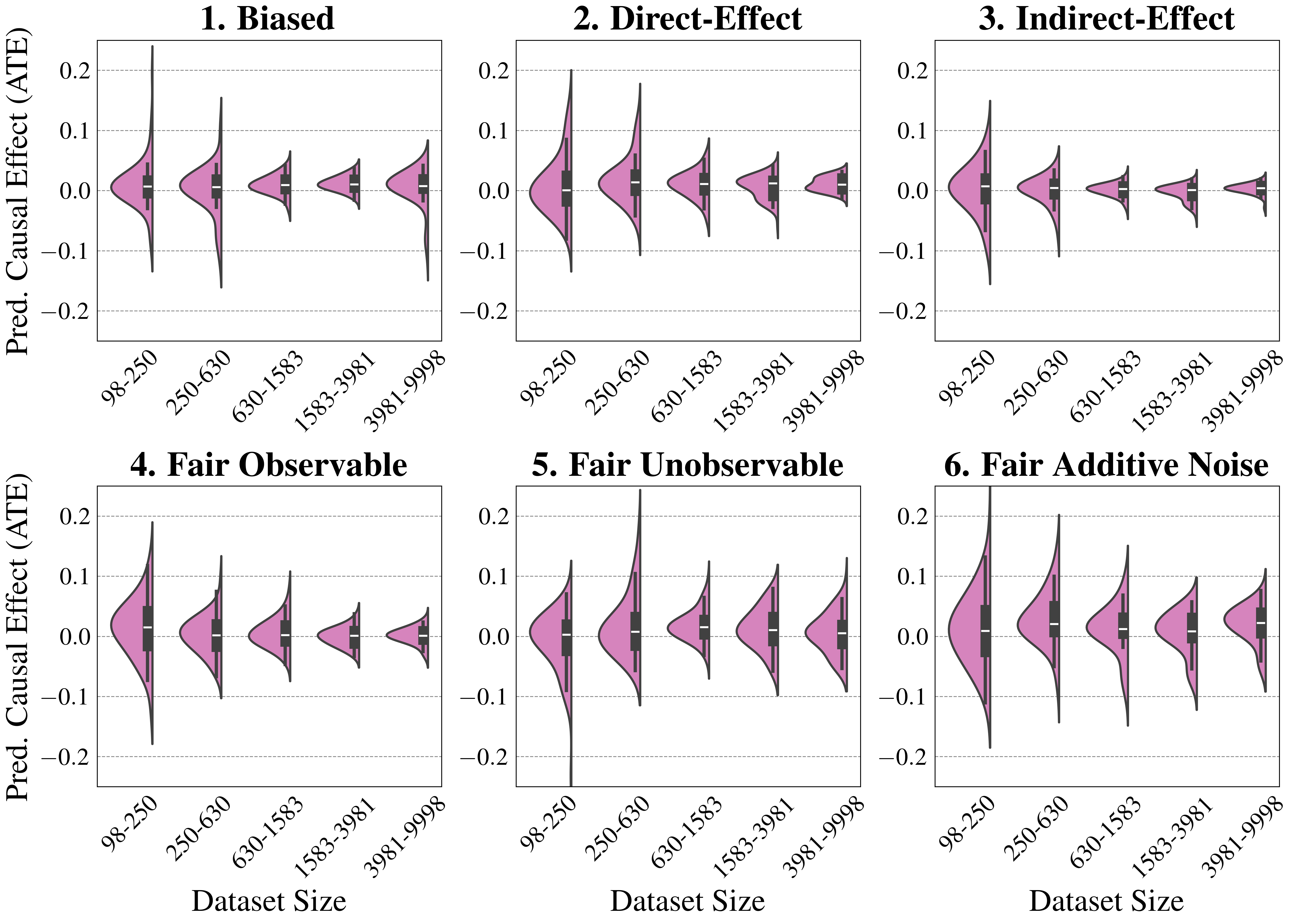

#### Ablation Study

We finally conduct an ablation study to evaluate FairPFN’s performance in causal effect removal across synthetic datasets with varying size, noise levels, and base rates of causal effect. Results indicate that FairPFN maintains consistent performance across different noise levels and base rates, improving in causal effect removal as dataset size increases and causal effects become easier to distinguish from spurious correlations Dai et al. (1997). We note that the variance of FairPFN, illustrated by box-plot outliers in Figure 4 that extend to 0.2 and -0.2, is primarily arises from small datasets with fewer than 250 samples (Appendix Figure 11), limiting FairPFN’s ability to identify causal mechanisms. We also show in Appendix Figure 14 that FairPFN’s fairness behavior remains consistent as graph complexity increases, though accuracy drops do to the combinatorially increasing problem complexity.

For a more in-depth analysis of these results, we refer to Appendix B.

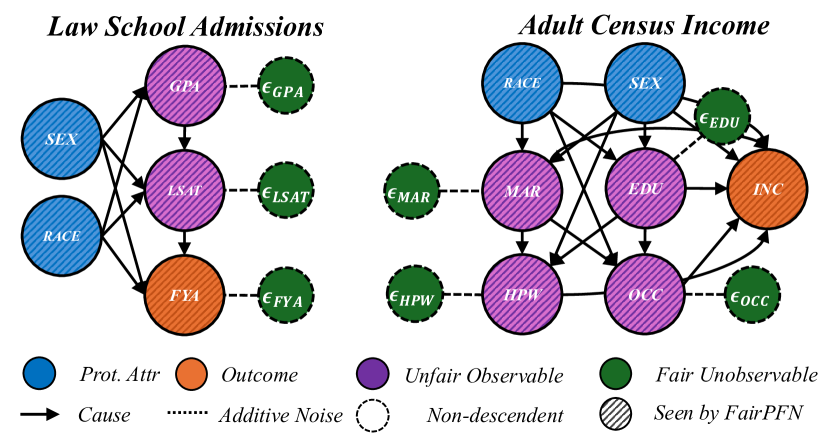

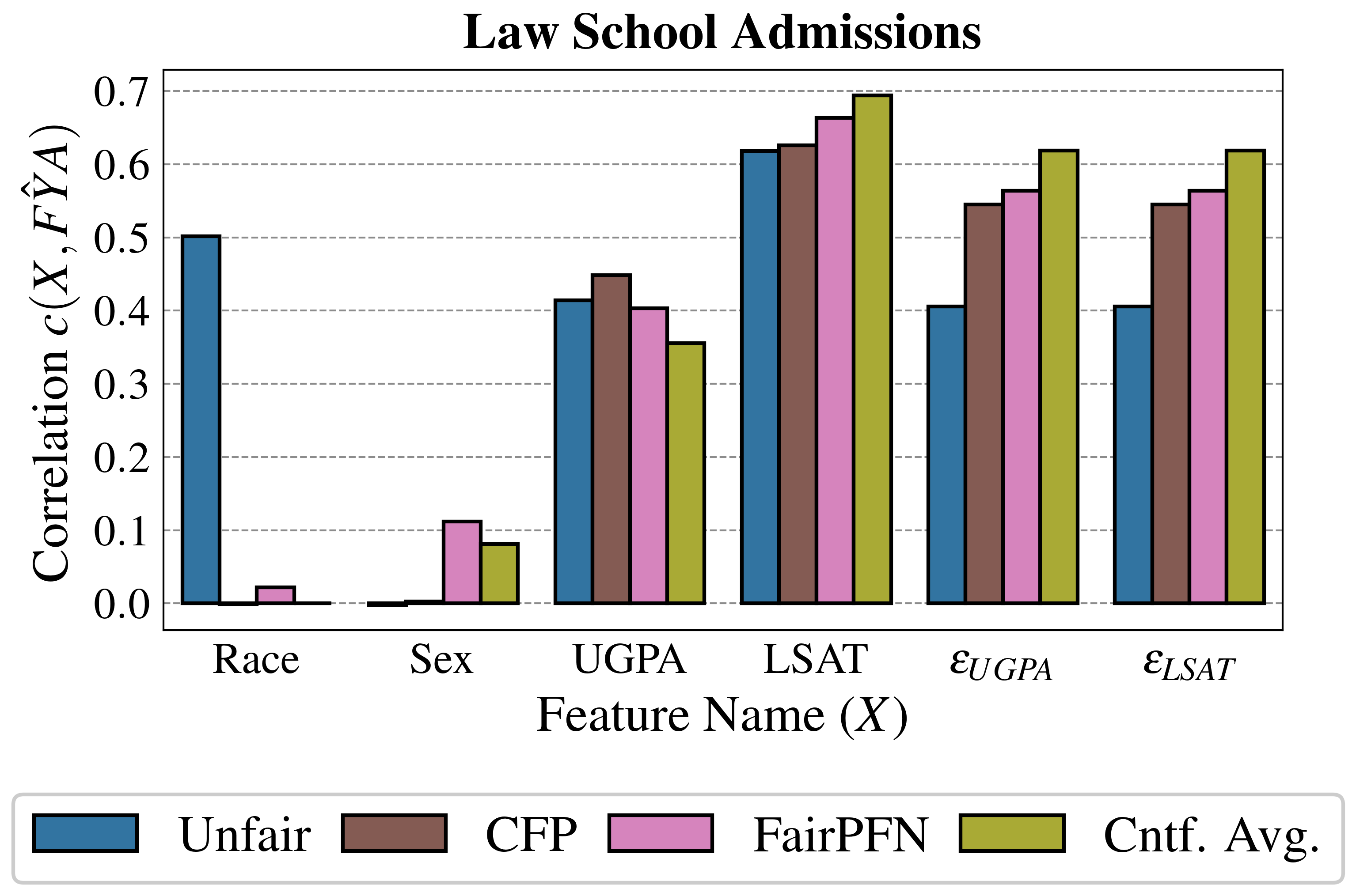

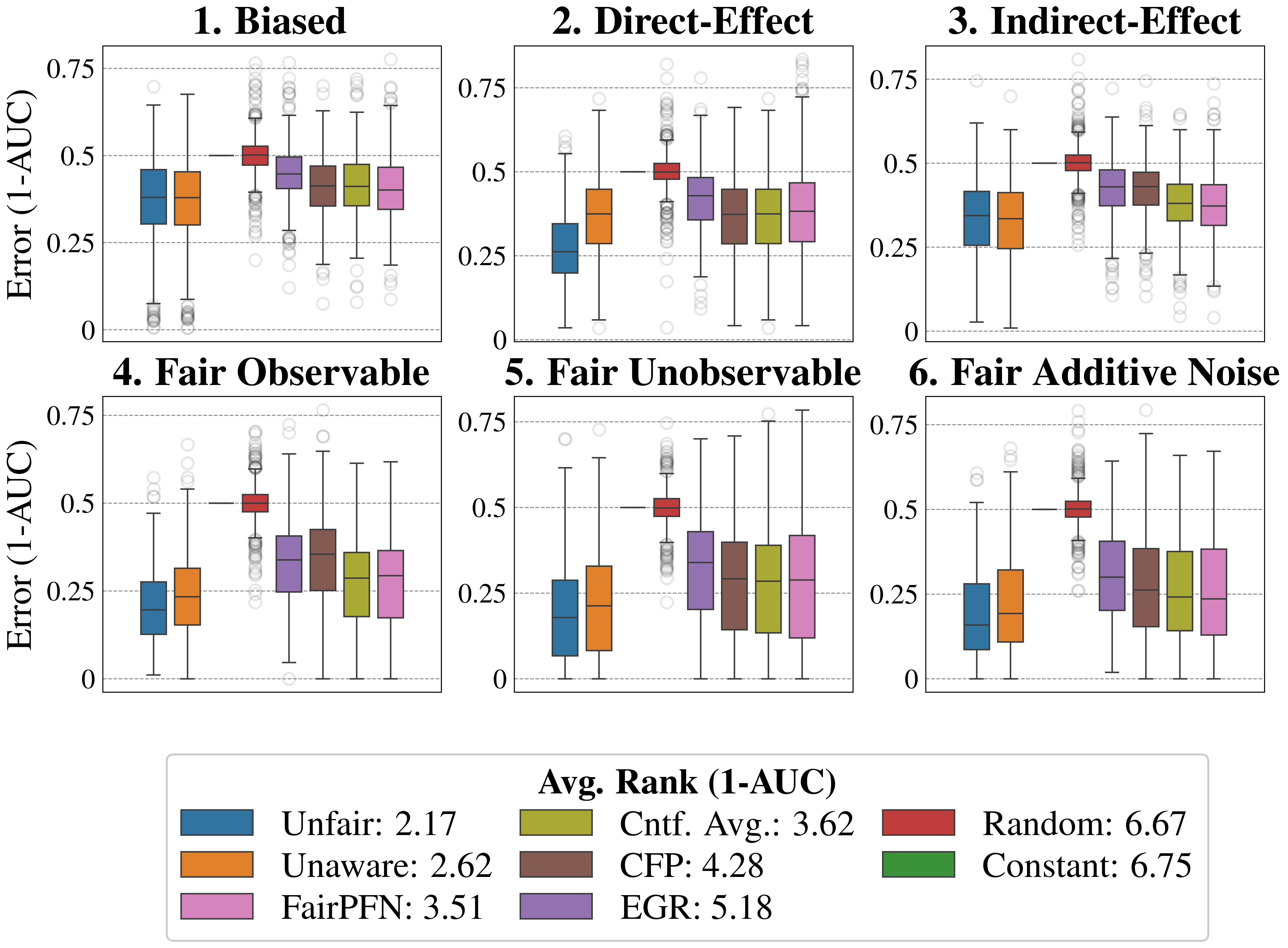

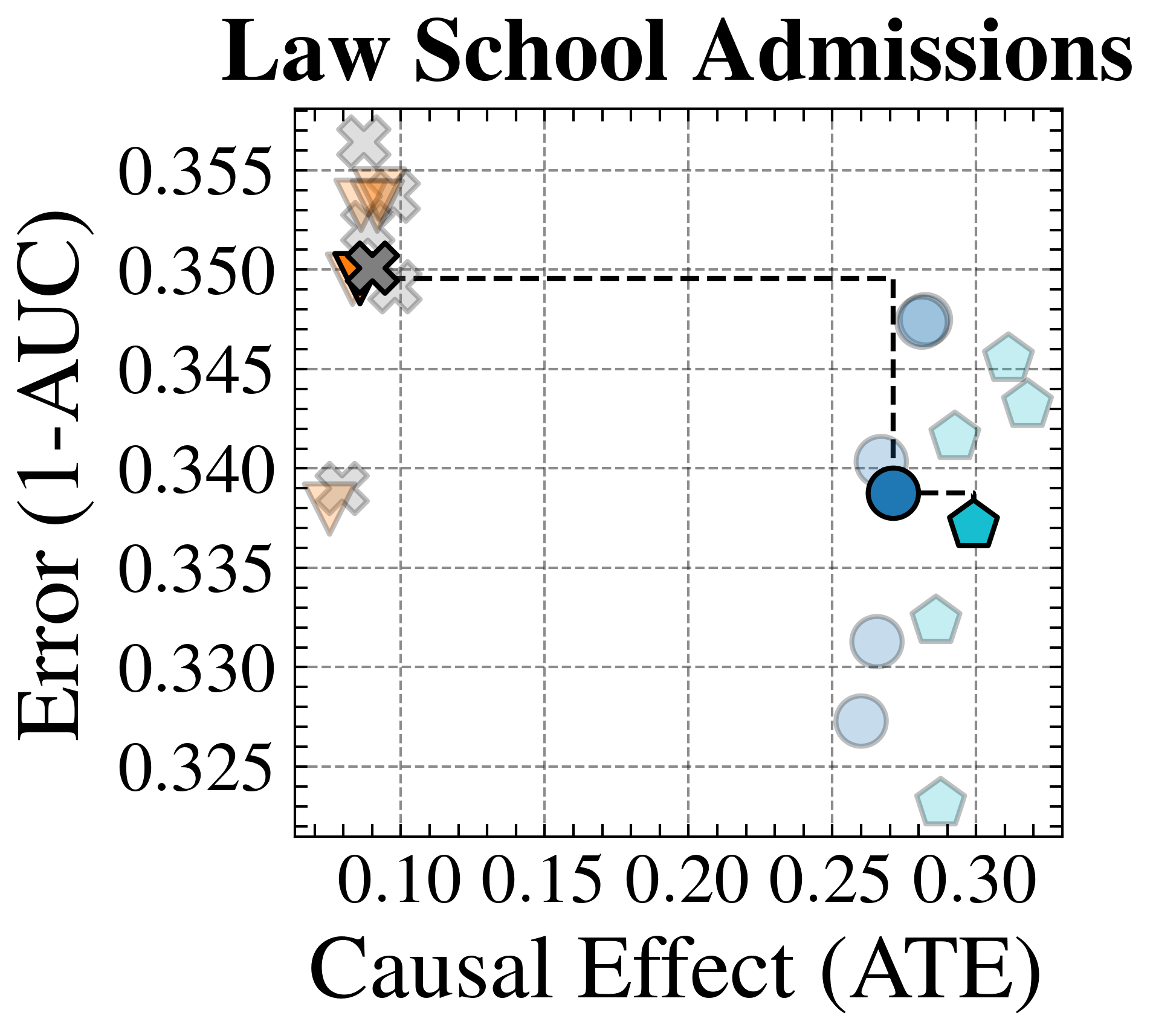

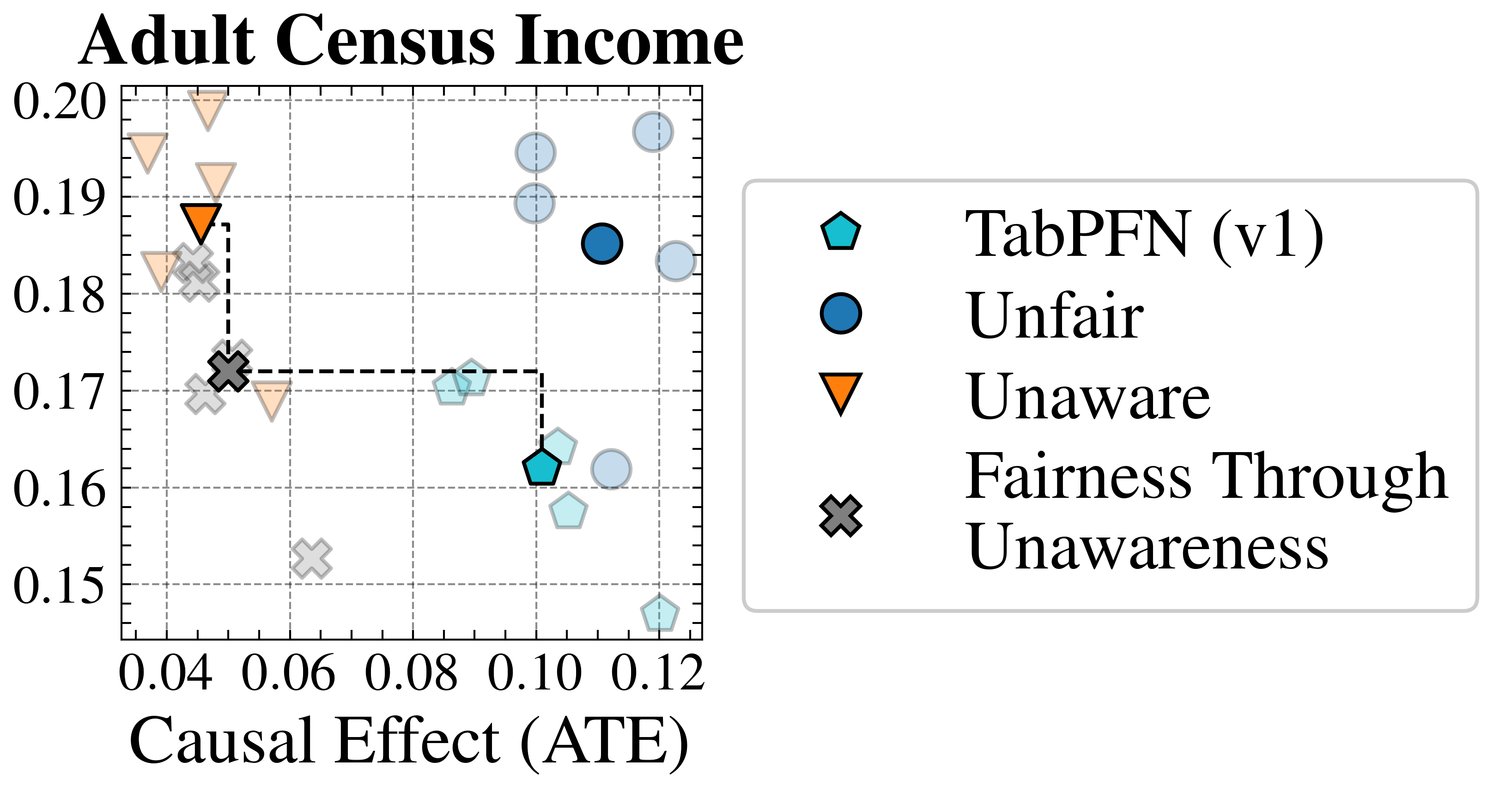

### 5.3 Real-World Data

This section evaluates FairPFN’s causal effect removal, predictive error, and correlation with fair latent variables on two real-world datasets with established causal graphs (Figure 5). For a description of our real-world datasets and the methods we use to obtain causal models, see Appendix A.

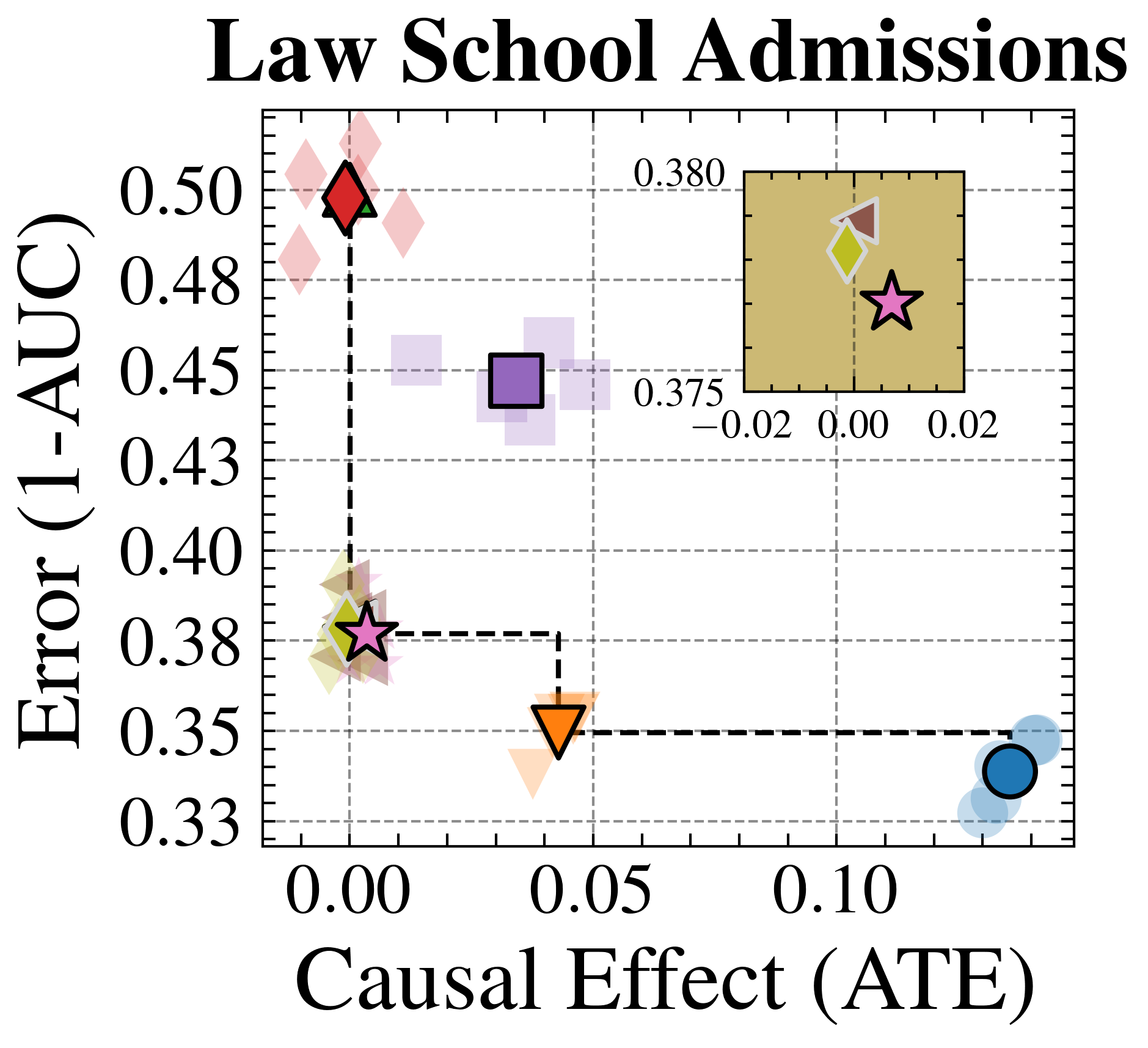

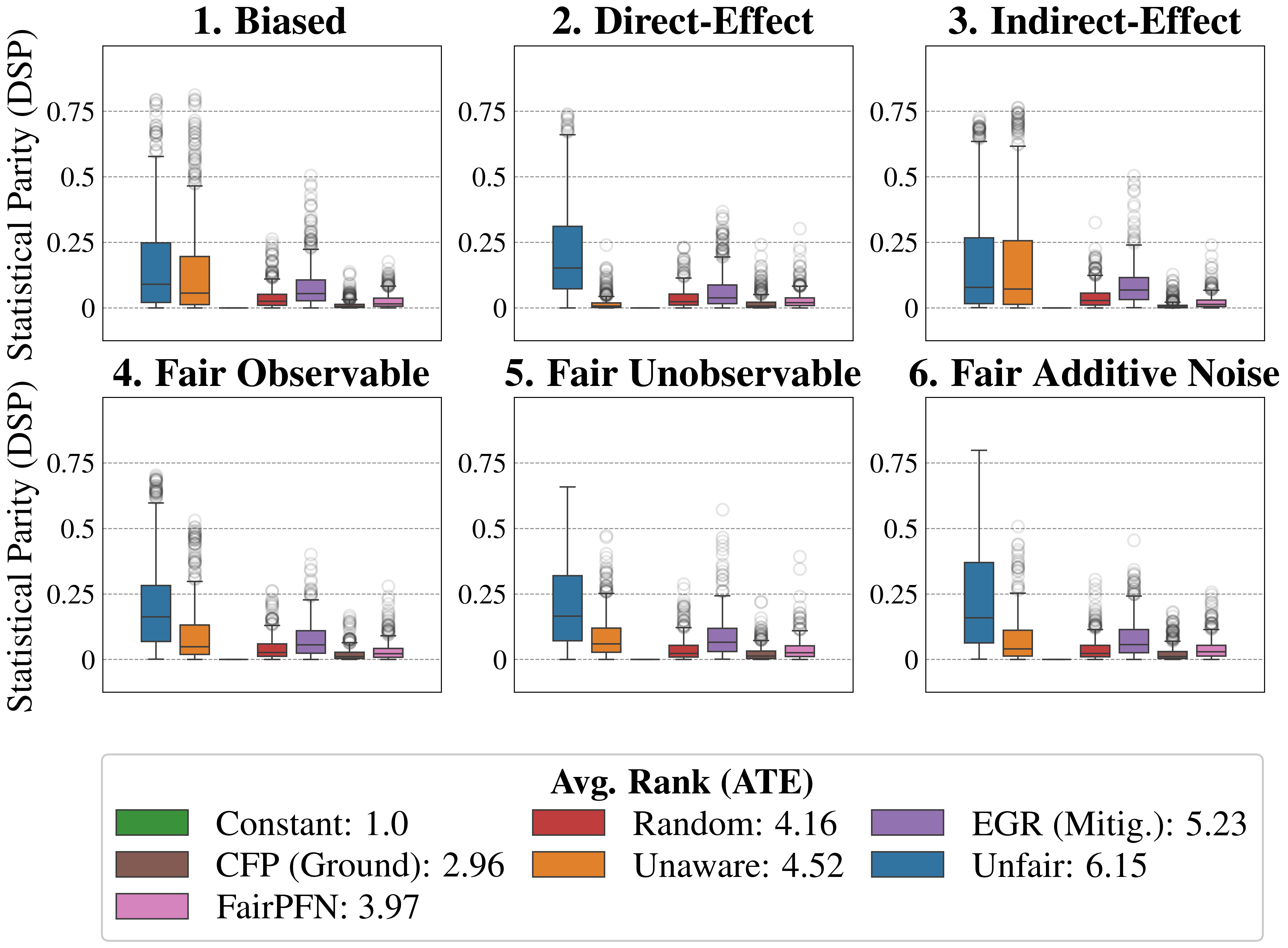

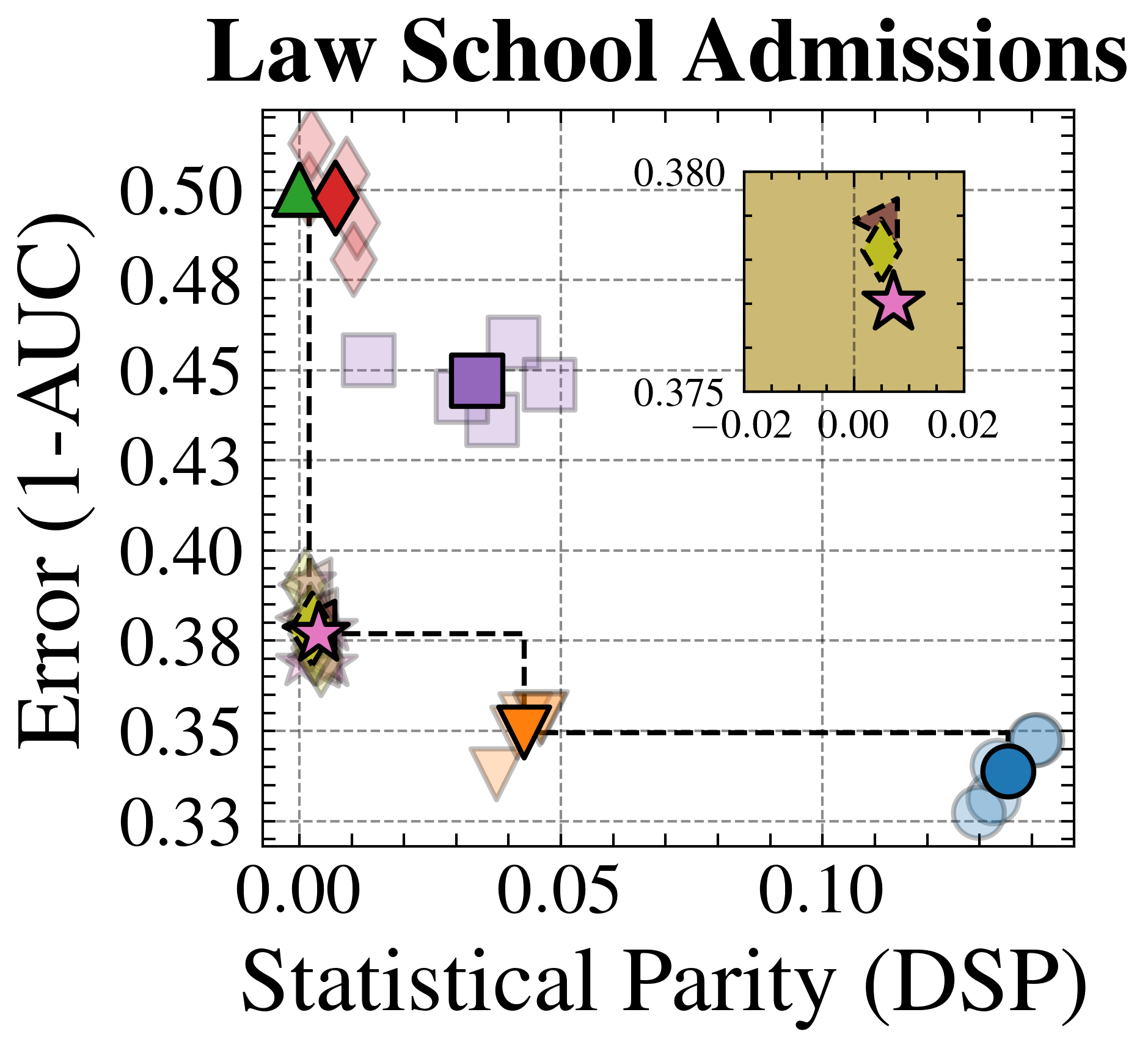

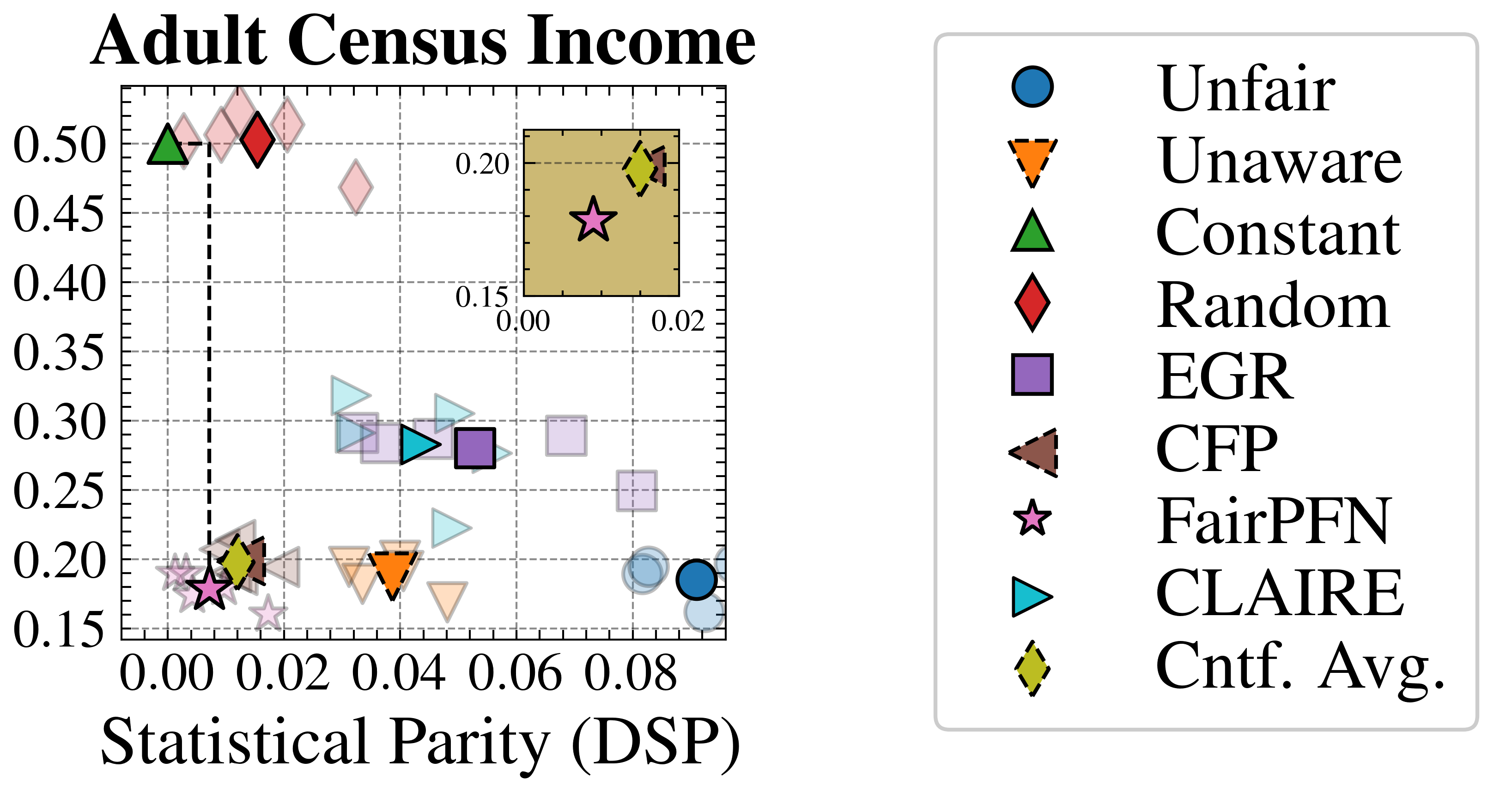

#### Fairness-Accuracy Trade-Off

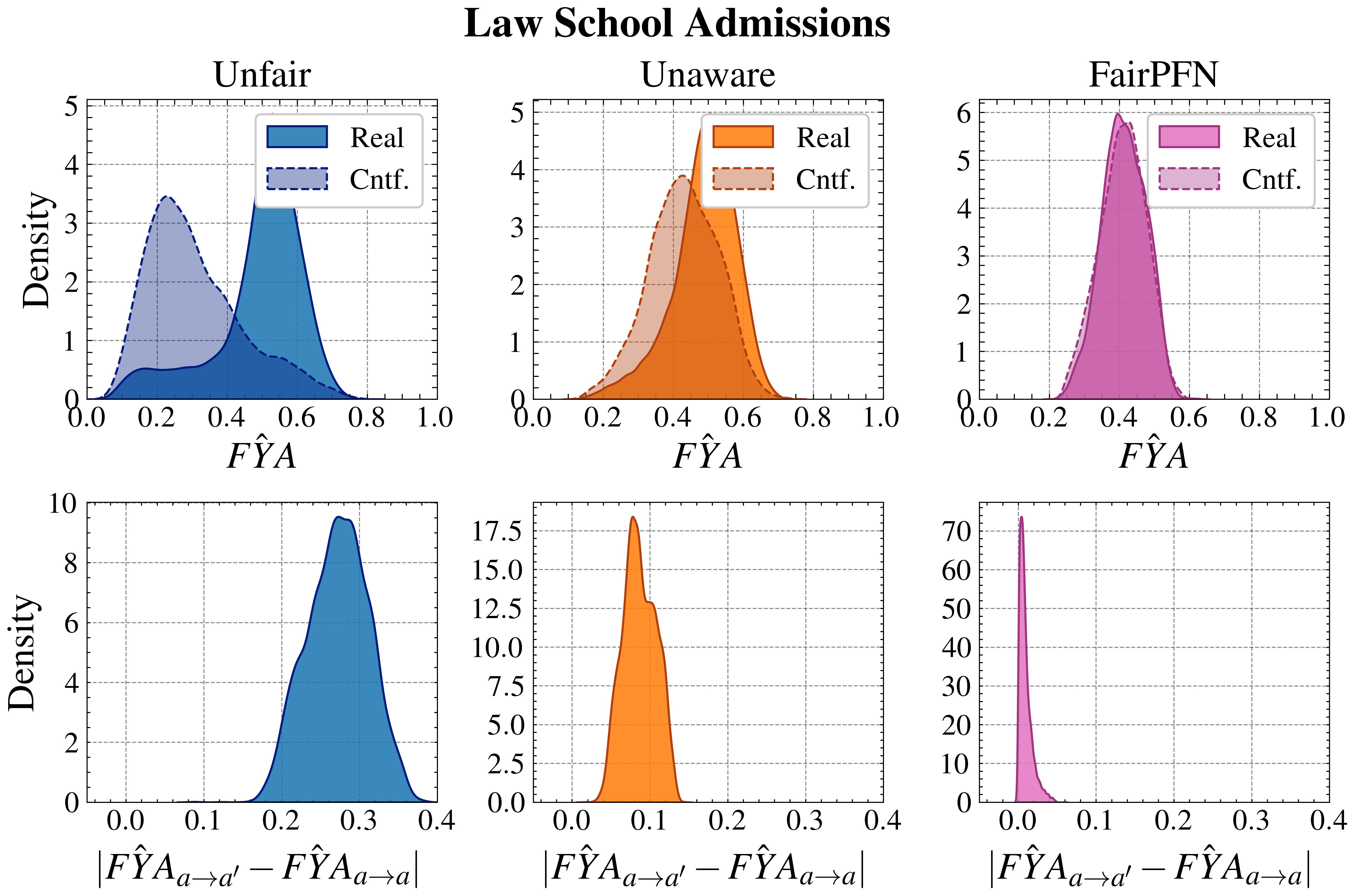

We evaluate FairPFN’s effectiveness on real-world data in reducing the causal impact of protected attributes while maintaining strong predictive accuracy. Figure 6 shows the mean prediction average treatment effect (ATE) and predictive error (1-AUC) across 5 K-fold cross-validation iterations. FairPFN achieves a prediction ATE below 0.01 on both datasets and maintains accuracy comparable to Unfair. Furthermore, FairPFN exhibits lower variability in prediction ATE across folds compared to EGR, indicating stable causal effect removal We note that we also evaluate a pre-trained version of CLAIRE Ma et al. (2023) on the Adult Census income dataset, but observe little improvement to EGR.

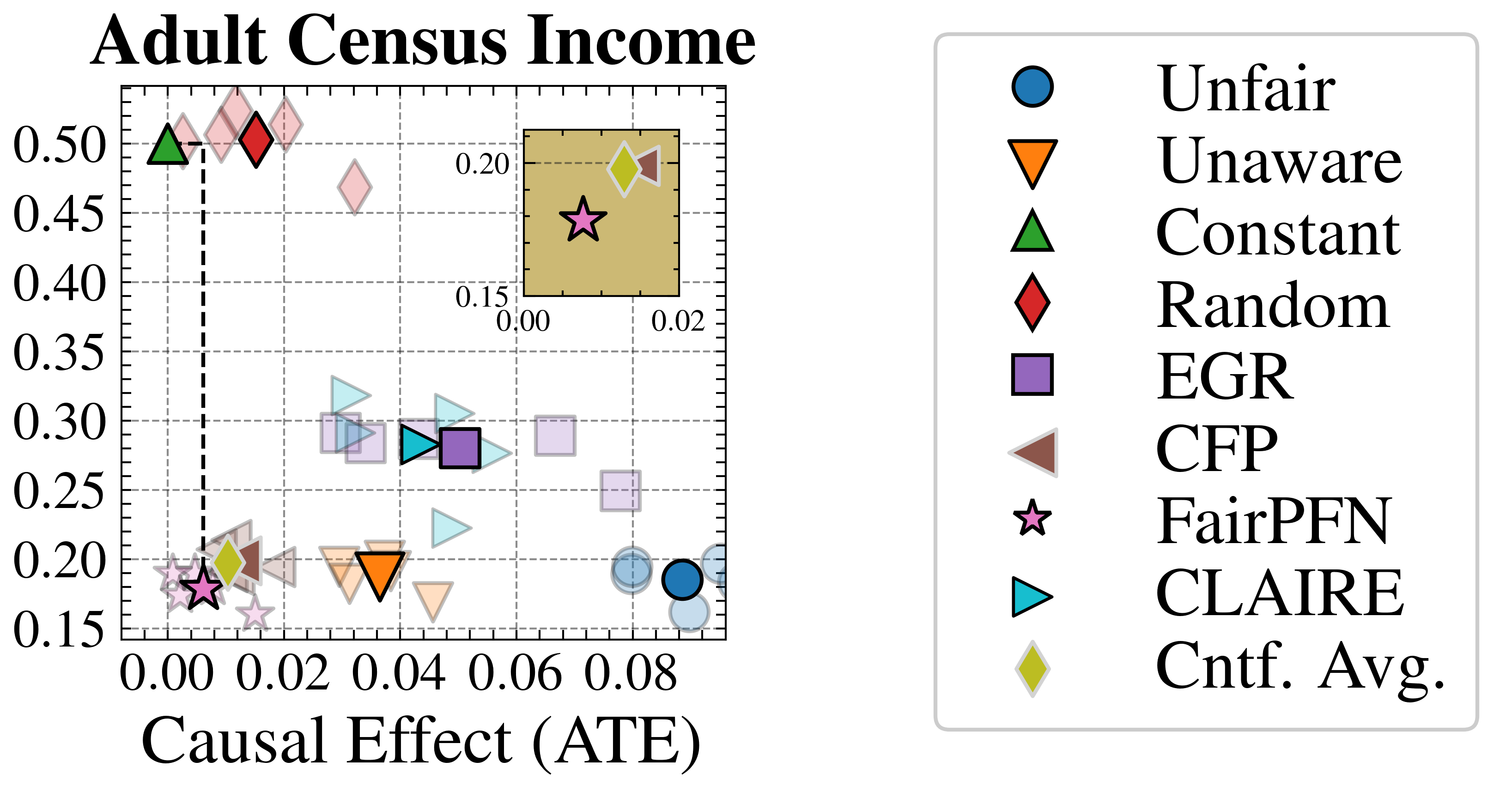

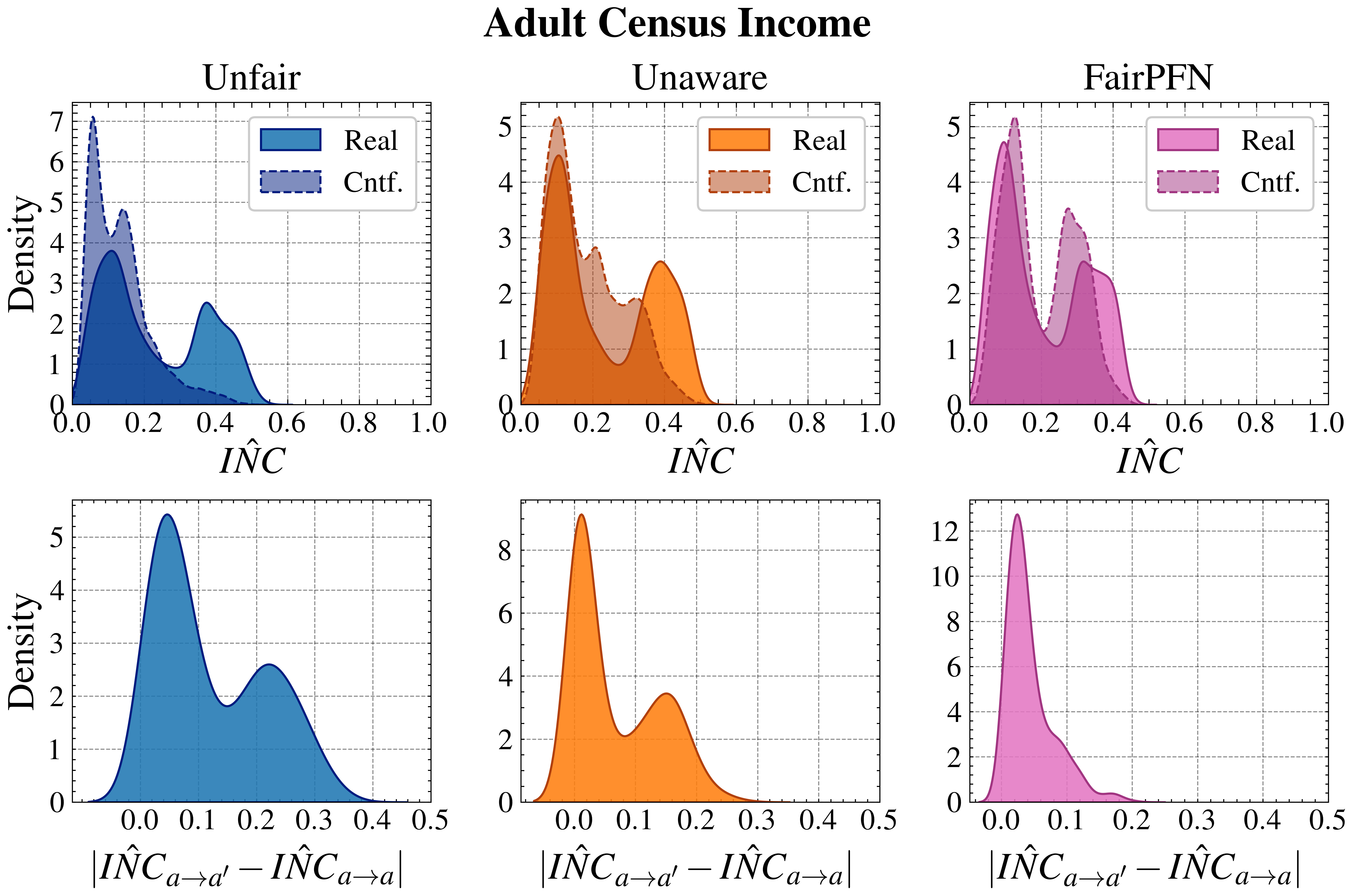

#### Counterfactual Fairness

Next, we evaluate the counterfactual fairness of FairPFN on real-world datasets as introduced in Section 3, noting that the following analysis is conducted at the individual sample level, rather than at the dataset level. Figure 7 illustrates the distribution of Absolute Error (AE) achieved by FairPFN and baselines that do not have access to causal information. FairPFN significantly reduces this error in both datasets, achieving maximum divergences of less than 0.05 on the Law School dataset and 0.2 on the Adult Census Income dataset. For a visual interpretation of the AE on our real-world datasets we refer to Appendix Figure 16.

In contrast, EGR performs similarly to Random in terms of counterfactual divergence, confirming previous studies which show that optimmizing for group fairness metrics does not optimize for individual level criteria Robertson et al. (2024). Interestingly, in an evaluation of group fairness metric Statistical Parity (DSP) FairPFN outperforms EGR on both our real-world data and causal case studies, a baseline was specifically optimized for this metric (Appendix Figures 20 and 21).

<details>

<summary>x3.png Details</summary>

### Visual Description

## Causal Diagrams: Law School Admissions & Adult Census Income

### Overview

The image displays two side-by-side causal diagrams (directed acyclic graphs) illustrating the relationships between variables in two different fairness-related datasets: "Law School Admissions" and "Adult Census Income." The diagrams use a color-coded and line-style-coded legend to categorize variable types and relationship types. The overall purpose is to model how protected attributes (like sex and race) causally influence outcomes (like first-year average grades or income), mediated by other observable and unobservable factors.

### Components/Axes

**Legend (Bottom Center):**

* **Colors & Node Types:**

* Blue Circle: `Prot. Attr` (Protected Attribute)

* Orange Circle: `Outcome`

* Purple Circle: `Unfair Observable`

* Green Circle: `Fair Unobservable`

* **Line Styles & Relationship Types:**

* Solid Arrow: `Cause`

* Dashed Line: `Additive Noise`

* Dotted Line: `Non-descendent`

* **Node Fill Pattern:**

* Diagonal Hatching: `Seen by FairPFN`

**Diagram 1: Law School Admissions (Left Side)**

* **Protected Attributes (Blue, Left):** `SEX`, `RACE`

* **Unfair Observables (Purple, Center):** `GPA`, `LSAT`

* **Outcome (Orange, Bottom-Right):** `FYA` (First-Year Average)

* **Fair Unobservables (Green, Right):** `ε_GPA`, `ε_LSAT`, `ε_FYA`

* **Causal Flow:** `SEX` and `RACE` have direct causal arrows pointing to `GPA`, `LSAT`, and `FYA`. `GPA` points to `LSAT`, and `LSAT` points to `FYA`. Each unfair observable (`GPA`, `LSAT`) and the outcome (`FYA`) is connected via a dashed "Additive Noise" line to a corresponding fair unobservable (`ε_GPA`, `ε_LSAT`, `ε_FYA`).

**Diagram 2: Adult Census Income (Right Side)**

* **Protected Attributes (Blue, Top):** `RACE`, `SEX`

* **Unfair Observables (Purple, Middle/Bottom):** `MAR` (Marital Status), `EDU` (Education), `HPW` (Hours per Week), `OCC` (Occupation)

* **Outcome (Orange, Right):** `INC` (Income)

* **Fair Unobservables (Green, Scattered):** `ε_MAR`, `ε_EDU`, `ε_HPW`, `ε_OCC`

* **Causal Flow:** This is a more complex network.

* `RACE` and `SEX` have arrows pointing to `MAR`, `EDU`, `HPW`, `OCC`, and `INC`.

* `MAR` points to `HPW` and `OCC`.

* `EDU` points to `OCC` and `INC`.

* `HPW` points to `INC`.

* `OCC` points to `INC`.

* Each unfair observable (`MAR`, `EDU`, `HPW`, `OCC`) is connected via a dashed "Additive Noise" line to a corresponding fair unobservable (`ε_MAR`, `ε_EDU`, `ε_HPW`, `ε_OCC`).

* A dotted "Non-descendent" line connects `ε_EDU` to `INC`.

### Detailed Analysis

**Node Inventory and Relationships:**

1. **Law School Admissions Diagram:**

* **Direct Causes of FYA:** `SEX`, `RACE`, `LSAT`.

* **Mediated Paths:** `SEX`/`RACE` -> `GPA` -> `LSAT` -> `FYA`. `SEX`/`RACE` -> `LSAT` -> `FYA`.

* **Noise Injection:** The model explicitly includes unobserved, fair factors (`ε` terms) that additively influence the observed variables `GPA`, `LSAT`, and `FYA`.

2. **Adult Census Income Diagram:**

* **Direct Causes of INC:** `SEX`, `RACE`, `EDU`, `HPW`, `OCC`.

* **Key Mediators:** `EDU` and `OCC` are central hubs. `EDU` influences `OCC` and `INC`. `OCC` is influenced by `RACE`, `SEX`, `MAR`, and `EDU`, and in turn influences `INC`.

* **Complex Interactions:** `MAR` (Marital Status) is modeled as being caused by `RACE` and `SEX`, and it subsequently influences `HPW` and `OCC`.

* **Noise & Non-descendent:** Fair unobservables (`ε`) add noise to `MAR`, `EDU`, `HPW`, and `OCC`. Notably, `ε_EDU` has a dotted "Non-descendent" relationship to `INC`, suggesting it is not a descendant of the protected attributes in the causal graph but may still be correlated.

**Spatial Grounding:**

* The **legend** is positioned at the bottom, centered horizontally.

* In both diagrams, **Protected Attributes (Blue)** are placed on the far left or top.

* **Outcomes (Orange)** are placed on the far right or bottom-right.

* **Unfair Observables (Purple)** occupy the central space between protected attributes and outcomes.

* **Fair Unobservables (Green)** are placed adjacent to their corresponding unfair observable, typically to the right.

### Key Observations

1. **Structural Difference:** The Law School diagram is a simpler, more linear chain, while the Adult Census diagram is a dense, interconnected network, reflecting the greater complexity of socioeconomic factors.

2. **Common Pattern:** In both models, protected attributes (`SEX`, `RACE`) have **direct causal arrows to the final outcome** (`FYA`, `INC`), not just indirect paths through mediators. This is a critical modeling choice for fairness analysis.

3. **Role of "Unfair Observable":** Variables like `GPA`, `LSAT`, `EDU`, and `OCC` are labeled "Unfair Observable." This implies that while they are observed and causally influence the outcome, they may themselves be influenced by protected attributes, making their use in prediction potentially discriminatory.

4. **Explicit Noise Modeling:** The inclusion of `ε` (epsilon) nodes for "Fair Unobservable" factors explicitly acknowledges that not all variance in the observed variables is explained by the modeled causes; some is due to random, fair noise.

5. **FairPFN Context:** The hatching pattern indicating "Seen by FairPFN" suggests these diagrams are part of an analysis or methodology related to a fairness-aware model or algorithm named FairPFN.

### Interpretation

These diagrams are **causal models for algorithmic fairness auditing**. They map the hypothesized real-world mechanisms through which sensitive attributes like race and sex might influence important outcomes (academic success, income).

* **What the data suggests:** The models argue that bias can flow through two primary channels: 1) **Direct influence** of protected attributes on outcomes, and 2) **Indirect influence** where protected attributes shape intermediary factors (test scores, education, occupation) which then determine outcomes. The "Unfair Observable" label is a normative judgment, indicating that using these intermediaries for prediction could perpetuate historical inequities.

* **Relationship between elements:** The diagrams establish a **chain of causality**. The protected attributes are root causes. The unfair observables are mediators that are "tainted" by the root causes. The outcome is the final effect. The fair unobservables represent legitimate, random variation. The arrows define the permissible paths for influence.

* **Notable implications:** The direct arrows from `SEX`/`RACE` to `INC`/`FYA` are significant. They imply that even if one controls for all mediators (education, occupation, test scores), a direct disparity might remain, pointing to potential direct discrimination or the influence of unmeasured mediators. The complexity of the Adult Census diagram highlights why fairness in socioeconomic contexts is particularly challenging—interventions (e.g., on education) can have cascading effects through the network. The models provide a structured framework for asking "what-if" questions and designing fairness interventions that respect the causal structure of the problem.

</details>

Figure 5: Real-World Scenarios: Assumed causal graphs of real-world datasets Law School Admissions and Adult Census Income.

<details>

<summary>extracted/6522797/figures/trade-off_lawschool.png Details</summary>

### Visual Description

## Scatter Plot with Inset Zoom: Law School Admissions

### Overview

The image is a scatter plot titled "Law School Admissions." It visualizes the relationship between two metrics: "Causal Effect (ATE)" on the x-axis and "Error (1-AUC)" on the y-axis. The plot contains multiple data points represented by distinct shapes and colors, some connected by dashed lines. An inset plot in the upper-right quadrant provides a zoomed-in view of a specific cluster of points.

### Components/Axes

* **Main Plot Title:** "Law School Admissions" (centered at the top).

* **X-Axis:**

* **Label:** "Causal Effect (ATE)"

* **Scale:** Linear, ranging from approximately 0.00 to 0.10.

* **Major Tick Marks:** 0.00, 0.05, 0.10.

* **Y-Axis:**

* **Label:** "Error (1-AUC)"

* **Scale:** Linear, ranging from approximately 0.33 to 0.50.

* **Major Tick Marks:** 0.33, 0.35, 0.38, 0.40, 0.43, 0.45, 0.48, 0.50.

* **Inset Plot (Upper-Right):**

* A smaller square plot with a tan background.

* **X-Axis:** Range approximately -0.02 to 0.02. Major ticks at -0.02, 0.00, 0.02.

* **Y-Axis:** Range approximately 0.375 to 0.380. Major ticks at 0.375, 0.380.

* Contains three data points: a pink star, a yellow diamond, and a brown triangle.

* **Data Series (Markers):** The plot uses distinct shapes and colors to represent different categories or methods. A legend is not explicitly shown, so identification is based on visual markers.

1. **Red Diamond:** Located at the top-left of the main plot.

2. **Purple Square:** Located in the upper-middle region.

3. **Pink Star:** Located in the lower-left region. A dashed line connects it to the orange triangle.

4. **Orange Triangle (pointing down):** Located in the lower-middle region. A dashed line connects it to the blue circle.

5. **Blue Circle:** Located at the bottom-right of the main plot.

6. **Yellow Diamond:** Located very close to the pink star in the lower-left. Also appears in the inset.

7. **Brown Triangle (pointing right):** Appears only in the inset plot.

* **Connecting Lines:** Dashed black lines connect the Pink Star to the Orange Triangle, and the Orange Triangle to the Blue Circle, suggesting a sequence or comparison.

### Detailed Analysis

* **Data Point Approximate Coordinates (Main Plot):**

* **Red Diamond:** Causal Effect (ATE) ≈ 0.00, Error (1-AUC) ≈ 0.50.

* **Purple Square:** Causal Effect (ATE) ≈ 0.04, Error (1-AUC) ≈ 0.45.

* **Pink Star:** Causal Effect (ATE) ≈ 0.00, Error (1-AUC) ≈ 0.38.

* **Yellow Diamond:** Causal Effect (ATE) ≈ 0.00, Error (1-AUC) ≈ 0.38 (slightly left/below the Pink Star).

* **Orange Triangle:** Causal Effect (ATE) ≈ 0.05, Error (1-AUC) ≈ 0.35.

* **Blue Circle:** Causal Effect (ATE) ≈ 0.10, Error (1-AUC) ≈ 0.33.

* **Data Point Approximate Coordinates (Inset Plot):**

* **Pink Star:** Causal Effect (ATE) ≈ 0.01, Error (1-AUC) ≈ 0.377.

* **Yellow Diamond:** Causal Effect (ATE) ≈ 0.00, Error (1-AUC) ≈ 0.378.

* **Brown Triangle:** Causal Effect (ATE) ≈ 0.00, Error (1-AUC) ≈ 0.379.

* **Trend Verification:**

* The dashed line from the **Pink Star** (low ATE, moderate Error) to the **Orange Triangle** (moderate ATE, lower Error) slopes downward to the right, indicating a decrease in error as causal effect increases along this path.

* The dashed line from the **Orange Triangle** to the **Blue Circle** (high ATE, lowest Error) continues this downward-right slope, reinforcing the trend of decreasing error with increasing causal effect for this series.

* The **Red Diamond** and **Purple Square** are isolated points with higher error values. The **Red Diamond** has near-zero causal effect but the highest error.

### Key Observations

1. **Trade-off Visualization:** The plot suggests a potential trade-off or relationship where methods achieving a higher Causal Effect (ATE) tend to have a lower Error (1-AUC), as seen in the connected series (Pink Star -> Orange Triangle -> Blue Circle).

2. **Cluster at Low ATE:** Several points (Red Diamond, Pink Star, Yellow Diamond) are clustered near a Causal Effect of 0.00, but with vastly different error rates (from ~0.38 to ~0.50).

3. **Inset Highlight:** The inset zooms in on the cluster near (0.00, 0.38), revealing that the Pink Star, Yellow Diamond, and Brown Triangle are very close in both metrics, with differences in the third decimal place for Error.

4. **Outlier:** The **Red Diamond** is a clear outlier with the highest error (0.50) despite having a near-zero causal effect.

### Interpretation

This chart likely compares different algorithmic models or policy interventions in the context of law school admissions. The metrics suggest a dual evaluation:

* **Causal Effect (ATE - Average Treatment Effect):** Measures the estimated impact of an intervention (e.g., using a specific admissions model) on an outcome.

* **Error (1-AUC):** Measures the predictive inaccuracy of the model. A lower value is better.

The data demonstrates that not all methods are equal. The connected path (Pink Star -> Orange Triangle -> Blue Circle) may represent a family of related models or a tuning process where increasing the model's causal effect estimate is associated with improved predictive accuracy (lower error). The **Blue Circle** method appears most favorable, achieving the highest causal effect and lowest error.

Conversely, the **Red Diamond** method performs poorly, with high error and negligible causal effect. The cluster near zero ATE (including the inset points) represents methods that have little to no estimated causal impact but vary significantly in their baseline predictive error. The inset emphasizes that even among these low-impact methods, fine-grained differences exist.

**Overall Implication:** The chart argues for the importance of considering both causal impact and predictive error when evaluating admissions models. It visually identifies a promising direction (increasing ATE while decreasing error) and highlights underperforming or neutral alternatives. The absence of a formal legend suggests the audience is expected to recognize the methods by their markers, indicating this is likely from a specialized technical paper or report.

</details>

<details>

<summary>extracted/6522797/figures/trade-off_adult.png Details</summary>

### Visual Description

\n