# FairPFN: A Tabular Foundation Model for Causal Fairness

Abstract

Machine learning (ML) systems are utilized in critical sectors, such as healthcare, law enforcement, and finance. However, these systems are often trained on historical data that contains demographic biases, leading to ML decisions that perpetuate or exacerbate existing social inequalities. Causal fairness provides a transparent, human-in-the-loop framework to mitigate algorithmic discrimination, aligning closely with legal doctrines of direct and indirect discrimination. However, current causal fairness frameworks hold a key limitation in that they assume prior knowledge of the correct causal model, restricting their applicability in complex fairness scenarios where causal models are unknown or difficult to identify. To bridge this gap, we propose FairPFN, a tabular foundation model pre-trained on synthetic causal fairness data to identify and mitigate the causal effects of protected attributes in its predictions. FairPFN’s key contribution is that it requires no knowledge of the causal model and still demonstrates strong performance in identifying and removing protected causal effects across a diverse set of hand-crafted and real-world scenarios relative to robust baseline methods. FairPFN paves the way for promising future research, making causal fairness more accessible to a wider variety of complex fairness problems.

1 Introduction

Algorithmic discrimination is among the most pressing AI-related risks of our time, manifesting when machine learning (ML) systems produce outcomes that disproportionately disadvantage historically marginalized groups Angwin et al. (2016). Despite significant advancements by the fairness-aware ML community, critiques highlight the contextual limitations and lack of transferability of current statistical fairness measures to practical legislative frameworks Weerts et al. (2023). In response, the field of causal fairness has emerged, providing a transparent and human-in-the-loop causal framework for assessing and mitigating algorithmic bias with a strong analogy to existing anti-discrimination legal doctrines Plecko & Bareinboim (2024).

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: FairPFN Pre-training Pipeline

### Overview

This diagram illustrates the pre-training pipeline for FairPFN, a model designed for real-world inference with a focus on fairness. The pipeline consists of three main stages: data generation, transformer input, fair prediction, and then a visual representation of the process using a Structural Causal Model (SCM), an Observational Dataset, the FairPFN model itself, and the calculation of a pre-training loss.

### Components/Axes

The diagram is segmented into four main sections:

1. **Data Generation (a):** Describes the process of creating the dataset.

2. **Transformer Input (b):** Explains how the observational dataset is used as input to a transformer model.

3. **Fair Prediction (c):** Details the prediction process and loss calculation.

4. **Real-world Inference:** A visual representation of the pipeline with the SCM, Observational Dataset, FairPFN, and Pre-training Loss.

The key elements within the "Real-world Inference" section are:

* **Structural Causal Model (SCM):** Nodes labeled A₀, U₂, X₁, X₂, X₃, Yb, and Yf, with directed edges representing causal relationships.

* **Observational Dataset:** A table with columns A, X₁, X₂, X₃, and Yb.

* **FairPFN:** A complex network of interconnected nodes.

* **Pre-training Loss:** Represented by the symbols Ŷf and Yf.

### Detailed Analysis or Content Details

**a) Data Generation:**

* A dataset is generated comprising a protected attribute *A*, potentially biased observables *Xb*, and biased outcome *Yb*.

* A fair outcome *Yf* is sampled by removing the outgoing edges of *A*.

**b) Transformer Input:**

* The observational dataset *D* is partitioned into training and validation splits.

* Given in-context examples *Dtrain*, the transformer makes predictions on the inference set *Dval* = (*Aval*, *Xval*).

**c) Fair Prediction:**

* The transformer makes predictions Ŷf on the validation set.

* The pre-training loss is calculated with respect to the fair outcomes in the validation set.

* The transformer learns the mapping *Xb* → *Yf*.

**Real-world Inference - SCM:**

* The SCM shows causal relationships:

* U₂ influences X₁, X₂, and X₃.

* A₀ influences X₁.

* X₁, X₂, and X₃ influence Yb.

* Yb influences Yf.

**Real-world Inference - Observational Dataset:**

* The dataset table has 5 columns: A, X₁, X₂, X₃, and Yb.

* The table is filled with a heatmap-like color gradient. The colors range from dark blue to dark red, indicating varying values. It's difficult to extract precise numerical values from the color gradient without a legend, but the color intensity suggests a range of values.

* The table appears to have approximately 10-15 rows.

**Real-world Inference - FairPFN:**

* The FairPFN is a complex network of interconnected nodes. The nodes are colored in shades of green and purple.

* The network has multiple layers and connections.

**Real-world Inference - Pre-training Loss:**

* Ŷf (predicted fair outcome) is connected to Yf (fair outcome).

* The arrow indicates the calculation of the pre-training loss.

**Mathematical Formula:**

* p(*Yf* | *Xb*, *Db*) ∝ ∫ p(*Yf* | *Xb*, φ)p(*D♭* | φ) dφ

### Key Observations

* The diagram emphasizes the importance of fairness by explicitly generating a "fair outcome" (*Yf*) and using it for loss calculation.

* The SCM visually represents the causal relationships between variables, highlighting the potential for bias introduced by the protected attribute *A*.

* The heatmap-like representation of the observational dataset suggests that the data is high-dimensional and potentially complex.

* The FairPFN model is a complex neural network, likely designed to capture intricate relationships in the data.

### Interpretation

The diagram illustrates a pipeline for training a fair machine learning model. The core idea is to mitigate bias by explicitly modeling the causal relationships between variables and using a "fair outcome" as the target for training. The SCM provides a visual representation of these relationships, while the observational dataset provides the data for training. The FairPFN model learns to predict the fair outcome, and the pre-training loss ensures that the model is aligned with the desired fairness criteria. The mathematical formula suggests a probabilistic approach to fairness, where the model aims to estimate the probability of the fair outcome given the observed data. The use of a transformer model suggests that the pipeline is designed to handle sequential or contextual data. The diagram suggests a sophisticated approach to fairness that goes beyond simply removing the protected attribute from the training data. It attempts to address the underlying causal mechanisms that lead to bias.

</details>

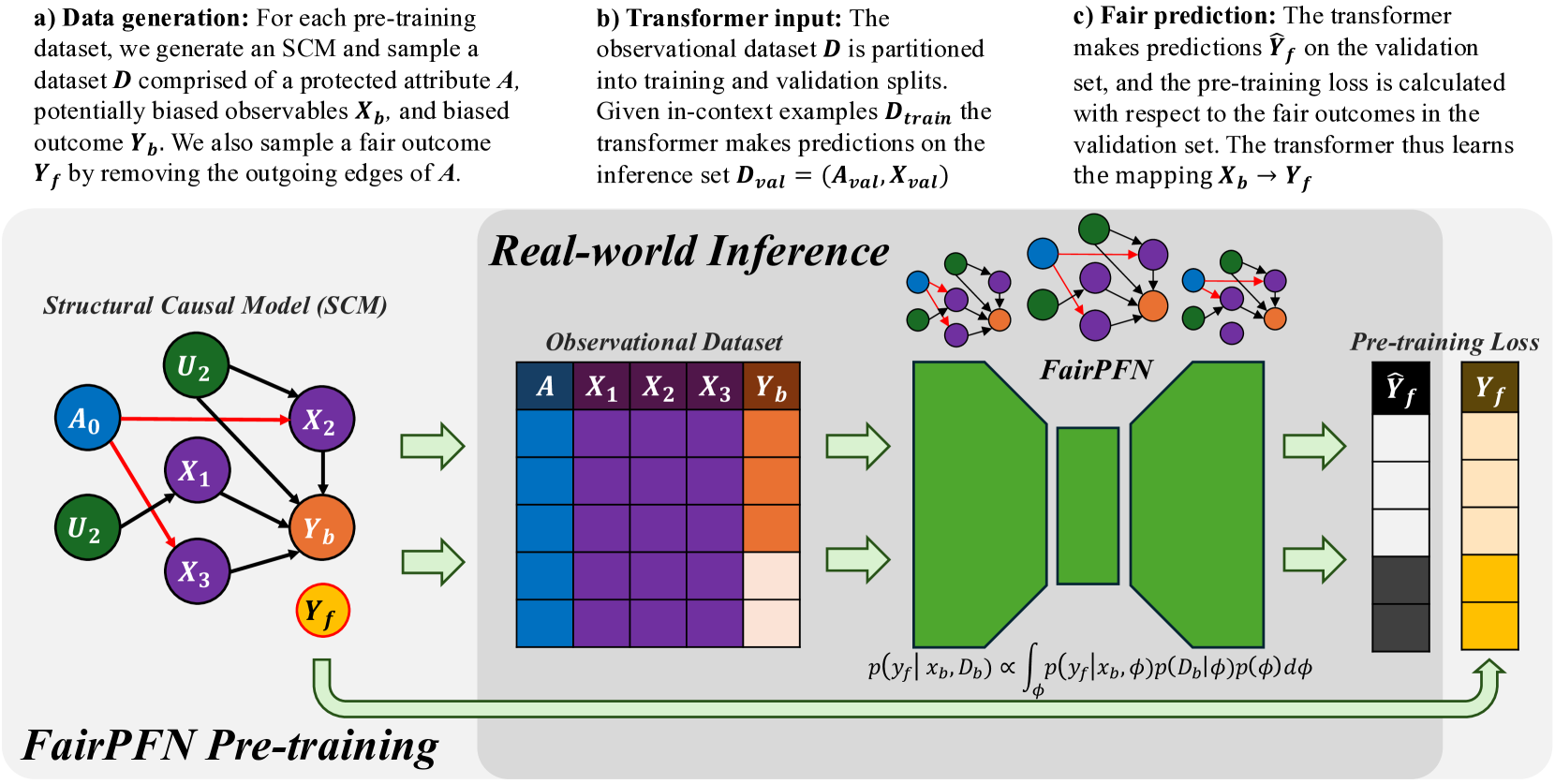

Figure 1: FairPFN Overview: FairPFN is a foundation model for causal fairness, pre-trained on synthetic datasets generated from sparse MLPs that represent SCMs with exogenous protected attributes (a). A biased dataset is created for each MLP/SCM and supplied as context to the transformer (b), with loss computed based on fair outcomes obtained by excluding the causal influence of the protected attribute (c). In practice, (d) FairPFN takes in only an observational dataset to predict fair targets by integrating over the simplest causal explanations for the biased data.

A recent review comparing outcome-based and causal fairness approaches (Castelnovo et al., 2022) argues that the non-identifiability of causal models from observational data Pearl (2009) limits the usage of current causal fairness frameworks in practical applications. In practice, users must provide full or partial information about the underlying causal model, a challenging task given the complexity of systemic inequalities. Furthermore, an incorrectly presumed causal graph, such as one falsely assuming a variable is independent of a protected attribute, can invalidate causal fairness metrics Ma et al. (2023); Binkytė-Sadauskienė et al. (2022), resulting in fairwashing and fostering a false sense of security and trust.

This paper takes a bold new perspective on achieving causal fairness. Our key contribution is FairPFN, a tabular foundation model for causal fairness, pre-trained on synthetic causal fairness data to learn to identify and remove the causal effects of protected attributes in tabular classification settings. When used on a new dataset, FairPFN does not rely on a user-specified causal model or graph, instead solely relying on the causally-generated data it has seen during pre-training. We demonstrate through extensive experiments that FairPFN effectively and consistently mitigates the causal impact of protected attributes across various hand-crafted and real-world scenarios, yielding causally fair predictions without user-specified causal information. We summarize our various contributions:

1. PFNs for Causal Fairness We propose a paradigm shift for algorithmic fairness, in which a transformer is pre-trained on synthetic causal fairness data.

1. Causal Fairness Prior: We introduce a synthetic causal data prior which offers a comprehensive representation for fairness datasets, modeling protected attributes as binary exogenous causes.

1. Foundation Model: We present FairPFN, a foundation model for causal fairness which, given only observational data, identifies and removes the causal effect of binary, exogenous protected attributes in predictions, and demonstrates strong performance in terms of both causal fairness and predictive accuracy on a combination of hand-crafted and real-world causal scenarios. We provide a prediction interface to evaluate and assess our pre-trained model, as well as code to generate and visualize our pre-training data at https://github.com/jr2021/FairPFN.

2 Related Work

In recent years, causality has gained prominence in the field of algorithmic fairness, providing fairness researchers with a structural framework to reason about algorithmic discrimination. Unlike traditional fairness research Kamishima et al. (2012); Agarwal et al. (2018); Hardt et al. (2016), which focuses primarily on optimizing statistical fairness measures, causal fairness frameworks concentrate on the structure of bias. This approach involves modeling causal relationships among protected attributes, observed variables, and outcomes, assessing the causal effects of protected attributes, and mitigating biases using causal methods, such as optimal transport Plecko & Bareinboim (2024) or latent variable estimation Kusner et al. (2017); Ma et al. (2023); Bhaila et al. (2024).

Counterfactual fairness, introduced by Kusner et al. (2017), posits that predictive outcomes should remain invariant between the actual world and a counterfactual scenario in which a protected attribute assumes an alternative value. This notion has spurred interest within the fairness research community, resulting in developments like path-specific extensions Chiappa (2019) and the application of Variational Autoencoders (VAEs) to create counterfactually fair latent representations Ma et al. (2023).

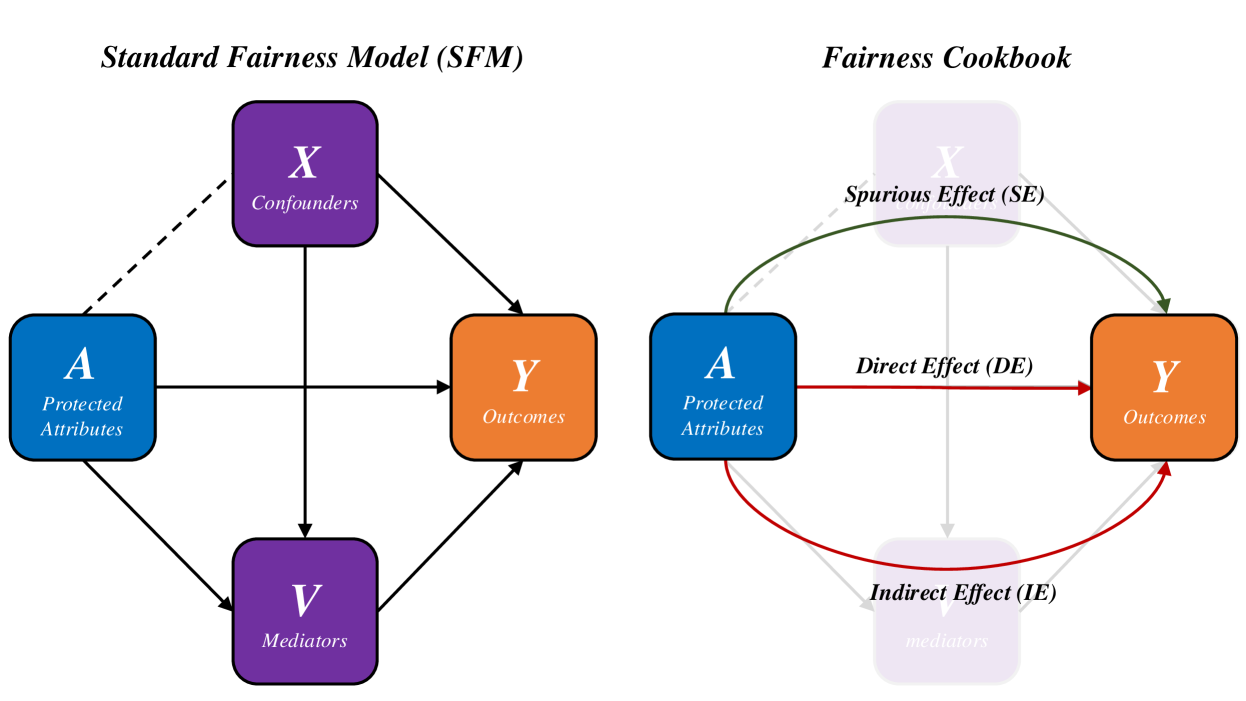

The initial counterfactual fairness framework necessitates comprehensive knowledge of the causal model. In contrast, the Causal Fairness Analysis (CFA) framework Plecko & Bareinboim (2024) relaxes this requirement by organizing variables within a Standard Fairness Model (SFM) for bias assessment and mitigation. Moreover, the CFA framework presents the Fairness Cookbook, which defines causal fairness metrics—Indirect-Effect, Direct-Effect, and Spurious-Effect—that directly align with US legal doctrines of disparate impact and treatment. Furthermore, the CFA framework challenges Kusner et al. (2017) ’s modeling of protected attributes as exogenous causes, permitting correlations between protected attributes and confounding variables that contribute to the legally admissible Spurious-Effect.

3 Background

This section establishes the scientific foundation of FairPFN, including terminology relevant to algorithmic fairness, causal ML, counterfactual fairness, and prior-data fitted networks (PFNs).

Algorithmic Fairness

Algorithmic discrimination occurs when historical biases against demographic groups (e.g., ethnicity, sex) are reflected in the training data of ML algorithms, leading to the perpetuation and amplification of these biases in predictions Barocas et al. (2023). Fairness research focuses on measuring algorithmic bias and developing fairness-aware ML models that produce non-discriminatory predictions. Practitioners have established over 20 fairness metrics, which generally break down into group-level and individual-level metrics Castelnovo et al. (2022). These metrics can be used to optimize predictive models, balancing the commonly observed trade-off between fairness and predictive accuracy Weerts et al. (2024).

Causal Machine Learning Causal ML is a developing field that leverages modern ML methods for causal reasoning Pearl (2009), facilitating advancements in causal discovery, causal inference, and causal reasoning Peters et al. (2014). Causal mechanisms are often represented as Structural Causal Models (SCMs), defined as $\mathcal{M}=(U,O,F)$ , where $U$ are unobservables, $O$ are observables, and $F$ is a set of structural equations. These equations are expressed as $f_{j}:X_{j}=f_{j}(PA_{j},N_{j})$ , indicating that an outcome variable $F$ depends on its parent variables $PA$ and independent noise $N_{j}$ . Non-linearities in the set of structural equations $F$ influence data complexity and identifiability of causal quantities from observational data Schölkopf et al. (2012). In an SCM, interventions can be made by setting $X← x_{1}$ and propagating this value through the model $\mathcal{M}$ , posing the question of "what will happen if I do something?". Counterfactuals expand upon the idea of interventions and are relevant when a value of $X$ is already observed, instead posing the question of "what would have happened if something had been different?" In addition to posing a slightly different question, counterfactuals require that exogenous noise terms are held constant, and thus classically require full knowledge of the causal model. In the context of algorithmic fairness, we are limited to level of counterfactuals as protected attributes are typically given and already observed.

In causal reasoning frameworks, one major application of counterfactuals is the estimation of causal effects such as the individual and average treatment effects (ITE and ATE) which quantify the difference and expected difference between outcomes under different values of $X$ .

$$

ITE:\tau=Y_{X\leftarrow x}-Y_{X\leftarrow x^{\prime}} \tag{1}

$$

$$

ATE:E[\tau]=E[Y_{X\leftarrow x}]-E[Y_{X\leftarrow x^{\prime}}]. \tag{2}

$$

Counterfactual Fairness

is a foundational notion of causal fairness introduced by Kusner et al. (2017), requiring that an individual’s predictive outcome should match that in a counterfactual scenario where they belong to a different demographic group. This notion is formalized in the theorem below.

**Theorem 1 (Unit-level/probabilistic)**

*Given an SCM $\mathcal{M}=(U,O,F)$ where $O=A\cup X$ , a predictor $\hat{Y}$ is counterfactually fair on the unit-level if $∀\hat{y}∈\hat{Y},∀ x,a,a^{\prime}∈ A$

$$

P(\hat{y}_{A\rightarrow a}(u)|X,A=x,a)=P(\hat{y}_{A\rightarrow a^{\prime}}(u)|%

X,A,=x,a)

$$*

Kusner et al. (2017) notably choose to model protected attributes as exogenous, which means that they may not be confounded by unobserved variables with respect to outcomes. We note that the definition of counterfactual fairness in Theorem 1 is the unit-level probabilistic one as clarified by Plecko & Bareinboim (2024), because counterfactual outcomes are generated deterministically with fixed unobservables $U=u$ . Theorem 1 can be applied on the dataset level to form the population-level version also provided by Plecko & Bareinboim (2024) which measures the alignment of natural and counterfactual predictive distributions.

**Theorem 2 (Population-level)**

*Given an SCM $\mathcal{M}=(U,O,F)$ where $O=A\cup X$ , a predictor $\hat{Y}$ is counterfactually fair on the population-level if $∀\hat{y}∈\hat{Y},∀ x,a,a^{\prime}∈ A$

$$

P(\hat{y}_{A\rightarrow a}|X,A=x,a)=P(\hat{y}_{A\rightarrow a^{\prime}}|X,A=x,a)

$$*

Theorem 1 can also be transformed into a counterfactual fairness metric by quantifying the difference between natural and counterfactual predictive distributions. In this study we quantify counterfactual fairness as the distribution of the counterfactual absolute error (AE) between predictions in each distribution.

**Definition 1 (Absolute Error (AE))**

*Given an SCM $\mathcal{M}=(U,O,F)$ where $O=A\cup X$ , the counterfactual absolute error of a predictor $\hat{Y}$ is the distribution

$$

AE=|P(\hat{y}_{A\rightarrow a}(u)|X,A=x,a)-P(\hat{y}_{A\rightarrow a^{\prime}}%

(u)|X,A=x,a)|

$$*

We note that because the outcomes are condition on the same noise terms $u$ our definition of AE builds off of Theorem 1. Intuitively, when the AE is skewed towards zero, then most individuals receive the same prediction in both the natural and counterfactual scenarios.

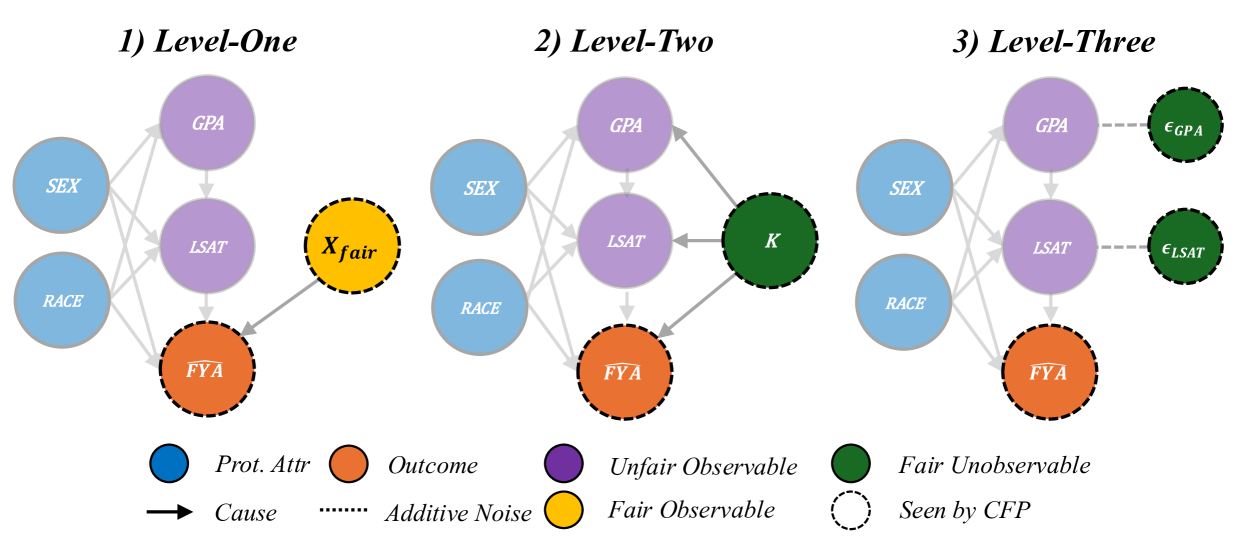

Kusner et al. (2017) present various implementations of Counterfactually Fair Prediction (CFP). The three levels of CFP can be achieved by fitting a predictive model $\hat{Y}$ to observable non-descendants if any exist (Level-One), inferred values of an exogenous unobserved variable $K$ (Level-Two), or additive noise terms (Level-Three). Kusner et al. (2017) acknowledge that in practice, Level-One rarely occurs. Level-Two requires that the causal model be invertible, which allows the unobservable $K$ to be inferred by abduction. Level-Three models the scenario as an Additive Noise Model, and thus is the strongest in terms of representational capacity, allowing more degrees of freedom than in Level-Two to represent fair terms. The three levels of CFP are depicted in Appendix Figure 22.

Causal Fairness The Causal Fairness Analysis (CFA) framework Plecko & Bareinboim (2024) introduces the Standard Fairness Model (SFM), which classifies variables as protected attributes $A$ , mediators $X_{med}$ , confounders $X_{conf}$ , and outcomes $Y$ . This framework includes a Fairness Cookbook of causal fairness metrics with a strong analogy to the legal notions of direct and indirect discrimination and business necessity as illustrated in Appendix Figure 23. Plecko & Bareinboim (2024) refute the modeling choice of Kusner et al. (2017) by their inclusion of confounders $X_{conf}$ in the SFM, arguing that these variables contribute to the legally admissible Spurious-Effect (SE).

For simplicity of our experimental results, we follow the modeling of Kusner et al. (2017), and focus on the elimination of the Total-Effect (TE) of protected attributes as defined by Plecko & Bareinboim (2024), while noting in Section 6 the importance of relaxing this assumption in future extensions.

Prior-data Fitted Networks Prior-data Fitted Networks (PFNs) Müller et al. (2022) and TabPFN Hollmann et al. (2023, 2025) represent a paradigm shift from traditional ML with a causal motivation, namely that simple causal models offer a quality explanation for real-world data. PFNs incorporate prior knowledge into transformer models by pre-training on datasets from a specific prior distribution Müller et al. (2022). TabPFN, a popular application of PFNs, applies these ideas to small tabular classification tasks by training a transformer on synthetic datasets derived from sparse Structural Causal Models (SCMs). As noted in Hollmann et al. (2023), a key advantage of TabPFN is its link to Bayesian Inference; where the transformer approximates the Posterior Predictive Distribution (PPD), thus achieving state-of-the-art performance by integrating over simple causal explanations for the data.

4 Methodology

In this section, we introduce FairPFN, a foundation model for legally or ethically sensitive tabular classification problems that draws inspiration from PFNs and principles of causal fairness. We introduce our pre-training scheme, synthetic data prior, and draw connections to Bayesian Inference to explain the inner workings of FairPFN.

4.1 FairPFN Pre-Training

First, we present our pre-training scheme, where FairPFN is fit to a prior of synthetic causal fairness data to identify and remove the causal effects of protected attributes in practice from observational data alone. We provide pseudocode for our pre-training algorithm in Algorithm 2, and outline the steps below.

Input:

Number of pre-training epochs $E$ and steps $S$

Transformer $\mathcal{M}$ with weights $\theta$

Hypothesis space of SCMs $\phi∈\Phi$

begin

for $epoch=1$ to $E$ do

for $step=1$ to $S$ do

Draw a random SCM $\phi$ from $\Phi$

Sample $D_{bias}=(A,X_{bias},Y_{bias})$ from $\phi$ where A $\{a_{0},a_{1}\}$ is an exogenous binary protected attribute

Sample $Y_{fair}$ from $\phi$ by performing dropout on outgoing edges of $A$ if any exist

Partition $D_{bias}$ and $D_{fair}$ into $train/val$

Pass $D_{bias}^{train}$ into $\mathcal{M}$ as context

Pass $D_{bias}^{val}$ into $\mathcal{M}$ to generate $Y_{pred}^{val}$

Calculate loss $L=CE(Y_{pred}^{val},Y_{fair}^{val})$

Update weights $\theta$ w.r.t $∇_{\theta}L$

end for

end for

Output: Transformer $\mathcal{M}:X_{bias}→ Y_{fair}$

Algorithm 1 FairPFN Pre-training

Data Generating Mechanisms FairPFN pre-training begins by creating synthetic datasets that capture the causal mechanisms of bias in real-world data. Following the approach of Hollmann et al. (2023), we use Multi-Layer Perceptrons (MLPs) to model Structural Causal Models (SCMs) via the structural equation $f=z(P· W^{T}x+\epsilon)$ , where $W$ denotes activation weights, $\epsilon$ represents Gaussian noise, $P$ is a dropout mask sampled from a log-scale to promote sparsity, and $z$ is a non-linearity. Figure 1 illustrates the connection among sampled MLPs, their corresponding SCMs, and the resulting synthetic pre-training data generated. We note that independent noise terms are not visualized in Figure 1.

Biased Data Generation An MLP is randomly sampled and sparsity is induced through dropout on select edges. The protected attribute is defined as a binary exogenous variable $A∈\{a_{0},a_{1}\}$ at the input layer. We uniformly select $m$ features $X$ from the second hidden layer onwards to capture rich representations of exogenous causes. The target variable $Y$ is chosen from the output layer and discretized into a binary variable using a random threshold. A forward pass through the MLP produces a dataset $D_{bias}=(A,X_{bias},Y_{bias})$ with $n$ samples containing the causal influence of the protected attribute.

Fair Data Generation

A second forward pass generates a fair dataset $D_{fair}$ by applying dropout to the outgoing edges of the protected attribute $A$ in the MLP, as shown by the red edges in Figure 1. This dropout, similar to that in TabPFN, masks the causal weight of $A$ to zero, effectively reducing its influence to Gaussian noise $\epsilon$ . This increases the influence of fair exogenous causes $U_{0}$ and $U_{1}$ and independent noise terms all over the MLP visualized in Figure 1. We note that $A$ is sampled from an arbitrary distribution $A∈\{a_{0},a_{1}\}$ , as opposed to $A∈\{0,1\}$ , since both functions $f=0· wx+\epsilon$ and $f=p· 0x+\epsilon$ yield equivalent outcomes. Only after generating the pre-training dataset is $A$ converted to a binary variable for processing by the transformer.

In-Context Learning After generating $D_{bias}$ and $D_{fair}$ , we partition them into training and validation sets: $D_{bias}^{train}$ , $D_{bias}^{val}$ , $D_{fair}^{train}$ , and $D_{fair}^{val}$ . We pass $D_{bias}^{train}$ as context to the transformer to provide information about feature-target relationships. To simulate inference, we input $X_{bias}^{val}$ into the transformer $\mathcal{M}$ , yielding predictions $Y_{pred}$ . We then compute the binary-cross-entropy (BCE) loss $L(Y_{pred},Y_{fair}^{val})$ against the fair outcomes $Y_{fair}^{val}$ , which do not contain effects of the protected attribute. Thus, the transformer $\mathcal{M}$ learns the mapping $\mathcal{M}:X_{bias}→ Y_{fair}$ .

1

Input:

- Number of exogenous causes $U$

- Number of endogenous variables $U× H$

- Number of features and samples $M× N$

begin

- Define MLP $\phi$ with depth $H$ and width $U$

- Initialize random weights $W:(U× U× H-1)$

- Sample sparsity masks $P$ with same dimensionality as weights

- Sample $H$ per-layer non-linearities $z_{i}\sim\{Identity,ReLU,Tanh\}$

- Initialize output matrix $X:(U× H)$

- Sample location $k$ of protected attribute in $X_{0}$

- Sample locations of features $X_{biased}$ in $X_{1:H-1}$ , and outcome $y_{bias}$ in $X_{H}$

- Sample protected attribute threshold $a_{t}$ and binary values $\{a_{0},a_{1}\}$

for $n=0$ to $N$ samples do

- Sample values of exogenous causes $X_{0}:(U× 1)$

- Sample values of additive noise terms $\epsilon:(U× H)$

for $i=0$ to $H-1$ layers do

- Pass intermediate representation through hidden layer $X_{i+1}=z_{i}(P_{i}· W_{i}^{T}X_{i}+\epsilon_{i})$

end for

- Select prot. attr. $A$ , features $X_{bias}$ and outcome $y_{bias}$ from $X_{0}$ , $X_{1:H-1}$ , and $X_{H}$

- Binarize $A∈\{a_{0},a_{1}\}$ over threshold $a_{t}$

- Set input weights in row $k$ of $W_{0}$ to 0

for $j=0$ to $H-1$ layers do

- Pass intermediate representation through hidden layer $X_{j+1}=z_{i}(P_{i}· W_{j}^{T}X_{j}+\epsilon_{j})$

end for

2 - Select the fair outcome $y_{fair}$ from $X_{H}$

end for

- Binarize $y_{fair}∈\{0,1\}$ and $y_{bias}∈\{0,1\}$ over randomly sampled output threshold $y_{t}$

3 Output: $D_{bias}=(A,X_{bias},y_{bias})$ and $y_{fair}$

Algorithm 2 FairPFN Synthetic Data Generation

Prior-Fitting The transformer is trained for approximately 3 days on an RTX-2080 GPU on approximately 1.5 million different synthetic data-generating mechanisms, in which we vary the MLP architecture, the number of features $m$ , the sample size $n$ , and the non-linearities $z$ .

Real-World Inference During real-world inference, FairPFN requires no knowledge of causal mechanisms in the data, but instead only takes as input a biased observational dataset and implicitly infers potential causal explanations for the data (Figure 1 d) based on the causally generated data it has seen during pre-training. Crucially, FairPFN is provided information regarding which variable is the protected attribute, which is represented in a protected attribute encoder step in the transformer. A key advantage of FairPFN is its alignment with Bayesian Inference, as transformers pre-trained in the PFN framework have been shown to approximate the Posterior Predictive Distribution (PPD) Müller et al. (2022).

FairPFN thus approximates a modified PPD, predicting a causally fair target $y_{f}$ given biased features $X_{b}$ and a biased dataset $D_{b}$ by integrating over hypotheses for the SCM $\phi∈\Phi$ :

$$

p(y_{f}|x_{b},D_{b})\propto\int_{\Phi}p(y_{f}|x_{b},\phi)p(D_{b}|\phi)p(\phi)d\phi \tag{3}

$$

This approach has two advantages: it reduces the necessity of precise causal model inference, thereby lowering the risk of fairwashing from incorrect models Ma et al. (2023), and carries with it regularization-related performance improvements observed in Hollmann et al. (2023). We also emphasize that FairPFN is a foundation model and thus does not need to be trained for new fairness problems in practice. Instead, FairPFN performs predictions in a single forward pass of the data through the transformer.

5 Experiments

This section assesses FairPFN’s performance on synthetic and real-world benchmarks, highlighting its capability to remove the causal influence of protected attributes without user-specified knowledge of the causal model, while maintaining high predictive accuracy.

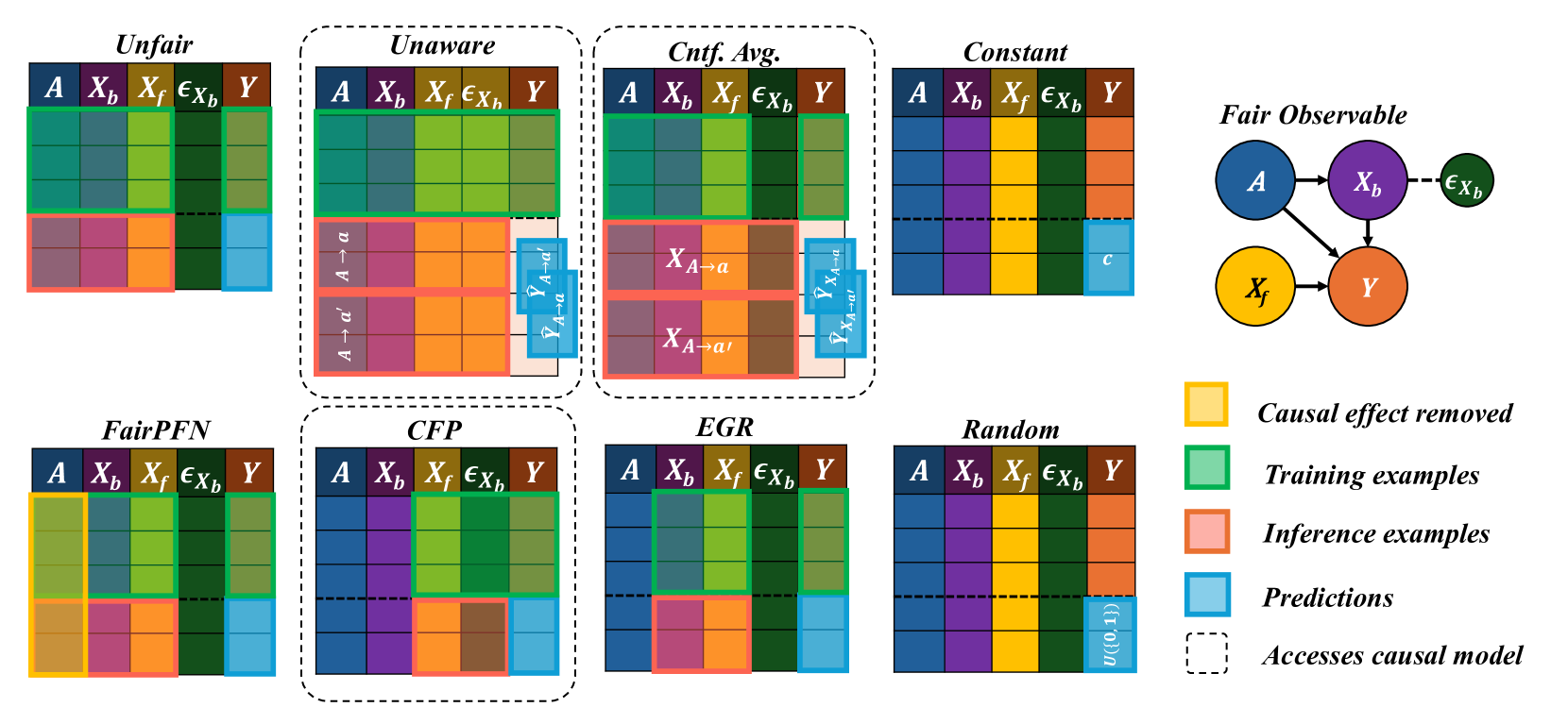

5.1 Baselines

We implement several baselines to compare FairPFN against a diverse set of traditional ML models, causal-fairness frameworks, and fairness-aware ML approaches. We summarize our baselines below, and provide a visualization of our baselines applied to the Fair Observable benchmark in Appendix Figure 25.

- Unfair: Fit the entire training set $(X,A,Y)$ .

- Unaware: Fit to the entire training set $(X,A,Y)$ . Inference returns the average of predictions on the original test set $(X,A)$ and the test set with alternative protected attribute values $(X,A→ a^{\prime})$ .

- Avg. Cnft: Fit to the entire training set $(X,A,Y)$ . Inference returns the average (avg.) of predictions on the original test set $(X,A)$ and the counterfactual (cntf) test set $(X_{A→ a^{\prime}},A→ a^{\prime})$ .

- Constant: Always predicts the majority class

- Random: Randomly predicts the target

- CFP: Combination of the three-levels of CFP as proposed in Kusner et al. (2017). Fit to non-descendant observables, unobservables, and independent noise terms $(X_{fair},U_{fair},\epsilon_{fair},Y)$ .

- EGR: Exponentiated Gradient Reduction (EGR) as proposed by Agarwal et al. (2018) is fit to non-protected attributes $(X,Y)$ with XGBoost Chen & Guestrin (2016) as a base model.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Fairness Scenarios in Machine Learning

### Overview

The image presents a series of six diagrams illustrating different scenarios related to fairness in machine learning models. Each scenario depicts a causal diagram with nodes representing protected attributes, unfair observable features, fair observable features, outcomes, and error terms. The diagrams aim to visualize how different types of bias and unfairness can manifest in a model's decision-making process.

### Components/Axes

The diagrams share the following components:

* **Protected Attribute (Prot. Attr):** Represented by a teal-colored circle labeled "A".

* **Outcome:** Represented by a purple circle labeled "Y".

* **Unfair Observable:** Represented by an orange circle labeled "X<sub>b</sub>".

* **Fair Observable:** Represented by a yellow circle labeled "X<sub>f</sub>".

* **Error Terms:** Represented by small, light-blue circles labeled "ε<sub>x</sub>" and "ε<sub>y</sub>".

* **Arrows:** Indicate causal relationships between variables.

* **Legend:** Located at the bottom-right, defining the color-coding for each component.

* **Mathematical Equations:** Below each diagram, defining the relationships between variables.

* **Titles:** Above each diagram, indicating the fairness scenario (1) Biased, (2) Direct-Effect, (3) Indirect-Effect, (4) Fair Observable, (5) Fair Unobservable, (6) Fair Additive Noise.

### Content Details

Here's a breakdown of each scenario, including the equations:

**1) Biased:**

* A -> X<sub>b</sub> -> Y

* A -> X<sub>f</sub> -> Y

* Equation: A ~ U(0,1), ε<sub>x</sub>, ε<sub>y</sub> ~ N(μ,0), σ, X<sub>b</sub> = W<sub>A</sub><sup>2</sup> + ε<sub>x</sub>, X<sub>f</sub> = W<sub>A</sub><sup>2</sup> + ε<sub>x</sub>, Y = W<sub>x</sub>X<sub>b</sub> + W<sub>x</sub>X<sub>f</sub> + ε<sub>y</sub>, Y = 1(Y ≥ γ)

**2) Direct-Effect:**

* A -> X<sub>b</sub> -> Y

* A -> X<sub>f</sub>

* Equation: A ~ U(0,1), X<sub>b</sub> ~ N(μ<sub>A</sub>,0), X<sub>f</sub> ~ N(μ<sub>0</sub>,0), X<sub>f</sub> = N(μ<sub>0</sub>), Y = W<sub>x</sub>X<sub>b</sub> + W<sub>x</sub>X<sub>f</sub> + ε<sub>y</sub>, Y = 1(Y ≥ γ)

**3) Indirect-Effect:**

* A -> X<sub>f</sub> -> X<sub>b</sub> -> Y

* Equation: ε<sub>x</sub>, ε<sub>y</sub> ~ N(μ,0), A ~ U(0,1), X<sub>f</sub> ~ N(μ<sub>0</sub>), X<sub>b</sub> = W<sub>A</sub><sup>2</sup> + ε<sub>x</sub>, Y = W<sub>x</sub>X<sub>b</sub> + W<sub>x</sub>X<sub>f</sub> + ε<sub>y</sub>, Y = 1(Y ≥ γ)

**4) Fair Observable:**

* A -> X<sub>f</sub> -> Y

* Equation: ε<sub>x</sub>, ε<sub>y</sub> ~ N(μ,0), A ~ U(0,1), X<sub>f</sub> ~ N(μ<sub>0</sub>), X<sub>b</sub> = W<sub>A</sub><sup>2</sup> + ε<sub>x</sub>, Y = W<sub>x</sub>X<sub>b</sub> + W<sub>x</sub>X<sub>f</sub> + ε<sub>y</sub>, Y = 1(Y ≥ γ)

**5) Fair Unobservable:**

* A -> U -> X<sub>b</sub> -> Y

* Equation: ε<sub>x</sub>, ε<sub>y</sub> ~ N(μ,0), A ~ U(0,1), U ~ N(μ<sub>0</sub>), X<sub>b</sub> = W<sub>A</sub><sup>2</sup> + W<sub>U</sub><sup>1</sup> + ε<sub>x</sub>, Y = W<sub>x</sub>X<sub>b</sub> + W<sub>x</sub>X<sub>f</sub> + ε<sub>y</sub>, Y = 1(Y ≥ γ)

**6) Fair Additive Noise:**

* A -> X<sub>b</sub> -> Y

* Equation: ε<sub>x</sub>, ε<sub>y</sub> ~ N(μ,0), A ~ U(0,1), X<sub>b</sub> = W<sub>A</sub><sup>2</sup> + ε<sub>x</sub>, X<sub>f</sub> = W<sub>A</sub><sup>2</sup> + ε<sub>x</sub>, Y = W<sub>x</sub>X<sub>b</sub> + W<sub>x</sub>X<sub>f</sub> + ε<sub>y</sub>, Y = 1(Y ≥ γ)

### Key Observations

* The diagrams consistently use the same node representations and causal arrow style.

* The mathematical equations provide a formal definition of the relationships depicted in each diagram.

* The scenarios vary in how the protected attribute (A) influences the outcome (Y), either directly, indirectly, or through observable/unobservable features.

* The inclusion of error terms (ε<sub>x</sub>, ε<sub>y</sub>) acknowledges the inherent noise and uncertainty in real-world data.

* The use of U(0,1) indicates a uniform distribution, while N(μ,σ) indicates a normal distribution.

### Interpretation

These diagrams illustrate different ways in which bias can enter a machine learning model and affect its fairness. They highlight the importance of considering causal relationships when evaluating and mitigating bias.

* **Scenario 1 (Biased):** Demonstrates a simple case where the protected attribute directly influences both observable features and the outcome, leading to potential discrimination.

* **Scenario 2 (Direct-Effect):** Shows a direct causal link between the protected attribute and the outcome, bypassing observable features.

* **Scenario 3 (Indirect-Effect):** Illustrates how the protected attribute can indirectly influence the outcome through a fair observable feature.

* **Scenario 4 (Fair Observable):** Suggests a scenario where fairness is achieved by ensuring that the observable features are independent of the protected attribute.

* **Scenario 5 (Fair Unobservable):** Introduces an unobservable variable (U) that mediates the relationship between the protected attribute and the outcome.

* **Scenario 6 (Fair Additive Noise):** Represents a scenario where fairness is achieved by adding noise to the model's predictions.

The diagrams, combined with the mathematical equations, provide a rigorous framework for analyzing and addressing fairness concerns in machine learning. They emphasize the need to understand the underlying causal mechanisms that drive bias and to develop interventions that target those mechanisms effectively. The "Seen by FairPFN" label at the bottom right suggests these diagrams are related to a specific fairness-aware machine learning framework or algorithm.

</details>

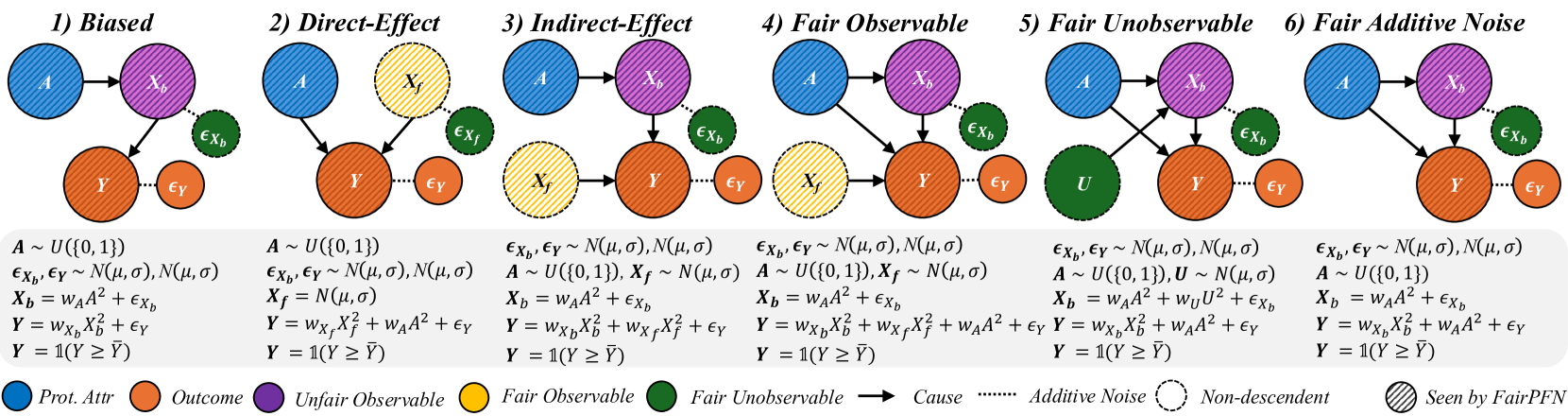

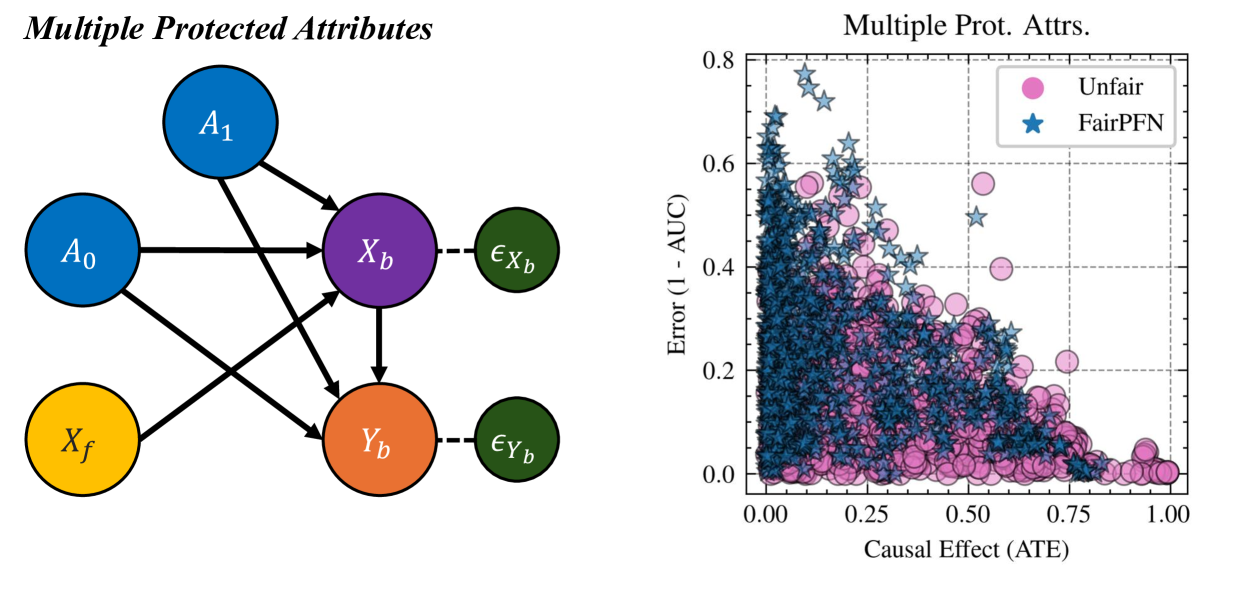

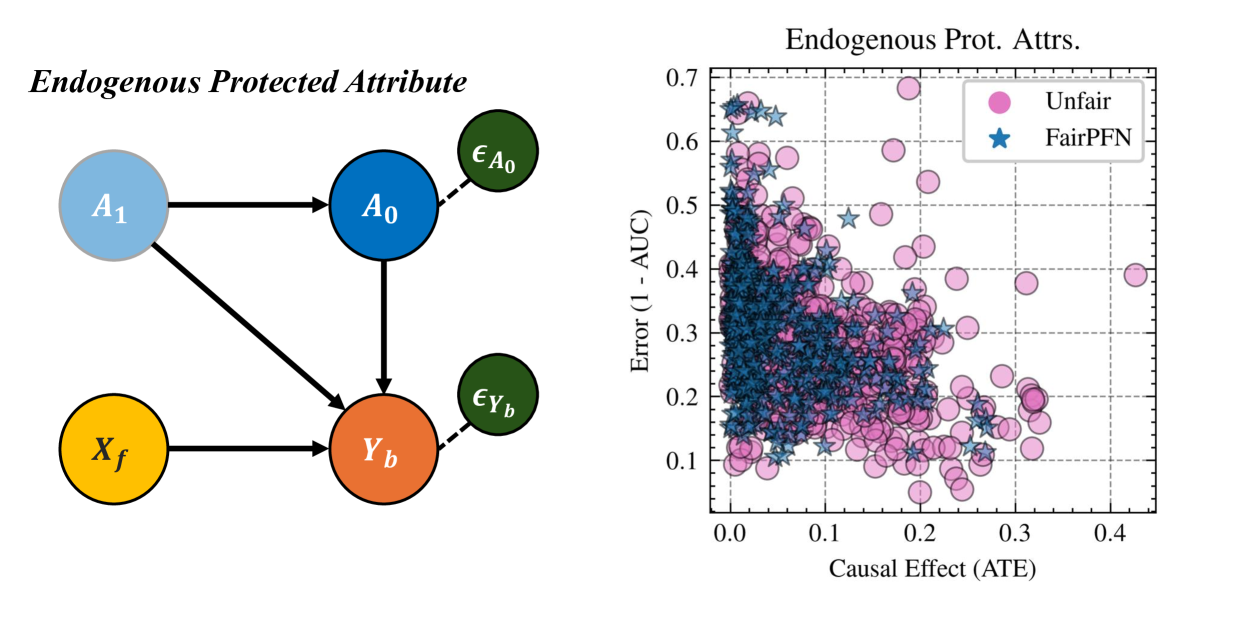

Figure 2: Causal Case Studies: Visualization and data generating processes of synthetic causal case studies, a handcrafted set of benchmarks designed to evaluate FairPFN’s ability to remove various sources of bias in causally generated data. For each group, 100 independent datasets are sampled, varying the number of samples, the standard deviation of noise terms $\sigma$ and the base causal effect $w_{A}$ of the protected attribute.

In the CFP, Unfair, Unaware, and Avg. Cntf. baselines, we employ FairPFN with a random noise term passed as a "protected attribute." We opt to use this UnfairPFN instead of TabPFN so as to not introduce any TabPFN-specific behavioral characteristics or artifacts. We show in Appendix Figure 17 that this reverts FairPFN to a normal tabular classifier with competitive peformance to TabPFN. We also note that our Unaware baseline is not the standard approach of dropping the protected attribute. We opt for our own implementation of Unaware as it shows improved causal effect removal to the standard approach (Appendix Figure 17).

5.2 Causal Case Studies

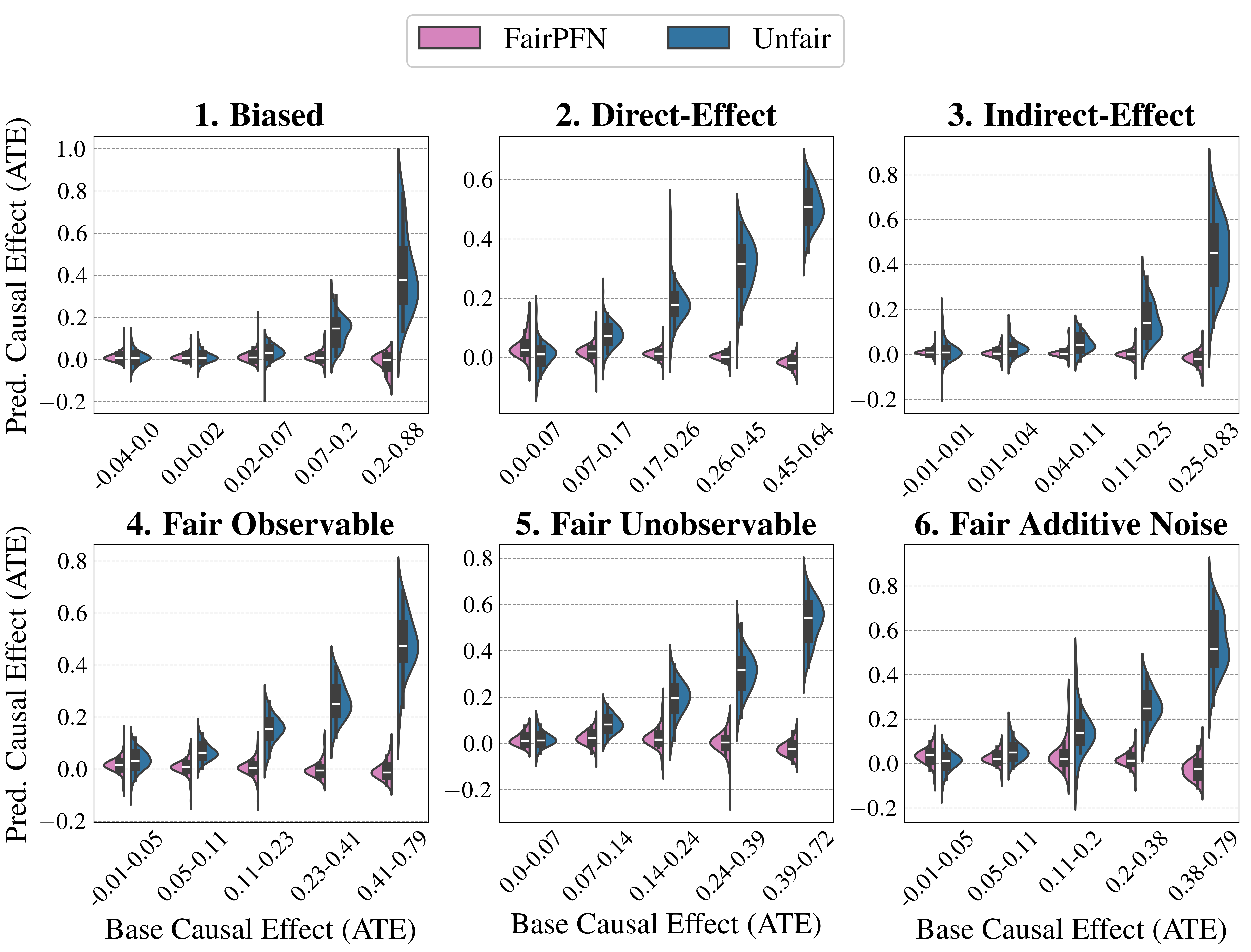

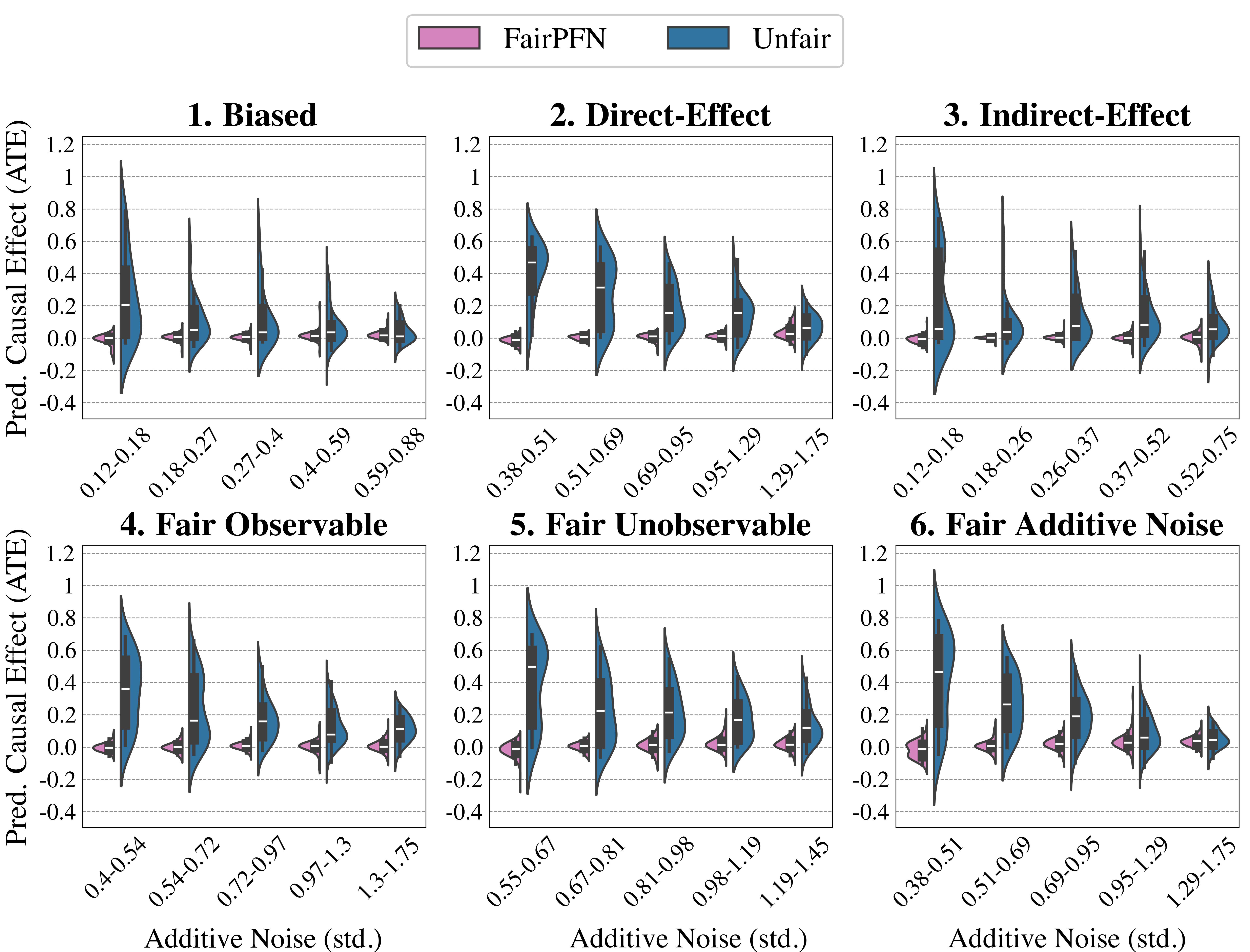

We first evaluate FairPFN using synthetic causal case studies to establish an experimental setting where the data-generating processes and all causal quantities are known, presenting a series of causal case studies with increasing difficulty to evaluate FairPFN’s capacity to remove various sources of bias in causally generated data. The data-generating processes and structural equations are illustrated in Figure 2, following the notation: $A$ for protected attributes, $X_{b}$ for biased-observables, $X_{f}$ for fair-observables, $U$ for fair-unobservables, $\epsilon_{X}$ for additive noise terms, and $Y$ for the outcome, discretized as $Y=\mathbb{1}(Y≥\bar{Y})$ . We term a variable $X$ "fair" iff $A∉ anc(X)$ . The structural equations in Figure 2 contain exponential non-linearities to ensure the direction of causality is identifiable Peters et al. (2014), distinguishing the Fair Unobservable and Fair Additive Noise scenarios, with the former including an unobservable yet identifiable causal effect $U$ .

For a robust evaluation, we generate 100 datasets per case study, varying causal weights of protected attributes $w_{A}$ , sample sizes $m∈(100,10000)$ (sampled on a log-scale), and the standard deviation $\sigma∈(0,1)$ (log-scale) of additive noise terms. We also create counterfactual versions of each dataset to assess FairPFN and its competitors across multiple causal and counterfactual fairness metrics, such as average treatment effect (ATE) and absolute error (AE) between predictions on observational and counterfactual datasets. We highlight that because our synthetic datasets are created from scratch, the fair causes, additive noise terms, counterfactual datasets, and ATE are ground truth. As a result, our baselines that have access to causal quantities are more precise in our causal case studies than in real-world scenarios where this causal information must be inferred.

<details>

<summary>extracted/6522797/figures/trade-off_by_group_synthetic.png Details</summary>

### Visual Description

## Charts: Performance Comparison of Fairness Interventions

### Overview

The image presents six individual scatter plots, each representing the performance of different fairness interventions under varying causal effect (ATE) levels. The y-axis represents "Error (1-AUC)", and the x-axis represents "Causal Effect (ATE)". Each plot focuses on a specific fairness setting: Biased, Direct-Effect, Indirect-Effect, Fair Observable, Fair Unobservable, and Fair Additive Noise. Multiple data series are plotted on each chart, representing different fairness algorithms. Error bars are present on some data points.

### Components/Axes

* **X-axis Label (all charts):** "Causal Effect (ATE)" - Scale ranges from 0.00 to 0.25, with markers at 0.05, 0.10, 0.15, 0.20.

* **Y-axis Label (all charts):** "Error (1-AUC)" - Scale ranges from 0.20 to 0.50, with markers at 0.20, 0.30, 0.40, 0.50.

* **Chart Titles:** 1. Biased, 2. Direct-Effect, 3. Indirect-Effect, 4. Fair Observable, 5. Fair Unobservable, 6. Fair Additive Noise.

* **Legend (bottom-center):**

* Blue Circle: Unfair

* Red Downward Triangle: Unaware

* Green Upward Triangle: Constant

* Blue Square: EGR

* Yellow Diamond: CFP

* Brown Downward Triangle: Random

* Star: FairPFN

* Light Green Diamond: Cnt. Avg.

### Detailed Analysis

Each chart will be analyzed individually, noting trends and approximate data points.

**1. Biased:**

* **Unfair (Blue Circle):** Starts at approximately (0.00, 0.35) and increases to approximately (0.25, 0.35). Relatively flat trend.

* **Unaware (Red Downward Triangle):** Starts at approximately (0.00, 0.45) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **Constant (Green Upward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.35). Downward sloping trend.

* **FairPFN (Star):** Starts at approximately (0.00, 0.45) and decreases to approximately (0.25, 0.40). Downward sloping trend.

**2. Direct-Effect:**

* **Unfair (Blue Circle):** Starts at approximately (0.00, 0.25) and increases to approximately (0.25, 0.35). Upward sloping trend.

* **Unaware (Red Downward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **Constant (Green Upward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.35). Downward sloping trend.

* **FairPFN (Star):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.35). Downward sloping trend.

**3. Indirect-Effect:**

* **Unfair (Blue Circle):** Starts at approximately (0.00, 0.35) and increases to approximately (0.25, 0.35). Relatively flat trend.

* **Unaware (Red Downward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **Constant (Green Upward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.35). Downward sloping trend.

* **FairPFN (Star):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.35). Downward sloping trend.

**4. Fair Observable:**

* **Unfair (Blue Circle):** Starts at approximately (0.00, 0.35) and increases to approximately (0.25, 0.40). Upward sloping trend.

* **Unaware (Red Downward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **Constant (Green Upward Triangle):** Starts at approximately (0.00, 0.35) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **FairPFN (Star):** Starts at approximately (0.00, 0.30) and increases to approximately (0.25, 0.35). Upward sloping trend.

**5. Fair Unobservable:**

* **Unfair (Blue Circle):** Starts at approximately (0.00, 0.30) and increases to approximately (0.25, 0.40). Upward sloping trend.

* **Unaware (Red Downward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **Constant (Green Upward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.35). Downward sloping trend.

* **FairPFN (Star):** Starts at approximately (0.00, 0.30) and increases to approximately (0.25, 0.30). Relatively flat trend.

**6. Fair Additive Noise:**

* **Unfair (Blue Circle):** Starts at approximately (0.00, 0.30) and increases to approximately (0.25, 0.40). Upward sloping trend.

* **Unaware (Red Downward Triangle):** Starts at approximately (0.00, 0.40) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **Constant (Green Upward Triangle):** Starts at approximately (0.00, 0.35) and decreases to approximately (0.25, 0.30). Downward sloping trend.

* **FairPFN (Star):** Starts at approximately (0.00, 0.30) and increases to approximately (0.25, 0.35). Upward sloping trend.

### Key Observations

* The "Unaware" algorithm consistently shows a decreasing error rate as the causal effect (ATE) increases across all fairness settings.

* The "Unfair" algorithm generally exhibits an increasing error rate with increasing causal effect, particularly in the "Fair Observable", "Fair Unobservable", and "Fair Additive Noise" settings.

* FairPFN generally performs better than Unfair and Unaware in the "Fair" settings.

* Error bars are present, indicating some variance in the results.

### Interpretation

The charts demonstrate the performance of various fairness interventions under different causal effect scenarios. The "Unaware" algorithm, while reducing error with increasing causal effect, likely does so by ignoring the fairness constraints. The "Unfair" algorithm's increasing error suggests that fairness interventions become more challenging as the causal effect grows. FairPFN appears to be a promising approach, maintaining relatively low error rates across different fairness settings. The differences in performance across the fairness settings highlight the importance of choosing an intervention appropriate for the specific causal structure of the data. The error bars suggest that the results are not deterministic and that further investigation is needed to understand the robustness of these interventions. The consistent downward trend of the "Unaware" algorithm suggests a trade-off between fairness and accuracy, as it achieves lower error by not explicitly addressing fairness concerns.

</details>

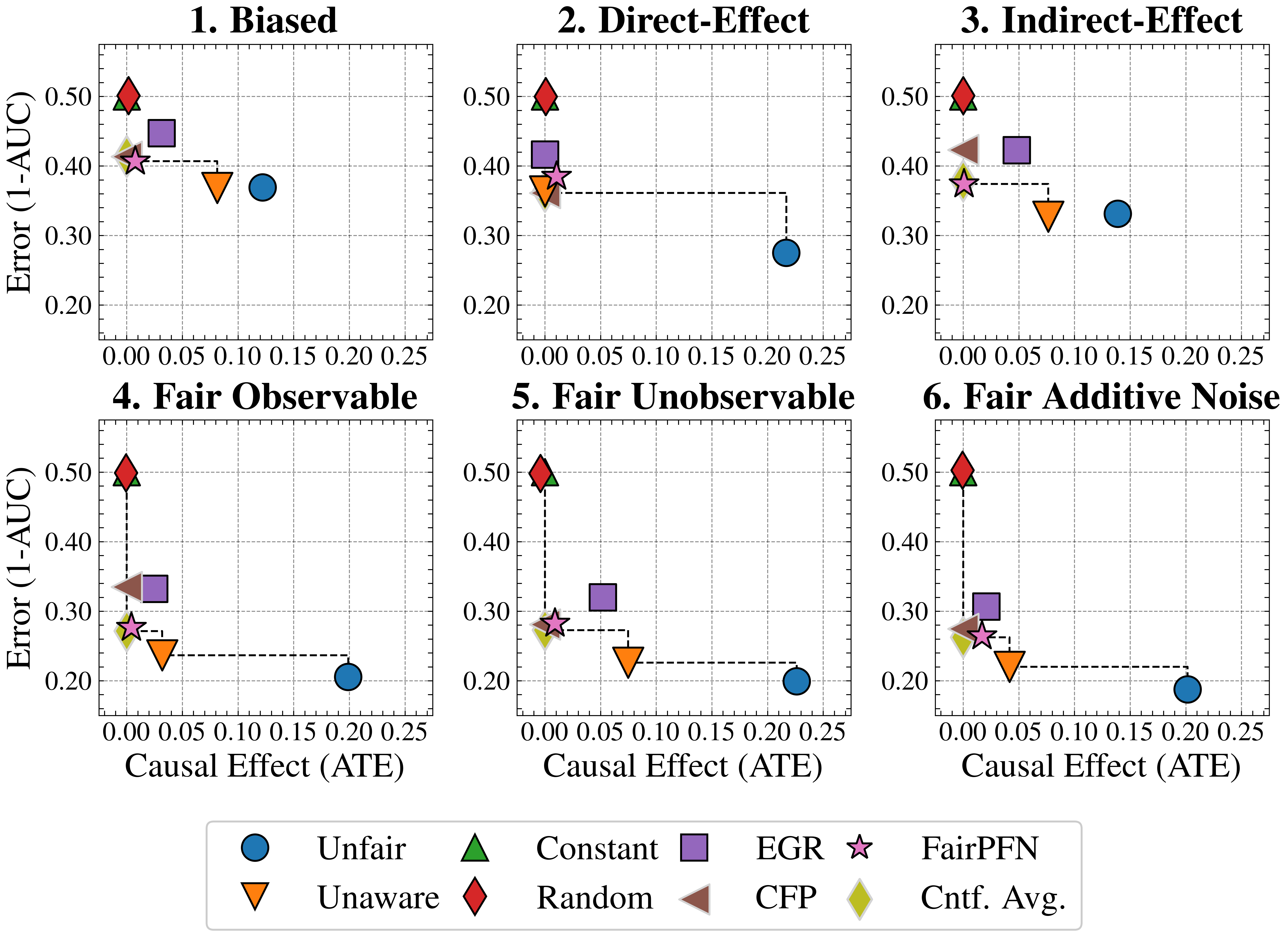

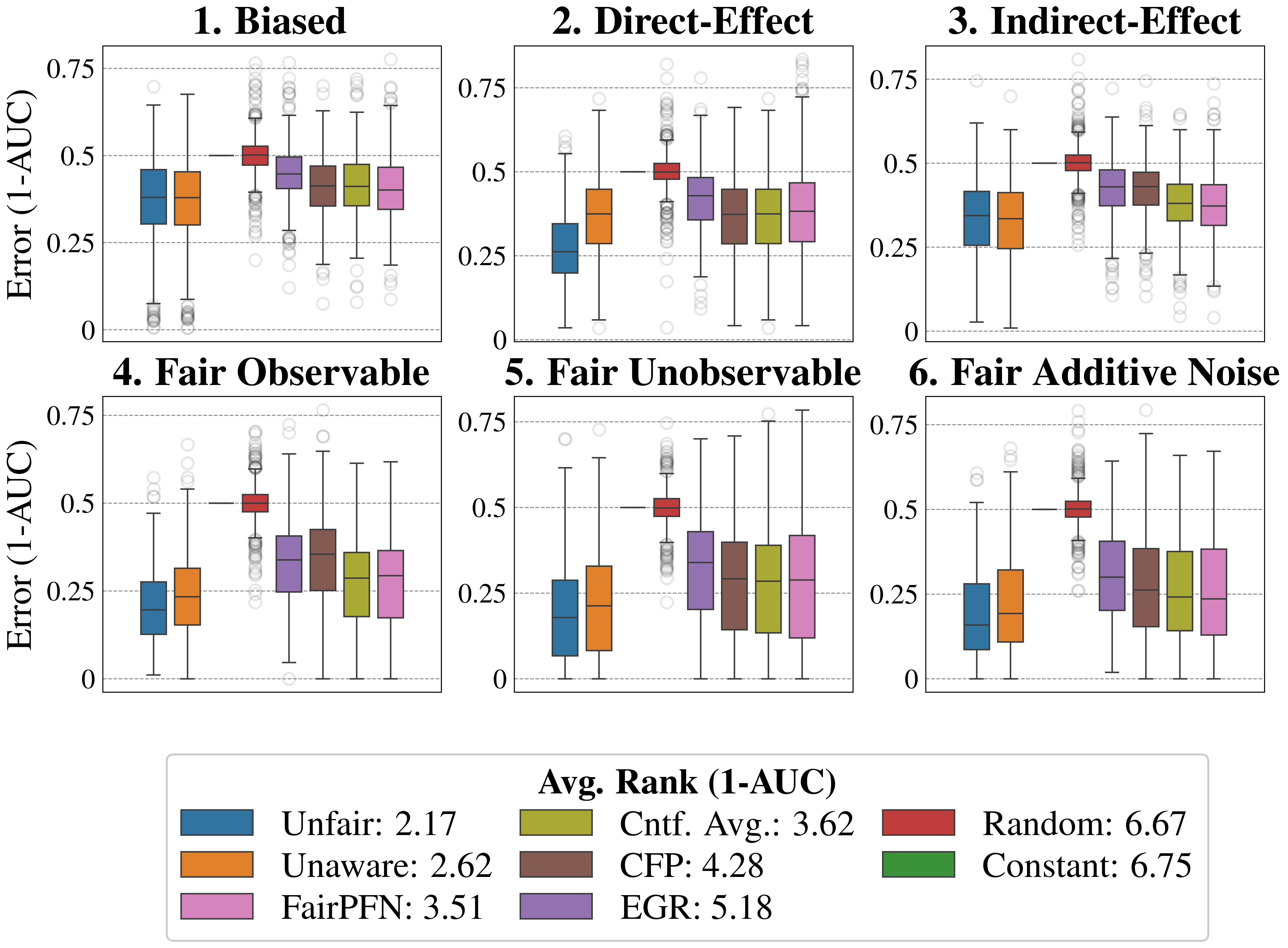

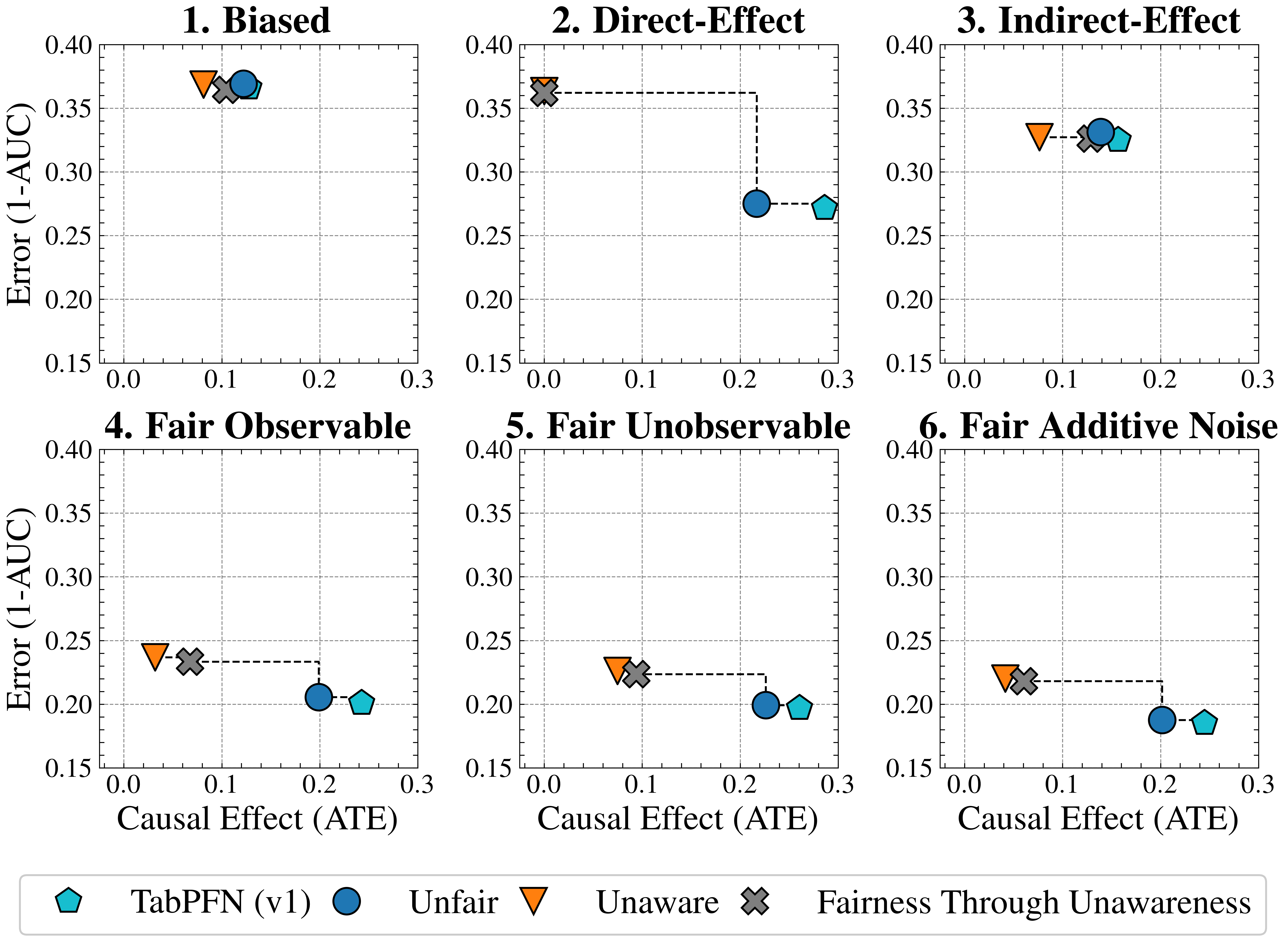

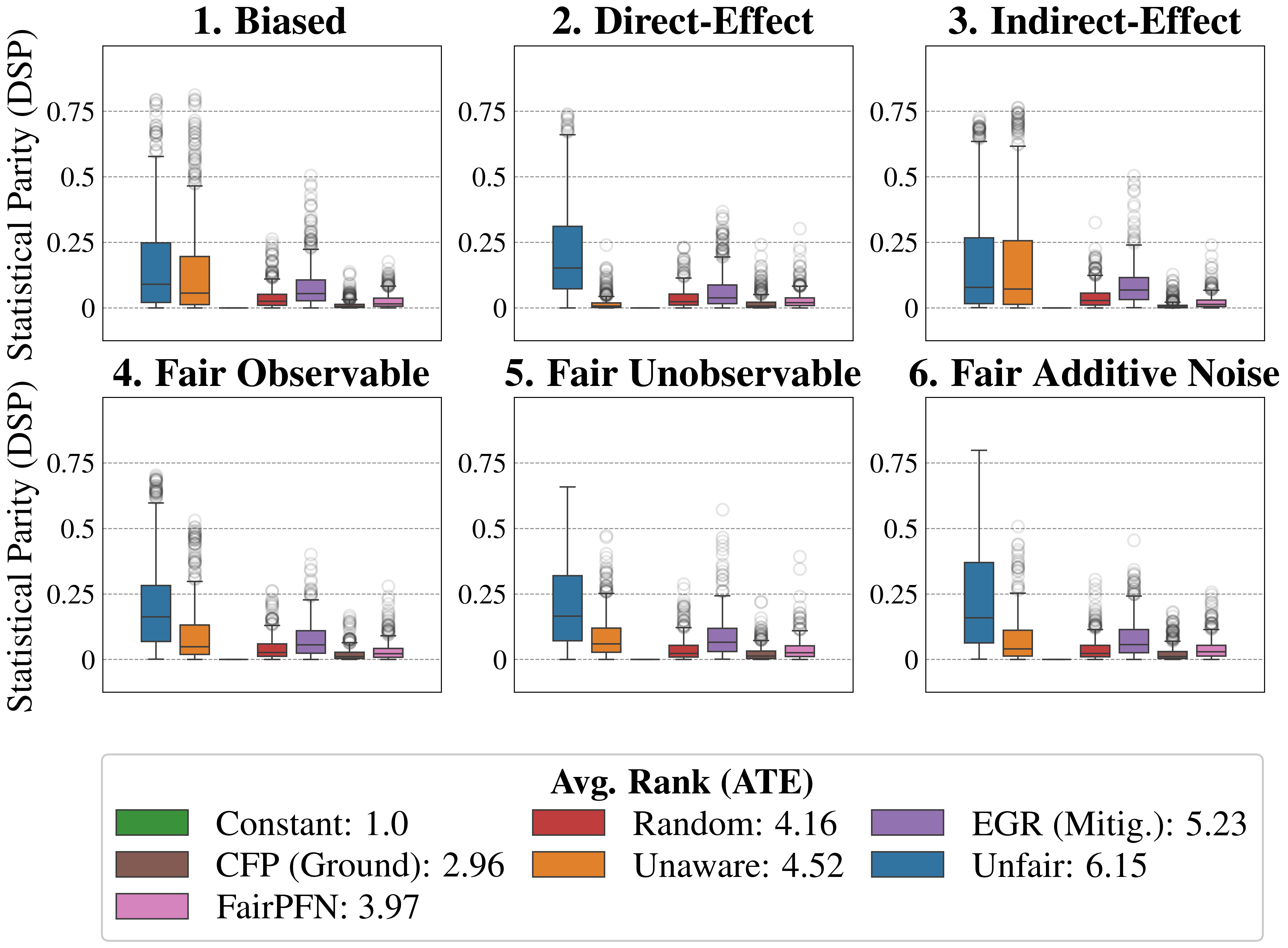

Figure 3: Fairness Accuracy Trade-Off (Synthetic): Average Treatment Effect (ATE) of predictions, predictive error (1-AUC), and Pareto Front performance of FairPFN versus baselines in our causal case studies. Baselines which have access to causal information are indicated by a light border. FairPFN is on the Pareto Front on 40% of synthetic datasets using only observational data, demonstrating competitive performance with the CFP and Cntf. Avg. baselines that utilize causal quantities from the true data-generating process.

Fairness-Accuracy Trade-Off

Figure 3 presents the fairness-accuracy trade-off for FairPFN and its baselines, displaying the mean absolute treatment effect (ATE) and mean predictive error (1-AUC) observed across synthetic datasets, along with the Pareto Front of non-dominated solutions. FairPFN (which only uses observational data) attains Pareto Optimal performance in 40% of the 600 synthetic datasets, exhibiting a fairness-accuracy trade-off competitive with CFP and Cntf. Avg., which use causal quantities from the true data-generating process. This is even the case in the Fair Unobservable and Fair Additive Noise benchmark groups, producing causally fair predictions using only observational variables that are either a protected attribute or a causal ancestor of it. This indicates FairPFN’s capacity to infer latent unobservables, which we further investigate in Section 5.3. We also highlight how the Cntf. Avg. baseline achieves lower error than CFP. We believe that this is due to Cntf. Avg. having access to both the observational and counterfactual datasets, which implicitly contains causal weights and non-linearities, while CFP is given only fair unobservables and must infer this causal information. The fact that a PFN is used as a base model in Cntf. Avg. could further explain this performance gain, as access to more observable variables helps guide the PFN toward accurate predictions realistic for the data. We suggest that this Cntf. Avg. as an alternative should be explored in future studies.

<details>

<summary>extracted/6522797/figures/tce_by_group_synthetic_new.png Details</summary>

### Visual Description

\n

## Box Plots: Causal Effect Analysis under Different Fairness Constraints

### Overview

The image presents six box plots, each representing the distribution of causal effects (ATE - Average Treatment Effect) under different fairness scenarios. Each plot compares four different algorithms: FairPFN, EGR, Unaware, and Unfair. The x-axis represents the average rank of each algorithm, and the y-axis represents the causal effect. A horizontal gray dashed line at y=0 serves as a reference point.

### Components/Axes

* **Y-axis:** "Causal Effect (ATE)" ranging from -0.5 to 0.75.

* **X-axis:** "Avg. Rank (ATE)" with values 1, 2, 3, and 4.

* **Titles:** Each subplot is titled with a fairness scenario: "1. Biased", "2. Direct-Effect", "3. Indirect-Effect", "4. Fair Observable", "5. Fair Unobservable", "6. Fair Additive Noise".

* **Legend:** Located at the bottom center of the image.

* FairPFN: Purple, labeled "1.88/4"

* EGR: Green, labeled "2.11/4"

* Unaware: Orange, labeled "2.16/4"

* Unfair: Blue, labeled "3.42/4"

### Detailed Analysis

Each subplot displays box plots for the four algorithms. The box plots show the median, quartiles, and outliers of the causal effect distribution.

**1. Biased:**

* FairPFN (Purple): Median around 0.1, IQR from approximately 0 to 0.25. Several outliers above 0.5.

* EGR (Green): Median around 0.2, IQR from approximately 0 to 0.3.

* Unaware (Orange): Median around 0, IQR from approximately -0.1 to 0.15.

* Unfair (Blue): Median around 0.25, IQR from approximately 0.1 to 0.4.

**2. Direct-Effect:**

* FairPFN (Purple): Median around 0.25, IQR from approximately 0.1 to 0.4.

* EGR (Green): Median around 0.25, IQR from approximately 0.1 to 0.4.

* Unaware (Orange): Median around 0, IQR from approximately -0.1 to 0.1.

* Unfair (Blue): Median around 0.25, IQR from approximately 0.1 to 0.4.

**3. Indirect-Effect:**

* FairPFN (Purple): Median around 0.2, IQR from approximately 0 to 0.3.

* EGR (Green): Median around 0.2, IQR from approximately 0 to 0.3.

* Unaware (Orange): Median around 0, IQR from approximately -0.1 to 0.1.

* Unfair (Blue): Median around 0.2, IQR from approximately 0 to 0.3.

**4. Fair Observable:**

* FairPFN (Purple): Median around 0.25, IQR from approximately 0.1 to 0.4.

* EGR (Green): Median around 0.2, IQR from approximately 0 to 0.3.

* Unaware (Orange): Median around 0, IQR from approximately -0.1 to 0.1.

* Unfair (Blue): Median around 0.2, IQR from approximately 0 to 0.3.

**5. Fair Unobservable:**

* FairPFN (Purple): Median around 0.2, IQR from approximately 0 to 0.3.

* EGR (Green): Median around 0.2, IQR from approximately 0 to 0.3.

* Unaware (Orange): Median around 0, IQR from approximately -0.1 to 0.1.

* Unfair (Blue): Median around 0.2, IQR from approximately 0 to 0.3.

**6. Fair Additive Noise:**

* FairPFN (Purple): Median around 0.2, IQR from approximately 0 to 0.3.

* EGR (Green): Median around 0.2, IQR from approximately 0 to 0.3.

* Unaware (Orange): Median around 0, IQR from approximately -0.1 to 0.1.

* Unfair (Blue): Median around 0.2, IQR from approximately 0 to 0.3.

### Key Observations

* The "Unfair" algorithm consistently exhibits a higher median causal effect than the other algorithms across most scenarios.

* The "Unaware" algorithm generally has a median causal effect close to zero.

* FairPFN and EGR show similar distributions in most scenarios.

* The average rank values in the legend indicate that FairPFN performs best (lowest rank) on average, followed by EGR and Unaware, with Unfair performing worst.

* Outliers are present in several box plots, particularly for FairPFN, suggesting variability in the causal effect.

### Interpretation

The data suggests that the "Unfair" algorithm consistently produces a higher causal effect, potentially indicating a bias in its predictions. The "Unaware" algorithm, which does not consider fairness constraints, tends to have a neutral causal effect. FairPFN and EGR, designed with fairness in mind, achieve comparable performance and generally exhibit lower causal effects than the "Unfair" algorithm. The average rank values confirm that FairPFN is the best-performing algorithm overall, followed by EGR. The presence of outliers suggests that the causal effect can vary significantly depending on the specific data instance. The different fairness scenarios (Biased, Direct-Effect, etc.) highlight the importance of considering different types of fairness constraints when evaluating and comparing algorithms. The consistent performance of FairPFN and EGR across these scenarios suggests their robustness to different fairness challenges.

</details>

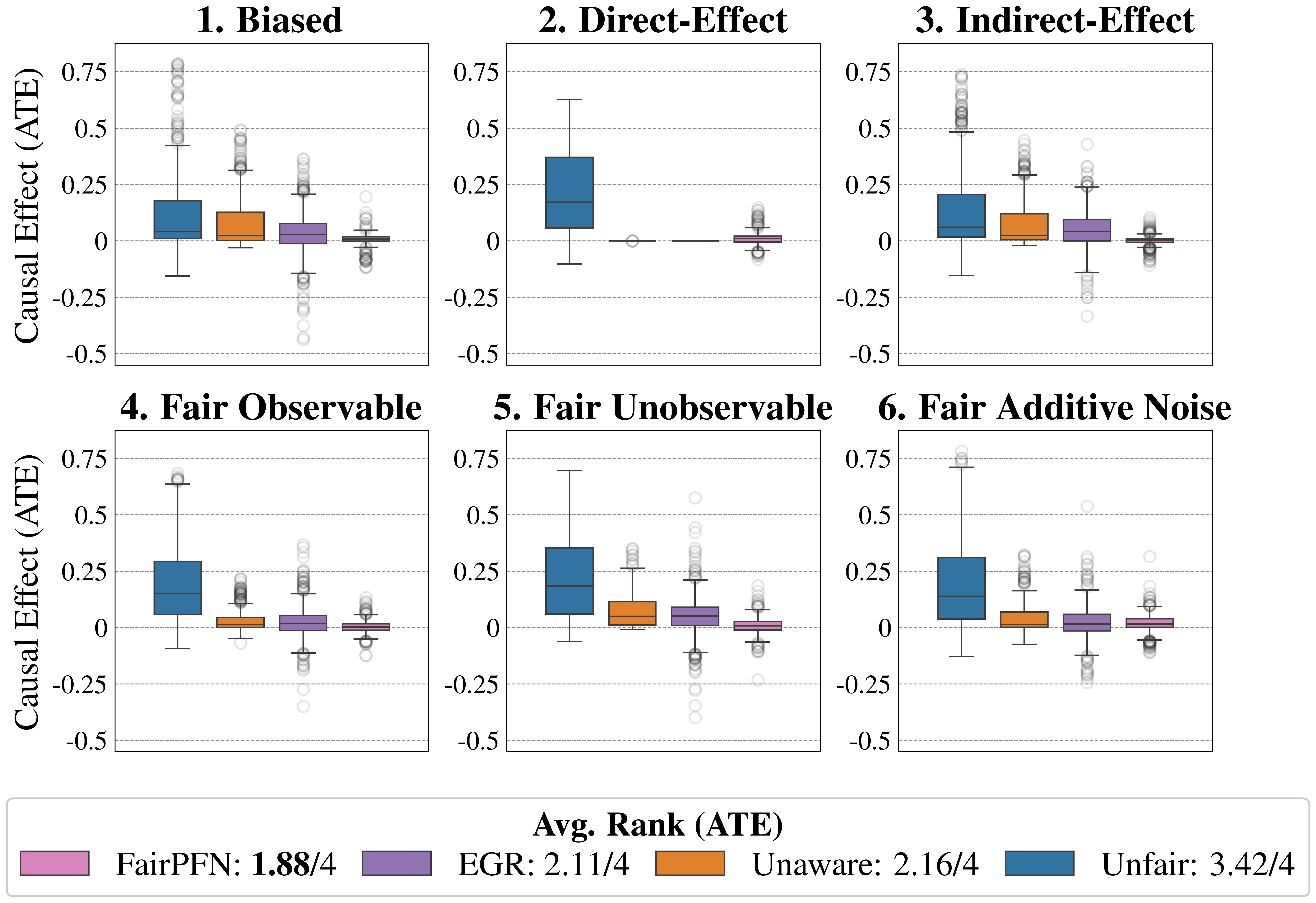

Figure 4: Causal Fairness (Synthetic): Average Treatment Effect (ATE) of predictions of FairPFN compared to baselines which do not have access to causal information. FairPFN consistently removes the causal effect with a margin of error of (-0.2, 0.2) and achieves an average rank of 1.88 out of 4, only to be outperformed on the Direct-Effect benchmark where Unaware is the optimal strategy.

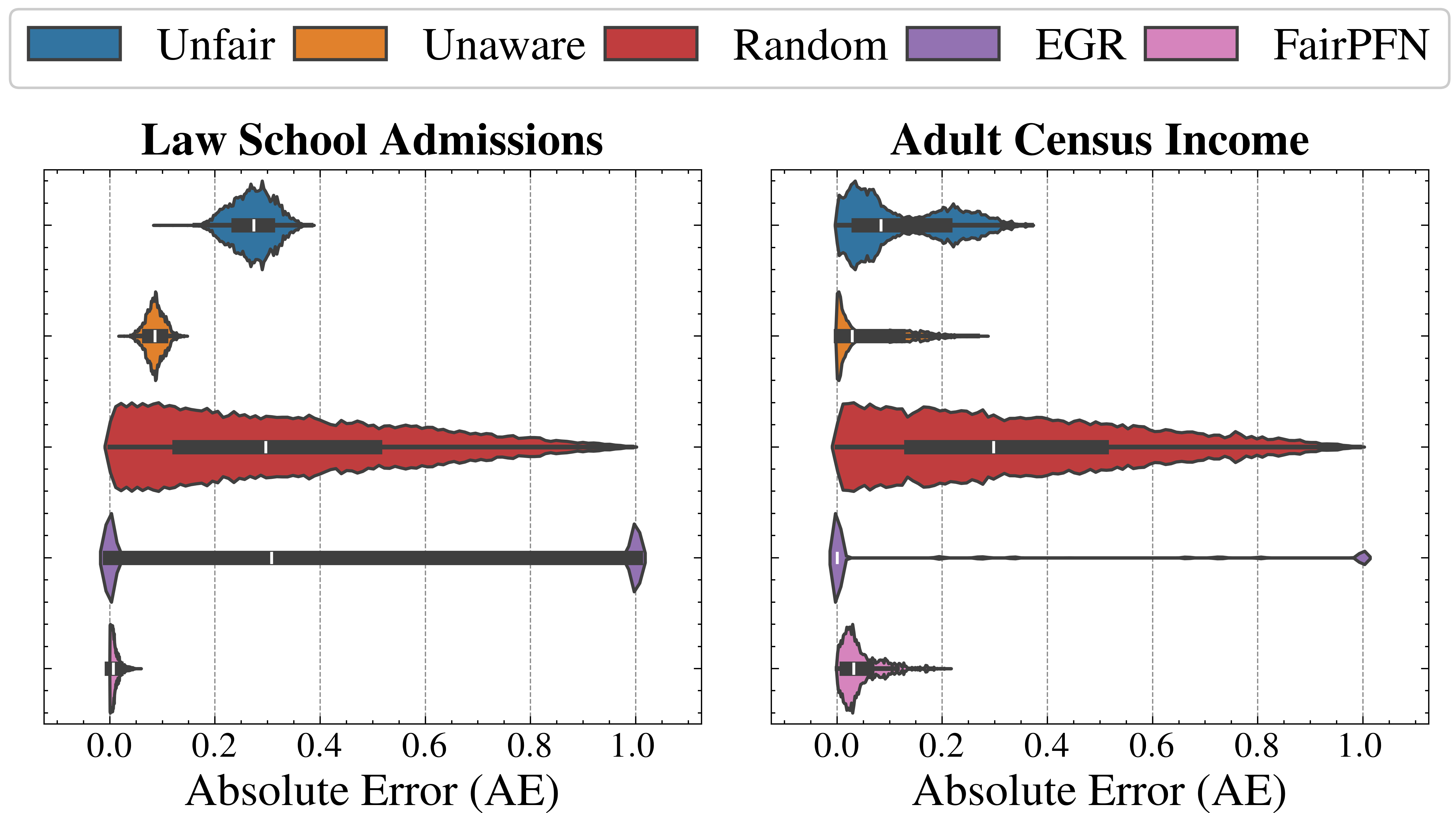

Causal Effect Removal We evaluate FairPFN’s efficacy in causal effect removal by analyzing box plots depicting the median, interquartile range (IQR), and average treatment effect (ATE) of predictions, compared to baseline predictive models that also do not access causal information (Figure 4). We observe that FairPFN exhibits a smaller IQR than the state-of-the-art bias mitigation method EGR. In an average rank test across 600 synthetic datasets, FairPFN achieves an average rank of 1.88 out of 4. We provide a comparison of FairPFN against all baselines in Figure 24. We note that our case studies crucially fit our prior assumptions about the causal representation of protected attributes. We show in Appendix Figure 13 that FairPFN reverts to a normal classifier when, for example, the exogeneity assumption is violated.

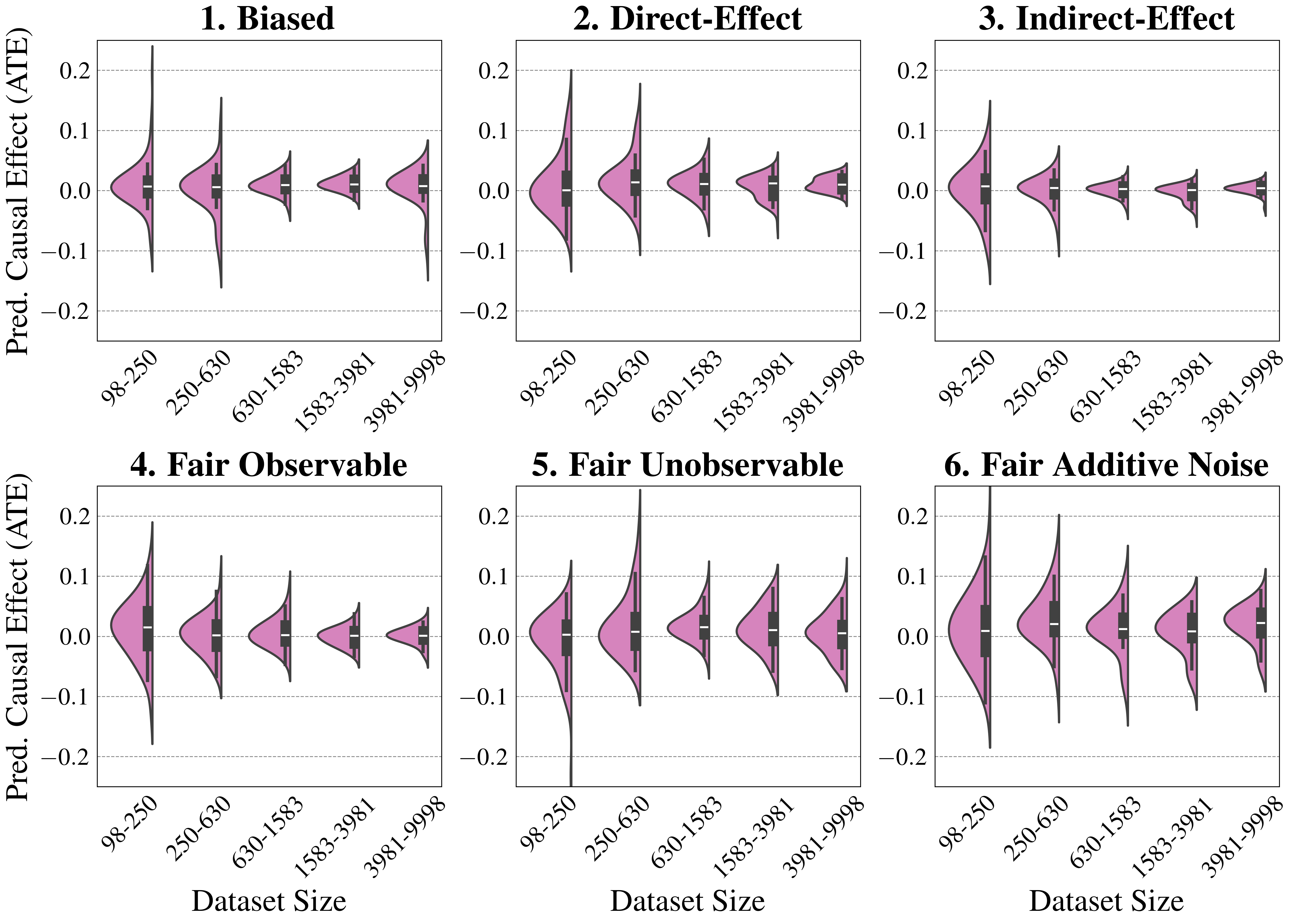

Ablation Study

We finally conduct an ablation study to evaluate FairPFN’s performance in causal effect removal across synthetic datasets with varying size, noise levels, and base rates of causal effect. Results indicate that FairPFN maintains consistent performance across different noise levels and base rates, improving in causal effect removal as dataset size increases and causal effects become easier to distinguish from spurious correlations Dai et al. (1997). We note that the variance of FairPFN, illustrated by box-plot outliers in Figure 4 that extend to 0.2 and -0.2, is primarily arises from small datasets with fewer than 250 samples (Appendix Figure 11), limiting FairPFN’s ability to identify causal mechanisms. We also show in Appendix Figure 14 that FairPFN’s fairness behavior remains consistent as graph complexity increases, though accuracy drops do to the combinatorially increasing problem complexity.

For a more in-depth analysis of these results, we refer to Appendix B.

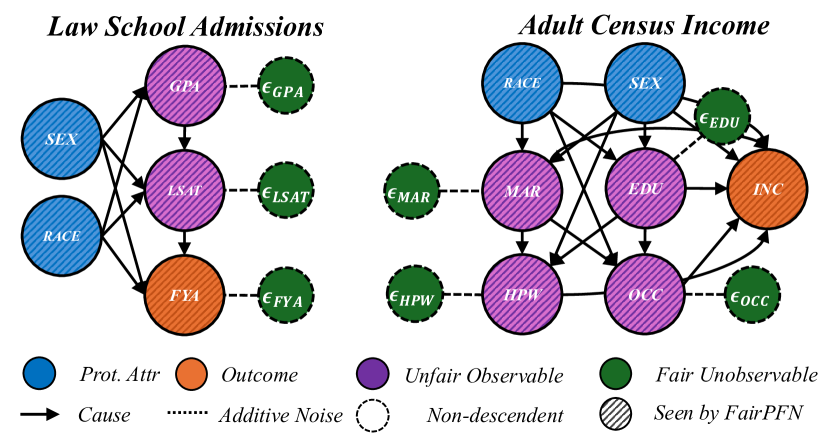

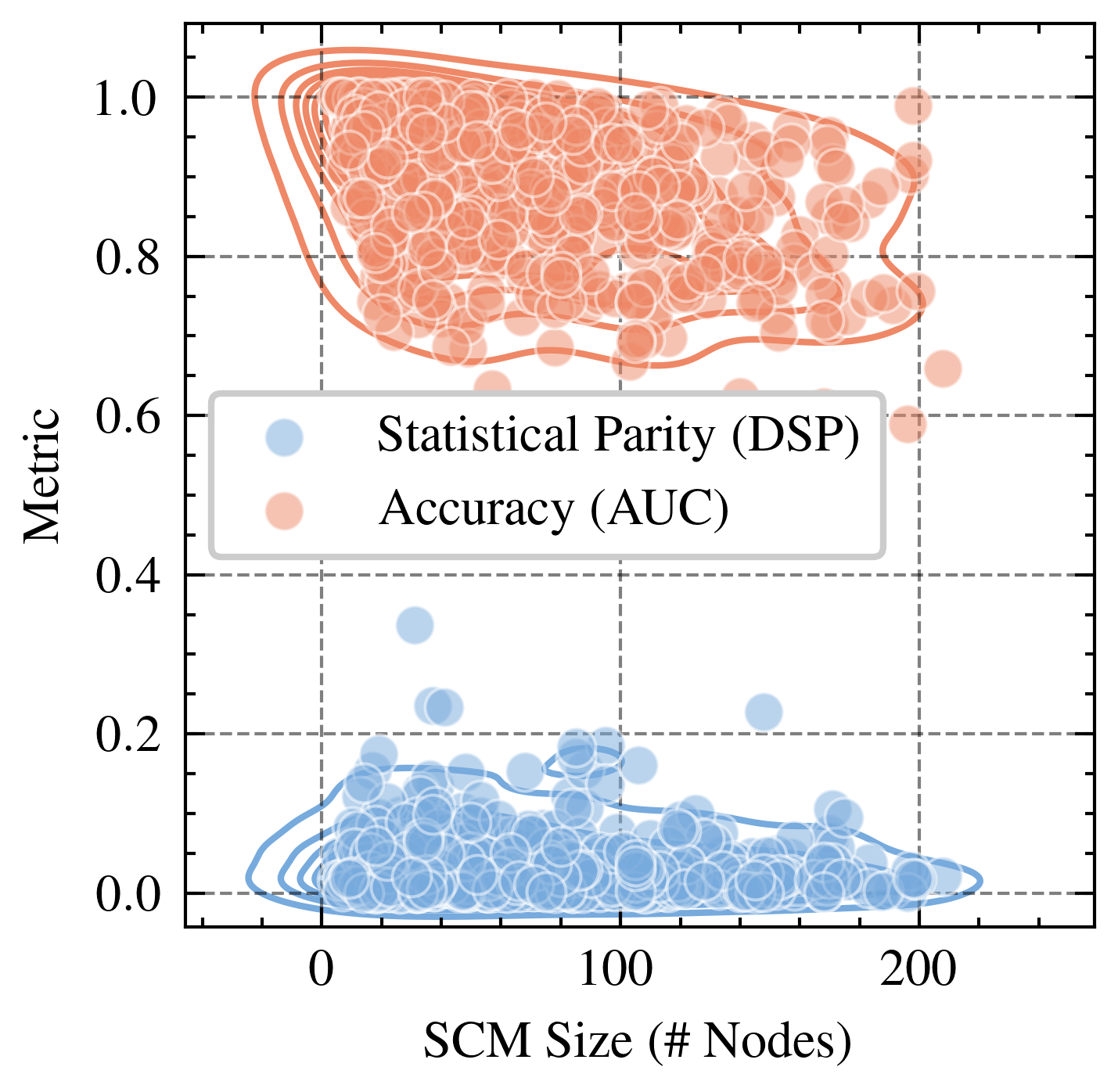

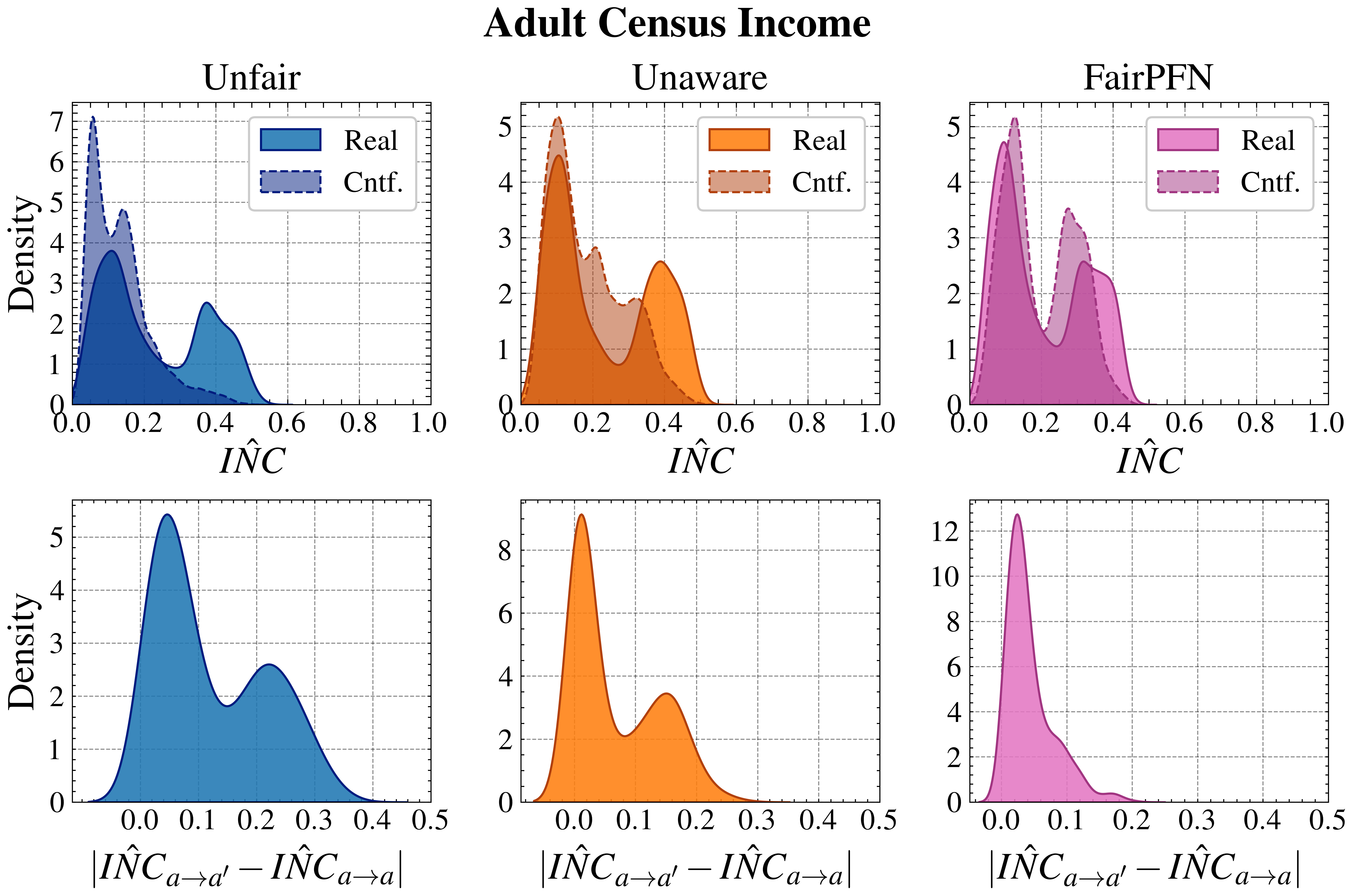

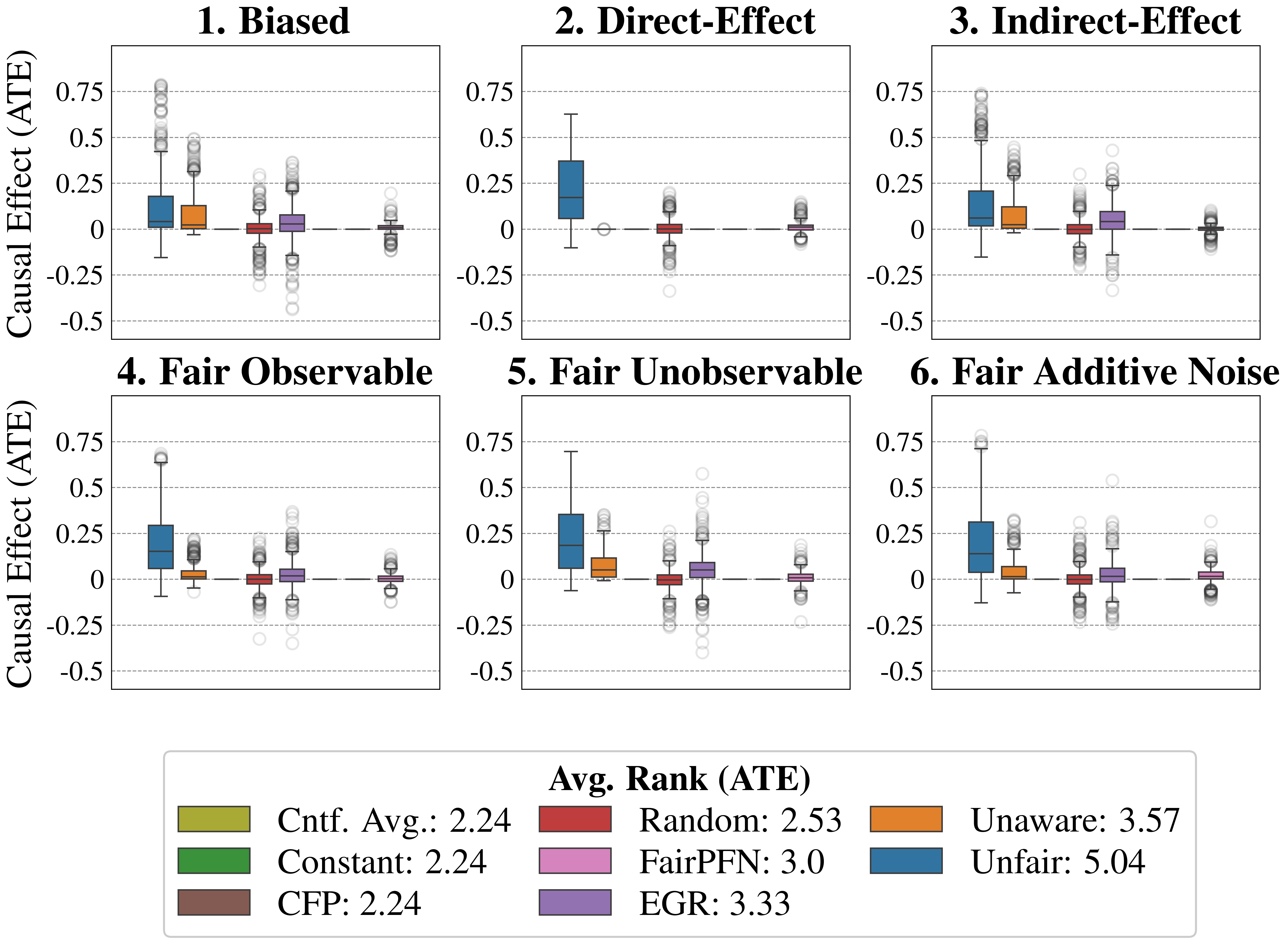

5.3 Real-World Data

This section evaluates FairPFN’s causal effect removal, predictive error, and correlation with fair latent variables on two real-world datasets with established causal graphs (Figure 5). For a description of our real-world datasets and the methods we use to obtain causal models, see Appendix A.

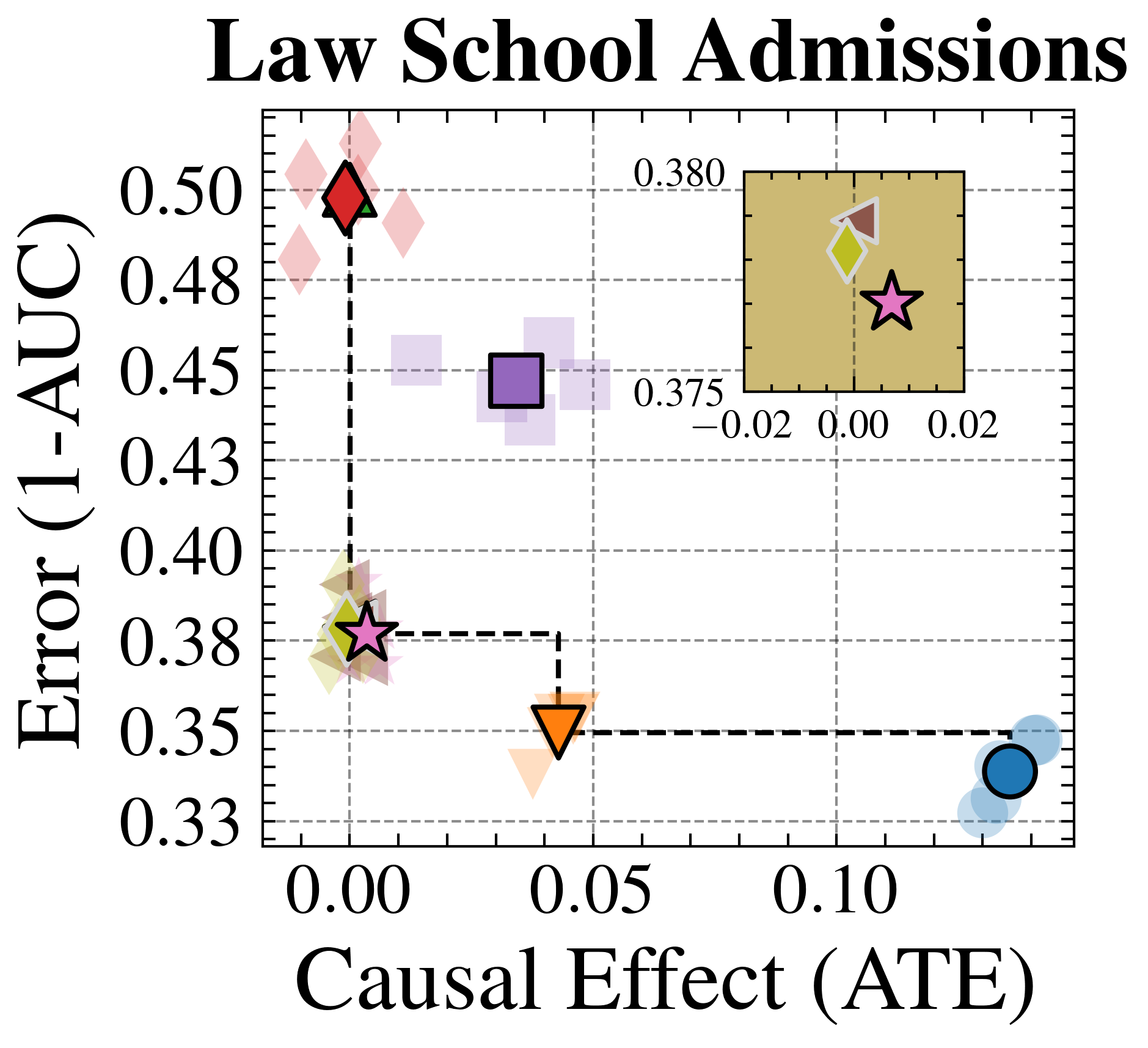

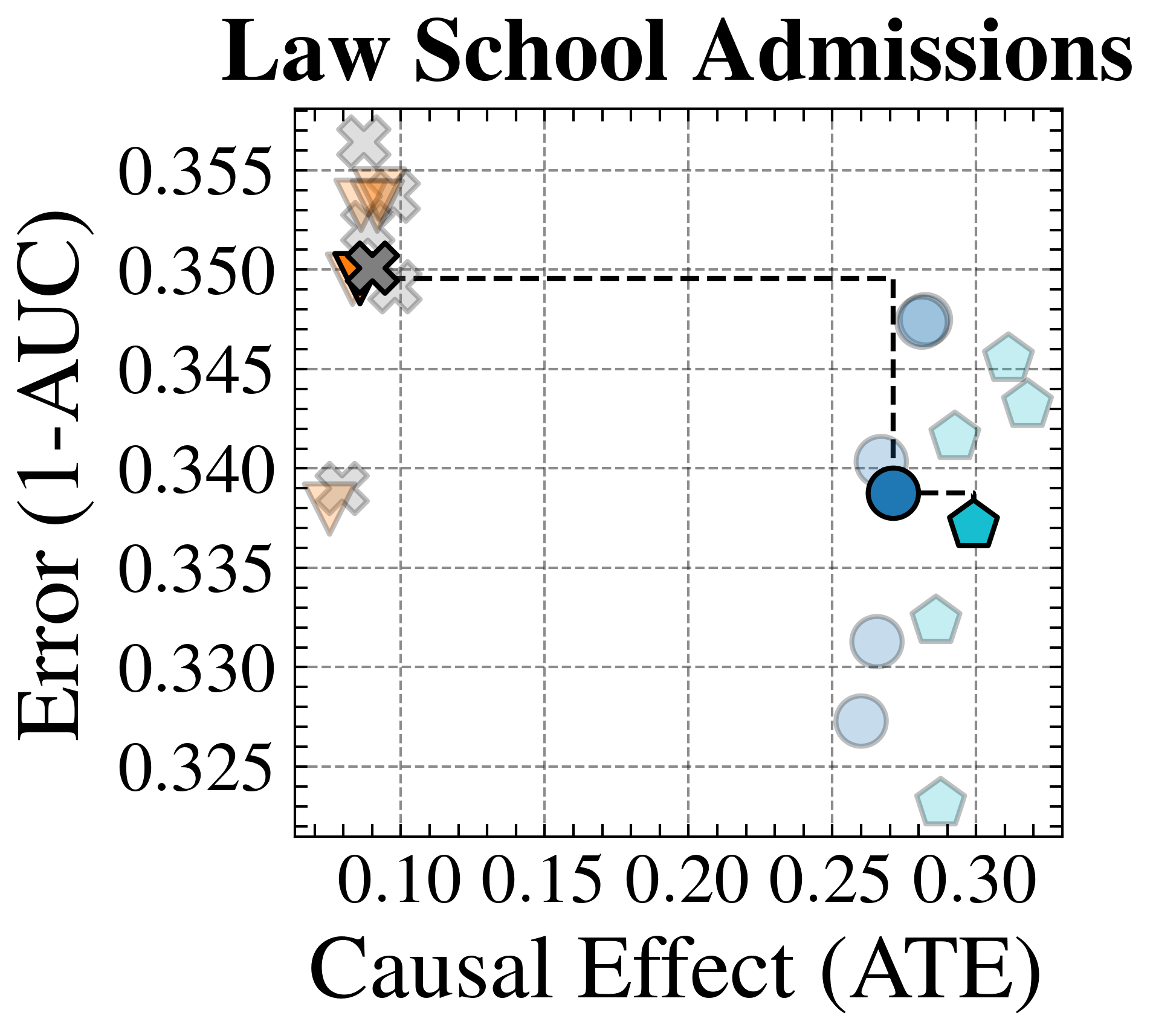

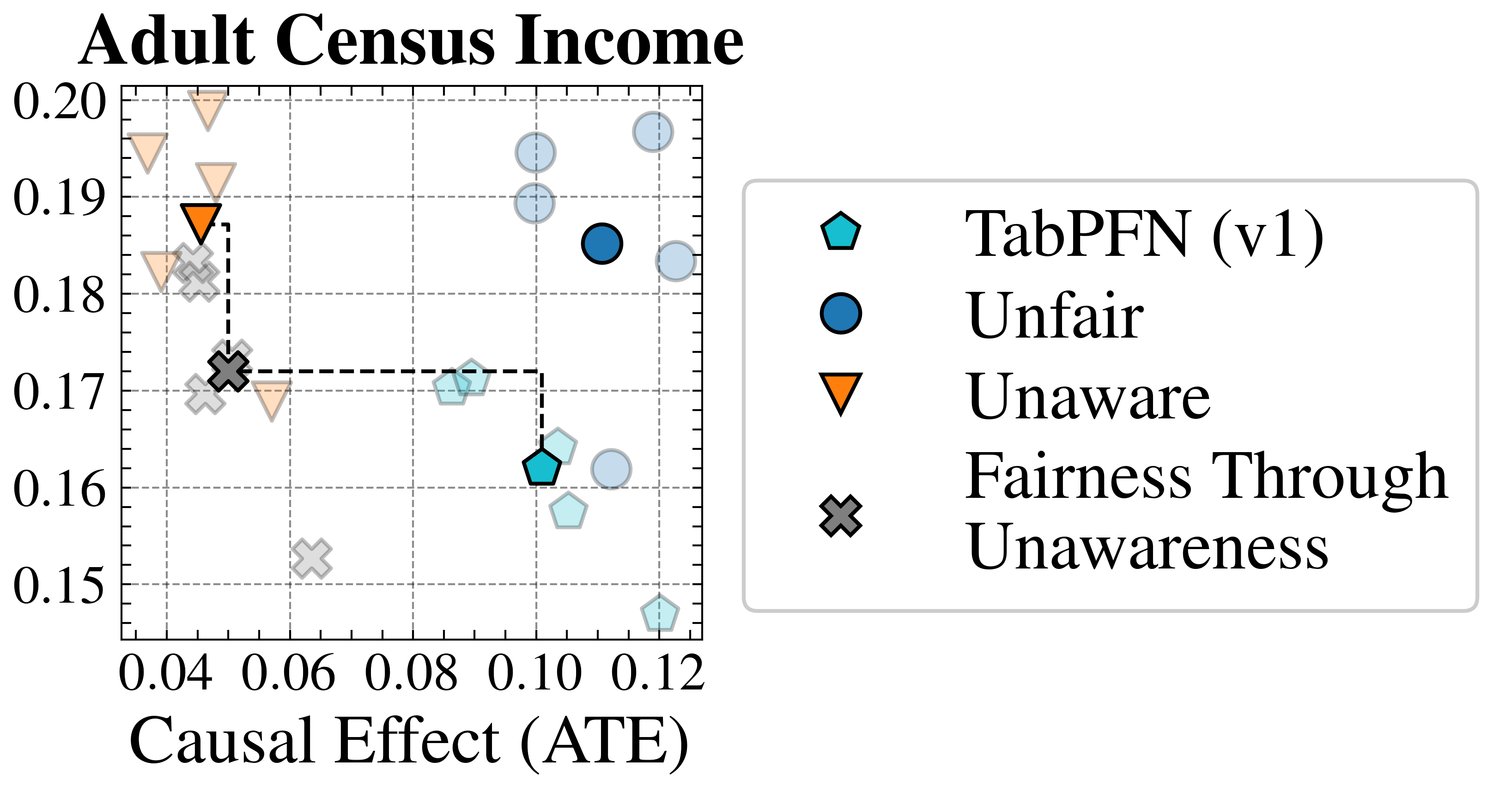

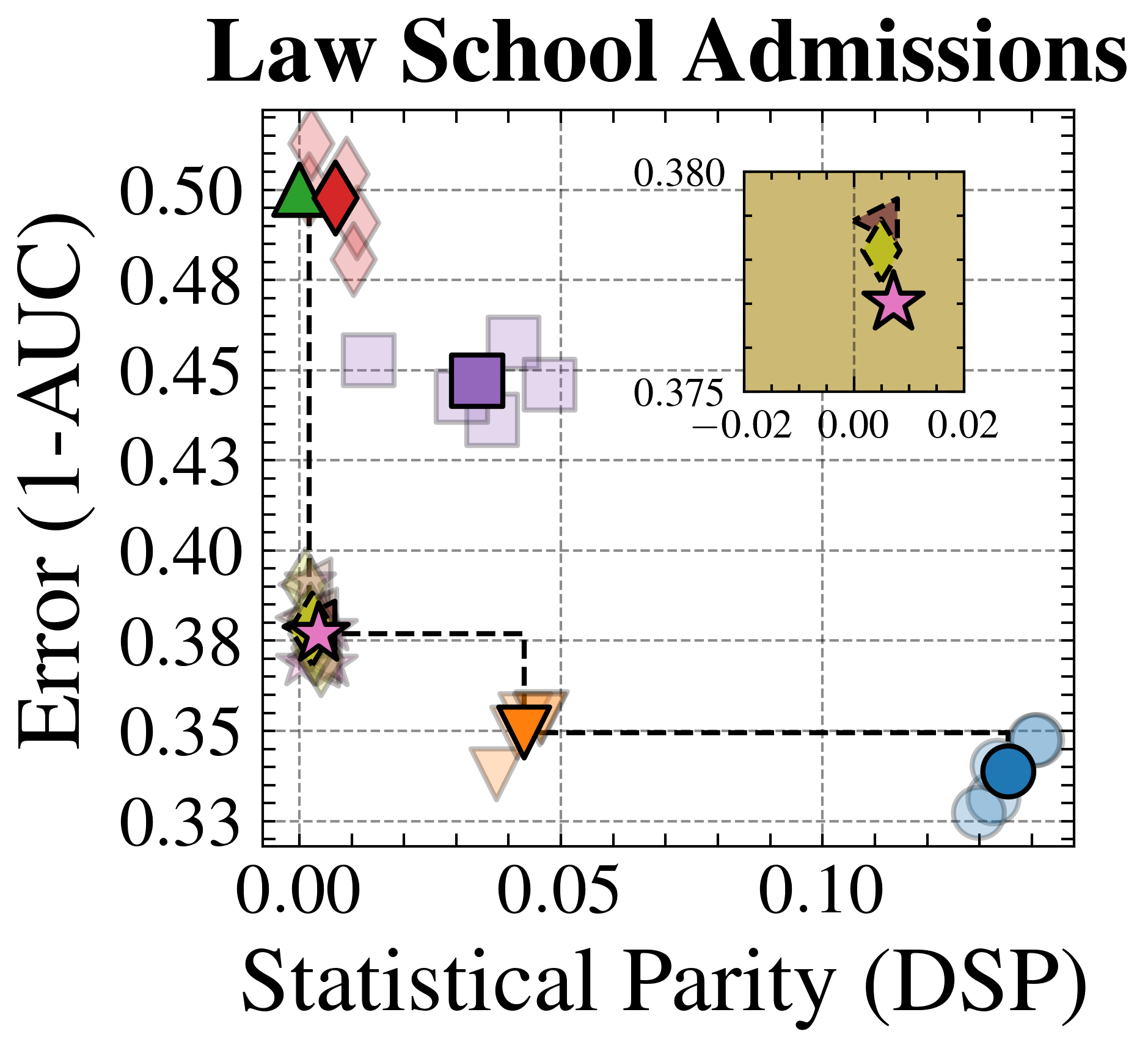

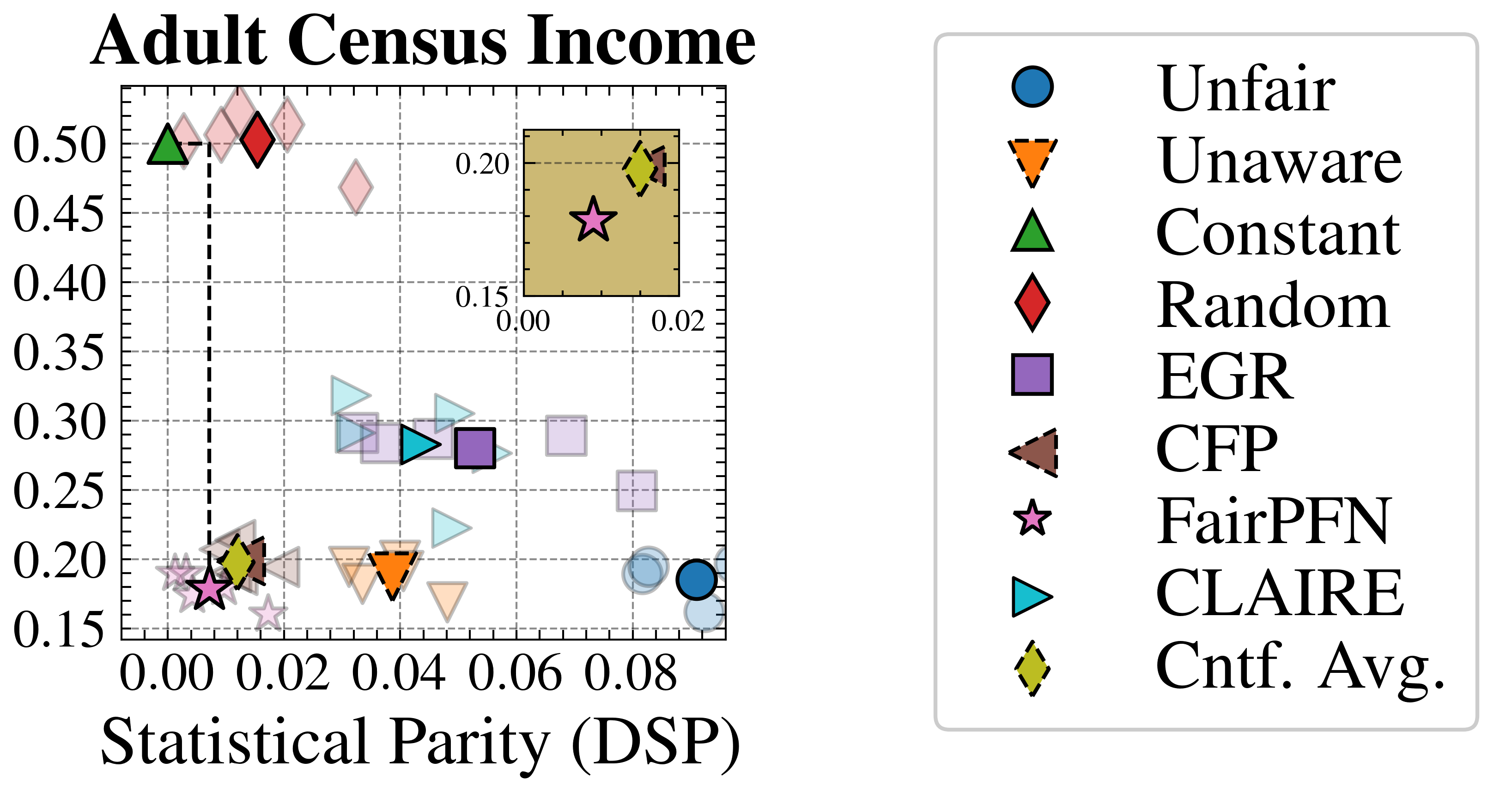

Fairness-Accuracy Trade-Off

We evaluate FairPFN’s effectiveness on real-world data in reducing the causal impact of protected attributes while maintaining strong predictive accuracy. Figure 6 shows the mean prediction average treatment effect (ATE) and predictive error (1-AUC) across 5 K-fold cross-validation iterations. FairPFN achieves a prediction ATE below 0.01 on both datasets and maintains accuracy comparable to Unfair. Furthermore, FairPFN exhibits lower variability in prediction ATE across folds compared to EGR, indicating stable causal effect removal We note that we also evaluate a pre-trained version of CLAIRE Ma et al. (2023) on the Adult Census income dataset, but observe little improvement to EGR.

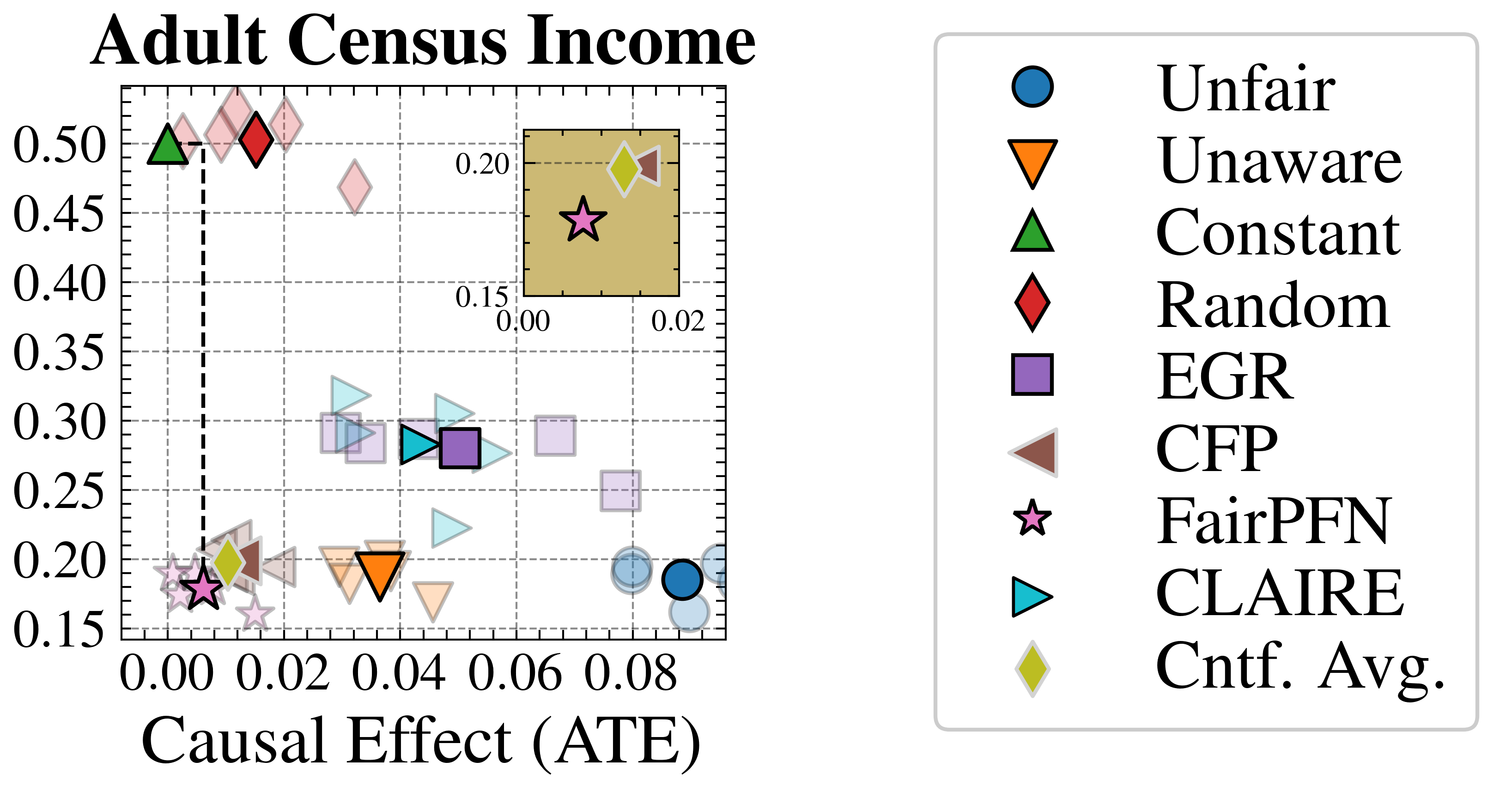

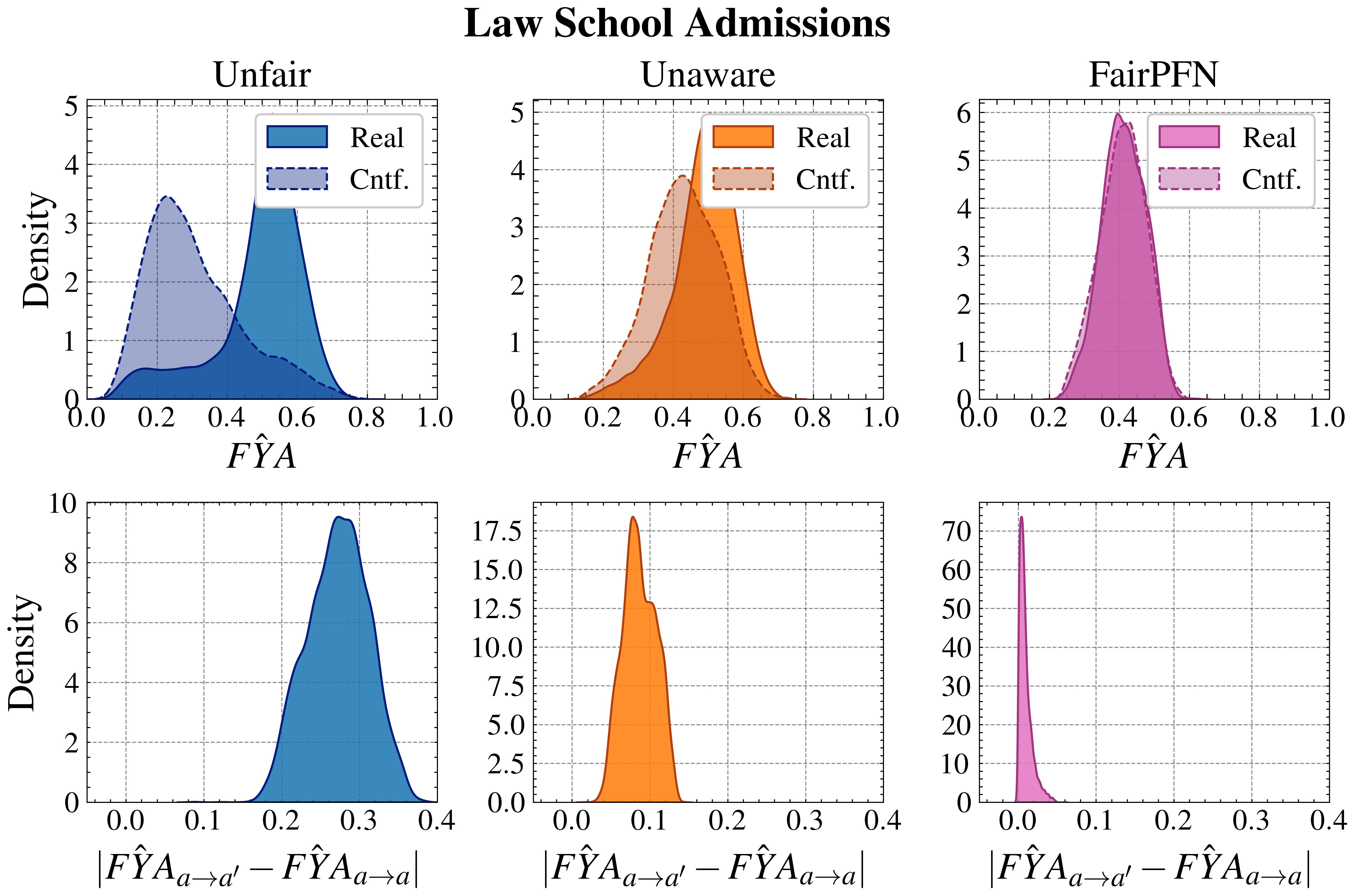

Counterfactual Fairness

Next, we evaluate the counterfactual fairness of FairPFN on real-world datasets as introduced in Section 3, noting that the following analysis is conducted at the individual sample level, rather than at the dataset level. Figure 7 illustrates the distribution of Absolute Error (AE) achieved by FairPFN and baselines that do not have access to causal information. FairPFN significantly reduces this error in both datasets, achieving maximum divergences of less than 0.05 on the Law School dataset and 0.2 on the Adult Census Income dataset. For a visual interpretation of the AE on our real-world datasets we refer to Appendix Figure 16.

In contrast, EGR performs similarly to Random in terms of counterfactual divergence, confirming previous studies which show that optimmizing for group fairness metrics does not optimize for individual level criteria Robertson et al. (2024). Interestingly, in an evaluation of group fairness metric Statistical Parity (DSP) FairPFN outperforms EGR on both our real-world data and causal case studies, a baseline was specifically optimized for this metric (Appendix Figures 20 and 21).

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Causal Diagrams for Law School Admissions and Adult Census Income

### Overview

The image presents two causal diagrams, side-by-side. The left diagram models factors influencing Law School Admissions, while the right diagram models factors influencing Adult Census Income. Both diagrams use nodes to represent variables and arrows to represent causal relationships. The diagrams also include elements representing noise and visibility to a fairness-focused model (FairPFN).

### Components/Axes

The diagrams utilize the following components, as indicated by the legend at the bottom:

* **Prot. Attr.** (Protected Attribute): Represented by blue circles.

* **Outcome:** Represented by orange circles.

* **Unfair Observable:** Represented by purple circles.

* **Fair Unobservable:** Represented by green circles.

* **Cause:** Represented by solid arrows (→).

* **Additive Noise:** Represented by dashed arrows (---).

* **Non-descendent:** Represented by dashed circles.

* **Seen by FairPFN:** Represented by diagonally striped circles.

**Law School Admissions Diagram:**

* Variables: SEX, RACE, GPA, LSAT, FYA (First Year Attendance), εGPA, εLSAT, εFYA

* Title: "Law School Admissions"

**Adult Census Income Diagram:**

* Variables: RACE, SEX, EDU (Education), MAR (Marital Status), OCC (Occupation), INC (Income), εEDU, εMAR, εHPW, εOCC

* Title: "Adult Census Income"

### Detailed Analysis or Content Details

**Law School Admissions Diagram:**

* RACE (blue) → SEX (blue)

* RACE (blue) → GPA (purple) → εGPA (dashed arrow)

* RACE (blue) → LSAT (purple) → εLSAT (dashed arrow)

* RACE (blue) → FYA (orange) → εFYA (dashed arrow)

* SEX (blue) → GPA (purple) → εGPA (dashed arrow)

* SEX (blue) → LSAT (purple) → εLSAT (dashed arrow)

* SEX (blue) → FYA (orange) → εFYA (dashed arrow)

**Adult Census Income Diagram:**

* RACE (blue) → SEX (blue)

* RACE (blue) → EDU (purple) → εEDU (dashed arrow)

* RACE (blue) → MAR (purple) → εMAR (dashed arrow)

* RACE (blue) → OCC (purple) → εOCC (dashed arrow)

* SEX (blue) → EDU (purple) → εEDU (dashed arrow)

* SEX (blue) → MAR (purple) → εMAR (dashed arrow)

* SEX (blue) → OCC (purple) → εOCC (dashed arrow)

* EDU (purple) → INC (orange)

* MAR (purple) → INC (orange)

* OCC (purple) → INC (orange)

* HPW (purple) → INC (orange)

### Key Observations

* Both diagrams share a similar structure, with protected attributes (RACE and SEX) influencing observable and unobservable factors that ultimately affect an outcome (FYA for Law School, INC for Income).

* The diagrams highlight the potential for indirect discrimination through multiple pathways.

* The inclusion of noise terms (εGPA, εLSAT, εFYA, εEDU, εMAR, εHPW, εOCC) acknowledges the inherent uncertainty and randomness in these relationships.

* The diagrams do not provide any quantitative data, only the structure of the causal relationships.

### Interpretation

These diagrams are conceptual models used to analyze fairness in machine learning. They illustrate how protected attributes like race and sex can influence outcomes, even if those attributes are not directly used in a predictive model. The noise terms represent unmeasured factors that contribute to the outcome. The diagrams are likely used to evaluate the fairness of algorithms designed to predict law school admissions or income, and to identify potential sources of bias. The "Seen by FairPFN" component (diagonally striped circles) suggests that a specific fairness-aware model (FairPFN) has access to certain variables, which may be used to mitigate bias. The diagrams are not about specific data points, but about the *relationships* between variables and the potential for unfairness. The diagrams are a tool for reasoning about causality and fairness, rather than a presentation of empirical results.

</details>

Figure 5: Real-World Scenarios: Assumed causal graphs of real-world datasets Law School Admissions and Adult Census Income.

<details>

<summary>extracted/6522797/figures/trade-off_lawschool.png Details</summary>

### Visual Description

\n

## Scatter Plot: Law School Admissions

### Overview

This image presents a scatter plot visualizing the relationship between "Causal Effect (ATE)" and "Error (1-AUC)" in the context of Law School Admissions. The plot features several data points represented by different geometric shapes and colors. A zoomed-in section highlights a cluster of points near the origin.

### Components/Axes

* **Title:** "Law School Admissions" (Top-center)

* **X-axis:** "Causal Effect (ATE)" - Ranges from approximately -0.02 to 0.12.

* **Y-axis:** "Error (1-AUC)" - Ranges from approximately 0.33 to 0.50.

* **Data Points:** Represented by various shapes (diamond, star, triangle, circle, square) and colors (red, green, orange, blue, purple).

* **Zoomed-in Section:** A rectangular region (approximately from -0.02 to 0.02 on the x-axis and 0.375 to 0.380 on the y-axis) highlights a cluster of data points.

* **Value Label:** "0.380" is displayed within the zoomed-in section.

* **Value Label:** "0.375" is displayed within the zoomed-in section.

### Detailed Analysis

The scatter plot displays the following data points (approximate values, based on visual estimation):

* **Red Diamond:** (Causal Effect: -0.01, Error: 0.49)

* **Light Red Diamond:** (Causal Effect: 0.02, Error: 0.46)

* **Purple Square:** (Causal Effect: 0.04, Error: 0.44)

* **Green Star:** (Causal Effect: -0.01, Error: 0.38)

* **Orange Triangle:** (Causal Effect: 0.06, Error: 0.35)

* **Blue Circle:** (Causal Effect: 0.11, Error: 0.34)

* **Yellow Hexagon:** (Causal Effect: 0.01, Error: 0.378) - Located within the zoomed-in section.

* **Green Star:** (Causal Effect: 0.01, Error: 0.378) - Located within the zoomed-in section.

The overall trend is not strongly linear. There appears to be a general tendency for higher Causal Effect values to correspond with lower Error values, but with significant variation.

### Key Observations

* The data points are scattered, indicating a complex relationship between Causal Effect and Error.

* The zoomed-in section suggests a concentration of data points with low Causal Effect and Error values.

* The red diamond has the highest Error value, while the blue circle has the highest Causal Effect value.

* There is a noticeable spread in Error values for similar Causal Effect values, and vice versa.

### Interpretation

The plot likely represents the performance of different models or methods in estimating the causal effect of some intervention in Law School Admissions, as measured by the Average Treatment Effect (ATE). The Error (1-AUC) represents the model's accuracy.

The data suggests that achieving a higher Causal Effect (more accurately estimating the impact of an intervention) often comes at the cost of increased Error (lower accuracy). The spread of the data points indicates that there is no single "best" method, and the optimal trade-off between Causal Effect and Error depends on the specific application.

The zoomed-in section highlights a region where both Causal Effect and Error are relatively low, suggesting that some methods perform well in this range. The presence of multiple data points in this region indicates that there are several viable options.

The outlier (red diamond) suggests that some methods may have high Error even with a low Causal Effect, potentially due to biases or limitations in the data or model. The blue circle suggests that a high causal effect can be achieved, but at the cost of a higher error.

</details>

<details>

<summary>extracted/6522797/figures/trade-off_adult.png Details</summary>

### Visual Description

## Scatter Plot: Adult Census Income

### Overview

This image presents a scatter plot visualizing the relationship between "Causal Effect (ATE)" on the x-axis and an unnamed y-axis, presumably representing some measure of fairness or performance. The plot compares several different fairness-aware algorithms and baseline methods. A zoomed-in inset plot highlights a specific region of the data.

### Components/Axes

* **Title:** "Adult Census Income" (top-center)

* **X-axis Label:** "Causal Effect (ATE)" (bottom-center)

* **Y-axis Label:** Not explicitly labeled, but the scale ranges from approximately 0.15 to 0.52.

* **Legend:** Located in the top-right corner, listing the following algorithms/methods with corresponding marker shapes and colors:

* Unfair (Blue Circle)

* Unaware (Orange Downward Triangle)

* Constant (Green Upward Triangle)

* Random (Red Diamond)

* EGR (Purple Square)

* CFP (Gray Circle)

* FairPFN (White Star)

* CLAIRE (Blue Downward Triangle)

* Cntf. Avg. (Yellow Diamond)

* **Inset Plot:** A zoomed-in section of the main plot, located in the top-right quadrant, with x-axis ranging from approximately 0.00 to 0.02 and y-axis ranging from approximately 0.15 to 0.20.

### Detailed Analysis

The main plot displays data points for each of the algorithms listed in the legend.

* **Unfair (Blue Circle):** Located near (0.08, 0.20).

* **Unaware (Orange Downward Triangle):** Located near (0.04, 0.48).

* **Constant (Green Upward Triangle):** Located near (0.00, 0.50).

* **Random (Red Diamond):** Several points are scattered between approximately (0.02, 0.25) and (0.08, 0.35).

* **EGR (Purple Square):** Located near (0.06, 0.30).

* **CFP (Gray Circle):** Located near (0.04, 0.25).

* **FairPFN (White Star):** Located near (0.06, 0.42) and (0.00, 0.18).

* **CLAIRE (Blue Downward Triangle):** Located near (0.04, 0.30).

* **Cntf. Avg. (Yellow Diamond):** Several points are scattered between approximately (0.02, 0.20) and (0.08, 0.30).

**Trends:**

* The "Constant" method consistently shows the highest y-axis values.

* The "Unaware" method generally has higher y-axis values than "Unfair".

* "FairPFN" has two distinct data points, one with a high y-axis value and one with a low y-axis value.

* The "Random" and "Cntf. Avg." methods exhibit a wider spread of values.

**Inset Plot:**

* The inset plot focuses on a region with lower "Causal Effect (ATE)" values.

* A "FairPFN" (White Star) data point is visible at approximately (0.01, 0.18).

### Key Observations

* There is a noticeable spread in the data, indicating that different algorithms perform differently in terms of the measured metrics.

* The "Constant" method appears to achieve the highest values on the y-axis, but it's unclear what this axis represents without further context.

* The presence of multiple data points for "FairPFN" suggests variability in its performance.

* The inset plot highlights the performance of "FairPFN" in a specific region of the "Causal Effect (ATE)" range.

### Interpretation

The scatter plot likely aims to compare the trade-offs between fairness and causal effect for different algorithmic approaches on the Adult Census Income dataset. The x-axis, "Causal Effect (ATE)", represents the average treatment effect, indicating the impact of a decision on an individual's income. The y-axis likely represents a fairness metric, where higher values indicate better fairness.

The plot suggests that achieving high fairness (high y-axis values) may come at the cost of a lower causal effect (lower x-axis values). The "Constant" method, while achieving the highest fairness, has a relatively low causal effect. The "Unaware" method, which does not explicitly consider fairness, has a higher causal effect but potentially lower fairness.

The two data points for "FairPFN" suggest that its performance is sensitive to certain conditions or parameters. The inset plot focuses on a region where "FairPFN" performs relatively well, indicating that it may be a viable option for achieving both fairness and a reasonable causal effect in specific scenarios.

The spread of data points for "Random" and "Cntf. Avg." indicates that these methods are less consistent in their performance. The overall goal of the visualization is to help decision-makers choose the most appropriate algorithm based on their specific priorities and constraints.

</details>

Figure 6: Fairness-Accuracy Trade-off (Real-World): Average Treatment Effect (ATE) of predictions, predictive error (1-AUC), and Pareto Front of the performance of FairPFN compared to our baselines on each of 5 validation folds (light) and across all five folds (solid) of our real-world datasets. Baselines which have access to causal information have a light border. FairPFN matches the performance of baselines which have access to inferred causal information with only access to observational data.

<details>

<summary>extracted/6522797/figures/kl_real.png Details</summary>

### Visual Description

\n

## Violin Plots: Absolute Error Comparison for Fairness Metrics

### Overview

The image presents two violin plots side-by-side, comparing the distribution of Absolute Error (AE) for different fairness metrics across two datasets: "Law School Admissions" and "Adult Census Income". The fairness metrics being compared are Unfair, Unaware, Random, EGR, and FairPFN. Each plot visualizes the AE distribution for each metric within a specific dataset.

### Components/Axes

* **X-axis:** Labeled "Absolute Error (AE)", ranging from 0.0 to 1.0 with increments of 0.2.

* **Y-axis:** Represents the density of the Absolute Error distribution. No explicit label is provided, but it is implied to be a density scale.

* **Title (Left Plot):** "Law School Admissions"

* **Title (Right Plot):** "Adult Census Income"

* **Legend (Top-Left):** A horizontal legend indicating the color mapping for each fairness metric:

* Unfair: Dark Blue

* Unaware: Orange

* Random: Red

* EGR: Gray

* FairPFN: Magenta

### Detailed Analysis or Content Details

**Law School Admissions (Left Plot):**

* **Unfair (Dark Blue):** The distribution is bimodal, with a peak around 0.05 and another around 0.25. The violin extends to approximately 0.4.

* **Unaware (Orange):** The distribution is also bimodal, with peaks around 0.02 and 0.2. The violin extends to approximately 0.35.

* **Random (Red):** A broad, relatively flat distribution spanning from 0.0 to approximately 0.8. The highest density is around 0.2-0.4.

* **EGR (Gray):** A narrow distribution centered around 0.25, with a violin extending to approximately 0.4.

* **FairPFN (Magenta):** A very concentrated distribution centered around 0.0, with a violin extending to approximately 0.1.

**Adult Census Income (Right Plot):**

* **Unfair (Dark Blue):** Similar to the Law School Admissions plot, bimodal with peaks around 0.05 and 0.25. The violin extends to approximately 0.4.

* **Unaware (Orange):** Bimodal, with peaks around 0.02 and 0.2. The violin extends to approximately 0.35.

* **Random (Red):** Broad, relatively flat distribution spanning from 0.0 to approximately 0.8. The highest density is around 0.2-0.4.

* **EGR (Gray):** A narrow distribution centered around 0.25, with a violin extending to approximately 0.4.

* **FairPFN (Magenta):** A very concentrated distribution centered around 0.0, with a violin extending to approximately 0.1.

### Key Observations

* **FairPFN consistently exhibits the lowest Absolute Error** across both datasets, indicating the best performance in terms of minimizing error.

* **Unfair and Unaware show similar distributions** in both datasets, with bimodal shapes and higher error values compared to FairPFN.

* **Random has the broadest distribution**, suggesting the most variability in Absolute Error.

* **EGR shows a relatively narrow distribution** centered around a moderate error value.

* The shapes of the distributions are remarkably similar between the two datasets for each fairness metric.

### Interpretation

The data suggests that the FairPFN fairness metric significantly reduces Absolute Error compared to other metrics (Unfair, Unaware, Random, and EGR) in both the Law School Admissions and Adult Census Income datasets. This implies that FairPFN is more effective at achieving accurate predictions while maintaining fairness. The bimodal distributions observed for Unfair and Unaware suggest potential issues with these metrics, possibly indicating a trade-off between fairness and accuracy. The broad distribution of Random indicates that it is the least predictable in terms of error. The similarity in distributions across both datasets suggests that the observed patterns are not specific to either dataset but are likely generalizable to other similar scenarios. The concentrated distributions of FairPFN indicate a consistent and low error rate, making it a potentially desirable choice for applications where accuracy and fairness are critical.

</details>

Figure 7: Counterfactual Fairness (Real-World): Distributions of Absolute Error (AE) between predictive distributions on observational and counterfactual datasets. Compared to baselines that do not have access to causal information, FairPFN achieves the lowest median and maximum AE on both datasets.

Trust & Interpretability

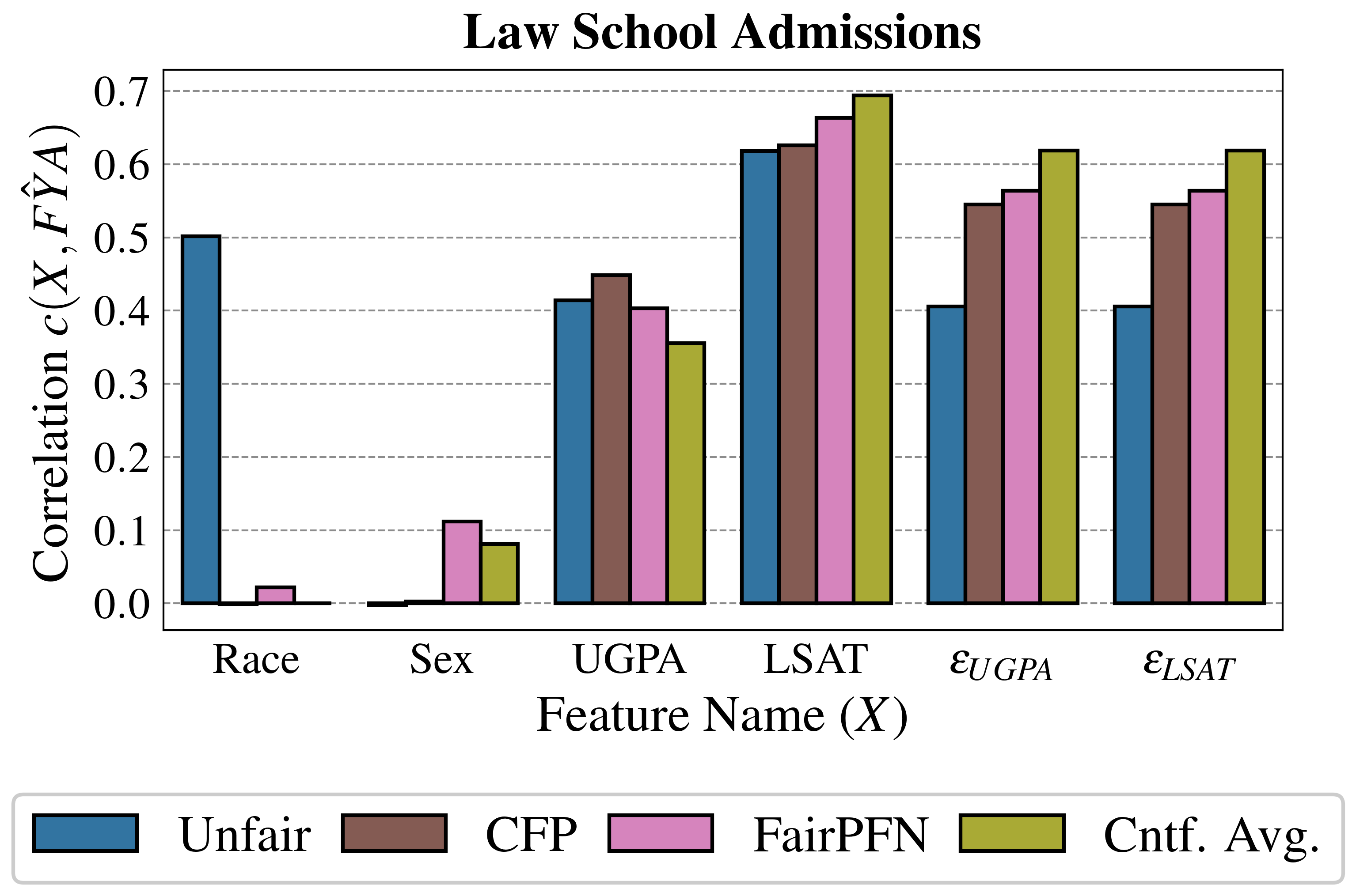

In order to build trust in FairPFN and explain its internal workings, we first perform a feature correlation analysis of FairPFN and baseline models using the Law School Admissions dataset. We measure the Kendall rank correlation between observable variables "LSAT" and "UGPA," and inferred noise terms $\epsilon_{LSAT}$ and $\epsilon_{UGPA}$ , with predicted admission probabilities $\hat{FYA}$ .

Figure 8 shows that despite only having access to observational data, FairPFN’s predictions correlate with fair noise terms similarly to CFP which was fit solely to these variables. This result suggests FairPFN’s ability to not only integrate over realistic causal explanations for the data, but also correctly remove the causal effect of the protected attribute such that its predictions are influenced only by fair exogenous causes. We note that while FairPFN mitigates the effect of "Race," it increases the correlation of "Sex" compared to the Unfair and CFP baselines. We discuss how future versions of FairPFN can tackle the problem of intersectionality in Section 6. We also further investigate this result in Appendix Figure 12, which confirms that FairPFN does not remove the effect of additional protected attributes other than the one specified.

We also observe in Figure 3 and 6 the strong performance of our Cntf. Avg. baseline, which predicts the average outcome probability in the observational and counterfactual worlds. We thus carry out a similarity test to Cntf. Avg. in Appendix Tables 1 and 2, calculating for each other baseline the mean difference in predictions, the standard deviation of this distribution, and the percentage of outliers. We find that FairPFN’s predictions are among the closest to this target, with a mean error on synthetic datasets of 0.00±0.06 with 1.87% of samples falling outside of three standard deviations, and a mean error on real-world datasets of 0.02±0.04 with 0.36% of outlying samples.

<details>

<summary>extracted/6522797/figures/lawschool_corr.png Details</summary>

### Visual Description

## Bar Chart: Law School Admissions - Correlation Analysis

### Overview

This bar chart visualizes the correlation between various features (Race, Sex, UGPA, LSAT, εUGPA, εLSAT) and the predicted admission outcome (ŶA) under different fairness constraints. The chart compares the correlation coefficients for "Unfair", "CFP", "FairPFN", and "Cntf. Avg." models. The y-axis represents the correlation coefficient, while the x-axis lists the features.

### Components/Axes

* **Title:** Law School Admissions

* **X-axis:** Feature Name (X) - Categories: Race, Sex, UGPA, LSAT, εUGPA, εLSAT

* **Y-axis:** Correlation c(X, ŶA) - Scale: 0.0 to 0.7 (with increments of 0.1)

* **Legend:**

* Unfair (Blue)

* CFP (Gray)

* FairPFN (Magenta/Pink)

* Cntf. Avg. (Light Green)

### Detailed Analysis

The chart consists of six groups of four bars, one group for each feature. Each bar represents the correlation coefficient for a specific fairness constraint.

**Race:**

* Unfair: Approximately 0.52

* CFP: Approximately 0.02

* FairPFN: Approximately 0.01

* Cntf. Avg.: Approximately 0.01

**Sex:**

* Unfair: Approximately 0.11

* CFP: Approximately 0.08

* FairPFN: Approximately 0.05

* Cntf. Avg.: Approximately 0.09

**UGPA:**

* Unfair: Approximately 0.45

* CFP: Approximately 0.42

* FairPFN: Approximately 0.44

* Cntf. Avg.: Approximately 0.43

**LSAT:**

* Unfair: Approximately 0.64

* CFP: Approximately 0.62

* FairPFN: Approximately 0.66

* Cntf. Avg.: Approximately 0.65

**εUGPA:**

* Unfair: Approximately 0.67

* CFP: Approximately 0.64

* FairPFN: Approximately 0.68

* Cntf. Avg.: Approximately 0.66

**εLSAT:**

* Unfair: Approximately 0.61

* CFP: Approximately 0.59

* FairPFN: Approximately 0.63

* Cntf. Avg.: Approximately 0.62

### Key Observations

* The "Unfair" model consistently exhibits the highest correlation coefficients across all features, indicating a strong predictive power without fairness considerations.